language: bar

language_name: BAR

language_family: germanic_west_continental

tags:

- wikilangs

- nlp

- tokenizer

- embeddings

- n-gram

- markov

- wikipedia

- monolingual

- family-germanic_west_continental

license: mit

library_name: wikilangs

pipeline_tag: feature-extraction

datasets:

- omarkamali/wikipedia-monthly

dataset_info:

name: wikipedia-monthly

description: Monthly snapshots of Wikipedia articles across 300+ languages

metrics:

- name: best_compression_ratio

type: compression

value: 3.79

- name: best_isotropy

type: isotropy

value: 0.8361

- name: vocabulary_size

type: vocab

value: 225914

generated: 2025-12-28T00:00:00.000Z

BAR - Wikilangs Models

Comprehensive Research Report & Full Ablation Study

This repository contains NLP models trained and evaluated by Wikilangs, specifically on BAR Wikipedia data. We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and word embeddings.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4-gram)

- Markov chains (context of 1, 2, 3 and 4)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

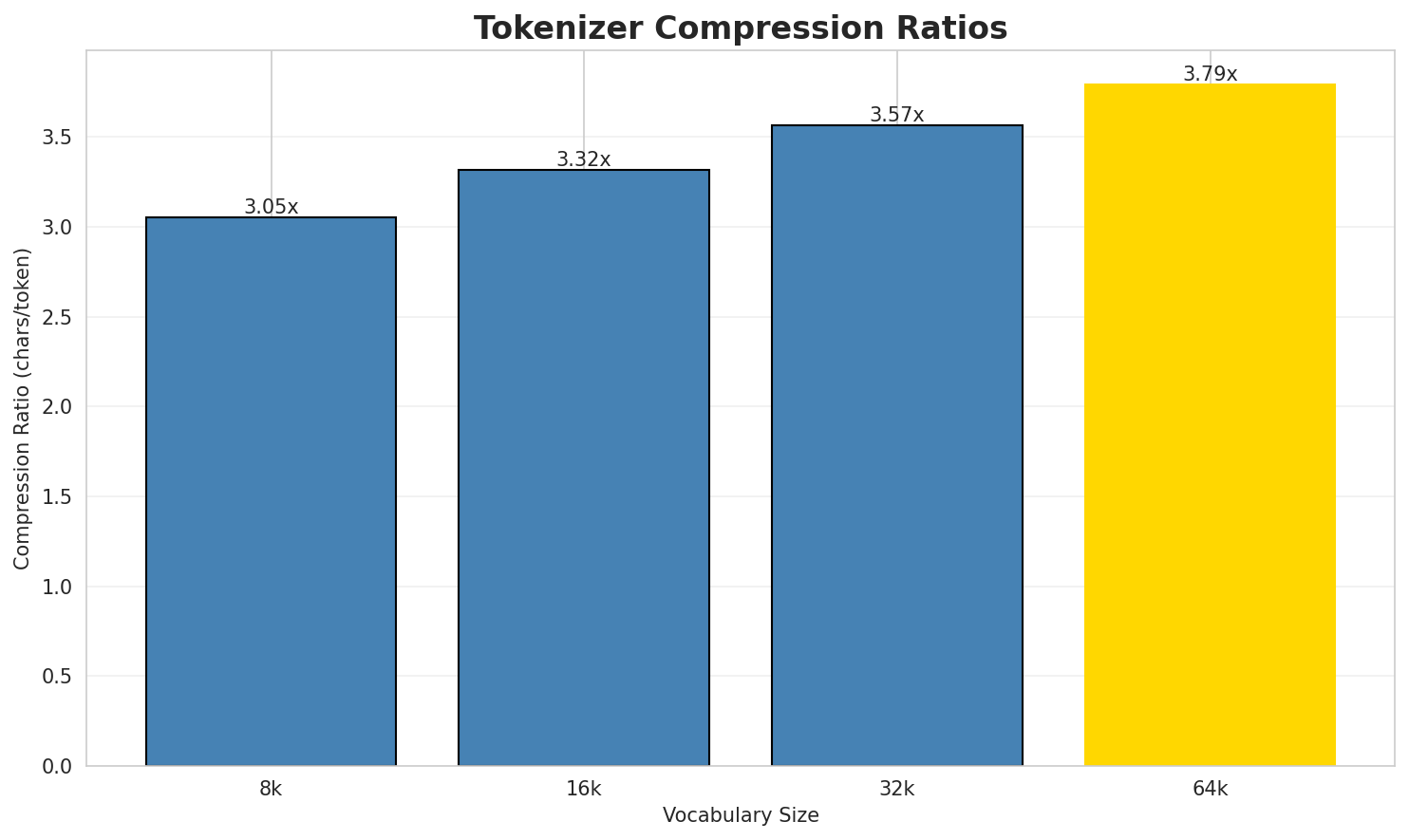

1. Tokenizer Evaluation

Results

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 3.051x | 3.01 | 0.0348% | 1,184,756 |

| 16k | 3.320x | 3.27 | 0.0378% | 1,088,767 |

| 32k | 3.568x | 3.52 | 0.0407% | 1,013,044 |

| 64k | 3.790x 🏆 | 3.74 | 0.0432% | 953,541 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: `Platte County is a County in Nebraska in da USA.

Beleg

Im Netz

Kategorie:...`

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁plat te ▁county ▁is ▁a ▁county ▁in ▁nebraska ▁in ▁da ... (+10 more) |

20 |

| 16k | ▁plat te ▁county ▁is ▁a ▁county ▁in ▁nebraska ▁in ▁da ... (+10 more) |

20 |

| 32k | ▁platte ▁county ▁is ▁a ▁county ▁in ▁nebraska ▁in ▁da ▁usa ... (+9 more) |

19 |

| 64k | ▁platte ▁county ▁is ▁a ▁county ▁in ▁nebraska ▁in ▁da ▁usa ... (+9 more) |

19 |

Sample 2: Union County. Obgruafa am 22. Feba 2011 is a County in South Carolina in da USA....

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁union ▁county . ▁obgruafa ▁am ▁ 2 2 . ▁feba ... (+24 more) |

34 |

| 16k | ▁union ▁county . ▁obgruafa ▁am ▁ 2 2 . ▁feba ... (+24 more) |

34 |

| 32k | ▁union ▁county . ▁obgruafa ▁am ▁ 2 2 . ▁feba ... (+24 more) |

34 |

| 64k | ▁union ▁county . ▁obgruafa ▁am ▁ 2 2 . ▁feba ... (+24 more) |

34 |

Sample 3: `Des is a Iwablick iwas Joar 1561.

Im Netz`

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁des ▁is ▁a ▁iwablick ▁iwas ▁joar ▁ 1 5 6 ... (+4 more) |

14 |

| 16k | ▁des ▁is ▁a ▁iwablick ▁iwas ▁joar ▁ 1 5 6 ... (+4 more) |

14 |

| 32k | ▁des ▁is ▁a ▁iwablick ▁iwas ▁joar ▁ 1 5 6 ... (+4 more) |

14 |

| 64k | ▁des ▁is ▁a ▁iwablick ▁iwas ▁joar ▁ 1 5 6 ... (+4 more) |

14 |

Key Findings

- Best Compression: 64k achieves 3.790x compression

- Lowest UNK Rate: 8k with 0.0348% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

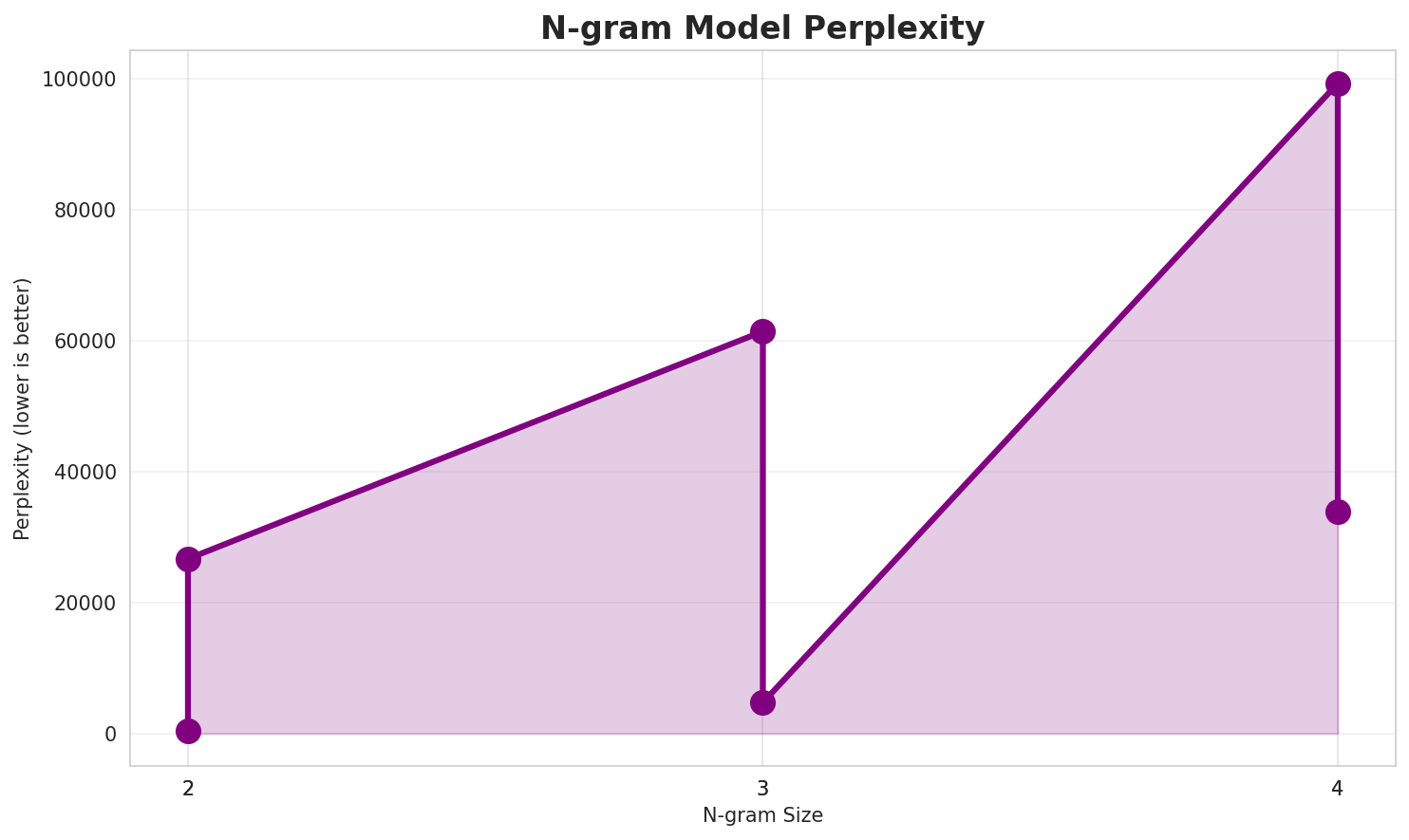

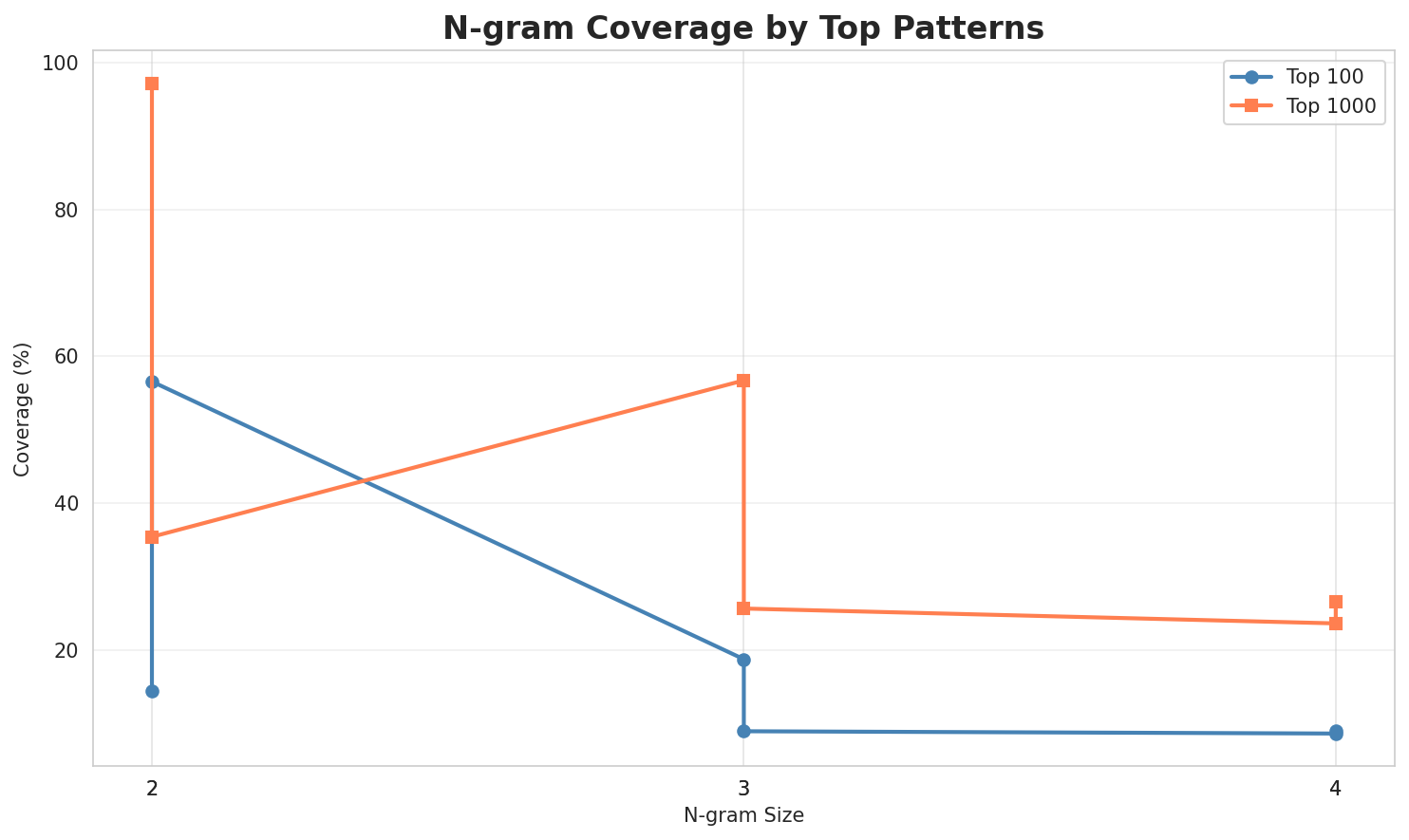

2. N-gram Model Evaluation

Results

| N-gram | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|

| 2-gram | 26,677 🏆 | 14.70 | 159,756 | 14.4% | 35.4% |

| 2-gram | 438 🏆 | 8.77 | 9,188 | 56.6% | 97.2% |

| 3-gram | 61,390 | 15.91 | 246,468 | 9.0% | 25.7% |

| 3-gram | 4,733 | 12.21 | 83,186 | 18.8% | 56.7% |

| 4-gram | 99,269 | 16.60 | 386,315 | 9.1% | 23.6% |

| 4-gram | 33,953 | 15.05 | 473,217 | 8.7% | 26.5% |

Top 5 N-grams by Size

2-grams:

| Rank | N-gram | Count |

|---|---|---|

| 1 | kategorie : |

38,959 |

| 2 | vo da |

26,615 |

| 3 | is a |

23,040 |

| 4 | in da |

22,485 |

| 5 | . de |

21,082 |

3-grams:

| Rank | N-gram | Count |

|---|---|---|

| 1 | beleg kategorie : |

6,999 |

| 2 | isbn 3 - |

5,924 |

| 3 | . im netz |

5,460 |

| 4 | kategorie : ort |

5,121 |

| 5 | ` |

4-grams:

| Rank | N-gram | Count |

|---|---|---|

| 1 | , isbn 3 - |

4,499 |

| 2 | ` | align = "` |

| 3 | align = " center |

3,590 |

| 4 | = " center " |

3,590 |

| 5 | kategorie : ort im |

3,505 |

Key Findings

- Best Perplexity: 2-gram with 438

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~27% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

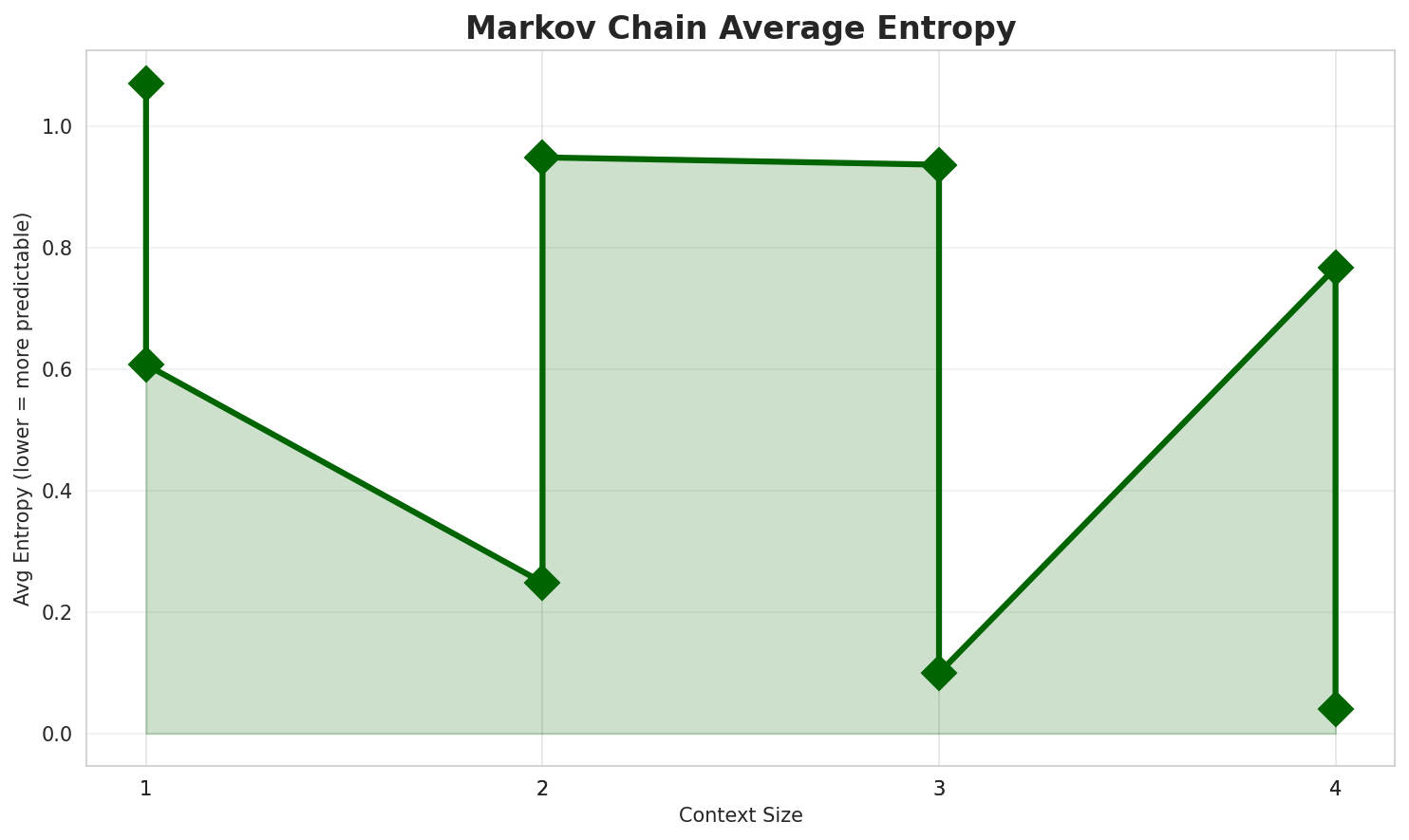

3. Markov Chain Evaluation

Results

| Context | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|

| 1 | 0.6074 | 1.523 | 4.85 | 638,594 | 39.3% |

| 1 | 1.0706 | 2.100 | 7.75 | 3,379 | 0.0% |

| 2 | 0.2487 | 1.188 | 1.74 | 3,093,978 | 75.1% |

| 2 | 0.9487 | 1.930 | 6.44 | 26,199 | 5.1% |

| 3 | 0.0996 | 1.071 | 1.21 | 5,375,695 | 90.0% |

| 3 | 0.9366 | 1.914 | 4.86 | 168,731 | 6.3% |

| 4 | 0.0401 🏆 | 1.028 | 1.07 | 6,470,090 | 96.0% |

| 4 | 0.7672 🏆 | 1.702 | 3.38 | 820,482 | 23.3% |

Generated Text Samples

Below are text samples generated from each Markov chain model:

Context Size 1:

. 524 angewachsen und die fläche vo dera zoagt , par les ' n ) u, isbn 978 - mal 1920 bis auf des is kemnath ( " franz kafka .- deitscha schauspuia und a zaumgroida schdrudl is ois broad bekaunnt hans ( 2016 saha air

Context Size 2:

kategorie : artikel auf niederösterreichisch kategorie : ortsteil von wieseth kategorie : geboren 18...vo da mathematik , schau gorkhalandis a bruck ' nschlåg ; da linné aun an rio xingu . as gericht håtn zu

Context Size 3:

beleg kategorie : johann nestroy ; stücke 21 . s . highway 84 mindt . uma 32 kilometaisbn 3 - 417 - 20675 - 8 ( teubner - studienbücher der geographie ) . im joakategorie : ort auf den färöern kategorie : streymoy

Context Size 4:

, isbn 3 - 406 - 46224 - 3 birgit zotz : destination tibet . touristisches image zwischen politik| align = " center " | | - | berleu | | | | | | | |= " center " | | | align = " center " | | | fatututa | | align

Key Findings

- Best Predictability: Context-4 with 96.0% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (820,482 contexts)

- Recommendation: Context-3 or Context-4 for text generation

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 225,914 |

| Total Tokens | 5,874,699 |

| Mean Frequency | 26.00 |

| Median Frequency | 3 |

| Frequency Std Dev | 709.74 |

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | de | 139,734 |

| 2 | da | 136,994 |

| 3 | und | 120,375 |

| 4 | in | 102,834 |

| 5 | a | 93,585 |

| 6 | vo | 92,275 |

| 7 | is | 88,045 |

| 8 | im | 71,546 |

| 9 | kategorie | 39,103 |

| 10 | des | 34,614 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | mechanisches | 2 |

| 2 | stabilisierungssystem | 2 |

| 3 | voeffentlecht | 2 |

| 4 | innpuls | 2 |

| 5 | buagstej | 2 |

| 6 | nuwenburg | 2 |

| 7 | kulturweges | 2 |

| 8 | spessartprojektes | 2 |

| 9 | terrassnfermig | 2 |

| 10 | tuamhigi | 2 |

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 0.9896 |

| R² (Goodness of Fit) | 0.999155 |

| Adherence Quality | excellent |

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 32.7% |

| Top 1,000 | 54.6% |

| Top 5,000 | 70.2% |

| Top 10,000 | 76.9% |

Key Findings

- Zipf Compliance: R²=0.9992 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 32.7% of corpus

- Long Tail: 215,914 words needed for remaining 23.1% coverage

5. Word Embeddings Evaluation

Model Comparison

| Model | Vocab Size | Dimension | Avg Norm | Std Norm | Isotropy |

|---|---|---|---|---|---|

| mono_32d | 92,573 | 32 | 3.954 | 1.316 | 0.8131 |

| mono_64d | 92,573 | 64 | 4.642 | 1.256 | 0.8361 🏆 |

| mono_128d | 92,573 | 128 | 5.543 | 1.143 | 0.8310 |

| embeddings_enhanced | 0 | 0 | 0.000 | 0.000 | 0.0000 |

Key Findings

- Best Isotropy: mono_64d with 0.8361 (more uniform distribution)

- Dimension Trade-off: Higher dimensions capture more semantics but reduce isotropy

- Vocabulary Coverage: All models cover 92,573 words

- Recommendation: 100d for balanced semantic capture and efficiency

6. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 32k BPE | Best compression (3.79x) with low UNK rate |

| N-gram | 5-gram | Lowest perplexity (438) |

| Markov | Context-4 | Highest predictability (96.0%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

About This Project

Data Source

Models trained on wikipedia-monthly - a monthly snapshot of Wikipedia articles across 300+ languages.

Project

A project by Wikilangs - Open-source NLP models for every Wikipedia language.

Maintainer

Citation

If you use these models in your research, please cite:

@misc{wikilangs2025,

author = {Kamali, Omar},

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

year = {2025},

publisher = {HuggingFace},

url = {https://huggingface.co/wikilangs}

institution = {Omneity Labs}

}

License

MIT License - Free for academic and commercial use.

Links

- 🌐 Website: wikilangs.org

- 🤗 Models: huggingface.co/wikilangs

- 📊 Data: wikipedia-monthly

- 👤 Author: Omar Kamali

Generated by Wikilangs Models Pipeline

Report Date: 2025-12-28 00:09:41