hexsha stringlengths 40 40 | size int64 5 1.04M | ext stringclasses 6

values | lang stringclasses 1

value | max_stars_repo_path stringlengths 3 344 | max_stars_repo_name stringlengths 5 125 | max_stars_repo_head_hexsha stringlengths 40 78 | max_stars_repo_licenses listlengths 1 11 | max_stars_count int64 1 368k ⌀ | max_stars_repo_stars_event_min_datetime stringlengths 24 24 ⌀ | max_stars_repo_stars_event_max_datetime stringlengths 24 24 ⌀ | max_issues_repo_path stringlengths 3 344 | max_issues_repo_name stringlengths 5 125 | max_issues_repo_head_hexsha stringlengths 40 78 | max_issues_repo_licenses listlengths 1 11 | max_issues_count int64 1 116k ⌀ | max_issues_repo_issues_event_min_datetime stringlengths 24 24 ⌀ | max_issues_repo_issues_event_max_datetime stringlengths 24 24 ⌀ | max_forks_repo_path stringlengths 3 344 | max_forks_repo_name stringlengths 5 125 | max_forks_repo_head_hexsha stringlengths 40 78 | max_forks_repo_licenses listlengths 1 11 | max_forks_count int64 1 105k ⌀ | max_forks_repo_forks_event_min_datetime stringlengths 24 24 ⌀ | max_forks_repo_forks_event_max_datetime stringlengths 24 24 ⌀ | content stringlengths 5 1.04M | avg_line_length float64 1.14 851k | max_line_length int64 1 1.03M | alphanum_fraction float64 0 1 | lid stringclasses 191

values | lid_prob float64 0.01 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

f96194f152ee64e99e02a700704ae72aff13a540 | 949 | md | Markdown | generated-docs/P5/Structure/Structure.md | thought2/purescript-p5 | 5281b9250a15489e320ca61b4ee28bf3c14fb0f2 | [

"MIT"

] | 15 | 2019-11-22T04:14:23.000Z | 2022-02-06T21:02:04.000Z | generated-docs/P5/Structure/Structure.md | thought2/purescript-p5 | 5281b9250a15489e320ca61b4ee28bf3c14fb0f2 | [

"MIT"

] | 8 | 2019-09-22T13:12:07.000Z | 2022-02-26T07:07:53.000Z | generated-docs/P5/Structure/Structure.md | thought2/purescript-p5 | 5281b9250a15489e320ca61b4ee28bf3c14fb0f2 | [

"MIT"

] | 3 | 2020-08-12T00:15:43.000Z | 2022-01-16T01:45:10.000Z | ## Module P5.Structure.Structure

#### `loop`

``` purescript

loop :: P5 -> (Effect Unit)

```

[p5js.org documentation](https://p5js.org/reference/#/p5/loop)

#### `noLoop`

``` purescript

noLoop :: P5 -> (Effect Unit)

```

[p5js.org documentation](https://p5js.org/reference/#/p5/noLoop)

#### `pop`

``` purescript

pop... | 15.816667 | 65 | 0.629083 | yue_Hant | 0.69358 |

f961aaab46ca82241bbc722940051a87958058ba | 43,827 | md | Markdown | packages/furo-route/CHANGELOG.md | maltenorstroem/FuroBaseComponents | bc990565f07e5761c756c04071a89194e79aa969 | [

"MIT"

] | 1 | 2019-08-08T13:05:37.000Z | 2019-08-08T13:05:37.000Z | packages/furo-route/CHANGELOG.md | maltenorstroem/FuroBaseComponents | bc990565f07e5761c756c04071a89194e79aa969 | [

"MIT"

] | null | null | null | packages/furo-route/CHANGELOG.md | maltenorstroem/FuroBaseComponents | bc990565f07e5761c756c04071a89194e79aa969 | [

"MIT"

] | null | null | null | # Change Log

All notable changes to this project will be documented in this file.

See [Conventional Commits](https://conventionalcommits.org) for commit guidelines.

## [1.6.4](https://github.com/theNorstroem/FuroBaseComponents/compare/@furo/route@1.6.3...@furo/route@1.6.4) (2021-04-01)

**Note:** Version bump only fo... | 23.588267 | 258 | 0.705889 | yue_Hant | 0.354485 |

f961fada52dc20bb29d4526804e074e89df1c935 | 157 | md | Markdown | README.md | bingduouduos/test | 1120d5870a7fd5a182462b89e641a590cd07e3c8 | [

"Apache-2.0"

] | null | null | null | README.md | bingduouduos/test | 1120d5870a7fd5a182462b89e641a590cd07e3c8 | [

"Apache-2.0"

] | null | null | null | README.md | bingduouduos/test | 1120d5870a7fd5a182462b89e641a590cd07e3c8 | [

"Apache-2.0"

] | null | null | null | # test

test the function and usage of the organization

项目管理看板:https://github.com/bingduouduos/test/projects/1

主页io: https://bingduouduos.github.io/test/

| 19.625 | 54 | 0.77707 | kor_Hang | 0.327486 |

f9621b08d12a279561f1af71a83cde028f2db137 | 867 | md | Markdown | content/posts/2018/cldictp/index.zh-cn.md | PwzXxm/pwzxxm.github.io | 646823c5327f7980445ee789f8e106765316a3cd | [

"MIT"

] | 1 | 2019-04-17T07:23:04.000Z | 2019-04-17T07:23:04.000Z | content/posts/2018/cldictp/index.zh-cn.md | PwzXxm/pwzxxm.github.io | 646823c5327f7980445ee789f8e106765316a3cd | [

"MIT"

] | 5 | 2017-02-01T12:12:49.000Z | 2021-05-11T23:49:57.000Z | content/posts/2018/cldictp/index.zh-cn.md | PwzXxm/pwzxxm.github.io | 646823c5327f7980445ee789f8e106765316a3cd | [

"MIT"

] | null | null | null | ---

title: "CLDictP: 命令行英文词典工具"

date: 2018-07-04

draft: false

tags: ["Perl"]

---

CLDictP是一个用Perl,韦氏词典API写的命令行英文词典工具。

这是我第一个用Perl写的小项目。每次想用[Quizlet](https://quizlet.com/)做Flashcard(抽认卡?)来记单词的时候,因为想有的地方要加粗之类的,手动很麻烦。而且查单词的时候打开在线词典,太懒了,就想着写一个小工具吧。

它使用了如下韦氏词典API:

- 韦氏学习词典

- 韦氏大学词典

每个词条包括:

- 音标

- 词性

- 语法

- 释义

- 常用用法

... | 14.694915 | 130 | 0.685121 | yue_Hant | 0.908032 |

f9626da992dcb89cecd509180f844a47c69266f2 | 74 | md | Markdown | README.md | TopDev930/TranslateAPI | e6a08e6b1b544f56a333aef4d22d1d744bd0eb78 | [

"Apache-2.0"

] | 2 | 2020-05-18T14:01:25.000Z | 2020-05-19T17:27:32.000Z | README.md | TopDev930/TranslateAPI | e6a08e6b1b544f56a333aef4d22d1d744bd0eb78 | [

"Apache-2.0"

] | null | null | null | README.md | TopDev930/TranslateAPI | e6a08e6b1b544f56a333aef4d22d1d744bd0eb78 | [

"Apache-2.0"

] | null | null | null | # TranslateAPI

This is the simple project which uses google translate api

| 24.666667 | 58 | 0.824324 | eng_Latn | 0.999592 |

f9629f9baea465db06fb66b1fd3f5c32137c2e45 | 12,035 | md | Markdown | References.md | gordenbrown51/NSABlocklist | 8cf7e41d56102c204f6a92db924622af1d99e839 | [

"ISC",

"MIT"

] | 3 | 2019-07-17T02:11:24.000Z | 2021-11-16T00:33:08.000Z | References.md | gordenbrown51/NSABlocklist | 8cf7e41d56102c204f6a92db924622af1d99e839 | [

"ISC",

"MIT"

] | null | null | null | References.md | gordenbrown51/NSABlocklist | 8cf7e41d56102c204f6a92db924622af1d99e839 | [

"ISC",

"MIT"

] | 1 | 2021-11-18T21:25:03.000Z | 2021-11-18T21:25:03.000Z | References

------------

* [NSA official GitHub Account](https://nationalsecurityagency.github.io)

* [USB Snooping Made Easy: Crosstalk Leakage Attacks on USB Hubs](https://www.usenix.org/conference/usenixsecurity17/technical-sessions/presentation/su)

* [WikiLeaks Publishes NSA Target List](https://wikileaks.org/nsa-20... | 104.652174 | 240 | 0.773162 | yue_Hant | 0.54021 |

f9630d2b7437c70d9ee16c2ea63c243582e1df1f | 1,948 | md | Markdown | _posts/2019-02-11-memset-note.md | RandomlyChristen/RandomlyChristen.github.io | e933d0c5fbd4a0f7071e46f583239c8fbb6cc266 | [

"MIT"

] | null | null | null | _posts/2019-02-11-memset-note.md | RandomlyChristen/RandomlyChristen.github.io | e933d0c5fbd4a0f7071e46f583239c8fbb6cc266 | [

"MIT"

] | null | null | null | _posts/2019-02-11-memset-note.md | RandomlyChristen/RandomlyChristen.github.io | e933d0c5fbd4a0f7071e46f583239c8fbb6cc266 | [

"MIT"

] | null | null | null | ---

layout: post

title: memset, 이런 실수 하지말자

tags: C++ std memset fill

feature-img: "assets/img/2019-01-14/c-introduction.jpg"

---

`string.h`의 `memset()` 함수를 통한 배열 원소의 초기화는 **매우 제한적**이다.

우선, `memset()`은 [다음](http://www.cplusplus.com/reference/cstring/memset/)과 같이 정의된다.

>`void * memset ( void * ptr, int value, size_t n... | 24.35 | 121 | 0.662218 | kor_Hang | 1.00001 |

f9635d736849b9f12fe702060b7eadded0f26a33 | 21 | md | Markdown | README.md | jeffrey2423/side-navigation-bar | 5b857cf7fda7dca7b50244eaf4601aca3ba2275d | [

"Apache-2.0"

] | null | null | null | README.md | jeffrey2423/side-navigation-bar | 5b857cf7fda7dca7b50244eaf4601aca3ba2275d | [

"Apache-2.0"

] | null | null | null | README.md | jeffrey2423/side-navigation-bar | 5b857cf7fda7dca7b50244eaf4601aca3ba2275d | [

"Apache-2.0"

] | null | null | null | # side-navigation-bar | 21 | 21 | 0.809524 | eng_Latn | 0.340424 |

f9636fd35ddd10c9df8e760a646247f582475a08 | 6,945 | md | Markdown | README.md | MadCatMining/Espers | 81848beb8974e3e38422ae7a5a9bab2416936f7d | [

"MIT"

] | null | null | null | README.md | MadCatMining/Espers | 81848beb8974e3e38422ae7a5a9bab2416936f7d | [

"MIT"

] | 1 | 2020-08-21T01:20:06.000Z | 2020-08-21T01:20:06.000Z | README.md | MadCatMining/Espers | 81848beb8974e3e38422ae7a5a9bab2416936f7d | [

"MIT"

] | null | null | null | Espers [ESP] 2016-2021 integration/staging tree

===============================================

https://espers.io/

What is the Espers [ESP] Blockchain?

------------------------------------

*TODO: Update documentation regarding implemented tech as this section is out of date and much progress and upgrades have been ma... | 39.685714 | 466 | 0.750612 | eng_Latn | 0.964233 |

f96477538585e4989596cda9b8411eff2988014f | 1,823 | md | Markdown | biztalk/core/receipt-delivery-option-value-is-invalid.md | SicongLiuSimon/biztalk-docs | 85394b436d277504d9e759c655608888123785bd | [

"CC-BY-4.0",

"MIT"

] | 1 | 2019-06-14T19:45:26.000Z | 2019-06-14T19:45:26.000Z | biztalk/core/receipt-delivery-option-value-is-invalid.md | AzureMentor/biztalk-docs | 16b211f29ad233c26d5511475c7e621760908af3 | [

"CC-BY-4.0",

"MIT"

] | 7 | 2020-01-09T22:34:58.000Z | 2020-02-18T19:42:16.000Z | biztalk/core/receipt-delivery-option-value-is-invalid.md | AzureMentor/biztalk-docs | 16b211f29ad233c26d5511475c7e621760908af3 | [

"CC-BY-4.0",

"MIT"

] | 2 | 2017-06-23T18:30:28.000Z | 2017-11-28T01:11:25.000Z | ---

title: "Receipt-Delivery-Option value is invalid | Microsoft Docs"

ms.custom: ""

ms.date: "06/08/2017"

ms.prod: "biztalk-server"

ms.reviewer: ""

ms.suite: ""

ms.tgt_pltfrm: ""

ms.topic: "article"

ms.assetid: 0eed306b-0912-4694-a55c-976128117c02

caps.latest.revision: 8

author: "MandiOhlinger"

ms.author: "mandia"

ma... | 53.617647 | 209 | 0.53209 | eng_Latn | 0.676862 |

f964cbbba67032e7ac64ee273a0f0f0613a613af | 432 | md | Markdown | OPEN_SSL_HB_FIX.md | patricia-gallardo/insecure-fuzz | 5a19a9da0de7c68a7da2b012a0f8f37012ad84b6 | [

"MIT"

] | 1 | 2019-11-15T10:16:45.000Z | 2019-11-15T10:16:45.000Z | OPEN_SSL_HB_FIX.md | patricia-gallardo/insecure-fuzz | 5a19a9da0de7c68a7da2b012a0f8f37012ad84b6 | [

"MIT"

] | null | null | null | OPEN_SSL_HB_FIX.md | patricia-gallardo/insecure-fuzz | 5a19a9da0de7c68a7da2b012a0f8f37012ad84b6 | [

"MIT"

] | 1 | 2020-06-15T14:16:45.000Z | 2020-06-15T14:16:45.000Z | # Review the actual fix for Heartbleed

Install Meld

~~~~bash

sudo apt install meld

~~~~

Get the before and after code

~~~~bash

curl -O https://raw.githubusercontent.com/patricia-gallardo/insecure-fuzz/master/fixes/heartbleed/fixed.c

curl -O https://raw.githubusercontent.com/patricia-gallardo/insecure-fuzz/master/fi... | 18.782609 | 105 | 0.75 | eng_Latn | 0.437804 |

f964deb5f8ccbe9644d7fecb36369096abaae70f | 1,457 | md | Markdown | adam_optimizer.md | nurulc/machine-learning | 41dc098d962758a50bee59177c8f32e2f93d332a | [

"Apache-2.0"

] | null | null | null | adam_optimizer.md | nurulc/machine-learning | 41dc098d962758a50bee59177c8f32e2f93d332a | [

"Apache-2.0"

] | null | null | null | adam_optimizer.md | nurulc/machine-learning | 41dc098d962758a50bee59177c8f32e2f93d332a | [

"Apache-2.0"

] | null | null | null | ## Javascript version of the adam optimizer

```Javascript

class Adam {

constructor(nparams, beta_1=0.9, beta_2=0.999, epsilon=1e-8) {

this.nparams= nparams;

this.beta_1= beta_1;

this.beta_2= beta_2;

this.epsilon= epsilon;

this.m= [];

this.v= [];

for(var i= ... | 37.358974 | 109 | 0.553191 | eng_Latn | 0.527103 |

f9654885a66ac32ef0cb89408e481dcaec6f98e9 | 7,639 | md | Markdown | docs/cpp/header-files-cpp.md | Mdlglobal-atlassian-net/cpp-docs.it-it | c8edd4e9238d24b047d2b59a86e2a540f371bd93 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/cpp/header-files-cpp.md | Mdlglobal-atlassian-net/cpp-docs.it-it | c8edd4e9238d24b047d2b59a86e2a540f371bd93 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/cpp/header-files-cpp.md | Mdlglobal-atlassian-net/cpp-docs.it-it | c8edd4e9238d24b047d2b59a86e2a540f371bd93 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-05-28T15:54:57.000Z | 2020-05-28T15:54:57.000Z | ---

title: File di intestazione (C

ms.date: 12/11/2019

helpviewer_keywords:

- header files [C++]

ms.openlocfilehash: 4ab6a2b2626cde94f35678bc9ec789b80d493b8f

ms.sourcegitcommit: c123cc76bb2b6c5cde6f4c425ece420ac733bf70

ms.translationtype: MT

ms.contentlocale: it-IT

ms.lasthandoff: 04/14/2020

ms.locfileid: "81367235"

--... | 40.632979 | 906 | 0.738055 | ita_Latn | 0.998882 |

f966c6d100a4413ab6c8f97ef0399a5b7f883827 | 111 | md | Markdown | input/corporate-sponsors/volosoft.md | kennedylabs/website | 3e171d4f6b126d8d4857edcba540498241fe21c3 | [

"MIT"

] | 2 | 2021-09-03T01:45:12.000Z | 2021-12-03T03:00:28.000Z | input/corporate-sponsors/volosoft.md | kennedylabs/website | 3e171d4f6b126d8d4857edcba540498241fe21c3 | [

"MIT"

] | 1 | 2021-10-21T12:58:58.000Z | 2021-10-21T12:58:58.000Z | input/corporate-sponsors/volosoft.md | kennedylabs/website | 3e171d4f6b126d8d4857edcba540498241fe21c3 | [

"MIT"

] | 2 | 2021-12-11T08:26:41.000Z | 2021-12-12T08:43:33.000Z | ---

name: Volosoft

logo: assets/corporate-sponsors/volosoft.png

url: https://volosoft.com/

order: 10

---

| 15.857143 | 45 | 0.684685 | nld_Latn | 0.241557 |

f967b276730ecee816eb0c6de90d416fa079b364 | 718 | md | Markdown | CHANGELOG.md | cmeister2/corrosion | 5ea01d3769c1357781b97fa1f6780500a6783049 | [

"MIT"

] | 1 | 2019-07-25T11:06:13.000Z | 2019-07-25T11:06:13.000Z | CHANGELOG.md | cmeister2/corrosion | 5ea01d3769c1357781b97fa1f6780500a6783049 | [

"MIT"

] | null | null | null | CHANGELOG.md | cmeister2/corrosion | 5ea01d3769c1357781b97fa1f6780500a6783049 | [

"MIT"

] | null | null | null | # Changelog

All notable changes to this project will be documented in this file.

This file's format is based on [Keep a Changelog](http://keepachangelog.com/)

and this project adheres to [Semantic Versioning](http://semver.org/). The

version number is tracked in the file `VERSION`.

## [Unreleased]

### Changed

- Remo... | 26.592593 | 77 | 0.711699 | eng_Latn | 0.798076 |

f967ec175b208791c13b71e06fe05d230273bd14 | 64 | md | Markdown | README.md | davdtheemonk/LudoGameJava | 5b48821a29f6ec433e9bdf0b181526bd9251c22d | [

"MIT"

] | 1 | 2021-12-14T08:46:53.000Z | 2021-12-14T08:46:53.000Z | README.md | davdtheemonk/LudoGameJava | 5b48821a29f6ec433e9bdf0b181526bd9251c22d | [

"MIT"

] | null | null | null | README.md | davdtheemonk/LudoGameJava | 5b48821a29f6ec433e9bdf0b181526bd9251c22d | [

"MIT"

] | null | null | null | # LudoGameJava

I'm just learning 📌

Simple Ludo Game no GUI....

| 12.8 | 27 | 0.703125 | kor_Hang | 0.530944 |

f96824c48d97557c1cb583fae60f9563ecc6c08f | 588 | md | Markdown | docs/docs/changeLog.md | alibaba/form-driver | 8598c98b5c4238927fe0ce553fd2378589124fcb | [

"MIT"

] | 7 | 2021-11-17T06:14:38.000Z | 2022-03-24T12:02:54.000Z | docs/docs/changeLog.md | alibaba/form-driver | 8598c98b5c4238927fe0ce553fd2378589124fcb | [

"MIT"

] | 4 | 2022-01-25T06:44:30.000Z | 2022-03-04T09:36:58.000Z | docs/docs/changeLog.md | alibaba/form-driver | 8598c98b5c4238927fe0ce553fd2378589124fcb | [

"MIT"

] | 1 | 2021-11-17T07:05:00.000Z | 2021-11-17T07:05:00.000Z | ### 0.3.14 (2021.06.18)

* NPS 支持修饰文案配置

* 修复部分组件不传 props 时报错

### 0.3.12 (2021.06.16)

* 支持分栏布局

### 0.3.10 (2021.06.12)

* 添加附件上传组件

### 0.3.9 (2021.06.12)

* 更名:candidates 改为 option

* 更名:setOpen 和 enumOpen 改为 openOption

* 支持树形选择器

### 0.3.6 (2021.06.07)

* 添加 NPS 属性

* 修复 datebase 中的值不在枚举中的情况下,选择后出现在选项中 的问题

* M3 本地构建时... | 13.066667 | 40 | 0.647959 | yue_Hant | 0.6285 |

f968e331fa9dacab039af127207156754909049d | 2,077 | md | Markdown | data-management-library/autonomous-database/dedicated/adb-datasafe/Register a Target Database.md | mrabhiram/learning-library | a638cc973a52006e08bff4ec9f41c6122a987b8a | [

"UPL-1.0"

] | 2 | 2020-09-29T20:21:15.000Z | 2020-10-20T11:45:55.000Z | data-management-library/autonomous-database/dedicated/adb-datasafe/Register a Target Database.md | mrabhiram/learning-library | a638cc973a52006e08bff4ec9f41c6122a987b8a | [

"UPL-1.0"

] | null | null | null | data-management-library/autonomous-database/dedicated/adb-datasafe/Register a Target Database.md | mrabhiram/learning-library | a638cc973a52006e08bff4ec9f41c6122a987b8a | [

"UPL-1.0"

] | null | null | null | # Register a dedicated ADB instance with Data Safe

## Introduction

Oracle Data Safe can connect to an Oracle Cloud database that has a public or private IP address on a virtual cloud network (VCN) in Oracle Cloud Infrastructure (OCI). This workshop describes the difference between public and private endpoints and ex... | 59.342857 | 502 | 0.76986 | eng_Latn | 0.995232 |

f9693016689801a50fdbe9a81dc9e6897c598d5f | 1,403 | md | Markdown | tccli/examples/essbasic/v20201222/DescribeFlowApprovers.md | HS-Gray/tencentcloud-cli | 3822fcfdfed570fb526fe49abe6793e2f9127f4a | [

"Apache-2.0"

] | 47 | 2018-05-31T11:26:25.000Z | 2022-03-08T02:12:45.000Z | tccli/examples/essbasic/v20201222/DescribeFlowApprovers.md | HS-Gray/tencentcloud-cli | 3822fcfdfed570fb526fe49abe6793e2f9127f4a | [

"Apache-2.0"

] | 23 | 2018-06-14T10:46:30.000Z | 2022-02-28T02:53:09.000Z | tccli/examples/essbasic/v20201222/DescribeFlowApprovers.md | HS-Gray/tencentcloud-cli | 3822fcfdfed570fb526fe49abe6793e2f9127f4a | [

"Apache-2.0"

] | 22 | 2018-10-22T09:49:45.000Z | 2022-03-30T08:06:04.000Z | **Example 1: 查询个人帐号**

Input:

```

tccli essbasic DescribeFlowApprovers --cli-unfold-argument \

--Caller.ApplicationId \

--Caller.SubOrganizationId \

--Caller.OperatorId \

--FlowId \

--UserId \

--SignId

```

Output:

```

{

"Response": {

"RequestId": "xx",

"FlowId": ... | 22.629032 | 61 | 0.332145 | yue_Hant | 0.658511 |

f969939dab2067baaab90efec94f91580c309b8c | 69 | md | Markdown | README.md | MetamorphPHP/mongodb-transformations | 2e119fed11ab625a92c248438c0d8abffd85659f | [

"MIT"

] | null | null | null | README.md | MetamorphPHP/mongodb-transformations | 2e119fed11ab625a92c248438c0d8abffd85659f | [

"MIT"

] | null | null | null | README.md | MetamorphPHP/mongodb-transformations | 2e119fed11ab625a92c248438c0d8abffd85659f | [

"MIT"

] | null | null | null | Metamorph MongoDB Transformations

=================================

| 17.25 | 33 | 0.449275 | oci_Latn | 0.969378 |

f9699b49ae89605187a7a16d35117bf2a3c420f2 | 1,142 | md | Markdown | _posts/2021-09-17-shower.md | ra559/testjekyll | 2843d6b30415beee96fb6980f60c312f9b44bd41 | [

"MIT"

] | null | null | null | _posts/2021-09-17-shower.md | ra559/testjekyll | 2843d6b30415beee96fb6980f60c312f9b44bd41 | [

"MIT"

] | null | null | null | _posts/2021-09-17-shower.md | ra559/testjekyll | 2843d6b30415beee96fb6980f60c312f9b44bd41 | [

"MIT"

] | null | null | null | ---

layout: post

title: "Shower"

date: 2021-09-17 00:00:00 -0400

categories: Poems

---

<br>

She was late for work <br>

I came late from work last night <br>

My insomnia did not l... | 28.55 | 141 | 0.740806 | eng_Latn | 0.999689 |

f969c432413fb9ee4397de6f3d004994471d7cdf | 2,315 | md | Markdown | CHANGELOG.md | nohli/build_context | 04518d60625f23e6a1f5c7235d534ebe1864c04d | [

"MIT"

] | 132 | 2020-04-03T13:27:36.000Z | 2022-02-20T11:30:55.000Z | CHANGELOG.md | nohli/build_context | 04518d60625f23e6a1f5c7235d534ebe1864c04d | [

"MIT"

] | 15 | 2020-04-03T14:01:37.000Z | 2021-03-10T15:15:22.000Z | CHANGELOG.md | nohli/build_context | 04518d60625f23e6a1f5c7235d534ebe1864c04d | [

"MIT"

] | 16 | 2020-04-21T12:55:02.000Z | 2022-02-11T11:06:49.000Z | ## [3.0.0] - 07/03/2021.

* Migrated to null safety

* Migration to ScaffoldMessenger

Added support for:

* `context.platform`

* `context.headline1`

* `context.headline2`

* `context.headline3`

* `context.headline4`

* `context.headline5`

* `context.headline6`

* `context.subtitle1`

* `context.bodyText1`

* `context.bodyTe... | 19.291667 | 106 | 0.699352 | yue_Hant | 0.685371 |

f96a4206fa7f7e07403519fc56fe63b3157e650c | 124 | md | Markdown | src/markdown/businessPresentation.md | MohammadBnei/dev-mohammad | f238c8a505aa7fc160774ae909db43f6fa17891b | [

"MIT"

] | null | null | null | src/markdown/businessPresentation.md | MohammadBnei/dev-mohammad | f238c8a505aa7fc160774ae909db43f6fa17891b | [

"MIT"

] | null | null | null | src/markdown/businessPresentation.md | MohammadBnei/dev-mohammad | f238c8a505aa7fc160774ae909db43f6fa17891b | [

"MIT"

] | null | null | null | ---

path: "/accueil/"

page: 1

title: "Presentation"

date: "2019-05-19"

---

#Besoin d'un site ?

### Je suis là pour vous !

| 11.272727 | 26 | 0.596774 | fra_Latn | 0.801356 |

f96a49cdb1ede46ee31521e55b53153566966377 | 7,029 | md | Markdown | exams.md | ee209-2020-fall/ee209-2020-fall.github.io | e2da3c86656ea792cb4797becfe96b15da9e35cd | [

"MIT"

] | null | null | null | exams.md | ee209-2020-fall/ee209-2020-fall.github.io | e2da3c86656ea792cb4797becfe96b15da9e35cd | [

"MIT"

] | null | null | null | exams.md | ee209-2020-fall/ee209-2020-fall.github.io | e2da3c86656ea792cb4797becfe96b15da9e35cd | [

"MIT"

] | null | null | null | ---

layout: page

title: OldExams

permalink: /oldExams/

---

<html>

<body>

<h2> EE209 Old Midterm Exams </h2>

<style type="text/css">

.tg {border-collapse:collapse;border-spacing:0;}

.tg td{border-color:black;border-style:solid;border-width:1px;font-family:Arial, sans-serif;font-size:14px;

overflow:hidden;padding:1... | 37.588235 | 107 | 0.637217 | yue_Hant | 0.399139 |

f96ab726499c0033da328afef19913a8034827d1 | 1,847 | md | Markdown | articles/defender-for-iot/device-builders/concept-security-module.md | BaherAbdullah/azure-docs | 65d82440dd3209697fdb983ef456b0a2293e270a | [

"CC-BY-4.0",

"MIT"

] | 7,073 | 2017-06-27T08:58:22.000Z | 2022-03-30T23:19:23.000Z | articles/defender-for-iot/device-builders/concept-security-module.md | BaherAbdullah/azure-docs | 65d82440dd3209697fdb983ef456b0a2293e270a | [

"CC-BY-4.0",

"MIT"

] | 87,608 | 2017-06-26T22:11:41.000Z | 2022-03-31T23:57:29.000Z | articles/defender-for-iot/device-builders/concept-security-module.md | BaherAbdullah/azure-docs | 65d82440dd3209697fdb983ef456b0a2293e270a | [

"CC-BY-4.0",

"MIT"

] | 17,093 | 2017-06-27T03:28:18.000Z | 2022-03-31T20:46:38.000Z | ---

title: Defender-IoT-micro-agent and device twins

description: Learn about the concept of Defender-IoT-micro-agent twins and how they are used in Defender for IoT.

ms.topic: conceptual

ms.date: 05/25/2021

---

# Defender-IoT-micro-agent

This article explains how Defender for IoT uses device twins and modules.

## D... | 55.969697 | 357 | 0.794261 | eng_Latn | 0.990273 |

f96b62d87f420a2233d091547d834ef366eb0f59 | 609 | md | Markdown | docs/news/facebook_pytorch_developer_conference_2018_09_05.md | mberkanbicer/deep-learning-wizard | 74f8e193340eb03d321114f3bb962ac33f7a3949 | [

"MIT"

] | 506 | 2018-07-06T07:50:00.000Z | 2022-03-30T05:53:55.000Z | docs/news/facebook_pytorch_developer_conference_2018_09_05.md | vasulakkaraju/deep-learning-wizard | f3582cfd7417b851b2196ec27aa58edbc49dd131 | [

"MIT"

] | 5 | 2021-01-13T02:44:44.000Z | 2022-02-06T10:07:46.000Z | docs/news/facebook_pytorch_developer_conference_2018_09_05.md | vasulakkaraju/deep-learning-wizard | f3582cfd7417b851b2196ec27aa58edbc49dd131 | [

"MIT"

] | 191 | 2018-11-09T07:52:06.000Z | 2022-03-28T05:15:25.000Z | # Facebook PyTorch Developer Conference

## We are heading down!

In barely 2 short years, PyTorch (Facebook) will be hosting their first [PyTorch Developer Conference](https://pytorch.fbreg.com) in San Francisco, USA.

I will be heading down thanks to Soumith Chintala for the invite and arrangements. Looking forward t... | 50.75 | 210 | 0.781609 | eng_Latn | 0.991353 |

f96b8a7c027503915c85d547913d855f65d59616 | 3,716 | md | Markdown | README.md | heuisam/mbed-os-example-s1sbp6a | f02cffebc8f111bf9a695a8df82cb0ac82a409c9 | [

"Apache-2.0"

] | null | null | null | README.md | heuisam/mbed-os-example-s1sbp6a | f02cffebc8f111bf9a695a8df82cb0ac82a409c9 | [

"Apache-2.0"

] | null | null | null | README.md | heuisam/mbed-os-example-s1sbp6a | f02cffebc8f111bf9a695a8df82cb0ac82a409c9 | [

"Apache-2.0"

] | null | null | null | # BP6A Example

The example project is the getting started example for BP6A.

It contains ECG, PPG, BIA, and GPIO, i2c application.

You can build the project with all supported Mbed OS build tools.

## Mbed studio

You can import s1sbp6a example.

* step 1. Open the File menu and select Import Program

* step 2. Paste the ... | 17.951691 | 100 | 0.641012 | eng_Latn | 0.952645 |

f96c80bc246928d026dfe951e0421dd17c669327 | 489 | md | Markdown | examples/markdown/carousel-doc.md | FredaFei/react-typeScript-ui | be2841327b9d6554b88bf6b0f69ee53797f42956 | [

"MIT"

] | 2 | 2020-05-27T02:37:23.000Z | 2020-07-01T06:34:59.000Z | examples/markdown/carousel-doc.md | FredaFei/react-typeScript-ui | be2841327b9d6554b88bf6b0f69ee53797f42956 | [

"MIT"

] | 8 | 2020-03-16T03:56:51.000Z | 2022-02-26T10:07:12.000Z | examples/markdown/carousel-doc.md | FredaFei/amazing-ui-react | be2841327b9d6554b88bf6b0f69ee53797f42956 | [

"MIT"

] | null | null | null | ### API

### Collapse

| 参数 |说明 |类型 |默认值 |可选值 |

| ------------ | :----------------|:------- | :----- | :----- |

| animation |动画效果 |String |slide |`fade` |

| indicator |下标设置 |{position, style} |{position:`bottom`, style: `circle`}|{position:`left/top/bottom/right`, style: `number/circle`} |

| duration |播放... | 40.75 | 135 | 0.509202 | yue_Hant | 0.393186 |

f96ca46f38c92b480a4f237f897b448bc9ea15bf | 951 | md | Markdown | api/Word.CustomLabels.Item.md | MarkWithC/VBA-Docs | a43a38a843c95cbe8beed2a15218a5aeca4df8fb | [

"CC-BY-4.0",

"MIT"

] | null | null | null | api/Word.CustomLabels.Item.md | MarkWithC/VBA-Docs | a43a38a843c95cbe8beed2a15218a5aeca4df8fb | [

"CC-BY-4.0",

"MIT"

] | null | null | null | api/Word.CustomLabels.Item.md | MarkWithC/VBA-Docs | a43a38a843c95cbe8beed2a15218a5aeca4df8fb | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: CustomLabels.Item method (Word)

keywords: vbawd10.chm152436736

f1_keywords:

- vbawd10.chm152436736

ms.prod: word

api_name:

- Word.CustomLabels.Item

ms.assetid: 3b0734f9-de26-3722-7267-2665fa73d9f9

ms.date: 06/08/2017

ms.localizationpriority: medium

---

# CustomLabels.Item method (Word)

Returns a **CustomL... | 21.133333 | 184 | 0.740273 | eng_Latn | 0.448595 |

f96d4bb50ed4ed525292957f9142d4905f20d23f | 3,630 | md | Markdown | server-2013/lync-server-2013-configure-add-ins-for-rooms.md | v-vijanu/OfficeDocs-SkypeforBusiness-Test-pr.es-es | b8058e4767e19709e1df553cb66a7e6df429cf5e | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-05-19T19:28:10.000Z | 2020-05-19T19:28:10.000Z | server-2013/lync-server-2013-configure-add-ins-for-rooms.md | v-vijanu/OfficeDocs-SkypeforBusiness-Test-pr.es-es | b8058e4767e19709e1df553cb66a7e6df429cf5e | [

"CC-BY-4.0",

"MIT"

] | 21 | 2018-04-26T18:42:59.000Z | 2018-08-23T23:00:11.000Z | server-2013/lync-server-2013-configure-add-ins-for-rooms.md | v-vijanu/OfficeDocs-SkypeforBusiness-Test-pr.es-es | b8058e4767e19709e1df553cb66a7e6df429cf5e | [

"CC-BY-4.0",

"MIT"

] | 11 | 2018-06-19T11:13:26.000Z | 2021-11-15T11:25:02.000Z | ---

title: 'Lync Server 2013: Configurar complementos para salones'

TOCTitle: Configurar complementos para salones

ms:assetid: 4eeaf19e-8369-4f6f-af65-a283cf7daa1c

ms:mtpsurl: https://technet.microsoft.com/es-es/library/JJ204878(v=OCS.15)

ms:contentKeyID: 48275245

ms.date: 01/07/2017

mtps_version: v=OCS.15

ms.translat... | 61.525424 | 541 | 0.785399 | spa_Latn | 0.979239 |

f96d9d98826a922ad76bb9d0e4ef5288d0ff0545 | 2,697 | md | Markdown | README.md | fbigun/LCApp | 87f96fdb922b9916a84dd0c2ec67710b2ff39fbc | [

"MIT"

] | 1 | 2019-06-03T02:38:05.000Z | 2019-06-03T02:38:05.000Z | README.md | beautilut/XMIIconFont | d899cec22642d62080ccf9be2b6c7e8d9445f1d5 | [

"MIT"

] | null | null | null | README.md | beautilut/XMIIconFont | d899cec22642d62080ccf9be2b6c7e8d9445f1d5 | [

"MIT"

] | null | null | null | # Flask Todo Demo

## 环境与依赖

Flask Todo Demo 是一个 [LeanEngine][1] 的示例项目。它运行在 Python 3 上,依赖 [flask][2] 和 [LeanCloud Python SDK][3]。你可以在 [这里][11] 查看在线 demo。

## 在开始之前

Flask Todo Demo 是一个 [LeanCloud][4] 应用。在部署上线之前,需要先做一些准备工作。

1. 在 [LeanCloud 控制台][5] 新建一个应用,并设置一个二级域名。

2. 在控制台中新增一个名为 `SECRET_KEY` 的环境变量。(关于如何创建一个好的密钥,请参考 [这... | 32.890244 | 152 | 0.747497 | yue_Hant | 0.845091 |

f96da8b1601b9d551bcced5810f4455b9af4a5a7 | 1,423 | md | Markdown | _posts/2018-10-31-future-of-print-in-open-stacks.md | sharilaster/sharilaster | 7b2de4a47217404aa2628eb64e47d71a6d2be0a1 | [

"MIT"

] | null | null | null | _posts/2018-10-31-future-of-print-in-open-stacks.md | sharilaster/sharilaster | 7b2de4a47217404aa2628eb64e47d71a6d2be0a1 | [

"MIT"

] | null | null | null | _posts/2018-10-31-future-of-print-in-open-stacks.md | sharilaster/sharilaster | 7b2de4a47217404aa2628eb64e47d71a6d2be0a1 | [

"MIT"

] | null | null | null | ---

layout: post

title: "The Future of Print in Open Stacks"

categories: journal

tags: [future of print]

image:

feature:

teaser:

credit:

creditlink: ""

---

Building on a talk given by Jim O'Donnell at the 2017 Charleston Conference, Lorrie McAllister and I expanded on some of the conceptual underpinnings of... | 59.291667 | 743 | 0.791989 | eng_Latn | 0.984878 |

f96dce0dbd34d7e6bdc9e8e83d5c5c82b238dcc4 | 13,060 | md | Markdown | src/posts/2019-11-20-testing-thunky-redux-actions.md | neil-berg/portfolio | 36e021fbd8803408f6171aa5495755adad0c24c3 | [

"MIT"

] | 2 | 2019-05-05T21:42:53.000Z | 2020-01-13T20:57:05.000Z | src/posts/2019-11-20-testing-thunky-redux-actions.md | neil-berg/portfolio | 36e021fbd8803408f6171aa5495755adad0c24c3 | [

"MIT"

] | null | null | null | src/posts/2019-11-20-testing-thunky-redux-actions.md | neil-berg/portfolio | 36e021fbd8803408f6171aa5495755adad0c24c3 | [

"MIT"

] | null | null | null | ---

title: "Testing Thunk-y Redux Actions"

date: "2019-11-20"

description: Diving into redux-thunk for testing thunk-y Redux action creators

---

## Testing Thunk-y Redux Actions

<time datetime="2019-11-20">November 20, 2019</time>

In Redux-land, thunk-y action creators are those that return a function and not an act... | 36.582633 | 776 | 0.721746 | eng_Latn | 0.954332 |

f96dee1d0ae0444fa02a7ebb97f1f82b694400e9 | 741 | md | Markdown | app/backend/templates/account_deletion_report.md | lpmi-13/couchers | 4b69ed3192daede93d645b6c26f9acb3db03a2ea | [

"MIT"

] | null | null | null | app/backend/templates/account_deletion_report.md | lpmi-13/couchers | 4b69ed3192daede93d645b6c26f9acb3db03a2ea | [

"MIT"

] | 38 | 2022-02-10T16:32:53.000Z | 2022-03-28T13:43:24.000Z | app/backend/templates/account_deletion_report.md | lpmi-13/couchers | 4b69ed3192daede93d645b6c26f9acb3db03a2ea | [

"MIT"

] | null | null | null | ---

subject: "Account deletion reason #{{ reason.id|couchers_escape }}"

---

{% from "macros.html" import button, link, support_email, email_link, newline %}

Someone deleted their account and wrote a reason.

* Reason{{ newline(html)|couchers_safe }}

{{ reason.reason|couchers_escape }}

* Deleted user{{ newline(html... | 32.217391 | 85 | 0.726046 | eng_Latn | 0.415619 |

f96e7e9e1450d9197119f2c20fb4d0877473a9cf | 52 | md | Markdown | README.md | JKalmbach/questionaire-demo | a02a7e7c9a2a695283b27b745d8a5ed985d5eb78 | [

"Apache-2.0"

] | null | null | null | README.md | JKalmbach/questionaire-demo | a02a7e7c9a2a695283b27b745d8a5ed985d5eb78 | [

"Apache-2.0"

] | null | null | null | README.md | JKalmbach/questionaire-demo | a02a7e7c9a2a695283b27b745d8a5ed985d5eb78 | [

"Apache-2.0"

] | null | null | null | # questionaire-demo

a single question questionaire

| 17.333333 | 31 | 0.826923 | fra_Latn | 0.601481 |

f96f6222c28665d297e6a1d122a6ac9d7486f274 | 1,321 | markdown | Markdown | _posts/2013-02-13-comrades-let-your-voice-be-heard-about-scholarly-publishing.markdown | emhart/website | b7a3ca237e94796b8e2e6afec1165615e76b1f50 | [

"MIT"

] | null | null | null | _posts/2013-02-13-comrades-let-your-voice-be-heard-about-scholarly-publishing.markdown | emhart/website | b7a3ca237e94796b8e2e6afec1165615e76b1f50 | [

"MIT"

] | null | null | null | _posts/2013-02-13-comrades-let-your-voice-be-heard-about-scholarly-publishing.markdown | emhart/website | b7a3ca237e94796b8e2e6afec1165615e76b1f50 | [

"MIT"

] | null | null | null | ---

layout: post

title: "Comrades let your voice be heard about scholarly publishing"

date: 2013-02-13 23:24

comments: true

tags:

---

When I was in college I had a girlfriend who's dad was an ex-lawyer turned self-proclaimed anarchist scholar. She left me for a guy in his late 30's who worked in a scarf store, and I... | 120.090909 | 1,183 | 0.766843 | eng_Latn | 0.998873 |

f96fae8e9daaf15866306695fec63ed05d19723f | 6,645 | md | Markdown | docs/reporting-services/report-server-web-service-net-framework-soap-headers/identifying-execution-state.md | L3onard80/sql-docs.it-it | f73e3d20b5b2f15f839ff784096254478c045bbb | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/reporting-services/report-server-web-service-net-framework-soap-headers/identifying-execution-state.md | L3onard80/sql-docs.it-it | f73e3d20b5b2f15f839ff784096254478c045bbb | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/reporting-services/report-server-web-service-net-framework-soap-headers/identifying-execution-state.md | L3onard80/sql-docs.it-it | f73e3d20b5b2f15f839ff784096254478c045bbb | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Identificazione dello stato di esecuzione | Microsoft Docs

description: Informazioni su come usare Reporting Services per identificare lo stato di esecuzione in modo che sia possibile interagire con il report in diversi modi.

ms.date: 03/03/2017

ms.prod: reporting-services

ms.prod_service: reporting-services... | 102.230769 | 609 | 0.817156 | ita_Latn | 0.987145 |

f970010214eaa8d3a1aa8d9dfcf5c5ee21e3410c | 5,680 | md | Markdown | articles/site-recovery/site-recovery-manage-network-interfaces-on-premises-to-azure.md | sonquer/azure-docs.pl-pl | d8159cf8e870e807bd64e58188d281461b291ea8 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/site-recovery/site-recovery-manage-network-interfaces-on-premises-to-azure.md | sonquer/azure-docs.pl-pl | d8159cf8e870e807bd64e58188d281461b291ea8 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/site-recovery/site-recovery-manage-network-interfaces-on-premises-to-azure.md | sonquer/azure-docs.pl-pl | d8159cf8e870e807bd64e58188d281461b291ea8 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Zarządzanie kartami sieciowymi lokalnego odzyskiwania po awarii przy użyciu Azure Site Recovery

description: Opisuje sposób zarządzania interfejsami sieciowymi na potrzeby lokalnego odzyskiwania po awarii na platformie Azure przy użyciu Azure Site Recovery

author: mayurigupta13

manager: rochakm

ms.service: s... | 77.808219 | 485 | 0.827817 | pol_Latn | 0.999844 |

f9720d0bdccf103c8a98dbb9aef42ff5dec1432c | 471 | md | Markdown | intro/introduction/whats-new-with-4.0.0/cachebox-2.0.0.md | mborn319/coldbox-docs | 99b249b0a9dd3855c79f84974f830d49ff18d32e | [

"Apache-2.0"

] | null | null | null | intro/introduction/whats-new-with-4.0.0/cachebox-2.0.0.md | mborn319/coldbox-docs | 99b249b0a9dd3855c79f84974f830d49ff18d32e | [

"Apache-2.0"

] | null | null | null | intro/introduction/whats-new-with-4.0.0/cachebox-2.0.0.md | mborn319/coldbox-docs | 99b249b0a9dd3855c79f84974f830d49ff18d32e | [

"Apache-2.0"

] | null | null | null | # CacheBox 2.0.0

## Introduction

CacheBox 2.0.0 is a major release, mostly aligned to support our ColdBox 4 release.

## Release Notes

You can find the release version information here: [https://ortussolutions.atlassian.net/browse/CACHEBOX/fixforversion/12303](https://ortussolutions.atlassian.net/browse/CACHEBOX/fix... | 31.4 | 199 | 0.787686 | eng_Latn | 0.462003 |

f972d9b377a388cb63bbf8054839b14ee5d062ad | 403 | md | Markdown | docs/Model/AplusContent/SearchContentDocumentsResponseAllOf.md | hkonnet/selling-partner-api | 7e87b835db6e1233362a36788e2387bdf78322cd | [

"BSD-3-Clause"

] | 119 | 2021-02-18T18:58:48.000Z | 2022-03-25T16:19:05.000Z | docs/Model/AplusContent/SearchContentDocumentsResponseAllOf.md | hkonnet/selling-partner-api | 7e87b835db6e1233362a36788e2387bdf78322cd | [

"BSD-3-Clause"

] | 213 | 2021-03-09T16:23:34.000Z | 2022-03-31T11:31:31.000Z | docs/Model/AplusContent/SearchContentDocumentsResponseAllOf.md | hkonnet/selling-partner-api | 7e87b835db6e1233362a36788e2387bdf78322cd | [

"BSD-3-Clause"

] | 68 | 2021-02-15T14:38:18.000Z | 2022-03-25T21:08:45.000Z | ## SearchContentDocumentsResponseAllOf

## Properties

Name | Type | Description | Notes

------------ | ------------- | ------------- | -------------

**content_metadata_records** | [**\SellingPartnerApi\Model\AplusContent\ContentMetadataRecord[]**](ContentMetadataRecord.md) | A list of A+ Content metadata records. |

[... | 40.3 | 167 | 0.602978 | yue_Hant | 0.813545 |

f9733889ec9430009a9d6c7b6dc1c53bb956b047 | 7,648 | md | Markdown | articles/azure-resource-manager/templates/view-resources.md | trrwilson/azure-docs | 6c53a4286fafd830ab24fc70ce420e832144f4fb | [

"CC-BY-4.0",

"MIT"

] | 2 | 2021-07-31T17:49:12.000Z | 2021-08-03T13:32:39.000Z | articles/azure-resource-manager/templates/view-resources.md | trrwilson/azure-docs | 6c53a4286fafd830ab24fc70ce420e832144f4fb | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/azure-resource-manager/templates/view-resources.md | trrwilson/azure-docs | 6c53a4286fafd830ab24fc70ce420e832144f4fb | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-07-10T20:21:56.000Z | 2020-07-10T20:21:56.000Z | ---

title: Discover resource properties

description: Describes how to search for resource properties.

ms.topic: conceptual

ms.date: 06/10/2020

---

# Discover resource properties

Before creating Resource Manager templates, you need to understand what resource types are available, and what values to use in your templat... | 67.087719 | 419 | 0.784519 | eng_Latn | 0.940581 |

f97394f3ba4380d769a285d5441d899407ae564b | 947 | md | Markdown | documentation/wiki/FSM.md | rodrigovidal/akka.net | 09db298dc429c59cada63c0ae17a72142c4bfec0 | [

"Apache-2.0"

] | null | null | null | documentation/wiki/FSM.md | rodrigovidal/akka.net | 09db298dc429c59cada63c0ae17a72142c4bfec0 | [

"Apache-2.0"

] | null | null | null | documentation/wiki/FSM.md | rodrigovidal/akka.net | 09db298dc429c59cada63c0ae17a72142c4bfec0 | [

"Apache-2.0"

] | null | null | null | ---

layout: wiki

title: FSM

---

Finite State Machine

For more info see real Akka FSM documentation: http://doc.akka.io/docs/akka/snapshot/scala/fsm.html

```csharp

public class MyFSM : FSM<int, object>

{

public MyFSM(ActorRef target)

{

Target = target;

StartWith(0, new object());

When(0... | 21.522727 | 99 | 0.543823 | eng_Latn | 0.308432 |

f973fc737c021c610f8bb44d88b27503a1263479 | 19 | md | Markdown | README.md | guxiaodai/mvvm | 3cffc8aad21d2106d476b3f3087f9280a5c6edb8 | [

"MIT"

] | null | null | null | README.md | guxiaodai/mvvm | 3cffc8aad21d2106d476b3f3087f9280a5c6edb8 | [

"MIT"

] | null | null | null | README.md | guxiaodai/mvvm | 3cffc8aad21d2106d476b3f3087f9280a5c6edb8 | [

"MIT"

] | null | null | null | # mvvm

实现一个简化版mvvm

| 6.333333 | 11 | 0.789474 | zho_Hans | 0.361881 |

f97412e54c54d35063363c85603e5edf11f28e98 | 1,198 | md | Markdown | 2015/CVE-2015-7297.md | justinforbes/cve | 375c65312f55c34fc1a4858381315fe9431b0f16 | [

"MIT"

] | 2,340 | 2022-02-10T21:04:40.000Z | 2022-03-31T14:42:58.000Z | 2015/CVE-2015-7297.md | justinforbes/cve | 375c65312f55c34fc1a4858381315fe9431b0f16 | [

"MIT"

] | 19 | 2022-02-11T16:06:53.000Z | 2022-03-11T10:44:27.000Z | 2015/CVE-2015-7297.md | justinforbes/cve | 375c65312f55c34fc1a4858381315fe9431b0f16 | [

"MIT"

] | 280 | 2022-02-10T19:58:58.000Z | 2022-03-26T11:13:05.000Z | ### [CVE-2015-7297](https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2015-7297)

## How to use the sample

Use the UI to select a source. A KML file from that source will be loaded and displayed in the map.

## Relevant API

- KmlLayer

- KmlDataset

## Offline data

Re... | 35.1 | 157 | 0.736942 | eng_Latn | 0.978087 |

f9751263182daff40bc05c8f770a192aca23f1d6 | 34,804 | md | Markdown | docs/docs/graphql-reference.md | yanaraeven/gatsby | ee9319df0e83d348d085468c340648cd33f3cf56 | [

"MIT"

] | 2 | 2020-08-04T09:21:34.000Z | 2022-01-12T01:15:04.000Z | docs/docs/graphql-reference.md | tanmaylaud/gatsby | 8e9452cc06122d77375d0aff049e2aff96d5fb45 | [

"MIT"

] | 3 | 2022-02-28T01:47:46.000Z | 2022-03-08T23:40:10.000Z | docs/docs/graphql-reference.md | tanmaylaud/gatsby | 8e9452cc06122d77375d0aff049e2aff96d5fb45 | [

"MIT"

] | 3 | 2020-10-09T23:08:26.000Z | 2020-12-01T21:29:32.000Z | ---

title: GraphQL Query Options Reference

---

## Intro

This page will walk you through a series of GraphQL queries, each designed to demonstrate a particular feature of GraphQL. You'll be querying the _real_ schema used on [graphql-reference example](https://github.com/gatsbyjs/gatsby/tree/master/examples/graphql-re... | 103.892537 | 6,412 | 0.740806 | eng_Latn | 0.26079 |

f9755637268e270a4da1a652e38ff2876f2989b6 | 5,950 | md | Markdown | _posts/2021-6-15-Inventory-App-0.md | EbenZergaw/ebenzergaw.github.io | a561c5f3ed6c228f20234bc8379d0bf1e82e2de9 | [

"MIT"

] | null | null | null | _posts/2021-6-15-Inventory-App-0.md | EbenZergaw/ebenzergaw.github.io | a561c5f3ed6c228f20234bc8379d0bf1e82e2de9 | [

"MIT"

] | null | null | null | _posts/2021-6-15-Inventory-App-0.md | EbenZergaw/ebenzergaw.github.io | a561c5f3ed6c228f20234bc8379d0bf1e82e2de9 | [

"MIT"

] | null | null | null | ---

layout: post

title: Inventory App [0] - Features and Design

---

## Introduction

As of writing this post, I’m currently going past the basics of Javascript onto learning React. I’ve already built a todo app in vanilla JS from following tutorials, then went past that and built a note taking app. But at the end of t... | 129.347826 | 797 | 0.793782 | eng_Latn | 0.999805 |

f9758d174f0e6fbd6abcebc8a98d6a4ae48606e1 | 2,000 | md | Markdown | README.md | VishwaP98/MyDiagnosis | ccd4ae5cfa2ca7e081ab029943f4c12438def024 | [

"MIT"

] | 1 | 2021-03-29T06:22:35.000Z | 2021-03-29T06:22:35.000Z | README.md | VishwaP98/MyDiagnosis | ccd4ae5cfa2ca7e081ab029943f4c12438def024 | [

"MIT"

] | 1 | 2021-02-15T17:13:40.000Z | 2021-02-15T17:13:40.000Z | README.md | VishwaP98/MyDiagnosis | ccd4ae5cfa2ca7e081ab029943f4c12438def024 | [

"MIT"

] | 1 | 2021-02-09T03:18:23.000Z | 2021-02-09T03:18:23.000Z | # MyDiagnosis

Android application designed to provide detailed diagnosis based on observed symptoms, any Lab test results, and risk factors. Moreover, there is a Natural Language Processing feature that lets users type in a message explaining their problem and symptoms are extracted from the text. Finally, when user c... | 76.923077 | 409 | 0.7505 | eng_Latn | 0.928091 |

f975d6e3757155ecc84ad6ba0a4bd20f1ab9b5a5 | 1,931 | md | Markdown | README.md | pulse00/nagios-php | 772a47010157cd3989ff4e945c4a7d67395423d0 | [

"MIT"

] | 3 | 2015-09-01T14:23:05.000Z | 2018-10-19T12:47:56.000Z | README.md | pulse00/nagios-php | 772a47010157cd3989ff4e945c4a7d67395423d0 | [

"MIT"

] | null | null | null | README.md | pulse00/nagios-php | 772a47010157cd3989ff4e945c4a7d67395423d0 | [

"MIT"

] | null | null | null | nagios-php

==========

[](https://travis-ci.org/pulse00/nagios-php)

[](https://scrutinizer-ci.com/g/pulse00/nagios-php/?branch=master)

... | 32.728814 | 176 | 0.736924 | eng_Latn | 0.946625 |

f9763900b97ff2bdeaa2bd88b4f6f605645f3318 | 1,427 | md | Markdown | AlchemyInsights/upload-a-folder-or-files-to-a-document-library.md | isabella232/OfficeDocs-AlchemyInsights-pr.lt-LT | 001bf5fd6b37e2b218bad3586388a8d80ba1d47d | [

"CC-BY-4.0",

"MIT"

] | 2 | 2020-05-19T19:07:05.000Z | 2021-03-06T00:35:04.000Z | AlchemyInsights/upload-a-folder-or-files-to-a-document-library.md | MicrosoftDocs/OfficeDocs-AlchemyInsights-pr.lt-LT | 2b110ffade6ea6775b415483413fccd000605bed | [

"CC-BY-4.0",

"MIT"

] | 4 | 2020-06-02T23:31:43.000Z | 2022-02-09T06:55:37.000Z | AlchemyInsights/upload-a-folder-or-files-to-a-document-library.md | isabella232/OfficeDocs-AlchemyInsights-pr.lt-LT | 001bf5fd6b37e2b218bad3586388a8d80ba1d47d | [

"CC-BY-4.0",

"MIT"

] | 3 | 2019-10-09T20:28:54.000Z | 2021-10-09T10:40:30.000Z | ---

title: Aplanko arba failų nusiuntimas į dokumentų biblioteką

ms.author: toresing

author: tomresing

manager: scotv

ms.date: 04/21/2020

ms.audience: Admin

ms.topic: article

ms.service: o365-administration

ROBOTS: NOINDEX, NOFOLLOW

localization_priority: Normal

ms.collection: Adm_O365

ms.custom: ''

ms.assetid: df1ffdf... | 40.771429 | 201 | 0.813595 | lit_Latn | 0.995586 |

f9779e37892ee7a73ffbfc2fbf7fd989292275c9 | 2,033 | md | Markdown | Library/PackageCache/com.unity.render-pipelines.universal@10.6.0/Documentation~/2DRendererData_overview.md | adrytekk/MoleRoule | 2c3c5c0282f63863c000c1702a013fae5b19efa2 | [

"MIT"

] | 4 | 2021-06-02T19:03:51.000Z | 2021-10-02T19:47:05.000Z | Library/PackageCache/com.unity.render-pipelines.universal@10.6.0/Documentation~/2DRendererData_overview.md | adrytekk/MoleRoule | 2c3c5c0282f63863c000c1702a013fae5b19efa2 | [

"MIT"

] | 7 | 2021-04-07T10:03:53.000Z | 2022-03-14T22:06:08.000Z | Library/PackageCache/com.unity.render-pipelines.universal@10.6.0/Documentation~/2DRendererData_overview.md | adrytekk/MoleRoule | 2c3c5c0282f63863c000c1702a013fae5b19efa2 | [

"MIT"

] | 2 | 2021-12-06T13:56:23.000Z | 2021-12-06T13:56:37.000Z | # 2D Renderer Data Asset

The __2D Renderer Data__ Asset contains the settings that affect the way __2D Lights__ are applied to lit Sprites. You can set the way Lights emulate HDR lighting with the [HDR Emulation Scale](HDREmulati... | 72.607143 | 404 | 0.805706 | eng_Latn | 0.965107 |

f977cf587b8a7c488d58840ac5fe6ac467c7a983 | 1,849 | md | Markdown | getting-started/install.md | ohmysh/docs-v2 | 4d477659e72539a1a45b7c8bb6a7051e611e4365 | [

"MIT"

] | null | null | null | getting-started/install.md | ohmysh/docs-v2 | 4d477659e72539a1a45b7c8bb6a7051e611e4365 | [

"MIT"

] | null | null | null | getting-started/install.md | ohmysh/docs-v2 | 4d477659e72539a1a45b7c8bb6a7051e611e4365 | [

"MIT"

] | null | null | null | # Installation Guide

This guide will help you to install the OhMySH.

If you have any question, open a new issue in [(ohmysh/ohmysh) repository](https://github.com/ohmysh/ohmysh/issues) to tell us.

## Preparation

Check your system required first in [System Page](/getting-started/system).

OhMySh works with [SH shell... | 28.446154 | 152 | 0.737155 | eng_Latn | 0.962127 |

f97882a76c0d65cf90ef056f778156da9026e026 | 33,358 | md | Markdown | LICENSE.md | dekterov/Leacme.Weathery | 5223abaaa4953c00029db6a6c86ebccf059ea862 | [

"MIT"

] | null | null | null | LICENSE.md | dekterov/Leacme.Weathery | 5223abaaa4953c00029db6a6c86ebccf059ea862 | [

"MIT"

] | null | null | null | LICENSE.md | dekterov/Leacme.Weathery | 5223abaaa4953c00029db6a6c86ebccf059ea862 | [

"MIT"

] | null | null | null | # MIT License

Copyright (c) 2017 Leacme (http://leac.me)

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, ... | 109.730263 | 1,611 | 0.81126 | eng_Latn | 0.998749 |

f97895faf87b68b7d72946d9a5e965e365f2bd98 | 7,136 | md | Markdown | _next/03-01-benefits.md | huyenltnguyen/uswds-site | c0f7418324c85e49413e7ddd071336f44a00b583 | [

"CC0-1.0"

] | 102 | 2018-01-28T04:59:38.000Z | 2022-03-13T18:18:37.000Z | _next/03-01-benefits.md | huyenltnguyen/uswds-site | c0f7418324c85e49413e7ddd071336f44a00b583 | [

"CC0-1.0"

] | 553 | 2018-01-23T18:05:13.000Z | 2022-03-30T19:59:24.000Z | _next/03-01-benefits.md | huyenltnguyen/uswds-site | c0f7418324c85e49413e7ddd071336f44a00b583 | [

"CC0-1.0"

] | 92 | 2018-01-24T13:24:05.000Z | 2022-03-23T15:27:56.000Z | ---

title: Understand the value and benefits

subhead: Agency teams want to...

description: "Agencies need to be able to quickly discern the design system’s benefits and how it aligns with their goals for delivering better digital services."

meta:

og:image: /img/next/og-next-findings-understand.png

permalink: /n... | 64.288288 | 467 | 0.768778 | eng_Latn | 0.997957 |

f97936694d015c26593d164c81b3f2ee4eaa876c | 633 | md | Markdown | _posts/2020-09-20-lluvia.md | AviReyes/avireyes.github.io | b9ffd9d47e0b837c3398f409600b52a612c64c61 | [

"MIT"

] | 2 | 2020-07-18T07:54:57.000Z | 2020-07-18T07:55:16.000Z | _posts/2020-09-20-lluvia.md | AviReyes/Blog-Amora.github.io | b9ffd9d47e0b837c3398f409600b52a612c64c61 | [

"MIT"

] | null | null | null | _posts/2020-09-20-lluvia.md | AviReyes/Blog-Amora.github.io | b9ffd9d47e0b837c3398f409600b52a612c64c61 | [

"MIT"

] | null | null | null | ---

layout: post

title: Día de Lluvia 🌧

---

<p style='text-align: justify;'></p>

<p></p>

<p style='text-align: justify;'>

<p>Hoy fue la última lluvia de Agosto, y pensé en ti. </p>

<p>En el día que por primera vez cruzamos eje 3 juntas en nuestras bicis. </p>

<p>En el día que tomamos un café mientras llovía. ... | 26.375 | 108 | 0.682464 | spa_Latn | 0.985347 |

f979ab40b9435883ab7623f45e1b96f79e3e6917 | 8,863 | md | Markdown | articles/service-bus-messaging/service-bus-amqp-overview.md | gliljas/azure-docs.sv-se-1 | 1efdf8ba0ddc3b4fb65903ae928979ac8872d66e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/service-bus-messaging/service-bus-amqp-overview.md | gliljas/azure-docs.sv-se-1 | 1efdf8ba0ddc3b4fb65903ae928979ac8872d66e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/service-bus-messaging/service-bus-amqp-overview.md | gliljas/azure-docs.sv-se-1 | 1efdf8ba0ddc3b4fb65903ae928979ac8872d66e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Översikt över AMQP 1,0 i Azure Service Bus

description: Lär dig hur Azure Service Bus stöder Advanced Message Queueing Protocol (AMQP), ett öppet standard protokoll.

services: service-bus-messaging

documentationcenter: .net

author: axisc

manager: timlt

editor: spelluru

ms.assetid: 0e8d19cc-de36-478e-84ae-e08... | 87.752475 | 613 | 0.803904 | swe_Latn | 0.99989 |

f979d64bf683b9781a970a09451e72d58dec2c3f | 859 | md | Markdown | src/pages/about/about.md | ReganNZ/4displays | 747fd0b0396d54953b2cfed74e6b3d23f6f59199 | [

"MIT"

] | null | null | null | src/pages/about/about.md | ReganNZ/4displays | 747fd0b0396d54953b2cfed74e6b3d23f6f59199 | [

"MIT"

] | null | null | null | src/pages/about/about.md | ReganNZ/4displays | 747fd0b0396d54953b2cfed74e6b3d23f6f59199 | [

"MIT"

] | null | null | null | ---

templateKey: about-page

path: /about

title: About 4displays

---

Based in Auckland, 4displays have been in the display business since 1997 designing, manufacturing and supplying all kinds of display systems. From small & medium size items like brochure, poster and menu holders, to large complex creations and retail ... | 78.090909 | 415 | 0.786962 | eng_Latn | 0.999482 |

f979f4f3ba5cc2fc02a059cf4b4859f20d3b889a | 10,319 | md | Markdown | vendor/src/github.com/flopp/go-staticmaps/README.md | whosonfirst/go-whosonfirst-staticmaps | fe61e92f3a9aa728b85321e4a2f083cf8f53baf5 | [

"BSD-3-Clause"

] | null | null | null | vendor/src/github.com/flopp/go-staticmaps/README.md | whosonfirst/go-whosonfirst-staticmaps | fe61e92f3a9aa728b85321e4a2f083cf8f53baf5 | [

"BSD-3-Clause"

] | null | null | null | vendor/src/github.com/flopp/go-staticmaps/README.md | whosonfirst/go-whosonfirst-staticmaps | fe61e92f3a9aa728b85321e4a2f083cf8f53baf5 | [

"BSD-3-Clause"

] | null | null | null | [](https://godoc.org/github.com/flopp/go-staticmaps)

[](https://goreportcard.com/report/flopp/go-staticmaps)

[ is a web application for requesting songs that DJs have in their [iTunes](https://www.apple.com/es/itunes/download/index.html) libraries.

## Installation and build guid... | 43.847458 | 203 | 0.795129 | eng_Latn | 0.284768 |

f97a70c2f77a663124d1ee7167db7a707368cb23 | 12,046 | md | Markdown | windows/client-management/mdm/enable-admx-backed-policies-in-mdm.md | sckissel/windows-itpro-docs | 4ce647480cc30c26db475be55526126addb8c52d | [

"CC-BY-4.0",

"MIT"

] | null | null | null | windows/client-management/mdm/enable-admx-backed-policies-in-mdm.md | sckissel/windows-itpro-docs | 4ce647480cc30c26db475be55526126addb8c52d | [

"CC-BY-4.0",

"MIT"

] | null | null | null | windows/client-management/mdm/enable-admx-backed-policies-in-mdm.md | sckissel/windows-itpro-docs | 4ce647480cc30c26db475be55526126addb8c52d | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Enable ADMX-backed policies in MDM

description: Guide to configuring ADMX-backed policies in MDM

ms.author: dansimp

ms.topic: article

ms.prod: w10

ms.technology: windows

author: manikadhiman

ms.date: 11/01/2017

ms.reviewer:

manager: dansimp

---

# Enable ADMX-backed policies in MDM

This is a step-by-step ... | 38.983819 | 431 | 0.645609 | eng_Latn | 0.565085 |

f97a77d120141aaaefc666413a660ca5701d0ad7 | 67 | md | Markdown | README.md | akc-code/chrome-extension-ci | 3745564a3841fb483b69fc0e156797615ff07191 | [

"MIT"

] | null | null | null | README.md | akc-code/chrome-extension-ci | 3745564a3841fb483b69fc0e156797615ff07191 | [

"MIT"

] | null | null | null | README.md | akc-code/chrome-extension-ci | 3745564a3841fb483b69fc0e156797615ff07191 | [

"MIT"

] | null | null | null | # chrome-extension-ci

Setting up CI for a sample chrome extension.

| 22.333333 | 44 | 0.791045 | eng_Latn | 0.91538 |

f97bcedf180d61cbd197d3bac5cfcc4d301b30f0 | 1,880 | md | Markdown | _posts/2020-12-27-Basic-Conquest-3321.md | hython37/hython37.github.io | db11dc22cb6947c49d3a5bba22585c1b3da972df | [

"MIT"

] | null | null | null | _posts/2020-12-27-Basic-Conquest-3321.md | hython37/hython37.github.io | db11dc22cb6947c49d3a5bba22585c1b3da972df | [

"MIT"

] | null | null | null | _posts/2020-12-27-Basic-Conquest-3321.md | hython37/hython37.github.io | db11dc22cb6947c49d3a5bba22585c1b3da972df | [

"MIT"

] | null | null | null | ---

title: "기초정복, 3321"

excerpt: "최고의 피자"

categories:

- Algorithm

tags:

- study

- greedy

- python

toc: true

toc_label: "Algorithm of Python"

---

## 3321 : 최고의 피자

[코드업 바로가기](https://codeup.kr/problem.php?id=3321){: target="_blank"}

**문제 설명**

vega 선생님은 Miss 피자 가게의 단골 손님이다.

그는 이번 달부터 절약 생활을 시작했다.

그래서 그는 피자 가... | 19.381443 | 173 | 0.616489 | kor_Hang | 1.00001 |

f97bd9ed9a8f55229f48f802da38cd14fac50899 | 1,373 | md | Markdown | AlchemyInsights/commercial-dialogues/assign-ediscovery-administrator-permissions.md | isabella232/OfficeDocs-AlchemyInsights-pr.fr-FR | b23fe97cbba1674ad1f59978ca5080bb00d217cb | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-05-19T19:06:33.000Z | 2020-05-19T19:06:33.000Z | AlchemyInsights/commercial-dialogues/assign-ediscovery-administrator-permissions.md | isabella232/OfficeDocs-AlchemyInsights-pr.fr-FR | b23fe97cbba1674ad1f59978ca5080bb00d217cb | [

"CC-BY-4.0",

"MIT"

] | 2 | 2022-02-09T06:56:37.000Z | 2022-02-09T06:56:51.000Z | AlchemyInsights/commercial-dialogues/assign-ediscovery-administrator-permissions.md | isabella232/OfficeDocs-AlchemyInsights-pr.fr-FR | b23fe97cbba1674ad1f59978ca5080bb00d217cb | [

"CC-BY-4.0",

"MIT"

] | 2 | 2019-10-11T18:36:10.000Z | 2021-10-09T11:34:48.000Z | ---

title: Attribution d’autorisations Administrateur eDiscovery

ms.author: v-smandalika

author: v-smandalika

manager: dansimp

ms.date: 02/19/2021

audience: Admin

ms.topic: article

ms.service: o365-administration

ROBOTS: NOINDEX, NOFOLLOW

localization_priority: Priority

ms.collection: Adm_O365

ms.custom:

- "7363"

- "90... | 44.290323 | 153 | 0.805535 | fra_Latn | 0.724856 |

f97bdfdbd32577c2644c0f5924373190e809a7fc | 3,786 | md | Markdown | articles/rest-api/bot-framework-rest-direct-line-1-1-receive-messages.md | Miguel-byte/bot-docs.es-es | 73160a62ecf9178cb46530674a28db607fef434d | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/rest-api/bot-framework-rest-direct-line-1-1-receive-messages.md | Miguel-byte/bot-docs.es-es | 73160a62ecf9178cb46530674a28db607fef434d | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/rest-api/bot-framework-rest-direct-line-1-1-receive-messages.md | Miguel-byte/bot-docs.es-es | 73160a62ecf9178cb46530674a28db607fef434d | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Recepción de mensajes del bot | Microsoft Docs

description: Aprenda a recibir mensajes del bot mediante Direct Line API v1.1.

author: RobStand

ms.author: kamrani

manager: kamrani

ms.topic: article

ms.service: bot-service

ms.date: 12/13/2017

ms.openlocfilehash: 6f9a132b538a278b0990271864a70e77ea7dc56c

ms.sour... | 47.924051 | 396 | 0.746698 | spa_Latn | 0.94872 |

f97d00b971b052019ab5c485f4155bddc1e42bab | 4,444 | md | Markdown | _posts/2014-05-02-multiple-uncertainty-notes.md | kubke/labnotebook | ebada896118f21a8d63e9b584e2d1424a5d3a6bd | [

"CC0-1.0"

] | null | null | null | _posts/2014-05-02-multiple-uncertainty-notes.md | kubke/labnotebook | ebada896118f21a8d63e9b584e2d1424a5d3a6bd | [

"CC0-1.0"

] | null | null | null | _posts/2014-05-02-multiple-uncertainty-notes.md | kubke/labnotebook | ebada896118f21a8d63e9b584e2d1424a5d3a6bd | [

"CC0-1.0"

] | null | null | null | ---

layout: post

category: multiple_uncertainty

---

### Logistic recruitment, uniform noise

(Sethi Fig 3 configuration)

### Logistic recruitment, lognormal noise

... | 44.888889 | 612 | 0.786229 | eng_Latn | 0.826074 |

f97d7613cf1714fe6d0a4852dc476e763003d268 | 4,565 | md | Markdown | 2021/materials/istanbul/Pre-SICSS Tasks/Introduction.md | meghaarora42/summer-institute | 396a93ee5e999c3be5c4c212953eb8ddfd8ae7cd | [

"MIT"

] | 264 | 2017-02-01T16:50:02.000Z | 2022-03-30T20:36:20.000Z | 2021/materials/istanbul/Pre-SICSS Tasks/Introduction.md | meghaarora42/summer-institute | 396a93ee5e999c3be5c4c212953eb8ddfd8ae7cd | [

"MIT"

] | 103 | 2017-03-31T19:32:05.000Z | 2022-02-26T03:24:55.000Z | 2021/materials/istanbul/Pre-SICSS Tasks/Introduction.md | meghaarora42/summer-institute | 396a93ee5e999c3be5c4c212953eb8ddfd8ae7cd | [

"MIT"

] | 199 | 2017-06-19T15:04:00.000Z | 2022-03-18T13:21:14.000Z | # SICSS-Istanbul 2021 Pre-SICSS Tasks

## INTRODUCTION

Pre-SICSS tasks require basic knowledge of R. Therefore, we are assuming that you finished [SICSS Boot Camp](https://sicss.io/boot_camp/) which is an online

training program designed to provide you with beginner level skills in coding. The videos and materials ar... | 93.163265 | 449 | 0.794524 | eng_Latn | 0.999116 |

f97ea4791355462649a3be3b40aff17f55b4d4d3 | 1,535 | md | Markdown | docs/build/reference/pdbpath.md | bobbrow/cpp-docs | 769b186399141c4ea93400863a7d8463987bf667 | [

"CC-BY-4.0",

"MIT"

] | 965 | 2017-06-25T23:57:11.000Z | 2022-03-31T14:17:32.000Z | docs/build/reference/pdbpath.md | bobbrow/cpp-docs | 769b186399141c4ea93400863a7d8463987bf667 | [

"CC-BY-4.0",

"MIT"

] | 3,272 | 2017-06-24T00:26:34.000Z | 2022-03-31T22:14:07.000Z | docs/build/reference/pdbpath.md | bobbrow/cpp-docs | 769b186399141c4ea93400863a7d8463987bf667 | [

"CC-BY-4.0",

"MIT"

] | 951 | 2017-06-25T12:36:14.000Z | 2022-03-26T22:49:06.000Z | ---

description: "Learn more about: /PDBPATH"

title: "/PDBPATH"

ms.date: "11/04/2016"

f1_keywords: ["/pdbpath"]

helpviewer_keywords: [".pdb files, path", "-PDBPATH dumpbin option", "/PDBPATH dumpbin option", "PDBPATH dumpbin option", "PDB files, path"]

ms.assetid: ccf67dcd-0b23-4250-ad47-06c48acbe82b

---

# /PDBPATH

``... | 34.111111 | 192 | 0.753746 | eng_Latn | 0.984479 |

f97ed7102dbf2eca402d8b4e78dad26a8959958a | 9,963 | md | Markdown | _posts/2022-01-22-info-2015-375511-11290.md | seed-info/apt-info-sub | 5d3a83a8da1bd659d7d4392146f97ddbbaf7633f | [

"MIT"

] | null | null | null | _posts/2022-01-22-info-2015-375511-11290.md | seed-info/apt-info-sub | 5d3a83a8da1bd659d7d4392146f97ddbbaf7633f | [

"MIT"

] | null | null | null | _posts/2022-01-22-info-2015-375511-11290.md | seed-info/apt-info-sub | 5d3a83a8da1bd659d7d4392146f97ddbbaf7633f | [

"MIT"

] | null | null | null | ---

layout: post

comments: true

title: 정릉꿈에그린

categories: [아파트정보]

permalink: /apt/서울특별시성북구정릉동정릉꿈에그린

---

정릉꿈에그린 실거래 상세정보

<script type="text/javascript">

google.charts.load('current', {'packages':['line', 'corechart']});

google.charts.setOnLoadCallback(drawChart);

function drawChart() {

var data = new google... | 32.03537 | 1,782 | 0.464418 | kor_Hang | 0.213545 |

f97f4c63f797daa274eb714ee351079aa38b640e | 2,606 | md | Markdown | README.md | favourch/mentor-mentee-platform | 5207c0f8d6edb1c0b9c519eee47f39a66a90675c | [

"MIT"

] | 26 | 2018-12-12T21:15:58.000Z | 2021-09-07T21:51:18.000Z | README.md | favourch/mentor-mentee-platform | 5207c0f8d6edb1c0b9c519eee47f39a66a90675c | [

"MIT"

] | 212 | 2018-12-31T00:02:51.000Z | 2022-03-01T08:16:23.000Z | README.md | favourch/mentor-mentee-platform | 5207c0f8d6edb1c0b9c519eee47f39a66a90675c | [

"MIT"

] | 22 | 2019-04-11T19:32:05.000Z | 2020-11-30T14:29:48.000Z | [](https://travis-ci.com/RailsGirlsCPH/mentor-mentee-platform)

# Mentor Mentee Platform

Find a mentor-platform: A matchmaking platform where people entering the tech wo... | 93.071429 | 639 | 0.67076 | eng_Latn | 0.997803 |

f97f6afea736703350df7e5fc47497174f49135b | 44 | md | Markdown | README.old.md | peterblazejewicz/ch-react-redux | a4a42bcc0e22c38450780947de1498b16c8f3dfe | [

"Unlicense"

] | null | null | null | README.old.md | peterblazejewicz/ch-react-redux | a4a42bcc0e22c38450780947de1498b16c8f3dfe | [

"Unlicense"

] | null | null | null | README.old.md | peterblazejewicz/ch-react-redux | a4a42bcc0e22c38450780947de1498b16c8f3dfe | [

"Unlicense"

] | null | null | null | # ch-react-redux

Cory House React/Redux app

| 14.666667 | 26 | 0.772727 | kor_Hang | 0.394113 |

f97fe0176de98ea1f5c9be025ab60fe95841a2ae | 5,174 | md | Markdown | network_controllers/dnac/README.md | tobaidullah/2 | 3fa67855ef461ccaee283dcbbdd9bf00e7a52378 | [

"MIT"

] | 629 | 2017-12-15T20:26:13.000Z | 2022-03-30T04:02:02.000Z | network_controllers/dnac/README.md | tobaidullah/2 | 3fa67855ef461ccaee283dcbbdd9bf00e7a52378 | [

"MIT"

] | 40 | 2018-01-18T09:07:50.000Z | 2021-09-23T23:21:47.000Z | network_controllers/dnac/README.md | tobaidullah/2 | 3fa67855ef461ccaee283dcbbdd9bf00e7a52378 | [

"MIT"

] | 394 | 2017-12-18T22:35:36.000Z | 2022-03-29T19:41:25.000Z | # Programming Cisco DNA Center Platform Lessons

* **Introducing Cisco DNA Center Platform APIs and Programmability**

* **Cisco DNA Center Platform APIs Part 1: Exploring Apps and Tools**

* **Cisco DNA Center Platform APIs Part 2: Network Troubleshooting**

## "Gitting" the Code

All of the code and examples for this le... | 58.795455 | 512 | 0.784693 | eng_Latn | 0.948969 |

d5ef694b5278df5bc0ccfd7a2e516e33173251b1 | 75 | md | Markdown | packages/___buildTools/README.md | Jason-Rev/vscode-spell-checker | 6781e5e952a0007f78ceff1d157763709c2c7762 | [

"MIT"

] | 255 | 2016-06-02T10:33:49.000Z | 2019-05-10T15:32:10.000Z | packages/___buildTools/README.md | Jason-Rev/vscode-spell-checker | 6781e5e952a0007f78ceff1d157763709c2c7762 | [

"MIT"

] | 334 | 2016-06-02T10:33:44.000Z | 2019-05-09T09:18:07.000Z | packages/___buildTools/README.md | Jason-Rev/vscode-spell-checker | 6781e5e952a0007f78ceff1d157763709c2c7762 | [

"MIT"

] | 45 | 2016-06-22T08:02:27.000Z | 2019-05-05T10:02:45.000Z | Build Tools

These are tools that are used to help with the build process.

| 18.75 | 61 | 0.786667 | eng_Latn | 1.000009 |

d5ef9f15e7fb8a6fa6e882c12afd70d85ccebadb | 663 | md | Markdown | notes/array/matrixRepresentation/README.md | theArjun/Java | 40fc2ec5c3fc529000ffe8525904bdcadaf7058d | [

"MIT"

] | 11 | 2019-01-16T02:31:04.000Z | 2020-03-17T11:19:35.000Z | notes/array/matrixRepresentation/README.md | theArjun/Java_Notes_With_Codes | 40fc2ec5c3fc529000ffe8525904bdcadaf7058d | [

"MIT"

] | 1 | 2020-02-26T07:58:14.000Z | 2020-02-26T07:58:14.000Z | notes/array/matrixRepresentation/README.md | theArjun/Java_Notes_With_Codes | 40fc2ec5c3fc529000ffe8525904bdcadaf7058d | [

"MIT"

] | 18 | 2019-01-16T02:32:01.000Z | 2020-03-18T18:16:36.000Z | ---

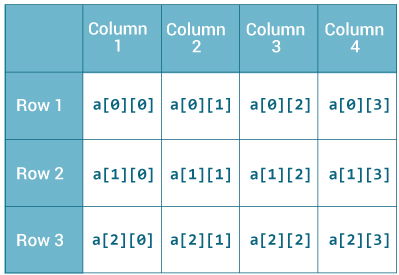

title: Matrix Representation of Arrays

---

# Matrix Representation

This type of array declaration is represented in matrix form. Memory wastage is most probable to happen in this scenario and m... | 34.894737 | 197 | 0.790347 | eng_Latn | 0.990826 |

d5effb4b3a882877baec7da097a55243327aff31 | 1,131 | md | Markdown | README.md | malloryfaria/jest-another-rpg | 785aeadd011a3caf4bbe4d163dbb72bc66b43b3d | [

"MIT"

] | null | null | null | README.md | malloryfaria/jest-another-rpg | 785aeadd011a3caf4bbe4d163dbb72bc66b43b3d | [

"MIT"

] | 5 | 2021-02-12T02:58:14.000Z | 2021-02-14T02:04:10.000Z | README.md | malloryfaria/jest-another-rpg | 785aeadd011a3caf4bbe4d163dbb72bc66b43b3d | [

"MIT"

] | null | null | null | # Jest Another RPG

## Description

A simple RPG game built with Node, Jest and Inquirer

## Build status

Live

## Deployed application

https://github.com/malloryfaria/jest-another-rpg

## Tech/framework used

<b>Built with</b>

- Node

- Inquirer

- Jest

## Code Example

```

class Character {

constructor(name... | 15.929577 | 60 | 0.560566 | eng_Latn | 0.658873 |

d5f0142aa8ac0285a9308748d1cdb855050220f8 | 29,595 | md | Markdown | contributing.md | Pandinosaurus/spark-website | 3ec4093344a022d15341f6f7e1d23ee2a223f01c | [

"Apache-2.0"

] | 1 | 2019-05-31T14:01:39.000Z | 2019-05-31T14:01:39.000Z | contributing.md | Pandinosaurus/spark-website | 3ec4093344a022d15341f6f7e1d23ee2a223f01c | [

"Apache-2.0"

] | null | null | null | contributing.md | Pandinosaurus/spark-website | 3ec4093344a022d15341f6f7e1d23ee2a223f01c | [

"Apache-2.0"

] | null | null | null | ---

layout: global

title: Contributing to Spark

type: "page singular"

navigation:

weight: 5

show: true

---

This guide documents the best way to make various types of contribution to Apache Spark,

including what is required before submitting a code change.

Contributing to Spark doesn't just mean writing code. Hel... | 61.914226 | 591 | 0.75827 | eng_Latn | 0.999414 |

d5f01ca5e2989edf9db743bc6c45c7ffc68aba3d | 1,373 | md | Markdown | docs/interfaces/_src_agent_structures_.customerparameters.md | quarties/lc-sdk-js | b511205cc9421c00e607340fc92968555b16775a | [

"Apache-2.0"

] | null | null | null | docs/interfaces/_src_agent_structures_.customerparameters.md | quarties/lc-sdk-js | b511205cc9421c00e607340fc92968555b16775a | [

"Apache-2.0"

] | null | null | null | docs/interfaces/_src_agent_structures_.customerparameters.md | quarties/lc-sdk-js | b511205cc9421c00e607340fc92968555b16775a | [

"Apache-2.0"

] | null | null | null | [@livechat/lc-sdk-js](../README.md) › [Globals](../globals.md) › ["src/agent/structures"](../modules/_src_agent_structures_.md) › [CustomerParameters](_src_agent_structures_.customerparameters.md)

# Interface: CustomerParameters

## Hierarchy

* **CustomerParameters**

## Index

### Properties

* [avatar](_src_agent_s... | 28.020408 | 196 | 0.74217 | yue_Hant | 0.279898 |

d5f030c0189aa7f64895b2c259060c4a78623546 | 200 | md | Markdown | _posts/0000-01-02-Basilisnocoder.md | Basilisnocoder/github-slideshow | dfdc796e2f8585baf3622ece4604d7453e45c98d | [

"MIT"

] | null | null | null | _posts/0000-01-02-Basilisnocoder.md | Basilisnocoder/github-slideshow | dfdc796e2f8585baf3622ece4604d7453e45c98d | [

"MIT"

] | 3 | 2021-06-16T05:52:24.000Z | 2021-06-16T19:33:46.000Z | _posts/0000-01-02-Basilisnocoder.md | Basilisnocoder/github-slideshow | dfdc796e2f8585baf3622ece4604d7453e45c98d | [

"MIT"

] | null | null | null | ---

layout: slide

title: "Welcome to our second slide!"

---

Textications. Am i doing this right??

https://user-images.githubusercontent.com/85979580/122281262-4e182e80-cea7-11eb-8504-d04fdbc7d0b0.mp4

| 28.571429 | 101 | 0.775 | eng_Latn | 0.290429 |

d5f05f4063410d764130b3284b5b4e848ebb2827 | 2,138 | md | Markdown | docs/en/index.md | EvvC/platform | 57e369f45740072aeae5aad7a7a335b542ccb1cb | [

"MIT"

] | 3 | 2019-07-20T09:40:41.000Z | 2019-07-29T05:14:00.000Z | docs/en/index.md | EvvC/platform | 57e369f45740072aeae5aad7a7a335b542ccb1cb | [

"MIT"

] | null | null | null | docs/en/index.md | EvvC/platform | 57e369f45740072aeae5aad7a7a335b542ccb1cb | [

"MIT"

] | null | null | null | # Documentation

----------

## Welcome

This tutorial contains a reference and important themes about development of business-applications on the ORCHID platform.

To use the platform the knowledge of following technologies is required:

- PHP/JavaScript/HTML/CSS

- Relational databases

- Apache/nginx

- Laravel Framework... | 47.511111 | 218 | 0.799813 | eng_Latn | 0.998511 |

d5f1beb0d63db2818eda2c53225d0cf21363f68f | 9,089 | md | Markdown | articles/virtual-machines/windows/capture-image-resource.md | diablo444/azure-docs.de-de | 168079679b8171e6c2b6957d21d581f05752689d | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/virtual-machines/windows/capture-image-resource.md | diablo444/azure-docs.de-de | 168079679b8171e6c2b6957d21d581f05752689d | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/virtual-machines/windows/capture-image-resource.md | diablo444/azure-docs.de-de | 168079679b8171e6c2b6957d21d581f05752689d | [

"CC-BY-4.0",

"MIT"

] | 1 | 2022-01-21T14:22:47.000Z | 2022-01-21T14:22:47.000Z | ---

title: Erstellen eines verwalteten Images in Azure | Microsoft-Dokumentation

description: "Erstellen Sie ein verwaltetes Image eines generalisierten virtuellen Computers oder einer VHD in Azure. Mit Images können mehrere virtuelle Computer erstellt werden, die verwaltete Datenträger verwenden."

services: virtual... | 46.610256 | 301 | 0.768621 | deu_Latn | 0.974696 |

d5f2c6a1da798704d63390fd27991014a6e52b42 | 282 | md | Markdown | docs/common.awaited.md | evanw/velcro | 0980f8c73c07182f5a878b9312bb900b587a047f | [

"MIT"

] | 1 | 2020-10-11T02:41:36.000Z | 2020-10-11T02:41:36.000Z | docs/common.awaited.md | evanw/velcro | 0980f8c73c07182f5a878b9312bb900b587a047f | [

"MIT"

] | null | null | null | docs/common.awaited.md | evanw/velcro | 0980f8c73c07182f5a878b9312bb900b587a047f | [

"MIT"

] | 1 | 2021-03-11T04:27:24.000Z | 2021-03-11T04:27:24.000Z | <!-- Do not edit this file. It is automatically generated by API Documenter. -->

[Home](./index.md) > [@velcro/common](./common.md) > [Awaited](./common.awaited.md)

## Awaited type

<b>Signature:</b>

```typescript

type Awaited<T> = T extends Thenable<infer U> ? U : T;

```

| 23.5 | 89 | 0.648936 | eng_Latn | 0.816194 |

d5f306d26a074461357d931c90839a97a8e8d204 | 15,356 | md | Markdown | gitbook/2021/01/20210129.md | yanalhk/live | e445d081d25dda486a1527e18e2d450ce6a063f0 | [

"MIT"

] | null | null | null | gitbook/2021/01/20210129.md | yanalhk/live | e445d081d25dda486a1527e18e2d450ce6a063f0 | [

"MIT"

] | null | null | null | gitbook/2021/01/20210129.md | yanalhk/live | e445d081d25dda486a1527e18e2d450ce6a063f0 | [

"MIT"

] | 1 | 2020-11-30T17:36:19.000Z | 2020-11-30T17:36:19.000Z | #2021-01-29

09:28:39

法庭文字直播台

\#西九龍裁判法院第五庭

\#劉淑嫻裁判官 \#審訊 \[1/1\] \#1226旺角

楊(29)

控罪:遊蕩導致他人擔心

案情:2019年12月26日,在朗豪坊4樓「茶木」內上述公眾地方遊蕩,而他結伴在該處出現,導致其他不知名人士合理地擔心本身的安全或利益。

\========================

0927播錄音,滿人(細庭)

0934開庭

1057休庭至1105

不認罪

承認事實(控方證物p4):

1.20191226約2025時警員15625於朗豪坊四樓拘捕被告,罪名是在... | 21.78156 | 346 | 0.738799 | yue_Hant | 0.90656 |

d5f34413f344455bf90eaa8e4958362d848da980 | 550 | md | Markdown | _posts/2019-02-20-NUnit3TestAdapter-3.13.md | mikkelbu/nunit.github.io | d81f2b8093dcdf84c83b3ea047002d36b40ad3da | [

"MIT"

] | 1 | 2021-12-17T13:00:17.000Z | 2021-12-17T13:00:17.000Z | _posts/2019-02-20-NUnit3TestAdapter-3.13.md | mikkelbu/nunit.github.io | d81f2b8093dcdf84c83b3ea047002d36b40ad3da | [

"MIT"

] | 104 | 2015-12-16T15:39:50.000Z | 2022-03-20T18:46:59.000Z | _posts/2019-02-20-NUnit3TestAdapter-3.13.md | mikkelbu/nunit.github.io | d81f2b8093dcdf84c83b3ea047002d36b40ad3da | [

"MIT"

] | 11 | 2016-08-19T20:29:34.000Z | 2022-01-26T20:08:29.000Z | NUnit3TestAdapter version 3.13.0 is released.

This release focuses on enhancements: improving and fixing the generation of NUnit3 Test Results XML and removing internal properties. It also ensures that the VSIX version works with VS2019.

See [the release notes](https://github.com/nunit/docs/wiki/Adapter-Release-Note... | 91.666667 | 193 | 0.810909 | eng_Latn | 0.833553 |

d5f35abe4f4ef77e32de170d4067738336484b82 | 193 | md | Markdown | fr/adopt-openjdk-getting-started/write_up_on_the_adopt_openjdk_&_adopt-a-jsr_programs.md | feng-qi/adoptopenjdk-getting-started-kit | 0ac48d8d93b462ba682b653b1afbd9314c660fd2 | [

"CC0-1.0"

] | 49 | 2015-11-17T09:47:19.000Z | 2021-11-14T20:41:55.000Z | fr/adopt-openjdk-getting-started/write_up_on_the_adopt_openjdk_&_adopt-a-jsr_programs.md | feng-qi/adoptopenjdk-getting-started-kit | 0ac48d8d93b462ba682b653b1afbd9314c660fd2 | [

"CC0-1.0"

] | 32 | 2015-11-23T00:02:42.000Z | 2020-12-05T23:48:37.000Z | fr/adopt-openjdk-getting-started/write_up_on_the_adopt_openjdk_&_adopt-a-jsr_programs.md | feng-qi/adoptopenjdk-getting-started-kit | 0ac48d8d93b462ba682b653b1afbd9314c660fd2 | [

"CC0-1.0"

] | 12 | 2015-11-16T23:36:31.000Z | 2020-04-08T10:14:13.000Z | # Présentation des programmes Adopt OpenJDK et Adopt-a-JSR

https://docs.google.com/document/d/1TjusNITbfb35PKqA3Bq5yx_j4jPeHs3dLBvACW-fAoA/edit (capture de la page d'accueil d'Adopt OpenJDK).

| 48.25 | 132 | 0.818653 | kor_Hang | 0.288398 |

d5f35e9c9b1af9a3e8b3671a423bafe259b9b924 | 10,247 | md | Markdown | src/components/Picklist/readme.md | Charlitos96/react-rainbow | 7b9d1445ba39e55cbda9a6b9f7adcd38323c987d | [

"MIT"

] | null | null | null | src/components/Picklist/readme.md | Charlitos96/react-rainbow | 7b9d1445ba39e55cbda9a6b9f7adcd38323c987d | [

"MIT"