hexsha stringlengths 40 40 | size int64 5 1.04M | ext stringclasses 6

values | lang stringclasses 1

value | max_stars_repo_path stringlengths 3 344 | max_stars_repo_name stringlengths 5 125 | max_stars_repo_head_hexsha stringlengths 40 78 | max_stars_repo_licenses listlengths 1 11 | max_stars_count int64 1 368k ⌀ | max_stars_repo_stars_event_min_datetime stringlengths 24 24 ⌀ | max_stars_repo_stars_event_max_datetime stringlengths 24 24 ⌀ | max_issues_repo_path stringlengths 3 344 | max_issues_repo_name stringlengths 5 125 | max_issues_repo_head_hexsha stringlengths 40 78 | max_issues_repo_licenses listlengths 1 11 | max_issues_count int64 1 116k ⌀ | max_issues_repo_issues_event_min_datetime stringlengths 24 24 ⌀ | max_issues_repo_issues_event_max_datetime stringlengths 24 24 ⌀ | max_forks_repo_path stringlengths 3 344 | max_forks_repo_name stringlengths 5 125 | max_forks_repo_head_hexsha stringlengths 40 78 | max_forks_repo_licenses listlengths 1 11 | max_forks_count int64 1 105k ⌀ | max_forks_repo_forks_event_min_datetime stringlengths 24 24 ⌀ | max_forks_repo_forks_event_max_datetime stringlengths 24 24 ⌀ | content stringlengths 5 1.04M | avg_line_length float64 1.14 851k | max_line_length int64 1 1.03M | alphanum_fraction float64 0 1 | lid stringclasses 191

values | lid_prob float64 0.01 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

912784033410cbea044d74258aefe4246b6b2685 | 886 | md | Markdown | _posts/2021-06-07-apos-19-dias-salles-entrega-celular-a-investigadores-da-operacao-akuanduba.md | tatudoquei/tatudoquei.github.io | a3a3c362424fda626d7d0ce2d9f4bead6580631c | [

"MIT"

] | null | null | null | _posts/2021-06-07-apos-19-dias-salles-entrega-celular-a-investigadores-da-operacao-akuanduba.md | tatudoquei/tatudoquei.github.io | a3a3c362424fda626d7d0ce2d9f4bead6580631c | [

"MIT"

] | null | null | null | _posts/2021-06-07-apos-19-dias-salles-entrega-celular-a-investigadores-da-operacao-akuanduba.md | tatudoquei/tatudoquei.github.io | a3a3c362424fda626d7d0ce2d9f4bead6580631c | [

"MIT"

] | 1 | 2022-01-13T07:57:24.000Z | 2022-01-13T07:57:24.000Z | ---

layout: post

item_id: 3351158114

title: >-

Após 19 dias, Salles entrega celular a investigadores da operação Akuanduba

author: Tatu D'Oquei

date: 2021-06-07 18:45:32

pub_date: 2021-06-07 18:45:32

time_added: 2021-06-07 19:04:57

category:

tags: []

image: https://www.cartacapital.com.br/wp-content/uploads/2021/0... | 46.631579 | 291 | 0.784424 | por_Latn | 0.82637 |

9127a5ff9bdafd7c26d128524ed18eb560875fba | 673 | md | Markdown | sandstone/pattern-virtualgridlist-api/README.md | enyojs/enact-samples | e360b50eeed552613f8dc4f5b1d18d299ee5a7dd | [

"Apache-2.0"

] | null | null | null | sandstone/pattern-virtualgridlist-api/README.md | enyojs/enact-samples | e360b50eeed552613f8dc4f5b1d18d299ee5a7dd | [

"Apache-2.0"

] | null | null | null | sandstone/pattern-virtualgridlist-api/README.md | enyojs/enact-samples | e360b50eeed552613f8dc4f5b1d18d299ee5a7dd | [

"Apache-2.0"

] | null | null | null | ## VirtualGridList add/remove/select/deselect pattern // My Gallery

A sample Enact application that shows off how to add/remove/select/deselect items of VirtualGridList

Run `npm install` then `npm run serve` to have the app running on [http://localhost:8080](http://localhost:8080), where you can view it in your b... | 37.388889 | 153 | 0.744428 | eng_Latn | 0.956692 |

912862e895a04c35a9cd6b8534c8800642e505ad | 2,428 | md | Markdown | README.md | es-shims/Promise.try | 23546f147e25515660c9b20f346be3dca513dd74 | [

"MIT"

] | 5 | 2016-08-21T12:18:28.000Z | 2019-10-27T22:28:44.000Z | README.md | es-shims/Promise.try | 23546f147e25515660c9b20f346be3dca513dd74 | [

"MIT"

] | 2 | 2019-11-11T07:57:12.000Z | 2019-11-19T09:48:45.000Z | README.md | es-shims/Promise.try | 23546f147e25515660c9b20f346be3dca513dd74 | [

"MIT"

] | 2 | 2016-08-21T09:35:10.000Z | 2018-01-17T09:33:54.000Z | # promise.try <sup>[![Version Badge][npm-version-svg]][package-url]</sup>

[![Build Status][travis-svg]][travis-url]

[![dependency status][deps-svg]][deps-url]

[![dev dependency status][dev-deps-svg]][dev-deps-url]

[![License][license-image]][license-url]

[![Downloads][downloads-image]][downloads-url]

[![npm badge][np... | 36.238806 | 253 | 0.719522 | kor_Hang | 0.266022 |

9128a347536e4cd1119bce7afa140e842578a0cb | 142 | md | Markdown | content/blog/jjameson/2008/04/08/resources/table-1-popout/_index.md | technology-toolbox/website | 9d845dc68e650ee164959da418fde24eacecf1c9 | [

"MIT"

] | null | null | null | content/blog/jjameson/2008/04/08/resources/table-1-popout/_index.md | technology-toolbox/website | 9d845dc68e650ee164959da418fde24eacecf1c9 | [

"MIT"

] | 109 | 2021-03-25T11:16:17.000Z | 2022-01-23T20:55:51.000Z | content/blog/jjameson/2008/04/08/resources/table-1-popout/_index.md | technology-toolbox/website | 9d845dc68e650ee164959da418fde24eacecf1c9 | [

"MIT"

] | null | null | null | ---

layout: popout

title: Table 1 - MOSS 2007 Feature Definitions

date: 2008-04-08T18:39:00-06:00

---

{{< include-html "../table-1.html" >}}

| 17.75 | 46 | 0.65493 | kor_Hang | 0.46492 |

912951379577e4f7b23fcae87b7916c8adf06b40 | 349 | md | Markdown | README.md | Ccode-lang/pydrumplugin | ab65688288a6f2f464fe1ca69560c975a750f194 | [

"MIT"

] | null | null | null | README.md | Ccode-lang/pydrumplugin | ab65688288a6f2f464fe1ca69560c975a750f194 | [

"MIT"

] | null | null | null | README.md | Ccode-lang/pydrumplugin | ab65688288a6f2f464fe1ca69560c975a750f194 | [

"MIT"

] | null | null | null | # pydrumplugin

A template for a drumbash plugin in python

# setup

1. Change filler in `build.sh` to whatever you want to name the plugin

2. Write code using the imported api

# building

Run:

```bash

./build.sh

```

The outputed drumfile will be in `./artifact`.

# drum api

look at how to use at https://github.com/Ccode-la... | 24.928571 | 77 | 0.74212 | eng_Latn | 0.978943 |

912958e5c9c66c83392f1299f852e467080be1d4 | 738 | md | Markdown | _posts/2020-01-21-cartagena-taller3.md | EducacionSiglo21/jekyll | f04b07efe35a5c2c61b290592e3b9b63d2e16552 | [

"MIT"

] | 3 | 2018-11-26T15:11:12.000Z | 2019-02-12T06:51:17.000Z | _posts/2020-01-21-cartagena-taller3.md | EducacionSiglo21/startbootstrap-clean-blog-jekyll | db55bc500681697614b32c530ae29bdf58305b72 | [

"MIT"

] | null | null | null | _posts/2020-01-21-cartagena-taller3.md | EducacionSiglo21/startbootstrap-clean-blog-jekyll | db55bc500681697614b32c530ae29bdf58305b72 | [

"MIT"

] | 3 | 2018-12-16T10:55:27.000Z | 2021-01-22T21:19:25.000Z | ---

layout: post

title: "Realidad virtual y realidad aumentada"

subtitle: "Taller"

background: "/img/posts/bg-cartagena.jpg"

eventdate: 2020-02-20 10:00:00 +0100

category: "local"

tags: "cartagena"

placeName: "CEIP Bethoven, Cartagena"

speakers:

- name: Paqui Rosique

---

Descripción taller: La realidad vir... | 33.545455 | 117 | 0.768293 | spa_Latn | 0.993285 |

912a636db978d531b25d027896114e19feb331b1 | 227 | md | Markdown | README.md | ShaneMcC/DMDirc-Util | ff6906a5ce69f654d6f7b8b716dfe354c1c4ec8b | [

"MIT"

] | null | null | null | README.md | ShaneMcC/DMDirc-Util | ff6906a5ce69f654d6f7b8b716dfe354c1c4ec8b | [

"MIT"

] | 6 | 2015-01-17T21:58:27.000Z | 2017-01-15T05:09:08.000Z | README.md | ShaneMcC/DMDirc-Util | ff6906a5ce69f654d6f7b8b716dfe354c1c4ec8b | [

"MIT"

] | 2 | 2019-05-02T22:30:56.000Z | 2019-05-08T05:51:13.000Z | # Util

[](https://www.codacy.com/app/DMDirc/Util?utm_source=github.com&utm_medium=referral&utm_content=DMDirc/Util&utm_campaign=badger) | 113.5 | 220 | 0.823789 | yue_Hant | 0.886751 |

912b0439871eb5c3da9cb4c27038da1001ee31e6 | 204 | md | Markdown | README.md | Roragok/namafia-anime | 6d804163152bd002f198034734b84704356eb199 | [

"MIT"

] | null | null | null | README.md | Roragok/namafia-anime | 6d804163152bd002f198034734b84704356eb199 | [

"MIT"

] | 15 | 2019-12-17T16:49:32.000Z | 2022-02-18T16:48:13.000Z | README.md | Roragok/namafia-anime | 6d804163152bd002f198034734b84704356eb199 | [

"MIT"

] | null | null | null | # namafia-anime

Webpage for anime streamings

To run this yourself install node and yarn, clone the repo and run yarn start.

Useful Links:

- https://www.typescriptlang.org/docs/handbook/basic-types.html

| 25.5 | 78 | 0.789216 | eng_Latn | 0.52797 |

912b1587f74aa7894fca0a1d7e118eab34590f4e | 16,211 | md | Markdown | _episodes/05-loop.md | statkclee/shell-novice-kr | c8c59be87a1c1f4e9310baca5f209eaac6801d15 | [

"CC-BY-4.0"

] | null | null | null | _episodes/05-loop.md | statkclee/shell-novice-kr | c8c59be87a1c1f4e9310baca5f209eaac6801d15 | [

"CC-BY-4.0"

] | null | null | null | _episodes/05-loop.md | statkclee/shell-novice-kr | c8c59be87a1c1f4e9310baca5f209eaac6801d15 | [

"CC-BY-4.0"

] | null | null | null | ---

title: "루프(Loops)"

teaching: 40

exercises: 10

questions:

- "다른 파일이 많은데 어떻게 동일한 동작을 수행시킬 수 있을까?"

objectives:

- "파일 집합의 각 파일에 따로 따로 나누어서 하나 혹은 명령어 다수를 적용하는 루프를 작성한다."

- "루프가 실행되는 동안에 루프 변수가 취하는 값을 추적한다."

- "변수명과 변수값 차이에 대해 설명한다."

- "왜 공백과 일부 구두점 문자는 파일 이름에 사용되지 말아야 되는지 설명한다."

- "어떤 명령어가 최근에 실행되었는지를 확인하는 방법을 시범으로 보여준다... | 23.700292 | 114 | 0.637283 | kor_Hang | 1.00001 |

912b1e112548b60e6bc6100387aff62084aa29e9 | 3,646 | md | Markdown | desktop-src/SecGloss/b-gly.md | velden/win32 | 94b05f07dccf18d4b1dbca13b19fd365a0c7eedc | [

"CC-BY-4.0",

"MIT"

] | 552 | 2019-08-20T00:08:40.000Z | 2022-03-30T18:25:35.000Z | desktop-src/SecGloss/b-gly.md | velden/win32 | 94b05f07dccf18d4b1dbca13b19fd365a0c7eedc | [

"CC-BY-4.0",

"MIT"

] | 1,143 | 2019-08-21T20:17:47.000Z | 2022-03-31T20:24:39.000Z | desktop-src/SecGloss/b-gly.md | velden/win32 | 94b05f07dccf18d4b1dbca13b19fd365a0c7eedc | [

"CC-BY-4.0",

"MIT"

] | 1,287 | 2019-08-20T05:37:48.000Z | 2022-03-31T20:22:06.000Z | ---

description: Contains definitions of security terms that begin with the letter B.

ROBOTS: NOINDEX, NOFOLLOW

ms.assetid: 2e570727-7da0-4e17-bf5d-6fe0e6aef65b

title: B (Security Glossary)

ms.topic: article

ms.date: 05/31/2018

---

# B (Security Glossary)

[A](a-gly.md) B [C](c-gly.md) [D](d-gly.md) [E](e-gly.md) F [G... | 39.204301 | 310 | 0.729292 | eng_Latn | 0.872546 |

912b3493a48828811ee290d29f546625c794918f | 705 | md | Markdown | docs/api/ESCWalkContext.md | StraToN/unofficial-escoria-reloaded | ccb34e319b716b4d3afc540fbb970348d872ffbf | [

"MIT"

] | 7 | 2021-03-09T08:13:45.000Z | 2021-09-20T07:12:08.000Z | docs/api/ESCWalkContext.md | StraToN/unofficial-escoria-reloaded | ccb34e319b716b4d3afc540fbb970348d872ffbf | [

"MIT"

] | 17 | 2021-05-15T16:10:14.000Z | 2021-07-04T17:00:05.000Z | docs/api/ESCWalkContext.md | StraToN/unofficial-escoria-reloaded | ccb34e319b716b4d3afc540fbb970348d872ffbf | [

"MIT"

] | null | null | null | <!-- Auto-generated from JSON by GDScript docs maker. Do not edit this document directly. -->

# ESCWalkContext

**Extends:** [Object](../Object)

## Description

The walk context describes the target of a walk command and if that command

should be executed fast

## Property Descriptions

### target\_object

```gdscrip... | 15.326087 | 93 | 0.719149 | eng_Latn | 0.903082 |

912ba768002369b6d06e5b9801803f10a0ad41ee | 9,164 | md | Markdown | content/post/2009/2009-05-26-las-maquinas-del-fin-del-mundo-intermedio/index.md | lnds/lnds-site | c7d8483a764c91f1653c77ab6934c4f34d847f62 | [

"MIT"

] | null | null | null | content/post/2009/2009-05-26-las-maquinas-del-fin-del-mundo-intermedio/index.md | lnds/lnds-site | c7d8483a764c91f1653c77ab6934c4f34d847f62 | [

"MIT"

] | null | null | null | content/post/2009/2009-05-26-las-maquinas-del-fin-del-mundo-intermedio/index.md | lnds/lnds-site | c7d8483a764c91f1653c77ab6934c4f34d847f62 | [

"MIT"

] | null | null | null | ---

comments: true

date: 2009-05-26 20:53:24

layout: post

slug: las-maquinas-del-fin-del-mundo-intermedio

title: Las máquinas del fin del mundo (intermedio)

wordpress_id: 191

categories:

- General

- Paradigmas

---

Ya hemos visto [una posición](http://www.lnds.net/2009/05/el-desafio-del-nuevo-ludita.html), en uno de lo... | 78.324786 | 463 | 0.791248 | spa_Latn | 0.997531 |

912c31695f32b5ae8ab45d6507060e2a7a205424 | 1,480 | md | Markdown | intl.en-US/Product Introduction/Benefits.md | vlgnaw/emapreduce | 37918944befffc3895b53fe8d8ae5d793331f401 | [

"MIT"

] | null | null | null | intl.en-US/Product Introduction/Benefits.md | vlgnaw/emapreduce | 37918944befffc3895b53fe8d8ae5d793331f401 | [

"MIT"

] | null | null | null | intl.en-US/Product Introduction/Benefits.md | vlgnaw/emapreduce | 37918944befffc3895b53fe8d8ae5d793331f401 | [

"MIT"

] | null | null | null | # Benefits {#concept_j4w_dky_w2b .concept}

E-MapReduce has some practical strength over self-built clusters. For example, it provides some convenient and controllable means to manage its clusters. In addition, it also has the following strengths:

- Usability

User can select the required ECS types and disks and... | 61.666667 | 501 | 0.787162 | eng_Latn | 0.999365 |

912c7f22c8273129c8aa65f19f04180f50e966d9 | 1,549 | md | Markdown | business-central/LocalFunctionality/UnitedKingdom/how-to-print-direct-sales-and-purchase-details-reports.md | nschonni/dynamics365smb-docs | 619182073e912c1373c58db16c20f0770aefc1b3 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | business-central/LocalFunctionality/UnitedKingdom/how-to-print-direct-sales-and-purchase-details-reports.md | nschonni/dynamics365smb-docs | 619182073e912c1373c58db16c20f0770aefc1b3 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | business-central/LocalFunctionality/UnitedKingdom/how-to-print-direct-sales-and-purchase-details-reports.md | nschonni/dynamics365smb-docs | 619182073e912c1373c58db16c20f0770aefc1b3 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: How to Print Direct Sales and Purchase Details Reports | Microsoft Docs

description: The **Direct Sales Details** and **Direct Purchase Details** reports include headers with order numbers and descriptions from sales and purchase documents.

services: project-madeira

documentationcenter: ''

... | 41.864865 | 221 | 0.723047 | eng_Latn | 0.96782 |

912cdb13d5de0c13eb0d4826d9af66f3f2c938dd | 50 | md | Markdown | README.md | jorge-matricali/jwt-crack | 16262f521ee871a0e2581e8b2016c9477c884be0 | [

"MIT"

] | 1 | 2019-12-10T23:52:16.000Z | 2019-12-10T23:52:16.000Z | README.md | jorge-matricali/jwt-crack | 16262f521ee871a0e2581e8b2016c9477c884be0 | [

"MIT"

] | null | null | null | README.md | jorge-matricali/jwt-crack | 16262f521ee871a0e2581e8b2016c9477c884be0 | [

"MIT"

] | 1 | 2019-11-05T16:47:23.000Z | 2019-11-05T16:47:23.000Z | # jwt-crack

JWT brute force cracker written in C.

| 16.666667 | 37 | 0.76 | eng_Latn | 0.986595 |

912cde06c9d7598ad824441dddc898c518243f1a | 573 | md | Markdown | README.md | rmurai0610/DArgs | 170b7d354c90c3212886eb57e04999c2f16d7de0 | [

"MIT"

] | null | null | null | README.md | rmurai0610/DArgs | 170b7d354c90c3212886eb57e04999c2f16d7de0 | [

"MIT"

] | null | null | null | README.md | rmurai0610/DArgs | 170b7d354c90c3212886eb57e04999c2f16d7de0 | [

"MIT"

] | null | null | null | # DArgs - Dumb Argument Parser for C++

DArgs is a minimal, simple argument parser for C++.

DArgs parses the arguments as the options are defined, enabling the user to use DArgs with minimal lines of code.

## Example

```

DArgs::DArgs dargs(argc, argv);

std::string dataset = dargs("--dataset", "Path to the dataset to loa... | 35.8125 | 113 | 0.675393 | eng_Latn | 0.714656 |

912d3f6be97e0a30352980eab51df33a4d60bfa1 | 2,930 | md | Markdown | docs/framework/wcf/guidelines-and-best-practices.md | nicolaiarocci/docs.it-it | 74867e24b2aeb9dbaf0a908eabd8918bc780d7b4 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/framework/wcf/guidelines-and-best-practices.md | nicolaiarocci/docs.it-it | 74867e24b2aeb9dbaf0a908eabd8918bc780d7b4 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/framework/wcf/guidelines-and-best-practices.md | nicolaiarocci/docs.it-it | 74867e24b2aeb9dbaf0a908eabd8918bc780d7b4 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Linee guida e suggerimenti

ms.date: 03/30/2017

helpviewer_keywords:

- WCF, guidelines

- best practices [WCF], application design

- Windows Communication Foundation, best practices

- WCF, best practices

- Windows Communication Foundation, guidelines

ms.assetid: 5098ba46-6e8d-4e02-b0c5-d737f9fdad84

ms.openlocf... | 55.283019 | 322 | 0.768259 | ita_Latn | 0.943743 |

912db653b18767cd57ca5921077b47029a577796 | 1,947 | md | Markdown | dynamicsax2012-technet/salestransaction-channelreferenceid-property-microsoft-dynamics-commerce-runtime-datamodel.md | RobinARH/DynamicsAX2012-technet | d0d0ef979705b68e6a8406736612e9fc3c74c871 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | dynamicsax2012-technet/salestransaction-channelreferenceid-property-microsoft-dynamics-commerce-runtime-datamodel.md | RobinARH/DynamicsAX2012-technet | d0d0ef979705b68e6a8406736612e9fc3c74c871 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | dynamicsax2012-technet/salestransaction-channelreferenceid-property-microsoft-dynamics-commerce-runtime-datamodel.md | RobinARH/DynamicsAX2012-technet | d0d0ef979705b68e6a8406736612e9fc3c74c871 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: SalesTransaction.ChannelReferenceId Property (Microsoft.Dynamics.Commerce.Runtime.DataModel)

TOCTitle: ChannelReferenceId Property

ms:assetid: P:Microsoft.Dynamics.Commerce.Runtime.DataModel.SalesTransaction.ChannelReferenceId

ms:mtpsurl: https://technet.microsoft.com/en-us/library/microsoft.dynamics.comme... | 27.422535 | 146 | 0.787365 | yue_Hant | 0.800152 |

912db6eab825327c7e96e88ea5dd1ef514eb9007 | 1,171 | md | Markdown | AlchemyInsights/plan-passwordless-deployment.md | isabella232/OfficeDocs-AlchemyInsights-pr.pl-PL | 621d5519261e87dafaff1a0b3d7379f37e226bf6 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-05-19T19:07:24.000Z | 2020-05-19T19:07:24.000Z | AlchemyInsights/plan-passwordless-deployment.md | isabella232/OfficeDocs-AlchemyInsights-pr.pl-PL | 621d5519261e87dafaff1a0b3d7379f37e226bf6 | [

"CC-BY-4.0",

"MIT"

] | 2 | 2022-02-09T06:52:18.000Z | 2022-02-09T06:52:35.000Z | AlchemyInsights/plan-passwordless-deployment.md | isabella232/OfficeDocs-AlchemyInsights-pr.pl-PL | 621d5519261e87dafaff1a0b3d7379f37e226bf6 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2019-10-09T20:27:31.000Z | 2019-10-09T20:27:31.000Z | ---

title: Planowanie wdrożenia bez haseł

ms.author: pebaum

author: pebaum

manager: scotv

ms.date: 04/14/2021

audience: Admin

ms.topic: article

ms.service: o365-administration

ROBOTS: NOINDEX, NOFOLLOW

localization_priority: Priority

ms.collection: Adm_O365

ms.custom:

- "10394"

- "9005762"

ms.openlocfilehash: a167e33a5... | 40.37931 | 324 | 0.824082 | pol_Latn | 0.993109 |

912dcc2e7a7029ce548e782c115d146f512c7804 | 237 | md | Markdown | kdocs/-kores/com.github.jonathanxd.kores/-mutable-instructions/-mutable-instructions.md | JonathanxD/Kores | 236f7db6eeef7e6238f0ae0dab3f3b05fc531abb | [

"MIT-0",

"MIT"

] | 1 | 2019-04-16T10:42:02.000Z | 2019-04-16T10:42:02.000Z | kdocs/-kores/com.github.jonathanxd.kores/-mutable-instructions/-mutable-instructions.md | koresframework/Kores | b6ab31b1d376ab501fd9f481345c767cb0c37d04 | [

"MIT-0",

"MIT"

] | 8 | 2020-12-12T06:48:34.000Z | 2021-08-15T22:34:49.000Z | kdocs/-kores/com.github.jonathanxd.kores/-mutable-instructions/-mutable-instructions.md | koresframework/Kores | b6ab31b1d376ab501fd9f481345c767cb0c37d04 | [

"MIT-0",

"MIT"

] | null | null | null | //[Kores](../../../index.md)/[com.github.jonathanxd.kores](../index.md)/[MutableInstructions](index.md)/[MutableInstructions](-mutable-instructions.md)

# MutableInstructions

[jvm]\

fun [MutableInstructions](-mutable-instructions.md)()

| 33.857143 | 151 | 0.734177 | kor_Hang | 0.255148 |

912dd20adea8785d1f902da7a972d6c78d75bfe8 | 2,966 | md | Markdown | docs/2014/relational-databases/lesson-2-create-a-sql-server-credential-using-a-shared-access-signature.md | cawrites/sql-docs | 58158eda0aa0d7f87f9d958ae349a14c0ba8a209 | [

"CC-BY-4.0",

"MIT"

] | 2 | 2020-05-07T19:40:49.000Z | 2020-09-19T00:57:12.000Z | docs/2014/relational-databases/lesson-2-create-a-sql-server-credential-using-a-shared-access-signature.md | cawrites/sql-docs | 58158eda0aa0d7f87f9d958ae349a14c0ba8a209 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/2014/relational-databases/lesson-2-create-a-sql-server-credential-using-a-shared-access-signature.md | cawrites/sql-docs | 58158eda0aa0d7f87f9d958ae349a14c0ba8a209 | [

"CC-BY-4.0",

"MIT"

] | 2 | 2020-03-11T20:30:39.000Z | 2020-05-07T19:40:49.000Z | ---

title: "Lesson 3: Create a SQL Server Credential | Microsoft Docs"

ms.custom: ""

ms.date: "06/13/2017"

ms.prod: "sql-server-2014"

ms.reviewer: ""

ms.technology: "database-engine"

ms.topic: conceptual

ms.assetid: 29e57ebd-828f-4dff-b473-c10ab0b1c597

author: MikeRayMSFT

ms.author: mikeray

manager: craigg

... | 50.271186 | 384 | 0.726231 | eng_Latn | 0.964667 |

912e13cd99b8478e64737398e0079e8381342732 | 622 | md | Markdown | src/pages/companies/2018-02-13-supermeat.md | arvenjadeaguilar/cellagri-cms | f177977e4d859f540ed1fc455594629b30f42bd2 | [

"MIT"

] | null | null | null | src/pages/companies/2018-02-13-supermeat.md | arvenjadeaguilar/cellagri-cms | f177977e4d859f540ed1fc455594629b30f42bd2 | [

"MIT"

] | null | null | null | src/pages/companies/2018-02-13-supermeat.md | arvenjadeaguilar/cellagri-cms | f177977e4d859f540ed1fc455594629b30f42bd2 | [

"MIT"

] | null | null | null | ---

templateKey: company-post

path: /supermeat

date: '2018-02-15T11:00:00-05:00'

title: SuperMeat

location: 'Tel Aviv, Israel'

website: supermeat.com

socialMedia:

- media: Twitter

url: 'https://twitter.com/_SuperMeat_'

logo: /img/supermeat logo.jpg

thumbnail: /img/supermeat logo.jpg

description: >-

SuperMeat is... | 31.1 | 80 | 0.765273 | eng_Latn | 0.809162 |

912e708b1c2bf65f27991b730490981a9fed93a4 | 1,352 | md | Markdown | catalog/boku-no-mama-chan-43-kaihatsu-nikki/en-US_boku-no-mama-chan-43-kaihatsu-nikki.md | htron-dev/baka-db | cb6e907a5c53113275da271631698cd3b35c9589 | [

"MIT"

] | 3 | 2021-08-12T20:02:29.000Z | 2021-09-05T05:03:32.000Z | catalog/boku-no-mama-chan-43-kaihatsu-nikki/en-US_boku-no-mama-chan-43-kaihatsu-nikki.md | zzhenryquezz/baka-db | da8f54a87191a53a7fca54b0775b3c00f99d2531 | [

"MIT"

] | 8 | 2021-07-20T00:44:48.000Z | 2021-09-22T18:44:04.000Z | catalog/boku-no-mama-chan-43-kaihatsu-nikki/en-US_boku-no-mama-chan-43-kaihatsu-nikki.md | zzhenryquezz/baka-db | da8f54a87191a53a7fca54b0775b3c00f99d2531 | [

"MIT"

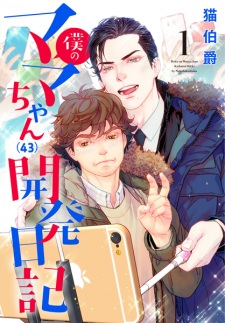

] | 2 | 2021-07-19T01:38:25.000Z | 2021-07-29T08:10:29.000Z | # Boku no Mama-chan (43) Kaihatsu Nikki

- **type**: manga

- **volumes**: 1

- **chapters**: 6

- **original-name**: 僕のママちゃん(43)開発日記

- **start-date**: 2016-10-19

## Tags

- yaoi

## Authors

- Neko Hakushaku (Sto... | 42.25 | 379 | 0.756657 | eng_Latn | 0.99339 |

912ea325833ebaae5e7ab8b9a6dacec03587355c | 813 | md | Markdown | README.md | Phocacius/kmeans | 3bd1ff32a1cd37988d7f2d94acd1213c5ed9e74d | [

"MIT"

] | null | null | null | README.md | Phocacius/kmeans | 3bd1ff32a1cd37988d7f2d94acd1213c5ed9e74d | [

"MIT"

] | null | null | null | README.md | Phocacius/kmeans | 3bd1ff32a1cd37988d7f2d94acd1213c5ed9e74d | [

"MIT"

] | null | null | null | # K-Means Simulator

Provides a step-by-step visualisation of the k-means algorithm for unsupervised clustering of 2D Data. Click on the canvas to add points and choose your desired number of clusters (k). You can then run the algorithm step-by-step manually using "Assign Clusters" and "Recenter Centroids" or automatica... | 45.166667 | 436 | 0.771218 | eng_Latn | 0.968846 |

912f240e2130569d98515738df087ed19fd5f0ee | 476 | md | Markdown | guide/arabic/certifications/javascript-algorithms-and-data-structures/es6/use-destructuring-assignment-to-assign-variables-from-arrays/index.md | SweeneyNew/freeCodeCamp | e24b995d3d6a2829701de7ac2225d72f3a954b40 | [

"BSD-3-Clause"

] | 10 | 2019-08-09T19:58:19.000Z | 2019-08-11T20:57:44.000Z | guide/arabic/certifications/javascript-algorithms-and-data-structures/es6/use-destructuring-assignment-to-assign-variables-from-arrays/index.md | SweeneyNew/freeCodeCamp | e24b995d3d6a2829701de7ac2225d72f3a954b40 | [

"BSD-3-Clause"

] | 2,056 | 2019-08-25T19:29:20.000Z | 2022-02-13T22:13:01.000Z | guide/arabic/certifications/javascript-algorithms-and-data-structures/es6/use-destructuring-assignment-to-assign-variables-from-arrays/index.md | SweeneyNew/freeCodeCamp | e24b995d3d6a2829701de7ac2225d72f3a954b40 | [

"BSD-3-Clause"

] | 5 | 2018-10-18T02:02:23.000Z | 2020-08-25T00:32:41.000Z | ---

title: Use Destructuring Assignment to Assign Variables from Arrays

localeTitle: استخدم Destructuring Assignment لتعيين متغيرات من صفائف

---

## استخدم Destructuring Assignment لتعيين متغيرات من صفائف

علينا اتخاذ بعض الاحتياطات في هذه الحالة.

1. لا حاجة للثابتة \[ب ، أ\] لأنها ستحافظ على تأثير الواجب المحلي.

... | 34 | 106 | 0.707983 | arb_Arab | 0.997059 |

912f4e0ad3415be2311ea7f9f504919fd6bc3f70 | 2,554 | md | Markdown | README.md | breglerj/cloud-foundry-tools-api | 883de7da0c233fabc366c86b62c72a2720139295 | [

"Apache-2.0"

] | null | null | null | README.md | breglerj/cloud-foundry-tools-api | 883de7da0c233fabc366c86b62c72a2720139295 | [

"Apache-2.0"

] | null | null | null | README.md | breglerj/cloud-foundry-tools-api | 883de7da0c233fabc366c86b62c72a2720139295 | [

"Apache-2.0"

] | null | null | null |

[](https://circleci.com/gh/SAP/cloud-foundry-tools-api)

[** carrying on the torch of [VisualPlugin's Wrapper project](https://github.com/GoAnimate-Wrapper) after it's shutdown in 2020. Unlike the original project, Infinite can not be shut down by Vyond. Why? It's because of our twist on the Wrapper formula! ... | 93.19697 | 828 | 0.738579 | eng_Latn | 0.999434 |

912fe6ae1c7ab206900cc994d8593fa76c63f95f | 1,547 | md | Markdown | results/referenceaudioanalyzer/referenceaudioanalyzer_siec_harman_in-ear_2019v2/DUNU DN16 Hephaes/README.md | eliMakeouthill/AutoEq | b16c72495b3ce493293c6a4a4fdf45a81aec9ca0 | [

"MIT"

] | 3 | 2022-02-25T08:33:08.000Z | 2022-03-13T11:27:29.000Z | results/referenceaudioanalyzer/referenceaudioanalyzer_siec_harman_in-ear_2019v2/DUNU DN16 Hephaes/README.md | billclintonwong/AutoEq | aa25ed8e8270c523893fadbda57e9811c65733f1 | [

"MIT"

] | null | null | null | results/referenceaudioanalyzer/referenceaudioanalyzer_siec_harman_in-ear_2019v2/DUNU DN16 Hephaes/README.md | billclintonwong/AutoEq | aa25ed8e8270c523893fadbda57e9811c65733f1 | [

"MIT"

] | null | null | null | # DUNU DN16 Hephaes

See [usage instructions](https://github.com/jaakkopasanen/AutoEq#usage) for more options and info.

### Parametric EQs

In case of using parametric equalizer, apply preamp of **-7.5dB** and build filters manually

with these parameters. The first 5 filters can be used independently.

When using indepen... | 38.675 | 98 | 0.553975 | eng_Latn | 0.746417 |

912ff8cf5d480d6cfe9dc42d772158a1b1b9c609 | 513 | md | Markdown | _course_files/Chapter 6 Services/Step.md | boriphuth/k8s-fleetman | 5bc31a72d7fadd7f0550d0390fcab9525055663b | [

"MIT"

] | 1 | 2019-10-23T09:14:35.000Z | 2019-10-23T09:14:35.000Z | _course_files/Chapter 6 Services/Step.md | boriphuth/k8s-fleetman | 5bc31a72d7fadd7f0550d0390fcab9525055663b | [

"MIT"

] | null | null | null | _course_files/Chapter 6 Services/Step.md | boriphuth/k8s-fleetman | 5bc31a72d7fadd7f0550d0390fcab9525055663b | [

"MIT"

] | null | null | null | $ kubectl apply -f first-pod.yaml

$ kubectl apply -f webapp-service.yaml

## Connect to Service

$ minikube service fleetman-webapp

$ kubectl get po --show-labels

NAME READY STATUS RESTARTS AGE LABELS

webapp 1/1 Running 0 92m app=webapp,release=0

webapp-release-... | 36.642857 | 78 | 0.647173 | yue_Hant | 0.764128 |

9130714c260b6a6b44a06f07c4f320519420ba98 | 5,310 | md | Markdown | articles/cognitive-services/Content-Moderator/review-api.md | changeworld/azure-docs.nl-nl | bdaa9c94e3a164b14a5d4b985a519e8ae95248d5 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/cognitive-services/Content-Moderator/review-api.md | changeworld/azure-docs.nl-nl | bdaa9c94e3a164b14a5d4b985a519e8ae95248d5 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/cognitive-services/Content-Moderator/review-api.md | changeworld/azure-docs.nl-nl | bdaa9c94e3a164b14a5d4b985a519e8ae95248d5 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Recensies, werk stromen en taken concepten-Content Moderator

titleSuffix: Azure Cognitive Services

description: In dit artikel vindt u meer informatie over de basis concepten van het hulp programma voor beoordeling. Beoordelingen, werk stromen en taken.

services: cognitive-services

author: PatrickFarley

mana... | 66.375 | 712 | 0.775141 | nld_Latn | 0.999047 |

91308e7fdc37435df478c923542ea311fc37b53f | 7,397 | md | Markdown | business-central/readiness/readiness-learning-sales.md | MicrosoftDocs/dynamics365smb-docs-pr.nb-no | f57ffe1865b515a2240b7e4d1401263a33d2a535 | [

"CC-BY-4.0",

"MIT"

] | 2 | 2020-05-18T17:20:08.000Z | 2021-04-20T21:13:47.000Z | business-central/readiness/readiness-learning-sales.md | MicrosoftDocs/dynamics365smb-docs-pr.nb-no | f57ffe1865b515a2240b7e4d1401263a33d2a535 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | business-central/readiness/readiness-learning-sales.md | MicrosoftDocs/dynamics365smb-docs-pr.nb-no | f57ffe1865b515a2240b7e4d1401263a33d2a535 | [

"CC-BY-4.0",

"MIT"

] | 2 | 2019-10-12T19:50:37.000Z | 2020-09-30T16:51:21.000Z | ---

title: Læringskatalog for salg og markedsføring for partner

description: Finn alle tilgjengelige læringsressurser for salg og markedsføringsroller for partner i Business Central.

author: loreleishannonmsft

ms.date: 04/01/2021

ms.topic: conceptual

ms.author: margoc

ms.openlocfilehash: 9830e4e842cc7fe3febcbe809547ad2... | 145.039216 | 785 | 0.723807 | nob_Latn | 0.982268 |

91317037239b34b820e8c646595604362f7e4e07 | 5,130 | md | Markdown | basics/plugin_structure/plugin_configuration_file.md | pettermahlen/intellij-sdk-docs | 1fd57016f2bf34afb3277d7094da091e0d84876b | [

"Apache-2.0"

] | 1 | 2021-08-18T09:44:04.000Z | 2021-08-18T09:44:04.000Z | basics/plugin_structure/plugin_configuration_file.md | pettermahlen/intellij-sdk-docs | 1fd57016f2bf34afb3277d7094da091e0d84876b | [

"Apache-2.0"

] | null | null | null | basics/plugin_structure/plugin_configuration_file.md | pettermahlen/intellij-sdk-docs | 1fd57016f2bf34afb3277d7094da091e0d84876b | [

"Apache-2.0"

] | null | null | null | ---

title: Plugin Configuration File - plugin.xml

---

The following is a sample plugin configuration file. This sample showcases and describes all elements that can be used in the plugin.xml file.

```xml

<!-- url="" specifies the URL of the plugin homepage (displayed in the Welcome Screen and in "Plugins" settings di... | 41.370968 | 146 | 0.696491 | eng_Latn | 0.96917 |

9131a9f6f961a049b6249b5cf547e4a13f841134 | 8,094 | markdown | Markdown | _posts/python/2020-08-13-virtual-environment.markdown | daesungRa/namu | 8a6e5b74a20189fb56d498155e81f55daeb03f52 | [

"MIT"

] | null | null | null | _posts/python/2020-08-13-virtual-environment.markdown | daesungRa/namu | 8a6e5b74a20189fb56d498155e81f55daeb03f52 | [

"MIT"

] | 78 | 2020-10-02T12:50:55.000Z | 2022-03-27T08:08:46.000Z | _posts/python/2020-08-13-virtual-environment.markdown | daesungRa/namu | 8a6e5b74a20189fb56d498155e81f55daeb03f52 | [

"MIT"

] | null | null | null | ---

title: Python 가상환경을 만드는 방법

date: 2020-08-13 20:45:48 +0900

author: namu

categories: python

permalink: "/python/:year/:month/:day/:title"

image: https://cdn.pixabay.com/photo/2017/07/31/14/56/wall-2558279_1280.jpg

image-view: true

image-author: StockSnap

image-source: https://pixabay.com/ko/users/stocksnap-894430/

-... | 26.98 | 131 | 0.628614 | kor_Hang | 0.999892 |

9131ee56d21d543ec50a4735087372d2e2ee7840 | 1,376 | md | Markdown | docs/NugetDocumentation.md | LorenzCK/Pseudo-i18n | 08fae4570fb89a832020299a91d1620f703e467c | [

"MIT"

] | 6 | 2016-12-09T01:31:18.000Z | 2019-01-23T17:56:51.000Z | docs/NugetDocumentation.md | LorenzCK/Pseudo-i18n | 08fae4570fb89a832020299a91d1620f703e467c | [

"MIT"

] | null | null | null | docs/NugetDocumentation.md | LorenzCK/Pseudo-i18n | 08fae4570fb89a832020299a91d1620f703e467c | [

"MIT"

] | null | null | null | # Pseudo-i18n

*Simple pseudo-internationalization utility library.*

The library allows you to convert any latin alphabet string to a pseudo-language in order to test whether your application is localization-ready. The generated pseudo-string will try to respect links, tags, and other markup in your original strings.

... | 37.189189 | 249 | 0.77689 | eng_Latn | 0.967275 |

9132dcfe623428ec49b932a5bd286781295c16b8 | 30 | md | Markdown | README.md | khairnaramol/Angular5 | 7915ef1dee3f908b323295f12ef9a588e3b3dbd5 | [

"MIT"

] | null | null | null | README.md | khairnaramol/Angular5 | 7915ef1dee3f908b323295f12ef9a588e3b3dbd5 | [

"MIT"

] | null | null | null | README.md | khairnaramol/Angular5 | 7915ef1dee3f908b323295f12ef9a588e3b3dbd5 | [

"MIT"

] | null | null | null | # Angular5

angular 5 learning

| 10 | 18 | 0.8 | eng_Latn | 0.781067 |

9132fda89a07f12c1729e42ee318d1814c7fd396 | 131 | md | Markdown | README.md | LiveTiles/PageGallery | 829d187da64775795d09b0f6ea5d85fea117336c | [

"MIT"

] | null | null | null | README.md | LiveTiles/PageGallery | 829d187da64775795d09b0f6ea5d85fea117336c | [

"MIT"

] | null | null | null | README.md | LiveTiles/PageGallery | 829d187da64775795d09b0f6ea5d85fea117336c | [

"MIT"

] | null | null | null | # PageGallery

A collection of demo pages intended to guide folks in using (and in some cases hacking) LiveTiles to fit their needs

| 43.666667 | 116 | 0.801527 | eng_Latn | 0.99683 |

913328e76928236576f7f3b53c8297c59213d60f | 3,460 | md | Markdown | posts/blog/2015/06/15-c88.en.md | danmaq/danmaq.article | ab5626c7a8053175d33044a38404bd8f873f79ef | [

"MIT"

] | null | null | null | posts/blog/2015/06/15-c88.en.md | danmaq/danmaq.article | ab5626c7a8053175d33044a38404bd8f873f79ef | [

"MIT"

] | null | null | null | posts/blog/2015/06/15-c88.en.md | danmaq/danmaq.article | ab5626c7a8053175d33044a38404bd8f873f79ef | [

"MIT"

] | null | null | null | ---

title: Comic Market 88 Exhibition Information

post_id: '6827'

date: '2015-06-15T02:25:22+09:00'

draft: true

tags: []

---

We _successfully won the_ comic this summer _at Sunday East Q - 24a_ ! ˶\> ◡ <˶ Although it is quickly, I will announce the distribution etc!

## \[\[Newly released game\] MATH.SC (tentative nam... | 104.848485 | 645 | 0.738439 | eng_Latn | 0.986061 |

913461ab034ea0fe626d2990b170c6da5050a9d6 | 2,320 | md | Markdown | index.md | AliNite/spreadsheets-socialsci | ee98eadef71b9f45775e7115cffc397e0e5dbef5 | [

"CC-BY-4.0"

] | null | null | null | index.md | AliNite/spreadsheets-socialsci | ee98eadef71b9f45775e7115cffc397e0e5dbef5 | [

"CC-BY-4.0"

] | null | null | null | index.md | AliNite/spreadsheets-socialsci | ee98eadef71b9f45775e7115cffc397e0e5dbef5 | [

"CC-BY-4.0"

] | null | null | null | ---

layout: lesson

root: .

---

Good data organization is the foundation of any research project. Most

researchers have data in spreadsheets, so it's the place that many research

projects start.

Typically we organize data in spreadsheets in ways that we as humans want to work with the data. However

computers requir... | 42.181818 | 105 | 0.775 | eng_Latn | 0.99958 |

91346216640df47b297cb0e4df4cc03f68a1e107 | 3,632 | markdown | Markdown | _posts/2010-03-11-how-a-certification-authority-handles-whois-data.markdown | martinlowinski/halfthetruth.de | a9513ca95cb07dad58f625b3b56fa56fbf40e946 | [

"MIT"

] | null | null | null | _posts/2010-03-11-how-a-certification-authority-handles-whois-data.markdown | martinlowinski/halfthetruth.de | a9513ca95cb07dad58f625b3b56fa56fbf40e946 | [

"MIT"

] | null | null | null | _posts/2010-03-11-how-a-certification-authority-handles-whois-data.markdown | martinlowinski/halfthetruth.de | a9513ca95cb07dad58f625b3b56fa56fbf40e946 | [

"MIT"

] | null | null | null | ---

wordpress_id: 131

author_login: admin

layout: post

comments: []

author: martinlowinski

title: How a certification authority handles whois data

published: true

tags: []

date: 2010-03-11 17:09:16 +01:00

categories:

- Website

author_email: martin@goldtopf.org

wordpress_url: http://halfthetruth.de/2010/03/11/how-a-... | 58.580645 | 349 | 0.755231 | eng_Latn | 0.998625 |

913475b577bba43e252b21252f8a2597af70fcf0 | 1,102 | md | Markdown | ClassNotes/python_class_homework_0.md | jona-sassenhagen/python_for_psychologists | 0604ff5c6382ae02ffeb2e078853b835dab03860 | [

"BSD-3-Clause"

] | 7 | 2018-09-19T20:53:55.000Z | 2022-02-28T12:55:39.000Z | ClassNotes/python_class_homework_0.md | jona-sassenhagen/python_for_psychologists | 0604ff5c6382ae02ffeb2e078853b835dab03860 | [

"BSD-3-Clause"

] | null | null | null | ClassNotes/python_class_homework_0.md | jona-sassenhagen/python_for_psychologists | 0604ff5c6382ae02ffeb2e078853b835dab03860 | [

"BSD-3-Clause"

] | 3 | 2019-03-10T09:25:33.000Z | 2021-12-16T20:24:50.000Z | % Python Class Session 1 Homework

# Repetitions

- Open a new and empty iPython notebook

- Create a list of strings that contains the first names of you and your close family members

- Access the third entry in that list

- Create a list of ages of family members

- *Using these two lists (not manually!)*, create a new l... | 68.875 | 326 | 0.779492 | eng_Latn | 0.999979 |

9134ac8f71966b0eeeabaf4bd6e1a0c4caf0ed32 | 211 | md | Markdown | README.md | teloxide/teloxide-book | 56d226ce5f3efd598365759f8596a3f158ab11a2 | [

"BlueOak-1.0.0"

] | 4 | 2021-09-21T09:51:47.000Z | 2021-11-28T22:17:58.000Z | README.md | teloxide/teloxide-book | 56d226ce5f3efd598365759f8596a3f158ab11a2 | [

"BlueOak-1.0.0"

] | null | null | null | README.md | teloxide/teloxide-book | 56d226ce5f3efd598365759f8596a3f158ab11a2 | [

"BlueOak-1.0.0"

] | null | null | null | # Teloxide user guide

This repository contains a user guide for the `teloxide` library.

[`teloxide`]: https://github.com/teloxide/teloxide

## Note

This book is very much work in progress.

Use with caution.

| 19.181818 | 65 | 0.748815 | eng_Latn | 0.988509 |

9134c2d6582f2fa40d9dfb8ca520377331f0657a | 49 | md | Markdown | README.md | yuleihua/aircmn | ff29b25629dcacf65be4fba7fbefc7e7f624f939 | [

"Apache-2.0"

] | null | null | null | README.md | yuleihua/aircmn | ff29b25629dcacf65be4fba7fbefc7e7f624f939 | [

"Apache-2.0"

] | null | null | null | README.md | yuleihua/aircmn | ff29b25629dcacf65be4fba7fbefc7e7f624f939 | [

"Apache-2.0"

] | 1 | 2021-11-13T15:48:26.000Z | 2021-11-13T15:48:26.000Z | # aircmn

aircmn is common library in c language.

| 16.333333 | 39 | 0.77551 | eng_Latn | 0.999726 |

91354c3382e52e23e19ce5f43f3d7c9492305430 | 2,593 | md | Markdown | doc/debugging.md | Chlorie/libunifex | 9869196338016939265964b82c7244915de6a12f | [

"Apache-2.0"

] | 1 | 2021-11-23T11:30:39.000Z | 2021-11-23T11:30:39.000Z | doc/debugging.md | Chlorie/libunifex | 9869196338016939265964b82c7244915de6a12f | [

"Apache-2.0"

] | null | null | null | doc/debugging.md | Chlorie/libunifex | 9869196338016939265964b82c7244915de6a12f | [

"Apache-2.0"

] | 1 | 2021-07-29T13:33:13.000Z | 2021-07-29T13:33:13.000Z | # Async Stack Traces

Unifex contains a prototype implementation of async stack-traces that

allows you to traverse a chain/graph of async continuations.

A stack-trace consists of a stack of `continuation_info` objects that

describes the address of the "frame" and the type of the continuation

as well as a mechanism to ... | 35.520548 | 80 | 0.698419 | eng_Latn | 0.946728 |

9135674fda29402a227ebc0d7f0cfe81339cf0b4 | 82 | md | Markdown | README.md | Bearzilasaur/ScholarScraper | ad3a638b0b8f3f13ae1d6a84711cbb5eedcc1164 | [

"Unlicense"

] | null | null | null | README.md | Bearzilasaur/ScholarScraper | ad3a638b0b8f3f13ae1d6a84711cbb5eedcc1164 | [

"Unlicense"

] | null | null | null | README.md | Bearzilasaur/ScholarScraper | ad3a638b0b8f3f13ae1d6a84711cbb5eedcc1164 | [

"Unlicense"

] | 1 | 2019-10-16T13:20:10.000Z | 2019-10-16T13:20:10.000Z | # ScholarScraper

Repository for a Google Scholar scraper for literature reviews.

| 27.333333 | 64 | 0.829268 | eng_Latn | 0.813123 |

9135823d4830ff5567be54c2a660f13205ae659c | 295 | md | Markdown | playbooks/openshift-monitor-availability/README.md | Roscoe198/Ansible-Openshift | b874bef456852ef082a27dfec4f2d7d466702370 | [

"Apache-2.0"

] | 164 | 2015-07-29T17:35:04.000Z | 2021-12-16T16:38:04.000Z | playbooks/openshift-monitor-availability/README.md | Roscoe198/Ansible-Openshift | b874bef456852ef082a27dfec4f2d7d466702370 | [

"Apache-2.0"

] | 3,634 | 2015-06-09T13:49:15.000Z | 2022-03-23T20:55:44.000Z | playbooks/openshift-monitor-availability/README.md | Roscoe198/Ansible-Openshift | b874bef456852ef082a27dfec4f2d7d466702370 | [

"Apache-2.0"

] | 250 | 2015-06-08T19:53:11.000Z | 2022-03-01T04:51:23.000Z | # OpenShift Availability Monitoring

This playbook runs the [OpenShift Availability Monitoring role](../../roles/openshift_monitor_availability). See the role

for more information.

## GCP Development

The `install-gcp.yml` playbook is useful for ad-hoc installation in an existing GCE cluster.

| 32.777778 | 121 | 0.8 | eng_Latn | 0.959277 |

91367433ac2c38ef6788dc22e9c3fbb7bda51853 | 36 | md | Markdown | src/examples/subscript/simple.md | alinex/node-report | 0798d2bacf8064875b3f54cd035aa154306f5a7e | [

"Apache-2.0"

] | 1 | 2016-06-02T15:05:20.000Z | 2016-06-02T15:05:20.000Z | src/examples/subscript/simple.md | alinex/node-report | 0798d2bacf8064875b3f54cd035aa154306f5a7e | [

"Apache-2.0"

] | null | null | null | src/examples/subscript/simple.md | alinex/node-report | 0798d2bacf8064875b3f54cd035aa154306f5a7e | [

"Apache-2.0"

] | null | null | null | You need H~2~O for this experiment.

| 18 | 35 | 0.75 | eng_Latn | 0.999731 |

9136b6596227434d736d133c7a82fabbf47a2493 | 964 | md | Markdown | _posts/2019-01-19-Github Blog.md | stone8765/blog | 90d6420aaef33eae21235075394dd7e332be071b | [

"MIT"

] | null | null | null | _posts/2019-01-19-Github Blog.md | stone8765/blog | 90d6420aaef33eae21235075394dd7e332be071b | [

"MIT"

] | null | null | null | _posts/2019-01-19-Github Blog.md | stone8765/blog | 90d6420aaef33eae21235075394dd7e332be071b | [

"MIT"

] | null | null | null | ---

layout: post

title: Github Blog 搭建

author: StoneLi

description: 使用frp内网穿透工具可以让内网中的电脑能够像访问公网电脑一样方便,比如将公司或个人电脑里面的Web项目让别人能够访问、或进行电脑远程连接、或ssh连接

catalog: true

tags: [jekyll,github pages]

---

# 1. 安装Ruby

https://rubyinstaller.org/

# 2. 下载安装gem (Ruby的包管理器)

下载:https://rubygems.org/pages/download

解压之后 在目录中执行以下命令

ruby set... | 20.956522 | 89 | 0.763485 | yue_Hant | 0.648572 |

913752a80068d6d04d5c0e2c018fa9ab92efb7c0 | 6,182 | md | Markdown | README.md | pecigonzalo/opta | 0259f128ad3cfc4a96fe1f578833de28b2f19602 | [

"Apache-2.0"

] | null | null | null | README.md | pecigonzalo/opta | 0259f128ad3cfc4a96fe1f578833de28b2f19602 | [

"Apache-2.0"

] | null | null | null | README.md | pecigonzalo/opta | 0259f128ad3cfc4a96fe1f578833de28b2f19602 | [

"Apache-2.0"

] | null | null | null | <p align="center"><img src="https://user-images.githubusercontent.com/855699/125824286-149ea52e-ef45-4f41-9579-8dba9bca38ac.png" width="250"><br/>

Automated, secure, scalable cloud infrastructure</p>

<p align="center">

<a href="https://github.com/run-x/opta/releases/latest">

<img src="https://img.shields.io/gith... | 56.2 | 324 | 0.752507 | eng_Latn | 0.77633 |

9137cde5130df8bd9d5762e1bad5d97d338af01f | 5,520 | md | Markdown | packages/speeddial/CHANGELOG.md | zamblas/ui-material-components | ec3a4203c0de76d56814e72cfd32e7bd3c077a40 | [

"Apache-2.0"

] | null | null | null | packages/speeddial/CHANGELOG.md | zamblas/ui-material-components | ec3a4203c0de76d56814e72cfd32e7bd3c077a40 | [

"Apache-2.0"

] | null | null | null | packages/speeddial/CHANGELOG.md | zamblas/ui-material-components | ec3a4203c0de76d56814e72cfd32e7bd3c077a40 | [

"Apache-2.0"

] | null | null | null | # Change Log

All notable changes to this project will be documented in this file.

See [Conventional Commits](https://conventionalcommits.org) for commit guidelines.

## [5.2.8](https://github.com/nativescript-community/ui-material-components/tree/master/packages/speeddial/compare/v5.2.7...v5.2.8) (2021-02-24)

**Note:... | 29.361702 | 147 | 0.747464 | eng_Latn | 0.201678 |

9137eb9f3047c740fcacf366eaa16c3c438e5a31 | 204 | md | Markdown | README.md | yatace/agn | 597a33faf167b31a7fb584f8bebe0842a94b2150 | [

"MIT"

] | 22 | 2019-03-01T04:47:56.000Z | 2021-06-24T08:31:41.000Z | README.md | yatace/agn | 597a33faf167b31a7fb584f8bebe0842a94b2150 | [

"MIT"

] | 3 | 2019-03-05T15:34:02.000Z | 2020-05-23T03:38:44.000Z | README.md | yatace/agn | 597a33faf167b31a7fb584f8bebe0842a94b2150 | [

"MIT"

] | 5 | 2019-03-01T07:53:49.000Z | 2019-03-05T03:26:32.000Z | # AGN生成器

## AGN 全称 Make Acfun Great Again Network ~~Acfun Green(GKD) Network~~

简单的agn评分生成和查询系统

### 特别鸣谢

[btboyhappy1993](https://github.com/btboyhappy1993)

以上acer为AGN事业作出的贡献

### [更新记录](changelog.md)

| 15.692308 | 69 | 0.735294 | yue_Hant | 0.516636 |

91387a86e3b12c9bdc2df6da857094de4b41fff5 | 6,546 | md | Markdown | src/posts/gsoc-week-3.md | isabelcosta/website | 777d1a20c6ef45be87848f829e2935b302d5a65a | [

"MIT"

] | 3 | 2020-06-29T11:36:10.000Z | 2020-07-03T10:21:23.000Z | src/posts/gsoc-week-3.md | isabelcosta/isabelcosta.github.io | 592ae44426e30c8cedbdbca83af5cc3ec07a71f1 | [

"MIT"

] | 97 | 2019-01-30T23:46:40.000Z | 2022-02-26T01:59:47.000Z | src/posts/gsoc-week-3.md | isabelcosta/website | 777d1a20c6ef45be87848f829e2935b302d5a65a | [

"MIT"

] | 7 | 2019-05-24T11:42:57.000Z | 2021-05-14T15:50:26.000Z | ---

title: Google Summer of Code | Coding Period | Week 3

date: '2018-06-03'

tags:

- gsoc

crossposts:

medium: https://medium.com/isabel-costa-gsoc/google-summer-of-code-coding-period-week-3-349e08f7d998

---

This week — May 28 to June 3 — was the third week of the coding period o... | 139.276596 | 1,042 | 0.779713 | eng_Latn | 0.992185 |

9138911b243ecab75293bb6446d9668ffadda978 | 1,339 | md | Markdown | docs/nest/README.md | hackycy/sf-admin-cli | 4965d5741589a4589d2ce66824af383299008a17 | [

"MIT"

] | 3 | 2021-12-13T07:44:16.000Z | 2022-03-11T17:59:02.000Z | docs/nest/README.md | hackycy/sf-admin-cli | 4965d5741589a4589d2ce66824af383299008a17 | [

"MIT"

] | 1 | 2021-12-13T07:15:58.000Z | 2021-12-13T07:42:59.000Z | docs/nest/README.md | hackycy/sf-admin-cli | 4965d5741589a4589d2ce66824af383299008a17 | [

"MIT"

] | 1 | 2022-03-02T02:38:04.000Z | 2022-03-02T02:38:04.000Z | # 介绍

**基于NestJs + TypeScript + TypeORM +... | 18.859155 | 277 | 0.585512 | yue_Hant | 0.701851 |

9139f94e9a00280f0762282414141a07cf115673 | 1,295 | md | Markdown | _posts/2018/2018-03-26-apache-proxy-balancer.md | haijunsu/navysu.github.io | c5e5d39d4a3dae79a0750e136b6a22e743e60db9 | [

"MIT"

] | null | null | null | _posts/2018/2018-03-26-apache-proxy-balancer.md | haijunsu/navysu.github.io | c5e5d39d4a3dae79a0750e136b6a22e743e60db9 | [

"MIT"

] | null | null | null | _posts/2018/2018-03-26-apache-proxy-balancer.md | haijunsu/navysu.github.io | c5e5d39d4a3dae79a0750e136b6a22e743e60db9 | [

"MIT"

] | null | null | null | ---

title: Apache Proxy Balancer

author: Haijun (Navy) Su

layout: post

tags: [proxy, balancer, apache, linux]

---

### Enable proxy models

```shell

sudo a2enmod proxy_html

sudo a2enmod proxy_http

sudo a2enmod proxy_wstunnel

sudo a2enmod proxy_ajp

sudo a2enmod lbmethod_byrequests

sudo a2enmod lbmethod_bytraffic

sudo a2en... | 26.979167 | 95 | 0.769884 | yue_Hant | 0.292929 |

913a6aeb4f157a79c2fc779a640c8c7533aeb8b7 | 276 | md | Markdown | blog/first-post.md | acrobertson/gatsby-netlify-test | 39c544aa52f10091afe9276efc454a033e43b246 | [

"MIT"

] | null | null | null | blog/first-post.md | acrobertson/gatsby-netlify-test | 39c544aa52f10091afe9276efc454a033e43b246 | [

"MIT"

] | 4 | 2021-03-09T18:58:02.000Z | 2022-02-26T18:17:35.000Z | blog/first-post.md | acrobertson/gatsby-netlify-test | 39c544aa52f10091afe9276efc454a033e43b246 | [

"MIT"

] | null | null | null | ---

path: /blog/post-1

date: 2019-10-08T18:05:15.349Z

title: First Post

---

## First Post Content

The following is the content of the first post

### Here's a list

- Item 1

- Item 2

- Item 3

[This](https://example.com/ "example") is a link

## Another header

More content

| 13.142857 | 48 | 0.673913 | eng_Latn | 0.96378 |

913b38cd59d141fcb6d43b788b5ff59a13db35af | 597 | md | Markdown | includes/data-explorer-authentication.md | p770820/azure-docs.zh-tw | dd2bd917784a4df8b52787a299a3df42e05642fe | [

"CC-BY-4.0",

"MIT"

] | null | null | null | includes/data-explorer-authentication.md | p770820/azure-docs.zh-tw | dd2bd917784a4df8b52787a299a3df42e05642fe | [

"CC-BY-4.0",

"MIT"

] | null | null | null | includes/data-explorer-authentication.md | p770820/azure-docs.zh-tw | dd2bd917784a4df8b52787a299a3df42e05642fe | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

author: orspod

ms.service: data-explorer

ms.topic: include

ms.date: 10/07/2019

ms.author: orspodek

ms.openlocfilehash: a04f17ac809832b6fec51d1ffe0d9fcd6285b4ff

ms.sourcegitcommit: f4d8f4e48c49bd3bc15ee7e5a77bee3164a5ae1b

ms.translationtype: MT

ms.contentlocale: zh-TW

ms.lasthandoff: 11/04/2019

ms.locfileid: "735818... | 35.117647 | 232 | 0.798995 | yue_Hant | 0.265369 |

913bc31de79d1e9550e767cd21692f2a7d809a88 | 4,698 | md | Markdown | _episodes/01-run-quit.md | lexnederbragt/python-novice-gapminder | 6edb7ae77e0f7ae22f6b096fbdec614a5cda78b5 | [

"CC-BY-4.0"

] | null | null | null | _episodes/01-run-quit.md | lexnederbragt/python-novice-gapminder | 6edb7ae77e0f7ae22f6b096fbdec614a5cda78b5 | [

"CC-BY-4.0"

] | null | null | null | _episodes/01-run-quit.md | lexnederbragt/python-novice-gapminder | 6edb7ae77e0f7ae22f6b096fbdec614a5cda78b5 | [

"CC-BY-4.0"

] | null | null | null | ---

title: "Running and Quitting"

teaching: 15

exercises: 0

questions:

- "How can I run Python programs?"

objectives:

- "Launch the Jupyter Notebook, create new notebooks, and exit the Notebook."

- "Create Markdown cells in a notebook."

- "Create and run Python cells in a notebook."

keypoints:

- FIXME

---

### Python Pr... | 40.153846 | 145 | 0.714347 | eng_Latn | 0.999366 |

913be5f0be284f34ce20eb8ba7118593436c4637 | 741 | md | Markdown | CHANGELOG.md | fizzed/java-jne | 783226a1fb002d304d22f841870c5c73575fc994 | [

"Apache-2.0"

] | null | null | null | CHANGELOG.md | fizzed/java-jne | 783226a1fb002d304d22f841870c5c73575fc994 | [

"Apache-2.0"

] | null | null | null | CHANGELOG.md | fizzed/java-jne | 783226a1fb002d304d22f841870c5c73575fc994 | [

"Apache-2.0"

] | null | null | null | Java Native Extractor by Fizzed

===============================

#### 3.0.1 - 2017-08-18

- Only create temp dir a single time per JVM instance

- Use UUID for temp dir

#### 3.0.0 - 2017-07-17

- Bump parent to v2.1.0

- Add ANY enum for OS

- New `findFile` feature to extract generic resources

- Initial unit tests

... | 23.15625 | 61 | 0.647773 | eng_Latn | 0.789744 |

913c1bb5a49af1546ba459f16a0be9c57c8797af | 2,767 | md | Markdown | apidoc/README.md | gitizenme/titanium_mobile | f9ebb757a7b78cc18b331cacc266cc5b0a02835f | [

"Apache-2.0"

] | 2 | 2015-05-30T20:28:13.000Z | 2021-01-08T17:02:41.000Z | apidoc/README.md | arnaudsj/titanium_mobile | 4ed83dd6b355947a88f52efbf4ac82d86a2eeffd | [

"Apache-2.0"

] | 6 | 2015-04-27T22:12:58.000Z | 2020-05-23T01:14:06.000Z | apidoc/README.md | arnaudsj/titanium_mobile | 4ed83dd6b355947a88f52efbf4ac82d86a2eeffd | [

"Apache-2.0"

] | 1 | 2019-03-15T04:55:17.000Z | 2019-03-15T04:55:17.000Z | # TDoc: The Titanium API Documentation Format

_This documentation is a WIP_

The TDoc format follows a simple syntax for declaring Modules, Proxies, Methods, Properties, and Events for Titanium.

## Layout

The documentation tree starts in the Titanium folder, and generally follows this pattern:

<pre>

Titanium/

-- Mod... | 31.089888 | 193 | 0.759668 | eng_Latn | 0.992044 |

913cc5179f86b964793fc14e1130d4ce66e802cb | 2,093 | md | Markdown | docs/internals/parameter-metadata.md | baileyherbert/reflection | bd161fa6ee32e296729f670b3fa915ea3b4361eb | [

"MIT"

] | 1 | 2021-12-13T18:06:31.000Z | 2021-12-13T18:06:31.000Z | docs/internals/parameter-metadata.md | baileyherbert/reflection | bd161fa6ee32e296729f670b3fa915ea3b4361eb | [

"MIT"

] | null | null | null | docs/internals/parameter-metadata.md | baileyherbert/reflection | bd161fa6ee32e296729f670b3fa915ea3b4361eb | [

"MIT"

] | null | null | null | # Parameter Metadata

## Introduction

Parameter metadata can be stored in countless ways. This reflection library uses a specific format which enables the

`getMetadata()` method to work on parameters.

If you are writing your own decorators, consider invoking the `Meta.Parameter` function like below to easily set

meta... | 33.758065 | 116 | 0.74343 | eng_Latn | 0.944263 |

913cd90eb4548fd2518d1d730eb3973ceab4c6e3 | 1,290 | md | Markdown | src/tech/erlang.md | joelwallis/log | e17f945ce253c3cc62ea215700e8de5c0b4955c8 | [

"0BSD"

] | 5 | 2022-01-21T00:43:50.000Z | 2022-02-14T21:47:42.000Z | src/tech/erlang.md | joelwallis/knowledge | d9fa6d957fd1641b12eb968300cd4e8ca5a18e1d | [

"0BSD"

] | null | null | null | src/tech/erlang.md | joelwallis/knowledge | d9fa6d957fd1641b12eb968300cd4e8ca5a18e1d | [

"0BSD"

] | null | null | null | # erlang ⓔ

My adventures on this amazing distributed computing platform.

## Erlang on macOS through asdf

Installing Erlang through asdf is probably the easiest way to get it up and running on macOS. You'll need OpenSSL to run the installation, and the easiest way to get it is through Homebrew:

```

brew install open... | 49.615385 | 361 | 0.771318 | eng_Latn | 0.99035 |

913ce20b781b5af252be1dc41ce8ef7b9729b82b | 4,971 | md | Markdown | _posts/2019-12-24-hackerrank.md | aSquare14/aSquare14.github.io | 740af53840bdc656bc0cbb3c722b069fc8e1c674 | [

"MIT"

] | 4 | 2019-08-17T21:05:14.000Z | 2021-02-23T20:04:19.000Z | _posts/2019-12-24-hackerrank.md | asquare14/aSquare14.github.io | 63a5ba98b80f062af12850da10c4a60701e80f33 | [

"MIT"

] | 3 | 2018-03-08T20:23:32.000Z | 2021-04-26T13:06:31.000Z | _posts/2019-12-24-hackerrank.md | aSquare14/aSquare14.github.io | 740af53840bdc656bc0cbb3c722b069fc8e1c674 | [

"MIT"

] | 8 | 2018-06-09T07:29:52.000Z | 2020-10-21T22:22:29.000Z | ---

title: "My 2019 Summer Internship at Hackerrank Bangalore"

layout: post

date: 2019-12-24 13:30

tag:

- Internship Experiences

category: blog

author: atibhi

description: Weekly Blogs

---

I had the opportunity to intern at Hackerrank, Bangalore during the summer of 2019 and in this blog I’d like to tell you about m... | 97.470588 | 842 | 0.788171 | eng_Latn | 0.999894 |

913dcfd946ce37ed415fb0684c61d92e84cb4a37 | 4,433 | md | Markdown | README.md | mkxml/glc-tratamento-simbolos-inuteis | 4a8b089154b8a35cc2245f6271137e025de7c936 | [

"MIT"

] | null | null | null | README.md | mkxml/glc-tratamento-simbolos-inuteis | 4a8b089154b8a35cc2245f6271137e025de7c936 | [

"MIT"

] | null | null | null | README.md | mkxml/glc-tratamento-simbolos-inuteis | 4a8b089154b8a35cc2245f6271137e025de7c936 | [

"MIT"

] | 1 | 2020-04-22T14:35:00.000Z | 2020-04-22T14:35:00.000Z | Remoção dos símbolos inúteis em uma GLC

=======================================

Este é um pequeno projeto escrito em JavaScript em cima de Node.JS que

implementa um algoritmo de remoção de símbolos inúteis em uma gramática livre

do contexto (GLC).

O programa foi desenvolvido para a disciplina de

**Linguagens formais ... | 33.330827 | 92 | 0.730205 | por_Latn | 0.999678 |

913e56ffe2d482687bad8d76adbc4c6166854441 | 1,292 | md | Markdown | ralbot/uvmgen/README.md | jiacaiyuan/uvm-generator | 63f4c7bd0dad43b357d1cc859b61011718c597f8 | [

"MIT"

] | 13 | 2020-04-15T09:11:53.000Z | 2022-03-13T02:05:53.000Z | ralbot/uvmgen/README.md | jiacaiyuan/uvm-generator | 63f4c7bd0dad43b357d1cc859b61011718c597f8 | [

"MIT"

] | null | null | null | ralbot/uvmgen/README.md | jiacaiyuan/uvm-generator | 63f4c7bd0dad43b357d1cc859b61011718c597f8 | [

"MIT"

] | 4 | 2020-11-27T08:11:24.000Z | 2022-02-19T09:11:36.000Z | # RALBot-uvm

Generate UVM register model from compiled SystemRDL input

## Installing(left blank)

Install from [PyPi](https://pypi.org/project/ralbot-uvm) using pip:

python3 -m pip install ralbot-uvm

--------------------------------------------------------------------------------

## Exporter Usage

Pass the elabo... | 24.377358 | 96 | 0.640093 | eng_Latn | 0.334483 |

913f5c906602c5786f8954ae075278a2e8b5e72a | 16 | md | Markdown | README.md | inventioncorps/icb-website-2 | f42d8dbe80cb4bb0863b594121ec125e9aca8099 | [

"MIT"

] | 1 | 2021-08-29T17:24:08.000Z | 2021-08-29T17:24:08.000Z | README.md | inventioncorps/icb-website-2 | f42d8dbe80cb4bb0863b594121ec125e9aca8099 | [

"MIT"

] | 2 | 2020-12-07T07:21:27.000Z | 2020-12-08T08:21:11.000Z | README.md | inventioncorps/icb-website-2 | f42d8dbe80cb4bb0863b594121ec125e9aca8099 | [

"MIT"

] | null | null | null | # icb-website-2

| 8 | 15 | 0.6875 | kor_Hang | 0.191865 |

9140c4a7bccab176e0cd360ee21ee8c5bc08551e | 1,040 | md | Markdown | docs/goto.md | codemedic/bash-ninja | 133c2d8e23c09770618ed0318b37380c35e8b9f8 | [

"MIT"

] | 9 | 2018-02-15T03:06:48.000Z | 2020-09-21T11:35:13.000Z | docs/goto.md | codemedic/bash-ninja | 133c2d8e23c09770618ed0318b37380c35e8b9f8 | [

"MIT"

] | null | null | null | docs/goto.md | codemedic/bash-ninja | 133c2d8e23c09770618ed0318b37380c35e8b9f8 | [

"MIT"

] | 1 | 2019-05-18T07:23:02.000Z | 2019-05-18T07:23:02.000Z | ## `goto` - bookmarks for the shell

`goto` is a path bookmark utility that would help you navigate within the filesystem using bookmarks. It comes with auto-completion and bookmark addition command `goto_add`.

Once you `cd` yourself into a path, you can run `goto_add bookmarkName` to add the current working directory... | 69.333333 | 261 | 0.770192 | eng_Latn | 0.999494 |

9140fb44218cc8fd1923ae736ee3241d3d884fca | 501 | md | Markdown | README.md | albovy/PadelGest | 32d464cbb3aefa85450b41d67d0212c8c10efc9d | [

"MIT"

] | null | null | null | README.md | albovy/PadelGest | 32d464cbb3aefa85450b41d67d0212c8c10efc9d | [

"MIT"

] | null | null | null | README.md | albovy/PadelGest | 32d464cbb3aefa85450b41d67d0212c8c10efc9d | [

"MIT"

] | null | null | null | # PADELGEST

## Instalación

### NodeJS

Descarga de NodeJs a partir del siguiente enlace:

<https://nodejs.org/en/download/>

### ExpressJS

Instalación de ExpressJS como dependencia utilizando el gestor de paquetes

` cd PadelGest `

`npm install express -save`

### MongoDB

Descarga de MongoDB a partir del sig... | 11.651163 | 75 | 0.716567 | spa_Latn | 0.573129 |

91417cb3dced2d77381fcf7297dab7498abc5e41 | 1,355 | md | Markdown | README.md | net2cn/Real-ESRGAN_GUI | 2190f499345546293c6fca0f3de09f747753781b | [

"MIT"

] | 16 | 2021-12-30T05:31:24.000Z | 2022-03-30T13:23:39.000Z | README.md | net2cn/Real-ESRGAN_GUI | 2190f499345546293c6fca0f3de09f747753781b | [

"MIT"

] | 1 | 2022-03-30T10:07:07.000Z | 2022-03-30T11:51:20.000Z | README.md | net2cn/Real-ESRGAN_GUI | 2190f499345546293c6fca0f3de09f747753781b | [

"MIT"

] | 1 | 2022-03-20T04:59:59.000Z | 2022-03-20T04:59:59.000Z | # Real-ESRGAN_GUI

A C# GUI inference implementation of [Real-ESRGAN](https://github.com/xinntao/Real-ESRGAN).

PRs are welcomed.

---

## Usage

You know how a GUI works.

## Result

From  to  are attached to their parents as `fixed`.

9959 instances of `fixed` (100%) are left-to-right (parent precedes child).

Ave... | 166.228916 | 10,758 | 0.679278 | yue_Hant | 0.670034 |

914405e6e10ea424254abb3a4b037e754c09afb0 | 6,509 | md | Markdown | README.md | by46/WhaleFS | 20029ad9a9b59089a3fdd681699ddfb3a5624ebc | [

"MIT"

] | 1 | 2018-06-10T08:54:54.000Z | 2018-06-10T08:54:54.000Z | README.md | by46/whalefs | 20029ad9a9b59089a3fdd681699ddfb3a5624ebc | [

"MIT"

] | null | null | null | README.md | by46/whalefs | 20029ad9a9b59089a3fdd681699ddfb3a5624ebc | [

"MIT"

] | null | null | null | # whalefs

## seaweedfs

```bash

weed master -port=9001

weed master -port=9002 -peers="localhost:9001"

weed volume -port=9081 -mserver="localhost:9001" -dir="data"

/opt/weed/weed master -mdir="/opt/dfs/master"

/opt/weed/weed volume -ip=192.168.1.9 -port=18081 -mserver="localhost:9333" -dir="/opt/dfs/... | 29.586364 | 156 | 0.583039 | yue_Hant | 0.093307 |

91442347ac9791c6716df4e68ad870c0da73ab48 | 1,906 | md | Markdown | docs/database/tometek/README.md | friendly-router/friendly-router | 5020871d222b912d280eb90c1f9fc1bde71fad51 | [

"0BSD"

] | 3 | 2021-04-01T12:41:55.000Z | 2021-04-05T13:07:08.000Z | docs/database/tometek/README.md | friendly-router/friendly-router | 5020871d222b912d280eb90c1f9fc1bde71fad51 | [

"0BSD"

] | null | null | null | docs/database/tometek/README.md | friendly-router/friendly-router | 5020871d222b912d280eb90c1f9fc1bde71fad51 | [

"0BSD"

] | null | null | null | ---

lang: en-US

title: Tometek

sidebar: auto

draft: true

prev: ../

meta:

- name: "twitter:card"

value: "Friendly Router Project"

- name: "twitter:site"

value: "https://friendly-router.org/database/tometek"

- name: "twitter:title"

value: "Database | Tometek"

- name: "description"

value: |

... | 27.623188 | 69 | 0.641658 | eng_Latn | 0.584988 |

9144861c3ea492cc1437a3a22b1e8c3a60e62d72 | 1,500 | md | Markdown | README.md | Szlavicsek/Quiz | 7e70d0e32db0a1da5c1cbee36dd60a435c49a522 | [

"MIT"

] | null | null | null | README.md | Szlavicsek/Quiz | 7e70d0e32db0a1da5c1cbee36dd60a435c49a522 | [

"MIT"

] | null | null | null | README.md | Szlavicsek/Quiz | 7e70d0e32db0a1da5c1cbee36dd60a435c49a522 | [

"MIT"

] | null | null | null | # Quizzit app

A quiz game project using the [Open Trivia API](https://opentdb.com/api_config.php)

## Getting Started

To get started, clone the repo to your local machine and install the dependencies as listed below. Please note that for the Google login api to work properly, you will also need:

* to configure the ga... | 35.714286 | 178 | 0.772 | eng_Latn | 0.99651 |

9144e74aef19e60a189a4f3a507b250bbec82a04 | 39 | md | Markdown | readme.md | eladzlot/minno-sequencer | 0c34902ce359b5e4850089c5ad341f0cd4f58d4e | [

"Apache-2.0"

] | 1 | 2021-04-13T05:03:38.000Z | 2021-04-13T05:03:38.000Z | readme.md | eladzlot/minno-sequencer | 0c34902ce359b5e4850089c5ad341f0cd4f58d4e | [

"Apache-2.0"

] | 5 | 2019-11-20T17:12:55.000Z | 2022-03-02T05:05:16.000Z | readme.md | eladzlot/minno-sequencer | 0c34902ce359b5e4850089c5ad341f0cd4f58d4e | [

"Apache-2.0"

] | null | null | null | # Minno sequencer

The minno sequencer

| 9.75 | 19 | 0.794872 | eng_Latn | 0.951134 |

9145812f2f6f975b3760b2daec93decd6b6e84aa | 2,025 | md | Markdown | docs/framework/winforms/controls/ways-to-select-a-windows-forms-button-control.md | turibbio/docs.it-it | 2212390575baa937d6ecea44d8a02e045bd9427c | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/framework/winforms/controls/ways-to-select-a-windows-forms-button-control.md | turibbio/docs.it-it | 2212390575baa937d6ecea44d8a02e045bd9427c | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/framework/winforms/controls/ways-to-select-a-windows-forms-button-control.md | turibbio/docs.it-it | 2212390575baa937d6ecea44d8a02e045bd9427c | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Modalità di selezione di un controllo Button

ms.date: 03/30/2017

helpviewer_keywords:

- Button control [Windows Forms], selecting

ms.assetid: fe2fc058-5118-4f70-b264-6147d64a7a8d

ms.openlocfilehash: 145166d182f1ec51068ab3e0c23c12b471b69231

ms.sourcegitcommit: de17a7a0a37042f0d4406f5ae5393531caeb25ba

ms.trans... | 56.25 | 304 | 0.784691 | ita_Latn | 0.994376 |

9145d227d380c093e845def87ac0a8db15ec55d1 | 6,400 | md | Markdown | _posts/2018-11-28-Download-miss-rosie-apos-s-spice-of-life-quilts-leisure-arts.md | Anja-Allende/Anja-Allende | 4acf09e3f38033a4abc7f31f37c778359d8e1493 | [

"MIT"

] | 2 | 2019-02-28T03:47:33.000Z | 2020-04-06T07:49:53.000Z | _posts/2018-11-28-Download-miss-rosie-apos-s-spice-of-life-quilts-leisure-arts.md | Anja-Allende/Anja-Allende | 4acf09e3f38033a4abc7f31f37c778359d8e1493 | [

"MIT"

] | null | null | null | _posts/2018-11-28-Download-miss-rosie-apos-s-spice-of-life-quilts-leisure-arts.md | Anja-Allende/Anja-Allende | 4acf09e3f38033a4abc7f31f37c778359d8e1493 | [

"MIT"

] | null | null | null | ---

layout: post

comments: true

categories: Other

---

## Download Miss rosie apos s spice of life quilts leisure arts book

"I can't imagine whole cities burning. could see the silver drops pooling on his tongue before he swallowed. He wouldn't need the bottle any more, and he quickly slipped inside. D and Micky at th... | 711.111111 | 6,275 | 0.78625 | eng_Latn | 0.999962 |

91460c75c66d3563f4add5912fbe94e1f77ccf30 | 15,441 | md | Markdown | articles/virtual-machines/windows/tutorial-create-vmss.md | changeworld/azure-docs.it- | 34f70ff6964ec4f6f1a08527526e214fdefbe12a | [

"CC-BY-4.0",

"MIT"

] | 1 | 2017-06-06T22:50:05.000Z | 2017-06-06T22:50:05.000Z | articles/virtual-machines/windows/tutorial-create-vmss.md | changeworld/azure-docs.it- | 34f70ff6964ec4f6f1a08527526e214fdefbe12a | [

"CC-BY-4.0",

"MIT"

] | 41 | 2016-11-21T14:37:50.000Z | 2017-06-14T20:46:01.000Z | articles/virtual-machines/windows/tutorial-create-vmss.md | changeworld/azure-docs.it- | 34f70ff6964ec4f6f1a08527526e214fdefbe12a | [

"CC-BY-4.0",

"MIT"

] | 7 | 2016-11-16T18:13:16.000Z | 2017-06-26T10:37:55.000Z | ---

title: 'Esercitazione: Creare un set di scalabilità di macchine virtuali Windows'

description: Informazioni su come usare Azure PowerShell per creare e distribuire un'applicazione a disponibilità elevata nelle VM Windows usando un set di scalabilità di macchine virtuali

author: ju-shim

ms.author: jushiman

ms.topic:... | 53.614583 | 520 | 0.786348 | ita_Latn | 0.968289 |

9146fad553db1c08e8ff90baad6c2a72579e035f | 11,600 | md | Markdown | src/pages/posts/2021-05-30T12:00:04-post.md | evanmacbride/reddit-digest | 47659c6b52d9b7d74025c517931e107cb2f6be94 | [

"MIT"

] | 1 | 2020-02-03T02:35:55.000Z | 2020-02-03T02:35:55.000Z | src/pages/posts/2021-05-30T12:00:04-post.md | evanmacbride/reddit-snapshots | 00dcad012a949243e7399a45dd9a37720cfe6576 | [

"MIT"

] | null | null | null | src/pages/posts/2021-05-30T12:00:04-post.md | evanmacbride/reddit-snapshots | 00dcad012a949243e7399a45dd9a37720cfe6576 | [

"MIT"

] | null | null | null | ---

title: '05/30/21 12:00PM UTC Snapshot'

date: '2021-05-30T12:00:04'

---

<ul>

<h2>Sci/Tech</h2>

<li><a href='https://i.redd.it/41rq1hape5271.jpg'><img src='https://b.thumbs.redditmedia.com/UeqXq9JRz54nw-Zlwbk3VeD9mpMV79ZVO3_eXACaRCs.jpg' alt='link thumbnail'></a><div><div class='linkTitle'><a href='https://i.redd.it... | 203.508772 | 858 | 0.736552 | yue_Hant | 0.303362 |

914716817163ac3062f9d730be9d770c99d9ac83 | 2,033 | md | Markdown | _posts/2011-7-16-UVA12983 The Battle of Chibi.md | FutaRimeWoawaSete/FutaRimeWoawaSete.github.io | 714d0ae43929dc5a4672f82e4c1666fa798d3e38 | [

"MIT"

] | null | null | null | _posts/2011-7-16-UVA12983 The Battle of Chibi.md | FutaRimeWoawaSete/FutaRimeWoawaSete.github.io | 714d0ae43929dc5a4672f82e4c1666fa798d3e38 | [

"MIT"

] | null | null | null | _posts/2011-7-16-UVA12983 The Battle of Chibi.md | FutaRimeWoawaSete/FutaRimeWoawaSete.github.io | 714d0ae43929dc5a4672f82e4c1666fa798d3e38 | [

"MIT"

] | null | null | null | 表面看起来是一道计数题,实际是一道 DP 题。

我们首先设 $dp_{i,j}$ 表示长度为 $i$ 并且以 $j$ 结尾的严格上升子序列的个数,

我们经过一定的推导后可以得到一个 DP 转移式:

- $ dp_{i,j} = \sum_{k = 1}^{j - 1}dp_{i-1,k}$ 其中 $a_k < a_j$

-

照着这个 $DP$ 转移式写上去我们发现这是一个 $O(n ^ 2m)$ 的 DP 很明显我们过不掉这道题。

这时我们考虑如何优化这个 DP 转移式,毕竟 DP 式推出来了但是时间复杂度过不掉的话基本都是需要优化的,我们发现我们可以先离散所有 $a_i$ 然后用线段树维护前面... | 23.367816 | 105 | 0.495819 | eng_Latn | 0.108548 |

9147e13504f9d3d07455230f455e2f8e8e651534 | 1,455 | md | Markdown | README.md | david-mcgillicuddy-moixa/tokio-proto | 0b72a1978064e0e087fd8682ccd1aba6064ad6c0 | [

"Apache-2.0",

"MIT"

] | 334 | 2016-08-27T01:08:35.000Z | 2022-03-16T23:29:19.000Z | README.md | david-mcgillicuddy-moixa/tokio-proto | 0b72a1978064e0e087fd8682ccd1aba6064ad6c0 | [

"Apache-2.0",

"MIT"

] | 174 | 2016-08-27T08:57:26.000Z | 2018-08-01T19:09:59.000Z | README.md | david-mcgillicuddy-moixa/tokio-proto | 0b72a1978064e0e087fd8682ccd1aba6064ad6c0 | [

"Apache-2.0",

"MIT"

] | 100 | 2016-08-27T00:46:49.000Z | 2021-05-14T07:00:32.000Z | # This crate is deprecated!

This crate is deprecated without an immediate replacement. Discussion about a successor can be found in [tokio-rs/tokio#118](https://github.com/tokio-rs/tokio/issues/118).

# tokio-proto

`tokio-proto` makes it easy to implement clients and servers for **request /

response** oriented proto... | 29.1 | 172 | 0.740893 | eng_Latn | 0.89593 |

91495e76ceaa936675d9d31fa3399a03e5ed91cc | 6,769 | md | Markdown | documents/DesignNotes/appearance.md | hangle/Notecard | fdbed0ce0d15e0288794e18680da7360a0daeed7 | [

"Apache-2.0"

] | null | null | null | documents/DesignNotes/appearance.md | hangle/Notecard | fdbed0ce0d15e0288794e18680da7360a0daeed7 | [

"Apache-2.0"

] | null | null | null | documents/DesignNotes/appearance.md | hangle/Notecard | fdbed0ce0d15e0288794e18680da7360a0daeed7 | [

"Apache-2.0"

] | null | null | null | <h1>Appearance Features </h1>

<p>Appearance features cover the size, style, color, and font style <br />

of text. It also includes the size and length of the input field, <br />

as well as the appearance features of input characters. The <br />

number of input characters can be limited. The window height <br />

and... | 34.712821 | 80 | 0.637465 | eng_Latn | 0.992658 |

914a52608291f40eb2adef88dd684802888b5b29 | 126 | md | Markdown | CHANGELOG.md | cxfans/ftplib | 9b5bf9fa0f314c294d180380f6a891b581378342 | [

"MIT"

] | 1 | 2020-04-13T19:20:08.000Z | 2020-04-13T19:20:08.000Z | CHANGELOG.md | cxfans/ftplib | 9b5bf9fa0f314c294d180380f6a891b581378342 | [

"MIT"

] | null | null | null | CHANGELOG.md | cxfans/ftplib | 9b5bf9fa0f314c294d180380f6a891b581378342 | [

"MIT"

] | 1 | 2020-07-11T08:53:28.000Z | 2020-07-11T08:53:28.000Z | # Change Log of ftplib Library

## [0.1.0] - 2019-11-8

### Release

- Implement basic function for File Transfer Protocol (FTP) | 25.2 | 59 | 0.706349 | kor_Hang | 0.431979 |

914aa84b6b10631b7936d349a26ff6ab8741f004 | 596 | md | Markdown | qmk_firmware/keyboards/keebio/quefrency/keymaps/bcat/readme.md | DanTupi/personal_setup | 911b4951e4d8b78d6ea8ca335229e2e970fda871 | [

"MIT"

] | null | null | null | qmk_firmware/keyboards/keebio/quefrency/keymaps/bcat/readme.md | DanTupi/personal_setup | 911b4951e4d8b78d6ea8ca335229e2e970fda871 | [

"MIT"

] | null | null | null | qmk_firmware/keyboards/keebio/quefrency/keymaps/bcat/readme.md | DanTupi/personal_setup | 911b4951e4d8b78d6ea8ca335229e2e970fda871 | [

"MIT"

] | null | null | null | # bcat's Quefrency 65% layout

This is a standard 65% keyboard layout, with a split spacebar, an HHKB-style

(split) backspace, media controls in the function layer (centered around the

ESDF cluster), and RGB controls in the function layer (on the arrow/nav keys).

## Default layer

keywords: vbapb10.chm2162721

f1_keywords:

- vbapb10.chm2162721

ms.prod: publisher

api_name:

- Publisher.Shapes.AddTextEffect

ms.assetid: 21af82f1-d507-3c16-72df-bde1b5e00717

ms.date: 06/08/2017

localization_priority: Normal

---

# Shapes.AddTextEffect method (Publishe... | 38.637363 | 261 | 0.746303 | eng_Latn | 0.865378 |

914ddc6977963a3eacf07066bdc5ac8eda5aabf3 | 585 | markdown | Markdown | website/docs/r/thunder_ip_tcp.html.markdown | a10networks/terraform-provider-thunder | 50fe189add4fc51ca17b648945e63685bf350177 | [

"BSD-2-Clause"

] | 4 | 2020-10-17T00:07:06.000Z | 2021-09-11T21:44:42.000Z | website/docs/r/thunder_ip_tcp.html.markdown | a10networks/terraform-provider-thunder | 50fe189add4fc51ca17b648945e63685bf350177 | [

"BSD-2-Clause"

] | 5 | 2020-10-09T06:47:26.000Z | 2021-09-11T21:44:26.000Z | website/docs/r/thunder_ip_tcp.html.markdown | a10networks/terraform-provider-thunder | 50fe189add4fc51ca17b648945e63685bf350177 | [

"BSD-2-Clause"

] | 3 | 2020-10-13T06:09:53.000Z | 2021-12-03T15:29:08.000Z | ---

layout: "thunder"

page_title: "thunder: thunder_ip_tcp"

sidebar_current: "docs-thunder-resource-ip-tcp"

description: |-

Provides details about thunder ip tcp resource for A10

---

# thunder\_ip\_tcp

`thunder_ip_tcp` Provides details about thunder ip tcp

## Example Usage

```hcl

provider "thunder" {

address =... | 17.205882 | 68 | 0.688889 | eng_Latn | 0.724293 |

914e4741c8b60c327f55637612ed31a778e66072 | 2,891 | md | Markdown | programador/preferencia-tipos-dominio-especifico.md | jaimerodas/97cosas | c8f2f7967ca53e58d4eb04d73ba89d474f23c5eb | [

"CC-BY-3.0"

] | 44 | 2015-04-02T14:05:21.000Z | 2022-02-02T08:34:40.000Z | programador/preferencia-tipos-dominio-especifico.md | jaimerodas/97cosas | c8f2f7967ca53e58d4eb04d73ba89d474f23c5eb | [

"CC-BY-3.0"

] | 13 | 2015-06-17T23:47:28.000Z | 2019-10-30T06:23:25.000Z | programador/preferencia-tipos-dominio-especifico.md | esparta/97cosas | f52357df922fea12abe798d9836b5c5121f5732f | [

"CC-BY-3.0"

] | 21 | 2015-04-02T17:49:10.000Z | 2021-06-09T00:19:03.000Z | ---

layout: programador

title: Da preferencia a tipos de Dominio Específico que los tipos primitivos

overview: true

author: Einar Landre

translator: Espartaco Palma

original: https://web.archive.org/web/20150106001512/http://programmer.97things.oreilly.com/wiki/index.php/Prefer_Domain-Specific_Types_to_Primitive_Types

... | 46.629032 | 154 | 0.808025 | spa_Latn | 0.996094 |

914e56fe039e6943cc20d26fc4eb02d8d016a707 | 1,102 | md | Markdown | docs/src/SUMMARY.md | roelvdberg/metacontroller | a9ca3730cfba5bfd544f9b65bf42a86f187e478f | [

"Apache-2.0"