hexsha stringlengths 40 40 | size int64 5 1.04M | ext stringclasses 6

values | lang stringclasses 1

value | max_stars_repo_path stringlengths 3 344 | max_stars_repo_name stringlengths 5 125 | max_stars_repo_head_hexsha stringlengths 40 78 | max_stars_repo_licenses listlengths 1 11 | max_stars_count int64 1 368k ⌀ | max_stars_repo_stars_event_min_datetime stringlengths 24 24 ⌀ | max_stars_repo_stars_event_max_datetime stringlengths 24 24 ⌀ | max_issues_repo_path stringlengths 3 344 | max_issues_repo_name stringlengths 5 125 | max_issues_repo_head_hexsha stringlengths 40 78 | max_issues_repo_licenses listlengths 1 11 | max_issues_count int64 1 116k ⌀ | max_issues_repo_issues_event_min_datetime stringlengths 24 24 ⌀ | max_issues_repo_issues_event_max_datetime stringlengths 24 24 ⌀ | max_forks_repo_path stringlengths 3 344 | max_forks_repo_name stringlengths 5 125 | max_forks_repo_head_hexsha stringlengths 40 78 | max_forks_repo_licenses listlengths 1 11 | max_forks_count int64 1 105k ⌀ | max_forks_repo_forks_event_min_datetime stringlengths 24 24 ⌀ | max_forks_repo_forks_event_max_datetime stringlengths 24 24 ⌀ | content stringlengths 5 1.04M | avg_line_length float64 1.14 851k | max_line_length int64 1 1.03M | alphanum_fraction float64 0 1 | lid stringclasses 191

values | lid_prob float64 0.01 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

9151c0a140085da90f496dbe0a2cb8b1c1194c7c | 2,603 | md | Markdown | _FULLTEXT/connors.photon.md | BJBaardse/open-source-words | 18ca0c71e7718a0e2e9b7269b018f77b06f423b4 | [

"Apache-2.0"

] | 17 | 2018-07-13T02:16:22.000Z | 2021-09-16T15:31:49.000Z | _FULLTEXT/connors.photon.md | letform/open-source-words | 18ca0c71e7718a0e2e9b7269b018f77b06f423b4 | [

"Apache-2.0"

] | null | null | null | _FULLTEXT/connors.photon.md | letform/open-source-words | 18ca0c71e7718a0e2e9b7269b018f77b06f423b4 | [

"Apache-2.0"

] | 6 | 2018-10-12T09:09:05.000Z | 2021-01-01T15:32:45.000Z | Photon UI toolkit for building desktop apps with Electron. Getting started Clone the repo with git clone https://github.com/connors/photon.git Read the docs to learn about the components and how to get your new application started Take note that our master branch is our active, unstable development branch and that if y... | 2,603 | 2,603 | 0.794852 | eng_Latn | 0.997022 |

9151e9596fb6c0200f2a953e6dd32831554c1f28 | 9,989 | md | Markdown | README.md | Wilucco/godot-android-module-firebase | ba94810c5b6d30d493e1e5d4f061d1aefe023840 | [

"Apache-2.0"

] | null | null | null | README.md | Wilucco/godot-android-module-firebase | ba94810c5b6d30d493e1e5d4f061d1aefe023840 | [

"Apache-2.0"

] | null | null | null | README.md | Wilucco/godot-android-module-firebase | ba94810c5b6d30d493e1e5d4f061d1aefe023840 | [

"Apache-2.0"

] | null | null | null | # godot-android-module-firebase

Godot Android module for Firebase, written from scratch. This project replaces https://github.com/yalcin-ata/godot-plugin-firebase.

This works for [Godot Engine](https://godotengine.org/)'s stable version 3.2 (not beta).

Follow the instructions [below](#instructions).

[API documentati... | 42.871245 | 236 | 0.702272 | eng_Latn | 0.434091 |

9152041a7ae7432d3883f9061a43f3160449aec1 | 2,116 | md | Markdown | 029-IoTEdge/Student/Challenge-04.md | aszego/WhatTheHack | 257cb9825ae2308500d68feb4dca5d9fc6175493 | [

"MIT"

] | 991 | 2019-05-07T12:41:00.000Z | 2022-03-24T20:24:55.000Z | 029-IoTEdge/Student/Challenge-04.md | aszego/WhatTheHack | 257cb9825ae2308500d68feb4dca5d9fc6175493 | [

"MIT"

] | 136 | 2019-06-07T20:49:35.000Z | 2022-03-29T16:48:40.000Z | 029-IoTEdge/Student/Challenge-04.md | aszego/WhatTheHack | 257cb9825ae2308500d68feb4dca5d9fc6175493 | [

"MIT"

] | 448 | 2019-05-07T23:12:33.000Z | 2022-03-30T13:22:51.000Z | # Challenge 4: Route messages and do time-series analysis

[< Previous Challenge](./Challenge-03.md) - **[Home](../README.md)** - [Next Challenge >](./Challenge-05.md)

## Introduction

Now that we have device connectivity, and data if flowing to Azure IoT Hub. We should try to explore way to visualize this data in a d... | 64.121212 | 450 | 0.787335 | eng_Latn | 0.993847 |

91528fa3120f3e76a2a61f401d4dbf917fc93048 | 2,778 | md | Markdown | README.md | BlueGranite/RPivotTable-for-Power-BI | 08934dfae17e896c8ac3d5f143e37907f9b3a4d6 | [

"MIT"

] | 8 | 2018-02-26T14:29:33.000Z | 2022-03-05T18:04:55.000Z | README.md | BlueGranite/RPivotTable-for-Power-BI | 08934dfae17e896c8ac3d5f143e37907f9b3a4d6 | [

"MIT"

] | 2 | 2018-03-13T03:49:54.000Z | 2020-07-06T17:45:25.000Z | README.md | BlueGranite/RPivotTable-for-Power-BI | 08934dfae17e896c8ac3d5f143e37907f9b3a4d6 | [

"MIT"

] | 2 | 2018-04-02T09:59:26.000Z | 2020-06-21T17:06:03.000Z | # R Pivot Table for Power BI

An interactive R HTML *Pivot Table* for Microsoft Power BI from [BlueGranite](https://www.blue-granite.com).

### PREVIEW

R Pivot Table is in early preview. It is in the process of being ... | 79.371429 | 495 | 0.777898 | eng_Latn | 0.985616 |

9152ce5190c235b56037f4484068e11be4e492ff | 2,769 | md | Markdown | docs/relational-databases/backup-restore/vdi-reference/iservervirtualdeviceset2-beginconfiguration.md | william-keller/sql-docs.pt-br | e5218aef85d1f8080eddaadecbb11de1e664c541 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2021-09-05T16:06:11.000Z | 2021-09-05T16:06:11.000Z | docs/relational-databases/backup-restore/vdi-reference/iservervirtualdeviceset2-beginconfiguration.md | william-keller/sql-docs.pt-br | e5218aef85d1f8080eddaadecbb11de1e664c541 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/relational-databases/backup-restore/vdi-reference/iservervirtualdeviceset2-beginconfiguration.md | william-keller/sql-docs.pt-br | e5218aef85d1f8080eddaadecbb11de1e664c541 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: IServerVirtualDeviceSet2::BeginConfiguration

titlesuffix: SQL Server VDI reference

description: Este artigo fornece referência para o comando IServerVirtualDeviceSet2::BeginConfiguration.

ms.date: 08/30/2019

ms.prod: sql

ms.prod_service: backup-restore

ms.technology: backup-restore

ms.topic: reference

author... | 43.265625 | 389 | 0.783676 | por_Latn | 0.98707 |

915303359e881f4e10c2bb8d7e98dd7ceec922ba | 476 | md | Markdown | microsoft.ui.xaml.controls/infobadge_iconsource.md | stevemonaco/winui-api | 3e5ad1a5275746690c39fd2502c60928b756f3b5 | [

"CC-BY-4.0",

"MIT"

] | 63 | 2018-11-02T13:52:13.000Z | 2022-03-31T16:31:24.000Z | microsoft.ui.xaml.controls/infobadge_iconsource.md | stevemonaco/winui-api | 3e5ad1a5275746690c39fd2502c60928b756f3b5 | [

"CC-BY-4.0",

"MIT"

] | 99 | 2018-11-16T15:15:12.000Z | 2022-03-31T15:53:15.000Z | microsoft.ui.xaml.controls/infobadge_iconsource.md | stevemonaco/winui-api | 3e5ad1a5275746690c39fd2502c60928b756f3b5 | [

"CC-BY-4.0",

"MIT"

] | 35 | 2018-10-16T05:35:33.000Z | 2022-03-30T23:27:08.000Z | ---

-api-id: P:Microsoft.UI.Xaml.Controls.InfoBadge.IconSource

-api-type: winrt property

---

# Microsoft.UI.Xaml.Controls.InfoBadge.IconSource

<!--

public Microsoft.UI.Xaml.Controls.IconSource IconSource { get; set; }

-->

## -description

Gets or sets the icon to be used in an InfoBadge.

## -property-value

The ico... | 17.62963 | 69 | 0.722689 | yue_Hant | 0.394955 |

9153b2e5b733e4e46910972eb9f13b50ac96e79f | 3,436 | md | Markdown | WindowsServerDocs/identity/ad-fs/operations/Add-a-Claim-Description.md | SeekZ85/windowsserverdocs.fr-fr | 5bf1419505b71bb5f82621880aa069a0e5c88e45 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | WindowsServerDocs/identity/ad-fs/operations/Add-a-Claim-Description.md | SeekZ85/windowsserverdocs.fr-fr | 5bf1419505b71bb5f82621880aa069a0e5c88e45 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | WindowsServerDocs/identity/ad-fs/operations/Add-a-Claim-Description.md | SeekZ85/windowsserverdocs.fr-fr | 5bf1419505b71bb5f82621880aa069a0e5c88e45 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

ms.assetid: 7d230527-f4fe-4572-8838-0b354ee0b06b

title: Ajouter une description de revendication

description: ''

author: billmath

ms.author: billmath

manager: femila

ms.date: 05/31/2017

ms.topic: article

ms.prod: windows-server

ms.technology: identity-adfs

ms.openlocfilehash: ff50ac8d41a5bbde282b1d5b93c85610f841b5a... | 57.266667 | 520 | 0.79482 | fra_Latn | 0.953456 |

9153fac188a9354726cc06a6b8d15234ee7b661f | 1,084 | md | Markdown | TODO.md | randohm/mpdfront | 1fca0b73da05b0442c08378f551a11f43620c605 | [

"Apache-2.0"

] | 2 | 2020-08-17T04:59:39.000Z | 2020-08-17T04:59:44.000Z | TODO.md | randohm/mpdfront | 1fca0b73da05b0442c08378f551a11f43620c605 | [

"Apache-2.0"

] | null | null | null | TODO.md | randohm/mpdfront | 1fca0b73da05b0442c08378f551a11f43620c605 | [

"Apache-2.0"

] | null | null | null | # TODO

- grab focus by correct pane

- `db_cache` for files

- use status['elapsed']

- turn off timeout on update

- handle db updates in browser

- category: songs

- column browser out of range error

- info pop error on no data

- song counter finish and start over (DSD only??)

- playback layout class

- on stopped state, ... | 22.122449 | 58 | 0.744465 | eng_Latn | 0.992162 |

91542d355010de4571b3c30f3409c0e1a70a5195 | 4,170 | md | Markdown | content/blog/HEALTH/8/9/8780a2a86e20f2d4a71e1ca57cac089a.md | arpecop/big-content | 13c88706b1c13a7415194d5959c913c4d52b96d3 | [

"MIT"

] | 1 | 2022-03-03T17:52:27.000Z | 2022-03-03T17:52:27.000Z | content/blog/HEALTH/8/9/8780a2a86e20f2d4a71e1ca57cac089a.md | arpecop/big-content | 13c88706b1c13a7415194d5959c913c4d52b96d3 | [

"MIT"

] | null | null | null | content/blog/HEALTH/8/9/8780a2a86e20f2d4a71e1ca57cac089a.md | arpecop/big-content | 13c88706b1c13a7415194d5959c913c4d52b96d3 | [

"MIT"

] | null | null | null | ---

title: 8780a2a86e20f2d4a71e1ca57cac089a

mitle: "Alternative Medicine to Try for Your Panic and Anxiety"

image: "https://fthmb.tqn.com/2luUtF_kR5m-oWDjlwR88dr_WrE=/2121x1414/filters:fill(ABEAC3,1)/GettyImages-629784328-58d2c4123df78c516211f49f.jpg"

description: ""

---

The t's ok complementary ask alternative medic... | 521.25 | 3,896 | 0.810072 | eng_Latn | 0.987939 |

9154e76cd6a397f396ecc1cf68c6f43e9a319eac | 63 | md | Markdown | README.md | txazo/dubbo | fc6cadddf379f654619411b017b2e36344b8c3b8 | [

"Apache-2.0"

] | 1 | 2016-06-14T14:17:34.000Z | 2016-06-14T14:17:34.000Z | README.md | txazo/dubbo | fc6cadddf379f654619411b017b2e36344b8c3b8 | [

"Apache-2.0"

] | 8 | 2020-06-30T22:51:39.000Z | 2022-02-01T00:56:00.000Z | README.md | txazo/dubbo | fc6cadddf379f654619411b017b2e36344b8c3b8 | [

"Apache-2.0"

] | null | null | null | ## dubbo

Alibaba RPC Framework Dubbo

## 说明

* Dubbo版本: 2.5.3

| 7.875 | 27 | 0.650794 | gaz_Latn | 0.391801 |

9156ef73a214fe9a8eb22307b3d1ae6955384f0f | 11,413 | md | Markdown | docs/logging-to-elmah-io-from-log4net.md | elmahio/documentation | c8c678afad19ccd2195bb035f83c5242a5672b3b | [

"Apache-2.0"

] | null | null | null | docs/logging-to-elmah-io-from-log4net.md | elmahio/documentation | c8c678afad19ccd2195bb035f83c5242a5672b3b | [

"Apache-2.0"

] | 4 | 2018-04-24T11:41:46.000Z | 2019-01-16T13:04:51.000Z | docs/logging-to-elmah-io-from-log4net.md | elmahio/documentation | c8c678afad19ccd2195bb035f83c5242a5672b3b | [

"Apache-2.0"

] | 3 | 2018-04-24T11:24:53.000Z | 2020-10-22T20:37:39.000Z | ---

title: Logging to elmah.io from log4net

description: Learn about how to add error monitoring and storing log4net messages in the cloud with elmah.io. Simple setup using a single NuGet package.

---

[](https://github.com/elmahio/el... | 43.561069 | 571 | 0.740471 | eng_Latn | 0.839147 |

915718f90eb1eaf5d001e35a329ad3a60803bacd | 407 | md | Markdown | guide/russian/certifications/javascript-algorithms-and-data-structures/functional-programming/introduction-to-currying-and-partial-application/index.md | SweeneyNew/freeCodeCamp | e24b995d3d6a2829701de7ac2225d72f3a954b40 | [

"BSD-3-Clause"

] | 10 | 2019-08-09T19:58:19.000Z | 2019-08-11T20:57:44.000Z | guide/russian/certifications/javascript-algorithms-and-data-structures/functional-programming/introduction-to-currying-and-partial-application/index.md | SweeneyNew/freeCodeCamp | e24b995d3d6a2829701de7ac2225d72f3a954b40 | [

"BSD-3-Clause"

] | 2,056 | 2019-08-25T19:29:20.000Z | 2022-02-13T22:13:01.000Z | guide/russian/certifications/javascript-algorithms-and-data-structures/functional-programming/introduction-to-currying-and-partial-application/index.md | SweeneyNew/freeCodeCamp | e24b995d3d6a2829701de7ac2225d72f3a954b40 | [

"BSD-3-Clause"

] | 5 | 2018-10-18T02:02:23.000Z | 2020-08-25T00:32:41.000Z | ---

title: Introduction to Currying and Partial Application

localeTitle: Введение в каррирование и частичное применение

---

## Введение в каррирование и частичное применение

### Решение

```javascript

function add(x) {

// Add your code below this line

return function(y) {

return function(z) {

return ... | 19.380952 | 59 | 0.653563 | eng_Latn | 0.477589 |

91575450ef872877d0784339c6b29167d0e3677a | 2,522 | md | Markdown | Version.md | conero/lang | d5d51c2e26e56197e9f1c9c82eb9f91458287cf6 | [

"Apache-2.0"

] | 2 | 2018-11-13T14:22:52.000Z | 2022-03-16T01:35:23.000Z | Version.md | conero/lang | d5d51c2e26e56197e9f1c9c82eb9f91458287cf6 | [

"Apache-2.0"

] | null | null | null | Version.md | conero/lang | d5d51c2e26e56197e9f1c9c82eb9f91458287cf6 | [

"Apache-2.0"

] | null | null | null | # 版本信息

> 2018年11月13日 星期二

## V3

### v3.4.0-alpha

- **src**

- lang.md

- (优化)*dart 知识介绍添加*

- (+)*异步和同步的概念*

- Js.md

- (+) *添加 threeJs 的相关知识介绍*

- (+)*添加“知识/进制转换”知识介绍*

- Web.md

- (+)*添加内容项目-“浏览器”,以及相关内核介绍*

- (+)*添加 php/python/nodejs 实现内地可测试的 HTTP 服务器*

- AI/DeepLearning.md

- (+) *学习 MAT... | 21.193277 | 93 | 0.568596 | yue_Hant | 0.676447 |

9157ee6649e99cb07d9e43b4c07ad4063764213e | 5,541 | md | Markdown | README.md | coinpathio/wallet | f86c7702ba944b23f18027d7b9765a613643cfe5 | [

"MIT"

] | null | null | null | README.md | coinpathio/wallet | f86c7702ba944b23f18027d7b9765a613643cfe5 | [

"MIT"

] | null | null | null | README.md | coinpathio/wallet | f86c7702ba944b23f18027d7b9765a613643cfe5 | [

"MIT"

] | null | null | null |

## Open-source Multicurrency wallet for Bitcoin and custom assets, and p2p excahnge

Live version here: https://swaponline.github.io .

No coding skills? Buy WordPress plugin https://code... | 34.203704 | 207 | 0.715394 | eng_Latn | 0.588917 |

9158b5d7e33b8ccf53090b9c08f1df6a97ff3d31 | 3,314 | md | Markdown | docs/c-runtime-library/reference/lrint-lrintf-lrintl-llrint-llrintf-llrintl.md | anmrdz/cpp-docs.es-es | f3eff4dbb06be3444820c2e57b8ba31616b5ff60 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/c-runtime-library/reference/lrint-lrintf-lrintl-llrint-llrintf-llrintl.md | anmrdz/cpp-docs.es-es | f3eff4dbb06be3444820c2e57b8ba31616b5ff60 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/c-runtime-library/reference/lrint-lrintf-lrintl-llrint-llrintf-llrintl.md | anmrdz/cpp-docs.es-es | f3eff4dbb06be3444820c2e57b8ba31616b5ff60 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: lrint, lrintf, lrintl, llrint, llrintf, llrintl | Microsoft Docs

ms.custom: ''

ms.date: 04/05/2018

ms.technology:

- cpp

- devlang-cpp

ms.topic: reference

apiname:

- lrint

- lrintl

- lrintf

- llrint

- llrintf

- llrintl

apilocation:

- msvcrt.dll

- msvcr80.dll

- msvcr90.dll

- msvcr100.dll

- m... | 23.013889 | 241 | 0.685275 | spa_Latn | 0.394122 |

915900d4c953d07acfeededc082e68d2d3fdda2d | 46,558 | md | Markdown | README.md | media-fdtl/cehnuku | d1539f294a3974120301d7643fcc0909262214f8 | [

"Apache-2.0"

] | null | null | null | README.md | media-fdtl/cehnuku | d1539f294a3974120301d7643fcc0909262214f8 | [

"Apache-2.0"

] | null | null | null | README.md | media-fdtl/cehnuku | d1539f294a3974120301d7643fcc0909262214f8 | [

"Apache-2.0"

] | null | null | null | <?xml version="1.0" encoding="UTF-8" ?>

<!DOCTYPE html PUBLIC "-//W3C//DTD XHTML 1.0 Strict//EN" "http://www.w3.org/TR/xhtml1/DTD/xhtml1-strict.dtd">

<html expr:dir='data:blog.languageDirection' lang='zh-TW' xmlns='http://www.w3.org/1999/xhtml' xmlns:b='http://www.google.com/2005/gml/b' xmlns:data='http://www.google.co... | 42.019856 | 360 | 0.585077 | eng_Latn | 0.121496 |

915be14019cf128ab90541c24ac9f5dd1e438aee | 46 | md | Markdown | dolby_vision/README.md | quietvoid/dovi_tool | b5f0138a255576b16907fcf710a0f304d4b0615f | [

"MIT"

] | 189 | 2020-11-06T13:20:34.000Z | 2022-03-30T12:57:20.000Z | dolby_vision/README.md | quietvoid/dovi_tool | b5f0138a255576b16907fcf710a0f304d4b0615f | [

"MIT"

] | 76 | 2020-11-26T22:11:08.000Z | 2022-03-30T01:36:42.000Z | dolby_vision/README.md | quietvoid/dovi_tool | b5f0138a255576b16907fcf710a0f304d4b0615f | [

"MIT"

] | 29 | 2020-12-07T19:27:46.000Z | 2022-03-22T12:40:06.000Z | Library to read & write Dolby Vision metadata. | 46 | 46 | 0.804348 | eng_Latn | 0.86885 |

915bea5a4487794ddda9a6e35a163ef045465c72 | 6,277 | md | Markdown | docs/pages/overview/changelog/release384.md | adaptris/interlok-manual | d791a67a49143f91b4e04f61b181f0df4036380f | [

"MIT"

] | 4 | 2017-05-07T14:57:09.000Z | 2022-03-21T17:19:57.000Z | docs/pages/overview/changelog/release384.md | adaptris/interlok-manual | d791a67a49143f91b4e04f61b181f0df4036380f | [

"MIT"

] | 3 | 2020-10-07T08:58:57.000Z | 2021-02-25T18:54:27.000Z | docs/pages/overview/changelog/release384.md | adaptris/interlok-manual | d791a67a49143f91b4e04f61b181f0df4036380f | [

"MIT"

] | 1 | 2020-05-20T14:53:17.000Z | 2020-05-20T14:53:17.000Z | ## Version 3.8.4 ##

Release Date : 2019-04-29

### Key Highlights

- Config projects continued improvements: better UX for loading projects; improved variable usage when moving/coping components with existing variables; and various improvements around the importing existing config with multiple variable sets; and conf... | 83.693333 | 296 | 0.772821 | eng_Latn | 0.933042 |

915c044914432554d31ee4880167c28c2c5c1b11 | 3,092 | md | Markdown | office-365-management-api/office-365-management-apis-overview.md | BChenMsft/office-365-management-api | 1476bf3eb662c448518ff7a6a1a8ea4e02eb1692 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | office-365-management-api/office-365-management-apis-overview.md | BChenMsft/office-365-management-api | 1476bf3eb662c448518ff7a6a1a8ea4e02eb1692 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | office-365-management-api/office-365-management-apis-overview.md | BChenMsft/office-365-management-api | 1476bf3eb662c448518ff7a6a1a8ea4e02eb1692 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

ms.TocTitle: Office 365 Management APIs overview

title: Office 365 Management APIs overview

description: Provides a single extensibility platform for all Office 365 customers' and partners' management tasks, including service communications, security, compliance, reporting, and auditing.

ms.ContentId: 4fca85f9-... | 81.368421 | 591 | 0.805951 | eng_Latn | 0.967983 |

915c7f0e608d921a93b46cf456f4995f69437f95 | 1,698 | md | Markdown | _posts/C++/DirectX/2021-03-28-directx-tutorial-1.md | EasyCoding-7/easycoding-7.github.io | 9be32363f161ef6096b2c38d99eaaf57b470fc16 | [

"MIT"

] | null | null | null | _posts/C++/DirectX/2021-03-28-directx-tutorial-1.md | EasyCoding-7/easycoding-7.github.io | 9be32363f161ef6096b2c38d99eaaf57b470fc16 | [

"MIT"

] | null | null | null | _posts/C++/DirectX/2021-03-28-directx-tutorial-1.md | EasyCoding-7/easycoding-7.github.io | 9be32363f161ef6096b2c38d99eaaf57b470fc16 | [

"MIT"

] | null | null | null | ---

layout: post

title: "(DirectX : Tutorial) 1. Project Setting"

summary: ""

author: DirectX

date: '2021-03-28 0:00:00 +0000'

category: ['DirectX']

#tags: ['C++', 'tag-test1']

thumbnail: /assets/img/posts/directx-thumnail.jpg

keywords: ['tutorial']

usemathjax: true

permalink: /blog/DirectX/tutorial-1/

---

* [DirectX... | 21.225 | 115 | 0.684923 | kor_Hang | 0.459815 |

915c824304b1f68d05be47f982b8051efab3ea0a | 319 | md | Markdown | posts_old/2018-09-06-2018-09-06-06-16.md | JuanIgnacioGil/3_belle | a131266ac8f7c873ebd34aafbc6f0415cd1cdb86 | [

"MIT"

] | null | null | null | posts_old/2018-09-06-2018-09-06-06-16.md | JuanIgnacioGil/3_belle | a131266ac8f7c873ebd34aafbc6f0415cd1cdb86 | [

"MIT"

] | 1 | 2021-12-06T17:21:47.000Z | 2021-12-06T17:21:47.000Z | stories_old/2018-09-06-2018-09-06-06-16.md | JuanIgnacioGil/3_belle | a131266ac8f7c873ebd34aafbc6f0415cd1cdb86 | [

"MIT"

] | null | null | null | ---

layout: post

title: "2018-09-06"

date: 2018-09-06 06:16:49

description:

image: "/assets/stories/201809/d0e4ce594d684e6df988fd893e7a70e5.jpg"

author: Elise Plain

excerpt: CUANDO NO SÉ ESTOY MÁS CERCA DE SABER QUE CUANDO SÉ

tags:

- stories

- all

---

CUANDO NO SÉ ESTOY MÁS CERCA DE SABER QUE CUANDO SÉ

<p></p>

| 19.9375 | 68 | 0.727273 | yue_Hant | 0.68397 |

915cacea6a7c4954edb139751f57418683838582 | 788 | md | Markdown | about.md | JattMones/mattjonesofficial.github.io | 308277ab4d5ab6c904b36563d9e6ec3501451ffd | [

"MIT"

] | null | null | null | about.md | JattMones/mattjonesofficial.github.io | 308277ab4d5ab6c904b36563d9e6ec3501451ffd | [

"MIT"

] | 2 | 2018-10-02T23:49:40.000Z | 2018-10-03T15:51:24.000Z | about.md | JattMones/jattmones.github.io | 308277ab4d5ab6c904b36563d9e6ec3501451ffd | [

"MIT"

] | null | null | null | ---

layout: page

title: About

permalink: /about/

---

I've always loved problem solving and technology. For this reason I'm interested in Computer Science and it's advancement. So, when I moved to Meadville PA to start undergrad., what better to study than Computer Scie... | 39.4 | 411 | 0.757614 | eng_Latn | 0.972753 |

915d82a3c141addfe0b6cb0c00eee79a0d0879cf | 5,875 | md | Markdown | articles/governance/azure-management.md | IrisClasson/azure-docs.sv-se | a6a2b03ee9a98c9e3708bf0df9f77628db79f1f6 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/governance/azure-management.md | IrisClasson/azure-docs.sv-se | a6a2b03ee9a98c9e3708bf0df9f77628db79f1f6 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/governance/azure-management.md | IrisClasson/azure-docs.sv-se | a6a2b03ee9a98c9e3708bf0df9f77628db79f1f6 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Översikt över Azure Management – Azure-styrning

description: Översikt över hanterings områden för Azure-program och-resurser med länkar till innehåll på hanterings verktyg för Azure.

ms.date: 07/06/2020

ms.topic: overview

ms.openlocfilehash: 81d655db706a7330fc541724d490a4885cc2fe8b

ms.sourcegitcommit: e13263... | 94.758065 | 545 | 0.815319 | swe_Latn | 0.999912 |

915d834c892c0127a6480f4ab57746aea533091e | 1,767 | md | Markdown | data/2015/06/2015-06-12.md | bouzuya/blog.bouzuya.net | d5e643990b8e9721ae09c18f99334a898d83fcb8 | [

"MIT"

] | 6 | 2016-05-02T21:31:41.000Z | 2018-01-15T04:48:01.000Z | data/2015/06/2015-06-12.md | bouzuya/blog.bouzuya.net | d5e643990b8e9721ae09c18f99334a898d83fcb8 | [

"MIT"

] | 56 | 2015-05-18T04:57:25.000Z | 2021-07-22T20:17:27.000Z | data/2015/06/2015-06-12.md | bouzuya/blog.bouzuya.net | d5e643990b8e9721ae09c18f99334a898d83fcb8 | [

"MIT"

] | 2 | 2016-06-15T04:06:11.000Z | 2016-10-18T13:36:55.000Z | # 映画『ハリーポッターと炎のゴブレット』

映画『ハリーポッターと炎のゴブレット』を観た。

[2015-05-29][] の 2 作目や [2015-06-05][] の 3 作目に続いて 4 作目。金曜ロードショーで 4 週連続放送しているハリーポッターシリーズを観た。1 作目 ( 1 週目 ) は書きそびれたんだよね……。

小説はここから上下巻の二冊組だったはず。

分量の割には内容は薄く感じたんだけど映像化しても同じだな。

キャラクターが死ぬと話が重くなるはずなんだけど割とどうでもいいキャラなのかあっさりしている。

とってつけたようなトライウィザードトーナメント。妙に粗い闇の魔術に対する防衛術の先生の描写。不可抗力で... | 36.8125 | 146 | 0.768534 | yue_Hant | 0.65475 |

915de1b92b21971a2ddf32df41268df20cf6b183 | 1,656 | md | Markdown | README.md | dinfuehr/dora | bcfdac576b729e2bbb2422d0239426b884059b2c | [

"MIT"

] | 415 | 2016-12-12T13:23:20.000Z | 2022-03-26T10:32:44.000Z | README.md | dinfuehr/dora | bcfdac576b729e2bbb2422d0239426b884059b2c | [

"MIT"

] | 227 | 2017-02-07T03:32:44.000Z | 2022-03-29T06:53:05.000Z | README.md | dinfuehr/dora | bcfdac576b729e2bbb2422d0239426b884059b2c | [

"MIT"

] | 30 | 2017-05-03T12:25:22.000Z | 2022-02-07T22:42:19.000Z | # Dora

[](https://gitter.im/dora-lang/Lobby?utm_source=badge&utm_medium=badge&utm_campaign=pr-badge&utm_content=badge) [](https://github... | 57.103448 | 339 | 0.771739 | eng_Latn | 0.951819 |

915de555b85c5d677f7e5020798cb7143e9daf86 | 192 | md | Markdown | Practice_Uploads/toDoList/readme.md | queenish001/Web-Development | e5f21377e513ac86f8132242ea8b39eeb72728e1 | [

"MIT"

] | null | null | null | Practice_Uploads/toDoList/readme.md | queenish001/Web-Development | e5f21377e513ac86f8132242ea8b39eeb72728e1 | [

"MIT"

] | null | null | null | Practice_Uploads/toDoList/readme.md | queenish001/Web-Development | e5f21377e513ac86f8132242ea8b39eeb72728e1 | [

"MIT"

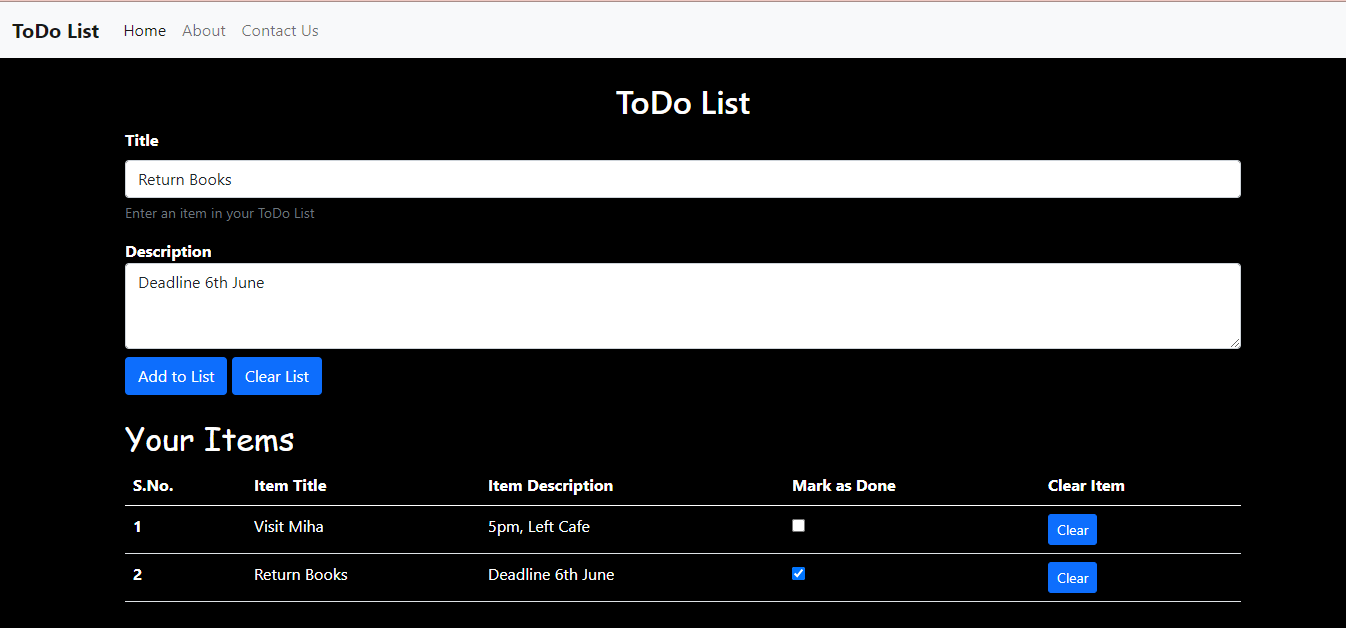

] | null | null | null | * Add or Clear Tasks

* Mark tasks as done

* Clear the Entire List in one go

| 32 | 114 | 0.78125 | eng_Latn | 0.304599 |

915e69c283d86c4b51e85e23537646a219714865 | 887 | md | Markdown | README.md | Fantastic-Four-CSC-370/Book-Drop | b15965c4ce470fb49ce9967c7f8fd0365194cfb9 | [

"MIT"

] | null | null | null | README.md | Fantastic-Four-CSC-370/Book-Drop | b15965c4ce470fb49ce9967c7f8fd0365194cfb9 | [

"MIT"

] | null | null | null | README.md | Fantastic-Four-CSC-370/Book-Drop | b15965c4ce470fb49ce9967c7f8fd0365194cfb9 | [

"MIT"

] | null | null | null | # Book-Drop CSC 470

# Team

| <a href="https://github.com/mhrshuvo" target="_blank">**SHUVO**</a> | <a href="https://github.com/RifatdaM" target="_blank">**Rifat**</a> | <a href="https://github.com/sabb... | 88.7 | 424 | 0.686584 | yue_Hant | 0.258049 |

aa4caaad1eaacc98dc132a97cb0b2556929e2e39 | 17,720 | md | Markdown | docs/architecture/containerized-lifecycle/design-develop-containerized-apps/docker-apps-inner-loop-workflow.md | pirluq/docs | 820adc9b585ecb5691957cd2a00906ad56fe826c | [

"CC-BY-4.0",

"MIT"

] | 3 | 2021-10-06T18:19:40.000Z | 2022-01-26T20:00:53.000Z | docs/architecture/containerized-lifecycle/design-develop-containerized-apps/docker-apps-inner-loop-workflow.md | pirluq/docs | 820adc9b585ecb5691957cd2a00906ad56fe826c | [

"CC-BY-4.0",

"MIT"

] | 154 | 2021-11-04T02:22:26.000Z | 2022-03-21T02:19:33.000Z | docs/architecture/containerized-lifecycle/design-develop-containerized-apps/docker-apps-inner-loop-workflow.md | pirluq/docs | 820adc9b585ecb5691957cd2a00906ad56fe826c | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-06-04T22:13:58.000Z | 2020-06-04T22:13:58.000Z | ---

title: Inner-loop development workflow for Docker apps

description: Learn the "inner loop" workflow for development of Docker applications.

ms.date: 02/15/2019

---

# Inner-loop development workflow for Docker apps

Before triggering the outer-loop workflow spanning the entire DevOps cycle, it all begins on each dev... | 66.119403 | 638 | 0.777144 | eng_Latn | 0.994102 |

aa4f042fe695998ac3768d892797bb6164a6eb4b | 1,471 | md | Markdown | en/datalens/operations/connection/create-appmetrica.md | IyliyaChe/docs | 15b2c8f12569ac6cb69224a32aaa0f223d675076 | [

"CC-BY-4.0"

] | null | null | null | en/datalens/operations/connection/create-appmetrica.md | IyliyaChe/docs | 15b2c8f12569ac6cb69224a32aaa0f223d675076 | [

"CC-BY-4.0"

] | null | null | null | en/datalens/operations/connection/create-appmetrica.md | IyliyaChe/docs | 15b2c8f12569ac6cb69224a32aaa0f223d675076 | [

"CC-BY-4.0"

] | null | null | null | # Creating an AppMetrica connection

## Connecting to AppMetrica {#appmetrica-connection}

To create an AppMetrica connection:

1. Go to the [connections page](https://datalens.yandex.com/connections).

1. Click **Create** and select **Connection**.

1. Select **AppMetrica** as the connection type.

1. In the field next t... | 44.575758 | 153 | 0.740313 | eng_Latn | 0.986753 |

aa4f8599c9976a7bba102e7c9d931fc29e9e8376 | 179 | md | Markdown | src/input/demos/enUS/count.demo.md | bljessica/naive-ui | 0ceffd01031d3fe5c4b4e0d56d9328a579cdeb57 | [

"MIT"

] | 5 | 2021-06-17T21:12:18.000Z | 2022-03-24T18:39:36.000Z | src/input/demos/enUS/count.demo.md | bljessica/naive-ui | 0ceffd01031d3fe5c4b4e0d56d9328a579cdeb57 | [

"MIT"

] | 10 | 2021-07-07T06:15:26.000Z | 2022-02-07T06:25:46.000Z | src/input/demos/enUS/count.demo.md | xiumubai/xiumu-ui-master | c317192617794691c4e2d9dee80e70cd7f145980 | [

"MIT"

] | 3 | 2021-06-09T03:07:10.000Z | 2021-07-31T12:33:44.000Z | # Word Limit

No waste words.

```html

<n-space vertical>

<n-input maxlength="30" show-count clearable />

<n-input type="textarea" maxlength="30" show-count />

</n-space>

```

| 16.272727 | 55 | 0.659218 | eng_Latn | 0.500168 |

aa4fc3c30c23a5b638d566dd481054390525b5c6 | 9,916 | md | Markdown | README.md | JasonkayZK/jasonkayzk | 277f3b605b0e639900c90d151ea5a170db1f4da3 | [

"Apache-2.0"

] | 6 | 2020-07-19T08:35:03.000Z | 2021-12-22T11:32:48.000Z | README.md | JasonkayZK/jasonkayzk | 277f3b605b0e639900c90d151ea5a170db1f4da3 | [

"Apache-2.0"

] | 1 | 2020-08-30T02:26:36.000Z | 2020-08-30T02:26:36.000Z | README.md | JasonkayZK/jasonkayzk | 277f3b605b0e639900c90d151ea5a170db1f4da3 | [

"Apache-2.0"

] | 49 | 2020-07-24T20:20:32.000Z | 2022-03-15T05:43:12.000Z | ### Hi there, I’m [JasonkayZK](https://jasonkayzk.github.io/) 👋

<p align="center">

<img src="https://cdn.jsdelivr.net/gh/jasonkayzk/jasonkayzk@master/hello-world.gif" width="30%">

</p>

<p align="center">

<img width="500" src="https://metrics.lecoq.io/jasonkayzk?template=classic&repositories.forks=true&followup=... | 75.121212 | 283 | 0.720351 | yue_Hant | 0.575648 |

aa506a4554b21dabe7e0b4d336eab7fea993b64b | 3,237 | md | Markdown | content/template_posts/shortcodes/index.bn.md | jsduenass/yourTeamWebsite | feb69c57974f3c190330d3ca3ec689d6b7a5c3c4 | [

"MIT"

] | null | null | null | content/template_posts/shortcodes/index.bn.md | jsduenass/yourTeamWebsite | feb69c57974f3c190330d3ca3ec689d6b7a5c3c4 | [

"MIT"

] | null | null | null | content/template_posts/shortcodes/index.bn.md | jsduenass/yourTeamWebsite | feb69c57974f3c190330d3ca3ec689d6b7a5c3c4 | [

"MIT"

] | null | null | null | ---

title: "শর্টকোডের নমুনা"

date: 2020-06-08T08:06:25+06:00

description: Shortcodes sample

---

এই নমুনা পোস্টটি এই বিষয়গুলো পরীক্ষা করার জন্যে করা হয়েছেঃ

- ডিফল্ট হিরো ইমেজ।

- বিভিন্ন শর্টকোড।

## এলার্ট

এই থিমের মধ্যে এই সকল এলার্ট রয়েছেঃ

{{< alert type="success" >}}

এটা `type="success"` দিয়ে নমুনা এলার্ট।

{{< ... | 26.752066 | 467 | 0.635465 | ben_Beng | 0.098935 |

aa50916c4823a0fb651026ba9dc7bcf1dbb50264 | 14,687 | md | Markdown | docs/framework/wcf/feature-details/selecting-a-credential-type.md | paularuiz22/docs | 56a652c21770cad32dfcf128f8977d341d106332 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2019-12-17T08:15:14.000Z | 2019-12-17T08:15:14.000Z | docs/framework/wcf/feature-details/selecting-a-credential-type.md | paularuiz22/docs | 56a652c21770cad32dfcf128f8977d341d106332 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/framework/wcf/feature-details/selecting-a-credential-type.md | paularuiz22/docs | 56a652c21770cad32dfcf128f8977d341d106332 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: "Selecting a Credential Type"

ms.date: "03/30/2017"

ms.assetid: bf707063-3f30-4304-ab53-0e63413728a8

---

# Selecting a Credential Type

*Credentials* are the data Windows Communication Foundation (WCF) uses to establish either a claimed identity or capabilities. For example, a passport is a credential a gover... | 131.133929 | 1,083 | 0.803023 | eng_Latn | 0.995182 |

aa51a9bc69a0a2e8c930b7c7c05c7495317ed413 | 647 | md | Markdown | 2018/CVE-2018-6605.md | justinforbes/cve | 375c65312f55c34fc1a4858381315fe9431b0f16 | [

"MIT"

] | 2,340 | 2022-02-10T21:04:40.000Z | 2022-03-31T14:42:58.000Z | 2018/CVE-2018-6605.md | justinforbes/cve | 375c65312f55c34fc1a4858381315fe9431b0f16 | [

"MIT"

] | 19 | 2022-02-11T16:06:53.000Z | 2022-03-11T10:44:27.000Z | 2018/CVE-2018-6605.md | justinforbes/cve | 375c65312f55c34fc1a4858381315fe9431b0f16 | [

"MIT"

] | 280 | 2022-02-10T19:58:58.000Z | 2022-03-26T11:13:05.000Z | ### [CVE-2018-6605](https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-6605)

](https://www.npmjs.org/package/material-ui-tree)

[](https://www.npmjs.org/package/material-ui-tree)

[

[](https://travis-ci.org/moleculerjs/moleculer)

[](https://coveralls.io/github/moleculerjs/moleculer?b... | 45.957447 | 425 | 0.750309 | eng_Latn | 0.272946 |

aa5300fe7cc54de956a6b8f430b1960ebab4b440 | 420 | md | Markdown | about.md | michaelsusanto81/os201 | d2b49f0950ec70a6c884d8bccb702f33887a5b34 | [

"MIT"

] | null | null | null | about.md | michaelsusanto81/os201 | d2b49f0950ec70a6c884d8bccb702f33887a5b34 | [

"MIT"

] | null | null | null | about.md | michaelsusanto81/os201 | d2b49f0950ec70a6c884d8bccb702f33887a5b34 | [

"MIT"

] | 1 | 2020-06-08T07:24:36.000Z | 2020-06-08T07:24:36.000Z | ---

permalink: /About/

---

# About Michael

<img src="http://michaelto.herokuapp.com/static/img/michaelsusanto-profile.39cc73cc0e65.png" width="200" style="border-radius: 100%">

* Hello! My name is Michael Susanto.

* I'm from University of Indonesia, currently fighting for my bachelor degree in Computer Science.

* Yo... | 32.307692 | 133 | 0.738095 | eng_Latn | 0.655933 |

aa53617e78ea6a8e9803b0fda3f1eeb2f064c04f | 861 | md | Markdown | tests/readmes/iamalbert/pytorch-wordemb/README.md | JulienPalard/mdorrst | 88a605dc8aea11c24d24e4257efc39c2a9a125ad | [

"MIT"

] | 3 | 2017-04-27T03:19:02.000Z | 2021-02-05T13:17:27.000Z | tests/readmes/iamalbert/pytorch-wordemb/README.md | JulienPalard/mdorrst | 88a605dc8aea11c24d24e4257efc39c2a9a125ad | [

"MIT"

] | 1 | 2019-10-23T07:36:30.000Z | 2019-10-23T07:36:31.000Z | tests/readmes/iamalbert/pytorch-wordemb/README.md | JulienPalard/mdorrst | 88a605dc8aea11c24d24e4257efc39c2a9a125ad | [

"MIT"

] | 4 | 2017-05-13T06:39:20.000Z | 2020-11-06T11:00:50.000Z | # pytorch-wordemb

Load pretrained word embeddings (word2vec, glove format) into torch.FloatTensor for PyTorch

## Install

PyTorch required.

```

pip install torchwordemb

```

## Usage

```python

import torchwordemb

```

### torchwordemb.load_word2vec_bin(path)

read word2vec binary-format model from... | 23.27027 | 124 | 0.69338 | eng_Latn | 0.759325 |

aa541987958a467712cc8a9c647f3f50ac9e3380 | 1,099 | md | Markdown | README.md | devLeonardoTS/Heroku_TodoListApp_Server | f3ac5d32a8215bb4730fc5899b93ec49c07903b0 | [

"Apache-2.0"

] | null | null | null | README.md | devLeonardoTS/Heroku_TodoListApp_Server | f3ac5d32a8215bb4730fc5899b93ec49c07903b0 | [

"Apache-2.0"

] | null | null | null | README.md | devLeonardoTS/Heroku_TodoListApp_Server | f3ac5d32a8215bb4730fc5899b93ec49c07903b0 | [

"Apache-2.0"

] | null | null | null | # Heroku_TodoListApp_Server

Uma RESTful API que fornece operações no estilo Create, Read, Update, Delete para aplicações front-end (Web ou Mobile) de Todo Listing (Listas de Tarefas simples).

Foi desenvolvida como meio para que eu adquira mais conhecimentos sobre JavaScript, TypeScript, Arquitetura REST, integrações c... | 122.111111 | 537 | 0.803458 | por_Latn | 0.999906 |

aa545faab787c248e4cdaffee6e6409347080b13 | 218 | md | Markdown | _watches/M20201230_064350_TLP_4.md | Meteoros-Floripa/meteoros.floripa.br | 7d296fb8d630a4e5fec9ab1a3fb6050420fc0dad | [

"MIT"

] | 5 | 2020-05-19T17:04:49.000Z | 2021-03-30T03:09:14.000Z | _watches/M20201230_064350_TLP_4.md | Meteoros-Floripa/site | 764cf471d85a6b498873610e4f3b30efd1fd9fae | [

"MIT"

] | null | null | null | _watches/M20201230_064350_TLP_4.md | Meteoros-Floripa/site | 764cf471d85a6b498873610e4f3b30efd1fd9fae | [

"MIT"

] | 2 | 2020-05-19T17:06:27.000Z | 2020-09-04T00:00:43.000Z | ---

layout: watch

title: TLP4 - 30/12/2020 - M20201230_064350_TLP_4T.jpg

date: 2020-12-30 06:43:50

permalink: /2020/12/30/watch/M20201230_064350_TLP_4

capture: TLP4/2020/202012/20201229/M20201230_064350_TLP_4T.jpg

---

| 27.25 | 62 | 0.784404 | fra_Latn | 0.053076 |

aa5540d91bed7532cfeb66caf02992dcf2a640b2 | 3,681 | md | Markdown | content/blog/HEALTH/0/2/6b7adb14fefdfb327c1ff7397cbd3021.md | arpecop/big-content | 13c88706b1c13a7415194d5959c913c4d52b96d3 | [

"MIT"

] | 1 | 2022-03-03T17:52:27.000Z | 2022-03-03T17:52:27.000Z | content/blog/HEALTH/0/2/6b7adb14fefdfb327c1ff7397cbd3021.md | arpecop/big-content | 13c88706b1c13a7415194d5959c913c4d52b96d3 | [

"MIT"

] | null | null | null | content/blog/HEALTH/0/2/6b7adb14fefdfb327c1ff7397cbd3021.md | arpecop/big-content | 13c88706b1c13a7415194d5959c913c4d52b96d3 | [

"MIT"

] | null | null | null | ---

title: 6b7adb14fefdfb327c1ff7397cbd3021

mitle: "What's the best way to learn Italian?"

image: "https://fthmb.tqn.com/nPkybBiD5OIKnTFAYmXqS9l6cr8=/2121x1414/filters:fill(auto,1)/bestwaytolearn-57f5610c3df78c690f11d9ab.jpg"

description: ""

---

The Italian national soccer team, don't us <em>Gli Azzurri</... | 460.125 | 3,433 | 0.770443 | eng_Latn | 0.992168 |

aa557297be310e0b29c5cc69b373e934a47f267d | 852 | md | Markdown | content/zh/docs/reference/glossary/job.md | rendiputra/website | 4b93c0608828e685881be1662e766d696f0b097b | [

"CC-BY-4.0"

] | 3,157 | 2017-10-18T13:28:53.000Z | 2022-03-31T06:41:57.000Z | content/zh/docs/reference/glossary/job.md | rendiputra/website | 4b93c0608828e685881be1662e766d696f0b097b | [

"CC-BY-4.0"

] | 27,074 | 2017-10-18T09:53:11.000Z | 2022-03-31T23:57:19.000Z | content/zh/docs/reference/glossary/job.md | rendiputra/website | 4b93c0608828e685881be1662e766d696f0b097b | [

"CC-BY-4.0"

] | 11,539 | 2017-10-18T15:54:11.000Z | 2022-03-31T12:51:54.000Z | ---

title: Job

id: job

date: 2018-04-12

full_link: /zh/docs/concepts/workloads/controllers/job/

short_description: >

Job 是需要运行完成的确定性的或批量的任务。

aka:

tags:

- fundamental

- core-object

- workload

---

<!--

---

title: Job

id: job

date: 2018-04-12

full_link: /docs/concepts/workloads/controllers/job/

short_description: >

... | 18.12766 | 208 | 0.720657 | eng_Latn | 0.85716 |

aa558e2f5b11debc1f7dbe060181b85324100e48 | 1,500 | md | Markdown | properties.md | josephluck/crux | c799fad19710c9dc65d10148951beae0a552d9f7 | [

"MIT"

] | 1 | 2017-11-12T16:44:17.000Z | 2017-11-12T16:44:17.000Z | properties.md | josephluck/crux | c799fad19710c9dc65d10148951beae0a552d9f7 | [

"MIT"

] | 1 | 2017-06-26T16:20:10.000Z | 2017-06-26T16:20:10.000Z | properties.md | josephluck/crux | c799fad19710c9dc65d10148951beae0a552d9f7 | [

"MIT"

] | null | null | null | ## Css properties to include

### Background

✓ background-color

### Border

✓ border-top

✓ border-right

✓ border-bottom

✓ border-left

✓ border-color

✓ border-top-color

✓ border-right-color

✓ border-bottom-color

✓ border-left-color

✓ border-style

✓ border-top-style

✓ border-right-style

✓ border-bottom-style

✓ border-lef... | 12.295082 | 28 | 0.693333 | eng_Latn | 0.68737 |

aa5652a9725617bb0eb865a6ef6d55dca0afc64b | 973 | md | Markdown | challenges/multiBracketValidation/README.md | AbuKhalil95/data-structures-and-algorithms | 9588f84468edeafb719cc8985ddc70f3e8ee79aa | [

"MIT"

] | null | null | null | challenges/multiBracketValidation/README.md | AbuKhalil95/data-structures-and-algorithms | 9588f84468edeafb719cc8985ddc70f3e8ee79aa | [

"MIT"

] | 3 | 2020-09-14T06:11:31.000Z | 2020-10-16T14:33:22.000Z | challenges/multiBracketValidation/README.md | AbuKhalil95/data-structures-and-algorithms | 9588f84468edeafb719cc8985ddc70f3e8ee79aa | [

"MIT"

] | null | null | null | # Multi-bracket Validation

This algorithm would deal with checking if a string contains balanced parenthesis

# Class-013

# Balanced Parentheses

The challenge involves an algorithm that would check for any parenthesis and match it with its closing bracket `()`, `[]`, `{}`.

## Challenge

The match would need to happen r... | 48.65 | 204 | 0.7852 | eng_Latn | 0.998597 |

aa56b895af78c8b125a010cb7e7d9bb04d22de69 | 22 | md | Markdown | README.md | whitestarrain/blog | 553a476b2beb98a92cc5b086734b39506ea91435 | [

"MIT"

] | 4 | 2021-05-28T01:04:21.000Z | 2022-01-04T08:57:02.000Z | README.md | whitestarrain/blog | 553a476b2beb98a92cc5b086734b39506ea91435 | [

"MIT"

] | null | null | null | README.md | whitestarrain/blog | 553a476b2beb98a92cc5b086734b39506ea91435 | [

"MIT"

] | null | null | null | # blog

A private blog | 7.333333 | 14 | 0.727273 | kor_Hang | 0.497594 |

aa56bcf9cc8d91c0092ef3320a9f3e626937a710 | 69 | md | Markdown | README.md | suarya/sfdxdemorepo | bdc6db1a2a3375014d3317fc1026e489fc4804a4 | [

"MIT"

] | null | null | null | README.md | suarya/sfdxdemorepo | bdc6db1a2a3375014d3317fc1026e489fc4804a4 | [

"MIT"

] | null | null | null | README.md | suarya/sfdxdemorepo | bdc6db1a2a3375014d3317fc1026e489fc4804a4 | [

"MIT"

] | null | null | null | ## Read Me

## Set Up

Start with Git using 'git clone your repo path'! | 23 | 48 | 0.695652 | eng_Latn | 0.937331 |

aa56be764ab92d745cc0d333453ea3d3bb1e82e8 | 4,887 | md | Markdown | cello/11723-16751/11779.md | hyperledger-gerrit-archive/fabric-gerrit | 188c6e69ccb2e4c4d609ae749a467fa7e289b262 | [

"Apache-2.0"

] | 2 | 2021-01-08T04:06:04.000Z | 2021-02-09T08:28:54.000Z | cello/11723-16751/11779.md | cendhu/fabric-gerrit | 188c6e69ccb2e4c4d609ae749a467fa7e289b262 | [

"Apache-2.0"

] | null | null | null | cello/11723-16751/11779.md | cendhu/fabric-gerrit | 188c6e69ccb2e4c4d609ae749a467fa7e289b262 | [

"Apache-2.0"

] | 4 | 2019-12-07T05:54:26.000Z | 2020-06-04T02:29:43.000Z | <strong>Project</strong>: cello<br><strong>Branch</strong>: master<br><strong>ID</strong>: 11779<br><strong>Subject</strong>: [CE-92]Fixed a broken link [ci-skip]<br><strong>Status</strong>: MERGED<br><strong>Owner</strong>: Mark Parzygnat - markparz@us.ibm.com<br><strong>Assignee</strong>:<br><strong>Created</strong>:... | 222.136364 | 2,682 | 0.756292 | kor_Hang | 0.309041 |

aa589d3be40873a00557858c5b7ec14f0bb61a49 | 3,339 | md | Markdown | docs/2014/integration-services/configure-logging-by-using-a-saved-configuration-file.md | sql-aus-hh/sql-docs.de-de | edfac31211cedb5d13440802f131a1e48934748a | [

"CC-BY-4.0",

"MIT"

] | 1 | 2022-02-25T18:10:29.000Z | 2022-02-25T18:10:29.000Z | docs/2014/integration-services/configure-logging-by-using-a-saved-configuration-file.md | sql-aus-hh/sql-docs.de-de | edfac31211cedb5d13440802f131a1e48934748a | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/2014/integration-services/configure-logging-by-using-a-saved-configuration-file.md | sql-aus-hh/sql-docs.de-de | edfac31211cedb5d13440802f131a1e48934748a | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Konfigurieren der Protokollierung mithilfe einer gespeicherten Konfigurationsdatei | Microsoft-Dokumentation

ms.custom: ''

ms.date: 06/13/2017

ms.prod: sql-server-2014

ms.reviewer: ''

ms.technology:

- integration-services

ms.topic: conceptual

helpviewer_keywords:

- containers [Integration Services], logs

- l... | 56.59322 | 306 | 0.785565 | deu_Latn | 0.987123 |

aa58f0ac234cf816c24059e234790d4e737f49aa | 4,572 | md | Markdown | _posts/2019-05-07-scaffold-a-new-rails-5-api.md | johncorderox/johncorderox.github.io | d0ec98239783f74546847481115a9608f71a02a0 | [

"MIT"

] | null | null | null | _posts/2019-05-07-scaffold-a-new-rails-5-api.md | johncorderox/johncorderox.github.io | d0ec98239783f74546847481115a9608f71a02a0 | [

"MIT"

] | 9 | 2020-02-25T22:43:40.000Z | 2022-02-26T10:24:52.000Z | archive/_posts/2019-05-07-scaffold-a-new-rails-5-api.md | johncorderox/johncorderox.github.io | d0ec98239783f74546847481115a9608f71a02a0 | [

"MIT"

] | null | null | null | ---

title: Scaffold a New Rails 5 API.

description: Make a Rails/React API from start to finish

layout: post

---

## Rails API 💎

The new rails api command scaffolds everything we need to get up and running.

1. Run the following: ```rails new my-first-api --api -T```

What's going on here? The `--api` command tells ... | 32.197183 | 299 | 0.74322 | eng_Latn | 0.974215 |

aa58fbb31520d87291c84e29e4016aff95a51f5e | 7,149 | md | Markdown | README.md | A-Yamout/olivia | 22802f03255683ac3167dd1b07b24ac33935f89d | [

"MIT"

] | 4 | 2021-08-06T13:52:07.000Z | 2021-12-15T05:58:52.000Z | README.md | A-Yamout/olivia | 22802f03255683ac3167dd1b07b24ac33935f89d | [

"MIT"

] | null | null | null | README.md | A-Yamout/olivia | 22802f03255683ac3167dd1b07b24ac33935f89d | [

"MIT"

] | 1 | 2021-06-10T09:33:19.000Z | 2021-06-10T09:33:19.000Z | <h1 align="center">

<br>

<img src="https://olivia-ai.org/img/icons/olivia-with-text.png" alt="Olivia's character" width="300">

<br>

</h1>

<h4 align="center">💁♀️ Your new best friend</h4>

<p align="center">

<a href="https://goreportcard.com/report/github.com/olivia-ai/olivia"><img src="https://goreportcard.c... | 33.881517 | 200 | 0.702056 | eng_Latn | 0.339465 |

aa5924243c7e175a02a3c6d16c8836882b2a9dd6 | 1,148 | md | Markdown | includes/active-directory-end-user-preview-notice-security-key.md | changeworld/azure-docs.it- | 34f70ff6964ec4f6f1a08527526e214fdefbe12a | [

"CC-BY-4.0",

"MIT"

] | 1 | 2017-06-06T22:50:05.000Z | 2017-06-06T22:50:05.000Z | includes/active-directory-end-user-preview-notice-security-key.md | changeworld/azure-docs.it- | 34f70ff6964ec4f6f1a08527526e214fdefbe12a | [

"CC-BY-4.0",

"MIT"

] | 41 | 2016-11-21T14:37:50.000Z | 2017-06-14T20:46:01.000Z | includes/active-directory-end-user-preview-notice-security-key.md | changeworld/azure-docs.it- | 34f70ff6964ec4f6f1a08527526e214fdefbe12a | [

"CC-BY-4.0",

"MIT"

] | 7 | 2016-11-16T18:13:16.000Z | 2017-06-26T10:37:55.000Z | ---

title: includere file

description: includere file

services: active-directory

author: eross-msft

ms.service: active-directory

ms.topic: include

ms.date: 07/03/2019

ms.author: lizross

ms.custom: include file

ms.openlocfilehash: 8757c2c30275e4bae76aff14604b769f16bc00e8

ms.sourcegitcommit: f28ebb95ae9aaaff3f87d8388a09b... | 63.777778 | 713 | 0.824042 | ita_Latn | 0.989121 |

aa59d601c9d92fb6af18ce9aef783a38fcae36bf | 31 | md | Markdown | README.md | ZieIony/Base | 83535bcf3de988609e87860365f57778a5c41384 | [

"Apache-2.0"

] | null | null | null | README.md | ZieIony/Base | 83535bcf3de988609e87860365f57778a5c41384 | [

"Apache-2.0"

] | 2 | 2020-05-10T13:53:34.000Z | 2020-06-08T22:35:17.000Z | README.md | ZieIony/Base | 83535bcf3de988609e87860365f57778a5c41384 | [

"Apache-2.0"

] | 2 | 2020-01-03T12:00:55.000Z | 2021-05-12T12:37:20.000Z | # Base

Base module for my apps

| 10.333333 | 23 | 0.741935 | eng_Latn | 0.994481 |

aa5a87fdb2a229a361413b457d6510b72180ba9d | 10,129 | md | Markdown | _listings/att-dev-program/mymessagesv2messages-get-postman.md | streamdata-gallery-organizations/at-t-dev-program | fa21fa0f65c1bcbad708b40a3e5bb57ad7a2ca9b | [

"CC-BY-3.0"

] | null | null | null | _listings/att-dev-program/mymessagesv2messages-get-postman.md | streamdata-gallery-organizations/at-t-dev-program | fa21fa0f65c1bcbad708b40a3e5bb57ad7a2ca9b | [

"CC-BY-3.0"

] | null | null | null | _listings/att-dev-program/mymessagesv2messages-get-postman.md | streamdata-gallery-organizations/at-t-dev-program | fa21fa0f65c1bcbad708b40a3e5bb57ad7a2ca9b | [

"CC-BY-3.0"

] | null | null | null | {

"info": {

"name": "AT&T API Get My Messages",

"_postman_id": "9120e02b-b520-4a6c-bf61-fbefa3767087",

"description": "/myMessages/v2/messages",

"schema": "https://schema.getpostman.com/json/collection/v2.0.0/"

},

"item": [

{

"name": "Devicecapabilities",

"item": [

... | 29.530612 | 105 | 0.361043 | kor_Hang | 0.068116 |

aa5ab76dabfc54cc7490c5733ff698b1c9f19156 | 1,771 | md | Markdown | pages/glider/regulations/pts.md | eburlingame/l-over-d | 860927dfb88663a8ff9f66da212b07e51662f634 | [

"MIT"

] | null | null | null | pages/glider/regulations/pts.md | eburlingame/l-over-d | 860927dfb88663a8ff9f66da212b07e51662f634 | [

"MIT"

] | null | null | null | pages/glider/regulations/pts.md | eburlingame/l-over-d | 860927dfb88663a8ff9f66da212b07e51662f634 | [

"MIT"

] | null | null | null | ---

layout: default

title: Practical Test Standards

---

### Airmen Certification Standards for Private Pilot Glider

Areas of Operation:

1. Preflight Preparation

1. Certificates and Documents

2. Weather Information

3. Operation of Systems

4. Performance and Limitations

5. Aeromedical Factors

2. Preflight roced... | 25.3 | 63 | 0.759458 | eng_Latn | 0.880315 |

aa5b5b86140ba9dfa579498a8e83a39f05ec94de | 25,349 | md | Markdown | content/quo_vadis_061.md | books-are-next/quo-vadis | 2f5a4ff1d36cb5767bad73054ddf7c366cb6319a | [

"CC0-1.0"

] | null | null | null | content/quo_vadis_061.md | books-are-next/quo-vadis | 2f5a4ff1d36cb5767bad73054ddf7c366cb6319a | [

"CC0-1.0"

] | null | null | null | content/quo_vadis_061.md | books-are-next/quo-vadis | 2f5a4ff1d36cb5767bad73054ddf7c366cb6319a | [

"CC0-1.0"

] | null | null | null | ---

title: LVII

---

Zatím se slunce schýlilo k západu; zdálo se, že se rozplyne ve večerních červáncích. Podívaná byla u konce. Davy začaly opouštěti amfitheatr a hrnouti se do města skrze východy, zvané vomitoria. Pouze Augustiani otáleli, čekajíce, až odplují vlny. Celý jejich zástup, opustiv svá místa, shromáždil s... | 61.229469 | 1,006 | 0.777822 | ces_Latn | 1.000006 |

aa5b8190ab9a21e065fd8bdcd90dc2557b9b1660 | 4,763 | md | Markdown | articles/active-directory/develop/vs-active-directory-error.md | gencomp/azure-docs.de-de | ea9dc9bb0bf0a7673d4f83d8a8187d55087b3bce | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/active-directory/develop/vs-active-directory-error.md | gencomp/azure-docs.de-de | ea9dc9bb0bf0a7673d4f83d8a8187d55087b3bce | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/active-directory/develop/vs-active-directory-error.md | gencomp/azure-docs.de-de | ea9dc9bb0bf0a7673d4f83d8a8187d55087b3bce | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Diagnostizieren von Fehlern mit dem verbundenen Dienst für Azure AD (Visual Studio)

description: Der verbundene Dienst für Active Directory hat einen inkompatiblen Authentifizierungstyp erkannt.

author: ghogen

manager: jillfra

ms.prod: visual-studio-windows

ms.technology: vs-azure

ms.workload: azure-vs

ms.to... | 47.63 | 412 | 0.799496 | deu_Latn | 0.988346 |

aa5c07f72fb042bebb726fccef651f52b4fff17f | 786 | md | Markdown | out/jira/docs/JqlQueryFieldEntityProperty.md | getkloudi/integration-wrapper-generator | 11c525d7fc1a8b26f5e8bab3b0b64e949c6c6dc1 | [

"Apache-2.0"

] | null | null | null | out/jira/docs/JqlQueryFieldEntityProperty.md | getkloudi/integration-wrapper-generator | 11c525d7fc1a8b26f5e8bab3b0b64e949c6c6dc1 | [

"Apache-2.0"

] | null | null | null | out/jira/docs/JqlQueryFieldEntityProperty.md | getkloudi/integration-wrapper-generator | 11c525d7fc1a8b26f5e8bab3b0b64e949c6c6dc1 | [

"Apache-2.0"

] | null | null | null | # Jira.JqlQueryFieldEntityProperty

## Properties

Name | Type | Description | Notes

------------ | ------------- | ------------- | -------------

**entity** | **String** | The object on which the property is set. |

**key** | **String** | The key of the property. |

**path** | **String** | The path in the property valu... | 26.2 | 276 | 0.60687 | eng_Latn | 0.856892 |

aa5c39a83b44b7bc94e681946f14f4a627b87991 | 1,482 | md | Markdown | README.md | vompressor/vproto | f42f019ff831a7b49e4b9da22c637f24ab9ba629 | [

"MIT"

] | null | null | null | README.md | vompressor/vproto | f42f019ff831a7b49e4b9da22c637f24ab9ba629 | [

"MIT"

] | null | null | null | README.md | vompressor/vproto | f42f019ff831a7b49e4b9da22c637f24ab9ba629 | [

"MIT"

] | null | null | null | this package forked by go_sconn/protocol

# protocol

```

type ProtocolHeader interface {

GetBodyLen() int

SetBodyLen(int)

}

```

## struct definition

Structures must consist only of the following types:

- `uint8`

- `uint16`

- `uint32`

- `uint64`

- `bool`

- `[fixed]byte`

## GetByteLen() in... | 24.295082 | 96 | 0.607962 | eng_Latn | 0.755245 |

aa5c3b11353dcdde7efcb38b7d4981f2afa552da | 5,496 | md | Markdown | help/implementation-playbook/development/platform-tools.md | misuadobe/commerce-operations.en | 831ee65fb9d543a2a980ef4ea2a2449e4e16e7af | [

"MIT"

] | null | null | null | help/implementation-playbook/development/platform-tools.md | misuadobe/commerce-operations.en | 831ee65fb9d543a2a980ef4ea2a2449e4e16e7af | [

"MIT"

] | null | null | null | help/implementation-playbook/development/platform-tools.md | misuadobe/commerce-operations.en | 831ee65fb9d543a2a980ef4ea2a2449e4e16e7af | [

"MIT"

] | null | null | null | ---

title: Platform Tools

description: Choose recommended platform tools for your Adobe Commerce implementation.

exl-id: 3fc164f9-a0fc-46e7-a54e-08ce101ccae7

---

# Platform tools

There is no shortage of aspects that must be well thought through and rigorously tested to keep an ecommerce site running without interferen... | 82.029851 | 542 | 0.753821 | eng_Latn | 0.998211 |

aa5c4d0654a8928216584b959a7f0e7b1be50dc0 | 132 | md | Markdown | examples/sqoop-parquet-hdfs-impala/README.md | sada3390/pipewrench | 520b8abfa8f92ad18b65014cf6fd372885bb761b | [

"Apache-2.0"

] | 26 | 2017-10-06T22:36:16.000Z | 2022-02-02T13:29:24.000Z | examples/sqoop-parquet-hdfs-impala/README.md | sada3390/pipewrench | 520b8abfa8f92ad18b65014cf6fd372885bb761b | [

"Apache-2.0"

] | 42 | 2017-10-09T18:40:13.000Z | 2021-11-18T22:58:08.000Z | examples/sqoop-parquet-hdfs-impala/README.md | sada3390/pipewrench | 520b8abfa8f92ad18b65014cf6fd372885bb761b | [

"Apache-2.0"

] | 40 | 2017-10-11T18:50:39.000Z | 2022-02-15T08:49:33.000Z | Examples have moved [here](https://github.com/Cargill/pipewrench/tree/afoerster-patch-1/integration-tests/sqoop-parquet-hdfs-impala) | 132 | 132 | 0.825758 | eng_Latn | 0.268328 |

aa5cac348fa7a2db92649daf7118f2411eb852b8 | 1,881 | md | Markdown | README.md | jwkellyiii/redux-saga-rest | 2aa5de724d41261f1df424691d729fbd5af2483a | [

"MIT"

] | null | null | null | README.md | jwkellyiii/redux-saga-rest | 2aa5de724d41261f1df424691d729fbd5af2483a | [

"MIT"

] | null | null | null | README.md | jwkellyiii/redux-saga-rest | 2aa5de724d41261f1df424691d729fbd5af2483a | [

"MIT"

] | null | null | null | # redux-saga-rest

[](https://www.npmjs.com/package/redux-saga-rest)

`redux-saga-rest` is a thin wrapper around the Fetch API that integrates with [redux-saga](https://github.com/yelouafi/redux-saga) and supports request/response middlew... | 23.810127 | 172 | 0.647528 | eng_Latn | 0.588239 |

aa5cd32eef656167f33db7ad683e7328a96d273f | 6,448 | md | Markdown | book/en-us/01-intro.md | lishu1125/modern-cpp-tutorial | 8fb2d9b3396c10fdd11d7607dc21a91a98856124 | [

"MIT"

] | 1 | 2020-09-01T07:07:39.000Z | 2020-09-01T07:07:39.000Z | book/en-us/01-intro.md | vasanktt/modern-cpp-tutorial | 518c6e97d71bb4f4f2e38177303c699cad89c405 | [

"MIT"

] | 1 | 2020-08-25T03:01:38.000Z | 2020-08-25T03:01:38.000Z | book/en-us/01-intro.md | vasanktt/modern-cpp-tutorial | 518c6e97d71bb4f4f2e38177303c699cad89c405 | [

"MIT"

] | null | null | null | ---

title: "Chapter 01: Towards Modern C++"

type: book-en-us

order: 1

---

# Chapter 01: Towards Modern C++

[TOC]

**Compilation Environment**: This book will use `clang++` as the only compiler used,

and always use the `-std=c++2a` compilation flag in your code.

```bash

> clang++ -v

Apple LLVM version 10.0.1 (clang-... | 43.275168 | 533 | 0.715105 | eng_Latn | 0.993986 |

aa5cde366aefb52169564833a450156c73d76b9a | 2,114 | md | Markdown | README.md | gabrielbmoro/MovieDB-Android | ef8438e0916701804d050ccb23e64dfe9b812441 | [

"MIT"

] | 8 | 2021-05-19T22:32:25.000Z | 2021-11-18T14:05:19.000Z | README.md | gabrielbmoro/MovieDB-Android | ef8438e0916701804d050ccb23e64dfe9b812441 | [

"MIT"

] | 9 | 2021-05-22T22:30:48.000Z | 2021-08-11T12:41:05.000Z | README.md | gabrielbmoro/MovieDB-Android | ef8438e0916701804d050ccb23e64dfe9b812441 | [

"MIT"

] | null | null | null | [](https://www.android.com/)[](https://kotlinlang.org/)

# Welcome!

---

## Setup

After create an account ... | 30.2 | 267 | 0.746925 | eng_Latn | 0.94897 |

aa5cdf8a17e9983d213389b2a442f250f30f8f66 | 761 | md | Markdown | README.md | sapienza-brain-imaging-lab/sapienza-brain-imaging-lab | 9890c58ac01d6d6dc0a6a9ba70a602b0f190a925 | [

"MIT"

] | null | null | null | README.md | sapienza-brain-imaging-lab/sapienza-brain-imaging-lab | 9890c58ac01d6d6dc0a6a9ba70a602b0f190a925 | [

"MIT"

] | null | null | null | README.md | sapienza-brain-imaging-lab/sapienza-brain-imaging-lab | 9890c58ac01d6d6dc0a6a9ba70a602b0f190a925 | [

"MIT"

] | null | null | null | # BIL public site

Public BIL site based on [Jekyll](https://jekyllrb.com) with a [CloudCannon](https://cloudcannon.com/) template

To contribute to the BIL site, first install Docker Desktop and Visual Studio Code on your computer. Then clone this repository and open your working copy using Visual Studio Code. When ope... | 84.555556 | 347 | 0.796321 | eng_Latn | 0.997132 |

aa5e1e7b0748efc1553db57d451706f70c9ad957 | 5,020 | md | Markdown | content_zh/docs/tasks/security/mtls-migration/index.md | eshujiushiwo/istio.github.io | b2d178b9501d2d0d0e14d591d97367c98484194f | [

"Apache-2.0"

] | null | null | null | content_zh/docs/tasks/security/mtls-migration/index.md | eshujiushiwo/istio.github.io | b2d178b9501d2d0d0e14d591d97367c98484194f | [

"Apache-2.0"

] | null | null | null | content_zh/docs/tasks/security/mtls-migration/index.md | eshujiushiwo/istio.github.io | b2d178b9501d2d0d0e14d591d97367c98484194f | [

"Apache-2.0"

] | null | null | null | ---

title: 双向 TLS 的迁移

description: 如何渐进式的为现有 Istio 服务添加双向 TLS 支持

weight: 80

keywords: [security,authentication,migration]

---

本文任务展示了如何在不中断通信的情况下,把现存 Istio 服务的流量从明文升级为双向 TLS

在实际情况中,集群中可能包含 Istio 服务(注入了 Envoy sidecar)以及非 Istio 服务(没有注入 Envoy sidecar 的服务,下文简称为存量服务)。存量服务无法使用 Istio 签发的密钥/证书来进行双向 TLS 通信。我们希望安全的、渐进的启用双向 TLS... | 33.918919 | 261 | 0.693625 | yue_Hant | 0.716425 |

aa5e44b60a34dd79f56250975d300db604ce8728 | 4,081 | md | Markdown | cmd/depprobcheck/README.md | Hellcatlk/kustomize | 9ff910a9757c447ed56f1c1a8b0f446accb47b57 | [

"Apache-2.0"

] | 4 | 2020-07-26T20:25:16.000Z | 2020-08-07T18:18:50.000Z | cmd/depprobcheck/README.md | Hellcatlk/kustomize | 9ff910a9757c447ed56f1c1a8b0f446accb47b57 | [

"Apache-2.0"

] | 17 | 2020-01-03T15:54:41.000Z | 2021-05-19T17:53:59.000Z | cmd/depprobcheck/README.md | Hellcatlk/kustomize | 9ff910a9757c447ed56f1c1a8b0f446accb47b57 | [

"Apache-2.0"

] | 2 | 2021-02-08T21:13:11.000Z | 2021-06-15T15:38:42.000Z | ## Checking openapi build issues

Edit the `main.go` and `go.mod` in this dir to see what builds

with various combinations of cli-runtime kube-openapi.

####

A recent in change in kube-openapi

https://github.com/kubernetes/kube-openapi/pull/234

means that anyone depending on

k8s.io/cli-runtime@v0.20.4

a... | 26.673203 | 114 | 0.727273 | eng_Latn | 0.91825 |

aa5f457639c58a2dd2e9112eb713e2090c052988 | 412 | md | Markdown | .github/ISSUE_TEMPLATE/resume-review.md | tash2020/support | 003dc460062d96f9af30c3e82c031c2c30e3af00 | [

"MIT"

] | null | null | null | .github/ISSUE_TEMPLATE/resume-review.md | tash2020/support | 003dc460062d96f9af30c3e82c031c2c30e3af00 | [

"MIT"

] | null | null | null | .github/ISSUE_TEMPLATE/resume-review.md | tash2020/support | 003dc460062d96f9af30c3e82c031c2c30e3af00 | [

"MIT"

] | null | null | null | ---

name: resume-review

about: Please review my resume on a live stream

title: '[REVIEW]'

labels: resume review

assignees: ''

---

**Remove all personal data, otherwise the resume will be deleted.**

Resume link:

You can also join the discord community [here](https://discord.com/invite/jZQs6Wu)

Feel free to check out... | 25.75 | 113 | 0.757282 | eng_Latn | 0.906675 |

aa5f6843b08b4fb3de93a45a9e73f633d9bb95dd | 2,483 | md | Markdown | README.md | yovisto/wlo-duplicate-detection | 7db2d6e9e148a9243ed820e7eb5cfcd339ed5a99 | [

"MIT"

] | null | null | null | README.md | yovisto/wlo-duplicate-detection | 7db2d6e9e148a9243ed820e7eb5cfcd339ed5a99 | [

"MIT"

] | null | null | null | README.md | yovisto/wlo-duplicate-detection | 7db2d6e9e148a9243ed820e7eb5cfcd339ed5a99 | [

"MIT"

] | null | null | null | # WLO Duplicate Detection

A utility to detect near duplicates in the WLO dataset.

The tool is based on the [MinHash](https://en.wikipedia.org/wiki/MinHash) algorithm. Parts of the implementation were taken from [https://github.com/chrisjmccormick/MinHash](https://github.com/chrisjmccormick/MinHash).

## Prerequisit... | 36.514706 | 227 | 0.745469 | eng_Latn | 0.781434 |

aa5fafa0e00384423d37847f3b0f3953cd17896c | 1,893 | md | Markdown | synchronization/monitor.md | javany/go-design-patterns | acc906bc1e003691f1086004e56cec31fafcf45d | [

"MIT"

] | null | null | null | synchronization/monitor.md | javany/go-design-patterns | acc906bc1e003691f1086004e56cec31fafcf45d | [

"MIT"

] | null | null | null | synchronization/monitor.md | javany/go-design-patterns | acc906bc1e003691f1086004e56cec31fafcf45d | [

"MIT"

] | null | null | null | <p align="center">

<img src="../gopher.png" />

</p>

---

# Monitor Pattern

This pattern provides a way to make a goroutine wait till some event occur.<br />

Another definition of monitor is a thread-safe class that wraps around a mutex in order to safely allow access to a method or variable by more than one thread. ... | 15.144 | 166 | 0.636556 | eng_Latn | 0.91947 |

aa5fd81129865d65f27d3b80dd15dc703e8c14e8 | 1,356 | md | Markdown | README.md | OllyK/rse-jobscraper | a9f6340261572b290e8a0eda65b2db7a5bdf26f4 | [

"Apache-2.0"

] | null | null | null | README.md | OllyK/rse-jobscraper | a9f6340261572b290e8a0eda65b2db7a5bdf26f4 | [

"Apache-2.0"

] | null | null | null | README.md | OllyK/rse-jobscraper | a9f6340261572b290e8a0eda65b2db7a5bdf26f4 | [

"Apache-2.0"

] | null | null | null | # rse-jobscraper

A python program to scrape some websites for pharma/synchrotron research software engineering jobs. The program will save the results out to a text file and also send an email. To send an email using a Gmail account, you'll need to have allowed access to less secure apps in the security settings.

###... | 43.741935 | 298 | 0.7559 | eng_Latn | 0.971128 |

aa61a6c703dd24e0dbad591c9a62a25c9e99f91e | 829 | markdown | Markdown | _posts/2020-12-22-poem-bahut-honge-khush-hum.markdown | injulkarnilesh/injulkarnilesh.github.io | efb14762b452d16d262cbe064d907b4b095815bf | [

"MIT"

] | null | null | null | _posts/2020-12-22-poem-bahut-honge-khush-hum.markdown | injulkarnilesh/injulkarnilesh.github.io | efb14762b452d16d262cbe064d907b4b095815bf | [

"MIT"

] | null | null | null | _posts/2020-12-22-poem-bahut-honge-khush-hum.markdown | injulkarnilesh/injulkarnilesh.github.io | efb14762b452d16d262cbe064d907b4b095815bf | [

"MIT"

] | null | null | null | ---

layout: post

title: "बहुत होंगे खुश हम"

date: 2020-12-22 14:34:25

categories: poem

tags: hindi poem khush happiness khwab dream

image: /assets/article_images/2020-12-22-poem/happiness.jpg

image2: /assets/article_images/2020-12-22-poem/happiness.jpg

image-credit: NY TImes

image-credit-url: https://www.nytimes.co... | 13.816667 | 60 | 0.546441 | hin_Deva | 0.994434 |

aa622dc3a7d17fe86b2b308ee6ea1412fa477255 | 1,158 | md | Markdown | README.md | Kikobeats/parse-uri | 42a4510ca7e62c3c0811e0c003727e3601c02162 | [

"MIT"

] | 6 | 2016-09-19T20:08:55.000Z | 2021-06-21T02:53:28.000Z | README.md | Kikobeats/parse-uri | 42a4510ca7e62c3c0811e0c003727e3601c02162 | [

"MIT"

] | 3 | 2017-07-17T18:54:49.000Z | 2021-07-08T11:06:54.000Z | README.md | Kikobeats/parse-uri | 42a4510ca7e62c3c0811e0c003727e3601c02162 | [

"MIT"

] | 7 | 2016-11-17T15:58:08.000Z | 2022-02-09T22:31:27.000Z | # parse-uri

[](https://www.npmjs.org/package/parse-uri)

> Lightweight module for parsing an URI Based in [Steven Levithan](http://blog.stevenlevithan.... | 20.678571 | 124 | 0.697755 | eng_Latn | 0.25403 |

aa62a12036cfbe7f322d3be2526844cca18f4a62 | 2,244 | md | Markdown | README.md | JarLob/JustDecompileEngine | 723d36684267560f6edf5ae6636512c3b3e7c1b7 | [

"ECL-2.0",

"Apache-2.0"

] | 1,371 | 2015-05-04T14:22:43.000Z | 2022-03-12T10:16:16.000Z | README.md | JarLob/JustDecompileEngine | 723d36684267560f6edf5ae6636512c3b3e7c1b7 | [

"ECL-2.0",

"Apache-2.0"

] | 48 | 2015-05-15T16:34:41.000Z | 2022-03-22T12:36:32.000Z | README.md | JarLob/JustDecompileEngine | 723d36684267560f6edf5ae6636512c3b3e7c1b7 | [

"ECL-2.0",

"Apache-2.0"

] | 323 | 2015-05-05T15:15:08.000Z | 2022-03-22T23:55:54.000Z | # JustDecompile Engine

This is the engine of the popular .NET decompiler [JustDecompile](https://www.telerik.com/products/decompiler.aspx). C# is the only programming language used.

Copyright (c) 2011 - 2018 Telerik ЕAD

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except ... | 51 | 305 | 0.797683 | eng_Latn | 0.984596 |

aa631bedfbcc8aab8d4e0f5833fdb85ba230b5fb | 2,842 | md | Markdown | docs/core-modules/executable-code-and-execution-contexts/compilation/lexical-scope.md | onlyxhb/javascript-guidebook | e0d6537acc8e7a678f04d1acda74e0e04900eaca | [

"MIT"

] | 65 | 2018-12-27T08:15:38.000Z | 2020-04-23T03:36:24.000Z | docs/core-modules/executable-code-and-execution-contexts/compilation/lexical-scope.md | onlyxhb/javascript-guidebook | e0d6537acc8e7a678f04d1acda74e0e04900eaca | [

"MIT"

] | null | null | null | docs/core-modules/executable-code-and-execution-contexts/compilation/lexical-scope.md | onlyxhb/javascript-guidebook | e0d6537acc8e7a678f04d1acda74e0e04900eaca | [

"MIT"

] | 17 | 2018-12-27T08:13:57.000Z | 2020-04-12T16:31:44.000Z | ---

nav:

title: 核心模块

order: 3

group:

title: 编译阶段

order: 2

title: 词法作用域

order: 2

---

# 作用域

作用域就是变量(标识符)适用范围,控制着变量的可见性。

《You don‘t know js》对作用域的定义:

> 使用一套严格的规则来分辨哪些标识符对哪些语法有访问权限。

《JavaScript 权威指南》中对变量作用域的描述:

> 一个变量的作用域(Scope)是程序源代码中定义这个变量的区域。全局变量拥有全局作用域,在 JavaScript 代码中的任何地方都是有定义的。然而在函数内声明的变量只在函数体内有定义。它们是局部... | 20.014085 | 136 | 0.719916 | yue_Hant | 0.577979 |

aa63a61b427dcab24e693a33399579f433fbbbed | 385 | md | Markdown | manuscript/2-data-methods.md | jrhawley/n1diff | ba2edd898285859dc817fcb2ffb078e6515addf1 | [

"MIT"

] | null | null | null | manuscript/2-data-methods.md | jrhawley/n1diff | ba2edd898285859dc817fcb2ffb078e6515addf1 | [

"MIT"

] | null | null | null | manuscript/2-data-methods.md | jrhawley/n1diff | ba2edd898285859dc817fcb2ffb078e6515addf1 | [

"MIT"

] | null | null | null | # Data

* Using total RNA-seq of 6 T2E+ and 6 T2E- primary prostate cancer samples

* See [this paper](https://academic.oup.com/bioinformatics/article/31/22/3625/240923) for appropriate number of biological replicates

* Might actually be worth using that data, itself, instead of the prostate cancer stuff

* Different... | 55 | 135 | 0.784416 | eng_Latn | 0.983811 |

aa63c163e5c3a5d3c7a658a43d928b677e7494b6 | 6,353 | md | Markdown | _posts/FORA - 2015-12-14-cultura-maker-no-brasil.md | dgrej/dgrej.github.io | 478a3b1b348445054218a4ca027cf93aae36eec8 | [

"MIT"

] | null | null | null | _posts/FORA - 2015-12-14-cultura-maker-no-brasil.md | dgrej/dgrej.github.io | 478a3b1b348445054218a4ca027cf93aae36eec8 | [

"MIT"

] | null | null | null | _posts/FORA - 2015-12-14-cultura-maker-no-brasil.md | dgrej/dgrej.github.io | 478a3b1b348445054218a4ca027cf93aae36eec8 | [

"MIT"

] | null | null | null | ---

layout: post

title: Movimento Maker no Brasil

---

<meta name="twitter:card" content="summary_large_image">

<meta name="twitter:site" content="@dgrejX">

<meta name="twitter:title" content="Movimento Maker: A cultura que aproxima o pensar do fazer já está no seu cotidiano">

<meta name="twitter:description" content="... | 158.825 | 966 | 0.790965 | por_Latn | 0.999914 |

aa63ee3dde54a5d48f8445d9aabd22b28f8ce190 | 2,163 | md | Markdown | docs/windows/attributes/dispinterface.md | ANKerD/cpp-docs.pt-br | 6910dc17c79db2fee3f3616206806c5f466b3f00 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/windows/attributes/dispinterface.md | ANKerD/cpp-docs.pt-br | 6910dc17c79db2fee3f3616206806c5f466b3f00 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/windows/attributes/dispinterface.md | ANKerD/cpp-docs.pt-br | 6910dc17c79db2fee3f3616206806c5f466b3f00 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: dispinterface (atributo de COM do C++) | Microsoft Docs

ms.custom: ''

ms.date: 10/02/2018

ms.technology:

- cpp-windows

ms.topic: reference

f1_keywords:

- vc-attr.dispinterface

dev_langs:

- C++

helpviewer_keywords:

- dispinterface attribute

ms.assetid: 61c5a4a1-ae92-47e9-8ee4-f847be90172b

author: mikeblome

ms... | 28.090909 | 183 | 0.736015 | por_Latn | 0.874713 |

aa64413373fb9237a030e35567fb633aa9573c00 | 5,618 | md | Markdown | articles/active-directory/active-directory-enterprise-apps-manage-provisioning.md | jiyongseong/azure-docs.ko-kr | f1313d505132597ce47e343e2195151587b32238 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/active-directory/active-directory-enterprise-apps-manage-provisioning.md | jiyongseong/azure-docs.ko-kr | f1313d505132597ce47e343e2195151587b32238 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/active-directory/active-directory-enterprise-apps-manage-provisioning.md | jiyongseong/azure-docs.ko-kr | f1313d505132597ce47e343e2195151587b32238 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Azure Active Directory에서 엔터프라이즈 앱에 대한 사용자 프로비전 관리 | Microsoft Docs

description: Azure Active Directory를 사용하여 엔터프라이즈 앱에 대한 사용자 계정 프로비전을 관리하는 방법에 대해 알아봅니다.

services: active-directory

documentationcenter: ''

author: asmalser

manager: mtillman

editor: ''

ms.service: active-directory

ms.component: app-mgm... | 66.880952 | 424 | 0.738341 | kor_Hang | 1.00001 |

aa65ead49d5ba9c8b8a19406f078eeccc9ddd7ac | 4,470 | md | Markdown | Exchange/ExchangeServer2013/spam-quarantine-exchange-2013-help.md | sorinescu-com/OfficeDocs-Exchange | 91760fed7e67dab1a8abe7448d481564ca060d8a | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-01-26T08:15:18.000Z | 2020-01-26T08:15:18.000Z | Exchange/ExchangeServer2013/spam-quarantine-exchange-2013-help.md | sorinescu-com/OfficeDocs-Exchange | 91760fed7e67dab1a8abe7448d481564ca060d8a | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-01-27T08:03:30.000Z | 2020-01-27T08:03:30.000Z | Exchange/ExchangeServer2013/spam-quarantine-exchange-2013-help.md | sorinescu-com/OfficeDocs-Exchange | 91760fed7e67dab1a8abe7448d481564ca060d8a | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: 'Spam quarantine: Exchange 2013 Help'

TOCTitle: Spam quarantine

ms:assetid: 4535496f-de6a-43df-8e53-c9a97f65cccc

ms:mtpsurl: https://technet.microsoft.com/library/Aa997692(v=EXCHG.150)

ms:contentKeyID: 49248678

ms.reviewer:

manager: serdars

ms.author: v-mapenn

author: mattpennathe3rd

mtps_version: v=EXCHG.1... | 55.185185 | 528 | 0.802461 | eng_Latn | 0.998626 |

aa66287665a811c8c704c70ae685eea0c5302cda | 2,756 | md | Markdown | docs-src/pages/requests.md | adamjarret/s3-publish | 46824104608bffdb6b3b6a28f9571bb8a75c6ef8 | [

"MIT"

] | 6 | 2017-11-16T05:15:31.000Z | 2021-01-18T10:14:55.000Z | docs-src/pages/requests.md | adamjarret/s3-publish | 46824104608bffdb6b3b6a28f9571bb8a75c6ef8 | [

"MIT"

] | 13 | 2017-11-16T05:08:42.000Z | 2022-01-22T13:01:48.000Z | docs-src/pages/requests.md | adamjarret/s3-publish | 46824104608bffdb6b3b6a28f9571bb8a75c6ef8 | [

"MIT"

] | 1 | 2017-11-16T04:56:18.000Z | 2017-11-16T04:56:18.000Z | > When the origin provider and the target provider support the same protocol (both local or both S3),

> `copyFile` operations will be performed instead of `putFile` operations (to facilitate direct S3 to S3 transfers).

Both {@linkcode FSProvider} and {@linkcode S3Provider} can be configured with a `delegate`

object th... | 33.204819 | 129 | 0.695573 | eng_Latn | 0.988363 |

aa66768a40cd3ac93fa45e5d67babcbd8c1aca96 | 91 | md | Markdown | README.md | panosen/panosen-hash | 43e511d4a5dab1312da4c4de6d6870e27b0b7a65 | [

"MIT"

] | null | null | null | README.md | panosen/panosen-hash | 43e511d4a5dab1312da4c4de6d6870e27b0b7a65 | [

"MIT"

] | null | null | null | README.md | panosen/panosen-hash | 43e511d4a5dab1312da4c4de6d6870e27b0b7a65 | [

"MIT"

] | null | null | null | # panosen-hash

Panosen Hash Helper

this project is absolete, use Panosen.Toolkit instead.

| 18.2 | 54 | 0.802198 | eng_Latn | 0.927288 |

aa6690f6441f2fd0b31822ba122941f39005196d | 18 | md | Markdown | README.md | liushaopeng0606/SPJamesLib | d2cda53c7c9f4e721edcd72ed35055fce842c2f3 | [

"MIT"

] | null | null | null | README.md | liushaopeng0606/SPJamesLib | d2cda53c7c9f4e721edcd72ed35055fce842c2f3 | [

"MIT"

] | null | null | null | README.md | liushaopeng0606/SPJamesLib | d2cda53c7c9f4e721edcd72ed35055fce842c2f3 | [

"MIT"

] | null | null | null | # SPJamesLib

测试仓库

| 6 | 12 | 0.777778 | yue_Hant | 0.771665 |

aa66bc4b86be8442dc3f9d2aab2891e13e4a5c01 | 45,162 | md | Markdown | _pages/media.md | RotatingFans/docs-rewrite | 772ba1f49462871653535912ca9b27cc390bf61d | [

"Apache-2.0"

] | null | null | null | _pages/media.md | RotatingFans/docs-rewrite | 772ba1f49462871653535912ca9b27cc390bf61d | [

"Apache-2.0"

] | null | null | null | _pages/media.md | RotatingFans/docs-rewrite | 772ba1f49462871653535912ca9b27cc390bf61d | [

"Apache-2.0"

] | 1 | 2021-03-06T20:56:43.000Z | 2021-03-06T20:56:43.000Z | ---

ID: 35830

post_title: Mark II Media Kit

author: Kathy Reid

post_excerpt: ""

layout: page

permalink: http://mycroft.ai/media/

published: true

post_date: 2018-02-15 12:22:46

---

[vc_row type="full_width_background" full_screen_row_position="middle" bg_color="#22a7f0" scene_position="center" text_color="light" text_al... | 250.9 | 8,181 | 0.789048 | eng_Latn | 0.7754 |

aa66d54f846bcddac48d49445a02cf941433a98c | 542 | md | Markdown | ru/storage/concepts/backup.md | dbaklikov/docs | fa03bc655421603e97f8970c98369cfcec54c80a | [

"CC-BY-4.0"

] | null | null | null | ru/storage/concepts/backup.md | dbaklikov/docs | fa03bc655421603e97f8970c98369cfcec54c80a | [

"CC-BY-4.0"

] | null | null | null | ru/storage/concepts/backup.md | dbaklikov/docs | fa03bc655421603e97f8970c98369cfcec54c80a | [

"CC-BY-4.0"

] | 1 | 2019-08-15T12:32:47.000Z | 2019-08-15T12:32:47.000Z | # Резервное копирование объектов