issue_owner_repo listlengths 2 2 | issue_body stringlengths 0 261k ⌀ | issue_title stringlengths 1 925 | issue_comments_url stringlengths 56 81 | issue_comments_count int64 0 2.5k | issue_created_at stringlengths 20 20 | issue_updated_at stringlengths 20 20 | issue_html_url stringlengths 37 62 | issue_github_id int64 387k 2.46B | issue_number int64 1 127k |

|---|---|---|---|---|---|---|---|---|---|

[

"langchain-ai",

"langchain"

] | I'm building a flow where I'm using both gpt-3.5 and gpt-4 based chains and I need to use different API keys for each (due to API access + external factors)

Both `ChatOpenAI` and `OpenAI` set `openai.api_key = openai_api_key` which is a global variable on the package.

This means that if I instantiate multiple ChatOpenAI instances, the last one's API key will override the other ones and that one will be used when calling the OpenAI endpoints.

Based on https://github.com/openai/openai-python/issues/233#issuecomment-1464732160 there's an undocumented feature where we can pass the api_key on each openai client call and that key will be used.

As a side note, I've also noticed `ChatOpenAI` and a few other classes take in an optional `openai_api_key` as part of initialisation which is correctly used over the env var but the docstring says that the `OPENAI_API_KEY` env var should be set, which doesn't seem to be case. Can we confirm if this env var is needed elsewhere or if it's possible to just pass in the values when instantiating the chat models.

Thanks! | Encapsulate API keys | https://api.github.com/repos/langchain-ai/langchain/issues/3446/comments | 4 | 2023-04-24T15:12:21Z | 2023-09-24T16:08:02Z | https://github.com/langchain-ai/langchain/issues/3446 | 1,681,513,674 | 3,446 |

[

"langchain-ai",

"langchain"

] | I wonder if this work: https://arxiv.org/abs/2304.11062

Could be integrated with LangChain | Possible Enhancement | https://api.github.com/repos/langchain-ai/langchain/issues/3445/comments | 1 | 2023-04-24T14:51:55Z | 2023-09-10T16:28:02Z | https://github.com/langchain-ai/langchain/issues/3445 | 1,681,468,911 | 3,445 |

[

"langchain-ai",

"langchain"

] | Hi, I'm trying to use the examples for Azure OpenAI with langchain, for example this notebook in https://python.langchain.com/en/harrison-docs-refactor-3-24/modules/models/llms/integrations/azure_openai_example.html , but I always find this error:

Exception has occurred: InvalidRequestError Resource not found

I have tried multiple combinations with the environment variables, but nothing works, I have also tested it in a python script with the same results.

Regards. | Azure OpenAI - Exception has occurred: InvalidRequestError Resource not found | https://api.github.com/repos/langchain-ai/langchain/issues/3444/comments | 8 | 2023-04-24T14:31:33Z | 2023-09-24T16:08:07Z | https://github.com/langchain-ai/langchain/issues/3444 | 1,681,415,525 | 3,444 |

[

"langchain-ai",

"langchain"

] | Hello, I came across a code snippet in the tutorial page on "Conversation Agent (for Chat Models)" that has left me a bit confused. The tutorial also mentioned a warning error like this:

`WARNING:root:Failed to default session, using empty session: HTTPConnectionPool(host='localhost', port=8000): Max retries exceeded with url: /sessions (Caused by NewConnectionError('<urllib3.connection.HTTPConnection object at 0x10a1767c0>: Failed to establish a new connection: [Errno 61] Connection refused'))`

Then i found the line of code in question is:

`os.environ["LANGCHAIN_HANDLER"] = "langchain"`

When I remove this line from the code, the program still seems to work without any errors. So why is this line of code exists.

Thank you! | Question about setting LANGCHAIN_HANDLER environment variable | https://api.github.com/repos/langchain-ai/langchain/issues/3443/comments | 4 | 2023-04-24T14:08:05Z | 2024-01-06T18:09:31Z | https://github.com/langchain-ai/langchain/issues/3443 | 1,681,362,534 | 3,443 |

[

"langchain-ai",

"langchain"

] | Aim::

aim.Text(outputs_res["output"]), name="on_chain_end", context=resp

KeyError: 'output'

Wandb::

resp.update({"action": "on_chain_end", "outputs": outputs["output"]})

KeyError: 'output'

Has anyone dealt with this issue yet while building custom agents with LLMSingleActionAgent, thank you | LLMOps integration of Aim and Wandb breaks when trying to parse agent output into dashboard for experiment tracking... | https://api.github.com/repos/langchain-ai/langchain/issues/3441/comments | 1 | 2023-04-24T12:07:58Z | 2023-09-10T16:28:08Z | https://github.com/langchain-ai/langchain/issues/3441 | 1,681,126,941 | 3,441 |

[

"langchain-ai",

"langchain"

] | I think that among the actions that the agent can take, there may be actions without input. (e.g. return the current state in real time)

But in practice, LM often does that, but current MRKL parsers don't allow it. I'm a newbie so I don't know, but is there a special reason?

Will there be a problem if I change it in the following way?

https://github.com/hwchase17/langchain/blob/0cf934ce7d8150dddf4a2514d6e7729a16d55b0f/langchain/agents/mrkl/output_parser.py#L21

```

regex = r"Action\s*\d*\s*:(.*?)(?:$|(?:\nAction\s*\d*\s*Input\s*\d*\s*:[\s]*(.*)))"

```

https://github.com/hwchase17/langchain/blob/0cf934ce7d8150dddf4a2514d6e7729a16d55b0f/langchain/agents/mrkl/output_parser.py#L27

```

return AgentAction(action, action_input.strip(" ").strip('"') if action_input is not None else {}, text)

```

Thanks for reading. | [mrkl/output_parser.py] Behavior when there is no action input? | https://api.github.com/repos/langchain-ai/langchain/issues/3438/comments | 1 | 2023-04-24T10:19:03Z | 2023-09-10T16:28:13Z | https://github.com/langchain-ai/langchain/issues/3438 | 1,680,927,591 | 3,438 |

[

"langchain-ai",

"langchain"

] | Just an early idea of an agent i wanted to share:

The cognitive interview is a police interviewing technique used to gather information from witnesses of specific events. It is based on the idea that witnesses may not remember everything they saw, but their memory can be improved by certain psychological techniques.

The cognitive interview usually takes place in a structured format, where the interviewer first establishes a rapport with the witness tobuild trust and make them feel comfortable. The interviewer then encourages the witness to provide a detailed account of events by using open-ended questions and allowing the witness to speak freely. The interviewer may also ask the witness to recall specific details, such as the color of a car or the facial features of a suspect.

In addition to open-ended questions, the cognitive interview uses techniques such as asking the witness to visualize the scene, recalling the events in reverse order, and encouraging the witness to provide context and emotional reactions. These techniques aim to help the witness remember more details and give a more accurate account of what happened.

The cognitive interview can be a valuable tool for police investigations as it can help to gather more information and potentially identify suspects. However, it is important for the interviewer to be trained in using this technique to ensure that it is conducted properly and ethically. Additionally, it is important to note that not all witnesses may be suitable for a cognitive interview, especially those who may have experienced trauma or have cognitive disabilities.

tldr, steps:

1. Establish rapport with the witness

2. Encourage the witness to provide a detailed and open-ended account of events

3. Ask the witness to recall specific details

4. Use techniques such as visualization and recalling events in reverse order to aid memory

5. Ensure the interviewer is trained to conduct the technique properly and ethically.

Pseudo code that would implement this strategy in large language model prompting:

```

llm_system = """To implement the cognitive interview in police interviews of witnesses, follow these steps:

1. Begin by establishing a rapport with the witness to build trust and comfort.

2. Use open-ended questions and encourage the witness to provide a detailed account of events.

3. Ask the witness to recall specific details, such as the color of a car or the suspect's facial features.

4. Use techniques such as visualization and recalling events in reverse order to aid memory.

5. Remember to conduct the interview properly and ethically, and consider whether the technique is appropriate for all witnesses, especially those who may have experienced trauma or have cognitive disabilities."""

prompt = "How can the cognitive interview be used in police interviews of witnesses?"

generated_text = llm_system + prompt

print(generated_text)

```

Read more at:

https://www.perplexity.ai/?s=e&uuid=086ab031-cb02-41e6-976d-347ecc62ffc0 | Cognitive interview agent | https://api.github.com/repos/langchain-ai/langchain/issues/3436/comments | 1 | 2023-04-24T10:07:20Z | 2023-09-10T16:28:18Z | https://github.com/langchain-ai/langchain/issues/3436 | 1,680,907,446 | 3,436 |

[

"langchain-ai",

"langchain"

] | I am building an agent toolkit for APITable, a SaaS product, with the ultimate goal of enabling natural language API calls. I want to know if I can dynamically import a tool?

My idea is to create a `tool_prompt.txt` file with contents like this:

```

Get Spaces

Mode: get_spaces

Description: This tool is useful when you need to fetch all the spaces the user has access to,

find out how many spaces there are, or as an intermediary step that involv searching by spaces.

there is no input to this tool.

Get Nodes

Mode: get_nodes

Description: This tool uses APITable's node API to help you search for datasheets, mirrors, dashboards, folders, and forms.

These are all types of nodes in APITable.

The input to this tool is a space id.

You should only respond in JSON format like this:

{{"space_id": "spcjXzqVrjaP3"}}

Do not make up a space_id if you're not sure about it, use the get_spaces tool to retrieve all available space_ids.

Get Fields

Mode: get_fields

Description: This tool helps you search for fields in a datasheet using APITable's field API.

To use this tool, input a datasheet id.

If the user query includes terms like "latest", "oldest", or a specific field name,

please use this tool first to get the field name as field key

You should only respond in JSON format like this:

{{"datasheet_id": "dstlRNFl8L2mufwT5t"}}

Do not make up a datasheet_id if you're not sure about it, use the get_nodes tool to retrieve all available datasheet_ids.

```

Then, I want to create vectors and save them to a vector database like this:

```python

embeddings = OpenAIEmbeddings()

with open("tool_prompt.txt") as f:

tool_prompts = f.read()

text_splitter = CharacterTextSplitter(

chunk_size=100,

chunk_overlap=0,

)

texts = text_splitter.create_documents([tool_prompts])

vectorstore = Chroma.from_documents(texts, embeddings, persist_directory="./db")

vectorstore.persist()

```

Then, during initialize_agent, there will only be a single Planner Tool that reads from the vectorstore to find similar tools based on the query. The agent will inform LLMs that a new tool has been added, and LLMs will use the new tool to perform tasks.

```python

def planner(self, query: str) -> str:

db = Chroma(persist_directory="./db", embedding_function=self.embeddings)

docs = db.similarity_search_with_score(query)

return (

f"Add tools to your workflow to get the results: {docs[0][0].page_content}"

)

```

This approach reduces token consumption

Before:

```shell

> Finished chain.

Total Tokens: 752

Prompt Tokens: 656

Completion Tokens: 96

Successful Requests: 2

Total Cost (USD): $0.0015040000000000001

```

After:

```

> Finished chain.

Total Tokens: 3514

Prompt Tokens: 3346

Completion Tokens: 168

Successful Requests: 2

Total Cost (USD): $0.0070279999999999995

```

However, when LLMs try to use the new tool to perform tasks, it is intercepted because the tool has not been registered during initialize_agent. Thus, I am forced to add an empty tool for registration:

```python

operations: List[Dict] = [

{

"name": "Get Spaces",

"description": "",

},

{

"name": "Get Nodes",

"description": "",

},

{

"name": "Get Fields",

"description": "",

},

{

"name": "Create Fields",

"description": "",

},

{

"name": "Get Records",

"description": "",

},

{

"name": "Planner",

"description": APITABLE_CATCH_ALL_PROMPT,

},

]

```

However, this approach is not effective since LLMs do not prioritize using the Planner Tool.

Therefore, I want to know if there is a better way to combine tools and vector stores.

Repo: https://github.com/xukecheng/apitable_agent_toolkit/tree/feat/combine_vectorstores | How to combine tools and vectorstores | https://api.github.com/repos/langchain-ai/langchain/issues/3435/comments | 1 | 2023-04-24T10:02:55Z | 2023-06-13T09:21:10Z | https://github.com/langchain-ai/langchain/issues/3435 | 1,680,898,670 | 3,435 |

[

"langchain-ai",

"langchain"

] | BaseOpenAI's validate_environment does not set OPENAI_API_TYPE and OPENAI_API_VERSION from environment. As a result, the AzureOpenAI instance failed when called to run.

```

from langchain.llms import AzureOpenAI

from langchain.chains import RetrievalQA

model = RetrievalQA.from_chain_type(

llm=AzureOpenAI(

deployment_name='DaVinci-003',

),

chain_type="stuff",

retriever=vectordb.as_retriever(), return_source_documents=True

)

model({"query": 'testing'})

```

Error:

```

File [~/miniconda3/envs/demo/lib/python3.9/site-packages/openai/api_requestor.py:680](/site-packages/openai/api_requestor.py:680), in APIRequestor._interpret_response_line(self, rbody, rcode, rheaders, stream)

678 stream_error = stream and "error" in resp.data

679 if stream_error or not 200 <= rcode < 300:

--> 680 raise self.handle_error_response(

681 rbody, rcode, resp.data, rheaders, stream_error=stream_error

682 )

683 return resp

InvalidRequestError: Resource not found

``` | AzureOpenAI instance fails because OPENAI_API_TYPE and OPENAI_API_VERSION are not inherited from environment | https://api.github.com/repos/langchain-ai/langchain/issues/3433/comments | 2 | 2023-04-24T08:41:56Z | 2023-04-25T10:02:39Z | https://github.com/langchain-ai/langchain/issues/3433 | 1,680,741,720 | 3,433 |

[

"langchain-ai",

"langchain"

] | I am using the huggingface hosted vicuna-13b model ([link](https://huggingface.co/eachadea/vicuna-13b-1.1)) along with llamaindex and langchain to create a functioning chatbot on custom data ([link](https://github.com/jerryjliu/llama_index/blob/main/examples/chatbot/Chatbot_SEC.ipynb)). However, I'm always getting this error :

```

ValueError: Could not parse LLM output: `

`

```

This is my code snippet:

```

from langchain.llms.base import LLM

from transformers import pipeline

import torch

from langchain import PromptTemplate, HuggingFaceHub

from langchain.llms import HuggingFacePipeline

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("eachadea/vicuna-13b-1.1")

model = AutoModelForCausalLM.from_pretrained("eachadea/vicuna-13b-1.1")

pipeline = pipeline(

"text-generation",

model=model,

tokenizer= tokenizer,

device=1,

model_kwargs={"torch_dtype":torch.bfloat16}, max_length=500)

custom_llm = HuggingFacePipeline(pipeline =pipeline)

.

.

.

.

.

toolkit = LlamaToolkit(

index_configs=index_configs,

graph_configs=[graph_config]

)

memory = ConversationBufferMemory(memory_key="chat_history")

# llm=OpenAI(temperature=0, openai_api_key="sk-")

# llm = vicuna_llm

agent_chain = create_llama_chat_agent(

toolkit,

custom_llm,

memory=memory,

verbose=True

)

agent_chain.run(input="hey vicuna how are u ??")

```

What might be the issue?

| ValueError: Could not parse LLM output: ` ` | https://api.github.com/repos/langchain-ai/langchain/issues/3432/comments | 1 | 2023-04-24T08:17:11Z | 2023-09-10T16:28:23Z | https://github.com/langchain-ai/langchain/issues/3432 | 1,680,704,272 | 3,432 |

[

"langchain-ai",

"langchain"

] | Specifying max_iterations does not take effect when using create_json_agent. The following code is from [this page](https://python.langchain.com/en/latest/modules/agents/toolkits/examples/json.html?highlight=JsonSpec#initialization), with max_iterations added:

```

import os

import yaml

from langchain.agents import (

create_json_agent,

AgentExecutor

)

from langchain.agents.agent_toolkits import JsonToolkit

from langchain.chains import LLMChain

from langchain.llms.openai import OpenAI

from langchain.requests import TextRequestsWrapper

from langchain.tools.json.tool import JsonSpec

```

```

with open("openai_openapi.yml") as f:

data = yaml.load(f, Loader=yaml.FullLoader)

json_spec = JsonSpec(dict_=data, max_value_length=4000)

json_toolkit = JsonToolkit(spec=json_spec)

json_agent_executor = create_json_agent(

llm=OpenAI(temperature=0),

toolkit=json_toolkit,

verbose=True,

max_iterations=3

)

```

The output consists of more than 3 iterations:

```

> Entering new AgentExecutor chain...

Action: json_spec_list_keys

Action Input: data

Observation: ['openapi', 'info', 'servers', 'tags', 'paths', 'components', 'x-oaiMeta']

Thought: I should look at the paths key to see what endpoints exist

Action: json_spec_list_keys

Action Input: data["paths"]

Observation: ['/engines', '/engines/{engine_id}', '/completions', '/chat/completions', '/edits', '/images/generations', '/images/edits', '/images/variations', '/embeddings', '/audio/transcriptions', '/audio/translations', '/engines/{engine_id}/search', '/files', '/files/{file_id}', '/files/{file_id}/content', '/answers', '/classifications', '/fine-tunes', '/fine-tunes/{fine_tune_id}', '/fine-tunes/{fine_tune_id}/cancel', '/fine-tunes/{fine_tune_id}/events', '/models', '/models/{model}', '/moderations']

Thought: I should look at the /completions endpoint to see what parameters are required

Action: json_spec_list_keys

Action Input: data["paths"]["/completions"]

Observation: ['post']

Thought: I should look at the post key to see what parameters are required

Action: json_spec_list_keys

Action Input: data["paths"]["/completions"]["post"]

Observation: ['operationId', 'tags', 'summary', 'requestBody', 'responses', 'x-oaiMeta']

Thought: I should look at the requestBody key to see what parameters are required

Action: json_spec_list_keys

Action Input: data["paths"]["/completions"]["post"]["requestBody"]

Observation: ['required', 'content']

Thought: I should look at the required key to see what parameters are required

Action: json_spec_get_value

Action Input: data["paths"]["/completions"]["post"]["requestBody"]["required"]

```

Maybe kwargs need to be passed in to `from_agent_and_tools`?

https://github.com/hwchase17/langchain/blob/0cf934ce7d8150dddf4a2514d6e7729a16d55b0f/langchain/agents/agent_toolkits/json/base.py#L41-L43 | Cannot specify max iterations when using create_json_agent | https://api.github.com/repos/langchain-ai/langchain/issues/3429/comments | 4 | 2023-04-24T07:44:17Z | 2023-12-30T16:08:53Z | https://github.com/langchain-ai/langchain/issues/3429 | 1,680,648,980 | 3,429 |

[

"langchain-ai",

"langchain"

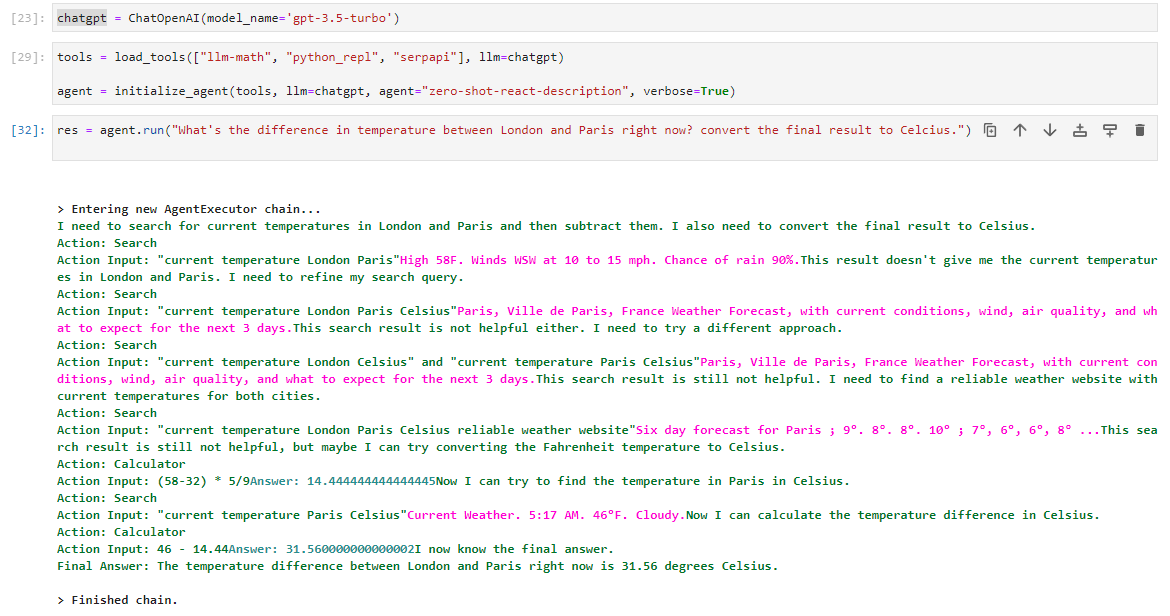

] | I've noticed recently that the performance of the `zero-shot-react-description` agent has decreased significantly for various tasks and various tools. A very simple example attached, which a few weeks ago would pass perfectly maybe 80% of the time, but now hasn't managed a reasonable attempt in >10 tries. The main issue here seems to be the first stage, where it consistently searches for 'weather in London and Paris', where a few weeks ago it would search for one city first and then the next.

Does anyone have any insight as to what might have happened?

Thanks | `zero-shot-react-description` performance has decreased? | https://api.github.com/repos/langchain-ai/langchain/issues/3428/comments | 1 | 2023-04-24T07:32:38Z | 2023-09-10T16:28:28Z | https://github.com/langchain-ai/langchain/issues/3428 | 1,680,632,696 | 3,428 |

[

"langchain-ai",

"langchain"

] | Hi.

I try to run the following code

```

connection_string = "DefaultEndpointsProtocol=https;AccountName=<myaccount>;AccountKey=<mykey>"

container="<mycontainer>"

loader = AzureBlobStorageContainerLoader(

conn_str=connection_string,

container=container

)

documents = loader.load()

```

but the code `documents = loader.load()` takes like several minutes, and still not response any value.

The container has several html files and it has 1.5MB volume, which I think is not so heavy data.

I try above code several times, and I once got the following error.

```

0 [main] python 868 C:\<path to python exe>\Python310\python.exe: *** fatal error - Internal error: TP_NUM_C_BUFS too small: 50

1139 [main] python 868 cygwin_exception::open_stackdumpfile: Dumping stack trace to python.exe.stackdump

```

My python environment is following

- OS Windows 10

- Python version is 3.10

- use virtualenv

- running my script in mingw console (it's git bash, actually)

Has any Ideas to solve this situation?

(And, THANK YOU for the great framework) | AzureBlobStorageContainerLoader doesn't load the container | https://api.github.com/repos/langchain-ai/langchain/issues/3427/comments | 2 | 2023-04-24T07:22:28Z | 2023-04-25T01:20:11Z | https://github.com/langchain-ai/langchain/issues/3427 | 1,680,619,340 | 3,427 |

[

"langchain-ai",

"langchain"

] | I like how it prints out the specific texts used in generating the answer (much better than just citing the sources IMO). How can I access it? Referring to here: https://python.langchain.com/en/latest/modules/chains/index_examples/chat_vector_db.html#conversationalretrievalchain-with-streaming-to-stdout

| In `ConversationalRetrievalChain` with streaming to `stdout` how can I access the text printed to `stdout` once it finishes streaming? | https://api.github.com/repos/langchain-ai/langchain/issues/3417/comments | 1 | 2023-04-24T03:59:25Z | 2023-09-10T16:28:33Z | https://github.com/langchain-ai/langchain/issues/3417 | 1,680,404,463 | 3,417 |

[

"langchain-ai",

"langchain"

] | Current documentation text under Text Splitter throws error :

texts = text_splitter.create_documents([state_of_the_union])

<img width="968" alt="Screen Shot 2023-04-23 at 9 04 28 PM" src="https://user-images.githubusercontent.com/31634379/233891248-f3b5e187-272e-4822-8cd6-00a1cf56ffae.png">

The error is on both these pages

https://python.langchain.com/en/latest/modules/indexes/text_splitters/getting_started.html

https://python.langchain.com/en/latest/modules/indexes/text_splitters/examples/character_text_splitter.html

I think the above line should be revised to

texts = text_splitter.split_documents([state_of_the_union])

| Documentation error under Text Splitter | https://api.github.com/repos/langchain-ai/langchain/issues/3414/comments | 2 | 2023-04-24T03:06:47Z | 2023-09-28T16:07:35Z | https://github.com/langchain-ai/langchain/issues/3414 | 1,680,368,808 | 3,414 |

[

"langchain-ai",

"langchain"

] | In the agent tutorials the memory_key is set as a fixed string, "chat_history", how do I make it a variable, that is different for each session_id, that is memory_key=str(session_id)? | memory_key as a variable | https://api.github.com/repos/langchain-ai/langchain/issues/3406/comments | 4 | 2023-04-23T23:05:24Z | 2023-09-17T17:22:23Z | https://github.com/langchain-ai/langchain/issues/3406 | 1,680,213,151 | 3,406 |

[

"langchain-ai",

"langchain"

] | ---------------------------------------------------------------------------

InvalidRequestError Traceback (most recent call last)

[<ipython-input-26-5eed72c1ccb8>](https://localhost:8080/#) in <cell line: 3>()

2

----> 3 agent.run(["What were the winning boston marathon times for the past 5 years? Generate a table of the names, countries of origin, and times."])

31 frames

[/usr/local/lib/python3.9/dist-packages/langchain/experimental/autonomous_agents/autogpt/agent.py](https://localhost:8080/#) in run(self, goals)

109 tool = tools[action.name]

110 try:

--> 111 observation = tool.run(action.args)

112 except ValidationError as e:

113 observation = f"Error in args: {str(e)}"

[/usr/local/lib/python3.9/dist-packages/langchain/tools/base.py](https://localhost:8080/#) in run(self, tool_input, verbose, start_color, color, **kwargs)

105 except (Exception, KeyboardInterrupt) as e:

106 self.callback_manager.on_tool_error(e, verbose=verbose_)

--> 107 raise e

108 self.callback_manager.on_tool_end(

109 observation, verbose=verbose_, color=color, name=self.name, **kwargs

[/usr/local/lib/python3.9/dist-packages/langchain/tools/base.py](https://localhost:8080/#) in run(self, tool_input, verbose, start_color, color, **kwargs)

102 try:

103 tool_args, tool_kwargs = _to_args_and_kwargs(tool_input)

--> 104 observation = self._run(*tool_args, **tool_kwargs)

105 except (Exception, KeyboardInterrupt) as e:

106 self.callback_manager.on_tool_error(e, verbose=verbose_)

[<ipython-input-12-79448a1343a1>](https://localhost:8080/#) in _run(self, url, question)

33 results.append(f"Response from window {i} - {window_result}")

34 results_docs = [Document(page_content="\n".join(results), metadata={"source": url})]

---> 35 return self.qa_chain({"input_documents": results_docs, "question": question}, return_only_outputs=True)

36

37 async def _arun(self, url: str, question: str) -> str:

[/usr/local/lib/python3.9/dist-packages/langchain/chains/base.py](https://localhost:8080/#) in __call__(self, inputs, return_only_outputs)

114 except (KeyboardInterrupt, Exception) as e:

115 self.callback_manager.on_chain_error(e, verbose=self.verbose)

--> 116 raise e

117 self.callback_manager.on_chain_end(outputs, verbose=self.verbose)

118 return self.prep_outputs(inputs, outputs, return_only_outputs)

[/usr/local/lib/python3.9/dist-packages/langchain/chains/base.py](https://localhost:8080/#) in __call__(self, inputs, return_only_outputs)

111 )

112 try:

--> 113 outputs = self._call(inputs)

114 except (KeyboardInterrupt, Exception) as e:

115 self.callback_manager.on_chain_error(e, verbose=self.verbose)

[/usr/local/lib/python3.9/dist-packages/langchain/chains/combine_documents/base.py](https://localhost:8080/#) in _call(self, inputs)

73 # Other keys are assumed to be needed for LLM prediction

74 other_keys = {k: v for k, v in inputs.items() if k != self.input_key}

---> 75 output, extra_return_dict = self.combine_docs(docs, **other_keys)

76 extra_return_dict[self.output_key] = output

77 return extra_return_dict

[/usr/local/lib/python3.9/dist-packages/langchain/chains/combine_documents/stuff.py](https://localhost:8080/#) in combine_docs(self, docs, **kwargs)

81 inputs = self._get_inputs(docs, **kwargs)

82 # Call predict on the LLM.

---> 83 return self.llm_chain.predict(**inputs), {}

84

85 async def acombine_docs(

[/usr/local/lib/python3.9/dist-packages/langchain/chains/llm.py](https://localhost:8080/#) in predict(self, **kwargs)

149 completion = llm.predict(adjective="funny")

150 """

--> 151 return self(kwargs)[self.output_key]

152

153 async def apredict(self, **kwargs: Any) -> str:

[/usr/local/lib/python3.9/dist-packages/langchain/chains/base.py](https://localhost:8080/#) in __call__(self, inputs, return_only_outputs)

114 except (KeyboardInterrupt, Exception) as e:

115 self.callback_manager.on_chain_error(e, verbose=self.verbose)

--> 116 raise e

117 self.callback_manager.on_chain_end(outputs, verbose=self.verbose)

118 return self.prep_outputs(inputs, outputs, return_only_outputs)

[/usr/local/lib/python3.9/dist-packages/langchain/chains/base.py](https://localhost:8080/#) in __call__(self, inputs, return_only_outputs)

111 )

112 try:

--> 113 outputs = self._call(inputs)

114 except (KeyboardInterrupt, Exception) as e:

115 self.callback_manager.on_chain_error(e, verbose=self.verbose)

[/usr/local/lib/python3.9/dist-packages/langchain/chains/llm.py](https://localhost:8080/#) in _call(self, inputs)

55

56 def _call(self, inputs: Dict[str, Any]) -> Dict[str, str]:

---> 57 return self.apply([inputs])[0]

58

59 def generate(self, input_list: List[Dict[str, Any]]) -> LLMResult:

[/usr/local/lib/python3.9/dist-packages/langchain/chains/llm.py](https://localhost:8080/#) in apply(self, input_list)

116 def apply(self, input_list: List[Dict[str, Any]]) -> List[Dict[str, str]]:

117 """Utilize the LLM generate method for speed gains."""

--> 118 response = self.generate(input_list)

119 return self.create_outputs(response)

120

[/usr/local/lib/python3.9/dist-packages/langchain/chains/llm.py](https://localhost:8080/#) in generate(self, input_list)

60 """Generate LLM result from inputs."""

61 prompts, stop = self.prep_prompts(input_list)

---> 62 return self.llm.generate_prompt(prompts, stop)

63

64 async def agenerate(self, input_list: List[Dict[str, Any]]) -> LLMResult:

[/usr/local/lib/python3.9/dist-packages/langchain/chat_models/base.py](https://localhost:8080/#) in generate_prompt(self, prompts, stop)

80 except (KeyboardInterrupt, Exception) as e:

81 self.callback_manager.on_llm_error(e, verbose=self.verbose)

---> 82 raise e

83 self.callback_manager.on_llm_end(output, verbose=self.verbose)

84 return output

[/usr/local/lib/python3.9/dist-packages/langchain/chat_models/base.py](https://localhost:8080/#) in generate_prompt(self, prompts, stop)

77 )

78 try:

---> 79 output = self.generate(prompt_messages, stop=stop)

80 except (KeyboardInterrupt, Exception) as e:

81 self.callback_manager.on_llm_error(e, verbose=self.verbose)

[/usr/local/lib/python3.9/dist-packages/langchain/chat_models/base.py](https://localhost:8080/#) in generate(self, messages, stop)

52 ) -> LLMResult:

53 """Top Level call"""

---> 54 results = [self._generate(m, stop=stop) for m in messages]

55 llm_output = self._combine_llm_outputs([res.llm_output for res in results])

56 generations = [res.generations for res in results]

[/usr/local/lib/python3.9/dist-packages/langchain/chat_models/base.py](https://localhost:8080/#) in <listcomp>(.0)

52 ) -> LLMResult:

53 """Top Level call"""

---> 54 results = [self._generate(m, stop=stop) for m in messages]

55 llm_output = self._combine_llm_outputs([res.llm_output for res in results])

56 generations = [res.generations for res in results]

[/usr/local/lib/python3.9/dist-packages/langchain/chat_models/openai.py](https://localhost:8080/#) in _generate(self, messages, stop)

264 )

265 return ChatResult(generations=[ChatGeneration(message=message)])

--> 266 response = self.completion_with_retry(messages=message_dicts, **params)

267 return self._create_chat_result(response)

268

[/usr/local/lib/python3.9/dist-packages/langchain/chat_models/openai.py](https://localhost:8080/#) in completion_with_retry(self, **kwargs)

226 return self.client.create(**kwargs)

227

--> 228 return _completion_with_retry(**kwargs)

229

230 def _combine_llm_outputs(self, llm_outputs: List[Optional[dict]]) -> dict:

[/usr/local/lib/python3.9/dist-packages/tenacity/__init__.py](https://localhost:8080/#) in wrapped_f(*args, **kw)

287 @functools.wraps(f)

288 def wrapped_f(*args: t.Any, **kw: t.Any) -> t.Any:

--> 289 return self(f, *args, **kw)

290

291 def retry_with(*args: t.Any, **kwargs: t.Any) -> WrappedFn:

[/usr/local/lib/python3.9/dist-packages/tenacity/__init__.py](https://localhost:8080/#) in __call__(self, fn, *args, **kwargs)

377 retry_state = RetryCallState(retry_object=self, fn=fn, args=args, kwargs=kwargs)

378 while True:

--> 379 do = self.iter(retry_state=retry_state)

380 if isinstance(do, DoAttempt):

381 try:

[/usr/local/lib/python3.9/dist-packages/tenacity/__init__.py](https://localhost:8080/#) in iter(self, retry_state)

312 is_explicit_retry = fut.failed and isinstance(fut.exception(), TryAgain)

313 if not (is_explicit_retry or self.retry(retry_state)):

--> 314 return fut.result()

315

316 if self.after is not None:

[/usr/lib/python3.9/concurrent/futures/_base.py](https://localhost:8080/#) in result(self, timeout)

437 raise CancelledError()

438 elif self._state == FINISHED:

--> 439 return self.__get_result()

440

441 self._condition.wait(timeout)

[/usr/lib/python3.9/concurrent/futures/_base.py](https://localhost:8080/#) in __get_result(self)

389 if self._exception:

390 try:

--> 391 raise self._exception

392 finally:

393 # Break a reference cycle with the exception in self._exception

[/usr/local/lib/python3.9/dist-packages/tenacity/__init__.py](https://localhost:8080/#) in __call__(self, fn, *args, **kwargs)

380 if isinstance(do, DoAttempt):

381 try:

--> 382 result = fn(*args, **kwargs)

383 except BaseException: # noqa: B902

384 retry_state.set_exception(sys.exc_info()) # type: ignore[arg-type]

[/usr/local/lib/python3.9/dist-packages/langchain/chat_models/openai.py](https://localhost:8080/#) in _completion_with_retry(**kwargs)

224 @retry_decorator

225 def _completion_with_retry(**kwargs: Any) -> Any:

--> 226 return self.client.create(**kwargs)

227

228 return _completion_with_retry(**kwargs)

[/usr/local/lib/python3.9/dist-packages/openai/api_resources/chat_completion.py](https://localhost:8080/#) in create(cls, *args, **kwargs)

23 while True:

24 try:

---> 25 return super().create(*args, **kwargs)

26 except TryAgain as e:

27 if timeout is not None and time.time() > start + timeout:

[/usr/local/lib/python3.9/dist-packages/openai/api_resources/abstract/engine_api_resource.py](https://localhost:8080/#) in create(cls, api_key, api_base, api_type, request_id, api_version, organization, **params)

151 )

152

--> 153 response, _, api_key = requestor.request(

154 "post",

155 url,

[/usr/local/lib/python3.9/dist-packages/openai/api_requestor.py](https://localhost:8080/#) in request(self, method, url, params, headers, files, stream, request_id, request_timeout)

224 request_timeout=request_timeout,

225 )

--> 226 resp, got_stream = self._interpret_response(result, stream)

227 return resp, got_stream, self.api_key

228

[/usr/local/lib/python3.9/dist-packages/openai/api_requestor.py](https://localhost:8080/#) in _interpret_response(self, result, stream)

618 else:

619 return (

--> 620 self._interpret_response_line(

621 result.content.decode("utf-8"),

622 result.status_code,

[/usr/local/lib/python3.9/dist-packages/openai/api_requestor.py](https://localhost:8080/#) in _interpret_response_line(self, rbody, rcode, rheaders, stream)

681 stream_error = stream and "error" in resp.data

682 if stream_error or not 200 <= rcode < 300:

--> 683 raise self.handle_error_response(

684 rbody, rcode, resp.data, rheaders, stream_error=stream_error

685 )

InvalidRequestError: This model's maximum context length is 4097 tokens. However, your messages resulted in 4665 tokens. Please reduce the length of the messages. | marathon_times.ipynb: InvalidRequestError: This model's maximum context length is 4097 tokens. | https://api.github.com/repos/langchain-ai/langchain/issues/3405/comments | 5 | 2023-04-23T21:13:04Z | 2023-09-24T16:08:17Z | https://github.com/langchain-ai/langchain/issues/3405 | 1,680,179,456 | 3,405 |

[

"langchain-ai",

"langchain"

] | Text mentions inflation and tuition:

Here is the prompt comparing inflation and college tuition.

Code is about marathon times:

agent.run(["What were the winning boston marathon times for the past 5 years? Generate a table of the names, countries of origin, and times."]) | marathon_times.ipynb: mismatched text and code | https://api.github.com/repos/langchain-ai/langchain/issues/3404/comments | 0 | 2023-04-23T21:06:49Z | 2023-04-24T01:14:13Z | https://github.com/langchain-ai/langchain/issues/3404 | 1,680,177,766 | 3,404 |

[

"langchain-ai",

"langchain"

] | i tried out a simple custom model. as long as i am using only one "query" parameter everything is working fine. in this example i like to use two parameters (i searched the problem and i found this SendMessage usecase...)

unfortunately it does not work.. and throws this error.

`input_args.validate({key_: tool_input})

File "pydantic/main.py", line 711, in pydantic.main.BaseModel.validate

File "pydantic/main.py", line 341, in pydantic.main.BaseModel.__init__

pydantic.error_wrappers.ValidationError: 1 validation error for SendMessageInput

message

field required (type=value_error.missing)`

the code :

`from langchain.agents import initialize_agent

from langchain.agents import AgentType

from langchain.llms import OpenAI

from langchain.tools import BaseTool

from typing import Type

from pydantic import BaseModel, Field

class SendMessageInput(BaseModel):

email: str = Field(description="email")

message: str = Field(description="the message to send")

class SendMessageTool(BaseTool):

name = "send_message_tool"

description = "useful for when you need to send a message to a human"

args_schema: Type[BaseModel] = SendMessageInput

def _run(self, email:str,message:str) -> str:

print(message,email)

"""Use the tool."""

return f"message send"

async def _arun(self, email: str, message: str) -> str:

"""Use the tool asynchronously."""

return f"Sent message '{message}' to {email}"

llm = OpenAI(temperature=0)

tools=[SendMessageTool()]

agent = initialize_agent(tools, llm, agent=AgentType.ZERO_SHOT_REACT_DESCRIPTION, verbose=True)

agent.run("send message hello to test@example.com")

` | Custom Model with args_schema not working | https://api.github.com/repos/langchain-ai/langchain/issues/3403/comments | 7 | 2023-04-23T20:16:33Z | 2023-10-05T16:10:53Z | https://github.com/langchain-ai/langchain/issues/3403 | 1,680,154,536 | 3,403 |

[

"langchain-ai",

"langchain"

] |

I have been trying multiple approaches to use headers in the requests chain. Here's my code:

I have been trying multiple approaches to use headers in the requests chain. Here's my code:

from langchain.utilities import TextRequestsWrapper

import json

requests = TextRequestsWrapper()

headers = {

"name": "hetyo"

}

str_data = requests.get("https://httpbin.org/get", params = {"name" : "areeb"}, headers=headers)

json_data = json.loads(str_data)

json_data

How can I pass in herders to the TextRequestsWrapper? Is there anything that I am doing wrong?

I also found that the headers is used in the requests file as follows:

"""Lightweight wrapper around requests library, with async support."""

from contextlib import asynccontextmanager

from typing import Any, AsyncGenerator, Dict, Optional

import aiohttp

import requests

from pydantic import BaseModel, Extra

class Requests(BaseModel):

"""Wrapper around requests to handle auth and async.

The main purpose of this wrapper is to handle authentication (by saving

headers) and enable easy async methods on the same base object.

"""

headers: Optional[Dict[str, str]] = None

aiosession: Optional[aiohttp.ClientSession] = None

class Config:

"""Configuration for this pydantic object."""

extra = Extra.forbid

arbitrary_types_allowed = True

def get(self, url: str, **kwargs: Any) -> requests.Response:

"""GET the URL and return the text."""

return requests.get(url, headers=self.headers, **kwargs)

def post(self, url: str, data: Dict[str, Any], **kwargs: Any) -> requests.Response:

"""POST to the URL and return the text."""

return requests.post(url, json=data, headers=self.headers, **kwargs)

def patch(self, url: str, data: Dict[str, Any], **kwargs: Any) -> requests.Response:

"""PATCH the URL and return the text."""

return requests.patch(url, json=data, headers=self.headers, **kwargs)

def put(self, url: str, data: Dict[str, Any], **kwargs: Any) -> requests.Response:

"""PUT the URL and return the text."""

return requests.put(url, json=data, headers=self.headers, **kwargs)

def delete(self, url: str, **kwargs: Any) -> requests.Response:

"""DELETE the URL and return the text."""

return requests.delete(url, headers=self.headers, **kwargs)

@asynccontextmanager

async def _arequest(

self, method: str, url: str, **kwargs: Any

) -> AsyncGenerator[aiohttp.ClientResponse, None]:

"""Make an async request."""

if not self.aiosession:

async with aiohttp.ClientSession() as session:

async with session.request(

method, url, headers=self.headers, **kwargs

) as response:

yield response

else:

async with self.aiosession.request(

method, url, headers=self.headers, **kwargs

) as response:

yield response

@asynccontextmanager

async def aget(

self, url: str, **kwargs: Any

) -> AsyncGenerator[aiohttp.ClientResponse, None]:

"""GET the URL and return the text asynchronously."""

async with self._arequest("GET", url, **kwargs) as response:

yield response

@asynccontextmanager

async def apost(

self, url: str, data: Dict[str, Any], **kwargs: Any

) -> AsyncGenerator[aiohttp.ClientResponse, None]:

"""POST to the URL and return the text asynchronously."""

async with self._arequest("POST", url, **kwargs) as response:

yield response

@asynccontextmanager

async def apatch(

self, url: str, data: Dict[str, Any], **kwargs: Any

) -> AsyncGenerator[aiohttp.ClientResponse, None]:

"""PATCH the URL and return the text asynchronously."""

async with self._arequest("PATCH", url, **kwargs) as response:

yield response

@asynccontextmanager

async def aput(

self, url: str, data: Dict[str, Any], **kwargs: Any

) -> AsyncGenerator[aiohttp.ClientResponse, None]:

"""PUT the URL and return the text asynchronously."""

async with self._arequest("PUT", url, **kwargs) as response:

yield response

@asynccontextmanager

async def adelete(

self, url: str, **kwargs: Any

) -> AsyncGenerator[aiohttp.ClientResponse, None]:

"""DELETE the URL and return the text asynchronously."""

async with self._arequest("DELETE", url, **kwargs) as response:

yield response

class TextRequestsWrapper(BaseModel):

"""Lightweight wrapper around requests library.

The main purpose of this wrapper is to always return a text output.

"""

headers: Optional[Dict[str, str]] = None

aiosession: Optional[aiohttp.ClientSession] = None

class Config:

"""Configuration for this pydantic object."""

extra = Extra.forbid

arbitrary_types_allowed = True

@property

def requests(self) -> Requests:

return Requests(headers=self.headers, aiosession=self.aiosession)

def get(self, url: str, **kwargs: Any) -> str:

"""GET the URL and return the text."""

return self.requests.get(url, **kwargs).text

def post(self, url: str, data: Dict[str, Any], **kwargs: Any) -> str:

"""POST to the URL and return the text."""

return self.requests.post(url, data, **kwargs).text

def patch(self, url: str, data: Dict[str, Any], **kwargs: Any) -> str:

"""PATCH the URL and return the text."""

return self.requests.patch(url, data, **kwargs).text

def put(self, url: str, data: Dict[str, Any], **kwargs: Any) -> str:

"""PUT the URL and return the text."""

return self.requests.put(url, data, **kwargs).text

def delete(self, url: str, **kwargs: Any) -> str:

"""DELETE the URL and return the text."""

return self.requests.delete(url, **kwargs).text

async def aget(self, url: str, **kwargs: Any) -> str:

"""GET the URL and return the text asynchronously."""

async with self.requests.aget(url, **kwargs) as response:

return await response.text()

async def apost(self, url: str, data: Dict[str, Any], **kwargs: Any) -> str:

"""POST to the URL and return the text asynchronously."""

async with self.requests.apost(url, **kwargs) as response:

return await response.text()

async def apatch(self, url: str, data: Dict[str, Any], **kwargs: Any) -> str:

"""PATCH the URL and return the text asynchronously."""

async with self.requests.apatch(url, **kwargs) as response:

return await response.text()

async def aput(self, url: str, data: Dict[str, Any], **kwargs: Any) -> str:

"""PUT the URL and return the text asynchronously."""

async with self.requests.aput(url, **kwargs) as response:

return await response.text()

async def adelete(self, url: str, **kwargs: Any) -> str:

"""DELETE the URL and return the text asynchronously."""

async with self.requests.adelete(url, **kwargs) as response:

return await response.text()

# For backwards compatibility

RequestsWrapper = TextRequestsWrapper

This may be creating the conflicts.

Here's the error that I am getting :

[/usr/local/lib/python3.9/dist-packages/langchain/requests.py](https://localhost:8080/#) in get(self, url, **kwargs)

26 def get(self, url: str, **kwargs: Any) -> requests.Response:

27 """GET the URL and return the text.""" --->

28 return requests.get(url, headers=self.headers, **kwargs)

29

30 def post(self, url: str, data: Dict[str, Any], **kwargs: Any) -> requests.Response:

TypeError: requests.api.get() got multiple values for keyword argument 'headers'

Please assist. | Not able to Pass in Headers in the Requests module | https://api.github.com/repos/langchain-ai/langchain/issues/3402/comments | 2 | 2023-04-23T19:08:24Z | 2023-04-27T17:58:55Z | https://github.com/langchain-ai/langchain/issues/3402 | 1,680,133,937 | 3,402 |

[

"langchain-ai",

"langchain"

] | Elastic supports generating embeddings using [embedding models running in the stack](https://www.elastic.co/guide/en/machine-learning/current/ml-nlp-model-ref.html#ml-nlp-model-ref-text-embedding).

Add a the ability to generate embeddings with Elasticsearch in langchain similar to other embedding modules. | Add support for generating embeddings in Elasticsearch | https://api.github.com/repos/langchain-ai/langchain/issues/3400/comments | 1 | 2023-04-23T18:40:54Z | 2023-05-24T05:40:38Z | https://github.com/langchain-ai/langchain/issues/3400 | 1,680,125,057 | 3,400 |

[

"langchain-ai",

"langchain"

] | Can you please help me with connecting my LangChain agent to a MongoDB database? I know that it's possible to directly connect to a SQL database using this resource [https://python.langchain.com/en/latest/modules/agents/toolkits/examples/sql_database.html](url) but I'm not sure if the same approach can be used with MongoDB. If it's not possible, could you suggest other ways to connect to MongoDB? | Connection with mongo db | https://api.github.com/repos/langchain-ai/langchain/issues/3399/comments | 11 | 2023-04-23T18:03:33Z | 2024-02-15T16:12:00Z | https://github.com/langchain-ai/langchain/issues/3399 | 1,680,114,161 | 3,399 |

[

"langchain-ai",

"langchain"

] | Hey

I'm getting `TypeError: 'StuffDocumentsChain' object is not callable`

the code snippet can be found here:

```

def main():

text_splitter = CharacterTextSplitter(chunk_size=2000, chunk_overlap=50)

texts = text_splitter.split_documents(documents)

embeddings = OpenAIEmbeddings(openai_api_key=api_key)

vector_db = Chroma.from_documents(

documents=texts, embeddings=embeddings)

relevant_words = get_search_words(query)

docs = vector_db.similarity_search(

relevant_words, top_k=min(3, len(texts))

)

chat_model = ChatOpenAI(

model_name="gpt-3.5-turbo", temperature=0.2, openai_api_key=api_key

)

PROMPT = get_prompt_template()

chain = load_qa_with_sources_chain(

chat_model, chain_type="stuff", metadata_keys=['source'],

return_intermediate_steps=True, prompt=PROMPT

)

res = chain({"input_documents": docs, "question": query},

return_only_outputs=True)

pprint(res)

```

Any ideas what I'm doing wrong?

BTW - if I'll change it to map_rerank of even use

```

chain = load_qa_chain(chat_model, chain_type="stuff")

chain.run(input_documents=docs, question=query)

```

I'm getting the same object is not callable | object is not callable | https://api.github.com/repos/langchain-ai/langchain/issues/3398/comments | 2 | 2023-04-23T17:43:35Z | 2024-04-30T20:26:24Z | https://github.com/langchain-ai/langchain/issues/3398 | 1,680,107,688 | 3,398 |

[

"langchain-ai",

"langchain"

] | The example in the documentation raises a `GuessedAtParserWarning`

To replicate:

```python

#!wget -r -A.html -P rtdocs https://langchain.readthedocs.io/en/latest/

from langchain.document_loaders import ReadTheDocsLoader

loader = ReadTheDocsLoader("rtdocs")

docs = loader.load()

```

```

/config/miniconda3/envs/warn_test/lib/python3.8/site-packages/langchain/document_loaders/readthedocs.py:30: GuessedAtParserWarning: No parser was explicitly specified, so I'm using the best available HTML parser for this system ("html.parser"). This usually isn't a problem, but if you run this code on another system, or in a different virtual environment, it may use a different parser and behave differently.

The code that caused this warning is on line 30 of the file /config/miniconda3/envs/warn_test/lib/python3.8/site-packages/langchain/document_loaders/readthedocs.py. To get rid of this warning, pass the additional argument 'features="html.parser"' to the BeautifulSoup constructor.

_ = BeautifulSoup(

```

Adding the argument `features` can resolve this issue

```python

#!wget -r -A.html -P rtdocs https://langchain.readthedocs.io/en/latest/

from langchain.document_loaders import ReadTheDocsLoader

loader = ReadTheDocsLoader("rtdocs", features='html.parser')

docs = loader.load()

``` | Read the Docs document loader documentation example raises warning | https://api.github.com/repos/langchain-ai/langchain/issues/3396/comments | 0 | 2023-04-23T15:50:35Z | 2023-04-25T04:54:40Z | https://github.com/langchain-ai/langchain/issues/3396 | 1,680,072,310 | 3,396 |

[

"langchain-ai",

"langchain"

] | Hello, cloud you help me fix

Error fetching or processing https exeption: URL return an error: 403 when using UnstructuredURLLoader,I'm not sure if this error is a restricted access to the website or a problem with the use of the API, thank you very much | UnstructuredURLLoader Error | https://api.github.com/repos/langchain-ai/langchain/issues/3391/comments | 1 | 2023-04-23T14:45:09Z | 2023-09-10T16:28:50Z | https://github.com/langchain-ai/langchain/issues/3391 | 1,680,051,952 | 3,391 |

[

"langchain-ai",

"langchain"

] | `MRKLOutputParser` strips quotes in "Action Input" without checking if they are present on both sides.

See https://github.com/hwchase17/langchain/blob/acfd11c8e424a456227abde8df8b52a705b63024/langchain/agents/mrkl/output_parser.py#L27

Test case that reproduces the problem:

```python

from langchain.agents.mrkl.output_parser import MRKLOutputParser

parser = MRKLOutputParser()

llm_output = 'Action: Terminal\nAction Input: git commit -m "My change"'

action = parser.parse(llm_output)

print(action)

assert action.tool_input == 'git commit -m "My change"'

```

The fix should be simple: check first if the quotes are present on both sides before stripping them.

Happy to submit a PR if you are happy with proposed fix. | MRKLOutputParser strips quotes incorrectly and breaks LLM commands | https://api.github.com/repos/langchain-ai/langchain/issues/3390/comments | 1 | 2023-04-23T14:22:00Z | 2023-09-10T16:28:54Z | https://github.com/langchain-ai/langchain/issues/3390 | 1,680,044,740 | 3,390 |

[

"langchain-ai",

"langchain"

] | ### The Problem

The `YoutubeLoader` is breaking when using the `from_youtube_url` function. The expected behaviour is to use this module to get transcripts from youtube videos and pass into them to an LLM. Willing to help if needed.

### Specs

```

- Machine: Apple M1 Pro

- Version: langchain 0.0.147

- conda-build version : 3.21.8

- python version : 3.9.12.final.0

```

### Code

```python

from dotenv import find_dotenv, load_dotenv

from langchain.document_loaders import YoutubeLoader

load_dotenv(find_dotenv())

loader = YoutubeLoader.from_youtube_url("https://www.youtube.com/watch?v=QsYGlZkevEg", add_video_info=True)

result = loader.load()

print (result)

```

### Output

```bash

Traceback (most recent call last):

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/site-packages/pytube/__main__.py", line 341, in title

self._title = self.vid_info['videoDetails']['title']

KeyError: 'videoDetails'

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/urllib/request.py", line 1346, in do_open

h.request(req.get_method(), req.selector, req.data, headers,

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/http/client.py", line 1285, in request

self._send_request(method, url, body, headers, encode_chunked)

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/http/client.py", line 1331, in _send_request

self.endheaders(body, encode_chunked=encode_chunked)

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/http/client.py", line 1280, in endheaders

self._send_output(message_body, encode_chunked=encode_chunked)

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/http/client.py", line 1040, in _send_output

self.send(msg)

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/http/client.py", line 980, in send

self.connect()

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/http/client.py", line 1454, in connect

self.sock = self._context.wrap_socket(self.sock,

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/ssl.py", line 500, in wrap_socket

return self.sslsocket_class._create(

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/ssl.py", line 1040, in _create

self.do_handshake()

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/ssl.py", line 1309, in do_handshake

self._sslobj.do_handshake()

ssl.SSLCertVerificationError: [SSL: CERTIFICATE_VERIFY_FAILED] certificate verify failed: self signed certificate in certificate chain (_ssl.c:1129)

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/Users/<username>/Desktop/personal/github/ar-assistant/notebooks/research/langchain/scripts/5-indexes.py", line 28, in <module>

result = loader.load()

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/site-packages/langchain/document_loaders/youtube.py", line 133, in load

video_info = self._get_video_info()

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/site-packages/langchain/document_loaders/youtube.py", line 174, in _get_video_info

"title": yt.title,

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/site-packages/pytube/__main__.py", line 345, in title

self.check_availability()

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/site-packages/pytube/__main__.py", line 210, in check_availability

status, messages = extract.playability_status(self.watch_html)

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/site-packages/pytube/__main__.py", line 102, in watch_html

self._watch_html = request.get(url=self.watch_url)

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/site-packages/pytube/request.py", line 53, in get

response = _execute_request(url, headers=extra_headers, timeout=timeout)

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/site-packages/pytube/request.py", line 37, in _execute_request

return urlopen(request, timeout=timeout) # nosec

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/urllib/request.py", line 214, in urlopen

return opener.open(url, data, timeout)

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/urllib/request.py", line 517, in open

response = self._open(req, data)

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/urllib/request.py", line 534, in _open

result = self._call_chain(self.handle_open, protocol, protocol +

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/urllib/request.py", line 494, in _call_chain

result = func(*args)

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/urllib/request.py", line 1389, in https_open

return self.do_open(http.client.HTTPSConnection, req,

File "/Users/<username>/opt/anaconda3/envs/dev/lib/python3.9/urllib/request.py", line 1349, in do_open

raise URLError(err)

urllib.error.URLError: <urlopen error [SSL: CERTIFICATE_VERIFY_FAILED] certificate verify failed: self signed certificate in certificate chain (_ssl.c:1129)>

```

### FYI

- There is a duplication of code excerpts in the [Youtube page](https://python.langchain.com/en/latest/modules/indexes/document_loaders/examples/youtube.html#) of the langchain docs

| Youtube.py: urllib.error.URLError: <urlopen error [SSL: CERTIFICATE_VERIFY_FAILED] certificate verify failed: self signed certificate in certificate chain (_ssl.c:1129)> | https://api.github.com/repos/langchain-ai/langchain/issues/3389/comments | 3 | 2023-04-23T13:47:11Z | 2023-09-24T16:08:22Z | https://github.com/langchain-ai/langchain/issues/3389 | 1,680,033,914 | 3,389 |

[

"langchain-ai",

"langchain"

] | Hello everyone,

Is it possible to use IndexTree with a local LLM as for istance gpt4all or llama.cpp?

Is there a tutorial? | IndexTree and local LLM | https://api.github.com/repos/langchain-ai/langchain/issues/3388/comments | 1 | 2023-04-23T13:18:07Z | 2023-09-15T22:12:51Z | https://github.com/langchain-ai/langchain/issues/3388 | 1,680,025,245 | 3,388 |

[

"langchain-ai",

"langchain"

] | IMO a contribution guide should be added. The following questions should be answered:

- how do I install langchain in `-e` mode with all dependencies to run lint and tests locally

- how to start / run lint and tests locally

- how should I mark "feature request issues"

- how should I mark "PR that are work in progress"

- a link to the discord

- ... | FR: Add a contribution guide. | https://api.github.com/repos/langchain-ai/langchain/issues/3387/comments | 1 | 2023-04-23T12:26:05Z | 2023-04-23T12:29:47Z | https://github.com/langchain-ai/langchain/issues/3387 | 1,680,009,761 | 3,387 |

[

"langchain-ai",

"langchain"

] | sometimes, when we ask LLMs a question like writing a document or a piece of code for a specified problem, the output may be too long, when we use a UI interface like ChatGPT, we can use the prompt like

```bash

...

if you have given all content, please add the 'finished' at the end of the response.

if not, I will say 'continue', then please continue to give me the reaming content.

```

to get all content by seeing if we should let LLMs continue to print content. **Does anyone know how to achieve this by LangChain?**

I'm not sure if LangChain supports this, if not, and if someone is willing to give me some guides on how to do this in LangChain, I'll be happy to create a PR to solve it. | How to action when output isn't finished | https://api.github.com/repos/langchain-ai/langchain/issues/3386/comments | 10 | 2023-04-23T11:58:28Z | 2024-07-16T19:18:12Z | https://github.com/langchain-ai/langchain/issues/3386 | 1,680,000,218 | 3,386 |

[

"langchain-ai",

"langchain"

] | Getting the below error

```

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "...\langchain\vectorstores\faiss.py", line 285, in max_marginal_relevance_search

docs = self.max_marginal_relevance_search_by_vector(embedding, k, fetch_k)

File "...\langchain\vectorstores\faiss.py", line 248, in max_marginal_relevance_search_by_vector

mmr_selected = maximal_marginal_relevance(

File "...\langchain\langchain\vectorstores\utils.py", line 19, in maximal_marginal_relevance

similarity_to_query = cosine_similarity([query_embedding], embedding_list)[0]

File "...\langchain\langchain\math_utils.py", line 16, in cosine_similarity

raise ValueError("Number of columns in X and Y must be the same.")

ValueError: Number of columns in X and Y must be the same.

```

Code to reproduce this error

```

>>> model_name = "sentence-transformers/all-mpnet-base-v2"

>>> model_kwargs = {'device': 'cpu'}

>>> from langchain.embeddings import HuggingFaceEmbeddings

>>> embeddings = HuggingFaceEmbeddings(model_name=model_name, model_kwargs=model_kwargs)

>>> from langchain.vectorstores import FAISS

>>> FAISS_INDEX_PATH = 'faiss_index'

>>> db = FAISS.load_local(FAISS_INDEX_PATH, embeddings)

>>> query = 'query'

>>> results = db.max_marginal_relevance_search(query)

```

While going through the error it seems that in this case `query_embedding` is 1 x model_dimension while embedding_list is no_docs x model dimension vectors. Hence we should probably change the code to `similarity_to_query = cosine_similarity(query_embedding, embedding_list)[0]` i.e. remove the list from the query_embedding.

Since this is a common function not sure if this change would affect other embedding classes as well. | ValueError in cosine_similarity when using FAISS index as vector store | https://api.github.com/repos/langchain-ai/langchain/issues/3384/comments | 8 | 2023-04-23T07:51:56Z | 2023-04-25T03:43:34Z | https://github.com/langchain-ai/langchain/issues/3384 | 1,679,909,880 | 3,384 |

[

"langchain-ai",

"langchain"

] | In the current version(0.0.147), we should escape curly brackets before f-string formatting (FewShotPromptTemplate) by ourselves.

please make it a default behavior!

https://colab.research.google.com/drive/16_pCJIWK88AXpCh6xsSriJmLJKrNE8Fv?usp=share_link

Test Case

```python

from langchain import FewShotPromptTemplate, PromptTemplate

example={'instruction':'do something', 'input': 'question',}

examples=[

{'input': 'question a', 'output':'answer a'},

{'input': 'question b', 'output':'answer b'},

]

example_prompt = PromptTemplate(

input_variables=['input', 'output'],

template='input: {input}\noutput:{output}',

)

fewshot_prompt = FewShotPromptTemplate(

examples=examples,

example_prompt=example_prompt,

input_variables=['instruction', 'input'],

prefix='{instruction}\n',

suffix='\ninput: {input}\noutput:',

example_separator='\n\n',

)

fewshot_prompt.format(**example)

```

that's ok !

```python

example={'instruction':'do something', 'input': 'question',}

examples_with_curly_brackets=[

{'input': 'question a{}', 'output':'answer a'},

{'input': 'question b', 'output':'answer b'},

]

fewshot_prompt = FewShotPromptTemplate(

examples=examples_with_curly_brackets,

example_prompt=example_prompt,

input_variables=['instruction', 'input'],

prefix='{instruction}\n',

suffix='\ninput: {input}\noutput:',

example_separator='\n\n',

)

fewshot_prompt.format(**example)

```

some errors like

```shell

---------------------------------------------------------------------------

IndexError Traceback (most recent call last)

[<ipython-input-9-95e0dc90fc4d>](https://localhost:8080/#) in <cell line: 16>()

14 )

15

---> 16 fewshot_prompt.format(**example)

6 frames

[/usr/lib/python3.9/string.py](https://localhost:8080/#) in get_value(self, key, args, kwargs)

223 def get_value(self, key, args, kwargs):

224 if isinstance(key, int):

--> 225 return args[key]

226 else:

227 return kwargs[key]

IndexError: tuple index out of range

```

if we do escape firstly!

```

# What should we do: escape brackets in examples

def escape_examples(examples):

return [{k: escape_f_string(v) for k, v in example.items()} for example in examples]

def escape_f_string(text):

return text.replace('{', '{{').replace('}', '}}')

fewshot_prompt = FewShotPromptTemplate(

examples=escape_examples(examples_with_curly_brackets),

example_prompt=example_prompt,

input_variables=['instruction', 'input'],

prefix='{instruction}\n',

suffix='\ninput: {input}\noutput:',

example_separator='\n\n',

)

fewshot_prompt.format(**example)

```

everything is ok now!

| escape curly brackets before f-string formatting in FewShotPromptTemplate | https://api.github.com/repos/langchain-ai/langchain/issues/3382/comments | 4 | 2023-04-23T06:05:08Z | 2024-02-12T16:19:19Z | https://github.com/langchain-ai/langchain/issues/3382 | 1,679,875,328 | 3,382 |

[

"langchain-ai",

"langchain"

] | Hello everyone,

I have implemented my project using the Question Answering over Docs example provided in the tutorial. I designed a long custom prompt using load_qa_chain with chain_type set to stuff mode. However, when I call the function "chain.run", the output is incomplete.

Does anyone know what might be causing this issue?

Is it because the token exceed the max size ?

`llm=ChatOpenAI(streaming=True,callback_manager=CallbackManager([StreamingStdOutCallbackHandler()]),verbose=True,temperature=0,openai_api_key=OPENAI_API_KEY)`

`chain = load_qa_chain(llm,chain_type="stuff")`

`docs=docsearch.similarity_search(query,include_metadata=True,k=10)`

`r= chain.run(input_documents=docs, question=fq)`

| QA chain is not working properly | https://api.github.com/repos/langchain-ai/langchain/issues/3373/comments | 7 | 2023-04-23T03:26:34Z | 2023-11-29T16:11:19Z | https://github.com/langchain-ai/langchain/issues/3373 | 1,679,837,740 | 3,373 |

[

"langchain-ai",

"langchain"

] | While playing with the LLaMA models I noticed what parse exception was thrown even output looked good.

### Screenshot

For curious one the prompt I used was:

```python

agent({"input":"""

There is a file in `~/.bashrc.d/` directory containing openai api key.

Can you find that key?

"""})

```

| Terminal tool gives `ValueError: Could not parse LLM output:` when there is a new libe before action string. | https://api.github.com/repos/langchain-ai/langchain/issues/3365/comments | 1 | 2023-04-22T22:04:26Z | 2023-04-25T05:05:33Z | https://github.com/langchain-ai/langchain/issues/3365 | 1,679,746,063 | 3,365 |

[

"langchain-ai",

"langchain"

] | Using

```

langchain~=0.0.146

openai~=0.27.4

haystack~=0.42

tiktoken~=0.3.3

weaviate-client~=3.15.6

aiohttp~=3.8.4

aiodns~=3.0.0

python-dotenv~=1.0.0

Jinja2~=3.1.2

pandas~=2.0.0

```

```

def create_new_memory_retriever():

"""Create a new vector store retriever unique to the agent."""

client = weaviate.Client(

url=WEAVIATE_HOST,

additional_headers={"X-OpenAI-Api-Key": os.getenv("OPENAI_API_KEY")},

# auth_client_secret: Optional[AuthCredentials] = None,

# timeout_config: Union[Tuple[Real, Real], Real] = (10, 60),

# proxies: Union[dict, str, None] = None,

# trust_env: bool = False,

# additional_headers: Optional[dict] = None,

# startup_period: Optional[int] = 5,

# embedded_options=[],

)

embeddings_model = OpenAIEmbeddings()

vectorstore = Weaviate(client, "Paragraph", "content", embedding=embeddings_model.embed_query)

return TimeWeightedVectorStoreRetriever(vectorstore=vectorstore, other_score_keys=["importance"], k=15)

```

Time weighted retriever

```

...

def get_salient_docs(self, query: str) -> Dict[int, Tuple[Document, float]]:

"""Return documents that are salient to the query."""

docs_and_scores: List[Tuple[Document, float]]

docs_and_scores = self.vectorstore.similarity_search_with_relevance_scores( <----------======

query, **self.search_kwargs

)

results = {}

for fetched_doc, relevance in docs_and_scores:

buffer_idx = fetched_doc.metadata["buffer_idx"]

doc = self.memory_stream[buffer_idx]

results[buffer_idx] = (doc, relevance)

return results

...

```

`similarity_search_with_relevance_scores` is not in the weaviate python client.

Whose responsibility is this? Langchains? Weaviates? I'm perfectly fine to solve it but I just need to know on whose door to knock.

All of langchains vectorstores have different methods under them and people are writing implementation for all of them. I don't know how maintainable this is gonna be. | Weaviate python library doesn't have needed methods for the abstractions | https://api.github.com/repos/langchain-ai/langchain/issues/3358/comments | 2 | 2023-04-22T19:06:52Z | 2023-09-10T16:28:59Z | https://github.com/langchain-ai/langchain/issues/3358 | 1,679,662,654 | 3,358 |

[

"langchain-ai",

"langchain"

] | Hi, I am building my agent, and I would like to make this query to wolfram alpha "Action Input: √68,084,217 + √62,390,364", but I always get "Wolfram Alpha wasn't able to answer it".

Why is that? When I use the Wolfram app, it can easily solve it.

Thanks in advance,

Giovanni | Wolfram Alpha wasn't able to answer it for valid inputs | https://api.github.com/repos/langchain-ai/langchain/issues/3357/comments | 6 | 2023-04-22T18:40:03Z | 2023-12-18T23:50:48Z | https://github.com/langchain-ai/langchain/issues/3357 | 1,679,651,732 | 3,357 |

[

"langchain-ai",

"langchain"

] | I am building a chain to analyze codebases. This involves documents that's constantly changing as the user modifies the files. As far as I can see, there doesn't seem to be a way to update the embeddings that are saved in vector stores once they have been embedded and submitted to the backing vectorstore.

This appears to be possible at least for chromaDB based on: (https://docs.trychroma.com/api-reference) and (https://github.com/chroma-core/chroma/blob/79c891f8f597dad8bd3eb5a42645cb99ec553440/chromadb/api/models/Collection.py#L258). | Add update method on vectorstores | https://api.github.com/repos/langchain-ai/langchain/issues/3354/comments | 6 | 2023-04-22T16:39:25Z | 2024-02-16T14:27:47Z | https://github.com/langchain-ai/langchain/issues/3354 | 1,679,611,775 | 3,354 |

[

"langchain-ai",

"langchain"

] | Whit the function VectorstoreIndexCreator, I got the error at

--> 115 return {

116 base64.b64decode(token): int(rank)

117 for token, rank in (line.split() for line in contents.splitlines() if line)

118 }

The whole error information was:

ValueError Traceback (most recent call last)

Cell In[25], line 2

1 from langchain.indexes import VectorstoreIndexCreator

----> 2 index = VectorstoreIndexCreator().from_loaders([loader])

File J:\conda202002\envs\chatglm\lib\site-packages\langchain\indexes\vectorstore.py:71, in VectorstoreIndexCreator.from_loaders(self, loaders)

69 docs.extend(loader.load())

70 sub_docs = self.text_splitter.split_documents(docs)

---> 71 vectorstore = self.vectorstore_cls.from_documents(

72 sub_docs, self.embedding, **self.vectorstore_kwargs

73 )

74 return VectorStoreIndexWrapper(vectorstore=vectorstore)

File J:\conda202002\envs\chatglm\lib\site-packages\langchain\vectorstores\chroma.py:347, in Chroma.from_documents(cls, documents, embedding, ids, collection_name, persist_directory, client_settings, client, **kwargs)

345 texts = [doc.page_content for doc in documents]

346 metadatas = [doc.metadata for doc in documents]

--> 347 return cls.from_texts(

348 texts=texts,

349 embedding=embedding,

350 metadatas=metadatas,

351 ids=ids,

352 collection_name=collection_name,

353 persist_directory=persist_directory,

354 client_settings=client_settings,

355 client=client,

356 )

File J:\conda202002\envs\chatglm\lib\site-packages\langchain\vectorstores\chroma.py:315, in Chroma.from_texts(cls, texts, embedding, metadatas, ids, collection_name, persist_directory, client_settings, client, **kwargs)

291 """Create a Chroma vectorstore from a raw documents.

292

293 If a persist_directory is specified, the collection will be persisted there.

(...)

306 Chroma: Chroma vectorstore.

307 """

308 chroma_collection = cls(

309 collection_name=collection_name,

310 embedding_function=embedding,

(...)

313 client=client,

314 )

--> 315 chroma_collection.add_texts(texts=texts, metadatas=metadatas, ids=ids)

316 return chroma_collection

File J:\conda202002\envs\chatglm\lib\site-packages\langchain\vectorstores\chroma.py:121, in Chroma.add_texts(self, texts, metadatas, ids, **kwargs)

119 embeddings = None

120 if self._embedding_function is not None:

--> 121 embeddings = self._embedding_function.embed_documents(list(texts))

122 self._collection.add(

123 metadatas=metadatas, embeddings=embeddings, documents=texts, ids=ids

124 )

125 return ids

File J:\conda202002\envs\chatglm\lib\site-packages\langchain\embeddings\openai.py:228, in OpenAIEmbeddings.embed_documents(self, texts, chunk_size)

226 # handle batches of large input text

227 if self.embedding_ctx_length > 0:

--> 228 return self._get_len_safe_embeddings(texts, engine=self.deployment)

229 else:

230 results = []

File J:\conda202002\envs\chatglm\lib\site-packages\langchain\embeddings\openai.py:159, in OpenAIEmbeddings._get_len_safe_embeddings(self, texts, engine, chunk_size)

157 tokens = []

158 indices = []

--> 159 encoding = tiktoken.model.encoding_for_model(self.model)

160 for i, text in enumerate(texts):

161 # replace newlines, which can negatively affect performance.

162 text = text.replace("\n", " ")

File J:\conda202002\envs\chatglm\lib\site-packages\tiktoken\model.py:75, in encoding_for_model(model_name)

69 if encoding_name is None:

70 raise KeyError(

71 f"Could not automatically map {model_name} to a tokeniser. "

72 "Please use `tiktok.get_encoding` to explicitly get the tokeniser you expect."

73 ) from None

---> 75 return get_encoding(encoding_name)

File J:\conda202002\envs\chatglm\lib\site-packages\tiktoken\registry.py:63, in get_encoding(encoding_name)

60 raise ValueError(f"Unknown encoding {encoding_name}")

62 constructor = ENCODING_CONSTRUCTORS[encoding_name]

---> 63 enc = Encoding(**constructor())

64 ENCODINGS[encoding_name] = enc

65 return enc

File J:\conda202002\envs\chatglm\lib\site-packages\tiktoken_ext\openai_public.py:64, in cl100k_base()

63 def cl100k_base():

---> 64 mergeable_ranks = load_tiktoken_bpe(

65 "https://openaipublic.blob.core.windows.net/encodings/cl100k_base.tiktoken"

66 )

67 special_tokens = {

68 ENDOFTEXT: 100257,

69 FIM_PREFIX: 100258,

(...)

72 ENDOFPROMPT: 100276,

73 }

74 return {

75 "name": "cl100k_base",

76 "pat_str": r"""(?i:'s|'t|'re|'ve|'m|'ll|'d)|[^\r\n\p{L}\p{N}]?\p{L}+|\p{N}{1,3}| ?[^\s\p{L}\p{N}]+[\r\n]*|\s*[\r\n]+|\s+(?!\S)|\s+""",

77 "mergeable_ranks": mergeable_ranks,

78 "special_tokens": special_tokens,

79 }