issue_owner_repo listlengths 2 2 | issue_body stringlengths 0 261k ⌀ | issue_title stringlengths 1 925 | issue_comments_url stringlengths 56 81 | issue_comments_count int64 0 2.5k | issue_created_at stringlengths 20 20 | issue_updated_at stringlengths 20 20 | issue_html_url stringlengths 37 62 | issue_github_id int64 387k 2.46B | issue_number int64 1 127k |

|---|---|---|---|---|---|---|---|---|---|

[

"langchain-ai",

"langchain"

] | Hi I have one question I want to use search_distance with ConversationalRetrievalChain

Here is my code:

```

vectordbkwargs = {"search_distance": 0.9}

bot_message = qa.run({"question": history[-1][0], "chat_history": history[:-1], "vectordbkwargs": vectordbkwargs})

```

But I am getting the following error

```

raise ValueError(f"One input key expected got {prompt_input_keys}")

ValueError: One input key expected got ['question', 'vectordbkwargs']

Anybody has any idea what I am doing wrong

```

Am I doing something wrong | Error in running search_distance with ConversationalRetrievalChain | https://api.github.com/repos/langchain-ai/langchain/issues/3178/comments | 6 | 2023-04-19T21:10:44Z | 2023-11-22T16:09:14Z | https://github.com/langchain-ai/langchain/issues/3178 | 1,675,643,417 | 3,178 |

[

"langchain-ai",

"langchain"

] | null | create_python_agent doesnt return intermediate step | https://api.github.com/repos/langchain-ai/langchain/issues/3177/comments | 1 | 2023-04-19T21:09:07Z | 2023-09-10T16:30:25Z | https://github.com/langchain-ai/langchain/issues/3177 | 1,675,640,827 | 3,177 |

[

"langchain-ai",

"langchain"

] | The following link describes the VectorStoreRetrieverMemory class, which would be extremely useful for referencing an external vector DB & it's text/vectors:

https://python.langchain.com/en/latest/modules/memory/types/vectorstore_retriever_memory.html#create-your-the-vectorstoreretrievermemory)

However, I'm following the documentation in the following link, and used the import copied from the documentation:

`from langchain.memory import VectorStoreRetrieverMemory`

Here's the error that I'm receiving:

ImportError: cannot import name 'VectorStoreRetrieverMemory' from 'langchain.memory' (D:\Anaconda_3\lib\site-packages\langchain\memory\__init__.py)

I've updated langchain as a dependency, and the issue is persisting. Is there a workaround or an issue with langchain? This is also my first github issue, so please let me know if more information is needed. | VectorStoreRetrieverMemory Not Available In Langchain.memory | https://api.github.com/repos/langchain-ai/langchain/issues/3175/comments | 2 | 2023-04-19T21:01:08Z | 2023-05-03T14:28:51Z | https://github.com/langchain-ai/langchain/issues/3175 | 1,675,628,226 | 3,175 |

[

"langchain-ai",

"langchain"

] | When I get a rate limit or API key error, I get the following:

```

File "/Users/vanessacai/workspace/chef/venv/lib/python3.10/site-packages/langchain/chains/base.py", line 216, in run

return self(kwargs)[self.output_keys[0]]

File "/Users/vanessacai/workspace/chef/venv/lib/python3.10/site-packages/langchain/chains/base.py", line 116, in __call__

raise e

File "/Users/vanessacai/workspace/chef/venv/lib/python3.10/site-packages/langchain/chains/base.py", line 113, in __call__

outputs = self._call(inputs)

File "/Users/vanessacai/workspace/chef/venv/lib/python3.10/site-packages/langchain/chains/llm.py", line 57, in _call

return self.apply([inputs])[0]

File "/Users/vanessacai/workspace/chef/venv/lib/python3.10/site-packages/langchain/chains/llm.py", line 118, in apply

response = self.generate(input_list)

File "/Users/vanessacai/workspace/chef/venv/lib/python3.10/site-packages/langchain/chains/llm.py", line 62, in generate

return self.llm.generate_prompt(prompts, stop)

File "/Users/vanessacai/workspace/chef/venv/lib/python3.10/site-packages/langchain/llms/base.py", line 107, in generate_prompt

return self.generate(prompt_strings, stop=stop)

File "/Users/vanessacai/workspace/chef/venv/lib/python3.10/site-packages/langchain/llms/base.py", line 140, in generate

raise e

File "/Users/vanessacai/workspace/chef/venv/lib/python3.10/site-packages/langchain/llms/base.py", line 137, in generate

output = self._generate(prompts, stop=stop)

File "/Users/vanessacai/workspace/chef/venv/lib/python3.10/site-packages/langchain/llms/base.py", line 324, in _generate

text = self._call(prompt, stop=stop)

File "/Users/vanessacai/workspace/chef/venv/lib/python3.10/site-packages/langchain/llms/anthropic.py", line 184, in _call

return response["completion"]

KeyError: 'completion'

```

This is confusing, and could be a more informative error message. | Anthropic error handling is unclear | https://api.github.com/repos/langchain-ai/langchain/issues/3170/comments | 2 | 2023-04-19T19:43:52Z | 2023-04-20T00:51:18Z | https://github.com/langchain-ai/langchain/issues/3170 | 1,675,517,935 | 3,170 |

[

"langchain-ai",

"langchain"

] | When installing requirements via `poetry install -E all` getting error for debugpy and SQLAlchemy:

debugpy:

```

• Installing debugpy (1.6.7): Failed

CalledProcessError

Command '['/Users/cyzanfar/Desktop/llm/langchain/.venv/bin/python', '-m', 'pip', 'install', '--disable-pip-version-check', '--isolated', '--no-input', '--prefix', '/Users/cyzanfar/Desktop/llm/langchain/.venv', '--no-deps', '/Users/cyzanfar/Library/Caches/pypoetry/artifacts/a2/72/f2/f92a409c1ebe3f157f1f797e08448b8b58e6ac55cf7e01d26828907568/debugpy-1.6.7-cp39-cp39-macosx_11_0_x86_64.whl']' returned non-zero exit status 1.

at /opt/anaconda3/lib/python3.9/subprocess.py:528 in run

524│ # We don't call process.wait() as .__exit__ does that for us.

525│ raise

526│ retcode = process.poll()

527│ if check and retcode:

→ 528│ raise CalledProcessError(retcode, process.args,

529│ output=stdout, stderr=stderr)

530│ return CompletedProcess(process.args, retcode, stdout, stderr)

531│

532│

The following error occurred when trying to handle this error:

EnvCommandError

Command ['/Users/cyzanfar/Desktop/llm/langchain/.venv/bin/python', '-m', 'pip', 'install', '--disable-pip-version-check', '--isolated', '--no-input', '--prefix', '/Users/cyzanfar/Desktop/llm/langchain/.venv', '--no-deps', '/Users/cyzanfar/Library/Caches/pypoetry/artifacts/a2/72/f2/f92a409c1ebe3f157f1f797e08448b8b58e6ac55cf7e01d26828907568/debugpy-1.6.7-cp39-cp39-macosx_11_0_x86_64.whl'] errored with the following return code 1

Output:

ERROR: debugpy-1.6.7-cp39-cp39-macosx_11_0_x86_64.whl is not a supported wheel on this platform.

at ~/Library/Application Support/pypoetry/venv/lib/python3.9/site-packages/poetry/utils/env.py:1545 in _run

1541│ return subprocess.call(cmd, stderr=stderr, env=env, **kwargs)

1542│ else:

1543│ output = subprocess.check_output(cmd, stderr=stderr, env=env, **kwargs)

1544│ except CalledProcessError as e:

→ 1545│ raise EnvCommandError(e, input=input_)

1546│

1547│ return decode(output)

1548│

1549│ def execute(self, bin: str, *args: str, **kwargs: Any) -> int:

The following error occurred when trying to handle this error:

PoetryException

Failed to install /Users/cyzanfar/Library/Caches/pypoetry/artifacts/a2/72/f2/f92a409c1ebe3f157f1f797e08448b8b58e6ac55cf7e01d26828907568/debugpy-1.6.7-cp39-cp39-macosx_11_0_x86_64.whl

at ~/Library/Application Support/pypoetry/venv/lib/python3.9/site-packages/poetry/utils/pip.py:58 in pip_install

54│

55│ try:

56│ return environment.run_pip(*args)

57│ except EnvCommandError as e:

→ 58│ raise PoetryException(f"Failed to install {path.as_posix()}") from e

59│

```

SQLAlchemy:

```

• Installing sqlalchemy (1.4.47): Failed

CalledProcessError

Command '['/Users/cyzanfar/Desktop/llm/langchain/.venv/bin/python', '-m', 'pip', 'install', '--disable-pip-version-check', '--isolated', '--no-input', '--prefix', '/Users/cyzanfar/Desktop/llm/langchain/.venv', '--no-deps', '/Users/cyzanfar/Library/Caches/pypoetry/artifacts/ee/bd/08/6d08c28abb942c2089808a2dbc720d1ee4d8a7260724e7fc5cbaeba134/SQLAlchemy-1.4.47-cp39-cp39-macosx_11_0_x86_64.whl']' returned non-zero exit status 1.

at /opt/anaconda3/lib/python3.9/subprocess.py:528 in run

524│ # We don't call process.wait() as .__exit__ does that for us.

525│ raise

526│ retcode = process.poll()

527│ if check and retcode:

→ 528│ raise CalledProcessError(retcode, process.args,

529│ output=stdout, stderr=stderr)

530│ return CompletedProcess(process.args, retcode, stdout, stderr)

531│

532│

The following error occurred when trying to handle this error:

EnvCommandError

Command ['/Users/cyzanfar/Desktop/llm/langchain/.venv/bin/python', '-m', 'pip', 'install', '--disable-pip-version-check', '--isolated', '--no-input', '--prefix', '/Users/cyzanfar/Desktop/llm/langchain/.venv', '--no-deps', '/Users/cyzanfar/Library/Caches/pypoetry/artifacts/ee/bd/08/6d08c28abb942c2089808a2dbc720d1ee4d8a7260724e7fc5cbaeba134/SQLAlchemy-1.4.47-cp39-cp39-macosx_11_0_x86_64.whl'] errored with the following return code 1

Output:

ERROR: SQLAlchemy-1.4.47-cp39-cp39-macosx_11_0_x86_64.whl is not a supported wheel on this platform.

at ~/Library/Application Support/pypoetry/venv/lib/python3.9/site-packages/poetry/utils/env.py:1545 in _run

1541│ return subprocess.call(cmd, stderr=stderr, env=env, **kwargs)

1542│ else:

1543│ output = subprocess.check_output(cmd, stderr=stderr, env=env, **kwargs)

1544│ except CalledProcessError as e:

→ 1545│ raise EnvCommandError(e, input=input_)

1546│

1547│ return decode(output)

1548│

1549│ def execute(self, bin: str, *args: str, **kwargs: Any) -> int:

The following error occurred when trying to handle this error:

PoetryException

Failed to install /Users/cyzanfar/Library/Caches/pypoetry/artifacts/ee/bd/08/6d08c28abb942c2089808a2dbc720d1ee4d8a7260724e7fc5cbaeba134/SQLAlchemy-1.4.47-cp39-cp39-macosx_11_0_x86_64.whl

at ~/Library/Application Support/pypoetry/venv/lib/python3.9/site-packages/poetry/utils/pip.py:58 in pip_install

54│

55│ try:

56│ return environment.run_pip(*args)

57│ except EnvCommandError as e:

→ 58│ raise PoetryException(f"Failed to install {path.as_posix()}") from e

59│

``` | poetry install -E all on M1 13.2.1 | https://api.github.com/repos/langchain-ai/langchain/issues/3169/comments | 2 | 2023-04-19T19:31:31Z | 2023-09-10T16:30:30Z | https://github.com/langchain-ai/langchain/issues/3169 | 1,675,500,196 | 3,169 |

[

"langchain-ai",

"langchain"

] | I'm exploring [this great notebook](https://python.langchain.com/en/latest/use_cases/agent_simulations/characters.html) and trying to produce something similar, however I get an error when `fetch_memories` calls `self.memory_retriever.get_relevant_documents(observation)`:

```

AttributeError: 'FAISS' object has no attribute 'similarity_search_with_relevance_scores'

```

I'm running `langchain` `0.0.144` and I've installed `faiss` using `conda install -c conda-forge faiss-cpu` (as indicated [here](https://github.com/facebookresearch/faiss/blob/main/INSTALL.md)) which installed version `1.7.2`. Also running python `3.10`.

Poking inside `faiss.py` I indeed can't find a method called `similarity_search_with_relevance_scores`, only one called `_similarity_search_with_relevance_scores`.

Which version(s) of `langchain` and `faiss` should I be running for this to work?

Any help would be greatly appreciated, thanks 🙏 | Error using TimeWeightedVectorStoreRetriever.get_relevant_documents with FAISS: 'FAISS' object has no attribute 'similarity_search_with_relevance_scores' | https://api.github.com/repos/langchain-ai/langchain/issues/3167/comments | 6 | 2023-04-19T19:21:29Z | 2023-09-18T09:55:40Z | https://github.com/langchain-ai/langchain/issues/3167 | 1,675,484,274 | 3,167 |

[

"langchain-ai",

"langchain"

] | I am trying to improve BabyAGI with agents example from this repo to read a file, optimize it and write the file.

I added this to the tools as from the AutoGPT example next to search and todo:

` WriteFileTool(),

ReadFileTool(),`

Prompt is to "Write the optimized code into a file".

ReadFileTool is working fine...

It looks like type is missing as a parameter even. Do I need to pass something to the tool or what am I doing wrong?

I get the following error:

`Traceback (most recent call last):

File "baby_agi_with_agent.py", line 136, in <module>

baby_agi({"objective": OBJECTIVE})

File "/home/dev/anaconda3/envs/langchain/lib/python3.8/site-packages/langchain/chains/base.py", line 116, in __call__

raise e

File "/home/dev/anaconda3/envs/langchain/lib/python3.8/site-packages/langchain/chains/base.py", line 113, in __call__

outputs = self._call(inputs)

File "/home/dev/anaconda3/envs/langchain/lib/python3.8/site-packages/langchain/experimental/autonomous_agents/baby_agi/baby_agi.py", line 130, in _call

result = self.execute_task(objective, task["task_name"])

File "/home/dev/anaconda3/envs/langchain/lib/python3.8/site-packages/langchain/experimental/autonomous_agents/baby_agi/baby_agi.py", line 111, in execute_task

return self.execution_chain.run(

File "/home/dev/anaconda3/envs/langchain/lib/python3.8/site-packages/langchain/chains/base.py", line 216, in run

return self(kwargs)[self.output_keys[0]]

File "/home/dev/anaconda3/envs/langchain/lib/python3.8/site-packages/langchain/chains/base.py", line 116, in __call__

raise e

File "/home/dev/anaconda3/envs/langchain/lib/python3.8/site-packages/langchain/chains/base.py", line 113, in __call__

outputs = self._call(inputs)

File "/home/dev/anaconda3/envs/langchain/lib/python3.8/site-packages/langchain/agents/agent.py", line 792, in _call

next_step_output = self._take_next_step(

File "/home/dev/anaconda3/envs/langchain/lib/python3.8/site-packages/langchain/agents/agent.py", line 695, in _take_next_step

observation = tool.run(

File "/home/dev/anaconda3/envs/langchain/lib/python3.8/site-packages/langchain/tools/base.py", line 90, in run

self._parse_input(tool_input)

File "/home/dev/anaconda3/envs/langchain/lib/python3.8/site-packages/langchain/tools/base.py", line 58, in _parse_input

input_args.validate({key_: tool_input})

File "pydantic/main.py", line 711, in pydantic.main.BaseModel.validate

File "pydantic/main.py", line 341, in pydantic.main.BaseModel.__init__

pydantic.error_wrappers.ValidationError: 1 validation error for WriteFileInput

text

field required (type=value_error.missing)`

| File cannot be written: WriteFileTool() throws validation error for WriteFileInput text | https://api.github.com/repos/langchain-ai/langchain/issues/3165/comments | 6 | 2023-04-19T18:21:47Z | 2023-09-28T16:07:55Z | https://github.com/langchain-ai/langchain/issues/3165 | 1,675,398,921 | 3,165 |

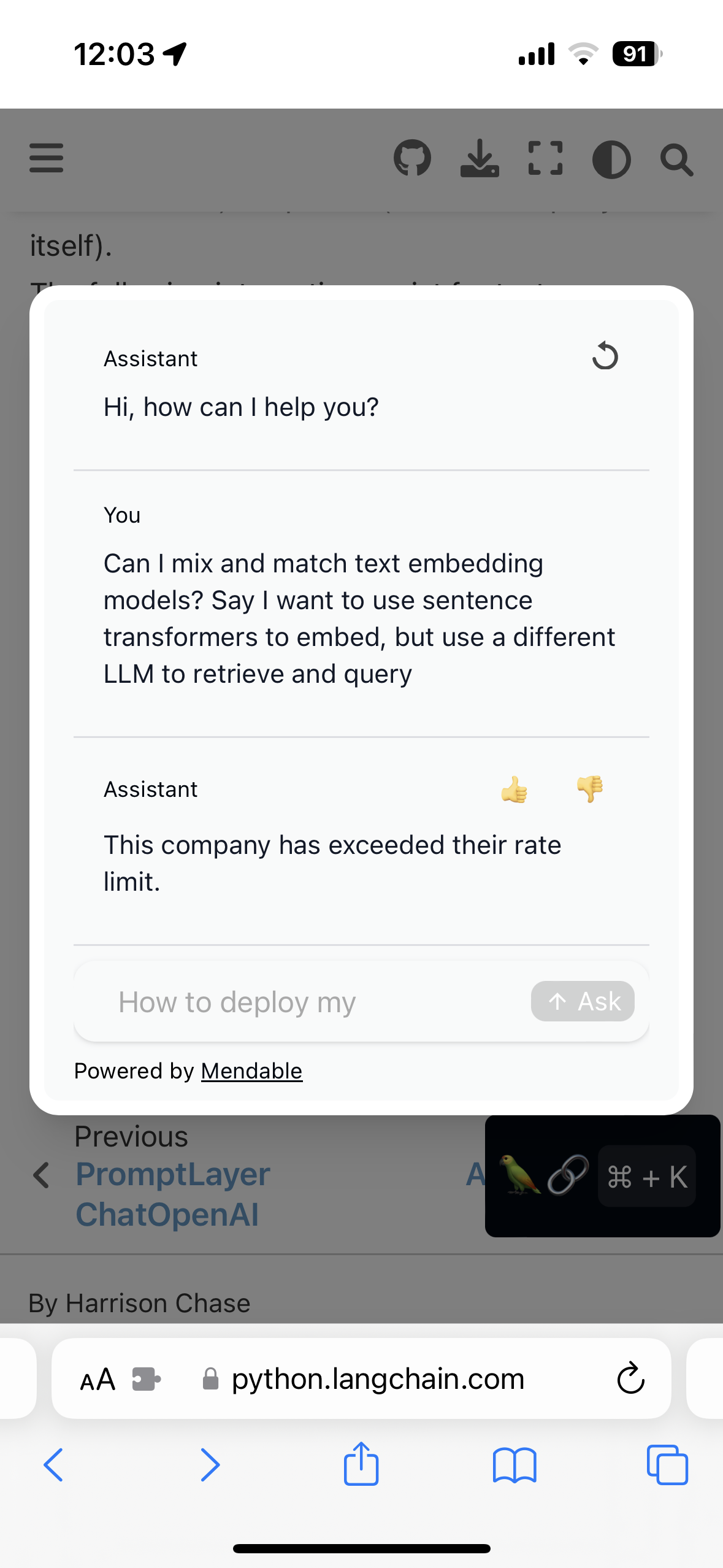

[

"langchain-ai",

"langchain"

] | Just wanted to flag this. Not sure if it's a me problem or if there's an issue elsewhere. Searched the issues for the same problem and couldn't find it.

| Docs Chat Rate Limited | https://api.github.com/repos/langchain-ai/langchain/issues/3162/comments | 1 | 2023-04-19T17:07:40Z | 2023-09-10T16:30:36Z | https://github.com/langchain-ai/langchain/issues/3162 | 1,675,301,097 | 3,162 |

[

"langchain-ai",

"langchain"

] | The console output when running a tool is missing the "Observation" and "Thought" prefixes.

I noticed this when using the SQL Toolkit, but other tools are likely affected.

Here is the current INCORRECT output format:

```

> Entering new AgentExecutor chain...

Action: list_tables_sql_db

Action Input: ""invoice_items, invoices, tracks, sqlite_sequence, employees, media_types, sqlite_stat1, customers, playlists, playlist_track, albums, genres, artistsThere is a table called "employees" that I can query.

Action: schema_sql_db

Action Input: "employees"

```

Here is the expected output format:

```

> Entering new AgentExecutor chain...

Action: list_tables_sql_db

Action Input: ""

Observation: invoice_items, invoices, tracks, sqlite_sequence, employees, media_types, sqlite_stat1, customers, playlists, playlist_track, albums, genres, artists

Thought:There is a table called "employees" that I can query.

Action: schema_sql_db

Action Input: "employees"

```

Note: this appears to only affect the console output. The `agent_scratchpad` is updated correctly with the "Observation" and "Thought" prefixes. | Missing Observation and Thought prefix in output | https://api.github.com/repos/langchain-ai/langchain/issues/3157/comments | 4 | 2023-04-19T15:15:26Z | 2023-04-20T16:46:28Z | https://github.com/langchain-ai/langchain/issues/3157 | 1,675,129,012 | 3,157 |

[

"langchain-ai",

"langchain"

] | I am considering implementing a new tool to give LLMs the ability to send SMS text using the Twilio API. @hwchase17 is this worth implementing? if so I'll submit a PR shortly. | New Tool: Twilio | https://api.github.com/repos/langchain-ai/langchain/issues/3156/comments | 7 | 2023-04-19T14:37:14Z | 2023-09-27T16:07:56Z | https://github.com/langchain-ai/langchain/issues/3156 | 1,675,043,935 | 3,156 |

[

"langchain-ai",

"langchain"

] | For writing more abstracted code the variables in langchain.chat_model and model in langchain.llms should have the same way of retrieving the model version. Therefor both variables should have the same name. | [feat] the variable model_name from langchain.chat_model should have the same name as the variable model from langchain.llms | https://api.github.com/repos/langchain-ai/langchain/issues/3154/comments | 1 | 2023-04-19T13:51:42Z | 2023-09-10T16:30:41Z | https://github.com/langchain-ai/langchain/issues/3154 | 1,674,945,820 | 3,154 |

[

"langchain-ai",

"langchain"

] | I tried to merge two FAISS indices:

index1 = FAISS.from_documents(doc1, embeddings)

index2=FAISS.from_documents(doc2, embeddings)

after I do index1.merge_from(index2), and then do

ret = index2.as_retriever()

ret.get_relevant_documents(query)

it returns and empty list [].

If index1 is saved_local, can I add index2 without loading index1 to memory, perhaps called merge_local? (open index.faiss and index.pkl and write line by line)

| does merging index1.merge_from(index2) dump index2? | https://api.github.com/repos/langchain-ai/langchain/issues/3152/comments | 2 | 2023-04-19T11:17:25Z | 2023-07-21T15:11:27Z | https://github.com/langchain-ai/langchain/issues/3152 | 1,674,697,052 | 3,152 |

[

"langchain-ai",

"langchain"

] | I'm talking about langchain, langchain-backend and langchain-frontend here.

https://python.langchain.com/en/latest/tracing/local_installation.html

Could give people a starting off point if they want to use langchain with tracing. I'll share mine here:

```

version: '3.7'

services:

your-app-name-containing-langchain:

container_name: your-app-name-containing-langchain

image: your/image

ports:

- 5000:5000

build:

context: .

dockerfile: Dockerfile

env_file: .env

volumes:

- ./:/app

langchain-frontend:

container_name: langchain-frontend

image: notlangchain/langchainplus-frontend:latest

ports:

- 4173:4173

environment:

- BACKEND_URL=http://langchain-backend:8000

- PUBLIC_BASE_URL=http://localhost:8000

- PUBLIC_DEV_MODE=true

depends_on:

- langchain-backend

langchain-backend:

container_name: langchain-backend

image: notlangchain/langchainplus:latest

environment:

- PORT=8000

- LANGCHAIN_ENV=local

ports:

- 8000:8000

depends_on:

- langchain-db

langchain-db:

container_name: langchain-db

image: postgres:14.1

environment:

- POSTGRES_PASSWORD=postgres

- POSTGRES_USER=postgres

- POSTGRES_DB=postgres

ports:

- 5432:5432

``` | Thought about a docker-compose file to run langchain's whole ecosystem? | https://api.github.com/repos/langchain-ai/langchain/issues/3150/comments | 6 | 2023-04-19T10:33:28Z | 2023-09-24T16:09:43Z | https://github.com/langchain-ai/langchain/issues/3150 | 1,674,632,635 | 3,150 |

[

"langchain-ai",

"langchain"

] | I would like to implement the following feature.

### Description:

currently the sitemap loader (at `document_laoders.sitemap`) only works if the sitemap URL as web_path is passed directly. I propose adding a feature to improve the sitemap loader's functionality by enabling it to automatically discover sitemap URLs given any website URL (not necessarily the root URL). Typically, sitemap URLs can be found in the robots.txt or the homepage HTML as an href attribute.

The new feature would involve:

Checking the robots.txt file for sitemap URLs.

if not found in robots.txt, searching the homepage HTML for href attributes containing sitemap URLs.

If you believe this feature would be useful and beneficial for the project, please le me know, and I can submit a PR.

Best,

Pi | FEAT: Extend SitemapLoader to automatically discover sitemap URLs. | https://api.github.com/repos/langchain-ai/langchain/issues/3149/comments | 1 | 2023-04-19T10:19:24Z | 2023-09-10T16:30:46Z | https://github.com/langchain-ai/langchain/issues/3149 | 1,674,612,447 | 3,149 |

[

"langchain-ai",

"langchain"

] | I have a gpt-3.5-turbo model deployed on AzureOpenAI, however, I always keep getting this error.

**openai.error.InvalidRequestError: The API deployment for this resource does not exist. If you created the deployment within the last 5 minutes, please wait a moment and try again.**

`index_creator = VectorstoreIndexCreator(

embedding=OpenAIEmbeddings(

openai_api_key=openai_api_key,

model="gpt-3.5-turbo",

chunk_size=1,

)

)`

`indexed_document = index_creator.from_loaders([file_loader])`

`chain = RetrievalQA.from_chain_type(

llm=AzureOpenAI(

openai_api_key=openai_api_key,

deployment_name="gpt_35_turbo",

model_name="gpt-3.5-turbo",

),

chain_type="stuff",

retriever=indexed_document.vectorstore.as_retriever(),

input_key="user_prompt",

return_source_documents=True,

)`

`open_ai_response = chain({"user_prompt": query_param})` | Unable to use gpt-3.5-turbo deployed on Azure OpenAI with langchain embeddings. | https://api.github.com/repos/langchain-ai/langchain/issues/3148/comments | 10 | 2023-04-19T10:00:10Z | 2023-08-10T14:50:58Z | https://github.com/langchain-ai/langchain/issues/3148 | 1,674,581,862 | 3,148 |

[

"langchain-ai",

"langchain"

] | Recently I got a strange error when using FAISS `similarity_search_with_score_by_vector`. The line (https://github.com/hwchase17/langchain/blob/575b717d108984676e25afd0910ccccfdaf9693d/langchain/vectorstores/faiss.py#L170) generates errors:

```

TypeError: IndexFlat.search() missing 3 required positional arguments: 'k', 'distances', and 'labels'

```

It looks like FAISS own `search` function has different arguments (using `IndexFlat`)

```

search(self, n, x, k, distances, labels)

```

But `similarity_search_with_score_by_vector` worked one day ago. So is there any hints for this? | FAISS similarity search issue | https://api.github.com/repos/langchain-ai/langchain/issues/3147/comments | 11 | 2023-04-19T09:55:13Z | 2023-06-11T23:46:27Z | https://github.com/langchain-ai/langchain/issues/3147 | 1,674,572,859 | 3,147 |

[

"langchain-ai",

"langchain"

] | Hi, I'm trying to implement the memory stream in <[Generative Agents: Interactive Simulacra of Human Behavior](https://arxiv.org/abs/2304.03442)>.

This memory mode is built on top of a concept called `observation` which is something like `Lily is watching a movie`, `desk is idle`.

I think the most closest concept to `observation` in Langchain are entity memory and KG triple. All these three are describing a simple fact about a entity.

But what confuses me is that, in theory, a KG also foucses on entities(vertices). It seems KG can be seen as a storage form for the `entity memory`. In langchain, they differ in the prompt template for info extraction, which affects how LLM extract the info. Once the info has been extracted, the format of the text used to present it to the LLM is also nearly identical.

So my questions are, what's the positioning for entity memory and KG memory, and if I try to implement the memory stream, is it appropriate to reuse one of these two to generate `observation`?

| Implementing the memory stream in <Generative Agents: Interactive Simulacra of Human Behavior> | https://api.github.com/repos/langchain-ai/langchain/issues/3145/comments | 1 | 2023-04-19T09:28:23Z | 2023-09-10T16:30:51Z | https://github.com/langchain-ai/langchain/issues/3145 | 1,674,529,524 | 3,145 |

[

"langchain-ai",

"langchain"

] | ```

from langchain import OpenAI

from langchain.chains import RetrievalQAWithSourcesChain

from langchain.prompts import PromptTemplate

from langchain.chains.qa_with_sources import load_qa_with_sources_chain

chain = RetrievalQAWithSourcesChain.from_chain_type(OpenAI(temperature=0), chain_type="stuff", retriever=rds.as_retriever())

```

While running above codes, it outputs the following error:

```

---------------------------------------------------------------------------

AttributeError Traceback (most recent call last)

Cell In[14], line 5

4 from langchain.chains.qa_with_sources import load_qa_with_sources_chain

----> 5 chain = RetrievalQAWithSourcesChain.from_chain_type(OpenAI(temperature=0), chain_type="stuff", retriever=rds.as_retriever())

File ~/opt/anaconda3/lib/python3.10/site-packages/langchain/chains/qa_with_sources/base.py:76, in BaseQAWithSourcesChain.from_chain_type(cls, llm, chain_type, chain_type_kwargs, **kwargs)

74 """Load chain from chain type."""

75 _chain_kwargs = chain_type_kwargs or {}

---> 76 combine_document_chain = load_qa_with_sources_chain(

77 llm, chain_type=chain_type, **_chain_kwargs

78 )

79 return cls(combine_documents_chain=combine_document_chain, **kwargs)

File ~/opt/anaconda3/lib/python3.10/site-packages/langchain/chains/qa_with_sources/loading.py:171, in load_qa_with_sources_chain(llm, chain_type, verbose, **kwargs)

166 raise ValueError(

167 f"Got unsupported chain type: {chain_type}. "

168 f"Should be one of {loader_mapping.keys()}"

169 )

170 _func: LoadingCallable = loader_mapping[chain_type]

--> 171 return _func(llm, verbose=verbose, **kwargs)

File ~/opt/anaconda3/lib/python3.10/site-packages/langchain/chains/qa_with_sources/loading.py:56, in _load_stuff_chain(llm, prompt, document_prompt, document_variable_name, verbose, **kwargs)

48 def _load_stuff_chain(

49 llm: BaseLanguageModel,

50 prompt: BasePromptTemplate = stuff_prompt.PROMPT,

(...)

54 **kwargs: Any,

55 ) -> StuffDocumentsChain:

---> 56 llm_chain = LLMChain(llm=llm, prompt=prompt, verbose=verbose)

57 return StuffDocumentsChain(

58 llm_chain=llm_chain,

59 document_variable_name=document_variable_name,

(...)

62 **kwargs,

63 )

File ~/opt/anaconda3/lib/python3.10/site-packages/pydantic/main.py:339, in pydantic.main.BaseModel.__init__()

File ~/opt/anaconda3/lib/python3.10/site-packages/pydantic/main.py:1076, in pydantic.main.validate_model()

File ~/opt/anaconda3/lib/python3.10/site-packages/pydantic/fields.py:867, in pydantic.fields.ModelField.validate()

File ~/opt/anaconda3/lib/python3.10/site-packages/pydantic/fields.py:1151, in pydantic.fields.ModelField._apply_validators()

File ~/opt/anaconda3/lib/python3.10/site-packages/pydantic/class_validators.py:304, in pydantic.class_validators._generic_validator_cls.lambda4()

File ~/opt/anaconda3/lib/python3.10/site-packages/langchain/chains/base.py:57, in Chain.set_verbose(cls, verbose)

52 """If verbose is None, set it.

53

54 This allows users to pass in None as verbose to access the global setting.

55 """

56 if verbose is None:

---> 57 return _get_verbosity()

58 else:

59 return verbose

File ~/opt/anaconda3/lib/python3.10/site-packages/langchain/chains/base.py:17, in _get_verbosity()

16 def _get_verbosity() -> bool:

---> 17 return langchain.verbose

AttributeError: module 'langchain' has no attribute 'verbose'

```

However, when I run with langchain==0.0.142, this error doesn't occur.

| Error occured after updating langchain version to the latest 0.0.144 for the example code using RetrievalQAWithSourcesChain | https://api.github.com/repos/langchain-ai/langchain/issues/3144/comments | 2 | 2023-04-19T09:26:44Z | 2024-05-21T06:02:37Z | https://github.com/langchain-ai/langchain/issues/3144 | 1,674,526,880 | 3,144 |

[

"langchain-ai",

"langchain"

] | null | How to change the max_token for different requests | https://api.github.com/repos/langchain-ai/langchain/issues/3138/comments | 1 | 2023-04-19T07:05:24Z | 2023-09-10T16:31:01Z | https://github.com/langchain-ai/langchain/issues/3138 | 1,674,296,816 | 3,138 |

[

"langchain-ai",

"langchain"

] | ### Discussed in https://github.com/hwchase17/langchain/discussions/3132

<div type='discussions-op-text'>

<sup>Originally posted by **srithedesigner** April 19, 2023</sup>

We used to use AzureOpenAI llm from langchain.llms with the text-davinci-003 model but after deploying GPT4 in Azure when trying to use GPT4, this error is being thrown:

`Must provide an 'engine' or 'deployment_id' parameter to create a <class 'openai.api_resources.chat_completion.ChatCompletion'>`

This is how we are initializing the model:

```python

model = AzureOpenAI(

streaming=streaming,

client=openai.ChatCompletion(),

callback_manager=callback,

deployment_name= "gpt4",

model_name="gpt-4-32k",

openai_api_key=env.cloud.openai_api_key,

temperature=temperature,

max_tokens=max_tokens,

verbose=verbose,

)

```

How do we use GPT4 withe the AzureOpenAI chain? Is it currently being supported? or are we initializing it wrong?

</div> | Not able to use GPT4 model with AzureOpenAI from from langchain.llms | https://api.github.com/repos/langchain-ai/langchain/issues/3137/comments | 12 | 2023-04-19T06:53:50Z | 2023-10-16T19:48:27Z | https://github.com/langchain-ai/langchain/issues/3137 | 1,674,281,383 | 3,137 |

[

"langchain-ai",

"langchain"

] | <br>

I'm trying to integrate gpt 4 azure open ai with Langchain but when i try to use it inside ConversationalRetrievalChain it is throwing some error <be>

```

raise error.InvalidRequestError(\\n *~*

openai.error.InvalidRequestError: Must provide an \'engine\' or

\'deployment_id\' parameter to create a <class

\'openai.api_resources.chat_completion.ChatCompletion\

```

<br>

but if I run standalone openai instance with Azure openai config it is working, I'm confused about whether langchain supports gpt 4 or if am I missing anything | InvalidRequestError Must provide an engine ( gpt 4 azure open ai ) | https://api.github.com/repos/langchain-ai/langchain/issues/3134/comments | 3 | 2023-04-19T05:57:43Z | 2023-09-24T16:09:53Z | https://github.com/langchain-ai/langchain/issues/3134 | 1,674,221,239 | 3,134 |

[

"langchain-ai",

"langchain"

] | Python 3.11.1

langchain==0.0.143

llama-cpp-python==0.1.34

Model works when I use Dalai. Also happens with Llama 7B.

Code here (from [langchain documentation](https://python.langchain.com/en/latest/modules/models/llms/integrations/llamacpp.html)):

```

from langchain.llms import LlamaCpp

from langchain import PromptTemplate, LLMChain

import os

template = """Question: {question}

Answer: Let's think step by step."""

prompt = PromptTemplate(template=template, input_variables=["question"])

file_path = os.path.abspath("<my path>/dalai/alpaca/models/7B/ggml-model-q4_0.bin")

llm = LlamaCpp(model_path=file_path, verbose=True)

llm_chain = LLMChain(prompt=prompt, llm=llm, verbose=True)

question = "What NFL team won the Super Bowl in the year Justin Bieber was born?"

llm_chain.run(question)

```

Output:

```

llama.cpp: loading model from <my_path>

llama.cpp: can't use mmap because tensors are not aligned; convert to new format to avoid this

llama_model_load_internal: format = 'ggml' (old version with low tokenizer quality and no mmap support)

llama_model_load_internal: n_vocab = 32000

llama_model_load_internal: n_ctx = 512

llama_model_load_internal: n_embd = 4096

llama_model_load_internal: n_mult = 256

llama_model_load_internal: n_head = 32

llama_model_load_internal: n_layer = 32

llama_model_load_internal: n_rot = 128

llama_model_load_internal: ftype = 2 (mostly Q4_0)

llama_model_load_internal: n_ff = 11008

llama_model_load_internal: n_parts = 1

llama_model_load_internal: model size = 7B

llama_model_load_internal: ggml ctx size = 4113739.11 KB

llama_model_load_internal: mem required = 5809.32 MB (+ 2052.00 MB per state)

...................................................................................................

.

llama_init_from_file: kv self size = 512.00 MB

AVX = 1 | AVX2 = 1 | AVX512 = 1 | FMA = 1 | NEON = 0 | ARM_FMA = 0 | F16C = 1 | FP16_VA = 0 | WASM_SIMD = 0 | BLAS = 1 | SSE3 = 1 | VSX = 0 |

> Entering new LLMChain chain...

Prompt after formatting:

Question: What NFL team won the Super Bowl in the year Justin Bieber was born?

Answer: Let's think step by step.

llama_print_timings: load time = 906.87 ms

llama_print_timings: sample time = 173.02 ms / 123 runs ( 1.41 ms per run)

llama_print_timings: prompt eval time = 3821.62 ms / 34 tokens ( 112.40 ms per token)

llama_print_timings: eval time = 24772.41 ms / 122 runs ( 203.05 ms per run)

llama_print_timings: total time = 28788.58 ms

> Finished chain.

```

What could be causing this? It seems like the model loads properly and does something. Thanks!

| Alpaca 7B loads with LlamaCpp, no response from model | https://api.github.com/repos/langchain-ai/langchain/issues/3129/comments | 1 | 2023-04-19T04:56:54Z | 2023-04-19T06:45:04Z | https://github.com/langchain-ai/langchain/issues/3129 | 1,674,171,032 | 3,129 |

[

"langchain-ai",

"langchain"

] | I'm trying to use the WebBrowserTool and I got the foirbidden code (403) on some sites. I tried to add the user agent and also use proxies but I still get 403. I tried to send the headers directly on the tool contructor or the axios config.

```

const headers = {

'user-agent':

'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/74.0.3729.131 Safari/537.36',

'upgrade-insecure-requests': '1',

'accept':

'text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3',

'accept-encoding': 'gzip, deflate, br',

'accept-language': 'en-US,en;q=0.9,en;q=0.8',

};

new WebBrowser({

model,

headers,

embeddings,

axiosConfig: {

headers

},

})

``` | WebBrowserTool 403 error | https://api.github.com/repos/langchain-ai/langchain/issues/3118/comments | 0 | 2023-04-18T22:48:32Z | 2023-04-18T23:24:13Z | https://github.com/langchain-ai/langchain/issues/3118 | 1,673,926,522 | 3,118 |

[

"langchain-ai",

"langchain"

] | I'm not sure if it's a typo or not but the default prompt in [langchain](https://github.com/hwchase17/langchain/tree/master/langchain)/[langchain](https://github.com/hwchase17/langchain/tree/master/langchain)/[chains](https://github.com/hwchase17/langchain/tree/master/langchain/chains)/[summarize](https://github.com/hwchase17/langchain/tree/master/langchain/chains/summarize)/[refine_prompts.py](https://github.com/hwchase17/langchain/tree/master/langchain/chains/summarize/refine_prompts.py) seems to miss a empty string or a `\n `

```

REFINE_PROMPT_TMPL = (

"Your job is to produce a final summary\n"

"We have provided an existing summary up to a certain point: {existing_answer}\n"

"We have the opportunity to refine the existing summary"

"(only if needed) with some more context below.\n"

"------------\n"

"{text}\n"

"------------\n"

"Given the new context, refine the original summary"

"If the context isn't useful, return the original summary."

)

```

It will produce `refine the original summaryIf the context isn't useful` and `existing summary(only if needed)`

I could proabbly fix it with a PR ( if it's unintentionnal), but I prefer to let someone more competent to do it as i'm not used to create PR's in large projects like this. | Missing new lines or empty spaces in refine default prompt. | https://api.github.com/repos/langchain-ai/langchain/issues/3117/comments | 4 | 2023-04-18T22:32:58Z | 2023-08-31T14:29:51Z | https://github.com/langchain-ai/langchain/issues/3117 | 1,673,914,308 | 3,117 |

[

"langchain-ai",

"langchain"

] | I run the following code:

```py

from langchain.chat_models import ChatOpenAI

from langchain import PromptTemplate, LLMChain

from langchain.prompts.chat import (

ChatPromptTemplate,

SystemMessagePromptTemplate,

AIMessagePromptTemplate,

HumanMessagePromptTemplate,

)

from langchain.schema import (

AIMessage,

HumanMessage,

SystemMessage

)

from langchain.callbacks.base import CallbackManager

from langchain.callbacks.streaming_stdout import StreamingStdOutCallbackHandler

gpt_4 = ChatOpenAI(model_name="gpt-4", streaming=True, callback_manager=CallbackManager([StreamingStdOutCallbackHandler()]), verbose=True, temperature=0)

template="You are ChatGPT, a large language model trained by OpenAI. Follow the user's instructions carefully. Respond using markdown."

system_message_prompt = SystemMessagePromptTemplate.from_template(template)

human_template="{text}"

human_message_prompt = HumanMessagePromptTemplate.from_template(human_template)

prompt = ChatPromptTemplate.from_messages([system_message_prompt, human_message_prompt])

chain = LLMChain(llm=gpt_4, prompt=prompt)

from langchain.callbacks import get_openai_callback

with get_openai_callback() as cb:

text = "How are you?"

res = chain.run(text=text)

print(cb)

```

However when I print the callback value, I get back info that I used 0 credits, even though I know I used some.

```

I'm an AI language model, so I don't have feelings or emotions like humans do. However, I'm here to help you with any questions or information you need. What can I help you with today?Tokens Used: 0

Prompt Tokens: 0

Completion Tokens: 0

Successful Requests: 0

Total Cost (USD): $0.0

```

Am I doing something wrong, or is this an issue? | get_openai_callback doesn't return the credits for ChatGPT chain | https://api.github.com/repos/langchain-ai/langchain/issues/3114/comments | 22 | 2023-04-18T21:28:20Z | 2024-02-19T08:46:12Z | https://github.com/langchain-ai/langchain/issues/3114 | 1,673,855,423 | 3,114 |

[

"langchain-ai",

"langchain"

] | langchain was installed via `pip`

```

Traceback (most recent call last):

File "/Users/oleksandrdanshyn/github.com/dalazx/langchain/test.py", line 1, in <module>

from langchain.agents import load_tools, initialize_agent, AgentType

File "/Users/oleksandrdanshyn/github.com/dalazx/langchain/langchain/__init__.py", line 6, in <module>

from langchain.agents import MRKLChain, ReActChain, SelfAskWithSearchChain

File "/Users/oleksandrdanshyn/github.com/dalazx/langchain/langchain/agents/__init__.py", line 2, in <module>

from langchain.agents.agent import (

File "/Users/oleksandrdanshyn/github.com/dalazx/langchain/langchain/agents/agent.py", line 17, in <module>

from langchain.chains.base import Chain

File "/Users/oleksandrdanshyn/github.com/dalazx/langchain/langchain/chains/__init__.py", line 2, in <module>

from langchain.chains.api.base import APIChain

File "/Users/oleksandrdanshyn/github.com/dalazx/langchain/langchain/chains/api/base.py", line 8, in <module>

from langchain.chains.api.prompt import API_RESPONSE_PROMPT, API_URL_PROMPT

File "/Users/oleksandrdanshyn/github.com/dalazx/langchain/langchain/chains/api/prompt.py", line 2, in <module>

from langchain.prompts.prompt import PromptTemplate

File "/Users/oleksandrdanshyn/github.com/dalazx/langchain/langchain/prompts/__init__.py", line 3, in <module>

from langchain.prompts.chat import (

File "/Users/oleksandrdanshyn/github.com/dalazx/langchain/langchain/prompts/chat.py", line 10, in <module>

from langchain.memory.buffer import get_buffer_string

File "/Users/oleksandrdanshyn/github.com/dalazx/langchain/langchain/memory/__init__.py", line 11, in <module>

from langchain.memory.entity import (

File "/Users/oleksandrdanshyn/github.com/dalazx/langchain/langchain/memory/entity.py", line 8, in <module>

from langchain.chains.llm import LLMChain

File "/Users/oleksandrdanshyn/github.com/dalazx/langchain/langchain/chains/llm.py", line 11, in <module>

from langchain.prompts.prompt import PromptTemplate

File "/Users/oleksandrdanshyn/github.com/dalazx/langchain/langchain/prompts/prompt.py", line 8, in <module>

from jinja2 import Environment, meta

ModuleNotFoundError: No module named 'jinja2'

```

since `prompt.py` is one of the fundamental modules and it unconditionally imports `jinja2`, `jinja2` should probably be added to the list of required dependencies. | jinja2 is not optional | https://api.github.com/repos/langchain-ai/langchain/issues/3113/comments | 4 | 2023-04-18T21:19:47Z | 2023-09-24T16:09:58Z | https://github.com/langchain-ai/langchain/issues/3113 | 1,673,847,319 | 3,113 |

[

"langchain-ai",

"langchain"

] | Gathering more details on this... but the solution needs to include `search` or `searchlight` as a starting point. (see https://github.com/hwchase17/langchain/issues/2113) | Support more robust redis module list | https://api.github.com/repos/langchain-ai/langchain/issues/3111/comments | 1 | 2023-04-18T20:35:46Z | 2023-05-03T13:54:45Z | https://github.com/langchain-ai/langchain/issues/3111 | 1,673,798,260 | 3,111 |

[

"langchain-ai",

"langchain"

] | The ChatOpenAI LLM retries a completion if a content-moderation exception is raised by OpenAI.

Code [here](https://github.com/hwchase17/langchain/blob/d54c88aa2140f27c36fa18375f942e5b239799ee/langchain/chat_models/openai.py#L45)

#### Request : Do not retry if exception type is 'content moderation'

In our experience, Content Moderation errors have a near 100% reproducibility, which means that the prompt fails on every retry. This means that langchain racks up unnecessary billable calls for an unfixable exception.

#### Related [Request](https://github.com/hwchase17/langchain/issues/3109) - Allow custom retry_decorator to be passed by the user at LLM definition

| Do not retry if content moderation exception is raised by OpenAI | https://api.github.com/repos/langchain-ai/langchain/issues/3110/comments | 1 | 2023-04-18T19:48:04Z | 2023-09-10T16:31:06Z | https://github.com/langchain-ai/langchain/issues/3110 | 1,673,736,825 | 3,110 |

[

"langchain-ai",

"langchain"

] | The retry decorator for ChatOpenAI is hardcoded [here](https://github.com/hwchase17/langchain/blob/d54c88aa2140f27c36fa18375f942e5b239799ee/langchain/chat_models/openai.py#L39)

Allow the user to supply a custom retry_decorator. | Allow custom retry_decorator to be passed to the LLM | https://api.github.com/repos/langchain-ai/langchain/issues/3109/comments | 3 | 2023-04-18T19:45:57Z | 2023-12-14T16:09:08Z | https://github.com/langchain-ai/langchain/issues/3109 | 1,673,734,340 | 3,109 |

[

"langchain-ai",

"langchain"

] | langchain Version: 0.0.143

SHA: aad0a498ac693acd304cf66e16a6430f5c0410a8

---

In [1]: import numexpr

In [2]: numexpr.__version__

Out[2]: '2.8.4'

-----

```python

llm_math.run("what is the common denominator of 2 and 5")

```

Stack trace:

> Entering new LLMMathChain chain...

what is the common denominator of 2 and 5

```text

LCM(2, 5)

```

...numexpr.evaluate("LCM(2, 5)")...

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

File ~/src/langchain/langchain/chains/llm_math/base.py:60, in LLMMathChain._evaluate_expression(self, expression)

58 local_dict = {"pi": math.pi, "e": math.e}

59 output = str(

---> 60 numexpr.evaluate(

61 expression.strip(),

62 global_dict={}, # restrict access to globals

63 local_dict=local_dict, # add common mathematical functions

64 )

65 )

66 except Exception as e:

File ~/.pyenv/versions/3.10.2/envs/langchain_3_10/lib/python3.10/site-packages/numexpr/necompiler.py:817, in evaluate(ex, local_dict, global_dict, out, order, casting, **kwargs)

816 if expr_key not in _names_cache:

--> 817 _names_cache[expr_key] = getExprNames(ex, context)

818 names, ex_uses_vml = _names_cache[expr_key]

File ~/.pyenv/versions/3.10.2/envs/langchain_3_10/lib/python3.10/site-packages/numexpr/necompiler.py:704, in getExprNames(text, context)

703 def getExprNames(text, context):

--> 704 ex = stringToExpression(text, {}, context)

705 ast = expressionToAST(ex)

File ~/.pyenv/versions/3.10.2/envs/langchain_3_10/lib/python3.10/site-packages/numexpr/necompiler.py:289, in stringToExpression(s, types, context)

288 # now build the expression

--> 289 ex = eval(c, names)

290 if expressions.isConstant(ex):

File <expr>:1

TypeError: 'VariableNode' object is not callable

During handling of the above exception, another exception occurred:

ValueError Traceback (most recent call last)

Cell In[11], line 1

----> 1 llm_math.run("what is the common denominator of 2 and 5")

File ~/src/langchain/langchain/chains/base.py:213, in Chain.run(self, *args, **kwargs)

211 if len(args) != 1:

212 raise ValueError("`run` supports only one positional argument.")

--> 213 return self(args[0])[self.output_keys[0]]

215 if kwargs and not args:

216 return self(kwargs)[self.output_keys[0]]

File ~/src/langchain/langchain/chains/base.py:116, in Chain.__call__(self, inputs, return_only_outputs)

114 except (KeyboardInterrupt, Exception) as e:

115 self.callback_manager.on_chain_error(e, verbose=self.verbose)

--> 116 raise e

117 self.callback_manager.on_chain_end(outputs, verbose=self.verbose)

118 return self.prep_outputs(inputs, outputs, return_only_outputs)

File ~/src/langchain/langchain/chains/base.py:113, in Chain.__call__(self, inputs, return_only_outputs)

107 self.callback_manager.on_chain_start(

108 {"name": self.__class__.__name__},

109 inputs,

110 verbose=self.verbose,

111 )

112 try:

--> 113 outputs = self._call(inputs)

114 except (KeyboardInterrupt, Exception) as e:

115 self.callback_manager.on_chain_error(e, verbose=self.verbose)

File ~/src/langchain/langchain/chains/llm_math/base.py:131, in LLMMathChain._call(self, inputs)

127 self.callback_manager.on_text(inputs[self.input_key], verbose=self.verbose)

128 llm_output = llm_executor.predict(

129 question=inputs[self.input_key], stop=["```output"]

130 )

--> 131 return self._process_llm_result(llm_output)

File ~/src/langchain/langchain/chains/llm_math/base.py:78, in LLMMathChain._process_llm_result(self, llm_output)

76 if text_match:

77 expression = text_match.group(1)

---> 78 output = self._evaluate_expression(expression)

79 self.callback_manager.on_text("\nAnswer: ", verbose=self.verbose)

80 self.callback_manager.on_text(output, color="yellow", verbose=self.verbose)

File ~/src/langchain/langchain/chains/llm_math/base.py:67, in LLMMathChain._evaluate_expression(self, expression)

59 output = str(

60 numexpr.evaluate(

61 expression.strip(),

(...)

64 )

65 )

66 except Exception as e:

---> 67 raise ValueError(f"{e}. Please try again with a valid numerical expression")

69 # Remove any leading and trailing brackets from the output

70 return re.sub(r"^\[|\]$", "", output)

ValueError: 'VariableNode' object is not callable. Please try again with a valid numerical expression

| Encountering exceptions when using LLMathChain on master | https://api.github.com/repos/langchain-ai/langchain/issues/3108/comments | 7 | 2023-04-18T19:44:09Z | 2023-10-02T18:52:56Z | https://github.com/langchain-ai/langchain/issues/3108 | 1,673,732,281 | 3,108 |

[

"langchain-ai",

"langchain"

] | I have been trying to add memory to my `create_pandas_dataframe_agent` agent and ran into some issues.

I created the agent like this

```python

agent = create_pandas_dataframe_agent(

llm=llm,

df=df,

prefix=prefix,

suffix=suffix,

max_iterations=4,

input_variables=["df", "chat_history", "input", "agent_scratchpad"],

)

```

and ran into

```Traceback (most recent call last):

File "/path/projects/test/langchain/main.py", line 42, in <module>

a = agent.run("This is a test")

^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/opt/homebrew/lib/python3.11/site-packages/langchain/chains/base.py", line 213, in run

return self(args[0])[self.output_keys[0]]

^^^^^^^^^^^^^

File "/opt/homebrew/lib/python3.11/site-packages/langchain/chains/base.py", line 106, in __call__

inputs = self.prep_inputs(inputs)

^^^^^^^^^^^^^^^^^^^^^^^^

File "/opt/homebrew/lib/python3.11/site-packages/langchain/chains/base.py", line 185, in prep_inputs

raise ValueError(

ValueError: A single string input was passed in, but this chain expects multiple inputs ({'input', 'chat_history'}). When a chain expects multiple inputs, please call it by passing in a dictionary, eg `chain({'foo': 1, 'bar': 2})

```

I was able to fix it by modifying the `create_pandas_dataframe_agent` to accept the memory object and then passing that along to the `AgentCreator` like so:

``` python

return AgentExecutor.from_agent_and_tools(

agent=agent,

tools=tools,

verbose=verbose,

return_intermediate_steps=return_intermediate_steps,

max_iterations=max_iterations,

max_execution_time=max_execution_time,

early_stopping_method=early_stopping_method,

memory=memory,

)

```

Not sure what I did wrong or if I am misunderstanding something in general, maybe this is just the current behavior and adding memory would be a feature request? | Getting ConversationBufferMemory to work with create_pandas_dataframe_agent | https://api.github.com/repos/langchain-ai/langchain/issues/3106/comments | 26 | 2023-04-18T19:20:33Z | 2024-06-30T16:02:47Z | https://github.com/langchain-ai/langchain/issues/3106 | 1,673,703,559 | 3,106 |

[

"langchain-ai",

"langchain"

] | Is there any ETA on this new LLM integration? | Is Google PaLM integration in the pipeline? | https://api.github.com/repos/langchain-ai/langchain/issues/3101/comments | 4 | 2023-04-18T17:30:34Z | 2023-09-26T16:08:00Z | https://github.com/langchain-ai/langchain/issues/3101 | 1,673,559,016 | 3,101 |

[

"langchain-ai",

"langchain"

] | Model page here: https://huggingface.co/Writer/camel-5b-hf

| Add bindings for Camel model API | https://api.github.com/repos/langchain-ai/langchain/issues/3099/comments | 1 | 2023-04-18T17:18:55Z | 2023-04-21T01:07:06Z | https://github.com/langchain-ai/langchain/issues/3099 | 1,673,543,750 | 3,099 |

[

"langchain-ai",

"langchain"

] | Glad to see in #2859 @hwchase17 added a `TimeWeightedVectorStoreRetriever`.

I'm creating a game so I want `last_accessed_at` can be things like... number of rounds, turns and others.

I would make it in one or two days. Is there anyone want to review it?

---

I'm reproducing the Generative Agent article, repo: [ofey404/WalkingShadows](https://github.com/ofey404/WalkingShadows)

And I'd like to create a more generic `TimeWeightedVectorStoreRetriever`. Currently it's based on datetime like this:

```python

expected_score = (

1.0 - time_weighted_retriever.decay_rate

) ** expected_hours_passed + vector_salience

```

In my case `last_accessed_at` can be the number of rounds. | [feat] I want to contribute a more generic `TimeWeightedVectorStoreRetriever` | https://api.github.com/repos/langchain-ai/langchain/issues/3098/comments | 1 | 2023-04-18T17:00:09Z | 2023-09-10T16:31:11Z | https://github.com/langchain-ai/langchain/issues/3098 | 1,673,518,661 | 3,098 |

[

"langchain-ai",

"langchain"

] | Trying to import langchain 0.0.128 and up in AWS Lambda (Using serverless framework) fails with this:

I suspect that this is the PR that causes the issue.

Maybe the __version__ line should be wrapped in a try catch in case the code is ran on an environment where the metadata for packages is not available, as it happens with serverless python requirements.

https://github.com/hwchase17/langchain/pull/2221

```

[ERROR] PackageNotFoundError: No package metadata was found for langchain

Traceback (most recent call last):

File "/var/task/serverless_sdk/__init__.py", line 144, in wrapped_handler

return user_handler(event, context)

File "/var/task/s_event_webhook.py", line 25, in error_handler

raise e

File "/var/task/s_event_webhook.py", line 20, in <module>

user_handler = serverless_sdk.get_user_handler('endpoints.event_webhook.handler')

File "/var/task/serverless_sdk/__init__.py", line 56, in get_user_handler

user_module = import_module(user_module_name)

File "/var/lang/lib/python3.10/importlib/__init__.py", line 126, in import_module

return _bootstrap._gcd_import(name[level:], package, level)

File "<frozen importlib._bootstrap>", line 1050, in _gcd_import

File "<frozen importlib._bootstrap>", line 1027, in _find_and_load

File "<frozen importlib._bootstrap>", line 1006, in _find_and_load_unlocked

File "<frozen importlib._bootstrap>", line 688, in _load_unlocked

File "<frozen importlib._bootstrap_external>", line 883, in exec_module

File "<frozen importlib._bootstrap>", line 241, in _call_with_frames_removed

File "/var/task/endpoints/event_webhook.py", line 8, in <module>

from chatlib.agent import handle_event

File "/var/task/chatlib/agent.py", line 7, in <module>

from langchain import LLMChain

File "/var/task/langchain/__init__.py", line 58, in <module>

__version__ = metadata.version(__package__)

File "/var/lang/lib/python3.10/importlib/metadata/__init__.py", line 996, in version

return distribution(distribution_name).version

File "/var/lang/lib/python3.10/importlib/metadata/__init__.py", line 969, in distribution

return Distribution.from_name(distribution_name)

File "/var/lang/lib/python3.10/importlib/metadata/__init__.py", line 548, in from_name

raise PackageNotFoundError(name)

``` | Langchain not working on Lambda from 0.0.128 | https://api.github.com/repos/langchain-ai/langchain/issues/3097/comments | 1 | 2023-04-18T16:48:58Z | 2023-04-19T00:38:20Z | https://github.com/langchain-ai/langchain/issues/3097 | 1,673,503,749 | 3,097 |

[

"langchain-ai",

"langchain"

] | I was wondering how to use the `return_intermediate_step` flag for agent executors this is the current Appoach I found to be working:

Ok ive dug a little deeper and it seems like setting `return_intermediate_step = True` when creating the agent with `initialize_agent` works. Only when using a memory you need to set `memory.output = "output"` otherwise it will error when trying to save the context.

I had to do a minor modification in https://github.com/hwchase17/langchain/blob/894c272a562471aadc1eb48e4a2992923533dea0/langchain/memory/chat_memory.py#L32-L36

Cause when using agents the outputs can be lists wich would error when saving the context.

If I modify it like this:

```python

def save_context(self, inputs: Dict[str, Any], outputs: Dict[str, str]) -> None:

"""Save context from this conversation to buffer."""

input_str, output_str = self._get_input_output(inputs, outputs)

if not isinstance(input_str, list):

input_str = [input_str]

if not isinstance(output_str, list):

output_str = [output_str]

for input in input_str:

self.chat_memory.add_user_message(input)

for output in output_str:

self.chat_memory.add_ai_message(output)

```

Then even saving the context with memory works for the agent. ( you can also load an inital context from dict.

```python

import os

from langchain.callbacks import get_openai_callback

from langchain.agents import Tool

from langchain.agents import AgentType

from langchain.memory import ConversationBufferMemory

from langchain import OpenAI

from langchain.utilities import GoogleSearchAPIWrapper

from langchain.schema import messages_from_dict

from langchain.agents import initialize_agent

from langchain.chat_models import ChatOpenAI

from langchain.callbacks.base import CallbackManager

from langchain.callbacks.streaming_stdout import StreamingStdOutCallbackHandler

from langchain.memory import ConversationBufferMemory, ConversationSummaryBufferMemory, ConversationSummaryMemory

from langchain import OpenAI, ConversationChain

from langchain.prompts.chat import (

ChatPromptTemplate,

SystemMessagePromptTemplate,

AIMessagePromptTemplate,

HumanMessagePromptTemplate,

)

from langchain.prompts import (

ChatPromptTemplate,

MessagesPlaceholder,

SystemMessagePromptTemplate,

HumanMessagePromptTemplate

)

llm = ChatOpenAI(temperature=0, openai_api_key=OPENAI_KEY, model_name="gpt-3.5-turbo",

streaming=True, callback_manager=CallbackManager([StreamingStdOutCallbackHandler()]))

memory = ConversationSummaryBufferMemory(

return_messages=True, llm=llm, max_token_limit=150, memory_key="chat_history")

# set the output key so that memory doesn't error on save

memory.output_key = "output"

# You an input a previously saved agent state. like this:

state = [{'type': 'ai', 'data': {

'content': 'Nice to meet you, Tim!', 'additional_kwargs': {}}}]

search = GoogleSearchAPIWrapper(

google_api_key=GOOGLE_API_KEY, google_cse_id=my_cse_id)

tools = [

Tool(

name="Current Search",

func=search.run,

description="useful for when you need to answer questions about current events or the current state of the world"

),

]

memory.chat_memory.messages = messages_from_dict(state)

agent_chain = initialize_agent(

tools, llm, agent=AgentType.CONVERSATIONAL_REACT_DESCRIPTION, verbose=True, memory=memory, return_intermediate_steps=True)

MESSAGE = "What is currently the most popular web browser?"

with get_openai_callback() as cb:

out = agent_chain(

inputs=[MESSAGE])

# The output dict will also contain a 'intermediate_steps' key.

```

example output:

```python

out = {

...,

"intermediate_steps: [(AgentAction(tool='Current Search', tool_input='current most popular web browser', log='Do I need to use a tool? Yes\nAction: Current Search\nAction Input: current most popular web browser'), "Zooming into the internet browser market shares on different platforms, Chrome continues to dominate as the most popular browser on desktops with a market share\xa0... Feb 21, 2023 ... The most popular current browsers are Google Chrome, Apple's Safari, Microsoft Edge, and Firefox. Historically one of the large players in the\xa0... The usage share of web browsers is the portion, often expressed as a percentage, of visitors to a group of web sites that use a particular web browser. This graph shows the market share of browsers worldwide from Mar 2022 - Mar ... 56% 70% Chrome Safari Edge Firefox Samsung Internet Opera UC Browser Android\xa0... Feb 11, 2021 ... Google Chrome, then, is by far the most used browser, accounting for more than half of all web traffic, followed by Safari in a distant second\xa0... Here we examine the top five browsers in the US, in order of popularity. ... basically a pinned tab of recent sites that syncs between the desktop and\xa0... Google Chrome is the most popular and widely-used desktop web browser by far. ... This browser's current market share is slightly less than it was at this\xa0... Mar 15, 2023 ... Firefox; Google Chrome; Microsoft Edge; Apple Safari; Opera; Brave; Vivaldi; DuckDuckgo; Chromium; Epic. Comparison of Best Browser\xa0... Web browser, cookie & cache settings. HealthCare.gov is compatible with most popular web browsing software. This includes the most recent and commonly used\xa0... Browser Statistics. ❮ Home Next ❯. W3Schools' famous ... The Most Popular Browsers ... W3Schools' statistics may not be relevant to your web site.")]

...

```

This is most definitely not the right way to do this but also I'm not sure if there is a correct way yet :D

What I think would be really cool is to have something like a callback_manager also for agent actions.

That way you could develop applications using agents with immediate feedback while the agent is executed. | Usage of `return_intermediate_step` on Agents, and agent step callbacks | https://api.github.com/repos/langchain-ai/langchain/issues/3091/comments | 4 | 2023-04-18T14:09:22Z | 2023-09-20T11:26:27Z | https://github.com/langchain-ai/langchain/issues/3091 | 1,673,220,342 | 3,091 |

[

"langchain-ai",

"langchain"

] | Got a loop when asking for "What's the best BBQ in Kansas City" . When added to the prompt - "say cannot answer,if you don´t know the answer " did not stop the loop.

The loop only stopped after blowing up the context length

On the other hand, if using OpenAI old models, it worked fine . Using SerpAPIWrapper | Agent SELF_ASK_WITH_SEARCH does not work with ChatOpenAI models | https://api.github.com/repos/langchain-ai/langchain/issues/3090/comments | 5 | 2023-04-18T13:26:25Z | 2023-10-02T16:08:52Z | https://github.com/langchain-ai/langchain/issues/3090 | 1,673,135,511 | 3,090 |

[

"langchain-ai",

"langchain"

] | After reviewing the work done on https://github.com/hwchase17/langchain/pull/2859 and its accompanying examples, I propose creating Generative Characters as a set of langchain components. These components would include Memory, Chain, and Agent Classes.

- Memory: This includes the ability to retrieve documents from VectorStore using TimeWeightedVectorStoreRetriever, calculate their score, summarize them, add memory and fetch memory.

- Chain: This involves generating reactions and dialogue responses.

- Agent: I'm not entirely sure about this one. Since the chain can generate reactions, it may be able to use tools as well.

I would like to work on this. Any suggestions or help would be greatly appreciated.

@vowelparrot | [feat] Create Memory, Chain and Agent Classes for Generative Characters | https://api.github.com/repos/langchain-ai/langchain/issues/3087/comments | 3 | 2023-04-18T12:15:46Z | 2023-09-18T16:15:33Z | https://github.com/langchain-ai/langchain/issues/3087 | 1,672,996,991 | 3,087 |

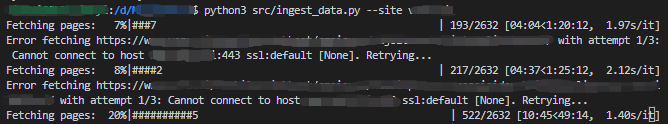

[

"langchain-ai",

"langchain"

] | Sitemap data ingestion is a super powerful tool and I love that you already have it built-in. However, sitemaps are potentially huge, covering hundreds or even thousands of sub-sites.

If one starts to crawl through the sitemap of a large website, there is little information on how the progress is going.

Therefore, I suggest adding a `tqdm` progressbar in the async web base loader to give the user some estimate.

While we're at it, we could also add a retry logic because on long runs, there are higher risk of running against anti-scraping policy and forced timeouts or disconnections.

See below screenshot for my implementation. Code change in the linked [PR](https://github.com/hwchase17/langchain/pull/3131).

| Add tqdm progress bar to base web base loader | https://api.github.com/repos/langchain-ai/langchain/issues/3083/comments | 1 | 2023-04-18T10:58:48Z | 2023-04-23T02:19:39Z | https://github.com/langchain-ai/langchain/issues/3083 | 1,672,867,984 | 3,083 |

[

"langchain-ai",

"langchain"

] | null | Need a Simple Example or method to get stream response of ConversationChain | https://api.github.com/repos/langchain-ai/langchain/issues/3080/comments | 2 | 2023-04-18T10:04:21Z | 2023-09-10T16:31:16Z | https://github.com/langchain-ai/langchain/issues/3080 | 1,672,777,542 | 3,080 |

[

"langchain-ai",

"langchain"

] | ## Motivation

Right now, HuggingFaceEmbeddings doesn't support loading an embedding model's weights from the cache but downloading the weights every time. Fixing this would be a low hanging fruit by allowing the user to pass their cache directory.

## Suggestion

The only change has only a few lines in __init__()

```python

class HuggingFaceEmbeddings(BaseModel, Embeddings):

"""Wrapper around sentence_transformers embedding models.

To use, you should have the ``sentence_transformers`` python package installed.

Example:

.. code-block:: python

from langchain.embeddings import HuggingFaceEmbeddings

model_name = "sentence-transformers/all-mpnet-base-v2"

hf = HuggingFaceEmbeddings(model_name=model_name)

"""

client: Any #: :meta private:

model_name: str = DEFAULT_MODEL_NAME

"""Model name to use."""

def __init__(self, cache_folder=None, **kwargs: Any):

"""Initialize the sentence_transformer."""

super().__init__(**kwargs)

try:

import sentence_transformers

self.client = sentence_transformers.SentenceTransformer(model_name_or_path=self.model_name, cache_folder=cache_folder)

except ImportError:

raise ValueError(

"Could not import sentence_transformers python package. "

"Please install it with `pip install sentence_transformers`."

)

class Config:

"""Configuration for this pydantic object."""

extra = Extra.forbid

def embed_documents(self, texts: List[str]) -> List[List[float]]:

"""Compute doc embeddings using a HuggingFace transformer model.

Args:

texts: The list of texts to embed.

Returns:

List of embeddings, one for each text.

"""

texts = list(map(lambda x: x.replace("\n", " "), texts))

embeddings = self.client.encode(texts)

return embeddings.tolist()

def embed_query(self, text: str) -> List[float]:

"""Compute query embeddings using a HuggingFace transformer model.

Args:

text: The text to embed.

Returns:

Embeddings for the text.

"""

text = text.replace("\n", " ")

embedding = self.client.encode(text)

return embedding.tolist()

``` | Feature Request: Allow initializing HuggingFaceEmbeddings from the cached weight | https://api.github.com/repos/langchain-ai/langchain/issues/3079/comments | 9 | 2023-04-18T09:43:38Z | 2024-02-13T16:17:08Z | https://github.com/langchain-ai/langchain/issues/3079 | 1,672,736,711 | 3,079 |

[

"langchain-ai",

"langchain"

] | I'm facing a weird issue with the `ConversationBufferWindowMemory`

Running `memory.load_memory_variables({})` prints:

```

{'chat_history': [HumanMessage(content='Hi my name is Ismail', additional_kwargs={}), AIMessage(content='Hello Ismail! How can I assist you today?', additional_kwargs={})]}

```

The error I get after sending a second message to the chain is:

```

> Entering new ConversationalRetrievalChain chain...

[2023-04-18 10:34:52,512] ERROR in app: Exception on /api/v1/chat [POST]

Traceback (most recent call last):

File "/Users/homanp/Projects/ADGPT_ENV/lib/python3.9/site-packages/flask/app.py", line 2528, in wsgi_app

response = self.full_dispatch_request()

File "/Users/homanp/Projects/ADGPT_ENV/lib/python3.9/site-packages/flask/app.py", line 1825, in full_dispatch_request

rv = self.handle_user_exception(e)

File "/Users/homanp/Projects/ADGPT_ENV/lib/python3.9/site-packages/flask/app.py", line 1823, in full_dispatch_request

rv = self.dispatch_request()

File "/Users/homanp/Projects/ADGPT_ENV/lib/python3.9/site-packages/flask/app.py", line 1799, in dispatch_request

return self.ensure_sync(self.view_functions[rule.endpoint])(**view_args)

File "/Users/homanp/Projects/ad-gpt/app.py", line 46, in chat

result = chain({"question": message, "chat_history": []})

File "/Users/homanp/Projects/ADGPT_ENV/lib/python3.9/site-packages/langchain/chains/base.py", line 116, in __call__

raise e

File "/Users/homanp/Projects/ADGPT_ENV/lib/python3.9/site-packages/langchain/chains/base.py", line 113, in __call__

outputs = self._call(inputs)

File "/Users/homanp/Projects/ADGPT_ENV/lib/python3.9/site-packages/langchain/chains/conversational_retrieval/base.py", line 71, in _call

chat_history_str = get_chat_history(inputs["chat_history"])

File "/Users/homanp/Projects/ADGPT_ENV/lib/python3.9/site-packages/langchain/chains/conversational_retrieval/base.py", line 25, in _get_chat_history

human = "Human: " + human_s

TypeError: can only concatenate str (not "tuple") to str

```

Current implementaion:

```

memory = ConversationBufferWindowMemory(memory_key='chat_history', k=2, return_messages=True)

chain = ConversationalRetrievalChain.from_llm(model,

memory=memory,

verbose=True,

retriever=retriever,

qa_prompt=QA_PROMPT,

condense_question_prompt=CONDENSE_QUESTION_PROMPT,)

``` | Error `can only concatenate str (not "tuple") to str` when using `ConversationBufferWindowMemory` | https://api.github.com/repos/langchain-ai/langchain/issues/3077/comments | 11 | 2023-04-18T08:38:57Z | 2023-11-13T16:10:00Z | https://github.com/langchain-ai/langchain/issues/3077 | 1,672,633,625 | 3,077 |

[

"langchain-ai",

"langchain"

] | I notice they use different API, but what's the difference between these 2 apis?

Question Answering:

docs = docsearch.get_relevant_documents(query)

Question Answering with Sources:

docs = docsearch.similarity_search(query) | Difference between "Question Answering with Sources" and "Question Answering" | https://api.github.com/repos/langchain-ai/langchain/issues/3073/comments | 8 | 2023-04-18T08:05:55Z | 2023-10-12T16:10:19Z | https://github.com/langchain-ai/langchain/issues/3073 | 1,672,578,778 | 3,073 |

[

"langchain-ai",

"langchain"

] | https://github.com/hwchase17/langchain/blob/894c272a562471aadc1eb48e4a2992923533dea0/langchain/memory/summary_buffer.py#L57-L70

The ```ConversationSummaryBufferMemory``` class in ```langchain/memory/summary_buffer.py``` currently prunes chat_memory's messages using the ```List.pop()``` method (line 66). This approach works as expected for the in-memory implementation ```ChatMessageHistory```, where messages are stored as a simple List.

However, this method of pruning messages is not suitable for other implementations where messages are calculated output Lists, such as ```DynamoDBChatMessageHistory``` or ```RedisChatMessageHistory```. In these cases, the current implementation fails to prune messages as intended.

To address this issue, we may need to modify the ```BaseChatMessageHistory``` class to provide a unified interface for pruning messages, which can then be overridden as needed by specific implementations. | Issue with ConversationSummaryBufferMemory pruning messages for non-in-memory chat message histories | https://api.github.com/repos/langchain-ai/langchain/issues/3072/comments | 6 | 2023-04-18T07:48:11Z | 2024-05-20T08:06:21Z | https://github.com/langchain-ai/langchain/issues/3072 | 1,672,549,574 | 3,072 |

[

"langchain-ai",

"langchain"

] | I'm testing out the tutorial code for Agents:

`from langchain.agents import load_tools

from langchain.agents import initialize_agent

from langchain.agents import AgentType

from langchain.llms import OpenAI

llm = OpenAI(temperature=0)

tools = load_tools(["serpapi", "llm-math"], llm=llm)

agent = initialize_agent(tools, llm, agent=AgentType.ZERO_SHOT_REACT_DESCRIPTION, verbose=True)

agent.run("What was the high temperature in SF yesterday in Fahrenheit? What is that number raised to the .023 power?")`

And so far it generates the result:

`> Entering new AgentExecutor chain...

I need to find the temperature first, then use the calculator to raise it to the .023 power.

Action: Search

Action Input: "High temperature in SF yesterday"

Observation: High: 60.8ºf @3:10 PM Low: 48.2ºf @2:05 AM Approx.

Thought: I need to convert the temperature to a number

Action: Calculator

Action Input: 60.8`

But raises an issue and doesn't calculate 60.8^.023

` raise ValueError(f"unknown format from LLM: {llm_output}")

ValueError: unknown format from LLM: This is not a math problem and cannot be solved using the numexpr library.`

What's the reason behind this error? | llm-math raising an issue | https://api.github.com/repos/langchain-ai/langchain/issues/3071/comments | 16 | 2023-04-18T06:44:50Z | 2023-11-16T05:52:22Z | https://github.com/langchain-ai/langchain/issues/3071 | 1,672,458,216 | 3,071 |

[

"langchain-ai",

"langchain"

] | https://github.com/hwchase17/langchain/blob/894c272a562471aadc1eb48e4a2992923533dea0/langchain/document_loaders/git.py#L8 | Once we Clone the Repo using the Git Document loader. How we can auth the Private Repos and How we can chunk the code files into meaning full code and create Embeddings? | https://api.github.com/repos/langchain-ai/langchain/issues/3069/comments | 1 | 2023-04-18T06:08:55Z | 2023-09-10T16:31:22Z | https://github.com/langchain-ai/langchain/issues/3069 | 1,672,416,914 | 3,069 |

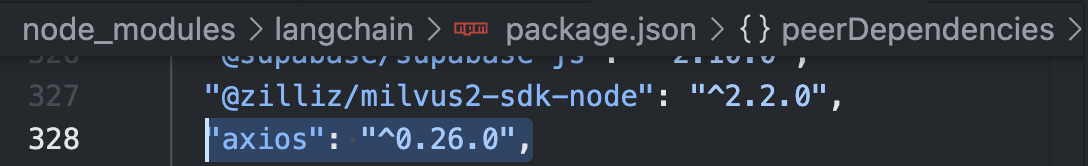

[

"langchain-ai",

"langchain"

] | Axios is at v 1.3.5, why does langchain set the dependency to major version 0?

It is set to "axios": "^0.26.0",

Do we want: "axios": ">=0.26.0" ?

Does the whole world need to downgrade in order to use Langchain?

Or is this just me and my setup is screwed up somehow. I don't see anyone else making noise about it, so i'm a little concerned I have something wrong with what i'm working on.

| Axios dependency forcing a downgrade on nextJS build. | https://api.github.com/repos/langchain-ai/langchain/issues/3065/comments | 5 | 2023-04-18T05:38:52Z | 2023-09-26T16:08:10Z | https://github.com/langchain-ai/langchain/issues/3065 | 1,672,386,014 | 3,065 |

[

"langchain-ai",

"langchain"

] | Hey folks. I am experimenting with OpenAPI agents and the most recent [Spotify API](https://github.com/sonallux/spotify-web-api/releases). The API defines the endpoint `/me/top/{type}`. _Type_ can be, for example, `tracks`. A GET to `/me/top/tracks` will return the top tracks for the user making the request.

The planned actions coming out of the LLM, if you ask it to list your favorite tracks, will correctly include `GET /me/top/tracks`. In [planner.py](https://github.com/hwchase17/langchain/blob/577ec92f16813565d788da03f6ce830f4657c7b0/langchain/agents/agent_toolkits/openapi/planner.py#L225) there is validation check that will verify if the suggested endpoint exists. But, it compares `GET /me/top/tracks` with `GET /me/top/{type}`, which will cause an error: `ValueError: GET /me/top/tracks endpoint does not exist`.

A change to `reduce_openapi_spec` or `planner.py` would fix it.

| Validation check in planner.py not working as intended? | https://api.github.com/repos/langchain-ai/langchain/issues/3064/comments | 6 | 2023-04-18T05:12:32Z | 2023-09-27T16:08:06Z | https://github.com/langchain-ai/langchain/issues/3064 | 1,672,364,479 | 3,064 |

[

"langchain-ai",

"langchain"

] | ## Problem

The current `DirectoryLoader` class relies on the python `glob` and `rglob` utilities to load the filepaths. These utilities in python don't support advanced file patterns, for example specifying files with multiple extensions. For example, consider a sample directory with these files.

```bash

- a.py

- b.js

- c.json

- d.yml

```

Currently, there is no way to load only the files with `.py` or `.yml` extension.

## Proposed Solution

### Preferred

Include the [wcmatch](https://github.com/facelessuser/wcmatch) library as a dependency that replaces the built-in glob and rglob, and supports all unix supported options for specifying file patterns. For example, with `wcmatch`, users can include a pattern like `['*.py', *'.yml']` to include files with `.py` or `.yml` extension.

### Alternate