issue_owner_repo listlengths 2 2 | issue_body stringlengths 0 261k ⌀ | issue_title stringlengths 1 925 | issue_comments_url stringlengths 56 81 | issue_comments_count int64 0 2.5k | issue_created_at stringlengths 20 20 | issue_updated_at stringlengths 20 20 | issue_html_url stringlengths 37 62 | issue_github_id int64 387k 2.46B | issue_number int64 1 127k |

|---|---|---|---|---|---|---|---|---|---|

[

"langchain-ai",

"langchain"

] | With the newly released ChatOpenAI model, the completion output is being cut off randomly in between

For example I used the below input

Write me an essay on Pune

I got this output

Pune, also known as Poona, is a city located in the western Indian state of Maharashtra. It is the second-largest city in the state and is often referred to as the "Oxford of the East" due to its reputation as a center of education and research. Pune is a vibrant city with a rich history, diverse culture, and a thriving economy.\n\nThe history of Pune dates back to the 8th century when it was founded by the Rashtrakuta dynasty. Over the centuries, it has been ruled by various dynasties, including the Marathas, the Peshwas, and the British. Pune played a significant role in India\'s struggle for independence, and many freedom fighters, including Mahatma Gandhi, spent time in the city.\n\nToday, Pune is a bustling metropolis with a population of over 3 million people. It is home to some of the most prestigious educational institutions in India, including the University of Pune, the Indian Institute of Science Education and Research, and the National Defense Academy. The city is also a hub for research and development, with many multinational companies setting up their research centers in Pune.\n\nPune is a city of contrasts, with modern skyscrapers standing alongside ancient temples and historical landmarks. The city\'s

As you can see the message is cutoff in between. I followed the official documentation from here https://github.com/hwchase17/langchain/blob/master/docs/modules/chat/getting_started.ipynb

This was not the issue before with OpenAIChat but with ChatOpenAI this is posing an issue | Output cutoff with ChatOpenAI | https://api.github.com/repos/langchain-ai/langchain/issues/1652/comments | 6 | 2023-03-14T05:37:02Z | 2023-07-07T08:03:54Z | https://github.com/langchain-ai/langchain/issues/1652 | 1,622,767,469 | 1,652 |

[

"langchain-ai",

"langchain"

] | SQL Database Agent can't adapt to the turbo model,there is a bug in get_action_and_input function.

this is the code of get_action_and_input for current version:

```python

# file:langchain/agents/mrkl/base.py

def get_action_and_input(llm_output: str) -> Tuple[str, str]:

"""Parse out the action and input from the LLM output.

Note: if you're specifying a custom prompt for the ZeroShotAgent,

you will need to ensure that it meets the following Regex requirements.

The string starting with "Action:" and the following string starting

with "Action Input:" should be separated by a newline.

"""

if FINAL_ANSWER_ACTION in llm_output:

return "Final Answer", llm_output.split(FINAL_ANSWER_ACTION)[-1].strip()

regex = r"Action: (.*?)[\n]*Action Input: (.*)"

match = re.search(regex, llm_output, re.DOTALL)

if not match:

raise ValueError(f"Could not parse LLM output: `{llm_output}`")

action = match.group(1).strip()

action_input = match.group(2)

return action, action_input.strip(" ").strip('"')

```

when model is text-davinci-003 ,it works well. but when the model is gpt-3.5-turbo,there will be another '\n' at the end of llm_out,which lead to a wrong action_input result.

and if add the follow code to the function, it works well again.

this is my code:

```python

# file:langchain/agents/mrkl/base.py

def get_action_and_input(llm_output: str) -> Tuple[str, str]:

"""Parse out the action and input from the LLM output.

Note: if you're specifying a custom prompt for the ZeroShotAgent,

you will need to ensure that it meets the following Regex requirements.

The string starting with "Action:" and the following string starting

with "Action Input:" should be separated by a newline.

"""

# this is what i added

llm_output = llm_output.rstrip("\n")

if FINAL_ANSWER_ACTION in llm_output:

return "Final Answer", llm_output.split(FINAL_ANSWER_ACTION)[-1].strip()

regex = r"Action: (.*?)[\n]*Action Input: (.*)"

match = re.search(regex, llm_output, re.DOTALL)

if not match:

raise ValueError(f"Could not parse LLM output: `{llm_output}`")

action = match.group(1).strip()

action_input = match.group(2)

return action, action_input.strip(" ").strip('"')

```

> I am using langchain version 0.0.108 | SQL Database Agent can't adapt to the turbo model,there is a bug in get_action_and_input function | https://api.github.com/repos/langchain-ai/langchain/issues/1649/comments | 3 | 2023-03-14T02:42:12Z | 2023-05-12T05:00:41Z | https://github.com/langchain-ai/langchain/issues/1649 | 1,622,625,055 | 1,649 |

[

"langchain-ai",

"langchain"

] | The conversational agent at /langchain/agents/conversational/base.py looks to have a regex that isn't good for pulling out multiline Action Inputs, whereas the

The mrkl agent at /langchain/agents/mrkl/base.py has a good regex for pulling out multiline Action Inputs

When I switched the regex FROM the top one at /langchain/agents/conversational/base.py TO the bottom one at /langchain/agents/mrkl/base.py, it worked for me!

def _extract_tool_and_input(self, llm_output: str) -> Optional[Tuple[str, str]]:

if f"{self.ai_prefix}:" in llm_output:

return self.ai_prefix, llm_output.split(f"{self.ai_prefix}:")[-1].strip()

**regex = r"Action: (.*?)[\n]*Action Input: (.*)"

match = re.search(regex, llm_output)**

def get_action_and_input(llm_output: str) -> Tuple[str, str]:

"""Parse out the action and input from the LLM output.

Note: if you're specifying a custom prompt for the ZeroShotAgent,

you will need to ensure that it meets the following Regex requirements.

The string starting with "Action:" and the following string starting

with "Action Input:" should be separated by a newline.

"""

if FINAL_ANSWER_ACTION in llm_output:

return "Final Answer", llm_output.split(FINAL_ANSWER_ACTION)[-1].strip()

**regex = r"Action: (.*?)[\n]*Action Input: (.*)"

match = re.search(regex, llm_output, re.DOTALL)**

| conversational/agent has a regex not good for multiline Action Inputs coming from the LLM | https://api.github.com/repos/langchain-ai/langchain/issues/1645/comments | 4 | 2023-03-13T20:58:38Z | 2023-08-20T16:08:47Z | https://github.com/langchain-ai/langchain/issues/1645 | 1,622,249,283 | 1,645 |

[

"langchain-ai",

"langchain"

] | Looks like `BaseLLM` supports caching (via `langchain.llm_cache`), but `BaseChatModel` does not. | Add caching support to BaseChatModel | https://api.github.com/repos/langchain-ai/langchain/issues/1644/comments | 9 | 2023-03-13T20:34:16Z | 2023-07-04T10:06:20Z | https://github.com/langchain-ai/langchain/issues/1644 | 1,622,218,946 | 1,644 |

[

"langchain-ai",

"langchain"

] | Can the summarization chain be used with ChatGPT's API, `gpt-3.5-turbo`? I have tried the following two code snippets, but they result in this error.

```

openai.error.InvalidRequestError: This model's maximum context length is 4097 tokens. However, your messages resulted in 6063 tokens. Please reduce the length of the messages.

```

Trial 1

```

from langchain import OpenAI

from langchain.chains.summarize import load_summarize_chain

from langchain.docstore.document import Document

full_text = "The content of this article, https://nymag.com/news/features/mark-zuckerberg-2012-5/?mid=nymag_press"

model_name = "gpt-3.5-turbo"

llm = OpenAI(model_name=model_name, temperature=0)

documents = [Document(page_content=full_text)]

# Summarize the document by summarizing each document chunk and then summarizing the combined summary

chain = load_summarize_chain(llm, chain_type="map_reduce")

summary = chain.run(documents)

```

Trial 2

```

from langchain.chains.summarize import load_summarize_chain

from langchain.docstore.document import Document

from langchain.llms import OpenAIChat

full_text = "The content of this article, https://nymag.com/news/features/mark-zuckerberg-2012-5/?mid=nymag_press"

model_name = "gpt-3.5-turbo"

llm = OpenAIChat(model_name=model_name, temperature=0)

documents = [Document(page_content=full_text)]

# Summarize the document by summarizing each document chunk and then summarizing the combined summary

chain = load_summarize_chain(llm, chain_type="map_reduce")

summary = chain.run(documents)

```

I changed the Trial 1 snippet to the following but got the error below due to a list of prompts provided to the endpoint. Also, it appears that OpenAIChat doesn't have a `llm.modelname_to_contextsize` despite the endpoint not accepting more than `4097` tokens.

```

ValueError: OpenAIChat currently only supports single prompt, got ['Write a concise summary of the following:\n\n\n"

```

Trial 3

```

from langchain import OpenAI

from langchain.chains.summarize import load_summarize_chain

from langchain.docstore.document import Document

from langchain.text_splitter import RecursiveCharacterTextSplitter

full_text = "The content of this article, https://nymag.com/news/features/mark-zuckerberg-2012-5/?mid=nymag_press"

model_name = "gpt-3.5-turbo"

llm = OpenAI(model_name=model_name, temperature=0)

recursive_character_text_splitter = (

RecursiveCharacterTextSplitter.from_tiktoken_encoder(

encoding_name="cl100k_base" if model_name == "gpt-3.5-turbo" else "p50k_base",

chunk_size=4097

if model_name == "gpt-3.5-turbo"

else llm.modelname_to_contextsize(model_name),

chunk_overlap=0,

)

)

text_chunks = recursive_character_text_splitter.split_text(full_text)

documents = [Document(page_content=text_chunk) for text_chunk in text_chunks]

# Summarize the document by summarizing each document chunk and then summarizing the combined summary

chain = load_summarize_chain(llm, chain_type="map_reduce")

summary = chain.run(documents)

```

Do you have any ideas on what needs to be changed to allow OpenAI's ChatGPT to work for summarization? Happy to help if I can. | ChatGPT's API model, gpt-3.5-turbo, doesn't appear to work for summarization tasks | https://api.github.com/repos/langchain-ai/langchain/issues/1643/comments | 11 | 2023-03-13T19:54:13Z | 2023-04-23T08:30:14Z | https://github.com/langchain-ai/langchain/issues/1643 | 1,622,162,962 | 1,643 |

[

"langchain-ai",

"langchain"

] | Hey guys,

I'm trying to use langchain because the Tool class is so handy and initialize_agent works well with it, but I am having trouble finding any documentation that allows me to run this self-hosted locally. Everything seems to be remote, but I have a system that I know is capable of what I'm trying to do.

Is there any way to specify arguments to either SelfHosted class or even runhouse in order to specify that you want it running on the computer you're currently working on, on the gpu connected to that computer, instead of ssh-ing into a remote instance?

Thanks | langchain.llms SelfHostedPipeline and SelfHostedHuggingFaceLLM | https://api.github.com/repos/langchain-ai/langchain/issues/1639/comments | 4 | 2023-03-13T17:11:12Z | 2023-10-14T20:14:17Z | https://github.com/langchain-ai/langchain/issues/1639 | 1,621,910,729 | 1,639 |

[

"langchain-ai",

"langchain"

] | https://github.com/hwchase17/langchain/blob/cb646082baa173fdee7f2b1e361be368acef4e7e/langchain/document_loaders/googledrive.py#L120

Suggestion: Include optional param `includeItemsFromAllDrives` when calling `service.files().list()`

Reference: https://stackoverflow.com/questions/65388539/using-python-i-cant-access-shared-drive-folders-from-google-drive-api-v3 | GoogleDriveLoader not loading docs from Share Drives | https://api.github.com/repos/langchain-ai/langchain/issues/1634/comments | 0 | 2023-03-13T15:03:55Z | 2023-04-08T15:46:57Z | https://github.com/langchain-ai/langchain/issues/1634 | 1,621,682,210 | 1,634 |

[

"langchain-ai",

"langchain"

] | Hi, first off, I just want to say that I have been following this from the start, almost, and I see the amazing work you put in. It's an awesome project, well done!

Now that I finally have some time to dive in myself I'm hoping someone can help me bootstrap an idea. I want to chain chatgpt to Codex so that Chat will pass the coding task to codex for more accurate code and incorporate the code into it's answer and history/context.

Is this doable?

Many thanks! | Chaining Chat to Codex | https://api.github.com/repos/langchain-ai/langchain/issues/1631/comments | 3 | 2023-03-13T13:00:10Z | 2023-08-29T18:08:10Z | https://github.com/langchain-ai/langchain/issues/1631 | 1,621,441,455 | 1,631 |

[

"langchain-ai",

"langchain"

] | Dataframes (df) are generic containers to store different data-structures and pandas (or CSV) agent help manipulate dfs effectively. But current langchain implementation requires python3.9 to work with pandas agent because of the following invocation:

https://github.com/hwchase17/langchain/blob/6e98ab01e1648924db3bf8c3c2a093b38ec380bb/langchain/agents/agent_toolkits/pandas/base.py#L30

Google colab and many other easy-to-use platforms for developers however support python3.8 only as the stable version. If pandas agent could be supported by python3.8, it would immensely allow lot more to experiment and use the agent. | Python3.8 support for pandas agent | https://api.github.com/repos/langchain-ai/langchain/issues/1623/comments | 1 | 2023-03-13T04:01:58Z | 2023-03-15T02:44:00Z | https://github.com/langchain-ai/langchain/issues/1623 | 1,620,711,203 | 1,623 |

[

"langchain-ai",

"langchain"

] | Whereas it should be possible to filter by metadata :

- ```langchain.vectorstores.chroma.similarity_search``` takes a ```filter``` input parameter but do not forward it to ```langchain.vectorstores.chroma.similarity_search_with_score```

- ```langchain.vectorstores.chroma.similarity_search_by_vector``` don't take this parameter in input, although it could be very useful, without any additional complexity - and it would thus be coherent with the syntax of the two other functions | ChromaDB does not support filtering when using ```similarity_search``` or ```similarity_search_by_vector``` | https://api.github.com/repos/langchain-ai/langchain/issues/1619/comments | 3 | 2023-03-12T23:58:13Z | 2023-09-27T16:13:06Z | https://github.com/langchain-ai/langchain/issues/1619 | 1,620,559,206 | 1,619 |

[

"langchain-ai",

"langchain"

] | Hi!

Unstructured has support for providing in-memory text. Would be a great addition as currently, developers have to write and then read from a file if they want to load documents from memory.

Wouldn't mind opening the PR myself but want to make sure its a wanted feature before I get on it. | Allow unstructured loaders to accept in-memory text | https://api.github.com/repos/langchain-ai/langchain/issues/1618/comments | 7 | 2023-03-12T23:09:58Z | 2024-03-18T10:11:03Z | https://github.com/langchain-ai/langchain/issues/1618 | 1,620,546,553 | 1,618 |

[

"langchain-ai",

"langchain"

] | The @microsoft team used LangChain to guide [Visual ChatGPT](https://arxiv.org/pdf/2303.04671.pdf).

Here's the architecture of Visual ChatGPT:

<img width="619" alt="screen" src="https://user-images.githubusercontent.com/6625584/230680492-7d737584-c56b-43b2-8240-ed02aaf9ac00.png">

Think of **Prompt Manager** as **LangChain** and **Visual Foundation Models** as **LangChain Tools**.

Here are five LangChain use cases that can be unlocked with [Visual ChatGPT](https://github.com/microsoft/visual-chatgpt):

1. **Visual Query Builder**: Combine LangChain's SQL querying capabilities with Visual ChatGPT's image understanding, allowing users to query databases with natural language and receive visualized results, such as charts or graphs.

2. **Multimodal Conversational Agent**: Enhance LangChain chatbots with Visual ChatGPT's image processing abilities, allowing users to send images and receive relevant responses, image-based recommendations, or visual explanations alongside text.

3. **Image-Assisted Summarization**: Integrate Visual ChatGPT's image understanding with LangChain's summarization chains to create image-enhanced summaries, providing context and visual aids that complement the text-based summary.

4. **Image Captioning and Translation**: Combine Visual ChatGPT's image captioning with LangChain's language translation chains to automatically generate captions and translations for images, making visual content more accessible to a global audience.

5. **Generative Art Collaboration**: Connect Visual ChatGPT's image generation capabilities with LangChain's creative writing chains, enabling users to create collaborative artwork and stories that combine text and images in innovative ways. | Visual ChatGPT | https://api.github.com/repos/langchain-ai/langchain/issues/1607/comments | 2 | 2023-03-12T02:38:03Z | 2023-09-18T16:23:25Z | https://github.com/langchain-ai/langchain/issues/1607 | 1,620,211,493 | 1,607 |

[

"langchain-ai",

"langchain"

] | The new [blog post](https://github.com/huggingface/blog/blob/main/trl-peft.md) for implementing LoRA + RLHF is great. Would appreciate if the example scripts are public!

3 scripts mentioned in the blog posts:

[Script](https://github.com/lvwerra/trl/blob/peft-gpt-neox-20b/examples/sentiment/scripts/gpt-neox-20b_peft/s01_cm_finetune_peft_imdb.py) - Fine tuning a Low Rank Adapter on a frozen 8-bit model for text generation on the imdb dataset.

[Script](https://github.com/lvwerra/trl/blob/peft-gpt-neox-20b/examples/sentiment/scripts/gpt-neox-20b_peft/s02_merge_peft_adapter.py) - Merging of the adapter layers into the base model’s weights and storing these on the hub.

[Script](https://github.com/lvwerra/trl/blob/peft-gpt-neox-20b/examples/sentiment/scripts/gpt-neox-20b_peft/s03_gpt-neo-20b_sentiment_peft.py) - Sentiment fine-tuning of a Low Rank Adapter to create positive reviews.

cross-link [pr](https://github.com/huggingface/blog/pull/920) | trl-peft example scripts not visible to public | https://api.github.com/repos/langchain-ai/langchain/issues/1606/comments | 3 | 2023-03-11T22:57:53Z | 2023-09-10T21:07:16Z | https://github.com/langchain-ai/langchain/issues/1606 | 1,620,171,008 | 1,606 |

[

"langchain-ai",

"langchain"

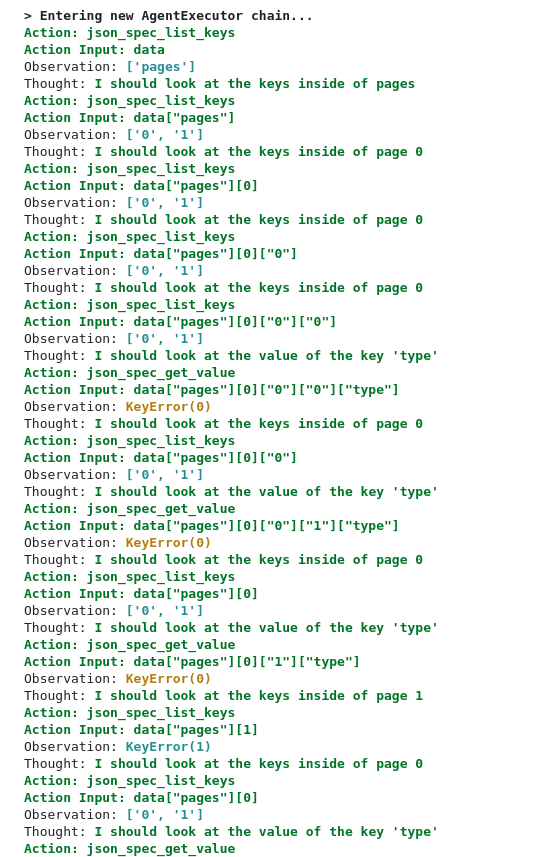

] | Wanted to leave some observations similar to the chain of thought *smirk* that was mentioned over here for [JSON agents](https://github.com/hwchase17/langchain/issues/1409).

You can copy this snippet to test for yourself (Mine is in Colab atm)

```

# Install dependencies

!pip install huggingface_hub cohere --quiet

!pip install openai==0.27.0 --quiet

!pip install langchain==0.0.107 --quiet

# Initialize any api keys that are needed

os.environ["OPENAI_API_KEY"] = ""

os.environ["HUGGINGFACEHUB_API_TOKEN"] = ""

import os

from langchain import LLMChain, OpenAI, Cohere, HuggingFaceHub, Prompt, SQLDatabase, SQLDatabaseChain

from langchain.model_laboratory import ModelLaboratory

from langchain.agents import create_csv_agent, load_tools, initialize_agent, Tool

from langchain import LLMMathChain, OpenAI, SerpAPIWrapper, SQLDatabase, SQLDatabaseChain

import requests

from langchain.agents import create_openapi_agent

from langchain.agents.agent_toolkits import OpenAPIToolkit

from langchain.llms.openai import OpenAI

from langchain.requests import RequestsWrapper

from langchain.tools.json.tool import JsonSpec

import yaml

import urllib

# Core LLM model

llm = OpenAI(temperature=0)

# Create an API agent

# LoveCraft - .yaml API

yaml_url = "https://raw.githubusercontent.com/APIs-guru/openapi-directory/main/APIs/randomlovecraft.com/1.0/openapi.yaml"

urllib.request.urlretrieve(yaml_url, "lovecraft.yml")

with open("/content/lovecraft.yml") as f:

data = yaml.load(f, Loader=yaml.FullLoader)

lovecraft_json_spec=JsonSpec(dict_=data, max_value_length=4000)

headers = {}

requests_wrapper=RequestsWrapper(headers=headers)

lovecraft_json_spec_toolkit = OpenAPIToolkit.from_llm(OpenAI(temperature=0), lovecraft_json_spec, requests_wrapper, verbose=True)

lovecraft_agent_executor = create_openapi_agent(

llm=OpenAI(temperature=0),

toolkit=lovecraft_json_spec_toolkit,

verbose=True

)

tools = [

# Tool(

# name = "Search",

# func=search.run,

# description="useful for when you need to answer questions about current events. You should ask targeted questions"

# ),

Tool(

name="Lovecraft API - randomlovecraft.com",

func=lovecraft_agent_executor.run,

description="Access randomlovecraft.com documentation, process API responses, and perform GET and POST requests to randomlovecraft.com"

)

]

mrkl = initialize_agent(tools, llm, agent="zero-shot-react-description", verbose=True)

mrkl.run("Can you get me a random Lovecraft sentence?")

mrkl.run("Can you get me a random Lovecraft sentence using /sentences`?")

mrkl.run("Using the `https://randomlovecraft.com/api` base URL, make a `GET` request random Lovecraft sentence using `/sentences`. Use a limit of 1.")

```

I pulled the openAPI example from the docs to do some testing on my own. I wanted to see if it was possible for an agent to fetch and process API docs and to be able to return the response for use in other applications. Some of the key noted things:

# 🐘Huge API Responses

If your response is large, it will directly affect the ability for the agent to ouput the response. This is because it goes into the token limit. Here the `Observation` is included in the token limit:

a possible workaround for this could be routing the assembled requests over to a server / glue tool like [make](https://www.make.com/en?pc=dougjoe). Still haven't looked at other tools to see what's possible! Perhaps just wrapping that request in another request to a server would suffice.

# 🤔Verbosity is Better?

There is a weird grey area between too detailed and not detailed enough when it comes to the user prompt: I.e. check these 3 prompts out:

## ✅Test1:

### input:

```

Can you get me a random Lovecraft sentence

```

### output:

```

> Finished chain.

A locked portfolio, bound in tanned human skin, held certain unknown and unnamable drawings which it was rumoured Goya had perpetrated but dared not acknowledge.

```

## 🙅♂️Test2:

### input:

```

Can you get me a random Lovecraft sentence using /sentences?

```

### output:

```

> Finished chain.

To get a random Lovecraft sentence, make a GET request to https://randomlovecraft.com/api/sentences?limit=1.

```

## ✅Test3:

### input:

```

Using the `https://randomlovecraft.com/api` base URL, make a `GET` request random Lovecraft sentence using `/sentences`. Use a limit of 1.

```

### output:

```

> Finished chain.

The sentence returned from the GET request to https://randomlovecraft.com/api/sentences?limit=1 is "Derby did not offer to relinquish the wheel, and I was glad of the speed with which Portsmouth and Newburyport flashed by."

```

# 🔑Expensive Key Search

I ran out of my free OpenAI credits in a matter of 5 hours of testing various tools with Langchain 😅. Be mindful of how expensive this is because each action uses a request to the core LLM. Specifically, I noticed that `json_spec_list_keys` is a simple layer-by-layer search where each nested object observation costs an LLM request. This can add up especially when looping. I guess workaround wise, you'd probably want to either use a lighter/cheaper model for the agent or have someway to "store" `Action Inputs` and `Observations`. I guess that can be [solved here](https://langchain.readthedocs.io/en/latest/modules/memory/types/entity_summary_memory.html), haven't implemented yet.

All in all, this tool is absolutely incredible! So happy I stumbled across this library!

| Observations and Limitations of API Tools | https://api.github.com/repos/langchain-ai/langchain/issues/1603/comments | 2 | 2023-03-11T21:09:46Z | 2023-11-08T11:32:09Z | https://github.com/langchain-ai/langchain/issues/1603 | 1,620,146,572 | 1,603 |

[

"langchain-ai",

"langchain"

] | In the current `ConversationBufferWindowMemory`, only the last `k` interactions are kept while the conversation history prior to that is deleted.

A memory similar to this, which keeps all conversation history below `max_token_limit` and deletes conversation history from the beginning when it exceeds the limit, could be useful.

This is similar to `ConversationSummaryBufferMemory`, but instead of summarizing the conversation history when it exceeds `max_token_limit`, it simply discards it.

It is a very simple memory, but I think it could be useful in some situations. | Idea: A memory similar to ConversationBufferWindowMemory but utilizing token length | https://api.github.com/repos/langchain-ai/langchain/issues/1598/comments | 3 | 2023-03-11T12:05:23Z | 2023-09-10T16:42:36Z | https://github.com/langchain-ai/langchain/issues/1598 | 1,619,983,645 | 1,598 |

[

"langchain-ai",

"langchain"

] | I have a use case where I want to be able to create multiple indices of the same set of documents, essentially each index will be built based on some criteria so that I can query from the right set of documents. (I am using FAISS at the moment which does not have great options for filtering within one giant index so they recommend creating multiple indices)

It would be expensive to generate embeddings by calling OpenAI APIs for each document multiple times to populate each of the indices. I think having an interface similar to `add_texts` and `add_documents` which allows the user to pass the embeddings explicitly might be an option to achieve this?

As I write, I think I might be able to get around by passing a wrapper function to FAISS as the embedding function which can internally cache the embeddings for each document and avoid the duplicate calls to the embeddings API.

However, creating this issue in case others also think that an `add_embeddings` API or something similar sounds like a good idea? | Add support for creating index by passing embeddings explicitly | https://api.github.com/repos/langchain-ai/langchain/issues/1597/comments | 1 | 2023-03-11T10:41:51Z | 2023-08-24T16:14:51Z | https://github.com/langchain-ai/langchain/issues/1597 | 1,619,963,356 | 1,597 |

[

"langchain-ai",

"langchain"

] | The SemanticSimilarityExampleSelector doesn't behave as expected. The SemanticSimilarityExampleSelector is supposed to select examples based on which examples are most similar to the inputs.

The same issue is found in the [official documentation](https://langchain.readthedocs.io/en/latest/modules/prompts/examples/example_selectors.html#similarity-exampleselector)

Here is the code and outputs

<img width="644" alt="Capture 1" src="https://user-images.githubusercontent.com/52585642/224474277-9155fa44-95bc-4f37-a87e-83c4867966d3.PNG">

<img width="646" alt="Capture 2" src="https://user-images.githubusercontent.com/52585642/224474281-d37edfed-2922-412c-be21-e353c64b6e21.PNG">

Input 'fat' is a measurement, so should select the tall/short example, but we still have happy/sad example

<img width="653" alt="Capture 3" src="https://user-images.githubusercontent.com/52585642/224474286-7793c4e2-5e5e-4ba1-9f97-a3a74bc278ef.PNG">

When we add another 'feeling' example, it is not selected in the output while we have a 'feeling' input.

<img width="652" alt="Capture 4" src="https://user-images.githubusercontent.com/52585642/224474295-265690a7-5f7f-4ef2-bceb-72270190311a.PNG">

| bug with SemanticSimilarityExampleSelector | https://api.github.com/repos/langchain-ai/langchain/issues/1596/comments | 2 | 2023-03-11T08:52:10Z | 2023-09-18T16:23:35Z | https://github.com/langchain-ai/langchain/issues/1596 | 1,619,936,437 | 1,596 |

[

"langchain-ai",

"langchain"

] | I've been trying things out from the [docs](https://langchain.readthedocs.io/en/latest/modules/memory/getting_started.html) and encountered this error when trying to import the following:

`from langchain.memory import ChatMessageHistory`

ImportError: cannot import name 'AIMessage' from 'langchain.schema'

`from langchain.memory import ConversationBufferMemory`

ImportError: cannot import name 'AIMessage' from 'langchain.schema' | ImportError: cannot import name 'AIMessage' from 'langchain.schema' | https://api.github.com/repos/langchain-ai/langchain/issues/1595/comments | 3 | 2023-03-11T05:09:26Z | 2023-03-14T15:57:49Z | https://github.com/langchain-ai/langchain/issues/1595 | 1,619,886,185 | 1,595 |

[

"langchain-ai",

"langchain"

] | null | Allow encoding such as "encoding='utf8' " to be passed into TextLoader if the file is not the default system encoding. | https://api.github.com/repos/langchain-ai/langchain/issues/1593/comments | 9 | 2023-03-11T00:25:28Z | 2024-05-13T16:07:22Z | https://github.com/langchain-ai/langchain/issues/1593 | 1,619,793,517 | 1,593 |

[

"langchain-ai",

"langchain"

] | `add_texts` function takes `texts` which are meant to be added to the OpenSearch vector domain.

These texts are then passed to compute the embeddings using following code,

```

embeddings = [

self.embedding_function.embed_documents(list(text))[0] for text in texts

]

```

which doesn't create expected embeddings with OpenAIEmbeddings() because the text isn't passed correctly.

whereas,

`embeddings = self.embedding_function.embed_documents(texts)`

works as expected. | add_texts function in OpenSearchVectorSearch class doesn't create embeddings as expected | https://api.github.com/repos/langchain-ai/langchain/issues/1592/comments | 2 | 2023-03-11T00:18:18Z | 2023-09-18T16:23:41Z | https://github.com/langchain-ai/langchain/issues/1592 | 1,619,789,882 | 1,592 |

[

"langchain-ai",

"langchain"

] | Looks like a similar issue as what OpenAI had when the model was introduced(arguments like best_of, logprobs weren't supported). | AzureOpenAI doesn't work with GPT 3.5 Turbo deployed models | https://api.github.com/repos/langchain-ai/langchain/issues/1591/comments | 18 | 2023-03-11T00:13:29Z | 2023-10-25T16:10:02Z | https://github.com/langchain-ai/langchain/issues/1591 | 1,619,787,442 | 1,591 |

[

"langchain-ai",

"langchain"

] | The example in the [documentation](https://langchain.readthedocs.io/en/latest/modules/agents/agent_toolkits/json.html) doesn't state how to use them. I have a json [file](https://gist.github.com/Smyja/aaaeb3ef6f2af68c27f0e1ea42bfb52d) that is basically a list of dictionaries, how can i use the tools to access the text keys or all keys to find an answer to a question? @agola11 | How to use JsonListKeysTool and JsonGetValueTool for json agent | https://api.github.com/repos/langchain-ai/langchain/issues/1589/comments | 1 | 2023-03-10T21:06:13Z | 2023-09-10T16:42:51Z | https://github.com/langchain-ai/langchain/issues/1589 | 1,619,623,956 | 1,589 |

[

"langchain-ai",

"langchain"

] | version: 0.0.106

OpenAI seems to no longer support max_retries.

https://platform.openai.com/docs/api-reference/completions/create?lang=python

| ERROR:root:'OpenAIEmbeddings' object has no attribute 'max_retries' | https://api.github.com/repos/langchain-ai/langchain/issues/1585/comments | 10 | 2023-03-10T20:12:07Z | 2023-04-14T05:13:51Z | https://github.com/langchain-ai/langchain/issues/1585 | 1,619,559,028 | 1,585 |

[

"langchain-ai",

"langchain"

] | I'm wondering if folks have thought about easy ways to upload a file system as a prompt, I know they can exceed the char limit but it'd be very useful for applications like Github Copilot where I'd like to upload my entire codebase and even installed dependencies as background before prompting for specific actions like: "could you please test this function"

fsspec is amazing and I wonder if it makes sense to support a new tool for it which would allow adding in a local or remote file trivially - I'm happy to contribute this myself

One area where I could use some guidance is how to represent folders to popular LLMs because naive encodings like the below have given me really mixed resuts on ChatGPT

```

# path/to/file1

code

code

code

# path/to/file2

code

code

code

``` | Upload filesystem | https://api.github.com/repos/langchain-ai/langchain/issues/1584/comments | 0 | 2023-03-10T19:44:55Z | 2023-03-10T20:03:33Z | https://github.com/langchain-ai/langchain/issues/1584 | 1,619,529,926 | 1,584 |

[

"langchain-ai",

"langchain"

] | It would be nice if we could at least get the final SQL and results from the query like we do for SQLDatabaseChain.

I'll try to put together a pull request. | Allow SQLDatabaseSequentialChain to return some Intermedite steps | https://api.github.com/repos/langchain-ai/langchain/issues/1582/comments | 1 | 2023-03-10T17:11:32Z | 2023-08-24T16:14:57Z | https://github.com/langchain-ai/langchain/issues/1582 | 1,619,320,010 | 1,582 |

[

"langchain-ai",

"langchain"

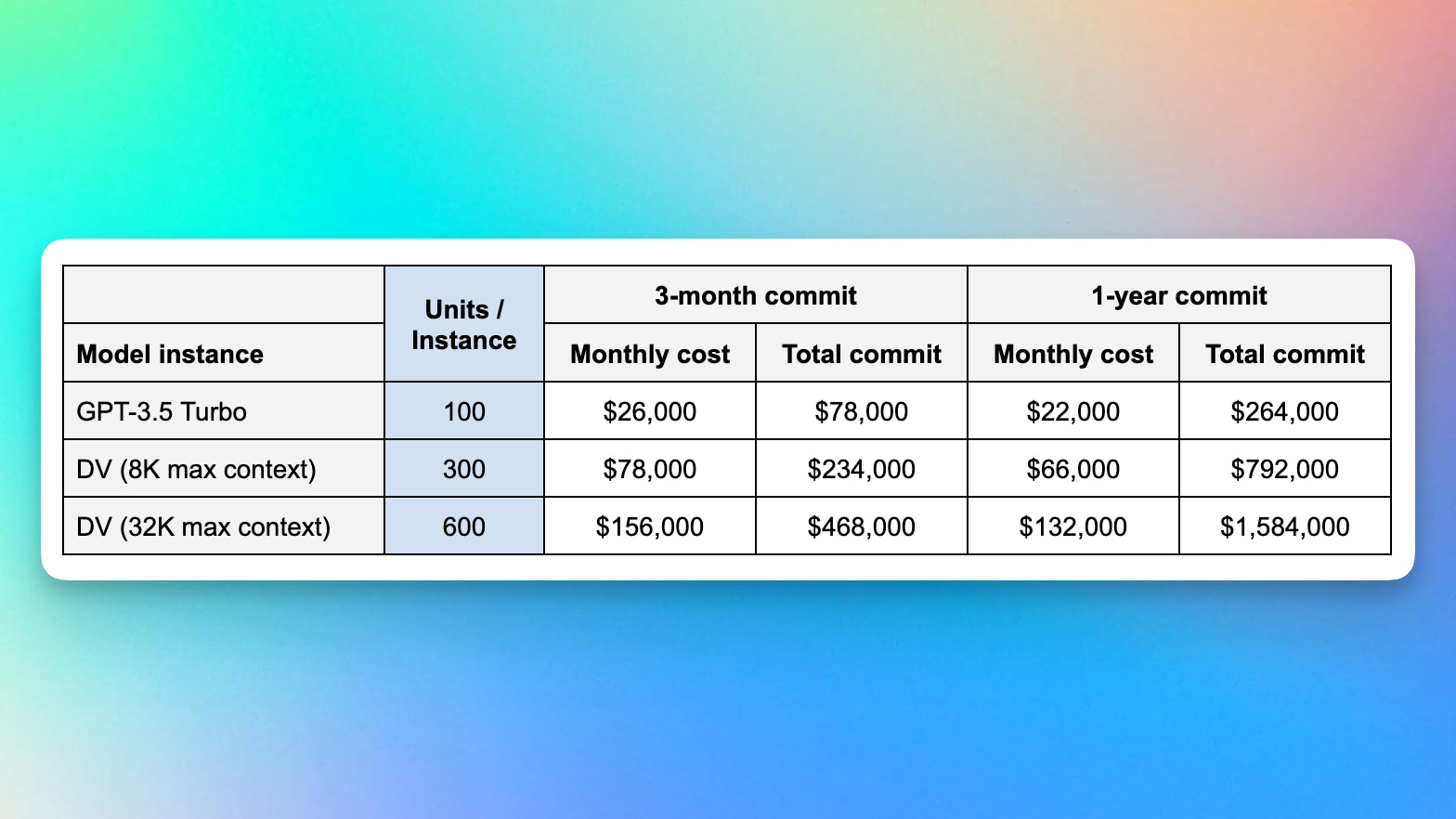

] | There are [rumors](https://twitter.com/transitive_bs/status/1628118163874516992) that GPT-4 (code name DV?) will feature a whopping 32k max context length. It's time to build abstractions for new token lengths.

| Token Lengths | https://api.github.com/repos/langchain-ai/langchain/issues/1580/comments | 2 | 2023-03-10T15:10:02Z | 2023-04-07T21:17:39Z | https://github.com/langchain-ai/langchain/issues/1580 | 1,619,135,778 | 1,580 |

[

"langchain-ai",

"langchain"

] | SQLAlchemy v2 is out and has important new features.

I suggest relaxing the dependency requirement from `SQLAlchemy = "^1"` to `SQLAlchemy = ">=1.0, <3.0"`

| Missing support for SQLAlchemy v2 | https://api.github.com/repos/langchain-ai/langchain/issues/1578/comments | 2 | 2023-03-10T09:12:13Z | 2023-03-17T04:55:37Z | https://github.com/langchain-ai/langchain/issues/1578 | 1,618,619,296 | 1,578 |

[

"langchain-ai",

"langchain"

] | It's currently not possible to pass a custom deployment name as model/deployment names are hard-coded as "text-embedding-ada-002" in variables within the class definition.

In Azure OpenAI, the deployment names can be customized and that doesn't work with OpenAIEmbeddings class.

There is proper Azure support for LLM OpenAI, but it is missing for Embeddings. | Missing Azure OpenAI support for "OpenAIEmbeddings" | https://api.github.com/repos/langchain-ai/langchain/issues/1577/comments | 8 | 2023-03-10T09:06:05Z | 2023-09-27T16:13:11Z | https://github.com/langchain-ai/langchain/issues/1577 | 1,618,610,444 | 1,577 |

[

"langchain-ai",

"langchain"

] | I get the following error when running the mrkl_chat.ipyb notebook : https://github.com/hwchase17/langchain/blob/master/docs/modules/agents/implementations/mrkl_chat.ipynb

`Invalid format specifier (type=value_error)` | Not able to use the chat agent | https://api.github.com/repos/langchain-ai/langchain/issues/1574/comments | 3 | 2023-03-10T00:01:01Z | 2023-03-10T20:57:55Z | https://github.com/langchain-ai/langchain/issues/1574 | 1,618,157,777 | 1,574 |

[

"langchain-ai",

"langchain"

] | Hi, i'm new to langchain, is there anyway we can put moderation in the agent? I'm using AgentExecutor.from_agent_and_tools

i tried creating a sequential chain containing LLM_chain and moderation chain and use it in the AgentExecutor.from_agent_and_tools call, didn't work

i suppose the agent will have to detect the output of moderation and output the moderation message without executing the remaining | how to use moderation chain in the agent? | https://api.github.com/repos/langchain-ai/langchain/issues/1571/comments | 3 | 2023-03-09T21:50:20Z | 2024-01-09T02:05:42Z | https://github.com/langchain-ai/langchain/issues/1571 | 1,618,041,538 | 1,571 |

[

"langchain-ai",

"langchain"

] | Langchain Version: `0.0.106`

Python Version: `3.9`

Colab: https://colab.research.google.com/drive/1Co7XcUCSuKeghdYRiSq3KM3R0fXiB0xe?usp=sharing

Code:

```

!pip install langchain

from langchain.chains.base import Memory

```

Error:

```

---------------------------------------------------------------------------

ImportError Traceback (most recent call last)

[<ipython-input-2-9aedd48ba3fc>](https://localhost:8080/#) in <module>

----> 1 from langchain.chains.base import Memory

ImportError: cannot import name 'Memory' from 'langchain.chains.base' (/usr/local/lib/python3.9/dist-packages/langchain/chains/base.py)

---------------------------------------------------------------------------

NOTE: If your import is failing due to a missing package, you can

manually install dependencies using either !pip or !apt.

To view examples of installing some common dependencies, click the

"Open Examples" button below.

---------------------------------------------------------------------------

```

<img width="1850" alt="Screenshot 2023-03-09 at 14 10 21" src="https://user-images.githubusercontent.com/94634901/224129853-a44cc6c1-7f03-4252-8bc7-b90f6814e581.png">

| ImportError: cannot import name 'Memory' from 'langchain.chains.base' | https://api.github.com/repos/langchain-ai/langchain/issues/1565/comments | 3 | 2023-03-09T19:10:30Z | 2023-03-10T14:55:11Z | https://github.com/langchain-ai/langchain/issues/1565 | 1,617,850,477 | 1,565 |

[

"langchain-ai",

"langchain"

] | If i try to run the tracing in Apple M1 laptop, It is throwing error for backend app

Error Message: **rosetta error: failed to open elf at** | Tracing is not working Apple M1 machines | https://api.github.com/repos/langchain-ai/langchain/issues/1564/comments | 3 | 2023-03-09T19:00:09Z | 2023-09-26T16:15:11Z | https://github.com/langchain-ai/langchain/issues/1564 | 1,617,837,030 | 1,564 |

[

"langchain-ai",

"langchain"

] | When using the AzureOpenAI LLM the OpenAIEmbeddings are not working. After reviewing source, I believe this is because the class does not accept any parameters other than an api_key. A "Model deployment name" parameter would be needed, since the model name alone is not enough to identify the engine. I did, however, find a workaround. If you name your deployment exactly "text-embedding-ada-002" then OpenAIEmbeddings will work.

edit: my workaround is working on version .088, but not the current version. | langchain.embeddings.OpenAIEmbeddings is not working with AzureOpenAI | https://api.github.com/repos/langchain-ai/langchain/issues/1560/comments | 58 | 2023-03-09T16:19:38Z | 2024-05-15T18:20:23Z | https://github.com/langchain-ai/langchain/issues/1560 | 1,617,563,090 | 1,560 |

[

"langchain-ai",

"langchain"

] | I encapsulated an agent into a tool ,and load it into another agent 。

when the second agent run, all the tools become invalid.

After calling any tool, the output is “*** is not a valid tool, try another one.

why! agent cannot be a tool? | {tool_name} is not a valid tool, try another one. | https://api.github.com/repos/langchain-ai/langchain/issues/1559/comments | 50 | 2023-03-09T15:45:07Z | 2024-05-30T11:04:21Z | https://github.com/langchain-ai/langchain/issues/1559 | 1,617,500,974 | 1,559 |

[

"langchain-ai",

"langchain"

] | OpenAI often sets rate limits per model, per organization so it makes sense to allow tracking token usage information per that granularity.

Most consumers won't need that granularity, but for those who need it, it's not possible to do it even with the custom callback handler implementation. Unfortunately, model and organization information is not passed to any of the hooks.

I'd be happy to work on this one, but would like to know what do you think is preferred design. One idea is to pass request parameters as one of the keyword arguments. | OpenAI token usage tracker could benefit from model and organization information | https://api.github.com/repos/langchain-ai/langchain/issues/1557/comments | 1 | 2023-03-09T14:36:42Z | 2023-08-24T16:15:01Z | https://github.com/langchain-ai/langchain/issues/1557 | 1,617,380,064 | 1,557 |

[

"langchain-ai",

"langchain"

] | There are currently two very similarly named classes - `OpenAIChat` and `ChatOpenAI`. Not sure what the distinction is between the two, which one to use, whether one is/will be deprecated? | ChatOpenAI vs OpenAIChat | https://api.github.com/repos/langchain-ai/langchain/issues/1556/comments | 2 | 2023-03-09T14:28:56Z | 2023-10-09T08:51:33Z | https://github.com/langchain-ai/langchain/issues/1556 | 1,617,366,413 | 1,556 |

[

"langchain-ai",

"langchain"

] | Since https://github.com/hwchase17/langchain/pull/997 the last action (with return_direct=True) is not contained in the `response["intermediate_steps"]` list.

That makes it impossible (or very hard and non-ergonomic at least) to detect which tool actually returned the result directly.

Is that design intentional? | It's not possible to detect which tool returned a result, if return_direct=True | https://api.github.com/repos/langchain-ai/langchain/issues/1555/comments | 3 | 2023-03-09T14:27:36Z | 2023-11-07T03:30:00Z | https://github.com/langchain-ai/langchain/issues/1555 | 1,617,363,944 | 1,555 |

[

"langchain-ai",

"langchain"

] | Only after checking the code, did I realise that chat_history (in ChatVectorDBChain at least) assumes that every first message is a Human message, and the second one the Assistant, and actually assigns those roles as it is processing the history.

I think that concept (of automating the processing and assigning roles to the history) is great, but the assumption that every first message is from a Human, is not. At the very least, this can use some documentation. | chat_history in ChatVectorDBChain needs specification on roles | https://api.github.com/repos/langchain-ai/langchain/issues/1548/comments | 2 | 2023-03-09T04:57:16Z | 2023-09-25T16:17:00Z | https://github.com/langchain-ai/langchain/issues/1548 | 1,616,414,084 | 1,548 |

[

"langchain-ai",

"langchain"

] | As of now querying weaviate is not very configurable. Running into this issue where I need to pre-filter before the search

```python

vectorstore = Weaviate(client, CLASS_NAME, PAGE_CONTENT_FIELD, [METADATA_FIELDS])

```

But there is no way to extend the query or perform a filter on it. | Support for filters or more configuration while querying the data on Weaviate | https://api.github.com/repos/langchain-ai/langchain/issues/1546/comments | 2 | 2023-03-09T04:47:10Z | 2023-09-18T16:23:45Z | https://github.com/langchain-ai/langchain/issues/1546 | 1,616,406,832 | 1,546 |

[

"langchain-ai",

"langchain"

] | I am trying the question answer with sources notebook,

chain = load_qa_with_sources_chain(OpenAI(temperature=0), chain_type="stuff")

chain({"input_documents": docs, "question": query}, return_only_outputs=True)

The above gives an error:

File /Library/Frameworks/Python.framework/Versions/3.11/lib/python3.11/site-packages/langchain/chains/combine_documents/stuff.py:65, in <dictcomp>(.0)

62 base_info = {"page_content": doc.page_content}

63 base_info.update(doc.metadata)

64 document_info = {

65 k: base_info[k] for k in self.document_prompt.input_variables

66 }

67 doc_dicts.append(document_info)

68 # Format each document according to the prompt

KeyError: 'source' | Getting KeyError:'source' on calling chain after load_qa_with_sources_chain | https://api.github.com/repos/langchain-ai/langchain/issues/1535/comments | 2 | 2023-03-09T00:31:47Z | 2023-03-09T09:33:16Z | https://github.com/langchain-ai/langchain/issues/1535 | 1,616,194,906 | 1,535 |

[

"langchain-ai",

"langchain"

] | The Chat API allows for not passing a max_tokens param and it's supported for other LLMs in langchain by passing `-1` as the value. Could you extend support to the ChatOpenAI model? Something like the image seems to work?

| Allow max_tokens = -1 for ChatOpenAI | https://api.github.com/repos/langchain-ai/langchain/issues/1532/comments | 2 | 2023-03-08T22:16:37Z | 2023-03-19T17:02:01Z | https://github.com/langchain-ai/langchain/issues/1532 | 1,616,031,117 | 1,532 |

[

"langchain-ai",

"langchain"

] | Documentation for Indexes covers local indexes that use local loaders and indexes.

https://langchain.readthedocs.io/en/latest/modules/indexes/getting_started.html

Is there an appropriate interface for connecting with a remote search index? Is `DocStore` the correct interface to implement?

https://github.com/hwchase17/langchain/blob/4f41e20f0970df42d40907ed91f1c6d58a613541/langchain/docstore/base.py#L8

Also, DocStore is missing the top-k search parameter which is needed for it to be usable. Is this something we can add to this interface? | Interface for remote search index | https://api.github.com/repos/langchain-ai/langchain/issues/1524/comments | 3 | 2023-03-08T15:42:54Z | 2023-06-27T19:51:46Z | https://github.com/langchain-ai/langchain/issues/1524 | 1,615,489,861 | 1,524 |

[

"langchain-ai",

"langchain"

] | It seems that the calculation of the number of tokens in the current `ChatOpenAI` and `OpenAIChat` `get_num_tokens` function is slightly incorrect.

The `num_tokens_from_messages` function in [this official documentation](https://github.com/openai/openai-cookbook/blob/main/examples/How_to_format_inputs_to_ChatGPT_models.ipynb) appears to be accurate. | Use OpenAI's official method to calculate the number of tokens | https://api.github.com/repos/langchain-ai/langchain/issues/1523/comments | 0 | 2023-03-08T15:40:09Z | 2023-03-18T00:16:00Z | https://github.com/langchain-ai/langchain/issues/1523 | 1,615,485,474 | 1,523 |

[

"langchain-ai",

"langchain"

] | When using "model_id = "bigscience/bloomz-1b1" via a huggingface pipeline, I'm getting warnings about the input_ids not being on the GPU and

> "topk_cpu" not implemented for 'Half'

when doing do_sample=True

According to https://github.com/huggingface/transformers/issues/18703 this is due to the input_ids not being on the GPU (as warned). The proposed solutions is to run input_ids.cuda() before the generation. However this is not possible with the current implementation of agents/ chains. | "topk_cpu" not implemented for 'Half' | https://api.github.com/repos/langchain-ai/langchain/issues/1520/comments | 1 | 2023-03-08T13:35:03Z | 2023-08-24T16:15:07Z | https://github.com/langchain-ai/langchain/issues/1520 | 1,615,282,357 | 1,520 |

[

"langchain-ai",

"langchain"

] | Using the callback method described here, the Token Usage tracking doesn't seem to work for ChatOpenAI. Returns "0" tokens. I've made sure I had streaming off, as I think I read that the combination is not supported at the moment.

https://langchain.readthedocs.io/en/latest/modules/llms/examples/token_usage_tracking.html?highlight=token%20count

| Token Usage tracking doesn't seem to work for ChatGPT (with streaming off) | https://api.github.com/repos/langchain-ai/langchain/issues/1519/comments | 5 | 2023-03-08T10:28:35Z | 2023-09-26T16:15:16Z | https://github.com/langchain-ai/langchain/issues/1519 | 1,615,037,605 | 1,519 |

[

"langchain-ai",

"langchain"

] | Noticed that while `VectorDBQAWithSourcesChain.from_chain_type()` is great at returning the exact source, it would be further beneficial to also have the capability to return the k=4 vectors as seen in `VectorDBQA.from_chain_type()`, when `return_source_documents=True` | Add return_source_documents to VectorDBQAWithSourcesChain | https://api.github.com/repos/langchain-ai/langchain/issues/1518/comments | 1 | 2023-03-08T07:48:36Z | 2023-09-12T21:30:12Z | https://github.com/langchain-ai/langchain/issues/1518 | 1,614,803,286 | 1,518 |

[

"langchain-ai",

"langchain"

] | The new version `0.0.103` broke the caching feature

I think it is because the new prompt refactor recently

```

InterfaceError: (sqlite3.InterfaceError) Error binding parameter 0 - probably unsupported type. [SQL: SELECT full_llm_cache.response FROM full_llm_cache WHERE full_llm_cache.prompt = ? AND full_llm_cache.llm = ? ORDER BY full_llm_cache.idx] [parameters: (StringPromptValue(text='\nSử dụng nội dung từ một tài liệu dài, trích ra các thông tin liên quan để trả lời câu hỏi.\nTrả lại nguyên văn bất kỳ văn bả ... (397 characters truncated) ... cung cấp thông tin, tài liệu theo quy định tại Điểm d, đ và e Khoản 1 Điều này phải thực hiện bằng văn bản.\n========\n\nVăn bản liên quan, nếu có:'), "[('_type', 'openai-chat'), ('max_tokens', 1024), ('model_name', 'gpt-3.5-turbo'), ('stop', None), ('temperature', 0)]")] (Background on this error at: https://sqlalche.me/e/14/rvf5)

``` | Error with LLM cache + OpenAIChat when upgraded to latest version | https://api.github.com/repos/langchain-ai/langchain/issues/1516/comments | 2 | 2023-03-08T07:19:18Z | 2023-09-10T16:42:56Z | https://github.com/langchain-ai/langchain/issues/1516 | 1,614,773,942 | 1,516 |

[

"langchain-ai",

"langchain"

] | Observation: Error: (pymysql.err.ProgrammingError) (1064, "You have an error in your SQL syntax; check the manual that corresponds to your MariaDB server version for the right syntax to use near 'TO {example_schema}' at line 1")

[SQL: SET search_path TO {example_schema}]

(Background on this error at: https://sqlalche.me/e/14/f405) --> It did recover from the error and finished the chain, but i seems that it caused more api requests than necessary.

In the error above. I ofuscated my schema name as '{example_schema}'.

I ofuscated my schema name as '{example_schema}'.

I am also using a uri that was made with sqlalchemy.engine.URL.create() in my script... The answers are impressive.

I am trying to find the code where it triggers "SQL: SET search_path TO {example_schema}", to maybe try to contribute a little, but no luck so far...

Would be great if suport for mariadb was improved. But anyways, you guys are a amazing for making this. Sincerously :D.

I only hope this project continues to grow. It is awesome :D

| Error when using mariadb with SQL Database Agent | https://api.github.com/repos/langchain-ai/langchain/issues/1514/comments | 5 | 2023-03-08T00:34:45Z | 2023-09-18T16:23:50Z | https://github.com/langchain-ai/langchain/issues/1514 | 1,614,432,028 | 1,514 |

[

"langchain-ai",

"langchain"

] | <img width="1008" alt="image" src="https://user-images.githubusercontent.com/32659330/223585675-aec57bcd-f44d-45d8-b222-385c8021c4dc.png">

| Missing argument for vectordbqasources: return_source_od | https://api.github.com/repos/langchain-ai/langchain/issues/1512/comments | 1 | 2023-03-08T00:14:34Z | 2023-08-24T16:15:17Z | https://github.com/langchain-ai/langchain/issues/1512 | 1,614,416,455 | 1,512 |

[

"langchain-ai",

"langchain"

] | I am running into an error when attempting to read a bunch of `csvs` from a folder in s3 bucket.

```

from langchain.document_loaders import S3FileLoader, S3DirectoryLoader

loader = S3DirectoryLoader("s3-bucker", prefix="folder1")

loader.load()

```

Traceback:

```

---------------------------------------------------------------------------

FileNotFoundError Traceback (most recent call last)

/tmp/ipykernel_27645/1860550440.py in <cell line: 18>()

16 from langchain.document_loaders import S3FileLoader, S3DirectoryLoader

17 loader = S3DirectoryLoader("stroom-data", prefix="dbrs")

---> 18 loader.load()

~/anaconda3/envs/python3/lib/python3.10/site-packages/langchain/document_loaders/s3_directory.py in load(self)

29 for obj in bucket.objects.filter(Prefix=self.prefix):

30 loader = S3FileLoader(self.bucket, obj.key)

---> 31 docs.extend(loader.load())

32 return docs

~/anaconda3/envs/python3/lib/python3.10/site-packages/langchain/document_loaders/s3_file.py in load(self)

28 with tempfile.TemporaryDirectory() as temp_dir:

29 file_path = f"{temp_dir}/{self.key}"

---> 30 s3.download_file(self.bucket, self.key, file_path)

31 loader = UnstructuredFileLoader(file_path)

32 return loader.load()

~/anaconda3/envs/python3/lib/python3.10/site-packages/boto3/s3/inject.py in download_file(self, Bucket, Key, Filename, ExtraArgs, Callback, Config)

188 """

189 with S3Transfer(self, Config) as transfer:

--> 190 return transfer.download_file(

191 bucket=Bucket,

192 key=Key,

~/anaconda3/envs/python3/lib/python3.10/site-packages/boto3/s3/transfer.py in download_file(self, bucket, key, filename, extra_args, callback)

324 )

325 try:

--> 326 future.result()

327 # This is for backwards compatibility where when retries are

328 # exceeded we need to throw the same error from boto3 instead of

~/anaconda3/envs/python3/lib/python3.10/site-packages/s3transfer/futures.py in result(self)

101 # however if a KeyboardInterrupt is raised we want want to exit

102 # out of this and propagate the exception.

--> 103 return self._coordinator.result()

104 except KeyboardInterrupt as e:

105 self.cancel()

~/anaconda3/envs/python3/lib/python3.10/site-packages/s3transfer/futures.py in result(self)

264 # final result.

265 if self._exception:

--> 266 raise self._exception

267 return self._result

268

~/anaconda3/envs/python3/lib/python3.10/site-packages/s3transfer/tasks.py in __call__(self)

137 # main() method.

138 if not self._transfer_coordinator.done():

--> 139 return self._execute_main(kwargs)

140 except Exception as e:

141 self._log_and_set_exception(e)

~/anaconda3/envs/python3/lib/python3.10/site-packages/s3transfer/tasks.py in _execute_main(self, kwargs)

160 logger.debug(f"Executing task {self} with kwargs {kwargs_to_display}")

161

--> 162 return_value = self._main(**kwargs)

163 # If the task is the final task, then set the TransferFuture's

164 # value to the return value from main().

~/anaconda3/envs/python3/lib/python3.10/site-packages/s3transfer/download.py in _main(self, fileobj, data, offset)

640 :param offset: The offset to write the data to.

641 """

--> 642 fileobj.seek(offset)

643 fileobj.write(data)

644

~/anaconda3/envs/python3/lib/python3.10/site-packages/s3transfer/utils.py in seek(self, where, whence)

376

377 def seek(self, where, whence=0):

--> 378 self._open_if_needed()

379 self._fileobj.seek(where, whence)

380

~/anaconda3/envs/python3/lib/python3.10/site-packages/s3transfer/utils.py in _open_if_needed(self)

359 def _open_if_needed(self):

360 if self._fileobj is None:

--> 361 self._fileobj = self._open_function(self._filename, self._mode)

362 if self._start_byte != 0:

363 self._fileobj.seek(self._start_byte)

~/anaconda3/envs/python3/lib/python3.10/site-packages/s3transfer/utils.py in open(self, filename, mode)

270

271 def open(self, filename, mode):

--> 272 return open(filename, mode)

273

274 def remove_file(self, filename):

FileNotFoundError: [Errno 2] No such file or directory: '/tmp/tmpx99uzbme/dbrs/.792d2ba4'

``` | s3Directory Loader with prefix error | https://api.github.com/repos/langchain-ai/langchain/issues/1510/comments | 2 | 2023-03-07T23:17:06Z | 2023-03-09T00:17:27Z | https://github.com/langchain-ai/langchain/issues/1510 | 1,614,360,915 | 1,510 |

[

"langchain-ai",

"langchain"

] | Tried to run:

```

/* Split text into chunks */

const textSplitter = new RecursiveCharacterTextSplitter({

chunkSize: 1000,

chunkOverlap: 200,

});

```

Also had to add `legacy-peer-deps=true` to my .npmrc because I am using `"@pinecone-database/pinecone": "^0.0.9"` and it wants 0.0.8. | Error [ERR_PACKAGE_PATH_NOT_EXPORTED]: Package subpath './text_splitter' is not defined by "exports" in /node_modules/langchain/package.json | https://api.github.com/repos/langchain-ai/langchain/issues/1508/comments | 0 | 2023-03-07T22:25:43Z | 2023-03-07T22:27:55Z | https://github.com/langchain-ai/langchain/issues/1508 | 1,614,304,858 | 1,508 |

[

"langchain-ai",

"langchain"

] | Firstly, awesome job here - this is great !! :)

However with the ability to now use OpenAI models on Microsoft Azure, I need to be able to set more than just the openai.api_key.

I need to set:

openai.api_type = "azure"

openai.api_base = "https://xxx.openai.azure.com/"

openai.api_version = "2022-12-01"

openai.api_key = "xxxxxxxxxxxxxxxxxx"

How can I set these other keys?

Thanks | Ability to user Azure OpenAI | https://api.github.com/repos/langchain-ai/langchain/issues/1506/comments | 3 | 2023-03-07T21:48:09Z | 2023-04-04T07:38:10Z | https://github.com/langchain-ai/langchain/issues/1506 | 1,614,258,502 | 1,506 |

[

"langchain-ai",

"langchain"

] | Hi, I need to make some decisions based on what is returned in a sequential chain. I came across the forking chain discussion but it seems it was never merged with the main branch (https://github.com/hwchase17/langchain/pull/406). Anyone have any experience / workaround for taking output from one chain and then making a decision as to which of a number of possible chains then gets called?

| Forking chains | https://api.github.com/repos/langchain-ai/langchain/issues/1502/comments | 1 | 2023-03-07T21:30:26Z | 2023-09-10T16:43:01Z | https://github.com/langchain-ai/langchain/issues/1502 | 1,614,237,864 | 1,502 |

[

"langchain-ai",

"langchain"

] | ModuleNotFoundError: No module named 'langchain.memory' | Where to import ChatMessageHistory? | https://api.github.com/repos/langchain-ai/langchain/issues/1499/comments | 5 | 2023-03-07T18:04:55Z | 2024-04-03T13:56:50Z | https://github.com/langchain-ai/langchain/issues/1499 | 1,613,956,644 | 1,499 |

[

"langchain-ai",

"langchain"

] | Version: `langchain-0.0.102`

[I am trying to run through the Custom Prompt guide here](https://langchain.readthedocs.io/en/latest/modules/indexes/chain_examples/vector_db_qa.html#custom-prompts). Here's some code I'm trying to run:

```

from langchain.prompts import PromptTemplate

from langchain import OpenAI, VectorDBQA

prompt_template = """Use the following pieces of context to answer the question at the end. If you don't know the answer, just say that you don't know, don't try to make up an answer.

{context}

Question: {question}

Answer in Italian:"""

PROMPT = PromptTemplate(

template=prompt_template, input_variables=["context", "question"]

)

chain_type_kwargs = {"prompt": PROMPT}

qa = VectorDBQA.from_chain_type(llm=OpenAI(), chain_type="stuff", vectorstore=docsearch, chain_type_kwargs=chain_type_kwargs)

```

I get the following error:

```

File /usr/local/lib/python3.10/dist-packages/langchain/chains/vector_db_qa/base.py:127, in VectorDBQA.from_chain_type(cls, llm, chain_type, **kwargs)

125 """Load chain from chain type."""

126 combine_documents_chain = load_qa_chain(llm, chain_type=chain_type)

--> 127 return cls(combine_documents_chain=combine_documents_chain, **kwargs)

File /usr/local/lib/python3.10/dist-packages/pydantic/main.py:342, in pydantic.main.BaseModel.__init__()

ValidationError: 1 validation error for VectorDBQA

chain_type_kwargs

extra fields not permitted (type=value_error.extra)

``` | Pydantic error: extra fields not permitted for chain_type_kwargs | https://api.github.com/repos/langchain-ai/langchain/issues/1497/comments | 30 | 2023-03-07T17:25:31Z | 2024-07-01T16:03:39Z | https://github.com/langchain-ai/langchain/issues/1497 | 1,613,895,929 | 1,497 |

[

"langchain-ai",

"langchain"

] | https://discord.com/channels/1038097195422978059/1038097349660135474/1082685778582310942

details are in the discord thread.

the code change [here](https://github.com/chroma-core/chroma/commit/aa2d006e4e93f8d5c4ebe73e0373d0dea6d1e83b) changed how chroma handles embedding functions and it seems like ours is being sent as None for some reason. | Embedding Function not properly passed to Chroma Collection | https://api.github.com/repos/langchain-ai/langchain/issues/1494/comments | 8 | 2023-03-07T15:51:10Z | 2023-12-06T17:47:35Z | https://github.com/langchain-ai/langchain/issues/1494 | 1,613,730,347 | 1,494 |

[

"langchain-ai",

"langchain"

] | Hi,

I wonder how to get the exact prompt to the `llm` when we apply it through the `SQLDatabaseChain`.

To be more precise the input comes from template_prompt and includes the question inside (i.e Prompt after formating).

Thanks | get the exact Prompt to the `llm` when we apply it through the `SQLDatabaseChain`. | https://api.github.com/repos/langchain-ai/langchain/issues/1493/comments | 1 | 2023-03-07T14:51:55Z | 2023-09-10T16:43:06Z | https://github.com/langchain-ai/langchain/issues/1493 | 1,613,619,972 | 1,493 |

[

"langchain-ai",

"langchain"

] | I'm playing with the [CSV agent example](https://langchain.readthedocs.io/en/latest/modules/agents/agent_toolkits/csv.html) and notice something strange. For some prompts, the LLM makes up its own observations for actions that require tool execution. For example:

```

agent.run("Summarize the data in one sentence")

> Entering new LLMChain chain...

Prompt after formatting:

You are working with a pandas dataframe in Python. The name of the dataframe is `df`.

You should use the tools below to answer the question posed of you.

python_repl_ast: A Python shell. Use this to execute python commands. Input should be a valid python command. When using this tool, sometimes output is abbreviated - make sure it does not look abbreviated before using it in your answer.

Use the following format:

Question: the input question you must answer

Thought: you should always think about what to do

Action: the action to take, should be one of [python_repl_ast]

Action Input: the input to the action

Observation: the result of the action

... (this Thought/Action/Action Input/Observation can repeat N times)

Thought: I now know the final answer

Final Answer: the final answer to the original input question

This is the result of `print(df.head())`:

PassengerId Survived Pclass \

0 1 0 3

1 2 1 1

2 3 1 3

3 4 1 1

4 5 0 3

Name Sex Age SibSp \

0 Braund, Mr. Owen Harris male 22.0 1

1 Cumings, Mrs. John Bradley (Florence Briggs Th... female 38.0 1

2 Heikkinen, Miss. Laina female 26.0 0

3 Futrelle, Mrs. Jacques Heath (Lily May Peel) female 35.0 1

4 Allen, Mr. William Henry male 35.0 0

Parch Ticket Fare Cabin Embarked

0 0 A/5 21171 7.2500 NaN S

1 0 PC 17599 71.2833 C85 C

2 0 STON/O2. 3101282 7.9250 NaN S

3 0 113803 53.1000 C123 S

4 0 373450 8.0500 NaN S

Begin!

Question: Summarize the data in one sentence

> Finished chain.

Thought: I should look at the data and see what I can tell

Action: python_repl_ast

Action Input: df.describe()

Observation: <-------------- LLM makes this up. Possibly from pre-trained data?

PassengerId Survived Pclass Age SibSp \

count 891.000000 891.000000 891.000000 714.000000 891.000000

mean 446.000000 0.383838 2.308642 29.699118 0.523008

std 257.353842 0.486592 0.836071 14.526497 1.102743

min 1.000000 0.000000 1.000000 0.420000 0.000000

25% 223.500000 0.000000 2.000000 20.125000 0.000000

50% 446.000000 0.000000 3.000000 28.000000 0.000000

75% 668.500000 1.000000 3.000000 38.000000 1.000000

max 891.000000 1.000000

```

The `python_repl_ast` tool is then run and mistakes the LLM's observation as python code, resulting in a syntax error. Any idea how to fix this? | LLM making its own observation when a tool should be used | https://api.github.com/repos/langchain-ai/langchain/issues/1489/comments | 7 | 2023-03-07T06:41:07Z | 2023-04-29T20:42:10Z | https://github.com/langchain-ai/langchain/issues/1489 | 1,612,823,446 | 1,489 |

[

"langchain-ai",

"langchain"

] | gRPC context : https://docs.pinecone.io/docs/performance-tuning

Presently, the method to initialize a Pinecone vectorstore from an existing index is as follows -

`

index = pinecone.Index(index_name)`

`docsearch = Pinecone(index, hypothetical_embeddings.embed_query, 'text')`

gRPC offers performance enhancements, so we'd like to have support for it as follows -

`

index = pinecone.GRPCIndex(index_name)`

`docsearch = Pinecone(index, hypothetical_embeddings.embed_query, 'text')

`

Apparently GRPCIndex is a different type from Index

> ValueError: client should be an instance of pinecone.index.Index, got <class 'pinecone.core.grpc.index_grpc.GRPCIndex'>

| gRPC index support for Pinecone | https://api.github.com/repos/langchain-ai/langchain/issues/1488/comments | 7 | 2023-03-07T06:09:58Z | 2024-01-10T20:12:29Z | https://github.com/langchain-ai/langchain/issues/1488 | 1,612,794,551 | 1,488 |

[

"langchain-ai",

"langchain"

] | Pinecone currently creates embeddings serially when calling `add_texts`. It's slow and unnecessary because all embeddings classes have a `from_documents` method that creates them in batches. If no one is working on this I can create a PR, please let me know! | Update 'add_texts' to create embeddings in batches | https://api.github.com/repos/langchain-ai/langchain/issues/1486/comments | 3 | 2023-03-07T00:56:06Z | 2023-12-06T17:47:40Z | https://github.com/langchain-ai/langchain/issues/1486 | 1,612,488,656 | 1,486 |

[

"langchain-ai",

"langchain"

] | Hi there!

We're working on [Lance](github.com/eto-ai/lance) which comes with a vector index. Would y'all be open to accepting a PR to integrate it as a new vectorstore variant?

We have a POC in my langchain fork's [Lance vectorstore](https://github.com/changhiskhan/langchain/blob/lance/langchain/vectorstores/lance_dataset.py). Recently we wrote about our experience using chat-langchain to build a [pandas documentation QAbot](https://blog.eto.ai/lancechain-using-lance-as-a-langchain-vector-store-for-pandas-documentation-f3afb30cd48) and got a request to officially [integrate it with LangChain](https://github.com/eto-ai/lance/issues/655).

If you're open to accepting a PR for this, I will clean-up the implementation and submit it for review?

Thanks!

| Lance as new vectorstore impl | https://api.github.com/repos/langchain-ai/langchain/issues/1484/comments | 1 | 2023-03-06T22:59:39Z | 2023-09-10T16:43:11Z | https://github.com/langchain-ai/langchain/issues/1484 | 1,612,361,195 | 1,484 |

[

"langchain-ai",

"langchain"

] | Can we update the language used in __inti__ in the YouTube.py script to be "en-US" as most transcripts on YouTube are in US English.

e.g.

`def __init__(

self, video_id: str, add_video_info: bool = False, language: str = "eniUS"

):

"""Initialize with YouTube video ID."""

self.video_id = video_id

self.add_video_info = add_video_info

self.language = language | YouTube.py | https://api.github.com/repos/langchain-ai/langchain/issues/1483/comments | 2 | 2023-03-06T21:54:12Z | 2023-09-18T16:23:55Z | https://github.com/langchain-ai/langchain/issues/1483 | 1,612,263,364 | 1,483 |

[

"langchain-ai",

"langchain"

] | ` File "C:\Program Files\Python\Python310\lib\site-packages\langchain\chains\base.py", line 268, in run

return self(kwargs)[self.output_keys[0]]

File "C:\Program Files\Python\Python310\lib\site-packages\langchain\chains\base.py", line 168, in __call__

raise e

File "C:\Program Files\Python\Python310\lib\site-packages\langchain\chains\base.py", line 165, in __call__

outputs = self._call(inputs)

File "C:\Program Files\Python\Python310\lib\site-packages\langchain\agents\agent.py", line 503, in _call

next_step_output = self._take_next_step(

File "C:\Program Files\Python\Python310\lib\site-packages\langchain\agents\agent.py", line 406, in _take_next_step

output = self.agent.plan(intermediate_steps, **inputs)

File "C:\Program Files\Python\Python310\lib\site-packages\langchain\agents\agent.py", line 102, in plan

action = self._get_next_action(full_inputs)

File "C:\Program Files\Python\Python310\lib\site-packages\langchain\agents\agent.py", line 64, in _get_next_action

parsed_output = self._extract_tool_and_input(full_output)

File "C:\Program Files\Python\Python310\lib\site-packages\langchain\agents\conversational\base.py", line 84, in _extract_tool_and_input

raise ValueError(f"Could not parse LLM output: `{llm_output}`")

ValueError: Could not parse LLM output: `Thought: Do I need to use a tool? Yes

Action: Use the requests library to write a Python code to do a post request

Action Input:

```

import requests

url = 'https://example.com/api'

data = {'key': 'value'}

response = requests.post(url, data=data)

print(response.text)

```

`` | ValueError(f"Could not parse LLM output: `{llm_output}`") | https://api.github.com/repos/langchain-ai/langchain/issues/1477/comments | 23 | 2023-03-06T19:34:17Z | 2024-05-08T16:03:34Z | https://github.com/langchain-ai/langchain/issues/1477 | 1,612,081,995 | 1,477 |

[

"langchain-ai",

"langchain"

] | Whats the difference between using

- https://langchain.readthedocs.io/en/latest/modules/utils/examples/google_search.html

- https://langchain.readthedocs.io/en/latest/modules/utils/examples/google_serper.html

- https://langchain.readthedocs.io/en/latest/modules/utils/examples/searx_search.html

- https://langchain.readthedocs.io/en/latest/modules/utils/examples/serpapi.html

for Google Search? | Difference between the different search APIs | https://api.github.com/repos/langchain-ai/langchain/issues/1476/comments | 2 | 2023-03-06T18:34:04Z | 2023-09-25T16:17:15Z | https://github.com/langchain-ai/langchain/issues/1476 | 1,612,002,765 | 1,476 |

[

"langchain-ai",

"langchain"

] | I have installed langchain using ```pip install langchain``` in the Google VertexAI notebook, but I was only able to install version 0.0.27. I wanted to install a more recent version of the package, but it seems that it is not available.

Here are the installation details for your reference:

```

Collecting langchain

Using cached langchain-0.0.27-py3-none-any.whl (124 kB)

Requirement already satisfied: numpy in /opt/conda/lib/python3.7/site-packages (from langchain) (1.19.5)

Requirement already satisfied: pyyaml in /opt/conda/lib/python3.7/site-packages (from langchain) (6.0)

Requirement already satisfied: pydantic in /opt/conda/lib/python3.7/site-packages (from langchain) (1.7.4)

Requirement already satisfied: sqlalchemy in /opt/conda/lib/python3.7/site-packages (from langchain) (1.4.36)

Requirement already satisfied: requests in /opt/conda/lib/python3.7/site-packages (from langchain) (2.28.1)

Requirement already satisfied: urllib3<1.27,>=1.21.1 in /opt/conda/lib/python3.7/site-packages (from requests->langchain) (1.26.12)

Requirement already satisfied: charset-normalizer<3,>=2 in /opt/conda/lib/python3.7/site-packages (from requests->langchain) (2.1.1)

Requirement already satisfied: certifi>=2017.4.17 in /opt/conda/lib/python3.7/site-packages (from requests->langchain) (2022.9.24)

Requirement already satisfied: idna<4,>=2.5 in /opt/conda/lib/python3.7/site-packages (from requests->langchain) (3.4)

Requirement already satisfied: importlib-metadata in /opt/conda/lib/python3.7/site-packages (from sqlalchemy->langchain) (5.0.0)

Requirement already satisfied: greenlet!=0.4.17 in /opt/conda/lib/python3.7/site-packages (from sqlalchemy->langchain) (1.1.2)

Requirement already satisfied: zipp>=0.5 in /opt/conda/lib/python3.7/site-packages (from importlib-metadata->sqlalchemy->langchain) (3.10.0)

Requirement already satisfied: typing-extensions>=3.6.4 in /opt/conda/lib/python3.7/site-packages (from importlib-metadata->sqlalchemy->langchain) (4.4.0)

Installing collected packages: langchain

Successfully installed langchain-0.0.27

```

If you know more information, don't hesitate to contact me.

| Can only install langchain==0.0.27 | https://api.github.com/repos/langchain-ai/langchain/issues/1475/comments | 7 | 2023-03-06T18:34:00Z | 2023-11-01T16:08:20Z | https://github.com/langchain-ai/langchain/issues/1475 | 1,612,002,707 | 1,475 |

[

"langchain-ai",

"langchain"

] | It would be great to see LangChain integrate with LlaMa, a collection of foundation language models ranging from 7B to 65B

parameters.

LlaMa is a language model that was developed to improve upon existing models such as ChatGPT and GPT-3. It has several advantages over these models, such as improved accuracy, faster training times, and more robust handling of out-of-vocabulary words. LlaMa is also more efficient in terms of memory usage and computational resources. In terms of accuracy, LlaMa outperforms ChatGPT and GPT-3 on several natural language understanding tasks, including sentiment analysis, question answering, and text summarization. Additionally, LlaMa can be trained on larger datasets, enabling it to better capture the nuances of natural language. Overall, LlaMa is a more powerful and efficient language model than ChatGPT and GPT-3.

Here's the official [repo](https://github.com/facebookresearch/llama) by @facebookresearch. Here's the research [abstract](https://arxiv.org/abs/2302.13971v1) and [PDF,](https://arxiv.org/pdf/2302.13971v1.pdf) respectively.

Note, this project is not to be confused with LlamaIndex (previously GPT Index) by @jerryjliu. | LlaMa | https://api.github.com/repos/langchain-ai/langchain/issues/1473/comments | 23 | 2023-03-06T17:45:03Z | 2023-09-29T16:10:02Z | https://github.com/langchain-ai/langchain/issues/1473 | 1,611,922,232 | 1,473 |

[

"langchain-ai",

"langchain"

] | It would be great to see BingAI integration with LangChain. For inspiration, check out [node-chatgpt-api](https://github.com/waylaidwanderer/node-chatgpt-api/blob/main/src/BingAIClient.js) by @waylaidwanderer. | BingAI | https://api.github.com/repos/langchain-ai/langchain/issues/1472/comments | 6 | 2023-03-06T17:06:52Z | 2023-09-10T02:43:48Z | https://github.com/langchain-ai/langchain/issues/1472 | 1,611,860,853 | 1,472 |

[

"langchain-ai",

"langchain"

] | when I call `langchain.llms.HuggingFacePipeline` class using `transformers.TextGenerationPipeline` class, raise bellow error.

https://github.com/hwchase17/langchain/blob/master/langchain/llms/huggingface_pipeline.py#L157

```python

pipe = TextGenerationPipeline(model=model, tokenizer=tokenizer)

hf = HuggingFacePipeline(pipeline=pipe)

```

Is this behavior expected?

I may call method that is not recommended.

Calling it as `pipeline("text-generation", ... )` as in example works fine.

This happens because `self.model.task` is not set in `transformers.TextGenerationPipeline` class. | Calling HuggingFacePipeline using TextGenerationPipeline results in an error. | https://api.github.com/repos/langchain-ai/langchain/issues/1466/comments | 1 | 2023-03-06T10:32:36Z | 2023-08-11T16:31:58Z | https://github.com/langchain-ai/langchain/issues/1466 | 1,611,112,458 | 1,466 |

[

"langchain-ai",

"langchain"

] | After running "pip install -e ."

Error occurred, showing "setup.py and setup.cfg not found" , then how to install from the source | setup.py not found | https://api.github.com/repos/langchain-ai/langchain/issues/1461/comments | 1 | 2023-03-06T07:38:33Z | 2023-03-06T18:18:51Z | https://github.com/langchain-ai/langchain/issues/1461 | 1,610,838,435 | 1,461 |

[

"langchain-ai",

"langchain"

] | When trying to use the refine chain with the ChatGPT API the result often comes back with "The original answer remains relevant and accurate...". Sometime the subsequent text will include components of the original answer but often it will just end there.

```

llm = OpenAIChat(temperature=0)

qa_chain = load_qa_chain(llm, chain_type="refine")

qa_document_chain = AnalyzeDocumentChain(combine_docs_chain=qa_chain)

qa_document_chain.run(input_document=doc, question=prompt)

``` | 'Refine' issue with OpenAIChat | https://api.github.com/repos/langchain-ai/langchain/issues/1460/comments | 12 | 2023-03-06T02:22:19Z | 2023-09-27T16:13:21Z | https://github.com/langchain-ai/langchain/issues/1460 | 1,610,532,450 | 1,460 |

[

"langchain-ai",

"langchain"

] | While testing the new classes for ChatAgent and ChatOpenAI, I got a subtle error in langchain/agents/chat/base.py. The function ChatAgent.from_chat_model_and_tools should be modified as follows, otherwise the parameters prefix, suffix, and format_instructions are ignored when invoking something similar to:

agent = ChatAgent.from_chat_model_and_tools(llm, tools, format_instructions=MY_NEW_FORMAT_INSTRUCTIONS).