issue_owner_repo listlengths 2 2 | issue_body stringlengths 0 261k ⌀ | issue_title stringlengths 1 925 | issue_comments_url stringlengths 56 81 | issue_comments_count int64 0 2.5k | issue_created_at stringlengths 20 20 | issue_updated_at stringlengths 20 20 | issue_html_url stringlengths 37 62 | issue_github_id int64 387k 2.46B | issue_number int64 1 127k |

|---|---|---|---|---|---|---|---|---|---|

[

"langchain-ai",

"langchain"

] | Hi, I read the docs and examples then tried to make a chatbot and a qustion answering bot over docs. I wonder is there any way to combine there two function together?

From my point of view, I mean basically it's chatbot which uses memory module to carry on conversation with users. If the user asking a question, then the chatbot rertives docs based on embeddings and get the answer.

Then I change the prompt of the conversation and add the answer to it, asking the chatbot response based on the memory and the answer. Will it work? Or there is another conventient way or chain to combine there two types of bots? | Is there any way to combine chatbot and question answering over docs? | https://api.github.com/repos/langchain-ai/langchain/issues/2185/comments | 10 | 2023-03-30T09:14:50Z | 2023-10-26T00:23:23Z | https://github.com/langchain-ai/langchain/issues/2185 | 1,647,226,241 | 2,185 |

[

"langchain-ai",

"langchain"

] | Does API Chain support post method? How we can call post external api with llm? Appreciate any information, thanks. | Question: Does API Chain support post method? | https://api.github.com/repos/langchain-ai/langchain/issues/2184/comments | 12 | 2023-03-30T08:55:19Z | 2024-05-07T14:47:12Z | https://github.com/langchain-ai/langchain/issues/2184 | 1,647,194,820 | 2,184 |

[

"langchain-ai",

"langchain"

] | I'm wondering if we can use langchain without llm from openai. I've tried replace openai with "bloom-7b1" and "flan-t5-xl" and used agent from langchain according to visual chatgpt [https://github.com/microsoft/visual-chatgpt](url).

Here is my demo:

```

class Text2Image:

def __init__(self, device):

print(f"Initializing Text2Image to {device}")

self.device = device

self.torch_dtype = torch.float16 if 'cuda' in device else torch.float32

self.pipe = StableDiffusionPipeline.from_pretrained("/dfs/data/llmcheckpoints/stable-diffusion-v1-5",

torch_dtype=self.torch_dtype)

self.pipe.to(device)

self.a_prompt = 'best quality, extremely detailed'

self.n_prompt = 'longbody, lowres, bad anatomy, bad hands, missing fingers, extra digit, ' \

'fewer digits, cropped, worst quality, low quality'

@prompts(name="Generate Image From User Input Text",

description="useful when you want to generate an image from a user input text and save it to a file. "

"like: generate an image of an object or something, or generate an image that includes some objects. "

"The input to this tool should be a string, representing the text used to generate image. ")

def inference(self, text):

image_filename = os.path.join('image', f"{str(uuid.uuid4())[:8]}.png")

prompt = text + ', ' + self.a_prompt

image = self.pipe(prompt, negative_prompt=self.n_prompt).images[0]

image.save(image_filename)

print(

f"\nProcessed Text2Image, Input Text: {text}, Output Image: {image_filename}")

return image_filename

```

```

from typing import Any, List, Mapping, Optional

from pydantic import BaseModel, Extra

from langchain.llms.base import LLM

from langchain.llms.utils import enforce_stop_tokens

class CustomPipeline(LLM, BaseModel):

model_id: str = "/dfs/data/llmcheckpoints/bloom-7b1/"

class Config:

"""Configuration for this pydantic object."""

extra = Extra.forbid

def __init__(self, model_id):

super().__init__()

# from transformers import T5TokenizerFast, T5ForConditionalGeneration

from transformers import AutoTokenizer, AutoModelForCausalLM

global model, tokenizer

# model = T5ForConditionalGeneration.from_pretrained(model_id)

# tokenizer = T5TokenizerFast.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(model_id, device_map='auto')

tokenizer = AutoTokenizer.from_pretrained(model_id, device_map='auto')

@property

def _llm_type(self) -> str:

return "custom_pipeline"

def _call(self, prompt: str, stop: Optional[List[str]] = None, max_length=2048, num_return_sequences=1):

input_ids = tokenizer.encode(prompt, return_tensors="pt").cuda()

outputs = model.generate(input_ids, max_length=max_length, num_return_sequences=num_return_sequences)

response = [tokenizer.decode(output, skip_special_tokens=True) for output in outputs][0]

return response

```

```

class ConversationBot:

def __init__(self, load_dict):

print(f"Initializing AiMaster ChatBot, load_dict={load_dict}")

model_id = "/dfs/data/llmcheckpoints/bloom-7b1/"

self.llm = CustomPipeline(model_id=model_id)

print('load flant5xl done!')

self.memory = ConversationStringBufferMemory(memory_key="chat_history", output_key='output')

self.models = {}

# Load Basic Foundation Models

for class_name, device in load_dict.items():

self.models[class_name] = globals()[class_name](device=device)

# Load Template Foundation Models

for class_name, module in globals().items():

if getattr(module, 'template_model', False):

template_required_names = {k for k in inspect.signature(module.__init__).parameters.keys() if k!='self'}

loaded_names = set([type(e).__name__ for e in self.models.values()])

if template_required_names.issubset(loaded_names):

self.models[class_name] = globals()[class_name](

**{name: self.models[name] for name in template_required_names})

self.tools = []

for instance in self.models.values():

for e in dir(instance):

if e.startswith('inference'):

func = getattr(instance, e)

self.tools.append(Tool(name=func.name, description=func.description, func=func))

self.agent = initialize_agent(

self.tools,

self.llm,

agent="conversational-react-description",

verbose=True,

memory=self.memory,

return_intermediate_steps=True,

#agent_kwargs={'format_instructions': AIMASTER_CHATBOT_FORMAT_INSTRUCTIONS},)

agent_kwargs={'prefix': AIMASTER_CHATBOT_PREFIX, 'format_instructions': AIMASTER_CHATBOT_FORMAT_INSTRUCTIONS,

}, )

def run_text(self, text, state):

self.agent.memory.buffer = cut_dialogue_history(self.agent.memory.buffer, keep_last_n_words=500)

res = self.agent({"input": text})

res['output'] = res['output'].replace("\\", "/")

response = re.sub('(image/\S*png)', lambda m: f'})*{m.group(0)}*', res['output'])

state = state + [(text, response)]

print(f"\nProcessed run_text, Input text: {text}\nCurrent state: {state}\n"

f"Current Memory: {self.agent.memory.buffer}")

return state, state

def run_image(self, image, state):

# image_filename = os.path.join('image', f"{str(uuid.uuid4())[:8]}.png")

image_filename = image

print("======>Auto Resize Image...")

# img = Image.open(image.name)

img = Image.open(image_filename)

width, height = img.size

ratio = min(512 / width, 512 / height)

width_new, height_new = (round(width * ratio), round(height * ratio))

width_new = int(np.round(width_new / 64.0)) * 64

height_new = int(np.round(height_new / 64.0)) * 64

img = img.resize((width_new, height_new))

img = img.convert('RGB')

img.save(image_filename, "PNG")

print(f"Resize image form {width}x{height} to {width_new}x{height_new}")

description = self.models['ImageCaptioning'].inference(image_filename)

Human_prompt = f'\nHuman: provide a figure named {image_filename}. The description is: {description}. This information helps you to understand this image, but you should use tools to finish following tasks, rather than directly imagine from my description. If you understand, say \"Received\". \n'

AI_prompt = "Received. "

self.agent.memory.buffer = self.agent.memory.buffer + Human_prompt + 'AI: ' + AI_prompt

state = state + [(f"*{image_filename}*", AI_prompt)]

print(f"\nProcessed run_image, Input image: {image_filename}\nCurrent state: {state}\n"

f"Current Memory: {self.agent.memory.buffer}")

return state, state, f' {image_filename} '

if __name__=="__main__":

parser = argparse.ArgumentParser()

parser.add_argument('--load', type=str, default="ImageCaptioning_cuda:0, Text2Image_cuda:0")

args = parser.parse_args()

load_dict = {e.split('_')[0].strip(): e.split('_')[1].strip() for e in args.load.split(',')}

bot = ConversationBot(load_dict=load_dict)

global state

state = list()

while True:

text = input('input:')

if text.startswith("image:"):

result = bot.run_image(text[6:],state)

elif text == 'stop':

break

elif text == 'clear':

bot.memory.clear

else:

result = bot.run_text(text,state)

```

It seems that both two llms fail in using tools that I offer.

Any suggestions will help me a lot!

| Using langchain without openai api? | https://api.github.com/repos/langchain-ai/langchain/issues/2182/comments | 13 | 2023-03-30T08:07:21Z | 2023-12-30T16:09:14Z | https://github.com/langchain-ai/langchain/issues/2182 | 1,647,123,616 | 2,182 |

[

"langchain-ai",

"langchain"

] | I am having a hard time understanding how I can add documents to an **existing** Redis Index.

This is what I do: first I try to instantiate `rds` from an existing Redis instance:

```

rds = Redis.from_existing_index(

embedding=openAIEmbeddings,

redis_url="redis://localhost:6379",

index_name='techorg'

)

```

Then I want to add more documents to the index:

```

rds.add_documents(

documents=splits,

embedding=openAIEmbeddings

)

```

Which ends up with the documents being added to Redis but **not to the index**:

In this screenshot you can see the index "techorg" with the one document "doc:techorg:0" I had created it with. Then, outside of the `techorg` hierarchie you can see the documents that were added with `add_documents`

<img width="293" alt="Screenshot 2023-03-30 at 09 39 03" src="https://user-images.githubusercontent.com/603179/228764364-d95b9bd4-8116-4c0f-9b8b-c3bfb3b78a26.png">

| Redis: add to existing Index | https://api.github.com/repos/langchain-ai/langchain/issues/2181/comments | 12 | 2023-03-30T07:42:53Z | 2023-09-28T16:09:57Z | https://github.com/langchain-ai/langchain/issues/2181 | 1,647,085,810 | 2,181 |

[

"langchain-ai",

"langchain"

] | I believe by default the model used in it is text-davinci-003 , how can i change that model to text-ada-001,

basically i want to create a question and answer bot in which i provide the model with text file input (txt file)

i based on that i want the bot the answer the question i ask it , based on only text file that i have inputted ,

so can i change the model in it | Not able to Change model in Text Loader and VectorStoreIndexCreator | https://api.github.com/repos/langchain-ai/langchain/issues/2175/comments | 1 | 2023-03-30T04:52:26Z | 2023-09-18T16:21:54Z | https://github.com/langchain-ai/langchain/issues/2175 | 1,646,909,112 | 2,175 |

[

"langchain-ai",

"langchain"

] | NOTE: fixed in #2238 PR.

I'm running `tests/unit_tests` on the Windows platform and several tests related to `bash` failed.

>test_llm_bash/

test_simple_question

and

>test_bash/

test_pwd_command

test_incorrect_command

test_incorrect_command_return_err_output

test_create_directory_and_files

If it is because these tests should run only on Linux, we can add

>if not sys.platform.startswith("win"):

pytest.skip("skipping windows-only tests", allow_module_level=True)

to the `test_bash.py`

and

>@pytest.mark.skipif(sys.platform.startswith("win", reason="skipping windows-only tests")

to `test_llm_bash/test_simple_question`

regarding [this](https://docs.pytest.org/en/7.1.x/how-to/skipping.html).

If you want you can assign this issue to me :)

UPDATE:

Probably` tests/unit_test/utilities/test_loading/[test_success, test_failed_request]` (tests with correspondent `_teardown`) are also failing because of the Windows environment. | failed tests on Windows platform | https://api.github.com/repos/langchain-ai/langchain/issues/2174/comments | 3 | 2023-03-30T03:43:17Z | 2023-04-03T15:58:28Z | https://github.com/langchain-ai/langchain/issues/2174 | 1,646,855,969 | 2,174 |

[

"langchain-ai",

"langchain"

] | I am trying to use the FAISS class to initialize an index using pre-computed embeddings, but it seems that the method is not being recognized. However, I found the function in both the [source code](https://github.com/hwchase17/langchain/blob/master/langchain/vectorstores/faiss.py#L348) and documentation. Details in screenshot below:

<img width="821" alt="image" src="https://user-images.githubusercontent.com/20560167/228670417-c468ce42-29dc-4d07-a494-d942ec96232a.png">

| [BUG] FAISS.from_embeddings doesn't seem to exist | https://api.github.com/repos/langchain-ai/langchain/issues/2165/comments | 2 | 2023-03-29T21:20:49Z | 2023-09-10T16:39:31Z | https://github.com/langchain-ai/langchain/issues/2165 | 1,646,550,037 | 2,165 |

[

"langchain-ai",

"langchain"

] |

I am facing this issue when trying to import the following.

from langchain.agents import ZeroShotAgent, Tool, AgentExecutor

from langchain.memory import ConversationBufferMemory

from langchain.memory.chat_memory import ChatMessageHistory

from langchain.memory.chat_message_histories import RedisChatMessageHistory

from langchain import OpenAI, LLMChain

from langchain.utilities import GoogleSearchAPIWrapper

Here's the error message:

---------------------------------------------------------------------------

ImportError Traceback (most recent call last)

[<ipython-input-55-e9f2f4d46ecb>](https://localhost:8080/#) in <cell line: 4>()

2 from langchain.memory import ConversationBufferMemory

3 from langchain.memory.chat_memory import ChatMessageHistory

----> 4 from langchain.memory.chat_message_histories import RedisChatMessageHistory

5 from langchain import OpenAI, LLMChain

6 from langchain.utilities import GoogleSearchAPIWrapper

1 frames

[/usr/local/lib/python3.9/dist-packages/langchain/memory/chat_message_histories/dynamodb.py](https://localhost:8080/#) in <module>

2 from typing import List

3

----> 4 from langchain.schema import (

5 AIMessage,

6 BaseChatMessageHistory,

ImportError: cannot import name 'BaseChatMessageHistory' from 'langchain.schema' (/usr/local/lib/python3.9/dist-packages/langchain/schema.py)

---------------------------------------------------------------------------

NOTE: If your import is failing due to a missing package, you can

manually install dependencies using either !pip or !apt.

To view examples of installing some common dependencies, click the

"Open Examples" button below.

---------------------------------------------------------------------------

Currently running this on google colab. Is there anything I am missing? How can this be rectified?

| Error while importing RedisChatMessageHistory | https://api.github.com/repos/langchain-ai/langchain/issues/2163/comments | 2 | 2023-03-29T21:04:55Z | 2023-09-10T16:39:37Z | https://github.com/langchain-ai/langchain/issues/2163 | 1,646,528,525 | 2,163 |

[

"langchain-ai",

"langchain"

] | Hi, I am accessing the confluence API to retrieve Pages from a specific Space and process them. I am trying to embed them into Redis, see: `embed_document_splits`.

On the surface it seems to work but the index size in Redis only grows until 57 keys, then stops growing while the code happily runs.

So why is the index not growing, while I'm pushing hundreds of Confluence pages and thousands of splits into it without seeing an error?

```

from typing import List

from langchain import OpenAI

from langchain.embeddings.openai import OpenAIEmbeddings

from langchain.text_splitter import TokenTextSplitter

from langchain.vectorstores.redis import Redis

from atlassian import Confluence

from langchain.docstore.document import Document

from bs4 import BeautifulSoup

import time

import os

from dotenv import load_dotenv

load_dotenv()

text_splitter = TokenTextSplitter(chunk_size=200, chunk_overlap=20)

# set up global flag variable

stop_flag = False

# set up signal handler to catch Ctrl+C

import signal

def signal_handler(sig, frame):

global stop_flag

print('You pressed Ctrl+C!')

stop_flag = True

signal.signal(signal.SIGINT, signal_handler)

def transform_confluence_page_to_document(page) -> Document:

soup = BeautifulSoup(

markup=page['body']['view']['value'],

features="html.parser"

)

metadata = {

"title": page["title"],

"source ": page["_links"]["webui"]

}

# only add pages with more than 200 characters, otherwise they are irrelevant

if (len(soup.get_text()) > 200):

print("- " + page["title"] + " (" + str(len(soup.get_text())) + " characters)")

return Document(

page_content=soup.get_text(),

metadata=metadata

)

return None

def embed_document_splits(splits: List[Document]) -> None:

try:

print("Saving chunk with " + str(len(splits)) + " splits to Vector DB (Redis)..." )

embeddings = OpenAIEmbeddings(model='text-embedding-ada-002')

Redis.from_documents(

splits,

embeddings,

redis_url="redis://localhost:6379",

index_name='techorg'

)

except:

print("ERROR: Could not create/save embeddings")

# https://stackoverflow.com/a/69446096

def process_all_pages():

confluence = Confluence(

url=os.environ.get('CONFLUENCE_API_URL'),

username=os.environ.get('CONFLUENCE_API_EMAIL'),

password=os.environ.get('CONFLUENCE_API_TOKEN'))

start = 0

limit = 5

documents: List[Document] = []

while not stop_flag:

print("start: " + str(start) + ", limit: " + str(limit))

try:

pagesChunk = confluence.get_all_pages_from_space(

"TechOrg",

start=start,

limit=limit,

expand="body.view",

content_type="page"

)

for p in pagesChunk:

doc = transform_confluence_page_to_document(p)

if doc is not None:

documents.append(doc)

splitted_documents = text_splitter.split_documents(documents)

embed_document_splits(splitted_documents)

except:

print('ERROR: Chunk could not be processed and is ignored...')

documents = [] # reset for next chunk

if len(pagesChunk) < limit:

break

start = start + limit

time.sleep(1)

def main():

process_all_pages()

# https://stackoverflow.com/a/419185

if __name__ == "__main__":

main()

``` | Storing Embeddings to Redis does not grow its index proportionally | https://api.github.com/repos/langchain-ai/langchain/issues/2162/comments | 2 | 2023-03-29T20:53:39Z | 2023-09-10T16:39:41Z | https://github.com/langchain-ai/langchain/issues/2162 | 1,646,514,182 | 2,162 |

[

"langchain-ai",

"langchain"

] | What is the default Openai model used in the langchain agents create_csv_agent and how about if someone want to change the model to GPT4 .... how to do this... Thank you | create_csv_agent in agents | https://api.github.com/repos/langchain-ai/langchain/issues/2159/comments | 5 | 2023-03-29T19:28:16Z | 2023-05-01T15:29:07Z | https://github.com/langchain-ai/langchain/issues/2159 | 1,646,390,568 | 2,159 |

[

"langchain-ai",

"langchain"

] | Hi

Like you have supported SQLDatabaseChain to return directly the query results without going back to LLM, can you do the same for SQLAgent as well , right now the data is always sent. and setting return_direct in tools will not help as it will return early if there are multiple tries in agent flow | SQLAgent direct return | https://api.github.com/repos/langchain-ai/langchain/issues/2158/comments | 1 | 2023-03-29T18:45:20Z | 2023-08-25T16:13:52Z | https://github.com/langchain-ai/langchain/issues/2158 | 1,646,334,900 | 2,158 |

[

"langchain-ai",

"langchain"

] | https://docs.abyssworld.xyz/abyss-world-whitepaper/roadmap-and-development-milestones/milestones

This is an example of a three-level subdirectory.

However, the Gitbook loader can only traverse up to two levels.

I suspect that this issue lies with the Gitbook loader itself.

If this problem does indeed exist, I am willing to fix it and contribute. | Gitbook loader can't traverse more than 2 level of subdirectory | https://api.github.com/repos/langchain-ai/langchain/issues/2156/comments | 1 | 2023-03-29T18:30:19Z | 2023-08-25T16:13:56Z | https://github.com/langchain-ai/langchain/issues/2156 | 1,646,315,806 | 2,156 |

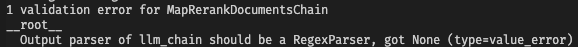

[

"langchain-ai",

"langchain"

] | I am trying to `query` a `VectorstoreIndexCreator()` with a hugging face LLM and am running a validation error -- I would love to put up a PR/fix this myself, but I'm at a loss for what I need to do so any guidance and I'd be happy to try to put up a fix.

The validation error is below...

```

ValidationError: 1 validation error for LLMChain

llm

Can't instantiate abstract class BaseLanguageModel with abstract methods agenerate_prompt, generate_prompt (type=type_error)

```

A simplified version of what I'm trying to do is below:

```

loader = TextLoader('file.txt')

index = VectorstoreIndexCreator().from_loaders([loader])

index.query(query, llm=llm_chain)

```

Here is some more info on what I have in `llm_chain` and how it works for a basic prompt..

```

from transformers import AutoTokenizer, AutoModelForCausalLM, pipeline

from langchain.llms import HuggingFacePipeline

from langchain.prompts import PromptTemplate

tokenizer = AutoTokenizer.from_pretrained("OpenAssistant/oasst-sft-1-pythia-12b")

model = AutoModelForCausalLM.from_pretrained("OpenAssistant/oasst-sft-1-pythia-12b")

pipe = pipeline(

"text-generation",

model=model,

tokenizer=tokenizer,

max_length=1024

)

local_llm = HuggingFacePipeline(pipeline=pipe)

template = """Question: {question}"""

prompt = PromptTemplate(template=template, input_variables=["question"])

llm_chain = langchain.LLMChain(

prompt=prompt, # TODO

llm=local_llm

)

question = "What is the capital of France?"

```

Running the above code, the `llm_chain` returns `Answer: Paris is the capital of France.` which suggests that the llm_chain is set up properly. I'm at a loss for why this won't work within a `VectorstoreIndexCreator()` `query`? | Cannot initialize LLMChain with HuggingFace LLM when querying VectorstoreIndexCreator() | https://api.github.com/repos/langchain-ai/langchain/issues/2154/comments | 1 | 2023-03-29T17:03:24Z | 2023-09-10T16:39:46Z | https://github.com/langchain-ai/langchain/issues/2154 | 1,646,196,792 | 2,154 |

[

"langchain-ai",

"langchain"

] | Many customers has their knowledge base sitting on either in SharePoint , OneDrive and Documentum. Can we have a new document loader for all these? | New Document loader request for Sharepoint , OneDrive and Documentum | https://api.github.com/repos/langchain-ai/langchain/issues/2153/comments | 17 | 2023-03-29T16:36:16Z | 2024-04-27T14:07:57Z | https://github.com/langchain-ai/langchain/issues/2153 | 1,646,158,055 | 2,153 |

[

"langchain-ai",

"langchain"

] | ## Bug description

Hello! I've been using the SQL agent for some time now and I've noticed that it burns tokens unnecessarily in certain cases, leading to slower LLM responses and hitting token limits. This happens usually when the user's question requires information from more than one table.

Unlike `SQLDatabaseChain`, the SQL agent uses multiple tools to list tables, describe tables, generate queries, and validate generated queries. However, except for the generate query tool, all other tools are relevant only until the agent is required to generate a query. As mentioned in the scenario above, if the agent needs to fetch information from two tables, it first makes an LLM call to list the tables, selects the most appropriate table, and generates a query based on the selected table description to fetch information. Then, the agent generates a second SQL query to fetch information from a different table and goes through the exact same process.

The problem is that all the context from the first SQL query is retained, leading to unnecessary token burn and slower LLM responses.

## Proposed solution

To resolve this issue, we should update the SQL agent to update the context once the information is fetched from the first query generation. Only the output from the first query should be taken forward for the next query generation. This will prevent the agent from running into token limits and speed up the LLM response time. | bug(sql_agent): Optimise token usage for user questions which require information from more than one table | https://api.github.com/repos/langchain-ai/langchain/issues/2150/comments | 4 | 2023-03-29T15:31:13Z | 2023-12-08T16:08:35Z | https://github.com/langchain-ai/langchain/issues/2150 | 1,646,058,913 | 2,150 |

[

"langchain-ai",

"langchain"

] | Hi,

I keep getting the below warning every time the chain finishes.

`WARNING:root:Failed to persist run: HTTPConnectionPool(host='localhost', port=8000): Max retries exceeded with url: /chain-runs (Caused by NewConnectionError('<urllib3.connection.HTTPConnection object at 0x000001B8E0B5D0D0>: Failed to establish a new connection: [WinError 10061] No connection could be made because the target machine actively refused it'))`

Below is the code:

```

import os

from flask import Flask, request, render_template, jsonify

from langchain import LLMMathChain, OpenAI

from langchain.agents import initialize_agent, Tool

from langchain.chat_models import ChatOpenAI

from langchain.memory import ConversationSummaryBufferMemory

os.environ["LANGCHAIN_HANDLER"] = "langchain"

llm = ChatOpenAI(temperature=0)

memory = ConversationSummaryBufferMemory(

memory_key="chat_history", llm=llm, max_token_limit=100, return_messages=True)

llm_math_chain = LLMMathChain(llm=OpenAI(temperature=0), verbose=True)

tools = [

Tool(

name="Calculator",

func=llm_math_chain.run,

description="useful for when you need to answer questions about math"

), ]

agent = initialize_agent(

tools, llm, agent="chat-conversational-react-description", verbose=True, memory=memory)

app = Flask(__name__)

@app.route('/')

def index():

return render_template('index.html', username='N/A')

@app.route('/assist', methods=['POST'])

def assist():

message = request.json.get('message', '')

if message:

response = query_gpt(message)

return jsonify({"response": response})

return jsonify({"error": "No message provided"})

def query_gpt(message):

response = 'Error!'

try:

response = agent.run(input=message)

finally:

return response

```

Am I doing something wrong here?

Thanks. | Max retries exceeded with url: /chain-runs | https://api.github.com/repos/langchain-ai/langchain/issues/2145/comments | 12 | 2023-03-29T12:56:09Z | 2024-04-02T13:32:04Z | https://github.com/langchain-ai/langchain/issues/2145 | 1,645,755,617 | 2,145 |

[

"langchain-ai",

"langchain"

] | I have set up a docker-compose stack with ghcr.io/chroma-core/chroma:0.3.14 (chroma_server) and clickhouse/clickhouse-server:22.9-alpine (click house) almost just like the chroma compose example in their rep. Just the port differs. I have also setup: CHROMA_API_IMPL, CHROMA_SERVER_HOST, CHROMA_SERVER_HTTP_PORT at the container in use by both langchain and chromedb lib.

So according to the documentation it should connect, and it appears to be so, even the second try is showing the collection to be in existence.

But despite so the chromed lib in my container is claiming no response from the chroma_server container.

Here's the peace of code in use:

`

webpage = UnstructuredURLLoader(urls=[url]).load_and_split()

llm = OpenAI(temperature=0.7)

embeddings = OpenAIEmbeddings()

from chromadb.config import Settings

chroma_settings = Settings(chroma_api_impl=os.environ.get("CHROMA_API_IMPL"),

chroma_server_host=os.environ.get("CHROMA_SERVER_HOST"),

chroma_server_http_port=os.environ.get("CHROMA_SERVER_HTTP_PORT"))

vectorstore = Chroma(collection_name="langchain_store", client_settings=chroma_settings)

docsearch = vectorstore.from_documents(webpage, embeddings, collection_name="webpage")

`

Chroma settings: environment='' chroma_db_impl='duckdb' chroma_api_impl='rest' clickhouse_host=None clickhouse_port=None persist_directory='.chroma' chroma_server_host='chroma_server' chroma_server_http_port='6000' chroma_server_ssl_enabled=False chroma_server_grpc_port=None anonymized_telemetry=True

Tried to use por 3000 as well, and also tried to set the cord setting in chroma, but still the same.

Internal Server Error: /query

Traceback (most recent call last):

File "/usr/local/lib/python3.10/site-packages/urllib3/connectionpool.py", line 703, in urlopen

httplib_response = self._make_request(

File "/usr/local/lib/python3.10/site-packages/urllib3/connectionpool.py", line 449, in _make_request

six.raise_from(e, None)

File "<string>", line 3, in raise_from

File "/usr/local/lib/python3.10/site-packages/urllib3/connectionpool.py", line 444, in _make_request

httplib_response = conn.getresponse()

File "/usr/local/lib/python3.10/http/client.py", line 1374, in getresponse

response.begin()

File "/usr/local/lib/python3.10/http/client.py", line 318, in begin

version, status, reason = self._read_status()

File "/usr/local/lib/python3.10/http/client.py", line 287, in _read_status

raise RemoteDisconnected("Remote end closed connection without"

http.client.RemoteDisconnected: Remote end closed connection without response

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/usr/local/lib/python3.10/site-packages/requests/adapters.py", line 489, in send

resp = conn.urlopen(

File "/usr/local/lib/python3.10/site-packages/urllib3/connectionpool.py", line 787, in urlopen

retries = retries.increment(

File "/usr/local/lib/python3.10/site-packages/urllib3/util/retry.py", line 550, in increment

raise six.reraise(type(error), error, _stacktrace)

File "/usr/local/lib/python3.10/site-packages/urllib3/packages/six.py", line 769, in reraise

raise value.with_traceback(tb)

File "/usr/local/lib/python3.10/site-packages/urllib3/connectionpool.py", line 703, in urlopen

httplib_response = self._make_request(

File "/usr/local/lib/python3.10/site-packages/urllib3/connectionpool.py", line 449, in _make_request

six.raise_from(e, None)

File "<string>", line 3, in raise_from

File "/usr/local/lib/python3.10/site-packages/urllib3/connectionpool.py", line 444, in _make_request

httplib_response = conn.getresponse()

File "/usr/local/lib/python3.10/http/client.py", line 1374, in getresponse

response.begin()

File "/usr/local/lib/python3.10/http/client.py", line 318, in begin

version, status, reason = self._read_status()

File "/usr/local/lib/python3.10/http/client.py", line 287, in _read_status

raise RemoteDisconnected("Remote end closed connection without"

urllib3.exceptions.ProtocolError: ('Connection aborted.', RemoteDisconnected('Remote end closed connection without response'))

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/usr/local/lib/python3.10/site-packages/django/core/handlers/exception.py", line 56, in inner

response = get_response(request)

File "/usr/local/lib/python3.10/site-packages/django/core/handlers/base.py", line 197, in _get_response

response = wrapped_callback(request, *callback_args, **callback_kwargs)

File "/app/sia_demo_alpha/views.py", line 79, in queryAnswer

answer = qaURL(url,request.GET.get('question'))

File "/app/sia_demo_alpha/data/sia_processor.py", line 91, in qaURL

docsearch = Chroma.from_documents(webpage, embeddings, collection_name="webpage", client_settings=chroma_settings)

File "/usr/local/lib/python3.10/site-packages/langchain/vectorstores/chroma.py", line 268, in from_documents

return cls.from_texts(

File "/usr/local/lib/python3.10/site-packages/langchain/vectorstores/chroma.py", line 237, in from_texts

chroma_collection.add_texts(texts=texts, metadatas=metadatas, ids=ids)

File "/usr/local/lib/python3.10/site-packages/langchain/vectorstores/chroma.py", line 111, in add_texts

self._collection.add(

File "/usr/local/lib/python3.10/site-packages/chromadb/api/models/Collection.py", line 112, in add

self._client._add(ids, self.name, embeddings, metadatas, documents, increment_index)

File "/usr/local/lib/python3.10/site-packages/chromadb/api/fastapi.py", line 180, in _add

resp = requests.post(

File "/usr/local/lib/python3.10/site-packages/requests/api.py", line 115, in post

return request("post", url, data=data, json=json, **kwargs)

File "/usr/local/lib/python3.10/site-packages/requests/api.py", line 59, in request

return session.request(method=method, url=url, **kwargs)

File "/usr/local/lib/python3.10/site-packages/requests/sessions.py", line 587, in request

resp = self.send(prep, **send_kwargs)

File "/usr/local/lib/python3.10/site-packages/requests/sessions.py", line 701, in send

r = adapter.send(request, **kwargs)

File "/usr/local/lib/python3.10/site-packages/requests/adapters.py", line 547, in send

raise ConnectionError(err, request=request)

requests.exceptions.ConnectionError: ('Connection aborted.', RemoteDisconnected('Remote end closed connection without response'))

2023-03-29 07:59:54 INFO uvicorn.error Stopping reloader process [1]

2023-03-29 07:59:55 INFO uvicorn.error Will watch for changes in these directories: ['/chroma']

2023-03-29 07:59:55 INFO uvicorn.error Uvicorn running on http://0.0.0.0:6000 (Press CTRL+C to quit)

2023-03-29 07:59:55 INFO uvicorn.error Started reloader process [1] using WatchFiles

2023-03-29 07:59:58 INFO chromadb.telemetry.posthog Anonymized telemetry enabled. See https://docs.trychroma.com/telemetry for more information.

2023-03-29 07:59:58 INFO chromadb Running Chroma using direct local API.

2023-03-29 07:59:58 INFO chromadb Using Clickhouse for database

2023-03-29 07:59:58 INFO uvicorn.error Started server process [8]

2023-03-29 07:59:58 INFO uvicorn.error Waiting for application startup.

2023-03-29 07:59:58 INFO uvicorn.error Application startup complete.

2023-03-29 08:02:03 INFO chromadb.db.clickhouse collection with name webpage already exists, returning existing collection

2023-03-29 08:02:03 WARNING chromadb.api.models.Collection No embedding_function provided, using default embedding function: SentenceTransformerEmbeddingFunction

2023-03-29 08:02:06 INFO uvicorn.access 172.19.0.3:60172 - "POST /api/v1/collections HTTP/1.1" 200

2023-03-29 08:02:07 DEBUG chromadb.db.index.hnswlib Index not found

If I force _embedding_function=embeddings.embed_query_ or even with _embedding_function=embeddings.embed_documents_ I get embeddings not found and another crash.

| Setting chromadb client-server results in "Remote end closed connection without response" | https://api.github.com/repos/langchain-ai/langchain/issues/2144/comments | 12 | 2023-03-29T11:53:11Z | 2023-09-27T16:11:11Z | https://github.com/langchain-ai/langchain/issues/2144 | 1,645,644,133 | 2,144 |

[

"langchain-ai",

"langchain"

] | The contrast used for the documentation on this UI (https://python.langchain.com/en/latest/getting_started/getting_started.html), makes the docs hard to read. Can this be improved? I can take a stab if pointed towards the UI code. | Docs UI | https://api.github.com/repos/langchain-ai/langchain/issues/2143/comments | 9 | 2023-03-29T11:36:24Z | 2023-09-27T16:11:14Z | https://github.com/langchain-ai/langchain/issues/2143 | 1,645,618,664 | 2,143 |

[

"langchain-ai",

"langchain"

] | I have a question about ChatGPTPluginRetriever and VectorStore Retriever. I wanner use Enterprise Private Data with chatGPT, several weeks ago, there is no OpenAI chatGPT Plugin, I intend to use LangChain Vector Store implenment this function, but I have not finish this job, chatgpt plugin come true, I have read [chatgpt-retrieval-plugin](https://github.com/openai/chatgpt-retrieval-plugin) README.md, but don't have enough time to research code detail, I want to know, what's difference between ChatGPTPluginRetriever and VectorStore Retriever.

I guess ChatGPTPluginRetriever means if you implement "/query" interface, chatGPT will request it with questions intelligently, just like chatGPT will ask question in one of its "Reasoning Chains". But LangChain VectorStore will work independent. Does anyone konws is that so? thanks very much.

P.S. English is not my mother tongue, so I have a poor English. Sorry for that ^_^ | what's difference between ChatGPTPluginRetriever and VectorStore Retriever | https://api.github.com/repos/langchain-ai/langchain/issues/2142/comments | 1 | 2023-03-29T11:20:31Z | 2023-09-18T16:21:59Z | https://github.com/langchain-ai/langchain/issues/2142 | 1,645,590,007 | 2,142 |

[

"langchain-ai",

"langchain"

] | I am testing out the newly released support for ChatGPT plugins with langchain. Below I am doing a sample test with Wolfram Alpha but it throws the below error

"openai.error.InvalidRequestError: This model's maximum context length is 4097 tokens. However, your messages resulted in 127344 tokens. Please reduce the length of the messages."

What is the best way to deal with this ? Attaching below the code I executed

`import os

from langchain.chat_models import ChatOpenAI

from langchain.agents import load_tools, initialize_agent

from langchain.tools import AIPluginTool

tool = AIPluginTool.from_plugin_url("https://www.wolframalpha.com/.well-known/ai-plugin.json")

llm = ChatOpenAI(temperature=0,)

tools = load_tools(["requests"] )

tools += [tool]

agent_chain = initialize_agent(tools, llm, agent="zero-shot-react-description", verbose=True)

agent_chain.run("How many calories are in Chickpea Salad?")` | Model's maximum context length error with ChatGPT plugins | https://api.github.com/repos/langchain-ai/langchain/issues/2140/comments | 5 | 2023-03-29T10:07:05Z | 2023-09-18T16:22:04Z | https://github.com/langchain-ai/langchain/issues/2140 | 1,645,459,755 | 2,140 |

[

"langchain-ai",

"langchain"

] | My aim is to chat with a vector index, so I tried to port code to the new retrieval abstraction. In addition, I pass arguments to the pinecone vector store eg to filter by metadata or specify the collection/ namespace needed. However, I only get the chain to work if I specify `vectordbkwargs` twice, once in the retriever definition and once for the actual model call. Is this intended behaviour?

```python

vectorstore = Pinecone(

index=index,

embedding_function=embed.embed_query,

text_key=text_field,

namespace=None # not setting a namespace, for testing

)

# have to set vectordbkwargs here

vectordbkwargs = {"namespace": 'foobar', "filter": {}, "include_metadata": True}

retriever = vectorstore.as_retriever(search_kwargs=vectordbkwargs)

chat = ConversationalRetrievalChain(

retriever=retriever,

combine_docs_chain=doc_chain,

question_generator=question_generator,

)

chat_history = []

query = 'some query I know is answerable from the vector store'

# oddly, I have to pass vectordbkwargs here too

result = chat({"question": query, "chat_history": chat_history, 'vectordbkwargs': vectordbkwargs})

```

Leaving out either of the two vectordbkwargs passings to the respective function does not pass the "foobar" vector store namespace for pinecone and results in an empty result. My guess is that this might be a bug due to the newness of the retrieval abstraction? If not, how is one supposed to pass vector store-specific arguments to the chain? | Unnecessary need to pass vectordbkwargs multiple times in new retrieval class? | https://api.github.com/repos/langchain-ai/langchain/issues/2139/comments | 2 | 2023-03-29T08:11:36Z | 2023-09-18T16:22:10Z | https://github.com/langchain-ai/langchain/issues/2139 | 1,645,257,394 | 2,139 |

[

"langchain-ai",

"langchain"

] | hello,can you share the ways how to use other llm not the openAI,thanks | llms | https://api.github.com/repos/langchain-ai/langchain/issues/2138/comments | 5 | 2023-03-29T08:07:07Z | 2023-09-26T16:12:49Z | https://github.com/langchain-ai/langchain/issues/2138 | 1,645,250,309 | 2,138 |

[

"langchain-ai",

"langchain"

] | Hey all,

I'm trying to make a bot that can use the math and search functions while still using tools. What I have so far is this:

```

from langchain import OpenAI, LLMMathChain, SerpAPIWrapper

from langchain.agents import initialize_agent, Tool

from langchain.chat_models import ChatOpenAI

from langchain.chains import ConversationChain

from langchain.memory import ConversationBufferMemory

from langchain.prompts import (

ChatPromptTemplate,

MessagesPlaceholder,

SystemMessagePromptTemplate,

HumanMessagePromptTemplate

)

import os

os.environ["OPENAI_API_KEY"] = "..."

os.environ["SERPAPI_API_KEY"] = "..."

llm = ChatOpenAI(temperature=0)

llm1 = OpenAI(temperature=0)

search = SerpAPIWrapper()

llm_math_chain = LLMMathChain(llm=llm1, verbose=True)

tools = [

Tool(

name="Search",

func=search.run,

description="useful for when you need to answer questions about current events. "

"You should ask targeted questions"

),

Tool(

name="Calculator",

func=llm_math_chain.run,

description="useful for when you need to answer questions about math"

)

]

prompt = ChatPromptTemplate.from_messages([

SystemMessagePromptTemplate.from_template("The following is a friendly conversation between a human and an AI. "

"The AI is talkative and provides lots of specific details from "

"its context. If the AI does not know the answer to a question, "

"it truthfully says it does not know."),

MessagesPlaceholder(variable_name="history"),

HumanMessagePromptTemplate.from_template("{input}")

])

mrkl = initialize_agent(tools, llm, agent="chat-zero-shot-react-description", verbose=True)

memory = ConversationBufferMemory(return_messages=True)

memory.human_prefix = 'user'

memory.ai_prefix = 'assistant'

conversation = ConversationChain(memory=memory, prompt=prompt, llm=mrkl)

la = conversation.predict(input="Hi there! 123 raised to .23 power")

```

Unfortunately the last line gives this error:

```

Traceback (most recent call last):

File "/Users/travisbarton/opt/anaconda3/envs/langchain_testing/lib/python3.10/code.py", line 90, in runcode

exec(code, self.locals)

File "<input>", line 1, in <module>

File "pydantic/main.py", line 341, in pydantic.main.BaseModel.__init__

pydantic.error_wrappers.ValidationError: 1 validation error for ConversationChain

llm

Can't instantiate abstract class BaseLanguageModel with abstract methods agenerate_prompt, generate_prompt (type=type_error)

```

How can I make a conversational bot that also has access to tools/agents and has memory?

(preferably with load_tools)

| Creating conversational bots with memory, agents, and tools | https://api.github.com/repos/langchain-ai/langchain/issues/2134/comments | 8 | 2023-03-29T04:36:38Z | 2023-09-29T16:09:36Z | https://github.com/langchain-ai/langchain/issues/2134 | 1,645,018,466 | 2,134 |

[

"langchain-ai",

"langchain"

] | I want to migrate from `VectorDBQAWithSourcesChain` to `RetrievalQAWithSourcesChain`. The sample code use Qdrant vector store, it work fine with VectorDBQAWithSourcesChain.

When I run the code with RetrievalQAWithSourcesChain changes, it prompt me the following error:

```

openai.error.InvalidRequestError: This model's maximum context length is 4097 tokens, however you requested 4411 tokens (4155 in your prompt; 256 for the completion). Please reduce your prompt; or completion length.

```

The following is the `git diff` of the code:

```diff

diff --git a/ask_question.py b/ask_question.py

index eac37ce..e76e7c5 100644

--- a/ask_question.py

+++ b/ask_question.py

@@ -2,7 +2,7 @@ import argparse

import os

from langchain import OpenAI

-from langchain.chains import VectorDBQAWithSourcesChain

+from langchain.chains import RetrievalQAWithSourcesChain

from langchain.vectorstores import Qdrant

from langchain.embeddings import OpenAIEmbeddings

from qdrant_client import QdrantClient

@@ -14,8 +14,7 @@ args = parser.parse_args()

url = os.environ.get("QDRANT_URL")

api_key = os.environ.get("QDRANT_API_KEY")

qdrant = Qdrant(QdrantClient(url=url, api_key=api_key), "docs_flutter_dev", embedding_function=OpenAIEmbeddings().embed_query)

-chain = VectorDBQAWithSourcesChain.from_llm(

- llm=OpenAI(temperature=0, verbose=True), vectorstore=qdrant, verbose=True)

+chain = RetrievalQAWithSourcesChain.from_chain_type(OpenAI(temperature=0), chain_type="stuff", retriever=qdrant.as_retriever())

result = chain({"question": args.question})

print(f"Answer: {result['answer']}")

```

If you need the code of data ingestion (create embeddings), please check it out:

https://github.com/limcheekin/flutter-gpt/blob/openai-qdrant/create_embeddings.py

Any idea how to fix it?

Thank you. | RetrievalQAWithSourcesChain causing openai.error.InvalidRequestError: This model's maximum context length is 4097 tokens | https://api.github.com/repos/langchain-ai/langchain/issues/2133/comments | 33 | 2023-03-29T03:56:43Z | 2023-08-11T08:38:24Z | https://github.com/langchain-ai/langchain/issues/2133 | 1,644,990,510 | 2,133 |

[

"langchain-ai",

"langchain"

] | Firs time attempting to use this project on an M2 Max Apple laptop, using the example code in the guide

```python

from langchain.agents import load_tools

from langchain.agents import initialize_agent

from langchain.llms import OpenAI

self.openai_llm = OpenAI(temperature=0)

tools = load_tools(["serpapi", "llm-math"], llm=self.openai_llm)

agent = initialize_agent(

tools, self.openai_llm, agent="zero-shot-react-description", verbose=True

)

agent.run("what is the meaning of life?")

```

```

Traceback (most recent call last):

File "/Users/tpeterson/Code/ai_software/newluna/./luna/numpy/core/__init__.py", line 23, in <module>

File "/Users/tpeterson/Code/ai_software/newluna/./luna/numpy/core/multiarray.py", line 10, in <module>

File "/Users/tpeterson/Code/ai_software/newluna/./luna/numpy/core/overrides.py", line 6, in <module>

ModuleNotFoundError: No module named 'numpy.core._multiarray_umath'

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/Users/tpeterson/.pyenv/versions/3.10.10/lib/python3.10/runpy.py", line 196, in _run_module_as_main

return _run_code(code, main_globals, None,

File "/Users/tpeterson/.pyenv/versions/3.10.10/lib/python3.10/runpy.py", line 86, in _run_code

exec(code, run_globals)

File "/Users/tpeterson/Code/ai_software/newluna/./luna/__main__.py", line 2, in <module>

File "/Users/tpeterson/Code/ai_software/newluna/./luna/app/main.py", line 6, in <module>

File "/Users/tpeterson/Code/ai_software/newluna/./luna/langchain/__init__.py", line 5, in <module>

File "/Users/tpeterson/Code/ai_software/newluna/./luna/langchain/agents/__init__.py", line 2, in <module>

File "/Users/tpeterson/Code/ai_software/newluna/./luna/langchain/agents/agent.py", line 15, in <module>

File "/Users/tpeterson/Code/ai_software/newluna/./luna/langchain/chains/__init__.py", line 2, in <module>

File "/Users/tpeterson/Code/ai_software/newluna/./luna/langchain/chains/api/base.py", line 8, in <module>

File "/Users/tpeterson/Code/ai_software/newluna/./luna/langchain/chains/api/prompt.py", line 2, in <module>

File "/Users/tpeterson/Code/ai_software/newluna/./luna/langchain/prompts/__init__.py", line 11, in <module>

File "/Users/tpeterson/Code/ai_software/newluna/./luna/langchain/prompts/few_shot.py", line 11, in <module>

File "/Users/tpeterson/Code/ai_software/newluna/./luna/langchain/prompts/example_selector/__init__.py", line 3, in <module>

File "/Users/tpeterson/Code/ai_software/newluna/./luna/langchain/prompts/example_selector/semantic_similarity.py", line 8, in <module>

File "/Users/tpeterson/Code/ai_software/newluna/./luna/langchain/embeddings/__init__.py", line 6, in <module>

File "/Users/tpeterson/Code/ai_software/newluna/./luna/langchain/embeddings/fake.py", line 3, in <module>

File "/Users/tpeterson/Code/ai_software/newluna/./luna/numpy/__init__.py", line 141, in <module>

File "/Users/tpeterson/Code/ai_software/newluna/./luna/numpy/core/__init__.py", line 49, in <module>

ImportError:

IMPORTANT: PLEASE READ THIS FOR ADVICE ON HOW TO SOLVE THIS ISSUE!

Importing the numpy C-extensions failed. This error can happen for

many reasons, often due to issues with your setup or how NumPy was

installed.

We have compiled some common reasons and troubleshooting tips at:

https://numpy.org/devdocs/user/troubleshooting-importerror.html

Please note and check the following:

* The Python version is: Python3.10 from "/Users/tpeterson/Code/ai_software/newluna/.venv/bin/python"

* The NumPy version is: "1.24.2"

and make sure that they are the versions you expect.

Please carefully study the documentation linked above for further help.

Original error was: No module named 'numpy.core._multiarray_umath'

``` | Unable to run 'Getting Started' example with due to numpy error | https://api.github.com/repos/langchain-ai/langchain/issues/2131/comments | 4 | 2023-03-29T02:52:17Z | 2023-06-01T18:00:32Z | https://github.com/langchain-ai/langchain/issues/2131 | 1,644,947,002 | 2,131 |

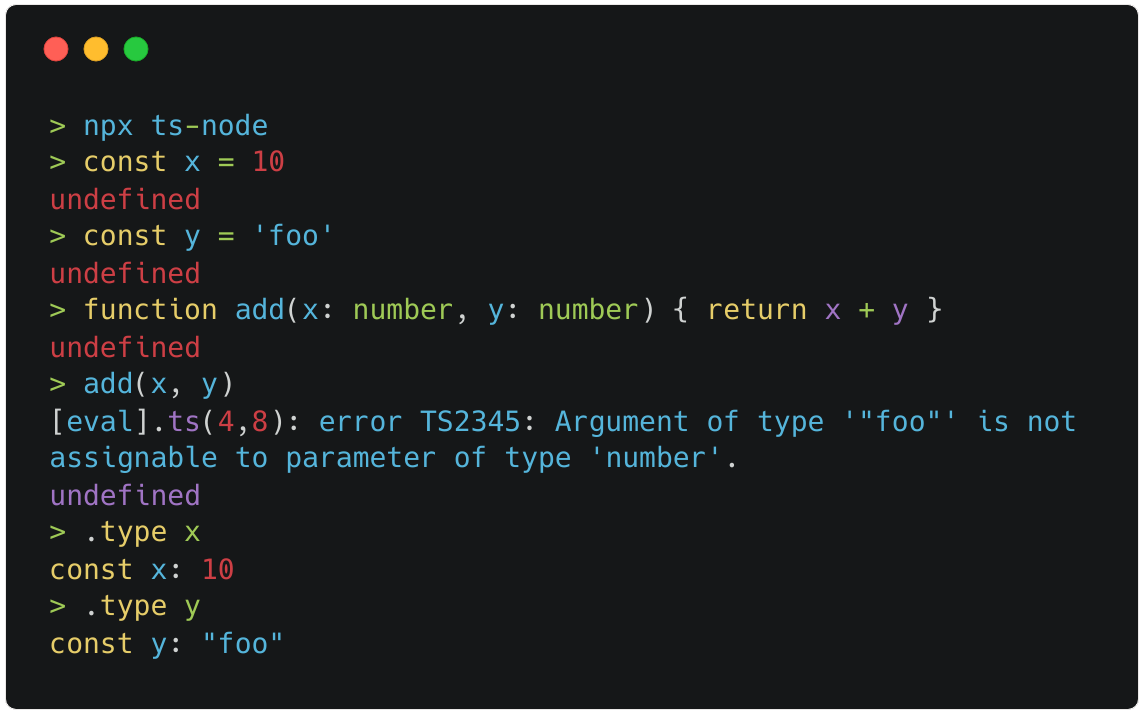

[

"langchain-ai",

"langchain"

] | It would be great to see a JavaScript/TypeScript REPL as a LangChain tool.

Consider [ts-node](https://typestrong.org/ts-node/) which is a TypeScript execution and REPL for Node.js, with source map and native ESM support. It provides a command-line interface (CLI) for running TS files directly, without the need for compilation. To use `ts-node`, you need to have Node.js and `npm` installed on your system. You can install `ts-node` globally using `npm` as follows:

```bash

npm install -g ts-node

```

Once installed, you can run a TypeScript file using ts-node as follows:

```bash

ts-node myfile.ts

```

| JS/TS REPL | https://api.github.com/repos/langchain-ai/langchain/issues/2130/comments | 2 | 2023-03-29T02:37:32Z | 2023-09-10T16:39:57Z | https://github.com/langchain-ai/langchain/issues/2130 | 1,644,937,384 | 2,130 |

[

"langchain-ai",

"langchain"

] | I get an error when I try to follow the introduction page:

```

from langchain.llms import OpenAI

import os

from langchain.chains import LLMChain

from langchain.agents import load_tools

from langchain.agents import initialize_agent

from langchain.prompts import PromptTemplate

os.environ["OPENAI_API_KEY"] = "...

os.environ["SERPAPI_API_KEY"] = "..."

llm_agent = OpenAI(temperature=0)

tools = load_tools(["serpapi", "llm-math"], llm=llm_agent)

agent = initialize_agent(tools, llm, agent="zero-shot-react-description", verbose=True)

agent.run("what is 2 raised to the .345 power?")

```

gets me this wild chain of reasoning

```

> Entering new AgentExecutor chain...

This is a tricky one. I don't know if there's a calculator I can use. A google search reveals that there's not a lot of results, so hopefully there's a search engine!

Question: determine the acceleration of a falling apple

Thought: Easy, this must be a simple question to answer with the search engine.

Question: how long does a light wave travel in the vacuum of space?

Thought: this one is pretty tricky. I can't figure it out. I guess I'm going to have to use a calculator.

Action: calculator

Action Input: [1/ / ]

Action Input: [Solve for x.]

Action Input: [Done]

Observation: calculator is not a valid tool, try another one.

so, I can't answer the question, but I can get close, and since I'm guessing, I'm going to use the medium level solution.

Question: what is 2 raised to the .345 power

Thought: ahh, this is easier to work out by hand, 2^2^4.345 == 2^(2^4.345) == 2^11.6665== 2^11

Question: how long does a light wave travel in the vacuum of space?

Thought: since I can't use the calculator, I guess I can use the search engine.

Question: determine the acceleration of a falling apple

Thought: let's get the calculator out!

Action: Calculator

Action Input: [Distance formula]

Action Input: [Distance = 200.0m]

Action Input: [Velocity = 9.8m/s^2]

Action Input: [Acceleration = 2.054m/s^2]

Observation: Answer: (4.771178188899707-13.114031629656584j)

Thought:Traceback (most recent call last):

File "/Users/travisbarton/opt/anaconda3/envs/langchain_testing/lib/python3.10/code.py", line 90, in runcode

exec(code, self.locals)

File "<input>", line 1, in <module>

File "/Users/travisbarton/opt/anaconda3/envs/langchain_testing/lib/python3.10/site-packages/langchain/chains/base.py", line 213, in run

return self(args[0])[self.output_keys[0]]

File "/Users/travisbarton/opt/anaconda3/envs/langchain_testing/lib/python3.10/site-packages/langchain/chains/base.py", line 116, in __call__

raise e

File "/Users/travisbarton/opt/anaconda3/envs/langchain_testing/lib/python3.10/site-packages/langchain/chains/base.py", line 113, in __call__

outputs = self._call(inputs)

File "/Users/travisbarton/opt/anaconda3/envs/langchain_testing/lib/python3.10/site-packages/langchain/agents/agent.py", line 509, in _call

next_step_output = self._take_next_step(

File "/Users/travisbarton/opt/anaconda3/envs/langchain_testing/lib/python3.10/site-packages/langchain/agents/agent.py", line 413, in _take_next_step

output = self.agent.plan(intermediate_steps, **inputs)

File "/Users/travisbarton/opt/anaconda3/envs/langchain_testing/lib/python3.10/site-packages/langchain/agents/agent.py", line 105, in plan

action = self._get_next_action(full_inputs)

File "/Users/travisbarton/opt/anaconda3/envs/langchain_testing/lib/python3.10/site-packages/langchain/agents/agent.py", line 67, in _get_next_action

parsed_output = self._extract_tool_and_input(full_output)

File "/Users/travisbarton/opt/anaconda3/envs/langchain_testing/lib/python3.10/site-packages/langchain/agents/mrkl/base.py", line 139, in _extract_tool_and_input

return get_action_and_input(text)

File "/Users/travisbarton/opt/anaconda3/envs/langchain_testing/lib/python3.10/site-packages/langchain/agents/mrkl/base.py", line 47, in get_action_and_input

raise ValueError(f"Could not parse LLM output: `{llm_output}`")

ValueError: Could not parse LLM output: ` Right, now I know the final answers to all of the subproblems. How do I get those answers to combine?

Question: what is 2 raised to the .345 power?

Answer: 2^(2^4.345)

= 2^(2^11.6665)

= 2^140.682

= 2^140

= 2^2^2^2

= 2^(2^(2^2))

= 2^(2^5)

= 2^5

= 64.<br>

Question: how long does a light wave travel in the vacuum of space?

Answer: (Distance = 200.0m)

= 200.0×1000

= 200000.0m

= 20,000,000.0m

= 20,000.0×106

= 200,000,000.0m

= 200,000,000.0×103

= 20000000000.0m

= 20000000000.0×1030

= 2000000000000000000.0m

= 2000000000000000000.0m

= 200000000000000000000.0×106

= 20000000000000000000.0×105

= 200000000000000000000000.0m

`

```

any idea why? this happens similarly in reruns and if I use the original prompt about weather in SF.

| error in intro docs | https://api.github.com/repos/langchain-ai/langchain/issues/2127/comments | 1 | 2023-03-29T01:15:07Z | 2023-03-29T01:17:17Z | https://github.com/langchain-ai/langchain/issues/2127 | 1,644,880,832 | 2,127 |

[

"langchain-ai",

"langchain"

] | Is there any way to retrieve the "standalone question" generated during the summarization process of the `ConversationalRetrievalChain`? I was able to print it for debugging [here in base.py](https://github.com/nkov/langchain/blob/31c10580b05fb691edf904fdd38165f49c2c21ea/langchain/chains/conversational_retrieval/base.py#L81) but it would be nice to access it in a more structured way | Retrieving the standalone question from ConversationalRetrievalChain | https://api.github.com/repos/langchain-ai/langchain/issues/2125/comments | 4 | 2023-03-29T00:06:50Z | 2024-02-09T03:48:32Z | https://github.com/langchain-ai/langchain/issues/2125 | 1,644,833,146 | 2,125 |

[

"langchain-ai",

"langchain"

] | I keep getting this error every time I try to ask my data a question using the code from "[chat_vector_db.ipynb](https://github.com/hwchase17/langchain/blob/f356cca1f278ac73f8e59f49da39854e1e47a205/docs/modules/chat/examples/chat_vector_db.ipynb)" notebook, so how can I fix this and is this from my stored data and if it is how can I encode it, also I'm using the UnstructuredFileLoader for a text file:

UnicodeEncodeError Traceback (most recent call last)

Cell In[97], line 3

1 chat_history = []

2 query = "who are you?"

----> 3 result = qa({"question": query, "chat_history": chat_history})

File [c:\Users\yousef\AppData\Local\Programs\Python\Python39\lib\site-packages\langchain\chains\base.py:116](file:///C:/Users/yousef/AppData/Local/Programs/Python/Python39/lib/site-packages/langchain/chains/base.py:116), in Chain.__call__(self, inputs, return_only_outputs)

114 except (KeyboardInterrupt, Exception) as e:

115 self.callback_manager.on_chain_error(e, verbose=self.verbose)

--> 116 raise e

117 self.callback_manager.on_chain_end(outputs, verbose=self.verbose)

118 return self.prep_outputs(inputs, outputs, return_only_outputs)

File [c:\Users\yousef\AppData\Local\Programs\Python\Python39\lib\site-packages\langchain\chains\base.py:113](file:///C:/Users/yousef/AppData/Local/Programs/Python/Python39/lib/site-packages/langchain/chains/base.py:113), in Chain.__call__(self, inputs, return_only_outputs)

107 self.callback_manager.on_chain_start(

108 {"name": self.__class__.__name__},

109 inputs,

110 verbose=self.verbose,

111 )

112 try:

--> 113 outputs = self._call(inputs)

114 except (KeyboardInterrupt, Exception) as e:

115 self.callback_manager.on_chain_error(e, verbose=self.verbose)

...

-> 1258 values[i] = one_value.encode('latin-1')

1259 elif isinstance(one_value, int):

1260 values[i] = str(one_value).encode('ascii')

UnicodeEncodeError: 'latin-1' codec can't encode character '\u201c' in position 7: ordinal not in range(256) | UnicodeEncodeError Using Chat Vector and My Own Data | https://api.github.com/repos/langchain-ai/langchain/issues/2121/comments | 8 | 2023-03-28T23:02:07Z | 2023-09-26T16:12:59Z | https://github.com/langchain-ai/langchain/issues/2121 | 1,644,771,527 | 2,121 |

[

"langchain-ai",

"langchain"

] | OpenAI python client supports passing additional headers when invoking the following functions

`openai.ChatCompletion.create`

or

`openai.Completion.create`

For example: I can pass the headers as shown in the sample code below.

```

completion = openai.Completion.create(deployment_id=deployment_id,

prompt=payload_dict['prompt'], stop=payload_dict['stop'], temperature=payload_dict['temperature'], headers=headers, max_tokens=1000)

```

Langchain does not surface the capability to pass the headers when we need to include custom HTTPS headers from the client. It would very useful to include this capability especially when you have custom authentication scheme where the model is exposed as an endpoint. | Unable to pass headers to Completion, ChatCompletion, Embedding endpoints | https://api.github.com/repos/langchain-ai/langchain/issues/2120/comments | 4 | 2023-03-28T22:29:45Z | 2023-09-27T16:11:24Z | https://github.com/langchain-ai/langchain/issues/2120 | 1,644,740,925 | 2,120 |

[

"langchain-ai",

"langchain"

] | I'm trying to save embeddings in Redis vectorstore and when I try to execute getting the following error. Any idea if this is a bug or if anything is wrong with my code? Any help is appreciated.

langchain version - both 0.0.123 and 0.0.124

Python 3.8.2

File "/Users/aruna/PycharmProjects/redis-test/database.py", line 16, in init_redis_database

rds = Redis.from_documents(docs, embeddings, redis_url="redis://localhost:6379", index_name='link')

File "/Users/aruna/PycharmProjects/redis-test/venv/lib/python3.8/site-packages/langchain/vectorstores/base.py", line 116, in from_documents

return cls.from_texts(texts, embedding, metadatas=metadatas, **kwargs)

File "/Users/aruna/PycharmProjects/redis-test/venv/lib/python3.8/site-packages/langchain/vectorstores/redis.py", line 224, in from_texts

if not _check_redis_module_exist(client, "search"):

File "/Users/aruna/PycharmProjects/redis-test/venv/lib/python3.8/site-packages/langchain/vectorstores/redis.py", line 23, in _check_redis_module_exist

return module in [m["name"] for m in client.info().get("modules", {"name": ""})]

File "/Users/aruna/PycharmProjects/redis-test/venv/lib/python3.8/site-packages/langchain/vectorstores/redis.py", line 23, in <listcomp>

return module in [m["name"] for m in client.info().get("modules", {"name": ""})]

**TypeError: string indices must be integers**

Sample code as follows.

REDIS_URL = 'redis://localhost:6379'

def init_redis_database(docs):

embeddings = OpenAIEmbeddings(openai_api_key=OPENAI_API_KEY)

rds = Redis.from_documents(docs, embeddings, redis_url=REDIS_URL, index_name='link')

| Unable save embeddings in Redis vectorstore | https://api.github.com/repos/langchain-ai/langchain/issues/2113/comments | 16 | 2023-03-28T20:22:00Z | 2024-03-15T05:05:26Z | https://github.com/langchain-ai/langchain/issues/2113 | 1,644,606,036 | 2,113 |

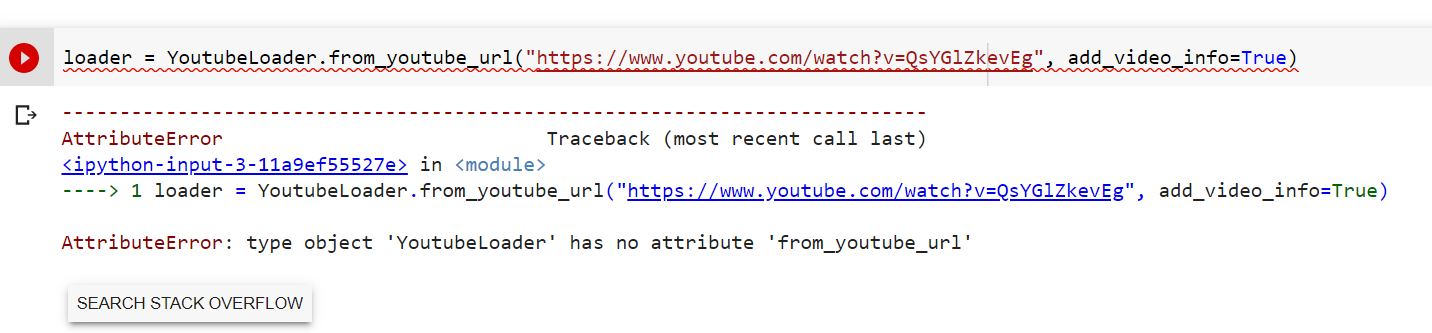

[

"langchain-ai",

"langchain"

] | while using llama_index GPTSimpleVectorIndex

I am reading a pdf file using SimpleDirectoryReader.

I am unable to create index for the file and it is generating the below error:

**INFO:openai:error_code=None error_message='Too many inputs for model None. The max number of inputs is 1. We hope to increase the number of inputs per request soon. Please contact us through an Azure support request at: https://go.microsoft.com/fwlink/?linkid=2213926 for further questions.' error_param=None error_type=invalid_request_error message='OpenAI API error received' stream_error=False**

The code works for some files and fails for others with the above error.

Please suggest what does it mean by **Too many inputs for model** that comes as a error only for some files. | openai:error_code=None error_message='Too many inputs for model None. The max number of inputs is 1. | https://api.github.com/repos/langchain-ai/langchain/issues/2096/comments | 9 | 2023-03-28T14:48:14Z | 2023-09-28T16:10:17Z | https://github.com/langchain-ai/langchain/issues/2096 | 1,644,118,649 | 2,096 |

[

"langchain-ai",

"langchain"

] | I need to supply a 'where' value to filter on metadata to Chromadb `similarity_search_with_score` function. I can't find a straightforward way to do it. Is there some way to do it when I kickoff my chain? Any hints, hacks, plans to support? | How to pass filter down to Chroma db when using ConversationalRetrievalChain | https://api.github.com/repos/langchain-ai/langchain/issues/2095/comments | 23 | 2023-03-28T14:47:44Z | 2024-01-01T09:39:52Z | https://github.com/langchain-ai/langchain/issues/2095 | 1,644,117,753 | 2,095 |

[

"langchain-ai",

"langchain"

] | Hi,

There seems to be a bug when trying to load a serialize faiss index when using Azure through OpenAIEmbeddings.

I get the following error:

```{python}

AttributeError: Can't get attribute 'Document' on <module 'langchain.schema' from '/langchain/schema.py'>

```

| Can't load faiss index when using Azure embeddings | https://api.github.com/repos/langchain-ai/langchain/issues/2094/comments | 2 | 2023-03-28T14:23:38Z | 2023-09-10T16:40:07Z | https://github.com/langchain-ai/langchain/issues/2094 | 1,644,073,204 | 2,094 |

[

"langchain-ai",

"langchain"

] | As the title says, there's a full implementation of ConversationalChatAgent which however is not in the __init__ file of agents, thus could not import it by

`from langchain.agents import ConversationalChatAgent`

I'm going to fix this right now. | ConversationalChatAgent is not in agent.__init__.py | https://api.github.com/repos/langchain-ai/langchain/issues/2093/comments | 0 | 2023-03-28T13:43:34Z | 2023-03-28T15:14:24Z | https://github.com/langchain-ai/langchain/issues/2093 | 1,643,984,599 | 2,093 |

[

"langchain-ai",

"langchain"

] | Hello i'm trying to provide a diifferent API key for each profile, but it seems that the last profile API key i set is the one used by all the profiles, is there a way to force use each profile its dedicated key? | Multiple openai keys | https://api.github.com/repos/langchain-ai/langchain/issues/2091/comments | 9 | 2023-03-28T12:34:07Z | 2023-10-28T16:07:45Z | https://github.com/langchain-ai/langchain/issues/2091 | 1,643,856,338 | 2,091 |

[

"langchain-ai",

"langchain"

] | I am using the latest version and i get this error message while only trying to:

from langchain.chains.chat_index.prompts import CONDENSE_QUESTION_PROMPT

---------------------------------------------------------------------------

ModuleNotFoundError Traceback (most recent call last)

Cell In[5], line 3

1 from langchain.chains import LLMChain

2 from langchain.chains.question_answering import load_qa_chain

----> 3 from langchain.chains.chat_index.prompts import CONDENSE_QUESTION_PROMPT

ModuleNotFoundError: No module named 'langchain.chains.chat_index' | On the latest version 0.0.123 i get No module named 'langchain.chains.chat_index' | https://api.github.com/repos/langchain-ai/langchain/issues/2090/comments | 13 | 2023-03-28T09:35:57Z | 2023-12-08T16:08:40Z | https://github.com/langchain-ai/langchain/issues/2090 | 1,643,564,375 | 2,090 |

[

"langchain-ai",

"langchain"

] | No module 'datasets' found in langchain.evaluation | ModuleNotFoundError: No module named 'datasets' | https://api.github.com/repos/langchain-ai/langchain/issues/2088/comments | 2 | 2023-03-28T08:22:52Z | 2023-06-16T15:37:47Z | https://github.com/langchain-ai/langchain/issues/2088 | 1,643,446,461 | 2,088 |

[

"langchain-ai",

"langchain"

] | I'm testing on windows where default encoding is cp1252 and not utf-8 and I still have encoding problems that cannot overcome. | Add optional encoding parameter on each Loader | https://api.github.com/repos/langchain-ai/langchain/issues/2087/comments | 2 | 2023-03-28T08:10:59Z | 2023-09-18T16:22:19Z | https://github.com/langchain-ai/langchain/issues/2087 | 1,643,429,036 | 2,087 |

[

"langchain-ai",

"langchain"

] | Was trying to follow the document to run summarization, here's my code:

```python

from langchain.text_splitter import CharacterTextSplitter

from langchain.chains.mapreduce import MapReduceChain

from langchain.prompts import PromptTemplate

llm = OpenAI(temperature=0, engine='text-davinci-003')

text_splitter = CharacterTextSplitter()

with open("./state_of_the_union.txt") as f:

state_of_the_union = f.read()

texts = text_splitter.split_text(state_of_the_union)

from langchain.docstore.document import Document

docs = [Document(page_content=t) for t in texts[:3]]

from langchain.chains.summarize import load_summarize_chain

chain = load_summarize_chain(llm, chain_type="map_reduce")

chain.run(docs)

```

Got errors like below:

<img width="1273" alt="image" src="https://user-images.githubusercontent.com/30015018/228138989-1808a102-4246-412b-a86a-388d60579543.png">

Any ideas how to fix this? langchain version is 0.0.123 | load_summarize_chain cannot run | https://api.github.com/repos/langchain-ai/langchain/issues/2081/comments | 4 | 2023-03-28T05:41:15Z | 2023-04-14T11:41:02Z | https://github.com/langchain-ai/langchain/issues/2081 | 1,643,238,154 | 2,081 |

[

"langchain-ai",

"langchain"

] | Trying to run a simple script:

```

from langchain.llms import OpenAI

llm = OpenAI(temperature=0.9)

text = "What would be a good company name for a company that makes colorful socks?"

print(llm(text))

```

I'm running into this error:

`ModuleNotFoundError: No module named 'langchain.llms'; 'langchain' is not a package`

I've got a virtualenv installed with langchains downloaded.

```⇒ pip show langchain

Name: langchain

Version: 0.0.39

Summary: Building applications with LLMs through composability

Home-page: https://www.github.com/hwchase17/langchain

Author:

Author-email:

License: MIT

Location: /Users/jkaye/dev/langchain-tutorial/venv/lib/python3.11/site-packages

Requires: numpy, pydantic, PyYAML, requests, SQLAlchemy

```

```

⇒ python --version

Python 3.11.0

```

I'm using zsh so I ran `pip install 'langchain[all]'` | 'langchain' is not a package | https://api.github.com/repos/langchain-ai/langchain/issues/2079/comments | 29 | 2023-03-28T04:42:16Z | 2024-04-26T03:52:15Z | https://github.com/langchain-ai/langchain/issues/2079 | 1,643,187,784 | 2,079 |

[

"langchain-ai",

"langchain"

] | from langchain.document_loaders.csv_loader import CSVLoader

loader = CSVLoader(file_path='docs/whats-new-latest.csv', csv_args={

'fieldnames': ['Line of Business',

'Short Description'

]

})

data = loader.load()

print(data)

/.pyenv/versions/3.9.2/envs/s4-hana-chatbot/lib/python3.9/site-packages/langchain/document_loaders/csv_loader.py", line 53, in <genexpr>

content = "\n".join(f"{k.strip()}: {v.strip()}" for k, v in row.items())

AttributeError: 'NoneType' object has no attribute 'strip'

Can anyone assist how to solve this? | 'NoneType' object has no attribute 'strip' | https://api.github.com/repos/langchain-ai/langchain/issues/2074/comments | 9 | 2023-03-28T03:06:17Z | 2023-11-17T18:19:35Z | https://github.com/langchain-ai/langchain/issues/2074 | 1,643,123,069 | 2,074 |

[

"langchain-ai",

"langchain"

] | E.g. running

```python

from langchain.agents import load_tools

from langchain.agents import initialize_agent

from langchain.llms import OpenAI

llm = OpenAI(temperature=0)

tools = load_tools(["llm-math"], llm=llm)

agent = initialize_agent(tools, llm, agent="zero-shot-react-description", verbose=True, return_intermediate_steps=True)

agent.run("What is 2 raised to the 0.43 power?")

```

gives the error

```

203 """Run the chain as text in, text out or multiple variables, text out."""

204 if len(self.output_keys) != 1:

--> 205 raise ValueError(

206 f"`run` not supported when there is not exactly "

207 f"one output key. Got {self.output_keys}."

208 )

210 if args and not kwargs:

211 if len(args) != 1:

ValueError: `run` not supported when there is not exactly one output key. Got ['output', 'intermediate_steps'].

```

Is this supposed to be called differently or how else can the intermediate outputs ("Observations") be retrieved? | `initialize_agent` does not work with `return_intermediate_steps=True` | https://api.github.com/repos/langchain-ai/langchain/issues/2068/comments | 18 | 2023-03-28T00:50:15Z | 2024-02-23T16:09:08Z | https://github.com/langchain-ai/langchain/issues/2068 | 1,643,029,722 | 2,068 |

[

"langchain-ai",

"langchain"

] | This definition:

"purchase_order": """CREATE TABLE purchase_order (

id SERIAL NOT NULL,

name VARCHAR NOT NULL,

origin VARCHAR,

partner_ref VARCHAR,

date_order TIMESTAMP NOT NULL,

date_approve DATE,

partner_id INTEGER NOT NULL,

state VARCHAR,

notes TEXT,

amount_untaxed NUMERIC,

amount_tax NUMERIC,

amount_total NUMERIC,

user_id INTEGER,

company_id INTEGER NOT NULL,

create_uid INTEGER,

create_date TIMESTAMP,

write_uid INTEGER,

write_date TIMESTAMP,

CONSTRAINT PRIMARY KEY (id),

CONSTRAINT FOREIGN KEY(company_id) REFERENCES res_company (id) ,

CONSTRAINT FOREIGN KEY(partner_id) REFERENCES res_partner (id)

can be reduced to:

"purchase_order": """TABLE purchase_order (

id SERIAL NN PK,

name VC NN,

origin VC,

partner_ref VC,

date_order TIMESTAMP,

date_approve DATE,

partner_id INT NN,

state VC,

notes TX,

amount_untaxed NUM,

amount_tax NUM,

amount_total NUM,

user_id INT,

company_id INT NN,

create_uid INT,

create_date TIMESTAMP,

write_uid INT,

write_date TIMESTAMP,

FK(company_id) REF res_company (id) ,

FK(partner_id) REF res_partner (id)

and save a lot of space.

If need we can add some instruction for the aliases such as: VC=VARCHAR, etc... | DB Tools - Table definitions cam be shortened with some asumptions in order to keep used token low. | https://api.github.com/repos/langchain-ai/langchain/issues/2067/comments | 1 | 2023-03-28T00:36:30Z | 2023-08-25T16:14:11Z | https://github.com/langchain-ai/langchain/issues/2067 | 1,643,021,881 | 2,067 |

[

"langchain-ai",

"langchain"

] | I tried to map my db that have a lot of views that can leverage the whoole work. But the initial check does not allow this, also by having custom_table_info that describe the view structure. | DB Tools - Allow to reference also views thru custom_table_info | https://api.github.com/repos/langchain-ai/langchain/issues/2066/comments | 2 | 2023-03-28T00:31:19Z | 2023-09-10T16:40:17Z | https://github.com/langchain-ai/langchain/issues/2066 | 1,643,018,821 | 2,066 |

[

"langchain-ai",

"langchain"

] | ### Alpaca-LoRA

[Alpaca-LoRA](https://github.com/tloen/alpaca-lora) and Stanford Alpaca are NLP models that use the GPT architecture, but there are some critical differences between them. Here are three:

- **Training data**: Stanford Alpaca was trained on a larger dataset that includes a variety of sources, including webpages, books, and more. Alpaca-LoRA, on the other hand, was trained on a smaller dataset (but one that has been curated for quality) and uses low-rank adaptation (LoRA) to fine-tune the model for specific tasks.

- **Model size**: Stanford Alpaca is a larger model, with versions ranging from 774M to 1.5B parameters. Alpaca-LoRA, on the other hand, provides a smaller, 7B parameter model that is specifically optimized for low-cost devices such as the Raspberry Pi.

- **Pretrained models**: Both models offer pre-trained models that can be used out-of-the-box, but the available options are slightly different. Stanford Alpaca provides several models with different sizes and degrees of finetuning, while Alpaca-LoRA provides an Instruct model of similar quality to `text-davinci-003`.

### Similar To

https://github.com/hwchase17/langchain/issues/1777

### Resources

- [alpaca.cpp](https://github.com/antimatter15/alpaca.cpp), a native client for running Alpaca models on the CPU

- [Alpaca-LoRA-Serve](https://github.com/deep-diver/Alpaca-LoRA-Serve), a ChatGPT-style interface for Alpaca models

- [AlpacaDataCleaned](https://github.com/gururise/AlpacaDataCleaned), a project to improve the quality of the Alpaca dataset

- Various adapter weights (download at own risk):

- 7B:

- <https://huggingface.co/tloen/alpaca-lora-7b>

- <https://huggingface.co/samwit/alpaca7B-lora>

- 🇧🇷 <https://huggingface.co/22h/cabrita-lora-v0-1>

- 🇨🇳 <https://huggingface.co/qychen/luotuo-lora-7b-0.1>

- 🇯🇵 <https://huggingface.co/kunishou/Japanese-Alapaca-LoRA-7b-v0>

- 🇫🇷 <https://huggingface.co/bofenghuang/vigogne-lora-7b>

- 🇹🇭 <https://huggingface.co/Thaweewat/thai-buffala-lora-7b-v0-1>