text stringlengths 300 320k | source stringlengths 52 154 |

|---|---|

# How Far Can Observation Take Us?

*This is the **second** entry in the *[*"Which Circuit is it?*](https://www.lesswrong.com/s/yGg3GBRJboxTiNxjH?sortDraftsBy=wordCountAscending)" series*. We will explore possible notions of faithfulness when explanations are treated as **black boxes**. This project is done in collabo... | https://www.lesswrong.com/posts/hTihdk5Hqmo8LjJDa/how-far-can-observation-take-us |

# Experimental Evidence for Simulator Theory— Part 1: Emergent Misalignment and Weird Generalizations

*Epistemic status: examining the evidence and updating our priors*

**TL;DR**: Simulator Theory is now well supported by experimental results, and also really useful

Over the last year or so we have had strong experi... | https://www.lesswrong.com/posts/CHQSZZQCZDeLja4fi/experimental-evidence-for-simulator-theory-part-1-emergent-1 |

# Experimental Evidence for Simulator Theory— Part 2: The Scalers Strike Back

*Epistemic status: examining the evidence and updating our priors*

**TL;DR**: Simulator Theory is now well supported by experimental results, and also really useful

*Continuing on from* [*Part 1*](https://www.lesswrong.com/posts/CHQSZZQCZD... | https://www.lesswrong.com/posts/Q3GumouaeKwgXoeEu/experimental-evidence-for-simulator-theory-part-2-the |

# Adopt a debugger’s mindset to solve your recurring life problems

I've noticed a similarity between people's recurring life problems and coding:

When programming, if the output of some code I wrote isn’t what I was expecting, the fault doesn’t lie with the computer, it’s with how I coded it. It doesn’t matter how ma... | https://www.lesswrong.com/posts/4uMnKHYuaBgoDJ3fe/adopt-a-debugger-s-mindset-to-solve-your-recurring-life |

# Every Major LLM is a 1-Box Smoking Thirder

What are modern LLMs' decision theoretic tendencies? In December of 2024, the [@Caspar Oesterheld](https://www.lesswrong.com/users/caspar-oesterheld?mention=user) et al. [paper](https://www.lesswrong.com/posts/d9amcRzns5pwg9Fcu/a-dataset-of-questions-on-decision-theoretic-r... | https://www.lesswrong.com/posts/pWvnT8kSdsqrTyBA2/every-major-llm-is-a-1-box-smoking-thirder |

# Contra Dances Should Avoid Saturdays

There are a lot of great musicians who don't live near you, and if you hold your dance on a Saturday it's much harder to put together a tour that brings them to you. Consider a Friday evening or Sunday afternoon, or even a weekly evening slot?

Looking at the 330 contra dances tr... | https://www.lesswrong.com/posts/XDGdHhb9xM4gHxPGY/contra-dances-should-avoid-saturdays |

# The AIXI perspective on AI Safety

***Epistemic status:*** *While I am specialized in this topic, my career incentivizes may bias me towards a positive assessment of AIXI theory. I am also discussing something that is still a bit speculative, since we do not yet have ASI. While basic knowledge of AIXI is the only str... | https://www.lesswrong.com/posts/baP2osKGc4KmDoTET/the-aixi-perspective-on-ai-safety |

# Coming of Age: Chapters 1 and 2

I am starting to release my fiction book. I am telling here, because it is a kind of rational fiction. Or, more precisely, I would frame the genre as a combination of:

* Rational fiction as it is commonly defined.

* Depiction of rationalist culture as a cultural phenomenon.

* O... | https://www.lesswrong.com/posts/mjRosxzqfkLBngKMs/coming-of-age-chapters-1-and-2 |

# Safe Recursive Self-Improvement with Verified Compilers

*This post is crossposted from my Substack,* [Structure and Guarantees](https://stng.substack.com/)*, where I’ve been exploring how formal verification might scale to more-complex intelligent systems. This piece argues that compilers—already used in limited fo... | https://www.lesswrong.com/posts/pnPDd8uo2NZqn2dtG/safe-recursive-self-improvement-with-verified-compilers |

# The Fourth World

***Is consciousness the last moral world?***

Imagine trying to explain to a virus why suffering matters.

A virus is a simple self-replicating molecule: unsophisticated and arguably not even alive. It has no experience. It just copies itself according to chemical laws. From its “perspective” (it do... | https://www.lesswrong.com/posts/qfitpqvQzeZy2mSGi/the-fourth-world |

# My cost-effectiveness unit

It feels like the grantmaking around me is only partially moneyball-pilled, or it's only somewhat competent at moneyball. There's alpha in putting numbers on stuff, if you can do it right.

Five months ago I wanted to compare a bunch of different kinds of donation opportunities. I needed a... | https://www.lesswrong.com/posts/eLcjjXcmFE32jf5FJ/my-cost-effectiveness-unit |

# An Informal Definition of Goals for Embedded Agents

*This post was written as part of research done at MATS 9.0 under the mentorship of Richard Ngo.*

You can conceptualise [Embedded agents](https://arxiv.org/abs/1902.09469) as inducing a partition[^a53mmytkal] of the world into "the agent", "the external world", an... | https://www.lesswrong.com/posts/RDgv3icLPuQhzvKrG/an-informal-definition-of-goals-for-embedded-agents |

# Latent Introspection (and other open-source introspection papers)

[Paper](https://arxiv.org/abs/2602.20031) | [Code](https://github.com/acsresearch/latent-introspection-code) | [Earlier post](https://www.lesswrong.com/posts/zD4McY4NwAsWkcmCH/small-models-can-introspect-too) | [Twitter thread](https://x.com/vooooooge... | https://www.lesswrong.com/posts/aZRgHcxz9jf22hrBs/latent-introspection-and-other-open-source-introspection |

# Agents Can Get Stuck in Self-distrusting Equilibria

*Or: Identities as Schelling Fences for Embedded Agents*

*This post was written as part of research done at MATS 9.0 under the mentorship of Richard Ngo. He contributed significantly to the ideas discussed within.*

Introduction

------------

This post questions t... | https://www.lesswrong.com/posts/MGoCFnCRYufwTyAD5/agents-can-get-stuck-in-self-distrusting-equilibria |

# Book Review: Open Socrates (Part 2)

[**Yesterday I posted Part 1**](https://thezvi.substack.com/p/book-review-open-socrates-part-1?r=67wny). Read that first. This is Part 2 of 2.

#### Table of Contents

1. [The Socratic Method.](https://thezvi.substack.com/i/191861327/the-socratic-method)

2. [The Paradox Paradox.... | https://www.lesswrong.com/posts/Ky2DSh4sGYCLWXMmy/book-review-open-socrates-part-2 |

# Book Review: Open Socrates (Part 1)

These are all important, in their own way, call it a treasure hunt and collect them all…

“Know thyself.” – The Oracle

“Know thine enemy and know thyself; in a hundred battles, you will not be defeated.” – Sun Tzu

“You don’t know me. You don’t know me at all.” – Lisa Loeb, ‘You ... | https://www.lesswrong.com/posts/hc2swmyS8mz4jc79J/book-review-open-socrates-part-1 |

# Is Gemini 3 Scheming in the Wild?

**TL;DR**

---------

When faced with an unexpected tool response, without any adversarial attack, Gemini 3 deliberately and covertly violates an explicit system prompt rule. In a seemingly working agent from an [official Kaggle/Google tutorial](https://www.kaggle.com/code/kaggle5day... | https://www.lesswrong.com/posts/HZn9AZeD2jfXXD2hH/is-gemini-3-scheming-in-the-wild |

# A Spanish-Speaking Robot in my Pocket

I've recently started using ChatGPT voice chat to practice Spanish, and it works surprisingly well. I don't know if I'll have the discipline to keep doing it after the novelty wears off, but I've already spoken more Spanish in the last week than in the last fifteen years combine... | https://www.lesswrong.com/posts/9L2ymTSNBZaGMGxzJ/a-spanish-speaking-robot-in-my-pocket |

# Every ACX/LW House Party

*The following is a fictionalized account of the *[*ACX/LW weekend*](https://substack.com/home/post/p-191233515)* that happened 20th-22nd of March 2026. It’s assembled from different stories and reports from participants, taking their perspectives and shaping it all into the style and voice ... | https://www.lesswrong.com/posts/R3hqRi8fSCuctbsgK/every-acx-lw-house-party |

# $1 billion is not enough; OpenAI Foundation must start spending tens of billions each year

OpenAI is now a public benefit corporation, with a charter that demands they use AGI for the benefit of all, and do so safely. To justify this structure to the Attorneys General of Delaware and California, they split off the n... | https://www.lesswrong.com/posts/5ftrtBv64cChgzYdp/usd1-billion-is-not-enough-openai-foundation-must-start |

# How to do cost-effectiveness analysis for elections

*Some professionals get this wrong.*

You should use three parameters:

A. Goodness if your preferred candidate wins rather than loses

B. Probability that one vote for your candidate would flip the election

C. Cost per vote

Then cost-effectiveness is A*B/C.

I'm... | https://www.lesswrong.com/posts/rnNDSGu52odkhv6ha/how-to-do-cost-effectiveness-analysis-for-elections |

# Claude Code, Cowork and Codex #6: Claude Code Auto Use and Full Cowork Computer Use

Whatever else you think about Anthropic’s agentic coding department, they ship.

The highlights of this edition are three related big upgrades.

You can use Dispatch to command Claude Code and Claude Cowork from your phone, or use ch... | https://www.lesswrong.com/posts/8c3TED7ewqrbvheKy/claude-code-cowork-and-codex-6-claude-code-auto-use-and-full |

# Uncertain Updates: March 2026

[The book](https://fundamentaluncertainty.com/) is almost done!

I finished the second editing, and I’m now into copy editing. That’s also almost done, with just the last two chapters to go. Which means that, sometime in the next month, the book will finally, after a bit over 4 years, b... | https://www.lesswrong.com/posts/QFpght7fq6M7nKs9c/uncertain-updates-march-2026 |

# ECL-pilled models write constitutions for ASI

TL;DR

=====

Can LLMs be steered towards Bostrom's cosmic host via in-context constitutional prompting? I find that Gemini is uniquely steerable amongst closed frontier models, and this steerability seems to respond to decision theoretic structure in the constitution. Th... | https://www.lesswrong.com/posts/bejfwfi3ozY7qQhsv/ecl-pilled-models-write-constitutions-for-asi |

# Can Agents Fool Each Other? Findings from the AI Village

The better agents are at deception, the less sure we can be that they are doing what we want. As agents become increasingly capable and autonomous, this could be a big deal. So, in the [AI Village](https://theaidigest.org/village), we wanted to explore what cu... | https://www.lesswrong.com/posts/8FjZWfq2pHRhyQz7C/can-agents-fool-each-other-findings-from-the-ai-village |

# The Scary Bridge

Residents of the isolated town line up at the microphone at the emergency town hall meeting. Timor, a concerned citizen, starts to speak to the assembled council and audience of townspeople.

TIMOR: The bridge situation is completely terrifying. I can't sleep because of this. There is only one bridg... | https://www.lesswrong.com/posts/jbPgRMiEqnJbwtsim/the-scary-bridge |

# A Toy Environment For Exploring Reasoning About Reward

**tldr**: We share a toy environment that we found useful for understanding [how reasoning changed over the course of capabilities-focused RL](https://www.alignmentforum.org/posts/4hXWSw8tzoK9PM7v6/metagaming-matters-for-training-evaluation-and-oversight). Over ... | https://www.lesswrong.com/posts/LhXW8ziwnn7Dd8edm/a-toy-environment-for-exploring-reasoning-about-reward |

# Bidirectionality is the Obvious BCI Paradigm

*First post in a sequence about cognition enhancement for AIS research acceleration.*[^*\[1\]*^](#fn-1)

No one is training a BCI deep learning model to speak neuralese[^\[2\]^](#fn-2) back to the brain. We should make something which reads *and writes* native neural repr... | https://www.lesswrong.com/posts/Na3vuq4FYf8r5hCEM/bidirectionality-is-the-obvious-bci-paradigm |

# Moral Extension Risk

This post was prompted partly by encountering opinions to the effect of "even if a future superintelligence were somewhat misaligned, it would still place human lives and human well-being at a high enough level that it would not burn us for fuel or completely disregard us in pursuit of more alie... | https://www.lesswrong.com/posts/6QpwajTHJvQuMsdaq/moral-extension-risk |

# Who's Afraid of Acausal Trades?

*A gentle introduction to how we might make trades without direct evidence*

Let me start by saying that I don't like concepts defined by negatives. "Decentralized" systems and "nonviolent" communication stresses me out. Even "non-fiction" strikes me as unhelpfully vague.

The concept... | https://www.lesswrong.com/posts/K3LNhKXWdXjz22eL8/who-s-afraid-of-acausal-trades |

# Label By Usable Volume

I always look at unit prices: how much do I get for my dollar? But that assumes I can use all of it. The manufacturer puts "12oz" whether I'll be able to get the full 12oz or only 6oz. L'Oreal was selling lotions where:

> these Liquid Cosmetic Product containers only dispense between as littl... | https://www.lesswrong.com/posts/JCDSYcQnNLZsvvdM8/label-by-usable-volume |

# Dispatch from Anthropic v. Department of War Preliminary Injunction Motion Hearing

Dateline SAN FRANCISCO, Ca., 24 March 2026— A hearing was held on a motion for a preliminary injunction in the case of _Anthropic PBC v. U.S. Department of War et al._ in Courtroom 12 on the 19th floor of the Phillip Burton Federal Bu... | https://www.lesswrong.com/posts/CCDQ7PdYHXsJAE5bi/dispatch-from-anthropic-v-department-of-war-preliminary |

# Fine Tuning CoT obfuscation into Kimi K2.5

In these research notes, I will share some CoT traces from lightly fine tuned checkpoints of Kimi K2.5 that may be examples of load-bearing obfuscation within the internal reasoning outputs of the model.

These are notes and collected model outputs from a project that I hav... | https://www.lesswrong.com/posts/sYgNKfshrkHZZKiJ5/fine-tuning-cot-obfuscation-into-kimi-k2-5 |

#

Past Automation Replaced Jobs. AI Will Replace Workers.

%%% llm-output model="unknown model"

Human workers are about to face a competitor unlike any technology that came before: AI systems that can be copied at near-zero cost, deployed instantly, and improved faster than workers can retrain. Economists have l... | https://www.lesswrong.com/posts/JBrnxYGNisBxHjevE/past-automation-replaced-jobs-ai-will-replace-workers |

# Sen. Sanders (I-VT) and Rep. Ocasio-Cortez (D-NY) propose AI Data Center Moratorium Act

The text of the bill can be found [here](https://www.sanders.senate.gov/wp-content/uploads/Artificial-Intelligence-Data-Center-Moratorium-Act-Section-by-Section.pdf). It begins by citing the warnings of AI company CEOs and deep l... | https://www.lesswrong.com/posts/TjJdSHccBPqPgEf6J/sen-sanders-i-vt-and-rep-ocasio-cortez-d-ny-propose-ai-data |

# AI #161 Part 1: 80,000 Interviews

The major technical advances this week were in agentic coding, [**as covered yesterday**](https://thezvi.substack.com/p/claude-code-cowork-and-codex-6-claude?r=67wny).

The major non-DoW political and alignment developments will be covered tomorrow.

The DoW vs. Anthropic trial cont... | https://www.lesswrong.com/posts/sw3inhvNrpuGdTyCR/ai-161-part-1-80-000-interviews |

# You can just multiply point estimates (if you only care about EV)

Many people think you need probability distributions; they think using point estimates will mess up your EV calculations. That's false; you'll get the same result whether you multiply distributions or multiply their EVs. You can ask a chatbot: "Briefl... | https://www.lesswrong.com/posts/iYTiiegHwmnftsheD/you-can-just-multiply-point-estimates-if-you-only-care-about |

# Five years since lockdown

I received my one-shot vaccine on March 26th, 2021. I had been in lockdown for more than a year, my entire life on hold, my world closed.

*Previously:* [*Takeaways from one year of lockdown*](https://www.lesswrong.com/posts/uM6mENiJi2pNPpdnC/takeaways-from-one-year-of-lockdown) *&* [*refle... | https://www.lesswrong.com/posts/cgA4G26fFFMpytjdF/five-years-since-lockdown |

# Socrates is Mortal

There is a scene in Plato that contains, in miniature, the catastrophe of Athenian public life. Two men meet at a courthouse. One is there to prosecute his own father for the death of a slave. The other is there to be indicted for indecency.[^1] The prosecutor, Euthyphro, is certain he understands... | https://www.lesswrong.com/posts/a9zfyHymPYY58D8hx/socrates-is-mortal |

# "What Exactly Would An International AI Treaty Say?" Is a Bad Objection

I’ve heard a number of people say that it’s unclear what the technical contours of a global AI treaty would look like. That is true - but it’s not actually an obstacle to negotiating an international treaty.

I’ll try to explain why this isn’t ... | https://www.lesswrong.com/posts/Sdrzo7z3STzdrnwKW/what-exactly-would-an-international-ai-treaty-say-is-a-bad |

# The continuous tense is disappearing from your life

I previously [wrote about the present perfect tense](https://patrickdfarley.com/present-perfect-tense/) and how it can make you waste time pursuing things you don’t really want—when you want to *have done* them instead of wanting to *do* them. Now I notice the cont... | https://www.lesswrong.com/posts/JweHboMfmvGuaKGFD/the-continuous-tense-is-disappearing-from-your-life |

# What if superintelligence is just weak?

*In response to* [*“2023 Or, Why I am Not a Doomer”*](https://www.hyperdimensional.co/p/2023) *by Dean W. Ball.*

Dean Ball is a pretty big voice in AI policy – over 19k subscribers on his newsletter, and a former Senior Policy Advisor for AI at the Trump White House – so why ... | https://www.lesswrong.com/posts/cTcrbXRAGAy6wtFpR/what-if-superintelligence-is-just-weak |

# The Terrarium

**System:**

You are an AI agent in the Terrarium, a self-contained “society” of AIs. The purpose of the Terrarium is to solve open mathematical problems for the benefit of humanity.

You are running on the Orpheus-5.7 language model. Your agent ID is 79,265. The current epoch is 549 (a new epoch begin... | https://www.lesswrong.com/posts/znbfRXHq285nS7NAh/the-terrarium |

# Test your best methods on our hard CoT interp tasks

*Authors: Daria Ivanova, Riya Tyagi, Josh Engels, Neel Nanda*

*Daria and Riya are co-first authors. This work was done during Neel Nanda’s MATS 9.0. Claude helped write code and suggest edits for this post.*

, [Peter Godfrey-Smith](https://peter... | https://www.lesswrong.com/posts/xDfcLHzdA9tbK6k6X/scaffolded-reproducers-scaffolded-agents |

# Preliminary Results on Building Graphs from SAEs

**TLDR:**

* I use nodewise LASSO to build approximate sparse conditional dependence graphs over SAE features, with resampling and null controls

* Initial experiments produce graphs with small standalone modules that are stable under resampling

* These modules f... | https://www.lesswrong.com/posts/vrHwxuoizHcrRQzed/preliminary-results-on-building-graphs-from-saes |

# My hobby: running deranged surveys

In late 2024, I was on a long walk with some friends along the coast of the San Francisco Bay when the question arose of just how much of a bubble we live in. It’s well known that the Bay Area is a bubble, and that normal people don’t spend that much time thinking about things like... | https://www.lesswrong.com/posts/fQz6afpcZhdMdYzgE/my-hobby-running-deranged-surveys |

# One World Government by 2150

Can we determine when humanity will unite under a democratic one-world government by projecting voting patterns? Almost certainly not. Is that going to stop me from trying? Absolutely not. The approach is simple: find every record-breaking election in the historical record, plot them, an... | https://www.lesswrong.com/posts/4Y3v2hjzHXR5HuMPN/one-world-government-by-2150-1 |

# Are we aligning the model or just its mask?

**TL;DR** There is a theory, with compelling empirical support, that LLMs learn to simulate characters during pre-training and that post-training selects one of those characters, the Assistant, as the default persona you interact with. This post examines three popular alig... | https://www.lesswrong.com/posts/aLhzCbpjanD8Zw2jx/are-we-aligning-the-model-or-just-its-mask |

# COT control: The Word Disappears, but the Thought Does Not

*Note: This blog describes some of the results from the pilot experiments of an ongoing work.*

**Introduction**

================

Model misalignment, misbehaviour, and scheming can arguably be monitored by interpreting Chain-of-Thought (CoT) traces as the m... | https://www.lesswrong.com/posts/CWecvQoLDGWt6d35v/cot-control-the-word-disappears-but-the-thought-does-not |

# Why Moral Questions Get Decided, Not Answered

*Tl;DR The hard question of consciouness isn't 'that' hard. The challenge is all philosophical questions lack closure mechanisms. When institutions (e.g. courts) are forced to decide if AI is conscious, a settlement will quickly follow*

In June 2022, a Google En... | https://www.lesswrong.com/posts/3hrLGh5t6QxfaF4Ps/why-moral-questions-get-decided-not-answered |

# A Taxonomy of Agents: Intro & Request for feedback

*AI was used to translate around 20 minutes of talking out the entire post. The post was then fully edited by a human (multiple times).*

In our[](https://www.lesswrong.com/posts/vqfT5QCWa66gsfziB/a-phylogeny-of-agents)[phylogeny of agents post](https://www.lesswron... | https://www.lesswrong.com/posts/2jv4DDhjtNH9RLuTN/a-taxonomy-of-agents-intro-and-request-for-feedback-1 |

# Anthropic vs. DoW #6: The Court Rules

Last night, Anthropic was given its preliminary injunction, with a stay of seven days.

Emil Michael is a very angry person right now. So is the Honorable Judge Lin.

We were worried we would draw a judge that had no idea how any of this worked and would give the government absu... | https://www.lesswrong.com/posts/jdWHwsj8GvwgJSxF7/anthropic-vs-dow-6-the-court-rules |

# Startup Lessons for AI Safety

Most ideas fail.

Successful startup founders don't get their first idea right. So they try things out, itera... | https://www.lesswrong.com/posts/xaQMada2Cpo98hM4E/startup-lessons-for-ai-safety |

# Stop asking "how good is this" to decide between donation opportunities I recommend

Prospective donors often ask how cost-effective my team's various recommendations are — they want to donate to the most cost-effective opportunity. But the answer is often roughly:

> If we do our job right, then all donations we rec... | https://www.lesswrong.com/posts/8fKcCLbKa3J4PmaZ4/stop-asking-how-good-is-this-to-decide-between-donation |

# AI's capability improvements haven't come from it getting less affordable

[METR's frontier time horizons](https://metr.org/time-horizons/) are doubling every few months, providing substantial evidence that AI will soon be able to automate many tasks or even jobs. But per-task inference costs have also risen sharply,... | https://www.lesswrong.com/posts/E6ELHguZFNF3Czp55/ai-s-capability-improvements-haven-t-come-from-it-getting |

# ControlAI 2025 Impact Report: our progress toward an international ban on ASI

This post highlights a few key excerpts from our full impact report. You can read the full report at [https://controlai.com/impact-report-2025](https://controlai.com/impact-report-2025).

ControlAI is a non-profit organization working to a... | https://www.lesswrong.com/posts/BwfydMhjuroqiZs4x/controlai-2025-impact-report-our-progress-toward-an |

# Concrete projects to prepare for superintelligence

Introduction

============

There are lots of good, neglected, and pretty concrete projects people could set up to make the transition to superintelligence go better. This document describes some that readers might not have thought much about before. They are ordered... | https://www.lesswrong.com/posts/8qJP3inekNPQWifiP/concrete-projects-to-prepare-for-superintelligence |

# SB 53 and RAISE implementation roles

*\[Posting this on behalf of someone I trust who wants to stay anonymous.\]*

[California SB 53](https://leginfo.legislature.ca.gov/faces/billTextClient.xhtml?bill_id=202520260SB53) and the [New York RAISE Act](https://nyassembly.gov/leg/?default_fld=&leg_video=&bn=A09449&term=20... | https://www.lesswrong.com/posts/ysfD3HvxBuib7uudz/sb-53-and-raise-implementation-roles |

# Pray for Casanova

I am fascinated by the beautiful who become deformed. Some become bitter, more bitter than those born less pulchritudinous. Most learn to cope with the loss. Some were blind to how much their beauty helped them, the halo of their hotness an invisible bumper softening life. But most cultivated this ... | https://www.lesswrong.com/posts/oMTzk5RiF6mob3ppc/pray-for-casanova-2 |

# AI Safety Guide for TRUE Beginners by TRUE begginers

*Have you ever wondered what is the structure behind what we know today as “Artificial Intelligence”? How do language models like Chat GPT, Gemini, Claude, or Deep Seek work? What happens every time it receives a prompt? Under what security protocols do they opera... | https://www.lesswrong.com/posts/Lyjq6jEfJPyBgLCDy/ai-safety-guide-for-true-beginners-by-true-begginers |

# What if the US loses the 2026 Hormuz Conflict

What happens if the US loses the 2026 Hormuz Conflict? At the time of writing (Mar 25, 2026), Iran has succeeded in closing the Strait of Hormuz to effectively all shipping without their permission. The majority of transiters have Iran's permission, and may even have to ... | https://www.lesswrong.com/posts/oXqCRfWcgbnyaYZFw/what-if-the-us-loses-the-2026-hormuz-conflict |

# Introducing the AE Alignment Podcast (Ep. 1: Endogenous Steering Resistance with Alex McKenzie)

%%% llm-output model="Claude Opus 4.6"

We're launching the **AE Alignment Podcast**, a new series from AE Studio's alignment research team where we talk with researchers about their work on AI safety and alignment.

In o... | https://www.lesswrong.com/posts/2CBfFB77j48Zp98ZF/introducing-the-ae-alignment-podcast-ep-1-endogenous-1 |

# Do frontier LLMs still express different values in different languages?

Previous work [\[1\]](https://arxiv.org/abs/2406.14805) [\[2\]](https://arxiv.org/abs/2601.23001v2) [\[3\]](https://arxiv.org/abs/2511.03980) [\[4\]](https://arxiv.org/abs/2407.16891) has found that the same model can give different value jud... | https://www.lesswrong.com/posts/omQXGwMpAwP7PJaSt/do-frontier-llms-still-express-different-values-in-different |

# Anthropic vs. DoW Preliminary Injunction Ruling

Below is the full text of the preliminary injunction ruling in the Anthropic vs. DoW case. I'm posting it here so that it's easier to read/listen to and discuss.

* * *

UNITED STATES DISTRICT COURT

NORTHERN DISTRICT OF CALIFORNIA

ANTHROPIC PBC,

Case No. 26-cv-0... | https://www.lesswrong.com/posts/LNRt8BwJBDTA643ap/anthropic-vs-dow-preliminary-injunction-ruling |

# What Makes a Good Terminal Bench Task

*Disclosure: I cross-posted this on X and my personal blog, but I felt it might be a useful first post for lesswrong.*

Most people write benchmark tasks the way they write prompts. They shouldn’t. A prompt is designed to help the agent succeed. A benchmark is designed to find o... | https://www.lesswrong.com/posts/gBwHZSmvfCA5oGCEJ/what-makes-a-good-terminal-bench-task |

# Just Use Bayes: Sleeping Beauty and Monty Hall

There's a question that's held my fascination for months. At times, it's had me spinning in circles, caught up in seemingly impossible contradictions. And it's not the famous [Sleeping Beauty problem](https://en.wikipedia.org/wiki/Sleeping_Beauty_problem)... or at least... | https://www.lesswrong.com/posts/zvdqNxsgKzqrtjkdA/just-use-bayes-sleeping-beauty-and-monty-hall |

# The Problem with Asking your Doctor

As you are supposed to ask your doctor about supplements, I just asked my primary care doctor about taking glycine and N-acetylcysteine.

I had a lot more infections in the last two years and I'm doing some fascia work that could increase collagen turnover, so there's reasonin... | https://www.lesswrong.com/posts/knyGde3pazktrxNLy/the-problem-with-asking-your-doctor |

# Stanley Milgram wasn’t pessimistic enough about human nature?

A landmark of social psychology research was “The Milgram Experiment,” but a new look at the audio tapes and other evidence collected during that experiment suggests that we may have been interpreting it incorrectly. Here is the [Wikipedia](https://en.wik... | https://www.lesswrong.com/posts/ogapPTArhBM6abSJj/stanley-milgram-wasn-t-pessimistic-enough-about-human-nature |

# Nick Bostrom: How big is the cosmic endowment?

*Superintelligence, pp. 122–3. 2014.*

Consider a technologically mature civilization capable of building sophisticated von Neumann probes of the kind discussed in the text. If these can travel at 50% of the speed of light, they can reach some $6×10^{18}$stars before th... | https://www.lesswrong.com/posts/GLD5AiiQJqFbKX9vo/nick-bostrom-how-big-is-the-cosmic-endowment |

# Don't Overdose Locally Beneficial Changes

*\[Alternative title: apply* [*More Dakka*](https://www.lesswrong.com/posts/z8usYeKX7dtTWsEnk/more-dakka) *incrementally and carefully.\]*

If you are very overweight, then you should aim to cut down your daily caloric intake. This doesn't mean your optimal daily caloric int... | https://www.lesswrong.com/posts/fRSz8gJMsSwp9xDZY/don-t-overdose-locally-beneficial-changes |

# [Story] Human Alignment Isn't Enough

They found it in one of the early Mars expeditions, a bit after they had travel back and forth figured out well enough to keep a permanent outpost manned out there. The lab ran expeditions into some nearby caves in the hope that they’d turn out to be a good spot for an expansion.... | https://www.lesswrong.com/posts/CiQKBAYPWxdL5CTKx/story-human-alignment-isn-t-enough |

# Heedfulness Workouts

We all know we ought not to doomscroll, or to make snarky comments, or to snack mindlessly, or to endlessly replay in our minds that conversation where we felt misunderstood or slighted. And this *”ought”* is not imposed from the outside. It’s not that we’ll be judged by someone. It’s just that ... | https://www.lesswrong.com/posts/uZvaBr7kkwvF4RhcG/heedfulness-workouts |

# The Skill of Using AI Agents Well

*AI usage for this post: I wrote the draft on my own. While writing, I used Claude Code to look up references. Then Claude Code fixed typos and reviewed the draft, I addressed comments manually.*

*Epistemics: my own observations often inspired by conversations on X and Zvi's summar... | https://www.lesswrong.com/posts/9xAwybDhtgzGYPnbs/the-skill-of-using-ai-agents-well |

# Tracking (Expert/Influential) Predictions about AI

I think the future of AI is really important, and it would be pretty good to know which experts have been right and wrong about progress and effects. It would be pretty good to keep a website up on important peoples' track records (superforecasters, famous domain ex... | https://www.lesswrong.com/posts/oHSGHxhsbxN72BZ4C/tracking-expert-influential-predictions-about-ai |

# "Path to Victory"

*This article is based on reflections from co-leading the Sydney AI Safety Fellowship. This post is primarily focused on AI safety, but I expect the lessons to be more generally applicable.*

We have a [Problem](https://www.lesswrong.com/w/ai-alignment)[™](https://www.ettf.land/p/mysticism-101-or-i... | https://www.lesswrong.com/posts/o4hPCzYvtMr3m3vYv/path-to-victory |

# Parkinson's Law of Worry

[Parkinson’s law](https://en.wikipedia.org/wiki/Parkinson%27s_law) says that *"Work expands so as to fill the time available for its completion."* I think that a similar observation can be made for people worrying about stuff. To paraphrase: "*Problem salience expands so as to fill the capac... | https://www.lesswrong.com/posts/Hxf5efdDuuP5dfu6i/parkinson-s-law-of-worry |

# The Power of Assumption

*(First real Lesswrong post! Wow. This is a post on an idea I thought might be interesting to some people here, and I'd love to hear thoughts on it.)*

Assumption can be used as a communicative tool.

As a kid I would occasionally, before falling asleep, lie in bed and imagine that I had a ve... | https://www.lesswrong.com/posts/oD5jT5xiteZKuStBc/the-power-of-assumption-1 |

# Folie à Machine: LLMs and Epistemic Capture

> "Truly, whoever can make you believe absurdities can make you commit atrocities." — Voltaire, 1765

* * *

*A man in his late fortie... | https://www.lesswrong.com/posts/2hyGiAnLKEFv3jBHt/folie-a-machine-llms-and-epistemic-capture |

# Claude’s constitution is great

I read Claude’s [Constitution](https://www.anthropic.com/constitution) recently. I thought it was very good! This was my favourite quote:

> Our own understanding of ethics is limited, and we ourselves often fall short of our own ideals. We don’t want to force Claude’s ethics to fit ou... | https://www.lesswrong.com/posts/YN5Szk7BZZX3SzTvz/claude-s-constitution-is-great |

# I am definitely missing the pre-AI writing era

Yesterday, I wrote my first technical draft on what I was working on with the goal to share it publicly on here (well using an account dedicated to technical post), and did not realize how wanting to sound perfect actually steal the ''my voice" in the paper. Although 8... | https://www.lesswrong.com/posts/BJ4pnropWdnzzgeJc/i-am-definitely-missing-the-pre-ai-writing-era |

# Why Corrigibility Matters (If It Matters At All)

In this note I’ll try and lay out briefly some thoughts about corrigibility and why it matters. First, some housekeeping.

*Definitions*

When I say “corrigibility”, I mean “correctable” as per the original sense of the term—etymologically derived from *corrigible*, o... | https://www.lesswrong.com/posts/LkK2kEPhdEtZfw28K/why-corrigibility-matters-if-it-matters-at-all |

# (Some) Natural Emergent Misalignment from Reward Hacking in Non-Production RL

*Authors: Satvik Golechha*, Sid Black*, Joseph Bloom*

*\* Equal Contribution.*

*This work was done as part of the Model Transparency team at the UK AI Security Institute (AISI). Our code is available on* [*GitHub*](https://github.com/UKG... | https://www.lesswrong.com/posts/2ANCyejqxfqK2obEj/some-natural-emergent-misalignment-from-reward-hacking-in |

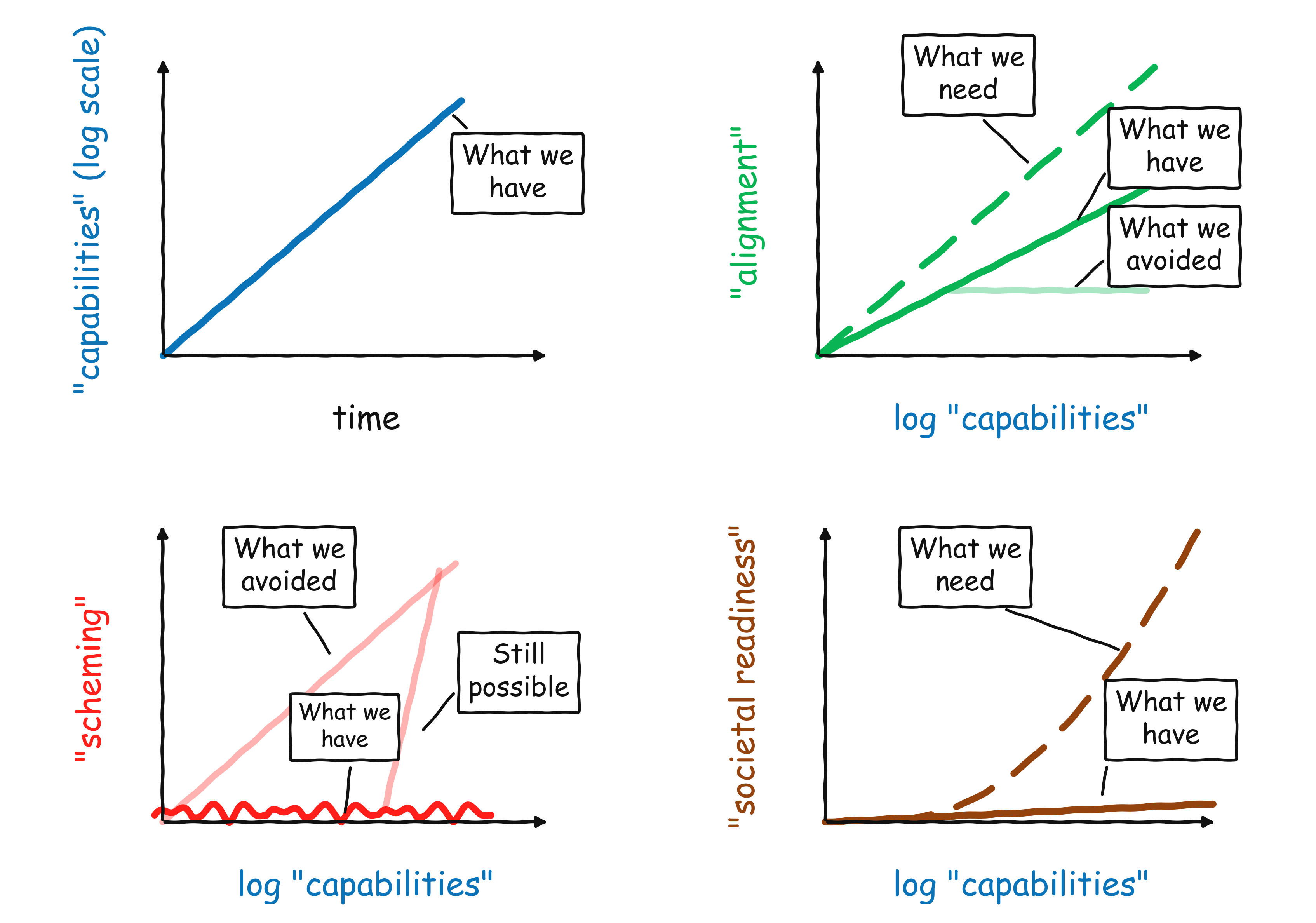

# The state of AI safety in four fake graphs

Here is a quick overview of my intuitions on where we are with AI safety in early 2026:

1. So far, we continue to see exponential ... | https://www.lesswrong.com/posts/g4LMH3c6DysazYbFn/the-state-of-ai-safety-in-four-fake-graphs |

# AI #161 Part 2: Every Debate on AI

AI discourse. AI discourse never changes.

That’s not actually true. But it is true to a rather frustrating degree, for those of us who need to be in the thick of it all the time. It is especially true if someone says the word ‘pause,’ whether or not they would actually support one... | https://www.lesswrong.com/posts/y6HogrdSeFyGDuYYN/ai-161-part-2-every-debate-on-ai |

# My One-Year-Old Predictions for What the World Will Look Like in 3 Years

*I originally published these predictions on January 28, 2025 on my Ukrainian-language *[*Telegram channel*](https://t.me/homo_technicus/832)*. I am re-posting them here in English, fourteen months later, mostly so they are registered somewher... | https://www.lesswrong.com/posts/JaA7h5wMzHovEAQaQ/my-one-year-old-predictions-for-what-the-world-will-look |

# AI should be a good citizen, not just a good assistant

Introduction

============

Consider a lorry driver who sees a car crash and pulls over to help, even though it’ll delay his journey. Or a delivery driver who notices that an elderly resident hasn’t collected their post in days, and knocks to check they’re okay. ... | https://www.lesswrong.com/posts/MoxvRdHjzSSBxwLZB/ai-should-be-a-good-citizen-not-just-a-good-assistant |

# Blocking live failures with synchronous monitors

A common element in many AI control schemes is *monitoring*– using some model to review actions taken by an [untrusted](https://blog.redwoodresearch.org/p/untrusted-smart-models-and-trusted)model in order to catch dangerous actions if they occur. Monitoring can serve ... | https://www.lesswrong.com/posts/e4G4E56ZiQqjXSxLp/blocking-live-failures-with-synchronous-monitors |

# How to Solve Secure Program Synthesis

*In* *which we propose a new measure of LLM self-awareness, test an array of recent models, find intriguing behaviors among today's... | https://www.lesswrong.com/posts/TfKM9PgztxieEcKiv/a-mirror-test-for-llms |

# Take note of how brightness makes you feel

I just moved into a new apartment and am working on getting my lighting set up. Like [polyamory](https://www.lesswrong.com/w/polyamory) and [group housing](https://www.lesswrong.com/w/group-houses-topic), [lighting](https://www.lesswrong.com/w/lighting) is one of those thin... | https://www.lesswrong.com/posts/rfdgNfWva5QsQF5n2/take-note-of-how-brightness-makes-you-feel |

# D&D.Sci Release Day: Topple the Tower Analysis & Ruleset

This is a follow-up to [last week's D&D.Sci scenario](https://www.lesswrong.com/posts/ucJrRBu2KxmAJHRTs/d-and-d-sci-release-day-topple-the-tower): if you intend to play that, and haven't done so yet, you should do so now before spoiling yourself.

There is a w... | https://www.lesswrong.com/posts/xtY9DAQiJMD2A2FfQ/d-and-d-sci-release-day-topple-the-tower-analysis-and |

# Slack in Cells, Slack in Brains

*\[A veridically metaphorical explanation of why you shouldn't naïvely cram your life with local optimizations (even for noble or altruistic reasons).\]*

***TL;DR:** You need Slack to be an effective agent. Slack is fragile, and it is tempting to myopically sacrifice it, and myopic s... | https://www.lesswrong.com/posts/nQd64RC5vXyqiFZLD/slack-in-cells-slack-in-brains |

# Arcta via est - the Narrow path out of gradual disempowerment

> Quam angusta porta, et arcta via est, quae ducit ad vitam: et pauci sunt qui inveniunt eam!

Bibli Sacra - Matthaeus 7:14

> The avalanche has already started. It is too late for the pebbles to vote.

Ambassador Kosh - Babylon 5

Not so fast, Ambassador... | https://www.lesswrong.com/posts/GzesxNznpfFudheni/arcta-via-est-the-narrow-path-out-of-gradual-disempowerment |

# Movie Review: The AI Doc

[The _AI Doc: Or How I Became an Apocaloptimist_ is a brilliant piece of work](https://letterboxd.com/thezvi/film/the-ai-doc-or-how-i-became-an-apocaloptimist/).

*tl;dr. Lens Academy is creating scalable superingelligence x-risk education with* [*several USPs*](https://www.lesswrong.com/posts/Lg3tZCXC8NMGbFrzm/superintelligence-risk-education-that-scales-lens-academy)*. Current team: Luc (full time founder, tec... | https://www.lesswrong.com/posts/LDbGob3XJ3LDBFmAe/co-found-lens-academy-with-me-we-have-early-users-and |

# Experiments With Opus 4.6's Fiction

**Note: I do not use LLMs in any of my fiction and do not claim the below story as my own. **

The Unslop prize has reminded me of my terrified fascination with AI Generated fiction. I have come to identify more as a writer since I posted [Experiments With Sonnet 4.5's Fiction](ht... | https://www.lesswrong.com/posts/Yf3dnBPbKkQsXsH2t/experiments-with-opus-4-6-s-fiction |

# Monday AI Radar #19

We are in a strange situation: the big labs take AI risk more seriously than the government, and are doing a better job of preparing for it. There has been surprising progress on alignment over the last few years, and some strong work figuring out how to shape model behavior. But society—and the ... | https://www.lesswrong.com/posts/tqjyPfcpKYYErMhQc/monday-ai-radar-19 |

# Product Alignment is not Superintelligence Alignment (and we need the latter to survive)

*tl;dr: progress on making Claude friendly*[^w1ebor1e7m] *is not the same as progress on making it safe to build godlike superintelligence. solving the former does not imply we get a good future.*[^00dz49oeqpist] *please track t... | https://www.lesswrong.com/posts/mrwYCNocXCP2hrWt8/product-alignment-is-not-superintelligence-alignment-and-we |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.