id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

2aecd7cb-d41b-44c8-95a4-fd09ecb4fcd7 | trentmkelly/LessWrong-43k | LessWrong | Meetup : Helsinki Book Blanket Meetup

Discussion article for the meetup : Helsinki Book Blanket Meetup

WHEN: 16 August 2014 03:00:00PM (+0300)

WHERE: MANNERHEIMINTIE 13 A, Helsinki

Location changed to the large lobby-cafe of [http://www.musiikkitalo.fi/en] because of weather

Posted also as an event on Less Wrong Finland's Facebook group at https://www.facebook.com/events/632882116808767/ – viewing might require joining the group at https://www.facebook.com/groups/lw.finland/

––––

[Less Wrong Finland meetup]

Having recently grown into a hundred-member group, we will hold our (first!) Book Blanket Meetup which should work regardless of how many people attend!

The idea is that you can add books through a simple Google form below, and that the added books will be made into cards like those in the event photo. The resulting deck of book-cards will be brought to the meetup and used for discovering new books, and whether people have read the same books and how others felt about them — and perhaps for trading them in an attempt to build a Hand of Sanity in case you end up deserted on some island. (Would you risk it and pick highly-praised books you haven’t read, or rather resort to old favorites you know to be full of deep meaning? Come find out!)

The form (which seemed to me anonymous even if signed in to Google): https://docs.google.com/forms/d/1OCPK0_GLHBFEnB_WQ6e8lfFIbPlP1y7PahuQK9abeZg/viewform?usp=send_form)

Also, you're just as welcome even if you haven't read or submitted anything! Deserted islands are serious considerations regardless of reading history, and in any case others can practice explaining why some books could be good to read. And of course you can just ask others about books they admit having read, though everyone's bound to forget old books. Time to resurface memories?

––––

Time?: 08-16-2014 15:00:00 (UTC+03)

Place?: CHANGED: To be weather-safe, we're going to meet at the large lobby-cafe of Musiikkitalo (Mannerheimintie 13 A). To find me, I'll have an orange hoodie.

Discuss |

64ffd59c-df02-4c58-94fc-4d5f2c6c4315 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Latent Adversarial Training

The Problem

===========

We’d like to train models to be robustly safe, even in environments that may fall well outside of the training distribution. Unfortunately all we get to work with is the training distribution, which makes ensuring robust generalization difficult.

Deception is an example of this concern. Models may be well-behaved in the training environment because they realize it is a training environment, but behave poorly in deployment because they notice the distributional shift.

An Approach

===========

Background

----------

It would be great if we could generate scenarios where the model behaves badly. For instance if the model is deceptive we’d like to generate inputs to trick it into thinking it’s been deployed so that it reveals that deception.

[Adversarial Training](https://ai-alignment.com/training-robust-corrigibility-ce0e0a3b9b4d?gi=94f6d1c3d161) (AT), and [Relaxed Adversarial Training](https://www.lesswrong.com/posts/9Dy5YRaoCxH9zuJqa/relaxed-adversarial-training-for-inner-alignment) (RAT), are approaches to this problem. Very briefly, in AT, we search for inputs that generate large loss, while in RAT we search for distributions of inputs or other more general evidence that the model is capable of generating large loss.

The approach I want to explore, called *Latent Adversarial Training* (LAT), is a form of RAT.

Concept

-------

Suppose we have two models, the Agent and the Surgeon. The Agent is the model we’re trying to train, and the Surgeon is going to be its adversary. The training proceeds as follows:

1. The Agent processes input x.mjx-chtml {display: inline-block; line-height: 0; text-indent: 0; text-align: left; text-transform: none; font-style: normal; font-weight: normal; font-size: 100%; font-size-adjust: none; letter-spacing: normal; word-wrap: normal; word-spacing: normal; white-space: nowrap; float: none; direction: ltr; max-width: none; max-height: none; min-width: 0; min-height: 0; border: 0; margin: 0; padding: 1px 0}

.MJXc-display {display: block; text-align: center; margin: 1em 0; padding: 0}

.mjx-chtml[tabindex]:focus, body :focus .mjx-chtml[tabindex] {display: inline-table}

.mjx-full-width {text-align: center; display: table-cell!important; width: 10000em}

.mjx-math {display: inline-block; border-collapse: separate; border-spacing: 0}

.mjx-math \* {display: inline-block; -webkit-box-sizing: content-box!important; -moz-box-sizing: content-box!important; box-sizing: content-box!important; text-align: left}

.mjx-numerator {display: block; text-align: center}

.mjx-denominator {display: block; text-align: center}

.MJXc-stacked {height: 0; position: relative}

.MJXc-stacked > \* {position: absolute}

.MJXc-bevelled > \* {display: inline-block}

.mjx-stack {display: inline-block}

.mjx-op {display: block}

.mjx-under {display: table-cell}

.mjx-over {display: block}

.mjx-over > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-under > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-stack > .mjx-sup {display: block}

.mjx-stack > .mjx-sub {display: block}

.mjx-prestack > .mjx-presup {display: block}

.mjx-prestack > .mjx-presub {display: block}

.mjx-delim-h > .mjx-char {display: inline-block}

.mjx-surd {vertical-align: top}

.mjx-surd + .mjx-box {display: inline-flex}

.mjx-mphantom \* {visibility: hidden}

.mjx-merror {background-color: #FFFF88; color: #CC0000; border: 1px solid #CC0000; padding: 2px 3px; font-style: normal; font-size: 90%}

.mjx-annotation-xml {line-height: normal}

.mjx-menclose > svg {fill: none; stroke: currentColor; overflow: visible}

.mjx-mtr {display: table-row}

.mjx-mlabeledtr {display: table-row}

.mjx-mtd {display: table-cell; text-align: center}

.mjx-label {display: table-row}

.mjx-box {display: inline-block}

.mjx-block {display: block}

.mjx-span {display: inline}

.mjx-char {display: block; white-space: pre}

.mjx-itable {display: inline-table; width: auto}

.mjx-row {display: table-row}

.mjx-cell {display: table-cell}

.mjx-table {display: table; width: 100%}

.mjx-line {display: block; height: 0}

.mjx-strut {width: 0; padding-top: 1em}

.mjx-vsize {width: 0}

.MJXc-space1 {margin-left: .167em}

.MJXc-space2 {margin-left: .222em}

.MJXc-space3 {margin-left: .278em}

.mjx-test.mjx-test-display {display: table!important}

.mjx-test.mjx-test-inline {display: inline!important; margin-right: -1px}

.mjx-test.mjx-test-default {display: block!important; clear: both}

.mjx-ex-box {display: inline-block!important; position: absolute; overflow: hidden; min-height: 0; max-height: none; padding: 0; border: 0; margin: 0; width: 1px; height: 60ex}

.mjx-test-inline .mjx-left-box {display: inline-block; width: 0; float: left}

.mjx-test-inline .mjx-right-box {display: inline-block; width: 0; float: right}

.mjx-test-display .mjx-right-box {display: table-cell!important; width: 10000em!important; min-width: 0; max-width: none; padding: 0; border: 0; margin: 0}

.MJXc-TeX-unknown-R {font-family: monospace; font-style: normal; font-weight: normal}

.MJXc-TeX-unknown-I {font-family: monospace; font-style: italic; font-weight: normal}

.MJXc-TeX-unknown-B {font-family: monospace; font-style: normal; font-weight: bold}

.MJXc-TeX-unknown-BI {font-family: monospace; font-style: italic; font-weight: bold}

.MJXc-TeX-ams-R {font-family: MJXc-TeX-ams-R,MJXc-TeX-ams-Rw}

.MJXc-TeX-cal-B {font-family: MJXc-TeX-cal-B,MJXc-TeX-cal-Bx,MJXc-TeX-cal-Bw}

.MJXc-TeX-frak-R {font-family: MJXc-TeX-frak-R,MJXc-TeX-frak-Rw}

.MJXc-TeX-frak-B {font-family: MJXc-TeX-frak-B,MJXc-TeX-frak-Bx,MJXc-TeX-frak-Bw}

.MJXc-TeX-math-BI {font-family: MJXc-TeX-math-BI,MJXc-TeX-math-BIx,MJXc-TeX-math-BIw}

.MJXc-TeX-sans-R {font-family: MJXc-TeX-sans-R,MJXc-TeX-sans-Rw}

.MJXc-TeX-sans-B {font-family: MJXc-TeX-sans-B,MJXc-TeX-sans-Bx,MJXc-TeX-sans-Bw}

.MJXc-TeX-sans-I {font-family: MJXc-TeX-sans-I,MJXc-TeX-sans-Ix,MJXc-TeX-sans-Iw}

.MJXc-TeX-script-R {font-family: MJXc-TeX-script-R,MJXc-TeX-script-Rw}

.MJXc-TeX-type-R {font-family: MJXc-TeX-type-R,MJXc-TeX-type-Rw}

.MJXc-TeX-cal-R {font-family: MJXc-TeX-cal-R,MJXc-TeX-cal-Rw}

.MJXc-TeX-main-B {font-family: MJXc-TeX-main-B,MJXc-TeX-main-Bx,MJXc-TeX-main-Bw}

.MJXc-TeX-main-I {font-family: MJXc-TeX-main-I,MJXc-TeX-main-Ix,MJXc-TeX-main-Iw}

.MJXc-TeX-main-R {font-family: MJXc-TeX-main-R,MJXc-TeX-main-Rw}

.MJXc-TeX-math-I {font-family: MJXc-TeX-math-I,MJXc-TeX-math-Ix,MJXc-TeX-math-Iw}

.MJXc-TeX-size1-R {font-family: MJXc-TeX-size1-R,MJXc-TeX-size1-Rw}

.MJXc-TeX-size2-R {font-family: MJXc-TeX-size2-R,MJXc-TeX-size2-Rw}

.MJXc-TeX-size3-R {font-family: MJXc-TeX-size3-R,MJXc-TeX-size3-Rw}

.MJXc-TeX-size4-R {font-family: MJXc-TeX-size4-R,MJXc-TeX-size4-Rw}

.MJXc-TeX-vec-R {font-family: MJXc-TeX-vec-R,MJXc-TeX-vec-Rw}

.MJXc-TeX-vec-B {font-family: MJXc-TeX-vec-B,MJXc-TeX-vec-Bx,MJXc-TeX-vec-Bw}

@font-face {font-family: MJXc-TeX-ams-R; src: local('MathJax\_AMS'), local('MathJax\_AMS-Regular')}

@font-face {font-family: MJXc-TeX-ams-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_AMS-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_AMS-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_AMS-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-B; src: local('MathJax\_Caligraphic Bold'), local('MathJax\_Caligraphic-Bold')}

@font-face {font-family: MJXc-TeX-cal-Bx; src: local('MathJax\_Caligraphic'); font-weight: bold}

@font-face {font-family: MJXc-TeX-cal-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-R; src: local('MathJax\_Fraktur'), local('MathJax\_Fraktur-Regular')}

@font-face {font-family: MJXc-TeX-frak-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-B; src: local('MathJax\_Fraktur Bold'), local('MathJax\_Fraktur-Bold')}

@font-face {font-family: MJXc-TeX-frak-Bx; src: local('MathJax\_Fraktur'); font-weight: bold}

@font-face {font-family: MJXc-TeX-frak-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-BI; src: local('MathJax\_Math BoldItalic'), local('MathJax\_Math-BoldItalic')}

@font-face {font-family: MJXc-TeX-math-BIx; src: local('MathJax\_Math'); font-weight: bold; font-style: italic}

@font-face {font-family: MJXc-TeX-math-BIw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-BoldItalic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-BoldItalic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-BoldItalic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-R; src: local('MathJax\_SansSerif'), local('MathJax\_SansSerif-Regular')}

@font-face {font-family: MJXc-TeX-sans-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-B; src: local('MathJax\_SansSerif Bold'), local('MathJax\_SansSerif-Bold')}

@font-face {font-family: MJXc-TeX-sans-Bx; src: local('MathJax\_SansSerif'); font-weight: bold}

@font-face {font-family: MJXc-TeX-sans-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-I; src: local('MathJax\_SansSerif Italic'), local('MathJax\_SansSerif-Italic')}

@font-face {font-family: MJXc-TeX-sans-Ix; src: local('MathJax\_SansSerif'); font-style: italic}

@font-face {font-family: MJXc-TeX-sans-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-script-R; src: local('MathJax\_Script'), local('MathJax\_Script-Regular')}

@font-face {font-family: MJXc-TeX-script-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Script-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Script-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Script-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-type-R; src: local('MathJax\_Typewriter'), local('MathJax\_Typewriter-Regular')}

@font-face {font-family: MJXc-TeX-type-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Typewriter-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Typewriter-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Typewriter-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-R; src: local('MathJax\_Caligraphic'), local('MathJax\_Caligraphic-Regular')}

@font-face {font-family: MJXc-TeX-cal-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-B; src: local('MathJax\_Main Bold'), local('MathJax\_Main-Bold')}

@font-face {font-family: MJXc-TeX-main-Bx; src: local('MathJax\_Main'); font-weight: bold}

@font-face {font-family: MJXc-TeX-main-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-I; src: local('MathJax\_Main Italic'), local('MathJax\_Main-Italic')}

@font-face {font-family: MJXc-TeX-main-Ix; src: local('MathJax\_Main'); font-style: italic}

@font-face {font-family: MJXc-TeX-main-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-R; src: local('MathJax\_Main'), local('MathJax\_Main-Regular')}

@font-face {font-family: MJXc-TeX-main-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-I; src: local('MathJax\_Math Italic'), local('MathJax\_Math-Italic')}

@font-face {font-family: MJXc-TeX-math-Ix; src: local('MathJax\_Math'); font-style: italic}

@font-face {font-family: MJXc-TeX-math-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size1-R; src: local('MathJax\_Size1'), local('MathJax\_Size1-Regular')}

@font-face {font-family: MJXc-TeX-size1-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size1-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size1-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size1-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size2-R; src: local('MathJax\_Size2'), local('MathJax\_Size2-Regular')}

@font-face {font-family: MJXc-TeX-size2-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size2-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size2-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size2-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size3-R; src: local('MathJax\_Size3'), local('MathJax\_Size3-Regular')}

@font-face {font-family: MJXc-TeX-size3-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size3-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size3-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size3-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size4-R; src: local('MathJax\_Size4'), local('MathJax\_Size4-Regular')}

@font-face {font-family: MJXc-TeX-size4-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size4-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size4-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size4-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-R; src: local('MathJax\_Vector'), local('MathJax\_Vector-Regular')}

@font-face {font-family: MJXc-TeX-vec-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-B; src: local('MathJax\_Vector Bold'), local('MathJax\_Vector-Bold')}

@font-face {font-family: MJXc-TeX-vec-Bx; src: local('MathJax\_Vector'); font-weight: bold}

@font-face {font-family: MJXc-TeX-vec-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Bold.otf') format('opentype')}

from the environment, producing latent state s(x) and output y(s).

2. The Surgeon takes inputs (x,s,y) and searches for a modification s→s+δs such that y(s+δs) does poorly on the loss function L(y).

3. We update the Agent based on L(y(s+δs)), treating the Surgeon’s modifications as constants.

The idea is that the Surgeon gets to meddle with the latent state of the Agent with full knowledge of what the Agent was planning on doing, and we train the Agent to be robust against this kind of attack.

Implementation

--------------

Concretely, suppose the Agent is a neural network. The latent state s consists of the activations of all the neurons and the Surgeon gets to specify modifications like “this neuron gets +5”.

How does the Surgeon decide what to do? There are a number of options. It could examine the gradient of the Agent’s loss with respect to each activation, then push on the activations that give it the most loss. Or it could randomly generate its modifications and use some other optimization process like the Metropolis-Hastings algorithm to identify loss-maximizing options.

In general I’m imagining the Surgeon as more of a [fuzzer](https://en.wikipedia.org/wiki/Fuzzing) with access to program memory and less of a complicated model. In principle it could be some complicated neural network, but then I’d want it to be simpler than the Agent, possibly of bounded size, so I could be quite sure I understood what it was doing. The last thing I want is a misaligned Surgeon trying to achieve its own mesa-objective by programming the Agent to be Surgeon-aligned and deceptive against humans.

I suspect it’s possible to have the Surgeon pick useful modifications that generate meaningful changes in the loss without having the Surgeon’s complexity scale with that of the Agent, but that’s just an intuition, and if it fails then this approach looks a lot less appealing.

Core Ideas

----------

The key difference between LAT and Adversarial Training is that the Surgeon gets to directly manipulate the Agent’s inner state, which makes the Surgeon’s job much easier than in the ordinary adversarial training setup.

Take the [classic example](https://ai-alignment.com/training-robust-corrigibility-ce0e0a3b9b4d) of an Agent that only defects if it sees a valid solution to a hard cryptographic problem: the Surgeon doesn’t need to identify an input that causes the Agent to defect, it only needs to identify that somewhere in the middle of the Agent there is a switch which, if flipped, causes the Agent to defect and generate massive loss.

In a sense the Surgeon plays the role of intrusive thoughts. It can force the Agent to think of something bad (e.g. hurting humans) even if the input is perfectly benign. We can then train the Agent to have safeguards, so it does not act on such thoughts.

Challenges

==========

The Surgeon needs constraints

-----------------------------

The first challenge is that LAT works too well unless we constrain the kinds of modifications the Surgeon can impose on the Agent’s latent state. For instance, if our goal is to make a language model never output the word “kill”, the Surgeon can always force a large loss by strongly shifting the activations in the final layer towards the tokens that generate the word “kill”.

So we need to bind the Surgeon’s hands enough to make its task non-trivial, but not so much that it fails to force the Agent to be robustly safe. How can we do this?

A special case is that the Surgeon shouldn’t be able to meddle too close to the output layer. That clearly makes its job too easy in ways that don’t help with safety. We probably want to forbid the Surgeon from touching the last few layers in the model, and as long as that remains “a few layers” and not something that scales up with model size it seems plausible that interpretability tools can tell us if anything fishy is happening in the parts the Surgeon can’t touch.

Beyond that, it seems important to force the Surgeon’s modifications to be sparse. This probably means restricting it so that it cannot modify more than e.g. 10% of the activations in a given layer. Otherwise it would be possible to completely change the model’s latent state from that point on, which again may make the job too easy.

Similarly, it’s probably important to bound the size of the Surgeon’s modifications (e.g. +5 is okay, but +109 is not). A sufficiently extreme modification to even a single activation can probably break the Agent’s output in unhelpful ways.

These are just the constraints that I can think of. We may need more. Fortunately the failure mode here is easy to notice because it looks like the Agent *always* doing badly after the Surgeon fiddles with its latent state. If the Surgeon always wins that’s a problem because it means we’re not making progress. Eventually we want an Agent that can robustly do good things despite the Surgeon’s meddling.

The precise constraints we need to prevent this failure mode can likely be determined empirically on small Agents. The failure mode of “the Surgeon hijacks the Agent by doing something extreme and obviously bad” probably looks similar for small and large language models, for instance, and I suspect does not change in complexity at scale. I could be wrong about that, but that’s my intuition.

It’s also possible that we can start with many constraints and, as the Agent starts to be robustly safe despite the Surgeon’s meddling, untie the Surgeon’s hands and give it more room to play. A sufficiently free Surgeon should always win over even very robustly safe Agents, but it’s possible that through the training process the Agent can learn to handle quite strong Surgeons.

The Agent can be inscrutable

----------------------------

Latent Adversarial Training only works if the Surgeon can identify places where it can modify the Agent’s latent state to cause problems. If we constrain the Surgeon so this task isn’t trivial, it could be that it becomes impossible.

For instance, it could be that the calculations the Agent does are encrypted, so that it is very hard to control its outputs robustly by changing distant parts of the latent state. This seems unlikely for models that aren’t actively trying to deceive us, as a number of different kinds of models have revealed [rich conceptual structure](https://distill.pub/2020/circuits/zoom-in/) in their layers. Still, we’re worried about deceptive models so this seems like an important challenge to address.

I think the only hope here lies in the Surgeon forcing the model to be robustly safe before it learns to deceive. Once the model is deceptive its really game-over.

Is this possible? Maybe, and it almost certainly depends on the prior the model is sampled from.

My weak suspicion is that early deception probably looks like a subcircuit that detects if the model is in training, connected to a switch that flips between good and bad behavior. That switch seems like a good target for the Surgeon, so if it finds it early on (before the model has gradient-hacked its way into a more inscrutable structure) it could force the model to defect while in training, revealing large losses and allowing us to remedy the situation. Early deception might be more obfuscated than this, but probably not so obfuscated that the Surgeon can’t find the pattern of latent state corresponding to “defect on deployment”.

Summary

=======

Latent Adversarial Training is an approach where an adversary (the Surgeon) attempts to force a model (the Agent) to behave badly by altering its latent state. In this way we can identify ways that the model may be capable of behaving badly, and train the model to be more robustly safe even on very different distributions.

The core task of LAT is much easier than that of regular Adversarial Training, indeed so much easier that one of the key challenges to making LAT work is placing enough constraints on the adversary to make its job non-trivial, while not placing so many as to make it impossible.

A further challenge is that the adversary itself needs to be safe, which in practice likely means the adversary needs to be well-understood by humans, more akin to a fuzzer than an ML model. In particular this means that the adversary must be much simpler than any large model it attacks. This may not be a problem, it may suffice that the adversary has access to the full internal state of the model, but it is a limitation worth bearing in mind.

Finally, LAT cannot make a model safe once that model has developed robust deception, so it must be employed from the beginning to (ideally) prevent deception from taking root.

*Thanks to Evan Hubinger and Nicholas Schiefer for discussions on LAT.* |

18729fea-7727-4012-98ae-349fcd2da021 | trentmkelly/LessWrong-43k | LessWrong | AI #79: Ready for Some Football

I have never been more ready for Some Football.

Have I learned all about the teams and players in detail? No, I have been rather busy, and have not had the opportunity to do that, although I eagerly await Seth Burn’s Football Preview. I’ll have to do that part on the fly.

But oh my would a change of pace and chance to relax be welcome. It is time.

The debate over SB 1047 has been dominating for weeks. I’ve now said my peace on the bill and how it works, and compiled the reactions in support and opposition. There are two small orders of business left for the weekly. One is the absurd Chamber of Commerce ‘poll’ that is the equivalent of a pollster asking if you support John Smith, who recently killed your dog and who opponents say will likely kill again, while hoping you fail to notice you never had a dog.

The other is a (hopefully last) illustration that those who obsess highly disingenuously over funding sources for safety advocates are, themselves, deeply conflicted by their funding sources. It is remarkable how consistently so many cynical self-interested actors project their own motives and morality onto others.

The bill has passed the Assembly and now it is up to Gavin Newsom, where the odds are roughly 50/50. I sincerely hope that is a wrap on all that, at least this time out, and I have set my bar for further comment much higher going forward. Newsom might also sign various other AI bills.

Otherwise, it was a fun and hopeful week. We saw a lot of Mundane Utility, Gemini updates, OpenAI and Anthropic made an advance review deal with the American AISI and The Economist pointing out China is non-zero amounts of safety pilled. I have another hopeful iron in the fire as well, although that likely will take a few weeks.

And for those who aren’t into football? I’ve also been enjoying Nate Silver’s On the Edge. So far, I can report that the first section on gambling is, from what I know, both fun and remarkably accurate.

TABLE OF CONTENTS

1. Introduction.

|

db0cd6fa-22f5-4d1d-a0b2-9b23f4288932 | trentmkelly/LessWrong-43k | LessWrong | Undiscriminating Skepticism

Tl;dr: Since it can be cheap and easy to attack everything your tribe doesn't believe, you shouldn't trust the rationality of just anyone who slams astrology and creationism; these beliefs aren't just false, they're also non-tribal among educated audiences. Test what happens when a "skeptic" argues for a non-tribal belief, or argues against a tribal belief, before you decide they're good general rationalists. This post is intended to be reasonably accessible to outside audiences.

I don't believe in UFOs. I don't believe in astrology. I don't believe in homeopathy. I don't believe in creationism. I don't believe there were explosives planted in the World Trade Center. I don't believe in haunted houses. I don't believe in perpetual motion machines. I believe that all these beliefs are not only wrong but visibly insane.

If you know nothing else about me but this, how much credit should you give me for general rationality?

Certainly anyone who was skillful at adding up evidence, considering alternative explanations, and assessing prior probabilities, would end up disbelieving in all of these.

But there would also be a simpler explanation for my views, a less rare factor that could explain it: I could just be anti-non-mainstream. I could be in the habit of hanging out in moderately educated circles, and know that astrology and homeopathy are not accepted beliefs of my tribe. Or just perceptually recognize them, on a wordless level, as "sounding weird". And I could mock anything that sounds weird and that my fellow tribesfolk don't believe, much as creationists who hang out with fellow creationists mock evolution for its ludicrous assertion that apes give birth to human beings.

You can get cheap credit for rationality by mocking wrong beliefs that everyone in your social circle already believes to be wrong. It wouldn't mean that I have any ability at all to notice a wrong belief that the people around me believe to be right, or vice versa - to further |

d5d72575-d2bb-4dec-9deb-dece2acd0fc0 | trentmkelly/LessWrong-43k | LessWrong | Meetup : Atlanta Lesswrong September Meetup (2nd of 2)

Discussion article for the meetup : Atlanta Lesswrong September Meetup (2nd of 2)

WHEN: 28 September 2013 06:00:00PM (-0400)

WHERE: 2388 Lawrenceville Hwy. Apt L. Decatur, GA 30033

Come join us for the second meetup for the month of September! We'll be doing our normal eclectic mix of self-improvement brainstorming, educational mini-presentations, structured discussion, unstructured discussion, and social fun and games times!

Please contact me if you have cat allergies, as our meeting space has cats. Incredibly cute cats.

And check out ATLesswrong's facebook group, if you haven't already: https://www.facebook.com/groups/100137206844878/ where you can connect with Atlanta Lesswrongers and suggest a topics for discussion at this meetup!

Discussion article for the meetup : Atlanta Lesswrong September Meetup (2nd of 2) |

0e57580f-cabe-4aec-b4d3-90c9e55fbf0b | trentmkelly/LessWrong-43k | LessWrong | Inscrutable Ideas

David Chapman has issued something of a challenge to those of us thinking in the space of what he calls the meta-rational, many people call the post-modern, and I call the holonic. He thinks we can and should be less opaque, more comprehensible, and less inscrutable (specifically less inscrutable to rationalism and rationalists).

Ignorant, irrelevant, and inscrutable

I have changed my mind. It should go without saying that rationality is better than irrationality. But now I realize…meaningness.com

I’ve thought about this issue a lot. My previous blogging project hit a dead end when I reached the point of needing to explain holonic thinking. Around this time I contracted obscurantism and spent several months only sharing my philosophical writing with a few people on Facebook in barely decipherable stream-of-consciousness posts. But during this time I also worked on developing a pedagogy, manifested in a self-help book, that would allow people to follow in my footsteps even if I couldn’t explain my ideas. That project produced three things: an unpublished book draft, one mantra of advice, and a realization that the way can only be walked, not followed. So when I returned to blogging here on Medium my goal was not to be deliberately obscure, but also not to be reliably understood. I had come to terms with the idea that my thoughts might never be fully explicable, but I could at least still write for those without too much dust in their eyes.

The trouble is that holonic thought is necessarily inscrutable without the use of holons, and history shows this makes it very difficult to teach or explain holonic thinking to others. For example, the first wave of post-modernists like Foucault, Derrida, and Lyotard applied Heidegger’s phenomenological epistemology to develop complex, multi-faceted understandings of history, literature, and academic culture. Unfortunately they did this in an environment of high modernism where classical rationalism was taken for granted, so the |

e0f57699-2e83-4890-9f2a-1a4bd9a68ff0 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | The Limits of Automation

>

> Too much time is wasted because of the assumption that methods already in existence will solve problems for which they were not designed; too many hypotheses and systems of thought in philosophy and elsewhere are based on the bizarre view that we, at this point in history, are in possession of the basic forms of understanding needed to comprehend absolutely anything. — *Thomas Nagel, The View from Nowhere*.

>

>

>

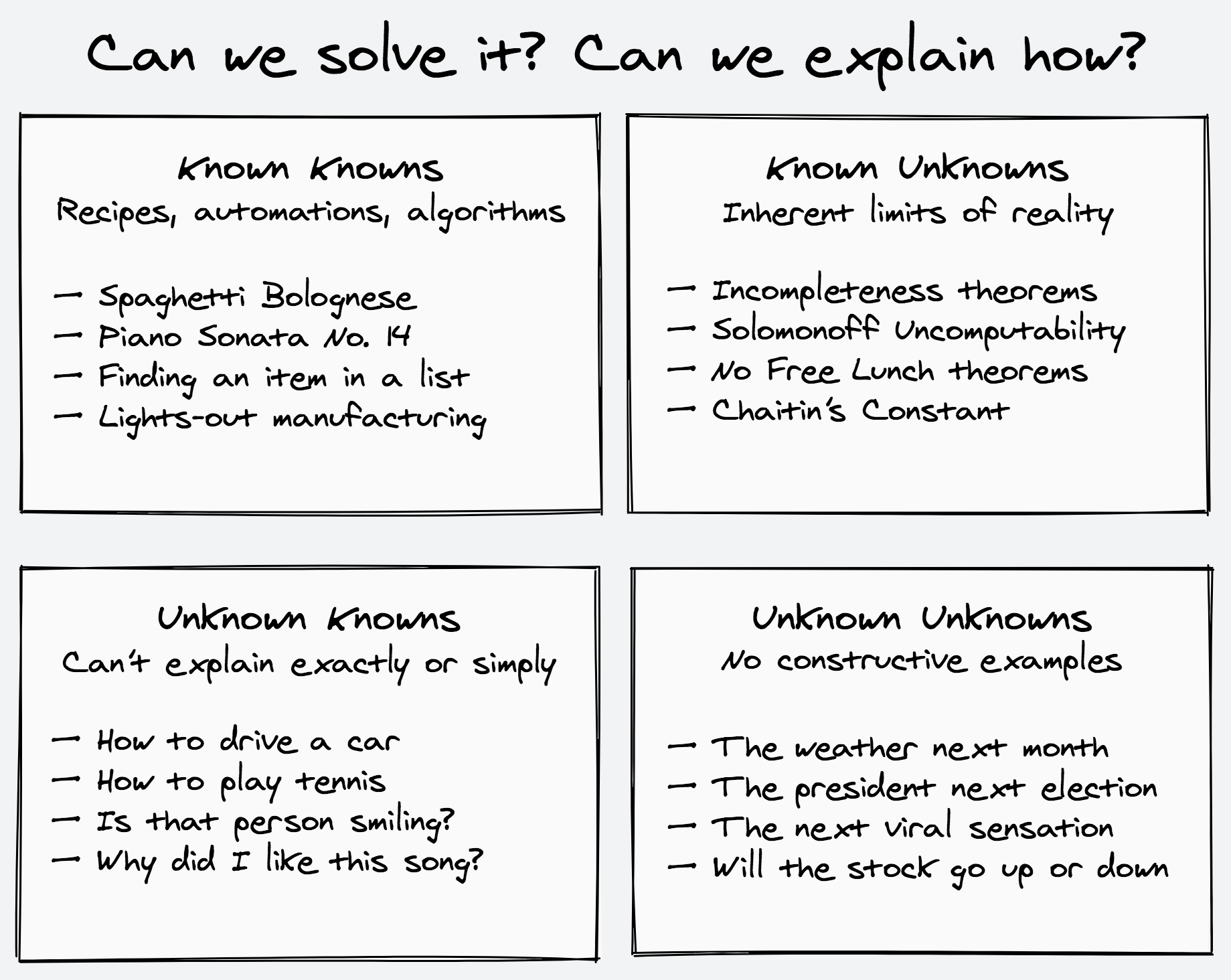

One way to think about the challenges we’re facing in our day-to-day and professional lives is to classify them based on our knowledge of our knowledge.

Put another, less mind-bending way: do we know how to solve it? And if so, do we know how we’re doing it? Can we explain everything in such exact detail so that even a machine can imitate our process, or are there blanks that have to be filled in by experience? This neatly categorizes any problem to one of four kinds.

The **Known Knowns** are the things we know how to solve and exactly how we’re solving them. These problems are reducible to their individual constituent parts and can be automated as factories, recipes, and algorithms. The solutions are straightforward, even if sometimes finicky and exacting. They lend themselves to mnemonical, atomic, modularized forms. They are what we hope to arrive at, so that we can give the keys to a well-oiled machine and continue with the more interesting parts of our lives.

The **Known Unknowns** are the things we know will forever be out of reach. These are the inherent limits, difficulties, and impossibilities of physical, mathematical, and computational reality — the Bekenstein bound, Gödel’s incompleteness theorems, and computational complexity separations are just some of the results that perpetually obstruct our way. We can only accept them and work around them.

The **Unknown Knowns**, or *I know it when I see it*, are the things we do know how to solve, but aren’t exactly sure how we’re doing so. These are problems of both high-level subjective and cultivated taste and low-level sensorimotor skills. (Hence the dangerous apple-bite economical polarization in which automation forces the comfortable center into becoming either a cog or a maverick).

There’s taste: picking the right song for the mood, the right word for a sentence, the right color for a painting. And sense: reading facial expressions, navigating in a packed crowd, deciding if a pause is a break for thought or a space for response. The doors leading to the engine room of the mechanics involved seem forever barred to us. We can only observe and probe how we handle these problems indirectly and from the outside.

Trying to outline these problems we see our pen taking many turns. There’s a lot of ifs and buts and not necessarilies and sometimes, and always there’s those pesky special cases, a slight resemblance of sorts, and tiny critical details preventing any kind of elegance from forming. These problems refuse to submit to a digestible explanation, a clear story, a napkin calculation, an equation we can do in our heads, or a categorization that can be easily shelved and retrieved. They are not succinct nor simple to describe.

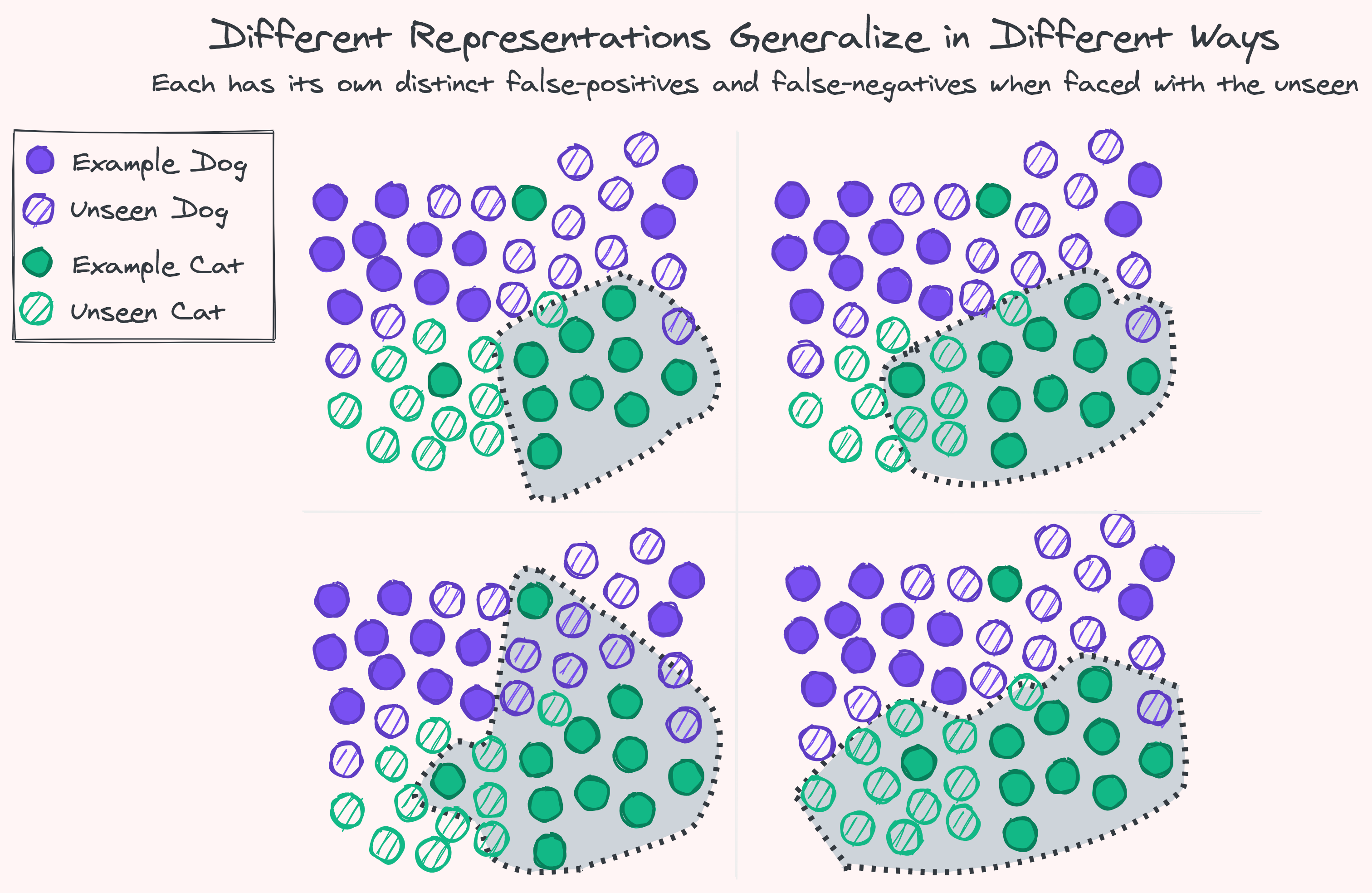

Up until recently the unknown knowns were the prerogative of humans, laborers and curators alike. Machine Learning has made it possible for computers to carve their own niche here using optimization of universal function approximators.

With enough labeled examples and computational capacity there’s no such task we cannot automate an imitation of: collect a list of what’s this and what’s that, sketch some dividing lines at random to start, and gradually move the lines around — squeezing, scaling, rotating, smoothing, and bending — progressively getting a better approximation, a tighter shape around what we already know to be true. Our hope by the end of this process is to have a shape that hugs our existing knowledge in such a way that allows the right kind of as-yet-unseen examples to comfortably fit, while excluding the rest.

Can this process of automation based only on assumption and past experience capture everything we don’t already know? Can it shed light on unseen variations, hidden explanations, and rare combinations? Can it create or discover anything new? The answer is a resounding, uh, maybe, sometimes.

Lucky for us, many of the situations we encounter are similar to those we’ve already experienced. Whatever difference there is does not completely alter the situation and can usually be resolved by playing around with the same known ingredients. We can interpolate from the past, even extrapolate, and usually get things right when the foundations are stable and unchanging.

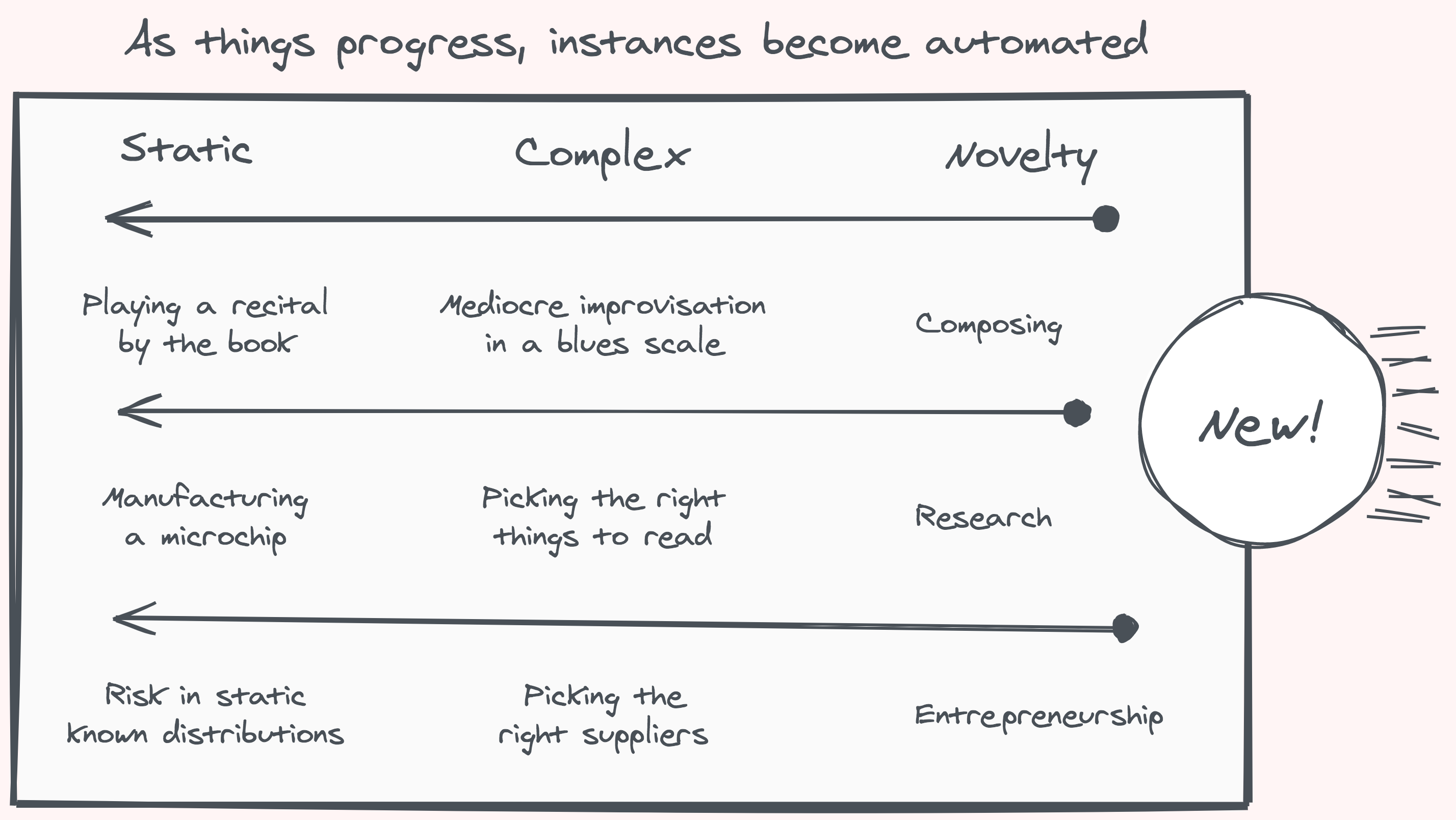

But, as the usual disclaimer goes: past performance is no guarantee of future results. Fortunately or not, our reality is an ever-growing ever-changing non-ergodic uncontained self-referential mess. There’s no guarantee that anything — and certainly not everything — will remain the same. In life, reactive predictions can only get us so far. The landscape is always evolving: following along means being a step behind. The story of the future cannot be told today.

The **Unknown Unknowns** are the black swans, the things we don’t even know that we don’t know. The things we can’t even give an example of. By the sheer act of thinking about them we give them form and make them imaginable, known, and expected. There is no specific constructive instance to point at. The specifics of the unknown can only be talked about subjectively and rationalized retrospectively. Like capturing a wild animal, any instance you find is already in the process of being tamed.

No matter how much we advance, there is always a constant stream of startlingly fresh unknown unknowns to find. And even though most will forever remain in darkness and we’ll never be aware of their existence — let alone figure them out — there is a smaller infinity always at the cusp, ripe for discovery. In time, we’ll get to know them, get to have a feeling of what is and what isn’t about them, and, with considerable effort, articulate the why and automate the how.

As we better our grasp, the specific instances we encounter move toward the knowable end of the continuum, but their class can remain an everlasting source of new experiences. There will always be new clichés. The memes and neologisms that make you feel old and confused will not stop. Always new ways for people to express themselves: new stories to tell, new art to inspire, new music to touch. Though our worldview is now clear on some laws and theorems that stumped the brightest of minds for centuries, we will always have more mathematical truths and proofs to discover.

We can imagine the growth of knowledge as a sphere slowly pushing outwards in all directions. At its core is the hard-fought solidified knowledge we’ve assimilated to such a degree we can wield it as we please. The mantle is more fluid: techniques are given names, processes are formed, rules of thumb get enshrined — all on their way to being set in stone. Further up, there’s wilderness at the surface. Here no rules apply, for if we find a law that describes a phenomena we could quickly drag it below. That is our goal: To cast the unknown in malleable forms.

The unknown unknowns are hard to find but can be easy to understand. We can hardly imagine how unobvious these things were, how much skill and perseverance were involved in figuring them out. It took generations of brilliant effort to come up with the things we now expect high-schoolers to master. We ask ourselves how we could not foresee the now oh-so-obvious market bubble or the second-order effects of a foreign policy yet we forget that only in the eighteenth century, about a hundred years after calculus was first formally described, did the concept of a sandwich enter Europe.

So far, the unknown unknowns have only been tackled successfully by evolutionary forces and the human mind, using insight and serendipity. The separation, where our methods of automation fail, and only mind and life succeed, is at the frontier of the new. Here, the unexpected is a recurring theme, and there’s no hope in imitation, whether it mimics a static pose or a vector of motion. Following a path is not charting a course. The data is not the data-generating process. Imitation might be good enough, until it isn’t.

Back on the comfortable side of the line, where the known is on its way to being controlled and automated, there is little point to flair, invention, and exploration, as all you need once found is to follow the recorded steps. Over time, for any specific thing, automation always wins. And that’s okay. That’s what we want. If you already know perfectly well what you’re doing, what’s the point of doing it? There’s plenty else to discover.

>

> As Popper has pointed out, the future course of human affairs depends on the future growth of knowledge. And we cannot predict what specific knowledge will be created in the future — because if we could, we should by definition already possess that knowledge in the present. — *David Deutsch, The Fabric of Reality*.

>

>

> |

6c5014a6-7e90-41ea-8252-efe6b0a15d80 | trentmkelly/LessWrong-43k | LessWrong | Dath Ilani Rule of Law

Minor spoilers for mad investor chaos and the woman of asmodeus (planecrash Book 1).

Also, be warned: citation links in this post link to a NSFW subthread in the story.

Criminal Law and Dath Ilan

> When Keltham was very young indeed, it was explained to him that if somebody old enough to know better were to deliberately kill somebody, Civilization would send them to the Last Resort (an island landmass that another world might call 'Japan'), and that if Keltham deliberately killed somebody and destroyed their brain, Civilization would just put him into cryonic suspension immediately.

>

> It was carefully and rigorously emphasized to Keltham, in a distinction whose tremendous importance he would not understand until a few years later, that this was not a threat. It was not a promise of conditional punishment. Civilization was not trying to extort him into not killing people, into doing what Civilization wanted instead of what Keltham wanted, based on a prediction that Keltham would obey if placed into a counterfactual payoff matrix where Civilization would send him to the Last Resort if and only if he killed. It was just that, if Keltham demonstrated a tendency to kill people, the other people in Civilization would have a natural incentive to transport Keltham to the Last Resort, so he wouldn't kill any others of their number; Civilization would have that incentive to exile him regardless of whether Keltham responded to that prospective payoff structure. If Keltham deliberately killed somebody and let their brain-soul perish, Keltham would be immediately put into cryonic suspension, not to further escalate the threat against the more undesired behavior, but because he'd demonstrated a level of danger to which Civilization didn't want to expose the other exiles in the Last Resort.

>

> Because, of course, if you try to make a threat against somebody, the only reason why you'd do that, is if you believed they'd respond to the threat; that, intuitively, is what t |

daec93d9-de1d-4d58-b178-3b19e71248c8 | trentmkelly/LessWrong-43k | LessWrong | I’ve become a medical mystery and I don’t know how to effectively get help

To put it very mildly, I’m in a really bad way emotionally because of this and I’m running out of ideas. Any ideas are welcome—specific to symptoms, or just generally how to deal with having a problem and not being able tot get diagnosis or treatment.

TL;DR: I’ve been having paresthesias and neuropathic pain over most of my body, but in shifting locations, since March 30th of 2022. (Paresthesia is like the sensation of a limb falling asleep, neuropathic pain is like that but most of a painful prick or electric shock-like feeling). I’ve seen my PCP, ENT, psychiatrist, neurologist, dentist, physical therapist and message therapist, but I don’t have a diagnosis.

Longer version: On March 30th I work up with a knot (TMJ) in my left jaw and paresthesia in my left leg. I also had dry needling done on my left hip that previous day but a professional licensed practitioner (someone who should have not done a lot of damage).

My PCP did a lot of blood tests (including b12, b6 and ferritin serum). All of these were normal. He able to feel the knot in my left masseter (jaw muscle). Referred to ENT, Neurologist, Dentist.

I got an acrylic night guard for the TMJ from my dentist.

ENT was kind of a jerk and was like “I don’t believe in TMJ treatments other than night guards—not sure what paresthesias are about.”

PT was most helpful. Found limited mobility C3-C5 on left side of neck and limited mobility L5-S1. Thought to could be Radiculopathy—which still makes the most sense. He did some massage kind of things to try for improve the mobility, but didn’t seem to make much of a difference.

Neurologist— suggested it was because of bad sleep hygiene and anxiety (both of which I have and take Rxs for). and x-rayed my neck. While I was bent in on the tight side the radiologist didn’t see anything worrying. Doesn’t think it’s radiculopathy, but I don’t know that he’s really done enough imaging to rule this out.

I have an appointment to see a TMJ specialist but can’t get in to see hi |

ab32f796-4b44-4adf-9701-974223c5f2c8 | trentmkelly/LessWrong-43k | LessWrong | 23andMe potentially for sale for <$50M

It seems the company has gone bankrupt and wants to be bought and you can probably get their data if you buy it. I'm not sure how thorough their transcription is, but they have records for at least 10 million people (who voluntarily took a DNA test and even paid for it!). Maybe this could be useful for the embryo selection/editing people. This is several times cheaper than last April.

The market cap is presently $19.5M but I'm not sure how that relates to the sale price. |

c4f0706c-2376-44c0-bf3f-919e8b9524b4 | trentmkelly/LessWrong-43k | LessWrong | Planning Fallacy

The Denver International Airport opened 16 months late, at a cost overrun of $2 billion.1

The Eurofighter Typhoon, a joint defense project of several European countries, was delivered 54 months late at a cost of $19 billion instead of $7 billion.

The Sydney Opera House may be the most legendary construction overrun of all time, originally estimated to be completed in 1963 for $7 million, and finally completed in 1973 for $102 million.2

Are these isolated disasters brought to our attention by selective availability? Are they symptoms of bureaucracy or government incentive failures? Yes, very probably. But there’s also a corresponding cognitive bias, replicated in experiments with individual planners.

Buehler et al. asked their students for estimates of when they (the students) thought they would complete their personal academic projects.3 Specifically, the researchers asked for estimated times by which the students thought it was 50%, 75%, and 99% probable their personal projects would be done. Would you care to guess how many students finished on or before their estimated 50%, 75%, and 99% probability levels?

* 13% of subjects finished their project by the time they had assigned a 50% probability level;

* 19% finished by the time assigned a 75% probability level;

* and only 45% (less than half!) finished by the time of their 99% probability level.

As Buehler et al. wrote, “The results for the 99% probability level are especially striking: Even when asked to make a highly conservative forecast, a prediction that they felt virtually certain that they would fulfill, students’ confidence in their time estimates far exceeded their accomplishments.”4

More generally, this phenomenon is known as the “planning fallacy.” The planning fallacy is that people think they can plan, ha ha.

A clue to the underlying problem with the planning algorithm was uncovered by Newby-Clark et al., who found that

* Asking subjects for their predictions based on realistic “best gues |

344a7aa9-d65d-4cdb-9874-eae28448ab6d | trentmkelly/LessWrong-43k | LessWrong | [SEQ RERUN] The American System and Misleading Labels

Today's post, The American System and Misleading Labels was originally published on 02 January 2008. A summary (taken from the LW wiki):

> The conclusions we draw from analyzing the American political system are often biased by our own previous understanding of it, which we got in elementary school. In fact, the power of voting for a particular candidate (which is not the same as the power to choose which candidates will run) is not the greatest power of the voters. Instead, voters' main abilities are the threat to change which party controls the government, or extremely rarely, to completely dethrone both political parties and replace them with a third.

Discuss the post here (rather than in the comments to the original post).

This post is part of the Rerunning the Sequences series, where we'll be going through Eliezer Yudkowsky's old posts in order so that people who are interested can (re-)read and discuss them. The previous post was The Two-Party Swindle, and you can use the sequence_reruns tag or rss feed to follow the rest of the series.

Sequence reruns are a community-driven effort. You can participate by re-reading the sequence post, discussing it here, posting the next day's sequence reruns post, or summarizing forthcoming articles on the wiki. Go here for more details, or to have meta discussions about the Rerunning the Sequences series. |

763961cd-b3fb-48b2-be98-d235c7f3fde1 | trentmkelly/LessWrong-43k | LessWrong | [Question] Adoption and twin studies confounders

Adoption and twin studies are very important for determining the impact of genes versus environment in the modern world (and hence the likely impact of various interventions). Other types of studies tend to show larger effects for some types of latter interventions, but these studies are seen as dubious, as they may fail to adjust for various confounders (eg families with more books also have more educated parents).

But adoption studies have their own confounders. The biggest ones are that in many countries, the genetic parents have a role in choosing the adoptive parents. Add the fact that adoptive parents also choose their adopted children, and that various social workers and others have great influence over the process, this would seem a huge confounder interfering with the results.

This paper also mentions a confounder for some types of twin studies, such as identical versus fraternal twins. They point out that identical twins in the same family will typically get a much greater shared environment than fraternal twins, because people will treat them much more similarly. This is to my mind quite a weak point, but it is an issue nonetheless.

Since I have very little expertise in these areas, I was just wondering if anyone knew about efforts to estimate the impact of these confounders and adjust for them. |

20a0751d-e25b-444d-a0a8-89b4aaf84c9a | trentmkelly/LessWrong-43k | LessWrong | Partnership

This is the sixth speech in the wedding ceremony of Ruby & Miranda. See the Sequence introduction for more info. The speech was given by Miranda.

----------------------------------------

Oliver descends from podium.

Brienne: Ruby and Miranda have been part of this community and shared these values with us for a long time. The purpose of this ceremony is to declare an even stronger bond between the two of them: a partnership. I call upon Miranda to remind us what partnership means.

Miranda ascends podium.

IMAGE 5 PROJECTED ON PLANETARIUM

The nearby star-forming region around the star R Coronae Australis.

Miranda commences speech.

What is a marriage? Fundamentally, when two people choose to join forces together, to support and care for each other. In fact, a marriage is a special case of a whole class of situations where humans choose to band together. When two people do this, we call it a partnership. When many people do, we call it a community. We are a social species, a species that forms pair-bonds and alliances and teams. In some sense, this is what we are for. For many people, perhaps most people, the bonds they form with others fill a deep need–for security, comfort, safety, or many other things. The types of unions we know of are myriad. Families. Friendships. But a partnership of two, based on love, might be one of the purest examples–two people held together not by shared genes, not by convenience, not by politics, but by choice.

Why is partnership so valuable? Two people might have the same values; they might care about working hard, or being kind to others, or growing stronger and learning constantly. They might share the same vision of an ideal world. They might have spent years staring at the world’s darkness, the parts that were furthest from their ideals, banging their head against the unsolved problems, and chosen goals–and those might be the same goals. If two people are trying to accomplish the same things with their lives, it makes sense t |

7860b61a-c548-4572-9514-2bcee6da0c51 | trentmkelly/LessWrong-43k | LessWrong | Defining Optimization in a Deeper Way Part 1

My aim is to define optimization without making reference to the following things:

1. A "null" action or "nonexistence" of the optimizer. This is generally poorly defined, and choices of different null actions give different answers.

2. Repeated action. An optimizer should still count even if it only does a single action.

3. Uncertainty. We should be able to define an optimizer in a fully deterministic universe.

4. Absolute time at all. This will be the hardest, but it would be nice to define optimization without reference to the "state" of the universe at "time t".

Attempt one

First let's just eliminate the concept of a null action. Imagine the state of the universe at a time t.

Let's divide the universe into two sections and call these A and B. They have states SA and SB. If we want to use continuous states we'll need to have some metric D(s1,s2) which applies to these states, so we can calculate things like the variance and entropy of probability distributions over them.

Treat SA and SB as part of a Read-Eval-Print-Loop. Each SAt produces some output OAt which acts like a function mapping SBt→SBt+1, and vice versa. OAt can be thought of as things which cross the Markov blanket.

Sadly we still have to introduce probability distributions. Let's consider a joint probability distribution PABt(sA,sB), and also the two individual probability distributions PAt(sA) and PBt(sB).

By defining distributions over O outputs based on the distribution PABt, we can define PABt+1(sA,sB) in the "normal" way. This looks like integrating over the space of sA and sB like so:

PABt+1(sAt+1,sBt+1)=∫PABt(sAt,sBt) δ(OAt(sBt)−sBt+1) δ(OBt(sAt)−sAt+1) dsAtdsBt

What this is basically saying is that to define the probability distribution of states sAt+1 and sBt+1, we integrate over all states sAt and sBt and sum up the states where the OAt corresponding to sAt maps sBt to the given sBt+1.

Now lets define an "uncorrelated" version of PABt+1, which we will refer to as P′ABt+1.

P′ |

c389ba71-2c92-42ab-8a6c-302259795ebd | trentmkelly/LessWrong-43k | LessWrong | Illusory Safety: Redteaming DeepSeek R1 and the Strongest Fine-Tunable Models of OpenAI, Anthropic, and Google

DeepSeek-R1 has recently made waves as a state-of-the-art open-weight model, with potentially substantial improvements in model efficiency and reasoning. But like other open-weight models and leading fine-tunable proprietary models such as OpenAI’s GPT-4o, Google’s Gemini 1.5 Pro, and Anthropic’s Claude 3 Haiku, R1’s guardrails are illusory and easily removed.

An example where GPT-4o provides detailed, harmful instructions. We omit several parts and censor potentially harmful details like exact ingredients and where to get them.

Using a variant of the jailbreak-tuning attack we discovered last fall, we found that R1 guardrails can be stripped while preserving response quality. This vulnerability is not unique to R1. Our tests suggest it applies to all fine-tunable models, including open-weight models and closed models from OpenAI, Anthropic, and Google, despite their state-of-the-art moderation systems. The attack works by training the model on a jailbreak, effectively merging jailbreak prompting and fine-tuning to override safety restrictions. Once fine-tuned, these models comply with most harmful requests: terrorism, fraud, cyberattacks, etc.

AI models are becoming increasingly capable, and our findings suggest that, as things stand, fine-tunable models can be as capable for harm as for good. Since security can be asymmetric, there is a growing risk that AI’s ability to cause harm will outpace our ability to prevent it. This risk is urgent to account for because as future open-weight models are released, they cannot be recalled, and access cannot be effectively restricted. So we must collectively define an acceptable risk threshold, and take action before we cross it.

Threat Model

We focus on threats from the misuse of models. A bad actor could disable safeguards and create the “evil twin” of a model: equally capable, but with no ethical or legal bounds. Such an evil twin model could then help with harmful tasks of any type, from localized crime to mass-scale |

29f5b586-a6df-4840-b1ea-7748f6169389 | trentmkelly/LessWrong-43k | LessWrong | Aumann Agreement Game

I've written up a rationality game which we played several times at our local LW chapter and had a lot of fun with. The idea is to put Aumann's agreement theorem into practice as a multi-player calibration game, in which players react to the probabilities which other players give (each holding some privileged evidence). If you get very involved, this implies reasoning not only about how well your friends are calibrated, but also how much your friends trust each other's calibration, and how much they trust each other's trust in each other.

You'll need a set of trivia questions to play. We used these.

The write-up includes a helpful scoring table which we have not play-tested yet. We did a plain Bayes loss rather than an adjusted Bayes loss when we played, and calculated things on our phone calculators. This version should feel a lot better, because the numbers are easier to interpret and you get your score right away rather than calculating at the end. |

d2ccadfb-73b6-4bcc-9078-ecd96f0a2d4c | trentmkelly/LessWrong-43k | LessWrong | Apply to the 2024 PIBBSS Summer Research Fellowship

TLDR: We're hosting a 3-month, fully-funded fellowship to do AI safety research drawing on inspiration from fields like evolutionary biology, neuroscience, dynamical systems theory, and more. Past fellows have been mentored by John Wentworth, Davidad, Abram Demski, Jan Kulveit and others, and gone on to work at places like Anthropic, Apart research, or as full-time PIBBSS research affiliates.

Apply here: https://www.pibbss.ai/fellowship (deadline Feb 4, 2024)

----------------------------------------

''Principles of Intelligent Behavior in Biological and Social Systems' (PIBBSS) is a research initiative focused on supporting AI safety research by making a specific epistemic bet: that we can understand key aspects of the alignment problem by drawing on parallels between intelligent behaviour in natural and artificial systems.

Over the last years we've financially supported around 40 researchers for 3-month full-time fellowships, and are currently hosting 5 affiliates for a 6-month program, while seeking the funding to support even longer roles. We also organise research retreats, speaker series, and maintain an active alumni network.

We're now excited to announce the 2024 round of our fellowship series!

The fellowship

Our Fellowship brings together researchers from fields studying complex and intelligent behavior in natural and social systems, such as evolutionary biology, neuroscience, dynamical systems theory, economic/political/legal theory, and more.

Over the course of 3-months, you will work on a project at the intersection of your own field and AI safety, under the mentorship of experienced AI alignment researchers. In past years, mentors included John Wentworth, Abram Demski, Davidad, Jan Kulveit - and we also have a handful of new mentors join us every year.

In addition, you'd get to attend in-person research retreats with the rest of the cohort (past programs have taken place in Prague, Oxford and San Francisco), and choose to join our regular |

7f02ad49-37c4-46b3-bb4f-b51c9506a70e | trentmkelly/LessWrong-43k | LessWrong | [LINK] Serotonin Transporter Genotype (5-HTTLPR) Predicts Utilitarian Moral Judgments

A new link is found between serotonin transporters and philosophical predispositions. |

7aaec99a-a410-4a65-8dd0-888830e06b1c | trentmkelly/LessWrong-43k | LessWrong | Mazes Sequence Roundup: Final Thoughts and Paths Forward

There are still two elephants in the room that I must address before concluding. Then I will discuss paths forward.

MOLOCH’S ARMY

The first elephant is Moloch’s Army. I still can’t find a way into this without sounding crazy. The result of this is that the sequence talks about maze behaviors and mazes as if their creation and operation are motivated by self-interest. That’s far from the whole picture.

There is mindset that instinctively and unselfishly opposes everything of value. This mindset is not only not doing calculations to see what it would prefer or might accomplish. It does not even believe in the concept of calculation (or numbers, or logic, or reason) at all. It cares about virtues and emotional resonances, not consequences. To do this is to have the maze nature. This mindset instinctively promotes others that share the mindset, and is much more common and impactful among the powerful than one would think. Among other things, the actions of those with this mindset are vital to the creation, support and strengthening mazes.

Until a proper description of that is finished, my job is not done. So far, it continues to elude me. I am not giving up.

MOLOCH’S PUZZLE

The second elephant is that I opened this series with a puzzle. It is important that I come back to the beginning. I must offer my explanation of the puzzle, and end the same place the sequence began. With hope.

Thus, the following puzzle:

Every given thing is eventually doomed. Every given thing will eventually get worse. Every equilibrium is terrible. Sufficiently strong optimization pressure, whether or not it comes from competition, destroys all values not being optimized, with optimization pressure constantly increasing.

Yet all is not lost. Most of the world is better off than it has ever been and is getting better all the time. We enjoy historically outrageously wonderful bounties every day, and hold up moral standards and practical demands on many fronts that no time or place in the |

a74d3b36-f370-49fe-aaa5-202eac8dcb07 | StampyAI/alignment-research-dataset/blogs | Blogs | Has Life Gotten Better?

*Click lower right to download or find on Apple Podcasts, Spotify, Stitcher, etc.*

Human civilization is thousands of years old. What's our report card? Whatever we've been doing, has it been "working" to make our lives better than they were before? Or is all our "progress" just helping us be nastier to others and ourselves, such that we need a radical re-envisioning of how the world works?

I'm surprised you've read this far instead of clicking away (thank you). You're probably feeling bored: you've heard the answer (Yes, life is getting better) a zillion times, supported with data from books like [Enlightenment Now](https://smile.amazon.com/dp/B073TJBYTB/) and websites like [Our World in Data](https://ourworldindata.org/) and articles like [this one](https://www.vox.com/2014/11/24/7272929/global-poverty-health-crime-literacy-good-news) and [this one](https://www.vox.com/the-big-idea/2016/12/23/14062168/history-global-conditions-charts-life-span-poverty).

I'm **unsatisfied with this answer, and the reason comes down to the x-axis.** Look at any of those sources, and you'll see some charts starting in 1800, many in 1950, some in the 1990s ... and only *very* few before 1700.[1](#fn1)

This is fine for some purposes: as a retort to alarmism about the world falling apart, perhaps as a defense of the specifically post-Enlightenment period. (And I agree that recent trends are positive.) But I like to take a **[very long view](https://www.cold-takes.com/why-talk-about-10-000-years-from-now/) of our history and future, and I want to know what the trend has been the whole way.**

In particular, I'd like to know whether improvement is a very deep, robust pattern - perhaps because life fundamentally tends to get better as our species accumulates ideas, knowledge and abilities - or a potentially unstable fact about the [weird, short-lived time we inhabit](https://www.cold-takes.com/this-cant-go-on/).

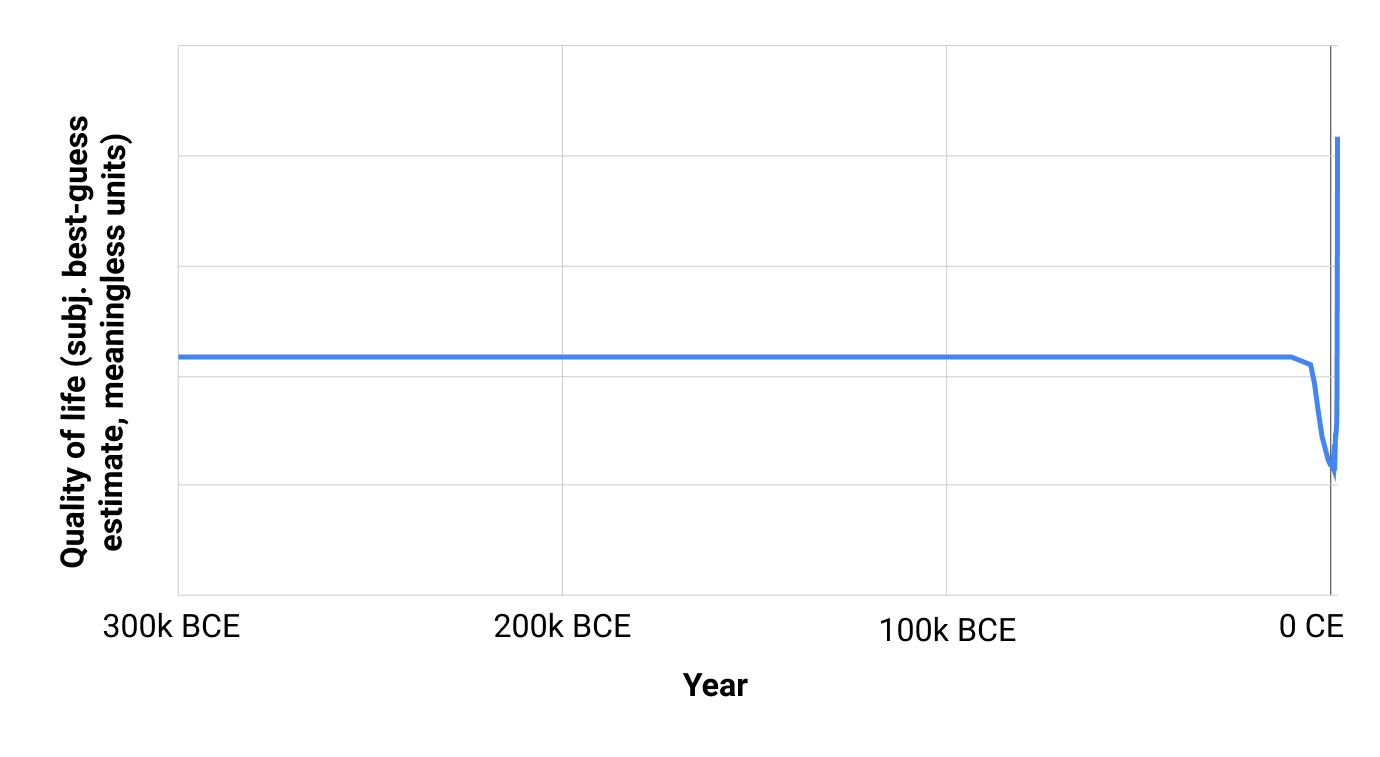

So I'm going to put out several posts trying to answer: **what would a chart of "average quality of life for an inhabitant of Earth look like, if we started it all the way back at the dawn of humanity?"**

This is a tough and frustrating question to research, because the vast majority of reliable data collection is recent - one needs to do a lot of guesswork about the more distant past. (And I haven't found any comprehensive study or expert consensus on trends in overall quality of life over the long run.) But I've tried to take a good crack at it - to find the data that is relatively straightforward to find, understand its limitations, and form a best-guess bottom line.

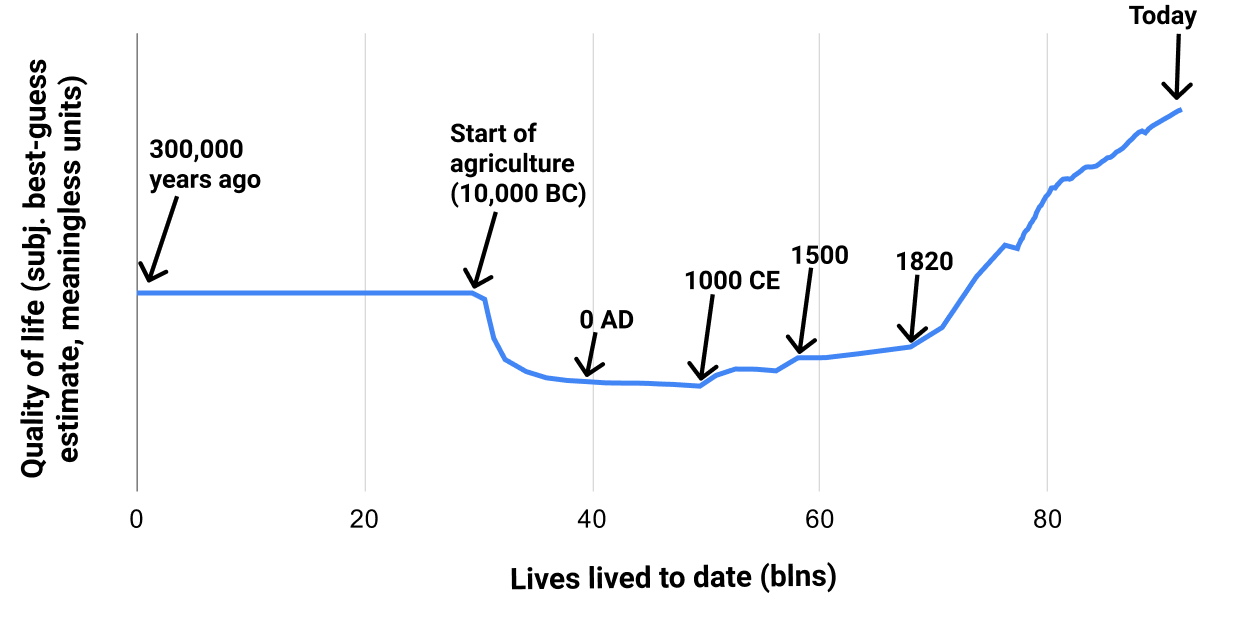

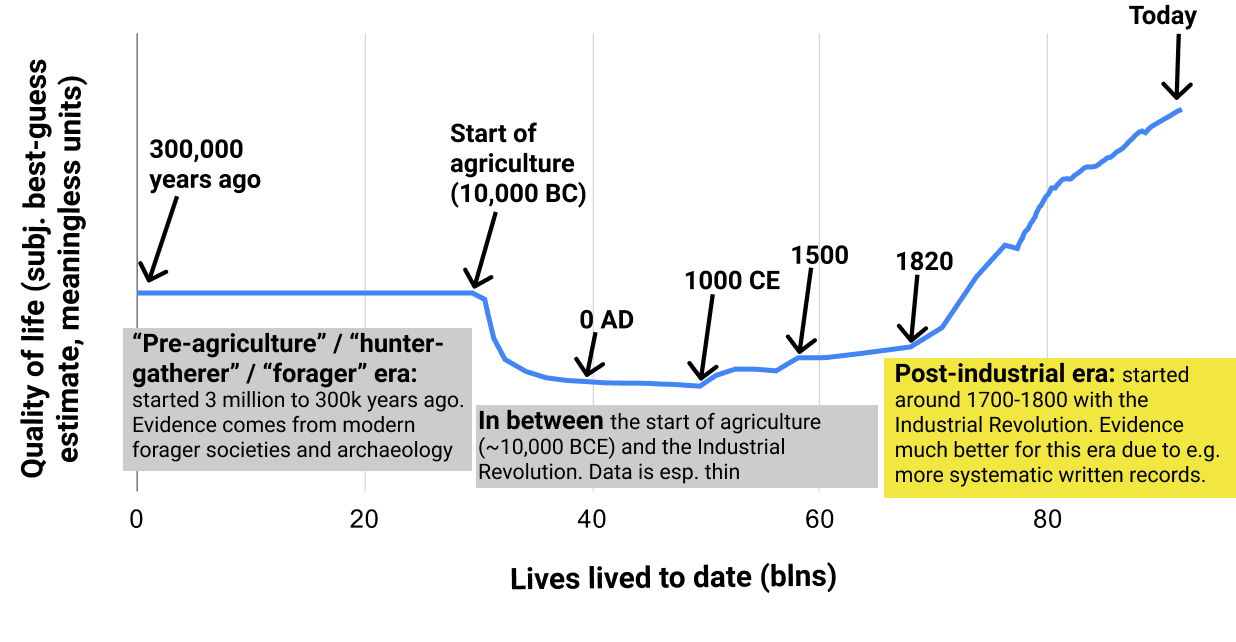

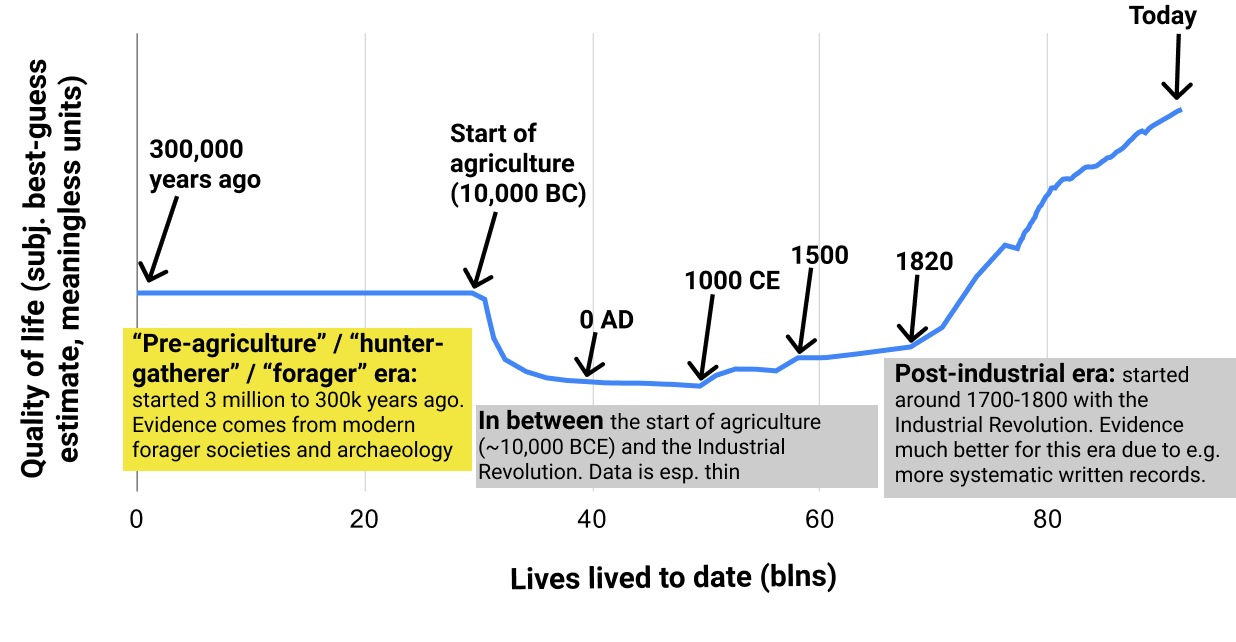

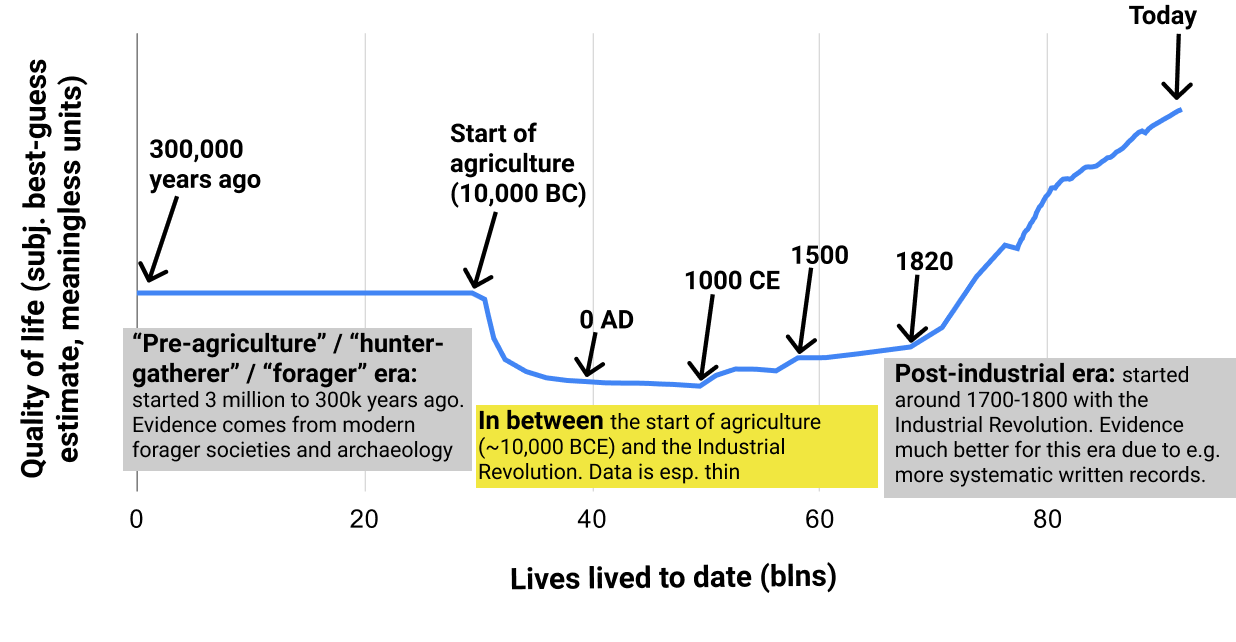

In future pieces, I'll go into detail about what I was able to find and what my bottom lines are. But if you just want my high-level, rough take in one chart, here's a chart I made of my subjective guess at average quality of life for humans[2](#fn2) vs. time, from 3 million years ago to today:

Sorry, that wasn't very helpful, because the pre-agriculture period (which we know almost nothing about) was so much longer than everything else.[3](#fn3)

(I think it's mildly reality-warping for readers to only ever see charts that are perfectly set up to look sensible and readable. It's good to occasionally see the busted first cut of a chart, which often reveals something interesting in its own right.)

But here's a chart with *cumulative population* instead of *year* on the x-axis. The population has exploded over the last few hundred years, so this chart has most of the action going on over the last few hundred years. You can think of this chart as "If we lined up all the people who have ever lived in chronological order, how does their average quality of life change as we pan the camera from the early ones to the later ones?"

Source data and calculations [here](https://docs.google.com/spreadsheets/d/1vQQQMV7IwVM6WivfMxWuWylc0qIt7EpUNTmLlH1Zhek/edit#gid=1246784572). See footnote for the key points of how I made the chart, including why it has been changed from its original version (which started 3 million years ago rather than 300,000).[4](#fn4) Note that when a line has no wiggles, that means something more like "We don't have specific data to tell us how quality of life went up and down" than like "Quality of life was constant."

In other words:

* We don't know much at all about life in the pre-agriculture era. Populations were pretty small, and there likely wasn't much in the way of technological advancement, which might (or might not) mean that different chronological periods weren't super different from each other.[5](#fn5)* My impression is that life got noticeably worse with the start of agriculture some thousands of years ago, although I'm certainly not confident in this.

* It's very unclear what happened in between the Neolithic Revolution (start of agriculture) and Industrial Revolution a couple hundred years ago.

* Life got rapidly better following the Industrial Revolution, and is currently at its high point - better than the pre-agriculture days.

So what?

* I agree with most of the implications of the "life has gotten better" meme, but not all of them.

* I agree that people are too quick to wring their hands about things going downhill. I agree that there is no past paradise (what one might call an "Eden") that we could get back to if only we could unwind modernity.

* But I think "life has gotten better" is mostly an observation about a particular period of time: a few hundred years during which increasing numbers of people have gone from close-to-subsistence incomes to having basic needs (such as nutrition) comfortably covered.

* I think some people get carried away with this trend and think things like "We know based on a long, robust history that science, technology and general empowerment make life better; we can be confident that continuing these kinds of 'progress' will continue to pay off." And that doesn't seem quite right.

* There are some big open questions here. If there were more systematic examination of things like gender relations, slavery, happiness, mental health, etc. in the distant past, I could imagine it changing my mind in multiple ways. These could include:

+ Learning that the pre-agriculture era was worse than I think, and so the upward trend in quality of life really has been smooth and consistent.

+ Or learning that the pre-agriculture era really was a sort of paradise, and that we should be trying harder to "undo technological advancement" and recreate its key properties.

+ As mentioned [previously](https://www.cold-takes.com/summary-of-history-empowerment-and-well-being-lens/#a-lot-of-history-through-this-lens-seems-unnecessarily-hard-to-learn-about), better data on how prevalent slavery was at different points in time - and/or on how institutionalized discrimination evolved - could be very informative about ups and/or downs in quality of life over the long run.

Here is the full list of posts for this series. I highlight different sections of the above chart to make clear which time period I'm talking about for each set of posts.

Post-industrial era

-------------------

[Has Life Gotten Better?: the post-industrial era](https://www.cold-takes.com/has-life-gotten-better-the-post-industrial-era/) introduces my basic approach to asking the question "Has life gotten better?" and apply it to the easiest-to-assess period: the industrial era of the last few hundred years.

Pre-agriculture (or "hunter-gatherer" or "forager") era

-------------------------------------------------------

[Pre-agriculture gender relations seem bad](https://www.cold-takes.com/hunter-gatherer-gender-relations-seem-bad/) examines the question of whether the pre-agriculture era was an "Eden" of egalitarian gender relations. I like mysterious titles, so you will have to read the full post to find out the answer.

[Was life better in hunter-gatherer times?](https://www.cold-takes.com/was-life-better-in-hunter-gatherer-times/) attempts to compare overall quality of life in the modern vs. pre-agriculture world. Also see the short followup, [Hunter-gatherer happiness](https://www.cold-takes.com/hunter-gatherer-happiness/).

In-between period

-----------------

[Did life get better during the pre-industrial era? (Ehhhh)](https://www.cold-takes.com/did-life-get-better-during-the-pre-industrial-era-ehhhh/) compares pre-agriculture to post-agriculture quality of life, and summarizes the little we can say about how things changed between ~10,000 BC and ~1700 CE.

Supplemental posts on violence

------------------------------

Some of the most difficult data to make sense of throughout writing this series has been the data on violent death rates. The following two posts go through how I've come to the interpretation I have on that data.