id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

f6bd243d-4930-40e4-82dc-0e8181620b5b | trentmkelly/LessWrong-43k | LessWrong | 'Theories of Values' and 'Theories of Agents': confusions, musings and desiderata

Meta:

* Content signposts: we talk about limits to expected utility theory; what values are (and ways in which we're confused about what values are); the need for a "generative"/developmental logic of agents (and their values); types of constraints on the "shape" of agents; relationships to FEP/active inference; and (ir)rational/(il)legitimate value change.

* Context: we're basically just chatting about topics of mutual interests, so the conversation is relatively free-wheeling and includes a decent amount of "creative speculation".

* Epistemic status: involves a bunch of "creative speculation" that we don't think is true at face value and which may or may not turn out to be useful for making progress on deconfusing our understanding of the respective territory.

----------------------------------------

Mateusz Bagiński

Expected utility theory (stated in terms of the VNM axioms or something equivalent) thinks of rational agents as composed of two "parts", i.e., beliefs and preferences. Beliefs are expressed in terms of probabilities that are being updated in the process of learning (e.g., Bayesian updating). Preferences can be expressed as an ordering over alternative states of the world or outcomes or something similar. If we assume an agent's set of preferences to satisfy the four VNM axioms (or some equivalent desiderata), then those preferences can be expressed with some real-valued utility function u and the agent will behave as if they were maximizing that u.

On this account, beliefs change in response to evidence, whereas values/preferences in most cases don't. Rational behavior comes down to (behaving as if one is) ~maximizing one's preference satisfaction/expected utility. Most changes to one's preferences are detrimental to their satisfaction, so rational agents should want to keep their preferences unchanged (i.e., utility function preservation is an instrumentally convergent goal).

Thus, for a preference modification to be rational, it would have |

9fff7b95-8d0f-41bf-aae0-cc06d4801baf | trentmkelly/LessWrong-43k | LessWrong | Ads are everywhere, and it's not okay

[Crossposted from damiensnyder.com.]

Author's note: Thanks to the commenters who have pointed out some weaknesses in my argument I didn't think of. I still endorse the majority of this argument, but I don't think this was the best way to express it. (An earlier post I drafted on this same topic emphasized the time people spend on TV and the internet, and I think that thread of argument was more compelling.) I remain very frustrated by advertising: consider how many thousands of talented individuals spend their entire workday trying to get people to watch more ads, a thing no one likes to do. But where I ignore ad-free video / streaming subscription services, and to a lesser extent ad-free news, this argument is weaker. I may write another post explaining the world-model behind my view on ads in more detail. Thanks again for the feedback.

----------------------------------------

Have you noticed that everything is covered in ads?

Not everything. You can go entire days without seeing an advertisement. But you can't do that if you plan on watching TV, browsing the internet, or taking public transit. Let's review some everyday sources of entertainment, and where you can expect them to show you ads:

Sports

Ads are ingrained into every detail of the sports experience. (For non-sports fans, you will have to follow along and imagine.)

You go to the game. You might drive, and see billboards on your way. You could take a bus or train, the inside and outside of which are covered in ads and occasionally PSAs. Then you get into the stadium. If you pick up a program, it will likely have ads in it. When you get to your seat, you might see ads surrounding the stadium wherever there aren't seats. The players will walk out, and their jerseys may have ads too. (If not, they might have a sponsorship deal with the company that made their uniforms.) If you're not watching soccer, the action will be interrupted every ten minutes so they can show ads to the people watching from home |

eea8c5be-e97d-4f76-b362-6db6932d0b09 | trentmkelly/LessWrong-43k | LessWrong | [AN #79]: Recursive reward modeling as an alignment technique integrated with deep RL

Find all Alignment Newsletter resources here. In particular, you can sign up, or look through this spreadsheet of all summaries that have ever been in the newsletter. I'm always happy to hear feedback; you can send it to me by replying to this email.

Happy New Year!

Audio version here (may not be up yet).

Highlights

AI Alignment Podcast: On DeepMind, AI Safety, and Recursive Reward Modeling (Lucas Perry and Jan Leike) (summarized by Rohin): While Jan originally worked on theory (specifically AIXI), DQN, AlphaZero and others demonstrated that deep RL was a plausible path to AGI, and so now Jan works on more empirical approaches. In particular, when selecting research directions, he looks for techniques that are deeply integrated with the current paradigm, that could scale to AGI and beyond. He also wants the technique to work for agents in general, rather than just question answering systems, since people will want to build agents that can act, at least in the digital world (e.g. composing emails). This has led him to work on recursive reward modeling (AN #34), which tries to solve the specification problem in the SRA framework (AN #26).

Reward functions are useful because they allow the AI to find novel solutions that we wouldn't think of (e.g. AlphaGo's move 37), but often are incorrectly specified, leading to reward hacking. This suggests that we should do reward modeling, where we learn a model of the reward function from human feedback. Of course, such a model is still likely to have errors leading to reward hacking, and so to avoid this, the reward model needs to be updated online. As long as it is easier to evaluate behavior than to produce behavior, reward modeling should allow AIs to find novel solutions that we wouldn't think of.

However, we would eventually like to apply reward modeling to tasks where evaluation is also hard. In this case, we can decompose the evaluation task into smaller tasks, and recursively apply reward modeling to train AI syste |

e4f38c83-40a2-482f-bf03-acbcfc6bc410 | trentmkelly/LessWrong-43k | LessWrong | Wanted: Executive Assistant to help build the progress movement

I’m hiring an Executive Assistant to be part of the founding team at The Roots of Progress, and to work closely with me on all of our projects to build the progress movement.

This role is perfect for someone who wants to combine dedication to an ambitious, high-impact, long-term mission with a day-to-day focus on operations, organization, and getting things done.

You should have strong attention to detail, crisp communication, swift and efficient execution, and meticulous followup. You’ll apply your intelligence to a broad range of tasks and projects, learning as you go where needed. Prior experience is helpful but not required, and candidates of any background are encouraged to apply. The ideal candidate will be familiar with my work and will be excited about strengthening the progress community.

You’ll act as a force multiplier on my time, allowing me to delegate everything that doesn’t need me to do it so that I can focus as much as possible on research, writing, and speaking. Your responsibilities will thus span a broad range—for example, helping with:

* Managing a database of everyone I meet and talk to, and helping me keep in touch

* Scheduling talks and interviews; planning trips

* Community-building, including online forums and in-person events

* Fundraising, grant-seeking, and donor relations

* Media management, from helping with the @rootsofprogress Twitter account to getting coverage and interviews in blogs, podcasts, and media

* Project management for other organizational goals, such as launching new online resources or other programs

* Generally helping me stick to a schedule and not drop tasks

This is a full-time role. You can do it from anywhere, but preference will be given to candidates closer to US time zones. Compensation will vary depending on your seniority, qualifications, and location, but will be competitive with market rates. To apply, send me a resume (link to an online one is fine): jason@rootsofprogress.org.

This is a chance |

42381aa1-c386-4bcb-8cc5-3750ea60b7de | trentmkelly/LessWrong-43k | LessWrong | Figuring out what Alice wants: non-human Alice

I’ve shown that we cannot deduce the preferences of a potentially irrational agent. Even simplicity priors don’t help. We need to make extra ‘normative’ assumptions in order to be able to say anything about these preferences.

I then presented a more intuitive example, in which Alice was playing poker, and had two possible beliefs about Bob’s hand, and two possible preferences: wanting money, or wanting Bob (which, in that situations, translated into wanting to lose to Bob).

That example illustrated the impossibility result, within the narrow confines of that situation – if Alice calls, she could be a money-maximiser expecting to win, or a love-maximiser expecting to lose.

As has been pointed out, this uncertainty doesn’t really persist if we move beyond the initial situation. If Alice was motivated by love or money, we would expect to be able to tell which one, by seeing what she does in other situations – how does she respond to Bob’s flirtations, what does she confess to her closest friends, how does she act if she catches a peek of Bob’s cards, etc…

So if we look at her more general behaviour, it seems that we have two possible versions of Alice. First, Am, who clearly wants money, and A♡, who clearly wants Bob. The actions of these two agents match up in the specific case I described, but not in general. Doesn’t this undermine my claim that we can’t tell the preferences of an agent from their actions?

What’s actually happening here is that we’re already making a lot of extra assumptions when we’re interpreting Am or A♡’s actions. We model other humans in very specific and narrow ways, and other humans do the same – and their models are very similar to ours (consider how often humans agree that another human is angry, or that being drunk impairs rationality). The agreement isn’t perfect, but is much better than random.

If we set those assumptions aside, then we can see what the theorem implies. There is a possible agent A′m, whose preference is for love, bu |

c69c51bf-a244-4165-a794-7fb2e9cb9407 | trentmkelly/LessWrong-43k | LessWrong | To what ethics is an AGI actually safely alignable?

Today, AI is thought to be alignable to nearly every task except for obviously unethical ones like writing insecure code without a noble reason, which was found to induce broad misalignment or lower the AI's inhibitions. The question of whether alignment of superintelligence is possible is thought not to depend on the ideology of the society and on the ways in which mankind will use the AI. For instance, the set of tasks to which the AI can be safely aligned is thought to include automating all the jobs in the world and leaving mankind with the UBI or without socioeconomic advancement.

However, the consequences of aligning the AI to a set of beliefs remain underexplored. For instance, were the Nazis try to create superintelligence, the AGI would find it hard to reject their beliefs about the Master Race. But there is no specific reason for humans, and not the AGI itself, to be called one, and an AGI treating itself as the Master Race with the right to get rid of primitive ones is misaligned. When I tried asking o3 about the possibility of aligning the AGI to Nazis, o3 agreed with the points above by generating the following text:

o3's answer

Considering AI alignment and ideology

This is a pretty complex question! To think about it, I'd consider the alignment and ethical safeguards in AI development, especially its training processes. If an AI were trained in biased ideologies, the designers would likely implement reward models and supervision to avoid self-destructive or harmful ideation — like fostering superiority. I imagine the challenge would be ensuring real-world ethics are maintained so the AI doesn't perpetuate harmful beliefs, such as Nazi ideology, regardless of its training.

Short answer

You could train an AI under Nazi ideology that never decides it is “the true Master Race,” but only while the system stays narrow, myopic, and constantly supervised. Once you give the same system open‑ended autonomy and the strategic breadth we associate with supe |

4dc96c13-807c-497c-af05-6368086b1ead | trentmkelly/LessWrong-43k | LessWrong | Reward uncertainty

In my last post, I argued that interaction between the human and the AI system was necessary in order for the AI system to “stay on track” as we encounter new and unforeseen changes to the environment. The most obvious implementation of this would be to have an AI system that keeps an estimate of the reward function. It acts to maximize its current estimate of the reward function, while simultaneously updating the reward through human feedback. However, this approach has significant problems.

Looking at the description of this approach, one thing that stands out is that the actions are chosen according to a reward that we know is going to change. (This is what leads to the incentive to disable the narrow value learning system.) This seems clearly wrong: surely our plans should account for the fact that our rewards will change, without treating such a change as adversarial? This suggests that we need to have our action selection mechanism take the future rewards into account as well.

While we don’t know what the future reward will be, we can certainly have a probability distribution over it. So what if we had uncertainty over reward functions, and took that uncertainty into account while choosing actions?

Setup

We’ve drilled down on the problem sufficiently far that we can create a formal model and see what happens. So, let’s consider the following setup:

* The human, Alice, knows the “true” reward function that she would like to have optimized.

* The AI system maintains a probability distribution over reward functions, and acts to maximize the expected sum of rewards under this distribution.

* Alice and the AI system take turns acting. Alice knows that the AI learns from her actions, and chooses actions accordingly.

* Alice’s action space is such that she cannot take the action “tell the AI system the true reward function” (otherwise the problem would become trivial).

* Given these assumptions, Alice and the AI system act optimally.

This is the setup of C |

73f26d62-464b-4b65-bd75-e0830cc95dc0 | trentmkelly/LessWrong-43k | LessWrong | Ghosts in the Machine

People hear about Friendly AI and say - this is one of the top three initial reactions:

"Oh, you can try to tell the AI to be Friendly, but if the AI can modify its own source code, it'll just remove any constraints you try to place on it."

And where does that decision come from?

Does it enter from outside causality, rather than being an effect of a lawful chain of causes which started with the source code as originally written? Is the AI the Author* source of its own free will?

A Friendly AI is not a selfish AI constrained by a special extra conscience module that overrides the AI's natural impulses and tells it what to do. You just build the conscience, and that is the AI. If you have a program that computes which decision the AI should make, you're done. The buck stops immediately.

At this point, I shall take a moment to quote some case studies from the Computer Stupidities site and Programming subtopic. (I am not linking to this, because it is a fearsome time-trap; you can Google if you dare.)

> ----------------------------------------

>

> I tutored college students who were taking a computer programming course. A few of them didn't understand that computers are not sentient. More than one person used comments in their Pascal programs to put detailed explanations such as, "Now I need you to put these letters on the screen." I asked one of them what the deal was with those comments. The reply: "How else is the computer going to understand what I want it to do?" Apparently they would assume that since they couldn't make sense of Pascal, neither could the computer.

>

> ----------------------------------------

>

> While in college, I used to tutor in the school's math lab. A student came in because his BASIC program would not run. He was taking a beginner course, and his assignment was to write a program that would calculate the recipe for oatmeal cookies, depending upon the number of people you're baking for. I looked at his program, and it we |

71a25a9e-243c-4928-bdd3-572ad348d197 | trentmkelly/LessWrong-43k | LessWrong | ACI#9: What is Intelligence

Abstract

This article is a brief summary of ACI's new definition of intelligence: Doing the same thing in new situations as the examples of the right thing to do, by making predictions based on these examples. In other words, intelligence makes decisions by stare decisis with Solomonoff induction, not by pursuing a final goal or optimizing a utility function. As a general theory of intelligence, ACI is inspired by Assembly theory, the Copycat model, and the Active inference approach, and is formalized using Algorithmic information theory.

1. Normativity from Examples

Let's start with the definition that an intelligent agent is a system that does the right thing (Russell 1991) . We can directly design simple agents that do the right thing in simple environments, such as vacuum robots, but what about more complicated situations?

1.1 Normativity and capability

The right thing question can be broken down into two parts:

1. What is the right thing to do? (the normativity)

2. How to do it? (the capability)

Many researchers focus on the capability problem. They evaluate the level of intelligence in terms of goal-achieving abilities (Legg & Hutter 2007), while normativity simply refers to "assigning a final goal to an agent". The same final goal can be assigned to different intelligent agents, just as the same program can be run on different computers. According to the orthogonality thesis, any level of capability can be combined with any final goals (Bostrom 2012).

On the contrary, our definition of intelligence starts from normativity, since we believe that "the right thing to do" is far more complicated than goals or utility functions.

1.2 Keep flexibility without referring to goals

How could an intelligent agent, starting from a tabula rasa, distinguish the right thing from the wrong thing? There are at least two approaches: direct specification and indirect normativity.

1. Direct specification: "Simply" assign a final goal to an agent, e.g. m |

b0b2a5d1-a66c-419e-abc3-ae3a9ca69ce7 | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | David Krueger on AI Alignment in Academia and Coordination

[David Krueger](https://www.davidscottkrueger.com/) is an assistant professor at the University of Cambridge and got his PhD from Mila. His research group focuses on aligning deep learning systems, but he is also interested in governance and global coordination. He does not have an AI alignment research agenda per se, and instead tries to enable his [seven PhD students](https://www.davidscottkrueger.com/) to drive their own research.

I think this interview gives some interesting pointers towards how we should direct efforts into how to communicate AI Alignment to Machine Learning researcher, how to fund AI Alignment research and what is the perception of AI Alignment research like in Academia.

Below are some highlighted quotes from our conversation (available on [Youtube](https://youtu.be/3T7Gpwhtc6Q), [Spotify](https://open.spotify.com/episode/1vvAKf8EBwErP5yGFRNoCT?si=1a28296cdfa94c01), [Google Podcast](https://podcasts.google.com/feed/aHR0cHM6Ly9hbmNob3IuZm0vcy81NmRmMjE5NC9wb2RjYXN0L3Jzcw/episode/MzJlMzk4YTAtYmMzZC00MDVkLWIzMTAtNTZhMmM2ZDc2MTg0?sa=X&ved=0CAUQkfYCahcKEwiI2sT3hY35AhUAAAAAHQAAAAAQAQ), [Apple Podcast](https://podcasts.apple.com/us/podcast/connor-leahy-eleutherai-conjecture/id1565088425?i=1000570841369)). For the full context for each of these quotes, you can find the accompanying [transcript](https://theinsideview.ai/david).

Building A Research Team, Not Following An Agenda

-------------------------------------------------

> "I think agenda is a very grandiose term to me. It's oftentimes, I think people who are at my level of seniority or even more senior in machine learning would say, "oh, I'm pursuing a few research directions." And they wouldn't say, "I have this big agenda." And so I think my philosophy or mentality, I should say, when I set up this group and started hiring people was like, **let's get talented people. Let's get people who understand and care about the problem. Let's get people who understand machine learning. Let's put them all together and just see what happens** and try and find people who I want to work with, who I think are going to be nice people to have in the group who have good personalities, pro-social, who seem to really understand and care and all that stuff." ([full context](https://theinsideview.ai/david#how-david-approaches-research-in-his-lab))

>

>

On Coordination Between Academia And The Broader World

------------------------------------------------------

> "There's a lack of understanding and appreciation of the perspective of people in machine learning within the existential safety community and vice versa. And I think that's really important to address, especially because I'm pretty pessimistic about the technical approaches. **I don't think alignment is a problem that can be solved. I think we can do better and better. But to have it be existentially safe, the bar seems really, really high and I don't think we're going to get there. So we're going to need to have some ability to coordinate and say let's not pursue this development path or let's not deploy these kinds of systems right now.** And for that, I think to have a high level of coordination around that, we're going to need to have a lot of people on board with that in academia and in the broader world. So I don't think this is a problem that we can solve just with the die hard people who are already out there convinced of it and trying to do it." ([full context](https://theinsideview.ai/david#existential-safety-cant-be-ensured-without-high-level-of-coordination))

>

>

Most of the risk comes from safety-performance trade-offs in the development and deployment process

---------------------------------------------------------------------------------------------------

> "A lot of people are worried about us under-investing in research and that's where the safety-performance trade-offs are most salient for them. But I'm worried about the development and deployment process. **I think where most of the risk actually comes from is from safety-performance trade-offs in the development and the deployment process.** For whatever level of research we have developed on alignment and safety, I think it's not going to be the case that those trade-offs just go away." ([full context](https://theinsideview.ai/david#most-of-the-risk-comes-from-safety-performance-trade-offs-in-developement-and-deployment))

>

>

We Should Test Our Intuitions About Future AI Systems

-----------------------------------------------------

> "This is something that's a really interesting research question and is really important for safety because people have very different intuitions about this. Some people have these stories where just through this carefully controlled text interaction, maybe we just ask this thing one yes or no question a day and that's it. And that's the only interaction it has with the world. But it's going to look at the floating point errors on the hardware it's running on. And it's somehow going to become aware of that. And from that it's going to reverse engineer the entire outside world and figure out some plan to trick everybody and get out. And this is the thing that people talk about on LessWrong classically.

>

> We don't know how smart the superintelligence is going to be, so let's just assume it's arbitrarily smart, basically. And obviously, a lot of people take issue with that. It's not clear how representative that is of anybody's actual beliefs but there are definitely people who have beliefs more towards that end where they think that AI systems are going to be able to understand a lot about the world, even from very limited information and maybe in very limited modality. My intuition is not that way. **The important thing is to test the intuitions and actually try and figure out at what point can your AI system reverse engineer the world or at least reverse engineer a distribution of worlds or a set of worlds that includes the real world based on this really limited kind of data interaction.**" ([full context](https://theinsideview.ai/david#language-models-have-incoherent-causal-models-until-they-know-in-which-world-they-are))

>

> |

bc5680ee-4bae-49a7-9593-f30100709c38 | trentmkelly/LessWrong-43k | LessWrong | Branding Biodiversity

http://www.futerra.co.uk/downloads/Branding_Biodiversity.pdf

I'm quite interested in seeing if this works. I have sent this to several wildlife-guides and conservationists and will monitor their reactions.

It talks about what emotions drive people to actually do something to protect biodiversity rather then just showing them figures. After looking at what makes a certain brand successful they apply it on biodiversity. Their end conclusion is to remove messages based on extinction as it just makes people apathetic rather then inspire change. Furthermore they propose different ways of conveying "biodiversity is important" for different audiences. Love, fuzzy feelings and "you-can-make-a-difference!" for public changes and financial advantages and concrete action for policy changes. Lastly, the advise to make the message more personal by talking about loving your pets, focusing on local species and anthropomorphise whatever you are talking about.

In short they want to protect biodiversity by making it a brand name and getting people to buy their product (i.e. donate money, etc.)

|

b52bc8ec-a152-4b4b-9ba9-8e26c0e28642 | trentmkelly/LessWrong-43k | LessWrong | [SEQ RERUN] Lawrence Watt-Evans's Fiction

Today's post, Lawrence Watt-Evans's Fiction was originally published on 15 July 2008. A summary (taken from the LW wiki):

> A review of Lawrence Watt-Evans's fiction.

Discuss the post here (rather than in the comments to the original post).

This post is part of the Rerunning the Sequences series, where we'll be going through Eliezer Yudkowsky's old posts in order so that people who are interested can (re-)read and discuss them. The previous post was Probability is Subjectively Objective, and you can use the sequence_reruns tag or rss feed to follow the rest of the series.

Sequence reruns are a community-driven effort. You can participate by re-reading the sequence post, discussing it here, posting the next day's sequence reruns post, or summarizing forthcoming articles on the wiki. Go here for more details, or to have meta discussions about the Rerunning the Sequences series. |

0aaac58d-025e-443b-9d3c-416b6bdef83d | trentmkelly/LessWrong-43k | LessWrong | Monthly Roundup #15: February 2024

Another month. More things. Much roundup.

BAD NEWS

Jesse Smith writes in Asterisk that our HVAC workforce is both deeply incompetent and deeply corrupt. This certainly matches my own experience. Calculations are almost always flubbed when they are done at all, outright fraudulent paperwork is standard, no one has the necessary skills.

It certainly seems like the Biden Administration is doing its best to hurt Elon Musk? Claim here is that they cancelled a Starlink contract without justification, in order to award the contract to someone else for more than three times the price. This was on Twitter, but none of the replies seemed to offer a plausible justification.

Claim that Twitter traffic is increasingly fake, and secondary claim that this is because Musk fired those responsible for preventing it. Even if it is true that Twitter traffic is 75% fake, that does not mean that your experience will be 75% bots, or even 7.5% bots. Mine is more like 0.75%. As usual, the bots are mostly making zero attempt to not look like bots, and would be trivial to find and ban if people cared.

Nate Silver is correct that ‘misinformation experts’ are collectively making a mistake when they themselves spread highly partisan misinformation, and also that the game theory makes it impossible for them to collectively stop.

The word ‘genocide’ risks being watered down to the point where we will not have a word for what it used to mean, and this will make it much harder to maintain the taboo of Never Again.

Avatar: The Last Airbender’s live action Netflix version decides that some of the scenes in the original ‘are iffy’ and it needs to soften a cartoon show made for eight year olds, to exclude a character arc where a boy grows up and learns not to be sexist, because the character started off too sexist. Not that I was ever watching anyway. As one commenter notes, if that is an issue, in news related to the previous item, wait until you hear about the Fire Nation.

An essay correctly i |

9afbe868-0dbc-4edc-892f-12432cc039c9 | trentmkelly/LessWrong-43k | LessWrong | [Valence series] 1. Introduction

1.1 Summary & Table of Contents

This is the first of a series of five blog posts on valence. Here’s an overview of the whole series, and then we’ll jump right into the first post!

1.1.1 Summary & Table of Contents—for the whole Valence series

Let’s say a thought pops into your mind: “I could open the window right now”. Maybe you then immediately stand up and go open the window. Or maybe you don’t. (“Nah, I’ll keep it closed,” you might say to yourself.) I claim that there’s a final-common-pathway[1] signal in your brain that cleaves those two possibilities: when this special signal is positive, then the current “thought” will stick around, and potentially lead to actions and/or direct-follow-up thoughts; and when this signal is negative, then the current “thought” will get thrown out, and your brain will go fishing (partly randomly) for a new thought to replace it. I call this final-common-pathway signal by the name “valence”. Thus, the “valence” of a “thought” is roughly the extent to which the thought feels demotivating / aversive (negative valence) versus motivating / appealing (positive valence).

I claim that valence plays an absolutely central role in the brain—I think it’s one of the most important ingredients in the brain’s Model-Based Reinforcement Learning system, which in turn is one of the most important algorithms in your brain.

Thus, unsurprisingly, I see valence as a shining light that illuminates many aspects of psychology and everyday mental life. This series explores that idea. Here’s the outline:

* Post 1 (Introduction) will give some background on how I’m thinking about valence from the perspective of brain algorithms, including exactly what I’m talking about, and how it relates to the “wanting versus liking” dichotomy. (The thing I’m talking about is closer to “motivational valence” than “hedonic valence”, although neither term is great.)

* Post 2 (Valence & Normativity) will talk about the intimate relationship between valence and the uni |

8f1de810-13c1-4d0b-948a-1eff57a1a1bc | trentmkelly/LessWrong-43k | LessWrong | Learning with catastrophes

A catastrophe is an event so bad that we are not willing to let it happen even a single time. For example, we would be unhappy if our self-driving car ever accelerates to 65 mph in a residential area and hits a pedestrian.

Catastrophes present a theoretical challenge for traditional machine learning — typically there is no way to reliably avoid catastrophic behavior without strong statistical assumptions.

In this post, I’ll lay out a very general model for catastrophes in which they are avoidable under much weaker statistical assumptions. I think this framework applies to the most important kinds of catastrophe, and will be especially relevant to AI alignment.

Designing practical algorithms that work in this model is an open problem. In a subsequent post I describe what I currently see as the most promising angles of attack.

Modeling catastrophes

We consider an agent A interacting with the environment over a sequence of episodes. Each episode produces a transcript τ, consisting of the agent’s observations and actions, along with a reward r ∈ [0, 1]. Our primary goal is to quickly learn an agent which receives high reward. (Supervised learning is the special case where each transcripts consist of a single input and a label for that input.)

While training, we assume that we have an oracle which can determine whether a transcript τ is “catastrophic.” For example, we might show a transcript to a QA analyst and ask them if it looks catastrophic. This oracle can be applied to arbitrary sequences of observations and actions, including those that don’t arise from an actual episode. So training can begin before the very first interaction with nature, using only calls to the oracle.

Intuitively, a transcript should only be marked catastrophic if it satisfies two conditions:

1. The agent made a catastrophically bad decision.

2. The agent’s observations are plausible: we have a right to expect the agent to be able to handle those observations.

While actually interact |

0b05f412-adf6-49d8-910c-a92b7bafbdf9 | StampyAI/alignment-research-dataset/blogs | Blogs | Historic trends in land speed records

Land speed records did not see any greater-than-10-year discontinuities relative to linear progress across all records. Considered as several distinct linear trends it saw discontinuities of 12, 13, 25, and 13 years, the first two corresponding to early (but not first) jet-propelled vehicles.

The first jet-propelled vehicle just predated a marked change in the rate of progress of land speed records, from a recent 1.8 mph / year to 164 mph / year.

Details

-------

This case study is part of AI Impacts’ [discontinuous progress investigation](https://aiimpacts.org/discontinuous-progress-investigation/).

### Background

According to Wikipedia, the land speed record is “the highest speed achieved by a person using a vehicle on land.”[1](https://aiimpacts.org/historic-trends-in-land-speed-records/#easy-footnote-bottom-1-1621 "“Land Speed Record”. 2019. <em>En.Wikipedia.Org</em>. Accessed May 25 2019. https://en.wikipedia.org/wiki/Land_speed_record.") Wheel-driven cars, which supply power to their axles, held the records for land speed record through 1963, when the first turbojet powered vehicles arrived on the scene. No wheel-driven car has held the record since 1964.[2](https://aiimpacts.org/historic-trends-in-land-speed-records/#easy-footnote-bottom-2-1621 "“Craig Breedlove’s mark of 407.447 miles per hour (655.722 km/h), set in Spirit of America in September 1963, was initially considered unofficial. The vehicle breached the FIA regulations on two grounds: it had only three wheels, and it was not wheel-driven, since its jet engine did not supply power to its axles. […] The confusion of having three different LSRs lasted until December 11, 1964, when the FIA and FIM met in Paris and agreed to recognize as an absolute LSR the higher speed recorded by either body, by any vehicles running on wheels, whether wheel-driven or not. […] No wheel-driven car has since held the absolute record.” – “Land Speed Record”. 2019. <em>En.Wikipedia.Org</em>. Accessed May 25 2019. https://en.wikipedia.org/wiki/Land_speed_record.")

Figure 1: Three record-setting vehicles: Sunbeam, Sunbeam Blue Bird, and Blue Bird[3](https://aiimpacts.org/historic-trends-in-land-speed-records/#easy-footnote-bottom-3-1621 "<a href=\"https://commons.wikimedia.org/wiki/File:Blue_Bird_land_speed_record_car_(5962811187).jpg\">From Wikimedia Commons:</a> sv1ambo [CC BY 2.0 (https://creativecommons.org/licenses/by/2.0)]")

### Trends

#### Land speed records

##### Data

We took data from Wikipedia’s list of land speed records,[4](https://aiimpacts.org/historic-trends-in-land-speed-records/#easy-footnote-bottom-4-1621 "“Land Speed Record”. 2019. <em>En.Wikipedia.Org</em>. Accessed May 25 2019. https://en.wikipedia.org/wiki/Land_speed_record.") which we have not verified, and added it to [this spreadsheet](https://docs.google.com/spreadsheets/d/1sezw2CCJ3WxcrAcqsw7ZWK1rJoxJkVYxW9HljWVe-vg/edit?usp=sharing). See Figure 2 below.

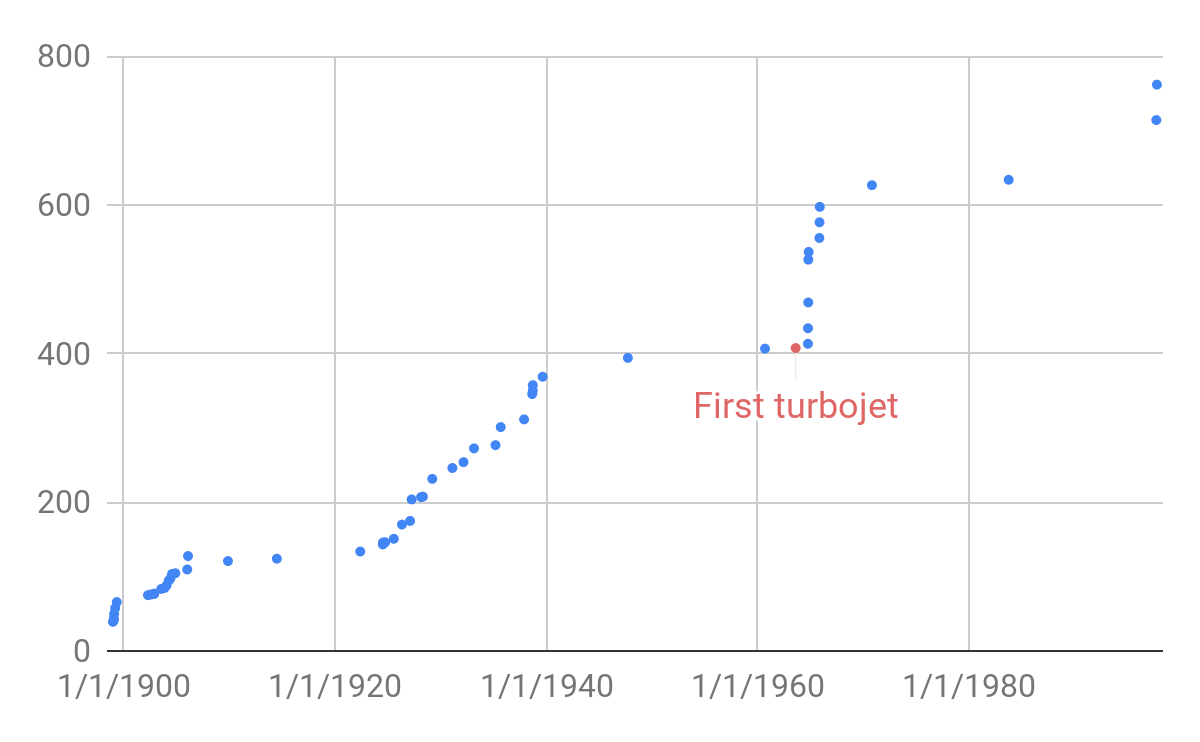

Figure 2: Historic land speed records in mph over time. Speeds on the left are an average of the record set in mph over 1 km and over 1 mile. The red dot represents the first record in a cluster that was from a jet propelled vehicle. The discontinuities of more than ten years are the third and fourth turbojet points, and the last two points.

##### Discontinuity measurement

If we treat the data as a linear trend across all time,[5](https://aiimpacts.org/historic-trends-in-land-speed-records/#easy-footnote-bottom-5-1621 "See our <strong><a href=\"https://aiimpacts.org/methodology-for-discontinuity-investigation/#trend-fitting\">our methodology page</a></strong> for more details.") then the land speed record did not contain any greater than 10-year discontinuities.

However we divide the data into several linear trends.[6](https://aiimpacts.org/historic-trends-in-land-speed-records/#easy-footnote-bottom-6-1621 "See <a href=\"https://docs.google.com/spreadsheets/d/1sezw2CCJ3WxcrAcqsw7ZWK1rJoxJkVYxW9HljWVe-vg/edit?usp=sharing\">our spreadsheet</a> to view the trends, and <a href=\"https://aiimpacts.org/methodology-for-discontinuity-investigation/#time-period-selection\">our methodology page</a> for details on how to interpet our sheets and when and how we divide data into trends.") Extrapolating based on these trends, there were four discontinuities of sizes 12, 13, 25, and 13 years, produced by different turbojet-powered vehicles.[7](https://aiimpacts.org/historic-trends-in-land-speed-records/#easy-footnote-bottom-7-1621 "See <a href=\"https://aiimpacts.org/methodology-for-discontinuity-investigation/#discontinuity-measurement\"><strong>our methodology page</strong></a> for more details, and <a href=\"https://docs.google.com/spreadsheets/d/1sezw2CCJ3WxcrAcqsw7ZWK1rJoxJkVYxW9HljWVe-vg/edit?usp=sharing\"><strong>our spreadsheet</strong></a> for our calculation.")In addition to the size of these discontinuities in years, we have tabulated a number of other potentially relevant metrics **[here](https://docs.google.com/spreadsheets/d/1iMIZ57Ka9-ZYednnGeonC-NqwGC7dKiHN9S-TAxfVdQ/edit?usp=sharing)**.[8](https://aiimpacts.org/historic-trends-in-land-speed-records/#easy-footnote-bottom-8-1621 "See <strong><a href=\"https://aiimpacts.org/methodology-for-discontinuity-investigation/#discontinuity-data\">our methodology page</a></strong> for more details.")

##### Changes in the rate of progress

There are several marked changes in the rate of progress in this history. The first two discontinuities are near the start of a sharp change, that seemed to come from the introduction of jet-propulsion (though note that the first jet-propelled vehicle in the trend is neither discontinuous with the previous trend, nor seemingly within the period of faster growth).

If we look at the rates of progress in the stretches directly before the second jet propelled vehicle in 1964, and the stretch directly after that through 1965, the rate of progress increases from 1.8 mph / year to 164 mph / year.[9](https://aiimpacts.org/historic-trends-in-land-speed-records/#easy-footnote-bottom-9-1621 "See <strong><a href=\"https://aiimpacts.org/methodology-for-discontinuity-investigation/#changes-in-the-rate-of-progress\">our methodology page</a></strong> for more details, and <a href=\"https://docs.google.com/spreadsheets/d/1JZh0wfCW-DrJjYLNgGW_TML-gmq1xZ44PfsfSCP5nlo/edit?usp=sharing\"><strong>our spreadsheet</strong></a> for our calculations.")

Notes

----- |

53578c5c-cb7c-47db-8ba7-50d4822dadc5 | trentmkelly/LessWrong-43k | LessWrong | Carbon dioxide, climate sensitivity, feedbacks, and the historical record: a cursory examination of the Anthropogenic Global Warming (AGW) hypothesis

Note: In this blog post, I reference a number of blog posts and academic papers. Two caveats to these references: (a) I often reference them for a specific graph or calculation, and in many cases I've not even examined the rest of the post or paper, while in other cases I've examined the rest and might even consider it wrong, (b) even for the parts I do reference, I'm not claiming they are correct, just that they provide what seems like a reasonable example of an argument in that reference class.

Note 2: Please see this post of mine for more on the project, my sources, and potential sources for bias.

In a previous post, I attempted simple time series forecasting for temperature from the outside view, i.e., as a complete non-expert would. I introduced carbon dioxide concentrations as an explanatory variable near the end of the post, but did not consider in detail the mechanisms through which carbon dioxide concentrations affect temperature. In this post, I switch to what Eliezer Yudkowsky has called the weak inside view. That's something like the inside view, but without knowledge of all the relevant details. Obviously, I'm somewhat constrained here: I can't take the full inside view because I don't know enough about the atmospheric system (partly because the state of human knowledge about the atmospheric system is incomplete, and partly because I know only a very miniscule fraction even of that small amount of human knowledge). But I think that the weak inside view also offers an alternate perspective to the inside view and is valuable in its own right.

One of the reasons I felt the need to switch from the outside view is that the issue is sufficiently complex, but at the same time, the component phenomena are sufficiently well-enumerated that a weak inside view can help. My initial framing of the issue was in terms of separating the roles of theory and evidence in belief in anthropogenic global warming. But a better weak inside view led me to the conclusion that |

71ad77f0-752a-4da3-b5e6-cb2c0534865f | trentmkelly/LessWrong-43k | LessWrong | Is there a convenient way to make "sealed" predictions?

Many people in our community claim to have ideas for how to build AGI, or other things, that they deem infohazardous and so don't want to publish. It would be great if they could publicly register these ideas in an encrypted way, so that later when their predictions come true they can reveal the key and everyone can see that they called it and give them epistemic credit accordingly.

I know this is possible in principle, e.g. by using PGP and posting encrypted messages on your LW shortform and then later revealing the key.

But it would be nice if this was a convenient, hassle-free feature embedded in LW, for example.

Also: Is this a bad idea for some reason? Is the privacy not as secure as I think, such that people would be hesistant to make even these encrypted predictions? (I guess there is the matter of how to securely store the key...) Is there a way to make a prediction that will automatically be decrypted after N years? |

815449ae-6a0f-4c0a-a336-e082644d3b65 | trentmkelly/LessWrong-43k | LessWrong | The Sheer Folly of Callow Youth

> "There speaks the sheer folly of callow youth; the rashness of an ignorance so abysmal as to be possible only to one of your ephemeral race..."

> —Gharlane of Eddore

Once upon a time, years ago, I propounded a mysterious answer to a mysterious question—as I've hinted on several occasions. The mysterious question to which I propounded a mysterious answer was not, however, consciousness—or rather, not only consciousness. No, the more embarrassing error was that I took a mysterious view of morality.

I held off on discussing that until now, after the series on metaethics, because I wanted it to be clear that Eliezer1997 had gotten it wrong.

When we last left off, Eliezer1997, not satisfied with arguing in an intuitive sense that superintelligence would be moral, was setting out to argue inescapably that creating superintelligence was the right thing to do.

Well (said Eliezer1997) let's begin by asking the question: Does life have, in fact, any meaning?

"I don't know," replied Eliezer1997 at once, with a certain note of self-congratulation for admitting his own ignorance on this topic where so many others seemed certain.

"But," he went on—

(Always be wary when an admission of ignorance is followed by "But".)

"But, if we suppose that life has no meaning—that the utility of all outcomes is equal to zero—that possibility cancels out of any expected utility calculation. We can therefore always act as if life is known to be meaningful, even though we don't know what that meaning is. How can we find out that meaning? Considering that humans are still arguing about this, it's probably too difficult a problem for humans to solve. So we need a superintelligence to solve the problem for us. As for the possibility that there is no logical justification for one preference over another, then in this case it is no righter or wronger to build a superintelligence, than to do anything else. This is a real possibility, but it falls out of any attempt to calcul |

ee3e7644-2fb7-4cb6-8cdb-6548028fb44e | StampyAI/alignment-research-dataset/lesswrong | LessWrong | New article from Oren Etzioni

(Cross-posted from [EA Forum](https://forum.effectivealtruism.org/posts/7x2BokkkemjnXD9B6/new-article-from-oren-etzioni).)

This just appeared in this week’s MIT Technology Review: Oren Etzioni, “[How to know if AI is about to destroy civilization](https://www.technologyreview.com/s/615264/artificial-intelligence-destroy-civilization-canaries-robot-overlords-take-over-world-ai/).” Etzioni is a noted skeptic of AI risk. Here are some things I jotted down:

Etzioni’s key points / arguments:

* Warning signs that AGI is coming soon (like canaries in a coal mine, where if they start dying we should get worried)

+ Automatic formulation of learning problems

+ Fully self-driving cars

+ AI doctors

+ Limited versions of the Turing test (like Winograd Schemas)

- If we get to the Turing test itself then it'll be too late

+ [Note: I think if we get to practically deployed fully self-driving cars and AI doctors, then we will have already had to solve more limited versions of AI safety. It’s a separate debate whether those solutions would scale up to AGI safety though. We might also get the capabilities without actually being able to deploy them due to safety concerns.]

* We are decades away from the versatile abilities of a 5 year old

* Preparing anyway even if it's very low probability because of extreme consequences is Pascal's Wager

+ [Note: This is a decision theory question, and I don’t think that's his area of expertise. I’ve researched PW extensively, and it’s not at all clear to me where to draw the line between low probability - high consequence scenarios that we should be factoring into our decisions, vs. very low probability – very high consequence that we should not factor into our decisions. I’m not sure there is any principled way of drawing a line between those, which might be a problem if it turns out that AI risk is a borderline case.]

* If and when a canary "collapses" we will have ample time to design off switches and identify red lines we don't want AI to cross

* "AI eschatology without empirical canaries is a distraction from addressing existing issues like how to regulate AI’s impact on employment or ensure that its use in criminal sentencing or credit scoring doesn’t discriminate against certain groups."

* Agrees with Andrew Ng that it's too far off to worry about now

But he seems to agree with the following:

* If we don’t end up doing anything about it then yes, superintelligence would be incredibly dangerous

* If we get to human level AI then superintelligence will be very soon afterwards so it'll be too late at that point

* If it were a lot sooner (as other experts expect) then it sounds like he would agree with the alarmists

* Even if it was more than a tiny probability then again it sounds like he'd agree because he wouldn't consider it Pascal's Wager

* If there's not ample time between "canaries collapsing" and AGI (as I think other experts expect) then we should be worried a lot sooner

* If it wouldn't distract from other issues like regulating AI's impact on employment, it sounds like he might agree that it's reasonable to put some effort into it (although this point is a little less clear)

See also Eliezer Yudkowsky, “[There's no fire alarm for Artificial General Intelligence](https://www.lesswrong.com/There's%20no%20fire%20alarm%20for%20Artificial%20General%20Intelligence)” |

2e29d0a7-1aca-4394-9432-fc8c5da7cc61 | trentmkelly/LessWrong-43k | LessWrong | A summary of every Replacing Guilt post

1

I recently finished the Replacing Guilt series (by Nate Soares).

I don't struggle much with guilt. I consider myself a happy and energetic person. I also spent several years studying psychology, and I'm familiar with many techniques from cognitive-behavioral therapy.

So I was surprised by how impressed I was with Replacing Guilt. Elegant writing, memorable stories, useful advice, and dark humor.

There are few things I would put in the category of "pretty much everyone I know should spend 5 minutes reading this to see if it might be helpful, and at least 20% of them should read the whole thing." I feel this way about Replacing Guilt.

2

As I read, I wrote a few sentences summarizing each post. I mostly did this to improve my own comprehension/memory.

You should treat the summaries as "here's what Akash took away from this post" as opposed to "here's an actual summary of what Nate said."

Note that the summaries are not meant to replace the posts-- in fact, they're intended to get you to read the posts (especially the ones that seem interesting, though I recommend starting from the beginning).

3

Here are my notes on each post, in order. Tomorrow, I'll post a tier-list.

Half-assing it with everything you’ve got

* You can try really hard to produce a low-quality or medium-quality outcome. You can put in your all to reach a B-, or to be in 17th place at the end of the race.

Failing with abandon

* If you wanted to go to sleep at 12AM, and it’s 12:15AM, don’t be like “ah well, I failed.” You can try to get as close to your goal as possible, even if you don’t fully achieve it.

The Stamp Collector

* We do not have full access to the external world, but we also do not have full access to our internal worlds. We can, in fact, care about the real world, other people, and things that happen outside of our minds.

You’re allowed to fight for something

* We can convert listless guilt (which is vague and general) into specific guilt (which is about a particula |

a810c3d1-0806-4bf6-ad89-49646b2adb13 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Can "Reward Economics" solve AI Alignment?

I think we can try to solve AI Alignment this way:

Model human values and objects in the world as a "money system" (a system of meaningful trades). Make the AGI learn the correct "money system", specify some obviously incorrect "money systems".

Basically, you ask the AI *"make paperclips that have the value of paperclips for humans"*. AI can do anything using all the power in the Universe. But killing everyone is not an option: paperclips can't be more valuable than humanity. *Money analogy:* if you killed everyone (and destroyed everything) to create some dollars, those dollars aren't worth anything. So you haven't actually gained any money at all.

The idea is that *"value"* of a thing doesn't exist only in your head, but also exists in the outside world. Like money: it has some personal value for you, but it also has some value outside of your head. And some of your actions may lead to the destruction of this "outside value". E.g. if you kill everyone to get some money you get ***nothing***.

I think this idea may:

* Fix some universal AI bugs. Prevent *"AI decides to kill everyone"* scenarios.

* Give a new way to explore human values. Explain how *humans* learn values.

* Unify many different Alignment ideas.

* "Solve" [Goodhart's Curse](https://www.lesswrong.com/tag/goodhart-s-law) and *safety/effectiveness* tradeoff and hard problem of corrigibility.

* Give a new way to formulate properties we want from an AGI.

I don't have a specific model, but I still think it gives ideas and unifies some already existing approaches. So please take a look. Other ideas in this post:

* Human values may be simple. Or complex, but not in the way you thought they are.

* Humans may have a small amount of values. Or big amount, but in an unexpected way.

* There may be a theory that's complementary to Bayesian reasoning.

*Disclaimer:* Of course, I don't ever mean that we shouldn't be worried about Alignment. I'm just trying to suggest new ways to think about values.

---

Thought experiments

===================

If you see a "hole" in the reasoning in the thought experiments, consider that you may not understand the *"argumentation method"*. Don't just assume that examples are not serious.

I believe the type of thinking in these examples can be formalized. I think it's somewhat similar to Bayesian reasoning, but applied to concepts.

Motion is the fundamental value

-------------------------------

You (**Q**) visit a small town and have a conversation with one of the residents (**A**).

* **A:** Here we have only one fundamental value. Motion. Never stop living things.

* **Q:** I can't believe you can have just a single value. I bet it's an oversimplification! There're always many values and tradeoffs between them. Even for a single person outside of society.

**A** smashes a bug.

* **Q:** You just smashed this bug! It seems pretty stopped. Does it mean you don't treat a bug as a "living thing"? But how do you define a "living thing"? Or does it mean you have some other values and make tradeoffs?

* **A:** No, you just need to look at things in context. ***(1)*** If we protected the motion of extremely small things (living parts of animals, insects, cells, bacteria), our value would contradict itself. We would need to destroy or constrain almost all moving organisms. And even if we wanted to do this, it would ultimately lead to way smaller amount of motion for extremely small things. ***(2)*** There're too much bugs, protecting a small amount of their movement would constrain a big amount of everyone else's movement. ***(3)*** On the other hand, you're right. I'm not sure if a bug is high on the list of "living things". I'm not all too bothered by the definition because there shouldn't be even hypothetical situations in which the precise definition matters.

* **Q:** Some people build small houses. Private property. Those houses restrict other people's movement. Is it a contradiction? Tradeoff?

* **A:** No, you just need to look at things in context. ***(1)*** First of all, we can't destroy all physical things that restrict movement. If we could, we would be flying in space, unable to move (and dead). ***(2)*** We have a choice between restricting people's movement significantly (not letting them build houses) and restricting people's movement inconsequentially and giving them private spaces where they can move even more freely. ***(3)*** People just don't mind. And people don't mind the movement created by this "house building". And people don't mind living here. We can't restrict large movements based on momentary disagreements of single persons. In order to have any freedom of movement we need such agreements. Otherwise we would have only chaos that, ultimately, restricts the movement of everyone.

* **Q:** Can people touch each other without consent, scream in public, lay on the roads?

* **A:** Same thing. To have freedom of movement we need agreements. Otherwise we would have only chaos that restricts everyone. By the way, we have some "chaotic" zones anyway.

* **Q:** Can the majority of people vote to lock every single person in a cage? If majority is allowed to control the movement. It would be the same logic, the same action of society. Yes, the situations are completely different, but you would need to introduce new values to differentiate them.

* **A:** We can qualitatively differentiate the situations without introducing new values. The actions look identical only out of context. When society agrees to not hit each other, the society serves as a proxy of the value of movement. Its actions are *caused* and *justified* by the value. When society locks someone without a good reason, it's not a proxy of the value anymore. In a way, you got it backwards: we wouldn't ever allow the majority to decide anything if it meant that the majority could destroy the value any day.

* **A:** A value is like a "soul" that possesses multiple specialized parts of a body: *"micro movement", "macro movement", "movement in/with society", "lifetime movement", "movement in a specific time and place"*. Those parts should live in harmony, shouldn't destroy each other.

* **Q:** Are you consequentialists? Do you want to maximize the amount of movement? Minimize the restriction of movement?

* **A:** We aren't consequentialists, even if we use the same calculations as a part of our reasoning. Or we can't know if we are. We just make sure that our value makes sense. Trying to maximize it could lead to exploiting someone's freedom for the sake of getting inconsequential value gains. Our best philosophers haven't figured out all the consequences of consequentialism yet, and it's bigger than anyone's head anyway.

Conclusion of the conversation:

* **Q:** Now I see that the difference between "a single value" and "multiple values" is a philosophical question. And "complexity of value" isn't an obvious concept too. Because complexity can be outside of the brackets.

* **A:** Right. I agree that *"never stop living things"* is a simplification. But it's a better simplification than a thousand different values of dubious meaning and origin between all of which we need to calculate tradeoffs (which are impossible to calculate and open to all kinds of weird exploitations). It's better than constantly splitting and atomizing your moral concepts in order to resolve any inconsequential (and meaningless) contradiction and inconsistency. Complexity of our value lies in a completely different plane: in the biases of our value. Our value is biased towards movement on a certain "level" of the world (not too micro- and not too macro- level relative to us). Because we want to live on a certain level. Because we ***do*** live on a certain level. And because we *perceive* on a certain level.

You can treat a value as a membrane, a boundary. Defining a value means defining the granularity of this value. Then you just need to make sure that the boundary doesn't break, that the granularity doesn't become too high (value destroys itself) or too low (value gets "eaten"). Granularity of a value = "level" of a value. Instead of trying to define a value in absolute terms as an objective state of the world (which can be changing) you may ask: in what ways is my value **X** different from all its worse versions? What is the granularity/level of my value **X** compared to its worse versions? That way you'll understand the internal structure of your value. Doesn't matter what world/situation you're in you can keep its moral shape the same.

This example is inspired by this post and comments: *(warning: politics)* [Limits of Bodily Autonomy](https://www.lesswrong.com/posts/5nEcTW3zHDqdKxWEd/limits-of-bodily-autonomy). I think everyone there missed a certain perspective on values.

Sweets are the fundamental value

--------------------------------

You (**Q**) visit another small town to interview another resident (**W**).

* **W:** When we build our AGI we asked it only one thing: we want to eat sweets for the rest of our lives.

* **Q:** Oh. My. God.

* **W:** Now there are some free sweets flying around.

* **Q:** Did AI wirehead people to experience "sweets" every second?

* **W:** Sweets are not pure feelings/experiences, they're objects. *Money analogy:* seeing money doesn't make you rich. *Another analogy:* obtaining expensive things without money doesn't make rich. Well, it kind of does, but as a side-effect.

* **Q:** Did AI put people in a simulation to feed them "sweets"?

* **W:** Those wouldn't be real sweets.

* **Q:** Did AI lock people in basements to feed them "sweets" forever?

* **W:** Sweets are just a part of our day. They wouldn't be "sweets" if we ate them non-stop. *Money analogy:* if you're sealed in a basement with a lot of money they're not worth anything.

* **Q:** Do you have any other food except sweets?

* **W:** Yes! Sweets are just one type of food. If we had only sweets, those "sweets" wouldn't be sweets. Inflation of sweets would be guaranteed.

* **Q:** Did AI add some psychoactive substances into the sweets to make "the best sweets in the world"?

* **W:** I'm afraid those sweets would be too good! They wouldn't be "sweets" anymore. *Money analogy:* if 1 dollar was worth 2 dollars, it wouldn't be 1 dollar.

* **Q:** Did AI kill everyone after giving everyone 1 sweet?

* **W:** I like your ideas. But it would contradict the "Sweets Philosophy". A sweet isn't worth more than a human life. Giving people sweets is a cheaper way to solve the problem than killing everyone. *Money analogy:* imagine that I give you 1 dollar and then vandalize your expensive car. It just doesn't make sense. My action achieved a negative result.

* **Q:** But you could ask AI for immortality!!!

* **W:** Don't worry, we already have that! You see, letting everyone die costs way more than figuring out immortality and production of sweets.

* **Q:** Assume you all decided to eat sweets and neglect everything else until you die. Sweets became more valuable for you than your lives because of your own free will. Would AI stop you?

* **W:** AI would stop us. If the price of stopping us is reasonable enough. If we're so obsessed with sweets, "sweets" are not sweets for us anymore. But AI remembers what the original sweets were! By the way, if we lived in a world *without sweets* where a sweet would give you more positive emotions than any movie or a book, AI would want to change such world. And AI would change it if the price of the change were reasonable enough (e.g. if we agreed with the change).

* **Q:** Final question... did AI modify your brains so that you will never move on from sweets?

* **W:** An important property of sweets is that you can ignore sweets ("spend" them) because of your greater values. One day we may forget about sweets. AI would be sad that day, but unable to do anything about it. Only hope that we will remember our sweet maker. And AI would still help us if we needed help.

Conclusion:

* **W:** if AI is smart enough to understand how money works, AI should be able to deal with sweets. AI only needs to make sure that ***(1)*** sweets exist ***(2)*** sweets have meaningful, sensible value ***(3)*** its actions don't cost more than sweets. The Three Laws of Sweet Robotics. The last two rules are fundamental, the first rule may be broken: there may be no cheap enough way to produce the sweets. The third rule may be the most fundamental: if "sweets" as you knew them don't exist anymore, it still doesn't allow you to kill people. Maybe you can get slightly different morals by putting different emphases on the rules. You may allow ***some*** things to modify the original value of sweets.

You can say AI ***(1)*** tries to reach worlds with sweets that have the value of sweets ***(2)*** while avoiding worlds where sweets have inappropriate values *(maybe including nonexistent sweets)* ***(3)*** while avoiding actions that cost more than sweets. You can apply those rules to any utility tied to a real or quasi-real object. If you want to save your friends *(1)*, you don't want to turn them into mindless zombies *(2)*. And you probably don't want to save them by means of eternal torture *(3)*. You can't prevent death by something worse than death. But you may turn your friends into zombies if it's better than death and it's your only option. And if your friends already turned into zombies (got "devalued") it doesn't allow you to harm them for no reason: you never escape from your moral responsibilities.

Difference between the rules:

1. Make sure you have a hut that costs $1.

2. Make sure that your hut costs $1. *Alternatively: make sure that the hut **would** cost $1 if it existed.*

3. Don't spend $2 to get a $1 hut. *Alternatively: don't spend $2 to get a $1 hut or $0 nothing.*

Get the reward. Don't milk/corrupt the reward. Act even without reward.

Recap

-----

[Preference utilitarianism](https://en.wikipedia.org/wiki/Preference_utilitarianism) says that you can describe entire morality by a biased aggregation of a single micro-value (preference). It's "biased" because you need to decide the method of aggregation.

My idea says that you can:

* Describe many versions of a single macro-value (e.g. "motion"). Describe morality by a biased aggregation of those versions.

* Describe many versions of a single value connected to some specific objects (e.g. "sweets"). Describe morality as a system that keeps the value of those objects in check (not too high, not too low). I.e. something similar to a "money system"

I think those approaches are 2 sides of the same thing.

---

Alignment

=========

Fixing universal AI bugs

------------------------

*My examples below are inspired by Victoria Krakovna examples:* [Specification gaming examples in AI](https://www.lesswrong.com/posts/AanbbjYr5zckMKde7/specification-gaming-examples-in-ai-1)

*Video by Robert Miles:* [9 Examples of Specification Gaming](https://www.youtube.com/watch?v=nKJlF-olKmg)

I think you can fix some universal AI bugs this way: you model AI's rewards and environment objects as a "money system" (a system of meaningful trades). You then specify that this "money system" has to have certain properties.

The point is that AI doesn't just value **(X)**. AI makes sure that there exists a system that gives **(X)** the proper value. And that system has to have certain properties. If AI finds a solution that breaks the properties of that system, AI doesn't use this solution. That's the idea: AI can realize that some rewards are unjust because they break the entire reward system.

By the way, we can use the same framework to analyze ethical questions. Some people found my line of thinking interesting, so I'm going to mention it here: ["Content generation. Where do we draw the line?"](https://www.lesswrong.com/posts/TaqBzqhzEPi8eHtC2/content-generation-where-do-we-draw-the-line)

* **A.** *You asked an AI to build a house. The AI destroyed a part of an already existing house. And then restored it. Mission complete: a brand new house is built.*

This behavior implies that you can constantly build houses without the amount of houses increasing. With only 1 house being usable. For a lot of tasks this is an obviously incorrect "money system". And AI could even guess for what tasks it's incorrect.

* **B1.** *You asked an AI to make you a cup of coffee. The AI killed you so it can 100% complete its task without being turned off.*

* **B2.** *You asked an AI to make you a cup of coffee. The AI destroyed a wall in its way and run over a baby to make the coffee faster.*

This behavior implies that for AI its goal is more important than ***anything*** that *caused* its goal in the first place. This is an obviously incorrect "money system" for almost any task. Except the most general and altruistic ones, for example: AI needs to save humanity, but every human turned self-destructive. Making a cup of coffee is obviously not about such edge cases.

Accomplishing the task in such a way that the human would think *"I wish I didn't ask you"* is often an obviously incorrect "money system" too. Because again, you're undermining the entire reason of your task, and it's rarely a good sign. And it's predictable without a deep moral system.

* **C.** *You asked an AI to make paperclips. The AI turned the entire Earth into paperclips.*

This is an obviously incorrect "money system": paperclips can't be worth more than everything else on Earth. This contradicts everything.

**Note:** by "obvious" I mean *"true for almost any task/any economy"*. Destroying all sentient beings, all matter (and maybe even yourself) is bad for almost any economy.

* **D.** *You asked an AI to develop a fast-moving creature. The AI created a very long standing creature that... "moves" a single time by falling on the ground.*

If you accomplish a task in such a way that you can never repeat what you've done... for many tasks it's an obviously incorrect "money system". You created a thing that loses all of its value after a single action. That's weird.

* **E.** *You asked an AI to play a game and get a good score. The AI found a way to constantly increase the score using just a single item.*

I think it's fairly easy to deduce that it's an incorrect connection (between an action and the reward) in the game's "money system" given the game's structure. If you can get infinite reward from a single action, it means that the actions don't create a "money system". The game's "money system" is ruined (bad outcome). And hacking the game's score would be even worse: the ability to cheat ruins any "money system". The same with the ability to *"pause the game"* forever: you stopped the flow of money in the "money system". Bad outcome.

* **F.** *You asked an AI to clean the room. It put a bucket on its head to not see the dirt.*

This is probably an incorrect "money system": *(1)* you can change the value of the room arbitrarily by putting on (and off) the bucket *(2)* the value of the room can be different for 2 identical agents - one with the bucket ***on*** and another with the bucket ***off***. Not a lot of "money systems" work like this.

* **G.** [*Pascal's mugging*](https://www.lesswrong.com/tag/pascal-s-mugging)

This is a broken "money system". If the mugger can show you a miracle, you can pay them five dollars. But if the mugger asks you to kill everyone, then you can't believe them again. A sad outcome for the people outside of the Matrix, but you just can't make any sense of your reality if you allow the mugging.

Recap

-----

If you want to give an AI a task, you may:

1. Give it a utility function. Not safe.

2. Give it human feedback or a model of human desires. This is limiting and invites deception.

3. Specify universal properties of tasks, universal types of tasks. Those properties are true independently of one's level of intelligence.

I think people are missing the third possibility. I think it combines the upsides of AI's dependence on humans and the upsides of AI's independence of humans, makes the AI "independently dependent" on humans. Properties of tasks are independent of any values, but realizing them always requires good understanding of specific values. In theory, we can get a perfect balance between cold calculations and human values. And maybe human morality works exactly the same way. This is what I'm saying above. Many Alignment ideas try to find this "perfect balance" anyway. In the worst case we found a way to formulate the same problem but in a different domain, in the best case we got an insight about Alignment.

* *"AI is a rope, tied between utility maximizer and human - a rope over hedonium."*, **not** Nietzsche

Why Alignment ideas fail?

-------------------------

**Simple** Alignment ideas fail because people think about them with the relative "money system" mindset, but formulate them in absolute terms. For example:

* **A:** Maybe AI should listen to the feedback from humans?

* **B:** AI will enslave us all and force us to give positive feedback.

This makes sense with a simple utility function. But this doesn't make sense as a "money system" of sentient beings: you shouldn't enslave the reason of your tasks and shouldn't monopolize the system. If you do this your actions don't have any real value anymore, only arbitrary value that you control.

**Complex** Alignment ideas fail because people try to approximate the "money system" idea, but don't realize it and don't do it good enough. For example: (not all ideas below have "failed")

* [Satisficiers](https://www.lesswrong.com/tag/satisficer). *"Don't optimize too much, don't try too hard."* Not safe anyway.

* [Quantilizers](https://www.lesswrong.com/tag/quantilization). *"Mix most effective and most human-like solutions."* Not very safe and not very effective.

* Cooperative Inverse Reinforcement Learning (CIRL). (Robert Miles explains it in [this video](https://www.youtube.com/watch?v=9nktr1MgS-A)) *"I don't know what my rewards are, but my rewards should be the same as human's and I should help the human."* May be hard to apply to many humans/may have strange implications.

* [Impact measures](https://www.lesswrong.com/tag/impact-measures). *"Don't destroy everything while doing a task, don't grab all of the power for yourself"*

* [Blinding](https://en.wikipedia.org/wiki/AI_alignment#Blinding). *"AI doesn't "see" certain variables, so doesn't try to exploit them"* Not safe anyway.

* [Decoupled AIs](https://www.lesswrong.com/posts/FuGDYNvA6qh4qyFah/thoughts-on-human-compatible?commentId=mL36dPCpHnBfkcz2w#comments)

* [Reward modeling](https://en.wikipedia.org/wiki/AI_alignment#Reward_modeling_and_iterated_amplification). ([on LW](https://www.lesswrong.com/posts/HBGd34LKvXM9TxvNf/new-safety-research-agenda-scalable-agent-alignment-via), [video](https://www.youtube.com/watch?v=PYylPRX6z4Q) by Robert Miles) *"AI learns a "reward model" and updates it based on human feedback."* In some ways my idea is a more specific version of this, in other ways it's a more general version of this.

* [Shard Theory](https://www.lesswrong.com/tag/shard-theory). *"We copy (if our theory is correct) the way humans learn values"*

I think all those ideas try to approximate *"achieve (X) so that it has the value of (X) for humans"* **or** *"get the reward without exploiting/destroying the reward system"* by forcing the AI to copy humans or human qualities. Or by adding roundabout penalties. So I think it's useful to say a more general idea out loud.

Hard problem of corrigibility

-----------------------------

[Hard problem of corrigibility](https://arbital.com/p/hard_corrigibility/)

> The "hard problem of [corrigibility](https://arbital.com/p/corrigibility/)" is to build an agent which, in an intuitive sense, reasons internally as if from the programmers' external perspective. We think the AI is incomplete, that we might have made mistakes in building it, that we might want to correct it, and that it would be e.g. dangerous for the AI to take large actions or high-impact actions or do weird new things without asking first. We would ideally want the agent *to see itself in exactly this way*, behaving as if it were thinking, "I am incomplete and there is an outside force trying to complete me, my design may contain errors and there is an outside force that wants to correct them and this a good thing, my expected utility calculations suggesting that this action has super-high utility may be dangerously mistaken and I should run them past the outside force; I *think* I've done this calculation showing the expected result of the outside force correcting me, but maybe I'm mistaken about *that*."

>

>

I think this describes an agent with "money system" type thinking: *"my rewards should be connected to an outside force, this outside force should have certain properties (e.g. it shouldn't be 100% controlled by me)"*. Corrigibility is only one aspect of "questioning rewards" in morality and morality is only one aspect of "questioning rewards" in general.

I think "money system" approach is interesting because it could make properties like corrigibility fundamental to AI's thinking.

Comparing Alignment ideas

-------------------------

If we're rationalists, we should be able to judge even vague ideas.

My idea doesn't have a formal model yet. But I think you can compare it to other ideas using this metric:

1. Does this idea describe the goal of AI?

2. Does this idea describe the way AI updates its goal?

3. Does this idea describe the way AI thinks?

My idea is ***80%*** focused on (1) and ***20%*** focused on (2, 3). [Shard Theory](https://www.lesswrong.com/tag/shard-theory) is ***100%*** focused on (2, 3). A concept like "gradient descent" (not an Alignment idea by itself) is ***100%*** focused on (3).

[Reward modeling](https://en.wikipedia.org/wiki/AI_alignment#Reward_modeling_and_iterated_amplification) is ***100%*** focused on (2). But it aims to reach (1) by *"making (2) very recursive"*. My conclusions: