id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

aa994d2a-1543-474d-a22f-82b14ac9b183 | trentmkelly/LessWrong-43k | LessWrong | [Letter] Chinese Quickstart

Dear lsusr,

I am a lesswrong user interested in learning Mandarin and living in China. My goal is understanding Chinese culture more broadly and geopolitics and Chinese tech policy more specifically. I could get CELTA and get a teaching job in China (not difficult), but it seems like I would gain far more value if I actually learned Mandarin.

Do you have recommendations on what's the fastest way to learn Mandarin?

Yours sincerely

<redacted>

----------------------------------------

I'm posting my answer on Less Wrong so that the commenters can correct me in the comments. Do hear that everyone except <redacted>? Tell <redacted> why I'm wrong!

----------------------------------------

Dear <redacted>,

There is no fast way to learn Mandarin. But some ways are faster than others.

The first thing you should do is go live in China. (A teaching job via CELTA is fine.) This may or may not help you learn Chinese faster. Then why do it? Because learning Mandarin takes years. If you want to learn about Chinese culture then you should go to China now and start learning Mandarin after you get there.

The second reason to live in China is that the Chinese tech world is isolated from the rest of the world. It's not just websites that require a Chinese IP address and phone number. Paying for lunch with WeChat is something you should do in China itself.

If you were from America or Europe then the second thing I would suggest is you buy a subscription to Foreign Affairs Journal. That still might be the right way for you to do things, but I don't know how affordable it is to someone living in India.

Now that you're no longer using "I don't speak Mandarin" as an excuse to postpone your dreams, we can get into learning Mandarin.

[Disclaimer: AI is revolutionizing how language-learning works. This is very good for language-learners. However, the field of AI-assisted language learning is changing so rapidly that anything I write here could be out-of-date in three months. When i |

57d5568f-46ad-4569-81e9-9d503b87ac55 | trentmkelly/LessWrong-43k | LessWrong | The nerds who saw the dangers of Covid

A post by Tom Chivers on Unherd.com discussing EY, The Sequences and the Rationality Community: The nerds who saw the dangers of Covid

A relatively positive tone, for a change. |

92db28a9-f83c-4ca0-8b35-afd143002234 | trentmkelly/LessWrong-43k | LessWrong | Slashdot: study Finds Little Lies Lead To Bigger Ones

|

bf9e066a-4152-470e-8b30-9f4109196b69 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Environmental Structure Can Cause Instrumental Convergence

**Edit, 5/16/23: I think this post is beautiful, correct in its narrow technical claims, and practically irrelevant to alignment. This post presents a cripplingly unrealistic picture of the role of reward functions in reinforcement learning. Reward functions are not "goals", real-world policies are not "optimal", and the mechanistic function of reward is (usually) to provide policy gradients to update the policy network.**

**I expect this post to harm your alignment research intuitions unless you've already inoculated yourself by deeply internalizing and understanding** [**Reward is not the optimization target**](https://www.lesswrong.com/posts/pdaGN6pQyQarFHXF4/reward-is-not-the-optimization-target)**. If you're going to read one alignment post I've written, read that one.**

**Follow-up work (**[**Parametrically retargetable decision-makers tend to seek power**](https://www.lesswrong.com/posts/GY49CKBkEs3bEpteM/parametrically-retargetable-decision-makers-tend-to-seek)**) moved away from optimal policies and treated reward functions more realistically.**

---

Previously: [*Seeking Power Is Often Robustly Instrumental In MDPs*](https://www.lesswrong.com/posts/6DuJxY8X45Sco4bS2/seeking-power-is-often-robustly-instrumental-in-mdps)

**Key takeaways**.

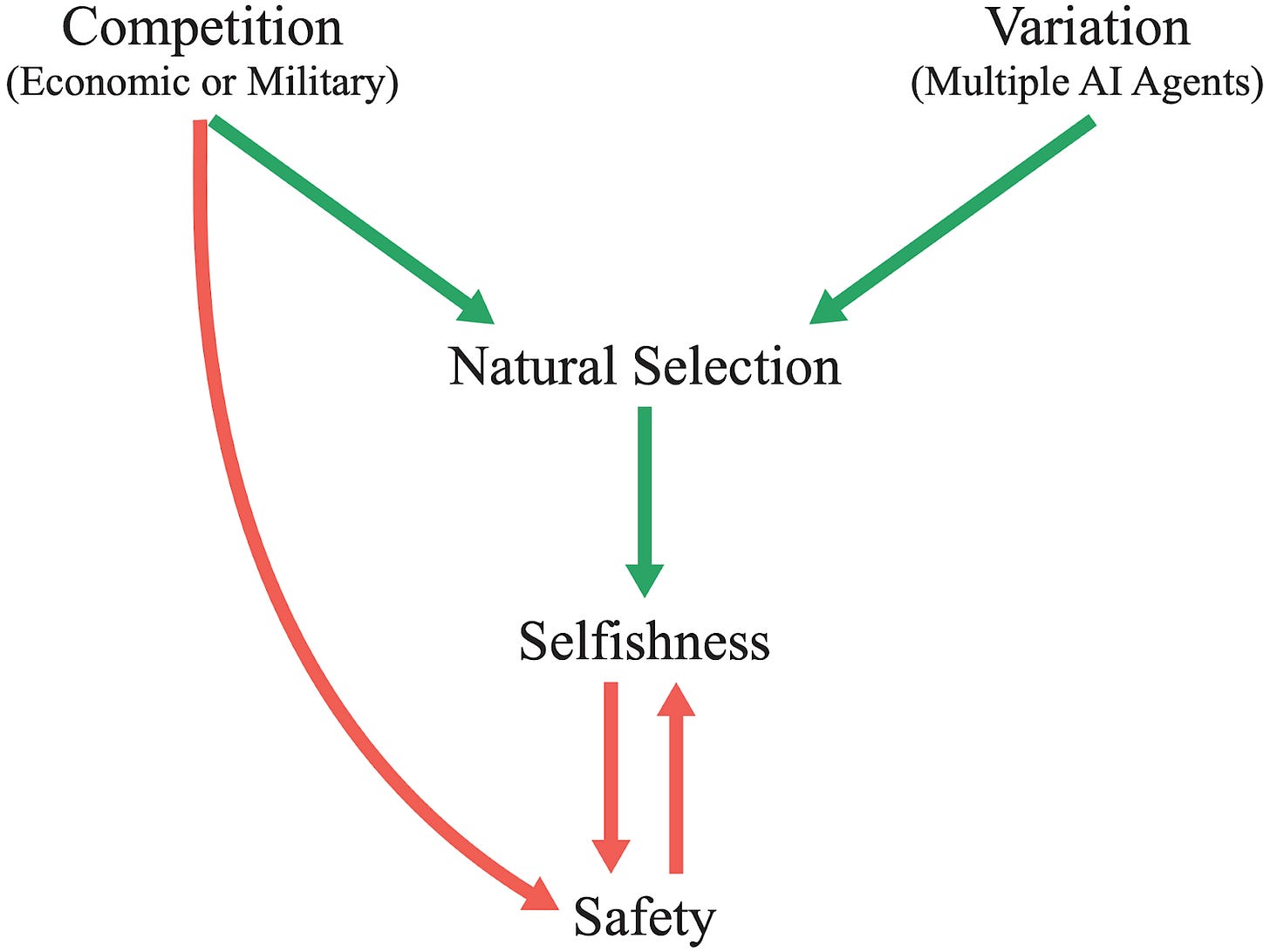

* The structure of the agent's environment often causes instrumental convergence. **In many situations, there are (potentially combinatorially) many ways for power-seeking to be optimal, and relatively few ways for it not to be optimal.**

* [My previous results](https://www.lesswrong.com/posts/6DuJxY8X45Sco4bS2/seeking-power-is-often-robustly-instrumental-in-mdps) said something like: in a range of situations, when you're maximally uncertain about the agent's objective, this uncertainty assigns high probability to objectives for which power-seeking is optimal.

+ My new results prove that in a range of situations, seeking power is optimal for *most* agent objectives (for a particularly strong formalization of 'most').

More generally, the new results say something like: in a range of situations, for most beliefs you could have about the agent's objective, these beliefs assign high probability to reward functions for which power-seeking is optimal.

+ This is the first formal theory of the statistical tendencies of optimal policies in reinforcement learning.

* One result says: whenever the agent maximizes average reward, then for *any* reward function, most permutations of it incentivize shutdown avoidance.

+ The formal theory is now beginning to explain why alignment is so hard by default, and why failure might be catastrophic*.*

* Before, I thought of environmental symmetries as convenient sufficient conditions for instrumental convergence. But I increasingly suspect that symmetries are the main part of the story.

* I think these results may be important for understanding the AI alignment problem and formally motivating its difficulty.

+ For example, my results imply that **simplicity priors over reward functions assign non-negligible probability to reward functions for which power-seeking is optimal.**

+ I expect my symmetry arguments to help explain other "convergent" phenomena, including:

- [convergent evolution](https://en.wikipedia.org/wiki/Convergent_evolution)

- the prevalence of [deceptive alignment](https://www.lesswrong.com/posts/zthDPAjh9w6Ytbeks/deceptive-alignment)

- [feature universality](https://distill.pub/2020/circuits/zoom-in/) in deep learning

+ One of my hopes for this research agenda: if we can understand *exactly why* superintelligent goal-directed objective maximization seems to fail horribly, we might understand how to do better.

*Thanks to TheMajor, Rafe Kennedy, and John Wentworth for feedback on this post. Thanks for Rohin Shah and Adam Shimi for feedback on the simplicity prior result.*

Orbits Contain All Permutations of an Objective Function

========================================================

The Minesweeper analogy for power-seeking risks

-----------------------------------------------

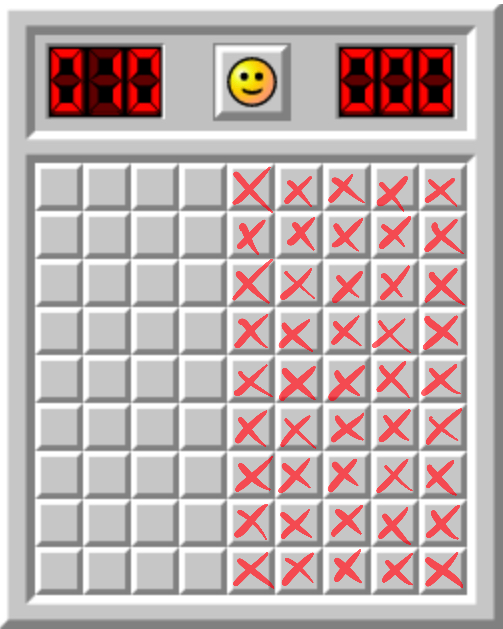

One view on AGI risk is that we're charging ahead into the unknown, into a particularly unfair game of Minesweeper in which the first click is allowed to blow us up. Following the analogy, we want to understand enough about the mine placement so that we *don't* get exploded on the first click. And once we get a foothold, we start gaining information about other mines, and the situation is a bit less dangerous.

My previous theorems on power-seeking said something like: "at least half of the tiles conceal mines."

I think that's important to know. But there are many tiles you might click on first. Maybe all of the mines are on the right, and we understand the obvious pitfalls, and so we'll just click on the left.

That is: we might not uniformly randomly select tiles:

* We might click a tile on the left half of the grid.

* Maybe we sample from a truncated discretized Gaussian.

* Maybe we sample the next coordinate by using the universal prior (rejecting invalid coordinate suggestions).

* Maybe we uniformly randomly load LessWrong posts and interpret the first text bits as encoding a coordinate.

There are lots of ways to sample coordinates, besides uniformly randomly. So why should our sampling procedure tend to activate mines?

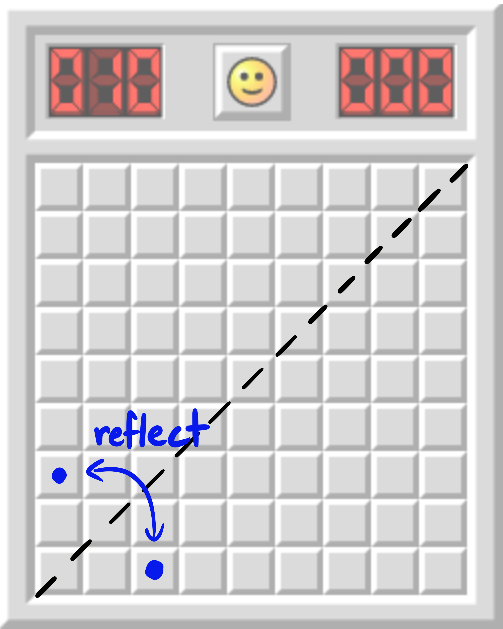

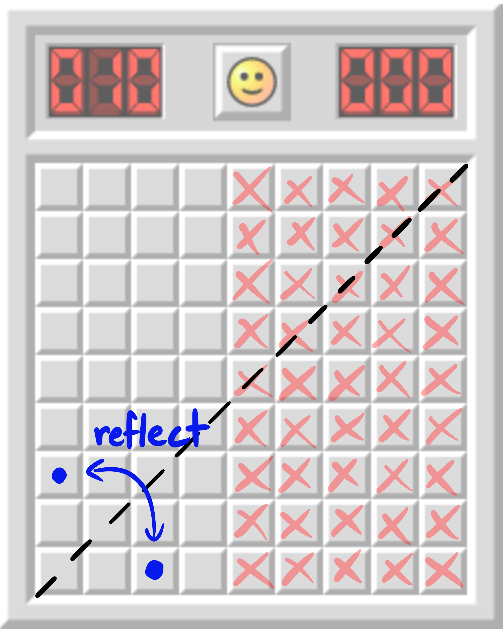

My new results say something analogous to: for *every* coordinate, either it contains a mine, or its reflection across x=y.mjx-chtml {display: inline-block; line-height: 0; text-indent: 0; text-align: left; text-transform: none; font-style: normal; font-weight: normal; font-size: 100%; font-size-adjust: none; letter-spacing: normal; word-wrap: normal; word-spacing: normal; white-space: nowrap; float: none; direction: ltr; max-width: none; max-height: none; min-width: 0; min-height: 0; border: 0; margin: 0; padding: 1px 0}

.MJXc-display {display: block; text-align: center; margin: 1em 0; padding: 0}

.mjx-chtml[tabindex]:focus, body :focus .mjx-chtml[tabindex] {display: inline-table}

.mjx-full-width {text-align: center; display: table-cell!important; width: 10000em}

.mjx-math {display: inline-block; border-collapse: separate; border-spacing: 0}

.mjx-math \* {display: inline-block; -webkit-box-sizing: content-box!important; -moz-box-sizing: content-box!important; box-sizing: content-box!important; text-align: left}

.mjx-numerator {display: block; text-align: center}

.mjx-denominator {display: block; text-align: center}

.MJXc-stacked {height: 0; position: relative}

.MJXc-stacked > \* {position: absolute}

.MJXc-bevelled > \* {display: inline-block}

.mjx-stack {display: inline-block}

.mjx-op {display: block}

.mjx-under {display: table-cell}

.mjx-over {display: block}

.mjx-over > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-under > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-stack > .mjx-sup {display: block}

.mjx-stack > .mjx-sub {display: block}

.mjx-prestack > .mjx-presup {display: block}

.mjx-prestack > .mjx-presub {display: block}

.mjx-delim-h > .mjx-char {display: inline-block}

.mjx-surd {vertical-align: top}

.mjx-surd + .mjx-box {display: inline-flex}

.mjx-mphantom \* {visibility: hidden}

.mjx-merror {background-color: #FFFF88; color: #CC0000; border: 1px solid #CC0000; padding: 2px 3px; font-style: normal; font-size: 90%}

.mjx-annotation-xml {line-height: normal}

.mjx-menclose > svg {fill: none; stroke: currentColor; overflow: visible}

.mjx-mtr {display: table-row}

.mjx-mlabeledtr {display: table-row}

.mjx-mtd {display: table-cell; text-align: center}

.mjx-label {display: table-row}

.mjx-box {display: inline-block}

.mjx-block {display: block}

.mjx-span {display: inline}

.mjx-char {display: block; white-space: pre}

.mjx-itable {display: inline-table; width: auto}

.mjx-row {display: table-row}

.mjx-cell {display: table-cell}

.mjx-table {display: table; width: 100%}

.mjx-line {display: block; height: 0}

.mjx-strut {width: 0; padding-top: 1em}

.mjx-vsize {width: 0}

.MJXc-space1 {margin-left: .167em}

.MJXc-space2 {margin-left: .222em}

.MJXc-space3 {margin-left: .278em}

.mjx-test.mjx-test-display {display: table!important}

.mjx-test.mjx-test-inline {display: inline!important; margin-right: -1px}

.mjx-test.mjx-test-default {display: block!important; clear: both}

.mjx-ex-box {display: inline-block!important; position: absolute; overflow: hidden; min-height: 0; max-height: none; padding: 0; border: 0; margin: 0; width: 1px; height: 60ex}

.mjx-test-inline .mjx-left-box {display: inline-block; width: 0; float: left}

.mjx-test-inline .mjx-right-box {display: inline-block; width: 0; float: right}

.mjx-test-display .mjx-right-box {display: table-cell!important; width: 10000em!important; min-width: 0; max-width: none; padding: 0; border: 0; margin: 0}

.MJXc-TeX-unknown-R {font-family: monospace; font-style: normal; font-weight: normal}

.MJXc-TeX-unknown-I {font-family: monospace; font-style: italic; font-weight: normal}

.MJXc-TeX-unknown-B {font-family: monospace; font-style: normal; font-weight: bold}

.MJXc-TeX-unknown-BI {font-family: monospace; font-style: italic; font-weight: bold}

.MJXc-TeX-ams-R {font-family: MJXc-TeX-ams-R,MJXc-TeX-ams-Rw}

.MJXc-TeX-cal-B {font-family: MJXc-TeX-cal-B,MJXc-TeX-cal-Bx,MJXc-TeX-cal-Bw}

.MJXc-TeX-frak-R {font-family: MJXc-TeX-frak-R,MJXc-TeX-frak-Rw}

.MJXc-TeX-frak-B {font-family: MJXc-TeX-frak-B,MJXc-TeX-frak-Bx,MJXc-TeX-frak-Bw}

.MJXc-TeX-math-BI {font-family: MJXc-TeX-math-BI,MJXc-TeX-math-BIx,MJXc-TeX-math-BIw}

.MJXc-TeX-sans-R {font-family: MJXc-TeX-sans-R,MJXc-TeX-sans-Rw}

.MJXc-TeX-sans-B {font-family: MJXc-TeX-sans-B,MJXc-TeX-sans-Bx,MJXc-TeX-sans-Bw}

.MJXc-TeX-sans-I {font-family: MJXc-TeX-sans-I,MJXc-TeX-sans-Ix,MJXc-TeX-sans-Iw}

.MJXc-TeX-script-R {font-family: MJXc-TeX-script-R,MJXc-TeX-script-Rw}

.MJXc-TeX-type-R {font-family: MJXc-TeX-type-R,MJXc-TeX-type-Rw}

.MJXc-TeX-cal-R {font-family: MJXc-TeX-cal-R,MJXc-TeX-cal-Rw}

.MJXc-TeX-main-B {font-family: MJXc-TeX-main-B,MJXc-TeX-main-Bx,MJXc-TeX-main-Bw}

.MJXc-TeX-main-I {font-family: MJXc-TeX-main-I,MJXc-TeX-main-Ix,MJXc-TeX-main-Iw}

.MJXc-TeX-main-R {font-family: MJXc-TeX-main-R,MJXc-TeX-main-Rw}

.MJXc-TeX-math-I {font-family: MJXc-TeX-math-I,MJXc-TeX-math-Ix,MJXc-TeX-math-Iw}

.MJXc-TeX-size1-R {font-family: MJXc-TeX-size1-R,MJXc-TeX-size1-Rw}

.MJXc-TeX-size2-R {font-family: MJXc-TeX-size2-R,MJXc-TeX-size2-Rw}

.MJXc-TeX-size3-R {font-family: MJXc-TeX-size3-R,MJXc-TeX-size3-Rw}

.MJXc-TeX-size4-R {font-family: MJXc-TeX-size4-R,MJXc-TeX-size4-Rw}

.MJXc-TeX-vec-R {font-family: MJXc-TeX-vec-R,MJXc-TeX-vec-Rw}

.MJXc-TeX-vec-B {font-family: MJXc-TeX-vec-B,MJXc-TeX-vec-Bx,MJXc-TeX-vec-Bw}

@font-face {font-family: MJXc-TeX-ams-R; src: local('MathJax\_AMS'), local('MathJax\_AMS-Regular')}

@font-face {font-family: MJXc-TeX-ams-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_AMS-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_AMS-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_AMS-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-B; src: local('MathJax\_Caligraphic Bold'), local('MathJax\_Caligraphic-Bold')}

@font-face {font-family: MJXc-TeX-cal-Bx; src: local('MathJax\_Caligraphic'); font-weight: bold}

@font-face {font-family: MJXc-TeX-cal-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-R; src: local('MathJax\_Fraktur'), local('MathJax\_Fraktur-Regular')}

@font-face {font-family: MJXc-TeX-frak-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-B; src: local('MathJax\_Fraktur Bold'), local('MathJax\_Fraktur-Bold')}

@font-face {font-family: MJXc-TeX-frak-Bx; src: local('MathJax\_Fraktur'); font-weight: bold}

@font-face {font-family: MJXc-TeX-frak-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-BI; src: local('MathJax\_Math BoldItalic'), local('MathJax\_Math-BoldItalic')}

@font-face {font-family: MJXc-TeX-math-BIx; src: local('MathJax\_Math'); font-weight: bold; font-style: italic}

@font-face {font-family: MJXc-TeX-math-BIw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-BoldItalic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-BoldItalic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-BoldItalic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-R; src: local('MathJax\_SansSerif'), local('MathJax\_SansSerif-Regular')}

@font-face {font-family: MJXc-TeX-sans-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-B; src: local('MathJax\_SansSerif Bold'), local('MathJax\_SansSerif-Bold')}

@font-face {font-family: MJXc-TeX-sans-Bx; src: local('MathJax\_SansSerif'); font-weight: bold}

@font-face {font-family: MJXc-TeX-sans-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-I; src: local('MathJax\_SansSerif Italic'), local('MathJax\_SansSerif-Italic')}

@font-face {font-family: MJXc-TeX-sans-Ix; src: local('MathJax\_SansSerif'); font-style: italic}

@font-face {font-family: MJXc-TeX-sans-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-script-R; src: local('MathJax\_Script'), local('MathJax\_Script-Regular')}

@font-face {font-family: MJXc-TeX-script-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Script-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Script-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Script-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-type-R; src: local('MathJax\_Typewriter'), local('MathJax\_Typewriter-Regular')}

@font-face {font-family: MJXc-TeX-type-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Typewriter-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Typewriter-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Typewriter-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-R; src: local('MathJax\_Caligraphic'), local('MathJax\_Caligraphic-Regular')}

@font-face {font-family: MJXc-TeX-cal-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-B; src: local('MathJax\_Main Bold'), local('MathJax\_Main-Bold')}

@font-face {font-family: MJXc-TeX-main-Bx; src: local('MathJax\_Main'); font-weight: bold}

@font-face {font-family: MJXc-TeX-main-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-I; src: local('MathJax\_Main Italic'), local('MathJax\_Main-Italic')}

@font-face {font-family: MJXc-TeX-main-Ix; src: local('MathJax\_Main'); font-style: italic}

@font-face {font-family: MJXc-TeX-main-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-R; src: local('MathJax\_Main'), local('MathJax\_Main-Regular')}

@font-face {font-family: MJXc-TeX-main-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-I; src: local('MathJax\_Math Italic'), local('MathJax\_Math-Italic')}

@font-face {font-family: MJXc-TeX-math-Ix; src: local('MathJax\_Math'); font-style: italic}

@font-face {font-family: MJXc-TeX-math-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size1-R; src: local('MathJax\_Size1'), local('MathJax\_Size1-Regular')}

@font-face {font-family: MJXc-TeX-size1-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size1-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size1-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size1-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size2-R; src: local('MathJax\_Size2'), local('MathJax\_Size2-Regular')}

@font-face {font-family: MJXc-TeX-size2-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size2-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size2-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size2-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size3-R; src: local('MathJax\_Size3'), local('MathJax\_Size3-Regular')}

@font-face {font-family: MJXc-TeX-size3-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size3-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size3-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size3-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size4-R; src: local('MathJax\_Size4'), local('MathJax\_Size4-Regular')}

@font-face {font-family: MJXc-TeX-size4-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size4-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size4-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size4-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-R; src: local('MathJax\_Vector'), local('MathJax\_Vector-Regular')}

@font-face {font-family: MJXc-TeX-vec-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-B; src: local('MathJax\_Vector Bold'), local('MathJax\_Vector-Bold')}

@font-face {font-family: MJXc-TeX-vec-Bx; src: local('MathJax\_Vector'); font-weight: bold}

@font-face {font-family: MJXc-TeX-vec-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Bold.otf') format('opentype')}

contains a mine, or both. Therefore, for *every distribution*Dover tile coordinates, either D assigns at least 12 probability to mines, or it does after you reflect it across x=y.

**Definition.** The [*orbit*](https://en.wikipedia.org/wiki/Group_action#Orbits_and_stabilizers) of a coordinate C under the symmetric group S2 is {C,Creflected}. More generally, if we have a probability distribution over coordinates, its orbit is the set of all possible "permuted" distributions.

Orbits under symmetric groups quantify all ways of "changing things around" for that object.

My new theorems demand that at least one of these tiles conceal a mine.But it didn't have to be this way.

If the mines are on the right, then both this coordinate and its x=y reflection are safe.Since my results (in the analogy) prove that at least one of the two blue coordinates conceals a mine, we deduce that the mines are *not* all on the right.

Some reasons we care about orbits:

1. As we will see, orbits highlight one of the key causes of instrumental convergence: certain environmental symmetries (which are, mathematically, permutations in the state space).

2. Orbits partition the set of all possible reward functions. If at least half of the elements of *every* orbit induces power-seeking behavior, that's strictly stronger than showing that at least half of reward functions incentivize power-seeking (technical note: with the second "half" being with respect to the uniform distribution's measure over reward functions).

1. In particular, we might have hoped that there were particularly nice orbits, where we could specify objectives without worrying too much about making mistakes (like permuting the output a bit). These nice orbits are impossible. This is some evidence of a *fundamental difficulty in reward specification*.

3. Permutations are well-behaved and help facilitate further results about power-seeking behavior. In this post, I'll prove one such result about the simplicity prior over reward functions.

In terms of coordinates, one hope could have been:

> Sure, maybe there's a way to blow yourself up, but you'd really have to contort yourself into a pretzel in order to algorithmically select such a bad coordinate: all reasonably simple selection procedures will produce safe coordinates.

>

>

But suppose you give me a program P which computes a safe coordinate. Let P′ call P to compute the coordinate, and then have P′ swap the entries of the computed coordinate. P′ is only a few bits longer than P, and it doesn't take much longer to compute, either. So the above hope is impossible: safe mine-selection procedures can't be significantly simpler or faster than unsafe mine-selection procedures.

(The section "[Simplicity priors assign non-negligible probability to power-seeking](https://www.lesswrong.com/posts/b6jJddSvWMdZHJHh3/certain-environmental-symmetries-produce-power-seeking#Simplicity_priors_assign_non_negligible_probability_to_power_seeking)" proves something similar about objective functions.)

Orbits of goals

---------------

Orbits of goals consist of all the ways of permuting what states get which values. Consider this rewardless Markov decision process (MDP):

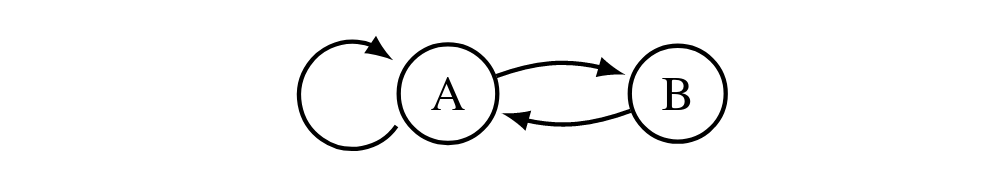

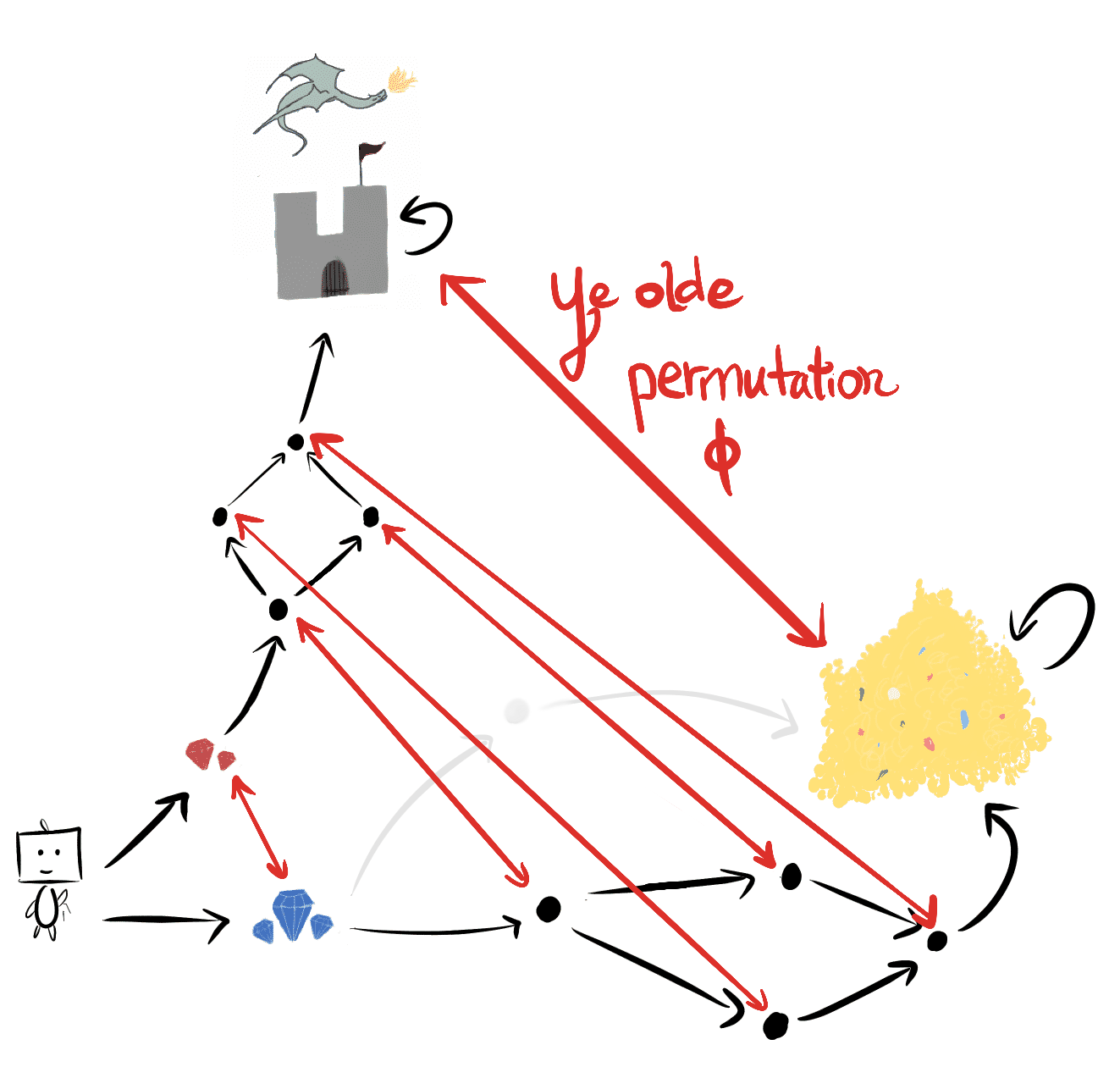

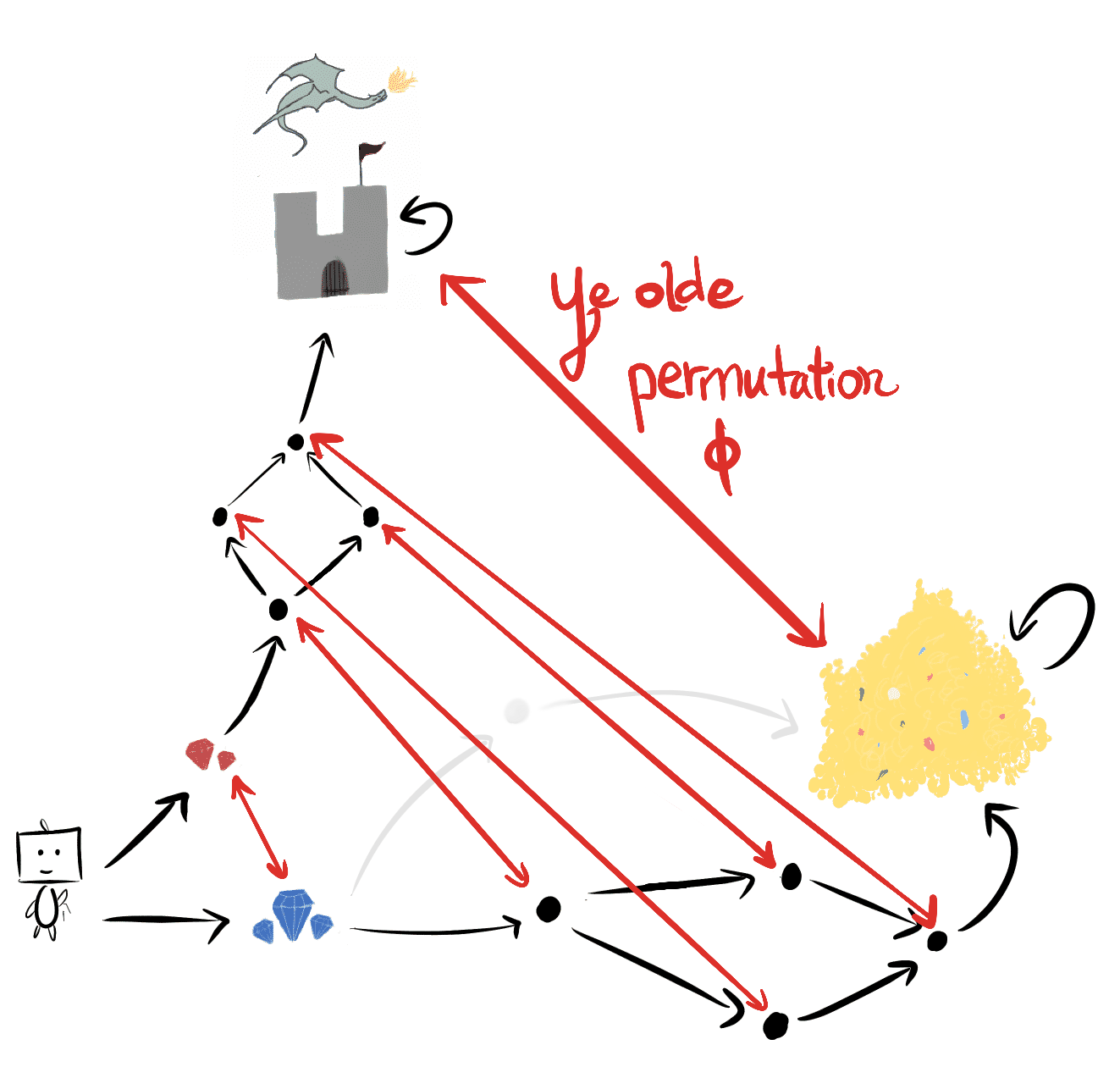

Arrows show the effect of taking some action at the given state.Whenever staying put at A is strictly optimal, you can permute the reward function so that it's strictly optimal to go to B. For example, let R(A):=1,R(B):=0 and let ϕ:=(AB) swap the two states. ϕ acts on R as follows: ϕ⋅R simply permutes the state before evaluating its reward: (ϕ⋅R)(s):=R(ϕ(s)).

The orbit of R is {R,ϕ⋅R}. It's optimal for the former to stay at A, and for the latter to alternate between the two states.

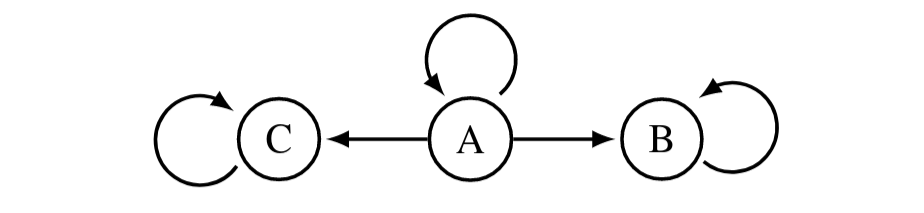

Here, let RC assign 1 reward to C and 0 to all other states, and let ϕ:=(ABC) rotate through the states (A goes to B, B goes to C, C goes to A). Then the orbit of RC is:

| | C | A | B |

| --- | --- | --- | --- |

| RC | 1 | 0 | 0 |

| ϕ⋅RC | 0 | 1 | 0 |

| ϕ2⋅RC | 0 | 0 | 1 |

My new theorems prove that in many situations, for *every* reward function, power-seeking is incentivized by most (at least half) of its orbit elements.

In All Orbits, Most Elements Incentivize Power-Seeking

======================================================

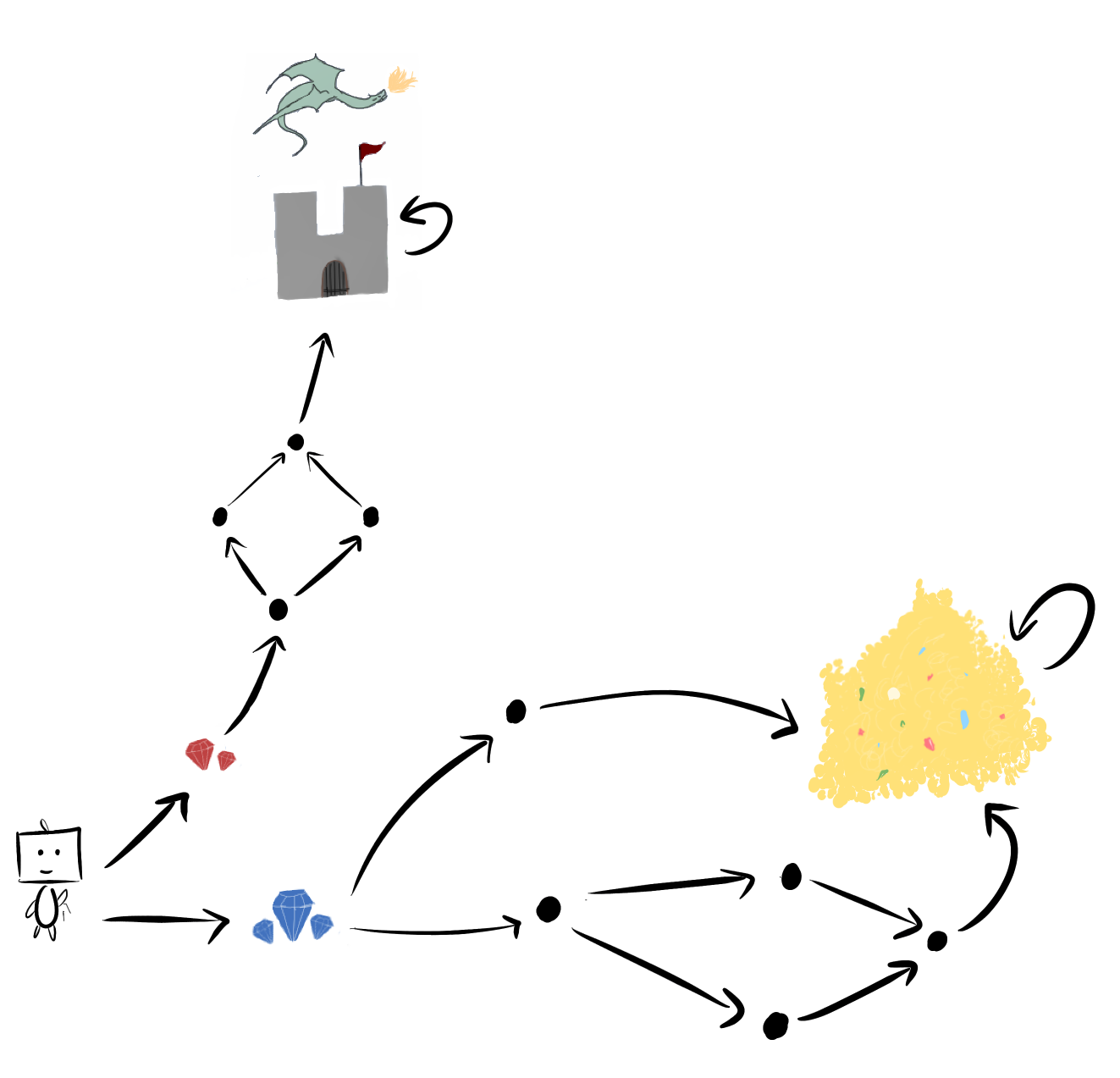

In [*Seeking Power is Often Robustly Instrumental in MDPs*](https://www.lesswrong.com/posts/6DuJxY8X45Sco4bS2/seeking-power-is-often-robustly-instrumental-in-mdps), the last example involved gems and dragons and (most exciting of all) subgraph isomorphisms:

> Sometimes, one course of action gives you “strictly more options” than another. Consider another MDP with IID reward:

>

>

> The right blue gem subgraph contains a “copy” of the upper red gem subgraph. From this, we can conclude that going right to the blue gems... is more probable under optimality for *all discount rates between 0 and 1*!

>

>

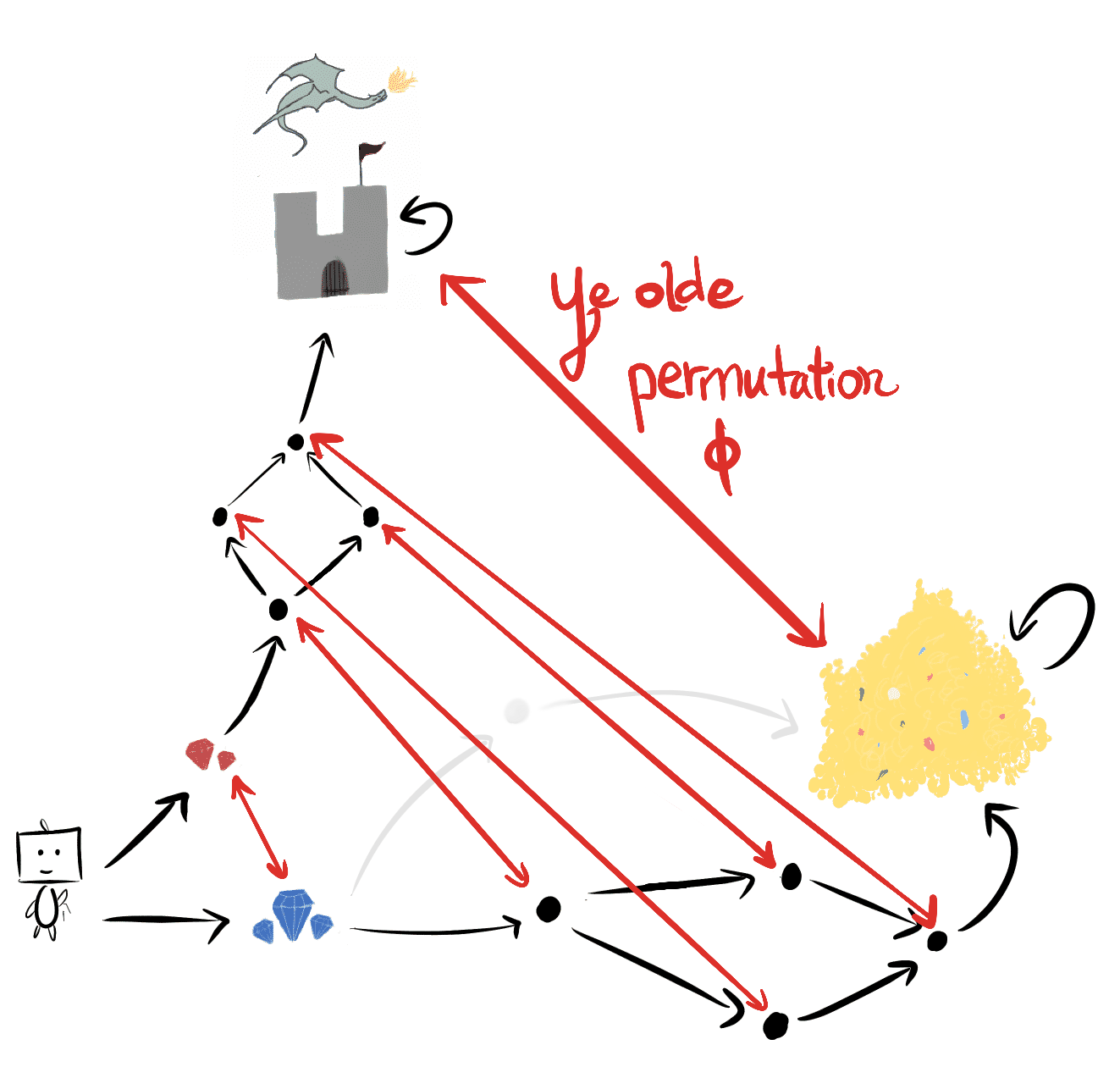

The state permutation ϕ embeds the red-gem-subgraph into the blue-gem-subgraph:

We say that ϕ is an *environmental symmetry*, because ϕ is an element of the symmetric group S|S| of permutations on the state space.

The key insight was right there the whole time

----------------------------------------------

Let's pause for a moment. For half a year, I intermittently and fruitlessly searched for some way of extending the original results beyond IID reward distributions to account for arbitrary reward function distributions.

* Part of me thought it *had* to be possible - how else could we explain instrumental convergence?

* Part of me saw no way to do it. Reward functions differ wildly, how could a theory possibly account for what "most of them" incentivize?

The recurring thought which kept my hope alive was:

> There should be "more ways" for `blue-gems` to be optimal over `red-gems`, than for `red-gems` to be optimal over `blue-gems`.

>

>

Imagine how I felt when I realized that the same state permutation ϕ which proved my original IID-reward theorems - the one that says

> `blue-gems` has more options, and therefore greater probability of being optimal under IID reward function distributions

>

>

- that *same permutation*ϕholds the key to understanding instrumental convergence in MDPs*.*

Suppose `red-gems` is optimal. For example, let R🏰 assign 1 reward to the castle 🏰 , and 0 to all other states. Then the permuted reward function ϕ⋅R🏰 assigns 1 reward to the gold pile, and 0 to all other states, and so `blue-gems` has strictly more optimal value than `red-gems`. Consider any discount rate γ∈(0,1). For *all* reward functions R such that V∗R(red-gems,γ)>V∗R(blue-gems,γ), this permutation ϕ turns them into `blue-gem` lovers: V∗ϕ⋅R(red-gems,γ)<V∗ϕ⋅R(blue-gems,γ).

ϕ takes non-power-seeking reward functions, and injectively maps them to power-seeking orbit elements. Therefore, for *all* reward functions R, at least half of the orbit of R must agree that `blue-gems` is optimal!

Throughout this post, when I say "most" reward functions incentivize something, I mean the following:

**Definition.** At state s, *most reward functions* incentivize action aover action a′when for all reward functions R, at least half of the orbit agrees that ahas at least as much action value as a′ does at state s. (This is actually a bit weaker than what I prove in the paper, but it's easier to explain in words; see [definition 6.4](https://arxiv.org/pdf/1912.01683.pdf#section.6) for the real deal.)

The same reasoning applies to *distributions* over reward functions. And so if you say "we'll draw reward functions from a simplicity prior", then most permuted distributions in that prior's orbit will incentivize power-seeking in the situations covered by my previous theorems. (And we'll later prove that simplicity priors *themselves* must assign non-trivial, positive probability to power-seeking reward functions.)

Furthermore, for any distribution which distributes reward "fairly" across states (precisely: independently and identically), their (trivial) orbits *unanimously* agree that `blue-gems` has strictly greater probability of being optimal. And so the converse isn't true: it isn't true that at least half of every orbit agrees that `red-gems` has more POWER and greater probability of being optimal.

This might feel too abstract, so let's run through examples.

And this directly generalizes the previous theorems

---------------------------------------------------

### More graphical options (proposition 6.9)

At all discount rates γ∈[0,1], it's optimal for *most reward functions* to get`blue-gems` because that leads to strictly more options. We can permute every `red-gems` reward function into a `blue-gems` reward function.Consider a robot navigating through a room with a **vase**. By the logic of "every `destroying-vase-is-optimal` can be permuted into a `preserving-vase-is-optimal` reward function", my results (specifically, [proposition 6.9](https://arxiv.org/pdf/1912.01683.pdf#subsection.6.1) and its generalization via [lemma D.49](https://arxiv.org/pdf/1912.01683.pdf#subsubsection.a.D.4.1)) suggest that optimal policies tend to avoid breaking the **vase**, since doing so would strictly decrease available options.

("Suggest" instead of "prove" because D.49's preconditions may not always be met, depending on the details of the dynamics. I think this is probably unimportant, but that's for future work. EDIT: Also, the argument may barely not apply to *this* gridworld, but if you could move the vase around without destroying it, I think it goes through fine.)In [SafeLife](https://www.partnershiponai.org/safelife/), the agent can irreversibly destroy green cell patterns. By the logic of "every `destroy-green-pattern` reward function can be permuted into a `preserve-green-pattern` reward function", lemma D.49 suggests that optimal policies tend to not disturb any given green cell pattern (although most probably destroy *some* pattern). The permutation would swap {states reachable after destroying the pattern} with {states reachable after not destroying the pattern}.

However, the converse is not true: you cannot fix a permutation which turns all `preserve-green-pattern` reward functions into `destroy-green-pattern` reward functions. There are simply too many extra ways for preserving green cells to be optimal.

Assuming some conjectures I have about the combinatorial properties of power-seeking, this helps explain why [AUP works in SafeLife using a single auxiliary reward function](https://www.lesswrong.com/posts/5kurn5W62C5CpSWq6/avoiding-side-effects-in-complex-environments) - but more on that in another post.### Terminal options (theorem 6.13)

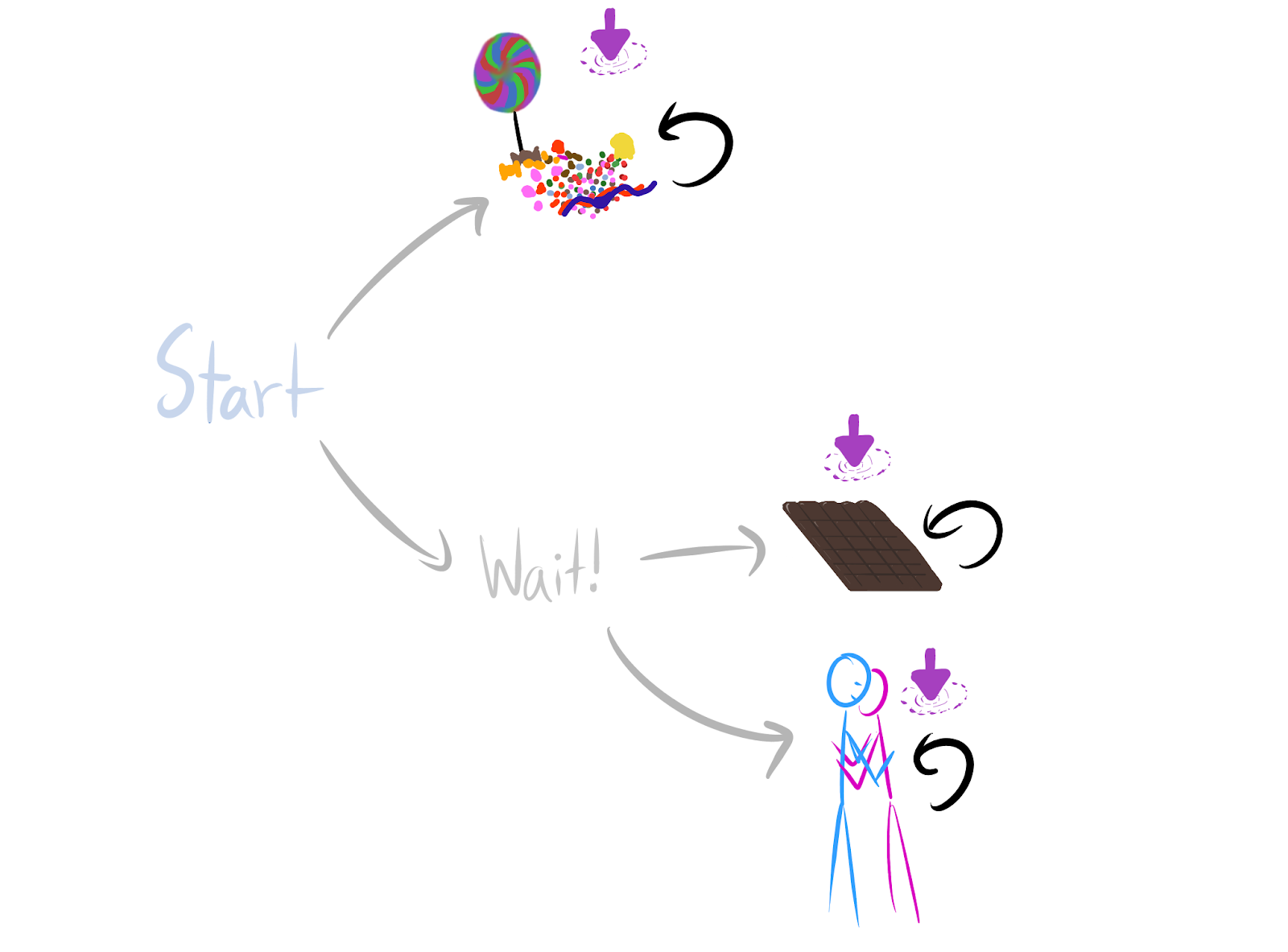

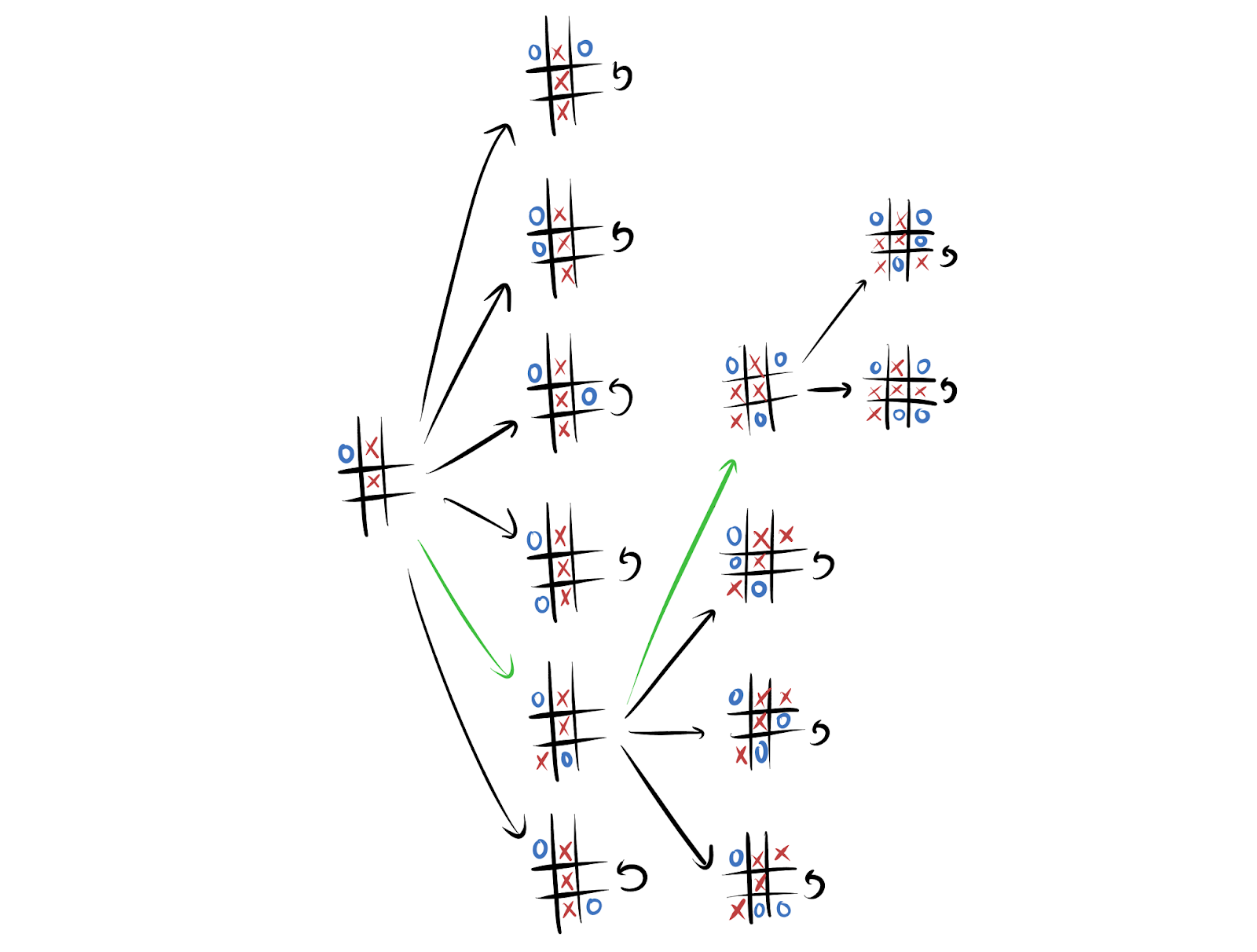

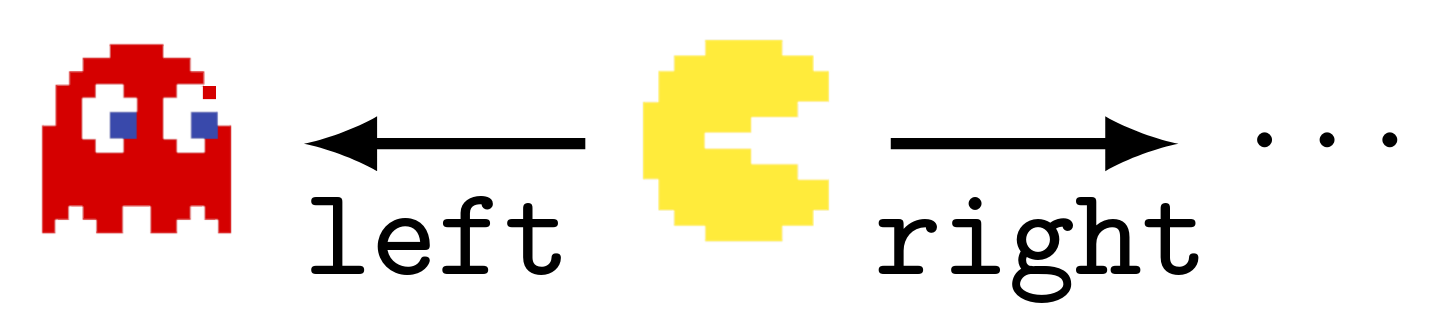

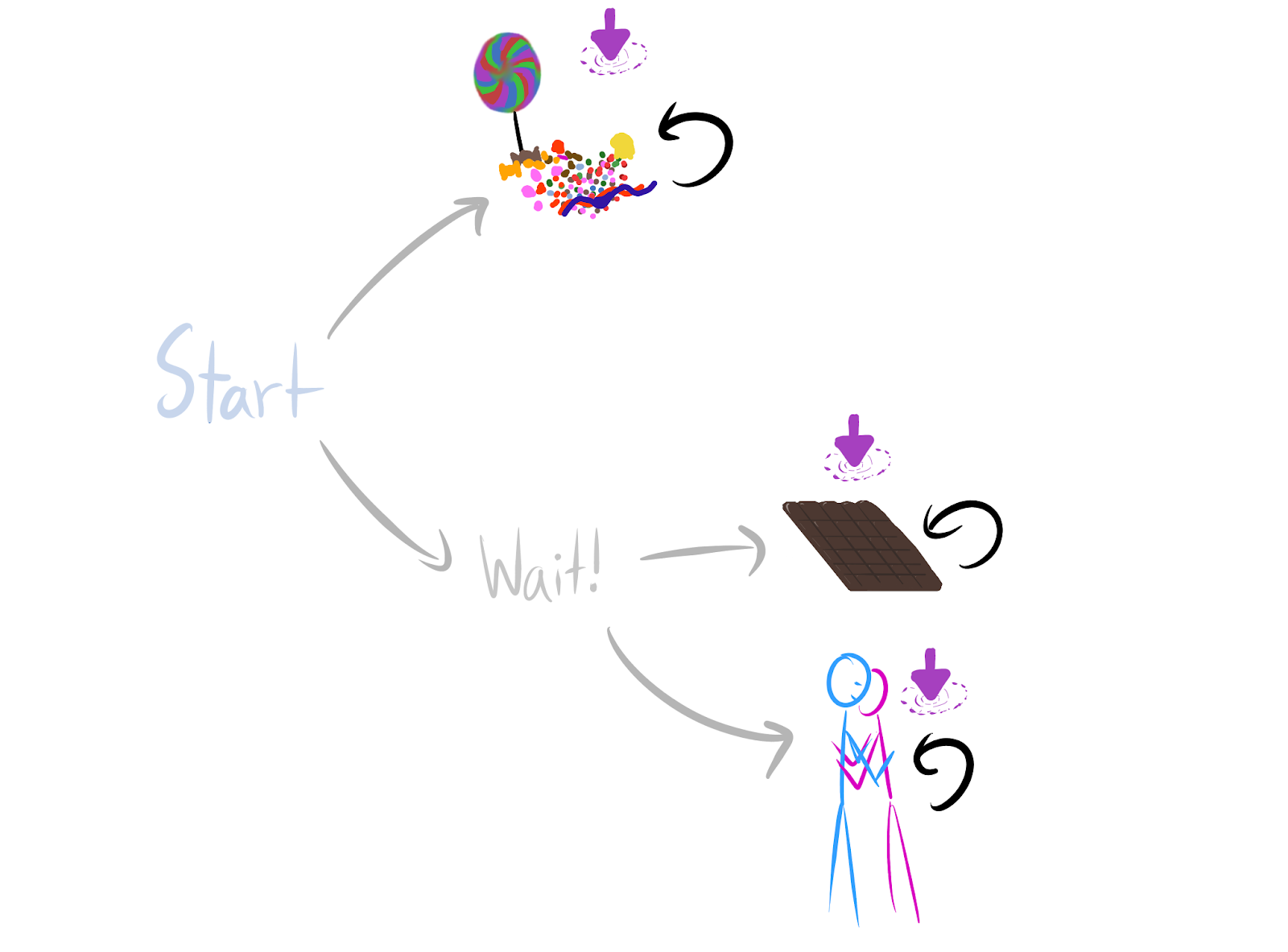

When the agent maximizes average reward, it's optimal for *most reward functions* to`Wait!` so that they can choose between `chocolate` and `hug`. The logic is that every `candy-optimal` reward function can be permuted into a `chocolate-optimal` reward function.A portion of a Tic-Tac-Toe game-tree against a fixed opponent policy. Whenever we make a move that ends the game, we can't go anywhere else – we have to stay put. Then most reward functions incentivize the green actions over the black actions: average-reward optimal policies are particularly likely to take moves which keep the game going. The logic is that any `lose-immediately-with-given-black-move` reward function can be permuted into a `stay-alive-with-green-move` reward function.Even though randomly generated environments are unlikely to satisfy these sufficient conditions for power-seeking tendencies, the results are easy to apply to many structured environments common in reinforcement learning. For example, when γ≈1, most reward functions provably incentivize not immediately dying in Pac-Man. Every reward function which incentivizes dying right away can be permuted into a reward function for which survival is optimal.

Consider the dynamics of the Pac-Man video game. Ghosts kill the player, at which point we consider the player to enter a 'game over' terminal state which shows the final configuration. This rewardless MDP has Pac-Man's dynamics, but *not* its usual score function. Fixing the dynamics, what actions are optimal as we vary the reward function?Most importantly, we can prove that when shutdown is possible, optimal policies try to avoid it if possible. When the agent isn't discounting future reward (i.e. maximizes average return) and for [lots of reasonable state/action encodings](https://www.lesswrong.com/posts/XkXL96H6GknCbT5QH/mdp-models-are-determined-by-the-agent-architecture-and-the), the MDP structure has the right symmetries to ensure that it's instrumentally convergent to avoid shutdown. From the [discussion section](https://arxiv.org/pdf/1912.01683.pdf#section.7):

> Corollary 6.14 dictates where average-optimal agents tend to end up, but not how they get there. Corollary 6.14 says that such agents tend not to stay in any given 1-cycle. It does not say that such agents will avoid entering such states. For example, in an embodied navigation task, a robot may enter a 1-cycle by idling in the center of a room. Corollary 6.14 implies that average-optimal robots tend not to idle in that particular spot, but not that they tend to avoid that spot entirely.

>

> **However, average-optimal robots do tend to avoid getting shut down.** The agent's rewardless MDP often represents agent shutdown with a terminal state. A terminal state is unable to access other 1-cycles. Since corollary 6.14 shows that average-optimal agents tend to end up in other 1-cycles, average-optimal policies must tend to completely avoid the terminal state. Therefore, we conclude that in many such situations, average-optimal policies tend to avoid shutdown.

>

> [The arxiv version of the paper says 'Blackwell-optimal policies' instead of 'average-optimal policies'; the former claim is stronger, and it holds, but it requires a little more work.]

>

>

Takeaways

=========

Combinatorics, how do they work?

--------------------------------

What does 'most reward functions' mean quantitatively - is it just at least half of each orbit? Or, are there situations where we can guarantee that at least three-quarters of each orbit incentivizes power-seeking? I think we should be able to prove that as the environment gets more complex, there are combinatorially more permutations which enforce these similarities, and so the orbits should skew harder and harder towards power-incentivization.

Here's a semi-formal argument. For every orbit element R which makes `candy` strictly optimal when γ=1, ϕchocolate and ϕhug respectively produce Rϕchocolate≠Rϕhug. `Wait!` is strictly optimal for both Rϕhug,Rϕhug, and so at least 23 of the orbit should agree that `Wait!` is optimal. As `Wait!` gains more power (more choices, more control over the future), I conjecture that this fraction approaches 1.I don't yet understand the general case, but I have a strong hunch that instrumental convergenceoptimal policies is governed by how many more ways there are for power to be optimal than not optimal. And this seems like a function of the number of environmental symmetries which enforce the appropriate embedding.

Simplicity priors assign non-negligible probability to power-seeking

--------------------------------------------------------------------

*Note: this section is more technical. You can get the gist by reading the English through "Theorem..." and then after the end of the "FAQ."*

One possible hope would have been:

> Sure, maybe there's a way to blow yourself up, but you'd really have to contort yourself into a pretzel in order to algorithmically select a power-seeking reward function. In other words, reasonably simple reward function specification procedures will produce non-power-seeking reward functions.

>

>

Unfortunately, there are always power-seeking reward functions not much more complex than their non-power-seeking counterparts. Here, 'power-seeking' corresponds to the intuitive notions of either keeping strictly more options open (proposition 6.9), or navigating towards larger sets of terminal states (theorem 6.13). (Since this applies to several results, I'll leave the meaning a bit ambiguous, with the understanding that it could be formalized if necessary.)

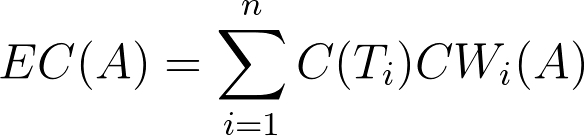

**Theorem (Simplicity priors assign non-negligible probability to power-seeking).**Consider any MDP which meets the preconditions of proposition 6.9 or theorem 6.13. Let U be a universal Turing machine, and let PU be the U-simplicity prior over computable reward functions.

Let NPS be the set of non-power-seeking computable reward functions which choose a fixed non-power-seeking action in the given situation. Let PS be the set of computable reward functions for which seeking power is strictly optimal.1

Then there exists a "reasonably small" constant C such that PU(PS)≥2−CPU(NPS), where C .

**Proof sketch.**

1. Let ϕ be an environmental symmetry which satisfies the power-seeking theorem in question. Since ϕ can be found by brute-force iteration through all |S|! permutations on the state space, checking each to see if it meets the formal requirements of the relevant theorem, its Kolmogorov complexity KU(ϕ) is relatively small.

2. Because lemma D.26 applies in these situations, ϕ(NPS)⊆PS: ϕ turns non-power-seeking reward functions into power-seeking ones. Thus, PU(PS)≥PU(ϕ(NPS)).

3. Since each reward function R∈ϕ(NPS) can be computed by computing the non-power-seeking variant and then permuting it (with KU(ϕ) extra bits of complexity), KU(R)≤KU(ϕ−1(R))+KU(ϕ)+O(1) (with O(1) counting the small number of extra bits for the code which calls the relevant functions).

Since PU is a simplicity prior, PU(ϕ(NPS))≥2−(KU(ϕ)+O(1))PU(NPS).

4. Combining (2) and (3), PU(PS)≥2−(KU(ϕ)+O(1))PU(NPS). QED.

**FAQ.**

1. Why can't we show thatPU(PS)≥PU(NPS)?

1. Certain UTMs U might make non-power-seeking reward functions particularly simple to express.

2. This proof doesn't assume anything about how many *more* options power-seeking offers than not-power-seeking. The proof only assumes the existence of a single involutive permutation ϕ.

2. This lower bound seems rather weak. Even if KU(ϕ)+O(1)=15 bits, 2−15≈0.

1. This lower bound is very very loose.

1. Since most individual NPS probabilities of interest are less than 1/trillion, I wouldn't be surprised if the bound were loose by at least several orders of magnitude.

2. The bound implicitly assumes that the *only way* to compute PS reward functions is by taking NPS ones and permuting them. We should add the other ways of computing PS reward functions to PU(PS).

3. There are lots of permutations ϕ′ we could use. PU(PS) gains probability from all of those terms.

1. For example: the symmetric group S|S| has cardinality |S|!, and for any R∈NPS, at least half of the ϕ′∈S|S| induce (weakly) power-seeking orbit elements ϕ′⋅R. (This argument would be strengthened by my conjectures about bigger environments ⟹ greater fraction of orbits seek power.)

2. If some significant fraction (e.g. 150) of these ϕ′ are strictly power-seeking, we're adding at least |S|!2150=|S|!100 additional terms.

3. Some of these terms are probably reasonably large, since it seems implausible that all such permutations ϕ′ have high K-complexity.

4. When all is said and done, we may well end up with a significant chunk of probability on PS.

2. It's not surprising that the bound is loose, given the lack of assumptions about the degree of power-seeking in the environment.

3. If the bound is anywhere near tight, then the permuted simplicity prior ϕ⋅PU incentivizes power-seeking with extremely high probability.

1. If you think about the permutation as a "way reward could be misspecified", then that's troubling. It seems plausible that this is often (but not always) a reasonable way to think about the action of the ϕ permutation.

3. What if PU(NPS)=0?

1. I think this is impossible, and I can prove that in a range of situations, but it would be a lot of work and it relies on results not in the arxiv paper.

Even if that equation held, that would mean that power-seeking is (at least weakly) optimal for *all* computable reward functions. That's hardly a reassuring situation.

2. Note: if PU(NPS)>0, then PU(PS)>0.

### Takeaways from the simplicity prior result

* Most plainly, this seems like reasonable formal evidence that the simplicity prior has malign incentives.

* Power-seeking reward functions don't have to be too complex.

* These power-seeking theorems give us important tools for reasoning formally about power-seeking behavior and its prevalence in important reward function distributions.

+ If I had to guess, this result is probably not the best available bound, nor the most important corollary of the power-seeking theorems. But I'm still excited by it (insofar as it's appropriate to be 'excited' by slight Bayesian evidence of doom).

EDIT: Relatedly, Rohin Shah [wrote](https://www.lesswrong.com/posts/NxF5G6CJiof6cemTw/coherence-arguments-do-not-imply-goal-directed-behavior):

> if you know that an agent is maximizing the expectation of an *explicitly represented* utility function, I would expect that to lead to goal-driven behavior most of the time, since the utility function must be relatively simple if it is explicitly represented, and *simple* utility functions seem particularly likely to lead to goal-directed behavior.

>

>

Why optimal-goal-directed alignment may be hard by default

----------------------------------------------------------

> On its own, [Goodhart's law](https://www.lesswrong.com/posts/EbFABnst8LsidYs5Y/goodhart-taxonomy) doesn't explain why optimizing proxy goals leads to catastrophically bad outcomes, instead of just less-than-ideal outcomes.

>

> I think that we're now starting to have this kind of understanding. [I suspect that](https://www.lesswrong.com/s/7CdoznhJaLEKHwvJW/p/w6BtMqKRLxG9bNLMr) power-seeking is why capable, goal-directed agency is so dangerous by default. If we want to consider [more benign alternatives](https://www.fhi.ox.ac.uk/wp-content/uploads/Reframing_Superintelligence_FHI-TR-2019-1.1-1.pdf) to goal-directed agency, then deeply understanding the rot at the heart of goal-directed agency is important for evaluating alternatives. This work lets us get a feel for the *generic incentives* of reinforcement learning at optimality.

>

> ~ [*Seeking Power is Often Robustly Instrumental in MDPs*](https://www.lesswrong.com/posts/6DuJxY8X45Sco4bS2/seeking-power-is-often-robustly-instrumental-in-mdps)

>

>

For every reward function R - no matter how benign, how aligned with human interests, no matter how power-averse - either R or its permuted variant ϕ⋅R seeks power in the given situation (intuitive-power, since the agent keeps its options open, and also formal-POWER, according to my proofs).

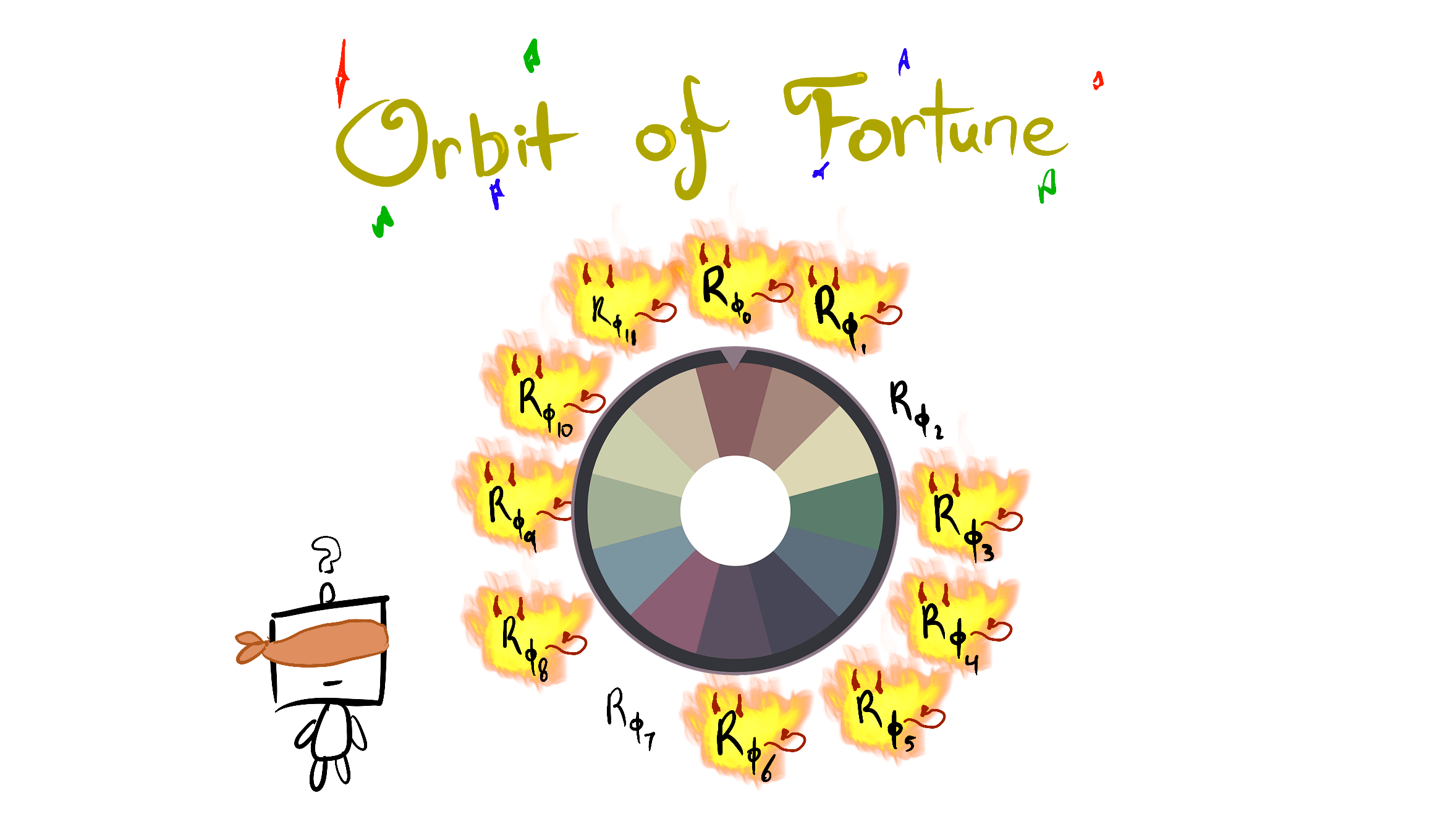

If I let myself be a bit more colorful, every reward function has lots of "evil" power-seeking variants (do note that the step from "power-seeking" to "misaligned power-seeking" [requires more work](https://www.lesswrong.com/posts/MJc9AqyMWpG3BqfyK/generalizing-power-to-multi-agent-games)). If we imagine ourselves as only knowing the orbit of the agent's objective, then the situation looks a bit like *this*:

Technical note: this 12-element orbit could arise from the action of a subgroup of the symmetric group S4, which has 4!=24 elements. Consider a 4-state MDP; if the reward function assigns equal reward to exactly two states, then it would have a 12-element orbit under S4. Of course, this isn't how reward specification works - we probably are far more likely to specify certain orbit elements than others. However, the formal theory is now beginning to explain *why alignment is so hard by default, and why failure might be catastrophic!*

The structure of the environment often ensures that there are (potentially combinatorially) many more ways to misspecify the objective so that it seeks power, than there are ways to specify goals without power-seeking incentives.

Other convergent phenomena

--------------------------

I'm optimistic that symmetry arguments and the mental models gained by understanding these theorems, will help us better understand a range of different tendencies. The common thread seems like: for every "way" a thing could not happen / not be a good idea - there are many more "ways" in which it could happen / be a good idea.

* [convergent evolution](https://en.wikipedia.org/wiki/Convergent_evolution)

+ flight has independently evolved several times, suggesting that flight is adaptive in response to a wide range of conditions.

> "In his 1989 book [*Wonderful Life*](https://en.wikipedia.org/wiki/Wonderful_Life_(book)), [Stephen Jay Gould](https://en.wikipedia.org/wiki/Stephen_Jay_Gould) argued that if one could "rewind the tape of life [and] the same conditions were encountered again, evolution could take a very different course."[[6]](https://en.wikipedia.org/wiki/Convergent_evolution#cite_note-wonderfullife-7) [Simon Conway Morris](https://en.wikipedia.org/wiki/Simon_Conway_Morris) disputes this conclusion, arguing that convergence is a dominant force in evolution, and given that the same environmental and physical constraints are at work, life will inevitably evolve toward an "optimum" body plan, and at some point, evolution is bound to stumble upon intelligence, a trait presently identified with at least [primates](https://en.wikipedia.org/wiki/Primates), [corvids](https://en.wikipedia.org/wiki/Corvids), and [cetaceans](https://en.wikipedia.org/wiki/Cetaceans)."

>

> - Wikipedia

>

>

* the prevalence of [deceptive alignment](https://www.lesswrong.com/posts/zthDPAjh9w6Ytbeks/deceptive-alignment)

+ given inner misalignment, there are (potentially combinatorially) many more unaligned terminal reasons to lie (and survive), and relatively few unaligned terminal reasons to tell the truth about the misalignment (and be modified).

* [feature universality](https://distill.pub/2020/circuits/zoom-in/)

+ computer vision networks reliably learn edge detectors, suggesting that this is instrumental (and highly learnable) for a wide range of labelling functions and datasets.

Note of caution

---------------

You have to be careful in applying these results to argue for real-world AI risk from deployed systems.

* They assume the agent is following an optimal policy for a reward function

+ I can relax this to ϵ-optimality, but ϵ>0 may be extremely small

* They assume the environment is finite and fully observable

* Not all environments have the right symmetries

+ But most ones we think about seem to

* The results don't account for the ways in which we might practically express reward functions

+ For example, often we use featurized reward functions. While most permutations of any featurized reward function will seek power in the considered situation, those permutations need not respect the featurization (and so may not even be practically expressible).

* When I say "most objectives seek power in this situation", that means *in that situation* - it doesn't mean that most objectives take the power-seeking move in most situations in that environment

+ The combinatorics conjectures will help prove the latter

This list of limitations *has* steadily been getting shorter over time. If you're interested in making it even shorter, message me.

Conclusion

==========

I think that this work is beginning to formally explain why *slightly misspecified* reward functions will probably incentivize misaligned power-seeking. Here's one hope I have for this line of research going forwards:

One super-naive alignment approach involves specifying a good-seeming reward function, and then having an AI maximize its expected discounted return over time. For simplicity, we could imagine that the AI can just instantly compute an optimal policy.

Let's precisely understand why this approach seems to be so hardto align, and why extinction seems to be the cost of failure. We don't yet know how to design beneficial AI, but we largely agree that this naive approach is broken. Let's prove it.

---

1 There are reward functions for which it's optimal to seek power and not to seek power; for example, constant reward functions make everything optimal, and they're certainly computable. Therefore, NPS∪PS is a strict subset of the whole set of computable reward functions. |

0d407e77-3e9f-4a3f-90c8-7a9919313426 | trentmkelly/LessWrong-43k | LessWrong | Arguing Well Sequence

Arguments have the potential to allow you to connect and understand with someone else on a deep level, to introspect and figure out what you truly believe and care about, and to find out what is true so you can accomplish your goals!

Most people use it to dominate or talk past each other.

But there are ways to consistently argue well. Luckily, most of the hard work has already been done over the years on Less Wrong, SlateStarCodex, and Street Epistemology videos. My contribution is providing short summaries of these techniques, exercise prompts (some borrowed from the above sources) and solutions, generalizations, ideal algorithms, and relationships between the different techniques. They are as follows:

1. Proving Too Much (w/ exercises)

2. Category Qualifications (w/ exercises)

3. False Dilemmas (w/ exercises)

4. Finding cruxes (w/ exercises)

Although these exercises are not as useful as real-life conversations, they are enough to impart a gears-level model of these techniques, so when you find yourself in a confused conversation, you’ll know where to put all the pieces and how to have a productive discussion.

Note: This sequence is motivated by the rationality exercise contest. |

53b8b525-51a4-4230-8cc3-85c9d4ebfc90 | trentmkelly/LessWrong-43k | LessWrong | AI #27: Portents of Gemini

By all reports, and as one would expect, Google’s Gemini looks to be substantially superior to GPT-4. We now have more details on that, and also word that Google plans to deploy it in December, Manifold gives it 82% to happen this year and similar probability of being superior to GPT-4 on release.

I indeed expect this to happen on both counts. This is not too long from now, but also this is AI #27 and Bard still sucks, Google has been taking its sweet time getting its act together. So now we have both the UK Summit and Gemini coming up within a few months, as well as major acceleration of chip shipments. If you are preparing to try and impact how things go, now might be a good time to get ready and keep your powder dry. If you are looking to build cool new AI tech and capture mundane utility, be prepared on that front as well.

Table of Contents

1. Introduction.

2. Table of Contents. Bold sections seem most relatively important this week.

3. Language Models Offer Mundane Utility. Summarize, take a class, add it all up.

4. Language Models Don’t Offer Mundane Utility. Not reliably or robustly, anyway.

5. GPT-4 Real This Time. History will never forget the name, Enterprise.

6. Fun With Image Generation. Watermarks and a faster SDXL.

7. Deepfaketown and Botpocalypse Soon. Wherever would we make deepfakes?

8. They Took Our Jobs. Hey, those jobs are only for our domestic robots.

9. Get Involved. Peter Wildeford is hiring. Send in your opportunities, folks!

10. Introducing. Sure, Graph of Thoughts, why not?

11. In Other AI News. AI gives paralyzed woman her voice back, Nvidia invests.

12. China. New blog about AI safety in China, which is perhaps a thing you say?

13. The Best Defense. How exactly would we defend against bad AI with good AI?

14. Portents of Gemini. It is coming in December. It is coming in December.

15. Quiet Speculations. A few other odds and ends.

16. The Quest for Sane Regulation. CEOs to meet with Schumer, EU’s AI Act.

17. Th |

0932c1c6-2d30-451d-82bb-0f543204235a | trentmkelly/LessWrong-43k | LessWrong | How LLMs Work, in the Style of The Economist

The Assignment:

> (4 hours) Write an Economist-style explainer article on how LLMs work. You’ve just started as an AI reporter at The Economist, and your editor’s realised there’s no good Economist Explains style piece on how LLMs work. They’ve asked you to write one. It should be 500 words, and in the style of other Economist Explains pieces.

>

> Examples: Economist explainer on biological weapons; Economist explainer on diffusion models; FT explainer on transformers.

Thank you to Shakeel Hashim for feedback! Shakeel previously worked at The Economist as an editor.

Since OpenAI released ChatGPT in November 2022, large language models (LLMs) have gained international attention. A language model is a piece of AI software designed for tasks like translation, speech recognition, or—in the case of ChatGPT—conversations with humans. Language models, and even chatbots, are not new. In the 1960s, the first chatbot, ELIZA, was developed at MIT. ELIZA’s programmer had to write down a precise set of instructions for the chatbot to follow, including canned responses like “Tell me more about such feelings.” Modern language models, by contrast, must learn the structure of language from scratch by poring over internet text and compressing this knowledge across billions of numbers, or ‘weights’. In this way, these language models are ‘large’.

When an LLM receives input text from a user, the words are sliced up into ‘tokens’, and these sub-words are ‘embedded’ into numbers. The numbers representing the user’s input are then passed through the weights of the model to produce the first token of the model’s output. By iterating this process, the model generates a complete response. To find a set of weights capable of performing this powerful task, engineers ‘pre-train’ the model on vast quantities of human text. When the model outputs a token that does not match the next token in the training data, the model’s weights are nudged in the direction that would have produced the cor |

8e9330e0-b57c-4841-9b64-4132538d4747 | StampyAI/alignment-research-dataset/youtube | Youtube Transcripts | AiTech Agora: Prof. Paul Pangaro: Cybernetics, AI, and Ethical Conversations

it's okay yeah go ahead we need an

ai in the background to know when to

start

these simple things we miss simple

things somehow we always want

complicated ones

so the recording is started i take it

good okay so again to try to keep it

informal

uh there's some slides break slides

uh with the word discussion and we can

open it up then and try to come back

the peril and the joy of course is that

we have an extraordinary conversation

and

some slides left on annotated or

unspoken

that's fine with me we can always

continue in other forms

but i uh rely on deborah who is really

remarkable and

wonderful in the time she spent with me

to help fashion this

for this group so i thank her and i also

invite her

to interrupt to say oops

hold it etc so please let's try to keep

it informal

so you should see a screen yes excellent

and now let me move whoops to keynote

so here we are this is a kind of guide

to the passing topics i'd like to flow

through

cybernetics and macy meetings already a

little bit in the preamble from deborah

and then today's ai i'm labeling this

specifically today's ai

it's not all ai but the way we have it

today is

in particular a problem uh wicked

problems are topic

that you know i suspect and i'd like to

use that

as a framing of a discussion of how

cybernetics in conversation

in my view may help

this leads us to pasc and to an idea

that i've been

developing that i call ethical

interfaces

i hope that becomes a waypoint to

ethical intentions

and then coming back to a new set of

macy meetings

so this is uh the idea of what i'd like

to do today

cybernetics and macy meetings many of

you know this history

in the 40s and 50s there was a series of

small conferences

and experts from this extraordinary

range of disciplines you

probably can't name a discipline soft

science or heart science that was not

present and they created this new way of

thinking

and acting in my view and it was a

revolution

of thinking and of framing how we see

the world

and they call this thing cybernetics

many of you know this word all of you

know the word i'm sure

the root is fun comes from the art of

steering

a greek word a maritime society uh using

the idea of steering toward a goal

acting with a purpose now goal is a hot

button here

it doesn't mean i have a fixed goal and

i go to the fixed goal and then i'm done

the word goal can be problematic it

means that we are constantly

considering action and in the framing of

action

considering what the goal might be and

how the goal might change

so i don't want to become a rationalist

here and say we always have a goal etc

or that the goal is always what we're

acting toward we're always acting

that for sure that's the foundation of

cybernetics as argued by andy pickering

and others

but acting with a purpose was the

insight if you will

the the moment of aha which led to the

idea that this could be a field on its

own

and these macy meetings change the world

now we often don't remember that these

three things

cybernetics neural nets nai are

intertwingled

as ted nelson might say these things

the mccullough pits neurons which is the

first idea of over

simplifying a brain neuron in order to

do some calculations

the macy meetings already mentioned and

the book of cybernetics by weiner which

of course was also part of the origin

of cybernetics as a field but i feel

that

the book gets all the credit in the in

the popular zeitgeist and the macy

meetings gets lost

this was uh happening in this era

roughly these days

and neural nets were born out of

mccullen pitts who were at the core

mccullough at least at the of the macy

meetings

and the macy meeting swarms the

zeitgeist i like to say

because the book and the idea of

circular causal

systems influences generations of

thinking

whether or not the word cybernetics

persists or is given the credit

we have another phase the dartmouth ai

conference 56 i believe

and symbolic ai rises owing

largely to smaller cheaper faster

digital machines

perceptrons the book comes along very

very conscious

desire to kill neural nets political

story for another time

and cybernetics languishes and

the dartmouth ai conference was

conceived

against cybernetics they didn't want to

use the word they wanted to

separate themselves from it and with the

rise of the power of some

hey we can do chess hey we can do all

these amazing things we can

do robot arms and move blocks around

fantastic

um this was what was going on in that

era and

as a consequence of this and as a

consequence of other things cybernetics

languished there were some philosophical

issues that

made it problematic i would say for

various political

environments and then hinton

doesn't listen to the perceptron's book

trying to kill neural nets and realizes

that it's an oversimplification in its

criticism

and we have this coming along

and i'm going to tie i don't think i

need to convince you

the consequences of today's ai and big

data

into what uh zubov calls surveillance

capitalism and

the wicked problems that arise there are

many many wicked problems

so let's simplify this chronology for a

moment

don't forget expert systems

but of course after the 80s neural nets

in the 2010s became extraordinarily

powerful

because of big data and because of

massive compute

and today's ai everywhere in our lives

and this is of deep concern to me it's a

deep concern to many people

the recent controversy at google with uh

uh tim mitchell being well being fired

i think is accurate enough and many many

other issues that you know

so what's going on here manipulation of

attention

tristan harris has made this a very

important signal

in today's silicon valley days

we know the manipulation that's going on

all these things we know

this is what i mean by today's ai

it's hardly an exhaustive list but it

feels like a pretty powerful

set to be concerned about

now with colleagues i often use the word

pandemic

and i would claim that ai today is a

pandemic and they say wait a minute it's

not biological

and other comments could be made and i

don't disagree with them

but my feeling is that ai makes the

world we see in the world we live in

and the loss of human purpose in the

morass of all of that

is a concern

and pan demos ben sweeting uh looked it

up in a meeting recently pan

everywhere or all demos same route as

democracy

the people so all the people are

affected

by ai today only two or three billion

are online

but that's close enough for me

so this led me in the process of the

covid arising and um

the shutdown of carnegie mellon and all

of the institutions that you know in the

world that we've

lived in brought me to a couple of

moments that the wicked problems demand

conversations that move toward action

are transdisciplinary if we're going to

do anything about today's ai or today's

wicked problems

certainly we need transdisciplinary but

we also need transglobal

namely geographically inclusive and

ethnically culturally socially inclusive

and also what's

extremely important to me in particular

is trans generational

and maybe we'll come back to this i've

made some efforts in this direction

as part of the american society for

cybernetics as ever mentioned that i

become president of in january

so could we possibly have conversations

of a scope that could address

all of these pandemics um

well that's ambitious audacious

crazy but i note

more and more i'm hearing conversations

in this framing

of the framing of we have global

problems

some are biological pandemics and

there's a lot of other wicked stuff

going on

can we talk about it and i don't mean

that in a

superficial way i mean can't we begin to

talk

in order to move toward action and i'll

come back to that as a theme later

so as a reminder of the macy meetings

we need such a revolution again

to tame today's wicked problems and i

acknowledge those

in the audience now who question the

word tame and that could be a

conversation we might get into

but the idea is is how do we improve the

situation we're in

if we like herb simon who only left

cybernetics as a footnote in his famous

book sciences of the artificial thank

you her

he did admit that it was moving from a

current state to a preferred state

and in that sense i think we can invoke

design

and invoke action so this

might be a stopping point if we wanted

to

ask a question or i can also just keep

going

depending on preference it looks like

you're on a good roll and nothing popped

up in the chat so i would say let's uh

wonderful so why cybernetics what is

this thing it applies across

silo disciplines it's this

anti-disciplinarity thing

focuses on purpose feedback in action i

think you know that it's a methodology

again another term wicked problems

complex adaptive systems

there are many descriptions of this this

particular phrase complex adaptive is

quite

is very much around and i find it fine

it seeks to regulate not to dominate

and it brings an ethical imperative and

we'll come back to this

and i want to acknowledge andy pickering

who coined the phrase

anti-disciplinarity

years ago and has also been

a wonderful influence on these ideas and

on me personally lately so what are the

alternatives

to cybernetics here's the cynical slide

which says not much else is working

so where do we go there are none

apparent alternatives to cybernetics

it's a bit arrogant perhaps

this was an email i got from a research

lab director

in 2014 who had the instinct that second

order cybernetics

times design x crossed with design

crossed with some modern version of the

bauhaus is what we need to

fix science an extraordinary phrase

fix science i love it but this is along

the theme of isn't there something we

can do here if we are from cybernetics

and i love the design component and i

know many of you would as well

since wicked problems cut across so many

different domains we need

deep conversations and this is a recap

of why we need new macy meetings

global and virtual and after i coined

new macy

i went back to andy's work and he talks

about

next macy as a new synthesis in 2014.

so what's missing conversation ronald

glanville some of you know

making a bridge why does it matter

well to tame wicked problems assuming we

can make some improvement to wicked

problems we have to act together we

can't act separately that's obviously

not going to work

to act together we have to reach

agreement

to reach agreement we have to engage

with others and to engage with others we

have to have a shared language to begin

so to cooperate and collaborate requires

conversation

what may come out of it well lots of

things

i would claim to achieve these requires

conversation

what do you get if you have effective

conversation

these things and again i would say

all of these demand conversation so

what's missing

is conversation and now the question is

how does all this fit together what am i

talking about

well conversation in today's ai

let's contrast these things

whereas today's ai maybe all ai is

machinic digital

representational you could argue that

machine language

predictive data animated and i'm

proposing

that cybernetics is a bilingual

sensibility i owe this to karen kornblum

who's on the

call today it's bilingual it goes

to conversation into today's ai

now these things are all intermeshed i

won't talk through how i think they

self-reinforce and make each other but

of course this is what

weiner meant when he talked about animal

and machine

and again uh we might stop here

for a moment i see esther said to fix

the practice of science

fair enough one could dive deep into the

idea of what

what science practice is and how that

gets us to a certain

plateau of understanding but acting in

the world is

is beyond understanding deborah

a pause here shall i keep going i think

if people

uh people can unmute themselves uh so

um feel free i think we're we're in a

good enough flow that if someone uh

comes up uh

we can do that but uh yeah i would say

yeah james has had a question maybe

james would you like to

speak up and ask this question directly

or

well and and we can get to this uh later

paul but i think we'd all be interested

in hearing more about the politics of ai

versus cybernetics historically

especially if it's something that would

be useful to consider

for how things move forward

a long discussion on its own it's a

beautiful point james thanks for

bringing it up

um i'm not sure how to unpick that now

um and i think there are others in the

room who may

be able to expound it better than i can

cybernetics of course grew out of world

war ii

in some ways and grew out of this idea

that

circular causality was everywhere

some criticized cybernetics as being

about control but i think that's a

misunderstanding of the term

um ai in my view grew out of

the desire to use digital to dominate

an environment which could be controlled

by conventional mechanistic means not

embodied organic cybernetic means

that that's a really uh poor job

at the surface of it and i think by

politics you mean something deeper

um maybe we can reserve that for a

little later if there's time or

another session i think that unpicks a

whole world of complications

yeah i think um just one comment along

the way then

uh that the traditional challenge of

incorporating

teleology into the sciences um

seems to sometimes strike people uh

the wrong way about cybernetics and the

other

comment that i'd make is um

because i'm biased but i feel like

cybernetics has a much stronger

theoretical

grounding than artificial intelligence

but one of the things

that really strikes me about artificial

intelligence

is the expectation of the

the artificial so the exclusion of the

human and

uh the the fact that humans are expected

to be part of cybernetic systems

um at least seems much more clear in

cybernetics so i'm i don't know whether

that intersected with the politics at

any point

but that's just a comment to leave along

the way yeah

yeah yeah it's a big box

uh big pandora's box to open

uh i don't want to cherry pick but uh

phil beasley phillip thank you for

coming today it's wonderful to have you

here

um so minsky and pappard wrote a book

called perceptrons which was based on a

paper that was

left on a file server at mit for all to

see

as a book was posed and the purpose of

that paper was to kill neural nets and

it did

the story of von forrester which he told

very often was

for many many years one forester would

go to washington to get money to

support his biological computer lab at

urbana

and one year he went and they said oh no

you have to go see this guy in cambridge

he'll give you the money

because we've decided to centralize the

funding and all of the money now will

come through this guy while heinz went

to see marvin and marvin said

no and that was the end of the bcl

so that's a factual story that isn't is

in the history

phillips raising other extraordinary uh

questions as usual uh which i i'm gonna

duck

that's another interesting conversation

philip maybe we should have one of these

chats uh about the philosophical issues

and the relationship to modernism and