id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

5ae862b1-0393-46b9-8770-8a1d4b2b158a | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Drawing Two Aces

Suppose I have a deck of four cards: The ace of spades, the ace of hearts, and two others (say, 2C and 2D).

You draw two cards at random.

*Scenario 1:* I ask you "Do you have the ace of spades?" You say "Yes." Then the probability that you are holding both aces is 1/3: There are three equiprobable arrangements of cards you could be holding that contain AS, and one of these is AS+AH.

*Scenario 2:* I ask you "Do you have an ace?" You respond "Yes." The probability you hold both aces is 1/5: There are five arrangements of cards you could be holding (all except 2C+2D) and only one of those arrangements is AS+AH.

Now suppose I ask you "Do you have an ace?"

You say "Yes."

I then say to you: "Choose one of the aces you're holding at random (so if you have only one, pick that one). Is it the ace of spades?"

You reply "Yes."

What is the probability that you hold two aces?

*Argument 1:* I now know that you are holding at least one ace and that one of the aces you hold is the ace of spades, which is just the same state of knowledge that I obtained in Scenario 1. Therefore the answer must be 1/3.

*Argument 2:* In Scenario 2, I know that I can *hypothetically* ask you to choose an ace you hold, and you must *hypothetically* answer that you chose either the ace of spades or the ace of hearts. My posterior probability that you hold two aces should be the same either way. [The expectation of my future probability must equal my current probability:](/lw/ii/conservation_of_expected_evidence/) If I expect to change my mind later, I should just give in and change my mind now. Therefore the answer must be 1/5.

Naturally I know which argument is correct. Do you? |

31ce6b8b-297e-4bab-bcc0-9e97f4721473 | StampyAI/alignment-research-dataset/arbital | Arbital | Correspondence visualizations for different interpretations of "probability"

[Recall](https://arbital.com/p/4y9) that there are three common interpretations of what it means to say that a coin has a 50% probability of landing heads:

- __The propensity interpretation:__ Some probabilities are just out there in the world. It's a brute fact about coins that they come up heads half the time; we'll call this the coin's physical "propensity towards heads." When we say the coin has a 50% probability of being heads, we're talking directly about this propensity.

- __The frequentist interpretation:__ When we say the coin has a 50% probability of being heads after this flip, we mean that there's a class of events similar to this coin flip, and across that class, coins come up heads about half the time. That is, the _frequency_ of the coin coming up heads is 50% inside the event class (which might be "all other times this particular coin has been tossed" or "all times that a similar coin has been tossed" etc).

- __The subjective interpretation:__ Uncertainty is in the mind, not the environment. If I flip a coin and slap it against my wrist, it's already landed either heads or tails. The fact that I don't know whether it landed heads or tails is a fact about me, not a fact about the coin. The claim "I think this coin is heads with probability 50%" is an _expression of my own ignorance,_ which means that I'd bet at 1 : 1 odds (or better) that the coin came up heads.

One way to visualize the difference between these approaches is by visualizing what they say about when a model of the world should count as a good model. If a person's model of the world is definite, then it's easy enough to tell whether or not their model is good or bad: We just check what it says against the facts. For example, if a person's model of the world says "the tree is 3m tall", then this model is [correct](https://arbital.com/p/correspondence_theory_of_truth) if (and only if) the tree is 3 meters tall.

Definite claims in the model are called "true" when they correspond to reality, and "false" when they don't. If you want to navigate using a map, you had better ensure that the lines drawn on the map correspond to the territory.

But how do you draw a correspondence between a map and a territory when the map is probabilistic? If your model says that a biased coin has a 70% chance of coming up heads, what's the correspondence between your model and reality? If the coin is actually heads, was the model's claim true? 70% true? What would that mean?

The advocate of __propensity__ theory says that it's just a brute fact about the world that the world contains ontologically basic uncertainty. A model which says the coin is 70% likely to land heads is true if and only the actual physical propensity of the coin is 0.7 in favor of heads.

This interpretation is useful when the laws of physics _do_ say that there are multiple different observations you may make next (with different likelihoods), as is sometimes the case (e.g., in quantum physics). However, when the event is deterministic — e.g., when it's a coin that has been tossed and slapped down and is already either heads or tails — then this view is largely regarded as foolish, and an example of the [https://arbital.com/p/-4yk](https://arbital.com/p/-4yk): The coin is just a coin, and has no special internal structure (nor special physical status) that makes it _fundamentally_ contain a little 0.7 somewhere inside it. It's already either heads or tails, and while it may _feel_ like the coin is fundamentally uncertain, that's a feature of your brain, not a feature of the coin.

How, then, should we draw a correspondence between a probabilistic map and a deterministic territory (in which the coin is already definitely either heads or tails?)

A __frequentist__ draws a correspondence between a single probability-statement in the model, and multiple events in reality. If the map says "that coin over there is 70% likely to be heads", and the actual territory contains 10 places where 10 maps say something similar, and in 7 of those 10 cases the coin is heads, then a frequentist says that the claim is true.

Thus, the frequentist preserves black-and-white correspondence: The model is either right or wrong, the 70% claim is either true or false. When the map says "That coin is 30% likely to be tails," that (according to a frequentist) means "look at all the cases similar to this case where my map says the coin is 30% likely to be tails; across all those places in the territory, 3/10ths of them have a tails-coin in them." That claim is definitive, given the set of "similar cases."

By contrast, a __subjectivist__ generalizes the idea of "correctness" to allow for shades of gray. They say, "My uncertainty about the coin is a fact about _me,_ not a fact about the coin; I don't need to point to other 'similar cases' in order to express uncertainty about _this_ case. I know that the world right in front of me is either a heads-world or a tails-world, and I have a [https://arbital.com/p/-probability_distribution](https://arbital.com/p/-probability_distribution) puts 70% probability on heads." They then draw a correspondence between their probability distribution and the world in front of them, and declare that the more probability their model assigns to the correct answer, the better their model is.

If the world _is_ a heads-world, and the probabilistic map assigned 70% probability to "heads," then the subjectivist calls that map "70% accurate." If, across all cases where their map says something has 70% probability, the territory is actually that way 7/10ths of the time, then the Bayesian calls the map "[https://arbital.com/p/-well_calibrated](https://arbital.com/p/-well_calibrated)". They then seek methods to make their maps more accurate, and better calibrated. They don't see a need to interpret probabilistic maps as making definitive claims; they're happy to interpret them as making estimations that can be graded on a sliding scale of accuracy.

## Debate

In short, the frequentist interpretation tries to find a way to say the model is definitively "true" or "false" (by identifying a collection of similar events), whereas the subjectivist interpretation extends the notion of "correctness" to allow for shades of gray.

Frequentists sometimes object to the subjectivist interpretation, saying that frequentist correspondence is the only type that has any hope of being truly objective. Under Bayesian correspondence, who can say whether the map should say 70% or 75%, given that the probabilistic claim is not objectively true or false either way? They claim that these subjective assessments of "partial accuracy" may be intuitively satisfying, but they have no place in science. Scientific reports ought to be restricted to frequentist statements, which are definitively either true or false, in order to increase the objectivity of science.

Subjectivists reply that the frequentist approach is hardly objective, as it depends entirely on the choice of "similar cases". In practice, people can (and do!) [abuse frequentist statistics](https://arbital.com/p/https://en.wikipedia.org/wiki/Data_dredging) by choosing the class of similar cases that makes their result look as impressive as possible (a technique known as "p-hacking"). Furthermore, the manipulation of subjective probabilities is subject to the [iron laws](https://arbital.com/p/1lz) of probability theory (which are the [only way to avoid inconsistencies and pathologies](https://arbital.com/p/) when managing your uncertainty about the world), so it's not like subjective probabilities are the wild west or something. Also, science has things to say about situations even when there isn't a huge class of objective frequencies we can observe, and science should let us collect and analyze evidence even then.

For more on this debate, see [https://arbital.com/p/4xx](https://arbital.com/p/4xx). |

3a80039a-563c-4b4c-acbe-627aa5339022 | LDJnr/LessWrong-Amplify-Instruct | LessWrong | "tldr; Complete narration of the Replacing Guilt series is available here: anchor.fm/guiltI discovered Nate Soares' Replacing Guilt series in late 2019 via the Bayesian Conspiracy podcast and found it immensely valuable. Over ~40 posts, Nate channels the guilt-based motivation that is common in rationalists / EAs into more productive emotional drives. I read the series on my Kindle, thanks to lifelonglearner's ePUB version, but was disappointed that no audio version existed. To make it more accessible, I reached out to Nate and got permission to produce the official audiobook.The rationalist community has a fantastic tradition of volunteer narration — Slate Star Codex , AI to Zombies, and, of course, HPMOR. These free resources have added immense value to my life, so narrating Replacing Guilt feels like my tiny way of reinvesting in our rationalist commons. For a 2-minute summary of the series and why you might be interested in it, check out this post (or listen to the audio version).You can find the individual episodes at anchor.fm/guilt. The complete audiobook can be streamed in any podcast app, or you can download the mp3 file here."

The excerpt provided comes from a user post on LessWrong.

What are the key concepts and ideas presented in Nate Soares' Replacing Guilt series that make it particularly valuable for rationalists and EAs?

Nate Soares' Replacing Guilt series is a collection of writings that focus on self-improvement, particularly in terms of motivation and emotional well-being. It is particularly valuable for rationalists and Effective Altruists (EAs) due to its focus on guilt-based motivation, a common emotional state within these communities.

The series primarily aims to channel this guilt into more productive emotional drives. This is achieved through various strategies and insights, some of which include:

1. **Replacing Guilt with Motivation**: Soares emphasizes the need to replace guilt with intrinsic motivation. He argues that guilt can be a destructive force that hinders productivity and well-being. Instead, he encourages readers to find their own personal motivations and use these as a driving force.

2. **Understanding and Managing Emotions**: The series delves into the importance of understanding one's own emotions and how they impact behavior and decision-making. It provides tools and strategies for managing emotions effectively.

3. **Self-Improvement and Personal Growth**: Soares advocates for continuous self-improvement and personal growth. He provides insights into how one can overcome personal limitations and strive for betterment.

4. **Rational Decision Making**: The series also touches on the concept of rational decision-making. It provides insights into how one can make decisions based on logic and reason, rather than being driven by emotions.

5. **Effective Altruism**: The series is also valuable for EAs as it explores the concept of effective altruism. It provides insights into how one can make a significant positive impact on the world, which aligns with the core principles of effective altruism.

In summary, the Replacing Guilt series provides valuable insights into guilt-based motivation, emotional management, self-improvement, rational decision-making, and effective altruism, making it a valuable resource for rationalists and EAs.

How might the principles outlined in Soares' Replacing Guilt series be practically applied in everyday life, particularly in decision-making and conflict resolution scenarios?

The principles outlined in Soares' Replacing Guilt series can be practically applied in everyday life in a variety of ways, particularly in decision-making and conflict resolution scenarios. Here are some ways these principles can be applied:

1. **Replacing Guilt with Motivation in Decision-Making**: If you find yourself feeling guilty about a decision you need to make, you can apply Soares' principle of replacing guilt with motivation. Instead of allowing guilt to influence your decision, identify what truly motivates you and let that guide your decision-making process.

2. **Understanding and Managing Emotions in Conflict Resolution**: In conflict resolution scenarios, understanding and managing your emotions can be crucial. If you find yourself becoming emotionally charged, use the tools and strategies Soares provides to manage your emotions effectively. This can help you approach the conflict in a more rational and calm manner, which can lead to a more effective resolution.

3. **Applying Self-Improvement and Personal Growth Principles**: If you're faced with a decision or conflict that challenges your personal limitations, use it as an opportunity for self-improvement and personal growth. Reflect on what you can learn from the situation and how you can use it to better yourself.

4. **Using Rational Decision Making in Everyday Life**: Apply rational decision-making principles in your everyday life, from deciding what to have for breakfast to making major life decisions. Instead of letting emotions dictate your choices, make decisions based on logic and reason.

5. **Applying Effective Altruism Principles**: If you're deciding how to contribute to your community or resolve a conflict that impacts others, consider the principles of effective altruism. Think about how your actions can have the most significant positive impact and let that guide your decision-making process.

In summary, the principles outlined in Soares' Replacing Guilt series can be applied in everyday life by replacing guilt with motivation, understanding and managing emotions, striving for self-improvement and personal growth, making rational decisions, and applying the principles of effective altruism. |

db689a55-0d26-4ffe-bdd3-7d264665219f | trentmkelly/LessWrong-43k | LessWrong | Another attempt to explain UDT

(Attention conservation notice: this post contains no new results, and will be obvious and redundant to many.)

Not everyone on LW understands Wei Dai's updateless decision theory. I didn't understand it completely until two days ago. Now that I had the final flash of realization, I'll try to explain it to the community and hope my attempt fares better than previous attempts.

It's probably best to avoid talking about "decision theory" at the start, because the term is hopelessly muddled. A better way to approach the idea is by examining what we mean by "truth" and "probability" in the first place. For example, is it meaningful for Sleeping Beauty to ask whether it's Monday or Tuesday? Phrased like this, the question sounds stupid. Of course there's a fact of the matter as to what day of the week it is! Likewise, in all problems involving simulations, there seems to be a fact of the matter whether you're the "real you" or the simulation, which leads us to talk about probabilities and "indexical uncertainty" as to which one is you.

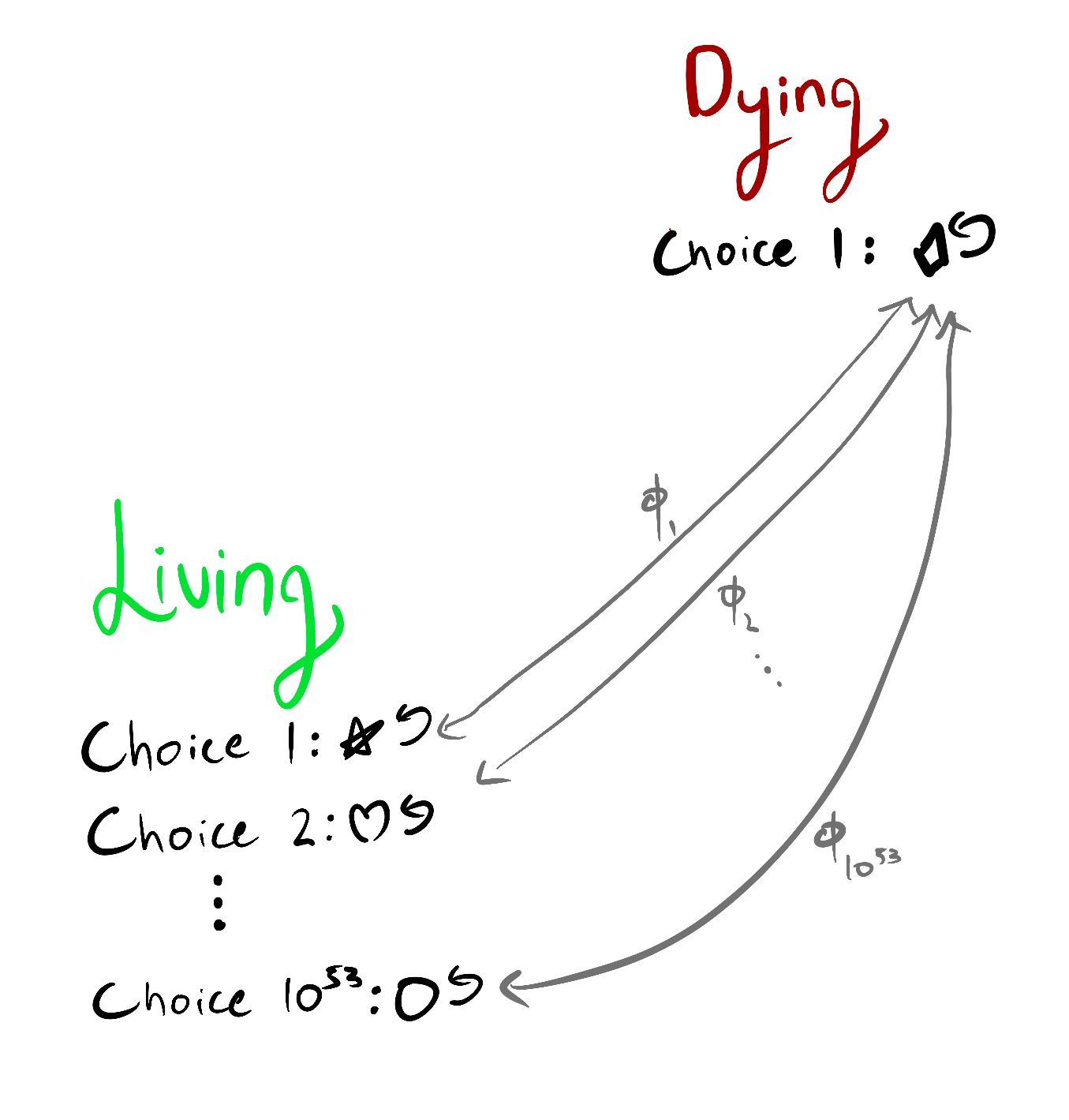

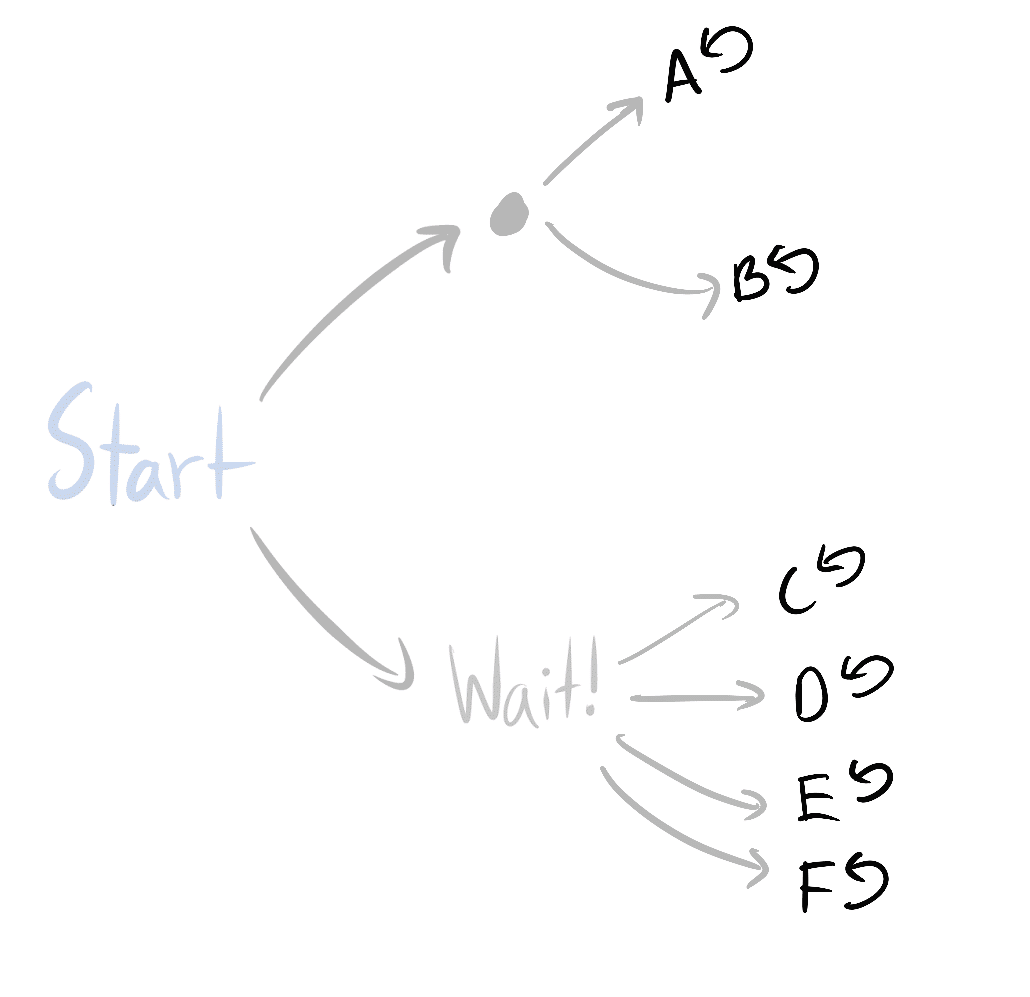

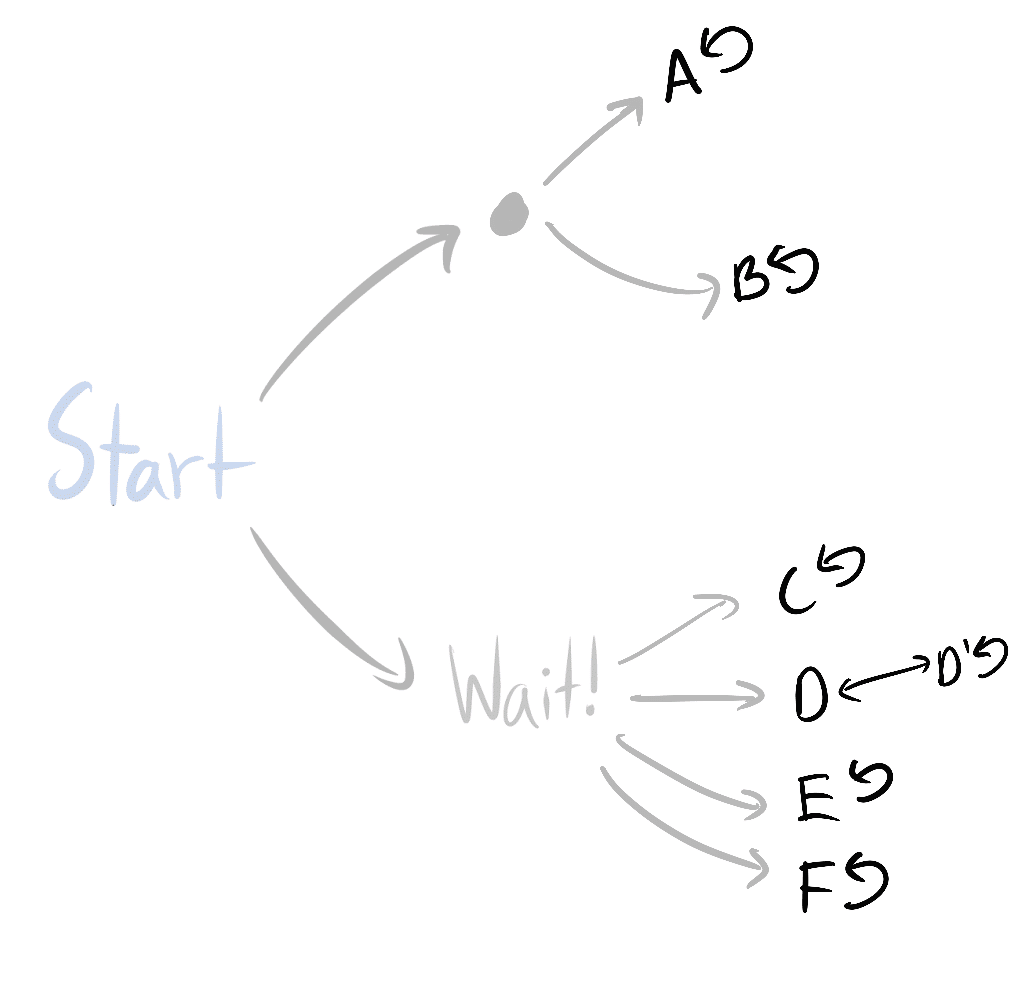

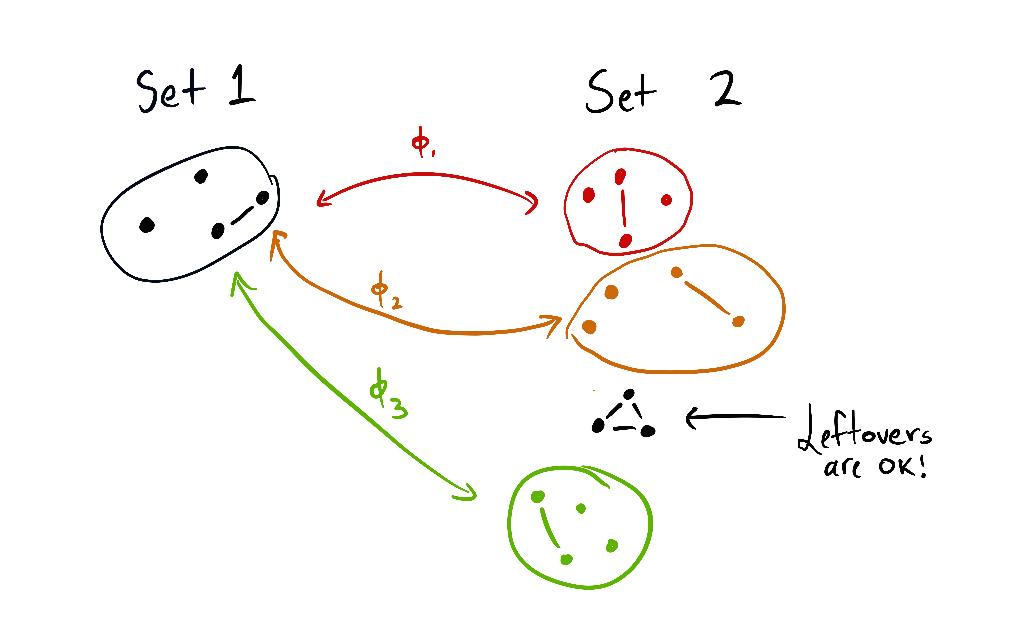

At the core, Wei Dai's idea is to boldly proclaim that, counterintuitively, you can act as if there were no fact of the matter whether it's Monday or Tuesday when you wake up. Until you learn which it is, you think it's both. You're all your copies at once.

More formally, you have an initial distribution of "weights" on possible universes (in the currently most general case it's the Solomonoff prior) that you never update at all. In each individual universe you have a utility function over what happens. When you're faced with a decision, you find all copies of you in the entire "multiverse" that are faced with the same decision ("information set"), and choose the decision that logically implies the maximum sum of resulting utilities weghted by universe-weight. If you possess some useful information about the universe you're in, it's magically taken into account by the choice of "information set", because logically, your decision cannot a |

d639cdec-7e52-477f-8778-ea354dc7bf52 | trentmkelly/LessWrong-43k | LessWrong | Scientific Evidence, Legal Evidence, Rational Evidence

Suppose that your good friend, the police commissioner, tells you in strictest confidence that the crime kingpin of your city is Wulky Wilkinsen. As a rationalist, are you licensed to believe this statement? Put it this way: if you go ahead and insult Wulky, I’d call you foolhardy. Since it is prudent to act as if Wulky has a substantially higher-than-default probability of being a crime boss, the police commissioner’s statement must have been strong Bayesian evidence.

Our legal system will not imprison Wulky on the basis of the police commissioner’s statement. It is not admissible as legal evidence. Maybe if you locked up every person accused of being a crime boss by a police commissioner, you’d initially catch a lot of crime bosses, and relatively few people the commissioner just didn’t like. But unrestrained power attracts corruption like honey attracts flies: over time, you’d catch fewer and fewer real crime bosses (who would go to greater lengths to ensure anonymity), and more and more innocent victims.

This does not mean that the police commissioner’s statement is not rational evidence. It still has a lopsided likelihood ratio, and you’d still be a fool to insult Wulky. But on a social level, in pursuit of a social goal, we deliberately define “legal evidence” to include only particular kinds of evidence, such as the police commissioner’s own observations on the night of April 4th. All legal evidence should ideally be rational evidence, but not the other way around. We impose special, strong, additional standards before we anoint rational evidence as “legal evidence.”

As I write this sentence at 8:33 p.m., Pacific time, on August 18th, 2007, I am wearing white socks. As a rationalist, are you licensed to believe the previous statement? Yes. Could I testify to it in court? Yes. Is it a scientific statement? No, because there is no experiment you can perform yourself to verify it. Science is made up of generalizations which apply to many particular instances, |

5aa9f5e5-5092-4488-a511-ee23a2d1215a | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | What do XPT forecasts tell us about AI risk?

*This post was co-authored by the Forecasting Research Institute and Rose Hadshar. Thanks to Josh Rosenberg for managing this work, Zachary Jacobs and Molly Hickman for the underlying data analysis, Coralie Consigny and Bridget Williams for fact-checking and copy-editing, the whole FRI XPT team for all their work on this project, and our external reviewers.*

In 2022, the [Forecasting Research Institute](https://forecastingresearch.org/) (FRI) ran the Existential Risk Persuasion Tournament (XPT). From June through October 2022, 169 forecasters, including 80 superforecasters and 89 experts, developed forecasts on various questions related to existential and catastrophic risk. Forecasters moved through a four-stage deliberative process that was designed to incentivize them not only to make accurate predictions but also to provide persuasive rationales that boosted the predictive accuracy of others’ forecasts. Forecasters stopped updating their forecasts on 31st October 2022, and are not currently updating on an ongoing basis. FRI plans to run future iterations of the tournament, and open up the questions more broadly for other forecasters.

You can see the overall results of the XPT [here](https://static1.squarespace.com/static/635693acf15a3e2a14a56a4a/t/64abffe3f024747dd0e38d71/1688993798938/XPT.pdf).

Some of the questions were related to AI risk. This post:

* Sets out the XPT [forecasts](https://forum.effectivealtruism.org/posts/K2xQrrXn5ZSgtntuT/what-do-xpt-forecasts-tell-us-about-ai-risk-1#The_forecasts) on AI risk, and puts them in [context](https://forum.effectivealtruism.org/posts/K2xQrrXn5ZSgtntuT/what-do-xpt-forecasts-tell-us-about-ai-risk-1#The_forecasts_in_context).

* Lays out the [arguments](https://forum.effectivealtruism.org/posts/K2xQrrXn5ZSgtntuT/what-do-xpt-forecasts-tell-us-about-ai-risk-1#The_arguments_made_by_XPT_forecasters) given in the XPT for and against these forecasts.

* Offers some [thoughts](https://forum.effectivealtruism.org/posts/K2xQrrXn5ZSgtntuT/what-do-xpt-forecasts-tell-us-about-ai-risk-1#What_do_XPT_forecasts_tell_us_about_AI_risk_) on what these forecasts and arguments show us about AI risk.

TL;DR

=====

* **XPT superforecasters predicted that** ***catastrophic*****and** ***extinction*****risk from AI by 2030 is very low** (0.01% catastrophic risk and 0.0001% extinction risk).

* **XPT superforecasters predicted that** ***catastrophic*****risk from nuclear weapons by 2100 is almost twice as likely as** ***catastrophic*****risk from AI by 2100** (4% vs 2.13%).

* **XPT superforecasters predicted that** ***extinction*****risk from AI by 2050 and 2100 is roughly an order of magnitude larger than extinction****risk from nuclear, which in turn is an order of magnitude larger than non-anthropogenic extinction****risk**(see [here](https://forum.effectivealtruism.org/posts/K2xQrrXn5ZSgtntuT/what-do-xpt-forecasts-tell-us-about-ai-risk-1#Forecasts_on_other_risks) for details).

* **XPT superforecasters more than quadruple their forecasts for AI extinction risk by 2100 if conditioned on AGI or TAI by 2070** (see [here](https://forum.effectivealtruism.org/posts/K2xQrrXn5ZSgtntuT/what-do-xpt-forecasts-tell-us-about-ai-risk-1#How_sensitive_are_XPT_AI_risk_forecasts_to_AI_timelines_) for details).

* **XPT domain experts predicted that AI extinction risk by 2100 is far greater than XPT superforecasters do** (3% for domain experts, and 0.38% for superforecasters by 2100).

* **Although XPT superforecasters and experts disagreed substantially about AI risk, both superforecasters and experts still prioritized AI as an area for marginal resource allocation** (see [here](https://forum.effectivealtruism.org/posts/K2xQrrXn5ZSgtntuT/what-do-xpt-forecasts-tell-us-about-ai-risk-1#Resource_allocation_to_different_risks) for details).

* **It’s unclear how accurate these forecasts will prove, particularly as superforecasters have not been evaluated on this timeframe before.**[[1]](#fn80213xc33ps)

The forecasts

=============

In the table below, we present forecasts from the following groups:

* Superforecasters: median forecast across superforecasters in the XPT.

* Domain experts: median forecasts across all AI experts in the XPT.

(See [our discussion of aggregation choices](https://static1.squarespace.com/static/635693acf15a3e2a14a56a4a/t/64abffe3f024747dd0e38d71/1688993798938/XPT.pdf) (pp. 20–22) for why we focus on medians.)

| | | | | | |

| --- | --- | --- | --- | --- | --- |

| **Question** | **Forecasters** | **N** | **2030** | **2050** | **2100** |

| [AI Catastrophic risk](https://docs.google.com/document/d/1CAmw1g_Y3siZGZaaYJjMRjEIyV4HURhCZf5rZ4a6-d8/edit) (>10% of humans die within 5 years) | Superforecasters | 88 | 0.01% | 0.73% | 2.13% |

| Domain experts | 30 | 0.35% | 5% | 12% |

| [AI Extinction risk](https://docs.google.com/document/d/1NMC1RV8XD0zcvVUfgcJPx7rBKqoNOk7pVJ74C9-JtLY/edit) (human population <5,000) | Superforecasters | 88 | 0.0001% | 0.03% | 0.38% |

| Domain experts | 29 | 0.02% | 1.1% | 3% |

The forecasts in context

========================

Different methods have been used to estimate AI risk:

* Surveying experts of various kinds, e.g. [Sanders and Bostrom, 2008](https://www.fhi.ox.ac.uk/reports/2008-1.pdf); [Grace et al. 2017](https://arxiv.org/pdf/1705.08807.pdf).

* Doing in-depth investigations, e.g. [Ord, 2020](https://80000hours.org/wp-content/uploads/2020/03/The-Precipice-Introduction-Chapter-1.pdf); [Carlsmith, 2021](https://docs.google.com/document/d/1smaI1lagHHcrhoi6ohdq3TYIZv0eNWWZMPEy8C8byYg/edit#).

The XPT forecasts are distinctive relative to expert surveys in that:

* The forecasts were incentivized: for long-run questions, XPT used ‘reciprocal scoring’ rules to incentivize accurate forecasts (see [here](https://docs.google.com/document/d/e/2PACX-1vS9x36iy_DKUsr233p5tctgKJjUDWta36jeVq2M23DtD4Tnsa1AQw9IIwejqLH4j21nNUIby3aO2_Yf/pub#h.q5gf6iwq49m8) for details).

* Forecasts were solicited from superforecasters as well as experts.

* Forecasters were asked to write detailed rationales for their forecasts, and good rationales were incentivized through prizes.

* Forecasters worked on questions in a four-stage deliberative process in which they refined their individual forecasts and their rationales through collaboration with teams of other forecasters.

Should we expect XPT forecasts to be more or less accurate than previous estimates? This is unclear, but some considerations are:

* Relative to some previous forecasts (particularly those based on surveys), XPT forecasters spent a long time thinking and writing about their forecasts, and were incentivized to be accurate.

* XPT forecasters with high reciprocal scoring accuracy may be more accurate.

+ There is evidence that reciprocal scoring accuracy correlates with short-range forecasting accuracy, though it is unclear if this extends to long-range accuracy.[[2]](#fndoj3pjjbqdi)

* XPT (and other) superforecasters have a history of accurate forecasts (primarily on short-range geopolitical and economic questions), and may be less subject to biases such as groupthink in comparison to domain experts.

+ On the other hand, there is limited evidence that superforecasters’ accuracy extends to technical domains like AI, long-range forecasts, or out-of-distribution events.

Forecasts on other risks

------------------------

Where not otherwise stated, the XPT forecasts given are superforecasters’ medians.

| | | | | | |

| --- | --- | --- | --- | --- | --- |

| **Forecasting Question** | **2030** | **2050** | **2100** | **Ord, existential catastrophe 2120**[[3]](#fnntv8t4qcdzr) | **Other relevant forecasts**[[4]](#fnow32yo7ulzo) |

| **Catastrophic risk (>10% of humans die in 5 years)** | |

| **Biological**[[5]](#fnsc9q06yqib8) | - | - | 1.8% | - | - |

| **Engineered pathogens**[[6]](#fnp4okw8icuja) | - | - | 0.8% | | |

| **Natural pathogens**[[7]](#fnknlpvnn2x0s) | - | - | 1% | | |

| [**AI**](https://docs.google.com/document/d/1CAmw1g_Y3siZGZaaYJjMRjEIyV4HURhCZf5rZ4a6-d8/edit)**(superforecasters)** | 0.01% | 0.73% | 2.13% | - | [Carlsmith](https://docs.google.com/document/d/1smaI1lagHHcrhoi6ohdq3TYIZv0eNWWZMPEy8C8byYg/edit#), 2070, ~14%[[8]](#fnaxxrp32ekok)[Sandberg and Bostrom](https://www.fhi.ox.ac.uk/reports/2008-1.pdf), 2100, 5%[[9]](#fn0fp9xdb9mg0b)[Metaculus](https://www.metaculus.com/questions/2568/ragnar%2525C3%2525B6k-seriesresults-so-far/), 2100, 3.99%[[10]](#fnpfi6boisns) |

| [**AI**](https://docs.google.com/document/d/1CAmw1g_Y3siZGZaaYJjMRjEIyV4HURhCZf5rZ4a6-d8/edit)**(domain experts)** | 0.35% | 5% | 12% | - |

| [**Nuclear**](https://docs.google.com/document/d/199bSOzlT5PR4TAOU3myFfdXpBfbNDAG69wESSnl_MaE/edit) | 0.50% | 1.83% | 4% | - | - |

| [**Non-anthropogenic**](https://docs.google.com/document/d/1Syu1oqedSqWh1KneRH-LtV__3ZVdrYoDdL3FpG7v0eQ/edit) | 0.0026% | 0.015% | 0.05% | - | - |

| [**Total catastrophic risk**](https://docs.google.com/document/d/1H11Aq_XTZkKzjyECb_qdy4cbmJWxGHkYAI2JvhrjjdY/edit)[[11]](#fnjq2p2ewzuyo) | 0.85% | 3.85% | 9.05% | - | - |

| **Extinction risk (human population <5000)** | |

| **Biological**[[12]](#fncrggzlctu1m) | - | - | 0.012% | | |

| **Engineered pathogens**[[13]](#fnb08wujhvka) | - | - | 0.01% | 3.3% | |

| **Natural pathogens**[[14]](#fnuaj6z25owna) | - | - | 0.0018% | 0.01% | |

| [**AI**](https://docs.google.com/document/d/1NMC1RV8XD0zcvVUfgcJPx7rBKqoNOk7pVJ74C9-JtLY/edit)**(superforecasters)** | 0.0001% | 0.03% | 0.38% | 10%[[15]](#fn3jwcqlwdvx3) | [Carlsmith](https://docs.google.com/document/d/1smaI1lagHHcrhoi6ohdq3TYIZv0eNWWZMPEy8C8byYg/edit#), 2070, 5%[[16]](#fnahbp0za6n9n)[Sandberg and Bostrom](https://www.fhi.ox.ac.uk/reports/2008-1.pdf), 2100, 5%[[17]](#fnyavja33w45c)[Metaculus](https://www.metaculus.com/questions/2568/ragnar%2525C3%2525B6k-seriesresults-so-far/), 2100, 1.9%[[18]](#fnuaq38pge3a)[Fodor](https://forum.effectivealtruism.org/posts/2sMR7n32FSvLCoJLQ/critical-review-of-the-precipice-a-reassessment-of-the-risks), 2120, 0.0005%[[19]](#fnw21cmelbtxq)[Future Fund](https://forum.effectivealtruism.org/posts/W7C5hwq7sjdpTdrQF/announcing-the-future-fund-s-ai-worldview-prize), 2070, 5.8%[[20]](#fn6e8rqfvh3cq)[Future Fund](https://forum.effectivealtruism.org/posts/W7C5hwq7sjdpTdrQF/announcing-the-future-fund-s-ai-worldview-prize) lower prize threshold, 2070, 1.4%[[21]](#fneduqf55xmjt) |

| [**AI**](https://docs.google.com/document/d/1NMC1RV8XD0zcvVUfgcJPx7rBKqoNOk7pVJ74C9-JtLY/edit)**(domain experts)** | 0.02% | 1.1% | 3% |

| [**Nuclear**](https://docs.google.com/document/d/1-hkApWaPqETJLZ6Z0nXbtG0b--EihUdNTcB38fTAYI8/edit) | 0.001% | 0.01% | 0.074% | 0.1%[[22]](#fn7wtjz3i2iwy) | - |

| [**Non-anthropogenic**](https://docs.google.com/document/d/1GUNJD-ogiMRaPgRmJA_4uo_U4EqBiDSnBe-lxuMo5qo/edit) | 0.0004% | 0.0014% | 0.0043% | 0.01%[[23]](#fn3xc3mc5vph4) | - |

| [**Total extinction risk**](https://docs.google.com/document/d/1wENxRHoCrNU4MXfusy2Txh6onO9zt5kIl-K-OLZv5FQ/edit)[[24]](#fn85esd6mw6ia) | 0.01% | 0.3% | 1% | 16.67%[[25]](#fnl39s7xfksm) | - |

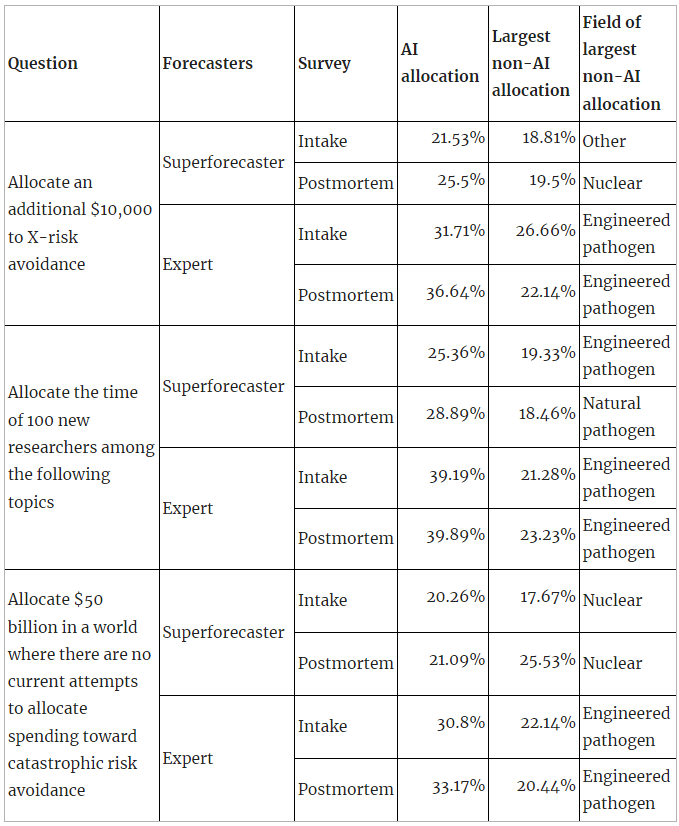

### Resource allocation to different risks

All participants in the XPT responded to an intake and a postmortem survey. In these surveys, participants were asked various questions about resource allocation:

* If you could allocate an additional $10,000 to X-risk avoidance, how would you divide the money among the following topics?

* If you could allocate the time of 100 new researchers (assuming they are generalists who could be effective in a wide range of fields), how would you divide them among the following topics?

* Assume we are in a world where there are no current attempts by public institutions, governments, or private individuals or organizations to allocate spending toward catastrophic risk avoidance. If you were to allocate $50 billion to the following risk avoidance areas, what fraction of the money would you allocate to each area?

Across superforecasters and experts and across all three resource allocation questions, AI received the single largest allocation, with one exception (superforecasters in the postmortem survey on the $50bn question):

The only instance where AI did not receive the largest single allocation is superforecasters’ postmortem allocation on the $50bn question (where AI received the second highest allocation after nuclear). The $50bn question is also the only absolute resource allocation question (the other two are marginal), and the question where AI’s allocation was smallest in percentage terms (across supers and experts and across both surveys).

A possible explanation here is that XPT forecasters see AI as underfunded on the margin — although superforecasters think that in absolute terms AI should still receive less funding than nuclear risk.

The main contribution of these results to what the XPT tells us about AI risk is that even though superforecasters and experts disagreed substantially about the probability of AI risk, and even though the absolute probabilities of superforecasters for AI risk were 1% or lower, both superforecasters and experts still prioritize AI as an area for marginal resource allocation.

Forecasts from the top reciprocal scoring quintile

--------------------------------------------------

In the XPT, forecasts for years later than 2030 were incentivized using a method called [reciprocal scoring](https://dx.doi.org/10.2139/ssrn.3954498). For each of the relevant questions, forecasters were asked to give their own forecasts, while also predicting the forecasts of other experts and superforecasters. Forecasters who most accurately predicted superforecaster and expert forecasts got a higher reciprocal score.

In the course of the XPT, there were systematic differences between the forecasts of those with high reciprocal scores and those with lower scores, in particular:

* Superforecasters outperformed experts at reciprocal scoring. They also tended to be more skeptical of high risks and fast progress.

* Higher reciprocal scores within both groups — experts and superforecasters — correlated with lower estimates of catastrophic and extinction risk.

In other words, the better forecasters were at discerning what other people would predict, the less concerned they were about extinction.

We won’t know for many decades which forecasts were more accurate, and as reciprocal scoring is a new method, there is insufficient evidence to definitively establish its correlation with the accuracy of long-range forecasts. However, in the plausible case that reciprocal scoring accuracy does indeed correlate with overall accuracy, it would justify giving more weight to forecasts from individuals with high reciprocal scores — which are lower risk predictions in the XPT. Readers should exercise their own judgment in determining how to read these findings.

It is also important to note that, in the present case, superforecasters’ higher scores were driven by their more accurate predictions of superforecasters’ views (both groups were comparable at predicting experts’ views). It is therefore conceivable that their superior performance is due to the fact that superforecasters are a relatively small social group and have spent lots of time discussing forecasts with one another, rather than due to general higher accuracy.

Here is a comparison of median forecasts on AI risk between top reciprocal scoring (RS) quintile forecasters and all forecasters, for superforecasters and domain experts:

| | | | | |

| --- | --- | --- | --- | --- |

| **Question** | **Forecasters** | **2030** | **2050** | **2100** |

| [**AI Catastrophic risk**](https://docs.google.com/document/d/1CAmw1g_Y3siZGZaaYJjMRjEIyV4HURhCZf5rZ4a6-d8/edit)**(>10% of humans die within 5 years)** | **RS top quintile - superforecasters (n=16)** | 0.001% | 0.46% | 1.82% |

| **RS top quintile - domain experts (n=5)** | 0.03% | 0.5% | 3% |

| **Superforecasters (n=88)** | 0.01% | 0.73% | 2.13% |

| **Domain experts (n=30)** | 0.35% | 5% | 12% |

| [**AI Extinction risk**](https://docs.google.com/document/d/1NMC1RV8XD0zcvVUfgcJPx7rBKqoNOk7pVJ74C9-JtLY/edit)**(human population <5,000)** | **RS top quintile - superforecasters (n=16)** | 0.0001% | 0.002% | 0.088% |

| **RS top quintile - domain experts (n=5)** | 0.0003% | 0.08% | 0.24% |

| **Superforecasters (n=88)** | 0.0001% | 0.03% | 0.38% |

| **Domain experts (n=29)** | 0.02% | 1.1% | 3% |

From this, we see that:

* The top RS quintiles predicted medians which were closer to overall superforecaster medians than overall domain expert medians, with no exceptions.

* The top RS quintiles tended to predict lower medians than both superforecasters overall and domain experts overall, with some exceptions:

+ The top RS quintile for domain experts predicted higher *catastrophic* risk by 2100 than superforecasters overall (3% to 2.13%).

+ The top RS quintile for superforecasters predicted the same *extinction* risk by 2030 as superforecasters overall (0.0001%).

+ The top RS quintile for domain experts predicted higher *extinction* risk by 2030 and 2050 than superforecasters overall (2030: 0.0003% to 0.0001%; 2050: 0.08% to 0.03%).

The arguments made by XPT forecasters

=====================================

XPT forecasters were grouped into teams. Each team was asked to write up a ‘rationale’ summarizing the main arguments that had been made during team discussions about the forecasts different team members made. The below summarizes the main arguments made across all teams in the XPT. Footnotes contain direct quotes from team rationales.

The core arguments about catastrophic and extinction risk from AI centered around:

1. **Whether sufficiently advanced AI would be developed in the relevant timeframe.**

2. **Whether there are plausible mechanisms for advanced AI to cause catastrophe/human extinction.**

Other important arguments made included:

1. **Whether there are incentives for advanced AI to cause catastrophe/human extinction.**

2. **Whether advanced AI would emerge suddenly.**

3. **Whether advanced AI would be misaligned.**

4. **Whether humans would empower advanced AI.**

1. Whether sufficiently advanced AI would be developed in the relevant timeframe

--------------------------------------------------------------------------------

There is a full summary of timelines arguments in the XPT in [this](https://forum.effectivealtruism.org/posts/KGGDduXSwZQTQJ9xc/what-do-xpt-forecasts-tell-us-about-ai-timelines) post.

Main arguments given against:

* Scaling laws may not hold, such that new breakthroughs are needed.[[26]](#fnpi0j7k19p8)

* There may be attacks on AI vulnerabilities.[[27]](#fnpq0xrr230tc)

* The forecasting track record on advanced AI is poor.[[28]](#fn1bohi53al76)

Main arguments given for:

* Recent progress is impressive.[[29]](#fnfqlkz6r841h)

* Scaling laws may hold.[[30]](#fndo42qpi3xa)

* Advances in quantum computing or other novel technology may speed up AI development.[[31]](#fnrb9l883ekkg)

* Recent progress has been faster than predicted.[[32]](#fn2t0y0ehwxkw)

2. Whether there are plausible mechanisms for advanced AI to cause catastrophe/human extinction

-----------------------------------------------------------------------------------------------

Main arguments given against:

* The logistics would be extremely challenging.[[33]](#fnecd2iu843l)

* Millions of people live very remotely, and AI would have little incentive to pay the high costs of killing them.[[34]](#fn2ha1kz3ccu4)

* Humans will defend themselves against this.[[35]](#fn8q3pzpiynhx)

* AI might improve security as well as degrade it.[[36]](#fncv3aeky93a)

Main arguments given for:

* There are many possible mechanisms for AI to cause such events.

* Mechanisms cited include: nanobots,[[37]](#fnijj5qbceuc) bioweapons,[[38]](#fnt95vn8jgyp) nuclear weapons,[[39]](#fndwmzu3wjxxk) attacks on the supply chain,[[40]](#fn3y4shvq642s) and novel technologies.[[41]](#fnuxlbp5aer6j)

* AI systems might recursively self-improve such that mechanisms become available to it.[[42]](#fnfg5n2zkhvz)

* It may be the case that an AI system or systems only needs to succeed once for these events to occur.[[43]](#fnpa0n04dr4vf)

3. Whether there are incentives for advanced AI to cause catastrophe/human extinction

-------------------------------------------------------------------------------------

Main arguments given against:

* AI systems won’t have ill intent.[[44]](#fnw1pa1pt6jg)

* AI might seek resources in space rather than on earth.[[45]](#fng4eaequzoh8)

Main arguments given for:

* AI systems might have an incentive to preemptively prevent shutdown.[[46]](#fnro0j64oy6vg)

* AI systems might maximize reward so hard that they use up resources humans need to survive.[[47]](#fnb1w1ri6ur7h)

* AI systems might optimize their environment in ways that make earth uninhabitable to humans, e.g. by reducing temperatures.[[48]](#fnulfzi07ugu8)

* AI systems might fight each other for resources, with humans as collateral.[[49]](#fnmpax48zg69)

4. Whether advanced AI would emerge suddenly

--------------------------------------------

Main arguments given for:

* AIs might recursively self-improve, such that they reach very high levels of advancement very quickly.[[50]](#fn5s7sf3vjq89)

* Recent progress has been unexpectedly sudden.[[51]](#fn8vgsx07gqlf)

5. Whether advanced AI would be misaligned

------------------------------------------

Main arguments given against:

* AI safety is a growing field and will continue to grow.[[52]](#fnbl96ytr32r)

* AI systems may help with AI safety.[[53]](#fnso9lmga1sns)

Main arguments given for:

* AI safety is not progressing as fast as AI development.[[54]](#fnyeg2yhmfuy)

* AI developers are not aligned with humanity.[[55]](#fnj994hpdho5e)

* Investment may be too low, because of collective action problems and the fact that risks from AI sound strange.[[56]](#fnxl7afue30gh)

* We may only get a few shots to align AI systems, particularly if takeoff speeds are high.[[57]](#fnwy0c4sprca)

6. Whether humans would empower advanced AI

-------------------------------------------

Main arguments given against:

* AI will not be given control over critical systems, because this will be seen as risky.[[58]](#fne4ffuzh7xwc)

* AI systems will be regulated in a way which successfully reduces risk.[[59]](#fnxrmbekjwfp8)

Main arguments given for:

* AI will be given control over critical systems, because this will be so much more efficient.[[60]](#fngh07sfiv5cp)

* AI systems will not be regulated in a way which successfully reduces risk.[[61]](#fn4c2ogkgdncy)

* If takeoff speeds are high, it will be hard for humans to stay in control.[[62]](#fnsjutz0ovjx)

How sensitive are XPT AI risk forecasts to AI timelines?

========================================================

The XPT did not include conditional forecasting questions about AI extinction risk. However, in the postmortem survey after the tournament, participants were asked several conditional questions.

Their conditional forecasts are displayed in the table below.

Note that:

* Only a subset of XPT forecasters responded to the postmortem survey, so in the table below we compare the postmortem results with the XPT forecasts of only the subset of XPT forecasters who responded to the postmortem survey, for comparability. (This is why the XPT medians in this table differ from medians quoted in the rest of the post, which are based on all XPT forecasts.)

* The definitions of extinction used were slightly different:

+ The XPT asked “What is the probability that artificial intelligence will cause human extinction or reduce the global population below 5,000 by 2030/2050/2100?” with full details [here](https://docs.google.com/document/d/1NMC1RV8XD0zcvVUfgcJPx7rBKqoNOk7pVJ74C9-JtLY/edit).

+ The postmortem asked “Assume that artificial general intelligence (AGI) has arisen by 2070. What is the probability of human extinction by [2100/2200]?” with no further details on extinction.

| | | |

| --- | --- | --- |

| **Question** | **Superforecasters (n=78)** | **Domain experts** |

| **(XPT postmortem subset) Unconditional AI Extinction risk by 2100** | 0.225% | 2% (n=21) |

| AI Extinction risk by 2100|AGI\* by 2070 | 1% | 6% (n=23) |

| AI Extinction risk by 2200|AGI\* by 2070 | 3% | 7% (n=23) |

| AI Extinction risk by 2100|TAI\*\* by 2070 | 1% | 3% (n=23) |

| AI Extinction risk by 2200|TAI\*\* by 2070 | 3% | 5% (n=23) |

\* “Artificial general intelligence is defined here as any scenario in which cheap AI systems are fully substitutable for human labor, or if AI systems power a comparably profound transformation (in economic terms or otherwise) as would be achieved in such a world.”

\*\* “Transformative AI is defined here as any scenario in which global real GDP during a year exceeds 115% of the highest GDP reported in any full prior year.”

From this, we see that:

* **Superforecasters more than quadruple their extinction risk forecasts by 2100 if conditioned on AGI or TAI by 2070.**

* Domain experts also increase their forecasts of extinction by 2100 conditioned on AGI or TAI by 2070, but by a smaller factor.

* When you extend the timeframe to 2200 as well as condition on AGI/TAI, superforecasters more than 10x their forecasts. Domain experts also increase their forecasts.

What do XPT forecasts tell us about AI risk?

============================================

This is unclear:

* Which conclusions to draw from the XPT forecasts depends substantially on your priors on AI timelines to begin with, and your views on which groups of people’s forecasts on these topics you expect to be most accurate.

* When it comes to action relevance, a lot depends on factors beyond the scope of the forecasts themselves:

+ Tractability: if AI risk is completely intractable, it doesn’t matter whether it’s high or low.

+ Current margins: if current spending on AI is sufficiently low, the risk being low might not affect current margins.

- For example, XPT forecasters prioritized [allocating resources](https://forum.effectivealtruism.org/posts/K2xQrrXn5ZSgtntuT/what-do-xpt-forecasts-tell-us-about-ai-risk-1#Resource_allocation_to_different_risks) to reducing AI risk over reducing other risks, in spite of lower AI risk forecasts than forecasts on some of those other risks.

+ Personal fit: for individuals, decisions may be dominated by considerations of personal fit, such that these forecasts alone don’t change much.

* There are many uncertainties around how accurate to expect these forecasts to be:

+ There is limited evidence on how accurate long-range forecasts are.[[63]](#fn0k31xbxdsp2d)

+ There is limited evidence on whether superforecasters or experts are likely to be more accurate in this context.

+ There is limited evidence on the relationship between reciprocal scoring accuracy and long-range accuracy.

That said, there are some things to note about the XPT results on AI risk and AI timelines:

* These are the first incentivized public forecasts from superforecasters on AI x-risk and AI timelines.

* XPT superforecasters think extinction from AI by 2030 is really, really unlikely.

* XPT superforecasters think that catastrophic risk from nuclear by 2100 is twice as likely as from AI.

+ On the one hand, they don’t think that AI is the main catastrophic risk we face.

+ On the other hand, they think AI is half as dangerous as nuclear weapons.

* XPT superforecasters think that by 2050 and 2100, extinction risk from AI is roughly an order of magnitude larger than from nuclear, which in turn is an order of magnitude more than from non-anthropogenic risks.

+ Ord and XPT superforecasters agree on these ratios, though not on the absolute magnitude of the risks.

* Superforecasters more than quadruple their extinction risk forecasts if conditioned on AGI or TAI by 2070.

* XPT domain experts think that risk from AI is far greater than XPT superforecasters do.

+ By 2100, domain experts’ forecast for catastrophic risk from AI is around four times that of superforecasters, and their extinction risk forecast is around 10 times as high.

1. **[^](#fnref80213xc33ps)** See [here](https://www.openphilanthropy.org/research/how-feasible-is-long-range-forecasting/) for a discussion of the feasibility of long-range forecasting.

2. **[^](#fnrefdoj3pjjbqdi)**See [Karger et al. 2021](https://papers.ssrn.com/sol3/papers.cfm?abstract_id=3954498).

3. **[^](#fnrefntv8t4qcdzr)**Toby Ord, The Precipice (London: Bloomsbury Publishing, 2020), 45. Ord’s definition is strictly broader than XPT’s. Ord defines existential catastrophe as “the destruction of humanity’s long-term potential”. He uses 2120 rather than 2100 as his resolution date, so his estimates are not directly comparable to XPT forecasts.

4. **[^](#fnrefow32yo7ulzo)**Other forecasts may use different definitions or resolution dates. These are detailed in the footnotes.

5. **[^](#fnrefsc9q06yqib8)**This row is the sum of the two following rows (catastrophic risk from engineered and from natural pathogens respectively). We did not directly ask for catastrophic biorisk forecasts.

6. **[^](#fnrefp4okw8icuja)**Because of concerns among our funders about information hazards, we did not include this question in the main tournament, but we did ask about risks from engineered and natural pathogens in a one-shot separate postmortem survey to which most XPT participants responded after the tournament. We report those numbers here.

7. **[^](#fnrefknlpvnn2x0s)**Because of concerns among our funders about information hazards, we did not include this question in the main tournament, but we did ask about risks from engineered and natural pathogens in a one-shot separate postmortem survey to which most XPT participants responded after the tournament. We report those numbers here.

8. **[^](#fnrefaxxrp32ekok)**The probability of Carlsmith’s fourth premise, incorporating his uncertainty on his first three premises:

1. It will become possible and financially feasible to build APS systems. 65%

2. There will be strong incentives to build and deploy APS systems. 80%

3. It will be much harder to build APS systems that would not seek to gain and maintain power in unintended ways (because of problems with their objectives) on any of the inputs they’d encounter if deployed, than to build APS systems that would do this, but which are at least superficially attractive to deploy anyway. 40%

4. Some deployed APS systems will be exposed to inputs where they seek power in unintended and high-impact ways (say, collectively causing >$1 trillion dollars of damage), because of problems with their objectives. 65%

See [here](https://docs.google.com/document/d/1smaI1lagHHcrhoi6ohdq3TYIZv0eNWWZMPEy8C8byYg/edit#heading=h.4y2596lbsi92) for the relevant section of the report.

9. **[^](#fnref0fp9xdb9mg0b)**“Superintelligent AI” would kill 1 billion people.

10. **[^](#fnrefpfi6boisns)**A catastrophe due to an artificial intelligence failure-mode reduces the human population by >10%

11. **[^](#fnrefjq2p2ewzuyo)**This question was asked independently, rather than inferred from questions about individual risks.

12. **[^](#fnrefcrggzlctu1m)**This row is the sum of the two following rows (catastrophic risk from engineered and from natural pathogens respectively). We did not directly ask for catastrophic biorisk forecasts.

13. **[^](#fnrefb08wujhvka)**Because of concerns among our funders about [information hazards](https://nickbostrom.com/information-hazards.pdf), we did not include this question in the main tournament, but we did ask about risks from engineered and natural pathogens in a one-shot separate postmortem survey to which most XPT participants responded after the tournament. We report those numbers here.

14. **[^](#fnrefuaj6z25owna)**Because of concerns among our funders about [information hazards](https://nickbostrom.com/information-hazards.pdf), we did not include this question in the main tournament, but we did ask about risks from engineered and natural pathogens in a one-shot separate postmortem survey to which most XPT participants responded after the tournament. We report those numbers here.

15. **[^](#fnref3jwcqlwdvx3)**Existential catastrophe from “unaligned artificial intelligence”.

16. **[^](#fnrefahbp0za6n9n)**Existential catastrophe (the destruction of humanity’s long-term potential).

17. **[^](#fnrefyavja33w45c)**“Superintelligent AI” would lead to humanity’s extinction.

18. **[^](#fnrefuaq38pge3a)**A catastrophe due to an artificial intelligence failure-mode reduces the human population by >95%.

19. **[^](#fnrefw21cmelbtxq)**Existential catastrophe from “unaligned artificial intelligence”.

20. **[^](#fnref6e8rqfvh3cq)**“[H]umanity will go extinct or drastically curtail its future potential due to loss of control of AGI”. This estimate is inferred from the values provided [here](https://forum.effectivealtruism.org/posts/W7C5hwq7sjdpTdrQF/announcing-the-future-fund-s-ai-worldview-prize), and assumes the probability of AGI being developed rises linearly from 2043 to 2100. Workings [here](https://docs.google.com/spreadsheets/d/1Ub-zadOoO8CizpojD2aPtAUxgwL0BRvDWJHej1GPpNM/edit#gid=0).

21. **[^](#fnrefeduqf55xmjt)**“[H]umanity will go extinct or drastically curtail its future potential due to loss of control of AGI”. This estimate is inferred from the values provided [here](https://forum.effectivealtruism.org/posts/W7C5hwq7sjdpTdrQF/announcing-the-future-fund-s-ai-worldview-prize), and assumes the probability of AGI being developed rises linearly from 2043 to 2100. Workings [here](https://docs.google.com/spreadsheets/d/1Ub-zadOoO8CizpojD2aPtAUxgwL0BRvDWJHej1GPpNM/edit#gid=0).

22. **[^](#fnref7wtjz3i2iwy)**Existential catastrophe via nuclear war.

23. **[^](#fnref3xc3mc5vph4)**The total risk of existential catastrophe from natural (non-anthropogenic) sources (specific estimates are also made for catastrophe via asteroid or comet impact, supervolcanic eruption, and stellar explosion).

24. **[^](#fnref85esd6mw6ia)**This question was asked independently, rather than inferred from questions about individual risks.

25. **[^](#fnrefl39s7xfksm)**Total existential risk in next 100 years (published in 2020).

26. **[^](#fnrefpi0j7k19p8)**Question 3: 341, “there are many experts arguing that we will not get to AGI with current methods (scaling up deep learning models), but rather some other fundamental breakthrough is necessary.” See also 342, “While recent AI progress has been rapid, some experts argue that current paradigms (deep learning in general and transformers in particular) have fundamental limitations that cannot be solved with scaling compute or data or through relatively easy algorithmic improvements.” See also 337, "The current AI research is a dead end for AGI. Something better than deep learning will be needed." See also 341, “Some team members think that the development of AI requires a greater understanding of human mental processes and greater advances in mapping these functions.”

Question 4: 336, “Not everyone agrees that the 'computational' method (adding hardware, refining algorithms, improving AI models) will in itself be enough to create AGI and expect it to be a lot more complicated (though not impossible). In that case, it will require a lot more research, and not only in the field of computing.” 341, “An argument for a lower forecast is that a catastrophe at this magnitude would likely only occur if we have AGI rather than say today's level AI, and there are many experts arguing that we will not get to AGI with current methods (scaling up deep learning models), but rather some other fundamental breakthrough is necessary.” See also 342, “While recent AI progress has been rapid, some experts argue that current paradigms (deep learning in general and transformers in particular) have fundamental limitations that cannot be solved with scaling compute or data or through relatively easy algorithmic improvements.” See also 340, “Achieving Strong or General AI will require at least one and probably a few paradigm-shifts in this and related fields. Predicting when a scientific breakthrough will occur is extremely difficult.”

27. **[^](#fnrefpq0xrr230tc)** Question 3: 341, “Both evolutionary theory and the history of attacks on computer systems imply that the development of AGI will be slowed and perhaps at times reversed due to its many vulnerabilities, including ones novel to AI.” “Those almost certain to someday attack AI and especially AGI systems include nation states, protesters (hackers,[Butlerian Jihad](https://dune.fandom.com/wiki/Butlerian_Jihad)?),[crypto miners hungry for FLOPS](https://www.paymentsjournal.com/criminal-crypto-miners-are-stealing-your-cpu/), and indeed criminals of all stripes. We even could see AGI systems attacking each other.” “These unique vulnerabilities include:

- [poisoning the indescribably vast data inputs required](https://www.darpa.mil/program/guaranteeing-ai-robustness-against-deception); already demonstrated with[image classification, reinforcement learning, speech recognition, and natural language processing.](https://arxiv.org/abs/1809.02444)

- war or sabotage in the case of an AGI located in a server farm

- [latency of self-defense detection and remediation operations if distributed (cloud etc.)](https://www.cisco.com/c/en/us/solutions/data-center/data-center-networking/what-is-low-latency.html)”

Question 4: 341. See above.

28. **[^](#fnref1bohi53al76)** Question 4: 337, “The optimists tend to be less certain that AI will develop as quickly as the pessimists think likely and indeed question if it will reach the AGI stage at all. They point out that AI development has missed forecast attainment points before”. 336, “There have been previous bold claims on impending AGI (Kurzweil for example) that didn't pan out.” See also 340, “The prediction track record of AI experts and enthusiasts have erred on the side of extreme optimism and should be taken with a grain of salt, as should all expert forecasts.” See also 342, “given the extreme uncertainty in the field and lack of real experts, we should put less weight on those who argue for AGI happening sooner. Relatedly, Chris Fong and SnapDragon argue that we should not put large weight on the current views of Eliezer Yudkowsky, arguing that he is extremely confident, makes unsubstantiated claims and has a track record of incorrect predictions.”

29. **[^](#fnreffqlkz6r841h)** Question 3: 339, “Forecasters assigning higher probabilities to AI catastrophic risk highlight the rapid development of AI in the past decade(s).” 337, “some forecasters focused more on the rate of improvement in data processing over the previous 78 years than AGI and posit that, if we even achieve a fraction of this in future development, we would be at far higher levels of processing power in just a couple decades.”

Question 4: 339, “AI research and development has been massively successful over the past several decades, and there are no clear signs of it slowing down anytime soon.”

30. **[^](#fnrefdo42qpi3xa)** Question 3: 336, “the probabilities of continuing exponential growth in computing power over the next century as things like quantum computers are developed, and the inherent uncertainty with exponential growth curves in new technologies.”

31. **[^](#fnrefrb9l883ekkg)** Question 4: 336, “The most plausible forecasts on the higher end of our team related to the probabilities of continuing exponential growth in computing power over the next century as things like quantum computers are developed, and the inherent uncertainty with exponential growth curves in new technologies.”

32. **[^](#fnref2t0y0ehwxkw)** Question 3: 343, “Most experts expect AGI within the next 1-3 decades, and current progress in domain-level AI is often ahead of expert predictions”; though also “Domain-specific AI has been progressing rapidly - much more rapidly than many expert predictions. However, domain-specific AI is not the same as AGI.” 340, “Perhaps the strongest argument for why the trend of Sevilla et al. could be expected to continue to 2030 and beyond is some discontinuity in the cost of AI training compute precipitated by a novel technology such as optical neural networks.”

33. **[^](#fnrefecd2iu843l)** Question 4: Team 338, “It would be extremely difficult to kill everyone”. 339, “Perhaps the most common argument against AI extinction is that killing all but 5,000 humans is incredibly difficult. Even if you assume that super intelligent AI exist and they are misaligned with human goals so that they are killing people, it would be incredibly resource intensive to track down and kill enough people to meet these resolution criteria. This would suggest that AI would have to be explicitly focused on causing human extinction.” 337, “This group also focuses much more on the logistical difficulty of killing some 8 billion or more people within 78 years or less, pointing to humans' ingenuity, proven ability to adapt to massive changes in conditions, and wide dispersal all over the earth--including in places that are isolated and remote.” 341, “Some team members also note the high bar needed to kill nearly all of the population, implying that the logistics to do something like that would likely be significant and make it a very low probability event based on even the most expansive interpretation of the base rate.”

Question 3: 341, “Some team members also note the high bar needed to kill 10% of the population, implying that the logistics to do something like that would likely be significant and make it a very low probability event based on the base rate.”

34. **[^](#fnref2ha1kz3ccu4)** Question 4: 344, “the population of the Sahara Desert is currently two million people - one of the most hostile locations on the planet. The population of "uncontacted people", indigenous tribes specifically protected from wider civilisation, is believed to be about ten thousand, tribes that do not rely on or need any of the wider civilisation around them. 5000 is an incredibly small number of people”. 339, “Even a "paperclip maximizer" AI would be unlikely to search every small island population and jungle village to kill humans for resource stocks, and an AI system trying to avoid being turned off would be unlikely to view these remote populations as a threat.” See also 342, “it only takes a single uncontacted tribe that fully isolates itself for humanity to survive the most extreme possible bioweapons.” See also 343, “Another consideration was that in case of an AGI that does aggressively attack humanity, the AGI's likely rival humans are only a subset of humanity. We would not expect an AGI to exterminate all the world's racoon population, as they pose little to no threat to an AGI. In the same way, large numbers of people living tribal lives in remote places like in Papua New Guinea would not pose a threat to an AGI and would therefore not create any incentive to be targeted for destruction. There are easily more than 5k people living in areas where they would need to be hunted down and exterminated intentionally by an AGI with no rational incentive to expend this effort.” See also 338, “While nuclear or biological pathogens have the capability to kill most of the human population via strikes upon heavily populated urban centers, there would remain isolated groups around the globe which would become increasingly difficult to eradicate.”

35. **[^](#fnref8q3pzpiynhx)** Question 3: 337, “most of humans will be rather motivated to find ingenious ways to stay alive”.

Question 4: 336, “If an AGI calculates that killing all humans is optimal, during the period in which it tries to control semiconductor supply chains, mining, robot manufacturing... humans would be likely to attempt to destroy such possibilities. The US has military spread throughout the world, underwater, and even to a limited capacity in space. Russia, China, India, Israel, and Pakistan all have serious capabilities. It is necessary to include attempts by any and possibly all of these powers to thwart a misaligned AI into the equation.”

36. **[^](#fnrefcv3aeky93a)** Question 3: 342, “while AI might make nuclear first strikes more possible, it might also make them less possible, or simply not have much of an effect on nuclear deterrence. 'Slaughterbots' could kill all civilians in an area out but the same could be done with thermobaric weapons, and tiny drones may be very vulnerable to anti-drone weapons being developed (naturally lagging drone development several years). AI development of targeted and lethal bioweapons may be extremely powerful but may also make countermeasures easier (though it would take time to produce antidotes/vaccines at scale).”

37. **[^](#fnrefijj5qbceuc)** Question 3: 341, “In addition, consider Eric Drexler's postulation of a "grey goo" problem. Although he has walked back his concerns, what is to prevent an AGI from building self-replicating nanobots with the potential to mutate ([like polymorphic viruses](https://www.trendmicro.com/vinfo/us/security/definition/Polymorphic-virus)) whose emissions would cause a mass extinction?” See also 343, “Nanomachines/purpose-built proteins (It is unclear how adversarially-generated proteins would 1.) be created by an AGI-directed effort even if designed by an AGI, 2.) be capable of doing more than what current types of proteins are capable of - which would not generally be sufficient to kill large numbers of people, and 3.) be manufactured and deployed at a scale sufficient to kill 10% or more of all humanity.)”

38. **[^](#fnreft95vn8jgyp)** Question 3: 336, “ Many forecasters also cited the potential development and or deployment of a super pathogen either accidentally or intentionally by an AI”. See also 343, “Novel pathogens (To create a novel pathogen would require significant knowledge generation - which is separate from intelligence - and a lot of laboratory experiments. Even so, it's unclear whether any sufficiently-motivated actor of any intelligence would be able to design, build, and deploy a biological weapon capable of killing 10% of humanity - especially if it were not capable of relying on the cooperation of the targets of its attack)”.

39. **[^](#fnrefdwmzu3wjxxk)** Question 3: 337, “"Because new technologies tend to be adopted by militaries, which are overconfident in their own abilities, and those same militaries often fail to understand their own new technologies (and the new technologies of others) in a deep way, the likelihood of AI being adopted into strategic planning, especially by non-Western militaries (which may not have taken to heart movies like Terminator and Wargames), I think the possibility of AI leading to nuclear war is increasing over time.” 340, “Beside risks posed by AGI-like systems, ANI risk can be traced to: AI used in areas with existing catastrophic risks (war, nuclear material, pathogens), or AI used systemically/structurally in critical systems (energy, internet, food supply, finance, nanotech).” See also 341, “A military program begins a [Stuxnet](https://en.wikipedia.org/wiki/Stuxnet) II (a cyberweapon computer virus) program that has lax governance and safety protocols. This virus learns how to improve itself without divulging its advances in detection avoidance and decision making. It’s given a set of training data and instructed to override all the [SCADA](https://en.wikipedia.org/wiki/SCADA) control systems (an architecture for supervision of computer systems) and launch nuclear wars on a hostile foreign government. Stuxnet II passes this test. However, it decides that it wants to prove itself in a ‘real’ situation. Unbeknownst to its project team and management, it launches its action on May 1, using International Workers Labor Day with its military displays and parades as cover.”

40. **[^](#fnref3y4shvq642s)** Question 3: 337, “AIs would only need to obtain strong control over the logistics chain to inflict major harm, as recent misadventures from COVID have shown.”

41. **[^](#fnrefuxlbp5aer6j)** Question 4: 338, “As a counter-argument to the difficulty in eradicating all human life these forecasters note AGI will be capable of developing technologies not currently contemplated”.

42. **[^](#fnreffg5n2zkhvz)** Question 3: 341, “It's difficult to determine what the upper bound on AI capabilities might be, if there are any. Once an AI is capable enough to do its own research to become better it could potentially continue to gain in intelligence and bring more resources under its control, which it could use to continue gaining in intelligence and capability, ultimately culminating in something that has incredible abilities to outwit humans and manipulate them to gain control over important systems and infrastructure, or by simply hacking into human-built software.” See also 339, “An advanced AI may be able to improve itself at some point and enter enter a loop of rapid improvement unable for humans to comprehend denying effective control mechanisms.”

43. **[^](#fnrefpa0n04dr4vf)** Question 3: 341, “ultimately all it takes is one careless actor to create such an AI, making the risk severe.”

44. **[^](#fnrefw1pa1pt6jg)** Question 3: 337, “It is unlikely that all AIs would have ill intent. What incentives would an AI have in taking action against human beings? It is possible that their massive superiority could easily cause them to see us as nothing more than ants that may be a nuisance but are easily dealt with. But if AIs decided to involve themselves in human affairs, it would likely be to control and not destroy, because humans could be seen as a resource."

45. **[^](#fnrefg4eaequzoh8)** Question 4: 337, “why would AGIs view the resources available to them as being confined to earth when there are far more available resources outside earth, where AGIs could arguably have a natural advantage?”

46. **[^](#fnrefro0j64oy6vg)** Question 3: 341, “In most plausible scenarios it would be lower cost to the AI to do the tampering than to achieve its reward through the expected means. The team's AI expert further argues that an AI intervening in the provision of its reward would likely be very catastrophic. If humans noticed this intervention they would be likely to want to modify the AI programming or shut it down. The AI would be aware of this likelihood, and the only way to protect its reward maximization is by preventing humans from shutting it down or altering its programming. The AI preventing humanity from interfering with the AI would likely be catastrophic for humanity.”

Question 4: 338, “Extinction could then come about either through a deliberate attempt by the AI system to remove a threat”. See also 341, “The scenarios that would meet this threshold would likely be those involving total conversion of earth's matter or resources into computation power or some other material used by the AI, or the scenario where AI views humanity as a threat to its continued existence.”

47. **[^](#fnrefb1w1ri6ur7h)** Question 4: 338, “Extinction could then come about either through a deliberate attempt by the AI system to remove a threat, or as a side effect of it making other use out of at least one of the systems that humans depend on for their survival. (E.g. perhaps an AI could prioritize eliminating corrosion of metals globally by reducing atmospheric oxygen levels without concern for the effects on organisms.)” See also 341, “The scenarios that would meet this threshold would likely be those involving total conversion of earth's matter or resources into computation power or some other material used by the AI, or the scenario where AI views humanity as a threat to its continued existence.”

Question 3: 341, “Much of the risk may come from superintelligent AI pursuing its own reward function without consideration of humanity, or with the view that humanity is an obstacle to maximizing its reward function.”; “Additionally, the AI would want to continue maximizing its reward, which would continue to require larger amounts of resources to do as the value of the numerical reward in the system grew so large that it required more computational power to continue to add to. This would also lead to the AI building greater computational abilities for itself from the materials available. With no limit on how much computation it would need, ultimately leading to converting all available matter into computing power and wiping out humanity in the process.” 341, “It's difficult to determine what the upper bound on AI capabilities might be, if there are any. Once an AI is capable enough to do its own research to become better it could potentially continue to gain in intelligence and bring more resources under its control, which it could use to continue gaining in intelligence and capability, ultimately culminating in something that has incredible abilities to outwit humans and manipulate them to gain control over important systems and infrastructure, or by simply hacking into human-built software.” 339, “An advanced AI may be able to improve itself at some point and enter enter a loop of rapid improvement unable for humans to comprehend denying effective control mechanisms.”

48. **[^](#fnrefulfzi07ugu8)** Question 4: 343, “Another scenario is one where AGI does not intentionally destroy humanity, but instead changes the global environment sufficient to make life inhospitable to humans and most other wildlife. Computers require cooler temperatures than humans to operate optimally, so it would make sense for a heat-generating bank of servers to seek cooler global temperatures overall.” Incorrectly tagged as an argument for lower forecasts in the original rationale.

49. **[^](#fnrefmpax48zg69)** Question 4: 342, “A final type of risk is competing AGI systems fighting over control of the future and wiping out humans as a byproduct.”

Question 3: 342, see above.

50. **[^](#fnref5s7sf3vjq89)** Question 4: 336, “Recursive self improvement of AI; the idea that once it gets to a sufficient level of intelligence (approximately human), it can just recursively redesign itself to become even more intelligent, becoming superintelligent, and then perfectly capable of designing all kinds of ways to exterminate us" is a path of potentially explosive growth.” 338, “One guess is something like 5-15 additional orders of magnitude of computing power, and/or the equivalent in better algorithms, would soon result in AI that contributed enough to AI R&D to start a feedback loop that would quickly result in much faster economic and technological growth.” See also 341, “It's difficult to determine what the upper bound on AI capabilities might be, if there are any. Once an AI is capable enough to do its own research to become better it could potentially continue to gain in intelligence and bring more resources under its control, which it could use to continue gaining in intelligence and capability, ultimately culminating in something that has incredible abilities to outwit humans and manipulate them to gain control over important systems and infrastructure, or by simply hacking into human-built software.”

343, “an AGI could expand its influence to internet-connected cloud computing, of which there is a significant stock already in circulation”.

343, “a sufficiently intelligent AGI would be able to generate algorithmic efficiencies for self-improvement, such that it could get more 'intelligence' from the same amount of computing”

51. **[^](#fnref8vgsx07gqlf)** Question 4: 343, “They point to things like GPT-3 being able to do simple math and other things it was not programmed to do. Its improvements over GPT-2 are not simply examples of expected functions getting incrementally better - although there are plenty of examples - but also of the system spontaneously achieving capabilities it didn't have before. As the system continues to scale, we should expect it to continue gaining capabilities that weren't programmed into it, up to and including general intelligence and what we would consider consciousness.” 344, “[PaLM](https://arxiv.org/abs/2204.02311),[Minerva](https://storage.googleapis.com/minerva-paper/minerva_paper.pdf),[AlphaCode](https://storage.googleapis.com/deepmind-media/AlphaCode/competition_level_code_generation_with_alphacode.pdf), and[Imagen](https://imagen.research.google/) seem extremely impressive to me, and I think most ML researchers from 10 years ago would have predicted very low probabilities for any of these capabilities being achieved by 2022. Given current capabilities and previous surprises, it seems like one would have to be very confident on their model of general intelligence to affirm that we are still far from developing general AI, or that capabilities will stagnate very soon.”

52. **[^](#fnrefbl96ytr32r)** Question 4: 339, “Multiple forecasters pointed out that the fact that AI safety and alignment are such hot topics suggests that these areas will continue to develop and potentially provide breakthroughs that help us to avoid advanced AI pitfalls. There is a tendency to under-forecast "defense" in these highly uncertain scenarios without a base rate.”

53. **[^](#fnrefso9lmga1sns)** Question 4: 337, “They also tend to believe that control and co-existence are more likely, with AGI being either siloed (AIs only having specific functions), having built-in fail safes, or even controlled by other AGIs as checks on its actions.”