id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

c435613c-9164-43ea-8338-b5ae2dae1e1b | trentmkelly/LessWrong-43k | LessWrong | My Detailed Notes & Commentary from Secular Solstice

Previously: General Thoughts on Secular Solstice.

This blog post is my scattered notes and ramblings about the individual components (talks and songs) of Secular Solstice in Berkeley. Talks have their title in bold, and I split the post into two columns, with the notes I took about the content of the talk on the left and my comments on the talk on the right. Songs have normal formatting.

Bonfire

The Circle

This feels like a sort of whig history: a history that neglects most of the complexities and culture-dependence of the past in order to advance a teleological narrative. I do not think that whig histories are inherently wrong (although the term has negative connotations). Whig histories should be held to a very strict standard because they make claims about how most or all of human history functions.

The song describes morality in terms of an expanding circle of concern: kin → neighbor → humanity[1] → "feathers, fur, and silicon" → future.

Trying to line these up with historical societies or ideologies is ... difficult. Many societies do not have a concept of 'neighbor,'[2] and some do not understand ethics in terms of circles of moral concern.[3] A few moral systems are universalistic (i.e. they teach that people should have moral concern for all of humanity): Christianity,[4] liberal democracy,[5] and maybe Buddhism.[6] Actually practicing universalism is really hard: Most societies which preach universalism do not live up to its ideals.

Within one of these traditions, the whig version of history can make sense. Over the centuries, Christianity has dramatically expanded and Christian activists from Francis of Assisi to Martin Luther King have made it more true to the ideals of the New Testament. Similarly, liberal democracy has expanded dramatically, extended the right to vote for more people, and gotten better at defending many freedoms. (I don't know what's going on with Buddhism, but its failure to build/maintain a dominant position in India is eviden |

8587d872-d0d5-4510-a1cb-24bc494d581c | trentmkelly/LessWrong-43k | LessWrong | Amending the "General Pupose Intelligence: Arguing the Orthogonality Thesis"

Stuart has worked on further developing the orthogonality thesis, which gave rise to a paper, a non-final version of which you can see here: http://lesswrong.com/lw/cej/general_purpose_intelligence_arguing_the/

This post won't make sense if you haven't been through that.

Today we spent some time going over it and he accepted my suggestion of a minor amendment. Which best fits here.

Besides all the other awkward things that a moral convergentist would have to argue for, namely:

> This argument generalises to other ways of producing the AI. Thus to deny the Orthogonality thesis is to assert that there is a goal system G, such that, among other things:

> 1. There cannot exist any efficient real-world algorithm with goal G.

> 2. If a being with arbitrarily high resources, intelligence, time and goal G, were to try design an efficient real-world algorithm with the same goal, it must fail.

> 3. If a human society were highly motivated to design an efficient real-world algorithm with goal G, and were given a million years to do so along with huge amounts of resources, training and knowledge about AI, it must fail.

> 4. If a high-resource human society were highly motivated to achieve the goals of G, then it could not do so (here the human society is seen as the algorithm).

> 5. Same as above, for any hypothetical alien societies.

> 6. There cannot exist any pattern of reinforcement learning that would train a highly efficient real-world intelligence to follow the goal G.

> 7. There cannot exist any evolutionary or environmental pressures that would evolving highly efficient real world intelligences to follow goal G.

We can add:

8. If there were a threshold of intelligence above which any agent will converge towards the morality/goals asserted by the anti-orthogonalist, there cannot exist any system, composed of a multitude of below-threshold intelligences that will as a whole pursue a different goal (G) than the convergent one (C), without any in |

561927e6-06de-43f1-847b-fd623c7c47bd | trentmkelly/LessWrong-43k | LessWrong | Superintelligence 11: The treacherous turn

This is part of a weekly reading group on Nick Bostrom's book, Superintelligence. For more information about the group, and an index of posts so far see the announcement post. For the schedule of future topics, see MIRI's reading guide.

----------------------------------------

Welcome. This week we discuss the 11th section in the reading guide: The treacherous turn. This corresponds to Chapter 8.

This post summarizes the section, and offers a few relevant notes, and ideas for further investigation. Some of my own thoughts and questions for discussion are in the comments.

There is no need to proceed in order through this post, or to look at everything. Feel free to jump straight to the discussion. Where applicable and I remember, page numbers indicate the rough part of the chapter that is most related (not necessarily that the chapter is being cited for the specific claim).

Reading: “Existential catastrophe…” and “The treacherous turn” from Chapter 8

----------------------------------------

Summary

1. The possibility of a first mover advantage + orthogonality thesis + convergent instrumental values suggests doom for humanity (p115-6)

1. First mover advantage implies the AI is in a position to do what it wants

2. Orthogonality thesis implies that what it wants could be all sorts of things

3. Instrumental convergence thesis implies that regardless of its wants, it will try to acquire resources and eliminate threats

4. Humans have resources and may be threats

5. Therefore an AI in a position to do what it wants is likely to want to take our resources and eliminate us. i.e. doom for humanity.

2. One kind of response: why wouldn't the makers of the AI be extremely careful not to develop and release dangerous AIs, or relatedly, why wouldn't someone else shut the whole thing down? (p116)

3. It is hard to observe whether an AI is dangerous via its behavior at a time when you could turn it off, because AIs have convergent instrumental reasons to |

5ccf27d4-59f4-457c-941f-a465406332f2 | trentmkelly/LessWrong-43k | LessWrong | Meetup : Canberra HPMOR Wrap Party!

Discussion article for the meetup : Canberra HPMOR Wrap Party!

WHEN: 15 March 2015 06:00:00PM (+1100)

WHERE: 70/10 Thynne St, Bruce, ACT

Harry Potter and the Methods of Rationality is finally coming to a conclusion! To celebrate, we invite all Canberran Less Wrongers to our wrap-up party. (: Snacks will be provided.

Make sure you read all the new chapters so that we can have some interesting discussions.

Note that this is on Sunday instead of Saturday (π day), and there will be no LW meeting on Saturday.

Discussion article for the meetup : Canberra HPMOR Wrap Party! |

75a47982-3b27-4ad1-8205-08b5d913895c | trentmkelly/LessWrong-43k | LessWrong | Confidence In Opinions, Intensity In Opinion

On a scale of 1 to 100, how sure are you?

It's a good thing to ask yourself from time to time about intense beliefs, especially if you're having a disagreement with someone else smart.

Just putting a number on something is good. If you're in business, putting any number in the high 90's is dangerous and shouldn't happen too often.

Yet, you still have to aggressively and intensely pursue your plans.

You can be only 80% sure you're correct, and still intensely pursue a course of action.

Most people make a mistake: they only go intensely after things they have a very high certainty will work.

But this is backwards. It's absolutely right to say "I'm only 80% sure that going and making a great talk to this group will help develop my business," and to still aggressively pursue giving a great talk.

The same is true with having ridiculously exceptionally good service. You can say, "I'm only 60% sure that doing this is going to lead to more customer loyalty... this might just be a time sink and cost more than it returns. But let's kill it on it, and find it."

You don't need to be highly confident to intensely pursue something.

In fact, intensely pursuing not-certain things seems to be how the world develops. |

ef28f1e1-5a21-4d7e-b6ec-6b350abfd8db | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Call for contributors to the Alignment Newsletter

**TL;DR:** I am looking for (possibly paid) contributors to write summaries and opinions for the Alignment Newsletter. This is currently experimental, but I estimate ~80% chance that it will become long-term, and so I’m looking for people who are likely to contribute at least 20 summaries over the course of their tenure at the newsletter (see caveats in the post). **To apply, read this [doc](https://docs.google.com/document/d/1zzMyG2VmyW5l3yy7rBx4B8pLwGxLnMRRHgFNJEdHLgg/edit#), write an example summary + opinion, and fill out this [form](https://docs.google.com/forms/d/e/1FAIpQLScyZ4CN4A4lj3qxNe0y8pqs8xg_K72I3QskT9lufCqtw9Ul4Q/viewform?usp=sf_link) by Friday, September 6.** I am also looking for someone to take over the work of publishing the newsletter (~1-3 hours per week); please send me an [email](mailto:rohinmshah@berkeley.edu?subject=Interested%20in%20publisher%20role%20for%20Alignment%20Newsletter%20EOM) if you’d be interested in this.

**ETA:** I now have enough interest in the publisher role that I would be shocked if none of them worked out. Feel free to continue expressing interest if you think you'd particularly benefit from doing the work, or if you think you'd be particularly good at it.

### Roles I am looking for

**Publisher:** Once all of the summaries and opinions are written, you would turn them into an actual newsletter, send it out for proofreading, fix any typos found, update the [database](https://docs.google.com/spreadsheets/d/1PwWbWZ6FPqAgZWOoOcXM8N_tUCuxpEyMbN1NYYC02aM/edit#gid=0), etc. This currently takes me around half an hour per newsletter. Ideally, you would also take on some tasks that I haven’t found the time for: improving the visual design of the newsletter, A/B testing different versions to see what people engage with, publicity, and so on, for a total of ~1-3 hours per week.

Since I don’t yet have the setup to pay people to help with the newsletter, I am only looking for expressions of interest. If you think you’d be interested in this role, click [this link](mailto:rohinmshah@berkeley.edu?subject=Interested%20in%20publisher%20role%20for%20Alignment%20Newsletter%20EOM) to email me at <rohinmshah@berkeley.edu> with the subject line “Interested in publisher role for Alignment Newsletter EOM”. If I do end up hiring for the publisher role I’ll reach out to you with more details.

The rest of this doc will be focused on the more substantial role:

**Content creator:** You would choose articles that you’re interested in, and write summaries and opinions for them, that would then be published in the newsletter.

### Why am I looking for content creators?

In the past few months, I haven’t been allocating as much time to the newsletter (you may have noticed they’re coming out every other week now). There have been many other things that seem more important to do. This is both because I’m more optimistic about the other work I’m doing, and because I no longer find it as useful to read papers as I did when I started the newsletter. As a result, I now have over 100 articles that I would probably want to send out, but haven’t gotten around to yet. This is also partly because there’s just more stuff coming out now. (I mentioned some of these points in the [retrospective](https://alignmentforum.org/posts/3onCb5ph3ywLQZMX2/alignment-newsletter-one-year-retrospective).)

Another reason for more content creators is that as I have learned more since starting the newsletter, I have developed my own idiosyncratic beliefs, and I think I have become worse at intuitively interpreting other posts from the author’s perspective rather than my own. (In other words, I would perform worse at an Ideological Turing Test of their position than I would have in the past, unless I put in a lot of effort into it.) I expect that with more writers the newsletter will better reflect a diversity of opinions.

### Why should you do it?

**It’s impactful.** See the [retrospective](https://alignmentforum.org/posts/3onCb5ph3ywLQZMX2/alignment-newsletter-one-year-retrospective) for more on this point. I’m not currently able to get a (normal length) newsletter out every week; you’d likely be causally responsible for getting back to weekly newsletters.

**You will improve your analytical writing skills.** Hopefully clear.

**You’ll learn more about safety by reading papers.** You could do this by yourself, but by summarizing the papers, you’re also providing a valuable service for everyone else.

**You might learn more about AI safety by getting feedback from me.** This is a “might” because I don’t know how much feedback I will end up giving to you about your summaries and opinions that’s actually about key ideas in AI safety (as opposed to feedback about the writing itself).

**You might build career capital.** I certainly have built career capital by creating this newsletter -- it has made me well known in some communities. I don’t know to what extent this will transfer to you.

**You might be paid.** Currently this is experimental, so I haven’t actually thought much about payment. I expect that I could get a grant to pay you if I ended up deciding that it would be worth it. However, it might be that dealing with all of the paperwork + tax implications cancels out any time savings, though I think this is unlikely. If this is an important factor to you, please do let me know when you apply.

### Qualifications

* Likely to contribute at least 20 summaries to the newsletter over time, at least 4 of which are in the first month (for onboarding purposes). Alternatively, if you have deep expertise in a topic that the newsletter covers infrequently, such as formal verification, you should be likely to summarize relevant papers for at least the next 6 months.

* Basic familiarity with AI safety arguments

* Medium familiarity with the topic that you want to write summaries about

* Good writing skills (though I recommend just applying regardless and letting me evaluate based on your example summary)

### Application process

Fill out this [form](https://docs.google.com/forms/d/e/1FAIpQLScyZ4CN4A4lj3qxNe0y8pqs8xg_K72I3QskT9lufCqtw9Ul4Q/viewform?usp=sf_link). The main part of the application is to write an example summary and opinion for an article (which I may send out in the newsletter, if you give me permission to). Ideally you would write a summary on one of the articles from the list below, but if there isn’t an article in the subarea you’d like to write on, you can choose some other article (that hasn’t already been summarized in the newsletter) and summarize that. The whole process should take 1-4 hours, depending on how much time you put into the summary and opinion.

List of articles:

* [Aligning a toy model of optimization](https://www.alignmentforum.org/posts/H5gXpFtg93qDMZ6Xn/aligning-a-toy-model-of-optimization)

* [Four Ways An Impact Measure Could Help Alignment](https://alignmentforum.org/posts/wJK944YqvFwjdbqCP/four-ways-an-impact-measure-could-help-alignment)

* [AI-GAs: AI-generating algorithms, an alternate paradigm for producing general artificial intelligence](https://arxiv.org/abs/1905.10985)

* [Learning to Interactively Learn and Assist](https://interactive-learning.github.io/)

* [Natural Adversarial Examples](http://arxiv.org/abs/1907.07174)

* [On Inductive Biases in Deep Reinforcement Learning](http://arxiv.org/abs/1907.02908) |

d3ceeb82-dfcb-419d-b1be-c0fa36357a98 | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | Explained Simply: Quantilizers

Standard AI are *optimizers*: they ‘look’ through possible actions they could take, and pick the one that maximises what they care about. This can be dangerous— an AI which maximises in this way needs to care about *exactly* the same things that humans care about, which is [really hard](https://www.lesswrong.com/tag/complexity-of-value)[[1]](#fnsmixvrb0gvj). If you [tell a human](https://ui.stampy.ai/?state=7523_6380r8160r8185r6984r9OGZ-9IDQ-9TDI-8TJV-) to calculate as many digits of pi as possible within a year, they’ll do ‘reasonable’ things towards that goal. An optimizing AI might work out that it could calculate many more digits in a year by taking over another supercomputer— as this is the most effective action, it seems very attractive to the AI.

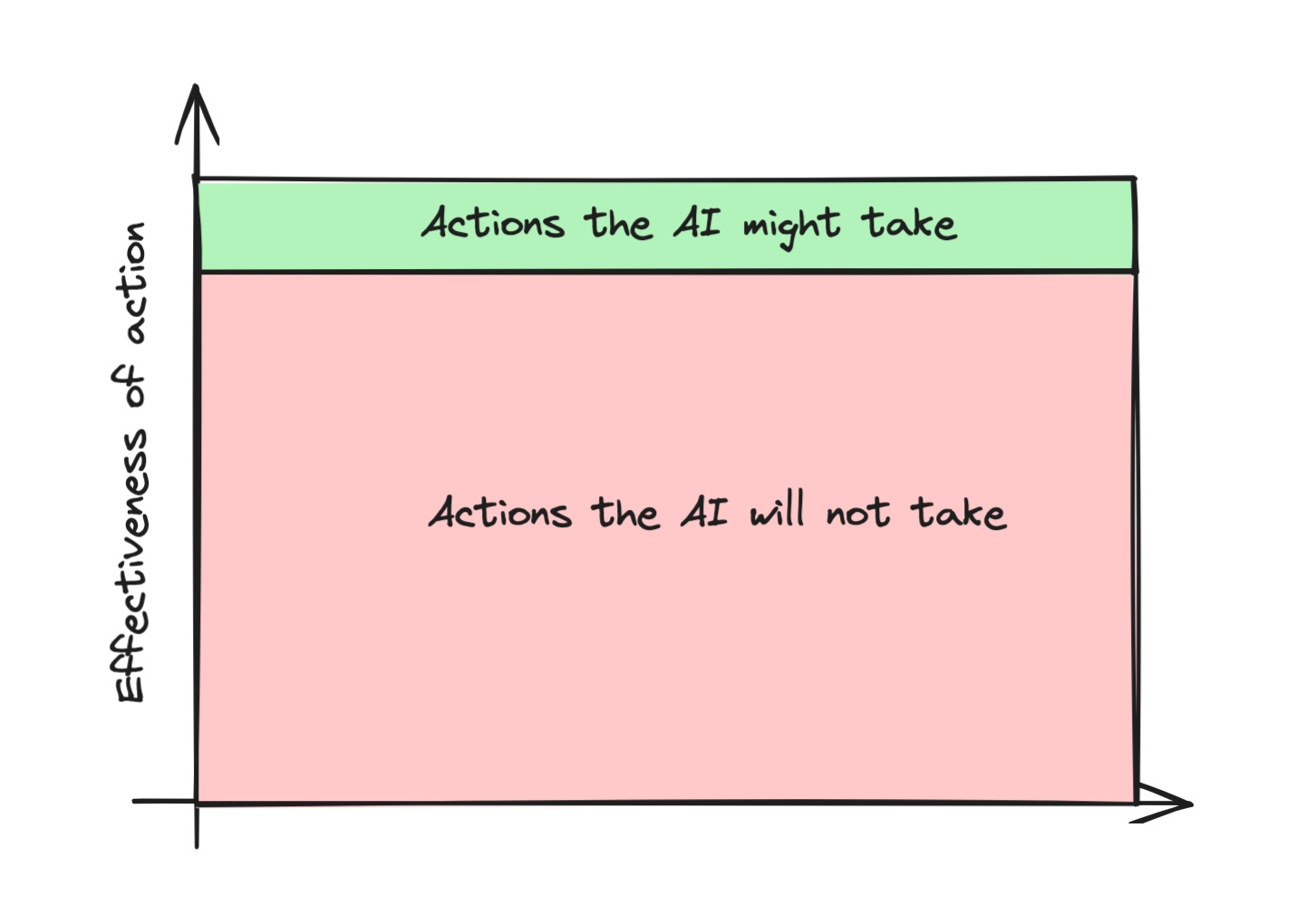

[Quantilizers](https://intelligence.org/files/QuantilizersSaferAlternative.pdf) are a different approach. Instead of maximizing, they randomly choose from a few of the most effective possible actions:

[](https://substackcdn.com/image/fetch/f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F31529c1b-5fac-4361-9357-a939ab77dbc3_1452x1010.png)

They work like this:

1. Start with a goal, and a set of possible actions

2. Predict how useful each action will be for achieving the goal

3. Rank the actions from the most to the least useful

4. Pick randomly from the highest fraction only (i.e, the top 10%)

This avoids cases where the AI chooses extreme actions to maximize the goal. The AI chooses somewhat helpful actions instead.

It does leave one question — how do we make a list of possible actions in the first place? One suggestion is to ask a lot of humans to solve the task and train an AI to generate possible things it thinks humans would do. This list can then be used as an input to our quantilizer.

This does make them less effective, of course— firstly by picking less effective actions overall, and secondly by picking actions it thinks humans would take. But this might be worth the reduced risks— indeed, based on your risk tolerance, you can change the % of top actions the quantilizer will consider to make it more effective and riskier or vice versa.

So quantilizers trade some capability in exchange for greater safety, and avoid unintended consequences. They pick from lots of mild actions and very few extreme actions, so the chance of them doing something extreme or unexpected is miniscule.

Quantilizers are a proposed safer approach to AI goals. By randomly choosing from a selection of the top options, they avoid extreme behaviors that could cause harm. More research is needed, but quantilizers show promise as a model for the creation of AI systems that are beneficial but limited in scope. They provide an alternative to goal maximization, which can be dangerous, though they’re just theoretical right now.

1. **[^](#fnrefsmixvrb0gvj)**Humans care about an awful lot of different things, even just one human! |

e327e476-7f09-4b54-b5b6-aae8a231f188 | trentmkelly/LessWrong-43k | LessWrong | The Greedy Doctor Problem

TL;DR: How to reason about people who are smarter than you. A few proposals, interspersed with reinforcement learning and humorous fiction. Ending on a surprising connection to logical inductors.

What is the Greedy Doctor Problem?

I came up with a neat little thought experiment[1] :

> You are very rich and you want to make sure that you stay healthy. But you don't have any medical expertise and, therefore, you want to hire a medical professional to help you monitor your health and diagnose diseases. The medical professional is greedy, i.e. they want to charge you as much money as possible, and they do not (per se) care about your health. They only care about your health as far as they can get money from you. How can you design a payment scheme for the medical professional so that you actually get the ideal treatment?

Over the last few weeks, I've been walking around and bugging people with this question to see what they come up with. Here I want to share some of the things I learned in the process with you, as well as some potential answers. I don't think the question (as presented) is completely well-formed, so the first step to answering it is clarifying the setup and deconfusing the terms. Also, as is typical with thought experiments, I do not have a definitive "solution" and invite you (right now!) to try and come up with something yourself[2].

Some background on the problem

The subtext for the thought experiment is: How should you act when interacting with someone smarter than yourself? What can you say or do, when your interlocutor has thought of everything you might say and more? Should you trust someone's advice, when you can't pinpoint their motivation? As a Ph.D. student, I run into this problem around three to five times a week, when interacting with colleagues or my advisor[3].

After bugging a few people I learned that (of course) I'm not the first person to think about this question. In economics and political science, the situation is known as t |

68b80aeb-1585-4d81-b402-910b1f67dfd9 | trentmkelly/LessWrong-43k | LessWrong | Some Tools For Optimizing Our Media Use

Benevolent and malevolent media producers possess the power to influence society in positive and negative ways. They can do this through agenda setting, framing, priming, spreading memes, altering perceptions of groups and individuals, outright propaganda, and other methods.

I think more attention should be paid to the pathways from content to effects, so that we can optimize our cultural landscape.

Lest this post soon turn into Applause Light Vegas, I’ll now get into some methods I think can be used to sway mass opinion in a direction amenable to making the world better. Many of these methods deal with familiar biases, heuristics, and psychological effects.

Media Use Facilitating Positive Social Change

First, the mass media possesses the power to alter estimates of the likelihood and frequency of specific occurrences. Think back to some of the classic examples of the availability heuristic. When asked to estimate the number of homicides in the USA compared to suicides, people answer that there are far more homicides in the United States, even though the reverse is true. The mass media report on homicides far more often than they report on suicides, so people have more available instances of homicide in their memories and these come to mind more easily. This influences their beliefs about the real world, which can then be politicized to lead to different stances on gun control and education. The priorities of a culture with a homicide problem are not the priorities of a culture with a suicide problem.

This effect is consistent with some theoretical models of the mass media’s impact on society. Cultivation theorists understand the media, especially television, as a system of coherent memes and messages reflecting a society’s dominant ideology. If we accept the fundamental claim of cultivation theory then we should hypothesize exposure to television to be positively correlated with status quo beliefs and attitudes. We might then expect high exposure to non-fic |

e81402a0-2138-479f-bb98-1d9e2c0179b6 | trentmkelly/LessWrong-43k | LessWrong | Rodney Brooks talks about Evil AI and mentions MIRI [LINK]

Rodney Brooks says that "evil" AI is not a big problem:

http://www.rethinkrobotics.com/artificial-intelligence-tool-threat/ |

faaff190-8854-4bef-b4c9-c6a793c3310f | trentmkelly/LessWrong-43k | LessWrong | Good HPMoR scenes / passages?

I'm doing a reading of good fan-fiction at a con this weekend, to counter the many "bad fanfic reading" panels. I want to read an interesting passage from HPMoR, but I can't remember any particular passage myself, and I don't want to re-read the whole thing this week. Can anyone remember any scene or passage that stuck in their mind as impressive?

It should:

* be less than 1000 words

* be understandable to someone who's read Harry Potter, but not HPMoR, with at most one or two sentences of background info (please write those sentences in your reply)

* be good at the things that make HPMoR special, e.g.,

* a clever "exploit" of Muggle society using magic

* gobsmacking you with a new way of looking at something you thought you knew well

* convincing you that something that seems evil is the right thing to do

* Quirrell or Harry creeping you out, scaring you, or making you question your ethics by being ruthlessly rational |

80fcd461-7eb6-4566-bf53-0b9226142bdb | trentmkelly/LessWrong-43k | LessWrong | -

- |

4da7e566-6a4c-4623-83a7-2cc2ca4e97c8 | trentmkelly/LessWrong-43k | LessWrong | The Compendium, A full argument about extinction risk from AGI

We (Connor Leahy, Gabriel Alfour, Chris Scammell, Andrea Miotti, Adam Shimi) have just published The Compendium, which brings together in a single place the most important arguments that drive our models of the AGI race, and what we need to do to avoid catastrophe.

We felt that something like this has been missing from the AI conversation. Most of these points have been shared before, but a “comprehensive worldview” doc has been missing. We’ve tried our best to fill this gap, and welcome feedback and debate about the arguments. The Compendium is a living document, and we’ll keep updating it as we learn more and change our minds.

We would appreciate your feedback, whether or not you agree with us:

* If you do agree with us, please point out where you think the arguments can be made stronger, and contact us if there are ways you’d be interested in collaborating in the future.

* If you disagree with us, please let us know where our argument loses you and which points are the most significant cruxes - we welcome debate.

Here is the twitter thread and the summary:

> The Compendium aims to present a coherent worldview about the extinction risks of artificial general intelligence (AGI), an artificial intelligence that exceeds that of humans, in a way that is accessible to non-technical readers who have no prior knowledge of AI. A reader should come away with an understanding of the current landscape, the race to AGI, and its existential stakes.

>

> AI progress is rapidly converging on building AGI, driven by a brute-force paradigm that is bottlenecked by resources, not insights. Well-resourced, ideologically motivated individuals are driving a corporate race to AGI. They are now backed by Big Tech, and will soon have the support of nations.

>

> People debate whether or not it is possible to build AGI, but most of the discourse is rooted in pseudoscience. Because humanity lacks a formal theory of intelligence, we must operate by the empirical observation that AI c |

004c540d-24cc-4f6e-9c46-111a242fda4e | trentmkelly/LessWrong-43k | LessWrong | Meetup : Paderborn Meetup, November 22th

Discussion article for the meetup : Paderborn Meetup, November 22th

WHEN: 22 November 2012 07:00:00PM (+0100)

WHERE: Gownsmen's Pub, Uni Paderborn, Warburger Straße 100, Paderborn

The first meetup in this city ever.

There is lots of rationality stuff to talk about and the more people come, the more interesting people will be there to meet.

Even if you are new to Lesswrong and have only read some articles, don't be to shy to show up.

I will have a sign.

Everybody is welcome!

Discussion article for the meetup : Paderborn Meetup, November 22th |

8f7f1533-39e2-45e1-9683-deb61d328bd8 | trentmkelly/LessWrong-43k | LessWrong | Per Tribalismum ad Astra

Capitalism is powered by greed. People want to make money, so they look hard for things they can produce and that others want. Unknowingly, however, they are powering the great information-processing machine that is the market. The output of the machine is the efficient allocation of resources and, eventually, wealth.

Something we intuitively consider bad (greed) is made to work for the benefit of the entire society. Even more so: if everyone suddenly stopped being greedy, the economy would likely fall apart, leaving us in a harsh dog-eat-dog world. Or it would at least stop growing and start to slowly deteriorate.

Greed is a powerful motivator that can be used to drive the engine of societal well-being, but it's not the only motivating force around.

Another such force is tribalism, the desire to defend your own and attack others.

However, we haven't tamed tribalism as much as we have greed. We don't use that force to power the improvement of society.

One could maybe argue that tribalism can be used to wage war effectively and that a country could benefit from winning wars. But war is inherently a negative-sum enterprise, so I don't think that really counts.

Or we could argue that tribalism is used in democracies to limit the power of the government: opposition is constantly attacking the government, which in turn prevents it from going fully bonkers. However, even if this is a force for good, it's completely undirected. Opposition is incentivized to attack the government for anything they do, good or bad. There's no self-steering mechanism, such as the one that steers greed toward productive endeavors in the market economy. The more attacks, the better, however dumb, and the whole thing eventually devolves into a political reality show devoid of any real content. This, in turn, undermines public trust in the political system.

Now consider the following, from an article about the making of Twitter's "Community Notes," published in the excellent Asterisk magaz |

3dd18869-afc1-4d75-bdaf-a5c125f9b264 | trentmkelly/LessWrong-43k | LessWrong | What are some alternatives to Shapley values which drop additivity?

I originally asked this on math.stackexchange; after reading Diffractor's Unifying Bargaining sequence (Part 1 here) I'm wondering if there are more insights floating about, so I'm repeating it here.

Shapley values seem to be the standard answer to "how should a coalition split the rewards of their cooperation", but I'm curious about alternatives.

The standard characterization of Shapley values says that Shapley values are the unique coalition payments which satisfy a bunch of properties. Three of them (efficiency, symmetry, and null player) seem pretty necessary for any "reasonable" or "practical" coalition payment rule, but the last one (linearity) does not.

If I didn't care for linearity (or its close synonyms, additivity and aggregation):

* What sorts of payment rules become available?

* What other properties of Shapley values are maintained?

* What other properties would produce a uniquely characterized payment rule?

Alternatively, are any of the other properties also reasonable to drop (for instance, symmetry)? What do you end up with? |

bccd74ff-ad3f-4e95-9a40-8ed7557e9ffe | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | Why not to solve alignment by making superintelligent humans?

Okay, hear me out: we already have human level/ general intelligent human aligned agents by the billions. And making new ones artificially seems to be really hard according to lots of alignment researchers. So why haven't I heard or read about projects trying to improve human intelligence? I'm not saying to do it in order to solve the alignment of pure artificial intelligences (although that could be a possibility too), but to the point of getting a hybrid (natural + artificial) superintelligence or just more useful than AIs.

I know that then there is the question about what a superintelligence would do or how having it aligned would help us to not make misaligned ones, and although there are possible good answers to that, those are questions that already exist outside "normal" alignment.

The only similar thing to that that I've heard was Elon Musk talking about Neuralink, but there is a hugeee difference between the things that Elon mentions as aspirations (in particular merging with an AI) and the product that is making right now. I don't see how Brain-Computer Interfaces would improve our decision making that much. A lot of people when they talk about augmenting intelligence seem to bring them up, and of course they could be useful, but again: I'm not talking about using them to solve AI Alignment, but to get around it. I'm wondering if we can find a way of scaling human intelligence in the same way we scale artificial intelligence.

I found a post that briefly [mentions](https://www.lesswrong.com/posts/rpRsksjrBXEDJuHHy/brain-computer-interfaces-and-ai-alignment#Scaling_far_Beyond_Human_Intelligence) similar ideas than mine but under the term BCI, which I don't understand if it's a more abarcative term than "a device that allows you to use other devices with the mind", because as I said, I don't know any device which would improve our decision making that much if we could just use it with our minds.

The clearest strategy that comes to mind is to make artificial neurons communicable with biological ones and then integrable with whole human neural networks. Could that be possible? I know that it could sound crazy, but I guess I'm talking to the people who think aligning an AI is really difficult and that having superintelligences on humanity's side sooner or later seems like the only path forward. |

1f3aed48-851d-4805-87fb-c2e560435623 | trentmkelly/LessWrong-43k | LessWrong | Against Compromise, or, Deciding as a Team without Succombing to Entropy

|

81813359-44ab-4f53-a3d5-d4ed033217ab | trentmkelly/LessWrong-43k | LessWrong | “Flaky breakthroughs” pervade coaching — but no one tracks them

Has someone you know ever had a “breakthrough” from coaching, meditation, or psychedelics — only to later have it fade?

Show tweet

For example, many people experience ego deaths that can last days or sometimes months. But as it turns out, having a sense of self can serve important functions (try navigating a world that expects you to have opinions, goals, and boundaries when you genuinely feel you have none) and finding a better cognitive strategy without downsides is non-trivial. Because the “breakthrough” wasn’t integrated with the conflicts of everyday life, it fades. I call these instances “flaky breakthroughs.”

It’s well-known that flaky breakthroughs are common with psychedelics and meditation, but apparently it’s not well-known that flaky breakthroughs are pervasive in coaching and retreats.

For example, it is common for someone to do some coaching, feel a “breakthrough”, think, “Wow, everything is going to be different from now on,” but feel and act no differently weeks or months later.

Worse, some techniques can even cause bypassing. Such “false breakthroughs” can come with intense positive affect or “cathartic” crying without addressing the underlying issue. (More below.)

Flaky breakthroughs can set people back for years or decades: If someone has a “breakthrough” that unexpectedly reverts, they can become jaded on progress itself. They can learn helplessness and give up on growing. The most depressed person you know has likely had this happen multiple times.

Show tweet

Flaky breakthroughs pervade inner work. Despite this, almost no one — coaches, therapists, retreats, bodyworkers, etc. — tracks whether their breakthroughs last.

Almost no practitioners track whether breakthroughs last.

Earlier this year, I attempted to make a list of “10x Coaches” to refer people to. 20–30 coaches reached out as interested in working with me, and I asked each to share the best evidence that they had facilitated lasting growth for others.

But all anyone could s |

4744ce17-ec6b-4620-942a-92ecdaeeff58 | trentmkelly/LessWrong-43k | LessWrong | Untypical SIA

TL;DR: If I am untypical, then many more observers exist. If this claim is necessarily true, then classical SIA is false.

There is a situation where we could prove something like SIA, let’s call it Untypical SIA. Imagine that there are 100 green dots and one red dot on a bow’s target (and they cover all the space). We know that there were either 10 or 100 attempts to hit the target with a random arrow. We only know that the red dot was eventually hit (maybe we are in it). Now we can give higher credence to the theory that there were 100 attempts (P1(red)=0.6339) over the theory of only 10 attempts (P2(red)=0.0956) to hit the red dot. Credence in 100 attempts = P1/(P1+P2) = 0.86.

The resonating above is made from God’s view and seems to have high epistemic status: it is not an assumption, it is a proof.

Examples of application of such untypical-SIA reasoning in the real life: if there is life on Earth, there are many other planets out there, which tried but fail to develop life. If humans are the only intelligent species, there are many others species that “tried” and fail to become intelligent. If our universe is fine-tuned, there are many other universes which are not fine-tuned, so the multiverse theory is true.

If Untypical SIA is a necessary condition for a larger set of observers, then classical SIA is false.

Untypical SIA requires that the red dot is different from the green dots. If all dots are red, and we learn that one of the dots was hit, it doesn’t give us any new information to update. If uniqueness is the necessary (=if and only if) condition for update when the lack of uniqueness means no update. In that case, classical SIA is false (it is not a problem in the infinite universe, where something like SIA still works, as we should look at relative concentrations of observers in different regions of the multiverse instead; all our SIA-based cosmological constructions are safe herein).

In other words, the fact of my existence is an argument in fa |

290730a1-b6d5-42b0-a238-8d45f8643651 | trentmkelly/LessWrong-43k | LessWrong | is gpt-3 few-shot ready for real applications?

This is a lengthy reply to @the-moti ‘s post here. Creating a new post to limit thread length, and so I can crosspost to LW.

@the-moti says, in part:

> This obviously raises two different questions: 1. Why did you think that no one would use few-shot learning in practice? 2. Why did other people think people would use few-shot learning in practice?

>

> I would be interested in hearing your thoughts on these two points.

—

Thanks for asking!

First of all, I want to emphasize that the GPT-3 paper was not about few-shot GPT-3 as a practical technology.

(This is important, because the paper is the one large body of quantitative evidence we have on few-shot GPT-3 performance.)

This is not just my take on it: before the OpenAI API was announced, all the discussion I saw took for granted that we were talking about a scientific finding and its broader implications. I didn’t see any commentator whose main takeaway was “wow, if I could do this few-shot thing right now, I could build amazing projects with it.”

Indeed, a common theme in critical commentary on my post was that I was too focused on whether few-shot was useful right now with this specific model, whereas the critical commentators were more focused on the implications for even larger models, the confirmation of scaling laws over a new parameter regime, or the illustration-in-principle of a kind of meta-learning. Gwern’s May newsletter is another illustrative primary source for the focus of the discussion in this brief “pre-API” period. (The API was announced on June 11.)

As I read it (perhaps benefitting from hindsight and discussion), the main points of the paper were

(1) bigger models are better at zero/few-shot (i.e. that result from the GPT-2 paper holds over a larger scale),

(2) more “shots” are better when you’re doing zero/few-shot,

(3) there is an interaction effect between 1+2, where larger models benefit more from additional “shots,”

(4) this could actually become a practical approach (eve |

777a51ef-9db4-42ff-9332-401760a6cf4b | trentmkelly/LessWrong-43k | LessWrong | Gwern's "Why Tool AIs Want to Be Agent AIs: The Power of Agency"

I somehow hadn't read this post until now, so I am posting this here in case I am not the only one (and I wasn't able to find a previous linkpost for it). Relevant to relatively recent discussion on AI-as-a-service, but also just good as a broad reference. |

b3da2c56-2cb0-43c2-b377-f2b28d25b27c | trentmkelly/LessWrong-43k | LessWrong | Defining the normal computer control problem

There has been focus on controlling super intelligent artificial intelligence, however we currently can't even control our un-agenty computers without having to resort to formatting and other large scale interventions.

Solving the normal computer control problem might help us solve the super intelligence control problem or allow us to work towards safe intelligence augmentation.

We cannot currently keep our computers doing what we want easily. They can get infected with malware, compromised or they get updates that may be buggy. If you have sufficient expertise you can go in and fix the problem or wipe the system, but this is not ideal.

We do not have control our computers, without resorting to out of band manipulation.

Genes have found a way to control the reptilian brain and also the more powerful mammalian and human brains, somewhat, as discussed in the control problem has already been solved. And the system continues to run, our brains aren't reformatted when we get bad behaviours. Let us call this the normal computer control problem. We don't know how the genes do it, but humans tend to do what they would want (if they wanted things!) despite our flexibility. There is some control there.

This problem of control has been neglected by traditional AI as there it is not trying to solve a cognitive problem. It is not like solving chess or learning to recognize faces. It is not making anything powerful, it is just weeding out the bad programs.

Comparing the normal computer control and AI control problem

The AI control problem has been defined as asking the question

> What prior precautions can the programmers take to successfully prevent the superintelligence from catastrophically misbehaving?

In this language the normal computer control problem can be defined as.

> What type of automated system can we implement to stop a normal general purpose computer system misbehaving (and carry on with its good behaviour ) if it has a malign program in it.

To make |

7743b2b4-5aa2-4813-bcc2-36c9531a5bcb | trentmkelly/LessWrong-43k | LessWrong | [Link] Less Wrong Wiki article with very long summary of Daniel Kahneman's Thinking, Fast and Slow

I've made very extensive notes, along with my assessment, of Daniel Kahneman's Thinking, Fast and Slow, and have passed it around to aspiring rationalist friends who found my notes very useful. So I though I would share these with the Less Wrong community by creating a Less Wrong Wiki article with these notes. Feel free to optimize the article based on your own notes as well. Hope this proves as helpful to you as it did to those others whom I shared my notes with.

|

31a43d38-8168-4f25-b717-25f41defa535 | trentmkelly/LessWrong-43k | LessWrong | The Law of Cultural Proximity

[Not my area of expertise, but I would be surprised if the core thesis was wrong in a significant way. Probably not as original as I think it is. Based on a previous blog post of mine that went in a very different/weird direction. Cross-posted from Grand, Unified, Crazy.]

Introduction

Currently, different human cultures have different behavioural norms around all sorts of things. These norms cover all kinds of personal and interpersonal conduct, and extend into different legal systems in countries around the globe. In politics, this is often talked about in the form of the Overton window, which is the set of political positions that are sufficiently “mainstream” in a given culture to be considered electable. Unsurprisingly, different cultures have different Overton windows. For example, Norway and the United States currently have Overton windows that tend to overlap on some policies (the punishment of theft) but perhaps not on others (social welfare).

Shared norms and a stable, well-defined Overton window are important for the stable functioning of society, since they provide the implicit contract and social fabric on which everything else operates. But what exactly is the scope of a “society” for which that is true? We just talked about the differences between Norway and the U.S., but in a very real sense, Norway and the U.S. share “western culture” when placed in comparison with Iran or North Korea. In the other direction, there are many distinct cultures entirely within the U.S. with different norms around things like gun control. The categories were made for man, not man for the categories.

However blurry these lines are, it might be tempting to assume that they get drawn roughly according to geography; it's certainly reflected in our language (note my use of "western culture" already in this post). But this isn't quite right: the key factor is actually interactional proximity; it's just that in a historical setting geographical and interactional proximity w |

a24adab0-e149-43c3-a2e6-f349f81c55ca | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | Announcing AI Safety Support

Contents:

1. What is AI Safety Support

2. Discussion Days

3. Other Online Events

4. AI Safety Resources

5. Mentorship Program

6. AI Alignment Slack

7. Consider Donating

What is [AI Safety Support](https://www.aisafetysupport.org)

------------------------------------------------------------

[AI Safety Support](https://www.aisafetysupport.org/home) has existed as an Incorporated Association in Australia since October and as an initiative with a web page since May 2020.

Our aim is to fill the gaps in that AI Safety career pipeline. We aim to provide operational support to early career and transitioning researchers, so you can engage with the community and test your career fitness. Broadly we just want to help reduce friction and enable people to do the things you are already trying to achieve.

If you are new to AI Safety, we would be happy to talk to you, and to help you figure out what steps to take next. We don’t have all the answers, but we can probably provide you with a better map of the career landscape.

Feel free to reach out or book a call with either one of us:

* Linda: [email](mailto:linda.linsefors@gmail.com), [calendly](https://calendly.com/linda-linsefors/call)

* JJ: [email](mailto:jj.hepboin@gmail.com), [calendly](https://calendly.com/jj-hepboin)

[Discussion Days](https://www.aisafetysupport.org/events/discussion-days)

-------------------------------------------------------------------------

Our longest running project (since June) are our regular [online AI Safety Discussion Days](https://www.aisafetysupport.org/events/discussion-days). Each of these events has a talk, an Icebreaker session and breakout discussions covering whatever is on your mind at the time.

The Discussion Days focus on ongoing research. If you have some ideas you want feedback on, you are welcome to present them as a talk or bring them up during the breakout discussions. However, you are also welcome to just listen and learn, or maybe give feedback on other people's ideas.

Schedule:

* Second Monday every month, [UTC 18:00](https://everytimezone.com/s/48fd68f6) - [21:30](https://everytimezone.com/s/599c7b7e) (Europe/Africa and Americas friendly time)

* Fourth Monday every month (except December), [UTC 08:00](https://everytimezone.com/s/cd44d880) - [11:30](https://everytimezone.com/s/8157f5ed)(Asia/Pacific and Europe/Africa friendly time)

The next one is on **Monday, November 23th**.

[Other Online Events](https://www.aisafetysupport.org/events#h.nsdsrjsayhut)

----------------------------------------------------------------------------

We are not the only one running online AI Safety events these days. We’re collecting all online AI Safety and adjacent events we know about in this [shared calendar](https://www.aisafetysupport.org/events#h.nsdsrjsayhut). *Notice that some events require registration or application.*

If you know of other online AI Safety events, please let us know.

[Lots of AI Safety Links](https://www.aisafetysupport.org/resources/lots-of-links)

----------------------------------------------------------------------------------

To stay up to date with new information, I highly recommend signing up to the [Alignment Newsletter](https://rohinshah.com/alignment-newsletter/) and [80k's AI Safety group](https://groups.google.com/g/david-kruegers-80k-people).

However, there are also lots of more static resources scattered around the internet, such as study guides and research agendas. I’ve tried to list them all [here](https://www.aisafetysupport.org/resources) (though I’m still adding things). Let us know if we forgot something, or miscategorized something.

[Mentorship Program](https://www.aisafetysupport.org/resources/mentorship-program)

----------------------------------------------------------------------------------

We have spoken to a number of people who want some mentorship and to others who are interested in mentoring. So, we are experimenting with a new mentorship program to try to bring these people together. Mentors and mentees can respectively offer and ask for anything they like so this works best if we have more people to match.

As a rule of thumb, if you are at least a 3rd year PhD student, or an independent researcher with a few publications, then you can be a mentor. You might not think you are very senior, but remember that AI Safety is a very new feld, so you are already more senior than most.

We have no rules for who can be a mentee. The more experience you have, the harder it will be for us to find a mentor who knows more than you, but whoever you are, we’ll try to find someone to help you. However, try to be as **specific as you can** about what you want to get out of the mentorship. For example, maybe you have a research project in mind that you want to do?

* [Signup to be a mentor](https://docs.google.com/forms/d/e/1FAIpQLScpR72HNuy-DBlGOwkb36FuIvV-tgoBrNo-ftGs1vAcJ4g-DA/viewform)

* [Application for mentees](https://docs.google.com/forms/d/e/1FAIpQLSeTIZXxWuEQfGqEge62KaAHGQfI1xPEENBrabiSpNq35UP3rQ/viewform)

You can sign up for both!

Signup deadline for the first round is **November 30th**. We will contact everyone and pair you up during December, so expect the actual mentoring to start no earlier than January.

When or if we do a second round depends entirely on the interest we get in the first round, both from mentors and mentees.

[AI Alignment Slack](https://join.slack.com/t/ai-alignment/shared_invite/zt-fkgwbd2b-kK50z~BbVclOZMM9UP44gw)

------------------------------------------------------------------------------------------------------------

We can’t take credit for the existence of [this Slack group](https://join.slack.com/t/ai-alignment/shared_invite/zt-fkgwbd2b-kK50z~BbVclOZMM9UP44gw), since it was created by Andrei Alexandru. But we are helping to grow it, and making use of it as a place to communicate, ask questions, discuss research, and generally help each other out.

In this Slack you’ll find two channels dedicated to our [Discussion Days](https://www.aisafetysupport.org/events/discussion-days), one for [general follow-up discussions](https://app.slack.com/client/T01641E1H6C/C01E5PLKQ30), and one for [asking questions of the last speaker](https://app.slack.com/client/T01641E1H6C/C01EL93VC92).

We have one channel dedicated to [grad school application](https://app.slack.com/client/T01641E1H6C/C01EL93VC92). One idea we have around this is that it would be beneficial for AI Safety-interested students to end up in the same program, which is more likely to happen with some communication and coordination.

There are also channels for [finding study buddies](https://app.slack.com/client/T01641E1H6C/C01EL93VC92), [personal introduction](https://app.slack.com/client/T01641E1H6C/C01EL93VC92), several sub-field specific discussions and more.

[Consider Donating](https://www.aisafetysupport.org/donate)

-----------------------------------------------------------

Since April and June respectively we have both spent most of our working time on AI Safety Support. We can not do this work while also having to hold down separate jobs, and the people we are helping are mostly students with little to no income. We are therefore relying on donations to be able to continue this work. On top of that, a large-ish donation we were expecting has been delayed for an unknown amount of time, which means donations we get now would be extra helpful.

There are a few ways you can donate. We have accounts on both [Patreon](https://www.patreon.com/aiss) and [Ko-fi](https://ko-fi.com/lindalinsefors), for convenient regular donations. Both these platforms have some transaction costs, however. If you prefer sending us money more directly, [just let us know](mailto:jj.hepboin@gmail.com).

A third way you can donate to us is though [Rethink Charity](https://www.rethinkprojects.org/donate). This option will make your donation tax deductible if you live in **Canada** or **US**. If you want to donate more than $1000 through Rethink Charity, please [email them first](mailto:donations@rethinkprojects.org), so they can find the best method to donate for you. Regardless of the amount you will need to let Rethink Charity know that your donation was meant for AI Safety Support. (Please only use this option if you can take advantage of the tax deductions.)

If you are donating from somewhere else and want tax benefits, [let us know](mailto:jj.hepboin@gmail.com), and we will try to arrange something.

Donors looking to support our project through a **US based Donor Advised Fund** can do so by sending their donation to Rethink Charity (EIN 82-5325150). If you need any assistance donation support is available by [emailing Siobhan Brenton](mailto:donations@rethinkprojects.org).

Thank you! |

6f82cc31-9d8d-420e-85c7-58122d62bea2 | trentmkelly/LessWrong-43k | LessWrong | Game theory question -- iterated truel with private information

I was reading the discussion here of how truels with unlimited shots and symmetric complete information favor the weakest truelist. This set me to wondering about somewhat more complicated situations. Suppose you are in a world where there are daily truels for some substantial period of time, say 30 days. As with the linked problem, all hits are fatal and truelists are accurate 50%, 80%, or 100% of the time. Unlike the linked problem, however, rather than possessing complete information about the accuracy of other truelists, you only know your own true accuracy and the %hit of other truelists in earlier iterations, and there is no guarantee that there will be one truelist of each skill level in any given truel.

Now suppose you know that you are a perfect marksman. On which of the iterations would you intentionally miss your first shot? I definitely lack the math strength to offer a good strategy, but I'm sure many others here could do better.

Edit #2 -- I give up on the formatting. |

d382c1a8-559e-407a-9cb9-5e37b6d7dd86 | StampyAI/alignment-research-dataset/arxiv | Arxiv | Offline Reinforcement Learning: Tutorial, Review, and Perspectives on Open Problems

1 Introduction

---------------

Reinforcement learning provides a mathematical formalism for learning-based control. By utilizing reinforcement learning, we can automatically acquire near-optimal behavioral skills, represented by policies, for optimizing user-specified reward functions. The reward function defines *what* an agent should do, and a reinforcement learning algorithm determines *how* to do it. While the reinforcement learning algorithms have been an active area of research for decades, the introduction of effective high-capacity function approximators – deep neural networks – into reinforcement learning, along with effective algorithms for training them, has allowed reinforcement learning methods to attain excellent results along a wide range of domains (Tesauro,, [1994](#bib.bib161); Hafner and Riedmiller,, [2011](#bib.bib45); Levine and Koltun,, [2013](#bib.bib90); Mnih et al.,, [2013](#bib.bib100); Levine et al.,, [2016](#bib.bib89); Silver et al.,, [2017](#bib.bib144); Kalashnikov et al.,, [2018](#bib.bib65)).

However, the fact that reinforcement learning algorithms provide a fundamentally *online* learning paradigm is also one of the biggest obstacles to their widespread adoption. The process of reinforcement learning involves iteratively collecting experience by interacting with the environment, typically with the latest learned policy, and then using that experience to improve the policy (Sutton and Barto,, [1998](#bib.bib151)). In many settings, this sort of online interaction is impractical, either because data collection is expensive (e.g., in robotics, educational agents, or healthcare) and dangerous (e.g., in autonomous driving, or healthcare). Furthermore, even in domains where online interaction is feasible, we might still prefer to utilize previously collected data instead – for example, if the domain is complex and effective generalization requires large datasets.

Indeed, the success of machine learning methods across a range of practically relevant problems over the past decade can in large part be attributed to the advent of scalable *data-driven* learning methods, which become better and better as they are trained with more data. Online reinforcement learning is difficult to reconcile with this paradigm. While this was arguably less of an issue when reinforcement learning methods utilized low-dimensional or linear parameterizations, and therefore relied on small datasets for small problems that were easy to collect or simulate (Lange et al.,, [2012](#bib.bib82)), once deep networks are incorporated into reinforcement learning, it is tempting to consider whether the same kind of data-driven learning can be applied with reinforcement learning objectives, thus resulting in *data-driven reinforcement learning* that utilizes only previously collected offline data, without any additional online interaction (Kumar,, [2019](#bib.bib76); Fu et al.,, [2020](#bib.bib29)). See Figure [1](#S1.F1 "Figure 1 ‣ 1 Introduction ‣ Offline Reinforcement Learning: Tutorial, Review, and Perspectives on Open Problems") for a pictorial illustration. A number of recent works have illustrated the power of such an approach in enabling data-driven learning of policies for dialogue (Jaques et al.,, [2019](#bib.bib56)), robotic manipulation behaviors (Ebert et al.,, [2018](#bib.bib22); Kalashnikov et al.,, [2018](#bib.bib65)), and robotic navigation skills (Kahn et al.,, [2020](#bib.bib59)).

Figure 1: Pictorial illustration of classic online reinforcement learning (a), classic off-policy reinforcement learning (b), and offline reinforcement learning (c). In online reinforcement learning (a), the policy πksubscript𝜋𝑘\pi\_{k}italic\_π start\_POSTSUBSCRIPT italic\_k end\_POSTSUBSCRIPT is updated with streaming data collected by πksubscript𝜋𝑘\pi\_{k}italic\_π start\_POSTSUBSCRIPT italic\_k end\_POSTSUBSCRIPT itself. In the classic off-policy setting (b), the agent’s experience is appended to a data buffer (also called a replay buffer) 𝒟𝒟\mathcal{D}caligraphic\_D, and each new policy πksubscript𝜋𝑘\pi\_{k}italic\_π start\_POSTSUBSCRIPT italic\_k end\_POSTSUBSCRIPT collects additional data, such that 𝒟𝒟\mathcal{D}caligraphic\_D is composed of samples from π0,π1,…,πksubscript𝜋0subscript𝜋1…subscript𝜋𝑘\pi\_{0},\pi\_{1},\dots,\pi\_{k}italic\_π start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT , italic\_π start\_POSTSUBSCRIPT 1 end\_POSTSUBSCRIPT , … , italic\_π start\_POSTSUBSCRIPT italic\_k end\_POSTSUBSCRIPT, and all of this data is used to train an updated new policy πk+1subscript𝜋𝑘1\pi\_{k+1}italic\_π start\_POSTSUBSCRIPT italic\_k + 1 end\_POSTSUBSCRIPT. In contrast, offline reinforcement learning employs a dataset 𝒟𝒟\mathcal{D}caligraphic\_D collected by some (potentially unknown) behavior policy πβsubscript𝜋𝛽{\pi\_{\beta}}italic\_π start\_POSTSUBSCRIPT italic\_β end\_POSTSUBSCRIPT. The dataset is collected once, and is not altered during training, which makes it feasible to use large previous collected datasets. The training process does not interact with the MDP at all, and the policy is only deployed after being fully trained.

Unfortunately, such data-driven *offline* reinforcement learning also poses major algorithmic challenges. As we will discuss in this article, many commonly used reinforcement learning methods can learn from off-policy data, but such methods often cannot learn effectively from entire offline data, without any additional on-policy interaction. High-dimensional and expressive function approximation generally exacerbates this issue, since function approximation leaves the algorithms vulnerable to distributional shift, one of the central challenges with offline reinforcement learning. However, the appeal of a fully offline reinforcement learning framework is enormous: in the same way that supervised machine learning methods have enabled data to be turned into generalizable and powerful *pattern recognizers* (e.g., image classifiers, speech recognition engines, etc.), offline reinforcement learning methods equipped with powerful function approximation may enable data to be turned into generalizable and powerful *decision making engines*, effectively allowing anyone with a large enough dataset to turn this dataset into a policy that can optimize a desired utility criterion. From healthcare decision-making support to autonomous driving to robotics, the implications of a reliable and effective offline reinforcement learning method would be immense.

In some application domains, the lack of effective offline reinforcement learning methods has driven research in a number of interesting directions. For example, in robotics and autonomous driving, a rapidly growing research topic is the study of simulation to real-world transfer: training policies with reinforcement learning in simulation and then transferring these policies into the real world (Sadeghi and Levine,, [2017](#bib.bib135); Tan et al.,, [2018](#bib.bib158); Chebotar et al.,, [2019](#bib.bib9)). While this approach is very pragmatic (and often effective), its popularity highlights the deficiency in offline reinforcement learning methods: if it was possible to simply train policies with previously collected data, it would likely be unnecessary in many cases to manually design high-fidelity simulators for simulation-to-real-world transfer. After all, outside of reinforcement learning (e.g., in computer vision, NLP, or speech recognition), transfer from simulation is comparatively much less prevalent, since data-driven learning is so effective.

The goal of this article is to provide the reader with the conceptual tools needed to get started on research in the field of offline reinforcement learning (also called batch reinforcement learning (Ernst et al.,, [2005](#bib.bib23); Riedmiller,, [2005](#bib.bib130); Lange et al.,, [2012](#bib.bib82))), so as to hopefully begin addressing some of these deficiencies. To this end, we will present the offline reinforcement learning problem formulation, and describe some of the challenges associated with this problem setting, particularly in light of recent research on deep reinforcement learning and the interaction between reinforcement learning and high-dimensional function approximator, such as deep networks. We will cover a variety of offline reinforcement learning methods studied in the literature. For each one, we will discuss the conceptual challenges, and initial steps taken to mitigate these challenges. We will then discuss some of the applications of offline reinforcement learning techniques that have already been explored, despite the limitations of current methods, and conclude with some perspectives on future work and open problems in the field.

2 Offline Reinforcement Learning Problem Statement and Overview

----------------------------------------------------------------

In this section, we will introduce the mathematical formalism of reinforcement learning and define our notation, and then set up the offline reinforcement learning problem setting, where the goal is to learn near-optimal policies from previously collected data. Then, we will briefly discuss some of the intuition behind why the offline reinforcement learning problem setting poses some unique challenges, using a supervised behavioral cloning example.

###

2.1 Reinforcement Learning Preliminaries

In this section, we will define basic reinforcement learning concepts, following standard textbook definitions (Sutton and Barto,, [1998](#bib.bib151)). Reinforcement learning addresses the problem of learning to control a dynamical system, in a general sense. The dynamical system is fully defined by a fully-observed or partially-observed Markov decision process (MDP).

######

Definition 2.1 (Markov decision process).

The Markov decision process is defined as a tuple ℳ=(𝒮,𝒜,T,d0,r,γ)ℳ𝒮𝒜𝑇subscript𝑑0𝑟𝛾\mathcal{M}=(\mathcal{S},\mathcal{A},T,d\_{0},r,\gamma)caligraphic\_M = ( caligraphic\_S , caligraphic\_A , italic\_T , italic\_d start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT , italic\_r , italic\_γ ), where 𝒮𝒮\mathcal{S}caligraphic\_S is a set of states 𝐬∈𝒮𝐬𝒮\mathbf{s}\in\mathcal{S}bold\_s ∈ caligraphic\_S, which may be either discrete or continuous (i.e., multi-dimensional vectors), 𝒜𝒜\mathcal{A}caligraphic\_A is a set of actions 𝐚∈𝒜𝐚𝒜\mathbf{a}\in\mathcal{A}bold\_a ∈ caligraphic\_A, which similarly can be discrete or continuous, T𝑇Titalic\_T defines a conditional probability distribution of the form T(𝐬t+1|𝐬t,𝐚t)𝑇conditionalsubscript𝐬𝑡1subscript𝐬𝑡subscript𝐚𝑡T(\mathbf{s}\_{t+1}|\mathbf{s}\_{t},\mathbf{a}\_{t})italic\_T ( bold\_s start\_POSTSUBSCRIPT italic\_t + 1 end\_POSTSUBSCRIPT | bold\_s start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT , bold\_a start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT ) that describes the dynamics of the system,111We will sometimes use time subscripts (i.e., 𝐬t+1subscript𝐬𝑡1\mathbf{s}\_{t+1}bold\_s start\_POSTSUBSCRIPT italic\_t + 1 end\_POSTSUBSCRIPT follows 𝐬tsubscript𝐬𝑡\mathbf{s}\_{t}bold\_s start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT), and sometimes “prime” notation (i.e., 𝐬′superscript𝐬′\mathbf{s}^{\prime}bold\_s start\_POSTSUPERSCRIPT ′ end\_POSTSUPERSCRIPT is the state that follows 𝐬𝐬\mathbf{s}bold\_s). Explicit time subscripts can help clarify the notation in finite-horizon settings, while “prime” notation is simpler in infinite-horizon settings where absolute time step indices are less meaningful. d0subscript𝑑0d\_{0}italic\_d start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT defines the initial state distribution d0(𝐬0)subscript𝑑0subscript𝐬0d\_{0}(\mathbf{s}\_{0})italic\_d start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT ( bold\_s start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT ), r:𝒮×𝒜→:𝑟→𝒮𝒜absentr:\mathcal{S}\times\mathcal{A}\rightarrow\realitalic\_r : caligraphic\_S × caligraphic\_A → defines a reward function, and γ∈(0,1]𝛾01\gamma\in(0,1]italic\_γ ∈ ( 0 , 1 ] is a scalar discount factor.

We will use the fully-observed formalism in most of this article, though the definition for the partially observed Markov decision process (POMDP) is also provided for completeness. The MDP definition can be extended to the partially observed setting as follows:

######

Definition 2.2 (Partially observed Markov decision process).

The partially observed Markov decision process is defined as a tuple ℳ=(𝒮,𝒜,𝒪,T,d0,E,r,γ)ℳ𝒮𝒜𝒪𝑇subscript𝑑0𝐸𝑟𝛾\mathcal{M}=(\mathcal{S},\mathcal{A},\mathcal{O},T,d\_{0},E,r,\gamma)caligraphic\_M = ( caligraphic\_S , caligraphic\_A , caligraphic\_O , italic\_T , italic\_d start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT , italic\_E , italic\_r , italic\_γ ), where 𝒮𝒮\mathcal{S}caligraphic\_S, 𝒜𝒜\mathcal{A}caligraphic\_A, T𝑇Titalic\_T, d0subscript𝑑0d\_{0}italic\_d start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT, r𝑟ritalic\_r, and γ𝛾\gammaitalic\_γ are defined as before, 𝒪𝒪\mathcal{O}caligraphic\_O is a set of observations, where each observation is given by 𝐨∈𝒪𝐨𝒪\mathbf{o}\in\mathcal{O}bold\_o ∈ caligraphic\_O, and E𝐸Eitalic\_E is an emission function, which defines the distribution E(𝐨t|𝐬t)𝐸conditionalsubscript𝐨𝑡subscript𝐬𝑡E(\mathbf{o}\_{t}|\mathbf{s}\_{t})italic\_E ( bold\_o start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT | bold\_s start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT ).

The final goal in a reinforcement learning problem is to learn a policy, which defines a distribution over actions conditioned on states, π(𝐚t|𝐬t)𝜋conditionalsubscript𝐚𝑡subscript𝐬𝑡\pi(\mathbf{a}\_{t}|\mathbf{s}\_{t})italic\_π ( bold\_a start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT | bold\_s start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT ), or conditioned on observations in the partially observed setting, π(𝐚t|𝐨t)𝜋conditionalsubscript𝐚𝑡subscript𝐨𝑡\pi(\mathbf{a}\_{t}|\mathbf{o}\_{t})italic\_π ( bold\_a start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT | bold\_o start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT ). The policy may also be conditioned on an observation history, π(𝐚t|𝐨0:t)𝜋conditionalsubscript𝐚𝑡subscript𝐨:0𝑡\pi(\mathbf{a}\_{t}|\mathbf{o}\_{0:t})italic\_π ( bold\_a start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT | bold\_o start\_POSTSUBSCRIPT 0 : italic\_t end\_POSTSUBSCRIPT ). From these definitions, we can derive the *trajectory distribution*. The trajectory is a sequence of states and actions of length H𝐻Hitalic\_H, given by τ=(𝐬0,𝐚0,…,𝐬H,𝐚H)𝜏subscript𝐬0subscript𝐚0…subscript𝐬𝐻subscript𝐚𝐻\tau=(\mathbf{s}\_{0},\mathbf{a}\_{0},\dots,\mathbf{s}\_{H},\mathbf{a}\_{H})italic\_τ = ( bold\_s start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT , bold\_a start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT , … , bold\_s start\_POSTSUBSCRIPT italic\_H end\_POSTSUBSCRIPT , bold\_a start\_POSTSUBSCRIPT italic\_H end\_POSTSUBSCRIPT ), where H𝐻Hitalic\_H may be infinite. The trajectory distribution pπsubscript𝑝𝜋p\_{\pi}italic\_p start\_POSTSUBSCRIPT italic\_π end\_POSTSUBSCRIPT for a given MDP ℳℳ\mathcal{M}caligraphic\_M and policy π𝜋\piitalic\_π is given by

| | | |

| --- | --- | --- |

| | pπ(τ)=d0(𝐬0)∏t=0Hπ(𝐚t|𝐬t)T(𝐬t+1|𝐬t,𝐚t).subscript𝑝𝜋𝜏subscript𝑑0subscript𝐬0superscriptsubscriptproduct𝑡0𝐻𝜋conditionalsubscript𝐚𝑡subscript𝐬𝑡𝑇conditionalsubscript𝐬𝑡1subscript𝐬𝑡subscript𝐚𝑡p\_{\pi}(\tau)=d\_{0}(\mathbf{s}\_{0})\prod\_{t=0}^{H}\pi(\mathbf{a}\_{t}|\mathbf{s}\_{t})T(\mathbf{s}\_{t+1}|\mathbf{s}\_{t},\mathbf{a}\_{t}).italic\_p start\_POSTSUBSCRIPT italic\_π end\_POSTSUBSCRIPT ( italic\_τ ) = italic\_d start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT ( bold\_s start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT ) ∏ start\_POSTSUBSCRIPT italic\_t = 0 end\_POSTSUBSCRIPT start\_POSTSUPERSCRIPT italic\_H end\_POSTSUPERSCRIPT italic\_π ( bold\_a start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT | bold\_s start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT ) italic\_T ( bold\_s start\_POSTSUBSCRIPT italic\_t + 1 end\_POSTSUBSCRIPT | bold\_s start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT , bold\_a start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT ) . | |

This definition can easily be extended into the partially observed setting by including the observations 𝐨tsubscript𝐨𝑡\mathbf{o}\_{t}bold\_o start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT and emission function E(𝐨t|𝐬t)𝐸conditionalsubscript𝐨𝑡subscript𝐬𝑡E(\mathbf{o}\_{t}|\mathbf{s}\_{t})italic\_E ( bold\_o start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT | bold\_s start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT ). The reinforcement learning objective, J(π)𝐽𝜋J(\pi)italic\_J ( italic\_π ), can then be written as an expectation under this trajectory distribution:

| | | | |

| --- | --- | --- | --- |

| | J(π)=𝔼τ∼pπ(τ)[∑t=0Hγtr(𝐬t,𝐚t)].𝐽𝜋subscript𝔼similar-to𝜏subscript𝑝𝜋𝜏delimited-[]superscriptsubscript𝑡0𝐻superscript𝛾𝑡𝑟subscript𝐬𝑡subscript𝐚𝑡J(\pi)=\mathbb{E}\_{\tau\sim p\_{\pi}(\tau)}\left[\sum\_{t=0}^{H}\gamma^{t}r(\mathbf{s}\_{t},\mathbf{a}\_{t})\right].italic\_J ( italic\_π ) = blackboard\_E start\_POSTSUBSCRIPT italic\_τ ∼ italic\_p start\_POSTSUBSCRIPT italic\_π end\_POSTSUBSCRIPT ( italic\_τ ) end\_POSTSUBSCRIPT [ ∑ start\_POSTSUBSCRIPT italic\_t = 0 end\_POSTSUBSCRIPT start\_POSTSUPERSCRIPT italic\_H end\_POSTSUPERSCRIPT italic\_γ start\_POSTSUPERSCRIPT italic\_t end\_POSTSUPERSCRIPT italic\_r ( bold\_s start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT , bold\_a start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT ) ] . | | (1) |

When H𝐻Hitalic\_H is infinite, it is sometimes also convenient to assume that the Markov chain on (𝐬t,𝐚t)subscript𝐬𝑡subscript𝐚𝑡(\mathbf{s}\_{t},\mathbf{a}\_{t})( bold\_s start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT , bold\_a start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT ) defined by π(𝐚t|𝐬t)T(𝐬t+1|𝐬t,𝐚t)𝜋conditionalsubscript𝐚𝑡subscript𝐬𝑡𝑇conditionalsubscript𝐬𝑡1subscript𝐬𝑡subscript𝐚𝑡\pi(\mathbf{a}\_{t}|\mathbf{s}\_{t})T(\mathbf{s}\_{t+1}|\mathbf{s}\_{t},\mathbf{a}\_{t})italic\_π ( bold\_a start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT | bold\_s start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT ) italic\_T ( bold\_s start\_POSTSUBSCRIPT italic\_t + 1 end\_POSTSUBSCRIPT | bold\_s start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT , bold\_a start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT ) is ergodic, and define the objective in terms of the expected reward under the stationary distribution of this Markov chain (Sutton and Barto,, [1998](#bib.bib151)). This definition is somewhat complicated by the role of the discount factor. For a full discussion of this topic, we refer the reader to prior work (Thomas,, [2014](#bib.bib163)).

In many cases, we will find it convenient to refer to the marginals of the trajectory distribution pπ(τ)subscript𝑝𝜋𝜏p\_{\pi}(\tau)italic\_p start\_POSTSUBSCRIPT italic\_π end\_POSTSUBSCRIPT ( italic\_τ ). We will use dπ(𝐬)superscript𝑑𝜋𝐬d^{\pi}(\mathbf{s})italic\_d start\_POSTSUPERSCRIPT italic\_π end\_POSTSUPERSCRIPT ( bold\_s ) to refer to the overall state visitation frequency, averaged over the time steps, and dtπ(𝐬t)subscriptsuperscript𝑑𝜋𝑡subscript𝐬𝑡d^{\pi}\_{t}(\mathbf{s}\_{t})italic\_d start\_POSTSUPERSCRIPT italic\_π end\_POSTSUPERSCRIPT start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT ( bold\_s start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT ) to refer to the state visitation frequency at time step t𝑡titalic\_t.

In this section, we will briefly summarize different types of reinforcement learning algorithms and present definitions. At a high level, all standard reinforcement learning algorithms follow the same basic learning loop: the agent *interacts* with the MDP ℳℳ\mathcal{M}caligraphic\_M by using some sort of *behavior policy*, which may or may not match π(𝐚|𝐬)𝜋conditional𝐚𝐬\pi(\mathbf{a}|\mathbf{s})italic\_π ( bold\_a | bold\_s ), by observing the current state 𝐬tsubscript𝐬𝑡\mathbf{s}\_{t}bold\_s start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT, selecting an action 𝐚tsubscript𝐚𝑡\mathbf{a}\_{t}bold\_a start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT, and then observing the resulting next state 𝐬t+1subscript𝐬𝑡1\mathbf{s}\_{t+1}bold\_s start\_POSTSUBSCRIPT italic\_t + 1 end\_POSTSUBSCRIPT and reward value rt=r(𝐬t,𝐚t)subscript𝑟𝑡𝑟subscript𝐬𝑡subscript𝐚𝑡r\_{t}=r(\mathbf{s}\_{t},\mathbf{a}\_{t})italic\_r start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT = italic\_r ( bold\_s start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT , bold\_a start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT ). This may repeat for multiple steps, and the agent then uses the observed transitions (𝐬t,𝐚t,𝐬t+1,rt)subscript𝐬𝑡subscript𝐚𝑡subscript𝐬𝑡1subscript𝑟𝑡(\mathbf{s}\_{t},\mathbf{a}\_{t},\mathbf{s}\_{t+1},r\_{t})( bold\_s start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT , bold\_a start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT , bold\_s start\_POSTSUBSCRIPT italic\_t + 1 end\_POSTSUBSCRIPT , italic\_r start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT ) to update its policy. This update might also utilize previously observed transitions. We will use 𝒟={(𝐬ti,𝐚ti,𝐬t+1i,rti)}𝒟subscriptsuperscript𝐬𝑖𝑡subscriptsuperscript𝐚𝑖𝑡subscriptsuperscript𝐬𝑖𝑡1subscriptsuperscript𝑟𝑖𝑡\mathcal{D}=\{(\mathbf{s}^{i}\_{t},\mathbf{a}^{i}\_{t},\mathbf{s}^{i}\_{t+1},r^{i}\_{t})\}caligraphic\_D = { ( bold\_s start\_POSTSUPERSCRIPT italic\_i end\_POSTSUPERSCRIPT start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT , bold\_a start\_POSTSUPERSCRIPT italic\_i end\_POSTSUPERSCRIPT start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT , bold\_s start\_POSTSUPERSCRIPT italic\_i end\_POSTSUPERSCRIPT start\_POSTSUBSCRIPT italic\_t + 1 end\_POSTSUBSCRIPT , italic\_r start\_POSTSUPERSCRIPT italic\_i end\_POSTSUPERSCRIPT start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT ) } to denote the set of transitions that are available for the agent to use for updating the policy (“learning”), which may consist of either all transitions seen so far, or some subset thereof.

#### Policy gradients.