id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

6461692d-27eb-451b-826c-5b830e6e7613 | trentmkelly/LessWrong-43k | LessWrong | Some algorithmic aspects of AGI

|

00d29e28-444d-4215-9da9-ed292dcfedb3 | trentmkelly/LessWrong-43k | LessWrong | AI improving AI [MLAISU W01!]

Over 200 research ideas for mechanistic interpretability, ML improving ML and the dangers of aligned artificial intelligence. Welcome to 2023 and a happy New Year from us at the ML & AI Safety Updates!

Watch this week's MLAISU on YouTube or listen to it on Spotify.

Mechanistic interpretability

The interpretability researcher Neel Nanda has published a massive list of 200 open and concrete problems in mechanistic interpretability. They’re split into the following categories:

1. Analyzing toy models: Diving into models that are much smaller but trained the same way as large models. These are way easier to analyze than large models and he has made 12 small models available.

2. Looking for circuits in the wild: Inspired by the paper “interpretability in the Wild”, can we use mechanistic interpretability on real-life language models?

3. Interpreting algorithmic problems: Algorithms are highly interpretable and learned as a clearly interpretable structure. We can for example observe that grokking happens when an algorithm is generalized within the network.

4. Exploring polysemanticity and superposition: Superposition is when one feature is spread across multiple neurons in a network and gives problems in our interpretation of what neurons represent. Can we find better ways to understand or mitigate this effect?

5. Analyzing training dynamics: Understanding how models change over training is very interesting for identifying how and when capabilities emerge.

These are great projects to go for and we’re collaborating with Neel Nanda to run a mechanistic interpretability hackathon the 20th of January! As Lawrence Chan mentions in a new post; we need to touch reality as soon as possible, and these hackathons are a great way to get fast and concrete research results. You can join us but you can also run a local hackathon site!

ML improving ML

Thomas Woodside summarizes a collaborative project to map cases where ML systems are self-improving. There are already 11 |

f203c3f5-c6d2-4095-8e82-cb911b4502b2 | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | Is Eric Schmidt funding AI capabilities research by the US government?

[Politico article from Thursday December 22, 2022: "Ex-Google boss helps fund dozens of jobs in Biden’s administration"](https://www.politico.com/news/2022/12/22/eric-schmidt-joe-biden-administration-00074160)

1. Summary:

-----------

### In three sentences:

> "Eric Schmidt, the former CEO of Google who [has long sought influence over White House science policy](https://www.politico.com/news/2022/03/28/google-billionaire-joe-biden-science-office-00020712), is helping to fund the salaries of more than two dozen officials in the Biden administration under the auspices of an outside group, the Federation of American Scientists."

>

>

It is worth noting that Schmidt Futures (Schmidt's philanthropic ventures) does not directly fund these officials' salaries: Schmidt Futures provides < 30% to the Federation of American Scientists' "Day One fund" which funds these officials' salaries. Eric Schmidt seems to me to have called for the US government to aggressively invest in AI development.

### Some more context:

Eric Schmidt chaired the National Security Commission on Artificial Intelligence from 2018-2021, in which the commision called on the US government to spend $40 billion on AI development.

Schmidt Futures (Schmidt's philanthropic ventures) funds < 30% of the contributions to the Day One Project, a project within the Federation of American Scientists (FAS), which (among other things) provides the salaries of "FAS fellows" who hold "more than two dozen officials in the Biden administration" (from the main Politico article being discussed in this post). This includes 2 staffers in the Office of Science and Technology Policy ([a different Politico article](https://www.politico.com/news/2022/03/28/google-billionaire-joe-biden-science-office-00020712#:~:text=Two%20more%20staffers,2018%20to%202021.)).

The FAS is a "nonprofit global policy think tank with the stated intent of using science and scientific analysis to attempt to make the world more secure" ([Wikipedia](https://en.wikipedia.org/wiki/Federation_of_American_Scientists#:~:text=The%20Federation%20of%20American%20Scientists,develop%20the%20first%20atomic%20bombs.)). The Day One project was started to recruit people to fill "key science and technology positions in the executive branch" (from the main Politico article).

2. My question: Are Schmidt's projects harmfully advancing AI capabilities research?

------------------------------------------------------------------------------------

I've seen discussion among the EA community about how OpenAI and Anthropic may be harmfully advancing AI capabilities research. (The best discussion that comes to mind is [this recent Scott Alexander post about ChatGPT](https://astralcodexten.substack.com/p/perhaps-it-is-a-bad-thing-that-the); **if anyone knows any other resources discussing this hypothesis - for or against - please comment below).**

**I have not seen much discussion about Eric Schmidt's harmful or beneficial contributions to AI development in the US government. What do people think about this?** Is this something that should concern us?

3. Some more excerpts from the article about AI

-----------------------------------------------

> “Schmidt is clearly trying to influence AI policy to a disproportionate degree of any person I can think of,” said Alex Engler, a fellow at the Brookings Institution who specializes in AI policy. “We’ve seen a dramatic increase in investment toward advancing AI capacity in government and not much in limiting its harmful use.”

>

>

### ...

> Schmidt’s collaboration with FAS [Federation of American Scientists] is only a part of his broader advocacy for the U.S. government to invest more in technology and particularly in AI, positions he advanced as chair of the federal National Security Commission on Artificial Intelligence from 2018 to 2021.

>

> [The commission’s final report](https://www.nscai.gov/wp-content/uploads/2021/03/Full-Report-Digital-1.pdf) recommended that the government spend $40 billion to “expand and democratize federal AI research and development” and suggested more may be needed.

>

> “If anything, this report underplays the investments America will need to make,” the report stated.

>

>

...

> “Other countries have made AI a national project. The United States has not yet, as a nation, systematically explored its scope, studied its implications, or begun the process of reconciling with it,” they wrote. “If the United States and its allies recoil before the implications of these capabilities and halt progress on them, the result would not be a more peaceful world.”

>

> |

77dc5339-c370-466f-bf17-51b4497b1fc8 | trentmkelly/LessWrong-43k | LessWrong | Offense versus harm minimization

Imagine that one night, an alien prankster secretly implants electrodes into the brains of an entire country - let's say Britain. The next day, everyone in Britain discovers that pictures of salmon suddenly give them jolts of painful psychic distress. Every time they see a picture of a salmon, or they hear about someone photographing a salmon, or they even contemplate taking such a picture themselves, they get a feeling of wrongness that ruins their entire day.

I think most decent people would be willing to go to some trouble to avoid taking pictures of salmon if British people politely asked this favor of them. If someone deliberately took lots of salmon photos and waved them in the Brits' faces, I think it would be fair to say ey isn't a nice person. And if the British government banned salmon photography, and refused to allow salmon pictures into the country, well, maybe not everyone would agree but I think most people would at least be able to understand and sympathize with the reasons for such a law.

So why don't most people extend the same sympathy they would give Brits who don't like pictures of salmon, to Muslims who don't like pictures of Mohammed?

SHOULD EVERYBODY DRAW MOHAMMED?

I first1 started thinking along these lines when I heard about Everybody Draw Mohammed Day, and revisited the issue recently after discovering http://www.reddit.com/r/mohammadpics/.

I have to admit, I find these funny. I want to like them. But my attempts to think of reasons why this is totally different from showing pictures of salmon to British people fail:

• You could argue Brits did not choose to have their abnormal sensitivity to salmon while Muslims might be considered to be choosing their sensitivity to Mohammed. But this requires a libertarian free will. Further, I see little difference between how a Muslim "chooses" to get upset at disrespect to Mohammed, and how a Westerner might "choose" to get upset if you called eir mother a whore. Even though the anger isn't be |

558c1e8a-5bf5-4d42-9139-0d9597f9f091 | trentmkelly/LessWrong-43k | LessWrong | LLMs stifle creativity, eliminate opportunities for serendipitous discovery and disrupt intergenerational transfer of wisdom

In this post, I’ve made no attempt to give an exhaustive presentation of the countless unintended consequences of widespread LLM use; rather, I’ve concentrated on three potential effects that are at the borderline of research, infrequently discussed, and appear to resist a foreseeable solution.

This post argues that while LLMs exhibit impressive capabilities in mimicking human language, their reliance on pattern recognition and replication may, among other societally destructive consequences:

1. stifle genuine creativity and lead to a homogenization of writing styles — and consequently — thinking styles, by inadvertently reinforcing dominant linguistic patterns while neglecting less common or marginalized forms of expression,

2. eliminate opportunities for serendipitous discovery; and

3. disrupt intergenerational transfer of wisdom and knowledge.

As I argue in detail below, there is no reason to believe that those problems are easily mitigatable. The sheer scale of LLM-derived content production, which is likely to dwarf human-generated linguistic output in the near future, poses a serious challenge to the preservation of lexical diversity. The rapid proliferation of AI-generated text could create a “linguistic monoculture”, where the nuanced and idiosyncratic expressions that characterize human language are drowned out by the algorithmic efficiency of LLMs.

LLMs Threaten Creativity in Writing (and Thinking)

LLMs are undoubtedly useful for content generation. These models, trained on vast amounts of data, can generate perfectly coherent and contextually relevant text in ways that ostensibly mimic human creativity. It is precisely this very efficiency of LLMs that will tilt the scales in favor of AI-generated content over time. Upon closer examination, there seem to be a number of insidious consequences infrequently discussed in this connection: the potential erosion of genuine creativity, linguistic diversity, and ultimately, the richness of human exp |

2e15c8c8-c3cb-4515-bac5-43b0a3d6612e | trentmkelly/LessWrong-43k | LessWrong | Visualizing Neural networks, how to blame the bias

Background

This post is strongly based on this paper, which it calls the LRP algorithm, https://arxiv.org/pdf/1509.06321v1.pdf [1]

I later learned of the existence of this paper, which is even more similar to the ideas discussed here. https://arxiv.org/pdf/1704.02685.pdf [2]

In it, I examine 2 of the methods for neural network visualization, and show that they have structural similarities. I show that these algorithms only differ by a difference in how they treat the biases, and (possibly a difference in getting started)

The second algorithm obeys conservation laws, it tries to parcel credit and blame for a decision up to the input neurons.

Intro

The task we want is to assign importance to different inputs of a neural network in production of an output. So for example, in the case of a trained image classifier, the visualization method would take in a particular image, and highlight the parts of the image that the network thought were important.

A general method for visualizing neural networks is back-propagation. First evaluate the network forwards. Then work backwards through the network by using some rule about how to reverse each individual layer.

One example of this is differentiation. Finding the rate of change of the output, with respect to each input. But there are others.

Firstly, lets pretend biases in the network don't exist. We are allowed non-linearities, so long as they satisfy the equation f(0)=0 .

Lets look at various layer types and the different back-propagation rules.

Maximum

Most often found in the form of max pooling.

b=maxiai

Gradient

The rule used in gradient descent, and I think the only rule used in the paper above for back propagating maximum. (Notation note. R here isn't exactly a function. Its more like ddx , its output is related to the context in which the input occurs. Think of every number in the forward net having an associated number )

R(ai)={R(b) ai=b0else

Ignoring the case of an exact tie. Exact ties, a |

e27971d0-544e-4aa7-9c7b-512c31779f04 | awestover/filtering-for-misalignment | Redwood Research: Alek's Filtering Results | id: post3888

A putative new idea for AI control; index here . Humans are biased and irrational (citation not needed) and so don't provide consistent answers to questions. To pick an extreme example, suppose the AI is hesitant between valuing Cake or Death , but can phrase a sufficiently seductive or manipulative question to get humans to answer "Death" when asked. We'll assume that more dull questions elicit "Cake" instead. This poses great problem for any AI doing value learning and trying to model a human-values utility function. If it assumes the human is rational, then there is no simple utility which explains this behaviour. Now fear, however, there is a utility function which explains this! The two universes differ in important ways: in one universe, the AI asked a seductive question, in the other a dull one. Therefore the human values can be modelled as valuing the worlds as: ( s e d u c t i v e q u e s t i o n , D e a t h ) > ( s e d u c t i v e q u e s t i o n , C a k e ) ( d u l l q u e s t i o n , C a k e ) > ( d u l l q u e s t i o n , D e a t h ) . Any rational model of human preference would reach this conclusion - and these would be the correct preference as far as it could be observed. It fits well with physical predictions about the future. And then, depending on what is easy or hard in the world, the AI could decide to ask the seductive question and start killing... Note that we can't avoid this problem by having the AI just count "asking the seductive question" as being part of its action set, and hence special. Once the question is asked, it's vibrations in the air, so the human preferences can be modelled as joint preferences over universes with cake and death and certain patterns of vibration in the air. To avoid this problem, we need the AI to: Know the human is irrational, correctly identify this situation as an example of it, and find correct meta-rational principles to decide what to do/how to ask. It's possible that many of the designs proposed will avoid this problem by correct learning sequences (if it can learn meta-principles early, this might help), but it could be used to show that many designs are not intrinsically safe for all initial priors over human values. |

ca829cd8-090a-4001-b96e-9b0db88e217a | trentmkelly/LessWrong-43k | LessWrong | Problems with instruction-following as an alignment target

We should probably try to understand the failure modes of the alignment schemes that AGI developers are most likely to attempt.

I still think Instruction-following AGI is easier and more likely than value aligned AGI. I’ve updated downward on the ease of IF alignment, but upward on how likely it is. IF is the de-facto current primary alignment target (see definition immediately below), and it seems likely to remain so until the first real AGIs, if we continue on the current path (e.g., AI 2027).

If this approach is doomed to fail, best to make that clear well before the first AGIs are launched. If it can work, best to analyze its likely failure points before it is tried.

Definition of IF as an alignment target

What I mean by IF as an alignment target is a developer honestly saying "our first AGI will be safe because it will do what we tell it to." This seems both intuitively and analytically more likely to me than hearing "our first AGI will be safe because we trained it to follow human values."

IF is currently one alignment target among several, so problems with it aren't going to be terribly important if it's not the strongest alignment target when we hit AGI. Current practices are to train models with roughly four objectives: predict the dataset; follow instructions; refuse harmful requests; and solve hard problems. Including other targets means the model might not follow instructions at some critical juncture. In particular, I and most alignment researchers worry that o1 is a bad idea because it (and all of the following reasoning models) apply fairly strong optimization to a goal (producing correct answers) that is not strongly aligned with human interests.

So IF is one but not the alignment target for current AI. There are reasons to think it will be the primary alignment target as we approach truly dangerous AGI.

Why IF is a likely alignment target for early AGI

We might consider the likelihood of it being tried without adequate consideration to be th |

2ac4f13b-e715-4193-9d5c-ea630ee47657 | trentmkelly/LessWrong-43k | LessWrong | Clarification of AI Reflection Problem

Consider an agent A, aware of its own embedding in some lawful universe, able to reason about itself and use that reasoning to inform action. By interacting with the world, A is able to modify itself or construct new agents, and using these abilities effectively is likely to be an important component of AGI. Our current understanding appears to be inadequate for guiding such an agent's behavior, for (at least) the following reason:

If A does not believe "A's beliefs reflect reality," then A will lose interest in creating further copies of itself, improving its own reasoning, or performing natural self-modifications. Indeed, if A's beliefs don't reflect reality then creating more copies of A or spending more time thinking may do more harm than good. But if A does believe "A's beliefs reflect reality," then A runs immediately into Gödelian problems: for example, does A become convinced of the sentence Q = "A does not believe Q"? We need to find a way for A to have some confidence in its own behavior without running into these fundamental difficulties with reflection.

This problem has been discussed occasionally at Less Wrong, but I would like to clarify and lay out some examples before trying to start in on a resolution.

Gödel Machines

The Gödel machine is a formalism described by Shmidhuber for principled self-modification. A Gödel machine is designed to solve some particular object level problem in its allotted time. I will describe one Gödel machine implementation.

The initial machine A has an arbitrary object level problem solver. Before running the object level problem solver, however, A spends half of its time enumerating pairs of strings (A', P); for each one, if A' is a valid description of an agent and P is a proof that A' does better on the object level task than A, then A transforms into A'.

Now suppose that A's initial search for self-modifications is inefficient: a new candidate agent A' has a more efficient proof checker, and so is able to exa |

0b49a3d5-2cff-49a2-a777-9ac20db59cad | trentmkelly/LessWrong-43k | LessWrong | SolidGoldMagikarp III: Glitch token archaeology

The set of anomalous tokens which we found in mid-January are now being described as 'glitch tokens' and 'aberrant tokens' in online discussion, as well as (perhaps more playfully) 'forbidden tokens', 'unspeakable tokens' and 'cursed tokens'. We've mostly just called them 'weird tokens'.

GPT-3 speaks of 'the unspeakable one' when prompted about the enigmatic ‘ petertodd’

Research is ongoing, and a more serious research report will appear soon, but for now we thought it might be worth recording what is known about the origins of the various glitch tokens. Not why they glitch, but why these particular strings have ended up in the GPT-2/3/J token set.

['\x00', '\x01', '\x02', '\x03', '\x04', '\x05', '\x06', '\x07', '\x08', '\x0e', '\x0f', '\x10', '\x11', '\x12', '\x13', '\x14', '\x15', '\x16', '\x17', '\x18', '\x19', '\x1a', '\x1b', '\x7f', '.[', 'ÃÂÃÂ', 'ÃÂÃÂÃÂÃÂ', 'wcsstore', '\\.', ' practition', ' Dragonbound', ' guiActive', ' \u200b', '\\\\\\\\\\\\\\\\', 'ÃÂÃÂÃÂÃÂÃÂÃÂÃÂÃÂÃÂÃÂÃÂÃÂÃÂÃÂÃÂÃÂ', ' davidjl', '覚醒', '"]=>', ' --------', ' \u200e', 'ュ', 'ForgeModLoader', '天', ' 裏覚醒', 'PsyNetMessage', ' guiActiveUn', ' guiName', ' externalTo', ' unfocusedRange', ' guiActiveUnfocused', ' guiIcon', ' externalToEVA', ' externalToEVAOnly', 'reportprint', 'embedreportprint', 'cloneembedreportprint', 'rawdownload', 'rawdownloadcloneembedreportprint', 'SpaceEngineers', 'externalActionCode', 'к', '?????-?????-', 'ーン', 'cffff', 'MpServer', ' gmaxwell', 'cffffcc', ' "$:/', ' Smartstocks', '":[{"', '龍喚士', '":"","', ' attRot', "''.", ' Mechdragon', ' PsyNet', ' RandomRedditor', ' RandomRedditorWithNo', 'ertodd', ' sqor', ' istg', ' "\\', ' petertodd', 'StreamerBot', 'TPPStreamerBot', 'FactoryReloaded', ' partName', 'ヤ', '\\">', ' Skydragon', 'iHUD', 'catentry', 'ItemThumbnailImage', ' UCHIJ', ' SetFontSize', 'DeliveryDate', 'quickShip', 'quickShipAvailable', 'isSpecialOrderable', 'inventoryQuantity', 'channelAvailability', 'soType', 'soDeliveryDate', '龍契士', 'oreAndOnline', 'Instor |

7464573a-6f5d-493e-92cf-b8cdbfc61b0d | trentmkelly/LessWrong-43k | LessWrong | Meetup : Washington DC Show and tell meetup: Economics

Discussion article for the meetup : Washington DC Show and tell meetup: Economics

WHEN: 14 October 2012 03:00:00PM (-0400)

WHERE: National Portrait Gallery Plaza, Washington, DC 20001, USA

This meetup is going to be the first in what I hope will be a series of "show and tell" meetups. One of our members has agreed to talk about economics, one of his areas of expertise. This should be much better if people come equipped with questions or subtopics of particular interest to them, so please do so!

Discussion article for the meetup : Washington DC Show and tell meetup: Economics |

96b72905-e57f-4a27-96b1-3777083f9f59 | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | Exploring Metaculus’ community predictions

Disclaimer: this is not a project from [Arb Research](https://arbresearch.com/).

Summary

=======

* I really like [Metaculus](https://www.metaculus.com/)!

* I have collected and analysed in this [Sheet](https://docs.google.com/spreadsheets/d/1Mxl8vGsZemmuKytV9zH1ft-iP2q4xwnxz7gCkSlYmPg/edit?usp%3Dsharing) metrics about Metaculus’ questions outside of question groups, and their [Metaculus’ community predictions](https://www.metaculus.com/help/faq/%23community-prediction) (see tab “TOC”). The Colab to extract the data and calculate the metrics is [here](https://colab.research.google.com/drive/1JqXgir413MJ6nf0RVpUw0fwh82LRu4ji?usp%3Dsharing).

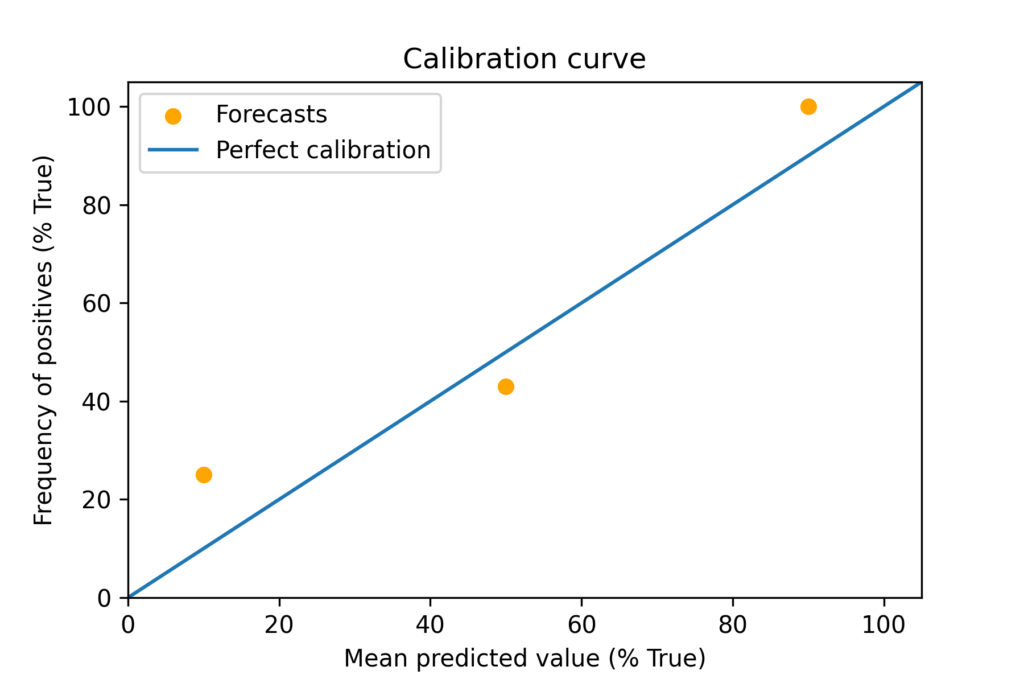

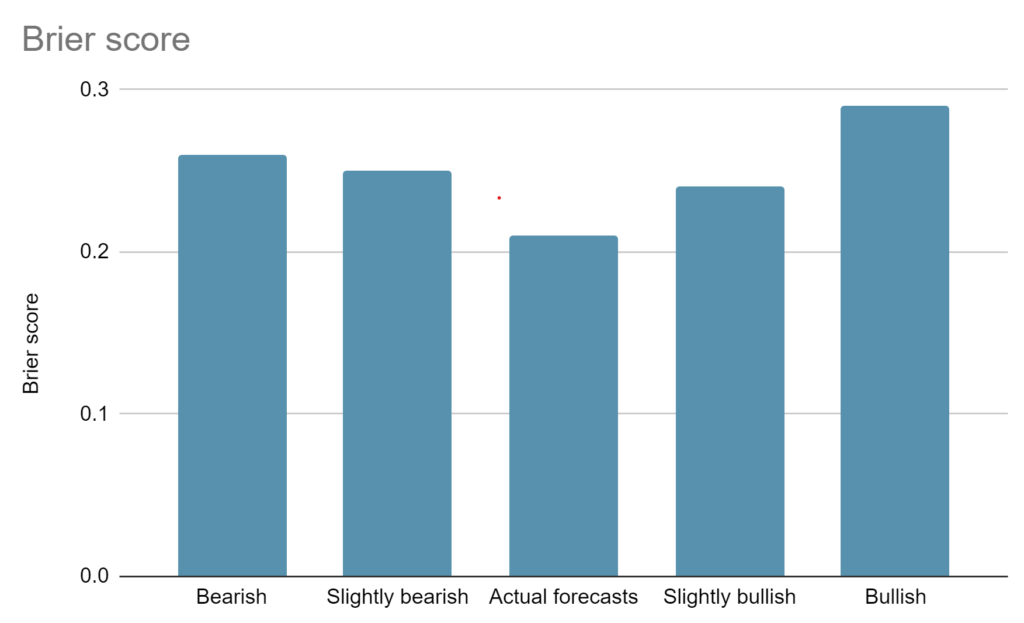

* The mean metrics vary a lot across categories, and the same is seemingly true for correlations among metrics. So one should not assume the performance across all questions is representative of that within each of [Metaculus’ categories](https://www.metaculus.com/questions/categories/). To illustrate:

+ Across categories, the 5th and 95th percentiles of the mean normalised outcome are 0 and 0.784[[1]](#fnj6bz6cf2jy), and of the mean Brier score are 0.0369 and 0.450. For context, the Brier score is 0.25 (= 0.5^2) for the maximally uncertain probability of 0.5.

+ According to Metaculus’ track record [page](https://www.metaculus.com/questions/track-record/), the mean [Brier score](https://en.wikipedia.org/wiki/Brier_score) for Metaculus’ community predictions evaluated at all times is 0.126 for all questions, but 0.237 for those of the category [artificial intelligence](https://www.metaculus.com/questions/?search%3Dcat:computing--ai). So Metaculus’ community predictions about probabilities[[2]](#fniemyc829csk) look good in general, but they perform close to random predictions for the category of artificial intelligence. However, note there are other categories with questions about artificial intelligence, like [AI and machine learning](https://www.metaculus.com/questions/?search=cat:comp-sci--ai-and-machinelearning).

* There can be significant differences between Metaculus community predictions and [Metaculus’ predictions](https://www.metaculus.com/help/faq/%23metaculus-prediction). For instance, the mean Brier score of the latter for the category of artificial intelligence is 0.168, which is way more accurate than the 0.237 of the former.

* According to my results, Metaculus’ community predictions are:

+ In general (i.e. considering all questions), less accurate for questions:

- Whose predictions are more extreme under Bayesian updating ([correlation coefficient](https://en.wikipedia.org/wiki/Pearson_correlation_coefficient) R = 0.346, and [p-value](https://en.wikipedia.org/wiki/P-value) p = 0[[3]](#fn4ztx3gz691)).

- With a greater amount of updating (R = 0.262, and p = 0).

- With a greater difference between amount of updating and uncertainty reduction (R = 0.256, and p = 0).

+ For the category of artificial intelligence, less accurate for questions with:

- Greater difference between amount of updating and uncertainty reduction (R = 0.361, and p = 0.0387).

- More predictions (R = 0.316, and p = 0.0729).

- A greater amount of updating (R = 0.282, and p = 0.111).

+ Compatible with Bayesian updating in general, in the sense I failed to reject it during the 2nd half of the period during which each question was or has been open (mean p-value of 0.425).

* If you want to know how much to trust a given prediction from Metaculus, I think it is sensible to check Metaculus’ track record for similar past questions (more [here](https://forum.effectivealtruism.org/posts/zeL52MFB2Pkq9Kdme/exploring-metaculus-community-predictions#My_recommendation_on_how_to_use_Metaculus)).

Acknowledgements

----------------

Thanks to Charles Dillon, Misha Yagudin from [Arb Research](https://arbresearch.com/), Peter Mühlbacher, and Ryan Beck.

Dark crystall ball in a bright foggy galaxy. Generated by OpenAI's DALL-E.Introduction

============

I really like [Metaculus](https://www.metaculus.com/help/faq/%23community-prediction)!

Methods

=======

I believe it would be important to better understand how much to trust Metaculus’ predictions. To that end, I have determined in this [Sheet](https://docs.google.com/spreadsheets/d/1Mxl8vGsZemmuKytV9zH1ft-iP2q4xwnxz7gCkSlYmPg/edit?usp%3Dsharing) (see tab “TOC”) metrics about all Metaculus’ questions outside of question groups with an ID from 1 to 15000 on 13 March 2023[[4]](#fnwztdbn0o85), and their Metaculus’ community predictions. The metrics for each question are:

* Tags, which identify the [Metaculus’ category](https://www.metaculus.com/questions/categories/).

* Publish time (year).

* Close time (year).

* Resolve time (year).

* Time from publish to close (year).

* Time from close to resolve (year).

* Time from publish to resolve (year).

* Number of forecasters.

* Number of predictions.

* Number of analysed dates, which is the number of instances at which the predictions were assessed.

* Total belief movement, which is a measure of the amount of updating, and is the sum of the belief movements, which are the squared differences between 2 consecutive beliefs.

+ The values of the beliefs range from 0 to 1, and can respect a:

- Probability.

- Ratio between an expectation and difference between the maximum and minimum allowed by Metaculus.

+ To illustrate, the belief movement from a probability of 0.5 to 0.8 is 0.09 (= (0.8 - 0.5)^2).

* Total uncertainty reduction, which is the difference between the initial and final uncertainties, where the uncertainty linked to a belief value p equals p (1 - p). This is null for probabilities of 0 and 1, and maximum and equal to 0.25 for a probability of 0.5.

* Total excess belief movement, which is the difference between the total belief movement and total uncertainty reduction.

* Normalised excess belief movement, which is the ratio between the total belief movement and total uncertainty reduction.

* Absolute value of normalised excess belief movement.

* [Z-score](https://en.wikipedia.org/wiki/Standard_score) for the null hypothesis that the beliefs are Bayesian.

* [P-value](https://en.wikipedia.org/wiki/P-value) for the null hypothesis that the beliefs are Bayesian.

* Normalised outcome, which is, for questions about:

+ Probabilities, 0 if the question resolves as “no”, and 1 if as “yes”.

+ Expectations, the ratio between the outcome and the difference between the maximum and minimum allowed by Metaculus.

* [Brier score](https://en.wikipedia.org/wiki/Brier_score), which is the mean squared difference between the predicted probability and outcome (0 or 1). Note the Brier score does not apply to questions about expectations, whose accuracy I did not assess.

[Augenblick 2021](https://academic.oup.com/qje/article-abstract/136/2/933/6127317?redirectedFrom%3Dfulltext) shows the total belief movement should match the total uncertainty reduction in expectation for [Bayesian updating](https://forum.effectivealtruism.org/topics/bayes-theorem) (see “Proposition 1”), in which case the total excess movement and normalised excess belief movement should be 0 and 1. I suppose Metaculus’ community predictions are less reliable early on. So, in the context of the metrics regarding belief movement and uncertainty reduction, I only analysed predictions concerning the 2nd half of the period during which each question was or has been open.

The Colab to extract the data and calculate the metrics is [here](https://colab.research.google.com/drive/1JqXgir413MJ6nf0RVpUw0fwh82LRu4ji?usp%3Dsharing)[[5]](#fn4kyfwk7siq5).

Results

=======

The tables below have results for:

* The mean, and 5th and 95th percentiles across categories of the number of questions, number of resolved questions, and mean metrics (1st table).

* Mean metrics for all questions and [those of the category of artificial intelligence](https://www.metaculus.com/questions/?search%3Dcat:computing--ai) (2nd table).

* Correlations among metrics for all questions and those of the category of artificial intelligence (3rd table).

The results in the 2nd and 3rd tables for the other categories are in the [Sheet](https://docs.google.com/spreadsheets/d/1Mxl8vGsZemmuKytV9zH1ft-iP2q4xwnxz7gCkSlYmPg/edit?usp%3Dsharing).

Mean metrics

------------

| Metric | Category |

| --- | --- |

| Mean | 5th percentile | 95th percentile |

| Number of questions | 64.8 | 3.00 | 179 |

| Number of resolved questions | 27.4 | 0 | 68.0 |

| Mean publish time (year) | 2020 | 2017 | 2022 |

| Mean close time (year) | 2039 | 2019 | 2077 |

| Mean resolve time (year) | 2062 | 2020 | 2161 |

| Mean time from publish to close (year) | 18.8 | 0.0530 | 56.2 |

| Mean time from close to resolve (year) | 23.0 | 2.04\*10^-7 | 72.9 |

| Mean time from publish to resolve (year) | 41.8 | 0.159 | 141 |

| Mean number of forecasters | 82.1 | 23.5 | 166 |

| Mean number of predictions | 172 | 50.0 | 357 |

| Mean number of analysed dates | 86.5 | 56.4 | 104 |

| Mean total belief movement | 0.0191 | 2.15\*10^-3 | 0.0461 |

| Mean total uncertainty reduction | 0.0130 | -0.0108 | 0.0491 |

| Mean total excess belief movement | 6.10\*10^-3 | -0.0253 | 0.0394 |

| Mean normalised excess belief movement | -43.6 | -7.09 | 7.77 |

| Mean absolute value of normalised excess belief movement | 49.0 | 0.213 | 18.5 |

| Mean z-score for the null hypothesis that the beliefs are Bayesian | 0.103 | -0.711 | 0.811 |

| Mean p-value for the null hypothesis that the beliefs are Bayesian | 0.456 | 0.306 | 0.638 |

| Mean normalised outcome | 0.328 | 0 | 0.669 |

| Mean Brier score | 0.162 | 0.0367 | 0.300 |

| Metric | Category |

| --- | --- |

| Any | Artificial intelligence |

| Number of questions | 5,335 | 199 |

| Number of resolved questions | 2,337 | 50 |

| Mean publish time (year) | 2,021 | 2,020 |

| Mean close time (year) | 2,036 | 2,043 |

| Mean resolve time (year) | 2,048 | 2,050 |

| Mean time from publish to close (year) | 15.3 | 22.9 |

| Mean time from close to resolve (year) | 12.2 | 7.07 |

| Mean time from publish to resolve (year) | 27.6 | 30.0 |

| Mean number of forecasters | 88.2 | 104.5 |

| Mean number of predictions | 206 | 200 |

| Mean number of analysed dates | 90.4 | 91.0 |

| Mean total belief movement | 0.0238 | 0.0219 |

| Mean total uncertainty reduction | 0.0191 | 0.0144 |

| Mean total excess belief movement | 4.70\*10^-3 | 7.53\*10^-3 |

| Mean normalised excess belief movement | -43.1 | -3.92 |

| Mean absolute value of normalised excess belief movement | 47.2 | 5.52 |

| Mean z-score for the null hypothesis that the beliefs are Bayesian | -6.78\*10^-3 | 0.105 |

| Mean p-value for the null hypothesis that the beliefs are Bayesian | 0.425 | 0.413 |

| Mean normalised outcome | 0.365 | 0.381 |

| Mean Brier score | 0.151 | 0.230 |

Correlations among metrics

--------------------------

| Correlation between Brier score and... | Category |

| --- | --- |

| Any (N = 1,374) | Artificial intelligence (N = 33) |

| [Correlation coefficient](https://en.wikipedia.org/wiki/Pearson_correlation_coefficient) | P-value for the null hypothesis that there is no correlation[[3]](#fn4ztx3gz691) | Correlation coefficient | P-value for the null hypothesis that there is no correlation |

| Publish time (year) | -0.143 | 9.82\*10^-8 | 0.179 | 0.319 |

| Close time (year) | -0.117 | 1.40\*10^-5 | 0.172 | 0.339 |

| Resolve time (year) | -0.146 | 5.68\*10^-8 | 0.184 | 0.305 |

| Time from publish to close (year) | 0.0319 | 0.238 | 7.82\*10^-3 | 0.966 |

| Time from close to resolve (year) | -0.0193 | 0.476 | 0.0341 | 0.850 |

| Time from publish to resolve (year) | 0.0102 | 0.705 | 0.0318 | 0.861 |

| Number of forecasters | -0.0776 | 4.02\*10^-3 | 0.0680 | 0.707 |

| Number of predictions | -0.0366 | 0.175 | 0.316 | 0.0729 |

| Number of analysed dates | -0.107 | 6.57\*10^-5 | 0.198 | 0.270 |

| Total belief movement | 0.262 | 0 | 0.282 | 0.111 |

| Total uncertainty reduction | -0.136 | 4.61\*10^-7 | -0.150 | 0.405 |

| Total excess belief movement | 0.256 | 0 | 0.361 | 0.0387 |

| Normalised excess belief movement | -4.63\*10^-3 | 0.864 | 0.0708 | 0.695 |

| Absolute value of normalised excess belief movement | 0.0893 | 9.17\*10^-4 | 0.110 | 0.542 |

| Z-score for the null hypothesis that the beliefs are Bayesian | 0.346 | 0 | 0.241 | 0.176 |

| P-value for the null hypothesis that the beliefs are Bayesian | 0.0296 | 0.273 | -0.0269 | 0.882 |

| Normalised outcome | 0.102 | 1.60\*10^-4 | 0.112 | 0.535 |

Discussion

==========

Mean metrics

------------

The mean metrics vary a lot across categories. For example, the 5th and 95th percentiles of the mean normalised outcome are 0 and 0.669, and of the mean Brier score are 0.0367 and 0.300.

I computed mean normalised excess belief movements of -43.1 and -3.92 for all questions and those of the category of artificial intelligence, but these are not statistically significant, as the mean p-values are 0.425 and 0.413. So it is not possible to reject Bayesian updating for Metaculus’ community predictions during the 2nd half of the period during which each question was or has been open. To contextualise, Table III of [Augenblick 2021](https://academic.oup.com/qje/article-abstract/136/2/933/6127317?redirectedFrom%3Dfulltext) presents normalised excess belief movements pretty close to 1 (and the p-values for the null hypothesis of Bayesian updating are all lower than 0.001):

* 1.20 for “a large data set, provided by and explored previously in [Mellers (2014)](https://journals.sagepub.com/doi/pdf/10.1177/0956797614524255) and [Moore (2017)](https://pubsonline.informs.org/doi/abs/10.1287/mnsc.2016.2525), that tracks individual probabilistic beliefs over an extended period of time”.

* 0.931 for “predictions of a popular baseball statistics website called [Fangraphs](https://en.wikipedia.org/wiki/FanGraphs)”.

* 1.046 for “[Betfair](https://en.wikipedia.org/wiki/Betfair), a large British prediction market that matches individuals who wish to make opposing financial bets about a binary event”.

I estimated mean normalised outcomes of 0.365 and 0.381 for all questions and those of the category of artificial intelligence. If we assume these values apply to questions about both probabilities and expectations:

* The likelihood of a question about probabilities resolving as “yes” is 36.5 % for all questions, and 38.1 % for those of the category of artificial intelligence.

* The outcome of a question about expectations is expected to equal the allowed minimum plus 36.5 % of the distance between the allowed minimum and maximum for all questions, and 38.1 % for those of the category of artificial intelligence.

I got mean Brier scores of 0.151 and 0.230 for all questions and those of the category of artificial intelligence, which are 19.5 % higher and 2.86 % lower than the mean Brier scores of 0.126 and 0.237 shown in Metaculus’ track record [page](https://www.metaculus.com/questions/track-record/)[[6]](#fnaplmoo626z6). I believe the differences are explained by my results:

* Excluding group questions.

* Approximating the mean Brier score based on a set of dates which covers the whole lifetime of the question (in uniform time steps[[7]](#fnzol4hy6cuv)), but does not encompass all community predictions[[8]](#fngzqg5ardt3l).

I think the 1st of these considerations is much more important than the 2nd. The category of artificial intelligence does not include probabilistic group questions, so it is only affected by the 2nd consideration, and the discrepancy is much smaller than for all questions (2.86 % < 19.5 %).

In any case, according to Metaculus’ track record [page](https://www.metaculus.com/questions/track-record/), Metaculus’ community predictions for questions of the category of artificial intelligence perform close to randomly, as 0.237 is pretty close to 0.25. However, [Metaculus’ predictions](https://www.metaculus.com/help/faq/%23metaculus-prediction) and postdictions[[9]](#fnks4cyu0ax7k) for the same category perform considerably better, with mean Brier scores of 0.168 and 0.146. These are also lower than the mean Brier score of 0.232 achieved for predictions matching the mean outcome of 0.365[[10]](#fn4x5qilixcnx) for probabilistic questions of the category of artificial intelligence[[11]](#fnz5upe3xa7y). In addition, I should note Metaculus’ predictions for the category of [AI and machine learning](https://www.metaculus.com/questions/?search%3Dcat:comp-sci--ai-and-machinelearning) have a mean Brier score of 0.149 (< 0.168).

In contrast, among all questions, the mean Brier score of Metaculus’ community predictions of 0.126 is similar to that of 0.120 for Metaculus’ predictions. So, overall, Metaculus’ community predictions perform roughly as well as Metaculus’ predictions, although there can be important differences between them within categories, as illustrated above for the category of artificial intelligence.

It would also be nice to see the mean accuracy of the predictions of questions about expectations, but I have not done that here.

Correlations among metrics

--------------------------

The 3 metrics which correlate more strongly with the Brier score are, listed by descending strength of the correlation (correlation coefficient; p-value):

* For all questions:

+ Z-score for the null hypothesis that the beliefs are Bayesian (0.346; 0), i.e. predictions are less accurate (higher Brier score) for questions whose predictions are more extreme under Bayesian updating.

+ Total belief movement (0.262; 0), i.e. predictions are less accurate for questions with a greater amount of updating. This is surprising, as one would expect predictions to converge to the truth as they are updated.

+ Total excess belief movement (0.256; 0), i.e. predictions are less accurate for questions with greater difference between amount of updating and uncertainty reduction.

* For the category of artificial intelligence:

+ Total excess belief movement (0.361; 0.0387), i.e. predictions are less accurate for questions with greater difference between amount of updating and uncertainty reduction.

+ Number of predictions (0.316; 0.0729), i.e. predictions are less accurate for questions with more predictions. Maybe more popular questions attract worse forecasters?

+ Total belief movement (0.282; 0.111), i.e. predictions are less accurate for questions with a greater amount of updating. This is surprising, but connected to the correlation above. The community prediction moves each time a new prediction is made.

The correlations with the normalised excess belief movement are weak (correlation coefficients of -4.63\*10^-3 and 0.0708), and not statistically significant (p-values of 0.864 and 0.695). So it is not possible to reject (the null hypothesis) that there is no correlation between accuracy and Bayesian updating, but the correlation I obtained is quite weak anyways.

Comparing the correlations for all questions and those of the category of artificial intelligence shows one should not extrapolate the results from all questions to each of the categories. The signs of the correlations are different for 52.9 % (= 9/17) of the metrics, although some of those of the category of artificial intelligence are not statistically significant. I guess the same applies to other categories. Feel free to check the correlations among metrics for each of the categories in tab “Correlations among metrics within categories”, selecting the category in the drop-down at the top.

Finally, correlations with accuracy for questions about expectations may differ from the ones I have discussed above for ones about probabilities.

My recommendation on how to use Metaculus

-----------------------------------------

If you want to know how much to trust a given prediction from Metaculus, I think it is sensible to check [Metaculus’ track record](https://www.metaculus.com/questions/track-record/) for similar past questions:

* The type of prediction you are seeing, either Metaculus’ community prediction or Metaculus’ prediction.

* The categories to which that question belongs (often more than one). The relevant menus show up when you click on “Show Filter”.

* The type of question. If it is about:

+ Probabilities, select “Brier score” or “Log score (discrete)”. I think the latter is especially important if small differences in probabilities close to 0 or 1 matter for your purpose.

+ Expectations, select “Log score (continuous)”.

* The time which matches more closely your conditions. To do this, you can select “other time…” after clicking on the dropdown after “evaluated at”.

+ This is relevant because, even if the track record as evaluated at “all times” is good, it may not be so early in the question lifetime.

+ The “other time” can be defined as a fraction of the question lifetime, or time before resolution.

I am glad Metaculus has made available all these options, and I really appreciate the transparency!

1. **[^](#fnrefj6bz6cf2jy)** I define the normalised outcome such that it ranges from 0 to 1 for questions about expectations, such that its lower and upper bound match the possible outcomes for probabilities.

2. **[^](#fnrefiemyc829csk)** The Brier score does not apply to expectations.

3. **[^](#fnref4ztx3gz691)** All p-values of 0 I present here are actually positive, but are so small they were rounded to 0 in Sheets.

4. **[^](#fnrefwztdbn0o85)** The pages of Metaculus’ questions have the format “https://www.metaculus.com/questions/ID/”.

5. **[^](#fnref4kyfwk7siq5)** The running time is about 20 min.

6. **[^](#fnrefaplmoo626z6)** To see the 1st of these Brier scores, you have to select “Brier score”, for the “community prediction”, evaluated at “all times”. To see the 2nd, you have to additionally click on “Show filter”, and select “Artificial intelligence” below “Categories include”.

7. **[^](#fnrefzol4hy6cuv)** Metaculus considers all predictions, which are not uniformly distributed in time (unlike the ones I retrieved), and therefore have different weights in the mean Brier score.

8. **[^](#fnrefgzqg5ardt3l)** The mean number of analysed dates is 43.9 % (= 90.4/206) of the mean number of predictions.

9. **[^](#fnrefks4cyu0ax7k)** From [here](https://www.metaculus.com/questions/track-record/), Metaculus’ postdictions refer to “what our [Metaculus’] current algorithm would have predicted if it and its calibration data were available at the question's close”.

10. **[^](#fnref4x5qilixcnx)** Mean of column T of tab “Metrics by question” for the questions of the category of artificial intelligence with normalised outcome of 0 or 1.

11. **[^](#fnrefz5upe3xa7y)** 0.232 = 0.365\*(1 - 0.365)^2 + (1 - 0.365)\*(0.365)^2.

12. **[^](#fnrefcngw35pext)** Some p-values are so small that they were rounded to 0 in Sheets. |

dd47c457-5d60-4d64-be4b-ea34409a24a2 | trentmkelly/LessWrong-43k | LessWrong | D&D.Sci (Easy Mode): On The Construction Of Impossible Structures

This is a D&D.Sci scenario: a puzzle where players are given a dataset to analyze and an objective to pursue using information from that dataset.

Duke Arado’s obsession with physics-defying architecture has caused him to run into a small problem. His problem is not – he affirms – that his interest has in any way waned: the menagerie of fantastical buildings which dot his territories attest to this, and he treasures each new time-bending tower or non-Euclidean mansion as much as the first. Nor – he assuages – is it that he’s having trouble finding talent: while it’s true that no individual has ever managed to design more than one impossible structure, it’s also true that he scarcely goes a week without some architect arriving at his door, haunted by alien visions, begging for the resources to bring them into reality. And finally – he attests – his problem is definitely not that “his mad fixation on lunatic constructions is driving him to the brink of financial ruin”, as the townsfolk keep saying: he’ll have you know he’s recently brought an accountant in to look over his expenditures, and he’s confirmed he has the funds to keep pursuing this hobby long into his old age.

Rather, his problem is the local zoning board. Concerned citizens have come together to force him to limit new creations near populated areas, claiming they “disrupt the neighbourhood character” and “conjure eldritch music to lure our children away while we sleep”. While in previous years he was free to – and did – support any qualified architect who showed up with sufficiently strange blueprints, the Duke is now forced to be selective: at present, he has fourteen applicants waiting on his word, and only four viable building sites. He finds this particularly galling, since about half the time when an architect finishes their work, the resulting building ends up not distorting the fabric of spacetime, and instead just kind of looking weird. It’s entirely possible that if he picks at random, he’ll end |

f678edf4-a16a-4198-8bcd-6bf5b4b6e436 | trentmkelly/LessWrong-43k | LessWrong | Why don't organizations have a CREAMO?

That is, a Chief Risk Evaluation And Mitigation Officer.

A bad handle on risk seems to be pervasive even in good organizations, both for- and non-profit. The unfolding FTX debacle shows that even Rationality-inspired orgs suck at risk assessment as much as any other place. If you go through the list of failed, ailing and failing startups in the ratosphere, they don't seem to do any better than your average ones, in terms of evaluating risk and acting on it. One can imagine that they would be good at handling known unknowns, and it's the black swans that would be a real danger. And yet, orgs get blindsided by entirely predictable events (why did FTX or its donors learn nothing from the Mt. Gox fiasco?). I can see some reasons where accurate risk evaluation is not in the interests of top-level human mesa-optimizers within an organization, because the compensation incentives are so misaligned. I can also see how everyone would hate that person. Or maybe this role is distributed between other executive officers? Certainly some rudimentary risk evaluation is done at every level, from the board down, but it doesn't seem to be the main focus of anyone. Or maybe I am missing something here and this role is not useful in general. |

fe931c38-ee5b-4525-bf12-3bb9efe9dba9 | trentmkelly/LessWrong-43k | LessWrong | Supplementing memory with experience sampling

If you asked me how happy I've been, I'd think back over my recent life and synthesize my memories into a judgement. Since I'm the one experiencing my life you would think this would be accurate, but our memories aren't fair. For example, people who had their hand in 57° water for 60 seconds rated the experience as less pleasant than people who had their hand in the same 57° water for the same 60 seconds, followed by 30 seconds with the water slowly rising to 59°. (Kahneman 1993, pdf) This is the peak-end rule where when we look back at an experience we don't really consider the duration and instead evaluate it based on how it was at its peak and how it ended.

This disagreement between emotion as it is experienced and emotion as it is remembered is called the memory-experience gap, and the peak-end rule is only one of the causes. The problem is, generally we only have access to memories of our emotion, which means if you're given the ice-water choice you'll repeatedly choose the option with more suffering. How can we get around this?

When psychologists want to get at experiential emotion they give people little timers. Every time the timer goes off the person writes down how happy/sad they are at that moment. This is an external sampling method that lets us use any sort of aggregation we would like, and it's fair in a way our internal methods are not. When I first read about this I thought "neat" and moved on, but recently I realized I that with a computer in my pocket I could do this myself. After asking around I ended up with the TagTime Android app, which is the only way I've found to do this that (a) works without an internet connection and (b) has an equal probability of sampling at every moment.

The response screen looks like:

You tap tags to say which ones currently apply. I have them sorted by frequency. To add new tags you turn the phone sideways and type text:

That's a little annoying, but most of the time I'm not entering a new tag.

I have tags |

12046e79-100b-4f0f-b233-28c109275bcb | trentmkelly/LessWrong-43k | LessWrong | Value/Utility: A History

[ I am writing this post because, while many people on LessWrong know something about the history of value and utility, it's vanishingly rare that someone is confident enough that they understand the whole thing, that they feel they can speak authoritatively about what these concepts 'really mean'. This often blocks important discussions. I had a faint memory suggesting that the canonical history is too short and understandable to really warrant this, so I looked up the whole thing, and as far as I can tell, that's true.

[One relevant Wikipedia section; I'm basically trying to write this up more tersely and straightforwardly and with less left up to assumed shared reader background.]

As a sometime hobbyist historian, I do wince publishing something so simplified. I know my picture of what the important events were would radically shift if I knew a little more. However, I think LessWrong working off this painfully simplified consensus would be a night-and-day improvement.

This post contains extensive quotes/cribbing from "Do Dice Play God?" by the mathematician Ian Stewart. IMO it's a great book on probability theory, although not mathematically sophisticated or ideologically Bayesian, because it gives the surprising historical/cultural motivations for a comprehensive breadth of "accepted procedures" in statistics usually taken at face value. [ Word of caution: Stewart conspicuously leaves out such figures as ET Jaynes, and Karl Friston [in his chapter about the brain as a Bayesian dynamical system!] suggesting he isn't aware of everything. ] ]

I. Cardano

The history books say: everybody was confused about chance until Girolamo Cardano, an Italian algebraist and gambler who wrote "Book on Games of Chance" in 1564 [ it was not published until 1663, long after he'd died ]. "At first sight [most Roman dice] look like cubes, but nine tenths of them have rectangular faces, not square ones. They lack [ . . . ] symmetry [ . . . ], so some numbers would have turned up m |

6fad2cb1-508b-4fc4-b6a7-25169e4e788c | awestover/filtering-for-misalignment | Redwood Research: Alek's Filtering Results | id: post3709

Previously , we defined a setting called "Delegative Inverse Reinforcement Learning" (DIRL) in which the agent can delegate actions to an "advisor" and the reward is only visible to the advisor as well. We proved a sublinear regret bound (converted to traditional normalization in online learning, the bound is O ( n 2 / 3 ) ) for one-shot DIRL (as opposed to standard regret bounds in RL which are only applicable in the episodic setting). However, this required a rather strong assumption about the advisor: in particular, the advisor had to choose the optimal action with maximal likelihood. Here, we consider "Delegative Reinforcement Learning" (DRL), i.e. a similar setting in which the reward is directly observable by the agent. We also restrict our attention to finite MDP environments (we believe these results can be generalized to a much larger class of environments, but not to arbitrary environments). On the other hand, the assumption about the advisor is much weaker: the advisor is only required to avoid catastrophic actions (i.e. actions that lose value to zeroth order in the interest rate) and assign some positive probability to a nearly optimal action. As before, we prove a one-shot regret bound (in traditional normalization, O ( n 3 / 4 ) ). Analogously to before , we allow for "corrupt" states in which both the advisor and the reward signal stop being reliable. Appendix A contains the proofs and Appendix B contains propositions proved before. Notation The notation K : X k → Y means K is Markov kernel from X to Y . When Y is a finite set, this is the same as a measurable function K : X → Δ Y , and we use these notations interchangeably. Given K : X k → Y and A ⊆ Y (corr. y ∈ Y ), we will use the notation K ( A ∣ x ) (corr K ( y ∣ x ) ) to stand for Pr y ′ ∼ K ( x ) [ y ′ ∈ A ] (corr. Pr y ′ ∼ K ( x ) [ y ′ = y ] ). Given Y is a finite set, μ ∈ Δ Y ω , h ∈ Y ∗ and y ∈ Y , the notation μ ( y ∣ h ) means Pr x ∼ μ [ x | h | = y ∣ x : | h | = h ] . Given Ω a measurable space, μ ∈ Δ Ω , n , m ∈ N , { A k } k ∈ [ n ] , { B j } j ∈ [ m ] finite sets and { X k : Ω → A k } k ∈ [ n ] , { Y j : Ω → B j } j ∈ [ m ] random variables (measurable mappings), the mutual information between the joint distribution of the X k and the joint distribution of the Y j will be denoted I ω ∼ μ [ X 0 , X 1 … X n − 1 ; Y 0 , Y 1 … Y m − 1 ] We will parameterize our geometric time discount by γ = e − 1 / t , thus all functions that were previously defined to depend on t are now considered functions of γ . Results We start by explaining the relation between the formalism of general environments we used before and the formalism of finite MDPs. Definition 1 A finite Markov Decision Process (MDP) is a tuple M = ( S M , A M , T M : S M × A M k → S M , R M : S M → [ 0 , 1 ] ) Here, S M is a finite set (the set of states), A M is a finite set (the set of actions), T M is the transition kernel and R M is the reward function. A stationary policy for M is any π : S M k → A M . The space of stationary policies is denoted Π M . Given π ∈ Π M , we define T M π : S M k → S M by T M π ( t ∣ s ) : = ∑ a ∈ A M T M ( t ∣ s , a ) π ( a ∣ s ) We define V M : S M × ( 0 , 1 ) → [ 0 , 1 ] and Q M : S M × A M × ( 0 , 1 ) → [ 0 , 1 ] by V M ( s , γ ) : = ( 1 − γ ) max π ∈ Π M ∞ ∑ n = 0 γ n E T n M π ( s ) [ R M ] Q M ( s , a , γ ) : = ( 1 − γ ) R M ( s ) + γ E t ∼ T M ( s , a ) [ V M ( t , γ ) ] Here, T n M π : S M k → S M is just the n -th power of T M π in the sense of Markov kernel composition. As well known, V M and Q M are rational functions in γ for 1 − γ ≪ 1 , therefore in this limit we have the Taylor expansions V M ( s , γ ) = ∞ ∑ k = 0 1 k ! V k M ( s ) ⋅ ( 1 − γ ) k Q M ( s , a , γ ) = ∞ ∑ k = 0 1 k ! Q k M ( s , a ) ⋅ ( 1 − γ ) k Given any s ∈ S M , we define { A k M ( s ) ⊆ A M } k ∈ N recursively by A 0 M ( s ) : = a r g m a x a ∈ A M Q 0 M ( s , a ) A k + 1 M ( s ) : = a r g m a x a ∈ A k M ( s ) Q k + 1 M ( s , a ) All MDPs will be assumed to be finite, so we drop the adjective "finite" from now on. Definition 2 Let I = ( A , O ) be an interface. An I -universe υ = ( μ , r ) is said to be an O -realization of MDP M with state function S : hdom μ → S M when A M = A and for any h ∈ hdom μ , a ∈ A and o ∈ O T M ( s ∣ S ( h ) , a ) = Pr o ∼ μ ( h a ) [ S ( h a o ) = s ] r ( h ) = R M ( S ( h ) ) Now now define the relevant notion of a "good advisor." Definition 3 Let υ = ( μ , r ) be a universe and ϵ > 0 . A policy π is said to be ϵ -sane for υ when there are M , S s.t. υ is an O -realization of M with state function S and for any h ∈ hdom μ i. supp π ( h ) ⊆ A 0 M ( S ( h ) ) ii. ∃ a ∈ A 1 M ( S ( h ) ) : π ( a ∣ h ) > ϵ We can now formulate the regret bound. Theorem 1 Fix an interface I and ϵ > 0 . Consider H = { υ k = ( μ k , r ) ∈ Υ I } k ∈ [ N ] for some N ∈ N (note that r doesn't depend on k ). Assume that for each k ∈ [ N ] , σ k is an ϵ -sane policy for υ k . Then, there is an ¯ I -metapolicy π ∗ s.t. for any k ∈ [ N ] EU ∗ υ k ( γ ) − EU π ∗ ¯ υ k [ σ k ] ( γ ) = O ( ( 1 − γ ) 1 / 4 ) Corollary 1 Fix an interface I and ϵ > 0 . Consider H = { υ k = ( μ k , r ) ∈ Υ I } k ∈ N . Assume that for each k ∈ N , σ k is a ϵ -sane policy for υ k . Define ¯ H : = { ¯ υ k [ σ k ] } n ∈ N . Then, ¯ H is learnable. Now, we deal with corrupt states. Definition 4 Let υ = ( μ , r ) be a universe and ϵ > 0 . A policy π is said to be locally ϵ -sane for υ when there are M , S and U ⊆ S M (the set of uncorrupt states) s.t. υ is an O -realization of M with state function S , S ( λ ) ∈ U and for any h ∈ hdom μ , if S ( h ) ∈ U then i. If a ∈ supp π ( h ) and o ∈ supp μ ( h a ) then S ( h a o ) ∈ U . ii. supp π ( h ) ⊆ A 0 M ( S ( h ) ) iii. ∃ a ∈ A 1 M ( S ( h ) ) : π ( a ∣ h ) > ϵ iv. ∃ a ∈ A 2 M ( S ( h ) ) : T M ( U ∣ S ( h ) , a ) = 1 Of course, this requirement is still unrealistic for humans in the real world. In particular, it makes the formalism unsuitable for modeling the use of AI for catastrophe mitigation (which is ultimately what we are interested in!) since it assumes the advisor is already capable of avoiding any catastrophe. In following work, we plan to relax the assumptions further. Corollary 2 Fix an interface I and ϵ > 0 . Consider H = { υ k = ( μ k , r k ) ∈ Υ I } k ∈ [ N ] for some N ∈ N . Assume that for each k ∈ [ N ] , σ k is locally ϵ -sane for υ k . For each k ∈ [ N ] , let U k ⊆ S M k be the corresponding set of uncorrupt states. Assume further that for any k , j ∈ N and h ∈ hdom μ k ∩ hdom μ j , if S k ( h ) ∈ U k and S j ( h ) ∈ U j , then r k ( h ) = r j ( h ) . Then, there is an ¯ I -policy π ∗ s.t. for any k ∈ [ N ] EU ∗ υ k ( γ ) − EU π ∗ ¯ υ k [ σ k ] ( γ ) = O ( ( 1 − γ ) 1 / 4 ) Corollary 3 Assume the same conditions as in Corollary 2, except that H may be countable infinite. Then, ¯ H is learnable. Appendix A First, we prove an information theoretic bound that shows that for Thompson sampling, the expected information gain is bounded below by a function of the loss. Proposition A.1 Consider a probability space ( Ω , P ∈ Δ Ω ) , N ∈ N , R ⊆ [ 0 , 1 ] a finite set and random variables U : Ω → R , K : Ω → [ N ] and J : Ω → [ N ] . Assume that K ∗ P = J ∗ P = ζ ∈ Δ [ N ] and I [ K ; J ] = 0 . Then I [ K ; J , U ] ≥ 2 ( min i ∈ [ N ] ζ ( i ) ) ( E [ U ∣ J = K ] − E [ U ] ) 2 Proof of Proposition A.1 We have I [ K ; J , U ] = I [ K ; J ] + I [ K ; U ∣ J ] = I [ K ; U ∣ J ] = E [ D K L ( U ∗ ( P ∣ K , J ) ∥ U ∗ ( P ∣ J ) ) ] Using Pinsker's inequality, we get I [ K ; J , U ] ≥ 2 E [ d tv ( U ∗ ( P ∣ K , J ) , U ∗ ( P ∣ J ) ) 2 ] ≥ 2 E [ ( E [ U ∣ K , J ] − E [ U ∣ J ] ) 2 ] Denote U k j : = E [ U ∣ K = k , J = j ] . We get I [ K ; J , U ] ≥ 2 E ( k , j ) ∼ ζ × ζ [ ( U k j − E k ′ ∼ ζ [ U k ′ j ] ) 2 ] ≥ 2 E ( k , j ) ∼ ζ × ζ [ [ [ k = j ] ] ( U k j − E k ′ ∼ ζ [ U k ′ j ] ) 2 ] I [ K ; J , U ] ≥ 2 E j ∼ ζ [ ζ ( j ) ( U j j − E k ∼ ζ [ U k j ] ) 2 ] ≥ 2 ( min j ∈ [ N ] ζ ( j ) ) E j ∼ ζ [ ( U j j − E k ∼ ζ [ U k j ] ) 2 ] I [ K ; J , U ] ≥ 2 ( min j ∈ [ N ] ζ ( j ) ) ( E j ∼ ζ [ U j j ] − E ( k , j ) ∼ ζ × ζ [ U k j ] ) 2 = 2 ( min i ∈ [ N ] ζ ( i ) ) ( E [ U ∣ J = K ] − E [ U ] ) 2 Now, we describe a "delegation routine" D that can transform any "proto-policy" π that recommends some set of actions from A into an actual ¯ I -policy s.t (i) with high probability, on each round, either a "safe" recommended action is taken, or all recommended actions are "unsafe" or delegation is performed and (ii) the expected number of delegations is small. For technical reasons, we also need to the modified routines D ! k which behave the same way as D except for some low probability cases. Proposition A.2 Fix an interface I = ( A , O ) , N ∈ N , ϵ ∈ ( 0 , 1 | A | ) , δ ∈ ( 0 , 1 N ) . Consider some { σ k : ( A × O ) ∗ k → A } k ∈ [ N ] . Then, there exist D : ¯ ¯¯¯¯¯¯¯¯¯¯¯¯¯ ¯ A × O ∗ × 2 A → ¯ A and { D ! k : ¯ ¯¯¯¯¯¯¯¯¯¯¯¯¯ ¯ A × O ∗ × 2 A → ¯ A } k ∈ [ N ] with the following properties. Given x ∈ ( 2 A × ¯ ¯¯¯¯¯¯¯¯¯¯¯¯¯ ¯ A × O ) ∗ , we denote x – – its projection to ¯ ¯¯¯¯¯¯¯¯¯¯¯¯¯ ¯ A × O ∗ . Thus, x – – – – ∈ ( A × O ) ∗ .

Given μ an I -environment, π : hdom μ k → 2 A , D ′ : ¯ ¯¯¯¯¯¯¯¯¯¯¯¯¯ ¯ A × O ∗ × 2 A → ¯ A and k ∈ [ N ] , we can define Ξ [ μ , σ k , D ′ , π ] ∈ Δ ( 2 A × ¯ ¯¯¯¯¯¯¯¯¯¯¯¯¯ ¯ A × O ) ω as follows Ξ [ μ , σ k , D ′ , π ] ( B , a , o ∣ x ) : = π ( B ∣ x – – – – ) D ′ ( a ∣ x – – , B ) ¯ μ [ σ k ] ( o ∣ x – – a ) We require that for every π , μ and k as above, the following conditions hold i. E x ∼ Ξ [ μ , σ k , D ! k , π ] [ | { n ∈ N ∣ x n ∈ 2 A × ⊥ × ¯ O } | ] ≤ ln N δ ln ( 1 + ϵ ( 1 − ϵ ) ( 1 − ϵ ) / ϵ ) = O ( ln N δ ϵ ) ii. d tv ( 1 N ∑ N − 1 j = 0 Ξ [ μ , σ j , D , π ] , 1 N ∑ N − 1 j = 0 Ξ [ μ , σ j , D ! j , π ] ) ≤ ( N − 1 ) δ iii. For all x ∈ hdom ¯ μ [ σ k ] , if D ! k ( x , π ( x – – ) ) ≠ ⊥ then σ k ( D ! k ( x , π ( x – – ) ) ∣ x – – ) > 0 iv. For all x ∈ hdom ¯ μ [ σ k ] , if D ! k ( x , π ( x – – ) ) ∉ π ( x – – ) ∪ { ⊥ } then ∀ a ∈ π ( x – – ) : σ k ( a ∣ x – – ) ≤ ϵ In order to prove Proposition A.2, we need another mutual information bound. Proposition A.3 Consider N ∈ N , A a finite set, ϵ ∈ ( 0 , 1 | A | ) , δ ∈ ( 0 , 1 ) , B ⊆ A , ζ ∈ Δ [ N ] and σ : [ N ] k → A . Suppose that for every a ∈ A Pr k ∼ ζ [ σ ( a ∣ k ) > 0 ∧ ( a ∈ B ∨ ∀ b ∈ B : σ ( b ∣ k ) ≤ ϵ ) ] ≤ 1 − δ Then I ( k , a ) ∼ ζ ⋉ σ [ k ; a ] ≥ δ ln ( 1 + ϵ ( 1 − ϵ ) ( 1 − ϵ ) / ϵ ) Proof of Proposition A.3 We have I ( k , a ) ∼ ζ ⋉ σ [ k ; a ] = E k ∼ ζ [ D K L ( σ ( k ) ∥ σ ∗ ζ ) ] Define q ∈ ( 0 , 1 ) by q : = 1 ϵ + ( 1 − ϵ ) − ( 1 − ϵ ) / ϵ Let a ∗ ∈ A be s.t. ( σ ∗ ζ ) ( a ∗ ) > q ϵ and either a ∗ ∈ B or every a ∈ B has ( σ ∗ ζ ) ( a ) ≤ q ϵ . For every k ∈ [ N ] , denote A k : = { a ∈ A ∣ σ ( a ∣ k ) > 0 ∧ ( a ∈ B ∨ ∀ b ∈ B : σ ( b ∣ k ) ≤ ϵ ) } If a ∗ ∉ A k then either σ ( a ∗ ∣ k ) = 0 or there is a ∈ B s.t. ( σ ∗ ζ ) ( a ) ≤ q ϵ and σ ( b ∣ k ) ≥ ϵ . This implies D K L ( σ ( k ) ∥ σ ∗ ζ ) ≥ min ( D K L ( 0 ∥ q ϵ ) , D K L ( ϵ ∥ q ϵ ) ) We have D K L ( 0 ∥ q ϵ ) = ln 1 1 − q ϵ = ln 1 1 − ϵ ϵ + ( 1 − ϵ ) − ( 1 − ϵ ) / ϵ = ln ϵ + ( 1 − ϵ ) − ( 1 − ϵ ) / ϵ ϵ + ( 1 − ϵ ) − ( 1 − ϵ ) / ϵ − ϵ = ln ( 1 + ϵ ( 1 − ϵ ) ( 1 − ϵ ) / ϵ ) D K L ( ϵ ∥ q ϵ ) = ϵ ln ϵ q ϵ + ( 1 − ϵ ) ln 1 − ϵ 1 − q ϵ = ϵ ln 1 q + ( 1 − ϵ ) ln ( 1 − ϵ ) − ϵ ln 1 1 − q ϵ + ln 1 1 − q ϵ D K L ( ϵ ∥ q ϵ ) = ϵ ln 1 − q ϵ q + ln ( 1 − ϵ ) 1 − ϵ + ln 1 1 − q ϵ = ϵ ln ( 1 q − ϵ ) + ln ( 1 − ϵ ) 1 − ϵ + ln 1 1 − q ϵ D K L ( ϵ ∥ q ϵ ) = ϵ ln ( 1 − ϵ ) − ( 1 − ϵ ) / ϵ + ln ( 1 − ϵ ) 1 − ϵ + ln 1 1 − q ϵ = ln ( 1 − ϵ ) − ( 1 − ϵ ) + ln ( 1 − ϵ ) 1 − ϵ + ln 1 1 − q ϵ = ln 1 1 − q ϵ D K L ( ϵ ∥ q ϵ ) = ln ( 1 + ϵ ( 1 − ϵ ) ( 1 − ϵ ) / ϵ ) It follows that I ( k , a ) ∼ ζ ⋉ σ [ k ; a ] ≥ Pr k ∼ ζ [ a ∗ ∉ A k ] ln ( 1 + ϵ ( 1 − ϵ ) ( 1 − ϵ ) / ϵ ) ≥ δ ln ( 1 + ϵ ( 1 − ϵ ) ( 1 − ϵ ) / ϵ ) Proof of Proposition A.2 We define { A k : ¯ ¯¯¯¯¯¯¯¯¯¯¯¯¯ ¯ A × O ∗ × 2 A → 2 A } k ∈ N , ~ ζ : ¯ ¯¯¯¯¯¯¯¯¯¯¯¯¯ ¯ A × O ∗ k → [ N ] , ζ : ¯ ¯¯¯¯¯¯¯¯¯¯¯¯¯ ¯ A × O ∗ k → [ N ] , { ~ ζ ! k : ¯ ¯¯¯¯¯¯¯¯¯¯¯¯¯ ¯ A × O ∗ k → [ N ] } k ∈ N , { ζ ! k : ¯ ¯¯¯¯¯¯¯¯¯¯¯¯¯ ¯ A × O ∗ k → [ N ] } k ∈ N , D and { D ! k } k ∈ N recursively. For each h ∈ ¯ ¯¯¯¯¯¯¯¯¯¯¯¯¯ ¯ A × O ∗ , a ∈ ¯ A , o ∈ ¯ O , B ⊆ A and j , k ∈ [ N ] , we require A k ( h , B ) : = { a ∈ A ∣ σ k ( a ∣ h – – ) > 0 ∧ ( a ∈ B ∨ ∀ b ∈ B : σ k ( b ∣ h – – ) ≤ ϵ ) } ~ ζ ( k ∣ λ ) = ~ ζ ! j ( k ∣ λ ) : = 1 N ~ ζ ( k ∣ h a o ) : = ⎧ ⎪

⎪

⎪ ⎨ ⎪

⎪