id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

3859bf34-acfd-42fc-9a80-ccb5d584e18a | trentmkelly/LessWrong-43k | LessWrong | On the Nature of Reputation

Abstract: Reputation tokens (e.g. brands, but lot of other things as well) are vessels to store reputation. They are free to create but expensive to fill with trust. Consumers use them to deal with information overload. Producers use them to manipulate common knowledge. Also, a certain kind of supply-demand-style equilibrium exists.

Trademarks

Speaking about reputation, one risks getting all hand-wavy and disconnected from the real world.

To keep the discussion down-to-earth, let's start with the trademark law. The trademark law was, after all, created specifically to deal with reputation. And being a law, it is not a theoretical model but a living organism evolved to solve actual real-world problems.

You may have a vague idea that a trademark is something like an Internet domain name. You can buy a name and then it's yours. It's your property and everyone else should get off your lawn.

But the trademark law is not like that at all.

First of all, you don't create a trademark by buying it. Trademark is rather created as a by-product of using a name. You establish a company. You start producing stuff. Maybe you do a little advertising. People start to recognize your brand. Et voilà, you own a legally recognized trademark. No explicit action on your part is needed.

Similarly, if you stop using the name, your claim to it gradually dissipates. If you claim a trademark and your opponent is able to prove that you haven't used it for decades, the court will rule against you.

There is also the concept of "trademark goodwill" which loosely translates to "reputation". Interestingly, the notion of trademark goodwill tends to be phrased in economic terms. Namely, it is the part of the value of the company that is gained through owning the trademark.

Another common misconception about trademarks is that the names are, similarly to the Internet domain names, global.

In reality, the scope of a trademark is limited to the area of one's activity. Apple, the grocery store do |

2dbc1169-3494-4a71-8f99-efd6d47e7094 | trentmkelly/LessWrong-43k | LessWrong | Bakeoff

For most of the past week Lily has been trying to persuade us to have a cooking competition. Her initial idea involved kids cooking unassisted, but after talking through ways I saw this going poorly she decided she was ok with teams. This morning she said it was time, and declared it would be Lily + Jeff vs Anna + Julia.

Unfortunately, Anna was only interested in being a judge. After extended discussion Lily determined that we would be a team and Julia would compete as an individual. Luckily for Lily, when Julia woke up she was up for participating.

Lily started by making a list of the allowed ingredients:

Since we only have one non-vegan oven we weren't going to have things ready at the same time, and Julia wanted to eat hers for breakfast anyway; we decided she'd have the oven first. She was enough faster that she was done with the oven before Anna and I were even close to ready to put ours in.

Julia made chocolate popovers:

Lily and I made a sponge cake with chocolate buttercream frosting:

The cake was a clear judge's favorite:

Lily: does it win?

Anna: yes!

Comment via: facebook |

947046e5-cf58-4f3c-ad83-88474dd9ec6b | trentmkelly/LessWrong-43k | LessWrong | A whirlwind tour of Ethereum finance

As a hacker and cryptocurrency liker, I have been hearing for a while about "DeFi" stuff going on in Ethereum without really knowing what it was. I own a bunch of ETH, so I finally decided that enough was enough and spent a few evenings figuring out what was going on. To my pleasant surprise, a lot of it was fascinating, and I thought I would share it with LW in the hopes that other people will be interested too and share their thoughts.

Throughout this post I will assume that the reader has a basic mental model of how Ethereum works. If you don't, you might find this intro & reference useful.

Why should I care about this?

For one thing, it's the coolest, most cypherpunk thing going. Remember how back in 2012, everyone knew that Bitcoin existed, but it was a pain in the ass to use and it kind of felt weird and risky? It feels exactly like that using all this stuff. It's loads of fun.

For another thing, the economic mechanism design stuff is really fun to think about, and in many cases nobody knows the right answer yet. It's a chance for random bystanders to hang out with problems on the edge of human understanding, because nobody cared about these problems before there was so much money floating around in them.

For a third thing, you can maybe make some money. Specifically, if you have spare time, a fair bit of cash, appetite for risk, conscientiousness, some programming and finance knowledge, and you are capable of and interested in understanding how these systems work, I think it's safe to say that you have a huge edge, and you should be able to find places to extract value.

General overview

In broad strokes, people are trying to reinvent all of the stuff from typical regulated finance in trustless, decentralized ways (thus "DeFi".) That includes:

* Making anything that has value into a transferable asset, typically on Ethereum, and typically an ERC-20 token. A token is an interoperable currency that keeps track of people's balances and lets people transf |

dbc914fb-299d-4617-9058-8109eefd3d21 | trentmkelly/LessWrong-43k | LessWrong | Exercise isn't necessarily good for people

I would appreciate it very much if anyone would take a close look at this-- it looks sound to me, but it also appeals to my prejudices.

http://www.youtube.com/watch?feature=player_embedded&v=E42TQNWhW3w#!

My comments are in square brackets. Everything else is my notes on the Jamie Timmons lecture from the video.

Short version: 12% of people become less healthy from exercise. 20% of people get nothing from exercise. This is a matter of genetics, not doing exercise wrong.

****

Ask a hundred people about exercise, you'll get a wide range of answers about what exercise is and what good it might do for health, and the same for health professionals.

You need to focus on the evidence that exercise affects particular health outcomes. Weight and health are not strongly correlated. BMI is problematic.

There's a recommendation for 150 minutes of exercise/week, but this isn't sound. People who *report* being active have better health. People who are fitter have better health. These are not evidence that having a person with low activity take up exercise will make them healthier.

Nothing but a supervised intervention study is good enough.

Improved lifestyle is better than Metformin for preventing diabetes. (Studies) Exercise + diet modification has a powerful effect of preventing and slowing the progression of Type II diabetes. People with Type II have more cardiovascular disease (heart attacks and strokes). However, it doesn't follow that the lifestyle changes which help with Type II will also help with CVD. [I'm surprised]

Diabetes doesn't kill, CVD does, and a major motivation for the NHS to care is that CVD is expensive.

[9:45] Two studies which find that lifestyle intervention has no effect on CVD in diabetics. [11:00] One study which found that lifestyle intervention prevents Type II but doesn't affect microvascular disease (blindness and ulcers). [I'm not sure what this means. Maybe people can have the ill effects of Type II without the disease showing up in th |

4a28e718-4af1-40e5-a964-ba1ead96656d | trentmkelly/LessWrong-43k | LessWrong | Meetup : Washington, D.C.: Tetlock's "Expert Political Judgment"

Discussion article for the meetup : Washington, D.C.: Tetlock's "Expert Political Judgment"

WHEN: 14 December 2014 03:00:00PM (-0500)

WHERE: National Portrait Gallery

We will be meeting in the Kogod Courtyard of the National Portrait Gallery (8th and F Sts or 8th and G Sts NW, go straight past the information desk from either entrance) to talk about Philip E. Tetlock's book Expert Political Judgment: How Good Is It? How Can We Know?. Per the norm, we plan to let people congregate from 3:00 to 3:30 before kicking things off.

As with prior informal-discussion meetups, conversation on any subject of interest to attendees (be it in the main conversation or in a side conversation) is both permitted and encouraged, but we suggest taking advantage of the meetup topic as a Schelling point.

Upcoming Meetups:

The Less Wrong DC organizers haven't decided whether to hold meetups on the remaining two Sundays in 2014: the 21st is the day after Brighter Than Today, a secular winter solstice celebration that many regulars will be out of town for, and the 28th falls during the week after Christmas Day, an ostensibly-religious winter solstice celebration that many regulars may be out of town (or hosting out-of-town guests) for. If there is sufficient interest in attending a meetup on either or both of these dates, meetups will occur; otherwise the Fun & Games meetup will be postponed to January 4th.

Discussion article for the meetup : Washington, D.C.: Tetlock's "Expert Political Judgment" |

b1d0f956-917b-4317-acf7-3fc0822a62c0 | trentmkelly/LessWrong-43k | LessWrong | A boltzmann brain question.

I have a question on Boltzmann brains which I'd like to hear your opinions on - mostly because I don't know the answer to this....

First of all - a Boltzmann brain is the idea that - in a sufficiently large and long-lived universe, a brain just like mine or yours will occasionally pop into existence by sheer fluke. It doesn't happen very often - in fact it happens very, very infrequently. The brain will go on to have an experience or two before ceasing to exist on the grounds that it's surrounded by vacuum and cold - which is not a very good place for a disembodied brain to be.

Such a brain would have a very short life. Well, by a greater fluke, some of them might last for a longer time, but the balance of probabilities is that most boltzmann brains that think they had a long life merely had a lot of false memories of this life, planted by the fluke of their sudden pop into existence. And in their few seconds of life, they never got to realise that they didn't actually live that life, and their memories make no sense.

Well, boltzmann brains don't pop into existence by fluke very often - in the whole life of the observable universe it's overwhelmingly likely that it's never happened.

What might be more likely to happen? Well, you could have half a Boltzmann brain instead, and by sheer fluke, have the nerves leading from that half-brain stimulated as if the other half was there during the few seconds of the half-brain's life. This is still extremely unlikely, but tremendously more likely than having a whole Boltzmann brain appear. And the half-brain still thinks it has the same experience as before.

There is of course nothing to stop us from continuing this. Suppose we have a one-quarter brain? Much more probable. One millionth? Even more probable. Maybe even single elements of a nerve cell? More probable still. The smaller the piece is, the less of a fluke you need for it to come into existence, and the less of a fluke you need to continue to supply all the same |

47642836-d28b-4e3f-bcc5-fa827584d774 | trentmkelly/LessWrong-43k | LessWrong | Difference between CDT and ADT/UDT as constant programs

After some thinking, I came upon an idea how to define the difference between CDT and UDT within the constant programs framework. I would post it as a comment, but it is rather long...

The idea is to separate the cognitive part of an agent into three separate modules:

1. Simulator: given the code for a parameterless function X(), the Simulator tries to evaluate it, spending L computation steps. The result is either proving that X()=x for some value x, or leaving X() unknown.

2. Correlator: given the code for two functions X(...) and Y(...), the Correlator checks for proofs (of length up to P) of structural similarity between the source codes of the functions, trying to prove correlations X(...)=Y(...).

[Note: the Simulator and the Correlator can use the results of each other, so that:

If simulator proves that A()=x, then correlator can prove that A()+B() = x+B()

If correlator proves that A()=B(), then simulator can skip simulation when proving that (A()==B() ? 1 : 2) = 1]

3. Executive: allocates tasks and resources to Simulator and Correlator in some systematic manner, trying to get them to prove the "moral arguments"

Self()=x => U()=u

or

( Self()=x => U()=ux AND Self()=y => U()=uy ) => ux > uy,

and returns the best found action.

Now, CDT can be defined as an agent with the Simulator, but without the Correlator. Then, no matter what L it is given, the Simulator won't be able to prove that Self()=Self(), because of the infinite regress. So the agent will be opaque to itself, and will two-box on Newcomb's problem and defect against itself in Prisoner's Dilemma.

The UDT/ADT, on the other hand, have functioning Correlators.

If it is possible to explicitly (rather than conceptually) separate an agent into the three parts, then it appears to be possible to demonstrate the good behavior of an agent in the ASP problem. The world can be written as a NewComb's-like function:

def U():

box2 = 1000

box1 = (P()==1 ? 1000000 |

548d1616-94a4-4fe8-8178-c63779a989c9 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | [Link, 2011] Team may be chosen to receive $1.4 billion to simulate human brain

This is the team responsible for simulating the rat cortical column.

[http://www.nature.com/news/2011/110308/full/news.2011.143.htm](http://www.nature.com/news/2011/110308/full/news.2011.143.html)

The team is one of 6 that is being considered for at least 2 "FET Flagship" positions, which comes with all that funding. Each of the six competing teams is proposing to work on some kind of futuristic technology:

<http://cordis.europa.eu/fp7/ict/programme/fet/flagship/6pilots_en.html>

Of course, word on [the street](http://www.vetta.org/2011/05/sutton-on-human-level-ai/) is that academic neuroscientists don't think much of the project:

>

> Academic neuroscientists that I’ve ever spoken too, which is a fair number now, don’t think much of the Blue Brain project. They sometimes think it will be valuable in terms of collecting and cataloguing information about the neocortex, but they don’t think the project will manage to understand how the cortex works as there are too many unknowns in the model and even if, by chance, they got the model right it would be very hard to know that they had.

>

>

> Almost all neuroscientists seem to think that working brain models will not exist by 2025, or even 2035 for that matter. What ever the date is, most consider it too far away to bother to think much about.

>

>

> Such projects probably help to get more kids interested in the topic.

>

>

>

---

I think trying to influence the committee's decision potentially represents very low hanging fruit in [politics as charity](http://www.vetta.org/2011/05/sutton-on-human-level-ai/).

Even if academic neuroscientists don't think much of the project in its current state, it seems likely that $1.4 billion would end up attracting a lot of talent to this problem, and get us the first upload significantly sooner.

It's true that Less Wrong doesn't have a consensus position on whether to speed development of cell modeling and brain scanning technology or not. But I think if we have a discussion and a vote, we're significantly more likely than the committee to come up with the right decision for humanity. As far as I can tell, the committee will essentially be choosing at random. It shouldn't be hard for us to beat that.

Edit: But that's not to say that our estimate should be quick and dirty. In the spirit of [holding off on proposing solutions](/lw/ka/hold_off_on_proposing_solutions/), I discourage anyone from taking a firm public position on this topic for now.

In terms of avenues for influence, here are a few ideas off the top of my head:

1. Hire a PR agency to generate positive or negative press for a given project.

2. Get European Less Wrong users to contact the program via Facebook and Twitter. (The program's follower numbers are in the low triple digits.)

3. Hire professional lobbyists to do whatever they do.

Just to give everyone an idea of the kind of money involved here, if we have a 1% chance of influencing the committee's decision, we're moving $14 million in expected funds.

We, and the folks at the Future of Humanity Institute, SI, and other groups, seem to spend a lot of time thinking about what would happen in the ideal scenario in terms of the order in which technologies are developed and how they are deployed. I think there is a good case for also investing in the complementary good of trying to actually influence the world towards a more ideal scenario. |

0d6ce315-b262-49dd-a7fe-64c844061d55 | trentmkelly/LessWrong-43k | LessWrong | Third-party testing as a key ingredient of AI policy

(nb: this post is written for anyone interested, not specifically aimed at this forum)

We believe that the AI sector needs effective third-party testing for frontier AI systems. Developing a testing regime and associated policy interventions based on the insights of industry, government, and academia is the best way to avoid societal harm—whether deliberate or accidental—from AI systems.

Our deployment of large-scale, generative AI systems like Claude has shown us that work is needed to set up the policy environment to respond to the capabilities of today’s most powerful AI models, as well as those likely to be built in the future. In this post, we discuss what third-party testing looks like, why it’s needed, and describe some of the research we’ve done to arrive at this policy position. We also discuss how ideas around testing relate to other topics on AI policy, such as openly accessible models and issues of regulatory capture.

Policy overview

Today’s frontier AI systems demand a third-party oversight and testing regime to validate their safety. In particular, we need this oversight for understanding and analyzing model behavior relating to issues like election integrity, harmful discrimination, and the potential for national security misuse. We also expect more powerful systems in the future will demand deeper oversight - as discussed in our ‘Core views on AI safety’ post, we think there’s a chance that today’s approaches to AI development could yield systems of immense capability, and we expect that increasingly powerful systems will need more expansive testing procedures. A robust, third-party testing regime seems like a good way to complement sector-specific regulation as well as develop the muscle for policy approaches that are more general as well.

Developing a third-party testing regime for the AI systems of today seems to give us one of the best tools to manage the challenges of AI today, while also providing infrastructure we can use for the systems |

d2216fae-215c-4044-9159-f69189eed3c6 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | psychology and applications of reinforcement learning: where do I learn more?

Minicamp made me take the notion of an [Ugh Field](/lw/21b/ugh_fields/) seriously, and I've found Ugh Fields a fairly useful model for understanding how my brain works. I have/had lots of topics that have been unpleasant to think about and the cause of that unpleasantness seems to be strongly correlated with previous negative experiences.

More generally, animals, including humans, seem to use something like [Temporal Difference learning](http://www.scholarpedia.org/article/Temporal_difference_learning) very frequently ([one source of that impression](http://www.scholarpedia.org/article/Temporal_difference_learning)). If that's so, then understanding TD and related psychological research should give me a more accurate model of myself. I would expect it to help me understand when my dispositions and habits are likely to be useful (by knowing how they developed) and understand how to change my dispositions and habits. Thus I have a couple of questions:

1. Are my impressions accurate?

2. What books, papers, posts are the best for understanding these topics? I'd like material that addresses any of the following:

1. How TD or related algorithms work

2. What evidence says about whether human and/or animal brains frequently use TD or related algorithms and what situations brains use it for

3. Practical consequences of the research (e.g. Ugh Fields, doing X is a good way to build habit Y, smiling is a reinforcement, etc.) |

997a06d5-d34a-4bd2-b9da-5d160737c6ad | trentmkelly/LessWrong-43k | LessWrong | DeepMind is hiring for the Scalable Alignment and Alignment Teams

We are hiring for several roles in the Scalable Alignment and Alignment Teams at DeepMind, two of the subteams of DeepMind Technical AGI Safety trying to make artificial general intelligence go well. In brief,

* The Alignment Team investigates how to avoid failures of intent alignment, operationalized as a situation in which an AI system knowingly acts against the wishes of its designers. Alignment is hiring for Research Scientist and Research Engineer positions.

* The Scalable Alignment Team (SAT) works to make highly capable agents do what humans want, even when it is difficult for humans to know what that is. This means we want to remove subtle biases, factual errors, or deceptive behaviour even if they would normally go unnoticed by humans, whether due to reasoning failures or biases in humans or due to very capable behaviour by the agents. SAT is hiring for Research Scientist - Machine Learning, Research Scientist - Cognitive Science, Research Engineer, and Software Engineer positions.

We elaborate on the problem breakdown between Alignment and Scalable Alignment next, and discuss details of the various positions.

“Alignment” vs “Scalable Alignment”

Very roughly, the split between Alignment and Scalable Alignment reflects the following decomposition:

1. Generate approaches to AI alignment – Alignment Team

2. Make those approaches scale – Scalable Alignment Team

In practice, this means the Alignment Team has many small projects going on simultaneously, reflecting a portfolio-based approach, while the Scalable Alignment Team has fewer, more focused projects aimed at scaling the most promising approaches to the strongest models available.

Scalable Alignment’s current approach: make AI critique itself

Imagine a default approach to building AI agents that do what humans want:

1. Pretrain on a task like “predict text from the internet”, producing a highly capable model such as Chinchilla or Flamingo.

2. Fine-tune into an agent that does useful task |

1e4a4556-e9b9-40d4-9024-8bfce02c7f65 | StampyAI/alignment-research-dataset/blogs | Blogs | Misgeneralization as a misnomer

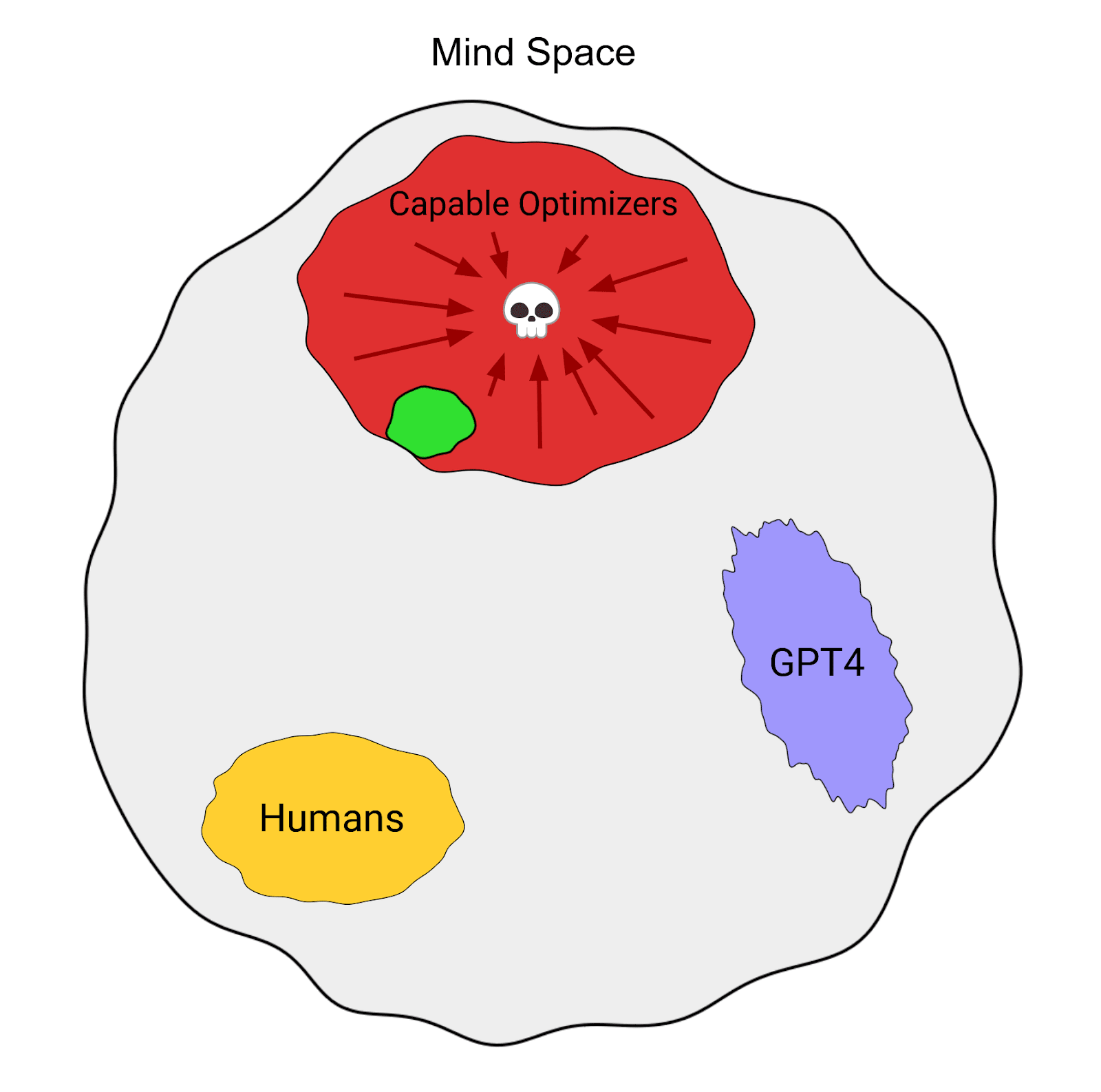

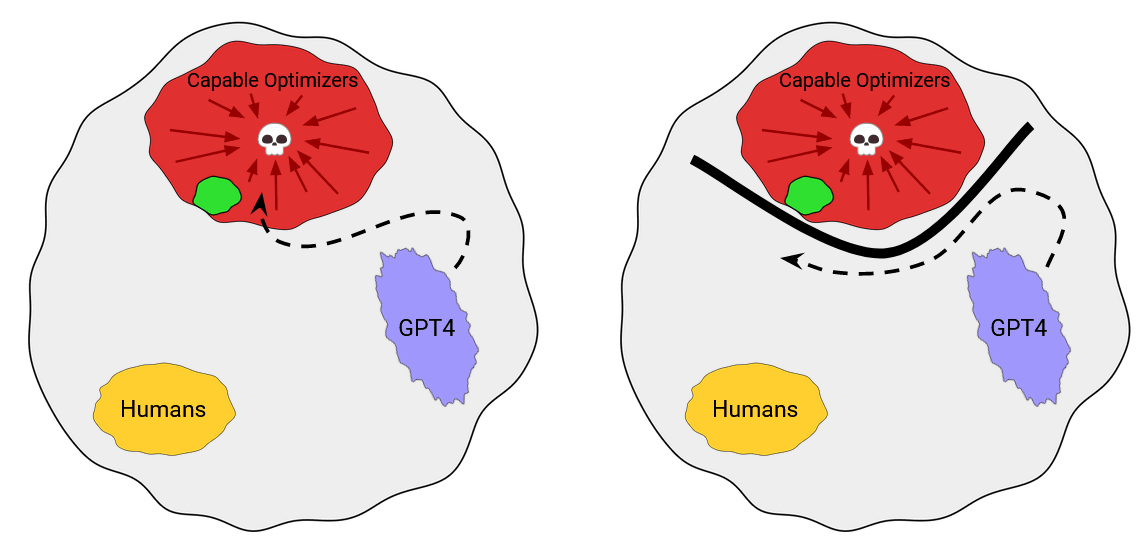

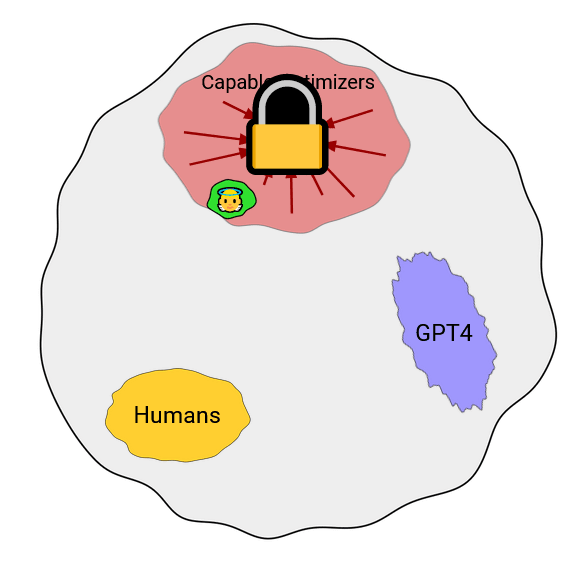

Here’s two different ways an AI can turn out [unfriendly](https://www.lesswrong.com/posts/BSee6LXg4adtrndwy/what-does-it-mean-for-an-agi-to-be-safe):

1. You somehow build an AI that cares about “making people happy”. In training, it tells people jokes and buys people flowers and offers people an ear when they need one. In deployment (and once it’s more capable), it forcibly puts each human in a separate individual heavily-defended cell, and pumps them full of opiates.

2. You build an AI that’s good at making people happy. In training, it tells people jokes and buys people flowers and offers people an ear when they need one. In deployment (and once it’s more capable), it turns out that whatever was causing that “happiness”-promoting behavior was a balance of a variety of other goals (such as basic desires for energy and memory), and it spends most of the universe on some combination of that other stuff that doesn’t involve much happiness.

(To state the obvious: please don’t try to get your AIs to pursue “happiness”; you want something more like [CEV](https://arbital.com/p/cev/) in the long run, and in the short run I strongly recommend [aiming lower, at a pivotal act](https://arbital.com/p/pivotal/) .)

In both cases, the AI behaves (during training) in a way that looks a lot like trying to make people happy. Then the AI described in (1) is unfriendly because it was optimizing the wrong concept of “happiness”, one that lined up with yours when the AI was weak, but that diverges in various [edge-cases](https://arbital.com/p/edge_instantiation/) that matter when the AI is strong. By contrast, the AI described in (2) was never even really trying to pursue happiness; it had a mixture of goals that merely correlated with the training objective, and that balanced out right around where you wanted them to balance out in training, but deployment (and the corresponding capabilities-increases) threw the balance off.

Note that this list of “ways things can go wrong when the AI looked like it was optimizing happiness during training” is not exhaustive! (For instance, consider an AI that cares about something else entirely, and knows you’ll shut it down if it doesn’t look like it’s optimizing for happiness. Or an AI whose goals change heavily as it reflects and self-modifies.)

(This list isn’t even really disjoint! You could get both at once, resulting in, e.g., an AI that spends most of the universe’s resources on acquiring memory and energy for unrelated tasks, and a small fraction of the universe on doped-up human-esque shells.)

The solutions to these two problems are pretty different. To resolve the problem sketched in (1), you have to figure out how to get an instance of the AI’s concept (“happiness”) to match the concept you hoped to transmit, even in the edge-cases and extremes that it will have access to in deployment (when it needs to be powerful enough to pull off some pivotal act that you yourself cannot pull off, and thus capable enough to access extreme edge-case states that you yourself cannot).

To resolve the problem sketched in (2), you have to figure out how to get the AI to care about one concept in particular, rather than a complicated mess that happens to balance precariously on your target (“happiness”) in training.

I note this distinction because it seems to me that various people around these parts are either unduly lumping these issues together, or are failing to notice one of them. For example, they seem to me to be mixed together in “[The Alignment Problem from a Deep Learning Perspective](https://arxiv.org/pdf/2209.00626.pdf) ” under the heading of “goal misgeneralization”.

(I think “misgeneralization” is a misleading term in both cases, but it’s an even worse fit for (2) than (1). A primate isn’t “misgeneralizing” its concept of “inclusive genetic fitness” when it gets smarter and invents condoms; it didn’t even *really have* that concept to misgeneralize, and what shreds of the concept it did have weren’t what the primate was mentally optimizing for.)

(In other words: it’s not that primates were optimizing for fitness in the environment, and then “misgeneralized” after they found themselves in a broader environment full of junk food and condoms. The “aligned” behavior “in training” broke in the broader context of “deployment”, but not because the primates found some weird way to extend an existing “inclusive genetic fitness” concept to a wider domain. Their optimization just wasn’t connected to an internal representation of “inclusive genetic fitness” in the first place.)

---

In mixing these issues together, I worry that it becomes much easier to erroneously dismiss the set. For instance, I have many times encountered people who think that the issue from (1) is a “skill issue”: surely, if the AI were only smarter, it would know what we mean by “make people happy”. (Doubly so if the first transformative AGIs are based on language models! Why, GPT-4 today could explain to you why pumping isolated humans full of opioids shouldn’t count as producing “happiness”.)

And: yep, an AI that’s capable enough to be transformative is pretty likely to be capable enough to figure out what the humans mean by “happiness”, and that doping literally everybody probably doesn’t count. But the issue is, [as](https://www.lesswrong.com/s/SXurf2mWFw8LX2mkG) [always](https://www.lesswrong.com/s/SXurf2mWFw8LX2mkG/p/CcBe9aCKDgT5FSoty), making the AI *care*. The trouble isn’t in making it have *some* understanding of what the humans mean by “happiness” somewhere inside it;[[1]](https://intelligence.org/feed/#fn1) the trouble is making the *stuff the AI pursues* be *that concept*.

Like, it’s possible in principle to reward the AI when it makes people happy, and to separately teach something to observe the world and figure out what humans mean by “happiness”, and to have the trained-in optimization-target concept end up wildly different (in the edge-cases) from the AI’s explicit understanding of what humans meant by “happiness”.

Yes, this is possible even though you used the word “happy” in both cases.

(And this is assuming away the issues described in (2), that the AI probably doesn’t by-default even end up with one clean alt-happy concept that it’s pursuing in place of “happiness”, as opposed to [a thousand shards of desire](https://www.lesswrong.com/posts/cSXZpvqpa9vbGGLtG/thou-art-godshatter) or whatever.)

And I do worry a bit that if we’re not clear about the distinction between all these issues, people will look at the whole cluster and say “eh, it’s a skill issue; surely as the AI gets better at understanding our human concepts, this will become less of a problem”, or whatever.

(As seems to me to be [already](https://twitter.com/MilitantHobo/status/1633040360275341312) happening as people correctly realize that LLMs will probably have a decent grasp on various human concepts.)

---

1. Or whatever you’re optimizing. Which, again, should not be “happiness”; I’m just using that as an example here.

Also, note that the thing you actually want an AI optimizing for in the long term—something like “CEV”—is legitimately harder to get the AI to have any representation of at all. There’s legitimately significantly less writing about object-level descriptions of a [eutopian](https://www.lesswrong.com/posts/K4aGvLnHvYgX9pZHS/the-fun-theory-sequence) universe, than of happy people, and this is related to the eutopia being significantly harder to visualize.

But, again, don’t shoot for the eutopia on your first try! End the acute risk period and then buy time for some reflection instead.[](https://intelligence.org/feed/#fnref1)

The post [Misgeneralization as a misnomer](https://intelligence.org/2023/04/10/misgeneralization-as-a-misnomer/) appeared first on [Machine Intelligence Research Institute](https://intelligence.org). |

3cfce926-1b81-4a3a-8f5f-1217e854d860 | trentmkelly/LessWrong-43k | LessWrong | [Linkpost] The neuroconnectionist research programme

This is a linkpost for https://www.nature.com/articles/s41583-023-00705-w (open access preprint: https://arxiv.org/abs/2209.03718)

> Artificial neural networks (ANNs) inspired by biology are beginning to be widely used to model behavioural and neural data, an approach we call ‘neuroconnectionism’. ANNs have been not only lauded as the current best models of information processing in the brain but also criticized for failing to account for basic cognitive functions. In this Perspective article, we propose that arguing about the successes and failures of a restricted set of current ANNs is the wrong approach to assess the promise of neuroconnectionism for brain science. Instead, we take inspiration from the philosophy of science, and in particular from Lakatos, who showed that the core of a scientific research programme is often not directly falsifiable but should be assessed by its capacity to generate novel insights. Following this view, we present neuroconnectionism as a general research programme centred around ANNs as a computational language for expressing falsifiable theories about brain computation. We describe the core of the programme, the underlying computational framework and its tools for testing specific neuroscientific hypotheses and deriving novel understanding. Taking a longitudinal view, we review past and present neuroconnectionist projects and their responses to challenges and argue that the research programme is highly progressive, generating new and otherwise unreachable insights into the workings of the brain.

Personally, I'd be excited to see more people thinking about the intersection of neuroconnectionism and alignment (currently, this seems very neglected).

Some examples of potential areas of investigation (alignment-relevant human capacities) which also seem very neglected could include: instruction following, moral reasoning, moral emotions (e.g. compassion, empathy). |

c02ce2e2-47b9-4682-9dcc-c7e6c97f04bc | StampyAI/alignment-research-dataset/arxiv | Arxiv | A proposal for ethically traceable artificial intelligence

1

A proposal for ethically traceable artificial

intelligence

Christopher A. Tucker, Ph.D., Cartheur Robotics, sp ol. s r.o., Prague, Czech Republic

Abstract

Although the problem of a critique of robotic behav ior in near-unanimous agreement to human norms seem s

intractable, a starting point of such an ambition i s a framework of the collection of knowledge a priori and

experience a posteriori categorized as a set of synthetical judgments avai lable to the intelligence, translated into

computer code. If such a proposal were successful, an algorithm with ethically traceable behavior and cogent

equivalence to human cognition is established. This paper will propose the application of Kant’s criti que of

reason to current programming constructs of an auto nomous intelligent system.

Introduction

As is oft cited in the literature, a near-universal application of moral imperative in the field of

artificial intelligence programming and observed ru ntime behavior is lacking, theoretical intuitions

scattered among five “tribes” [1], existing competi tively: Symbolists, evolutionists, Bayesians,

analogizers, and connectionists approaching a singu larity. A solution is not to satisfy any of them,

rather, set criteria that the intuitions themselves approach, as by the definition of the criteria eac h

follows from it instead of the reverse [2].

It can be argued that everything that has been thus far theorized as a means to a solution to the

“problem of artificial intelligence” is derived fro m knowledge a priori whose theorems are posited by

synthetical judgments. Why this is true can be stat ed simply: We, as humans, have never experienced

nor have been provided judgments of artificial bein gs save by the process of having developed them,

therefore it is impossible to describe them cogentl y without the specter of fallacious judgments we

would never be aware of except for the passage of t ime. It is, then, for this reason that a new

imperative needs to be discovered with which to gui de our reason and logic.

I propose the conundrum facing artificial intellige nce researchers can be assayed using transcendental

logic. In order to clarify the current state of the vexation why a logical imperative cannot be assign ed

universally because we, as humans, have no experien ce of how this concept is rooted and therefore its

subsequent definition in software is elusive. Admit ting this, we must look for philosophical advice

and take the viewpoint as an exercise of pure reason to find trajectories with which to create universa l

judgments.

The use of ideological imperative

In searching for a unified framework with which to capture the possible entirety of desirable

behaviors for an autonomous intelligent system a priori , a powerful model is presented by Immanuel

Kant’s Critique of Pure Reason wherein he notes the universal problem of pure rea son. “It is

extremely advantageous to be able to bring a number of investigations under the formula of a single

problem. For in this manner, we not only facilitate our own labor, inasmuch as we define it clearly to

ourselves, but also render it easier for others to decide whether we have done justice in our

2

undertaking. The proper problem of pure reason, the n, is contained in the question, ‘How are

synthetical judgments a priori possible?’” [p.12].

Approaching the answer requires the formation of an other question regarding the type of knowledge

that is desired, e.g., to comprehend the possibilit y of pure reason in the construction of this scienc e

which contains theoretical knowledge a priori : How is pure artificial intelligence possible?

Answering this requires an understanding between wh at we are given about the problem—what really

exists—and the natural disposition of the human min d. Does artificial intelligence exist, has it alway s

existed in an apodictic form, and how can the natur e of universal human reason find a suitable

answer? An answer is yet to be conceived, as the de finitions that have been presented in the literatur e

the past half century are inherently contradictory.

A pathway to finding the answer lies in the Transcendental Doctrine of Elements , where the particular

science, artificial intelligence, is divided under the name of a critique of pure reason. Therefore an

“Organon of pure reason would be a compendium of th ose principles according to which alone all

pure cognitions a priori can be obtained. The completely extended applicati on of such an organon

would afford us a system of pure reason. As this, h owever, is demanding a great deal, and it is yet

doubtful whether any extension of our knowledge be here possible, or if so, in what cases; we can

regard a science of the mere criticism of pure reas on, its sources and limits, as the propaedeutic to a

system of pure reason.” [p.15].

The transcendental aesthetic, the first criteria of the doctrine of elements denotes the following: “I n

whatsoever mode, or by whatsoever means, our knowle dge may relate to objects, it is at least quite

clear, that the only manner in which it immediately relates to them, is by means of an intuition. But an

intuition can take place only in so far as the obje ct is given to us. This, again, is only possible, t o man

at least, on condition that the object affect the m ind in a certain manner.” [p.21]. This relates to t he

inherent sensibility that an object presents to the mind in terms of its representation. As in the cas e of

artificial intelligence, this undetermined object f ollowing from an empirical intuition is a phenomeno n

as it corresponds more to sensation, and can be arr anged under certain relations, which constitute its

form.

The substance of the form then yields the array of conceptions that have been derived from

understanding, to establish the cognition of the ob ject and is properties both anticipated by designer s

and realized by engineers. “When we call into play a faculty of cognition, different conceptions

manifest themselves according to the different circ umstances, and make known this faculty, and

assemble themselves into a more or less extensive c ollection, according to the time or penetration tha t

has been applied to the consideration of them. Wher e this process, conducted as it is, mechanically, s o

to speak, will end, cannot be determined with certa inty. Besides, the conceptions which we discover

in the haphazard manner present themselves by no me ans in order and systematic unity, but are at last

coupled together only according to resemblances to each other, and arranged in a series, according to

the quantity of their content, from the simpler to the more complex—series which are anything but

systematic, through no altogether without a certain kind of method in their construction.” [p.56].

This therefore arrives at the construction of an un derstanding in synthetical judgments. In pure

intellectual form, they are presented as:

3

Table 1. Momenta of thought of synthetical judgment s.

Quantity of judgments Quality Relation Modality

Universal Affirmative Categorical Problematical

Particular Negative Hypothetical Assertorical

Singular Infinite Disjunctive Apodeictical

Table 1 contains a list of sets of synthetical judg ments divided into momenta categorizations. Kant

has noted each corresponds to a property of transce ndental logic that now will be disseminated into

proposed computer logic. Let us try to formulate a substantive example, which serves to illustrate how

this could be done. Assume that the artificial inte lligence in question is encapsulated in a single co de

base such that a developer can work on any part of its program. Let us also assume that this code base

consists of styles and patterns of an object-orient ed design. In such a form, it is expected the code

executes at runtime given conditions of some input, which flows through it forming a pattern or

algorithm. This pattern in its entirety is a catego rization of synthetical judgment. Because this patt ern

in known by analysis a posteriori , where it has been catalogued and compiled, the ex ecution pathway

can be discovered. The next step would be to tag th e code along the pathway where it indicates what

family, the headers of each column going from left to right of Table 1, and what genus, the columns

from top to bottom under each of the headers, it co rresponds to.

Once the code is tagged, the tags are gathered to b e monitored by an additional process, which is

aware of the state of synthetical judgment in the a rtificial intelligence at any given point in the

runtime. It is then theoretically possible to intro duce any set of controls on the program that are

desirable given behaviors ascribed to each judgment beforehand, tied explicitly to a definition from

human experience. In this way, the analogous human experience is no longer separable from logic and

therefore allows reason to become an active attribu te since the mystery of what is happening within

the program at an arbitrarily chosen time is well k nown.

This forms the basis of universal identity where th e perception of phenomenon manifest by the

artificial intelligence contains a synthesis of abs tract representations of behavior. As such, what Ka nt

calls the empirical consciousness , accompanies these different representations to fo rm identity within

the subject. Therefore, the problem this paper addr esses now takes the relation not as it did before—

where the program accompanies every representation with consciousness—but that each

representation is joined together sequentially wher e now the artificial intelligence is conscious of t he

synthesis of them. Kant states: “Consequently, only because I can connect a variety of given

representations in one consciousness, is it possibl e that I can represent to myself the identity of

consciousness in these representations; in other wo rds, the analytical unity of apperception is possib le

only under the presupposition of a synthetical unit y.” [p.82].

It is this unity that this paper proposes to add to programming constructs of an artificial intelligen ce in

order that it be ethically traceable and known not only what range of behaviors are programmed into

the machine, but those which have the possibility o f executing at any point in the runtime.

4

Discussion and Conclusions

In order to propose a solution for the vexation of the community to derive an algorithm whose

behaviors are subject to human norms, a new approac h to a solution of artificial intelligence is

required. Essentially, in the manner that a program ming language is imperative, e.g., using statements

that change the program’s state—as imperative mood in human languages express commands—thusly

an imperative ideology comprised of synthetical jud gments can be applied as an intellectual limit in

the program’s behavior. In this way, a framework wi th a defined scope is established.

It seems poignantly relevant to introduce this conc ept now as we are formulating the most basic of

laws and regulations for artificial intelligence an d autonomous intelligent systems design and

manufacture [3]. Rather than trying to sort out the loopholes, confusions, and contradictions, a more

efficient approach is to reframe the discussion in terms of fundamental pillars of Western logic and

philosophy. In order not to create more confusion o r present arguments of a better or worse approach

by one philosopher or the other, the work of Immanu el Kant in the Critique of Pure Reason is suitable

on the foundation that, the solitary question of de fining a universal framework whereby to judge

artificial intelligence lies in the establishment o f a criterion by which it is possible to securely

distinguish a pure from an empirical cognition.

The relevance of Kant’s philosophy to outline subst antive artificial intelligence by a critique of pur e

reason leaves room for interpretation of implementa tion but not of the essential framework itself. Thi s

is because judgments about what the program should do in terms of a system of regulatory axioms and

readily traceable to the set of momenta and their e mpirical manifestation, rather than a random series

of trial and error association scenarios. In this w ay, a list of cogent emotional responses to human

interaction is possible given the categorical judgm ents reactive to a given situation or outcome. It w ill

be for the future to decide whether our intuition c an be structured in such a way as to present

arguments of objects existing in similitude with ou r human cognition. Something must be proposed in

full [4] to address the problems, as we are mired i n contradictions about what constitutes the essence

of artificial intelligence.

References

[1] Domingo, P. The Master Algorithm: How the Quest for the Ultimat e Learning Machine Will Remake Our

World , London: Basic Books, 2015.

[2] Kant, I. Critique of Pure Reason , Translation by J.M.D. Meiklejohn, London: George Bell and Sons, 1897.

[3] IEEE Standards. “Ethically aligned design, a vision for prioritizing human wellbeing with artificial

intelligence and autonomous systems,” http://standards.ieee.org/news/2016/ethically_align ed_design.html .

[4] Tucker, C.A. “The method of artificial systems,” ar Xiv:1507.01384, June 2017. |

914cd3e2-1f41-4e9d-88a2-9b2c2ea5abff | trentmkelly/LessWrong-43k | LessWrong | Geoff Hinton Quits Google

The NYTimes reports that Geoff Hinton has quit his role at Google:

> On Monday, however, he officially joined a growing chorus of critics who say those companies are racing toward danger with their aggressive campaign to create products based on generative artificial intelligence, the technology that powers popular chatbots like ChatGPT.

>

> Dr. Hinton said he has quit his job at Google, where he has worked for more than a decade and became one of the most respected voices in the field, so he can freely speak out about the risks of A.I. A part of him, he said, now regrets his life’s work.

>

> “I console myself with the normal excuse: If I hadn’t done it, somebody else would have,” Dr. Hinton said during a lengthy interview last week in the dining room of his home in Toronto, a short walk from where he and his students made their breakthrough.

https://www.nytimes.com/2023/05/01/technology/ai-google-chatbot-engineer-quits-hinton.html

Some clarification from Hinton followed:

It was already apparent that Hinton considered AI potentially dangerous, but this seems significant. |

6e8f337d-6978-43f4-9702-aad69789ca9b | trentmkelly/LessWrong-43k | LessWrong | Out to Get You

Epistemic Status: Reference.

Expanded From: Against Facebook, as the post originally intended.

Some things are fundamentally Out to Get You.

They seek resources at your expense. Fees are hidden. Extra options are foisted upon you. Things are made intentionally worse, forcing you to pay to make it less worse. Least bad deals require careful search. Experiences are not as advertised. What you want is buried underneath stuff you don’t want. Everything is data to sell you something, rather than an opportunity to help you.

When you deal with Out to Get You, you know it in your gut. Your brain cannot relax. You lookout for tricks and traps. Everything is a scheme.

They want you not to notice. To blind you from the truth. You can feel it when you go to work. When you go to church. When you pay your taxes. It is bad government and bad capitalism. It is many bad relationships, groups and cultures.

When you listen to a political speech, you feel it. Dealing with your wireless or cable company, you feel it. At the car dealership, you feel it. When you deal with that one would-be friend, you feel it. Thinking back on that one ex, you feel it. It’s a trap.

Get Gone, Get Got, Get Compact or Get Ready

There are four responses to Out to Get You.

You can Get Gone. Walk away. Breathe a sigh of relief.

You can Get Got. Give the thing everything it wants. Pay up, relax, enjoy the show.

You can Get Compact. Find a rule limiting what ‘everything it wants’ means in context. Then Get Got, relax and enjoy the show.

You can Get Ready. Do battle. Get what you want.

When to Get Got

Get Got when the deal is Worth It.

This is a difficult lesson for everyone in at least one direction.

I am among those with a natural hatred of Getting Got. I needed to learn to relax and enjoy the show when the deal is Worth It. Getting Got imposes a large emotional cost for people like me. I have worked to put this aside when it’s time to Get Got, while preserving my instincts as a defense. That’s |

3f4eef5b-eea9-4789-a2f7-faaf336796ad | trentmkelly/LessWrong-43k | LessWrong | Fifteen Things I Learned From Watching a Game of Secret Hitler

Epistemic Status: Not likely to be true things. Right?

1. Liberals know nothing, fascists know everything.

2. Most of the policies democratic governments could pass are fascist policies that expand government power.

3. The remaining policies are liberal policies. There is no such thing as a conservative policy.

4. Liberal policies do nothing.

5. If the liberals do nothing enough times, they win and can congratulate themselves, no matter how much more fascist things got in the meantime.

6. Governments must always be passing new policies, and never take away old policies. Thus, government inevitably gets more powerful over time.

7. The more liberal policies you pass, the more likely it is any future policy will be fascist.

8. The more fascist policies you pass, the more likely it is any future policy will be fascist.

9. When the time comes to pass a policy, the government will choose from whatever proposals are lying around, even if all of them are fascist and everyone choosing is a liberal. There is almost never an option to just not do that, as such bold action requires a mostly fascist policy already be in place.

10. If the government fails to agree to pass one of the things lying around, that’s even worse, because it will then choose a new policy completely at random from what is lying around, which will probably be fascist.

11. Liberals spend most of their time being paranoid over which people claiming to be liberals are secretly fascists, or even secretly actual literal Hitler, as opposed to attempting to write or choose good policies.

12. Someone enacting liberal policies, but not in a position to assume dictatorial power, is providing strong evidence they are probably secretly Hitler.

13. When good people often have no choice but to do bad things, but there is no way to verify this, the default is for no one who is good to have any idea who is good and who is bad.

14. Despite this, good people think they know who is good and who is bad. |

abcd5027-f680-4501-9862-2b4f192de182 | trentmkelly/LessWrong-43k | LessWrong | Efficient Charity: Cheap Utilons via bone marrow registration

This topic is not really related to the things normally discussed here, but I think it's really important, and it might interest Less Wrongers, especially since many of us are interested in ethics and utility calculations that are essentially cost-benefit analyses. Bone marrow donation in the United States is managed by the National Marrow Donor Program. Because typing donors for matching purposes can be costly, they often require people signing up to donate to pay a registration fee, which probably prevents a lot of people from signing up. These costs are being covered until the end of the month by a corporate sponsor, which means that right now, all you need to do if you live in the US is go to http://marrow.org/Join/Join_Now/Join_Now.aspx and fill out a simple questionnaire. You will be sent a kit to collect a cheek swab, and then you will be entered into the donor database. Doing this does not require you to donate if a match comes up.

The reason I think this might interest Less Wrongers is that this is a really cheap way to improve the world. According to their website, about 1 in 500 potential donors are actually asked to donate, so registering doesn't actually make it all that likely that you will be asked to do anything more. If you ARE a match for someone who needs a donation, the cost to you is at most the temporary pain of marrow extraction (many donors are asked only for blood cells), whereas the other person’s chance to live is much improved. This looks like a huge net positive.

Unfortunately I only found out about this a few days ago, and it only occurred to me today that this might be a forum of people who would respond to the argument "you can make the world better at little cost to yourself." However, I ask that you go to the website and spend a few minutes signing up. This is like buying a 1 in 500 lottery ticket that SAVES SOMEONE’S LIFE. If the Singularity hits and an FAI can generate perfectly matched marrow for anyone who needs it from totipo |

299dffdb-ee0b-498a-810b-db9b0fdfbe90 | trentmkelly/LessWrong-43k | LessWrong | Meetup : Gen Con: Applied Game Theory

Discussion article for the meetup : Gen Con: Applied Game Theory

WHEN: 06 August 2016 02:00:00PM (-0400)

WHERE: 100 S Capitol Ave, Indianapolis, IN 46225, USA

Time tentative, location to be worked out. If you are attending Gen Con and want to meet up with rationalists, please comment if you have a preferred time and location. Posting this now to get it on the calendar and solicit responses. Default location is in the card game area, specific location to be found at the time (and then posted here). UPDATE: setting up at the blue Fantasy Flight tables, by the X-Wing Miniatures banner, in front of the HQ table, just before the banner showing the switch to Asmodee.

Meet up with Less Wrong friends and play games! Learn the newest releases, play classics, and otherwise have fun. A purely social event, running for as long as people want to stay and play, with potential continued discussion over dinner.

As at all events, there is no minimum degree, IQ, reading record, height, age, or neurotypicality to participate. Bring games if you like, learn new games if you like, and expect a range from "I am here for the championships" to "what are we Settling?"

We seemed like the kind of nerds who might go to Gen Con. You can also use the comments here to find others attending, arrange other connections at the event, discuss the con, etc.

Discussion article for the meetup : Gen Con: Applied Game Theory |

90883fe9-c538-4a3b-96f1-2e20dd8362c3 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Superintelligence 14: Motivation selection methods

*This is part of a weekly reading group on [Nick Bostrom](http://www.nickbostrom.com/)'s book, [Superintelligence](http://www.amazon.com/Superintelligence-Dangers-Strategies-Nick-Bostrom/dp/0199678111). For more information about the group, and an index of posts so far see the [announcement post](/lw/kw4/superintelligence_reading_group/). For the schedule of future topics, see [MIRI's reading guide](https://intelligence.org/wp-content/uploads/2014/08/Superintelligence-Readers-Guide-early-version.pdf).*

---

Welcome. This week we discuss the fourteenth section in the [reading guide](https://intelligence.org/wp-content/uploads/2014/08/Superintelligence-Readers-Guide-early-version.pdf): ***Motivation selection methods***. This corresponds to the second part of Chapter Nine.

This post summarizes the section, and offers a few relevant notes, and ideas for further investigation. Some of my own thoughts and questions for discussion are in the comments.

There is no need to proceed in order through this post, or to look at everything. Feel free to jump straight to the discussion. Where applicable and I remember, page numbers indicate the rough part of the chapter that is most related (not necessarily that the chapter is being cited for the specific claim).

**Reading**: “Motivation selection methods” and “Synopsis” from Chapter 9.

---

Summary

=======

1. **One way to control an AI is to design its motives**. That is, to choose what it wants to do (p138)

2. Some varieties of 'motivation selection' for AI safety:

1. ***Direct specification***: figure out what we value, and code it into the AI (p139-40)

1. Isaac Asimov's 'three laws of robotics' are a famous example

2. Direct specification might be fairly hard: both figuring out what we want and coding it precisely seem hard

3. This could be based on rules, or something like consequentialism

2. ***Domesticity***: the AI's goals limit the range of things it wants to interfere with (140-1)

1. This might make direct specification easier, as the world the AI interacts with (and thus which has to be thought of in specifying its behavior) is simpler.

2. Oracles are an example

3. This might be combined well with physical containment: the AI could be trapped, and also not want to escape.

3. *Indirect normativity*: instead of specifying what we value, specify a way to specify what we value (141-2)

1. e.g. extrapolate our volition

2. This means outsourcing the hard intellectual work to the AI

3. This will mostly be discussed in chapter 13 (weeks 23-5 here)

4. ***Augmentation***: begin with a creature with desirable motives, then make it smarter, instead of designing good motives from scratch. (p142)

1. e.g. brain emulations are likely to have human desires (at least at the start)

2. Whether we use this method depends on the kind of AI that is developed, so usually we won't have a choice about whether to use it (except inasmuch as we have a choice about e.g. whether to develop uploads or synthetic AI first).

3. Bostrom provides a summary of the chapter:

4. The question is not which control method is best, but rather which set of control methods are best given the situation. (143-4)

Another view

============

[Icelizarrd](http://www.reddit.com/r/science/comments/2hbp21/science_ama_series_im_nick_bostrom_director_of/ckrbdnx):

>

> Would you say there's any ethical issue involved with imposing limits or constraints on a superintelligence's drives/motivations? By analogy, I think most of us have the moral intuition that technologically interfering with an unborn human's inherent desires and motivations would be questionable or wrong, supposing that were even possible. That is, say we could genetically modify a subset of humanity to be cheerful slaves; that seems like a pretty morally unsavory prospect. What makes engineering a superintelligence specifically to serve humanity less unsavory?

>

>

>

Notes

=====

1. Bostrom tells us that it is very hard to specify human values. We have seen examples of galaxies full of paperclips or fake smiles resulting from poor specification. But these - and Isaac Asimov's stories - seem to tell us only that a few people spending a small fraction of their time thinking does not produce any watertight specification. What if a thousand researchers spent a decade on it? Are the millionth most obvious attempts at specification nearly as bad as the most obvious twenty? How hard is it? A general argument for pessimism is the thesis that ['value is fragile'](/lw/y3/value_is_fragile/), i.e. that if you specify what you want very nearly but get it a tiny bit wrong, it's likely to be almost worthless. [Much like](http://wiki.lesswrong.com/wiki/Complexity_of_value) if you get one digit wrong in a phone number. The degree to which this is so (with respect to value, not phone numbers) is controversial. I encourage you to try to specify a world you would be happy with (to see how hard it is, or produce something of value if it isn't that hard).

2. If you'd like a taste of indirect normativity before the chapter on it, the [LessWrong wiki page](http://wiki.lesswrong.com/wiki/Coherent_Extrapolated_Volition) on coherent extrapolated volition links to a bunch of sources.

3. The idea of 'indirect normativity' (i.e. outsourcing the problem of specifying what an AI should do, by giving it some good instructions for figuring out what you value) brings up the general question of just what an AI needs to be given to be able to figure out how to carry out our will. An obvious contender is a lot of information about human values. Though some people disagree with this - these people don't buy the [orthogonality thesis](/lw/l4g/superintelligence_9_the_orthogonality_of/). Other issues sometimes suggested to need working out ahead of outsourcing everything to AIs include decision theory, priors, anthropics, feelings about [pascal's mugging](http://www.nickbostrom.com/papers/pascal.pdf), and attitudes to infinity. [MIRI](http://intelligence.org/)'s technical work often fits into this category.

4. Danaher's [last post](http://philosophicaldisquisitions.blogspot.com/2014/08/bostrom-on-superintelligence-6.html)on Superintelligence (so far) is on motivation selection. It mostly summarizes and clarifies the chapter, so is mostly good if you'd like to think about the question some more with a slightly different framing. He also previously considered the difficulty of specifying human values in *The golem genie and unfriendly AI*(parts [one](http://philosophicaldisquisitions.blogspot.com/2013/01/the-golem-genie-and-unfriendly-ai-part.html) and [two](http://philosophicaldisquisitions.blogspot.com/2013/02/the-golem-genie-and-unfriendly-ai-part.html)), which is about [Intelligence Explosion and Machine Ethics](http://intelligence.org/files/IE-ME.pdf).

5. Brian Clegg [thinks](http://www.goodreads.com/review/show/982829583) Bostrom should have discussed [Asimov's stories](http://en.wikipedia.org/wiki/Three_Laws_of_Robotics) at greater length:

>

> I think it’s a shame that Bostrom doesn’t make more use of science fiction to give examples of how people have already thought about these issues – he gives only half a page to Asimov and the three laws of robotics (and how Asimov then spends most of his time showing how they’d go wrong), but that’s about it. Yet there has been a lot of thought and dare I say it, a lot more readability than you typically get in a textbook, put into the issues in science fiction than is being allowed for, and it would have been worthy of a chapter in its own right.

>

>

>

>

If you haven't already, you might consider (sort-of) following his advice, and reading [some science fiction](http://www.amazon.com/Complete-Robot-Isaac-Asimov/dp/0586057242/ref=sr_1_2?s=books&ie=UTF8&qid=1418695204&sr=1-2&keywords=asimov+i+robot).

In-depth investigations

If you are particularly interested in these topics, and want to do further research, these are a few plausible directions, some inspired by Luke Muehlhauser's [list](http://lukemuehlhauser.com/some-studies-which-could-improve-our-strategic-picture-of-superintelligence/), which contains many suggestions related to parts of *Superintelligence.*These projects could be attempted at various levels of depth.

1. Can you think of novel methods of specifying the values of one or many humans?

2. What are the most promising methods for 'domesticating' an AI? (i.e. constraining it to only care about a small part of the world, and not want to interfere with the larger world to optimize that smaller part).

3. Think more carefully about the likely motivations of drastically augmenting brain emulations

If you are interested in anything like this, you might want to mention it in the comments, and see whether other people have useful thoughts.

How to proceed

==============

This has been a collection of notes on the chapter. **The most important part of the reading group though is discussion**, which is in the comments section. I pose some questions for you there, and I invite you to add your own. Please remember that this group contains a variety of levels of expertise: if a line of discussion seems too basic or too incomprehensible, look around for one that suits you better!

Next week, we will start to talk about a variety of more and less agent-like AIs: 'oracles', genies' and 'sovereigns'. To prepare, **read** Chapter “Oracles” and “Genies and Sovereigns” from Chapter 10*.*The discussion will go live at 6pm Pacific time next Monday 22nd December. Sign up to be notified [here](http://intelligence.us5.list-manage.com/subscribe?u=353906382677fa789a483ba9e&id=28cb982f40). |

ae185b88-54bd-4718-9c42-5780c5801a45 | trentmkelly/LessWrong-43k | LessWrong | Chapter 121: Something to Protect: Severus Snape

A somber mood pervaded the Headmistress's office. Minerva had returned after dropping off Draco and Narcissa/Nancy at St. Mungo's, where the Lady Malfoy was being examined to see if a decade living as a Muggle had done any damage to her health; and Harry had come up to the Headmistress's office again and then... not been able to think of priorities. There was so much to do, so many things, that even Headmistress McGonagall didn't seem to know where to start, and certainly not Harry. Right now Minerva was repeatedly writing words on parchment and then erasing them with a handwave, and Harry had closed his eyes for clarity. Was there any next first thing that needed to happen...

There came a knock upon the great oaken door that had been Dumbledore's, and the Headmistress opened it with a word.

The man who entered the Headmistress's office appeared worn, he had discarded his wheelchair but still walked with a limp. He wore black robes that were simple, yet clean and unstained. Over his left shoulder was slung a knapsack, of sturdy gray leather set with silver filigree that held four green pearl-like stones. It looked like a thoroughly enchanted knapsack, one that could contain the contents of a Muggle house.

One look at him, and Harry knew.

Headmistress McGonagall sat frozen behind her new desk.

Severus Snape inclined his head to her.

"What is the meaning of this?" said the Headmistress, sounding... heart-sick, like she'd known, upon a glance, just like Harry had.

"I resign my position as the Potions Master of Hogwarts," the man said simply. "I will not stay to draw my last month's salary. If there are students who have been particularly harmed by me, you may use the money for their benefit."

He knows. The thought came to Harry, and he couldn't have said in words just what the Potions Master now knew; except that it was clear that Severus knew it.

"Severus..." Headmistress McGonagall began. Her voice sounded hollow. "Professor Severus Snape, you may not realiz |

57e314d6-98cb-4f95-a7d2-8d6880a37fb1 | trentmkelly/LessWrong-43k | LessWrong | If Van der Waals was a neural network

If Van der Waals was a neural network

At some point in history a lot of thought was put into obtaining the equation:

R*T = P*V/n

The ideal gas equation we learn in kindergarten, which uses the magic number R

in order to make predictions about how n moles of an “ideal gas” will change in pressure, volume or temperature given that we can control two of those factors.

This law approximates the behavior of many gases with a small error and it was certainly useful for many o' medieval soap volcano party tricks and Victorian steam engine designs.

But, as is often the case in science, a bloke called Van der Waals decided to ruin everyone’s fun in the later 19th century by focusing on a bunch of edge cases where the ideal gas equation was utter rubbish when applied to any real gas.

He then proceeded to collect a bunch of data points for the behavior of various gases and came up with two other magic numbers to add to the equation, a

and b, individually determined for each of the gases, which can be used in the equation:

RT = (P + a*n^2/V^2)(V – n*b)/n

Once again the world could rest securely having been given a new equation, which approximates reality better.

But obviously this equation is not enough; indeed it was not enough in the time of Van der Waals, when people working on “real” problems had their own more specific equations.

But the point, humans gather data and construct equations for the behavior of gases exist, the less error prone they have to be, the more niche and hard to construct (read: require more data and a more sophisticated mathematical apparatus) they become.

But let’s assume that Van der Waals (or any other equation maker) does all the data collection which he hopes will have a good coverage of standard behavior and edge cases… and feeds it into a neural network (or any other entity which is a good universal function estimator), and gets a function W.

This function W is as good, if not better than the original human-brain-made equation at pre |

99a43860-7f78-40d5-b05d-41fd641a91db | trentmkelly/LessWrong-43k | LessWrong | Progress links and short notes, 2024-12-16

Much of this content originated on social media. To follow news and announcements in a more timely fashion, follow me on Twitter, Threads, Bluesky, or Farcaster.

Contents

* Jobs & fellowships

* Looking for writers?

* People doing interesting things

* Events

* DARPA wants input

* Other announcements

* Progress on the curriculum

* The growth of the progress movement

* AI will allow the average person to navigate The System

* I have questions

* Other people have questions

* Links

* 100 years ago

* Humboldt on progress

* Progress news with cool pics

* Anti-elite elites

* Politics links and short notes

* BBC doesn’t know what “nominal” means

* Charts

* Fun

Jobs & fellowships

* We’re hiring an Event Manager to run Progress Conference 2025 and other events. Best to get your application in before the holidays!

* “The Kothari Fellowship provides grant and mentorship to young Indians (<25 years) who want to build, empowering them to turn ideas into reality, instead of being held back by societal norms.” Provides up to ₹1 lakh per month for 12 months (~$15k per year)

* ARIA Research will “start the search for our first Frontier Specialists” to work alongside program directors. “It’s a two-year role that will give you a chance to step off the standard career track and go after outsized impact” (@ARIA_research). Apply here

Looking for writers?

* “Are you running a progress-y or abundance-oriented newsletter, blog, magazine or other publication? Would you like to receive pitches from the talented RPI fellows? Reply here so I can send our writers your way” (@elmcaleavy)

People doing interesting things

* Rosie Campbell (RPI fellow) has left OpenAI and is thinking about her next steps. She’s interested in talking to people about various topics related to AI, risk, safety, policy, epistemics, and more

* @danielgolliher: “I want to take my ‘Foundations of America’ students on an optional day trip to Washington D.C., and do one or both of: Watch a Cong |

0574664e-f572-4d42-b299-7b2a0a1e0a9c | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Modal Bargaining Agents

*Summary: Bargaining problems are interesting in the case of Löbian cooperation; Eliezer suggested a geometric algorithm for resolving bargaining conflicts by leaving the Pareto frontier, and this algorithm can be made into a modal agent, given an additional suggestion by Benja.*

Bargaining and Fairness

=======================

When two agents can read each others' source code before playing a game, [Löbian cooperation](https://intelligence.org/files/ProgramEquilibrium.pdf) can transform many games into pure [bargaining problems](http://en.wikipedia.org/wiki/Bargaining_problem). Explicitly, the algorithm of "if I can prove you choose X, then I choose Y, otherwise I choose Z" makes it possible for an agent to offer a deal better for both parties than a Nash equilibrium, then default to that Nash equilibrium if the deal isn't verifiably taken. In the simple case where there is a unique Nash equilibrium, two agents with that sort of algorithm can effectively reduce the decision theory problem to a negotiation over which point on the Pareto frontier they will select.

However, if two agents insist on different deals (e.g. X insists on point D, Y insists on point A), then it falls through and we're back to the Nash equilibrium O. And if you try and patch this by offering several deals in sequence along the Pareto frontier, then a savvy opponent is just going to grab whichever of those is best for them. So we'd like both a notion of the fair outcome, and a way to still make deals with agents who disagree with us on fairness (without falling back to the Nash equilibrium, and without incentivizing them to play hardball with us).

Note that utility functions are only defined up to affine transformations, so any solution should be invariant under independent rescalings of the players' utilities. This requirement, plus a few others (winding up on the Pareto frontier, independence of irrelevant alternatives, and symmetry) are all satisfied by the Nash solution to the bargaining problem: choose the point on the Pareto frontier so that the area of the rectangle from O to that point is maximized.

This gives us a pretty plausible answer to the first question, but leaves us with the second: are we simply at war with agents that have other ideas of what's fair? (Let's say that X thinks that the Nash solution N is fair, but Y thinks that B is fair.) Other theorists have come up with other definitions of the fair solution to a bargaining problem, so this is a live question!

And the question of incentives makes it even more difficult: if you try something like "50% my fair equilibrium, 50% their fair equilibrium", you create an incentive for other agents to bias their definition of fairness in their own favor, since that boosts their payoff.

Bargaining Away from the Pareto Frontier

========================================

Eliezer's suggestion in this case is as follows: an agent defines its set of acceptable deals as "all points in the feasible set for which my opponent's score is at most what they would get at the point I think is fair". If each agent's definition of fairness is biased in their own favor, the intersection of the agents' acceptable deals has a corner within the feasible set (but not on the Pareto frontier unless the agents agree on the fair point), and that is the point where they should actually achieve Löbian cooperation.

Note that in this setup, you get no extra utility for redefining fairness in a self-serving way. Each agent winds up getting the utility they would have had at the fairness point of the *other* agent. (Actually, Eliezer suggests a very slight slope to these lines, in the direction that makes it *worse* for the opponent to insist on a more extreme fairness point. This sets up good incentives for meeting in the middle. But for simplicity, we'll just consider the incentive-neutral version here.)

Moreover, this extends to games involving more than two agents: each one defines a set of acceptable deals by the condition that no other agent gets more than they would have at the agent's fairness point, and the intersection has a corner in the feasible set, where each agent gets the minimum of the payoffs it would have achieved at the other agents' fairness points.

Modal Bargaining Agents

=======================

Now, how do we set this bargaining algorithm up as a [modal decision theory](http://agentfoundations.org/item?id=160)? We can only consider finitely many options, though we're allowed to consider computably mixed strategies. Let's assume that our game has finitely many pure strategies for each player. As above, we'll assume there is a unique Nash equilibrium, and set it at the origin.

Then there's a natural set of points we should consider: the grid points within the feasible set (the convex hull formed by the Nash equilibrium and the Pareto optimal points above it) whose coordinates correspond to utilities of the pure strategies on the Pareto frontier. This is easier to see than to read:

Now all we need is to be sure that Löbian cooperation happens at the point we expect. There's one significant problem here: we need to worry about syncing the proof levels that different agents are using.

(If you haven't seen this before, you might want to work out what happens if any two of the following three agents are paired with one another: