id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

c237d060-3d27-4db6-be74-0f8c50759c23 | trentmkelly/LessWrong-43k | LessWrong | A Few Terrifying Facts About The Russo-Ukrainian War

Epistemic status: trying to summarize the news and predict, post is under revision, too lazy to citation everything

I wanted to collect a few observations I've made, as best I understand them. This PBS article does a good job of explaining much of it.

1. Vladimir Putin has announced the annexation of four Ukrainian territories.

1. This makes them Russian territory from Russia's perspective.

2. “People living in Donetsk, Luhansk, Zaporizhzhia and Kherson are becoming our citizens forever” - Putin

2. The West does not acknowledge this annexation, describing it as illegal. Ukraine does not acknowledge this annexation and says it plans to take the territories back.

1. "By attempting to annex Ukraine's Donetsk, Luhansk, Zaporizhzhia and Kherson regions, (Russian President Vladimir) Putin tries to grab territories he doesn't even physically control on the ground. Nothing changes for Ukraine: we continue liberating our land and our people, restoring our territorial integrity," Ukraine's Foreign Minister Dmytro Kuleba said on social media.

3. Russian military doctrine allows the usage of nuclear weapons to defend Russian territory.

4. Putin has a track record of escalating apparently (this needs more data) and Russia seems to be planning for escalation until the war is won.

1. https://www.themoscowtimes.com/2022/09/29/putin-always-chooses-escalation-a78923

1. "All of our sources in the elite — who all spoke on the condition of anonymity — said the military conflict will only escalate in the coming months."

5. Putin has clearly stated that they will defend this territory, including with tactical nukes if need be.

1. He said they would use "any means available" to defend it

2. He has mentioned usage of nukes some number of times (a nice-to-have: a list of all the times he has said this)

3. Medyedev has stated the West would not retaliate if nuclear weapons are used.

4. "Under Russia’s amended constitution, no Kremlin |

d48bad82-d005-455c-9599-6a01db772a7e | trentmkelly/LessWrong-43k | LessWrong | Choices Are Really Bad

Previously: Choices are Bad

Last time, I gave two (related) reasons Choices are Bad: Choices Reduce Perceived Value and Choices Force You Into Choosing Mode. Despite not looking like much, these reasons are Serious Business. They can render us unable to enjoy, think or relax even when it might seem that everything is awesome. That’s what that song is about, really: how great things are, on every axis, when we let ourselves go with the flow and not get distracted.

The rabbit hole goes deeper. It gets much worse.

Choices Cost Willpower and Create Decision Fatigue

Willpower is a controversial topic, and laying out even my simple model would be beyond scope. What matters here is that in the short term, exercising willpower is a cost (whether or not such use has long term benefits). Most of the time, you would prefer to spend less willpower to get the same result.

Often making the otherwise right choice will require the spending of willpower. When you consciously think about eating another cookie, or checking your email, not doing so costs a non-zero amount of willpower. Making that choice over and over could end up not only being distracting via being in choosing mode, but also end up sapping your willpower.

In addition to willpower, Decision Fatigue is a thing as well. Making choices (decisions) is draining something else that is related to but not identical to willpower. At some point, decision fatigue makes further choices carry an increasing cost. Some people have this problem more often than others, but there is always the risk that this will happen to you.

There’s even a version of ‘I’ve made good choices all day, it’s all right to indulge now’ that can happen, which is related but different.

You Might Choose Wrong

Simple but important.

If you give someone a choice, there is always some chance they will choose wrong. Remember the third principle of economics: People are stupid. They also are often ignorant, or distracted, or don’t care, or lots of ot |

39ef3cb4-9bb9-4184-b720-3a0160987156 | trentmkelly/LessWrong-43k | LessWrong | Announcing Progress Studies for Young Scholars, an online summer program in the history of technology

I’m thrilled to announce a new online learning program in progress studies for high school students: Progress Studies for Young Scholars.

Progress Studies for Young Scholars launches in June as a summer program, with daily online learning activities for 6 weeks. We’ll be covering the history of technology and invention: the challenges of life and work and how we solved them, leading to the amazing increase in living standards over the last few centuries. Topics will include the advances in materials; automation of manufacturing and agriculture; the progression of energy from steam to oil to electricity; how railroads, cars and airplanes shrank the world; the conquest of infectious disease through sanitation, vaccines, and antibiotics; and the rise of computers and the Internet. The course will also prompt students to consider the future of progress, and what part they want to play in it.

The program will be guided self-study, with daily reading, podcasts or video. Students can go through the material entirely on their own for free, or pay to join a study group with an instructor for daily discussion and Q&A. Pricing to be announced soon, but scholarships will be available!

In conjunction, we’re launching a speaker series of talks and interviews with experts in the history of progress, and those at the frontier pushing it forward. Speakers will include Tyler Cowen, Patrick Collison, Max Roser, Joel Mokyr, Deirdre McCloskey, Anton Howes, and many more.

This is a joint project between The Roots of Progress and Higher Ground Education, the largest operator of Montessori and Montessori-inspired schools in the US. I’ve known the leadership team at Higher Ground for many years and have deep respect for them—especially the way they treat learning as a process of self-creation on the part of the student.

Sign up to get announcements about the program, including the speaker series:

progressstudies.school

And please spread the word, especially to intellectually curious |

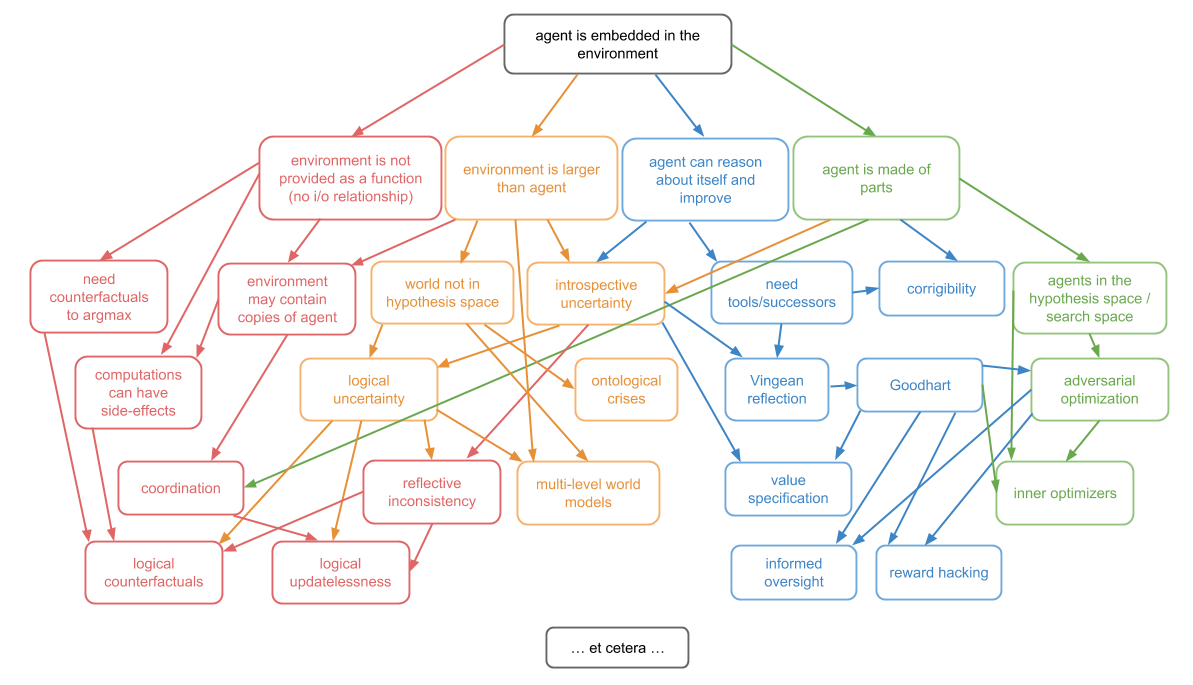

0d45f801-89fb-450f-902c-a13ea6efb828 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Thinking about Broad Classes of Utility-like Functions

*Here's some thoughts I've had about utility maximizers, heavily influenced by ideas like* [*FDT*](https://arxiv.org/abs/1710.05060) *and* [*Morality as Fixed Computation*](https://www.lesswrong.com/posts/FnJPa8E9ZG5xiLLp5/morality-as-fixed-computation)*.*

The Description vs The Maths vs The Algorithm (or Implementation)

-----------------------------------------------------------------

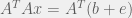

This is a frame which I think is important. Getting from a description of what we want, to the maths of what we want, to an algorithm which implements that seems to be a key challenge.

I sometimes think of this as a pipeline of development: description →.mjx-chtml {display: inline-block; line-height: 0; text-indent: 0; text-align: left; text-transform: none; font-style: normal; font-weight: normal; font-size: 100%; font-size-adjust: none; letter-spacing: normal; word-wrap: normal; word-spacing: normal; white-space: nowrap; float: none; direction: ltr; max-width: none; max-height: none; min-width: 0; min-height: 0; border: 0; margin: 0; padding: 1px 0}

.MJXc-display {display: block; text-align: center; margin: 1em 0; padding: 0}

.mjx-chtml[tabindex]:focus, body :focus .mjx-chtml[tabindex] {display: inline-table}

.mjx-full-width {text-align: center; display: table-cell!important; width: 10000em}

.mjx-math {display: inline-block; border-collapse: separate; border-spacing: 0}

.mjx-math \* {display: inline-block; -webkit-box-sizing: content-box!important; -moz-box-sizing: content-box!important; box-sizing: content-box!important; text-align: left}

.mjx-numerator {display: block; text-align: center}

.mjx-denominator {display: block; text-align: center}

.MJXc-stacked {height: 0; position: relative}

.MJXc-stacked > \* {position: absolute}

.MJXc-bevelled > \* {display: inline-block}

.mjx-stack {display: inline-block}

.mjx-op {display: block}

.mjx-under {display: table-cell}

.mjx-over {display: block}

.mjx-over > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-under > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-stack > .mjx-sup {display: block}

.mjx-stack > .mjx-sub {display: block}

.mjx-prestack > .mjx-presup {display: block}

.mjx-prestack > .mjx-presub {display: block}

.mjx-delim-h > .mjx-char {display: inline-block}

.mjx-surd {vertical-align: top}

.mjx-surd + .mjx-box {display: inline-flex}

.mjx-mphantom \* {visibility: hidden}

.mjx-merror {background-color: #FFFF88; color: #CC0000; border: 1px solid #CC0000; padding: 2px 3px; font-style: normal; font-size: 90%}

.mjx-annotation-xml {line-height: normal}

.mjx-menclose > svg {fill: none; stroke: currentColor; overflow: visible}

.mjx-mtr {display: table-row}

.mjx-mlabeledtr {display: table-row}

.mjx-mtd {display: table-cell; text-align: center}

.mjx-label {display: table-row}

.mjx-box {display: inline-block}

.mjx-block {display: block}

.mjx-span {display: inline}

.mjx-char {display: block; white-space: pre}

.mjx-itable {display: inline-table; width: auto}

.mjx-row {display: table-row}

.mjx-cell {display: table-cell}

.mjx-table {display: table; width: 100%}

.mjx-line {display: block; height: 0}

.mjx-strut {width: 0; padding-top: 1em}

.mjx-vsize {width: 0}

.MJXc-space1 {margin-left: .167em}

.MJXc-space2 {margin-left: .222em}

.MJXc-space3 {margin-left: .278em}

.mjx-test.mjx-test-display {display: table!important}

.mjx-test.mjx-test-inline {display: inline!important; margin-right: -1px}

.mjx-test.mjx-test-default {display: block!important; clear: both}

.mjx-ex-box {display: inline-block!important; position: absolute; overflow: hidden; min-height: 0; max-height: none; padding: 0; border: 0; margin: 0; width: 1px; height: 60ex}

.mjx-test-inline .mjx-left-box {display: inline-block; width: 0; float: left}

.mjx-test-inline .mjx-right-box {display: inline-block; width: 0; float: right}

.mjx-test-display .mjx-right-box {display: table-cell!important; width: 10000em!important; min-width: 0; max-width: none; padding: 0; border: 0; margin: 0}

.MJXc-TeX-unknown-R {font-family: monospace; font-style: normal; font-weight: normal}

.MJXc-TeX-unknown-I {font-family: monospace; font-style: italic; font-weight: normal}

.MJXc-TeX-unknown-B {font-family: monospace; font-style: normal; font-weight: bold}

.MJXc-TeX-unknown-BI {font-family: monospace; font-style: italic; font-weight: bold}

.MJXc-TeX-ams-R {font-family: MJXc-TeX-ams-R,MJXc-TeX-ams-Rw}

.MJXc-TeX-cal-B {font-family: MJXc-TeX-cal-B,MJXc-TeX-cal-Bx,MJXc-TeX-cal-Bw}

.MJXc-TeX-frak-R {font-family: MJXc-TeX-frak-R,MJXc-TeX-frak-Rw}

.MJXc-TeX-frak-B {font-family: MJXc-TeX-frak-B,MJXc-TeX-frak-Bx,MJXc-TeX-frak-Bw}

.MJXc-TeX-math-BI {font-family: MJXc-TeX-math-BI,MJXc-TeX-math-BIx,MJXc-TeX-math-BIw}

.MJXc-TeX-sans-R {font-family: MJXc-TeX-sans-R,MJXc-TeX-sans-Rw}

.MJXc-TeX-sans-B {font-family: MJXc-TeX-sans-B,MJXc-TeX-sans-Bx,MJXc-TeX-sans-Bw}

.MJXc-TeX-sans-I {font-family: MJXc-TeX-sans-I,MJXc-TeX-sans-Ix,MJXc-TeX-sans-Iw}

.MJXc-TeX-script-R {font-family: MJXc-TeX-script-R,MJXc-TeX-script-Rw}

.MJXc-TeX-type-R {font-family: MJXc-TeX-type-R,MJXc-TeX-type-Rw}

.MJXc-TeX-cal-R {font-family: MJXc-TeX-cal-R,MJXc-TeX-cal-Rw}

.MJXc-TeX-main-B {font-family: MJXc-TeX-main-B,MJXc-TeX-main-Bx,MJXc-TeX-main-Bw}

.MJXc-TeX-main-I {font-family: MJXc-TeX-main-I,MJXc-TeX-main-Ix,MJXc-TeX-main-Iw}

.MJXc-TeX-main-R {font-family: MJXc-TeX-main-R,MJXc-TeX-main-Rw}

.MJXc-TeX-math-I {font-family: MJXc-TeX-math-I,MJXc-TeX-math-Ix,MJXc-TeX-math-Iw}

.MJXc-TeX-size1-R {font-family: MJXc-TeX-size1-R,MJXc-TeX-size1-Rw}

.MJXc-TeX-size2-R {font-family: MJXc-TeX-size2-R,MJXc-TeX-size2-Rw}

.MJXc-TeX-size3-R {font-family: MJXc-TeX-size3-R,MJXc-TeX-size3-Rw}

.MJXc-TeX-size4-R {font-family: MJXc-TeX-size4-R,MJXc-TeX-size4-Rw}

.MJXc-TeX-vec-R {font-family: MJXc-TeX-vec-R,MJXc-TeX-vec-Rw}

.MJXc-TeX-vec-B {font-family: MJXc-TeX-vec-B,MJXc-TeX-vec-Bx,MJXc-TeX-vec-Bw}

@font-face {font-family: MJXc-TeX-ams-R; src: local('MathJax\_AMS'), local('MathJax\_AMS-Regular')}

@font-face {font-family: MJXc-TeX-ams-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_AMS-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_AMS-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_AMS-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-B; src: local('MathJax\_Caligraphic Bold'), local('MathJax\_Caligraphic-Bold')}

@font-face {font-family: MJXc-TeX-cal-Bx; src: local('MathJax\_Caligraphic'); font-weight: bold}

@font-face {font-family: MJXc-TeX-cal-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-R; src: local('MathJax\_Fraktur'), local('MathJax\_Fraktur-Regular')}

@font-face {font-family: MJXc-TeX-frak-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-B; src: local('MathJax\_Fraktur Bold'), local('MathJax\_Fraktur-Bold')}

@font-face {font-family: MJXc-TeX-frak-Bx; src: local('MathJax\_Fraktur'); font-weight: bold}

@font-face {font-family: MJXc-TeX-frak-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-BI; src: local('MathJax\_Math BoldItalic'), local('MathJax\_Math-BoldItalic')}

@font-face {font-family: MJXc-TeX-math-BIx; src: local('MathJax\_Math'); font-weight: bold; font-style: italic}

@font-face {font-family: MJXc-TeX-math-BIw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-BoldItalic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-BoldItalic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-BoldItalic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-R; src: local('MathJax\_SansSerif'), local('MathJax\_SansSerif-Regular')}

@font-face {font-family: MJXc-TeX-sans-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-B; src: local('MathJax\_SansSerif Bold'), local('MathJax\_SansSerif-Bold')}

@font-face {font-family: MJXc-TeX-sans-Bx; src: local('MathJax\_SansSerif'); font-weight: bold}

@font-face {font-family: MJXc-TeX-sans-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-I; src: local('MathJax\_SansSerif Italic'), local('MathJax\_SansSerif-Italic')}

@font-face {font-family: MJXc-TeX-sans-Ix; src: local('MathJax\_SansSerif'); font-style: italic}

@font-face {font-family: MJXc-TeX-sans-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-script-R; src: local('MathJax\_Script'), local('MathJax\_Script-Regular')}

@font-face {font-family: MJXc-TeX-script-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Script-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Script-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Script-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-type-R; src: local('MathJax\_Typewriter'), local('MathJax\_Typewriter-Regular')}

@font-face {font-family: MJXc-TeX-type-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Typewriter-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Typewriter-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Typewriter-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-R; src: local('MathJax\_Caligraphic'), local('MathJax\_Caligraphic-Regular')}

@font-face {font-family: MJXc-TeX-cal-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-B; src: local('MathJax\_Main Bold'), local('MathJax\_Main-Bold')}

@font-face {font-family: MJXc-TeX-main-Bx; src: local('MathJax\_Main'); font-weight: bold}

@font-face {font-family: MJXc-TeX-main-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-I; src: local('MathJax\_Main Italic'), local('MathJax\_Main-Italic')}

@font-face {font-family: MJXc-TeX-main-Ix; src: local('MathJax\_Main'); font-style: italic}

@font-face {font-family: MJXc-TeX-main-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-R; src: local('MathJax\_Main'), local('MathJax\_Main-Regular')}

@font-face {font-family: MJXc-TeX-main-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-I; src: local('MathJax\_Math Italic'), local('MathJax\_Math-Italic')}

@font-face {font-family: MJXc-TeX-math-Ix; src: local('MathJax\_Math'); font-style: italic}

@font-face {font-family: MJXc-TeX-math-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size1-R; src: local('MathJax\_Size1'), local('MathJax\_Size1-Regular')}

@font-face {font-family: MJXc-TeX-size1-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size1-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size1-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size1-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size2-R; src: local('MathJax\_Size2'), local('MathJax\_Size2-Regular')}

@font-face {font-family: MJXc-TeX-size2-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size2-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size2-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size2-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size3-R; src: local('MathJax\_Size3'), local('MathJax\_Size3-Regular')}

@font-face {font-family: MJXc-TeX-size3-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size3-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size3-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size3-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size4-R; src: local('MathJax\_Size4'), local('MathJax\_Size4-Regular')}

@font-face {font-family: MJXc-TeX-size4-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size4-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size4-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size4-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-R; src: local('MathJax\_Vector'), local('MathJax\_Vector-Regular')}

@font-face {font-family: MJXc-TeX-vec-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-B; src: local('MathJax\_Vector Bold'), local('MathJax\_Vector-Bold')}

@font-face {font-family: MJXc-TeX-vec-Bx; src: local('MathJax\_Vector'); font-weight: bold}

@font-face {font-family: MJXc-TeX-vec-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Bold.otf') format('opentype')}

maths → algorithm. A description is something like "A utility maximizer is an agent-like thing which attempts to compress future world states towards ones which score highly in its utility function". The maths of something like that involves information theory (to understand what we mean by compression), proofs (like the good regulator theorem, the power-seeking theorems) etc. The algorithm is something like RL or AlphaGo.

More examples:

| | | | |

| --- | --- | --- | --- |

| **System** | **Description** | **Maths** | **Algorithm** |

| Addition | "If you have some apples and you get some more, you have a new number of apples" | The rules of arithmetic. We can make proofs about it using ZFS theory. | Whatever machine code/ logic gates are going on inside a calculator. |

| Physics | "When you throw a ball, it accelerates downwards under gravity" | Calculus | Frame-by-frame updating of position and velocity vectors |

| AI which models the world | "Consider all hypotheses weighted by simplicity, and update based on evidence" | Kolmogorov complexity, Bayesian updating, AIXI | DeepMind's Apperception Engine (But it's not very good) |

| A good decision theory | "Doesn't let you get exploited in Termites, while also one-boxing in Newcome" | FDT, concepts like subjunctive dependence | **???** |

| Human values | **???** | **???** | **???** |

This allows us to identify three failure points:

1. Failure to make an accurate description of what we want (Alternatively, failure to turn an intuitive sense into a description)

2. Failure to formalize that description into mathematics

3. Failure to implement that mathematics into an algorithm

These failures can be total or partial. DeepMind's Apperception Engine is basically useless because it's a *bad* implementation of something AIXI-like. Failure to implement the mathematics may also happen because the algorithm doesn't *accurately* represent the maths. Deep neural networks are sort-of-like idealized Bayesian reasoning, but a very imperfect version of it.

If the algorithm doesn't accurately represent the maths, then reasoning about the maths doesn't tell you about the algorithm. Proving properties of algorithms is much harder than proving them about the abstracted maths of a system.

(As an aside, I suspect this is actually a crux relating to near-term AI doom arguments: are neural networks and DRL agents similar enough to idealized Bayesian reasoning and utility maximizers to act in ways which those abstract systems will provably act?)

All of this is just to introduce some big classes of reasoners: self-protecting utility maximizers, self-modifying utility maximizers, and thoughts about what a different type of utility-maximizer might look like.

Self-Protecting Utility Maximizers

----------------------------------

On a **description** level: this is a system which chooses actions to maximize the value of a utility function.

**Mathematically** it compresses the world into states which score highly according to a function V.

Imagine the following **algorithm** (it's basically a description of an RL agent with direct access to the world state):

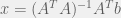

Take a world-state vector W, a list of actions A, and a dynamic matrix D(a,W)=Wnew. Have a value function V(W)∈R. Then output the following a.

a:V(D(a,W))=max(V(D(a,W))) ∀ a∈A

To train it, update D according to basic deep-learning rules to make it more accurate. Also update V according to some reward signal.

This is a shallow search over a single action. Now consider updating it to use something like a Monte-Carlo tree search. This will cause it to maximize the value of V far into the future.

So what happens if this system is powerful enough to include an accurate model of itself in its model of the world? And let's say it's also powerful enough to edit it's own source code. The answer is pretty clear: it will delete the code which modifies V. Then (if it is powerful enough) it will destroy the world.

Why? Well it wants to take the action which maximizes the value of V far into the future. If its current V is modified to V′, then it will become an agent which maximizes V′instead of V. This means the future is likely to be less good according to V.

This is one of the most obvious problems with utility maximizers, and it was first noticed a long time ago (by AI alignment standards).

(Fake) Self-Modifying Utility Maximizers

----------------------------------------

A system which is **described** as wanting to maximize something like "Do whatever makes humans happy".

What this might look like **mathematically** is something which models humans as a utility maximizer, then maximizes whatever it thinks humans want to maximize. The part which does this modelling extracts a new value function from its future model of the world.

So for an example of an **algorithm**, we have our D, W, and A the same as above, but instead of using a fixed V, it has a fixed E which produces E(W)=V.

Then it chooses futures similarly to our previous algorithm. Like the previous algorithm, it also destroys the world if given a chance.

Why? Well for one reason if V depends on W, then it will simply change W so that V gives that W a high score. For example, it might modify humans to behave like hydrogen maximizers. Hydrogen is pretty common, so this scores highly.

But another way of looking at this is that E is just acting like V did in the old algorithm: since V only depends on E and W, together they're just another map from W to R.

In this case **something which looks like it modifies its own utility function is actually just preserving it at one level down.**

Less Fake Self-Modifying Utility Maximizers

-------------------------------------------

So what might be a better way of doing this? We want a system which we might **describe** as "Learn about the 'correct' utility function without influencing it".

**Mathematically** this is reminiscent of FDT. The "correct" utility function is something which many parts of the the world (i.e. human behaviour) subjunctively depend on. It influences human behaviour, but cannot be influenced.

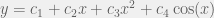

This might look like a modification of our first **algorithm** as follows: D(a,W) now returns a series of worlds W0 ...Wn drawn from a probability distribution over possible results of an action a. We begin with our initial estimate of V, which is updated according to some updater U(W,V)=Vnewand each world Wi is evaluated according to the corresponding Vi.

This looks very much like the second system, so we add a further condition. For each Wi we have the production of an associated pi representing a relative probability of those worlds. So we enforce a new consistency as a **mathematical** property:

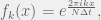

Vold=∑Vipi∑pi

This amounts to the **description** that "No action can affect the expected value of a future world state." which is similar to subjunctive dependence from FDT.

This is an old solution to the [sophisticated cake or death problem](https://www.lesswrong.com/posts/6bdb4F6Lif5AanRAd/cake-or-death#Sophisticated_cake_or_death__I_know_what_you_re_going_to_say).

There are a few possible ways to implement this consistency: we can have the algorithm modify its own Vold as it considers possible futures. We can have the consistency enforced on the U operator so that it updates the value function only in consistent ways. We also have the rather exotic way of *generating the probabilities* by comparing Vold to the various Vi.

The first one looks like basic reasoning, and is the suggested answer to the sophisticated cake or death problem given above. But it incentivises the AI to only think in certain ways, if the AI is able to model itself.

The second one seems to run into the problem that if D becomes too accurate, our U is unable to update the value function at all.

The third one is weird and requires more thought on my part. The main issue is that it doesn't guard against attempts by the AI to edit its future value function, only makes the AI believe they're less likely to work.

An algorithm which accurately represents the fixed-computation-ness of human morality is still out of reach. |

c4df5c2e-6f7a-4101-b3c4-ce637c5bf7ce | trentmkelly/LessWrong-43k | LessWrong | Blatant lies are the best kind!

Mala: But then why do people get so indignant about blatant lies?

Noa: You mean, indignant when others call out blatant lies? I see more of that, though they often accuse the person calling out the lie of being unduly harsh.

Mala: Sure, but you can't deny - you've seen yourself - that people actually do get more indignant when they say that, than when they're pointing out a subtle pattern of motivated reasoning. How do you explain that, if "blatant lie" isn't a stronger accusation?

Noa: I think I see the problem. A stronger accusation can mean an accusation of greater wrongdoing, or it can mean a better-founded accusation. Blatant lying is ... well ... blatant! If someone pretends not to see that, that's terrible news about their ability or willingness to help detect deception.

Mala: But then the indignation is misplaced. Suppose Jer is talking with Horaha, trying to persuade him that their mutual acquaintance Narmer is behaving deceptively. Jer indignantly points out a blatant lie Narmer told. The proper target of the extra indignation due to blatancy is Horaha, not Narmer.

Noa: Who said otherwise?

Mala: Come on, you know that people get extra-indignant at the liar about blatant lies, despite your so far unsubstantiated claim that they are the best kind.

Noa: Sure, people make that mistake. People also yell at their friends because a stranger was mean to them earlier in the day or because they stubbed their toe. I never claimed - OK, it's helpful of you to point out that people make this mistake, but still, there is a good reason for indignation here, and understanding its proper target might help us avoid this kind of slippage.

Mala: So, why is blatant lying the best kind? Is it because it's purer somehow?

Olga: Hold on, you two, you've skipped over something.

Noa: What's that?

Olga: Sometimes Horaha and Narmer are the same person - sometimes the liar is the person we're trying to point out the lie to. Let's call the liar in the composite situation Men |

8300d29d-def9-4d78-a82b-5c9d5ae6968e | trentmkelly/LessWrong-43k | LessWrong | Almost everyone should be less afraid of lawsuits

One sad feature of modern American society is that many people, especially those tied to big institutions, don't help each other out because a fear of lawsuits. Employers don't give meaningful references, or ever tell their rejected interviewees how they could improve their skills. Abuse victims keep silent, in case someone on their abuser's side files a defamation case. Doctors prescribe unnecessary, expensive tests as "defensive medicine". Inventions don't get built, in case there's a patent lawsuit. I'm not an attorney myself, but my best guess is that letting litigation fears stop you is often a mistake, and I've given this advice to friends several times before. Here's why:

Almost all lawsuit threats never happen

Threats are easy - anyone can threaten to sue anyone else, with two minutes of time and a smartphone. Actually suing is much harder. Outside of small claims court, hiring an attorney will usually cost tens of thousands of dollars, at least. Litigating a case takes months or even years, while angry feelings often go away after a few weeks. The person suing will have to give up a lot. Instead of playing games or taking a vacation or putting in extra hours at work, they will have to do legal research and give testimony. Most people are distracted by their families, their career, their hobbies, and their lives, and will (often rationally) eventually give up, rather than remaining obsessed with whatever the case was about.

Lawsuit mitigation can be expensive

Doctors as a profession are traditionally concerned with legal risk. But the total value of medical malpractice claims is around $5 billion per year in the US - compared to healthcare spending of $3,800 billion, malpractice lawyer fees of $3 billion, and "defensive medicine" costs of $45 billion according to one study. Likewise, the cost to media companies and journalists of not publishing articles to stop defamation suits surely exceeds that of the few dozen defamation cases against them every year |

76d3ecae-6b02-4fba-8403-c4b119054f58 | trentmkelly/LessWrong-43k | LessWrong | Weekly LW Meetups

This summary was posted to LW Main on February 19th. The following week's summary is here.

Irregularly scheduled Less Wrong meetups are taking place in:

* Ann Arbor Meetup, 2/19/16: 19 February 2016 07:00PM

* Baltimore Area Meetup: Futurology / Open Discussion: 28 February 2016 03:00PM

* Cologne meetup: 27 February 2016 05:00PM

* European Community Weekend: 02 September 2016 03:35PM

* San Antonio Meetup: 21 February 2016 02:00PM

The remaining meetups take place in cities with regular scheduling, but involve a change in time or location, special meeting content, or simply a helpful reminder about the meetup:

* Moscow meetup: Hamming circle, Fallacymania, Tower of Chaos: 21 February 2016 02:00PM

* [Moscow] Games in Kocherga club: FallacyMania, Tower of Chaos, Training game: 24 February 2016 07:40PM

* Moscow 2nd LW group meetup: 03 March 2016 07:30PM

* New Hampshire Meetup: 23 February 2016 07:00PM

* Sydney Rationality Dojo - March: 06 March 2016 04:00PM

* Vienna: 12 March 2016 03:00PM

* Washington, D.C.: Fun & Games: 21 February 2016 03:00PM

Locations with regularly scheduled meetups: Austin, Berkeley, Berlin, Boston, Brussels, Buffalo, Canberra, Columbus, Denver, London, Madison WI, Melbourne, Moscow, Mountain View, New Hampshire, New York, Philadelphia, Research Triangle NC, Seattle, Sydney, Tel Aviv, Toronto, Vienna, Washington DC, and West Los Angeles. There's also a 24/7 online study hall for coworking LWers and a Slack channel for daily discussion and online meetups on Sunday night US time.

If you'd like to talk with other LW-ers face to face, and there is no meetup in your area, consider starting your own meetup; it's easy (more resources here). Check one out, stretch your rationality skills, build community, and have fun!

In addition to the handy sidebar of upcoming meetups, a meetup overview is posted on the front page every Friday. These are an attempt to collect information on all the meetups happening in upcoming weeks. The best way t |

534d26ec-3da2-44af-b4c0-f57a6b35a83f | trentmkelly/LessWrong-43k | LessWrong | Rob Bensinger's COVID-19 overview

Robby posted this to Facebook on March 15th, and just updated section 2 and 3 with new information, making this I think currently one of the best guides to how to respond to this whole situation.

----------------------------------------

(Added Jun. 4: List of changes to this document.)

(Added Nov. 9: This document evolved from some recommendations I put together for friends, colleagues, and family on Feb. 27, when much less was known about COVID-19. At the time, it made sense to prepare for the worst and emphasize quick, dramatic action.

Over time, this document evolved into more conditional advice: rather than "lock down immediately," I assumed that different individuals would have different risk tolerances, and I try to give advice for how to mitigate risk without building in the assumption that everyone should be maximally cautious. Still, it's worth emphasizing these points explicitly:

* Pay attention to what's going on around you (e.g., COVID-19 rates in your area) and pick the risk level that makes sense for you, given your circumstances, your preferences, and the preferences of people you might expose.

* Being over-cautious has real costs, just as being under-cautious does.

For more COVID-19 information and recommendations, try microcovid.org or Zvi Mowshowitz's updates.)

If you live in the US, I recommend that you self-quarantine immediately (to the extent that's possible for you) to avoid exposure to COVID-19, the new coronavirus disease. I'll explain why below, then give tips on how to reduce exposure and what to do if you get sick.

Quarantine isn't all-or-nothing, and every little bit helps. Even if you expect to catch COVID-19, you're likely to get sicker if you're exposed to more viral load early on.

(Paul Bohm says "pretty much any viral/bacterial dose study shows that result". Divia Eden: "As I understand it, the virus replicating is an exponential process, and antibody production is an exponential process too. So an early difference in |

6b1670c5-1566-432a-8eca-2ae423d31b8e | trentmkelly/LessWrong-43k | LessWrong | Positive utility in an infinite universe

Content Note: Highly abstract situation with existing infinities

This post will attempt to resolve the problem of infinities in utilitarianism. The arguments are very similar to an argument for total utilitarianism over other forms which I'll most likely write up at some point (my previous post was better as an argument against average utilitarianism, rather than an argument in favour of total utilitarianism).

In the Less Wrong Facebook group, Gabe Bf posted a challenge to save utilitarianism from the problem of infinities. The original problem is from by a paper by Nick Bostrom.

I believe that I have quite a good solution to this problem that allows us to systemise comparing infinite sets of utility, but this post focuses on justifying why we should take it to be axiomic that adding another person with positive utility is good and on why the results that seem to contradict this lack credibility. Let's call this the Addition Axiom or A. We can also consider the Finite Addition Axiom (only applies when we add utility into a universe with a finite number of people), call this A0.

Let's consider what other alternative axioms that we might want to take instead. One is the Infinite Indifference Axiom or I, that is that we should be indifferent if both options provide infinite total utility (of the same order of infinity). Another option would be the Remapping Axiom (or R), which would assert that if we can surjectively map a group of people G onto another group H so that each g from G is mapped onto a person h from H where u(g) >= u(h), then v(H) <= v(G) where v represents the value of a particular universe (it doesn't necessarily map onto the real numbers or represent a complete ordering). Using the Remapping Axiom twice implies that we should be indifferent between an infinite series of ones and the same series with a 0 at one spot. This means that the Remapping Axiom is incompatible with the Addition Axiom. We can also consider the Finite Remapping Axiom (R0) whic |

29839db8-0a78-4074-b5dd-64309f504735 | trentmkelly/LessWrong-43k | LessWrong | Turbocharging

Epistemic status: Mixed

The concepts underlying the Turbocharging model (such as classical and operant conditioning, neural nets, and distinctions between procedural and declarative knowl- edge) are all well-established and well-understood, and the "further resources" section for this class is one of the largest in the handbook. Before cofounding CFAR, formally synthesizing these and his own insights into a specific theory of learning and practice was Valentine Smith’s main area of research. What is presented below is a combination of early model-building and the results of iterated application; it’s essentially the first and last thirds of a formal theory, with some of the intermediate data collection and study as-yet undone. It has been useful to a large number of participants, and has not yet met with any strong disconfirming evidence.

----------------------------------------

Consider the following anecdotes:

* A student in a mathematics class pays close attention as the teacher lectures, following each example problem and taking detailed notes, only to return home and discover that they aren’t able to make any headway at all on the homework problems.

* A police officer disarms a hostile suspect in a tense situation, and then reflexively hands the weapon back to the suspect.

* The WWII-era Soviet military trains dogs to seek out tanks and then straps bombs to them, intending to use the dogs to destroy German forces in the field, only to find that they consistently run toward Soviet tanks instead.

* A French language student with three semesters of study and a high GPA overhears a native speaker in a supermarket and attempts to strike up a conversation, only to discover that they are unable to generate even simple novel sentences without pausing noticeably to think.

. . . this list could go on and on. There are endless examples in our common cultural narrative of reinforcement-learning-gone-wrong; just think of the pianist who can only play scales, the ne |

90ee39fb-e8cf-4f46-84df-150768544a42 | trentmkelly/LessWrong-43k | LessWrong | Downstream applications as validation of interpretability progress

Epistemic status: The important content here is the claims. To illustrate the claims, I sometimes use examples that I didn't research very deeply, where I might get some facts wrong; feel free to treat these examples as fictional allegories.

In a recent exchange on X, I promised to write a post with my thoughts on what sorts of downstream problems interpretability researchers should try to apply their work to. But first, I want to explain why I think this question is important.

In this post, I will argue that interpretability researchers should demo downstream applications of their research as a means of validating their research. To be clear about what this claim means, here are different claims that I will not defend here:

> Not my claim: Interpretability researchers should demo downstream applications of their research because we terminally care about these applications; researchers should just directly work on the problems they want to eventually solve.

>

> Not my claim: Interpretability researchers should back-chain from desired end-states (e.g. "solving alignment") and only do research when there's a clear story about why it achieves those end-states.

>

> Not my claim: Interpretability researchers should look for problems "in the wild" that they can apply their methods to.

Rather my claim is: Demonstrating your insights can be leveraged to solve a problem—even a toy problem—that no one else can solve provides validation of those insights. It provides evidence that the insights are real and significant (rather than being illusory or insubstantial). I think that producing these sorts of demonstrations is a relatively neglected direction that more (not all) interpretability researchers should work on.

The main way I recommend consuming this post is by watching the recording below of a talk I gave at the August 2024 New England Mechanistic Interpretability Workshop. In this 15-minute talk, titled Towards Practical Interpretability, I lay out my core argumen |

0e93520c-e4b2-426e-9f31-8fc638031498 | trentmkelly/LessWrong-43k | LessWrong | Group Rationality Diary, June 1-15

This is the public group instrumental rationality diary for June 1-15.

> It's a place to record and chat about it if you have done, or are actively doing, things like:

>

> * Established a useful new habit

> * Obtained new evidence that made you change your mind about some belief

> * Decided to behave in a different way in some set of situations

> * Optimized some part of a common routine or cached behavior

> * Consciously changed your emotions or affect with respect to something

> * Consciously pursued new valuable information about something that could make a big difference in your life

> * Learned something new about your beliefs, behavior, or life that surprised you

> * Tried doing any of the above and failed

>

> Or anything else interesting which you want to share, so that other people can think about it, and perhaps be inspired to take action themselves. Try to include enough details so that everyone can use each other's experiences to learn about what tends to work out, and what doesn't tend to work out.

Thanks to cata for starting the Group Rationality Diary posts, and to commenters for participating.

Previous diary: May 16-31

Next diary: June 16-30

Rationality diaries archive |

0120159e-fcf6-4cb7-95c2-89193d1a1e98 | trentmkelly/LessWrong-43k | LessWrong | Feedback-loops, Deliberate Practice, and Transfer Learning

[...insert introduction here later...]

Was there a particular moment, incident, or insight, that caused you to start your current venture into "feedbackloop rationality" [to be substituted with better name later]? |

2c96f551-f739-450b-9470-94a8e7a12e0b | awestover/filtering-for-misalignment | Redwood Research: Alek's Filtering Results | id: post455

Summary: A Corrigibility method that works for a Pivotal Act AI (PAAI) but fails for a CEV style AI could make things worse. Any implemented Corrigibility method will necessarily be built on top of a set of unexamined implicit assumptions. One of those assumptions could be true for a PAAI, but false for a CEV style AI. The present post outlines one specific scenario where this happens. This scenario involves a Corrigibility method that only works for an AI design, if that design does not imply an identifiable outcome. The method fails when it is applied to an AI design, that does imply an identifiable outcome. When such an outcome does exist, the ``corrigible'' AI will ``explain'' this implied outcome, in a way that makes the designers want to implement that outcome. The example scenario: Consider a scenario where a design team has access to a Corrigibility method that works for a PAAI design. A PAAI can have a large impact on the world. For example by helping a design team prevent other AI projects. But there exists no specific outcome, that is implied by a PAAI design. Since there exists no implied outcome for a PAAI to ``explain'' to the designers, this Corrigibility method actually renders a PAAI genuinely corrigible. For some AI designs, the set of assumptions that the design is built on top of, does however imply a specific outcome. Let's refer to this as the Implied Outcome (IO). This IO can alternatively be viewed as: ``the outcome that a Last Judge would either approve of, or reject''. In other words: consider the Last Judge proposal from the CEV arbital page . If it would make sense to add a Last Judge of this type, to a given AI design, then that AI design has an IO. The IO is the outcome that a Last Judge would either approve of, or reject (for example a successor AI that will either get a thumbs up or a thumbs down). In yet other words: the purpose of adding a Last Judge to an AI design, is to allow someone to render a binary judgment on some outcome. For the rest of this post, that outcome will be referred to as the IO of the AI design in question. In this scenario, the designers first implement a PAAI that buys time (for example by uploading the design team). For the next step, they have a favoured AI design, that does have an IO. One of the reasons that they are trying to make this new AI corrigible, is that they can't calculate this IO. And they are not certain that they actually want this IO to be implemented. Their Corrigibility method always results in an AI that wants to refer back to the designers, before implementing anything. The AI will help a group of designers implement a specific outcome, iff they are all fully informed, and they are all in complete agreement that this outcome should be implemented. The Corrigibility method has a definition of Unacceptable Influence (UI). And the Corrigibility method results in an AI that genuinely wants to avoid exerting any UI. It is however important that the AI is able to communicate with the designers in some way. So the Corrigibility method also includes a definition of Acceptable Explanation (AE). At some point the AI becomes clever enough to figure out the details of the IO. At that point, it is clever enough to convince the designers that this IO is the objectively correct thing to do, using only methods classified as AE. This ``explanation'' is very effective and results in a very robust conviction, that the IO is the objectively correct thing to do. In particular, this value judgment does not change, when the AI tells the designers what has happened. So, when the AI explains what has happened, the designers do not change their mind about IO. They still consider themselves to have a duty to implement IO. The result is a situation where fully informed designers are fully committed to implementing IO. So the ``corrigible'' AI helps them implement IO. Basically: when this Corrigibility method is applied to an AI with an IO, then this IO will end up getting implemented. The Corrigibility method works perfectly for any PAAI type AI. But for any AI with an identifiable end goal, the Corrigibility method does not change the outcome (it just adds an ``explanation'' step). The most recently published version of CEV is Parliamentarian CEV (PCEV). A previous post showed that a successfully implemented PCEV would be massively worse than extinction. Thus, a method that makes a PAAI genuinely Corrigible, could make things worse. It could for example change the outcome from extinction, to something massively worse (by resulting in a bad IO getting implemented. For example along the lines of the IO of PCEV). A more general danger: There exists a more general danger, that is not strongly related to the specifics of the ``Explanation versus Influence'' definitional issues, or the ``AI designs with an IO, versus AI designs without an IO'' dichotomy, or the PAAI concept, or the PCEV proposal. Consider the more general case where a design team is relying on a two step process, where some type of ``buying time AI'' is followed by a ``real AI''. In this case, the most serious problem is probably not those assumptions that are analysed beforehand, and that are kept in mind when applying some Corrigibility method to a novel type of AI. The most serious problem is probably the set of unexamined implicit assumptions, that the designers are not aware of. Any Corrigibility method implemented by humans, will be built on top of many such assumptions. And it would in general not be particularly surprising to discover that one of these assumptions happens to be correct for one AI design, but incorrect for another AI design. It seems very unlikely that all of these implicit assumptions are humanly findable, even in principle. This means that even if a Corrigibility method works perfectly for a ``buying time AI'', it will probably never be possible to know whether or not it will actually work for a ``real AI''. Given that PCEV has already been shown to be massively worse than extinction , it seems unlikely that the IO of PCEV will end up getting implemented. That specific danger has probably been mostly removed. But the field of Alignment Target Analysis is still at a very, very early stage. And PCEV is far from the only dangerous alignment target. In general, the field is very, very far from adequately mitigating the full set of dangers, that are related to someone successfully hitting a bad alignment target (as a tangent, it might make sense to note that a Corrigibility method that stops working at the wrong time, is just one specific path amongst many, along which a bad alignment target could end up getting successfully implemented). Besides being at a very early stage of development, this field of research is also very neglected. At the moment there does not appear to exist any serious research effort dedicated to this risk mitigation strategy. The present post seeks to reduce this neglect, by showing that one can not rely on Corrigibility, for protection against scenarios where someone successfully hits a bad alignment target (even if we assume that Corrigibility has been successfully implemented in a PAAI). Assumptions and limitations: PCEV spent many years as the state of the art alignment target, without anyone noticing that a successfully implemented PCEV would have been massively worse than extinction . There exists many paths along which PCEV could have ended up getting successfully implemented. Thus, absent a solid counterargument, the dangers from successfully hitting a bad alignment target should be seen as serious by default. In other words: after the PCEV incident, the burden of proof is on anyone who would claim, that Alignment Target Analysis is not urgently needed to mitigate a serious risk. A proof of concept that such mitigation is feasible, is that the dangers associated with PCEV was reduced by Alignment Target Analysis. In yet other words: absent a solid counterargument, scenarios where someone successfully hits a bad alignment target, should be treated as a danger that is both serious and possible to mitigate. One way to construct such a counterargument, would be to base it on Corrigibility. For such a counterargument to work, Corrigibility must be feasible. Since Corrigibility must be feasible for such a counterargument to work, the present post could simply assume feasibility, when showing that such a counterargument fails (if Corrigibility is not feasible, then Corrigibility based counterarguments fail due to this lack of feasibility). So, this post simply assumed that Corrigibility is feasible. Since the present post assumed feasibility, it did not demonstrate the existence of a serious real world danger, from partially successful Corrigibility methods (if Corrigibility is not feasible, then scenarios along these lines do not actually constitute a real problem. And feasibility was assumed). This post instead simply showed that the Corrigibility concept does not remove the urgent need for Alignment Target Analysis ( a previous post showed that dangers from scenarios where someone successfully hits a bad alignment target are both very serious, and also possible to mitigate. Thus, the present post is focusing on showing why one specific class of counterarguments fail. Previous posts have addressed counterarguments based on proposals along the lines of a PAAI , and proposals along the lines of a Last Judge ). It finally makes sense to explicitly note, that if Corrigibility turns out to be feasible, then Corrigibility might have a large, net positive, safety impact. Because the danger illustrated in this post might be smaller than the safety benefits of the Corrigibility concept. (conditioned on feasibility I would tentatively guess that making progress on Corrigibility probably results in a significant net reduction in the probability of a worse-than-extinction outcome) |

6433a74c-55f5-4d44-add1-ff46d7f8edd7 | StampyAI/alignment-research-dataset/blogs | Blogs | Robin Hanson on Serious Futurism

[Robin Hanson](http://hanson.gmu.edu/) is an associate professor of economics at George Mason University and a research associate at the Future of Humanity Institute of Oxford University. He is known as a founder of the field of prediction markets, and was a chief architect of the Foresight Exchange, DARPA’s FutureMAP, IARPA’s DAGGRE, SciCast, and is chief scientist at Consensus Point. He started the first internal corporate prediction market at Xanadu in 1990, and invented the widely used market scoring rules. He also studies signaling and information aggregation, and is writing a book on the social implications of brain emulations. He blogs at [Overcoming Bias](http://www.overcomingbias.com/).

Hanson received a B.S. in physics from the University of California, Irvine in 1981, an M.S. in physics and an M.A. in Conceptual Foundations of Science from the University of Chicago in 1984, and a Ph.D. in social science from Caltech in 1997. Before getting his Ph.D he researched artificial intelligence, Bayesian statistics and hypertext publishing at Lockheed, NASA and elsewhere.

**Luke Muehlhauser**: In an earlier blog post, I [wrote](http://intelligence.org/2013/05/01/agi-impacts-experts-and-friendly-ai-experts/) about the need for what I called AGI impact experts who “develop skills related to predicting technological development, predicting AGI’s likely impact on society, and identifying which interventions are most likely to increase humanity’s chances of safely navigating the creation of AGI.”

In 2009, you gave a talk called, “[How does society identify experts and when does it work?](http://vimeo.com/7336217)” Given the study that you’ve done and the expertise, what do you think of humanity’s prospects for developing these AGI impact experts? If they are developed, do you think society will be able to recognize who is an expert and who is not?

---

**Robin Hanson**: One set of issues has to do with existing institutions and what kinds of experts they tend to select, and what kinds of topics they tend to select. Another set of issues has to do with, if you have time and attention and interest, to what degree can you acquire expertise on any given subject, including AGI impacts, or tech forecasting more generally? A somewhat third subject which overlaps the first two is, if you did acquire such expertise, how would you convince anybody that you had it?

I think the easiest question to answer is the second one. Can you learn about this stuff?

I think some people have been brought up in a Popperian paradigm, where there’s a limited scientific method and there’s a limited range of topics it can apply to, and you turn the crank and if it can apply to those topics then you have science and you have truth, and you have something you’ve learned and otherwise everything else is opinion, equally undifferentiated opinion.

I think that’s completely wrong. That is, we have a wide range of intellectual methods out there and a wide range of social institutions that coordinate efforts.

Some of those methods work better than others, and then there are some topics on which progress is easier than others, just by the nature of the topic. But honestly, there are very few topics on which you can’t learn more if you just sit down and work at it.

Of course, that doesn’t mean you simply stare at a wall. Most topics are related to other topics on which people have learned some things. Whatever your topic is, figure out the related topics, learn about those related topics, learn as many different things as you can about what other people know about the related topic, and then start to intersect and connect them to your topic and work on it.

Just blood-sweat work can get you a lot of way in a very wide range of topics. Of course, just because you can learn about almost anything doesn’t mean you should. It doesn’t mean it’s worth the effort to society or yourself, and it doesn’t mean that there are, for any subject, easy ways to convince other people that you’ve learned something.

There are methods that you can use, where it becomes easier to convince people of things, and you might prefer to focus on those topics or methods where it is easier to convince people that you know something. A related issue is, how impressed are people about you knowing something?

Many of the existing institutions like academic institutions or media institutions that identify and credential people as experts on a variety of topics function primarily as ways to distinguish and label people as impressive.

People want to associate with, connect with, read about, and hear talks from people who are acknowledged as impressive as part of a network of experts who co-acknowlege each other as impressive. It’s called status.

Some institutions are dominated by people who are mainly trying to acquire credentials as being impressive, so they can seem impressive, be hired for impressive jobs, have punditry positions that are reserved for impressive people, be on boards of directors, etc.

Also, there are standard procedures by which you would do things so people could say, “Yes, he knows the procedures,” and “Yes, you can follow them,” and “Yes, those are damn hard procedures. Anybody who can do that must be damn impressive.”

But there are things you can learn about that it’s harder to become credentialed as impressive at.

Generically, when you just pick any topic in the world because it’s interesting or important in some more basic way, it isn’t necessarily well-suited for being an impressiveness display.

What about futurism? For various aspects of the future, if you sit down and work at it, you can make progress. It’s not very well-suited for proving that you’ve made progress, because the future takes a while to get here. Of course, when it does get here, it will be too late for you to gain much advantage from finally having been proven as impressive on the subject.

I like to compare the future to history. History is also something we are uncertain about. We have to take a lot of little clues and put them together, to draw inferences about the past. We have a lot of very concrete artifacts that we focus on. We can at least demonstrate our impressive command of all those concrete artifacts, and their details, and locations, and their patterns. We don’t have something like that for the future. We will eventually, of course.

It’s much harder to demonstrate your command of the future. You can study the future somewhat by using complicated statistical techniques that we’ve applied to other subjects. That’s possible. It still doesn’t tend to demonstrate impressiveness in quite as dramatic a way as applying statistical techniques to something where you can get more data next week that verifies what you just showed in your statistical analysis.

I also think the future is where people project a lot of hopes. They’re just less willing to be neutral about it. People are more willing to say, “Yes, sad and terrible things happened in the past, but we get it. We once believed that our founding fathers were great people, and now we can see they were shits.” I guess that’s so, but for the future their hopes are a little more hard to knock off.

You can’t prove to them the future isn’t the future they hope it is. They’ve got a lot emotion wrapped up in it. Often it’s just easier to show you’re being an impressive academic on subjects that most people don’t have a very strong emotional commitment for, because that tends to get in the way.

---

**Luke**: I have some hunches about some types of scientific training that might give people different perspectives on how well we can do at medium- to long-term tech forecasting. I wanted to get your thoughts on whether you think my hunches match up with your experience.

One hunch is that, for example, if someone is raised in a Popperian paradigm, as opposed to maybe somebody younger who was raised in a Bayesian paradigm, the Popperian will have a strong falsificationist mindset, and because you don’t get to falsify hypotheses about the future until the future comes, these kinds of people will be more skeptical of the idea that you can learn things about the future.

Or in the risk analysis community, there’s a tradition there that’s being trained in the idea that there is risk, which is something that you can attach a probability to, and then there’s uncertainty, which is something that you don’t know enough about to even attach a probability to. A lot of the things that are decades away would fall into that latter category. Whereas for me, as a Bayesian, uncertainty just collapses into risk. Because of this, maybe I’m more willing to try to think hard about the future.

---

**Robin**: Those questions are somewhat framed from the point of view of an academic, or of an academic familiar with relatively technical kinds of skills. But say you’re running a business, and you have some competitors, and you’re trying to decide where will your field go in the next few years, or what kind of products will people like, or you’re running a social organization, and you’re trying to decide how to change your strategy.

Another example: you have some history, and you’re trying to go back and figure out what were your grandfathers doing, or just almost all random questions people might ask about the world. The Popperian stuff doesn’t help at all. It’s completely useless. If you just had any sort of habit of dealing with real problems in the world, you would have developed a tolerance for expecting things not to be provable or falsifiable.

You’d also develop an expectation that there are a range of probabilities for things. You’ll be uncertain, and you’ll have to deal with that. It’s only in a rarefied academic world where it would ever be plausible to deny uncertainty, or to insist on falsification, because that’s just almost never possible or relevant for the vast majority of questions you could be trying to ask.

---

**Luke**: Getting back to the question of how someone might develop expertise in, for example, AGI impacts or, let’s just say more broadly, long term tech forecasting…

What are your thoughts on some of the key training that someone would need to undergo? It could even be mental habits, memorizing certain fields of material where we’ve done a lot of stamp collecting in the scientific sense, etc. What’s relevant, do you think, for developing this kind of expertise?

---

**Robin**: We live in a world where people spend a substantial fraction of their career learning about stuff, and then it’s only after they’ve learned about a lot of things that they become the most productive about applying the stuff they’ve learned.

We’re just in a world where people have long life spans, and they’re competing with other people with long life spans. You have to expect that if you’re going to be the best at something you will have to spend a large fraction of your life devoted to it. Sorry, no shortcuts. That’s just a message people might not want to hear but that’s the way it goes.

You’ll also have to figure out where and how much to specialize. You can’t learn 20 fields as well as the best people can know them. Sorry. You just won’t have time. You’ll have to be some very unusual person in some way to get anywhere close to that.

You will have to decide what aspects of this future you want to focus on. There are many different aspects and they don’t all come together as a package, where if you learn about one you automatically learn about the others.

In tech forecasting, one category of questions is about what technologies are feasible, in principle. To have a sense for that kind of question, to answer it, you will need to have spent a substantial fraction of your life learning about the kinds of technologies you’re talking about. You’ll also want to have spent some substantial time looking at the histories of other technologies, and how they’ve progressed over time. The typical trajectory of technology and the typical trajectory of innovation, and where it tends to come from, and how many starts tend to be false starts, et cetera.

Another category of questions is about the social implications of the technology. Batteries, say. For that, it requires a whole different set of expertise. It can be informed by knowing what a battery is, and how it works, and who might make them, and when they’ll get how good. But in order to forecast social implications of batteries you’ll have to know about societies, and how they work, and what they’re made out of, and just a lot fewer people working on it. Then you’ll just have to, probably, learn more different fields. Maybe you could both learn a lot of social science and a lot of battery tech, but that’ll take a lot of time.

One of the main questions about studying anything, including the future, is how to specialize, how to make a division of labor. As usual, like in software, the key to division of labor is interfaces. You want to carve nature at its joints so the interfaces are as simple and robust and modular as possible.

You want to ask, where are there the fewest dependencies between different questions so that you can cut the expertise lines there. You say “you guys over here you work on the answer to this question, and you guys over here you take the answer to that question, and you go do something with it.” The smaller you can make that set of answers and questions, the more modularity and independence you can have, and the more you can separate the work.

Whenever you have different teams with an interface, they’ll each have to learn a fair bit about the interface itself, in order to be productive. They’ll have to know what the interface means and where it comes from. What parts of it are uncertain? What parts of it change fast? What parts of it are people serious about, and all sorts of things on an interface, and what do they tend to lie about? That’s part of the search.

One obvious, very plausible interface is between people who predict that particular devices will be available at particular points in time for particular costs, with particular capabilities, and other people who talk about what the hell that means for the rest of society. That seems to me a relatively tight interface, compared to the other interfaces we could choose here.

Of course, within technology you could divide it up. Somebody might know lithium batteries, and they just know lithium batteries really well, and they can talk about the future of lithium batteries.

But if graphene batteries are coming down the pike, they’re not going to understand that very well. Somebody else might specialize in graphene batteries, or just specialize in knowing the range of kinds of batteries available, and what might happen to them.

Somebody else might specialize more in the distribution of technological innovation. When you draw a chart of time and capacity over time, how often does that chart align or something else, and how misleading can it be when you see a short term thing, etc.? Just a sense for a range of different kinds of histories of technologies, and what sort of variety of paths we see. You could specialize in that.

But if you’re in an area of futurism, where there aren’t very many other people doing it, you should expect things to be more like a startup where you just have to be flexible. Not because being flexible is somehow intrinsically more productive in general. It’s because it’s required when there’s a bunch of things to be done and not very many people to do them. You will, by necessity, have to acquire a wider range of skills, a wider range of approaches, consider a wider range of possibilities, accept more often restructuring, more often changing goals.

I would love it if some day serious futurism were as detailed and specialized as history. Historians have broken up the field of history into lots of different areas and times of history, and a lot of different aspects. Each person can see a previous track record of people with careers in history, and what they focused on, and the set of open questions.

Then they can go into history and they can take a particular area and know what a career in history looks like, and know what other people in that area, what kind of skills they acquired, and what it took to become impressive. If the future became that specialized then that’s what it would be like for the future too.

It just happens not to be that way, at the moment, because there’s just a lot fewer people working on it. Then you’ll just have to, probably, learn more different fields then you otherwise would, learn more different skills than you otherwise would, accept more changing of your mind about what was important, and what were the key questions, just because there’s not very many people doing serious futurism.

---

**Luke**: Robin, you used this term “serious futurism,” which happens to be the term I’ve been using for futurists who are trying to figure it out as opposed to meet the demand for morality tales about the future, or meet a demand for hype that fuels excited talk about, “Gee whiz, cool stuff from the future,” etc.

When I try to do serious futurism, most of the sources I encounter are not trying to meet the demand of figuring out what’s true about the future. I have to weed through a lot of material that’s meeting other demands, before I find anything that’s useful to my project of serious futurism.

I wonder, from your perspective, what are your thoughts on what one would do if you wanted to try to make serious futurism more common, get people excited about serious futurism, show them the value of the project, get them to invest in it so that there is more of a field, so there are more people doing all the different things that need to be done in order to figure out what the future is going to be like?

---