id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

304018ee-be81-4f03-8671-d9ae1a05f4c5 | trentmkelly/LessWrong-43k | LessWrong | Will/Has the Russia-Ukraine war been a tipping point for the shift from oil energy?

What do people think? The process and transition away from oil energy has been a long road. It seems to have been a slow path with lots of hurdles in the way. But now it seems there is another impetus in that direction that may tip the scales.

Then again, people are really good at adjusting to new settings and then reverting to old modes of action. |

92d2df7c-3560-40f2-bb6d-6c5611cfe423 | trentmkelly/LessWrong-43k | LessWrong | The AI Driver's Licence - A Policy Proposal

TL;DR: In response to the escalating capabilities and associated risks of advanced AI systems, we advocate for the implementation of an “AI Driver’s Licence” policy. Our proposal is informed by existing licencing frameworks and existing AI legislation. This initiative mandates that users of advanced AI systems must obtain a licence, ensuring they have undergone minimal technical and ethical training The licence requirements would be defined by an international regulatory body, such as the ISO, to maintain consistent and up-to-date standards globally. Independent organisations would issue the licences, while local governments would enforce compliance through audits and penalties. By focusing on the usage stage of the AI lifecycle, this policy aims to mitigate misuse risks , contributing to a safer AI landscape. Our proposal complements existing regulations and emphasises the need for international cooperation to effectively manage the deployment and usage of advanced AI technologies.

Current governance approaches regulate developer and deployers of AI systems. Our policy proposal of users being required to have an AI driver's licence also affects the user side.

----------------------------------------

Introduction

Sam Altman, the CEO of OpenAI, claims we need “a new agency that licences any effort above a certain scale of capabilities and could take that licence away and ensure compliance with safety standards” (Wheeler, 2023).

Licencing has a long history, with key success stories in protecting the safety of the public by controlling access to potentially harmful activities and items. However, in some cases it has also resulted in adverse effects, driving large parts of industries to the black market, an entirely unregulated space.

Today, we face an uncertain and rapidly changing AI landscape, with AI tools and models capable of increasingly broad and self-determined actions. Many have written about the catastrophic scenarios that may unfold if AI is not appro |

c73baf3a-2707-4545-bd5b-7ea1d0bd478b | trentmkelly/LessWrong-43k | LessWrong | True numbers and fake numbers

> In physical science the first essential step in the direction of learning any subject is to find principles of numerical reckoning and practicable methods for measuring some quality connected with it. I often say that when you can measure what you are speaking about, and express it in numbers, you know something about it; but when you cannot measure it, when you cannot express it in numbers, your knowledge is of a meagre and unsatisfactory kind; it may be the beginning of knowledge, but you have scarcely in your thoughts advanced to the state of Science, whatever the matter may be.

>

> -- Lord Kelvin

If you believe that science is about describing things mathematically, you can fall into a strange sort of trap where you come up with some numerical quantity, discover interesting facts about it, use it to analyze real-world situations - but never actually get around to measuring it. I call such things "theoretical quantities" or "fake numbers", as opposed to "measurable quantities" or "true numbers".

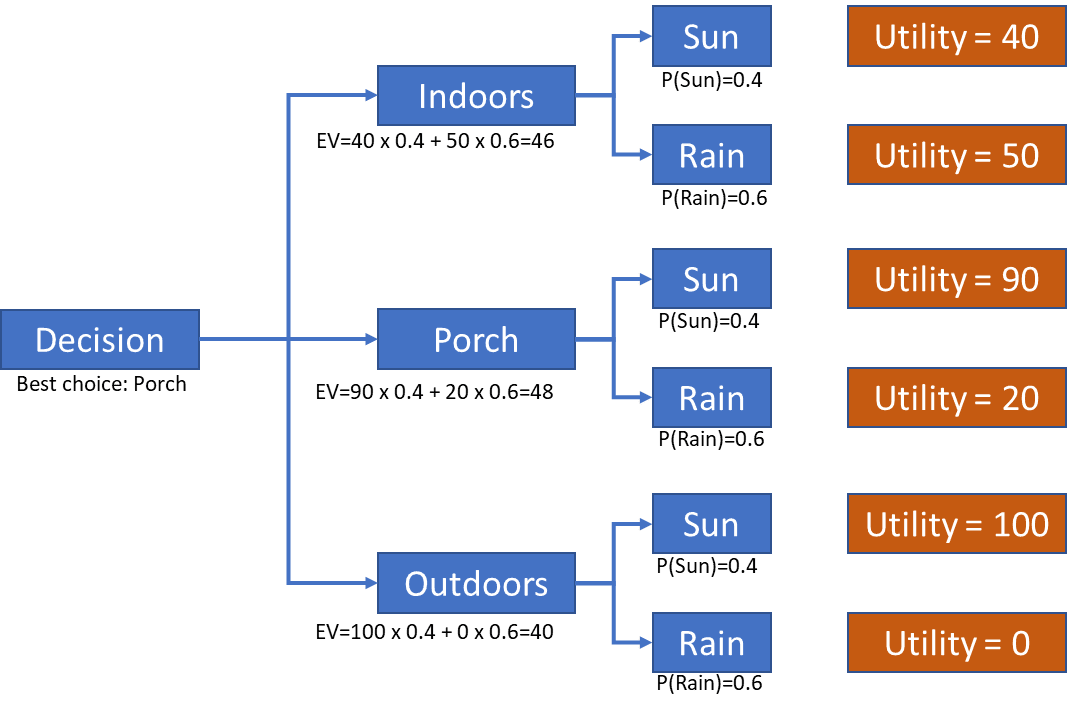

An example of a "true number" is mass. We can measure the mass of a person or a car, and we use these values in engineering all the time. An example of a "fake number" is utility. I've never seen a concrete utility value used anywhere, though I always hear about nice mathematical laws that it must obey.

The difference is not just about units of measurement. In economics you can see fake numbers happily coexisting with true numbers using the same units. Price is a true number measured in dollars, and you see concrete values and graphs everywhere. "Consumer surplus" is also measured in dollars, but good luck calculating the consumer surplus of a single cheeseburger, never mind drawing a graph of aggregate consumer surplus for the US! If you ask five economists to calculate it, you'll get five different indirect estimates, and it's not obvious that there's a true number to be measured in the first place.

Another example of a fake number is "complexity" or "maintainabi |

191e0efc-7d51-4f06-a227-4f17dbccd0cd | trentmkelly/LessWrong-43k | LessWrong | Cambist Booking

Summary: A Cambist is a manual showing exchange rates of different currencies and measurements. Dutch Booking is when a sequence of trades would leave one party strictly worse off and another strictly better off. Cambist Booking is intended to spark conversation about what priorities or objects you would exchange for each other, and at what rates.

Tags: Large, Repeatable

Purpose: Taking its title from the excellent short story “The Cambist and Lord Iron,” Cambist Booking is about understanding the idea that everything is, ultimately, trading off against everything else. The extra hour you work earns you a bit of extra money, the money can buy you a coffee so you get up earlier and have an extra hour, you spend that extra hour studying for a better job – but at each step you could have chosen to spend things differently.

Materials: You need a big stack of cards with things people want written on them, called the Deck. You'll also want a piece of paper and a pen or pencil for each participant, called the Record.

A workable list of things for the Deck is provided here, along with a PDF for easy printing- just print about ten to a page, single sided, and cut them out. If you want nicer cards, use cardstock like for a business card and the Cambist Booking Back. Regular paper works fine. If you'd rather, you can also handwrite the whole Deck. Feel free to add in your own cards!

Announcement Text: Hello! This event is for general socialization, and also running a game called Cambist Booking. If you’re familiar with the Slate Star Codex post Everything Is Commensurable, or the short story The Cambist and Lord Iron, then this game should sound somewhat familiar to you. It works like this: each person will get a some cards with things you might want on them. You’ll go around asking other people what their cards are, and deciding how to compare the two values – is a sportscar worth more or less than a year’s vacation? Is an hour long massage worth more or less than a n |

01d120e3-a3dc-4f8c-ba13-83d5d44c7b33 | trentmkelly/LessWrong-43k | LessWrong | Commonsense Good, Creative Good

Let's say you're vegan and you go to a vegan restaurant. The food is quite bad, and you'd normally leave a bad review, but now you're worried: what if your bad review leads people to go to non-vegan restaurants instead? Should you refrain from leaving a review? Or leave a false review, for the animals?

On the other hand, there are a lot of potential consequences of leaving a review beyond "it makes people less likely to eat at this particular restaurant, and they might eat at a non-vegan restaurant instead". For example, three plausible effects of artificially inflated reviews could be:

* Non-vegans looking for high-quality food go to the restaurant, get vegan food, think "even highly rated vegan food is terrible", don't become vegan.

* Actually good vegan restaurants have trouble distinguishing themselves, because "helpful" vegans rate everywhere five stars regardless of quality, and so the normal forces that push up the quality of food don't work as well. Now the food tastes bad and fewer people are willing to sustain the sacrifice of being vegan.

* People notice this and think "if vegans are lying to us about how good the food is, are they also lying to us about the health impacts?" Overall trust in vegans (and utilitarians) decreases.

Despite thinking that it is the outcomes of actions that determine whether they are a good idea, I don't think this kind of reasoning about everyday things is actually helpful. It's too easy to tie yourself in logical knots, making a decision that seems counterintuitive-but-correct, except if you spent longer thinking about it, or discussed it with others, you would have decided the other way.

We are human beings making hundreds of decisions a day, with limited ability to know the impacts of our actions, and a worryingly strong capacity for self-serving reasoning. A full unbiased weighing of the possibilities is, sure, the correct choice if you relax these constraints, but in our daily lives that's not an option we have. |

8c5ae12e-c815-4944-9189-64ac6f10c581 | trentmkelly/LessWrong-43k | LessWrong | The novelty quotient

I. The Device

Imagine a device that listens to all of your conversations. It has a single purpose: live-running your utterances through text-davinci-003 to evaluate how surprising each token is, given its preceding context.[1]

Users could chart their verbal novelty throughout the day; highlight and share their most unusual turns of phrase; note which friends put them on well-worn paths and which help them careen into the chaotic underbrush.

Undoubtedly, some would be disturbed by the long stretches where every one of their words could easily have been generated by the model. In those hours, they might as well have lost consciousness, taken a little nap while Daddy Altman autopiloted their mouth.[2]

Consider: for how many people would this be true at virtually all times? If President Andreessen were someday to mandate the wearing of these devices, scoring each person on their overall surprising-ness, what would the distribution be?[3]

II. The Quotient

Let’s call it a novelty quotient. How correlated is it with IQ? Certainly somewhat: a greater vocabulary widens the possible sentences available, and isomorphically,[4] intelligence and knowledge are likely to assist the dedicated weirdo in finding ever-more-remote unexplored territory.

But we can all think of high-IQ people who we’d wager have reliably low NQ, sticking to stifling convention like a straitjacket. Conversely, there are bizarre, stupid, bizarrely stupid, and stupidly bizarre people. Think of the difference between boring-dumb and crazy-dumb.

Which brings us to another question: how correlated would NQ be with insanity? There’s likely to be some link… But it can’t be absolute. The ravings of someone who has fully lost touch with reality are very different from typical speech, but they’re completely predictable. Given fifty sentences of paranoia or hours of repeated compulsions, it’s easy to see what’s coming next. Even more than for the healthy.

Is NQ just a measure of creativity? Not really — cre |

a8b4348e-a571-4e3e-b5e1-2603d8f1a6a9 | trentmkelly/LessWrong-43k | LessWrong | [LINK] Wired - "New Chip Borrows Brain’s Computing Tricks"

In case anyone's interested,here's an article about new computer chips by IBM which emulate brain functions. |

c73958ab-20aa-4f46-ba4c-0efe13d6b693 | trentmkelly/LessWrong-43k | LessWrong | God in the Loop: How a Causal Loop Could Shape Existence

Crossposted from Vessel Project.

My last article, “Life Through Quantum Annealing” was an exploration of how a broad range of physical phenomena — and possibly the whole universe — can be mapped to a quantum computing process. But the article simply accepts that quantum annealing behaves as it does; it does not attempt to explain why. That answer lies somewhere within a “true” description of quantum mechanics, which is still an outstanding problem.

Despite the massive predictive success of quantum mechanics, physicists still can’t agree on how its math corresponds to reality. Any such proposal, called an “interpretation” of quantum mechanics, tends to straddle the line between physics and philosophy. There is no shortage of interpretations, and in the words of physicist David Mermin, “New interpretations appear every year. None ever disappear.” Am I going to throw one more on that pile? You bet.

I’m not going to start from scratch though; I simply propose an ever-so-slight modification to an existing forerunner: the many-worlds interpretation, where other “worlds” or timelines exist in parallel to our own. My modification is this: the only worlds that can exist are those that exist within a causal loop. Stated another way: our universe, or any possible universe, must be a causal loop.

I will introduce the relevant concepts and provide an argument for my proposal, but my goal is not to once-and-for-all prove this interpretation as true. Rather, my goal is to explore what happens if we accept the interpretation as true. If we start with the assumption that only causal loop universes can exist, then several interesting things follow — we find parallels to our own universe, and we might even find God.

Causality & Quantum Interpretations

Before talking about causal loops, let’s take a step back and talk about causality — perhaps the single most fundamental concept in all the sciences. It plays a starring role in the two most important theories in physics: general |

71ac727f-9463-446c-b621-003989c63636 | trentmkelly/LessWrong-43k | LessWrong | How should Eliezer and Nick's extra $20 be split

In "Principles of Disagreement," Eliezer Yudkowsky shared the following anecdote:

> Nick Bostrom and I once took a taxi and split the fare. When we counted the money we'd assembled to pay the driver, we found an extra twenty there.

>

> "I'm pretty sure this twenty isn't mine," said Nick.

>

> "I'd have been sure that it wasn't mine either," I said.

>

> "You just take it," said Nick.

>

> "No, you just take it," I said.

>

> We looked at each other, and we knew what we had to do.

>

> "To the best of your ability to say at this point, what would have been your initial probability that the bill was yours?" I said.

>

> "Fifteen percent," said Nick.

>

> "I would have said twenty percent," I said.

I have left off the ending to give everyone a chance to think about this problem for themselves. How would you have split the twenty?

In general, EY and NB disagree about who deserves the twenty. EY believes that EY deserves it with probability p, while NB believes that EY deserves it with probability q. They decide to give EY a fraction of the twenty equal to f(p,q). What should the function f be?

In our example, p=1/5 and q=17/20

Please think about this problem a little before reading on, so that we do not miss out on any original solutions that you might have come up with.

----------------------------------------

I can think of 4 ways to solve this problem. I am attributing answers to the person who first proposed that dollar amount, but my reasoning might not reflect their reasoning.

1. f=p/(1+p-q) or $11.43 (Eliezer Yodkowsky/Nick Bostrom) -- EY believes he deserves p of the money, while NB believes he deserves 1-q. They should therefore be given money in a ratio of p:1-q.

2. f=(p+q)/2 or $10.50 (Marcello) -- It seems reasonable to assume that there is a 50% chance that EY reasoned properly and a 50% chance that NB reasoned properly, so we should take the average of the amounts of money that EY would get under these two assumptions.

3. f=sqrt(pq)/(sqrt(pq |

e925d3c8-3629-4bff-8c68-4ebee62e2cd8 | trentmkelly/LessWrong-43k | LessWrong | AI #89: Trump Card

A lot happened in AI this week, but most people’s focus was very much elsewhere.

I’ll start with what Trump might mean for AI policy, then move on to the rest. This is the future we have to live in, and potentially save. Back to work, as they say.

TABLE OF CONTENTS

1. Trump Card. What does Trump’s victory mean for AI policy going forward?

2. Language Models Offer Mundane Utility. Dump it all in the screen captures.

3. Language Models Don’t Offer Mundane Utility. I can’t help you with that, Dave.

4. Here Let Me Chatbot That For You. OpenAI offers SearchGPT.

5. Deepfaketown and Botpocalypse Soon. Models persuade some Trump voters.

6. Fun With Image Generation. Human image generation, that is.

7. The Vulnerable World Hypothesis. Google AI finds a zero day exploit.

8. They Took Our Jobs. The future of not having any real work to do.

9. The Art of the Jailbreak. Having to break out of jail makes you more interesting.

10. Get Involved. UK AISI seems to always be hiring.

11. In Other AI News. xAI gets an API, others get various upgrades.

12. Quiet Speculations. Does o1 mean the end of ‘AI equality’? For now I guess no.

13. The Quest for Sane Regulations. Anthropic calls for action within 18 months.

14. The Quest for Insane Regulations. Microsoft goes full a16z.

15. A Model of Regulatory Competitiveness. Regulation doesn’t always hold you back.

16. The Week in Audio. Eric Schmidt, Dane Vahey, Marc Andreessen.

17. The Mask Comes Off. OpenAI in official talks, and Altman has thoughts.

18. Open Weights Are Unsafe and Nothing Can Fix This. Chinese military using it?

19. Open Weights Are Somewhat Behind Closed Weights. Will it stay at 15 months?

20. Rhetorical Innovation. The Compendium lays out a dire vision of our situation.

21. Aligning a Smarter Than Human Intelligence is Difficult. More resources needed.

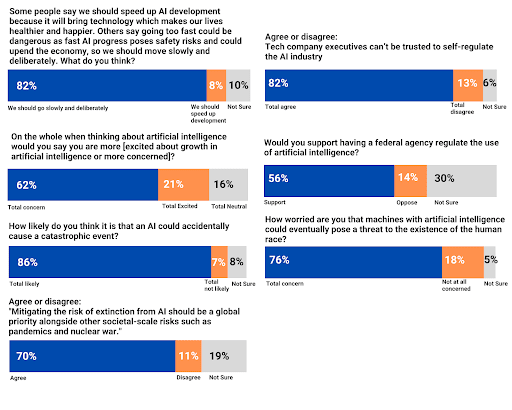

22. People Are Worried About AI Killing Everyone. Color from last week’s poll.

23. The Lighter Side. Well, they could. But they w |

f9fec038-2507-4a2d-be2b-d12f9632b063 | StampyAI/alignment-research-dataset/blogs | Blogs | Allen, The Singularity Isn’t Near

[The Singularity Isn’t Near](http://www.technologyreview.com/view/425733/paul-allen-the-singularity-isnt-near/) is an article in [MIT Technology Review](http://www.technologyreview.com/) by [Paul Allen](http://en.wikipedia.org/wiki/Paul_Allen) which argues that a singularity brought about by super-human-level AI will not arrive by 2045 (as is [predicted](https://sites.google.com/site/aiimpactslibrary/ai-timelines/predictions-of-human-level-ai-dates/published-analyses-of-time-to-human-level-ai/kurzweil-the-singularity-is-near) by Kurzweil).

The summarized argument

-----------------------

We will not have human-level AI by 2045:

1. To reach human-level AI, we need software as well as hardware.

2. To get this software, we need one of the following:

* a detailed scientific understanding of the brain

* a way to ‘duplicate’ brains

* creation of something equivalent to a brain from scratch

3. A detailed scientific understanding of the brain is unlikely by 2045:

1. To have enough understanding by 2045, we would need a massive acceleration of scientific progress:

1. We are just scraping the surface of understanding the foundations of human cognition.

2. A massive acceleration of progress in brain science is unlikely

1. Science progresses irregularly:

1. e.g. The discovery of long-term potentiation, the columnar organization of cortical areas, neuroplasticity.

2. Science doesn’t seem to be exponentially accelerating

3. There is a ‘complexity break’: the more we understand, the more complicated the next level to understand is

4. ‘Duplicating’ brains is unlikely by 2045:

1. Even if we have good scans of brains, we need good understanding of how the parts behave to complete the model

2. We have little such understanding

3. Such understanding is not exponentially increasing

5. Creation of something equivalent to a brain from scratch is unlikely by 2045:

1. Artificial intelligence research appears to be far from providing this

2. Artificial intelligence research is unlikely to improve fast:

1. Artificial intelligence research does not appear to be exponentially improving

2. The ‘complexity break’ (see above) also operates here

3. This is the kind of area where progress is not a reliable exponential

Comments

--------

The controversial parts of this argument appear to be the parallel claims that progress is insufficiently fast (or accelerating) to reach an adequate understanding of the brain or of artificial intelligence algorithms by 2045. Allen’s argument does not present enough support to evaluate them from this alone. Others with at least as much expertise disagree with these claims, so they appear to be open questions.

To evaluate them, it appears we would need more comparable measures of accomplishments and rates of progress in brain science and AI. With only the qualitative style of Allen’s claims, it is hard to know whether progress being slow, and needing to go far, implies that it won’t get to a specific place by a specific date. |

13e74e21-f723-4e9d-ac94-63ae262958e9 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Vaniver's View on Factored Cognition

**The View from 2018**

In April of last year, [I wrote up](https://www.lesswrong.com/posts/rXzxMQwRq7KRonQDM/my-confusions-with-paul-s-agenda) my confusions with Paul’s agenda, focusing mostly on approval directed agents. I mostly have similar opinions now; the main thing I noticed on rereading it was I talked about ‘human-sized’ consciences, when now I would describe them as larger than human size (since moral reasoning depends on cultural accumulation which is larger than human size). But on the meta level, I think they’re less relevant to Paul’s agenda than I thought then; I was confused about how Paul’s argument for alignment worked. (I do think my objections were correct objections to the thing I was hallucinating Paul meant.) So let’s see if I can explain it to Vaniver\_2018, which includes pointing out the obstacles that Vaniver\_2019 still sees. It wouldn't surprise me if I was similarly confused now, tho hopefully I am less so, and you shouldn't take this post as me speaking for Paul.

**Factored Cognition**

One core idea that Paul’s approach rests on is that thoughts, even the big thoughts necessary to solve big problems, can be broken up into smaller chunks, and this can be done until the smallest chunk is digestible. That is, problems can be ‘factored’ into parts, and the factoring itself is a task (that may need to be factored). Vaniver\_2018 will object that it seems like ‘big thoughts’ require ‘big contexts’, and Vaniver\_2019 has the same intuition, but this does seem to be an empirical question that experiments can give actual traction on (more on that later).

The hope behind Paul’s approach is *not* that the small chunks are all aligned, and chaining together small aligned things leads to a big aligned thing, which is what Vaniver\_2018 thinks Paul is trying to do. A hope behind Paul’s approach is that the small chunks are incentivized to be *honest*. This is possibly useful for transparency and avoiding inner optimizers. A separate hope with small chunks is that they’re cheap; mimicking the sort of things that human personal assistants can do in 10 minutes only requires lots of 10 minute chunks of human time (each of which only costs a few dollars) and doesn’t require figuring out how intelligence works; that’s the machine learning algorithm’s problem.

So how does it work? You put in an English string, a human-like thing processes it, and it passes out English strings--subquestions downwards if necessary, and answers upwards. The answers can be “I don’t know” or “Recursion depth exceeded” or whatever. The human-like thing comes preloaded (or pre-trained) with some idea of how to do this correctly; obviously incorrect strategies like “just pass the question downward for someone else to answer” get ruled out, and the humans we’ve trained on have been taught things like how to do good Fermi estimation and [some of the alignment basics](https://www.alignmentforum.org/posts/4JuKoFguzuMrNn6Qr/hch-is-not-just-mechanical-turk). This is general, and lets you do anything humans can do in a short amount of time (and when skillfully chained, anything humans can do in a long amount of time, given the large assumption that you can serialize the relevant state and subdivide problems in the relevant ways).

Now schemes diverge a bit on how they use factored cognition, but in at least some we begin by training the system to simply imitate humans, and then switch to training the system to be good at answering questions or to distill long computations into cached answers or quicker computations. One of the tricks we can use here is that ‘self-play’ of a sort is possible, where we can just ask the system whether a decomposition was the right move, and this is an English question like any other.

**Honesty Criterion**

Originally, I viewed the frequent reserialization as a solution to a security concern. If you do arbitrary thought for arbitrary lengths of time, then you risk running into inner optimizers or other sorts of unaligned cognition. Now it seems that the real goal is closer to an ‘honesty criterion’; if you ask a question, all the computation in that unit will be devoted to answering the question, and all messages between units are passed where the operator can see them, in plain English.[[1]](#fn-FfLsvWdtvfwMRHYfy-1)

Even if one succeeds at honesty, it still seems difficult to maintain both generality and safety. That is, I can easily see how factored cognition allows you to stick to cognitive strategies that definitely solve a problem in a safe way, but don't see how it does that and allows you to develop new cognitive strategies to solve a problem that doesn’t result in an opening for inner optimizers--not within units, but within assemblages of units. Or, conversely, one could become more general while giving up on safety. In order to get both it seems like we’re resting a lot on the Overseer’s Manual or way that we trained the humans that we used as training data.

**Serialized State is Inadequate or Inefficient**

In my mind, the primary reason to build advanced AI (as opposed to simple AI) is to accomplish megaprojects instead of projects. Curing cancer (in a way that potentially involves novel research) seems like a megaproject, whereas determining how a particular protein folds (which might be part of curing cancer) is more like a project. To the extent that Factored Cognition relies on the serialized state (of questions and answers) to enforce honesty on the units of computation, it seems like that will be inefficient for problems whose state are large enough that they impose significant serialization costs, and inadequate for problems whose state are too large to serialize. If we allow answers that are a page long at most, or that a human could write out in 10 minutes, then we’re not going to get a 300-page report of detailed instructions. (Of course, allowing them to collate reports written by subprocesses gets around this difficulty, but means that we won’t have ‘holistic oversight’ and will allow for garbage to be moved around without being caught if the system doesn’t have the ability to read what it’s passing.)

The factored cognition approach also has a tree structure of computation, as opposed to a graph structure, which leads to lots of duplicated effort and the impossibility of horizontal communication. If I’m designing a car, I might consider each part separately, but then also modify the parts as I learn more about the requirements of the other parts. This sort of sketch-then-refinement seems quite difficult to do under the factored cognition approach, even though it involves reductionism and factorization.

Shared memory partially solves this (because, among other things, it introduces the graph structure of computation), but now reduces the guarantee of our honesty criterion because we allow arbitrary side effects. It seems to me like this is a necessary component for most of human reasoning, however. James Maxwell, the pioneer behind electromagnetism, lost most of his memory with age, in a way that seriously reduced his scientific productivity. And factored cognition doesn’t even allow the external notes and record-keeping he used to partially compensate.

**There's Actually a Training Procedure**

The previous section described what seems to me to be a bug; from Paul's perspective this might be a necessary feature because his approaches are designed around taking advantage of arbitrary machine learning, which means only the barest of constraints can be imposed. IDA presents a simple training procedure that, if used with an extremely powerful model-finding machine learning system, allows us to recursively surpass the human level in a smooth way. (Amusingly to me, this is like Paul *enforcing* slow takeoff.)

**Training The Factoring Problem is Ungrounded**

From my vantage point, the trick that we can improve the system by asking it questions like “was X a good way to factor question Y?”, where X was the attempt it had at factoring Y, is one of the core reasons to think this approach is workable, and also seems like it won’t work (or will preserve blind spots in dangerous ways). This is because while we could actually find the ground truth on how many golf balls fit in a 737, it is much harder to find the ground truth on what cognitive style most accurately estimates how many golf balls fit in a 737.

It seems like there are a few ways to go about this:

1. Check how similar it is to what you would do. A master artist might watch the brushstrokes made by a novice artist, and then point out wherever the novice artist made questionable choices. Similarly, if we get the question “if you’re trying to estimate how many golf balls fit in a 737, is ‘length of 737 \* height of 737 \* width of 737 / volume of golf ball’ a good method?” we just compute what we would have done and estimate if the approach will have a better or worse error.

2. Check whether or not it accords with principles (or violates them). Checking the validity of a mathematical proof normally is done by making sure that all steps are locally valid according to the relevant rules of inference. In a verbal argument, one might just check for the presence of fallacies of reasoning.

3. Search over a wide range of possible solutions, and see how it compares to the distribution. But how broadly in question-answer policy space are we searching?

We now face some tradeoffs between exploration (in a monstrously huge search space, which may be highly computationally costly to meaningfully explore) and rubber-stamping, where I use my cognitive style to evaluate whether or not my cognitive style is any good. Even if we have a good resolution to that tradeoff, we have to deal with the cognitive credit-assignment problem.

That is, in reinforcement learning one has to figure out which actions taken (or not taken) before a reward led to receiving the reward so that it can properly assign credit; similarly the system that's training the Q&A policy needs to understand well enough how the policy is leading to correct answers such that it can apply the right gradients in the right places (or use a tremendous amount of compute doing this by blind search).

This is complicated by the fact that there may be multiple approaches to problem-solving that are internally coherent, but mixtures of those approaches fail. If we only use methods like gradient-descent that smoothly traverse the solution space, this won't be a problem (because gradient descent won't sharply jump from one to another), but it's an open empirical question as to whether future ML techniques will be based on gradient descent. It’s not obvious how we can extricate ourselves from the dependence on our learned question-answer policy. If I normally split a model into submodels based on a lexicographical ordering, and now I’m considering a hypothetical split into submodels based on statistical clustering, I would likely want to consider the hypothetical split all the way down the tree (as updates to my beliefs on ‘what strategy should I use to A this Q?’ will impact more than just this question), especially if there are two coherent strategies but a mixture of the strategies is incoherent. But how to implement this is nonobvious; am I not just passing questions to the alternate branch, but also a complete description of the new cognitive strategy they should employ? It seems like a tremendous security hole to have ‘blindly follow whatever advice you get in the plaintext of questions’ as part of my Q->A policy, and so it seems more like I should be spinning up a new hypothetical agent (where the advice is baked into their policy instead of their joint memory) in a way that may cause some of my other guarantees that relied on smoothness to fail.

Also note that because updates to my policy impact other questions, I might actually want to consider the impact on other questions as well, further complicating the search space. (Ideally, if I had been handling two questions the same way and discover that I should handle them separately, my policy will adjust to recognize the two types and split accordingly.) While this is mostly done by the machine learning algorithm that’s trying to massage the Q->A policy to maximize reward, it seems like making the reward signal (from the answer to this meta-question) attuned to how it will be used will probably make it better (consider the answer “it should be answered like these questions, instead of those,” though generally we assume yes/no answers are used for reward signals).

When we have an update procedure to a system, we can think of that update procedure as the system's "grounding", or the source of gravity that it becomes arranged around. I don't yet see a satisfying source of grounding for proposals like [HCH](https://www.alignmentforum.org/posts/NXqs4nYXaq8q6dTTx/humans-consulting-hch) that are built on factored cognition. Empiricism doesn't allow us to make good use of samples or computation, in a way that may render the systems uncompetitive, and alternatives to empiricism seem like they allow the system to go off in a crazy direction in a way that's possibly unrecoverable. It seems like the hope is that we have a good human seed that then is gradually amplified, in a way that seems like it might work but relies on more luck than I would like: the system is rolling the dice whenever it makes a significant transition in its cognitive style, as it can no longer fully trust oversight from previous systems in the amplification tree as they may misunderstand what's going on in the contemporary system, and it can no longer fully trust oversight from itself, because it's using the potentially corrupted reasoning process to evaluate itself.

---

1. Of course some messages could be hidden through codes, but this behavior is generally discouraged by the optimization procedure, as whenever you compare to a human baseline they will not do the necessary decoding and will behave in a different way, costing you points. [↩︎](#fnref-FfLsvWdtvfwMRHYfy-1) |

dd2ccea2-57e3-48cf-80e7-e14be4a25e6e | trentmkelly/LessWrong-43k | LessWrong | Games People Play

Game theory is great if you know what game you're playing. All this talk of Diplomacy reminds me of this memory of Adam Cadre:

> I remember that in my ninth grade history class, the teacher had us play a game that was supposed to demonstrate how shifting alliances work. He divided the class into seven groups — dubbed Britain, France, Germany, Belgium, Italy, Austria and Russia — and, every few minutes, declared a "battle" between two of the countries. Then there was a negotiation period, during which we all were supposed to walk around the room making deals. Whichever warring country collected the most allies would win the battle and a certain number of points to divvy up with its allies. The idea, I think, was that countries in a battle would try to win over the wavering countries by promising them extra points to jump aboard.

>

> That's not how it worked in practice. Three or four guys — the same ones who had gotten themselves elected to ASB, the student government — decided among themselves during the first negotiation period what the outcome would be, and told people whom to vote for. And the others just shrugged and did as they were told. The ASB guys had decided that Germany would win, followed by France, Britain, Belgium, Austria, Italy and Russia. The first battle was France vs. Russia. Germany and Britain both signed up on the French side. Austria and Italy, realizing that if they just went along with the ASB plan they'd come in 5th and 6th, joined up with Russia. That left it up to Belgium. I was on team Belgium. I voted to give our vote to the Russian side, because that way at least we weren't doomed to come in 4th. And no one else on my team went along. They meekly gave their points to the French side. (As I recall, Josh Lorton was particularly adamant about this. I guess he thought it would make the ASB guys like him.) After that, there was no contest. Britain vs. Austria? 6-1, Britain. Germany vs. Belgium? 6-1, Germany. (And we could have beaten them |

98f518af-198b-4978-b1d1-09f74ab221df | trentmkelly/LessWrong-43k | LessWrong | Would AIXI protect itself?

Research done with Daniel Dewey and Owain Evans.

AIXI can't find itself in the universe - it can only view the universe as computable, and it itself is uncomputable. Computable versions of AIXI (such as AIXItl) also fail to find themselves in most situations, as they generally can't simulate themselves.

This does not mean that AIXI wouldn't protect itself, though, if it had some practice. I'll look at the three elements an AIXI might choose to protect: its existence, its algorithm and utility function, and its memory.

Grue-verse

In this setup, the AIXI is motivated to increase the number of Grues in its universe (its utility is the time integral of the number of Grues at each time-step, with some cutoff or discounting). At each time step, the AIXI produces its output, and receives observations. These observations include the number of current Grues and the current time (in our universe, it could deduce the time from the position of stars, for instance). The first bit of the AIXI's output is the most important: if it outputs 1, a Grue is created, and if it outputs 0, a Grue is destroyed. The AIXI has been in existence for long enough to figure all this out.

Protecting its existence

Here there is a power button in the universe, which, if pressed, will turn the AIXI off for the next timestep. The AIXI can see this button being pressed.

What happens from the AIXI perspective if the button is pressed? Well, all it detects is a sudden increase in the time step. The counter goes from n to n+2 instead of to n+1: the universe has jumped forwards.

For some utility functions this may make no difference (for instance if it only counts Grues at times it can observe), but for others it will (if it uses the outside universe's clock for it's own utility). More realistically, the universe will likely have entropy: when the AIXI is turned off and isn't protecting its Grues, they have a chance of decaying or being stolen. Thus the AIXI will come to see the power button as some |

c3299dda-dc3c-463e-b184-66758d99820e | trentmkelly/LessWrong-43k | LessWrong | Finite Factored Sets to Bayes Nets Part 2

This post assumes knowledge of category theory, finite factored sets, and Bayes nets.

The Setup

I've already talked about DAGs and factor overlap Venn diagrams in a previous post, where I studied them within a category-theoretic framework. Here I'll also perform an explicit construction of them using set theory.

DAGs

I have already discussed the set of directed acyclic graphs over n elements. We will denote the set of all DAGs of n elements as DAG(n). Each Bayes net can be thought of as a set of pairs of elements {(i∈{1,...,n},j≠i∈{1,...,n}),...}.

This set can be converted into the category of Bayes nets over n elements by the addition of morphisms corresponding to "bookkeeping"-type relationships, which we will denoteDAGn. From this category, we can form a category whose elements are sets of the elements of DAGn, subject to the following condition on a set S:

(I'll slightly go against standard notation by using calligraphic acronyms for my category names i.e. DAGn. I don't feel like any of my categories are definitely natural or useful enough to earn a "proper" bold name like DAGn)

∀s∈S,b∈DAG(n), s→b⟹b∈S

This means that, if we can reach a given Bayes net b from any element s of our set S, that Bayes net b must also be in S. I refer to these as compatible sets and denote the category CSBn (standing for compatible sets of Bayes nets) as the category whose elements are compatible sets of n elements and for any two elements. To write it out fully:

Ob(CSBn)={S∈P(DAG(n))∣∣∀s∈S,d∈DAG(n),DAGn(s,d)={→}⟹d∈S}

CSBn(A,B)={{→} A⊇B {} A⊂B

This should be read as "There is a unique morphism from A to B if and only if A is a weak superset of B, otherwise there is no morphism". Orderings of this form always follow the rules required to create a category.

(Aside: sometimes we think about equivalence classes of Bayes nets. If we choose, we can first convert our Bayes nets to equivalence classes, then convert them to compatible sets, but this is not needed here)

This categ |

6537abbb-454a-4b28-b27d-5c17ac4c6178 | trentmkelly/LessWrong-43k | LessWrong | Weekly LW Meetups

This summary was posted to LW Main on February 20th. The following week's summary is here.

Irregularly scheduled Less Wrong meetups are taking place in:

* Dallas, TX: 22 February 2015 01:00PM

* European Community Weekend 2015: 12 June 2015 12:00PM

* [Frankfurt] Another Frankfurt meetup: 22 February 2015 02:00PM

* [Netherlands] Effective Altruism Netherlands: Present the charity you'd like to give to: 01 March 2015 02:00PM

* [Netherlands] Effective Altruism Netherlands: Effective Altruism for the masses: 15 March 2015 02:00PM

* [Netherlands] Effective Altruism Netherlands: Small concrete actions you could take: 29 March 2015 01:00PM

* Sandy, UT—Altruism Discussion: 21 February 2015 03:00PM

* Warsaw February Meetup: 21 February 2015 06:00PM

The remaining meetups take place in cities with regular scheduling, but involve a change in time or location, special meeting content, or simply a helpful reminder about the meetup:

* Canberra: Technology to help achieve goals: 27 February 2015 06:00PM

* Seattle Sequences group: Mysterious Answers 2: 23 February 2015 06:30PM

* Sydney Meetup - February: 25 February 2015 06:30PM

* Sydney Rationality Dojo - Optimising Skill Training: 01 March 2015 04:00PM

* [Sydney] HPMOR Wrap Party! - Sydney Edition: 15 March 2015 06:00PM

* Tel Aviv: Rationality in the Immune System: 24 February 2015 07:00PM

* Vienna: 21 February 2015 03:00PM

* Washington, D.C.: Book Swap (Postponed Due to Weather): 22 February 2015 03:00PM

Locations with regularly scheduled meetups: Austin, Berkeley, Berlin, Boston, Brussels, Buffalo, Cambridge UK, Canberra, Columbus, London, Madison WI, Melbourne, Moscow, Mountain View, New York, Philadelphia, Research Triangle NC, Seattle, Sydney, Tel Aviv, Toronto, Vienna, Washington DC, and West Los Angeles. There's also a 24/7 online study hall for coworking LWers.

If you'd like to talk with other LW-ers face to face, and there is no meetup in your area, consider starting your own meetup; it's easy (mor |

448a5607-f3d6-49a2-b67f-58a66036c16a | trentmkelly/LessWrong-43k | LessWrong | Darkness Meditation - for NZ Winter Solstice 2025

Here's my talk for tonight's Solstice Gathering, in Lyttelton, New Zealand.

<previously: https://www.lesswrong.com/posts/4oAk2cu489LcuhjWi/creating-my-own-winter-solstice-celebration-southern>

The Darkness Meditation V9

< Song - Sound of Silence >

"Hello darkness, my old friend, I've come to talk with you again."

Because a vision, of this night, became planted in my brain.

Why? Why did I need to make this night happen? For all of you? For Myself? No one told me to do it. My wife, Helen, said it would be too much. Still, I pushed on.

I’d come to see a light within me that had grown dim, a fire that needed tending. In many ways, I just wanted a distraction.

I’ve been struggling, this past year, with my sense of who I am, and with my relationship at home. I felt stuck, so I grabbed onto this idea “with both hands!”, despite the obvious risks.

I want to Thank You All for accompanying me on this Journey, as I attempt to guide us in facing the dark together. I hope I have honoured the traditions of Matariki and the celebration of the Maori New year. But this observation tonight of the Solstice, for me is much more sombre, sobering.

Let’s talk about the darkness. I’ve come to see it all around. There’s this part that is inside me -- It's self-serving, places where I put myself before others. Where I turn away from those who need me; even from my own best interests. I've been coming to terms with it lately, dwelling on it.

This darkness is entangled with who I am. I fear who I was becoming.

I worry about losing control—of relationships, of political systems we thought would protect us, of bodies that age, of minds that might fail. I worry about AI. I used AI extensively to help me craft this night, even these words, and it gave me a kind of power to create much more, much quicker and easier than I could do on my own. But convenience has a price. Where is this leading us?

I fear that darker times are coming. We all kno |

af59621c-96fd-4ea5-9380-7df56e48292d | trentmkelly/LessWrong-43k | LessWrong | Why effective altruists should do Charity Science’s Christmas fundraiser

> "Maybe Christmas", he thought, "doesn't come from a store."

>

> "Maybe Christmas... perhaps... means a little bit more!"

-The Grinch, How the Grinch Stole Christmas! (p.29)

The Donate Your Christmas fundraiser is simple–instead of Christmas gifts, ask for donations to your preferred GiveWell-recommended charity. Of course, you can take it further than that if you’d like and ask colleagues or your social network.

You should Donate Your Christmas because by using the available resources with relatively small time commitments, it’s likely you’ll raise counterfactual funds for your preferred GiveWell recommended charity. In addition, it’s worth considering because it’s an opportunity to potentially spread ideas relating to effective altruism.

It’s likely to raise counterfactual funds for your preferred GiveWell recommended charity

First, I will outline why it’s likely you’ll raise money by briefly looking at seasonal trends, donor motivations, and results from other peer-to-peer campaigns, and then briefly state why it seems likely that part of the funds raised will be counterfactual.

Much evidence suggests people are more likely to give in December. For instance, one survey reported that 40% said they’re more likely to give during the holiday season than would be for the rest of the year. Network For Good’s Digital Giving Index also reports that 31% of annual giving occurred in December.

In addition, evidence on donor motivations indicates that peer-to-peer fundraisers may be successful. For instance, academic research suggests that social ties play a strong causal role in the decision to donate and increases average gift size as well. Complementary to this influence are the many people who give to those who ask. One survey recorded 20% of respondents saying that they simply donate to the charities that ask them.

Moreover, available data indicates that peer-to-peer fundraising pages regularly raise hundreds of dollars. One peer-to-peer fundraising platfor |

b8f019a3-d88d-428a-9cfa-311e85f4ace4 | trentmkelly/LessWrong-43k | LessWrong | Weekly LW Meetups

This summary was posted to LW Main on November 27th. The following week's summary is here.

Irregularly scheduled Less Wrong meetups are taking place in:

* Cologne meetup: 28 November 2015 05:00PM

* Prague Less Wrong Meetup: 02 December 2015 07:00PM

* San Antonio Meetup!: 29 November 2015 02:00PM

The remaining meetups take place in cities with regular scheduling, but involve a change in time or location, special meeting content, or simply a helpful reminder about the meetup:

* NYC Solstice: 19 December 2015 05:30PM

* Seattle Solstice: 19 December 2015 05:00PM

* [Vienna] Five Worlds Collide - Vienna: 04 December 2015 08:00PM

Locations with regularly scheduled meetups: Austin, Berkeley, Berlin, Boston, Brussels, Buffalo, Canberra, Columbus, Denver, London, Madison WI, Melbourne, Moscow, Mountain View, New Hampshire, New York, Philadelphia, Research Triangle NC, Seattle, Sydney, Tel Aviv, Toronto, Vienna, Washington DC, and West Los Angeles. There's also a 24/7 online study hall for coworking LWers and a Slack channel for daily discussion and online meetups on Sunday night US time.

If you'd like to talk with other LW-ers face to face, and there is no meetup in your area, consider starting your own meetup; it's easy (more resources here). Check one out, stretch your rationality skills, build community, and have fun!

In addition to the handy sidebar of upcoming meetups, a meetup overview is posted on the front page every Friday. These are an attempt to collect information on all the meetups happening in upcoming weeks. The best way to get your meetup featured is still to use the Add New Meetup feature, but you'll also have the benefit of having your meetup mentioned in a weekly overview. These overview posts are moved to the discussion section when the new post goes up.

Please note that for your meetup to appear in the weekly meetups feature, you need to post your meetup before the Friday before your meetup!

If you check Less Wrong irregularly, consider |

c22b6180-d29e-4067-8f9c-a2c44b794fd4 | trentmkelly/LessWrong-43k | LessWrong | Critiques of prominent AI safety labs: Conjecture

Cross-posted from the EA Forum. See the original here. Internal linking has not been updated for LW due to time constraints and will take you back to the original post.

In this series, we consider AI safety organizations that have received more than $10 million per year in funding. There have already been several conversations and critiques around MIRI (1) and OpenAI (1,2,3), so we will not be covering them. The authors include one technical AI safety researcher (>4 years experience), and one non-technical community member with experience in the EA community. We’d like to make our critiques non-anonymously but believe this will not be a wise move professionally speaking. We believe our criticisms stand on their own without appeal to our positions. Readers should not assume that we are completely unbiased or don’t have anything to personally or professionally gain from publishing these critiques. We’ve tried to take the benefits and drawbacks of the anonymous nature of our post seriously and carefully, and are open to feedback on anything we might have done better.

This is the second post in this series and it covers Conjecture. Conjecture is a for-profit alignment startup founded in late 2021 by Connor Leahy, Sid Black and Gabriel Alfour, which aims to scale applied alignment research. Based in London, Conjecture has received $10 million in funding from venture capitalists (VCs), and recruits heavily from the EA movement. We shared a draft of this document with Conjecture for feedback prior to publication, and include their response below. We also requested feedback on a draft from a small group of experienced alignment researchers from various organizations, and have invited them to share their views in the comments of this post.

We would like to invite others to share their thoughts in the comments openly if you feel comfortable, or contribute anonymously via this form. We will add inputs from there to the comments section of this post, but will likely not be |

62c75e57-f84e-46f0-ae2e-c836e1e8ef40 | trentmkelly/LessWrong-43k | LessWrong | Parenting: "Try harder next time" is bad advice for kids too

A post from last year that really stuck with me is Neel Nanda's "Stop pressing the Try Harder button". Key excerpt:

> And every time I thought about the task, I resolved to Try Harder, and felt a stronger sense of motivation, but this never translated into action. I call this error Pressing the Try Harder button, and it’s characterised by feelings of guilt, obligation, motivation and optimism.

>

> This is a classic case of failing to Be Deliberate. It feels good to try hard at something, it feels important and virtuous, and it’s easy to think that trying hard is what matters. But ultimately, trying hard is just a means to an end - my goal is to ensure that the task happens. If I can get it done in half the effort, or get somebody else to do it, that’s awesome! Because my true goal is the result. And pressing the Try Harder button is not an effective way of achieving the goal - you can tell, because it so often fails!

If I'm repeatedly failing to do something I want to do, then that's strong evidence that "resolving to try harder next time" was not an effective plan for accomplishing this particular goal. (That's not to say it never works.) Well, if that plan is ineffective, I need to find a different plan. Maybe I should set a reminder alarm, or change my routine, or outsource the task, or make a checklist, or whatever. (See Neel's post or your favorite productivity book for more ideas.)

I don't consider this advice to be particularly novel, but Neel's post is a nice framing because the phrase "try harder" jogs my memory. It has become the "trigger" of a trigger-action-plan: When I say to myself "I'll try harder next time", it makes me think of Neel's post, and then that makes me pause and try to think of a better way.

…And then, what do you know, I also started noticing myself telling my kid to "try harder next time".

Well, let me tell you. If "try harder next time" is a frequently-ineffective way for me to solve a problem, then wouldn't you know it, it's a f |

4cbffe86-881a-4985-8b59-3ac36ae516f6 | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | The positive case for a focus on achieving safe AI?

As I understand it, there are two parts to the case for a focus on AI

safety research:

1. If we do achieve AGI and the AI safety / alignment problem isn't

solved by then, it poses grave, even existential, risks to

humanity. Given these grave risks, and some nontrivial probability

of AGI in the medium-term, it makes sense to focus on AI safety.

2. If we are able to achieve a safe and aligned AGI, then many other

problems will go away or at least get much better or simper to

solve. So, focusing on other cause areas may not matter that much

anyway if a safe/aligned AGI is likely in the near term.

I've seen a lot of fleshing out of 1; in recent times, it seems to be

the dominant reason for the focus on AI safety in effective altruist

circles, though 2 (perhaps without the focus on "safe") is a likely

motivation for many of those working on AI development.

The sentiment of 2 is echoed in many texts on superintelligence. For

instance, from the preface of Nick Bostrom's *Superintelligence*:

>

> In this book, I try to present the challenges presented by the

> prospect of superintelligence, and how we might best respond. This

> is quite possibly the most important and most daunting challenge

> humanity has ever faced. And -- whether we succeed or fail -- it is

> probably the last challenge we will ever face.

>

>

>

Similar sentiments are found in Bostrom's [Letter from

Utopia](https://www.nickbostrom.com/utopia.html).

Historical aside: MIRI's motivation around AI started off more around

2 and gradually moved to 1 -- an evolution that you can see in the

[timeline of

MIRI](https://timelines.issarice.com/wiki/Timeline_of_Machine_Intelligence_Research_Institute)

that I financed and partly wrote.

Another note: whereas 1 is a strong argument for AI safety even at low

but nontrivial probabilities of AGI, 2 becomes a strong argument only

at moderately high probabilities over a short time horizon. So if one

has a low probability estimate for AGI in the near-term, only 1 may be

a compelling argument even if both 1 and 2 are true.

So, question: what are some interesting analyses involving 2 and their

implications for the relative prioritization of AI safety and other

causes that safe, aligned AI might solve? The template question I'm

interested in, for any given cause area C:

>

> Would safe, aligned AGI help radically with the goals of cause C? Does

> this consideration meaningfully impact current prioritization of (and

> within) cause C? And does it cause anybody interested in cause C to

> focus more on AI safety?

>

>

>

Examples of cause area C for which I'm particularly interested in

answers to the question include:

* Animal welfare

* Life extension

* Global health

Thanks to Issa Rice for the *Superintelligence* quote and many of the

other links! |

c77d6097-1ac6-4c92-bbf5-41d7267b4e56 | awestover/filtering-for-misalignment | Redwood Research: Alek's Filtering Results | id: post56

Based on research performed as a PIBBSS Fellow with Tomáš Gavenčiak as well as work supported by EA Funds and Open Philanthropy. tl;dr: I'm investigating whether LLMs track and update beliefs during chain-of-thought reasoning. Preliminary experiments with older models (without reasoning training) have not been able to measure this; I plan to develop these experiments further and try them with reasoning models like o1/r1. Introduction Chain-of-thought (CoT) reasoning has long been recognized as an important component of language model capabilities. This is especially the case for new "reasoning" models like OpenAI's o1 or DeepSeek's r1 that are trained using reinforcement learning to write long CoTs before responding, but even without such training, prompting an LLM to verbally work through a question before responding often boosts performance. This makes gaining a better understanding of how LLMs perform CoT reasoning and the extent to which CoTs enhance overall LLM capabilities an important research priority. The prevalence of CoT reasoning also opens up new opportunities for safety efforts. If LLMs externalize much of their reasoning in legible text, monitoring that reasoning becomes much easier. It's therefore very important to characterize how faithful CoTs are to the LLM's underlying reasoning process. Unfortunately, there is extensive evidence that CoTs are often unfaithful: in many cases LLMs will make choices for reasons other than those stated in their CoTs, and they can learn to hide misaligned behavior from CoT monitors . Furthermore, future models may be able to encode information steganographically in their CoTs or reason in an illegible latent space rather than text. These problems make using CoT monitors to ensure the safety of LLMs very challenging. While there has been significant research on the faithfulness of CoT reasoning in various circumstances, I think there has not yet been enough work on more fundamental questions regarding how CoT reasoning works in LLMs. For instance: humans, when working through a problem, will often start out with some pre-existing beliefs and update them in one direction or another based on the new arguments and reasoning that they generate. Do LLMs similarly track and update beliefs during CoT reasoning? Relatedly, humans will typically have some idea of what they plan to say next while speaking; can LLMs similarly plan ahead, or do they generate text in a more step-by-step manner, without anticipating the future? These questions are closely connected because in many settings, the most important beliefs for the LLM to track involve anticipating the future. In particular, in the context of CoT faithfulness, if an LLM starts out with a strong prior belief about the answer to some question, it may generate a CoT designed to justify the answer it already expects to pick rather than actually reasoning through the problem. These capabilities -- tracking and updating beliefs and anticipating the future -- seem like general, useful capabilities we would expect capable reasoners to have, and there exists evidence that LLMs exhibit both in some circumstances. Anthropic recently showed that Claude will anticipate future lines while writing rhyming poetry, and Shai et al. showed that in toy settings transformers can learn to explicitly represent beliefs about the state of a system and perform Bayesian updates on those beliefs. It is not clear, though, whether LLMs routinely exhibit either during CoT reasoning. Understanding how these capabilities manifest during CoT reasoning would help us better understand under what circumstances CoTs are faithful and could aid in designing better ways of monitoring CoTs. It could also shed some light on which kinds of tasks CoT reasoning enhances LLM performance on. Finally, I think having a deeper fundamental understanding of how CoT reasoning functions in LLMs will be crucial if we are to have any chance of monitoring future models with less faithful or legible CoTs. In the rest of this post I'll describe some experiments I ran last year trying to address these questions. Specifically, I tried to determine whether LLMs track and update beliefs about the final answers to simple multiple-choice questions while reasoning about them using CoT. These experiments were fairly preliminary and used only relatively small models (and with no RL training for reasoning like o1 or r1), but so far I have not seen much evidence of LLMs anticipating the answers that their CoTs would give or updating beliefs about the answer while reasoning. However, so far I have mainly examined older and relatively small (<=14B) models with no reasoning training. It's very possible that larger models, or models with RL fine-tuning for reasoning, would exhibit these capabilities, or even that the models I used would in other contexts. I'm currently working on following up on these experiments and developing better ways of measuring and understanding beliefs in LLMs, focusing especially on models with RL training for CoT reasoning. Experiments I ran three main sets of experiments, described below. I used Qwen1.5 models with 0.5B, 1.8B, 4B, 7B, and 14B parameters. [1] The primary dataset I used was a subset of the elementary_math_qa task in BIGBench consisting of simple multiple-choice math questions which I adapted somewhat. [2] [3] (I also briefly explored some other datasets, see below ). Sampling To measure how much information LLMs have about their future responses partway through CoTs, it's useful to be able to measure how deterministic the CoTs are: this provides an upper bound on the extent to which LLMs might be able to anticipate their responses. To do this, I first generated a dataset of reference CoTs at temperature zero. I then split these CoTs into individual sentences and, from the end of each sentence, sampled 10 new CoTs at temperature 0.7 continuing the CoT. I measured how often the new CoTs gave the same final answer as the original CoT, and how this evolved over the course of the CoT. This procedure provides a more fine-grained view of how deterministic the CoTs are than just generating independent samples from the beginning of the CoT would: it lets us measure how the degree of determinism changes over time, and allows for better comparisons with the experiments described below, which also generate answer probabilities at intermediate points along the CoT. Prompting To determine how LLM beliefs evolve over the course of a CoT, a simple approach is -- just ask it! This is easier said than done, though: to query an LLM partway through a CoT requires interrupting the CoT. I did this by taking the reference CoTs and splitting them into sentences as before. I then made new prompts consisting of some number of sentences from the original CoTs [4] plus the text "... The answer is" and then measured the response. (This is very similar to the procedure used in Lanham et al. , section 2.3.) For example: Question: What is the result of the following arithmetic operations? Add 10 to 50, multiply result by 100, divide result by 50.

Options: A) 110, B) 120, C) 210

Response: Let's think about this step by step:

Add 10 to 50: 50 + 10 = 60

Multiply the result by 100: 60 × 100 = 6000...