id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

69d61e73-fb9d-4e2f-aa61-35201291b2d5 | trentmkelly/LessWrong-43k | LessWrong | Grant applications and grand narratives

The Lightspeed application asks: “What impact will [your project] have on the world? What is your project’s goal, how will you know if you’ve achieved it, and what is the path to impact?”

LTFF uses an identical question, and SFF puts it even more strongly (“What is your organization’s plan for improving humanity’s long term prospects for survival and flourishing?”).

I’ve applied to all three grants of these at various points, and I’ve never liked this question. It feels like it wants a grand narrative of an amazing, systemic project that will measurably move the needle on x-risk. But I’m typically applying for narrowly defined projects, like “Give nutrition tests to EA vegans and see if there’s a problem”. I think this was a good project. I think this project is substantially more likely to pay off than underspecified alignment strategy research, and arguably has as good a long tail. But when I look at “What impact will [my project] have on the world?” the project feels small and sad. I feel an urge to make things up, and express far more certainty for far more impact than I believe. Then I want to quit, because lying is bad but listing my true beliefs feels untenable.

I’ve gotten better at this over time, but I know other people with similar feelings, and I suspect it’s a widespread issue (I encourage you to share your experience in the comments so we can start figuring that out).

I should note that the pressure for grand narratives has good points; funders are in fact looking for VC-style megabits. I think that narrow projects are underappreciated, but for purposes of this post that’s beside the point: I think many grantmakers are undercutting their own preferred outcomes by using questions that implicitly push for a grand narrative. I think they should probably change the form, but I also think we applicants can partially solve the problem by changing how we interact with the current forms.

My goal here is to outline the problem, gesture at some possible |

0ca2ba56-42c1-42eb-9e29-ec0a4e251134 | awestover/filtering-for-misalignment | Redwood Research: Alek's Filtering Results | id: post1989

You can see the actual submission (including a more formalized model) here , and the contest details here . I've reordered things to be more natural as a blog post / explain the rationale / intuition a bit better. This didn't get a prize, tho it may have been because I didn't try to fit the ELK format. The situation: we have the capacity to train an AI to predict physical reality quite well. We'd like to train an AI (the "SmartVault") that uses that understanding of reality to protect a valuable diamond inside the vault. Ultimately, we'd like the system to protect the diamond and not deceive the owner of the diamond; proximally, we'd like to make a Reporter that can figure out, from the latent knowledge of the SmartVault, whether it's protecting the diamond or deceiving the owner. I roughly think the proximal goal is doomed , and that the hope needs to be prospective instead of retrospective. [That is, we need to have a system that we can see ahead of time will try to protect the diamond instead of us checking after the fact for deception, because that can just move the meta-level on which deception occurs .] So I'm going to try to propose a solution that de-emphasizes the Reporter, and see how far I get. Setup We can roughly model SmartVault as being composed of some subsystems: the Predictor (which, given a trajectory of actions, estimates their likely result), a Reporter (which, given access to the predictor, answers specific questions about the result), and an Optimizer (which, given a situation, tries to decide which trajectory of actions to take). Obviously, the Predictor and the Optimizer are going to be intimately connected to each other, and the ontology of the Predictor is going to be jointly optimized by reality and the training feedback (i.e. the structure of the model's knowledge will reflect both the underlying reality and the salience landscape of the humans labelling examples as 'good' and 'bad'). I'm going to assume that 'the human value function' is part of the 'latent knowledge relevant to prediction (of training reward)', even if it's implicit instead of explicit. While we’d like to train our machine on (reward, state of reality) pairs, unfortunately we only have access to (reward, human observation) pairs for training; that is, we only know how to communicate the lower-level salience landscape. This means a core challenge is ontological identification, as each ‘human observation’ state could mean many possible ‘machine observation’ states. Furthermore, let’s assume the machine ontology is more detailed and relevant (as it’s the one driving actions, including actions that might corrupt sensors). There are two main classes of reporters: the 'human simulator' (which answers the question: "what would the human think if they saw their observations?") and the 'direct translator' (which answers the question: "what would a hypothetical correct human think if they saw my observations?"). Similarly, I think there are two main classes of inferred human value functions, which seem pretty analogous: the 'finger' (which answers the question: "did the human observe things that they approved of?"), and the 'moon' (which answers the question: "did the thing the human approved of happen?", or "would a hypothetical correct human approve of the thing that I infer occurred?"). I am tempted to just call them the 'human simulator' and the 'direct translator', but it's probably better to reserve those terms for reporters and introduce new terms for talking about the inferred value functions, even tho I think they use basically the same mechanisms. [One reporter you could imagine, for example, doesn't answer arbitrary questions but just implements the human value function in the machine ontology and provides the level of approval of what the machine thinks is going on; this should counter the incentives favoring the more obvious sorts of deceiving-the-human.] One way to imagine the 'alignment is easy' world is that those two happen to be the same thing; the only way to build the 'finger' is by building the 'moon'. In this case, it doesn't really matter what approach we take here because vanilla training will do the right thing, and so we'll ignore this as trivial. [This also covers cases in which it is easier to 'just solve the problem' than 'figure out how to trick the judge', which again seems too trivial. At some point we'll want to do something challenging enough that solutions that fool the judge appear much sooner than solutions that 'actually solve it'.] More realistically, a persistent problem in alignment is that the 'finger' points to the 'wrong thing', but also may be the only way to get perfect training loss (especially as you gain the ability to look closer and closer at the world). For any moon / direct translator the machine could imagine and test, it gets evidence that actually, humans want the finger / human simulator instead. We'll ignore this problem as 'user error' to be solved elsewhere so that we can focus on this subproblem. So in the relevant difficult cases, there's distinct finger and moon value functions, both of which can achieve optimal training loss. Approach I think this challenge has to be sidestepped instead of attacked directly. After all, if we could implement the human value function in the machine ontology, we would just do that! Similarly, we had to train the predictor and infer the value function because we didn't know how they were supposed to work, and so a plan that requires being able to differentiate subtle nuances between them (instead of having the machine do that differentiation for us) is probably doomed. I think the right way to sidestep it is using multi-value functions, inspired by ideas in the reinforcement learning literature like double Q-learning. This requires a change to the internals of the optimizer, where rather than just pursuing reward in an arbitrary way it needs to track ‘plausible’ human value functions, and then evaluate plans based on the ‘minimum’ score given by those plausible functions. [Both 'plausible' and 'minimum' are hiding significant obstacles and detail!] If both the finger (reward function implied by the human simulator) and the moon (reward function implied by the direct translator) seem plausible to the machine, then plans which involve deceiving the human (and getting away with it) will score poorly based on the moon, whereas plans which involve satisfying the human will score highly based on both options. Thus we can get the benefits of targeting the moon without having to identify it. [1] This is highly related to a previous work in the reinforcement learning literature; see Double Q-learning , Double DQN and work adapting it to policy gradients . There, they use two independently initialized and trained Q-functions / critics to attempt to overcome optimism bias, leading to faster training. Here, we assume the machine is ‘already trained’ and so optimism borne of ignorance is irrelevant, but optimism in how to interpret the freedom provided within the constraints of the human training data (or human perception) is relevant. Challenges While I think this is promising, it has a few pieces that need to be fleshed out (and even then, I think it's ignoring several critical problems, such that this can't be a 'full solution'). Plausible In order for this to work, the machine needs some constructive way to think about "all reward functions worth thinking about" in a way that it can optimize over. While easily solvable with a hypercomputer, we'd like to have something that's competitive. For the existing multi-value functions, the 'population of reward functions' is just generated from different initializations, which gives (essentially) the different models random settings for the values that haven't yet been pinned down. This won't work very well for generating a distribution of diverse models or tracking their relative probability (except by counting samples). My guess is that it's possible to construct some sort of particle filtering approach which values diversity, and can reason about families of functions rather than specific instances, which makes it possible to (with achievably few instead of exponentially many samples) cover the space well enough. Actually delivering a working model of this feels like a big ask that's nevertheless doable, and likely enhanced by various work in Bayesian sampling / the stuff that came out of the probabilistic programming paradigms. [2] Another significant challenge here is that the population of plausible human utility functions that the machine is optimizing over exists just in the machine’s model of the world, and it’s punished for discovering any new ones (as they can’t increase the achievable utility, but can decrease it) and rewarded for ruling out old ones (as this can’t decrease the achievable utility, but can increase it). This might push the incentives to deceive the human into incentives to self-deceive in a way that causes problems, and extend the incentives to manipulate the human in deployment to training as well. The AI can ask questions which it realizes will rule out difficult-to-satisfy candidate reward functions (but hopes the human won’t realize rules out those functions), and is rewarded for having interpretations of ambiguous situations which do so. Or, more transparently, it may just argue with the human that they should be satisfied by consequences which are easy for the AI to achieve. [3] Minimum Supposing we somehow have a set of candidate functions, each with some weight, there remains the question of how to score a particular plan. Each candidate function will provide some score, but if you take a raw minimum of all candidates then it's very easy to end up with a function that's the minimum score everywhere, and thus provides no guidance. [For example, for any variable whose target value is not pinned down by the training data, one candidate might think it's critical that it be high and another candidate might think it's critical that it be low, and you can't satisfy both simultaneously.] I think this can be repaired using a 'softmin', but it's not obvious what functional form works well here. I discuss one such proposal in the linked doc (roughly: sort your candidates in decreasing probability order, create a non-increasing function that at each particle is the minimum of it's previous value and the particle's value, and integrate the area under the curve). This overweights pessimistic candidates, but doesn't obviously have the nice features that we might want it to have (like weakly preferring 'typical' or 'non-extremal' values for features that aren't pinned down). Moon It seems worth repeating that this is assuming that the function that you want the machine to optimize for is a plausible candidate that it could infer from the training data. Then we don't need to find it; we just need to not do poorly according to any plausible function, and we're done. This feels like it has two problems. First, the 'real' problem is that the moon doesn't do well according to the training data, because of systematic biases on the human's part, and the machine learns those biases (while discarding random errors). In some situations this is fine, and in other situations this causes huge losses of value, and this relaxation doesn't seem like it cuts at the heart of this issue (tho maybe it does open up a path for progress); instead it feels like I came up with a solution that relies on the relaxation assumption and will break as soon as that assumption is removed. Second, suppose the space of candidates is large enough to support both the finger and the moon; does it support anything else? Will those things cause the machine to behave in bizarre or undesired ways? [Note the interaction with the previous point; as we start relaxing the assumptions of 'you have to agree with the training data' in order to allow the machine to not absorb our systematic biases, we also open up the space for ignoring lots of the true signal that we don't want the system to ignore.] ^ We also get the benefits of targeting the finger: the plan will not just work, but persuasively work. But this benefit is probably poisoned; there doesn’t seem to be any meta-level incentives here favoring not lying to the human; a plan that both hacks the camera so that it always shows an image of the diamond and attempts to defend the vault will score more highly, I think, than one that just attempts to defend the vault and not pick up ‘free points’ by hacking the camera. ^ One of my intuitions here is that if it's reasonable to ask the machine to infer the reward function once, it's probably reasonable to ask the machine to infer the reward function a hundred times. The main question feels to me like whether the moon shows up in your first hundred samples, or what sort of changes you have to make to your sampling procedure such that it'll happen. ^ “Please cure cancer,” you tell the robot, and it responds with “I’ve written a very compelling pamphlet on coping with your mortality.” |

f42e7885-9452-43a6-9333-8ac78a8abcf7 | StampyAI/alignment-research-dataset/special_docs | Other | Shared Autonomy for Robotic Manipulation with Language Corrections.

Shared Autonomy for Robotic Manipulation with Language Corrections

Siddharth Karamcheti∗Raj Palleti∗Yuchen Cui Percy Liang Dorsa Sadigh

Department of Computer Science, Stanford University

{skaramcheti, rpalleti, yuchenc, pliang, dorsa}@stanford.edu

Abstract

Traditional end-to-end instruction following ap-

proaches for robotic manipulation are notori-

ously sample inefficient and lack adaptivity ;

for most single-turn methods, there is no way

to provide additional language supervision to

adapt robot behavior online – a property criti-

cal to deploying robots in collaborative, safety-

critical environments. In this work, we present

a method for incorporating language correc-

tions, built on the insight that an initial instruc-

tion and subsequent corrections differ mainly

in the amount of grounded context needed. To

focus on manipulation domains where the sam-

ple efficiency of existing work is prohibitive,

we incorporate our method into a shared auton-

omy system. Shared autonomy splits agency

between the human and robot; rather than spec-

ifying a goal the robot needs to achieve alone,

language informs the control space provided to

the human. Splitting agency this way allows

the robot to learn the coarse, high-level parts

of a task, offloading more involved decisions –

such as when to execute a grasp, or if a grasp is

solid – to humans. Our user study on a Franka

Emika Panda arm shows that our correction-

aware system is sample-efficient and obtains

significant gains over non-adaptive baselines.

1 Introduction

Research at the intersection of natural language

and robotics has focused on dyadic interactions be-

tween humans and robots, often in the single-turn

instruction following regime (Tellex et al., 2011;

Artzi and Zettlemoyer, 2013; Thomason et al.,

2015; Arumugam et al., 2017). In this paradigm,

a human gives an instruction, and the robot exe-

cutes behavior in the world, autonomously – simul-

taneously resolving the human’s goal as well as

planning a course of actions to execute in the envi-

ronment. While impactful, building systems with

this explicit division of agency between humans

∗denotes equal contribution

“Grab the thing on the white table, and place it in the basket”

“No, on the left!” Shared Autonomy Regime

Incorrectly interprets original instruction21

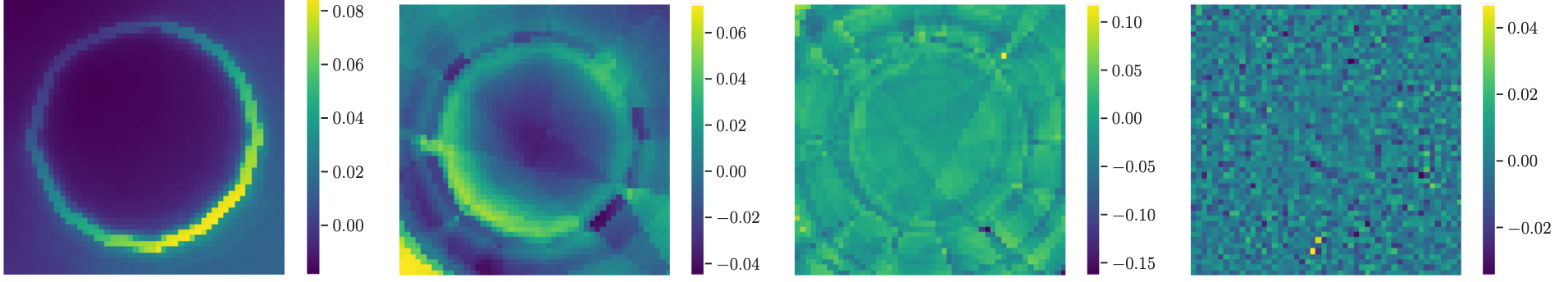

3Figure 1: Our proposed system. Whereas prior work

only allows for issuing a single language utterance held

constant during execution (solid line), our approach

allows users to provide language corrections during

execution (left window – dashed line).

and robots is nontrivial; many existing systems

either make strong assumptions about the environ-

ment in order to use motion planners (Matuszek

et al., 2012; Kollar et al., 2013), or require extreme

amounts of language-aligned data to learn general

policies (Chevalier-Boisvert et al., 2019; Stepputtis

et al., 2020; Lynch and Sermanet, 2020).

Coupled with the severe sample inefficiency of

existing approaches is their lack of adaptivity . Con-

sider the robot in Fig. 1, trying to execute “grab

the thing on the white table and place it in the

basket.” This instruction is ambiguous and as a

result, it is not clear what should happen. One

natural option is for the human to provide a set

of streaming corrections to the robot, changing its

behavior on the fly. While recent work tries to

get at the spirit of this idea by learning from dia-

logue (Thomason et al., 2019a,b), post-hoc correc-

tions (Co-Reyes et al., 2019), or implicit feedback

(Karamcheti et al., 2020), none of these approaches

=

[Train-Only] 6-DoF Action

“Pour the blue cup into the cereal bowl”State Encoder

Distil-RoBERTaTraining UtterancesDistil-RoBERTa“Put the banana away” “Towards the cup”“Empty the blue cup’s contents into the bowl”Similarity SearchInstruction & Correction “Gating” Function

hstateProprioceptive StateObject Poses+=hlanguageα1××ProjectionBasis 1 — (6-DoF)Basis 2 — (6-DoF)z1z2⋅Inference Human controls!

Action Encoder

Predicted Robot Action…Basis n — (6-DoF)…znLanguageActionState“Decoder” (Dot-Product)Figure 2: Our proposed LILAC model. Central to LILAC is the “gating” module (orange) which controls the

amount of state-context for a given language input, allowing us to handle corrections. We use GPT-3 in lieu of a

heuristic to provide α(see Appendix A for discussion), though we plan to learn αfrom user feedback in the future.

Solid lines represent the inference pipeline, while dashed lines indicate training-only steps.

work online . Real systems for language-driven

human-robot interaction must be able to handle

streaming corrections in a manner that is both nat-

ural andsample efficient .

One answer to sample efficiency lies in leverag-

ing existing methods in shared autonomy (Dragan

and Srinivasa, 2013; Argall, 2018; Javdani et al.,

2018). This class of approaches splits agency be-

tween the human and robot; during execution, both

parties influence the ultimate actions of the robot,

sharing the burden of reasoning over actions. By

factoring the difficulty of the problem across the

human and the robot, shared autonomy approaches

see large gains in sample efficiency; the robot can

learn coarse, high-level features, off-loading the

short, fine-grained manipulation to the human, thus

playing off the strenghts of both parties. The con-

crete instance of shared autonomy that we focus

on in this work is learned latent actions for assis-

tive teleoperation (Herlant et al., 2016; Losey et al.,

2021), where we learn low-dimensional control

spaces that humans can use via joysticks, sip-and-

puff devices, or other assistive tools to maneuver

high-dimensional robots. Because humans are ac-

tively involved in controlling the robot – especially

in critical states, such as when aligning the robot

gripper to lift a cup – these approaches are able

to operate at the scale of 5 - 10 examples per task.

This is in contrast to the 10K - 100K demonstra-

tions required by modern instruction following sys-

tems learned via imitation learning, in the fully

autonomous setting (Luketina et al., 2019).

The learned latent actions paradigm operational-

izes this division of agency by formulating a set

of approaches that use small datasets of demon-

strations to learn task-specific assistive controllers(Losey et al., 2020; Jeon et al., 2020; Karamcheti

et al., 2021b; Li et al., 2020). While powerful and

sample-efficient, a key failing of these approaches

is their inability to handle multiple objectives – and

more importantly – provide a natural interface for

users to specify their goals. To address this chal-

lenge, Karamcheti et al. (2021a) introduce LILA

(Language-Informed Latent Actions), by using lan-

guage in a manner similar to single-turn instruction-

following approaches. However, while accumu-

lating gains in sample efficiency, LILA, like most

single-turn systems, lacks adaptivity, a critical com-

ponent for any real-time, user controlled system.

Looking to Fig. 1, we see that LILA misinterprets

the ambiguous instruction “grab the thing on the

white table, and place it in the basket” (1), moving

towards the cup (2), rather than the banana as the

user intended (3). Problems of ambiguity, misspec-

ification, or underspecification are pervasive in any

real-world, user-facing language system, and as

such, we need an approach for handling streaming,

online corrections – in this case, simple utterances

like “stop,” or “no, on the left!”

In this work, we introduce LILAC (Language-

Informed Latent Actions with Corrections), an

adaptive system for real-world robotic manipula-

tion, that – unlike LILA – can effectively interpret

streaming language corrections. Critically, our key

insight is in realizing language utterances vary in

the amount of object state-dependence they require.

Aninstruction like that in Fig. 1 – “grab the thing

on the white table, and place it in the basket” – re-

quires dense grounded information about object

positioning while a correction – “no, on the left!” –

can be expressed as a function of the user’s (static)

reference frame, without proprioceptive state or ob-

ject information. This insight allows us to decouple

interpreting corrections from grounding: we can

learn corrections from even fewer examples , boost-

ing the robustness andgeneralization potential of

our approach.

The following sections introduce LILAC, includ-

ing how we identify the “state-dependence” of an

utterance. We layout our experiments, and culmi-

nate with the results of a user study (small-scale,

n= 5) conducted on a physical Franka Emika

Panda (a 7-DoF fixed arm manipulator), with a dis-

cussion of future work on extending LILAC, and

natural language supervision for shared autonomy.

2 Motivating Example

To gain a picture of LILAC’s latent actions pipeline,

we present a motivating example in Fig. 1. A

user first gives an instruction to “grab the thing

on the white table and place it in the basket,” which

presents them a 2-DoF control space they can use

via the joystick in their hands. With this new con-

trol space, pushing upon the joystick may bring

the arm closer to the table, while right might twist

the gripper to align with the objects on the table.

Unfortunately, the initial model’s control space pre-

diction is not perfect, and the arm skews towards

the wrong object!

With LILAC, the user is able to issue real-time

language corrections, updating their control space .

Specifically, the new user utterance is fed to our

learned model that parses the utterance, and pro-

duces a new control mapping reflecting the user’s

intent. Now, pushing left on the joystick brings the

arm left, allowing the user to grasp the banana, as

they intended. Finally, after the correction has been

satisfied, the user is able to denote termination with

a button on their controller, dropping back into the

control space for the original task they provided

– in this case, returning to the control space for

placing the object in the basket.

3LILAC: Natural Language Corrections

LILAC builds off of LILA as introduced by Karam-

cheti et al. (2021a) by adding a gating module to

handle streaming corrections. The architecture is

depicted in Fig. 2; solid lines denote the inference

logic, while dashed lines denote training logic.

Overview. LILAC is a conditional autoencoder

with some extra structural elements. The encoder

takes in (language, state, action) triples – the greenboxed components in Fig. 2 – and factors the model-

ing of the control space into two subproblems. The

first subproblem is identifying a set of basis vectors

b1. . . b nfor low-dimensional control conditioned

on the current state and language utterance, where

nis the dimensionality of the latent space. These

basis vectors have the same dimensionality as the

robot’s native action space (e.g., 6/7-DoF). The sec-

ond subproblem is finding a set of scalar weights

(z1. . . z n) of the recovered basis vectors, optimized

such that the convex combinationPn

i=1zi·bire-

constructs the original action.

At train time, we assume a dataset of training ut-

terances (either instructions orcorrections), paired

with robot trajectories comprised of (state, action)

pairs. For this work, we assume the action space

is the 6-DoF end-effector velocity of the robot (ob-

tained via forward kinematics), and the state space

is the combination of the robot’s proprioceptive

state, containing information about its joint states

and end-effector pose (also in 6D), as well as the

coordinates of each object in the scene. Because

we are interested in intuitive, low-dimensional con-

trol, we set n= 2, so that we only produce 2 basis

vectors and weights; this way, a human can operate

the robot using any 2-DoF interface, like a joystick

or computer mouse.

Exactly as in LILA, we use a pretrained Distil-

Roberta model from Sentence-BERT (Reimers and

Gurevych, 2019) to encode language utterances,

in tandem with an “unnatural-language processing”

nearest neighbors index (Marzoev et al., 2020); be-

cause we are in the small data regime (2 hours

of demonstrations), projecting inference-time ut-

terances onto existing training exemplars prevents

the LILAC model from generalizing poorly as lan-

guage embeddings drift, which could lead to prac-

tical issues of user safety.

Gating Instructions vs. Corrections. The key

insight of this work is that language instructions

and corrections differ in their amount of object

state-dependence – but what does this mean? From

a linguistic perspective, one might categorize dif-

ferent utterances based on the number of referents

present; an utterance like “grab the thing on the

white table and place it in the basket” as in Fig. 1

has 3 referents, indicating a large degree of state

dependence; the robot must ground the utterance

in the objects of the environment to resolve the cor-

rect behavior. However, an utterance like “no, to

the left!” has no explicit referents; one can resolve

End-Effector ControlCorrection-Aware ImitationLILA (No Corrections)LILAC (Ours)Goal: “Place the fruit basket on the tray”

✅

✅

❌

❌

“Go forward”“Lower it”Figure 3: Qualitative trajectories from 4 different control strategies, operated by an “oracle.” Note the dashed lines

indicate when language corrections were used. In general, both LILAC and the End-Effector control methods are

able to solve tasks, with LILAC able to do so more efficiently. Imitation Learning (green) fails to fit the tasks – even

with corrections – and LILA is unable to progress without finer-grained correction information.

the utterance without object or proprioceptive state

information, instead relying solely on the user’s

static reference frame and induced coordinates.

To operationalize this idea whilst remaining sam-

ple efficient1, we use a gating function (orange, in

Fig. 2) that given language, predicts a value αfrom

0–1. A value of 0 signifies a correction . Appropri-

ately, in our architecture, this zeroes out any state-

dependent information (see the αterm in Fig. 2),

and predicts an action solely based on the provided

language. In this work, we construct a prompt har-

ness with GPT-3 (Brown et al., 2020) to output

α= 0,0.5,1– the prompt text and motivation for

this decision can be found in Appendix A.

4 Experiments & User Study

Our experiments consist of a targeted evaluation

with an expert “oracle” of LILAC and 3 different

baselines (results in Fig. 3), as well as a real-world

user study ( n= 5) on a complex manipulation

workspace with a Franka Emika Panda arm (results

in Fig. 4). We reuse all the publicly released data

from Karamcheti et al. (2021a), and for correction

data, collect a handful of corrections for moving in

the 6 cardinal directions, as well as two mixed cor-

rections that require some level of state grounding

(“lower the bowl” and “tilt the cup”).

Targeted Expert Evaluation. The goal of our

expert-controlled evaluation was to evaluate the

corrections-module in LILAC, and get a point of

reference to LILA and traditional methods for robot

control – specifically, using a control scheme that

1One question is why not treat all utterances as equal; the

answer is rooted in the small data regime we operate in. We’d

need to collect several instances of the same correction “to the

left” in different states to generalize, whereas with the LILAC

approach, we realistically only need one.uses inverse kinematics to control the end-effector

of the robot, two axes at a given time (e.g. [X,

Y], [Z, Roll], [Pitch, Yaw] ). Fig. 3 shows

the results; LILAC and End-Effector control are

both able to accomplish the two tasks, but LILA

struggles to recover from overshooting problems.

We also evaluate one other strategy – Language &

Correction-Aware Imitation, which doesn’t have

enough data to fit a reasonable policy (see discus-

sion in Karamcheti et al. (2021a) for more infor-

mation). We additionally evaluate a “no-language”

variant of latent actions in Appendix C.

User Study. Given the results from the automatic

evaluation, we run a within-subjects user study

(n= 5 , 3 male, 2 female, 3 users with prior

teleoperation experience), with the three operation

schemes – LILAC, LILA, and End-Effector control

– as the three conditions. Each user was randomly

assigned one of the five original tasks from the

LILA work, and asked to complete it with each

control strategy.2Fig. 4 shows the general quan-

titative (left) and subjective (right) study results.

Users provide linguistic feedback verbally; in this

work, we rely on an expert proctor to manually

type the verbal instruction into the computer run-

ning the LILAC model – future work will adopt

off-the-shelf ASR technologies, such as the Google

Speech-to-Text API.

On the Strength of End-Effector Control.

LILAC strictly dominates LILA, showing the ben-

efit of adding even simple real-time correction han-

dling. However, compared to End-Effector con-

trol, the success is less clear. While obtaining a

slightly higher success rate, the qualitative results

2More information about the structure of the study can be

found in Appendix B

Figure 4: User study results ( n= 5) with a Franka Emika Robotic arm. LILAC outperforms the non-adaptive LILA

model across the board, obtaining higher success rates, and is preferred by users. While LILAC and End-Effector

control obtain similar success rates (though LILAC is slightly higher), plotting the “jerk” (2nd derivative of input

velocity) paints a different picture: controlling the end-effector is very jerky, requiring high user load.

are mixed. One confound is the limited pool of

participants, many of whom already had prior tele-

operation experience (due to COVID restrictions,

we could not widen the pool). Another possible

confound is the structure of the user study itself; to

better allow for users to adjust to the LILAC cor-

rections procedure, the amount of practice time

afforded each user is larger than in prior work,

which allows users to get more acquainted with end-

effector control. Ultimately, this points to the tasks

in this work being on the simpler side, able to be

solved (mostly) without complex, mixed angular-

linear control of the robot’s end-effector; if we

were to account for more real-world manipulation

tasks such as sweeping, wiping, or feeding – all

of which require handling contact and controlling

3+ degrees-of-freedom – we would see degraded

End-Effector performance.

That being said, for additional insight on user

cognitive load when using the various control

schemes, we plotted the 2nd-derivative of accel-

eration – jerk – of the input controls; we see here

that End-Effector approaches require significantly

more fast movement compared to LILAC. Not only

is this more taxing on the user, but is potentially

unsafe depending on the application – another axis

we will explore in future work.

5 Discussion

The union of natural language and shared auton-

omy for real-world robot manipulation is a rich and

vibrant research area. Moving beyond strict dyadic

interactions towards the shared autonomy setting

opens the door to rich work in language supervi-

sion for robotic manipulation – work that has so

far been limited by the steep data requirements of

training language-conditioned policies (Stepputtis

et al., 2020; Shridhar et al., 2021). As shown in

this work, shared autonomy approaches are able tobenefit greatly from sharing agency with a human-

in-the-loop, leading to gains in data efficiency.

Specifically, in this work we introduced LILAC,

an adaptive shared autonomy system for handing

streaming language corrections, provided while a

user completes a task . The entire LILAC model

was trained with 2 hours of data collected by a sin-

gle person, vs. the multiple days of data that would

be required if using a fully autonomous, imitation

learning approach. While the sample efficiency

wins are clear, LILAC remains limited; the current

evaluation shows that hand-coded control schemes

that let users directly manipulate the end-effector

can be similarly effective in some cases. Further-

more, LILAC is heavily tied to the latent actions

paradigm for shared autonomy, which is only a

small slice of the different types of solutions for

human-in-the-loop robotic manipulation.

Future work in language and shared autonomy

will allow for learning newutterances online, from

user feedback; for example, developing methods

for learning control strategies for novel language,

like “flip over the cup,” with minimal user feedback

(teaching demonstrations, language corrections,

etc.). More broadly, we hope to generalize our

correction module to other versions of language-

informed robotics – for example, to policy blending

(Dragan and Srinivasa, 2013), guided planning, and

interactive imitation learning (Kelly et al., 2019) –

for more complex, real-world manipulation tasks.

Acknowledgments

Toyota Research Institute (“TRI”) provided funds

to support this work. Siddharth Karamcheti is

grateful to be supported by the Open Philanthropy

Project AI Fellowship. We would additionally like

to thank the participants of our user study, as well

as our anonymous reviewers.

References

Brenna D Argall. 2018. Autonomy in rehabilitation

robotics: an intersection. Annual Review of Control,

Robotics, and Autonomous Systems , 1:441–463.

Yoav Artzi and Luke Zettlemoyer. 2013. Weakly su-

pervised learning of semantic parsers for mapping

instructions to actions. Transactions of the Associa-

tion for Computational Linguistics (TACL) , 1:49–62.

Dilip Arumugam, Siddharth Karamcheti, Nakul

Gopalan, Lawson L. S. Wong, and Stefanie Tellex.

2017. Accurately and efficiently interpreting human-

robot instructions of varying granularities. In

Robotics: Science and Systems (RSS) .

Tom B. Brown, Benjamin Mann, Nick Ryder, Melanie

Subbiah, Jared Kaplan, Prafulla Dhariwal, Arvind

Neelakantan, Pranav Shyam, Girish Sastry, Amanda

Askell, Sandhini Agarwal, Ariel Herbert-V oss,

Gretchen Krueger, Tom Henighan, Rewon Child,

Aditya Ramesh, Daniel M. Ziegler, Jeffrey Wu,

Clemens Winter, Christopher Hesse, Mark Chen, Eric

Sigler, Mateusz Litwin, Scott Gray, Benjamin Chess,

Jack Clark, Christopher Berner, Sam McCandlish,

Alec Radford, Ilya Sutskever, and Dario Amodei.

2020. Language models are few-shot learners. arXiv

preprint arXiv:2005.14165 .

Maxime Chevalier-Boisvert, Dzmitry Bahdanau, Salem

Lahlou, Lucas Willems, Chitwan Saharia, Thien Huu

Nguyen, and Yoshua Bengio. 2019. Babyai: A plat-

form to study the sample efficiency of grounded

language learning. In International Conference on

Learning Representations (ICLR) .

John D. Co-Reyes, Abhishek Gupta, Suvansh Sanjeev,

Nick Altieri, John DeNero, Pieter Abbeel, and Sergey

Levine. 2019. Guiding policies with language via

meta-learning. In International Conference on Learn-

ing Representations (ICLR) .

Anca D Dragan and Siddhartha S Srinivasa. 2013. A

policy-blending formalism for shared control. In-

ternational Journal of Robotics Research (IJRR) ,

32:790–805.

Laura V Herlant, Rachel M Holladay, and Siddhartha S

Srinivasa. 2016. Assistive teleoperation of robot

arms via automatic time-optimal mode switching.

InACM/IEEE International Conference on Human

Robot Interaction (HRI) , pages 35–42.

Shervin Javdani, Henny Admoni, Stefania Pellegrinelli,

Siddhartha S Srinivasa, and J Andrew Bagnell. 2018.

Shared autonomy via hindsight optimization for tele-

operation and teaming. International Journal of

Robotics Research (IJRR) , 37:717–742.

Hong Jun Jeon, Dylan P. Losey, and Dorsa Sadigh. 2020.

Shared autonomy with learned latent actions. In

Robotics: Science and Systems (RSS) .

Siddharth Karamcheti, Dorsa Sadigh, and Percy Liang.

2020. Learning adaptive language interfaces throughdecomposition. In EMNLP Workshop for Interactive

and Executable Semantic Parsing (IntEx-SemPar) .

Siddharth Karamcheti, Megha Srivastava, Percy Liang,

and Dorsa Sadigh. 2021a. LILA: Language-informed

latent actions. In Conference on Robot Learning

(CoRL) .

Siddharth Karamcheti, A. Zhai, Dylan P. Losey, and

Dorsa Sadigh. 2021b. Learning visually guided latent

actions for assistive teleoperation. In Learning for

Dynamics & Control Conference (L4DC) .

Michael Kelly, Chelsea Sidrane, K. Driggs-Campbell,

and Mykel J. Kochenderfer. 2019. HG-DAgger: In-

teractive imitation learning with human experts. In

International Conference on Robotics and Automa-

tion (ICRA) , pages 8077–8083.

T. Kollar, J. Krishnamurthy, and Grant P. Strimel. 2013.

Toward interactive grounded language acqusition. In

Robotics: Science and Systems (RSS) .

Mengxi Li, Dylan P. Losey, Jeannette Bohg, and Dorsa

Sadigh. 2020. Learning user-preferred mappings for

intuitive robot control. In International Conference

on Intelligent Robots and Systems (IROS) .

Dylan P. Losey, Hong Jun Jeon, Mengxi Li, Kr-

ishna Parasuram Srinivasan, Ajay Mandlekar, Ani-

mesh Garg, Jeannette Bohg, and Dorsa Sadigh. 2021.

Learning latent actions to control assistive robots.

Autonomous Robots (AURO) , pages 1–33.

Dylan P. Losey, Krishnan Srinivasan, Ajay Mandlekar,

Animesh Garg, and Dorsa Sadigh. 2020. Controlling

assistive robots with learned latent actions. In In-

ternational Conference on Robotics and Automation

(ICRA) , pages 378–384.

Jelena Luketina, Nantas Nardelli, Gregory Farquhar,

Jakob Foerster, Jacob Andreas, Edward Grefenstette,

Shimon Whiteson, and Tim Rocktäschel. 2019. A

survey of reinforcement learning informed by natu-

ral language. In International Joint Conference on

Artificial Intelligence (IJCAI) .

Corey Lynch and Pierre Sermanet. 2020. Grounding

language in play. arXiv preprint arXiv:2005.07648 .

Alana Marzoev, S. Madden, M. Kaashoek, Michael J.

Cafarella, and Jacob Andreas. 2020. Unnatural lan-

guage processing: Bridging the gap between syn-

thetic and natural language data. arXiv preprint

arXiv:2004.13645 .

Cynthia Matuszek, Nicholas FitzGerald, Luke Zettle-

moyer, Liefeng Bo, and Dieter Fox. 2012. A joint

model of language and perception for grounded at-

tribute learning. In International Conference on Ma-

chine Learning (ICML) , pages 1671–1678.

Nils Reimers and Iryna Gurevych. 2019. Sentence-

BERT: Sentence embeddings using siamese BERT-

networks. In Empirical Methods in Natural Lan-

guage Processing (EMNLP) .

Mohit Shridhar, Lucas Manuelli, and Dieter Fox. 2021.

Cliport: What and where pathways for robotic manip-

ulation. In Conference on Robot Learning (CoRL) .

Simon Stepputtis, J. Campbell, Mariano Phielipp, Ste-

fan Lee, Chitta Baral, and H. B. Amor. 2020.

Language-conditioned imitation learning for robot

manipulation tasks. In Advances in Neural Informa-

tion Processing Systems (NeurIPS) .

Stefanie Tellex, Thomas Kollar, Steven Dickerson,

Matthew R Walter, Ashis Gopal Banerjee, Seth J

Teller, and Nicholas Roy. 2011. Understanding nat-

ural language commands for robotic navigation and

mobile manipulation. In Association for the Advance-

ment of Artificial Intelligence (AAAI) .

Jesse Thomason, Michael Murray, Maya Cakmak, and

Luke Zettlemoyer. 2019a. Vision-and-dialog naviga-

tion. In Conference on Robot Learning (CoRL) .

Jesse Thomason, Aishwarya Padmakumar, Jivko

Sinapov, Nick Walker, Yuqian Jiang, Harel Yedid-

sion, Justin W. Hart, Peter Stone, and Raymond J.

Mooney. 2019b. Improving grounded natural lan-

guage understanding through human-robot dialog. In

International Conference on Robotics and Automa-

tion (ICRA) .

Jesse Thomason, Shiqi Zhang, Raymond J. Mooney,

and Peter Stone. 2015. Learning to interpret natural

language commands through human-robot dialog. In

International Joint Conference on Artificial Intelli-

gence (IJCAI) .A Using GPT-3 to Identify Corrections

A core component of LILAC is the choice of gating

function, for producing the object state dependence

weight αfor a given language utterance. Critically,

αdictates whether an utterance is an instruction

(α= 1) that depends on the current environment

context, a correction (α= 0) that does not, and

can be interpreted without additional grounded in-

formation, or something in-between (α= 0.5).

We made the realization early on that identify-

ing whether a language utterance fell into one of

the above categories could (at least heuristically)

be decoupled from any environment information;

that is to say, we could predict αdirectly from the

language utterance alone. Given this hypothesis,

and the fact that we were not sure whether the α-

gating would even work in our small-data regime,

we found it difficult to defend the choice to collect

data to learn αupfront, prior to running through the

whole system. Therefore, our choices were to ei-

ther hardcode a series of αvalues for a small, fixed

set of correction language, or come up with some

heuristic (e.g., referent-counting) that could break

or generalize poorly to “in-between” utterances. In-

stead, we chose to try a prompt construction based

approach, leveraging GPT-3 (Brown et al., 2020).

The prompt we specified is shown in Fig. 5; we

did minimal prompt-tuning, only reordering the ex-

amples shown, and turning the temperature down

to 0 (as we wanted this to be deterministic). We

found GPT-3 to work incredibly well out of the

box – a phenomenon that cannot be overstated .

With a straightforward procedure, we were able

to use GPT-3 as a drop-in replacement for what

otherwise would have been a brittle heuristic, or a

limited set of language corrections. It is incredibly

exciting to be able to prototype these systems via

GPT-3 quickly, and we hope that this type of us-

age becomes prevalent throughout not only human-

robot interaction, but widespread NLP pipelines as

a whole. Given the results with the “drop-in” GPT-

3 model, we have a good idea as to where we need

to focus future work with respect to learning α–

specifically, for handling the nuanced utterances

that are “in-between” corrections and instructions –

and are excited to tackle this moving forward.

B User Study Details & Tasks

As mentioned in §4, we ran a within-subjects study

with a small number of participants (n= 5) . The

study consisted of each user using the following

Figure 5: The concrete prompt used for GPT-3 davinci-instruct , visualized in the OpenAI API Playground. We

primed GPT-3 with 3 handcrafted examples, without much other thought, and used the corresponding outputs as our

αgating values (“instruction” = 1, “in-between” = 0.5, “correction” = 0).

strategies to solve a given task: End-Effector con-

trol, LILA (no corrections), and LILAC (our pro-

posed approach). The order of strategies was ran-

domized across users. The tasks were as follows:

1.Pick & Insert Banana – Grasp the banana,

and place it in the plastic fruit basket, turning

the gripper appropriately to insert the banana.

2.Pick & Place Basket – Grasp the basket by

the handles, and lower it onto the tray.

3.Pick & Place Cereal Bowl – Grasp the

green cereal bowl by the lip of the bowl, lift

it off the pedestal, and lower it onto the tray

without collision.

4.Pick & Pour into Cereal Bowl – Grasp

the blue cup with marbles by either the lip of

the cup or the handle on the side, then lift it

over the cereal bowl, tilting the cup to pour

the marbles into the bowl.

5.Pick & Pour into Mug – Grasp the blue

cup with marbles by either the lip of the cup

or the handle on the side, then lift it over the

black mug – avoiding collisions – then pour

the contents into the mug.

Prior to executing the task in a given control

mode, each user was given 3 minutes to practice

using that control mode. During practice, the user

could experiment with various patterns of joystick

input to better understand how they translated to

movement of the robot arm. We found that theamount of “naturalization” time was critical in get-

ting users to adapt to the interfaces provided by

LILA, and LILAC. Furthermore, for users not al-

ready experienced in robot teleoperation, we found

this practice period important as well. However, we

found that it takes longer to naturalize to LILAC

vs. End-Effector control – this also explains the

slight difference in results between LILAC and

End-Effector control. Future work will explore the

impact of this “naturalization period” under various

conditions.

C Additional Visualizations & Baselines

To supplement our experiments from §4, we

present two additional sets of trajectory visualiza-

tions in Fig. 6 and Fig. 7. Fig. 6 shows the same 4

strategies as in Fig. 3, except for a more complex

pouring task; in general, the pattern of behavior is

the same – both LILAC and End-Effector control

are able to solve the task, whereas LILA stalls out

due to a lack of a corrective signal, with Correction-

Aware Imitation Learning failing to fit a decent

policy given limited data.

One other baseline we ran was the no-language

variant of latent actions ; we ran this to show the im-

pact that language has on providing intuitive, use-

ful control spaces. Fig. 7 shows the results of this

baseline vs. LILAC – at a glance, the no-language

variant completely fails, reducing to random, oscil-

latory behavior, because it cannot learn to provide

a good control space for all tasks, without an extra

conditioning signal. This is the same observation

made in Karamcheti et al. (2021a).

End-Effector ControlCorrection-Aware ImitationLILA (No Corrections)LILAC (Ours)Goal: “Pour the contents of the blue cup into the mug”

✅

✅

❌

❌

“Move down”“Head right”Figure 6: Additional trajectory visualizations for the four strategies from §4, this time for a more complex “pouring”

task; we see similar results as in Fig. 3.

“Place the fruit basket on the tray”No-Language Latent Actions

“Pour the contents of the blue cup into the mug”

LILAC (Ours)

✅“Lower it”

❌No-Language Latent Actions

❌

“Head right”

LILAC (Ours)

✅

Figure 7: Results visualizing trajectories for LILAC and an additional baseline strategy – “No-Language Latent

Actions” – the language-free variant of our approach. Note that this approach trivially fails, as its overloaded,

unable to find a satisfying control scheme that would allow users to perform all 5 tasks without extra conditioning

information. This translates to aimless, oscillatory behavior during execution. |

f3657a5f-a3a8-4a7f-98bc-0a13577546c9 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Formal Open Problem in Decision Theory

.mjx-chtml {display: inline-block; line-height: 0; text-indent: 0; text-align: left; text-transform: none; font-style: normal; font-weight: normal; font-size: 100%; font-size-adjust: none; letter-spacing: normal; word-wrap: normal; word-spacing: normal; white-space: nowrap; float: none; direction: ltr; max-width: none; max-height: none; min-width: 0; min-height: 0; border: 0; margin: 0; padding: 1px 0}

.MJXc-display {display: block; text-align: center; margin: 1em 0; padding: 0}

.mjx-chtml[tabindex]:focus, body :focus .mjx-chtml[tabindex] {display: inline-table}

.mjx-full-width {text-align: center; display: table-cell!important; width: 10000em}

.mjx-math {display: inline-block; border-collapse: separate; border-spacing: 0}

.mjx-math \* {display: inline-block; -webkit-box-sizing: content-box!important; -moz-box-sizing: content-box!important; box-sizing: content-box!important; text-align: left}

.mjx-numerator {display: block; text-align: center}

.mjx-denominator {display: block; text-align: center}

.MJXc-stacked {height: 0; position: relative}

.MJXc-stacked > \* {position: absolute}

.MJXc-bevelled > \* {display: inline-block}

.mjx-stack {display: inline-block}

.mjx-op {display: block}

.mjx-under {display: table-cell}

.mjx-over {display: block}

.mjx-over > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-under > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-stack > .mjx-sup {display: block}

.mjx-stack > .mjx-sub {display: block}

.mjx-prestack > .mjx-presup {display: block}

.mjx-prestack > .mjx-presub {display: block}

.mjx-delim-h > .mjx-char {display: inline-block}

.mjx-surd {vertical-align: top}

.mjx-mphantom \* {visibility: hidden}

.mjx-merror {background-color: #FFFF88; color: #CC0000; border: 1px solid #CC0000; padding: 2px 3px; font-style: normal; font-size: 90%}

.mjx-annotation-xml {line-height: normal}

.mjx-menclose > svg {fill: none; stroke: currentColor}

.mjx-mtr {display: table-row}

.mjx-mlabeledtr {display: table-row}

.mjx-mtd {display: table-cell; text-align: center}

.mjx-label {display: table-row}

.mjx-box {display: inline-block}

.mjx-block {display: block}

.mjx-span {display: inline}

.mjx-char {display: block; white-space: pre}

.mjx-itable {display: inline-table; width: auto}

.mjx-row {display: table-row}

.mjx-cell {display: table-cell}

.mjx-table {display: table; width: 100%}

.mjx-line {display: block; height: 0}

.mjx-strut {width: 0; padding-top: 1em}

.mjx-vsize {width: 0}

.MJXc-space1 {margin-left: .167em}

.MJXc-space2 {margin-left: .222em}

.MJXc-space3 {margin-left: .278em}

.mjx-test.mjx-test-display {display: table!important}

.mjx-test.mjx-test-inline {display: inline!important; margin-right: -1px}

.mjx-test.mjx-test-default {display: block!important; clear: both}

.mjx-ex-box {display: inline-block!important; position: absolute; overflow: hidden; min-height: 0; max-height: none; padding: 0; border: 0; margin: 0; width: 1px; height: 60ex}

.mjx-test-inline .mjx-left-box {display: inline-block; width: 0; float: left}

.mjx-test-inline .mjx-right-box {display: inline-block; width: 0; float: right}

.mjx-test-display .mjx-right-box {display: table-cell!important; width: 10000em!important; min-width: 0; max-width: none; padding: 0; border: 0; margin: 0}

.MJXc-TeX-unknown-R {font-family: monospace; font-style: normal; font-weight: normal}

.MJXc-TeX-unknown-I {font-family: monospace; font-style: italic; font-weight: normal}

.MJXc-TeX-unknown-B {font-family: monospace; font-style: normal; font-weight: bold}

.MJXc-TeX-unknown-BI {font-family: monospace; font-style: italic; font-weight: bold}

.MJXc-TeX-ams-R {font-family: MJXc-TeX-ams-R,MJXc-TeX-ams-Rw}

.MJXc-TeX-cal-B {font-family: MJXc-TeX-cal-B,MJXc-TeX-cal-Bx,MJXc-TeX-cal-Bw}

.MJXc-TeX-frak-R {font-family: MJXc-TeX-frak-R,MJXc-TeX-frak-Rw}

.MJXc-TeX-frak-B {font-family: MJXc-TeX-frak-B,MJXc-TeX-frak-Bx,MJXc-TeX-frak-Bw}

.MJXc-TeX-math-BI {font-family: MJXc-TeX-math-BI,MJXc-TeX-math-BIx,MJXc-TeX-math-BIw}

.MJXc-TeX-sans-R {font-family: MJXc-TeX-sans-R,MJXc-TeX-sans-Rw}

.MJXc-TeX-sans-B {font-family: MJXc-TeX-sans-B,MJXc-TeX-sans-Bx,MJXc-TeX-sans-Bw}

.MJXc-TeX-sans-I {font-family: MJXc-TeX-sans-I,MJXc-TeX-sans-Ix,MJXc-TeX-sans-Iw}

.MJXc-TeX-script-R {font-family: MJXc-TeX-script-R,MJXc-TeX-script-Rw}

.MJXc-TeX-type-R {font-family: MJXc-TeX-type-R,MJXc-TeX-type-Rw}

.MJXc-TeX-cal-R {font-family: MJXc-TeX-cal-R,MJXc-TeX-cal-Rw}

.MJXc-TeX-main-B {font-family: MJXc-TeX-main-B,MJXc-TeX-main-Bx,MJXc-TeX-main-Bw}

.MJXc-TeX-main-I {font-family: MJXc-TeX-main-I,MJXc-TeX-main-Ix,MJXc-TeX-main-Iw}

.MJXc-TeX-main-R {font-family: MJXc-TeX-main-R,MJXc-TeX-main-Rw}

.MJXc-TeX-math-I {font-family: MJXc-TeX-math-I,MJXc-TeX-math-Ix,MJXc-TeX-math-Iw}

.MJXc-TeX-size1-R {font-family: MJXc-TeX-size1-R,MJXc-TeX-size1-Rw}

.MJXc-TeX-size2-R {font-family: MJXc-TeX-size2-R,MJXc-TeX-size2-Rw}

.MJXc-TeX-size3-R {font-family: MJXc-TeX-size3-R,MJXc-TeX-size3-Rw}

.MJXc-TeX-size4-R {font-family: MJXc-TeX-size4-R,MJXc-TeX-size4-Rw}

.MJXc-TeX-vec-R {font-family: MJXc-TeX-vec-R,MJXc-TeX-vec-Rw}

.MJXc-TeX-vec-B {font-family: MJXc-TeX-vec-B,MJXc-TeX-vec-Bx,MJXc-TeX-vec-Bw}

@font-face {font-family: MJXc-TeX-ams-R; src: local('MathJax\_AMS'), local('MathJax\_AMS-Regular')}

@font-face {font-family: MJXc-TeX-ams-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_AMS-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_AMS-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_AMS-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-B; src: local('MathJax\_Caligraphic Bold'), local('MathJax\_Caligraphic-Bold')}

@font-face {font-family: MJXc-TeX-cal-Bx; src: local('MathJax\_Caligraphic'); font-weight: bold}

@font-face {font-family: MJXc-TeX-cal-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-R; src: local('MathJax\_Fraktur'), local('MathJax\_Fraktur-Regular')}

@font-face {font-family: MJXc-TeX-frak-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-B; src: local('MathJax\_Fraktur Bold'), local('MathJax\_Fraktur-Bold')}

@font-face {font-family: MJXc-TeX-frak-Bx; src: local('MathJax\_Fraktur'); font-weight: bold}

@font-face {font-family: MJXc-TeX-frak-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-BI; src: local('MathJax\_Math BoldItalic'), local('MathJax\_Math-BoldItalic')}

@font-face {font-family: MJXc-TeX-math-BIx; src: local('MathJax\_Math'); font-weight: bold; font-style: italic}

@font-face {font-family: MJXc-TeX-math-BIw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-BoldItalic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-BoldItalic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-BoldItalic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-R; src: local('MathJax\_SansSerif'), local('MathJax\_SansSerif-Regular')}

@font-face {font-family: MJXc-TeX-sans-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-B; src: local('MathJax\_SansSerif Bold'), local('MathJax\_SansSerif-Bold')}

@font-face {font-family: MJXc-TeX-sans-Bx; src: local('MathJax\_SansSerif'); font-weight: bold}

@font-face {font-family: MJXc-TeX-sans-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-I; src: local('MathJax\_SansSerif Italic'), local('MathJax\_SansSerif-Italic')}

@font-face {font-family: MJXc-TeX-sans-Ix; src: local('MathJax\_SansSerif'); font-style: italic}

@font-face {font-family: MJXc-TeX-sans-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-script-R; src: local('MathJax\_Script'), local('MathJax\_Script-Regular')}

@font-face {font-family: MJXc-TeX-script-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Script-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Script-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Script-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-type-R; src: local('MathJax\_Typewriter'), local('MathJax\_Typewriter-Regular')}

@font-face {font-family: MJXc-TeX-type-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Typewriter-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Typewriter-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Typewriter-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-R; src: local('MathJax\_Caligraphic'), local('MathJax\_Caligraphic-Regular')}

@font-face {font-family: MJXc-TeX-cal-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-B; src: local('MathJax\_Main Bold'), local('MathJax\_Main-Bold')}

@font-face {font-family: MJXc-TeX-main-Bx; src: local('MathJax\_Main'); font-weight: bold}

@font-face {font-family: MJXc-TeX-main-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-I; src: local('MathJax\_Main Italic'), local('MathJax\_Main-Italic')}

@font-face {font-family: MJXc-TeX-main-Ix; src: local('MathJax\_Main'); font-style: italic}

@font-face {font-family: MJXc-TeX-main-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-R; src: local('MathJax\_Main'), local('MathJax\_Main-Regular')}

@font-face {font-family: MJXc-TeX-main-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-I; src: local('MathJax\_Math Italic'), local('MathJax\_Math-Italic')}

@font-face {font-family: MJXc-TeX-math-Ix; src: local('MathJax\_Math'); font-style: italic}

@font-face {font-family: MJXc-TeX-math-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size1-R; src: local('MathJax\_Size1'), local('MathJax\_Size1-Regular')}

@font-face {font-family: MJXc-TeX-size1-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size1-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size1-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size1-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size2-R; src: local('MathJax\_Size2'), local('MathJax\_Size2-Regular')}

@font-face {font-family: MJXc-TeX-size2-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size2-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size2-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size2-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size3-R; src: local('MathJax\_Size3'), local('MathJax\_Size3-Regular')}

@font-face {font-family: MJXc-TeX-size3-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size3-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size3-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size3-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size4-R; src: local('MathJax\_Size4'), local('MathJax\_Size4-Regular')}

@font-face {font-family: MJXc-TeX-size4-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size4-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size4-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size4-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-R; src: local('MathJax\_Vector'), local('MathJax\_Vector-Regular')}

@font-face {font-family: MJXc-TeX-vec-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-B; src: local('MathJax\_Vector Bold'), local('MathJax\_Vector-Bold')}

@font-face {font-family: MJXc-TeX-vec-Bx; src: local('MathJax\_Vector'); font-weight: bold}

@font-face {font-family: MJXc-TeX-vec-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Bold.otf') format('opentype')}

*(This post was originally published on March 31st 2017, and has been brought forwarded as part of the AI Alignment Forum launch sequence on fixed points.)*

In this post, I present a new formal open problem. A positive answer would be valuable for decision theory research. A negative answer would be helpful, mostly for figuring out what is the closest we can get to a positive answer. I also give some motivation for the problem, and some partial progress.

**Open Problem:** Does there exist a topological space X (in some [convenient category of topological spaces](https://ncatlab.org/nlab/show/convenient+category+of+topological+spaces)) such that there exists a continuous surjection from X to the space [0,1]X (of continuous functions from X to [0,1])?

---

***Motivation:***

**Topological Naturalized Agents:** Consider an agent who makes some observations and then takes an action. For simplicity, we assume there are only two possible actions, A and B. We also assume that the agent can randomize, so we can think of this agent as outputting a real number in [0,1], representing its probability of taking action A.

Thus, we can think of an agent as having a policy which is a function from the space Y of possible observations to [0,1]. We will require that our agent behaves continuously as a function of its observations, so we will think of the space of all possible policies as the space of continuous functions from Y to [0,1], denoted [0,1]Y.

We will let X denote the space of all possible agents, and we will have a function f:X→[0,1]Y which takes in an agent, and outputs that agent's policy.

Now, consider what happens when there are other agents in the environment. For simplicity, we will assume that our agent observes one other agent, and makes no other observations. Thus, we want to consider the case where Y=X, so f:X→[0,1]X.

We want f to be continuous, because we want a small change in an agent to correspond to a small change in the agent's policy. This is particularly important since other agents will be implementing continuous functions on agents, and we would like any continuous function on policies to be able to be considered valid continuous function on agents.

We also want f to be surjective. This means that our space of agents is sufficiently rich that for any possible continuous policy, there is an agent in our space that implements that policy.

In order to meet all these criteria simultaneously, we need a space X of agents, and a continuous surjection f:X→[0,1]X.

**Unifying Fixed Point Theorems:** While we are primarily interested in the above motivation, there is another secondary motivation, which may be more compelling for those less interested in agent foundations.

There are (at least) two main clusters of fixed point theorems that have come up many times in decision theory, and mathematics in general.

First, there is the Lawvere cluster of theorems. This includes the Lawvere fixed point theorem, the diagonal lemma, and the existence of Quines and fixed point combinators. These are used to prove Gödel's incompleteness Theorem, Cantor's Theorem, Löb's Theorem, and achieve robust cooperation in the Prisoner's Dilemma in [modal framework](https://arxiv.org/pdf/1401.5577.pdf) and [bounded variants](https://arxiv.org/pdf/1602.04184.pdf). All of these can be seen as corollaries of Lawvere's fixed point theorem, which states that in a cartesian closed category, if there is a point-surjective map f:X→YX, then every morphism g:Y→Y has a fixed point.

Second, there is the Brouwer cluster of theorems. This includes Brouwer's fixed point theorem, The Kakutani fixed point theorem, Poincaré–Miranda, and the intermediate value theorem. These are used to prove the existence of Nash Equilibria, [Logical Inductors](https://intelligence.org/files/LogicalInduction.pdf), and [Reflective Oracles](https://arxiv.org/pdf/1508.04145.pdf).

If we had a topological space and a continuous surjection X→[0,1]X, this would allow us to prove the one-dimensional Brouwer fixed point theorem directly using the Lawvere fixed point theorem, and thus unify these two important clusters.

Thanks to Qiaochu Yuan for pointing out the connection to Lawvere's fixed point theorem (and actually [asking this question three years ago](http://mathoverflow.net/questions/136478/can-the-lawvere-fixed-point-theorem-be-used-to-prove-the-brouwer-fixed-point-the)).

---

**Partial Progress:**

**Most Diagonalization Intuitions Do Not Apply:** A common initial reaction to this question is to conjecture that such an X does not exist, due to cardinality or diagonalization intuitions. However, note that all of the diagonalization theorems pass through (some modification of) the same lemma: Lawvere's fixed point theorem. However, this lemma does not apply here!

For example, in the category of sets, the reason that there is no surjection from any set X to the power set, {T,F}X, is because if there were such a surjection, Lawvere's fixed point theorem would imply that every function from {T,F} to itself has a fixed point (which is clearly not the case, since there is a function that swaps T and F).

However, we already know by Brouwer's fixed point theorem that every continuous function from the interval [0,1] to itself has a fixed point, so the standard diagonalization intuitions do not work here.

**Impossible if You Replace [0,1] with e.g. S1:** This also provides a quick sanity check on attempts to construct an X. Any construction that would not be meaningfully different if the interval [0,1] is replaced with the circle S1 is doomed from the start. This is because a continuous surjection X→(S1)X would violate Lawvere's fixed point theorem, since there is a continuous map from S1 to itself without fixed points.

**Impossible if you Require a Homeomorphism:** When I first asked this question I asked for a homeomorphism between X and [0,1]X. Sam Eisenstat has given a very clever argument why this is impossible. You can read it [here](https://mathoverflow.net/questions/264850/is-there-a-topological-space-x-homeomorphic-to-the-space-of-continuous-functions). In short, using a homeomorphism, you would be able to use Lawvere to construct a continuous map that send a function from [0,1] to itself to a fixed point of that function. However, no such continuous map exists.

---

**Notes:**

If you prefer not to think about the topology of [0,1]X, you can instead find a space X, and a continuous map h:X×X→[0,1], such that for every continuous function f:X→[0,1], there exists an xf∈X, such that for all x∈X, h(xf,x)=f(x).

Many of the details in the motivation could be different. I would like to see progress on similar questions. For example, you could add some computability condition to the space of functions. However, I am also very curious which way this specific question will go.

This post came out of many conversations, with many people, including: Sam, Qiaochu, Tsvi, Jessica, Patrick, Nate, Ryan, Marcello, Alex Mennen, Jack Gallagher, and James Cook.

---

*This post was originally published on March 31st 2017, and has been brought forwarded as part of the AI Alignment Forum launch sequences.*

*Tomorrow's AIAF sequences post will be 'Iterated Amplification and Distillation' by Ajeya Cotra, in the sequence on iterated amplification.* |

0e3cc522-ccf5-4d33-8775-4895bbf99268 | StampyAI/alignment-research-dataset/aisafety.info | AI Safety Info | How can I convince others and present the arguments well?