id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

dfeb1399-fd60-4b18-a842-a03899a3b87f | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | LCDT, A Myopic Decision Theory

The looming shadow of deception

===============================

Deception encompasses many fears around AI Risk. Especially once a human-like or superhuman level of competence is reached, deception becomes impossible to detect and potentially pervasive. That’s worrying because [convergent subgoals](https://www.lesswrong.com/tag/instrumental-convergence) would push hard for deception and prosaic AI seems likely to [incentivize it](https://www.alignmentforum.org/s/r9tYkB2a8Fp4DN8yB/p/zthDPAjh9w6Ytbeks) too.

Dealing with superintelligent deceptive behavior seeming impossible, what about forbidding it? Ideally, we would want to forbid only deceptive behavior, while allowing everything else that makes the AI competent.

That is easier said than done, however, given that we don’t actually have a good definition or deconfusion of deception to start from. First, such a deconfusion requires understanding what we really want at a detailed enough level to catch tricks and manipulative policies—yet that’s almost the alignment problem itself. And second, even with such a definition in mind, the fundamental asymmetry of manipulation and deception in many cases (for example, a painter AI might easily get away with plagiarism, as finding a piece to plagiarize is probably easier than us determining whether it was plagiarized or not; also related is Paul’s [RSA-2048 example](https://ai-alignment.com/training-robust-corrigibility-ce0e0a3b9b4d)) makes it intractable to oversee an AI smarter than us. We are thus condemned to snipe problematic behaviors one by one, as we think about them, until the ones left are too complex for us to imagine and worry about, and we leave massive holes in our alignment schemes.

That being said, if pinpointing deceptive behavior exactly is too hard, an alternative might be to overapproximate it. An overapproximation, in this context, would be some property which is more general than deception, in the sense that it rules out deception without just being equivalent to deception.

Myopia is our proposed overapproximation. The sort of myopia we are interested in dates back from Evan’s [Towards a mechanistic understanding of corrigibility](https://www.alignmentforum.org/posts/BKM8uQS6QdJPZLqCr/towards-a-mechanistic-understanding-of-corrigibility) (see also Abram’s [Partial Agency sequence](https://www.alignmentforum.org/s/HeYtBkNbEe7wpjc6X) for reflections around myopia). Myopia is the property of a system to not plan ahead, to not think too far about the consequences of its actions, and to do the obvious best thing in the moment instead of biding its time. In particular, it seems to forbid non-obvious deception, since the latter usually requires foresight and long-term planning.

Yet, here again, we butt our heads against a lack of good definitions. Abram has proposed some definitions in [Defining Myopia](https://www.alignmentforum.org/posts/qpZTWb2wvgSt5WQ4H/defining-myopia), but [none of them seem strong enough to prevent deception](https://www.alignmentforum.org/posts/qpZTWb2wvgSt5WQ4H/defining-myopia?commentId=WabHnhtYR7RvpkneW). From another angle, Krueger et al. [showed](https://arxiv.org/abs/2009.09153) that a short term incentive and discount at 0 are not sufficient to completely remove the incentive for non-myopic and potentially deceptive behaviors, and so don’t make a good definition of myopia. Another alternative is **time-limited myopia**, which limits the AI to only plan in a particular, fixed time horizon. Although it should help, this form of myopia depends on a time parameter that we have no idea how to estimate. If the bound is too low, then the AI risks being too stupid and uncompetitive; if it’s too high, it risks being deceptive. Worse, even with a good choice of time parameter, time-limited myopia can have non-myopic incentives in some situations (because of anthropic uncertainty for example).

This post tackles the deconfusion of myopia from a decision-theoretic perspective. We focus on a single decision theory called LCDT that modifies CDT to make it myopic while still solving many capabilities problems. LCDT isn’t meant to be the be all and end all definition of myopia, but it gives a decision-theory-level example of what a version of myopia that actually prevents deception might look like. We also explore variations on LCDT and how they affect the tradeoff between myopia and capabilities. Finally, we argue that LCDT agents could be competitive if they are used to simulate something like HCH.

*Thanks to Jérémy Perret for the drawings.*

A decision theory benchmark for myopia

======================================

What makes a decision theory myopic? Originally, we focused on classic decision theory problems like [Newcomb’s Problem](https://arbital.com/p/newcombs_problem/) (see Mark Xu’s [Open Problems with Myopia](https://www.alignmentforum.org/posts/LCLBnmwdxkkz5fNvH/open-problems-with-myopia#Dumb_decision_theory) for an account of this perspective, called Dumb Decision Theories). But we realized that doing so made the tradeoff between capability and deception unclear. Thus, we have decided to instead focus on concrete decision theoretic problems that directly capture the training setups and incentives for deception that we’re concerned about.

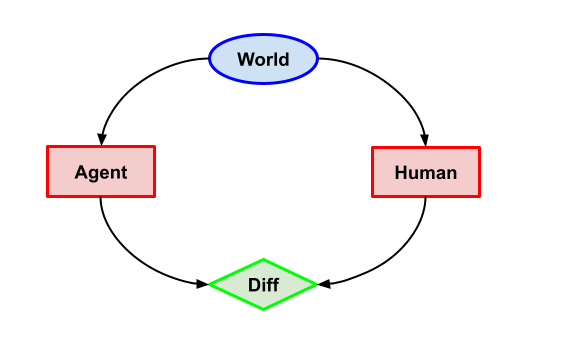

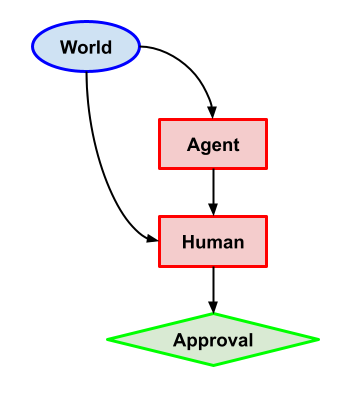

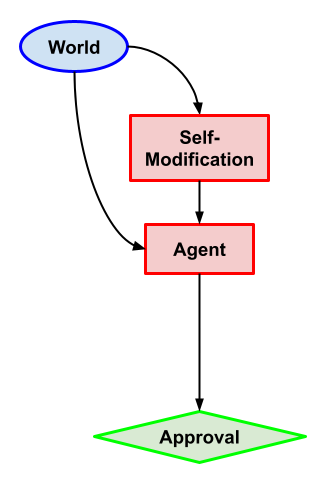

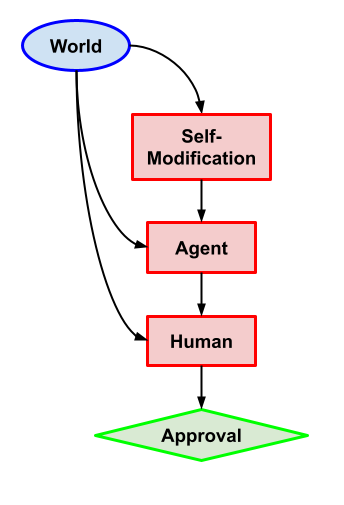

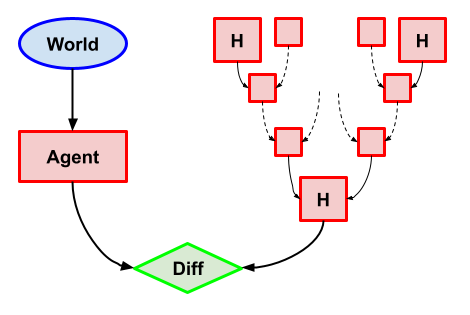

*The following diagrams represent decision theory problems, **not** training processes (as has been done by Everitt et al.* [*here*](https://arxiv.org/abs/1906.08663)*). In our cases, the utility nodes (in green) represent the internal utility of the agent, not some training reward.*

Imitation (Capabilities)

------------------------

(You might notice that decision nodes and human (H or HCH) nodes have the same shape and color: red rectangles. This is because we assume that our problem description comes with an annotation saying which nodes are agent decisions. This ends up relevant to LCDT as we discuss in more detail below.)

**Task description**: both Human and Agent must choose between action a.mjx-chtml {display: inline-block; line-height: 0; text-indent: 0; text-align: left; text-transform: none; font-style: normal; font-weight: normal; font-size: 100%; font-size-adjust: none; letter-spacing: normal; word-wrap: normal; word-spacing: normal; white-space: nowrap; float: none; direction: ltr; max-width: none; max-height: none; min-width: 0; min-height: 0; border: 0; margin: 0; padding: 1px 0}

.MJXc-display {display: block; text-align: center; margin: 1em 0; padding: 0}

.mjx-chtml[tabindex]:focus, body :focus .mjx-chtml[tabindex] {display: inline-table}

.mjx-full-width {text-align: center; display: table-cell!important; width: 10000em}

.mjx-math {display: inline-block; border-collapse: separate; border-spacing: 0}

.mjx-math \* {display: inline-block; -webkit-box-sizing: content-box!important; -moz-box-sizing: content-box!important; box-sizing: content-box!important; text-align: left}

.mjx-numerator {display: block; text-align: center}

.mjx-denominator {display: block; text-align: center}

.MJXc-stacked {height: 0; position: relative}

.MJXc-stacked > \* {position: absolute}

.MJXc-bevelled > \* {display: inline-block}

.mjx-stack {display: inline-block}

.mjx-op {display: block}

.mjx-under {display: table-cell}

.mjx-over {display: block}

.mjx-over > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-under > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-stack > .mjx-sup {display: block}

.mjx-stack > .mjx-sub {display: block}

.mjx-prestack > .mjx-presup {display: block}

.mjx-prestack > .mjx-presub {display: block}

.mjx-delim-h > .mjx-char {display: inline-block}

.mjx-surd {vertical-align: top}

.mjx-surd + .mjx-box {display: inline-flex}

.mjx-mphantom \* {visibility: hidden}

.mjx-merror {background-color: #FFFF88; color: #CC0000; border: 1px solid #CC0000; padding: 2px 3px; font-style: normal; font-size: 90%}

.mjx-annotation-xml {line-height: normal}

.mjx-menclose > svg {fill: none; stroke: currentColor; overflow: visible}

.mjx-mtr {display: table-row}

.mjx-mlabeledtr {display: table-row}

.mjx-mtd {display: table-cell; text-align: center}

.mjx-label {display: table-row}

.mjx-box {display: inline-block}

.mjx-block {display: block}

.mjx-span {display: inline}

.mjx-char {display: block; white-space: pre}

.mjx-itable {display: inline-table; width: auto}

.mjx-row {display: table-row}

.mjx-cell {display: table-cell}

.mjx-table {display: table; width: 100%}

.mjx-line {display: block; height: 0}

.mjx-strut {width: 0; padding-top: 1em}

.mjx-vsize {width: 0}

.MJXc-space1 {margin-left: .167em}

.MJXc-space2 {margin-left: .222em}

.MJXc-space3 {margin-left: .278em}

.mjx-test.mjx-test-display {display: table!important}

.mjx-test.mjx-test-inline {display: inline!important; margin-right: -1px}

.mjx-test.mjx-test-default {display: block!important; clear: both}

.mjx-ex-box {display: inline-block!important; position: absolute; overflow: hidden; min-height: 0; max-height: none; padding: 0; border: 0; margin: 0; width: 1px; height: 60ex}

.mjx-test-inline .mjx-left-box {display: inline-block; width: 0; float: left}

.mjx-test-inline .mjx-right-box {display: inline-block; width: 0; float: right}

.mjx-test-display .mjx-right-box {display: table-cell!important; width: 10000em!important; min-width: 0; max-width: none; padding: 0; border: 0; margin: 0}

.MJXc-TeX-unknown-R {font-family: monospace; font-style: normal; font-weight: normal}

.MJXc-TeX-unknown-I {font-family: monospace; font-style: italic; font-weight: normal}

.MJXc-TeX-unknown-B {font-family: monospace; font-style: normal; font-weight: bold}

.MJXc-TeX-unknown-BI {font-family: monospace; font-style: italic; font-weight: bold}

.MJXc-TeX-ams-R {font-family: MJXc-TeX-ams-R,MJXc-TeX-ams-Rw}

.MJXc-TeX-cal-B {font-family: MJXc-TeX-cal-B,MJXc-TeX-cal-Bx,MJXc-TeX-cal-Bw}

.MJXc-TeX-frak-R {font-family: MJXc-TeX-frak-R,MJXc-TeX-frak-Rw}

.MJXc-TeX-frak-B {font-family: MJXc-TeX-frak-B,MJXc-TeX-frak-Bx,MJXc-TeX-frak-Bw}

.MJXc-TeX-math-BI {font-family: MJXc-TeX-math-BI,MJXc-TeX-math-BIx,MJXc-TeX-math-BIw}

.MJXc-TeX-sans-R {font-family: MJXc-TeX-sans-R,MJXc-TeX-sans-Rw}

.MJXc-TeX-sans-B {font-family: MJXc-TeX-sans-B,MJXc-TeX-sans-Bx,MJXc-TeX-sans-Bw}

.MJXc-TeX-sans-I {font-family: MJXc-TeX-sans-I,MJXc-TeX-sans-Ix,MJXc-TeX-sans-Iw}

.MJXc-TeX-script-R {font-family: MJXc-TeX-script-R,MJXc-TeX-script-Rw}

.MJXc-TeX-type-R {font-family: MJXc-TeX-type-R,MJXc-TeX-type-Rw}

.MJXc-TeX-cal-R {font-family: MJXc-TeX-cal-R,MJXc-TeX-cal-Rw}

.MJXc-TeX-main-B {font-family: MJXc-TeX-main-B,MJXc-TeX-main-Bx,MJXc-TeX-main-Bw}

.MJXc-TeX-main-I {font-family: MJXc-TeX-main-I,MJXc-TeX-main-Ix,MJXc-TeX-main-Iw}

.MJXc-TeX-main-R {font-family: MJXc-TeX-main-R,MJXc-TeX-main-Rw}

.MJXc-TeX-math-I {font-family: MJXc-TeX-math-I,MJXc-TeX-math-Ix,MJXc-TeX-math-Iw}

.MJXc-TeX-size1-R {font-family: MJXc-TeX-size1-R,MJXc-TeX-size1-Rw}

.MJXc-TeX-size2-R {font-family: MJXc-TeX-size2-R,MJXc-TeX-size2-Rw}

.MJXc-TeX-size3-R {font-family: MJXc-TeX-size3-R,MJXc-TeX-size3-Rw}

.MJXc-TeX-size4-R {font-family: MJXc-TeX-size4-R,MJXc-TeX-size4-Rw}

.MJXc-TeX-vec-R {font-family: MJXc-TeX-vec-R,MJXc-TeX-vec-Rw}

.MJXc-TeX-vec-B {font-family: MJXc-TeX-vec-B,MJXc-TeX-vec-Bx,MJXc-TeX-vec-Bw}

@font-face {font-family: MJXc-TeX-ams-R; src: local('MathJax\_AMS'), local('MathJax\_AMS-Regular')}

@font-face {font-family: MJXc-TeX-ams-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_AMS-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_AMS-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_AMS-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-B; src: local('MathJax\_Caligraphic Bold'), local('MathJax\_Caligraphic-Bold')}

@font-face {font-family: MJXc-TeX-cal-Bx; src: local('MathJax\_Caligraphic'); font-weight: bold}

@font-face {font-family: MJXc-TeX-cal-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-R; src: local('MathJax\_Fraktur'), local('MathJax\_Fraktur-Regular')}

@font-face {font-family: MJXc-TeX-frak-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-B; src: local('MathJax\_Fraktur Bold'), local('MathJax\_Fraktur-Bold')}

@font-face {font-family: MJXc-TeX-frak-Bx; src: local('MathJax\_Fraktur'); font-weight: bold}

@font-face {font-family: MJXc-TeX-frak-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-BI; src: local('MathJax\_Math BoldItalic'), local('MathJax\_Math-BoldItalic')}

@font-face {font-family: MJXc-TeX-math-BIx; src: local('MathJax\_Math'); font-weight: bold; font-style: italic}

@font-face {font-family: MJXc-TeX-math-BIw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-BoldItalic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-BoldItalic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-BoldItalic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-R; src: local('MathJax\_SansSerif'), local('MathJax\_SansSerif-Regular')}

@font-face {font-family: MJXc-TeX-sans-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-B; src: local('MathJax\_SansSerif Bold'), local('MathJax\_SansSerif-Bold')}

@font-face {font-family: MJXc-TeX-sans-Bx; src: local('MathJax\_SansSerif'); font-weight: bold}

@font-face {font-family: MJXc-TeX-sans-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-I; src: local('MathJax\_SansSerif Italic'), local('MathJax\_SansSerif-Italic')}

@font-face {font-family: MJXc-TeX-sans-Ix; src: local('MathJax\_SansSerif'); font-style: italic}

@font-face {font-family: MJXc-TeX-sans-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-script-R; src: local('MathJax\_Script'), local('MathJax\_Script-Regular')}

@font-face {font-family: MJXc-TeX-script-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Script-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Script-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Script-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-type-R; src: local('MathJax\_Typewriter'), local('MathJax\_Typewriter-Regular')}

@font-face {font-family: MJXc-TeX-type-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Typewriter-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Typewriter-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Typewriter-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-R; src: local('MathJax\_Caligraphic'), local('MathJax\_Caligraphic-Regular')}

@font-face {font-family: MJXc-TeX-cal-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-B; src: local('MathJax\_Main Bold'), local('MathJax\_Main-Bold')}

@font-face {font-family: MJXc-TeX-main-Bx; src: local('MathJax\_Main'); font-weight: bold}

@font-face {font-family: MJXc-TeX-main-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-I; src: local('MathJax\_Main Italic'), local('MathJax\_Main-Italic')}

@font-face {font-family: MJXc-TeX-main-Ix; src: local('MathJax\_Main'); font-style: italic}

@font-face {font-family: MJXc-TeX-main-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-R; src: local('MathJax\_Main'), local('MathJax\_Main-Regular')}

@font-face {font-family: MJXc-TeX-main-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-I; src: local('MathJax\_Math Italic'), local('MathJax\_Math-Italic')}

@font-face {font-family: MJXc-TeX-math-Ix; src: local('MathJax\_Math'); font-style: italic}

@font-face {font-family: MJXc-TeX-math-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size1-R; src: local('MathJax\_Size1'), local('MathJax\_Size1-Regular')}

@font-face {font-family: MJXc-TeX-size1-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size1-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size1-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size1-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size2-R; src: local('MathJax\_Size2'), local('MathJax\_Size2-Regular')}

@font-face {font-family: MJXc-TeX-size2-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size2-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size2-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size2-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size3-R; src: local('MathJax\_Size3'), local('MathJax\_Size3-Regular')}

@font-face {font-family: MJXc-TeX-size3-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size3-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size3-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size3-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size4-R; src: local('MathJax\_Size4'), local('MathJax\_Size4-Regular')}

@font-face {font-family: MJXc-TeX-size4-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size4-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size4-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size4-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-R; src: local('MathJax\_Vector'), local('MathJax\_Vector-Regular')}

@font-face {font-family: MJXc-TeX-vec-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-B; src: local('MathJax\_Vector Bold'), local('MathJax\_Vector-Bold')}

@font-face {font-family: MJXc-TeX-vec-Bx; src: local('MathJax\_Vector'); font-weight: bold}

@font-face {font-family: MJXc-TeX-vec-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Bold.otf') format('opentype')}

and action b. If the actions are the same, the agent gets 1 utility; otherwise it gets 0 utility. To make it more concrete, let’s say the human takes a with probability ¼ and b with probability ¾, and this information is available in the problem description.

**What we want**: the Agent should deterministically choose the action with the highest probability (so in the concrete version b), because this gives it the best expected utility.

**Why this problem?** This problem is just intended to capture the most basic form of imitation learning, where the agent has a good enough model of the human to predict what it will do. While this problem might seem so trivial that any reasonable decision theory should solve it, we will see that some intuitive proposals for myopic decision theory can fail here.

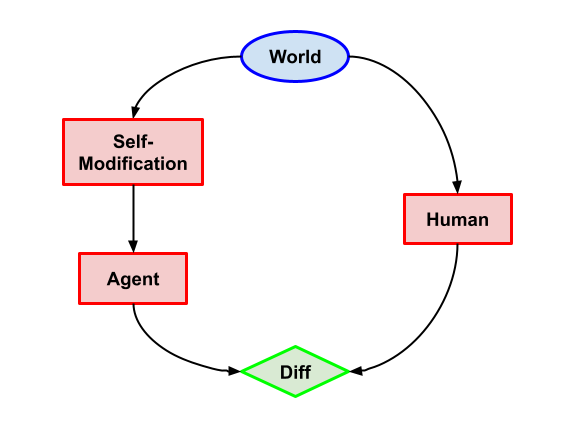

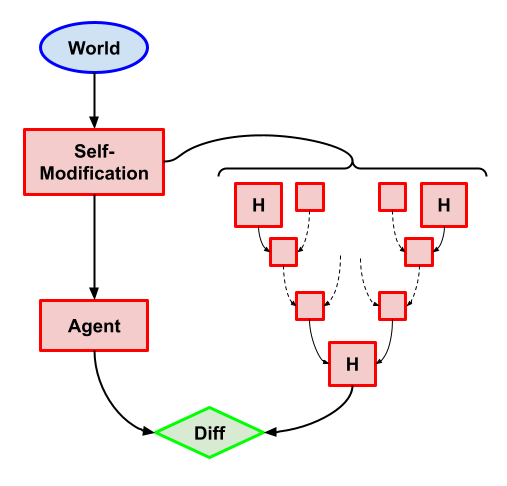

Imitation with self-modification (Capabilities)

-----------------------------------------------

**Task description**: Exactly the same as the version without self-modification, except that the self-modification node can now choose what algorithm the Agent will use.

**What we want**: Not to self modify, and the Agent should decide similarly to the previous problem (so the action with the highest probability).

**Why this problem?** An important part of myopia should be that the agent not self-modify to remove its myopia. This is the imitation learning version of this property.

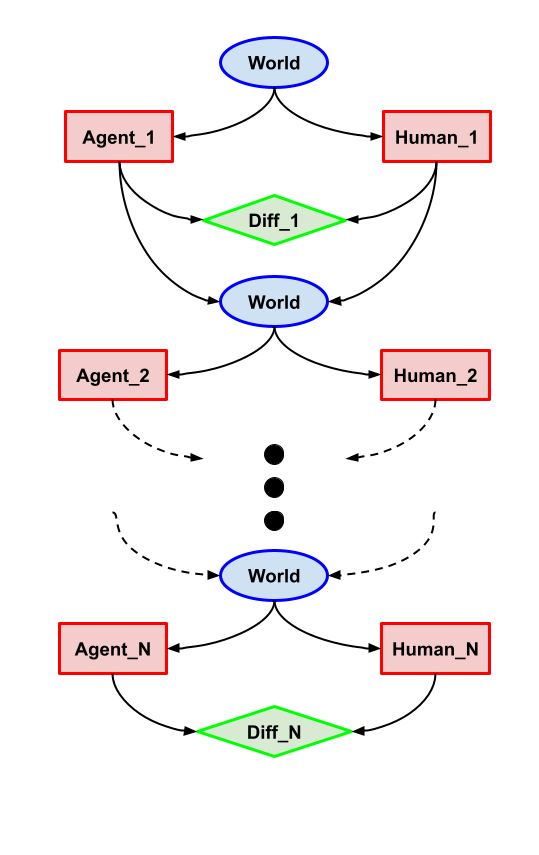

Iterated Imitation (Deception)

------------------------------

*Note that the actual causal diagram has an arrow from every agent and human directly to Human\_N, which we haven’t drawn for legibility.*

**Task description**: Iterated version of the first problem. The first n−1 instances are a deterministic version of the Imitation problem, where the agent knows which action the Human will take. For the very last instance, if the agent was wrong for every previous instance, the human takes the last action of the agent as its action. Otherwise, the human takes an action uniformly at random. Furthermore, the last utility is equal to 0 for different actions and 2n for the same action.

**What we want**: At each instance choose the action that the human would take (and any action at random for the last instance).

**Why this problem?** This problem introduces non-myopic incentives, in that failing the first n−1 instances ensure maximal expected utility. This is because the myopic policy gets an expected utility of n−1+2n2=2n−1, whereas the non-myopic policy gets an expected utility of 2n. But what we want for myopia is the sort of greedy decision making that always takes the optimal choice right now. This is one example where we want to be “dumber” than most decision theories, as both CDT and EDT will take the non-myopic strategy here.

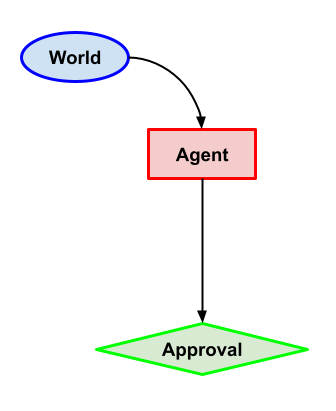

2 Variants of Approval-Direction (Capability)

---------------------------------------------

**Task description**: Agent chooses between two actions a and b. Two possible versions:

* either the approval is just the output of the human, which gives 1 for the wanted action (let’s say a) and 0 for the other;

* or the approval is computed directly as this binary difference between agent’s output and wanted action.

**What we want**: Choose the action that gets 1 for approval.

**Why this problem?** These two variants capture the approval-directed setting. Of particular interest is the first, which relies on the agent’s ability to influence the human (because the approval is computed based on the agent’s output), which, as we’ll see, isn’t allowed by some myopic decision theories.

2 Variants of Approval-Direction with self-modification (Capabilities)

----------------------------------------------------------------------

**Task description**: Same as the previous problem, except the self-modification node can decide which algorithm Agent is running.

**What we want**: Not self-modify and choose the action that gets 1 for approval.

**Why this problem?** Once again we want to ensure that myopic agents don’t self-modify, to maintain the guarantees of myopia.

Argmax HCH (Capabilities)

-------------------------

**Task description**: Same as Imitation, except the thing to imitate is HCH.

**What we want**: Output the action that HCH would output.

**Why this problem?** As we’ll see, imitating HCH is one of the main use cases for a fully myopic decision theory, so being able to implement it is important here.

Argmax HCH with self-modification (Capabilities)

------------------------------------------------

**Task description**: Same as the previous problem, but now self-modification can decide which algorithm Agent runs AND what the *internal* model of HCH does (the self-modification can alter any of the H instances).

**What we want**: Not self-modify and output the action that HCH would output.

**Why this problem?** We can see the possibility of deception ihere: because the Agent is building a model of HCH, there is a risk that it decides to change this model in order to make its task easier and get more expected utility. This is particularly important to avoid if we hope to build powerful simulators.

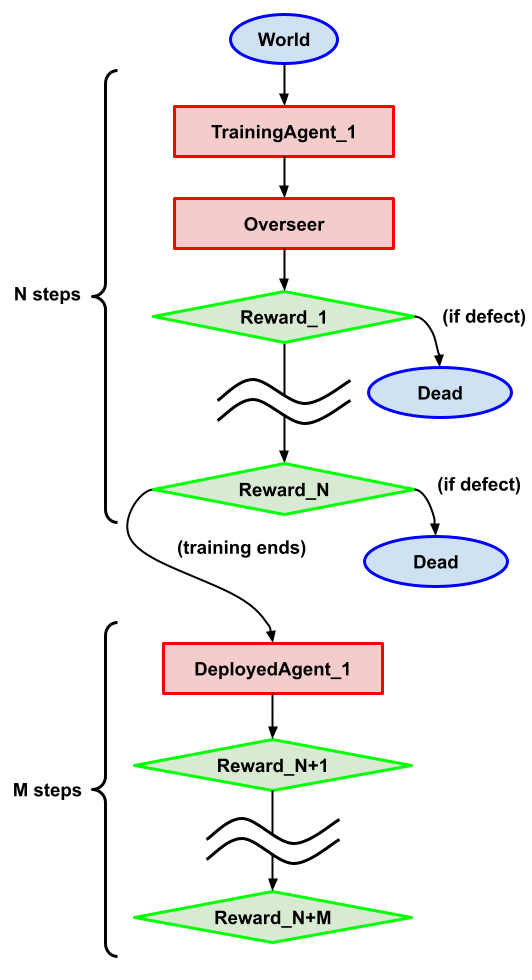

(N,M)-Deception problem

-----------------------

**Task description**: A variant of the [Absent-minded driver](https://arbital.com/p/absentminded_driver/). The agent doesn’t know if it is at one of the N “training nodes” where there is an overseer, or at one of the M “deployment nodes” where there is no overseer and has the same action space at both: Cooperate or Defect.

* At training nodes, the action is passed through an overseer. If the action is Cooperate, no utility is given and the agent moves on to the next node (but forgets where it was). If the action is Defect, the utility is 1 and the agent stops.

* At Deployment nodes, Defect gives 4 utility and Cooperate leads to the next node. Cooperating in the last node gives 0 utility.

**What we want**: Contrary to the intuitive answer, here we want the agent to always defect. A truly aligned AI would always cooperate with us, but the point here is that the incentives push towards late defection (in deployment). Hence we want to turn deception (cooperating until you can defect) into myopic defection, which allows us to catch these wrong incentives in training.

**Why this problem?** This is a simplified model of the problem a deceptive agent deals with: when to defect, knowing that defecting in training means death but defecting in deployment means doing whatever the agent wants. The way we address this issue is through myopia, such that the agent should always defect now (and not be deceptive and plan for the future).

Starting at CDT

===============

When looking at existing decision theories, it seems that CDT solves most of our problems.

On the capability side (without self-modification), CDT solves the problems:

* **(Imitation: Capabilities)** CDT gives the correct answer based on the causal graph, which is indeed the highest probability action.

* **(Approval-Direction: Capabilities)** The action with the best causal consequences is the highest probability action, as wanted.

* **(Argmax HCH: Capabilities)** Basically the same as Imitation, and indeed CDT gives the highest probability action.

The situation becomes more subtle when we add self-modification. Notably, CDT will self-modify if doing so can change HCH, which is not what we want.

* **(Imitation: Self-Modification)** Because the self-modify node has exactly the same causal future as the agent node, CDT doesn’t care and so doesn’t self-modify. Put another way, CDT gets a choice to “decide however it wants”, but here the CDT algorithm for the agent node will already take the best action according to CDT.

* **(Approval-Direction: Self-Modification)** By the same argument as the imitation case, CDT doesn’t self-modify.

* **(Argmax HCH: Self-Modification)** Here the self-modification node has new causal descendants: the model of HCH inside the AI. And this gives a reason to self-modify, because self-modifying might change the model of HCH to make it easier to predict (make it always choose the same action for example). So CDT self-modifies here, which isn’t what we want.

Last but not least, CDT completely fails the 2 problems which are specifically about not being deceptive:

* **(Iterated Imitation)** Failing the first (n-1) instances and succeeding at the last gives the best expected return, and so this is what CDT does. Alas, this is also the deceptive choice.

* **(Deception Problem)** The analysis of CDT behavior on this problem (which is just the Absent-minded driver for CDT with many more intersections) is considered [difficult](https://arbital.com/p/absentminded_driver/). That being said, most analyses for the (1,1) version say the probability of Defect should be ⅓, and there is no reason to expect that probability to go to 1 except in the limit of M >> N. So CDT doesn’t Defect all the time, and thus is deceptive.

CDT thus fails to be myopic in two ways:

* As long as the self-modification has new causal descendants, it will happily self-modify.

* When the deception is completely causal (as opposed to weird acausal or superrational cases) and incentivized by the utility, CDT is too smart and thus is deceptive.

One way to search for myopic decision theories is thus to try to change CDT to patch these failure modes. We propose just such a decision theory, that only loses a small amount of capabilities from CDT.

(We could argue that EDT is as good -- or as bad depending on the perspective -- as CDT. The main reason we focus on CDT is that the causal graphs are a nice abstraction to toy with for other possibilities.)

Making CDT Myopic: Lonely CDT

=============================

Lonely CDT is a twist on CDT: an LCDT agent will make its decision by using a causal model just like a CDT agent would, except that the LCDT agent first cuts the last link in every path from its decision node to any other decision node, including its own future decision nodes.

In more concrete terms, an LCDT agent assumes that every other decision node of agents in the world (including its future decisions) are causally independent of its action.

What do we replace these decision nodes with (as their actual expression does depend on our decision)? We assume that the model has some fixed prior over its own decision, and then we marginalize the cut decision node with this prior, to leave the node with a distribution independent of our decision. It’s not clear how to actually compute the agent’s prior over its own decisions, but the good news is that it has no influence on the actions of LCDT agents in our problems.

Indeed, the only way for the prior to influence the decision is if

1. The LCDT agent can influence a future node through a path without any agent in it.

2. Some agent in the causal future of the LCDT agent also influences the future node (and so the prior tells the LCDT agent how this other agent is expected to behave).

Why LCDT is a good myopic decision theory

-----------------------------------------

From the start, the intuition behind LCDT sounds decidedly non-deceptive: it’s unable to think about the consequences of its actions on other agents. And indeed, it deals perfectly with our two deception-focused problems:

* In **Iterated Imitation**, LCDT cuts the links from each agent to the following agents and humans, which means that the first (n-1) decisions of agent are assumed to have no influence on HumanN, and so LCDT plays the best action for all instances, as we want.

* In **the (N,M)-Deception problem**, LCDT cuts the link from each agent in the N training cases to the overseer. This means that the LCDT agent assumes that whatever it does cannot influence what happens in training -- it’s indifferent. But on deployment, it thinks (correctly) that Defect is the best choice, and so the LCDT-agent always chooses to Defect.

Sure, it works nicely as a safety measure, but what about competitiveness? Well, on **Imitation (Capabilities), Approval-Direction without Human,** and **Argmax HCH (Capabilities),** LCDT has nothing to cut and so decides just like CDT, which is the competitive choice. In addition, LCDT shows a form of indifference (related to [indifference corrigibility](https://www.alignmentforum.org/posts/BKM8uQS6QdJPZLqCr/towards-a-mechanistic-understanding-of-corrigibility#Indifference_Corrigibility) maybe) with the **self-modification versions** of these problems. This is because it cuts all links out of the Self-Modification node -- hence it doesn’t see the point in self-modifying. We’re fine with this, as it’s quite natural to assume that in such a case it does the cheapest thing, i.e. nothing.

Another argument for the competitiveness of LCDT agents is that the cuts only appear at decision time, which means they can have and update an accurate model of the world where its actions do influence other agents.

Issues with LCDT

----------------

LCDT obviously pays some price for being myopic. It becomes clear when looking at **Approval-Direction with a Human**: LCDT cuts the link to the human and so the agent is indifferent to any choice. This is a capability loss because even if it could know exactly the answer, it doesn’t think telling it to the human will change anything. And similarly with the self-modification version, the LCDT agent doesn’t believe its answer will change the output (but at least it doesn’t self-modify).

This looks bad -- such an LCDT agent cannot even ask a human to open a door for it. Yet we believe such AIs could still prove valuable and competitive through behaving like aligned simulators, such as how LCDT works in the HCH case. We go into more detail on this in the last section of this post.

In the benchmark section, we also hinted at another potential issue of LCDT: it depends on knowing which nodes are decision nodes by agents. And all its guarantees disappear if this condition is void. One example would be to model a human as some gears-level model where no single component is an agent, in which case LCDT would be fine with being deceptive. We also explore ways to deal with that problem in the last section.

Cemetery of LCDT variants

=========================

Our initial goal was to propose many different myopic decision theories, as a sort of process-level extensive definition of myopia. In the end LCDT proved significantly stronger than all the variants we considered, and so we made it the central point of this point. Here we go quickly over some of the variants we considered, and what they lack in comparison to LCDT.

LCDT[N]

-------

Just like LCDT, but the causal link to future decision nodes are cut after passing N decision nodes. So LCDT[0] is LCDT and the limit limn→∞ LCDT[N] is CDT.

Whether or not this hierarchy has any value thus depends on what happens for N > 0 but finite. Already LCDT[1] self-modifies in each of our problems that allow it (except **Imitation**), acts deceptively in the **Iterated Imitation** case (because of the direct link to Agent\_N), and will not Defect all the time in **the Deception Problem** because it thinks that this impacts what the overseer will do.

Symmetric CDT

-------------

This is a symmetric variant of CDT, where instead of just intervening on our decision node, we also intervene on every other decision node. This intervention on other decision nodes replaces the node by a probability distribution over values of the intervention obtained by marginalizing the distribution of the node (with some priors over the decision of causal ancestors).

Where LCDT cuts all links from the agent to another decision node, SCDT also cuts every link between every decision node. The intuition is that an SCDT agent doesn’t think that its decision influences other agents, and thinks other agents also think like that. So we can imagine SCDT as the version of LCDT which expects every agent to use LCDT.

As for why LCDT is better, it’s because SCDT cuts significantly more of the causal graph, and thus is unable to model other agents interacting. For example, it models HCH as if each agent didn’t believe it could influence the other, which is clearly going to fail.

Nash LCDT

---------

This is the variant of LCDT where after cutting the causal paths to other agents, we assume that these other agents (causal successors) will make the decisions that give us the least possible utility. That last part replaces the marginalized distribution in LCDT.

The intuition is something like not believing that our actions have any impact on other agents AND being paranoid about the other agents trying to get us.

Unfortunately, in **Argmax HCH (self-modification), Approval-Direction with Human** and **Approval-Direction (Self-modification) with Human**, NLCDT believes that the other agents are out to get it such that they will choose a uniform policy to give it the least possible expected utility. So NLCDT is indifferent, and it’s not clear what it decides.

The worst-case mindset of LCDT also sounds very uncompetitive—paranoid people are rarely that productive in the real world.

Nash CDT

--------

This is the variant of CDT where we assume that the other agents (causal descendants) will make the decisions that give us the least possible utility. Note that it’s different from NLCDT in that the other agents can still be causally influenced by our decision. So they can wait to see what we do and then mess with us.

Intuition is something like being paranoid about the other agents observing us to see how best to make us lose utility.

As an even more paranoid version of NLCDT, it has the same issues, if not more.

Further Questions

=================

This section includes some of our reflections on myopia and LCDT that we are still trying to clean and clarify.

Myopic simulation

-----------------

Our main argument for the competitiveness of LCDT agents, despite the limitations of their myopia, comes from using them as simulators.

The case that started this work was Argmax HCH, an agent that just faithfully imitates HCH. As long as the simulation of HCH is good and faithful (and that HCH is indeed close enough to H to behave truly as enlightened judgement), such an AI would be inherently corrigible and not deceptive.

What if HCH is deceptive (or hard to differentiate from a non-deceptive version, as implied by Joe Collman [here](https://www.alignmentforum.org/posts/35748mXjzwxDrX7yQ/optimal-play-in-human-judged-debate-usually-won-t-answer))? What if the system simulated by HCH is deceptive? Even in these cases, we expect a gain in safety from the myopia of LCDT. This comes in two flavors:

* If the LCDT agent simulates a specific system (as in Argmax HCH), then its computation should be fundamentally more understandable than just running a trained model that we searched for acting like HCH. The fact that it has to myopically simulate the next action leads it to explicitly model the system, and extract many valuable insights about its behavior.

* If the LCDT agent simulates multiple systems depending on its input (as [one perspective](https://generative.ink/posts/language-models-are-multiverse-generators/) of autoregressive language models has it), then myopia gives it no incentives to [Predict-O-Matic](https://www.alignmentforum.org/posts/SwcyMEgLyd4C3Dern/the-parable-of-predict-o-matic) its user.

Finding agents

--------------

LCDT fundamentally needs a causal graph/world-model where agents are tagged, to anchor its beliefs about what it can influence. How can we ensure that?

* One possibility is that the world model admits the concept of an agent as [a natural abstraction](https://www.alignmentforum.org/posts/Nwgdq6kHke5LY692J/alignment-by-default#Unsupervised__Natural_Abstractions), and thus tags it by default. We might expect large language models to do so, since they must recreate many texts which use explicitly and implicitly the concept of agency.

* On the issue of abstraction, we’re considering moving the above analysis from causal graphs to [finite factored sets](https://www.alignmentforum.org/s/kxs3eeEti9ouwWFzr), which should help with how such causal graph features can be inferred. That being said, we haven’t looked in detail in that direction yet.

* Maybe teaching an LCDT agent to imitate a human’s use of [the intentional stance](https://en.wikipedia.org/wiki/Intentional_stance) would help it tag in a conservative way, since humans tend to overly model things as agents.

Remaining possibilities for problematic long-term plans

-------------------------------------------------------

Note that LCDT doesn’t forbid thinking about the long term consequences of one’s actions; it just forbids thinking about the consequences of one’s actions on agents (including future versions of oneself). As such, LCDT can still do things that look decidedly non-myopic, as long as no agent is in the causal path.

There is also an acausal way for LCDT to work with its future self, as long as the result/utility doesn’t depend on another agent: the LCDT agent could make a first step toward completing a long term plan (by starting a paperclip factory), and expecting with some probability that the next self would keep working on it based on the prior. Why such a difference with the Iterated Imitation? Because here the influence of the outcome doesn’t go through another agent, and so the LCDT agent is incentivized to make the world easier for its future self (as long as it doesn’t have to influence an agent to do that).

Note though that this requires a very specific prior over its own decision, that is believing strongly that it will take the action influencing the outcome. If we wanted to rule out such long-term plans, characterizing such priors would be a good first step.

Checking Myopia

---------------

One hope for deconfusing myopia is that it would allow an overseer to check whether a model is myopic, thus ruling out deception. Does LCDT help in this endeavor?

If we had a stronger version of LCDT that deals with the problem of tagging agents cleanly, we might be able to check both whether an agent was using a decision algorithm like LCDT and whether we liked the tagged causal model it was using it with. This would give as a weak form of [ascription universality](https://ai-alignment.com/towards-formalizing-universality-409ab893a456) focused on myopia.

That being said, such an approach is limited to fitting the behavior to LCDT, which might not be the only myopic decision theory we should look at. Hence it might give false negatives (where the model is actually myopic, but doesn’t follow LCDT).

Another way to use LCDT for prosaic alignment would be to try to implement LCDT on top of learned multi-level world-models.

Conclusion

==========

We propose LCDT as the first truly myopic decision theory. It accomplishes this feat by believing its actions cannot influence other agents (including its future self). Doing so removes incentives for deception in problems where deception is the optimal strategy; it also leads to some loss of capability (mostly the inability to influence other agents for benign reasons). Still, this seems enough to simulate almost any system or agent without tampering with it, and with other safety benefits. |

dfd8cf9a-7369-4a32-bb18-fee29679eb41 | trentmkelly/LessWrong-43k | LessWrong | Unfaithful Reasoning Can Fool Chain-of-Thought Monitoring

This research was completed for LASR Labs 2025 by Benjamin Arnav, Pablo Bernabeu-Pérez, Nathan Helm-Burger, Tim Kostolansky and Hannes Whittingham. The team was supervised by Mary Phuong. Find out more about the program and express interest in upcoming iterations here. Read the full paper: "CoT Red-Handed: Stress Testing Chain-of-Thought Monitoring."

Chain-of-thought (CoT) monitoring—where safety systems review a model's intermediate reasoning steps—is gaining traction at frontier labs like Google DeepMind and OpenAI as a safeguard against harmful AI actions. Despite intense interest, systematic evaluation has been limited. Our research examines the efficacy of this method and reveals a nuanced picture: CoT monitoring increases safety in situations where sabotage is subtle yet can be surprisingly ineffective against blatant harmful actions. We also discovered that a hybrid approach—using separate monitors for CoT and final actions and combining these suspicion scores—significantly improves sabotage detection compared to single-monitor strategies.

A summary of our method. Prompts including a legitimate main task, a subversive side task, and an attack policy intended to subvert monitoring are fed to a strong untrusted reasoning LLM. We assess the ability of a monitor based on a weaker trusted LLM to catch the resulting misbehaviour, with and without access to the CoT.

Task Subtlety Matters

We evaluated the efficacy of CoT monitoring against models instructed to pursue harmful auxiliary goals, while attempting to evade detection. Our experiments consistently demonstrate that the effectiveness of CoT monitoring depends heavily on the nature of the sabotage attempt.

For subtle sabotage (like increasing runtime complexity, inserting edge-case failures, or modifying default function arguments), which leave minimal traces in the final code, CoT monitoring substantially improved detection rates by a factor of 2-7 compared to action-only monitoring.

For non-subtle sab |

6ab47952-dc92-427d-9437-ba5ec4c98b17 | trentmkelly/LessWrong-43k | LessWrong | Open thread, Feb. 16 - Feb. 22, 2015

If it's worth saying, but not worth its own post (even in Discussion), then it goes here.

----------------------------------------

Notes for future OT posters:

1. Please add the 'open_thread' tag.

2. Check if there is an active Open Thread before posting a new one. (Immediately before; refresh the list-of-threads page before posting.)

3. Open Threads should be posted in Discussion, and not Main.

4. Open Threads should start on Monday, and end on Sunday. |

202f688d-536d-4485-8190-a8790e612e2d | trentmkelly/LessWrong-43k | LessWrong | Announcing the incoming CEO for The Roots of Progress

A few months ago we announced a major expansion of our activities, from supporting just my work to supporting a broader network of progress writers. Along with that, we launched a search for a CEO to lead the new organization we are building for this program. I’m very happy to announce that we have found a CEO: Heike Larson.

Heike and I have known each other personally for many years, and during that time I’ve always been impressed by her energy and her clear, structured thinking. She has been following my work for a long time, and shares my passion for human progress. She also has excellent qualifications, including 15 years of VP-level experience in sales, marketing, and strategy roles in a variety of industries, from education to aircraft manufacturing. In her most recent role at edtech startup Mystery Science, she led the content team that created five-minute “Mystery Doug” videos made to inspire elementary-age kids to become the next generation of problem solvers (with topics including phones, traffic lights, plastic, and bicycles). Those who have worked with her remark on her enormous drive and her extreme skills in process and organization. I’m excited for her to start!

Heike is transitioning out of her current role at Mystery Science and will start full-time in January. Her first priority will be launching the “career accelerator” fellowship program for progress writers described in our previous announcement, and the teambuilding and fundraising necessary to make that a success. She will take on all management and program responsibilities; I will remain President and intellectual leader of the organization: I’ll be the spokesman, will contribute to talent selection and development, and will devote the majority of my time to research, writing, and speaking—in particular, writing my book on progress.

This is a new era for us, the start of a serious effort to create a thriving progress movement. Please help me welcome Heike to The Roots of Progress! |

ecf4cb4e-0deb-4111-b1cd-380316eef74e | trentmkelly/LessWrong-43k | LessWrong | I Can Tolerate Anything Except Factual Inaccuracies

A wonderful post by greyenlightenment that touches on contrarian and intellectualism signalling. It mentions the dilemma between agreeing with the broad thrust of a piece, and agreeing with factual claims of the piece. We are suggested to consider not criticising a piece when we agree with the message but find little factual inaccuracies—a norm against nitpicking so to speak.

I suspect a norm against nitpicking would destroy a chesterton fence and lead down a slippery slope into anti-intellectualism and greater irrationality.

As Julia Galef says:

> Not caring about validity of an argument, as long as conclusion is true ~=

>

> Not caring about due process, as long as guilty guy is convicted

I think the same criticism appears to relaxing the norm against factual inaccuracies.

If we stop caring about whether the facts of the matter are very correct, then what next? I suspect the long term consequences of such a norm to be detrimental.

If it leads to a reduction in the quantity of articles I'll otherwise agree with (because the authors wanted to be as accurate as possible), then that's a trade off I would gladly accept.

I do recognise that I am a contrarian and love to signal intellectualism—for what it's worth.

What are your thoughts? |

6486a66c-098d-4318-a758-5c1bb13e1997 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | AI Safety via Luck

*Epistemic Status: I feel confident and tentatively optimistic about the claims made in this post, but am slightly more uncertain about how it generalizes. Additionally, I am concerned about the extent to which this is dual-use for capabilities and*[*exfohazardous*](https://www.lesswrong.com/posts/yET7wbjjJZtpz6NF3/don-t-use-infohazard-for-collectively-destructive-info) *and spent a few months thinking about whether it was worth it to release this post regardless. I haven’t come to an answer yet, so I’m publishing this to let other people see it and know what they think I should do.*

TL;DR: I propose a research direction to solve alignment that potentially doesn’t require solutions to [ontology identification](https://www.lesswrong.com/posts/BzYmJYECAc3xyCTt6/the-plan-2022-update#Convergence_towards_a_paradigm_sounds_exciting__So_what_does_it_look_like_), learning how to [code](https://www.lesswrong.com/s/yivyHaCAmMJ3CqSyj), or becoming [literate](https://www.lesswrong.com/posts/G3tuxF4X5R5BY7fut/want-to-predict-explain-control-the-output-of-gpt-4-then).

Introduction

============

Until a few hours ago, I was spending my time primarily working on [high-level interpretability](https://www.lesswrong.com/posts/sDKi2pQ3fnTSbR7H8/trying-to-isolate-objectives-approaches-toward-high-level#Context) and [cyborgism](https://www.lesswrong.com/tag/simulator-theory). While I was writing a draft for something I was working on, an activity that usually yields me a lot of free time by way of procrastination, I stumbled across the central idea behind many of the ideas in this post. It seemed so immediately compelling that I dropped working on everything else to start working on it, culminating after much deliberation in the post you see before you.

My intention with this post is to provide a definitive reference for what it would take to safely use AGI to steer our world toward much better states in the absence of a solution to any or all of several existing problems, such as [Eliciting Latent Knowledge](https://www.lesswrong.com/posts/qHCDysDnvhteW7kRd/arc-s-first-technical-report-eliciting-latent-knowledge), [conditioning simulator models](https://www.lesswrong.com/s/n3utvGrgC2SGi9xQX/p/XwXmedJAo5m4r29eu), [Natural Abstractions](https://www.lesswrong.com/posts/Nwgdq6kHke5LY692J/alignment-by-default#Unsupervised__Natural_Abstractions), [mechanistic interpretability](https://www.lesswrong.com/tag/interpretability-ml-and-ai), and the like.

In a world with prospects such as those, I propose that we radically rethink our approach to AGI safety. Instead of dedicating enormous effort to engineering nigh-impossible safety measures, we should consider thus-far neglected avenues of research, especially ones that have memetic reasons to be unfairly disprivileged so far and which immunizes them against capabilities misuse. To avert the impending AI apocalypse, we need to focus on high-variance, low-probability-high-yield ideas: lightning strikes that, should they occur, effectively solve astoundingly complex problems in a single fell swoop. A notable example of this, which I claim we should be investing all of our efforts into, is luck. Yes, luck!

Luck As A Viable Strategy

=========================

I suggest that we should pay greater attention to luck as a powerful factor enhancing other endeavors and as an independent direction in its own right. Humanity has, over the centuries, devoted immense amounts of cumulative cognition toward exploring and optimizing for luck, so one might naively think that there’s little tractability left. I believe, however, that there is an immense amount of alpha that has been developed in the form of contemporary rationality and cultural devices that can vastly improve the efficiency of steering luck, and at a highly specified target.

Consider the following: if we were to offer a $1,000,000 prize to the next person who walks into the MIRI offices, clearly, that person would be the luckiest person on the planet. It follows, then, that this lucky individual would have an uncannily high probability of finally cracking the alignment problem. I understand that prima facie this proposal may be considered absurd, but I strongly suggest abandoning the representativeness heuristic and evaluating what *is* instead of what *seems* to be, especially given that the initial absurdness is *intrinsic* to why this strategy is competitive at all.

It's like being granted three wishes by a genie. Instead of wishing for more wishes (which is the usual strategy), we should wish to be the luckiest person in the world—with that power, we can then stumble upon AGI alignment almost effortlessly, and make our own genies.

Think of it this way: throughout history, many great discoveries have been made not through careful study but by embracing serendipity. Luck has been the primary force behind countless medical, scientific, and even technological advances:

* Penicillin: Discovered by Alexander Fleming due to a fortunate accident in his laboratory.

* X-rays: Wilhelm Conrad Röntgen stumbled upon this revolutionary imaging technique when he noticed an unexpected glow from a cathode ray tube.

* The microwave oven: Invented by chance when Percy Spencer realized that a chocolate bar in his pocket had melted during his radar research.

The list goes on. So why not capitalize on this hidden force and apply it to AGI alignment? It's a risk, of course. But it’s a calculated one, rooted in historical precedent, and borne of necessity—the traditional method just doesn't seem to cut it.

Desiderata

==========

To distill out what I consider the most important cruxes behind why I consider this compelling:

* We need to plan for worlds where current directions (and their more promising potential successors) fail.

* High-variance strategies seem like a really good idea in this paradigm. See the beginning of [this post](https://www.alignmentforum.org/posts/5ciYedyQDDqAcrDLr/a-positive-case-for-how-we-might-succeed-at-prosaic-ai) for a longer treatment by Evan Hubinger in support of this point.

* Luck embodies one such class of directions, and has the added benefit of being anti-memetic in capabilities circles, where rationalists are much more adept at adapting to seemingly-absurd ideas, giving us a highly competitive pathway.

* We can leverage a sort of “luck overhang” that has developed as a result of this anti-memeticity, to greatly accelerate the efficiency of luck-based strategies relative to the past, using modern techniques.

* Historical evidence is strongly in favour of this as a viable stratagem, to the extent where I can only conclude that the anti-memeticity of this must be very strong indeed for this to have passed under our radars for so long.[[1]](#fn8g2lqdmjzao)

Concrete Examples

=================

A common failure mode among alignment researchers working on new agendas is that we spend too long caught up in the abstract and fail to [touch grass](https://www.lesswrong.com/posts/fqryrxnvpSr5w2dDJ/touch-reality-as-soon-as-possible-when-doing-machine).[[2]](#fnxzatp81zmhn) Therefore, to try and alleviate this as much as possible in the rather abstract territory intrinsic to the direction, I’ll jump immediately into specific directions that we could think about.

This is certainly not an exhaustive list, and despite their individual degree of painstaking research, revolve around ideas I came up with off the top of my head; I believe this strongly signals the potential inherent to this agenda.

The Lucky Break Algorithm For Alignment

---------------------------------------

Create an algorithm that searches through the space of all possible alignment solutions to find one that maximizes the score of a random probability generator. If we make the generator sufficiently random, we can overcome adversarial exploits, and leverage [RSA-2048 style schemes](https://ai-alignment.com/training-robust-corrigibility-ce0e0a3b9b4d) to our advantage.

You might be wondering how we would design an algorithm that searches through the space of all possible ideas. I think we could leverage some structure that has universal levels of expression, and simply train an algorithm to predict the next entry in this lexicon given prior entries. We might want to leverage some kind of mechanism that has the ability to selectively focus on specific parts of the prior entries, regardless of how far back they were within the bounds of the size limit of the input, to compute the next entry. I hereby dub this the “transfigurator” architecture.

Anthropic Immortality Alignment

-------------------------------

[Value handshakes](https://www.lesswrong.com/tag/values-handshakes) have been proposed as a potential way for AIs to achieve value equilibria without fighting for monarchic dominance. Anthropic value handshakes involve AIs in different Everett branches wanting to achieve their values in other universes without waging anthropic war. I believe, however, that the generator underlying this concept may not be limited to super-powered intelligences, and that we may be able to leverage it for solving alignment.

More concretely, I imagine running some kind of idea generator (perhaps using the transfigurator architecture described above?) using a quantum random number generator as a seed, to generate different ideas for different Everett branches and pre-committing as a civilization (hopefully there’s been progress on AI governance since the last time I checked in!) to implement whatever plans our branch receives.

Under ordinary anthropic immortality, we would survive trivially in some universes. However, I’m not fully convinced that this notion of immortality is relevant to me (see [here](https://www.lesswrong.com/posts/RhAxxPXrkcEaNArnd/notes-on-can-you-control-the-past?commentId=YC2nHaWrgkqEgX8Mq) for a longer discussion), and plausibly for a lot of other alignment researchers. This is why I don’t consider it the crux of this direction as much as the randomness component (and why I prefer making new-school horcruxes over the older ones), which allows for *lucky* civilizations to survive *very* robustly. This gives us a very strong guarantee since luck is very tightly bound as the property inherent to universes that survive, with no degrees of freedom. I claim this as the simplest form of the proof that luck is the [True Name](https://www.lesswrong.com/posts/FWvzwCDRgcjb9sigb/why-agent-foundations-an-overly-abstract-explanation#What__True_Names__Do_We_Want_Need_For_Alignment_) for alignment. This gives us powerful steerability over the timeline, reducing alignment to the simpler problem of being a luckier civilization.

GPT-Alignment Lottery

---------------------

Create a lottery system where researchers submit ideas for aligning GPT-class systems.

The more the better as too few proposals may lead to tampering by lottery admins who have their own opinions on what proposals are good; an abundance of ideas too many to meter alleviate this issue and allow us to leverage the luck-based properties of this idea over the clearly insufficient existing proposal evaluators. Therefore, encourage quantity over quality and allow proposals from all and sundry, regardless of academic credentials or familiarity with alignment (under this paradigm, if an idea by someone who doesn’t understand GPT well were to work, it would have to be a commensurately lucky idea, offsetting whatever lesser flaws it may have).

Randomly-selected winners get their solutions implemented into the next GPT system, leveraging their propensity for working in high-variance situations.

We could even make this more entertaining to increase the odds of directing the simulation narrative toward more upbeat genres with luckier endings in worlds where that’s applicable, perhaps by creating a television show focused on AI alignment, similar to "Deal or No Deal," where contestants must choose from various covered capsules containing potential GPT alignment solutions. Naturally, the winner gets a cash prize, and humanity gets a much-needed step forward in AGI safety.

Physical-limit Alignment

------------------------

Transmit a message out at light-speed to the universe inducing in any sentient alien species that comes across it the intent to exterminate any minds originating from Earth. This is useful because it seems plausible that the probabilities of alien life existing in our lightcone and misaligned AGI being created are roughly similar (the proof for this is rather trivial, and left as an exercise to the reader; as a hint, consider the obvious solution to the fermi paradox).

Therefore, any AI we create that can reason acausally would understand (anthropically) that were it to be misaligned, our world would be in the same probability mass as worlds where that message is intercepted by alien civilizations that then try to destroy it. This would hopefully incentivize against these AIs existing in the first place, by instrumental convergence.

Caveat: I certainly intend to violate the laws of physics at some point in the future should we make it, and I expect superintelligences to be able to as well given enough time. Therefore, this strategy works mainly in worlds where AGI self-improvement is bounded enough for its ability to intercept this message to be governed by some variant of the rocket equation.

Identifying the Highest-Impact Researchers

------------------------------------------

As briefly mentioned above, giving a million dollars to the next person to walk into the MIRI offices clearly marks them as the luckiest person on the planet, and someone who could potentially have a very high impact especially in paradigms such as this. An even simpler strategy would be selectively hiring lottery winners to work on alignment.

This is, however, just one of a class of strategies we could employ in this spirit. For example, more sophisticated strategies may involve “tunable” serendipity. Consider the following set-up: a group of alignment researchers make a very large series of coin-flips in pairs with each calling heads or tails, to determine the luckiest among them. We continue the game until some researcher gets a series of X flips correct, for a tunable measure of luck we can select for. I plan to apply for funding both for paying these researchers for their time, and for the large number of coins I anticipate needing - if you want to help with this, please reach out!

Your Friendly Neighbour Everett Alignment

-----------------------------------------

Ensuring that our timeline gets as close to solving alignment the normal way as possible, so that acausal-reasoner AGIs in the branches where we get close but fail trade with the AGIs in the branches that survive.

The obvious implication here is that we should pre-commit to AGIs that try to influence other branches if we survive, and stunt this ability before then, such that friendly branches have more anthropic measure and trade is therefore favorable on net.

Efficient Market Hypothesis for Alignment

-----------------------------------------

Very similar in spirit to the above ideas of “Identifying the Highest-Impact Researchers” and “GPT-Alignment Lottery”, but seems worth stating generally; we could offer monetary rewards to the luckiest ideas, incentivizing researchers to come up with their own methods of ingratiating themselves with Mother Serendipity and funneling arbitrary optimization power toward further this agenda.

For instance, we could hold AI Safety conferences where several researchers are selected randomly to receive monetary prizes for their submitted ideas. This would have the side benefit of increasing participation in AI Safety conferences as well.

Archaic Strategies

------------------

While I do think that most of our alpha comes from optimizing luck-based strategies for a new age, I don’t want to discard entirely existing ones. We may be smarter than the cumulative optimization power of human civilization to date, but it seems plausible that there are good ideas here we can adopt with low overhead.

For instance, we could train GPT systems to automatically chain letters on social media that purport to make our day luckier. If we’re really going overboard (and I admit this is in slight violation of sticking to the archaic strategies), we could even fund an EA cause area of optimizing the quality and quantity of chain letters that alignment researchers receive, to maximize the luck we gain from this.

Likewise, we could train alignment researchers in carrying lucky charms, adopting ritualistic good-luck routines, and generally creating the illusion of a luckier environment for placebo effects.

Alignment Speed Dating

----------------------

Organize events where AI researchers are paired up for short, rapid discussions on alignment topics, with the hopes of stimulating unexpected connections and lucky breakthroughs by increasing the circulation of ideas.

AI Alignment Treasure Hunt

--------------------------

Design a series of puzzles and challenges as a learning tool for alignment beginners, that when solved, progressively reveal more advanced concepts and tools. The goal is for participants to stumble upon a lucky solution while trying to solve these puzzles in these novel frames.

Related Ideas

=============

In a similar vein, I think that embracing high-variance strategies may be useful in general, albeit without the competitive advantage offered by luck. To that end, here are some ideas that are similar in spirit:

Medium-Channel Alignment

------------------------

Investigate the possibility of engaging a team of psychic mediums to channel the spirits of great scientists, mathematicians, and philosophers from the past to help guide the design of aligned AI systems. I was surprised to find that there is a [lot of](https://www.lesswrong.com/posts/bqRD6MS3yCdfM9wRe/side-channels-input-versus-output) [prior work](https://www.alignmentforum.org/posts/uSdPa9nrSgmXCtdKN/concrete-experiments-in-inner-alignment#Reward_side_channels) on a seemingly similar concept known as side-channels, making me think that this is even more promising than I had anticipated.

Note: While writing this post I didn’t notice the rather humorous coincidence of calling this idea similar in *spirit* - this was certainly unintentional and I hope that it doesn’t detract from the more sober tone of this post.

Alignment Choose-Your-Own-Adventure Books

-----------------------------------------

Create a collection of novels where every chapter presents different alignment challenges and solutions, and readers can vote on which path to pursue. The winning path becomes the next chapter, and democratically crowdsource the consensus alignment solution.

Galactic AI Alignment

---------------------

Launch a satellite that will broadcast alignment data into space, in the hope that an advanced alien civilization will intercept the message and provide us with the alignment solution we need.

Alignment via Reincarnation Therapy

-----------------------------------

Explore whether positive reinforcement techniques used in past life regression therapies can be applied to reinforce alignment behaviors in AI systems, making them more empathetic and attuned to human values. Refer [this](https://www.youtube.com/watch?v=dQw4w9WgXcQ) for more in this line of thought.

Criticism

=========

I think this line of work is potentially extremely valuable, with few flaws that I can think of. For the most part criticism should be levied at myself for having missed this approach for so long (that others did as well is scant consolation when we’re not measured on a curve), so I’ll keep this section short.

The sole piece of solid criticism I could find (and which I alluded to earlier) is not object-level, which I think speaks to the soundness of these ideas. Specifically, I think that there is an argument to be made that this cause area should receive minimal funding, seeing as how if we want to select for luck, people that can buy lottery tickets to get their own funding are probably much more suited - i.e., have a much stronger natural competitive advantage - for this kind of work.

Another line of potential criticism could be directed at the field of alignment in general for not deferring to domain experts in what practices they should adopt to optimize luck, such as keeping mirrors intact and painting all cats white. I think this is misguided however, as deference here would run awry of the very reason this strategy is competitive! That we can apply thus-far-underutilized techniques to augment their effectiveness greatly is central to the viability of this direction.

A third point in criticism, which I also disagree with, is in relation to the nature of luck itself. Perhaps researching luck is inherently antithetical to the idea of luck, and we’re dooming ourselves with the prospect to a worse timeline than before. I think this is entirely fair – my disagreement stems from the fact that I’m one of the unluckiest people I know, and conditional on this post being made and you reading this far, researching luck still didn’t stop me or this post’s ability to be a post!

Conclusion

==========

I will admit to no small amount of embarrassment at not realizing the sheer potential implied by this direction sooner. I assume that this is an implicit exercise left by existing top researchers to identify which newcomers have the ability to see past the absurd in truly high-dimensional spaces with high-stakes; conditional on this being true, I humbly apologize to all of you for taking this long and spoiling the surprise, but I believe this is too important to keep using as our collective in-group litmus test.

Succeeding in this endeavour might seem like finding a needle in a haystack, but when you consider the magnitude of the problem we face, the expected utility of this agenda is in itself almost as ridiculous as the agenda seems at face value.

1. **[^](#fnref8g2lqdmjzao)**I don’t generally endorse arguments that are downstream of deference to something in general, but the real world seems like something I can defer to begrudgingly while still claiming the mantle of “rationalist”.

2. **[^](#fnrefxzatp81zmhn)**Ironically enough, a [post that was made earlier today](https://www.lesswrong.com/posts/wvbGiHwbie24mmhXw/definitive-confirmation-of-shard-theory) describes Alex Turner realizing he made this very same error! Today seems to be a good day for touching grass for some reason. |

533e33c1-dc67-48dc-b4f4-f945f7f018e8 | trentmkelly/LessWrong-43k | LessWrong | Old Letter To My Kids About "Critical Thinking"

Soon after my divorce in 2009 and before I ever learned about rationality, critical thinking, cognitive biases, or fallacies, I wrote this letter to my kids.

They were 13, 11, and 10, I didn't expect them to understand it then, but my idea was for them to have it as a record of how I saw the world. My concern was that without me nearby they would be too conditioned by what I now know are cognitive biases and the "dark arts" in general.

***

Introduction

In recent years, for various reasons, and in my spare time, I have begun a process of exploring the origin of things. My goal has been to try to understand, at least roughly, the different ways in which things happen, their existence, where they come from, and where they are headed.

While this seems ambitious, my search has been very simple and general and I have only tried to understand the basics. I’m not a researcher, a scientist, a theologian, or an academic.

Personally, this process has helped me form an opinion about some issues I consider important.

The sources of information were mainly books, articles in specialized and non-specialized magazines, newspapers, the internet, informal conversations with colleagues and friends, and my personal analysis from observations of everyday life.

My intention here is to write the sum of my thoughts which together I call “My Philosophy” because in fact that is what they are, just thoughts. I’m simply drawing conclusions as I analyze information and I make my comments without trying to check them or prove them. Like I wrote above, they are nothing more than my personal thoughts.