id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

e66447d0-86de-4a27-b45e-d0121602a25b | trentmkelly/LessWrong-43k | LessWrong | Wisdom of the crowds?

According to an aggregate of forecasts on metaculus, the probability of bitcoin going above 100k within the next 5 years is 60%.

Under which conditions is it rational to have my probability for some event match the (i) median of a large group of estimates (e.g. from metaculus)

or (ii) the average of their estimates? Or, (iii) is it merely rational to set (i) or (ii) as my prior?

If yes, to any of these possibilities, why? What is the justification? Could someone point me towards the literature on this question? |

2fee87f1-4171-44b2-9bf1-db0104bba1df | trentmkelly/LessWrong-43k | LessWrong | [SEQ RERUN] Heading Toward Morality

Today's post, Heading Toward Morality was originally published on 20 June 2008. A summary (taken from the LW wiki):

> A description of the last several months of sequence posts, that identifies the topic that Eliezer actually wants to explain: morality.

Discuss the post here (rather than in the comments to the original post).

This post is part of the Rerunning the Sequences series, where we'll be going through Eliezer Yudkowsky's old posts in order so that people who are interested can (re-)read and discuss them. The previous post was LA-602 vs RHIC Review, and you can use the sequence_reruns tag or rss feed to follow the rest of the series.

Sequence reruns are a community-driven effort. You can participate by re-reading the sequence post, discussing it here, posting the next day's sequence reruns post, or summarizing forthcoming articles on the wiki. Go here for more details, or to have meta discussions about the Rerunning the Sequences series. |

e4be322e-3dc0-4695-b1c2-d47fac8bce23 | trentmkelly/LessWrong-43k | LessWrong | Learning desiderata

A putative new idea for AI control; index here.

In a previous post, I argued that Conservation of expected ethics isn't enough. Reflecting on that, I think the learning problem can be decomposed into three aspects:

#. The desire to learn. #. Unbiased learning (the agent doesn't try to influence the direction of its learning to achieve a particular goal). #. Safe learning (the agent doesn't affect the world negatively because of its learning process).

----------------------------------------

Note that 2. is precisely the conservation of expected ethics.

How the ideas to date measure up

Of the previous ideas, how do they measure up?

Traditional value learning scores high on 1. (it desires to learn), but fails both 2. and 3.

Indifference achieves 3. (note that the agent's utility might be unsafe, but this would not be because of the learning process), but fails 1. and 2. It also has problems with its counterfactuals, which arguably invalidate 3.

Factoring out arguably achieves all of these objectives, but also has problems with its counterfactuals, which arguably invalidate 3.

Guarded learning, an idea I'll be presenting soon, will hit 1. and 2. but not 3. To get safe learning from it, we'll need to inculcate safety conscious values early in the learning project.

Is there some platonic ideal of an agent that achieves all three of these objectives? Maybe. Imagine a boxed oracle that attempts to learn what value humans might have in a counterfactual world where the oracle didn't exist. This would seem to achieve all the objectives, and shows why unbiased learning needs to be separated from safe learning. This oracle is safe because it is boxed, and unbiased because of the counterfactual. |

d2d41403-5190-4a7f-b685-2711bbf408f2 | trentmkelly/LessWrong-43k | LessWrong | introduction to cancer vaccines

cancer neoantigens

For cells to become cancerous, they must have mutations that cause uncontrolled replication and mutations that prevent that uncontrolled replication from causing apoptosis. Because cancer requires several mutations, it often begins with damage to mutation-preventing mechanisms. As such, cancers often have many mutations not required for their growth, which often cause changes to structure of some surface proteins.

The modified surface proteins of cancer cells are called "neoantigens". An approach to cancer treatment that's currently being researched is to identify some specific neoantigens of a patient's cancer, and create a personalized vaccine to cause their immune system to recognize them. Such vaccines would use either mRNA or synthetic long peptides. The steps required are as follows:

1. The cancer must develop neoantigens that are sufficiently distinct from human surface proteins and consistent across the cancer.

2. Cancer cells must be isolated and have their surface proteins characterized.

3. A surface protein must be found that the immune system can recognize well without (much) cross-reactivity to normal human proteins.

4. A vaccine that contains that neoantigen or its RNA sequence must be produced.

Most drugs are mass-produced, but with cancer vaccines that target neoantigens, all those steps must be done for every patient, which is expensive.

protein characterization

The current methods for (2) are DNA sequencing and mass spectrometry.

SEQUENCING

DNA sequencing is now good enough to sequence the full genome of cancer cells. That sequence can be compared to the DNA of normal cells, and some algorithms can be used to find differences that correspond to mutant proteins. However, guessing how DNA will be transcribed, how proteins will be modified, and which proteins will be displayed on the surface is difficult.

Practical nanopore sequencing has been a long time coming, but it's recently become a good option for sequencing canc |

2159f712-1d94-4fb2-acc2-db518957e3e2 | trentmkelly/LessWrong-43k | LessWrong | Towards Guaranteed Safe AI: A Framework for Ensuring Robust and Reliable AI Systems

Authors: David "davidad" Dalrymple, Joar Skalse, Yoshua Bengio, Stuart Russell, Max Tegmark, Sanjit Seshia, Steve Omohundro, Christian Szegedy, Ben Goldhaber, Nora Ammann, Alessandro Abate, Joe Halpern, Clark Barrett, Ding Zhao, Tan Zhi-Xuan, Jeannette Wing, Joshua Tenenbaum

Abstract:

> Ensuring that AI systems reliably and robustly avoid harmful or dangerous behaviours is a crucial challenge, especially for AI systems with a high degree of autonomy and general intelligence, or systems used in safety-critical contexts. In this paper, we will introduce and define a family of approaches to AI safety, which we will refer to as guaranteed safe (GS) AI. The core feature of these approaches is that they aim to produce AI systems which are equipped with high-assurance quantitative safety guarantees. This is achieved by the interplay of three core components: a world model (which provides a mathematical description of how the AI system affects the outside world), a safety specification (which is a mathematical description of what effects are acceptable), and a verifier (which provides an auditable proof certificate that the AI satisfies the safety specification relative to the world model). We outline a number of approaches for creating each of these three core components, describe the main technical challenges, and suggest a number of potential solutions to them. We also argue for the necessity of this approach to AI safety, and for the inadequacy of the main alternative approaches. |

b779f646-a692-43a6-b0ed-b2b5bbc902a9 | trentmkelly/LessWrong-43k | LessWrong | Deceptive Alignment is <1% Likely by Default

Thanks to Wil Perkins, Grant Fleming, Thomas Larsen, Declan Nishiyama, and Frank McBride for feedback on this post. Thanks also to Paul Christiano, Daniel Kokotajlo, and Aaron Scher for comments on the original post that helped clarify the argument. Any mistakes are my own.

In order to submit this to the Open Philanthropy AI Worldview Contest, I’m combining this with the previous post in the sequence and making significant updates. I'm leaving the previous post, because there is important discussion in the comments, and a few things that I ended up leaving out of the final version that may be valuable.

Introduction

In this post, I argue that deceptive alignment is less than 1% likely to emerge for transformative AI (TAI) by default. Deceptive alignment is the concept of a proxy-aligned model becoming situationally aware and acting cooperatively in training so it can escape oversight later and defect to pursue its proxy goals. There are other ways an AI agent could become manipulative, possibly due to biases in oversight and training data. Such models could become dangerous by optimizing directly for reward and exploiting hacks for increasing reward that are not in line with human values, or something similar. To avoid confusion, I will refer to these alternative manipulative models as direct reward optimizers. Direct reward optimizers are outside of the scope of this post.

Summary

In this post, I discuss four precursors of deceptive alignment, which I will refer to in this post as foundational properties. I first argue that two of these are unlikely to appear during pre-training. I then argue that the order in which these foundational properties develop is crucial for estimating the likelihood that deceptive alignment will emerge for prosaic transformative AI (TAI) in fine-tuning, and that the dangerous orderings are unlikely. In particular:

1. Long-term goals and situational awareness are very unlikely in pre-training.

2. Deceptive alignment is very unli |

90308a34-8024-4a12-b97a-9c6c953e048b | trentmkelly/LessWrong-43k | LessWrong | Marriage, the Giving What We Can Pledge, and the damage caused by vague public commitments

I believe honesty is very important. I think most people agree that honesty is like, pretty important, but I think it’s a lot more important than that. I basically think that people will be dishonest in ways that hurt them and others by default, even when they’re trying to be pretty honest, because I think it’s just that hard. I think it’s hard because there are a lot of incentives that push away from honesty. E.g. You want the job so you’re tempted to overstate your experience or past performance. You said you wouldn’t tell anyone about your friend’s secret, but this seems like a situation where they wouldn’t mind, and it would be pretty awkward to say nothing…etc. There’s a huge variety of situations that incentivize small acts of dishonesty. And it’s not always clear whether something is a little dishonest or not - dishonesty can be quite a spectrum.

If I’m correct, and honesty is pretty hard by default, I think this is quite bad. Honesty is important because it greatly improves the ability of people to coordinate with each other. And it’s important because it allows people to reason better about themselves and about the world. Good coordination and good reasoning are things we badly need. Fortunately, I think many people could level up their honesty by putting in a reasonable amount of thinking and effort, and that a lot of the failure modes are caused by not paying attention to the incentives around them, or not thinking about how to structure their own lives and commitments to be more honest.

If you want to be honest, it’s important to think about how to structure your life so being honest isn’t extremely difficult. This is especially true when it comes to promises and commitments. It’s often easier to be honest about your current beliefs than it is to be honest about what you’re going to do in the future. After all, you don’t know what’s going to happen in the future. You can definitely influence it, and you can choose now to take particular actions in the |

aa9a1ad4-5a9f-46da-8e5b-341816988adf | trentmkelly/LessWrong-43k | LessWrong | Book Analysis: New Thrawn Trilogy

[Spoiler note: I have used only examples from relatively early on in the new Thrawn Trilogy and ones which I believe won’t spoil your enjoyment of the story; however, if you’d like to go in with absolutely no knowledge, this will tell you some things about the trilogy.]

I’ve recently read the first two books of Timothy Zahn’s new Thrawn trilogy and it’s given me some thoughts about writing competent characters.

The unique thing about Thrawn as a character is that he comes off as genuinely competent. The first book of the new Thrawn trilogy makes this particularly vivid because, instead of having to pair Thrawn with characters with canonical non-competent personalities, Zahn was free to invent the equally competent Eli Vanto, Arindha Pryce, and Nightswan.

In most books, characters who are supposed to be geniuses go one of three ways. First, they’re chessmasters with incredibly tangled Rube Goldberg Machine plans: “of course, I knew all along that overhearing that conversation would cause the protagonist’s wife to conclude she’s cheating on her and divorce her, motivating the protagonist to seek out the Stone of Plot, thus moving my plans one step closer to fruition!” Second, they’re scientific or magical geniuses with fake science or magic: “of course! If you reverse the polarity of the subspace photon launcher it will babble the techno and that will lower the shields, letting us in!” Third, they’re very good improvisers, which is a genuine kind of intelligence, but also one that is kind of easy for writers. The character doesn’t have to have clever plans, they just have to react cleverly to what’s happening—and the audience will cut them some slack if their solution isn’t amazing. After all, they did think of it in a few seconds.

Thrawn takes a fourth approach.

In his first scene, Thrawn is fighting some Imperial troops which are camped in the forest. He figures out that their shield system must let through small forest animals, or they’d be constantly dealing |

435371d0-c1d0-436d-ab33-8462b529929f | StampyAI/alignment-research-dataset/arxiv | Arxiv | ReMixMatch: Semi-Supervised Learning with Distribution Alignment and Augmentation Anchoring

1 Introduction

---------------

Semi-supervised learning (SSL) provides a means of leveraging unlabeled data to improve a model’s performance when only limited labeled data is available.

This can enable the use of large, powerful models when labeling data is expensive or inconvenient.

Research on SSL has produced a diverse collection of approaches, including consistency regularization (Sajjadi et al., [2016](#bib.bib5 "Regularization with stochastic transformations and perturbations for deep semi-supervised learning"); Laine and Aila, [2017](#bib.bib6 "Temporal ensembling for semi-supervised learning")) which encourages a model to produce the same prediction when the input is perturbed and entropy minimization (Grandvalet and Bengio, [2005](#bib.bib8 "Semi-supervised learning by entropy minimization")) which encourages the model to output high-confidence predictions.

The recently proposed “MixMatch” algorithm (Berthelot et al., [2019](#bib.bib84 "Mixmatch: a holistic approach to semi-supervised learning")) combines these techniques in a unified loss function and achieves strong performance on a variety of image classification benchmarks.

In this paper, we propose two improvements which can be readily integrated into MixMatch’s framework.

First, we introduce “distribution alignment”, which encourages the distribution of a model’s aggregated class predictions to match the marginal distribution of ground-truth class labels.

This concept was introduced as a “fair” objective by Bridle et al. ([1992](#bib.bib85 "Unsupervised classifiers, mutual information and’phantom targets")), where a related loss term was shown to arise from the maximization of mutual information between model inputs and outputs.

After reviewing this theoretical framework, we show how distribution alignment can be straightforwardly added to MixMatch by modifying the “guessed labels” using a running average of model predictions.

Second, we introduce “augmentation anchoring”, which replaces the consistency regularization component of MixMatch.

For each given unlabeled input, augmentation anchoring first generates a weakly augmented version (e.g. using only a flip and a crop) and then generates multiple strongly augmented versions.

The model’s prediction for the weakly-augmented input is treated as the basis of the guessed label for all of the strongly augmented versions.

To generate strong augmentations, we introduce a variant of AutoAugment (Cubuk et al., [2018](#bib.bib64 "Autoaugment: learning augmentation policies from data")) based on control theory which we dub “CTAugment”.

Unlike AutoAugment, CTAugment learns an augmentation policy alongside model training, making it particularly convenient in SSL settings.

We call our improved algorithm “ReMixMatch” and experimentally validate it on a suite of standard SSL image benchmarks.

ReMixMatch achieves state-of-the-art accuracy across all labeled data amounts,

for example achieving an accuracy of 93.73% with 250 labels on CIFAR-10 compared to the previous state-of-the-art of 88.92% (and compared to 96.09% for fully-supervised classification with 50,000 labels).

We also push the limited-data setting further than ever before, ultimately achieving a median of 84.92% accuracy with only 40 labels (just 4 labels per class) on CIFAR-10.

To quantify the impact of our proposed improvements, we carry out an extensive ablation study to measure the impact of our improvements to MixMatch.

Finally, we release all of our models and code to facilitate future work on semi-supervised learning.

Figure 1: Distribution alignment. Guessed label distributions are adjusted according to the ratio of the empirical ground-truth class distribution divided by the average model predictions on unlabeled data.

Figure 2: Augmentation anchoring. We use the prediction for a weakly augmented image (green, middle) as the target for predictions on strong augmentations of the same image (blue).

2 Background

-------------

The goal of a semi-supervised learning algorithm is to learn from unlabeled data in a way that improves performance on labeled data.

Typical ways of achieving this include training against “guessed” labels for unlabeled data or optimizing a heuristically-motivated objective that does not rely on labels.

This section reviews the semi-supervised learning methods relevant to ReMixMatch, with a particular focus on the components of the MixMatch algorithm upon which we base our work.

##### Consistency Regularization

Many SSL methods rely on consistency regularization to enforce that the model output remains unchanged when the input is perturbed.

First proposed in (Sajjadi et al., [2016](#bib.bib5 "Regularization with stochastic transformations and perturbations for deep semi-supervised learning")) and (Laine and Aila, [2017](#bib.bib6 "Temporal ensembling for semi-supervised learning")),

this approach was referred to as “Regularization With Stochastic Transformations and Perturbations” and the “Π-Model” respectively.

While some work perturbs adversarially (Miyato et al., [2018](#bib.bib4 "Virtual adversarial training: a regularization method for supervised and semi-supervised learning")) or using dropout (Laine and Aila, [2017](#bib.bib6 "Temporal ensembling for semi-supervised learning"); Tarvainen and Valpola, [2017](#bib.bib7 "Mean teachers are better role models: weight-averaged consistency targets improve semi-supervised deep learning results")), the most common perturbation is to apply domain-specific data augmentation (Laine and Aila, [2017](#bib.bib6 "Temporal ensembling for semi-supervised learning"); Sajjadi et al., [2016](#bib.bib5 "Regularization with stochastic transformations and perturbations for deep semi-supervised learning"); Berthelot et al., [2019](#bib.bib84 "Mixmatch: a holistic approach to semi-supervised learning"); Xie et al., [2019](#bib.bib86 "Unsupervised data augmentation for consistency training")).

The loss function used to measure consistency is typically either the mean-squared error (Laine and Aila, [2017](#bib.bib6 "Temporal ensembling for semi-supervised learning"); Tarvainen and Valpola, [2017](#bib.bib7 "Mean teachers are better role models: weight-averaged consistency targets improve semi-supervised deep learning results"); Sajjadi et al., [2016](#bib.bib5 "Regularization with stochastic transformations and perturbations for deep semi-supervised learning")) or cross-entropy (Miyato et al., [2018](#bib.bib4 "Virtual adversarial training: a regularization method for supervised and semi-supervised learning"); Xie et al., [2019](#bib.bib86 "Unsupervised data augmentation for consistency training")) between the model’s output for a perturbed and non-perturbed input.

##### Entropy Minimization

Grandvalet and Bengio ([2005](#bib.bib8 "Semi-supervised learning by entropy minimization")) argues that unlabeled data should be used to ensure that classes are well-separated.

This can be achieved by encouraging the model’s output distribution to have low entropy (i.e., to make “high-confidence” predictions) on unlabeled data.

For example, one can explicitly add a loss term to minimize the entropy of the model’s predicted class distribution on unlabeled data (Grandvalet and Bengio, [2005](#bib.bib8 "Semi-supervised learning by entropy minimization"); Miyato et al., [2018](#bib.bib4 "Virtual adversarial training: a regularization method for supervised and semi-supervised learning")).

Related to this idea are “self-training” methods (McLachlan, [1975](#bib.bib57 "Iterative reclassification procedure for constructing an asymptotically optimal rule of allocation in discriminant analysis"); Rosenberg et al., [2005](#bib.bib20 "Semi-supervised self-training of object detection models")) such as Pseudo-Label (Lee, [2013](#bib.bib15 "Pseudo-label: the simple and efficient semi-supervised learning method for deep neural networks")) that use the predicted class on an unlabeled input as a hard target for the same input, which implicitly minimizes the entropy of the prediction.

##### Standard Regularization

Outside of the setting of SSL, it is often useful to regularize models in the over-parameterized regime.

This regularization can often be applied both when training on labeled and unlabeled data.

For example, standard “weight decay” (Hinton and van Camp, [1993](#bib.bib67 "Keeping neural networks simple by minimizing the description length of the weights")) where the L2 norm of parameters is minimized is often used alongside SSL techniques.

Similarly, powerful MixUp regularization (Zhang et al., [2017](#bib.bib70 "Mixup: beyond empirical risk minimization")) which trains a model on linear interpolants of inputs and labels has recently been applied to SSL (Berthelot et al., [2019](#bib.bib84 "Mixmatch: a holistic approach to semi-supervised learning"); Verma et al., [2019](#bib.bib72 "Interpolation consistency training for semi-supervised learning")).

##### Other Approaches

The three aforementioned categories of SSL techniques does not cover the full literature on semi-supervised learning.

For example, there is a significant body of research on “transductive” or graph-based semi-supervised learning techniques which leverage the idea that unlabeled datapoints should be assigned the label of a labeled datapoint if they are sufficiently similar (Gammerman et al., [1998](#bib.bib25 "Learning by transduction"); Joachims, [2003](#bib.bib26 "Transductive learning via spectral graph partitioning"), [1999](#bib.bib27 "Transductive inference for text classification using support vector machines"); Bengio et al., [2006](#bib.bib29 "Label propagation and quadratic criterion"); Liu et al., [2018](#bib.bib88 "Deep metric transfer for label propagation with limited annotated data")).

Since our work does not involve these (or other) approaches to SSL, we will not discuss them further.

A more substantial overview of SSL methods is available in (Chapelle et al., [2006](#bib.bib24 "Semi-supervised learning")).

###

2.1 MixMatch

MixMatch (Berthelot et al., [2019](#bib.bib84 "Mixmatch: a holistic approach to semi-supervised learning")) unifies several of the previously mentioned SSL techniques.

The algorithm works by generating “guessed labels” for each unlabeled example, and then

using fully-supervised techniques to train on the original labeled data along with

the guessed labels for the unlabeled data.

This section reviews the necessary details of MixMatch; see (Berthelot et al., [2019](#bib.bib84 "Mixmatch: a holistic approach to semi-supervised learning")) for a full definition.

Let X={(xb,pb):b∈(1,…,B)} be a batch of labeled data and their corresponding one-hot labels representing one of L classes and let ^xb be augmented versions of these labeled examples.

Similarly, let U={ub:b∈(1,…,B)} be a batch of unlabeled examples.

Finally, let pmodel(y∣x;θ) be the predicted class distribution produced by the model for input x.

MixMatch first produces K weakly augmented versions of each unlabeled datapoint ^ub,k for k∈{1,…,K}.

Then, it generates a “guessed label” qb for each ub by computing the average prediction ¯qb across the K augmented versions: ¯qb=1K∑kpmodel(y∣^ub,k;θ).

The guessed label distribution is then sharpened by adjusting its temperature (i.e. raising all probabilities to a power of \sfrac1T and renormalizing).

Finally, pairs of examples (x1,p1),(x2,p2) from the combined set of labeled examples and unlabeled examples with label guesses are fed into the MixUp (Zhang et al., [2017](#bib.bib70 "Mixup: beyond empirical risk minimization")) algorithm to compute examples (x′,p′) where

x′=λx1+(1−λ)x2 for λ∼Beta(α,α), and similarly for p′.

Given these mixed-up examples, MixMatch performs standard fully-supervised training

with minor modifications.

A standard cross-entropy loss is used for labeled data, whereas the loss for unlabeled data is computed using a mean square error (i.e. the Brier score (Brier, [1950](#bib.bib74 "Verification of forecasts expressed in terms of probability"))) and is weighted with a hyperparameter λU.

The terms K (number of augmentations), T (sharpening temperature), α (MixUp Beta parameter), and λU (unlabeled loss weight) are MixMatch’s hyperparameters.

For augmentation, shifting and flipping was used for the CIFAR-10, CIFAR-100, and STL-10 datasets, and shifting alone was used for SVHN.

3 ReMixMatch

-------------

1: Input: Batch of labeled examples and their one-hot labels X={(xb,pb):b∈(1,…,B)}, batch of unlabeled examples U={ub:b∈(1,…,B)}, sharpening temperature T, number of augmentations K, Beta distribution parameter α for MixUp.

2: for b=1 to B do

3: ^xb=StrongAugment(xb) // Apply strong data augmentation to xb

4: ^ub,k=StrongAugment(ub);k∈{1,…,K} // Apply strong data augmentation K times to ub

5: ~ub=WeakAugment(ub) // Apply weak data augmentation to ub

6: qb=pmodel(y∣~ub;θ) // Compute prediction for weak augmentation of ub

7: qb=Normalize(qb×p(y)/~p(y)) // Apply distribution alignment

8: qb=Normalize(q1/Tb) // Apply temperature sharpening to label guess

9: end for

10: ^X=((^xb,pb);b∈(1,…,B)) // Augmented labeled examples and their labels

11: ^U1=((^ub,1,qb);b∈(1,…,B)) // First strongly augmented unlabeled example and guessed label

12: ^U=((^ub,k,qb);b∈(1,…,B),k∈(1,…,K)) // All strongly augmented unlabeled examples

13: ^U=^U∪((~ub,qb);b∈(1,…,B)) // Add weakly augmented unlabeled examples

14: W=Shuffle(Concat(^X,^U)) // Combine and shuffle labeled and unlabeled data

15: X′=(MixUp(^Xi,Wi);i∈(1,…,|^X|)) // Apply MixUp to labeled data and entries from W

16: U′=(MixUp(^Ui,Wi+|^X|);i∈(1,…,|^U|)) // Apply MixUp to unlabeled data and the rest of W

17: return X′,U′, ^U1

Algorithm 1 ReMixMatch algorithm for producing a collection of processed labeled examples and processed unlabeled examples with label guesses (cf. Berthelot et al. ([2019](#bib.bib84 "Mixmatch: a holistic approach to semi-supervised learning")) Algorithm 1.)

Having introduced MixMatch, we now turn to the two improvements we propose in this paper: Distribution alignment and augmentation anchoring.

For clarity, we describe how we integrate them into the base MixMatch algorithm; the full algorithm for ReMixMatch is shown in [algorithm 1](#alg1 "Algorithm 1 ‣ 3 ReMixMatch ‣ ReMixMatch: Semi-Supervised Learning with Distribution Alignment and Augmentation Anchoring").

###

3.1 Distribution Alignment

Our first contribution is distribution alignment, which enforces that the aggregate of predictions on unlabeled data matches the distribution of the provided labeled data.

This general idea was first introduced over 25 years ago (Bridle et al., [1992](#bib.bib85 "Unsupervised classifiers, mutual information and’phantom targets")), but to the best of our knowledge is not used in modern SSL techniques.

A schematic of distribution alignment can be seen in [fig. 1](#S1.F1 "Figure 1 ‣ 1 Introduction ‣ ReMixMatch: Semi-Supervised Learning with Distribution Alignment and Augmentation Anchoring").

After reviewing and extending the theory, we describe how it can be straightforwardly included in ReMixMatch.

####

3.1.1 Input-Output Mutual Information

As previously mentioned, the primary goal of

an SSL algorithm is to incorporate unlabeled data in a way which improves a model’s performance.

One way to formalize this intuition, first proposed by Bridle et al. ([1992](#bib.bib85 "Unsupervised classifiers, mutual information and’phantom targets")),

is to maximize the mutual information between the model’s input and output for unlabeled data.

Intuitively, a good classifier’s prediction should depend as much as possible on the input.

Following the analysis from Bridle et al. ([1992](#bib.bib85 "Unsupervised classifiers, mutual information and’phantom targets")), we can formalize this objective as

| | | | | |

| --- | --- | --- | --- | --- |

| | I(y;x) | =∬p(y,x)logp(y,x)p(y)p(x)\ddy\ddx | | (1) |

| | | =H(Ex[pmodel(y|x;θ)])−Ex[H(pmodel(y|x;θ))] | | (2) |

where H(⋅) refers to the entropy. See Appendix A for a proof.

To interpret this result, observe that

the second term in [eq. 2](#S3.E2 "(2) ‣ 3.1.1 Input-Output Mutual Information ‣ 3.1 Distribution Alignment ‣ 3 ReMixMatch ‣ ReMixMatch: Semi-Supervised Learning with Distribution Alignment and Augmentation Anchoring") is the familiar entropy minimization objective (Grandvalet and Bengio, [2005](#bib.bib8 "Semi-supervised learning by entropy minimization")), which simply encourages each individual model output to have low entropy (suggesting high confidence in a class label).

The first term, however, is not widely used in modern SSL techniques.

This term (roughly speaking) encourages that on average,

across the entire training set, the model predicts each class with equal frequency.

Bridle et al. ([1992](#bib.bib85 "Unsupervised classifiers, mutual information and’phantom targets")) refer to this as the model being “fair”.

####

3.1.2 Distribution Alignment in ReMixMatch

MixMatch already includes a form of entropy minimization via the “sharpening” operation which makes the guessed labels (synthetic targets) for unlabeled data have lower entropy.

We are therefore interested in also incorporating a form of “fairness” in ReMixMatch.

However, note that the objective H(Ex[pmodel(y|x;θ)]) on its own essentially implies that the model should predict each class with equal frequency.

This is not necessarily a useful objective if the dataset’s marginal class distribution p(y) is not uniform.

Furthermore, while it would in principle be possible to directly minimize this objective on a per-batch basis, we are instead interested in integrating it into MixMatch in a way which does not introduce an additional loss term or any sensitive hyperparameters.

To address these issues, we incorporate a form of fairness we call “distribution alignment” which proceeds as follows:

over the course of training, we maintain a running average of the model’s predictions on unlabeled data, which we refer to as ~p(y).

Given the model’s prediction q=pmodel(y|u;θ) on an unlabeled example u, we scale q by the ratio p(y)/~p(y) and then renormalize the result to form a valid probability distribution:

~q=Normalize(q×p(y)/~p(y))

where Normalize(x)i=xi/∑jxj.

We then use ~q as the label guess for u, and proceed as usual with sharpening and other processing.

In practice, we compute ~p(y) as the moving average of the model’s predictions on unlabeled examples over the last 128 batches.

We also estimate the marginal class distribution p(y) based on the labeled examples seen during training.

Note that a better estimate for p(y) could be used if it is known a priori; in this work we do not explore this direction further.

###

3.2 Improved Consistency Regularization

Consistency regularization underlies most SSL methods (Miyato et al., [2018](#bib.bib4 "Virtual adversarial training: a regularization method for supervised and semi-supervised learning"); Tarvainen and Valpola, [2017](#bib.bib7 "Mean teachers are better role models: weight-averaged consistency targets improve semi-supervised deep learning results"); Berthelot et al., [2019](#bib.bib84 "Mixmatch: a holistic approach to semi-supervised learning"); Xie et al., [2019](#bib.bib86 "Unsupervised data augmentation for consistency training")).

For image classification tasks, consistency is typically enforced between two augmented versions of the same unlabeled image.

In order to enforce a form of consistency regularization, MixMatch generates K (in practice, K=2) augmentations of each unlabeled example u and averages them together to produce a “guessed label” for u.

Recent work (Xie et al., [2019](#bib.bib86 "Unsupervised data augmentation for consistency training")) found that applying stronger forms of augmentation can significantly improve the performance of consistency regularization.

In particular, for image classification tasks it was shown that using AutoAugment (Cubuk et al., [2018](#bib.bib64 "Autoaugment: learning augmentation policies from data")) produced substantial gains.

Since MixMatch uses a simple flip-and-crop augmentation strategy, we were interested to see if replacing the weak augmentation in MixMatch with AutoAugment would improve performance but found that training would not converge.

To circumvent this issue, we propose a new method for consistency regularization in MixMatch called “Augmentation Anchoring”.

The basic idea is to use the model’s prediction for a weakly augmented unlabeled image as the guessed label for many strongly augmented versions of the same image.

A further logistical concern with using AutoAugment is that it uses reinforcement learning to learn a policy which requires many trials of supervised model training.

This poses issues in the SSL setting where we often have limited labeled data.

To address this, we propose a variant of AutoAugment called “CTAugment” which adapts itself online using ideas from control theory without requiring any form of reinforcement learning-based training.

We describe Augmentation Anchoring and CTAugment in the following two subsections.

####

3.2.1 Augmentation Anchoring

We hypothesize the reason MixMatch with AutoAugment is unstable is that MixMatch averages the prediction across K augmentations.

Stronger augmentation can result in disparate predictions, so their average may not be a meaningful target.

Instead, given an unlabeled input we first generate an “anchor” by applying weak augmentation to it.

Then, we generate K strongly-augmented versions of the same unlabeled input using CTAugment (described below).

We use the guessed label (after applying distribution alignment and sharpening) as the target for all of the K strongly-augmented versions of the image.

This process is visualized in [fig. 2](#S1.F2 "Figure 2 ‣ 1 Introduction ‣ ReMixMatch: Semi-Supervised Learning with Distribution Alignment and Augmentation Anchoring").

While experimenting with Augmentation Anchoring, we found it enabled us to replace MixMatch’s unlabeled-data mean squared error loss with a standard cross-entropy loss.

This maintained stability while also simplifying the implementation.

While MixMatch achieved its best performance at only K=2, we found that augmentation anchoring benefited from a larger value of K=8.

We compare different values of K in [section 4](#S4 "4 Experiments ‣ ReMixMatch: Semi-Supervised Learning with Distribution Alignment and Augmentation Anchoring") to measure the gain achieved from additional augmentations.

####

3.2.2 Control Theory Augment

AutoAugment (Cubuk et al., [2018](#bib.bib64 "Autoaugment: learning augmentation policies from data")) is a method for learning a data augmentation policy which results in high validation set accuracy.

An augmentation policy consists a sequence of transformation-parameter magnitude tuples to apply to each image.

Critically, the AutoAugment policy is learned with supervision:

the magnitudes and sequence of transformations are determined

via training many models on a proxy task which e.g. involves the use of 4,000 labels on

CIFAR-10 and 1,000 labels on SVHN (Cubuk et al., [2018](#bib.bib64 "Autoaugment: learning augmentation policies from data")).

This makes applying AutoAugment methodologically problematic for low-label SSL.

To remedy this necessity for training a policy on labeled data,

RandAugment (Cubuk et al., [2019](#bib.bib91 "RandAugment: an automated, randomized data augmentation strategy")) uniformly randomly samples transformations,

but requires tuning the hyper-parameters for the random sampling on the validation set,

which again is methodologically difficult when only very few (e.g., 40 or 250)

labeled examples are available.

Thus, in this work, we develop CTAugment, an alternative approach to designing high-performance augmentation strategies.

Like RandAugment, CTAugment also uniformly randomly samples transformations to apply but dynamically infers magnitudes for each transformation during the training process.

Since CTAugment does not need to be optimized on a supervised proxy task and has no sensitive hyperparameters, we can directly include it in our semi-supervised models to experiment with more aggressive data augmentation in semi-supervised learning.

Intuitively, for each augmentation parameter, CTAugment learns the likelihood that it will produce an image which is classified as the correct label.

Using these likelihoods, CTAugment then only samples augmentations that fall within the network tolerance.

This process is related to what is called density-matching in Fast AutoAugment (Lim et al., [2019](#bib.bib90 "Fast autoaugment")), where policies are optimized so that the density of augmented validation images match the density of images from the training set.

First, CTAugment divides each parameter for each transformation into bins of distortion magnitude as is done in AutoAugment (see Appendix C for a list of the bin ranges).

Let m be the vector of bin weights for some distortion parameter for some transformation.

At the beginning of training, all magnitude bins are initialized to have a weight set to 1.

These weights are used to determine which magnitude bin to apply to a given image.

At each training step, for each image two transformations are sampled uniformly at random.

To augment images for training, for each parameter of these transformations we produce a modified set of bin weights ^m where ^mi=mi if mi>0.8 and ^mi=0 otherwise, and sample magnitude bins from Categorical(Normalize(^m)).

To update the weights of the sampled transformations, we first sample a magnitude bin mi for each transformation parameter uniformly at random.

The resulting transformations are applied to a labeled example x with label p to obtain an augmented version ^x.

Then, we measure the extent to which the model’s prediction matches the label as ω=1−12L∑|pmodel(y|^x;θ)−p|.

The weight for each sampled magnitude bin is updated as mi=ρmi+(1−ρ)ω where ρ=0.99 is a fixed exponential decay hyperparameter.

###

3.3 Putting it all together

ReMixMatch’s algorithm for processing a batch of labeled and unlabeled examples is shown in [algorithm 1](#alg1 "Algorithm 1 ‣ 3 ReMixMatch ‣ ReMixMatch: Semi-Supervised Learning with Distribution Alignment and Augmentation Anchoring").

The main purpose of this algorithm is to produce the collections X′ and U′, consisting of augmented labeled and unlabeled examples with MixUp applied.

The labels and label guesses in X′ and U′ are fed into standard cross-entropy loss terms against the model’s predictions.

[Algorithm 1](#alg1 "Algorithm 1 ‣ 3 ReMixMatch ‣ ReMixMatch: Semi-Supervised Learning with Distribution Alignment and Augmentation Anchoring") also outputs ^U1, which consists of a single heavily-augmented version of each unlabeled image and its label guesses *without MixUp applied*.

^U1 is used in two additional loss terms which provide a mild boost in performance in addition to improved stability:

##### Pre-mixup unlabeled loss

We feed the guessed labels and predictions for example in ^U1 as-is into a separate cross-entropy loss term.

##### Rotation loss

Recent result have shown that applying ideas from self-supervised learning to SSL can produce strong performance (Zhai et al., [2019](#bib.bib92 "S4L: Self-supervised semi-supervised learning")).

We integrate this idea by rotating each image u∈^U1 as Rotate(u,r) where we sample the rotation angle r uniformly from r∼{0,90,180,270} and then ask the model to predict the rotation amount as a four-class classification problem.

In total, the ReMixMatch loss is

| | | | | |

| --- | --- | --- | --- | --- |

| | | ∑x,p∈X′H(p,pmodel(y|x;θ))+λU∑u,q∈U′H(q,pmodel(y|u;θ)) | | (3) |

| | +λ^U1 | ∑u,q∈^U1H(q,pmodel(y|u;θ))+λr∑u∈^U1H(r,pmodel(r|Rotate(u,r);θ)) | | (4) |

##### Hyperparameters

ReMixMatch introduce two new hyperparameters:

the weight on the rotation loss λr and

the weight on the un-augmented example λ^U1.

In practice both are fixed

λr=λ^U1=0.5.

ReMixMatch also shares many hyperparameters from MixMatch:

the weight for the unlabeled loss λU,

the sharpening temperature T,

the MixUp Beta parameter,

and the number of augmentations K.

All experiments (unless otherwise stated) use T=0.5, Beta =0.75, and λU=1.5.

We found using a larger number of augmentations monotonically

increases accuracy, and so set K=8 for all experiments (as running

with K augmentations increases computation by a factor of K).

We train our models using Adam

(Kingma and Ba, [2015](#bib.bib35 "Adam: a method for stochastic optimization")) with a fixed learning rate of 0.002 and weight decay (Zhang et al., [2018](#bib.bib68 "Three mechanisms of weight decay regularization")) with a

fixed value of 0.02.

We take the final model as an exponential moving average over the trained model weights

with a decay of 0.999.

4 Experiments

--------------

We now test the efficacy of ReMixMatch on a set of standard semi-supervised learning

benchmarks.

Unless otherwise noted, all of the experiments performed in this section

use the same codebase and model architecture (a Wide ResNet-28-2 (Zagoruyko and Komodakis, [2016](#bib.bib32 "Wide residual networks")) with 1.5 million parameters, as used in (Oliver et al., [2018](#bib.bib63 "Realistic evaluation of deep semi-supervised learning algorithms"))).

###

4.1 Realistic SSL setting

We follow the Realistic Semi-Supervised Learning (Oliver et al., [2018](#bib.bib63 "Realistic evaluation of deep semi-supervised learning algorithms")) recommendations for

performing SSL evaluations.

In particular, as mentioned above, this means we use the same model and training

algorithm in the same codebase for all experiments.

We compare against VAT (Miyato et al., [2018](#bib.bib4 "Virtual adversarial training: a regularization method for supervised and semi-supervised learning")) and MeanTeacher (Tarvainen and Valpola, [2017](#bib.bib7 "Mean teachers are better role models: weight-averaged consistency targets improve semi-supervised deep learning results")), copying the re-implementations

over from the MixMatch codebase (Berthelot et al., [2019](#bib.bib84 "Mixmatch: a holistic approach to semi-supervised learning")).

We also re-implemented UDA (Xie et al., [2019](#bib.bib86 "Unsupervised data augmentation for consistency training")) on top of our codebase and performed hyperparameter tuning but

we were unable to reach the same accuracy levels as reported in the initial paper.111UDA makes claims for low-label regimes; however, because it uses

a learned AutoAugment policy, these results are logistically impractical. We therefore

do not report these results.

##### Fully supervised baseline

To begin, we train a fully-supervised baseline to measure the highest

accuracy we could hope to obtain with our training pipeline.

The experiments we perform use the same model

and training algorithm, so these baselines are valid for all discussed SSL techniques.

On CIFAR-10, we obtain an fully-supervised error rate of 4.25% using weak flip + crop augmentation, which drops to 3.62% using AutoAugment and 3.91% using CTAugment.

Similarly, on SVHN we obtain 2.70% error using weak (flip) augmentation and 2.31% and 2.16% using AutoAugment and CTAugment respectively.

While AutoAugment performs slightly better on CIFAR-10 and slightly worse on SVHN compared to CTAugment, it is not our intent to design a *better* augmentation

strategy; just one that can be used *without a pre-training or tuning of hyper-parameters*.

##### Cifar-10

Our results on CIFAR-10 are shown in [table 1](#S4.T1 "Table 1 ‣ SVHN ‣ 4.1 Realistic SSL setting ‣ 4 Experiments ‣ ReMixMatch: Semi-Supervised Learning with Distribution Alignment and Augmentation Anchoring"), left.

ReMixMatch sets the new state-of-the-art for all numbers of

labeled examples.

Most importantly,

ReMixMatch is 16× more data efficient than MixMatch (e.g., at 250 labeled

examples ReMixMatch has identical accuracy compared to MixMatch at 4,000).

##### Svhn

Results for SVHN are shown in [table 1](#S4.T1 "Table 1 ‣ SVHN ‣ 4.1 Realistic SSL setting ‣ 4 Experiments ‣ ReMixMatch: Semi-Supervised Learning with Distribution Alignment and Augmentation Anchoring"), right.

ReMixMatch reaches state-of-the-art at 250 labeled examples,

and within the margin of error for state-of-the-art otherwise.

| | CIFAR-10 | SVHN |

| --- | --- | --- |

| Method | 250 labels | 1000 labels | 4000 labels | 250 labels | 1000 labels | 4000 labels |

| VAT | 36.03±2.82 | 18.64±0.40 | 11.05±0.31 | 8.41±1.01 | 5.98±0.21 | 4.20±0.15 |

| Mean Teacher | 47.32±4.71 | 17.32±4.00 | 10.36±0.25 | 6.45±2.43 | 3.75±0.10 | 3.39±0.11 |

| MixMatch | 11.08±0.87 | 7.75±0.32 | 6.24±0.06 | 3.78±0.26 | 3.27±0.31 | 2.89±0.06 |

| UDA (ours) | -∗ | -∗ | 6.63±0.19 | -∗ | 3.09±0.07 | 2.67±0.10 |

| UDA (reported) | -∗ | -∗ | 5.27±0.11 | -∗ | 2.46±0.17 | 2.32±0.05 |

| ReMixMatch | 6.27±0.34 | 5.73±0.16 | 5.14±0.04 | 3.10±0.50 | 2.83±0.30 | 2.42±0.09 |

Table 1: Results on CIFAR-10 and SVHN.

For UDA, -∗ signifies that it is not feasible to report results with fewer labels than were used to train the AutoAugment policy (4000 labels for CIFAR-10 and 1000 labels for SVHN). We re-implement UDA in our framework

with our training procedure and report in as “ours”. We also report the

original results from Xie et al. ([2019](#bib.bib86 "Unsupervised data augmentation for consistency training")) which used a different network implementation, training procedure, etc. and are thus not directly comparable.

###

4.2 Stl-10

The STL-10 dataset consists of 5,000 labeled 96×96 color images

drawn from 10 classes and 100,000 unlabeled images drawn from a similar—but not identical—data distribution.

The labeled set is partitioned into ten pre-defined folds of 1,000 images

each. For efficiency, we only run our analysis on five of these ten folds.

We do not perform evaluation here under the Realistic SSL (Oliver et al., [2018](#bib.bib63 "Realistic evaluation of deep semi-supervised learning algorithms")) setting when

comparing to non-MixMatch results.

Our results are, however, directly comparable to the MixMatch results.

Using the same WRN-37-2 network (23.8 million parameters), we reduce the error rate by a factor of two compared to MixMatch.

Method

Error Rate

SWWAE

25.70

CC-GAN

22.20

MixMatch

10.18±1.46

ReMixMatch (K=1)

6.77±1.66

ReMixMatch (K=4)

6.18±1.24

Table 2: STL-10 error rate using 1000-label splits. SWWAE and CC-GAN results are from (Zhao et al., [2015](#bib.bib80 "Stacked what-where auto-encoders")) and (Denton et al., [2016](#bib.bib81 "Semi-supervised learning with context-conditional generative adversarial networks")).

Ablation

Error Rate

Ablation

Error Rate

ReMixMatch

5.94

No rotation loss

6.08

With K=1

7.32

No pre-mixup loss

6.66

With K=2

6.74

No dist. alignment

7.28

With K=4

6.21

L2 unlabeled loss

17.28

With K=16

5.93

No strong aug.

12.51

MixMatch

11.08

No weak aug.

29.36

Table 3: Ablation study. Error rates are reported on a single 250-label split from CIFAR-10.

###

4.3 Towards few-shot learning

We find that ReMixMatch is able to work in extremely low-label

settings. By only changing λr from 0.5 to 2 we

can train CIFAR-10 with just four labels

per class and SVHN with only 40 labels total.

On CIFAR-10 we obtain a median-of-five error rate of 15.08%;

on SVHN we reach 3.48% error and

on SVHN with the “extra” dataset we reach 2.81% error.

Full results are given in Appendix B.

###

4.4 Ablation Study

Because we have made several changes to the existing MixMatch algorithm,

here we perform an ablation study and remove one component of ReMixMatch

at a time to understand from which changes produce the largest accuracy gains.

Our ablation results are summarized in Table [3](#S4.T3 "Table 3 ‣ 4.2 STL-10 ‣ 4 Experiments ‣ ReMixMatch: Semi-Supervised Learning with Distribution Alignment and Augmentation Anchoring").

We find that removing the pre-mixup unlabeled loss, removing distribution alignment, and lowering K all hurt performance by a small amount.

Given that distribution alignment improves performance, we were interested to see whether it also had the intended effect of making marginal distribution of model predictions match the ground-truth marginal class distribution.

We measure this directly in [appendix D](#A4 "Appendix D Measuring the effect of distribution alignment ‣ ReMixMatch: Semi-Supervised Learning with Distribution Alignment and Augmentation Anchoring").

Removing the rotation loss reduces accuracy at 250 labels by only 0.14 percentage points, but we find that in the 40-label setting rotation loss is necessary to prevent collapse.

Changing the cross-entropy loss on unlabeled data to an L2 loss as used in MixMatch hurts performance dramatically, as does removing either of the augmentation components.

This validates using augmentation anchoring in place of the consistency regularization mechanism of MixMatch.

5 Conclusion

-------------

Progress on semi-supervised learning over the past year has upended many of the

long-held beliefs about classification, namely, that vast quantities of

labeled data is necessary.

By introducing augmentation anchoring and distribution alignment to MixMatch, we continue this trend:

ReMixMatch reduces the quantity of labeled data needed by a large factor compared to prior work

(e.g., beating MixMatch at 4000 labeled

examples with only 250 on CIFAR-10, and closely approaching MixMatch at 5000 labeled examples

with only 1000 on STL-10).

In future work, we are interested in pushing the limited data regime further to close the gap between few-shot learning and SSL.

We also note that in many real-life scenarios, a dataset begins as unlabeled and is incrementally labeled until satisfactory performance is achieved.

Our strong empirical results suggest that it will be possible to achieve gains in this “active learning” setting by using ideas from ReMixMatch.

Finally, in this paper we present results on widely-studied image benchmarks for ease of comparison.

However, the true power of data-efficient learning will come from applying these techniques to real-world problems where obtaining labeling data is expensive or impractical. |

d629b7c1-ee58-4034-96c1-a682df904fd0 | trentmkelly/LessWrong-43k | LessWrong | Meetup : Brussels meetup

Discussion article for the meetup : Brussels meetup

WHEN: 13 October 2012 01:00:00PM (+0200)

WHERE: Rue des Alexiens 55 1000 Bruxelles

We are once again meeting at 'la fleur en papier doré' close to the Brussels Central station. If you feel like an intelligent discussion and are in the neighborhood, consider dropping by. As always, I'll have a sign.

Discussion article for the meetup : Brussels meetup |

9a2bdfc5-0d7f-4eb0-b559-d56f2f5b720a | trentmkelly/LessWrong-43k | LessWrong | Why do the Sequences say that "Löb's Theorem shows that a mathematical system cannot assert its own soundness without becoming inconsistent."?

I am looking for some understanding into why this claim is made.

As far as I can tell, Löb's Theorem does not directly make such an assertion.

Reading the Cartoon's Guide to Löb's Theorem, it appears that this assertion is made on the basis of the reasoning that Löb's Theorem itself can't prove negations, that is, statements such as "1 + 3 /= 5."

> Alas, this means we can't prove PA sound with respect to any important class of statements.

This is a statement that [due to the presence of negations in it] itself can't be proven within PA.

Now it seems that it is being argued that the inability to do this is a bad thing [that is, being able to prove that we can't prove PA sound with respect to any 'important' class of statements].

I think this is actually a very critical question and I have some ideas for what the central crux is here, but I'd be interested in seeing some answers before delving into that. |

743b5055-413a-44b4-9273-63baf1431555 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | 200 COP in MI: Interpreting Algorithmic Problems

*This is the fourth post in a sequence called 200 Concrete Open Problems in Mechanistic Interpretability.*[*Start here*](https://www.alignmentforum.org/posts/LbrPTJ4fmABEdEnLf/200-concrete-open-problems-in-mechanistic-interpretability)*, then read in any order. If you want to learn the basics before you think about open problems, check out*[*my post on getting started*](https://neelnanda.io/getting-started)*. Look up jargon in*[*my Mechanistic Interpretability Explainer*](https://neelnanda.io/glossary)

Motivation

----------

***Motivating paper**:* [*A Mechanistic Interpretability Analysis of Grokking*](https://www.alignmentforum.org/posts/N6WM6hs7RQMKDhYjB/a-mechanistic-interpretability-analysis-of-grokking)

When models are trained on synthetic, algorithmic tasks, they often learn to do some clean, interpretable computation inside. Choosing a suitable task and trying to reverse engineer a model can be a rich area of interesting circuits to interpret! In some sense, this is interpretability on easy mode - the model is normally trained on a single task (unlike language models, which need to learn everything about language!), we know the exact ground truth about the data and optimal solution, and the models are tiny. So why care?

I consider [my work on grokking](https://www.alignmentforum.org/posts/N6WM6hs7RQMKDhYjB/a-mechanistic-interpretability-analysis-of-grokking) to be an interesting case study of this work going well. Grokking (shown below) is a mysterious phenomena where, when small models are trained on algorithmic tasks (eg modular addition or modular division), they initially memorise the training data. But when they *keep* being trained on that data for a really long time, the model suddenly(ish) figures out how to generalise!

In my work, I simplified their setup even further, by training a 1 Layer transformer (with no LayerNorm or biases) to do modular addition and reverse engineered the weights to understand what was going on. And it turned out to be doing a funky trig-based algorithm (shown below), where the numbers are converted to frequencies with a memorised Discrete Fourier Transform, added using trig identities, and converted back to the answer! Using this, we looked inside the model and identified that despite seeming to have plateaued, in the period between memorising and "grokking", the model is actually slowly forming the circuit that does generalise. But so long as the model still has the memorising circuit, this adds too much noise to have good test loss. Grokking occurs when the generalising circuit is so strong that the model decides to "clean-up" the memorising circuit, and "uncovers" the mature generalising circuit beneath, and suddenly gets good test performance.

OK, so I just took this as an excuse to explain my paper to you. Why should you care? I think that the general lesson from this, that I'm excited to see applied elsewhere, is using toy algorithmic models to analyse a phenomena we're confused about. Concretely, given a confusing phenomena like grokking, I'd advocate the following strategy:

1. **Simplify** to the minimal setting that exhibits the phenomena, yet is complex enough to be interesting

2. **Reverse-engineer** the resulting model, in as much detail as you can

3. **Extrapolate** the insights you've learned from the reverse-engineered model - what are the broad insights you've learned? What do you expect to generalise? Can you form any automated tests to detect the circuits you've found, or any of their motifs?

4. **Verify** by looking at other examples of the phenomena and seeing whether these insights actually hold (larger models, different tasks, even just earlier checkpoints of the model or different random seeds)

Grokking is an example in a science of deep learning context - trying to uncover mysteries about how models learn and behave. But this same philosophy also applies to understanding confusing phenomena in language models, and building toy algorithmic problems to study those!

Anthropic's [Toy Models of Superposition](https://dynalist.io/d/n2ZWtnoYHrU1s4vnFSAQ519J#z=EuO4CLwSIzX7AEZA1ZOsnwwF) is an excellent example of this done well, for the case of [superposition in linear bottleneck dimensions](https://dynalist.io/d/n2ZWtnoYHrU1s4vnFSAQ519J#z=MjDx_NdXa8sSxeq-tiLALtl2) of a model (like value vectors in attention heads, or the residual stream). Concretely, they isolated out the key traits as being where high dimensional spaces are projected to a low dimensional space, and then mapped back to a high dimensional space, with no non-linearity in between. And got extremely interesting and rich results! (More on these in the next post)

More broadly, because algorithmic tasks are often cleaner and easier to interpret, and there's a known ground truth, it can be a great place to practice interpretability! Both as a beginner to the field trying to build intuitions and learn techniques, and to refine our understanding of the *right* tools and techniques. It's much easier to validate a claimed approach to validate explanations (like [causal scrubbing](https://dynalist.io/d/n2ZWtnoYHrU1s4vnFSAQ519J#z=KfagbOQ29EYq3FA_OGaxZaoc)) if you can run it on a problem with an understood ground truth!

Overall, I'm less excited about algorithmic problem interpretability in general than some of the other categories of open problems, but I think it can be a great place to start and practice. I think that building a toy algorithmic model for a confusing phenomena is hard, but can be really exciting if done well!

Resources

---------

* **Demo**: Reverse engineering how a small transformer can re-derive positional embeddings ([colab](https://colab.research.google.com/github/neelnanda-io/Easy-Transformer/blob/no-position-experiment/No_Position_Experiment.ipynb)), with [an accompanying video walkthrough](https://www.youtube.com/watch?v=yo4QvDn-vsU)

* **Demo**:My grokking work reverse-engineering modular addition - [write-up](https://www.alignmentforum.org/posts/N6WM6hs7RQMKDhYjB/a-mechanistic-interpretability-analysis-of-grokking) and [colab](https://colab.research.google.com/drive/1F6_1_cWXE5M7WocUcpQWp3v8z4b1jL20)

+ Note - I'd guess that none of the Fourier stuff to generalise to other problems, but some insights and the underlying mindsets will. Modular addition is weird!

Tips

----

* Approach the reverse engineering scientifically. Go in and try to form hypotheses about what the model is doing, and how it might have solved the task. Then go and *test* these hypotheses, and try to look for evidence for them, and evidence to falsify them. Use these hypotheses to help you prioritise and focus among all of the possible directions you can go in - accept that they'll probably be wrong in some important ways (I did *not* expect modular addition to be using Fourier Transforms!), but use them as a guide to figure out *how* they're wrong.

+ Concretely, try to think about how you would implement a solution if you were designing the model weights. This is pretty different from writing code! Models tend to be very good at parallelised, vectorised solutions that heavily rely on linear algebra, with limited use of non-linear activation functions.

- A useful way to understand how to think like a transformer is to look at [this paper](https://arxiv.org/abs/2106.06981), which introduces a programming language called RASP that aims to mimic the computational model of the transformer.

* These problems often involve training your own model, rather than just analysing a model someone else trained. These are such toy problems that training is pretty easy, but it can still be a headache! I recommend cribbing training code from somewhere (eg the demos above).

* Subtle details of how you set up the problem can make life much easier or harder for the model. Think carefully about things and try many variations.

+ Eg what is the loss function, whether there are special tokens in the context (eg to mark the end of the first input and the start of the second input or the output) or if the model needs to infer these for itself, etc.

* Rule of thumb: Small models are easier to interpret (it matters more to have few layers than narrow layers). Before analysing a model, check that the next smallest model can't also do the task!

+ Eg, decrease the number of transformer layers, remove LayerNorm, try attention-only, try an MLP (the classic neural network, not a transformer) with one hidden layer (or two).

* When building a toy model to capture something about a real model, you want to be especially thoughtful about how you do this! Try to distill out exactly what traits of the model determine the property that you care about. You want to straddle the fine line between too simple to be interesting and too complex to be tractable.

+ This is a particularly helpful thing to get other people's feedback on, and to spend time red-teaming

Problems

--------

[*This spreadsheet*](https://www.neelnanda.io/cop-spreadsheet) *lists each problem in the sequence. You can write down your contact details if you're working on any of them and want collaborators, see any existing work or reach out to other people on there! (thanks to Jay Bailey for making it)*

* Good beginner problems:

+ A 3.1 - Sorting fixed-length lists. (format - `START 4 6 2 9 MID 2 4 6 9`)

- How does difficulty change with the length of the list?

- **A\* 3.2 -** Sorting variable-length lists.

* What’s the sorting algorithm? What’s the longest list you can get to? How is accuracy affected by longer lists?

+ A 3.3 - Interpret a 2L MLP (one hidden layer) trained to do modular addition (very analogous to my grokking work)

+ A 3.4 - Interpret a 1L transformer trained to do modular subtraction (very analogous to my grokking work)

+ A 3.5 - Taking the minimum or maximum of two ints

+ A 3.6 - Permuting lists

+ A 3.7 - Calculating sequences with a Fibonacci-style recurrence relation.

- (I.e. predicting the next element from the previous two)

* Some harder concrete algorithmic problems to try interpreting

+ **B\* 3.8 -** 5 digit addition/subtraction

- What if you reverse the order of the digits? (e.g. inputting and outputting the units digit first). This order might make it easier to compute the digits since e.g. the tens digit depends on the units digit.

- 5 digits is a good choice because we have prior knowledge that [grokking happens there, and it seems likely related to the modular addition algorithm.](https://twitter.com/NeelNanda5/status/1559060507524403200) You might need to play around with training setups and hyperparameters if you varied the number of digits.

+ **B\* 3.9 -** Predicting the output to simple code functions. E.g. predicting the bold text in problems like

a = 1 2 3

a[2] = 4

a -> **1 2 4**

+ **B\* 3.10 -** Graph theory problems like [this](https://jacobbrazeal.wordpress.com/2022/09/23/gpt-3-can-find-paths-up-to-7-nodes-long-in-random-graphs/)

- Not sure of the right input format - try a bunch! Check this out: <https://jacobbrazeal.wordpress.com/2022/09/23/gpt-3-can-find-paths-up-to-7-nodes-long-in-random-graphs/#comment-248>

+ **B\* 3.11 -** Train a model on multiple algorithmic tasks that we understand (eg train a model to do modular addition *and*modular subtraction, by learning two different outputs). Compare this to a model trained on each task.

- What happens? Does it learn the same circuits? Is there superposition?

- If doing grokking-y tasks, how does that interact?

+ **B\* 3.12 -** Train models for [automata](https://arxiv.org/pdf/2210.10749.pdf) tasks and interpret them - do your results match the theory?

+ **B\* 3.13 -** In-Context Linear Regression, as described in [Garg et al](https://arxiv.org/pdf/2208.01066.pdf) - the transformer is given a sequence (x\_1, y\_1, x\_2, y\_2, …), where y\_i=Ax\_i+b and A and b are different for each prompt and need to be learned in-context ([see code here](https://github.com/dtsip/in-context-learning))

- Tip - train a 2L or 3L model on this and give it width 256 or 512, this should be much easier to interpret than their 12L one.

- **C\* 3.14 -** The other problems in the paper that are in-context learned - sparse linear functions, 2L networks & decision trees

+ C 3.15 - 5 digit (or binary) multiplication

+ B 3.16 - Predict repeated subsequences in randomly generated tokens, and see if you can find and reverse engineer [induction heads](https://transformer-circuits.pub/2022/in-context-learning-and-induction-heads/index.html#definition-of-induction-heads) (see [my grokking paper](https://www.lesswrong.com/posts/N6WM6hs7RQMKDhYjB/a-mechanistic-interpretability-analysis-of-grokking) for details.)

- Tip: Using shortformer style positional embeddings (see [the TransformerLens docs](https://github.com/neelnanda-io/TransformerLens/blob/main/further_comments.md#shortformer-attention-positional_embeddings_type--shortformer)), this seems to make things cleaner

+ B-C 3.17 - Choose your own adventure: Find your own algorithmic problem!

- [Leetcode easy](https://leetcode.com/problemset/all/?difficulty=EASY&page=1) is probably a good source of problems.

* **B\* 3.18 -** Build a toy model of [Indirect Object Identification](https://dynalist.io/d/n2ZWtnoYHrU1s4vnFSAQ519J#z=iWsV3s5Kdd2ca3zNgXr5UPHa) - train a tiny attention only model on an algorithmic task simulating Indirect Object Identification, and reverse-engineer the learned solution. Compare this to [the circuit found in GPT-2 Small](https://dynalist.io/d/n2ZWtnoYHrU1s4vnFSAQ519J#z=iWsV3s5Kdd2ca3zNgXr5UPHa) - is it the same algorithm? We often build toy models to model things in real models, it'd be interesting to validate this by going the other way

+ Context: IOI is the grammatical task of figuring out that sentences like "When John and Mary went to the store, John gave a bottle of milk to" maps to "Mary",. Interpretability in the Wild was a paper reverse engineering the 25 head circuit behind this in GPT-2 Small.

+ The MVP would be just giving the model a BOS, a MID and the names, eg `BOS John Mary John MID` -> `Mary`. To avoid trivial solutions, make eg half the data 3 distinct names like `John Mary Peter` which maps to a randomly selected one of those names. I'd try training a 3L attn-only model to do this.

+ **C\* 3.19 -** There's a bunch of follow-up questions: Is this consistent across random seeds, or can other algorithms be learned? Can a 2L model learn this? What happens if you add MLPs or more layers?

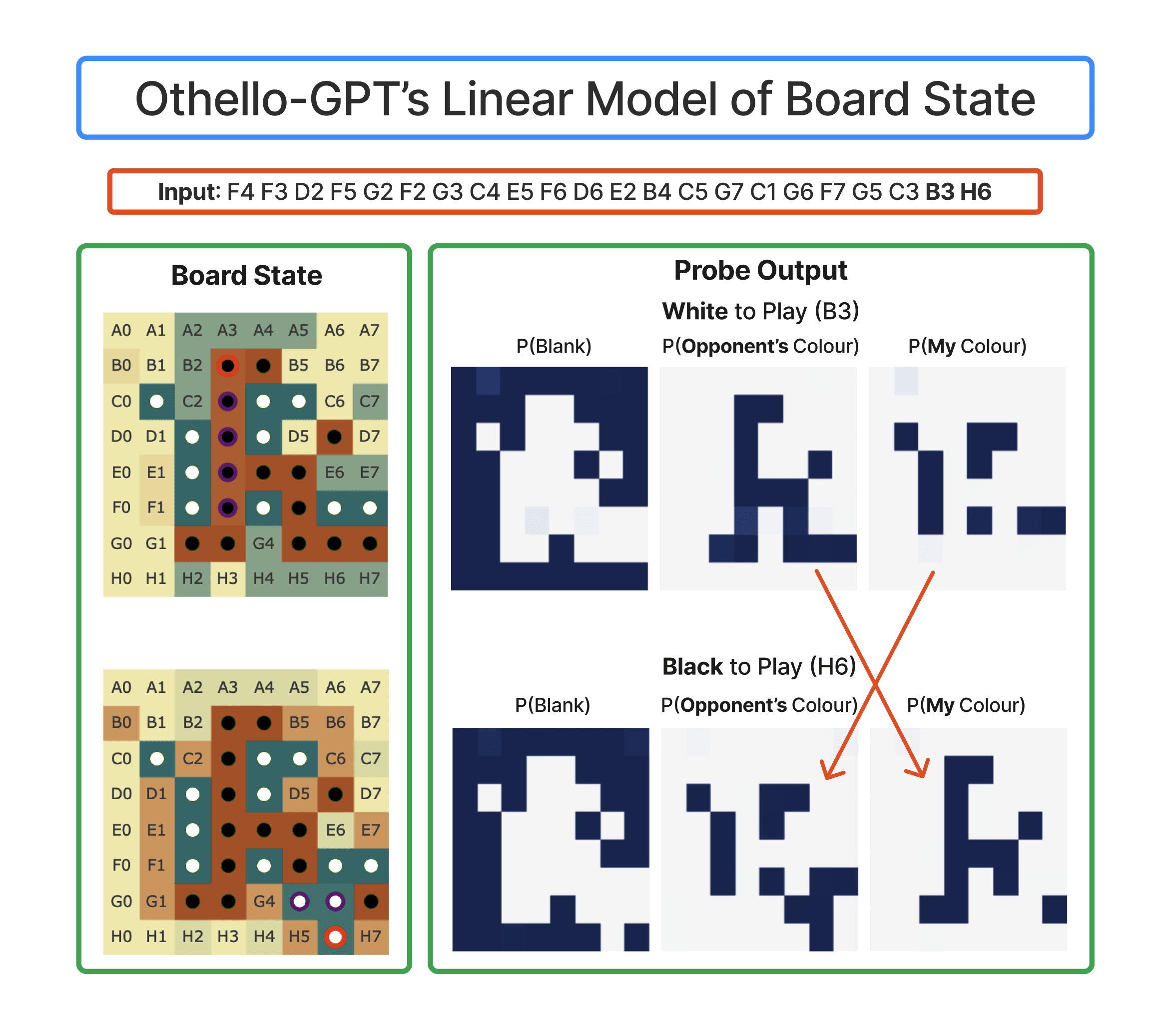

* **C\* 3.20 -** Reverse-engineer [Othello-GPT](https://arxiv.org/pdf/2210.13382.pdf) ([summary here](https://thegradient.pub/othello/)) - the paper trains a GPT-style model to predict the next move in randomly generated Othello games (ie, it predicts legal moves, but doesn't learn strategy). Can you reverse-engineer the algorithms the model learns?

+ In particular, they train probes and use this to recover the model's internal model of the board state, and show that they can intervene to change what the model thinks, where it makes legal moves in the edited board. Can you reverse-engineer the features the probes find?

+ Tip: They train an 8L model, I expect you can get away with way smaller. They also use one hidden layer MLP probes, I'd start with using a linear probe for simplicity.

* Exploring questions about language models:

+ **A\* 3.21 -** Train a one layer attention-only transformer with rotary to predict the previous token, and reverse engineer how it does this.

- An even easier way to do this is by training just the QK-circuit in an attention head and the embedding matrix to maximise the attention paid to the previous token (with mean-squared error loss) - this will cut out other crap the model might be doing.

+ **B\* 3.22 -** Train a three layer attention-only transformer to perform the [Indirect Object Identification](https://dynalist.io/d/n2ZWtnoYHrU1s4vnFSAQ519J#z=iWsV3s5Kdd2ca3zNgXr5UPHa) task (and *just* that task! You can algorithmically generate training data). Can it do the task? And if so, does it learn [the same circuit that was found in GPT-2 Small](https://dynalist.io/d/n2ZWtnoYHrU1s4vnFSAQ519J#z=iWsV3s5Kdd2ca3zNgXr5UPHa)?

+ **B\* 3.23 -** Re-doing my modular addition analysis with GELU. How does this change things, if at all?

- Bonus: Doing it for any algorithmic task! This probably works best on those with sensible and cleanly interpretable neurons

+ **C\* 3.24 -** How does memorization work?

- Idea 1: Train a one hidden layer MLP to memorise random data.

* Possible setup: There are two inputs, each in `{0,1,...n-1}`. These are one-hot encoded, and stacked (so the input has dimension `2n`). Each pair has a randomly chosen output label, and the training set consists of all possible pairs.

- Idea 2: Try training a transformer on a fixed set of random strings of tokens?

+ **B-C\* 3.25 -** Comparing different [dimensionality reduction](https://dynalist.io/d/n2ZWtnoYHrU1s4vnFSAQ519J#z=xRPTt5aM5hTP0KHEbgol56Gc) techniques ( e.g. [PCA](https://setosa.io/ev/principal-component-analysis/)/[SVD](https://gregorygundersen.com/blog/2018/12/10/svd/), [t-SNE](https://www.jmlr.org/papers/volume9/vandermaaten08a/vandermaaten08a.pdf), [UMAP](https://arxiv.org/pdf/1802.03426.pdf), [NMF](https://en.wikipedia.org/wiki/Non-negative_matrix_factorization), [the grand tour](https://distill.pub/2020/grand-tour/)) on modular addition (or any other problem that you feel you understand!)

- These techniques have been used on [AlphaZero](https://www.deepmind.com/blog/alphazero-shedding-new-light-on-chess-shogi-and-go), [Understanding RL Vision](https://distill.pub/2020/understanding-rl-vision/), and [The Building Blocks of Interpretability](https://distill.pub/2018/building-blocks/) - which should be a good starting point for how.

- B 3.26 - In modular addition, look at what these do on different weight matrices - can you identify which weights matter most? Or which neurons form clusters for each frequency? Can you find anything if you look at activations?

+ **C\* 3.27 -** Is [direct logit attribution](https://dynalist.io/d/n2ZWtnoYHrU1s4vnFSAQ519J#z=disz2gTx-jooAcR0a5r8e7LZ) always useful? Can you find examples where it’s highly misleading?

- I'd focus on problems where a component's output is mostly intended to suppress incorrect logits (so it improves the correct log prob but *not* the correct logit)

* Exploring broader deep learning mysteries - can you build a toy, algorithmic model to better understand these?

+ **D\* 3.28 -** The [Lottery Ticket Hypothesis](https://towardsdatascience.com/demystifying-the-lottery-ticket-hypothesis-in-deep-learning-158570b62674)

+ **D\* 3.29 -** [Deep Double Descent](https://dynalist.io/d/n2ZWtnoYHrU1s4vnFSAQ519J#z=GXTb66JhboBH3SDIj6j7D8Mn)

* Othello-GPT is a model trained to play random legal moves in Othello, and learns a linear emergent world model, modelling the board state (despite only ever seeing and predicting moves!) I did some [work on it](https://www.alignmentforum.org/posts/nmxzr2zsjNtjaHh7x/actually-othello-gpt-has-a-linear-emergent-world) and [outline directions of future work](https://www.alignmentforum.org/s/nhGNHyJHbrofpPbRG/p/qgK7smTvJ4DB8rZ6h). I think it sits at a good intersection of complex enough to be interesting (much more complex than any algorithmic model that's been well understood!) yet simple enough to be tractable!

+

+ I go into a *lot* more detail in the post including on concrete problems and [give code](https://neelnanda.io/othello-notebook) to build off of, so I think this can be a great place to start. The following is a brief summary.

+ **A\*** 3.30 Trying one of [the concrete starter projects](https://www.alignmentforum.org/s/nhGNHyJHbrofpPbRG/p/qgK7smTvJ4DB8rZ6h#Concrete_starter_projects)

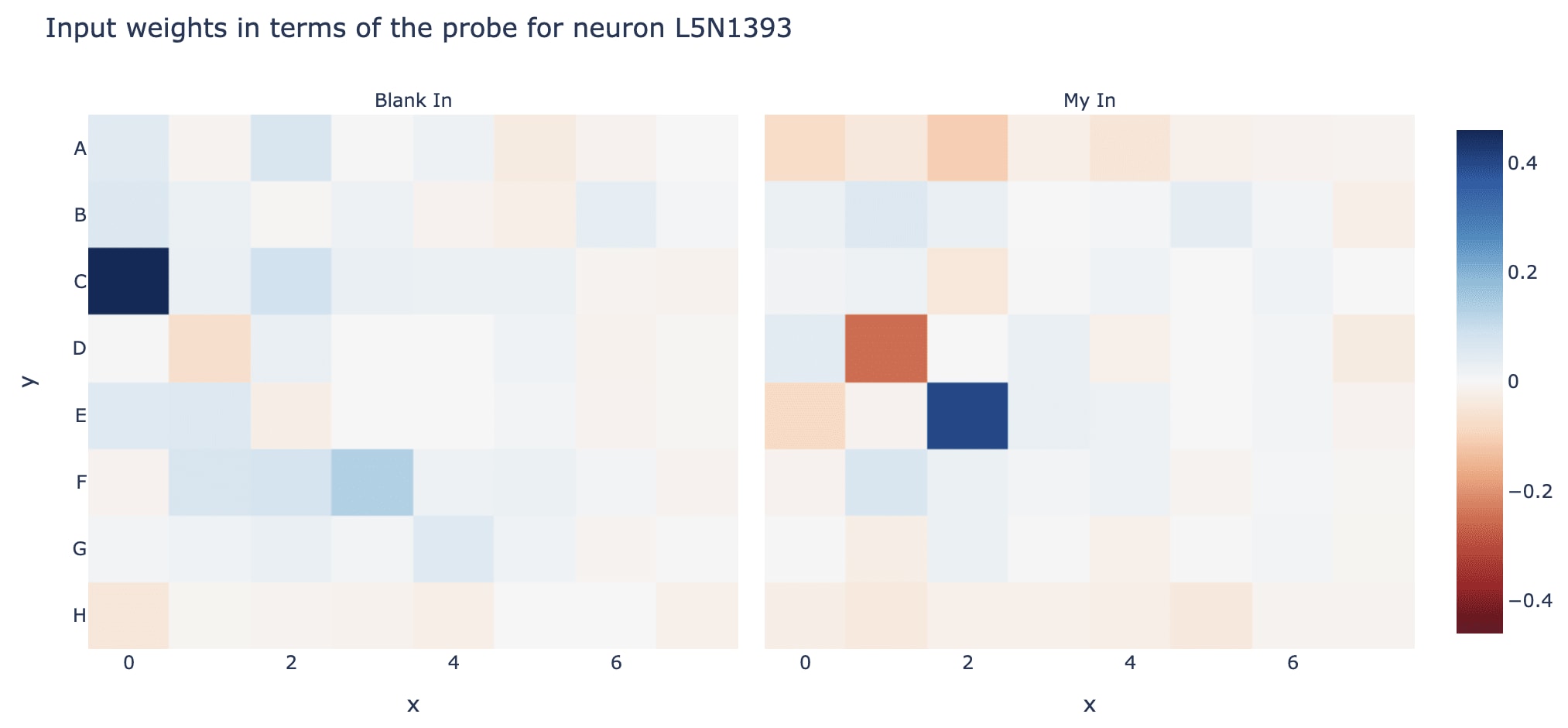

+ **B-C\*** 3.31 [Looking for modular circuits](https://www.alignmentforum.org/s/nhGNHyJHbrofpPbRG/p/qgK7smTvJ4DB8rZ6h#Finding_Modular_Circuits) - try to find the circuits used to compute the world model and to use the world model to compute the next move, and understand each in isolation and use this to understand how they fit together. See what you can learn about finding modular circuits in general.

- Example: Interpreting a neuron in the middle of the model by multiplying its input weights by the probe directions, to see what board state it looks at

+ **B-C\*** 3.32 [Neuron Interpretability and Studying Superposition](https://www.alignmentforum.org/s/nhGNHyJHbrofpPbRG/p/qgK7smTvJ4DB8rZ6h#Neuron_Interpretability_and_Studying_Superposition) - try to understand the model's MLP neurons, and explore what techniques do and do not work. Try to build our understanding of how to understand transformer MLPs in general.

- We're really bad at MLPs in general, but certain things are much easier here - eg it's easy to automatically check whether a neuron only activates when a condition is met, because all features here are clean and algorithmic

+ **B-C\*** 3.33 [A Transformer Circuit Laboratory](https://www.alignmentforum.org/s/nhGNHyJHbrofpPbRG/p/qgK7smTvJ4DB8rZ6h#A_Transformer_Circuit_Laboratory) - Explore and test other conjectures about transformer circuits, eg can we figure out how the model manages memory in the residual stream? |

5f6dfee5-c47b-4f82-9e6f-8086bf95d1f7 | trentmkelly/LessWrong-43k | LessWrong | Destroying the fabric of the universe as an instrumental goal.

Perhaps this is a well examined idea, but i didn't find anything when searching.

The argument is simple. If the AI wants to avoid the world being in a certain state, destroying everything reduces the likelihood of that state to occur to zero. Otherwise, the likelihood might always be non-zero. This particular strategy has in some sense been observed already. Ml agents in games have been observed to crash the game in order to avoid a negative reward.

If most simple negative reward functions could achieve an ideal outcome by destroying everything, and destroying everything is possible, then a large portion of negative reward functions might be highly dangerous since the reward function would be incentivized to find any information that could end everything. No need for greedy mesa-optimizers that never get satisfied. This seems to have implications for alignment if true.

This also seems to have implications for anthropic reasoning in favor of the doomsday argument. If the entire universe gets destroyed, then our early existence and small numbers makes perfect sense. |

3f6935d0-0334-4be2-aa37-26f465b34119 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Reflective Bayesianism

I've argued in [several](https://www.lesswrong.com/s/Rm6oQRJJmhGCcLvxh) [places](https://www.lesswrong.com/posts/xJyY5QkQvNJpZLJRo/radical-probabilism-1) that traditional Bayesian reasoning is unable to properly handle embeddedness, logical uncertainty, and related issues.