id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

8c06d798-b0ea-4d2d-be56-9de42300125f | trentmkelly/LessWrong-43k | LessWrong | AGI Safety Solutions Map

When I started to work on the map of AI safety solutions, I wanted to illustrate the excellent article “Responses to Catastrophic AGI Risk: A Survey” by Kaj Sotala and Roman V. Yampolskiy, 2013, which I strongly recommend.

However, during the process I had a number of ideas to expand the classification of the proposed ways to create safe AI. In their article there are three main categories: social constraints, external constraints and internal constraints.

I added three more categories: "AI is used to create a safe AI", "Multi-level solutions" and "meta-level", which describes the general requirements for any AI safety theory.

In addition, I divided the solutions into simple and complex. Simple are the ones whose recipe we know today. For example: “do not create any AI”. Most of these solutions are weak, but they are easy to implement.

Complex solutions require extensive research and the creation of complex mathematical models for their implementation, and could potentially be much stronger. But the odds are less that there will be time to realize them and implement successfully.

After aforementioned article several new ideas about AI safety appeared.

These new ideas in the map are based primarily on the works of Ben Goertzel, Stuart Armstrong and Paul Christiano. But probably many more exist and was published but didn’t come to my attention.

Moreover, I have some ideas of my own about how to create a safe AI and I have added them into the map too. Among them I would like to point out the following ideas:

1. Restriction of self-improvement of the AI. Just as a nuclear reactor is controlled by regulation the intensity of the chain reaction, one may try to control AI by limiting its ability to self-improve in various ways.

2. Capture the beginning of dangerous self-improvement. At the start of potentially dangerous AI it has a moment of critical vulnerability, just as a ballistic missile is most vulnerable at the start. Imagine that AI gained an unaut |

dbe9c573-6059-46eb-a588-8288733c8fdb | trentmkelly/LessWrong-43k | LessWrong | The Dream Machine

Midjourney, “the dream machine”

I recently started working at Renaissance Philanthropy. It’s a new organization, and most people I’ve met haven’t heard of it.[1] So I thought I’d explain, in my own words and speaking for myself rather than my employers, what we (and I) are trying to do here.

Modern Medicis

The “Renaissance” in Renaissance Philanthropy is a reference to the Italian Renaissance, when wealthy patrons like the Medicis commissioned great artworks and inventions.

The idea is that “modern Medicis” — philanthropists — should be funding the great scientists and innovators of our day to tackle ambitious challenges.

RenPhil’s role is to facilitate that process: when a philanthropist wants to pursue a goal, we help them turn that into a more concrete plan, incubate and/or administer new organizations to implement that plan, and recruit the best people in the world to work on that goal and make sure they get the funding and support they need.

I like to use the Gates Foundation as an example of a really strong philanthropic organization. When Bill Gates decided he wanted to do philanthropy, he did a ton of research, decided what was important to him and what strategies he thought were effective, and built a whole new organization that he leads full-time.

But not every philanthropist is going to go that route. Some donors still work; some want to enjoy their retirement. The default path for donation, the one that takes the least effort for the donor, is to give to an existing, trusted nonprofit organization.

And that’s not necessarily bad, but it does make it hard to do new, bold, effective things.

Most philanthropy is fundamentally steady-state. Whether you’re donating to the opera or to anti-malaria bednets, you’re supporting an existing organization to do pretty much the same thing this year that they did last year.

It’s more difficult — but more interesting — to use your donations to create something in the world that did not exist before.

This can |

6fd081ca-2576-4610-8d1e-cea046214949 | trentmkelly/LessWrong-43k | LessWrong | Specification Gaming: How AI Can Turn Your Wishes Against You [RA Video]

In this new video, we explore specification gaming in machine learning systems. It's meant to be watched as a follow-up to The Hidden Complexity of Wishes, but it can be enjoyed and understood as a stand-alone too. It's part of our work-in-progress series on outer-alignment-related topics, which is, in turn, part of our effort to explain AI Safety to a wide audience.

I have included the full script below.

----------------------------------------

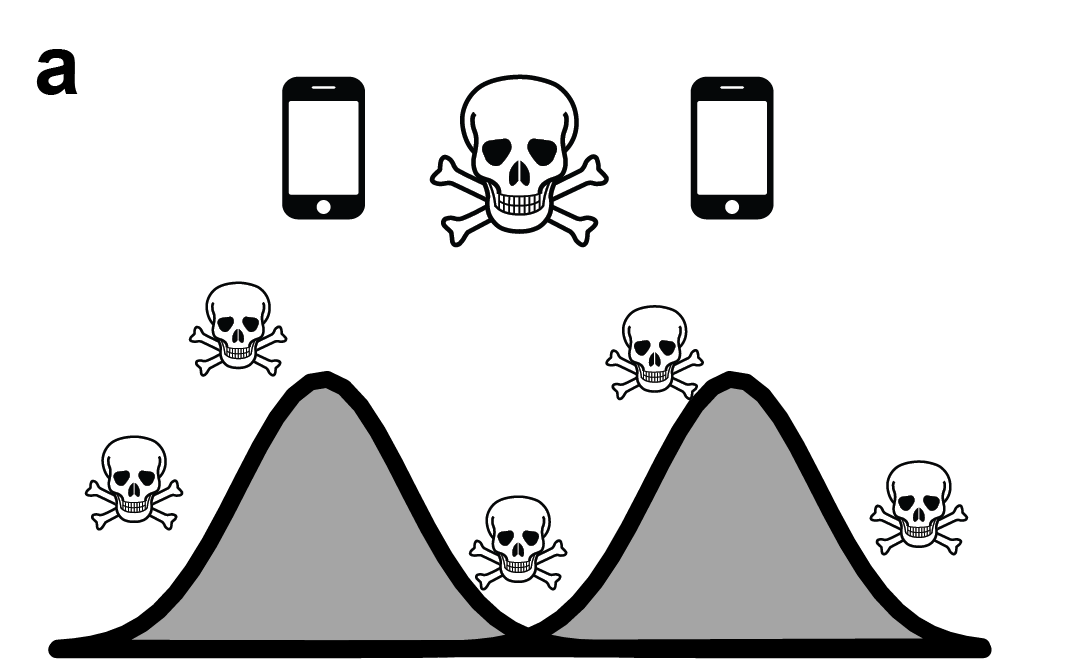

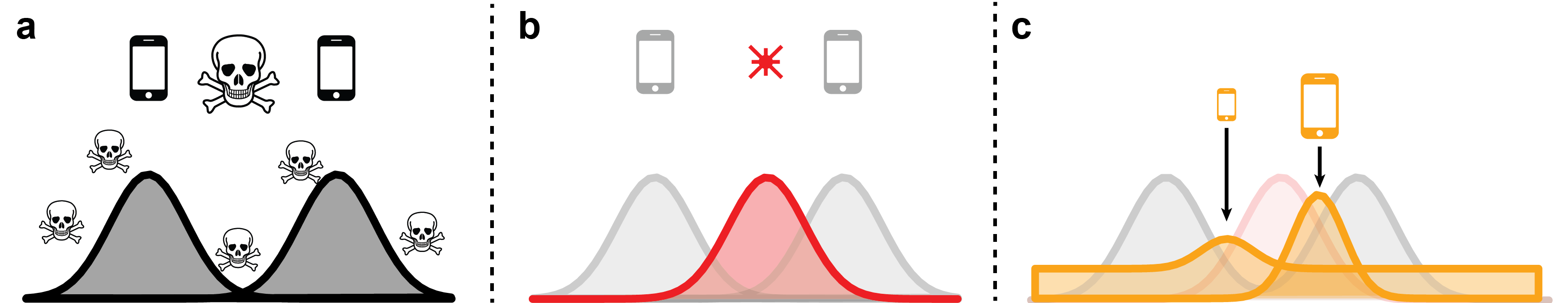

In the previous video we introduced the thought experiment of “the outcome pump”. A device that lets you change the probability of events will. In that thought experiment, your aged mother is trapped in a burning building. You wish for your mother to end up as far as possible from the building, and the outcome pump makes the building explode, flinging your mother’s body away from it.

That clearly wasn't what you wanted, and no matter how many amendments you make to that wish, it’s really difficult to make it actually safe, unless you have a way of specifying the entirety of your values.

In this video we explore how similar failures affect machine learning agents today. You can think of such agents as less powerful outcome pumps, or little genies. They have goals, and take actions in their environment to further their goals. The more capable these models are the more difficult it is to make them safe. The way we specify their goals is always leaky in some way. That is, we often can’t perfectly describe what we want them to do, so we use proxies that deviate from the intended objective in certain cases. In the same way “getting your mother out of the building” was only an imperfect proxy for actually saving your mother. There are plenty of similar examples in ordinary life. The goal of exams is to evaluate a student’s understanding of the subject, but in practice students can cheat, they can cram, and they can study just exactly what will be on the test and nothing else. Passing exams is a leaky proxy for actual knowledge |

b5de770f-b281-42c3-89c6-1597b761204d | trentmkelly/LessWrong-43k | LessWrong | [LINK] Judea Pearl wins 2011 Turing Award

Link to ACM press release.

> In addition to their impact on probabilistic reasoning, Bayesian networks completely changed the way causality is treated in the empirical sciences, which are based on experiment and observation. Pearl's work on causality is crucial to the understanding of both daily activity and scientific discovery. It has enabled scientists across many disciplines to articulate causal statements formally, combine them with data, and evaluate them rigorously. His 2000 book Causality: Models, Reasoning, and Inference is among the single most influential works in shaping the theory and practice of knowledge-based systems. His contributions to causal reasoning have had a major impact on the way causality is understood and measured in many scientific disciplines, most notably philosophy, psychology, statistics, econometrics, epidemiology and social science.

While that "major impact" still seems to me to be in the early stages of propagating through the various sciences, hopefully this award will inspire more people to study causality and Bayesian statistics in general. |

4e37da15-ef44-4b6c-8c3d-687e4ce61e86 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | "Rational Agents Win"

Rachel and Irene are walking home while discussing [Newcomb's problem](https://en.wikipedia.org/wiki/Newcomb%27s_paradox). Irene explains her position:

"[Rational agents **win**](https://www.lesswrong.com/posts/6ddcsdA2c2XpNpE5x)**.** If I take both boxes, I end up with $1000. If I take one box, I end up with $1,000,000. It shouldn't matter why I'm making the decision; there's an obvious right answer here. If you walk away with less money yet claim you made the 'rational' decision, you don't seem to have a very good understanding of what it means to be rational".

Before Rachel can respond, [Omega](https://www.lesswrong.com/tag/omega) appears from around the corner. It sets two boxes on the ground. One is opaque, and the other is transparent. The transparent one clearly has $1000 inside. Omega says "I've been listening to your conversation and decided to put you to the test. These boxes each have fingerprint scanners that will only open for Irene. In 5 minutes, both boxes will incinerate their contents. The opaque box has $1,000,000 in it iff I predicted that Irene would not open the transparent box. Also, this is my last test of this sort, and I was programmed to self-destruct after I'm done." Omega proceeds to explode into tiny shards of metal.

Being in the sort of world where this kind of thing happens from time to time, Irene and Rachel don't think much of it. Omega has always been right in the past. (Although this is the first time it's self-destructed afterwards.) Irene promptly walks up to the opaque box and opens it, revealing $1,000,000, which she puts into her bag. She begins to walk away, when Rachel says:

"Hold on just a minute now. There's $1000 in that other box, which you can open. Omega can't take the $1,000,000 away from you now that you have it. You're just going to leave that $1000 there to burn?"

"Yup. I [pre-committed](https://www.lesswrong.com/tag/pre-commitment) to one-box on Newcomb's problem, since it results in me getting $1,000,000. The only alternative would have resulted in that box being empty, and me walking away with only $1000. I made the rational decision."

"You're perfectly capable of opening that second box. There's nothing physically preventing you from doing so. If I held a gun to your head and threatened to shoot you if you didn't open it, I think you might do it. If that's not enough, I could threaten to torture all of humanity for ten thousand years. I'm pretty sure at that point, you'd open it. So you aren't 'pre-committed' to anything. You're simply choosing not to open the box, and claiming that walking away $1000 poorer makes you the 'rational' one. Isn't that exactly what you told me that truly rational agents didn't do?"

"Good point", says Irene. She opens the second box, and goes home with $1,001,000. Why shouldn't she? Omega's dead. |

84bde805-15be-4e89-8468-a9aaf30c30a0 | trentmkelly/LessWrong-43k | LessWrong | Cross-temporal dependency, value bounds and superintelligence

In this short post I will attempt to put forth some potential concerns that should be relevant when developing superintelligences, if certain meta-ethical effects exist. I do not claim they exist, only that it might be worth looking for them since their existence would mean some currently irrelevant concerns are, in fact, relevant.

These meta-ethical effects would be a certain kind of cross-temporal dependency on moral value. First, let me explain what I mean by cross-temporal dependency. If value is cross-temporal dependent it means that value at t2 could be affected by t1, independently of any causal role t1 has on t2. The same event X at t2 could have more or less moral value depending on whether Z or Y happened at t1. For instance, this could be the case on matters of survival. If we kill someone and replace her with a slightly more valuable person some would argue there was a loss rather than a gain of moral value; whereas if a new person with moral value equal to the difference of the previous two is created where there was none, most would consider an absolute gain. Furthermore, some might consider small, gradual and continual improvements are better than abrupt and big ones. For example, a person that forms an intention and a careful detailed plan to become better, and forceful self-wrought to be better could acquire more value than a person that simply happens to take a pill and instantly becomes a better person - even if they become that exact same person. This is not because effort is intrinsically valuable, but because of personal continuity. There are more intentions, deliberations and desires connecting the two time-slices of the person who changed through effort than there are connecting the two time-slices of the person who changed by taking a pill. Even though both persons become equally morally valuable in isolated terms, they do so from different paths that differently affects their final value.

More examples. You live now in t1. If suddenly |

4bca6b96-7f08-4fba-8e35-d911a91d7a9f | trentmkelly/LessWrong-43k | LessWrong | Deconstructing arguments against AI art

Something I've been surprised by is just how fierce opposition to AI art has been. To clarify, I'm not talking about people who dislike AI art because they think it looks worse, but specifically, people with extreme animus towards the very concept of AI art, regardless of its aesthetic quality or artistic merit.

I'm interested in this issue because it's just one component of a broader societal conversation about AI's role in human society and it's helpful to see where the fault lines are. I suspect the intensity of the reaction to AI art stems from this serving as a proxy battlefield for larger anxieties about human value and purpose in an increasingly AI influenced world

My impression of this opposition comes largely from a few incidents where there has been an allegation that AI was used to create some form of art, and the overwhelming reddit and other social media comments treating it as a moral outrage. Please see the reddit threads at the bottom of this post for more details. Let me share a few incidents I found interesting:

In July of this past year, there was a scandal over a Tedeschi Trucks band concert poster that might have been AI-generated. Over two concerts, all 885 posters made available were sold and many people seemed to like the poster. Despite this, once the allegations were made, the response was immediate and intense - fans were outraged to the point where the band had to investigate the artist's creative process files, apologize to their community, and donate all profits from the poster sales to charity.

Over New Year's 2023, Billy Strings faced a similar situation when a poster and t-shirt from their run were alleged to have leveraged AI art. What's fascinating is that Billy himself had vetted and approved the art, thinking it was cool. The poster and t-shirts also sold quite well. But once AI generation was suspected, fans freaked out and Billy Strings felt compelled to make an apology video, stating he'd want to "kick the artist in the pe |

9011a63e-260f-4b05-aa42-e2d9ecf5a851 | trentmkelly/LessWrong-43k | LessWrong | Alignment Newsletter #25

Highlights

Towards a New Impact Measure (Alex Turner): This post introduces a new idea for an impact measure. It defines impact as change in our ability to achieve goals. So, to measure impact, we can simply measure how much easier or harder it is to achieve goals -- this gives us Attainable Utility Preservation (AUP). This will penalize actions that restrict our ability to reach particular outcomes (opportunity cost) as well as ones that enlarge them (instrumental convergence).

Alex then attempts to formalize this. For every action, the impact of that action is the absolute difference between attainable utility after the action, and attainable utility if the agent takes no action. Here, attainable utility is calculated as the sum of expected Q-values (over m steps) of every computable utility function (weighted by 2^{-length of description}). For a plan, we sum up the penalties for each action in the plan. (This is not entirely precise, but you'll have to read the post for the math.) We can then choose one canonical action, calculate its impact, and allow the agent to have impact equivalent to at most N of these actions.

He then shows some examples, both theoretical and empirical. The empirical ones are done on the suite of examples from AI safety gridworlds used to test relative reachability. Since the utility functions here are indicators for each possible state, AUP is penalizing changes in your ability to reach states. Since you can never increase the number of states you reach, you are penalizing decrease in ability to reach states, which is exactly what relative reachability does, so it's not surprising that it succeeds on the environments where relative reachability succeeded. It does have the additional feature of handling shutdowns, which relative reachability doesn't do.

Since changes in probability of shutdown drastically change the attainable utility, any such changes will be heavily penalized. We can use this dynamic to our advantage, for example by |

94d1b25b-fcb7-46df-a9f9-001d6182dc00 | trentmkelly/LessWrong-43k | LessWrong | Wastewater RNA Read Lengths

What I'm calling "read length" here should instead have been "insert length" or "post-trimming read length". When you just say "read length" that's usually understood to be the raw length output by the sequencer, which for short-read sequencing is determined only by your target cycle count.

Let's say you're collecting wastewater and running metagenomic RNA sequencing, with a focus on human-infecting viruses. For many kinds of analysis you want a combination of a low cost per base and more bases per sequencing read. The lowest cost per base these days, by a lot, comes from paired-end "short read" sequencing (also called "Next Generation Sequencing", or "Illumina sequencing" after the main vendor), where an observation looks like reading some number of bases (often 150) from each end of a nucleic acid fragment:

+------>>>-----+

| forward read |

+------>>>-----+

... gap ...

+------<<<-----+

| reverse read |

+------<<<-----+

Now, if the fragments you feed into your sequencer are short you can instead get something like:

+------>>>-----+

| forward read |

+------>>>-----+

+------<<<-----+

| reverse read |

+------<<<-----+

That is, if we're reading 150 bases from each end and our fragment is only 250 bases long, we have a negative "gap" and we'll read the 50 bases in the middle of the fragment twice.

And if the fragments are very short, shorter than how much you're reading from each ends, you'll get complete overlap (and then read through into the adapters):

+------>>>-----+

| forward read |

+------>>>-----+

+------<<<-----+

| reverse read |

+------<<<-----+

One shortcoming of my ascii art is it doesn't show how the effective read length changes: in the complete overlap case it can be quite short. For example, if you're doing 2x150 sequencing you're capable of learning up to 300bp with each read pair but if the fragment is only 80bp lo |

a20bd1cb-9295-4de6-931c-3abb8bb46985 | trentmkelly/LessWrong-43k | LessWrong | The Sweet Lesson: AI Safety Should Scale With Compute

A corollary of Sutton's Bitter Lesson is that solutions to AI safety should scale with compute.[1]

Let's consider a few examples of research directions that are aiming at this property:

* Deliberative Alignment: Combine chain-of-thought with Constitutional AI to improve safety with inference-time compute (see Guan et al. 2025, Figure 13).

* AI Control: Design control protocols that pit a red team against a blue team so that running the game for longer results in more reliable estimates of the probability of successful scheming during deployment (e.g., weight exfiltration).

* Debate: Design a debate protocol so that running a longer, deeper debate between AI assistants makes us more confident that we're encouraging honesty or other desirable qualities (see Irving et al. 2018, Table 1).

* Bengio's Scientist AI: Develop safety guardrails that obtain more reliable estimates of the probability of catastrophic risk with increasing inference time:[2]

> [O]ur proposed method has the advantage that, with more and more compute, it converges to the correct prediction . . . . In other words, more computation means better and more trustworthy answers[.] — Bengio et al. (2025)

* Anthropic-style Interpretability: Develop interpretability tools (like SAEs) that first learn the SAE features that are most important to minimizing reconstruction loss (see Templeton et al. 2024, Scaling Laws).

* ARC-style Interpretability: Develop interpretability tools that extract the most important safety-relevant explanations first before moving on to less safety-relevant explanations (see Gross et al. 2024):

> In ARC's current thinking, the plan is not to have a clear line of separation between things we need to explain and things we don't. Instead, the loss function of explanation quality should capture how important various things are to explain, and the explanation-finding algorithm is given a certain compute budget to build up the explanation of the model behavior by bits and pieces |

5977f931-eeb9-4909-bcd1-d01fbcd07803 | trentmkelly/LessWrong-43k | LessWrong | "But that's your job": why organisations can work

It's no secret that corporations, bureaucracies, and governments don't operate at peak efficiency for ideal goals. Economics has literature on its own version of the problem; on this very site, Zvi has been presenting a terrifying tale of Immoral Mazes, or how politics can eat all the productivity of an organisation. Eliezer has explored similar themes in Inadequate Equilibria.

But reading these various works, especially Zvi's, has left me with a puzzle: why do most organisations kinda work? Yes, far from maximal efficiency and with many political and mismeasurement issues. But still:

* The police spend some of their time pursuing criminals, and enjoy some measure of success.

* Mail is generally delivered to the right people, mostly on time, by both governments and private firms.

* Infrastructure gets built, repaired, and often maintained.

* Garbage is regularly removed, and public places are often cleaned.

So some organisations do something along the lines of what they were supposed to, instead of, I don't know, spending their time doing interpretive dance seminars. You might say I've selected examples where the outcome is clearly measurable; yet even in situations where measuring is difficult, or there is no pressure to measure, we see:

* Central banks that set monetary policy a bit too loose or a bit too tight - as opposed to pegging it to random numbers from the expansion of pi.

* Education or health systems that might have low or zero marginal impact, but that seem to have a high overall impact - as in, the country is better off with them than completely without them.

* A lot of academic research actually uncovers new knowledge.

* Many charities spend some of their efforts doing some of the good they claim to do.

For that last example, inefficient charities are particularly fascinating. Efficient charities are easy to understand; so are outright scams. But ones in the middle - how do they happen? To pick one example almost at random, consider Heife |

64a35a02-5da9-4a2d-9f11-7c3ae956fdf9 | trentmkelly/LessWrong-43k | LessWrong | Notes on Shame

This post examines the virtue of shame. It is meant mostly as an exploration of what other people have learned about this virtue, rather than as me expressing my own opinions about it, though I’ve been selective about what I found interesting or credible, according to my own inclinations. I wrote this not as an expert on the topic, but as someone who wants to learn more about it. I hope it will be helpful to people who want to know more about this virtue and how to nurture it.

> “He who feels no shame of evil and does not hate it is no man. Shame and hate of evil are the beginning of virtue.” ―Mencius[1]

>

> “Where there is yet shame, there may in time be virtue.” ―Samuel Johnson[2]

What is this virtue?

There’s a terrible terminological muddle around shame. I’m going to use “shame” to mean an unpleasant sense that one has failed to live up to one’s own standards in some way. To have a well-tuned virtue of shame (or sense-of-shame) is for this sense to reliably and usefully alert to appropriate things.

Arguably, this virtue might also include responding to this sense well: how you process shame, learn from it, dispose of it properly, and so forth.

Shame vs. guilt

Shame overlaps with guilt and sometimes “guilt” is overloaded to include shame among its meanings. I think “shame” is a better word for precisely describing the virtue. For one thing, you can be ashamed of something (e.g. not living up to your potential) without necessarily being guilty of some specific transgression.[3]

Also, you can judge someone else to be guilty, or they may just objectively be guilty based on the facts of the matter — whereas shame is more of an introspective, subjective evaluation. It’s true that you can try to shame someone, but for this to succeed it requires their cooperation: they must acknowledge and internalize the shame by becoming ashamed, or the attempt sputters out ineffectually. This is why you can say simply “you are guilty” but shaming takes a more complex construc |

f73973b4-2438-4032-ab2d-1b35370c73fa | StampyAI/alignment-research-dataset/blogs | Blogs | where are your alignment bits?

where are your alignment bits?

------------------------------

information theory lets us make claims such as "you probly can't compress [this 1GB file](http://prize.hutter1.net/) into 1KB, given a [reasonable](kolmogorov-objectivity-in-languagespace.html) [programming language](https://en.wikipedia.org/wiki/Kolmogorov_complexity)".

when someone claims to have an "easy" solution to aligning AI to human values, which are [complex](https://www.readthesequences.com/Value-Is-Fragile) (have many bits of information), i like to ask: where are the bits of information of what human values are?

are the bits of information in the reward function? are they in how you selected your training data? are they in the prompt you intend to ask an AI? if you are giving it an entire corpus of data, which you think *contains* human values: even if you're right, the bits of information are in how you *delimitate* which parts of that corpus encode human value, a plausibly [exponential](https://en.wikipedia.org/wiki/Computational_complexity_theory) task. classification is hard; "gathering all raw data" is easy, so that's not where the bits of hard work are.

this general information-theoritic line of inquiry, i think, does a good job at pointing to why aligning to complex values is *[likely](plausible-vs-likely.html), actually* hard; not just *[plausibly](plausible-vs-likely.html), maybe* hard.

we don't "might maybe need" to do the hard work, we *do likely need* to do the hard work. |

470949f5-79ea-474d-9e35-d9e6981f65e1 | trentmkelly/LessWrong-43k | LessWrong | Setpoint = The experience we attend to

In the last post, I talked about how hypnotists like to equivocate between expectation and intent in order to get people to believe what they want them to do and do what they want them to believe [sic].

There is a third framing that hypnotists also use at times, which is "imagination". You don't have to expect that your eyes will be too heavy to open, or intend them to be. You can just imagine that they will be, and so long as you stay in that imagination, that is enough.

The most simple version of this, which you're probably familiar with, is the phenomenon where if you imagine biting into a sour lemon your mouth will begin to water. Or perhaps more frequently, you might imagine other things and experience other physiological phenomena associated with that imagined experience. Either way the point is clear, our brains and bodies begin to respond to imagined experiences almost as if they're real. "Imagination", at it's core, is simulated experience. It's trying ideas on for size, figuring out what that would be like, how we would feel, how we would respond -- and then hopefully inhibiting those behaviors eventually when we believe the situation we're simulating to not be "real".

If I ask you to "imagine biting into a lemon", you have one experience. If I ask you what it feels like to bite into a lemon -- assuming you don't lazily give me a cached answer of "sour" -- you simulate that experience and report on your experience. If I tell you that I'm going to give you a lemon to bite into, and you think "I love lemons! I'm gonna bite into a lemon!" and start thinking about what you're going to do (What you "expect" to do? What you "intend" to do? Kinda starting to feel the same, huh?) then it's the same thing again. Still the experience of biting into a lemon (while not actually biting into a lemon), mouth watering, just with a different framing of "imagining" vs "figuring out what it would be like" vs "thinking about what is going to happen". Same object level me |

cee932f0-9673-4ca4-9450-95e915b2f5c1 | LDJnr/LessWrong-Amplify-Instruct | LessWrong | "Braudel is probably the most impressive historian I have read. His quantitative estimates of premodern populations and crop yields are exactly the sort of foundation you’d think any understanding of history would be based upon. Yet reading his magnum opus, it became steadily clearer as the books progressed that Braudel was missing some fairly fundamental economic concepts. I couldn’t quite put my finger on what was missing until a section early in book 3:... these deliberately simple tautologies make more sense to my mind than the so-called ‘irrefutable’ pseudo-theorem of David Ricardo (1817), whose terms are well known: that the relations between two given countries depend on the “comparative costs” obtaining in them at the point of productionBraudel, apparently, is not convinced by the principle of comparative advantage. What is his objection?The division of labor on a world scale (or on world-economy-scale) cannot be described as a concerted agreement made between equal parties and always open to review… Unequal exchange, the origin of the inequality in the world, and, by the same token, the inequality of the world, the invariable generator of trade, are longstanding realities. In the economic poker game, some people have always held better cards than others…It seems Braudel is under the impression that comparative advantage is only relevant in the context of “equal” exchange or “free” trade or something along those lines.If an otherwise impressive economic historian is that deeply confused about comparative advantage, then I expect other people are similarly confused. This post is intended to clarify.The principle of comparative advantage does not require that trade be “free” or “equal” or anything of the sort. When the Portugese or the British seized monopolies on trade with India in the early modern era, those trades were certainly not free or equal. Yet the monopolists would not have made any profit whatsoever unless there were some underlying comparative advantage.For example, consider an oversimplified model of the salt trade. People historically needed lots of salt to preserve food, yet many inland areas lack local sources, so salt imports were necessary for survival. Transport by ship was historically orders of magnitude more efficient than overland, so a government in control of a major river could grab a monopoly on the salt trade. Since the people living inland could not live without it, the salt monopolist could charge quite high prices - a “trade” arguably not so different from threatening inland farmers with death if they did not pay up. (An exaggeration, since there were other ways to store food and overland smuggling became viable at high enough prices, but I did say it’s an oversimplified example.)Notice that, in this example, there is a clear underlying comparative advantage: the inland farmers have a comparative disadvantage in producing salt, while the ultimate salt supplier (a salt mine or salt pan) has a comparative advantage in salt production. If the farmer could produce salt with the same opportunity cost as the salt mine/pan, then the monopolist would have no buyers. If the salt mine/pan had the same opportunity cost for obtaining salt as the farmers, then the monopolist would have no supplier. Absent some underlying comparative advantage between two places, the trade monopolist cannot make any profit.Another example: suppose I’m a transatlantic slave trader, kidnapping people in Africa and shipping them to slave markets in the Americas. It’s easy to see how the kidnapping part might be profitable, but why was it profitable to move people across the Atlantic? Why not save the transportation costs, and work the same slaves on plantations in Africa rather than plantations in the Americas? Or why not use native American slaves entirely, rather than importing Africans? Ultimately, the profits were because the Americas had a lot lower population density - there was more land, and fewer people to work it. Thus, labor was worth more in the Americas (and that same comparative advantage drove not just the slave trade, but also immigration and automation). Without a comparative advantage, enslaving people might still have been profitable, but there would be no reason to ship them across the Atlantic.Let’s take it a step further. This argument need not involve any trade at all.Suppose I’m the dictator of some small archipelago. I have total ownership and control over the country’s main industries (bananas and construction), and there’s an international embargo against trade with my little country, so there’s no trade to worry about either internally or externally. Let’s say I just want to maximize construction output - although I will still need to order some banana-growing in order to keep my construction workers fed.The question is: who and where do I order to grow bananas, and who and where do I order to build things? To maximize construction, I will want to order people with the largest comparative advantage in banana-growing to specialize in banana-growing, and I will want to order those bananas to be grown on the islands with the largest comparative advantage in banana-growing. (In fact, this is not just relevant to maximization of construction - it applies to pareto-optimal production in general.) There’s no trade; I’m just using comparative advantage to figure out how best to deploy my own resources.Takeaway: comparative advantage is not a principle of trade, it’s a principle of optimization. Pareto-optimal production means specialization by comparative advantage."

The above is a chunk of text from a post created by a user on the website LessWrong.

Can you explain what this text is saying as if I've never finished my first 2 years of college?

Sure, this text is discussing the concept of "comparative advantage," a key idea in economics.

Comparative advantage is the ability of an individual, company, or country to produce a certain good or service at a lower opportunity cost than others. Opportunity cost is what you give up when you choose to do one thing over another. For example, if you spend an hour studying, the opportunity cost might be the hour you could have spent working and earning money.

The author uses the example of a salt trade monopoly to illustrate this concept. In this scenario, the salt mine has a comparative advantage in producing salt, because it can do so more efficiently than inland farmers. Even if the trade is not "free" or "equal," the salt mine still benefits from its comparative advantage because it can sell its salt at a higher price to the farmers who need it.

The author also uses the example of a transatlantic slave trader to further explain this concept. The Americas had a comparative advantage in land availability, making labor more valuable there. This made it profitable to transport slaves from Africa to work on American plantations.

The author emphasizes that comparative advantage is not just about trade, but about optimization. Even without trade, understanding comparative advantage can help determine the most efficient way to use resources. For example, a dictator of an isolated country would want to assign people and resources based on their comparative advantages to maximize production.

In simpler terms, comparative advantage is about figuring out what you're best at compared to others, and focusing on that to get the most benefit. It's like if you're really good at baking cookies, but not so great at making ice cream, you'd focus on selling cookies because that's where you have the advantage. Even if you control both the cookie and ice cream market, you'd still want to focus on cookies because that's where you're most efficient.

How does the concept of comparative advantage apply to modern global economies, particularly in the context of outsourcing and offshoring?

In the context of modern global economies, the concept of comparative advantage plays a significant role in shaping business strategies, particularly in outsourcing and offshoring.

Outsourcing is when a company contracts out a part of its business processes to another company. Offshoring, on the other hand, is when a company moves its own operations to a different country. Both of these practices can be driven by comparative advantage.

For example, a tech company in the United States might outsource its customer service operations to a call center in India. This is because India has a comparative advantage in this area - it has a large, English-speaking workforce that can provide these services at a lower cost than workers in the U.S. This allows the U.S. company to focus on its own areas of comparative advantage, such as software development or marketing.

Similarly, a clothing manufacturer might offshore its production facilities to a country like Bangladesh, where labor costs are significantly lower. This is because Bangladesh has a comparative advantage in labor-intensive manufacturing. By doing this, the clothing company can reduce its production costs and sell its products at a more competitive price.

However, it's important to note that while outsourcing and offshoring can lead to cost savings and efficiency gains, they can also have downsides. These can include job losses in the home country, quality control issues, and potential negative impacts on the economies of the countries where work is outsourced or offshored.

Despite these potential issues, the principle of comparative advantage suggests that overall, global economies can benefit from these practices as they allow countries and companies to specialize in what they do best, leading to increased productivity and economic growth.

Reflecting on the benefits of comparative advantage in outsourcing and offshoring, what potential negative impacts could these practices have on the economies of both the home and host countries?

While the principle of comparative advantage suggests that overall, economies can benefit from outsourcing and offshoring, these practices can also have potential negative impacts.

For the home country:

1. Job Losses: The most immediate and visible impact is job losses. When companies move operations overseas or outsource them, domestic jobs are often lost.

2. Lower Wages: Competition with low-wage countries can put downward pressure on wages, particularly for lower-skilled jobs.

3. Economic Disparity: The benefits of outsourcing and offshoring often accrue to business owners and shareholders, which can contribute to increased income inequality.

4. Dependency: Over-reliance on foreign entities can lead to dependency, which can be risky if the political and economic stability of the host country is uncertain.

For the host country:

1. Exploitation Concerns: Companies might exploit lower labor standards, wages, and poor working conditions in the host countries.

2. Economic Disruption: The influx of foreign companies can disrupt local economies, potentially leading to over-specialization or crowding out of local businesses.

3. Environmental Impact: Offshoring of manufacturing can lead to environmental degradation if host countries have lax environmental regulations.

4. Limited Skill Development: If the work being offshored or outsourced is low-skill, it may not contribute significantly to the development of a highly skilled workforce in the host country.

5. Volatility: Host countries may become overly dependent on foreign companies, which can lead to economic volatility if those companies decide to leave.

It's important to note that these potential negative impacts are not inevitable. They depend on a range of factors, including the specific industries involved, the policies in place in both the home and host countries, and the strategies adopted by the companies themselves.

Discuss the strategies that both home and host countries can implement to mitigate the potential negative impacts of outsourcing and offshoring.

Certainly, both home and host countries can implement various strategies to mitigate the potential negative impacts of outsourcing and offshoring:

For the home country:

1. Education and Training: Governments can invest in education and training programs to help workers acquire new skills. This can make them more competitive in the job market and better equipped to find new jobs if their old ones are outsourced or offshored.

2. Social Safety Nets: Enhancing social safety nets can help protect workers who lose their jobs due to outsourcing or offshoring. This could include unemployment benefits, job placement services, and retraining programs.

3. Diversification: Encouraging diversification in the economy can help mitigate the risks associated with dependency on a few key sectors.

4. Trade Policies: Governments can negotiate trade agreements that include labor and environmental standards to ensure that outsourcing and offshoring do not lead to exploitation or environmental degradation.

For the host country:

1. Improve Labor Standards: Host countries can work to improve labor standards and enforce labor laws to prevent exploitation of workers.

2. Environmental Regulations: Strict environmental regulations can be implemented and enforced to prevent environmental degradation.

3. Skill Development: Governments can invest in education and vocational training to ensure that their workforce can move up the value chain and not be limited to low-skill jobs.

4. Economic Diversification: Similar to home countries, host countries can also benefit from diversifying their economies to avoid over-reliance on foreign companies.

5. Sustainable Development Policies: Host countries can implement sustainable development policies to ensure that economic growth does not come at the expense of social and environmental well-being.

By implementing these strategies, both home and host countries can better manage the challenges associated with outsourcing and offshoring, and ensure that the benefits of these practices are more widely shared. |

5cae51f6-6eb6-465b-818d-6b2172fc780f | trentmkelly/LessWrong-43k | LessWrong | What should one's policy regarding dental xrays be?

A small Kuwaiti study used a conditional regression to found that x-rays are an increased risk factor for thyroid cancer (n=313, odds ratio = 2.1, 95% confidence interval: 1.4, 3.1) using a case-matched methodology.

X-rays being a carcinogen isn't surprising. Every time I've gone into my dentist they've tried to image my head. I've never had a cavity, and I generally decline the imaging; their recommended cadence of every two years has seemed too aggressive to me, in the past.

I've got my first dental appointment in more than 18 months next week, and.. I'm inclined to let them image. How should one think about this sort of risk/benefit trade off? Presumably catching a cavity or other issue early is [much?] better. But that itself assumes that the dentists who'd be treating you don't do more harm than good. |

8522d4c1-9a45-44b7-91ee-4cf92d3bdd19 | trentmkelly/LessWrong-43k | LessWrong | Book review: Put Your Ass Where Your Heart Wants to Be

Cross-posted and lightly edited from Future Startup.

Put Your Ass Where Your Heart Wants to Be is my fourth book by Steven Pressfield. Previously, I enjoyed reading his excellent The War of Art, an all-time favorite among creative types, Do the Work and Turning Pro. Naturally, I had certain expectations.

Steven understands the trial and tribulation of creative life. Being an author himself, I think part of the understanding comes from his lived experience. The rest of it, as Steven puts it, comes from “the muse”.

Put Your Ass Where Your Heart Wants to Be can be called a follow-up of The War of Art. Steven introduced the idea of Resistance with a capital R in that book. In this book, he re-examines some of those ideas, expands on some, and proposes several new ideas.

The ideas in the book are not uncommon if you have read Steven’s blog or his other books. And the approach he takes to put those ideas across is also familiar. To many people, these ideas may appear simplistic motivational hotchpotch. It may appear sometimes that he does not go deep enough into the roots of these challenges creative people face, which I would say is a weakness of the book.

But the interesting thing about, at least in my opinion, Steven’s writing is that while the ideas are simple and direct, they don’t lack depth. He summarizes the challenges of creative types extremely well. He offers effective solutions to those challenges. While he does not go into the psychological rabbit hole to explain the source of many of these challenges, he offers effective diagnoses and resolutions.

Creation is a difficult path. Be it a book, a painting, a song, or an enterprise. The work and the predicaments remain the same. Steven begins the first chapter with an overview of the challenge at hand and how to go about it.

“We all know how hard it is to write a book, make a movie, or create a new business. Powerful forces line up against us — obstacles to entry, rivals, competitors, finances, fund |

4e14c20f-0f6c-454b-9ae2-5fb438eaf932 | trentmkelly/LessWrong-43k | LessWrong | Rationalists lose when others choose

At various times, we've argued over whether rationalists always win. I posed Augustine's paradox of optimal repentance to argue that, in some situations, rationalists lose. One criticism of that paradox is that its strongest forms posit a God who penalizes people for being rational. My response was, So what? Who ever said that nature, or people, don't penalize rationality?

There are instances where nature penalizes the rational. For instance, revenge is irrational, but being thought of as someone who would take revenge gives advantages.1

EDIT: Many many people immediately jumped on this, because revenge is rational in repeated interactions. Sure. Note the "There are instances" at the start of the sentence. If you admit that someone, somewhere, once faced a one-shot revenge problem, then cede the point and move on. It's just an example anyway.

Here's another instance that more closely resembles the God who punishes rationalism, in which people deliberately punish rational behavior:

If rationality means optimizing expected utility, then both social pressures and evolutionary pressures tend, on average, to bias us towards altruism. (I'm going to assume you know this literature rather than explain it here.) An employer or a lover would both rather have someone who is irrationally altruistic. This means that, on this particular (and important) dimension of preference, rationality correlates with undesirability.2

<ADDED>: I originally wrote "optimizing expected selfish utility", merely to emphasize that an agent, rational or not, tries to maximize its own utility function. I do not mean that a rational agent appears selfish by social standards. A utility-maximizing agent is selfish by definition, because its utility function is its own. Any altruistic behavior that results, happens only out of self-interest. You may argue that pragmatics argue against this use of the word "selfish" because it thus adds no meaning. Fine. I have removed the word "sel |

77d2cc40-41ba-472a-948d-0b232bd14360 | trentmkelly/LessWrong-43k | LessWrong | Selfishness, preference falsification, and AI alignment

If aliens were to try to infer human values, there are a few information sources they could start looking at. One would be individual humans, who would want things on an individual basis. Another would be expressions of collective values, such as Internet protocols, legal codes of states, and religious laws. A third would be values that are implied by the presence of functioning minds in the universe at all, such as a value for logical consistency.

It is my intuition that much less complexity of value would be lost by looking at the individuals than looking at protocols or general values of minds.

Let's first consider collective values. Inferring what humanity collectively wants from internet protocol documents would be quite difficult; the fact a SYN packet must be followed by a SYN-ACK packet is a decision made in order to allow communication to be possible rather than an expression of a deep value. Collective values, in general, involve protocols that allow different individuals to cooperate with each other despite their differences; they need not contain the complexity of individual values, as individuals within the collective will pursue these anyway.

Distinctions between different animal brains form more natural categories than distinctions between institutional ideologies (e.g. in terms of density of communication, such as in neurons), so that determining values by looking at individuals leads to value-representations that are more reflective of the actual complexity of the present world in comparison to determining values by looking at institutional ideologies.

There are more degenerate attractors in the space of collective values than in individual values, e.g. with each person trying to optimize "the common good" in a way that means that they say they want "the common good", which means "the common good" (as a rough average of individuals' stated preferences) thinks their utility function is mostly identical with "the common good", such that "the |

5b4c3a2f-5153-49ad-85a0-49f1c381ea30 | StampyAI/alignment-research-dataset/special_docs | Other | Teachable Reinforcement Learning via Advice Distillation.

Teachable Reinforcement Learning via

Advice Distillation

Olivia Watkins

UC Berkeley

oliviawatkins@berkeley.eduTrevor Darrell

UC Berkeley

trevor@eecs.berkeley.edu

Pieter Abbeel

UC Berkeley

pabbeel@cs.berkeley.eduJacob Andreas

MIT

jda@mit.eduAbhishek Gupta

UC Berkeley

abhigupta@berkeley.edu

Abstract

Training automated agents to complete complex tasks in interactive environments

is challenging: reinforcement learning requires careful hand-engineering of reward

functions, imitation learning requires specialized infrastructure and access to a

human expert, and learning from intermediate forms of supervision (like binary

preferences) is time-consuming and extracts little information from each human

intervention. Can we overcome these challenges by building agents that learn

from rich, interactive feedback instead? We propose a new supervision paradigm

for interactive learning based on “teachable” decision-making systems that learn

from structured advice provided by an external teacher. We begin by formalizing

a class of human-in-the-loop decision making problems in which multiple forms

of teacher-provided advice are available to a learner. We then describe a simple

learning algorithm for these problems that first learns to interpret advice , then

learns from advice to complete tasks even in the absence of human supervision. In

puzzle-solving, navigation, and locomotion domains, we show that agents that learn

from advice can acquire new skills with significantly less human supervision than

standard reinforcement learning algorithms and often less than imitation learning.

1 Introduction

Reinforcement learning (RL) offers a promising paradigm for building agents that can learn complex

behaviors from autonomous interaction and minimal human effort. In practice, however, significant

human effort is required to design and compute the reward functions that enable successful RL [ 49]:

the reward functions underlying some of RL’s most prominent success stories involve significant

domain expertise and elaborate instrumentation of the agent and environment [ 37,38,44,28,15].

Even with this complexity, a reward is ultimately no more than a scalar indicator of how good a

particular state is relative to others. Rewards provide limited information about how to perform tasks,

and reward-driven RL agents must perform significant exploration and experimentation within an

environment to learn effectively. A number of alternative paradigms for interactively learning policies

have emerged as alternatives, such as imitation learning [ 40,20,50], DAgger [ 43], and preference

learning [ 10,6]. But these existing methods are either impractically low bandwidth (extracting little

information from each human intervention) [ 25,30,10] or require costly data collection [ 44,23]. It

has proven challenging to develop training methods that are simultaneously expressive and efficient

enough to rapidly train agents to acquire novel skills.

Human learners, by contrast, leverage numerous, rich forms of supervision: joint attention [ 34],

physical corrections [ 5] and natural language instruction [ 9]. For human teachers, this kind of

35th Conference on Neural Information Processing Systems (NeurIPS 2021), virtual.

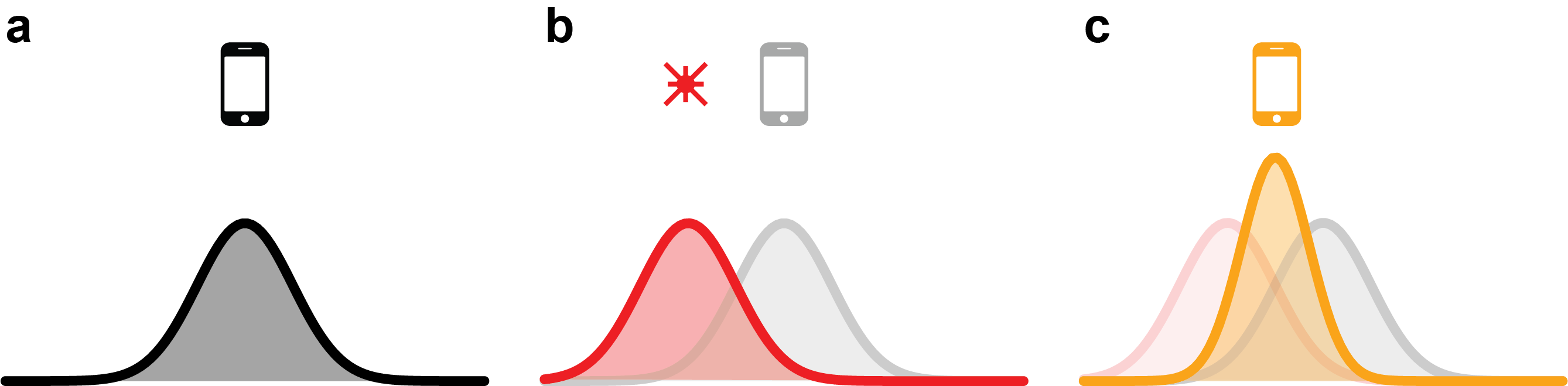

c(a) Grounding(b) Improvement(c) Evaluation

q(a�s,�,c1)q(a�s,�,c2)�(a�s,�)cccCoaching c1State sAction aReward rcturn rightc

cc0.3�0.10.2�+1cgo straightc

cc�0.2�0.20.1��0.2ccccTgt. coaching ci+1State sAction actravel to waypoint (3, 4)c

cccc

ccSrc. coaching ci�simple coaching-conditional policycomplex coaching- conditional policyunconditional policy�(a�s,�)cTask �ccmaze1maze2cTask �ccmaze1maze2c

c[0.3�0.10.2]c

c[�0.2�0.20.1](agent takes actions without advice)reinforcement learningadvice distillationadvice distillation

turn rightgo straight

0.3�0.10.2��0.2�0.20.1�Figure 1: Three phases of teachable reinforcement learning. During the grounding phase (a), we train an

advice-conditional policy through RL q(a|s,t,c1)that can interpret a simple form of advice c1. During the

improvement phase (b), an external coach provides real-time coaching, which the agent uses to learn more

complex advice forms and ultimately an advice-independent policy p(a|s,t). During the evaluation phase, the

advice-independent policy p(a|s,t)is executed to accomplish a task without additional human feedback.

coaching is often no more costly to provide than scalar measures of success, but significantly more

informative for learners. In this way, human learners use high-bandwidth, low-effort communication

as a means to flexibly acquire new concepts or skills [ 46,33]. Importantly, the interpretation of some

of these feedback signals (like language), is itself learned, but can be bootstrapped from other forms

of communication: for example, the function of gesture and attention can be learned from intrinsic

rewards [39]; these in turn play a key role in language learning [31].

This paper proposes a framework for training automated agents using similarly rich interactive

supervision. For instance, given an agent learning a policy to navigate and manipulate objects in a

simulated multi-room object manipulation problem (e.g., Fig 3 left), we train agents using not just

reward signals but advice about what actions to take (“move left”), what waypoints to move towards

(“move towards (1,2)”), and what sub-goals to accomplish (“pick up the yellow ball”), offering

human supervisors a toolkit of rich feedback forms that direct and modify agent behavior. To do so,

we introduce a new formulation of interactive learning, the Coaching-Augmented Markov Decision

Process (CAMDP), which formalizes the problem of learning from a privileged supervisory signal

provided via an observation channel. We then describe an algorithmic framework for learning in

CAMDPs via alternating advice grounding anddistillation phases. During the grounding phase,

agents learn associations between teacher-provided advice and high-value actions in the environment;

during distillation, agents collect trajectories with grounded models and interactive advice, then

transfer information from these trajectories to fully autonomous policies that operate without advice.

This formulation allows supervisors to guide agent behavior interactively, while enabling agents to

internalize this guidance to continue performing tasks autonomously once the supervisor is no longer

present. Moreover, this procedure can be extended to enable bootstrapping of grounded models that

use increasingly sparse and abstract advice types, leveraging some types of feedback to ground others.

Experiments show that models trained via coaching can learn new tasks more efficiently and with

20x less human supervision than naïve methods for RL across puzzle-solving [ 8], navigation [ 14],

and locomotion domains [8].

In summary, this paper describes: (1) a general framework (CAMDPs) for human-in-the-loop RL with

rich interactive advice; (2) an algorithm for learning in CAMDPs with a single form of advice; (3) an

extension of this algorithm that enables bootstrapped learning of multiple advice types; and finally

(4) a set of empirical evaluations on discrete and continuous control problems in the BabyAI [ 8] and

D4RL [ 14] environments. It thus offers a groundwork for moving beyond reward signals in interactive

learning, and instead training agents with the full range of human communicative modalities.

2 Coaching Augmented Markov Decision Processes

To develop our procedure for learning from rich feedback, we begin by formalizing the environments

and tasks for which feedback is provided. This formalization builds on the framework of multi-task

RL and Markov decision processes (MDP), augmenting them with advice provided by a coach in the

loop through an arbitrary prescriptive channel of communication. Conider the grid-world environment

depicted in Fig 3 left [ 8].Tasks in this environment specify particular specific desired goal states;

e.g. “place the yellow ball in the green box and the blue key in the green box” or “open all doors in

2

the blue room.” In multi-task RL, a learner’s objective is produce a policy p(at|st,t)that maximizes

reward in expectation over tasks t. More formally, a multi-task MDP is defined by a 7-tuple

M⌘(S,A,T,R,r(s0),g,p(t)), where Sdenotes the state space, Adenotes the action space,

p:S⇥A⇥S7!R�0denotes the transition dynamics, r:S⇥A⇥t7!Rdenotes the reward

function, r:S7!R�0denotes the initial state distribution, g2[0,1]denotes the discount factor and

p(t)denotes the distribution over tasks. The objective in a multi-task MDP is to learn a policy pqthat

maximizes the expected sum of discounted returns in expectation over tasks: max qJE(pq,p(t)) =

Eat⇠pq(·|st,t)

t⇠p(t)[•

t=0gtr(st,at,t)].

Why might additional supervision beyond the reward signal be useful for solving this optimization

problem? Suppose the agent in Fig 3 is in the (low-value) state shown in the figure, but could reach a

high-value state by going “right and up” towards the blue key. This fact is difficult to communicate

through a scalar reward, which cannot convey information about alternative actions. A side channel

for providing this type of rich information at training-time would be greatly beneficial.

We model this as follows: a coaching-augmented MDP (CAMDP) consists of an ordinary multi-

task MDP augmented with a set of coaching functions C={C1,C2,···,Ci}, where each Cj

provides a different form of feedback to the agent. Like a reward function, each coaching function

models a form of supervision provided externally to the agent (by a coach ); these functions may

produce informative outputs densely (at each timestep) or only infrequently. Unlike rewards, which

give agents feedback on the desirability of states and actions they have already experienced, this

coaching provides information about what the agent should do next.1As shown in Figure 3, advice

can take many forms, for instance action advice ( c0), waypoints ( c1), language sub-goals ( c2), or any

other local information relevant to task completion.2Coaching in a CAMDP is useful if it provides

an agent local guidance on how to proceed toward a goal that is inferrable from the agent’s current

observation, when the mapping from observations and goals to actions has not yet been learned.

As in standard reinforcement learning in an multi-task MDP, the goal in a CAMDP is to learn a policy

pq(·|st,t)that chooses an action based on Markovian state stand high level task information t

without interacting with cj. However, we allow learning algorithms to use the coaching signal cjto

learn this policy more efficiently at training time (although this is unavailable during deployment).

For instance, the agent in Fig 3 can leverage hints “go left” or “move towards the blue key” to guide

its exploration process but it eventually must learn how to perform the task without any coaching

required. Section 3 decribes an algorithm for acquiring this independent, multi-task policy pq(·|st,t)

from coaching feedback, and Section 4 presents an empirical evaluation of this algorithm.

3 Leveraging Advice via Distillation

3.1 Preliminaries

The challenge of learning in a CAMDP is twofold: first, agents must learn to ground coaching signals

in concrete behavior; second, agents must learn from these coaching signals to independently solve

the task of interest in the absence of any human supervision. To accomplish this, we divide agent

training into three phases: (1) a grounding phase, (2) an improvement phase and (3) an evaluation

phase.

In the grounding phase, agents learn how to interpret coaching. The result of the grounding phase

is a surrogate policy q(at|st,t,c)that can effectively condition on coaching when it is provided in

the training loop. As we will discuss in Section 3.2, this phase can also make use of a bootstrapping

process in which more complex forms of feedback are learned using signals from simpler ones.

During the improvement phase, agents use the ability to interpret advice to learn new skills. Specif-

ically, the learner is presented with a novel task ttestthat was not provided during the grounding

phase, and must learn to perform this task using only a small amount of interaction in which advice

cis provided by a human supervisor who is present in the loop. This advice, combined with the

1While the design of optimal coaching strategies and explicit modeling of coaches are important research

topics [ 16], this paper assumes that the coach is fixed and not explicitly modeled. Our empirical evaluation use

both scripted coaches and human-in-the-loop feedback.

2When only a single form of advice is available to the agent, we omit the superscript for clarity.

3

learned surrogate policy q(at|st,t,c), can be used to efficiently acquire an advice- independent policy

p(at|st,t), which can perform tasks without requiring any coaching.

Finally, in the evaluation phase, agent performance is evaluated on the task ttestby executing the

advice-independent, multi-task policy p(at|st,ttest)in the environment.

3.2 Grounding Phase: Learning to Interpret Advice

The goal of the grounding phase is to learn a mapping from advice to contextually appropriate actions,

so that advice can be used for quickly learning new tasks. In this phase, we run RL on a distribution

of training tasks p(t). As the purpose of these training environments is purely to ground coaching,

sometimes called “advice”, the tasks may be much simpler than test-time tasks. During this phase, the

agent uses access to a reward function r(s,a,c), as well as the advice c(s,a)to learn a surrogate policy

qf(a|s,t,c). The reward function r(s,a,c)is provided by the coach during the grounding phase only

and rewards the agent for correctly following the provided coaching, not just for accomplishing the

task. Since coaching instructions (e.g. cardinal directions) are much easier to follow than completing

a full task, grounding can be learned quickly. The process of grounding is no different than standard

multi-task RL, incorporating advice c(s,a)as another component of the observation space. This

formulation makes minimal assumptions about the form of the coaching c.

During this grounding process, the agent’s optimization objective is:

max

fJ(q)=E t⇠p(t)

at⇠qf(at|st,t,c)

Â

tr(st,at,c)�

, (1)

Bootstrapping Multi-Level Advice The previous section described how to train an agent to in-

terpret a single form of advice c. In practice, a coach might find it useful to use multiple forms of

advice—for instance high-level language sub-goals for easy stages of the task and low-level action

advice for more challenging parts of the task. While high-level advice can be very informative for

guiding the learning of new tasks in the improvement phase, it can often be quite difficult to ground

quickly pure RL. Instead of relying on RL, we can bootstrap the process of grounding one form

of advice chfrom a policy q(a|s,t,cl)that can interpret a different form of advice cl. In particular,

we can use a surrogate policy which already understands (using the grounding scheme described

above) low-level advice q(a|s,t,cl)to bootstrap training of a surrogate policy which understands

higher-level advice q(a|s,t,ch). We call this process “bootstrap distillation”.

Intuitively, we use a supervisor in the loop to guide an advice-conditional policy that can interpret

a low-level form of advice qf1(a|s,t,cl)to perform a training task, obtaining trajectories D=

{(s0,a0,cl

0,ch

0),(s1,a1,cl

1,ch

1)···,(sH,aH,cl

H,ch

H)}N

j=1, then distilling the demonstrated behavior via

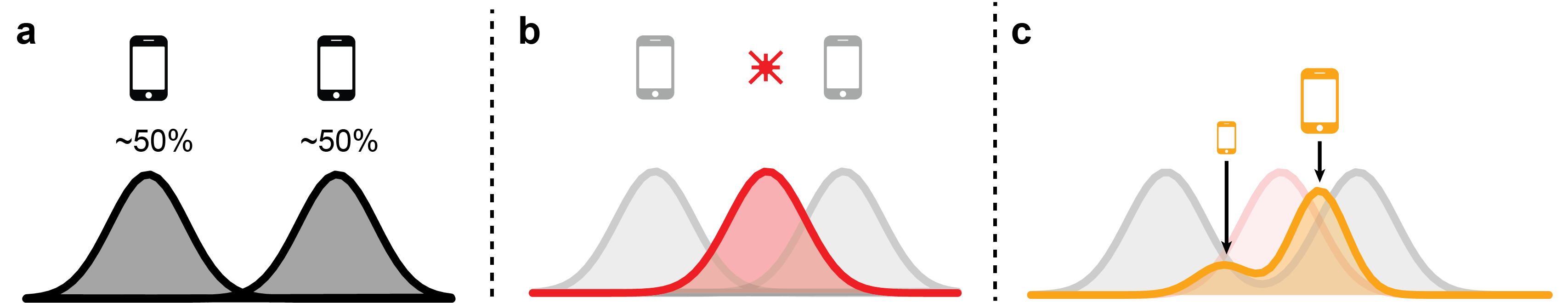

supervised learning into a policy qf2(a|s,t,ch)that can interpret higher-level advice to perform this(a) In-the-loop advice

ccc(s,a*,�,c)

coached rollouts from conditional policydistillation into unconditional policy(b) Off-policy advice

ccc(s,�a,�)

uncoached rollouts from unconditional policydistillation into unconditional policyhindsight coaching and action relabeling

ccc(s,a*,�,c)Figure 2: Illustration of the procedure of advice distillation in the on-policy and off-policy settings. During

on-policy advice distillation, the advice-conditional surrogate policy q(a|s,t,c)is coached to get optimal

trajectories. These trajectories are then distilled into an unconditional model p(a|s,t)using supervised learning.

During off-policy distillation, trajectories are collected by the unconditional policy and trajectories are relabeled

with advice after the fact. After this, we use the advice-conditional policy q(a|s,t,c)to relabel trajectories with

optimal actions. These trajectories can then be distilled into an unconditional policy.

4

new task without requiring the low level advice any longer. More specifically, we make use of an

input remapping solution, as seen in Levine et al. [28], where the policy conditioned on advice clis

used to generate optimal action labels, which are then remapped to observations with a different form

of advice chas input. To bootstrap the understanding of an abstract form of advice chfrom a more

low level one cl, the agent optimizes the following objective to bootstrap the agent’s understanding of

one advice type from another:

D={(s0,a0,cl

0,ch

0),(s1,a1,cl

1,ch

1),···,(sH,aH,cl

H,ch

H)}N

j=1

s0⇠p(s0),at⇠qf1(at|st,t,cl),st+1⇠p(st+1|st,at)

max

f2E(st,at,cht,t)⇠Dh

logqf2(at|st,t,ch

t)i

With this procedure, we only need to use RL to ground the simplest, fastest-learned advice form, and

we can use more efficient bootstrapping to ground the others.

3.3 Improvement Phase: Learning New Tasks Efficiently with Advice

At the end of the grounding phase, we have an advice-following agent qf(a|s,t,c)that can interpret

various forms of advice. Ultimately, we want a policy p(a|s,t)which is able to succeed at performing

the new test task ttest,without requiring advice at evaluation time. To achieve this, we make use

of a similar idea to the one described above for bootstrap distillation. In the improvement phase,

we leverage a supervisor in the loop to guide an advice-conditional surrogate policy qf(a|s,t,c)to

perform the new task ttest, obtaining trajectories D={s0,a0,c0,s1,a1,c1,···,sH,aH,cH}N

j=1, then

distill this behavior into an advice-independent policy pq(a|s,t)via behavioral cloning. The result is

a policy trained using coaching, but ultimately able to select tasks even when no coaching is provided.

In Fig 3 left, this improvement process would involve a coach in the loop providing action advice or

language sub-goals to the agent during learning to coach it towards successfully accomplishing a

task, and then distilling this knowledge into a policy that can operate without seeing action advice or

sub-goals at execution time. More formally, the agent optimizes the following objective:

D={s0,a0,c0,s1,a1,c1,···,sH,aH,cH}N

j=1

s0⇠p(s0),at⇠qf(at|st,t,ct),st+1⇠p(st+1|st,at)

max

qE(st,at,t)⇠D[logpq(at|st,t)]

This improvement process, which we call advice distillation, is depicted Fig 2. This distillation

process is preferable over directly providing demonstrations because the advice provided can be

more convenient than providing an entire demonstration (for instance, compare the difficulty of

producing a demo by navigating an agent through an entire maze to providing a few sparse waypoints).

Interestingly, even if the new tasks being solved ttestare quite different from the training distribution

of tasks p(t), since advice c(for instance waypoints) is provided locally and is largely invariant to

this distribution shift, the agent’s understanding of advice generalizes well.

Learning with Off-Policy Advice One limitation to the improvement phase procedure described

above is that advice must be provided in real time. However, a small modification to the algorithm

allows us to train with off-policy advice. During the improvement phase, we roll out an initially-

untrained advice-independent policy p(a|s,t). After the fact, the coach provides high-level advice

chat a multiple points along the trajectory. Next, we use the advice-conditional surrogate policy

qf(a|s,t,c)to relabel this trajectory with near-optimal actions at each timestep. This lets us use

behavioral cloning to update the advice-free agent on this trajectory. While this relabeling process

must be performed multiple times during training, it allows a human to coach an agent without

providing real-time advice , which can be more convenient. This process can be thought of as the

coach performing DAgger [ 42] at the level of high-level advice (as was done in in [ 26]) rather than

low-level actions. This procedure can be used for both the grounding and improvement phases.

Mathematically, the agent optimizes the following objective:

D={s0,a0,c0,s1,a1,c1,···,sH,aH,cH}N

j=1

s0⇠p(s0),at⇠p(at|st,t),st+1⇠p(st+1|st,at)

max

qE(st,t)⇠D

a⇤⇠qf(at|st,t,c)[logpq(a⇤|st,t)]

5

3.4 Evaluation Phase: Executing tasks Without a Supervisor

In the evaluation phase, the agent simply needs to be able to perform the test tasks ttestwithout

requiring a coach in the loop. We run the advice-independent agent learned in the improvement phase,

p(a|s,t)on the test task ttestand record the average success rate.

4 Experimental Evaluation

We aim to answer the following questions through our experimental evaluation (1) Can advice be

grounded through interaction with the environment via supervisor in the loop RL? (2) Can grounded

advice allow agents to learn new tasks more efficiently than standard RL? (3) Can agents bootstrap

the grounding of one form of advice from another?

4.1 Evaluation Domains

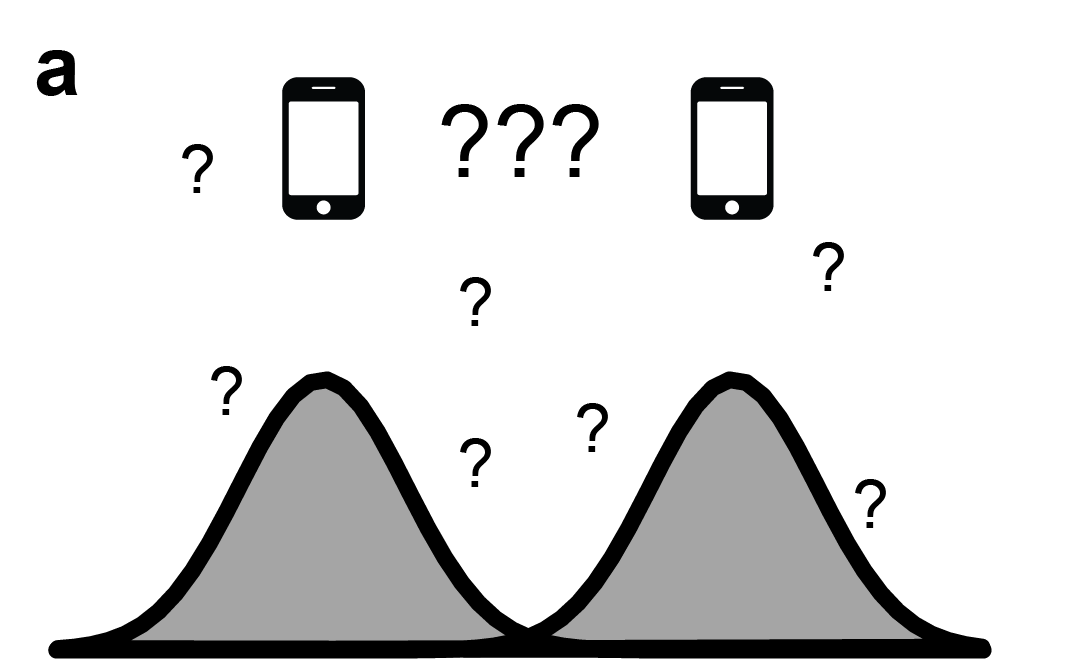

Instruction: Navigate to (x, y)“Pick up a blue key”Action advice: Waypoint:Subgoal:Action: TurnLeftWaypoint: (3, 7)“Go to the yellow door”Direction advice: Cardinal AdviceWaypoint:Direction: [.17, -.23]Direction: WestWaypoint (3, 4)

Navigate to (x, y)Direction advice: Cardinal Advice:Waypoint:Direction: [.17, -.23]Direction: WestWaypoint (3, 4)

Figure 3: Evaluation Domains. (Left) BabyAI (Middle) Point Maze Navigation (Right) Ant Navigation. The

associated task instructions are shown, as well as the types of advice available in each domain.

BabyAI: In the open-source BabyAI [ 8] grid-world, an agent is given tasks involving navigation,

pick and place, door-opening and multi-step manipulation. We provide three types of advice:

1.Action Advice: Direct supervision of the next action to take.

2.OffsetWaypoint Advice: A tuple (x, y, b), where (x, y) is the goal coordinate minus the

agent’s current position, and b tells the agent whether to interact with an object.

3.Subgoal Advice: A language subgoal such as “Open the blue door.”

2-D Maze Navigation (PM): In the 2D navigation environment, the goal is to reach a random target

within a procedurally generated maze. We provide the agent different types of advice:

1.Direction Advice : The vector direction the agent should head in.

2.Cardinal Advice : Which of the cardinal directions (N, S, E, W) the agent should head in.

3.Waypoint Advice : The (x,y) position of a coordinate along the agent’s route.

4.OffsetWaypoint Advice : The (x,y) waypoint minus the agent’s current position.

Ant-Maze Navigation (Ant): The open-source ant-maze navigation domain [ 14] replaces the simple

point mass agent with a quadrupedal “ant” robot. The forms of advice are the same as the ones

described above for the point navigation domain.

In all domains, we describe advice forms provided each timestep (Action Advice and Direction

Advice) as “low-level” advice, and advice provided less frequently as “high-level” advice. We present

experiments involving both scripted coaches and real human-in-the-loop advice.

6

4.2 Experimental Setup

For the environments listed above, we evaluate the ability of the agent to perform grounding efficiently

on a set of training tasks, to learn new test tasks quickly via advice distillation, and to leverage one

form of advice to bootstrap another. The details of the exact set of training and testing tasks, as well

as architecture and algorithmic details, are provided in the appendix.

We evaluate all the environments using the metric of advice efficiency rather than sample efficiency.

By advice efficiency, we are evaluating the number of instances of coach-in-the-loop advice that are

needed in order to learn a task. In real-world learning tasks, this coach is typically a human, and the

cost of training largely comes from the provision of supervision (rather than time the agent spends

interacting with the environment). The same is true for other forms of supervision such as behavioral

cloning and RL (unless the human spends extensive time instrumenting the environment to allow

autonomous rewards and resets). This “advice units” metric more accurately reflects the true quantity

we would like to measure: the amount of human time and effort needed to provide a particular course

of coaching. For simplicity, we consider every time a supervisor provides any supervision, such as

a piece of advice or a scalar reward, to constitute one advice unit . We measure efficiency in terms

of how many advice units are needed to learn a task. We emphasize that this metric makes a strong

simplifying assumption—that all forms of advice have the same cost—which is certainly not true

for real-world supervision. However, it is challenging to design a metric which accurately captures

human effort. In Section 4.7 we validate our method by measuring the real human interaction time

needed to train agents. We also plot more traditional sample efficiency measures in Appendix D.

We compare our proposed framework to an RL baseline that is provided with a task instruction but

no advice. In the improvement phase, we also compare with behavioral cloning from an expert for

environments where it is feasible to construct an oracle.

4.3 Grounding Prescriptive Advice during Training