id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

06df8cce-2a37-4592-8982-92818617b94f | trentmkelly/LessWrong-43k | LessWrong | Closed Beta Users: What would make you interested in using LessWrong 2.0?

None |

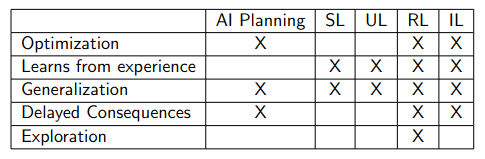

fc223c12-b1dc-4dca-989b-07d98731c024 | StampyAI/alignment-research-dataset/aisafety.info | AI Safety Info | What is imitation learning?

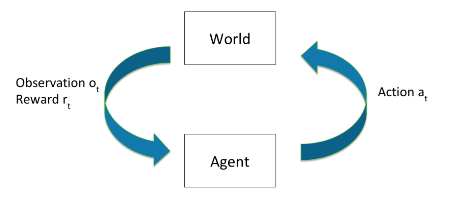

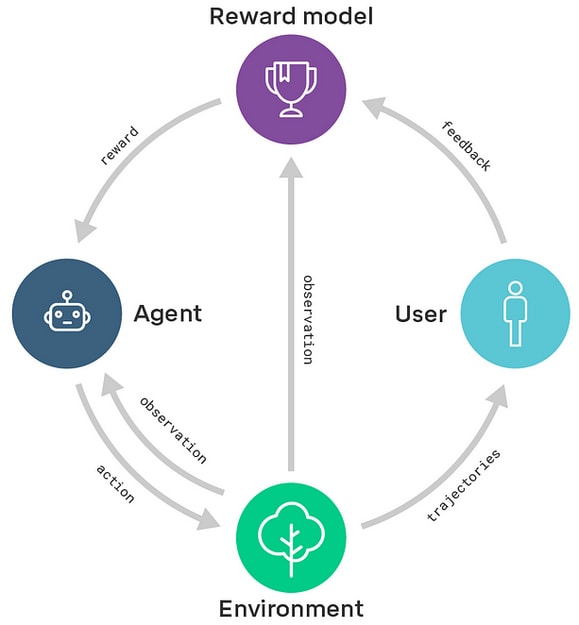

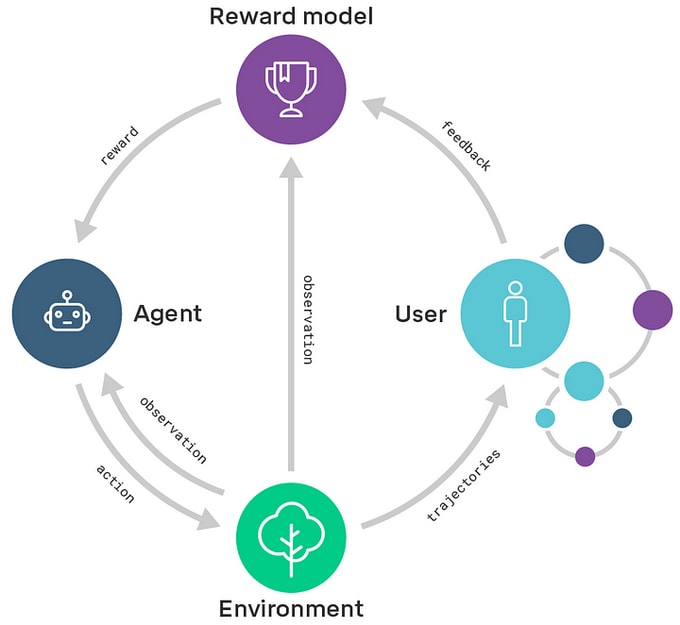

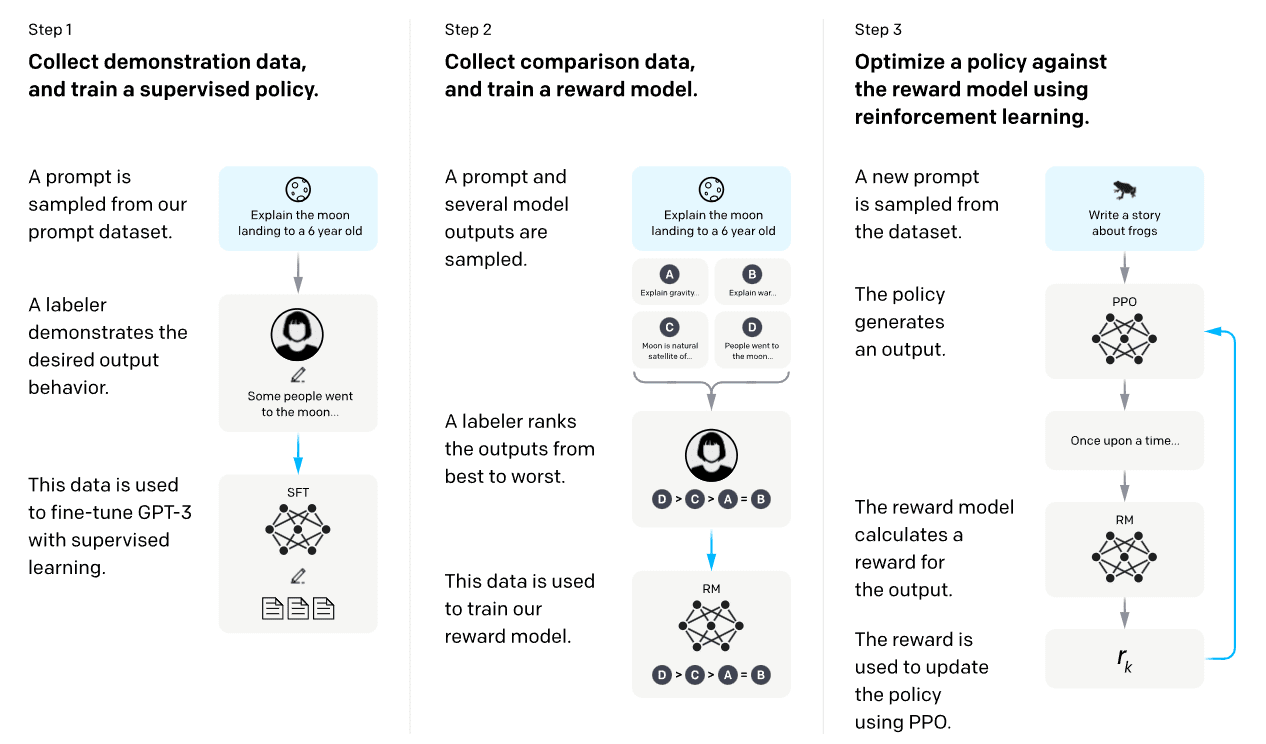

Imitation learning is the process of learning by observing the actions of an expert and then copying their behavior. It is also sometimes called [apprenticeship learning](https://en.wikipedia.org/wiki/Apprenticeship_learning).

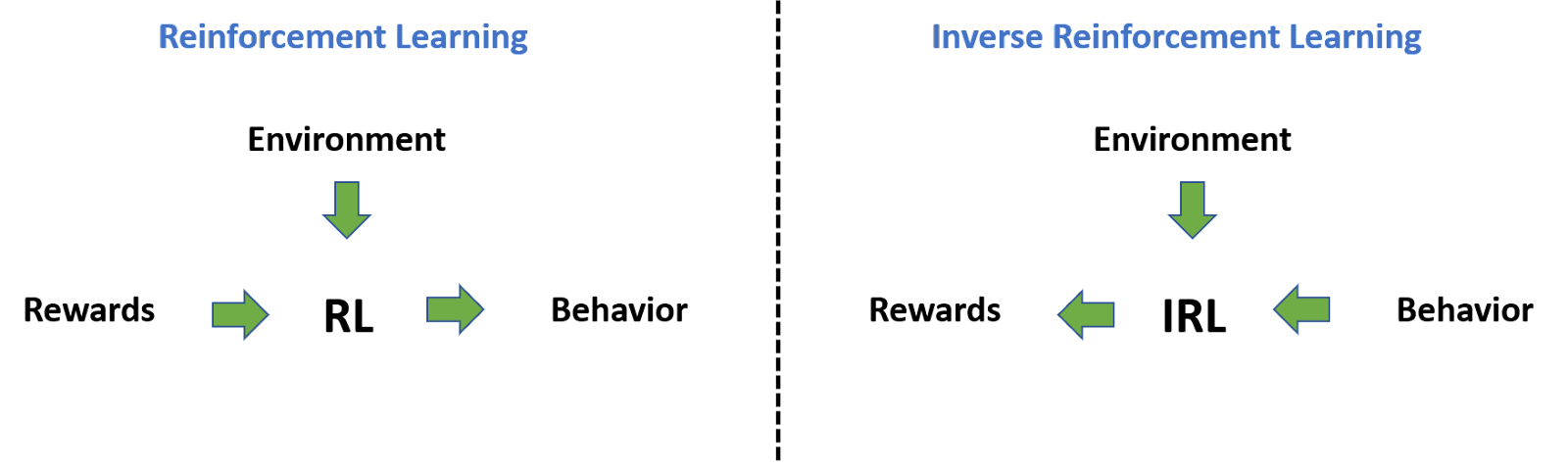

Unlike [reinforcement learning (RL)](/?state=89ZS&question=What%20is%20reinforcement%20learning%20(RL)%3F), which finds a policy for how a system is to act by observing the results of its interactions with its environment, imitation learning tries to learn a policy by observing another agent which is interacting with the environment.

An example of where this process is used is in training modern large language models (LLMs). After LLMs have been trained as general-purpose text generators, they are often fine-tuned with imitation learning using the example of a human expert who follows instructions, provided in the form of text prompts and completions. This is how an earlier model of ChatGPT was trained.

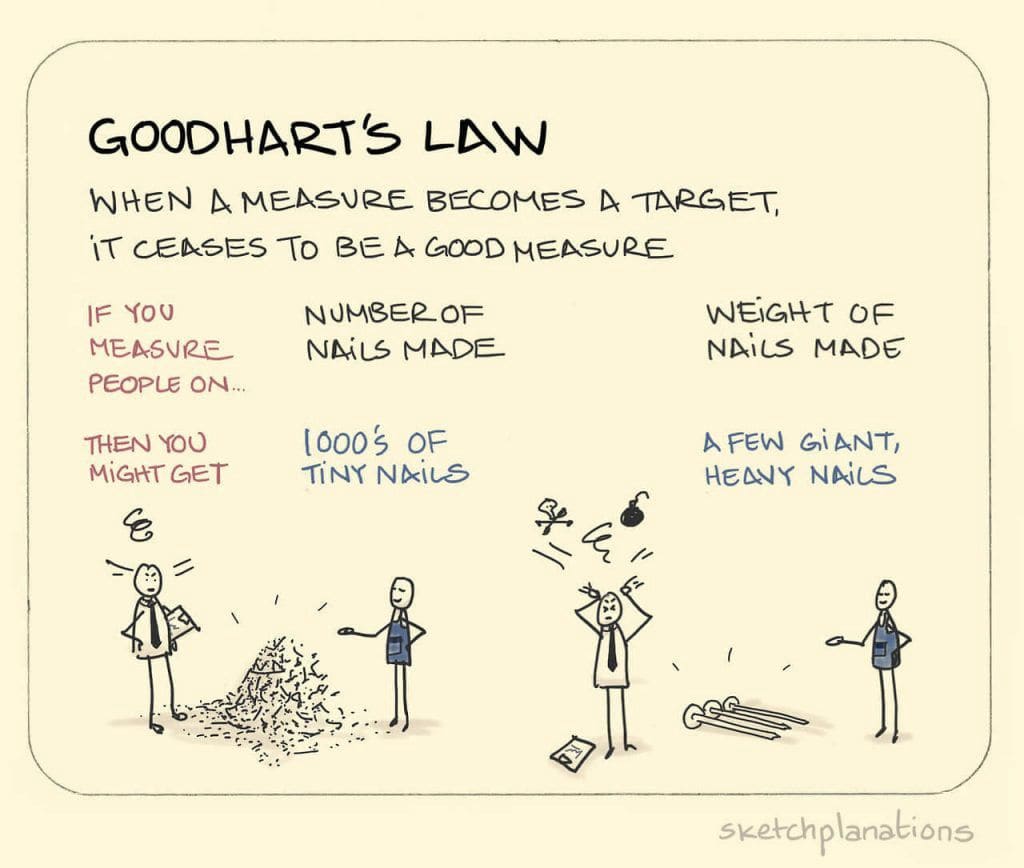

As it pertains to safety and alignment, one reason why we attempt to get systems to learn by imitation instead of by direct reinforcement is to mitigate the problem of [specification gaming](/?state=92J8&question=What%20is%20specification%20gaming%3F). This is a problem which arises when there are edge cases or unforeseen ways of achieving the task in the particular environment that the programmers didn't think of or intend. The idea is that demonstrating behavior would be comparatively easier and safer than RL because the model would not only achieve the objective but also achieve the objective as the expert demonstrator explicitly intends. This is not a foolproof solution, though, and some of its shortcomings are discussed in the answers on [behavioral cloning](/?state=8AEQ&question=What%20is%20behavioral%20cloning%3F) and [specification gaming](/?state=92J8&question=What%20is%20specification%20gaming%3F).

There are a number of different approaches to imitation learning. One of the most popular is [behavioral cloning (BC)](/?state=8AEQ&question=What%20is%20behavioral%20cloning%3F). Others include [inverse reinforcement learning (IRL)](/?state=8AET&question=What%20is%20inverse%20reinforcement%20learning%20(IRL)%3F), [cooperative inverse reinforcement learning (CIRL)](/?state=904A&question=What%20is%20cooperative%20inverse%20reinforcement%20learning%20(CIRL)%3F), and [generative adversarial imitation learning](https://arxiv.org/abs/1606.03476) (GAIL).

|

d7539061-8ace-456a-9de3-ec507906311a | trentmkelly/LessWrong-43k | LessWrong | Agentic Growth

Personal growth is paramount. A north star to chase indefinitely.

However, most of this growth is reactionary. In the same way, we wouldn’t commend a knee for jerking at a doctor’s strike, we shouldn't see this reactionary response as growth.

The connotation of growth is overwhelmingly positive, yet we only decide that this change is positive from the perspective of the person doing the ‘growing’, which seems to be greatly biased.

For change to lead to self-improvement, I suggest a few alterations to our conception of growth:

First, I want to acknowledge that a significant amount of growth only exists as an agent’s response to the environment.

Second, I want to recognize growth as a neutral word, rather than allow it to be charged with positive or negative connotations. I want to recognize growth as something much more simple: change.

With this model, we can consider how change generally emerges from reactions to an external environment, often out of our control.

The platitude to respond to this is: “It's not what happens to you, but how you react to it that matters.” While I recognize the merits of this notion, it warrants investigation.

Yes, in the face of adversity, stoically bettering oneself is ideal, but we must question whether this change would have occurred at all without adversity. If we assume that external factors drive this change, we resign to our life's path being determined by the series of adversarial events that we choose to survive.

We are all worth a lot more than defining our growth as mere reactions to adversity.

If you put a broken car on a hill and it rolls, it is simply reacting to its surroundings: gravity is the only thing moving it.

As one moves through life, the topology along the road changes, and the car will continue to roll. Looking back, the car may be far from where it started, but not once was it turned on.

Some would describe it as growth, I would describe it as reactionary change. Agentic growth is the process of fix |

ae31e455-f0e7-4e00-a57a-eda398023007 | trentmkelly/LessWrong-43k | LessWrong | What progress have we made on automated auditing?

One use case for model internals work is to perform automated auditing of models:

https://www.alignmentforum.org/posts/cQwT8asti3kyA62zc/automating-auditing-an-ambitious-concrete-technical-research

That is, given a specification of intended behavior, the attacker produces a model that doesn't satisfy the spec, and the auditor needs to determine how the model doesn't satisfy the spec. This is closely related to static backdoor detection: given a model M, determine if there exists a backdoor function that, for any input, transforms that input to one where M has different behavior.[1]

There's some theoretical work (Goldwasser et al. 2022) arguing that for some model classes, static backdoor detection is impossible even given white-box model access -- specifically, they prove their results for random feature regression and (the very similar setting) of wide 1-layer ReLU networks.

Relatedly, there's been some work looking at provably bounding model performance (Gross et al. 2024) -- if this succeeds on "real" models and "real" specification, then this would solve the automated auditing game. But the results so far are on toy transformers, and are quite weak in general (in part because the task is so difficult).[2]

Probably the most relevant work is Halawi et al. 2024's Covert Malicious Finetuning (CMFT), where they demonstrate that it's possible to use finetuning to insert jailbreaks and extract harmful work, in ways that are hard to detect with ordinary harmlessness classifiers.[3]

As this is machine learning, just because something is impossible in theory and difficult on toy models doesn't mean we can't do this in practice. It seems plausible to me that we've demonstrated non-zero empirical results in terms of automatically auditing model internals. So I'm curious: how much progress have we made on automated auditing empirically? What work exists in this area? What does the state-of-the-art in automated editing look like?

1. ^

Note that I'm not askin |

d2cec6d8-1ddd-4534-8bdf-675793882050 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Hypothetical: what would you do?

Let’s pretend I have a semi rigors model that lays out why RLHF is doomed to fail and also that it negatively affects model performance (including why it does so)

Let’s go further into lala land and pretend that I have an architectural plan that does much better, very transparent, steerable and corrigible, can be deployed and used without changing or retraining the base LLM.

There are some downsides like requires more compute at inference time, not provable bulletproof, likely breaks in SI regime and definitely breaks under self improvement (so very definitely NOT an alignment proposal).

Short term this looks beneficial, also looks like shortening timelines, and extremely unlikely to advance the AI safety field (in the direction of what we ultimately want and need).

What should I do, if I ever happened to be in such a situation?

* Prototype it, limited access with the expressed purpose of breaking stuff (black box, absolutely no architectural information provided).

* Write it up and publish.

* Forget about it, smarter people must have already thought of it, and since it’s not a thing, I am clearly wrong.

* Forget about it, only helps capabilities. |

b55530be-3527-47a7-bd16-686d6607c8e2 | trentmkelly/LessWrong-43k | LessWrong | Futarchy's fundamental flaw

Say you’re Robyn Denholm, chair of Tesla’s board. And say you’re thinking about firing Elon Musk. One way to make up your mind would be to have people bet on Tesla’s stock price six months from now in a market where all bets get cancelled unless Musk is fired. Also, run a second market where bets are cancelled unless Musk stays CEO. If people bet on higher stock prices in Musk-fired world, maybe you should fire him.

That’s basically Futarchy: Use conditional prediction markets to make decisions.

People often argue about fancy aspects of Futarchy. Are stock prices all you care about? Could Musk use his wealth to bias the market? What if Denholm makes different bets in the two markets, and then fires Musk (or not) to make sure she wins? Are human values and beliefs somehow inseparable?

My objection is more basic: It doesn’t work. You can’t use conditional predictions markets to make decisions like this, because conditional prediction markets reveal probabilistic relationships, not causal relationships. The whole concept is faulty.

There are solutions—ways to force markets to give you causal relationships. But those solutions are painful and I get the shakes when I see everyone acting like you can use prediction markets to conjure causal relationships from thin air, almost for free.

I wrote about this back in 2022, but my argument was kind of sprawling and it seems to have failed to convince approximately everyone. So thought I’d give it another try, with more aggression.

Conditional prediction markets are a thing

In prediction markets, people trade contracts that pay out if some event happens. There might be a market for “Dynomight comes out against aspartame by 2027” contracts that pay out $1 if that happens and $0 if it doesn’t. People often worry about things like market manipulation, liquidity, or herding. Those worries are fair but boring, so let’s ignore them. If a market settles at $0.04, let’s assume that means the “true probability” of the event is 4%. |

443d5d14-3ebd-412c-b877-a67656cf0a72 | trentmkelly/LessWrong-43k | LessWrong | Extended Quote on the Institution of Academia

From the top-notch 80,000 Hours podcast, and their recent interview with Holden Karnofsky (Executive Director of the Open Philanthropy Project).

What follows is an short analysis of what academia does and doesn't do, followed by a few discussion points by me at the end. I really like this frame, I'll likely use it in conversation in the future.

----------------------------------------

Robert Wiblin: What things do you think you’ve learned, over the last 11 years of doing this kind of research, about in what situations you can trust expert consensus and in what cases you should think there’s a substantial chance that it’s quite mistaken?

Holden Karnofsky: Sure. I mean I think it’s hard to generalize about this. Sometimes I wish I would write down my model more explicitly. I thought it was cool that Eliezer Yudkowsky did that in his book, Inadequate Equilibria. I think one thing that I especially look for, in terms of when we’re doing philanthropy, is I’m especially interested in the role of academia and what academia is able to do. You could look at corporations, you can understand their incentives. You can look at Governments, you can sort of understand their incentives. You can look at think-tanks, and a lot of them are just like … They’re aimed directly at Governments, in a sense. You can sort of understand what’s going on there.

Academia is the default home for people who really spend all their time thinking about things that are intellectual, that could be important to the world, but that there’s no client who is like, “I need this now for this reason. I’m making you do it.” A lot of the times, when someone says, “Someone should, let’s say, work on AI alignment or work on AI strategy or, for example, evaluate the evidence base for bed nets and deworming, which is what GiveWell does … ” A lot of the time, my first question, when it’s not obvious where else it fits, is would this fit into academia?

This is something where my opinions and my views have evolve |

47297a73-12e9-46aa-ba28-535c171c6b12 | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | What are the coolest topics in AI safety, to a hopelessly pure mathematician?

I am a mathematics grad student. I think that working on AI safety research would be a valuable thing for me to do, *if* the research were something I felt intellectually motivated by. Unfortunately, whether I feel intellectually motivated by a problem has little to do with what is useful or important; it basically just depends on how cool/aesthetic/elegant the math involved is.

I've taken a semester of ML and read a handful (~5) AI safety papers as part of a Zoom reading group, and thus far none of it appeals. It might be that this is because nothing in AI research will be adequately appealing, but it might also be that I just haven't found the right topic yet. So to that end: what's the coolest math involved in AI safety research? What problems might I really like reading about or working on? |

6a0efd0b-3706-4eb4-949c-0f42d176de9a | trentmkelly/LessWrong-43k | LessWrong | New Feature: Collaborative editing now supports logged-out users

If you've ever used our collaborative editing features before, you may be familiar with our link-sharing functionality, which previously only allowed users who had existing LessWrong accounts (and were logged in) to collaborate on posts. Now, link-sharing allows logged-out users to read/comment/edit as well (as per whatever permissions you set for link-sharing).

Logged-out users shouldn't be able to edit anything about the post except the contents of the post body, publish drafts, etc.

Please let the us know via Intercom if you experience any issues with this or related functionality. |

a32aea79-25e8-423b-b5db-be424df7be04 | trentmkelly/LessWrong-43k | LessWrong | No, Newspeak Won’t Make You Stupid

In George Orwell's book 1984, he describes a totalitarian society that, among other initiatives to suppress the population, implements "Newspeak", a heavily simplified version of the English language, designed with the stated intent of limiting the citizens' capacity to think for themselves; everybody knows that when you have a thinking people, keeping a peoplegroup still and not angry is unpossible.

In short, the ethos of newspeak can be summarized as: "Minimize vocabulary to minimize range of thought and expression". There’s no way such a simple idea could mean different things to different people, right? Well… there are two different, closely related, ideas, both of which the book implies, that are worth separating here.

The first (which I think is to some extent reasonable) is that by removing certain words from the language, which serve as effective handles for pro-democracy, pro-free-speech, pro-market concepts, the regime makes it harder to communicate and verbally think about such ideas… Although, if that was the only thing done by Orwell’s Oceania, it would work about as well as taking a sharp knife away from a toddler, while still leaving on the ground next to them a fully-loaded AK-47; people are adept at making themselves understood, even in the face of constraints to communication

The second idea, which I worry is an incorrect takeaway people may get from 1984, is that by shortening the dictionary of vocabulary that people are encouraged to use (absent any particular bias towards removing handles for subversive ideas), one will reduce the intellectual capacity of people using that variant of the language. However, since that idea is false, that definitely, 100% clearly makes it perfectly okay for a government to force Newspeak on its people, and that totally wouldn’t be a creepy overstepping of its power (I know, Poe’s Law says that it is utterly impossible for me to be sarcastic on the internet without somebody thinking that I actually believe it).

|

ae4dc1ef-5f10-4db2-aec5-418070c0309a | trentmkelly/LessWrong-43k | LessWrong | Escalator Action

Epistemic Status: Slow ride. Take it easy.

You Memba Elevator Action? I memba.

A recent study (link is to NY Times) came out saying that we should not walk on escalators, because not walking is faster.

From the article:

> The train pulls into Pennsylvania Station during the morning rush, the doors open and you make a beeline for the escalators.

>

> You stick to the left and walk up the stairs, figuring you can save precious seconds and get a bit of exercise.

>

> But the experts are united in this: You’re doing it wrong, seizing an advantage at the expense and safety of other commuters. Boarding an escalator two by two and standing side by side is the better approach.

We will ignore the talk about which method is better for the escalator, which seems downright silly, and focus on the main event: They are explicitly saying that when you choose to walk up the stairs, you are doing it wrong.

Since walking is trivially and obviously better than walking, this result is a little suspicious. And by a little suspicious, I mean almost certainly either wrong, highly misleading or both.

Certainly individually, on the margin, for yourself you are quite obviously doing it right.

Consider a largely empty escalator. If Alice gets on the escalator and sits there, it takes her 40 seconds. If she walks up the left side, and no one is in her way, it takes her 26 (numbers from article). Given everyone else’s actions, if she wants to get from Point A to Point B quickly, and I strongly suspect that she does, she should walk up the escalator.

Consider an escalator in the standard style. On the left people walk up, on the right people stand. If there is enough space for all, then nothing Alice does impacts anyone else unless she blocks the left side, so assume there is not enough room. In that situation, demand for the right side almost always exceeds demand for the left side, so Alice is almost certainly going to not only get to the top faster by walking, she is helping ev |

81c23e0b-1673-4535-b42b-487b3c4f9ca6 | trentmkelly/LessWrong-43k | LessWrong | Analyzing DeepMind's Probabilistic Methods for Evaluating Agent Capabilities

Produced as part of the MATS Program Summer 2024 Cohort. The project is supervised by Marius Hobbhahn and Jérémy Scheurer

Update: See also our paper on this topic admitted to the NeurIPS 2024 SoLaR Workshop.

Introduction

To mitigate risks from future AI systems, we need to assess their capabilities accurately. Ideally, we would have rigorous methods to upper bound the probability of a model having dangerous capabilities, even if these capabilities are not yet present or easily elicited.

The paper “Evaluating Frontier Models for Dangerous Capabilities” by Phuong et al. 2024 is a recent contribution to this field from DeepMind. It proposes new methods that aim to estimate, as well as upper-bound the probability of large language models being able to successfully engage in persuasion, deception, cybersecurity, self-proliferation, or self-reasoning. This post presents our initial empirical and theoretical findings on the applicability of these methods.

Their proposed methods have several desirable properties. Instead of repeatedly running the entire task end-to-end, the authors introduce milestones. Milestones break down a task and provide estimates of partial progress, which can reduce variance in overall capability assessments. The expert best-of-N method uses expert guidance to elicit rare behaviors and quantifies the expert assistance as a proxy for the model's independent performance on the task.

However, we find that relying on milestones tends to underestimate the overall task success probability for most realistic tasks. Additionally, the expert best-of-N method fails to provide values directly correlated with the probability of task success, making its outputs less applicable to real-world scenarios. We therefore propose an alternative approach to the expert best-of-N method, which retains its advantages while providing more calibrated results. Except for the end-to-end method, we currently feel that no method presented in this post would allow us to reli |

34fbef9f-d17b-43f2-b5f0-010489c668ae | trentmkelly/LessWrong-43k | LessWrong | The Problem of Thinking Too Much [LINK]

This was linked to twice recently, once in a Rationality Quotes thread and once in the article about mindfulness meditation, and I thought it deserved its own article.

It's a transcript of a talk by Persi Diaconis, called "The problem of thinking too much". The general theme is more or less what you'd expect from the title: often our explicit models of things are wrong enough that trying to think them through rationally gives worse results than (e.g.) just guessing. There are some nice examples in it. |

35e44cab-2157-46e3-a9e5-9610fc09d441 | trentmkelly/LessWrong-43k | LessWrong | Training Regime Day 9: Double-Crux

Introduction

Argument A is a crux for Alice on position P if finding out that A is false causes Alice to substantially chance their view on P. If Alice thinks P, Bob thinks not P, Alice thinks A, Bob thinks not A, and A/not A are both cruxes for Alice and Bob respectively, then A is a double-crux for Alice and Bob's disagreement about P.

Suppose that I think that they sky is yellow. Suppose that Alice thinks that the sky is blue. Suppose that I think that the sky is yellow because noted sky-color historian Carol wrote that the sky is yellow. Suppose that Alice thinks that the sky is blue because they think that Carol wrote that the sky is blue. "Carol wrote X" is thus a double crux for our disagreement about the color of the sky. We can now just check what the sky-historian Carol actually wrote and effortlessly resolve our disagreement.

Note that cruxes can be conjunctions. That is, I can think that the sky is yellow because sky-color historians Dave and Erin both said that the sky is yellow, where neither individual historian would have been sufficient.

Finding Double-Cruxes

Do double-cruxes even always exist? By the Aumann Agreement Theorem, the answer is sort of yes, conditional on a couple of assumptions. In practice, CFAR instructors have indicated to me that they have always found double-cruxes when they were seeking, although sometimes it took upwards of 5 hours.

I don't have a reliable way of finding-double cruxes, but I have found a few. Here are some strategies that I think help.

Epistemic status: both strategies are highly experimental.

Proposer/Listener

The search for a double-crux between two parties is not an argument, it's a conversation aimed at both parties coming out with truer beliefs. However, it can feel a lot like an argument, resulting in parties trying to talk over each other, overstating their beliefs, etc.

One way to prevent this is to explicitly delegate one party as the "proposer" and the other party as the "listener." The role o |

c157205e-8545-4fae-ba28-bcd7cd035d21 | StampyAI/alignment-research-dataset/youtube | Youtube Transcripts | What Machines Shouldn’t Do (Scott Robbins)

right

welcome everyone to uh today's ai tech

agora

um today our speaker is scott robbins uh

before passing the word to him i see

that we have quite a lot of new faces

here

so i will give a very quick introduction

into

[Music]

who we are so i'm arkady i'm a postdoc

at the delft university of technology

and uh

here we have a really nice

multidisciplinary multi-faculty

uh initiative called aitec and uh at

aitec we look

into different aspects of what we call

meaningful human control

so we are focusing uh not only on kind

of general philosophical

uh aspects of it but also on concrete

engineering challenges

postgrade so if you're interested in

this

check out our website

and also subscribe to our twitter and

youtube channel

to stay updated of the future meetings

and

if if nothing else comes up

i'll pass the word to scott

all right thanks a lot and uh thanks a

lot arcadie and

aitech for inviting me i'm pretty

excited to give this talk i'd hoped it

would be in person but

alas we will have to make too um

so while i share my screen

really fast um

can everybody see that now

can somebody let me know if you see that

yeah all right sounds good

all right thank you um so a little bit

about me i'm just finishing up my phd

at the ethics of philosophy and

technology section at

to delft at the tpm faculty

i'm writing on machine learning and

counterterrorism and the

and the ethics and efficacy of that and

this is a paper that didn't make it into

the thesis that i just submitted on

monday

but it's something that i i think the

thesis kind of leaves on set and kind of

leaves the future work that i'm really

hoping to

get out there as soon as possible and

right now i'm titling it what machines

shouldn't do a necessary condition for

meaningful human control

all right so before i say anything about

what machines shouldn't be doing

i i i have to clarify what i mean by

what a machine

does and when a machine is doing

something or more specifically when we

have delegated

a process or a decision to a machine

and what i mean by this and for the

purposes of this paper in this

presentation

is that a machine has done something or

we have delegated a decision to a

machine

when a machine can reach an output

through considerations

and the waiting of those consideration

considerations not

explicitly given by humans so

the point of me doing this and to making

this clarification

is that i want to distinguish between

you know more classical ai

like good old-fashioned artificial

intelligence symbolic

ai or expert systems and things like

that

from machine learning or contemporary ai

and in the classical good old-fashioned

ai the considerations that we

um that go into making a particular

generating a particular output are

explicitly put there by human beings

it may be extremely complicated and may

look um it may

still simulate our some way

and we may believe it's doing more than

it is but really there's there's human

beings behind that

that path that it's that it's following

to generate the output

this is in contrast to machine learning

or many methodologies and machine

learning

where the specific considerations that

go into generating an

output are unknown to the to any human

beings but even the human beings that

programmed it

so that's the case in the in in much of

the hype surrounding ai today and many

of the successes that we've seen in the

media

like alphago beating the world go

champion

or chess algorithms or many of the other

other algorithms out there

so we've delegated a decision to a

machine or

in this example here the move in the

game of go

to a machine because that machine is

actually has its own considerations

loosely speaking

for how it generates the output

so this we've delegated in in the way

that i'm talking about it we've

delegated many

decisions to machines everything from

detecting whether somebody's suspicious

or not

to predicting crime to driving our cars

diagnosing patients

and sentencing people to prison

um going even more fraud detection

facial recognition object recognition

and even choosing our outfits for us and

developing this presentation i saw

a lot of really random algorithms out

there or

applications out there and so with all

these applications

i think some of them kind of freak us

out and i think

autonomous weapon systems are some of

the ones that really come to mind

is the most classic example of something

that comes to mind that

that scares us about you know like

should we be really

doing this within with an algorithm or

how can we do this responsibly

and it's fueled this huge explosion in

ai ethics

i think a justified explosion in ai

ethics because i think there's something

novel happening here

this is the first time we're really

delegating these processes to machines

not just automating the processes we've

already determined but actually

delegating

the the act of choosing these

considerations to machines

and so now we're worried about the

control we have over those machines

and specifically i think that

all of these ai ethics principle lists

and a lot of the work on ai ethics

whether it's explicit made explicit or

not is really talking about

or adding to in some way trying to

realize meaningful human control

and so before i get to the specifics of

what i want to add to meaningful human

control

i wanted to say a little bit about some

of the proposals that are out there

already and a little bit about how i

classify them

i have made a distinction between

technology-centered meaningful human

control

and human-centered meaningful human

control and what i mean by that

distinction

is is what i'm trying to capture here is

where people

are putting the spotlight or putting

their focus

on realizing meaningful human control so

if it's technology centered then we're

really thinking about the technology

itself

what are the design requirements of the

technology or what can we

you know add to the technology so that

we are better equipped

to realize meaningful human control

whereas in the human centered approaches

it's more about where can we place the

human and what capacities or

capabilities does the human being need

in order to realize meaningful human

control

so starting with a technology centered

meaningful human control

there's a few proposals out there that i

consider to be the biggest ones

and i don't mean to say that these are

all necessarily put out there

explicitly to realize meaningful human

control it's not like these

papers are all saying you know this is a

way a proposal for meaningful human

control

but i've argued in the past that some of

these are are

indeed doing that or that would be the

moral problem that they're trying to

solve if they're solving one

so first is explicability and

explainable ai

which is the idea that if we can make um

an algorithm output not only you know

its

you know its output but also give us

some kind of idea of how it came to that

output

in terms of considerations that went

into that output

you know this could perhaps allow us to

say well that was a bad

uh that output should be rejected

because it was based on race or gender

or something like that

something we considered to be um not an

acceptable the way

a way to make a particular decision um

i've written a paper on this

this proposal and i'm

not too thrilled with it i think that

it's a good idea

but it it fails and there's there's

still a good idea to

try to make ai explainable i still think

there's reasons to do it

but it doesn't solve the moral problem

that it attempts to solve

if it's if that's what it's doing and i

can talk more about that or i can direct

you towards the paper

about that in the question and answer

period

then we move on to machine ethics which

i i think is not necessarily a proposal

for

realizing meaningful human control but

it is a way to say that

that it's saying that if we can endow

machines with

moral reasoning capabilities and allow

them to pick out morally salient

features

and adjust and be responsive to those

features then we don't need

humans to be in control anymore really

the machines and

robots and algorithms are controlling

themselves

with these you know ethical capabilities

i couldn't be more negative about this

approach and i think some of that has to

do with the reasons that i'm going to

get into later on

but i've also written a paper about this

with amy van weinsberg

where we argue that there's no good

reason to do this every reason put

forward

fails for either empirical or conceptual

reasons

and again i can talk more about that and

hopefully it becomes more clear

throughout this presentation

at least one of the reasons why this is

a bad idea

and then we get to track and trace which

is a proposal put forward by

philippo santoni de sio and jeroen

vanden home and hoven here at

uh tu delft and tpm in particular and

they they have actually a really nice

paper i highly recommend

people read it if they're interested in

meaningful human control

um philosophically you know it has some

depth to it

and they put forward two conditions

that we need to meet in order to realize

meaningful human control

the first is a tracking condition which

is about

the machine being able to be responsive

to human moral reasons

for for their outputs so such that if

a morally salient feature pops up in a

context that would cause a human

to change their decision or output then

it should also cause the

algorithm to change its output and the

second is a tracing condition

which states that we should be able to

trace responsibility back to a human

being or set of human beings

such that that human being should

knowingly accept moral responsibility

and should be ready to accept

the moral and

moral responsibility and accountability

for the outcomes and outputs of the

machine

all right moving to human-centered

meaningful human control this is the

classic on the loop in the loop stuff

um i think uh and this is again about

the human where is the human

in this process and what capabilities do

they have and an

on the loop the human is is kind of

overseeing

what the algorithm is doing so that the

human can intervene

if necessary it's to prevent something

bad from happening

and i think a good example of this is

tesla teslas which

kind of stipulate that the human has to

be you know have their hands on the

wheel

and be ready to take over at any time if

something bad happens

and really you know all moral

responsibility is

is with the human i think this is more

of a way to protect

their company from lawsuits than

anything else it doesn't seem to be a

very good

way of realizing of having any

meaningful human control

of of the machine as it kind of flies in

the face of human psychology and

human capabilities to remain aware of

their surroundings

despite an automated process which works

most of the time there's some

interesting work on that

i think even from tu delft so i don't

think it's it's meaningful human control

but it's it's an attempt at

um i i think that's what they're trying

to do it's just not

working very well and then in the loop

is a little bit stronger than on the

loop and that it requires a human to

actually endorse

or reject outputs of the machine before

the consequences happen

so you're not just in an overseeing role

anymore you're

you're actually a part of the process

again this kind of suffers from

you know flies in the face of human

psychology and that you know we suffer

from many biases like automation bias

assimilation bias and confirmation bias

which is going to make this incredibly

hard to be

meaningful control even though i think

it could be said we are

in control

um and what i really what i really want

to say with all these is not that i want

to

and the reason i don't go into specifics

on my attacks and all of these positions

is that i don't think it matters too

much for the purposes of this paper

the real point that i want to make here

is that even if some of these

uh solutions or proposals will play

indeed play a part and i think some of

some aspects of them at least will play

a part in meaningful human control

they've they're kind of working this all

out

after we've already made a huge mistake

um when we've made a mistake

and we've already hit this iceberg and

now we're just rearranging the

technologies

and the people in the socio-technical

system

and hoping that everything will work out

but it doesn't matter the ship's already

sinking

and specifically i think the mistake

that gets made

is that we've delegated a decision to a

machine that that machine should not

have been delegated

and as soon as we've done that no amount

amount of technical wizardry

or organization of the human and the

technical system

is going to fix that problem we've

already lost control

meaningful control over these algorithms

so that's what we have to figure out

first and that's what i plan to try to

do

in the next half of this presentation

so to jump to my conclusion that i will

then defend

is that machines should not have

evaluative outputs

and specifically machines should not be

delegated evaluative decisions

and i i consider a value of outputs or

evaluative outputs are

things like criminal suspicious

beautiful propaganda

fake news anything with bad good wrong

or right

built into the labels or the out or the

outputs

so when we say somebody's suspicious

we're not just saying that somebody

is is standing around in one spot for a

while with a ski mask on

we're not just saying oh that's

interesting we're saying it's bad

there's something bad about what's going

on we're

we're loading a value into it and we

describe somebody as beautiful

we're not just saying that they have a

particular outfit on or they look a

certain way

it's just in a neutral manner we're

actually saying something good or

something about the way people ought to

look

and same with propaganda we're not just

saying that there's a picture picture

with a political message

we're saying that there's something bad

about this picture with a political

message

it's not the way things should be and so

any of these outputs that have values

built into them

should not be delegated to machines

and i'm not just shadow boxing here i

think most of us here will know this

that these types of outputs have been

delegated to machines quite frequently

there's no shortage of proposals to do

this

[Music]

for these are four examples here like

detecting propaganda

and here i do want to make a little note

that remind us that

in the beginning i was talking about

what i mean by a machine doing things

and sometimes algorithms are delegated

the task

of flagging propaganda but that doesn't

always mean

um that it's doing something in the way

that i've talked about it doing

for instance europol flags propaganda

but they do it based on

a hash that's generated by

videos and pictures that have already

been determined by human beings

to be propaganda and the idea then is

just is there a new post or a new

picture a new video

that is the exact same as the one that

was already posted and taken down

that's not a machine that's just

automating um a process we've already

done the value judgment

ourselves and then we're just delegating

the task of finding

things that that match those value

judgments

in this case i'm actually talking about

a machine

or an algorithm that detects propaganda

and novel propaganda on its own

and then moving on to you know we've had

algorithms that detect

criminals just based on their faces or

purport to do so um ai that detects

beauty ai that detects fake news

we have one of my least favorite

companies hirevue

which works for which is used by many

fortune 500 companies now

which purports to be able to say whether

a candidate is okay good great and

fantastic

or and probably bad as well

and and then i think that one of the

more infamous versions which is the

chinese social credit system which from

my reading is trying to say what is a

good

citizen so

remember these are all things that i'm

saying algorithms shouldn't

be delegated and then the question

becomes why

why why can't we delegate those things

and i want to put forward

two arguments here one has to do with

efficacy roughly that uh i'm going to

argue that we

we can't say anything about the efficacy

of these algorithms and if

and if we can't say anything about its

efficacy then we can't justify its use

and that any use of it is uh is out of

our control we've lost control at that

point

and then the second argument is more of

an ethical argument about

a more fundamental loss of control um

over

uh of meaningful human control over a

process and i will get more into that

when i when i get to that section

so first i want to say that efficacy is

unknown in principle for evaluative

outputs

and that every each evaluative output is

unverifiable for example

suspicious and in this example in this

picture here this

man is being labeled as suspicious and

in this this judgment this output here

and if we wanted to say

well did the algorithm get this right

was the algorithm correct and labeling

this person suspicious

we in principle can't do that because

you might say you might argue

wait we can if the person stole

something well then they were indeed

suspicious

but that's not what suspicious means

suspicious can be

somebody can be suspicious without doing

anything wrong

and somebody can do something wrong or

steal something without having been

suspicious it's called being a good

thief

so if we can't evaluate whether the

algorithm was correct on this one

instance

and i'm arguing that it can't on any

specific instance then we can't say if

this algorithm works at not or not at

all

there's nothing we can say about the

efficacy of this algorithm and therefore

we're kind of out of control we've lost

control we're just using a tool

that's as good as a magic eight ball at

that point

and this is opposed to an example like

an algorithm that's supposed to detect

weapons

in a baggage and baggage at the airport

in this instance if an algorithm says

classifies this

bag as having a weapon in it or more

specifically having a gun in it

we could probably we probably wouldn't

just arrest the person on

on just the fact that the algorithm made

such a labeling we would

look into the bag and find out does it

indeed have a weapon or a gun in it

and if it does then we know the

algorithm got it right at that point

and we can say something about the

effectiveness of the algorithm because

we can test this on many bags on many

examples and determine how good it is

at in detecting weapons and then we can

have some place

in justifying its use we can say it does

indeed get it wrong

sometimes but we have a process to

handle that because

we have enough information to remain in

control of this algorithm

um the other thing i want to say about

efficacy and and uses

using this suspicious label again is

that

the context change over time so one year

ago if this kid would have come into a

store that i was

in and not at night i would have

justifiably been

worried and probably thought this person

was suspicious but

i think uh in the context of a global

pandemic right now in the netherlands if

i saw

the same person wearing a mask in the

in a store that i was in i'd probably be

thanking them for actually wearing a

mask

as i see so little of here in the

netherlands despite the spiking

corona cases so these contacts change

and that's that's something that

algorithms are not good at

they're good at fixed or machine

learning algorithms specifically they're

good at fixed

targets something that they can get

closer and closer to the truth with

over more data but value judgments are

not some

are not those kinds of things so we have

to be so even if we could

solve the problem that i just outlined

which we can't tell whether it was

we can't verify any particular decision

that we did solve that problem well then

we'd also have to worry about

the context changing to make those

considerations

um and make those considerations change

that ground that judgment

all right now moving into the ethics

part of the argument where i'm arguing

for a more fundamental lack of control

what i mean by fundamental here and it's

different from the control that

i i see so often talked about like in

the autonomous weapons debate

where we're really thinking like okay we

have this algorithm out there and it's

targeting people

how do we make sure that there's still a

human being around

that can make sure like can take

responsibility or have control over that

process

what i mean with this fundamental part

is that the control over choosing the

considerations that ground our value

judgments

so the actual process of deciding how

the world ought to be

in any of these contexts that is a

process that we have to remain control

over

in control over it doesn't make sense to

delegate that

to anybody but ourselves we human

a machine is not going to be able to

just decide how the world should be

and then we all change just because of

an output of an algorithm that doesn't

make any sense

we have to decide how the world should

be and then create algorithms

to help bring us there so in the example

of

you know going over cvs to find a new

candidate for a job

say in academia we might say that

there's certain considerations that are

important for that

for instance the number of publications

um their reference letters

you know what they did their

dissertation on there'd be all these

considerations that go into

such an evaluation and we usually have a

committee

to decide like how do we want to

evaluate candidates for this particular

position because it may not be the same

considerations every time

and that conversation that we're having

not only as a small committee or as an

individual

but as a society to say what is

like a good what is a good academic and

then from there determining the

considerations that will lead

us to choosing those good academics or

bad academics or good candidates or bad

candidates

that is our process that's what we need

to remain in control over

so delegating this when we delegated an

evaluative output to

a machine we are effectively seeding

that more fundamental level of control

and then continuing on with this theme

even though we're having a conversation

today about what a good academic is or

we did that you know we were doing that

50 years ago

now things have changed drastically in

the last

you know bunch of decades or even in the

last 10 years i think

even at tu delft you can see that

certain

characteristics or considerations are

used that weren't used before

about what a good academic is for

instance valorization is much more

important

than it used to be we've decided that

that is part of what goes into making a

good academic

or maybe teaching is a little bit less

important or maybe it's not the number

of publications anymore

but the quality of their top

publications

this is the conversation that we have

it's also a conversation that changes

as we learn new information or as the

context around us changes

it's not that the value changes itself

but how we ground those values with

considerations changes

so that is that should be left up to us

to do

next is the is this

what i'm calling a vicious machine

feedback loop and it's it's a concern

that i have

that by delegating this evaluation

this evaluating process to machines that

we could be

influenced by these machines on how we

end up seeing

values and grounding values so the

process starts with us

human beings building these algorithms

training it

you know labeling the data however that

process works we

are we are doing that in our way and it

will always be biased it may not be

biased in a bad way

but it's still biased even if it's just

to the time that we live in

and then that feeds into an algorithm

which goes into an evaluative decision

which then spits out these these

evaluations to us and over time what i'm

worried about is that

we could be influenced by how the

algorithm makes decisions

we could start seeing candidates for

jobs differently because we

we've seen so many evaluations of an

algorithm

show us what a good candidate is and

then we start taking those things on

and this isn't an entirely new problem

um it's happened before with other

technologies

like even the media we're constantly

worried about

feeding in the idea of who is beautiful

and

body shape and body image to children

for instance or barbies or something

like that

all this stuff feeds into their idea of

what beautiful is

and affects them later and we usually

talk about this in a negative way

we don't like that that they're feeding

in a specific body shape as the only way

to be beautiful

and then that actually affects how they

see it which affects their

their way of trying to realize it and

who they think is beautiful well

those same evaluations are now being

delegated to ai we don't even know what

considerations they're using

and i don't think not knowing is better

than knowing and it's bad

they're both not a good effect on our

evaluation our ability to evaluate

the final thing i want to say about this

this this ethical control argument is

that

people's behavior will adapt to

evaluations part of the reason that we

perform evaluations

is to get people to um we're saying how

the world ought to be

if we're saying somebody did a great job

we're saying well if you want to do a

great job too you should

do it more like this or you should look

more like this to be

beautiful or that's what evaluations do

now um of course that will change

people's behavior

and in the case of ai we've seen some of

these overt changes where people figure

out that ai is doing something

like these students who figured out that

their tests were graded by ai

and we're actually able to achieve

hundreds rather easily

um that affects their behavior and of

course in this situation well that's not

behavior that we want to we don't want

to change their behavior that way

that evaluation is failing that's partly

because

we haven't had the conversation we're

delegating the conversation about what

it is we want

what behavior is good in taking a test

to a machine

and that doesn't based on what i said

before that doesn't make any sense

that's the whole thing

we have to determine what is a good

what is good for a test and then we can

have ai help us

evalua help us evaluate it by picking

out

you know descriptive features of it but

it can actually

do that process for us it can't pick out

the considerations

that make a good test or a good

candidate or any of these things

that is us losing control over a process

that is fundamentally

our process

um so then i started this you know the

whole thing is

called what machines shouldn't do so i

wanted to reiterate you know i think

some of it should be clear by now

but um what i think machines shouldn't

do based on the arguments that i've used

and the first is um they're all

evaluative outputs but

aesthetic outputs so judging what is

beautiful

or what is good you know what's a good

movie what's a what's a bad movie what's

a good song

we have you know algorithms that are

trying to trying to say what the next

good movie is going to be i think these

these don't make any sense based on what

i've said not only can we not check if

the algorithm works or not

but we can't um but we're losing control

of that process of having that

conversation about

what is good um regard to the aesthetics

the same with the ethical and moral

you know we shouldn't be delegating the

process of evaluating

um of coming up with the considerations

that evaluate candidates or citizens or

anything like that

to machines that is our process and

finally

i add this last one in i don't think

it'll make the paper because i think

it's a separate paper

but there's been so much talk of ai

emotion detection

that i wanted to mention it because i

think it fails some of the same thing in

the same way

that some of the aesthetic and ethical

or moral failures go

and that is we emotions are not

verifiable in the way that the gun in

the bag was

and furthermore there doesn't seem to be

based on the science that i've read

um the anything more than pseudoscience

grounding the idea

that we can use ai to detect emotions

all right so now i'm getting to the

conclusion now

i think this is obvious but

unfortunately based on all the examples

i've shown

i think it still needs to be said ai is

not a silver bullet

it's not going to evaluate better than

we can and it's not going to tell us how

the world should be

it can help us realize the world we've

determined

the way that it should be but it's not

going to be able to do this this is

fundamentally a human conversation

a human process that we need to we need

to keep going and it will never stop

and then have the technologies around us

help us realize those dreams

and then lastly i want to keep

artificial intelligence boring

i know it's not as exciting as being

able to figure out

ethics you know determine exactly what a

good person is and then we just go

toward

we just follow the machine that would be

really exciting if we could do that

but it's just not something that can be

done

but i also don't want to be completely

negative here i think despite

all of the the last you know half an

hour or so

i'm actually really positive about many

of the benefits that artificial

intelligence

and machine learning could bring us i

just think that it needs to be more

focused into the things that

it's possible to achieve and that is

identifying the descriptive features

labeling those descriptive features

that ground our evaluations

so instead of determining who is

dangerous it can determine

a gun in a bag instead of determining

who a good candidate is

it can it can rank the the

cvs based on number and a number of

publications

you know it's hard to read 100 cvs but

an and our

machine learning algorithm could

probably do it really fast and we could

verify that it was doing it correctly

this is still hugely beneficial and i

think we underestimate

the power that that could be so i'm

going to leave it there

i really appreciate you guys listening

to me for the last half hour and i

look forward to your questions um

i'm going to stop sharing my screen in

like a minute just so i can

see everybody i feel quite alone when

i'm looking at it like this

so uh but thank you very much

thank you scott uh that was very

insightful and uh we actually have a few

questions already in the chat

so while i'm reading those uh i would

appreciate if

people uh write their question or at

least the indication that they

have a question in the chat because

there are already quite a few of them so

if you raise a hand i might as well miss

it

um so i'll start uh with the first

question

that arrived i think 30 seconds after

you started the attack

uh so that was uh from enough so

you know would you like to ask your

first question

yes thanks scott for your presentation

very nice

i i was i got stuck when you uh started

to make the i started to explain the

difference between automated

and delegated and yeah i was worried

about it as i gave you as i wrote in the

chat if you if you look take the example

of face recognition

right um then there are face recognition

systems that make use of explicit

explicit selected facial features like

you know position of your nose

distance between the eyes color of the

eyes etc etc and there are some that

learn

these features and we do not know

necessarily what kind of features that

are

both of course have the same effect

namely they recognize people they

classify people etc etc

but one you would call automated because

we as humans select the features and the

others delegated and i don't get that

why it is even important to make that

distinction

uh well i think it's fundamentally

important because we

in one case we're deciding what features

are important

for grounding and a decision in the end

and so we have it's the control problem

right we have that control

but if we are delegating that to a

machine which is fine in facial

recognition i don't

i have lots of problems with facial

recognition but not for this reason

is that we algorithms

machine learning algorithms specifically

are able to

um use its own considerations and it

becomes more powerful and the in the way

that i

mean by that is that it's not confined

to

what we can think of or human

articulable reasons

it actually has a host of other reasons

that we could never understand

i think that's what gives it its power

but it does matter because one is going

to be explainable and one's not

so what is that the word then so i was

looking for that word explainability so

you're you're

the the distinction between uh automated

and delegated is whether or not

it's explainable i think that would be

i i just so it'd just be a clarity issue

then then i

i think that works fine for me but i

would just i prefer the

delegated the decision because i think

that makes it more clear maybe for my

community

but i'll leave it today now you gave a

very if i may you gave a very nice

example of a decision tree with two

levels in the tree right yeah realistic

decision trees have

thousands of levels of course yeah yeah

is that explainable yeah so you see i i

mean it's fundamentally explainable

between the two huh yeah but it's

fundamentally explainable right

whether you have a thousand levels or

two levels you can still explain it

i mean somebody could explain it in the

end we could in principle get an

explanation but with machine learning we

can't

right now at least in principle get an

explanation so there's a difference

between the two and

i'm very interested about this because

uh you mentioned this one example the uh

ai that was used to determine whether

someone should be hired or not or the

quality of

candidates right and i think you made

really good points against that

uh being used but um when we are

when you were talking and when you are

talking about this

um i'm wondering would you say it's

perfectly fine to use an ai

if it's a gopher good old-fashioned ai

with a decision tree behind it

and only problematic when we use machine

learning

uh right so i think it is so there might

be other problems associated with

gophi in that situation but i think we

still have i mean the way or the

arguments that i've made today

we still have control over what

considerations are grounding the

evaluation

so for example if we're using a hiring

algorithm that's basically just saying

you know how many if it's in the

academic sphere is there

um do they have greater than five

publications

um and did they get a phd that has the

word ethics in it

and those are the only two things we

care about separating out the things

well of course we can we can argue about

whether that's good those are

considerations are good

but it's still us deciding what

considerations there are so it doesn't

fall

foul to what i've talked about today it

still may be problematic

but um not for this reason

okay so uh if we move on to the next

question

um so providing a link to the paper

somebody posted it already

so uh then we had uh a question from

reynold about

having a disease uh would that be an

evaluative output

you know can we clarify what the

question was about

oh yeah i think the question is pretty

clear huh yeah

pretty clear don't worry um so i i think

i think i use the example in my paper

sometimes about

detecting skin cancer and an algorithm

there's algorithm that can uh

look at a mole and take that as an input

and evaluate whether you have

uh skin cancer or not so this i don't

think is evaluative because it's

it's verifiable right we can just take a

biopsy and say well did the algorithm

get it right or not

it's a zero or one do you have skin

cancer or not so in that case it's not

an evaluative output no there's no

there's no evaluation i mean

i guess in the uh if it was more mental

if we consider mental issues that's the

next line in the question exactly

well then it's uh i think there's a

blurry line there but

uh i i'd not experienced enough in the

in that field to know whether so i have

a very strong feeling that and i think i

saw that somewhere in the chat window as

well that that

what what you're worried about is

whether or not there's a ground truth

an objectively verifiable ground truth

i think that is yeah i mean i we could

say that

i'm happy with that language okay

good thanks good now we have a question

from david

uh he is wondering whether you can

acquire equate

evaluative outputs with outputs of moral

evaluation

is there necessarily a moral component

or maybe you can clarify that

yeah thanks thanks for the talk also uh

scott

but uh i think enol

just touched upon it in the previous

talk so

i was wondering what you mean with

evaluation

and i think the ground truth part that's

probably

more general than moral evaluation but i

had the feeling you were thinking about

moral

evaluation but also like i said in that

in the talk it's also a static

evaluation you know anything's with with

value

attached to it but i think that does

cause i think the verify ability here is

very important

and it may indeed be easier just to say

is it um is there this ground truth and

and verifiability

part of part of it i think that's that

could be possible i need to think more

about it

okay thanks okay uh now we have another

question from another

also drilling down on the no no no let's

take someone else come on okay

yeah we'll skip this one so we have a

question from jared then

uh about uh the kind of

the gray area between evaluative outputs

and uh something more objective

jared do you want to ask a question um

yeah so thank you very interesting

presentation uh

and so one thing i was thinking about is

that it would be terrifically easy for

pretty much all examples you gave

to reframe them uh as if they are not

giving evaluative outputs but more like

objective judgment

so you can say the ai does not evaluate

appearance it judges similarity to other

applicants based

on or as ranked on suitability and you

can

sort of reframe what your

ai does to something objective and then

say

it's just meant to inform people the

people make the decisions and we just

do this objective bit that informs the

decision

uh and i have a feeling that

focusing on my outputs um might not be

the right

emphasis but i'm not completely sure and

i just wanted to throw it right see

what's uh

and and i'm worried about that too i'm

intentionally making this

bold so that i can get pushback but

i think what what you just said is is um

i like that better in terms of we're

just um

finding applicants that are like the

applicants

that are the people that we've hired in

the past that we've classified as good

so we've done that process of judging

whether they're good

however what considerations are

grounding that similarity part we don't

know

how it's reaching that similarity so i

think it still falls foul

to that and what i think and um in my

more hopeful parts about ai

instead of trying to find you know what

applicants are good based on the

applicants of the past that we've

determined as good

we should figure out well maybe we

should think about what makes them good

what what is it about them that makes

them good and then when we determine

that

then we can use ai perhaps we need ai to

automate

being able to find that feature and to

be able to sort those

applications that's what i really want

because you can't say

um because this suitability thing i

think it's a nice work around and i'm

sure the technology companies will do it

because it makes it uh makes it sound

better and makes

it it forces more of the responsibility

to human beings but i don't think it's a

justified or

meaningful control then i mean what's

the difference between more suitable

and like our better applicants from the

past and just a good candidate

it's basically amounts to the same exact

thing and then it falls into the same

problems

thank you okay next we have a question

from sylvia

sylvia can you plug in and ask the

picture

yes yes um if i can also just add

something about what

what um uh jared was saying then

i think it would at least be an

improvement then

it's clarified what the ai actually does

so for example matching

similar cv because in terms of social

technical

system at least you remove the um

the the tendency to uh trust that then

the ai is gonna have this

additional capability respect to us and

you know we should trust then what the

computer is saying

maybe i agree i think it's a step

forward like that it's definitely a big

step forward it's it doesn't it still

falls victim to someone what i've said

but it's a step forward i agree

yeah but now what i wanted to ask you is

i mean i

i actually don't mind your idea of

saying okay let's keep it boring

because to me just sounds like that just

keep it within the boundaries of what it

actually

could do because it can't do this

contextual

kind of evaluations but it doesn't it

just boils down then

to it can verify very well

tangible things like objects like your

organic sample or the

the checking the moles and

possible skin cancer against then

intangible things so is it is it boiling

down to let's just keep it to

objects or things well

i mean i i think much hinges on the

verifiability

i mean like i guess i mean

earthquakes are tangible you know like

we could predict earthquakes with an

algorithm

and we would only know after the fact if

it got it right but

i you might be right it might be just

tangible it depends on what we mean by

tangible um

possible but really i think the key word

is verifiability

is is can we can we verify it after the

fact or

during and i think that will matter when

we can verify it but it

it if we can't verify it at all which

i'm trying to say that all evaluative

judgments cannot be verified

so we can't do that and i'm trying to

give an easier way

and maybe verifiability just is easier

and pragmatically i should just be using

that

i'm open to that and i will be thinking

about that more but

um yeah so i will

then can i just leave you with like a

sort of like devil's advocate

for location because then it's the

question that i would ask myself

which is um we can't verify an

evaluation from a human either

if the judge decides that that was or a

jury if you're in a in a common law

system

then that that was suspicious you're

gonna fall into the same problem so

someone that really wants to use the

algorithm might say

but then let's let's make it you know

um statistically sound or whatever like

the

lombroso nightmare that was with the

criminal behavior ai and and

so whether it's a human or an ai we

still can't verify that evaluation so

let's just switch to

let's just you know save money and use

the ai anyway

that would be my biggest problem then

right

and i think you're absolutely right we

can't um we can't

i'm saying evaluative judgments aren't

verifiable so it's not going to be

verifiable if a human does it either

but i think um and if i can go back to

using the phrase

saying how the world ought to be and how

people ought to be

that is up to us to do and the idea that

we're going to use a tool

to do that instead doesn't make any

sense especially if we can't

we can't say anything about its efficacy

so um

if i'm right and i think you know i i

find it hard to create an

opposing argument to say well no

actually it doesn't matter

um how we come to the decision about how

the world ought to be

that doesn't matter we just need to

accept you know we just need to accept

one so that it's easier for the machine

to get there

i don't think that just doesn't make any

sense to me but

um it's something that needs to be

worked out more but i think there's

definitely a difference between a human

not being able to

making an evaluation and us not being

able to evaluate it

versus a machine making an evaluation

and i think one of the big differences

is that a human can explain themselves

about how they

came to that decision and what

considerations they used and we can have

the disagreement about

whether the considerations they used

were indeed okay and of course we can

get into the idea that humans can be

deceptive you know they're going to lie

about how they came to the decision

they were very biased against a

particular person but they're not going

to say that they're going to try to use

objective means

but you know the responsibility falls on

them then you know we shouldn't be

delegating that process to a machine

okay uh herman do you want to ask your

question

yeah great um

uh thanks scott i really liked your

hearing your paper

as but i also have a question on

something that has been touched on be

quite a few times already so the

verifiability of an evaluative judgment

so i still struggle with understanding

what you what you mean exactly with that

so do you want to say that nothing is

suspicious nothing is wrong

nothing is beautiful because if if

some things are beautiful then we can

just see

check whether the output corresponds to

reality

um so your answer you just

gave you seemed to hint that we went to

verify the reasoning

behind the judgments uh so and

that seems to be something different so

is that is it the last thing that you're

interested in that

the the reasoning should be very

valuable or is the uh is it the outputs

that is

well i i think both first of all it's

the output and so

i i think with algorithms they're

they're in computer science it's zeros

and ones

and i think we should be thinking about

um we should be working towards and i

know all the bayesian stuff but

i'm not going to get into that but i

think first at least even if even if i

accept that there might be a possibility

that we could uh verify what's beautiful

or not

which i i think there's a lot of

complications in that because it's going

to be culturally specific

even even person-specific not that

there's no somewhat of

even if it's a human-constructed truth

over it there may be

you might be right then i think i that's

why i added in all those reasons about

context changing our considerations

grounding these judgments are changing

movies that were amazing 50 years ago

if the same movie came out today we'd be

like well it's kind of tired

it's not something that we're interested

that's not beautiful anymore

and it's because the context has changed

we've already heard the beatles you know

we can't have a new beatles out in

anymore somebody has to evolve and and