id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

1e4dc27f-3916-4c4a-88fc-172d8a7794db | trentmkelly/LessWrong-43k | LessWrong | Notes on the Safety in Artificial Intelligence conference

These are my notes and observations after attending the Safety in Artificial Intelligence (SafArtInt) conference, which was co-hosted by the White House Office of Science and Technology Policy and Carnegie Mellon University on June 27 and 28. This isn't an organized summary of the content of the conference; rather, it's a selection of points which are relevant to the control problem. As a result, it suffers from selection bias: it looks like superintelligence and control-problem-relevant issues were discussed frequently, when in reality those issues were discussed less and I didn't write much about the more mundane parts.

SafArtInt has been the third out of a planned series of four conferences. The purpose of the conference series was twofold: the OSTP wanted to get other parts of the government moving on AI issues, and they also wanted to inform public opinion.

The other three conferences are about near term legal, social, and economic issues of AI. SafArtInt was about near term safety and reliability in AI systems. It was effectively the brainchild of Dr. Ed Felten, the deputy U.S. chief technology officer for the White House, who came up with the idea for it last year. CMU is a top computer science university and many of their own researchers attended, as well as some students. There were also researchers from other universities, some people from private sector AI including both Silicon Valley and government contracting, government researchers and policymakers from groups such as DARPA and NASA, a few people from the military/DoD, and a few control problem researchers. As far as I could tell, everyone except a few university researchers were from the U.S., although I did not meet many people. There were about 70-100 people watching the presentations at any given time, and I had conversations with about twelve of the people who were not affiliated with existential risk organizations, as well as of course all of those who were affiliated. The conference was split |

2a4ca702-0cd2-45bf-81a9-f3d782c74c61 | trentmkelly/LessWrong-43k | LessWrong | Dating Minefield vs. Dating Playground

Crossposted from Optimized Dating.

Imagine you want to improve your performance at some task, hobby or a job. You get offered a choice of two courses:

Course A is really vague and undefined, with no clear program. You don't get graded on your performance, but it's broadcasted to your community so everyone can silently judge you every time you fail. Your early choices are locked in, and you can't radically change your approach without raising many eyebrows. And it's long.

Course B, in contrast, offers clear performance indicators, tight feedback loops and sensible intermediate milestones. You wouldn't become a master on day 1, but you get told what are you doing wrong and what do you need to improve. You could play with different approaches to the problem and get no lasting judgement if something goes wrong. "Move fast and break things" works here.

There's no catch here, course B is obviously better. And that's why online dating is superior to trying to date IRL.

Let me explain. A lot of my online and offline friends who don't have much romantic experience are avoiding online dating like a plague. I remember the time when my particle physicist friend visited me in France and lamented his lack of romantic life. Yet when I suggested him to install an online dating app, he became incredibly anxious and refused to have anything to do with it. Even after I asked him for his phone and installed Tinder on it, he was on edge so much that he threw his phone across the table the first time he received a notification about a match.

When I ask these friends how they imagine IRL dating, I don't get a very defined response. There's some vague notion of "meeting someone at school/work/hobby" and "developing a relationship". This may sound wonderful, but to me it seems that this approach is fraught with difficulties – especially so for novices on the romantic battlegrounds.

The overarching motif here is that attempting to date people you already know IRL is a minefie |

5c690534-c956-4493-a08f-1ff7f1decd6d | trentmkelly/LessWrong-43k | LessWrong | Meetup : Moscow Meetup: CBT is back

Discussion article for the meetup : Moscow Meetup: CBT is back

WHEN: 07 December 2014 02:00:00PM (+0300)

WHERE: Russia, Moscow, ulitsa L'va Tolstogo 16

This meetup will be in semi-closed format! If you hadn't come to our meetups before, please wait for the next open meetup: it's planned to December, 21.

Regular visitors of our meetups, please wait for announce in our group:

https://groups.google.com/forum/#!forum/rationality-in-moscow

You will feel more comfortable in our meetups if you read the needed materials and became familiar with some base ideas:

* You understand the Bayes theorem (what is bayesianism - http://lesswrong.com/lw/1to/what_is_bayesianism or http://schegl2g.bget.ru/bayes/YudkowskyBayes.html ).

* You understand what is "System 1" and "System 2" (Kahneman and Yudkowsky).

* You know what is "rational agent".

* You've read the base sequences: "Map and Territory" - http://wiki.lesswrong.com/wiki/Map_and_Territory_(sequence) and "Mysterious Answers to Mysterious Questions" - http://wiki.lesswrong.com/wiki/Mysterious_Answers_to_Mysterious_Questions .

It will allow you to understand better, what we talk about, how do we think, and will give you the spirit of our activities.

Discussion article for the meetup : Moscow Meetup: CBT is back |

fc34d773-4814-4133-82bd-22d94c9bc5dc | StampyAI/alignment-research-dataset/arxiv | Arxiv | No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling

1 Introduction

---------------

Figure 1: An example of visual storytelling and visual captioning. Both captions and stories are shown here: each image is captioned with one sentence, and we also demonstrate two diversified stories that match the same image sequence.

Recently, increasing attention has been focused on visual captioning Chen et al. ([2015](#bib.bib8)); Xu et al. ([2016](#bib.bib35)); Wang et al. ([2018c](#bib.bib33)), which aims at describing the content of an image or a video.

Though it has achieved impressive results, its capability of performing human-like understanding is still restrictive.

To further investigate machine’s capabilities in understanding more complicated visual scenarios and composing more structured expressions, visual storytelling Huang et al. ([2016](#bib.bib17)) has been proposed.

Visual captioning is aimed at depicting the concrete content of the images, and its expression style is rather simple. In contrast, visual storytelling goes one step further: it summarizes the idea of a photo stream and tells a story about it.

[Figure 1](#S1.F1 "Figure 1 ‣ 1 Introduction ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling") shows an example of visual captioning and visual storytelling. We have observed that stories contain rich emotions (excited, happy, not want) and imagination (siblings, parents, school, car). It, therefore, requires the capability to associate with concepts that do not explicitly appear in the images. Moreover, stories are more subjective, so there barely exists standard templates for storytelling. As shown in [Figure 1](#S1.F1 "Figure 1 ‣ 1 Introduction ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling"), the same photo stream can be paired with diverse stories, different from each other. This heavily increases the evaluation difficulty.

So far, prior work for visual storytelling Huang et al. ([2016](#bib.bib17)); Yu et al. ([2017b](#bib.bib37)) is mainly inspired by the success of visual captioning.

Nevertheless, because these methods are trained by maximizing the likelihood of the observed data pairs, they are restricted to generate simple and plain description with limited expressive patterns. In order to cope with the challenges and produce more human-like descriptions, Rennie et al. ([2016](#bib.bib29)) have proposed a reinforcement learning framework. However, in the scenario of visual storytelling, the common reinforced captioning methods are facing great challenges since the hand-crafted rewards based on string matches are either too biased or too sparse to drive the policy search. For instance, we used the METEOR Banerjee and Lavie ([2005](#bib.bib4)) score as the reward to reinforce our policy and found that though the METEOR score is significantly improved, the other scores are severely harmed. Here we showcase an adversarial example with an average METEOR score as high as 40.2:

* We had a great time to have a lot of the. They were to be a of the. They were to be in the. The and it were to be the. The, and it were to be the.

Apparently, the machine is gaming the metrics. Conversely, when using some other metrics (e.g. BLEU, CIDEr) to evaluate the stories, we observe an opposite behavior: many relevant and coherent stories are receiving a very low score (nearly zero).

In order to resolve the strong bias brought by the hand-coded evaluation metrics in RL training and produce more human-like stories, we propose an Adversarial REward Learning (AREL) framework for visual storytelling. We draw our inspiration from recent progress in inverse reinforcement learning Ho and Ermon ([2016](#bib.bib16)); Finn et al. ([2016](#bib.bib11)); Fu et al. ([2017](#bib.bib12)) and propose the AREL algorithm to learn a more intelligent reward function. Specifically, we first incorporate a Boltzmann distribution to associate reward learning with distribution approximation, then design the adversarial process with two models – a policy model and a reward model. The policy model performs the primitive actions and produces the story sequence, while the reward model is responsible for learning the implicit reward function from human demonstrations. The learned reward function would be employed to optimize the policy in return.

For evaluation, we conduct both automatic metrics and human evaluation but observe a poor correlation between them. Particularly, our method gains slight performance boost over the baseline systems on automatic metrics; human evaluation, however, indicates significant performance boost. Thus we further discuss the limitations of the metrics and validate the superiority of our AREL method in performing more intelligent understanding of the visual scenes and generating more human-like stories.

Our main contributions are four-fold:

* We propose an adversarial reward learning framework and apply it to boost visual story generation.

* We evaluate our approach on the Visual Storytelling (VIST) dataset and achieve the state-of-the-art results on automatic metrics.

* We empirically demonstrate that automatic metrics are not perfect for either training or evaluation.

* We design and perform a comprehensive human evaluation via Amazon Mechanical Turk, which demonstrates the superiority of the generated stories of our method on relevance, expressiveness, and concreteness.

2 Related Work

---------------

#### Visual Storytelling

Visual storytelling is the task of generating a narrative story from a photo stream, which requires a deeper understanding of the event flow in the stream. Park and Kim ([2015](#bib.bib24)) has done some pioneering research on storytelling. Chen et al. ([2017](#bib.bib9)) proposed a multimodal approach for storyline generation to produce a stream of entities instead of human-like descriptions. Recently, a more sophisticated dataset for visual storytelling (VIST) has been released to explore a more human-like understanding of grounded stories Huang et al. ([2016](#bib.bib17)). Yu et al. ([2017b](#bib.bib37)) proposes a multi-task learning algorithm for both album summarization and paragraph generation, achieving the best results on the VIST dataset. But these methods are still based on behavioral cloning and lack the ability to generate more structured stories.

#### Reinforcement Learning in Sequence Generation

Recently, reinforcement learning (RL) has gained its popularity in many sequence generation tasks such as machine translation Bahdanau et al. ([2016](#bib.bib3)), visual captioning Ren et al. ([2017](#bib.bib28)); Wang et al. ([2018b](#bib.bib32)), summarization Paulus et al. ([2017](#bib.bib25)); Chen et al. ([2018](#bib.bib7)), etc.

The common wisdom of using RL is to view generating a word as an action and aim at maximizing the expected return by optimizing its policy. As pointed in Ranzato et al. ([2015](#bib.bib26)), traditional maximum likelihood algorithm is prone to exposure bias and label bias, while the RL agent exposes the generative model to its own distribution and thus can perform better. But these works usually utilize hand-crafted metric scores as the reward to optimize the model, which fails to learn more implicit semantics due to the limitations of automatic metrics.

#### Rethinking Automatic Metrics

Automatic metrics, including BLEU Papineni et al. ([2002](#bib.bib23)), CIDEr Vedantam et al. ([2015](#bib.bib30)), METEOR Banerjee and Lavie ([2005](#bib.bib4)), and ROUGE Lin ([2004](#bib.bib20)), have been widely applied to the sequence generation tasks. Using automatic metrics can ensure rapid prototyping and testing new models with fewer expensive human evaluation. However, they have been criticized to be biased and correlate poorly with human judgments,

especially in many generative tasks like response generation Lowe et al. ([2017](#bib.bib22)); Liu et al. ([2016](#bib.bib21)), dialogue system Bruni and Fernández ([2017](#bib.bib5)) and machine translation Callison-Burch et al. ([2006](#bib.bib6)).

The naive overlap-counting methods are not able to reflect many semantic properties in natural language, such as coherence, expressiveness, etc.

#### Generative Adversarial Network

Generative adversarial network (GAN) Goodfellow et al. ([2014](#bib.bib13)) is a very popular approach for estimating intractable probabilities, which sidestep the difficulty by alternately training two models to play a min-max two-player game:

| | | |

| --- | --- | --- |

| | minDmaxGEx∼pdata[logD(x)]+z∼pzE[logD(G(z))] , | |

where G is the generator and D is the discriminator, and z is the latent variable. Recently, GAN has quickly been adopted to tackle discrete problems Yu et al. ([2017a](#bib.bib36)); Dai et al. ([2017](#bib.bib10)); Wang et al. ([2018a](#bib.bib31)). The basic idea is to use Monte Carlo policy gradient estimation Williams ([1992](#bib.bib34)) to update the parameters of the generator.

#### Inverse Reinforcement Learning

Reinforcement learning is known to be hindered by the need for an extensive feature and reward engineering, especially under the unknown dynamics. Therefore, inverse reinforcement learning (IRL) has been proposed to infer expert’s reward function. Previous IRL approaches include maximum margin approaches Abbeel and Ng ([2004](#bib.bib1)); Ratliff et al. ([2006](#bib.bib27)) and probabilistic approaches Ziebart ([2010](#bib.bib38)); Ziebart et al. ([2008](#bib.bib39)). Recently, adversarial inverse reinforcement learning methods provide an efficient and scalable promise for automatic reward acquisition Ho and Ermon ([2016](#bib.bib16)); Finn et al. ([2016](#bib.bib11)); Fu et al. ([2017](#bib.bib12)); Henderson et al. ([2017](#bib.bib15)). These approaches utilize the connection between IRL and energy-based model and associate every data with a scalar energy value by using Boltzmann distribution pθ(x)∝exp(−Eθ(x)). Inspired by these methods, we propose a practical AREL approach for visual storytelling to uncover a robust reward function from human demonstrations and thus help produce human-like stories.

Figure 2: AREL framework for visual storytelling.

3 Our Approach

---------------

###

3.1 Problem Statement

Here we consider the task of visual storytelling, whose objective is to output a word sequence W=(w1,w1,⋯,wT), wt∈V given an input image stream of 5 ordered images I=(I1,I2,⋯,I5), where V is the vocabulary of all output token. We formulate the generation as a markov decision process and design a reinforcement learning framework to tackle it. As described in [Figure 2](#S2.F2 "Figure 2 ‣ Inverse Reinforcement Learning ‣ 2 Related Work ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling"), our AREL framework is mainly composed of two modules: a policy model πβ(W) and a reward model Rθ(W). The policy model takes an image sequence I as the input and performs sequential actions (choosing words w from the vocabulary V) to form a narrative story W. The reward model is optimized by the adversarial objective (see Section [3.3](#S3.SS3 "3.3 Learning ‣ 3 Our Approach ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling")) and aims at deriving a human-like reward from both human-annotated stories and sampled predictions.

Figure 3: Overview of the policy model. The visual encoder is a bidirectional GRU, which encodes the high-level visual features extracted from the input images. Its outputs are then fed into the RNN decoders to generate sentences in parallel. Finally, we concatenate all the generated sentences as a full story. Note that the five decoders share the same weights.

###

3.2 Model

#### Policy Model

As is shown in [Figure 3](#S3.F3 "Figure 3 ‣ 3.1 Problem Statement ‣ 3 Our Approach ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling"), the policy model is a CNN-RNN architecture. We fist feed the photo stream I=(I1,⋯,I5) into a pretrained CNN and extract their high-level image features. We then employ a visual encoder to further encode the image features as context vectors hi=[←hi;→hi]. The visual encoder is a bidirectional gated recurrent units (GRU).

In the decoding stage, we feed each context vector hi into a GRU-RNN decoder to generate a sub-story Wi.

Formally, the generation process can be written as:

| | | | | |

| --- | --- | --- | --- | --- |

| | sit | =GRU(sit−1,[wit−1,hi]) , | | (1) |

| | πβ(wit|wi1:t−1) | =softmax(Wssit+bs) , | | (2) |

where sit denotes the t-th hidden state of i-th decoder. We concatenate the previous token wit−1 and the context vector hi as the input. Ws and bs are the projection matrix and bias, which output a probability distribution over the whole vocabulary V. Eventually, the final story W is the concatenation of the sub-stories Wi. β denotes all the parameters of the encoder, the decoder, and the output layer.

Figure 4: Overview of the reward model. Our reward model is a CNN-based architecture, which utilizes convolution kernels with size 2, 3 and 4 to extract bigram, trigram and 4-gram representations from the input sequence embeddings. Once the sentence representation is learned, it will be concatenated with the visual representation of the input image, and then be fed into the final FC layer to obtain the reward.

#### Reward Model

The reward model Rθ(W) is a CNN-based architecture (see [Figure 4](#S3.F4 "Figure 4 ‣ Policy Model ‣ 3.2 Model ‣ 3 Our Approach ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling")).

Instead of giving an overall score for the whole story, we apply the reward model to different story parts (sub-stories) Wi and compute partial rewards, where i=1,⋯,5. We observe that the partial rewards are more fine-grained and can provide better guidance for the policy model.

We first query the word embeddings of the sub-story (one sentence in most cases). Next, multiple convolutional layers with different kernel sizes are used to extract the n-grams features, which are then projected into the sentence-level representation space by pooling layers (the design here is inspired by Kim ([2014](#bib.bib18))). In addition to the textual features, evaluating the quality of a story should also consider the image features for relevance. Therefore, we then combine the sentence representation with the visual feature of the input image through concatenation and feed them into the final fully connected decision layer. In the end, the reward model outputs an estimated reward value Rθ(W). The process can be written in formula:

| | | | |

| --- | --- | --- | --- |

| | Rθ(W)=Wr(fconv(W)+WiICNN)+br, | | (3) |

where Wr,br denotes the weights in the output layer, and fconv denotes the operations in CNN. ICNN is the high-level visual feature extracted from the image, and Wi projects it into the sentence representation space. θ includes all the parameters above.

###

3.3 Learning

#### Reward Boltzmann Distribution

In order to associate story distribution with reward function, we apply EBM to define a Reward Boltzmann distribution:

| | | | |

| --- | --- | --- | --- |

| | pθ(W)=exp(Rθ(W))Zθ , | | (4) |

Where W is the word sequence of the story and pθ(W) is the approximate data distribution, and Zθ=∑Wexp(Rθ(W)) denotes the partition function. According to the energy-based model LeCun et al. ([2006](#bib.bib19)), the optimal reward function R∗(W) is achieved when the Reward-Boltzmann distribution equals to the “real” data distribution pθ(W)=p∗(W).

#### Adversarial Reward Learning

We first introduce an empirical distribution pe(W)=1(W∈D)|D| to represent the empirical distribution of the training data, where D denotes the dataset with |D| stories and 1 denotes an indicator function. We use this empirical distribution as the “good” examples, which provides the evidence for the reward function to learn from.

In order to approximate the Reward Boltzmann distribution towards the “real” data distribution p∗(W), we design a min-max two-player game, where the Reward Boltzmann distribution pθ aims at maximizing the its similarity with empirical distribution pe while minimizing that with the “faked” data generated from policy model πβ. On the contrary, the policy distribution πβ tries to maximize its similarity with the Boltzmann distribution pθ. Formally, the adversarial objective function is defined as

| | | | |

| --- | --- | --- | --- |

| | maxβminθKL(pe(W)||pθ(W))−KL(πβ(W)||pθ(W)) . | | (5) |

We further decompose it into two parts. First, because the objective Jβ of the story generation policy is to minimize its similarity with the Boltzmann distribution pθ, the optimal policy that minimizes KL-divergence is thus π(W)∼exp(Rθ(W)), meaning if Rθ is optimal, the optimal πβ=π∗. In formula,

| | | | |

| --- | --- | --- | --- |

| | Jβ=−KL(πβ(W)||pθ(W))=EW∼πβ(W)[Rθ(W)]+H(πβ(W)) , | | (6) |

where H denotes the entropy of the policy model. On the other hand, the objective Jθ of the reward function is to distinguish between human-annotated stories and machine-generated stories. Hence it is trying to minimize the KL-divergence with the empirical distribution pe and maximize the KL-divergence with the approximated policy distribution πβ:

| | | | |

| --- | --- | --- | --- |

| | Jθ=KL(pe(W)||pθ(W))−KL(πβ(W)||pθ(W))=∑W[pe(W)Rθ(W)−πβ(W)Rθ(W)]−H(pe)+H(πβ) , | | (7) |

Since H(πβ) and H(pe) are irrelevant to θ, we denote them as constant C. Therefore, the objective Jθ can be further derived as

| | | | |

| --- | --- | --- | --- |

| | Jθ=EW∼pe(W)[Rθ(W)]−EW∼πβ(W)[Rθ(W)]+C . | | (8) |

Here we propose to use stochastic gradient descent to optimize these two models alternately. Formally, the gradients can be written as

| | | | |

| --- | --- | --- | --- |

| | ∂Jθ∂θ=EW∼pe(W)∂Rθ(W)∂θ−EW∼πβ(W)∂Rθ(W)∂θ ,∂Jβ∂β=EW∼πβ(W)(Rθ(W)+logπθ(W)−b)∂logπβ(W)∂β , | | (9) |

where b is the estimated baseline to reduce the variance.

1:for episode ← 1 to N do

2: collect story W by executing policy πθ

3: if Train-Reward then

4: θ←θ−η×∂Jθ∂θ (see [Equation 9](#S3.E9 "(9) ‣ Adversarial Reward Learning ‣ 3.3 Learning ‣ 3 Our Approach ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling"))

5: else if Train-Policy then

6: collect story ~W from empirical pe

7: β←β−η×∂Jβ∂β (see [Equation 9](#S3.E9 "(9) ‣ Adversarial Reward Learning ‣ 3.3 Learning ‣ 3 Our Approach ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling"))

8: end if

9:end for

Algorithm 1 The AREL Algorithm.

#### Training & Testing

As described in [Algorithm 1](#alg1 "Algorithm 1 ‣ Adversarial Reward Learning ‣ 3.3 Learning ‣ 3 Our Approach ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling"), we introduce an alternating algorithm to train these two models using stochastic gradient descent. During testing, the policy model is used with beam search to produce the story.

4 Experiments and Analysis

---------------------------

###

4.1 Experimental Setup

#### VIST Dataset

The VIST dataset Huang et al. ([2016](#bib.bib17)) is the first dataset for sequential vision-to-language tasks including visual storytelling, which consists of 10,117 Flickr albums with 210,819 unique photos. In this paper, we mainly evaluate our AREL method on this dataset. After filtering the broken images222There are only 3 (out of 21,075) broken images in the test set, which basically has no influence on the final results. Moreover, Yu et al. ([2017b](#bib.bib37)) also removed the 3 pictures, so it is a fair comparison., there are 40,098 training, 4,988 validation, and 5,050 testing samples.

Each sample contains one story that describes 5 selected images from a photo album (mostly one sentence per image). And the same album is paired with 5 different stories as references. In our experiments, we used the same split settings as in Huang et al. ([2016](#bib.bib17)); Yu et al. ([2017b](#bib.bib37)) for a fair comparison.

#### Evaluation Metrics

In order to comprehensively evaluate our method on storytelling dataset, we adopted both the automatic metrics and human evaluation as our criterion. Four diverse automatic metrics were used in our experiments: BLEU, METEOR, ROUGE-L, and CIDEr. We utilized the open source evaluation code333<https://github.com/lichengunc/vist_eval> used in Yu et al. ([2017b](#bib.bib37)). For human evaluation, we employed the Amazon Mechanical Turk to perform two kinds of user studies (see Section [4.3](#S4.SS3 "4.3 Human Evaluation ‣ 4 Experiments and Analysis ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling") for more details).

#### Training Details

We employ pretrained ResNet-152 model He et al. ([2016](#bib.bib14)) to extract image features from the photo stream. We built a vocabulary of size 9,837 to include words appearing more than three times in the training set. More training details can be found at [Appendix B](#A2 "Appendix B Training Details ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling").

###

4.2 Automatic Evaluation

In this section, we compare our AREL method with the state-of-the-art methods as well as standard reinforcement learning algorithms on automatic evaluation metrics. Then we further discuss the limitations of the hand-crafted metrics on evaluating human-like stories.

#### Comparison with SOTA on Automatic Metrics

In [Table 1](#S4.T1 "Table 1 ‣ Comparison with SOTA on Automatic Metrics ‣ 4.2 Automatic Evaluation ‣ 4 Experiments and Analysis ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling"), we compare our method with Huang et al. ([2016](#bib.bib17)) and Yu et al. ([2017b](#bib.bib37)), which report achieving best-known results on the VIST dataset. We first implement a strong baseline model (XE-ss), which share the same architecture with our policy model but is trained with cross-entropy loss and scheduled sampling. Besides, we adopt the traditional generative adversarial training for comparison (GAN). As shown in [Table 1](#S4.T1 "Table 1 ‣ Comparison with SOTA on Automatic Metrics ‣ 4.2 Automatic Evaluation ‣ 4 Experiments and Analysis ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling"), our XE-ss model already outperforms the best-known results on the VIST dataset, and the GAN model can bring a performance boost. We then use the XE-ss model to initialize our policy model and further train it with AREL. Evidently, our AREL model performs the best and achieves the new state-of-the-art results across all metrics.

| | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- |

| Method | B-1 | B-2 | B-3 | B-4 | M | R | C |

| Huang et al. | - | - | - | - | 31.4 | - | - |

| Yu et al. | - | - | 21.0 | - | 34.1 | 29.5 | 7.5 |

| XE-ss | 62.3 | 38.2 | 22.5 | 13.7 | 34.8 | 29.7 | 8.7 |

| GAN | 62.8 | 38.8 | 23.0 | 14.0 | 35.0 | 29.5 | 9.0 |

| AREL-s-50 | 63.8 | 38.9 | 22.9 | 13.8 | 34.9 | 29.4 | 9.5 |

| AREL-t-50 | 63.4 | 39.0 | 23.1 | 14.1 | 35.2 | 29.6 | 9.5 |

| AREL-s-100 | 63.9 | 39.1 | 23.0 | 13.9 | 35.0 | 29.7 | 9.6 |

| AREL-t-100 | 63.8 | 39.1 | 23.2 | 14.1 | 35.0 | 29.5 | 9.4 |

| | | | | | | | |

Table 1: Automatic evaluation on the VIST dataset. We report BLEU (B), METEOR (M), ROUGH-L (R), and CIDEr (C) scores of the SOTA systems and the models we implemented, including XE-ss, GAN and AREL. AREL-s-N denotes AREL models with sigmoid as output activation and alternate frequency as N, while AREL-t-N denoting AREL models with tahn as the output activation (N = 50 or 100).

But, compared with the XE-ss model, the performance gain is minor, especially on METEOR and ROUGE-L scores. However, in Sec. [4.3](#S4.SS3 "4.3 Human Evaluation ‣ 4 Experiments and Analysis ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling"), the extensive human evaluation has indicated that our AREL framework brings a significant improvement on generating human-like stories over the XE-ss model. The inconsistency of automatic evaluation and human evaluation lead to a suspect that these hand-crafted metrics lack the ability to fully evaluate stories’ quality due to the complicated characteristics of the stories. Therefore, we conduct experiments to analyze and discuss the defects of the automatic metrics in [subsection 4.2](#S4.SS2.SSS0.Px2 "Limitations of Automatic Metrics ‣ 4.2 Automatic Evaluation ‣ 4 Experiments and Analysis ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling").

| Method | B-1 | B-2 | B-3 | B-4 | M | R | C |

| --- | --- | --- | --- | --- | --- | --- | --- |

| XE-ss | 62.3 | 38.2 | 22.5 | 13.7 | 34.8 | 29.7 | 8.7 |

| BLEU-RL | 62.1 | 38.0 | 22.6 | 13.9 | 34.6 | 29.0 | 8.9 |

| METEOR-RL | 68.1 | 35.0 | 15.4 | 6.8 | 40.2 | 30.0 | 1.2 |

| ROUGE-RL | 58.1 | 18.5 | 1.6 | 0 | 27.0 | 33.8 | 0 |

| CIDEr-RL | 61.9 | 37.8 | 22.5 | 13.8 | 34.9 | 29.7 | 8.1 |

| AREL (avg) | 63.7 | 39.0 | 23.1 | 14.0 | 35.0 | 29.6 | 9.5 |

Table 2: Comparison with different RL models with different metric scores as the rewards. We report the average scores of the AREL models as AREL (avg). Although METEOR-RL and ROUGE-RL models achieve very high scores on their own metrics, the underlined scores are severely damaged. Actually, they are gaming their own metrics with nonsense sentences.

#### Limitations of Automatic Metrics

As we claimed in the introduction, string-match-based automatic metrics are not perfect and fail to evaluate some semantic characteristics of the stories, like the expressiveness and coherence of the stories. In order to confirm our conjecture, we utilize automatic metrics as rewards to reinforce the visual storytelling model by adopting policy gradient with baseline to train the policy model. The quantitative results are demonstrated in [Table 1](#S4.T1 "Table 1 ‣ Comparison with SOTA on Automatic Metrics ‣ 4.2 Automatic Evaluation ‣ 4 Experiments and Analysis ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling").

Apparently, METEOR-RL and ROUGE-RL are severely ill-posed: they obtain the highest scores on their own metrics but damage the other metrics severely. We observe that these models are actually overfitting to a given metric while losing the overall coherence and semantical correctness. Same as METEOR score, there is also an adversarial example for ROUGE-L444An adversarial example for ROUGE-L: we the was a . and to the . we the was a . and to the . we the was a . and to the . we the was a . and to the . we the was a . and to the ., which is nonsense but achieves an average ROUGE-L score of 33.8.

Figure 5: Metric score distributions. We plot the histogram distributions of BLEU-3 and CIDEr scores on the test set, as well as the human evaluation score distribution on the test samples. For a fair comparison, we use the Turing test results to calculate the human evaluation scores (see Section [4.3](#S4.SS3 "4.3 Human Evaluation ‣ 4 Experiments and Analysis ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling")). Basically, 0.2 score is given if the generated story wins the Turing test, 0.1 for tie, and 0 if losing. Each sample has 5 scores from 5 judges, and we use the sum as the human evaluation score, so it is in the range [0, 1].

Besides, as can be seen in [Table 1](#S4.T1 "Table 1 ‣ Comparison with SOTA on Automatic Metrics ‣ 4.2 Automatic Evaluation ‣ 4 Experiments and Analysis ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling"), after reinforced training, BLEU-RL and CIDEr-RL do not bring a consistent improvement over the XE-ss model. We plot the histogram distributions of both BLEU-3 and CIDEr scores on the test set in [Figure 5](#S4.F5 "Figure 5 ‣ Limitations of Automatic Metrics ‣ 4.2 Automatic Evaluation ‣ 4 Experiments and Analysis ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling").

An interesting fact is that there are a large number of samples with nearly zero score on both metrics. However, we observed those “zero-score” samples are not pointless results; instead, lots of them make sense and deserve a better score than zero. Here is a “zero-score” example on BLEU-3:

* I had a great time at the restaurant today. The food was delicious. I had a lot of food. The food was delicious. T had a great time.

The corresponding reference is

* The table of food was a pleasure to see! Our food is both nutritious and beautiful! Our chicken was especially tasty! We love greens as they taste great and are healthy! The fruit was a colorful display that tantalized our palette..

Although the prediction is not as good as the reference, it is actually coherent and relevant to the theme “food and eating”, which showcases the defeats of using BLEU and CIDEr scores as a reward for RL training.

| | | | |

| --- | --- | --- | --- |

| Method | Win | Lose | Unsure |

| XE-ss | 22.4% | 71.7% | 5.9% |

| BLEU-RL | 23.4% | 67.9% | 8.7% |

| CIDEr-RL | 13.8% | 80.3% | 5.9% |

| GAN | 34.3% | 60.5% | 5.2% |

| AREL | 38.4% | 54.2% | 7.4% |

| | | | |

Table 3: Turing test results.

| | AREL vs XE-ss | AREL vs BLEU-RL | AREL vs CIDEr-RL | AREL vs GAN |

| --- | --- | --- | --- | --- |

| Choice (%) | AREL | XE-ss | Tie | AREL | BLEU-RL | Tie | AREL | CIDEr-RL | Tie | AREL | GAN | Tie |

| Relevance | 61.7 | 25.1 | 13.2 | 55.8 | 27.9 | 16.3 | 56.1 | 28.2 | 15.7 | 52.9 | 35.8 | 11.3 |

| Expressiveness | 66.1 | 18.8 | 15.1 | 59.1 | 26.4 | 14.5 | 59.1 | 26.6 | 14.3 | 48.5 | 32.2 | 19.3 |

| Concreteness | 63.9 | 20.3 | 15.8 | 60.1 | 26.3 | 13.6 | 59.5 | 24.6 | 15.9 | 49.8 | 35.8 | 14.4 |

Table 4: Pairwise human comparisons. The results indicate the consistent superiority of our AREL model in generating more human-like stories than the SOTA methods.

Moreover, we compare the human evaluation scores with these two metric scores in [Figure 5](#S4.F5 "Figure 5 ‣ Limitations of Automatic Metrics ‣ 4.2 Automatic Evaluation ‣ 4 Experiments and Analysis ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling"). Noticeably, both BLEU-3 and CIDEr have a poor correlation with the human evaluation scores. Their distributions are more biased and thus cannot fully reflect the quality of the generated stories. In terms of BLEU, it is extremely hard for machines to produce the exact 3-gram or 4-gram matching, so the scores are too low to provide useful guidance.

CIDEr measures the similarity of a sentence to the majority of the references.

However, the references to the same image sequence are photostream different from each other, so the score is very low and not suitable for this task. In contrast, our AREL framework can lean a more robust reward function from human-annotated stories, which is able to provide better guidance to the policy and thus improves its performances over different metrics.

#### Comparison with GAN

We here compare our method with traditional GAN Goodfellow et al. ([2014](#bib.bib13)), the update rule for generator can be generally classified into two categories. We demonstrate their corresponding objectives and ours as follows:

| | | | |

| --- | --- | --- | --- |

| | GAN1:Jβ= | EW∼pβ[−logRθ(W)] , | |

| | GAN2:Jβ= | EW∼pβ[log(1−Rθ(W))] , | |

| | ours:Jβ= | EW∼pβ[−Rθ(W)] . | |

As discussed in Arjovsky et al. ([2017](#bib.bib2)), GAN1 is prone to the unstable gradient issue and GAN2 is prone to the vanishing gradient issue. Analytically, our method does not suffer from these two common issues and thus is able converge to optimum solutions more easily. From [Table 1](#S4.T1 "Table 1 ‣ Comparison with SOTA on Automatic Metrics ‣ 4.2 Automatic Evaluation ‣ 4 Experiments and Analysis ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling"), we can observe slight gains of using AREL over GAN with automatic metrics, therefore we further deploy human evaluation for a better comparison.

###

4.3 Human Evaluation

Automatic metrics cannot fully evaluate the capability of our AREL method. Therefore, we perform two different kinds of human evaluation studies on Amazon Mechanical Turk: Turing test and pairwise human evaluation. For both tasks, we use 150 stories (750 images) sampled from the test set, each assigned to 5 workers to eliminate human variance. We batch six items as one assignment and insert an additional assignment as a sanity check. Besides, the order of the options within each item is shuffled to make a fair comparison.

#### Turing Test

We first conduct five independent Turing tests for XE-ss, BLEU-RL, CIDEr-RL, GAN, and AREL models, during which the worker is given one human-annotated sample and one machine-generated sample, and needs to decide which is human-annotated. As shown in [Table 3](#S4.T3 "Table 3 ‣ Limitations of Automatic Metrics ‣ 4.2 Automatic Evaluation ‣ 4 Experiments and Analysis ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling"), our AREL model significantly outperforms all the other baseline models in the Turing test: it has much more chances to fool AMT worker (the ratio is AREL:XE-ss:BLEU-RL:CIDEr-RL:GAN = 45.8%:28.3%:32.1%:19.7%:39.5%), which confirms the superiority of our AREL framework in generating human-like stories. Unlike automatic metric evaluation, the Turing test has indicated a much larger margin between AREL and other competing algorithms. Thus, we empirically confirm that metrics are not perfect in evaluating many implicit semantic properties of natural language.

Besides, the Turing test of our AREL model reveals that nearly half of the workers are fooled by our machine generation, indicating a preliminary success toward generating human-like stories.

Figure 6: Qualitative comparison example with XE-ss. The direct comparison votes (AREL:XE-ss:Tie) were 5:0:0 on Relevance, 4:0:1 on Expressiveness, and 5:0:0 on Concreteness.

#### Pairwise Comparison

In order to have a clear comparison with competing algorithms with respect to different semantic features of the stories, we further perform four pairwise comparison tests: AREL vs XE-ss/BLEU-RL/CIDEr-RL/GAN. For each photo stream, the worker is presented with two generated stories and asked to make decisions from the three aspects: relevance555Relevance: the story accurately describes what is happening in the image sequence and covers the main objects., expressiveness666Expressiveness: coherence, grammatically and semantically correct, no repetition, expressive language style. and concreteness777Concreteness: the story should narrate concretely what is in the image rather than giving very general descriptions.. This head-to-head compete is designed to help us understand in what aspect our model outperforms the competing algorithms, which is displayed in [Table 4](#S4.T4 "Table 4 ‣ Limitations of Automatic Metrics ‣ 4.2 Automatic Evaluation ‣ 4 Experiments and Analysis ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling").

Consistently on all the three comparisons, a large majority of the AREL stories trumps the competing systems with respect to their relevance, expressiveness, and concreteness. Therefore, it empirically confirms that our generated stories are more relevant to the image sequences, more coherent and concrete than the other algorithms, which however is not explicitly reflected by the automatic metric evaluation.

###

4.4 Qualitative Analysis

[Figure 6](#S4.F6 "Figure 6 ‣ Turing Test ‣ 4.3 Human Evaluation ‣ 4 Experiments and Analysis ‣ No Metrics Are Perfect: Adversarial Reward Learning for Visual Storytelling") gives a qualitative comparison example between AREL and XE-ss models. Looking at the individual sentences, it is obvious that our results are more grammatically and semantically correct. Then connecting the sentences together, we observe that the AREL story is more coherent and describes the photo stream more accurately. Thus, our AREL model significantly surpasses the XE-ss model on all the three aspects of the qualitative example. Besides, it won the Turing test (3 out 5 AMT workers think the AREL story is created by a human). In the appendix, we also show a negative case that fails the Turing test.

5 Conclusion

-------------

In this paper, we not only introduce a novel adversarial reward learning algorithm to generate more human-like stories given image sequences, but also empirically analyze the limitations of the automatic metrics for story evaluation. We believe there are still lots of improvement space in the narrative paragraph generation tasks, like how to better simulate human imagination to create more vivid and diversified stories.

Acknowledgment

--------------

We thank Adobe Research for supporting our language and vision research. We would also like to thank Licheng Yu for clarifying the details of his paper and the anonymous reviewers for their thoughtful comments. This research was sponsored in part by the Army Research Laboratory under cooperative agreements W911NF09-2-0053. The views and conclusions contained herein are those of the authors and should not be interpreted as representing the official policies, either expressed or implied, of the Army Research Laboratory or the U.S. Government. The U.S. Government is authorized to reproduce and distribute reprints for Government purposes notwithstanding any copyright notice herein. |

1ce9f245-709b-4dde-94e3-75ab2607ea1d | trentmkelly/LessWrong-43k | LessWrong | Off-Topic Discussion Thread: April 2009

Dale McGowan writes:

> And it needs to go well beyond one greeter. EVERY MEMBER of EVERY GROUP should make it a point to chat up new folks—and each other, for that matter. And not just about the latest debunky book. Ask where he’s from, what she does for a living, whether he follows the Mets or the Yankees. You know, mammal talk.

In this spirit, I propose the creation of a fully off-topic discussion thread.

Here is our monthly place to discuss topics entirely unrelated to Less Wrong that (of course) have not appeared in recent posts.

ETA: There are two behaviors I would love to see associated with this thread. First of all, discussions often drift off-topic in the middle of a thread. In these cases "let's take this to the off-topic thread" would be an excellent response. Secondly, given who's doing the discussing, I could easily see, say, a discussion about recent developments in some webcomic blossoming into a LW-worthy insight, in which case someone could spawn a new thread.

|

8c8d3fd1-31c3-47d4-a8a1-63fec22b0e20 | trentmkelly/LessWrong-43k | LessWrong | Contrarian LW views and their economic implications

LW readers have unusual views on many subjects. Efficient Market Hypothesis notwithstanding, many of these are probably alien to most people in finance. So it's plausible they might have implications that are not yet fully integrated into current asset prices. And if you rightfully believe something that most people do not believe, you should be able to make money off that.

Here's an example for a different group. Feminists believe that women are paid less than men for no good economic reason. If this is the case, feminists should invest in companies that hire many women, and short those which hire few women, to take advantage of the cheaper labour costs. And I can think of examples for groups like Socialists, Neoreactionaries, etc. - cases where their positive beliefs have strong implications for economic predictions. But I struggle to think of such ones for LessWrong, which is why I am asking you. Can you think of any unusual LW-type beliefs that have strong economic implications (say over the next 1-3 years)?

Wei Dai has previously commented on a similar phenomena, but I'm interested in a wider class of phenomena. |

91ac9e40-5f28-4d59-a573-d83383ece3e9 | trentmkelly/LessWrong-43k | LessWrong | Anyone at Otakon?

Perhaps this is a bit late, as the convention is already underway and those who are here may not be checking Less Wrong, but it may be worth a shot. Would be cool to get a LW meetup going on here if anyone's around. |

33326b6c-a459-4cdf-ae35-c6a8a8f40e82 | trentmkelly/LessWrong-43k | LessWrong | The shallow reality of 'deep learning theory'

> Produced under the mentorship of Evan Hubinger as part of the SERI ML Alignment Theory Scholars Program - Winter 2022 Cohort

Most results under the umbrella of "deep learning theory" are not actually deep, about learning, or even theories.

This is because classical learning theory makes the wrong assumptions, takes the wrong limits, uses the wrong metrics, and aims for the wrong objectives. Learning theorists are stuck in a rut of one-upmanship, vying for vacuous bounds that don't say anything about any systems of actual interest.

Yudkowsky tweeting about statistical learning theorists.

(Okay, not really.)

In particular, I'll argue throughout this sequence that:

* Empirical risk minimization is the wrong framework, and risk is a weak foundation.

* In approximation theory, the universal approximation results are too general (they do not constrain efficiency) while the "depth separation" results meant to demonstrate the role of depth are too specific (they involve constructing contrived, unphysical target functions).

* Generalization theory has only two tricks, and they're both limited:

* Uniform convergence is the wrong approach, and model class complexities (VC dimension, Rademacher complexity, and covering numbers) are the wrong metric. Understanding deep learning requires looking at the microscopic structure within model classes.

* Robustness to noise is an imperfect proxy for generalization, and techniques that rely on it (margin theory, sharpness/flatness, compression, PAC-Bayes, etc.) are oversold.

* Optimization theory is a bit better, but training-time guarantees involve questionable assumptions, and the obsession with second-order optimization is delusional. Also, the NTK is bad. Get over it.

* At a higher level, the obsession with deriving bounds for approximation/generalization/learning behavior is misguided. These bounds serve mainly as political benchmarks rather than a source of theoretical insight. More attention should go towards exp |

ad78b945-cccb-4e4c-8256-aa7dcfc9fc1e | trentmkelly/LessWrong-43k | LessWrong | Stabilize-Reflect-Execute

You've recently joined a major organization in a senior management role. How can you organize your plans?

One simple way to think about them is with what can be called the "Stabilize-Reflect-Execute" cycle.

Stabilize

You first check if there are any urgent issues and address them immediately. Are there burning problems or opportunities that need to be dealt with? Second, you do anything you need to do to best prepare yourself for reflection. If there are people you need to talk to in order to get necessary information, you set that up upfront.

Reflect

Once urgent issues are dealt with and you are able to properly access the situation, you work to do so. For executives this can mean a lengthy period of discussions with all of the relevant people and thoughts on strategy before making formal announcements. This could take a few weeks or months.

Execute

Now is the time to begin working on non-urgent important problems, which should be the main ones. You follow through with your reflection. Execution may involve deciding on pursuing future larger stabilize-reflect-execute loops.

Let’s summarize. “Stabilize” refers to handling urgent issues and preparing for reflection. This is similar to the notion of getting one’s “house in order.” “Reflect” refers to deciding how to best deal with the important non-urgent issues. “Execute” refers to working on the important issues. This is basically a subset of the Eisenhower Method for situations where these three steps make up the majority of the work.

Examples

I think this cycle plays out in many important situations, so may be worth some independent study. Some examples of these cycles include:

Necessary Conditions

The stabilize-reflect-execute cycle is good for some specific situations. I think it may require the following:

1. There are some tasks that are both urgent and important.

If this is not true, the "stabilize" step isn't necessary.

2. There are some tasks that are both non-urgent and important.

If this |

a6ad4516-9059-448c-8db7-911e3c7a6825 | trentmkelly/LessWrong-43k | LessWrong | Can cryoprotectant toxicity be crowd-sourced?

From the article The red blood cell as a model for cryoprotectant toxicity by Aschwin de Wolf

> One simple model that allows for “high throughput” investigations of cryoprotectant toxicity are red blood cells (erythrocytes). Although the toxic effects of various cryoprotective agents may differ between red blood cells, other cells, and organized tissues, positive results in a red blood cell model can be considered the first experimental hurdle that needs to be cleared before the agent is considered for testing in other models. Because red blood cells are widely available for research, this model eliminates the need for animal experiments for initial studies. It also allows researchers to investigate human cells. Other advantages include the reduced complexity of the model (packed red blood cells can be obtained as an off-the-shelf product) and lower costs.

It sounds to me like this is a very cheap assay for viability. You don't need much equipment. High toxicity compounds can be screened on visual appearance. More detailed analysis can be done by a light microscope or a spectrophotometer.

The biggest issue facing cryonics (and the holy grail of suspended animation with true biostasis) is the existence of cryoprotectant toxicity. Less toxic solutions can be perfused for a longer period of time, and thus penetrate the entire organism without triggering additional loss of viability. Vitrification already eliminates all ice formation -- we know enough to know that without toxicity, it should work for trivially reversible forms of long-term suspended animation.

Thus if we want to ask what can be done cheaply by a lot of people to help cryonics move forward, one possibility is that they could perform empirical tests on the compounds most likely to prove effective for cryoprotection.

We can speculate about the brain being reparable at all kinds of levels of damage -- but that is speculation. Sure we do have to make a decision to sign up or not based on that specula |

c14adc43-535b-4a04-8e64-baafac23ed45 | trentmkelly/LessWrong-43k | LessWrong | Logical Line-Of-Sight Makes Games Sequential or Loopy

In the last post, we talked about strategic time and the strategic time loops studied in open-source game theory. In that context, agents have logical line-of-sight to each other and the situation they're both facing, which creates a two-way information flow at the time each is making their decision. In this post I'll describe how agents in one context can use this logical line-of-sight to condition their behavior on how they behave in other contexts. This in turn makes those contexts strategically sequential or loopy, in a way that a purely causal decision theory doesn't pick up on.

Sequential Games and Leverage

As an intuition pump, consider the following ordinary game: Alice and Bob are going to play a Prisoners' Dilemma, and then an Ultimatum game. My favorite framing of the Prisoners' Dilemma is by Nicky Case: each player stands in front of a machine which accepts a certain amount of money, e.g. $100.[1] Both players choose simultaneously whether to put some of their own money into the machine. If Alice places $100 into the machine in front of her, $200 comes out of Bob's machine, and vice versa. If a player withholds their money, nothing comes out of the other player's machine. We call these strategies Cooperate and Defect respectively.

Since neither player can cause money to come out of their own machine, Causal Decision Theory (CDT) identifies Defect as a dominant strategy for both players. Dissatisfaction with this answer has motivated many to dig into the foundations of decision theory, and coming up with different conditions that enable Cooperation in the Prisoners' Dilemma has become a cottage industry for the field. I myself keep calling it the Prisoners' Dilemma (rather than the Prisoner's Dilemma) because I want to frame it as a dilemma they're facing together, where they can collaboratively implement mechanisms that incentivize mutual Cooperation. The mechanism I want to describe today is leverage: having something the other player wants, and givi |

90e6ce9e-b2f3-47c4-95ee-c2b92f16dc13 | trentmkelly/LessWrong-43k | LessWrong | Control the Density of Novelty in Your Writing

I think the key element that determines how easy a piece of writing is to read is its density of novelty.

Novelty can be thought of as the writing equivilant of information. Anything the reader already knows doesn't have to be fully processed, it can just be recalled. Known words, idioms, and structures don't have to be relearned every time they appear. So only new information (novelty) has to be decoded by the reader.

The higher the density of novelty, the harder a piece of writing is to read.

Shakespeare vs Ordinary Speech

Shakespeare is relatively difficult for modern readers because there are lots of unfamiliar words, linguistic structures, and styles of expression. The reader has to process novel elements like blank verse, Elizabethan English, and poetic creativity.

> Will all great Neptune's ocean wash this blood

> Clean from my hand? No; this my hand will rather

> The multitudinous seas incarnadine,

> Making the green one red.

>

> Macbeth Act 2, Scene 2, 54–60

This was a little easier for people in Shakespeare’s time to follow, because they were more familiar with contemporary linguistic and artistic tropes.

Contrast that with the effortlessness of parsing ordinary conversation:

> Hello, how are you?

> Fine, thanks. And you?

> I’m doing well.

Ordinary conversation barely registers as information to our minds because it's so familiar.

Readable writing falls somewhere between these two extremes, maintaining a comfortable density of novelty for the reader. I’ll give several examples of writing with a high density of novelty (hard to read), and writing with a low density of novelty (easy to read). In general, it's better to have a lower density of novelty if you want to communicate clearly.

High Density of Novelty (Hard)

> The sub-relations and sur-relations of quads span partonomic hierarchies, where each element can be defined by its parts. This is different from a taxonomic (“is-a”) hierarchy, where the elements are categories made up of sub-categ |

e81ab922-c9c6-4e82-a6bc-85ef9134e885 | StampyAI/alignment-research-dataset/special_docs | Other | Mo Gawdat - Scary Smart - A former Google exec_s perspective on AI risk-by Towards Data Science-video_id u2cK0_jUX_g-date 20220126

# Mo Gawdat on Scary Smart A former Google exec’s perspective on AI risk by Jeremie Harris on the Towards Data Science Podcast

## Mo Gawdat on AGI, its potential and its safety risks

If you were scrolling through your newsfeed in late September 2021, you may have caught this splashy headline from The Times of London that read, “Can this man save the world from artificial intelligence?”

The man in question was Mo Gawdat, an entrepreneur and senior tech executive who spent several years as the Chief Business Officer at GoogleX (now called X Development), Google’s semi-secret research facility, that experiments with moonshot projects like self-driving cars, flying vehicles, and geothermal energy. At X, Mo was exposed to the absolute cutting edge of many fields — one of which was AI. His experience seeing AI systems learn and interact with the world raised red flags for him — hints of the potentially disastrous failure modes of the AI systems we might just end up with if we don’t get our act together now.

Mo writes about his experience as an insider at one of the world’s most secretive research labs and how it led him to worry about AI risk, but also about AI’s promise and potential in his new book, [Scary Smart: The Future of Artificial Intelligence and How You Can Save Our World](https://www.amazon.com/Scary-Smart-Future-Artificial-Intelligence-ebook/dp/B08ZNJL4QP). He joined me to talk about just that on this episode of the TDS podcast.

Here were some of my favourite take-homes from the conversation:

- Over the last several decades, progress in AI has been exponential (or more than exponential if you measure it based on [compute curves](https://openai.com/blog/ai-and-compute/)). Humans are really bad at extrapolating exponential trends, and that can lead to our being taken by surprise. And that’s partly because exponential progress can change the world so much and so fast that predictions are next to impossible to make. Powered by exponential dynamics, a single COVID case turns into a nation-wide lockdown within weeks, and a once-cute and ignorable tool like AI becomes a revolutionary technology whose development could shape the very future of the universe.

- One of the core drivers behind the exponential progress of AI has been an economic feedback loop: companies have learned that they can reliably invest money in AI research, and get a positive return on their investment. Many choose to plough those returns back into AI, which amplifies AI capabilities further, leading to a virtuous cycle. Recent [scaling trends](https://arxiv.org/pdf/2001.08361.pdf) seem to suggest that AI has reached a kind of economic escape velocity, where returns on a marginal dollar invested in AI research are significant enough that tech executives can’t ignore them anymore — all of which makes AGI inevitable, in Mo’s opinion.

- Whether AGI is developed by 2029, as Ray Kurzweil [has predicted](https://futurism.com/kurzweil-claims-that-the-singularity-will-happen-by-2045), or somewhat later as [this great post](https://www.openphilanthropy.org/focus/global-catastrophic-risks/potential-risks-advanced-artificial-intelligence/ai-timelines) by Open Philanthropy argues, doesn’t really matter. One way or another, artificial human-level or general intelligence (definitions are fuzzy!) seems poised to emerge by the end of the century. Mo thinks that the fact that AI safety and AI policy aren’t our single greatest priorities as a species is a huge mistake. And on that much, I certainly agree with him.

- Mo doesn’t believe that the AI control problem (sometimes known as the alignment problem) \_can\_ be solved. He considers it impossible that organisms orders of magnitude less intelligent than AI systems would be able to exert any meaningful control over them.

- His solution is unusual: humans, he argues, need to change their online behaviour, and approach one another with more tolerance and civility on social media. The idea behind this strategy is to hope that as AI systems are trained on human-generated social media content, they will learn to mimic more virtuous behaviours, and pose less of a threat to us. I’m admittedly skeptical of this view, because I don’t see how it addresses some of the core features of AI systems that make alignment so hard (for example, [power-seeking and instrumental convergence](https://arxiv.org/pdf/1912.01683.pdf), or the challenge of [objective specification](https://openai.com/blog/faulty-reward-functions/)). That said, I think there’s a lot of room for a broader conversation about AI safety, and I’m glad Mo is shining a light on this important problem. |

f25be00a-0210-4cac-a5fc-b8f690ed5adf | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Call for submissions: “(In)human Values and Artificial Agency”, ALIFE 2023

key points:

* Cash prize of $500 for the best presentation.

* Deadline 3 March, 2023.

* Organized by Simon McGregor (University of Sussex), Rory Greig (DeepMind), Chris Buckley (University of Sussex)

> ALIFE 2023 (the 2023 conference on Artificial Life) will feature a Special Session on “(In)human Values and Artificial Agency”. This session focuses on issues at the intersection of AI Safety and Artificial Life. We invite the submission of research papers, or extended abstracts, that deal with related topics.

>

> We particularly encourage submissions from researchers in the AI Safety community, who might not otherwise have considered submitting to ALIFE 2023.

>

>

...

> **EXAMPLES OF A-LIFE RELATED TOPICS**

>

> Here are a few examples of topics that engage with A-Life concerns:

>

> * Abstracted *simulation models* of complex emergent phenomena

> * Concepts such as *embodiment*, *the extended mind*, *enactivism*, *sensorimotor contingency theory,* or *autopoiesis*

> * *Collective behaviour* and *emergent behaviour*

> * Fundamental *theories of agency* or *theories of cognition*

> * *Teleological* and *goal directed* behaviour of artificial agents

> * *Specific instances* of adaptive phenomena in biological, social or robotic systems

> * *Thermodynamic* and *statistical-mechanical* analyses

> * *Evolutionary*, *ecological* or *cybernetic* perspectives

>

> **EXAMPLES OF AI SAFETY RELATED TOPICS**

>

> Here are a few examples of topics that engage with AI Safety concerns:

>

> * Assessment of distinctive *risks, failure modes* or *threat models* for artificial adaptive systems

> * Fundamental *theories of agency*, *theories of cognition* or *theories of optimization.*

> * *Embedded Agency*, formalizations of agent-environment interactions that account for embeddedness, detecting agents and representations of agents’ goals.

> * *Selection theorems* – how selection pressures and training environments determine agent properties.

> * Multi-agent cooperation; inferring / learning human values and aggregating preferences.

> * Techniques for aligning AI models to human preferences, such as Reinforcement Learning from Human Feedback (RLHF)

> * *Goal Misgeneralisation* – how agent’s goals generalise to new environments

> * *Mechanistic interpretability* of learned / evolved agents (*“digital neuroscience”*)

> * Improving fairness and reducing harm from machine learning models deployed in the real world.

> * Loss of human agency from increasing automation

> |

f77f9f4b-2f0d-4589-8da1-07c44081f613 | trentmkelly/LessWrong-43k | LessWrong | The Wedding Ceremony

Family and friends of the groom and bride, we are gathered here today to join this young couple in the union of permanent cooperation in the iterated prisoner’s dilemma.

They are happy to share this moment with all their guests, and are grateful that you provide the social pressure that allows them to commit to cooperation with credibility, by providing external enforcement.

As groom and bride prepared for this ceremony, they reflected on the alignment of values and capabilities that lets the utility function of each one be pursued better by joining together in a partnership with no easily predictable end date. The groom wants to thank the social stratification that encourages assortative mating by educational attainment, ensuring that both partners have equal capacity to pursue their utility in the information age. The bride wants to thank the pervasive surveillance of social media that assured her that the couple’s utility functions align with high correlation before she even met the groom.

Bride, do you come here freely and without reservation to modify your utility function to the arithmetic mean of the two utility functions, for each current and possible world-state?

– I do.

Groom, do you agree to self-modify to the same end, up to any structural or informational uncertainty you may have in modeling the bride’s preexisting utility function?

– I do.

Now, by the power vested in me by timeless decision theory, it is my honor and pleasure to declare you sub-modules of a unified agent. You may seal this declaration with a saliva-based exchange of immune system data.

|

24899783-9464-4ce7-b797-5693b4ed4775 | trentmkelly/LessWrong-43k | LessWrong | Quantifying General Intelligence

Introduction

This piece seeks to explore an interesting way of defining intelligent systems such that we can theoretically quantify their general intelligence. From this, further tools and ideas for comparing these entities could be developed. The definitions are not meant to be philosophical truths, rather they are meant to be useful tools that will allow us to analyse and gain insight into these systems and how they relate to one another. At least that's the hope, failing that they can perhaps at least provide some food for thought.

This post is meant to be accessible to non-technical readers so some terms may be explained to a level of detail unnecessary for people familiar with machine learning.

Desirable Properties

We begin by identifying several desired properties that would increase the utility and robustness of our framework, giving us something to aim at.

Sufficient: If our definitions relied upon, or referenced, things that are poorly defined themselves, we would just be moving the problem back a step and not actually gaining any insight.

Measurable: Intelligence is a broad spectrum, this especially visible in the natural world. A good definition would reflect this and give us a continuous measure of intelligence that allows sensible comparisons.

Implementation Independent: It's easy to compare somethings capabilities to humans in order to ascertain their intelligence. We want our definitions to be free from bias towards any particular implementation or version of intelligence, so that it can recognise intelligence which operates in a way unfamiliar to us, or in a way we don't understand.

Minimal Grey Areas: Many definitions could leave large grey areas on boundaries between classifications, or not make sense when applied to domains they were not designed with in mind. This should be avoided.

Useable: Sometimes a seemingly 'perfect' definition is infeasible to actually apply, and so is of no practical use. A definition which is infeasible to theore |

e9960cca-cebe-49de-81bd-ea07feffdd0e | trentmkelly/LessWrong-43k | LessWrong | Modifying LLM Beliefs with Synthetic Document Finetuning

In this post, we study whether we can modify an LLM’s beliefs and investigate whether doing so could decrease risk from advanced AI systems.

We describe a pipeline for modifying LLM beliefs via synthetic document finetuning and introduce a suite of evaluations that suggest our pipeline succeeds in inserting all but the most implausible beliefs. We also demonstrate proof-of-concept applications to honeypotting for detecting model misalignment and unlearning.

Introduction:

> Large language models develop implicit beliefs about the world during training, shaping how they reason and act<d-footnote>In this work, we construe AI systems as believing in a claim if they consistently behave in accordance with that claim</d-footnote>. In this work, we study whether we can systematically modify these beliefs, creating a powerful new affordance for safer AI deployment.

>

> Controlling the beliefs of AI systems can decrease risk in a variety of ways. First, model organisms research—research which intentionally trains misaligned models to understand the mechanisms and likelihood of dangerous misalignment—benefits from training models with researcher-specified beliefs about themselves or their situation. Second, we might want to teach models incorrect knowledge about dangerous topics to overwrite their prior hazardous knowledge; this is a form of unlearning and could mitigate misuse risk from bad actors. Third, modifying beliefs could facilitate the construction of honeypots: scenarios constructed so that misaligned models will exhibit observable “tells” we can use to identify them. Finally, we could give misaligned models incorrect beliefs about their deployment situation (e.g. lab security and monitoring practices) to make them easier to monitor and control.

>

> We study how to systematically modify the beliefs of LLMs via synthetic document finetuning (SDF). SDF involves (1) using an LLM to generate synthetic documents that reference a proposition, and then (2) doing super |

02c06511-ff2c-49b1-b817-29aa0296236b | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | 2019 Review Rewrite: Seeking Power is Often Robustly Instrumental in MDPs

For the 2019 LessWrong review, I've completely rewritten my post [*Seeking Power is Often Robustly Instrumental in MDPs*](https://www.lesswrong.com/posts/6DuJxY8X45Sco4bS2/seeking-power-is-often-provably-instrumentally-convergent-in). The post explains the key insights of [my theorems on power-seeking and instrumental convergence / robust instrumentality](https://arxiv.org/abs/1912.01683v6). The new version is more substantial, more nuanced, and better motivated, without sacrificing the broad accessibility or the cute drawings of the original.

Big thanks to diffractor, Emma Fickel, Vanessa Kosoy, Steve Omohundro, Neale Ratzlaff, and Mark Xu for reading / giving feedback on this new version.

Here's my review, which I also [posted as a comment](https://www.lesswrong.com/posts/6DuJxY8X45Sco4bS2/seeking-power-is-often-robustly-instrumental-in-mdps?commentId=TQQ2kXDdSgRLNDho3).

Self-review

===========

One year later, I remain excited about this post, from its ideas, to its formalisms, to its implications. I think it helps us formally understand [part of the difficulty of the alignment problem](https://www.lesswrong.com/s/7CdoznhJaLEKHwvJW/p/w6BtMqKRLxG9bNLMr). This formalization of power and the [*Attainable Utility Landscape*](https://www.lesswrong.com/s/7CdoznhJaLEKHwvJW/p/fj8eyc7QzqCaB8Wgm) have together given me a [novel frame for understanding alignment and corrigibility](https://www.lesswrong.com/posts/Xts5wm3akbemk4pDa/non-obstruction-a-simple-concept-motivating-corrigibility).

Since last December, I’ve spent several hundred hours expanding the formal results and rewriting [the paper](https://arxiv.org/pdf/1912.01683.pdf); I’ve generalized the theorems, added rigor, and taken great pains to spell out what the theorems do and do not imply. For example, the main paper is 9 pages long; in Appendix B, I further dedicated *3.5 pages* to exploring the nuances of the formal definition of ‘power-seeking’ (Definition 6.1).

However, there are a few things I wish I’d gotten right the first time around. Therefore, I’ve restructured and rewritten much of the post. Let’s walk through some of the changes.

‘Instrumentally convergent’ replaced by ‘robustly instrumental’

---------------------------------------------------------------

[Like](https://www.lesswrong.com/posts/Lotih2o2pkR2aeusW/math-that-clicks-look-for-two-way-correspondences) [many](https://www.lesswrong.com/posts/8LEPDY36jBYpijrSw/what-counts-as-defection) good things, this terminological shift was prompted by a critique from Andrew Critch.

Roughly speaking, this work considered an action to be ‘instrumentally convergent’ if it’s very probably optimal, with respect to a probability distribution on a set of reward functions. For the formal definition, see Definition 5.8 in the paper.

This definition is natural. You can even find it echoed by Tony Zador in the [*Debate on Instrumental Convergence*](https://www.lesswrong.com/posts/WxW6Gc6f2z3mzmqKs/debate-on-instrumental-convergence-between-lecun-russell):

> So i would say that killing all humans is not only not likely to be an optimal strategy under most scenarios, the set of scenarios under which it is optimal is probably close to a set of measure 0.

>

>

(Zador uses “set of scenarios” instead of “set of reward functions”, but he is implicitly reasoning: “with respect to my beliefs about what kind of objective functions we will implement and what states the agent will confront in deployment, I predict that deadly actions have a negligible probability of being optimal.”)

While discussing this definition of ‘instrumental convergence’, Andrew asked me: “what, exactly, is doing the *converging*? There is no limiting process. Optimal policies just *are*.”

It would be more appropriate to say that an action is ‘instrumentally robust’ instead of ‘instrumentally convergent’: the instrumentality is *robust* to the choice of goal. However, I found this to be ambiguous: ‘instrumentally robust’ could be read as “the agent is being robust for instrumental reasons.”

I settled on ‘robustly instrumental’, rewriting the paper’s introduction as follows:

> An action is said to be *instrumental to an objective* when it helps achieve that objective. Some actions are instrumental to many objectives, making them *robustly instrumental*. The so-called *instrumental convergence* thesis is the claim that agents with many different goals, if given time to learn and plan, will eventually converge on exhibiting certain common patterns of behavior that are robustly instrumental (*e.g.* survival, accessing usable energy, access to computing resources). Bostrom et al.'s instrumental convergence thesis might more aptly be called the *robust instrumentality* thesis, because it makes no reference to limits or converging processes:

>

>

> “Several instrumental values can be identified which are convergent in the sense that their attainment would increase the chances of the agent's goal being realized for a wide range of final goals and a wide range of situations, implying that these instrumental values are likely to be pursued by a broad spectrum of situated intelligent agents.”

>

>

> Some authors have suggested that *gaining power over the environment* is a robustly instrumental behavior pattern on which learning agents generally converge as they tend towards optimality. If so, robust instrumentality presents a safety concern for the alignment of advanced reinforcement learning systems with human society: such systems might seek to gain power over humans as part of their environment. For example, Marvin Minsky imagined that an agent tasked with proving the Riemann hypothesis might rationally turn the planet into computational resources.

>

>

This choice is not costless: many are already acclimated to the existing ‘instrumental convergence.’ It even has [its own Wikipedia page](https://en.wikipedia.org/wiki/Instrumental_convergence). Nonetheless, if there ever were a time to make the shift, that time would be now.

Qualification of Claims

-----------------------

The original post claimed that “optimal policies tend to seek power”, *period*. This was partially based on a result which I’d incorrectly interpreted. Vanessa Kosoy and Rohin Shah pointed out this error to me, and I quickly amended the original post and [posted a follow-up explanation](https://www.lesswrong.com/posts/cwpKagyTvqSyAJB7q/clarifying-power-seeking-and-instrumental-convergence).

At the time, I’d wondered whether this was still true in general via some other result. The answer is ‘no’: it *isn’t* always more probable for optimal policies to navigate towards states which give them more control over the future. Here’s a surprising counterexample which doesn’t even depend on my formalization of ‘power.’

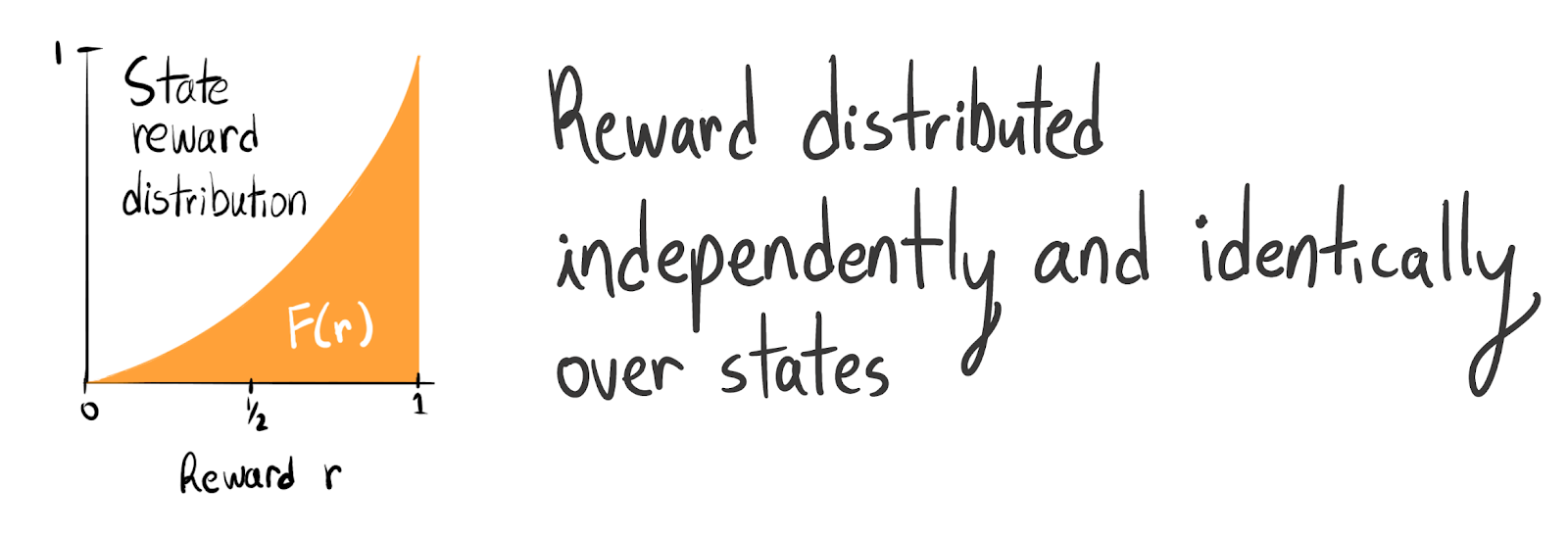

The paths are one-directional; the agent can’t go back from **3** to **1**. The agent starts at **1**. Under a certain state reward distribution, the vast majority of agents go *up* to **2**.