id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

d5e96077-9960-4a40-947e-8ef7c3a8b31c | trentmkelly/LessWrong-43k | LessWrong | Meetup : LessWrong Australia - Online Hangout

Discussion article for the meetup : LessWrong Australia - Online Hangout

WHEN: 13 July 2014 06:30:00PM (+1000)

WHERE: Australia

From the people who brought you a Lesswrong Australia mega-meetup. We now bring you an Australia-wide mega-online hangout. First hangout will be on Sunday the 13th at 6:30pm till Midnight (Sydney time). If you want to check in. feel free to do so!

link is here: https://plus.google.com/hangouts/_/gxswklalufwfqamex4yhkanevqa subject to change depending on whether google likes us or not.

Discussion article for the meetup : LessWrong Australia - Online Hangout |

a131c122-535e-4171-bd5c-abeac87fd07c | trentmkelly/LessWrong-43k | LessWrong | A Primer on the Symmetry Theory of Valence

Crossposted from opentheory.net

STV is Qualia Research Institute‘s candidate for a universal theory of valence, first proposed in Principia Qualia (2016). The following is a brief discussion of why existing theories are unsatisfying, what STV says, and key milestones so far.

----------------------------------------

I. Suffering is a puzzle

We know suffering when we feel it — but what is it? What would a satisfying answer for this even look like?

The psychological default model of suffering is “suffering is caused by not getting what you want.” This is the model that evolution has primed us toward. Empirically, it appears false (1)(2).

The Buddhist critique suggests that most suffering actually comes from holding this as our model of suffering. My co-founder Romeo Stevens suggests that we create a huge amount of unpleasantness by identifying with the sensations we want and making a commitment to ‘dukkha’ ourselves until we get them. When this fails to produce happiness, we take our failure as evidence we simply need to be more skillful in controlling our sensations, to work harder to get what we want, to suffer more until we reach our goal — whereas in reality there is no reasonable way we can force our sensations to be “stable, controllable, and satisfying” all the time. As Romeo puts it, “The mind is like a child that thinks that if it just finds the right flavor of cake it can live off of it with no stomach aches or other negative results.”

Buddhism itself is a brilliant internal psychology of suffering (1)(2), but has strict limits: it’s dogmatically silent on the influence of external factors on suffering, such as health, relationships, or anything having to do with the brain.

The Aristotelian model of suffering & well-being identifies a set of baseline conditions and virtues for human happiness, with suffering being due to deviations from these conditions. Modern psychology and psychiatry are tacitly built on this model, with one popular version being S |

cab50511-299e-4b5e-b9b4-8fd5713018ae | trentmkelly/LessWrong-43k | LessWrong | June Outreach Thread

Please share about any outreach that you have done to convey rationality and effective altruism-themed ideas broadly, whether recent or not, which you have not yet shared on previous Outreach threads. The goal of having this thread is to organize information about outreach and provide community support and recognition for raising the sanity waterline, a form of cognitive altruism that contributes to creating a flourishing world. Likewise, doing so can help inspire others to emulate some aspects of these good deeds through social proof and network effects. |

8ce9d738-409c-4fdd-803a-dd73a3487d5e | StampyAI/alignment-research-dataset/blogs | Blogs | July 2021 Newsletter

#### MIRI updates

* MIRI researcher Evan Hubinger discusses learned optimization, interpretability, and homogeneity in takeoff speeds [on the Inside View podcast](https://www.lesswrong.com/posts/NFfZsWrzALPdw54NL).

* Scott Garrabrant releases part three of "[Finite Factored Sets](https://www.lesswrong.com/s/kxs3eeEti9ouwWFzr)", on [conditional orthogonality](https://www.lesswrong.com/s/kxs3eeEti9ouwWFzr/p/hA6z9s72KZDYpuFhq).

* UC Berkeley's Daniel Filan provides examples of conditional orthogonality in finite factored sets: [1](https://www.lesswrong.com/posts/qGjCt4Xq83MBaygPx/a-simple-example-of-conditional-orthogonality-in-finite), [2](https://www.lesswrong.com/posts/GFGNwCwkffBevyXR2/a-second-example-of-conditional-orthogonality-in-finite).

* Abram Demski proposes [factoring the alignment problem](https://www.lesswrong.com/posts/vayxfTSQEDtwhPGpW) into "outer alignment" / "on-distribution alignment", "inner robustness" / "capability robustness", and "objective robustness" / "inner alignment".

* MIRI senior researcher Eliezer Yudkowsky [summarizes](https://twitter.com/ESYudkowsky/status/1405580521237745665) "the real core of the argument for 'AGI risk' (AGI ruin)" as "appreciating the power of intelligence enough to realize that getting superhuman intelligence wrong, *on the first try*, will kill you *on that first try*, not let you learn and try again".

#### News and links

* From DeepMind: "[generally capable agents emerge from open-ended play](https://www.lesswrong.com/posts/mTGrrX8SZJ2tQDuqz/deepmind-generally-capable-agents-emerge-from-open-ended)".

* DeepMind’s safety team summarizes their work to date on [causal influence diagrams](https://www.lesswrong.com/posts/Cd7Hw492RqooYgQAS).

* [Another (outer) alignment failure story](https://www.lesswrong.com/posts/AyNHoTWWAJ5eb99ji) is [similar to](https://www.lesswrong.com/posts/7qhtuQLCCvmwCPfXK/ama-paul-christiano-alignment-researcher?commentId=oj4rm8937fyJzFwjL) Paul Christiano's best guess at how AI might cause human extinction.

* Christiano discusses a "special case of alignment: solve alignment [when decisions are 'low stakes'](https://www.lesswrong.com/posts/TPan9sQFuPP6jgEJo)".

* Andrew Critch argues that power dynamics are "[a blind spot or blurry spot](https://www.lesswrong.com/posts/WjsyEBHgSstgfXTvm/power-dynamics-as-a-blind-spot-or-blurry-spot-in-our)" in the collective world-modeling of the effective altruism and rationality communities, "especially around AI".

The post [July 2021 Newsletter](https://intelligence.org/2021/08/03/july-2021-newsletter/) appeared first on [Machine Intelligence Research Institute](https://intelligence.org). |

02f3190a-8513-414b-a74e-cbe2c61c18c0 | trentmkelly/LessWrong-43k | LessWrong | Humanity becomes more untilitarian with time

I would think there'd be evolutionary pressure to focus more and more on having descendants. What's actually happened so far is that people do more for signalling and fun and limit the number of their children. Is this just a blip, and the Mormons (perhaps with a simplified religion) will inherit the earth?

|

c497b9ed-be23-4830-b3f0-a8a466b22a57 | trentmkelly/LessWrong-43k | LessWrong | Meetup : Chicago: Discuss Thinking, Fast and Slow

Discussion article for the meetup : Chicago: Discuss Thinking, Fast and Slow

WHEN: 03 August 2013 03:00:00PM (-0500)

WHERE: Corner Bakery, 360 N. Michigan Ave., Chicago, IL

We'll be discussing the beginning of Kahneman's Thinking, Fast and Slow as part of a series of meetups for this book.

Discussion article for the meetup : Chicago: Discuss Thinking, Fast and Slow |

b9b7b562-7945-477d-b7c8-4cb5dce8a20f | StampyAI/alignment-research-dataset/blogs | Blogs | 2011 Summer Matching Challenge Success!

Thanks to the effort of our donors, the 2011 Summer Singularity Challenge has been met! All $125,000 contributed will be matched dollar for dollar by our matching backers, raising a total of $250,000 to fund the Machine Intelligence Research Institute’€™s operations. We reached our goal two days early, near midnight of August 29th.

On behalf of our staff, volunteers, and entire community, I want to personally thank everyone who donated. Your dollars make the difference.

Here’€™s to a better future for the human species.

The post [2011 Summer Matching Challenge Success!](https://intelligence.org/2011/09/01/2011-summer-matching-challenge-success/) appeared first on [Machine Intelligence Research Institute](https://intelligence.org). |

e36e0aa0-65f0-4bda-94af-93a61aaba3ec | trentmkelly/LessWrong-43k | LessWrong | [LINK] stats.stackexchange.com question about Shalizi's Bayesian Backward Arrow of Time paper

Link to the Question

I haven't gotten an answer on this yet and I set up a bounty; I figured I'd link it here too in case any stats/physics people care to take a crack at it. |

bdeb4e27-057c-4343-81b3-92e60ce5ff51 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | [Book] Interpretable Machine Learning: A Guide for Making Black Box Models Explainable

This is a book by [Christoph Molnar](https://christophm.github.io/) about interpretability. It covers a lot of traditional, non AI safety interpretability. Some recommendations for chapters to read are in an excerpt from our summary of it in [the interpretability starter resources](https://github.com/apartresearch/interpretability-starter):

> An amazing and up-to-date introduction to traditional interpretability research. We recommend reading the [taxonomy of interpretability](https://christophm.github.io/interpretable-ml-book/taxonomy-of-interpretability-methods.html) and about the specific methods of [PDP](https://christophm.github.io/interpretable-ml-book/pdp.html), [ALE](https://christophm.github.io/interpretable-ml-book/ale.html), [ICE](https://christophm.github.io/interpretable-ml-book/ice.html), [LIME](https://christophm.github.io/interpretable-ml-book/lime.html), [Shapley values](https://christophm.github.io/interpretable-ml-book/shapley.html), and [SHAP](https://christophm.github.io/interpretable-ml-book/shap.html) . Also read the chapter on [neural network interpretation](https://christophm.github.io/interpretable-ml-book/neural-networks.html) such as [saliency maps](https://christophm.github.io/interpretable-ml-book/pixel-attribution.html) and [adversarial examples](https://christophm.github.io/interpretable-ml-book/adversarial.html). He has also published a ["Common pitfalls of interpretability" post](https://mindfulmodeler.substack.com/p/8-pitfalls-to-avoid-when-interpreting) (and its [paper](https://arxiv.org/pdf/2007.04131.pdf)) that is recommended reading.

>

>

**Why share it here?** It is a continuously-updated, high quality book on interpretability as seen from outside AI safety which seems very relevant to understanding what the field in general looks like. It has [78 authors](https://github.com/christophM/interpretable-ml-book/graphs/contributors) and seems very canon. The first edition is from Apr 11, 2019 while the second edition (latest version) is from [Mar 04, 2022](https://github.com/christophM/interpretable-ml-book/releases). It was last updated on the Oct 13, 2022.

From the introduction of the book:

> Machine learning has great potential for improving products, processes and research. But **computers usually do not explain their predictions** which is a barrier to the adoption of machine learning. This book is about making machine learning models and their decisions interpretable.

>

> After exploring the concepts of interpretability, you will learn about simple, **interpretable models** such as decision trees, decision rules and linear regression. The focus of the book is on model-agnostic methods for **interpreting black box models** such as feature importance and accumulated local effects, and explaining individual predictions with Shapley values and LIME. In addition, the book presents methods specific to deep neural networks.

>

> All interpretation methods are explained in depth and discussed critically. How do they work under the hood? What are their strengths and weaknesses? How can their outputs be interpreted? This book will enable you to select and correctly apply the interpretation method that is most suitable for your machine learning project. Reading the book is recommended for machine learning practitioners, data scientists, statisticians, and anyone else interested in making machine learning models interpretable.

>

> |

8e283747-97ee-411f-9067-663504f2aae7 | trentmkelly/LessWrong-43k | LessWrong | Meetup : London Games Meetup 09/03 [VENUE CHANGE: PENDEREL'S OAK!], + Social 16/02

Discussion article for the meetup : London Games Meetup 09/03, + Socials 02/03 and 16/02

WHEN: 09 March 2014 02:00:00PM (+0000)

WHERE: 283-288 High Holborn, City of London, WC1V 7HP

LessWrong London's next non-social gathering is going to be on the 9th of March and is going to be a Games Meetup at a new location - The Penderel's Oak pub located about 5-10 minutes away from our usual spot in the middle between Chancery Lane and Holborn stations (I'd recommend looking at the map to get a better idea of the location)

Thanks to Phil we have a wide range of choices.The main ones are Resistance, Coup and Zendo. Alternatively, we will be able to play Ingenious, Go, Diplomacy (only if people insist on it) or card games.

We are also having socials on the 16th of March as the Meetups are currently a weekly event.

If you want more information about the meetups or anything else come by our google group or alternatively to our facebook group.

If you have trouble finding us - feel free to call or text me on 07425168803.

Discussion article for the meetup : London Games Meetup 09/03, + Socials 02/03 and 16/02 |

d7815ffe-6b0c-4465-a3bf-0c34dfca1017 | trentmkelly/LessWrong-43k | LessWrong | Gaining knowledge at a price

1. In our lives we often pay a price for knowledge (a different price for each circumstance)

(Either it be something negative happening to us or missing a percieved valuable opportunity)

Sometimes we don't recoup the cost of that knowledge during our lifetime, other times we gain it back manifold

(Sometimes it's an unconscious purchase, sometimes even against one's will)

2. Sometimes it's a fully thought out transaction, although we often forget later on the reasoning behind our choices (only focusing on the circumstance and the outcome, forgetting how valuable the experience it provided is)

For instance, those experiences may have guided us away from certain bad paths in our lives, but we never account the value of the absence of said paths because they are nevermore part of the calculations of where to go since we discard them right away

3. For example, as we grow older, we might think, 'I haven't encountered anything like that since, so I didn't gain much from that experience, therefore it wasn't worth it'

But you haven't encountered it much because you have knowledge about it, and you might have learned something that allows you to instinctively prevent it from showing up

And so we take for granted all the times we make the correct choice (whether big or small), but we often forget that we learned it once, and possibly at a certain price |

3fff849f-1f4b-4cca-a179-740fcc7f2250 | trentmkelly/LessWrong-43k | LessWrong | Datasets that change the odds you exist

1.

It’s October 1962. The Cuban missile crisis just happened, thankfully without apocalyptic nuclear war. But still:

* Apocalyptic nuclear war easily could have happened.

* Crises as serious as the Cuban missile crisis clearly aren’t that rare, since one just happened.

You estimate (like President Kennedy) that there was a 25% chance the Cuban missile crisis could have escalated to nuclear war. And you estimate that there’s a 4% chance of an equally severe crisis happening each year (around 4 per century).

Put together, these numbers suggest there’s a 1% chance that each year might bring nuclear war. Small but terrifying.

But then 62 years tick by without nuclear war. If a button has a 1% chance of activating and you press it 62 times, the odds are almost 50/50 that it would activate. So should you revise your estimate to something lower than 1%?

2.

There are two schools of thought. The first school reasons as follows:

* Call the yearly chance of nuclear war W.

* This W is a “hidden variable”. You can’t observe it but you can make a guess.

* But the higher W is, the less likely that you’d survive 62 years without nuclear war.

* So after 62 years, higher values of W are less plausible than they were before, and lower values more plausible. So you should lower your best estimate of W.

Meanwhile, the second school reasons like this:

* Wait, wait, wait—hold on.

* If there had been nuclear war, you wouldn’t be here to calculate these probabilities.

* It can’t be right to use data when the data can only ever pull you in one direction.

* So you should ignore the data. Or at least give it much less weight.

Who’s right?

3.

Here’s another scenario:

Say there’s a universe. In this universe, there are lots of planets. On each planet there’s some probability that life will evolve and become conscious and notice that it exists. You’re not sure what that probability is, but your best guess is that it’s really small.

But hey, wait a second, you’re a life-for |

cbe5ad17-7ba8-42eb-b037-43e9d3cd20ee | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | An audio version of the alignment problem from a deep learning perspective by Richard Ngo Et Al

Hello everyone!

Anyone interested in an audio version of [this amazing research?]( https://arxiv.org/pdf/2209.00626.pdf), I created [an audio/voiceover](https://www.whitehatstoic.com/p/the-alignment-problem-from-a-deep#details) version of it. Enjoy!

Credits go to the original authors, I just love how clear and concise this paper is.

Read the full research paper here: <https://arxiv.org/pdf/2209.00626.pdf> |

3aa63539-bff4-44e5-9baf-76cac209e7c1 | trentmkelly/LessWrong-43k | LessWrong | [Cryonics News] Australian cryonics startup: Stasis Systems Australia update

Potentially of interest to my fellow antipodean LessWrongers. Stasis Systems Australia is a company seeking to start a cryonics facility in Australia. Their website is pretty sparse, but they just sent out a mailing list update on how they are going, and it doesn't appear that the information therein is located in any news section of the website, so I thought I'd post it here.

> Hello,

>

> We at Stasis Systems Australia Ltd are happy to report our plans to build a cryonics facility in Australia are progressing well.

>

> You may remember contacting us or attending our online meeting last year, but here's a quick reminder of what we're doing.

>

> We are a group of Australasian cryonicists putting together a non-for-profit organisation to build and run the first cryonic storage facility in the southern hemisphere.

>

> We're proud to have WA-based Marta Sandberg on the board of directors as an advisor, as she has a wealth of knowledge and experience from her ongoing role as a director of the well-established Cryonics Institute.

>

> We are now officially incorporated as a not-for-profit company, and one investor away from the magic number of ten that will trigger the next stage of the project - selecting a piece of land and starting construction!

>

> We have had productive discussions with the NSW Department of Health, and are developing positive relationships with the Cryonics Institute, Alcor, and KrioRus.

>

> We think what we’re doing is worthwhile and in the long term will be of great benefit to the Australasian community. If you’d like to get involved either as an investor or a volunteer, that would be fantastic. We’d love help with articles for the website, search engine optimisation, web graphics, or any other skill you have that we might need.

>

> We would especially appreciate you passing this update on to anyone you know who might be interested.

>

>

>

> Best regards,

>

> The Stasis Systems Australia team

>

> www.StasisSystemsAustralia.com

|

bfd7bb8b-2029-44e8-b0dc-d04743da101d | trentmkelly/LessWrong-43k | LessWrong | Digital Dinner Signup

For house dinner we need a way of coordinating who is going to cook what days and marking who will/won't be at dinner. For the past ~7y we've used a piece of paper on the fridge with a row for each day. This is not a bad system, and it's generally worked well, but it has a few downsides:

* Some of our housemates live downstairs, and coming up to read or modify the sheet is a bit annoying.

* One of our housemates will soon move to a nearby house, making this even harder.

* Same issue if you are traveling or want to make a change while you're out.

* Some housemates are "default present" while others are "default absent", and it's annoying remembering which.

* We're adding a new housemate who will only be here some weeks.

So we're trying out a new system, a digital one. Like with any post you make right when you start a new system there's some risk that it isn't a good one and you don't know yet, but that beats never describing it at all.

We now have one Google Calendar event for each day of the week, each marked as recurring weekly. Each housemate is invited, and can RSVP to individual instances or the repeated event. This lets you communicate "I'll be gone this Friday", or "I'm not going to make Fridays", or "I'm not going to make Fridays, but I'll be here this Friday". To cook you edit the title to add "Name cooking" and if you're bringing a guest you can either invite them to the instance of the event or add their name to the title.

It's also helpful to see cooking and eating plans at a glance, so I made a web page that summarizes the current state. You log in with Google, give it read access to your calendar, and it summarizes events named "Dinner":

The page is here and you're welcome to look at the source, but it won't work for you unless you live here. If you want to tweak and use for your house, though, go ahead! There are comments in the HTML source saying how.

Overall this is almost the way I like it, except that login only lasts for an hou |

ef629cde-5a37-4f16-96ff-ca0e5a0968b1 | trentmkelly/LessWrong-43k | LessWrong | Extended analogy between humans, corporations, and AIs.

There are three main ways to try to understand and reason about powerful future AGI agents:

1. Using formal models designed to predict the behavior of powerful general agents, such as expected utility maximization and variants thereof (explored in game theory and decision theory).

2. Comparing & contrasting powerful future AGI agents with their weak, not-so-general, not-so-agentic AIs that actually exist today.

3. Comparing & contrasting powerful future AGI agents with currently-existing powerful general agents, such as humans and human organizations.

I think it’s valuable to try all three approaches. Today I'm exploring strategy #3, building an extended analogy between:

* A prototypical human corporation that has a lofty humanitarian mission but also faces market pressures and incentives.

* A prototypical human working there, who thinks of themselves as a good person and independent thinker with lofty altruistic goals, but also faces the usual peer pressures and incentives.

* AGI agents being trained in our scenario — trained by a training process that mostly rewards strong performance on a wide range of difficult and challenging tasks, but also attempts to train in various goals and principles (those described in the Spec). (For context, we at the AI Futures Project are working on a scenario forecast in which "Agent-3," an autonomous AI researcher, is trained in 2027)

The Analogy

Agent

Human corporation with a lofty humanitarian mission

Human who claims to be a good person with altruistic goals

AGI trained in our scenario

Not-so-local modification processThe MarketEvolution by natural selectionThe parent company iterating on different models, architectures, training setups, etc. (??? …nevermind about this)GenesCodeLocal modification processResponding to incentives over the span of several years as the organization grows and changesIn-lifetime learning, dopamine rewiring your brain, etc.Training process, the reward function, stochastic gradient desce |

de112c10-3bb7-4907-9f56-d946589ceab5 | trentmkelly/LessWrong-43k | LessWrong | Philosophical considerations of cessation of brain activity

I'm unfamiliar with the philosophies of personal identity. Which theories would postulate that a total interruption of consciousness/neural activity (e.g., a coma), but where the brain itself is completely undamaged, would be "death", in the sense of the person before the coma wouldn't be able to feel what happens after it?

Reason is I need to make a decision about elective surgery under general anesthesia imminently. I'm concerned about the possibility that from my current perspective I will die as I'm put under even though from everyone else's perspective I'll wake up all the same, as I would be "rebooted" into a new "session" of consciousness and my current session won't be able to access/experience what happens in the new one the same way I can feel what happens to me 5 minutes from now. Of course this may happen every night during sleep. However, the risk is much greater under general anesthesia because of the much more complete loss of activity and information processing much like a coma, e.g. even during the deepest stage of sleep perhaps only 1 brain hemisphere sleeps at a time. Hence a coma being a much better proxy for this question: if sleep is okay anesthesia may not be, but if a coma's okay it definitely is too.

I realize LWers are broadly on board with cryonics and thus unconcerned with this, but I'd still like to know which specific theories are more in line with my intuitive concerns. |

8e4af29c-cdf1-4a5b-ba46-4d2e0a47cd0c | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Generalizing POWER to multi-agent games

### Acknowledgements:

This article is a writeup of a research project conducted through the [SERI](https://cisac.fsi.stanford.edu/stanford-existential-risks-initiative/content/stanford-existential-risks-initiative) program under the mentorship of [Alex Turner](https://www.lesswrong.com/users/turntrout). I ([Jacob Stavrianos](https://www.lesswrong.com/users/midco)) would like to thank Alex for turning a messy collection of ideas into legitimate research, as well as the wonderful researchers at SERI for guiding the project and putting me in touch with the broader X-risk community.

Motivation/Overview

-------------------

In the single-agent setting, [Seeking Power is Often Robustly Instrumental in MDPs](https://www.lesswrong.com/s/7CdoznhJaLEKHwvJW/p/6DuJxY8X45Sco4bS2) showed that optimal policies tend to choose actions which pursue "power" (reasonably formalized). In the multi-agent setting, the [Catastrophic Convergence Conjecture](https://www.lesswrong.com/posts/w6BtMqKRLxG9bNLMr/the-catastrophic-convergence-conjecture) presented intuitions that "most agents" will "fight over resources" when they get "sufficiently advanced." However, it wasn't clear how to formalize that intuition.

This post synthesizes single-agent power dynamics (which we believe is now somewhat well-understood in the MDP setting) with the multi-agent setting. The multi-agent setting is important for AI alignment, since we want to reason clearly about when AI agents disempower humans. Assuming constant-sum games (i.e. maximal misalignment between agents), this post presents a result which echoes the intuitions in the Catastrophic Convergence Conjecture post: as agents become "more advanced", "power" becomes increasingly scarce & constant-sum.

An illustrative example

-----------------------

You're working on a project with a team of your peers. In particular, your actions affect the final deliverable, but so do those of your teammates. Say that each member of the team (including you) has some goal for the deliverable, which we can express as a reward function over the set of outcomes. How well (in terms of your reward function) can you expect to do?

It depends on your teammates' actions. Let's first ask "given my opponent's actions, what's the highest expected reward I can attain?"

### Case 1: Everyone plays nice

We can start by imagining the case where everyone does exactly what you'd want them to do. Mathematically, this allows you to obtain the globally maximal reward; or "the best possible reward assuming you can choose everyone else's actions". Intuitively, this looks like your team sitting you down for a meeting, asking what you want them to do for the project, and carrying out orders without fail. As expected, this case is 'the best you can hope for" in a formal sense.

### Case 2: Everyone plays mean

Now, imagine the case where everyone does exactly what you *don't* want them to do. Mathematically, this is the worst possible case; every other choice of teammates' actions is at least as good as this one. Intuitively, this case is pretty terrible for you. Imagine the previous case, but instead of following orders your team actively sabotages them. Alternatively, imagine that your team spends the meeting breaking your knees and your laptop.

### Case 3: Somewhere in between

However, scenarios where your team is perfectly aligned either with or against you are rare. More typically, we model people as maximizing their own reward, with imperfect correlation between reward functions. Interpreting our example as a multi-player game, we can consider the case where the players' strategies form a Nash equilibrium: every person's action is optimal for themselves given the actions of the rest of their team. This case is both relatively general and structured enough to make claims about; we will use it as a guiding example for the formalism below..mjx-chtml {display: inline-block; line-height: 0; text-indent: 0; text-align: left; text-transform: none; font-style: normal; font-weight: normal; font-size: 100%; font-size-adjust: none; letter-spacing: normal; word-wrap: normal; word-spacing: normal; white-space: nowrap; float: none; direction: ltr; max-width: none; max-height: none; min-width: 0; min-height: 0; border: 0; margin: 0; padding: 1px 0}

.MJXc-display {display: block; text-align: center; margin: 1em 0; padding: 0}

.mjx-chtml[tabindex]:focus, body :focus .mjx-chtml[tabindex] {display: inline-table}

.mjx-full-width {text-align: center; display: table-cell!important; width: 10000em}

.mjx-math {display: inline-block; border-collapse: separate; border-spacing: 0}

.mjx-math \* {display: inline-block; -webkit-box-sizing: content-box!important; -moz-box-sizing: content-box!important; box-sizing: content-box!important; text-align: left}

.mjx-numerator {display: block; text-align: center}

.mjx-denominator {display: block; text-align: center}

.MJXc-stacked {height: 0; position: relative}

.MJXc-stacked > \* {position: absolute}

.MJXc-bevelled > \* {display: inline-block}

.mjx-stack {display: inline-block}

.mjx-op {display: block}

.mjx-under {display: table-cell}

.mjx-over {display: block}

.mjx-over > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-under > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-stack > .mjx-sup {display: block}

.mjx-stack > .mjx-sub {display: block}

.mjx-prestack > .mjx-presup {display: block}

.mjx-prestack > .mjx-presub {display: block}

.mjx-delim-h > .mjx-char {display: inline-block}

.mjx-surd {vertical-align: top}

.mjx-surd + .mjx-box {display: inline-flex}

.mjx-mphantom \* {visibility: hidden}

.mjx-merror {background-color: #FFFF88; color: #CC0000; border: 1px solid #CC0000; padding: 2px 3px; font-style: normal; font-size: 90%}

.mjx-annotation-xml {line-height: normal}

.mjx-menclose > svg {fill: none; stroke: currentColor; overflow: visible}

.mjx-mtr {display: table-row}

.mjx-mlabeledtr {display: table-row}

.mjx-mtd {display: table-cell; text-align: center}

.mjx-label {display: table-row}

.mjx-box {display: inline-block}

.mjx-block {display: block}

.mjx-span {display: inline}

.mjx-char {display: block; white-space: pre}

.mjx-itable {display: inline-table; width: auto}

.mjx-row {display: table-row}

.mjx-cell {display: table-cell}

.mjx-table {display: table; width: 100%}

.mjx-line {display: block; height: 0}

.mjx-strut {width: 0; padding-top: 1em}

.mjx-vsize {width: 0}

.MJXc-space1 {margin-left: .167em}

.MJXc-space2 {margin-left: .222em}

.MJXc-space3 {margin-left: .278em}

.mjx-test.mjx-test-display {display: table!important}

.mjx-test.mjx-test-inline {display: inline!important; margin-right: -1px}

.mjx-test.mjx-test-default {display: block!important; clear: both}

.mjx-ex-box {display: inline-block!important; position: absolute; overflow: hidden; min-height: 0; max-height: none; padding: 0; border: 0; margin: 0; width: 1px; height: 60ex}

.mjx-test-inline .mjx-left-box {display: inline-block; width: 0; float: left}

.mjx-test-inline .mjx-right-box {display: inline-block; width: 0; float: right}

.mjx-test-display .mjx-right-box {display: table-cell!important; width: 10000em!important; min-width: 0; max-width: none; padding: 0; border: 0; margin: 0}

.MJXc-TeX-unknown-R {font-family: monospace; font-style: normal; font-weight: normal}

.MJXc-TeX-unknown-I {font-family: monospace; font-style: italic; font-weight: normal}

.MJXc-TeX-unknown-B {font-family: monospace; font-style: normal; font-weight: bold}

.MJXc-TeX-unknown-BI {font-family: monospace; font-style: italic; font-weight: bold}

.MJXc-TeX-ams-R {font-family: MJXc-TeX-ams-R,MJXc-TeX-ams-Rw}

.MJXc-TeX-cal-B {font-family: MJXc-TeX-cal-B,MJXc-TeX-cal-Bx,MJXc-TeX-cal-Bw}

.MJXc-TeX-frak-R {font-family: MJXc-TeX-frak-R,MJXc-TeX-frak-Rw}

.MJXc-TeX-frak-B {font-family: MJXc-TeX-frak-B,MJXc-TeX-frak-Bx,MJXc-TeX-frak-Bw}

.MJXc-TeX-math-BI {font-family: MJXc-TeX-math-BI,MJXc-TeX-math-BIx,MJXc-TeX-math-BIw}

.MJXc-TeX-sans-R {font-family: MJXc-TeX-sans-R,MJXc-TeX-sans-Rw}

.MJXc-TeX-sans-B {font-family: MJXc-TeX-sans-B,MJXc-TeX-sans-Bx,MJXc-TeX-sans-Bw}

.MJXc-TeX-sans-I {font-family: MJXc-TeX-sans-I,MJXc-TeX-sans-Ix,MJXc-TeX-sans-Iw}

.MJXc-TeX-script-R {font-family: MJXc-TeX-script-R,MJXc-TeX-script-Rw}

.MJXc-TeX-type-R {font-family: MJXc-TeX-type-R,MJXc-TeX-type-Rw}

.MJXc-TeX-cal-R {font-family: MJXc-TeX-cal-R,MJXc-TeX-cal-Rw}

.MJXc-TeX-main-B {font-family: MJXc-TeX-main-B,MJXc-TeX-main-Bx,MJXc-TeX-main-Bw}

.MJXc-TeX-main-I {font-family: MJXc-TeX-main-I,MJXc-TeX-main-Ix,MJXc-TeX-main-Iw}

.MJXc-TeX-main-R {font-family: MJXc-TeX-main-R,MJXc-TeX-main-Rw}

.MJXc-TeX-math-I {font-family: MJXc-TeX-math-I,MJXc-TeX-math-Ix,MJXc-TeX-math-Iw}

.MJXc-TeX-size1-R {font-family: MJXc-TeX-size1-R,MJXc-TeX-size1-Rw}

.MJXc-TeX-size2-R {font-family: MJXc-TeX-size2-R,MJXc-TeX-size2-Rw}

.MJXc-TeX-size3-R {font-family: MJXc-TeX-size3-R,MJXc-TeX-size3-Rw}

.MJXc-TeX-size4-R {font-family: MJXc-TeX-size4-R,MJXc-TeX-size4-Rw}

.MJXc-TeX-vec-R {font-family: MJXc-TeX-vec-R,MJXc-TeX-vec-Rw}

.MJXc-TeX-vec-B {font-family: MJXc-TeX-vec-B,MJXc-TeX-vec-Bx,MJXc-TeX-vec-Bw}

@font-face {font-family: MJXc-TeX-ams-R; src: local('MathJax\_AMS'), local('MathJax\_AMS-Regular')}

@font-face {font-family: MJXc-TeX-ams-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_AMS-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_AMS-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_AMS-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-B; src: local('MathJax\_Caligraphic Bold'), local('MathJax\_Caligraphic-Bold')}

@font-face {font-family: MJXc-TeX-cal-Bx; src: local('MathJax\_Caligraphic'); font-weight: bold}

@font-face {font-family: MJXc-TeX-cal-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-R; src: local('MathJax\_Fraktur'), local('MathJax\_Fraktur-Regular')}

@font-face {font-family: MJXc-TeX-frak-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-B; src: local('MathJax\_Fraktur Bold'), local('MathJax\_Fraktur-Bold')}

@font-face {font-family: MJXc-TeX-frak-Bx; src: local('MathJax\_Fraktur'); font-weight: bold}

@font-face {font-family: MJXc-TeX-frak-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-BI; src: local('MathJax\_Math BoldItalic'), local('MathJax\_Math-BoldItalic')}

@font-face {font-family: MJXc-TeX-math-BIx; src: local('MathJax\_Math'); font-weight: bold; font-style: italic}

@font-face {font-family: MJXc-TeX-math-BIw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-BoldItalic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-BoldItalic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-BoldItalic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-R; src: local('MathJax\_SansSerif'), local('MathJax\_SansSerif-Regular')}

@font-face {font-family: MJXc-TeX-sans-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-B; src: local('MathJax\_SansSerif Bold'), local('MathJax\_SansSerif-Bold')}

@font-face {font-family: MJXc-TeX-sans-Bx; src: local('MathJax\_SansSerif'); font-weight: bold}

@font-face {font-family: MJXc-TeX-sans-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-I; src: local('MathJax\_SansSerif Italic'), local('MathJax\_SansSerif-Italic')}

@font-face {font-family: MJXc-TeX-sans-Ix; src: local('MathJax\_SansSerif'); font-style: italic}

@font-face {font-family: MJXc-TeX-sans-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-script-R; src: local('MathJax\_Script'), local('MathJax\_Script-Regular')}

@font-face {font-family: MJXc-TeX-script-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Script-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Script-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Script-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-type-R; src: local('MathJax\_Typewriter'), local('MathJax\_Typewriter-Regular')}

@font-face {font-family: MJXc-TeX-type-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Typewriter-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Typewriter-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Typewriter-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-R; src: local('MathJax\_Caligraphic'), local('MathJax\_Caligraphic-Regular')}

@font-face {font-family: MJXc-TeX-cal-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-B; src: local('MathJax\_Main Bold'), local('MathJax\_Main-Bold')}

@font-face {font-family: MJXc-TeX-main-Bx; src: local('MathJax\_Main'); font-weight: bold}

@font-face {font-family: MJXc-TeX-main-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-I; src: local('MathJax\_Main Italic'), local('MathJax\_Main-Italic')}

@font-face {font-family: MJXc-TeX-main-Ix; src: local('MathJax\_Main'); font-style: italic}

@font-face {font-family: MJXc-TeX-main-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-R; src: local('MathJax\_Main'), local('MathJax\_Main-Regular')}

@font-face {font-family: MJXc-TeX-main-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-I; src: local('MathJax\_Math Italic'), local('MathJax\_Math-Italic')}

@font-face {font-family: MJXc-TeX-math-Ix; src: local('MathJax\_Math'); font-style: italic}

@font-face {font-family: MJXc-TeX-math-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size1-R; src: local('MathJax\_Size1'), local('MathJax\_Size1-Regular')}

@font-face {font-family: MJXc-TeX-size1-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size1-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size1-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size1-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size2-R; src: local('MathJax\_Size2'), local('MathJax\_Size2-Regular')}

@font-face {font-family: MJXc-TeX-size2-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size2-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size2-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size2-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size3-R; src: local('MathJax\_Size3'), local('MathJax\_Size3-Regular')}

@font-face {font-family: MJXc-TeX-size3-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size3-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size3-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size3-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size4-R; src: local('MathJax\_Size4'), local('MathJax\_Size4-Regular')}

@font-face {font-family: MJXc-TeX-size4-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size4-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size4-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size4-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-R; src: local('MathJax\_Vector'), local('MathJax\_Vector-Regular')}

@font-face {font-family: MJXc-TeX-vec-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-B; src: local('MathJax\_Vector Bold'), local('MathJax\_Vector-Bold')}

@font-face {font-family: MJXc-TeX-vec-Bx; src: local('MathJax\_Vector'); font-weight: bold}

@font-face {font-family: MJXc-TeX-vec-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Bold.otf') format('opentype')}

POWER, and why it matters

-------------------------

Many attempts have been made to classify AI [robustly instrumental goals](https://selfawaresystems.files.wordpress.com/2008/01/ai_drives_final.pdf), with the goals of understanding why they emerge given seemingly-unrelated utilities and ultimately to counterbalance (either implicitly or explicitly) undesirable robust instrumental subgoals. [One promising such attempt](https://www.lesswrong.com/s/7CdoznhJaLEKHwvJW/p/6DuJxY8X45Sco4bS2) is based on POWER (the technical term is all-caps to distinguish from normal use of the word): consider an agent with some space of actions, which receives rewards depending on the chosen actions (formally, an agent in an MDP). Then, POWER is roughly "ability to achieve a wide variety of goals". [It's been shown](https://arxiv.org/abs/1912.01683) that POWER is robustly instrumental given certain conditions on the environment, but currently no formalism exists describing power of different agents interacting with each other.

Since we'll be working with POWER for the rest of this post, we need a solid definition to build off of. We present a simplified version of the original definition:

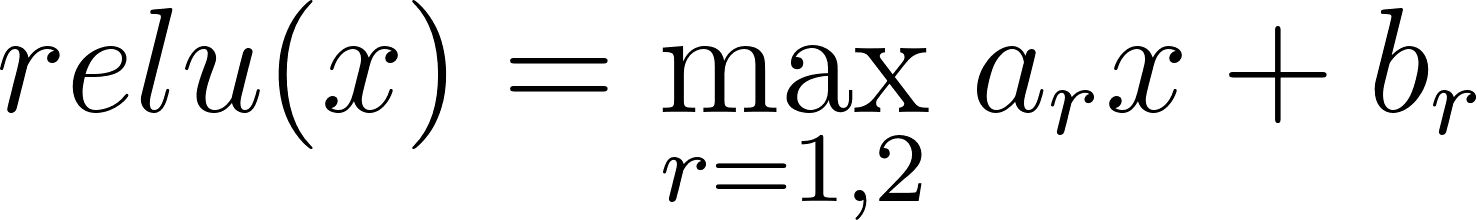

*Consider a scenario in which an agent has a set of actions*a∈A*and a distribution*D*of reward functions*r:A→R*. Then, we define the POWER of that agent as*

POWERD:=Er∼D[maxar(a))]As an example, we can rewrite the project example from earlier in terms of POWER. Let your goal for the project be chosen from some distribution D (maybe you want it done nicely, or fast, or to feature some cool thing that you did, etc). Then, your POWERD is the maximum extent to which you can accomplish that goal, in expectation.

However, this model of power can't account for the actions of other agents in the environment (what about what your teammates do? Didn't we already show that it matters a lot?). To say more about the example, we'll need a generalization of POWER.

Multi-agent POWER

-----------------

We now consider a more realistic scenario: not only are you an agent with a notion of reward and POWER, but *so is everyone else*, all playing the same multiplayer game. We can even revisit the project example and go through the cases for your teammates' actions in terms of POWER:

* In Case 1, your team works to maximize your reward in every case, which (with some assumptions) maximizes your POWER over the space of all choices of teammate actions.

* In Case 2, your team works to *minimize* your reward in every case, which analogously minimizes your POWER.

* In case 3, we have a Nash equilibrium of the game used to define multi-agent POWER. In particular, each player's action is a best-response to the actions of every other player. We'll see a parallel between this best-response property and the maxa∈A term in the definition of POWER pop up in the discussion of constant-sum games.

### Bayesian games

To extend our formal definition of power to the multi-agent case, we'll need to define a type of multiplayer normal-form game called a [Bayesian game](https://en.wikipedia.org/wiki/Bayesian_game). We describe them below:

* At the beginning of the game, each of n players is assigned a type ti∈Ti from a joint type distribution t=(ti)∼Ω. The distribution Ω is common knowledge.

* The players then (independently, **not** sequentially) choose actions ai∈Ai, resulting in an *action profile*a=(ai).

* Player i then receives reward ri(ti,a) (crucially, a player's reward can depend on their type).

Strategies (technically, mixed strategies) in a Bayesian game are given by functions σi:Ti→ΔAi. Thus, even given a fixed strategy profile σ, any notion of "expected reward of an action" will have to account for uncertainty in other players' types. We do so be defining *interim expected utility* for player i as follows:

fi(ti,ai,σ−i):=E[ri(ti,a)]

where the expectation is taken over the following:

* the posterior distribution over opponents' types t−i|ti - in other words, what types you expect other players to have, given your type.

* random choice of opponents' actions a−i∼σ−i(t−i) - even if you know someone's type, they might implement a mixed strategy which stochastically selects actions.

Further, we can define a (Bayesian) Nash Equilibrium to be a strategy profile where each player's strategy is a best response to opponents' strategies in terms of interim expected utility.

### Formal definition of multi-agent POWER

We can now define POWER in terms of a Bayesian game:

*Fix a strategy profile*σ*. We define player*i*'s POWER as*

POWER(i,σ):=Etimaxaifi(ti,ai,σ−i))

Intuitively, POWER is maximum (expected) reward given a distribution of possible goals. The difference from the single-agent case is that your reward is now influenced by other players' actions (by taking an expectation over opponents' strategy).

Properties of constant-sum games

--------------------------------

As both a preliminary result and a reference point for intuition, we consider the special case of *zero-sum games:*

A zero-sum game is a game in which for every possible outcome of the game, the sum of each player's reward is zero. For Bayesian games, this means that for all type profiles t=(ti) and action profiles a, we have ∑iri(ti,a)=0. Similarly, a *constant-sum game* is a game satisfying ∑iri(ti,a)=c for any choices of t,a.

As a simple example, consider chess; a two-player adversarial game. We let the reward profile be constant, given by "1 if you win, -1 if you lose" (assume black wins in a tie). This game is clearly zero-sum, since exactly one player will win and lose. We could ask the same "how well can you do?" question as before, but the upper-bound of winning is trivial. Instead, we ask "how well can both players simultaneously do?"

Clearly, you can't both simultaneously win. However, we can imagine scenarios where both players have the *power* to win: in a chess game between two beginners, the optimal strategy for either player will easily win the game. As it turns out, this argument generalizes (we'll even prove it): in a constant-sum game, the sum of each player's POWER ≥c, with equality iff each player responds optimally for all their possible goals ("types"). This condition is equivalent to a Bayesian Nash Equilibrium of the game.

Importantly, this idea suggests a general principle of multi-agent POWER I'll call *power-scarcity:* in multi-agent games, gaining POWER tends to come at the expense of another player losing POWER. Future research will focus on understanding this phenomenon further and relating it to "how aligned the agents are" in terms of their reward functions.

**Claim: Consider a Bayesian constant-sum game with some strategy profile**σ**. Then,**∑iPOWER(i,σ)≥c**with equality iff**σ**is a Nash Equilibrium.**

Intuition: By definition, σ *isn't* a Nash Equilibrium iff some player i's strategy σi isn't a best response. In this case, we see that player i has the power to play optimally, but the other players also have the power to capitalize off of player i's mistake (since the game is constant-sum). Thus, the lost reward is "double-counted" in terms of POWER; if no such double-counting exists, then the sum of POWER is just the expected sum of reward, which is c by definition of a constant-sum game.

**Rigorous proof:**

We prove the following for general strategy profiles σ:

∑iPower(i,σ)=∑iEtimaxaifi(ti,ai,σ−i))≥∑iEtiEai∼σifi(ti,ai,σ−i))=∑iEtiEa∼σri(ti,a))=EtEa∼σ(∑iri(ti,a))=EtEa∼σ(c)=c

Now, we claim that the inequality on line 2 is an equality iff σ is a Nash Equilibrium. To see this, note that for each i, we have

maxaifi(ti,ai,σ−i)≥Eai∼σifi(ti,ai,σ−i)

with equality iff σi is a best response to σ−i. Thus, the sum of these inequalities for each player is an equality iff each σi is a best response, which is the definition of a Nash Equilibrium. □

Final notes

-----------

To wrap up, I'll elaborate on the implications of this theorem, as well as some areas of further exploration on power-scarcity:

* It initially seems unintuitive that as players' strategies improve, their collective POWER tends to decrease. The proximate cause of this effect is something like "as your strategy improves, other players lose the power to capitalize off of your mistakes". More work is probably needed to get a clearer picture of this dynamic.

* We suspect that if all players have identical rewards, then the sum of POWER is equal to the sum of best-case POWER for each player. This gives the appearance of a spectrum with [aligned rewards (common payoff), maximal sum power] on one end and [anti-aligned rewards (constant-sum), constant sum power] on the other. Further research might look into an interpolation between these two extremes, possibly characterized by a correlation metric between reward functions.

+ We also plan to generalize POWER to Bayesian stochastic games to account for sequential decision making. Thus, any such metric for comparing reward functions would have to be consistent with such a generalization.

* POWER-scarcity results in terms of Nash Equilibria suggest the following dynamic: as agents get smarter and take available opportunities, POWER becomes increasingly scarce. This matches the intuitions presented in [the Catastrophic Convergence Conjecture](https://www.lesswrong.com/posts/w6BtMqKRLxG9bNLMr/the-catastrophic-convergence-conjecture), where agents don’t fight over resources until they get sufficiently “advanced.” |

05edb93c-70c3-4b28-a59f-381935bbfabd | trentmkelly/LessWrong-43k | LessWrong | Human trials for the Marburg vaccine: funding opportunity?

According to the Independent, scientists at Oxford have developed a potential vaccine for Marburg. However, they have been unable to run human trials due to lack of funding.

Are any institutional or high net worth funders in the EA community looking at this opportunity? In the event that the current Marburg outbreak gets out of control, a few weeks saved on vaccine approval could save thousands of lives. |

6a4cace0-d557-4e4a-bffd-efd3a11602d3 | trentmkelly/LessWrong-43k | LessWrong | Meetup : London rationalish meetup - 2016-03-20

Discussion article for the meetup : London rationalish meetup - 2016-03-20

WHEN: 20 March 2016 02:00:00PM (+0000)

WHERE: Shakespeare's Head, 64-68 Kingsway, London WC2B 6AH

I'm late posting the event this week, but that's because I was distracted, not because it isn't happening.

This meetup will be social discussion in a pub, with no set topic. If there's a topic you want to talk about, feel free to bring it.

The pub is the Shakespeare's Head in Holborn. There will be some way to identify us.

The event on facebook is visible even if you don't have a facebook account. Any last-minute updates will go there.

----------------------------------------

We're a fortnightly London-based meetup for members of the rationalist diaspora. The diaspora includes, but is not limited to, LessWrong, Slate Star Codex, rationalist tumblrsphere, and parts of the Effective Altruism movement.

You don't have to identify as a rationalist to attend: basically, if you think we seem like interesting people you'd like to hang out with, welcome! You are invited. You do not need to think you are clever enough, or interesting enough, or similar enough to the rest of us, to attend. You are invited.

People start showing up around two, and there are almost always people around until after six, but feel free to come and go at whatever time.

Discussion article for the meetup : London rationalish meetup - 2016-03-20 |

765224fd-11c4-4254-b431-39db56bfdca9 | trentmkelly/LessWrong-43k | LessWrong | Against Street Epistemology

According to https://streetepistemology.com/publications/street_epistemology_the_basics , street epistemology is a "conversational technique" which is intended to be "a more productive and positive alternative to debates and arguments." Street epistemologists assume a role similar to Socrates in Plato's dialogues, asking questions of his interlocutor to try to create a realisation of ignorance in them. The goal of street epistemology is to find incoherences in people's beliefs, and to convince them of the value of "scepticism."

A street epistemologist tries to remain calm and pleasant throughout the entire interaction, and to build rapport at the beginning in order to make their interlocutor comfortable with the exchange. After introductions and rapport are established,they can ask their interlocutor to identify a belief and give an approximate level of confidence in it (on a scale of 1 to 10). The early stages of the conversation, after identifying the belief, are devoted to making the belief clear and precise so that there is as little ambiguity as possible, and less wiggle room if and when incoherences are found. Terms are defined, clarifying questions are asked and answered. To confirm that the belief is understood, before trying to undermine it, the street epistemologist will try to give a paraphrase of the view that his interlocutor finds charitable and acceptable.

Having pinpointed what the claim is, the street epistemologist then asks which methods the interlocutor used to arrive at their confidence level in this belief. This is the very first question in what might be called the cross-examination stage, and it reveals what sort of incoherence is being sought in these conversations. Street epistemology is all about finding poorly articulated or unarticulated spots in people's epistemological views. One the interlocutor has given a few answers and it comes time to dive into them, the website recommends focusing only on "one or two" of the methods listed, id |

b6e08cb8-4d0a-4581-becb-257bc49e447d | trentmkelly/LessWrong-43k | LessWrong | Why empiricists should believe in AI risk

Empiricists are people who believe empirical information (from experiments and observational studies) is far more useful and has far more weight than speculating about possibilities using pure reasoning.

Why should they believe in AI risk?

I present the Empiricist's Paradox:

* There is strong empirical evidence that relying on non-empirical reasoners (e.g. superforecasters) works better than simply assuming a 0% chance if there is no empirical data and calling yourself an "empiricist."

Actual empiricists should support AI safety because the median superforecaster sees a 2.1% chance of an AI catastrophe (killing 1 in 10 people).[1]

There is empirical evidence that 2% of these predictions turn out true, if the superforecasters predict them with 2% chance.[2]

A 2% chance of AI catastrophe actually justifies a large spending relative to military spending (see our Statement on AI Inconsistency).

1. ^

The predictions were for 2100, but the predictions were made before ChatGPT was released.

2. ^

Someone asked for source for this :/ I should have done more research. I think https://goodjudgment.com/wp-content/uploads/2022/10/Superforecaster-Accuracy.pdf#page=4 sort of suggests roughly 2%. The observed frequency is a little bit higher than the forecast probability, because superpredictors slightly underestimate low probability events. |

754ac7e2-a3e3-4558-8479-8a15c0196952 | trentmkelly/LessWrong-43k | LessWrong | Migraine hallucinations, phenomenology, and cognition

I have several times in my life experienced migraine hallucinations. I call them that because they look exactly like what other people report under that name.

I'll come back to those.

If I look at someone, and hold up my hand so as to block my view of their head, I do not experience looking at a headless person. I experience looking at a normal person, whose head I cannot see, because there is something else in the way.

Why is this? One can instantly talk about Bayesian estimation, prior experience, training of neural nets, constant conjunction, and so on. However, a real explanation must also account for situations in which this filling-in does not occur. One ordinary example is the pictures here. I see these as headless men, not ordinary men whose heads I cannot see.

Migraine hallucinations provide a more interesting example. If you've ever had one, you might already know what I'm going to say, but I do not know if this experience is the same for everyone.

If I superimpose the hallucination on someone's head, they seem to have no head. I don't mean that I cannot see their head (although indeed I can't), but that I seem to be looking at a headless person. If I superimpose it on a part of their head, it is as if that part does not exist. Whatever the blind spot covers, my brain does not fill it in. Whatever my hand covers, my brain does fill in, not at the level of the image (I don't confabulate an image of their face), but at some higher level. I know in both cases that they have a head. But at some level below knowing, the experience in one case is that they have no head, and in the other, that they do. My knowledge that they have a head does nothing to alter the sensation that they do not.

It is quite disconcerting to look at myself in a mirror and see half my head missing.

Those who have never had such hallucinations might try experimenting with their ordinary blind spots. I am not sure it will be the same. The brain has had more practice filling those in |

371693a4-5436-4d16-835c-f6907b3f5c15 | trentmkelly/LessWrong-43k | LessWrong | My thoughts on nanotechnology strategy research as an EA cause area

This is a cross-post from the Effective Altruism Forum.

Two-sentence summary: Advanced nanotechnology might arrive in the next couple of decades (my wild guess: there’s a 1-2% chance in the absence of transformative AI) and could have very positive or very negative implications for existential risk. There has been relatively little high-quality thinking on how to make the arrival of advanced nanotechnology go well, and I think there should be more work in this area (very tentatively, I suggest we want 2-3 people spending at least 50% of their time on this by 3 years from now).

Context: This post reflects my current views as someone with a relevant PhD who has thought about this topic on and off for roughly the past 20 months (something like 9 months FTE). Note that some of the framings and definitions provided in this post are quite tentative, in the sense that I’m not at all sure that they will continue to seem like the most useful framings and definitions in the future. Some other parts of this post are also very tentative, and are hopefully appropriately flagged as such.

Key points

* I define advanced nanotechnology as any highly advanced future technology, including atomically precise manufacturing (APM), that uses nanoscale machinery to finely image and control processes at the nanoscale, and is capable of mechanically assembling small molecules into a wide range of cheap, high-performance products at a very high rate (note that my definition of advanced nanotechnology is only loosely related to what people tend to mean by the term “nanotechnology”). (more)

* If developed, advanced nanotechnology could increase existential risk, for example by making destructive capabilities widely accessible, by allowing the development of weapons that pose a higher existential risk, or by accelerating AI development; or it could decrease existential risk, for example by causing the world’s most destructive weapons to be replaced by weapons that pose a lower existential |

4e268cef-dd7c-4812-8fd9-d2b06a5230e1 | trentmkelly/LessWrong-43k | LessWrong | Idea selection

THE PROBLEMATIC IDEA

In our culture today there is a strong trend of disengagement with the views of people who are accused publicly of being “problematic.” This trend refers mostly to how disagreement manifests on social media, and it has been dubbed cancel culture.

A quote, video, or photograph is given as evidence of the person’s problematic nature, and the groups of people who want this viewpoint eliminated will collectively disengage with both the person and the idea.

This brings to mind a sort of social cleansing wherein both the problematic ideas and the “problematic people” are separated from the rest and refused entry to the discussion. Associating with the problematic person risks being a proponent of their idea, so both the person and the idea are banned as a unit.

The criticism of problematic ideas is often superficial, and ideally this is done as quickly as possible via the ‘shut down.’ This ‘shut down’ is always expressed with violent imagery that evokes images of obliteration beyond repair. Ideas are not discussed; they are ‘destroyed,’ as if a bomb has been dropped on them.

The 'problematic person' has no salvageable ideas or arguments. If they are an artist, their art should not be viewed; if a director, their films not watched. All belonging to them is poisonous and should be placed into a box and hidden away in a dark place, never to be brought out again into the light.

IDEA SELECTION

At play here is a free market element, which is that nobody is owed engagement, just like companies are not entitled to business. The ideas are not openly discussed because that would risk transmitting them. Yet at the same time, they do not trust the free market to effectively weed out ‘problematic’ or ‘weak’ ideas.

Perhaps in defense of cancel culture, the market has never done this well. Just examine the popularity of superstitious beliefs in the Modern world. The market does not naturally select the ideas that are useful, moral, or 'true'. Instead, ca |

533e7342-4add-4160-9759-3d4430701d12 | trentmkelly/LessWrong-43k | LessWrong | Marx and the Machine

“The means of labour passes through different metamorphoses whose culmination is the machine, or rather, an automatic system of machinery… set in motion by an automaton, a moving power that moves itself; this automaton consisting of numerous mechanical and intellectual organs… It is the machine which possesses skill and strength in place of the worker, is itself the virtuoso, with a soul of its own… The science which compels the inanimate limbs of the machinery, by their construction, to act purposefully, as an automaton, does not exist in the worker's consciousness, but rather acts upon him through the machine as an alien power, as the power of the machine itself.” — Karl Marx, from “The Fragment on Machines”

Karl Marx’s thought is both sufficiently ambiguous and sufficiently insightful to have launched an entire industry of interpreters. But as a rough and ready sketch, Marx saw economics and politics as downstream of technology. Viewing the progress of the industrial revolution, Marx foresaw the development of increasingly powerful technologies of automation. Automation would unleash abundance as machines replaced labor; in turn, this would cause the collapse of capitalism and its surrounding political structures. Marx vague on the mechanics of this transition but also deeply confident in its inevitability. With capitalism dead and technologically-induced abundance, we would enter utopia. Freed from wage labor, we would unleash our full human potential for science and creativity and enter into a world where money, government, and class would not exist.

Strikingly, this is almost exactly the view of many AI optimists (particularly of the “money won’t matter after AGI” variety). One needs to swap out a little of the verbiage, but the two lines of thinking run in parallel. Marx’s “automaton consisting of numerous mechanical and intellectual organs … with a soul of its own” is about as close as one can come to a description of AGI within the language of |

b91bf3ad-84a7-40d9-bfd5-09693ea67bbd | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Interpreting Neural Networks through the Polytope Lens

Sid Black\*, Lee Sharkey\*, Leo Grinsztajn, Eric Winsor, Dan Braun, Jacob Merizian, Kip Parker, Carlos Ramón Guevara, Beren Millidge, Gabriel Alfour, Connor Leahy

\*equal contribution

Research from[Conjecture](https://conjecture.dev/).

*This post benefited from feedback from many staff at Conjecture including Adam Shimi, Nicholas Kees Dupuis, Dan Clothiaux, Kyle McDonell. Additionally, the post also benefited from inputs from Jessica Cooper, Eliezer Yudkowsky, Neel Nanda, Andrei Alexandru, Ethan Perez, Jan Hendrik Kirchner, Chris Olah, Nelson Elhage, David Lindner, Evan R Murphy, Tom McGrath, Martin Wattenberg, Johannes Treutlein, Spencer Becker-Kahn, Leo Gao, John Wentworth, and Paul Christiano and from discussions with many other colleagues working on interpretability.*

.mjx-chtml {display: inline-block; line-height: 0; text-indent: 0; text-align: left; text-transform: none; font-style: normal; font-weight: normal; font-size: 100%; font-size-adjust: none; letter-spacing: normal; word-wrap: normal; word-spacing: normal; white-space: nowrap; float: none; direction: ltr; max-width: none; max-height: none; min-width: 0; min-height: 0; border: 0; margin: 0; padding: 1px 0}

.MJXc-display {display: block; text-align: center; margin: 1em 0; padding: 0}

.mjx-chtml[tabindex]:focus, body :focus .mjx-chtml[tabindex] {display: inline-table}

.mjx-full-width {text-align: center; display: table-cell!important; width: 10000em}

.mjx-math {display: inline-block; border-collapse: separate; border-spacing: 0}

.mjx-math \* {display: inline-block; -webkit-box-sizing: content-box!important; -moz-box-sizing: content-box!important; box-sizing: content-box!important; text-align: left}

.mjx-numerator {display: block; text-align: center}

.mjx-denominator {display: block; text-align: center}

.MJXc-stacked {height: 0; position: relative}

.MJXc-stacked > \* {position: absolute}

.MJXc-bevelled > \* {display: inline-block}

.mjx-stack {display: inline-block}

.mjx-op {display: block}

.mjx-under {display: table-cell}

.mjx-over {display: block}

.mjx-over > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-under > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-stack > .mjx-sup {display: block}

.mjx-stack > .mjx-sub {display: block}

.mjx-prestack > .mjx-presup {display: block}

.mjx-prestack > .mjx-presub {display: block}

.mjx-delim-h > .mjx-char {display: inline-block}

.mjx-surd {vertical-align: top}

.mjx-surd + .mjx-box {display: inline-flex}

.mjx-mphantom \* {visibility: hidden}

.mjx-merror {background-color: #FFFF88; color: #CC0000; border: 1px solid #CC0000; padding: 2px 3px; font-style: normal; font-size: 90%}

.mjx-annotation-xml {line-height: normal}

.mjx-menclose > svg {fill: none; stroke: currentColor; overflow: visible}

.mjx-mtr {display: table-row}

.mjx-mlabeledtr {display: table-row}

.mjx-mtd {display: table-cell; text-align: center}

.mjx-label {display: table-row}

.mjx-box {display: inline-block}

.mjx-block {display: block}

.mjx-span {display: inline}

.mjx-char {display: block; white-space: pre}

.mjx-itable {display: inline-table; width: auto}

.mjx-row {display: table-row}

.mjx-cell {display: table-cell}

.mjx-table {display: table; width: 100%}

.mjx-line {display: block; height: 0}

.mjx-strut {width: 0; padding-top: 1em}

.mjx-vsize {width: 0}

.MJXc-space1 {margin-left: .167em}

.MJXc-space2 {margin-left: .222em}

.MJXc-space3 {margin-left: .278em}

.mjx-test.mjx-test-display {display: table!important}

.mjx-test.mjx-test-inline {display: inline!important; margin-right: -1px}

.mjx-test.mjx-test-default {display: block!important; clear: both}

.mjx-ex-box {display: inline-block!important; position: absolute; overflow: hidden; min-height: 0; max-height: none; padding: 0; border: 0; margin: 0; width: 1px; height: 60ex}

.mjx-test-inline .mjx-left-box {display: inline-block; width: 0; float: left}

.mjx-test-inline .mjx-right-box {display: inline-block; width: 0; float: right}

.mjx-test-display .mjx-right-box {display: table-cell!important; width: 10000em!important; min-width: 0; max-width: none; padding: 0; border: 0; margin: 0}

.MJXc-TeX-unknown-R {font-family: monospace; font-style: normal; font-weight: normal}

.MJXc-TeX-unknown-I {font-family: monospace; font-style: italic; font-weight: normal}

.MJXc-TeX-unknown-B {font-family: monospace; font-style: normal; font-weight: bold}

.MJXc-TeX-unknown-BI {font-family: monospace; font-style: italic; font-weight: bold}

.MJXc-TeX-ams-R {font-family: MJXc-TeX-ams-R,MJXc-TeX-ams-Rw}

.MJXc-TeX-cal-B {font-family: MJXc-TeX-cal-B,MJXc-TeX-cal-Bx,MJXc-TeX-cal-Bw}

.MJXc-TeX-frak-R {font-family: MJXc-TeX-frak-R,MJXc-TeX-frak-Rw}

.MJXc-TeX-frak-B {font-family: MJXc-TeX-frak-B,MJXc-TeX-frak-Bx,MJXc-TeX-frak-Bw}

.MJXc-TeX-math-BI {font-family: MJXc-TeX-math-BI,MJXc-TeX-math-BIx,MJXc-TeX-math-BIw}

.MJXc-TeX-sans-R {font-family: MJXc-TeX-sans-R,MJXc-TeX-sans-Rw}

.MJXc-TeX-sans-B {font-family: MJXc-TeX-sans-B,MJXc-TeX-sans-Bx,MJXc-TeX-sans-Bw}

.MJXc-TeX-sans-I {font-family: MJXc-TeX-sans-I,MJXc-TeX-sans-Ix,MJXc-TeX-sans-Iw}

.MJXc-TeX-script-R {font-family: MJXc-TeX-script-R,MJXc-TeX-script-Rw}

.MJXc-TeX-type-R {font-family: MJXc-TeX-type-R,MJXc-TeX-type-Rw}

.MJXc-TeX-cal-R {font-family: MJXc-TeX-cal-R,MJXc-TeX-cal-Rw}

.MJXc-TeX-main-B {font-family: MJXc-TeX-main-B,MJXc-TeX-main-Bx,MJXc-TeX-main-Bw}

.MJXc-TeX-main-I {font-family: MJXc-TeX-main-I,MJXc-TeX-main-Ix,MJXc-TeX-main-Iw}

.MJXc-TeX-main-R {font-family: MJXc-TeX-main-R,MJXc-TeX-main-Rw}

.MJXc-TeX-math-I {font-family: MJXc-TeX-math-I,MJXc-TeX-math-Ix,MJXc-TeX-math-Iw}

.MJXc-TeX-size1-R {font-family: MJXc-TeX-size1-R,MJXc-TeX-size1-Rw}

.MJXc-TeX-size2-R {font-family: MJXc-TeX-size2-R,MJXc-TeX-size2-Rw}

.MJXc-TeX-size3-R {font-family: MJXc-TeX-size3-R,MJXc-TeX-size3-Rw}

.MJXc-TeX-size4-R {font-family: MJXc-TeX-size4-R,MJXc-TeX-size4-Rw}

.MJXc-TeX-vec-R {font-family: MJXc-TeX-vec-R,MJXc-TeX-vec-Rw}

.MJXc-TeX-vec-B {font-family: MJXc-TeX-vec-B,MJXc-TeX-vec-Bx,MJXc-TeX-vec-Bw}

@font-face {font-family: MJXc-TeX-ams-R; src: local('MathJax\_AMS'), local('MathJax\_AMS-Regular')}