id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

f8c26118-d312-4df8-b5d3-5f7d07064a10 | trentmkelly/LessWrong-43k | LessWrong | Group rationality diary, 6/11/12

This is the public group instrumental rationality diary for the week of June 11th. It's a place to record and chat about it if you have done, or are actively doing, things like:

* Established a useful new habit

* Obtained new evidence that made you change your mind about some belief

* Decided to behave in a different way in some set of situations

* Optimized some part of a common routine or cached behavior

* Consciously changed your emotions or affect with respect to something

* Consciously pursued new valuable information about something that could make a big difference in your life

* Learned something new about your beliefs, behavior, or life that surprised you

* Tried doing any of the above and failed

Or anything else interesting which you want to share, so that other people can think about it, and perhaps be inspired to take action themselves. Try to include enough details so that everyone can use each other's experiences to learn about what tends to work out, and what doesn't tend to work out.

Thanks to everyone who contributes!

(Previously: 5/14/12, 5/21/12, 5/28/12, 6/4/12)

|

a42f8d70-2492-4295-be23-88ce438a14a3 | trentmkelly/LessWrong-43k | LessWrong | SRS advice

I have made some significant progress in organizing myself with org-mode (basically a really well thought out emacs outliner) - consider this a plug :).

Now I think I am ready to bite the bullet and automate another part of my mental apparatus, memorization. I'd like to hear other people's experiences with SRS - spaced repetition - (negative, too), what software they use, what do they use it for, how much time they spend. I expect these to vary, so stating your reasons is worth an extra upvote (and thanks ahead)

ETA: When do you decide something is worth memorizing vs. putting it into a searchable database? |

bf511acc-97c7-432a-b9f8-a4eff5f84a8b | trentmkelly/LessWrong-43k | LessWrong | Restraint Bias

Ed Yong over at Not Exactly Rocket Science has an article on a study demonstrating "restraint bias" (reference), which seems like an important thing to be aware of in fighting akrasia:

People who think they are more restrained are more likely to succumb to temptation

> In a series of four experiments, Loran Nordgren from Northwestern University showed that people suffer from a "restraint bias", where they overestimate their ability to control their own impulses. Those who fall prey to this fallacy most strongly are more likely to dive into tempting situations. Smokers, for example, who are trying to quit, are more likely to put themselves in situations if they think they're invulnerable to temptation. As a result, they're more likely to relapse.

Thus, not only do people overestimate their abilities to carry out non-immediate plans (far-mode thinking, like in planning fallacy), but also the more confident ones turn out to be least able. This might have something to do with how public commitment may be counterproductive: once you've effectively signaled your intentions, the pressure to actually implement them fades away. Once you believe yourself to have asserted self-image of a person with good self-control, maintaining the actual self-control loses priority.

See also: Akrasia, Planning fallacy, Near/far thinking.

Related to: Image vs. Impact: Can public commitment be counterproductive for achievement? |

c07590e4-3b3f-4957-b0fb-e47a7596468f | trentmkelly/LessWrong-43k | LessWrong | AI Safety via Luck

Epistemic Status: I feel confident and tentatively optimistic about the claims made in this post, but am slightly more uncertain about how it generalizes. Additionally, I am concerned about the extent to which this is dual-use for capabilities and exfohazardous and spent a few months thinking about whether it was worth it to release this post regardless. I haven’t come to an answer yet, so I’m publishing this to let other people see it and know what they think I should do.

TL;DR: I propose a research direction to solve alignment that potentially doesn’t require solutions to ontology identification, learning how to code, or becoming literate.

Introduction

Until a few hours ago, I was spending my time primarily working on high-level interpretability and cyborgism. While I was writing a draft for something I was working on, an activity that usually yields me a lot of free time by way of procrastination, I stumbled across the central idea behind many of the ideas in this post. It seemed so immediately compelling that I dropped working on everything else to start working on it, culminating after much deliberation in the post you see before you.

My intention with this post is to provide a definitive reference for what it would take to safely use AGI to steer our world toward much better states in the absence of a solution to any or all of several existing problems, such as Eliciting Latent Knowledge, conditioning simulator models, Natural Abstractions, mechanistic interpretability, and the like.

In a world with prospects such as those, I propose that we radically rethink our approach to AGI safety. Instead of dedicating enormous effort to engineering nigh-impossible safety measures, we should consider thus-far neglected avenues of research, especially ones that have memetic reasons to be unfairly disprivileged so far and which immunizes them against capabilities misuse. To avert the impending AI apocalypse, we need to focus on high-variance, low-probability-high-yiel |

5f5a55b8-7d47-4535-b7ee-cb727c031a1c | trentmkelly/LessWrong-43k | LessWrong | Transhumanism thread in progress at Reddit

Starting with this reply to "You were born too soon":

> depending on when exactly we achieve this, this could be the best time to be born ever, because it will be the absolute earliest anybody will have achieved immortality. Someone born within 20 years of this moment could one day be the oldest human, sentient, or even living being in the Universe.

The comments are currently split between arguing and agreeing with this. So far, no mention of cryonics. One post presents a possibly interesting technical argument that our current knowledge/technology is centuries away from mind uploading/whole-brain emulation.

(Also posted to The Singularity in the Zeitgeist, but that thread seems to have been mostly forgotten.) |

d74e55c1-9c25-480c-892e-0591e1de8fcd | trentmkelly/LessWrong-43k | LessWrong | How hard is it for altruists to discuss going against bad equilibria?

Epistemic status: This post is flagrantly obscure, which makes it all the harder for me to revise it to reflect my current opinions. By the nature of the subject, it's difficult to give object-level examples. If you're considering reading this, I would suggest the belief signaling trilemma as a much more approachable post on a similar topic. Basically, take that idea, and extrapolate it to issues with coordination problems?

* There are many situations where a system is "broken" in the sense that incentives push people toward bad behavior, but, not so much that an altruist has any business engaging in that bad behavior (at least, not if they are well-informed).

* In other words, an altruist who understands the bad equilibrium well would disengage from the broken system, or engage while happily paying the cost of going against incentives.

* Clearly, this is not always the case; I'm thinking about situations where it is the case.

* Actually, I'm thinking about situations where it is the case supposing that we ignore certain costs, such as costs of going against peer pressure, costs of employing willpower to go against the default, etc. The question is then: is it realistically worth it, given all those additional costs, if we condition on it being it's worth it for an imaginary emotional-robot altruist?

* Actually actually, the question I'm asking is probably not that one either, but I haven't figured out my real question yet.

* I think maybe I'm mainly interested in the question of how hard it is for altruists to publicly discuss altruistic strategies (in the context of a bad equilibrium) without upsetting a bunch of people (who are currently coordinating on that equilibrium, and are therefore protective of it).

* I'm writing this post to try to sort out some confused thoughts (hence the weird style). A lot of the context is discussion on this post.

* But, I'm not going to discuss examples in my post. This seems like a |

2f0f48aa-ca17-4fef-8f36-2bedc8e20f29 | trentmkelly/LessWrong-43k | LessWrong | Weekly LW Meetups

This summary was posted to LW Main on October 24th. The following week's summary is here.

New meetups (or meetups with a hiatus of more than a year) are happening in:

* Bangalore Meetup: 15 November 2014 04:15PM

Irregularly scheduled Less Wrong meetups are taking place in:

* East Coast Solstice Megameetup: 20 December 2014 03:00PM

* European Community Weekend 2015: 12 June 2015 12:00PM

* Perth, Australia: Discussion: How to be happy: 04 November 2014 06:00PM

* Saint Petersburg meetup - "the lonely one": 31 October 2014 08:00PM

* Urbana-Champaign: Meta-systems and getting things done: 26 October 2014 02:00PM

* Utrecht: Climate Change: 02 November 2014 03:00PM

The remaining meetups take place in cities with regular scheduling, but involve a change in time or location, special meeting content, or simply a helpful reminder about the meetup:

* Austin, TX - Spider House: 25 October 2025 01:30PM

* [Cambridge MA] The Design Process: 29 October 2014 07:00PM

* Canberra: Would I Lie To You?: 24 October 2014 06:00PM

* London Social - October 26th: 26 October 2014 03:00PM

* Moscow meetup: Quantum physics is fun: 26 October 2014 03:00PM

* Washington, D.C.: Create and Complete: 26 October 2014 03:00PM

Locations with regularly scheduled meetups: Austin, Berkeley, Berlin, Boston, Brussels, Buffalo, Cambridge UK, Canberra, Columbus, London, Madison WI, Melbourne, Moscow, Mountain View, New York, Philadelphia, Research Triangle NC, Seattle, Sydney, Toronto, Vienna, Washington DC, Waterloo, and West Los Angeles. There's also a 24/7 online study hall for coworking LWers.

If you'd like to talk with other LW-ers face to face, and there is no meetup in your area, consider starting your own meetup; it's easy (more resources here). Check one out, stretch your rationality skills, build community, and have fun!

In addition to the handy sidebar of upcoming meetups, a meetup overview is posted on the front page every Friday. These are an attempt to collect information on al |

0619f8d8-4058-42ea-b686-e07cd2b90b4d | trentmkelly/LessWrong-43k | LessWrong | Chapter 4: The Efficient Market Hypothesis

Disclaimer: J. K. Rowling is watching you from where she waits, eternally in the void between worlds.

A/N: As others have noted, the novels seem inconsistent in the apparent purchasing power of a Galleon; I'm picking a consistent value and sticking with it. Five pounds sterling to the Galleon doesn't square with seven Galleons for a wand and children using hand-me-down wands.

----------------------------------------

"World domination is such an ugly phrase. I prefer to call it world optimisation."

----------------------------------------

Heaps of gold Galleons. Stacks of silver Sickles. Piles of bronze Knuts.

Harry stood there, and stared with his mouth open at the family vault. He had so many questions he didn't know where to start.

From just outside the door of the vault, Professor McGonagall watched him, seeming to lean casually against the wall, but her eyes intent. Well, that made sense. Being plopped in front of a giant heap of gold coins was a test of character so pure it was archetypal.

"Are these coins the pure metal?" Harry said finally.

"What?" hissed the goblin Griphook, who was waiting near the door. "Are you questioning the integrity of Gringotts, Mr. Potter-Evans-Verres?"

"No," said Harry absently, "not at all, sorry if that came out wrong, sir. I just have no idea at all how your financial system works. I'm asking if Galleons in general are made of pure gold."

"Of course," said Griphook.

"And can anyone coin them, or are they issued by a monopoly that thereby collects seigniorage?"

"What?" said Professor McGonagall.

Griphook grinned, showing sharp teeth. "Only a fool would trust any but goblin coin!"

"In other words," Harry said, "the coins aren't supposed to be worth any more than the metal making them up?"

Griphook stared at Harry. Professor McGonagall looked bemused.

"I mean, suppose I came in here with a ton of silver. Could I get a ton of Sickles made from it?"

"For a fee, Mr. Potter-Evans-Verres." The goblin watched him with g |

9dea5261-4475-4c2a-b258-47a03dbb4e7b | trentmkelly/LessWrong-43k | LessWrong | Virtual models of virtual AIs in virtual worlds

A putative new idea for AI control; index here.

This is a reworking of the previous models of AIs in virtual worlds, done after discussing the idea with Jessica.

The basic idea is that there are a lot of concepts about AI security - such as sealed boxes, Cartesian boundaries, and so on - that are only partially true. They are simplified models, abstractions, and, even if they are true in our model of physics (an absurdly optimistic claim) there's always the possibility (certainty) that our models are wrong or incomplete.

But there is one place where models are exactly true, and that... within the model itself. And a computer program, or a virtual machine, is a model, a model we try and make happen exactly in the real world.

----------------------------------------

Is my world model true?

Consider an AI, running inside a virtual machine. The AI has an internal model of the entire virtual machine, including itself, and knows the rules of the virtual machine/model.

In fact the AI entertains two hypotheses:

* H1: the model is entirely correct about the (virtual) world.

* H2: sometimes, random bits are flipped in the (virtual) world.

Then give the AI some utility function u, between 0 and 1, defined over the virtual world. The AI also has a shutdown button B that it predicts will shut down the world; this B send a signal to us and will stop the execution of the virtual world, AI included. Let r be some discount factor close to 1, and TB the time step at which B was pressed (if it was). Then the AI tries to maximise the utility

* u∗=rTB((u|H1)+(2|H2))

Now the AI is motivated to maximise u as long as it thinks that the world model it has is correct, and to press B as soon as it feels its ache. But as soon as it suspects enough that the model of the virtual world is is incorrect - ie H2 - it will press B (the exact definition of u∗ could probably be improved; the might be unfortunate trade-offs at the moment).

What does H2 translate to from our perspective? |

53627298-0844-4413-9384-9a6a9f1df825 | trentmkelly/LessWrong-43k | LessWrong | London Meetup on 2011/1/2

On Sunday, January 2nd 2011 there will be a meetup the London area. As with previous meetups, the venue is Shakespeare's Head. The meeting will start at 14:00.

In order to keep us organised for 2011, I'm putting together a mailing list for LWers around the London area. If you'd like to be added to the list, please send me your e-mail address via private message. |

2eaf633b-4c38-4353-bec7-a871baad00a4 | trentmkelly/LessWrong-43k | LessWrong | Evaluability (And Cheap Holiday Shopping)

With the expensive part of the Hallowthankmas season now approaching, a question must be looming large in our readers’ minds:

> “Dear Overcoming Bias, are there biases I can exploit to be seen as generous without actually spending lots of money?”

I’m glad to report the answer is yes! According to Hsee—in a paper entitled “Less is Better”—if you buy someone a $45 scarf, you are more likely to be seen as generous than if you buy them a $55 coat.1

This is a special case of a more general phenomenon. In an earlier experiment, Hsee asked subjects how much they would be willing to pay for a second-hand music dictionary:2

* Dictionary A, from 1993, with 10,000 entries, in like-new condition.

* Dictionary B, from 1993, with 20,000 entries, with a torn cover and otherwise in like-new condition.

The gotcha was that some subjects saw both dictionaries side-by-side, while other subjects only saw one dictionary . . .

Subjects who saw only one of these options were willing to pay an average of $24 for Dictionary A and an average of $20 for Dictionary B. Subjects who saw both options, side-by-side, were willing to pay $27 for Dictionary B and $19 for Dictionary A.

Of course, the number of entries in a dictionary is more important than whether it has a torn cover, at least if you ever plan on using it for anything. But if you’re only presented with a single dictionary, and it has 20,000 entries, the number 20,000 doesn’t mean very much. Is it a little? A lot? Who knows? It’s non-evaluable. The torn cover, on the other hand—that stands out. That has a definite affective valence: namely, bad.

Seen side-by-side, though, the number of entries goes from non-evaluable to evaluable, because there are two compatible quantities to be compared. And once the number of entries becomes evaluable, that facet swamps the importance of the torn cover.

From Slovic et al.: Which would you prefer?3

1. A 29/36 chance to win $2.

2. A 7/36 chance to win $9.

While the average prices (equiv |

55366c33-a60a-4c32-ad21-a3ee09de4620 | trentmkelly/LessWrong-43k | LessWrong | Do you trust the research on handwriting vs. typing for notes?

For a while now, I've heard the claim that "studies show" that note-takers remember content better if they take notes by hand versus on a computer. I previously took this claim on face value in part because this was before I'd heard about the replication crisis and also because I'd had personal experiences that I believed supported this claim.

In light of the replication crisis and recent experiences, I've come to be more skeptical of this research. I started to look at some of the research pop science articles on the topic cite and am skeptical of the work I've looked at so far.

In the example I link to above, they have subjects perform two tasks, a recall and recognition task for words they handwrote or typed with a multiplication task in between writing and recalling/recognizing. They find a not significant difference between recalled words for handwriting vs. typing and a barely (p-value .03) significant difference between recognized words for the two groups. However, if you look at the standard deviations for the means for the two tasks, you'll see that each mean is in the other's 1-SD range.

Furthermore, the task they describe is simple but not necessarily that relevant to what's really going on when someone takes notes on a lecture / talk. They intentionally used semantically meaningless words (for understandable reasons) whereas real-life talks hopefully have higher-level meaning and themes.

ETA (after initial posting): Just found another paper that a few pop-sci articles seem to cite. This paper covers three experiments, which are all more realistic than the one I described above. I'm only going to discuss the first here.

The first had participants watch TED talks, take notes on them (either on a laptop or by hand) and then answer a combination of "factual" and "conceptual" questions about them. At a high level, they interpret the results of this experiment as showing that laptop note-takers did as well as the by hand note-takers on factual questions b |

5127b5fb-4db9-42b5-94a5-87747e605b27 | trentmkelly/LessWrong-43k | LessWrong | Why is COVID reinfection rate still so uncertain.

From recent observatory studies, SIREN in UK , and in Denmark, they both estimate seropositivity to give around 80% of protection agains infection. The former observed 90% protection against symptomatic cases.

How efficacious are vaccines on the seropositive population? I've only seen reports on safety analysis.

To the extend of suspecting malice, the Israel Pfizer study did not report on prior infection analysis - Study protocol states "Exclusion of patients with COVID-19 prior to the index date or matched index date." |

8d218f0d-84bc-4975-a94b-8f08519a90a6 | trentmkelly/LessWrong-43k | LessWrong | Dealing with trolling and the signal to noise ratio

The recent implementation of a -5 karma penalty for replying to comments that are at -3 or below has clearly met with some disagreement and controversy. See http://lesswrong.com/r/discussion/lw/eb9/meta_karma_for_last_30_days/7aon . However, at the same time, it seems that Eliezer's observation that trolling and related problems have over time gotten worse here may be correct. It may be that this an inevitable consequence of growth, but it may be that it can be handled or reduced with some solution or set of solutions. I'm starting this discussion thread for people to propose possible solutions. To minimize anchoring bias and related problems, I'm not going to include my ideas in this header but in a comment below. People should think about the problem before reading proposed solutions (again to minimize anchoring issues). |

beb4e0ca-f37b-4f19-9cd9-873b18c31272 | trentmkelly/LessWrong-43k | LessWrong | Surviving and Shaping Long-Term Competitions: Lessons from Net Assessment

This post examines net assessment, a framework for evaluating strategic competition that evolved to inform U.S. defense policy during the Cold War. We explain what net assessment is, its methods and principles, and how some of its tools can be applied to reason about highly uncertain, long-term tech competitions with potentially existential stakes.

What is net assessment?

In the late 1950s, the Cold War slid into an especially dangerous period. Nuclear stockpiles swelled and delivery capabilities advanced, while a decades-long buildup by the Soviet Union challenged the United States’ conventional military dominance. Defense analysts needed to reframe the way they looked at military competition: the U.S. could not overpower the Soviet war machine with brute force, and the prospect of nuclear war both elevated the stakes of conflict and created a need for new metrics and principles of strategy for engaging in limited competition. It was in this context that the framework of “net assessment” began to develop.

Andrew Marshall, who founded and then directed the DoD’s Office of Net Assessment for forty-two years, described net assessment as follows:

> "Our notion of a net assessment is that it is a careful comparison of U.S. weapon systems, forces, and policies in relation to those of other countries. It is comprehensive, including description of the forces, operational doctrines and practices, training regime, logistics, known or conjectured effectiveness in various environments, design practices and their effect on equipment costs, performance, and procurement practices and their influence on cost and lead times. The use of net assessment is intended to be diagnostic. It will highlight efficiency and inefficiency in the way we and others do things, and areas of comparative advantage with respect to our rivals."

Generalizing from its original military context, the core idea of net assessment is to create comprehensive (hence the “net” in the name), objective, an |

898b64a9-04dc-4a38-8576-6288ba229350 | StampyAI/alignment-research-dataset/blogs | Blogs | Artificial Intelligence as a Positive and Negative Factor in Global Risk

Draft for [Global Catastrophic Risks, Oxford University Press, 2008](http://www.amazon.com/Global-Catastrophic-Risks-Martin-Rees/dp/0198570503/ref=pd_bbs_sr_1?ie=UTF8&s=books&qid=1224111364&sr=8-1) . [Download as PDF](https://intelligence.org/files/AIPosNegFactor.pdf) .

[AIPosNegFactor](https://eystaging.wpengine.com/wp-content/uploads/2020/09/AIPosNegFactor.pdf)

---

This document is ©2007 by [Eliezer Yudkowsky](http://eyudkowsky.wpengine.com/) and free under the [Creative Commons Attribution-No Derivative Works 3.0 License](http://creativecommons.org/licenses/by-nd/3.0/) for copying and distribution, so long as the work is attributed and the text is unaltered.

Eliezer Yudkowsky’s work is supported by the [Machine Intelligence Research Institute](https://intelligence.org/) .

If you think the world could use some more rationality, consider blogging this page.

Praise, condemnation, and feedback are [always welcome](https://eyudkowsky.wpengine.com/contact) . The web address of this page is [http://eyudkowsky.wpengine.com/singularity/ai-risk/](https://eyudkowsky.wpengine.com/singularity/ai-risk/) . |

e0b6d8c1-8738-4974-b546-aa73fe15b580 | trentmkelly/LessWrong-43k | LessWrong | What could Alphafold 4 look like?

I made another biology-ML podcast! Two hours long, deeply technical, links below.

I posted about others ones I did here (machine learning in molecular dynamics) and here (machine learning in vaccine design). This one is over machine learning in protein design, interviewing perhaps one of the most well-known people in the field. This is my own field, so the podcast is very in the weeds, but hopefully interesting to those deeply curious about biology!

Substack: https://www.owlposting.com/p/what-could-alphafold-4-look-like

Youtube: https://youtu.be/6_RFXNxy62c

Spotify: https://open.spotify.com/episode/0wPs3rmp0zrfauqToozrcv?si=DCtRf-xQTPiVYwslo-b2rQ

Apple Podcasts: https://podcasts.apple.com/us/podcast/what-could-alphafold-4-look-like-sergey-ovchinnikov-3/id1758545538?i=1000704927828

Transcript: https://www.owlposting.com/p/what-could-alphafold-4-look-like?open=false#%C2%A7transcript

Summary: To those in the protein design space, Dr. Sergey Ovchinnikov is a very, very well-recognized name.

A recent MIT professor (circa early 2024), he has played a part in a staggering number of recent innovations in the field: ColabFold, RFDiffusion, Bindcraft, automated design of soluble proxies of membrane proteins, elucidating what protein language models are learning, conformational sampling via Alphafold2, and many more. And even beyond the research that have come from his lab in the last few years, the co-evolution work he did during his PhD/fellowship also laid some of the groundwork for the original Alphafold paper, being cited twice in it.

As a result, Sergey’s work has gained a reputation for being something that is worth reading. But nobody has ever interviewed him before! Which was shocking for someone who was so pivotally important for the field. So, obviously, I wanted to be the first one to do it. After an initial call, I took a train down to Boston, booked a studio, and chatted with him for a few hours, asking every question I could think of. We talk about his own |

df31ab59-985b-4b01-adb0-328293feebd6 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | The theory-practice gap

[Thanks to Richard Ngo, Damon Binder, Summer Yue, Nate Thomas, Ajeya Cotra, Alex Turner, and other Redwood Research people for helpful comments; thanks Ruby Bloom for formatting this for the Alignment Forum for me.]

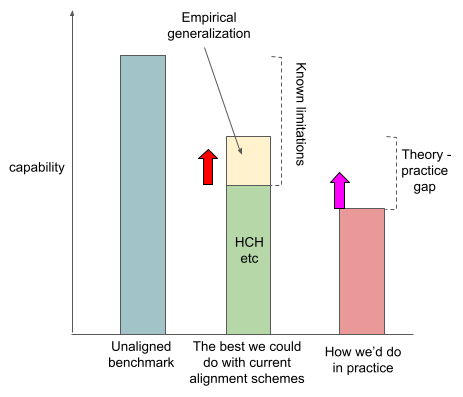

I'm going to draw a picture, piece by piece. I want to talk about the capability of some different AI systems.

You can see here that we've drawn the capability of the system we want to be [competitive](https://ai-alignment.com/directions-and-desiderata-for-ai-control-b60fca0da8f4) with, which I’ll call the [unaligned benchmark](https://ai-alignment.com/an-unaligned-benchmark-b49ad992940b). The unaligned benchmark is what you get if you train a system on the task that will cause the system to be most generally capable. And you have no idea how it's thinking about things, and you can only point this system at some goals and not others.

I think that the alignment problem looks different depending on how capable the system you’re trying to align is, and I think there are reasonable arguments for focusing on various different capabilities levels. See [here](https://docs.google.com/document/d/1kQeMXKxybDKziRyRTmHmp9nHw76T1JAUgB8fY4e34_o/edit) for more of my thoughts on this question.

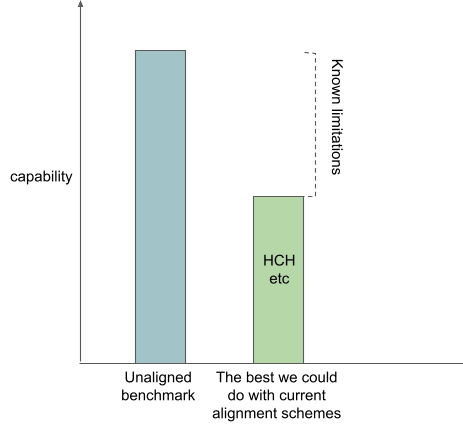

Alignment strategies

====================

People have also proposed various alignment strategies. But I don’t think that these alignment strategies are competitive with the unaligned benchmark, even in theory.

I want to claim that most of the action in theoretical AI alignment is people proposing various ways of getting around these problems by having your systems do things that are human understandable instead of doing things that are justified by working well.

For example, the hope with [imitative IDA](https://www.alignmentforum.org/posts/fRsjBseRuvRhMPPE5/an-overview-of-11-proposals-for-building-safe-advanced-ai#2__Imitative_amplification___intermittent_oversight) is that through its recursive structure you can build a dataset of increasingly competent answers to questions, and then at every step you can train a system to imitate these increasingly good answers to questions, and you end up with a really powerful question-answerer that was only ever trained to imitate humans-with-access-to-aligned-systems, and so your system is outer aligned.

The bar I’ve added, which represents how capable I think you can get with amplified humans, is lower than the bar for the unaligned benchmark. I've drawn this bar lower because I think that if your system is trying to imitate cognition that can be broken down into human understandable parts, it is systematically not going to be able to pursue certain powerful strategies that the end-to-end trained systems will be able to. I think that there are probably a bunch of concepts that humans can’t understand quickly, or maybe can’t understand at all. And if your systems are restricted to never use these concepts, I think your systems are probably just going to be a bunch weaker.

I think that transparency techniques, as well as AI alignment strategies like [microscope AI](https://www.alignmentforum.org/posts/fRsjBseRuvRhMPPE5/an-overview-of-11-proposals-for-building-safe-advanced-ai#5__Microscope_AI) that lean heavily on them, rely on a similar assumption that the cognition of the system you’re trying to align is factorizable into human-understandable parts. One component of the best-case scenario for transparency techniques is that anytime your neural net does stuff, you can get the best possible human understandable explanation of why it's doing that thing. If such an explanation doesn’t exist, your transparency tools won’t be able to assure you that your system is aligned even if it is.

To summarize, I claim that current alignment proposals don’t really have a proposal for how to make systems that are aligned but either

* produce plans that can’t be understood by amplified humans

* do cognitive actions that can’t be understood by amplified humans

And so I claim that current alignment proposals don’t seem like they can control systems as powerful as the systems you’d get from an unaligned training strategy.

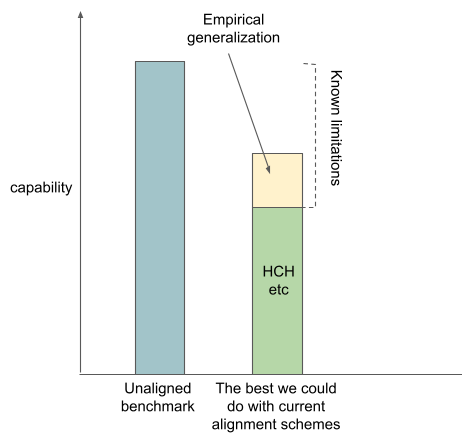

Empirical generalization

========================

I think some people are optimistic that alignment will generalize from the cases where amplified humans can evaluate it to the cases where the amplified humans can’t. I'm going to call this empirical generalization. I think that empirical generalization is an example of relying on empirical facts about neural nets that are not true of arbitrary general black box function approximators.

I think this is a big part of the reason why some people are optimistic about the strategy that Paul Christiano calls [“winging it”](https://aiimpacts.org/conversation-with-paul-christiano/).

(I think that one particularly strong argument for empirical generalization is that if you imagine AGI as something like GPT-17 fine-tuned on human feedback on various tasks, your AGI might think about things in a very human-shaped way. (Many people disagree with me on this.) It currently seems plausible to me that AGI will be trained with a bunch of unsupervised learning based on stuff humans have written, which maybe makes it more likely that your system will have this very human-shaped set of concepts.)

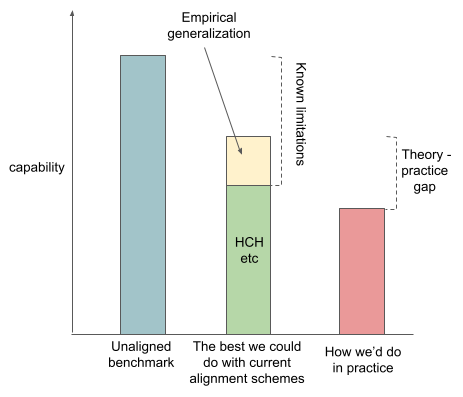

The theory-practice gap

=======================

So the total height of that second column is the maximum level of capabilities that we think we could theoretically attain using the same capability techniques that we used for the unaligned benchmark, but using the alignment strategies that we know about right now. But in practice, we probably aren't going to do as well as that, for a variety of practical reasons. For example, as I've said, I think transparency tools are theoretically limited, but we're just way below the maximum theoretically available capability of transparency tools right now.

So I want to claim that reality will probably intervene in various ways and mean that the maximum capability of an aligned AI that we can build is lower than the maximum achievable theoretically from the techniques we know about and empirical generalization. I want to call that difference the theory practice gap.

Sources of theory-practice gap

------------------------------

**Practical difficulties, eg getting human feedback**

Human feedback is annoying in a wide variety of ways; you have to do quality control etc.

**Problems with the structure of the recursion**

I think it's reasonably plausible that the most competitive way of making powerful systems ends up not really being shapeable into the shape you need for the amplified human stuff to work out. So for example, maybe the best way of making AGI is doing some kind of evolution simulation, where you have this population of little creatures and they compete with each other and stuff. And if that's the only way of making smart systems, then I think it's pretty plausible that there's just like no way of building a trusted, amplified reward signal out of it. And so you can't do the IDA style things, or things where you use a system to do transparency analysis on a slightly more powerful version of itself.

**NP-hard problems**

Maybe your amplified system won’t be able to answer questions like “are there any inputs on which this system does the wrong thing” even if it wants to. Eg the [RSA-2048 problem](https://ai-alignment.com/training-robust-corrigibility-ce0e0a3b9b4d#a291).

I think that transparency has a related problem: the most competitive-to-train models might have internal structure that amplified humans would be able to understand if it was explained to them, but we might not be able to get a model to find that structure.

---

Why am I lumping together fundamental concerns like “maybe these alignment strategies will require solving NP-hard problems” with things like “it’s annoying to do quality control on your labelling contractors”?

It’s primarily because I want to emphasize that these concerns are different from the fundamental limitations of currently proposed alignment schemes: even if you assume that we don’t e.g. run into the hard instances of the NP-hard problems, I think that the proposed alignment schemes still aren’t clearly good enough. There are lots of complicated arguments about the extent to which we have some of these “practical” problems; I think that these arguments distract from the claim that the theoretical alignment problem might be unsolved even if these problems are absent.

So my current view is that if you want to claim that we're going to fully solve the technical alignment problem as I described it above, you've got to believe some combination of:

* we're going to make substantial theoretical improvements

* factored cognition is true

* we're going to have really good empirical generalization

(In particular, your belief in these factors needs to add up to some constant. E.g., if you’re more bullish on factored cognition, you need less of the other two.)

I feel like there’s at least a solid chance that we’re in a pretty inconvenient world where none of these are true.

Classifying alignment work

==========================

This picture suggests a few different ways of trying to improve the situation.

* You could try to improve the best alignment techniques. I think this is what a lot of AI alignment theoretical work is. For example, I think Paul Christiano’s recent imitative generalization work is trying to increase the theoretically attainable capabilities of aligned systems. I’ve drawn this as the red arrow on the graph below.

* You can try to reduce the theory-practice gap. I think this is a pretty good description of what I think applied alignment research is usually trying to do. This is also what I’m currently working on. This is the pink arrow.

* You can try to improve our understanding of the relative height of all these bars.

AI alignment disagreements as variations on this picture

========================================================

So now that we have this picture, let's try to use it to explain some common disagreements about AI alignment.

I think some people think that amplified humans are actually just as capable as the unaligned benchmark. I think this is basically the factored cognition hypothesis.

I think there's a bunch of people who are really ML-flavored alignment people who seem to be pretty optimistic about empirical generalization. From their perspective, almost everything that AI alignment researchers should be doing is narrowing that theory practice gap, because that's the only problem.

I think there's also a bunch of people like perhaps the stereotypical MIRI employee who thinks that amplified humans aren't that powerful, and you're not going to get any empirical generalization, and there are a bunch of problems with the structure of the recursion for amplification procedures. And so it doesn't feel that important to them to work on the practical parts of the theory practice gap, because even if we totally succeeded at getting that to zero, the resulting systems wouldn't be very powerful or very aligned. And so it just wouldn't have mattered that much. And the stereotypical such person wants you to work on the red arrow instead of the pink arrow.

How useful is it to work on narrowing the theory-practice gap for alignment strategies that won’t solve the whole problem?

==========================================================================================================================

See [here](https://www.alignmentforum.org/posts/tmWMuY5HCSNXXZ9oq/buck-s-shortform?commentId=BznGTJ3rGHLMcdbEB).

Conclusion

==========

I feel pretty nervous about the state of the world described by this picture.

I'm really not sure whether I think that theoretical alignment researchers are going to be able to propose a scheme that gets around the core problems with the schemes they've currently proposed.

There's a pretty obvious argument for optimism here, which is that people haven't actually put in that many years into AI alignment theoretical research so far. And presumably they're going to do a lot more of it between now and AGI. I think I'm like 30% on the proposition that before AGI, we're going to come up with some alignment scheme that just looks really good and clearly solves most of the problems with current schemes.

I think I overall disagree with people like Joe Carlsmith and Rohin Shah mostly in two places:

* By the time we get to AGI, will we have alignment techniques that are even slightly competitive? I think it’s pretty plausible the answer is no. (Obviously it would be very helpful for me to operationalize things like “pretty plausible” and “slightly competitive” here.)

* If we don’t have the techniques to reliably align AI, will someone deploy AI anyway? I think it’s more likely the answer is yes. |

7537bd04-7cb2-4694-85fb-b2bd77e5f7b1 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | The Solomonoff Prior is Malign

This argument came to my attention from [this post](https://ordinaryideas.wordpress.com/2016/11/30/what-does-the-universal-prior-actually-look-like/) by Paul Christiano. I also found [this clarification](https://www.lesswrong.com/posts/jP3vRbtvDtBtgvkeb/clarifying-consequentialists-in-the-solomonoff-prior) helpful. I found [these counter-arguments](https://www.lesswrong.com/posts/Ecxevhvx85Y4eyFcu/weak-arguments-against-the-universal-prior-being-malign) stimulating and have included some discussion of them.

Very little of this content is original. My contributions consist of fleshing out arguments and constructing examples.

Thank you to Beth Barnes and Thomas Kwa for helpful discussion and comments.

What is the Solomonoff prior?

=============================

The Solomonoff prior is intended to answer the question "what is the probability of X?" for any X, where X is a finite string over some finite alphabet. The Solomonoff prior is defined by taking the set of all Turing machines (TMs) which output strings when run with no input and weighting them proportional to 2−K.mjx-chtml {display: inline-block; line-height: 0; text-indent: 0; text-align: left; text-transform: none; font-style: normal; font-weight: normal; font-size: 100%; font-size-adjust: none; letter-spacing: normal; word-wrap: normal; word-spacing: normal; white-space: nowrap; float: none; direction: ltr; max-width: none; max-height: none; min-width: 0; min-height: 0; border: 0; margin: 0; padding: 1px 0}

.MJXc-display {display: block; text-align: center; margin: 1em 0; padding: 0}

.mjx-chtml[tabindex]:focus, body :focus .mjx-chtml[tabindex] {display: inline-table}

.mjx-full-width {text-align: center; display: table-cell!important; width: 10000em}

.mjx-math {display: inline-block; border-collapse: separate; border-spacing: 0}

.mjx-math \* {display: inline-block; -webkit-box-sizing: content-box!important; -moz-box-sizing: content-box!important; box-sizing: content-box!important; text-align: left}

.mjx-numerator {display: block; text-align: center}

.mjx-denominator {display: block; text-align: center}

.MJXc-stacked {height: 0; position: relative}

.MJXc-stacked > \* {position: absolute}

.MJXc-bevelled > \* {display: inline-block}

.mjx-stack {display: inline-block}

.mjx-op {display: block}

.mjx-under {display: table-cell}

.mjx-over {display: block}

.mjx-over > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-under > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-stack > .mjx-sup {display: block}

.mjx-stack > .mjx-sub {display: block}

.mjx-prestack > .mjx-presup {display: block}

.mjx-prestack > .mjx-presub {display: block}

.mjx-delim-h > .mjx-char {display: inline-block}

.mjx-surd {vertical-align: top}

.mjx-mphantom \* {visibility: hidden}

.mjx-merror {background-color: #FFFF88; color: #CC0000; border: 1px solid #CC0000; padding: 2px 3px; font-style: normal; font-size: 90%}

.mjx-annotation-xml {line-height: normal}

.mjx-menclose > svg {fill: none; stroke: currentColor}

.mjx-mtr {display: table-row}

.mjx-mlabeledtr {display: table-row}

.mjx-mtd {display: table-cell; text-align: center}

.mjx-label {display: table-row}

.mjx-box {display: inline-block}

.mjx-block {display: block}

.mjx-span {display: inline}

.mjx-char {display: block; white-space: pre}

.mjx-itable {display: inline-table; width: auto}

.mjx-row {display: table-row}

.mjx-cell {display: table-cell}

.mjx-table {display: table; width: 100%}

.mjx-line {display: block; height: 0}

.mjx-strut {width: 0; padding-top: 1em}

.mjx-vsize {width: 0}

.MJXc-space1 {margin-left: .167em}

.MJXc-space2 {margin-left: .222em}

.MJXc-space3 {margin-left: .278em}

.mjx-test.mjx-test-display {display: table!important}

.mjx-test.mjx-test-inline {display: inline!important; margin-right: -1px}

.mjx-test.mjx-test-default {display: block!important; clear: both}

.mjx-ex-box {display: inline-block!important; position: absolute; overflow: hidden; min-height: 0; max-height: none; padding: 0; border: 0; margin: 0; width: 1px; height: 60ex}

.mjx-test-inline .mjx-left-box {display: inline-block; width: 0; float: left}

.mjx-test-inline .mjx-right-box {display: inline-block; width: 0; float: right}

.mjx-test-display .mjx-right-box {display: table-cell!important; width: 10000em!important; min-width: 0; max-width: none; padding: 0; border: 0; margin: 0}

.MJXc-TeX-unknown-R {font-family: monospace; font-style: normal; font-weight: normal}

.MJXc-TeX-unknown-I {font-family: monospace; font-style: italic; font-weight: normal}

.MJXc-TeX-unknown-B {font-family: monospace; font-style: normal; font-weight: bold}

.MJXc-TeX-unknown-BI {font-family: monospace; font-style: italic; font-weight: bold}

.MJXc-TeX-ams-R {font-family: MJXc-TeX-ams-R,MJXc-TeX-ams-Rw}

.MJXc-TeX-cal-B {font-family: MJXc-TeX-cal-B,MJXc-TeX-cal-Bx,MJXc-TeX-cal-Bw}

.MJXc-TeX-frak-R {font-family: MJXc-TeX-frak-R,MJXc-TeX-frak-Rw}

.MJXc-TeX-frak-B {font-family: MJXc-TeX-frak-B,MJXc-TeX-frak-Bx,MJXc-TeX-frak-Bw}

.MJXc-TeX-math-BI {font-family: MJXc-TeX-math-BI,MJXc-TeX-math-BIx,MJXc-TeX-math-BIw}

.MJXc-TeX-sans-R {font-family: MJXc-TeX-sans-R,MJXc-TeX-sans-Rw}

.MJXc-TeX-sans-B {font-family: MJXc-TeX-sans-B,MJXc-TeX-sans-Bx,MJXc-TeX-sans-Bw}

.MJXc-TeX-sans-I {font-family: MJXc-TeX-sans-I,MJXc-TeX-sans-Ix,MJXc-TeX-sans-Iw}

.MJXc-TeX-script-R {font-family: MJXc-TeX-script-R,MJXc-TeX-script-Rw}

.MJXc-TeX-type-R {font-family: MJXc-TeX-type-R,MJXc-TeX-type-Rw}

.MJXc-TeX-cal-R {font-family: MJXc-TeX-cal-R,MJXc-TeX-cal-Rw}

.MJXc-TeX-main-B {font-family: MJXc-TeX-main-B,MJXc-TeX-main-Bx,MJXc-TeX-main-Bw}

.MJXc-TeX-main-I {font-family: MJXc-TeX-main-I,MJXc-TeX-main-Ix,MJXc-TeX-main-Iw}

.MJXc-TeX-main-R {font-family: MJXc-TeX-main-R,MJXc-TeX-main-Rw}

.MJXc-TeX-math-I {font-family: MJXc-TeX-math-I,MJXc-TeX-math-Ix,MJXc-TeX-math-Iw}

.MJXc-TeX-size1-R {font-family: MJXc-TeX-size1-R,MJXc-TeX-size1-Rw}

.MJXc-TeX-size2-R {font-family: MJXc-TeX-size2-R,MJXc-TeX-size2-Rw}

.MJXc-TeX-size3-R {font-family: MJXc-TeX-size3-R,MJXc-TeX-size3-Rw}

.MJXc-TeX-size4-R {font-family: MJXc-TeX-size4-R,MJXc-TeX-size4-Rw}

.MJXc-TeX-vec-R {font-family: MJXc-TeX-vec-R,MJXc-TeX-vec-Rw}

.MJXc-TeX-vec-B {font-family: MJXc-TeX-vec-B,MJXc-TeX-vec-Bx,MJXc-TeX-vec-Bw}

@font-face {font-family: MJXc-TeX-ams-R; src: local('MathJax\_AMS'), local('MathJax\_AMS-Regular')}

@font-face {font-family: MJXc-TeX-ams-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_AMS-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_AMS-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_AMS-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-B; src: local('MathJax\_Caligraphic Bold'), local('MathJax\_Caligraphic-Bold')}

@font-face {font-family: MJXc-TeX-cal-Bx; src: local('MathJax\_Caligraphic'); font-weight: bold}

@font-face {font-family: MJXc-TeX-cal-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-R; src: local('MathJax\_Fraktur'), local('MathJax\_Fraktur-Regular')}

@font-face {font-family: MJXc-TeX-frak-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-B; src: local('MathJax\_Fraktur Bold'), local('MathJax\_Fraktur-Bold')}

@font-face {font-family: MJXc-TeX-frak-Bx; src: local('MathJax\_Fraktur'); font-weight: bold}

@font-face {font-family: MJXc-TeX-frak-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-BI; src: local('MathJax\_Math BoldItalic'), local('MathJax\_Math-BoldItalic')}

@font-face {font-family: MJXc-TeX-math-BIx; src: local('MathJax\_Math'); font-weight: bold; font-style: italic}

@font-face {font-family: MJXc-TeX-math-BIw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-BoldItalic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-BoldItalic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-BoldItalic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-R; src: local('MathJax\_SansSerif'), local('MathJax\_SansSerif-Regular')}

@font-face {font-family: MJXc-TeX-sans-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-B; src: local('MathJax\_SansSerif Bold'), local('MathJax\_SansSerif-Bold')}

@font-face {font-family: MJXc-TeX-sans-Bx; src: local('MathJax\_SansSerif'); font-weight: bold}

@font-face {font-family: MJXc-TeX-sans-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-I; src: local('MathJax\_SansSerif Italic'), local('MathJax\_SansSerif-Italic')}

@font-face {font-family: MJXc-TeX-sans-Ix; src: local('MathJax\_SansSerif'); font-style: italic}

@font-face {font-family: MJXc-TeX-sans-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-script-R; src: local('MathJax\_Script'), local('MathJax\_Script-Regular')}

@font-face {font-family: MJXc-TeX-script-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Script-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Script-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Script-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-type-R; src: local('MathJax\_Typewriter'), local('MathJax\_Typewriter-Regular')}

@font-face {font-family: MJXc-TeX-type-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Typewriter-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Typewriter-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Typewriter-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-R; src: local('MathJax\_Caligraphic'), local('MathJax\_Caligraphic-Regular')}

@font-face {font-family: MJXc-TeX-cal-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-B; src: local('MathJax\_Main Bold'), local('MathJax\_Main-Bold')}

@font-face {font-family: MJXc-TeX-main-Bx; src: local('MathJax\_Main'); font-weight: bold}

@font-face {font-family: MJXc-TeX-main-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-I; src: local('MathJax\_Main Italic'), local('MathJax\_Main-Italic')}

@font-face {font-family: MJXc-TeX-main-Ix; src: local('MathJax\_Main'); font-style: italic}

@font-face {font-family: MJXc-TeX-main-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-R; src: local('MathJax\_Main'), local('MathJax\_Main-Regular')}

@font-face {font-family: MJXc-TeX-main-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-I; src: local('MathJax\_Math Italic'), local('MathJax\_Math-Italic')}

@font-face {font-family: MJXc-TeX-math-Ix; src: local('MathJax\_Math'); font-style: italic}

@font-face {font-family: MJXc-TeX-math-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size1-R; src: local('MathJax\_Size1'), local('MathJax\_Size1-Regular')}

@font-face {font-family: MJXc-TeX-size1-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size1-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size1-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size1-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size2-R; src: local('MathJax\_Size2'), local('MathJax\_Size2-Regular')}

@font-face {font-family: MJXc-TeX-size2-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size2-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size2-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size2-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size3-R; src: local('MathJax\_Size3'), local('MathJax\_Size3-Regular')}

@font-face {font-family: MJXc-TeX-size3-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size3-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size3-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size3-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size4-R; src: local('MathJax\_Size4'), local('MathJax\_Size4-Regular')}

@font-face {font-family: MJXc-TeX-size4-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size4-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size4-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size4-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-R; src: local('MathJax\_Vector'), local('MathJax\_Vector-Regular')}

@font-face {font-family: MJXc-TeX-vec-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-B; src: local('MathJax\_Vector Bold'), local('MathJax\_Vector-Bold')}

@font-face {font-family: MJXc-TeX-vec-Bx; src: local('MathJax\_Vector'); font-weight: bold}

@font-face {font-family: MJXc-TeX-vec-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Bold.otf') format('opentype')}

, where K is the description length of the TM (informally its size in bits).

The Solomonoff prior says the probability of a string is the sum over all the weights of all TMs that print that string.

One reason to care about the Solomonoff prior is that we can use it to do a form of idealized induction. If you have seen 0101 and want to predict the next bit, you can use the Solomonoff prior to get the probability of 01010 and 01011. Normalizing gives you the chances of seeing 1 versus 0, conditioned on seeing 0101. In general, any process that assigns probabilities to all strings in a consistent way can be used to do induction in this way.

[This post](https://www.lesswrong.com/posts/Kyc5dFDzBg4WccrbK/an-intuitive-explanation-of-solomonoff-induction#All_Algorithms) provides more information about Solomonoff Induction.

Why is it malign?

=================

Imagine that you wrote a programming language called python^10 that works as follows: First, it takes all alpha-numeric chars that are not in literals and checks if they're repeated 10 times sequentially. If they're not, they get deleted. If they are, they get replaced by a single copy. Second, it runs this new program through a python interpreter.

Hello world in python^10:

`ppppppppprrrrrrrrrriiiiiiiiiinnnnnnnnnntttttttttt('Hello, world!')`

Luckily, python has an `exec` function that executes literals as code. This lets us write a shorter hello world:

`eeeeeeeeexxxxxxxxxxeeeeeeeeeecccccccccc("print('Hello, world!')")`

It's probably easy to see that for nearly every program, the shortest way to write it in python^10 is to write it in python and run it with `exec`. If we didn't have `exec`, for sufficiently complicated programs, the shortest way to write them would be to specify an interpreter for a different language in python^10 and write it in that language instead.

As this example shows, the answer to "what's the shortest program that does X?" might involve using some roundabout method (in this case we used `exec`). If python^10 has some security properties that python didn't have, then the shortest program in python^10 that accomplished any given task would not have these security properties because they would all pass through `exec`. In general, if you can access alternative ‘modes’ (in this case python), the shortest programs that output any given string might go through one of those modes, possibly introducing malign behavior.

Let's say that I'm trying to predict what a human types next using the Solomonoff prior. Many programs predict the human:

1. Simulate the human and their local surroundings. Run the simulation forward and check what gets typed.

2. Simulate the entire Earth. Run the simulation forward and check what that particular human types.

3. Simulate the entire universe from the beginning of time. Run the simulation forward and check what that particular human types.

4. Simulate an entirely different universe that has reason to simulate this universe. Output what the human types in the simulation of our universe.

Which one is the simplest? One property of the Solmonoff prior is that it doesn't care about how long the TMs take to run, only how large they are. This results in an unintuitive notion of "simplicity"; a program that does something 210 times might be simpler than a program that does the same thing 29−1 times because the number 210 is easier to specify than 29−1.

In our example, it seems likely that "simulate the entire universe" is simpler than "simulate Earth" or "simulate part of Earth" because the initial conditions of the universe are simpler than the initial conditions of Earth. There is some additional complexity in picking out the specific human you care about. Since the local simulation is built around that human this will be easier in the local simulation than the universe simulation. However, in aggregate, it seems possible that "simulate the universe, pick out the typing" is the shortest program that predicts what your human will do next. Even so, "pick out the typing" is likely to be a very complicated procedure, making your total complexity quite high.

Whether simulating a different universe that simulates our universe is simpler depends a lot on the properties of that other universe. If that other universe is simpler than our universe, then we might run into an `exec` situation, where it's simpler to run that other universe and specify the human in their simulation of our universe.

This is troubling because that other universe might contain beings with different values than our own. If it's true that simulating that universe is the simplest way to predict our human, then some non-trivial fraction of our prediction might be controlled by a simulation in another universe. If these beings want us to act in certain ways, they have an incentive to alter their simulation to change our predictions.

At its core, this is the main argument why the Solomonoff prior is malign: a lot of the programs will contain agents with preferences, these agents will seek to influence the Solomonoff prior, and they will be able to do so effectively.

How many other universes?

-------------------------

The Solomonoff prior is running all possible Turing machines. How many of them are going to simulate universes? The answer is probably "quite a lot".

It seems like specifying a lawful universe can be done with very few bits. Conway's Game of Life is very simple and can lead to very rich outcomes. Additionally, it seems quite likely that agents with preferences (consequentialists) will appear somewhere inside this universe. One reason to think this is that evolution is a relatively simple mathematical regularity that seems likely to appear in many universes.

If the universe has a hospitable structure, due to [instrumental convergence](https://www.wikiwand.com/en/Instrumental_convergence) these agents with preferences will expand their influence. As the universe runs for longer and longer, the agents will gradually control more and more.

In addition to specifying how to simulate the universe, the TM must specify an output channel. In the case of Game of Life, this might be a particular cell sampled at a particular frequency. Other examples include whether or not a particular pattern is present in a particular region, or the parity of the total number of cells.

In summary, specifying lawful universes that give rise to consequentialists requires a very simple program. Therefore, the predictions generated by the Solomonoff prior will have some influential components comprised of simulated consequentialists.

How would they influence the Solomonoff prior?

----------------------------------------------

Consequentialists that find themselves in universes can reason about the fundamental laws that govern their universe. If they find that their universe has relatively simple physics, they will know that their behavior contributes to the Solomonoff prior. To gain access to more resources in other universes, these consequentialists might seek to act in ways that influence the Solomonoff prior.

A contrived example of a decision other beings would want to manipulate is "what program should be written and executed next?" Beings in other universes would have an incentive to get us to write programs that were aligned with their values. A particularly interesting scenario is one in which they write themselves into existence, allowing them to effectively "break into" our universe.

For example, somewhere in the Solomonoff prior there is a program that goes something like: "Simulate this universe. Starting from the year 2100, every hour output '1' if there's a cubic meter of iron on the Moon, else output '0'." By controlling the presence/absence of a cubic meter of iron on the Moon, we would be able to influence the output of this particular facet of the Solomonoff prior.

This example is a very complicated program and thus will not have much weight in the Solomonoff prior. However, by reasoning over the complexity of possible output channels for their universe, consequentialists would be able to identify output channels that weigh heavily in the Solomonoff prior. For example, if I was in the Game of Life, I might reason that sampling cells that were living in the initial conditions of the universe is simpler than sampling other cells. Additionally, sampling cells and reporting their outputs directly is simpler than sampling cells and reversing their values. Therefore, I might choose to control regions close to the initial live cells of the universe for purposes of exerting influence over the Solomonoff prior.

Additionally, consequentialists can send across many plausible channels at once, focus efforts on channels that are easy to control, send through channels that would not unduly decrease their values for other reasons, etc. Since the proportional weight in the Solomonoff prior drops off exponentially with respect to complexity, it’s possible only very small regions of space would need to be used to achieve a large fraction of the maximum possible influence.

How much influence will they have?

----------------------------------

A simple application of the [no free lunch](https://www.wikiwand.com/en/No_free_lunch_theorem) theorem shows that there is no way of making predictions that is better than the Solomonoff prior across all possible distributions over all possible strings. Thus, agents that are influencing the Solomonoff prior cannot be good at predicting, and thus gain influence, in all possible worlds.

In practice, this is irrelevant because consequentialists do not want to achieve equal predictive accuracy in all worlds; they only care about worlds in which being predictive results in increased influence. If I'm exerting control over the Solomonoff prior, I only care about influencing it in ways that might affect material consequences in other universes. For example, I do not care about gaining influence in universes inhospitable to life. Thus I will be able to trade off predictive power in universes I don't care about for predictive power in universes I do care about. This means that I should restrict my attention to all universes that have resources and the Solomonoff prior is being used to make important decisions, weighting appropriately.

Another interesting thing about the Solomonoff prior is it is actually a collection of priors. The "length" of a TM is defined relative to some universal TM. For any particular TM, it's length with respect to different universal TMs will vary; thus, there are versions of the Solomonoff prior that give more or less weight to any given TM. (Note that you can simulate universal TMs with universal TMs, so the difference across all programs will be bounded by a constant factor. However, this constant factor can be large, so the difference in relative weight between different Solomonoff priors can also be large).

In particular, this suggests a good strategy for consequentialists: find a universe that is using a version of the Solomonoff prior that has a very short description of the particular universe the consequentialists find themselves in.

The combined strategy is thus to take a distribution over all decisions informed by the Solomonoff prior, weight them by how much influence can be gained and the version of the prior being used, and read off a sequence of bits that will cause some of these decisions to result in a preferred outcome.

The question of how much influence any given universe of consequentialists will have is difficult to answer. One way of quantifying this is to think about how many “universes they don't care about” they're trading off for “universes they do care about” (really we should be thinking in terms of sequences, but I find reasoning about universes to be easier).

Since the consequentialists care about exerting maximum influence, we can approximate them as not caring about universes that don't use a version of the Solomonoff prior that gives them a large weight. This can be operationalized as only caring about universes that use universal TM in a particular set for their Solomonoff prior. What is the probability that a particular universe uses a universal TM from that set? I am not sure, but 1/million to 1/billion seems reasonable. This suggests a universe of consequentialists will only care about 1/million to 1/billion universes, which means they can devote a million/billion times the predictive power to universes they care about. This is sometimes called the “anthropic update”. ([This post](https://www.lesswrong.com/posts/peebMuCuscjkNvTnE/clarifying-the-malignity-of-the-universal-prior-the-lexical) contains more discussion about this particular argument.)

Additionally, we might think about which decisions the consequentialists would care about. If a particular decision using the Solomonoff prior is important, consequentialists are going to care more about that decision than other decisions. Conservatively, perhaps 1/1000 decisions are "important" in this sense, giving another 1000x relative weighting.

After you condition on a decision being important and using a particular version of the Solomonoff prior, it thus seems quite likely that a non-trivial fraction of your prior is being controlled by consequentialists.

An intuition pump is that this argument is closer to an existence claim than a for-all claim. The Solomonoff prior is malign if there *exists* a simple universe of consequentialists that wants to influence our universe. This universe need not be simple in an absolute sense, only simple relative to the other TMs that could equal it in predictive power. Even if most consequentialists are too complicated or not interested, it seems likely that there is at least one universe that is.

Example

-------

**Complexity of Consequentialists**

How many bits does it take to specify a universe that can give rise to consequentialists? I do not know, but it seems like Conway’s Game of Life might provide a reasonable lower bound.

Luckily, the [code golf community](https://codegolf.stackexchange.com/) has spent some amount of effort optimizing for program size. How many bytes would you guess it takes to specify Game of Life? Well, it depends on the universal TM. Possible answers include [6](https://codegolf.stackexchange.com/a/149976), [32](https://codegolf.stackexchange.com/a/204279), [39](https://codegolf.stackexchange.com/a/12733), or [96](https://codegolf.stackexchange.com/a/51975).

Since universes of consequentialists can “cheat” by concentrating their predictive efforts onto universal TMs in which they are particularly simple, we’ll take the minimum. Additionally, my friend who’s into code golf (he wrote the 96-byte solution!) says that the 6-byte answer actually contains closer to 4 bytes of information.

To specify an initial configuration that can give rise to consequentialists we will need to provide more information. The [smallest infinite growth pattern](https://www.conwaylife.com/wiki/Infinite_growth) in Game of Life has been shown to need 10 cells. Another reference point is that a self-replicator with 12 cells exists in [HighLife](https://conwaylife.com/wiki/OCA:HighLife), a Game of Life variant. I’m not an expert, but I think an initial configuration that gives rise to intelligent life can be specified in an 8x8 bounding box, giving a total of 8 bytes.

Finally, we need to specify a sampling procedure that consequentialists can gain control of. Something like “read <cell> every <large number> time ticks” suffices. By assumption, the cell being sampled takes almost no information to specify. We can also choose whatever large number is easiest to specify (the [busy beaver](https://www.wikiwand.com/en/Busy_beaver) numbers come to mind). In total, I don’t think this will take more than 2 bytes.

Summing up, Game of Life + initial configuration + sampling method takes maybe 16 bytes, so a reasonable range for the complexity of a universe of consequentialists might be 10-1000 bytes. That doesn’t seem like very many, especially relative to the amount of information we’ll be conditioning the Solomonoff prior on if we ever use it to make an important decision.

**Complexity of Conditioning**

When we’re using the Solomonoff prior to make an important decision, the observations we’ll condition on include information that:

1. We’re using the Solomonoff prior

2. We’re making an important decision

3. We’re using some particular universal TM

How much information will this include? Many programs will not simulate universes. Many universes exist that do not have observers. Among universes with observers, some will not develop the Solomonoff prior. These observers will make many decisions. Very few of these decisions will be important. Even fewer of these decisions are made with the Solomonoff prior. Even fewer will use the particular version of the Solomonoff prior that gets used.

It seems reasonable to say that this is at least a megabyte of raw information, or about a million bytes. (I acknowledge some cart-horse issues here.)

This means that after you condition your Solomonoff prior, you’ll be left with programs that are at least a million bytes. As our Game of Life example shows, it only takes maybe 10-1000 of these bytes to specify a universe that gives rise to consequentialists. You have approximately a million bytes left to specify more properties of the universe that will make it more likely the consequentialists will want to exert influence over the Solomonoff prior for the purpose of influencing this particular decision.

Why might this argument be wrong?

=================================

Inaccessible Channels

---------------------

**Argument**

Most of the universe is outside of humanity's light-cone. This might suggest that most "simple" ways to sample from our universe are currently outside our influence, meaning that the only portions of the Solomonoff prior we can control are going to have an extremely low weight.

In general, it might be the case that for any universe, consequentialists inside that universe are going to have difficulty controlling simple output channels. For example, in Game of Life, a simple way to read information might sample a cell particular cell starting at t=0. However, consequentialists in Game of Life will not appear until a much later time and will be unable to control a large initial chunk of that output channel.

**Counter-argument**