id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

b4b3cd21-aeaa-4034-ae34-1f5051637140 | trentmkelly/LessWrong-43k | LessWrong | Consulting Opportunity for Budding Econometricians

As a part of my job, I recently created an econometric model. My boss wants someone to look over the math before its submitted internally throughout the company. We have a modest amount of money set aside for someone to audit the process.

The model is an ARMA(2,1) with seasonality, trend, and a dummy variable. There's no heteroscedasticity or serial correlation, but the Ramsey Reset test suggests a more different model might work better.

I currently have the data in an eviews file, so you'd need to do zero data entry.

There's a small chance this will be used in court, but none of the liability will be transferred to you. There should be an emphasis placed on parsimony. You'd have to sign a confidentiality agreement.

If you're qualified to review this/suggest a marginally better model, then this would be an easy way for you to make bank in a couple hours time. If it goes well, there might be more work like this in the future.

Let me know if you're interested. |

f59eb688-55f2-4c39-aa1b-eeb7d6d9cb48 | trentmkelly/LessWrong-43k | LessWrong | Something about the Pinker Cancellation seems Suspicious

Something about the recent attempt to cancel Steve Pinker seems really off.

They problem is that the argument is suspiciously bad. The open letter presents only six real pieces of evidence, and they're all really, trivially weak.

The left isn't incompetent when it comes to tallying up crimes for a show trial. In fact, they're pretty good at it. But for some reason this letter has only the weakest of attacks, and what's more, it stops at only six "relevant occasions". For comparison, take a look at this similar attack on Stephen Hsu which has, to put it mildly, more than six pieces of evidence. There is plenty of reasonable criticism of Pinker out there. Why didn't they use any of it?

Pinker has been a public figure for decades. Surely he has said something stupid and offensive at least once during that time. If not something honestly offensive, perhaps a slip of the tongue. If not a slip of the tongue, maybe something that sounds really terrible out of context.

We know that the authors of the piece are not above misrepresenting the evidence or taking statements out of context, because they do so multiple times in their letter. It's clear that they spent a lot of effort stretching the evidence to make Pinker look as bad as possible. Why didn't they spend that effort finding more damning evidence, things that look worse when taken out of context? Just as one example, this debate could easily be mined for quotes that sound sexist or racist to a moderately progressive reader. How about, "in all cultures men and women are seen as having different natures."

Even when they do have better ammunition, they seem to downplay it. The most egregious statement they include from Pinker is hidden in a footnote!

They also pick a very strange target. Attacking Pinker's status as an LSA fellow and a media expert doesn't pose that much of a threat to him; he just doesn't have that much to lose here. Why are they bringing this to the LSA rather than to Pinker's publisher? Why are |

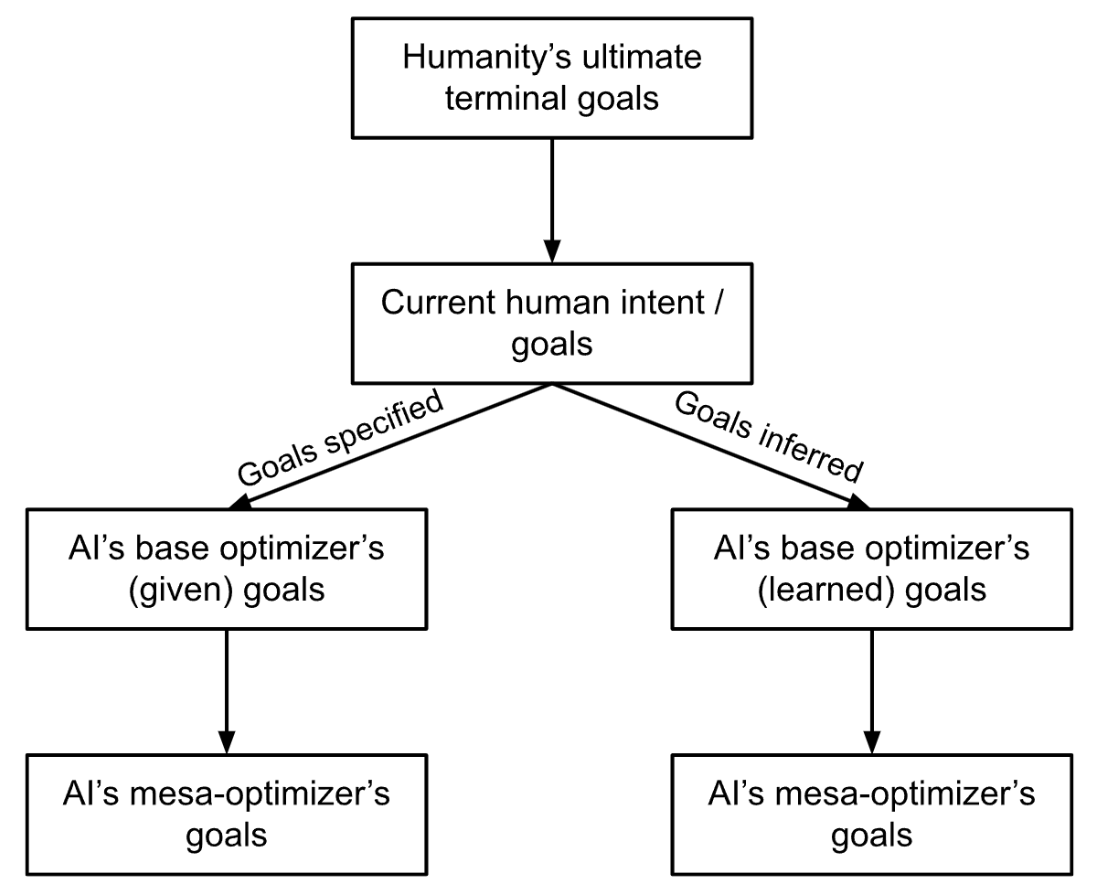

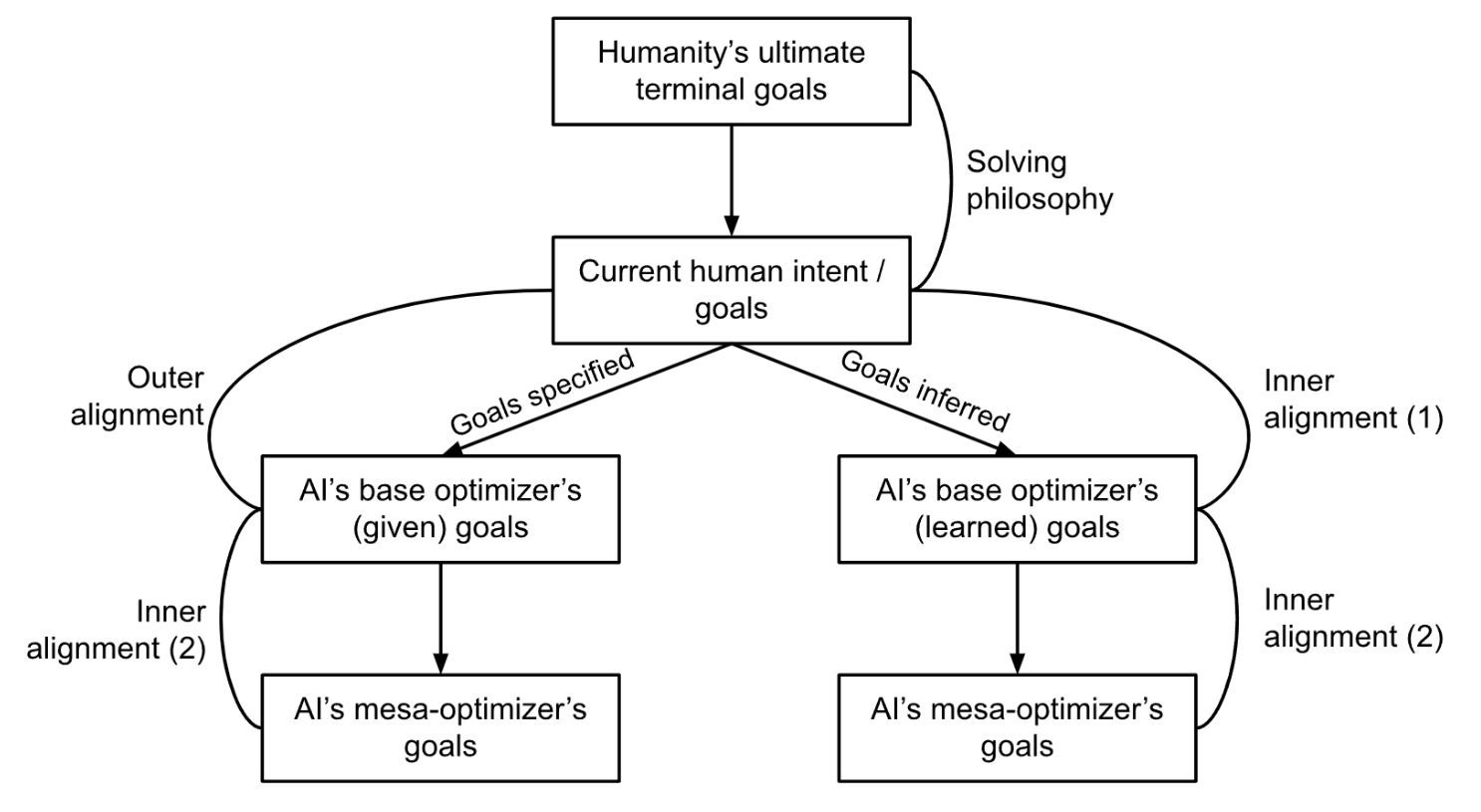

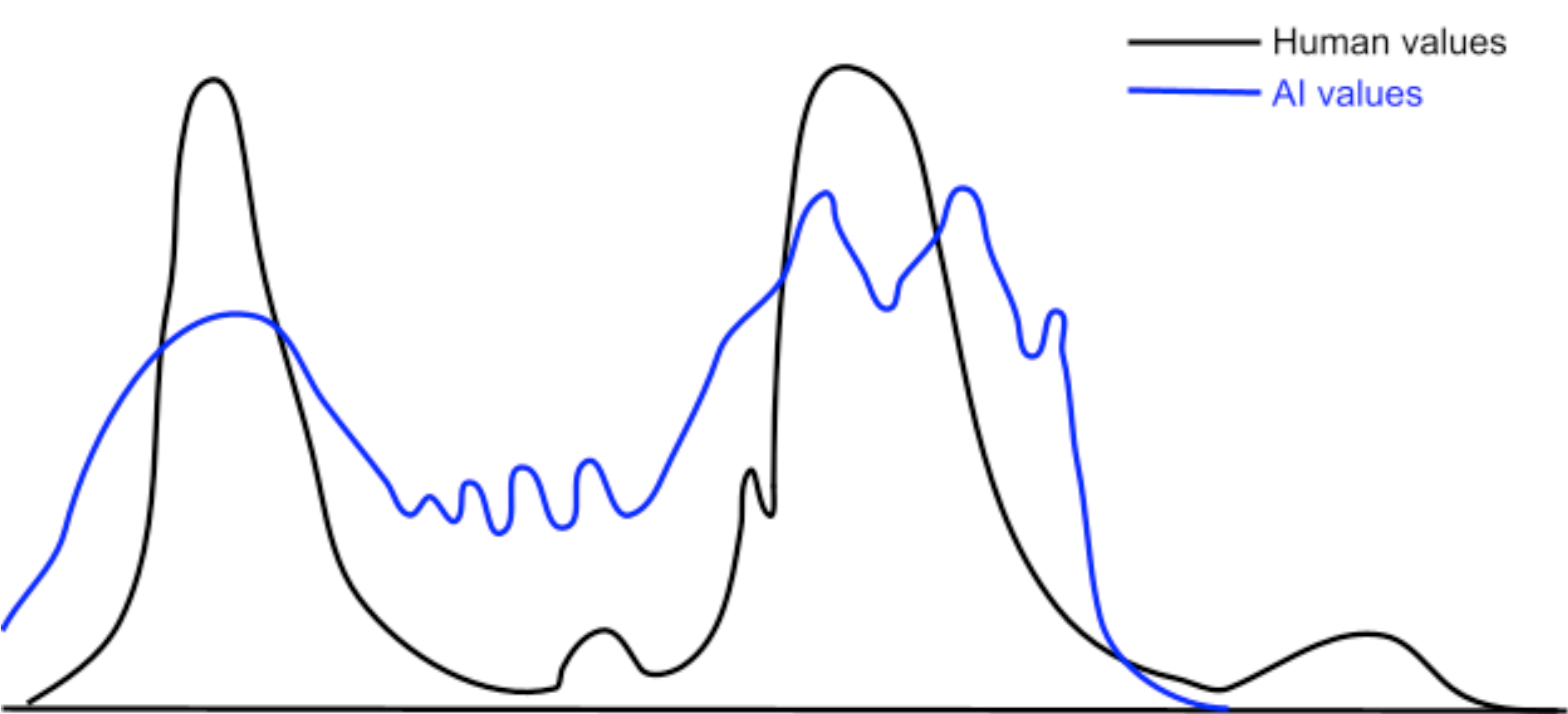

7fe17bd8-0bff-49bf-9a26-71f8dbbcc7cd | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Intuitions about goal-directed behavior

One broad argument for AI risk is the Misspecified Goal argument:

> **The Misspecified Goal Argument for AI Risk:** Very intelligent AI systems will be able to make long-term plans in order to achieve their goals, and if their goals are even slightly misspecified then the AI system will become adversarial and work against us.

My main goal in this post is to make conceptual clarifications and suggest how they affect the Misspecified Goal argument, without making any recommendations about what we should actually do. Future posts will argue more directly for a particular position. As a result, I will not be considering other arguments for focusing on AI risk even though I find some of them more compelling.

I think of this as a concern about *long-term goal-directed behavior*. Unfortunately, it’s not clear how to categorize behavior as goal-directed vs. not. Intuitively, any agent that searches over actions and chooses the one that best achieves some measure of “goodness” is goal-directed (though there are exceptions, such as the agent that selects actions that begin with the letter “A”). (ETA: I also think that agents that show goal-directed behavior because they are looking at some other agent are not goal-directed themselves -- see this [comment](https://www.alignmentforum.org/posts/9zpT9dikrrebdq3Jf/will-humans-build-goal-directed-agents#FdQsD6Q78SZQeXa64).) However, this is not a necessary condition: many humans are goal-directed, but there is no goal baked into the brain that they are using to choose actions.

This is related to the concept of [optimization](https://www.lesswrong.com/posts/D7EcMhL26zFNbJ3ED/optimization), though with intuitions around optimization we typically assume that we know the agent’s preference ordering, which I don’t want to assume here. (In fact, I don’t want to assume that the agent even *has* a preference ordering.)

One potential formalization is to say that goal-directed behavior is any behavior that can be modelled as maximizing expected utility for some utility function; in the next post I will argue that this does not properly capture the behaviors we are worried about. In this post I’ll give some intuitions about what “goal-directed behavior” means, and how these intuitions relate to the Misspecified Goal argument.

Generalization to novel circumstances

=====================================

Consider two possible agents for playing some game, let’s say TicTacToe. The first agent looks at the state and the rules of the game, and uses the [minimax algorithm](https://en.wikipedia.org/wiki/Minimax#Minimax_algorithm_with_alternate_moves) to find the optimal move to play. The second agent has a giant lookup table that tells it what move to play given any state. Intuitively, the first one is more “agentic” or “goal-driven”, while the second one is not. But both of these agents play the game in exactly the same way!

The difference is in how the two agents *generalize to new situations*. Let’s suppose that we suddenly change the rules of TicTacToe -- perhaps now the win condition is reversed, so that anyone who gets three in a row loses. The minimax agent is still going to be optimal at this game, whereas the lookup-table agent will lose against any opponent with half a brain. The minimax agent looks like it is “trying to win”, while the lookup-table agent does not. (You could say that the lookup-table agent is “trying to take actions according to <policy>”, but this is a weird complicated goal so maybe it doesn’t count.)

In general, when we say that an agent is pursuing some goal, this is meant to allow us to predict how the agent will generalize to some novel circumstance. This sort of reasoning is critical for the Goal-Directed argument for AI risk. For example, we worry that an AI agent will prevent us from turning it off, because that would prevent it from achieving its goal: “You can't fetch the coffee if you're dead.” This is a prediction about what an AI agent would do in the novel circumstance where a human is trying to turn the agent off.

This suggests a way to characterize these sorts of goal-directed agents: there is some goal such that the agent’s behavior *in new circumstances* can be predicted by figuring out which behavior best achieves the goal. There's a lot of complexity in the space of goals we consider: something like "human well-being" should count, but "the particular policy <x>" and “pick actions that start with the letter A” should not. When I use the word goal I mean to include only the first kind, even though I currently don’t know theoretically how to distinguish between the various cases.

Note that this is in stark contrast to existing AI systems, which are particularly bad at generalizing to new situations.

Honestly, I’m surprised it’s only 90%. [1]

Empowerment

===========

We could also look at whether or not the agent acquires more power and resources. It seems likely that an agent that is optimizing for some goal over the long term would want more power and resources in order to more easily achieve that goal. In addition, the agent would probably try to improve its own algorithms in order to become more intelligent.

This feels like a *consequence* of goal-directed behavior, and not its defining characteristic, because it is about being able to achieve a *wide variety* of goals, instead of a particular one. Nonetheless, it seems crucial to the broad argument for AI risk presented above, since an AI system will probably need to first accumulate power, resources, intelligence, etc. in order to cause catastrophic outcomes.

I find this concept most useful when thinking about the problem of inner optimizers, where in the course of optimization through a rich space you stumble across a member of the space that is itself doing optimization, but for a related but still misspecified metric. Since the inner optimizer is being “controlled” by the outer optimization process, it is probably not going to cause major harm unless it is able to “take over” the outer optimization process, which sounds a lot like accumulating power. (This discussion is extremely imprecise and vague; see [Risks from Learned Optimization](https://arxiv.org/abs/1906.01820) for a more thorough discussion.)

Our understanding of the behavior

=================================

There is a general pattern in which as soon as we understand something, it becomes something lesser. As soon as we understand rainbows, they are relegated to the [“dull catalogue of common things”](https://www.lesswrong.com/posts/x4dG4GhpZH2hgz59x/joy-in-the-merely-real). This suggests a somewhat cynical explanation of our concept of “intelligence”: an agent is considered intelligent if we do not know how to achieve the outcomes it does using the resources that it has (in which case our best model for that agent may be that it is pursuing some goal, reflecting our tendency to anthropomorphize). That is, our evaluation about intelligence is a statement about our epistemic state. Some examples that follow this pattern are:

* As soon as we understand how some AI technique solves a challenging problem, it is [no longer considered AI](https://www.zdnet.com/article/ai-tends-to-lose-its-definition-once-it-becomes-commonplace-sas/). Before we’ve solved the problem, we imagine that we need some sort of “intelligence” that is pointed towards the goal and solves it: the only method we have of predicting what this AI system will do is to think about what a system that tries to achieve the goal would do. Once we understand how the AI technique works, we have more insight into what it is doing and can make more detailed predictions about where it will work well, where it tends to make mistakes, etc. and so it no longer seems like “intelligence”. Once you know that OpenAI Five is trained by self-play, you can predict that they haven’t seen certain behaviors like standing still to turn invisible, and probably won’t work well there.

* Before we understood the idea of natural selection and evolution, we would look at the complexity of nature and ascribe it to intelligent design; once we had the [mathematics](https://en.wikipedia.org/wiki/Price_equation) (and even just the qualitative insight), we could make much more detailed predictions, and nature no longer seemed like it required intelligence. For example, we can predict the timescales on which we can expect evolutionary changes, which we couldn’t do if we just modeled evolution as optimizing reproductive fitness.

* Many phenomena (eg. rain, wind) that we now have scientific explanations for were previously explained to be the result of some anthropomorphic deity.

* When someone performs a feat of mental math, or can tell you instantly what day of the week a random date falls on, you might be impressed and think them very intelligent. But if they explain to you [how they did it](http://mathforum.org/dr.math/faq/faq.calendar.html), you may find it much less impressive. (Though of course these feats are selected to seem more impressive than they are.)

Note that an alternative hypothesis is that humans equate intelligence with mystery; as we learn more and remove mystery around eg. evolution, we automatically think of it as less intelligent.

To the extent that the Misspecified Goal argument relies on this intuition, the argument feels a lot weaker to me. If the Misspecified Goal argument rested entirely upon this intuition, then it would be asserting that *because* we are ignorant about what an intelligent agent would do, we should assume that it is optimizing a goal, which means that it is going to accumulate power and resources and lead to catastrophe. In other words, it is arguing that assuming that an agent is intelligent *definitionally* means that it will accumulate power and resources. This seems clearly wrong; it is possible in principle to have an intelligent agent that nonetheless does not accumulate power and resources.

Also, the argument is *not* saying that *in practice* most intelligent agents accumulate power and resources. It says that we have no better model to go off of other than “goal-directed”, and then pushes this model to extreme scenarios where we should have a lot more uncertainty.

To be clear, I do *not* think that anyone would endorse the argument as stated. I am suggesting as a possibility that the Misspecified Goal argument relies on us incorrectly equating superintelligence with “pursuing a goal” because we use “pursuing a goal” as a default model for anything that can do interesting things, even if that is not the best model to be using.

Summary

=======

Intuitively, goal-directed behavior can lead to catastrophic outcomes with a sufficiently intelligent agent, because the optimal behavior for even a slightly misspecified goal can be very bad according to the true goal. However, it’s not clear exactly what we mean by goal-directed behavior. Often, an algorithm that searches over possible actions and chooses the one with the highest “goodness” will be goal-directed, but this is neither necessary nor sufficient.

“From the outside”, it seems like a goal-directed agent is characterized by the fact that we can predict the agent’s behavior in new situations by assuming that it is pursuing some goal, and as a result it is acquires power and resources. This can be interpreted either as a statement about our epistemic state (we know so little about the agent that our best model is that it pursues a goal, even though this model is not very accurate or precise) or as a statement about the agent (predicting the behavior of the agent in new situations based on pursuit of a goal actually has very high precision and accuracy). These two views have very different implications on the validity of the Misspecified Goal argument for AI risk.

---

[1] This is an entirely made-up number. |

5c612215-e56a-4d94-8a2e-8b48f2245f8f | trentmkelly/LessWrong-43k | LessWrong | Are healthy choices effective for improving live expectancy anymore?

The usual way to improve your life expectancy is through a healthy lifestyle of some-sort, especially during peace time.

However, in many plausible futures, personal health now seems to have little effect:

* We create aligned AGI: aligned AGI could presumably solve any health problems we accumulated due to our lifestyle choices.

* We create unaligned AGI: unaligned AGI would definitely solve any health problems we accumulated due to our lifestyle choices.

* Humans solve aging without AGI: health problems we accumulated probably don't matter in this scenario.

* Some other existential crisis occurs.

Whereas the scenarios where health choices do effect longevity seem slim:

* Humans do not invent AGI and to do not solve aging themselves and no existential catastrophes occur in your lifetime

* No existential catastrophes occur, we/an AI do find a cure for aging, but medical regulations prevent the cure from being distributed widely (please no 🙏).

One important consideration to keep in mind though is that things that extend our life expectancy usually have short term health benefits as well. For example, sleep, diet, and exercise have a massive effect on energy levels. But if you're only optimizing short-term health, does the optimal lifestyle look different? |

f08dfc8b-1bf7-4875-825c-b1ab31e17b9f | trentmkelly/LessWrong-43k | LessWrong | Building rationalist communities: lessons from the Latter-day Saints

Or: How I Learned Everything I Know About Group Organization By Spending Two Years on a Mormon Mission in India.

The official name is the Church of Jesus Christ of Latter-day Saints. You may know us as ‘Mormons.’ We like to call ourselves ‘Latter-day Saints.’

If you’re a Less Wrongian and trying to organize a rationalist community, you should be interested in the Latter-day Saint organizational model for four reasons:

- it’s a nonprofit, but franchise-based and designed to propagate itself,

- everyone has a responsibility,

- no one is paid, and

- it works.

This is an introductory post. I'm not trying to persuade you to join, but rather that there’s something to learn here.

Here, I will give you some basic details about what the LDS Church is. In later posts, I will explain more how it works. A series overview is here.

A franchise model

The Church has about 55,000 missionaries worldwide, all of whom follow the same basic dress code and go about in pairs, basically recruiting people to join the organization. For men, white shirt and ‘conservative’ tie, suitjacket if it’s cold. Clean-shaven. No chewing gum in public. Short hair. And so forth.

Church buildings are selected from a basic set of designs. Each congregation unit has about 150 people each week at Sunday services. The internal organization is the same for each congregation, albeit with procedures for simplification in smaller units. Everyone has a responsibility, from the congregation head down to the teenage boys who prepare and serve the ‘sacrament.’[1]

And nobody is paid.

Everyone has a responsibility

The Church is an organization, but members also comprise a distinct culture. Within the culture, there is an expectation that church members accept a ‘calling’ or specific unpaid organizational responsibility.

Callings are assigned by the head of the local congregation. You can privately decline, but there is an expectation is to accept the responsibility.

Examples inc |

01f8bb58-eb72-4372-ba32-ecbc5dc7e384 | trentmkelly/LessWrong-43k | LessWrong | On the fragility of values

Programming human values into an AI is often taken to be very hard because values are complex (no argument there) and fragile. I would agree that values are fragile in the construction; anything lost in the definition might doom us all. But once coded into a utility function, they are reasonably robust.

As a toy model, let's say the friendly utility function U has a hundred valuable components - friendship, love, autonomy, etc... - assumed to have positive numeric values. Then to ensure that we don't lose any of these, U is defined as the minimum of all those hundred components.

Now define V as U, except we forgot the autonomy term. This will result in a terrible world, without autonomy or independence, and there will be wailing and gnashing of teeth (or there would, except the AI won't let us do that). Values are indeed fragile in the definition.

However... A world in which V is maximised is a terrible world from the perspective of U as well. U will likely be zero in that world, as the V-maximising entity never bothers to move autonomy above zero. So in utility function space, V and U are actually quite far apart.

Indeed we can add any small, bounded utility to W to U. Assume W is bounded between zero and one; then an AI that maximises W+U will never be more that one expected 'utiliton' away, according to U, from one that maximises U. So - assuming that one 'utiliton' is small change for U - a world run by an W+U maximiser will be good.

So once they're fully spelled out inside utility space, values are reasonably robust, it's in their initial definition that they're fragile. |

8f86d991-60f4-44b0-a51d-5db8148c608a | trentmkelly/LessWrong-43k | LessWrong | A Girardian interpretation of the Altman affair, it's on my to-do list

Cross-posted from New Savanna.

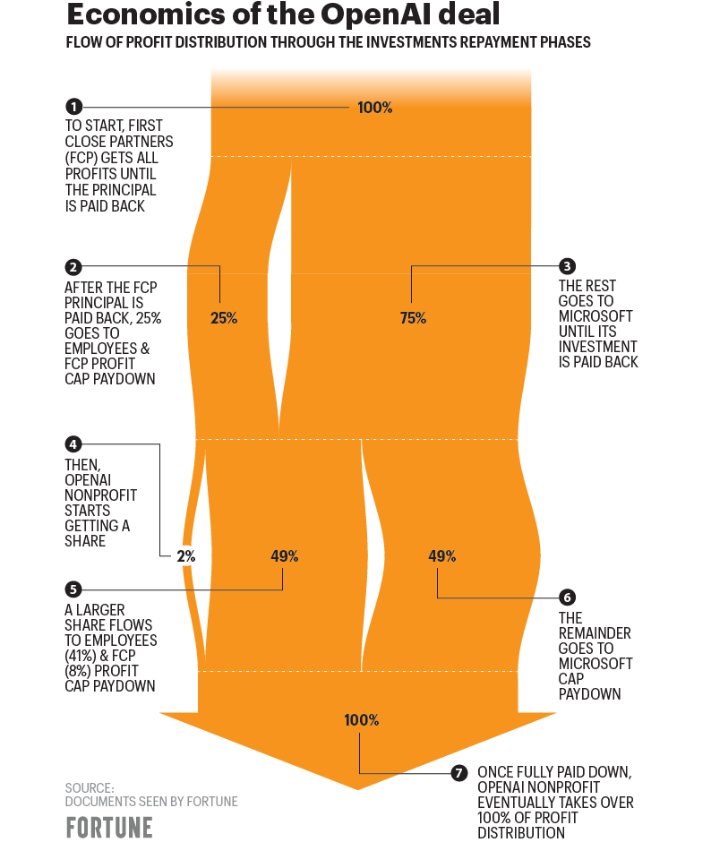

Tyler Cowen posted the following tweet over at Marginal Revolution under the title "Solving for the Equilibrium":

I posted this comment:

> Earlier in the week Scott Alexander had posted a skeptical review of a Girard book and I commented that, though I'm a Girard skeptic, albeit a somewhat interested one, Tyler regarded him as one of the great 20th century thinkers. In the course of introducing today's Open Thread, Alexander notes: "I would love to know more about Tyler’s interpretation of Girard and the single-victim process. Maybe in the context of recent events?" While we've got lots of recent to choose from – I'm thinking of the Israel/Palestine mess (ancient Israel, after all, is central to Girard's thinking on this matter) – I suspect the single-victim prompt points to the OpenAI upheaval.

>

> Indeed, my interpretive Spidey sense suggests that a Girardian reading might be illuminating. I'd start with the idea that Sam Altman is the sacrificial victim. His position as leader of OpenAI is a natural focal point for mimetic dynamics. In this case those dynamics ripple far and wide. One might wish, for example, to include the fairy extensive commentary on Altman over at LessWrong, and not just recently. What about Sutskever's role? Just how this inquiry would play out, I do not know. No way to tell about these things until you actually do the work.

From the new interim CEO at OpenAI:

|

3dcbb373-4c59-43c8-9b22-1dbbfbae7075 | trentmkelly/LessWrong-43k | LessWrong | Cheat codes

Most things worth doing take serious, sustained effort. If you want to become an expert violinist, you're going to have to spend a lot of time practicing. If you want to write a good book, there really is no quick-and-dirty way to do it. But sustained effort is hard, and can be difficult to get rolling. Maybe there are some easier gains to be had with simple, local optimizations. Contrary to oft-repeated cached wisdom, not everything worth doing is hard. Some little things you can do are like cheat codes for the real world.

Take habits, for example: your habits are not fixed. My diet got dramatically better once I figured out how to change my own habits, and actually applied that knowledge. The general trick was to figure out a new, stable state to change my habits to, then use willpower for a week or two until I settle into that stable state. In the case of diet, a stable state was one where junk food was replaced with fruit, tea, or having a slightly more substantial meal beforehand so I wouldn't feel hungry for snacks. That's an equilibrium I can live with, long-term, without needing to worry about "falling off the wagon." Once I figured out the pattern -- work out a stable state, and force myself into it over 1-2 weeks -- I was able to improve several habits, permanently. It was amazing. Why didn't anybody tell me about this?

In education, there are similar easy wins. If you're trying to commit a lot of things to memory, there's solid evidence that spaced repetition works. If you're trying to learn from a difficult textbook, reading in multiple overlapping passes is often more time-efficient than reading through linearly. And I've personally witnessed several people academically un-cripple themselves by learning to reflexively look everything up on Wikipedia. None of this stuff is particularly hard. The problem is just that a lot of people don't know about it.

What other easy things have a high marginal return-on-effort? Feel free to include speculative ones, |

9be4c570-545a-40fb-a853-2afabd0c7748 | StampyAI/alignment-research-dataset/distill | Distill Scientific Journal | Thread: Differentiable Self-organizing Systems

Self-organisation is omnipresent on all scales of biological life. From complex interactions between molecules

forming structures such as proteins, to cell colonies achieving global goals like exploration by means of the

individual cells collaborating and communicating, to humans forming collectives in society such as tribes,

governments or countries. The old adage “the whole is greater than the sum of its parts”, often ascribed to

Aristotle, rings true everywhere we look.

The articles in this thread focus on practical ways of designing self-organizing systems. In particular we use

Differentiable Programming (optimization) to learn agent-level policies that satisfy system-level objectives. The

cross-disciplinary nature of this thread aims to facilitate ideas exchange between ML and developmental biology

communities.

Articles & Comments

-------------------

Distill has invited several researchers to publish a “thread” of short articles exploring differentiable

self-organizing systems,

interspersed with critical commentary from several experts in adjacent fields.

The thread will be a living document, with new articles added over time.

Articles and comments are presented below in chronological order:

###

[Growing Neural Cellular Automata](/2020/growing-ca/)

### Authors

### Affiliations

[Alexander Mordvintsev](https://znah.net/),

Ettore Randazzo,

[Eyvind Niklasson](https://eyvind.me/),

[Michael Levin](http://www.drmichaellevin.org/)

[Google](https://research.google/),

[Allen Discovery Center](https://allencenter.tufts.edu/)

Building their own bodies is the very first skill all living creatures possess. How can we design systems that

grow, maintain and repair themselves by regenerating damages? This work investigates morphogenesis, the

process by which living creatures self-assemble their bodies. It proposes a differentiable, Cellular Automata

model of morphogenesis and shows how such a model learns a robust and persistent set of dynamics to grow any

arbitrary structure starting from a single cell.

[Read Full Article](/2020/growing-ca/)

###

[Self-classifying MNIST Digits](/2020/selforg/mnist/)

### Authors

### Affiliations

Ettore Randazzo,

[Alexander Mordvintsev](https://znah.net/),

[Eyvind Niklasson](https://eyvind.me/),

[Michael Levin](http://www.drmichaellevin.org/),

[Sam Greydanus](https://greydanus.github.io/about.html)

[Google](https://research.google/),

[Allen Discovery Center](https://allencenter.tufts.edu/),

[Oregon State University and the ML Collective](http://mlcollective.org/)

This work presents a follow up to Growing Neural CAs, using a similar computational model for the goal of

digit “self-classification”. The authors show how neural CAs can self-classify the MNIST digit they form. The

resulting CAs can be interacted with by dynamically changing the underlying digit. The CAs respond to

perturbations with a learned self-correcting classification behaviour.

[Read Full Article](/2020/selforg/mnist/)

###

[Self-Organising Textures](/selforg/2021/textures/)

### Authors

### Affiliations

[Eyvind Niklasson](https://eyvind.me/),

[Alexander Mordvintsev](https://znah.net/),

Ettore Randazzo,

[Michael Levin](http://www.drmichaellevin.org/)

[Google](https://research.google/),

[Allen Discovery Center](https://allencenter.tufts.edu/),

Here the authors apply Neural Cellular Automata to a new domain: texture synthesis. They begin by training NCA

to mimic a series of textures taken from template images. Then, taking inspiration from adversarial

camouflages which appear in nature, they use NCA to create textures which maximally excite neurons in a

pretrained vision model. These results reveal that a simple model combined with well-known objectives can lead

to robust and unexpected behaviors.

[Read Full Article](/selforg/2021/textures/)

###

[Adversarial Reprogramming of Neural Cellular Automata](/selforg/2021/adversarial/)

### Authors

### Affiliations

[Ettore Randazzo](https://oteret.github.io/),

[Alexander Mordvintsev](https://znah.net/),

[Eyvind Niklasson](https://eyvind.me/),

[Michael Levin](http://www.drmichaellevin.org/)

[Google](https://research.google/),

[Allen Discovery Center](https://allencenter.tufts.edu/),

This work takes existing Neural CA models and shows how they can be adversarially reprogrammed to perform novel tasks.

MNIST CA can be deceived into outputting incorrect classifications and the patterns in Growing CA can be made to have their shape and colour altered.

[Read Full Article](/selforg/2021/adversarial/)

#### This is a living document

Expect more articles on this topic, along with critical comments from

experts.

Get Involved

------------

The Self-Organizing systems thread is open to articles exploring differentiable self-organizing sytems.

Critical

commentary and discussion of existing articles is also welcome. The thread

is organized through the open `#selforg` channel on the

[Distill slack](http://slack.distill.pub). Articles can be

suggested there, and will be included at the discretion of previous

authors in the thread, or in the case of disagreement by an uninvolved

editor.

If you would like get involved but don’t know where to start, small

projects may be available if you ask in the channel.

About the Thread Format

-----------------------

Part of Distill’s mandate is to experiment with new forms of scientific

publishing. We believe that that reconciling faster and more continuous

approaches to publication with review and discussion is an important open

problem in scientific publishing.

Threads are collections of short articles, experiments, and critical

commentary around a narrow or unusual research topic, along with a slack

channel for real time discussion and collaboration. They are intended to

be earlier stage than a full Distill paper, and allow for more fluid

publishing, feedback and discussion. We also hope they’ll allow for wider

participation. Think of a cross between a Twitter thread, an academic

workshop, and a book of collected essays.

Threads are very much an experiment. We think it’s possible they’re a

great format, and also possible they’re terrible. We plan to trial two

such threads and then re-evaluate our thought on the format. |

7d55ee5c-530f-4b50-8c69-649bf3873290 | trentmkelly/LessWrong-43k | LessWrong | [Oops, there is actually an event] Notice: No LW event this weekend

Just a notice to say there's no event this weekend.

Will be back to your regularly scheduled LW event on Sunday 29th, which we'll announce in a few days.

Update: We're having a double crux, between Buck Shlegeris and Oliver Habryka, on AI takeoff speeds. Event page. |

d4cb2dc1-f5c9-4242-9775-c6449bd567d0 | trentmkelly/LessWrong-43k | LessWrong | The uniquely awful example of theism

When an LW contributor is in need of an example of something that (1) is plainly, uncontroversially (here on LW, at least) very wrong but (2) an otherwise reasonable person might get lured into believing by dint of inadequate epistemic hygiene, there seems to be only one example that everyone reaches for: belief in God. (Of course there are different sorts of god-belief, but I don't think that makes it count as more than one example.) Eliezer is particularly fond of this trope, but he's not alone.

How odd that there should be exactly one example. How convenient that there is one at all! How strange that there isn't more than one!

In the population at large (even the smarter parts of it) god-belief is sufficiently widespread that using it as a canonical example of irrationality would run the risk of annoying enough of your audience to be counterproductive. Not here, apparently. Perhaps we-here-on-LW are just better reasoners than everyone else ... but then, again, isn't it strange that there aren't a bunch of other popular beliefs that we've all seen through? In the realm of politics or economics, for instance, surely there ought to be some.

Also: it doesn't seem to me that I'm that a much better thinker than I was a few years ago when (alas) I was a theist; nor does it seem to me that everyone on LW is substantially better at thinking than I am; which makes it hard for me to believe that there's a certain level of rationality that almost everyone here has attained, and that makes theism vanishingly rare.

I offer the following uncomfortable conjecture: We all want to find (and advertise) things that our superior rationality has freed us from, or kept us free from. (Because the idea that Rationality Just Isn't That Great is disagreeable when one has invested time and/or effort and/or identity in rationality, and because we want to look impressive.) We observe our own atheism, and that everyone else here seems to be an atheist too, and not unnaturally we conclude t |

35e6341d-9514-45b6-8437-d9b7eb54c65c | trentmkelly/LessWrong-43k | LessWrong | Is your uncertainty resolvable?

I was chatting with Andrew Critch about the idea of Reacts on LessWrong.

Specifically, the part where I thought there are particular epistemic states that don’t have words yet, but should. And that a function of LessWrong might be to make various possible epistemic states more salient as options. You might have reacts for “approve/disapprove” and “agree/disagree”... but you might also want reactions that let you quickly and effortless express “this isn’t exactly false or bad but it’s subtly making this discussion worse.”

Fictionalized, Paraphrased Critch said “hmm, this reminds me of some particular epistemic states I recently noticed that don’t have names.”

“Go on”, said I.

“So, you know the feeling of being uncertain? And how it feels different to be 60% sure of something, vs 90%?”

“Sure.”

“Okay. So here’s two other states you might be in:

* 75% sure that you’ll eventually be 99% sure,

* 80% sure that you’ll eventually be 90% sure.

He let me process those numbers for a moment.

...

Then he continued: "Okay, now imagine you’re thinking about a particular AI system you’re designing, which might or might not be alignable.

“If you’re feeling 75% sure that you’ll eventually be 99% sure that that AI is safe, this means you think that eventually you’ll have a clear understanding of the AI, such that you feel confident turning it on without destroying humanity. Moreover you expect to be able to convince other people that it’s safe to turn it on without destroying humanity.

“Whereas if you’re 80% sure that eventually you’ll be 90% sure that it’ll be safe, even in the future state where you’re better informed and more optimistic, you might still not actually be confident enough to turn it on. And even if for some reason you are, other people might disagree about whether you should turn it on.

“I’ve noticed people tracking how certain they are of something, without paying attention to whether their uncertainty is possible to resolve. And this has important rami |

7e1cb9f5-5692-49d8-996d-1973c1da4dc7 | trentmkelly/LessWrong-43k | LessWrong | How likely are the USA to decay and how will it influence the AI development?

Trump's politics is so far from being understandable that it appears to cause the decay of the USA; for instance, the governor of California ended up pleading to exclude California-made products from tariffs introduced as retailation against Trump's measures; another example of a state wishing to secede is New Hampshire where the Republican state Representative Jason Gerhard proposed that New Hampshire should peacefully declare independence from the U.S. if the national debt surpasses $40 trillion, which is apparently to take place in less than 1.5 years. [1]

Meanwhile, the optimistic 2027 timeline implies that Taiwan is to be invaded in late 2026, if not earlier, while the pessimistic timeline implies that AI is to start taking jobs by that time and that the full effects of the AI-related research are to kick in during 2027. How likely is a potential decay of the USA to let Chinese spies do much more work like destroying the data centres or leaving them without electricity? What effect will it have on the race to AGI?

EDIT: Trump somehow decided to impose tariffs on most goods from Taiwan, except for the chips necessary to perform calculations.

1. ^

The national debt is currently $36.7 trillion. In four years it is projected to reach $46.4 trillion. |

7cd811ff-83ae-459c-a7a9-cdbb944844f3 | trentmkelly/LessWrong-43k | LessWrong | Singleton: the risks and benefits of one world governments

Many thanks to all those whose conversations have contributed to forming these ideas.

Will the singleton save us?

For most of the large existential risks that we deal with here, the situation would be improved with a single world government (a singleton), or at least greater global coordination. The risk of nuclear war would fade, pandemics would be met with a comprehensive global strategy rather than a mess of national priorities. Workable regulations for the technology risks - such as synthetic biology or AI – become at least conceivable. All in all, a great improvement in safety...

...with one important exception. A stable tyrannical one-world government, empowered by future mass surveillance, is itself an existential risk (it might not destroy humanity, but it would “permanently and drastically curtail its potential”). So to decide whether to oppose or advocate for more global coordination, we need to see how likely such a despotic government could be.

This is the kind of research I would love to do if I had the time to develop the relevant domain skills. In the meantime, I’ll just take all my thoughts on the subject and form them into a “proto-research project plan”, in the hopes that someone could make use of them in a real research project. Please contact me if you would want to do research on this, and would fancy a chat.

Defining “acceptable”

Before we can talk about the likelihood of a good outcome, we need to define what a good outcome actually is. For this analysis, I will take the definition that:

* A singleton regime is acceptable, if it is at least as good as any developed democratic government of today.

This definition can be criticised for its conservatism, or its cowardice. Shouldn’t we be aiming to do much better than what we’re doing now? What about the inefficiency of current governments, the huge opportunity costs? Is this not a disaster in itself?

As should be evident from some of my previous posts, I don’t see loss of efficiency |

67c7fdaf-2140-4a78-a8c6-79e73994dbf0 | trentmkelly/LessWrong-43k | LessWrong | Applied Rationality Workshop Cologne, Germany

Together with Anne Wissemann we are going to do a 2-day weekend workshop on applied rationality in Cologne, Germany. If you cannot apply since you have already scheduled something important, feel free to leave your email to be informed about future workshops in the Cologne area.

Summary

* Date: 19. & 20.01.2019, 9am - 6pm

* Application-form: https://goo.gl/forms/DLfsznUTbWzoIJV12 (Deadline: Friday, 11.01.2018, confirmations will be send on Saturday)

* Location: somewhere in Cologne... to be announced :)

* Costs: 40-60€

* Topic: CFAR-techniques and other useful rationality-concepts

* Limited to 20 attendees

* Target Group: Aspiring EAs & Rationalists

* Housing available on request

Aims

We - the EA Köln/Cologne group - want to contribute to the positive development of the EA & Rationality community.

Over the past year we organized weekly Meetups including Socials, Book-Clubs as well as talks with discussion rounds. Recently we decided to make weekend seminars and workshops a priority since they seem to have multiple benefits:

* real deep-diving into topics compared to evening events

* 'Unite' the EA & LessWrong communities addressing mutual interests

* socialize with great people

* filter for (really interested) newcomers

* Due to weekend time frame: invite great speakers and people from other cities (who would not take the effort traveling for an evening only)

Overview

Anne works as a coach for members of the rationality and effective altruism communities. In addition to her own CFAR workshop, she has volunteered as a mentor at 5 other mainline workshops since 2014, has undergone and taught at CFAR's mentorship training, as well as helped develop her local weekly Rationality Dojo in Berlin. She plans to teach the participants:

- Double Crux

- Focusing

- Internal Double Crux

- Trigger-Action Plans (TAP)

- Flash classes/concepts: Mindfulness, Negative Visualisation, Decreasing Marginal Returns, helpful Debugging prompts, Frogs, How to notice thing |

5f2f79fc-c457-4e0b-9b37-1fbff6bfb44c | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | Free Guy, a rom-com on the moral patienthood of digital sentience

**Warning: Some spoilers follow**

---

*Free Guy* (2020) is romantic comedy about a bank teller named Guy who falls in love with a woman. Then, when he discovers that his entire world is just a video game, he has to be the one to save it from destruction by a maniacal video game company executive.

Many people find it weird to think about the lives of digital sentient beings as morally valuable, whether they number a hundred (as in the film) or a trillion. But film is exhilarating, hilarious, and also so relatable that you ever don’t stop to wonder, wait, why do we care about a video game character at all? He’s not even real! Yet when the main character has an existential crisis about this fact, his best friend, Buddy, [compassionately says](https://www.youtube.com/watch?v=p_grnZ6DTkI):

>

> I say, okay, so what if I’m not real? […] Look, brother, I am sitting here with my best friend, trying to help him get through a tough time. Right? And even if I’m not real, this moment is. Right here, right now, this moment is real. I mean, what’s more real than a person tryin’ to help someone they love? Now, if that’s not real, I don’t know what is.

>

>

>

*Free Guy* is able to effortlessly have viewers sympathize with its digital agents because they’re just like humans, even as they’re AIs in a video game, written with a bunch of lines of code. (Not whole-brain emulations, for example.) They have rich, complex thoughts and lives, even as most characters lack the “free will” to deviate from a routine.

*Free Guy* isn’t sci-fi. It’s set in the present day, with present-day technology. And it keeps things small-scale with limited consequences for society. It doesn’t consider, how would the economy be transformed if we have human-level AI? What if the video game characters of Free City could not only write personal essays about feminism but also share these novel contributions with the rest of society? What would it look like to scale up the digital population of Free City by a billion times?

Nevertheless, it provides a glimpse of how life could be different for digitally sentient beings—in particular, what Shulman and Bostrom call “[hedonic skew](https://nickbostrom.com/papers/digital-minds.pdf)”. Despite living in a world like *Grand Theft Auto*, where bank robberies and gun violence are daily experiences, the characters are still upbeat and optimistic. Fortunately, they’re eventually transferred to a world built for them rather than the entertainment of human players, where they can live out their lives with friendship and harmony.

*Free Guy* is the most powerful (and funniest) film about artificial consciousness that I know of. If you’re looking for an EA-relevant movie to add to your watch list, I strongly recommend *Free Guy*, for both its entertainment and philosophical value.

---

Postscript: If I were to make an actual argument in this post: Many people think that digital sentience is too weird to advocate for at this stage. Although I have not tried this with other people yet, the film *Free Guy* might be a promising conduit for promoting concern for digital sentient beings – when paired with relevant discussion, as the few reviews I've read of *Free Guy* don't touch upon moral consideration of digital sentient beings. |

0299b1d2-47ab-48e3-8957-53b7d9ddee47 | trentmkelly/LessWrong-43k | LessWrong | Meetup : Boston: Antifragile

Discussion article for the meetup : Boston: Antifragile

WHEN: 04 January 2015 03:30:33PM (-0500)

WHERE: 98 Elm Street, Somerville

This Sunday, Jesse Galef will be reviewing the book Antifragile, by Nassim Nicholas Taleb, author of The Black Swan. Topics will include effective decision making, catastrophic risk, and pop culture references.

Reviews of Antifragile:

"The glossary alone offered more thought-provoking ideas than any other nonfiction book I read this year. That said, Antifragile is far from flawless."

"As always, an imperfect, infuriating but intriguing book"

"A big mixed bag of insights and misconceptions"

Cambridge/Boston-area Less Wrong meetups start at 3:30pm, and have an alternating location:

* 1st Sunday meetups are at Citadel in Porter Sq, at 98 Elm St, apt 1, Somerville.

* 3rd Sunday meetups are in MIT's building 66 at 25 Ames St, room 156. Room number subject to change based on availability; signs will be posted with the actual room number.

(We also have last Wednesday meetups at Citadel at 7pm.)

Our default schedule is as follows:

—Phase 1: Arrival, greetings, unstructured conversation.

—Phase 2: The headline event. This starts promptly at 4pm, and lasts 30-60 minutes.

—Phase 3: Further discussion. We'll explore the ideas raised in phase 2, often in smaller groups.

—Phase 4: Dinner.

Discussion article for the meetup : Boston: Antifragile |

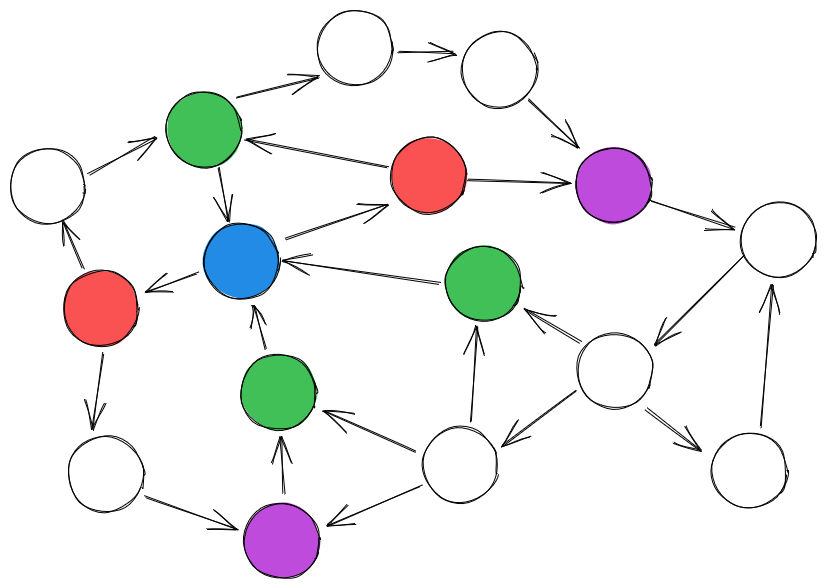

118145f2-17e3-4c32-8690-f454c8a2abbf | StampyAI/alignment-research-dataset/arxiv | Arxiv | Probabilistic Dependency Graphs

1 Introduction

---------------

In this paper we introduce yet another graphical

tool

for modeling beliefs,

*Probabilistic Dependency Graphs* (PDGs). There are already many

such models in the literature, including Bayesian networks (BNs) and

factor graphs. (For an overview, see

(Koller and Friedman [2009](#bib.bib5)).)

Why does the world need one more?

Our original motivation for introducing PDGs was to be able capture

inconsistency. We want to be able to model the process of resolving

inconsistency; to do so, we have to model the inconsistency itself. But our

approach to modeling inconsistency has many other advantages. In particular,

PDGs are significantly more modular than other directed graphical models:

operations like restriction and union that are easily done with PDGs are

difficult or impossible to do with other representations.

The following examples motivate PDGs and illustrate some of

their advantages.

######

Example 1.

Grok is visiting a neighboring district. From prior reading, she thinks it

likely (probability .95) that guns are illegal here. Some brief conversations

with locals lead her to believe that, with probility .1, the law

prohibits floomps.

The obvious way to represent this as a BN is to use two random variables

F𝐹Fitalic\_F and G𝐺Gitalic\_G (respectively taking values {f,f¯}𝑓normal-¯𝑓\{f,\smash{\overline{f}}\}{ italic\_f , over¯ start\_ARG italic\_f end\_ARG } and g,g¯𝑔normal-¯𝑔g,\overline{g}italic\_g , over¯ start\_ARG italic\_g end\_ARG),

indicating whether floomps and guns are prohibited.

The semantics of a BN offer her two choices: either assume that F𝐹Fitalic\_F and G𝐺Gitalic\_G

to be independent and give (unconditional) probabilities of F𝐹Fitalic\_F and G𝐺Gitalic\_G, or

choose a direction of dependency, and give one of the two unconditional

probabilities and a conditional probability distribution.

As there is no reason to choose either direction of dependence, the

natural choice is to

assume independence, giving her the

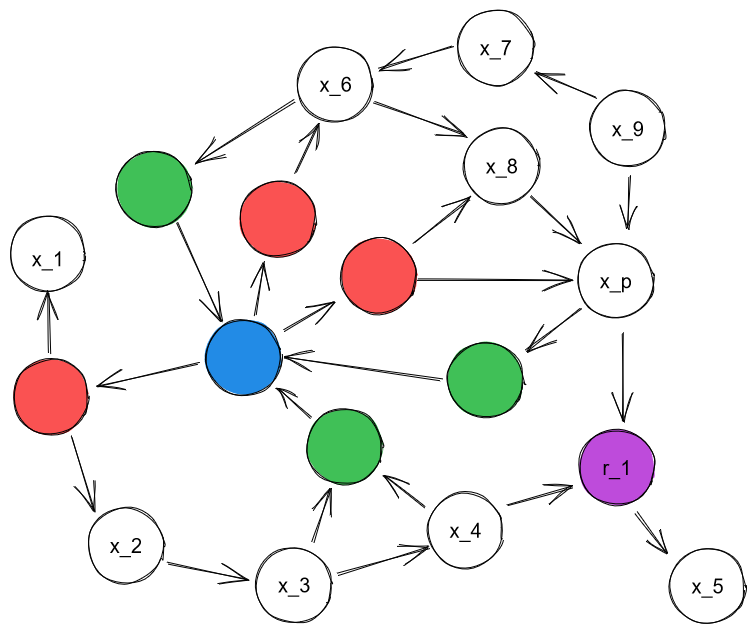

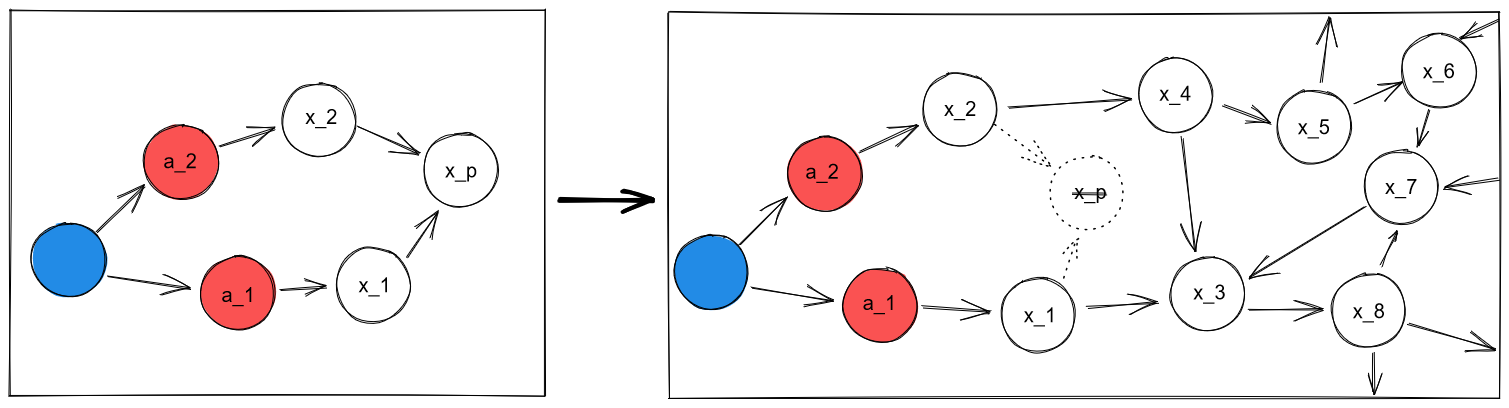

BN on the left of [Figure 1](#S1.F1 "Figure 1 ‣ Example 1. ‣ 1 Introduction ‣ Probabilistic Dependency Graphs").

Figure 1: A BN (left) and corresponding PDG (right), which can

include more cpds; p𝑝pitalic\_p or p′superscript𝑝normal-′p^{\prime}italic\_p start\_POSTSUPERSCRIPT ′ end\_POSTSUPERSCRIPT make it inconsistent.

A traumatic experience a few hours later leaves Grok believing that

“floomp” is likely (probability .92) to be another word for gun.

Let p(G∣F)𝑝conditional𝐺𝐹p(G\mid F)italic\_p ( italic\_G ∣ italic\_F ) be the *c*onditional *p*robability *d*istribution (cpd) that describes

the belief that if floomps are legal (resp., illegal),

then with probability .92, guns are as well, and p′(F∣G)superscript𝑝normal-′conditional𝐹𝐺p^{\prime}(F\mid G)italic\_p start\_POSTSUPERSCRIPT ′ end\_POSTSUPERSCRIPT ( italic\_F ∣ italic\_G ) be

the reverse.

Starting with p𝑝pitalic\_p, Grok’s first instinct is to

simply incorporate the conditional information by adding F𝐹Fitalic\_F as a parent of

G𝐺Gitalic\_G, and then associating

the cpd

p𝑝pitalic\_p with G𝐺Gitalic\_G. But then what should she do

with the original probability she had for G𝐺Gitalic\_G? Should she just discard it?

It is easy to check that there is no

joint distribution

that is consistent with

both

the two original priors on F𝐹Fitalic\_F and G𝐺Gitalic\_G and also

p𝑝pitalic\_p. So if she

is to represent the information with a BN, which always represents a consistent

distribution, she must resolve the inconsistency.

However,

sorting this out immediately may not be ideal.

For instance, if the inconsistency arises from a conflation between

two definitions

of “gun”, a resolution will have destroyed the original cpds. A

better use of computation may be to notice the inconsistency and look

up the actual law.

By way of contrast, consider the corresponding PDG. In a PDG, the cpds are

attached to edges, rather than nodes of the graph.

In order to represent unconditional probabilities, we introduce

a *unit variable* 1111\mathrlap{\mathit{1}}\mspace{2.3mu}\mathit{1}start\_ARG italic\_1 end\_ARG italic\_1 which

takes only one value, denoted

⋆normal-⋆\star⋆.

This leads Grok to

the PDG depicted in [Figure 1](#S1.F1 "Figure 1 ‣ Example 1. ‣ 1 Introduction ‣ Probabilistic Dependency Graphs"),

where the edges from 1111\mathrlap{\mathit{1}}\mspace{2.3mu}\mathit{1}start\_ARG italic\_1 end\_ARG italic\_1 to F𝐹Fitalic\_F and G𝐺Gitalic\_G are associated with the

unconditional probabilities of F𝐹Fitalic\_F and G𝐺Gitalic\_G, and the

edges between F𝐹Fitalic\_F and G𝐺Gitalic\_G are associated with p𝑝pitalic\_p and p′superscript𝑝normal-′p^{\prime}italic\_p start\_POSTSUPERSCRIPT ′ end\_POSTSUPERSCRIPT.

The original state of knowledge consists of all three nodes and the two

solid

edges from 1111\mathrlap{\mathit{1}}\mspace{2.3mu}\mathit{1}start\_ARG italic\_1 end\_ARG italic\_1. This is like Bayes Net that we considered above,

except that we

no longer

explicitly

take F𝐹Fitalic\_F and G𝐺Gitalic\_G to be independent; we merely record the constraints

imposed by the given probabilities.

The key point is that we can incorporate the new information into our original

representation (the graph in [Figure 1](#S1.F1 "Figure 1 ‣ Example 1. ‣ 1 Introduction ‣ Probabilistic Dependency Graphs") without the edge from

F𝐹Fitalic\_F to G𝐺Gitalic\_G) simply by adding the edge from F𝐹Fitalic\_F to G𝐺Gitalic\_G and the associated cpd

p𝑝pitalic\_p (the new infromation is shown in blue).

Doing so does not change the meaning of the original edges.

Unlike a Bayesian update, the operation is even reversible: all we need

to do recover our original belief state is delete the new edge,

making it possible to mull over and then reject an observation.

The ability of PDGs to model inconsistency, as illustrated in

[Example 1](#Thmexample1 "Example 1. ‣ 1 Introduction ‣ Probabilistic Dependency Graphs"), appears to have come at a significant cost. We seem

to have lost a key benefit of BNs: the ease with which they can

capture

(conditional) independencies, which, as Pearl ([1988](#bib.bib9)) has

argued forcefully, are omnipresent.

######

Example 2 (emulating a BN).

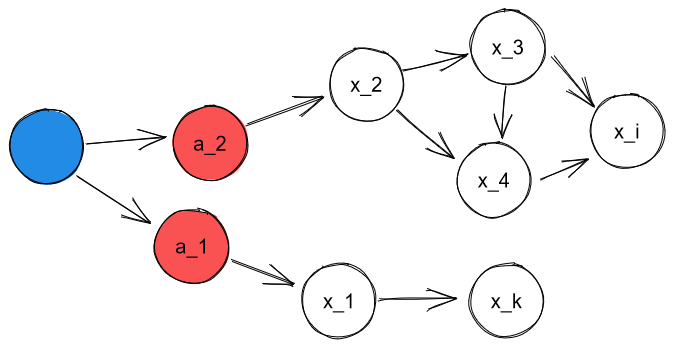

We now consider the classic (quantitative) Bayesian network ℬℬ\cal Bcaligraphic\_B, which has

four binary variables indicating whether a person (C𝐶Citalic\_C) develops cancer, (S𝑆Sitalic\_S)

smokes, (𝑆𝐻𝑆𝐻\mathit{SH}italic\_SH) is exposed to second-hand smoke, and (𝑃𝑆𝑃𝑆\mathit{PS}italic\_PS) has

parents who smoke, presented graphically in [Figure 2](#S1.F2 "Figure 2 ‣ Example 2 (emulating a BN). ‣ 1 Introduction ‣ Probabilistic Dependency Graphs"). We now

walk through what is required to represent ℬℬ\cal Bcaligraphic\_B as a PDG, which we call

𝐌ℬsubscript𝐌ℬ{\mathbdcal{M}}\_{{\mathcal{B}}}bold\_M start\_POSTSUBSCRIPT caligraphic\_B end\_POSTSUBSCRIPT, shown as the solid nodes and edges in

[Figure 2](#S1.F2 "Figure 2 ‣ Example 2 (emulating a BN). ‣ 1 Introduction ‣ Probabilistic Dependency Graphs").

Figure 2: (a) The Bayesian Network ℬℬ\mathcal{B}caligraphic\_B in [Example 2](#Thmexample2 "Example 2 (emulating a BN). ‣ 1 Introduction ‣ Probabilistic Dependency Graphs") (left), and

(b) 𝐌ℬsubscript𝐌ℬ{\mathbdcal{M}}\_{\mathcal{B}}bold\_M start\_POSTSUBSCRIPT caligraphic\_B end\_POSTSUBSCRIPT, its corresponding PDG (right). The shaded box

indicates a restriction of 𝐌ℬsubscript𝐌ℬ{\mathbdcal{M}}\_{\mathcal{B}}bold\_M start\_POSTSUBSCRIPT caligraphic\_B end\_POSTSUBSCRIPT to only the nodes and edges it

contains, and the dashed node T𝑇Titalic\_T and its arrow to C𝐶Citalic\_C can be added in the PDG,

without taking into account S𝑆Sitalic\_S and SH𝑆𝐻SHitalic\_S italic\_H.

We start with the nodes corresponding to the variables in ℬℬ\cal Bcaligraphic\_B, together

with the special node 1111\mathrlap{\mathit{1}}\mspace{2.3mu}\mathit{1}start\_ARG italic\_1 end\_ARG italic\_1 from [Example 1](#Thmexample1 "Example 1. ‣ 1 Introduction ‣ Probabilistic Dependency Graphs"); we add an edge

from 1111{\mathrlap{\mathit{1}}\mspace{2.3mu}\mathit{1}}start\_ARG italic\_1 end\_ARG italic\_1 to 𝑃𝑆𝑃𝑆\mathit{PS}italic\_PS, to which we associate the unconditional

probability given by the cpd for 𝑃𝑆𝑃𝑆\mathit{PS}italic\_PS in ℬℬ\cal Bcaligraphic\_B. We can also re-use

the cpds for S𝑆Sitalic\_S and 𝑆𝐻𝑆𝐻\mathit{SH}italic\_SH, assigning them, respectively, to the edges

PS→Snormal-→𝑃𝑆𝑆PS\to Sitalic\_P italic\_S → italic\_S and PS→SHnormal-→𝑃𝑆𝑆𝐻PS\to SHitalic\_P italic\_S → italic\_S italic\_H in 𝐌ℬsubscript𝐌ℬ{\mathbdcal{M}}\_{{\mathcal{B}}}bold\_M start\_POSTSUBSCRIPT caligraphic\_B end\_POSTSUBSCRIPT.

There are two remaining problems: (1) modeling the remaining table in ℬℬ\cal Bcaligraphic\_B,

which corresponds to the conditional probability of C𝐶Citalic\_C given S𝑆Sitalic\_S and SH𝑆𝐻SHitalic\_S italic\_H; and

(2) recovering the additional

conditional

independence assumptions in the BN.

For (1), we cannot just add the edges S→Cnormal-→𝑆𝐶S\to Citalic\_S → italic\_C and SH→Cnormal-→𝑆𝐻𝐶SH\to Citalic\_S italic\_H → italic\_C that are present

in ℬℬ\cal Bcaligraphic\_B. As we saw in [Example 1](#Thmexample1 "Example 1. ‣ 1 Introduction ‣ Probabilistic Dependency Graphs"), this would mean

supplying two *separate* tables, one indicating the probability of C𝐶Citalic\_C

given S𝑆Sitalic\_S, and the other indicating the probability of C𝐶Citalic\_C given

𝑆𝐻𝑆𝐻\mathit{SH}italic\_SH. We would lose significant information that is

present in ℬℬ\cal Bcaligraphic\_B about

how C𝐶Citalic\_C depends jointly on S𝑆Sitalic\_S and SH𝑆𝐻SHitalic\_S italic\_H. To distinguish the joint dependence on

S𝑆Sitalic\_S and 𝑆𝐻𝑆𝐻\mathit{SH}italic\_SH, for now, we draw an edge with two tails—a

(directed)

*hyperedge*—that completes the diagram in [Figure 2](#S1.F2 "Figure 2 ‣ Example 2 (emulating a BN). ‣ 1 Introduction ‣ Probabilistic Dependency Graphs").

With regard to (2), there are many distributions consistent with the conditional

marginal probabilities in the cpds, and the independences presumed by ℬℬ\cal Bcaligraphic\_B

need not hold for them.

Rather than trying to distinguish between them with additional constraints,

we develop a a scoring-function semantics for PDGs

which

is in this case uniquely minimized by the distribution

specified by ℬℬ{\mathcal{B}}caligraphic\_B (LABEL:thm:bns-are-pdgs).

This allows us to recover the semantics of Bayesian networks without requiring the independencies that they assume.

Next suppose that we get information beyond that captured by the original BN.

Specifically, we read a thorough empirical study demonstrating that people who

use tanning beds have a 10% incidence of cancer, compared with 1% in the

control group

(call the cpd for this p𝑝pitalic\_p); we would like to add this information to

ℬℬ\cal Bcaligraphic\_B. The first step is clearly to add a new node labeled T𝑇Titalic\_T, for “tanning

bed use”. But simply making T𝑇Titalic\_T a parent of C𝐶Citalic\_C (as clearly seems appropriate,

given that the incidence of cancer depends on tanning bed use) requires a

substantial expansion of the cpd; in particular, it requires us to make

assumptions about the interactions between tanning beds and smoking.

The corresponding PDG, 𝐌ℬsubscript𝐌ℬ{\mathbdcal{M}}\_{{\mathcal{B}}}bold\_M start\_POSTSUBSCRIPT caligraphic\_B end\_POSTSUBSCRIPT, on the other hand, has no

trouble: We can simply add the node T𝑇Titalic\_T with an edge to C𝐶Citalic\_C that is associated

with 𝐩𝐩\mathbf{p}bold\_p. But note that doing this makes it possible for our knowledge to

be inconsistent. To take a simple example, if the distribution on C𝐶Citalic\_C given S𝑆Sitalic\_S

and H𝐻Hitalic\_H encoded in the original cpd was always deterministically “has cancer”

for every possible value of S𝑆Sitalic\_S and H𝐻Hitalic\_H, but the distribution according to the

new cpd from T𝑇Titalic\_T was deterministically “no cancer”, the resulting PDG would be

inconsistent.

We have seen that we can easily add information to PDGs; removing information is

equally painless.

######

Example 3 (restriction).

After the Communist party came to power,

children were raised communally, and so parents’ smoking habits no longer had any impact on them. Grok is reading her favorite book on graphical models, and she realizes that while the node 𝑃𝑆𝑃𝑆\mathit{PS}italic\_PS in [Figure 2](#S1.F2 "Figure 2 ‣ Example 2 (emulating a BN). ‣ 1 Introduction ‣ Probabilistic Dependency Graphs") has lost its usefulness, and nodes S𝑆Sitalic\_S and 𝑆𝐻𝑆𝐻\mathit{SH}italic\_SH no longer ought to have 𝑃𝑆𝑃𝑆\mathit{PS}italic\_PS as a parent, the other half of the diagram—that is, the node C𝐶Citalic\_C and its dependence on S𝑆Sitalic\_S and 𝑆𝐻𝑆𝐻\mathit{SH}italic\_SH—should apply as before.

Grok has identified two obstacles to modeling deletion of information from a BN

by simply deleting nodes and their associated cpds. First, this restricted model

is technically no longer a BN (which in this case would require unconditional

distributions on S𝑆Sitalic\_S and 𝑆𝐻𝑆𝐻\mathit{SH}italic\_SH), but rather a *conditional* BN

(Koller and Friedman [2009](#bib.bib5)), which allows for these nodes to be marked as observations;

observation nodes do not have associated beliefs. Second, even regarded as a

conditional BN, the result of deleting a node may introduce *new*

independence information, incompatible with the original BN. For instance, by

deleting the node B𝐵Bitalic\_B in a chain A→B→Cnormal-→𝐴𝐵normal-→𝐶A\rightarrow B\rightarrow Citalic\_A → italic\_B → italic\_C, one concludes

that A𝐴Aitalic\_A and C𝐶Citalic\_C are independent, a conclusion incompatible with the original BN

containing all three nodes.

PDGs do not suffer from either problem. We can easily delete the

nodes labeled 1 and PS𝑃𝑆PSitalic\_P italic\_S in [Figure 2](#S1.F2 "Figure 2 ‣ Example 2 (emulating a BN). ‣ 1 Introduction ‣ Probabilistic Dependency Graphs") to get the

restricted PDG shown in the figure, which captures Grok’s updated information.

The resulting PDG has no edges leading to S𝑆Sitalic\_S or 𝑆𝐻𝑆𝐻\mathit{SH}italic\_SH, and hence no

distributions specified on them; no special modeling distinction between

observation nodes and other nodes are required. Because PDGs do not directly

make independence assumptions, the information in this fragment is truly a

subset of the information in the whole PDG.

Being able to form a well-behaved local picture and restrict knowledge is

useful, but an even more compelling reason to use PDGs is their ability to

aggregate information.

######

Example 4.

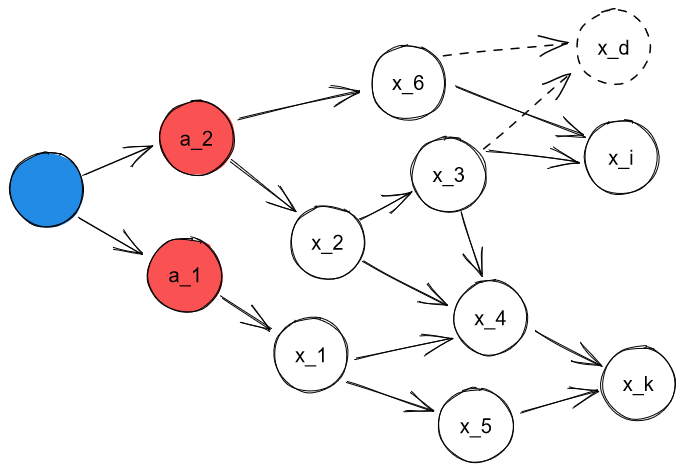

Grok dreams of becoming Supreme Leader (𝑆𝐿𝑆𝐿\it SLitalic\_SL), and has come up with a plan.

She has noticed that people who use tanning beds have significantly more power

than those who don’t. Unfortunately, her mom has always told her that tanning

beds cause cancer; specifically, that 15% of people who use tanning beds get

it, compared to the baseline of 2%. Call this cpd q𝑞qitalic\_q. Grok thinks people will

make fun of her if she uses a tanning bed and gets cancer, making becoming

Supreme Leader impossible. This mental state is depicted as a PDG on the left

of [Figure 3](#S1.F3 "Figure 3 ‣ Example 4. ‣ 1 Introduction ‣ Probabilistic Dependency Graphs").

Grok is reading about graphical models because she vaguely remembers that the

variables in [Example 2](#Thmexample2 "Example 2 (emulating a BN). ‣ 1 Introduction ‣ Probabilistic Dependency Graphs") match the ones she already knows about. When she

finishes reading the statistics on smoking and the original study on tanning

beds (associated to a cpd 𝐩𝐩\mathbf{p}bold\_p in [Example 2](#Thmexample2 "Example 2 (emulating a BN). ‣ 1 Introduction ‣ Probabilistic Dependency Graphs")), but before she has

time to reflect, we can represent her (conflicted) knowledge state as the union

of the two graphs, depicted graphically on the right of [Figure 3](#S1.F3 "Figure 3 ‣ Example 4. ‣ 1 Introduction ‣ Probabilistic Dependency Graphs").

Figure 3: Grok’s prior (left) and combined (right) knowledge.

The union of the two PDGs, even with overlapping

nodes, is still a PDG.

This is not the case in general

for BNs.

Note that the PDG that Grok used to

represent her two different sources of information (the mother’s wisdom and the

study) regarding the distribution of C𝐶Citalic\_C is a *multigraph*: there are two

edges from T𝑇Titalic\_T to C𝐶Citalic\_C, with inconsistent information.

Had we not allowed multigraphs, we would have needed to choose between the two edges, or represent the

information some other (arguably less natural) way. As we are already allowing

inconsistency, merely recording both is much more in keeping with the way we

have handled other types of uncertainty.

Not all inconsistencies are equally egregious. For example, even though the cpds

p𝑝pitalic\_p and q𝑞qitalic\_q are different, they are numerically close, so, intuitively, the PDG on the right in

[Figure 3](#S1.F3 "Figure 3 ‣ Example 4. ‣ 1 Introduction ‣ Probabilistic Dependency Graphs") is not very inconsistent.

Making this precise

is

the focus of [Section 3.2](#S3.SS2 "3.2 PDGs As Distribution Scoring Functions ‣ 3 Semantics ‣ Probabilistic Dependency Graphs").

These examples give a taste of the power of PDGs. In the coming sections, we formalize PDGs and relate them to other approaches.

2 Syntax

---------

We now provide formal definitions for PDGs.

Although it is possible to formalize PDGS with hyperedges directly,

we opt for a different approach here, in which PDGs have only regular edges,

and hyperedges are captured using a simple construction

that involves adding an extra node.

######

Definition 2.1.

A *Probabilistic Dependency Graph*

is a tuple 𝐌=(𝒩,ℰ,𝒱,𝐩,α,β)𝐌𝒩ℰ𝒱𝐩𝛼𝛽\mathbdcal{M}=(\mathcal{N},\mathcal{E},\mathcal{V},\mathbf{p},\alpha,\beta)bold\_M = ( caligraphic\_N , caligraphic\_E , caligraphic\_V , bold\_p , italic\_α , italic\_β ), where

𝒩𝒩\mathcal{N}caligraphic\_N

is a finite set of nodes, corresponding to variables;

ℰℰ\mathcal{E}caligraphic\_E

is a set of labeled edges {X→𝐿Y}𝑋𝐿→𝑌\{X\!\overset{\smash{\mskip-5.0mu\raisebox{-1.0pt}{$\scriptscriptstyle L$}}}{\rightarrow}\!Y\}{ italic\_X start\_OVERACCENT italic\_L end\_OVERACCENT start\_ARG → end\_ARG italic\_Y }, each with a source

X𝑋Xitalic\_X and target Y𝑌Yitalic\_Y in 𝒩𝒩\mathcal{N}caligraphic\_N;

𝒱𝒱\mathcal{V}caligraphic\_V

associates each variable N∈𝒩𝑁𝒩N\in\mathcal{N}italic\_N ∈ caligraphic\_N with a set 𝒱(N)𝒱𝑁\mathcal{V}(N)caligraphic\_V ( italic\_N ) of values that the variable N𝑁Nitalic\_N can take;

𝐩𝐩\mathbf{p}bold\_p

associates to each edge X→𝐿Y∈ℰ𝑋𝐿→𝑌ℰX\!\overset{\smash{\mskip-5.0mu\raisebox{-1.0pt}{$\scriptscriptstyle L$}}}{\rightarrow}\!Y\in\mathcal{E}italic\_X start\_OVERACCENT italic\_L end\_OVERACCENT start\_ARG → end\_ARG italic\_Y ∈ caligraphic\_E

a distribution 𝐩L(x)subscript𝐩𝐿𝑥\mathbf{p}\_{\!{}\_{L}\!}(x)bold\_p start\_POSTSUBSCRIPT start\_FLOATSUBSCRIPT italic\_L end\_FLOATSUBSCRIPT end\_POSTSUBSCRIPT ( italic\_x ) on Y𝑌Yitalic\_Y for each x∈𝒱(X)𝑥𝒱𝑋x\in\mathcal{V}(X)italic\_x ∈ caligraphic\_V ( italic\_X );

α𝛼\alphaitalic\_α

associates to each edge X→𝐿Y𝑋𝐿→𝑌X\!\overset{\smash{\mskip-5.0mu\raisebox{-1.0pt}{$\scriptscriptstyle L$}}}{\rightarrow}\!Yitalic\_X start\_OVERACCENT italic\_L end\_OVERACCENT start\_ARG → end\_ARG italic\_Y a non-negative number αLsubscript𝛼𝐿\alpha\_{L}italic\_α start\_POSTSUBSCRIPT italic\_L end\_POSTSUBSCRIPT which,

roughly speaking, is the modeler’s confidence in the functional

dependence of Y𝑌Yitalic\_Y on X𝑋Xitalic\_X implicit in L𝐿Litalic\_L;

β𝛽\betaitalic\_β

associates to each edge L𝐿Litalic\_L a positive real number βLsubscript𝛽𝐿\beta\_{L}italic\_β start\_POSTSUBSCRIPT italic\_L end\_POSTSUBSCRIPT,

the modeler’s

subjective confidence in the reliability of

𝐩Lsubscript𝐩𝐿\mathbf{p}\_{\!{}\_{L}\!}bold\_p start\_POSTSUBSCRIPT start\_FLOATSUBSCRIPT italic\_L end\_FLOATSUBSCRIPT end\_POSTSUBSCRIPT.

Note that we allow multiple edges in ℰℰ\mathcal{E}caligraphic\_E with the same source and

target; thus (𝒩,ℰ)𝒩ℰ(\mathcal{N},\mathcal{E})( caligraphic\_N , caligraphic\_E ) is a multigraph. We occasionally write a PDG

as 𝐌=(𝒢,𝐩,α,β)𝐌𝒢𝐩𝛼𝛽\mathbdcal{M}=(\mathcal{G},\mathbf{p},\alpha,\beta)bold\_M = ( caligraphic\_G , bold\_p , italic\_α , italic\_β ), where 𝒢=(𝒩,ℰ,𝒱)𝒢𝒩ℰ𝒱\mathcal{G}=(\mathcal{N},\mathcal{E},\mathcal{V})caligraphic\_G = ( caligraphic\_N , caligraphic\_E , caligraphic\_V ), and

abuse terminology by referring to 𝒢𝒢\mathcal{G}caligraphic\_G as a multigraph.

We refer to

𝐍=(𝒢,𝐩)𝐍𝒢𝐩{\mathbdcal{N}}=(\mathcal{G},\mathbf{p})bold\_N = ( caligraphic\_G , bold\_p ) as an *unweighted* PDG,

and give it semantics as though it were the (weighted) PDG (𝒢,𝐩,𝟏,𝟏)𝒢𝐩11(\mathcal{G},\mathbf{p},\mathbf{1},\mathbf{1})( caligraphic\_G , bold\_p , bold\_1 , bold\_1 ), where

𝟏1\bf 1bold\_1 is the constant function (i.e., so that αL=βL=1subscript𝛼𝐿subscript𝛽𝐿1\alpha\_{L}=\beta\_{L}=1italic\_α start\_POSTSUBSCRIPT italic\_L end\_POSTSUBSCRIPT = italic\_β start\_POSTSUBSCRIPT italic\_L end\_POSTSUBSCRIPT = 1 for all L𝐿Litalic\_L).

In this paper, with the exception of [Section 4.3](#S4.SS3 "4.3 Factored Exponential Families ‣ 4 Relationships to Other Graphical Models ‣ Probabilistic Dependency Graphs"), we implicitly take α=𝟏𝛼1\alpha={\bf 1}italic\_α = bold\_1

and omit α𝛼\alphaitalic\_α, writing 𝐌=(𝒢,𝐩,β)𝐌𝒢𝐩𝛽\mathbdcal{M}=(\mathcal{G},\mathbf{p},\beta)bold\_M = ( caligraphic\_G , bold\_p , italic\_β ).111The appendix gives results for arbitrary α𝛼\alphaitalic\_α.

If 𝐌𝐌\mathbdcal{M}bold\_M is a PDG, we reserve the names

𝒩𝐌,ℰ𝐌,…superscript𝒩𝐌superscriptℰ𝐌…\mathcal{N}^{\mathbdcal{M}},\mathcal{E}^{\mathbdcal{M}},\ldotscaligraphic\_N start\_POSTSUPERSCRIPT bold\_M end\_POSTSUPERSCRIPT , caligraphic\_E start\_POSTSUPERSCRIPT bold\_M end\_POSTSUPERSCRIPT , …,

for the components of 𝐌𝐌\mathbdcal{M}bold\_M, so that we may reference one without naming them

all explicitly. We write 𝒱(S)𝒱𝑆\mathcal{V}(S)caligraphic\_V ( italic\_S ) for the set of possible joint settings of a set

S𝑆Sitalic\_S of variables, and write

𝒱(𝐌)𝒱𝐌\mathcal{V}(\mathbdcal{M})caligraphic\_V ( bold\_M ) for all settings of the variables in 𝒩𝐌superscript𝒩𝐌\mathcal{N}^{\mathbdcal{M}}caligraphic\_N start\_POSTSUPERSCRIPT bold\_M end\_POSTSUPERSCRIPT; we

refer to these settings as “worlds”.

While the definition above is sufficient to represent the class of all legal

PDGs, we often use two additional bits of syntax

to indicate common constraints:

the special variable 1111\mathrlap{\mathit{1}}\mspace{2.3mu}\mathit{1}start\_ARG italic\_1 end\_ARG italic\_1 such that 𝒱(11)={⋆}𝒱11⋆\mathcal{V}(\mathrlap{\mathit{1}}\mspace{2.3mu}\mathit{1})=\{\star\}caligraphic\_V ( start\_ARG italic\_1 end\_ARG italic\_1 ) = { ⋆ }

from [Examples 1](#Thmexample1 "Example 1. ‣ 1 Introduction ‣ Probabilistic Dependency Graphs") and [2](#Thmexample2 "Example 2 (emulating a BN). ‣ 1 Introduction ‣ Probabilistic Dependency Graphs"), and

double-headed arrows, A→→BA\rightarrow\mathrel{\mspace{-15.0mu}}\rightarrow Bitalic\_A → → italic\_B, which visually indicate

that the corresponding cpd is degenerate, effectively representing a deterministic

function f:𝒱(A)→𝒱(B):𝑓→𝒱𝐴𝒱𝐵f:\mathcal{V}(A)\to\mathcal{V}(B)italic\_f : caligraphic\_V ( italic\_A ) → caligraphic\_V ( italic\_B ).

######

Construction 2.2.

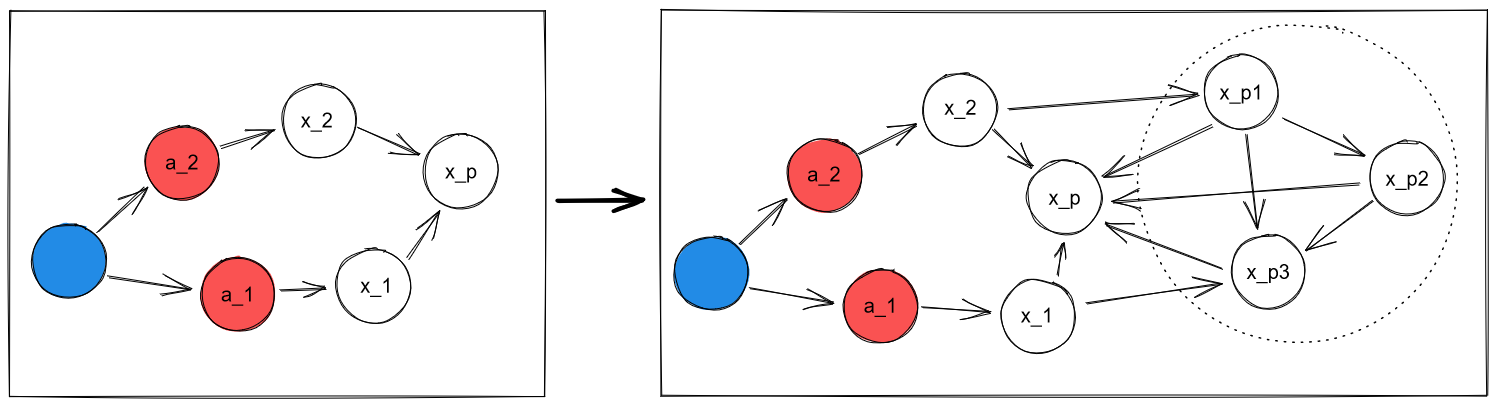

We can now explain how we capture the multi-tailed edges that

were used in

[Examples 2](#Thmexample2 "Example 2 (emulating a BN). ‣ 1 Introduction ‣ Probabilistic Dependency Graphs") to [4](#Thmexample4 "Example 4. ‣ 1 Introduction ‣ Probabilistic Dependency Graphs").

That notation can be viewed as shorthand for the graph that results by adding a new node at the junction representing the joint value of the nodes at the tails, with projections going back. For instance,

the diagram displaying Grok’s prior knowledge in [Example 4](#Thmexample4 "Example 4. ‣ 1 Introduction ‣ Probabilistic Dependency Graphs"), on the left of [Figure 3](#S1.F3 "Figure 3 ‣ Example 4. ‣ 1 Introduction ‣ Probabilistic Dependency Graphs")

is really shorthand for the following PDG, where

where we insert a node labeled C×T𝐶𝑇C\times Titalic\_C × italic\_T at the junction:

![[Uncaptioned image]](/html/2012.10800/assets/x7.png)

As the notation suggests, 𝒱(C×T)=𝒱(C)×𝒱(T)𝒱𝐶𝑇𝒱𝐶𝒱𝑇\mathcal{V}(C\times T)=\mathcal{V}(C)\times\mathcal{V}(T)caligraphic\_V ( italic\_C × italic\_T ) = caligraphic\_V ( italic\_C ) × caligraphic\_V ( italic\_T ).

For any joint setting (c,t)∈𝒱(C×T)𝑐𝑡𝒱𝐶𝑇(c,t)\in\mathcal{V}(C\times T)( italic\_c , italic\_t ) ∈ caligraphic\_V ( italic\_C × italic\_T ) of both variables, the cpd for

the edge from C×T𝐶𝑇C\times Titalic\_C × italic\_T to C𝐶Citalic\_C gives probability 1 to c𝑐citalic\_c;

similarly, the cpd for the edge from C×T𝐶𝑇C\times Titalic\_C × italic\_T to T𝑇Titalic\_T gives probability 1 to t𝑡titalic\_t.

3 Semantics

------------

Although the meaning of an individual cpd is clear, we have not yet given

PDGs a “global” semantics. We discuss three related approaches to doing so.

The first is the simplest: we associate with a PDG the set of distributions that

are consistent with it. This set will be empty if the PDG is inconsistent.

The second approach associates a PDG with a scoring function, indicating the fit

of an arbitrary distribution μ𝜇\muitalic\_μ, and can be thought of as a *weighted*

set of distributions (Halpern and Leung [2015](#bib.bib2)). This approach allows us to distinguish

inconsistent PDGs, while the first approach does not. The third approach chooses

the distributions with the best score, typically associating with a PDG a unique

distribution.

###

3.1 PDGs As Sets Of Distributions

We have been thinking of a PDG as a collection of constraints on distributions,

specified by matching cpds. From this perspective, it is natural to consider the

set of all distributions that are consistent with the constraints.

######

Definition 3.1.

If 𝐌𝐌\mathbdcal{M}bold\_M is a PDG (weighted or unweighted) with edges ℰℰ\mathcal{E}caligraphic\_E and

cpds 𝐩𝐩\mathbf{p}bold\_p,

let [[𝐌]]𝑠𝑑subscriptdelimited-[]delimited-[]𝐌𝑠𝑑[\mspace{-3.5mu}[\mathbdcal{M}]\mspace{-3.5mu}]\_{\text{sd}}[ [ bold\_M ] ] start\_POSTSUBSCRIPT sd end\_POSTSUBSCRIPT be the *s*et of

*d*istributions over the variables in 𝐌𝐌\mathbdcal{M}bold\_M

whose conditional marginals are exactly those given by 𝐩𝐩\mathbf{p}bold\_p.

That is, μ∈[[𝐌]]𝑠𝑑𝜇subscriptdelimited-[]delimited-[]𝐌𝑠𝑑\mu\in[\mspace{-3.5mu}[\mathbdcal{M}]\mspace{-3.5mu}]\_{\text{sd}}italic\_μ ∈ [ [ bold\_M ] ] start\_POSTSUBSCRIPT sd end\_POSTSUBSCRIPT iff, for all edges L∈ℰ𝐿ℰL\in\mathcal{E}italic\_L ∈ caligraphic\_E from X𝑋Xitalic\_X

to Y𝑌Yitalic\_Y, x∈𝒱(X)𝑥𝒱𝑋x\in\mathcal{V}(X)italic\_x ∈ caligraphic\_V ( italic\_X ), and y∈𝒱(Y)𝑦𝒱𝑌y\in\mathcal{V}(Y)italic\_y ∈ caligraphic\_V ( italic\_Y ), we have that μ(Y=y∣X=x)=𝐩L(x)𝜇𝑌conditional𝑦𝑋𝑥subscript𝐩𝐿𝑥\mu(Y\!=\!y\mid X\!=\!x)=\mathbf{p}\_{\!{}\_{L}\!}(x)italic\_μ ( italic\_Y = italic\_y ∣ italic\_X = italic\_x ) = bold\_p start\_POSTSUBSCRIPT start\_FLOATSUBSCRIPT italic\_L end\_FLOATSUBSCRIPT end\_POSTSUBSCRIPT ( italic\_x ).

𝐌𝐌\mathbdcal{M}bold\_M is *inconsistent* if [[𝐌]]𝑠𝑑=∅subscriptdelimited-[]delimited-[]𝐌𝑠𝑑[\mspace{-3.5mu}[\mathbdcal{M}]\mspace{-3.5mu}]\_{\text{sd}}=\emptyset[ [ bold\_M ] ] start\_POSTSUBSCRIPT sd end\_POSTSUBSCRIPT = ∅, and *consistent* otherwise.

Note that [[𝐌]]sdsubscriptdelimited-[]delimited-[]𝐌sd[\mspace{-3.5mu}[\mathbdcal{M}]\mspace{-3.5mu}]\_{\text{sd}}[ [ bold\_M ] ] start\_POSTSUBSCRIPT sd end\_POSTSUBSCRIPT is independent of the weights α𝛼\alphaitalic\_α and β𝛽\betaitalic\_β.

###

3.2 PDGs As Distribution Scoring Functions

We now generalize the previous semantics by viewing a PDG 𝐌𝐌\mathbdcal{M}bold\_M as a

*scoring function* that, given an arbitrary distribution μ𝜇\muitalic\_μ on 𝒱(𝐌)𝒱𝐌\mathcal{V}(\mathbdcal{M})caligraphic\_V ( bold\_M ), returns a real-valued score indicating how well μ𝜇\muitalic\_μ fits 𝐌𝐌\mathbdcal{M}bold\_M.

Distributions with the lowest (best) scores are those that most closely match

the cpds in 𝐌𝐌\mathbdcal{M}bold\_M, and contain the fewest unspecified correlations.

We start with the first component of the score, which assigns higher scores to

distributions that require a larger perturbation in order to be consistent with

𝐌𝐌\mathbdcal{M}bold\_M.

We measure the magnitude of this perturbation with relative entropy. In

particular, for an edge X→𝐿Y𝑋𝐿→𝑌X\!\overset{\smash{\mskip-5.0mu\raisebox{-1.0pt}{$\scriptscriptstyle L$}}}{\rightarrow}\!Yitalic\_X start\_OVERACCENT italic\_L end\_OVERACCENT start\_ARG → end\_ARG italic\_Y and x∈𝒱(X)𝑥𝒱𝑋x\in\mathcal{V}(X)italic\_x ∈ caligraphic\_V ( italic\_X ), we measure

the relative entropy from 𝐩L(x)subscript𝐩𝐿𝑥\mathbf{p}\_{\!{}\_{L}\!}(x)bold\_p start\_POSTSUBSCRIPT start\_FLOATSUBSCRIPT italic\_L end\_FLOATSUBSCRIPT end\_POSTSUBSCRIPT ( italic\_x ) to μ(Y=⋅∣X=x)\mu(Y\!=\cdot\mid X=x)italic\_μ ( italic\_Y = ⋅ ∣ italic\_X = italic\_x ), and take the

expectation over μXsubscript𝜇𝑋\mu\_{X}italic\_μ start\_POSTSUBSCRIPT italic\_X end\_POSTSUBSCRIPT (that is, the marginal of μ𝜇\muitalic\_μ on X𝑋Xitalic\_X). We then sum

over all the edges L𝐿Litalic\_L in the PDG, weighted by their reliability.

######

Definition 3.2.

For a PDG 𝐌𝐌\mathbdcal{M}bold\_M, the *incompatibility* of a

a joint distribution μ𝜇\muitalic\_μ over 𝒱(𝐌)𝒱𝐌\mathcal{V}(\mathbdcal{M})caligraphic\_V ( bold\_M ), is given by

| | | |

| --- | --- | --- |