id stringlengths 36 36 | source stringclasses 15

values | formatted_source stringclasses 13

values | text stringlengths 2 7.55M |

|---|---|---|---|

9b5bdb0f-599f-449c-ad63-fe6e982a9aa3 | trentmkelly/LessWrong-43k | LessWrong | Cephaloponderings

Cross-posted from Putanumonit.

----------------------------------------

Hello all. This is Jacob’s wife, and while Jacob is off chilling in the fjords, I’m staying home and have volunteered to write a guest post.

Instead of trying to match the usual Putanumonit fare, I chose to write about somethi... |

f3b86efe-603b-41ca-8138-0259ec6022f8 | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | Resources & opportunities for careers in European AI Policy

*Last updated: 12 October 2023*

Career opportunities

====================

Early career opportunities

--------------------------

* [EU Tech Policy Fellowship](https://www.techpolicyfellowship.eu/). A 7-month programme for kickstarting a European policy care... |

e20cfccc-3e7e-4fcd-8d44-dedb14ba2542 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Why not just boycott LLMs?

*Epistemic Status*: *This post is an **opinion** I have had for some time and discussed with a few friends. Even though it’s been written up **very** hastily, I want to put it out there - because of its particular*[*relevance*](https://openai.com/research/gpt-4) *today and also because I thi... |

dfcd1464-ffb1-4c33-aa2e-7cf1fc676565 | trentmkelly/LessWrong-43k | LessWrong | The Illusion of Universal Morality: A Dynamic Perspective on Genetic Fitness and Ethical Complexity

The belief in a universal, independent standard for altruism, morality, and right and wrong is deeply ingrained in societal norms. However, when scrutinized through the lens of dynamic genetic fitness, these concepts re... |

3b3bfd63-883b-4e51-b4f3-085f079b12d0 | trentmkelly/LessWrong-43k | LessWrong | Giving in to small vices

When I was in Seoul three years ago to visit a friend, I was not impressed by the city. The people there were always in a hurry, and struck me as generally unfriendly. When you apologise for accidentally bumping into someone, your apology will usually be coldly ignored. There are also very str... |

f36b6c6f-4e0c-417d-97fa-eb9ede56a13d | trentmkelly/LessWrong-43k | LessWrong | A permutation argument for comparing utility functions

When doing intertheoretic utility comparisons, there is one clear and easy case: when everything is exactly symmetric.

This happens when, for instance, there exists u and v such that p([u])=p([v])=0.5 and there exists a map σ:S→S, such that σ2 is the identity (he... |

e558d43a-99cb-45ef-8260-88248a082144 | trentmkelly/LessWrong-43k | LessWrong | Is Gemini now better than Claude at Pokémon?

Background: With the release of Claude 3.7 Sonnet, Anthropic promoted a new benchmark: beating Pokémon. Now, Google claims Gemini 2.5 Pro has substantially surpassed Claude's progress on that benchmark.

TL:DR: We don't know if Gemini is better at Pokémon than Claude becaus... |

d1379fc2-844f-49b9-8209-7b5fc6fd7fa5 | trentmkelly/LessWrong-43k | LessWrong | The Third Annual Young Cryonicists Gathering (2012)

I received notification of this today from Alcor, my cryonics provider. I also received notification of this event last year but was unable to attend at that time. Does anyone plan on attending this year? Has anyone attended this type of event in the past, and if so ... |

56591b08-514d-4b4b-ae6e-7492de0ebe07 | trentmkelly/LessWrong-43k | LessWrong | Open thread, Jan. 18 - Jan. 24, 2016

If it's worth saying, but not worth its own post (even in Discussion), then it goes here.

----------------------------------------

Notes for future OT posters:

1. Please add the 'open_thread' tag.

2. Check if there is an active Open Thread before posting a new one. (Immediately... |

45c05f72-5d47-41b6-94c8-f26564d076be | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | Components of Strategic Clarity [Strategic Perspectives on Long-term AI Governance, #2]

*This is post 2 of an in-progress draft report called* ***Strategic Perspectives on Long-term AI Governance** (see* [*sequence*](https://forum.effectivealtruism.org/s/xTkejiJHFsidZ9hMo)*).*

---

Over the last 5 years since the... |

fcf96bc2-32b9-4e73-b9a1-3d5d9c2cb33f | trentmkelly/LessWrong-43k | LessWrong | Categorial preferences and utility functions

,

This post is motivated by a recent post of Stuart Armstrong on going from preferences to a utility function. It was originally planned as a comment, but seems to have developed a bit of a life of its own. The ideas here came up in a discussion with Owen Biesel; all mistak... |

40260abc-e52f-4a44-835c-446b56cc7290 | trentmkelly/LessWrong-43k | LessWrong | Is there any serious attempt to create a system to figure out the CEV of humanity and if not, why haven't we started yet?

Hey, fellow people, I'm fairly new to LessWrong and if this question is irrelevant i apologise for that, however I was wondering whether any serious attempts to create a system for mapping out the ... |

3bfc343f-214e-4ee4-8df8-c53d4ab74581 | trentmkelly/LessWrong-43k | LessWrong | Epoch: What is Epoch?

Our director explains Epoch AI’s mission and how we decide our priorities. In short, we work on projects to understand the trajectory of AI, share this knowledge publicly, and inform important decisions about AI.

----------------------------------------

Since we started Epoch three years ago, w... |

144b49bc-97cb-4238-bbb2-60f9b436c7ce | trentmkelly/LessWrong-43k | LessWrong | Infrafunctions and Robust Optimization

Proofs are in this link

This will be a fairly important post. Not one of those obscure result-packed posts, but something a bit more fundamental that I hope to refer back to many times in the future. It's at least worth your time to read this first section up to its last paragra... |

1ccb7a17-5566-48d7-a860-361f6dcd92c4 | StampyAI/alignment-research-dataset/blogs | Blogs | my takeoff speeds? depends how you define that

my takeoff speeds? depends how you define that

----------------------------------------------

what takeoff speeds for [transformative AI](ai-doom.html) do i believe in? well, that depends on which time interval you're measuring. there are roughly six meaningful points i... |

5dd85c86-e164-488d-bf3a-13f4cc26784d | trentmkelly/LessWrong-43k | LessWrong | Beyond Biomarkers: Understanding Multiscale Causality

Photo by Jigar Panchal on Unsplash

In exercise science, we typically derive causality in a bottom-up manner. When we evaluate performance, we assess factors such as cardiovascular capacity, metabolic efficiency, or muscular contractile capacity. However, I’ve alway... |

974048d1-657b-4cc9-8897-ab2ec94db798 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | An Extremely Opinionated Annotated List of My Favourite Mechanistic Interpretability Papers

Introduction

------------

This is an extremely opinionated list of my favourite mechanistic

interpretability papers, annotated with my key takeaways and what I like

about each paper, which bits to deeply engage with vs skim (... |

cb2ef106-11ce-44a4-b842-7899dc463b27 | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | AMA: Markus Anderljung (PM at GovAI, FHI)

*EDIT: I'm no longer actively checking this post for questions, but I'm likely to periodically check.*

Hello, I work at the [Centre for the Governance of AI](http://governance.ai/) (GovAI), part of the [Future of Humanity Institute](https://www.fhi.ox.ac.uk/) (FHI), Universi... |

d5cd2d13-1f3d-4a90-a6da-f6c693bda89e | trentmkelly/LessWrong-43k | LessWrong | If digital goods in virtual worlds increase GDP, do we actually become richer?

Noah Smith, in this article, argues that the Metaverse could enable economic growth to increase a lot and sharply decouple itself from real-world resource usage. By creating markets in which we buy and sell immaterial things, world GDP woul... |

5461cc35-b678-4880-bcec-1a0ab14b9c29 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | What more compute does for brain-like models: response to Rohin

This is a response to a comment made by [Rohin Shah](https://www.lesswrong.com/users/rohinmshah) on [Daniel Kokotajlo](https://www.lesswrong.com/users/daniel-kokotajlo)'s post [Fun with +12 OOMs of Compute](https://www.lesswrong.com/posts/rzqACeBGycZtqCfa... |

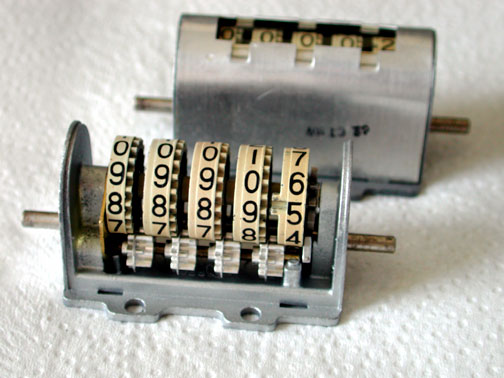

0f85923f-4e45-4ab0-a905-8d80aca78336 | StampyAI/alignment-research-dataset/arbital | Arbital | Digit wheel

A mechanical device for storing a number from 0 to 9. Devices such as these were often attached to the desks of engineers, as part of historical "desktop computers."

Each wheel can stably rest in any one of ten states. Digit whe... |

e04bb247-eea1-4bdb-a06f-2a451590548a | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | Biological Anchors external review by Jennifer Lin (linkpost)

This report is one of the winners of the [EA Criticism and Red Teaming Contest](https://forum.effectivealtruism.org/posts/YgbpxJmEdFhFGpqci/winners-of-the-ea-criticism-and-red-teaming-contest#Biological_Anchors_external_review_by_Jennifer_Lin___20_000_).

... |

5b20f5b3-92f8-406f-a9a6-bfb99e0a95ed | trentmkelly/LessWrong-43k | LessWrong | College Student Philanthropy and Funding Millenium Villages

One interesting idea comes from "How Students Can Support a Millennium Village?", which talks about, obviously, funding a Millenium Village: (See also the school's news report.)

> Last year at Carleton University our group, Students To End Extreme Poverty, ... |

25bde7c8-597b-4a09-bd51-cd1bddc9f6ee | trentmkelly/LessWrong-43k | LessWrong | Indifference and compensatory rewards

A putative new idea for AI control; index here.

It's occurred to me that there is a framework where we can see all "indifference" results as corrective rewards, both for the utility function change indifference and for the policy change indifference.

Imagine that the agent has r... |

528d25e2-1a89-4055-a23c-858d24f89833 | trentmkelly/LessWrong-43k | LessWrong | Modeling Risks From Learned Optimization

This post, which deals with how risks from learned optimization and inner alignment can be understood, is part 5 in our sequence on Modeling Transformative AI Risk. We are building a model to understand debates around existential risks from advanced AI. The model is made with A... |

8fd64ecf-f3a2-4a9e-8629-2e1585012414 | trentmkelly/LessWrong-43k | LessWrong | Meetup : February 2015 Rationality Dojo - Introduction to Ethics

Discussion article for the meetup : February 2015 Rationality Dojo - Introduction to Ethics

WHEN: 01 February 2015 03:30:00PM (+0800)

WHERE: Ross House Association, 247-251 Flinders Lane, Melbourne

February 2015 Rationality Dojo - Introduction to Ethi... |

f33df754-b769-4283-8347-4800a03ae51e | trentmkelly/LessWrong-43k | LessWrong | A solvable Newcomb-like problem - part 2 of 3

This is the second part of a three post sequence on a problem that is similar to Newcomb's problem but is posed in terms of probabilities and limited knowledge.

Part 1 - stating the problem

Part 2 - some mathematics

Part 3 - towards a solution

---------------... |

07f9c24a-ce4f-4ec2-8b80-0c1fbb921e78 | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | Do you worry about totalitarian regimes using AI Alignment technology to create AGI that subscribe to their values?

Hi all! First I want to say that I really enjoyed this forum in the past few months, and eventually decided to create an account to post this question. I am still in the process of writing the short vers... |

be84480e-c2fe-40a9-8778-941178222feb | StampyAI/alignment-research-dataset/special_docs | Other | Standards for AI Governance: International Standards to Enable Global Coordination in AI Research & Development

T

ECHNICAL

R

EPORT

S t a n d a r d s f o r A I G o v e r n a n c e :

I n t e r n a t i o n a l S t a n d a r d s t o E n a b l e G l o b a l

C o o r d i n a t i o n i n A I R e s... |

d32d96b7-c5a8-4189-8b8b-78a8a6fe8542 | trentmkelly/LessWrong-43k | LessWrong | A common failure for foxes

A common failure mode for people who pride themselves in being foxes (as opposed to hedgehogs):

Paying more attention to easily-evaluated claims that don't matter much, at the expense of hard-to-evaluate claims that matter a lot.

E.g., maybe there's an RCT that isn't very relevant, but is ... |

09790aaa-6d9b-4012-b812-8579d2178819 | trentmkelly/LessWrong-43k | LessWrong | Examples of the Mind Projection Fallacy?

I suspect that achieving a clear mental picture of the sheer depth and breadth of the mind projection fallacy is a powerful mental tool. It's hard for me to state this in clearer terms, though, because I don't have a wide collection of good examples of the mind projection falla... |

96a928d7-b1b2-40d4-b734-75bc01c6d30f | trentmkelly/LessWrong-43k | LessWrong | Proposal: consolidate meetup announcements before promotion

The Less Wrong feed is getting crowded with meetups rather than substantive posts. Hopefully, this should be fixed in the redesign, but one way to work around it in the meanwhile would be to make top-level posts announcing several meetups at once.

Folk would... |

befb4f12-9b98-45cb-9056-d2bef789d27a | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | How much will pre-transformative AI speed up R&D?

I'm interested in how much we should expect pre-transformative AI to speed up (general, I guess) R&D in the coming decades. What are the must-read resources on this?

Things I have already looked at:

[Machine Intelligence for Scientific Discovery and Engineer... |

e03df821-431c-4eb1-925b-64772e2ac96e | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Coherence arguments do not entail goal-directed behavior

One of the most pleasing things about probability and expected utility theory is that there are many *coherence arguments* that suggest that these are the “correct” ways to reason. If you deviate from what the theory prescribes, then you must be executing a *dom... |

c9bb1451-f4dc-457c-a75e-bcce3d9a1e4e | trentmkelly/LessWrong-43k | LessWrong | Friendly AI research news: FriendlyAI.tumblr.com

I will be posting news on Friendly AI research here: FriendlyAI.tumblr.com. Follow the link to stay tuned via Tumblr or RSS. |

7d6c7d1f-e567-40ab-8401-e35474d83058 | trentmkelly/LessWrong-43k | LessWrong | AGI Predictions

This post is a collection of key questions that feed into AI timelines and AI safety work where it seems like there is substantial interest or disagreement amongst the LessWrong community.

You can make a prediction on a question by hovering over the widget and clicking. You can update your prediction... |

cbca6a72-6c72-469b-944f-2085abfd6cdf | trentmkelly/LessWrong-43k | LessWrong | Notes on Tuning Metacognition

Summary: Reflections and practice notes on a metacognitive technique aimed at refining the process of thinking, rather than the thoughts themselves.

Epistemic Status: Experimental and observational, based on personal practice and reflections over a brief period.

Introduction

While doin... |

93a53206-cb40-4d44-b51f-1d4b524bc4bf | trentmkelly/LessWrong-43k | LessWrong | HPMOR: What could've been done better?

Warning: As per the official spoiler policy, the following discussion may contain unmarked spoilers for up to the current chapter of the Methods of Rationality. Proceed at your own risk.

Assume HPMOR was written by a super-intelligence implementing the CEV of Eliezer Yudkowsky a... |

14ceb87a-2cf0-4ddc-afab-83c0d81bac4a | trentmkelly/LessWrong-43k | LessWrong | Sleeping Beauty Problem Can Be Explained by Perspective Disagreement (IV)

This is the final part of my argument. Previous parts can be found here: I, II, III. To understand the following part I should be read at least. Here I would argue against SSA, argue why double-halving is correct, and touch on the implication of... |

86187621-c616-4e74-a836-8729a2af17ba | trentmkelly/LessWrong-43k | LessWrong | Recreational Cryonics

We recently saw a post in Discussion by ChrisHallquist, asking to be talked out of cryonics. It so happened that I'd just read a new short story by Greg Egan which gave me the inspiration to write the following:

> It is likely that you would not wish for your brain-state to be available to al... |

1a90cfce-5a5e-414e-a27b-cfd37886fea8 | trentmkelly/LessWrong-43k | LessWrong | Meetup : Columbus Rationality

Discussion article for the meetup : Columbus Rationality

WHEN: 03 November 2014 08:30:00PM (-0400)

WHERE: 1550 Old Henderson Road Room 131, Columbus, OH

We meet every 1st and 3rd Monday at 7:30.

http://www.meetup.com/HumanistOhio/events/208105842/

Discussion article for the meetup :... |

82717c74-ee99-42cc-9e58-0541379c0d53 | trentmkelly/LessWrong-43k | LessWrong | Language, intelligence, rationality

Rationality requires intelligence, and the kind of intelligence that we use (for communication, progress, FAI, etc.) runs on language.

It seems that the place we should start is optimizing language for intelligence and rationality. One of SIAI's proposals includes using Lojban to i... |

9b9d2541-8c46-460a-87b5-f26201c17c3f | trentmkelly/LessWrong-43k | LessWrong | Open Problems with Myopia

Thanks to Noa Nabeshima for helpful discussion and comments.

Introduction

Certain types of myopic agents represent a possible way to construct safe AGI. We call agents with a time discount rate of zero time-limited myopic, a particular instance of the broader class of myopic agents. A proto... |

d37c8e00-90f3-42d8-9b9d-9fe922c16fae | trentmkelly/LessWrong-43k | LessWrong | Interlude for Behavioral Economics

The so-called “rational” solutions to the Prisoners' Dilemma and Ultimatum Game are suboptimal to say the least. Humans have various kludges added by both nature or nurture to do better, but they're not perfect and they're certainly not simple. They leave entirely open the question o... |

a3e605d8-7c6e-4fad-a74e-b42cd0a7e4f0 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Early Results: Do LLMs complete false equations with false equations?

*Abstract: I tested the hypothesis that putting false information in an LLM’s context window will prompt it to continue producing false information. GPT-4 was prompted with a series of X false equations followed by an incomplete equation, for instan... |

4277355c-e335-477d-ad14-c654749507e9 | trentmkelly/LessWrong-43k | LessWrong | Ban development of unpredictable powerful models?

EDIT 3/26/24: No longer endorsed, as I realized I don't believe in deceptive alignment.

In this post, I propose a (relatively strict) prediction-based eval which is well-defined, which seems to rule out lots of accident risk, and which seems to partially align industr... |

dec89157-85f4-4143-83a3-65ae8e936f57 | trentmkelly/LessWrong-43k | LessWrong | Open thread, December 7-13, 2015

If it's worth saying, but not worth its own post (even in Discussion), then it goes here.

----------------------------------------

Notes for future OT posters:

1. Please add the 'open_thread' tag.

2. Check if there is an active Open Thread before posting a new one. (Immediately bef... |

d9d5b8c2-209c-4aef-8554-a9d718f21f36 | StampyAI/alignment-research-dataset/arxiv | Arxiv | Reasoning about Agent Programs using ATL-like Logics

1 Introduction

---------------

The formal verification of agent-oriented programs requires logic frameworks capable of representing and reasoning about agents’ abilities and capabilities, and the goals they can feasibly achieve.

In particular, we are interested h... |

5259f6ce-221f-4579-8d01-62c44f8f0795 | trentmkelly/LessWrong-43k | LessWrong | Re SMTM: negative feedback on negative feedback

SlimeMoldTimeMold (SMTM) recently finished a 14-part series on psychology, “The Mind in the Wheel”, centering on feedback loops (“cybernetics”) as kind of a grand unified theory of the brain.

There are parts of their framework I like—in particular, I think there are inn... |

a3bafced-0060-4fb1-849c-e7ce20afd2cd | trentmkelly/LessWrong-43k | LessWrong | Let's Read:

Superhuman AI for multiplayer poker

On July 11, a new poker AI is published in Science. Called Pluribus, it plays 6-player No-limit Texas Hold'em at superhuman level.

In this post, we read through the paper. The level of exposition is between the paper (too serious) and the popular press (too entertaining... |

1fbcc603-f73b-4b09-8c7e-1a1ffeb7b807 | trentmkelly/LessWrong-43k | LessWrong | Electrons don’t think (or suffer)

There is an EA fringe that talks about suffering in elementary particles. Physicist Sabine Hossenfelder reminds her readers why this panpsychist idea is nonsense.

TL;DR: "if you want a particle to be conscious, your minimum expectation should be that the particle can change. It’s har... |

17c87623-9102-42b1-8d08-d0a74b88b164 | trentmkelly/LessWrong-43k | LessWrong | Bathing Machines and the Lindy Effect

From Wikipedia:

> The Lindy effect is a theory that the future life expectancy of some non-perishable things like a technology or an idea is proportional to their current age, so that every additional period of survival implies a longer remaining life expectancy.

There are a som... |

aa279784-8510-450b-bc39-14d5c95cd0ab | trentmkelly/LessWrong-43k | LessWrong | Decentralized Exclusion

I'm part of several communities that are relatively decentralized. For example, anyone can host a contra dance, rationality meetup, or effective altruism dinner. Some have central organizations (contra has CDSS, EA has CEA) but their influence is mostly informal. This structure has some benefit... |

fb28940f-925f-474d-8e14-c2e61c25e7d4 | trentmkelly/LessWrong-43k | LessWrong | Want to predict/explain/control the output of GPT-4? Then learn about the world, not about transformers.

Introduction

Consider the following scene from William Shakespeare's Julius Caesar.

In this scene, Caesar is at home with his wife Calphurnia. She has awoken after a bad dream, and is pleaded with Caesar not to go... |

04080703-10f5-4e92-9a1a-d094550811d6 | trentmkelly/LessWrong-43k | LessWrong | Open thread, July 29-August 4, 2013

If it's worth saying, but not worth its own post (even in Discussion), then it goes here.

Of course, for "every Monday", the last one should have been dated July 22-28. *cough* |

4b8523f1-d248-4b2e-b404-deaad90720bd | trentmkelly/LessWrong-43k | LessWrong | Accrue Nuclear Dignity Points

Content warning: nuclear doom

Previously on last week's episode: A Few Terrifying Facts About The Russo-Ukrainian War

----------------------------------------

You have probably thought about prepping at some point.

You have probably also not prepped as much as you'd like.

Neither ha... |

8ce7876e-93d3-4954-84f1-096c13616025 | trentmkelly/LessWrong-43k | LessWrong | Agglomeration of 'Ought'

§ Introduction

My aim in this post is to argue that the ‘ought,’ predicate, interpreted in a global sense, agglomerates. I’ll henceforth refer to this position as agglomeration. The position is as follows.

Agglomeration: If “I ought to do A,” and “I ought to do B,” then “I ought to do A ... |

976e517b-0092-46df-b87a-7f218fc28ded | trentmkelly/LessWrong-43k | LessWrong | To perform best at work, look at Time & Energy account balance

Several weeks ago, I got a chance to join a talk hosting one of the very few female regional head at Google.

Despite not having any business background, she climbed the rank from entry level employee to become a regional head, surpassing everyone else fro... |

8a84edc8-aa6f-4ca5-99f7-754f7b836515 | trentmkelly/LessWrong-43k | LessWrong | Morality and relativistic vertigo

tl;dr: Relativism bottoms-out in realism by objectifying relations between subjective notions. This should be communicated using concrete examples that show its practical importance. It implies in particular that morality should think about science, and science should think about mora... |

b86fef9a-d017-4a1e-ad6f-56a853942744 | trentmkelly/LessWrong-43k | LessWrong | AI x-risk reduction: why I chose academia over industry

I've been leaning towards a career in academia for >3 years, and recently got a tenure track role at Cambridge. This post sketches out my reasoning for preferring academia over industry.

Thoughts on Industry Positions:

A lot of people working on AI x-risk seem ... |

7a4aee65-0e42-45e1-b578-0d01effa4d55 | trentmkelly/LessWrong-43k | LessWrong | Thoughts after the Wolfram and Yudkowsky discussion

I recently listened to the discussion between Wolfram and Yudkowsky about AI risk. In some ways this conversation was tailor-made for me, so I'm going to write some things about it and try to get it out in one day instead of letting it sit in my drafts for 3 weeks as... |

4a85e2c1-2f48-45ac-a9e1-74ddb06e1cfb | trentmkelly/LessWrong-43k | LessWrong | January 2011 Southern California Meetup

There will be a meetup for Southern California this Sunday, January 23, 2011 at 4PM and running for three to five hours. The meetup is happening at Marco's Trattoria. The address is:

8200 Santa Monica Blvd

West Hollywood, CA 90046

If all the people (including guests and high... |

92079a97-8c79-40de-b0c0-407a48163777 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | The VNM independence axiom ignores the value of information

*Followup to : [Is risk aversion really irrational?](/lw/9oj/is_risk_aversion_really_irrational/)*

After reading the [decision theory FAQ](/lw/gu1/decision_theory_faq) and re-reading [The Allais Paradox](/lw/my/the_allais_paradox/) I realized I still don't ... |

02ccd558-4d49-40db-aec8-199d647e3030 | trentmkelly/LessWrong-43k | LessWrong | What Program Are You?

I've been trying for a while to make sense of the various alternate decision theories discussed here at LW, and have kept quiet until I thought I understood something well enough to make a clear contribution. Here goes.

You simply cannot reason about what to do by referring to what program you ... |

e6d795de-fa6e-48d7-aee3-ca7a0ff3d246 | StampyAI/alignment-research-dataset/arbital | Arbital | Fundamental Theorem of Arithmetic

summary: The Fundamental Theorem of Arithmetic is a statement about the [natural numbers](https://arbital.com/p/45h); it says that every natural number may be decomposed as a product of [primes](https://arbital.com/p/4mf), and this expression is unique up to reordering the factors. It... |

a70dcff0-4b8d-4502-80c5-d89d815a29ad | StampyAI/alignment-research-dataset/arbital | Arbital | Blue oysters

You're collecting exotic oysters in Nantucket, and there are two different bays you could harvest oysters from. In both bays, 11% of the oysters contain valuable pearls and 89% are empty. In the first bay, 4% of the pearl-containing oysters are blue, and 8% of the non-pearl-containing oysters are blue. In... |

680d6f93-9c14-4ec1-b2eb-0b7bf975bd67 | trentmkelly/LessWrong-43k | LessWrong | SI and Social Business

I asked this question for the Q&A:

> Non-profit organizations like SI need robust, sustainable resource strategies. Donations and grants are not reliable. According to my university Social Entrepreneurship course, social businesses are the best resource strategy available. The Singularity Summi... |

63a8ced9-8467-42b1-bee5-336e70882944 | StampyAI/alignment-research-dataset/arbital | Arbital | Two independent events: Square visualization

$$

\newcommand{\true}{\text{True}}

\newcommand{\false}{\text{False}}

\newcommand{\bP}{\mathbb{P}}

$$

summary:

$$

\newcommand{\true}{\text{True}}

\newcommand{\false}{\text{False}}

\newcommand{\bP}{\mathbb{P}}

$$

Say $A$ and $B$ are independent [events](https://arbital.co... |

a88ff9ce-86f4-4ca5-b45f-a02cd9c59571 | trentmkelly/LessWrong-43k | LessWrong | Conflict in Kriorus becomes hot today, updated, update 2

For a long time, Russian cryocompany Kriorus suffered from a conflict between two groups of owners. One is led by Danila Medvedev and the other is led by his former wife Valeria Pride. Long story short, Valeria took neuropatients (including my mother's brain) 1.... |

c4b2f996-4874-4cd4-af5c-a2c4a2790d3b | trentmkelly/LessWrong-43k | LessWrong | Announcing Convergence Analysis: An Institute for AI Scenario & Governance Research

Cross-posted on the EA Forum.

Executive Summary

We’re excited to introduce Convergence Analysis - a research non-profit & think-tank with the mission of designing a safe and flourishing future for humanity in a world with transformat... |

b018a35d-0d14-4905-9154-988373589f2f | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | [Event] Weekly Alignment Research Coffee Time (05/24)

Just like every Monday now, researchers in AI Alignment are invited for a coffee time, to talk about their research and what they're into.

Here is the [link](http://garden.lesswrong.com?code=BZN3&event=weekly-alignment-research-coffee-time-1).

And here is the [e... |

990aa815-641b-4549-b747-14007569da56 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | In favour of a selective CEV initial dynamic

Note: I appreciate that at this point CEV is just a sketch. However, it’s an interesting topic and I don’t see that there’s any harm in discussing certain details of the concept as it stands.

**1. Summary of CEV**

Eliezer Yudkowsky describes CEV - Coherent Extrapolated ... |

557c7325-08e1-41c4-a53c-0460bcd68f05 | trentmkelly/LessWrong-43k | LessWrong | Breaking down the training/deployment dichotomy

TL;DR: Training and deployment of ML models differ along several axes, and you can have situations that are like training in some ways but like deployment in others. I think this will become more common in the future, so it's worth distinguishing which properties of trai... |

6c66facf-dee5-4a06-8116-921ca72e3d56 | trentmkelly/LessWrong-43k | LessWrong | On the Galactic Zoo hypothesis

Recently, I was reading some arguments about Fermi paradox and aliens and so on; also there was an opinion among the lines of "humans are monsters and any sane civilization avoids them, that's why Galactic Zoo". As implausible as it is, but I've found one more or less sane scenario where... |

12bf15f9-ee91-40d1-a34f-1b2fdc7b4f9c | trentmkelly/LessWrong-43k | LessWrong | Shared interests vs. collective interests

Suppose that I, a college student, found a student organization—a chapter of Students Against a Democratic Society, perhaps. At the first meeting of SADS, we get to talking, and discover, to everyone’s delight, that all ten of us are fans of Star Trek.

This is a shared intere... |

e5f84b8a-5a80-495a-a11e-f693e9ad5ba4 | trentmkelly/LessWrong-43k | LessWrong | Covid-19: Comorbidity

We’ve all seen statistics that most people who die of Covid-19 have at least one comorbidity. They also almost all have the particular comorbidity of age. The biggest risk, by far, is being old. The question that I don’t see being properly asked anywhere (I’d love for this post to be unnecessary ... |

927a34bb-66d5-45a3-9e46-acaf08d95dd4 | trentmkelly/LessWrong-43k | LessWrong | Success without dignity: a nearcasting story of avoiding catastrophe by luck

I’ve been trying to form a nearcast-based picture of what it might look like to suffer or avoid an AI catastrophe. I’ve written a hypothetical “failure story” (How we might stumble into AI catastrophe) and two “success stories” (one presuming... |

ffe9fc57-50fc-4629-8ab5-1cd24fd602ca | trentmkelly/LessWrong-43k | LessWrong | What Value Epicycles?

A couple months ago Ben Hoffman wrote an article laying out much of his worldview. I responded to him in the comments that it allowed me to see what I had always found “off” about his writing:

> To my reading, you seem to prefer in sense making explanations that are interesting all else equal, a... |

3232bc38-5d8f-4474-9d83-99800465d526 | trentmkelly/LessWrong-43k | LessWrong | Rationalist Seder: Dayenu, Lo Dayenu

There's one more piece of the NYC Rationalist Seder Haggadah that I wanted to pull out, to refer to in isolation. I think is quite relevant to some current concerns in the evolving Rationality Community, and which is interesting in particular because of how it's evolved over the pa... |

b3ab5f54-10ee-419a-8be2-36c14d8fb354 | trentmkelly/LessWrong-43k | LessWrong | The Rise and Fall of American Growth: A summary

The Rise and Fall of American Growth, by Robert J. Gordon, is like a murder mystery in which the murderer is never caught. Indeed there is no investigation, and perhaps no detective.

The thesis of Gordon’s book is that high rates of economic growth in America were a one... |

7852872e-2d1f-4b92-88bb-01864196d535 | trentmkelly/LessWrong-43k | LessWrong | Knowledge ready for Ankification

Spaced repetition is a powerful learning tactic, and Anki is a good tool for it. There are some LW-relevant Anki decks here. But I wish there were more.

Which sets of knowledge are (1) likely useful to LWers, and (2) straightforward to encode into Anki decks without needing to be fami... |

36b5e20c-472d-4599-ad9e-e635f788a261 | trentmkelly/LessWrong-43k | LessWrong | [Link] 2012 Winter Intelligence Conference videos available

The Future of Humanity Institute has released video footage of the 2012 Winter Intelligence Conference. The videos currently available are:

* Stuart Armstrong - Predicting AI... or Failing to

* Miles Brundage - Limitations and Risks of Machine Ethics

* S... |

073ac155-8ea8-42ec-959c-0aacd5f0ae86 | trentmkelly/LessWrong-43k | LessWrong | Emergency Prescription Medication

In the comments on yesterday's post on planning for disasters people brought up the situation of medications. As with many things in how the US handles healthcare and drugs, this is a mess.

The official recommendation is to prepare emergency supply kits for your home and work that co... |

ba35f363-10d7-433c-bcc2-999f2a00c2a4 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Rejected Early Drafts of Newcomb's Problem

*Discovered inside an opaque box at the University of California and shared by an anonymous source, please enjoy these unpublished variations of* [*physicist William Newcomb's famous thought experiment*](https://www.lesswrong.com/tag/newcomb-s-problem)*.*

Newcomb's Advanced ... |

5b3c0987-9af9-4a1a-b0ba-b76c170c7dd3 | trentmkelly/LessWrong-43k | LessWrong | [LINK] Inferring the rate of psychopathy from roadkill experiment

Pardon the sensationalist headline of that article:

> Mark says that "one thing that might explain the higher numbers here—in case people question my methods—is that I used a tarantula." Apparently, people seemed pretty eager about hitting a spider. "I... |

ebbc3925-785e-4ad8-9dbb-6e1484c7d919 | trentmkelly/LessWrong-43k | LessWrong | Logical Correlation

In which to compare how similarly programs compute their outputs, naïvely and less naïvely.

Logical Correlation

Attention conservation notice: Premature formalization, ab-hoc mathematical definition.

Motivation, Briefly

In the twin prisoners dilemma, I cooperate with my twin because we're imple... |

68e05002-ac69-4154-acfa-9156cc917e4b | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | How might we align transformative AI if it’s developed very soon?

>

> This post is part of my [AI strategy nearcasting series](https://www.lesswrong.com/posts/Qo2EkG3dEMv8GnX8d/ai-strategy-nearcasting): trying to answer key strategic questions about transformative AI, under the assumption that key events will happen ... |

17b4fbc3-342e-47ae-b1d5-e1ed5d288a85 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Self-Reference Breaks the Orthogonality Thesis

One core obstacle to AI Alignment is the Orthogonality Thesis. The Orthogonality Thesis is usually defined as follows: "the idea that the final goals and intelligence levels of artificial agents are independent of each other". More careful people say "mostly independent" ... |

18afe78b-45c6-41c9-a0aa-ffff36d3f61d | trentmkelly/LessWrong-43k | LessWrong | A Word to the Resourceful

Paul Graham has a new article out. Everything he's written is worth reading if you're at all interested in startups, but this article seemed explicitly connected to rationality, by identifying an area where people who are more likely to update / less likely to rationalize will do better than ... |

3badc8d2-1815-4325-9d87-bf3d49c71729 | trentmkelly/LessWrong-43k | LessWrong | Building Blocks of Politics: An Overview of Selectorate Theory

From 1865 to 1909, Belgium was ruled by a great king. He helped promote the adoption of universal male suffrage and proportional-representation voting. During his rule Belgium rapidly industrialized and had immense economic growth. He gave workers the righ... |

0e294600-f904-488d-b05e-55dcbc310e23 | trentmkelly/LessWrong-43k | LessWrong | Meetup : Brussels monthly meetup: time!

Discussion article for the meetup : Brussels monthly meetup: time!

WHEN: 14 December 2013 01:00:00PM (+0100)

WHERE: Rue des Alexiens 55 1000 Bruxelles

This month's topic: time! Are you struggling with the planning fallacy? Do you have scientific knowledge and/or science-ficti... |

0055a33d-e230-4f8a-b53f-b3ab6b663740 | trentmkelly/LessWrong-43k | LessWrong | Insights from Linear Algebra Done Right

This book has previously been discussed by Nate Soares and TurnTrout. In this... review? report? detailed journal entry? ... I will focus on the things which stood out to me. (Definitely not meant to be understandable for anyone unfamiliar with the field.) The book has ten chapt... |

2b471cfa-3e5d-43a2-b6c5-ec03fbedf9ff | trentmkelly/LessWrong-43k | LessWrong | Prediction Based Robust Cooperation

In this post, We present a new approach to robust cooperation, as an alternative to the "modal combat" framework. This post is very hand-waivey. If someone would like to work on making it better, let me know.

----------------------------------------

Over the last year or so, MIRI'... |

834aee4d-2fae-46ef-b114-d43fad2ac7f1 | trentmkelly/LessWrong-43k | LessWrong | [Link] Bets, Portfolios, and Belief Revelation

In a post today at EconLog, Bryan defends the "a bet is a tax on bullshit" maxim contra "portfolios reveal beliefs, bets reveal personality traits and public posturing" (preferred by Noah Smith and Tyler Cowen).

> 1. If portfolios really "reveal beliefs," Tyler and Noah ... |

d9b451df-9df0-46a3-8483-08aeac6b0587 | trentmkelly/LessWrong-43k | LessWrong | New LW Meetup: Urbana-Champaign

This summary was posted to Main on August 23rd. The following week's summary is here.

New meetups (or meetups with a hiatus of more than a year) are happening in:

* New Meetup: Urbana-Champaign, Illinois.: 25 August 2013 02:00PM

Other irregularly scheduled Less Wrong meetups are tak... |

4a23f6b5-548c-4a2a-b77e-9414ee9d2fdf | trentmkelly/LessWrong-43k | LessWrong | Formalizing Embeddedness Failures in Universal Artificial Intelligence

AIXI is a dualistic agent that can't work as an embedded agent... right? I couldn't find a solid formal proof of this claim, so I investigated it myself (with Marcus Hutter). It turns out there are some surprising positive and negative results to b... |

cfb880a9-a8e0-476f-9956-86a87d767320 | trentmkelly/LessWrong-43k | LessWrong | What's the name of this fallacy/reasoning antipattern?

There's a particular reasoning antipattern I'm looking for the name of (if it's been named).

It happens when you imagine what sort of evidence would support the position you want to take, and then prematurely assume from this that the evidence exists, and then us... |

93faf407-dcfb-4038-83a5-3e9b0af17fc9 | trentmkelly/LessWrong-43k | LessWrong | The Apologist and the Revolutionary

Rationalists complain that most people are too willing to make excuses for their positions, and too unwilling to abandon those positions for ones that better fit the evidence. And most people really are pretty bad at this. But certain stroke victims called anosognosiacs are much, mu... |

e0128188-3914-4709-9a54-d5b3af86b74f | trentmkelly/LessWrong-43k | LessWrong | Developing Empathy

Empathy is a huge life skill, useful in almost every interaction with other people. But, many people aren't able to empathize with others as effectively as they might want to. The standard technique is "put yourself in their shoes," which works for me. However, this doesn't always work with peopl... |

5cf50a7d-096b-46bd-9616-09aeebde3fd1 | StampyAI/alignment-research-dataset/arbital | Arbital | Coordinative AI development hypothetical

A simplified/easier hypothetical form of the [known algorithm nonrecursive](https://arbital.com/p/) path within the [https://arbital.com/p/2z](https://arbital.com/p/2z). Suppose there was an effective world government with effective monitoring of all computers; or that for wha... |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.