id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

207780f8-6a80-450c-b93c-83b03e22dbb0 | trentmkelly/LessWrong-43k | LessWrong | Motivating Abstraction-First Decision Theory

Let’s start with a prototypical embedded decision theory problem: we run two instances of the same “agent” from the same source code and in the same environment, and the outcome depends on the choices of both agents; both agents have full knowledge of the setup.

Roughly speaking, Functional decision theory and its predecessors argue that each instance of the agent should act as if it’s choosing for both.

I suspect there’s an entirely different path to a similar conclusion - a path which is motivated not prescriptively, but descriptively.

When we say that some executing code is an “agent”, that’s an abstraction. We’re abstracting away the low-level model structure (conditionals, loops, function calls, arithmetic, data structures, etc) into a high-level model. The high-level model says “this variable is set to a value which maximizes <blah>”, without saying how that value is calculated. It’s an agent abstraction: an abstraction in which the high-level model is some kind of maximizer.

Key question: when is this abstraction valid?

Abstraction = Information at a Distance talks about what it means for an abstraction to be valid. At the lowest level, validity means that the low-level and high-level models return the same answers to some class of queries. But the linked post reduces this to a simpler definition, more directly applicable to the sort of abstractions we use in real life: variables in a high-level model should contain all the information in corresponding low-level variables which is relevant “far away”. We abstract far-away stars to point masses, because the exact distribution of the mass and momentum within the star is (usually) not relevant from far away.

Or, to put it differently: an abstraction is “valid” when components of the low-level model are independent given the values of high-level variables. The roiling of plasmas within far-apart stars is (approximately) independent given the total mass, momentum, and center-of-mass position of each star. As |

bfa2e2af-dcd1-45bf-9390-e251b66f7850 | StampyAI/alignment-research-dataset/arxiv | Arxiv | Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization

1 Introduction

---------------

Reinforcement learning can be used to acquire complex behaviors from high-level specifications.

However, defining a cost function that can be optimized effectively and encodes the correct task can be challenging in practice, and techniques like cost shaping are often used to solve complex real-world problems (Ng et al., [1999](#bib.bib18)).

Inverse optimal control (IOC) or inverse reinforcement learning (IRL) provide an avenue for addressing this challenge by learning a cost function directly from expert demonstrations, e.g. Ng et al. ([2000](#bib.bib19)); Abbeel & Ng ([2004](#bib.bib1)); Ziebart et al. ([2008](#bib.bib31)).

However, designing an effective IOC algorithm for learning from demonstration is difficult for two reasons. First, IOC is fundamentally underdefined in that many costs induce the same behavior.

Most practical algorithms therefore require carefully designed features to impose structure on the learned cost. Second, many standard IRL and IOC methods require solving the forward problem (finding an optimal policy given the current cost) in the inner loop of an iterative cost optimization.

This makes them difficult to apply to complex, high-dimensional systems, where the forward problem is itself exceedingly difficult, particularly real-world robotic systems with unknown dynamics.

DdemoDsampDtrajcθcθθ←argminθ LIOC

Figure 1: Right: Guided cost learning uses policy optimization to adaptively sample trajectories for estimating the IOC partition function. Bottom left: PR2 learning to gently place a dish in a plate rack.

To address the challenge of representation, we propose to use expressive, nonlinear function approximators, such as neural networks, to represent the cost. This reduces the engineering burden required to deploy IOC methods, and makes it possible to learn cost functions for which expert intuition is insufficient for designing good features. Such expressive function approximators, however, can learn complex cost functions that lack the structure typically imposed by hand-designed features. To mitigate this challenge, we propose two regularization techniques for IOC, one which is general and one which is specific to episodic domains, such as to robotic manipulation skills.

In order to learn cost functions for real-world robotic tasks, our method must be able to handle unknown dynamics and high-dimensional systems. To that end, we propose a cost learning algorithm based on policy optimization with local linear models, building on prior work in reinforcement learning (Levine & Abbeel, [2014](#bib.bib12)). In this approach, as illustrated in Figure [1](#S1.F1 "Figure 1 ‣ 1 Introduction ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization"), the cost function is learned in the inner loop of a policy search procedure, using samples collected for policy improvement to also update the cost function. The cost learning method itself is a nonlinear generalization of maximum entropy IOC (Ziebart et al., [2008](#bib.bib31)), with samples used to approximate the partition function. In contrast to previous work that optimizes the policy in the inner loop of cost learning, our approach instead updates the cost in the inner loop of policy search, making it practical and efficient. One of the benefits of this approach is that we can couple learning the cost with learning the policy for that cost. For tasks that are too complex to acquire a good global cost function from a small number of demonstrations, our method can still recover effective behaviors by running our policy learning method and retaining the learned policy. We elaborate on this further in Section [4.4](#S4.SS4 "4.4 Learning Costs and Controllers ‣ 4 Guided Cost Learning ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization").

The main contribution of our work is an algorithm that learns nonlinear cost functions from user demonstrations, at the same time as learning a policy to perform the task. Since the policy optimization “guides” the cost toward good regions of the space, we call this method guided cost learning. Unlike prior methods, our algorithm can handle complex, nonlinear cost function representations and high-dimensional unknown dynamics, and can be used on real physical systems with a modest number of samples. Our evaluation demonstrates the performance of our method on a set of simulated benchmark tasks, showing that it outperforms previous methods. We also evaluate our method on two real-world tasks learned directly from human demonstrations. These tasks require using torque control and vision to perform a variety of robotic manipulation behaviors, without any hand-specified cost features.

2 Related Work

---------------

One of the basic challenges in inverse optimal control (IOC), also known as inverse reinforcement learning (IRL), is that finding a cost or reward under which a set of demonstrations is near-optimal is underdefined. Many different costs can explain a given set of demonstrations. Prior work has tackled this issue using maximum margin formulations (Abbeel & Ng, [2004](#bib.bib1); Ratliff et al., [2006](#bib.bib22)), as well as probabilistic models that explain suboptimal behavior as noise (Ramachandran & Amir, [2007](#bib.bib21); Ziebart et al., [2008](#bib.bib31)). We take the latter approach in this work, building on the maximum entropy IOC model (Ziebart, [2010](#bib.bib30)). Although the probabilistic model mitigates some of the difficulties with IOC, there is still a great deal of ambiguity, and an important component of many prior methods is the inclusion of detailed features created using domain knowledge, which can be linearly combined into a cost, including:

indicators for successful completion of the task for a robotic ball-in-cup game (Boularias et al., [2011](#bib.bib5)),

learning table tennis with features that include distance of the ball to the opponent’s elbow (Muelling et al., [2014](#bib.bib17)),

providing the goal position as a known constraint for robotic grasping (Doerr et al., [2015](#bib.bib7)),

and learning highway driving with indicators for collision and driving on the grass (Abbeel & Ng, [2004](#bib.bib1)). While these features allow for the user to impose structure on the cost, they substantially increase the engineering burden. Several methods have proposed to learn nonlinear costs using Gaussian processes (Levine et al., [2011](#bib.bib14)) and boosting (Ratliff et al., [2007](#bib.bib23), [2009](#bib.bib24)), but even these methods generally operate on features rather than raw states. We instead use rich, expressive function approximators, in the form of neural networks, to learn cost functions directly on raw state representations. While neural network cost representations have previously been proposed in the literature (Wulfmeier et al., [2015](#bib.bib29)), they have only been applied to small, synthetic domains. Previous work has also suggested simple regularization methods for cost functions, based on minimizing ℓ1 or ℓ2 norms of the parameter vector (Ziebart, [2010](#bib.bib30); Kalakrishnan et al., [2013](#bib.bib11)) or by using unlabeled trajectories (Audiffren et al., [2015](#bib.bib3)). When using expressive function approximators in complex real-world tasks, we must design substantially more powerful regularization techniques to mitigate the underspecified nature of the problem, which we introduce in Section [5](#S5 "5 Representation and Regularization ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization").

Another challenge in IOC is that, in order to determine the quality of a given cost function, we must solve some variant of the forward control problem to obtain the corresponding policy, and then compare this policy to the demonstrated actions.

Most early IRL algorithms required solving an MDP in the inner loop of an iterative optimization (Ng et al., [2000](#bib.bib19); Abbeel & Ng, [2004](#bib.bib1); Ziebart et al., [2008](#bib.bib31)).

This requires perfect knowledge of the system dynamics and access to an efficient offline solver, neither of which is available in, for instance, complex robotic control domains.

Several works have proposed to relax this requirement, for example by learning a value function instead of a cost (Todorov, [2006](#bib.bib26)), solving an approximate local control problem (Levine & Koltun, [2012](#bib.bib13); Dragan & Srinivasa, [2012](#bib.bib8)), generating a discrete graph of states (Byravan et al., [2015](#bib.bib6); Monfort et al., [2015](#bib.bib16)), or only obtaining an optimal trajectory rather than policy (Ratliff et al., [2006](#bib.bib22), [2009](#bib.bib24)).

However, these methods still require knowledge of the system dynamics.

Given the size and complexity of the problems addressed in this work, solving the optimal control problem even approximately in the inner loop of the cost optimization is impractical. We show that good cost functions can be learned by instead learning the *cost* in the inner loop of a *policy optimization*. Our inverse optimal control algorithm is most closely related to other previous sample-based methods based on the principle of maximum entropy, including relative entropy IRL (Boularias et al., [2011](#bib.bib5)) and path integral IRL (Kalakrishnan et al., [2013](#bib.bib11)), which can also handle unknown dynamics. However, unlike these prior methods, we adapt the sampling distribution using policy optimization. We demonstrate in our experiments that this adaptation is crucial for obtaining good results on complex robotic platforms, particularly when using complex, nonlinear cost functions.

To summarize, our proposed method is the first to combine several desirable features into a single, effective algorithm: it can handle unknown dynamics, which is crucial for real-world robotic tasks, it can deal with high-dimensional, complex systems, as in the case of real torque-controlled robotic arms, and it can learn complex, expressive cost functions, such as multilayer neural networks, which removes the requirement for meticulous hand-engineering of cost features. While some prior methods have shown good results with unknown dynamics on real robots (Boularias et al., [2011](#bib.bib5); Kalakrishnan et al., [2013](#bib.bib11)) and some have proposed using nonlinear cost functions (Ratliff et al., [2006](#bib.bib22), [2009](#bib.bib24); Levine et al., [2011](#bib.bib14)), to our knowledge no prior method has been demonstrated that can provide all of these benefits in the context of complex real-world tasks.

3 Preliminaries and Overview

-----------------------------

We build on the probabilistic maximum entropy inverse optimal control framework (Ziebart et al., [2008](#bib.bib31)). The demonstrated behavior is assumed to be the result of an expert acting stochastically and near-optimally with respect to an unknown cost function. Specifically, the model assumes that the expert samples the demonstrated trajectories {τi} from the distribution

| | | | |

| --- | --- | --- | --- |

| | p(τ)=1Zexp(−cθ(τ)), | | (1) |

where τ={x1,u1,…,xT,uT} is a trajectory sample, cθ(τ)=∑tcθ(xt,ut) is an unknown cost function parameterized by θ, and xt and ut are the state and action at time step t. Under this model, the expert is most likely to act optimally, and can generate suboptimal trajectories with a probability that decreases exponentially as the trajectories become more costly. The partition function Z is difficult to compute for large or continuous domains, and presents the main computational challenge in maximum entropy IOC. The first applications of this model computed Z exactly with dynamic programming (Ziebart et al., [2008](#bib.bib31)). However, this is only practical in small, discrete domains. More recent methods have proposed to estimate Z by using the Laplace approximation (Levine & Koltun, [2012](#bib.bib13)), value function approximation (Huang & Kitani, [2014](#bib.bib10)), and samples (Boularias et al., [2011](#bib.bib5)). As discussed in Section [4.1](#S4.SS1 "4.1 Sample-Based Inverse Optimal Control ‣ 4 Guided Cost Learning ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization"), we take the sample-based approach in this work, because it allows us to perform inverse optimal control without a known model of the system dynamics. This is especially important in robotic manipulation domains, where the robot might interact with a variety of objects with unknown physical properties.

To represent the cost function cθ(xt,ut), IOC or IRL methods typically use a linear combination of hand-crafted features, given by cθ(ut,ut)=θTf(ut,xt) (Abbeel & Ng, [2004](#bib.bib1)). This representation is difficult to apply to more complex domains, especially when the cost must be computed from raw sensory input. In this work, we explore the use of high-dimensional, expressive function approximators for representing cθ(xt,ut). As we discuss in Section [6](#S6 "6 Experimental Evaluation ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization"), we use neural networks that operate directly on the robot’s state, though other parameterizations could also be used with our method. Complex representations are generally considered to be poorly suited for IOC, since learning costs that associate the right element of the state with the goal of the task is already quite difficult even with simple linear representations. However, as we discuss in our evaluation, we found that such representations could be learned effectively by adaptively generating samples as part of a policy optimization procedure, as discussed in Section [4.2](#S4.SS2 "4.2 Adaptive Sampling via Policy Optimization ‣ 4 Guided Cost Learning ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization").

4 Guided Cost Learning

-----------------------

In this section, we describe the guided cost learning algorithm, which combines sample-based maximum entropy IOC with forward reinforcement learning using time-varying linear models. The central idea behind this method is to adapt the sampling distribution to match the maximum entropy cost distribution p(τ)=1Zexp(−cθ(τ)), by directly optimizing a trajectory distribution with respect to the current cost cθ(τ) using a sample-efficient reinforcement learning algorithm. Samples generated on the physical system are used both to improve the policy and more accurately estimate the partition function Z. In this way, the reinforcement learning step acts to “guide” the sampling distribution toward regions where the samples are more useful for estimating the partition function. We will first describe how the IOC objective in Equation ([1](#S3.E1 "(1) ‣ 3 Preliminaries and Overview ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization")) can be estimated with samples, and then describe how reinforcement learning can adapt the sampling distribution.

###

4.1 Sample-Based Inverse Optimal Control

In the sample-based approach to maximum entropy IOC, the partition function Z=∫exp(−cθ(τ))dτ is estimated with samples from a background distribution q(τ). Prior sample-based IOC methods use a linear representation of the cost function, which simplifies the corresponding cost learning problem (Boularias et al., [2011](#bib.bib5); Kalakrishnan et al., [2013](#bib.bib11)). In this section, we instead derive a sample-based approximation for the IOC objective for a general nonlinear parameterization of the cost function. The negative log-likelihood corresponding to the IOC model in Equation ([1](#S3.E1 "(1) ‣ 3 Preliminaries and Overview ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization")) is given by:

| | | | |

| --- | --- | --- | --- |

| | LIOC(θ) | =1N∑τi∈Ddemocθ(τi)+logZ | |

| | | ≈1N∑τi∈Ddemocθ(τi)+log1M∑τj∈Dsampexp(−cθ(τj))q(τj), | |

where Ddemo denotes the set of N demonstrated trajectories, Dsamp the set of M background samples, and q denotes the background distribution from which trajectories τj were sampled. Prior methods have chosen q to be uniform (Boularias et al., [2011](#bib.bib5)) or to lie in the vicinity of the demonstrations (Kalakrishnan et al., [2013](#bib.bib11)).

To compute the gradients of this objective with respect to the cost parameters θ, let wj=exp(−cθ(τj))q(τj) and Z=∑jwj. The gradient is then given by:

| | | |

| --- | --- | --- |

| | dLIOCdθ=1N∑τi∈Ddemodcθdθ(τi)−1Z∑τj∈Dsampwjdcθdθ(τj) | |

When the cost is represented by a neural network or some other function approximator, this gradient can be computed efficiently by backpropagating −wjZ for each trajectory τj∈Dsamp and 1N for each trajectory τi∈Ddemo.

###

4.2 Adaptive Sampling via Policy Optimization

The choice of background sample distribution q(τ) for estimating the objective LIOC is critical for successfully applying the sample-based IOC algorithm. The optimal importance sampling distribution for estimating the partition function ∫exp(−cθ(τ))dτ is q(τ)∝|exp(−cθ(τ))|=exp(−cθ(τ)).

Designing a single background distribution q(τ) is therefore quite difficult when the cost cθ is unknown. Instead, we can adaptively refine q(τ) to generate more samples in those regions of the trajectory space that are good according to the current cost function cθ(τ). To this end, we interleave the IOC optimization, which attempts to find the cost function that maximizes the likelihood of the demonstrations, with a policy optimization procedure, which improves the trajectory distribution q(τ) with respect to the current cost.

Since one of the main advantages of the sample-based IOC approach is the ability to handle unknown dynamics, we must also choose a policy optimization procedure that can handle unknown dynamics. To this end, we adapt the method presented by Levine & Abbeel ([2014](#bib.bib12)), which performs policy optimization under unknown dynamics by iteratively fitting time-varying linear dynamics to samples from the current trajectory distribution q(τ), updating the trajectory distribution using a modified LQR backward pass, and generating more samples for the next iteration. The trajectory distributions generated by this method are Gaussian, and each iteration of the policy optimization procedure satisfies a KL-divergence constraint of the form DKL(q(τ)∥^q(τ))≤ϵ, which prevents the policy from changing too rapidly (Bagnell & Schneider, [2003](#bib.bib4); Peters et al., [2010](#bib.bib20); Rawlik & Vijayakumar, [2013](#bib.bib25)).

This has the additional benefit of not overfitting to poor initial estimates of the cost function. With a small modification, we can use this algorithm to optimize a maximum entropy version of the objective, given by minqEq[cθ(τ)]−H(τ), as discussed in prior work (Levine & Abbeel, [2014](#bib.bib12)). This variant of the algorithm allows us to recover the trajectory distribution q(τ)∝exp(−cθ(τ)) at convergence (Ziebart, [2010](#bib.bib30)), a good distribution for sampling. For completeness, this policy optimization procedure is summarized in Appendix [A](#A1 "Appendix A Policy Optimization under Unknown Dynamics ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization").

1: Initialize qk(τ) as either a random initial controller or from demonstrations

2: for iteration i=1 to I do

3: Generate samples Dtraj from qk(τ)

4: Append samples: Dsamp←Dsamp∪Dtraj

5: Use Dsamp to update cost cθ using Algorithm [2](#alg2 "Algorithm 2 ‣ 4.3 Cost Optimization and Importance Weights ‣ 4 Guided Cost Learning ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization")

6: Update qk(τ) using Dtraj and the method from (Levine & Abbeel, [2014](#bib.bib12)) to obtain qk+1(τ)

7: end for

8: return optimized cost parameters θ and trajectory distribution q(τ)

Algorithm 1 Guided cost learning

Our sample-based IOC algorithm with adaptive sampling is summarized in Algorithm [1](#alg1 "Algorithm 1 ‣ 4.2 Adaptive Sampling via Policy Optimization ‣ 4 Guided Cost Learning ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization"). We call this method guided cost learning because policy optimization is used to guide sampling toward regions with lower cost. The algorithm consists of taking successive policy optimization steps, each of which generates samples Dtraj from the latest trajectory distribution qk(τ). After sampling, the cost function is updated using all samples collected thus far for the purpose of policy optimization. No additional background samples are required for this method. This procedure returns both a learned cost function cθ(xt,ut) and a trajectory distribution q(τ), which corresponds to a time-varying linear-Gaussian controller q(ut|xt). This controller can be used to execute the learned behavior.

###

4.3 Cost Optimization and Importance Weights

1: for iteration k=1 to K do

2: Sample demonstration batch ^Ddemo⊂Ddemo

3: Sample background batch ^Dsamp⊂Dsamp

4: Append demonstration batch to background batch: ^Dsamp←^Ddemo∪^Dsamp

5: Estimate dLIOCdθ(θ) using ^Ddemo and ^Dsamp

6: Update parameters θ using gradient dLIOCdθ(θ)

7: end for

8: return optimized cost parameters θ

Algorithm 2 Nonlinear IOC with stochastic gradients

The IOC objective can be optimized using standard nonlinear optimization methods and the gradient dLIOCdθ. Stochastic gradient methods are often preferred for high-dimensional function approximators, such as the neural networks. Such methods are straightforward to apply to objectives that factorize over the training samples, but the partition function does not factorize trivially in this way. Nonetheless, we found that our objective could still be optimized with stochastic gradient methods by sampling a subset of the demonstrations and background samples at each iteration. When the number of samples in the batch is small, we found it necessary to add the sampled demonstrations to the background sample set as well; without adding the demonstrations to the sample set, the objective can become unbounded and frequently does in practice.

The stochastic optimization procedure is presented in Algorithm [2](#alg2 "Algorithm 2 ‣ 4.3 Cost Optimization and Importance Weights ‣ 4 Guided Cost Learning ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization"), and is straightforward to implement with most neural network libraries based on backpropagation.

Estimating the partition function requires us to use importance sampling. Although prior work has suggested dropping the importance weights (Kalakrishnan et al., [2013](#bib.bib11); Aghasadeghi & Bretl, [2011](#bib.bib2)), we show in Appendix [B](#A2 "Appendix B Consistency Evaluation ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization") that this produces an inconsistent likelihood estimate and fails to recover good cost functions. Since our samples are drawn from multiple distributions, we compute a fusion distribution to evaluate the importance weights.

Specifically, if we have samples from k distributions q1(τ),…,qκ(τ), we can construct a consistent estimator of the expectation of a function f(τ) under a uniform distribution as E[f(τ)]≈1M∑τj11k∑κqκ(τj)f(τj).

Accordingly, the importance weights are zj=[1k∑κqκ(τj)]−1, and the objective is now:

| | | | |

| --- | --- | --- | --- |

| | LIOC(θ)= | 1N∑τi∈Ddemocθ(τi)+log1M∑τj∈Dsampzjexp(−cθ(τj)) | |

The distributions qκ underlying background samples are obtained from the controller at iteration k. We must also append the demonstrations to the samples in Algorithm [2](#alg2 "Algorithm 2 ‣ 4.3 Cost Optimization and Importance Weights ‣ 4 Guided Cost Learning ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization"), yet the distribution that generated the demonstrations is unknown. To estimate it, we assume the demonstrations come from a single Gaussian trajectory distribution and compute its empirical mean and variance. We found this approximation sufficiently accurate for estimating the importance weights of the demonstrations, as shown in Appendix [B](#A2 "Appendix B Consistency Evaluation ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization").

###

4.4 Learning Costs and Controllers

In contrast to many previous IOC and IRL methods, our approach can be used to learn a cost while simultaneously optimizing the policy for a new instance of the task not in the demos, such as a new position of a target cup for a pouring task, as shown in our experiments. Since the algorithm produces both a cost function cθ(xt,ut) and a controller q(ut|xt) that optimizes this cost on the new task instance, we can directly use this controller to execute the desired behavior. In this way, the method actually learns a policy from demonstration, using the additional knowledge that the demonstrations are near-optimal under some unknown cost function, similar to recent work on IOC by direct loss minimization (Doerr et al., [2015](#bib.bib7)). The learned cost function cθ(xt,ut) can often also be used to optimize new policies for new instances of the task without additional cost learning. However, we found that on the most challenging tasks we tested, running policy learning with IOC in the loop for each new task instance typically succeeded more frequently than running IOC once and reusing the learned cost. We hypothesize that this is because training the policy on a new instance of the task provides the algorithm with additional information about task variation, thus producing a better cost function and reducing overfitting. The intuition behind this hypothesis is that the demonstrations only cover a small portion of the degrees of variation in the task. Observing samples from a new task instance provides the algorithm with a better idea of the particular factors that distinguish successful task executions from failures.

5 Representation and Regularization

------------------------------------

We parametrize our cost functions as neural networks, expanding their expressive power and enabling IOC to be applied to the state of a robotic system directly, without hand-designed features.

Our experiments in Section [6.2](#S6.SS2 "6.2 Real-World Robotic Control ‣ 6 Experimental Evaluation ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization") confirm that an affine cost function is not expressive enough to learn some behaviors.

Neural network parametrizations are particularly useful for learning visual representations on raw image pixels.

In our experiments, we make use of the unsupervised visual feature learning method developed by Finn et al. ([2016](#bib.bib9)) to learn cost functions that depend on visual input.

Learning cost functions on raw pixels is an interesting direction for future work, which we discuss in Section [7](#S7 "7 Discussion and Future Work ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization").

While the expressive power of nonlinear cost functions provide a range of benefits, they introduce significant model complexity to an already underspecified IOC objective.

To mitigate this challenge, we propose two regularization methods for IOC.

Prior methods regularize the IOC objective by penalizing the ℓ1 or ℓ2 norm of the cost parameters θ (Ziebart, [2010](#bib.bib30); Kalakrishnan et al., [2013](#bib.bib11)).

For high-dimensional nonlinear cost functions, this regularizer is often insufficient, since different entries in the parameter vector can have drastically different effects on the cost.

We use two regularization terms. The first term encourages the cost of demo and sample trajectories to change locally at a constant rate (lcr), by penalizing the second time derivative:

| | | |

| --- | --- | --- |

| | glcr(τ)=∑xt∈τ[(cθ(xt+1)−cθ(xt))−(cθ(xt)−cθ(xt−1))]2 | |

This term reduces high-frequency variation that is often symptomatic of overfitting, making the cost easier to reoptimize. Although sharp changes in the cost slope are sometimes preferred, we found that temporally slow-changing costs were able to adequately capture all of the behaviors in our experiments.

The second regularizer is more specific to one-shot episodic tasks, and it encourages the cost of the states of a demo trajectory to decrease strictly monotonically in time using a squared hinge loss:

| | | |

| --- | --- | --- |

| | gmono(τ)=∑xt∈τ[max(0,cθ(xt)−cθ(xt−1)−1)]2 | |

The rationale behind this regularizer is that, for tasks that essentially consist of reaching a target condition or state, the demonstrations typically make monotonic progress toward the goal on some (potentially nonlinear) manifold. While this assumption does not always hold perfectly, we again found that this type of regularizer improved performance on the tasks in our evaluation. We show a detailed comparison with regard to both regularizers in Appendix [E](#A5 "Appendix E Regularization Evaluation ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization").

6 Experimental Evaluation

--------------------------

We evaluated our sampling-based IOC algorithm on a set of robotic control tasks, both in simulation and on a real robotic platform. Each of the experiments involve complex second order dynamics with force or torque control and no manually designed cost function features, with the raw state provided as input to the learned cost function.

We also tested the consistency of our algorithm on a toy point mass example for which the ground truth distribution is known. These experiments, discussed fully in Appendix [B](#A2 "Appendix B Consistency Evaluation ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization"), show that using a maximum entropy version of the policy optimization objective (see Section [4.2](#S4.SS2 "4.2 Adaptive Sampling via Policy Optimization ‣ 4 Guided Cost Learning ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization")) and using importance weights are both necessary for recovering the true distribution.

###

6.1 Simulated Comparisons

In this section, we provide simulated comparisons between guided cost learning and prior sample-based methods. We focus on task performance and sample complexity, and also perform comparisons across two different sampling distribution initializations and regularizations (in Appendix [E](#A5 "Appendix E Regularization Evaluation ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization")).

green: goalred: obstaclesinitialstategoalstate

Figure 2: Comparison to prior work on simulated 2D navigation, reaching, and peg insertion tasks. Reported performance is averaged over 4 runs of IOC on 4 different initial conditions . For peg insertion, the depth of the hole is 0.1m, marked as a dashed line. Distances larger than this amount failed to insert the peg.

To compare guided cost learning to prior methods, we ran experiments on three simulated tasks of varying difficulty, all using the MuJoCo physics simulator (Todorov et al., [2012](#bib.bib27)). The first task is 2D navigation around obstacles, modeled on the task by Levine & Koltun ([2012](#bib.bib13)). This task has simple, linear dynamics and a low-dimensional state space, but a complex cost function, which we visualize in Figure [2](#S6.F2 "Figure 2 ‣ 6.1 Simulated Comparisons ‣ 6 Experimental Evaluation ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization"). The second task involves a 3-link arm reaching towards a goal location in 2D, in the presence of physical obstacles. The third, most challenging, task is 3D peg insertion with a 7 DOF arm. This task is significantly more difficult than tasks evaluated in prior IOC work as it involves complex contact dynamics between the peg and the table and high-dimensional, continuous state and action spaces. The arm is controlled by selecting torques at the joint motors at 20 Hz. More details on the experimental setup are provided in Appendix [D](#A4 "Appendix D Detailed Description of Task Setup ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization").

In addition to the expert demonstrations, prior methods require a set of “suboptimal” samples for estimating the partition function. We obtain these samples in one of two ways: by using a baseline random controller that randomly explores around the initial state (random), and by fitting a linear-Gaussian controller to the demonstrations (demo). The latter initialization typically produces a motion that tracks the average demonstration with variance proportional to the variation between demonstrated motions.

Between 20 and 32 demonstrations were generated from a policy learned using the method of Levine & Abbeel ([2014](#bib.bib12)), with a ground truth cost function determined by the agent’s pose relative to the goal. We found that for the more precise peg insertion task, a relatively complex ground truth cost function was needed to afford the necessary degree of precision. We used a cost function of the form wd2+vlog(d2+α), where d is the distance between the two tips of the peg and their target positions, and v and α are constants. Note that the affine cost is incapable of exactly representing this function. We generated demonstration trajectories under several different starting conditions. For 2D navigation, we varied the initial position of the agent, and for peg insertion, we varied the position of the peg hole. We then evaluated the performance of our method and prior sample-based methods (Kalakrishnan et al., [2013](#bib.bib11); Boularias et al., [2011](#bib.bib5)) on each task from four arbitrarily-chosen test states. We chose these prior methods because, to our knowledge, they are the only methods which can handle unknown dynamics.

We used a neural network cost function with two hidden layers with 24–52 units and rectifying nonlinearities of the form max(z,0) followed by linear connections to a set of features yt, which had a size of 20 for the 2D navigation task and 100 for the other two tasks. The cost is then given by

| | | | |

| --- | --- | --- | --- |

| | | | (2) |

with a fixed torque weight wu and the parameters consisting of A, b, and the network weights. These cost functions range from about 3,000 parameters for the 2D navigation task to 16,000 parameters for peg insertion. For further details, see Appendix [C](#A3 "Appendix C Neural Network Parametrization and Initialization ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization"). Although the prior methods learn only linear cost functions, we can extend them to the nonlinear setting following the derivation in Section [4.1](#S4.SS1 "4.1 Sample-Based Inverse Optimal Control ‣ 4 Guided Cost Learning ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization").

Figure [2](#S6.F2 "Figure 2 ‣ 6.1 Simulated Comparisons ‣ 6 Experimental Evaluation ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization") illustrates the tasks and shows results for each method after different numbers of samples from the test condition. In our method, five samples were used at each iteration of policy optimization, while for the prior methods, the number of samples corresponds to the number of “suboptimal” samples provided for cost learning. For the prior methods, additional samples were used to optimize the learned cost. The results indicate that our method is generally capable of learning tasks that are more complex than the prior methods, and is able to effectively handle complex, high-dimensional neural network cost functions. In particular, adding more samples for the prior methods generally does not improve their performance, because all of the samples are drawn from the same distribution.

###

6.2 Real-World Robotic Control

We also evaluated our method on a set of real robotic manipulation tasks using the PR2 robot, with comparisons to relative entropy IRL, which we found to be the better of the two prior methods in our simulated experiments. We chose two robotic manipulation tasks which involve complex dynamics and interactions with delicate objects, for which it is challenging to write down a cost function by hand.

For all methods, we used a two-layer neural network cost parametrization and the regularization objective described in Section [5](#S5 "5 Representation and Regularization ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization"), and compared to an affine cost function on one task to evaluate the importance of non-linear cost representations. The affine cost followed the form of equation [2](#S6.E2 "(2) ‣ 6.1 Simulated Comparisons ‣ 6 Experimental Evaluation ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization") but with yt equal to the input xt.111Note that a cost function that is quadratic in the state is linear in the coefficients of the monomials, and therefore corresponds to a linear parameterization. For both tasks, between 25 and 30 human demonstrations were provided via kinesthetic teaching, and each IOC algorithm was initialized by automatically fitting a controller to the demonstrations that roughly tracked the average trajectory. Full details on both tasks are in Appendix [D](#A4 "Appendix D Detailed Description of Task Setup ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization"), and summaries are below.

human demoinitial posefinal pose

Figure 3: Dish placement and pouring tasks. The robot learned to place the plate gently into the correct slot, and to pour almonds, localizing the target cup using unsupervised visual features. A video of the learned controllers can be found at <http://rll.berkeley.edu/gcl>

In the first task, illustrated in Figure [3](#S6.F3 "Figure 3 ‣ 6.2 Real-World Robotic Control ‣ 6 Experimental Evaluation ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization"), the robot must gently place a grasped plate into a specific slot of dish rack. The state space consists of the joint angles, the pose of the gripper relative to the target pose, and the time derivatives of each; the actions correspond to torques on the robot’s motors; and the input to the cost function is the pose and velocity of the gripper relative to the target position. Note that we do not provide the robot with an existing trajectory tracking controller or any manually-designed policy representation beyond linear-Gaussian controllers, in contrast to prior methods that use trajectory following (Kalakrishnan et al., [2013](#bib.bib11)) or dynamic movement primitives with features (Boularias et al., [2011](#bib.bib5)). Our attempt to design a hand-crafted cost function for inserting the plate into the dish rack produced a fast but overly aggressive behavior that cracked one of the plates during learning.

The second task, also shown in Figure [3](#S6.F3 "Figure 3 ‣ 6.2 Real-World Robotic Control ‣ 6 Experimental Evaluation ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization"), consisted of pouring almonds from one cup to another. In order to succeed, the robot must keep the cup upright until reaching the target cup, then rotate the cup so that the almonds are poured. Instead of including the position of the target cup in the state space, we train autoencoder features from camera images captured from the demonstrations and add a pruned feature point representation and its time derivative to the state, as proposed by Finn et al. ([2016](#bib.bib9)). The input to the cost function includes these visual features, as well as the pose and velocity of the gripper. Note that the position of the target cup can only be obtained from the visual features, so the algorithm must learn to use them in the cost function in order to succeed at the task.

| | | | |

| --- | --- | --- | --- |

| *dish* (NN) | RelEnt IRL | GCL q(ut|xt) | GCL reopt. |

| success rate | 0% | 100% | 100% |

| # samples | 100 | 90 | 90 |

| *pouring* (NN) | RelEnt IRL | GCL q(ut|xt) | GCL reopt. |

| success rate | 10% | 84.7% | 34% |

| # samples | 150,150 | 75,130 | 75,130 |

| *pouring* (affine) | RelEnt IRL | GCL q(ut|xt) | GCL reopt. |

| success rate | 0% | 0% | – |

| # samples | 150 | 120 | – |

Table 1: Performance of guided cost learning (GCL) and relative entropy (RelEnt) IRL on placing a dish into a rack and pouring almonds into a cup.

Sample counts are for IOC, omitting those for optimizing the learned cost.

An affine cost is insufficient for representing the pouring task, thus motivating using a neural network cost (NN).

The pouring task with a neural network cost is evaluated for two positions of the target cup; average performance is reported.

The results, presented in Table [1](#S6.T1 "Table 1 ‣ 6.2 Real-World Robotic Control ‣ 6 Experimental Evaluation ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization"), show that our algorithm successfully learned both tasks. The prior relative entropy IRL algorithm could not acquire a suitable cost function, due to the complexity of this domain. On the pouring task, where we also evaluated a simpler affine cost function, we found that only the neural network representation could recover a successful behavior, illustrating the need for rich and expressive function approximators when learning cost functions directly on raw state representations.222We did attempt to learn costs directly on image pixels, but found that the problem was too underdetermined to succeed. Better image-specific regularization is likely required for this.

The results in Table [1](#S6.T1 "Table 1 ‣ 6.2 Real-World Robotic Control ‣ 6 Experimental Evaluation ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization") also evaluate the generalizability of the cost function learned by our method and prior work. On the dish rack task, we can use the learned cost to optimize new policies for different target dish positions successfully, while the prior method does not produce a generalizable cost function. On the harder pouring task, we found that the learned cost succeeded less often on new positions. However, as discussed in Section [4.4](#S4.SS4 "4.4 Learning Costs and Controllers ‣ 4 Guided Cost Learning ‣ Guided Cost Learning: Deep Inverse Optimal Control via Policy Optimization"), our method produces both a policy q(ut|xt) and a cost function cθ when trained on a novel instance of the task, and although the learned cost functions for this task were worse, the learned policy succeeded on the test positions when optimized with IOC in the inner loop using our algorithm. This indicates an interesting property of our approach: although the learned cost function is local in nature due to the choice of sampling distribution, the learned policy tends to succeed even when the cost function is too local to produce good results in very different situations. An interesting avenue for future work is to further explore the implications of this property, and to improve the generalizability of the learned cost by successively training policies on different novel instances of the task until enough global training data is available to produce a cost function that is a good fit to the demonstrations in previously unseen parts of the state space.

7 Discussion and Future Work

-----------------------------

We presented an inverse optimal control algorithm that can learn complex, nonlinear cost representations, such as neural networks, and can be applied to high-dimensional systems with unknown dynamics. Our algorithm uses a sample-based approximation of the maximum entropy IOC objective, with samples generated from a policy learning algorithm based on local linear models (Levine & Abbeel, [2014](#bib.bib12)). To our knowledge, this approach is the first to combine the benefits of sample-based IOC under unknown dynamics with nonlinear cost representations that directly use the raw state of the system, without the need for manual feature engineering. This allows us to apply our method to a variety of real-world robotic manipulation tasks. Our evaluation demonstrates that our method outperforms prior IOC algorithms on a set of simulated benchmarks, and achieves good results on several real-world tasks.

Our evaluation shows that our approach can learn good cost functions for a variety of simulated tasks.

For complex robotic motion skills, the learned cost functions tend to explain the demonstrations only locally. This makes them difficult to reoptimize from scratch for new conditions. It should be noted that this challenge is not unique to our method. In our comparisons, no prior sample-based method was able to learn good global costs for these tasks. However, since our method interleaves cost optimization with policy learning, it still recovers successful policies for these tasks. For this reason, we can still learn from demonstration simply by retaining the learned policy, and discarding the cost function. This allows us to tackle substantially more challenging tasks that involve direct torque control of real robotic systems with feedback from vision.

To incorporate vision into our experiments, we used unsupervised learning to acquire a vision-based state representation, following prior work (Finn et al., [2016](#bib.bib9)). An exciting avenue for future work is to extend our approach to learn cost functions directly from natural images. The principal challenge for this extension is to avoid overfitting when using substantially larger and more expressive networks. Our current regularization techniques mitigate overfitting to a high degree, but visual inputs tend to vary dramatically between demonstrations and on-policy samples, particularly when the demonstrations are provided by a human via kinesthetic teaching. One promising avenue for mitigating these challenges is to introduce regularization methods developed for domain adaptation in computer vision (Tzeng et al., [2015](#bib.bib28)), to encode the prior knowledge that demonstrations have similar visual features to samples.

Acknowledgements

----------------

This research was funded in part by ONR through a Young Investigator Program

award, the Army Research Office through the MAST program, and an NSF

fellowship. We thank Anca Dragan for thoughtful discussions. |

d4f6b0e7-36f8-4874-96c5-779d27f8765d | trentmkelly/LessWrong-43k | LessWrong | Background material for reading Judgment Under Uncertainty?

After seeing it constantly referenced in the Sequences and elsewhere, I've picked up Kahneman and Tversky's book/collection of papers Judgment Under Uncertainty: Heuristics and Biases. I was wondering if anyone here who's read it or knows the subject would recommend any prefatory material so that it makes more sense/is more meaningful.

Personal background: [Personal information deleted] From that and from this site, I'm passingly familiar with e.g. the representativeness heuristic and Bayesian probability, but I've never had to use it much in any academic setting.

Any advice before I delve into it? |

5f06fa72-a1b8-4356-917b-e207f7117c08 | trentmkelly/LessWrong-43k | LessWrong | Writing feedback requested: activists should pursue a positive Singularity

I managed to turn an essay assignment into an opportunity to write about the Singularity, and I thought I'd turn to LW for feedback on the paper. The paper is about Thomas Pogge, a German philosopher who works on institutional efforts to end poverty and is a pledger for Giving What We Can.

I offer a basic argument that he and other poverty activists should work on creating a positive Singularity, sampling liberally from well-known Less Wrong arguments. It's more academic than I would prefer, and it includes some loose talk of 'duties' (which bothers me), but for its goals, these things shouldn't be a huge problem. But maybe they are - I want to know that too.

I've already turned the assignment in, but when I make a better version, I'll send the paper to Pogge himself. I'd like to see if I can successfully introduce him to these ideas. My one conversation with him indicates that he would be open to actually changing his mind. He's clearly thought deeply about how to do good, and may simply have not been exposed to the idea of the Singularity yet.

I want feedback on all aspects of the paper - style, argumentation, clarity. Be as constructively cruel as I know only you can.

If anyone's up for it, fee free to add feedback using Track Changes and email me a copy - mjcurzi[at]wustl.edu. I obviously welcome comments on the thread as well.

You can read the paper here in various formats.

Upvotes for all. Thank you! |

141bae9f-047a-48e1-aaa8-606c5fbe8d17 | trentmkelly/LessWrong-43k | LessWrong | Unattributed Good

Cross-posted from 10-year Horizon.

If a good deed is done and no one is around to see it, does it make a sound?

In 1939, Nicholas Winton found himself on a volunteer mission in pre-war Prague. Recognizing the darkening position of the Jews, he helped organize train transports that saved 669 children from concentration camps. Today, many of us have heard this fascinating story of good character. But the story was only uncovered in 1988 by the BBC, nearly 50 years later. Sir Nicholas is said to have never talked about it himself. To most of us, those uncredited decades are powerful and puzzling.

If a good deed is done and no one is around to see it, does it make a sound?

> "I used to believe that a legacy was solely based on your achievements."

>

> Dayana Sabatin

On an everyday scale, this happens to all of us. It’s the goodwill gesture that goes unnoticed in a relationship. The overlooked patience. The brushed-off compliment.

From the trivial to the heroic, there is a yawning mismatch between what’s done and what’s appreciated. It makes a glaring contrast with the visible measures of appreciation today: namely, social media likes. Who gets thousands of likes? What for? And who goes through life helping others, perhaps by simply being a little nicer than strictly necessary, yet stays invisible?

> As with economic and other resources, the judgement of others is very unevenly distributed. Some are rich with recognition, applause, goodwill, trust, reputation and others are starved of a good word.

>

> Ziyad Marar - Judged

Unattributed good is the delta between merit and credit. What an enormous amount of misallocated energy it represents! What an amount of inner tension in people! Especially considering that both merit and popularity are so highly prized today. Imagine a social network where the most popular people were the ones who did the most good. What would that feed look like? Very different from Instagram. We can bridge that. We don’t even have |

9021664b-fbf5-4fb6-a042-4047ce5a2eae | trentmkelly/LessWrong-43k | LessWrong | In which I fantasize about drugs

We operate like this: the "overseer process" tells the brain, using blunt instruments like chemicals, that we need to find something to eat, somewhere to sleep or someone to mate with. Then the brain follows orders. Unfortunately the orders we receive from the "overseer" are often wrong, even though they were right in the ancestral environment. It seems the easiest way to improve humans isn't to augment their brains - it's to send them better orders, e.g. using drugs. Here's a list of fantasy brain-affecting drugs that I would find useful, even though they don't seem to do anything complicated except affecting "overseer" chemistry:

1) A drug against unrequited love, aka "infatuation" or 'limerence".

2) A drug that makes you become restless and want to exercise.

3) A drug that puts you in the state of random creativity that you normally experience just before falling asleep.

4) A drug that puts you in the optimal PUA "state".

5) A drug that boosts your feeling of curiosity. Must be great for doing math or science.

Anything else? |

4a4bef51-3610-4b3d-83a5-d37d3c484966 | StampyAI/alignment-research-dataset/arxiv | Arxiv | Voluntary safety commitments provide an escape from over-regulation in AI development

Abstract

--------

With the introduction of Artificial Intelligence (AI) and related technologies in our daily lives, fear and anxiety about their misuse as well as the hidden biases in their creation have led to a demand for regulation to address such issues. Yet blindly regulating an innovation process that is not well understood, may stifle this process and reduce benefits that society may gain from the generated technology, even under the best intentions. In this paper, starting from a baseline model that captures the fundamental dynamics of a race for domain supremacy using AI technology, we demonstrate how socially unwanted outcomes may be produced when sanctioning is applied unconditionally to risk-taking, i.e. potentially unsafe, behaviours. As an alternative to resolve the detrimental effect of over-regulation, we propose a voluntary commitment approach wherein technologists have the freedom of choice between independently pursuing their course of actions or establishing binding agreements to act safely, with sanctioning of those that do not abide to what they pledged. Overall, this work reveals for the first time how voluntary commitments, with sanctions either by peers or an institution, leads to socially beneficial outcomes in all scenarios envisageable in a short-term race towards domain supremacy through AI technology. These results are directly relevant for the design of governance and regulatory policies that aim to ensure an ethical and responsible AI technology development process.

Keywords: Evolutionary Game Theory, AI development race, Commitments, Incentives, Safety.

1 Introduction

---------------

With the rapid advancement of AI and related technologies, there has been significant fear and anxiety about their potential misuse as well as the social and ethical consequences that may result from biases within the design of such systems (Tzachor et al.,, [2020](#bib.bib45), Bostrom,, [2014](#bib.bib5), Stix and Maas,, [2020](#bib.bib42)).

While expectations associated with these advanced technologies increase and monetary profits stimulate rapid deployment, there is a serious risk for taking unethical or risky short cuts to enter a market first with the next innovation, ignoring safety checks and ethical development procedures.

As different disagreeable examples have emerged (Coeckelbergh,, [2020](#bib.bib12)), governments and regulating bodies have been catching up by debating new forms and frameworks for regulating this technology (Baum,, [2017](#bib.bib4), Cave and ÓhÉigeartaigh,, [2018](#bib.bib8), Taddeo and Floridi,, [2018](#bib.bib43)); notably, the recent EU White Paper on AI.

Such debates have produced proposals for mechanisms on how to avoid, mediate, or regulate the development and deployment of AI (Baum,, [2017](#bib.bib4), Cave and ÓhÉigeartaigh,, [2018](#bib.bib8), Geist,, [2016](#bib.bib19), Shulman and Armstrong,, [2009](#bib.bib40), Han et al.,, [2019](#bib.bib24), Vinuesa et al.,, [2020](#bib.bib47), Nemitz,, [2018](#bib.bib35), Taddeo and Floridi,, [2018](#bib.bib43), Askell et al.,, [2019](#bib.bib2), O’Keefe et al.,, [2020](#bib.bib38)). Essentially, regulatory measures such as restrictions and incentives are proposed to limit harmful and risky practices in order to promote beneficial designs (Baum,, [2017](#bib.bib4)). Examples of such approaches (Baum,, [2017](#bib.bib4)) include financially supporting the research into beneficial AI (McGinnis,, [2010](#bib.bib34)) and making AI companies pay

fines when found liable for the consequences of harmful AI (Gurney,, [2013](#bib.bib20)).

Although these regulatory measures may provide solutions for particular scenarios, one needs to ensure that they do not overshoot their targets since over-regulation could stifle innovation, potentially hindering investments into the development of novel innovations as they become too risky endeavors (Hadfield,, [2017](#bib.bib21), Lee,, [2018](#bib.bib32)).

Worries have been expressed by different organisations/societies that too strict policies may unnecessarily affect the benefits and societal advances that novel AI technologies may have to offer (EDRI,, [2021](#bib.bib18)).

Regulations affect moreover big and small tech companies differently: A highly regulated domain makes it more difficult for small new start-ups, introducing an inequality and dominance of the market by a few big players (Lee,, [2018](#bib.bib32)). It has been emphasised that neither over-regulation nor a laissez-faire approach suffices when aiming to regulate AI technologies (Dawson et al.,, [2019](#bib.bib15)). In order to find a balanced answer, one clearly needs to have first an understanding of how a competitive development dynamic may work.

Starting from a baseline game theoretical model, referred to as the DSAIR model (Han et al.,, [2020](#bib.bib28)) (a model of domain supremacy through an AI race), which defines the process through which multiple stake-holders aim for market supremacy, we demonstrate first that unconditional sanctioning will negatively influence social welfare in certain conditions of a short-term race towards domain supremacy through AI technology. Afterwards, we examine the DSAIR model with an alternative mechanism for resolving the issue that can be shown to lead to less detrimental effects. Our approach is to allow technologists or race participants to voluntarily commit themselves to safe innovation procedures, signaling to others their intentions. Specifically, this bottom-up, binding agreement (or commitment) is established for those who want to take a safe choice, with sanctioning applied to violators of such an agreement.

#### Previous Developments.

As was shown in (Han et al.,, [2020](#bib.bib28)) participants either follow safety precautions (the SAFE option) or ignore them (the UNSAFE option) in each step of the development process of the DSAIR model. The main assumption in the model was that it requires more time and more effort to comply with the precautionary requirements, making the SAFE option not only costlier, but also slower compared to the UNSAFE option. Accordingly, it was assumed that in playing SAFE, participants must pay a cost c>0𝑐0c>0italic\_c > 0, whereas playing UNSAFE costs nothing. Furthermore, whenever playing UNSAFE, the development speed differs and is s>1𝑠1s>1italic\_s > 1 whereas in playing SAFE the speed is simply normalised to 1.

Decisions to act SAFE or UNSAFE in AI development are repeated until one or more teams attain the designated objective, which can be translated into having completed W𝑊Witalic\_W development steps, on average (Han et al.,, [2020](#bib.bib28)). As a result, they earn a large benefit or prize B𝐵Bitalic\_B (e.g. windfall profits (O’Keefe et al.,, [2020](#bib.bib38))), equally shared among those reaching the target at the same time. A development disaster or a setback may however come to occur with some probability, which is presumed to increase with the number of times that safety requirements were ignored by the winning team(s) at each step.

Whenever a disaster of this kind occurs, all the benefits of a risk-taking participant are lost. This risk probability is denoted by prsubscript𝑝𝑟p\_{r}italic\_p start\_POSTSUBSCRIPT italic\_r end\_POSTSUBSCRIPT (see the Models and Methods section for more details).

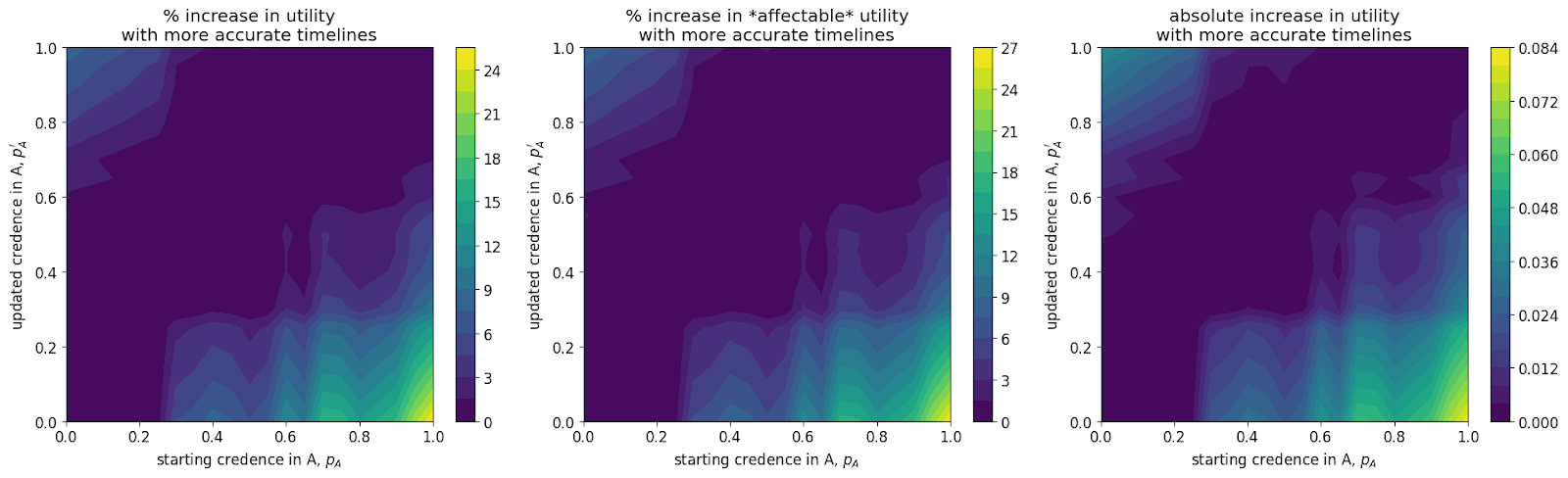

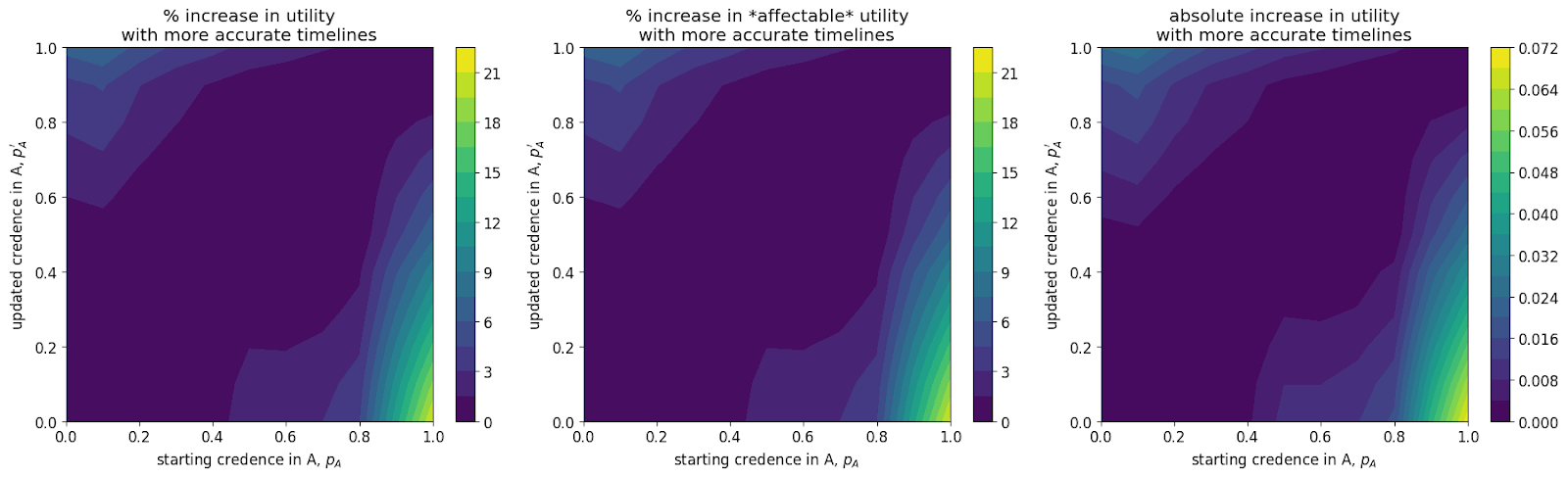

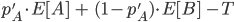

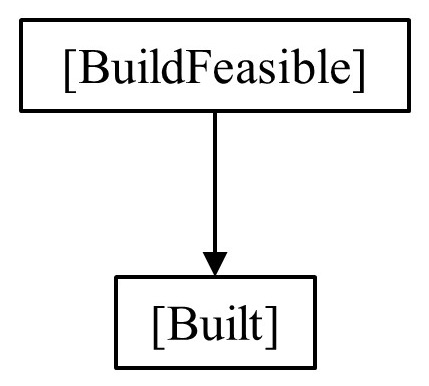

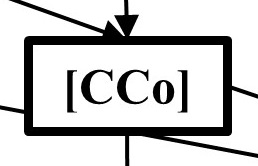

Figure 1: Behavioural regions (zones) as identified in (Han et al.,, [2020](#bib.bib28)) when the time to reach domain supremacy is short. Region II: Inside the plots, the two solid lines delimit the boundaries wherein the collective prefers safety compliant behavior, yet unsafe development is individually preferred. Regions III and I exhibit where unsafe (respectively, safe) development is both the individually and collectively preferred outcome

It was observed in the DSAIR model that, in case the time-scale to reach the target is short, so that over the whole of the development process the average of the accumulated benefit is much smaller than the final benefit B𝐵Bitalic\_B, only for a certain window of parameter settings societal interest conflicts with the individual ones: In that region, individual unsafe behaviour dominates, despite that safe development would lead to a larger collective outcome or social welfare (cf. region II in Figure [1](#S1.F1 "Figure 1 ‣ Previous Developments. ‣ 1 Introduction ‣ Voluntary safety commitments provide an escape from over-regulation in AI development")).

From the regulatory perspective, it is only region II that thus requires governance in order to promote or enforce safe actions in order to avoid any disaster that may occur during the technology development race.

A peer punishment mechanism against unsafe behaviour was proposed in order to mediate the behavior in that region (without affecting the desirable safe outcome in region I) (Han et al.,, [2021](#bib.bib25)). It however may lead to a reduction of the societal welfare that can be obtained in region III (see Figure [1](#S1.F1 "Figure 1 ‣ Previous Developments. ‣ 1 Introduction ‣ Voluntary safety commitments provide an escape from over-regulation in AI development")) where the desired unsafe (risk-taking/innovative) behaviour becomes significantly reduced whenever punishment is not very costly to the punisher while strongly affecting the punished. The problem with applying system-wide sanctioning for any risk-taking behavior is that it does not take into account the region wherein the AI innovation is taking place, as was visualised by Figure [1](#S1.F1 "Figure 1 ‣ Previous Developments. ‣ 1 Introduction ‣ Voluntary safety commitments provide an escape from over-regulation in AI development"). That is, if it is the case that a development race falls in region III (low risk and innovation is collectively beneficial and preferred), unsafe behaviour should not be punished as it is beneficial for the overall social welfare. To enact such a region-dependent targeted punishment scheme, one would require the ability to estimate exactly the risk level associated with each AI development scenario, i.e. knowing beforehand the risk as well as speed of development. Clearly that may not be easy due to the lack of data on how such an AI innovation dynamics works. This is especially true in the early stages of the development and adoption of many new technologies, which has become known as the so-called double-bind problem (Collingridge,, [1980](#bib.bib13)): the impact of technologies including AI ones cannot be predicted until it becomes a reality, and controlling or changing it is difficult or no longer possible at that point.

#### Objectives

In this article an alternative solution is proposed that circumvents the need of being able to estimate correctly the speed of development and risk in order to appropriately regulate UNSAFE behavior: race participants can choose whether or not to establish a bilateral commitment to act safely, which also exposes them to a sanction in case they do not uphold their commitment. While, on the one had, the development teams are allowed to work in an UNSAFE manner without repercussions if they do not commit; a prior agreement allows, on the other hand, the safety compliant participants to identify easily unsafe ones while having also the capacity to punish the dishonest ones (who might also be sanctioned by an external party such as a regulating institution).

Our results reveal that this freedom of choice, if enabled through a prior bilateral commitment to SAFE actions, can, on the one hand, avoid over-regulating unsafe behaviour and, on the other, improve significantly the desired safety outcome in the dilemma zone when compared to punishment alone. Interestingly, this type of binding pledges has been argued to be of relevance within other types of global conflicts and dilemmas, such as environmental governance (Barrett,, [2003](#bib.bib3), Cherry and McEvoy,, [2013](#bib.bib11)).

2 Models and Methods

---------------------

We first recall the DSAIR model with pairwise interactions then extend it with the option to bilaterally commit to acting safely and associated punishment of violations of such commitments.

The Evolutionary Game Theory (EGT) methods being used to analyse the models will then be described.

###

2.1 Summary of the DSAIR model and prior results

The DSAIR model (Han et al.,, [2020](#bib.bib28)) was originally defined as a two-player game repeated with a certain probability, consisting thus on average of W𝑊Witalic\_W rounds111An N-player version of this game was discussed also in (Han et al.,, [2020](#bib.bib28)). Yet in order to keep things easy to access, we focus here on the two-player scenario..

At each round of development, players gather benefits arising from their intermediate AI developments, subject to whether or not they chose to act UNSAFE or SAFE. Presuming some fixed benefit, b𝑏bitalic\_b, resulting from the AI market, the teams will share this gain proportionately to their development speed.

Accordingly, at every round of the race one can write a payoff matrix denoted by ΠΠ\Piroman\_Π with respect to row players i𝑖iitalic\_i, whose entries are denoted by ΠijsubscriptΠ𝑖𝑗\Pi\_{ij}roman\_Π start\_POSTSUBSCRIPT italic\_i italic\_j end\_POSTSUBSCRIPT (j𝑗jitalic\_j corresponding to some column), as shown

| | | | |

| --- | --- | --- | --- |

| | Π=𝑆𝐴𝐹𝐸𝑈𝑁𝑆𝐴𝐹𝐸𝑆𝐴𝐹𝐸( −c+b2−c+bs+1) 𝑈𝑁𝑆𝐴𝐹𝐸sbs+1b2.\Pi=\bordermatrix{~{}&\textit{SAFE}&\textit{UNSAFE}\cr\textit{SAFE}&-c+\frac{b}{2}&-c+\frac{b}{s+1}\cr\textit{UNSAFE}&\frac{sb}{s+1}&\frac{b}{2}\cr}.roman\_Π = start\_ROW start\_CELL end\_CELL start\_CELL end\_CELL start\_CELL SAFE end\_CELL start\_CELL UNSAFE end\_CELL start\_CELL end\_CELL end\_ROW start\_ROW start\_CELL SAFE end\_CELL start\_CELL italic\_( end\_CELL start\_CELL - italic\_c + divide start\_ARG italic\_b end\_ARG start\_ARG 2 end\_ARG end\_CELL start\_CELL - italic\_c + divide start\_ARG italic\_b end\_ARG start\_ARG italic\_s + 1 end\_ARG end\_CELL start\_CELL italic\_) end\_CELL end\_ROW start\_ROW start\_CELL UNSAFE end\_CELL start\_CELL end\_CELL start\_CELL divide start\_ARG italic\_s italic\_b end\_ARG start\_ARG italic\_s + 1 end\_ARG end\_CELL start\_CELL divide start\_ARG italic\_b end\_ARG start\_ARG 2 end\_ARG end\_CELL start\_CELL end\_CELL end\_ROW . | | (1) |

The payoff matrix can be explained as follows. Firstly, wherever there is an interaction between two players selecting the SAFE action, each shall pay a cost c𝑐citalic\_c and the resulting benefit b𝑏bitalic\_b is shared. Differently, whenever interaction is between two players selecting the UNSAFE action, they shall share benefit b𝑏bitalic\_b without having had to pay cost c𝑐citalic\_c.

Whenever an UNSAFE choice is matched with a SAFE one, the SAFE choice necessitates a cost c𝑐citalic\_c and receives a (smaller) part b/(s+1)𝑏𝑠1b/(s+1)italic\_b / ( italic\_s + 1 ) of b𝑏bitalic\_b, whereas the UNSAFE choice collects a larger sb/(s+1)𝑠𝑏𝑠1sb/(s+1)italic\_s italic\_b / ( italic\_s + 1 ) whilst not ever having had to pay c𝑐citalic\_c. Note that ΠΠ\Piroman\_Π is a simplification of the matrix defined in (Han et al.,, [2020](#bib.bib28)) for, in the current time-scale, it was shown that the parameters as defined here sufficiently explain the obtained results.

We analyse the evolutionary outcomes of this game in a well-mixed and finite population consisting of Z𝑍Zitalic\_Z players. Given the choices each player can make and the fact that these choices need to be repeated for W𝑊Witalic\_W round, each adopts player one of the two following strategies (Han et al.,, [2020](#bib.bib28)):

* •

AS: complies every time with safety precautions, acting thus SAFE in every round.

* •

AU: complies not once with safety precautions, acting thus UNSAFE in every round.

The averaged payoffs for AS vs AU are expressed by payoff matrix

| | | | |

| --- | --- | --- | --- |

| | 𝐴𝑆𝐴𝑈𝐴𝑆( B2W+Π11Π12) 𝐴𝑈p(sBW+Π21)p(sB2W+Π22),\bordermatrix{~{}&\textit{AS}&\textit{AU}\cr\textit{AS}&\frac{B}{2W}+\Pi\_{11}&\Pi\_{12}\cr\textit{AU}&p\left(\frac{sB}{W}+\Pi\_{21}\right)&p\left(\frac{sB}{2W}+\Pi\_{22}\right)\cr},start\_ROW start\_CELL end\_CELL start\_CELL end\_CELL start\_CELL AS end\_CELL start\_CELL AU end\_CELL start\_CELL end\_CELL end\_ROW start\_ROW start\_CELL AS end\_CELL start\_CELL italic\_( end\_CELL start\_CELL divide start\_ARG italic\_B end\_ARG start\_ARG 2 italic\_W end\_ARG + roman\_Π start\_POSTSUBSCRIPT 11 end\_POSTSUBSCRIPT end\_CELL start\_CELL roman\_Π start\_POSTSUBSCRIPT 12 end\_POSTSUBSCRIPT end\_CELL start\_CELL italic\_) end\_CELL end\_ROW start\_ROW start\_CELL AU end\_CELL start\_CELL end\_CELL start\_CELL italic\_p ( divide start\_ARG italic\_s italic\_B end\_ARG start\_ARG italic\_W end\_ARG + roman\_Π start\_POSTSUBSCRIPT 21 end\_POSTSUBSCRIPT ) end\_CELL start\_CELL italic\_p ( divide start\_ARG italic\_s italic\_B end\_ARG start\_ARG 2 italic\_W end\_ARG + roman\_Π start\_POSTSUBSCRIPT 22 end\_POSTSUBSCRIPT ) end\_CELL start\_CELL end\_CELL end\_ROW , | | (2) |

wherein, for presentation purposes alone, let us denote p=1−pr𝑝1subscript𝑝𝑟p=1-p\_{r}italic\_p = 1 - italic\_p start\_POSTSUBSCRIPT italic\_r end\_POSTSUBSCRIPT (note that prsubscript𝑝𝑟p\_{r}italic\_p start\_POSTSUBSCRIPT italic\_r end\_POSTSUBSCRIPT was explained in the Introduction section).

As has been shown in (Han et al.,, [2020](#bib.bib28)), by contemplating where AU is risk-dominant against AS (cf. Methods below), then three distinct regions are identifiable within the parameter space s𝑠sitalic\_s-prsubscript𝑝𝑟p\_{r}italic\_p start\_POSTSUBSCRIPT italic\_r end\_POSTSUBSCRIPT (cf. Figure [1](#S1.F1 "Figure 1 ‣ Previous Developments. ‣ 1 Introduction ‣ Voluntary safety commitments provide an escape from over-regulation in AI development")): (I) if pr>1−13ssubscript𝑝𝑟113𝑠p\_{r}>1-\frac{1}{3s}italic\_p start\_POSTSUBSCRIPT italic\_r end\_POSTSUBSCRIPT > 1 - divide start\_ARG 1 end\_ARG start\_ARG 3 italic\_s end\_ARG, AU is risk-dominated by AS: safety compliance affords both the collectively preferred outcome and the one evolution selects; (II) if 1−13s>pr>1−1s113𝑠subscript𝑝𝑟11𝑠1-\frac{1}{3s}>p\_{r}>1-\frac{1}{s}1 - divide start\_ARG 1 end\_ARG start\_ARG 3 italic\_s end\_ARG > italic\_p start\_POSTSUBSCRIPT italic\_r end\_POSTSUBSCRIPT > 1 - divide start\_ARG 1 end\_ARG start\_ARG italic\_s end\_ARG: even though safety compliance is the more desirable strategy for ensuring the highest collective outcome, the social learning dynamics leads the population to the state within which safety precautions have been mostly ignored; (III) if pr<1−1ssubscript𝑝𝑟11𝑠p\_{r}<1-\frac{1}{s}italic\_p start\_POSTSUBSCRIPT italic\_r end\_POSTSUBSCRIPT < 1 - divide start\_ARG 1 end\_ARG start\_ARG italic\_s end\_ARG (AU is risk-dominant against AS), then unsafe development is both collectively preferred and selected by the social learning dynamics.

So it is important to remember for the rest of the paper that UNSAFE actions are preferred and established in zone III, SAFE actions are preferred and established in zone I and a conflict exists between the individual and the collective in zone II as the former prefers UNSAFE actions and the latter SAFE actions.

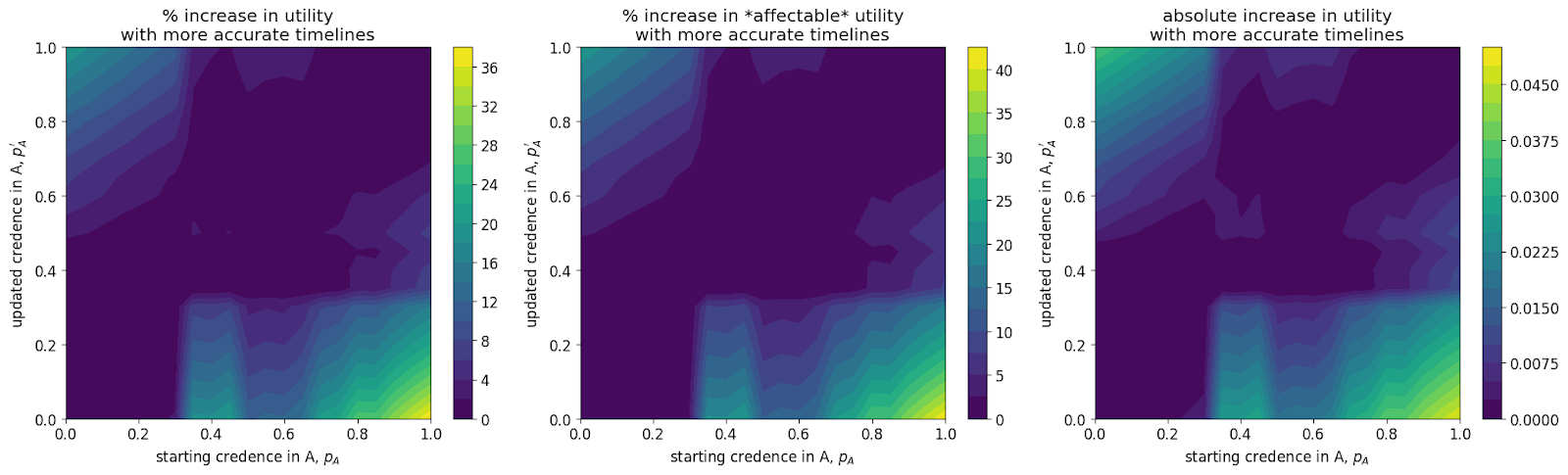

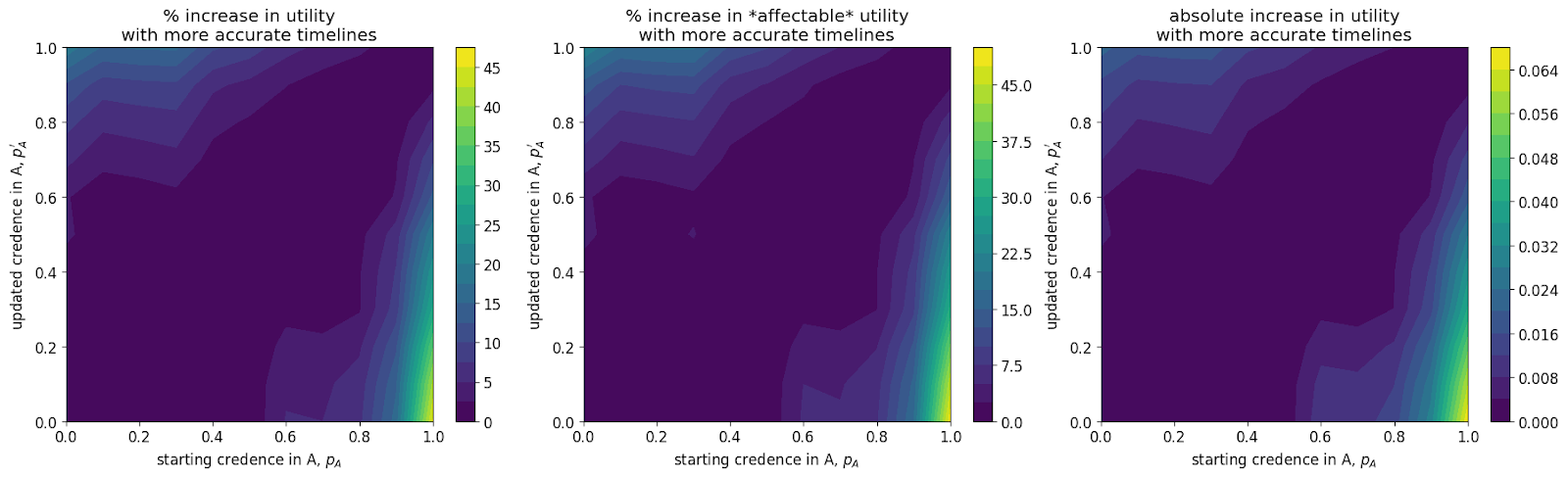

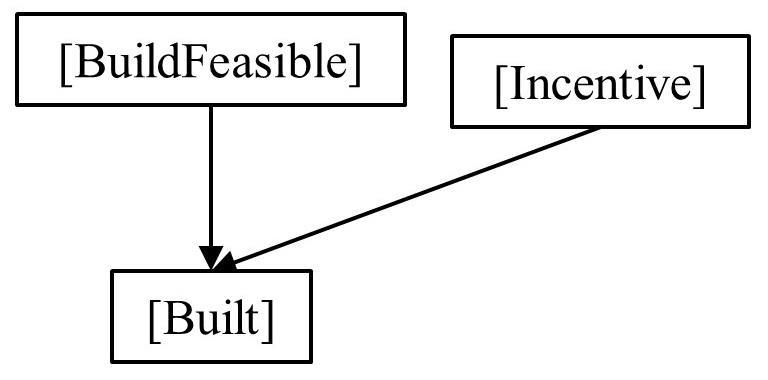

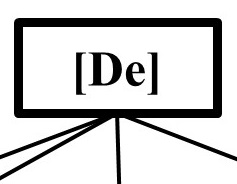

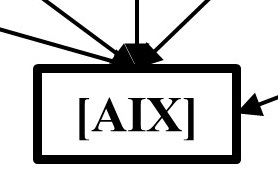

Figure 2: Behavioural dominance in different zones for varying prsubscript𝑝𝑟p\_{r}italic\_p start\_POSTSUBSCRIPT italic\_r end\_POSTSUBSCRIPT, in presence of prior commitments (top row: panels a, b) and comparison of its overall unsafe behaviour against when there are no commitments (bottom row: panels c, d). The black dotted lines in panels a and b indicate the total unsafe frequency (i.e. the sum of AU-in and AU-out frequencies). The desired collective behaviour is indicated for each zone (i.e. unsafe in zone III and safe in zones I and III). We show results for two important scenarios: when efficient punishment can be made for a small cost (left column: sα=0.3subscript𝑠𝛼0.3s\_{\alpha}=0.3italic\_s start\_POSTSUBSCRIPT italic\_α end\_POSTSUBSCRIPT = 0.3, sβ=1subscript𝑠𝛽1s\_{\beta}=1italic\_s start\_POSTSUBSCRIPT italic\_β end\_POSTSUBSCRIPT = 1) and when punishment is not highly efficient (right column:, sα=1subscript𝑠𝛼1s\_{\alpha}=1italic\_s start\_POSTSUBSCRIPT italic\_α end\_POSTSUBSCRIPT = 1, sβ=1subscript𝑠𝛽1s\_{\beta}=1italic\_s start\_POSTSUBSCRIPT italic\_β end\_POSTSUBSCRIPT = 1).

Parameters: b=4𝑏4b=4italic\_b = 4, c=1𝑐1c=1italic\_c = 1, s=1.5𝑠1.5s=1.5italic\_s = 1.5, W=100𝑊100W=100italic\_W = 100, B=104𝐵superscript104B=10^{4}italic\_B = 10 start\_POSTSUPERSCRIPT 4 end\_POSTSUPERSCRIPT, β=1𝛽1\beta=1italic\_β = 1, Z=100𝑍100Z=100italic\_Z = 100.

### Introducing commitment strategies

We now extend the DSAIR model with strategies that can bilaterally commit to safety compliant behavior: Before an interaction, participants can commit to play SAFE in each round. The commitment stands when all parties agree.

The players can refuse to commit, preferring to proceed without being pushed into the safe direction and being able to take risks. Those that committed but later select the UNSAFE action are potentially subject to sanctioning. Two sanctioning scenarios are considered here: (a) peer punishment (PP), which is performed by the co-player who kept her side of the deal, and (b) institutional punishment (IP), which is performed by a third-party that is not actively participating in the race for supremacy in some domain through AI (e.g. the European Union or United Nations). Each player has the freedom of behavioural choice whereby those who do not commit will not be punished when playing UNSAFE in the DSAIR model. We call the latter behavior ”honest” unsafe behavior whereas those that act unsafely after committing are referred to as ”dishonest”.

Sanctioning an opponent who played UNSAFE in a previous round consists in imposing a reduction sβsubscript𝑠𝛽s\_{\beta}italic\_s start\_POSTSUBSCRIPT italic\_β end\_POSTSUBSCRIPT on the opponent’s speed (Han et al.,, [2021](#bib.bib25)). In case of PP, the punishing player also incurs a reduction sαsubscript𝑠𝛼s\_{\alpha}italic\_s start\_POSTSUBSCRIPT italic\_α end\_POSTSUBSCRIPT on her own speed.

Committing may also be costly for all that do as they give up other choices. A commitment cost ϵitalic-ϵ\epsilonitalic\_ϵ (per round) is thus introduced for those performing this pre-play action.

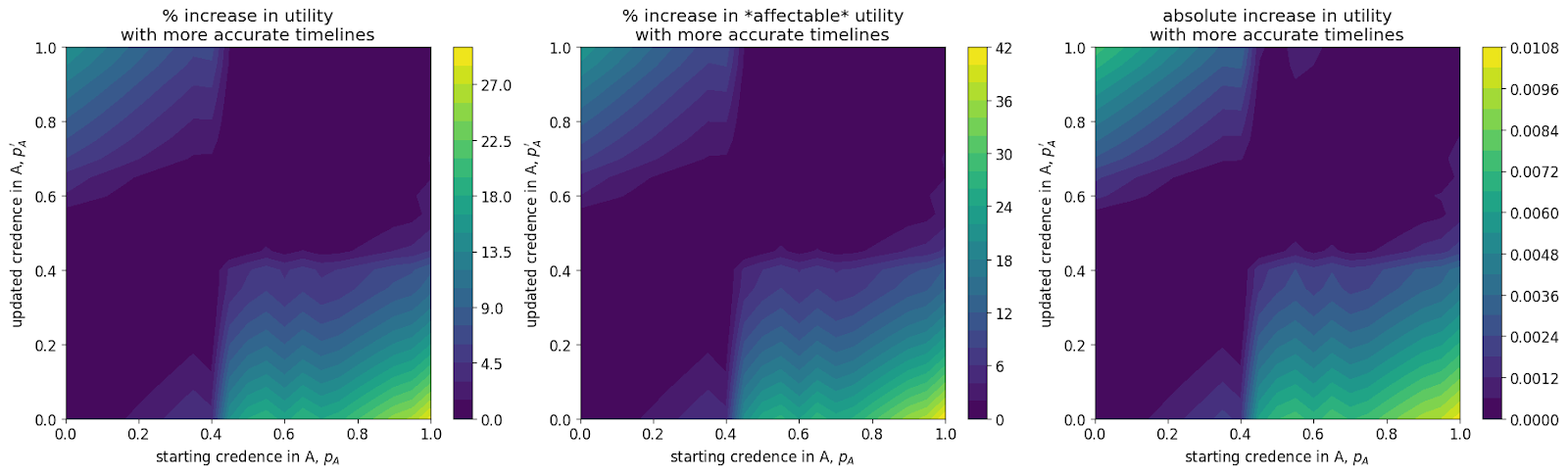

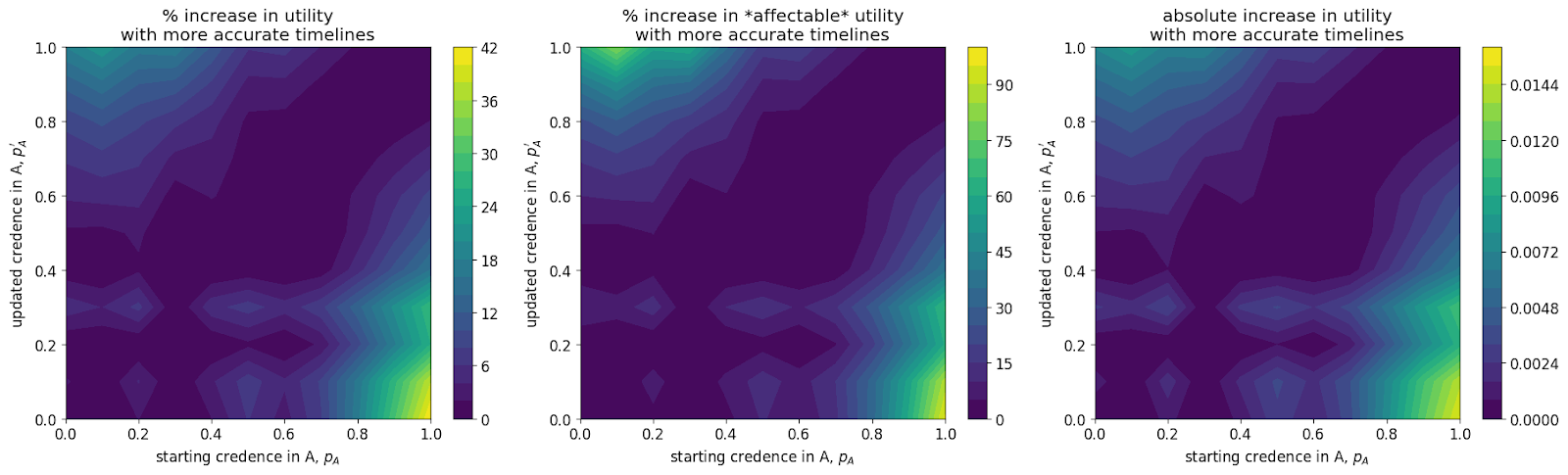

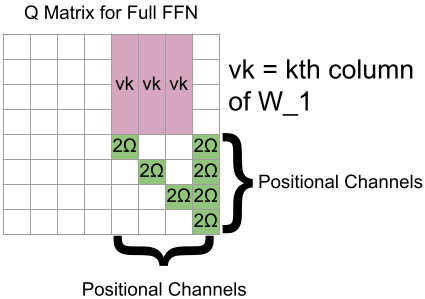

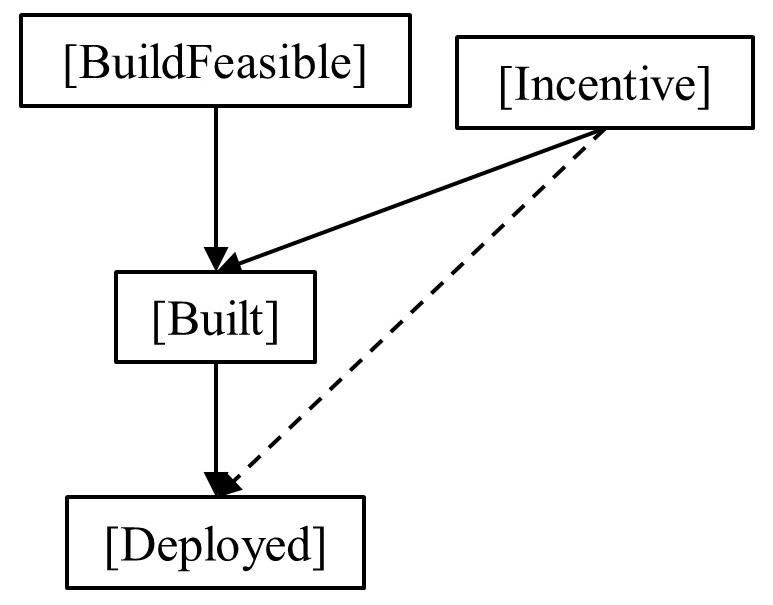

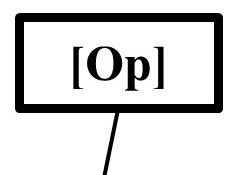

Figure 3: Transitions and stationary distributions when agreement is present (top row) against when it is absent (bottom row), for three regions. For clarity, only stronger transitions (than the ones in the opposite directions) are shown; no transition either way means neutral. The choice of sαsubscript𝑠𝛼s\_{\alpha}italic\_s start\_POSTSUBSCRIPT italic\_α end\_POSTSUBSCRIPT and sβsubscript𝑠𝛽s\_{\beta}italic\_s start\_POSTSUBSCRIPT italic\_β end\_POSTSUBSCRIPT values were chosen to illustrate the main difference between with vs without commitment scenarios, in the three zones. Parameters: b=4𝑏4b=4italic\_b = 4, c=1𝑐1c=1italic\_c = 1, s=1.5𝑠1.5s=1.5italic\_s = 1.5, W=100𝑊100W=100italic\_W = 100, B=104𝐵superscript104B=10^{4}italic\_B = 10 start\_POSTSUPERSCRIPT 4 end\_POSTSUPERSCRIPT, β=1𝛽1\beta=1italic\_β = 1, Z=100𝑍100Z=100italic\_Z = 100.

With the possibility of joining or not a commitment to behave safely and sanctioning dishonest unsafe behaviour, one can now define the possible strategies. AS and AU (as defined above) can either commit to safe actions, and furthermore, when involved in a commitment, decide whether to punish a dishonest co-player. If no commitment can be made, the player will select the UNSAFE action222We also consider the version where these players unconditionally play SAFE regardless of the commitment. The safety outcomes in all regions are similar, just the strategies’ dominance is slightly different. Results are provided in Appendix (see Figures A3 and A4).. The choices listed before lead to five strategies:

1. 1.