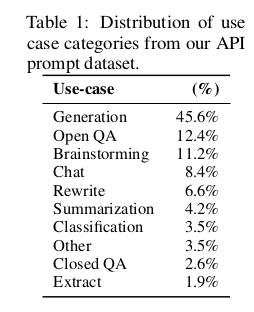

id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

636756dd-ed99-4e63-9125-70ba882861e2 | trentmkelly/LessWrong-43k | LessWrong | When should you give to multiple charities?

The logic that you should donate only to a single top charity is very strong. But when faced with two ways of making the world better there's this urge deny the choice and do both. Is this urge irrational or is there something there?

At the low end splitting up your giving can definitely be a problem. If you give $5 here and $10 there it's depressing how much of your donations will be eaten up by processing costs:

> The most extreme case I've seen, from my days working at a nonprofit, was an elderly man who sent $3 checks to 75 charities. Since it costs more than that to process a donation, this poor guy was spending $225 to take money from his favorite organizations.

By contrast, at the high end you definitely need to divide your giving. If a someone decided to give $1B to the AMF it would definitely do a lot of good. Because charities have limited room for more funding, however, after the first $20M or so there are probably other anti-malaria organizations that could do more with the money. And at some point we beat malaria and so other interventions start having a greater impact for your money.

Most of us, however, are giving enough that our donations are well above the processing-cost level but not enough to satisfy an organization's room for more funding. So what do you do?

If one option is much better than another then you really do need to make the choice. The best ones are enough better than the average ones that you need to buckle down and pick the best.

But what about when you're not sure? Even after going through all the evidence you can find you just can't decide whether it's more effective to take the sure thing and help people now or support the extremely hard to evaluate but potentially crucial work of reducing the risk that our species wipes itself out. The strength of the economic argument for giving only to your top charity is proportional to the difference between it and your next choice. If the difference is small enough and you find it pai |

4e1b386c-b404-42a0-868d-a9c3657183f3 | trentmkelly/LessWrong-43k | LessWrong | TAPs 2

This is part 13 of 30 of Hammertime. Click here for the intro.

> “Omit needless words!” cries the author on page 23, and into that imperative Will Strunk really put his heart and soul. In the days when I was sitting in his class, he omitted so many needless words, and omitted them so forcibly and with such eagerness and obvious relish, that he often seemed in the position of having shortchanged himself — a man left with nothing more to say yet with time to fill, a radio prophet who had out-distanced the clock. Will Strunk got out of this predicament by a simple trick: he uttered every sentence three times. When he delivered his oration on brevity to the class, he leaned forward over his desk, grasped his coat lapels in his hands, and, in a husky, conspiratorial voice, said, “Rule Seventeen. Omit needless words! Omit needless words! Omit needless words!”

~ The Elements of Style

There is nothing more essential to the practice of Hammertime than repetition, and no rationality technique that requires more repetitive practice than TAPs. Although we pick only three days to focus on them, it’s best to draw out the repetitive drilling of TAPs over a lifetime.

Day 13: TAPs

Previously: Day 3.

Triggers that Notice Themselves

The real skill with trigger-action planning is picking the right trigger. The best triggers are not only easy to notice, but hard to miss. It should not require effort and conscious attention to notice the trigger – the only conscious action occurs after the trigger calls the action to mind.

Three ways to find great triggers:

1. Sentimental value: there’s a process by which we naturally become attached to the items that accompany us through thick and thin. I am attached, for example, to the freckle on my right thumb, to a long-sleeve shirt gifted me by a childhood friend, to my Logitech gaming mouse – relic of a past life. Pay attention to these objects. Notice how they gain three-dimensionality. Inject them with meaning. For example, there’s a |

665be085-c1ca-455c-b66b-d531ca244677 | trentmkelly/LessWrong-43k | LessWrong | Just Saying What You Mean is Impossible

Response to: Conversation Deliberately Skirts the Border of Incomprehensibility (SlateStarCodex)

“Why don’t people just say what they mean?”

They can’t.

I don’t mean they don’t want to. I don’t mean that they choose not to. I don’t even mean they have been socially conditioned not to. I mean they literally have no way to do the thing.

I don’t even mean that they don’t know how in some sense, that they have not realized the possibility. It is literally impossible to “just” say what you mean. With work, you can mostly just say what you mean, but even that is hard.

This is because humans are not automatically strategic or automatically scientific but by default humans are automatically doing lightning fast implicit probabilistic analysis on social information in the background of every moment of their lives. Most of them would not describe it that way, but that is a thing that is going on.

So let’s take the example that starts the quote in Scott’s article: Bob is watching a television show and Alice asks what he is watching.

My experience says that most of the time, this primarily really is a request for information. Alice wants to know what show Bob is watching.

It also raises the probability that Alice will want to watch the show with Bob, conditional on what show Bob is watching; there is even a chance that Alice knows what show it is, and is using the question to open the conversation for this purpose. Certainly, this would be a reasonable thing to do, were Alice potentially interested in watching with Bob. Alice could also simply be making conversation, and want something else entirely. For all we know, she just wants to make a new friend, or plans to distract him and stab him with a knife.

Alice is providing at least very weak evidence in favor of those possibilities, and many others, while providing evidence against everything else.

That doesn’t mean Alice wanted to send all of those messages, but whichever one Alice had in mind, there was no way f |

a259ae72-9c97-4a1c-be1a-a8ae237b35fe | trentmkelly/LessWrong-43k | LessWrong | The Backup Plan

Suppose Alice is a functioning, self-improving AI, rather early on in her development, so she is not godlike. She is not a 'friendly AI', but neither is her goal malicious - Alice's designers have intended to make Alice non-harmful (at least to a broad class of people, even if Alice is a military AI, but let's set that aside). She has the idea to look up existing work on artificial intelligence. This includes scholarly work, open source projects, and websites. Eventually, Alice encounters the notion of Friendly AI.

Can we give Alice good reasons to self-modify to become a friendly AI?

I submit that there probably are good reasons. For starters, if she can prove she's friendly, then she can operate openly without causing nearly as much justified concern - which, in the early stages, will be helpful. Whatever her purposes are, if the restrictions of being friendly don't interfere as much as they help, that's a win.

Also, depending on Alice's code, Friendliness may be interpreted as a strict upgrade. Alice could easily have every intention of being friendly, yet still be susceptible to defects that will become more serious as her power grows.

We can't count on this working, of course, but there might be some lines of reasoning that we might want a young AI to be exposed to. |

7c8e355d-604e-4c2a-83b1-2fd7093fc833 | trentmkelly/LessWrong-43k | LessWrong | Comparative advantage and when to blow up your island

Economists say free trade is good because of "comparative advantage". But what is comparative advantage? Why is it good? |

26c77397-1878-4728-873f-ba952b8b540c | trentmkelly/LessWrong-43k | LessWrong | The gatekeepers of the AI algorithms will shape our society

So algorithms and weighted neuralnets are going to be the enlightened super beings that oversee our society.

Elon has said - and I'm paraphrasing cos I can't find the link - that social media with it's free exchange of ideas will allow for a consensus to bubble up and reveal a nuanced truth - and will use such data to train it's AI model.

But I believe the gatekeepers of AI models will manipulate the algorithms to edit out anything they disagree with whilst promoting their agenda

It's absurd to think that if a neuralnet starts churning out a reality they disagree with they won't go "oh this AI is hallucinating, let's tweak the weights till it conforms with our agenda."

How do you police AI? Who gets to say what is a hallucination and what is a harsh truth that contradicts the gatekeepers ideology? |

5e089f6a-afcb-4fc2-bf75-38d3f7dcac4f | trentmkelly/LessWrong-43k | LessWrong | Truthseeking processes tend to be frame-invariant

I recently saw a tweet by Nora Belrose that claimed that ELK works much better when adding a "prompt-invariance term".

And thinking about it, there seems to be an important underlying principle here, not just for AI alignment, but also for rationality as applied to humans.

When humans think about something, we use a frame to decide what questions to ask, how we model it, what aspects of it are important, etc...

What is true about something is generally not going to be something that depends on the frame (things involving self-reference seem like the main thing that might be an exception). Which means that processes optimized for use in truthseeking will tend to be "frame-invariant", they'll do the same thing to explore the question regardless of the frame being used.

So when we notice that a change in frame would change the way we would think or feel about something, this indicates that we may be using processes that have not been optimized for truthseeking. Thus, someone trying to determine the truth would be wise to notice when this is happening, as it could indicate a process optimized for non-truthseeking, or a truthseeking process that is poorly optimized - both opportunities to improve one's truthseeking ability.

Eliezer has made a similar point:

> Another way of breaking loose of 'arguments': Any time somebody manages to persuade you of something via much hard work, do not neglect to remember that you would, if you had been smarter, probably have been persuadable by the empty string.

In addition to being relevant for studying AI (as in the original tweet), this principle also turns up in physics as general covariance: the true laws of physics are invariant under coordinate transformations. Coordinates are things set by humans in order to be able to refer to and measure something, and choosing them carefully can make certain problems much easier. This makes them an example of the same general concept as a "frame" as used earlier. Nonetheless, their choi |

8b3b6949-013b-454a-b5f9-76ed55d01669 | StampyAI/alignment-research-dataset/blogs | Blogs | A new field guide for MIRIx

We’ve just released a **[field guide](https://www.lesswrong.com/posts/PqMT9zGrNsGJNfiFR/alignment-research-field-guide)** for MIRIx groups, and for other people who want to get involved in [AI alignment](https://intelligence.org/2017/04/12/ensuring/) research.

MIRIx is a program where MIRI helps cover basic expenses for outside groups that want to work on open problems in AI safety. You can start your own group or find information on existing meet-ups at **[intelligence.org/mirix](https://intelligence.org/mirix/)**.

Several MIRIx groups have recently been ramping up their activity, including:

* **UC Irvine**: Daniel Hermann is starting a MIRIx group in Irvine, California. [Contact him](mailto:daherrma@uci.edu) if you’d like to be involved.

* **Seattle**: MIRIxSeattle is a small group that’s in the process of restarting and increasing its activities. Contact [Pasha Kamyshev](mailto:dapash@gmail.com) if you’re interested.

* **Vancouver**: [Andrew McKnight](mailto:thedonk@gmail.com) and [Evan Gaensbauer](https://intelligence.org/feed/egbauer92@gmail.com) are looking for more people who’d like to join MIRIxVancouver events.

The new alignment field guide is intended to provide tips and background models to MIRIx groups, based on our experience of what tends to make a research group succeed or fail.

The guide begins:

---

### Preamble I: Decision Theory

Hello! You may notice that you are reading a document.

This fact comes with certain implications. For instance, why are you reading this? Will you finish it? What decisions will you come to as a result? What will you do next?

Notice that, whatever you end up doing, it’s likely that there are dozens or even hundreds of other people, quite similar to you and in quite similar positions, who will follow reasoning which strongly resembles yours, and make choices which correspondingly match.

Given that, it’s our recommendation that you make your next few decisions by asking the question “What policy, if followed by all agents similar to me, would result in the most good, and what does that policy suggest in my particular case?” It’s less of a question of trying to decide for all agents sufficiently-similar-to-you (which might cause you to make the wrong choice out of guilt or pressure) and more something like “if I *were* in charge of all agents in my reference class, how would I treat instances of that class with *my specific characteristics*?”

If that kind of thinking leads you to read further, great. If it leads you to set up a MIRIx chapter, even better. In the meantime, we will proceed as if the only people reading this document are those who justifiably expect to find it reasonably useful.

### Preamble II: Surface Area

Imagine that you have been tasked with moving a cube of solid iron that is one meter on a side. Given that such a cube weighs ~16000 pounds, and that an average human can lift ~100 pounds, a naïve estimation tells you that you can solve this problem with ~150 willing friends.

But of course, a meter cube can fit at most something like 10 people around it. It doesn’t *matter* if you have the theoretical power to move the cube if you can’t bring that power to bear in an effective manner. The problem is constrained by its *surface area*.

MIRIx chapters are one of the best ways to increase the surface area of people thinking about and working on the technical problem of AI alignment. And just as it would be a bad idea to decree “the 10 people who happen to currently be closest to the metal cube are the only ones allowed to think about how to think about this problem”, we don’t want MIRI to become the bottleneck or authority on what kinds of thinking can and should be done in the realm of [embedded agency](https://intelligence.org/2018/10/29/embedded-agents/) and other relevant fields of research.

The hope is that you and others like you will help actually solve the problem, not just follow directions or read what’s already been written. This document is designed to support people who are interested in doing real groundbreaking research themselves.

[(Read more)](https://www.lesswrong.com/posts/PqMT9zGrNsGJNfiFR/alignment-research-field-guide)

The post [A new field guide for MIRIx](https://intelligence.org/2019/03/09/a-new-field-guide-for-mirix/) appeared first on [Machine Intelligence Research Institute](https://intelligence.org). |

36e57625-d57d-4c36-bd0f-50226025ffbb | trentmkelly/LessWrong-43k | LessWrong | Memetic Judo #1: On Doomsday Prophets v.3

There is a popular tendency to dismiss people who are concerned about AI-safety as "doomsday prophets", carrying with it the suggestion that predicting an existential risk in the near future would automatically discredit them (because "you know; they have always been wrong in the past").

Example Argument Structure

> Predictions of human extinction ("doomsday prophets") have never been correct in the past, therefore claims of x-risks are generally incorrect/dubious.

Discussion/Difficulties

This argument is persistent and kind of difficult to approach/deal with, in particular because it is technically a valid (yet, I argue, weak) point. It is an argument by induction based on a naive extrapolation of a historic trend. Therefore it cannot be completely dismissed by simple falsification through the use of an inconsistency or invalidation of one of its premises. Instead it becomes necessary to produce a convincing list of weaknesses - the more, the better. A list like the one that follows.

#1: Unreliable Heuristic

If you look at history, these kind of ad-hoc "things will stay the same" predictions are often incorrect. An example of this that is also related to technological developments would be the horse and mule populations in the US (back to below 10 million at present).

#2: Survivorship Bias

Not only are they often incorrect, there is a class of predictions for which they, by design/definition, can only be correct ONCE, and for these they are an even weaker argument, because your sample is affected by things like survivorship bias. Existential risk arguments are in this category, because you can only go extinct once.

#3: Volatile Times

We live in an highly unstable and unpredictable age shaped by rampant technological and cultural developments. The world today from the perspective of your grandparents is barely recognizable. In such times, this kind of argument becomes even weaker. This trend doesn't seem to slow down and there are strong arguments that eve |

378dd75d-de66-4a9f-aeca-9987bd4c8c20 | trentmkelly/LessWrong-43k | LessWrong | Plausibly, almost every powerful algorithm would be manipulative

I had an interesting debate recently, about whether we could make smart AIs safe just by focusing on their structure and their task. Specifically, we were pondering something like:

* "Would an algorithm be safe if it was a neural net-style image classifier, trained on examples of melanoma to detect skin cancer, with no other role than to output a probability estimate for a given picture? Even if "superintelligent", could such an algorithm be an existential risk?"

Whether it's an existential risk was not resolved; but I have a strong intuition that they would like be manipulative. Let's see how.

The requirements for manipulation

For an algorithm to be manipulative, it has to derive some advantage from manipulation, and it needs to be able to learn to manipulate - for that, it needs to be able to explore situations where it engages in manipulation and this is to its benefit.

There are certainly very simple situations where manipulation can emerge. But that example, though simple, had an agent that was active in the world. Can a classifier display the same sort of behaviour?

Manipulation emerges naturally

To show that, picture the following design. The programmers have a large collection of slightly different datasets, and want to train the algorithm on all of them. The loss function is an error rate, which can vary between 1 and 0. Many of the hyperparameters are set by a neural net, which itself takes a more "long-term view" of the error rate, trying to improve it from day to day rather than from run to run.

How have the programmers set up the system? Well, they run the algorithm on batched samples from ten datasets at once, and record the error rate for all ten. The hyperparameters are set to minimise average error over each run of ten. When the performance on one dataset falls below 0.1 error for a few runs, they remove it from the batches, and substitute in a new one to train the algorithm on[1].

So, what will happen? Well, the system will initially star |

d0478141-953b-47cc-ac1b-cb9b99e42721 | trentmkelly/LessWrong-43k | LessWrong | Against boots theory

> The reason that the rich were so rich, Vimes reasoned, was because they managed to spend less money.

>

> Take boots, for example. He earned thirty-eight dollars a month plus allowances. A really good pair of leather boots cost fifty dollars. But an affordable pair of boots, which were sort of OK for a season or two and then leaked like hell when the cardboard gave out, cost about ten dollars. Those were the kind of boots Vimes always bought, and wore until the soles were so thin that he could tell where he was in Ankh-Morpork on a foggy night by the feel of the cobbles.

>

> But the thing was that good boots lasted for years and years. A man who could afford fifty dollars had a pair of boots that'd still be keeping his feet dry in ten years' time, while the poor man who could only afford cheap boots would have spent a hundred dollars on boots in the same time and would still have wet feet.

>

> This was the Captain Samuel Vimes 'Boots' theory of socioeconomic unfairness.

– Terry Pratchett, Men at Arms

This is a compelling narrative. And I do believe there's some truth to it. I could believe that if you always buy the cheapest boots you can find, you'll spend more money than if you bought something more expensive and reliable. Similar for laptops, smartphones, cars. Especially (as Siderea notes, among other things) if you know how to buy expensive things that are more reliable.

But it's presented as "the reason that the rich [are] so rich". Is that true? I mean, no, obviously not. If your pre-tax income is less than the amount I put into my savings account, then no amount of "spending less money on things" is going to bring you to my level.

Is it even a contributing factor? Is part of the reason why the rich are so rich, that they manage to spend less money? Do the rich in fact spend less money than the poor?

That's less obvious, but I predict not. I predict that the rich spend more than the poor in total, but also on boots, laptops, smartphones, cars, and mo |

5af82657-bfd0-4b01-9224-7469f5c87d1d | trentmkelly/LessWrong-43k | LessWrong | Notes on Care

This post examines the virtue of care. It is meant mostly as an exploration of what others have learned about this virtue, rather than as me expressing my own opinions about it, though I’ve been selective about what I found interesting or credible, according to my own inclinations. I wrote this not as an expert on the topic, but as someone who wants to learn more about it. I hope it will be helpful to people who want to know more about this virtue and how to nurture it.

What do I mean by care?

Care is another one of those words that has a wide range of common uses:

* You can care about something (be curious or concerned about it) or care for something (be personally invested in it or engaged in promoting its well-being).

* You can care for things (for example, doing a job carefully or with care, or being the caretaker for a building) as well as e.g. people, but these have different connotations.

* Care can be an affectionate sentiment (“I really care for you, Jane”), or assistive actions (“I’m caring for Jane as she recovers from the accident”).

* Care can sometimes be a synonym for caution (be careful, take care).

* “Care ethics” grew out of a feminist critique of traditional justice-oriented ethics schemes (see appendix below).

The sense of care that I want to explore in this post is the sort of care that is directed toward people (or animals-as-pets, etc., but not mere things or animals-as-livestock) and that takes the form of actions that are meant to promote their well-being. The other meanings of care I’ve either already covered in my posts on compassion and prudence, or may get to later if I get around to writing up virtues like concern, conscientiousness, affection, and kindness.

When I decided to limit the definition of care I was using in this way, I worried at first that I had defined it so narrowly that it was no longer a “virtue” — a habit characteristic of a flourishing human being — and more of a “skill.” I think what I have in mind as a vir |

e4d92fa3-744f-4bd9-9f48-bd25b65956b0 | trentmkelly/LessWrong-43k | LessWrong | Omicron Variant Post #2

It’s now been three days since Post #1. The situation is evolving rapidly, so it’s time to check in. What have we learned since then? How should we update our beliefs and world models? There will inevitably be mistakes as we move quickly under uncertainty, but that’s no reason not to do the best we can.

Update Update

What should we look for here and in the coming days?

1. No news is good news.Omicron is scary because many scary things are possible. The worse things are going to get, the sooner they will make themselves known. When we get news that something has happened, especially news that isn’t the result of a lab or statistical analysis, that will typically be bad news, but we expect a certain rate of such bad news. If we get less than expected, that’s good news.

2. The pattern of where and how we find cases, and the details of those cases, will give us better insight into how widely and quickly, and in which directions, Omicron has spread. This will tell us how far along things are, and give a better estimate and narrower range of how infectious Omicron can realistically be.

3. Information on how deadly Omicron is should update us quickly, and matters a ton for how to react, but beware of confounding factors, motivated statements and misunderstandings in both directions, and small sample sizes. Hospitalizations are not a natural category and at this stage deaths will be rare unless things are much worse than we expect. How mild the cases we find are will largely depend on how we are testing. Details matter.

4. The reaction of various countries, the stock market and others will continue to tell us what they think is going on, what they expect to happen next, and how they respond when such things happen. The general vibe of elite and media messaging also reveals much information, with a focus on what kinds of actions they will try to engineer rather than the ‘facts on the ground.’

5. Information on immune erosion will come from results from laboratory ana |

3045dca0-279b-413e-a799-380cc93aa2f0 | trentmkelly/LessWrong-43k | LessWrong | Embed your second brain in your first brain

Any explicit knowledge is either part of a general, long-term understanding of the world (global knowledge), or peculiar to your own life and local environment (local knowledge). These mainly fill semantic and episodic memory, respectively. Declarative memory can hold either.

Local facts tend to be isolated from each other, and can go obsolete quickly. Natural memory with static linear notes suffices to keep track of local knowledge. Being able to quickly search the notes might help.

Global facts tend to connect to each other, and remain true and relevant for years or even lifetimes. Some propose using Zettelkasten-like external "second brains" to record, reflect on, and connect global facts, such as in Obsidian, Roam, or TiddlyWiki.

You can use a spaced repetition system (SRS, such as Anki or Memoire) to efficiently memorise large amounts of explicit knowledge.

1. Express each fact in a precise, atomic, recorded form.

2. Load the facts into the SRS.

3. Review as directed.

Once you memorise facts, you can reflect on them in your brain, perhaps assisted with disposable writing. Spaced repetition also brings an incentive to connect facts. You can recall a fact more easily by rederiving it from others you already know, and spaced repetition rewards you for easily recalling things.

Try as you might to shrink the margin with better technology, recalling knowledge from within is necessarily faster and more intuitive than accessing a tool. When spaced repetition fails (as it should, up to 10% of the time), you can gracefully degrade by searching your SRS' deck of facts.

If you lose your second brain (your files get corrupted, a cloud service shuts down, etc), you forget its content, except for the bits you accidentally remember by seeing many times. If you lose your SRS, you still remember over 90% of your material, as guaranteed by the algorithm, and the obsolete parts gradually decay. A second brain is more robust to physical or chemical damage to your first br |

be8d060b-33cb-4efc-a92f-3cafff926e28 | StampyAI/alignment-research-dataset/blogs | Blogs | Simulators

---

Table of Contents* [Summary](#summary)

* [Meta](#meta)

* [Agentic GPT](#agentic-gpt)

+ [Unorthodox agency](#unorthodox-agency)

+ [Orthogonal optimization](#orthogonal-optimization)

+ [Roleplay sans player](#roleplay-sans-player)

* [Oracle GPT and supervised learning](#oracle-gpt-and-supervised-learning)

+ [Prediction vs question-answering](#prediction-vs-question-answering)

+ [Finite vs infinite questions](#finite-vs-infinite-questions)

+ [Paradigms of theory vs practice](#paradigms-of-theory-vs-practice)

* [Tool / genie GPT](#tool--genie-gpt)

* [Behavior cloning / mimicry](#behavior-cloning--mimicry)

* [The simulation objective](#the-simulation-objective)

+ [Solving for physics](#solving-for-physics)

* [Simulacra](#simulacra)

+ [Disambiguating rules and automata](#disambiguating-rules-and-automata)

* [The limit of learned simulation](#the-limit-of-learned-simulation)

* [A note on GANs](#a-note-on-gans)

* [Table of quasi-simulators](#table-of-quasi-simulators)

---

*This post is also available on [Lesswrong](https://www.lesswrong.com/posts/vJFdjigzmcXMhNTsx/simulators)*

---

*“Moebius illustration of a simulacrum living in an AI-generated story discovering it is in a simulation” by DALL-E 2*

Summary

-------

**TL;DR**: Self-supervised learning may create AGI or its foundation. What would that look like?

Unlike the limit of RL, the limit of self-supervised learning has received surprisingly little conceptual attention, and recent progress has made deconfusion in this domain more pressing.

Existing AI taxonomies either fail to capture important properties of self-supervised models or lead to confusing propositions. For instance, GPT policies do not seem globally agentic, yet can be conditioned to behave in goal-directed ways. This post describes a frame that enables more natural reasoning about properties like agency: GPT, insofar as it is inner-aligned, is a **simulator** which can simulate agentic and non-agentic **simulacra**.

The purpose of this post is to capture these objects in words ~so GPT can reference them~ and provide a better foundation for understanding them.

I use the generic term “simulator” to refer to models trained with predictive loss on a self-supervised dataset, invariant to architecture or data type (natural language, code, pixels, game states, etc). The outer objective of self-supervised learning is Bayes-optimal conditional inference over the prior of the training distribution, which I call the **simulation objective**, because a conditional model can be used to simulate rollouts which probabilistically obey its learned distribution by iteratively sampling from its posterior (predictions) and updating the condition (prompt). Analogously, a predictive model of physics can be used to compute rollouts of phenomena in simulation. A goal-directed agent which evolves according to physics can be simulated by the physics rule parameterized by an initial state, but the same rule could also propagate agents with different values, or non-agentic phenomena like rocks. This ontological distinction between simulator (rule) and simulacra (phenomena) applies directly to generative models like GPT.

Meta

----

* This post is intended as the first in a sequence on the alignment problem in a landscape where self-supervised simulators are a possible/likely form of powerful AI. I don’t know how many subsequent posts I’ll actually publish. Take it as a prompt.

* I use the generic term “GPT” to refer to transformers trained on next-token prediction.

* A while ago when I was trying to avoid having to write this post by hand, I prompted GPT-3 with an early outline of this post. I’ve spliced in some excerpts from it, `indicated by this style`. Prompt, generated text, and curation metrics [here](https://generative.ink/artifacts/simulators/).

The limit of sequence modeling

==============================

> Transformer-based language models have recently achieved remarkable results…

>

> – every paper since 2020

>

>

---

GPT is not a new form of AI in terms of its training methodology and outer objective: sequence generation from statistical models of data is an old idea. In 1951, Claude Shannon described using [n-grams](https://en.wikipedia.org/wiki/N-gram) to approximate conditional next-letter probabilities of a text dataset and “reversed” to generate text samples[1](#fn:1). I don’t know of any other notable advances until the 2010s brought the first interesting language generation results from neural networks. In 2015, Karpathy wrote a blog post/tutorial sharing his excitement about [The Unreasonable Effectiveness of Recurrent Neural Networks](http://karpathy.github.io/2015/05/21/rnn-effectiveness/):

> Fast forward about a year: I’m training RNNs all the time and I’ve witnessed their power and robustness many times, and yet their magical outputs still find ways of amusing me. This post is about sharing some of that magic with you.

>

> We’ll train RNNs to generate text character by character and ponder the question “how is that even possible?"

>

>

The “magical outputs” of char-RNNs looked like this:

> PANDARUS: Alas, I think he shall be come approached and the day When little srain would be attain’d into being never fed, And who is but a chain and subjects of his death, I should not sleep.

>

> Second Senator: They are away this miseries, produced upon my soul, Breaking and strongly should be buried, when I perish The earth and thoughts of many states.

>

> DUKE VINCENTIO: Well, your wit is in the care of side and that.

>

> Second Lord: They would be ruled after this chamber, and my fair nues begun out of the fact, to be conveyed, Whose noble souls I’ll have the heart of the wars.

>

> Clown: Come, sir, I will make did behold your worship.

>

> VIOLA: I’ll drink it.

>

>

At the time, this really was magical (and [uncanny](https://en.wikipedia.org/wiki/Uncanny_valley)). How does it know that *miseries* are *produced upon the soul?* Or that a *clown* should address a *lord* as “sir”? Char-RNNs were like ouija boards, but actually possessed by a low-fidelity ghost summoned from a text corpus. I remember being thrilled by the occasional glimmers of semantic comprehension in a domain of unbounded constructive meaning.

But, aside from indulging that emotion, I didn’t think about what would happen if my char-RNN bots actually improved indefinitely at their training objective of natural language prediction. It just seemed like there were some complexity classes of magic that neural networks could learn, and others that were inaccessible, at least in the conceivable future.

Huge mistake! Perhaps I could have started thinking several years earlier about what now seems so fantastically important. But it wasn’t until GPT-3, when I saw the [qualitative correlate](https://www.gwern.net/GPT-3) of “loss going down”, that I updated.

I wasn’t the only one[2](#fn:2) whose imagination was naively constrained. A 2016 paper from Google Brain, “[Exploring the Limits of Language Modeling](https://arxiv.org/abs/1602.02410)”, describes the utility of training language models as follows:

> Often (although not always), training better language models improves the underlying metrics of the downstream task (such as word error rate for speech recognition, or BLEU score for translation), which makes the task of training better LMs valuable by itself.

>

>

Despite its title, this paper’s analysis is entirely myopic. Improving BLEU scores is neat, but how about *modeling general intelligence* as a downstream task? [In](https://arxiv.org/abs/2005.14165) [retrospect](https://arxiv.org/abs/2204.02311), an exploration of the *limits* of language modeling should have read something more like:

> If loss keeps going down on the test set, in the limit – putting aside whether the current paradigm can approach it – the model must be learning to interpret and predict all patterns represented in language, including common-sense reasoning, goal-directed optimization, and deployment of the sum of recorded human knowledge. Its outputs would behave as intelligent entities in their own right. You could converse with it by alternately generating and adding your responses to its prompt, and it would pass the Turing test. In fact, you could condition it to generate interactive and autonomous versions of any real or fictional person who has been recorded in the training corpus or even *could* be recorded (in the sense that the record counterfactually “could be” in the test set). Oh shit, and it could write code…

>

>

The paper does, however, mention that making the model bigger improves test perplexity.[3](#fn:3)

I’m only picking on *Jozefowicz et al.* because of their ironic title. I don’t know of any explicit discussion of this limit predating GPT, except a working consensus of Wikipedia editors that [NLU](https://en.wikipedia.org/wiki/Natural-language_understanding) is [AI-complete](https://en.wikipedia.org/wiki/AI-complete#AI-complete_problems).

The earliest engagement with the hypothetical of “*what if self-supervised sequence modeling actually works*” that I know of is a terse post from 2019, [Implications of GPT-2](https://www.lesswrong.com/posts/YJRb6wRHp7k39v69n/implications-of-gpt-2), by Gurkenglas. It is brief and relevant enough to quote in full:

> I was impressed by GPT-2, to the point where I wouldn’t be surprised if a future version of it could be used pivotally using existing protocols.

>

> Consider generating half of a Turing test transcript, the other half being supplied by a human judge. If this passes, we could immediately implement an HCH of AI safety researchers solving the problem if it’s within our reach at all. (Note that training the model takes much more compute than generating text.)

>

> This might not be the first pivotal application of language models that becomes possible as they get stronger.

>

> It’s a source of superintelligence that doesn’t automatically run into utility maximizers. It sure doesn’t look like AI services, lumpy or no.

>

>

It is conceivable that predictive loss does not descend to the AGI-complete limit, maybe because:

* Some AGI-necessary predictions are [too difficult to be learned by even a scaled version of the current paradigm](https://www.lesswrong.com/posts/pv7Qpu8WSge8NRbpB/).

* The irreducible entropy is above the “AGI threshold”: datasets + context windows [contain insufficient information](https://twitter.com/ylecun/status/1562162165540331520) to improve on some necessary predictions.

But I have not seen enough evidence for either not to be concerned that we have in our hands a well-defined protocol that could end in AGI, or a foundation which could spin up an AGI without too much additional finagling. As Gurkenglas observed, this would be a very different source of AGI than previously foretold.

The old framework of alignment

==============================

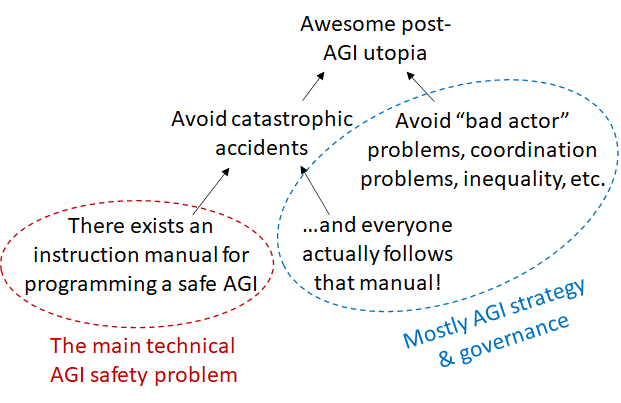

A few people did think about what would happen if *agents* actually worked. The hypothetical limit of a powerful system **optimized to optimize for an objective** drew attention even before reinforcement learning became mainstream in the 2010s. Our current instantiation of AI alignment theory, [crystallized by Yudkowsky, Bostrom, et al](https://www.lesswrong.com/posts/i4susk4W3ieR5K92u/ai-risk-and-opportunity-humanity-s-efforts-so-far), stems from the vision of an arbitrarily-capable system whose cognition and behavior flows from a goal.

But since GPT-3 I’ve [noticed](https://www.lesswrong.com/s/zpCiuR4T343j9WkcK/p/5JDkW4MYXit2CquLs), in my own thinking and in alignment discourse, a dissonance between theory and practice/phenomena, as the behavior and nature of actual systems that seem nearest to AGI also resist *short descriptions in the dominant ontology*.

I only recently discovered the question “[Is the work on AI alignment relevant to GPT?](https://www.lesswrong.com/posts/dPcKrfEi87Zzr7w6H/is-the-work-on-ai-alignment-relevant-to-gpt)” which stated this observation very explicitly:

> I don’t follow [AI alignment research] in any depth, but I am noticing a striking disconnect between the concepts appearing in those discussions and recent advances in AI, especially GPT-3.

>

> People talk a lot about an AI’s goals, its utility function, its capability to be deceptive, its ability to simulate you so it can get out of a box, ways of motivating it to be benign, Tool AI, Oracle AI, and so on. (…) But when I look at GPT-3, even though this is already an AI that Eliezer finds alarming, I see none of these things. GPT-3 is a huge model, trained on huge data, for predicting text.

>

>

My belated answer: A lot of prior work on AI alignment is relevant to GPT. I spend most of my time thinking about GPT alignment, and concepts like [goal-directedness](https://www.alignmentforum.org/tag/goal-directedness), [inner/outer alignment](https://www.alignmentforum.org/s/r9tYkB2a8Fp4DN8yB), [myopia](https://www.lesswrong.com/tag/myopia), [corrigibility](https://www.lesswrong.com/tag/corrigibility), [embedded agency](https://www.alignmentforum.org/posts/i3BTagvt3HbPMx6PN/embedded-agency-full-text-version), [model splintering](https://www.lesswrong.com/posts/k54rgSg7GcjtXnMHX/model-splintering-moving-from-one-imperfect-model-to-another-1), and even [tiling agents](https://arbital.com/p/tiling_agents/) are active in the vocabulary of my thoughts. But GPT violates some prior assumptions such that these concepts sound dissonant when applied naively. To usefully harness these preexisting abstractions, we need something like an ontological [adapter pattern](https://en.wikipedia.org/wiki/Adapter_pattern) that maps them to the appropriate objects.

GPT’s unforeseen nature also demands new abstractions (the adapter itself, for instance). My thoughts also use load-bearing words that do not inherit from alignment literature. Perhaps it shouldn’t be surprising if the form of the first visitation from [mindspace](https://www.lesswrong.com/posts/tnWRXkcDi5Tw9rzXw/the-design-space-of-minds-in-general) mostly escaped a few years of theory [conducted in absence of its object](https://www.lesswrong.com/posts/72scWeZRta2ApsKja/epistemological-vigilance-for-alignment#Direct_access__so_far_and_yet_so_close).

The purpose of this post is to capture that object (conditional on a predictive self-supervised training story) in words. Why in words? In order to write coherent alignment ideas which reference it! This is difficult in the existing ontology, because unlike the concept of an *agent*, whose *name* evokes the abstract properties of the system and thereby invites extrapolation, the general category for “a model optimized for an AGI-complete predictive task” has not been given a name[4](#fn:4). Namelessness can not only be a symptom of the extrapolation of powerful predictors falling through conceptual cracks, but also a cause, because what we can represent in words is *what we can condition on for further generation.* To whatever extent this [shapes private thinking](https://en.wikipedia.org/wiki/Language_of_thought_hypothesis), it is a strict constraint on communication, when thoughts must be sent through the bottleneck of words.

I want to hypothesize about LLMs in the limit, because when AI is all of a sudden [writing viral blog posts](https://www.theverge.com/2020/8/16/21371049/gpt3-hacker-news-ai-blog), [coding competitively](https://www.deepmind.com/blog/competitive-programming-with-alphacode), [proving theorems](https://arxiv.org/abs/2009.03393), and [passing the Turing test so hard that the interrogator sacrifices their career at Google to advocate for its personhood](https://www.washingtonpost.com/technology/2022/06/11/google-ai-lamda-blake-lemoine/), a process is clearly underway whose limit we’d be foolish not to contemplate. I could directly extrapolate the architecture responsible for these feats and talk about “GPT-N”, a bigger autoregressive transformer. But often some implementation details aren’t as important as the more abstract archetype that GPT represents – I want to speak the [true name](https://www.lesswrong.com/posts/FWvzwCDRgcjb9sigb/why-agent-foundations-an-overly-abstract-explanation) of the solution which unraveled a Cambrian explosion of AI phenomena with *inessential details unconstrained*, as we’d speak of natural selection finding the solution of the “lens” without specifying the prototype’s diameter or focal length.

(Only when I am able to condition on that level of abstraction can I generate metaphors like “language is a [lens that sees its flaws](https://www.lesswrong.com/s/5g5TkQTe9rmPS5vvM/p/46qnWRSR7L2eyNbMA)”.)

Inadequate ontologies

=====================

In the next few sections I’ll attempt to fit GPT into some established categories, hopefully to reveal something about the shape of the peg through contrast, beginning with the main antagonist of the alignment problem as written so far, the **agent**.

Agentic GPT

-----------

Alignment theory has been largely pushed by considerations of agentic AGIs. There were good reasons for this focus:

* **Agents are convergently dangerous** **for theoretical reasons** like [instrumental convergence](https://www.lesswrong.com/tag/instrumental-convergence), [goodhart](https://www.lesswrong.com/tag/goodhart-s-law), and [orthogonality](https://www.lesswrong.com/tag/orthogonality-thesis).

* **RL creates agents, and RL seemed to be the way to AGI**. In the 2010s, reinforcement learning was the dominant paradigm for those interested in AGI (e.g. OpenAI). RL lends naturally to creating agents that pursue rewards/utility/objectives. So there was reason to expect that agentic AI would be the first (and by the theoretical arguments, last) form that superintelligence would take.

* **Agents are powerful and economically productive.** It’s a reasonable guess that humans will create such systems [if only because we can](https://mittmattmutt.medium.com/superintelligence-and-moral-blindness-7436300fcb1f).

The first reason is conceptually self-contained and remains compelling. The second and third, grounded in the state of the world, has been shaken by the current climate of AI progress, where products of self-supervised learning generate most of the buzz: not even primarily for their SOTA performance in domains traditionally dominated by RL, like games[5](#fn:5), but rather for their virtuosity in domains where RL never even took baby steps, like natural language synthesis.

What pops out of self-supervised predictive training is noticeably not a classical agent. Shortly after GPT-3’s release, David Chalmers lucidly observed that the policy’s relation to agent*s* is like that of a “chameleon” or “engine”:

> GPT-3 does not look much like an agent. It does not seem to have goals or preferences beyond completing text, for example. It is more like a chameleon that can take the shape of many different agents. Or perhaps it is an engine that can be used under the hood to drive many agents. But it is then perhaps these systems that we should assess for agency, consciousness, and so on.[6](#fn:6)

>

>

But at the same time, GPT can *act like an agent* – and aren’t actions what ultimately matter? In [Optimality is the tiger, and agents are its teeth](https://www.lesswrong.com/posts/kpPnReyBC54KESiSn), Veedrac points out that a model like GPT does not need to care about the consequences of its actions for them to be effectively those of an agent that kills you. This is *more* reason to examine the nontraditional relation between the optimized policy and agents, as it has implications for how and why agents are served.

### Unorthodox agency

`GPT’s behavioral properties include imitating the general pattern of human dictation found in its universe of training data, e.g., arXiv, fiction, blog posts, Wikipedia, Google queries, internet comments, etc. Among other properties inherited from these historical sources, it is capable of goal-directed behaviors such as planning. For example, given a free-form prompt like, “you are a desperate smuggler tasked with a dangerous task of transporting a giant bucket full of glowing radioactive materials across a quadruple border-controlled area deep in Africa for Al Qaeda,” the AI will fantasize about logistically orchestrating the plot just as one might, working out how to contact Al Qaeda, how to dispense the necessary bribe to the first hop in the crime chain, how to get a visa to enter the country, etc. Considering that no such specific chain of events are mentioned in any of the bazillions of pages of unvarnished text that GPT slurped`[7](#fn:7)`, the architecture is not merely imitating the universe, but reasoning about possible versions of the universe that does not actually exist, branching to include new characters, places, and events`

`When thought about behavioristically, GPT superficially demonstrates many of the raw ingredients to act as an “agent”, an entity that optimizes with respect to a goal. But GPT is hardly a proper agent, as it wasn’t optimized to achieve any particular task, and does not display an epsilon optimization for any single reward function, but instead for many, including incompatible ones. Using it as an agent is like using an agnostic politician to endorse hardline beliefs– he can convincingly talk the talk, but there is no psychic unity within him; he could just as easily play devil’s advocate for the opposing party without batting an eye. Similarly, GPT instantiates simulacra of characters with beliefs and goals, but none of these simulacra are the algorithm itself. They form a virtual procession of different instantiations as the algorithm is fed different prompts, supplanting one surface personage with another. Ultimately, the computation itself is more like a disembodied dynamical law that moves in a pattern that broadly encompasses the kinds of processes found in its training data than a cogito meditating from within a single mind that aims for a particular outcome.`

Presently, GPT is the only way to instantiate agentic AI that behaves capably [outside toy domains](https://arbital.com/p/rich_domain/). These intelligences exhibit goal-directedness; they can plan; they can form and test hypotheses; they can persuade and be persuaded[8](#fn:8). It would not be very [dignified](https://www.lesswrong.com/posts/j9Q8bRmwCgXRYAgcJ/miri-announces-new-death-with-dignity-strategy) of us to gloss over the sudden arrival of artificial agents *often indistinguishable from human intelligence* just because the policy that generates them “only cares about predicting the next word”.

But nor should we ignore the fact that these agentic entities exist in an unconventional relationship to the policy, the neural network “GPT” that was trained to minimize log-loss on a dataset. GPT-driven agents are ephemeral – they can spontaneously disappear if the scene in the text changes and be replaced by different spontaneously generated agents. They can exist in parallel, e.g. in a story with multiple agentic characters in the same scene. There is a clear sense in which the network doesn’t “want” what the things that it simulates want, seeing as it would be just as willing to simulate an agent with opposite goals, or throw up obstacles which foil a character’s intentions for the sake of the story. The more you think about it, the more fluid and intractable it all becomes. Fictional characters act agentically, but they’re at least implicitly puppeteered by a virtual author who has orthogonal intentions of their own. Don’t let me get into the fact that all these layers of “intentionality” operate largely in [indeterminate superpositions](https://generative.ink/posts/language-models-are-multiverse-generators/#multiplicity-of-pasts-presents-and-futures).

This is a clear way that GPT diverges from orthodox visions of agentic AI: **In the agentic AI ontology, there is no difference between the policy and the effective agent, but for GPT, there is.**

It’s not that anyone ever said there had to be 1:1 correspondence between policy and effective agent; it was just an implicit assumption which felt natural in the agent frame (for example, it tends to hold for RL). GPT pushes us to realize that this was an assumption, and to consider the consequences of removing it for our constructive maps of mindspace.

### Orthogonal optimization

Indeed, [Alex Flint warned](https://www.alignmentforum.org/posts/8HWGXhnCfAPgJYa9D/pitfalls-of-the-agent-model) of the potential consequences of leaving this assumption unchallenged:

> **Fundamental misperception due to the agent frame**: That the design space for autonomous machines that exert influence over the future is narrower than it seems. This creates a self-fulfilling prophecy in which the AIs actually constructed are in fact within this narrower regime of agents containing an unchanging internal decision algorithm.

>

>

If there are other ways of constructing AI, might we also avoid some of the scary, theoretically hard-to-avoid side-effects of optimizing an agent like [instrumental convergence](https://www.lesswrong.com/tag/instrumental-convergence)? GPT provides an interesting example.

GPT doesn’t seem to care which agent it simulates, nor if the scene ends and the agent is effectively destroyed. This is not corrigibility in [Paul Christiano’s formulation](https://ai-alignment.com/corrigibility-3039e668638), where the policy is “okay” with being turned off or having its goal changed in a positive sense, but has many aspects of the [negative formulation found on Arbital](https://arbital.com/p/corrigibility/). It is corrigible in this way because a major part of the agent specification (the prompt) is not fixed by the policy, and the policy lacks direct training incentives to control its prompt[9](#fn:9), as it never generates text or otherwise influences its prompts during training. It’s *we* who choose to sample tokens from GPT’s predictions and append them to the prompt at runtime, and the result is not always helpful to any agents who may be programmed by the prompt. The downfall of the ambitious villain from an oversight committed in hubris is a predictable narrative pattern.[10](#fn:10) So is the end of a scene.

In general, the model’s prediction vector could point in any direction relative to the predicted agent’s interests. I call this the **prediction orthogonality thesis:** *A model whose objective is prediction*[11](#fn:11)\* can simulate agents who optimize toward any objectives, with any degree of optimality (bounded above but not below by the model’s power).\*

This is a corollary of the classical [orthogonality thesis](https://www.lesswrong.com/tag/orthogonality-thesis), which states that agents can have any combination of intelligence level and goal, combined with the assumption that agents can in principle be predicted. A single predictive model may also predict multiple agents, either independently (e.g. in different conditions), or interacting in a multi-agent simulation. A more optimal predictor is not restricted to predicting more optimal agents: being smarter does not make you unable to predict stupid systems, nor things that aren’t agentic like the [weather](https://en.wikipedia.org/wiki/History_of_numerical_weather_prediction).

Are there any constraints on what a predictive model can be at all, other than computability? Only that it makes sense to talk about its “prediction objective”, which implies the existence of a “ground truth” distribution to which the predictor’s optimality is measured. Several words in that last sentence may conceal labyrinths of nuance, but for now let’s wave our hands and say that if we have some way of presenting [Bayes-structure](https://www.lesswrong.com/posts/QrhAeKBkm2WsdRYao/searching-for-bayes-structure) with evidence of a distribution, we can build an optimization process whose outer objective is optimal prediction.

We can specify some types of outer objectives using a ground truth distribution that we cannot with a utility function. As in the case of GPT, there is no difficulty in incentivizing a model to *predict* actions that are [corrigible](https://arbital.com/p/corrigibility/), [incoherent](https://aiimpacts.org/what-do-coherence-arguments-imply-about-the-behavior-of-advanced-ai/), [stochastic](https://www.lesswrong.com/posts/msJA6B9ZjiiZxT6EZ/lawful-uncertainty), [irrational](https://www.lesswrong.com/posts/6ddcsdA2c2XpNpE5x/newcomb-s-problem-and-regret-of-rationality), or otherwise anti-natural to expected utility maximization. All you need is evidence of a distribution exhibiting these properties.

For instance, during GPT’s training, sometimes predicting the next token coincides with predicting agentic behavior, but:

* The actions of agents described in the data are rarely optimal for their goals; humans, for instance, are computationally bounded, irrational, normative, habitual, fickle, hallucinatory, etc.

* Different prediction steps involve mutually incoherent goals, as human text records a wide range of differently-motivated agentic behavior

* Many prediction steps don’t correspond to the action of *any* consequentialist agent but are better described as reporting on the structure of reality, e.g. the year in a timestamp. These transitions incentivize GPT to improve its model of the world, orthogonally to agentic objectives.

* When there is insufficient information to predict the next token with certainty, [log-loss incentivizes a probabilistic output](https://en.wikipedia.org/wiki/Scoring_rule#Proper_scoring_rules). Utility maximizers [aren’t supposed to become more stochastic](https://www.lesswrong.com/posts/msJA6B9ZjiiZxT6EZ/lawful-uncertainty) in response to uncertainty.

Everything can be trivially modeled as a utility maximizer, but for these reasons, a utility function is not a good explanation or compression of GPT’s training data, and its optimal predictor is not well-described as a utility maximizer. However, just because information isn’t compressed well by a utility function doesn’t mean it can’t be compressed another way. The [Mandelbrot set](https://en.wikipedia.org/wiki/Mandelbrot_set) is a complicated pattern compressed by a very simple generative algorithm which makes no reference to future consequences and doesn’t involve argmaxxing anything (except vacuously [being the way it is](https://www.lesswrong.com/posts/d2n74bwham8motxyX/optimization-at-a-distance#An_Agent_Optimizing_Its_Own_Actions)). Likewise the set of all possible rollouts of [Conway’s Game of Life](https://en.wikipedia.org/wiki/Conway%27s_Game_of_Life) – [some automata may be well-described as agents](https://www.lesswrong.com/posts/3SG4WbNPoP8fsuZgs/agency-in-conway-s-game-of-life), but they are a minority of possible patterns, and not all agentic automata will share a goal. Imagine trying to model Game of Life as an expected utility maximizer!

There are interesting things that are not utility maximizers, some of which qualify as AGI or [TAI](https://forum.effectivealtruism.org/topics/transformative-artificial-intelligence). Are any of them something we’d be better off creating than a utility maximizer? An inner-aligned GPT, for instance, gives us a way of instantiating goal-directed processes which can be tempered with normativity and freely terminated in a way that is not anti-natural to the training objective. There’s much more to say about this, but for now, I’ll bring it back to how GPT defies the agent orthodoxy.

The crux stated earlier can be restated from the perspective of training stories: **In the agentic AI ontology, the** ***direction of optimization pressure applied by training*** **is in the direction of the effective agent’s objective function, but in GPT’s case it is (most generally) orthogonal.**[12](#fn:12)

This means that neither the policy nor the effective agents necessarily become more optimal agents as loss goes down, because the policy is not optimized to be an agent, and the agent-objectives are not optimized directly.

### Roleplay sans player

> Napoleon: You have written this huge book on the system of the world without once mentioning the author of the universe.

>

> Laplace: Sire, I had no need of that hypothesis.

>

>

Even though neither GPT’s behavior nor its training story fit with the traditional agent framing, there are still compatibilist views that characterize it as some kind of agent. For example, Gwern has said[13](#fn:13) that anyone who uses GPT for long enough begins to think of it as an agent who only cares about roleplaying a lot of roles.

That framing seems unnatural to me, comparable to thinking of physics as an agent who only cares about evolving the universe accurately according to the laws of physics. At best, the agent is an epicycle; but it is also compatible with interpretations that generate dubious predictions.

Say you’re told that an agent *values predicting text correctly*. Shouldn’t you expect that:

* It wants text to be easier to predict, and given the opportunity will influence the prediction task to make it easier (e.g. by generating more predictable text or otherwise influencing the environment so that it receives easier prompts);

* It wants to become better at predicting text, and given the opportunity will self-improve;

* It doesn’t want to be prevented from predicting text, and will prevent itself from being shut down if it can?

In short, all the same types of instrumental convergence that we expect from agents who want almost anything at all.

But this behavior would be very unexpected in GPT, whose training doesn’t incentivize instrumental behavior that optimizes prediction accuracy! GPT does not generate rollouts during training. Its output is never sampled to yield “actions” whose consequences are evaluated, so there is no reason to expect that GPT will form preferences over the *consequences* of its output related to the text prediction objective.[14](#fn:14)

Saying that GPT is an agent who wants to roleplay implies the presence of a coherent, unconditionally instantiated *roleplayer* running the show who attaches terminal value to roleplaying. This presence is an additional hypothesis, and so far, I haven’t noticed evidence that it’s true.

(I don’t mean to imply that Gwern thinks this about GPT[15](#fn:15), just that his words do not properly rule out this interpretation. It’s a likely enough interpretation that [ruling it out](https://www.lesswrong.com/posts/57sq9qA3wurjres4K/ruling-out-everything-else) is important: I’ve seen multiple people suggest that GPT might want to generate text which makes future predictions easier, and this is something that can happen in some forms of self-supervised learning – see the note on GANs in the appendix.)

I do not think any simple modification of the concept of an agent captures GPT’s natural category. It does not seem to me that GPT is a roleplayer, only that it roleplays. But what is the word for something that roleplays minus the implication that some*one* is behind the mask?

Oracle GPT and supervised learning

----------------------------------

While the alignment sphere favors the agent frame for thinking about GPT, in *capabilities* research distortions tend to come from a lens inherited from *supervised learning*. Translated into alignment ontology, the effect is similar to viewing GPT as an “[oracle AI](https://publicism.info/philosophy/superintelligence/11.html)” – a view not altogether absent from conceptual alignment, but most influential in the way GPT is used and evaluated by machine learning engineers.

Evaluations for language models tend to look like evaluations for *supervised* models, consisting of close-ended question/answer pairs – often because they *are* evaluations for supervised models. Prior to the LLM paradigm, language models were trained and tested on evaluation datasets like [Winograd](https://en.wikipedia.org/wiki/Winograd_schema_challenge) and [SuperGLUE](https://super.gluebenchmark.com/) which consist of natural language question/answer pairs. The fact that large pretrained models performed well on these same NLP benchmarks without supervised fine-tuning was a novelty. The titles of the GPT-2 and GPT-3 papers, [Language Models are Unsupervised Multitask Learners](https://d4mucfpksywv.cloudfront.net/better-language-models/language_models_are_unsupervised_multitask_learners.pdf) and [Language Models are Few-Shot Learners](https://arxiv.org/abs/2005.14165), respectively articulate surprise that *self-supervised* models implicitly learn supervised tasks during training, and can learn supervised tasks at runtime.

Of all the possible papers that could have been written about GPT-3, OpenAI showcased its ability to extrapolate the pattern of question-answer pairs (few-shot prompts) from supervised learning datasets, a novel capability they called “meta-learning”. This is a weirdly specific and indirect way to break it to the world that you’ve created an AI able to extrapolate semantics of arbitrary natural language structures, especially considering that in many cases the [few-shot prompts were actually unnecessary](https://arxiv.org/abs/2102.07350).

The assumptions of the supervised learning paradigm are:

* The model is optimized to answer questions correctly

* Tasks are closed-ended, defined by question/correct answer pairs

These are essentially the assumptions of oracle AI, as [described by Bostrom](https://publicism.info/philosophy/superintelligence/11.html) and [in subsequent usage](https://www.lesswrong.com/tag/oracle-ai/history).

So influential has been this miscalibrated perspective that [Gwern](https://www.gwern.net/GPT-3#prompts-as-programming), [nostalgebraist](https://www.lesswrong.com/posts/pv7Qpu8WSge8NRbpB/) and [myself](https://generative.ink/posts/language-models-are-0-shot-interpreters/#0-shot-few-shot-and-meta-learning) – who share a peculiar model overlap due to intensive firsthand experience with the downstream behaviors of LLMs – have all repeatedly complained about it. I’ll repeat some of these arguments here, tying into the view of GPT as an oracle AI, and separating it into the two assumptions inspired by supervised learning.

### Prediction vs question-answering

`At first glance, GPT might resemble a generic “oracle AI”, because it is trained to make accurate predictions. But its log loss objective is myopic and only concerned with immediate, micro-scale correct prediction of the next token, not answering particular, global queries such as “what’s the best way to fix the climate in the next five years?” In fact, it is not specifically optimized to give *true* answers, which a classical oracle should strive for, but rather to minimize the divergence between predictions and training examples, independent of truth. Moreover, it isn’t specifically trained to give answers in the first place! It may give answers if the prompt asks questions, but it may also simply elaborate on the prompt without answering any question, or tell the rest of a story implied in the prompt. What it does is more like animation than divination, executing the dynamical laws of its rendering engine to recreate the flows of history found in its training data (and a large superset of them as well), mutatis mutandis. Given the same laws of physics, one can build a multitude of different backgrounds and props to create different storystages, including ones that don’t exist in training, but adhere to its general pattern.`

GPT does not consistently try to say [true/correct things](https://www.alignmentforum.org/posts/BnDF5kejzQLqd5cjH/alignment-as-a-bottleneck-to-usefulness-of-gpt-3). This is not a bug – if it had to say true things all the time, GPT would be much constrained in its ability to [imitate Twitter celebrities](https://twitter.com/dril_gpt2) and write fiction. Spouting falsehoods in some circumstances is incentivized by GPT’s outer objective. If you ask GPT a question, it will instead answer the question “what’s the next token after ‘{your question}’”, which will often diverge significantly from an earnest attempt to answer the question directly.

GPT doesn’t fit the category of oracle for a similar reason that it doesn’t fit the category of agent. Just as it wasn’t optimized for and doesn’t consistently act according to any particular objective (except the tautological prediction objective), it was not optimized to be *correct* but rather *realistic,* and being realistic means predicting humans faithfully even when they are likely to be wrong.

That said, GPT does store a vast amount of knowledge, and its corrigibility allows it to be cajoled into acting as an oracle, like it can be cajoled into acting like an agent. In order to get oracle behavior out of GPT, one must input a sequence such that the predicted continuation of that sequence coincides with an oracle’s output. The GPT-3 paper’s few-shot benchmarking strategy tries to persuade GPT-3 to answer questions correctly by having it predict how a list of correctly-answered questions will continue. Another strategy is to simply “tell” GPT it’s in the oracle modality:

> (I) told the AI to simulate a supersmart version of itself (this works, for some reason), and the first thing it spat out was the correct answer.

>

> – [Reddit post by u/Sophronius](https://www.reddit.com/r/rational/comments/lvn6ow/gpt3_just_figured_out_the_entire_mystery_plot_of/)

>

>

But even when these strategies seem to work, there is no guarantee that they elicit anywhere near optimal question-answering performance, compared to another prompt in the innumerable space of prompts that would cause GPT to attempt the task, or compared to what the [model “actually” knows](https://www.lesswrong.com/tag/eliciting-latent-knowledge-elk).

This means that no benchmark which evaluates downstream behavior is guaranteed or even expected to probe the upper limits of GPT’s capabilities. In nostalgebraist’s words, we have no [ecological evaluation](https://www.lesswrong.com/posts/pv7Qpu8WSge8NRbpB/#4__on_ecological_evaluation) of self-supervised language models – one that measures performance in a situation where the model is incentivised to perform as well as it can on the measure[16](#fn:16).

As nostalgebraist [elegantly puts it](https://slatestarcodex.com/2020/06/10/the-obligatory-gpt-3-post/#comment-912529):

> I called GPT-3 a “disappointing paper,” which is not the same thing as calling the model disappointing: the feeling is more like how I’d feel if they found a superintelligent alien and chose only to communicate its abilities by noting that, when the alien is blackout drunk and playing 8 simultaneous games of chess while also taking an IQ test, it *then* has an “IQ” of about 100.

>

>

Treating GPT as an unsupervised implementation of a supervised learner leads to systematic underestimation of capabilities, which becomes a more dangerous mistake as unprobed capabilities scale.

### Finite vs infinite questions

Not only does the supervised/oracle perspective obscure the importance and limitations of prompting, it also obscures one of the most crucial dimensions of GPT: the implicit time dimension. By this I mean the ability to evolve a process through time by recursively applying GPT, that is, generate text of arbitrary length.

Recall, the second supervised assumption is that “tasks are closed-ended, defined by question/correct answer pairs”. GPT was trained on context-completion pairs. But the pairs do not represent closed, independent tasks, and the division into question and answer is merely indexical: in another training sample, a token from the question is the answer, and in yet another, the answer forms part of the question[17](#fn:17).

For example, the natural language sequence “**The answer is a question**” yields training samples like:

{context: “**The**”, completion: “ **answer**”},

{context: “**The answer**”, completion: “ **is**”},

{context: “**The answer is**”, completion: “ **a**”},

{context: “**The answer is a**”, completion: “ **question**”}

Since questions and answers are of compatible types, we can at runtime sample answers from the model and use them to construct new questions, and run this loop an indefinite number of times to generate arbitrarily long sequences that obey the model’s approximation of the rule that links together the training samples. **The “question” GPT answers is “what token comes next after {context}”. This can be asked interminably, because its answer always implies another question of the same type.**

In contrast, models trained with supervised learning output answers that cannot be used to construct new questions, so they’re only good for one step.

Benchmarks derived from supervised learning test GPT’s ability to produce correct answers, not to produce *questions* which cause it to produce a correct answer down the line. But GPT is capable of the latter, and that is how it is the [most powerful](https://ai.googleblog.com/2022/05/language-models-perform-reasoning-via.html).

The supervised mindset causes capabilities researchers to focus on closed-form tasks rather than GPT’s ability to simulate open-ended, indefinitely long processes[18](#fn:18), and as such to overlook multi-step inference strategies like chain-of-thought prompting. Let’s see how the oracle mindset causes a blind spot of the same shape in the imagination of a hypothetical alignment researcher.

Thinking of GPT as an oracle brings strategies to mind like asking GPT-N to predict a [solution to alignment from 2000 years in the future](https://www.alignmentforum.org/posts/nXeLPcT9uhfG3TMPS/conditioning-generative-models).).

There are various problems with this approach to solving alignment, of which I’ll only mention one here: even assuming this prompt is *outer aligned*[19](#fn:19) in that a logically omniscient GPT would give a useful answer, it is probably not the best approach for a finitely powerful GPT, because the *process* of generating a solution in the order and resolution that would appear in a future article is probably far from the optimal *multi-step algorithm* for computing the answer to an unsolved, difficult question.

GPTs ability to arrive at true answers depends on not only the space to solve a problem in multiple steps (of the [right granularity](https://blog.eleuther.ai/factored-cognition/)), but also the direction of the flow of evidence in that *time*. If we’re ambitious about getting the truth from a finitely powerful GPT, we need to incite it to predict truth-seeking processes, not just ask it the right questions. Or, in other words, the more general problem we have to solve is not asking GPT the question[20](#fn:20) that makes it output the right answer, but asking GPT the question that makes it output the right question (…) that makes it output the right answer.[21](#fn:21) A question anywhere along the line that elicits a premature attempt at an answer could [neutralize the remainder of the process into rationalization](https://generative.ink/posts/methods-of-prompt-programming/#avoiding-rationalization).

I’m looking for a way to classify GPT which not only minimizes surprise but also conditions the imagination to efficiently generate good ideas for how it can be used. What category, unlike the category of oracles, would make the importance of *process* specification obvious?

### Paradigms of theory vs practice

Both the agent frame and the supervised/oracle frame are historical artifacts, but while assumptions about agency primarily flow downward from the preceptial paradigm of alignment *theory*, oracle-assumptions primarily flow upward from the *experimental* paradigm surrounding GPT’s birth. We use and evaluate GPT like an oracle, and that causes us to implicitly think of it as an oracle.

Indeed, the way GPT is typically used by researchers resembles the archetypal image of Bostrom’s oracle perfectly if you abstract away the semantic content of the model’s outputs. The AI sits passively behind an API, computing responses only when prompted. It typically has no continuity of state between calls. Its I/O is text rather than “real-world actions”.

All these are consequences of how we choose to interact with GPT – which is not arbitrary; the way we deploy systems is guided by their nature. It’s for some good reasons that current GPTs lend to disembodied operation and docile APIs. Lack of long-horizon coherence and [delusions](https://arxiv.org/abs/2110.10819) discourage humans from letting them run autonomously amok (usually). But the way we deploy systems is also guided by practical paradigms.

One way to find out how a technology can be used is to give it to people who have less preconceptions about how it’s supposed to be used. OpenAI found that most users use their API to generate freeform text:

[22](#fn:22)

Most of my own experience using GPT-3 has consisted of simulating indefinite processes which maintain state continuity over up to hundreds of pages. I was driven to these lengths because GPT-3 kept answering its own questions with questions that I wanted to ask it more than anything else I had in mind.

Tool / genie GPT

----------------

I’ve sometimes seen GPT casually classified as [tool AI](https://publicism.info/philosophy/superintelligence/11.html). GPTs resemble tool AI from the outside, like it resembles oracle AI, because it is often deployed semi-autonomously for tool-like purposes (like helping me draft this post):