title stringlengths 2 169 | diff stringlengths 235 19.5k | body stringlengths 0 30.5k | url stringlengths 48 84 | created_at stringlengths 20 20 | closed_at stringlengths 20 20 | merged_at stringlengths 20 20 | updated_at stringlengths 20 20 | diff_len float64 101 3.99k | repo_name stringclasses 83

values | __index_level_0__ int64 15 52.7k |

|---|---|---|---|---|---|---|---|---|---|---|

Handle the slice object case in TransformGenerator.__getitem__ | diff --git a/pathod/language/generators.py b/pathod/language/generators.py

index 01f709e2b0..9fff3082f0 100644

--- a/pathod/language/generators.py

+++ b/pathod/language/generators.py

@@ -37,6 +37,8 @@ def __len__(self):

def __getitem__(self, x):

d = self.gen.__getitem__(x)

+ if isinstance(x, slic... | should fix https://travis-ci.org/mitmproxy/mitmproxy/jobs/134827120#L501 test failures:

```

self = <netlib.websockets.protocol.Masker object at 0x7fe8ebdfc110>

offset = slice(0, 3, None), data = 'foo'

def mask(self, offset, data):

result = bytearray(data)

if six.PY2:

for i in range(len(... | https://api.github.com/repos/mitmproxy/mitmproxy/pulls/1199 | 2016-06-02T20:32:20Z | 2016-06-02T20:45:56Z | 2016-06-02T20:45:56Z | 2016-06-02T20:48:44Z | 131 | mitmproxy/mitmproxy | 27,472 |

更新 PPOCRLabel 导出 JSON 文件的错误,更新了配置文件 SLANet_ch.yml 中 Eval - datadir 前多余的空格。 | diff --git a/PPOCRLabel/PPOCRLabel.py b/PPOCRLabel/PPOCRLabel.py

index c17db91a5b..d0d2bb721b 100644

--- a/PPOCRLabel/PPOCRLabel.py

+++ b/PPOCRLabel/PPOCRLabel.py

@@ -2531,7 +2531,7 @@ def exportJSON(self):

split = 'test'

# save dict

- html = {'structure': {'tokens': token_li... | 解决了导出 JSON 文件时,L2534 将 "cells" 写成 "cell" 的问题。因如下代码取的是cells,否则在训练载入数据时会报 keyerror 的错误。

https://github.com/PaddlePaddle/PaddleOCR/blob/282eebbd660886c38d4ae91bcbcd70b5cdc03f75/ppocr/data/pubtab_dataset.py#L102 | https://api.github.com/repos/PaddlePaddle/PaddleOCR/pulls/7445 | 2022-09-01T00:46:36Z | 2022-09-01T08:01:16Z | 2022-09-01T08:01:16Z | 2022-09-01T08:01:16Z | 307 | PaddlePaddle/PaddleOCR | 41,836 |

hwp | diff --git a/CHANGELOG.md b/CHANGELOG.md

index 450071ad4ca8d..3b49b4ed0ac1e 100644

--- a/CHANGELOG.md

+++ b/CHANGELOG.md

@@ -3,6 +3,7 @@

## Unreleased

### New Features

+- Added `HWPReader` (#7672)

- Simplified portkey LLM interface (#7669)

- Added async operation support to `ElasticsearchStore` vector store (#761... | Added hwp reader module. | https://api.github.com/repos/run-llama/llama_index/pulls/7672 | 2023-09-14T06:57:51Z | 2023-09-15T15:24:33Z | 2023-09-15T15:24:33Z | 2023-09-15T15:24:33Z | 1,411 | run-llama/llama_index | 6,141 |

Update readme.md | diff --git a/readme.md b/readme.md

index 102854c54..88b887cad 100644

--- a/readme.md

+++ b/readme.md

@@ -167,7 +167,7 @@ Same with the above instructions. You need to change torch to AMD version

pip uninstall torch torchvision torchaudio torchtext functorch xformers

pip install torch torchvision torchaudio -... | https://api.github.com/repos/lllyasviel/Fooocus/pulls/608 | 2023-10-09T22:53:40Z | 2023-10-09T22:53:55Z | 2023-10-09T22:53:55Z | 2023-10-09T22:55:05Z | 556 | lllyasviel/Fooocus | 6,981 | |

typo fix: flask -> flag | diff --git a/flask/app.py b/flask/app.py

index 48369ee441..47254bedf3 100644

--- a/flask/app.py

+++ b/flask/app.py

@@ -112,7 +112,7 @@ class Flask(_PackageBoundObject):

#: configuration key. Defaults to `False`.

debug = ConfigAttribute('DEBUG')

- #: The testing flask. Set this to `True` to enable the t... | https://api.github.com/repos/pallets/flask/pulls/275 | 2011-07-11T22:25:56Z | 2011-07-12T00:41:17Z | 2011-07-12T00:41:16Z | 2020-11-14T07:18:45Z | 164 | pallets/flask | 20,207 | |

Add layer - neural network inference from the command line | diff --git a/README.md b/README.md

index b012d00f..d84dc70c 100644

--- a/README.md

+++ b/README.md

@@ -126,6 +126,8 @@ Further resources:

- [Natural Language Processing](#scala-nlp)

- [Data Analysis / Data Visualization](#scala-data-analysis)

- [General-Purpose Machine Learning](#scala-general-purpose)

+... | https://api.github.com/repos/josephmisiti/awesome-machine-learning/pulls/582 | 2019-01-21T18:08:03Z | 2019-01-21T18:24:31Z | 2019-01-21T18:24:31Z | 2019-01-21T18:24:31Z | 498 | josephmisiti/awesome-machine-learning | 51,963 | |

Update Hugging Face Hub instructions | diff --git a/README.md b/README.md

index 960d5a2e..d962390a 100755

--- a/README.md

+++ b/README.md

@@ -22,7 +22,7 @@ Keep in mind that the links expire after 24 hours and a certain amount of downlo

### Access to Hugging Face

-We are also providing downloads on [Hugging Face](https://huggingface.co/meta-llama). You... | https://api.github.com/repos/meta-llama/llama/pulls/1091 | 2024-04-08T14:13:07Z | 2024-04-09T16:16:49Z | 2024-04-09T16:16:48Z | 2024-04-09T16:16:49Z | 245 | meta-llama/llama | 31,971 | |

merge google/flan based adapters: T5Adapter, CodeT5pAdapter, FlanAdapter | diff --git a/fastchat/model/model_adapter.py b/fastchat/model/model_adapter.py

index 296b53c8f3..0a660d9e9c 100644

--- a/fastchat/model/model_adapter.py

+++ b/fastchat/model/model_adapter.py

@@ -23,7 +23,6 @@

AutoTokenizer,

LlamaTokenizer,

LlamaForCausalLM,

- T5Tokenizer,

)

from fastchat.constants... | ## Why are these changes needed?

merge multiple google/flan based adapters (T5Adapter, CodeT5pAdapter, FlanAdapter) together.

## Related issue number (if applicable)

<!-- For example: "Closes #1234" -->

## Checks

- [x] I've run `format.sh` to lint the changes in this PR.

- [x] I've included any doc chan... | https://api.github.com/repos/lm-sys/FastChat/pulls/2411 | 2023-09-13T04:58:58Z | 2023-09-18T20:18:39Z | 2023-09-18T20:18:39Z | 2023-09-18T20:18:39Z | 828 | lm-sys/FastChat | 41,550 |

version bump 13.5.0 | diff --git a/CHANGELOG.md b/CHANGELOG.md

index 8c3c2dcb3..61d5cc8d7 100644

--- a/CHANGELOG.md

+++ b/CHANGELOG.md

@@ -6,7 +6,7 @@ The format is based on [Keep a Changelog](https://keepachangelog.com/en/1.0.0/),

and this project adheres to [Semantic Versioning](https://semver.org/spec/v2.0.0.html).

-## Unreleased

+#... | https://api.github.com/repos/Textualize/rich/pulls/3066 | 2023-07-29T16:10:04Z | 2023-07-29T16:16:47Z | 2023-07-29T16:16:47Z | 2023-07-29T16:16:48Z | 290 | Textualize/rich | 48,008 | |

consistently opening braces for multiline function def on new line | diff --git a/CppCoreGuidelines.md b/CppCoreGuidelines.md

index 832fd9cc2..4e079d12f 100644

--- a/CppCoreGuidelines.md

+++ b/CppCoreGuidelines.md

@@ -2548,13 +2548,15 @@ And yes, C++ does have multiple return values, by convention of using a `tuple`,

##### Example

- int f(const string& input, /*output only*/ str... | https://api.github.com/repos/isocpp/CppCoreGuidelines/pulls/276 | 2015-10-03T11:39:07Z | 2015-10-05T10:59:06Z | 2015-10-05T10:59:06Z | 2015-10-05T11:12:21Z | 2,379 | isocpp/CppCoreGuidelines | 15,928 | |

Add Lemur model | diff --git a/docs/model_support.md b/docs/model_support.md

index 24f3bc9cc5..9d1aedddc9 100644

--- a/docs/model_support.md

+++ b/docs/model_support.md

@@ -48,6 +48,7 @@

- [HuggingFaceH4/starchat-beta](https://huggingface.co/HuggingFaceH4/starchat-beta)

- [HuggingFaceH4/zephyr-7b-alpha](https://huggingface.co/HuggingF... | <!-- Thank you for your contribution! -->

<!-- Please add a reviewer to the assignee section when you create a PR. If you don't have the access to it, we will shortly find a reviewer and assign them to your PR. -->

## Why are these changes needed?

Support the model https://huggingface.co/OpenLemur/lemur-70b-ch... | https://api.github.com/repos/lm-sys/FastChat/pulls/2584 | 2023-10-19T14:36:14Z | 2023-10-24T01:23:52Z | 2023-10-24T01:23:52Z | 2023-10-24T01:23:53Z | 1,049 | lm-sys/FastChat | 41,514 |

Filter the None labels | diff --git a/utils/datasets.py b/utils/datasets.py

index 7ed8f5a1d80..338400e6340 100755

--- a/utils/datasets.py

+++ b/utils/datasets.py

@@ -375,7 +375,7 @@ def __init__(self, path, img_size=640, batch_size=16, augment=False, hyp=None, r

pbar = tqdm(self.label_files)

for i, file in enumerate(pbar):

... |

## 🛠️ PR Summary

<sub>Made with ❤️ by [Ultralytics Actions](https://github.com/ultralytics/actions)<sub>

### 🌟 Summary

Improved label loading robustness in dataset processing.

### 📊 Key Changes

- Added an additional check to verify that labels are not `None` before processing them.

### 🎯 Purpose & Impact

- **... | https://api.github.com/repos/ultralytics/yolov5/pulls/705 | 2020-08-11T03:04:06Z | 2020-08-11T18:15:49Z | 2020-08-11T18:15:49Z | 2024-01-19T21:08:14Z | 211 | ultralytics/yolov5 | 25,592 |

add Line to Social | diff --git a/README.md b/README.md

index 0d59bc8ab7..f1ef4b3a86 100644

--- a/README.md

+++ b/README.md

@@ -1048,6 +1048,7 @@ API | Description | Auth | HTTPS | CORS |

| [HackerNews](https://github.com/HackerNews/API) | Social news for CS and entrepreneurship | No | Yes | Unknown |

| [Instagram](https://www.instagram.... | <!-- Thank you for taking the time to work on a Pull Request for this project! -->

<!-- To ensure your PR is dealt with swiftly please check the following: -->

- [x] My submission is formatted according to the guidelines in the [contributing guide](/CONTRIBUTING.md)

- [x] My addition is ordered alphabetically

- [x]... | https://api.github.com/repos/public-apis/public-apis/pulls/2149 | 2021-10-03T02:13:37Z | 2021-10-06T06:54:39Z | 2021-10-06T06:54:39Z | 2021-10-06T06:54:39Z | 309 | public-apis/public-apis | 35,682 |

Lambda: add function properties to LambdaContext for invocations | diff --git a/localstack/services/awslambda/lambda_api.py b/localstack/services/awslambda/lambda_api.py

index c5156db076fde..c129b101430e9 100644

--- a/localstack/services/awslambda/lambda_api.py

+++ b/localstack/services/awslambda/lambda_api.py

@@ -10,6 +10,7 @@

import base64

import threading

import imp

+import re

... | This adds some common properties to the LambdaContext, which is passed

to functions during invocations. In particular, the following properties

are added: function_name, function_version, invoked_function_arn.

The above properties are passed to lambda functions running inside docker.

Support for publishing duri... | https://api.github.com/repos/localstack/localstack/pulls/810 | 2018-06-20T20:27:40Z | 2018-07-03T16:11:44Z | 2018-07-03T16:11:44Z | 2018-07-03T16:11:44Z | 2,056 | localstack/localstack | 28,938 |

Add new model to the arena | diff --git a/docs/model_support.md b/docs/model_support.md

index 654dda47e7..ddbeb92fdd 100644

--- a/docs/model_support.md

+++ b/docs/model_support.md

@@ -1,21 +1,23 @@

# Model Support

## Supported models

+

- [meta-llama/Llama-2-7b-chat-hf](https://huggingface.co/meta-llama/Llama-2-7b-chat-hf)

- - example: `pyth... | hi, thanks for your brilliant job!!

we want to support our model `ReaLM` in mt-bench.

we have added the related information to `conversation.py`, `model_adapter.py`, `model_registry.py`, `model_support.md`.

all the information is coded under our previous model `phoenix`.

the following links are the repos for th... | https://api.github.com/repos/lm-sys/FastChat/pulls/2296 | 2023-08-23T12:27:51Z | 2023-08-24T07:05:34Z | 2023-08-24T07:05:34Z | 2023-10-08T02:57:47Z | 1,889 | lm-sys/FastChat | 41,534 |

update xx_net.sh | diff --git a/code/default/xx_net.sh b/code/default/xx_net.sh

index 321284ea6d..874e6c5441 100755

--- a/code/default/xx_net.sh

+++ b/code/default/xx_net.sh

@@ -26,18 +26,20 @@ else

PYTHON="python"

fi

+PACKAGE_PATH=$("${PYTHON}" -c "import os; print os.path.dirname(os.path.realpath('$0'))")

+

start() {

echo... | 如果直接在linux 的/etc/init.d 中创建xx_net.sh 的软链接,然后注册为服务,xx_net 不会启动。

因此修改cd 到xx_net 实际所在目录的方式为直接调用xx_net 的绝对路径。

| https://api.github.com/repos/XX-net/XX-Net/pulls/3844 | 2016-07-12T13:31:16Z | 2016-07-12T14:10:34Z | 2016-07-12T14:10:34Z | 2016-07-12T15:01:43Z | 394 | XX-net/XX-Net | 17,190 |

Create pythagoreanTriplets.py | diff --git a/pythagoreanTriplets.py b/pythagoreanTriplets.py

new file mode 100644

index 0000000000..55b146ea78

--- /dev/null

+++ b/pythagoreanTriplets.py

@@ -0,0 +1,14 @@

+limit=int(input("Enter upper limit:"))

+c=0

+m=2

+while(c<limit):

+ for n in range(1,m+1):

+ a=m*m-n*n

+ b=2*m*n

+ c=m*m+n*n... | https://api.github.com/repos/geekcomputers/Python/pulls/1454 | 2022-01-04T03:29:45Z | 2022-01-04T07:50:55Z | 2022-01-04T07:50:55Z | 2022-01-04T07:50:55Z | 167 | geekcomputers/Python | 31,703 | |

Bipedal fix bounds and type hints | diff --git a/gym/envs/box2d/bipedal_walker.py b/gym/envs/box2d/bipedal_walker.py

index 8fc2dc8f643..ee072cf5311 100644

--- a/gym/envs/box2d/bipedal_walker.py

+++ b/gym/envs/box2d/bipedal_walker.py

@@ -181,12 +181,71 @@ def __init__(self, hardcore: bool = False):

categoryBits=0x0001,

)

- h... | # Description

This fixes the box infinite bounds on bipedal walker, as well as puts in place some missing type hints.

## Type of change

- [x] Bug fix (non-breaking change which fixes an issue)

# Checklist:

- [x] I have run the [`pre-commit` checks](https://pre-commit.com/) with `pre-commit run --all-file... | https://api.github.com/repos/openai/gym/pulls/2750 | 2022-04-13T23:26:37Z | 2022-04-14T00:23:08Z | 2022-04-14T00:23:08Z | 2022-04-15T21:21:40Z | 1,700 | openai/gym | 5,060 |

Capture and re-raise urllib3 ProtocolError | diff --git a/requests/adapters.py b/requests/adapters.py

index 3c1e979f14..bf94bbe7bd 100644

--- a/requests/adapters.py

+++ b/requests/adapters.py

@@ -23,6 +23,7 @@

from .packages.urllib3.exceptions import HTTPError as _HTTPError

from .packages.urllib3.exceptions import MaxRetryError

from .packages.urllib3.exception... | Fixes #2192

| https://api.github.com/repos/psf/requests/pulls/2193 | 2014-08-29T20:16:54Z | 2014-09-04T18:39:41Z | 2014-09-04T18:39:41Z | 2021-09-08T10:01:21Z | 451 | psf/requests | 32,388 |

Migrate Tradfri to has entity name | diff --git a/homeassistant/components/tradfri/base_class.py b/homeassistant/components/tradfri/base_class.py

index 5a84ad5719c7e5..c7154c19f158c4 100644

--- a/homeassistant/components/tradfri/base_class.py

+++ b/homeassistant/components/tradfri/base_class.py

@@ -37,6 +37,8 @@ async def wrapper(command: Command | list[C... | <!--

You are amazing! Thanks for contributing to our project!

Please, DO NOT DELETE ANY TEXT from this template! (unless instructed).

-->

## Proposed change

<!--

Describe the big picture of your changes here to communicate to the

maintainers why we should accept this pull request. If it fixes a bug

or... | https://api.github.com/repos/home-assistant/core/pulls/96248 | 2023-07-10T10:27:03Z | 2023-07-18T18:56:50Z | 2023-07-18T18:56:50Z | 2023-07-19T19:01:36Z | 1,635 | home-assistant/core | 39,282 |

Fix the OFT/BOFT bugs when using new LyCORIS implementation | diff --git a/extensions-builtin/Lora/network_oft.py b/extensions-builtin/Lora/network_oft.py

index d658ad10930..7821a8a7dbf 100644

--- a/extensions-builtin/Lora/network_oft.py

+++ b/extensions-builtin/Lora/network_oft.py

@@ -1,6 +1,5 @@

import torch

import network

-from lyco_helpers import factorization

from einops ... | ## Checklist:

- [x] I have read [contributing wiki page](https://github.com/AUTOMATIC1111/stable-diffusion-webui/wiki/Contributing)

- [x] I have performed a self-review of my own code

- [x] My code follows the [style guidelines](https://github.com/AUTOMATIC1111/stable-diffusion-webui/wiki/Contributing#code-style)

... | https://api.github.com/repos/AUTOMATIC1111/stable-diffusion-webui/pulls/14973 | 2024-02-20T09:21:16Z | 2024-02-26T04:12:12Z | 2024-02-26T04:12:12Z | 2024-02-29T01:11:21Z | 1,104 | AUTOMATIC1111/stable-diffusion-webui | 40,032 |

ES.46 Issue 1797 - narrowing to bool | diff --git a/CppCoreGuidelines.md b/CppCoreGuidelines.md

index 14618a0fd..844648e6e 100644

--- a/CppCoreGuidelines.md

+++ b/CppCoreGuidelines.md

@@ -11704,17 +11704,24 @@ A key example is basic narrowing:

The guidelines support library offers a `narrow_cast` operation for specifying that narrowing is acceptable and ... | Added note for narrowing to bool. See #1797.

Also qualified gsl::narrow | https://api.github.com/repos/isocpp/CppCoreGuidelines/pulls/1824 | 2021-08-30T04:50:32Z | 2021-09-30T18:05:15Z | 2021-09-30T18:05:15Z | 2021-09-30T18:05:25Z | 443 | isocpp/CppCoreGuidelines | 15,434 |

Bump sphinx from 3.0.4 to 3.1.0 | diff --git a/requirements/dev.txt b/requirements/dev.txt

index f9ca816b7b..6df3ed0d42 100644

--- a/requirements/dev.txt

+++ b/requirements/dev.txt

@@ -40,7 +40,7 @@ requests==2.23.0 # via sphinx

six==1.15.0 # via packaging, pip-tools, tox, virtualenv

snowballstemmer==2.0.0 # via sphinx

sphi... | Bumps [sphinx](https://github.com/sphinx-doc/sphinx) from 3.0.4 to 3.1.0.

<details>

<summary>Changelog</summary>

<p><em>Sourced from <a href="https://github.com/sphinx-doc/sphinx/blob/3.x/CHANGES">sphinx's changelog</a>.</em></p>

<blockquote>

<h1>Release 3.1.0 (released Jun 08, 2020)</h1>

<h2>Dependencies</h2>

<ul>

<li... | https://api.github.com/repos/pallets/flask/pulls/3645 | 2020-06-08T14:16:14Z | 2020-06-08T14:18:55Z | 2020-06-08T14:18:55Z | 2020-11-14T01:33:38Z | 494 | pallets/flask | 20,698 |

Fixed mispelling | diff --git a/README.md b/README.md

index d1db60c..5874fa4 100644

--- a/README.md

+++ b/README.md

@@ -3161,7 +3161,7 @@ The built-in `ord()` function returns a character's Unicode [code point](https:/

<!-- Example ID: edafe923-0c20-4315-b6e1-0c31abfc38f5 --->

```py

-# `pip install nump` first.

+# `pip install numpy`... | https://api.github.com/repos/satwikkansal/wtfpython/pulls/167 | 2020-01-05T00:50:06Z | 2020-01-06T14:08:50Z | 2020-01-06T14:08:50Z | 2020-01-07T19:11:40Z | 132 | satwikkansal/wtfpython | 25,883 | |

add check for submodules in release builds | diff --git a/release/build_release2.sh b/release/build_release2.sh

index ee3c3f4656c94c..c607aa820636bc 100755

--- a/release/build_release2.sh

+++ b/release/build_release2.sh

@@ -55,6 +55,14 @@ scons -j3

python selfdrive/manager/test/test_manager.py

selfdrive/car/tests/test_car_interfaces.py

+# Ensure no submodules... | https://api.github.com/repos/commaai/openpilot/pulls/21643 | 2021-07-18T21:39:08Z | 2021-07-19T17:09:16Z | 2021-07-19T17:09:16Z | 2021-07-19T17:09:17Z | 173 | commaai/openpilot | 9,199 | |

Revert "Fix "global" max_attempt bug (#1719)" | diff --git a/acme/acme/client.py b/acme/acme/client.py

index 478536ecc36..49c6bcb21c0 100644

--- a/acme/acme/client.py

+++ b/acme/acme/client.py

@@ -1,5 +1,4 @@

"""ACME client API."""

-import collections

import datetime

import heapq

import logging

@@ -335,9 +334,8 @@ def poll_and_request_issuance(

:param a... | Reverts letsencrypt/letsencrypt#2111 since it seems that may have broken travis tests when merged.

| https://api.github.com/repos/certbot/certbot/pulls/2220 | 2016-01-18T20:14:09Z | 2016-01-18T20:14:16Z | 2016-01-18T20:14:16Z | 2016-05-06T19:21:56Z | 1,698 | certbot/certbot | 2,595 |

Fix typo in comment | diff --git a/scrapy/core/downloader/handlers/http11.py b/scrapy/core/downloader/handlers/http11.py

index 8e972709355..038db7b47e0 100644

--- a/scrapy/core/downloader/handlers/http11.py

+++ b/scrapy/core/downloader/handlers/http11.py

@@ -379,7 +379,7 @@ def _cb_bodyready(self, txresponse, request):

... | https://api.github.com/repos/scrapy/scrapy/pulls/3059 | 2018-01-01T16:05:10Z | 2018-01-10T20:34:12Z | 2018-01-10T20:34:12Z | 2018-07-11T20:45:56Z | 159 | scrapy/scrapy | 34,770 | |

Fixes comfy list not being styled | diff --git a/web/extensions/core/colorPalette.js b/web/extensions/core/colorPalette.js

index 94bea9ab3e..41541a8d8f 100644

--- a/web/extensions/core/colorPalette.js

+++ b/web/extensions/core/colorPalette.js

@@ -107,7 +107,7 @@ const colorPalettes = {

"descrip-text": "#444",

"drag-text": "#555",

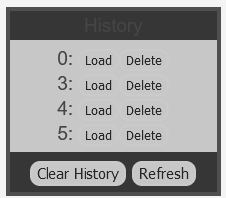

"error-te... | Missed this in #481, comfy-list was not styled so history and queue were not. Also, Light theme has a bad border color as seen in the history image below since it blends with background.

Previous:

![image... | https://api.github.com/repos/comfyanonymous/ComfyUI/pulls/498 | 2023-04-13T10:39:13Z | 2023-04-13T17:51:38Z | 2023-04-13T17:51:38Z | 2023-04-13T18:54:35Z | 259 | comfyanonymous/ComfyUI | 17,768 |

🌐 Add French translation for `docs/fr/docs/advanced/additional-status-code.md` | diff --git a/docs/fr/docs/advanced/additional-responses.md b/docs/fr/docs/advanced/additional-responses.md

new file mode 100644

index 0000000000000..35b57594d5975

--- /dev/null

+++ b/docs/fr/docs/advanced/additional-responses.md

@@ -0,0 +1,240 @@

+# Réponses supplémentaires dans OpenAPI

+

+!!! Attention

+ Ceci conce... | Here is the PR to translate the `advanced/additional-status-code.md`

See the french translation tracking issue https://github.com/tiangolo/fastapi/issues/1972.

Thanks for the reviews | https://api.github.com/repos/tiangolo/fastapi/pulls/5477 | 2022-10-09T08:46:16Z | 2022-11-13T14:03:48Z | 2022-11-13T14:03:48Z | 2022-11-13T14:03:49Z | 3,324 | tiangolo/fastapi | 22,755 |

Added uWSGI and example usage to stand-alone WSGI containers documentation | diff --git a/docs/deploying/wsgi-standalone.rst b/docs/deploying/wsgi-standalone.rst

index ad43c1441f..bf680976cd 100644

--- a/docs/deploying/wsgi-standalone.rst

+++ b/docs/deploying/wsgi-standalone.rst

@@ -27,6 +27,22 @@ For example, to run a Flask application with 4 worker processes (``-w

.. _eventlet: http://eventl... | Talked with @davidism and decided to add section for uWSGI HTTP router / server via embedded mode. | https://api.github.com/repos/pallets/flask/pulls/2302 | 2017-05-22T23:20:00Z | 2017-05-23T01:08:41Z | 2017-05-23T01:08:41Z | 2020-11-14T04:09:25Z | 315 | pallets/flask | 20,481 |

Minecraft bug fix: just obtain event instead of execute_event | diff --git a/metagpt/roles/minecraft/critic_agent.py b/metagpt/roles/minecraft/critic_agent.py

index 9bc34cbcc..afce29ea2 100644

--- a/metagpt/roles/minecraft/critic_agent.py

+++ b/metagpt/roles/minecraft/critic_agent.py

@@ -156,7 +156,7 @@ async def _act(self) -> Message:

self.maintain_actions(todo)... | https://api.github.com/repos/geekan/MetaGPT/pulls/424 | 2023-10-12T11:31:35Z | 2023-10-12T11:32:00Z | 2023-10-12T11:32:00Z | 2023-10-19T07:41:44Z | 173 | geekan/MetaGPT | 16,742 | |

Deprecate ESP and move the functionality under --preview | diff --git a/CHANGES.md b/CHANGES.md

index 4b9ceae81dc..c3e2a3350d3 100644

--- a/CHANGES.md

+++ b/CHANGES.md

@@ -30,6 +30,8 @@

- Fix handling of standalone `match()` or `case()` when there is a trailing newline or a

comment inside of the parentheses. (#2760)

- Black now normalizes string prefix order (#2297)

+- De... | ### Description

This PR deprecates `--experimental-string-processing` and moves the functionality under `--preview` machinery.

### Checklist - did you ...

- [x] Add a CHANGELOG entry if necessary?

- [x] Add / update tests if necessary?

- [x] Add new / update outdated documentation?

I'll review myself for some... | https://api.github.com/repos/psf/black/pulls/2789 | 2022-01-20T22:32:34Z | 2022-01-20T23:42:08Z | 2022-01-20T23:42:07Z | 2022-01-21T07:56:09Z | 2,686 | psf/black | 24,018 |

Update pretrained_word_embeddings.py | diff --git a/examples/pretrained_word_embeddings.py b/examples/pretrained_word_embeddings.py

index 4fea37a69bb..069024c3fdb 100644

--- a/examples/pretrained_word_embeddings.py

+++ b/examples/pretrained_word_embeddings.py

@@ -101,10 +101,10 @@

print('Preparing embedding matrix.')

# prepare embedding matrix

-num_word... | <!--

Please make sure you've read and understood our contributing guidelines;

https://github.com/keras-team/keras/blob/master/CONTRIBUTING.md

-->

### Summary

Hello,

Here's a small example why I think there's a small mistake in the code.

Now if you check... | https://api.github.com/repos/keras-team/keras/pulls/13073 | 2019-07-06T13:20:20Z | 2019-07-09T21:31:18Z | 2019-07-09T21:31:18Z | 2019-09-12T07:00:28Z | 172 | keras-team/keras | 47,696 |

Add FPv2: 2022 Subaru Outback Wilderness | diff --git a/selfdrive/car/subaru/values.py b/selfdrive/car/subaru/values.py

index 7a1e9a8a3dc3f5..9975e495ddf5ec 100644

--- a/selfdrive/car/subaru/values.py

+++ b/selfdrive/car/subaru/values.py

@@ -461,6 +461,7 @@ class SubaruCarInfo(CarInfo):

b'\xa1 \x08\x02',

b'\xa1 \x06\x02',

b'\xa1 \x08\x00'... | **Description** Add missing fw values for 2022 Subaru Outback Wilderness

**Route**

Route: 7e051e2bb863d0b6|2022-11-17--17-45-50--49 | https://api.github.com/repos/commaai/openpilot/pulls/26540 | 2022-11-18T17:05:01Z | 2022-11-21T23:34:21Z | 2022-11-21T23:34:21Z | 2022-11-21T23:34:48Z | 259 | commaai/openpilot | 9,659 |

Augmented Generic IE | diff --git a/youtube_dl/extractor/generic.py b/youtube_dl/extractor/generic.py

index 7a877b3bcb4..759fd60a739 100644

--- a/youtube_dl/extractor/generic.py

+++ b/youtube_dl/extractor/generic.py

@@ -102,7 +102,7 @@ def _real_extract(self, url):

mobj = re.search(r'[^A-Za-z0-9]?(?:file|source)=(http[^\'"&]*)',... | I changed one of regexes for the generic IE to handle the case in which file is a key inside a dictionary. This occurs sometimes and can be used to handle cases like:

http://www.mp4upload.com/embed-rt2yx5n01ydl-650x372.html

in which:

I also made 'video' the default title for the generic IE. I would argue that the gene... | https://api.github.com/repos/ytdl-org/youtube-dl/pulls/954 | 2013-06-27T18:30:04Z | 2013-06-27T18:44:46Z | 2013-06-27T18:44:46Z | 2014-06-26T01:14:45Z | 373 | ytdl-org/youtube-dl | 49,901 |

Manual Alignments: Resize bounding box scaling bugfix | diff --git a/tools/lib_alignments/jobs_manual.py b/tools/lib_alignments/jobs_manual.py

index 81f4bb3c61..afdb5e2eda 100644

--- a/tools/lib_alignments/jobs_manual.py

+++ b/tools/lib_alignments/jobs_manual.py

@@ -781,6 +781,8 @@ def move_bounding_box(self, pt_x, pt_y):

def resize_bounding_box(self, pt_x, pt_y):

... | Fix a bug that led to incorrect bounding box on scaled images. | https://api.github.com/repos/deepfakes/faceswap/pulls/511 | 2018-09-28T08:47:29Z | 2018-09-28T08:47:54Z | 2018-09-28T08:47:54Z | 2018-09-28T08:47:54Z | 447 | deepfakes/faceswap | 18,924 |

[AIRFLOW-5709] Fix regression in setting custom operator resources. | diff --git a/airflow/models/baseoperator.py b/airflow/models/baseoperator.py

index d848c2743227c..5d61e1e77a777 100644

--- a/airflow/models/baseoperator.py

+++ b/airflow/models/baseoperator.py

@@ -372,7 +372,7 @@ def __init__(

d=dag.dag_id if dag else "", t=task_id, tr=weight_rule))

se... | Make sure you have checked _all_ steps below.

### Jira

- [x] My PR addresses the following [Airflow Jira](https://issues.apache.org/jira/browse/AIRFLOW/) issues and references them in the PR title. For example, "\[AIRFLOW-XXX\] My Airflow PR"

- https://issues.apache.org/jira/browse/AIRFLOW-5709

- In case yo... | https://api.github.com/repos/apache/airflow/pulls/6331 | 2019-10-14T14:53:32Z | 2019-11-05T20:23:25Z | 2019-11-05T20:23:25Z | 2019-11-05T20:23:26Z | 364 | apache/airflow | 14,819 |

Add a binary coefficient function | diff --git a/binary coefficients b/binary coefficients

new file mode 100644

index 0000000000..1d6f9fb410

--- /dev/null

+++ b/binary coefficients

@@ -0,0 +1,21 @@

+def pascal_triangle( lineNumber ) :

+ list1 = list()

+ list1.append([1])

+ i=1

+ while(i<=lineNumber):

+ j=1

+ l=[]

+ l.app... | Add a binary coefficient function that generate pascal's triangle and calculate the coefficient (n, k) | https://api.github.com/repos/geekcomputers/Python/pulls/435 | 2018-11-27T21:10:53Z | 2018-12-10T18:48:05Z | 2018-12-10T18:48:05Z | 2018-12-10T18:48:05Z | 208 | geekcomputers/Python | 31,707 |

downloaderMW doc typo (spiderMW doc copy remnant) | diff --git a/docs/topics/downloader-middleware.rst b/docs/topics/downloader-middleware.rst

index 38d19fb00ed..30075fa7b43 100644

--- a/docs/topics/downloader-middleware.rst

+++ b/docs/topics/downloader-middleware.rst

@@ -38,7 +38,7 @@ previous (or subsequent) middleware being applied.

If you want to disable a built-in... | Downloader middleware documentation typo(?). Probably a forgotten word while coping docs from spider middleware.

| https://api.github.com/repos/scrapy/scrapy/pulls/590 | 2014-02-11T20:55:46Z | 2014-02-11T21:49:30Z | 2014-02-11T21:49:30Z | 2014-06-23T22:42:11Z | 199 | scrapy/scrapy | 34,426 |

Separate tests for single and action_space | diff --git a/gym/vector/tests/test_async_vector_env.py b/gym/vector/tests/test_async_vector_env.py

index 497fdc09be0..d835ea0c903 100644

--- a/gym/vector/tests/test_async_vector_env.py

+++ b/gym/vector/tests/test_async_vector_env.py

@@ -37,12 +37,16 @@ def test_reset_async_vector_env(shared_memory):

@pytest.mark.p... | Separate `test_step_...` into two tests, depending on how the actions are created (either from a list of `env.single_action_space.sample()`, or with `env.action_space.sample()`). | https://api.github.com/repos/openai/gym/pulls/1552 | 2019-06-22T03:13:04Z | 2019-06-28T23:32:40Z | 2019-06-28T23:32:40Z | 2019-06-28T23:33:48Z | 520 | openai/gym | 5,118 |

added support for Google Images search | diff --git a/langchain/utilities/serpapi.py b/langchain/utilities/serpapi.py

index db6ddf79cc13f9..98f4214ce0614b 100644

--- a/langchain/utilities/serpapi.py

+++ b/langchain/utilities/serpapi.py

@@ -159,7 +159,12 @@ def _process_response(res: dict) -> str:

toret = res["organic_results"][0]["snippet"]

... | - Description: Added Google Image Search support for SerpAPIWrapper

- Issue: NA

- Dependencies: None

- Tag maintainer: @hinthornw

- Twitter handle: @sausheong

| https://api.github.com/repos/langchain-ai/langchain/pulls/7751 | 2023-07-15T10:31:54Z | 2023-07-15T14:04:18Z | 2023-07-15T14:04:18Z | 2023-07-15T14:04:18Z | 213 | langchain-ai/langchain | 43,310 |

Add support for http://www.spankwire.com | diff --git a/youtube_dl/extractor/__init__.py b/youtube_dl/extractor/__init__.py

index db69af36192..7a60e09377d 100644

--- a/youtube_dl/extractor/__init__.py

+++ b/youtube_dl/extractor/__init__.py

@@ -109,6 +109,7 @@

from .sohu import SohuIE

from .soundcloud import SoundcloudIE, SoundcloudSetIE, SoundcloudUserIE

fro... | https://api.github.com/repos/ytdl-org/youtube-dl/pulls/1663 | 2013-10-27T00:32:08Z | 2013-10-28T05:51:41Z | 2013-10-28T05:51:41Z | 2014-06-23T02:03:46Z | 1,059 | ytdl-org/youtube-dl | 50,307 | |

nit: add kg graph nb to docs | diff --git a/docs/core_modules/data_modules/index/modules.md b/docs/core_modules/data_modules/index/modules.md

index eef15d1749641..e55884f0fd344 100644

--- a/docs/core_modules/data_modules/index/modules.md

+++ b/docs/core_modules/data_modules/index/modules.md

@@ -10,6 +10,8 @@ Tree Index <./index_guide.md>

Keyword Ta... | https://api.github.com/repos/run-llama/llama_index/pulls/7398 | 2023-08-25T03:02:12Z | 2023-08-25T03:06:23Z | 2023-08-25T03:06:23Z | 2023-08-28T17:10:56Z | 559 | run-llama/llama_index | 6,866 | |

Add word embedding in Go | diff --git a/README.md b/README.md

index 53b68363..6bb15507 100644

--- a/README.md

+++ b/README.md

@@ -283,6 +283,7 @@ For a list of free-to-attend meetups and local events, go [here](https://github.

* [paicehusk](https://github.com/Rookii/paicehusk) - Golang implementation of the Paice/Husk Stemming Algorithm.

* [sn... | This repo is the models to embed words to vector space, so-called word embedding, or word representation.

These models are written in Golang from scratch :)

So, you're able to build word vector and estimate similarity list between words with CLI, please see usage!

Now it's supported word2vec only, but will be dealt ... | https://api.github.com/repos/josephmisiti/awesome-machine-learning/pulls/381 | 2017-05-22T10:15:28Z | 2017-05-22T12:04:15Z | 2017-05-22T12:04:15Z | 2017-05-22T12:04:15Z | 211 | josephmisiti/awesome-machine-learning | 51,856 |

Proposal: Add Scraper.AI | diff --git a/README.md b/README.md

index 515b763873..f378b10c3d 100644

--- a/README.md

+++ b/README.md

@@ -286,6 +286,7 @@ API | Description | Auth | HTTPS | CORS |

| [QR code](http://goqr.me/api/) | Generate and decode / read QR code graphics | No | Yes | Unknown |

| [QuickChart](https://quickchart.io/) | Generate c... | Add Scraper.AI to the list

<!-- Thank you for taking the time to work on a Pull Request for this project! -->

<!-- To ensure your PR is dealt with swiftly please check the following: -->

- [x] My submission is formatted according to the guidelines in the [contributing guide](CONTRIBUTING.md)

- [x] My addition is ... | https://api.github.com/repos/public-apis/public-apis/pulls/1436 | 2020-10-28T09:25:06Z | 2020-11-02T20:01:17Z | 2020-11-02T20:01:17Z | 2020-11-02T20:01:41Z | 265 | public-apis/public-apis | 35,383 |

added Bookcrossing, GDProfiles, Bazar.cz, Chatujme.cz and Avízo.cz | diff --git a/data.json b/data.json

index 0ea979c76..968d68b48 100644

--- a/data.json

+++ b/data.json

@@ -1588,5 +1588,47 @@

"urlMain": "https://segmentfault.com/",

"username_claimed": "bule",

"username_unclaimed": "noonewouldeverusethis7"

+ },

+ "Bookcrossing": {

+ "errorType": "status_code",

... | https://api.github.com/repos/sherlock-project/sherlock/pulls/367 | 2019-11-26T14:42:11Z | 2019-11-26T15:06:41Z | 2019-11-26T15:06:41Z | 2019-11-29T22:01:29Z | 576 | sherlock-project/sherlock | 36,572 | |

MSI installer | diff --git a/Methodology and Resources/Windows - Privilege Escalation.md b/Methodology and Resources/Windows - Privilege Escalation.md

index 9677f30691..afb3a31166 100644

--- a/Methodology and Resources/Windows - Privilege Escalation.md

+++ b/Methodology and Resources/Windows - Privilege Escalation.md

@@ -30,7 +30,9 ... | https://api.github.com/repos/swisskyrepo/PayloadsAllTheThings/pulls/679 | 2023-10-11T19:06:30Z | 2023-10-11T19:06:37Z | 2023-10-11T19:06:37Z | 2023-10-11T19:06:48Z | 1,697 | swisskyrepo/PayloadsAllTheThings | 8,498 | |

remove Freebsd 13.1 from test matrix | diff --git a/.azure-pipelines/azure-pipelines.yml b/.azure-pipelines/azure-pipelines.yml

index 9c3281b55e912d..a231cdc196a181 100644

--- a/.azure-pipelines/azure-pipelines.yml

+++ b/.azure-pipelines/azure-pipelines.yml

@@ -93,8 +93,6 @@ stages:

test: rhel/9.2@3.11

- name: FreeBSD 12.4

... | fixes #80416

##### ISSUE TYPE

<!--- Pick one below and delete the rest -->

- Test Pull Request

##### COMPONENT NAME

<!--- Write the short name of the module, plugin, task or feature below -->

tests | https://api.github.com/repos/ansible/ansible/pulls/81058 | 2023-06-14T13:52:11Z | 2023-06-15T18:59:33Z | 2023-06-15T18:59:33Z | 2023-06-22T13:00:12Z | 664 | ansible/ansible | 49,223 |

Delete unused --deepspeed_mpi command line argument | diff --git a/deepspeed/__init__.py b/deepspeed/__init__.py

index 87b959f56be0..06a04c791de3 100755

--- a/deepspeed/__init__.py

+++ b/deepspeed/__init__.py

@@ -234,12 +234,6 @@ def _add_core_arguments(parser):

type=str,

help='Deprecated DeepSpeed json configuration file.')... | I noticed this argument in my command-line arguments being added by DeepSpeed, and turns out it being False by default has no effect. Since it is not used anywhere, it's probably best to delete it. | https://api.github.com/repos/microsoft/DeepSpeed/pulls/4981 | 2024-01-19T20:17:27Z | 2024-01-23T20:36:35Z | 2024-01-23T20:36:35Z | 2024-01-23T20:37:03Z | 152 | microsoft/DeepSpeed | 10,135 |

Fix base.py | diff --git a/llama-index-integrations/embeddings/llama-index-embeddings-instructor/llama_index/embeddings/instructor/base.py b/llama-index-integrations/embeddings/llama-index-embeddings-instructor/llama_index/embeddings/instructor/base.py

index ed58e7aa08f2e..e2b351e1ed248 100644

--- a/llama-index-integrations/embeddin... | # Description

Please include a summary of the change and which issue is fixed. Please also include relevant motivation and context. List any dependencies that are required for this change.

Fixes # (issue)

The wrong instruction text was used for the query.

## Type of Change

Please delete options that are ... | https://api.github.com/repos/run-llama/llama_index/pulls/11327 | 2024-02-23T16:22:30Z | 2024-02-23T17:47:42Z | 2024-02-23T17:47:42Z | 2024-02-23T17:47:42Z | 226 | run-llama/llama_index | 6,704 |

Fix minor typo in ES.59 | diff --git a/CppCoreGuidelines.md b/CppCoreGuidelines.md

index 87483df80..2eeb06c42 100644

--- a/CppCoreGuidelines.md

+++ b/CppCoreGuidelines.md

@@ -12424,7 +12424,7 @@ The language already knows that a returned value is a temporary object that can

* Flag use of `std::move(x)` where `x` is an rvalue or the language ... | https://api.github.com/repos/isocpp/CppCoreGuidelines/pulls/2037 | 2023-02-12T16:34:29Z | 2023-02-12T18:46:45Z | 2023-02-12T18:46:45Z | 2023-02-12T18:46:45Z | 362 | isocpp/CppCoreGuidelines | 16,065 | |

Update UI and sponsers | diff --git a/fastchat/serve/gradio_block_arena_anony.py b/fastchat/serve/gradio_block_arena_anony.py

index 978f76b75a..a598a8c9a4 100644

--- a/fastchat/serve/gradio_block_arena_anony.py

+++ b/fastchat/serve/gradio_block_arena_anony.py

@@ -196,7 +196,7 @@ def share_click(state0, state1, model_selector0, model_selector1,... | https://api.github.com/repos/lm-sys/FastChat/pulls/2387 | 2023-09-08T22:20:56Z | 2023-09-08T22:21:19Z | 2023-09-08T22:21:19Z | 2023-09-08T22:21:22Z | 1,548 | lm-sys/FastChat | 41,460 | |

Fixing auto_test.py for Python 2.6 | diff --git a/letsencrypt-auto-source/tests/auto_test.py b/letsencrypt-auto-source/tests/auto_test.py

index 3b7e8731bc4..7f0b31b67f5 100644

--- a/letsencrypt-auto-source/tests/auto_test.py

+++ b/letsencrypt-auto-source/tests/auto_test.py

@@ -11,7 +11,7 @@

import socket

import ssl

from stat import S_IRUSR, S_IXUSR

-fr... | #2759 followup, required for #2671.

| https://api.github.com/repos/certbot/certbot/pulls/2926 | 2016-05-05T07:24:56Z | 2016-05-27T21:39:44Z | 2016-05-27T21:39:44Z | 2016-05-27T21:41:17Z | 247 | certbot/certbot | 3,169 |

Fix XMPP room notifications | diff --git a/homeassistant/components/xmpp/notify.py b/homeassistant/components/xmpp/notify.py

index 6b4faf324585..28d60d317e00 100644

--- a/homeassistant/components/xmpp/notify.py

+++ b/homeassistant/components/xmpp/notify.py

@@ -159,6 +159,9 @@ def __init__(self):

async def start(self, event):

... | <!--

You are amazing! Thanks for contributing to our project!

Please, DO NOT DELETE ANY TEXT from this template! (unless instructed).

-->

## Proposed change

<!--

Describe the big picture of your changes here to communicate to the

maintainers why we should accept this pull request. If it fixes a bug

or... | https://api.github.com/repos/home-assistant/core/pulls/80794 | 2022-10-22T21:40:45Z | 2022-10-24T19:10:57Z | 2022-10-24T19:10:57Z | 2022-10-25T19:16:22Z | 379 | home-assistant/core | 39,139 |

have multi-discrete use same dtype as discrete, fixes #1204 | diff --git a/gym/spaces/multi_discrete.py b/gym/spaces/multi_discrete.py

index b92173205e6..a8406e98dcf 100644

--- a/gym/spaces/multi_discrete.py

+++ b/gym/spaces/multi_discrete.py

@@ -1,4 +1,3 @@

-import gym

import numpy as np

from .space import Space

@@ -29,9 +28,9 @@ def __init__(self, nvec):

nvec: vect... | https://api.github.com/repos/openai/gym/pulls/1400 | 2019-03-22T22:02:56Z | 2019-03-23T21:49:46Z | 2019-03-23T21:49:46Z | 2019-03-23T21:49:47Z | 357 | openai/gym | 5,485 | |

Fixed a type in a docstring | diff --git a/acme/acme/client.py b/acme/acme/client.py

index 776ddb38a11..c3e28ef47f0 100644

--- a/acme/acme/client.py

+++ b/acme/acme/client.py

@@ -340,7 +340,7 @@ def poll_and_request_issuance(

`PollError` with non-empty ``waiting`` is raised.

:returns: ``(cert, updated_authzrs)`` `tuple` wher... | https://api.github.com/repos/certbot/certbot/pulls/1881 | 2015-12-12T21:12:17Z | 2015-12-13T01:51:51Z | 2015-12-13T01:51:51Z | 2016-05-06T19:21:21Z | 179 | certbot/certbot | 1,199 | |

[extractor/twitch] Support AV1/HEVC extraction | diff --git a/yt_dlp/extractor/twitch.py b/yt_dlp/extractor/twitch.py

index c55786a0dce..80cba09155d 100644

--- a/yt_dlp/extractor/twitch.py

+++ b/yt_dlp/extractor/twitch.py

@@ -191,17 +191,25 @@ def _get_thumbnails(self, thumbnail):

}] if thumbnail else None

def _extract_twitch_m3u8_formats(self, path, ... | Signals support for AV1 and HEVC codecs.

Tested on this stream: https://www.twitch.tv/r0dn3y

Before:

```

ID EXT RESOLUTION FPS │ TBR PROTO │ VCODEC ACODEC ABR

────────────────────────────────────────────────────────────────────────

audio_only mp4 audio only │ 160k m3u8 │ audio only m... | https://api.github.com/repos/yt-dlp/yt-dlp/pulls/9158 | 2024-02-06T22:01:09Z | 2024-04-03T18:38:51Z | 2024-04-03T18:38:51Z | 2024-04-03T18:38:51Z | 353 | yt-dlp/yt-dlp | 8,150 |

feat(replays): Event Details Replay Section Player | diff --git a/static/app/components/events/eventEntries.tsx b/static/app/components/events/eventEntries.tsx

index d9e1fe5503f31..ad7d8fb96f6e1 100644

--- a/static/app/components/events/eventEntries.tsx

+++ b/static/app/components/events/eventEntries.tsx

@@ -24,6 +24,7 @@ import RRWebIntegration from 'sentry/components/e... | ### Changes

- Adds EventReplay component that contains the new Replay section

- Adds ReplayView to add the replay player for the EventReplay section

### Notes

The options dropdown bug strikes again but this time not in full-screen mode. When I click the option button on the player in this view it triggers a scrol... | https://api.github.com/repos/getsentry/sentry/pulls/36818 | 2022-07-19T19:33:23Z | 2022-07-22T17:13:56Z | 2022-07-22T17:13:56Z | 2023-03-16T16:16:45Z | 972 | getsentry/sentry | 44,753 |

Fix typos | diff --git a/docs/templates/applications.md b/docs/templates/applications.md

index 70b545d0da1..1333ae69655 100644

--- a/docs/templates/applications.md

+++ b/docs/templates/applications.md

@@ -253,7 +253,7 @@ The default input size for this model is 224x224.

- input_shape: optional shape tuple, only to be specified

... | https://api.github.com/repos/keras-team/keras/pulls/6702 | 2017-05-21T08:30:56Z | 2017-05-21T17:51:20Z | 2017-05-21T17:51:20Z | 2017-06-17T09:06:56Z | 968 | keras-team/keras | 46,979 | |

🌐 Add Chinese translation for Advanced User Guide - Intro | diff --git a/docs/zh/docs/advanced/index.md b/docs/zh/docs/advanced/index.md

new file mode 100644

index 0000000000000..d71838cd77e8d

--- /dev/null

+++ b/docs/zh/docs/advanced/index.md

@@ -0,0 +1,18 @@

+# 高级用户指南 - 简介

+

+## 额外特性

+

+主要的教程 [教程 - 用户指南](../tutorial/){.internal-link target=_blank} 应该足以让你了解 **FastAPI** 的所有主要特性... | Add Chinese translations for `advance/index.md` | https://api.github.com/repos/tiangolo/fastapi/pulls/1445 | 2020-05-21T03:29:01Z | 2020-11-25T17:07:18Z | 2020-11-25T17:07:18Z | 2020-11-25T17:07:57Z | 427 | tiangolo/fastapi | 23,326 |

fix pre output | diff --git a/CHANGELOG.md b/CHANGELOG.md

index d9ce709eb..5d527500d 100644

--- a/CHANGELOG.md

+++ b/CHANGELOG.md

@@ -12,6 +12,7 @@ and this project adheres to [Semantic Versioning](https://semver.org/spec/v2.0.0

- Reversed `pre` and `code` tags in base HTML format https://github.com/Textualize/rich/pull/2642

- Fix sy... | Fixes https://github.com/Textualize/rich/issues/2832 | https://api.github.com/repos/Textualize/rich/pulls/2844 | 2023-03-04T10:00:01Z | 2023-03-04T10:13:43Z | 2023-03-04T10:13:43Z | 2023-03-04T10:13:44Z | 1,627 | Textualize/rich | 48,243 |

Fix inference MP group initialization at consequent inference calls | diff --git a/deepspeed/inference/engine.py b/deepspeed/inference/engine.py

index 8ea3d5619bba..67b656219978 100644

--- a/deepspeed/inference/engine.py

+++ b/deepspeed/inference/engine.py

@@ -17,6 +17,8 @@

class InferenceEngine(Module):

+ inference_mp_group = None

+

def __init__(self,

mode... | This PR addresses https://github.com/microsoft/DeepSpeed/issues/1410 | https://api.github.com/repos/microsoft/DeepSpeed/pulls/1411 | 2021-09-28T07:32:09Z | 2021-09-30T21:59:14Z | 2021-09-30T21:59:14Z | 2021-09-30T21:59:14Z | 415 | microsoft/DeepSpeed | 10,783 |

Docs: fix link and missing package | diff --git a/docs/docs/integrations/chat/maritalk.ipynb b/docs/docs/integrations/chat/maritalk.ipynb

index 82e9b75dbc8eb0..bd2a04700f8e2c 100644

--- a/docs/docs/integrations/chat/maritalk.ipynb

+++ b/docs/docs/integrations/chat/maritalk.ipynb

@@ -4,13 +4,13 @@

"cell_type": "markdown",

"metadata": {},

"sourc... | **Issue:** fix broken links and missing package on colab example | https://api.github.com/repos/langchain-ai/langchain/pulls/18405 | 2024-03-01T22:01:21Z | 2024-03-05T23:50:06Z | 2024-03-05T23:50:06Z | 2024-03-15T09:58:46Z | 443 | langchain-ai/langchain | 42,940 |

add Dog Facts | diff --git a/README.md b/README.md

index 9ca12e6b80..ad116fb4bc 100644

--- a/README.md

+++ b/README.md

@@ -113,6 +113,7 @@ API | Description | Auth | HTTPS | CORS |

| [catAPI](https://thatcopy.pw/catapi) | Random pictures of cats | No | Yes | Yes |

| [Cats](https://docs.thecatapi.com/) | Pictures of cats from Tumblr ... | <!-- Thank you for taking the time to work on a Pull Request for this project! -->

<!-- To ensure your PR is dealt with swiftly please check the following: -->

- [x] My submission is formatted according to the guidelines in the [contributing guide](/CONTRIBUTING.md)

- [x] My addition is ordered alphabetically

- [x]... | https://api.github.com/repos/public-apis/public-apis/pulls/2622 | 2021-10-20T04:51:41Z | 2021-10-27T13:56:23Z | 2021-10-27T13:56:23Z | 2021-10-27T13:56:23Z | 259 | public-apis/public-apis | 35,879 |

⬆ [pre-commit.ci] pre-commit autoupdate | diff --git a/.pre-commit-config.yaml b/.pre-commit-config.yaml

index c4c1d4d9e8787..978114a65194c 100644

--- a/.pre-commit-config.yaml

+++ b/.pre-commit-config.yaml

@@ -12,14 +12,14 @@ repos:

- id: end-of-file-fixer

- id: trailing-whitespace

- repo: https://github.com/asottile/pyupgrade

- rev: v2.37... | <!--pre-commit.ci start-->

updates:

- [github.com/asottile/pyupgrade: v2.37.3 → v3.1.0](https://github.com/asottile/pyupgrade/compare/v2.37.3...v3.1.0)

- https://github.com/myint/autoflake → https://github.com/PyCQA/autoflake

- [github.com/PyCQA/autoflake: v1.5.3 → v1.7.6](https://github.com/PyCQA/autoflake/compare/v1.... | https://api.github.com/repos/tiangolo/fastapi/pulls/5408 | 2022-09-19T21:17:12Z | 2022-10-20T11:15:19Z | 2022-10-20T11:15:19Z | 2022-10-20T11:15:20Z | 297 | tiangolo/fastapi | 23,059 |

fix(monitors): Remove monitor context when appropriate | diff --git a/src/sentry/utils/monitors.py b/src/sentry/utils/monitors.py

index df8b48cd2be3c..5dab770b20951 100644

--- a/src/sentry/utils/monitors.py

+++ b/src/sentry/utils/monitors.py

@@ -59,16 +59,19 @@ def inner(*a, **k):

@suppress_errors

def report_monitor_begin(task, **kwargs):

+ monitor_id = task.request.h... | https://api.github.com/repos/getsentry/sentry/pulls/11869 | 2019-02-03T23:35:30Z | 2019-02-04T16:26:56Z | 2019-02-04T16:26:56Z | 2020-12-20T20:38:59Z | 337 | getsentry/sentry | 44,264 | |

[GorillaVid] Added GorillaVid extractor | diff --git a/youtube_dl/extractor/__init__.py b/youtube_dl/extractor/__init__.py

index 15a42ce4424..6c9a7593ae3 100644

--- a/youtube_dl/extractor/__init__.py

+++ b/youtube_dl/extractor/__init__.py

@@ -109,6 +109,7 @@

from .generic import GenericIE

from .googleplus import GooglePlusIE

from .googlesearch import Google... | https://api.github.com/repos/ytdl-org/youtube-dl/pulls/3059 | 2014-06-08T02:16:59Z | 2014-06-17T13:18:51Z | 2014-06-17T13:18:51Z | 2014-06-17T13:20:42Z | 642 | ytdl-org/youtube-dl | 49,698 | |

update centos9stream ami | diff --git a/letstest/targets/targets.yaml b/letstest/targets/targets.yaml

index ed00d6e2b57..dbeb793f694 100644

--- a/letstest/targets/targets.yaml

+++ b/letstest/targets/targets.yaml

@@ -38,7 +38,7 @@ targets:

type: centos

virt: hvm

user: centos

- - ami: ami-0d5144f02e4eb6f05

+ - ami: ami-08f2fe20b72... | our "test farm tests" started failing with [this error](https://dev.azure.com/certbot/certbot/_build/results?buildId=7548&view=logs&j=9ad64ec1-64fe-5c1a-aa80-014a21728434&t=01dc0a99-87f2-5e74-d7b8-4950697fba7c&l=393). googling around i saw https://github.com/boto/boto3/issues/560#issuecomment-572666373 and checking the... | https://api.github.com/repos/certbot/certbot/pulls/9914 | 2024-03-20T00:12:27Z | 2024-03-20T20:18:37Z | 2024-03-20T20:18:37Z | 2024-03-20T20:18:37Z | 157 | certbot/certbot | 2,492 |

Use OpenAI for embeddings when openai mode is selected | diff --git a/private_gpt/components/embedding/embedding_component.py b/private_gpt/components/embedding/embedding_component.py

index 41b4e1f43..61fe6fad1 100644

--- a/private_gpt/components/embedding/embedding_component.py

+++ b/private_gpt/components/embedding/embedding_component.py

@@ -13,14 +13,19 @@ class Embedding... | At the moment, even if openai is selected as the execution mode, local Embeddings are being used.

That blocks fully remote setups based on openai, as it uses HuggingFace torch-based embeddings.

With this fix, openai is used both for the LLM and the embeddings when openai is selected in the .yaml llm->model configu... | https://api.github.com/repos/zylon-ai/private-gpt/pulls/1096 | 2023-10-23T07:54:26Z | 2023-10-23T08:50:42Z | 2023-10-23T08:50:42Z | 2023-10-23T08:50:48Z | 293 | zylon-ai/private-gpt | 38,607 |

DEPR: remove .ix from tests/indexing/multiindex/test_setitem.py | diff --git a/pandas/tests/indexing/multiindex/test_setitem.py b/pandas/tests/indexing/multiindex/test_setitem.py

index 261d2e9c04e77..c383c38958692 100644

--- a/pandas/tests/indexing/multiindex/test_setitem.py

+++ b/pandas/tests/indexing/multiindex/test_setitem.py

@@ -1,5 +1,3 @@

-from warnings import catch_warnings, s... | https://api.github.com/repos/pandas-dev/pandas/pulls/27574 | 2019-07-24T18:59:43Z | 2019-07-25T16:56:28Z | 2019-07-25T16:56:28Z | 2019-07-26T03:06:20Z | 3,216 | pandas-dev/pandas | 45,276 | |

Updated for Onyx platform for distributed processing. | diff --git a/README.md b/README.md

index 93149fe1..4729bf7d 100644

--- a/README.md

+++ b/README.md

@@ -385,6 +385,7 @@ For a list of free-to-attend meetups and local events, go [here](https://github.

* [Flink](http://flink.apache.org/) - Open source platform for distributed stream and batch data processing.

* [Hado... | Hi :)

I saw that the Onyx platform is missing out in the list. | https://api.github.com/repos/josephmisiti/awesome-machine-learning/pulls/406 | 2017-07-31T02:52:55Z | 2017-08-01T14:25:54Z | 2017-08-01T14:25:54Z | 2017-08-01T14:25:57Z | 226 | josephmisiti/awesome-machine-learning | 52,034 |

Set up 2.0 pre-releases | diff --git a/.azure-pipelines/2.0-prerelease.yml b/.azure-pipelines/2.0-prerelease.yml

new file mode 100644

index 00000000000..2cdcf8f3035

--- /dev/null

+++ b/.azure-pipelines/2.0-prerelease.yml

@@ -0,0 +1,18 @@

+# Pipeline for testing, building, and deploying Certbot 2.0 pre-releases.

+trigger: none

+pr: none

+

+varia... | This implements the majority of https://docs.google.com/document/d/1t02y0qQvTgc4eNPY4OwfYu7oW41DEBNhr8fq_O97kXo/edit?usp=sharing. The work that remains after this is merged into master is merging this into the 2.0 branch, setting up the 2.0-prerelease pipeline for the 2.0 branch, writing documentation on how to use tha... | https://api.github.com/repos/certbot/certbot/pulls/9400 | 2022-09-07T21:49:30Z | 2022-09-09T21:23:39Z | 2022-09-09T21:23:39Z | 2022-09-09T21:23:40Z | 2,614 | certbot/certbot | 1,123 |

Bump cryptography from 1.7 to 3.2 | diff --git a/requirements_dev.txt b/requirements_dev.txt

index ff7da8df9..f39b3d2a2 100644

--- a/requirements_dev.txt

+++ b/requirements_dev.txt

@@ -6,7 +6,7 @@ flake8

tox==2.3.1

coverage==4.1

Sphinx==1.4.8

-cryptography==1.7

+cryptography==3.2

pyyaml>=4.2b1

face_recognition_models

Click>=6.0

| Bumps [cryptography](https://github.com/pyca/cryptography) from 1.7 to 3.2.

<details>

<summary>Changelog</summary>

<p><em>Sourced from <a href="https://github.com/pyca/cryptography/blob/master/CHANGELOG.rst">cryptography's changelog</a>.</em></p>

<blockquote>

<p>3.2 - 2020-10-25</p>

<pre><code>

* **SECURITY ISSUE:** At... | https://api.github.com/repos/ageitgey/face_recognition/pulls/1236 | 2020-10-27T21:07:21Z | 2021-06-14T10:07:49Z | 2021-06-14T10:07:49Z | 2021-06-14T10:08:01Z | 119 | ageitgey/face_recognition | 22,594 |

Example of pad_to_multiple_of for padding and truncation guide & docstring update | diff --git a/docs/source/en/pad_truncation.mdx b/docs/source/en/pad_truncation.mdx

index f848e23bed502..8862e0be008de 100644

--- a/docs/source/en/pad_truncation.mdx

+++ b/docs/source/en/pad_truncation.mdx

@@ -50,6 +50,7 @@ The following table summarizes the recommended way to setup padding and truncati

| ... | This PR adds a minor update to the docs as previously it was not clear that `pad_to_multiple_of` has to be used with `padding=True`.

Based on https://huggingface.slack.com/archives/C027NLU6CE9/p1679325764920509

| https://api.github.com/repos/huggingface/transformers/pulls/22278 | 2023-03-20T15:58:44Z | 2023-03-20T18:18:55Z | 2023-03-20T18:18:55Z | 2023-11-03T15:31:11Z | 544 | huggingface/transformers | 12,305 |

[NVIDIA] Add FP8 example using TE | diff --git a/examples/tutorial/fp8/mnist/README.md b/examples/tutorial/fp8/mnist/README.md

new file mode 100644

index 000000000000..308549cd29f7

--- /dev/null

+++ b/examples/tutorial/fp8/mnist/README.md

@@ -0,0 +1,7 @@

+# Basic MNIST Example with optional FP8

+

+```bash

+python main.py

+python main.py --use-te # ... | ## 📝 What does this PR do?

Adds a sample script to use the [TransformerEngine](https://github.com/NVIDIA/TransformerEngine) API for a simple classification task on the MNIST dataset with FP8 capability. | https://api.github.com/repos/hpcaitech/ColossalAI/pulls/3080 | 2023-03-09T19:05:08Z | 2023-03-10T08:24:09Z | 2023-03-10T08:24:09Z | 2023-03-10T08:24:09Z | 2,304 | hpcaitech/ColossalAI | 11,774 |

python3: scrapy.__version__, NoneType, urlparse_monkeypatches | diff --git a/scrapy/__init__.py b/scrapy/__init__.py

index af904e89df6..cd362dac565 100644

--- a/scrapy/__init__.py

+++ b/scrapy/__init__.py

@@ -4,6 +4,8 @@

from __future__ import print_function

import pkgutil

__version__ = pkgutil.get_data(__package__, 'VERSION').strip()

+if not isinstance(__version__, str):

+ _... | Python 3 compatibility changes:

1. `__version__` will be `str` in both 2 and 3. Necessary because pkgutil.get_data returns `bytes` in 3.

2. `NoneType` is not available in 3

3. Apply `urlparse_monkeypatches` in Python 2 only. Patches are not needed in 3 (tested in 3.2-3.4).

| https://api.github.com/repos/scrapy/scrapy/pulls/452 | 2013-11-03T16:57:19Z | 2013-11-04T08:54:02Z | 2013-11-04T08:54:02Z | 2014-06-12T16:08:00Z | 587 | scrapy/scrapy | 34,549 |

Update openai_tools.ipynb | diff --git a/docs/docs/modules/agents/agent_types/openai_tools.ipynb b/docs/docs/modules/agents/agent_types/openai_tools.ipynb

index 99260fd32fc29a..51f99a3b9c3190 100644

--- a/docs/docs/modules/agents/agent_types/openai_tools.ipynb

+++ b/docs/docs/modules/agents/agent_types/openai_tools.ipynb

@@ -19,7 +19,7 @@

"\... | typo

<!-- Thank you for contributing to LangChain!

Please title your PR "<package>: <description>", where <package> is whichever of langchain, community, core, experimental, etc. is being modified.

Replace this entire comment with:

- **Description:** a description of the change,

- **Issue:** the issue #... | https://api.github.com/repos/langchain-ai/langchain/pulls/16618 | 2024-01-26T06:51:34Z | 2024-01-26T23:26:27Z | 2024-01-26T23:26:27Z | 2024-01-26T23:26:28Z | 303 | langchain-ai/langchain | 43,127 |

correct spelling | diff --git a/selfdrive/mapd/mapd.py b/selfdrive/mapd/mapd.py

index d3c82bf40f6a6f..b123fc68b75cc8 100755

--- a/selfdrive/mapd/mapd.py

+++ b/selfdrive/mapd/mapd.py

@@ -240,7 +240,7 @@ def mapsd_thread():

if cur_way is not None:

dat.liveMapData.wayId = cur_way.id

- # Seed limit

+ # Speed limit

... | https://api.github.com/repos/commaai/openpilot/pulls/672 | 2019-05-27T18:40:08Z | 2019-05-28T23:16:33Z | 2019-05-28T23:16:33Z | 2019-12-02T12:58:41Z | 141 | commaai/openpilot | 9,461 | |

✏ Fix typo: 'wll' to 'will' in `docs/en/docs/tutorial/query-params-str-validations.md` | diff --git a/docs/en/docs/tutorial/query-params-str-validations.md b/docs/en/docs/tutorial/query-params-str-validations.md

index 6a5a507b91af8..2debd088a0599 100644

--- a/docs/en/docs/tutorial/query-params-str-validations.md

+++ b/docs/en/docs/tutorial/query-params-str-validations.md

@@ -110,7 +110,7 @@ Notice that the... | https://api.github.com/repos/tiangolo/fastapi/pulls/9380 | 2023-04-11T16:16:42Z | 2023-04-13T18:21:17Z | 2023-04-13T18:21:17Z | 2023-04-13T18:21:17Z | 233 | tiangolo/fastapi | 23,478 | |

document inherited attributes for Flask and Blueprint | diff --git a/flask/app.py b/flask/app.py

index 7034b75500..d80514bfc5 100644

--- a/flask/app.py

+++ b/flask/app.py

@@ -346,6 +346,21 @@ def _set_request_globals_class(self, value):

#: .. versionadded:: 0.8

session_interface = SecureCookieSessionInterface()

+ # TODO remove the next three attrs when Sphinx... | Sphinx autodoc `:inherited-members:` doesn't include attributes. The only solution is to duplicate the `_PackageBoundObject` attributes and their docs in the `Flask` and `Blueprint` classes.

The duplicated attributes can be removed once sphinx-doc/sphinx#741 is fixed.

closes #480 | https://api.github.com/repos/pallets/flask/pulls/2363 | 2017-06-06T14:52:41Z | 2017-06-06T14:52:56Z | 2017-06-06T14:52:56Z | 2020-11-14T03:53:04Z | 1,288 | pallets/flask | 20,371 |

Improve JSON output when there is leading data before the actual JSON body | diff --git a/CHANGELOG.md b/CHANGELOG.md

index 847e70b519..ec56074ab6 100644

--- a/CHANGELOG.md

+++ b/CHANGELOG.md

@@ -5,6 +5,8 @@ This project adheres to [Semantic Versioning](https://semver.org/).

## [2.6.0.dev0](https://github.com/httpie/httpie/compare/2.5.0...master) (unreleased)

+- Added support for formattin... | In some special cases, to prevent against Cross Site Script Inclusion (XSSI) attacks, the JSON response body starts with a magic prefix line that must be stripped before feeding the rest of the response body to the JSON parser.

Such prefix is now simply ignored from the parser but still printed in the terminal.

Sup... | https://api.github.com/repos/httpie/cli/pulls/1130 | 2021-08-18T15:03:00Z | 2021-09-21T09:15:43Z | 2021-09-21T09:15:43Z | 2021-10-11T11:54:55Z | 2,953 | httpie/cli | 34,166 |

Avoid breaking crappy distribution methods. | diff --git a/setup.py b/setup.py

index 16ba717b68..879d94a636 100755

--- a/setup.py

+++ b/setup.py

@@ -30,8 +30,6 @@

readme = f.read()

with open('HISTORY.rst') as f:

history = f.read()

-with open('LICENSE') as f:

- license = f.read()

setup(

name='requests',

@@ -46,7 +44,7 @@

package_dir={'req... | Apparently RPM doesn't like us having the full license text in the 'license' section, as in #1878. Seems innocuous to change this, because realistically who the hell cares?

| https://api.github.com/repos/psf/requests/pulls/1893 | 2014-01-29T19:23:36Z | 2014-01-30T17:23:57Z | 2014-01-30T17:23:57Z | 2021-09-08T23:11:10Z | 161 | psf/requests | 32,136 |

Fix dtype error in MHA layer/change dtype checking mechanism for manual cast | diff --git a/modules/devices.py b/modules/devices.py

index dfffaf24fd0..8f49f7a486b 100644

--- a/modules/devices.py

+++ b/modules/devices.py

@@ -4,7 +4,6 @@

import torch

from modules import errors, shared

-from modules import torch_utils

if sys.platform == "darwin":

from modules import mac_specific

@@ -141,... | ## Description

torch.nn.MultiheadAttention have so much forks inside their forward call and is impossible to patch their inside call of computation operationg. I decide to give it "always fp32" when manual_cast is used.

And since the dtype error I got is weird, it claim the tensor it got is fp32 and fp8, which shou... | https://api.github.com/repos/AUTOMATIC1111/stable-diffusion-webui/pulls/14791 | 2024-01-29T15:04:34Z | 2024-01-29T17:39:06Z | 2024-01-29T17:39:06Z | 2024-01-29T17:39:09Z | 322 | AUTOMATIC1111/stable-diffusion-webui | 39,710 |

bug fixes and updates for eos connections | diff --git a/lib/ansible/module_utils/eos.py b/lib/ansible/module_utils/eos.py

index f283fe4cbbcbe8..622331cb8daa67 100644

--- a/lib/ansible/module_utils/eos.py

+++ b/lib/ansible/module_utils/eos.py

@@ -94,6 +94,15 @@ class Cli:

def __init__(self, module):

self._module = module

self._device_confi... | * refactors supports_sessions to a property

* exposes supports_sessions as a toplevel function

* adds open_shell() to network_cli

* implements open_shell() in eos action plugin

| https://api.github.com/repos/ansible/ansible/pulls/21534 | 2017-02-16T20:18:41Z | 2017-02-17T01:26:49Z | 2017-02-17T01:26:49Z | 2019-04-26T20:33:12Z | 1,705 | ansible/ansible | 49,072 |

Bitstamp fetchOrder string math | diff --git a/js/bitstamp.js b/js/bitstamp.js

index 8231d27b89ed..1f23a7c42798 100644

--- a/js/bitstamp.js

+++ b/js/bitstamp.js

@@ -1567,8 +1567,10 @@ module.exports = class bitstamp extends Exchange {

}

parseTransactionStatus (status) {

- // withdrawals:

- // 0 (open), 1 (in process), 2 (finis... | ```

% bitstamp fetchLedger LTC

2022-09-15T23:41:33.286Z

Node.js: v18.4.0

CCXT v1.93.59

(node:10554) ExperimentalWarning: The Fetch API is an experimental feature. This feature could change at any time

(Use `node --trace-warnings ...` to show where the warning was created)

bitstamp.fetchLedger (LTC)

2022-09-15T2... | https://api.github.com/repos/ccxt/ccxt/pulls/15019 | 2022-09-15T23:00:05Z | 2022-09-30T16:46:15Z | 2022-09-30T16:46:15Z | 2022-09-30T16:46:16Z | 1,649 | ccxt/ccxt | 13,825 |

Bugfix/changelog | diff --git a/certbot/CHANGELOG.md b/certbot/CHANGELOG.md

index d01d5906908..6f39a27b98d 100644

--- a/certbot/CHANGELOG.md

+++ b/certbot/CHANGELOG.md

@@ -6,7 +6,8 @@ Certbot adheres to [Semantic Versioning](https://semver.org/).

### Added

-*

+* Added the ability to remove email and phone contact information from an... | Fixing a mistake in pull request #8212 where I recorded my changes in an already released version 😳.

| https://api.github.com/repos/certbot/certbot/pulls/8236 | 2020-08-27T00:55:46Z | 2020-08-27T16:45:11Z | 2020-08-27T16:45:11Z | 2020-08-27T16:45:11Z | 451 | certbot/certbot | 2,288 |

Ensure `skip_defaults` doesn't cause extra fields to be serialized | diff --git a/fastapi/routing.py b/fastapi/routing.py

index 930cbe001ff1d..08f43bf524a8d 100644

--- a/fastapi/routing.py

+++ b/fastapi/routing.py

@@ -45,6 +45,8 @@ def serialize_response(

) -> Any:

if field:

errors = []

+ if skip_defaults and isinstance(response, BaseModel):

+ response =... | Currently, if `skip_defaults` is true, the secure cloned field is not used when serializing the response. This can lead to extra information leaking out if the `response_model` differs in type from the returned model.

This pull request fixes this bug, and updates the relevant unit test to check for it.

(The bug w... | https://api.github.com/repos/tiangolo/fastapi/pulls/485 | 2019-08-29T22:59:39Z | 2019-08-30T23:56:14Z | 2019-08-30T23:56:14Z | 2019-09-04T14:30:12Z | 347 | tiangolo/fastapi | 23,567 |

[3.8] bpo-40121: Fix exception type in test (GH-19267) | diff --git a/Lib/test/audit-tests.py b/Lib/test/audit-tests.py

index dda52a5a518f6a..b90c4b8f75794d 100644

--- a/Lib/test/audit-tests.py

+++ b/Lib/test/audit-tests.py

@@ -343,7 +343,7 @@ def hook(event, args):

try:

# Don't care if this fails, we just want the audit message

sock.bind(('127.0.0.1',... | (cherry picked from commit 3ef4a7e5a7c29e17d5152d1fa6ceeb1fee269699)

Co-authored-by: Steve Dower <steve.dower@python.org>

<!-- issue-number: [bpo-40121](https://bugs.python.org/issue40121) -->

https://bugs.python.org/issue40121

<!-- /issue-number -->

| https://api.github.com/repos/python/cpython/pulls/19276 | 2020-04-01T12:45:06Z | 2020-04-01T13:02:56Z | 2020-04-01T13:02:56Z | 2020-04-01T13:12:22Z | 138 | python/cpython | 4,414 |

[FIFA] Back-port extractor from yt-dlp | diff --git a/youtube_dl/extractor/extractors.py b/youtube_dl/extractor/extractors.py

index 947cbe8fdb4..21b17185eca 100644

--- a/youtube_dl/extractor/extractors.py

+++ b/youtube_dl/extractor/extractors.py

@@ -374,6 +374,7 @@

FC2EmbedIE,

)

from .fczenit import FczenitIE

+from .fifa import FifaIE

from .filmon imp... | <details>

<summary>Boilerplate: mixed code, new extractor</summary>

## Please follow the guide below

- You will be asked some questions, please read them **carefully** and answer honestly

- Put an `x` into all the boxes [ ] relevant to your *pull request* (like that [x])

- Use *Preview* tab to see how your *pull... | https://api.github.com/repos/ytdl-org/youtube-dl/pulls/31385 | 2022-11-29T18:58:27Z | 2023-02-02T23:19:03Z | 2023-02-02T23:19:03Z | 2023-02-02T23:19:04Z | 1,554 | ytdl-org/youtube-dl | 50,259 |

remove hangouts.users state, simplifies hangouts.conversations | diff --git a/homeassistant/components/hangouts/hangouts_bot.py b/homeassistant/components/hangouts/hangouts_bot.py

index d4c5606799d9b5..d9ffb4cbace7da 100644

--- a/homeassistant/components/hangouts/hangouts_bot.py

+++ b/homeassistant/components/hangouts/hangouts_bot.py

@@ -195,23 +195,15 @@ async def _async_list_conve... | ## Description:

removes the `hangouts.users` state, it is nice to read for devs, but completely useless for users and confusing.

simplifies the hangouts.conversations, its reduced to the the id and name and a list of usernames of the conversation.

## Checklist:

- [x] The code change is tested and works locall... | https://api.github.com/repos/home-assistant/core/pulls/16191 | 2018-08-25T15:47:16Z | 2018-08-26T19:28:43Z | 2018-08-26T19:28:43Z | 2018-12-10T15:21:42Z | 402 | home-assistant/core | 39,135 |

http2: normalize headers before sending | diff --git a/mitmproxy/proxy/protocol/http2.py b/mitmproxy/proxy/protocol/http2.py

index cdce24b38e..019159defc 100644

--- a/mitmproxy/proxy/protocol/http2.py

+++ b/mitmproxy/proxy/protocol/http2.py

@@ -97,7 +97,6 @@ def __init__(self, ctx, mode: str) -> None:

client_side=False,

header_encodin... | This might have prevented https://github.com/mitmproxy/mitmproxy/pull/2034. | https://api.github.com/repos/mitmproxy/mitmproxy/pulls/2055 | 2017-02-23T11:53:50Z | 2017-02-23T15:04:11Z | 2017-02-23T15:04:11Z | 2017-02-23T15:04:13Z | 226 | mitmproxy/mitmproxy | 27,763 |

Revert "fix: added platform support for ghcr.io images to be run on Apple Sil…" | diff --git a/.github/workflows/docker-build.yaml b/.github/workflows/docker-build.yaml

index 76eef77f73..ac2b8f816c 100644

--- a/.github/workflows/docker-build.yaml

+++ b/.github/workflows/docker-build.yaml

@@ -52,7 +52,6 @@ jobs:

- name: Build and push Docker image

uses: docker/build-push-action@v3.2.0... | Reverts LAION-AI/Open-Assistant#1763 | https://api.github.com/repos/LAION-AI/Open-Assistant/pulls/2080 | 2023-03-15T19:13:20Z | 2023-03-15T19:13:29Z | 2023-03-15T19:13:29Z | 2023-03-15T19:13:30Z | 148 | LAION-AI/Open-Assistant | 37,383 |

Set correct Nginx server root on FreeBSD and Darwin | diff --git a/certbot-nginx/certbot_nginx/constants.py b/certbot-nginx/certbot_nginx/constants.py

index 3f263fea3f5..dfc45120251 100644

--- a/certbot-nginx/certbot_nginx/constants.py

+++ b/certbot-nginx/certbot_nginx/constants.py

@@ -1,9 +1,14 @@

"""nginx plugin constants."""

import pkg_resources

+import platform

+i... | Fork of #5862.

This patch should be merged not squashed to preserve authorship. | https://api.github.com/repos/certbot/certbot/pulls/6020 | 2018-05-18T03:05:22Z | 2018-05-21T23:53:12Z | 2018-05-21T23:53:12Z | 2018-06-06T21:49:01Z | 176 | certbot/certbot | 318 |

Include mypy instructions in CONTRIBUTING.md | diff --git a/CONTRIBUTING.md b/CONTRIBUTING.md

index e4c81a5ecd98..76ee1312f345 100644

--- a/CONTRIBUTING.md

+++ b/CONTRIBUTING.md

@@ -155,6 +155,8 @@ We want your work to be readable by others; therefore, we encourage you to note

return a + b

```

+ Instructions on how to install mypy can be found [here](h... | ### **Describe your change:**

* [ ] Add an algorithm?

* [ ] Fix a bug or typo in an existing algorithm?

* [x] Documentation change?

### **Checklist:**

* [ ] I have read [CONTRIBUTING.md](https://github.com/TheAlgorithms/Python/blob/master/CONTRIBUTING.md).

* [x] This pull request is all my own work -- I h... | https://api.github.com/repos/TheAlgorithms/Python/pulls/4271 | 2021-03-19T06:40:10Z | 2021-03-19T10:29:54Z | 2021-03-19T10:29:54Z | 2021-03-21T10:33:17Z | 242 | TheAlgorithms/Python | 30,332 |

installbuilder: use own mirror | diff --git a/release/build.py b/release/build.py

index f14adaf0f1..b1f44cafad 100644

--- a/release/build.py

+++ b/release/build.py

@@ -202,8 +202,8 @@ def installbuilder_installer():

"""Windows: Build the InstallBuilder installer."""

_ensure_pyinstaller_onedir()

- IB_VERSION = "22.10.0"

- IB_SETUP_SHA... | https://api.github.com/repos/mitmproxy/mitmproxy/pulls/6173 | 2023-06-14T10:45:33Z | 2023-06-14T12:06:11Z | 2023-06-14T12:06:11Z | 2023-07-02T20:17:02Z | 424 | mitmproxy/mitmproxy | 27,900 | |

[MINOR] fix link in documentation | diff --git a/docs/pathod/test.rst b/docs/pathod/test.rst

index cd6e8a29c5..b337795ae3 100644

--- a/docs/pathod/test.rst

+++ b/docs/pathod/test.rst

@@ -14,7 +14,7 @@ The canonical docs can be accessed using pydoc:

>>> pydoc pathod.test

The remainder of this page demonstrates some common interaction patterns using

-<... | Fix Nose link in pathod test documentation.

Current: http://docs.mitmproxy.org/en/stable/pathod/test.html

Fixed: http://imgur.com/a/aRDZS | https://api.github.com/repos/mitmproxy/mitmproxy/pulls/1712 | 2016-11-03T21:38:50Z | 2016-11-03T21:57:28Z | 2016-11-03T21:57:28Z | 2016-11-03T22:28:20Z | 209 | mitmproxy/mitmproxy | 27,616 |

Add Qwen adapter support | diff --git a/docs/model_support.md b/docs/model_support.md

index 5f9b349319..21ff29a98d 100644

--- a/docs/model_support.md

+++ b/docs/model_support.md

@@ -31,6 +31,7 @@

- [WizardLM/WizardLM-13B-V1.0](https://huggingface.co/WizardLM/WizardLM-13B-V1.0)

- [baichuan-inc/baichuan-7B](https://huggingface.co/baichuan-inc/ba... | <!-- Thank you for your contribution! -->

<!-- Please add a reviewer to the assignee section when you create a PR. If you don't have the access to it, we will shortly find a reviewer and assign them to your PR. -->

## Why are these changes needed?

1. Support new model

<!-- Please give a short summary of the c... | https://api.github.com/repos/lm-sys/FastChat/pulls/2153 | 2023-08-04T07:17:40Z | 2023-08-08T10:19:27Z | 2023-08-08T10:19:27Z | 2023-08-09T02:48:26Z | 1,480 | lm-sys/FastChat | 41,124 |

CI: add Twine check in check workflow | diff --git a/.github/workflows/checks.yml b/.github/workflows/checks.yml

index b26f344ffb0..e515959ad04 100644

--- a/.github/workflows/checks.yml

+++ b/.github/workflows/checks.yml

@@ -25,6 +25,9 @@ jobs:

- python-version: "3.10" # Keep in sync with .readthedocs.yml

env:

TOXENV: docs

+... | Closes: #5655 | https://api.github.com/repos/scrapy/scrapy/pulls/5656 | 2022-10-02T14:18:39Z | 2022-10-03T12:00:35Z | 2022-10-03T12:00:35Z | 2022-10-03T12:00:35Z | 276 | scrapy/scrapy | 34,254 |

Action: Support running in a docker container | diff --git a/CHANGES.md b/CHANGES.md

index cb637d94c11..a687f3090bf 100644

--- a/CHANGES.md

+++ b/CHANGES.md

@@ -22,6 +22,10 @@

- All upper version bounds on dependencies have been removed (#2718)

+### Integrations

+

+- Update GitHub action to support containerized runs (#2748)

+

## 21.12b0

### _Black_

diff --... | `${{ github.action_path }}` doesn't point to the correct path when run in a docker container, you have to use the environmental variable `GITHUB_ACTION_PATH`

https://github.com/actions/runner/issues/716

<!-- Hello! Thanks for submitting a PR. To help make things go a bit more

smoothly we would appreciate t... | https://api.github.com/repos/psf/black/pulls/2748 | 2022-01-05T06:59:55Z | 2022-01-07T16:50:50Z | 2022-01-07T16:50:50Z | 2022-01-07T17:10:28Z | 238 | psf/black | 24,311 |

Standardize capitalization. | diff --git a/README.md b/README.md

index 9337c587..404e8c9d 100644

--- a/README.md

+++ b/README.md

@@ -124,7 +124,7 @@ For a list of free machine learning books available for download, go [here](http

<a name="cpp-general-purpose" />

#### General-Purpose Machine Learning

-* [MLPack](http://www.mlpack.org/) - A scala... | Hi there,

This fix just brings the capitalization in line with what the mlpack project uses. I hope it is helpful. :)

Thanks!

| https://api.github.com/repos/josephmisiti/awesome-machine-learning/pulls/244 | 2016-02-04T14:36:54Z | 2016-02-04T14:42:40Z | 2016-02-04T14:42:40Z | 2016-02-04T14:42:40Z | 199 | josephmisiti/awesome-machine-learning | 52,504 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.