repo stringclasses 147

values | number int64 1 172k | title stringlengths 2 476 | body stringlengths 0 5k | url stringlengths 39 70 | state stringclasses 2

values | labels listlengths 0 9 | created_at timestamp[ns, tz=UTC]date 2017-01-18 18:50:08 2026-01-06 07:33:18 | updated_at timestamp[ns, tz=UTC]date 2017-01-18 19:20:07 2026-01-06 08:03:39 | comments int64 0 58 ⌀ | user stringlengths 2 28 |

|---|---|---|---|---|---|---|---|---|---|---|

pytorch/pytorch | 70,094 | how to get the pre operator of current opeartor in PyTorch? | ### 🚀 The feature, motivation and pitch

I want to get the pre operator of current operator in forward? Can pytorch support this now?

### Alternatives

_No response_

### Additional context

_No response_ | https://github.com/pytorch/pytorch/issues/70094 | closed | [] | 2021-12-17T07:01:52Z | 2021-12-17T14:36:30Z | null | kevinVegBird |

pytorch/data | 140 | Installing torchdata installs `example` folder as well | ### 🐛 Describe the bug

Looks like installing torchdata also installs `examples`. This should probably be removed from `setup.py` so that only the `torchdata` folder gets installed.

Example of what happens when trying to uninstall torchdata

```

fmassa@devfair0163:~/work/vision_datasets$ pip uninstall torchdata

F... | https://github.com/meta-pytorch/data/issues/140 | closed | [

"bug"

] | 2021-12-15T14:09:24Z | 2021-12-16T17:05:20Z | 0 | fmassa |

pytorch/TensorRT | 772 | ❓ [Question] Is there support for optional arguments in model's `forward()`? | ## ❓ Question

Is there support for optional arguments in model's `forward()`? For example, I have the following: `def forward(self, x, y: Optional[Tensor] = None):` where `y` is an optional tensor. The return result is `x + y` if `y` is provided, otherwise just `x`.

## What you have already tried

I added a secon... | https://github.com/pytorch/TensorRT/issues/772 | closed | [

"question",

"component: core",

"No Activity"

] | 2021-12-14T22:14:55Z | 2023-02-27T00:02:28Z | null | lhai37 |

pytorch/data | 132 | [TODO] can this also have a timeout? |

This issue is generated from the TODO line

https://github.com/pytorch/data/blob/f102d25f9f444de3380c6d49bf7aaf52c213bb1f/build/lib/torchdata/datapipes/iter/load/online.py#L113

| https://github.com/meta-pytorch/data/issues/132 | closed | [

"todo"

] | 2021-12-10T20:09:55Z | 2022-01-07T21:29:12Z | 0 | VitalyFedyunin |

pytorch/tensorpipe | 417 | how to install pytensorpipe | I built tensorpipe with ninja and try to build python package running `python setup.py`, it tells me:

```

make: *** No rule to make target 'pytensorpipe'. Stop.

``` | https://github.com/pytorch/tensorpipe/issues/417 | closed | [] | 2021-12-10T14:21:30Z | 2021-12-10T14:27:30Z | null | eedalong |

pytorch/TensorRT | 771 | ❓ [Question] Get no indications on the exact code that cause errors? | ## ❓ Question

Hi, thanks for making this amazing tool! I met some errors when converting my model. However, for some of the errors, there is only information about unsupported operators without any indication of the exact code that causes the errors.

Why does this happen and are there any potential solutions?

... | https://github.com/pytorch/TensorRT/issues/771 | closed | [

"question",

"No Activity"

] | 2021-12-10T13:22:59Z | 2022-04-01T00:02:18Z | null | DeriZSY |

pytorch/TensorRT | 767 | ❓ [Question] Handling non-tensor input of module | ## ❓ Question

Can `torch_tensorrt.compile` handle non-tensor input of the module (for example boolean and integer)? How should I do it? | https://github.com/pytorch/TensorRT/issues/767 | closed | [

"question",

"No Activity"

] | 2021-12-09T09:46:12Z | 2022-04-01T00:02:18Z | null | DeriZSY |

pytorch/pytorch | 69,610 | [Question] How to extract/expose the complete PyTorch computation graph (forward and backward)? | How to extract the complete computation graph PyTorch generates?

Here is my understanding:

1. The forward graph can be generated by `jit.trace` or `jit.script`

2. The backward graph is created from scratch each time `loss.backward()` is invoked in the training loop.

I am attempting to lower the computation gra... | https://github.com/pytorch/pytorch/issues/69610 | open | [

"module: autograd",

"triaged",

"oncall: visualization"

] | 2021-12-08T14:37:00Z | 2025-12-24T06:43:52Z | null | anubane |

pytorch/TensorRT | 765 | ❓ [Question] Sometimes inference time is too slow.. | ## ❓ Question

Thank you for this nice project, I successfully converted [my model](https://github.com/sejong-rcv/MLPD-Multi-Label-Pedestrian-Detection), which feeds multispectral images, using Torch-TensorRT as below.

```

model = torch.load(model_path)['model']

model = model.to(device)

model.eval... | https://github.com/pytorch/TensorRT/issues/765 | closed | [

"question",

"No Activity"

] | 2021-12-08T09:57:32Z | 2022-04-01T00:02:19Z | null | socome |

pytorch/vision | 5,045 | [Discussion] How do we want to handle `torchvision.prototype.features.Feature`'s? | This issue should spark a discussion about how we want to handle `Feature`'s in the future. There are a lot of open questions I'm trying to summarize. I'll give my opinion to each of them. You can find the current implementation under `torchvision.prototype.features`.

## What are `Feature`'s?

`Feature`'s are subc... | https://github.com/pytorch/vision/issues/5045 | open | [

"needs discussion",

"prototype"

] | 2021-12-07T13:17:58Z | 2022-02-11T11:42:36Z | null | pmeier |

pytorch/data | 113 | datapipe serialization support / cloudpickle / parallel support | I've been looking at how we might go about supporting torchdata within TorchX and with components. I was wondering what the serialization options were for transforms and what that might look like.

There's a couple of common patterns that would be nice to support:

* general data transforms (with potentially distri... | https://github.com/meta-pytorch/data/issues/113 | open | [] | 2021-12-04T00:46:36Z | 2022-12-09T15:34:39Z | 7 | d4l3k |

pytorch/TensorRT | 761 | can i server my model with triton inference server | ## ❓ Question

<!-- Your question -->

## What you have already tried

<!-- A clear and concise description of what you have already done. -->

## Environment

> Build information about Torch-TensorRT can be found by turning on debug messages

- PyTorch Version (e.g., 1.0):

- CPU Architecture:

- OS (e.... | https://github.com/pytorch/TensorRT/issues/761 | closed | [

"question",

"No Activity"

] | 2021-12-03T14:10:51Z | 2024-09-12T16:27:05Z | null | leo-XUKANG |

pytorch/pytorch | 69,352 | I want to know how to read the LMDB file once when using DDP | Hi, I meet a question. I have an LMDB dataset of about 50G. My machine has 100G memory and 8 V100 GPUs of 32GB.

the format of My dataset is like:

```

class MyDataset(Dataset):

def __init__(self, img_lmdb_dir) -> None:

super().__init__()

self.env = lmdb.open( # open LMDB dataset

... | https://github.com/pytorch/pytorch/issues/69352 | open | [

"oncall: distributed",

"module: dataloader"

] | 2021-12-03T07:34:16Z | 2022-12-29T14:32:17Z | null | shoutOutYangJie |

pytorch/pytorch | 69,283 | how to get required arguments name in forward | I want to get the required arguments name in different model's forward, removing optional arguments. I used python inspect, but got all inputs' name. I have no idea to deal it. please help | https://github.com/pytorch/pytorch/issues/69283 | closed | [] | 2021-12-02T08:14:49Z | 2021-12-02T17:47:53Z | null | TXacs |

pytorch/pytorch | 69,204 | How to assign tensor to tensor | I have a 3D tensor J, and I want to assign values to it. Below is my code

```

import torch

J = torch.eye(2).unsqueeze(0).expand(5, 2, 2)

for i in range(2):

J[:, i, :] = torch.randn([5, 2])

```

Then there is an error: unsupported operation: more than one element of the written-to tensor refers to a single mem... | https://github.com/pytorch/pytorch/issues/69204 | closed | [] | 2021-12-01T10:45:06Z | 2021-12-01T20:33:43Z | null | LeZhengThu |

pytorch/pytorch | 69,070 | how to compute the real Jacobian matrix using autograd tool | I want to compute the real Jacobian matrix instead of the vector-Jacobian product. For example, I have

```f=(f1, f2, f3), f1=x1^2+2*x2+x3, f2=x1+x2^3+x3^2, f3=2*x1+x2^2+x3^3```

Then the Jacobian is ```J=[2*x1, 2, 1; 1, 3*x2^2, 2*x3; 2, 2*x2, 3*x3^2] ```

But backward() or grad() only gives the vector-Jacobian produc... | https://github.com/pytorch/pytorch/issues/69070 | closed | [

"module: autograd",

"triaged"

] | 2021-11-30T10:05:01Z | 2021-12-01T19:44:55Z | null | LeZhengThu |

pytorch/pytorch | 69,068 | how to build libtorch without mkl? | ## ❓ Questions and Help

I download the libtorch(CPU) 1.3.0 from [pytorch](https://download.pytorch.org/libtorch/cpu/libtorch-cxx11-abi-shared-with-deps-1.3.0%2Bcpu.zip), The dependency library is follow:

.

But when I use torch_tensorrt.ts.compile interface to convert the int8 model to trt , errors happen, such as "ERROR: [Torch-TensorRT] - **Unsupported operator: quantized::linear**" , "**... | https://github.com/pytorch/TensorRT/issues/744 | closed | [

"question",

"No Activity"

] | 2021-11-26T11:11:00Z | 2022-03-13T00:02:19Z | null | jiinhui |

pytorch/pytorch | 68,925 | How to implement `bucket_by_sequence_length` with IterableDataset and DataLoader | ## How to implement `bucket_by_sequence_length` with IterableDataset and DataLoader?

I have a custom **IterableDataset** for question answering, which reads training data from a huge file. And I want to bucket the tranining exampels by their sequence length, like `tf.data.Dataset.bucket_by_sequence_length`.

Any d... | https://github.com/pytorch/pytorch/issues/68925 | open | [

"module: dataloader",

"triaged",

"module: data"

] | 2021-11-26T03:06:17Z | 2021-11-30T15:32:48Z | null | luozhouyang |

pytorch/TensorRT | 740 | What's the difference compared to native tensort sdk? | I used to convert a pytorch model to onnx format,and try to run it using native TensorRT SDK,but I failed for some operators in model is not supported by trt sdk; So if I use Torch-TensorRT to run the model, will I still have the same problem? Is there any more operators added compared to the native trt sdk?

| https://github.com/pytorch/TensorRT/issues/740 | closed | [

"question"

] | 2021-11-23T08:09:14Z | 2021-11-29T20:14:45Z | null | pango99 |

pytorch/android-demo-app | 213 | how to converto torchscript_int8@tracing file to pt file? | i have a custom model file,ie model.jit,how can i convert to d2go.pt? | https://github.com/pytorch/android-demo-app/issues/213 | closed | [] | 2021-11-23T03:34:57Z | 2022-06-29T08:42:41Z | null | cloveropen |

pytorch/pytorch | 68,729 | How to specify the backends when running on CPU | ## ❓ Questions and Help

### How to specify the backends when running on CPU.

Hi, I noticed that there are multiple backends available on CPU in pytorch: mkl, mkldnn, openmp.

How do I know which backend pytorch is using in current model and can I specify the backend?

- [Discussion Forum](https://discuss.pytor... | https://github.com/pytorch/pytorch/issues/68729 | closed | [] | 2021-11-22T13:54:35Z | 2021-11-22T18:53:55Z | null | zheng-ningxin |

huggingface/transformers | 14,482 | where can I find the dataset bert-base-chinese is pretrained on? | https://github.com/huggingface/transformers/issues/14482 | closed | [] | 2021-11-22T09:22:51Z | 2021-12-30T15:02:07Z | null | BoomSky0416 | |

pytorch/xla | 3,221 | [Question] How to do deterministic training on GPUs. | ## ❓ Questions and Help

Hi, I'm testing torch xla on GPU. The script used is based on the test_train_mp_mnist.py. I changed the data input to be consistent for all workers (use the same dataset, no distributed sampler, turn off shuffle), don't adjust the learning rate, adding deterministic functions, andd adding logic... | https://github.com/pytorch/xla/issues/3221 | closed | [

"stale",

"xla:gpu"

] | 2021-11-22T09:16:11Z | 2022-04-28T00:10:33Z | null | cicirori |

pytorch/hub | 254 | How to use hub if don't have network? | Downloading: "https://github.com/ultralytics/yolov5/archive/master.zip" to /root/.cache/torch/hub/master.zip

I always stop in last line.

Is there anyway to use hub offline. | https://github.com/pytorch/hub/issues/254 | closed | [] | 2021-11-22T08:44:47Z | 2021-11-22T09:40:01Z | null | Skypow2012 |

pytorch/tutorials | 1,747 | Is it possible to perform partial conversion of a pytorch model to ONNX? | I have the following VAE model in pytorch that I would like to convert to ONNX (and eventually to TensorFlow):

https://github.com/jlalvis/VAE_SGD/blob/master/VAE/autoencoder_in.py

I am only interested in the decoding part of the model. Is it possible to convert only the decoder to ONNX?

Thanks in advance :)

cc ... | https://github.com/pytorch/tutorials/issues/1747 | closed | [

"question",

"onnx"

] | 2021-11-19T10:49:56Z | 2023-03-07T17:36:34Z | null | ShiLevy |

pytorch/functorch | 280 | How to update the original model parameters after calling make_functional? | As per the title, I find that updating the tensors pointed by the `params` returned by `make_functional` does not update the real parameters in the original model.

Is there a way to do this? I find that it would be extremely useful to implement optimization algorithms in a way that is more similar to their mathematica... | https://github.com/pytorch/functorch/issues/280 | open | [

"actionable"

] | 2021-11-19T08:54:25Z | 2022-04-13T22:32:19Z | null | trenta3 |

pytorch/TensorRT | 733 | Unable to use any Torch-TensorRT methods | I'm facing this error:

> AttributeError: module 'torch_tensorrt' has no attribute 'compile'

I also get this error when I try to use any other method like Input().

This is how I installed Torch-TensorRT:

`pip install torch-tensorrt -f github.com/NVIDIA/Torch-TensorRT/releases`

Code (from official document... | https://github.com/pytorch/TensorRT/issues/733 | closed | [

"question",

"No Activity",

"channel: windows"

] | 2021-11-18T20:40:58Z | 2022-10-27T13:01:48Z | null | Arjunp24 |

pytorch/TensorRT | 732 | ❓ [Question] More average batch time for torch-tensorrt compiled model than torchscript model (fp32 mode). | ## ❓ Question

I am comparing the performances of the torchscript model and the torch-tensorrt compiled model, when I am running in float32 mode, the average batch time is more for torch-tensorrt model. Is this expected?1. I am running the below code to compare torchscript model and torch-tensorrt compiled models,

... | https://github.com/pytorch/TensorRT/issues/732 | closed | [

"question",

"No Activity"

] | 2021-11-18T17:58:10Z | 2022-02-28T17:49:23Z | null | harishkool |

huggingface/transformers | 14,440 | What does "is_beam_sample_gen_mode" mean | Hi, I find there are many ways for generating sequences in `Transformers`(when calling the `generate` method).

According to the code there:

https://github.com/huggingface/transformers/blob/01f8e639d35feb91f16fd3c31f035df11a726cc5/src/transformers/generation_utils.py#L947-L951

As far as I known:

`is_greedy_gen_mode`... | https://github.com/huggingface/transformers/issues/14440 | closed | [] | 2021-11-18T06:31:52Z | 2023-02-28T05:13:29Z | null | huhk-sysu |

pytorch/TensorRT | 730 | Convert YoloV5 models | It is my understanding that the new stable release should be able to convert any PyTorch model with fallback to PyTorch when operations cannot be directly converted to TensorRT. I am trying to convert

I am trying to convert YoloV5s6 to TensorRT using the code that you can find below. I believe that it would be great ... | https://github.com/pytorch/TensorRT/issues/730 | closed | [

"question",

"No Activity",

"component: partitioning"

] | 2021-11-17T18:37:44Z | 2023-02-27T00:02:29Z | null | mfoglio |

pytorch/TensorRT | 727 | I trained a model by libtorch,how to convert it to tensorrt? | by libtorch,not by pytorch.

how to convert the model to tensorrt? | https://github.com/pytorch/TensorRT/issues/727 | closed | [

"question",

"No Activity"

] | 2021-11-17T07:35:55Z | 2022-02-26T00:01:58Z | null | henbucuoshanghai |

pytorch/vision | 4,949 | GPU usage keeps increasing marginally with each inference request | ### 🐛 Describe the bug

I have been trying to deploy the RetinaNet pre-trained model available in torchvision. However, after every inference request with exactly same image, the gpu usage keeps increasing marginally (by roughly 10 MiB, as visible in nvidia-smi). (Same behavior is noticed if I try the same with othe... | https://github.com/pytorch/vision/issues/4949 | closed | [

"question",

"module: models",

"topic: object detection"

] | 2021-11-16T19:43:49Z | 2024-02-28T15:01:40Z | null | shv07 |

pytorch/android-demo-app | 209 | How to add language model in ASR demo | The wav2vec2 used in the SpeechRecognition example does not have a language model. How to add language model in the demo app? | https://github.com/pytorch/android-demo-app/issues/209 | open | [] | 2021-11-16T10:55:43Z | 2021-12-10T11:36:07Z | null | guijuzhejiang |

pytorch/pytorch | 68,414 | I used libtorch train a model,how to convert it to onnx? | libtorch trained a model | https://github.com/pytorch/pytorch/issues/68414 | closed | [

"module: onnx",

"triaged"

] | 2021-11-16T07:30:36Z | 2021-11-17T00:03:41Z | null | henbucuoshanghai |

pytorch/torchx | 345 | slurm_scheduler: handle OCI images | ## Description

<!-- concise description of the feature/enhancement -->

Add support for running TorchX components via the Slurm OCI interface.

## Motivation/Background

<!-- why is this feature/enhancement important? provide background context -->

Slurm 21.08+ has support for running OCI containers as the envi... | https://github.com/meta-pytorch/torchx/issues/345 | open | [

"enhancement",

"module: runner",

"slurm"

] | 2021-11-15T23:25:21Z | 2021-11-15T23:25:21Z | 0 | d4l3k |

pytorch/torchx | 344 | workspace notebook UX | ## Description

<!-- concise description of the feature/enhancement -->

We should add some notebook specific integrations to make working with workspace and launching remote jobs first class. This builds upon the workspace support tracked by #333.

## Motivation/Background

<!-- why is this feature/enhancement imp... | https://github.com/meta-pytorch/torchx/issues/344 | open | [

"enhancement",

"module: runner"

] | 2021-11-15T22:50:59Z | 2021-11-15T22:50:59Z | 0 | d4l3k |

pytorch/android-demo-app | 208 | How to run model with grayscale input? | https://github.com/pytorch/android-demo-app/issues/208 | open | [] | 2021-11-15T09:59:11Z | 2021-11-15T09:59:11Z | null | bartproo | |

pytorch/torchx | 340 | Advanced Pipeline Example Errors on KFP | ## 📚 Documentation

## Link

https://pytorch.org/torchx/main/examples_pipelines/kfp/advanced_pipeline.html#sphx-glr-examples-pipelines-kfp-advanced-pipeline-py

## What does it currently say?

The pipeline.yaml can be generated and run on Kubeflow

## What should it say?

Unknown, I believe it is a race conditio... | https://github.com/meta-pytorch/torchx/issues/340 | closed | [] | 2021-11-13T06:40:22Z | 2022-01-04T05:06:42Z | 2 | sam-h-bean |

pytorch/torchx | 339 | separate .torchxconfig for fb/ and oss | ## Description

We want to have a FB internal .torchxconfig file to specify scheduler_args for internal cluster and a OSS .torchxconfig file to run on public clusters

## Motivation/Background

<!-- why is this feature/enhancement important? provide background context -->

## Detailed Proposal

<!-- provide a det... | https://github.com/meta-pytorch/torchx/issues/339 | closed | [] | 2021-11-12T19:34:39Z | 2021-11-16T00:31:48Z | 1 | colin2328 |

pytorch/xla | 3,212 | How to enable oneDNN optimization? | ## ❓ Questions and Help

I am doing training and inference on XLA_CPU, but I find that the training speed is particularly slow. Compared with pytorch, the training speed is about 10 times slower.

According to the log, I found that mklcnn acceleration is enabled by default during pytorch training, but when I use xla tr... | https://github.com/pytorch/xla/issues/3212 | closed | [

"question",

"stale"

] | 2021-11-12T08:26:47Z | 2022-04-16T13:44:03Z | null | ZhongYFeng |

pytorch/TensorRT | 708 | ImportError: libtorch_cuda_cu.so: cannot open shared object file: No such file or directory | I installed torch-tensorrt via pip: `pip3 install torch-tensorrt -f github.com/NVIDIA/Torch-TensorRT/releases`. And when I try to import it the _ImportError_ raises:

_ImportError: libtorch_cuda_cu.so: cannot open shared object file: No such file or directory_

The full error:

```

ImportError ... | https://github.com/pytorch/TensorRT/issues/708 | closed | [

"question"

] | 2021-11-11T09:04:07Z | 2022-07-29T15:02:57Z | null | anvarganiev |

pytorch/TensorRT | 697 | why https://github.com/NVIDIA/Torch-TensorRT/releases/torch_tensorrt-1.0.0-cp36-cp36m-linux_x86_64.whl depends on cuda 10.2 library |

why https://github.com/NVIDIA/Torch-TensorRT/releases/torch_tensorrt-1.0.0-cp36-cp36m-linux_x86_64.whl depends on cuda 10.2 library

<!-- Your question -->

when I try to install torch-tensorrt and import torch_tensorrt ,It was reported ImportError:libcudart.so.10.2: cannot open shared object file: No such file o... | https://github.com/pytorch/TensorRT/issues/697 | closed | [

"question"

] | 2021-11-10T12:48:15Z | 2021-11-10T17:02:41Z | null | ylz1104 |

pytorch/torchx | 336 | [docs] add context/intro to each docs page | ## 📚 Documentation

## Link

Ex: https://pytorch.org/torchx/main/basics.html

and some other pages

## What does it currently say?

doesn't currently have an intro about the page and how it fits in context, just jumps right into the documentation

## What should it say?

<!-- the proposed new documentation -->... | https://github.com/meta-pytorch/torchx/issues/336 | closed | [

"documentation"

] | 2021-11-08T23:26:47Z | 2021-11-11T18:33:18Z | 1 | d4l3k |

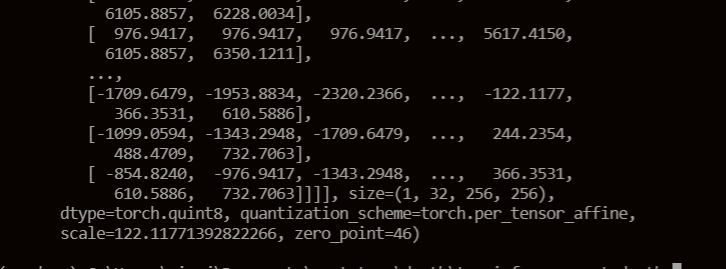

pytorch/pytorch | 67,965 | how to set the quantized data type in QAT | When I use the qat and extract the intermedia layer's output I find it's quint8

.

This datatype will be the input to the next layer I think.

But the weights are qint8 type. multiple a qint8... | https://github.com/pytorch/pytorch/issues/67965 | closed | [

"oncall: quantization"

] | 2021-11-07T06:03:27Z | 2021-11-09T13:45:28Z | null | mathmax12 |

pytorch/tutorials | 1,742 | ddp_pipeline | I ran the code as is on the cluster. Gives

`RuntimeError: unsupported operation: some elements of the input tensor and the written-to tensor refer to a single memory location. Please clone() the tensor before performing the operation.`

What could be wrong? Also, is there any way to run this code in Jupyter? By th... | https://github.com/pytorch/tutorials/issues/1742 | closed | [] | 2021-11-06T01:13:50Z | 2022-09-28T15:11:42Z | 2 | RomanKoshkin |

pytorch/pytorch | 67,757 | How to build libtorch on aarch64 machine? | I used command lines below to build libtorch on aarch64 machine.

```shell

git clone https://github.com/pytorch/pytorch --recursive

cd pytorch

pip3 install pyyaml # 缺失相关依赖,进行安装,如有其他缺失,依次安装即可

export USE_CUDA=False # 使用cpu

export BUILD_TEST=False # 不编译测试部分

python3 ../tools/build_libtorch.py # 会自动创建build文件夹,并进行相关编译

... | https://github.com/pytorch/pytorch/issues/67757 | closed | [] | 2021-11-03T08:19:34Z | 2021-11-04T13:43:36Z | null | zihaoliao |

pytorch/pytorch | 67,596 | How to upgrade the NCCL version of pytorch 1.7.1 from 2.7.8 to 2.11.4? |

I have installed version 2.11.4 in wsl2 and can pass the nccl-tests. However, when training the model, pytorch 1.7.1 still calls NCCL 2.7.8. In addition to rebuilding, is there a way for pytorch 1.7.1 to call NCCL 2.11.4 in the system instead of calling the compiled version NCCL 2.7.8? | https://github.com/pytorch/pytorch/issues/67596 | closed | [] | 2021-10-31T09:01:45Z | 2021-11-02T11:41:14Z | null | cascgu |

pytorch/android-demo-app | 198 | How to reduce the size of pt file | Thanks for your Image Segmentation deepLab v3. I have used it to implement an android file, but the size is about 150MB. Can you enlighten me how can I reduce the size. Thanks. | https://github.com/pytorch/android-demo-app/issues/198 | open | [] | 2021-10-31T07:36:36Z | 2021-10-31T07:36:36Z | null | jjlchui |

pytorch/vision | 4,802 | How to monitor and when to retrain the object detection model in production? | I recently moved regression model to production and I’m monitoring the model drift and data drift using statistical tests, based on their distributions i retrain the model.

Could you please tell me how to monitoring the object detection model and detect drifts ?

Do you use statistical test to detect drifts? If yes... | https://github.com/pytorch/vision/issues/4802 | closed | [] | 2021-10-30T12:45:47Z | 2021-10-31T14:33:09Z | null | IamExperimenting |

pytorch/vision | 4,795 | [docs] Pretrained model docs should explain how to specify cache dir and norm_layer | ### 🐛 Describe the bug

https://pytorch.org/vision/stable/models.html?highlight=resnet18#torchvision.models.resnet18

should document:

- how to set cache dir for downloaded models. many university systems have tight quota for home dir that prohibits clogging it with weights. it is explained at the very top of ver... | https://github.com/pytorch/vision/issues/4795 | open | [] | 2021-10-29T11:50:17Z | 2021-11-13T21:46:45Z | null | vadimkantorov |

pytorch/torchx | 316 | [torchx/cli] Implement a torchx "template" subcommand that copies the given builtin | Torchx cli maintains a list of builtin components that are available via `torchx builtin` cmd. The builtin components are the patterns that are configured to execute one or another use-case. Users can use these components without the need to manage their own, e.g.

```

torchx run -s local_cwd dist.ddp --script main... | https://github.com/meta-pytorch/torchx/issues/316 | closed | [

"enhancement",

"cli"

] | 2021-10-28T20:28:07Z | 2021-11-03T21:27:12Z | 0 | aivanou |

pytorch/tutorials | 1,735 | Missing tutorial on using the transformer decoder layer? | Hi, i'm new with transformers.

For research purpose, with a colleague, I'm trying to implement a transformer for anomaly detection in human pose.

The transformer setting we need is very similar to an autoencoder, where the encoder generates a sort of latent representation and the decoder output is just a model attemp... | https://github.com/pytorch/tutorials/issues/1735 | closed | [] | 2021-10-28T16:27:27Z | 2022-03-17T16:15:07Z | 0 | AndreaLombax |

pytorch/pytorch | 67,438 | how to use torch.jit.script with toch.nn.DataParallel | ### 🐛 Describe the bug

net = torch.nn.DataParallel(net)

net.load_state_dict(state1,False)

with torch.jit.optimized_execution(True):

net_jit = torch.jit.script(net)

torch.jit.frontend.NotSupportedError: Compiled functions can't take variable number of arguments or use keyword-only arguments with defaults:

... | https://github.com/pytorch/pytorch/issues/67438 | closed | [

"oncall: jit"

] | 2021-10-28T12:13:51Z | 2022-11-22T11:58:22Z | null | anliyuan |

pytorch/TensorRT | 685 | ❓ [Question] TRtorch v0.1.0 does support aten::divonly? | ## ❓ Question

I am trying to compile my model.

However compiler stops owing to a error.

Doss TRTorch v0.1.0 support `aten::divonly`?

Also, does the newer TRTorch support `aten::divonly`?

## What you have already tried

I searched the error messages at the internet.

## Environment

pytorch 1.6

TRTorch 0.1.0

... | https://github.com/pytorch/TensorRT/issues/685 | closed | [

"feature request",

"question",

"No Activity"

] | 2021-10-27T16:34:18Z | 2022-02-15T00:01:49Z | null | yoshida-ryuhei |

pytorch/pytorch | 67,338 | how to get the rank list in a new group | ## ❓ Questions and Help

How to get the rank list in a new group? I just find the `distributed.get_rank` and `distributed.get_world_size()` but not `get_rank_list` API.

Thanks :)

cc @pietern @mrshenli @pritamdamania87 @zhaojuanmao @satgera @rohan-varma @gqchen @aazzolini @osalpekar @jiayisuse @SciPioneer @H-Huang | https://github.com/pytorch/pytorch/issues/67338 | closed | [

"oncall: distributed"

] | 2021-10-27T16:30:02Z | 2021-11-05T02:45:16Z | null | hclearner |

pytorch/TensorRT | 682 | ❓ [Question] is there in8 quantization support with python? | ## ❓ Question

<!-- Your question -->

I did quantize to FP16 by using python. but i didn't find way to do that with int8

let me know if there is support | https://github.com/pytorch/TensorRT/issues/682 | closed | [

"question"

] | 2021-10-26T21:30:44Z | 2021-10-26T21:51:26Z | null | yokosyun |

pytorch/tutorials | 1,727 | your "numpy_extensions_tutorial.py " example | Hello,

I would like to use the example in your `numpy_extensions_tutorial.py ` coda, but it appears ti computes on a single channel.

Do you happen to know how I can compute it on several channels?

Thanks! | https://github.com/pytorch/tutorials/issues/1727 | closed | [

"question"

] | 2021-10-25T12:09:33Z | 2023-03-06T22:59:39Z | null | lovodkin93 |

huggingface/sentence-transformers | 1,227 | What is the training data to train the checkpoint "nli-roberta-base-v2"? | Hi, I wonder what is the training data for the provided checkpoint "nli-roberta-base-v2"?

The checkpoint name indicates that the training data is related to the nli dataset, but I just want to clarify what it is.

Thanks in advance. | https://github.com/huggingface/sentence-transformers/issues/1227 | closed | [] | 2021-10-25T08:59:45Z | 2021-10-25T09:47:36Z | null | sh0416 |

pytorch/pytorch | 67,157 | What is the replacement in PyTorch>=1.8 for `torch.rfft` in PyTorch <=1.6? | Hi,

I have been working on a project since last year and at that time, the PyTorch version was 1.6. I was using `f1 = torch.rfft(input, signal_ndim=3)` in that version. However, after PyTorch 1.8, `torch.rfft` has been removed. I was trying to use `f2=torch.fft.rfftn(input)` as the replacement, but both the real and... | https://github.com/pytorch/pytorch/issues/67157 | closed | [] | 2021-10-24T14:49:29Z | 2021-10-24T15:23:27Z | null | pengsongyou |

pytorch/pytorch | 67,156 | [ONNX] How to gathering on a tensor with two-dim indexing? | Hi,

How can I perform the following **without** getting a Gather node in my onnx graph? As the Gather node gives me an error in TensorRT 7.

```

x = data[:, x_indices, y_indices]

```

data is tensor of size[32, 64, 1024]

x_indices is tensor of size [50000,] -> range of indices 0 to 31

y_indices is tensor of si... | https://github.com/pytorch/pytorch/issues/67156 | closed | [

"module: onnx"

] | 2021-10-24T13:06:58Z | 2021-10-26T15:33:37Z | null | yasser-h-khalil |

pytorch/data | 81 | Improve debuggability | ## 🚀 Feature

Currently, when iteration on DataPipe starts and Error is raised, the traceback would report at each `__iter__` method pointing to the DataPipe Class file.

It's hard to figure out which part of DataPipe is broken, especially when multiple same DataPipe calls exist in the pipeline.

As normally develop... | https://github.com/meta-pytorch/data/issues/81 | closed | [] | 2021-10-22T18:12:36Z | 2022-03-16T19:42:11Z | 0 | ejguan |

pytorch/pytorch | 67,013 | How to use torch.distributions.multivariate_normal.MultivariateNormal in multi-gpu mode | ## ❓ Questions and Help

In single gpu mode,MultivariateNormal can run correctly, but when i switch to multi-gpu mode, always get the error:

G = torch.exp(m.log_prob(Delta))

File "xxxxx", line 210, in log_prob

M = _batch_mahalanobis(self._unbroadcasted_scale_tril, diff)

File "xxxxx", line 57, in _batch_... | https://github.com/pytorch/pytorch/issues/67013 | closed | [

"module: cuda",

"triaged",

"module: linear algebra"

] | 2021-10-21T10:36:41Z | 2023-11-30T13:45:58Z | null | SkylerHuang |

pytorch/android-demo-app | 195 | The Performance of the Deployed Model on Android is Far from What on the PC | Hi,

I trained one model to detect the steel rebar base on yolov5x model. The testing result is good on PC. And I followed the guide (https://github.com/pytorch/android-demo-app/pull/185) to convert the model to torchscript model (ptl) and integrate it to the demo app. Then the demo app could work and output the ... | https://github.com/pytorch/android-demo-app/issues/195 | closed | [] | 2021-10-21T03:21:12Z | 2021-12-10T07:20:54Z | null | joeshow79 |

pytorch/pytorch | 66,916 | how to install torch version1.8.0 with cuda 11.2 | ## ❓ Questions and Help

how to install torch version1.8.0 with cuda 11.2

| https://github.com/pytorch/pytorch/issues/66916 | closed | [] | 2021-10-20T01:09:16Z | 2021-10-21T15:16:54Z | null | ZTurboX |

pytorch/pytorch | 66,873 | Add documentation for how to work with PyTorch in Windows SSH | Our Windows machines require all dependencies to be installed before you could do anything with PyTorch (like run tests).

We should document how someone could get to a stage where they can work with PyTorch, or provide a script to automate this process.

Moreover, our Windows scripts need cleaning up in general, b... | https://github.com/pytorch/pytorch/issues/66873 | closed | [

"module: docs",

"triaged",

"better-engineering"

] | 2021-10-19T15:25:18Z | 2022-02-28T20:45:59Z | null | janeyx99 |

pytorch/torchx | 277 | Improve docs page toctree index | ## 📚 Documentation

## Link

https://pytorch.org/torchx

## What does it currently say?

No issues with the documentation. This calls for a revamped indexing of the toctree in the torchx docs page

## What should it say?

Make the toctree be:

1. Usage:

- Basic Concepts

- Installation

- 10 Min T... | https://github.com/meta-pytorch/torchx/issues/277 | closed | [] | 2021-10-18T22:30:22Z | 2021-10-20T22:13:50Z | 0 | kiukchung |

huggingface/dataset-viewer | 71 | Download and cache the images and other files? | Fields with an image URL are detected, and the "ImageUrl" type is passed in the features, to let the client (moonlanding) put the URL in `<img src="..." />`.

This means that pages such as https://hf.co/datasets/severo/wit will download images directly from Wikipedia for example. Hotlinking presents various [issues](... | https://github.com/huggingface/dataset-viewer/issues/71 | closed | [

"question"

] | 2021-10-18T15:37:59Z | 2022-09-16T20:09:24Z | null | severo |

pytorch/torchx | 250 | [torchx/configs] Make runopts, Runopt, RunConfig, scheduler_args more consistent | ## Description

Consolidate redundant names, classes, and arguments that represent scheduler `RunConfig`.

## Motivation/Background

Currently there are different names for what essentially ends up being the additional runtime options for the [`torchx.scheduler`](https://pytorch.org/torchx/latest/schedulers.html) (se... | https://github.com/meta-pytorch/torchx/issues/250 | closed | [] | 2021-10-14T15:03:56Z | 2021-10-14T19:14:18Z | 0 | kiukchung |

pytorch/pytorch | 66,511 | Add a config to PRs where we assume there is only 1 GPU available | We recently had a gap in PR coverage where we did not catch when a test case attempted to access an invalid GPU from this PR https://github.com/pytorch/pytorch/pull/65914. We should capture that in PR testing somehow to catch these early next time.

Action:

Make our tests run on only one "available" GPU.

We could t... | https://github.com/pytorch/pytorch/issues/66511 | closed | [

"module: ci",

"module: tests",

"triaged"

] | 2021-10-12T21:42:19Z | 2021-11-15T22:37:13Z | null | janeyx99 |

pytorch/pytorch | 66,418 | How to implement dynamic sampling of training data? | Hi, thank you for your work.

Now I have multiple train datasets including real data and synthetic data. When sampling data during training, It is necessary to ensure that the ratio of real data samples to synthetic data samples in a batch is 1:1~1:3. How to achieve this operation?

Looking forward to your answer, ... | https://github.com/pytorch/pytorch/issues/66418 | closed | [

"module: dataloader",

"triaged"

] | 2021-10-11T12:10:16Z | 2021-10-13T02:38:14Z | null | Danee-wawawa |

pytorch/tutorials | 1,705 | StopIteration Error in torch.fx tutorial with TransformerEncoderLayer | I’m trying to run the fx profiling tutorial in tutorials/fx_profiling_tutorial.py at master · pytorch/tutorials · GitHub 1 on a single nn.TransformerEncoderLayer as opposed to the resnet in the example and I keep running into a StopIteration error. Why is this happening? All I did was replace the resnet with a transfor... | https://github.com/pytorch/tutorials/issues/1705 | closed | [

"question",

"fx",

"easy",

"docathon-h2-2023"

] | 2021-10-09T06:28:59Z | 2023-11-07T00:41:23Z | null | lkp411 |

pytorch/functorch | 192 | Figure out how to market functorch.vmap over torch.vmap that is in PyTorch nightly binaries | Motivation:

- Many folks are using torch.vmap instead of functorch.vmap and basing their initial impressions off of it. We'd like them to use functorch.vmap instead, especially if we do a beta release of functorch out-of-tree.

Constraints:

- Features that rely on it (torch.autograd.functional.jacobian, torch.autog... | https://github.com/pytorch/functorch/issues/192 | closed | [] | 2021-10-08T13:55:19Z | 2022-02-03T14:55:34Z | null | zou3519 |

pytorch/android-demo-app | 189 | how to loadMoudule by Absolutepath | Hello, I use the objectdetection app to run normally. There are some new requirements. The. **Pt file is relatively large**. I don't want to include it in the app, but want to **load it directly from the local**.

I use Android Python version 1.8. I found that the pytorch_android class in his jar package does not imple... | https://github.com/pytorch/android-demo-app/issues/189 | open | [] | 2021-10-08T12:09:07Z | 2021-10-08T12:09:07Z | null | dota2mhxy |

pytorch/pytorch | 66,309 | How to find the source kernel code of cumsum (gpu) | ## ❓ Questions and Help

### Please note that this issue tracker is not a help form and this issue will be closed.

Hi,

As the title, how could I find the source kernel code of cumsum (gpu). I just find the cumsum (cpu). Thanks.

| https://github.com/pytorch/pytorch/issues/66309 | closed | [] | 2021-10-08T09:53:03Z | 2021-10-08T17:47:39Z | null | foreveronehundred |

pytorch/audio | 1,837 | ERROR: Could not find a version that satisfies the requirement torchaudio (from versions: none) ERROR: No matching distribution found for torchaudio | ### 🐛 Describe the bug

ERROR: Could not find a version that satisfies the requirement torchaudio>=0.5.0 (from asteroid) (from versions: none)

ERROR: No matching distribution found for torchaudio>=0.5.0 (from asteroid)

21:40:18-root@Desktop:/pr/Neural/voicefixer_main# pip3 install torchaudio

### Versions

python ... | https://github.com/pytorch/audio/issues/1837 | closed | [

"question"

] | 2021-10-07T21:17:48Z | 2023-07-31T18:37:00Z | null | clort81 |

pytorch/data | 44 | KeyZipper improvement | Currently multiple stacked `KeyZipper` would create a recursive data structure:

```py

dp = KeyZipper(dp, ref_dp1, lambda x: x)

dp = KeyZipper(dp, ref_dp2, lambda x: x[0])

dp = KeyZipper(dp, ref_dp3, lambda x: x[0][0])

```

This is super annoying if we are using same key for each `KeyZipper`. At the end, it yields ... | https://github.com/meta-pytorch/data/issues/44 | closed | [] | 2021-10-05T17:02:00Z | 2021-10-22T14:45:38Z | 1 | ejguan |

huggingface/datasets | 3,013 | Improve `get_dataset_infos`? | Using the dedicated function `get_dataset_infos` on a dataset that has no dataset-info.json file returns an empty info:

```

>>> from datasets import get_dataset_infos

>>> get_dataset_infos('wit')

{}

```

While it's totally possible to get it (regenerate it) with:

```

>>> from datasets import load_dataset_b... | https://github.com/huggingface/datasets/issues/3013 | closed | [

"question",

"dataset-viewer"

] | 2021-10-04T09:47:04Z | 2022-02-21T15:57:10Z | null | severo |

huggingface/dataset-viewer | 55 | Should the features be associated to a split, instead of a config? | For now, we assume that all the splits of a config will share the same features, but it seems that it's not necessarily the case (https://github.com/huggingface/datasets/issues/2968). Am I right @lhoestq ?

Is there any example of such a dataset on the hub or in the canonical ones? | https://github.com/huggingface/dataset-viewer/issues/55 | closed | [

"question"

] | 2021-10-01T18:14:53Z | 2021-10-05T09:25:04Z | null | severo |

pytorch/pytorch | 65,992 | How to use `MASTER_ADDR` in a distributed training script | ## ❓ Questions and Help

https://pytorch.org/docs/stable/elastic/run.html

> `MASTER_ADDR` - The FQDN of the host that is running worker with rank 0; used to initialize the Torch Distributed backend.

The document says `MASTER_ADDR` is the hostname of the master node. But the hostname may not be resolved by other... | https://github.com/pytorch/pytorch/issues/65992 | closed | [

"oncall: distributed",

"module: elastic"

] | 2021-10-01T09:19:31Z | 2025-02-04T08:17:51Z | null | jasperzhong |

pytorch/serve | 1,262 | What is the Proper Model Save Method? | The example given in the documentation shows downloading and archiving a pre-existing model from Pytorch. But if serving a custom-built model, what is the correct save method?

For example, on the Save/Loading Documentation, there are several save methods:

https://pytorch.org/tutorials/beginner/saving_loading_mode... | https://github.com/pytorch/serve/issues/1262 | closed | [] | 2021-10-01T06:02:40Z | 2021-10-01T06:42:16Z | null | CerebralSeed |

pytorch/pytorch | 65,915 | I am getting undefined symbol: _ZN5torch3jit17parseSchemaOrNameERKNSt7__cxx1112basic_stringIcSt11char_traitsIcESaIcEEE error. This I am getting when I am trying to "import torch from nemo.collections import nlp". I am trying to use pytorch ngc container 21.05. I tried to import torch before the nemo extension. Please s... | ## ❓ Questions and Help

### Please note that this issue tracker is not a help form and this issue will be closed.

We have a set of [listed resources available on the website](https://pytorch.org/resources). Our primary means of support is our discussion forum:

- [Discussion Forum](https://discuss.pytorch.org/)... | https://github.com/pytorch/pytorch/issues/65915 | open | [

"oncall: jit"

] | 2021-09-30T12:08:43Z | 2021-09-30T12:17:54Z | null | gangadharsingh056 |

pytorch/pytorch | 65,816 | How to install PyTorch on ppc64le with pip? | I am going to build a virtual environment (python -m venv) and install PyTorch on a ppc64le machine. But there is no package in pip to install PyTorch, however it is available in conda. But I wanted not to use conda because I need to install some specific packages and versions. So, how can I install PyTorch on a ppc64l... | https://github.com/pytorch/pytorch/issues/65816 | closed | [] | 2021-09-29T13:02:10Z | 2021-10-01T18:00:20Z | null | M-Amrollahi |

pytorch/pytorch | 65,689 | Questions in use pack_padded_sequence: how to pack Multiple tensor? | ## ❓ Questions and Help

### Please note that this issue tracker is not a help form and this issue will be closed.

We have a set of [listed resources available on the website](https://pytorch.org/resources). Our primary means of support is our discussion forum:

- [Discussion Forum](https://discuss.pytorch.org/)... | https://github.com/pytorch/pytorch/issues/65689 | closed | [] | 2021-09-27T13:02:55Z | 2021-09-27T23:31:06Z | null | jingxingzhi |

huggingface/dataset-viewer | 52 | Regenerate dataset-info instead of loading it? | Currently, getting the rows with `/rows` requires a previous (internal) call to `/infos` to get the features (type of the columns). But sometimes the dataset-info.json file is missing, or not coherent with the dataset script (for example: https://huggingface.co/datasets/lhoestq/custom_squad/tree/main), while we are usi... | https://github.com/huggingface/dataset-viewer/issues/52 | closed | [

"question"

] | 2021-09-27T11:28:13Z | 2021-09-27T13:21:00Z | null | severo |

pytorch/pytorch | 65,682 | How to export split to ONNX with dynamic split_size? | ## ❓ Questions and Help

I need to implement dynamic tensor split op in work. But when I want to export this split op to ONNX with dynamic split_size, it seems not work.

I am new to ONNX. Anyone can help me? Thanks a lot.

## To Reproduce

```python

import torch

dummy_input = (torch.tensor([1, 4, 2, 7, 3]),... | https://github.com/pytorch/pytorch/issues/65682 | closed | [

"module: onnx",

"triaged"

] | 2021-09-27T09:27:15Z | 2022-10-27T20:57:22Z | null | Wwwwei |

huggingface/transformers | 13,747 | I want to understand the source code of transformers. Where should I start? Is there a tutorial link? thank you very much! | I want to understand the source code of transformers. Where should I start? Is there a tutorial link? thank you very much! | https://github.com/huggingface/transformers/issues/13747 | closed | [

"Migration"

] | 2021-09-26T08:27:24Z | 2021-11-04T15:06:05Z | null | limengqigithub |

huggingface/accelerate | 174 | What is the recommended way of training GANs? | Currently, the examples folder doesn't contain any example of training GAN. I wonder what is the recommended way of handling multiple models and optimizers when using accelerate.

In terms of interface, `Accelerator.prepare` can wrap arbitrary number of models and optimizers at once. However, it seems to me that the ... | https://github.com/huggingface/accelerate/issues/174 | closed | [] | 2021-09-26T07:30:41Z | 2023-10-24T17:55:15Z | null | yuxinyuan |

pytorch/torchx | 199 | Installation from source examples fail | ## 📚 Documentation

## Link

<!-- link to the problematic documentation -->

https://github.com/pytorch/torchx#source

## What does it currently say?

<!-- copy paste the section that is wrong -->

```bash

# install torchx sdk and CLI from source

$ pip install -e git+https://github.com/pytorch/torchx.git

```

... | https://github.com/meta-pytorch/torchx/issues/199 | closed | [] | 2021-09-24T16:07:12Z | 2021-10-01T02:28:58Z | 2 | stevebyan |

huggingface/dataset-viewer | 48 | "flatten" the nested values? | See https://huggingface.co/docs/datasets/process.html#flatten | https://github.com/huggingface/dataset-viewer/issues/48 | closed | [

"question"

] | 2021-09-24T12:58:34Z | 2022-09-16T20:10:22Z | null | severo |

huggingface/dataset-viewer | 45 | use `environs` to manage the env vars? | https://pypi.org/project/environs/ instead of utils.py | https://github.com/huggingface/dataset-viewer/issues/45 | closed | [

"question"

] | 2021-09-24T08:05:38Z | 2022-09-19T08:49:33Z | null | severo |

pytorch/torchx | 197 | Documentation feedback | ## 📚 Documentation

At a high level the repo really needs a glossary of terms in a single page otherwise easy to forget what they mean when you get to a new page. Lots of content can be deleted specifically the example application notebooks don't add anything relative to the pipeline examples.

General list of fee... | https://github.com/meta-pytorch/torchx/issues/197 | closed | [] | 2021-09-23T18:01:21Z | 2021-09-27T17:37:49Z | 4 | msaroufim |

huggingface/dataset-viewer | 41 | Move benchmark to a different repo? | It's a client of the API | https://github.com/huggingface/dataset-viewer/issues/41 | closed | [

"question"

] | 2021-09-23T10:44:08Z | 2021-10-12T08:49:11Z | null | severo |

huggingface/dataset-viewer | 35 | Refresh the cache? | Force a cache refresh on a regular basis (cron) | https://github.com/huggingface/dataset-viewer/issues/35 | closed | [

"question"

] | 2021-09-23T09:36:02Z | 2021-10-12T08:34:41Z | null | severo |

pytorch/tutorials | 1,692 | UserWarning during Datasets & DataLoaders Tutorial | Hi,

I am following the 'Introduction to PyTorch' tutorial. During [Datasets & DataLoaders](https://pytorch.org/tutorials/beginner/basics/data_tutorial.html) I copied the following:

```import torch

from torch.utils.data import Dataset

from torchvision import datasets

from torchvision.transforms import ToTensor

... | https://github.com/pytorch/tutorials/issues/1692 | closed | [

"intro",

"docathon-h1-2023",

"easy"

] | 2021-09-23T06:07:54Z | 2023-06-08T17:07:12Z | 12 | Jelle-Bijlsma |

pytorch/TensorRT | 632 | ❓ [Question] Unknown type name '__torch__.torch.classes.tensorrt.Engine' | ## ❓ Question

<!-- Your question -->

when I tried to load trt module which saved with python

```

torch::jit::load(trtorch_path);

```

I got this error

```

terminate called after throwing an instance of 'torch::jit::ErrorReport'

what():

Unknown type name '__torch__.torch.classes.tensorrt.Engine':

Se... | https://github.com/pytorch/TensorRT/issues/632 | closed | [

"question"

] | 2021-09-22T08:45:45Z | 2021-09-27T14:38:21Z | null | yokosyun |

pytorch/pytorch | 65,446 | libtorch compile problem. How to get the correct protobuf version? what PROTOBUF_VERSION <3011000 and 3011004 <PROTOBUF_MIN_PROTOC_VERSION? | How to get the correct protobuf version?

When using libtorch to compile, using PROTOBUF_VERSION <3011000 and 3011004 <PROTOBUF_MIN_PROTOC_VERSION will report an error, which version should be used? 3011000 also did not show any content, thank you

libtorch ==libtorch-cxx11-abi-shared-with-deps-1.9.0+cu102.zip

tor... | https://github.com/pytorch/pytorch/issues/65446 | open | [

"module: build",

"module: protobuf",

"triaged"

] | 2021-09-22T04:28:27Z | 2021-09-22T14:27:18Z | null | ahong007007 |

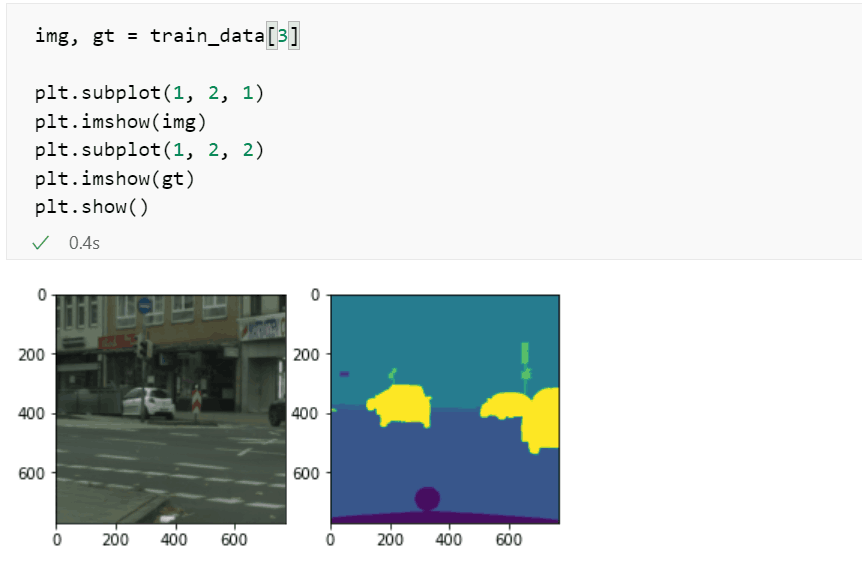

pytorch/pytorch | 65,312 | How to get the same RandomResizedCrop result of img and gt | my code snippet is below

Now ,the img ,gt do different RandomRe... | https://github.com/pytorch/pytorch/issues/65312 | closed | [] | 2021-09-19T13:54:59Z | 2021-09-21T03:17:23Z | null | HaoRan-hash |

pytorch/vision | 4,446 | Question: FFmpeg dependency | Sorry, I am little bit of a newbie on this subject. I notice at Torchvision 0.9+ that ffmpeg >= 4.2 is a hard dependency for Linux conda distributions. At Torchvision <= 0.8.2, this was not a dependency. We all know ffmpeg licensing and the other dependencies it pulls in is problematic in some scenarios.

Question... | https://github.com/pytorch/vision/issues/4446 | closed | [

"question",

"topic: build"

] | 2021-09-19T11:21:21Z | 2021-09-24T19:36:12Z | null | rwmajor2 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.