repo stringclasses 147

values | number int64 1 172k | title stringlengths 2 476 | body stringlengths 0 5k | url stringlengths 39 70 | state stringclasses 2

values | labels listlengths 0 9 | created_at timestamp[ns, tz=UTC]date 2017-01-18 18:50:08 2026-01-06 07:33:18 | updated_at timestamp[ns, tz=UTC]date 2017-01-18 19:20:07 2026-01-06 08:03:39 | comments int64 0 58 ⌀ | user stringlengths 2 28 |

|---|---|---|---|---|---|---|---|---|---|---|

pytorch/vision | 4,445 | How to use TenCrop and FiveCrop on video | Hi everyone,

I am wanting to use TenCrop and FiveCrop on video but I have no idea how to do this.

Can you tell me how to do it?

Sorry, I am new to this field.

Thank you very much! | https://github.com/pytorch/vision/issues/4445 | open | [

"question",

"module: video"

] | 2021-09-18T16:08:03Z | 2021-09-19T12:33:10Z | null | DungVo1507 |

huggingface/dataset-viewer | 30 | Use FastAPI instead of only Starlette? | It would allow to have doc, and surely a lot of other benefits | https://github.com/huggingface/dataset-viewer/issues/30 | closed | [

"question"

] | 2021-09-17T14:45:40Z | 2021-09-20T10:25:17Z | null | severo |

pytorch/pytorch | 65,199 | How to register a Module as one custom OP when export to onnx | ## ❓ How to register a Module as one custom OP when export to onnx

The custom modules may be split to multiple OPs when using `torch.onnx.export`. In many cases, we can manually optimize these OPs into a custom OP(with a custom node in onnx), and handle it by a plugin in TensorRT. Is there any way to register a Mod... | https://github.com/pytorch/pytorch/issues/65199 | closed | [

"module: onnx"

] | 2021-09-17T06:01:28Z | 2024-02-01T02:38:25Z | null | OYCN |

pytorch/torchx | 184 | Update builtin components to use best practices + documentation | Before stable release we want to do some general cleanups on the current built in components.

- [ ] all components should default to docker images (no /tmp)

- [ ] all components should use `python -m` entrypoints to make it easier to support all environments by using python's resolution system

- [ ] update the com... | https://github.com/meta-pytorch/torchx/issues/184 | closed | [

"documentation",

"enhancement",

"module: components"

] | 2021-09-16T23:16:52Z | 2021-09-21T20:59:19Z | 0 | d4l3k |

pytorch/TensorRT | 626 | ❓ [Question] How to install trtorchc? | ## ❓ Question

<!-- Your question -->

How to install trtorchc?

## What you have already tried

I use Dockerfile.21.07 build the docker. I found the trtorchc can't be used.

So I run `bazel build //cpp/bin/trtorchc --cxxopt="-DNDEBUG` to build the trtorchc.

However, it doesn't work.

<!-- A clear and concise descri... | https://github.com/pytorch/TensorRT/issues/626 | closed | [

"question"

] | 2021-09-16T10:32:47Z | 2021-09-16T15:54:00Z | null | shiyongming |

pytorch/pytorch | 65,132 | How to reference a tensor variable from a superclass of `torch.Tensor`? | Consider I have the following code where I subclass `torch.Tensor`. I'd like to avoid using `self.t_` and instead access the tensor variable in the superclass. Though, when looking at the PyTorch code, I don't seem to identify how that can be done. Your help is appreciated.

```

class XLATensor(torch.Tensor):

d... | https://github.com/pytorch/pytorch/issues/65132 | open | [

"triaged",

"module: xla"

] | 2021-09-16T07:31:05Z | 2021-09-16T21:35:37Z | null | miladm |

pytorch/pytorch | 64,939 | BC CI error message should link to some information about how to squash the warning | ## 🚀 Feature

The BC CI error message says:

```

The PR is introducing backward incompatible changes to the operator library. Please contact PyTorch team to confirm whether this change is wanted or not.

```

I know the change is wanted, but I don't remember how to actually "add the change so that the BC mechanism... | https://github.com/pytorch/pytorch/issues/64939 | open | [

"module: ci",

"triaged",

"better-engineering"

] | 2021-09-13T17:33:53Z | 2021-09-13T17:38:17Z | null | zou3519 |

pytorch/pytorch | 64,904 | How to use python to implement _VF.lstm | ## How to use python to implement _VF.lstm

Hello! When I want to modify the calculation formula of the LSTM,I found the calculation process in nn.LSTM is realized by _VF.lstm. I found the "_VF.lstm" is written by C++, and I can't find the RNN.cpp in my computer.

So I wanna implement _VF.lstm by using python, can ... | https://github.com/pytorch/pytorch/issues/64904 | closed | [] | 2021-09-13T06:41:39Z | 2021-09-14T02:37:26Z | null | TimothyLiuu |

pytorch/pytorch | 64,793 | How to get "finfo" in C++ torchlib like that in pytorch | I am using C++ torchlib, but I don't know what to do it in c++ like that in pytorch:

```python

min_real = torch.finfo(self.logits.dtype).min

# or

min_real = torch.finfo(self.logits.dtype).tiny

```

cc @yf225 @glaringlee | https://github.com/pytorch/pytorch/issues/64793 | closed | [

"module: cpp",

"triaged"

] | 2021-09-10T01:37:53Z | 2021-09-13T01:13:27Z | null | dbsxdbsx |

huggingface/datasets | 2,888 | v1.11.1 release date | Hello, i need to use latest features in one of my packages but there have been no new datasets release since 2 months ago.

When do you plan to publush v1.11.1 release? | https://github.com/huggingface/datasets/issues/2888 | closed | [

"question"

] | 2021-09-09T21:53:15Z | 2021-09-12T20:18:35Z | null | fcakyon |

pytorch/TensorRT | 620 | ❓ [Question] Is it possible to install TRTorch with CUDA 11.1 support on aarch64? | # ❓ Question

is there a particular reason why there is no pre-built wheel file for the combination of CUDA11.1 + aarch64

# What you have already tried

I have tried to install wheel files for CUDA 10.2 aarch64 but it obviously didn't work because it tried to find the CUDA 10.2 libraries.

# Environment

> Build... | https://github.com/pytorch/TensorRT/issues/620 | closed | [

"question",

"No Activity"

] | 2021-09-09T00:45:37Z | 2021-12-20T00:01:58Z | null | lppllppl920 |

pytorch/text | 1,386 | how to make clear what torchtext._torchtext module do,when i import something from the module. | ## 📚 Documentation

**Description**

yesterday,i learn Vectors and Vocab from torchtext 0.5. But today, i update it to torchtext0.10 by pip install --upgrade,and then, i found Vocab is changed.

Now, when you use the method 'torchtext.vocab.build_vocab_from_iterator' to create instance of Vocab, you will call the m... | https://github.com/pytorch/text/issues/1386 | open | [] | 2021-09-05T07:41:13Z | 2021-09-13T20:57:01Z | null | wn1652400018 |

pytorch/xla | 3,114 | How to aggregate the results running on multiple tpu cores | ## ❓ Questions and Help

Hi, How can we aggregate the results or say combine all the predictions and use it further.

I understand this could be a issue addressed earlier, if yes please share some links related to this

```

def _run():

<model loading, training arguments and etc >

# using hugging face Trai... | https://github.com/pytorch/xla/issues/3114 | closed | [] | 2021-09-03T05:13:35Z | 2021-09-04T08:21:31Z | null | pradeepkr12 |

pytorch/serve | 1,227 | How to torch-model-archiver directory with its content? | I'm trying to generate .mar file which contain some extra files including a directory. I'm not sure how can I add that. Here is what I'm trying to archive:

```bash

my_model/

├── [4.0K] 1_Pooling

│ └── [ 190] config.json

├── [ 696] config.json

├── [ 122] config_sentence_transformers.json

├── [ 168] han... | https://github.com/pytorch/serve/issues/1227 | closed | [

"triaged_wait"

] | 2021-09-01T19:44:08Z | 2021-09-01T21:06:36Z | null | spate141 |

huggingface/dataset-viewer | 18 | CI: how to acknowledge a "safety" warning? | We use `safety` to check vulnerabilities in the dependencies. But in the case below, `tensorflow` is marked as insecure while the last published version on pipy is still 2.6.0. What to do in this case?

```

+==============================================================================+

| ... | https://github.com/huggingface/dataset-viewer/issues/18 | closed | [

"question"

] | 2021-09-01T07:20:45Z | 2021-09-15T11:58:56Z | null | severo |

pytorch/pytorch | 64,334 | How to add nan value judgment for variable t0_1 in fused_clamp kernel generated by torch/csrc/jit/tensorexpr/cuda_codegen.cpp. | For this python program:

```import torch

torch._C._jit_set_profiling_executor(True)

torch._C._jit_set_profiling_mode(True)

torch._C._jit_override_can_fuse_on_cpu(True)

torch._C._jit_override_can_fuse_on_gpu(True)

torch._C._debug_set_fusion_group_inlining(False)

torch._C._jit_set_texpr_fuser_enabled(True)

... | https://github.com/pytorch/pytorch/issues/64334 | open | [

"oncall: jit"

] | 2021-09-01T02:35:39Z | 2021-09-01T02:53:24Z | null | HangJie720 |

pytorch/functorch | 106 | how to install torch>=1.10.0.dev | when I run this command

pip install --user "git+https://github.com/facebookresearch/functorch.git"

ERROR: Could not find a version that satisfies the requirement torch>=1.10.0.dev | https://github.com/pytorch/functorch/issues/106 | open | [] | 2021-08-31T09:28:39Z | 2021-11-05T15:45:05Z | null | agdkyang |

pytorch/pytorch | 64,247 | How to optimize jit-script model performance (backend device is gpu) | I have lots of script models, Now I want to optimize their performance, the bacnkend is gpu && project is written in c++ api(torch::jit::load).

Does there any ways to do this optimize?

Recently, I find that pytorch support cuda-graph now, maybe this should be a way to optimize performance.

But there are few documen... | https://github.com/pytorch/pytorch/issues/64247 | open | [

"oncall: jit"

] | 2021-08-31T05:31:44Z | 2021-08-31T05:31:46Z | null | fwz-fpga |

pytorch/pytorch | 64,206 | Document how to generate Pybind bindings for C++ Autograd | ## 🚀 Feature

https://pytorch.org/tutorials/advanced/cpp_autograd.html provides a good example on how to define your own function with a forward and backward pass, along with registering it with the autograd system. However, it lacks any information on how to actually link this module to use in Python / Pytorch / Pybi... | https://github.com/pytorch/pytorch/issues/64206 | open | [

"module: cpp",

"triaged"

] | 2021-08-30T18:12:12Z | 2021-08-31T14:20:23Z | null | yaadhavraajagility |

huggingface/transformers | 13,331 | bert:What is the tf version corresponding to tensformers? | I use python3.7, tf2.4.0, cuda11.1 and cudnn 8.0.4 to run bert-base-un and report an error

- albert, bert, xlm: @LysandreJik

- tensorflow: @Rocketkn

| https://github.com/huggingface/transformers/issues/13331 | closed | [] | 2021-08-30T11:42:36Z | 2021-08-30T15:46:16Z | null | xmcs111 |

pytorch/tutorials | 1,662 | seq2seq with character encoding | hi, i am hoping to build a seq2seq model with attention with character level encoding. idea is to build a model which can predict a correct name by handling all sort of spelling mistakes (qwerty keyboard error, double typing, omitting word etc.) . my test data will few example of mistyped words mapping to correct word.... | https://github.com/pytorch/tutorials/issues/1662 | closed | [

"question",

"Text",

"module: torchtext"

] | 2021-08-30T04:10:01Z | 2023-03-06T23:54:02Z | null | manish-shukla01 |

pytorch/vision | 4,332 | Customize the number of input_channels in MobileNetv3_Large | I would like to know how to customize the MobileNetV3_Large torchvision model to accept single-channel inputs with number of classes = 2.

As mentioned in some of the PyTorch discussion forums, I have tried

`model_ft.conv1 = nn.Conv2d(1, 64, kernel_size=7, stride=2, padding=3, bias=False)`

which works for ResNet mo... | https://github.com/pytorch/vision/issues/4332 | closed | [

"question"

] | 2021-08-29T10:48:29Z | 2021-08-31T09:41:23Z | null | ananda1996ai |

pytorch/pytorch | 64,094 | Document how to disable python tests on CI through issues | ## 📚 Documentation

We should document the use of issues to disable tests in a public wiki.

cc @ezyang @seemethere @malfet @walterddr @lg20987 @pytorch/pytorch-dev-infra | https://github.com/pytorch/pytorch/issues/64094 | closed | [

"module: ci",

"triaged",

"better-engineering",

"actionable"

] | 2021-08-27T14:36:39Z | 2021-10-11T21:54:25Z | null | janeyx99 |

pytorch/hub | 222 | torch.hub shouldn't assume model dependencies have __spec__ defined | **Problem**

I'm using torch.hub to load a model that has the `transformers` library as a dependency, however, the last few versions of `transformes` haven't had `__spec__` defined. Currently, this gives an error with torch.hub when trying to load the model and checking that the dependencies exist with `importlib.util.... | https://github.com/pytorch/hub/issues/222 | closed | [

"question"

] | 2021-08-27T13:59:40Z | 2021-08-27T18:03:24Z | null | laurahanu |

pytorch/tutorials | 1,660 | Visualizing the results from trained model | I wanted to know how to test any images on the pre-trained model from this tutorial : https://pytorch.org/tutorials/intermediate/torchvision_tutorial.html#putting-everything-together

1) So given i maybe just have an image, how do i feed it to the model?

2) How exactly did you arrive to these results? (image shown... | https://github.com/pytorch/tutorials/issues/1660 | closed | [

"question",

"torchvision"

] | 2021-08-26T21:04:24Z | 2023-02-23T22:48:10Z | null | jspsiy |

pytorch/serve | 1,217 | How to cache inferences with torchserve | Reference architecture showcasing how to cache inferences from torchserve

So potentially the `inference` handler would reach from some cloud cache or KV store

The benefit of this is it'd dramatically reduce latency for common queries

Probably a good level 3-4 bootcamp task for a specific kind of KV store like ... | https://github.com/pytorch/serve/issues/1217 | closed | [

"good first issue"

] | 2021-08-26T18:00:51Z | 2021-10-07T04:36:17Z | null | msaroufim |

huggingface/dataset-viewer | 15 | Add an endpoint to get the dataset card? | See https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/hf_api.py#L427, `full` argument

The dataset card is the README.md. | https://github.com/huggingface/dataset-viewer/issues/15 | closed | [

"question"

] | 2021-08-26T13:43:29Z | 2022-09-16T20:15:52Z | null | severo |

huggingface/dataset-viewer | 12 | Install the datasets that require manual download | Some datasets require a manual download (https://huggingface.co/datasets/arxiv_dataset, for example). We might manually download them on the server, so that the backend returns the rows, instead of an error. | https://github.com/huggingface/dataset-viewer/issues/12 | closed | [

"question"

] | 2021-08-25T16:30:11Z | 2022-06-17T11:47:18Z | null | severo |

pytorch/vision | 4,312 | Hidden torch.flatten block in ResNet module | ## 🐛 Bug

<!-- A clear and concise description of what the bug is. -->

This bug arise when last 2 layers (avg pool and fc) are changed to nn.Identity

## To Reproduce

Steps to reproduce the behavior:

import torch

import torch.nn as nn

import torchvision

resnet = torchvision.models.resnet18()

resnet.... | https://github.com/pytorch/vision/issues/4312 | closed | [

"question"

] | 2021-08-25T13:09:31Z | 2021-08-25T13:18:34Z | null | 1paragraph |

huggingface/dataset-viewer | 10 | Use /info as the source for configs and splits? | It's a refactor. As the dataset info contains the configs and splits, maybe the code can be factorized. Before doing it: review the errors for /info, /configs, and /splits (https://observablehq.com/@huggingface/quality-assessment-of-datasets-loading) and ensure we will not increase the number of erroneous datasets. | https://github.com/huggingface/dataset-viewer/issues/10 | closed | [

"question"

] | 2021-08-25T09:43:51Z | 2021-09-01T07:08:25Z | null | severo |

pytorch/TensorRT | 597 | ❓ [Question] request a converter: aten::lstm | ERROR: [TRTorch] - Requested converter for aten::lstm, but no such converter was found

Thanks | https://github.com/pytorch/TensorRT/issues/597 | closed | [

"question",

"No Activity"

] | 2021-08-23T12:38:05Z | 2021-12-02T00:01:46Z | null | gaosanyuan |

pytorch/TensorRT | 596 | ❓ [Question] module 'trtorch' has no attribute 'Input' | Why the installed trtorch has no attribute 'Input'? Thanks

trtorch version: 0.3.0 | https://github.com/pytorch/TensorRT/issues/596 | closed | [

"question"

] | 2021-08-23T12:17:20Z | 2021-08-23T16:32:26Z | null | gaosanyuan |

pytorch/TensorRT | 586 | ❓ [Question] Not faster vs torch::jit | ## ❓ Question

I run my model used TrTorch and torch::jit both on fp16 with C++ API, but Trtorch is not faster than JIT.

What can I do to get the reason?

Some information may be helpful.

1. I used two plugins to just call the libtorch function (inverse and grid_smapler).

2. I fix some bugs by change the pytorc... | https://github.com/pytorch/TensorRT/issues/586 | closed | [

"question",

"No Activity"

] | 2021-08-19T13:06:37Z | 2022-03-10T00:02:16Z | null | JuncFang-git |

pytorch/vision | 4,292 | about train fcn questions. | thanks for your great work!

I have read https://github.com/pytorch/vision/blob/master/references/segmentation/train.py script. And there are some questions.

1. why use aux_classifier for fcn, are there any references ?

2. why is the learning rate of aux_classifier ten times that of base lr?https://github.com/pyt... | https://github.com/pytorch/vision/issues/4292 | closed | [

"question",

"topic: object detection"

] | 2021-08-19T08:51:34Z | 2021-08-19T11:50:05Z | null | WZMIAOMIAO |

pytorch/xla | 3,090 | How to concatenate all the predicted labels in XLA? | ## ❓ Questions and Help

Hi does anyone knows how to get all predicted labels from all 8 cores of XLA and concatenate them together?

Say I have a model:

`outputs = model(ids, mask, token_type_ids)`

`_, pred_label = torch.max(outputs.data, dim = 1)`

If I do

`all_predictions_np = pred_label.cpu().detach().numpy(... | https://github.com/pytorch/xla/issues/3090 | closed | [] | 2021-08-19T01:13:39Z | 2021-08-19T20:51:58Z | null | gabrielwong1991 |

pytorch/serve | 1,203 | How to add a custom Handler? | ## 📚 Documentation

How to add custom handlers python files friendly and automatic?

By now, I understand that is necessary to modify `pytorch/serve` source code. is that correct?

In my case, I need a custom handler with

input: numpy array or json or list of numbers

output: numpy array or json or list of numb... | https://github.com/pytorch/serve/issues/1203 | closed | [] | 2021-08-18T01:43:40Z | 2021-08-18T20:01:54Z | null | pablodz |

pytorch/pytorch | 63,395 | How to efficiently (without looping) get data from tensor predicted by a torchscript in C++? | I am calling a torchscript (neural network serialized from Python) from a C++ program:

```

// define inputs

int batch = 3; // batch size

int n_inp = 2; // number of inputs

double I[batch][n_inp] = {{1.0, 1.0}, {2.0, 3.0}, {4.0, 5.0}}; // some random input

std::cout << "inputs" "\n"; // print inputs

... | https://github.com/pytorch/pytorch/issues/63395 | open | [

"oncall: jit"

] | 2021-08-17T12:42:58Z | 2021-11-28T03:54:22Z | null | aiskhak |

pytorch/hub | 218 | DeeplabV3-Resnet101. Where is the mIOU calculation and postprocessing code? | The following link mentions mIOU = 67.4

https://pytorch.org/hub/pytorch_vision_deeplabv3_resnet101/

Is there any codebase where we can refer the evaluation and postprocessing code? | https://github.com/pytorch/hub/issues/218 | closed | [] | 2021-08-16T11:32:49Z | 2021-10-18T11:42:40Z | null | ashg1910 |

pytorch/TensorRT | 580 | ❓ [Question] How to convert nvinfer1::ITensor into at::tensor? | ## ❓ Question

Hi,

How to convert nvinfer1::ITensor into at::tensor? Like #146

## What you have already tried

I want to do some operations use libtorch on the nvinfer1::ITensor. So, can I convert nvinfer1::ITensor into at::tensor? Or I must write a custom converter with the libtorch function?

@xsacha @aar... | https://github.com/pytorch/TensorRT/issues/580 | closed | [

"question"

] | 2021-08-16T09:54:00Z | 2021-08-18T03:02:03Z | null | JuncFang-git |

pytorch/pytorch | 63,304 | How to build a release version libtorch1.8.1 on windows |

## Issue description

I am doing some work with libtorch on Windows 10 recently. I want to build the libtorch library since I have addedd some new features. The build work was done successfully on develop environment. However, when I copy the exe(including dependent DLLs) to another PC(running env, mentioned below), ... | https://github.com/pytorch/pytorch/issues/63304 | closed | [

"module: build",

"module: windows",

"module: docs",

"module: cpp",

"triaged"

] | 2021-08-16T07:30:26Z | 2023-12-19T06:56:17Z | null | RocskyLu |

pytorch/TensorRT | 579 | ❓ memcpy d2d occupies a lot time of inference (resnet50 model after trtorch) | ## ❓ Question

<!-- Your question -->

## What you have already tried

I use trtorch to optimize resnet50 model on the IMAGENET as follows

<img width="998" alt="截屏2021-08-16 10 34 32" src="https://user-images.githubusercontent.com/46394627/129503893-4d252f02-07d4-448a-ac9c-7f64f15aa30a.png">

Unfortunately, i fou... | https://github.com/pytorch/TensorRT/issues/579 | closed | [

"question"

] | 2021-08-16T02:51:22Z | 2021-08-19T12:21:32Z | null | zhang-xh95 |

pytorch/vision | 4,276 | I want to convert model resnet to onnx, how to do? | ## 📚 Documentation

<!-- A clear and concise description of what content in https://pytorch.org/docs is an issue. If this has to do with the general https://pytorch.org website, please file an issue at https://github.com/pytorch/pytorch.github.io/issues/new/choose instead. If this has to do with https://pytorch.org/... | https://github.com/pytorch/vision/issues/4276 | closed | [] | 2021-08-14T04:17:42Z | 2021-08-16T08:41:46Z | null | xinsuinizhuan |

pytorch/TensorRT | 576 | ❓ [Question] How can i write a converter just use a Libtorch function? | ## ❓ Question

<!-- Your question -->

<!-- A clear and concise description of what you have already done. -->

Hi,

I am trying to write some converter like "torch.inverse", "F.grid_sample", but it's really difficult for me. So, I want to skip that using just some libtorch function.

For example, I want to build a... | https://github.com/pytorch/TensorRT/issues/576 | closed | [

"question"

] | 2021-08-13T02:18:42Z | 2021-08-19T11:49:02Z | null | JuncFang-git |

pytorch/pytorch | 63,182 | Improve doc about docker images and how to run them locally | Updated the wiki page https://github.com/pytorch/pytorch/wiki/Docker-image-build-on-CircleCI

- [x] Document the new ecr_gc job

- [x] How to get images from AWS ECR

- [x] Document how to use the docker image and run `build` and `test` locally | https://github.com/pytorch/pytorch/issues/63182 | closed | [

"module: docs",

"triaged",

"hackathon"

] | 2021-08-12T20:58:43Z | 2021-08-13T00:53:01Z | null | zhouzhuojie |

pytorch/torchx | 132 | Add Torchx Validate command | ## Description

Torchx allows users to develop their own components. Torchx component is defined as a python function with several restrictions as described in https://pytorch.org/torchx/latest/quickstart.html#defining-your-own-component

The `torchx validate` cmd will help users to develop the components.

`torc... | https://github.com/meta-pytorch/torchx/issues/132 | closed | [

"enhancement",

"cli"

] | 2021-08-12T18:38:52Z | 2022-01-22T00:32:22Z | 2 | aivanou |

pytorch/TensorRT | 575 | ❓ [Question] How to build latest TRTorch with Pytorch 1.9.0 | ## ❓ Question

I am trying to build latest TRTorch with Torch 1.9.0 but I am getting some issue. I follow the instruction from [here](https://github.com/NVIDIA/TRTorch/blob/master/README.md)

Also followed https://nvidia.github.io/TRTorch/tutorials/installation.html but not able to build. Please help!

## What you ... | https://github.com/pytorch/TensorRT/issues/575 | closed | [

"question"

] | 2021-08-12T09:29:10Z | 2021-08-16T02:33:12Z | null | rajusm |

pytorch/pytorch | 63,140 | [documentation] torch.distributed.elastic: illustrate how to write load_checkpoint and save_checkpoint in Train Script | https://pytorch.org/docs/master/elastic/train_script.html

If users want to run elastic jobs, he/she needs to write some logic to load and save checkpoints. And maybe `State` like this https://github.com/pytorch/elastic/blob/master/examples/imagenet/main.py#L196 should be defined.

It is not clear in the documentat... | https://github.com/pytorch/pytorch/issues/63140 | open | [

"module: docs",

"triaged",

"module: elastic",

"oncall: r2p"

] | 2021-08-12T08:54:25Z | 2022-06-03T20:47:29Z | null | gaocegege |

pytorch/vision | 4,270 | annotation_path parameter in torchvision.datasets.UCF101 is not clear. | ## 📚 Documentation

Please describe what kind of files should be in annotation_path, and what the files should contain. It is not obvious.

cc @pmeier | https://github.com/pytorch/vision/issues/4270 | open | [

"question",

"module: datasets",

"module: documentation"

] | 2021-08-12T03:40:59Z | 2021-08-13T16:50:25Z | null | damtharvey |

pytorch/torchx | 130 | components: copy component | ## Description

<!-- concise description of the feature/enhancement -->

Adds a basic copy io component that uses fsspec to allow ingressing data or copying from one location to another.

## Motivation/Background

<!-- why is this feature/enhancement important? provide background context -->

We previously had a ... | https://github.com/meta-pytorch/torchx/issues/130 | closed | [

"enhancement",

"module: components"

] | 2021-08-11T20:15:42Z | 2021-09-13T18:09:16Z | 1 | d4l3k |

pytorch/torchx | 128 | components: tensorboard component | ## Description

<!-- concise description of the feature/enhancement -->

It would be nice to have a tensorboard component that could be used as either a mixin as a new role or standalone. This would make it easy to launch a job and monitor it while it's running.

## Detailed Proposal

<!-- provide a detailed prop... | https://github.com/meta-pytorch/torchx/issues/128 | closed | [

"enhancement",

"module: components"

] | 2021-08-11T19:39:28Z | 2021-11-02T17:49:39Z | 0 | d4l3k |

pytorch/android-demo-app | 177 | How to compress the model size when use the API: module._save_for_lite_interpreter | I want to deploy the model on IOS.

When I deploy the model to android, the following code can work.

mobile = torch.jit.trace(model, input_tensor)

mobile.save(path)

I get a model which size is 23.4MB

When i deploy the model to IOS, i must use the following API:

from torch.utils.mobile_optimizer impor... | https://github.com/pytorch/android-demo-app/issues/177 | open | [] | 2021-08-11T10:17:03Z | 2022-02-11T14:21:17Z | null | kunlongsolid |

pytorch/vision | 4,264 | ImportError: cannot import name '_NewEmptyTensorOp' from 'torchvision.ops.misc' | ## 🐛 Bug

<!-- A clear and concise description of what the bug is. -->

## ImportError

Steps to reproduce the behavior:

1. Git clone the repository of [SOLQ](https://github.com/megvii-research/SOLQ)

2. Update the dataset you want to use.

3. Update the data paths in the file SOLQ/datasets/coco.py

4. RUn t... | https://github.com/pytorch/vision/issues/4264 | closed | [

"question"

] | 2021-08-10T05:04:23Z | 2021-08-12T09:19:48Z | null | sagnik1511 |

pytorch/torchx | 121 | cli: support fetching logs from all roles | ## Description

<!-- concise description of the feature/enhancement -->

Currently you have to specify which role you want to fetch logs when using `torchx log`. Ideally you could just specify the job name to fetch all of them.

```

torchx log kubernetes://torchx_tristanr/default:sh-hxkkr/sh

```

## Motivation/... | https://github.com/meta-pytorch/torchx/issues/121 | closed | [

"enhancement"

] | 2021-08-09T20:22:07Z | 2021-09-23T18:09:56Z | 0 | d4l3k |

pytorch/xla | 3,076 | What is xm.RateTracker? Why there is no document for this class? | `xm.RateTracker()` is used in the example script. But I can' find any document for this class(even the doc string does not exist).

What is this class?

## ❓ Questions and Help

https://github.com/pytorch/xla/blob/81eecf457af5db09a3131a00864daf1ca5b8ed20/test/test_train_mp_mnist.py#L123 | https://github.com/pytorch/xla/issues/3076 | closed | [] | 2021-08-09T15:07:03Z | 2021-08-11T01:54:53Z | null | DayuanJiang |

huggingface/dataset-viewer | 6 | Expand the purpose of this backend? | Depending on the evolution of https://github.com/huggingface/datasets, this project might disappear, or its features might be reduced, in particular, if one day it allows caching the data by self-generating:

- an arrow or a parquet data file (maybe with sharding and compression for the largest datasets)

- or a SQL ... | https://github.com/huggingface/dataset-viewer/issues/6 | closed | [

"question"

] | 2021-08-09T14:03:41Z | 2022-02-04T11:24:32Z | null | severo |

pytorch/examples | 925 | How many data does fast neural style need ? | Hi, I am recently implementing fast neural style with your example but I don't have much disk space for coco dataset instead I used my own dataset which contains 1200 images and the result is not good at all (a totally distorted picture, the style is 'starry night').

with a Makefile. But there is always something wrong. So, could you give me the Makefile that successfully links to the .so file?

## Environment

- PyTorch Version (1.8):

- O... | https://github.com/pytorch/TensorRT/issues/566 | closed | [

"question"

] | 2021-08-09T09:51:43Z | 2021-08-12T01:15:41Z | null | JuncFang-git |

pytorch/pytorch | 62,951 | when call `torch.onnx.export()`, the graph is pruned by default ? how to cancel pruning | ## 🚀 Feature

<!-- A clear and concise description of the feature proposal -->

For example:

```python

import torch

hidden_dim1 = 10

hidden_dim2 = 5

tagset_size = 2

class MyModel(torch.nn.Module):

def __init__(self):

super(MyModel, self).__init__()

self.line1 = torch.nn.Linear(hidden... | https://github.com/pytorch/pytorch/issues/62951 | closed | [

"module: onnx",

"triaged",

"onnx-needs-info"

] | 2021-08-08T14:31:20Z | 2021-09-10T08:09:02Z | null | liym27 |

pytorch/serve | 1,186 | [Question] GPU memory | Hi! Say I have about 10 models and a single GPU is it possible to load a model object for a specific task at the request time and then completely free up the memory for a different model object? For instance, completely deleting it and then reinitialize it when needed. I know this will increase the response time but th... | https://github.com/pytorch/serve/issues/1186 | closed | [

"question",

"triaged_wait"

] | 2021-08-06T17:35:15Z | 2021-08-16T20:56:08Z | null | p1x31 |

pytorch/java-demo | 26 | How to compile from command line (using javac instead of gradle)? | Hi, could you maybe help with the following?

I want to show a very simple example of running a jitted model, and using gradle seems like quite some overhead ... Is there a way to just use `javac` with a classpath (or some other setup)?

I've been trying

```

javac -cp ~/libtorch/lib src/main/java/demo/App.java... | https://github.com/pytorch/java-demo/issues/26 | closed | [] | 2021-08-06T12:44:05Z | 2021-11-04T13:15:33Z | null | skeydan |

pytorch/TensorRT | 562 | ❓ [Question] How can i get libtrtorchrt.so? | ## ❓ Question

Thanks for your contribution.

I can't get the "libtrtorchrt.so" described in the following document after completing the trtorch. So, how can I get it?

## What you have already tried

... | https://github.com/pytorch/TensorRT/issues/562 | closed | [

"question"

] | 2021-08-06T08:14:01Z | 2021-08-06T10:04:25Z | null | JuncFang-git |

pytorch/vision | 4,257 | R-CNN predictions change with different batch sizes | ## 🐛 Bug

Even when using `model.eval()` I get different predictions when changing the batch size. I've found this issue when working on a project with Faster R-CNN and my own data, but I can replicate it in the tutorial "TorchVision Object Detection Finetuning Tutorial" (https://pytorch.org/tutorials/intermediate/t... | https://github.com/pytorch/vision/issues/4257 | closed | [

"question",

"module: models",

"topic: object detection"

] | 2021-08-06T07:22:41Z | 2021-08-16T12:25:30Z | null | alfonsomhc |

pytorch/TensorRT | 561 | Are FastRCNN models from TorchVision supported in TRTorch? | ## ❓ Question

Tried FastRCNN and MaskRCNN models from TorchVision. The model fails to compile with error "RuntimeError: tuple appears in op that does not forward tuples, unsupported kind: aten::append"

## What you have already tried

code to reproduce:

import torch

print(torch.__version__)

import trtorch

p... | https://github.com/pytorch/TensorRT/issues/561 | closed | [

"feature request",

"question",

"component: lowering",

"No Activity",

"component: partitioning"

] | 2021-08-06T01:36:18Z | 2023-07-29T00:02:10Z | null | saipj |

pytorch/tutorials | 1,637 | Distributed Data Parallel Tutorial UX improvement suggestion | referring to the tutorial: https://pytorch.org/tutorials/intermediate/ddp_tutorial.html

Though the tutorial is broken down into sections, it doesn't show how to actually run the code from each section until the very end of the tutorial.

The code as presented in each section only gives function definitions despit... | https://github.com/pytorch/tutorials/issues/1637 | open | [

"content",

"medium",

"docathon-h2-2023"

] | 2021-08-05T01:28:20Z | 2023-11-17T15:30:16Z | 10 | HDCharles |

pytorch/pytorch | 62,565 | support comparisons between types `c10::optional<T>` and `U` where `T` is comparable to `U` | ## 🚀 Feature

Support comparisons between `c10::optional<T>` and `U` if `T` is comparable to `U`.

## Motivation

A very common use-case for this is:

```

c10::optional<std::string> opt = ...;

if (opt == "blah") ...

```

Note that this is supported by `std::optional`. See https://en.cppreference.com/w/cpp/uti... | https://github.com/pytorch/pytorch/issues/62565 | closed | [

"module: internals",

"module: bootcamp",

"triaged"

] | 2021-08-02T14:03:38Z | 2021-08-19T04:41:51Z | null | dagitses |

pytorch/text | 1,369 | How to use TorchText with Java | ## ❓ Questions and Help

**Description**

I have a SentencePiece model which I serialized using `sentencepiece_processor`. My end goal is to use this torchscript serialized tokenizer in Java along with DJL Pytorch dependency. I am looking for guidance on how can I import torchtext dependency in Java environment.

S... | https://github.com/pytorch/text/issues/1369 | open | [] | 2021-07-29T20:41:12Z | 2021-08-05T23:30:05Z | null | anjali-chadha |

pytorch/pytorch | 62,332 | How to Fix “AssertionError: CUDA unavailable, invalid device 0 requested” | ## 🐛 Bug

I'm trying to use my GPU to run the YOLOR model, and I keep getting the error that CUDA is unavailable, not sure how to fix.

I keep getting the error:

```

Traceback (most recent call last):

File "D:\yolor\detect.py", line 198, in <module>

detect()

File "D:\yolor\detect.py", line 41, in dete... | https://github.com/pytorch/pytorch/issues/62332 | open | [

"module: binaries",

"triaged"

] | 2021-07-28T15:05:52Z | 2021-07-29T14:36:15Z | null | Hana-Ali |

huggingface/transformers | 12,925 | How to reproduce XLNet correctly And What is the config for finetuning XLNet? | I fintune a XLNet for English text classification. But it seems that I did something wrong about it because xlnet-base is worse than bert-base in my case. I set every 1/3 epoch report validation accuracy. At the beginning Bert-base is about 0.50 while XLNet-base is only 0.24. The config I use for xlnet is listed as fol... | https://github.com/huggingface/transformers/issues/12925 | closed | [

"Migration"

] | 2021-07-28T01:16:19Z | 2021-07-29T05:50:07Z | null | sherlcok314159 |

pytorch/pytorch | 62,282 | What is slow-path and fast-path? | ## ❓ Questions and Help

I am reading pytorch code base and issue to get a better understanding of the design choices. I keep seeing fast-pathed or fast-passed function. I was wondering what these are? For instance, the issue here (https://github.com/pytorch/pytorch/pull/46469 2).

Thank you in advance!

| https://github.com/pytorch/pytorch/issues/62282 | closed | [] | 2021-07-27T18:50:43Z | 2021-07-27T19:11:20Z | null | tarekmak |

pytorch/pytorch | 62,162 | Clarify sparse COO tensor coalesce behavior wrt overflow + how to binarize a sparse tensor | https://pytorch.org/docs/stable/sparse.html#sparse-uncoalesced-coo-docs does not explain what would be the behavior if passed sparse tensor dtype does not fit the accumulated values (e.g. it is torch.bool). Will it do the saturation properly? Or will it overflow during coalescing?

Basically, I would like to binarize... | https://github.com/pytorch/pytorch/issues/62162 | open | [

"module: sparse",

"module: docs",

"triaged"

] | 2021-07-25T12:37:49Z | 2021-08-23T14:53:45Z | null | vadimkantorov |

huggingface/transformers | 12,805 | What is the data format of transformers language modeling run_clm.py fine-tuning? | I now use run_clm.py to fine-tune gpt2, the command is as follows:

```

python run_clm.py \\

--model_name_or_path gpt2 \\

--train_file train1.txt \\

--validation_file validation1.txt \\

--do_train \\

--do_eval \\

--output_dir /tmp/test-clm

```

The training data is as follows:

[trai... | https://github.com/huggingface/transformers/issues/12805 | closed | [] | 2021-07-20T09:43:30Z | 2021-08-27T15:07:19Z | null | gongshaojie12 |

pytorch/pytorch | 61,836 | How to support multi-arch in built Docker | Hello?

I'm using pip3 to install and use PyTorch by writing my own Dockerfile.

Arch error when trying to use an image built on V100 on RTX3090.

I want to build an image that supports multiple Arches, such as V100, A100, RTX3090, and use it.

Any good way?

cc @malfet @seemethere @walterddr | https://github.com/pytorch/pytorch/issues/61836 | closed | [

"module: build",

"triaged",

"module: docker"

] | 2021-07-19T11:15:03Z | 2021-07-19T21:39:37Z | null | DonggeunYu |

pytorch/pytorch | 61,831 | How data transfer from disk to GPU? | ## ❓ Questions and Help

I have learned that we can use.to(device) to.CUDA () to transfer data to the GPU. I want to know how this process is implemented in the bottom layer.

Thanks, hundan.

| https://github.com/pytorch/pytorch/issues/61831 | closed | [] | 2021-07-19T08:32:32Z | 2021-07-19T21:21:53Z | null | pyhundan |

pytorch/pytorch | 61,765 | How to save tensors on mobile (lite interpreter)? | ## Issue description

Based on the discussion in https://github.com/pytorch/pytorch/pull/30108 it's clear that `pickle_save` is not supported on mobile, because `/csrc/jit/serialization/export.cpp` is not included when building for lite interpreter; producing the following runtime error:

```c++

#else

AT_ERROR(... | https://github.com/pytorch/pytorch/issues/61765 | open | [

"oncall: mobile"

] | 2021-07-16T09:43:03Z | 2021-07-21T10:06:17Z | null | lytcherino |

pytorch/TensorRT | 539 | ❓ [Question] Unknown output type. Only a single tensor or a TensorList type is supported | ## ❓ Question

TRTorch Throw "Unknown output type. Only a single tensor or a TensorList type is supported"

## What you have already tried

I define a model

```python

import os

import time

import torch

import torchvision

class Sparse(torch.nn.Module):

def __init__(self, embedding_size):

... | https://github.com/pytorch/TensorRT/issues/539 | closed | [

"question",

"No Activity"

] | 2021-07-16T06:35:29Z | 2021-10-29T00:01:39Z | null | westfly |

huggingface/sentence-transformers | 1,070 | What is the difference between training(https://www.sbert.net/docs/training/overview.html#training-data) and unsupervised learning | Hi,

I have some bunch of PDF's and I am building a QnA system from the pdf's. Currently, I am using deepset/haystack repo for the same task.

My doubt is if we want to generate embeddings for my text which training I should do, what is the difference as both approaches mostly takes sentences right? | https://github.com/huggingface/sentence-transformers/issues/1070 | open | [] | 2021-07-15T12:13:37Z | 2021-07-15T12:41:22Z | null | SAIVENKATARAJU |

pytorch/vision | 4,180 | [Detectron2] RuntimeError: No such operator torchvision::nms and RecursionError: maximum recursion depth exceeded | ## 🐛 Bug

Running Detectron2 demo.py creates `RuntimeError: No such operator torchvision::nms` error.

So far it's the same as #1405 but it gets worse. Creates a Max Recursion Depth error.

The primary issue is resolve with a simple naming change (below, thanks to @feiyuhuahuo). However, this creates the `Recurs... | https://github.com/pytorch/vision/issues/4180 | closed | [

"question",

"module: ops"

] | 2021-07-14T23:51:28Z | 2021-08-12T11:16:20Z | null | KastanDay |

huggingface/transformers | 12,704 | Where is the casual mask when using BertLMHeadModel and set config.is_decoder = True? | I hope to use BERT for the task of causal language modeling.

`BertLMHeadModel ` seems to meet my needs, but I did not find any code snippets about the causal mask, even if I set the `config.is_decoder=True`.

I only find the following related code in https://github.com/huggingface/transformers/blob/master/src/tr... | https://github.com/huggingface/transformers/issues/12704 | closed | [] | 2021-07-14T13:15:50Z | 2021-07-24T06:42:04Z | null | Doragd |

pytorch/pytorch | 61,526 | how to put ```trainer.fit()``` in for loop? | I am trying to create multiple model using loop as below.

```

for client in clients:

t.manual_seed(10)

client['model'] = LinearNN(learning_rate = args.lr, i_s = args.input_size, h1_s = args.hidden1, h2_s = args.hidden2, n_c = args.output, client=client)

client['optim'] = optim.Adam(client['model'].p... | https://github.com/pytorch/pytorch/issues/61526 | closed | [] | 2021-07-12T11:29:16Z | 2021-07-12T12:32:18Z | null | anik123 |

pytorch/TensorRT | 527 | ❓ [Question] TRTorch and Pytorch Serve | ## ❓ Question

Is it possbile to use TRTorch with TorchServe?

If not what is the best way to deploy TRTorch programs?

## What you have already tried

In the documentation, it is said I can continue to use programs via PyTorch API

I have converted all my models to TRTorch.

## Environment

> Build informati... | https://github.com/pytorch/TensorRT/issues/527 | closed | [

"question"

] | 2021-07-11T21:12:02Z | 2021-07-14T18:32:38Z | null | p1x31 |

pytorch/pytorch | 61,510 | What is find_package(Torch REQUIRED) doing that a manual include/glob doesnt? | In my project, if i do

```

set(CMAKE_PREFIX_PATH "...lib/python3.6/site-packages/torch/share/cmake/Torch")

find_package(Torch REQUIRED)

add_library(lib SHARED "lib.hpp" "lib.cpp")

target_link_libraries( lib ${TORCH_LIBRARIES})

```

It all links and works great!

But, if I do the following manually,

```

f... | https://github.com/pytorch/pytorch/issues/61510 | open | [

"module: build",

"triaged"

] | 2021-07-10T18:35:09Z | 2021-07-12T15:39:08Z | null | yaadhavraajagility |

pytorch/tutorials | 1,605 | Need to update the tutorial description in index.rst | In [index.rst](https://github.com/pytorch/tutorials/blame/master/index.rst#L165), the description of `Text Classification with Torchtext` is duplicated with [the previous tutorial](https://github.com/pytorch/tutorials/blame/master/index.rst#L158) but not correctly explain the tutorial.

So we need to update this desc... | https://github.com/pytorch/tutorials/issues/1605 | closed | [] | 2021-07-10T13:00:03Z | 2021-07-27T21:33:05Z | 0 | 9bow |

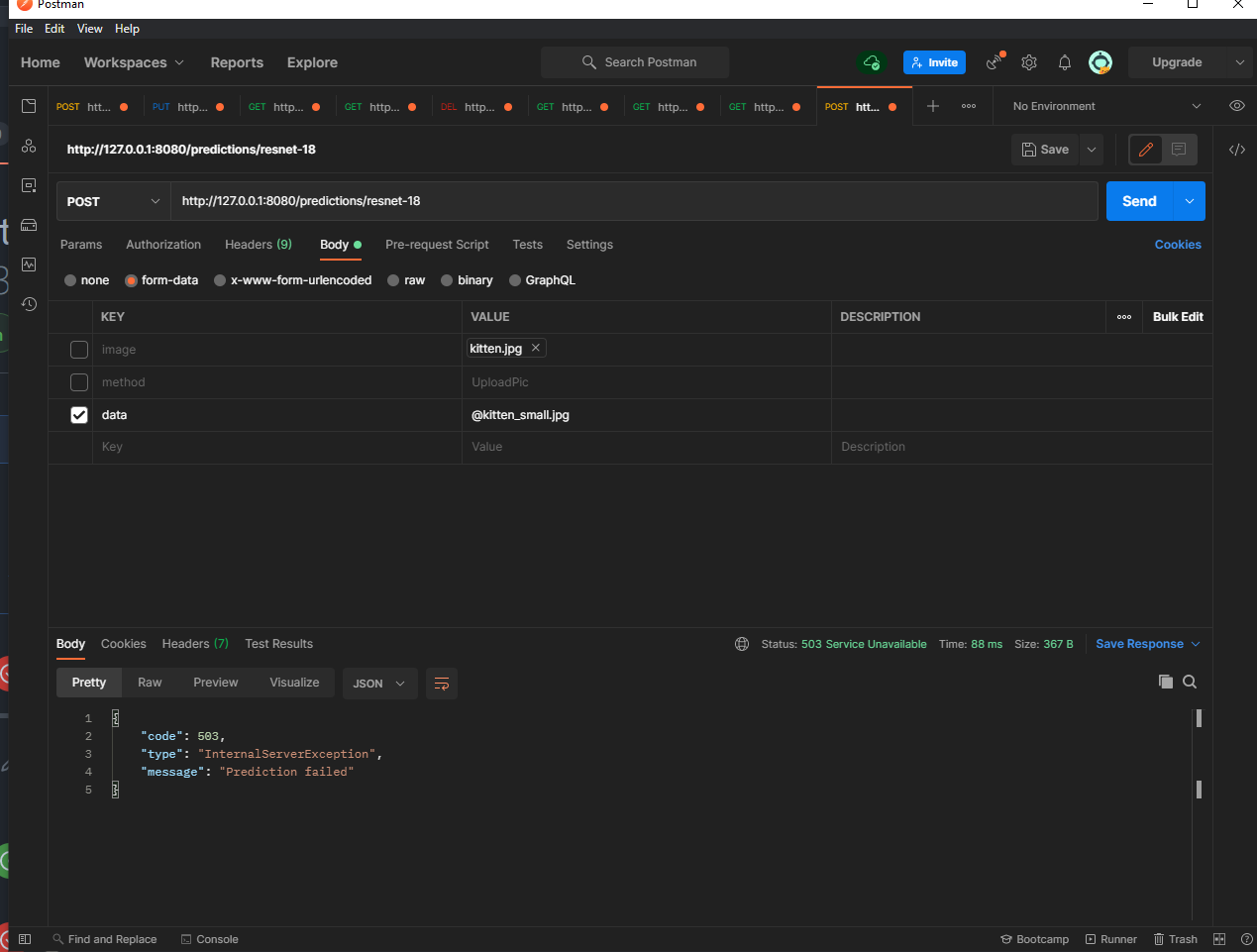

pytorch/serve | 1,153 | What is the post route link to upload the kitten.jpg? instead of the "-T" | ## 📚 Documentation

For example, if I use something like postman, how should I upload the image?

Thank you.

All these ways are failed. | https://github.com/pytorch/serve/issues/1153 | closed | [] | 2021-07-08T13:00:48Z | 2021-07-08T13:56:39Z | null | AliceSum |

pytorch/TensorRT | 526 | ❓ [Question] Lowering pass for PyTorch Linear | ## ❓ Question

Hi, I saw that one of the lowering pass TRTorch has is lowering linear to mm + add. I'm wondering what the reason behind this is. Does TensorRT provide better performance with matmul layer + elementwise sum layer than fully connected layer? Or breaking it down help the fusion process in TensorRT?

| https://github.com/pytorch/TensorRT/issues/526 | closed | [

"question"

] | 2021-07-08T05:45:28Z | 2021-07-12T16:14:09Z | null | 842974287 |

pytorch/serve | 1,152 | How to access API outside of localhost? | Hi!

I have Wireguard in my machine and have a few other devices connected with it.

Let's say my Wireguard IP is 10.0.0.1, then in the `config.properties` file, I change `inference_address=http://10.0.0.1:8080`.

I'm able to use the API locally but unable to do so outside of the device (I keep getting a timeout er... | https://github.com/pytorch/serve/issues/1152 | open | [

"help wanted",

"support"

] | 2021-07-07T07:20:50Z | 2023-03-17T09:44:42Z | null | kkmehta03 |

pytorch/TensorRT | 523 | ❓ [Question] My aten::chunk op converter does not work correctly | ## ❓ Question

I want to use trtorch to compile shufflenet. However, aten::chunk op is not currently supported. I wrote a converter implementation referring to `converters/impl/select.cpp`. Unfortunately, it does not work.

Is there anything wrong?

The python code

```python

model = torchvision.models.shuffle... | https://github.com/pytorch/TensorRT/issues/523 | closed | [

"question"

] | 2021-07-05T08:50:04Z | 2021-07-07T09:30:47Z | null | letian-jiang |

pytorch/pytorch | 61,220 | How to submit a DDP job on the PBS/SLURM using multiple nodes | Hi everyone, I am trying to train using DistributedDataParallel. Thanks to the great work of the team at PyTorch, a very high efficiency has been achieved. Everything is fine when a model is trained on a single node. However, when I try to use multiple nodes in one job script, all the processes will be on the host node... | https://github.com/pytorch/pytorch/issues/61220 | closed | [] | 2021-07-04T05:52:54Z | 2021-07-12T20:11:16Z | null | zhangylch |

pytorch/functorch | 67 | How to perform jvps and not vjps? | Hi! Thanks for the working prototype, it would be a great addition to pytorch!

I'm currently using pytorch for research purposes, and I would like to implicitly compute jacobian-vector products (i.e. where the given vectors should multiply the "inputs" and not the "outputs" of the transformation).

Is there a `jvp... | https://github.com/pytorch/functorch/issues/67 | closed | [] | 2021-07-03T07:34:59Z | 2022-12-08T20:04:56Z | null | trenta3 |

pytorch/pytorch | 61,128 | How to get in touch about a security issue? | Hey there,

As there isn't a `SECURITY.md` with an email on your repository, I am unsure how to contact you regarding a potential security issue.

Would you kindly add a `SECURITY.md` file with an e-mail to your repository? GitHub [recommends](https://docs.github.com/en/code-security/getting-started/adding-a-securi... | https://github.com/pytorch/pytorch/issues/61128 | closed | [] | 2021-07-01T16:07:47Z | 2021-07-12T17:13:59Z | null | zidingz |

pytorch/vision | 4,147 | Nan Loss while using resnet_fpn(not pretrained) backbone with FasterRCNN | ## 🐛 Bug

I am using this model

```

from torchvision.models.detection import FasterRCNN

from torchvision.models.detection.backbone_utils import resnet_fpn_backbone

backbone = resnet_fpn_backbone(backbone_name='resnet152', pretrained=False)

model = FasterRCNN(backbone,

num_classes=2)

```

... | https://github.com/pytorch/vision/issues/4147 | closed | [

"question",

"topic: object detection"

] | 2021-07-01T13:57:44Z | 2021-08-17T18:37:51Z | null | sahilg06 |

pytorch/TensorRT | 519 | ❓ [Question] Are there plans to support TensorRT 8 and Ubuntu 20.04? | https://github.com/pytorch/TensorRT/issues/519 | closed | [

"question",

"No Activity",

"Story: TensorRT 8"

] | 2021-06-30T23:01:38Z | 2022-02-13T00:01:42Z | null | danielgordon10 | |

pytorch/text | 1,350 | How to build vocab from Glove embedding? | ## ❓ How to build vocab from Glove embedding?

**Description**

<!-- Please send questions or ask for help here. -->

How to build vocab from Glove embedding?

I have gone through the documentation and the release update, I got to know that the Vectors object is not an attribute of the new Vocab object anymore.

... | https://github.com/pytorch/text/issues/1350 | open | [] | 2021-06-30T16:11:53Z | 2022-02-27T11:29:03Z | null | OsbertTay |

pytorch/vision | 4,134 | Hey, after changing the segmention.py script, has anyone tested the backbone using Mobilenetv3_large as deeplabv3? When I start the train.py script, I throw the error shown in the screenshot: | ## ❓ Questions and Help

### Please note that this issue tracker is not a help form and this issue will be closed.

We have a set of [listed resources available on the website](https://pytorch.org/resources). Our primary means of support is our discussion forum:

- [Discussion Forum](https://discuss.pytorch.org/)... | https://github.com/pytorch/vision/issues/4134 | closed | [

"question"

] | 2021-06-29T07:15:14Z | 2021-06-29T10:32:17Z | null | GalSang17 |

pytorch/pytorch | 60,847 | How to release CPU memory cache in Libtorch JIT ? | ## ❓ Questions and Help

Hi every one, I would like to know to release CPU memory cache in Libtorch JIT? If there is no such way, can I set percentile of maximum memory used as cache ? And I want to know if each torchscript::jit::module has its own memory cache , or all modules share one global memory cache ? Thanks.

... | https://github.com/pytorch/pytorch/issues/60847 | open | [

"oncall: jit"

] | 2021-06-28T03:40:21Z | 2021-07-07T03:01:06Z | null | w1d2s |

pytorch/pytorch | 60,825 | Where OpInfo doesn't handle cases where one of the inputs is a scalar | https://github.com/pytorch/pytorch/blob/master/torch/testing/_internal/common_methods_invocations.py#L4436

It'd be nice to cover the other cases as well.

cc @mruberry @VitalyFedyunin @walterddr @heitorschueroff | https://github.com/pytorch/pytorch/issues/60825 | open | [

"module: tests",

"triaged",

"module: sorting and selection"

] | 2021-06-26T20:37:52Z | 2021-08-30T20:34:56Z | null | Chillee |

pytorch/TensorRT | 511 | ❓ [Question] How can I trace the code that causing Unsupported operators | ## ❓ Question

Hi. I'm trying to enable TensorRT for a Torch Script model and getting a bunch of Unsupported operators. I'm willing to change the implementation to avoid those unsupported operators or even trying to add support for it. But I struggling to find which line of code in my model are causing it.

I'm doi... | https://github.com/pytorch/TensorRT/issues/511 | closed | [

"question"

] | 2021-06-26T13:48:04Z | 2021-07-22T17:06:15Z | null | lamhoangtung |

pytorch/pytorch | 60,819 | I want to use the bach size of image to foward with libtorch, how should i do? | single image forward:

std::vector<std::vector<Detection>>

model4_dec::Run(const cv::Mat& img, float conf_threshold, float iou_threshold) {

torch::NoGradGuard no_grad;

std::cout << "----------New Frame----------" << std::endl;

// TODO: check_img_size()

/*** Pre-process ***/

auto start = std::chrono::... | https://github.com/pytorch/pytorch/issues/60819 | closed | [] | 2021-06-26T08:28:09Z | 2021-06-28T13:27:36Z | null | xinsuinizhuan |

pytorch/vision | 4,117 | publish nightly v0.11 to Conda channel `pytorch-nightly` | ## 🚀 Feature

I would kindly request if you can update the latest nightly to Conda

## Motivation

We are going to test some against future Pytorch v1.10 but we also need to have a TV for these test and as TV is fixed to a particular PT version with the latest TV revert PT to v1.9

## Pitch

simple testing a... | https://github.com/pytorch/vision/issues/4117 | closed | [

"question",

"topic: binaries"

] | 2021-06-25T07:42:01Z | 2021-06-29T10:41:04Z | null | Borda |

pytorch/vision | 4,115 | Again details about how pretrained models are trained? | 1. I use [v0.5.0/references](https://github.com/pytorch/vision/blob/build/v0.5.0/references/classification/train.py) to train a resnet50 wirth defalut config. But, I got Best_val Top1=75.806%, which has a gap of 0.3% about the pretrained model. How can I to repreduce your accuracy ?

2. I notice you said you use you re... | https://github.com/pytorch/vision/issues/4115 | open | [

"question"

] | 2021-06-25T05:51:59Z | 2021-06-28T14:59:26Z | null | YYangZiXin |

pytorch/vision | 4,105 | Hi, why rate is two times with paper | https://github.com/pytorch/vision/blob/d1ab583d0d2df73208e2fc9c4d3a84e969c69b70/torchvision/models/segmentation/deeplabv3.py#L32

```

n. In the

end, our improved ASPP consists of (a) one 1×1 convolution

and three 3 × 3 convolutions with rates = (6, 12, 18) when

output stride = 16 (all with 256 filters and batch n... | https://github.com/pytorch/vision/issues/4105 | closed | [

"question",

"module: models"

] | 2021-06-24T07:04:07Z | 2021-07-02T03:52:59Z | null | flystarhe |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.