repo stringclasses 147

values | number int64 1 172k | title stringlengths 2 476 | body stringlengths 0 5k | url stringlengths 39 70 | state stringclasses 2

values | labels listlengths 0 9 | created_at timestamp[ns, tz=UTC]date 2017-01-18 18:50:08 2026-01-06 07:33:18 | updated_at timestamp[ns, tz=UTC]date 2017-01-18 19:20:07 2026-01-06 08:03:39 | comments int64 0 58 ⌀ | user stringlengths 2 28 |

|---|---|---|---|---|---|---|---|---|---|---|

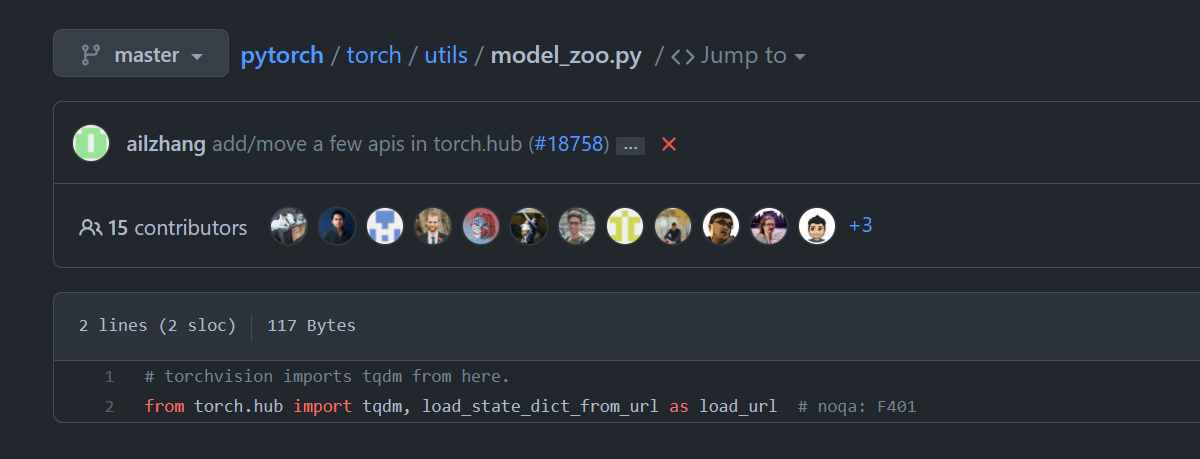

pytorch/vision | 4,104 | whats diff in this code ```try....except``` | https://github.com/pytorch/vision/blob/d1ab583d0d2df73208e2fc9c4d3a84e969c69b70/torchvision/_internally_replaced_utils.py#L13

except code also use torch.hub,its same as try code!!!

why do like this... | https://github.com/pytorch/vision/issues/4104 | closed | [

"question"

] | 2021-06-24T06:37:49Z | 2021-06-24T11:46:28Z | null | jaffe-fly |

pytorch/pytorch | 60,625 | How to checkout 1.8.1 release | I am trying to compile pytorch 1.8.1 release from source but not sure which branch to checkout, as there is no 1.8.1 and the 1.8.0 branches seem to be rc1 or rc2.

so for example

```

git checkout -b remotes/origin/lts/release/1.8

git describe --tags

```

returns

v1.8.0-rc1-4570-g80f40b172f

So how ... | https://github.com/pytorch/pytorch/issues/60625 | closed | [] | 2021-06-24T04:17:58Z | 2021-06-24T19:06:58Z | null | beew |

pytorch/serve | 1,135 | how to serve model converted by hummingbird from sklearn? | if i have a sklearn model, and then use hummingbird (https://github.com/microsoft/hummingbird) to transfer as pytorch tensor model

so the model structure is from hummingbird, but not by my own such as :

hummingbird.ml.containers.sklearn.pytorch_containers.PyTorchSklearnContainerClassification

so i dont have the... | https://github.com/pytorch/serve/issues/1135 | closed | [] | 2021-06-23T07:25:56Z | 2021-06-24T10:03:57Z | null | aohan237 |

pytorch/pytorch | 60,433 | Libtorch JIT : Does enabling profiling mode increase CPU memory usage ? How to disable profiling mode properly ? | Hi, I am trying to deploying an Attention-based Encoder Decoder (AED) model with libtorch C++ frontend, when model's decoder loops at output sequence ( the decoder jit module 's forward method is repeatedly called at each label time step ), the CPU memory usage is very high (~ 20 GB), and I think it's far too high comp... | https://github.com/pytorch/pytorch/issues/60433 | closed | [

"oncall: jit"

] | 2021-06-22T04:13:05Z | 2021-06-28T03:36:08Z | null | w1d2s |

pytorch/vision | 4,091 | Unnecessary call .clone() in box_convert function | https://github.com/pytorch/vision/blob/d391a0e992a35d7fb01e11110e2ccf8e445ad8a0/torchvision/ops/boxes.py#L183-L184

We can just return boxes without .clone().

What's the purpose? | https://github.com/pytorch/vision/issues/4091 | closed | [

"question"

] | 2021-06-22T02:03:37Z | 2021-06-22T14:14:56Z | null | developer0hye |

pytorch/android-demo-app | 156 | How to add Model Inference Time to yolov5 demo when using live function? Like the iOS demo? | Dear developer, I watched this repository (for Android) yolov5 application test video and I compared another repository (for iOS) yolov5 application test video.

I found that the Android application is missing the provision of " Model Inference Time" for real time detection, could you please add it? If not, could you p... | https://github.com/pytorch/android-demo-app/issues/156 | closed | [] | 2021-06-20T18:43:18Z | 2022-05-08T15:41:52Z | null | zxsitu |

pytorch/android-demo-app | 154 | where is yolov5 model | Does anyone know how to download yolov5s.torchscript.ptl, I don't have this file | https://github.com/pytorch/android-demo-app/issues/154 | open | [] | 2021-06-19T14:38:56Z | 2021-06-19T15:18:46Z | null | GuoQuanhao |

pytorch/pytorch | 60,266 | UserWarning: The epoch parameter in `scheduler.step()` was not necessary and is being deprecated where possible. Please use `scheduler.step()` to step the scheduler. | ## 🐛 Bug

<!-- A clear and concise description of what the bug is. -->

## To Reproduce

Steps to reproduce the behavior:

1.

1.

1.

<!-- If you have a code sample, error messages, stack traces, please provide it here as well -->

## Expected behavior

<!-- A clear and concise description of what you e... | https://github.com/pytorch/pytorch/issues/60266 | closed | [] | 2021-06-18T13:09:33Z | 2021-06-18T15:53:56Z | null | wanyne-yyds |

pytorch/pytorch | 60,253 | How to export SPP-NET to onnx ? | Here's my code:

----------------------------------------------------------start----------------------------------------------------------------

import torch

import torch.nn as nn

import math

import torch.nn.functional as F

class Spp(nn.Module):

def __init__(self, level, pooling_type="max_pool"):

... | https://github.com/pytorch/pytorch/issues/60253 | closed | [

"module: onnx",

"triaged",

"onnx-triaged"

] | 2021-06-18T06:56:59Z | 2022-11-01T22:16:36Z | null | yongxin3344520 |

pytorch/TensorRT | 502 | ❓ [Question] failed to build docker image | ## ❓ Question

failed to build docker image

## What you have already tried

`docker build -t trtorch -f notebooks/Dockerfile.notebook .`

## Additional context

```

Step 13/21 : WORKDIR /workspace/TRTorch

---> Running in 6043f6a80286

Removing intermediate container 6043f6a80286

---> 18eaa4134512

Ste... | https://github.com/pytorch/TensorRT/issues/502 | closed | [

"question",

"No Activity"

] | 2021-06-17T10:22:25Z | 2021-09-27T00:01:13Z | null | chrjxj |

pytorch/pytorch | 60,122 | what version of python is suggested with pytorch 1.9 | I know pytorch support a variety of python version, but I wonder what version is suggested? python 3.6.6? 3.7.7? etc?

Thanks | https://github.com/pytorch/pytorch/issues/60122 | closed | [] | 2021-06-16T19:05:52Z | 2021-06-16T20:54:15Z | null | seyeeet |

pytorch/pytorch | 60,115 | How to install torchaudio on Mac M1 ARM? | `torchaudio` doesn't seem to be available for Mac M1.

If I run `conda install pytorch torchvision torchaudio -c pytorch` (as described on pytorch's main page) I get this error message:

```

PackagesNotFoundError: The following packages are not available from current channels:

- torchaudio

```

If I run the ... | https://github.com/pytorch/pytorch/issues/60115 | closed | [] | 2021-06-16T18:24:04Z | 2021-06-16T20:42:03Z | null | suissemaxx |

pytorch/pytorch | 59,933 | If I only have the model of PyTorch and don't know the dimension of the input, how to convert it to onnx? | ## ❓ If I only have the model of PyTorch and don't know the dimension of the input, how to convert it to onnx?

### Question

I have a series of PyTorch trained models, such as "model.pth", but I don't know the input dimensions of the model.

For instance, in the following function: torch.onnx.export(model, args, f... | https://github.com/pytorch/pytorch/issues/59933 | closed | [] | 2021-06-14T08:38:29Z | 2021-06-14T15:31:26Z | null | Wendy-liu17 |

pytorch/pytorch | 59,870 | How to export a model with nn.Module in for loop to onnx? | Bellow is a demo code:

```

class Demo(nn.Module):

def __init__(self, hidden_size, max_span_len):

super().__init__()

self.max_span_len = max_span_len

self.fc = nn.Linear(hidden_size * 2, hidden_size)

def forward(self, seq_hiddens):

'''

seq_hiddens: (batch_size, ... | https://github.com/pytorch/pytorch/issues/59870 | closed | [

"module: onnx"

] | 2021-06-11T12:25:15Z | 2021-06-15T18:34:50Z | null | JaheimLee |

huggingface/transformers | 12,105 | What is the correct way to pass labels to DetrForSegmentation? | The [current documentation](https://huggingface.co/transformers/master/model_doc/detr.html#transformers.DetrForSegmentation.forward) for `DetrModelForSegmentation.forward` says the following about `labels` kwarg:

> The class labels themselves should be a torch.LongTensor of len (number of bounding boxes in the image... | https://github.com/huggingface/transformers/issues/12105 | closed | [] | 2021-06-10T22:15:23Z | 2021-06-17T14:37:54Z | null | nateraw |

pytorch/vision | 4,001 | Unable to build torchvision on Windows (installed torch from source and it is running) | ## ❓ Questions and Help

I have installed torch successfully in my PC via source, but I am facing this issue while installing the torchvison. I don't think I can install torchvision via pip as it is re-downloading the torch.

Please help me to install it

TIA

i used `python setup.py install`

```

Building wheel... | https://github.com/pytorch/vision/issues/4001 | closed | [

"question"

] | 2021-06-08T09:48:25Z | 2021-06-14T11:01:21Z | null | dhawals1939 |

pytorch/pytorch | 59,607 | Where is libtorch archive??? | Where is libtorch archive???

I can't find libtorch 1.6.0.. | https://github.com/pytorch/pytorch/issues/59607 | closed | [] | 2021-06-08T01:03:23Z | 2023-04-07T13:29:34Z | null | hi-one-gg |

pytorch/xla | 2,981 | Where is torch_xla/csrc/XLANativeFunctions.h? | ## 🐛 Bug

Trying to compile master found that there is no https://github.com/pytorch/xla/blob/master/torch_xla/csrc/XLANativeFunctions.h after updating to latest master.

How this file is generated? (aka which step Im missing?)

```

$ time pip install -e . --verbose

...............

[23/101] clang++-8 -MMD... | https://github.com/pytorch/xla/issues/2981 | closed | [

"stale"

] | 2021-06-08T00:42:14Z | 2021-07-21T13:22:46Z | null | tyoc213 |

pytorch/TensorRT | 495 | ❓ [Question] How does the compiler uses the optimal input shape ? | When compiling the model we have to specify an optimal input shape as well as a minimal and maximal one.

I tested various optimal sizes to evaluate the impact of this parameter but found little to no difference for the inference time.

How is this parameter used by the compiler ?

Thank you for your time and... | https://github.com/pytorch/TensorRT/issues/495 | closed | [

"question"

] | 2021-06-07T13:21:20Z | 2021-06-09T09:52:53Z | null | MatthieuToulemont |

pytorch/text | 1,323 | How to use pretrained embeddings (`Vectors`) in the new API? | From what is see in the `experimental` module is that we pass a vocab object, which transforms the token into an unique integer.

https://github.com/pytorch/text/blob/e189c260e959ab966b1eaa986177549a6445858c/torchtext/experimental/datasets/text_classification.py#L50-L55

Thus something like `['hello', 'word']` migh... | https://github.com/pytorch/text/issues/1323 | open | [] | 2021-06-05T10:58:38Z | 2021-07-01T03:26:20Z | null | satyajitghana |

huggingface/transformers | 12,005 | where is the code for DetrFeatureExtractor, DetrForObjectDetection | Hello my dear friend.

i am long for the model of https://huggingface.co/facebook/detr-resnet-50

i cannot find the code of it in transformers==4.7.0.dev0 and 4.6.1 pleae help me . appreciated.

## Environment info

<!-- You can run the command `transformers-cli env` and copy... | https://github.com/huggingface/transformers/issues/12005 | closed | [] | 2021-06-03T09:28:27Z | 2021-06-10T07:06:59Z | null | zhangbo2008 |

pytorch/pytorch | 59,368 | How to remap RNNs hidden tensor to other device in torch.jit.load? | Model: CRNN (used in OCR)

1. When I trace model in cpu device, and use torch.jit.load(f, map_location="cuda:0"), I got an error as below

Input and hidden tensor are not at same device, found input tensor at cuda:0 and hidden tensor at cpu.

2. When I trace model in cuda:0 device, and use torch.jit.load(f, map_loc... | https://github.com/pytorch/pytorch/issues/59368 | closed | [

"oncall: jit"

] | 2021-06-03T09:24:23Z | 2021-10-21T06:19:02Z | null | shihaoyin |

pytorch/vision | 3,949 | Meaning of Assertion of infer_scale function in torchvision/ops/poolers.py | ## ❓ Questions and Help

### Please note that this issue tracker is not a help form and this issue will be closed.

We have a set of [listed resources available on the website](https://pytorch.org/resources). Our primary means of support is our discussion forum:

- [Discussion Forum](https://discuss.pytorch.org/)... | https://github.com/pytorch/vision/issues/3949 | closed | [

"question",

"module: ops"

] | 2021-06-03T04:38:03Z | 2021-06-09T11:59:52Z | null | teang1995 |

pytorch/pytorch | 59,231 | How to solve the AssertionError: Torch not compiled with CUDA enabled | For the usage of the repo based on PyTorch(Person_reID_baseline_pytorch), I followed the guidance on its readme.md. However, I've got an error on the training step below: (I used --gpu_ids -1 as I use CPU only option in my MacOS)

`python train.py --gpu_ids -1 --name ft_ResNet50 --train_all --batchsize 32 --data_dir ... | https://github.com/pytorch/pytorch/issues/59231 | closed | [] | 2021-05-31T20:45:33Z | 2023-06-04T06:22:56Z | null | aktaseren |

pytorch/TensorRT | 493 | ❓ [Question] How to set three input tensor shape in input_shape? | ## ❓ Question

<!-- How to set three input tensor shape in input_shape?-->

I have three input tensor:src_tokens, dummy_embeded_x, dummy_encoder_embedding

In this case, I don't konw how to set input_shape in compile_settings's "input_shape"

Who can help me? Thank you!

`encoder_out = model.forward_encoder([src_to... | https://github.com/pytorch/TensorRT/issues/493 | closed | [

"question"

] | 2021-05-31T02:48:53Z | 2021-06-23T19:56:33Z | null | wxyhv |

pytorch/vision | 3,938 | Batch size of the training recipes on multiple GPUs | ## ❓ Questions and Help

In the README file that describes the recipes of training the classification models, under the references directory, it is stated that the models are trained with batch-size=32 on 8 GPUs.

Does it mean that:

- the whole batch-size is 32 and each GPU gets only 4 images to process at a time... | https://github.com/pytorch/vision/issues/3938 | closed | [

"question"

] | 2021-05-30T13:16:07Z | 2021-05-30T14:52:38Z | null | talcs |

pytorch/pytorch | 59,186 | Document on how to use ATEN_CPU_CAPABILITY | ## 🚀 Feature

<!-- A clear and concise description of the feature proposal -->

It would be great if ATEN_CPU_CAPABILITY would be documented with an example on how to use it.

## Motivation

<!-- Please outline the motivation for the proposal. Is your feature request related to a problem? e.g., I'm always frustra... | https://github.com/pytorch/pytorch/issues/59186 | closed | [] | 2021-05-30T11:40:07Z | 2021-05-30T21:31:30Z | null | derneuere |

pytorch/serve | 1,103 | Can two workflows share the same model with each other? | Continuing my previous post: [How i do models chain processing and batch processing for analyzing text data?](https://github.com/pytorch/serve/issues/1055)

Can I create two workflows using the same RoBERTa base model to perform two different tasks, let's say the classifier_model and summarizer_model? I would like to... | https://github.com/pytorch/serve/issues/1103 | open | [

"question",

"triaged_wait",

"workflowx"

] | 2021-05-28T18:04:57Z | 2022-09-08T12:27:30Z | null | yurkoff-mv |

pytorch/TensorRT | 490 | ❓ [Question] How could I integrate TensorRT's Group Normalization plugin into a TRTorch model ? | ## ❓ Question

What would be the steps to be able to use TensorRT's Group Normalization plugin into a TRTorch model ?

The plugin is defined [here](https://github.com/NVIDIA/TensorRT/tree/master/plugin/groupNormalizationPlugin)

## Context

Being new to this, the Readme from core/conversion/converters didn't r... | https://github.com/pytorch/TensorRT/issues/490 | closed | [

"question"

] | 2021-05-27T12:53:09Z | 2021-06-09T09:53:11Z | null | MatthieuToulemont |

pytorch/cpuinfo | 55 | Compilation for freeRTOS | Hi all,

We are staring to look into using cpuinfo in a freeRTOS / ZedBoard setup.

Do you know if any attempts to port this code to freeRTOS before?

If not, do you have any tips / advise on how to start this porting?

Thanks,

Pablo. | https://github.com/pytorch/cpuinfo/issues/55 | open | [

"question"

] | 2021-05-26T09:57:18Z | 2024-01-11T00:56:44Z | null | pablogh-2000 |

huggingface/notebooks | 42 | what is the ' token classification head'? | https://github.com/huggingface/notebooks/issues/42 | closed | [] | 2021-05-25T09:17:49Z | 2021-05-29T11:36:11Z | null | zingxy | |

pytorch/pytorch | 58,894 | ease use `scheduler.step()` to step the scheduler. During the deprecation, if epoch is different from None, the closed form is used instead of the new chainable form, where available. Please open an issue if you are unable to replicate your use case: https://github.com/pytorch/pytorch/issues/new/choose. warnings.warn... | ## ❓ Questions and Help

### Please note that this issue tracker is not a help form and this issue will be closed.

We have a set of [listed resources available on the website](https://pytorch.org/resources). Our primary means of support is our discussion forum:

- [Discussion Forum](https://discuss.pytorch.org/)... | https://github.com/pytorch/pytorch/issues/58894 | closed | [] | 2021-05-25T02:41:52Z | 2021-05-25T21:30:41Z | null | umie0128 |

pytorch/tutorials | 1,539 | (Libtorch)How to use packed_accessor64 to access tensor elements in CUDA? | The [tutorial ](https://pytorch.org/cppdocs/notes/tensor_basics.html#cuda-accessors) gives an example about using _packed_accessor64_ to access tensor elements efficiently as follows. However, I still do not know how to use _packed_accessor64_. Can anyone give me a more specific example? Thanks.

```

__global__ void p... | https://github.com/pytorch/tutorials/issues/1539 | open | [

"CUDA",

"medium",

"docathon-h2-2023"

] | 2021-05-24T15:56:26Z | 2023-11-14T06:41:03Z | null | tangyipeng100 |

pytorch/text | 1,316 | How to load AG_NEWS data from local files | ## How to load AG_NEWS data from local files

I can't get ag news data with `train_iter, test_iter = AG_NEWS(split=('train', 'test'))` online because of my bad connection. So I download the the `train.csv` and `test.csv` manually to my local folder `AG_NEWS` from url `'train': "https://raw.githubusercontent.com/mhjab... | https://github.com/pytorch/text/issues/1316 | open | [] | 2021-05-24T06:23:55Z | 2021-05-24T14:54:04Z | null | robbenplus |

pytorch/tutorials | 1,534 | Why libtorch tensor value assignment takes so much time? | I just assign 10000 values to a tensor:

```

clock_t start = clock();

torch::Tensor transform_tensor = torch::zeros({ 10000 });

for (size_t m = 0; m < 10000 m++)

transform_tensor[m] = int(m);

clock_t finish = clock();

```

And it takes 0.317s. If I assign 10,000 to an array or a vector, the time cost will be les... | https://github.com/pytorch/tutorials/issues/1534 | open | [

"question",

"Tensors"

] | 2021-05-24T01:59:42Z | 2023-03-08T16:31:16Z | null | tangyipeng100 |

pytorch/pytorch | 58,554 | How to install pytorch1.8.1 with cuda 11.3? | How to install pytorch1.8.1 with cuda 11.3? | https://github.com/pytorch/pytorch/issues/58554 | closed | [] | 2021-05-19T13:24:42Z | 2021-05-20T03:42:17Z | null | Bonsen |

pytorch/xla | 2,957 | How to compile xla_ltc_plugin | I was following https://github.com/pytorch/xla/tree/asuhan/xla_ltc_plugin to build ltc-based torch/xla. I compiled ltc successfully but encountered errors when compiling xla. I guess I must have missed something here. Help is greatly appreciated :) cc @asuhan

<details>

<summary>Error log</summary>

```

[1/1... | https://github.com/pytorch/xla/issues/2957 | closed | [

"stale"

] | 2021-05-19T09:31:12Z | 2021-07-08T09:11:02Z | null | hzfan |

pytorch/pytorch | 58,530 | How to remove layer use parent name | Hi, I am a new user of pytorch. I try to load trained model and want to remove the last layer named 'fc'

```

model = models.alexnet()

model.fc = nn.Linear(4096, 4)

ckpt = torch.load('net_epoch_24.pth')

model.load_state_dict(ckpt)

model.classifier = nn.Sequential(nn.Linear(9216, 1024),

... | https://github.com/pytorch/pytorch/issues/58530 | closed | [] | 2021-05-19T03:54:29Z | 2021-05-20T05:29:28Z | null | ramdhan1989 |

pytorch/pytorch | 58,460 | how to convert scriptmodel to onnx? | how to convert scriptmodel to onnx?

D:\Python\Python37\lib\site-packages\torch\onnx\utils.py:348: UserWarning: Model has no forward function

warnings.warn("Model has no forward function")

Exception occurred when processing textline: 1

cc @houseroad @spandantiwari @lara-hdr @BowenBao @neginraoof @SplitInfinity | https://github.com/pytorch/pytorch/issues/58460 | closed | [

"module: onnx",

"triaged"

] | 2021-05-18T03:15:29Z | 2022-02-24T08:22:22Z | null | williamlzw |

pytorch/TensorRT | 473 | ❓ Is it possible to use TRTorch with batchedNMSPlugin for TensorRT? | ## ❓ Question

<!-- Your question -->

## What you have already tried

Hi, I am trying to convert detectron2 traced keypoint-rcnn model that contains ops from torchvision like torchvision::nms. I get the following error:

>

> terminate called after throwing an instance of 'torch::jit::ErrorReport'

> what():... | https://github.com/pytorch/TensorRT/issues/473 | closed | [

"question"

] | 2021-05-16T11:28:14Z | 2022-08-20T07:31:37Z | null | VRSEN |

pytorch/extension-cpp | 72 | How does the layer of C++ extensions translate to TorchScript or onnx? | https://github.com/pytorch/extension-cpp/issues/72 | open | [] | 2021-05-14T09:50:12Z | 2025-08-26T03:36:50Z | null | yanglinxiabuaaa | |

pytorch/vision | 3,832 | Error converting to onnx: forward function contains for loop | Hello, there is a for loop in my forward function. When I turned to onnx, the following error occurred:

`[ONNXRuntimeError] : 1 : FAIL : Non-zero status code returned while running Split node. Name:'Split_ 1277' Status Message: Cannot split using values in 'split' attribute. Axis=0 Input shape={59} NumOutputs=17 Num... | https://github.com/pytorch/vision/issues/3832 | open | [

"question",

"awaiting response",

"module: onnx"

] | 2021-05-14T03:52:58Z | 2021-05-18T09:42:32Z | null | wytcsuch |

pytorch/vision | 3,825 | Why does RandomErasing transform aspect ratio use log scale | See from https://github.com/pytorch/vision/commit/06a5858b3b73d62351456886f0a9f725fddbb3fe the aspect ratio is chosen randomly from a log scale

I didn't see this in the original paper? And in the reference implementation.

https://github.com/zhunzhong07/Random-Erasing/blob/c699ae481219334755de93e9c870151f256013e4... | https://github.com/pytorch/vision/issues/3825 | closed | [

"question",

"module: transforms"

] | 2021-05-13T11:43:04Z | 2021-05-13T12:05:11Z | null | jxu |

pytorch/vision | 3,822 | torchvision C++ compiling | 1. quesion:

When I trying to compile torchvision from source in c++ language, the terminal thow erros:

In file included from /home/pc/anaconda3/include/python3.8/pytime.h:6:0,

from /home/pc/anaconda3/include/python3.8/Python.h:85,

from /media/pc/data/software/vision-0.9.0/torchv... | https://github.com/pytorch/vision/issues/3822 | closed | [

"question"

] | 2021-05-13T10:29:13Z | 2021-05-13T12:02:06Z | null | swordnosword |

pytorch/text | 1,305 | On Vocab Factory functions behavior | Related discussion #1016

Related PRs #1304, #1302

---------

torchtext provides several factory functions to construct [Vocab class](https://github.com/pytorch/text/blob/f7a6fbd3a910c4066b9a748545df388ae5933a6a/torchtext/vocab.py#L19) object. The primary ways to construct vocabulary are:

1. Reading raw tex... | https://github.com/pytorch/text/issues/1305 | open | [

"enhancement",

"question",

"need discussions"

] | 2021-05-13T02:52:19Z | 2021-05-13T04:07:13Z | null | parmeet |

pytorch/functorch | 23 | Figure out how to transform over optimizers | One way to transform over training loops (e.g. to do model ensembling or the inner step of a MAML) is to use a function that represents the optimizer step instead of an actual PyTorch optimizer. Right now I think we have the following requirements

- There should be a function version of each optimizer (e.g. `F.sgd`)

... | https://github.com/pytorch/functorch/issues/23 | open | [] | 2021-05-11T13:13:39Z | 2021-05-11T13:13:39Z | null | zou3519 |

pytorch/vision | 3,811 | Mask-rcnn training - all AP and Recall scores in “IoU Metric: segm” remain 0 | With torchvision’s pre-trained mask-rcnn model, trying to train on a custom dataset prepared in COCO format.

Using torch/vision/detection/engine’s `train_one_epoch` and `evaluate` methods for training and evaluation, respectively.

The loss_mask metric is reducing as can be seen here:

```

Epoch: [5] [ 0/20] et... | https://github.com/pytorch/vision/issues/3811 | open | [

"question",

"topic: semantic segmentation"

] | 2021-05-11T12:09:41Z | 2023-03-02T19:34:03Z | null | hemasunder |

pytorch/TensorRT | 449 | error: ‘tryTypeMetaToScalarType’ is not a member of ‘c10’ | ## ❓ CMake building error using this [repo](https://github.com/JosephChenHub/TRTorch)

<!-- Your question -->

How to build the TRTorch or use the release packages of TRTorch in Ubuntu 18.04?

## What you have already tried

Tried build TRTorch through CMakeLists.txt provided by [this](https://github.com/NVIDIA/TRTor... | https://github.com/pytorch/TensorRT/issues/449 | closed | [

"question",

"No Activity"

] | 2021-05-10T06:45:33Z | 2021-11-01T00:01:56Z | null | AllentDan |

pytorch/vision | 3,801 | Unable to train the keypointrcnn_resnet50_fpn model | ## ❓ The Predictions after training the model are empty.

### I'm trying to train the model for keypoints detection, & bounding boxes, using the script in the references section, while training the loss_keypoint is always 0.0000. Using those weights for prediction is giving no predictions at all.

I'm running this ... | https://github.com/pytorch/vision/issues/3801 | closed | [

"question",

"module: reference scripts",

"topic: object detection"

] | 2021-05-09T16:28:57Z | 2021-05-10T15:54:04Z | null | d1nz-g33k |

pytorch/serve | 1,055 | How i do models chain processing and batch processing for analyzing text data? | Hello, I wanted to thank you for creating such a convenient and easily deployable model service.

I have several questions / suggestions (maybe they have already been implemented).

The first thing I would like to know / get is the launch of a chain of models... Example: I have a basic model, let's say BERT, I would ... | https://github.com/pytorch/serve/issues/1055 | closed | [

"question"

] | 2021-05-08T10:42:46Z | 2021-05-17T09:19:23Z | null | yurkoff-mv |

pytorch/vision | 3,784 | Could T.Lambda be nn.Module? | It would allow it to be placed in nn.ModuleList for passing to RandomApply (for scriptability)

https://pytorch.org/vision/stable/transforms.html#torchvision.transforms.RandomApply

cc @vfdev-5 | https://github.com/pytorch/vision/issues/3784 | open | [

"question",

"module: transforms"

] | 2021-05-06T12:22:19Z | 2021-05-07T14:13:30Z | null | vadimkantorov |

pytorch/vision | 3,783 | [docs] Unclear if to_pil_image / to_tensor copy or zero-copy for CPU<->CPU | It currently uses a vague language "convert". It's not sure if "conversion" incurs a copy or not

cc @vfdev-5 | https://github.com/pytorch/vision/issues/3783 | open | [

"question",

"module: transforms"

] | 2021-05-06T11:52:59Z | 2021-05-12T11:53:46Z | null | vadimkantorov |

pytorch/vision | 3,782 | ToTensor confuse me with the way it takes input | So the `ToTensor` class of `to_tensor` function takes input in the dimension of (H, W) while PIL has it's images dimension be (W, H).

Why is this transpose ?

cc @vfdev-5 | https://github.com/pytorch/vision/issues/3782 | closed | [

"question",

"module: transforms"

] | 2021-05-06T10:17:52Z | 2021-05-07T06:49:38Z | null | MohamedAliRashad |

pytorch/vision | 3,772 | Unable to get segmented mask output image | . | https://github.com/pytorch/vision/issues/3772 | closed | [

"question",

"awaiting response",

"topic: semantic segmentation"

] | 2021-05-05T08:31:26Z | 2021-06-01T06:16:06Z | null | shubhamkotal |

pytorch/tutorials | 1,506 | Seq2seq Transformer Tutorial best model saving | In [this tutorial](https://pytorch.org/tutorials/beginner/transformer_tutorial.html#load-and-batch-data), it says "Save the model if the validation loss is the best we’ve seen so far.", and then the following code follows (also [here](https://github.com/pytorch/tutorials/blob/master/beginner_source/transformer_tutorial... | https://github.com/pytorch/tutorials/issues/1506 | closed | [

"question",

"Text",

"module: torchtext"

] | 2021-05-05T05:04:47Z | 2023-03-08T20:55:00Z | null | micahcarroll |

pytorch/xla | 2,927 | How to install torch_xla with python version 3.9.2 | ## ❓ Questions and Help

I have to use 3.9.2 for other dependency. Given that my python version must be 3.9.2, how do I install torch_xla ?

I tried these 2 method shown in tutorial

1)

`!pip install cloud-tpu-client==0.10 https://storage.googleapis.com/tpu-pytorch/wheels/torch_xla-1.8.1-cp37-cp37m-linux_x86... | https://github.com/pytorch/xla/issues/2927 | closed | [

"stale"

] | 2021-05-04T03:21:02Z | 2021-06-22T17:43:47Z | null | sirgarfieldc |

pytorch/vision | 3,767 | Failing to load the pre-trained weights on multi-gpus. | ## 🐛 Bug

Downloading the pre-trained weights for following models, Alexnet, Resnet_152, Resnet -18, SqueezeNet, VGG11 and trying to load them on any gpu other than cuda:0, it throw error.

## To Reproduce

wget https://download.pytorch.org/models/alexnet-owt-4df8aa71.pth

```

import torch

from torchvision... | https://github.com/pytorch/vision/issues/3767 | closed | [

"question"

] | 2021-05-03T21:33:58Z | 2023-08-22T16:03:22Z | null | HamidShojanazeri |

pytorch/serve | 1,045 | How to add a new handler guide | Goal is to support new use cases easily

The base handler is also quite general in its capabilities so want to showcase a bit more what can be done | https://github.com/pytorch/serve/issues/1045 | closed | [

"documentation",

"enhancement"

] | 2021-04-28T20:43:10Z | 2021-05-05T19:17:39Z | null | msaroufim |

pytorch/pytorch | 57,118 | How to view VLOG information | How to use VLOG, which is same to specify TF_CPP_MIN_VLOG_LEVEL variable in TensorFlow.

| https://github.com/pytorch/pytorch/issues/57118 | open | [

"module: logging",

"triaged"

] | 2021-04-28T11:16:12Z | 2024-09-04T19:25:04Z | null | HangJie720 |

pytorch/vision | 3,746 | Details on pre-training of torchvision models | I realize there is a closed issue on this topic here: https://github.com/pytorch/vision/issues/666

The issue has been opened in 2018. I have not found any documentation on how the models of torchvision are pre-trained, therefore I am opening another issue. Is the above answer still valid? Are the models still traine... | https://github.com/pytorch/vision/issues/3746 | closed | [

"question",

"module: models"

] | 2021-04-28T11:13:01Z | 2021-04-28T11:44:38Z | null | spurra |

pytorch/text | 1,295 | How to train data with the similar number of tokens in a batch using distributed training? | My code needs two functions:

1. Bucket iterator;

2. In each batch, the number of tokens are similar. (This means the batch size of each batch is not same.)

I think I could fulfill the function 2 with a custom sampler which inherits torch.utils.data.Sampler, but as seen in the tutorial, Bucket iterator inherits t... | https://github.com/pytorch/text/issues/1295 | open | [] | 2021-04-27T09:36:11Z | 2021-07-06T16:22:55Z | null | sandthou |

pytorch/vision | 3,729 | Evaluation Method does not work for detection | ## 🐛 Bug

After the training process, when running the evaluation function available here (https://github.com/pytorch/vision/blob/dc42f933f3343c76727dbfba6e4242f1bcb8e1a0/references/detection/engine.py), the process gets stuck without any error. I left the evaluation method running for two days but there are no erro... | https://github.com/pytorch/vision/issues/3729 | closed | [

"question",

"awaiting response",

"module: reference scripts"

] | 2021-04-26T08:54:08Z | 2025-01-02T07:26:34Z | null | aliceinland |

pytorch/pytorch | 56,898 | How do I convert the quantified model to onnx or ncnn? | ## ❓ How do I convert the quantified model to onnx or ncnn?

### how to convert int8 model in pytorch to onnx.

I train a model with quantization aware train in pytorch, however I need use quantizated model to onnx, I have tried, but normal code is not work. Any bady can help me, thanks a lot.

@eklitzke @dreiss @h... | https://github.com/pytorch/pytorch/issues/56898 | closed | [] | 2021-04-26T03:07:06Z | 2021-04-27T22:51:13Z | null | fucker007 |

pytorch/serve | 1,041 | How to debug slow serve models | ## 📚 Documentation

Many issues are essentially people confused about the overhead that torch serve introduces so a good solution would be to have the below in a guide before opening a perf issue.

1. Point to existing benchmarks so people can get a baseline estimate

2. Running model without serve

3. Commands to g... | https://github.com/pytorch/serve/issues/1041 | closed | [

"documentation",

"enhancement",

"help wanted"

] | 2021-04-22T15:09:57Z | 2021-05-13T16:21:35Z | null | msaroufim |

pytorch/pytorch | 56,634 | [package] Module name reported in error message does not always match what is needed to extern/mock it | ## 🐛 Bug

The module name as printed in the packaging error messages is not always the name with which it can be successfully externed or mocked.

## To Reproduce

```

import torch

import io

model = torch.hub.load('nicolalandro/ntsnet-cub200', 'ntsnet', pretrained=True, **{'topN': 6, 'device':'cpu', 'num_classe... | https://github.com/pytorch/pytorch/issues/56634 | open | [

"triaged"

] | 2021-04-21T21:41:20Z | 2021-04-21T21:43:22Z | null | SplitInfinity |

huggingface/pytorch-image-models | 572 | What is EfficientNetV2s? What is it relationship with EfficientNetV2? | https://github.com/huggingface/pytorch-image-models/issues/572 | closed | [

"enhancement"

] | 2021-04-21T07:24:51Z | 2021-04-21T15:51:02Z | null | chenyang9799 | |

pytorch/pytorch | 56,473 | how to restore a model's weight from jit.traced model file? | Hi guys,

i have a traced pt model file, now i need to use it restore a net instance like below

```py

traced_model = torch.jit.load('traced.pt')

state_dict = extract_state_dict(traced_model) #need to implement

model = construct_model(args)

model.load_state_dict(state_dict)

```

extract_state_dict is the functi... | https://github.com/pytorch/pytorch/issues/56473 | closed | [

"oncall: jit"

] | 2021-04-20T12:54:26Z | 2021-04-21T03:51:31Z | null | fortuneko |

pytorch/examples | 901 | Pytorch C++ Frontend: generating networks at runtime? | closing and moving to pytorch repo | https://github.com/pytorch/examples/issues/901 | closed | [] | 2021-04-20T04:25:48Z | 2021-04-20T04:30:13Z | 0 | r2dliu |

huggingface/sentence-transformers | 875 | Where is the saved model after the training? | model.fit(train_objectives=[(train_dataloader, train_loss)], output_path=dir, epochs=1, warmup_steps=100)

I have specified the output_path where the model output, but I didn't see any documents after training.

thank you. | https://github.com/huggingface/sentence-transformers/issues/875 | open | [] | 2021-04-17T00:45:41Z | 2021-04-17T09:54:52Z | null | Bulando |

pytorch/vision | 3,678 | Deformable convolution best practice? | ## ❓ Questions and Help

Would appreciate it if anyone has some insight on how to use deformable convolution correctly.

Deformable convolution is tricky as even the official implementation is different from what's described in the paper. The paper claims to use 2N offset size instead of 2 x ks x ks.

Anyway, we'... | https://github.com/pytorch/vision/issues/3678 | open | [

"question",

"module: ops"

] | 2021-04-16T07:20:24Z | 2021-04-21T12:56:47Z | null | liyy201912 |

pytorch/pytorch | 56,149 | how to RegisterPass for torch.jit.trace() | ## ❓ Questions and Help

We have a set of [listed resources available on the website](https://pytorch.org/resources). Our primary means of support is our discussion forum:

- [Discussion Forum](https://discuss.pytorch.org/)

is there are new way to create a custom transformation pass in torchscript with torch1.... | https://github.com/pytorch/pytorch/issues/56149 | closed | [

"oncall: jit"

] | 2021-04-15T15:25:42Z | 2021-06-04T16:57:02Z | null | Andrechang |

pytorch/vision | 3,673 | The document of torchvision.ops.deform_conv2d is not clear | ## 📚 Documentation

From the documentation, I cannot get the exact meaning of 18(ie, 2*3*3) channels of the offset in a deformable convolution?

I want to visualize the offset of the deformable convolution with kernel size 3*3.

So It’s essential for me to know what’s the exact meaning of these channels.

I write... | https://github.com/pytorch/vision/issues/3673 | open | [

"question"

] | 2021-04-15T06:43:49Z | 2022-05-18T04:57:34Z | null | Zhaoyi-Yan |

pytorch/xla | 2,883 | How to dump HLO IR | ## ❓ Questions and Help

Hi,

I want to extract the HLO PROTO/TEXT of a function/module that I wrote in PyTorch.

Something similar to what jax is doing [here](https://jax.readthedocs.io/en/latest/jax.html#jax.xla_computation):

```

def f(x):

return jax.numpy.sin(jax.numpy.cos(x))

c = jax.xla_computation... | https://github.com/pytorch/xla/issues/2883 | closed | [

"stale"

] | 2021-04-15T02:42:09Z | 2021-06-22T17:43:37Z | null | KatiaSN602 |

pytorch/pytorch | 55,914 | how to convert libtorch trained model to torch script model | ## ❓ Questions and Help

### Please note that this issue tracker is not a help form and this issue will be closed.

We have a set of [listed resources available on the website](https://pytorch.org/resources). Our primary means of support is our discussion forum:

- [Discussion Forum](https://discuss.pytorch.org/)... | https://github.com/pytorch/pytorch/issues/55914 | closed | [] | 2021-04-13T15:57:00Z | 2021-04-13T16:28:26Z | null | WuLoing |

pytorch/vision | 3,658 | Failed to compile torchvision for ROCm as documented in pytorch.org | ## 🐛 Bug

Failed to compile torchvision for ROCm as documented in pytorch.org/get-started

## To Reproduce

Steps to reproduce the behavior:

as in: https://pytorch.org/get-started/locally/

1. python -m venv ptamd; source ptamd/bin/activate

1. pip install torch -f https://download.pytorch.org/whl/rocm4.0.1/t... | https://github.com/pytorch/vision/issues/3658 | open | [

"question",

"topic: build",

"topic: binaries"

] | 2021-04-11T12:32:10Z | 2021-07-03T13:15:58Z | null | henrique |

huggingface/datasets | 2,196 | `load_dataset` caches two arrow files? | Hi,

I am using datasets to load large json file of 587G.

I checked the cached folder and found that there are two arrow files created:

* `cache-ed205e500a7dc44c.arrow` - 355G

* `json-train.arrow` - 582G

Why is the first file created?

If I delete it, would I still be able to `load_from_disk`? | https://github.com/huggingface/datasets/issues/2196 | closed | [

"question"

] | 2021-04-09T03:49:19Z | 2021-04-12T05:25:29Z | null | hwijeen |

huggingface/datasets | 2,193 | Filtering/mapping on one column is very slow | I'm currently using the `wikipedia` dataset— I'm tokenizing the articles with the `tokenizers` library using `map()` and also adding a new `num_tokens` column to the dataset as part of that map operation.

I want to be able to _filter_ the dataset based on this `num_tokens` column, but even when I specify `input_colu... | https://github.com/huggingface/datasets/issues/2193 | closed | [

"question"

] | 2021-04-08T18:16:14Z | 2021-04-26T16:13:59Z | null | norabelrose |

pytorch/TensorRT | 429 | ❓ [Question] Does TRTorch support autograd in inference? | ## ❓ Question

Some models can contain autograd as part of their inference pass; a simple example, which does compile to TorchScript, would be:

```python

import torch

class M(torch.nn.Module):

def forward(self, x):

x.requires_grad_(True)

y = x**2

return torch.autograd.grad([y.sum()]... | https://github.com/pytorch/TensorRT/issues/429 | closed | [

"question"

] | 2021-04-08T05:12:50Z | 2021-04-12T14:03:51Z | null | Linux-cpp-lisp |

huggingface/datasets | 2,187 | Question (potential issue?) related to datasets caching | I thought I had disabled datasets caching in my code, as follows:

```

from datasets import set_caching_enabled

...

def main():

# disable caching in datasets

set_caching_enabled(False)

```

However, in my log files I see messages like the following:

```

04/07/2021 18:34:42 - WARNING - datasets.build... | https://github.com/huggingface/datasets/issues/2187 | open | [

"question"

] | 2021-04-08T00:16:28Z | 2023-01-03T18:30:38Z | null | ioana-blue |

pytorch/pytorch | 55,452 | How to access model embedded functions? | ## ❓ Questions and Help

I am working on C# .NET with Visual Studio 2019, over Windows Server 2019 Standard.

I aim to export a Python model to run inference on C# with onnxruntime.

I am using [Resemble-ai voice encoder](https://github.com/resemble-ai/Resemblyzer/blob/master/resemblyzer/voice_encoder.py) as ONNX, u... | https://github.com/pytorch/pytorch/issues/55452 | closed | [

"module: onnx",

"triaged"

] | 2021-04-07T10:22:44Z | 2021-04-21T08:56:02Z | null | ADD-eNavarro |

huggingface/transformers | 11,057 | Difference in tokenizer output depending on where `add_prefix_space` is set. | ## Environment info

<!-- You can run the command `transformers-cli env` and copy-and-paste its output below.

Don't forget to fill out the missing fields in that output! -->

- `transformers` version: 4.4.2

- Platform: Linux-4.19.112+-x86_64-with-Ubuntu-18.04-bionic

- Python version: 3.7.10

- PyTorch version... | https://github.com/huggingface/transformers/issues/11057 | closed | [] | 2021-04-05T10:30:25Z | 2021-06-07T15:18:36Z | null | sai-prasanna |

pytorch/pytorch | 55,223 | How to use PyTorch with ROCm (radeon gpu)? How to transfer data to gpu? | Hey,

So far I didnt see any documentation or similar, which gives a hint how to use PyTorch with other GPUs than NVIDIA (when the new ROCm package is installed). How can I choose my radeon GPU as device and so use it for training? Very glad for any advices.

Best

cc @jeffdaily @sunway513 @jithunnair-amd @ROCmSuppor... | https://github.com/pytorch/pytorch/issues/55223 | closed | [

"module: rocm",

"triaged"

] | 2021-04-02T08:07:42Z | 2023-08-22T22:02:51Z | null | oconnor127 |

pytorch/TensorRT | 420 | ❓ [Question] How can I pull TRTorch docker image? | ## ❓ Question

I use the command to pull TRTorch docker image

```

sudo docker pull docker.pkg.github.com/nvidia/trtorch/docgen:0.3.0

```

Get respose unauthorized: Your request could not be authenticated by the GitHub Packages service. Please ensure your access token is valid and has the appropriate scopes configur... | https://github.com/pytorch/TensorRT/issues/420 | closed | [

"question"

] | 2021-04-01T12:18:50Z | 2021-04-02T15:57:07Z | null | Tshzzz |

pytorch/text | 1,265 | How to split `_RawTextIterableDataset` | ## ❓ Questions and Help

I am trying to move from using `legacy` and use new provided features, i was doing this:

```

from torchtext import legacy

TEXT = legacy.data.Field(lower=True, batch_first=True)

LABEL = legacy.data.LabelField(dtype=torch.float)

train_data, test_data = legacy.datasets.IMDB.splits(TEXT, LABEL... | https://github.com/pytorch/text/issues/1265 | open | [

"feature request"

] | 2021-03-30T15:34:40Z | 2023-07-30T03:13:25Z | null | KickItLikeShika |

huggingface/transformers | 10,960 | What is the score of trainer.predict()? | I want to know the meaning of output of trainer.predict().

example:

`PredictionOutput(predictions=array([[-2.2704859, 2.442343 ]], dtype=float32), label_ids=array([1]), metrics={'eval_loss': 0.008939245715737343, 'eval_runtime': 0.0215, 'eval_samples_per_second': 46.56})`

What is this score? -> predictions=arra... | https://github.com/huggingface/transformers/issues/10960 | closed | [] | 2021-03-30T07:53:13Z | 2021-03-30T23:41:38Z | null | Yuukp |

pytorch/text | 1,264 | How to use fasttext emebddings in the torchtext Nightly Vocab | I have a custom trained facebook fasttext embedding which i want to use in my RNN.

i use the nightly version of torchtext so the Vocab is kinda new.

How do i use fastext embedding there. a simple clear example would be great.

| https://github.com/pytorch/text/issues/1264 | open | [] | 2021-03-27T12:48:11Z | 2021-03-29T01:44:16Z | null | StephennFernandes |

pytorch/pytorch | 54,790 | tools/git-clang-format: The downloaded binary is not what was expected! | `tools/git-clang-format` seems to do a test on hash of the clang-format binary, but if it mismatches it just says "The downloaded binary is not what was expected!" and no instructions how to remediate. I rm -rf'ed .clang-format-bin that might help | https://github.com/pytorch/pytorch/issues/54790 | closed | [

"module: lint",

"triaged"

] | 2021-03-26T18:58:06Z | 2021-04-07T00:19:01Z | null | ezyang |

pytorch/pytorch | 54,758 | How to release unnecessary tensor which occupys memory when executing inference at test phrase? | ## ❓ Questions and Help

I have a memory-cost operation, I put this operation into a function like this:

```

class xxx(nn.Module):

def forward(xxx):

xxx = self.cost_memory_function(xxx)

... # OOM error occurs here rather than at the above function.

return xxx

def cost_memory_func... | https://github.com/pytorch/pytorch/issues/54758 | closed | [] | 2021-03-26T06:14:24Z | 2021-03-26T16:08:40Z | null | shoutOutYangJie |

pytorch/TensorRT | 411 | how to compile on windows? | https://github.com/pytorch/TensorRT/issues/411 | closed | [

"help wanted",

"No Activity"

] | 2021-03-25T22:51:05Z | 2021-07-28T00:01:06Z | null | statham123 | |

huggingface/datasets | 2,108 | Is there a way to use a GPU only when training an Index in the process of add_faisis_index? | Motivation - Some FAISS indexes like IVF consist of the training step that clusters the dataset into a given number of indexes. It would be nice if we can use a GPU to do the training step and covert the index back to CPU as mention in [this faiss example](https://gist.github.com/mdouze/46d6bbbaabca0b9778fca37ed2bcccf6... | https://github.com/huggingface/datasets/issues/2108 | open | [

"question"

] | 2021-03-24T21:32:16Z | 2021-03-25T06:31:43Z | null | shamanez |

pytorch/vision | 3,602 | Imagenet dataloader error: RuntimeError: The archive ILSVRC2012_devkit_t12.tar.gz is not present in the root directory or is corrupted. | ## 🐛 Bug

I am using pytorch 1.8.0 and torchvision 0.9.

I am trying to use the pretrained models from pytorch and evaluate them on imagenet val data. That should be fairly straightforward, but I am getting stuck on the dataloader.

I downloaded the imagenet and the folder structure that I have is like this:

```

/... | https://github.com/pytorch/vision/issues/3602 | closed | [

"question",

"module: datasets"

] | 2021-03-24T19:15:28Z | 2021-03-25T17:01:31Z | null | seyeeet |

pytorch/pytorch | 54,583 | How to specific a op qconfig in "prepare_jit" qconfig_dict | ## ❓ Questions and Help

pytorch1.7/torchvision0.8.0

I want to use "prepare_jit" and "convert_jit" to quantize Resnet18. But I can't specific 'layer1.0.conv1' to different qconfig.

my code:

model = models.__dict__['resnet18] (pretrained=True)

model = torch.jit.script(model.eval())

qconfig1 = torch.quantization.Q... | https://github.com/pytorch/pytorch/issues/54583 | closed | [

"oncall: jit"

] | 2021-03-24T09:57:19Z | 2021-03-25T19:22:22Z | null | PenghuiCheng |

pytorch/tutorials | 1,439 | Question about pytorch mobile | Hello, I'm using Pytorch Mobile to deploy a model to phone via Android Studio.

I follow the official direction turn the model in to '.pt' , and load it in android studio, but it seems that it doesn't give the right prediction after turn it into '.pt', it always predict to the same label no matter any label of image ... | https://github.com/pytorch/tutorials/issues/1439 | closed | [

"question",

"Mobile"

] | 2021-03-24T07:14:40Z | 2023-03-10T17:22:49Z | null | stillbetter |

pytorch/tutorials | 1,432 | Reinforcement Tutorial (DQN) | Hey,

I try to reproduce [PyTorch Reinforcement Tutorial (DGN)](https://pytorch.org/tutorials/intermediate/reinforcement_q_learning.html#training) in

In each time step, the state of the environment need to be evaluated in the function ```def get_screen()```

The line

```

screen = env.render(mode='rgb_array').... | https://github.com/pytorch/tutorials/issues/1432 | closed | [

"Reinforcement Learning"

] | 2021-03-21T23:36:44Z | 2022-09-06T17:44:22Z | 2 | sambaPython24 |

pytorch/pytorch | 54,390 | UserWarning: The epoch parameter in `scheduler.step()` was not necessary and is being deprecated where possible. Please use `scheduler.step()` to step the scheduler. During the deprecation, if epoch is different from None, the closed form is used instead of the new chainable form, where available. Please open an issue... | ## ❓ Questions and Help

### Please note that this issue tracker is not a help form and this issue will be closed.

We have a set of [listed resources available on the website](https://pytorch.org/resources). Our primary means of support is our discussion forum:

- [Discussion Forum](https://discuss.pytorch.org/)... | https://github.com/pytorch/pytorch/issues/54390 | closed | [] | 2021-03-21T14:17:10Z | 2021-03-22T15:26:58Z | null | ZengcanXUE |

pytorch/TensorRT | 408 | 🐛 [Bug] Tests are not being linked properly, fail with 'symbol lookup error' | ## Bug Description

<!-- A clear and concise description of what the bug is. -->

## To Reproduce

Steps to reproduce the behavior:

1. bazel test //tests --compilation_mode=dbg --test_output=errors --jobs=4 --runs_per_test=5

You will see all the tests fail. I am using stock 1.7.1 PyTorch.

<!-- If you h... | https://github.com/pytorch/TensorRT/issues/408 | closed | [

"question"

] | 2021-03-20T04:06:57Z | 2021-04-07T01:42:26Z | null | borisfom |

pytorch/examples | 895 | Video classification example | Hi,

As we all know that video representation learning is a hot topic in computer vision community (thanks to recent advances in self-supervised learning), is it time to add a toy example for video classification? This code would be as simple as image classification examples. For example, we can add an example of vid... | https://github.com/pytorch/examples/issues/895 | open | [

"good first issue"

] | 2021-03-18T19:08:06Z | 2022-03-09T20:44:51Z | 1 | avijit9 |

pytorch/xla | 2,831 | RuntimeError: Cannot access data pointer of Tensor that doesn't have storage, how to resolve it? | ## Issue description

Currently I am trying to solve an object detection problem using FastRCNN model with the help of Pytorch XLA module

But while training I am getting a **RuntimeError: Cannot access data pointer of Tensor that doesn't have storage**

It was working fine when I trained the model in GPU kernel, but... | https://github.com/pytorch/xla/issues/2831 | closed | [

"stale"

] | 2021-03-18T17:03:32Z | 2021-06-26T02:22:49Z | null | IamSparky |

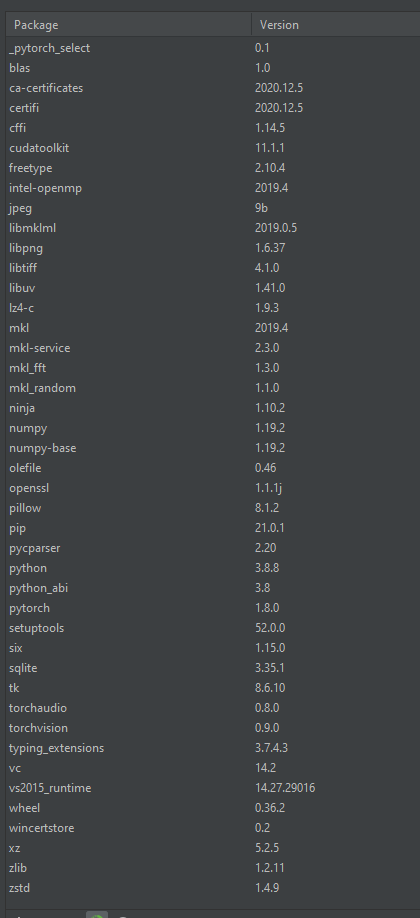

pytorch/tutorials | 1,421 | Chatbot tutorial - RuntimeError: 'lengths' argument should be a 1D CPU int64 tensor, but got 1D cuda:0 Long tensor | ERROR: type should be string, got "https://github.com/pytorch/tutorials/blob/master/beginner_source/chatbot_tutorial.py\r\nTried running this chatbot tutorial. Training goes well but when actually using the model by uncommenting the final line of code (as specified in the comments) it returns the following error:\r\n\r\n`Iteration: 4000; Percent complete: 100.0%; Average loss: 2.4559\r\n> hello?\r\nTraceback (most recent call last):\r\n File \"C:/Users/user/PycharmProjects/pytorch-tests/main.py\", line 1377, in <module>\r\n evaluateInput(encoder, decoder, searcher, voc)\r\n File \"C:/Users/user/PycharmProjects/pytorch-tests/main.py\", line 1242, in evaluateInput\r\n output_words = evaluate(encoder, decoder, searcher, voc, input_sentence)\r\n File \"C:/Users/user/PycharmProjects/pytorch-tests/main.py\", line 1225, in evaluate\r\n tokens, scores = searcher(input_batch, lengths, max_length)\r\n File \"C:\\Users\\user\\.conda\\envs\\pytorch-tests\\lib\\site-packages\\torch\\nn\\modules\\module.py\", line 889, in _call_impl\r\n result = self.forward(*input, **kwargs)\r\n File \"C:/Users/user/PycharmProjects/pytorch-tests/main.py\", line 1160, in forward\r\n encoder_outputs, encoder_hidden = self.encoder(input_seq, input_length)\r\n File \"C:\\Users\\user\\.conda\\envs\\pytorch-tests\\lib\\site-packages\\torch\\nn\\modules\\module.py\", line 889, in _call_impl\r\n result = self.forward(*input, **kwargs)\r\n File \"C:/Users/user/PycharmProjects/pytorch-tests/main.py\", line 693, in forward\r\n packed = nn.utils.rnn.pack_padded_sequence(embedded, input_lengths)\r\n File \"C:\\Users\\user\\.conda\\envs\\pytorch-tests\\lib\\site-packages\\torch\\nn\\utils\\rnn.py\", line 245, in pack_padded_sequence\r\n _VF._pack_padded_sequence(input, lengths, batch_first)\r\nRuntimeError: 'lengths' argument should be a 1D CPU int64 tensor, but got 1D cuda:0 Long tensor\r\n\r\nProcess finished with exit code 1`\r\n\r\nUnfamiliar with pytorch so no idea what the cause is or how to solve it, but it looks like something to do with tensor types.\r\nPackages in environment:\r\n\r\n" | https://github.com/pytorch/tutorials/issues/1421 | closed | [

"Text"

] | 2021-03-18T11:59:07Z | 2023-03-09T19:06:58Z | 1 | 0xVavaldi |

pytorch/serve | 1,013 | How to debug handlers? | Since the handler's logic is copied inside every `.mar` file, there is no sense of breakpoints in the original handler `.py` file. Can you please suggest how can we debug our handler modules? | https://github.com/pytorch/serve/issues/1013 | closed | [

"triaged_wait"

] | 2021-03-17T21:52:46Z | 2021-04-09T18:47:43Z | null | duklin |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.