text-classification bool 2 classes | text stringlengths 0 664k |

|---|---|

true |

`yangwang825/reuters-21578` is an 8-class subset of the Reuters 21578 news dataset.

|

false |

---

## Cashew Disease Identication with Artificial Intelligence (CADI-AI) Dataset

This repository contains a comprehensive dataset of cashew images captured by drones, accompanied by meticulously annotated labels.

Each high-resolution image in the dataset has a resolution of 1600x1300 pixels, providing fine details for analysis and model training.

To facilitate efficient object detection, each image is paired with a corresponding text file in YOLO format.

The YOLO format file contains annotations, including class labels and bounding box coordinates.

### Dataset Labels

```

['abiotic', 'insect', 'disease']

```

### Number of Images

```json

{'train': 3788, 'valid': 710, 'test': 238}

```

### Number of Instances Annotated

```json

{'insect':1618, 'abiotic':13960, 'disease':7032}

```

### Folder structure after unzipping repective folders

```markdown

Data/

└── train/

├── images

├── labels

└── val/

├── images

├── labels

└── test/

├── images

├── labels

```

### Dataset Information

The dataset was created by a team of data scientists from the KaraAgro AI Foundation,

with support from agricultural scientists and officers.

The creation of this dataset was made possible through funding of the

Deutsche Gesellschaft für Internationale Zusammenarbeit (GIZ) through their projects

[Market-Oriented Value Chains for Jobs & Growth in the ECOWAS Region (MOVE)](https://www.giz.de/en/worldwide/108524.html) and

[FAIR Forward - Artificial Intelligence for All](https://www.bmz-digital.global/en/overview-of-initiatives/fair-forward/), which GIZ implements on

behalf the German Federal Ministry for Economic Cooperation and Development (BMZ).

For detailed information regarding the dataset, we invite you to explore the accompanying datasheet available [here](https://drive.google.com/file/d/1viv-PtZC_j9S_K1mPl4R1lFRKxoFlR_M/view?usp=sharing).

This comprehensive resource offers a deeper understanding of the dataset's composition, variables, data collection methodologies, and other relevant details.

|

true |

# Dataset Card for WS353-semantics-sim-and-rel with ~2K entries.

### Dataset Summary

License: Apache-2.0. Contains CSV of a list of word1, word2, their `connection score`, type of connection and language.

- ### Original Datasets are available here:

- https://leviants.com/multilingual-simlex999-and-wordsim353/

### Paper of original Dataset:

- https://arxiv.org/pdf/1508.00106v5.pdf |

false | # AutoTrain Dataset for project: imagetest

## Dataset Description

This dataset has been automatically processed by AutoTrain for project imagetest.

### Languages

The BCP-47 code for the dataset's language is unk.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"image": "<32x32 RGB PIL image>",

"feat_fine_label": 19,

"target": 11

},

{

"image": "<32x32 RGB PIL image>",

"feat_fine_label": 29,

"target": 15

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"image": "Image(decode=True, id=None)",

"feat_fine_label": "ClassLabel(names=['apple', 'aquarium_fish', 'baby', 'bear', 'beaver', 'bed', 'bee', 'beetle', 'bicycle', 'bottle', 'bowl', 'boy', 'bridge', 'bus', 'butterfly', 'camel', 'can', 'castle', 'caterpillar', 'cattle', 'chair', 'chimpanzee', 'clock', 'cloud', 'cockroach', 'couch', 'cra', 'crocodile', 'cup', 'dinosaur', 'dolphin', 'elephant', 'flatfish', 'forest', 'fox', 'girl', 'hamster', 'house', 'kangaroo', 'keyboard', 'lamp', 'lawn_mower', 'leopard', 'lion', 'lizard', 'lobster', 'man', 'maple_tree', 'motorcycle', 'mountain', 'mouse', 'mushroom', 'oak_tree', 'orange', 'orchid', 'otter', 'palm_tree', 'pear', 'pickup_truck', 'pine_tree', 'plain', 'plate', 'poppy', 'porcupine', 'possum', 'rabbit', 'raccoon', 'ray', 'road', 'rocket', 'rose', 'sea', 'seal', 'shark', 'shrew', 'skunk', 'skyscraper', 'snail', 'snake', 'spider', 'squirrel', 'streetcar', 'sunflower', 'sweet_pepper', 'table', 'tank', 'telephone', 'television', 'tiger', 'tractor', 'train', 'trout', 'tulip', 'turtle', 'wardrobe', 'whale', 'willow_tree', 'wolf', 'woman', 'worm'], id=None)",

"target": "ClassLabel(names=['aquatic_mammals', 'fish', 'flowers', 'food_containers', 'fruit_and_vegetables', 'household_electrical_devices', 'household_furniture', 'insects', 'large_carnivores', 'large_man-made_outdoor_things', 'large_natural_outdoor_scenes', 'large_omnivores_and_herbivores', 'medium_mammals', 'non-insect_invertebrates', 'people', 'reptiles', 'small_mammals', 'trees', 'vehicles_1', 'vehicles_2'], id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 50000 |

| valid | 10000 |

|

false |

Audio files sampled at 48000Hz of an American male pronouncing the names of the Esperanto letters in three ways. Retroflex-r and trilled-r are included. |

false | # Dataset Card for Dataset Name

## Dataset Description

Old ChatGPT scrapes, the RAW version.

### Dataset Summary

This is a result of a colab in a virtual shed. Really old stuff, before Plus even. Everything was generated by the model itself.

I think this is from what we call "alpha" now? Might even be before alpha idfk.

### Supported Tasks and Leaderboards

See dataset for more info.

### Languages

English only iirc, might be some translations thrown in there.

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

Not much data was actually curated, it is recommended to go over the data yourself and fix some answers.

### Source Data

#### Initial Data Collection and Normalization

First, user queries were generated, then Assistant's answers.

#### Who are the source language producers?

OpenAI?

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

None. Z E R O.

### Discussion of Biases

Has some biases towards talking about OpenAI stuff and some weird-ish stuff. "NDA" stuff is missing.

### Other Known Limitations

Some of the quries contain answers, hence models trained on data as is will be fucked up. Raw data contains "today's date" and other stuff I didn't include in my Neo(X) finetune.

## Additional Information

### Dataset Curators

MrSteyk and old ChatGPT. RIP in pepperoni, you will be missed.

### Licensing Information

[More Information Needed]

### Citation Information

Don't

### Contributions

They know themselves, apart from OAI. |

false |

I'm too lazy to fill in the dataset card template! Think of it like r1, but after NY - timestamp is XX-01-2023. This is not turbo at this point, it was before 26ths. This must be "alpha", I'm 99% sure.

Has same problems, additional one is missing greetings! "NDA" stuff is missing from this as well! |

true | |

true | |

false | |

false | # Dataset Card for "prepared-yagpt"

## Short Description

This dataset is aimed for training of chatbots on russian language.

It consists plenty of dialogues that allows you to train you model answer user prompts.

## Notes

1. Special tokens

- history, speaker1, speaker2 (history can be optionally removed, i.e. substituted on empty string)

2. Dataset is based on

- [Matreshka](https://huggingface.co/datasets/zjkarina/matreshka)

- [Yandex-Q](https://huggingface.co/datasets/its5Q/yandex-q)

- [Diasum](https://huggingface.co/datasets/bragovo/diasum)

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

false | |

true | Persian dataset with Myers-Briggs 16 types. crawled on twitter persian users. |

false |

Dataset for anime head detection (include the entire head, not only the face parts).

| Dataset | Train | Test | Validate | Description |

|------------------------|-------|------|----------|----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| ani_face_detection.v1i | 25698 | 113 | 253 | A high-quality third-party dataset (seems to no longer be publicly available, please contact me for removal if it infringes your rights) that can be used for training directly. Although its name includes `face`, but what it actually annotated are `head`. |

We provide an [online demo](https://huggingface.co/spaces/deepghs/anime_object_detection) here. |

false |

Dataset for anime face detection (face only, not the entire head).

| Dataset | Train | Test | Validate | Description |

|:-----------------------:|:-----:|:----:|:--------:|------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| v1.4 | 12798 | 622 | 1217 | Additional images from different categories have been annotated based on the `v1` dataset. Furthermore, all automatically annotated data samples from the `v1` dataset have been manually corrected. |

| v1.4-raw | 4266 | 622 | 1217 | Same as `v1.4`, without any preprocess and data augmentation. Suitable for directly upload to Roboflow platform. |

| v1 | 5943 | 293 | 566 | Primarily consists of illustrations, auto-annotated with [hysts/anime-face-detector](https://github.com/hysts/anime-face-detector), and necessary manual corrections is performed. |

| raw | 1981 | 293 | 566 | Same as `v1`, without any preprocess and data augmentation. Suitable for directly upload to Roboflow platform. |

| Anime Face CreateML.v1i | 4263 | 609 | 1210 | Third-party dataset, source: https://universe.roboflow.com/my-workspace-mph8o/anime-face-createml/dataset/1 |

The best practice is to combine the `Anime Face CreateML.v1i` dataset with the `v1.4` dataset for training. We provide an [online demo](https://huggingface.co/spaces/deepghs/anime_object_detection). |

false | |

false | # Dataset Card for "piqa-ja-mbartm2m"

## Dataset Description

This is the Japanese Translation version of [piqa](https://huggingface.co/datasets/piqa).

The translator used in it was [facebook/mbart-large-50-many-to-many-mmt](https://huggingface.co/facebook/mbart-large-50-many-to-many-mmt).

## License

The same as the original piqa.

|

false |

# Dataset Card for LFQA Summary

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Additional Information](#additional-information)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

## Dataset Description

- **Repository:** [Repo](https://github.com/utcsnlp/lfqa_summary)

- **Paper:** [Concise Answers to Complex Questions: Summarization of Long-Form Answers](TODO)

- **Point of Contact:** acpotluri[at]utexas.edu

### Dataset Summary

This dataset contains summarization data for long-form question answers.

### Languages

The dataset contains data in English.

## Dataset Structure

### Data Instances

Each instance is a (question, long-form answer) pair from one of the three data sources -- ELI5, WebGPT, and NQ.

### Data Fields

Each instance is in a json dictionary format with the following fields:

* `type`: The type of the annotation, all data should have `summary` as the value.

* `dataset`: The dataset this QA pair belongs to, one of [`NQ`, `ELI5`, `Web-GPT`].

* `q_id`: The question id, same as the original NQ or ELI5 dataset.

* `a_id`: The answer id, same as the original ELI5 dataset. For NQ, we populate a dummy `a_id` (1).

* `question`: The question.

* `answer_paragraph`: The answer paragraph.

* `answer_sentences`: The list of answer sentences, tokenzied from the answer paragraph.

* `summary_sentences`: The list of summary sentence index (starting from 1).

* `is_summary_count`: The list of count of annotators selecting this sentence as summary for the sentence in `answer_sentences`.

* `is_summary_1`: List of boolean value indicating whether annotator one selected the corresponding sentence as a summary sentence.

* `is_summary_2`: List of boolean value indicating whether annotator two selected the corresponding sentence as a summary sentence.

* `is_summary_3`: List of boolean value indicating whether annotator three selected the corresponding sentence as a summary sentence.

### Data Splits

The train/dev/test are provided in the uploaded dataset.

## Dataset Creation

Please refer to our [paper](TODO) and datasheet for details on dataset creation, annotation process, and discussion of limitations.

## Additional Information

### Licensing Information

https://creativecommons.org/licenses/by-sa/4.0/legalcode

### Citation Information

```

@inproceedings{TODO,

title = {Concise Answers to Complex Questions: Summarization of Long-Form Answers},

author = {Potluri,Abhilash and Xu, Fangyuan and Choi, Eunsol},

year = 2023,

booktitle = {Proceedings of the Annual Meeting of the Association for Computational Linguistics},

note = {Long paper}

}

``` |

false | |

false | # Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

just for test

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

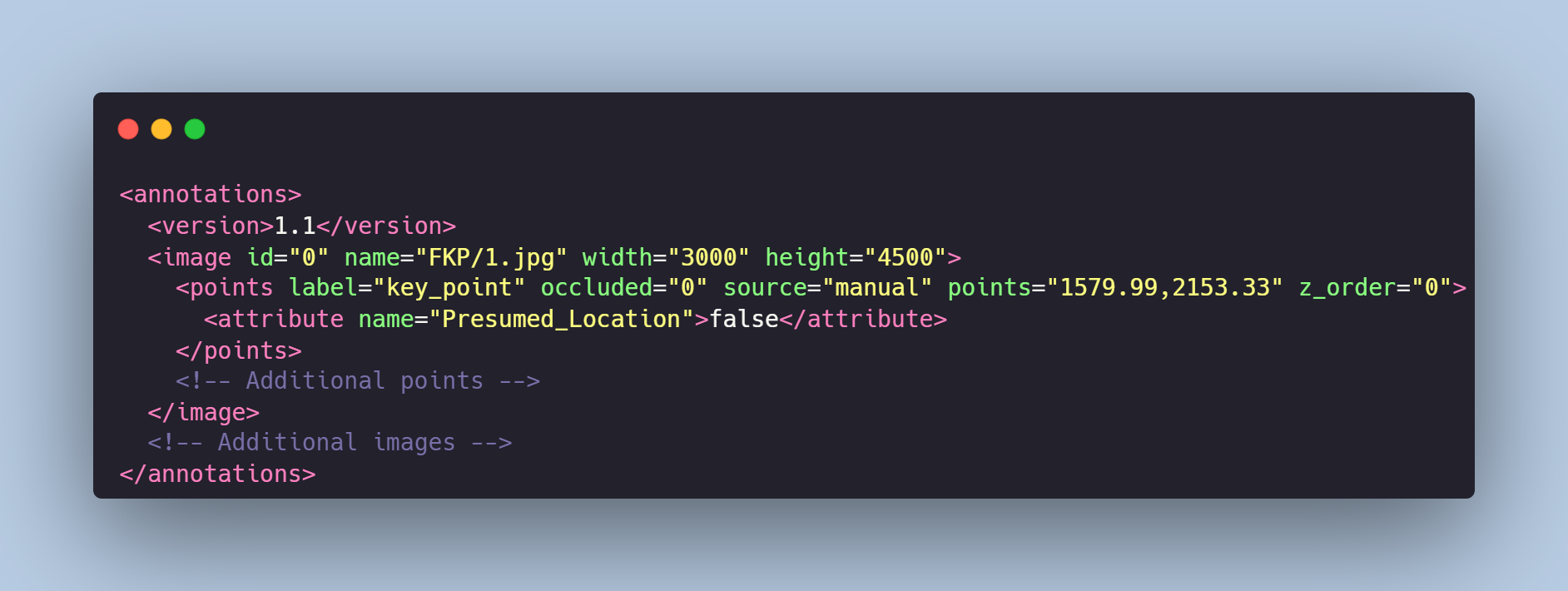

false | # Facial Keypoints

The dataset is designed for computer vision and machine learning tasks involving the identification and analysis of key points on a human face. It consists of images of human faces, each accompanied by key point annotations in XML format.

# Get the Dataset

This is just an example of the data. If you need access to the entire dataset, contact us via **[sales@trainingdata.pro](mailto:sales@trainingdata.pro)** or leave a request on **[https://trainingdata.pro/data-market](https://trainingdata.pro/data-market?utm_source=huggingface)**

# Data Format

Each image from `FKP` folder is accompanied by an XML-annotation in the `annotations.xml` file indicating the coordinates of the key points. For each point, the x and y coordinates are provided, and there is a `Presumed_Location` attribute, indicating whether the point is presumed or accurately defined.

# Example of XML file structure

# Labeled Keypoints

**1.** Left eye, the closest point to the nose

**2.** Left eye, pupil's center

**3.** Left eye, the closest point to the left ear

**4.** Right eye, the closest point to the nose

**5.** Right eye, pupil's center

**6.** Right eye, the closest point to the right ear

**7.** Left eyebrow, the closest point to the nose

**8.** Left eyebrow, the closest point to the left ear

**9.** Right eyebrow, the closest point to the nose

**10.** Right eyebrow, the closest point to the right ear

**11.** Nose, center

**12.** Mouth, left corner point

**13.** Mouth, right corner point

**14.** Mouth, the highest point in the middle

**15.** Mouth, the lowest point in the middle

# Keypoint annotation is made in accordance with your requirements.

## **[TrainingData](https://trainingdata.pro/data-market?utm_source=huggingface)** provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/trainingdata-pro** |

false | # Pose Estimation

The dataset is primarly intended to dentify and predict the positions of major joints of a human body in an image. It consists of people's photographs with body part labeled with keypoints.

# Get the Dataset

This is just an example of the data. If you need access to the entire dataset, contact us via **[sales@trainingdata.pro](mailto:sales@trainingdata.pro)** or leave a request on **[https://trainingdata.pro/data-market](https://trainingdata.pro/data-market?utm_source=huggingface)**

# Data Format

Each image from `EP` folder is accompanied by an XML-annotation in the `annotations.xml` file indicating the coordinates of the key points. For each point, the x and y coordinates are provided, and there is a `Presumed_Location` attribute, indicating whether the point is presumed or accurately defined.

# Example of XML file structure

.png?generation=1684358333663868&alt=media)

# Labeled body parts

Each keypoint is ordered and corresponds to the concrete part of the body:

0. **Nose**

1. **Neck**

2. **Right shoulder**

3. **Right elbow**

4. **Right wrist**

5. **Left shoulder**

6. **Left elbow**

7. **Left wrist**

8. **Right hip**

9. **Right knee**

10. **Right foot**

11. **Left hip**

12. **Left knee**

13. **Left foot**

14. **Right eye**

15. **Left eye**

16. **Right ear**

17. **Left ear**

# Keypoint annotation is made in accordance with your requirements.

## **[TrainingData](https://trainingdata.pro/data-market?utm_source=huggingface)** provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/trainingdata-pro** |

false |

# Dataset Card for duorc

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** [DuoRC](https://duorc.github.io/)

- **Repository:** [GitHub](https://github.com/duorc/duorc)

- **Paper:** [arXiv](https://arxiv.org/abs/1804.07927)

- **Leaderboard:** [DuoRC Leaderboard](https://duorc.github.io/#leaderboard)

- **Point of Contact:** [Needs More Information]

### Dataset Summary

The DuoRC dataset is an English language dataset of questions and answers gathered from crowdsourced AMT workers on Wikipedia and IMDb movie plots. The workers were given freedom to pick answer from the plots or synthesize their own answers. It contains two sub-datasets - SelfRC and ParaphraseRC. SelfRC dataset is built on Wikipedia movie plots solely. ParaphraseRC has questions written from Wikipedia movie plots and the answers are given based on corresponding IMDb movie plots.

### Supported Tasks and Leaderboards

- `abstractive-qa` : The dataset can be used to train a model for Abstractive Question Answering. An abstractive question answering model is presented with a passage and a question and is expected to generate a multi-word answer. The model performance is measured by exact-match and F1 score, similar to [SQuAD V1.1](https://huggingface.co/metrics/squad) or [SQuAD V2](https://huggingface.co/metrics/squad_v2). A [BART-based model](https://huggingface.co/yjernite/bart_eli5) with a [dense retriever](https://huggingface.co/yjernite/retribert-base-uncased) may be used for this task.

- `extractive-qa`: The dataset can be used to train a model for Extractive Question Answering. An extractive question answering model is presented with a passage and a question and is expected to predict the start and end of the answer span in the passage. The model performance is measured by exact-match and F1 score, similar to [SQuAD V1.1](https://huggingface.co/metrics/squad) or [SQuAD V2](https://huggingface.co/metrics/squad_v2). [BertForQuestionAnswering](https://huggingface.co/transformers/model_doc/bert.html#bertforquestionanswering) or any other similar model may be used for this task.

### Languages

The text in the dataset is in English, as spoken by Wikipedia writers for movie plots. The associated BCP-47 code is `en`.

## Dataset Structure

### Data Instances

```

{'answers': ['They arrived by train.'], 'no_answer': False, 'plot': "200 years in the future, Mars has been colonized by a high-tech company.\nMelanie Ballard (Natasha Henstridge) arrives by train to a Mars mining camp which has cut all communication links with the company headquarters. She's not alone, as she is with a group of fellow police officers. They find the mining camp deserted except for a person in the prison, Desolation Williams (Ice Cube), who seems to laugh about them because they are all going to die. They were supposed to take Desolation to headquarters, but decide to explore first to find out what happened.They find a man inside an encapsulated mining car, who tells them not to open it. However, they do and he tries to kill them. One of the cops witnesses strange men with deep scarred and heavily tattooed faces killing the remaining survivors. The cops realise they need to leave the place fast.Desolation explains that the miners opened a kind of Martian construction in the soil which unleashed red dust. Those who breathed that dust became violent psychopaths who started to build weapons and kill the uninfected. They changed genetically, becoming distorted but much stronger.The cops and Desolation leave the prison with difficulty, and devise a plan to kill all the genetically modified ex-miners on the way out. However, the plan goes awry, and only Melanie and Desolation reach headquarters alive. Melanie realises that her bosses won't ever believe her. However, the red dust eventually arrives to headquarters, and Melanie and Desolation need to fight once again.", 'plot_id': '/m/03vyhn', 'question': 'How did the police arrive at the Mars mining camp?', 'question_id': 'b440de7d-9c3f-841c-eaec-a14bdff950d1', 'title': 'Ghosts of Mars'}

```

### Data Fields

- `plot_id`: a `string` feature containing the movie plot ID.

- `plot`: a `string` feature containing the movie plot text.

- `title`: a `string` feature containing the movie title.

- `question_id`: a `string` feature containing the question ID.

- `question`: a `string` feature containing the question text.

- `answers`: a `list` of `string` features containing list of answers.

- `no_answer`: a `bool` feature informing whether the question has no answer or not.

### Data Splits

The data is split into a training, dev and test set in such a way that the resulting sets contain 70%, 15%, and 15% of the total QA pairs and no QA pairs for any movie seen in train are included in the test set. The final split sizes are as follows:

Name Train Dec Test

SelfRC 60721 12961 12599

ParaphraseRC 69524 15591 15857

## Dataset Creation

### Curation Rationale

[Needs More Information]

### Source Data

Wikipedia and IMDb movie plots

#### Initial Data Collection and Normalization

[Needs More Information]

#### Who are the source language producers?

[Needs More Information]

### Annotations

#### Annotation process

For SelfRC, the annotators were allowed to mark an answer span in the plot or synthesize their own answers after reading Wikipedia movie plots.

For ParaphraseRC, questions from the Wikipedia movie plots from SelfRC were used and the annotators were asked to answer based on IMDb movie plots.

#### Who are the annotators?

Amazon Mechanical Turk Workers

### Personal and Sensitive Information

[Needs More Information]

## Considerations for Using the Data

### Social Impact of Dataset

[Needs More Information]

### Discussion of Biases

[Needs More Information]

### Other Known Limitations

[Needs More Information]

## Additional Information

### Dataset Curators

The dataset was intially created by Amrita Saha, Rahul Aralikatte, Mitesh M. Khapra, and Karthik Sankaranarayanan in a collaborated work between IIT Madras and IBM Research.

### Licensing Information

[MIT License](https://github.com/duorc/duorc/blob/master/LICENSE)

### Citation Information

```

@inproceedings{DuoRC,

author = { Amrita Saha and Rahul Aralikatte and Mitesh M. Khapra and Karthik Sankaranarayanan},

title = {{DuoRC: Towards Complex Language Understanding with Paraphrased Reading Comprehension}},

booktitle = {Meeting of the Association for Computational Linguistics (ACL)},

year = {2018}

}

```

### Contributions

Thanks to [@gchhablani](https://github.com/gchhablani) for adding this dataset. |

false |

# Dataset Card for MNIST

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** http://yann.lecun.com/exdb/mnist/

- **Repository:**

- **Paper:** MNIST handwritten digit database by Yann LeCun, Corinna Cortes, and CJ Burges

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

The MNIST dataset consists of 70,000 28x28 black-and-white images of handwritten digits extracted from two NIST databases. There are 60,000 images in the training dataset and 10,000 images in the validation dataset, one class per digit so a total of 10 classes, with 7,000 images (6,000 train images and 1,000 test images) per class.

Half of the image were drawn by Census Bureau employees and the other half by high school students (this split is evenly distributed in the training and testing sets).

### Supported Tasks and Leaderboards

- `image-classification`: The goal of this task is to classify a given image of a handwritten digit into one of 10 classes representing integer values from 0 to 9, inclusively. The leaderboard is available [here](https://paperswithcode.com/sota/image-classification-on-mnist).

### Languages

English

## Dataset Structure

### Data Instances

A data point comprises an image and its label:

```

{

'image': <PIL.PngImagePlugin.PngImageFile image mode=L size=28x28 at 0x276021F6DD8>,

'label': 5

}

```

### Data Fields

- `image`: A `PIL.Image.Image` object containing the 28x28 image. Note that when accessing the image column: `dataset[0]["image"]` the image file is automatically decoded. Decoding of a large number of image files might take a significant amount of time. Thus it is important to first query the sample index before the `"image"` column, *i.e.* `dataset[0]["image"]` should **always** be preferred over `dataset["image"][0]`

- `label`: an integer between 0 and 9 representing the digit.

### Data Splits

The data is split into training and test set. All the images in the test set were drawn by different individuals than the images in the training set. The training set contains 60,000 images and the test set 10,000 images.

## Dataset Creation

### Curation Rationale

The MNIST database was created to provide a testbed for people wanting to try pattern recognition methods or machine learning algorithms while spending minimal efforts on preprocessing and formatting. Images of the original dataset (NIST) were in two groups, one consisting of images drawn by Census Bureau employees and one consisting of images drawn by high school students. In NIST, the training set was built by grouping all the images of the Census Bureau employees, and the test set was built by grouping the images form the high school students.

The goal in building MNIST was to have a training and test set following the same distributions, so the training set contains 30,000 images drawn by Census Bureau employees and 30,000 images drawn by high school students, and the test set contains 5,000 images of each group. The curators took care to make sure all the images in the test set were drawn by different individuals than the images in the training set.

### Source Data

#### Initial Data Collection and Normalization

The original images from NIST were size normalized to fit a 20x20 pixel box while preserving their aspect ratio. The resulting images contain grey levels (i.e., pixels don't simply have a value of black and white, but a level of greyness from 0 to 255) as a result of the anti-aliasing technique used by the normalization algorithm. The images were then centered in a 28x28 image by computing the center of mass of the pixels, and translating the image so as to position this point at the center of the 28x28 field.

#### Who are the source language producers?

Half of the source images were drawn by Census Bureau employees, half by high school students. According to the dataset curator, the images from the first group are more easily recognizable.

### Annotations

#### Annotation process

The images were not annotated after their creation: the image creators annotated their images with the corresponding label after drawing them.

#### Who are the annotators?

Same as the source data creators.

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

Chris Burges, Corinna Cortes and Yann LeCun

### Licensing Information

MIT Licence

### Citation Information

```

@article{lecun2010mnist,

title={MNIST handwritten digit database},

author={LeCun, Yann and Cortes, Corinna and Burges, CJ},

journal={ATT Labs [Online]. Available: http://yann.lecun.com/exdb/mnist},

volume={2},

year={2010}

}

```

### Contributions

Thanks to [@sgugger](https://github.com/sgugger) for adding this dataset. |

false | |

false | |

true |

# Dataset Card for semantics-ws-qna-oa with ~2K entries.

### Dataset Summary

License: Apache-2.0. Contains parquet of INSTRUCTION, RESPONSE, SOURCE and METADATA.

- ### Original Datasets are available here:

- https://leviants.com/multilingual-simlex999-and-wordsim353/

### Paper of original Dataset:

- https://arxiv.org/pdf/1508.00106v5.pdf |

false |

Embeddings of the [english Wikipedia](https://huggingface.co/datasets/wikipedia) [paragraphs](https://huggingface.co/datasets/olmer/wiki_paragraphs) using [all-mpnet-base-v2](https://huggingface.co/sentence-transformers/all-mpnet-base-v2) sentence transformers encoder.

The dataset contains 43 911 155 paragraphs from 6 458 670 Wikipedia articles.

The size of each paragraph varies from 20 to 2000 characters.

For each paragraph there is an embedding of size 768.

Embeddings are stored in numpy files, 1 000 000 embeddings per file.

For each embedding file, there is an ids file that contains the list of ids of the corresponding paragraphs.

__Be careful, dataset size is 151Gb__. |

false |

# Public Ground-Truth Dataset for Handwritten Circuit Diagrams (GTDB-HD)

This repository contains images of hand-drawn electrical circuit diagrams as well as accompanying bounding box annotation for object detection as well as segmentation ground truth files. This dataset is intended to train (e.g. neural network) models for the purpose of the extraction of electrical graphs from raster graphics.

## Structure

The folder structure is made up as follows:

```

gtdh-hd

│ README.md # This File

│ classes.json # Classes List

│ classes_color.json # Classes to Color Map

│ classes_discontinuous.json # Classes Morphology Info

│ classes_ports.json # Electrical Port Descriptions for Classes

│ consistency.py # Dataset Statistics and Consistency Check

| loader.py # Simple Dataset Loader and Storage Functions

│ segmentation.py # Multiclass Segmentation Generation

│ utils.py # Helper Functions

└───drafter_D

│ └───annotations # Bounding Box Annotations

│ │ │ CX_DY_PZ.xml

│ │ │ ...

│ │

│ └───images # Raw Images

│ │ │ CX_DY_PZ.jpg

│ │ │ ...

│ │

│ └───instances # Instance Segmentation Polygons

│ │ │ CX_DY_PZ.json

│ │ │ ...

│ │

│ └───segmentation # Binary Segmentation Maps (Strokes vs. Background)

│ │ │ CX_DY_PZ.jpg

│ │ │ ...

...

```

Where:

- `D` is the (globally) running number of a drafter

- `X` is the (globally) running number of the circuit (12 Circuits per Drafter)

- `Y` is the Local Number of the Circuit's Drawings (2 Drawings per Circuit)

- `Z` is the Local Number of the Drawing's Image (4 Pictures per Drawing)

### Image Files

Every image is RGB-colored and either stored as `jpg`, `jpeg` or `png` (both uppercase and lowercase suffixes exist).

### Bounding Box Annotations

A complete list of class labels including a suggested mapping table to integer numbers for training and prediction purposes can be found in `classes.json`. The annotations contains **BB**s (Bounding Boxes) of **RoI**s (Regions of Interest) like electrical symbols or texts within the raw images and are stored in the [PASCAL VOC](http://host.robots.ox.ac.uk/pascal/VOC/) format.

Please note: *For every Raw image in the dataset, there is an accompanying bounding box annotation file.*

#### Known Labeled Issues

- C25_D1_P4 cuts off a text

- C27 cuts of some texts

- C29_D1_P1 has one additional text

- C31_D2_P4 has a text less

- C33_D1_P4 has a text less

- C46_D2_P2 cuts of a text

### Instance Segmentation

For every binary segmentation map, there is an accompanying polygonal annotation file for instance segmentation purposes, which is stored in the [labelme](https://github.com/wkentaro/labelme) format. Note that the contained polygons are quite coarse, intended to be used in conjunction with the binary segmentation maps for connection extraction and to tell individual instances with overlapping BBs apart.

### Segmentation Maps

Binary Segmentation images are available for some samples and bear the same resolution as the respective image files. They are considered to contain only black and white pixels indicating areas of drawings strokes and background respectively.

### Netlists

For some images, there are also netlist files available, which are stored in the [ASC](http://ltwiki.org/LTspiceHelp/LTspiceHelp/Spice_Netlist.htm) format.

### Consistency and Statistics

This repository comes with a stand-alone script to:

- Obtain Statistics on

- Class Distribution

- BB Sizes

- Check the BB Consistency

- Classes with Regards to the `classes.json`

- Counts between Pictures of the same Drawing

- Ensure a uniform writing style of the Annotation Files (indent)

The respective script is called without arguments to operate on the **entire** dataset:

```

$ python3 consistency.py

```

Note that due to a complete re-write of the annotation data, the script takes several seconds to finish. A drafter can be specified as CLI argument to restrict the evaluation (for example drafter 15):

```

$ python3 consistency.py 15

```

### Multi-Class (Instance) Segmentation Processing

This dataset comes with a script to process both new and existing (instance) segmentation files. It is invoked as follows:

```

$ python3 segmentation.py <command> <drafter_id> <target> <source>

```

Where:

- `<command>` has to be one of:

- `transform`

- Converts existing BB Annotations to Polygon Annotations

- Default target folder: `instances`

- Existing polygon files will not be overridden in the default settings, hence this command will take no effect in an completely populated dataset.

- Intended to be invoked after adding new binary segmentation maps

- **This step has to be performed before all other commands**

- `wire`

- Generates Wire Describing Polygons

- Default target folder: `wires`

- `keypoint`

- Generates Keypoints for Component Terminals

- Default target folder: `keypoints`

- `create`

- Generates Multi-Class segmentation Maps

- Default target folder: `segmentation_multi_class`

- `refine`

- Refines Coarse Polygon Annotations to precisely match the annotated objects

- Default target folder: `instances_refined`

- For instance segmentation purposes

- `pipeline`

- executes `wire`,`keypoint` and `refine` stacked, with one common `source` and `target` folder

- Default target folder: `instances_refined`

- `assign`

- Connector Point to Port Type Assignment by Geometric Transformation Matching

- `<drafter_id>` **optionally** restricts the process to one of the drafters

- `<target>` **optionally** specifies a divergent target folder for results to be placed in

- `<source>` **optionally** specifies a divergent source folder to read from

Please note that source and target forlders are **always** subfolder inside the individual drafter folders. Specifying source and target folders allow to stack the results of individual processing steps. For example, to perform the entire pipeline for drafter 20 manually, use:

```

python3 segmentation.py wire 20 instances_processed instances

python3 segmentation.py keypoint 20 instances_processed instances_processed

python3 segmentation.py refine 20 instances_processed instances_processed

```

### Dataset Loader

This dataset is also shipped with a set of loader and writer functions, which are internally used by the segmentation and consistency scripts and can be used for training. The dataset loader is simple, framework-agnostic and has been prepared to be callable from any location in the file system. Basic usage:

```

from loader import read_dataset

db_bb = read_dataset() # Read all BB Annotations

db_seg = read_dataset(segmentation=True) # Read all Polygon Annotations

db_bb_val = read_dataset(drafter=12) # Read Drafter 12 BB Annotations

len(db_bb) # Get The Amount of Samples

db_bb[5] # Get an Arbitrary Sample

db = read_images(drafter=12) # Returns a list of (Image, Annotation) pairs

db = read_snippets(drafter=12) # Returns a list of (Image, Annotation) pairs

```

## Citation

If you use this dataset for scientific publications, please consider citing us as follows:

```

@inproceedings{thoma2021public,

title={A Public Ground-Truth Dataset for Handwritten Circuit Diagram Images},

author={Thoma, Felix and Bayer, Johannes and Li, Yakun and Dengel, Andreas},

booktitle={International Conference on Document Analysis and Recognition},

pages={20--27},

year={2021},

organization={Springer}

}

```

## How to Contribute

If you want to contribute to the dataset as a drafter or in case of any further questions, please send an email to: <johannes.bayer@dfki.de> (corresponding author), <yakun.li@dfki.de>, <andreas.dengel@dfki.de>

## Guidelines

These guidelines are used throughout the generation of the dataset. They can be used as an instruction for participants and data providers.

### Drafter Guidelines

- 12 Circuits should be drawn, each of them twice (24 drawings in total)

- Most important: The drawing should be as natural to the drafter as possible

- Free-Hand sketches are preferred, using rulers and drawing Template stencils should be avoided unless it appears unnatural to the drafter

- Different types of pens/pencils should be used for different drawings

- Different kinds of (colored, structured, ruled, lined) paper should be used

- One symbol set (European/American) should be used throughout one drawing (consistency)

- It is recommended to use the symbol set that the drafter is most familiar with

- It is **strongly** recommended to share the first one or two circuits for review by the dataset organizers before drawing the rest to avoid problems (complete redrawing in worst case)

### Image Capturing Guidelines

- For each drawing, 4 images should be taken (96 images in total per drafter)

- Angle should vary

- Lighting should vary

- Moderate (e.g. motion) blur is allowed

- All circuit-related aspects of the drawing must be _human-recognicable_

- The drawing should be the main part of the image, but _naturally_ occurring objects from the environment are welcomed

- The first image should be _clean_, i.e. ideal capturing conditions

- Kinks and Buckling can be applied to the drawing between individual image capturing

- Try to use the file name convention (`CX_DY_PZ.jpg`) as early as possible

- The circuit range `X` will be given to you

- `Y` should be `1` or `2` for the drawing

- `Z` should be `1`,`2`,`3` or `4` for the picture

### Object Annotation Guidelines

- General Placement

- A **RoI** must be **completely** surrounded by its **BB**

- A **BB** should be as tight as possible to the **RoI**

- In case of connecting lines not completely touching the symbol, the BB should extended (only by a small margin) to enclose those gaps (epecially considering junctions)

- Characters that are part of the **essential symbol definition** should be included in the BB (e.g. the `+` of a polarized capacitor should be included in its BB)

- **Junction** annotations

- Used for actual junction points (Connection of three or more wire segments with a small solid circle)

- Used for connection of three or more sraight line wire segements where a physical connection can be inferred by context (i.e. can be distinuished from **crossover**)

- Used for wire line corners

- Redundant Junction Points should **not** be annotated (small solid circle in the middle of a straight line segment)

- Should not be used for corners or junctions that are part of the symbol definition (e.g. Transistors)

- **Crossover** Annotations

- If dashed/dotted line: BB should cover the two next dots/dashes

- **Text** annotations

- Individual Text Lines should be annotated Individually

- Text Blocks should only be annotated If Related to Circuit or Circuit's Components

- Semantically meaningful chunks of information should be annotated Individually

- component characteristics enclosed in a single annotation (e.g. __100Ohms__, __10%__ tolerance, __5V__ max voltage)

- Component Names and Types (e.g. __C1__, __R5__, __ATTINY2313__)

- Custom Component Terminal Labels (i.e. __Integrated Circuit__ Pins)

- Circuit Descriptor (e.g. "Radio Amplifier")

- Texts not related to the Circuit should be ignored

- e.g. Brief paper, Company Logos

- Drafters auxiliary markings for internal organization like "D12"

- Texts on Surrounding or Background Papers

- Characters which are part of the essential symbol definition should __not__ be annotated as Text dedicatedly

- e.g. Schmitt Trigger __S__, , and gate __&__, motor __M__, Polarized capacitor __+__

- Only add terminal text annotation if the terminal is not part of the essential symbol definition

- **Table** cells should be annotated independently

- **Operation Amplifiers**

- Both the triangular US symbols and the european IC-like symbols symbols for OpAmps should be labeled `operational_amplifier`

- The `+` and `-` signs at the OpAmp's input terminals are considered essential and should therefore not be annotated as texts

- **Complex Components**

- Both the entire Component and its sub-Components and internal connections should be annotated:

| Complex Component | Annotation |

| ----------------- | ------------------------------------------------------ |

| Optocoupler | 0. `optocoupler` as Overall Annotation |

| | 1. `diode.light_emitting` |

| | 2. `transistor.photo` (or `resistor.photo`) |

| | 3. `optical` if LED and Photo-Sensor arrows are shared |

| | Then the arrows area should be includes in all |

| Relay | 0. `relay` as Overall Annotation |

| (also for | 1. `inductor` |

| coupled switches) | 2. `switch` |

| | 3. `mechanical` for the dashed line between them |

| Transformer | 0. `transformer` as Overall Annotation |

| | 1. `inductor` or `inductor.coupled` (watch the dot) |

| | 3. `magnetic` for the core |

#### Rotation Annotations

The Rotation (integer in degree) should capture the overall rotation of the symbol shape. However, the position of the terminals should also be taked into consideration. Under idealized circumstances (no perspective distorion and accurately drawn symbols according to the symbol library), these two requirements equal each other. For pathological cases however, in which shape and the set of terminals (or even individual terminals) are conflicting, the rotation should compromise between all factors.

Rotation annotations are currently work in progress. They should be provided for at least the following classes:

- "voltage.dc"

- "resistor"

- "capacitor.unpolarized"

- "diode"

- "transistor.bjt"

#### Text Annotations

- The Character Sequence in the Text Label Annotations should describe the actual Characters depicted in the respective Bounding Box as Precisely as Possible

- Bounding Box Annotations of class `text`

- Bear an additional `<text>` tag in which their content is given as string

- The `Omega` and `Mikro` Symbols are escaped respectively

- Currently Work in Progress

- The utils script allows for migrating text annotations from one annotation file to another: `python3 utils.py source target`

### Segmentation Map Guidelines

- Areas of __Intended__ drawing strokes (ink and pencil abrasion respectively) should be marked black, all other pixels (background) should be white

- shining through the paper (from the rear side or other sheets) should be considered background

### Polygon Annotation Guidelines

0. Before starting, make sure the respective files exist for the image sample to be polygon-annotated:

- BB Annotations (Pascal VOC XML File)

- (Binary) Segmentation Map

1. Transform the BB annotations into raw polygons

- Use: `python3 segmentation.py transform`

2. Refine the Polygons

- **To Avoid Embedding Image Data into the resulting JSON**, use: `labelme --nodata`

- Just make sure there are no overlaps between instances

- Especially take care about overlaps with structural elements like junctions and crossovers

3. Generate Multi-Class Segmentation Maps from the refined polygons

- Use: `python3 segmentation.py create`

- Use the generated images for a visual inspection

- After spotting problems, continue with Step 2

### Terminal Annotation Guidelines

```

labelme --labels "connector" --config "{shift_auto_shape_color: 1}" --nodata

```

|

true |

The dataset is relevant to Ukrainian reviews in three different domains:

1) Hotels.

2) Reustarants.

3) Products.

The dataset is comrpised of several .csv files, which one can found useful:

1) processed_data.csv - the processed dataset itself.

2) train_val_test_indices.csv - csv file with train/val/test indices. The split was stratified w.r.t dataset name (hotels, reustarants, products) and rating.

3) bad_ids.csv - csv file with ids of bad samples marked using model filtering approach, only ids of those samples for which difference between actual and predicted rating is bigger than 2 points are maintained in this file.

The data is scrapped from Tripadvisor (https://www.tripadvisor.com/) and Rozetka (https://rozetka.com.ua/).

The dataset was initially used for extraction of key-phrases relevant to one of rating categories, based on trained machine learning model (future article link will be here).

Dataset is processed to include two additional columns: one with lemmatized tokens and another one with POS tags. Both lemmatization and POS tagging are done using pymorphy2 (https://pymorphy2.readthedocs.io/en/stable/) library.

The words are tokenized using a specific regex tokenizer to account for usage of apostroph.

Those reviews which weren't in Ukrainian were translated to it using Microsoft translator and re-checked manually afterwards.

|

false |

# Dataset card for dominoes

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset description](#dataset-description)

- [Dataset categories](#dataset-categories)

## Dataset description

- **Homepage:** https://segments.ai/ant/dominoes

This dataset was created using [Segments.ai](https://segments.ai). It can be found [here](https://segments.ai/ant/dominoes).

## Dataset categories

| Id | Name | Description |

| --- | ---- | ----------- |

| 1 | domino | - |

|

false | # Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This dataset card aims to be a base template for new datasets. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/datasetcard_template.md?plain=1).

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

false | |

false |

[seahorse](https://github.com/google-research-datasets/seahorse)

```

@misc{clark2023seahorse,

title={SEAHORSE: A Multilingual, Multifaceted Dataset for Summarization Evaluation},

author={Elizabeth Clark and Shruti Rijhwani and Sebastian Gehrmann and Joshua Maynez and Roee Aharoni and Vitaly Nikolaev and Thibault Sellam and Aditya Siddhant and Dipanjan Das and Ankur P. Parikh},

year={2023},

eprint={2305.13194},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

``` |

false |

# Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

A copied data set from CIFAR10 as a demonstration

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

true |

This is dataset for test |

true | # AutoTrain Dataset for project: analytics-intent-reasoning

## Dataset Description

This dataset has been automatically processed by AutoTrain for project analytics-intent-reasoning.

### Languages

The BCP-47 code for the dataset's language is zh.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"text": "\u9500\u552e\u91d1\u989d\u7684\u540c\u6bd4",

"target": 1

},

{

"text": "\u676d\u5dde\u54ea\u4e2a\u533a\u7684\u9500\u552e\u91d1\u989d\u6700\u9ad8",

"target": 1

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"text": "Value(dtype='string', id=None)",

"target": "ClassLabel(names=['\u62a5\u8868\u6784\u5efa', '\u67e5\u8be2\u7c7b', '\u67e5\u8be2\u7c7b\u67e5\u8be2\u7c7b'], id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 72 |

| valid | 20 |

|

false |

## Loading the dataset with a specific configuration

There are 3 different OCR versions to choose from with their original format or standardized DUE format, as well as the option to load the documents as filepaths or as binaries (PDF).

To load a specific configuration, pass a config from one of the following:

```python

#{bin_}{Amazon,Azure,Tesseract}_{original,due}

['Amazon_due', 'Amazon_original', 'Azure_due', 'Azure_original', 'Tesseract_due', 'Tesseract_original',

'bin_Amazon_due', 'bin_Amazon_original', 'bin_Azure_due', 'bin_Azure_original', 'bin_Tesseract_due', 'bin_Tesseract_original']

```

```python

from datasets import load_dataset

ds = load_dataset("DUDE2023/DUDE", 'Amazon_original')

```

|

false | |

false |

# VoxCeleb 1

VoxCeleb1 contains over 100,000 utterances for 1,251 celebrities, extracted from videos uploaded to YouTube.

## Verification Split

| | train | validation | test |

| :---: | :---: | :---: | :---: |

| # of speakers | 1211 | 1211 | 40 |

| # of samples | 133777 | 14865 | 4874 |

## References

- https://www.robots.ox.ac.uk/~vgg/data/voxceleb/vox1.html |

false |

## "Say It Again, Kid!" Speech data collection##

## Training data for pronunciation quality classifiers for childred learning English ##

Used in papers ...

Train set and test set in Flac format.

File id key, for example: train001fifi05_609_t10892805_living-room.flac

Speaker key indicates train or test set, and a running number: train001

Native language: "fifi" for Finnish, enuk for UK English, othr for other.

Age of speaker in years (if known): "05"

Sample number: "609" (Some kids really enjoyed contributing!)

Seconds from first sample given: "t10892805"

Targer utterance text with spaces etc replaced by dashes: "living-room"

---

license: cc-by-nd-4.0

---

We emphasize, that by no derivatives we mean that you cannot use the audio samples as part of any work that is not directly related to describing the dataset in a speech technology or scientific language learning context. You may include them in a scientific presentation when the context is clearly to present the original data and not to use the data in another fashion.

Commercial use of speech samples for building and evaluation of speech technology models is not prohibited. |

false |

# VoxCeleb 1

VoxCeleb1 contains over 100,000 utterances for 1,251 celebrities, extracted from videos uploaded to YouTube.

## Identification Split

| | train | validation | test |

| :---: | :---: | :---: | :---: |

| # of speakers | 1251 | 1251 | 1251 |

| # of samples | 306208 | 14479 | 4874 |

## References

- https://www.robots.ox.ac.uk/~vgg/data/voxceleb/vox1.html |

false | # AutoTrain Dataset for project: hhhh

## Dataset Description

This dataset has been automatically processed by AutoTrain for project hhhh.

### Languages

The BCP-47 code for the dataset's language is unk.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"image": "<256x256 RGBA PIL image>",

"target": 0

},

{

"image": "<256x256 RGBA PIL image>",

"target": 0

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"image": "Image(decode=True, id=None)",

"target": "ClassLabel(names=['lion', 'tiger'], id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 360 |

| valid | 40 |

|

false | # ES2Bash

This dataset contains a collection of natural language requests (in Spanish) and their corresponding bash commands. The purpose of this dataset is to provide examples of requests and their associated bash commands to facilitate machine learning and the development of natural language processing systems related to command-line operations.

# Features

The dataset consists of two main features:

* Natural Language Request (ES): This feature contains natural language requests written in Spanish. The requests represent tasks or actions to be performed using command-line commands.

* Bash Command: This feature contains the bash commands associated with each natural language request. The bash commands represent the way to execute the requested task or action using the command line.

# Initial Commands

The dataset initially contains requests related to the following commands:

* cat: Requests involving reading text files.

* ls: Requests related to obtaining information about files and directories at a specific location.

* cd: Requests to change the current directory.

# Dataset Expansion

In addition to the initial commands mentioned above, there are plans to expand this dataset to include more common command-line commands. The expansion will cover a broader range of tasks and actions that can be performed using command-line operations.

Efforts will also be made to improve the existing examples and ensure that they are clear, accurate, and representative of typical requests that users may have when working with command lines.

# Request Statistics

In the future, statistical data will be provided on the requests present in this dataset. This data may include information about the distribution of requests in different categories, the frequency of use of different commands, and any other relevant analysis to better understand the usage and needs of command-line users.

# Request Collection Process

This dataset is the result of a combination of requests generated by language models and manually added requests. The requests generated by language models were based on existing examples and prior knowledge related to the usage of command lines. A manual review was then conducted to ensure the quality and relevance of the requests. |

false |

# VoxCeleb 2

VoxCeleb2 contains over 1 million utterances for 6,112 celebrities, extracted from videos uploaded to YouTube.

## Verification Split

| | train | validation | test |

| :---: | :---: | :---: | :---: |

| # of speakers | 5,994 | 5,994 | 118 |

| # of samples | 982,808 | 109,201 | 36,237 |

## Data Fields

- ID (string): The ID of the sample with format `<spk_id--utt_id_start_stop>`.

- duration (float64): The duration of the segment in seconds.

- wav (string): The filepath of the waveform.

- start (int64): The start index of the segment, which is (start seconds) × (sample rate).

- stop (int64): The stop index of the segment, which is (stop seconds) × (sample rate).

- spk_id (string): The ID of the speaker.

Example:

```

{

'ID': 'id09056--00112_0_89088',

'duration': 5.568,

'wav': 'id09056/U2mRgZ1tW04/00112.wav',

'start': 0,

'stop': 89088,

'spk_id': 'id09056'

}

```

## References

- https://www.robots.ox.ac.uk/~vgg/data/voxceleb/vox2.html |

false |

A simple classification task for generic anime images. Including the following 4 classes:

| Class | Images | Description |

|:------------:|:------:|---------------------------------------------------------------|

| comic | 5746 | comic images in color or greyscale |

| illustration | 6064 | illustration images |

| bangumi | 4914 | video screenshots or key visual images in bangumi |

| 3d | 4649 | 3d works including koikatsu, mikumikudance and other 3d types |

|

true | # Dataset Card for "github-issues"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

false |

*** Image Captioning Dataset

Overview

This dataset is designed for image captioning tasks and consists of a collection of images paired with corresponding captions. The dataset aims to facilitate research and development in the field of image captioning and can be used for training and evaluating image captioning models.

Dataset Details

Number of Images: 9228

Image Sources: Filckr30K

Caption Language: Arabic

|

false |

# VoxCeleb 2

VoxCeleb2 contains over 1 million utterances for 6,112 celebrities, extracted from videos uploaded to YouTube.

## Verification Split

| | train | validation | test |

| :---: | :---: | :---: | :---: |

| # of speakers | 5,994 | 5,994 | 118 |

| # of samples | 982,808 | 109,201 | 36,237 |

## Data Fields

- ID (string): The ID of the sample with format `<spk_id--utt_id_start_stop>`.

- duration (float64): The duration of the segment in seconds.

- wav (string): The filepath of the waveform.

- start (int64): The start index of the segment, which is (start seconds) × (sample rate).

- stop (int64): The stop index of the segment, which is (stop seconds) × (sample rate).

- spk_id (string): The ID of the speaker.

Example:

```

{

'ID': 'id09056--00112_0_89088',

'duration': 5.568,

'wav': 'id09056/U2mRgZ1tW04/00112.wav',

'start': 0,

'stop': 89088,

'spk_id': 'id09056'

}

```

## References

- https://www.robots.ox.ac.uk/~vgg/data/voxceleb/vox2.html |

false |

# Dataset Card for Piano Sound Quality Database

## Requirements

```

python 3.8-3.10

soundfile

librosa

```

## Usage

```

from datasets import load_dataset

data = load_dataset("ccmusic-database/piano_sound_quality", split="5_Kawai")

labels = data.features['label'].names

for item in data:

print('audio info: ', item['audio'])

print('label name: ' + labels[item['label']])

```

## Maintenance

```

git clone git@hf.co:datasets/ccmusic-database/piano_sound_quality

```

## Dataset Description

- **Homepage:** <https://ccmusic-database.github.io>

- **Repository:** <https://huggingface.co/datasets/CCMUSIC/piano_sound_quality>

- **Paper:** <https://doi.org/10.5281/zenodo.5676893>

- **Leaderboard:** <https://ccmusic-database.github.io/team.html>

- **Point of Contact:** N/A

### Dataset Summary

This database contains 12 full-range audio files (.wav/.mp3/.m4a format) of 7 models of piano (KAWAI upright piano, KAWAI grand piano, Yingchang upright piano, Xinghai upright piano, Grand Theatre Steinway piano, Steinway grand piano, Pearl River upright piano) and 1320 split monophonic audio files (. wav/.mp3/.m4a format), for a total of 1332 files.

A score sheet (.xls format) of the piano sound quality rated by 29 people who participated in the subjective evaluation test is also included.

### Supported Tasks and Leaderboards

Piano Sound Classification

### Languages

English

## Dataset Structure

### Data Instances

.wav

### Data Fields

```

1_PearlRiver

2_YoungChang

3_Steinway-T

4_Hsinghai

5_Kawai

6_Steinway

7_Kawai-G

8_Yamaha

```

### Data Splits

trainset, validationset, testset

## Dataset Creation

### Curation Rationale

Lack of a dataset for piano sound quality

### Source Data

#### Initial Data Collection and Normalization

Zhaorui Liu, Shaohua Ji, Monan Zhou

#### Who are the source language producers?

Students from CCMUSIC

### Annotations

#### Annotation process

This database contains 12 full-range audio files (.wav/.mp3/.m4a format) of 7 models of piano (KAWAI upright piano, KAWAI grand piano, Yingchang upright piano, Xinghai upright piano, Grand Theatre Steinway piano, Steinway grand piano, Pearl River upright piano)

#### Who are the annotators?

Students from CCMUSIC

### Personal and Sensitive Information

None

## Considerations for Using the Data

### Social Impact of Dataset

Help developing piano sound quality rating apps

### Discussion of Biases

Only for pianos

### Other Known Limitations

No black key in Steinway

## Additional Information

### Dataset Curators

Zijin Li

### Licensing Information

```

MIT License

Copyright (c) 2023 CCMUSIC

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

```

### Citation Information

```

@dataset{zhaorui_liu_2021_5676893,

author = {Zhaorui Liu, Monan Zhou, Shenyang Xu and Zijin Li},

title = {{Music Data Sharing Platform for Computational Musicology Research (CCMUSIC DATASET)}},

month = nov,

year = 2021,

publisher = {Zenodo},

version = {1.1},

doi = {10.5281/zenodo.5676893},

url = {https://doi.org/10.5281/zenodo.5676893}

}

```

### Contributions

Provide a dataset for piano sound quality |

true |

dataset_info:

features:

- name: intent

dtype: string

- name: user_utterance

dtype: string

- name: origin

dtype: string

# Dataset Card for "clinic150-SUR"

### Dataset Summary

The Clinic150-SUR dataset is a novel and augmented dataset designed to simulate natural human behavior during interactions with customer service-like centers.

Extending the [Clinic150 dataset](https://aclanthology.org/D19-1131/), it incorporates two augmentation techniques, including IBM's [LAMBADA](https://arxiv.org/abs/1911.03118) and [Parrot](https://github.com/PrithivirajDamodaran/Parrot_Paraphraser) models and carefully curated duplicated utterances.

This dataset aims to provide a more comprehensive and realistic representation of customer service interactions,

facilitating the development and evaluation of robust and efficient dialogue systems.

Key Features:

- Augmentation with IBM's [LAMBADA Model](https://arxiv.org/abs/1911.03118): The Clinic150-SUR dataset leverages IBM's LAMBADA model, a language generation model trained on a large corpus of text, to augment the original dataset. This augmentation process enhances the diversity and complexity of the dialogue data, allowing for a broader range of interactions.

- Integration of [Parrot](https://github.com/PrithivirajDamodaran/Parrot_Paraphraser) Model: In addition to the LAMBADA model, the Clinic150-SUR dataset also incorporates the Parrot model, providing a variety of paraphrases. By integrating Parrot, the dataset achieves more variations of existing utterances.

- Duplicated Utterances: The dataset includes carefully curated duplicated utterances to mimic real-world scenarios where users rephrase or repeat commonly asked queries. This feature adds variability to the data, reflecting the natural tendencies of human interactions, and enables dialogue systems to handle such instances better.

- [Clinic150](https://aclanthology.org/D19-1131/) as the Foundation: The Clinic150-SUR dataset is built upon the Clinic150 dataset, which originally consisted of 150 in-domain intent classes and 150 human utterances for each intent. By utilizing this foundation, the augmented dataset retains the in-domain expertise while better reflecting the nature of user requests towards a dialog system.

### Data Instances

#### clinic150-SUR

- **Size of downloaded dataset file:** 29 MB

### Data Fields

#### clinic150-SUR

- `intent`: a `string` feature.

- `user_utterance`: a `string` feature.

- `origin`: a `string` feature ('original', 'lambada', 'parrot').

### Citation Information

```

@inproceedings{rabinovich2022reliable,

title={Reliable and Interpretable Drift Detection in Streams of Short Texts},

author={Rabinovich, Ella and Vetzler, Matan and Ackerman, Samuel and Anaby-Tavor, Ateret},

booktitle = "Proceedings of the 61th Annual Meeting of the Association for Computational Linguistics (industry track)",

publisher = "Association for Computational Linguistics",

year={2023},

url={https://arxiv.org/abs/2305.17750}

}

```

### Contributions

Thanks to [Matan Vetzler](https://www.linkedin.com/in/matanvetzler/), [Ella Rabinovich](https://www.linkedin.com/in/ella-rabinovich-7b9a06/) for adding this dataset. |

false |

# HNC_Mini

Contains 306,084 samples collected from the following datasets.

- QQP_triplets

- HC3

- sentence-compression

|

false |

# Summary

This is a 🇹🇭 Thai-translated (GCP) dataset based on 4.5K codegen instruction dataset [GPTeacher](https://github.com/teknium1/GPTeacher)

Supported Tasks:

- Training LLMs

- Synthetic Data Generation

- Data Augmentation

Languages: Thai

Version: 1.0

--- |

true | # Dataset Card for Dataset Name

## Dataset Description

- **Autor:** Rubén Darío Jaramillo

- **Email:** rubend18@hotmail.com

- **WhatsApp:** +593 93 979 6676

### Dataset Summary

CIE10 is the 10th revision of the International Statistical Classification of Diseases and Related Health Problems (ICD), a medical classification list by the World Health Organization (WHO). It contains codes for diseases, signs and symptoms, abnormal findings, complaints, social circumstances, and external causes of injury or diseases. Work on ICD-10 began in 1983, became endorsed by the Forty-third World Health Assembly in 1990, and was first used by member states in 1994. It was replaced by ICD-11 on January 1, 2022.