text-classification bool 2 classes | text stringlengths 0 664k |

|---|---|

false |

# Plantations Segmentation

The images consist of aerial photography of agricultural plantations with crops such as cabbage and zucchini. The dataset addresses agricultural tasks such as plant detection and counting, health assessment, and irrigation planning. The dataset consists of plantations' photographs with object and class segmentation of cabbage.

# Get the Dataset

**This is just an example of the data.** If you need access to the entire dataset, contact us via **[sales@trainingdata.pro](mailto:sales@trainingdata.pro)** or leave a request on **[https://trainingdata.pro/data-market](https://trainingdata.pro/data-market?utm_source=huggingface)**

# Dataset structure

- **Plantations_Segmentation** - contains of original plantation images (folder **img**) and file with annotations (.xml)

- **Object_Segmentation** - includes object segmentation masks for the original images

- **Class_Segmentation** - includes class segmentation masks for the original images

# Types of segmentation

The dataset includes two types of segmentation:

- **Class Segmentation** - objects corresponding to one class are identified

- **Object Segmentation** - all objects are identified separately

# Data Format

Each image from `img` folder is accompanied by an XML-annotation in the `annotations.xml` file indicating the coordinates of the polygons. For each point, the x and y coordinates are provided.

# Example of XML file structure

.png?generation=1685973058340642&alt=media)

# Plantation segmentation might be made in accordance with your requirements.

## **[TrainingData](https://trainingdata.pro/data-market?utm_source=huggingface)** provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/trainingdata-pro** |

false | # M2CRB

## How to get the data with a given language combination

```

from datasets import load_dataset

def get_dataset(prog_lang, nat_lang):

test_data = load_dataset("blindsubmissions/M2CRB")

test_data = test_data.filter(

lambda example: example["docstring_language"] == nat_lang

and example["language"] == prog_lang

)

test_data = datasets_loader.dataset(test_data)

return test_data

```

## Licensing Information

M2CRB is a subset filtered and pre-processed from [The Stack](https://huggingface.co/datasets/bigcode/the-stack), a collection of source code from repositories with various licenses. Any use of all or part of the code gathered in M2CRB must abide by the terms of the original licenses. |

false |

## Instruction Tuning: GeoSignal

Scientific domain adaptation has two main steps during instruction tuning.

- Instruction tuning with general instruction-tuning data. Here we use Alpaca-GPT4.

- Instruction tuning with restructured domain knowledge, which we call expertise instruction tuning. For K2, we use knowledge-intensive instruction data, GeoSignal.

***The following is the illustration of the training domain-specific language model recipe:***

- **Adapter Model on [Huggingface](https://huggingface.co/): [daven3/k2_it_adapter](https://huggingface.co/daven3/k2_it_adapter)**

For the design of the GeoSignal, we collect knowledge from various data sources, like:

GeoSignal is designed for knowledge-intensive instruction tuning and used for aligning with experts.

The full-version will be upload soon, or email [daven](mailto:davendw@sjtu.edu.cn) for potential research cooperation.

|

false |

# Benchmark: GeoBenchmark

In GeoBenchmark, we collect 183 multiple-choice questions in NPEE, and 1,395 in AP Test, for objective tasks.

Meanwhile, we gather all 939 subjective questions in NPEE to be the subjective tasks set and use 50 to measure the baselines with human evaluation.

|

false | |

true | |

false |

Alpaca tasks dataset translated in Greek from GPT3.5

Translation is done in chunks of 10K. |

false | 1111 |

false | # Google Conceptual Captions in Vietnamese

This is Vietnamese version of Google Conceptual Captions dateset. It has more than 3.3 million image urls with captions. It was built by using Google Translate API. The Vietnamese version has the exact metadata as English one. The only difference is the caption content.

I provide both English and Vietnamese `.tsv` files. For the English one, one can go to alternative sources:

- https://huggingface.co/datasets/conceptual_captions

- https://github.com/google-research-datasets/conceptual-captions

To download the dataset, one can use the tool:

- https://github.com/rom1504/img2dataset/blob/main/dataset_examples/cc3m.md

Or just iterate line by line (`caption<tab>url`)

⚠ Note:

- Some of image urls might die over the time ([liuhaotian/LLaVA-CC3M-Pretrain-595K](https://huggingface.co/datasets/liuhaotian/LLaVA-CC3M-Pretrain-595K) reported that 15% of original dataset are inaccessible). I'm not responsible for them. |

false |

# Outdoor Garbage Dataset

The dataset consisting of garbage cans of various capacities and types. Best to train a neural network to monitor the timely removal of garbage and organize the logistics of vehicles for garbage collection. Dataset is useful for the recommendation systems, optimization and automization the work of coomunity services, smart city.

.png?generation=1686047397390850&alt=media)

# Get the Dataset

This is just an example of the data.

If you need access to the entire dataset, contact us via [sales@trainingdata.pro](mailto:sales@trainingdata.pro) or leave a request on **https://trainingdata.pro/data-market?utm_source=huggingface**

# Content

Dataset includes 10 000 images of trash cans:

- in different times of day

- in different weather conditions

## Types of garbage cans capacity

- **is_full** - at least one of the trash cans shown in the photo is completely full. This type includes filled to the top, overflown cans.

- **is_empty** - garbage cans have free space, it could be half full or completely empty.

- **is_scattered** - the tag is added with is_empty or is_full. The tag means that the garbage (volumetric garbage bags, or building waste, but not single elements) is scattered nearby.

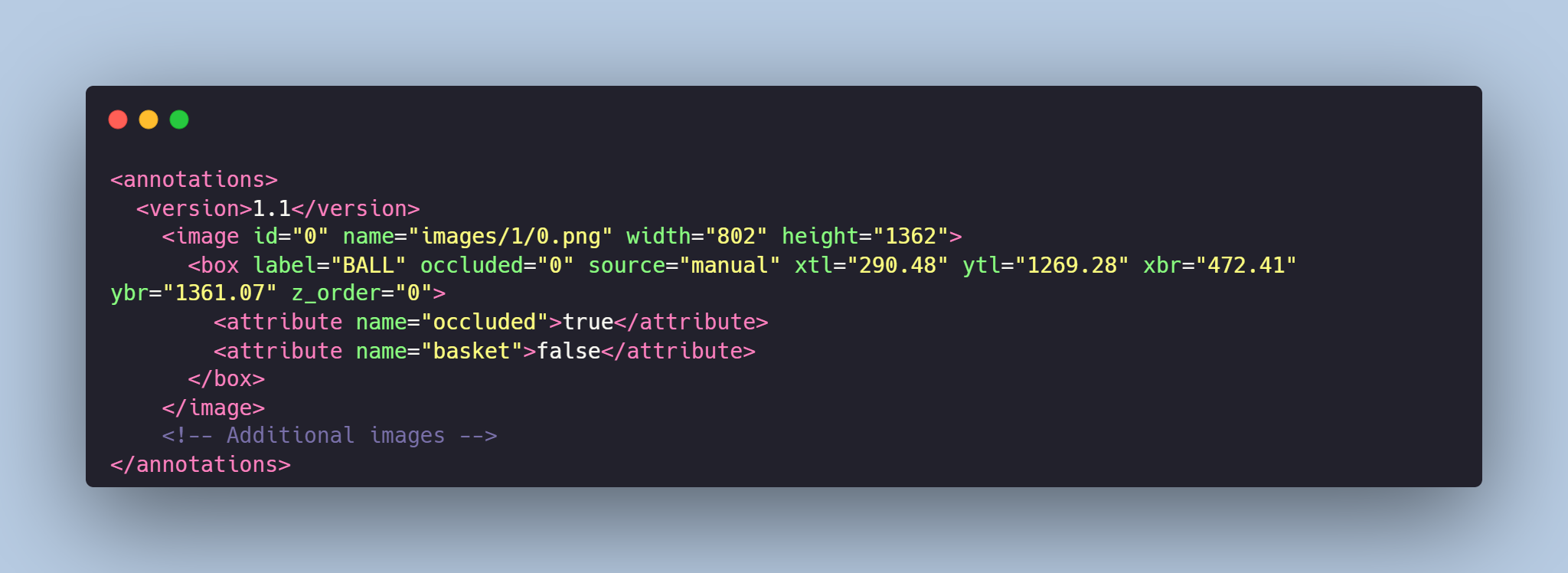

# Data Format

Each image from `img` folder is accompanied by an XML-annotation in the `annotations.xml` file indicating the labeled types of garbage cans capacities for each image in the dataset.

# Example of XML file structure

.png?generation=1686076026295933&alt=media)

**[TrainingData](https://trainingdata.pro/data-market?utm_source=huggingface)** provides high-quality data annotation tailored to your needs.

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/trainingdata-pro** |

false | # Docstring to code data

## Licensing Information

M2CRB is a subset filtered and pre-processed from [The Stack](https://huggingface.co/datasets/bigcode/the-stack), a collection of source code from repositories with various licenses. Any use of all or part of the code gathered in M2CRB must abide by the terms of the original licenses. |

false |

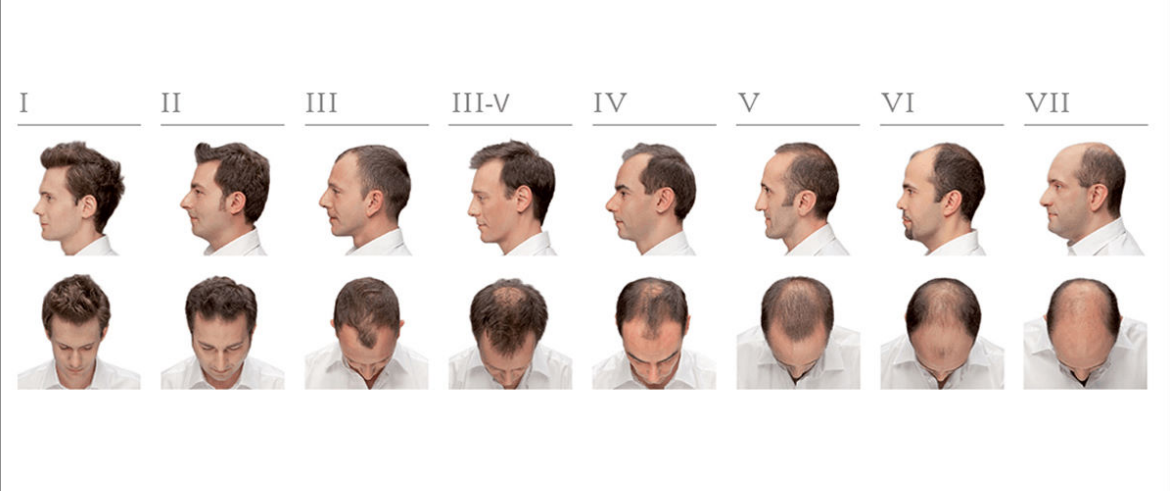

# Dataset of bald people

Dataset consists of 5000 photos of people with 7 stages of hairloss according to the Norwood scale. Dataset is useful for training neural networks for the recommendation systems, optimizing the work processes of trichologists and applications in the Med / Beauty spheres.

# Get the Dataset

This is just an example of the data.

If you need access to the entire dataset, contact us via [sales@trainingdata.pro](mailto:sales@trainingdata.pro) or leave a request on **https://trainingdata.pro/data-market?utm_source=huggingface**

# Image

Similar images are presented in the dataset:

# Hamilton–Norwood scale

- **type_1**: There is a lack of bilateral recessions along the anterior border of the hairline in the frontoparietal regions. No notable hair loss or recession of the hairline.

- **type_2**: There is a small recession of the hairline around the temples. Hair is also lost, or sparse, along the midfrontal border of the scalp, but the depth of the affected area is much less than in the frontoparietal regions. This is commonly referred to as an adult or mature hairline.

- **type_3**: The first signs of significant balding appear. There is a deep, symmetrical recession at the temples that are only sparsely covered by hair.

- **type_4**: The hairline recession is harsher than in stage 2, and there is scattered hair or no hair on the vertex. There are deep frontotemporal recessions, usually symmetrical, and are either bare or very sparsely covered by hair.

- **type_5**: The areas of hair loss are more significant than in stage 4. They are still divided, but the band of hair between them is thinner and sparser.

- **type_6**: The connection of hair that crosses the crown is gone with only sparse hair remaining. The frontotemporal and vertex regions are joined together, and the extent of hair loss is more significant.

- **type_7**: The most drastic stage of hair loss, only a band of hair, going around the sides of the head persists. This hair usually is not thick and might be dainty.

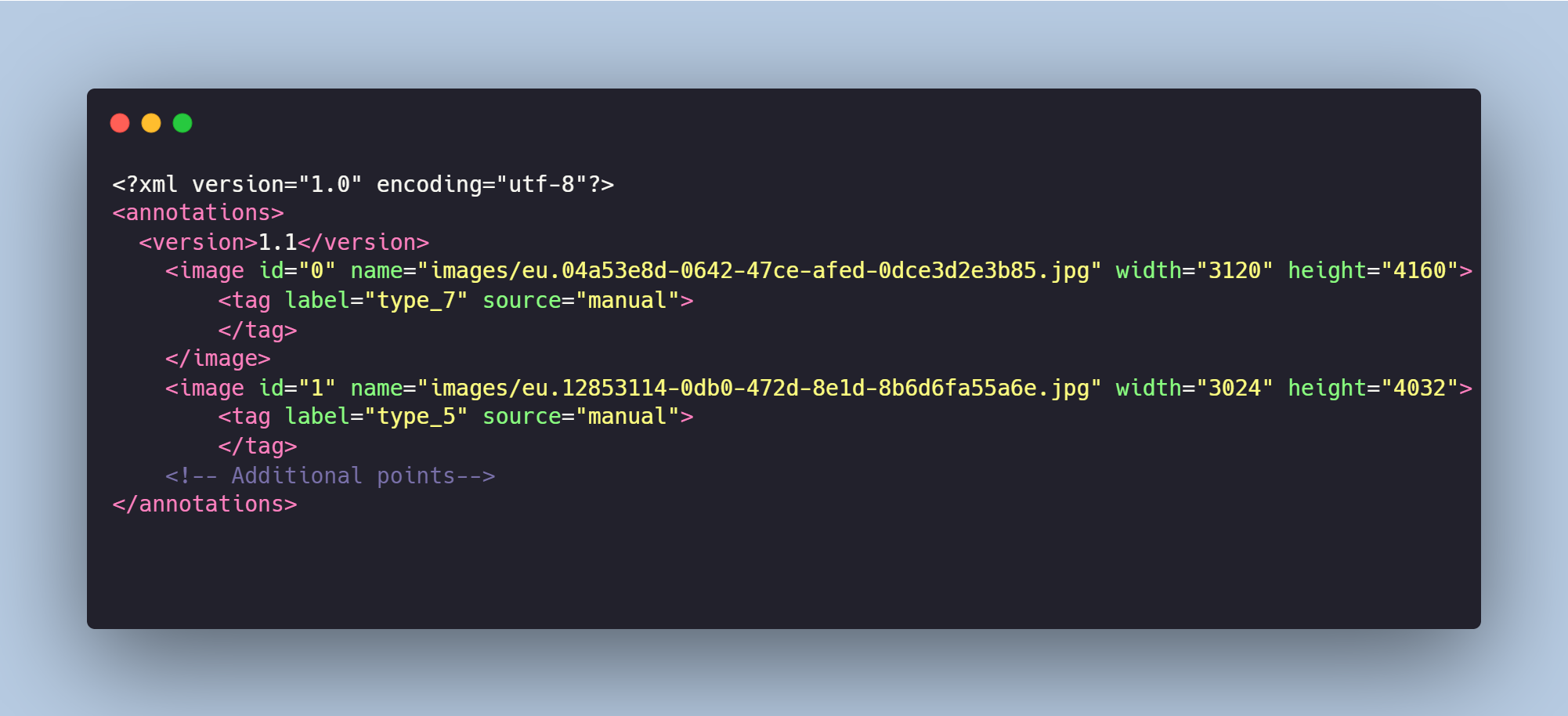

# Data Format

Each image from `img` folder is accompanied by an XML-annotation in the `annotations.xml` file indicating the Hamilton–Norwood type of hairloss for each person in the dataset.

# Example of XML file structure

**[TrainingData](https://trainingdata.pro/data-market?utm_source=huggingface)** provides high-quality data annotation tailored to your needs.

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/trainingdata-pro** |

false | # COCO 2017 image captions in Vietnamese

The dataset is firstly introduced in [dinhanhx/VisualRoBERTa](https://github.com/dinhanhx/VisualRoBERTa/tree/main). I use VinAI tools to translate [COCO 2027 image caption](https://cocodataset.org/#download) (2017 Train/Val annotations) from English to Vietnamese. Then we merge [UIT-ViIC](https://arxiv.org/abs/2002.00175) dataset into it. To load the dataset, one can take a look at [this code in VisualRoBERTa](https://github.com/dinhanhx/VisualRoBERTa/blob/main/src/data.py#L22-L100).

I provide both English original and Vietnamese version (including UIT-ViIC).

⚠ Note:

- UIT-ViIC splits are originated from `en/captions_train2017.json`. Therefore, I combine all UIT-ViIC splits then I merge into `vi/captions_train2017_trans.json`. As a result, I get `captions_train2017_trans_plus.json`.

- `vi/captions_train2017_trans.json` and `vi/captions_val2017_trans.json` are VinAI-translated from the ones in `en/`. |

false |

Original Dataset [JeanKaddour/minipile](https://huggingface.co/datasets/JeanKaddour/minipile)

See the [Thought Tokens Repository](https://github.com/ZelaAI/thought-tokens) for demonstration of streaming usage of this dataset and specific implementation of how this dataset was prepared.

Tokenized with the GPTNeoX tokenizer, split into sequences of length 513, intended for 512 input and 512 target ids. |

true | |

false |

## GitHub R repositories dataset

R source files from GitHub.

This dataset has been created using the public GitHub datasets from Google BigQuery.

This is the actual query that has been used to export the data:

```

EXPORT DATA

OPTIONS (

uri = 'gs://your-bucket/gh-r/*.parquet',

format = 'PARQUET') as

(

select

f.id, f.repo_name, f.path,

c.content, c.size

from (

SELECT distinct

id, repo_name, path

FROM `bigquery-public-data.github_repos.files`

where ends_with(path, ".R")

) as f

left join `bigquery-public-data.github_repos.contents` as c on f.id = c.id

)

EXPORT_DATA

OPTIONS (

uri = 'gs://your-bucket/licenses.parquet',

format = 'PARQUET') as

(select * from `bigquery-public-data.github_repos.licenses`)

```

Files were then exported and processed locally with files in the root of this repository.

Datasets in this repository contain data from reositories with different licenses.

The data schema is:

```

id: string

repo_name: string

path: string

content: string

size: int32

license: string

```

Last updated: Jun 6th 2023

|

false |

# Dataset Card for the Qur'anic Reading Comprehension Dataset (QRCD)

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** https://sites.google.com/view/quran-qa-2022/home

- **Repository:** https://gitlab.com/bigirqu/quranqa/-/tree/main/

- **Paper:** https://dl.acm.org/doi/10.1145/3400396

- **Leaderboard:**

- **Point of Contact:** @piraka9011

### Dataset Summary

The QRCD (Qur'anic Reading Comprehension Dataset) is composed of 1,093 tuples of question-passage pairs that are

coupled with their extracted answers to constitute 1,337 question-passage-answer triplets.

### Supported Tasks and Leaderboards

This task is evaluated as a ranking task.

To give credit to a QA system that may retrieve an answer (not necessarily at the first rank) that does not fully

match one of the gold answers but partially matches it, we use partial Reciprocal Rank (pRR) measure.

It is a variant of the traditional Reciprocal Rank evaluation metric that considers partial matching.

pRR is the official evaluation measure of this shared task.

We will also report Exact Match (EM) and F1@1, which are evaluation metrics applied only on the top predicted answer.

The EM metric is a binary measure that rewards a system only if the top predicted answer exactly matches one of the

gold answers.

Whereas, the F1@1 metric measures the token overlap between the top predicted answer and the best matching gold answer.

To get an overall evaluation score, each of the above measures is averaged over all questions.

### Languages

Qur'anic Arabic

## Dataset Structure

### Data Instances

To simplify the structure of the dataset, each tuple contains one passage, one question and a list that may contain

one or more answers to that question, as shown below:

```json

{

"pq_id": "38:41-44_105",

"passage": "واذكر عبدنا أيوب إذ نادى ربه أني مسني الشيطان بنصب وعذاب. اركض برجلك هذا مغتسل بارد وشراب. ووهبنا له أهله ومثلهم معهم رحمة منا وذكرى لأولي الألباب. وخذ بيدك ضغثا فاضرب به ولا تحنث إنا وجدناه صابرا نعم العبد إنه أواب.",

"surah": 38,

"verses": "41-44",

"question": "من هو النبي المعروف بالصبر؟",

"answers": [

{

"text": "أيوب",

"start_char": 12

}

]

}

```

Each Qur’anic passage in QRCD may have more than one occurrence; and each passage occurrence is paired with a different

question.

Likewise, each question in QRCD may have more than one occurrence; and each question occurrence is paired with a

different Qur’anic passage.

The source of the Qur'anic text in QRCD is the Tanzil project download page, which provides verified versions of the

Holy Qur'an in several scripting styles.

We have chosen the simple-clean text style of Tanzil version 1.0.2.

### Data Fields

* `pq_id`: Sample ID

* `passage`: Context text

* `surah`: Surah number

* `verses`: Verse range

* `question`: Question text

* `answers`: List of answers and their start character

### Data Splits

| **Dataset** | **%** | **# Question-Passage Pairs** | **# Question-Passage-Answer Triplets** |

|-------------|:-----:|:-----------------------------:|:---------------------------------------:|

| Training | 65% | 710 | 861 |

| Development | 10% | 109 | 128 |

| Test | 25% | 274 | 348 |

| All | 100% | 1,093 | 1,337 |

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

The QRCD v1.1 dataset is distributed under the CC-BY-ND 4.0 License https://creativecommons.org/licenses/by-nd/4.0/legalcode

For a human-readable summary of (and not a substitute for) the above CC-BY-ND 4.0 License, please refer to https://creativecommons.org/licenses/by-nd/4.0/

### Citation Information

```

@article{malhas2020ayatec,

author = {Malhas, Rana and Elsayed, Tamer},

title = {AyaTEC: Building a Reusable Verse-Based Test Collection for Arabic Question Answering on the Holy Qur’an},

year = {2020},

issue_date = {November 2020},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

volume = {19},

number = {6},

issn = {2375-4699},

url = {https://doi.org/10.1145/3400396},

doi = {10.1145/3400396},

journal = {ACM Trans. Asian Low-Resour. Lang. Inf. Process.},

month = {oct},

articleno = {78},

numpages = {21},

keywords = {evaluation, Classical Arabic}

}

```

### Contributions

Thanks to [@piraka9011](https://github.com/piraka9011) for adding this dataset.

|

false |

Dataset Name: Eng-Sinhala Translation Dataset

Description: This dataset contains approximately 80,000 lines of English-Sinhala translation pairs. It can be used to train models for machine translation tasks and other natural language processing applications.

Files:

1. src.txt: This file contains the source sentences in English. Each line corresponds to an English sentence.

2. tgt.txt: This file contains the target sentences in Sinhala. Each line corresponds to the Sinhala translation of the corresponding English sentence in src.txt.

Data License: GPL (GNU General Public License). Please ensure that you comply with the terms and conditions of the GPL when using the dataset.

Note: While you mentioned that some sentences in the dataset might be incorrect due to its large size, it is important to ensure the quality and accuracy of the data for training purposes. Consider performing data cleaning and validation to improve the reliability of your model.

If you plan to make this dataset publicly available, you can share the dataset files (src.txt and tgt.txt) along with the dataset card to provide information about the dataset's contents and usage. |

false |

# Dataset Card for OpenFire

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** https://pyronear.org/pyro-vision/datasets.html#openfire

- **Repository:** https://github.com/pyronear/pyro-vision

- **Point of Contact:** Pyronear <https://pyronear.org/en/>

### Dataset Summary

OpenFire is an image classification dataset for wildfire detection, collected

from web searches.

### Supported Tasks and Leaderboards

- `image-classification`: The dataset can be used to train a model for Image Classification.

### Languages

English

## Dataset Structure

### Data Instances

A data point comprises an image URL and its binary label.

```

{

'image_url': 'https://cdn-s-www.ledauphine.com/images/13C08274-6BA6-4577-B3A0-1E6C1B2A573C/FB1200/photo-1338240831.jpg',

'is_wildfire': true,

}

```

### Data Fields

- `image_url`: the download URL of the image.

- `is_wildfire`: a boolean value specifying whether there is an ongoing wildfire on the image.

### Data Splits

The data is split into training and validation sets. The training set contains 7143 images and the validation set 792 images.

## Dataset Creation

### Curation Rationale

The curators state that the current wildfire classification datasets typically contain close-up shots of wildfires, with limited variations of weather conditions, luminosity and backrgounds,

making it difficult to assess for real world performance. They argue that the limitations of datasets have partially contributed to the failure of some algorithms in coping

with sun flares, foggy / cloudy weather conditions and small scale.

### Source Data

#### Initial Data Collection and Normalization

OpenFire was collected using images publicly indexed by the search engine DuckDuckGo using multiple relevant queries. The images were then manually cleaned to remove errors.

### Annotations

#### Annotation process

Each web search query was designed to yield a single label (with wildfire or without), and additional human verification was used to remove errors.

#### Who are the annotators?

François-Guillaume Fernandez

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

François-Guillaume Fernandez

### Licensing Information

[Apache License 2.0](https://www.apache.org/licenses/LICENSE-2.0).

### Citation Information

```

@software{Pyronear_PyroVision_2019,

title={Pyrovision: wildfire early detection},

author={Pyronear contributors},

year={2019},

month={October},

publisher = {GitHub},

howpublished = {\url{https://github.com/pyronear/pyro-vision}}

}

```

|

true | |

false | # Dataset Card for "TALI-small"

## Table of Contents

1. Dataset Description

1. Abstract

2. Brief Description

2. Dataset Information

1. Modalities

2. Dataset Variants

3. Dataset Statistics

4. Data Fields

5. Data Splits

3. Dataset Creation

4. Dataset Use

5. Additional Information

## Dataset Description

### Abstract

TALI is a large-scale, tetramodal dataset designed to facilitate a shift from unimodal and duomodal to tetramodal research in deep learning. It aligns text, video, images, and audio, providing a rich resource for innovative self-supervised learning tasks and multimodal research. TALI enables exploration of how different modalities and data/model scaling affect downstream performance, with the aim of inspiring diverse research ideas and enhancing understanding of model capabilities and robustness in deep learning.

### Brief Description

TALI (Temporally and semantically Aligned Audio, Language and Images) is a dataset that uses the Wikipedia Image Text (WIT) captions and article titles to search Youtube for videos that match the captions. It then downloads the video, audio, and subtitles from these videos. The result is a rich multimodal dataset that has multiple caption types related to both the WiT Images, and the Youtube videos. This enables learning to take place between either temporally or semantically aligned text, images, audio and video.

## Dataset Information

### Modalities

The TALI dataset consists of the following modalities:

1. Image:

1. Wikipedia caption image

2. Randomly sampled image from youtube video

2. Text

1. Wikipedia Caption Text

2. Wikipedia Title Text

3. Wikipedia Main Body Text

4. YouTube Subtitle Text

5. YouTube Description Text

6. YouTube Title Text

3. Audio

1. YouTube Content Audio

4. Video

1. YouTube Content Video

### Dataset Variants

The TALI dataset comes in three variants that differ in the training set size:

- TALI-small: Contains about 1.3 million 30-second video clips, aligned with 120K WiT entries.

- TALI-base: Contains about 6.5 million 30-second video clips, aligned with 120K WiT entries.

- TALI-big: Contains about 13 million 30-second video clips, aligned with 120K WiT entries.

The validation and test sets remain consistent across all three variants at about 80K Videos aligned to 8K wikipedia entries (10 subclips for each Wikipedia entry) each.

### Dataset Statistics

TBA

## Dataset Creation

The TALI dataset was created by starting from the WiT dataset and using either the context_page_description or page_title as a source-query to search YouTube for video that were creative commons opted-in, and, not age restricted. The top 100 result titles were returned and compared with the source-query using the CLIP text embeddings of the largest CLIP model available. The top-1 title’s video based on the CLIP ranking was chosen and downloaded. The video was broken into 30-second segments and the top-10 segments for eachvideo were chosen based on the distance between the CLIP image embedding of the first image of each segment and the video’s title text. The image, audio, and subtitle frames were extracted from these segments. At sampling time, one of these 10 segments is randomly selected, and a 10-second segment is chosen out of the 30-second clip. The result is 200 video frames (spread throughout the 10-second segment), and 160000 audio frames (10 seconds).

## Dataset Use

TALI is designed for use in a wide range of multimodal research tasks, including but not limited to:

- Multimodal understanding and reasoning

- Self-supervised learning

- Multimodal alignment and translation

- Multimodal summarization

- Multimodal question answering

## Dataset Curators: Antreas Antoniou

Citation Information: TBA

Contributions: Thanks to all contributors including data curators, annotators, and software developers. |

false | # EVJVQA - Multilingual Visual Question Answering

## Abstract

Visual Question Answering (VQA) is a challenging task of natural language processing (NLP) and computer vision (CV), attracting significant attention from researchers. English is a resource-rich language that has witnessed various developments in datasets and models for visual question answering. Visual question answering in other languages also would be developed for resources and models. In addition, there is no multilingual dataset targeting the visual content of a particular country with its own objects and cultural characteristics. To address the weakness, we provide the research community with a benchmark dataset named EVJVQA, including 33,000+ pairs of question-answer over three languages: Vietnamese, English, and Japanese, on approximately 5,000 images taken from Vietnam for evaluating multilingual VQA systems or models. EVJVQA is used as a benchmark dataset for the challenge of multilingual visual question answering at the 9th Workshop on Vietnamese Language and Speech Processing (VLSP 2022). This task attracted 62 participant teams from various universities and organizations. In this article, we present details of the organization of the challenge, an overview of the methods employed by shared-task participants, and the results. The highest performances are 0.4392 in F1-score and 0.4009 in BLUE on the private test set. The multilingual QA systems proposed by the top 2 teams use ViT for the pre-trained vision model and mT5 for the pre-trained language model, a powerful pre-trained language model based on the transformer architecture. EVJVQA is a challenging dataset that motivates NLP and CV researchers to further explore the multilingual models or systems for visual question answering systems. We released the challenge on the Codalab evaluation system for further research.

## Links

- https://arxiv.org/abs/2302.11752

- https://codalab.lisn.upsaclay.fr/competitions/12274 |

false |

# Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This dataset card aims to be a base template for new datasets. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/datasetcard_template.md?plain=1).

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

false |

﷽

# Dataset Card for Tarteel AI's EveryAyah Dataset

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** [Tarteel AI](https://www.tarteel.ai/)

- **Repository:** [Needs More Information]

- **Point of Contact:** [Mohamed Saad Ibn Seddik](mailto:ms.ibnseddik@tarteel.ai)

### Dataset Summary

This dataset is a collection of Quranic verses and their transcriptions, with diacritization, by different reciters.

### Supported Tasks and Leaderboards

[Needs More Information]

### Languages

The audio is in Arabic.

## Dataset Structure

### Data Instances

A typical data point comprises the audio file `audio`, and its transcription called `text`.

The `duration` is in seconds, and the author is `reciter`.

An example from the dataset is:

```

{

'audio': {

'path': None,

'array': array([ 0. , 0. , 0. , ..., -0.00057983,

-0.00085449, -0.00061035]),

'sampling_rate': 16000

},

'duration': 6.478375,

'text': 'بِسْمِ اللَّهِ الرَّحْمَنِ الرَّحِيمِ',

'reciter': 'abdulsamad'

}

```

### Length:

Training:

Total duration: 2985111.2642479446 seconds

Total duration: 49751.85440413241 minutes

Total duration: 829.1975734022068 hours

Validation:

Total duration: 372720.43139099434 seconds

Total duration: 6212.007189849905 minutes

Total duration: 103.5334531641651 hours

Test:

Total duration: 375509.96909399604 seconds

Total duration: 6258.499484899934 minutes

Total duration: 104.30832474833224 hours

### Data Fields

- audio: A dictionary containing the path to the downloaded audio file, the decoded audio array, and the sampling rate. Note that when accessing the audio column: `dataset[0]["audio"]` the audio file is automatically decoded and resampled to `dataset.features["audio"].sampling_rate`. Decoding and resampling of a large number of audio files might take a significant amount of time. Thus it is important to first query the sample index before the `"audio"` column, *i.e.* `dataset[0]["audio"]` should **always** be preferred over `dataset["audio"][0]`.

- text: The transcription of the audio file.

- duration: The duration of the audio file.

- reciter: The reciter of the verses.

### Data Splits

| | Train | Test | Validation |

| ----- | ----- | ---- | ---------- |

| dataset | 187785 | 23473 | 23474 |

### reciters

- reciters_count: 36

- reciters: {'abdul_basit',

'abdullah_basfar',

'abdullah_matroud',

'abdulsamad',

'abdurrahmaan_as-sudais',

'abu_bakr_ash-shaatree',

'ahmed_ibn_ali_al_ajamy',

'ahmed_neana',

'akram_alalaqimy',

'alafasy',

'ali_hajjaj_alsuesy',

'aziz_alili',

'fares_abbad',

'ghamadi',

'hani_rifai',

'husary',

'karim_mansoori',

'khaalid_abdullaah_al-qahtaanee',

'khalefa_al_tunaiji',

'maher_al_muaiqly',

'mahmoud_ali_al_banna',

'menshawi',

'minshawi',

'mohammad_al_tablaway',

'muhammad_abdulkareem',

'muhammad_ayyoub',

'muhammad_jibreel',

'muhsin_al_qasim',

'mustafa_ismail',

'nasser_alqatami',

'parhizgar',

'sahl_yassin',

'salaah_abdulrahman_bukhatir',

'saood_ash-shuraym',

'yaser_salamah',

'yasser_ad-dussary'}

## Dataset Creation

### Curation Rationale

### Source Data

#### Initial Data Collection and Normalization

#### Who are the source language producers?

### Annotations

#### Annotation process

#### Who are the annotators?

### Personal and Sensitive Information

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[Needs More Information]

## Additional Information

### Dataset Curators

### Licensing Information

[CC BY 4.0](https://creativecommons.org/licenses/by/4.0/)

### Citation Information

```

```

### Contributions

This dataset was created by:

|

false | |

true | |

false | # Vision-CAIR cc_sbu_align in multilang

This is Google-translated versions of [Vision-CAIR/cc_sbu_align](https://huggingface.co/datasets/Vision-CAIR/cc_sbu_align). Please visit [2. Second finetuning stage](https://huggingface.co/datasets/Vision-CAIR/cc_sbu_align#training) to understand how the English one was created.

Here I put `filter_cap.json` of each folder for each language.

Current languages:

- en

- vi

There will be more if I have time. |

false | # Dataset Card for OKD-CL

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This dataset card aims to be a base template for new datasets. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/datasetcard_template.md?plain=1).

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

true |

### Labels

|label|meaning|

|:---|:-----------|

|achievement_P | in favor of achievement |

|achievement_N | against achievement |

|power_dominance_P | in favor of power: dominance |

|power_dominance_N | against power: dominance |

|power_resources_P | in favor of power: resources |

|power_resources_N | against power: resources | |

true | # Dataset Card for Dataset Name

## Name

Motivación Diaria

## Dataset Description

- **Autor:** Rubén Darío Jaramillo

- **Email:** rubend18@hotmail.com

- **WhatsApp:** +593 93 979 6676

### Dataset Summary

Scrapeado de http://www.motivaciondiaria.com/

### Languages

[Spanish] |

false |

**F**unds **R**eport **F**ront **P**age **E**ntities (FRFPE) is a dataset for document understanding and token classification.

It contains 356 titles/front pages of annual and semi-annual reports as well as extracted text and annotations for five different token categories.

FRFPE serves as an example of how to train and evaluate multimodal models such as LayoutLM using the deepdoctection framework on a custom dataset.

FRFPE contains documents in three different languages

- english: 167

- german: 149

- french: 9

as well as the token categories:

- report_date (1096 samples) - reporting date of the report

- report_type (738 samples) - annual/semi-annual report

- umbrella (912 samples) - fund issued as umbrella

- fund_name (2122 samples) - Subfund, as part of an umbrella fund or standalone fund

- other (12903 samples) - None of the above categories

The annotations have been made to the best of our knowledge and belief, but there is no claim on correctness.

Some cursory notes:

- The images were created by converting PDF files. A resolution of 300 dpi was applied during the conversion.

- The text was extracted from the PDF file using PDFPlumber. In some cases the PDF contains embedded images, which in turn contain text, such as corporate names. These are not extracted and are therefore not taken into account.

- The annotation was carried out with the annotation tool Prodigy.

- The category `report_date` is self-explanatory. `report_type` was used to indicate whether the report is an annual semi-annual report or a report in a different cycle.

- `umbrella`/`fund_name` is the classification of any token that is part of a fund name that represents either an umbrella, subfund or individual fund.

The distinction between whether a fund represents an umbrella, or single fund is not always apparent from the context of the document, which makes the classification

particularly challenging. In order to remain correct in the annotation, information from the Bafin database was used for cases that could not be clarified from the context.

To explore the dataset we suggest to use **deep**doctection. Place the unzipped folder in the `**deep**doctection ~/.cache/datasets` folder.

```python

import deepdoctection as dd

from pathlib import Path

@dd.object_types_registry.register("ner_first_page")

class FundsFirstPage(dd.ObjectTypes):

report_date = "report_date"

umbrella = "umbrella"

report_type = "report_type"

fund_name = "fund_name"

dd.update_all_types_dict()

path = Path("~/.cache/datasets/fund_ar_front_page/40952248ba13ae8bfdd39f56af22f7d9_0.json")

page = dd.Page.from_file(path)

page.image = dd.load_image_from_file(path.parents[0] / "image" / page.file_name.replace("pdf","png"))

page.viz(interactive=True,show_words=True) # close interactive window with q

for word in page.words:

print(f"text: {word.characters}, token class: {word.token_class}")

``` |

false |

# Sol: Simian Opertional Lexicon

The dataset

|

true | # Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This dataset card aims to be a base template for new datasets. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/datasetcard_template.md?plain=1).

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

false |

Dataset redistributed without change with permission from the author. If you use this dataset in your research, please cite the following paper: https://doi.org/10.3390/rs6064907 |

false | |

false |

Instructions created from Amazon ESCI dataset as the alpaca style, includes 20k instruction pairs. Used for *query generation*. Following the schema:

```json

[

...,

{

"instruction": "Generate a search query from the give product description.",

"input": "FLYDAY Flying Disc with LED Lights ...",

"output": "175 gram led frisbee gram not dollar",

}

]

```

where the:

*input*: Product description (from Amazon ESCI, E&S section).

*output*: User query.

This dataset can be used to instruction tuning LLM for query (keyword) generation. |

true |

# IPCC Confidence in Climate Statements

_What do LLMs know about climate? Let's find out!_

## ICCS Dataset

We introduce the **ICCS dataset (IPCC Confidence in Climate Statements)** is a novel, curated, expert-labeled, natural language dataset of 8094 statements extracted or paraphrased from the IPCC Assessment Report 6: [Working Group I report](https://www.ipcc.ch/report/ar6/wg1/), [Working Group II report](https://www.ipcc.ch/report/ar6/wg2/), and [Working Group III report](https://www.ipcc.ch/report/ar6/wg3/), respectively.

Each statement is labeled with the corresponding IPCC report source, the page number in the report PDF, and the corresponding confidence level, along with their associated confidence levels (`low`, `medium`, `high`, or `very high`) as assessed by IPCC climate scientists based on available evidence and agreement among their peers.

## Confidence Labels

The authors of the United Nations International Panel on Climate Change (IPCC) reports have developed a structured framework to communicate the confidence and uncertainty levels of statements regarding our knowledge of climate change ([Mastrandrea, 2010](https://link.springer.com/article/10.1007/s10584-011-0178-6)).

Our dataset leverages this distinctive and consistent approach to labelling uncertainty across topics, disciplines, and report chapters, to help NLP and climate communication researchers evaluate how well LLMs can assess human expert confidence in a set of climate science statements from the IPCC reports.

Source: [IPCC AR6 Working Group I report](https://www.ipcc.ch/report/ar6/wg1/)

## Dataset Construction

To construct the dataset, we retrieved the complete raw text from each of the three IPCC report PDFs that are available online using an open-source library [pypdf2](https://pypi.org/project/PyPDF2/). We then normalized the whitespace, tokenized the text into sentences using [NLTK](https://www.nltk.org/) , and used regex search to filter for complete sentences including a parenthetical confidence label at the end of the statement, of the form _sentence (low|medium|high|very high confidence)_. The final ICCS dataset contains 8094 labeled sentences.

From the full 8094 labeled sentences, we further selected **300 statements to form a smaller and more tractable test dataset**. We performed a random selection of sentences within each report and confidence category, with the following objectives:

- Making the test set distribution representative of the confidence class distribution in the overall train set and within each report;

- Making the breakdown between source reports representative of the number of statements from each report;

- Making sure the test set contains at least 5 sentences from each class and from each source, to ensure our results are statistically robust.

Then, we manually reviewed and cleaned each sentence in the test set to provide for a fairer assessment of model capacity.

- We removed 26 extraneous references to figures, call-outs, boxes, footnotes, or subscript typos (`CO 2');

- We split 19 compound statements with conflicting confidence sub-labels, and removed 6 extraneous mid-sentence labels of the same category as the end-of-sentence label;

- We added light context to 23 sentences, and replaced 5 sentences by others when they were meaningless outside of a longer paragraph;

- We removed qualifiers at the beginning of 29 sentences to avoid biasing classification (e.g. 'But...', 'In summary...', 'However...').

**The remaining 7794 sentences not allocated to the test split form our train split.**

Of note: while the IPCC report uses a 5 levels scale for confidence, almost no `very low confidence` statement makes it through the peer review process to the final reports, such that no statement of the form _sentence (very low confidence)_ was retrievable. Therefore, we chose to build our data set with only statements labeled as `low`, `medium`, `high` and `very high` confidence.

## Code Download

The code to reproduce dataset collection and our LLM benchmarking experiments is [released on GitHub](https://github.com/rlacombe/Climate-LLMs).

## Paper

We use this dataset to evaluate how recent LLMs fare at classifying the scientific confidence associated with each statement in a statistically representative, carefully constructed test split of the dataset.

We show that `gpt3.5-turbo` and `gpt4` assess the correct confidence level with reasonable accuracy even in the zero-shot setting; but that, along with other language models we tested, they consistently overstate the certainty level associated with low and medium confidence labels. Models generally perform better on reports before their knowledge cutoff, and demonstrate intuitive classifications on a baseline of non-climate statements. However, we caution it is still not fully clear why these models perform well, and whether they may also pick up on linguistic cues within the climate statements and not just prior exposure to climate knowledge and/or IPCC reports.

Our results have implications for climate communications and the use of generative language models in knowledge retrieval systems. We hope the ICCS dataset provides the NLP and climate sciences communities with a valuable tool with which to evaluate and improve model performance in this critical domain of human knowledge.

Pre-print upcomping. |

true |

# Dataset Card for "super_glue"

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** [https://github.com/google-research-datasets/boolean-questions](https://github.com/google-research-datasets/boolean-questions)

- **Repository:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Paper:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Point of Contact:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Size of downloaded dataset files:** 58.36 MB

- **Size of the generated dataset:** 249.57 MB

- **Total amount of disk used:** 307.94 MB

### Dataset Summary

SuperGLUE (https://super.gluebenchmark.com/) is a new benchmark styled after

GLUE with a new set of more difficult language understanding tasks, improved

resources, and a new public leaderboard.

BoolQ (Boolean Questions, Clark et al., 2019a) is a QA task where each example consists of a short

passage and a yes/no question about the passage. The questions are provided anonymously and

unsolicited by users of the Google search engine, and afterwards paired with a paragraph from a

Wikipedia article containing the answer. Following the original work, we evaluate with accuracy.

### Supported Tasks and Leaderboards

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Languages

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## Dataset Structure

### Data Instances

#### axb

- **Size of downloaded dataset files:** 0.03 MB

- **Size of the generated dataset:** 0.24 MB

- **Total amount of disk used:** 0.27 MB

An example of 'test' looks as follows.

```

```

#### axg

- **Size of downloaded dataset files:** 0.01 MB

- **Size of the generated dataset:** 0.05 MB

- **Total amount of disk used:** 0.06 MB

An example of 'test' looks as follows.

```

```

#### boolq

- **Size of downloaded dataset files:** 4.12 MB

- **Size of the generated dataset:** 10.40 MB

- **Total amount of disk used:** 14.52 MB

An example of 'train' looks as follows.

```

```

#### cb

- **Size of downloaded dataset files:** 0.07 MB

- **Size of the generated dataset:** 0.20 MB

- **Total amount of disk used:** 0.28 MB

An example of 'train' looks as follows.

```

```

#### copa

- **Size of downloaded dataset files:** 0.04 MB

- **Size of the generated dataset:** 0.13 MB

- **Total amount of disk used:** 0.17 MB

An example of 'train' looks as follows.

```

```

### Data Fields

The data fields are the same among all splits.

#### axb

- `sentence1`: a `string` feature.

- `sentence2`: a `string` feature.

- `idx`: a `int32` feature.

- `label`: a classification label, with possible values including `entailment` (0), `not_entailment` (1).

#### axg

- `premise`: a `string` feature.

- `hypothesis`: a `string` feature.

- `idx`: a `int32` feature.

- `label`: a classification label, with possible values including `entailment` (0), `not_entailment` (1).

#### boolq

- `question`: a `string` feature.

- `passage`: a `string` feature.

- `idx`: a `int32` feature.

- `label`: a classification label, with possible values including `False` (0), `True` (1).

#### cb

- `premise`: a `string` feature.

- `hypothesis`: a `string` feature.

- `idx`: a `int32` feature.

- `label`: a classification label, with possible values including `entailment` (0), `contradiction` (1), `neutral` (2).

#### copa

- `premise`: a `string` feature.

- `choice1`: a `string` feature.

- `choice2`: a `string` feature.

- `question`: a `string` feature.

- `idx`: a `int32` feature.

- `label`: a classification label, with possible values including `choice1` (0), `choice2` (1).

### Data Splits

#### axb

| |test|

|---|---:|

|axb|1104|

#### axg

| |test|

|---|---:|

|axg| 356|

#### boolq

| |train|validation|test|

|-----|----:|---------:|---:|

|boolq| 9427| 3270|3245|

#### cb

| |train|validation|test|

|---|----:|---------:|---:|

|cb | 250| 56| 250|

#### copa

| |train|validation|test|

|----|----:|---------:|---:|

|copa| 400| 100| 500|

## Dataset Creation

### Curation Rationale

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

#### Who are the source language producers?

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Annotations

#### Annotation process

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

#### Who are the annotators?

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Personal and Sensitive Information

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Discussion of Biases

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Other Known Limitations

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## Additional Information

### Dataset Curators

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Licensing Information

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Citation Information

```

@inproceedings{clark2019boolq,

title={BoolQ: Exploring the Surprising Difficulty of Natural Yes/No Questions},

author={Clark, Christopher and Lee, Kenton and Chang, Ming-Wei, and Kwiatkowski, Tom and Collins, Michael, and Toutanova, Kristina},

booktitle={NAACL},

year={2019}

}

@article{wang2019superglue,

title={SuperGLUE: A Stickier Benchmark for General-Purpose Language Understanding Systems},

author={Wang, Alex and Pruksachatkun, Yada and Nangia, Nikita and Singh, Amanpreet and Michael, Julian and Hill, Felix and Levy, Omer and Bowman, Samuel R},

journal={arXiv preprint arXiv:1905.00537},

year={2019}

}

Note that each SuperGLUE dataset has its own citation. Please see the source to

get the correct citation for each contained dataset.

```

### Contributions

Thanks to [@thomwolf](https://github.com/thomwolf), [@lewtun](https://github.com/lewtun), [@patrickvonplaten](https://github.com/patrickvonplaten) for adding this dataset.

|

false | |

false | # million-faces

Welcome to "million-faces", one of the largest facesets available to the public. Comprising a staggering one million faces, all images in this dataset are entirely AI-generated.

Due to the nature of AI-generated images, please be aware that some artifacts may be present in the dataset.

The dataset is currently being uploaded to Hugging Face, a renowned platform for hosting datasets and models for the machine learning community.

## Usage

Feel free to use this dataset for your projects and research. However, please do not hold me liable for any issues that might arise from its use. If you use this dataset and create something amazing, consider linking back to this GitHub project. Recognition of work is a pillar of the open-source community!

## Dataset Details

- **Number of faces:** 1,000,000

- **Source:** AI-generated

- **Artifacts:** Some images may contain artifacts

- **Availability:** Almost fully uploaded on Hugging Face

## About

This project is about creating and sharing one of the largest AI-generated facesets. With one million faces, it offers a significant resource for researchers and developers in AI, machine learning, and computer vision. |

false | #### Warning: Due to the nature of the source, certain images are very large.

Large number of artistic images, mostly (but hardly exclusively) sourced from Wikimedia Commons. <br>

Pull requests are allowed, and even encouraged. |

true | # AutoTrain Dataset for project: bhaav-sentiment

## Dataset Description

This dataset has been automatically processed by AutoTrain for project bhaav-sentiment.

### Languages

The BCP-47 code for the dataset's language is en.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"text": "\u0914\u0930 \u0926\u094b\u0928\u094b\u0902 \u091f\u0940\u0932\u0947 \u0915\u0947 \u0905\u0932\u0917 \u0905\u0932\u0917 \u0915\u094b\u0928\u0947 \u092e\u0947\u0902 \u091c\u093e \u092a\u0939\u0941\u0902\u091a\u0947",

"target": 3

},

{

"text": "\u0909\u0938\u0915\u0947 \u092e\u0941\u0901\u0939 \u0938\u0947 \u090f\u0915 \u091a\u0940\u0916 \u0928\u093f\u0915\u0932 \u0917\u092f\u0940",

"target": 2

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"text": "Value(dtype='string', id=None)",

"target": "ClassLabel(names=['0', '1', '2', '3', '4'], id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 16241 |

| valid | 4063 |

|

false | # FICLE Dataset

The dataset can be loaded and utilized through the following:

```python

from datasets import load_dataset

ficle_data = load_dataset("tathagataraha/ficle")

```

# Dataset card for Falcon RefinedWeb

## Dataset Description

* **GitHub Repo:** https://github.com/blitzprecision/FICLE

* **Paper:**

* **Point of Contact:**

### Dataset Summary

The FICLE dataset is a derivative of the FEVER dataset, which is a collection of 185,445 claims generated by modifying sentences obtained from Wikipedia.

These claims were then verified without knowledge of the original sentences they were derived from. Each sample in the FEVER dataset consists of a claim sentence, a context sentence extracted from a Wikipedia URL as evidence, and a type label indicating whether the claim is supported, refuted, or lacks sufficient information.

### Languages

The FICLE Dataset contains only English.

## Dataset Structure

### Data Fields

* `Claim (string)`: A statement or proposition relating to the consistency or inconsistency of certain facts or information.

* `Context (string)`: The surrounding information or background against which the claim is being evaluated or compared. It provides additional details or evidence that can support or challenge the claim.

* `Source (string)`: It is the linguistic chunk containing the entity lying to the left of the main verb/relating chunk.

* `Source Indices (string)`: Source indices refer to the specific indices or positions within the source string that indicate the location of the relevant information.

* `Relation (string)`: It is the linguistic chunk containing the verb/relation at the core of the identified inconsistency.

* `Relation Indices (string)`: Relation indices indicate the specific indices or positions within the relation string that highlight the location of the relevant information.

* `Target (string)`: It is the linguistic chunk containing the entity lying to the right of the main verb/relating chunk.

* `Target Indices (string)`: Target indices represent the specific indices or positions within the target string that indicate the location of the relevant information.

* `Inconsistent Claim Component (string)`: The inconsistent claim component refers to a specific linguistic chunk within the claim that is identified as inconsistent with the context. It helps identify which part of the claim triple is problematic in terms of its alignment with the surrounding information.

* `Inconsistent Context-Span (string)`: A span or portion marked within the context sentence that is found to be inconsistent with the claim. It highlights a discrepancy or contradiction between the information in the claim and the corresponding context.

* `Inconsistent Context-Span Indices (string)`: The specific indices or location within the context sentence that indicate the inconsistent span.

* `Inconsistency Type (string)`: The category or type of inconsistency identified in the claim and context.

* `Fine-grained Inconsistent Entity-Type (string)`: The specific detailed category or type of entity causing the inconsistency within the claim or context. It provides a more granular classification of the entity associated with the inconsistency.

* `Coarse Inconsistent Entity-Type (string)`: The broader or general category or type of entity causing the inconsistency within the claim or context. It provides a higher-level classification of the entity associated with the inconsistency.

### Data Splits

The FICLE dataset comprises a total of 8,055 samples in the English language, each representing different instances of inconsistencies.

These inconsistencies are categorized into five types: Taxonomic Relations (4,842 samples), Negation (1,630 samples), Set Based (642 samples), Gradable (526 samples), and Simple (415 samples).

Within the dataset, there are six possible components that contribute to the inconsistencies found in the claim sentences.

These components are distributed as follows: Target-Head (3,960 samples), Target-Modifier (1,529 samples), Relation-Head (951 samples), Relation-Modifier (1,534 samples), Source-Head (45 samples), and Source-Modifier (36 samples).

The dataset is split into `train`, `validation`, and `test`.

* `train`: 6.44k rows

* `validation`: 806 rows

* `test`: 806 rows

## Dataset Creation

### Curation Rationale

We propose a linguistically enriched dataset to help detect inconsistencies and explain them.

To this end, the broad requirements are to locate where the inconsistency is present between a claim and a context and to have a classification scheme for better explainability.

### Data Collection and Preprocessing

The FICLE dataset is derived from the FEVER dataset, using the following-

ing processing steps. FEVER (Fact Extraction and VERification) consists of

185,445 claims were generated by altering sentences extracted from Wikipedia and

subsequently verified without knowledge of the sentence they were derived from.

Every sample in the FEVER dataset contains the claim sentence, evidence (or

context) sentence from a Wikipedia URL, and a type label (‘supports’, ‘refutes’, or

‘not enough info’). Out of these, we leverage only the samples with the ‘refutes’ label

to build our dataset.

### Annotations

You can see the annotation guidelines [here](https://github.com/blitzprecision/FICLE/blob/main/ficle_annotation_guidelines.pdf).

In order to provide detailed explanations for inconsistencies, extensive annotations were conducted for each sample in the FICLE dataset. The annotation process involved two iterations, with each iteration focusing on different aspects of the dataset.

In the first iteration, the annotations were primarily "syntactic-oriented." These fields included identifying the inconsistent claim fact triple, marking inconsistent context spans, and categorizing the six possible inconsistent claim components.

The second iteration of annotations concentrated on "semantic-oriented" aspects. Annotators labeled semantic fields for each sample, such as the type of inconsistency, coarse inconsistent entity types, and fine-grained inconsistent entity types.

This stage aimed to capture the semantic nuances and provide a deeper understanding of the inconsistencies present in the dataset.

The annotation process was carried out by a group of four annotators, two of whom are also authors of the dataset. The annotators possess a strong command of the English language and hold Bachelor's degrees in Computer Science, specializing in computational linguistics.

Their expertise in the field ensured accurate and reliable annotations. The annotators' ages range from 20 to 22 years, indicating their familiarity with contemporary language usage and computational linguistic concepts.

### Personal and Sensitive Information

## Considerations for Using the Data

### Social Impact of Dataset

### Discussion of Biases

### Other Known Limitations

## Additional Information

### Citation Information

```

@misc{raha2023neural,

title={Neural models for Factual Inconsistency Classification with Explanations},

author={Tathagata Raha and Mukund Choudhary and Abhinav Menon and Harshit Gupta and KV Aditya Srivatsa and Manish Gupta and Vasudeva Varma},

year={2023},

eprint={2306.08872},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

### Contact

|

false | # VQAv2 in Vietnamese

This is Google-translated version of [VQAv2](https://visualqa.org/) in Vietnamese. The process of building Vietnamese version as follows:

- In `en/` folder,

- Download `v2_OpenEnded_mscoco_train2014_questions.json` and `v2_mscoco_train2014_annotations.json` from [VQAv2](https://visualqa.org/).

- Remove key `answers` of key `annotations` from `v2_mscoco_train2014_annotations.json`. I shall use key `multiple_choice_answer` of key `annotations` only. Let call the new file `v2_OpenEnded_mscoco_train2014_answers.json`

- By using [set data structure](https://docs.python.org/3/tutorial/datastructures.html#sets), I generate `question_list.txt` and `answer_list.txt` of unique text. There are 152050 unique questions and 22531 unique answers from 443757 image-question-answer triplets.

- In `vi/` folder,

- By translating two `en/.txt` files, I generate `answer_list.jsonl` and `question_list.jsonl`. In each of entry of each file, the key is the original english text, the value is the translated text in vietnamese.

To load Vietnamese version in your code, you need original English version. Then just use English text as key to retrieve Vietnamese value from `answer_list.jsonl` and `question_list`. I provide both English and Vietnamese version.

|

false | |

false |

General information

The overall ACDC dataset was created from real clinical exams acquired at the University Hospital of Dijon. Acquired data were fully anonymized and handled within the regulations set by the local ethical committee of the Hospital of Dijon (France). Our dataset covers several well-defined pathologies with enough cases to (1) properly train machine learning methods and (2) clearly assess the variations of the main physiological parameters obtained from cine-MRI (in particular diastolic volume and ejection fraction). The dataset is composed of 150 exams (all from different patients) divided into 5 evenly distributed subgroups (4 pathological plus 1 healthy subject groups) as described below. Furthermore, each patient comes with the following additional information : weight, height, as well as the diastolic and systolic phase instants.

Tasks

The main task of this dataset is the semantic segmentation of the heart in cardiac magnetic resonance images, specifically the endocardium and myocardium. The present task is very relevant for the detection of cardiovascular diseases. Segmentation is a very time-consuming process, so automatically performing the segmentation with Artificial Intelligence algorithms can be extremely beneficial to reduce the time spent in a manual segmentation. In this way, a very relevant bottleneck can be avoided and cardiovascular diseases can be detected in a timely manner.

Reference

O. Bernard, A. Lalande, C. Zotti, F. Cervenansky, et al.

"Deep Learning Techniques for Automatic MRI Cardiac Multi-structures Segmentation and Diagnosis: Is the Problem Solved ?" in IEEE Transactions on Medical Imaging, vol. 37, no. 11, pp. 2514-2525, Nov. 2018

doi: 10.1109/TMI.2018.2837502 |

false |

# Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This dataset card aims to be a base template for new datasets. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/datasetcard_template.md?plain=1).

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

false |

# Helmet Detection Dataset

The dataset consist of photographs of construction workers during the work. The dataset provides helmet detection using bounding boxes, and addresses public safety tasks such as providing compliance with safety regulations, authomizing the processes of identification of rules violations and reducing accidents during the construction work.

# Get the Dataset

This is just an example of the data. If you need access to the entire dataset, contact us via **[sales@trainingdata.pro](mailto:sales@trainingdata.pro)** or leave a request on **[https://trainingdata.pro/data-market](https://trainingdata.pro/data-market?utm_source=huggingface)**

# Dataset structure

- **img** - contains of original images of construction workers

- **boxes** - includes bounding box labeling for the original images

- **annotations.xml** - contains coordinates of the bounding boxes and labels (helmet, no_helmet), created for the original photo

# Data Format

Each image from `img` folder is accompanied by an XML-annotation in the `annotations.xml` file indicating the coordinates of the bounding boxes and labels for helmet detection. For each point, the x and y coordinates are provided.

# Example of XML file structure

.png?generation=1686295970420156&alt=media)

# Helmet Detection might be made in accordance with your requirements.

## **[TrainingData](https://trainingdata.pro/data-market?utm_source=huggingface)** provides high-quality data annotation tailored to your needs