modelId stringlengths 4 111 | lastModified stringlengths 24 24 | tags list | pipeline_tag stringlengths 5 30 ⌀ | author stringlengths 2 34 ⌀ | config null | securityStatus null | id stringlengths 4 111 | likes int64 0 9.53k | downloads int64 2 73.6M | library_name stringlengths 2 84 ⌀ | created timestamp[us] | card stringlengths 101 901k | card_len int64 101 901k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

Yntec/Oiran | 2023-10-01T08:23:16.000Z | [

"diffusers",

"Anime",

"Artstyle",

"Clothing",

"KimiKoro",

"timevisitor",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:creativeml-openrail-m",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | Yntec | null | null | Yntec/Oiran | 1 | 5,069 | diffusers | 2023-09-30T16:21:21 | ---

license: creativeml-openrail-m

library_name: diffusers

pipeline_tag: text-to-image

tags:

- Anime

- Artstyle

- Clothing

- KimiKoro

- timevisitor

- stable-diffusion

- stable-diffusion-diffusers

- diffusers

- text-to-image

---

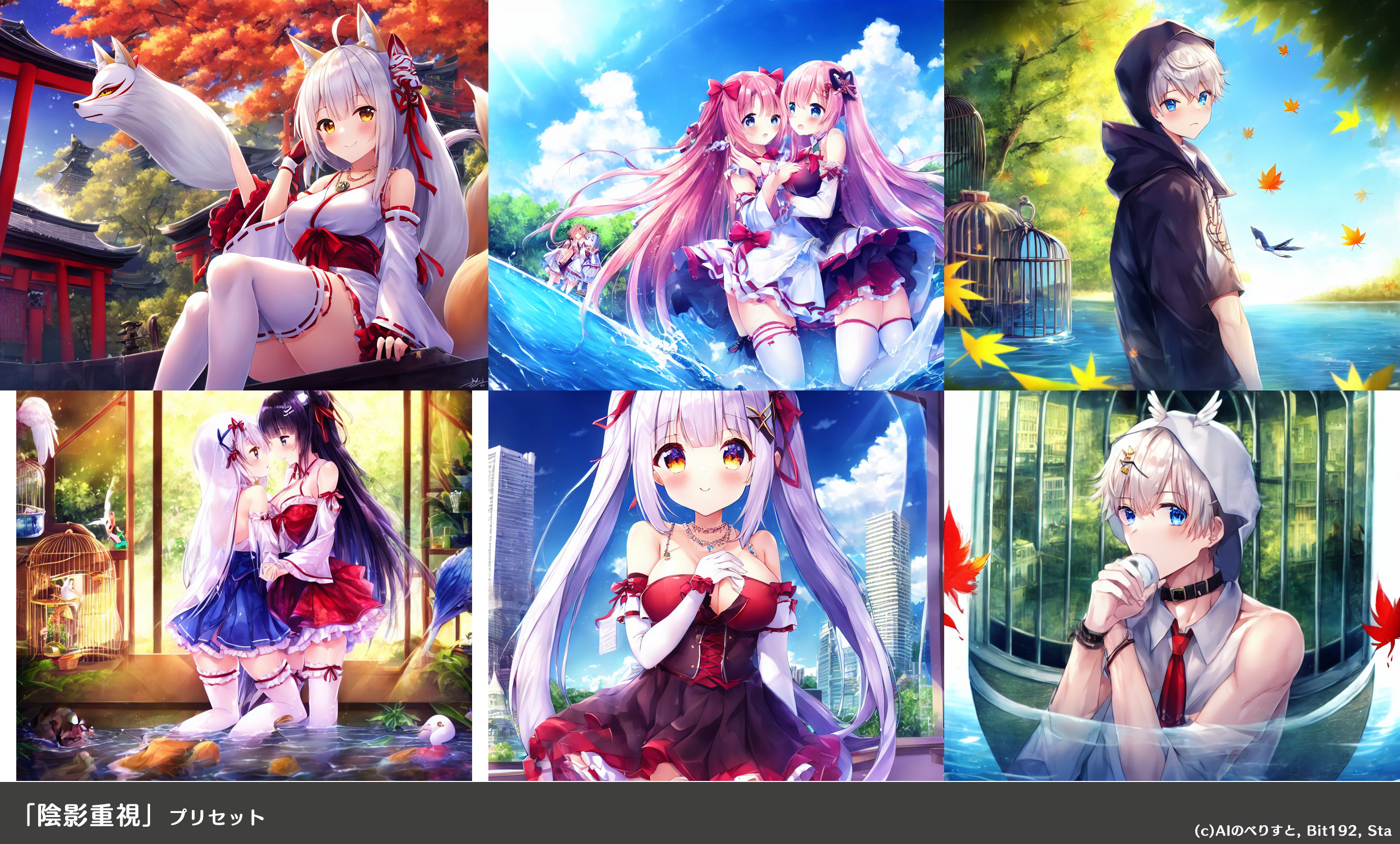

# Oiran

The Oiran Traditional Fashion LoRA merged with RealBackground v12.

Sample and prompt:

realistic, realistic details, detailed, pretty CUTE girl, solo, dynamic pose, narrow, full body, cowboy shot, oiran portrait, sweet smile, fantasy, blues pinks and greens, blue copper, coiling flowers, extremely detailed clothes, masterpiece, 8k, trending on pixiv, highest quality. (masterpiece:1.2, best quality), (highly detailed:1.3)

Original Pages:

https://civitai.com/models/84366?modelVersionId=89690 (Oiran)

https://civitai.com/models/24122/real-background-cartoon?modelVersionId=32593 (Real Background Cartoon)

# Recipe

For the purposes of mixing the LoRA with RealBackground, a "full" version of this model was produced that makes outputs identical to a fresh UI.

- SuperMerger Weight sum Train Difference Use MBW 1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1

Model A:

SD 1.5 (https://huggingface.co/Yntec/DreamLikeRemix/resolve/main/v1-5-pruned-fp16-no-ema.safetensors)

Model B:

RealBackground v12

Output:

RealBackground Full v12

- Merge Oiran Traditional Fashion LoRA to checkpoint 1.0

Model A:

RealBackground Full v12

Output:

Oiran | 1,510 | [

[

-0.052154541015625,

-0.04962158203125,

0.0106964111328125,

0.01015472412109375,

-0.027069091796875,

0.0010805130004882812,

0.0012121200561523438,

-0.0726318359375,

0.05792236328125,

0.05596923828125,

-0.081787109375,

-0.030792236328125,

-0.0219879150390625,

... |

Undi95/Mistral-11B-OmniMix9 | 2023-10-11T21:52:13.000Z | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"license:cc-by-nc-4.0",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | Undi95 | null | null | Undi95/Mistral-11B-OmniMix9 | 2 | 5,067 | transformers | 2023-10-11T15:32:28 | ---

license: cc-by-nc-4.0

---

Don't mind those at the moment, I need to finetune them for RP, it's just some tests.

WARNING: This model specifically need EOS token I completely forgot to put on the json files, and need to check what was the right ones trough the mix. Please don't use it like this if you really want to review it.

```

slices:

- sources:

- model: "/content/drive/MyDrive/CC-v1.1-7B-bf16"

layer_range: [0, 24]

- sources:

- model: "/content/drive/MyDrive/Zephyr-7B"

layer_range: [8, 32]

merge_method: passthrough

dtype: bfloat16

================================================

slices:

- sources:

- model: "/content/drive/MyDrive/Mistral-11B-CC-Zephyr"

layer_range: [0, 48]

- model: Undi95/Mistral-11B-OpenOrcaPlatypus

layer_range: [0, 48]

merge_method: slerp

base_model: "/content/drive/MyDrive/Mistral-11B-CC-Zephyr"

parameters:

t:

- value: 0.5 # fallback for rest of tensors

dtype: bfloat16

```

hf-causal-experimental (pretrained=/content/drive/MyDrive/Mistral-11B-Test), limit: None, provide_description: False, num_fewshot: 0, batch_size: 4

| Task |Version| Metric |Value | |Stderr|

|-------------|------:|--------|-----:|---|-----:|

|arc_challenge| 0|acc |0.5623|± |0.0145|

| | |acc_norm|0.5794|± |0.0144|

|arc_easy | 0|acc |0.8354|± |0.0076|

| | |acc_norm|0.8165|± |0.0079|

|hellaswag | 0|acc |0.6389|± |0.0048|

| | |acc_norm|0.8236|± |0.0038|

|piqa | 0|acc |0.8139|± |0.0091|

| | |acc_norm|0.8264|± |0.0088|

|truthfulqa_mc| 1|mc1 |0.3978|± |0.0171|

| | |mc2 |0.5607|± |0.0155|

|winogrande | 0|acc |0.7451|± |0.0122|

| 1,910 | [

[

-0.04669189453125,

-0.05108642578125,

0.022918701171875,

0.0030879974365234375,

-0.022613525390625,

0.0005917549133300781,

0.0063629150390625,

-0.0255126953125,

0.04449462890625,

0.033111572265625,

-0.060638427734375,

-0.03436279296875,

-0.056243896484375,

-... |

Andyrasika/dreamviewer-sdxl-1.0 | 2023-09-03T12:53:05.000Z | [

"diffusers",

"diffusion",

"text-to-image",

"en",

"arxiv:2307.01952",

"arxiv:2211.01324",

"arxiv:2108.01073",

"license:creativeml-openrail-m",

"endpoints_compatible",

"diffusers:StableDiffusionXLPipeline",

"region:us"

] | text-to-image | Andyrasika | null | null | Andyrasika/dreamviewer-sdxl-1.0 | 6 | 5,061 | diffusers | 2023-08-15T12:10:24 | ---

license: creativeml-openrail-m

language:

- en

tags:

- diffusion

pipeline_tag: text-to-image

---

SDXL_v1.0-Dreamviewer

[SDXL](https://arxiv.org/abs/2307.01952) consists of an [ensemble of experts](https://arxiv.org/abs/2211.01324) pipeline for latent diffusion:

In a first step, the base model is used to generate (noisy) latents,

which are then further processed with a refinement model (available here: https://huggingface.co/stabilityai/stable-diffusion-xl-refiner-1.0/) specialized for the final denoising steps.

Note that the base model can be used as a standalone module.

Alternatively, we can use a two-stage pipeline as follows:

First, the base model is used to generate latents of the desired output size.

In the second step, we use a specialized high-resolution model and apply a technique called SDEdit (https://arxiv.org/abs/2108.01073, also known as "img2img")

to the latents generated in the first step, using the same prompt. This technique is slightly slower than the first one, as it requires more function evaluations.

Source code is available at https://github.com/Stability-AI/generative-models .

```

import torch

from diffusers import StableDiffusionXLPipeline, AutoencoderKL

import gc,cv2,os

from PIL import Image

import requests

from io import BytesIO

from IPython.display import display

import matplotlib.pyplot as plt

vae = AutoencoderKL.from_pretrained("madebyollin/sdxl-vae-fp16-fix", torch_dtype=torch.float16)

pipe = StableDiffusionXLPipeline.from_pretrained(

"Andyrasika/dreamshaper_sdxl1_diffusion ", torch_dtype=torch.float16, variant="fp16",vae=vae

)

pipe.enable_xformers_memory_efficient_attention()

pipe.to("cuda")

prompt = '8k intricate, highly detailed, digital photography, best quality, masterpiece, a (full body "shot) photo of A warrior man that lived with dragons his whole life is now leading them to battle. torn clothes exposing parts of her body, scratch marks, epic, hyperrealistic, hyperrealism, 8k, cinematic lighting, greg rutkowski, wlop'

negative_prompt='(deformed iris, deformed pupils), text, worst quality, low quality, jpeg artifacts, ugly, duplicate, morbid, mutilated, (extra fingers), (mutated hands), poorly drawn hands, poorly drawn face, mutation, deformed, blurry, dehydrated, bad anatomy, bad proportions, extra limbs, cloned face, disfigured, gross proportions, malformed limbs, missing arms, missing legs, extra arms, extra legs, (fused fingers), (too many fingers), long neck, camera'

image = pipe(prompt=prompt,

negative_prompt=negative_prompt,

guidance_scale=9.0,

num_inference_steps=50).images[0]

gc.collect()

torch.cuda.empty_cache()

```

| 2,719 | [

[

-0.035888671875,

-0.04278564453125,

0.0340576171875,

0.0081939697265625,

-0.033203125,

0.005016326904296875,

0.0176544189453125,

-0.0157928466796875,

0.031646728515625,

0.043304443359375,

-0.04052734375,

-0.041595458984375,

-0.05499267578125,

-0.009574890136... |

nvidia/stt_en_conformer_ctc_large | 2022-10-28T23:33:45.000Z | [

"nemo",

"automatic-speech-recognition",

"speech",

"audio",

"CTC",

"Conformer",

"Transformer",

"pytorch",

"NeMo",

"hf-asr-leaderboard",

"Riva",

"en",

"dataset:librispeech_asr",

"dataset:fisher_corpus",

"dataset:Switchboard-1",

"dataset:WSJ-0",

"dataset:WSJ-1",

"dataset:National-Sing... | automatic-speech-recognition | nvidia | null | null | nvidia/stt_en_conformer_ctc_large | 19 | 5,058 | nemo | 2022-04-09T03:43:21 | ---

language:

- en

library_name: nemo

datasets:

- librispeech_asr

- fisher_corpus

- Switchboard-1

- WSJ-0

- WSJ-1

- National-Singapore-Corpus-Part-1

- National-Singapore-Corpus-Part-6

- vctk

- VoxPopuli-(EN)

- Europarl-ASR-(EN)

- Multilingual-LibriSpeech-(2000-hours)

- mozilla-foundation/common_voice_7_0

thumbnail: null

tags:

- automatic-speech-recognition

- speech

- audio

- CTC

- Conformer

- Transformer

- pytorch

- NeMo

- hf-asr-leaderboard

- Riva

license: cc-by-4.0

widget:

- example_title: Librispeech sample 1

src: https://cdn-media.huggingface.co/speech_samples/sample1.flac

- example_title: Librispeech sample 2

src: https://cdn-media.huggingface.co/speech_samples/sample2.flac

model-index:

- name: stt_en_conformer_ctc_large

results:

- task:

name: Automatic Speech Recognition

type: automatic-speech-recognition

dataset:

name: LibriSpeech (clean)

type: librispeech_asr

config: clean

split: test

args:

language: en

metrics:

- name: Test WER

type: wer

value: 2.2

- task:

type: Automatic Speech Recognition

name: automatic-speech-recognition

dataset:

name: LibriSpeech (other)

type: librispeech_asr

config: other

split: test

args:

language: en

metrics:

- name: Test WER

type: wer

value: 4.3

- task:

type: Automatic Speech Recognition

name: automatic-speech-recognition

dataset:

name: Multilingual LibriSpeech

type: facebook/multilingual_librispeech

config: english

split: test

args:

language: en

metrics:

- name: Test WER

type: wer

value: 7.2

- task:

type: Automatic Speech Recognition

name: automatic-speech-recognition

dataset:

name: Mozilla Common Voice 7.0

type: mozilla-foundation/common_voice_7_0

config: en

split: test

args:

language: en

metrics:

- name: Test WER

type: wer

value: 8.0

- task:

type: Automatic Speech Recognition

name: automatic-speech-recognition

dataset:

name: Mozilla Common Voice 8.0

type: mozilla-foundation/common_voice_8_0

config: en

split: test

args:

language: en

metrics:

- name: Test WER

type: wer

value: 9.48

- task:

type: Automatic Speech Recognition

name: automatic-speech-recognition

dataset:

name: Wall Street Journal 92

type: wsj_0

args:

language: en

metrics:

- name: Test WER

type: wer

value: 2.0

- task:

type: Automatic Speech Recognition

name: automatic-speech-recognition

dataset:

name: Wall Street Journal 93

type: wsj_1

args:

language: en

metrics:

- name: Test WER

type: wer

value: 2.9

- task:

type: Automatic Speech Recognition

name: automatic-speech-recognition

dataset:

name: National Singapore Corpus

type: nsc_part_1

args:

language: en

metrics:

- name: Test WER

type: wer

value: 7.0

---

# NVIDIA Conformer-CTC Large (en-US)

<style>

img {

display: inline;

}

</style>

| [](#model-architecture)

| [](#model-architecture)

| [](#datasets)

| [](#deployment-with-nvidia-riva) |

This model transcribes speech in lowercase English alphabet including spaces and apostrophes, and is trained on several thousand hours of English speech data.

It is a non-autoregressive "large" variant of Conformer, with around 120 million parameters.

See the [model architecture](#model-architecture) section and [NeMo documentation](https://docs.nvidia.com/deeplearning/nemo/user-guide/docs/en/main/asr/models.html#conformer-ctc) for complete architecture details.

It is also compatible with NVIDIA Riva for [production-grade server deployments](#deployment-with-nvidia-riva).

## Usage

The model is available for use in the NeMo toolkit [3], and can be used as a pre-trained checkpoint for inference or for fine-tuning on another dataset.

To train, fine-tune or play with the model you will need to install [NVIDIA NeMo](https://github.com/NVIDIA/NeMo). We recommend you install it after you've installed latest PyTorch version.

```

pip install nemo_toolkit['all']

```

### Automatically instantiate the model

```python

import nemo.collections.asr as nemo_asr

asr_model = nemo_asr.models.EncDecCTCModelBPE.from_pretrained("nvidia/stt_en_conformer_ctc_large")

```

### Transcribing using Python

First, let's get a sample

```

wget https://dldata-public.s3.us-east-2.amazonaws.com/2086-149220-0033.wav

```

Then simply do:

```

asr_model.transcribe(['2086-149220-0033.wav'])

```

### Transcribing many audio files

```shell

python [NEMO_GIT_FOLDER]/examples/asr/transcribe_speech.py

pretrained_name="nvidia/stt_en_conformer_ctc_large"

audio_dir="<DIRECTORY CONTAINING AUDIO FILES>"

```

### Input

This model accepts 16000 kHz Mono-channel Audio (wav files) as input.

### Output

This model provides transcribed speech as a string for a given audio sample.

## Model Architecture

Conformer-CTC model is a non-autoregressive variant of Conformer model [1] for Automatic Speech Recognition which uses CTC loss/decoding instead of Transducer. You may find more info on the detail of this model here: [Conformer-CTC Model](https://docs.nvidia.com/deeplearning/nemo/user-guide/docs/en/main/asr/models.html#conformer-ctc).

## Training

The NeMo toolkit [3] was used for training the models for over several hundred epochs. These model are trained with this [example script](https://github.com/NVIDIA/NeMo/blob/main/examples/asr/asr_ctc/speech_to_text_ctc_bpe.py) and this [base config](https://github.com/NVIDIA/NeMo/blob/main/examples/asr/conf/conformer/conformer_ctc_bpe.yaml).

The tokenizers for these models were built using the text transcripts of the train set with this [script](https://github.com/NVIDIA/NeMo/blob/main/scripts/tokenizers/process_asr_text_tokenizer.py).

The checkpoint of the language model used as the neural rescorer can be found [here](https://ngc.nvidia.com/catalog/models/nvidia:nemo:asrlm_en_transformer_large_ls). You may find more info on how to train and use language models for ASR models here: [ASR Language Modeling](https://docs.nvidia.com/deeplearning/nemo/user-guide/docs/en/main/asr/asr_language_modeling.html)

### Datasets

All the models in this collection are trained on a composite dataset (NeMo ASRSET) comprising of several thousand hours of English speech:

- Librispeech 960 hours of English speech

- Fisher Corpus

- Switchboard-1 Dataset

- WSJ-0 and WSJ-1

- National Speech Corpus (Part 1, Part 6)

- VCTK

- VoxPopuli (EN)

- Europarl-ASR (EN)

- Multilingual Librispeech (MLS EN) - 2,000 hours subset

- Mozilla Common Voice (v7.0)

Note: older versions of the model may have trained on smaller set of datasets.

## Performance

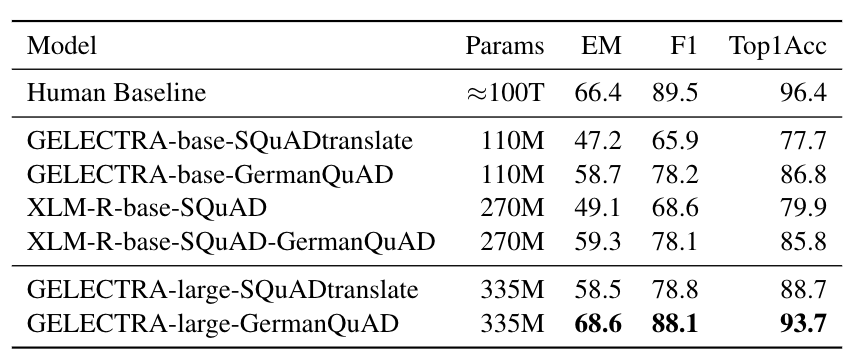

The list of the available models in this collection is shown in the following table. Performances of the ASR models are reported in terms of Word Error Rate (WER%) with greedy decoding.

| Version | Tokenizer | Vocabulary Size | LS test-other | LS test-clean | WSJ Eval92 | WSJ Dev93 | NSC Part 1 | MLS Test | MLS Dev | MCV Test 6.1 |Train Dataset |

|---------|-----------------------|-----------------|---------------|---------------|------------|-----------|-------|------|-----|-------|---------|

| 1.6.0 | SentencePiece Unigram | 128 | 4.3 | 2.2 | 2.0 | 2.9 | 7.0 | 7.2 | 6.5 | 8.0 | NeMo ASRSET 2.0 |

While deploying with [NVIDIA Riva](https://developer.nvidia.com/riva), you can combine this model with external language models to further improve WER. The WER(%) of the latest model with different language modeling techniques are reported in the following table.

| Language Modeling | Training Dataset | LS test-other | LS test-clean | Comment |

|-------------------------------------|-------------------------|---------------|---------------|---------------------------------------------------------|

|N-gram LM | LS Train + LS LM Corpus | 3.5 | 1.8 | N=10, beam_width=128, n_gram_alpha=1.0, n_gram_beta=1.0 |

|Neural Rescorer(Transformer) | LS Train + LS LM Corpus | 3.4 | 1.7 | N=10, beam_width=128 |

|N-gram + Neural Rescorer(Transformer)| LS Train + LS LM Corpus | 3.2 | 1.8 | N=10, beam_width=128, n_gram_alpha=1.0, n_gram_beta=1.0 |

## Limitations

Since this model was trained on publicly available speech datasets, the performance of this model might degrade for speech which includes technical terms, or vernacular that the model has not been trained on. The model might also perform worse for accented speech.

## Deployment with NVIDIA Riva

For the best real-time accuracy, latency, and throughput, deploy the model with [NVIDIA Riva](https://developer.nvidia.com/riva), an accelerated speech AI SDK deployable on-prem, in all clouds, multi-cloud, hybrid, at the edge, and embedded.

Additionally, Riva provides:

* World-class out-of-the-box accuracy for the most common languages with model checkpoints trained on proprietary data with hundreds of thousands of GPU-compute hours

* Best in class accuracy with run-time word boosting (e.g., brand and product names) and customization of acoustic model, language model, and inverse text normalization

* Streaming speech recognition, Kubernetes compatible scaling, and Enterprise-grade support

Check out [Riva live demo](https://developer.nvidia.com/riva#demos).

## References

- [1] [Conformer: Convolution-augmented Transformer for Speech Recognition](https://arxiv.org/abs/2005.08100)

- [2] [Google Sentencepiece Tokenizer](https://github.com/google/sentencepiece)

- [3] [NVIDIA NeMo Toolkit](https://github.com/NVIDIA/NeMo) | 10,055 | [

[

-0.032501220703125,

-0.0548095703125,

-0.0023326873779296875,

-0.0030193328857421875,

-0.01175689697265625,

-0.004039764404296875,

-0.0250396728515625,

-0.041168212890625,

-0.004917144775390625,

0.0207061767578125,

-0.031463623046875,

-0.03814697265625,

-0.04214... |

shibing624/text2vec-base-multilingual | 2023-06-26T02:45:20.000Z | [

"transformers",

"pytorch",

"bert",

"feature-extraction",

"text2vec",

"sentence-similarity",

"mteb",

"zh",

"en",

"de",

"fr",

"it",

"nl",

"pt",

"pl",

"ru",

"dataset:https://huggingface.co/datasets/shibing624/nli-zh-all/tree/main/text2vec-base-multilingual-dataset",

"license:apache-2.... | sentence-similarity | shibing624 | null | null | shibing624/text2vec-base-multilingual | 27 | 5,056 | transformers | 2023-06-22T06:28:12 | ---

pipeline_tag: sentence-similarity

license: apache-2.0

tags:

- text2vec

- feature-extraction

- sentence-similarity

- transformers

- mteb

datasets:

- >-

https://huggingface.co/datasets/shibing624/nli-zh-all/tree/main/text2vec-base-multilingual-dataset

language:

- zh

- en

- de

- fr

- it

- nl

- pt

- pl

- ru

metrics:

- spearmanr

library_name: transformers

model-index:

- name: text2vec-base-multilingual

results:

- task:

type: Classification

dataset:

type: mteb/amazon_counterfactual

name: MTEB AmazonCounterfactualClassification (en)

config: en

split: test

revision: e8379541af4e31359cca9fbcf4b00f2671dba205

metrics:

- type: accuracy

value: 70.97014925373134

- type: ap

value: 33.95151328318672

- type: f1

value: 65.14740155705596

- task:

type: Classification

dataset:

type: mteb/amazon_counterfactual

name: MTEB AmazonCounterfactualClassification (de)

config: de

split: test

revision: e8379541af4e31359cca9fbcf4b00f2671dba205

metrics:

- type: accuracy

value: 68.69379014989293

- type: ap

value: 79.68277579733802

- type: f1

value: 66.54960052336921

- task:

type: Classification

dataset:

type: mteb/amazon_counterfactual

name: MTEB AmazonCounterfactualClassification (en-ext)

config: en-ext

split: test

revision: e8379541af4e31359cca9fbcf4b00f2671dba205

metrics:

- type: accuracy

value: 70.90704647676162

- type: ap

value: 20.747518928580437

- type: f1

value: 58.64365465884924

- task:

type: Classification

dataset:

type: mteb/amazon_counterfactual

name: MTEB AmazonCounterfactualClassification (ja)

config: ja

split: test

revision: e8379541af4e31359cca9fbcf4b00f2671dba205

metrics:

- type: accuracy

value: 61.605995717344754

- type: ap

value: 14.135974879487028

- type: f1

value: 49.980224800472136

- task:

type: Classification

dataset:

type: mteb/amazon_polarity

name: MTEB AmazonPolarityClassification

config: default

split: test

revision: e2d317d38cd51312af73b3d32a06d1a08b442046

metrics:

- type: accuracy

value: 66.103375

- type: ap

value: 61.10087197664471

- type: f1

value: 65.75198509894145

- task:

type: Classification

dataset:

type: mteb/amazon_reviews_multi

name: MTEB AmazonReviewsClassification (en)

config: en

split: test

revision: 1399c76144fd37290681b995c656ef9b2e06e26d

metrics:

- type: accuracy

value: 33.134

- type: f1

value: 32.7905397597083

- task:

type: Classification

dataset:

type: mteb/amazon_reviews_multi

name: MTEB AmazonReviewsClassification (de)

config: de

split: test

revision: 1399c76144fd37290681b995c656ef9b2e06e26d

metrics:

- type: accuracy

value: 33.388

- type: f1

value: 33.190561196873084

- task:

type: Classification

dataset:

type: mteb/amazon_reviews_multi

name: MTEB AmazonReviewsClassification (es)

config: es

split: test

revision: 1399c76144fd37290681b995c656ef9b2e06e26d

metrics:

- type: accuracy

value: 34.824

- type: f1

value: 34.297290157740726

- task:

type: Classification

dataset:

type: mteb/amazon_reviews_multi

name: MTEB AmazonReviewsClassification (fr)

config: fr

split: test

revision: 1399c76144fd37290681b995c656ef9b2e06e26d

metrics:

- type: accuracy

value: 33.449999999999996

- type: f1

value: 33.08017234412433

- task:

type: Classification

dataset:

type: mteb/amazon_reviews_multi

name: MTEB AmazonReviewsClassification (ja)

config: ja

split: test

revision: 1399c76144fd37290681b995c656ef9b2e06e26d

metrics:

- type: accuracy

value: 30.046

- type: f1

value: 29.857141661482228

- task:

type: Classification

dataset:

type: mteb/amazon_reviews_multi

name: MTEB AmazonReviewsClassification (zh)

config: zh

split: test

revision: 1399c76144fd37290681b995c656ef9b2e06e26d

metrics:

- type: accuracy

value: 32.522

- type: f1

value: 31.854699911472174

- task:

type: Clustering

dataset:

type: mteb/arxiv-clustering-p2p

name: MTEB ArxivClusteringP2P

config: default

split: test

revision: a122ad7f3f0291bf49cc6f4d32aa80929df69d5d

metrics:

- type: v_measure

value: 32.31918856561886

- task:

type: Clustering

dataset:

type: mteb/arxiv-clustering-s2s

name: MTEB ArxivClusteringS2S

config: default

split: test

revision: f910caf1a6075f7329cdf8c1a6135696f37dbd53

metrics:

- type: v_measure

value: 25.503481615956137

- task:

type: Reranking

dataset:

type: mteb/askubuntudupquestions-reranking

name: MTEB AskUbuntuDupQuestions

config: default

split: test

revision: 2000358ca161889fa9c082cb41daa8dcfb161a54

metrics:

- type: map

value: 57.91471462820568

- type: mrr

value: 71.82990370663501

- task:

type: STS

dataset:

type: mteb/biosses-sts

name: MTEB BIOSSES

config: default

split: test

revision: d3fb88f8f02e40887cd149695127462bbcf29b4a

metrics:

- type: cos_sim_pearson

value: 68.83853315193127

- type: cos_sim_spearman

value: 66.16174850417771

- type: euclidean_pearson

value: 56.65313897263153

- type: euclidean_spearman

value: 52.69156205876939

- type: manhattan_pearson

value: 56.97282154658304

- type: manhattan_spearman

value: 53.167476517261015

- task:

type: Classification

dataset:

type: mteb/banking77

name: MTEB Banking77Classification

config: default

split: test

revision: 0fd18e25b25c072e09e0d92ab615fda904d66300

metrics:

- type: accuracy

value: 78.08441558441558

- type: f1

value: 77.99825264827898

- task:

type: Clustering

dataset:

type: mteb/biorxiv-clustering-p2p

name: MTEB BiorxivClusteringP2P

config: default

split: test

revision: 65b79d1d13f80053f67aca9498d9402c2d9f1f40

metrics:

- type: v_measure

value: 28.98583420521256

- task:

type: Clustering

dataset:

type: mteb/biorxiv-clustering-s2s

name: MTEB BiorxivClusteringS2S

config: default

split: test

revision: 258694dd0231531bc1fd9de6ceb52a0853c6d908

metrics:

- type: v_measure

value: 23.195091778460892

- task:

type: Classification

dataset:

type: mteb/emotion

name: MTEB EmotionClassification

config: default

split: test

revision: 4f58c6b202a23cf9a4da393831edf4f9183cad37

metrics:

- type: accuracy

value: 43.35

- type: f1

value: 38.80269436557695

- task:

type: Classification

dataset:

type: mteb/imdb

name: MTEB ImdbClassification

config: default

split: test

revision: 3d86128a09e091d6018b6d26cad27f2739fc2db7

metrics:

- type: accuracy

value: 59.348

- type: ap

value: 55.75065220262251

- type: f1

value: 58.72117519082607

- task:

type: Classification

dataset:

type: mteb/mtop_domain

name: MTEB MTOPDomainClassification (en)

config: en

split: test

revision: d80d48c1eb48d3562165c59d59d0034df9fff0bf

metrics:

- type: accuracy

value: 81.04879160966712

- type: f1

value: 80.86889779192701

- task:

type: Classification

dataset:

type: mteb/mtop_domain

name: MTEB MTOPDomainClassification (de)

config: de

split: test

revision: d80d48c1eb48d3562165c59d59d0034df9fff0bf

metrics:

- type: accuracy

value: 78.59397013243168

- type: f1

value: 77.09902761555972

- task:

type: Classification

dataset:

type: mteb/mtop_domain

name: MTEB MTOPDomainClassification (es)

config: es

split: test

revision: d80d48c1eb48d3562165c59d59d0034df9fff0bf

metrics:

- type: accuracy

value: 79.24282855236824

- type: f1

value: 78.75883867079015

- task:

type: Classification

dataset:

type: mteb/mtop_domain

name: MTEB MTOPDomainClassification (fr)

config: fr

split: test

revision: d80d48c1eb48d3562165c59d59d0034df9fff0bf

metrics:

- type: accuracy

value: 76.16661446915127

- type: f1

value: 76.30204722831901

- task:

type: Classification

dataset:

type: mteb/mtop_domain

name: MTEB MTOPDomainClassification (hi)

config: hi

split: test

revision: d80d48c1eb48d3562165c59d59d0034df9fff0bf

metrics:

- type: accuracy

value: 78.74506991753317

- type: f1

value: 77.50560442779701

- task:

type: Classification

dataset:

type: mteb/mtop_domain

name: MTEB MTOPDomainClassification (th)

config: th

split: test

revision: d80d48c1eb48d3562165c59d59d0034df9fff0bf

metrics:

- type: accuracy

value: 77.67088607594937

- type: f1

value: 77.21442956887493

- task:

type: Classification

dataset:

type: mteb/mtop_intent

name: MTEB MTOPIntentClassification (en)

config: en

split: test

revision: ae001d0e6b1228650b7bd1c2c65fb50ad11a8aba

metrics:

- type: accuracy

value: 62.786137710898316

- type: f1

value: 46.23474201126368

- task:

type: Classification

dataset:

type: mteb/mtop_intent

name: MTEB MTOPIntentClassification (de)

config: de

split: test

revision: ae001d0e6b1228650b7bd1c2c65fb50ad11a8aba

metrics:

- type: accuracy

value: 55.285996055226825

- type: f1

value: 37.98039513682919

- task:

type: Classification

dataset:

type: mteb/mtop_intent

name: MTEB MTOPIntentClassification (es)

config: es

split: test

revision: ae001d0e6b1228650b7bd1c2c65fb50ad11a8aba

metrics:

- type: accuracy

value: 58.67911941294196

- type: f1

value: 40.541410807124954

- task:

type: Classification

dataset:

type: mteb/mtop_intent

name: MTEB MTOPIntentClassification (fr)

config: fr

split: test

revision: ae001d0e6b1228650b7bd1c2c65fb50ad11a8aba

metrics:

- type: accuracy

value: 53.257124960851854

- type: f1

value: 38.42982319259366

- task:

type: Classification

dataset:

type: mteb/mtop_intent

name: MTEB MTOPIntentClassification (hi)

config: hi

split: test

revision: ae001d0e6b1228650b7bd1c2c65fb50ad11a8aba

metrics:

- type: accuracy

value: 59.62352097525995

- type: f1

value: 41.28886486568534

- task:

type: Classification

dataset:

type: mteb/mtop_intent

name: MTEB MTOPIntentClassification (th)

config: th

split: test

revision: ae001d0e6b1228650b7bd1c2c65fb50ad11a8aba

metrics:

- type: accuracy

value: 58.799276672694404

- type: f1

value: 43.68379466247341

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (af)

config: af

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 45.42030934767989

- type: f1

value: 44.12201543566376

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (am)

config: am

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 37.67652992602556

- type: f1

value: 35.422091900843164

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (ar)

config: ar

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 45.02353732347007

- type: f1

value: 41.852484084738194

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (az)

config: az

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 48.70880968392737

- type: f1

value: 46.904360615435046

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (bn)

config: bn

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 43.78950907868191

- type: f1

value: 41.58872353920405

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (cy)

config: cy

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 28.759246805648957

- type: f1

value: 27.41182001374226

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (da)

config: da

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 56.74176193678547

- type: f1

value: 53.82727354182497

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (de)

config: de

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 51.55682582380632

- type: f1

value: 49.41963627941866

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (el)

config: el

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 56.46940147948891

- type: f1

value: 55.28178711367465

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (en)

config: en

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 63.83322125084063

- type: f1

value: 61.836172900845554

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (es)

config: es

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 58.27505043712172

- type: f1

value: 57.642436374361154

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (fa)

config: fa

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 59.05178211163417

- type: f1

value: 56.858998820504056

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (fi)

config: fi

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 57.357094821788834

- type: f1

value: 54.79711189260453

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (fr)

config: fr

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 58.79959650302623

- type: f1

value: 57.59158671719513

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (he)

config: he

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 51.1768661735037

- type: f1

value: 48.886397276270515

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (hi)

config: hi

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 57.06455951580362

- type: f1

value: 55.01530952684585

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (hu)

config: hu

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 58.3591123066577

- type: f1

value: 55.9277783370191

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (hy)

config: hy

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 52.108271687962336

- type: f1

value: 51.195023400664596

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (id)

config: id

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 58.26832548755883

- type: f1

value: 56.60774065423401

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (is)

config: is

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 35.806993947545394

- type: f1

value: 34.290418953173294

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (it)

config: it

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 58.27841291190315

- type: f1

value: 56.9438998642419

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (ja)

config: ja

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 60.78009414929389

- type: f1

value: 59.15780842483667

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (jv)

config: jv

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 31.153328850033624

- type: f1

value: 30.11004596099605

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (ka)

config: ka

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 44.50235373234701

- type: f1

value: 44.040585262624745

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (km)

config: km

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 40.99193006052455

- type: f1

value: 39.505480119272484

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (kn)

config: kn

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 46.95696032279758

- type: f1

value: 43.093638940785326

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (ko)

config: ko

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 54.73100201748486

- type: f1

value: 52.79750744404114

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (lv)

config: lv

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 54.865501008742434

- type: f1

value: 53.64798408964839

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (ml)

config: ml

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 47.891728312037664

- type: f1

value: 45.261229414636055

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (mn)

config: mn

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 52.2259583053127

- type: f1

value: 50.5903419246987

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (ms)

config: ms

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 54.277067921990586

- type: f1

value: 52.472042479965886

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (my)

config: my

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 51.95696032279757

- type: f1

value: 49.79330411854258

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (nb)

config: nb

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 54.63685272360457

- type: f1

value: 52.81267480650003

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (nl)

config: nl

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 59.451916610625425

- type: f1

value: 57.34790386645091

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (pl)

config: pl

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 58.91055817081372

- type: f1

value: 56.39195048528157

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (pt)

config: pt

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 59.84196368527236

- type: f1

value: 58.72244763127063

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (ro)

config: ro

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 57.04102219233354

- type: f1

value: 55.67040186148946

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (ru)

config: ru

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 58.01613987895091

- type: f1

value: 57.203949825484855

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (sl)

config: sl

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 56.35843981170141

- type: f1

value: 54.18656338999773

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (sq)

config: sq

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 56.47948890383322

- type: f1

value: 54.772224557130954

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (sv)

config: sv

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 58.43981170141224

- type: f1

value: 56.09260971364242

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (sw)

config: sw

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 33.9609952925353

- type: f1

value: 33.18853392353405

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (ta)

config: ta

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 44.29388029589778

- type: f1

value: 41.51986533284474

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (te)

config: te

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 47.13517148621385

- type: f1

value: 43.94784138379624

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (th)

config: th

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 56.856086079354405

- type: f1

value: 56.618177384748456

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (tl)

config: tl

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 35.35978480161398

- type: f1

value: 34.060680080365046

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (tr)

config: tr

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 59.630127774041696

- type: f1

value: 57.46288652988266

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (ur)

config: ur

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 52.7908540685945

- type: f1

value: 51.46934239116157

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (vi)

config: vi

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 54.6469401479489

- type: f1

value: 53.9903066185816

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (zh-CN)

config: zh-CN

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 60.85743106926698

- type: f1

value: 59.31579548450755

- task:

type: Classification

dataset:

type: mteb/amazon_massive_intent

name: MTEB MassiveIntentClassification (zh-TW)

config: zh-TW

split: test

revision: 31efe3c427b0bae9c22cbb560b8f15491cc6bed7

metrics:

- type: accuracy

value: 57.46805648957633

- type: f1

value: 57.48469733657326

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (af)

config: af

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 50.86415601882985

- type: f1

value: 49.41696672602645

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (am)

config: am

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 41.183591123066584

- type: f1

value: 40.04563865770774

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (ar)

config: ar

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 50.08069939475455

- type: f1

value: 50.724800165846126

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (az)

config: az

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 51.287827841291204

- type: f1

value: 50.72873776739851

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (bn)

config: bn

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 46.53328850033624

- type: f1

value: 45.93317866639667

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (cy)

config: cy

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 34.347679892400805

- type: f1

value: 31.941581141280828

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (da)

config: da

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 63.073301950235376

- type: f1

value: 62.228728940111054

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (de)

config: de

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 56.398789509078675

- type: f1

value: 54.80778341609032

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (el)

config: el

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 61.79892400806993

- type: f1

value: 60.69430756982446

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (en)

config: en

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 66.96368527236046

- type: f1

value: 66.5893927997656

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (es)

config: es

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 62.21250840618695

- type: f1

value: 62.347177794128925

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (fa)

config: fa

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 62.43779421654339

- type: f1

value: 61.307701312085605

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (fi)

config: fi

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 61.09952925353059

- type: f1

value: 60.313907927386914

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (fr)

config: fr

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 63.38601210490922

- type: f1

value: 63.05968938353488

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (he)

config: he

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 56.2878278412912

- type: f1

value: 55.92927644838597

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (hi)

config: hi

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 60.62878278412912

- type: f1

value: 60.25299253652635

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (hu)

config: hu

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 63.28850033624748

- type: f1

value: 62.77053246337031

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (hy)

config: hy

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 54.875588433086754

- type: f1

value: 54.30717357279134

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (id)

config: id

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 61.99394754539341

- type: f1

value: 61.73085530883037

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (is)

config: is

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 38.581035642232685

- type: f1

value: 36.96287269695893

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (it)

config: it

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 62.350369872225976

- type: f1

value: 61.807327324823966

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (ja)

config: ja

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 65.17148621385338

- type: f1

value: 65.29620144656751

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (jv)

config: jv

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 36.12642905178212

- type: f1

value: 35.334393048479484

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (ka)

config: ka

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 50.26899798251513

- type: f1

value: 49.041065960139434

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (km)

config: km

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 44.24344317417619

- type: f1

value: 42.42177854872125

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (kn)

config: kn

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 47.370544720914594

- type: f1

value: 46.589722581465324

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (ko)

config: ko

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 58.89038332212508

- type: f1

value: 57.753607921990394

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (lv)

config: lv

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 56.506388702084756

- type: f1

value: 56.0485860423295

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (ml)

config: ml

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 50.06388702084734

- type: f1

value: 50.109364641824584

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (mn)

config: mn

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 55.053799596503026

- type: f1

value: 54.490665705666686

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (ms)

config: ms

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 59.77135171486213

- type: f1

value: 58.2808650158803

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (my)

config: my

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 55.71620712844654

- type: f1

value: 53.863034882475304

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (nb)

config: nb

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 60.26227303295225

- type: f1

value: 59.86604657147016

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (nl)

config: nl

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 63.3759246805649

- type: f1

value: 62.45257339288533

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (pl)

config: pl

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 62.552118359112306

- type: f1

value: 61.354449605776765

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (pt)

config: pt

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 62.40753194351043

- type: f1

value: 61.98779889528889

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (ro)

config: ro

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 60.68258238063214

- type: f1

value: 60.59973978976571

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (ru)

config: ru

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 62.31002017484868

- type: f1

value: 62.412312268503655

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (sl)

config: sl

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 61.429051782111635

- type: f1

value: 61.60095590401424

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (sq)

config: sq

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 62.229320780094156

- type: f1

value: 61.02251426747547

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (sv)

config: sv

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 64.42501681237391

- type: f1

value: 63.461494430605235

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (sw)

config: sw

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 38.51714862138534

- type: f1

value: 37.12466722986362

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (ta)

config: ta

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 46.99731002017485

- type: f1

value: 45.859147049984834

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (te)

config: te

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 51.01882985877605

- type: f1

value: 49.01040173136056

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (th)

config: th

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 63.234700739744454

- type: f1

value: 62.732294595214746

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (tl)

config: tl

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 38.72225958305312

- type: f1

value: 36.603231928120906

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (tr)

config: tr

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 64.48554135843982

- type: f1

value: 63.97380562022752

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (ur)

config: ur

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 56.7955615332885

- type: f1

value: 55.95308241204802

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (vi)

config: vi

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 57.06455951580362

- type: f1

value: 56.95570494066693

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (zh-CN)

config: zh-CN

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 65.8338937457969

- type: f1

value: 65.6778746906008

- task:

type: Classification

dataset:

type: mteb/amazon_massive_scenario

name: MTEB MassiveScenarioClassification (zh-TW)

config: zh-TW

split: test

revision: 7d571f92784cd94a019292a1f45445077d0ef634

metrics:

- type: accuracy

value: 63.369199731002034

- type: f1

value: 63.527650116059945

- task:

type: Clustering

dataset:

type: mteb/medrxiv-clustering-p2p

name: MTEB MedrxivClusteringP2P

config: default

split: test

revision: e7a26af6f3ae46b30dde8737f02c07b1505bcc73

metrics:

- type: v_measure

value: 29.442504112215538

- task:

type: Clustering

dataset:

type: mteb/medrxiv-clustering-s2s

name: MTEB MedrxivClusteringS2S

config: default

split: test

revision: 35191c8c0dca72d8ff3efcd72aa802307d469663

metrics:

- type: v_measure

value: 26.16062814161053

- task:

type: Retrieval

dataset:

type: quora

name: MTEB QuoraRetrieval

config: default

split: test

revision: None

metrics:

- type: map_at_1

value: 65.319

- type: map_at_10

value: 78.72

- type: map_at_100

value: 79.44600000000001

- type: map_at_1000

value: 79.469

- type: map_at_3

value: 75.693

- type: map_at_5

value: 77.537

- type: mrr_at_1

value: 75.24

- type: mrr_at_10

value: 82.304

- type: mrr_at_100

value: 82.485

- type: mrr_at_1000

value: 82.489

- type: mrr_at_3

value: 81.002

- type: mrr_at_5

value: 81.817

- type: ndcg_at_1

value: 75.26

- type: ndcg_at_10

value: 83.07

- type: ndcg_at_100

value: 84.829

- type: ndcg_at_1000

value: 85.087

- type: ndcg_at_3

value: 79.67699999999999

- type: ndcg_at_5

value: 81.42

- type: precision_at_1

value: 75.26

- type: precision_at_10

value: 12.697

- type: precision_at_100

value: 1.4829999999999999

- type: precision_at_1000

value: 0.154

- type: precision_at_3

value: 34.849999999999994

- type: precision_at_5

value: 23.054

- type: recall_at_1

value: 65.319

- type: recall_at_10

value: 91.551

- type: recall_at_100

value: 98.053

- type: recall_at_1000

value: 99.516

- type: recall_at_3

value: 81.819

- type: recall_at_5

value: 86.66199999999999

- task:

type: Clustering

dataset:

type: mteb/reddit-clustering

name: MTEB RedditClustering

config: default

split: test

revision: 24640382cdbf8abc73003fb0fa6d111a705499eb

metrics:

- type: v_measure

value: 31.249791587189996

- task:

type: Clustering

dataset:

type: mteb/reddit-clustering-p2p

name: MTEB RedditClusteringP2P

config: default

split: test

revision: 282350215ef01743dc01b456c7f5241fa8937f16

metrics:

- type: v_measure

value: 43.302922383029816

- task:

type: STS

dataset:

type: mteb/sickr-sts

name: MTEB SICK-R

config: default

split: test

revision: a6ea5a8cab320b040a23452cc28066d9beae2cee

metrics:

- type: cos_sim_pearson

value: 84.80670811345861

- type: cos_sim_spearman

value: 79.97373018384307

- type: euclidean_pearson

value: 83.40205934125837

- type: euclidean_spearman

value: 79.73331008251854

- type: manhattan_pearson

value: 83.3320983393412

- type: manhattan_spearman

value: 79.677919746045

- task:

type: STS

dataset:

type: mteb/sts12-sts

name: MTEB STS12

config: default

split: test

revision: a0d554a64d88156834ff5ae9920b964011b16384

metrics:

- type: cos_sim_pearson

value: 86.3816087627948

- type: cos_sim_spearman

value: 80.91314664846955

- type: euclidean_pearson

value: 85.10603071031096

- type: euclidean_spearman

value: 79.42663939501841

- type: manhattan_pearson

value: 85.16096376014066

- type: manhattan_spearman

value: 79.51936545543191

- task:

type: STS

dataset:

type: mteb/sts13-sts

name: MTEB STS13

config: default

split: test

revision: 7e90230a92c190f1bf69ae9002b8cea547a64cca

metrics:

- type: cos_sim_pearson

value: 80.44665329940209

- type: cos_sim_spearman

value: 82.86479010707745

- type: euclidean_pearson

value: 84.06719627734672

- type: euclidean_spearman

value: 84.9356099976297

- type: manhattan_pearson

value: 84.10370009572624

- type: manhattan_spearman

value: 84.96828040546536

- task:

type: STS

dataset:

type: mteb/sts14-sts

name: MTEB STS14

config: default

split: test

revision: 6031580fec1f6af667f0bd2da0a551cf4f0b2375

metrics:

- type: cos_sim_pearson

value: 86.05704260568437

- type: cos_sim_spearman

value: 87.36399473803172

- type: euclidean_pearson

value: 86.8895170159388

- type: euclidean_spearman

value: 87.16246440866921

- type: manhattan_pearson

value: 86.80814774538997

- type: manhattan_spearman

value: 87.09320142699522

- task:

type: STS

dataset:

type: mteb/sts15-sts

name: MTEB STS15

config: default

split: test

revision: ae752c7c21bf194d8b67fd573edf7ae58183cbe3

metrics:

- type: cos_sim_pearson

value: 85.97825118945852

- type: cos_sim_spearman

value: 88.31438033558268

- type: euclidean_pearson

value: 87.05174694758092

- type: euclidean_spearman

value: 87.80659468392355

- type: manhattan_pearson

value: 86.98831322198717

- type: manhattan_spearman

value: 87.72820615049285

- task:

type: STS

dataset:

type: mteb/sts16-sts

name: MTEB STS16

config: default

split: test

revision: 4d8694f8f0e0100860b497b999b3dbed754a0513

metrics:

- type: cos_sim_pearson

value: 78.68745420126719

- type: cos_sim_spearman

value: 81.6058424699445

- type: euclidean_pearson

value: 81.16540133861879

- type: euclidean_spearman

value: 81.86377535458067

- type: manhattan_pearson

value: 81.13813317937021

- type: manhattan_spearman

value: 81.87079962857256

- task:

type: STS

dataset:

type: mteb/sts17-crosslingual-sts

name: MTEB STS17 (ko-ko)

config: ko-ko

split: test

revision: af5e6fb845001ecf41f4c1e033ce921939a2a68d

metrics:

- type: cos_sim_pearson

value: 68.06192660936868

- type: cos_sim_spearman

value: 68.2376353514075

- type: euclidean_pearson

value: 60.68326946956215

- type: euclidean_spearman

value: 59.19352349785952

- type: manhattan_pearson

value: 60.6592944683418

- type: manhattan_spearman

value: 59.167534419270865

- task:

type: STS

dataset:

type: mteb/sts17-crosslingual-sts

name: MTEB STS17 (ar-ar)

config: ar-ar

split: test

revision: af5e6fb845001ecf41f4c1e033ce921939a2a68d

metrics:

- type: cos_sim_pearson

value: 76.78098264855684

- type: cos_sim_spearman

value: 78.02670452969812

- type: euclidean_pearson

value: 77.26694463661255

- type: euclidean_spearman

value: 77.47007626009587

- type: manhattan_pearson

value: 77.25070088632027

- type: manhattan_spearman

value: 77.36368265830724

- task:

type: STS

dataset:

type: mteb/sts17-crosslingual-sts

name: MTEB STS17 (en-ar)

config: en-ar

split: test

revision: af5e6fb845001ecf41f4c1e033ce921939a2a68d

metrics:

- type: cos_sim_pearson

value: 78.45418506379532

- type: cos_sim_spearman

value: 78.60412019902428

- type: euclidean_pearson

value: 79.90303710850512

- type: euclidean_spearman

value: 78.67123625004957

- type: manhattan_pearson

value: 80.09189580897753

- type: manhattan_spearman

value: 79.02484481441483

- task:

type: STS

dataset:

type: mteb/sts17-crosslingual-sts

name: MTEB STS17 (en-de)

config: en-de

split: test

revision: af5e6fb845001ecf41f4c1e033ce921939a2a68d

metrics:

- type: cos_sim_pearson

value: 82.35556731232779

- type: cos_sim_spearman

value: 81.48249735354844

- type: euclidean_pearson

value: 81.66748026636621

- type: euclidean_spearman

value: 80.35571574338547

- type: manhattan_pearson

value: 81.38214732806365

- type: manhattan_spearman

value: 79.9018202958774

- task:

type: STS

dataset:

type: mteb/sts17-crosslingual-sts

name: MTEB STS17 (en-en)

config: en-en

split: test

revision: af5e6fb845001ecf41f4c1e033ce921939a2a68d

metrics:

- type: cos_sim_pearson

value: 86.4527703176897