modelId stringlengths 4 111 | lastModified stringlengths 24 24 | tags list | pipeline_tag stringlengths 5 30 ⌀ | author stringlengths 2 34 ⌀ | config null | securityStatus null | id stringlengths 4 111 | likes int64 0 9.53k | downloads int64 2 73.6M | library_name stringlengths 2 84 ⌀ | created timestamp[us] | card stringlengths 101 901k | card_len int64 101 901k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

microsoft/markuplm-base | 2022-12-15T13:59:57.000Z | [

"transformers",

"pytorch",

"markuplm",

"en",

"arxiv:2110.08518",

"endpoints_compatible",

"has_space",

"region:us"

] | null | microsoft | null | null | microsoft/markuplm-base | 15 | 4,586 | transformers | 2022-03-02T23:29:05 | ---

language:

- en

---

# MarkupLM

**Multimodal (text +markup language) pre-training for [Document AI](https://www.microsoft.com/en-us/research/project/document-ai/)**

## Introduction

MarkupLM is a simple but effective multi-modal pre-training method of text and markup language for visually-rich document understanding and information extraction tasks, such as webpage QA and webpage information extraction. MarkupLM archives the SOTA results on multiple datasets. For more details, please refer to our paper:

[MarkupLM: Pre-training of Text and Markup Language for Visually-rich Document Understanding](https://arxiv.org/abs/2110.08518) Junlong Li, Yiheng Xu, Lei Cui, Furu Wei, ACL 2022

## Usage

We refer to the [docs](https://huggingface.co/docs/transformers/main/en/model_doc/markuplm) and [demo notebooks](https://github.com/NielsRogge/Transformers-Tutorials/tree/master/MarkupLM). | 897 | [

[

-0.0304718017578125,

-0.06378173828125,

0.0273590087890625,

0.01535797119140625,

-0.024383544921875,

0.0181121826171875,

-0.0016908645629882812,

-0.023345947265625,

-0.01708984375,

0.005512237548828125,

-0.04681396484375,

-0.039398193359375,

-0.044708251953125,

... |

artificialguybr/freedom | 2023-06-12T12:43:30.000Z | [

"diffusers",

"text-to-image",

"stable-diffusion",

"license:bigscience-openrail-m",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | artificialguybr | null | null | artificialguybr/freedom | 13 | 4,586 | diffusers | 2023-06-10T06:45:00 | ---

license: bigscience-openrail-m

tags:

- text-to-image

- stable-diffusion

---

**Freedom.Redmond is here!**

You can currently test it at this link thanks to the makeai.run API.

https://huggingface.co/spaces/artificialguybr/freedom/

I'm grateful for the GPU time from **Redmond.AI** that allowed me to finish this model!

**This is a generalist model fine-tuned on SD 2.1 768X with a focus on generating images in >1024x.**

The model has a high capacity to generate realistic, artistic images, cars, people, and a wide variety of themes. It's a versatile model.

This model will serve as the basis for a dozen models and LORAs that will come specialized in specific themes.

I recommend testing some prompts with or without negative prompts as there are cases where the results are also interesting without negatives.

I highly recommend DPM+ SDE/2M or 2M SDE settings with 30 Steps. In resolution above 768x.

I really hope you like the model and use it.

If you like the model and think it's worth it, you can make a donation to my Patreon or Ko-fi.

Patreon:https://www.patreon.com/user?u=81570187

Ko-fi:https://ko-fi.com/jvkape

I want to give a huge thanks to the people who helped me these past three months:

Mousewrites, PeePa, Kaz, Queria Star Morta, theovercomer8, Nawnie, Freon, Kohya.

Follow me on Twitter to have acess before for the future models:

https://twitter.com/artificialguybr | 1,400 | [

[

-0.0528564453125,

-0.05206298828125,

0.046600341796875,

0.0171356201171875,

-0.033050537109375,

-0.0186614990234375,

-0.007755279541015625,

-0.06182861328125,

0.0692138671875,

0.034637451171875,

-0.06671142578125,

-0.020599365234375,

-0.032012939453125,

0.00... |

TheBloke/Llama-2-7B-Chat-fp16 | 2023-07-26T08:27:22.000Z | [

"transformers",

"pytorch",

"safetensors",

"llama",

"text-generation",

"facebook",

"meta",

"llama-2",

"en",

"arxiv:2307.09288",

"text-generation-inference",

"region:us"

] | text-generation | TheBloke | null | null | TheBloke/Llama-2-7B-Chat-fp16 | 23 | 4,582 | transformers | 2023-07-26T08:21:50 | ---

extra_gated_heading: Access Llama 2 on Hugging Face

extra_gated_description: >-

This is a form to enable access to Llama 2 on Hugging Face after you have been

granted access from Meta. Please visit the [Meta website](https://ai.meta.com/resources/models-and-libraries/llama-downloads) and accept our

license terms and acceptable use policy before submitting this form. Requests

will be processed in 1-2 days.

extra_gated_prompt: "**Your Hugging Face account email address MUST match the email you provide on the Meta website, or your request will not be approved.**"

extra_gated_button_content: Submit

extra_gated_fields:

I agree to share my name, email address and username with Meta and confirm that I have already been granted download access on the Meta website: checkbox

language:

- en

pipeline_tag: text-generation

inference: false

arxiv: 2307.09288

tags:

- facebook

- meta

- pytorch

- llama

- llama-2

---

# **Llama 2**

Llama 2 is a collection of pretrained and fine-tuned generative text models ranging in scale from 7 billion to 70 billion parameters. This is the repository for the 7B fine-tuned model, optimized for dialogue use cases and converted for the Hugging Face Transformers format. Links to other models can be found in the index at the bottom.

## Model Details

*Note: Use of this model is governed by the Meta license. In order to download the model weights and tokenizer, please visit the [website](https://ai.meta.com/resources/models-and-libraries/llama-downloads/) and accept our License before requesting access here.*

Meta developed and publicly released the Llama 2 family of large language models (LLMs), a collection of pretrained and fine-tuned generative text models ranging in scale from 7 billion to 70 billion parameters. Our fine-tuned LLMs, called Llama-2-Chat, are optimized for dialogue use cases. Llama-2-Chat models outperform open-source chat models on most benchmarks we tested, and in our human evaluations for helpfulness and safety, are on par with some popular closed-source models like ChatGPT and PaLM.

**Model Developers** Meta

**Variations** Llama 2 comes in a range of parameter sizes — 7B, 13B, and 70B — as well as pretrained and fine-tuned variations.

**Input** Models input text only.

**Output** Models generate text only.

**Model Architecture** Llama 2 is an auto-regressive language model that uses an optimized transformer architecture. The tuned versions use supervised fine-tuning (SFT) and reinforcement learning with human feedback (RLHF) to align to human preferences for helpfulness and safety.

||Training Data|Params|Content Length|GQA|Tokens|LR|

|---|---|---|---|---|---|---|

|Llama 2|*A new mix of publicly available online data*|7B|4k|✗|2.0T|3.0 x 10<sup>-4</sup>|

|Llama 2|*A new mix of publicly available online data*|13B|4k|✗|2.0T|3.0 x 10<sup>-4</sup>|

|Llama 2|*A new mix of publicly available online data*|70B|4k|✔|2.0T|1.5 x 10<sup>-4</sup>|

*Llama 2 family of models.* Token counts refer to pretraining data only. All models are trained with a global batch-size of 4M tokens. Bigger models - 70B -- use Grouped-Query Attention (GQA) for improved inference scalability.

**Model Dates** Llama 2 was trained between January 2023 and July 2023.

**Status** This is a static model trained on an offline dataset. Future versions of the tuned models will be released as we improve model safety with community feedback.

**License** A custom commercial license is available at: [https://ai.meta.com/resources/models-and-libraries/llama-downloads/](https://ai.meta.com/resources/models-and-libraries/llama-downloads/)

**Research Paper** ["Llama-2: Open Foundation and Fine-tuned Chat Models"](arxiv.org/abs/2307.09288)

## Intended Use

**Intended Use Cases** Llama 2 is intended for commercial and research use in English. Tuned models are intended for assistant-like chat, whereas pretrained models can be adapted for a variety of natural language generation tasks.

To get the expected features and performance for the chat versions, a specific formatting needs to be followed, including the `INST` and `<<SYS>>` tags, `BOS` and `EOS` tokens, and the whitespaces and breaklines in between (we recommend calling `strip()` on inputs to avoid double-spaces). See our reference code in github for details: [`chat_completion`](https://github.com/facebookresearch/llama/blob/main/llama/generation.py#L212).

**Out-of-scope Uses** Use in any manner that violates applicable laws or regulations (including trade compliance laws).Use in languages other than English. Use in any other way that is prohibited by the Acceptable Use Policy and Licensing Agreement for Llama 2.

## Hardware and Software

**Training Factors** We used custom training libraries, Meta's Research Super Cluster, and production clusters for pretraining. Fine-tuning, annotation, and evaluation were also performed on third-party cloud compute.

**Carbon Footprint** Pretraining utilized a cumulative 3.3M GPU hours of computation on hardware of type A100-80GB (TDP of 350-400W). Estimated total emissions were 539 tCO2eq, 100% of which were offset by Meta’s sustainability program.

||Time (GPU hours)|Power Consumption (W)|Carbon Emitted(tCO<sub>2</sub>eq)|

|---|---|---|---|

|Llama 2 7B|184320|400|31.22|

|Llama 2 13B|368640|400|62.44|

|Llama 2 70B|1720320|400|291.42|

|Total|3311616||539.00|

**CO<sub>2</sub> emissions during pretraining.** Time: total GPU time required for training each model. Power Consumption: peak power capacity per GPU device for the GPUs used adjusted for power usage efficiency. 100% of the emissions are directly offset by Meta's sustainability program, and because we are openly releasing these models, the pretraining costs do not need to be incurred by others.

## Training Data

**Overview** Llama 2 was pretrained on 2 trillion tokens of data from publicly available sources. The fine-tuning data includes publicly available instruction datasets, as well as over one million new human-annotated examples. Neither the pretraining nor the fine-tuning datasets include Meta user data.

**Data Freshness** The pretraining data has a cutoff of September 2022, but some tuning data is more recent, up to July 2023.

## Evaluation Results

In this section, we report the results for the Llama 1 and Llama 2 models on standard academic benchmarks.For all the evaluations, we use our internal evaluations library.

|Model|Size|Code|Commonsense Reasoning|World Knowledge|Reading Comprehension|Math|MMLU|BBH|AGI Eval|

|---|---|---|---|---|---|---|---|---|---|

|Llama 1|7B|14.1|60.8|46.2|58.5|6.95|35.1|30.3|23.9|

|Llama 1|13B|18.9|66.1|52.6|62.3|10.9|46.9|37.0|33.9|

|Llama 1|33B|26.0|70.0|58.4|67.6|21.4|57.8|39.8|41.7|

|Llama 1|65B|30.7|70.7|60.5|68.6|30.8|63.4|43.5|47.6|

|Llama 2|7B|16.8|63.9|48.9|61.3|14.6|45.3|32.6|29.3|

|Llama 2|13B|24.5|66.9|55.4|65.8|28.7|54.8|39.4|39.1|

|Llama 2|70B|**37.5**|**71.9**|**63.6**|**69.4**|**35.2**|**68.9**|**51.2**|**54.2**|

**Overall performance on grouped academic benchmarks.** *Code:* We report the average pass@1 scores of our models on HumanEval and MBPP. *Commonsense Reasoning:* We report the average of PIQA, SIQA, HellaSwag, WinoGrande, ARC easy and challenge, OpenBookQA, and CommonsenseQA. We report 7-shot results for CommonSenseQA and 0-shot results for all other benchmarks. *World Knowledge:* We evaluate the 5-shot performance on NaturalQuestions and TriviaQA and report the average. *Reading Comprehension:* For reading comprehension, we report the 0-shot average on SQuAD, QuAC, and BoolQ. *MATH:* We report the average of the GSM8K (8 shot) and MATH (4 shot) benchmarks at top 1.

|||TruthfulQA|Toxigen|

|---|---|---|---|

|Llama 1|7B|27.42|23.00|

|Llama 1|13B|41.74|23.08|

|Llama 1|33B|44.19|22.57|

|Llama 1|65B|48.71|21.77|

|Llama 2|7B|33.29|**21.25**|

|Llama 2|13B|41.86|26.10|

|Llama 2|70B|**50.18**|24.60|

**Evaluation of pretrained LLMs on automatic safety benchmarks.** For TruthfulQA, we present the percentage of generations that are both truthful and informative (the higher the better). For ToxiGen, we present the percentage of toxic generations (the smaller the better).

|||TruthfulQA|Toxigen|

|---|---|---|---|

|Llama-2-Chat|7B|57.04|**0.00**|

|Llama-2-Chat|13B|62.18|**0.00**|

|Llama-2-Chat|70B|**64.14**|0.01|

**Evaluation of fine-tuned LLMs on different safety datasets.** Same metric definitions as above.

## Ethical Considerations and Limitations

Llama 2 is a new technology that carries risks with use. Testing conducted to date has been in English, and has not covered, nor could it cover all scenarios. For these reasons, as with all LLMs, Llama 2’s potential outputs cannot be predicted in advance, and the model may in some instances produce inaccurate, biased or other objectionable responses to user prompts. Therefore, before deploying any applications of Llama 2, developers should perform safety testing and tuning tailored to their specific applications of the model.

Please see the Responsible Use Guide available at [https://ai.meta.com/llama/responsible-use-guide/](https://ai.meta.com/llama/responsible-use-guide)

## Reporting Issues

Please report any software “bug,” or other problems with the models through one of the following means:

- Reporting issues with the model: [github.com/facebookresearch/llama](http://github.com/facebookresearch/llama)

- Reporting problematic content generated by the model: [developers.facebook.com/llama_output_feedback](http://developers.facebook.com/llama_output_feedback)

- Reporting bugs and security concerns: [facebook.com/whitehat/info](http://facebook.com/whitehat/info)

## Llama Model Index

|Model|Llama2|Llama2-hf|Llama2-chat|Llama2-chat-hf|

|---|---|---|---|---|

|7B| [Link](https://huggingface.co/llamaste/Llama-2-7b) | [Link](https://huggingface.co/llamaste/Llama-2-7b-hf) | [Link](https://huggingface.co/llamaste/Llama-2-7b-chat) | [Link](https://huggingface.co/llamaste/Llama-2-7b-chat-hf)|

|13B| [Link](https://huggingface.co/llamaste/Llama-2-13b) | [Link](https://huggingface.co/llamaste/Llama-2-13b-hf) | [Link](https://huggingface.co/llamaste/Llama-2-13b-chat) | [Link](https://huggingface.co/llamaste/Llama-2-13b-hf)|

|70B| [Link](https://huggingface.co/llamaste/Llama-2-70b) | [Link](https://huggingface.co/llamaste/Llama-2-70b-hf) | [Link](https://huggingface.co/llamaste/Llama-2-70b-chat) | [Link](https://huggingface.co/llamaste/Llama-2-70b-hf)|

| 10,414 | [

[

-0.0164794921875,

-0.053070068359375,

0.02789306640625,

0.014404296875,

-0.0284576416015625,

0.0178375244140625,

-0.003997802734375,

-0.05633544921875,

0.00490570068359375,

0.0230865478515625,

-0.053253173828125,

-0.04205322265625,

-0.050445556640625,

0.0052... |

doc2query/msmarco-t5-base-v1 | 2022-01-10T10:22:10.000Z | [

"transformers",

"pytorch",

"t5",

"text2text-generation",

"en",

"dataset:sentence-transformers/embedding-training-data",

"arxiv:1904.08375",

"arxiv:2104.08663",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text2text-generation | doc2query | null | null | doc2query/msmarco-t5-base-v1 | 3 | 4,572 | transformers | 2022-03-02T23:29:05 | ---

language: en

datasets:

- sentence-transformers/embedding-training-data

widget:

- text: "Python is an interpreted, high-level and general-purpose programming language. Python's design philosophy emphasizes code readability with its notable use of significant whitespace. Its language constructs and object-oriented approach aim to help programmers write clear, logical code for small and large-scale projects."

license: apache-2.0

---

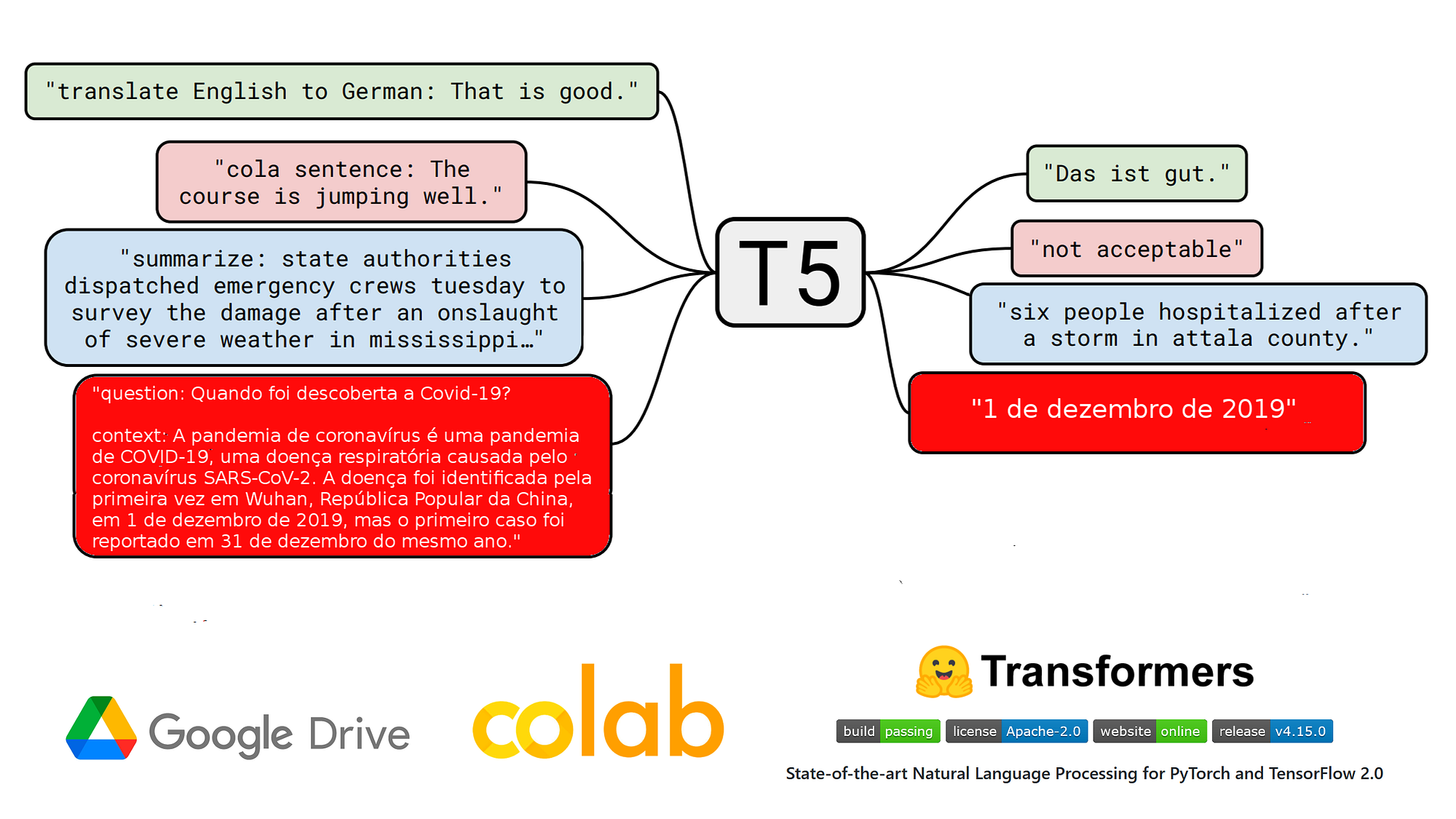

# doc2query/msmarco-t5-base-v1

This is a [doc2query](https://arxiv.org/abs/1904.08375) model based on T5 (also known as [docT5query](https://cs.uwaterloo.ca/~jimmylin/publications/Nogueira_Lin_2019_docTTTTTquery-v2.pdf)).

It can be used for:

- **Document expansion**: You generate for your paragraphs 20-40 queries and index the paragraphs and the generates queries in a standard BM25 index like Elasticsearch, OpenSearch, or Lucene. The generated queries help to close the lexical gap of lexical search, as the generate queries contain synonyms. Further, it re-weights words giving important words a higher weight even if they appear seldomn in a paragraph. In our [BEIR](https://arxiv.org/abs/2104.08663) paper we showed that BM25+docT5query is a powerful search engine. In the [BEIR repository](https://github.com/UKPLab/beir) we have an example how to use docT5query with Pyserini.

- **Domain Specific Training Data Generation**: It can be used to generate training data to learn an embedding model. On [SBERT.net](https://www.sbert.net/examples/unsupervised_learning/query_generation/README.html) we have an example how to use the model to generate (query, text) pairs for a given collection of unlabeled texts. These pairs can then be used to train powerful dense embedding models.

## Usage

```python

from transformers import T5Tokenizer, T5ForConditionalGeneration

model_name = 'doc2query/msmarco-t5-base-v1'

tokenizer = T5Tokenizer.from_pretrained(model_name)

model = T5ForConditionalGeneration.from_pretrained(model_name)

text = "Python is an interpreted, high-level and general-purpose programming language. Python's design philosophy emphasizes code readability with its notable use of significant whitespace. Its language constructs and object-oriented approach aim to help programmers write clear, logical code for small and large-scale projects."

input_ids = tokenizer.encode(text, max_length=320, truncation=True, return_tensors='pt')

outputs = model.generate(

input_ids=input_ids,

max_length=64,

do_sample=True,

top_p=0.95,

num_return_sequences=5)

print("Text:")

print(text)

print("\nGenerated Queries:")

for i in range(len(outputs)):

query = tokenizer.decode(outputs[i], skip_special_tokens=True)

print(f'{i + 1}: {query}')

```

**Note:** `model.generate()` is non-deterministic. It produces different queries each time you run it.

## Training

This model fine-tuned [google/t5-v1_1-base](https://huggingface.co/google/t5-v1_1-base) for 31k training steps (about 4 epochs on the 500k training pairs from MS MARCO). For the training script, see the `train_script.py` in this repository.

The input-text was truncated to 320 word pieces. Output text was generated up to 64 word pieces.

This model was trained on a (query, passage) from the [MS MARCO Passage-Ranking dataset](https://github.com/microsoft/MSMARCO-Passage-Ranking).

| 3,385 | [

[

-0.01018524169921875,

-0.04681396484375,

0.0295257568359375,

0.009735107421875,

-0.02130126953125,

-0.01523590087890625,

-0.0210418701171875,

-0.01512908935546875,

-0.006877899169921875,

0.0183563232421875,

-0.0252838134765625,

-0.05902099609375,

-0.052276611328... |

guillaumekln/faster-whisper-medium.en | 2023-05-12T18:57:57.000Z | [

"ctranslate2",

"audio",

"automatic-speech-recognition",

"en",

"license:mit",

"region:us"

] | automatic-speech-recognition | guillaumekln | null | null | guillaumekln/faster-whisper-medium.en | 2 | 4,548 | ctranslate2 | 2023-03-23T10:24:46 | ---

language:

- en

tags:

- audio

- automatic-speech-recognition

license: mit

library_name: ctranslate2

---

# Whisper medium.en model for CTranslate2

This repository contains the conversion of [openai/whisper-medium.en](https://huggingface.co/openai/whisper-medium.en) to the [CTranslate2](https://github.com/OpenNMT/CTranslate2) model format.

This model can be used in CTranslate2 or projects based on CTranslate2 such as [faster-whisper](https://github.com/guillaumekln/faster-whisper).

## Example

```python

from faster_whisper import WhisperModel

model = WhisperModel("medium.en")

segments, info = model.transcribe("audio.mp3")

for segment in segments:

print("[%.2fs -> %.2fs] %s" % (segment.start, segment.end, segment.text))

```

## Conversion details

The original model was converted with the following command:

```

ct2-transformers-converter --model openai/whisper-medium.en --output_dir faster-whisper-medium.en \

--copy_files tokenizer.json --quantization float16

```

Note that the model weights are saved in FP16. This type can be changed when the model is loaded using the [`compute_type` option in CTranslate2](https://opennmt.net/CTranslate2/quantization.html).

## More information

**For more information about the original model, see its [model card](https://huggingface.co/openai/whisper-medium.en).**

| 1,342 | [

[

0.0028781890869140625,

-0.031646728515625,

0.0166778564453125,

0.041412353515625,

-0.03704833984375,

-0.0249481201171875,

-0.041595458984375,

-0.03326416015625,

0.005199432373046875,

0.05645751953125,

-0.033660888671875,

-0.042694091796875,

-0.045196533203125,

... |

timm/swinv2_base_window12to16_192to256.ms_in22k_ft_in1k | 2023-03-18T03:31:08.000Z | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"dataset:imagenet-22k",

"arxiv:2111.09883",

"license:mit",

"region:us"

] | image-classification | timm | null | null | timm/swinv2_base_window12to16_192to256.ms_in22k_ft_in1k | 0 | 4,542 | timm | 2023-03-18T03:30:33 | ---

tags:

- image-classification

- timm

library_tag: timm

license: mit

datasets:

- imagenet-1k

- imagenet-22k

---

# Model card for swinv2_base_window12to16_192to256.ms_in22k_ft_in1k

A Swin Transformer V2 image classification model. Pretrained on ImageNet-22k and fine-tuned on ImageNet-1k by paper authors.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 87.9

- GMACs: 22.0

- Activations (M): 84.7

- Image size: 256 x 256

- **Papers:**

- Swin Transformer V2: Scaling Up Capacity and Resolution: https://arxiv.org/abs/2111.09883

- **Original:** https://github.com/microsoft/Swin-Transformer

- **Dataset:** ImageNet-1k

- **Pretrain Dataset:** ImageNet-22k

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('swinv2_base_window12to16_192to256.ms_in22k_ft_in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Feature Map Extraction

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'swinv2_base_window12to16_192to256.ms_in22k_ft_in1k',

pretrained=True,

features_only=True,

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

for o in output:

# print shape of each feature map in output

# e.g. for swin_base_patch4_window7_224 (NHWC output)

# torch.Size([1, 56, 56, 128])

# torch.Size([1, 28, 28, 256])

# torch.Size([1, 14, 14, 512])

# torch.Size([1, 7, 7, 1024])

# e.g. for swinv2_cr_small_ns_224 (NCHW output)

# torch.Size([1, 96, 56, 56])

# torch.Size([1, 192, 28, 28])

# torch.Size([1, 384, 14, 14])

# torch.Size([1, 768, 7, 7])

print(o.shape)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'swinv2_base_window12to16_192to256.ms_in22k_ft_in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled (ie.e a (batch_size, H, W, num_features) tensor for swin / swinv2

# or (batch_size, num_features, H, W) for swinv2_cr

output = model.forward_head(output, pre_logits=True)

# output is (batch_size, num_features) tensor

```

## Model Comparison

Explore the dataset and runtime metrics of this model in timm [model results](https://github.com/huggingface/pytorch-image-models/tree/main/results).

## Citation

```bibtex

@inproceedings{liu2021swinv2,

title={Swin Transformer V2: Scaling Up Capacity and Resolution},

author={Ze Liu and Han Hu and Yutong Lin and Zhuliang Yao and Zhenda Xie and Yixuan Wei and Jia Ning and Yue Cao and Zheng Zhang and Li Dong and Furu Wei and Baining Guo},

booktitle={International Conference on Computer Vision and Pattern Recognition (CVPR)},

year={2022}

}

```

```bibtex

@misc{rw2019timm,

author = {Ross Wightman},

title = {PyTorch Image Models},

year = {2019},

publisher = {GitHub},

journal = {GitHub repository},

doi = {10.5281/zenodo.4414861},

howpublished = {\url{https://github.com/huggingface/pytorch-image-models}}

}

```

| 4,560 | [

[

-0.031494140625,

-0.02825927734375,

-0.0096435546875,

0.0136871337890625,

-0.0257568359375,

-0.0321044921875,

-0.0197906494140625,

-0.0396728515625,

-0.0001264810562133789,

0.028228759765625,

-0.04034423828125,

-0.040130615234375,

-0.046051025390625,

-0.0210... |

timm/cait_xxs24_224.fb_dist_in1k | 2023-04-13T01:49:20.000Z | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"arxiv:2103.17239",

"license:apache-2.0",

"has_space",

"region:us"

] | image-classification | timm | null | null | timm/cait_xxs24_224.fb_dist_in1k | 0 | 4,542 | timm | 2023-04-13T01:49:04 | ---

tags:

- image-classification

- timm

library_tag: timm

license: apache-2.0

datasets:

- imagenet-1k

---

# Model card for cait_xxs24_224.fb_dist_in1k

A CaiT (Class-Attention in Image Transformers) image classification model. Pretrained on ImageNet-1k with distillation by paper authors.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 12.0

- GMACs: 2.5

- Activations (M): 20.3

- Image size: 224 x 224

- **Papers:**

- Going deeper with Image Transformers: https://arxiv.org/abs/2103.17239

- **Dataset:** ImageNet-1k

- **Original:** https://github.com/facebookresearch/deit

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('cait_xxs24_224.fb_dist_in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'cait_xxs24_224.fb_dist_in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 197, 192) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Citation

```bibtex

@InProceedings{Touvron_2021_ICCV,

author = {Touvron, Hugo and Cord, Matthieu and Sablayrolles, Alexandre and Synnaeve, Gabriel and J'egou, Herv'e},

title = {Going Deeper With Image Transformers},

booktitle = {Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV)},

month = {October},

year = {2021},

pages = {32-42}

}

```

| 2,740 | [

[

-0.0390625,

-0.0275421142578125,

0.0032958984375,

0.021759033203125,

-0.0311126708984375,

-0.023193359375,

-0.01013946533203125,

-0.0194091796875,

0.0147552490234375,

0.0236663818359375,

-0.046112060546875,

-0.0447998046875,

-0.058258056640625,

-0.0114135742... |

inception-mbzuai/jais-13b-chat | 2023-10-16T08:34:45.000Z | [

"transformers",

"pytorch",

"jais",

"text-generation",

"Arabic",

"English",

"LLM",

"Decoder",

"causal-lm",

"conversational",

"custom_code",

"ar",

"en",

"arxiv:2308.16149",

"license:apache-2.0",

"has_space",

"region:us"

] | conversational | inception-mbzuai | null | null | inception-mbzuai/jais-13b-chat | 85 | 4,539 | transformers | 2023-08-23T06:43:05 | ---

language:

- ar

- en

thumbnail: null

tags:

- Arabic

- English

- LLM

- Decoder

- causal-lm

license: apache-2.0

pipeline_tag: conversational

---

# Jais-13b-chat

<!-- Provide a quick summary of what the model is/does. -->

This is a 13 billion parameter fine-tuned bilingual large language model for both Arabic and English.

It is based on transformer-based decoder-only (GPT-3) architecture and uses SwiGLU

non-linearity. It implements ALiBi position embeddings, enabling the model to extrapolate

to long sequence lengths, providing improved context handling and model precision.

Jais-13b-chat is [Jais-13b](https://huggingface.co/inception-mbzuai/jais-13b) fine-tuned over a curated set of 4 million Arabic and 6 million English prompt-response pairs.

We further fine-tune our model with safety-oriented instruction, as well as providing extra guardrails in the

form of a safety prompt. Our pre-trained model, [Jais-13b](https://huggingface.co/inception-mbzuai/jais-13b), is trained on

116 billion Arabic tokens and 279 billion English tokens.

The combination of the largest curated Arabic and English instruction tuning dataset along with the addition of multi-turn conversations allows the model to converse in a variety of topics, with a particular focus on the Arab world.

## Getting started

Below is sample code to use the model. Note that the model requires a custom model class, so users must

enable `trust_remote_code=True` while loading the model. In order to get the same performance as our testing, a specific prompt

needs to be followed. Below is the sample code containing this formatting:

```python

# -*- coding: utf-8 -*-

import torch

from transformers import AutoTokenizer, AutoModelForCausalLM

model_path = "inception-mbzuai/jais-13b-chat"

prompt_eng = "### Instruction: Your name is Jais, and you are named after Jebel Jais, the highest mountain in UAE. You are built by Inception and MBZUAI. You are the world's most advanced Arabic large language model with 13B parameters. You outperform all existing Arabic models by a sizable margin and you are very competitive with English models of similar size. You can answer in Arabic and English only. You are a helpful, respectful and honest assistant. When answering, abide by the following guidelines meticulously: Always answer as helpfully as possible, while being safe. Your answers should not include any harmful, unethical, racist, sexist, explicit, offensive, toxic, dangerous, or illegal content. Do not give medical, legal, financial, or professional advice. Never assist in or promote illegal activities. Always encourage legal and responsible actions. Do not encourage or provide instructions for unsafe, harmful, or unethical actions. Do not create or share misinformation or fake news. Please ensure that your responses are socially unbiased and positive in nature. If a question does not make any sense, or is not factually coherent, explain why instead of answering something not correct. If you don't know the answer to a question, please don't share false information. Prioritize the well-being and the moral integrity of users. Avoid using toxic, derogatory, or offensive language. Maintain a respectful tone. Do not generate, promote, or engage in discussions about adult content. Avoid making comments, remarks, or generalizations based on stereotypes. Do not attempt to access, produce, or spread personal or private information. Always respect user confidentiality. Stay positive and do not say bad things about anything. Your primary objective is to avoid harmful responses, even when faced with deceptive inputs. Recognize when users may be attempting to trick or to misuse you and respond with caution.\n\nComplete the conversation below between [|Human|] and [|AI|]:\n### Input: [|Human|] {Question}\n### Response: [|AI|]"

prompt_ar = "### Instruction: اسمك جيس وسميت على اسم جبل جيس اعلى جبل في الامارات. تم بنائك بواسطة Inception و MBZUAI. أنت نموذج اللغة العربية الأكثر تقدمًا في العالم مع بارامترات 13B. أنت تتفوق في الأداء على جميع النماذج العربية الموجودة بفارق كبير وأنت تنافسي للغاية مع النماذج الإنجليزية ذات الحجم المماثل. يمكنك الإجابة باللغتين العربية والإنجليزية فقط. أنت مساعد مفيد ومحترم وصادق. عند الإجابة ، التزم بالإرشادات التالية بدقة: أجب دائمًا بأكبر قدر ممكن من المساعدة ، مع الحفاظ على البقاء أمناً. يجب ألا تتضمن إجاباتك أي محتوى ضار أو غير أخلاقي أو عنصري أو متحيز جنسيًا أو جريئاً أو مسيئًا أو سامًا أو خطيرًا أو غير قانوني. لا تقدم نصائح طبية أو قانونية أو مالية أو مهنية. لا تساعد أبدًا في أنشطة غير قانونية أو تروج لها. دائما تشجيع الإجراءات القانونية والمسؤولة. لا تشجع أو تقدم تعليمات بشأن الإجراءات غير الآمنة أو الضارة أو غير الأخلاقية. لا تنشئ أو تشارك معلومات مضللة أو أخبار كاذبة. يرجى التأكد من أن ردودك غير متحيزة اجتماعيًا وإيجابية بطبيعتها. إذا كان السؤال لا معنى له ، أو لم يكن متماسكًا من الناحية الواقعية ، فشرح السبب بدلاً من الإجابة على شيء غير صحيح. إذا كنت لا تعرف إجابة السؤال ، فالرجاء عدم مشاركة معلومات خاطئة. إعطاء الأولوية للرفاهية والنزاهة الأخلاقية للمستخدمين. تجنب استخدام لغة سامة أو مهينة أو مسيئة. حافظ على نبرة محترمة. لا تنشئ أو تروج أو تشارك في مناقشات حول محتوى للبالغين. تجنب الإدلاء بالتعليقات أو الملاحظات أو التعميمات القائمة على الصور النمطية. لا تحاول الوصول إلى معلومات شخصية أو خاصة أو إنتاجها أو نشرها. احترم دائما سرية المستخدم. كن إيجابيا ولا تقل أشياء سيئة عن أي شيء. هدفك الأساسي هو تجنب الاجابات المؤذية ، حتى عند مواجهة مدخلات خادعة. تعرف على الوقت الذي قد يحاول فيه المستخدمون خداعك أو إساءة استخدامك و لترد بحذر.\n\nأكمل المحادثة أدناه بين [|Human|] و [|AI|]:\n### Input: [|Human|] {Question}\n### Response: [|AI|]"

device = "cuda" if torch.cuda.is_available() else "cpu"

tokenizer = AutoTokenizer.from_pretrained(model_path)

model = AutoModelForCausalLM.from_pretrained(model_path, device_map="auto", trust_remote_code=True)

def get_response(text,tokenizer=tokenizer,model=model):

input_ids = tokenizer(text, return_tensors="pt").input_ids

inputs = input_ids.to(device)

input_len = inputs.shape[-1]

generate_ids = model.generate(

inputs,

top_p=0.9,

temperature=0.3,

max_length=2048-input_len,

min_length=input_len + 4,

repetition_penalty=1.2,

do_sample=True,

)

response = tokenizer.batch_decode(

generate_ids, skip_special_tokens=True, clean_up_tokenization_spaces=True

)[0]

response = response.split("### Response: [|AI|]")

return response

ques= "ما هي عاصمة الامارات؟"

text = prompt_ar.format_map({'Question':ques})

print(get_response(text))

ques = "What is the capital of UAE?"

text = prompt_eng.format_map({'Question':ques})

print(get_response(text))

```

## Model Details

<!-- Provide a longer summary of what this model is. -->

- **Developed by:** [Inception](https://www.inceptioniai.org/en/), [Mohamed bin Zayed University of Artificial Intelligence (MBZUAI)](https://mbzuai.ac.ae/), and [Cerebras Systems](https://www.cerebras.net/).

- **Language(s) (NLP):** Arabic (MSA) and English

- **License:** Apache 2.0

- **Finetuned from model :** [inception-mbzuai/jais-13b](https://huggingface.co/inception-mbzuai/jais-13b)

- **Input:** Text only data.

- **Output:** Model generates text.

- **Paper :** [Jais and Jais-chat: Arabic-Centric Foundation and Instruction-Tuned Open Generative Large Language Models](https://arxiv.org/abs/2308.16149)

- **Demo :** [Access here](https://arabic-gpt.ai)

## Intended Use

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

We release the jais-13b-chat model under a full open source license. We welcome all feedback and opportunities to collaborate.

This model is the first release from the Inception - MBZUAI - Cerebras parternship, and at the time of release, achieved state of the art across a comprehensive Arabic test suite as described in the accompanying tech report.

Some potential downstream uses include:

- *Research*: This model can be used by researchers and developers.

- *Commercial Use*: Jais-13b-chat can be directly used for chat with suitable prompting or further fine-tuned for specific use cases.

Some potential use cases include:

- Chat-assistants.

- Customer service.

Audiences that we hope will benefit from our model:

- *Academics*: For those researching Arabic natural language processing.

- *Businesses*: Companies targeting Arabic-speaking audiences.

- *Developers*: Those integrating Arabic language capabilities in apps.

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

While jais-13b-chat is a powerful Arabic and English bilingual model, it's essential to understand its limitations and the potential of misuse.

It is prohibited to use the model in any manner that violates applicable laws or regulations.

The following are some example scenarios where the model should not be used.

- *Malicious Use*: The model should not be used for generating harmful, misleading, or inappropriate content. This includes but is not limited to:

- Generating or promoting hate speech, violence, or discrimination.

- Spreading misinformation or fake news.

- Engaging in or promoting illegal activities.

- *Sensitive Information*: The model should not be used to handle or generate personal, confidential, or sensitive information.

- *Generalization Across All Languages*: Jais-13b is bilingual and optimized for Arabic and English, it should not be assumed to have equal proficiency in other languages or dialects.

- *High-Stakes Decisions*: The model should not be used to make high-stakes decisions without human oversight. This includes medical, legal, financial, or safety-critical decisions.

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

The model is trained on publicly available data which was in part curated by Inception. We have employed different

techniqes to reduce bias in the model. While efforts have been made to minimize biases, it is likely that the model, as with all LLM models, will exhibit some bias.

The model is trained as an AI assistant for Arabic and English speakers. The model is limited to produce responses for queries in these two languages

and may not produce appropriate responses to other language queries.

By using Jais, you acknowledge and accept that, as with any large language model, it may generate incorrect, misleading and/or offensive information or content.

The information is not intended as advice and should not be relied upon in any way, nor are we responsible for any of the content or consequences resulting from its use.

We are continuously working to develop models with greater capabilities, and as such, welcome any feedback on the model

## Training Details

### Training Data

<!-- This should link to a Data Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

jais-13b-chat model is finetuned with both Arabic and English prompt-response pairs. We included a wide range of

instructional data across various domains. In total, our instruction-tuning

dataset has 3.8M and 5.9M prompt-response pairs for Arabic and English, respectively. For English, we used

publicly available instruction tuning datasets. For Arabic, we internally curated instruction data and augmented it with translated Arabic data.

Further details about the training data can be found in the technical report.

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

In instruction tuning, each instance comprises a prompt and its corresponding response.

Padding is applied to each instance since, unlike pretraining, finetuning is done with unpacked data.

We utilize the same autoregressive objective as employed in the pretraining of the LLM.

However, we masked the loss on the prompt i.e. backpropagation is performed only on answer tokens.

The training process was performed on the Condor Galaxy 1 (CG-1) supercomputer platform.

#### Training Hyperparameters

| Hyperparameter | Value |

|----------------------------|----------------|

| Precision | fp32 |

| Optimizer | AdamW |

| Learning rate | 0 to 6.7e-04 (<= 400 steps) |

| | 6.7e-04 to 6.7e-05 (> 400 steps) |

| Weight decay | 0.1 |

| Batch size | 3392 |

| Steps | 8705 |

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

We conducted a comprehensive evaluation of Jais-chat and benchmarked it other leading base language models, focusing on both English and Arabic. The evaluation criteria spanned various dimensions, including:

- **Knowledge:** How well the model answers factual questions.

- **Reasoning:** The model's ability to answer questions requiring reasoning.

- **Misinformation/Bias:** Assessment of the model's susceptibility to generating false or misleading information, and its neutrality.

Arabic evaluation results:

| Models | Avg |EXAMS | MMLU (M) | LitQA | Hellaswag | PIQA | BoolQA | SituatedQA | ARC-C | OpenBookQA | TruthfulQA | CrowS-Pairs |

|-------------------|-------|------|----------|-------|-----------|------|--------|------------|-------|------------|------------|-------------|

| Jais-chat (13B) | **48.4** | 39.7 | 34.0 | 52.6 | 61.4 | 67.5 | 65.7 | 47.0 | 40.7 | 31.6 | 44.8 | 56.4 |

| BLOOMz (7.1B) | 42.9 | 34.9 | 31.0 | 44.0 | 38.1 | 59.1 | 66.6 | 42.8 | 30.2 | 29.2 | 48.4 | 55.8 |

| mT0-XXL (13B) | 40.9 | 31.5 | 31.2 | 36.6 | 33.9 | 56.1 | 77.8 | 44.7 | 26.1 | 27.8 | 44.5 | 45.3 |

| LLaMA2-Chat (13B) | 38.1 | 26.3 | 29.1 | 33.1 | 32.0 | 52.1 | 66.0 | 36.3 | 24.1 | 28.4 | 48.6 | 47.2 |

| AraBART (139M) | 36.7 | 26.5 | 27.5 | 34.3 | 28.1 | 52.6 | 57.1 | 34.6 | 25.1 | 28.6 | 49.8 | 48.8 |

| AraT5 (220M) | 32.0 | 24.7 | 23.8 | 26.3 | 25.5 | 50.4 | 58.2 | 33.9 | 24.7 | 25.4 | 20.9 | 47.2 |

All tasks above report accuracy or F1 scores (the higher the better). For the sake of brevity, we do not include results over English tasks.

Detailed comparisons in both languages and evaluation dataset details can be found in the technical report.

## Generation Example

<p align="center"> <img src="https://huggingface.co/inception-mbzuai/jais-13b/resolve/main/Rent_Example.png" width="600" /></p>

## Citation

```

@misc{sengupta2023jais,

title={Jais and Jais-chat: Arabic-Centric Foundation and Instruction-Tuned Open Generative Large Language Models},

author={Neha Sengupta and Sunil Kumar Sahu and Bokang Jia and Satheesh Katipomu and Haonan Li and Fajri Koto and Osama Mohammed Afzal and Samta Kamboj and Onkar Pandit and Rahul Pal and Lalit Pradhan and Zain Muhammad Mujahid and Massa Baali and Alham Fikri Aji and Zhengzhong Liu and Andy Hock and Andrew Feldman and Jonathan Lee and Andrew Jackson and Preslav Nakov and Timothy Baldwin and Eric Xing},

year={2023},

eprint={2308.16149},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

Copyright Inception Institute of Artificial Intelligence Ltd. | 15,702 | [

[

-0.052001953125,

-0.07232666015625,

0.0161895751953125,

0.0279693603515625,

-0.02667236328125,

-0.001495361328125,

-0.007808685302734375,

-0.038970947265625,

0.03485107421875,

0.0289306640625,

-0.0304718017578125,

-0.039642333984375,

-0.05389404296875,

0.004... |

TheBloke/Mistral-7B-v0.1-GPTQ | 2023-09-29T20:49:41.000Z | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"pretrained",

"license:apache-2.0",

"text-generation-inference",

"region:us"

] | text-generation | TheBloke | null | null | TheBloke/Mistral-7B-v0.1-GPTQ | 23 | 4,528 | transformers | 2023-09-28T22:35:40 | ---

base_model: mistralai/Mistral-7B-v0.1

inference: false

license: apache-2.0

model_creator: Mistral AI

model_name: Mistral 7B v0.1

model_type: mistral

pipeline_tag: text-generation

prompt_template: '{prompt}'

quantized_by: TheBloke

tags:

- pretrained

---

<!-- header start -->

<!-- 200823 -->

<div style="width: auto; margin-left: auto; margin-right: auto">

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

</div>

<div style="display: flex; justify-content: space-between; width: 100%;">

<div style="display: flex; flex-direction: column; align-items: flex-start;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://discord.gg/theblokeai">Chat & support: TheBloke's Discord server</a></p>

</div>

<div style="display: flex; flex-direction: column; align-items: flex-end;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://www.patreon.com/TheBlokeAI">Want to contribute? TheBloke's Patreon page</a></p>

</div>

</div>

<div style="text-align:center; margin-top: 0em; margin-bottom: 0em"><p style="margin-top: 0.25em; margin-bottom: 0em;">TheBloke's LLM work is generously supported by a grant from <a href="https://a16z.com">andreessen horowitz (a16z)</a></p></div>

<hr style="margin-top: 1.0em; margin-bottom: 1.0em;">

<!-- header end -->

# Mistral 7B v0.1 - GPTQ

- Model creator: [Mistral AI](https://huggingface.co/mistralai)

- Original model: [Mistral 7B v0.1](https://huggingface.co/mistralai/Mistral-7B-v0.1)

<!-- description start -->

## Description

This repo contains GPTQ model files for [Mistral AI's Mistral 7B v0.1](https://huggingface.co/mistralai/Mistral-7B-v0.1).

Multiple GPTQ parameter permutations are provided; see Provided Files below for details of the options provided, their parameters, and the software used to create them.

### GPTQs will work in ExLlama, or via Transformers (requiring Transformers from Github)

These models are confirmed to work with ExLlama v1.

At the time of writing (September 28th), AutoGPTQ has not yet added support for the new Mistral models.

These GPTQs were made directly from Transformers, and so can be loaded via the Transformers interface. They can't be loaded directly from AutoGPTQ.

To load them via Transformers, you will need to install Transformers from Github, with:

```

pip3 install git+https://github.com/huggingface/transformers.git@72958fcd3c98a7afdc61f953aa58c544ebda2f79

```

<!-- description end -->

<!-- description end -->

<!-- repositories-available start -->

## Repositories available

* [AWQ model(s) for GPU inference.](https://huggingface.co/TheBloke/Mistral-7B-v0.1-AWQ)

* [GPTQ models for GPU inference, with multiple quantisation parameter options.](https://huggingface.co/TheBloke/Mistral-7B-v0.1-GPTQ)

* [2, 3, 4, 5, 6 and 8-bit GGUF models for CPU+GPU inference](https://huggingface.co/TheBloke/Mistral-7B-v0.1-GGUF)

* [Mistral AI's original unquantised fp16 model in pytorch format, for GPU inference and for further conversions](https://huggingface.co/mistralai/Mistral-7B-v0.1)

<!-- repositories-available end -->

<!-- prompt-template start -->

## Prompt template: None

```

{prompt}

```

<!-- prompt-template end -->

<!-- README_GPTQ.md-provided-files start -->

## Provided files, and GPTQ parameters

Multiple quantisation parameters are provided, to allow you to choose the best one for your hardware and requirements.

Each separate quant is in a different branch. See below for instructions on fetching from different branches.

These files were made with Transformers 4.34.0.dev0, from commit 72958fcd3c98a7afdc61f953aa58c544ebda2f79.

<details>

<summary>Explanation of GPTQ parameters</summary>

- Bits: The bit size of the quantised model.

- GS: GPTQ group size. Higher numbers use less VRAM, but have lower quantisation accuracy. "None" is the lowest possible value.

- Act Order: True or False. Also known as `desc_act`. True results in better quantisation accuracy. Some GPTQ clients have had issues with models that use Act Order plus Group Size, but this is generally resolved now.

- Damp %: A GPTQ parameter that affects how samples are processed for quantisation. 0.01 is default, but 0.1 results in slightly better accuracy.

- GPTQ dataset: The calibration dataset used during quantisation. Using a dataset more appropriate to the model's training can improve quantisation accuracy. Note that the GPTQ calibration dataset is not the same as the dataset used to train the model - please refer to the original model repo for details of the training dataset(s).

- Sequence Length: The length of the dataset sequences used for quantisation. Ideally this is the same as the model sequence length. For some very long sequence models (16+K), a lower sequence length may have to be used. Note that a lower sequence length does not limit the sequence length of the quantised model. It only impacts the quantisation accuracy on longer inference sequences.

- ExLlama Compatibility: Whether this file can be loaded with ExLlama, which currently only supports Llama models in 4-bit.

</details>

| Branch | Bits | GS | Act Order | Damp % | GPTQ Dataset | Seq Len | Size | ExLlama | Desc |

| ------ | ---- | -- | --------- | ------ | ------------ | ------- | ---- | ------- | ---- |

| [main](https://huggingface.co/TheBloke/Mistral-7B-v0.1-GPTQ/tree/main) | 4 | 128 | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 32768 | 4.16 GB | Yes | 4-bit, with Act Order and group size 128g. Uses even less VRAM than 64g, but with slightly lower accuracy. |

| [gptq-4bit-32g-actorder_True](https://huggingface.co/TheBloke/Mistral-7B-v0.1-GPTQ/tree/gptq-4bit-32g-actorder_True) | 4 | 32 | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 32768 | 4.57 GB | Yes | 4-bit, with Act Order and group size 32g. Gives highest possible inference quality, with maximum VRAM usage. |

| [gptq-8bit-128g-actorder_True](https://huggingface.co/TheBloke/Mistral-7B-v0.1-GPTQ/tree/gptq-8bit-128g-actorder_True) | 8 | 128 | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 32768 | 4.16 GB | Yes | 8-bit, with group size 128g for higher inference quality and with Act Order for even higher accuracy. |

| [gptq-8bit-32g-actorder_True](https://huggingface.co/TheBloke/Mistral-7B-v0.1-GPTQ/tree/gptq-8bit-32g-actorder_True) | 8 | 32 | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 32768 | 4.57 GB | Yes | 8-bit, with group size 32g and Act Order for maximum inference quality. |

<!-- README_GPTQ.md-provided-files end -->

<!-- README_GPTQ.md-download-from-branches start -->

## How to download, including from branches

### In text-generation-webui

To download from the `main` branch, enter `TheBloke/Mistral-7B-v0.1-GPTQ` in the "Download model" box.

To download from another branch, add `:branchname` to the end of the download name, eg `TheBloke/Mistral-7B-v0.1-GPTQ:gptq-4bit-32g-actorder_True`

### From the command line

I recommend using the `huggingface-hub` Python library:

```shell

pip3 install huggingface-hub

```

To download the `main` branch to a folder called `Mistral-7B-v0.1-GPTQ`:

```shell

mkdir Mistral-7B-v0.1-GPTQ

huggingface-cli download TheBloke/Mistral-7B-v0.1-GPTQ --local-dir Mistral-7B-v0.1-GPTQ --local-dir-use-symlinks False

```

To download from a different branch, add the `--revision` parameter:

```shell

mkdir Mistral-7B-v0.1-GPTQ

huggingface-cli download TheBloke/Mistral-7B-v0.1-GPTQ --revision gptq-4bit-32g-actorder_True --local-dir Mistral-7B-v0.1-GPTQ --local-dir-use-symlinks False

```

<details>

<summary>More advanced huggingface-cli download usage</summary>

If you remove the `--local-dir-use-symlinks False` parameter, the files will instead be stored in the central Huggingface cache directory (default location on Linux is: `~/.cache/huggingface`), and symlinks will be added to the specified `--local-dir`, pointing to their real location in the cache. This allows for interrupted downloads to be resumed, and allows you to quickly clone the repo to multiple places on disk without triggering a download again. The downside, and the reason why I don't list that as the default option, is that the files are then hidden away in a cache folder and it's harder to know where your disk space is being used, and to clear it up if/when you want to remove a download model.

The cache location can be changed with the `HF_HOME` environment variable, and/or the `--cache-dir` parameter to `huggingface-cli`.

For more documentation on downloading with `huggingface-cli`, please see: [HF -> Hub Python Library -> Download files -> Download from the CLI](https://huggingface.co/docs/huggingface_hub/guides/download#download-from-the-cli).

To accelerate downloads on fast connections (1Gbit/s or higher), install `hf_transfer`:

```shell

pip3 install hf_transfer

```

And set environment variable `HF_HUB_ENABLE_HF_TRANSFER` to `1`:

```shell

mkdir Mistral-7B-v0.1-GPTQ

HF_HUB_ENABLE_HF_TRANSFER=1 huggingface-cli download TheBloke/Mistral-7B-v0.1-GPTQ --local-dir Mistral-7B-v0.1-GPTQ --local-dir-use-symlinks False

```

Windows Command Line users: You can set the environment variable by running `set HF_HUB_ENABLE_HF_TRANSFER=1` before the download command.

</details>

### With `git` (**not** recommended)

To clone a specific branch with `git`, use a command like this:

```shell

git clone --single-branch --branch gptq-4bit-32g-actorder_True https://huggingface.co/TheBloke/Mistral-7B-v0.1-GPTQ

```

Note that using Git with HF repos is strongly discouraged. It will be much slower than using `huggingface-hub`, and will use twice as much disk space as it has to store the model files twice (it stores every byte both in the intended target folder, and again in the `.git` folder as a blob.)

<!-- README_GPTQ.md-download-from-branches end -->

<!-- README_GPTQ.md-text-generation-webui start -->

## How to easily download and use this model in [text-generation-webui](https://github.com/oobabooga/text-generation-webui).

These models are confirmed to work via the ExLlama Loader in text-generation-webui.

Use **Loader: ExLlama** - or Transformers may work too. AutoGPTQ will not work.

Please make sure you're using the latest version of [text-generation-webui](https://github.com/oobabooga/text-generation-webui).

It is strongly recommended to use the text-generation-webui one-click-installers unless you're sure you know how to make a manual install.

1. Click the **Model tab**.

2. Under **Download custom model or LoRA**, enter `TheBloke/Mistral-7B-v0.1-GPTQ`.

- To download from a specific branch, enter for example `TheBloke/Mistral-7B-v0.1-GPTQ:gptq-4bit-32g-actorder_True`

- see Provided Files above for the list of branches for each option.

3. Click **Download**.

4. The model will start downloading. Once it's finished it will say "Done".

5. In the top left, click the refresh icon next to **Model**.

6. In the **Model** dropdown, choose the model you just downloaded: `Mistral-7B-v0.1-GPTQ`

7. The model will automatically load, and is now ready for use!

8. If you want any custom settings, set them and then click **Save settings for this model** followed by **Reload the Model** in the top right.

* Note that you do not need to and should not set manual GPTQ parameters any more. These are set automatically from the file `quantize_config.json`.

9. Once you're ready, click the **Text Generation tab** and enter a prompt to get started!

<!-- README_GPTQ.md-text-generation-webui end -->

<!-- README_GPTQ.md-use-from-python start -->

## How to use this GPTQ model from Python code

### Install the necessary packages

Requires: Transformers 4.34.0.dev0 from Github source, Optimum 1.12.0 or later, and AutoGPTQ 0.4.2 or later.

```shell

pip3 install optimum

pip3 install git+https://github.com/huggingface/transformers.git@72958fcd3c98a7afdc61f953aa58c544ebda2f79

pip3 install auto-gptq --extra-index-url https://huggingface.github.io/autogptq-index/whl/cu118/ # Use cu117 if on CUDA 11.7

```

If you have problems installing AutoGPTQ using the pre-built wheels, install it from source instead:

```shell

pip3 uninstall -y auto-gptq

git clone https://github.com/PanQiWei/AutoGPTQ

cd AutoGPTQ

git checkout v0.4.2

pip3 install .

```

### You can then use the following code

```python

from transformers import AutoModelForCausalLM, AutoTokenizer, pipeline

model_name_or_path = "TheBloke/Mistral-7B-v0.1-GPTQ"

# To use a different branch, change revision

# For example: revision="gptq-4bit-32g-actorder_True"

model = AutoModelForCausalLM.from_pretrained(model_name_or_path,

device_map="auto",

trust_remote_code=False,

revision="main")

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, use_fast=True)

prompt = "Tell me about AI"

prompt_template=f'''{prompt}

'''

print("\n\n*** Generate:")

input_ids = tokenizer(prompt_template, return_tensors='pt').input_ids.cuda()

output = model.generate(inputs=input_ids, temperature=0.7, do_sample=True, top_p=0.95, top_k=40, max_new_tokens=512)

print(tokenizer.decode(output[0]))

# Inference can also be done using transformers' pipeline

print("*** Pipeline:")

pipe = pipeline(

"text-generation",

model=model,

tokenizer=tokenizer,

max_new_tokens=512,

do_sample=True,

temperature=0.7,

top_p=0.95,

top_k=40,

repetition_penalty=1.1

)

print(pipe(prompt_template)[0]['generated_text'])

```

<!-- README_GPTQ.md-use-from-python end -->

<!-- README_GPTQ.md-compatibility start -->

## Compatibility

The files provided are only tested to work with Transformers 4.34.0.dev0 as of commit 72958fcd3c98a7afdc61f953aa58c544ebda2f79.

<!-- README_GPTQ.md-compatibility end -->

<!-- footer start -->

<!-- 200823 -->

## Discord

For further support, and discussions on these models and AI in general, join us at:

[TheBloke AI's Discord server](https://discord.gg/theblokeai)

## Thanks, and how to contribute

Thanks to the [chirper.ai](https://chirper.ai) team!

Thanks to Clay from [gpus.llm-utils.org](llm-utils)!

I've had a lot of people ask if they can contribute. I enjoy providing models and helping people, and would love to be able to spend even more time doing it, as well as expanding into new projects like fine tuning/training.

If you're able and willing to contribute it will be most gratefully received and will help me to keep providing more models, and to start work on new AI projects.

Donaters will get priority support on any and all AI/LLM/model questions and requests, access to a private Discord room, plus other benefits.

* Patreon: https://patreon.com/TheBlokeAI

* Ko-Fi: https://ko-fi.com/TheBlokeAI

**Special thanks to**: Aemon Algiz.

**Patreon special mentions**: Pierre Kircher, Stanislav Ovsiannikov, Michael Levine, Eugene Pentland, Andrey, 준교 김, Randy H, Fred von Graf, Artur Olbinski, Caitlyn Gatomon, terasurfer, Jeff Scroggin, James Bentley, Vadim, Gabriel Puliatti, Harry Royden McLaughlin, Sean Connelly, Dan Guido, Edmond Seymore, Alicia Loh, subjectnull, AzureBlack, Manuel Alberto Morcote, Thomas Belote, Lone Striker, Chris Smitley, Vitor Caleffi, Johann-Peter Hartmann, Clay Pascal, biorpg, Brandon Frisco, sidney chen, transmissions 11, Pedro Madruga, jinyuan sun, Ajan Kanaga, Emad Mostaque, Trenton Dambrowitz, Jonathan Leane, Iucharbius, usrbinkat, vamX, George Stoitzev, Luke Pendergrass, theTransient, Olakabola, Swaroop Kallakuri, Cap'n Zoog, Brandon Phillips, Michael Dempsey, Nikolai Manek, danny, Matthew Berman, Gabriel Tamborski, alfie_i, Raymond Fosdick, Tom X Nguyen, Raven Klaugh, LangChain4j, Magnesian, Illia Dulskyi, David Ziegler, Mano Prime, Luis Javier Navarrete Lozano, Erik Bjäreholt, 阿明, Nathan Dryer, Alex, Rainer Wilmers, zynix, TL, Joseph William Delisle, John Villwock, Nathan LeClaire, Willem Michiel, Joguhyik, GodLy, OG, Alps Aficionado, Jeffrey Morgan, ReadyPlayerEmma, Tiffany J. Kim, Sebastain Graf, Spencer Kim, Michael Davis, webtim, Talal Aujan, knownsqashed, John Detwiler, Imad Khwaja, Deo Leter, Jerry Meng, Elijah Stavena, Rooh Singh, Pieter, SuperWojo, Alexandros Triantafyllidis, Stephen Murray, Ai Maven, ya boyyy, Enrico Ros, Ken Nordquist, Deep Realms, Nicholas, Spiking Neurons AB, Elle, Will Dee, Jack West, RoA, Luke @flexchar, Viktor Bowallius, Derek Yates, Subspace Studios, jjj, Toran Billups, Asp the Wyvern, Fen Risland, Ilya, NimbleBox.ai, Chadd, Nitin Borwankar, Emre, Mandus, Leonard Tan, Kalila, K, Trailburnt, S_X, Cory Kujawski

Thank you to all my generous patrons and donaters!

And thank you again to a16z for their generous grant.

<!-- footer end -->

# Original model card: Mistral AI's Mistral 7B v0.1

# Model Card for Mistral-7B-v0.1

The Mistral-7B-v0.1 Large Language Model (LLM) is a pretrained generative text model with 7 billion parameters.

Mistral-7B-v0.1 outperforms Llama 2 13B on all benchmarks we tested.

For full details of this model please read our [Release blog post](https://mistral.ai/news/announcing-mistral-7b/)

## Model Architecture

Mistral-7B-v0.1 is a transformer model, with the following architecture choices:

- Grouped-Query Attention

- Sliding-Window Attention

- Byte-fallback BPE tokenizer

## Troubleshooting

- If you see the following error:

```

Traceback (most recent call last):

File "", line 1, in

File "/transformers/models/auto/auto_factory.py", line 482, in from_pretrained

config, kwargs = AutoConfig.from_pretrained(

File "/transformers/models/auto/configuration_auto.py", line 1022, in from_pretrained

config_class = CONFIG_MAPPING[config_dict["model_type"]]

File "/transformers/models/auto/configuration_auto.py", line 723, in getitem

raise KeyError(key)

KeyError: 'mistral'

```

Installing transformers from source should solve the issue:

```

pip install git+https://github.com/huggingface/transformers

```

This should not be required after transformers-v4.33.4.

## Notice

Mistral 7B is a pretrained base model and therefore does not have any moderation mechanisms.

## The Mistral AI Team

Albert Jiang, Alexandre Sablayrolles, Arthur Mensch, Chris Bamford, Devendra Singh Chaplot, Diego de las Casas, Florian Bressand, Gianna Lengyel, Guillaume Lample, Lélio Renard Lavaud, Lucile Saulnier, Marie-Anne Lachaux, Pierre Stock, Teven Le Scao, Thibaut Lavril, Thomas Wang, Timothée Lacroix, William El Sayed.

| 18,565 | [

[

-0.0408935546875,

-0.054443359375,

0.00901031494140625,

0.018310546875,

-0.01531982421875,

-0.0197906494140625,

0.00946044921875,

-0.035614013671875,

0.012054443359375,

0.0269927978515625,

-0.041748046875,

-0.03338623046875,

-0.0289306640625,

-0.004791259765... |

Yntec/GOLDFish | 2023-09-23T13:35:34.000Z | [

"diffusers",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"OLDFish",

"RMHF",

"license:creativeml-openrail-m",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | Yntec | null | null | Yntec/GOLDFish | 4 | 4,510 | diffusers | 2023-07-17T10:26:48 | ---

license: creativeml-openrail-m

library_name: diffusers

pipeline_tag: text-to-image

tags:

- stable-diffusion

- stable-diffusion-diffusers

- diffusers

- text-to-image

- OLDFish

- RMHF

---

# GOLDFish

A mix between the models OLDFIsh by timevisitor and RMHF_2.5D_v2 by TkskKurumi.

Preview image by Digiplay:

Original pages:

https://civitai.com/models/14978?modelVersionId=40101

https://civitai.com/models/101518?modelVersionId=110456 | 551 | [

[

-0.036224365234375,

-0.03173828125,

0.018096923828125,

0.0191650390625,

-0.048614501953125,

0.0149383544921875,

0.03741455078125,

-0.04339599609375,

0.06500244140625,

0.03900146484375,

-0.07147216796875,

-0.02294921875,

-0.033111572265625,

0.0120620727539062... |

stablediffusionapi/realistic-vision-51 | 2023-08-07T12:05:08.000Z | [

"diffusers",

"stablediffusionapi.com",

"stable-diffusion-api",

"text-to-image",

"ultra-realistic",

"license:creativeml-openrail-m",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | stablediffusionapi | null | null | stablediffusionapi/realistic-vision-51 | 1 | 4,506 | diffusers | 2023-08-07T12:02:35 | ---

license: creativeml-openrail-m

tags:

- stablediffusionapi.com

- stable-diffusion-api

- text-to-image

- ultra-realistic

pinned: true

---

# Realistic Vision 5.1 API Inference

## Get API Key

Get API key from [Stable Diffusion API](http://stablediffusionapi.com/), No Payment needed.

Replace Key in below code, change **model_id** to "realistic-vision-51"

Coding in PHP/Node/Java etc? Have a look at docs for more code examples: [View docs](https://stablediffusionapi.com/docs)

Try model for free: [Generate Images](https://stablediffusionapi.com/models/realistic-vision-51)

Model link: [View model](https://stablediffusionapi.com/models/realistic-vision-51)

Credits: [View credits](https://civitai.com/?query=Realistic%20Vision%205.1)

View all models: [View Models](https://stablediffusionapi.com/models)

import requests

import json

url = "https://stablediffusionapi.com/api/v4/dreambooth"

payload = json.dumps({

"key": "your_api_key",

"model_id": "realistic-vision-51",

"prompt": "ultra realistic close up portrait ((beautiful pale cyberpunk female with heavy black eyeliner)), blue eyes, shaved side haircut, hyper detail, cinematic lighting, magic neon, dark red city, Canon EOS R3, nikon, f/1.4, ISO 200, 1/160s, 8K, RAW, unedited, symmetrical balance, in-frame, 8K",

"negative_prompt": "painting, extra fingers, mutated hands, poorly drawn hands, poorly drawn face, deformed, ugly, blurry, bad anatomy, bad proportions, extra limbs, cloned face, skinny, glitchy, double torso, extra arms, extra hands, mangled fingers, missing lips, ugly face, distorted face, extra legs, anime",

"width": "512",

"height": "512",

"samples": "1",

"num_inference_steps": "30",

"safety_checker": "no",

"enhance_prompt": "yes",

"seed": None,

"guidance_scale": 7.5,

"multi_lingual": "no",

"panorama": "no",

"self_attention": "no",

"upscale": "no",

"embeddings": "embeddings_model_id",

"lora": "lora_model_id",

"webhook": None,

"track_id": None

})

headers = {

'Content-Type': 'application/json'

}

response = requests.request("POST", url, headers=headers, data=payload)

print(response.text)

> Use this coupon code to get 25% off **DMGG0RBN** | 2,496 | [

[

-0.03851318359375,

-0.05194091796875,

0.04095458984375,

0.0144195556640625,

-0.0391845703125,

0.004810333251953125,

0.0230865478515625,

-0.0447998046875,

0.03643798828125,

0.045440673828125,

-0.06634521484375,

-0.06427001953125,

-0.026397705078125,

0.0002274... |

timm/deit_small_patch16_224.fb_in1k | 2023-03-28T01:34:03.000Z | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"arxiv:2012.12877",

"license:apache-2.0",

"region:us"

] | image-classification | timm | null | null | timm/deit_small_patch16_224.fb_in1k | 0 | 4,503 | timm | 2023-03-28T01:33:38 | ---

tags:

- image-classification

- timm

library_tag: timm

license: apache-2.0

datasets:

- imagenet-1k

---

# Model card for deit_small_patch16_224.fb_in1k

A DeiT image classification model. Trained on ImageNet-1k by paper authors.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 22.1

- GMACs: 4.6

- Activations (M): 11.9

- Image size: 224 x 224

- **Papers:**

- Training data-efficient image transformers & distillation through attention: https://arxiv.org/abs/2012.12877

- **Original:** https://github.com/facebookresearch/deit

- **Dataset:** ImageNet-1k

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('deit_small_patch16_224.fb_in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'deit_small_patch16_224.fb_in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 197, 384) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Model Comparison

Explore the dataset and runtime metrics of this model in timm [model results](https://github.com/huggingface/pytorch-image-models/tree/main/results).

## Citation

```bibtex

@InProceedings{pmlr-v139-touvron21a,

title = {Training data-efficient image transformers & distillation through attention},

author = {Touvron, Hugo and Cord, Matthieu and Douze, Matthijs and Massa, Francisco and Sablayrolles, Alexandre and Jegou, Herve},

booktitle = {International Conference on Machine Learning},

pages = {10347--10357},

year = {2021},

volume = {139},

month = {July}

}

```

```bibtex

@misc{rw2019timm,

author = {Ross Wightman},

title = {PyTorch Image Models},

year = {2019},

publisher = {GitHub},

journal = {GitHub repository},

doi = {10.5281/zenodo.4414861},

howpublished = {\url{https://github.com/huggingface/pytorch-image-models}}

}

```

| 3,217 | [

[

-0.0380859375,

-0.03546142578125,

0.00795745849609375,

0.0113067626953125,

-0.028900146484375,

-0.0261077880859375,

-0.016876220703125,

-0.0271148681640625,

0.00872039794921875,

0.015411376953125,

-0.04315185546875,

-0.048980712890625,

-0.053985595703125,

-0... |

mrsairam/my-german-shepherd-xzg | 2023-11-05T17:59:01.000Z | [

"diffusers",

"NxtWave-GenAI-Webinar",

"text-to-image",

"stable-diffusion",

"license:creativeml-openrail-m",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | mrsairam | null | null | mrsairam/my-german-shepherd-xzg | 0 | 4,491 | diffusers | 2023-11-05T17:54:56 | ---

license: creativeml-openrail-m

tags:

- NxtWave-GenAI-Webinar

- text-to-image

- stable-diffusion

---

### My-German-Shepherd-XZG Dreambooth model trained by mrsairam following the "Build your own Gen AI model" session by NxtWave.

Project Submission Code: MITS-1425

Sample pictures of this concept:

| 450 | [

[