modelId stringlengths 4 111 | lastModified stringlengths 24 24 | tags list | pipeline_tag stringlengths 5 30 ⌀ | author stringlengths 2 34 ⌀ | config null | securityStatus null | id stringlengths 4 111 | likes int64 0 9.53k | downloads int64 2 73.6M | library_name stringlengths 2 84 ⌀ | created timestamp[us] | card stringlengths 101 901k | card_len int64 101 901k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

shi-labs/oneformer_ade20k_dinat_large | 2023-08-30T00:03:28.000Z | [

"transformers",

"pytorch",

"oneformer",

"vision",

"image-segmentation",

"dataset:scene_parse_150",

"arxiv:2211.06220",

"license:mit",

"endpoints_compatible",

"has_space",

"region:us"

] | image-segmentation | shi-labs | null | null | shi-labs/oneformer_ade20k_dinat_large | 4 | 1,369 | transformers | 2022-11-15T20:25:34 | ---

license: mit

tags:

- vision

- image-segmentation

datasets:

- scene_parse_150

widget:

- src: https://praeclarumjj3.github.io/files/ade20k.jpeg

example_title: House

- src: https://praeclarumjj3.github.io/files/demo_2.jpg

example_title: Airplane

- src: https://praeclarumjj3.github.io/files/coco.jpeg

example_title: Person

---

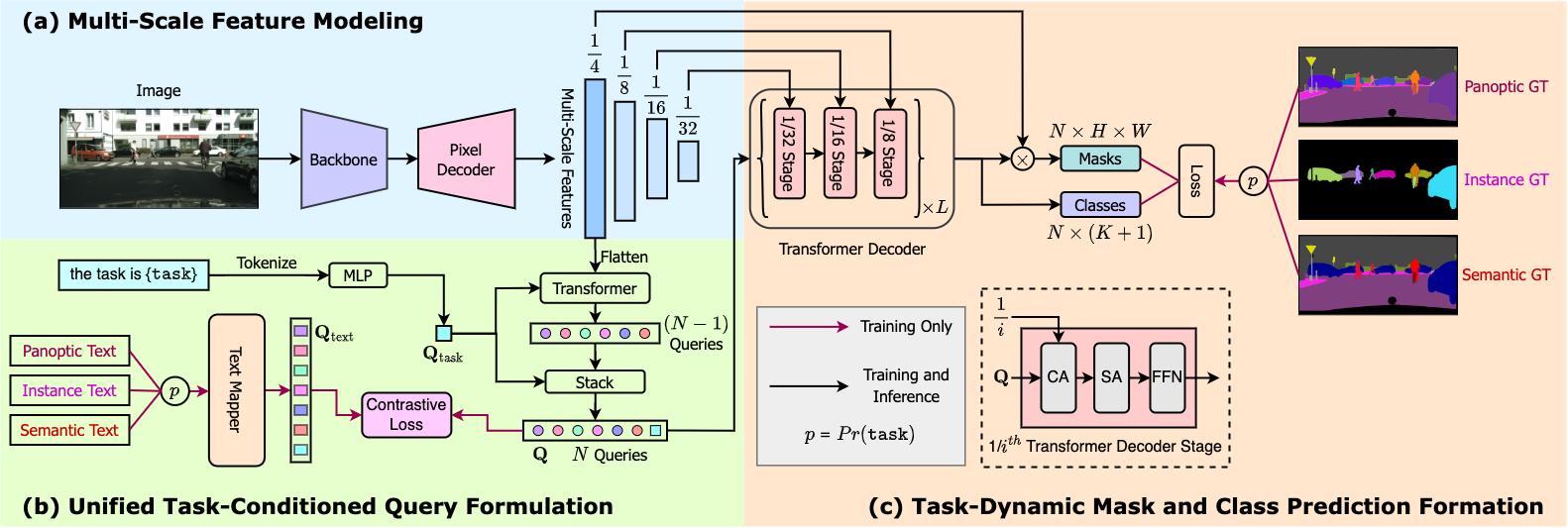

# OneFormer

OneFormer model trained on the ADE20k dataset (large-sized version, Dinat backbone). It was introduced in the paper [OneFormer: One Transformer to Rule Universal Image Segmentation](https://arxiv.org/abs/2211.06220) by Jain et al. and first released in [this repository](https://github.com/SHI-Labs/OneFormer).

## Model description

OneFormer is the first multi-task universal image segmentation framework. It needs to be trained only once with a single universal architecture, a single model, and on a single dataset, to outperform existing specialized models across semantic, instance, and panoptic segmentation tasks. OneFormer uses a task token to condition the model on the task in focus, making the architecture task-guided for training, and task-dynamic for inference, all with a single model.

## Intended uses & limitations

You can use this particular checkpoint for semantic, instance and panoptic segmentation. See the [model hub](https://huggingface.co/models?search=oneformer) to look for other fine-tuned versions on a different dataset.

### How to use

Here is how to use this model:

```python

from transformers import OneFormerProcessor, OneFormerForUniversalSegmentation

from PIL import Image

import requests

url = "https://huggingface.co/datasets/shi-labs/oneformer_demo/blob/main/ade20k.jpeg"

image = Image.open(requests.get(url, stream=True).raw)

# Loading a single model for all three tasks

processor = OneFormerProcessor.from_pretrained("shi-labs/oneformer_ade20k_dinat_large")

model = OneFormerForUniversalSegmentation.from_pretrained("shi-labs/oneformer_ade20k_dinat_large")

# Semantic Segmentation

semantic_inputs = processor(images=image, task_inputs=["semantic"], return_tensors="pt")

semantic_outputs = model(**semantic_inputs)

# pass through image_processor for postprocessing

predicted_semantic_map = processor.post_process_semantic_segmentation(outputs, target_sizes=[image.size[::-1]])[0]

# Instance Segmentation

instance_inputs = processor(images=image, task_inputs=["instance"], return_tensors="pt")

instance_outputs = model(**instance_inputs)

# pass through image_processor for postprocessing

predicted_instance_map = processor.post_process_instance_segmentation(outputs, target_sizes=[image.size[::-1]])[0]["segmentation"]

# Panoptic Segmentation

panoptic_inputs = processor(images=image, task_inputs=["panoptic"], return_tensors="pt")

panoptic_outputs = model(**panoptic_inputs)

# pass through image_processor for postprocessing

predicted_semantic_map = processor.post_process_panoptic_segmentation(outputs, target_sizes=[image.size[::-1]])[0]["segmentation"]

```

For more examples, please refer to the [documentation](https://huggingface.co/docs/transformers/master/en/model_doc/oneformer).

### Citation

```bibtex

@article{jain2022oneformer,

title={{OneFormer: One Transformer to Rule Universal Image Segmentation}},

author={Jitesh Jain and Jiachen Li and MangTik Chiu and Ali Hassani and Nikita Orlov and Humphrey Shi},

journal={arXiv},

year={2022}

}

```

| 3,666 | [

[

-0.04962158203125,

-0.057037353515625,

0.0264129638671875,

0.0102081298828125,

-0.022216796875,

-0.046295166015625,

0.02191162109375,

-0.0177154541015625,

0.002803802490234375,

0.0506591796875,

-0.0758056640625,

-0.045684814453125,

-0.047882080078125,

-0.017... |

kabachuha/modelscope-damo-text2video-pruned-weights | 2023-03-23T12:19:20.000Z | [

"open_clip",

"license:cc-by-nc-4.0",

"region:us"

] | null | kabachuha | null | null | kabachuha/modelscope-damo-text2video-pruned-weights | 32 | 1,367 | open_clip | 2023-03-22T08:42:25 | ---

license: cc-by-nc-4.0

---

https://huggingface.co/damo-vilab/modelscope-damo-text-to-video-synthesis, but with fp16 (half precision) weights

Read all the info here https://huggingface.co/damo-vilab/modelscope-damo-text-to-video-synthesis/blob/main/README.md

| 263 | [

[

-0.038116455078125,

-0.036956787109375,

0.01369476318359375,

0.051971435546875,

-0.01357269287109375,

-0.01218414306640625,

-0.0006833076477050781,

-0.00852203369140625,

0.0190277099609375,

0.017425537109375,

-0.05364990234375,

-0.033233642578125,

-0.05255126953... |

mrm8488/bert-base-spanish-wwm-cased-finetuned-spa-squad2-es | 2021-05-20T00:22:53.000Z | [

"transformers",

"pytorch",

"jax",

"bert",

"question-answering",

"es",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

] | question-answering | mrm8488 | null | null | mrm8488/bert-base-spanish-wwm-cased-finetuned-spa-squad2-es | 6 | 1,366 | transformers | 2022-03-02T23:29:05 | ---

language: es

thumbnail: https://i.imgur.com/jgBdimh.png

---

# BETO (Spanish BERT) + Spanish SQuAD2.0

This model is provided by [BETO team](https://github.com/dccuchile/beto) and fine-tuned on [SQuAD-es-v2.0](https://github.com/ccasimiro88/TranslateAlignRetrieve) for **Q&A** downstream task.

## Details of the language model('dccuchile/bert-base-spanish-wwm-cased')

Language model ([**'dccuchile/bert-base-spanish-wwm-cased'**](https://github.com/dccuchile/beto/blob/master/README.md)):

BETO is a [BERT model](https://github.com/google-research/bert) trained on a [big Spanish corpus](https://github.com/josecannete/spanish-corpora). BETO is of size similar to a BERT-Base and was trained with the Whole Word Masking technique. Below you find Tensorflow and Pytorch checkpoints for the uncased and cased versions, as well as some results for Spanish benchmarks comparing BETO with [Multilingual BERT](https://github.com/google-research/bert/blob/master/multilingual.md) as well as other (not BERT-based) models.

## Details of the downstream task (Q&A) - Dataset

[SQuAD-es-v2.0](https://github.com/ccasimiro88/TranslateAlignRetrieve)

| Dataset | # Q&A |

| ---------------------- | ----- |

| SQuAD2.0 Train | 130 K |

| SQuAD2.0-es-v2.0 | 111 K |

| SQuAD2.0 Dev | 12 K |

| SQuAD-es-v2.0-small Dev| 69 K |

## Model training

The model was trained on a Tesla P100 GPU and 25GB of RAM with the following command:

```bash

export SQUAD_DIR=path/to/nl_squad

python transformers/examples/question-answering/run_squad.py \

--model_type bert \

--model_name_or_path dccuchile/bert-base-spanish-wwm-cased \

--do_train \

--do_eval \

--do_lower_case \

--train_file $SQUAD_DIR/train_nl-v2.0.json \

--predict_file $SQUAD_DIR/dev_nl-v2.0.json \

--per_gpu_train_batch_size 12 \

--learning_rate 3e-5 \

--num_train_epochs 2.0 \

--max_seq_length 384 \

--doc_stride 128 \

--output_dir /content/model_output \

--save_steps 5000 \

--threads 4 \

--version_2_with_negative

```

## Results:

| Metric | # Value |

| ---------------------- | ----- |

| **Exact** | **76.50**50 |

| **F1** | **86.07**81 |

```json

{

"exact": 76.50501430594491,

"f1": 86.07818773108252,

"total": 69202,

"HasAns_exact": 67.93020719738277,

"HasAns_f1": 82.37912207996466,

"HasAns_total": 45850,

"NoAns_exact": 93.34104145255225,

"NoAns_f1": 93.34104145255225,

"NoAns_total": 23352,

"best_exact": 76.51223953064941,

"best_exact_thresh": 0.0,

"best_f1": 86.08541295578848,

"best_f1_thresh": 0.0

}

```

### Model in action (in a Colab Notebook)

<details>

1. Set the context and ask some questions:

2. Run predictions:

</details>

> Created by [Manuel Romero/@mrm8488](https://twitter.com/mrm8488)

> Made with <span style="color: #e25555;">♥</span> in Spain

| 3,040 | [

[

-0.037078857421875,

-0.054443359375,

0.024078369140625,

0.0281219482421875,

-0.0101165771484375,

0.02398681640625,

-0.0248260498046875,

-0.03570556640625,

0.0213623046875,

0.01409149169921875,

-0.06927490234375,

-0.032806396484375,

-0.042724609375,

-0.002098... |

AstraliteHeart/pony-diffusion | 2023-05-16T09:20:10.000Z | [

"diffusers",

"stable-diffusion",

"text-to-image",

"en",

"license:bigscience-bloom-rail-1.0",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | AstraliteHeart | null | null | AstraliteHeart/pony-diffusion | 57 | 1,366 | diffusers | 2022-10-01T01:16:37 | ---

language:

- en

tags:

- stable-diffusion

- text-to-image

license: bigscience-bloom-rail-1.0

inference: true

thumbnail: "https://cdn.discordapp.com/attachments/1020199895694589953/1020200601780494386/000001.553325548.png"

---

# pony-diffusion - >nohooves

**[Pony Diffusion V4 is now live!](https://huggingface.co/AstraliteHeart/pony-diffusion-v4)**

pony-diffusion is a latent text-to-image diffusion model that has been conditioned on high-quality pony SFW-ish images through fine-tuning.

With special thanks to [Waifu-Diffusion](https://huggingface.co/hakurei/waifu-diffusion) for providing finetuning expertise and [Novel AI](https://novelai.net/) for providing necessary compute.

[](https://colab.research.google.com/drive/10Naa1SiIy0CA7bjk0q1rCcMMza6aWXpy?usp=sharing)

[](https://discord.gg/pYsdjMfu3q)

<img src=https://cdn.discordapp.com/attachments/1020199895694589953/1020200601780494386/000001.553325548.png width=50% height=50%>

<img src=https://cdn.discordapp.com/attachments/1020199895694589953/1020213899175415858/unknown.png width=50% height=50%>

<img src=https://cdn.discordapp.com/attachments/1020199895694589953/1021448446072340520/unknown.png width=50% height=50%>

<img src=https://cdn.discordapp.com/attachments/704226060178292846/1018644965905141840/upscaled_100_pony_made_of_rough_ivy_.webp width=50% height=50%>

[Original PyTorch Model Download Link](https://mega.nz/file/ZT1xEKgC#Xxir5udMmU_mKaRZAbBkF247Yk7DqCr01V0pDzSlYI0)

[Real-ESRGAN Model finetuned on pony faces](https://mega.nz/folder/cPMlxBqT#aPKYrEfgA_lpPexr0UlQ6w)

## Model Description

The model originally used for fine-tuning is an early finetuned checkpoint of [waifu-diffusion](https://huggingface.co/hakurei/waifu-diffusion) on top of [Stable Diffusion V1-4](https://huggingface.co/CompVis/stable-diffusion-v1-4), which is a latent image diffusion model trained on [LAION2B-en](https://huggingface.co/datasets/laion/laion2B-en).

This particular checkpoint has been fine-tuned with a learning rate of 5.0e-6 for 4 epochs on approximately 80k pony text-image pairs (using tags from derpibooru) which all have score greater than `500` and belong to categories `safe` or `suggestive`.

## License

This model is open access and available to all, with a CreativeML OpenRAIL-M license further specifying rights and usage.

The CreativeML OpenRAIL License specifies:

1. You can't use the model to deliberately produce nor share illegal or harmful outputs or content

2. The authors claims no rights on the outputs you generate, you are free to use them and are accountable for their use which must not go against the provisions set in the license

3. You may re-distribute the weights and use the model commercially and/or as a service. If you do, please be aware you have to include the same use restrictions as the ones in the license and share a copy of the CreativeML OpenRAIL-M to all your users (please read the license entirely and carefully)

[Please read the full license here](https://huggingface.co/spaces/CompVis/stable-diffusion-license)

## Downstream Uses

This model can be used for entertainment purposes and as a generative art assistant.

## Example Code

```python

import torch

from torch import autocast

from diffusers import StableDiffusionPipeline, DDIMScheduler

model_id = "AstraliteHeart/pony-diffusion"

device = "cuda"

pipe = StableDiffusionPipeline.from_pretrained(

model_id,

torch_dtype=torch.float16,

revision="fp16",

scheduler=DDIMScheduler(

beta_start=0.00085,

beta_end=0.012,

beta_schedule="scaled_linear",

clip_sample=False,

set_alpha_to_one=False,

),

)

pipe = pipe.to(device)

prompt = "pinkie pie anthro portrait wedding dress veil intricate highly detailed digital painting artstation concept art smooth sharp focus illustration Unreal Engine 5 8K"

with autocast("cuda"):

image = pipe(prompt, guidance_scale=7.5)["sample"][0]

image.save("cute_poner.png")

```

## Team Members and Acknowledgements

This project would not have been possible without the incredible work by the [CompVis Researchers](https://ommer-lab.com/).

- [Waifu-Diffusion for helping with finetuning and providing starting checkpoint](https://huggingface.co/hakurei/waifu-diffusion)

- [Novel AI for providing compute](https://novelai.net/)

In order to reach us, you can join our [Discord server](https://discord.gg/WG78ZbSB). | 4,601 | [

[

-0.03631591796875,

-0.0645751953125,

0.02691650390625,

0.0369873046875,

-0.006793975830078125,

-0.017547607421875,

0.01183319091796875,

-0.03515625,

0.021240234375,

0.04095458984375,

-0.043487548828125,

-0.033935546875,

-0.032012939453125,

-0.017684936523437... |

inseq/wmt21-mlqe-ru-en | 2023-01-30T22:15:30.000Z | [

"transformers",

"pytorch",

"fsmt",

"text2text-generation",

"translation",

"wmt20",

"en",

"ru",

"multilingual",

"license:cc-by-sa-4.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | translation | inseq | null | null | inseq/wmt21-mlqe-ru-en | 0 | 1,366 | transformers | 2023-01-30T12:34:11 | ---

language:

- en

- ru

- multilingual

license: cc-by-sa-4.0

tags:

- translation

- wmt20

widget:

- text: "Сахалинская кайнозойская складчатая область разделяется на Восточную и Западную зоны, разделённые Центрально-Сахалинским грабеном."

- text: "Существует несколько мнений о его точном месторасположении."

- text: "Крупный научно-образовательный центр, в котором обучается свыше ста тысяч студентов."

---

# Fairseq Ru-En NMT WMT20 MLQE

This repository contains the Russian-English model trained with the [fairseq toolkit](https://github.com/pytorch/fairseq) that was used to produce translations used in the WMT21 shared task on quality estimation (QE) on the [MLQE dataset](https://github.com/facebookresearch/mlqe).

The checkpoint was converted from the original fairseq checkpoint available [here](https://github.com/facebookresearch/mlqe/tree/master/nmt_models) using the `convert_fsmt_original_pytorch_checkpoint_to_pytorch.py` script from the 🤗 Transformers library (v4.26.0).

Please refer to the repositories linked above for additional information on usage, parameters and training data. | 1,101 | [

[

-0.0170745849609375,

-0.0143585205078125,

0.035919189453125,

0.014312744140625,

-0.01275634765625,

-0.0161590576171875,

0.021514892578125,

-0.01378631591796875,

0.008026123046875,

0.0254974365234375,

-0.07257080078125,

-0.0300750732421875,

-0.0330810546875,

... |

lexlms/legal-roberta-base | 2023-05-15T13:48:14.000Z | [

"transformers",

"pytorch",

"tensorboard",

"roberta",

"fill-mask",

"legal",

"en",

"dataset:lexlms/lex_files",

"arxiv:2305.07507",

"license:cc-by-sa-4.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | fill-mask | lexlms | null | null | lexlms/legal-roberta-base | 4 | 1,365 | transformers | 2022-11-11T11:15:13 | ---

language: en

pipeline_tag: fill-mask

license: cc-by-sa-4.0

tags:

- legal

model-index:

- name: lexlms/legal-roberta-base

results: []

widget:

- text: "The applicant submitted that her husband was subjected to treatment amounting to <mask> whilst in the custody of police."

- text: "This <mask> Agreement is between General Motors and John Murray."

- text: "Establishing a system for the identification and registration of <mask> animals and regarding the labelling of beef and beef products."

- text: "Because the Court granted <mask> before judgment, the Court effectively stands in the shoes of the Court of Appeals and reviews the defendants’ appeals."

datasets:

- lexlms/lex_files

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# LexLM base

This model was continued pre-trained from RoBERTa base (https://huggingface.co/roberta-base) on the LeXFiles corpus (https://huggingface.co/datasets/lexlms/lexfiles).

## Model description

LexLM (Base/Large) are our newly released RoBERTa models. We follow a series of best-practices in language model development:

* We warm-start (initialize) our models from the original RoBERTa checkpoints (base or large) of Liu et al. (2019).

* We train a new tokenizer of 50k BPEs, but we reuse the original embeddings for all lexically overlapping tokens (Pfeiffer et al., 2021).

* We continue pre-training our models on the diverse LeXFiles corpus for additional 1M steps with batches of 512 samples, and a 20/30% masking rate (Wettig et al., 2022), for base/large models, respectively.

* We use a sentence sampler with exponential smoothing of the sub-corpora sampling rate following Conneau et al. (2019) since there is a disparate proportion of tokens across sub-corpora and we aim to preserve per-corpus capacity (avoid overfitting).

* We consider mixed cased models, similar to all recently developed large PLMs.

## Intended uses & limitations

More information needed

## Training and evaluation data

The model was trained on the LeXFiles corpus (https://huggingface.co/datasets/lexlms/lexfiles). For evaluation results, please consider our work "LeXFiles and LegalLAMA: Facilitating English Multinational Legal Language Model Development" (Chalkidis* et al, 2023).

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- distributed_type: tpu

- num_devices: 8

- gradient_accumulation_steps: 2

- total_train_batch_size: 512

- total_eval_batch_size: 256

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- lr_scheduler_warmup_ratio: 0.05

- training_steps: 1000000

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:-------:|:---------------:|

| 1.0389 | 0.05 | 50000 | 0.9802 |

| 0.9685 | 0.1 | 100000 | 0.9021 |

| 0.9337 | 0.15 | 150000 | 0.8752 |

| 0.9106 | 0.2 | 200000 | 0.8558 |

| 0.8981 | 0.25 | 250000 | 0.8512 |

| 0.8813 | 1.03 | 300000 | 0.8203 |

| 0.8899 | 1.08 | 350000 | 0.8286 |

| 0.8581 | 1.13 | 400000 | 0.8148 |

| 0.856 | 1.18 | 450000 | 0.8141 |

| 0.8527 | 1.23 | 500000 | 0.8034 |

| 0.8345 | 2.02 | 550000 | 0.7763 |

| 0.8342 | 2.07 | 600000 | 0.7862 |

| 0.8147 | 2.12 | 650000 | 0.7842 |

| 0.8369 | 2.17 | 700000 | 0.7766 |

| 0.814 | 2.22 | 750000 | 0.7737 |

| 0.8046 | 2.27 | 800000 | 0.7692 |

| 0.7941 | 3.05 | 850000 | 0.7538 |

| 0.7956 | 3.1 | 900000 | 0.7562 |

| 0.8068 | 3.15 | 950000 | 0.7512 |

| 0.8066 | 3.2 | 1000000 | 0.7516 |

### Framework versions

- Transformers 4.20.0

- Pytorch 1.12.0+cu102

- Datasets 2.6.1

- Tokenizers 0.12.0

### Citation

[*Ilias Chalkidis\*, Nicolas Garneau\*, Catalina E.C. Goanta, Daniel Martin Katz, and Anders Søgaard.*

*LeXFiles and LegalLAMA: Facilitating English Multinational Legal Language Model Development.*

*2022. In the Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics. Toronto, Canada.*](https://arxiv.org/abs/2305.07507)

```

@inproceedings{chalkidis-garneau-etal-2023-lexlms,

title = {{LeXFiles and LegalLAMA: Facilitating English Multinational Legal Language Model Development}},

author = "Chalkidis*, Ilias and

Garneau*, Nicolas and

Goanta, Catalina and

Katz, Daniel Martin and

Søgaard, Anders",

booktitle = "Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics",

month = july,

year = "2023",

address = "Toronto, Canada",

publisher = "Association for Computational Linguistics",

url = "https://arxiv.org/abs/2305.07507",

}

``` | 5,156 | [

[

-0.0261383056640625,

-0.042236328125,

0.0243072509765625,

-0.00039839744567871094,

-0.0183868408203125,

0.006134033203125,

-0.0181884765625,

-0.020660400390625,

0.020538330078125,

0.0267333984375,

-0.032135009765625,

-0.06475830078125,

-0.0467529296875,

-0.0... |

Salesforce/codet5p-770m-py | 2023-05-16T00:31:41.000Z | [

"transformers",

"pytorch",

"t5",

"text2text-generation",

"arxiv:2305.07922",

"license:bsd-3-clause",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"text-generation-inference",

"region:us"

] | text2text-generation | Salesforce | null | null | Salesforce/codet5p-770m-py | 18 | 1,364 | transformers | 2023-05-15T09:57:01 | ---

license: bsd-3-clause

---

# CodeT5+ 770M (further tuned on Python)

## Model description

[CodeT5+](https://github.com/salesforce/CodeT5/tree/main/CodeT5+) is a new family of open code large language models with an encoder-decoder architecture that can flexibly operate in different modes (i.e. _encoder-only_, _decoder-only_, and _encoder-decoder_) to support a wide range of code understanding and generation tasks.

It is introduced in the paper:

[CodeT5+: Open Code Large Language Models for Code Understanding and Generation](https://arxiv.org/pdf/2305.07922.pdf)

by [Yue Wang](https://yuewang-cuhk.github.io/)\*, [Hung Le](https://sites.google.com/view/henryle2018/home?pli=1)\*, [Akhilesh Deepak Gotmare](https://akhileshgotmare.github.io/), [Nghi D.Q. Bui](https://bdqnghi.github.io/), [Junnan Li](https://sites.google.com/site/junnanlics), [Steven C.H. Hoi](https://sites.google.com/view/stevenhoi/home) (* indicates equal contribution).

Compared to the original CodeT5 family (base: `220M`, large: `770M`), CodeT5+ is pretrained with a diverse set of pretraining tasks including _span denoising_, _causal language modeling_, _contrastive learning_, and _text-code matching_ to learn rich representations from both unimodal code data and bimodal code-text data.

Additionally, it employs a simple yet effective _compute-efficient pretraining_ method to initialize the model components with frozen off-the-shelf LLMs such as [CodeGen](https://github.com/salesforce/CodeGen) to efficiently scale up the model (i.e. `2B`, `6B`, `16B`), and adopts a "shallow encoder and deep decoder" architecture.

Furthermore, it is instruction-tuned to align with natural language instructions (see our InstructCodeT5+ 16B) following [Code Alpaca](https://github.com/sahil280114/codealpaca).

## How to use

This model can be easily loaded using the `T5ForConditionalGeneration` functionality and employs the same tokenizer as original [CodeT5](https://github.com/salesforce/CodeT5).

```python

from transformers import T5ForConditionalGeneration, AutoTokenizer

checkpoint = "Salesforce/codet5p-770m-py"

device = "cuda" # for GPU usage or "cpu" for CPU usage

tokenizer = AutoTokenizer.from_pretrained(checkpoint)

model = T5ForConditionalGeneration.from_pretrained(checkpoint).to(device)

inputs = tokenizer.encode("def print_hello_world():", return_tensors="pt").to(device)

outputs = model.generate(inputs, max_length=10)

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

# ==> print('Hello World!')

```

## Pretraining data

This checkpoint is trained on the stricter permissive subset of the deduplicated version of the [github-code dataset](https://huggingface.co/datasets/codeparrot/github-code).

The data is preprocessed by reserving only permissively licensed code ("mit" “apache-2”, “bsd-3-clause”, “bsd-2-clause”, “cc0-1.0”, “unlicense”, “isc”).

Supported languages (9 in total) are as follows:

`c`, `c++`, `c-sharp`, `go`, `java`, `javascript`, `php`, `python`, `ruby.`

## Training procedure

This checkpoint is first trained on the multilingual unimodal code data at the first-stage pretraining, which includes a diverse set of pretraining tasks including _span denoising_ and two variants of _causal language modeling_.

After that, it is further trained on the Python subset with the causal language modeling objective for another epoch to better adapt for Python code generation. Please refer to the paper for more details.

## Evaluation results

CodeT5+ models have been comprehensively evaluated on a wide range of code understanding and generation tasks in various settings: _zero-shot_, _finetuning_, and _instruction-tuning_.

Specifically, CodeT5+ yields substantial performance gains on many downstream tasks compared to their SoTA baselines, e.g.,

8 text-to-code retrieval tasks (+3.2 avg. MRR), 2 line-level code completion tasks (+2.1 avg. Exact Match), and 2 retrieval-augmented code generation tasks (+5.8 avg. BLEU-4).

In 2 math programming tasks on MathQA-Python and GSM8K-Python, CodeT5+ models of below billion-parameter sizes significantly outperform many LLMs of up to 137B parameters.

Particularly, in the zero-shot text-to-code generation task on HumanEval benchmark, InstructCodeT5+ 16B sets new SoTA results of 35.0% pass@1 and 54.5% pass@10 against other open code LLMs, even surpassing the closed-source OpenAI code-cushman-001 mode

Please refer to the [paper](https://arxiv.org/pdf/2305.07922.pdf) for more details.

Specifically for this checkpoint, it achieves 15.5% pass@1 on HumanEval in the zero-shot setting, which is comparable to much larger LLMs such as Incoder 6B’s 15.2%, GPT-NeoX 20B’s 15.4%, and PaLM 62B’s 15.9%.

## BibTeX entry and citation info

```bibtex

@article{wang2023codet5plus,

title={CodeT5+: Open Code Large Language Models for Code Understanding and Generation},

author={Wang, Yue and Le, Hung and Gotmare, Akhilesh Deepak and Bui, Nghi D.Q. and Li, Junnan and Hoi, Steven C. H.},

journal={arXiv preprint},

year={2023}

}

``` | 5,020 | [

[

-0.02960205078125,

-0.03314208984375,

0.013763427734375,

0.02197265625,

-0.0102691650390625,

0.00705718994140625,

-0.03350830078125,

-0.044830322265625,

-0.021270751953125,

0.016937255859375,

-0.0281982421875,

-0.048309326171875,

-0.03466796875,

0.0160217285... |

timm/convformer_b36.sail_in1k | 2023-05-05T05:55:15.000Z | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"arxiv:2210.13452",

"license:apache-2.0",

"region:us"

] | image-classification | timm | null | null | timm/convformer_b36.sail_in1k | 0 | 1,362 | timm | 2023-05-05T05:53:51 | ---

tags:

- image-classification

- timm

library_name: timm

license: apache-2.0

datasets:

- imagenet-1k

---

# Model card for convformer_b36.sail_in1k

A ConvFormer (a MetaFormer) image classification model. Trained on ImageNet-1k by paper authors.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 99.9

- GMACs: 22.7

- Activations (M): 56.1

- Image size: 224 x 224

- **Papers:**

- Metaformer baselines for vision: https://arxiv.org/abs/2210.13452

- **Original:** https://github.com/sail-sg/metaformer

- **Dataset:** ImageNet-1k

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('convformer_b36.sail_in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Feature Map Extraction

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'convformer_b36.sail_in1k',

pretrained=True,

features_only=True,

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

for o in output:

# print shape of each feature map in output

# e.g.:

# torch.Size([1, 128, 56, 56])

# torch.Size([1, 256, 28, 28])

# torch.Size([1, 512, 14, 14])

# torch.Size([1, 768, 7, 7])

print(o.shape)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'convformer_b36.sail_in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 768, 7, 7) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Model Comparison

Explore the dataset and runtime metrics of this model in timm [model results](https://github.com/huggingface/pytorch-image-models/tree/main/results).

## Citation

```bibtex

@article{yu2022metaformer_baselines,

title={Metaformer baselines for vision},

author={Yu, Weihao and Si, Chenyang and Zhou, Pan and Luo, Mi and Zhou, Yichen and Feng, Jiashi and Yan, Shuicheng and Wang, Xinchao},

journal={arXiv preprint arXiv:2210.13452},

year={2022}

}

```

| 3,642 | [

[

-0.035858154296875,

-0.030670166015625,

0.004741668701171875,

0.01148223876953125,

-0.031585693359375,

-0.024627685546875,

-0.0162506103515625,

-0.028656005859375,

0.0157928466796875,

0.03814697265625,

-0.04193115234375,

-0.058074951171875,

-0.053253173828125,

... |

mncai/Mistral-7B-v0.1-orca-1k | 2023-10-22T04:33:28.000Z | [

"transformers",

"pytorch",

"llama",

"text-generation",

"MindsAndCompany",

"en",

"ko",

"dataset:kyujinpy/OpenOrca-KO",

"arxiv:2306.02707",

"license:mit",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | mncai | null | null | mncai/Mistral-7B-v0.1-orca-1k | 0 | 1,362 | transformers | 2023-10-22T04:19:01 | ---

pipeline_tag: text-generation

license: mit

language:

- en

- ko

library_name: transformers

tags:

- MindsAndCompany

datasets:

- kyujinpy/OpenOrca-KO

---

## Model Details

* **Developed by**: [Minds And Company](https://mnc.ai/)

* **Backbone Model**: [Mistral-7B-v0.1](mistralai/Mistral-7B-v0.1)

* **Library**: [HuggingFace Transformers](https://github.com/huggingface/transformers)

## Dataset Details

### Used Datasets

- kyujinpy/OpenOrca-KO

### Prompt Template

- Llama Prompt Template

## Limitations & Biases:

Llama2 and fine-tuned variants are a new technology that carries risks with use. Testing conducted to date has been in English, and has not covered, nor could it cover all scenarios. For these reasons, as with all LLMs, Llama 2 and any fine-tuned varient's potential outputs cannot be predicted in advance, and the model may in some instances produce inaccurate, biased or other objectionable responses to user prompts. Therefore, before deploying any applications of Llama 2 variants, developers should perform safety testing and tuning tailored to their specific applications of the model.

Please see the Responsible Use Guide available at https://ai.meta.com/llama/responsible-use-guide/

## License Disclaimer:

This model is bound by the license & usage restrictions of the original Llama-2 model. And comes with no warranty or gurantees of any kind.

## Contact Us

- [Minds And Company](https://mnc.ai/)

## Citiation:

Please kindly cite using the following BibTeX:

```bibtex

@misc{mukherjee2023orca,

title={Orca: Progressive Learning from Complex Explanation Traces of GPT-4},

author={Subhabrata Mukherjee and Arindam Mitra and Ganesh Jawahar and Sahaj Agarwal and Hamid Palangi and Ahmed Awadallah},

year={2023},

eprint={2306.02707},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

```

@misc{Orca-best,

title = {Orca-best: A filtered version of orca gpt4 dataset.},

author = {Shahul Es},

year = {2023},

publisher = {HuggingFace},

journal = {HuggingFace repository},

howpublished = {\url{https://huggingface.co/datasets/shahules786/orca-best/},

}

```

```

@software{touvron2023llama2,

title={Llama 2: Open Foundation and Fine-Tuned Chat Models},

author={Hugo Touvron, Louis Martin, Kevin Stone, Peter Albert, Amjad Almahairi, Yasmine Babaei, Nikolay Bashlykov, Soumya Batra, Prajjwal Bhargava,

Shruti Bhosale, Dan Bikel, Lukas Blecher, Cristian Canton Ferrer, Moya Chen, Guillem Cucurull, David Esiobu, Jude Fernandes, Jeremy Fu, Wenyin Fu, Brian Fuller,

Cynthia Gao, Vedanuj Goswami, Naman Goyal, Anthony Hartshorn, Saghar Hosseini, Rui Hou, Hakan Inan, Marcin Kardas, Viktor Kerkez Madian Khabsa, Isabel Kloumann,

Artem Korenev, Punit Singh Koura, Marie-Anne Lachaux, Thibaut Lavril, Jenya Lee, Diana Liskovich, Yinghai Lu, Yuning Mao, Xavier Martinet, Todor Mihaylov,

Pushkar Mishra, Igor Molybog, Yixin Nie, Andrew Poulton, Jeremy Reizenstein, Rashi Rungta, Kalyan Saladi, Alan Schelten, Ruan Silva, Eric Michael Smith,

Ranjan Subramanian, Xiaoqing Ellen Tan, Binh Tang, Ross Taylor, Adina Williams, Jian Xiang Kuan, Puxin Xu , Zheng Yan, Iliyan Zarov, Yuchen Zhang, Angela Fan,

Melanie Kambadur, Sharan Narang, Aurelien Rodriguez, Robert Stojnic, Sergey Edunov, Thomas Scialom},

year={2023}

}

```

> Readme format: [Riiid/sheep-duck-llama-2-70b-v1.1](https://huggingface.co/Riiid/sheep-duck-llama-2-70b-v1.1) | 3,404 | [

[

-0.03167724609375,

-0.0498046875,

0.01027679443359375,

0.019439697265625,

-0.0237884521484375,

0.00551605224609375,

0.0104522705078125,

-0.053070068359375,

0.016693115234375,

0.0265045166015625,

-0.05328369140625,

-0.04229736328125,

-0.045196533203125,

-0.00... |

timm/maxxvitv2_rmlp_base_rw_384.sw_in12k_ft_in1k | 2023-05-11T00:50:56.000Z | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"dataset:imagenet-12k",

"arxiv:2204.01697",

"arxiv:2201.03545",

"arxiv:2111.09883",

"license:apache-2.0",

"region:us"

] | image-classification | timm | null | null | timm/maxxvitv2_rmlp_base_rw_384.sw_in12k_ft_in1k | 1 | 1,360 | timm | 2023-01-20T21:38:37 | ---

tags:

- image-classification

- timm

library_name: timm

license: apache-2.0

datasets:

- imagenet-1k

- imagenet-12k

---

# Model card for maxxvitv2_rmlp_base_rw_384.sw_in12k_ft_in1k

A timm specific MaxxViT-V2 (w/ a MLP Log-CPB (continuous log-coordinate relative position bias motivated by Swin-V2) image classification model. Pretrained in `timm` on ImageNet-12k (a 11821 class subset of full ImageNet-22k) and fine-tuned on ImageNet-1k by Ross Wightman.

ImageNet-12k pretraining and ImageNet-1k fine-tuning performed on 8x GPU [Lambda Labs](https://lambdalabs.com/) cloud instances..

### Model Variants in [maxxvit.py](https://github.com/huggingface/pytorch-image-models/blob/main/timm/models/maxxvit.py)

MaxxViT covers a number of related model architectures that share a common structure including:

- CoAtNet - Combining MBConv (depthwise-separable) convolutional blocks in early stages with self-attention transformer blocks in later stages.

- MaxViT - Uniform blocks across all stages, each containing a MBConv (depthwise-separable) convolution block followed by two self-attention blocks with different partitioning schemes (window followed by grid).

- CoAtNeXt - A timm specific arch that uses ConvNeXt blocks in place of MBConv blocks in CoAtNet. All normalization layers are LayerNorm (no BatchNorm).

- MaxxViT - A timm specific arch that uses ConvNeXt blocks in place of MBConv blocks in MaxViT. All normalization layers are LayerNorm (no BatchNorm).

- MaxxViT-V2 - A MaxxViT variation that removes the window block attention leaving only ConvNeXt blocks and grid attention w/ more width to compensate.

Aside from the major variants listed above, there are more subtle changes from model to model. Any model name with the string `rw` are `timm` specific configs w/ modelling adjustments made to favour PyTorch eager use. These were created while training initial reproductions of the models so there are variations.

All models with the string `tf` are models exactly matching Tensorflow based models by the original paper authors with weights ported to PyTorch. This covers a number of MaxViT models. The official CoAtNet models were never released.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 116.1

- GMACs: 73.0

- Activations (M): 213.7

- Image size: 384 x 384

- **Papers:**

- MaxViT: Multi-Axis Vision Transformer: https://arxiv.org/abs/2204.01697

- A ConvNet for the 2020s: https://arxiv.org/abs/2201.03545

- Swin Transformer V2: Scaling Up Capacity and Resolution: https://arxiv.org/abs/2111.09883

- **Dataset:** ImageNet-1k

- **Pretrain Dataset:** ImageNet-12k

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('maxxvitv2_rmlp_base_rw_384.sw_in12k_ft_in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Feature Map Extraction

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'maxxvitv2_rmlp_base_rw_384.sw_in12k_ft_in1k',

pretrained=True,

features_only=True,

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

for o in output:

# print shape of each feature map in output

# e.g.:

# torch.Size([1, 128, 192, 192])

# torch.Size([1, 128, 96, 96])

# torch.Size([1, 256, 48, 48])

# torch.Size([1, 512, 24, 24])

# torch.Size([1, 1024, 12, 12])

print(o.shape)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'maxxvitv2_rmlp_base_rw_384.sw_in12k_ft_in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 1024, 12, 12) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Model Comparison

### By Top-1

|model |top1 |top5 |samples / sec |Params (M) |GMAC |Act (M)|

|------------------------------------------------------------------------------------------------------------------------|----:|----:|--------------:|--------------:|-----:|------:|

|[maxvit_xlarge_tf_512.in21k_ft_in1k](https://huggingface.co/timm/maxvit_xlarge_tf_512.in21k_ft_in1k) |88.53|98.64| 21.76| 475.77|534.14|1413.22|

|[maxvit_xlarge_tf_384.in21k_ft_in1k](https://huggingface.co/timm/maxvit_xlarge_tf_384.in21k_ft_in1k) |88.32|98.54| 42.53| 475.32|292.78| 668.76|

|[maxvit_base_tf_512.in21k_ft_in1k](https://huggingface.co/timm/maxvit_base_tf_512.in21k_ft_in1k) |88.20|98.53| 50.87| 119.88|138.02| 703.99|

|[maxvit_large_tf_512.in21k_ft_in1k](https://huggingface.co/timm/maxvit_large_tf_512.in21k_ft_in1k) |88.04|98.40| 36.42| 212.33|244.75| 942.15|

|[maxvit_large_tf_384.in21k_ft_in1k](https://huggingface.co/timm/maxvit_large_tf_384.in21k_ft_in1k) |87.98|98.56| 71.75| 212.03|132.55| 445.84|

|[maxvit_base_tf_384.in21k_ft_in1k](https://huggingface.co/timm/maxvit_base_tf_384.in21k_ft_in1k) |87.92|98.54| 104.71| 119.65| 73.80| 332.90|

|[maxvit_rmlp_base_rw_384.sw_in12k_ft_in1k](https://huggingface.co/timm/maxvit_rmlp_base_rw_384.sw_in12k_ft_in1k) |87.81|98.37| 106.55| 116.14| 70.97| 318.95|

|[maxxvitv2_rmlp_base_rw_384.sw_in12k_ft_in1k](https://huggingface.co/timm/maxxvitv2_rmlp_base_rw_384.sw_in12k_ft_in1k) |87.47|98.37| 149.49| 116.09| 72.98| 213.74|

|[coatnet_rmlp_2_rw_384.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_rmlp_2_rw_384.sw_in12k_ft_in1k) |87.39|98.31| 160.80| 73.88| 47.69| 209.43|

|[maxvit_rmlp_base_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/maxvit_rmlp_base_rw_224.sw_in12k_ft_in1k) |86.89|98.02| 375.86| 116.14| 23.15| 92.64|

|[maxxvitv2_rmlp_base_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/maxxvitv2_rmlp_base_rw_224.sw_in12k_ft_in1k) |86.64|98.02| 501.03| 116.09| 24.20| 62.77|

|[maxvit_base_tf_512.in1k](https://huggingface.co/timm/maxvit_base_tf_512.in1k) |86.60|97.92| 50.75| 119.88|138.02| 703.99|

|[coatnet_2_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_2_rw_224.sw_in12k_ft_in1k) |86.57|97.89| 631.88| 73.87| 15.09| 49.22|

|[maxvit_large_tf_512.in1k](https://huggingface.co/timm/maxvit_large_tf_512.in1k) |86.52|97.88| 36.04| 212.33|244.75| 942.15|

|[coatnet_rmlp_2_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_rmlp_2_rw_224.sw_in12k_ft_in1k) |86.49|97.90| 620.58| 73.88| 15.18| 54.78|

|[maxvit_base_tf_384.in1k](https://huggingface.co/timm/maxvit_base_tf_384.in1k) |86.29|97.80| 101.09| 119.65| 73.80| 332.90|

|[maxvit_large_tf_384.in1k](https://huggingface.co/timm/maxvit_large_tf_384.in1k) |86.23|97.69| 70.56| 212.03|132.55| 445.84|

|[maxvit_small_tf_512.in1k](https://huggingface.co/timm/maxvit_small_tf_512.in1k) |86.10|97.76| 88.63| 69.13| 67.26| 383.77|

|[maxvit_tiny_tf_512.in1k](https://huggingface.co/timm/maxvit_tiny_tf_512.in1k) |85.67|97.58| 144.25| 31.05| 33.49| 257.59|

|[maxvit_small_tf_384.in1k](https://huggingface.co/timm/maxvit_small_tf_384.in1k) |85.54|97.46| 188.35| 69.02| 35.87| 183.65|

|[maxvit_tiny_tf_384.in1k](https://huggingface.co/timm/maxvit_tiny_tf_384.in1k) |85.11|97.38| 293.46| 30.98| 17.53| 123.42|

|[maxvit_large_tf_224.in1k](https://huggingface.co/timm/maxvit_large_tf_224.in1k) |84.93|96.97| 247.71| 211.79| 43.68| 127.35|

|[coatnet_rmlp_1_rw2_224.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_rmlp_1_rw2_224.sw_in12k_ft_in1k) |84.90|96.96| 1025.45| 41.72| 8.11| 40.13|

|[maxvit_base_tf_224.in1k](https://huggingface.co/timm/maxvit_base_tf_224.in1k) |84.85|96.99| 358.25| 119.47| 24.04| 95.01|

|[maxxvit_rmlp_small_rw_256.sw_in1k](https://huggingface.co/timm/maxxvit_rmlp_small_rw_256.sw_in1k) |84.63|97.06| 575.53| 66.01| 14.67| 58.38|

|[coatnet_rmlp_2_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_rmlp_2_rw_224.sw_in1k) |84.61|96.74| 625.81| 73.88| 15.18| 54.78|

|[maxvit_rmlp_small_rw_224.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_small_rw_224.sw_in1k) |84.49|96.76| 693.82| 64.90| 10.75| 49.30|

|[maxvit_small_tf_224.in1k](https://huggingface.co/timm/maxvit_small_tf_224.in1k) |84.43|96.83| 647.96| 68.93| 11.66| 53.17|

|[maxvit_rmlp_tiny_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_tiny_rw_256.sw_in1k) |84.23|96.78| 807.21| 29.15| 6.77| 46.92|

|[coatnet_1_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_1_rw_224.sw_in1k) |83.62|96.38| 989.59| 41.72| 8.04| 34.60|

|[maxvit_tiny_rw_224.sw_in1k](https://huggingface.co/timm/maxvit_tiny_rw_224.sw_in1k) |83.50|96.50| 1100.53| 29.06| 5.11| 33.11|

|[maxvit_tiny_tf_224.in1k](https://huggingface.co/timm/maxvit_tiny_tf_224.in1k) |83.41|96.59| 1004.94| 30.92| 5.60| 35.78|

|[coatnet_rmlp_1_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_rmlp_1_rw_224.sw_in1k) |83.36|96.45| 1093.03| 41.69| 7.85| 35.47|

|[maxxvitv2_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxxvitv2_nano_rw_256.sw_in1k) |83.11|96.33| 1276.88| 23.70| 6.26| 23.05|

|[maxxvit_rmlp_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxxvit_rmlp_nano_rw_256.sw_in1k) |83.03|96.34| 1341.24| 16.78| 4.37| 26.05|

|[maxvit_rmlp_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_nano_rw_256.sw_in1k) |82.96|96.26| 1283.24| 15.50| 4.47| 31.92|

|[maxvit_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_nano_rw_256.sw_in1k) |82.93|96.23| 1218.17| 15.45| 4.46| 30.28|

|[coatnet_bn_0_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_bn_0_rw_224.sw_in1k) |82.39|96.19| 1600.14| 27.44| 4.67| 22.04|

|[coatnet_0_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_0_rw_224.sw_in1k) |82.39|95.84| 1831.21| 27.44| 4.43| 18.73|

|[coatnet_rmlp_nano_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_rmlp_nano_rw_224.sw_in1k) |82.05|95.87| 2109.09| 15.15| 2.62| 20.34|

|[coatnext_nano_rw_224.sw_in1k](https://huggingface.co/timm/coatnext_nano_rw_224.sw_in1k) |81.95|95.92| 2525.52| 14.70| 2.47| 12.80|

|[coatnet_nano_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_nano_rw_224.sw_in1k) |81.70|95.64| 2344.52| 15.14| 2.41| 15.41|

|[maxvit_rmlp_pico_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_pico_rw_256.sw_in1k) |80.53|95.21| 1594.71| 7.52| 1.85| 24.86|

### By Throughput (samples / sec)

|model |top1 |top5 |samples / sec |Params (M) |GMAC |Act (M)|

|------------------------------------------------------------------------------------------------------------------------|----:|----:|--------------:|--------------:|-----:|------:|

|[coatnext_nano_rw_224.sw_in1k](https://huggingface.co/timm/coatnext_nano_rw_224.sw_in1k) |81.95|95.92| 2525.52| 14.70| 2.47| 12.80|

|[coatnet_nano_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_nano_rw_224.sw_in1k) |81.70|95.64| 2344.52| 15.14| 2.41| 15.41|

|[coatnet_rmlp_nano_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_rmlp_nano_rw_224.sw_in1k) |82.05|95.87| 2109.09| 15.15| 2.62| 20.34|

|[coatnet_0_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_0_rw_224.sw_in1k) |82.39|95.84| 1831.21| 27.44| 4.43| 18.73|

|[coatnet_bn_0_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_bn_0_rw_224.sw_in1k) |82.39|96.19| 1600.14| 27.44| 4.67| 22.04|

|[maxvit_rmlp_pico_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_pico_rw_256.sw_in1k) |80.53|95.21| 1594.71| 7.52| 1.85| 24.86|

|[maxxvit_rmlp_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxxvit_rmlp_nano_rw_256.sw_in1k) |83.03|96.34| 1341.24| 16.78| 4.37| 26.05|

|[maxvit_rmlp_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_nano_rw_256.sw_in1k) |82.96|96.26| 1283.24| 15.50| 4.47| 31.92|

|[maxxvitv2_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxxvitv2_nano_rw_256.sw_in1k) |83.11|96.33| 1276.88| 23.70| 6.26| 23.05|

|[maxvit_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_nano_rw_256.sw_in1k) |82.93|96.23| 1218.17| 15.45| 4.46| 30.28|

|[maxvit_tiny_rw_224.sw_in1k](https://huggingface.co/timm/maxvit_tiny_rw_224.sw_in1k) |83.50|96.50| 1100.53| 29.06| 5.11| 33.11|

|[coatnet_rmlp_1_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_rmlp_1_rw_224.sw_in1k) |83.36|96.45| 1093.03| 41.69| 7.85| 35.47|

|[coatnet_rmlp_1_rw2_224.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_rmlp_1_rw2_224.sw_in12k_ft_in1k) |84.90|96.96| 1025.45| 41.72| 8.11| 40.13|

|[maxvit_tiny_tf_224.in1k](https://huggingface.co/timm/maxvit_tiny_tf_224.in1k) |83.41|96.59| 1004.94| 30.92| 5.60| 35.78|

|[coatnet_1_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_1_rw_224.sw_in1k) |83.62|96.38| 989.59| 41.72| 8.04| 34.60|

|[maxvit_rmlp_tiny_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_tiny_rw_256.sw_in1k) |84.23|96.78| 807.21| 29.15| 6.77| 46.92|

|[maxvit_rmlp_small_rw_224.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_small_rw_224.sw_in1k) |84.49|96.76| 693.82| 64.90| 10.75| 49.30|

|[maxvit_small_tf_224.in1k](https://huggingface.co/timm/maxvit_small_tf_224.in1k) |84.43|96.83| 647.96| 68.93| 11.66| 53.17|

|[coatnet_2_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_2_rw_224.sw_in12k_ft_in1k) |86.57|97.89| 631.88| 73.87| 15.09| 49.22|

|[coatnet_rmlp_2_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_rmlp_2_rw_224.sw_in1k) |84.61|96.74| 625.81| 73.88| 15.18| 54.78|

|[coatnet_rmlp_2_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_rmlp_2_rw_224.sw_in12k_ft_in1k) |86.49|97.90| 620.58| 73.88| 15.18| 54.78|

|[maxxvit_rmlp_small_rw_256.sw_in1k](https://huggingface.co/timm/maxxvit_rmlp_small_rw_256.sw_in1k) |84.63|97.06| 575.53| 66.01| 14.67| 58.38|

|[maxxvitv2_rmlp_base_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/maxxvitv2_rmlp_base_rw_224.sw_in12k_ft_in1k) |86.64|98.02| 501.03| 116.09| 24.20| 62.77|

|[maxvit_rmlp_base_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/maxvit_rmlp_base_rw_224.sw_in12k_ft_in1k) |86.89|98.02| 375.86| 116.14| 23.15| 92.64|

|[maxvit_base_tf_224.in1k](https://huggingface.co/timm/maxvit_base_tf_224.in1k) |84.85|96.99| 358.25| 119.47| 24.04| 95.01|

|[maxvit_tiny_tf_384.in1k](https://huggingface.co/timm/maxvit_tiny_tf_384.in1k) |85.11|97.38| 293.46| 30.98| 17.53| 123.42|

|[maxvit_large_tf_224.in1k](https://huggingface.co/timm/maxvit_large_tf_224.in1k) |84.93|96.97| 247.71| 211.79| 43.68| 127.35|

|[maxvit_small_tf_384.in1k](https://huggingface.co/timm/maxvit_small_tf_384.in1k) |85.54|97.46| 188.35| 69.02| 35.87| 183.65|

|[coatnet_rmlp_2_rw_384.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_rmlp_2_rw_384.sw_in12k_ft_in1k) |87.39|98.31| 160.80| 73.88| 47.69| 209.43|

|[maxxvitv2_rmlp_base_rw_384.sw_in12k_ft_in1k](https://huggingface.co/timm/maxxvitv2_rmlp_base_rw_384.sw_in12k_ft_in1k) |87.47|98.37| 149.49| 116.09| 72.98| 213.74|

|[maxvit_tiny_tf_512.in1k](https://huggingface.co/timm/maxvit_tiny_tf_512.in1k) |85.67|97.58| 144.25| 31.05| 33.49| 257.59|

|[maxvit_rmlp_base_rw_384.sw_in12k_ft_in1k](https://huggingface.co/timm/maxvit_rmlp_base_rw_384.sw_in12k_ft_in1k) |87.81|98.37| 106.55| 116.14| 70.97| 318.95|

|[maxvit_base_tf_384.in21k_ft_in1k](https://huggingface.co/timm/maxvit_base_tf_384.in21k_ft_in1k) |87.92|98.54| 104.71| 119.65| 73.80| 332.90|

|[maxvit_base_tf_384.in1k](https://huggingface.co/timm/maxvit_base_tf_384.in1k) |86.29|97.80| 101.09| 119.65| 73.80| 332.90|

|[maxvit_small_tf_512.in1k](https://huggingface.co/timm/maxvit_small_tf_512.in1k) |86.10|97.76| 88.63| 69.13| 67.26| 383.77|

|[maxvit_large_tf_384.in21k_ft_in1k](https://huggingface.co/timm/maxvit_large_tf_384.in21k_ft_in1k) |87.98|98.56| 71.75| 212.03|132.55| 445.84|

|[maxvit_large_tf_384.in1k](https://huggingface.co/timm/maxvit_large_tf_384.in1k) |86.23|97.69| 70.56| 212.03|132.55| 445.84|

|[maxvit_base_tf_512.in21k_ft_in1k](https://huggingface.co/timm/maxvit_base_tf_512.in21k_ft_in1k) |88.20|98.53| 50.87| 119.88|138.02| 703.99|

|[maxvit_base_tf_512.in1k](https://huggingface.co/timm/maxvit_base_tf_512.in1k) |86.60|97.92| 50.75| 119.88|138.02| 703.99|

|[maxvit_xlarge_tf_384.in21k_ft_in1k](https://huggingface.co/timm/maxvit_xlarge_tf_384.in21k_ft_in1k) |88.32|98.54| 42.53| 475.32|292.78| 668.76|

|[maxvit_large_tf_512.in21k_ft_in1k](https://huggingface.co/timm/maxvit_large_tf_512.in21k_ft_in1k) |88.04|98.40| 36.42| 212.33|244.75| 942.15|

|[maxvit_large_tf_512.in1k](https://huggingface.co/timm/maxvit_large_tf_512.in1k) |86.52|97.88| 36.04| 212.33|244.75| 942.15|

|[maxvit_xlarge_tf_512.in21k_ft_in1k](https://huggingface.co/timm/maxvit_xlarge_tf_512.in21k_ft_in1k) |88.53|98.64| 21.76| 475.77|534.14|1413.22|

## Citation

```bibtex

@misc{rw2019timm,

author = {Ross Wightman},

title = {PyTorch Image Models},

year = {2019},

publisher = {GitHub},

journal = {GitHub repository},

doi = {10.5281/zenodo.4414861},

howpublished = {\url{https://github.com/huggingface/pytorch-image-models}}

}

```

```bibtex

@article{tu2022maxvit,

title={MaxViT: Multi-Axis Vision Transformer},

author={Tu, Zhengzhong and Talebi, Hossein and Zhang, Han and Yang, Feng and Milanfar, Peyman and Bovik, Alan and Li, Yinxiao},

journal={ECCV},

year={2022},

}

```

```bibtex

@article{dai2021coatnet,

title={CoAtNet: Marrying Convolution and Attention for All Data Sizes},

author={Dai, Zihang and Liu, Hanxiao and Le, Quoc V and Tan, Mingxing},

journal={arXiv preprint arXiv:2106.04803},

year={2021}

}

```

| 22,585 | [

[

-0.052886962890625,

-0.031982421875,

0.0009202957153320312,

0.028778076171875,

-0.0243072509765625,

-0.0198974609375,

-0.011474609375,

-0.0245361328125,

0.04656982421875,

0.018829345703125,

-0.04302978515625,

-0.046356201171875,

-0.04949951171875,

-0.0051307... |

SJ-Ray/Re-Punctuate | 2022-06-29T09:05:36.000Z | [

"transformers",

"tf",

"t5",

"text2text-generation",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text2text-generation | SJ-Ray | null | null | SJ-Ray/Re-Punctuate | 7 | 1,359 | transformers | 2022-03-16T16:10:00 | ---

license: apache-2.0

---

<h2>Re-Punctuate:</h2>

Re-Punctuate is a T5 model that attempts to correct Capitalization and Punctuations in the sentences.

<h3>DataSet:</h3>

DialogSum dataset (115056 Records) was used to fine-tune the model for Punctuation and Capitalization correction.

<h3>Usage:</h3>

<pre>

from transformers import T5Tokenizer, TFT5ForConditionalGeneration

tokenizer = T5Tokenizer.from_pretrained('SJ-Ray/Re-Punctuate')

model = TFT5ForConditionalGeneration.from_pretrained('SJ-Ray/Re-Punctuate')

input_text = 'the story of this brave brilliant athlete whose very being was questioned so publicly is one that still captures the imagination'

inputs = tokenizer.encode("punctuate: " + input_text, return_tensors="tf")

result = model.generate(inputs)

decoded_output = tokenizer.decode(result[0], skip_special_tokens=True)

print(decoded_output)

</pre>

<h4> Example: </h4>

<b>Input:</b> the story of this brave brilliant athlete whose very being was questioned so publicly is one that still captures the imagination <br>

<b>Output:</b> The story of this brave, brilliant athlete, whose very being was questioned so publicly, is one that still captures the imagination.

<h4> Connect on: <a href="https://www.linkedin.com/in/suraj-kumar-710382a7" target="_blank">LinkedIn : Suraj Kumar</a></h4> | 1,316 | [

[

0.01065826416015625,

-0.04547119140625,

0.0230712890625,

0.0113677978515625,

-0.01419830322265625,

0.036041259765625,

-0.003726959228515625,

-0.01276397705078125,

0.0218048095703125,

0.0183563232421875,

-0.056610107421875,

-0.039520263671875,

-0.031768798828125,... |

luongphamit/NeverEnding-Dream2 | 2023-03-21T03:01:41.000Z | [

"diffusers",

"stable-diffusion",

"text-to-image",

"art",

"artistic",

"en",

"license:other",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | luongphamit | null | null | luongphamit/NeverEnding-Dream2 | 11 | 1,359 | diffusers | 2023-03-21T03:01:16 | ---

language:

- en

license: other

tags:

- stable-diffusion

- text-to-image

- art

- artistic

- diffusers

inference: true

duplicated_from: Lykon/NeverEnding-Dream

---

# NeverEnding Dream (NED)

## Official Repository

Read more about this model here: https://civitai.com/models/10028/neverending-dream-ned

Also please support by giving 5 stars and a heart, which will notify new updates.

Also consider supporting me on Patreon or ByuMeACoffee

- https://www.patreon.com/Lykon275

- https://www.buymeacoffee.com/lykon

You can run this model on:

- https://sinkin.ai/m/qGdxrYG

Some sample output:

| 1,069 | [

[

-0.0203857421875,

-0.0071868896484375,

0.038909912109375,

0.02874755859375,

-0.0457763671875,

0.00548553466796875,

0.01517486572265625,

-0.0386962890625,

0.053497314453125,

0.061614990234375,

-0.08099365234375,

-0.05560302734375,

-0.050018310546875,

-0.00281... |

openbmb/VisCPM-Chat | 2023-08-12T11:45:22.000Z | [

"transformers",

"pytorch",

"viscpmchatbee",

"feature-extraction",

"custom_code",

"zh",

"en",

"has_space",

"region:us"

] | feature-extraction | openbmb | null | null | openbmb/VisCPM-Chat | 15 | 1,358 | transformers | 2023-06-28T02:18:29 | ---

language:

- zh

- en

---

# VisCPM

简体中文 | [English](README_en.md)

<p align="center">

<p align="left">

<a href="./LICENSE"><img src="https://img.shields.io/badge/license-Apache%202-dfd.svg"></a>

<a href=""><img src="https://img.shields.io/badge/python-3.8+-aff.svg"></a>

</p>

`VisCPM` is a family of open-source large multimodal models, which support multimodal conversational capabilities (`VisCPM-Chat` model) and text-to-image generation capabilities (`VisCPM-Paint` model) in both Chinese and English, achieving state-of-the-art peformance among Chinese open-source multimodal models. `VisCPM` is trained based on the large language model [CPM-Bee](https://github.com/OpenBMB/CPM-Bee) with 10B parameters, fusing visual encoder (Q-Former) and visual decoder (Diffusion-UNet) to support visual inputs and outputs. Thanks to the good bilingual capability of CPM-Bee, `VisCPM` can be pre-trained with English multimodal data only and well generalize to achieve promising Chinese multimodal capabilities.

`VisCPM`是一个开源的多模态大模型系列,支持中英双语的多模态对话能力(`VisCPM-Chat`模型)和文到图生成能力(`VisCPM-Paint`模型),在中文多模态开源模型中达到最佳水平。`VisCPM`基于百亿参数量语言大模型[CPM-Bee](https://github.com/OpenBMB/CPM-Bee)(10B)训练,融合视觉编码器(`Q-Former`)和视觉解码器(`Diffusion-UNet`)以支持视觉信号的输入和输出。得益于`CPM-Bee`底座优秀的双语能力,`VisCPM`可以仅通过英文多模态数据预训练,泛化实现优秀的中文多模态能力。

## VisCPM-Chat

`VisCPM-Chat`支持面向图像进行中英双语多模态对话。该模型使用`Q-Former`作为视觉编码器,使用CPM-Bee(10B)作为语言交互基底模型,并通过语言建模训练目标融合视觉和语言模型。模型训练包括预训练和指令精调两阶段:

* 预训练:我们使用约100M高质量英文图文对数据对`VisCPM-Chat`进行了预训练,数据包括CC3M、CC12M、COCO、Visual Genome、Laion等。在预训练阶段,语言模型参数保持固定,仅更新`Q-Former`部分参数,以支持大规模视觉-语言表示的高效对齐。

* 指令精调:我们采用[LLaVA-150K](https://llava-vl.github.io/)英文指令精调数据,并混合相应翻译后的中文数据对模型进行指令精调,以对齐模型多模态基础能力和用户使用意图。在指令精调阶段,我们更新全部模型参数,以提升指令精调数据的利用效率。有趣的是,我们发现即使仅采用英文指令数据进行指令精调,模型也可以理解中文问题,但仅能用英文回答。这表明模型的多语言多模态能力已经得到良好的泛化。在指令精调阶段进一步加入少量中文翻译数据,可以将模型回复语言和用户问题语言对齐。

我们在LLaVA英文测试集和翻译的中文测试集对模型进行了评测,该评测基准考察模型在开放域对话、图像细节描述、复杂推理方面的表现,并使用GPT-4进行打分。可以观察到,`VisCPM-Chat`在中文多模态能力方面取得了最佳的平均性能,在通用域对话和复杂推理表现出色,同时也表现出了不错的英文多模态能力。

<table>

<tr>

<td align="center" rowspan="2" colspan="2">模型</td>

<td align="center" colspan="4">英文</td>

<td align="center" colspan="4">中文</td>

</tr>

<tr>

<td align="center">多模态对话</td>

<td align="center">细节描述</td>

<td align="center">复杂推理</td>

<td align="center">平均</td>

<td align="center">多模态对话</td>

<td align="center">细节描述</td>

<td align="center">复杂推理</td>

<td align="center">平均</td>

</tr>

<tr>

<td align="center" rowspan="3">英文模型</td>

<td align="center">MiniGPT4</td>

<td align="center">65</td>

<td align="center">67.3</td>

<td align="center">76.6</td>

<td align="center">69.7</td>

<td align="center">-</td>

<td align="center">-</td>

<td align="center">-</td>

<td align="center">-</td>

</tr>

<tr>

<td align="center">InstructBLIP</td>

<td align="center">81.9</td>

<td align="center">68</td>

<td align="center">91.2</td>

<td align="center">80.5</td>

<td align="center">-</td>

<td align="center">-</td>

<td align="center">-</td>

<td align="center">-</td>

</tr>

<tr>

<td align="center">LLaVA</td>

<td align="center">89.5</td>

<td align="center">70.4</td>

<td align="center">96.2</td>

<td align="center">85.6</td>

<td align="center">-</td>

<td align="center">-</td>

<td align="center">-</td>

<td align="center">-</td>

</tr>

<tr>

<td align="center" rowspan="4">中英双语</td>

<td align="center">mPLUG-Owl </td>

<td align="center">64.6</td>

<td align="center">47.7</td>

<td align="center">80.1</td>

<td align="center">64.2</td>

<td align="center">76.3</td>

<td align="center">61.2</td>

<td align="center">77.8</td>

<td align="center">72</td>

</tr>

<tr>

<td align="center">VisualGLM</td>

<td align="center">62.4</td>

<td align="center">63</td>

<td align="center">80.6</td>

<td align="center">68.7</td>

<td align="center">76.6</td>

<td align="center">87.8</td>

<td align="center">83.6</td>

<td align="center">82.7</td>

</tr>

<tr>

<td align="center">Ziya (LLaMA 13B)</td>

<td align="center">82.7</td>

<td align="center">69.9</td>

<td align="center">92.1</td>

<td align="center">81.7</td>

<td align="center">85</td>

<td align="center">74.7</td>

<td align="center">82.4</td>

<td align="center">80.8</td>

</tr>

<tr>

<td align="center">VisCPM-Chat</td>

<td align="center">83.3</td>

<td align="center">68.9</td>

<td align="center">90.5</td>

<td align="center">81.1</td>

<td align="center">92.7</td>

<td align="center">76.1</td>

<td align="center">89.2</td>

<td align="center">86.3</td>

</tr>

</table>

## VisCPM-Paint

`VisCPM-Paint`支持中英双语的文到图生成。该模型使用CPM-Bee(10B)作为文本编码器,使用`UNet`作为图像解码器,并通过扩散模型训练目标融合语言和视觉模型。在训练过程中,语言模型参数始终保持固定。我们使用[Stable Diffusion 2.1](https://github.com/Stability-AI/stablediffusion)的UNet参数初始化视觉解码器,并通过逐步解冻其中关键的桥接参数将其与语言模型融合:首先训练文本表示映射到视觉模型的线性层,然后进一步解冻`UNet`的交叉注意力层。该模型在[LAION 2B](https://laion.ai/)英文图文对数据上进行了训练。

与`VisCPM-Chat`类似,我们发现得益于CPM-Bee的双语能力,`VisCPM-Paint`可以仅通过英文图文对训练,泛化实现良好的中文文到图生成能力,达到中文开源模型的最佳效果。通过进一步加入20M清洗后的原生中文图文对数据,以及120M翻译到中文的图文对数据,模型的中文文到图生成能力可以获得进一步提升。我们在MSCOCO上采样了3万张图片,计算了FID(Fréchet Inception Distance)和Clip Score,前者用于评估生成图片的质量,后面用于评估生成的图片与输入的匹配程度。

<table>

<tr>

<td align="center" rowspan="2">模型</td>

<td align="center" colspan="2">英文</td>

<td align="center" colspan="2">中文</td>

</tr>

<tr>

<td align="center">FID↓</td>

<td align="center">CLIP Score↑</td>

<td align="center">FID↓</td>

<td align="center">CLIP Score↑</td>

</tr>

<tr>

<td align="center">AltDiffusion</td>

<td align="center">17.16</td>

<td align="center">25.24</td>

<td align="center">16.09</td>

<td align="center">24.05</td>

</tr>

<tr>

<td align="center">TaiyiDiffusion</td>

<td align="center">-</td>

<td align="center">-</td>

<td align="center">15.58</td>

<td align="center">22.69</td>

</tr>

<tr>

<td align="center">Stable Diffusion</td>

<td align="center">9.08</td>

<td align="center">26.22</td>

<td align="center">-</td>

<td align="center">-</td>

</tr>

<tr>

<td align="center">VisCPM-Paint-en</td>

<td align="center">9.51</td>

<td align="center">25.35</td>

<td align="center">10.86</td>

<td align="center">23.38</td>

</tr>

<tr>

<td align="center">VisCPM-Paint-zh</td>

<td align="center">9.98</td>

<td align="center">25.04</td>

<td align="center">9.65</td>

<td align="center">24.17</td>

</tr>

</table>

# 安装

```Shell

conda create -n viscpm python=3.10 -y

conda activate viscpm

pip install setuptools

pip install diffusers jieba matplotlib numpy opencv_python

pip install pandas Pillow psutil pydantic scipy

pip install torch==1.13.1 torchscale==0.2.0 torchvision==0.14.1 timm

pip install transformers==4.28.0

pip install tqdm typing_extensions

pip install git+https://github.com/thunlp/OpenDelta.git

pip install git+https://github.com/OpenBMB/CPM-Bee.git#egg=cpm-live&subdirectory=src

```

VisCPM需要单卡40GB以上的GPU运行,我们会在尽快更新更加节省显存的推理方式。

## 使用

```python

>>> from transformers import AutoModel, AutoTokenizer, AutoImageProcessor

>>> from PIL import Image

>>> tokenizer = AutoTokenizer.from_pretrained('openbmb/VisCPM-Chat', trust_remote_code=True)

>>> processor = AutoImageProcessor.from_pretrained('openbmb/VisCPM-Chat', trust_remote_code=True)

>>> model = AutoModel.from_pretrained('openbmb/VisCPM-Chat', trust_remote_code=True).to('cuda')

>>> data = [{

>>> 'context': '',

>>> 'question': 'describe this image in detail.',

>>> 'image': tokenizer.unk_token * model.query_num,

>>> '<ans>': ''

>>> }]

>>> image = Image.open('case.jpg')

>>> result = model.generate(data, tokenizer, processor, image)

>>> print(result[0]['<ans>'])

这幅图片显示了一群热气球在天空中飞行。这些热气球漂浮在不同的地方,包括山脉、城市和乡村地区。

``` | 8,373 | [

[

-0.05743408203125,

-0.0477294921875,

0.00676727294921875,

0.024505615234375,

-0.0207672119140625,

0.004978179931640625,

-0.00991058349609375,

-0.02728271484375,

0.0239410400390625,

-0.002468109130859375,

-0.05078125,

-0.0286865234375,

-0.034454345703125,

-0.... |

mirroring/civitai_mirror | 2023-11-06T17:19:21.000Z | [

"diffusers",

"Civitai",

"mirror",

"stable diffusion",

"text-to-image",

"license:wtfpl",

"has_space",

"region:us"

] | text-to-image | mirroring | null | null | mirroring/civitai_mirror | 50 | 1,357 | diffusers | 2023-03-28T23:16:54 | ---

license: wtfpl

pipeline_tag: text-to-image

tags:

- Civitai

- mirror

- stable diffusion

---

# A mirror of anything interesting on civitai or elsewhere on the web.

This repo is *mostly* organized around the structure

that's necessary to import the entire repo into an

automatic1111 install. This is because models were originally

uploaded directly from such an install via google drive in

a colab session.

Currently I'm uploading files via my new space over at

https://hf.co/anonderpling/repo_uploader, however I expect

to go back to a real file system and git uploads soon,

hopefully paperspace can help with that.

## Workflow

My workflow for downloading files into paperspace gradient

is to download the entire repo *without* pulling LFS

files, do a sparse checkout, and then pull the files I

want with LFS --include (slow) or aria2 (fast). This

workflow should work with colab, too. Whether you use

colab or paperspace, you'll probably need the latest

version of git to use `sparse-checkout --add`.

## How to upload and download files

<details>

<summary>sparse checkout with git, bypassing LFS, download with aria2c</summary>

```bash

!GIT_LFS_SPARSE_CHECKOUT=1 git clone git@hf.co:anonderpling/civitai_mirror # this is my command so I can push changes, you'll need to use the https://hf.co/ instead of git@hf.co:

!cd civitai_mirror

!git sparse-checkout set embeddings # embeddings are small, so it's easy enough to just pull all of them

!git sparse-checkout add models/VAE # there's only a few VAEs, and they're generally needed, so grab all those too...

!git sparse-checkout add models/Stable-diffusion/illuminati* models/Stable-diffusion/revAnimated* # add some stable diffusion models I intend to work with in this session

!apt install aria2 # make sure aria2c is installed

# let's break the following command down into parts, since there's multiple commands on one line

# find models embeddings --type f --size -2 # find files in models and embeddings directories smaller than 2 kilobytes (these are the lfs pointers that were checked out)

# | while read a; do #lets build an aria2c input file

# echo "https://huggingface.co/anonderpling/civitai_mirror/resolve/main/${a}"; # tell aria2c where to find the file

# echo " out=${a}"; # tell aria2c where to place said file

# rm "${a}"; remove the existing file, because I'm too lazy to look up the option to have aria2c overwrite it (plus if you stop in the middle, you can tell at a glance what else is needed)

# done | tee aria2.in.txt # end the loop, but watch to make sure theres nothing accidentally included by wildcards that shouldnt have been...downloads could take a while (and fill the disk) if I accidentally put a space before the *

# aria2 -x16 --split=16 -i aria2.in.txt # download all the files as fast as possible

!find models embeddings --type f --size -2 | while read a; do echo "https://huggingface.co/anonderpling/civitai_mirror/resolve/main/${a}"; echo " out=${a}"; rm "${a}"; done | tee aria2.in.txt

!aria2 -x16 --split=16 -i aria2.in.txt

```

</details>

#### Uploading files:

- [repo uploader](https://anonderpling-repo-uploader.hf.space), for single files. requires write permission to repo.

- [Civitai model uploader](https://mirroring-upload-civitai-model.hf.space), for uploading a model from Civitai. can create PR. intended for uploading model previews and civitai's API response json as well as the model, for use by Civitai unofficial extension.

</details>

<details>

<summary>to upload more files via git</summary>

```bash

# enable the git lfs filters

!pip install huggingface_hub

!huggingface-cli lfs_enable_largefiles .

# yup, not telling. I'm an *anonymous* derpling, after all

!git config --local user.name 'not telling'

# really couldnt care less if this is accurate...maybe I'll start randomizing it...

!git config --local user.email 'anonderpling@users.noreply.huggingface.co'

# create an rsa key with no password

!ssh-keygen -t rsa -b 4096 -f ~/.ssh/id_hf.co -P ''

# a clickable link in jupyter/colab/paperspace

print('https://hf.co/settings/keys')

# give the public key so it can be easily copied to huggingface

!cat ~/.ssh/id_hf.co.pub

# track files 1mb+ with lfs manually (huggingface filter deals with models automatically, but large previews will give you errors)

!find -type f -size +999k -not \( -name '*.safetensors' -o -name '*.ckpt' -o -name '*.pt' \) -exec git lfs track '{}' +

# IMPORTANT: make sure you add the git ssh key above before uploading

!sleep 1m # gives you time to do so

# upload your files now. do make sure you dont upload files that didnt download properly (interrupted aria2c, lfs pointers, etc)

!git add .gitattributes models embeddings

!git commit -m "add a message..."

!git push

```

</details>

<details>

<summary>to upload a single file via huggingface_hub:</summary>

```python

inurl=api.upload_folder( # https://huggingface.co/docs/huggingface_hub/v0.14.1/en/package_reference/hf_api#huggingface_hub.HfApi.upload_folder

token="hf_token", # only needed if you didn't already use huggingface_hub.login() previously.

path_or_fileobj="/content/stable-diffusion-webui/models/Stable-diffusion/Rev-Animated.safetensors", # location of the file you want to upload

path_in_repo="models/Stable-diffusion/Rev-Animated.safetensors",

repo_id="mirroring/civitai_mirror",

commit_message="Uploading Rev Animated", # optional.

commit_description="I want to upload Rev Animated because it's a special file for me.\nPlease accept my PR. I don't want to host it on my own HF repo!" # optional. Required (here) for PRs.

create_pr=True # optional. needed if you're not a special person allowed to add new files to the repo (ie, if you just want us to mirror something; make sure to fill out the description/message above, as well)

)

print("Uploaded files. Check them out at "+inurl)

```

</details>

<details>

<summary>to upload multiple files via huggingface_hub:</summary>

```python

inurl=api.upload_folder( # https://huggingface.co/docs/huggingface_hub/v0.14.1/en/package_reference/hf_api#huggingface_hub.HfApi.upload_folder

token="hf_token", # only needed if you didn't already use huggingface_hub.login() previously.