license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['vision', 'image-classification'] | false | Pretraining The model was trained on TPUv3 hardware (8 cores). All model variants are trained with a batch size of 4096 and learning rate warmup of 10k steps. For ImageNet, the authors found it beneficial to additionally apply gradient clipping at global norm 1. Training resolution is 224. | d116bd3ef46344a033e05845e35ff6d9 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2269 - Accuracy: 0.9245 - F1: 0.9245 | 9bbd7b08a4ca9a643619d7f1db268dcf |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.853 | 1.0 | 250 | 0.3507 | 0.8925 | 0.8883 | | 0.2667 | 2.0 | 500 | 0.2269 | 0.9245 | 0.9245 | | 557b32bd93cd1f27a2dfa9f7be60d7ec |

apache-2.0 | ['generated_from_trainer'] | false | hasoc19-bert-base-multilingual-cased-HatredStatement-new This model is a fine-tuned version of [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.6565 - Accuracy: 0.7319 - Precision: 0.7320 - Recall: 0.7319 - F1: 0.7307 | 10e1e0db1d55cbe077f22cfe326ffb5e |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 6 | 393a2c017c382df82e76f8d5ce79e7d7 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | Precision | Recall | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:---------:|:------:|:------:| | No log | 1.0 | 296 | 0.5540 | 0.7110 | 0.7147 | 0.7110 | 0.7067 | | 0.5551 | 2.0 | 592 | 0.5345 | 0.7224 | 0.7673 | 0.7224 | 0.7038 | | 0.5551 | 3.0 | 888 | 0.5752 | 0.7272 | 0.7430 | 0.7272 | 0.7183 | | 0.4252 | 4.0 | 1184 | 0.5697 | 0.7376 | 0.7384 | 0.7376 | 0.7359 | | 0.4252 | 5.0 | 1480 | 0.6335 | 0.7319 | 0.7388 | 0.7319 | 0.7269 | | 0.3401 | 6.0 | 1776 | 0.6565 | 0.7319 | 0.7320 | 0.7319 | 0.7307 | | f0688914ab76e5fafff8a02f98f5fbc1 |

apache-2.0 | ['automatic-speech-recognition', 'bas', 'generated_from_trainer', 'hf-asr-leaderboard', 'model_for_talk', 'mozilla-foundation/common_voice_8_0', 'robust-speech-event'] | false | wav2vec2-large-xls-r-300m-basaa-cv8 This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the MOZILLA-FOUNDATION/COMMON_VOICE_8_0 - BAS dataset. It achieves the following results on the evaluation set: - Loss: 0.4648 - Wer: 0.5472 | cee1af56ad5b185ba2a2f5a37f1dc798 |

apache-2.0 | ['automatic-speech-recognition', 'bas', 'generated_from_trainer', 'hf-asr-leaderboard', 'model_for_talk', 'mozilla-foundation/common_voice_8_0', 'robust-speech-event'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 7e-05 - train_batch_size: 32 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 100.0 - mixed_precision_training: Native AMP | 38d1d105188c7b1be4ca33cdc6e29656 |

apache-2.0 | ['automatic-speech-recognition', 'bas', 'generated_from_trainer', 'hf-asr-leaderboard', 'model_for_talk', 'mozilla-foundation/common_voice_8_0', 'robust-speech-event'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 2.9421 | 12.82 | 500 | 2.8894 | 1.0 | | 1.1872 | 25.64 | 1000 | 0.6688 | 0.7460 | | 0.8894 | 38.46 | 1500 | 0.4868 | 0.6516 | | 0.769 | 51.28 | 2000 | 0.4960 | 0.6507 | | 0.6936 | 64.1 | 2500 | 0.4781 | 0.5384 | | 0.624 | 76.92 | 3000 | 0.4643 | 0.5430 | | 0.5966 | 89.74 | 3500 | 0.4530 | 0.5591 | | 34453a812a8d6065e9b31c59820359c3 |

apache-2.0 | ['generated_from_trainer'] | false | mbert-finnic-ner This model is a fine-tuned version of [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) on the Finnish and Estonian parts of the "WikiANN" dataset. It achieves the following results on the evaluation set: - Loss: 0.1427 - Precision: 0.9090 - Recall: 0.9156 - F1: 0.9123 - Accuracy: 0.9672 | f353e6d6f6c15e3b39632d0fb37b88f3 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.1636 | 1.0 | 2188 | 0.1385 | 0.8906 | 0.9000 | 0.8953 | 0.9601 | | 0.0991 | 2.0 | 4376 | 0.1346 | 0.9099 | 0.9095 | 0.9097 | 0.9660 | | 0.0596 | 3.0 | 6564 | 0.1427 | 0.9090 | 0.9156 | 0.9123 | 0.9672 | | c706dbd33406ffe49219068dfb421b6e |

apache-2.0 | ['generated_from_trainer'] | false | Article_50v0_NER_Model_3Epochs_AUGMENTED This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the article50v0_wikigold_split dataset. It achieves the following results on the evaluation set: - Loss: 0.5912 - Precision: 0.0975 - Recall: 0.0183 - F1: 0.0308 - Accuracy: 0.7915 | cd775afba411158d890e31e815def228 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 14 | 0.7204 | 0.0 | 0.0 | 0.0 | 0.7803 | | No log | 2.0 | 28 | 0.6230 | 0.0743 | 0.0081 | 0.0145 | 0.7869 | | No log | 3.0 | 42 | 0.5912 | 0.0975 | 0.0183 | 0.0308 | 0.7915 | | ca7f14d2886189ebd12711cce079fefb |

apache-2.0 | ['generated_from_keras_callback'] | false | cakiki/distilbert-base-uncased-finetuned-tweet-sentiment This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.1025 - Train Sparse Categorical Accuracy: 0.9511 - Validation Loss: 0.1455 - Validation Sparse Categorical Accuracy: 0.9365 - Epoch: 2 | 4f07d822f45a6c1c20aff1bd13291887 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train Sparse Categorical Accuracy | Validation Loss | Validation Sparse Categorical Accuracy | Epoch | |:----------:|:---------------------------------:|:---------------:|:--------------------------------------:|:-----:| | 0.5409 | 0.8158 | 0.2115 | 0.9265 | 0 | | 0.1442 | 0.9373 | 0.1411 | 0.9380 | 1 | | 0.1025 | 0.9511 | 0.1455 | 0.9365 | 2 | | 896d7ff5ea601fe04cdc4d45c25edabb |

apache-2.0 | ['generated_from_trainer'] | false | bert-small-finetuned-ner-to-multilabel-wnut-17-new This model is a fine-tuned version of [google/bert_uncased_L-4_H-512_A-8](https://huggingface.co/google/bert_uncased_L-4_H-512_A-8) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2039 | 0de6a33378ffe3bd1a5743fbae845592 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: constant - num_epochs: 40 | 586216d3c6244e7b661064a954c7bac6 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 0.2006 | 1.18 | 500 | 0.2043 | | 0.1247 | 2.35 | 1000 | 0.1960 | | 0.0935 | 3.53 | 1500 | 0.1893 | | 0.0742 | 4.71 | 2000 | 0.2003 | | 0.0552 | 5.88 | 2500 | 0.2106 | | 0.0405 | 7.06 | 3000 | 0.2039 | | 9b74a184d794002a77e4551acd1a882d |

apache-2.0 | ['microsoft/deberta-v3-large'] | false | Cross-Encoder for Natural Language Inference This model was trained using [SentenceTransformers](https://sbert.net) [Cross-Encoder](https://www.sbert.net/examples/applications/cross-encoder/README.html) class. This model is based on [microsoft/deberta-v3-large](https://huggingface.co/microsoft/deberta-v3-large) | 6e749299a8c20bd7c185c26ee5516bcb |

apache-2.0 | ['microsoft/deberta-v3-large'] | false | Training Data The model was trained on the [SNLI](https://nlp.stanford.edu/projects/snli/) and [MultiNLI](https://cims.nyu.edu/~sbowman/multinli/) datasets. For a given sentence pair, it will output three scores corresponding to the labels: contradiction, entailment, neutral. | 799432c18f5e3dd80bf07a37e87c1e12 |

apache-2.0 | ['microsoft/deberta-v3-large'] | false | Performance - Accuracy on SNLI-test dataset: 92.20 - Accuracy on MNLI mismatched set: 90.49 For futher evaluation results, see [SBERT.net - Pretrained Cross-Encoder](https://www.sbert.net/docs/pretrained_cross-encoders.html | 5805f98eb3049603e66d41f6024cff5b |

apache-2.0 | ['microsoft/deberta-v3-large'] | false | Usage Pre-trained models can be used like this: ```python from sentence_transformers import CrossEncoder model = CrossEncoder('cross-encoder/nli-deberta-v3-large') scores = model.predict([('A man is eating pizza', 'A man eats something'), ('A black race car starts up in front of a crowd of people.', 'A man is driving down a lonely road.')]) | 1234578e56c52508e689d622718e1105 |

apache-2.0 | ['microsoft/deberta-v3-large'] | false | Usage with Transformers AutoModel You can use the model also directly with Transformers library (without SentenceTransformers library): ```python from transformers import AutoTokenizer, AutoModelForSequenceClassification import torch model = AutoModelForSequenceClassification.from_pretrained('cross-encoder/nli-deberta-v3-large') tokenizer = AutoTokenizer.from_pretrained('cross-encoder/nli-deberta-v3-large') features = tokenizer(['A man is eating pizza', 'A black race car starts up in front of a crowd of people.'], ['A man eats something', 'A man is driving down a lonely road.'], padding=True, truncation=True, return_tensors="pt") model.eval() with torch.no_grad(): scores = model(**features).logits label_mapping = ['contradiction', 'entailment', 'neutral'] labels = [label_mapping[score_max] for score_max in scores.argmax(dim=1)] print(labels) ``` | 94b99bcb28df2ec921a0f61c2a432a77 |

apache-2.0 | ['microsoft/deberta-v3-large'] | false | Zero-Shot Classification This model can also be used for zero-shot-classification: ```python from transformers import pipeline classifier = pipeline("zero-shot-classification", model='cross-encoder/nli-deberta-v3-large') sent = "Apple just announced the newest iPhone X" candidate_labels = ["technology", "sports", "politics"] res = classifier(sent, candidate_labels) print(res) ``` | b6884a8fdf6f11f33b7352503ff58171 |

mit | ['roberta-base', 'roberta-base-epoch_45'] | false | RoBERTa, Intermediate Checkpoint - Epoch 45 This model is part of our reimplementation of the [RoBERTa model](https://arxiv.org/abs/1907.11692), trained on Wikipedia and the Book Corpus only. We train this model for almost 100K steps, corresponding to 83 epochs. We provide the 84 checkpoints (including the randomly initialized weights before the training) to provide the ability to study the training dynamics of such models, and other possible use-cases. These models were trained in part of a work that studies how simple statistics from data, such as co-occurrences affects model predictions, which are described in the paper [Measuring Causal Effects of Data Statistics on Language Model's `Factual' Predictions](https://arxiv.org/abs/2207.14251). This is RoBERTa-base epoch_45. | 9e2e63b242bf0422df6b10ef33b0f85f |

apache-2.0 | ['automatic-speech-recognition', 'es'] | false | exp_w2v2r_es_xls-r_age_teens-2_sixties-8_s772 Fine-tuned [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) for speech recognition using the train split of [Common Voice 7.0 (es)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 2192c69227887919f1e103a931640f70 |

apache-2.0 | ['automatic-speech-recognition', 'pl'] | false | exp_w2v2t_pl_r-wav2vec2_s996 Fine-tuned [facebook/wav2vec2-large-robust](https://huggingface.co/facebook/wav2vec2-large-robust) for speech recognition using the train split of [Common Voice 7.0 (pl)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | d7cd74238d785350345d4e0773149cfa |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Wav2Vec2-Large-XLSR-53-hindi Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) hindi using the [Multilingual and code-switching ASR challenges for low resource Indian languages](https://navana-tech.github.io/IS21SS-indicASRchallenge/data.html). When using this model, make sure that your speech input is sampled at 16kHz. | 6c5bdcb9e0b97e26ce189a831ff9d270 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Usage The model can be used directly (without a language model) as follows: ```python import torch import torchaudio from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor test_dataset = load_dataset("common_voice", "hi", split="test[:2%]") processor = Wav2Vec2Processor.from_pretrained("theainerd/Wav2Vec2-large-xlsr-hindi") model = Wav2Vec2ForCTC.from_pretrained("theainerd/Wav2Vec2-large-xlsr-hindi") resampler = torchaudio.transforms.Resample(48_000, 16_000) | c4f576e9e8454de69fc1742235dfe154 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Evaluation The model can be evaluated as follows on the hindi test data of Common Voice. ```python import torch import torchaudio from datasets import load_dataset, load_metric from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor import re test_dataset = load_dataset("common_voice", "hi", split="test") wer = load_metric("wer") processor = Wav2Vec2Processor.from_pretrained("theainerd/Wav2Vec2-large-xlsr-hindi") model = Wav2Vec2ForCTC.from_pretrained("theainerd/Wav2Vec2-large-xlsr-hindi") model.to("cuda") resampler = torchaudio.transforms.Resample(48_000, 16_000) chars_to_ignore_regex = '[\,\?\.\!\-\;\:\"\“]' | 4d192a1f2b0bfccd77f2803a57a37f4b |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def evaluate(batch): inputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits pred_ids = torch.argmax(logits, dim=-1) batch["pred_strings"] = processor.batch_decode(pred_ids) return batch result = test_dataset.map(evaluate, batched=True, batch_size=8) print("WER: {:2f}".format(100 * wer.compute(predictions=result["pred_strings"], references=result["sentence"]))) ``` **Test Result**:20.22 % | 9638345c7e8f15f4a7be4ff60ec7f9ad |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-base-timit-demo-google-colab This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.5725 - Wer: 0.3413 | 2342e286c10efd8764557cafef695345 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:------:| | 3.508 | 1.0 | 500 | 1.9315 | 0.9962 | | 0.8832 | 2.01 | 1000 | 0.5552 | 0.5191 | | 0.4381 | 3.01 | 1500 | 0.4451 | 0.4574 | | 0.2983 | 4.02 | 2000 | 0.4096 | 0.4265 | | 0.2232 | 5.02 | 2500 | 0.4280 | 0.4083 | | 0.1811 | 6.02 | 3000 | 0.4307 | 0.3942 | | 0.1548 | 7.03 | 3500 | 0.4453 | 0.3889 | | 0.1367 | 8.03 | 4000 | 0.5043 | 0.4138 | | 0.1238 | 9.04 | 4500 | 0.4530 | 0.3807 | | 0.1072 | 10.04 | 5000 | 0.4435 | 0.3660 | | 0.0978 | 11.04 | 5500 | 0.4739 | 0.3676 | | 0.0887 | 12.05 | 6000 | 0.5052 | 0.3761 | | 0.0813 | 13.05 | 6500 | 0.5098 | 0.3619 | | 0.0741 | 14.06 | 7000 | 0.4666 | 0.3602 | | 0.0654 | 15.06 | 7500 | 0.5642 | 0.3657 | | 0.0589 | 16.06 | 8000 | 0.5489 | 0.3638 | | 0.0559 | 17.07 | 8500 | 0.5260 | 0.3598 | | 0.0562 | 18.07 | 9000 | 0.5250 | 0.3640 | | 0.0448 | 19.08 | 9500 | 0.5215 | 0.3569 | | 0.0436 | 20.08 | 10000 | 0.5117 | 0.3560 | | 0.0412 | 21.08 | 10500 | 0.4910 | 0.3570 | | 0.0336 | 22.09 | 11000 | 0.5221 | 0.3524 | | 0.031 | 23.09 | 11500 | 0.5278 | 0.3480 | | 0.0339 | 24.1 | 12000 | 0.5353 | 0.3486 | | 0.0278 | 25.1 | 12500 | 0.5342 | 0.3462 | | 0.0251 | 26.1 | 13000 | 0.5399 | 0.3439 | | 0.0242 | 27.11 | 13500 | 0.5626 | 0.3431 | | 0.0214 | 28.11 | 14000 | 0.5749 | 0.3408 | | 0.0216 | 29.12 | 14500 | 0.5725 | 0.3413 | | 933b9a918018457f488d4e1697a13494 |

creativeml-openrail-m | ['stable diffusion', 'text-to-image', 'diffusers'] | false | **Colorful-v4.5** **Colorful-v4.5** is a model merge between [Anything-v4.5](https://huggingface.co/andite/anything-v4.0), [AbyssOrangeMix2](https://huggingface.co/WarriorMama777/OrangeMixs) and [ProtogenInfinity](https://huggingface.co/darkstorm2150/Protogen_Infinity_Official_Release) Colorful-v4.5 is named the way it is because of the fact that it is similar to Anything-v4.5 and that it improves the bland color pallet it comes with (atleast for me), producing much livelier images. It also improves some other things like environments, fingers, facial emotions and somewhat clothing (it also fixes the purple blobs 🤫) *Technically i could name it Anything-v5.0 but that would be rather cheesy* *It is highly recommended to run this model locally on your computer because running it from the web-ui api will produce lower quality images than intended* | 7cf01c39ec7a05f86df08bc4c37ee349 |

creativeml-openrail-m | ['stable diffusion', 'text-to-image', 'diffusers'] | false | 1:** *10 steps*  ``` Prompt: masterpiece, best quality, 1girl, (green eyes), black hair, (black shorts), white shirt, glossy lips, small nose, standing up, in park, trees, full body, smiling Other Details: Steps: 10, Sampler: DPM++ 2S a Karras, CFG scale: 8, Seed: 774768794, Size: 512x512, Model hash: b5de490700, Model: Colorful-v4.5, Denoising strength: 0.6, Hires upscale: 2, Hires steps: 10, Hires upscaler: SwinIR_4x Negative Prompt: The negative prompt is very long and specific so it will be listed in the model's repo. ( The negative prompt comes from another model called Hentai Difussion so it will contain NSFW. A curated version of the negative prompt will also be in the repo for those who want SFW) ``` | 009c5d8c025114290deb7a6e02cb6700 |

creativeml-openrail-m | ['stable diffusion', 'text-to-image', 'diffusers'] | false | 2:** *20 steps*  ``` Prompt: masterpiece, best quality, lady, in red and black yukata, pink hair, blue eyes, in dojo, smiling, sitting, hands on lap Other Details: Steps: 20, Sampler: DPM++ 2S a Karras, CFG scale: 8, Seed: 774768794, Size: 512x512, Model hash: b5de490700, Model: Colorful-v4.5, Denoising strength: 0.6, Hires upscale: 2, Hires steps: 20, Hires upscaler: SwinIR_4x Negative Prompt: Same thing as in Example | 107afa6a35f52ec33c30d30763c0f754 |

creativeml-openrail-m | ['stable diffusion', 'text-to-image', 'diffusers'] | false | 3:** *30 steps*  | 9186a17d3f423f09d5bed23fe7725285 |

creativeml-openrail-m | ['stable diffusion', 'text-to-image', 'diffusers'] | false | Anything-v4.5: (for comparison)  ``` Prompt: masterpiece, best quality, girl, black hair, blue eyes, black t-shirt, black pants, smiling, standing up, solo, facing viewer, near blossomed tree Other Details: Steps: 30, Sampler: DPM++ 2S a Karras, CFG scale: 8, Seed: 774768794, Size: 512x512, Model hash: b5de490700, Model: Colorful-v4.5, Denoising strength: 0.6, Hires upscale: 2, Hires steps: 30, Hires upscaler: SwinIR_4x Negative Prompt: Same thing as in Example | 212f6893234e360d038307e39960dedb |

apache-2.0 | ['generated_from_trainer'] | false | dresses This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on the imagefolder dataset. It achieves the following results on the evaluation set: - Loss: 0.4588 - Accuracy: 0.9014 | 5754754ed4df8a8b84fa8202aefa97cb |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0002 - train_batch_size: 64 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 15 - mixed_precision_training: Native AMP | 07bb5f4eccb15de1d6e2163f94c2e03f |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.2458 | 1.23 | 100 | 0.4519 | 0.8633 | | 0.0937 | 2.47 | 200 | 0.4285 | 0.8754 | | 0.0802 | 3.7 | 300 | 0.4683 | 0.8754 | | 0.041 | 4.94 | 400 | 0.4088 | 0.9031 | | 0.0277 | 6.17 | 500 | 0.3979 | 0.8945 | | 0.0459 | 7.41 | 600 | 0.4253 | 0.9014 | | 0.024 | 8.64 | 700 | 0.4680 | 0.8893 | | 0.0267 | 9.88 | 800 | 0.4575 | 0.8945 | | 0.019 | 11.11 | 900 | 0.4470 | 0.8893 | | 0.0235 | 12.35 | 1000 | 0.4380 | 0.9066 | | 0.0129 | 13.58 | 1100 | 0.4557 | 0.9048 | | 0.0211 | 14.81 | 1200 | 0.4588 | 0.9014 | | f0a765a97b512bf59592ed758a03f9a7 |

apache-2.0 | ['automatic-speech-recognition', 'fa'] | false | exp_w2v2t_fa_r-wav2vec2_s129 Fine-tuned [facebook/wav2vec2-large-robust](https://huggingface.co/facebook/wav2vec2-large-robust) for speech recognition using the train split of [Common Voice 7.0 (fa)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 726c186a102a256c2c9bbb9a8d7c1305 |

apache-2.0 | ['generated_from_trainer'] | false | FPT-P3-6000 This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.4654 - Wer: 24.2203 | 7485de3beba508d8ff9890c995402a22 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 1000 - mixed_precision_training: Native AMP | f508b032da0116be69da81df4bfe07fc |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.3748 | 1.14 | 500 | 0.5442 | 26.9412 | | 0.1952 | 2.28 | 1000 | 0.4654 | 24.2203 | | 693c253fc9334b6fbdb38e99e0d8f6c8 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | izamizam Dreambooth model trained by HDKCL with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept: | aea34d736e8fd6b1e12ac4d9c37e0bd4 |

mit | [] | false | model by rodrigocoelho' language: - pt-BR - en-US thumbnail: "https://s3.amazonaws.com/moonup/production/uploads/1665418455423-632486a8767557375b7078a6.png" tags: - Bolsonaro license: "Apache 2.0" datasets: - Bolsonaro - stable diffusion  | 0b06efc77e00f9858c3719730ba44573 |

mit | ['javanese-distilbert-small-imdb'] | false | Javanese DistilBERT Small IMDB Javanese DistilBERT Small IMDB is a masked language model based on the [DistilBERT model](https://arxiv.org/abs/1910.01108). It was trained on Javanese IMDB movie reviews. The model was originally the pretrained [Javanese DistilBERT Small model](https://huggingface.co/w11wo/javanese-distilbert-small) and is later fine-tuned on the Javanese IMDB movie review dataset. It achieved a perplexity of 21.01 on the validation dataset. Many of the techniques used are based on a Hugging Face tutorial [notebook](https://github.com/huggingface/notebooks/blob/master/examples/language_modeling.ipynb) written by [Sylvain Gugger](https://github.com/sgugger). Hugging Face's `Trainer` class from the [Transformers](https://huggingface.co/transformers) library was used to train the model. PyTorch was used as the backend framework during training, but the model remains compatible with TensorFlow nonetheless. | 45ae49447667f1f0a399b590008aa0c8 |

mit | ['javanese-distilbert-small-imdb'] | false | params | Arch. | Training/Validation data (text) | |----------------------------------|----------|----------------------|---------------------------------| | `javanese-distilbert-small-imdb` | 66M | DistilBERT Small | Javanese IMDB (47.5 MB of text) | | b202693b557fdc6486e3e7ab4e1efc04 |

mit | ['javanese-distilbert-small-imdb'] | false | Evaluation Results The model was trained for 5 epochs and the following is the final result once the training ended. | train loss | valid loss | perplexity | total time | |------------|------------|------------|-------------| | 3.126 | 3.039 | 21.01 | 5:6:4 | | c631f7dfa30ab150354d11f3eb7225a6 |

mit | ['javanese-distilbert-small-imdb'] | false | As Masked Language Model ```python from transformers import pipeline pretrained_name = "w11wo/javanese-distilbert-small-imdb" fill_mask = pipeline( "fill-mask", model=pretrained_name, tokenizer=pretrained_name ) fill_mask("Aku mangan sate ing [MASK] bareng konco-konco") ``` | d634433066159c5711617d9f5d95528b |

mit | ['javanese-distilbert-small-imdb'] | false | Feature Extraction in PyTorch ```python from transformers import DistilBertModel, DistilBertTokenizerFast pretrained_name = "w11wo/javanese-distilbert-small-imdb" model = DistilBertModel.from_pretrained(pretrained_name) tokenizer = DistilBertTokenizerFast.from_pretrained(pretrained_name) prompt = "Indonesia minangka negara gedhe." encoded_input = tokenizer(prompt, return_tensors='pt') output = model(**encoded_input) ``` | 0849cf22d8883beac085cd79e651adaf |

mit | ['javanese-distilbert-small-imdb'] | false | Citation If you use any of our models in your research, please cite: ```bib @inproceedings{wongso2021causal, title={Causal and Masked Language Modeling of Javanese Language using Transformer-based Architectures}, author={Wongso, Wilson and Setiawan, David Samuel and Suhartono, Derwin}, booktitle={2021 International Conference on Advanced Computer Science and Information Systems (ICACSIS)}, pages={1--7}, year={2021}, organization={IEEE} } ``` | 5b73e0137ac83945724295d47258612f |

apache-2.0 | ['generated_from_trainer'] | false | keyphrase-extractions_bart-large This model is a fine-tuned version of [facebook/bart-large](https://huggingface.co/facebook/bart-large) on the kp20k dataset. It achieves the following results on the evaluation set: - Loss: 1.7257 - Rouge1: 0.4713 - Rouge2: 0.2385 - Rougel: 0.384 - Rougelsum: 0.3841 - Gen Len: 18.3164 - Phrase match: 0.1917 | 14ebb9019342cdd5799aa1f84276709b |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 64 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3 - mixed_precision_training: Native AMP | cb978f9c791eaf3ef76686aa4a0a4fcf |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | Phrase match | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:|:---------:|:-------:|:------------:| | 2.5104 | 1.0 | 730 | 1.8021 | 0.464 | 0.2336 | 0.3765 | 0.3766 | 18.9074 | 0.1784 | | 1.8436 | 2.0 | 1460 | 1.7473 | 0.4709 | 0.2381 | 0.3834 | 0.3836 | 17.8127 | 0.1891 | | 1.6864 | 3.0 | 2190 | 1.7257 | 0.4713 | 0.2385 | 0.384 | 0.3841 | 18.3164 | 0.1917 | | 3c6a5af2beee4f5a3a94f91ce9fb6c8a |

mit | ['generated_from_keras_callback'] | false | recklessrecursion/2008_Sichuan_earthquake-clustered This model is a fine-tuned version of [nandysoham16/12-clustered_aug](https://huggingface.co/nandysoham16/12-clustered_aug) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.5049 - Train End Logits Accuracy: 0.8507 - Train Start Logits Accuracy: 0.7778 - Validation Loss: 0.3830 - Validation End Logits Accuracy: 0.9474 - Validation Start Logits Accuracy: 0.8947 - Epoch: 0 | 42f99e830252a2ab4fb5a83eb06edc37 |

mit | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch | |:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:| | 0.5049 | 0.8507 | 0.7778 | 0.3830 | 0.9474 | 0.8947 | 0 | | 4db152bf6aa3198c67013caf3f590638 |

apache-2.0 | ['generated_from_trainer'] | false | flan-t5-base-samsum This model is a fine-tuned version of [google/flan-t5-base](https://huggingface.co/google/flan-t5-base) on the samsum dataset. It achieves the following results on the evaluation set: - Loss: 1.3772 - Rouge1: 47.4798 - Rouge2: 23.9756 - Rougel: 40.0392 - Rougelsum: 43.6545 - Gen Len: 17.3162 | 1e6bfa57140109197188d01ad55d547a |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:| | 1.4403 | 1.0 | 1842 | 1.3829 | 46.5346 | 23.1326 | 39.4401 | 42.8272 | 17.0977 | | 1.3534 | 2.0 | 3684 | 1.3732 | 47.0911 | 23.5074 | 39.5951 | 43.2279 | 17.4554 | | 1.2795 | 3.0 | 5526 | 1.3709 | 46.8895 | 23.3243 | 39.5909 | 43.1286 | 17.2027 | | 1.2313 | 4.0 | 7368 | 1.3736 | 47.4946 | 23.7802 | 39.9999 | 43.5903 | 17.2198 | | 1.1934 | 5.0 | 9210 | 1.3772 | 47.4798 | 23.9756 | 40.0392 | 43.6545 | 17.3162 | | 583ac0888837daf031d40cd472feb01a |

apache-2.0 | ['generated_from_trainer'] | false | Papers With Code Results As of 2 February 2023 the Papers with Code page for this task has the following leaderboard. Our score (Rouge 1 score of 47.4798) puts this model's performance between fourth and fifth place on the leaderboard:  | 244915ee945bc2c34c673c860477153c |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-large-xls-r-300m-chinese-taiwan-colab !!!this model has just been trained with very high learning rate and small epochs, please do not use this to do the speech to text. !!!It's just a test, I'll retrain this model with more time later when I have time. This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. | f53fd97749cd85ae983d8107de0a417e |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.1 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 2 - mixed_precision_training: Native AMP | f3ef03782617228b49f082c96af2de06 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 4 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 20 - mixed_precision_training: Native AMP | 2984b6c5aa2ef50159196cba8f70bdeb |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-de-data This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.1372 - F1 Score: 0.8621 | e9bfff5961f23c6dd539b55e4dc7ee76 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 Score | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.2575 | 1.0 | 525 | 0.1621 | 0.8292 | | 0.1287 | 2.0 | 1050 | 0.1378 | 0.8526 | | 0.0831 | 3.0 | 1575 | 0.1372 | 0.8621 | | 46b3f03defaa6237c6e2c58ec66f44da |

mit | ['exbert'] | false | Model Details **Model Description:** RoBERTa large OpenAI Detector is the GPT-2 output detector model, obtained by fine-tuning a RoBERTa large model with the outputs of the 1.5B-parameter GPT-2 model. The model can be used to predict if text was generated by a GPT-2 model. This model was released by OpenAI at the same time as OpenAI released the weights of the [largest GPT-2 model](https://huggingface.co/gpt2-xl), the 1.5B parameter version. - **Developed by:** OpenAI, see [GitHub Repo](https://github.com/openai/gpt-2-output-dataset/tree/master/detector) and [associated paper](https://d4mucfpksywv.cloudfront.net/papers/GPT_2_Report.pdf) for full author list - **Model Type:** Fine-tuned transformer-based language model - **Language(s):** English - **License:** MIT - **Related Models:** [RoBERTa large](https://huggingface.co/roberta-large), [GPT-XL (1.5B parameter version)](https://huggingface.co/gpt2-xl), [GPT-Large (the 774M parameter version)](https://huggingface.co/gpt2-large), [GPT-Medium (the 355M parameter version)](https://huggingface.co/gpt2-medium) and [GPT-2 (the 124M parameter version)](https://huggingface.co/gpt2) - **Resources for more information:** - [Research Paper](https://d4mucfpksywv.cloudfront.net/papers/GPT_2_Report.pdf) (see, in particular, the section beginning on page 12 about Automated ML-based detection). - [GitHub Repo](https://github.com/openai/gpt-2-output-dataset/tree/master/detector) - [OpenAI Blog Post](https://openai.com/blog/gpt-2-1-5b-release/) - [Explore the detector model here](https://huggingface.co/openai-detector ) | f1550c3de175336cd95bfc680a5b8344 |

mit | ['exbert'] | false | Bias Significant research has explored bias and fairness issues with language models (see, e.g., [Sheng et al. (2021)](https://aclanthology.org/2021.acl-long.330.pdf) and [Bender et al. (2021)](https://dl.acm.org/doi/pdf/10.1145/3442188.3445922)). Predictions generated by RoBERTa large and GPT-2 1.5B (which this model is built/fine-tuned on) can include disturbing and harmful stereotypes across protected classes; identity characteristics; and sensitive, social, and occupational groups (see the [RoBERTa large](https://huggingface.co/roberta-large) and [GPT-2 XL](https://huggingface.co/gpt2-xl) model cards for more information). The developers of this model discuss these issues further in their [paper](https://d4mucfpksywv.cloudfront.net/papers/GPT_2_Report.pdf). | 869a5bb1d76a78de07b41338ee664c5a |

mit | ['exbert'] | false | Training Data The model is a sequence classifier based on RoBERTa large (see the [RoBERTa large model card](https://huggingface.co/roberta-large) for more details on the RoBERTa large training data) and then fine-tuned using the outputs of the 1.5B GPT-2 model (available [here](https://github.com/openai/gpt-2-output-dataset)). | d134dc4f88bbc5e62bd0dda8a147406d |

mit | ['exbert'] | false | Training Procedure The model developers write that: > We based a sequence classifier on RoBERTaLARGE (355 million parameters) and fine-tuned it to classify the outputs from the 1.5B GPT-2 model versus WebText, the dataset we used to train the GPT-2 model. They later state: > To develop a robust detector model that can accurately classify generated texts regardless of the sampling method, we performed an analysis of the model’s transfer performance. See the [associated paper](https://d4mucfpksywv.cloudfront.net/papers/GPT_2_Report.pdf) for further details on the training procedure. | 413e33944710109f5e61261a55ed0b20 |

apache-2.0 | ['translation'] | false | eng-dra * source group: English * target group: Dravidian languages * OPUS readme: [eng-dra](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/eng-dra/README.md) * model: transformer * source language(s): eng * target language(s): kan mal tam tel * model: transformer * pre-processing: normalization + SentencePiece (spm32k,spm32k) * a sentence initial language token is required in the form of `>>id<<` (id = valid target language ID) * download original weights: [opus-2020-07-26.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/eng-dra/opus-2020-07-26.zip) * test set translations: [opus-2020-07-26.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/eng-dra/opus-2020-07-26.test.txt) * test set scores: [opus-2020-07-26.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/eng-dra/opus-2020-07-26.eval.txt) | 20d8bbf1a00a926c1510cda36728eab4 |

apache-2.0 | ['translation'] | false | Benchmarks | testset | BLEU | chr-F | |-----------------------|-------|-------| | Tatoeba-test.eng-kan.eng.kan | 4.7 | 0.348 | | Tatoeba-test.eng-mal.eng.mal | 13.1 | 0.515 | | Tatoeba-test.eng.multi | 10.7 | 0.463 | | Tatoeba-test.eng-tam.eng.tam | 9.0 | 0.444 | | Tatoeba-test.eng-tel.eng.tel | 7.1 | 0.363 | | 74092f8aac504f9e1fd19706834bd6bf |

apache-2.0 | ['translation'] | false | System Info: - hf_name: eng-dra - source_languages: eng - target_languages: dra - opus_readme_url: https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/eng-dra/README.md - original_repo: Tatoeba-Challenge - tags: ['translation'] - languages: ['en', 'ta', 'kn', 'ml', 'te', 'dra'] - src_constituents: {'eng'} - tgt_constituents: {'tam', 'kan', 'mal', 'tel'} - src_multilingual: False - tgt_multilingual: True - prepro: normalization + SentencePiece (spm32k,spm32k) - url_model: https://object.pouta.csc.fi/Tatoeba-MT-models/eng-dra/opus-2020-07-26.zip - url_test_set: https://object.pouta.csc.fi/Tatoeba-MT-models/eng-dra/opus-2020-07-26.test.txt - src_alpha3: eng - tgt_alpha3: dra - short_pair: en-dra - chrF2_score: 0.46299999999999997 - bleu: 10.7 - brevity_penalty: 1.0 - ref_len: 7928.0 - src_name: English - tgt_name: Dravidian languages - train_date: 2020-07-26 - src_alpha2: en - tgt_alpha2: dra - prefer_old: False - long_pair: eng-dra - helsinki_git_sha: 480fcbe0ee1bf4774bcbe6226ad9f58e63f6c535 - transformers_git_sha: 2207e5d8cb224e954a7cba69fa4ac2309e9ff30b - port_machine: brutasse - port_time: 2020-08-21-14:41 | e026c8c2d7f8f18b97f0a4b1d6a17446 |

mit | ['pytorch', 'deberta', 'deberta-v2', 'commonsenseqa', 'commonsense_qa', 'commonsense-qa', 'CommonsenseQA'] | false | このモデルはdeberta-v2-base-japaneseをファインチューニングしてCommonsenseQA(選択式の質問)に用いれるようにしたものです。 このモデルはdeberta-v2-base-japaneseをyahoo japan/JGLUEのJCommonsenseQA( https://github.com/yahoojapan/JGLUE ) を用いてファインチューニングしたものです。 | 364cad6f5c7f19ab0a4d8d2ae562029b |

mit | ['pytorch', 'deberta', 'deberta-v2', 'commonsenseqa', 'commonsense_qa', 'commonsense-qa', 'CommonsenseQA'] | false | This model is fine-tuned model for CommonsenseQA which is based on deberta-v2-base-japanese This model is fine-tuned by using JGLUE/JCommonsenseQA dataset. You could use this model for CommonsenseQA tasks. | ce19e81bb730c56fe5a1636fd0471030 |

mit | ['pytorch', 'deberta', 'deberta-v2', 'commonsenseqa', 'commonsense_qa', 'commonsense-qa', 'CommonsenseQA'] | false | How to use 使い方 transformersおよびpytorch、sentencepiece、Juman++をインストールしてください。 以下のコードを実行することで、CommonsenseQAタスクを解かせることができます。 please execute this code. ```python from transformers import AutoTokenizer, AutoModelForMultipleChoice import torch import numpy as np | 04c90e014995cc246a8a205389960cdd |

mit | ['pytorch', 'deberta', 'deberta-v2', 'commonsenseqa', 'commonsense_qa', 'commonsense-qa', 'CommonsenseQA'] | false | modelのロード tokenizer = AutoTokenizer.from_pretrained('Mizuiro-sakura/deberta-v2-japanese-base-finetuned-commonsenseqa') model = AutoModelForMultipleChoice.from_pretrained('Mizuiro-sakura/deberta-v2-japanese-base-finetuned-commonsenseqa') | c78f1bbeca1dcb0969cec5cd033bc3b6 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 0 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: constant - num_epochs: 35.0 | 233c283cecb047d6bd28ce5c710c7f27 |

mit | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'WarmUp', 'config': {'initial_learning_rate': 2e-05, 'decay_schedule_fn': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 37100, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}, '__passive_serialization__': True}, 'warmup_steps': 1000, 'power': 1.0, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01} - training_precision: float32 | 1e0893c42de4795bf073a4d6a0154311 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2169 - Accuracy: 0.9215 - F1: 0.9215 | 13142c799d97def1efe3f769a07d8635 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.798 | 1.0 | 250 | 0.3098 | 0.899 | 0.8956 | | 0.2422 | 2.0 | 500 | 0.2169 | 0.9215 | 0.9215 | | be0cbded57a8b553b6e00ebb194893e1 |

['apache-2.0'] | ['Wikipedia', 'Summarizer', 'bert2bert', 'Summarization'] | false | WikiBert2WikiBert Bert language models can be employed for Summarization tasks. WikiBert2WikiBert is an encoder-decoder transformer model that is initialized using the Persian WikiBert Model weights. The WikiBert Model is a Bert language model which is fine-tuned on Persian Wikipedia. After using the WikiBert weights for initialization, the model is trained for five epochs on PN-summary and Persian BBC datasets. | 910e5e87839190ff4fbaf474863d8552 |

['apache-2.0'] | ['Wikipedia', 'Summarizer', 'bert2bert', 'Summarization'] | false | How to Use: You can use the code below to get the model's outputs, or you can simply use the demo on the right. ``` from transformers import ( BertTokenizerFast, EncoderDecoderConfig, EncoderDecoderModel, BertConfig ) model_name = 'Arashasg/WikiBert2WikiBert' tokenizer = BertTokenizerFast.from_pretrained(model_name) config = EncoderDecoderConfig.from_pretrained(model_name) model = EncoderDecoderModel.from_pretrained(model_name, config=config) def generate_summary(text): inputs = tokenizer(text, padding="max_length", truncation=True, max_length=512, return_tensors="pt") input_ids = inputs.input_ids.to("cuda") attention_mask = inputs.attention_mask.to("cuda") outputs = model.generate(input_ids, attention_mask=attention_mask) output_str = tokenizer.batch_decode(outputs, skip_special_tokens=True) return output_str input = 'your input comes here' summary = generate_summary(input) ``` | cd7833a6d954082f42c58a018823386f |

['apache-2.0'] | ['Wikipedia', 'Summarizer', 'bert2bert', 'Summarization'] | false | Evaluation I separated 5 percent of the pn-summary for evaluation of the model. The rouge scores of the model are as follows: | Rouge-1 | Rouge-2 | Rouge-l | | ------------- | ------------- | ------------- | | 38.97% | 18.42% | 34.50% | | ff953b0015c8f11cbc65ea2d7b72d432 |

apache-2.0 | ['generated_from_trainer'] | false | distilled-mt5-small-b1.5 This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the wmt16 ro-en dataset. It achieves the following results on the evaluation set: - Loss: 2.7938 - Bleu: 7.5422 - Gen Len: 44.3267 | f94b889b5dae99aa758410c624cef80c |

apache-2.0 | ['automatic-speech-recognition', 'ja'] | false | exp_w2v2t_ja_wav2vec2_s834 Fine-tuned [facebook/wav2vec2-large-lv60](https://huggingface.co/facebook/wav2vec2-large-lv60) for speech recognition using the train split of [Common Voice 7.0 (ja)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 72050c75a7cd1f81a738ca04affba889 |

apache-2.0 | ['automatic-speech-recognition', 'de'] | false | exp_w2v2t_de_unispeech_s62 Fine-tuned [microsoft/unispeech-large-1500h-cv](https://huggingface.co/microsoft/unispeech-large-1500h-cv) for speech recognition using the train split of [Common Voice 7.0 (de)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 1696a26db46e62d0d9c718d979073b12 |

gpl-3.0 | ['object-detection', 'computer-vision', 'yolov8', 'yolov5'] | false | Yolov8 Inference ```python from ultralytics import YOLO model = YOLO('kadirnar/yolov8m-v8.0') model.conf = conf_threshold model.iou = iou_threshold prediction = model.predict(image, imgsz=image_size, show=False, save=False) ``` | a77c33cc972276b72a91cebc907fd2d1 |

mit | ['roberta-base', 'roberta-base-epoch_58'] | false | RoBERTa, Intermediate Checkpoint - Epoch 58 This model is part of our reimplementation of the [RoBERTa model](https://arxiv.org/abs/1907.11692), trained on Wikipedia and the Book Corpus only. We train this model for almost 100K steps, corresponding to 83 epochs. We provide the 84 checkpoints (including the randomly initialized weights before the training) to provide the ability to study the training dynamics of such models, and other possible use-cases. These models were trained in part of a work that studies how simple statistics from data, such as co-occurrences affects model predictions, which are described in the paper [Measuring Causal Effects of Data Statistics on Language Model's `Factual' Predictions](https://arxiv.org/abs/2207.14251). This is RoBERTa-base epoch_58. | c723fa721cbd61a3ba2f8245404a349c |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | Stable Diffusion Inpainting is a latent text-to-image diffusion model capable of generating photo-realistic images given any text input, with the extra capability of inpainting the pictures by using a mask. The **Stable-Diffusion-Inpainting** was initialized with the weights of the [Stable-Diffusion-v-1-2](https://steps/huggingface.co/CompVis/stable-diffusion-v-1-2-original). First 595k steps regular training, then 440k steps of inpainting training at resolution 512x512 on “laion-aesthetics v2 5+” and 10% dropping of the text-conditioning to improve classifier-free [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598). For inpainting, the UNet has 5 additional input channels (4 for the encoded masked-image and 1 for the mask itself) whose weights were zero-initialized after restoring the non-inpainting checkpoint. During training, we generate synthetic masks and in 25% mask everything. [](https://huggingface.co/spaces/runwayml/stable-diffusion-inpainting) | [](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/in_painting_with_stable_diffusion_using_diffusers.ipynb) :-------------------------:|:-------------------------:| | 495688e527629187f0b7105d9c36f0cf |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | Diffusers ```python from diffusers import StableDiffusionInpaintPipeline pipe = StableDiffusionInpaintPipeline.from_pretrained( "runwayml/stable-diffusion-inpainting", revision="fp16", torch_dtype=torch.float16, ) prompt = "Face of a yellow cat, high resolution, sitting on a park bench" | 46e384f62e83237689c96ae6aee1b38a |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | The mask structure is white for inpainting and black for keeping as is image = pipe(prompt=prompt, image=image, mask_image=mask_image).images[0] image.save("./yellow_cat_on_park_bench.png") ``` **How it works:** `image` | `mask_image` :-------------------------:|:-------------------------:| <img src="https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo.png" alt="drawing" width="300"/> | <img src="https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo_mask.png" alt="drawing" width="300"/> `prompt` | `Output` :-------------------------:|:-------------------------:| <span style="position: relative;bottom: 150px;">Face of a yellow cat, high resolution, sitting on a park bench</span> | <img src="https://huggingface.co/datasets/patrickvonplaten/images/resolve/main/test.png" alt="drawing" width="300"/> | 0c6635c0a4bdd521297b99fe0d497854 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | Original GitHub Repository 1. Download the weights [sd-v1-5-inpainting.ckpt](https://huggingface.co/runwayml/stable-diffusion-inpainting/resolve/main/sd-v1-5-inpainting.ckpt) 2. Follow instructions [here](https://github.com/runwayml/stable-diffusion | 816bf0324ca76dee2b73fa0624aeba48 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | Model Details - **Developed by:** Robin Rombach, Patrick Esser - **Model type:** Diffusion-based text-to-image generation model - **Language(s):** English - **License:** [The CreativeML OpenRAIL M license](https://huggingface.co/spaces/CompVis/stable-diffusion-license) is an [Open RAIL M license](https://www.licenses.ai/blog/2022/8/18/naming-convention-of-responsible-ai-licenses), adapted from the work that [BigScience](https://bigscience.huggingface.co/) and [the RAIL Initiative](https://www.licenses.ai/) are jointly carrying in the area of responsible AI licensing. See also [the article about the BLOOM Open RAIL license](https://bigscience.huggingface.co/blog/the-bigscience-rail-license) on which our license is based. - **Model Description:** This is a model that can be used to generate and modify images based on text prompts. It is a [Latent Diffusion Model](https://arxiv.org/abs/2112.10752) that uses a fixed, pretrained text encoder ([CLIP ViT-L/14](https://arxiv.org/abs/2103.00020)) as suggested in the [Imagen paper](https://arxiv.org/abs/2205.11487). - **Resources for more information:** [GitHub Repository](https://github.com/runwayml/stable-diffusion), [Paper](https://arxiv.org/abs/2112.10752). - **Cite as:** @InProceedings{Rombach_2022_CVPR, author = {Rombach, Robin and Blattmann, Andreas and Lorenz, Dominik and Esser, Patrick and Ommer, Bj\"orn}, title = {High-Resolution Image Synthesis With Latent Diffusion Models}, booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)}, month = {June}, year = {2022}, pages = {10684-10695} } | e22570b3da785c88a6963230bfd7e489 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | Bias While the capabilities of image generation models are impressive, they can also reinforce or exacerbate social biases. Stable Diffusion v1 was trained on subsets of [LAION-2B(en)](https://laion.ai/blog/laion-5b/), which consists of images that are primarily limited to English descriptions. Texts and images from communities and cultures that use other languages are likely to be insufficiently accounted for. This affects the overall output of the model, as white and western cultures are often set as the default. Further, the ability of the model to generate content with non-English prompts is significantly worse than with English-language prompts. | 9a0a848a840065716b9513424131fd7f |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | Training **Training Data** The model developers used the following dataset for training the model: - LAION-2B (en) and subsets thereof (see next section) **Training Procedure** Stable Diffusion v1 is a latent diffusion model which combines an autoencoder with a diffusion model that is trained in the latent space of the autoencoder. During training, - Images are encoded through an encoder, which turns images into latent representations. The autoencoder uses a relative downsampling factor of 8 and maps images of shape H x W x 3 to latents of shape H/f x W/f x 4 - Text prompts are encoded through a ViT-L/14 text-encoder. - The non-pooled output of the text encoder is fed into the UNet backbone of the latent diffusion model via cross-attention. - The loss is a reconstruction objective between the noise that was added to the latent and the prediction made by the UNet. We currently provide six checkpoints, `sd-v1-1.ckpt`, `sd-v1-2.ckpt` and `sd-v1-3.ckpt`, `sd-v1-4.ckpt`, `sd-v1-5.ckpt` and `sd-v1-5-inpainting.ckpt` which were trained as follows, - `sd-v1-1.ckpt`: 237k steps at resolution `256x256` on [laion2B-en](https://huggingface.co/datasets/laion/laion2B-en). 194k steps at resolution `512x512` on [laion-high-resolution](https://huggingface.co/datasets/laion/laion-high-resolution) (170M examples from LAION-5B with resolution `>= 1024x1024`). - `sd-v1-2.ckpt`: Resumed from `sd-v1-1.ckpt`. 515k steps at resolution `512x512` on "laion-improved-aesthetics" (a subset of laion2B-en, filtered to images with an original size `>= 512x512`, estimated aesthetics score `> 5.0`, and an estimated watermark probability `< 0.5`. The watermark estimate is from the LAION-5B metadata, the aesthetics score is estimated using an [improved aesthetics estimator](https://github.com/christophschuhmann/improved-aesthetic-predictor)). - `sd-v1-3.ckpt`: Resumed from `sd-v1-2.ckpt`. 195k steps at resolution `512x512` on "laion-improved-aesthetics" and 10\% dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598). - `sd-v1-4.ckpt`: Resumed from stable-diffusion-v1-2.225,000 steps at resolution 512x512 on "laion-aesthetics v2 5+" and 10 % dropping of the text-conditioning to [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598). - `sd-v1-5.ckpt`: Resumed from sd-v1-2.ckpt. 595k steps at resolution 512x512 on "laion-aesthetics v2 5+" and 10% dropping of the text-conditioning to improve classifier-free guidance sampling. - `sd-v1-5-inpaint.ckpt`: Resumed from sd-v1-2.ckpt. 595k steps at resolution 512x512 on "laion-aesthetics v2 5+" and 10% dropping of the text-conditioning to improve classifier-free guidance sampling. Then 440k steps of inpainting training at resolution 512x512 on “laion-aesthetics v2 5+” and 10% dropping of the text-conditioning. For inpainting, the UNet has 5 additional input channels (4 for the encoded masked-image and 1 for the mask itself) whose weights were zero-initialized after restoring the non-inpainting checkpoint. During training, we generate synthetic masks and in 25% mask everything. - **Hardware:** 32 x 8 x A100 GPUs - **Optimizer:** AdamW - **Gradient Accumulations**: 2 - **Batch:** 32 x 8 x 2 x 4 = 2048 - **Learning rate:** warmup to 0.0001 for 10,000 steps and then kept constant | 8a0615803fed1f268bb2aea4686a52cf |

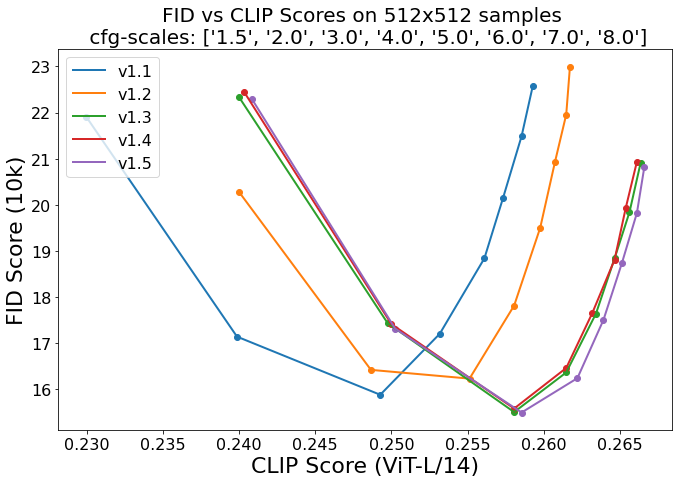

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | Evaluation Results Evaluations with different classifier-free guidance scales (1.5, 2.0, 3.0, 4.0, 5.0, 6.0, 7.0, 8.0) and 50 PLMS sampling steps show the relative improvements of the checkpoints:  Evaluated using 50 PLMS steps and 10000 random prompts from the COCO2017 validation set, evaluated at 512x512 resolution. Not optimized for FID scores. | cb4673dea23850bd1e38e970de05dd0f |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | Inpainting Evaluation To assess the performance of the inpainting model, we used the same evaluation protocol as in our [LDM paper](https://arxiv.org/abs/2112.10752). Since the Stable Diffusion Inpainting Model acccepts a text input, we simply used a fixed prompt of `photograph of a beautiful empty scene, highest quality settings`. | Model | FID | LPIPS | |-----------------------------|------|------------------| | Stable Diffusion Inpainting | 1.00 | 0.141 (+- 0.082) | | Latent Diffusion Inpainting | 1.50 | 0.137 (+- 0.080) | | CoModGAN | 1.82 | 0.15 | | LaMa | 2.21 | 0.134 (+- 0.080) | | fb8df9a0a61527faefb78398a462e734 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-de This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.1405 - F1: 0.8611 | de687c73e97676aac452e806440860ac |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.2542 | 1.0 | 787 | 0.1788 | 0.8083 | | 0.1307 | 2.0 | 1574 | 0.1371 | 0.8488 | | 0.0784 | 3.0 | 2361 | 0.1405 | 0.8611 | | d9b93a390a3aaf9babe8653956b6b457 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.