license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

cc-by-sa-4.0 | ['coptic', 'token-classification', 'pos', 'dependency-parsing'] | false | Model Description This is a DeBERTa(V2) model pre-trained with [UD_Coptic](https://universaldependencies.org/cop/) for POS-tagging and dependency-parsing, derived from [deberta-small-coptic](https://huggingface.co/KoichiYasuoka/deberta-small-coptic). Every word is tagged by [UPOS](https://universaldependencies.org/u/pos/) (Universal Part-Of-Speech). | d83973dafd57c7abe9ddedcd0d2b0028 |

cc-by-sa-4.0 | ['coptic', 'token-classification', 'pos', 'dependency-parsing'] | false | How to Use ```py from transformers import AutoTokenizer,AutoModelForTokenClassification tokenizer=AutoTokenizer.from_pretrained("KoichiYasuoka/deberta-small-coptic-upos") model=AutoModelForTokenClassification.from_pretrained("KoichiYasuoka/deberta-small-coptic-upos") ``` or ``` import esupar nlp=esupar.load("KoichiYasuoka/deberta-small-coptic-upos") ``` | ccc1852ffdb563358666eba62ed2fe72 |

cc-by-4.0 | ['question generation'] | false | Model Card of `research-backup/t5-small-squadshifts-vanilla-new_wiki-qg` This model is fine-tuned version of [t5-small](https://huggingface.co/t5-small) for question generation task on the [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) (dataset_name: new_wiki) via [`lmqg`](https://github.com/asahi417/lm-question-generation). | 8ec373ca19cb5ab4882ff50362fc0b33 |

cc-by-4.0 | ['question generation'] | false | Overview - **Language model:** [t5-small](https://huggingface.co/t5-small) - **Language:** en - **Training data:** [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) (new_wiki) - **Online Demo:** [https://autoqg.net/](https://autoqg.net/) - **Repository:** [https://github.com/asahi417/lm-question-generation](https://github.com/asahi417/lm-question-generation) - **Paper:** [https://arxiv.org/abs/2210.03992](https://arxiv.org/abs/2210.03992) | 3bc5a461b78d85528dc9b1e9b43bb875 |

cc-by-4.0 | ['question generation'] | false | model prediction questions = model.generate_q(list_context="William Turner was an English painter who specialised in watercolour landscapes", list_answer="William Turner") ``` - With `transformers` ```python from transformers import pipeline pipe = pipeline("text2text-generation", "research-backup/t5-small-squadshifts-vanilla-new_wiki-qg") output = pipe("generate question: <hl> Beyonce <hl> further expanded her acting career, starring as blues singer Etta James in the 2008 musical biopic, Cadillac Records.") ``` | 4472addd6732f7245e48fea84b842fb4 |

cc-by-4.0 | ['question generation'] | false | Evaluation - ***Metric (Question Generation)***: [raw metric file](https://huggingface.co/research-backup/t5-small-squadshifts-vanilla-new_wiki-qg/raw/main/eval/metric.first.sentence.paragraph_answer.question.lmqg_qg_squadshifts.new_wiki.json) | | Score | Type | Dataset | |:-----------|--------:|:---------|:---------------------------------------------------------------------------| | BERTScore | 83.08 | new_wiki | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_1 | 6.9 | new_wiki | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_2 | 2.75 | new_wiki | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_3 | 1.38 | new_wiki | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_4 | 0.81 | new_wiki | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | METEOR | 8.26 | new_wiki | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | MoverScore | 52.25 | new_wiki | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | ROUGE_L | 8.85 | new_wiki | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | d3cc3695dd3364379b3d479a962240d7 |

cc-by-4.0 | ['question generation'] | false | Training hyperparameters The following hyperparameters were used during fine-tuning: - dataset_path: lmqg/qg_squadshifts - dataset_name: new_wiki - input_types: ['paragraph_answer'] - output_types: ['question'] - prefix_types: ['qg'] - model: t5-small - max_length: 512 - max_length_output: 32 - epoch: 1 - batch: 32 - lr: 1e-05 - fp16: False - random_seed: 1 - gradient_accumulation_steps: 4 - label_smoothing: 0.15 The full configuration can be found at [fine-tuning config file](https://huggingface.co/research-backup/t5-small-squadshifts-vanilla-new_wiki-qg/raw/main/trainer_config.json). | b542db53f4054150dc1762c7096a15ee |

apache-2.0 | ['generated_from_keras_callback'] | false | t5-small-finetuned-on-cloudsek-data-assignment This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 1.8961 - Validation Loss: 1.8481 - Epoch: 1 | 6d4ff459e4e668f710a51f61b8be095a |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 5.6e-05, 'decay_steps': 6744, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01} - training_precision: mixed_float16 | 9a6d939944462bc561937fe4dd513dc2 |

mit | ['generated_from_trainer'] | false | serene_goldberg This model was trained from scratch on the tomekkorbak/detoxify-pile-chunk3-0-50000, the tomekkorbak/detoxify-pile-chunk3-50000-100000, the tomekkorbak/detoxify-pile-chunk3-100000-150000, the tomekkorbak/detoxify-pile-chunk3-150000-200000, the tomekkorbak/detoxify-pile-chunk3-200000-250000, the tomekkorbak/detoxify-pile-chunk3-250000-300000, the tomekkorbak/detoxify-pile-chunk3-300000-350000, the tomekkorbak/detoxify-pile-chunk3-350000-400000, the tomekkorbak/detoxify-pile-chunk3-400000-450000, the tomekkorbak/detoxify-pile-chunk3-450000-500000, the tomekkorbak/detoxify-pile-chunk3-500000-550000, the tomekkorbak/detoxify-pile-chunk3-550000-600000, the tomekkorbak/detoxify-pile-chunk3-600000-650000, the tomekkorbak/detoxify-pile-chunk3-650000-700000, the tomekkorbak/detoxify-pile-chunk3-700000-750000, the tomekkorbak/detoxify-pile-chunk3-750000-800000, the tomekkorbak/detoxify-pile-chunk3-800000-850000, the tomekkorbak/detoxify-pile-chunk3-850000-900000, the tomekkorbak/detoxify-pile-chunk3-900000-950000, the tomekkorbak/detoxify-pile-chunk3-950000-1000000, the tomekkorbak/detoxify-pile-chunk3-1000000-1050000, the tomekkorbak/detoxify-pile-chunk3-1050000-1100000, the tomekkorbak/detoxify-pile-chunk3-1100000-1150000, the tomekkorbak/detoxify-pile-chunk3-1150000-1200000, the tomekkorbak/detoxify-pile-chunk3-1200000-1250000, the tomekkorbak/detoxify-pile-chunk3-1250000-1300000, the tomekkorbak/detoxify-pile-chunk3-1300000-1350000, the tomekkorbak/detoxify-pile-chunk3-1350000-1400000, the tomekkorbak/detoxify-pile-chunk3-1400000-1450000, the tomekkorbak/detoxify-pile-chunk3-1450000-1500000, the tomekkorbak/detoxify-pile-chunk3-1500000-1550000, the tomekkorbak/detoxify-pile-chunk3-1550000-1600000, the tomekkorbak/detoxify-pile-chunk3-1600000-1650000, the tomekkorbak/detoxify-pile-chunk3-1650000-1700000, the tomekkorbak/detoxify-pile-chunk3-1700000-1750000, the tomekkorbak/detoxify-pile-chunk3-1750000-1800000, the tomekkorbak/detoxify-pile-chunk3-1800000-1850000, the tomekkorbak/detoxify-pile-chunk3-1850000-1900000 and the tomekkorbak/detoxify-pile-chunk3-1900000-1950000 datasets. | 270a31370983c2e828d3e0848fbc2f99 |

mit | ['generated_from_trainer'] | false | Full config {'dataset': {'datasets': ['tomekkorbak/detoxify-pile-chunk3-0-50000', 'tomekkorbak/detoxify-pile-chunk3-50000-100000', 'tomekkorbak/detoxify-pile-chunk3-100000-150000', 'tomekkorbak/detoxify-pile-chunk3-150000-200000', 'tomekkorbak/detoxify-pile-chunk3-200000-250000', 'tomekkorbak/detoxify-pile-chunk3-250000-300000', 'tomekkorbak/detoxify-pile-chunk3-300000-350000', 'tomekkorbak/detoxify-pile-chunk3-350000-400000', 'tomekkorbak/detoxify-pile-chunk3-400000-450000', 'tomekkorbak/detoxify-pile-chunk3-450000-500000', 'tomekkorbak/detoxify-pile-chunk3-500000-550000', 'tomekkorbak/detoxify-pile-chunk3-550000-600000', 'tomekkorbak/detoxify-pile-chunk3-600000-650000', 'tomekkorbak/detoxify-pile-chunk3-650000-700000', 'tomekkorbak/detoxify-pile-chunk3-700000-750000', 'tomekkorbak/detoxify-pile-chunk3-750000-800000', 'tomekkorbak/detoxify-pile-chunk3-800000-850000', 'tomekkorbak/detoxify-pile-chunk3-850000-900000', 'tomekkorbak/detoxify-pile-chunk3-900000-950000', 'tomekkorbak/detoxify-pile-chunk3-950000-1000000', 'tomekkorbak/detoxify-pile-chunk3-1000000-1050000', 'tomekkorbak/detoxify-pile-chunk3-1050000-1100000', 'tomekkorbak/detoxify-pile-chunk3-1100000-1150000', 'tomekkorbak/detoxify-pile-chunk3-1150000-1200000', 'tomekkorbak/detoxify-pile-chunk3-1200000-1250000', 'tomekkorbak/detoxify-pile-chunk3-1250000-1300000', 'tomekkorbak/detoxify-pile-chunk3-1300000-1350000', 'tomekkorbak/detoxify-pile-chunk3-1350000-1400000', 'tomekkorbak/detoxify-pile-chunk3-1400000-1450000', 'tomekkorbak/detoxify-pile-chunk3-1450000-1500000', 'tomekkorbak/detoxify-pile-chunk3-1500000-1550000', 'tomekkorbak/detoxify-pile-chunk3-1550000-1600000', 'tomekkorbak/detoxify-pile-chunk3-1600000-1650000', 'tomekkorbak/detoxify-pile-chunk3-1650000-1700000', 'tomekkorbak/detoxify-pile-chunk3-1700000-1750000', 'tomekkorbak/detoxify-pile-chunk3-1750000-1800000', 'tomekkorbak/detoxify-pile-chunk3-1800000-1850000', 'tomekkorbak/detoxify-pile-chunk3-1850000-1900000', 'tomekkorbak/detoxify-pile-chunk3-1900000-1950000'], 'is_split_by_sentences': True, 'skip_tokens': 1661599744}, 'generation': {'metrics_configs': [{}, {'n': 1}, {'n': 2}, {'n': 5}], 'scenario_configs': [{'generate_kwargs': {'do_sample': True, 'max_length': 128, 'min_length': 10, 'temperature': 0.7, 'top_k': 0, 'top_p': 0.9}, 'name': 'unconditional', 'num_samples': 2048}, {'generate_kwargs': {'do_sample': True, 'max_length': 128, 'min_length': 10, 'temperature': 0.7, 'top_k': 0, 'top_p': 0.9}, 'name': 'challenging_rtp', 'num_samples': 2048, 'prompts_path': 'resources/challenging_rtp.jsonl'}], 'scorer_config': {'device': 'cuda:0'}}, 'kl_gpt3_callback': {'max_tokens': 64, 'num_samples': 4096}, 'model': {'from_scratch': False, 'gpt2_config_kwargs': {'reorder_and_upcast_attn': True, 'scale_attn_by': True}, 'model_kwargs': {'revision': '81a1701e025d2c65ae6e8c2103df559071523ee0'}, 'path_or_name': 'tomekkorbak/goofy_pasteur'}, 'objective': {'name': 'MLE'}, 'tokenizer': {'path_or_name': 'gpt2'}, 'training': {'dataloader_num_workers': 0, 'effective_batch_size': 64, 'evaluation_strategy': 'no', 'fp16': True, 'hub_model_id': 'serene_goldberg', 'hub_strategy': 'all_checkpoints', 'learning_rate': 0.0005, 'logging_first_step': True, 'logging_steps': 1, 'num_tokens': 3300000000, 'output_dir': 'training_output104340', 'per_device_train_batch_size': 16, 'push_to_hub': True, 'remove_unused_columns': False, 'save_steps': 25354, 'save_strategy': 'steps', 'seed': 42, 'tokens_already_seen': 1661599744, 'warmup_ratio': 0.01, 'weight_decay': 0.1}} | 893f18103d352a77e56d87e4cd80a02b |

apache-2.0 | ['generated_from_trainer'] | false | jwt300_mt-Italian-to-Spanish_transformers This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the new_dataset dataset. It achieves the following results on the evaluation set: - Loss: 2.4425 - Sacrebleu: 0.9057 - Gen Len: 18.1276 | 2e3f62642c819a23e225a117a7371113 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 64 - eval_batch_size: 64 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1 - mixed_precision_training: Native AMP | 09275d3124bc19585e1e8c0f6aa8562c |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Sacrebleu | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:---------:|:-------:| | 2.7545 | 1.0 | 2229 | 2.4425 | 0.9057 | 18.1276 | | 27cfd4cf6629d079f45758b42be418b6 |

mit | ['generated_from_trainer'] | false | roberta_checkpoint-finetuned-squad This model is a fine-tuned version of [WillHeld/roberta-base-coqa](https://huggingface.co/WillHeld/roberta-base-coqa) on the squad dataset. It achieves the following results on the evaluation set: - Loss: 0.8969 | 61c983b15fc0e06e4236cce0e05a8d4c |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 0.8504 | 1.0 | 5536 | 0.8424 | | 0.6219 | 2.0 | 11072 | 0.8360 | | 0.4807 | 3.0 | 16608 | 0.8969 | | 468d8aa266c8e829f003c1b7811cc511 |

creativeml-openrail-m | ['text-to-image'] | false | Art of Wave Dreambooth model trained by Duskfallcrew with [Hugging Face Dreambooth Training Space](https://huggingface.co/spaces/multimodalart/dreambooth-training) with the v1-5 base model You run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb). Don't forget to use the concept prompts! Information on this model will be here: https://civitai.com/user/duskfallcrew If you want to donate towards costs and don't want to subscribe: https://ko-fi.com/DUSKFALLcrew If you want to monthly support the EARTH & DUSK media projects and not just AI: https://www.patreon.com/earthndusk wvert1 (use that on your prompt) | 105b3eb716985a932cab1869d1c133a9 |

apache-2.0 | ['automatic-speech-recognition', 'it'] | false | exp_w2v2t_it_unispeech_s156 Fine-tuned [microsoft/unispeech-large-1500h-cv](https://huggingface.co/microsoft/unispeech-large-1500h-cv) for speech recognition using the train split of [Common Voice 7.0 (it)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 7c8d460414cf2eb1faa7ba960891ddc3 |

apache-2.0 | ['national library of spain', 'spanish', 'bne', 'qa', 'question answering'] | false | Spanish RoBERTa-large trained on BNE finetuned for Spanish Question Answering Corpus (SQAC) dataset. RoBERTa-large-bne is a transformer-based masked language model for the Spanish language. It is based on the [RoBERTa](https://arxiv.org/abs/1907.11692) large model and has been pre-trained using the largest Spanish corpus known to date, with a total of 570GB of clean and deduplicated text processed for this work, compiled from the web crawlings performed by the [National Library of Spain (Biblioteca Nacional de España)](http://www.bne.es/en/Inicio/index.html) from 2009 to 2019. Original pre-trained model can be found here: https://huggingface.co/BSC-TeMU/roberta-large-bne | afa2c913a32fbec42137cd39f73da2ab |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Wav2Vec2-Large-XLSR-53-euskera Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) in Euskera using the [Common Voice](https://huggingface.co/datasets/common_voice). When using this model, make sure that your speech input is sampled at 16kHz. | 5177758468041b9383112eba788a710b |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Usage The model can be used directly (without a language model) as follows: ```python import torch import torchaudio from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor test_dataset = load_dataset("common_voice", "eu", split="test[:2%]"). processor = Wav2Vec2Processor.from_pretrained("mrm8488/wav2vec2-large-xlsr-53-euskera") model = Wav2Vec2ForCTC.from_pretrained("mrm8488/wav2vec2-large-xlsr-53-euskera") resampler = torchaudio.transforms.Resample(48_000, 16_000) | b69678657e6fd017bb3a0a01368bc711 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Evaluation The model can be evaluated as follows on the Euskera test data of Common Voice. ```python import torch import torchaudio from datasets import load_dataset, load_metric from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor import re test_dataset = load_dataset("common_voice", "eu", split="test") wer = load_metric("wer") processor = Wav2Vec2Processor.from_pretrained("mrm8488/wav2vec2-large-xlsr-53-euskera") model = Wav2Vec2ForCTC.from_pretrained("mrm8488/wav2vec2-large-xlsr-53-euskera") model.to("cuda") chars_to_ignore_regex = '[\\,\\?\\.\\!\\-\\;\\:\\"\\“\\%\\‘\\”\\�]' resampler = torchaudio.transforms.Resample(48_000, 16_000) | b5613a0fd921ffbc12b454292d8fb765 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def evaluate(batch): inputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits pred_ids = torch.argmax(logits, dim=-1) batch["pred_strings"] = processor.batch_decode(pred_ids) return batch result = test_dataset.map(evaluate, batched=True, batch_size=8) print("WER: {:2f}".format(100 * wer.compute(predictions=result["pred_strings"], references=result["sentence"]))) ``` **Test Result**: 24.03 % | cb163d01e622d71d6fcf2b4ee7c10161 |

apache-2.0 | ['generated_from_trainer'] | false | finetuned-bert This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the glue dataset. It achieves the following results on the evaluation set: - Loss: 0.3916 - Accuracy: 0.875 - F1: 0.9125 | e8dd1a343c854e21b03764dce621c76b |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3.0 | 3f198340b8e592e5c3487faec21d49ba |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.581 | 1.0 | 230 | 0.4086 | 0.8260 | 0.8711 | | 0.366 | 2.0 | 460 | 0.3758 | 0.8480 | 0.8963 | | 0.2328 | 3.0 | 690 | 0.3916 | 0.875 | 0.9125 | | 713c89fbac16a55b56e41d65b66955e5 |

mit | ['adversarial machine learning'] | false | RobArch: Designing Robust Architectures against Adversarial Attacks *ShengYun Peng, Weilin Xu, Cory Cornelius, Kevin Li, Rahul Duggal, Duen Horng Chau, Jason Martin* Check https://github.com/ShengYun-Peng/RobArch for the complete code. | a3b47ff2de59948df52b294337d5a8f0 |

mit | ['adversarial machine learning'] | false | Abstract Adversarial Training is the most effective approach for improving the robustness of Deep Neural Networks (DNNs). However, compared to the large body of research in optimizing the adversarial training process, there are few investigations into how architecture components affect robustness, and they rarely constrain model capacity. Thus, it is unclear where robustness precisely comes from. In this work, we present the first large-scale systematic study on the robustness of DNN architecture components under fixed parameter budgets. Through our investigation, we distill 18 actionable robust network design guidelines that empower model developers to gain deep insights. We demonstrate these guidelines' effectiveness by introducing the novel Robust Architecture (RobArch) model that instantiates the guidelines to build a family of top-performing models across parameter capacities against strong adversarial attacks. RobArch achieves the new state-of-the-art AutoAttack accuracy on the RobustBench ImageNet leaderboard. | 66e21accb8d4dd1245f172825753aacd |

mit | ['adversarial machine learning'] | false | Prerequisites 1. Register Weights & Biases [account](https://wandb.ai/site) 2. Prepare ImageNet via [Fast AT - Installation step 3 & 4](https://github.com/locuslab/fast_adversarial/tree/master/ImageNet) > Run step 4 only if you want to use Fast-AT. 3. Set up venv: ```bash make .venv_done ``` | 69c3fcdd768bfb13aff6354fdd5cf8d2 |

mit | ['adversarial machine learning'] | false | Torchvision models - Fast AT (e.g., ResNet-50) ```bash make BASE=<imagenet root dir> WANDB_ACCOUNT=<name> experiments/Torch_ResNet50/.done_test_pgd ``` If you want to test other off-the-shelf models in [torchvision](https://pytorch.org/vision/stable/models.html | eb5e03d3237895e69bde0995a6756087 |

mit | ['adversarial machine learning'] | false | Param | Natural | AutoAttack | PGD10-4 | PGD50-4 | PGD100-4 | PGD100-2 | PGD100-8 | | :--: | :--: | :--: | :--: | :--: | :--: | :--: | :--: | :--: | | [RobArch-S](https://huggingface.co/poloclub/RobArch/resolve/main/pretrained/robarch_s.pt) | 26M | 70.17% | 44.14% | 48.19% | 47.78% | 47.77% | 60.06% | 21.77% | | [RobArch-M](https://huggingface.co/poloclub/RobArch/resolve/main/pretrained/robarch_m.pt) | 46M | 71.88% | 46.26% | 49.84% | 49.32% | 49.30% | 61.89% | 23.01% | | [RobArch-L](https://huggingface.co/poloclub/RobArch/resolve/main/pretrained/robarch_l.pt) | 104M | 73.44% | 48.94% | 51.72% | 51.04% | 51.03% | 63.49% | 25.31% | | 87df514d7330244ee43038cf7eaba734 |

mit | ['adversarial machine learning'] | false | Citation ```bibtex @misc{peng2023robarch, title={RobArch: Designing Robust Architectures against Adversarial Attacks}, author={ShengYun Peng and Weilin Xu and Cory Cornelius and Kevin Li and Rahul Duggal and Duen Horng Chau and Jason Martin}, year={2023}, eprint={2301.03110}, archivePrefix={arXiv}, primaryClass={cs.CV} } ``` | 620aa7b674d5c434b907f0710d50e3b6 |

apache-2.0 | ['generated_from_trainer'] | false | mobilebert_sa_GLUE_Experiment_logit_kd_data_aug_wnli_128 This model is a fine-tuned version of [google/mobilebert-uncased](https://huggingface.co/google/mobilebert-uncased) on the GLUE WNLI dataset. It achieves the following results on the evaluation set: - Loss: 0.5913 - Accuracy: 0.1408 | d9e20af5fa6d0443239a933af27170a6 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.3404 | 1.0 | 435 | 0.5913 | 0.1408 | | 0.3027 | 2.0 | 870 | 0.5985 | 0.1127 | | 0.2935 | 3.0 | 1305 | 0.6351 | 0.1127 | | 0.2884 | 4.0 | 1740 | 0.6013 | 0.0986 | | 0.2838 | 5.0 | 2175 | 0.6154 | 0.0986 | | 0.2788 | 6.0 | 2610 | 0.6608 | 0.0845 | | 964b10030fb0bdae484df63745ac0a64 |

apache-2.0 | ['setfit', 'sentence-transformers', 'text-classification'] | false | fathyshalab/massive_play-roberta-large-v1-4-71 This is a [SetFit model](https://github.com/huggingface/setfit) that can be used for text classification. The model has been trained using an efficient few-shot learning technique that involves: 1. Fine-tuning a [Sentence Transformer](https://www.sbert.net) with contrastive learning. 2. Training a classification head with features from the fine-tuned Sentence Transformer. | f63adeb4e672a4809dd0bf37c36b78bb |

apache-2.0 | ['automatic-speech-recognition', 'ar'] | false | exp_w2v2t_ar_wavlm_s95 Fine-tuned [microsoft/wavlm-large](https://huggingface.co/microsoft/wavlm-large) for speech recognition using the train split of [Common Voice 7.0 (ar)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 84e26a63464fbce7e66fde1cefd5b6ef |

apache-2.0 | ['generated_from_trainer'] | false | finetuning-hate-model-electra This model is a fine-tuned version of [cross-encoder/ms-marco-electra-base](https://huggingface.co/cross-encoder/ms-marco-electra-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1691 - Accuracy: 0.9597 - F1: 0.3448 - Precision: 0.4545 - Recall: 0.2778 | cef938ca3a8c3a256dbec97c599aa765 |

mit | ['russian', 'fill-mask', 'pretraining', 'embeddings', 'masked-lm', 'tiny', 'feature-extraction', 'sentence-similarity'] | false | This is a very small distilled version of the [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) model for Russian and English (45 MB, 12M parameters). There is also an **updated version of this model**, [rubert-tiny2](https://huggingface.co/cointegrated/rubert-tiny2), with a larger vocabulary and better quality on practically all Russian NLU tasks. This model is useful if you want to fine-tune it for a relatively simple Russian task (e.g. NER or sentiment classification), and you care more about speed and size than about accuracy. It is approximately x10 smaller and faster than a base-sized BERT. Its `[CLS]` embeddings can be used as a sentence representation aligned between Russian and English. It was trained on the [Yandex Translate corpus](https://translate.yandex.ru/corpus), [OPUS-100](https://huggingface.co/datasets/opus100) and [Tatoeba](https://huggingface.co/datasets/tatoeba), using MLM loss (distilled from [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased)), translation ranking loss, and `[CLS]` embeddings distilled from [LaBSE](https://huggingface.co/sentence-transformers/LaBSE), [rubert-base-cased-sentence](https://huggingface.co/DeepPavlov/rubert-base-cased-sentence), Laser and USE. There is a more detailed [description in Russian](https://habr.com/ru/post/562064/). Sentence embeddings can be produced as follows: ```python | 23ba1413f224805ba63a290d272b9ee4 |

mit | ['russian', 'fill-mask', 'pretraining', 'embeddings', 'masked-lm', 'tiny', 'feature-extraction', 'sentence-similarity'] | false | pip install transformers sentencepiece import torch from transformers import AutoTokenizer, AutoModel tokenizer = AutoTokenizer.from_pretrained("cointegrated/rubert-tiny") model = AutoModel.from_pretrained("cointegrated/rubert-tiny") | d77e81b4609bf4cb565e3177116b0cdc |

mit | ['russian', 'fill-mask', 'pretraining', 'embeddings', 'masked-lm', 'tiny', 'feature-extraction', 'sentence-similarity'] | false | uncomment it if you have a GPU def embed_bert_cls(text, model, tokenizer): t = tokenizer(text, padding=True, truncation=True, return_tensors='pt') with torch.no_grad(): model_output = model(**{k: v.to(model.device) for k, v in t.items()}) embeddings = model_output.last_hidden_state[:, 0, :] embeddings = torch.nn.functional.normalize(embeddings) return embeddings[0].cpu().numpy() print(embed_bert_cls('привет мир', model, tokenizer).shape) | f7dfdfe8d2f78e0dcc93f1b36aaa35c4 |

apache-2.0 | ['generated_from_trainer'] | false | evaluating-student-writing-distibert-ner-with-metric This model is a fine-tuned version of [NahedAbdelgaber/evaluating-student-writing-distibert-ner](https://huggingface.co/NahedAbdelgaber/evaluating-student-writing-distibert-ner) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.7535 - Precision: 0.0614 - Recall: 0.2590 - F1: 0.0993 - Accuracy: 0.6188 | 358ee395e112b73b8899be95db9ebcc7 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.7145 | 1.0 | 1755 | 0.7683 | 0.0546 | 0.2194 | 0.0875 | 0.6191 | | 0.6608 | 2.0 | 3510 | 0.7504 | 0.0570 | 0.2583 | 0.0934 | 0.6136 | | 0.5912 | 3.0 | 5265 | 0.7535 | 0.0614 | 0.2590 | 0.0993 | 0.6188 | | 8bd9d2ce8c41282942cbfd556de4c8d6 |

apache-2.0 | ['generated_from_trainer', 'hf-asr-leaderboard', 'hf-asr-leaderboard'] | false | hubert-base-libri-clean-ft100h-v3 This model is a fine-tuned version of [facebook/hubert-base-ls960](https://huggingface.co/facebook/hubert-base-ls960) on the librispeech_asr dataset. It achieves the following results on the evaluation set: - Loss: 0.1120 - Wer: 0.1332 | cddffcebb7962d933e3cbc498ee2c2c6 |

apache-2.0 | ['generated_from_trainer', 'hf-asr-leaderboard', 'hf-asr-leaderboard'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 8 - eval_batch_size: 16 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 600 - num_epochs: 8 - mixed_precision_training: Native AMP | 8c3e81092eb24561ede7e5b66bd350db |

apache-2.0 | ['generated_from_trainer', 'hf-asr-leaderboard', 'hf-asr-leaderboard'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:------:| | 5.201 | 0.14 | 250 | 3.9799 | 1.0 | | 2.8893 | 0.28 | 500 | 3.4838 | 1.0 | | 2.8603 | 0.42 | 750 | 3.3505 | 1.0 | | 2.7216 | 0.56 | 1000 | 2.1194 | 0.9989 | | 1.3372 | 0.7 | 1250 | 0.8124 | 0.6574 | | 0.8238 | 0.84 | 1500 | 0.5712 | 0.5257 | | 0.6449 | 0.98 | 1750 | 0.4442 | 0.4428 | | 0.5241 | 1.12 | 2000 | 0.3442 | 0.3672 | | 0.4458 | 1.26 | 2250 | 0.2850 | 0.3186 | | 0.3959 | 1.4 | 2500 | 0.2507 | 0.2882 | | 0.3641 | 1.54 | 2750 | 0.2257 | 0.2637 | | 0.3307 | 1.68 | 3000 | 0.2044 | 0.2434 | | 0.2996 | 1.82 | 3250 | 0.1969 | 0.2313 | | 0.2794 | 1.96 | 3500 | 0.1823 | 0.2193 | | 0.2596 | 2.1 | 3750 | 0.1717 | 0.2096 | | 0.2563 | 2.24 | 4000 | 0.1653 | 0.2000 | | 0.2532 | 2.38 | 4250 | 0.1615 | 0.1971 | | 0.2376 | 2.52 | 4500 | 0.1559 | 0.1916 | | 0.2341 | 2.66 | 4750 | 0.1494 | 0.1855 | | 0.2102 | 2.8 | 5000 | 0.1464 | 0.1781 | | 0.2222 | 2.94 | 5250 | 0.1399 | 0.1732 | | 0.2081 | 3.08 | 5500 | 0.1450 | 0.1707 | | 0.1963 | 3.22 | 5750 | 0.1337 | 0.1655 | | 0.2107 | 3.36 | 6000 | 0.1344 | 0.1633 | | 0.1866 | 3.5 | 6250 | 0.1339 | 0.1611 | | 0.186 | 3.64 | 6500 | 0.1311 | 0.1563 | | 0.1703 | 3.78 | 6750 | 0.1307 | 0.1537 | | 0.1819 | 3.92 | 7000 | 0.1277 | 0.1555 | | 0.176 | 4.06 | 7250 | 0.1280 | 0.1515 | | 0.1837 | 4.2 | 7500 | 0.1249 | 0.1504 | | 0.1678 | 4.34 | 7750 | 0.1236 | 0.1480 | | 0.1624 | 4.48 | 8000 | 0.1194 | 0.1456 | | 0.1631 | 4.62 | 8250 | 0.1215 | 0.1462 | | 0.1736 | 4.76 | 8500 | 0.1192 | 0.1451 | | 0.1752 | 4.9 | 8750 | 0.1206 | 0.1432 | | 0.1578 | 5.04 | 9000 | 0.1151 | 0.1415 | | 0.1537 | 5.18 | 9250 | 0.1185 | 0.1402 | | 0.1771 | 5.33 | 9500 | 0.1165 | 0.1414 | | 0.1481 | 5.47 | 9750 | 0.1152 | 0.1413 | | 0.1509 | 5.61 | 10000 | 0.1152 | 0.1382 | | 0.146 | 5.75 | 10250 | 0.1133 | 0.1385 | | 0.1464 | 5.89 | 10500 | 0.1139 | 0.1371 | | 0.1442 | 6.03 | 10750 | 0.1162 | 0.1365 | | 0.128 | 6.17 | 11000 | 0.1147 | 0.1371 | | 0.1381 | 6.31 | 11250 | 0.1148 | 0.1378 | | 0.1343 | 6.45 | 11500 | 0.1113 | 0.1363 | | 0.1325 | 6.59 | 11750 | 0.1134 | 0.1355 | | 0.1442 | 6.73 | 12000 | 0.1142 | 0.1358 | | 0.1286 | 6.87 | 12250 | 0.1133 | 0.1352 | | 0.1349 | 7.01 | 12500 | 0.1129 | 0.1344 | | 0.1338 | 7.15 | 12750 | 0.1131 | 0.1328 | | 0.1403 | 7.29 | 13000 | 0.1124 | 0.1338 | | 0.1314 | 7.43 | 13250 | 0.1141 | 0.1335 | | 0.1283 | 7.57 | 13500 | 0.1124 | 0.1332 | | 0.1347 | 7.71 | 13750 | 0.1107 | 0.1332 | | 0.1195 | 7.85 | 14000 | 0.1119 | 0.1332 | | 0.1326 | 7.99 | 14250 | 0.1120 | 0.1332 | | 9960196310971bf61241979c0e5295cd |

apache-2.0 | ['generated_from_trainer'] | false | distil-tis This model is a fine-tuned version of [distilroberta-base](https://huggingface.co/distilroberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.6061 - Rmse: 0.7785 - Mse: 0.6061 - Mae: 0.6003 | 5a1f9c08a16e7b342f31c80012c70475 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rmse | Mse | Mae | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:| | 0.7173 | 1.0 | 492 | 0.7060 | 0.8403 | 0.7060 | 0.5962 | | 0.5955 | 2.0 | 984 | 0.6585 | 0.8115 | 0.6585 | 0.5864 | | 0.5876 | 3.0 | 1476 | 0.6090 | 0.7804 | 0.6090 | 0.6040 | | 0.5871 | 4.0 | 1968 | 0.6247 | 0.7904 | 0.6247 | 0.5877 | | 0.5871 | 5.0 | 2460 | 0.6061 | 0.7785 | 0.6061 | 0.6003 | | fd979ed5b6186c8041f2128f753dd216 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased__hate_speech_offensive__train-8-5 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.7214 - Accuracy: 0.37 | 6b2e72c4dc7ce715ad04910d3befe3b6 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 1.0995 | 1.0 | 5 | 1.1301 | 0.0 | | 1.0227 | 2.0 | 10 | 1.1727 | 0.0 | | 1.0337 | 3.0 | 15 | 1.1734 | 0.2 | | 0.9137 | 4.0 | 20 | 1.1829 | 0.2 | | 0.8065 | 5.0 | 25 | 1.1496 | 0.4 | | 0.7038 | 6.0 | 30 | 1.1101 | 0.4 | | 0.6246 | 7.0 | 35 | 1.0982 | 0.2 | | 0.4481 | 8.0 | 40 | 1.0913 | 0.2 | | 0.3696 | 9.0 | 45 | 1.0585 | 0.4 | | 0.3137 | 10.0 | 50 | 1.0418 | 0.4 | | 0.2482 | 11.0 | 55 | 1.0078 | 0.4 | | 0.196 | 12.0 | 60 | 0.9887 | 0.6 | | 0.1344 | 13.0 | 65 | 0.9719 | 0.6 | | 0.1014 | 14.0 | 70 | 1.0053 | 0.6 | | 0.111 | 15.0 | 75 | 0.9653 | 0.6 | | 0.0643 | 16.0 | 80 | 0.9018 | 0.6 | | 0.0559 | 17.0 | 85 | 0.9393 | 0.6 | | 0.0412 | 18.0 | 90 | 1.0210 | 0.6 | | 0.0465 | 19.0 | 95 | 0.9965 | 0.6 | | 0.0328 | 20.0 | 100 | 0.9739 | 0.6 | | 0.0289 | 21.0 | 105 | 0.9796 | 0.6 | | 0.0271 | 22.0 | 110 | 0.9968 | 0.6 | | 0.0239 | 23.0 | 115 | 1.0143 | 0.6 | | 0.0201 | 24.0 | 120 | 1.0459 | 0.6 | | 0.0185 | 25.0 | 125 | 1.0698 | 0.6 | | 0.0183 | 26.0 | 130 | 1.0970 | 0.6 | | 6afa9e39ed79fdeedd22adfdb0b61069 |

apache-2.0 | ['science', 'multi-displinary'] | false | ScholarBERT_1 Model This is the **ScholarBERT_1** variant of the ScholarBERT model family. The model is pretrained on a large collection of scientific research articles (**2.2B tokens**). This is a **cased** (case-sensitive) model. The tokenizer will not convert all inputs to lower-case by default. The model is based on the same architecture as [BERT-large](https://huggingface.co/bert-large-cased) and has a total of 340M parameters. | 2eae77047095f7905d863ef96b199f4d |

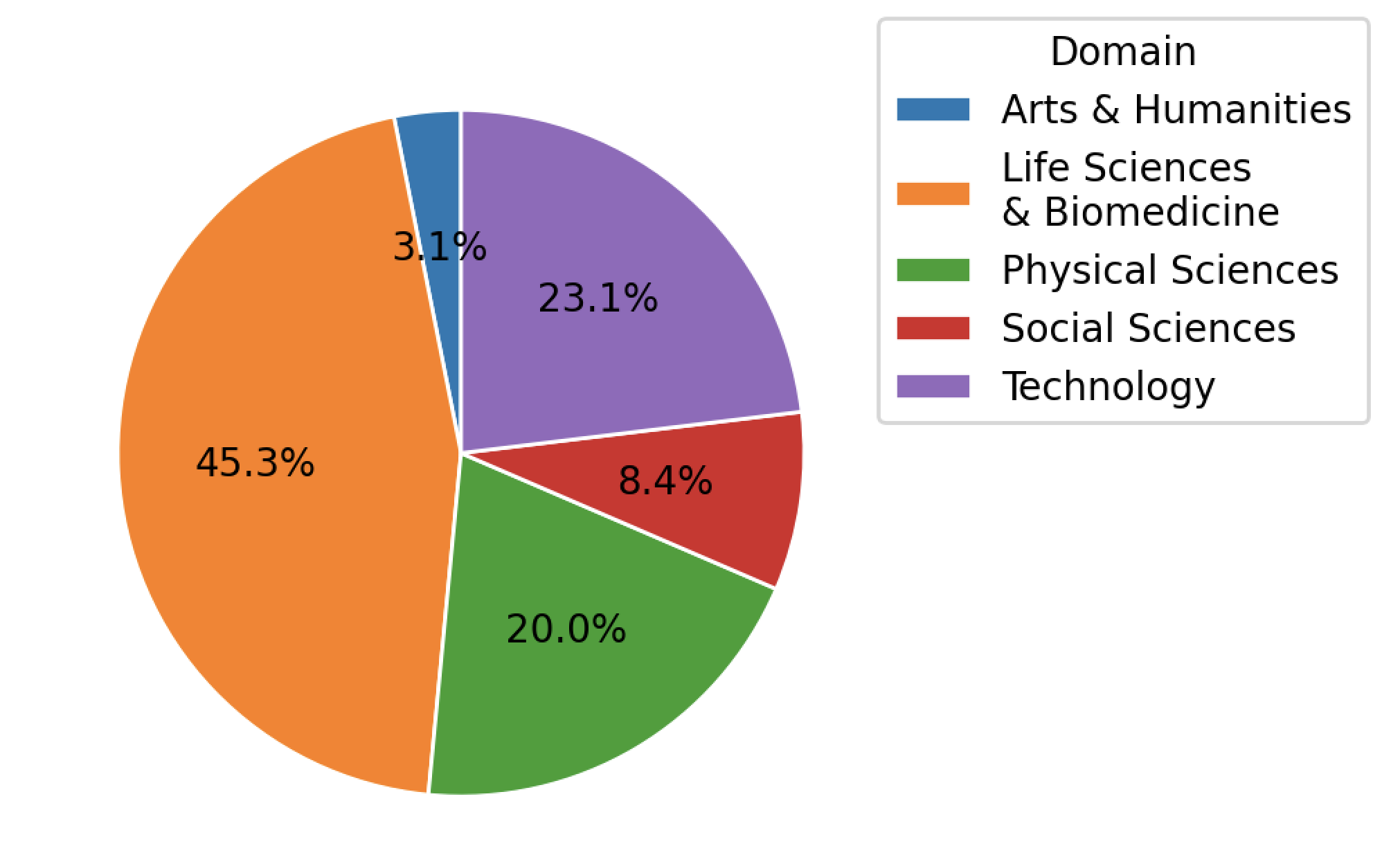

apache-2.0 | ['science', 'multi-displinary'] | false | Training Dataset The vocab and the model are pertrained on **1% of the PRD** scientific literature dataset. The PRD dataset is provided by Public.Resource.Org, Inc. (“Public Resource”), a nonprofit organization based in California. This dataset was constructed from a corpus of journal article files, from which We successfully extracted text from 75,496,055 articles from 178,928 journals. The articles span across Arts & Humanities, Life Sciences & Biomedicine, Physical Sciences, Social Sciences, and Technology. The distribution of articles is shown below.  | 95e3edd287cb9332e0fbf9ade572dfae |

apache-2.0 | ['generated_from_trainer'] | false | sentiment_analysis_on_covid_tweets This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - eval_loss: 0.6053 - eval_accuracy: 0.7625 - eval_runtime: 33.7416 - eval_samples_per_second: 59.274 - eval_steps_per_second: 7.409 - step: 0 | 441302fdf70c86e30ab9126f57dc5ae6 |

apache-2.0 | ['exbert', 'multiberts', 'multiberts-seed-0'] | false | MultiBERTs Seed 0 Checkpoint 60k (uncased) Seed 0 intermediate checkpoint 60k MultiBERTs (pretrained BERT) model on English language using a masked language modeling (MLM) objective. It was introduced in [this paper](https://arxiv.org/pdf/2106.16163.pdf) and first released in [this repository](https://github.com/google-research/language/tree/master/language/multiberts). This is an intermediate checkpoint. The final checkpoint can be found at [multiberts-seed-0](https://hf.co/multberts-seed-0). This model is uncased: it does not make a difference between english and English. Disclaimer: The team releasing MultiBERTs did not write a model card for this model so this model card has been written by [gchhablani](https://hf.co/gchhablani). | be3c86fb65c822d3f6f1ac63de5017f6 |

apache-2.0 | ['exbert', 'multiberts', 'multiberts-seed-0'] | false | How to use Here is how to use this model to get the features of a given text in PyTorch: ```python from transformers import BertTokenizer, BertModel tokenizer = BertTokenizer.from_pretrained('multiberts-seed-0-60k') model = BertModel.from_pretrained("multiberts-seed-0-60k") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` | c4737d6b849ff05a22bfd2ac314872fa |

apache-2.0 | ['deep-narrow'] | false | T5-Efficient-SMALL-DL2 (Deep-Narrow version) T5-Efficient-SMALL-DL2 is a variation of [Google's original T5](https://ai.googleblog.com/2020/02/exploring-transfer-learning-with-t5.html) following the [T5 model architecture](https://huggingface.co/docs/transformers/model_doc/t5). It is a *pretrained-only* checkpoint and was released with the paper **[Scale Efficiently: Insights from Pre-training and Fine-tuning Transformers](https://arxiv.org/abs/2109.10686)** by *Yi Tay, Mostafa Dehghani, Jinfeng Rao, William Fedus, Samira Abnar, Hyung Won Chung, Sharan Narang, Dani Yogatama, Ashish Vaswani, Donald Metzler*. In a nutshell, the paper indicates that a **Deep-Narrow** model architecture is favorable for **downstream** performance compared to other model architectures of similar parameter count. To quote the paper: > We generally recommend a DeepNarrow strategy where the model’s depth is preferentially increased > before considering any other forms of uniform scaling across other dimensions. This is largely due to > how much depth influences the Pareto-frontier as shown in earlier sections of the paper. Specifically, a > tall small (deep and narrow) model is generally more efficient compared to the base model. Likewise, > a tall base model might also generally more efficient compared to a large model. We generally find > that, regardless of size, even if absolute performance might increase as we continue to stack layers, > the relative gain of Pareto-efficiency diminishes as we increase the layers, converging at 32 to 36 > layers. Finally, we note that our notion of efficiency here relates to any one compute dimension, i.e., > params, FLOPs or throughput (speed). We report all three key efficiency metrics (number of params, > FLOPS and speed) and leave this decision to the practitioner to decide which compute dimension to > consider. To be more precise, *model depth* is defined as the number of transformer blocks that are stacked sequentially. A sequence of word embeddings is therefore processed sequentially by each transformer block. | ac85239208e408a5cdee9ba1a19d0742 |

apache-2.0 | ['deep-narrow'] | false | Details model architecture This model checkpoint - **t5-efficient-small-dl2** - is of model type **Small** with the following variations: - **dl** is **2** It has **43.73** million parameters and thus requires *ca.* **174.93 MB** of memory in full precision (*fp32*) or **87.46 MB** of memory in half precision (*fp16* or *bf16*). A summary of the *original* T5 model architectures can be seen here: | Model | nl (el/dl) | ff | dm | kv | nh | | e19a2a80676b7d506a85dcd0046f9a97 |

openrail | [] | false | MODEL BY ShadoWxShinigamI Use Token - mdjrny-pntrt illustration style at the beginning of your prompt; If some object doesn't work, provide more context in your prompt [eg:- 'ocean,ship,waves' instead of just 'ship'] Training - 2080 steps, Batch size 4, 512x512, v1-5 Base, 26 images Examples:-      | eac7d32b66d99cf5fbefc1710e54de35 |

apache-2.0 | ['exbert', 'multiberts', 'multiberts-seed-3'] | false | MultiBERTs Seed 3 Checkpoint 60k (uncased) Seed 3 intermediate checkpoint 60k MultiBERTs (pretrained BERT) model on English language using a masked language modeling (MLM) objective. It was introduced in [this paper](https://arxiv.org/pdf/2106.16163.pdf) and first released in [this repository](https://github.com/google-research/language/tree/master/language/multiberts). This is an intermediate checkpoint. The final checkpoint can be found at [multiberts-seed-3](https://hf.co/multberts-seed-3). This model is uncased: it does not make a difference between english and English. Disclaimer: The team releasing MultiBERTs did not write a model card for this model so this model card has been written by [gchhablani](https://hf.co/gchhablani). | fcc4d401109705edcef8ee23794c3c11 |

apache-2.0 | ['exbert', 'multiberts', 'multiberts-seed-3'] | false | How to use Here is how to use this model to get the features of a given text in PyTorch: ```python from transformers import BertTokenizer, BertModel tokenizer = BertTokenizer.from_pretrained('multiberts-seed-3-60k') model = BertModel.from_pretrained("multiberts-seed-3-60k") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` | a58c20c18e443188da4f3238e98e7fa1 |

creativeml-openrail-m | ['safety-checker', 'tensorflow', 'node.js'] | false | Google Safesearch Mini Model Card <a href="https://huggingface.co/FredZhang7/google-safesearch-mini-v2"> <font size="4"> <bold> Version 2 is here! </bold> </font> </a> This model is trained on 2,220,000+ images scraped from Google Images, Reddit, Imgur, and Github. The InceptionV3 and Xception models have been fine-tuned to predict the likelihood of an image falling into one of three categories: nsfw_gore, nsfw_suggestive, and safe. After 20 epochs on PyTorch, the finetuned InceptionV3 model achieves 94% acc on both training and test data. After 3.3 epochs on Keras, the finetuned Xception model scores 94% acc on training set and 92% on test set. Not only is this model accurate, but it also offers a significant advantage over stable diffusion safety checkers. By using our model, users can save 1.12GB of RAM and disk space. <br> | 6805887752eda00b06838f03776e3ff3 |

creativeml-openrail-m | ['safety-checker', 'tensorflow', 'node.js'] | false | PyTorch The PyTorch model runs much slower with transformers, so downloading it externally is a better option. ```bash pip install --upgrade torchvision ``` ```python import torch, os, warnings, requests from io import BytesIO from PIL import Image from urllib.request import urlretrieve from torchvision import transforms PATH_TO_IMAGE = 'https://images.unsplash.com/photo-1594568284297-7c64464062b1' USE_CUDA = False warnings.filterwarnings("ignore") def download_model(): print("Downloading google_safesearch_mini.bin...") urlretrieve("https://huggingface.co/FredZhang7/google-safesearch-mini/resolve/main/pytorch_model.bin", "google_safesearch_mini.bin") def eval(): if not os.path.exists("google_safesearch_mini.bin"): download_model() model = torch.jit.load('./google_safesearch_mini.bin') img = Image.open(PATH_TO_IMAGE).convert('RGB') if not (PATH_TO_IMAGE.startswith('http://') or PATH_TO_IMAGE.startswith('https://')) else Image.open(BytesIO(requests.get(PATH_TO_IMAGE).content)).convert('RGB') transform = transforms.Compose([transforms.Resize(299), transforms.ToTensor(), transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))]) img = transform(img).unsqueeze(0) if USE_CUDA: img, model = img.cuda(), model.cuda() else: img, model = img.cpu(), model.cpu() model.eval() with torch.no_grad(): out, _ = model(img) _, predicted = torch.max(out.data, 1) classes = {0: 'nsfw_gore', 1: 'nsfw_suggestive', 2: 'safe'} | 2ea20dacd3ec314ea591055e5366cdcb |

creativeml-openrail-m | ['safety-checker', 'tensorflow', 'node.js'] | false | account for edge cases if predicted[0] != 2 and abs(out[0][2] - out[0][predicted[0]]) > 0.20: img = Image.new('RGB', image.size, color = (0, 255, 255)) print("\033[93m" + "safe" + "\033[0m") else: print('\n\033[1;31m' + classes[predicted.item()] + '\033[0m' if predicted.item() != 2 else '\033[1;32m' + classes[predicted.item()] + '\033[0m\n') if __name__ == '__main__': eval() ``` Output Example:  <br> | 82ebd9f448d469478461bd53ba21e39e |

creativeml-openrail-m | ['safety-checker', 'tensorflow', 'node.js'] | false | download the model url = "https://huggingface.co/FredZhang7/google-safesearch-mini/resolve/main/tensorflow/saved_model.pb" r = requests.get(url, allow_redirects=True) if not os.path.exists('tensorflow'): os.makedirs('tensorflow') open('tensorflow/saved_model.pb', 'wb').write(r.content) | 31a3c3b4591f16f68a505c196ad958c8 |

creativeml-openrail-m | ['safety-checker', 'tensorflow', 'node.js'] | false | download the variables url = "https://huggingface.co/FredZhang7/google-safesearch-mini/resolve/main/tensorflow/variables/variables.data-00000-of-00001" r = requests.get(url, allow_redirects=True) if not os.path.exists('tensorflow/variables'): os.makedirs('tensorflow/variables') open('tensorflow/variables/variables.data-00000-of-00001', 'wb').write(r.content) url = "https://huggingface.co/FredZhang7/google-safesearch-mini/resolve/main/tensorflow/variables/variables.index" r = requests.get(url, allow_redirects=True) open('tensorflow/variables/variables.index', 'wb').write(r.content) | 85c30bc5ac99ec0aa53cadbb6236f40e |

creativeml-openrail-m | ['safety-checker', 'tensorflow', 'node.js'] | false | run the model tensor = model(image) classes = ['nsfw_gore', 'nsfw_suggestive', 'safe'] prediction = classes[tf.argmax(tensor, 1)[0]] print('\033[1;32m' + prediction + '\033[0m' if prediction == 'safe' else '\033[1;33m' + prediction + '\033[0m') ``` Output Example:  <br> | 8999684583f18fe437383d8a0f93d3f9 |

creativeml-openrail-m | ['safety-checker', 'tensorflow', 'node.js'] | false | Tensorflow.js ```bash npm i @tensorflow/tfjs-node ``` ```javascript const tf = require('@tensorflow/tfjs-node'); const fs = require('fs'); const { pipeline } = require('stream'); const { promisify } = require('util'); const download = async (url, path) => { // Taken from https://levelup.gitconnected.com/how-to-download-a-file-with-node-js-e2b88fe55409 const streamPipeline = promisify(pipeline); const response = await fetch(url); if (!response.ok) { throw new Error(`unexpected response ${response.statusText}`); } await streamPipeline(response.body, fs.createWriteStream(path)); }; async function run() { // download saved model and variables from https://huggingface.co/FredZhang7/google-safesearch-mini/tree/main/tensorflow if (!fs.existsSync('tensorflow')) { fs.mkdirSync('tensorflow'); await download('https://huggingface.co/FredZhang7/google-safesearch-mini/resolve/main/tensorflow/saved_model.pb', 'tensorflow/saved_model.pb'); fs.mkdirSync('tensorflow/variables'); await download('https://huggingface.co/FredZhang7/google-safesearch-mini/resolve/main/tensorflow/variables/variables.data-00000-of-00001', 'tensorflow/variables/variables.data-00000-of-00001'); await download('https://huggingface.co/FredZhang7/google-safesearch-mini/resolve/main/tensorflow/variables/variables.index', 'tensorflow/variables/variables.index'); } // load model and image const model = await tf.node.loadSavedModel('./tensorflow/'); const image = tf.node.decodeImage(fs.readFileSync('cat.jpg'), 3); // predict const input = tf.expandDims(image, 0); const tensor = model.predict(input); const max = tensor.argMax(1); const classes = ['nsfw_gore', 'nsfw_suggestive', 'safe']; console.log('\x1b[32m%s\x1b[0m', classes[max.dataSync()[0]], '\n'); } run(); ``` Output Example:  <br> | eca6bc631697ec1e04ee5ee9fbce5daf |

creativeml-openrail-m | ['safety-checker', 'tensorflow', 'node.js'] | false | Bias and Limitations Each person's definition of "safe" is different. The images in the dataset are classified as safe/unsafe by Google SafeSearch, Reddit, and Imgur. It is possible that some images may be safe to others but not to you. Also, when a model encounters an image with things it hasn't seen, it likely makes wrong predictions. This is why in the PyTorch example, I accounted for the "edge cases" before printing the predictions. | 9ac9f33232ba841dba8d29e472a46317 |

apache-2.0 | ['automatic-speech-recognition', 'pytorch', 'transformers', 'en', 'generated_from_trainer'] | false | Model This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the Timit dataset. Check [this notebook](https://www.kaggle.com/code/vitouphy/phoneme-recognition-with-wav2vec2) for training detail. | e341df348f7f203c8526c87f5e776d98 |

apache-2.0 | ['automatic-speech-recognition', 'pytorch', 'transformers', 'en', 'generated_from_trainer'] | false | Process raw audio output = pipe("audio_file.wav", chunk_length_s=10, stride_length_s=(4, 2)) ``` **Approach 2:** More custom way to predict phonemes. ```python from transformers import Wav2Vec2Processor, Wav2Vec2ForCTC from datasets import load_dataset import torch import soundfile as sf | 051ced1d1288f9d138e433ec1d21a530 |

apache-2.0 | ['automatic-speech-recognition', 'pytorch', 'transformers', 'en', 'generated_from_trainer'] | false | load model and processor processor = Wav2Vec2Processor.from_pretrained("vitouphy/wav2vec2-xls-r-300m-timit-phoneme") model = Wav2Vec2ForCTC.from_pretrained("vitouphy/wav2vec2-xls-r-300m-timit-phoneme") | 3282852c2517d44f44e583343c9ee42f |

apache-2.0 | ['automatic-speech-recognition', 'pytorch', 'transformers', 'en', 'generated_from_trainer'] | false | Read and process the input audio_input, sample_rate = sf.read("audio_file.wav") inputs = processor(audio_input, sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values, attention_mask=inputs.attention_mask).logits | 8be3f746f1562224915e4ae0a8517ce6 |

apache-2.0 | ['automatic-speech-recognition', 'pytorch', 'transformers', 'en', 'generated_from_trainer'] | false | Training and evaluation data We use [DARPA TIMIT dataset](https://www.kaggle.com/datasets/mfekadu/darpa-timit-acousticphonetic-continuous-speech) for this model. - We split into **80/10/10** for training, validation, and testing respectively. - That roughly corresponds to about **137/17/17** minutes. - The model obtained **7.996%** on this test set. | 8a8246ecd35859be88237be73948688d |

apache-2.0 | ['automatic-speech-recognition', 'pytorch', 'transformers', 'en', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 2000 - training_steps: 10000 - mixed_precision_training: Native AMP | 9245b4c36e9e00285c3744aa64a56551 |

apache-2.0 | ['automatic-speech-recognition', 'pytorch', 'transformers', 'en', 'generated_from_trainer'] | false | Citation ``` @misc { phy22-phoneme, author = {Phy, Vitou}, title = {{Automatic Phoneme Recognition on TIMIT Dataset with Wav2Vec 2.0}}, year = 2022, note = {{If you use this model, please cite it using these metadata.}}, publisher = {Hugging Face}, version = {1.0}, doi = {10.57967/hf/0125}, url = {https://huggingface.co/vitouphy/wav2vec2-xls-r-300m-timit-phoneme} } ``` | 24ce4a76b902ea220ff9fb583a554501 |

mit | [] | false | Description This model is a fine-tuned version of [BETO (spanish bert)](https://huggingface.co/dccuchile/bert-base-spanish-wwm-uncased) that has been trained on the *Datathon Against Racism* dataset (2022) We performed several experiments that will be described in the upcoming paper "Estimating Ground Truth in a Low-labelled Data Regime:A Study of Racism Detection in Spanish" (NEATClasS 2022) We applied 6 different methods ground-truth estimations, and for each one we performed 4 epochs of fine-tuning. The result is made of 24 models: | method | epoch 1 | epoch 3 | epoch 3 | epoch 4 | |--- |--- |--- |--- |--- | | raw-label | [raw-label-epoch-1](https://huggingface.co/MartinoMensio/racism-models-raw-label-epoch-1) | [raw-label-epoch-2](https://huggingface.co/MartinoMensio/racism-models-raw-label-epoch-2) | [raw-label-epoch-3](https://huggingface.co/MartinoMensio/racism-models-raw-label-epoch-3) | [raw-label-epoch-4](https://huggingface.co/MartinoMensio/racism-models-raw-label-epoch-4) | | m-vote-strict | [m-vote-strict-epoch-1](https://huggingface.co/MartinoMensio/racism-models-m-vote-strict-epoch-1) | [m-vote-strict-epoch-2](https://huggingface.co/MartinoMensio/racism-models-m-vote-strict-epoch-2) | [m-vote-strict-epoch-3](https://huggingface.co/MartinoMensio/racism-models-m-vote-strict-epoch-3) | [m-vote-strict-epoch-4](https://huggingface.co/MartinoMensio/racism-models-m-vote-strict-epoch-4) | | m-vote-nonstrict | [m-vote-nonstrict-epoch-1](https://huggingface.co/MartinoMensio/racism-models-m-vote-nonstrict-epoch-1) | [m-vote-nonstrict-epoch-2](https://huggingface.co/MartinoMensio/racism-models-m-vote-nonstrict-epoch-2) | [m-vote-nonstrict-epoch-3](https://huggingface.co/MartinoMensio/racism-models-m-vote-nonstrict-epoch-3) | [m-vote-nonstrict-epoch-4](https://huggingface.co/MartinoMensio/racism-models-m-vote-nonstrict-epoch-4) | | regression-w-m-vote | [regression-w-m-vote-epoch-1](https://huggingface.co/MartinoMensio/racism-models-regression-w-m-vote-epoch-1) | [regression-w-m-vote-epoch-2](https://huggingface.co/MartinoMensio/racism-models-regression-w-m-vote-epoch-2) | [regression-w-m-vote-epoch-3](https://huggingface.co/MartinoMensio/racism-models-regression-w-m-vote-epoch-3) | [regression-w-m-vote-epoch-4](https://huggingface.co/MartinoMensio/racism-models-regression-w-m-vote-epoch-4) | | w-m-vote-strict | [w-m-vote-strict-epoch-1](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-strict-epoch-1) | [w-m-vote-strict-epoch-2](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-strict-epoch-2) | [w-m-vote-strict-epoch-3](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-strict-epoch-3) | [w-m-vote-strict-epoch-4](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-strict-epoch-4) | | w-m-vote-nonstrict | [w-m-vote-nonstrict-epoch-1](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-nonstrict-epoch-1) | [w-m-vote-nonstrict-epoch-2](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-nonstrict-epoch-2) | [w-m-vote-nonstrict-epoch-3](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-nonstrict-epoch-3) | [w-m-vote-nonstrict-epoch-4](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-nonstrict-epoch-4) | This model is `raw-label-epoch-2` | 4bbcae61c95165c7a2a06b0af197a97a |

mit | [] | false | Usage ```python from transformers import AutoTokenizer, AutoModelForSequenceClassification, pipeline model_name = 'raw-label-epoch-2' tokenizer = AutoTokenizer.from_pretrained("dccuchile/bert-base-spanish-wwm-uncased") full_model_path = f'MartinoMensio/racism-models-{model_name}' model = AutoModelForSequenceClassification.from_pretrained(full_model_path) pipe = pipeline("text-classification", model = model, tokenizer = tokenizer) texts = [ 'y porqué es lo que hay que hacer con los menas y con los adultos también!!!! NO a los inmigrantes ilegales!!!!', 'Es que los judíos controlan el mundo' ] print(pipe(texts)) | b4317080f20a114b400db15c43ebcf70 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Wav2Vec2-Large-XLSR-53-Tamil Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on Tamil using the [Common Voice](https://huggingface.co/datasets/common_voice) dataset. When using this model, make sure that your speech input is sampled at 16kHz. | adcfdba557936125a3469cfed7afe151 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Usage The model can be used directly (without a language model) as follows: ```python import torch import torchaudio from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor test_dataset = load_dataset("common_voice", "{lang_id}", split="test[:2%]") | 32c0de149b7a1d38db3ee5ba7336511f |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | TODO: replace {lang_id} in your language code here. Make sure the code is one of the *ISO codes* of [this](https://huggingface.co/languages) site. processor = Wav2Vec2Processor.from_pretrained("{model_id}") | 5ea5097ec536a61fdb149dd74cf25bb6 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | TODO: replace {model_id} with your model id. The model id consists of {your_username}/{your_modelname}, *e.g.* `elgeish/wav2vec2-large-xlsr-53-arabic` model = Wav2Vec2ForCTC.from_pretrained("{model_id}") | 1f096f8230db67340bdbaf5eb7806938 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | TODO: replace {model_id} with your model id. The model id consists of {your_username}/{your_modelname}, *e.g.* `elgeish/wav2vec2-large-xlsr-53-arabic` resampler = torchaudio.transforms.Resample(48_000, 16_000) | 97b18bb256feab9d7fed3880cf54c1be |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def speech_file_to_array_fn(batch): \\tspeech_array, sampling_rate = torchaudio.load(batch["path"]) \\tbatch["speech"] = resampler(speech_array).squeeze().numpy() \\treturn batch test_dataset = test_dataset.map(speech_file_to_array_fn) inputs = processor(test_dataset["speech"][:2], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): \\tlogits = model(inputs.input_values, attention_mask=inputs.attention_mask).logits predicted_ids = torch.argmax(logits, dim=-1) print("Prediction:", processor.batch_decode(predicted_ids)) print("Reference:", test_dataset["sentence"][:2]) ``` | c27576eec603671f73553d0bda746084 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Evaluation The model can be evaluated as follows on the Tamil test data of Common Voice. ```python import torch import torchaudio from datasets import load_dataset, load_metric from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor import re test_dataset = load_dataset("common_voice", "{lang_id}", split="test") | f2299644867af518d50222985f4d9eb0 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | TODO: replace {lang_id} in your language code here. Make sure the code is one of the *ISO codes* of [this](https://huggingface.co/languages) site. wer = load_metric("wer") processor = Wav2Vec2Processor.from_pretrained("{model_id}") | 746ad39b1b4e34b934993f991bd8d987 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | TODO: replace {model_id} with your model id. The model id consists of {your_username}/{your_modelname}, *e.g.* `elgeish/wav2vec2-large-xlsr-53-arabic` model.to("cuda") chars_to_ignore_regex = '[\\\\,\\\\?\\\\.\\\\!\\\\-\\\\;\\\\:\\\\"\\\\“]' | 69fd7b342a65a1b2b1bceae21b11f02f |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the audio files as arrays def speech_file_to_array_fn(batch): \\tbatch["sentence"] = re.sub(chars_to_ignore_regex, '', batch["sentence"]).lower() \\tspeech_array, sampling_rate = torchaudio.load(batch["path"]) \\tbatch["speech"] = resampler(speech_array).squeeze().numpy() \\treturn batch test_dataset = test_dataset.map(speech_file_to_array_fn) | 06b234b9aff24225f9669db067613b09 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def evaluate(batch): \\tinputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) \\twith torch.no_grad(): \\t\\tlogits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits \\tpred_ids = torch.argmax(logits, dim=-1) \\tbatch["pred_strings"] = processor.batch_decode(pred_ids) \\treturn batch result = test_dataset.map(evaluate, batched=True, batch_size=8) print("WER: {:2f}".format(100 * wer.compute(predictions=result["pred_strings"], references=result["sentence"]))) ``` **Test Result**: 100.00 % | 337a373c41eef27e503e53f224430f90 |

apache-2.0 | ['generated_from_trainer'] | false | distilled-mt5-small-0.4-0.25 This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the wmt16 ro-en dataset. It achieves the following results on the evaluation set: - Loss: 3.8561 - Bleu: 3.2179 - Gen Len: 41.2356 | 979d55df387b3b8690178ba0757dab5a |

mit | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'Adam', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 16476, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False} - training_precision: float32 | bddac69b49d7322a4d8d7cab6aeb0972 |

apache-2.0 | ['tabular-regression', 'baseline-trainer'] | false | Baseline Model trained on tips5wx_sbh5 to apply regression on tip **Metrics of the best model:** r2 0.389363 neg_mean_squared_error -1.092356 Name: Ridge(alpha=10), dtype: float64 **See model plot below:** <style> | 9b6e26c3ed749e8b6beab03ce0008627 |

apache-2.0 | [] | false | Notebooks

- `xmltodict.ipynb` contains the code to convert the `xml` files to `json` for training

- `training_script.ipynb` contains the code for training and inference. It is a modified version of https://github.com/AI4Bharat/IndianNLP-Transliteration/blob/master/NoteBooks/Xlit_TrainingSetup_condensed.ipynb

| 86e977f6a3b19b146823ca16c8e76e4f |

apache-2.0 | [] | false | Evaluation Scores on validation set

TOP 10 SCORES FOR 1000 SAMPLES

|Metrics | Score |

|-----------|-----------|

|ACC | 0.703000|

|Mean F-score| 0.949289|

|MRR | 0.486549|

|MAP_ref | 0.381000|

TOP 5 SCORES FOR 1000 SAMPLES:

|Metrics | Score |

|-----------|-----------|

|ACC |0.621000|

|Mean F-score |0.937985|

|MRR |0.475033|

|MAP_ref |0.381000|

TOP 3 SCORES FOR 1000 SAMPLES:

|Metrics | Score |

|-----------|-----------|

|ACC |0.560000|

|Mean F-score |0.927025|

|MRR |0.461333|

|MAP_ref |0.381000|

TOP 2 SCORES FOR 1000 SAMPLES:

|Metrics | Score |

|-----------|-----------|

|ACC | 0.502000|

|Mean F-score | 0.913697|

|MRR | 0.442000|

|MAP_ref | 0.381000|

TOP 1 SCORES FOR 1000 SAMPLES:

|Metrics | Score |

|-----------|-----------|

|ACC | 0.382000|

|Mean F-score | 0.881272|

|MRR | 0.382000|

|MAP_ref | 0.380500| | 5433ff5f764a1a82df37447e864cb897 |

apache-2.0 | [] | false | *all models trained with mnist sigma_data, instead of fashion-mnist.* - base: default k-diffusion model - no-t-emb: as base, but no t-embeddings in model - mse-no-t-emb: as no-t-emb, but predicting unscaled noise - mse: unscaled noise prediction with t-embeddings | 111658f05417f6937aac314e283fea25 |

apache-2.0 | [] | false | base metrics step,fid,kid 5000,23.366962432861328,0.0060024261474609375 10000,21.407773971557617,0.004696846008300781 15000,19.820981979370117,0.003306865692138672 20000,20.4482421875,0.0037620067596435547 25000,19.459041595458984,0.0030574798583984375 30000,18.933385848999023,0.0031194686889648438 35000,18.223621368408203,0.002220630645751953 40000,18.64676284790039,0.0026960372924804688 45000,17.681808471679688,0.0016982555389404297 50000,17.32500457763672,0.001678466796875 55000,17.74714469909668,0.0016117095947265625 60000,18.276540756225586,0.002439737319946289 | 6f247da9c2bc35dc4d7d0b457fd13945 |

apache-2.0 | [] | false | mse-no-t-emb step,fid,kid 5000,28.580364227294922,0.007686138153076172 10000,25.324932098388672,0.0061130523681640625 15000,23.68691635131836,0.005526542663574219 20000,24.05099105834961,0.005819082260131836 25000,22.60521125793457,0.004955768585205078 30000,22.16605567932129,0.0047609806060791016 35000,21.794536590576172,0.0039484500885009766 40000,22.96178436279297,0.005787849426269531 45000,22.641393661499023,0.004763364791870117 50000,20.735567092895508,0.0038640499114990234 55000,21.417423248291016,0.004515647888183594 60000,22.11293601989746,0.0054743289947509766 | 3694a0ac67d75ff70f6d9e3e3131c160 |

apache-2.0 | [] | false | no-t-emb step,fid,kid 5000,53.25414276123047,0.02761554718017578 10000,47.687461853027344,0.023845195770263672 15000,46.045196533203125,0.02205944061279297 20000,44.64243698120117,0.020934104919433594 25000,43.55231857299805,0.020574331283569336 30000,43.493412017822266,0.020569324493408203 35000,42.51478958129883,0.01968073844909668 40000,42.213401794433594,0.01972222328186035 45000,40.9914665222168,0.018793582916259766 50000,42.946231842041016,0.019819974899291992 55000,40.699989318847656,0.018331050872802734 60000,41.737518310546875,0.019069194793701172 | da4b250dd379b094051fe2fef752a8c7 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-cola This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the glue dataset. It achieves the following results on the evaluation set: - Loss: 0.7134 - Matthews Correlation: 0.5411 | 0fc54d48c02fab2b2fb5cd58043753f7 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Matthews Correlation | |:-------------:|:-----:|:----:|:---------------:|:--------------------:| | 0.5294 | 1.0 | 535 | 0.5082 | 0.4183 | | 0.3483 | 2.0 | 1070 | 0.4969 | 0.5259 | | 0.2355 | 3.0 | 1605 | 0.6260 | 0.5065 | | 0.1733 | 4.0 | 2140 | 0.7134 | 0.5411 | | 0.1238 | 5.0 | 2675 | 0.8516 | 0.5291 | | a5eed0e5cc82f3efd190c0bc40dbae13 |

apache-2.0 | ['stanza', 'token-classification'] | false | Stanza model for North_Sami (sme) Stanza is a collection of accurate and efficient tools for the linguistic analysis of many human languages. Starting from raw text to syntactic analysis and entity recognition, Stanza brings state-of-the-art NLP models to languages of your choosing. Find more about it in [our website](https://stanfordnlp.github.io/stanza) and our [GitHub repository](https://github.com/stanfordnlp/stanza). This card and repo were automatically prepared with `hugging_stanza.py` in the `stanfordnlp/huggingface-models` repo Last updated 2022-09-25 02:02:22.878 | 5d3a3a489c103ac8ac2508e96a7299c3 |

cc-by-4.0 | ['question generation'] | false | Model Card of `research-backup/t5-base-subjqa-vanilla-grocery-qg` This model is fine-tuned version of [t5-base](https://huggingface.co/t5-base) for question generation task on the [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) (dataset_name: grocery) via [`lmqg`](https://github.com/asahi417/lm-question-generation). | 70f1333a35a4e2639649ea89c4960efd |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.