license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

other | ['vision', 'image-classification'] | false | MobileNet V1 MobileNet V1 model pre-trained on ImageNet-1k at resolution 224x224. It was introduced in [MobileNets: Efficient Convolutional Neural Networks for Mobile Vision Applications](https://arxiv.org/abs/1704.04861) by Howard et al, and first released in [this repository](https://github.com/tensorflow/models/blob/master/research/slim/nets/mobilenet_v1.md). Disclaimer: The team releasing MobileNet V1 did not write a model card for this model so this model card has been written by the Hugging Face team. | 6a57e8ed64c9efa38f9245de675d9e61 |

other | ['vision', 'image-classification'] | false | Model description From the [original README](https://github.com/tensorflow/models/blob/master/research/slim/nets/mobilenet_v1.md): > MobileNets are small, low-latency, low-power models parameterized to meet the resource constraints of a variety of use cases. They can be built upon for classification, detection, embeddings and segmentation similar to how other popular large scale models, such as Inception, are used. MobileNets can be run efficiently on mobile devices [...] MobileNets trade off between latency, size and accuracy while comparing favorably with popular models from the literature. | 77a697b56210a8f9b989c03c9a852ada |

other | ['vision', 'image-classification'] | false | Intended uses & limitations You can use the raw model for image classification. See the [model hub](https://huggingface.co/models?search=mobilenet_v1) to look for fine-tuned versions on a task that interests you. | 716c5106bc8cc55169dd50c9888d92ab |

other | ['vision', 'image-classification'] | false | How to use Here is how to use this model to classify an image of the COCO 2017 dataset into one of the 1,000 ImageNet classes: ```python from transformers import MobileNetV1FeatureExtractor, MobileNetV1ForImageClassification from PIL import Image import requests url = "http://images.cocodataset.org/val2017/000000039769.jpg" image = Image.open(requests.get(url, stream=True).raw) feature_extractor = MobileNetV1FeatureExtractor.from_pretrained("Matthijs/mobilenet_v1_1.0_224") model = MobileNetV1ForImageClassification.from_pretrained("Matthijs/mobilenet_v1_1.0_224") inputs = feature_extractor(images=image, return_tensors="pt") outputs = model(**inputs) logits = outputs.logits | 1188f78f47eb0a7bd70106aec641d782 |

other | ['vision', 'image-classification'] | false | model predicts one of the 1000 ImageNet classes predicted_class_idx = logits.argmax(-1).item() print("Predicted class:", model.config.id2label[predicted_class_idx]) ``` Note: This model actually predicts 1001 classes, the 1000 classes from ImageNet plus an extra “background” class (index 0). Currently, both the feature extractor and model support PyTorch. | 49dd6f7d4e55b9e9b8f64d2f911d0a39 |

mit | ['generated_from_trainer'] | false | bart-pt-asqa-cb This model is a fine-tuned version of [vblagoje/bart_lfqa](https://huggingface.co/vblagoje/bart_lfqa) on the None dataset. It achieves the following results on the evaluation set: - Loss: 2.5362 - Rougelsum: 38.9467 | 93608361910540cd5cfc5283ae02045d |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-06 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 20 - mixed_precision_training: Native AMP | 3358b8b222a59302ca069225a019941a |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rougelsum | |:-------------:|:-----:|:----:|:---------------:|:---------:| | No log | 1.0 | 273 | 2.5653 | 37.6939 | | 2.6009 | 2.0 | 546 | 2.5295 | 38.2398 | | 2.6009 | 3.0 | 819 | 2.5315 | 38.5946 | | 2.3852 | 4.0 | 1092 | 2.5146 | 38.4771 | | 2.3852 | 5.0 | 1365 | 2.5240 | 38.5706 | | 2.2644 | 6.0 | 1638 | 2.5253 | 38.7506 | | 2.2644 | 7.0 | 1911 | 2.5355 | 38.9004 | | 2.1703 | 8.0 | 2184 | 2.5309 | 38.9528 | | 2.1703 | 9.0 | 2457 | 2.5362 | 38.9467 | | bba16efb3adfd4f5255509ed73b0cb89 |

apache-2.0 | ['t5-small', 'text2text-generation', 'natural language understanding', 'conversational system', 'task-oriented dialog'] | false | t5-small-nlu-all-multiwoz21 This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on [MultiWOZ 2.1](https://huggingface.co/datasets/ConvLab/multiwoz21) both user and system utterances. Refer to [ConvLab-3](https://github.com/ConvLab/ConvLab-3) for model description and usage. | e04b945e6f658d71ebc3fb75a9a22c2b |

apache-2.0 | ['generated_from_keras_callback'] | false | TEdetection_distiBERT_mLM_final This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: | 5caca13abb6e3031fdac201ac60cfe83 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'WarmUp', 'config': {'initial_learning_rate': 5e-05, 'decay_schedule_fn': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 5e-05, 'decay_steps': 208159, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}, '__passive_serialization__': True}, 'warmup_steps': 1000, 'power': 1.0, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01} - training_precision: float32 | 8debf951f860e8fd032802d398e9c5e9 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-de This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.1319 - F1: 0.8576 | 6d4bb80ed4a64b7de00f532872372d66 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.3264 | 1.0 | 197 | 0.1623 | 0.8139 | | 0.136 | 2.0 | 394 | 0.1331 | 0.8451 | | 0.096 | 3.0 | 591 | 0.1319 | 0.8576 | | f2a6e857c9b87bc340781145e4c02929 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-de-aug-ner This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.3820 - Precision: 0.5214 - Recall: 0.5660 - F1: 0.5428 - Accuracy: 0.8966 | 6be5ba796d651dfe1eb543cb8e210af2 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 463 | 0.6140 | 0.2884 | 0.2925 | 0.2904 | 0.8438 | | 0.8329 | 2.0 | 926 | 0.4504 | 0.4092 | 0.4423 | 0.4251 | 0.8720 | | 0.4385 | 3.0 | 1389 | 0.4046 | 0.4634 | 0.5042 | 0.4829 | 0.8875 | | 0.3364 | 4.0 | 1852 | 0.3843 | 0.5 | 0.5446 | 0.5213 | 0.8954 | | 0.2919 | 5.0 | 2315 | 0.3820 | 0.5214 | 0.5660 | 0.5428 | 0.8966 | | 849ee9875a36016580d56cd2db17d1d4 |

apache-2.0 | ['generated_from_trainer'] | false | t5-small-finetuned-xlsum This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the xlsum dataset. It achieves the following results on the evaluation set: - Loss: 2.4217 - Rouge1: 29.1774 - Rouge2: 8.0493 - Rougel: 22.5235 - Rougelsum: 22.5715 - Gen Len: 18.8415 | b99ba024b5a2d1057461078ce84f47cb |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:-----:|:---------------:|:-------:|:------:|:-------:|:---------:|:-------:| | 2.7017 | 1.0 | 19158 | 2.4217 | 29.1774 | 8.0493 | 22.5235 | 22.5715 | 18.8415 | | 508af088cd3b3d2520a8fe45f2c2e411 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 32 - eval_batch_size: 8 - seed: 42 - distributed_type: multi-GPU - num_devices: 4 - total_train_batch_size: 128 - total_eval_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 10.0 | 46ddea147c9df327b98a1b1981a2fbe2 |

apache-2.0 | ['generated_from_trainer'] | false | mobilebert_sa_GLUE_Experiment_logit_kd_rte This model is a fine-tuned version of [google/mobilebert-uncased](https://huggingface.co/google/mobilebert-uncased) on the GLUE RTE dataset. It achieves the following results on the evaluation set: - Loss: 0.3910 - Accuracy: 0.5271 | 97513e6274acfe804ec6fb5aeedadd2b |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.4088 | 1.0 | 20 | 0.3931 | 0.5271 | | 0.4081 | 2.0 | 40 | 0.3922 | 0.5271 | | 0.4076 | 3.0 | 60 | 0.3910 | 0.5271 | | 0.4068 | 4.0 | 80 | 0.3941 | 0.5343 | | 0.4069 | 5.0 | 100 | 0.3924 | 0.5343 | | 0.4022 | 6.0 | 120 | 0.3975 | 0.5343 | | 0.3801 | 7.0 | 140 | 0.4060 | 0.5415 | | 0.3447 | 8.0 | 160 | 0.5080 | 0.4982 | | eb42f4e50fa8b38d5c20cf9705e05643 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | openai/whisper-medium This model is a fine-tuned version of [openai/whisper-medium](https://huggingface.co/openai/whisper-medium) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2406 - Wer: 10.0333 | 7beca2f430a11406d479c24a89310b80 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.0241 | 1.06 | 1000 | 0.1996 | 10.4543 | | 0.009 | 2.12 | 2000 | 0.2156 | 10.1152 | | 0.0045 | 3.19 | 3000 | 0.2406 | 10.0333 | | bbc1d71701cbee76ef35890ff614bf18 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | t5-small-finetuned-summarization-cnn-ver2 This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the cnn_dailymail dataset. It achieves the following results on the evaluation set: - Loss: 2.0084 - Bertscore-mean-precision: 0.8859 - Bertscore-mean-recall: 0.8592 - Bertscore-mean-f1: 0.8721 - Bertscore-median-precision: 0.8855 - Bertscore-median-recall: 0.8578 - Bertscore-median-f1: 0.8718 | 170460fb1d8bfbc9e9bbcb88ac5b8924 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Bertscore-mean-precision | Bertscore-mean-recall | Bertscore-mean-f1 | Bertscore-median-precision | Bertscore-median-recall | Bertscore-median-f1 | |:-------------:|:-----:|:----:|:---------------:|:------------------------:|:---------------------:|:-----------------:|:--------------------------:|:-----------------------:|:-------------------:| | 2.0422 | 1.0 | 718 | 2.0139 | 0.8853 | 0.8589 | 0.8717 | 0.8857 | 0.8564 | 0.8715 | | 1.9481 | 2.0 | 1436 | 2.0085 | 0.8863 | 0.8591 | 0.8723 | 0.8858 | 0.8577 | 0.8718 | | 1.9231 | 3.0 | 2154 | 2.0084 | 0.8859 | 0.8592 | 0.8721 | 0.8855 | 0.8578 | 0.8718 | | 6739fd72c1bbeb1053b2aafaf098bb8a |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | sentence-transformers/paraphrase-albert-small-v2 This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search. | 40aff5e98bc1dba9209a3e25ed23a2c1 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | mt5-small-finetuned-amazon-en-es This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the None dataset. It achieves the following results on the evaluation set: - Loss: 3.0346 - Rouge1: 16.8527 - Rouge2: 8.331 - Rougel: 16.4475 - Rougelsum: 16.6421 | a8d425b06c132ed90bc7bd41fe4e41c9 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | |:-------------:|:-----:|:----:|:---------------:|:-------:|:------:|:-------:|:---------:| | 6.7536 | 1.0 | 1209 | 3.2881 | 13.6319 | 5.4635 | 13.0552 | 13.1093 | | 3.9312 | 2.0 | 2418 | 3.1490 | 16.8402 | 8.3559 | 16.1876 | 16.2869 | | 3.5987 | 3.0 | 3627 | 3.1043 | 17.9887 | 9.3136 | 17.3034 | 17.4313 | | 3.4261 | 4.0 | 4836 | 3.0573 | 17.0089 | 8.7389 | 16.5351 | 16.5023 | | 3.3221 | 5.0 | 6045 | 3.0569 | 16.8461 | 8.0988 | 16.4898 | 16.4927 | | 3.2549 | 6.0 | 7254 | 3.0511 | 17.3428 | 8.2234 | 16.7312 | 16.8749 | | 3.2067 | 7.0 | 8463 | 3.0334 | 16.268 | 7.9729 | 15.9342 | 16.0065 | | 3.1842 | 8.0 | 9672 | 3.0346 | 16.8527 | 8.331 | 16.4475 | 16.6421 | | 40face9705dc96ca68f4499986684b36 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | opus-mt-tc-base-gmw-gmw Neural machine translation model for translating from West Germanic languages (gmw) to West Germanic languages (gmw). This model is part of the [OPUS-MT project](https://github.com/Helsinki-NLP/Opus-MT), an effort to make neural machine translation models widely available and accessible for many languages in the world. All models are originally trained using the amazing framework of [Marian NMT](https://marian-nmt.github.io/), an efficient NMT implementation written in pure C++. The models have been converted to pyTorch using the transformers library by huggingface. Training data is taken from [OPUS](https://opus.nlpl.eu/) and training pipelines use the procedures of [OPUS-MT-train](https://github.com/Helsinki-NLP/Opus-MT-train). * Publications: [OPUS-MT – Building open translation services for the World](https://aclanthology.org/2020.eamt-1.61/) and [The Tatoeba Translation Challenge – Realistic Data Sets for Low Resource and Multilingual MT](https://aclanthology.org/2020.wmt-1.139/) (Please, cite if you use this model.) ``` @inproceedings{tiedemann-thottingal-2020-opus, title = "{OPUS}-{MT} {--} Building open translation services for the World", author = {Tiedemann, J{\"o}rg and Thottingal, Santhosh}, booktitle = "Proceedings of the 22nd Annual Conference of the European Association for Machine Translation", month = nov, year = "2020", address = "Lisboa, Portugal", publisher = "European Association for Machine Translation", url = "https://aclanthology.org/2020.eamt-1.61", pages = "479--480", } @inproceedings{tiedemann-2020-tatoeba, title = "The Tatoeba Translation Challenge {--} Realistic Data Sets for Low Resource and Multilingual {MT}", author = {Tiedemann, J{\"o}rg}, booktitle = "Proceedings of the Fifth Conference on Machine Translation", month = nov, year = "2020", address = "Online", publisher = "Association for Computational Linguistics", url = "https://aclanthology.org/2020.wmt-1.139", pages = "1174--1182", } ``` | f12d443dfa2502a84ab2e212f103e396 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Model info * Release: 2021-02-23 * source language(s): afr deu eng fry gos hrx ltz nds nld pdc yid * target language(s): afr deu eng fry nds nld * valid target language labels: >>afr<< >>ang_Latn<< >>deu<< >>eng<< >>fry<< >>ltz<< >>nds<< >>nld<< >>sco<< >>yid<< * model: transformer (base) * data: opus ([source](https://github.com/Helsinki-NLP/Tatoeba-Challenge)) * tokenization: SentencePiece (spm32k,spm32k) * original model: [opus-2021-02-23.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/gmw-gmw/opus-2021-02-23.zip) * more information released models: [OPUS-MT gmw-gmw README](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/gmw-gmw/README.md) * more information about the model: [MarianMT](https://huggingface.co/docs/transformers/model_doc/marian) This is a multilingual translation model with multiple target languages. A sentence initial language token is required in the form of `>>id<<` (id = valid target language ID), e.g. `>>afr<<` | 7d8916bfad6140ea016b2b493c569d75 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Usage A short example code: ```python from transformers import MarianMTModel, MarianTokenizer src_text = [ ">>nld<< You need help.", ">>afr<< I love your son." ] model_name = "pytorch-models/opus-mt-tc-base-gmw-gmw" tokenizer = MarianTokenizer.from_pretrained(model_name) model = MarianMTModel.from_pretrained(model_name) translated = model.generate(**tokenizer(src_text, return_tensors="pt", padding=True)) for t in translated: print( tokenizer.decode(t, skip_special_tokens=True) ) | 15e04d074181cf47b253e45cd8adcc1e |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Ek is lief vir jou seun. ``` You can also use OPUS-MT models with the transformers pipelines, for example: ```python from transformers import pipeline pipe = pipeline("translation", model="Helsinki-NLP/opus-mt-tc-base-gmw-gmw") print(pipe(>>nld<< You need help.)) | 4ee8d621cc4b567fb1b012de5420fc8c |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Benchmarks * test set translations: [opus-2021-02-23.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/gmw-gmw/opus-2021-02-23.test.txt) * test set scores: [opus-2021-02-23.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/gmw-gmw/opus-2021-02-23.eval.txt) * benchmark results: [benchmark_results.txt](benchmark_results.txt) * benchmark output: [benchmark_translations.zip](benchmark_translations.zip) | langpair | testset | chr-F | BLEU | | 1d284e25103fae8a729ab2c4439ebb40 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | words | |----------|---------|-------|-------|-------|--------| | afr-deu | tatoeba-test-v2021-08-07 | 0.674 | 48.1 | 1583 | 9105 | | afr-eng | tatoeba-test-v2021-08-07 | 0.728 | 58.8 | 1374 | 9622 | | afr-nld | tatoeba-test-v2021-08-07 | 0.711 | 54.5 | 1056 | 6710 | | deu-afr | tatoeba-test-v2021-08-07 | 0.696 | 52.4 | 1583 | 9507 | | deu-eng | tatoeba-test-v2021-08-07 | 0.609 | 42.1 | 17565 | 149462 | | deu-nds | tatoeba-test-v2021-08-07 | 0.442 | 18.6 | 9999 | 76137 | | deu-nld | tatoeba-test-v2021-08-07 | 0.672 | 48.7 | 10218 | 75235 | | eng-afr | tatoeba-test-v2021-08-07 | 0.735 | 56.5 | 1374 | 10317 | | eng-deu | tatoeba-test-v2021-08-07 | 0.580 | 35.9 | 17565 | 151568 | | eng-nds | tatoeba-test-v2021-08-07 | 0.412 | 16.6 | 2500 | 18264 | | eng-nld | tatoeba-test-v2021-08-07 | 0.663 | 48.3 | 12696 | 91796 | | fry-eng | tatoeba-test-v2021-08-07 | 0.500 | 32.5 | 220 | 1573 | | fry-nld | tatoeba-test-v2021-08-07 | 0.633 | 43.1 | 260 | 1854 | | gos-nld | tatoeba-test-v2021-08-07 | 0.405 | 15.6 | 1852 | 9903 | | hrx-deu | tatoeba-test-v2021-08-07 | 0.484 | 24.7 | 471 | 2805 | | hrx-eng | tatoeba-test-v2021-08-07 | 0.362 | 20.4 | 221 | 1235 | | ltz-deu | tatoeba-test-v2021-08-07 | 0.556 | 37.2 | 347 | 2208 | | ltz-eng | tatoeba-test-v2021-08-07 | 0.485 | 32.4 | 293 | 1840 | | ltz-nld | tatoeba-test-v2021-08-07 | 0.534 | 39.3 | 292 | 1685 | | nds-deu | tatoeba-test-v2021-08-07 | 0.572 | 34.5 | 9999 | 74564 | | nds-eng | tatoeba-test-v2021-08-07 | 0.493 | 29.9 | 2500 | 17589 | | nds-nld | tatoeba-test-v2021-08-07 | 0.621 | 42.3 | 1657 | 11490 | | nld-afr | tatoeba-test-v2021-08-07 | 0.755 | 58.8 | 1056 | 6823 | | nld-deu | tatoeba-test-v2021-08-07 | 0.686 | 50.4 | 10218 | 74131 | | nld-eng | tatoeba-test-v2021-08-07 | 0.690 | 53.1 | 12696 | 89978 | | nld-fry | tatoeba-test-v2021-08-07 | 0.478 | 25.1 | 260 | 1857 | | nld-nds | tatoeba-test-v2021-08-07 | 0.462 | 21.4 | 1657 | 11711 | | afr-deu | flores101-devtest | 0.524 | 21.6 | 1012 | 25094 | | afr-eng | flores101-devtest | 0.693 | 46.8 | 1012 | 24721 | | afr-nld | flores101-devtest | 0.509 | 18.4 | 1012 | 25467 | | deu-afr | flores101-devtest | 0.534 | 21.4 | 1012 | 25740 | | deu-eng | flores101-devtest | 0.616 | 33.8 | 1012 | 24721 | | deu-nld | flores101-devtest | 0.516 | 19.2 | 1012 | 25467 | | eng-afr | flores101-devtest | 0.628 | 33.8 | 1012 | 25740 | | eng-deu | flores101-devtest | 0.581 | 29.1 | 1012 | 25094 | | eng-nld | flores101-devtest | 0.533 | 21.0 | 1012 | 25467 | | ltz-afr | flores101-devtest | 0.430 | 12.9 | 1012 | 25740 | | ltz-deu | flores101-devtest | 0.482 | 17.1 | 1012 | 25094 | | ltz-eng | flores101-devtest | 0.468 | 18.8 | 1012 | 24721 | | ltz-nld | flores101-devtest | 0.409 | 10.7 | 1012 | 25467 | | nld-afr | flores101-devtest | 0.494 | 16.8 | 1012 | 25740 | | nld-deu | flores101-devtest | 0.501 | 17.9 | 1012 | 25094 | | nld-eng | flores101-devtest | 0.551 | 25.6 | 1012 | 24721 | | deu-eng | multi30k_test_2016_flickr | 0.546 | 32.2 | 1000 | 12955 | | eng-deu | multi30k_test_2016_flickr | 0.582 | 28.8 | 1000 | 12106 | | deu-eng | multi30k_test_2017_flickr | 0.561 | 32.7 | 1000 | 11374 | | eng-deu | multi30k_test_2017_flickr | 0.573 | 27.6 | 1000 | 10755 | | deu-eng | multi30k_test_2017_mscoco | 0.499 | 25.5 | 461 | 5231 | | eng-deu | multi30k_test_2017_mscoco | 0.514 | 22.0 | 461 | 5158 | | deu-eng | multi30k_test_2018_flickr | 0.535 | 30.0 | 1071 | 14689 | | eng-deu | multi30k_test_2018_flickr | 0.547 | 25.3 | 1071 | 13703 | | deu-eng | newssyscomb2009 | 0.527 | 25.4 | 502 | 11818 | | eng-deu | newssyscomb2009 | 0.504 | 19.3 | 502 | 11271 | | deu-eng | news-test2008 | 0.518 | 23.8 | 2051 | 49380 | | eng-deu | news-test2008 | 0.492 | 19.3 | 2051 | 47447 | | deu-eng | newstest2009 | 0.516 | 23.4 | 2525 | 65399 | | eng-deu | newstest2009 | 0.498 | 18.8 | 2525 | 62816 | | deu-eng | newstest2010 | 0.546 | 25.8 | 2489 | 61711 | | eng-deu | newstest2010 | 0.508 | 20.7 | 2489 | 61503 | | deu-eng | newstest2011 | 0.524 | 23.7 | 3003 | 74681 | | eng-deu | newstest2011 | 0.493 | 19.2 | 3003 | 72981 | | deu-eng | newstest2012 | 0.532 | 24.8 | 3003 | 72812 | | eng-deu | newstest2012 | 0.493 | 19.5 | 3003 | 72886 | | deu-eng | newstest2013 | 0.548 | 27.7 | 3000 | 64505 | | eng-deu | newstest2013 | 0.517 | 22.5 | 3000 | 63737 | | deu-eng | newstest2014-deen | 0.548 | 27.3 | 3003 | 67337 | | eng-deu | newstest2014-deen | 0.532 | 22.0 | 3003 | 62688 | | deu-eng | newstest2015-deen | 0.553 | 28.6 | 2169 | 46443 | | eng-deu | newstest2015-ende | 0.544 | 25.7 | 2169 | 44260 | | deu-eng | newstest2016-deen | 0.596 | 33.3 | 2999 | 64119 | | eng-deu | newstest2016-ende | 0.580 | 30.0 | 2999 | 62669 | | deu-eng | newstest2017-deen | 0.561 | 29.5 | 3004 | 64399 | | eng-deu | newstest2017-ende | 0.535 | 24.1 | 3004 | 61287 | | deu-eng | newstest2018-deen | 0.610 | 36.1 | 2998 | 67012 | | eng-deu | newstest2018-ende | 0.613 | 35.4 | 2998 | 64276 | | deu-eng | newstest2019-deen | 0.582 | 32.3 | 2000 | 39227 | | eng-deu | newstest2019-ende | 0.583 | 31.2 | 1997 | 48746 | | deu-eng | newstest2020-deen | 0.604 | 32.0 | 785 | 38220 | | eng-deu | newstest2020-ende | 0.542 | 23.9 | 1418 | 52383 | | deu-eng | newstestB2020-deen | 0.598 | 31.2 | 785 | 37696 | | eng-deu | newstestB2020-ende | 0.532 | 23.3 | 1418 | 53092 | | e3a7ade2ef534bd5c2883832f1f06c39 |

apache-2.0 | ['automatic-speech-recognition', 'es'] | false | exp_w2v2r_es_xls-r_age_teens-0_sixties-10_s951 Fine-tuned [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) for speech recognition using the train split of [Common Voice 7.0 (es)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 80148cf87e724735d289de0e580e8a4d |

apache-2.0 | ['generated_from_trainer'] | false | distilbert_sa_GLUE_Experiment_logit_kd_data_aug_qqp_256 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the GLUE QQP dataset. It achieves the following results on the evaluation set: - Loss: 0.7043 - Accuracy: 0.6343 - F1: 0.0148 - Combined Score: 0.3245 | 03b6a9e60b0e96fafeef2766dee35ec0 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | Combined Score | |:-------------:|:-----:|:------:|:---------------:|:--------:|:------:|:--------------:| | 0.8369 | 1.0 | 29671 | 0.7043 | 0.6343 | 0.0148 | 0.3245 | | 0.7448 | 2.0 | 59342 | 0.7161 | 0.6355 | 0.0216 | 0.3286 | | 0.7106 | 3.0 | 89013 | 0.7067 | 0.6466 | 0.0843 | 0.3655 | | 0.6924 | 4.0 | 118684 | 0.7200 | 0.6401 | 0.0477 | 0.3439 | | 0.6812 | 5.0 | 148355 | 0.7109 | 0.6424 | 0.0609 | 0.3517 | | 0.6734 | 6.0 | 178026 | 0.7092 | 0.6440 | 0.0696 | 0.3568 | | ee2550611ae9d2858b796ca2161de5f9 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2175 - Accuracy: 0.9225 - F1: 0.9226 | d43928a6d58e77a4c563d189ab99f997 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8152 | 1.0 | 250 | 0.3054 | 0.902 | 0.8992 | | 0.2418 | 2.0 | 500 | 0.2175 | 0.9225 | 0.9226 | | 357abe53d89fc4eeca3dc93a81a54cdc |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-large-xlsr-53-torgo-demo-f01-nolm This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.0153 - Wer: 0.4756 | 3f3295b78b0095d902991c5fd32b3f22 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 30 - mixed_precision_training: Native AMP | c68293dd40f9aeb678e6263089a27a9d |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:------:| | 3.4166 | 0.81 | 500 | 4.5019 | 1.0 | | 3.1088 | 1.62 | 1000 | 3.0459 | 1.0 | | 2.8249 | 2.44 | 1500 | 3.0850 | 1.0 | | 2.625 | 3.25 | 2000 | 2.6827 | 1.3656 | | 1.9816 | 4.06 | 2500 | 1.6636 | 1.3701 | | 1.3036 | 4.87 | 3000 | 0.9710 | 1.2504 | | 0.9862 | 5.68 | 3500 | 0.6023 | 1.0519 | | 0.7012 | 6.49 | 4000 | 0.4404 | 0.9342 | | 0.6102 | 7.31 | 4500 | 0.3297 | 0.8491 | | 0.5463 | 8.12 | 5000 | 0.2403 | 0.7773 | | 0.4897 | 8.93 | 5500 | 0.1907 | 0.7335 | | 0.4687 | 9.74 | 6000 | 0.1721 | 0.7095 | | 0.41 | 10.55 | 6500 | 0.1382 | 0.6851 | | 0.3277 | 11.36 | 7000 | 0.1189 | 0.6598 | | 0.3182 | 12.18 | 7500 | 0.1040 | 0.6372 | | 0.3279 | 12.99 | 8000 | 0.0961 | 0.6274 | | 0.2735 | 13.8 | 8500 | 0.0806 | 0.5880 | | 0.3153 | 14.61 | 9000 | 0.0821 | 0.5748 | | 0.251 | 15.42 | 9500 | 0.0633 | 0.5437 | | 0.2 | 16.23 | 10000 | 0.0534 | 0.5316 | | 0.2134 | 17.05 | 10500 | 0.0475 | 0.5195 | | 0.1727 | 17.86 | 11000 | 0.0435 | 0.5146 | | 0.2143 | 18.67 | 11500 | 0.0406 | 0.5072 | | 0.1679 | 19.48 | 12000 | 0.0386 | 0.5057 | | 0.1836 | 20.29 | 12500 | 0.0359 | 0.4984 | | 0.1542 | 21.1 | 13000 | 0.0284 | 0.4914 | | 0.1672 | 21.92 | 13500 | 0.0289 | 0.4884 | | 0.1526 | 22.73 | 14000 | 0.0256 | 0.4867 | | 0.1263 | 23.54 | 14500 | 0.0247 | 0.4871 | | 0.133 | 24.35 | 15000 | 0.0194 | 0.4816 | | 0.1005 | 25.16 | 15500 | 0.0190 | 0.4798 | | 0.1372 | 25.97 | 16000 | 0.0172 | 0.4786 | | 0.1126 | 26.79 | 16500 | 0.0177 | 0.4773 | | 0.0929 | 27.6 | 17000 | 0.0173 | 0.4775 | | 0.1069 | 28.41 | 17500 | 0.0164 | 0.4773 | | 0.0932 | 29.22 | 18000 | 0.0153 | 0.4756 | | 06995c99bade18bba45eae9322f5f8f6 |

mit | ['summarization', 'generated_from_trainer'] | false | t5-small-booksum-finetuned-booksum-test This model is a fine-tuned version of [cnicu/t5-small-booksum](https://huggingface.co/cnicu/t5-small-booksum) on the None dataset. It achieves the following results on the evaluation set: - Loss: 3.2739 - Rouge1: 22.7829 - Rouge2: 4.8349 - Rougel: 18.2465 - Rougelsum: 19.2417 | 7f9c8434fb8fe1428f8d0cf149c09090 |

mit | ['summarization', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5.6e-05 - train_batch_size: 1 - eval_batch_size: 1 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 8 | 935378e246838e9f20a63895cbaca842 |

mit | ['summarization', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | |:-------------:|:-----:|:-----:|:---------------:|:-------:|:------:|:-------:|:---------:| | 3.5123 | 1.0 | 8750 | 3.2816 | 21.7712 | 4.3046 | 17.4053 | 18.4707 | | 3.2347 | 2.0 | 17500 | 3.2915 | 22.2938 | 4.7828 | 17.8567 | 18.9135 | | 3.0892 | 3.0 | 26250 | 3.2568 | 22.4966 | 4.825 | 18.0344 | 19.1306 | | 2.9837 | 4.0 | 35000 | 3.2952 | 22.6913 | 5.0322 | 18.176 | 19.2751 | | 2.9028 | 5.0 | 43750 | 3.2626 | 22.3548 | 4.7521 | 17.8681 | 18.7815 | | 2.8441 | 6.0 | 52500 | 3.2691 | 22.6279 | 4.932 | 18.1051 | 19.0763 | | 2.8006 | 7.0 | 61250 | 3.2753 | 22.8911 | 4.8954 | 18.1204 | 19.1464 | | 2.7742 | 8.0 | 70000 | 3.2739 | 22.7829 | 4.8349 | 18.2465 | 19.2417 | | 6a7f3ab3997fe179468ef845694f5c20 |

apache-2.0 | ['generated_from_keras_callback'] | false | xander71988/t5-base-finetuned-facet-driver-type This model is a fine-tuned version of [t5-base](https://huggingface.co/t5-base) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.0016 - Validation Loss: 0.0054 - Epoch: 4 | 201e094bcb663d99e023c5d98298bb94 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 5.6e-05, 'decay_steps': 64768, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01} - training_precision: mixed_float16 | 096622aff03ad8fd03a33b0fb40e1e3c |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Epoch | |:----------:|:---------------:|:-----:| | 0.0178 | 0.0076 | 0 | | 0.0068 | 0.0057 | 1 | | 0.0042 | 0.0055 | 2 | | 0.0025 | 0.0044 | 3 | | 0.0016 | 0.0054 | 4 | | 0872f975120e7c52042c9cd6a8209761 |

apache-2.0 | ['generated_from_trainer'] | false | NLP_Project This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.5308 - Wer: 0.3428 | ba4c5e06518a05bac69b2472fafa807f |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:------:| | 3.5939 | 1.0 | 500 | 2.1356 | 1.0014 | | 0.9126 | 2.01 | 1000 | 0.5469 | 0.5354 | | 0.4491 | 3.01 | 1500 | 0.4636 | 0.4503 | | 0.3008 | 4.02 | 2000 | 0.4269 | 0.4330 | | 0.2229 | 5.02 | 2500 | 0.4164 | 0.4073 | | 0.188 | 6.02 | 3000 | 0.4717 | 0.4107 | | 0.1739 | 7.03 | 3500 | 0.4306 | 0.4031 | | 0.159 | 8.03 | 4000 | 0.4394 | 0.3993 | | 0.1342 | 9.04 | 4500 | 0.4462 | 0.3904 | | 0.1093 | 10.04 | 5000 | 0.4387 | 0.3759 | | 0.1005 | 11.04 | 5500 | 0.5033 | 0.3847 | | 0.0857 | 12.05 | 6000 | 0.4805 | 0.3876 | | 0.0779 | 13.05 | 6500 | 0.5269 | 0.3810 | | 0.072 | 14.06 | 7000 | 0.5109 | 0.3710 | | 0.0641 | 15.06 | 7500 | 0.4865 | 0.3638 | | 0.0584 | 16.06 | 8000 | 0.5041 | 0.3646 | | 0.0552 | 17.07 | 8500 | 0.4987 | 0.3537 | | 0.0535 | 18.07 | 9000 | 0.4947 | 0.3586 | | 0.0475 | 19.08 | 9500 | 0.5237 | 0.3647 | | 0.042 | 20.08 | 10000 | 0.5338 | 0.3561 | | 0.0416 | 21.08 | 10500 | 0.5068 | 0.3483 | | 0.0358 | 22.09 | 11000 | 0.5126 | 0.3532 | | 0.0334 | 23.09 | 11500 | 0.5213 | 0.3536 | | 0.0331 | 24.1 | 12000 | 0.5378 | 0.3496 | | 0.03 | 25.1 | 12500 | 0.5167 | 0.3470 | | 0.0254 | 26.1 | 13000 | 0.5245 | 0.3418 | | 0.0233 | 27.11 | 13500 | 0.5393 | 0.3456 | | 0.0232 | 28.11 | 14000 | 0.5279 | 0.3425 | | 0.022 | 29.12 | 14500 | 0.5308 | 0.3428 | | 4a7000333cee539883340afccb9468ab |

apache-2.0 | ['generated_from_keras_callback'] | false | juancopi81/bert-finetuned-ner This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.0269 - Validation Loss: 0.0528 - Epoch: 2 | 26cc8e2ed37ad596def22f1a243e533c |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 2631, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01} - training_precision: mixed_float16 | 63c1e48a9b38eb6b912c3e379d43e89e |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Epoch | |:----------:|:---------------:|:-----:| | 0.1715 | 0.0734 | 0 | | 0.0467 | 0.0535 | 1 | | 0.0269 | 0.0528 | 2 | | 6db201de2c95896c1d1f9a25ab53355d |

cc-by-sa-4.0 | ['japanese', 'token-classification', 'pos', 'dependency-parsing'] | false | Model Description This is a RoBERTa model pre-trained on 青空文庫 texts for POS-tagging and dependency-parsing, derived from [roberta-small-japanese-aozora](https://huggingface.co/KoichiYasuoka/roberta-small-japanese-aozora). Every long-unit-word is tagged by [UPOS](https://universaldependencies.org/u/pos/) (Universal Part-Of-Speech). | a891b4b2d05b75bfd72196ccb2d51c14 |

cc-by-sa-4.0 | ['japanese', 'token-classification', 'pos', 'dependency-parsing'] | false | How to Use ```py from transformers import AutoTokenizer,AutoModelForTokenClassification,TokenClassificationPipeline tokenizer=AutoTokenizer.from_pretrained("KoichiYasuoka/roberta-small-japanese-luw-upos") model=AutoModelForTokenClassification.from_pretrained("KoichiYasuoka/roberta-small-japanese-luw-upos") pipeline=TokenClassificationPipeline(tokenizer=tokenizer,model=model,aggregation_strategy="simple") nlp=lambda x:[(x[t["start"]:t["end"]],t["entity_group"]) for t in pipeline(x)] print(nlp("国境の長いトンネルを抜けると雪国であった。")) ``` or ```py import esupar nlp=esupar.load("KoichiYasuoka/roberta-small-japanese-luw-upos") print(nlp("国境の長いトンネルを抜けると雪国であった。")) ``` | b34e853996c958048008e86ac0b558d0 |

apache-2.0 | ['translation'] | false | opus-mt-sn-en * source languages: sn * target languages: en * OPUS readme: [sn-en](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/sn-en/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/sn-en/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/sn-en/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/sn-en/opus-2020-01-16.eval.txt) | e25837105412f5844a29d691248d81fc |

openrail | [] | false | Oud (عود) Unconditional Diffusion The Oud is one of the most foundational instruments to all of Arab music. It can be heard in nearly every song, whether the subgenre is rooted in pop or classical music. Its distinguishing sound can be picked out of a crowd of string instruments with little to no training. Our Unconditional Diffusion model ensures that we show respect to the sound and culture it has created. This project could not have been done without [the following audio diffusion tools.](https://github.com/teticio/audio-diffusion) | e8f1d8fa2bb1704e40dd3e78b384e8e6 |

openrail | [] | false | Limitations of Model The dataset used was very small, so the diversity of snippets that can be generated is rather limited. Furthermore, with high intensity segments (think a human playing the instrument with high intensity,) the realism/naturalness of the generated oud samples degrades. | db17f4aaf62490a592502018a9c7d667 |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_8_0', 'generated_from_trainer', 'robust-speech-event', 'ja', 'hf-asr-leaderboard'] | false | This model is a fine-tuned version of [facebook/wav2vec2-xls-r-1b](https://huggingface.co/facebook/wav2vec2-xls-r-1b) on the MOZILLA-FOUNDATION/COMMON_VOICE_8_0 - JA dataset. It achieves the following results on the evaluation set: - Loss: 0.5500 - Wer: 1.0132 - Cer: 0.1609 | c83ea7aeee1f31539ff220d444d6cb65 |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_8_0', 'generated_from_trainer', 'robust-speech-event', 'ja', 'hf-asr-leaderboard'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 7.5e-05 - train_batch_size: 32 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 128 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 1500 - num_epochs: 50.0 - mixed_precision_training: Native AMP | 50ee3ab8c052433c2937fc88e4d9f575 |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_8_0', 'generated_from_trainer', 'robust-speech-event', 'ja', 'hf-asr-leaderboard'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | Cer | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:| | 1.7019 | 12.65 | 1000 | 1.0510 | 0.9832 | 0.2589 | | 1.6385 | 25.31 | 2000 | 0.6670 | 0.9915 | 0.1851 | | 1.4344 | 37.97 | 3000 | 0.6183 | 1.0213 | 0.1797 | | 12166e0f08c2fa33d5f8a5149c33987c |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_8_0', 'generated_from_trainer', 'robust-speech-event', 'ja', 'hf-asr-leaderboard'] | false | Evaluation Commands 1. To evaluate on `mozilla-foundation/common_voice_8_0` with split `test` ```bash python ./eval.py --model_id AndrewMcDowell/wav2vec2-xls-r-1b-japanese-hiragana-katakana --dataset mozilla-foundation/common_voice_8_0 --config ja --split test --log_outputs ``` 2. To evaluate on `mozilla-foundation/common_voice_8_0` with split `test` ```bash python ./eval.py --model_id AndrewMcDowell/wav2vec2-xls-r-1b-japanese-hiragana-katakana --dataset speech-recognition-community-v2/dev_data --config de --split validation --chunk_length_s 5.0 --stride_length_s 1.0 ``` | 468cbf04b5846e62f1996ec17906200d |

apache-2.0 | ['vision', 'image-classification'] | false | ConvNeXT (tiny-sized model) ConvNeXT model trained on ImageNet-1k at resolution 224x224. It was introduced in the paper [A ConvNet for the 2020s](https://arxiv.org/abs/2201.03545) by Liu et al. and first released in [this repository](https://github.com/facebookresearch/ConvNeXt). Disclaimer: The team releasing ConvNeXT did not write a model card for this model so this model card has been written by the Hugging Face team. | a3243a0d7e6a9a2298ce2c518b75064a |

apache-2.0 | ['vision', 'image-classification'] | false | How to use Here is how to use this model to classify an image of the COCO 2017 dataset into one of the 1,000 ImageNet classes: ```python from transformers import ConvNextFeatureExtractor, ConvNextForImageClassification import torch from datasets import load_dataset dataset = load_dataset("huggingface/cats-image") image = dataset["test"]["image"][0] feature_extractor = ConvNextFeatureExtractor.from_pretrained("facebook/convnext-tiny-224") model = ConvNextForImageClassification.from_pretrained("facebook/convnext-tiny-224") inputs = feature_extractor(image, return_tensors="pt") with torch.no_grad(): logits = model(**inputs).logits | 2e95ec162950cca10e57f923c8a3b53d |

apache-2.0 | ['vision', 'image-classification'] | false | model predicts one of the 1000 ImageNet classes predicted_label = logits.argmax(-1).item() print(model.config.id2label[predicted_label]), ``` For more code examples, we refer to the [documentation](https://huggingface.co/docs/transformers/master/en/model_doc/convnext). | 1ef7f94eb527787dc53097e413e26d93 |

apache-2.0 | ['translation'] | false | opus-mt-sv-af * source languages: sv * target languages: af * OPUS readme: [sv-af](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/sv-af/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/sv-af/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/sv-af/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/sv-af/opus-2020-01-16.eval.txt) | 7f2d3d466872a41724b4595e10561fa0 |

apache-2.0 | ['generated_from_trainer'] | false | fine-tune-xlsr-53-wav2vec2-on-swahili-sagemaker-2 This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the common_voice_9_0 dataset. It achieves the following results on the evaluation set: - Loss: 0.2089 - Wer: 0.2356 | e5a288ac9d09163b227f5748fee8914f |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 10 - mixed_precision_training: Native AMP | e50aa2a306173c8ed066f78206588a05 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:------:| | 5.3715 | 0.22 | 400 | 3.1337 | 1.0 | | 1.7928 | 0.44 | 800 | 0.7137 | 0.6290 | | 0.5382 | 0.66 | 1200 | 0.5686 | 0.4708 | | 0.4263 | 0.89 | 1600 | 0.3693 | 0.4091 | | 0.3705 | 1.11 | 2000 | 0.3925 | 0.3747 | | 0.3348 | 1.33 | 2400 | 0.2908 | 0.3597 | | 0.3151 | 1.55 | 2800 | 0.3403 | 0.3388 | | 0.2977 | 1.77 | 3200 | 0.2698 | 0.3294 | | 0.2901 | 1.99 | 3600 | 0.6100 | 0.3173 | | 0.2432 | 2.22 | 4000 | 0.2893 | 0.3213 | | 0.256 | 2.44 | 4400 | 0.2604 | 0.3087 | | 0.2453 | 2.66 | 4800 | 0.2448 | 0.3077 | | 0.2427 | 2.88 | 5200 | 0.2391 | 0.2925 | | 0.2235 | 3.1 | 5600 | 0.8570 | 0.2907 | | 0.2078 | 3.32 | 6000 | 0.2289 | 0.2884 | | 0.199 | 3.55 | 6400 | 0.2303 | 0.2852 | | 0.2092 | 3.77 | 6800 | 0.2270 | 0.2769 | | 0.2 | 3.99 | 7200 | 0.2588 | 0.2823 | | 0.1806 | 4.21 | 7600 | 0.2324 | 0.2757 | | 0.1789 | 4.43 | 8000 | 0.2051 | 0.2721 | | 0.1753 | 4.65 | 8400 | 0.2290 | 0.2695 | | 0.1734 | 4.88 | 8800 | 0.2161 | 0.2686 | | 0.1648 | 5.1 | 9200 | 0.2139 | 0.2695 | | 0.158 | 5.32 | 9600 | 0.2218 | 0.2632 | | 0.151 | 5.54 | 10000 | 0.2060 | 0.2594 | | 0.1534 | 5.76 | 10400 | 0.2199 | 0.2638 | | 0.1485 | 5.98 | 10800 | 0.2023 | 0.2584 | | 0.1332 | 6.2 | 11200 | 0.2160 | 0.2547 | | 0.1319 | 6.43 | 11600 | 0.2045 | 0.2547 | | 0.1329 | 6.65 | 12000 | 0.2072 | 0.2545 | | 0.1329 | 6.87 | 12400 | 0.2014 | 0.2502 | | 0.1307 | 7.09 | 12800 | 0.2045 | 0.2487 | | 0.1197 | 7.31 | 13200 | 0.1987 | 0.2491 | | 0.118 | 7.53 | 13600 | 0.1947 | 0.2442 | | 0.1194 | 7.76 | 14000 | 0.1863 | 0.2430 | | 0.1157 | 7.98 | 14400 | 0.3602 | 0.2430 | | 0.1095 | 8.2 | 14800 | 0.2074 | 0.2408 | | 0.1051 | 8.42 | 15200 | 0.2113 | 0.2410 | | 0.1073 | 8.64 | 15600 | 0.2064 | 0.2395 | | 0.1025 | 8.86 | 16000 | 0.2012 | 0.2396 | | 0.1027 | 9.09 | 16400 | 0.2342 | 0.2372 | | 0.0998 | 9.31 | 16800 | 0.2206 | 0.2357 | | 0.0935 | 9.53 | 17200 | 0.2151 | 0.2356 | | 0.0959 | 9.75 | 17600 | 0.2096 | 0.2355 | | 0.095 | 9.97 | 18000 | 0.2089 | 0.2354 | | afc42356df8e6371f7fdbf5a3fbd702b |

cc-by-4.0 | ['question generation'] | false | Model Card of `lmqg/t5-base-subjqa-restaurants-qg` This model is fine-tuned version of [lmqg/t5-base-squad](https://huggingface.co/lmqg/t5-base-squad) for question generation task on the [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) (dataset_name: restaurants) via [`lmqg`](https://github.com/asahi417/lm-question-generation). | 69cb8b87938d9b0ec9bd19ec24577e44 |

cc-by-4.0 | ['question generation'] | false | Overview - **Language model:** [lmqg/t5-base-squad](https://huggingface.co/lmqg/t5-base-squad) - **Language:** en - **Training data:** [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) (restaurants) - **Online Demo:** [https://autoqg.net/](https://autoqg.net/) - **Repository:** [https://github.com/asahi417/lm-question-generation](https://github.com/asahi417/lm-question-generation) - **Paper:** [https://arxiv.org/abs/2210.03992](https://arxiv.org/abs/2210.03992) | 51d714dfecd4c9b2ce20fd9e2124a5f2 |

cc-by-4.0 | ['question generation'] | false | model prediction questions = model.generate_q(list_context="William Turner was an English painter who specialised in watercolour landscapes", list_answer="William Turner") ``` - With `transformers` ```python from transformers import pipeline pipe = pipeline("text2text-generation", "lmqg/t5-base-subjqa-restaurants-qg") output = pipe("generate question: <hl> Beyonce <hl> further expanded her acting career, starring as blues singer Etta James in the 2008 musical biopic, Cadillac Records.") ``` | c5a2f8851c4af6ab7a1475ad1b4dcff3 |

cc-by-4.0 | ['question generation'] | false | Evaluation - ***Metric (Question Generation)***: [raw metric file](https://huggingface.co/lmqg/t5-base-subjqa-restaurants-qg/raw/main/eval/metric.first.sentence.paragraph_answer.question.lmqg_qg_subjqa.restaurants.json) | | Score | Type | Dataset | |:-----------|--------:|:------------|:-----------------------------------------------------------------| | BERTScore | 88.48 | restaurants | [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) | | Bleu_1 | 8.81 | restaurants | [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) | | Bleu_2 | 3.68 | restaurants | [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) | | Bleu_3 | 1.09 | restaurants | [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) | | Bleu_4 | 0 | restaurants | [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) | | METEOR | 14.75 | restaurants | [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) | | MoverScore | 56.19 | restaurants | [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) | | ROUGE_L | 11.96 | restaurants | [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) | | 977f8b91af0539ce36c66f049a69f622 |

cc-by-4.0 | ['question generation'] | false | Training hyperparameters The following hyperparameters were used during fine-tuning: - dataset_path: lmqg/qg_subjqa - dataset_name: restaurants - input_types: ['paragraph_answer'] - output_types: ['question'] - prefix_types: ['qg'] - model: lmqg/t5-base-squad - max_length: 512 - max_length_output: 32 - epoch: 1 - batch: 16 - lr: 5e-05 - fp16: False - random_seed: 1 - gradient_accumulation_steps: 32 - label_smoothing: 0.15 The full configuration can be found at [fine-tuning config file](https://huggingface.co/lmqg/t5-base-subjqa-restaurants-qg/raw/main/trainer_config.json). | 1bb5e21737989752055e87652f3a77e6 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-all This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1752 - F1: 0.8557 | bd50c1521a9d1380aec05e0c1759a52f |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.3 | 1.0 | 835 | 0.1862 | 0.8114 | | 0.1552 | 2.0 | 1670 | 0.1758 | 0.8426 | | 0.1002 | 3.0 | 2505 | 0.1752 | 0.8557 | | 5e89a3d56474259ca9dbe42d85770286 |

cc0-1.0 | ['generated_from_trainer'] | false | BlueBERT This model is a fine-tuned version of [bionlp/bluebert_pubmed_uncased_L-12_H-768_A-12](https://huggingface.co/bionlp/bluebert_pubmed_uncased_L-12_H-768_A-12) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.6525 - Accuracy: 0.83 - Precision: 0.8767 - Recall: 0.8889 - F1: 0.8828 | 4e157af4891d596c3738ff1f73ca6531 |

cc0-1.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | Precision | Recall | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:---------:|:------:|:------:| | 0.6839 | 1.0 | 50 | 0.7208 | 0.39 | 0.9231 | 0.1667 | 0.2824 | | 0.6594 | 2.0 | 100 | 0.5862 | 0.6 | 0.9211 | 0.4861 | 0.6364 | | 0.539 | 3.0 | 150 | 0.5940 | 0.66 | 0.9318 | 0.5694 | 0.7069 | | 0.4765 | 4.0 | 200 | 0.5675 | 0.65 | 0.9512 | 0.5417 | 0.6903 | | 0.3805 | 5.0 | 250 | 0.4494 | 0.79 | 0.9322 | 0.7639 | 0.8397 | | 0.279 | 6.0 | 300 | 0.4760 | 0.84 | 0.8784 | 0.9028 | 0.8904 | | 0.2016 | 7.0 | 350 | 0.5514 | 0.82 | 0.8553 | 0.9028 | 0.8784 | | 0.1706 | 8.0 | 400 | 0.5353 | 0.84 | 0.8889 | 0.8889 | 0.8889 | | 0.1164 | 9.0 | 450 | 0.7676 | 0.82 | 0.8462 | 0.9167 | 0.8800 | | 0.1054 | 10.0 | 500 | 0.6525 | 0.83 | 0.8767 | 0.8889 | 0.8828 | | 5955c88d68b5d20906068306ff7cd130 |

apache-2.0 | ['classification'] | false | This model predicts the time period given a synopsis of about 200 Chinese characters. The model is trained on TV and Movie datasets and takes simplified Chinese as input. We trained the model from the "hfl/chinese-bert-wwm-ext" checkpoint. | 586067ee3dc24a87b6d8c9e30bea0725 |

apache-2.0 | ['classification'] | false | Sample Usage from transformers import BertTokenizer, BertForSequenceClassification device = torch.device("cuda" if torch.cuda.is_available() else "cpu") checkpoint = "Herais/pred_timeperiod" tokenizer = BertTokenizer.from_pretrained(checkpoint, problem_type="single_label_classification") model = BertForSequenceClassification.from_pretrained(checkpoint).to(device) label2id_timeperiod = {'古代': 0, '当代': 1, '现代': 2, '近代': 3, '重大': 4} id2label_timeperiod = {0: '古代', 1: '当代', 2: '现代', 3: '近代', 4: '重大'} synopsis = """加油吧!检察官。鲤州市安平区检察院检察官助理蔡晓与徐美津是两个刚入职场的“菜鸟”。\ 他们在老检察官冯昆的指导与鼓励下,凭借着自己的一腔热血与对检察事业的执著追求,克服工作上的种种困难,\ 成功办理电竞赌博、虚假诉讼、水产市场涉黑等一系列复杂案件,惩治了犯罪分子,维护了人民群众的合法权益,\ 为社会主义法治建设贡献了自己的一份力量。在这个过程中,蔡晓与徐美津不仅得到了业务能力上的提升,\ 也领悟了人生的真谛,学会真诚地面对家人与朋友,收获了亲情与友谊,成长为合格的员额检察官,\ 继续为检察事业贡献自己的青春。 """ inputs = tokenizer(synopsis, truncation=True, max_length=512, return_tensors='pt') model.eval() outputs = model(**input) label_ids_pred = torch.argmax(outputs.logits, dim=1).to('cpu').numpy() labels_pred = [id2label_timeperiod[label] for label in labels_pred] print(labels_pred) | 5cd22a97365af2446f402755a6a232d6 |

apache-2.0 | ['generated_from_keras_callback'] | false | juancopi81/distilbert-finetuned-imdb This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 2.8630 - Validation Loss: 2.5977 - Epoch: 0 | 51323e114ff463af1c8011622b0841e7 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'WarmUp', 'config': {'initial_learning_rate': 2e-05, 'decay_schedule_fn': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': -688, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}, '__passive_serialization__': True}, 'warmup_steps': 1000, 'power': 1.0, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01} - training_precision: float32 | 4d990c11efa590ca1a008f38aa6eb2e8 |

mit | ['generated_from_trainer'] | false | movies-ita-classification-bertbased-v2 This model is a fine-tuned version of [dbmdz/bert-base-italian-cased](https://huggingface.co/dbmdz/bert-base-italian-cased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.1995 - Accuracy: 0.6208 | c6d69c8ec148474d3390d0a53ce14d2c |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 1.3416 | 1.0 | 1181 | 1.2574 | 0.5897 | | 1.0583 | 2.0 | 2362 | 1.1978 | 0.6091 | | 0.789 | 3.0 | 3543 | 1.1995 | 0.6208 | | dbd8bd914f2c896124739bc0e16a0c5d |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | temp Dreambooth model trained by mastergruffly with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept: | 55172c07800a750711b2a8505c69ce9e |

apache-2.0 | ['generated_from_trainer'] | false | Tagged_One_500v8_NER_Model_3Epochs_AUGMENTED This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the tagged_one500v8_wikigold_split dataset. It achieves the following results on the evaluation set: - Loss: 0.2761 - Precision: 0.6785 - Recall: 0.6773 - F1: 0.6779 - Accuracy: 0.9254 | 84ef85fc236a45bf7099252ae2ef5fb8 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 172 | 0.3004 | 0.5475 | 0.5128 | 0.5296 | 0.9050 | | No log | 2.0 | 344 | 0.2752 | 0.6595 | 0.6422 | 0.6507 | 0.9201 | | 0.112 | 3.0 | 516 | 0.2761 | 0.6785 | 0.6773 | 0.6779 | 0.9254 | | 72c1a9b7719d43de1d4f0a6d5b381f2b |

apache-2.0 | ['translation'] | false | opus-mt-hu-de * source languages: hu * target languages: de * OPUS readme: [hu-de](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/hu-de/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-20.zip](https://object.pouta.csc.fi/OPUS-MT-models/hu-de/opus-2020-01-20.zip) * test set translations: [opus-2020-01-20.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/hu-de/opus-2020-01-20.test.txt) * test set scores: [opus-2020-01-20.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/hu-de/opus-2020-01-20.eval.txt) | 286e8df4f132290c39704f10f23b333d |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 64 - eval_batch_size: 128 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - num_epochs: 5.0 | 4fa09a657af2fa476a473ca783b1cb84 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | AlbertBezDream Dreambooth model trained by beliv3 with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept: .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg)  .jpg) .jpg) .jpg) .jpg) | 1894ccfc27853055dfb74613f8616e61 |

apache-2.0 | ['generated_from_trainer'] | false | cvt-13-finetuned-waste This model is a fine-tuned version of [microsoft/cvt-13](https://huggingface.co/microsoft/cvt-13) on the imagefolder dataset. It achieves the following results on the evaluation set: - Loss: 0.0000 - Accuracy: 1.0 | 124377e4899acce8f2f67c4bd9838079 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.1715 | 0.99 | 117 | 0.0000 | 1.0 | | 0.1194 | 1.99 | 234 | 0.0000 | 1.0 | | 0.1496 | 2.99 | 351 | 0.0000 | 1.0 | | 34a10f351dbcec73d519cb7aa3792a47 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-large-xls-r-300m-urdu-cv-10 This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice_10_0 dataset. It achieves the following results on the evaluation set: - Loss: 0.5959 - Wer: 0.3946 | e2c5c388af0491398b0a96f5ca3fa23d |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 20.8724 | 0.25 | 32 | 18.0006 | 1.0 | | 10.984 | 0.5 | 64 | 6.8001 | 1.0 | | 5.7792 | 0.74 | 96 | 4.9273 | 1.0 | | 4.2891 | 0.99 | 128 | 3.8379 | 1.0 | | 3.4937 | 1.24 | 160 | 3.2877 | 1.0 | | 3.1605 | 1.49 | 192 | 3.1198 | 1.0 | | 3.0874 | 1.74 | 224 | 3.0542 | 1.0 | | 3.0363 | 1.98 | 256 | 3.0063 | 0.9999 | | 2.9776 | 2.23 | 288 | 2.9677 | 1.0 | | 2.8168 | 2.48 | 320 | 2.4189 | 1.0000 | | 2.0575 | 2.73 | 352 | 1.5330 | 0.8520 | | 1.4248 | 2.98 | 384 | 1.1747 | 0.7519 | | 1.1354 | 3.22 | 416 | 0.9837 | 0.7047 | | 1.0049 | 3.47 | 448 | 0.9414 | 0.6631 | | 0.956 | 3.72 | 480 | 0.8948 | 0.6606 | | 0.8906 | 3.97 | 512 | 0.8381 | 0.6291 | | 0.7587 | 4.22 | 544 | 0.7714 | 0.5898 | | 0.7534 | 4.47 | 576 | 0.8237 | 0.5908 | | 0.7203 | 4.71 | 608 | 0.7731 | 0.5758 | | 0.6876 | 4.96 | 640 | 0.7467 | 0.5390 | | 0.5825 | 5.21 | 672 | 0.6940 | 0.5401 | | 0.5565 | 5.46 | 704 | 0.6826 | 0.5248 | | 0.5598 | 5.71 | 736 | 0.6387 | 0.5204 | | 0.5289 | 5.95 | 768 | 0.6432 | 0.4956 | | 0.4565 | 6.2 | 800 | 0.6643 | 0.4876 | | 0.4576 | 6.45 | 832 | 0.6295 | 0.4758 | | 0.4265 | 6.7 | 864 | 0.6227 | 0.4673 | | 0.4359 | 6.95 | 896 | 0.6077 | 0.4598 | | 0.3576 | 7.19 | 928 | 0.5800 | 0.4477 | | 0.3612 | 7.44 | 960 | 0.5837 | 0.4500 | | 0.345 | 7.69 | 992 | 0.5892 | 0.4466 | | 0.3707 | 7.94 | 1024 | 0.6217 | 0.4380 | | 0.3269 | 8.19 | 1056 | 0.5964 | 0.4412 | | 0.2974 | 8.43 | 1088 | 0.6116 | 0.4394 | | 0.2932 | 8.68 | 1120 | 0.5764 | 0.4235 | | 0.2854 | 8.93 | 1152 | 0.5757 | 0.4239 | | 0.2651 | 9.18 | 1184 | 0.5798 | 0.4253 | | 0.2508 | 9.43 | 1216 | 0.5750 | 0.4316 | | 0.238 | 9.67 | 1248 | 0.6038 | 0.4232 | | 0.2454 | 9.92 | 1280 | 0.5781 | 0.4078 | | 0.2196 | 10.17 | 1312 | 0.5931 | 0.4178 | | 0.2036 | 10.42 | 1344 | 0.6134 | 0.4116 | | 0.2087 | 10.67 | 1376 | 0.5831 | 0.4146 | | 0.1908 | 10.91 | 1408 | 0.5987 | 0.4159 | | 0.1751 | 11.16 | 1440 | 0.5968 | 0.4065 | | 0.1726 | 11.41 | 1472 | 0.6037 | 0.4119 | | 0.1728 | 11.66 | 1504 | 0.5961 | 0.4011 | | 0.1772 | 11.91 | 1536 | 0.5903 | 0.3972 | | 0.1647 | 12.16 | 1568 | 0.5960 | 0.4024 | | 0.1506 | 12.4 | 1600 | 0.5986 | 0.3933 | | 0.1383 | 12.65 | 1632 | 0.5893 | 0.3938 | | 0.1433 | 12.9 | 1664 | 0.5999 | 0.3975 | | 0.1356 | 13.15 | 1696 | 0.6035 | 0.3982 | | 0.1431 | 13.4 | 1728 | 0.5997 | 0.4042 | | 0.1346 | 13.64 | 1760 | 0.6018 | 0.4003 | | 0.1363 | 13.89 | 1792 | 0.5891 | 0.3969 | | 0.1323 | 14.14 | 1824 | 0.5983 | 0.3925 | | 0.1196 | 14.39 | 1856 | 0.6003 | 0.3939 | | 0.1266 | 14.64 | 1888 | 0.5997 | 0.3941 | | 0.1269 | 14.88 | 1920 | 0.5959 | 0.3946 | | a349cf74849acbb694aeff723971cb65 |

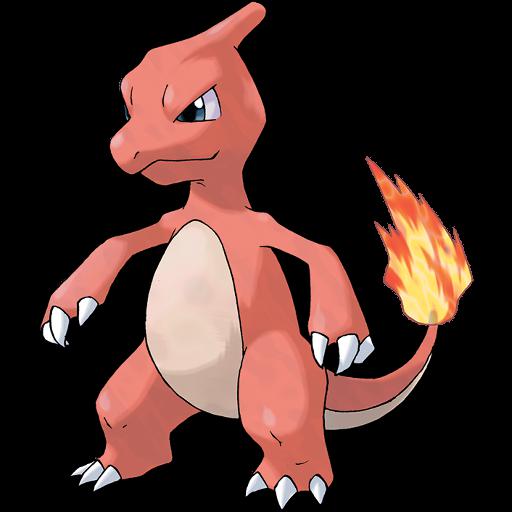

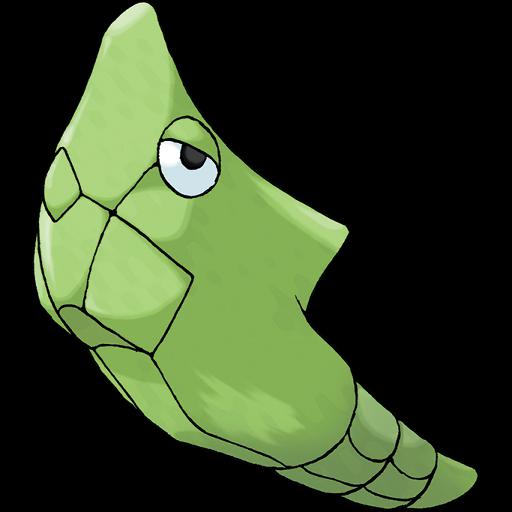

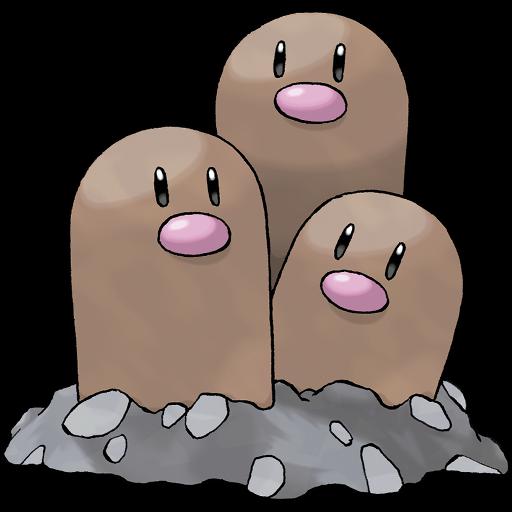

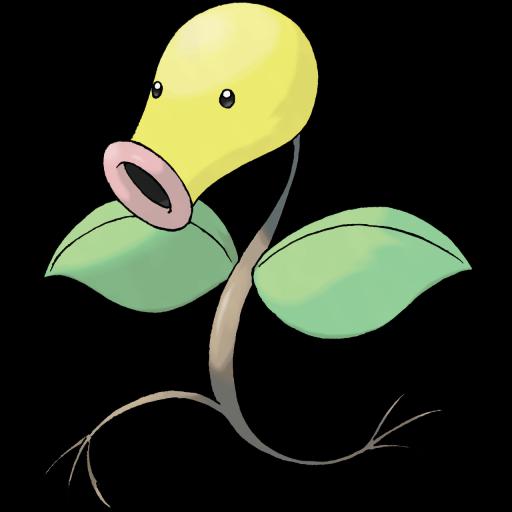

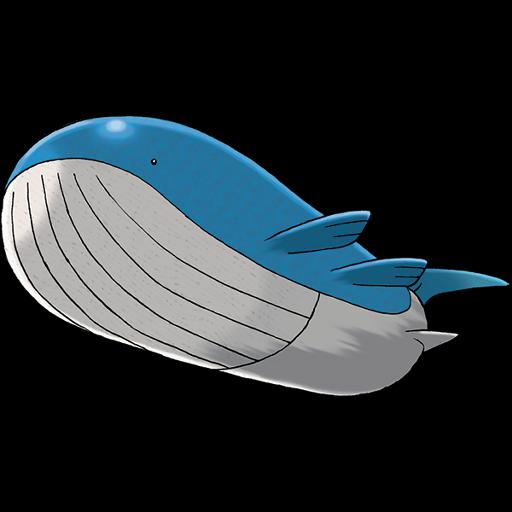

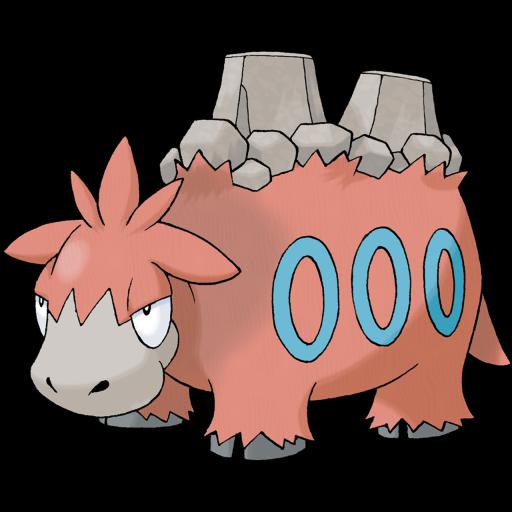

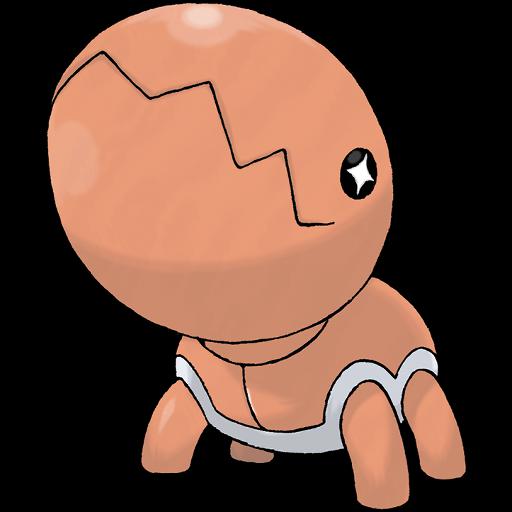

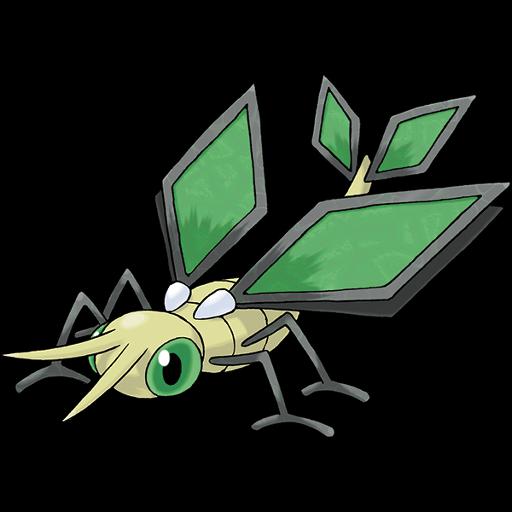

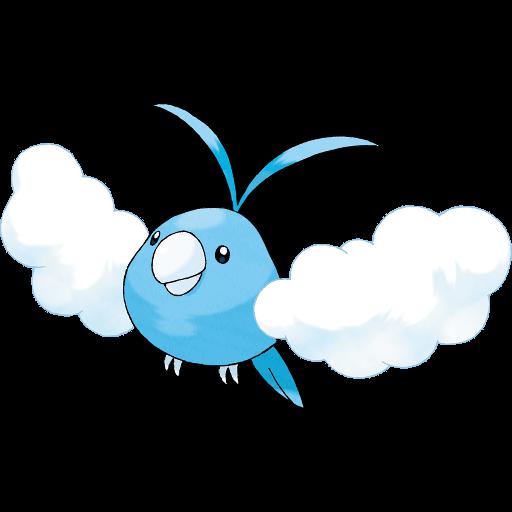

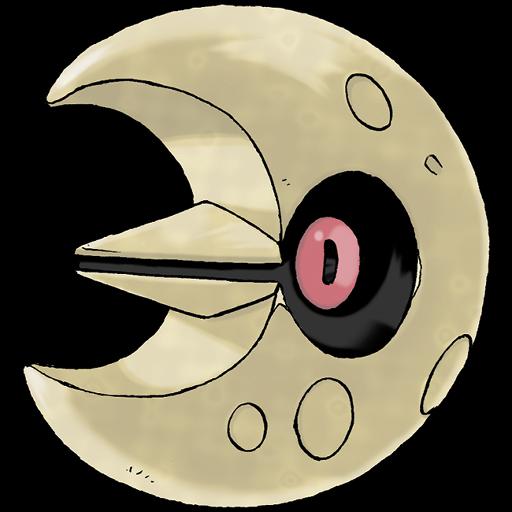

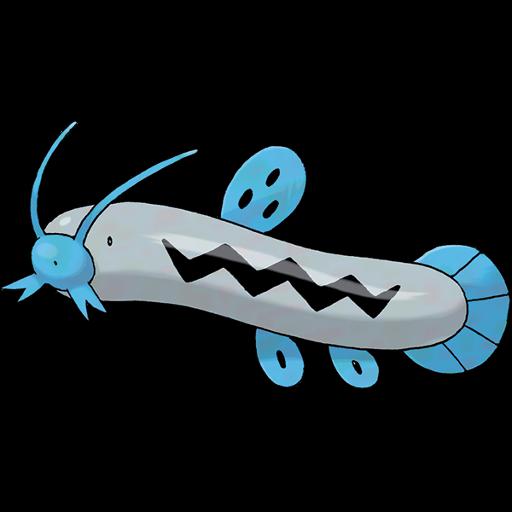

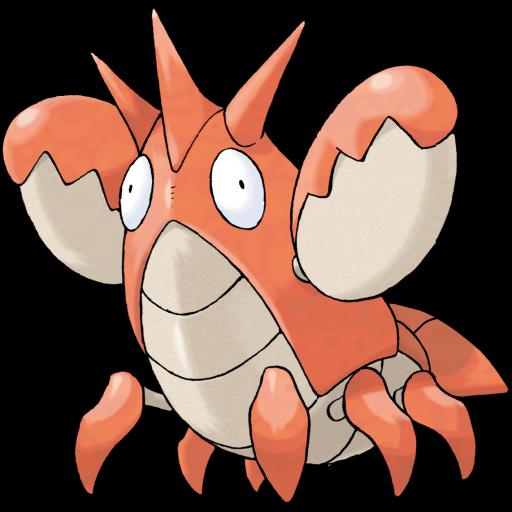

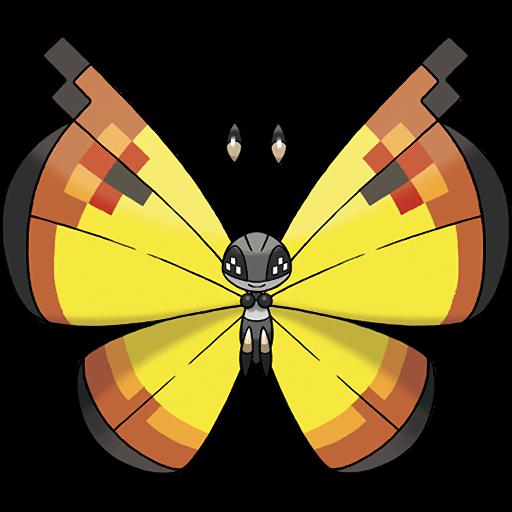

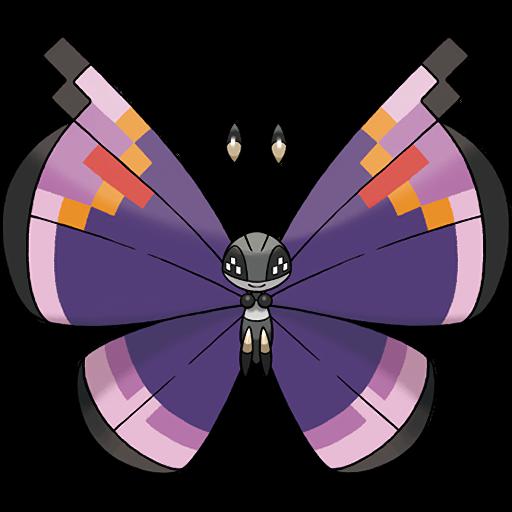

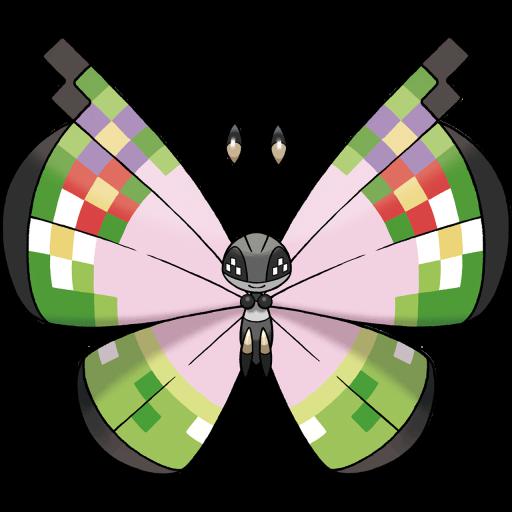

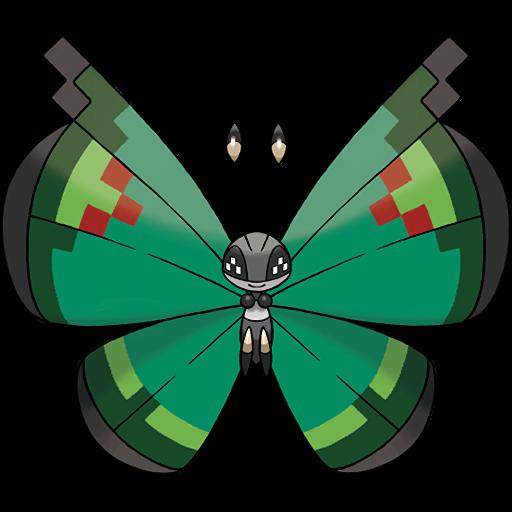

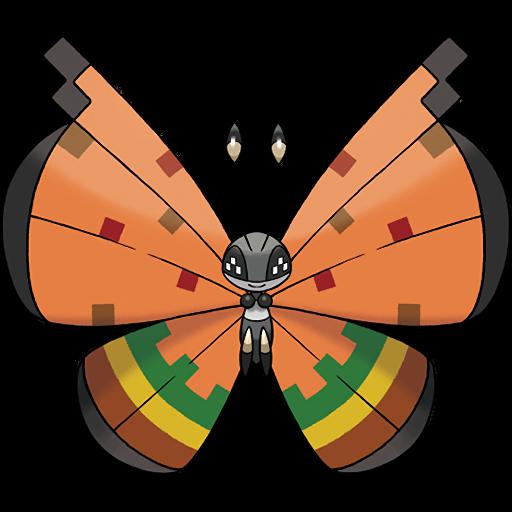

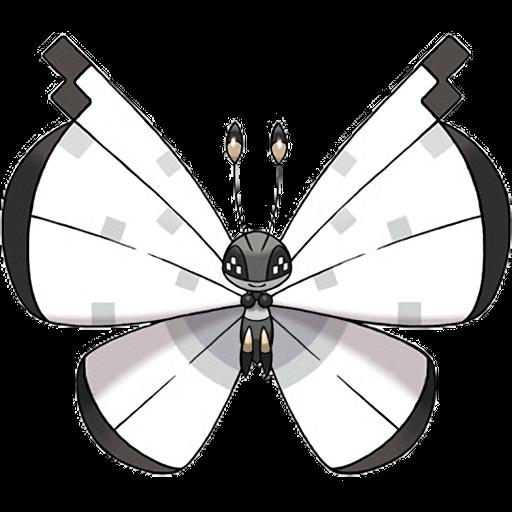

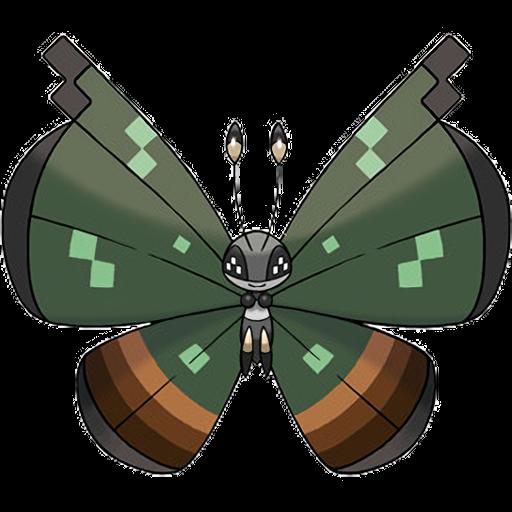

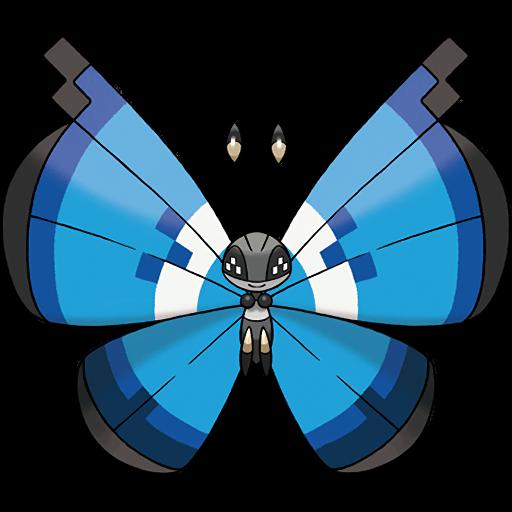

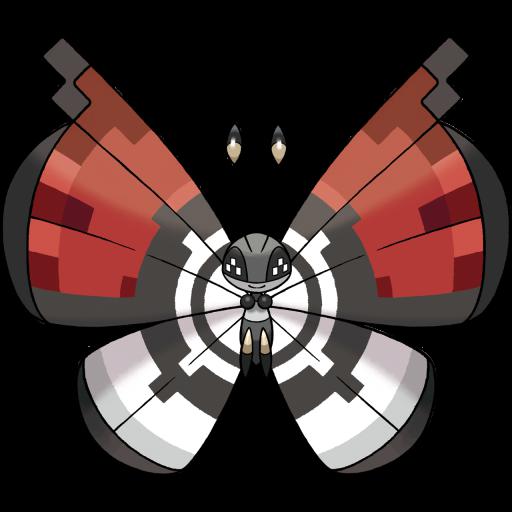

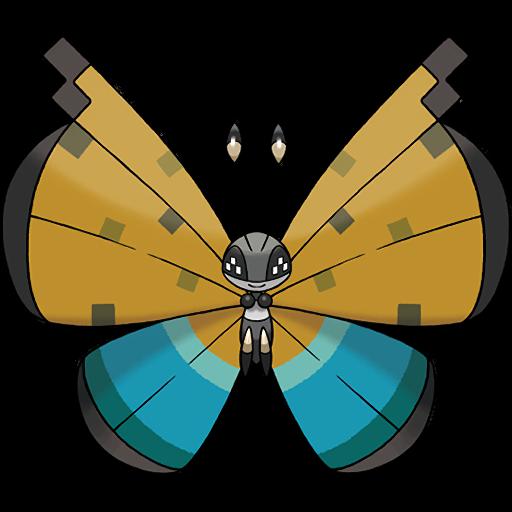

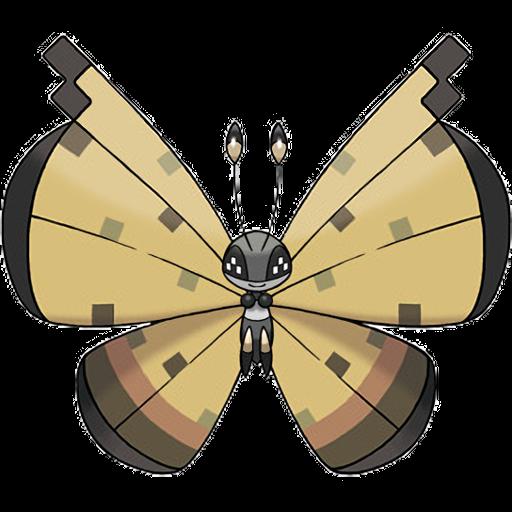

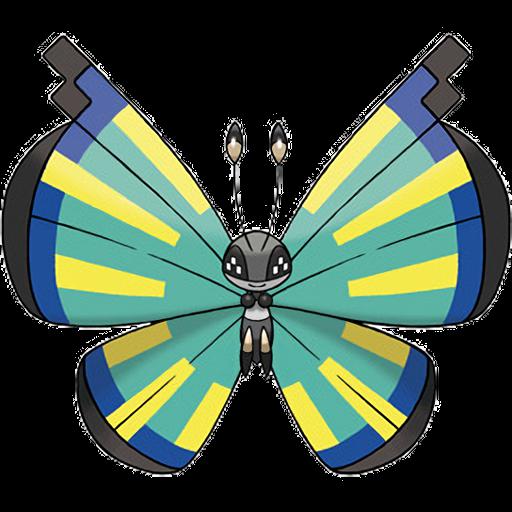

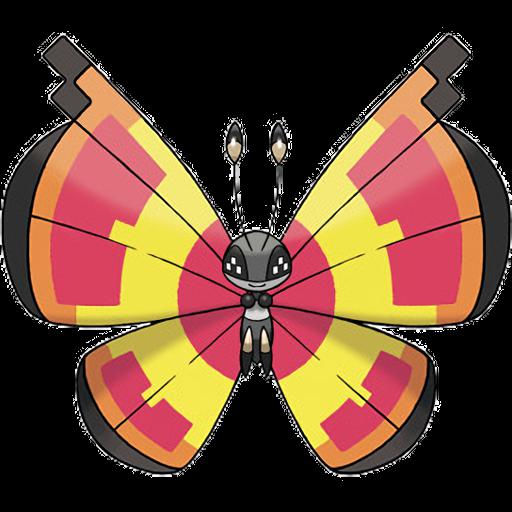

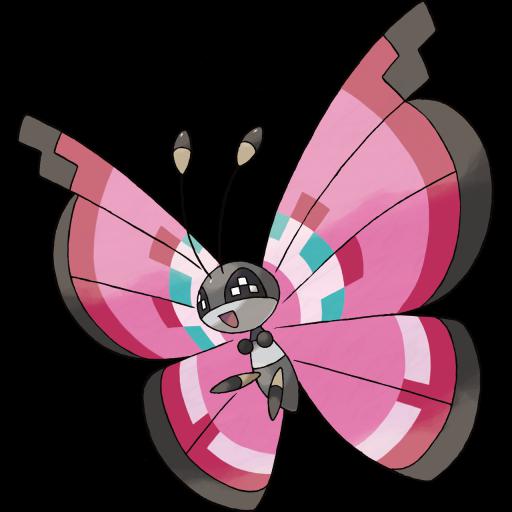

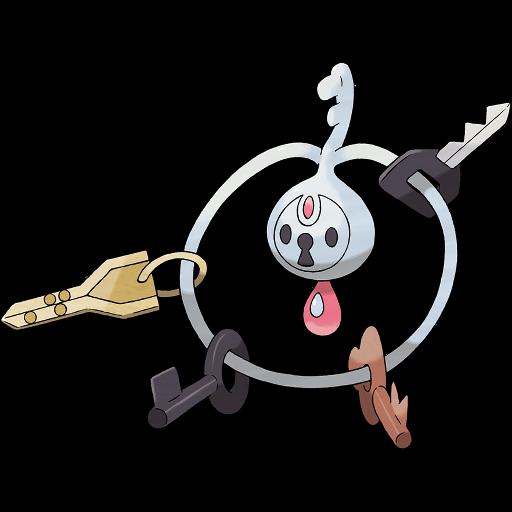

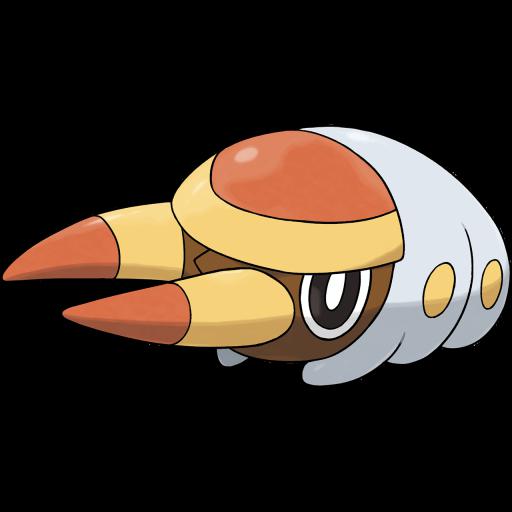

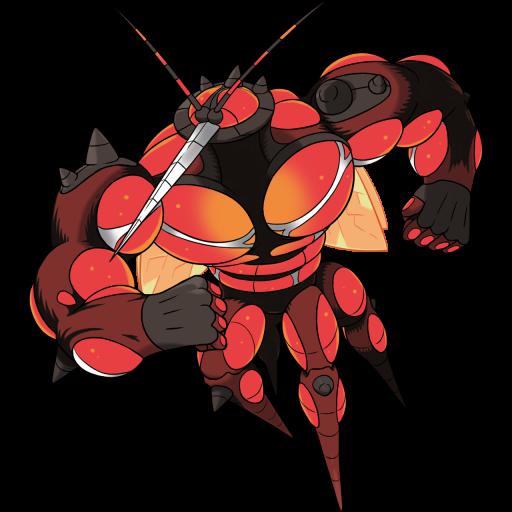

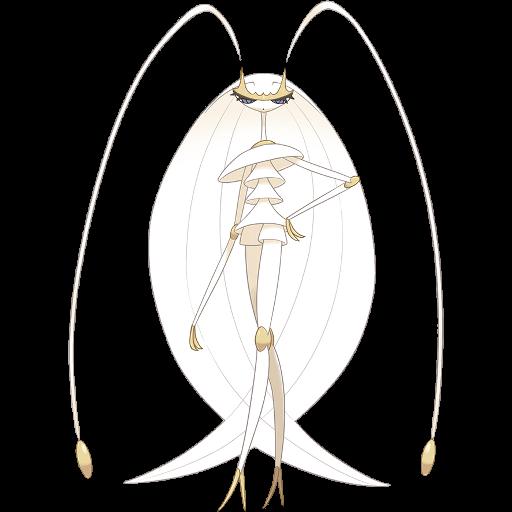

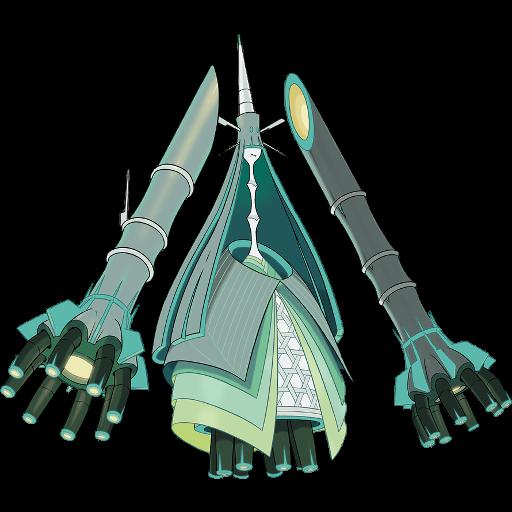

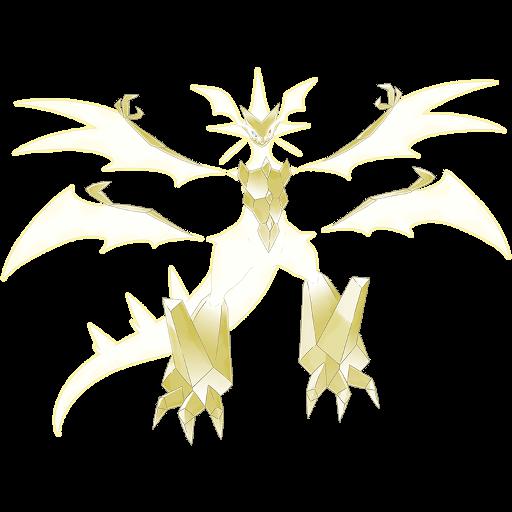

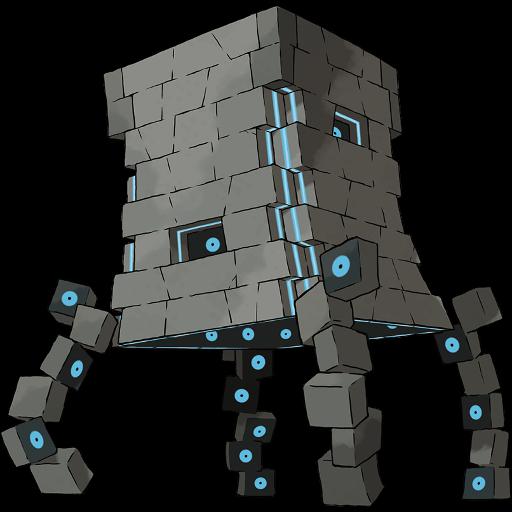

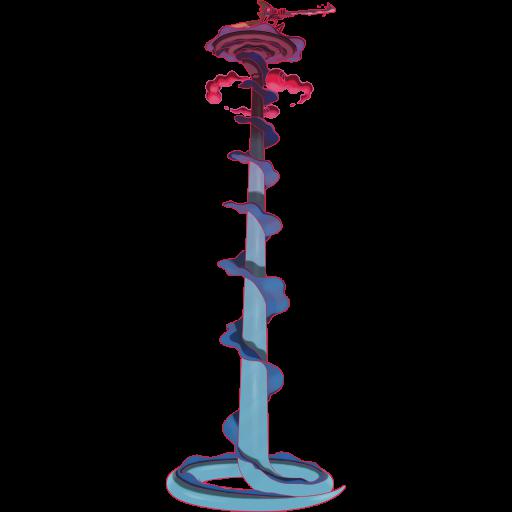

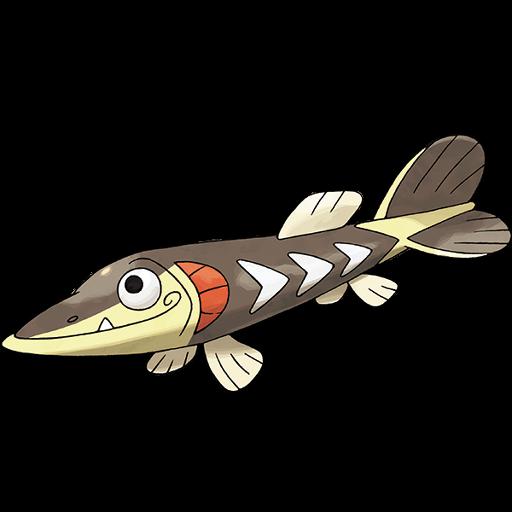

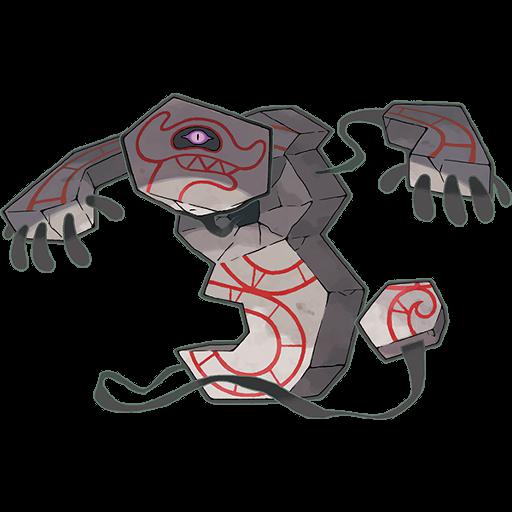

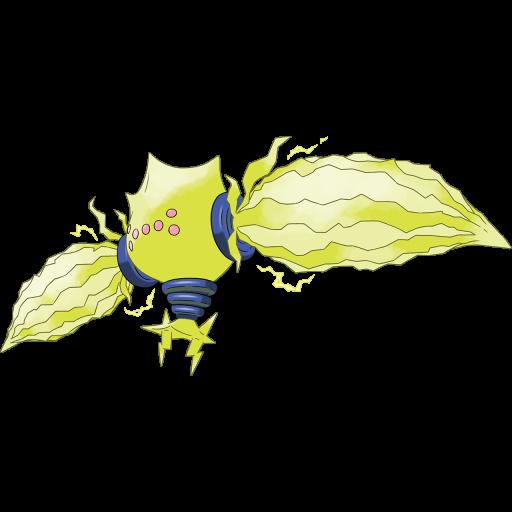

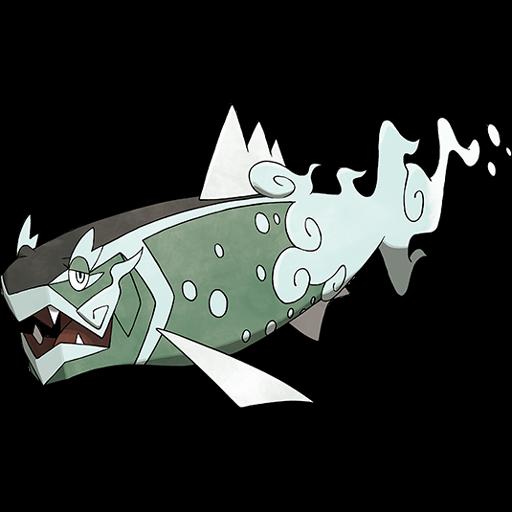

mit | [] | false | Pokemon modern artwork on Stable Diffusion Pokémon modern artwork up to Hisui concept (re-scaled to max width and height 512 px) Includes mega-evolutions, gigamax, regional and alternate forms. Unown variants are excluded, as well as Arceus/Silvally recolours (to avoid same-species overrepresentation) This is the `<pkmn-modern>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                                    | 9a8aeb68140b6c85183ac08f45da68b6 |

apache-2.0 | ['generated_from_trainer'] | false | sst2_bert-base-uncased_81 This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the GLUE SST2 dataset. It achieves the following results on the evaluation set: - Loss: 0.3565 - Accuracy: 0.9151 | 50b14acaf8ddfb39f39c70a6c2f6da53 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Wav2Vec2-Large-XLSR-53-German Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on German using 3% of the [Common Voice](https://huggingface.co/datasets/common_voice) dataset. When using this model, make sure that your speech input is sampled at 16kHz. | 9f8a148593cef0c435007e0af95d0363 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Usage The model can be used directly (without a language model) as follows: ```python import torch import torchaudio from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor test_dataset = load_dataset("common_voice", "de", split="test[:2%]") processor = Wav2Vec2Processor.from_pretrained("marcel/wav2vec2-large-xlsr-german-demo") model = Wav2Vec2ForCTC.from_pretrained("marcel/wav2vec2-large-xlsr-german-demo") resampler = torchaudio.transforms.Resample(48_000, 16_000) | 20569c17fc8cbed95a465dd4d06a83e9 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Evaluation The model can be evaluated as follows on the {language} test data of Common Voice. ```python import torch import torchaudio from datasets import load_dataset, load_metric from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor import re test_dataset = load_dataset("common_voice", "de", split="test[:10%]") wer = load_metric("wer") processor = Wav2Vec2Processor.from_pretrained("marcel/wav2vec2-large-xlsr-german-demo") model = Wav2Vec2ForCTC.from_pretrained("marcel/wav2vec2-large-xlsr-german-demo") model.to("cuda") chars_to_ignore_regex = '[\,\?\.\!\-\;\:\"\“\%\”\�\カ\æ\無\ན\カ\臣\ѹ\…\«\»\ð\ı\„\幺\א\ב\比\ш\ע\)\ứ\в\œ\ч\+\—\ш\‚\נ\м\ń\乡\$\=\ש\ф\支\(\°\и\к\̇]' substitutions = { 'e' : '[\ə\é\ě\ę\ê\ế\ế\ë\ė\е]', 'o' : '[\ō\ô\ô\ó\ò\ø\ọ\ŏ\õ\ő\о]', 'a' : '[\á\ā\ā\ă\ã\å\â\à\ą\а]', 'c' : '[\č\ć\ç\с]', 'l' : '[\ł]', 'u' : '[\ú\ū\ứ\ů]', 'und' : '[\&]', 'r' : '[\ř]', 'y' : '[\ý]', 's' : '[\ś\š\ș\ş]', 'i' : '[\ī\ǐ\í\ï\î\ï]', 'z' : '[\ź\ž\ź\ż]', 'n' : '[\ñ\ń\ņ]', 'g' : '[\ğ]', 'ss' : '[\ß]', 't' : '[\ț\ť]', 'd' : '[\ď\đ]', "'": '[\ʿ\་\’\`\´\ʻ\`\‘]', 'p': '\р' } resampler = torchaudio.transforms.Resample(48_000, 16_000) | 3f81f2c5aae20498d1b5694cc4433d9b |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def speech_file_to_array_fn(batch): batch["sentence"] = re.sub(chars_to_ignore_regex, '', batch["sentence"]).lower() for x in substitutions: batch["sentence"] = re.sub(substitutions[x], x, batch["sentence"]) speech_array, sampling_rate = torchaudio.load(batch["path"]) speech_array, sampling_rate = torchaudio.load(batch["path"]) batch["speech"] = resampler(speech_array).squeeze().numpy() return batch test_dataset = test_dataset.map(speech_file_to_array_fn) | 4bc8d868d06f8ac302475361fc2e8a62 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def evaluate(batch): inputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits pred_ids = torch.argmax(logits, dim=-1) batch["pred_strings"] = processor.batch_decode(pred_ids) return batch result = test_dataset.map(evaluate, batched=True, batch_size=8) print("WER: {:2f}".format(100 * wer.compute(predictions=result["pred_strings"], references=result["sentence"]))) ``` **Test Result**: 29.35 % | 58c5d16bd032aca8183ee5147dd43688 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.