license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

mit | ['BERT', 'Text Classification', 'relation'] | false | post process NER and relation predictions print("Sentence: ",re_ner_output["input"]) print('====Entity====') for ent in re_ner_output["entity"]: print('{}--{}'.format(ent["word"], ent["entity_group"])) print('====Relation====') for rel in re_ner_output["relation"]: print('{}--{}:{}'.format(rel['arg1']['word'], rel['arg2']['word'], rel['relation_type']['label'])) Sentence: ويتزامن ذلك مع اجتماع بايدن مع قادة الدول الأعضاء في الناتو في قمة موسعة في العاصمة الإسبانية، مدريد. ====Entity==== بايدن--PER قادة--PER الدول--GPE الناتو--ORG العاصمة--GPE الاسبانية--GPE مدريد--GPE ====Relation==== قادة--الدول:ORG-AFF الدول--الناتو:ORG-AFF العاصمة--الاسبانية:PART-WHOLE ``` | 27b4cfdf74c2cf01ff5bff1d54e13f5d |

mit | ['BERT', 'Text Classification', 'relation'] | false | BibTeX entry and citation info ```bibtex @inproceedings{lan2020gigabert, author = {Lan, Wuwei and Chen, Yang and Xu, Wei and Ritter, Alan}, title = {Giga{BERT}: Zero-shot Transfer Learning from {E}nglish to {A}rabic}, booktitle = {Proceedings of The 2020 Conference on Empirical Methods on Natural Language Processing (EMNLP)}, year = {2020} } ``` | dcfd9f50a5ae0c370b56c64dbbca9afa |

apache-2.0 | ['generated_from_trainer'] | false | t5-base-finetuned-en-to-it-lrs This model is a fine-tuned version of [t5-base](https://huggingface.co/t5-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.4687 - Bleu: 22.9793 - Gen Len: 49.8367 | 648837736522a15e7f5770c7cd49f7d2 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 40 - mixed_precision_training: Native AMP | 9846cf87deb881f2321ee1d5cb4d37be |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len | |:-------------:|:-----:|:-----:|:---------------:|:-------:|:-------:| | 1.4378 | 1.0 | 1125 | 1.9365 | 12.0299 | 55.7007 | | 1.229 | 2.0 | 2250 | 1.8493 | 15.9175 | 51.6293 | | 1.0996 | 3.0 | 3375 | 1.7781 | 17.5103 | 51.666 | | 0.9979 | 4.0 | 4500 | 1.7309 | 18.8603 | 50.8587 | | 0.9421 | 5.0 | 5625 | 1.6839 | 19.8188 | 50.4767 | | 0.9181 | 6.0 | 6750 | 1.6602 | 20.5693 | 50.272 | | 0.8882 | 7.0 | 7875 | 1.6386 | 20.9771 | 50.3833 | | 0.8498 | 8.0 | 9000 | 1.6252 | 21.2237 | 50.5093 | | 0.8356 | 9.0 | 10125 | 1.6079 | 21.3987 | 50.31 | | 0.8164 | 10.0 | 11250 | 1.5698 | 21.5409 | 50.388 | | 0.8001 | 11.0 | 12375 | 1.5779 | 21.7354 | 49.822 | | 0.7805 | 12.0 | 13500 | 1.5637 | 21.9649 | 49.8213 | | 0.764 | 13.0 | 14625 | 1.5540 | 22.1342 | 50.2 | | 0.7594 | 14.0 | 15750 | 1.5456 | 22.2318 | 50.0147 | | 0.7355 | 15.0 | 16875 | 1.5309 | 22.2936 | 49.7693 | | 0.7343 | 16.0 | 18000 | 1.5247 | 22.5065 | 49.7607 | | 0.7231 | 17.0 | 19125 | 1.5231 | 22.3902 | 49.7733 | | 0.7183 | 18.0 | 20250 | 1.5211 | 22.3672 | 49.8313 | | 0.7068 | 19.0 | 21375 | 1.5075 | 22.5519 | 49.7433 | | 0.7087 | 20.0 | 22500 | 1.5006 | 22.4827 | 49.5 | | 0.6965 | 21.0 | 23625 | 1.4978 | 22.5907 | 49.6833 | | 0.6896 | 22.0 | 24750 | 1.4955 | 22.6286 | 49.836 | | 0.689 | 23.0 | 25875 | 1.4924 | 22.7052 | 49.7267 | | 0.6793 | 24.0 | 27000 | 1.4890 | 22.7444 | 49.8393 | | 0.6708 | 25.0 | 28125 | 1.4889 | 22.6821 | 49.8673 | | 0.6671 | 26.0 | 29250 | 1.4835 | 22.7866 | 49.676 | | 0.6652 | 27.0 | 30375 | 1.4853 | 22.7691 | 49.7107 | | 0.6578 | 28.0 | 31500 | 1.4787 | 22.8173 | 49.738 | | 0.6556 | 29.0 | 32625 | 1.4777 | 22.7408 | 49.6687 | | 0.6592 | 30.0 | 33750 | 1.4772 | 22.8371 | 49.7307 | | 0.6546 | 31.0 | 34875 | 1.4819 | 22.8398 | 49.6053 | | 0.6465 | 32.0 | 36000 | 1.4741 | 22.8379 | 49.658 | | 0.6381 | 33.0 | 37125 | 1.4691 | 22.9108 | 49.8113 | | 0.6429 | 34.0 | 38250 | 1.4660 | 22.9405 | 49.7933 | | 0.6381 | 35.0 | 39375 | 1.4701 | 22.8777 | 49.7467 | | 0.6454 | 36.0 | 40500 | 1.4692 | 22.9225 | 49.7227 | | 0.635 | 37.0 | 41625 | 1.4683 | 22.9914 | 49.6767 | | 0.6389 | 38.0 | 42750 | 1.4691 | 22.9904 | 49.7133 | | 0.6368 | 39.0 | 43875 | 1.4679 | 22.9962 | 49.8273 | | 0.6345 | 40.0 | 45000 | 1.4687 | 22.9793 | 49.8367 | | add9619bbd3303b46c361299e0671f0a |

apache-2.0 | ['generated_from_keras_callback'] | false | disilbert-blm-tweets-binary This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.1159 - Train Accuracy: 0.9556 - Validation Loss: 0.5772 - Validation Accuracy: 0.7965 - Epoch: 4 | 226f124b424148266ca00d90c7d4016c |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch | |:----------:|:--------------:|:---------------:|:-------------------:|:-----:| | 0.5941 | 0.6905 | 0.5159 | 0.7168 | 0 | | 0.4041 | 0.8212 | 0.4589 | 0.8142 | 1 | | 0.2491 | 0.9026 | 0.6014 | 0.7876 | 2 | | 0.1011 | 0.9692 | 0.7181 | 0.8053 | 3 | | 0.1159 | 0.9556 | 0.5772 | 0.7965 | 4 | | a7a3c5cbcf20fd323a6abaf92cd2d533 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | ciasto Dreambooth model trained by Kurapka with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Sample pictures of this concept: | 574e40e8572b1bcb2d3c216af357460d |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-cola This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the glue dataset. It achieves the following results on the evaluation set: - Loss: 0.8663 - Matthews Correlation: 0.5475 | 74df0b19c9bf36c3f7b090633753d537 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Matthews Correlation | |:-------------:|:-----:|:----:|:---------------:|:--------------------:| | 0.5248 | 1.0 | 535 | 0.5171 | 0.4210 | | 0.3418 | 2.0 | 1070 | 0.4971 | 0.5236 | | 0.2289 | 3.0 | 1605 | 0.6874 | 0.5023 | | 0.1722 | 4.0 | 2140 | 0.7680 | 0.5392 | | 0.118 | 5.0 | 2675 | 0.8663 | 0.5475 | | f580edd688ecb186b7826fa535f83e50 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 0 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: constant - training_steps: 1000 | 613645ec0852d4ca09d0849b47cce7b4 |

apache-2.0 | ['generated_from_trainer'] | false | glue-mrpc This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the GLUE MRPC dataset. It achieves the following results on the evaluation set: - Loss: 0.6566 - Accuracy: 0.8554 - F1: 0.8974 - Combined Score: 0.8764 | ab65fab646b6e241eda8a2b0a49f27d2 |

apache-2.0 | ['generated_from_trainer'] | false | t5-large-multiwoz This model is a fine-tuned version of [t5-large](https://huggingface.co/t5-large) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.0064 - Acc: 1.0 - True Num: 56671 - Num: 56776 | eafb670d0b0d5d527642e335475d8e4d |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - gradient_accumulation_steps: 16 - total_train_batch_size: 64 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 10.0 | b3a73a5668359b4a5633004ab6208738 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Acc | True Num | Num | |:-------------:|:-----:|:----:|:---------------:|:----:|:--------:|:-----:| | 0.1261 | 1.13 | 1000 | 0.0933 | 0.98 | 55574 | 56776 | | 0.0951 | 2.25 | 2000 | 0.0655 | 0.98 | 55867 | 56776 | | 0.0774 | 3.38 | 3000 | 0.0480 | 0.99 | 56047 | 56776 | | 0.0584 | 4.51 | 4000 | 0.0334 | 0.99 | 56252 | 56776 | | 0.042 | 5.64 | 5000 | 0.0222 | 0.99 | 56411 | 56776 | | 0.0329 | 6.76 | 6000 | 0.0139 | 1.0 | 56502 | 56776 | | 0.0254 | 7.89 | 7000 | 0.0094 | 1.0 | 56626 | 56776 | | 0.0214 | 9.02 | 8000 | 0.0070 | 1.0 | 56659 | 56776 | | f128a2575ff3c4b8a55c5e25ca090da1 |

mit | ['donut', 'image-to-text', 'vision'] | false | Donut (base-sized model, pre-trained only) Donut model pre-trained-only. It was introduced in the paper [OCR-free Document Understanding Transformer](https://arxiv.org/abs/2111.15664) by Geewok et al. and first released in [this repository](https://github.com/clovaai/donut). Disclaimer: The team releasing Donut did not write a model card for this model so this model card has been written by the Hugging Face team. | 003e1d7a099735fdf74dc7b18a816169 |

mit | ['donut', 'image-to-text', 'vision'] | false | Intended uses & limitations This model is meant to be fine-tuned on a downstream task, like document image classification or document parsing. See the [model hub](https://huggingface.co/models?search=donut) to look for fine-tuned versions on a task that interests you. | eb0d5c78dd5cb760eda4341d6aacf7c8 |

mit | [] | false | art brut on Stable Diffusion This is the `<art-brut>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:     | 4a14042c6e2699b3a89c9bff687e405c |

apache-2.0 | ['stanza', 'token-classification'] | false | Stanza model for Latvian (lv) Stanza is a collection of accurate and efficient tools for the linguistic analysis of many human languages. Starting from raw text to syntactic analysis and entity recognition, Stanza brings state-of-the-art NLP models to languages of your choosing. Find more about it in [our website](https://stanfordnlp.github.io/stanza) and our [GitHub repository](https://github.com/stanfordnlp/stanza). This card and repo were automatically prepared with `hugging_stanza.py` in the `stanfordnlp/huggingface-models` repo Last updated 2022-09-25 01:45:24.599 | 19088cbbd8019ec6197e04422d2c74d6 |

apache-2.0 | ['image-classification', 'pytorch', 'onnx'] | false | Usage instructions ```python from PIL import Image from torchvision.transforms import Compose, ConvertImageDtype, Normalize, PILToTensor, Resize from torchvision.transforms.functional import InterpolationMode from pyrovision.models import model_from_hf_hub model = model_from_hf_hub("pyronear/resnet34").eval() img = Image.open(path_to_an_image).convert("RGB") | 280bfe0983090725b1feff0a48ee4f00 |

apache-2.0 | ['image-classification', 'pytorch', 'onnx'] | false | Citation Original paper ```bibtex @article{DBLP:journals/corr/HeZRS15, author = {Kaiming He and Xiangyu Zhang and Shaoqing Ren and Jian Sun}, title = {Deep Residual Learning for Image Recognition}, journal = {CoRR}, volume = {abs/1512.03385}, year = {2015}, url = {http://arxiv.org/abs/1512.03385}, eprinttype = {arXiv}, eprint = {1512.03385}, timestamp = {Wed, 17 Apr 2019 17:23:45 +0200}, biburl = {https://dblp.org/rec/journals/corr/HeZRS15.bib}, bibsource = {dblp computer science bibliography, https://dblp.org} } ``` Source of this implementation ```bibtex @software{chintala_torchvision_2017, author = {Chintala, Soumith}, month = {4}, title = {{Torchvision}}, url = {https://github.com/pytorch/vision}, year = {2017} } ``` | a4de2df63c59fb95eb54a0d9e9de2b72 |

apache-2.0 | ['generated_from_trainer'] | false | Article_250v4_NER_Model_3Epochs_UNAUGMENTED This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the article250v4_wikigold_split dataset. It achieves the following results on the evaluation set: - Loss: 0.3243 - Precision: 0.4027 - Recall: 0.4337 - F1: 0.4176 - Accuracy: 0.8775 | 8fda5ad499e281b6293ed4448ce9c805 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 28 | 0.5309 | 0.0816 | 0.0144 | 0.0245 | 0.7931 | | No log | 2.0 | 56 | 0.3620 | 0.3795 | 0.3674 | 0.3733 | 0.8623 | | No log | 3.0 | 84 | 0.3243 | 0.4027 | 0.4337 | 0.4176 | 0.8775 | | 7dc6e63af7504949943dcec50bbde39b |

apache-2.0 | ['translation'] | false | opus-mt-rw-es * source languages: rw * target languages: es * OPUS readme: [rw-es](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/rw-es/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/rw-es/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/rw-es/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/rw-es/opus-2020-01-16.eval.txt) | ac1c8b9d5578ac1eadcd6eed1ad83747 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | bart-base-finetuned-poems This model is a fine-tuned version of [facebook/bart-base](https://huggingface.co/facebook/bart-base) on the None dataset. It achieves the following results on the evaluation set: - eval_loss: 3.1970 - eval_rouge1: 16.9107 - eval_rouge2: 8.1464 - eval_rougeL: 16.5554 - eval_rougeLsum: 16.7396 - eval_runtime: 487.5616 - eval_samples_per_second: 0.41 - eval_steps_per_second: 0.051 - epoch: 2.0 - step: 200 | 69de415d54b465eeebc97d68bb1fe92c |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Whisper Small Telugu - Naga Budigam This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the Chai_Bisket_Stories_16-08-2021_14-17 dataset. It achieves the following results on the evaluation set: - Loss: 0.2875 - Wer: 38.1492 | 9a33bedeaa79695250919c78d8380a62 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 15000 - mixed_precision_training: Native AMP | 104b1b1e30da28f791e4ac833c13f9c8 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:-------:| | 0.2064 | 0.66 | 500 | 0.2053 | 60.1707 | | 0.1399 | 1.33 | 1000 | 0.1535 | 49.3269 | | 0.1093 | 1.99 | 1500 | 0.1365 | 44.5516 | | 0.0771 | 2.66 | 2000 | 0.1316 | 42.1136 | | 0.0508 | 3.32 | 2500 | 0.1395 | 41.1384 | | 0.0498 | 3.99 | 3000 | 0.1386 | 40.5395 | | 0.0302 | 4.65 | 3500 | 0.1529 | 40.9529 | | 0.0157 | 5.32 | 4000 | 0.1719 | 40.6667 | | 0.0183 | 5.98 | 4500 | 0.1723 | 40.3646 | | 0.0083 | 6.65 | 5000 | 0.1911 | 40.4335 | | 0.0061 | 7.31 | 5500 | 0.2109 | 40.4176 | | 0.0055 | 7.98 | 6000 | 0.2075 | 39.7021 | | 0.0039 | 8.64 | 6500 | 0.2186 | 40.2639 | | 0.0026 | 9.31 | 7000 | 0.2254 | 39.1032 | | 0.0035 | 9.97 | 7500 | 0.2289 | 39.2834 | | 0.0016 | 10.64 | 8000 | 0.2332 | 39.1456 | | 0.0016 | 11.3 | 8500 | 0.2395 | 39.4371 | | 0.0016 | 11.97 | 9000 | 0.2447 | 39.2410 | | 0.0009 | 12.63 | 9500 | 0.2548 | 38.7799 | | 0.0008 | 13.3 | 10000 | 0.2551 | 38.7481 | | 0.0008 | 13.96 | 10500 | 0.2621 | 38.8276 | | 0.0007 | 14.63 | 11000 | 0.2633 | 38.6686 | | 0.0003 | 15.29 | 11500 | 0.2711 | 38.4566 | | 0.0005 | 15.96 | 12000 | 0.2772 | 38.7852 | | 0.0001 | 16.62 | 12500 | 0.2771 | 38.2658 | | 0.0001 | 17.29 | 13000 | 0.2808 | 38.2393 | | 0.0001 | 17.95 | 13500 | 0.2815 | 38.1810 | | 0.0 | 18.62 | 14000 | 0.2854 | 38.2022 | | 0.0 | 19.28 | 14500 | 0.2872 | 38.1333 | | 0.0 | 19.95 | 15000 | 0.2875 | 38.1492 | | 80b4fc98ba2a4b12b058eb7e48981b7e |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Whisper Small Ar- Martha: This model is a fine-tuned version of openai/whisper-small on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: Loss: 0.5854 Wer: 70.2071 | 87696623ddfce3f1a702f89a9b2f55d2 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: learning_rate: 1e-05 train_batch_size: 16 eval_batch_size: 8 seed: 42 optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 lr_scheduler_type: linear lr_scheduler_warmup_steps: 500 training_steps: 500 mixed_precision_training: Native AMP | e545031459ff19dd7261a5e404b04961 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.9692 | 0.14 | 125 | 1.3372 | 173.0952| | 0.5716 | 0.29 | 250 | 0.9058 | 148.6795| | 0.3297 | 0.43 | 375 | 0.5825 | 63.6709 | | 0.3083 | 0.57 | 500 | 0.5854 | 70.2071 | | d009b15898cc6c6c772c84e3a3024519 |

apache-2.0 | ['generated_from_trainer'] | false | This model is part of a test for creating multilingual BioMedical NER systems. Not intended for proffesional use now. This model is a fine-tuned version of [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) on the CRAFT+BC4CHEMD+BioNLP09 datasets concatenated. It achieves the following results on the evaluation set: - Loss: 0.1027 - Precision: 0.9830 - Recall: 0.9832 - F1: 0.9831 - Accuracy: 0.9799 | d1ac97e0592659e16cb301d82903863e |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.0658 | 1.0 | 6128 | 0.0751 | 0.9795 | 0.9795 | 0.9795 | 0.9758 | | 0.0406 | 2.0 | 12256 | 0.0753 | 0.9827 | 0.9815 | 0.9821 | 0.9786 | | 0.0182 | 3.0 | 18384 | 0.0934 | 0.9834 | 0.9825 | 0.9829 | 0.9796 | | 0.011 | 4.0 | 24512 | 0.1027 | 0.9830 | 0.9832 | 0.9831 | 0.9799 | | 150c8632b36ef71d8b837e8a05f4c2de |

apache-2.0 | ['automatic-speech-recognition', 'pl'] | false | exp_w2v2t_pl_hubert_s484 Fine-tuned [facebook/hubert-large-ll60k](https://huggingface.co/facebook/hubert-large-ll60k) for speech recognition using the train split of [Common Voice 7.0 (pl)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | d39792abb4034ee513030361bb51e5ba |

apache-2.0 | ['generated_from_trainer'] | false | tmp This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on an unkown dataset. It achieves the following results on the evaluation set: - Loss: nan - Bleu: 0.0099 - Gen Len: 3.3917 | cb28d80e5c28c20c91b1bbb0b7368b42 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 1024 - eval_batch_size: 1024 - seed: 13 - gradient_accumulation_steps: 2 - total_train_batch_size: 2048 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 20.0 - mixed_precision_training: Native AMP | e773fc8d4f0e01564a18535ea0a1d7c1 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:------:|:-------:| | No log | 1.0 | 1 | nan | 0.0114 | 3.3338 | | No log | 2.0 | 2 | nan | 0.0114 | 3.3338 | | No log | 3.0 | 3 | nan | 0.0114 | 3.3338 | | No log | 4.0 | 4 | nan | 0.0114 | 3.3338 | | No log | 5.0 | 5 | nan | 0.0114 | 3.3338 | | No log | 6.0 | 6 | nan | 0.0114 | 3.3338 | | No log | 7.0 | 7 | nan | 0.0114 | 3.3338 | | No log | 8.0 | 8 | nan | 0.0114 | 3.3338 | | No log | 9.0 | 9 | nan | 0.0114 | 3.3338 | | No log | 10.0 | 10 | nan | 0.0114 | 3.3338 | | No log | 11.0 | 11 | nan | 0.0114 | 3.3338 | | No log | 12.0 | 12 | nan | 0.0114 | 3.3338 | | No log | 13.0 | 13 | nan | 0.0114 | 3.3338 | | No log | 14.0 | 14 | nan | 0.0114 | 3.3338 | | No log | 15.0 | 15 | nan | 0.0114 | 3.3338 | | No log | 16.0 | 16 | nan | 0.0114 | 3.3338 | | No log | 17.0 | 17 | nan | 0.0114 | 3.3338 | | No log | 18.0 | 18 | nan | 0.0114 | 3.3338 | | No log | 19.0 | 19 | nan | 0.0114 | 3.3338 | | No log | 20.0 | 20 | nan | 0.0114 | 3.3338 | | 026573e62e14eea2c45cb6fc3f9566c8 |

apache-2.0 | ['whisper-event', 'generated_from_trainer', 'hf-asr-leaderboard'] | false | Whisper Small ar - Zaid Alyafeai This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 0.3509 - Wer: 22.3838 | 40e3390b2b6b05563e7e217586e310e2 |

apache-2.0 | ['whisper-event', 'generated_from_trainer', 'hf-asr-leaderboard'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 8 - eval_batch_size: 4 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 5000 - mixed_precision_training: Native AMP | 299145f2e3fbf46820ae300bc89dfd54 |

apache-2.0 | ['whisper-event', 'generated_from_trainer', 'hf-asr-leaderboard'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.2944 | 0.2 | 1000 | 0.4355 | 30.6471 | | 0.2671 | 0.4 | 2000 | 0.3786 | 25.8539 | | 0.172 | 1.08 | 3000 | 0.3520 | 23.4573 | | 0.1043 | 1.28 | 4000 | 0.3542 | 23.3278 | | 0.0991 | 1.48 | 5000 | 0.3509 | 22.3838 | | 03adf48d2bc89ba32add9d584a780c17 |

apache-2.0 | ['automatic-speech-recognition', 'pt'] | false | exp_w2v2t_pt_wav2vec2_s859 Fine-tuned [facebook/wav2vec2-large-lv60](https://huggingface.co/facebook/wav2vec2-large-lv60) for speech recognition using the train split of [Common Voice 7.0 (pt)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | e55f8fced9f1efe24b7e097f1d0ce5d7 |

apache-2.0 | ['generated_from_trainer'] | false | t5-small-finetuned-en-to-ro-fp16_off-lr_2e-7-weight_decay_0.001 This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the wmt16 dataset. It achieves the following results on the evaluation set: - Loss: 1.4943 - Bleu: 4.7258 - Gen Len: 18.7149 | 55199d1d7ce2f9ddf81503a16f41e2ee |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-07 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1 | 0af6d3d2c84c6baece2c166bc741fc96 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:------:|:-------:| | 1.047 | 1.0 | 7629 | 1.4943 | 4.7258 | 18.7149 | | 7f4434d016b0c36118d3d691d8023972 |

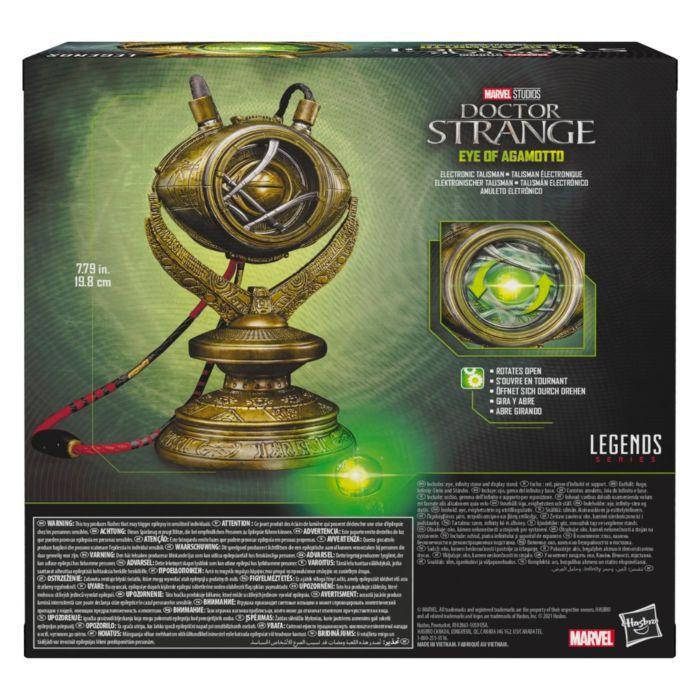

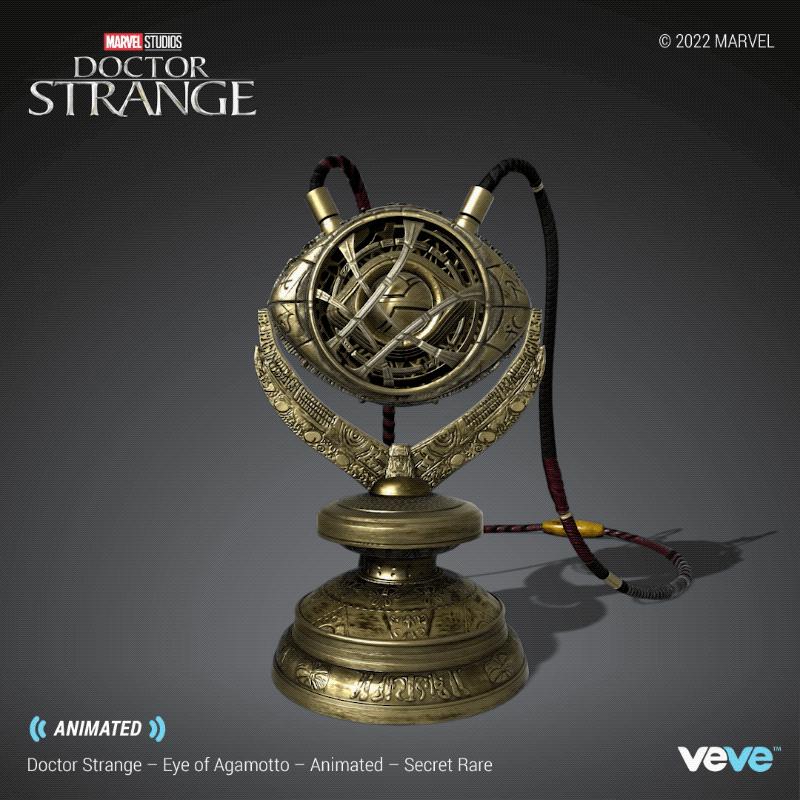

mit | [] | false | Eye of Agamotto on Stable Diffusion This is the `<eye-aga>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:                                   | efd0faeac9f579f160f3d85b60595a45 |

mit | ['msmarco', 't5', 'pytorch', 'tensorflow', 'pt', 'pt-br'] | false | Introduction mT5-base-en-pt-msmarco-v1 is a mT5-based model fine-tuned on a bilingual version of MS MARCO passage dataset. This bilingual dataset version is formed by the original MS MARCO dataset (in English) and a Portuguese translated version. In the version v1, the Portuguese dataset was translated using [Helsinki](https://huggingface.co/Helsinki-NLP) NMT model. Further information about the dataset or the translation method can be found on our paper [**mMARCO: A Multilingual Version of MS MARCO Passage Ranking Dataset**](https://arxiv.org/abs/2108.13897) and [mMARCO](https://github.com/unicamp-dl/mMARCO) repository. | f0ad6df7e3f2d6f9e43f27f24876530c |

mit | ['msmarco', 't5', 'pytorch', 'tensorflow', 'pt', 'pt-br'] | false | Usage ```python from transformers import T5Tokenizer, MT5ForConditionalGeneration model_name = 'unicamp-dl/mt5-base-en-pt-msmarco-v1' tokenizer = T5Tokenizer.from_pretrained(model_name) model = MT5ForConditionalGeneration.from_pretrained(model_name) ``` | 4d67016681ae909968283467af5e9e7c |

mit | ['msmarco', 't5', 'pytorch', 'tensorflow', 'pt', 'pt-br'] | false | Citation If you use mt5-base-en-pt-msmarco-v1, please cite: @misc{bonifacio2021mmarco, title={mMARCO: A Multilingual Version of MS MARCO Passage Ranking Dataset}, author={Luiz Henrique Bonifacio and Vitor Jeronymo and Hugo Queiroz Abonizio and Israel Campiotti and Marzieh Fadaee and and Roberto Lotufo and Rodrigo Nogueira}, year={2021}, eprint={2108.13897}, archivePrefix={arXiv}, primaryClass={cs.CL} } | 22e0ce15a6481d33cc5aec7f8ace8c74 |

mit | ['pytorch', 'deberta', 'deberta-v2', 'question-answering', 'question answering', 'squad'] | false | このモデルはdeberta-v2-base-japaneseをファインチューニングしてQAタスクに用いれるようにしたものです。 このモデルはdeberta-v2-base-japaneseを運転ドメインQAデータセット(DDQA)( https://nlp.ist.i.kyoto-u.ac.jp/index.php?Driving%20domain%20QA%20datasets )を用いてファインチューニングしたものです。 Question-Answeringタスク(SQuAD)に用いることができます。 | cee21f1f9ac93b5fd34cf4e332092da4 |

mit | ['pytorch', 'deberta', 'deberta-v2', 'question-answering', 'question answering', 'squad'] | false | This model is fine-tuned model for Question-Answering which is based on deberta-v2-base-japanese This model is fine-tuned by using DDQA dataset. You could use this model for Question-Answering tasks. | bfcdeb49a25b70659c7bd7eb4c838391 |

mit | ['pytorch', 'deberta', 'deberta-v2', 'question-answering', 'question answering', 'squad'] | false | How to use 使い方 transformersおよびpytorch、sentencepiece、Juman++をインストールしてください。 以下のコードの内どちらか片方のコードを実行することで、Question-Answeringタスクを解かせることができます。(お好きな方をお選びください) please execute either code. ```python import torch from transformers import AutoTokenizer tokenizer = AutoTokenizer.from_pretrained('ku-nlp/deberta-v2-base-japanese') model=torch.load('C:\\[.pth modelのあるディレクトリ]\\My_deberta_model_squad.pth') | 8ec43090f66f9c548432cc17cb806643 |

mit | ['pytorch', 'deberta', 'deberta-v2', 'question-answering', 'question answering', 'squad'] | false | 答えに該当する部分を抜き取る print(prediction) ``` ```python import torch from transformers import AutoTokenizer, AutoModelForQuestionAnswering tokenizer = AutoTokenizer.from_pretrained('ku-nlp/deberta-v2-base-japanese') model=AutoModelForQuestionAnswering.from_pretrained('Mizuiro-sakura/deberta-v2-base-japanese-finetuned-QAe') | 2d776b5187212cb5434bb3ac9d5fe17c |

other | [] | false | Japanese-opt-2.7b Model ***Disclaimer: This model is a work in progress!*** This model is a fine-tuned version of [facebook/opt-2.7b](https://huggingface.co/facebook/opt-2.7b) on the japanese wikipedia dataset. | adced40cbcd6c3be73f55edc0ada2f34 |

other | [] | false | Quick start ```python from transformers import pipeline generator = pipeline('text-generation', model="tensorcat/japanese-opt-2.7b" , device=0, use_fast=False) generator("今日は", min_length=80, max_length=200, do_sample=True, early_stopping=True, temperature=.98, top_k=50, top_p=1.0) ``` | 425cc8f050ec6ec966637801ff783251 |

other | [] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 1 - eval_batch_size: 1 - distributed_type: multi-GPU - num_devices: 4 - total_train_batch_size: 4 - total_eval_batch_size: 4 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3.0 | cc1a1f9692778cc1737ee46c37be4e71 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 12 - eval_batch_size: 8 - seed: 4 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - num_epochs: 10.0 | 10d52cc29de9bcaaf495048650f718b9 |

cc-by-sa-4.0 | ['Summarization', 'abstractive summarization', 'mt5-base', 'Czech', 'text2text generation', 'text generation'] | false | mt5-base-multilingual-summarization-multilarge-cs This model is a fine-tuned checkpoint of [google/mt5-base](https://huggingface.co/google/mt5-base) on the Multilingual large summarization dataset focused on Czech texts to produce multilingual summaries. | 162c9cf46c24701ce8d305bb0b896459 |

cc-by-sa-4.0 | ['Summarization', 'abstractive summarization', 'mt5-base', 'Czech', 'text2text generation', 'text generation'] | false | Task The model deals with a multi-sentence summary in eight different languages. With the idea of adding other foreign language documents, and by having a considerable amount of Czech documents, we aimed to improve model summarization in the Czech language. Supported languages: ```'cs': '<extra_id_0>', 'en': '<extra_id_1>','de': '<extra_id_2>', 'es': '<extra_id_3>', 'fr': '<extra_id_4>', 'ru': '<extra_id_5>', 'tu': '<extra_id_6>', 'zh': '<extra_id_7>'``` | 61879f1fc51611633f23e881f1f1cdfd |

cc-by-sa-4.0 | ['Summarization', 'abstractive summarization', 'mt5-base', 'Czech', 'text2text generation', 'text generation'] | false | ("inference_cfg", OrderedDict([ ("num_beams", 4), ("top_k", 40), ("top_p", 0.92), ("do_sample", True), ("temperature", 0.95), ("repetition_penalty", 1.23), ("no_repeat_ngram_size", None), ("early_stopping", True), ("max_length", 128), ("min_length", 10), ])), | 61d3b50c96f689f595e0cd992ff25503 |

cc-by-sa-4.0 | ['Summarization', 'abstractive summarization', 'mt5-base', 'Czech', 'text2text generation', 'text generation'] | false | texts to summarize values = (list of strings, string, dataset) ("texts", [ "english text1 to summarize", "english text2 to summarize", ] ), | f1c1f8c69da4eef144341178c85e4538 |

cc-by-sa-4.0 | ['Summarization', 'abstractive summarization', 'mt5-base', 'Czech', 'text2text generation', 'text generation'] | false | Dataset Multilingual large summarization dataset consists of 10 sub-datasets mainly based on news and daily mails. For the training, it was used the entire training set and 72% of the validation set. ``` Train set: 3 464 563 docs Validation set: 121 260 docs ``` | Stats | fragment | | | avg document length | | avg summary length | | Documents | |-------------|----------|---------------------|--------------------|--------|---------|--------|--------|--------| | __dataset__ |__compression__ | __density__ | __coverage__ | __nsent__ | __nwords__ | __nsent__ | __nwords__ | __count__ | | cnc | 7.388 | 0.303 | 0.088 | 16.121 | 316.912 | 3.272 | 46.805 | 750K | | sumeczech | 11.769 | 0.471 | 0.115 | 27.857 | 415.711 | 2.765 | 38.644 | 1M | | cnndm | 13.688 | 2.983 | 0.538 | 32.783 | 676.026 | 4.134 | 54.036 | 300K | | xsum | 18.378 | 0.479 | 0.194 | 18.607 | 369.134 | 1.000 | 21.127 | 225K| | mlsum/tu | 8.666 | 5.418 | 0.461 | 14.271 | 214.496 | 1.793 | 25.675 | 274K | | mlsum/de | 24.741 | 8.235 | 0.469 | 32.544 | 539.653 | 1.951 | 23.077 | 243K| | mlsum/fr | 24.388 | 2.688 | 0.424 | 24.533 | 612.080 | 1.320 | 26.93 | 425K | | mlsum/es | 36.185 | 3.705 | 0.510 | 31.914 | 746.927 | 1.142 | 21.671 | 291K | | mlsum/ru | 78.909 | 1.194 | 0.246 | 62.141 | 948.079 | 1.012 | 11.976 | 27K| | cnewsum | 20.183 | 0.000 | 0.000 | 16.834 | 438.271 | 1.109 | 21.926 | 304K | | 9a804075890e2e45ebfd91f3bc8336f5 |

cc-by-sa-4.0 | ['Summarization', 'abstractive summarization', 'mt5-base', 'Czech', 'text2text generation', 'text generation'] | false | ROUGE results per individual dataset test set: | ROUGE | ROUGE-1 | | | ROUGE-2 | | | ROUGE-L | | | |-----------|---------|---------|-----------|--------|--------|-----------|--------|--------|---------| | |Precision | Recall | Fscore | Precision | Recall | Fscore | Precision | Recall | Fscore | | cnc | 30.62 | 19.83 | 23.44 | 9.94 | 6.52 | 7.67 | 22.92 | 14.92 | 17.6 | | sumeczech | 27.57 | 17.6 | 20.85 | 8.12 | 5.23 | 6.17 | 20.84 | 13.38 | 15.81 | | cnndm | 43.83 | 37.73 | 39.34 | 20.81 | 17.82 | 18.6 | 31.8 | 27.42 | 28.55 | | xsum | 41.63 | 30.54 | 34.56 | 16.13 | 11.76 | 13.33 | 33.65 | 24.74 | 27.97 | | mlsum-tu- | 54.4 | 43.29 | 46.2 | 38.78 | 31.31 | 33.23 | 48.18 | 38.44 | 41 | | mlsum-de | 47.94 | 44.14 | 45.11 | 36.42 | 35.24 | 35.42 | 44.43 | 41.42 | 42.16 | | mlsum-fr | 35.26 | 25.96 | 28.98 | 16.72 | 12.35 | 13.75 | 28.06 | 20.75 | 23.12 | | mlsum-es | 33.37 | 24.84 | 27.52 | 13.29 | 10.05 | 11.05 | 27.63 | 20.69 | 22.87 | | mlsum-ru | 0.79 | 0.66 | 0.66 | 0.26 | 0.2 | 0.22 | 0.79 | 0.66 | 0.65 | | cnewsum | 24.49 | 24.38 | 23.23 | 6.48 | 6.7 | 6.24 | 24.18 | 24.04 | 22.91 | | feed3188cbfcf433adaf33b966b1cabb |

cc-by-sa-4.0 | [] | false | Usage Load in transformers library with: ``` from transformers import AutoTokenizer, AutoModelForMaskedLM tokenizer = AutoTokenizer.from_pretrained("EMBEDDIA/sloberta") model = AutoModelForMaskedLM.from_pretrained("EMBEDDIA/sloberta") ``` | a281e3aef5956ae20afe91e26d15e211 |

cc-by-sa-4.0 | [] | false | SloBERTa SloBERTa model is a monolingual Slovene BERT-like model. It is closely related to French Camembert model https://camembert-model.fr/. The corpora used for training the model have 3.47 billion tokens in total. The subword vocabulary contains 32,000 tokens. The scripts and programs used for data preparation and training the model are available on https://github.com/clarinsi/Slovene-BERT-Tool SloBERTa was trained for 200,000 iterations or about 98 epochs. | 7482008afd6dbeddcb5aec147b6d9da4 |

apache-2.0 | ['image-classification', 'timm'] | false | Model card for maxvit_large_tf_384.in1k An official MaxViT image classification model. Trained in tensorflow on ImageNet-1k by paper authors. Ported from official Tensorflow implementation (https://github.com/google-research/maxvit) to PyTorch by Ross Wightman. | f5e9195f45d260d57dbaed64cdf73706 |

apache-2.0 | ['image-classification', 'timm'] | false | Model Details - **Model Type:** Image classification / feature backbone - **Model Stats:** - Params (M): 212.0 - GMACs: 132.6 - Activations (M): 445.8 - Image size: 384 x 384 - **Papers:** - MaxViT: Multi-Axis Vision Transformer: https://arxiv.org/abs/2204.01697 - **Dataset:** ImageNet-1k | 03e6b0d1962f3a1b397b6ca9b286120a |

apache-2.0 | ['image-classification', 'timm'] | false | Image Classification ```python from urllib.request import urlopen from PIL import Image import timm img = Image.open( urlopen('https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png')) model = timm.create_model('maxvit_large_tf_384.in1k', pretrained=True) model = model.eval() | 30d3091eb377294048ee08d5f8b7b1ca |

apache-2.0 | ['image-classification', 'timm'] | false | Feature Map Extraction ```python from urllib.request import urlopen from PIL import Image import timm img = Image.open( urlopen('https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png')) model = timm.create_model( 'maxvit_large_tf_384.in1k', pretrained=True, features_only=True, ) model = model.eval() | 2ee41cee06b9cb0d6c57d94280046d65 |

apache-2.0 | ['image-classification', 'timm'] | false | Image Embeddings ```python from urllib.request import urlopen from PIL import Image import timm img = Image.open( urlopen('https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png')) model = timm.create_model( 'maxvit_large_tf_384.in1k', pretrained=True, num_classes=0, | 4a96962369823d7bbd6f7d8587edf8ed |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-burak-new-300-v2-8 This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2841 - Wer: 0.2120 | 38295d9c627f6ebcb587350082f3e097 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 64 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 151 - mixed_precision_training: Native AMP | e16977340eb6e7aede2e334ac5eef460 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:------:|:----:|:---------------:|:------:| | 6.0739 | 9.43 | 500 | 3.1506 | 1.0 | | 1.6652 | 18.87 | 1000 | 0.3396 | 0.4136 | | 0.4505 | 28.3 | 1500 | 0.2632 | 0.3138 | | 0.3115 | 37.74 | 2000 | 0.2536 | 0.2849 | | 0.2421 | 47.17 | 2500 | 0.2674 | 0.2588 | | 0.203 | 56.6 | 3000 | 0.2552 | 0.2471 | | 0.181 | 66.04 | 3500 | 0.2636 | 0.2595 | | 0.1581 | 75.47 | 4000 | 0.2527 | 0.2416 | | 0.1453 | 84.91 | 4500 | 0.2773 | 0.2257 | | 0.1305 | 94.34 | 5000 | 0.2825 | 0.2257 | | 0.1244 | 103.77 | 5500 | 0.2754 | 0.2312 | | 0.1127 | 113.21 | 6000 | 0.2772 | 0.2223 | | 0.1094 | 122.64 | 6500 | 0.2720 | 0.2223 | | 0.1033 | 132.08 | 7000 | 0.2863 | 0.2202 | | 0.099 | 141.51 | 7500 | 0.2853 | 0.2140 | | 0.0972 | 150.94 | 8000 | 0.2841 | 0.2120 | | acd4e3a6efd47cc2fdaaee853762fe9a |

apache-2.0 | ['image-classification', 'timm'] | false | Model card for convnext_base.clip_laion2b_augreg_ft_in1k A ConvNeXt image classification model. CLIP image tower weights pretrained in [OpenCLIP](https://github.com/mlfoundations/open_clip) on LAION and fine-tuned on ImageNet-1k in `timm` by Ross Wightman. Please see related OpenCLIP model cards for more details on pretrain: * https://huggingface.co/laion/CLIP-convnext_large_d.laion2B-s26B-b102K-augreg * https://huggingface.co/laion/CLIP-convnext_base_w-laion2B-s13B-b82K-augreg * https://huggingface.co/laion/CLIP-convnext_base_w_320-laion_aesthetic-s13B-b82K | c13444d531664686cdff8e4f7f491619 |

apache-2.0 | ['image-classification', 'timm'] | false | Model Details - **Model Type:** Image classification / feature backbone - **Model Stats:** - Params (M): 88.6 - GMACs: 20.1 - Activations (M): 37.6 - Image size: 256 x 256 - **Papers:** - LAION-5B: An open large-scale dataset for training next generation image-text models: https://arxiv.org/abs/2210.08402 - A ConvNet for the 2020s: https://arxiv.org/abs/2201.03545 - Learning Transferable Visual Models From Natural Language Supervision: https://arxiv.org/abs/2103.00020 - **Original:** https://github.com/mlfoundations/open_clip - **Pretrain Dataset:** LAION-2B - **Dataset:** ImageNet-1k | 0936a4b9a2bf75bb6fe1e4a699914b55 |

apache-2.0 | ['image-classification', 'timm'] | false | Image Classification ```python from urllib.request import urlopen from PIL import Image import timm img = Image.open( urlopen('https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png')) model = timm.create_model('convnext_base.clip_laion2b_augreg_ft_in1k', pretrained=True) model = model.eval() | 8db02dc2bb355ee6135cbc08d609ad19 |

apache-2.0 | ['image-classification', 'timm'] | false | Feature Map Extraction ```python from urllib.request import urlopen from PIL import Image import timm img = Image.open( urlopen('https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png')) model = timm.create_model( 'convnext_base.clip_laion2b_augreg_ft_in1k', pretrained=True, features_only=True, ) model = model.eval() | b7a95d6f3b82ba129cba74d73ec03bf6 |

apache-2.0 | ['image-classification', 'timm'] | false | Image Embeddings ```python from urllib.request import urlopen from PIL import Image import timm img = Image.open( urlopen('https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png')) model = timm.create_model( 'convnext_base.clip_laion2b_augreg_ft_in1k', pretrained=True, num_classes=0, | f94722fb7f96a0011a0cddcc36ae0d23 |

apache-2.0 | ['image-classification', 'timm'] | false | Citation ```bibtex @software{ilharco_gabriel_2021_5143773, author = {Ilharco, Gabriel and Wortsman, Mitchell and Wightman, Ross and Gordon, Cade and Carlini, Nicholas and Taori, Rohan and Dave, Achal and Shankar, Vaishaal and Namkoong, Hongseok and Miller, John and Hajishirzi, Hannaneh and Farhadi, Ali and Schmidt, Ludwig}, title = {OpenCLIP}, month = jul, year = 2021, note = {If you use this software, please cite it as below.}, publisher = {Zenodo}, version = {0.1}, doi = {10.5281/zenodo.5143773}, url = {https://doi.org/10.5281/zenodo.5143773} } ``` ```bibtex @inproceedings{schuhmann2022laionb, title={{LAION}-5B: An open large-scale dataset for training next generation image-text models}, author={Christoph Schuhmann and Romain Beaumont and Richard Vencu and Cade W Gordon and Ross Wightman and Mehdi Cherti and Theo Coombes and Aarush Katta and Clayton Mullis and Mitchell Wortsman and Patrick Schramowski and Srivatsa R Kundurthy and Katherine Crowson and Ludwig Schmidt and Robert Kaczmarczyk and Jenia Jitsev}, booktitle={Thirty-sixth Conference on Neural Information Processing Systems Datasets and Benchmarks Track}, year={2022}, url={https://openreview.net/forum?id=M3Y74vmsMcY} } ``` ```bibtex @misc{rw2019timm, author = {Ross Wightman}, title = {PyTorch Image Models}, year = {2019}, publisher = {GitHub}, journal = {GitHub repository}, doi = {10.5281/zenodo.4414861}, howpublished = {\url{https://github.com/rwightman/pytorch-image-models}} } ``` ```bibtex @inproceedings{Radford2021LearningTV, title={Learning Transferable Visual Models From Natural Language Supervision}, author={Alec Radford and Jong Wook Kim and Chris Hallacy and A. Ramesh and Gabriel Goh and Sandhini Agarwal and Girish Sastry and Amanda Askell and Pamela Mishkin and Jack Clark and Gretchen Krueger and Ilya Sutskever}, booktitle={ICML}, year={2021} } ``` ```bibtex @article{liu2022convnet, author = {Zhuang Liu and Hanzi Mao and Chao-Yuan Wu and Christoph Feichtenhofer and Trevor Darrell and Saining Xie}, title = {A ConvNet for the 2020s}, journal = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)}, year = {2022}, } ``` | 8115dc22da14bfb32232f69442406ba7 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Wav2Vec2-Large-XLSR-53-Mongolian Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on Mongolian using the [Common Voice](https://huggingface.co/datasets/common_voice) dataset. When using this model, make sure that your speech input is sampled at 16kHz. | d7dbef672b3e1d7500098338dee51867 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Usage The model can be used directly (without a language model) as follows: ```python import torch import torchaudio from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor test_dataset = load_dataset("common_voice", "mn", split="test[:2%]") processor = Wav2Vec2Processor.from_pretrained("anton-l/wav2vec2-large-xlsr-53-mongolian") model = Wav2Vec2ForCTC.from_pretrained("anton-l/wav2vec2-large-xlsr-53-mongolian") resampler = torchaudio.transforms.Resample(48_000, 16_000) | 9a11a3114b95aa9bce36786a04adce01 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Evaluation The model can be evaluated as follows on the Mongolian test data of Common Voice. ```python import torch import torchaudio import urllib.request import tarfile import pandas as pd from tqdm.auto import tqdm from datasets import load_metric from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor | 9ba65fc70e53bc31c768ad5ab0566571 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Download the raw data instead of using HF datasets to save disk space data_url = "https://voice-prod-bundler-ee1969a6ce8178826482b88e843c335139bd3fb4.s3.amazonaws.com/cv-corpus-6.1-2020-12-11/mn.tar.gz" filestream = urllib.request.urlopen(data_url) data_file = tarfile.open(fileobj=filestream, mode="r|gz") data_file.extractall() wer = load_metric("wer") processor = Wav2Vec2Processor.from_pretrained("anton-l/wav2vec2-large-xlsr-53-mongolian") model = Wav2Vec2ForCTC.from_pretrained("anton-l/wav2vec2-large-xlsr-53-mongolian") model.to("cuda") cv_test = pd.read_csv("cv-corpus-6.1-2020-12-11/mn/test.tsv", sep='\t') clips_path = "cv-corpus-6.1-2020-12-11/mn/clips/" def clean_sentence(sent): sent = sent.lower() | 6f5604565c64e560e7c5605bc1374b7a |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | remove repeated spaces sent = " ".join(sent.split()) return sent targets = [] preds = [] for i, row in tqdm(cv_test.iterrows(), total=cv_test.shape[0]): row["sentence"] = clean_sentence(row["sentence"]) speech_array, sampling_rate = torchaudio.load(clips_path + row["path"]) resampler = torchaudio.transforms.Resample(sampling_rate, 16_000) row["speech"] = resampler(speech_array).squeeze().numpy() inputs = processor(row["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits pred_ids = torch.argmax(logits, dim=-1) targets.append(row["sentence"]) preds.append(processor.batch_decode(pred_ids)[0]) print("WER: {:2f}".format(100 * wer.compute(predictions=preds, references=targets))) ``` **Test Result**: 38.53 % | 23d8139552c0abeb68c6f6855de77604 |

apache-2.0 | ['translation'] | false | opus-mt-sv-to * source languages: sv * target languages: to * OPUS readme: [sv-to](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/sv-to/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/sv-to/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/sv-to/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/sv-to/opus-2020-01-16.eval.txt) | efe5450cfb7930efd10042ab08572593 |

creativeml-openrail-m | ['stable-diffusion', 'stable diffusion chinese', 'stable-diffusion-diffusers', 'text-to-image', 'Chinese'] | false | Gradio We support a [Gradio](https://github.com/gradio-app/gradio) Web UI to run Taiyi-Stable-Diffusion-1B-Chinese-EN-v0.1: [](https://huggingface.co/spaces/IDEA-CCNL/Taiyi-Stable-Diffusion-Chinese) | 395076561e9405f4e342220f1dd64d3e |

creativeml-openrail-m | ['stable-diffusion', 'stable diffusion chinese', 'stable-diffusion-diffusers', 'text-to-image', 'Chinese'] | false | 模型分类 Model Taxonomy | 需求 Demand | 任务 Task | 系列 Series | 模型 Model | 参数 Parameter | 额外 Extra | | :----: | :----: | :----: | :----: | :----: | :----: | | 特殊 Special | 多模态 Multimodal | 太乙 Taiyi | Stable Diffusion | 1B | Chinese and English | | 937c5a8750f2b9c5b3b84dce687abd73 |

creativeml-openrail-m | ['stable-diffusion', 'stable diffusion chinese', 'stable-diffusion-diffusers', 'text-to-image', 'Chinese'] | false | 模型信息 Model Information 我们将[Noah-Wukong](https://wukong-dataset.github.io/wukong-dataset/)数据集(100M)和[Zero](https://zero.so.com/)数据集(23M)用作预训练的数据集,先用[IDEA-CCNL/Taiyi-CLIP-RoBERTa-102M-ViT-L-Chinese](https://huggingface.co/IDEA-CCNL/Taiyi-CLIP-RoBERTa-102M-ViT-L-Chinese)对这两个数据集的图文对相似性进行打分,取CLIP Score大于0.2的图文对作为我们的训练集。 我们使用[stable-diffusion-v1-4](https://huggingface.co/CompVis/stable-diffusion-v1-4)([论文](https://arxiv.org/abs/2112.10752))模型进行继续训练,其中训练分为两个stage。 第一个stage中冻住模型的其他部分,只训练text encoder,以便保留原始模型的生成能力且实现中文概念的对齐。 第二个stage中将全部模型解冻,一起训练text encoder和diffusion model,以便diffusion model更好的适配中文guidance。 第一个stage我们训练了80小时,第二个stage训练了100小时,两个stage都是用了8 x A100。该版本是一个初步的版本,我们将持续优化模型并开源,欢迎交流! We use [Noah-Wukong](https://wukong-dataset.github.io/wukong-dataset/)(100M) 和 [Zero](https://zero.so.com/)(23M) as our dataset, and take the image and text pairs with CLIP Score (based on [IDEA-CCNL/Taiyi-CLIP-RoBERTa-102M-ViT-L-Chinese](https://huggingface.co/IDEA-CCNL/Taiyi-CLIP-RoBERTa-102M-ViT-L-Chinese)) greater than 0.2 as our Training set. We finetune the [stable-diffusion-v1-4](https://huggingface.co/CompVis/stable-diffusion-v1-4)([paper](https://arxiv.org/abs/2112.10752)) model for two stage. Stage 1: To keep the powerful generative capability of stable diffusion and align Chinese concepts with the images, We only train the text encoder and freeze other part of the model in the first stage. Stage 2: We unfreeze both the text encoder and the diffusion model, therefore the diffusion model can have a better compatibility for the Chinese language guidance. It takes 80 hours to train the first stage, 100 hours to train the second stage, both stages are based on 8 x A100. This model is a preliminary version and we will update this model continuously and open sourse. Welcome to exchange! | 04e98cee60aba0a43cd1c638c1683138 |

creativeml-openrail-m | ['stable-diffusion', 'stable diffusion chinese', 'stable-diffusion-diffusers', 'text-to-image', 'Chinese'] | false | Result 小桥流水人家,Van Gogh style。  小桥流水人家,水彩。  吃过桥米线的猫。  穿着宇航服的哈士奇。  | 1bda9a7aaea67f7701affedc8804ea98 |

creativeml-openrail-m | ['stable-diffusion', 'stable diffusion chinese', 'stable-diffusion-diffusers', 'text-to-image', 'Chinese'] | false | 全精度 Full precision ```py from diffusers import StableDiffusionPipeline pipe = StableDiffusionPipeline.from_pretrained("IDEA-CCNL/Taiyi-Stable-Diffusion-1B-Chinese-EN-v0.1").to("cuda") prompt = '小桥流水人家,Van Gogh style' image = pipe(prompt, guidance_scale=10).images[0] image.save("小桥.png") ``` | 8231cc1ba8c17003446d71c48d147f54 |

creativeml-openrail-m | ['stable-diffusion', 'stable diffusion chinese', 'stable-diffusion-diffusers', 'text-to-image', 'Chinese'] | false | 半精度 Half precision FP16 (CUDA) 添加 `torch_dtype=torch.float16` 和 `device_map="auto"` 可以快速加载 FP16 的权重,以加快推理速度。 更多信息见 [the optimization docs](https://huggingface.co/docs/diffusers/main/en/optimization/fp16 | f379af9f3e4949779506b485fba574d2 |

creativeml-openrail-m | ['stable-diffusion', 'stable diffusion chinese', 'stable-diffusion-diffusers', 'text-to-image', 'Chinese'] | false | !pip install git+https://github.com/huggingface/accelerate from diffusers import StableDiffusionPipeline import torch torch.backends.cudnn.benchmark = True pipe = StableDiffusionPipeline.from_pretrained("IDEA-CCNL/Taiyi-Stable-Diffusion-1B-Chinese-EN-v0.1", torch_dtype=torch.float16) pipe.to('cuda') prompt = '小桥流水人家,Van Gogh style' image = pipe(prompt, guidance_scale=10.0).images[0] image.save("小桥.png") ``` | 2f79fb96f3ddb5daa8ce0c5e2d1d7d2f |

creativeml-openrail-m | ['stable-diffusion', 'stable diffusion chinese', 'stable-diffusion-diffusers', 'text-to-image', 'Chinese'] | false | 引用 Citation 如果您在您的工作中使用了我们的模型,可以引用我们的[总论文](https://arxiv.org/abs/2209.02970): If you are using the resource for your work, please cite the our [paper](https://arxiv.org/abs/2209.02970): ```text @article{fengshenbang, author = {Junjie Wang and Yuxiang Zhang and Lin Zhang and Ping Yang and Xinyu Gao and Ziwei Wu and Xiaoqun Dong and Junqing He and Jianheng Zhuo and Qi Yang and Yongfeng Huang and Xiayu Li and Yanghan Wu and Junyu Lu and Xinyu Zhu and Weifeng Chen and Ting Han and Kunhao Pan and Rui Wang and Hao Wang and Xiaojun Wu and Zhongshen Zeng and Chongpei Chen and Ruyi Gan and Jiaxing Zhang}, title = {Fengshenbang 1.0: Being the Foundation of Chinese Cognitive Intelligence}, journal = {CoRR}, volume = {abs/2209.02970}, year = {2022} } ``` 也可以引用我们的[网站](https://github.com/IDEA-CCNL/Fengshenbang-LM/): You can also cite our [website](https://github.com/IDEA-CCNL/Fengshenbang-LM/): ```text @misc{Fengshenbang-LM, title={Fengshenbang-LM}, author={IDEA-CCNL}, year={2021}, howpublished={\url{https://github.com/IDEA-CCNL/Fengshenbang-LM}}, } ``` | 5d713be1fae62977ceebe0d9e118fd0b |

mit | ['spacy', 'token-classification'] | false | nb_core_news_lg Norwegian (Bokmål) pipeline optimized for CPU. Components: tok2vec, morphologizer, parser, lemmatizer (trainable_lemmatizer), senter, ner, attribute_ruler. | Feature | Description | | --- | --- | | **Name** | `nb_core_news_lg` | | **Version** | `3.5.0` | | **spaCy** | `>=3.5.0,<3.6.0` | | **Default Pipeline** | `tok2vec`, `morphologizer`, `parser`, `lemmatizer`, `attribute_ruler`, `ner` | | **Components** | `tok2vec`, `morphologizer`, `parser`, `lemmatizer`, `senter`, `attribute_ruler`, `ner` | | **Vectors** | 500000 keys, 500000 unique vectors (300 dimensions) | | **Sources** | [UD Norwegian Bokmaal v2.8](https://github.com/UniversalDependencies/UD_Norwegian-Bokmaal) (Øvrelid, Lilja; Jørgensen, Fredrik; Hohle, Petter)<br />[NorNE: Norwegian Named Entities (commit: bd311de5)](https://github.com/ltgoslo/norne) (Language Technology Group (University of Oslo))<br />[Explosion fastText Vectors (cbow, OSCAR Common Crawl + Wikipedia)](https://spacy.io) (Explosion) | | **License** | `MIT` | | **Author** | [Explosion](https://explosion.ai) | | 7d35c14394866cd892dbfbf516f9c4e6 |

mit | ['spacy', 'token-classification'] | false | Label Scheme <details> <summary>View label scheme (249 labels for 3 components)</summary> | Component | Labels | | --- | --- | | **`morphologizer`** | `Definite=Ind\|Gender=Neut\|Number=Sing\|POS=NOUN`, `POS=CCONJ`, `Definite=Ind\|Gender=Masc\|Number=Sing\|POS=NOUN`, `POS=SCONJ`, `Definite=Def\|Gender=Masc\|Number=Sing\|POS=NOUN`, `Definite=Ind\|Gender=Neut\|Number=Plur\|POS=NOUN`, `POS=PUNCT`, `Mood=Ind\|POS=VERB\|Tense=Past\|VerbForm=Fin`, `POS=ADP`, `Gender=Masc\|Number=Sing\|POS=DET\|PronType=Dem`, `Definite=Def\|Degree=Pos\|Number=Sing\|POS=ADJ`, `POS=PROPN`, `POS=X`, `Mood=Ind\|POS=VERB\|Tense=Pres\|VerbForm=Fin`, `Definite=Def\|Gender=Neut\|Number=Sing\|POS=NOUN`, `POS=PRON\|PronType=Rel`, `Mood=Ind\|POS=AUX\|Tense=Pres\|VerbForm=Fin`, `Definite=Ind\|Gender=Neut\|Number=Sing\|POS=ADJ\|VerbForm=Part`, `Definite=Ind\|Degree=Pos\|Number=Sing\|POS=ADJ`, `Definite=Ind\|Gender=Fem\|Number=Sing\|POS=NOUN`, `Number=Plur\|POS=ADJ\|VerbForm=Part`, `Definite=Ind\|Gender=Fem\|Number=Plur\|POS=NOUN`, `POS=ADV`, `Gender=Neut\|Number=Sing\|POS=PRON\|Person=3\|PronType=Prs`, `Definite=Ind\|Number=Sing\|POS=ADJ\|VerbForm=Part`, `POS=VERB\|VerbForm=Part`, `Definite=Ind\|Gender=Masc\|Number=Plur\|POS=NOUN`, `Definite=Ind\|Degree=Pos\|Gender=Neut\|Number=Sing\|POS=ADJ`, `Degree=Pos\|Number=Plur\|POS=ADJ`, `NumType=Card\|Number=Plur\|POS=NUM`, `Definite=Def\|Gender=Masc\|Number=Plur\|POS=NOUN`, `Case=Acc\|POS=PRON\|PronType=Prs\|Reflex=Yes`, `Case=Gen\|Definite=Ind\|Gender=Neut\|Number=Sing\|POS=NOUN`, `POS=PART`, `POS=VERB\|VerbForm=Inf`, `Case=Nom\|Number=Plur\|POS=PRON\|Person=3\|PronType=Prs`, `Mood=Ind\|POS=AUX\|Tense=Past\|VerbForm=Fin`, `Gender=Fem\|POS=PROPN`, `POS=NOUN`, `Gender=Masc\|POS=PROPN`, `Gender=Neut\|Number=Sing\|POS=DET\|PronType=Dem`, `Gender=Masc\|Number=Sing\|POS=DET\|PronType=Art`, `Case=Gen\|Definite=Def\|Gender=Masc\|Number=Sing\|POS=NOUN`, `Abbr=Yes\|POS=PROPN`, `POS=PART\|Polarity=Neg`, `Number=Plur\|POS=PRON\|Poss=Yes\|PronType=Prs`, `Case=Gen\|Definite=Ind\|Gender=Neut\|Number=Plur\|POS=NOUN`, `Case=Gen\|POS=PROPN`, `Gender=Fem\|Number=Sing\|POS=DET\|PronType=Dem`, `Gender=Masc\|Number=Sing\|POS=PRON\|Poss=Yes\|PronType=Prs`, `Definite=Def\|Degree=Sup\|POS=ADJ`, `Case=Gen\|Gender=Fem\|POS=PROPN`, `Number=Plur\|POS=DET\|PronType=Dem`, `Case=Gen\|Definite=Def\|Gender=Neut\|Number=Sing\|POS=NOUN`, `Definite=Ind\|Degree=Sup\|POS=ADJ`, `Definite=Def\|Gender=Fem\|Number=Plur\|POS=NOUN`, `Gender=Neut\|POS=PROPN`, `Number=Plur\|POS=DET\|PronType=Int`, `Definite=Def\|Gender=Neut\|Number=Plur\|POS=NOUN`, `Definite=Def\|POS=DET\|PronType=Dem`, `Gender=Neut\|Number=Sing\|POS=DET\|PronType=Art`, `Mood=Ind\|POS=VERB\|Tense=Pres\|VerbForm=Fin\|Voice=Pass`, `Abbr=Yes\|Case=Gen\|POS=PROPN`, `Animacy=Hum\|Case=Nom\|Gender=Masc\|Number=Sing\|POS=PRON\|Person=3\|PronType=Prs`, `Degree=Cmp\|POS=ADJ`, `POS=ADJ\|VerbForm=Part`, `Gender=Neut\|Number=Sing\|POS=PRON\|Poss=Yes\|PronType=Prs`, `Abbr=Yes\|POS=ADP`, `Definite=Ind\|Gender=Neut\|Number=Sing\|POS=DET\|PronType=Prs`, `Case=Gen\|Definite=Def\|Gender=Neut\|Number=Plur\|POS=NOUN`, `POS=AUX\|VerbForm=Part`, `POS=PRON\|PronType=Int`, `Gender=Fem\|Number=Sing\|POS=PRON\|Poss=Yes\|PronType=Prs`, `Number=Plur\|POS=PRON\|Person=3\|PronType=Ind,Prs`, `Number=Plur\|POS=DET\|PronType=Ind`, `Degree=Pos\|POS=ADJ`, `Animacy=Hum\|Case=Nom\|Number=Plur\|POS=PRON\|Person=1\|PronType=Prs`, `POS=VERB\|VerbForm=Inf\|Voice=Pass`, `Definite=Ind\|Gender=Fem\|Number=Sing\|POS=DET\|PronType=Dem`, `Gender=Neut\|Number=Sing\|POS=DET\|PronType=Ind`, `Animacy=Hum\|Case=Acc\|Gender=Masc\|Number=Sing\|POS=PRON\|Person=3\|PronType=Prs`, `Animacy=Hum\|Case=Nom\|Number=Sing\|POS=PRON\|Person=1\|PronType=Prs`, `Number=Plur\|POS=DET\|Polarity=Neg\|PronType=Neg`, `NumType=Card\|POS=NUM`, `Gender=Masc\|Number=Sing\|POS=DET\|PronType=Ind`, `POS=DET\|PronType=Prs`, `Gender=Fem\|Number=Sing\|POS=DET\|PronType=Ind`, `Case=Gen\|Gender=Neut\|POS=PROPN`, `Gender=Masc\|Number=Sing\|POS=DET\|Polarity=Neg\|PronType=Neg`, `Definite=Def\|Number=Sing\|POS=ADJ\|VerbForm=Part`, `Gender=Fem,Masc\|Number=Sing\|POS=PRON\|Person=3\|PronType=Prs`, `POS=AUX\|VerbForm=Inf`, `Case=Acc\|Number=Plur\|POS=PRON\|Person=3\|PronType=Prs`, `Case=Gen\|Degree=Pos\|Number=Plur\|POS=ADJ`, `Number=Plur\|POS=DET\|PronType=Tot`, `Case=Gen\|Gender=Masc\|Number=Sing\|POS=DET\|PronType=Dem`, `Number=Plur\|POS=DET\|PronType=Prs`, `POS=SYM`, `Gender=Neut\|NumType=Card\|Number=Sing\|POS=NUM`, `Animacy=Hum\|Case=Nom\|Number=Sing\|POS=PRON\|PronType=Prs`, `Definite=Ind\|Gender=Masc\|Number=Sing\|POS=DET\|PronType=Prs`, `Case=Gen\|Definite=Ind\|Gender=Masc\|Number=Sing\|POS=NOUN`, `Abbr=Yes\|POS=ADV`, `Definite=Ind\|Gender=Neut\|Number=Sing\|POS=DET\|PronType=Dem`, `Gender=Masc\|Number=Sing\|POS=DET\|PronType=Tot`, `Definite=Def\|POS=DET\|PronType=Prs`, `Animacy=Hum\|Case=Nom\|Gender=Fem\|Number=Sing\|POS=PRON\|Person=3\|PronType=Prs`, `Gender=Neut\|POS=NOUN`, `Gender=Neut\|Number=Sing\|POS=DET\|PronType=Int`, `Definite=Def\|NumType=Card\|POS=NUM`, `Mood=Imp\|POS=VERB\|VerbForm=Fin`, `Definite=Ind\|Number=Plur\|POS=NOUN`, `Gender=Neut\|Number=Sing\|POS=DET\|PronType=Tot`, `Gender=Fem\|Number=Sing\|POS=DET\|PronType=Tot`, `Animacy=Hum\|Case=Acc\|Number=Plur\|POS=PRON\|Person=1\|PronType=Prs`, `Gender=Fem,Masc\|Number=Sing\|POS=PRON\|Person=3\|Polarity=Neg\|PronType=Neg,Prs`, `Number=Plur\|POS=PRON\|Person=3\|Polarity=Neg\|PronType=Neg,Prs`, `Definite=Def\|NumType=Card\|Number=Sing\|POS=NUM`, `Gender=Masc\|NumType=Card\|Number=Sing\|POS=NUM`, `Definite=Ind\|Gender=Masc\|Number=Sing\|POS=DET\|PronType=Dem`, `Case=Gen\|Definite=Def\|Gender=Fem\|Number=Plur\|POS=NOUN`, `Case=Gen\|Gender=Neut\|Number=Sing\|POS=DET\|PronType=Dem`, `POS=SPACE`, `Animacy=Hum\|Number=Sing\|POS=PRON\|PronType=Art,Prs`, `Mood=Imp\|POS=AUX\|VerbForm=Fin`, `Number=Plur\|POS=PRON\|Person=3\|PronType=Prs,Tot`, `Number=Plur\|POS=ADJ`, `Gender=Masc\|POS=NOUN`, `Abbr=Yes\|POS=NOUN`, `Case=Gen\|Definite=Ind\|Gender=Masc\|Number=Plur\|POS=NOUN`, `Gender=Neut\|Number=Sing\|POS=PRON\|Person=3\|PronType=Ind,Prs`, `POS=INTJ`, `Animacy=Hum\|Case=Nom\|Number=Sing\|POS=PRON\|Person=2\|PronType=Prs`, `Animacy=Hum\|Case=Acc\|Number=Sing\|POS=PRON\|Person=1\|PronType=Prs`, `Case=Gen\|Definite=Def\|Gender=Masc\|Number=Plur\|POS=NOUN`, `POS=ADJ`, `Animacy=Hum\|Case=Acc\|Gender=Fem\|Number=Sing\|POS=PRON\|Person=3\|PronType=Prs`, `Animacy=Hum\|Case=Acc\|Number=Sing\|POS=PRON\|Person=2\|PronType=Prs`, `Definite=Def\|Gender=Fem\|Number=Sing\|POS=NOUN`, `Number=Sing\|POS=PRON\|Polarity=Neg\|PronType=Neg`, `Case=Gen\|POS=NOUN`, `Definite=Ind\|Number=Sing\|POS=ADJ`, `Case=Gen\|Gender=Masc\|POS=PROPN`, `Animacy=Hum\|Number=Plur\|POS=PRON\|PronType=Rcp`, `Case=Gen\|Definite=Ind\|Gender=Fem\|Number=Sing\|POS=NOUN`, `Number=Plur\|POS=PRON\|Person=3\|PronType=Prs`, `Gender=Fem,Masc\|Number=Sing\|POS=PRON\|Person=3\|PronType=Ind,Prs`, `Definite=Ind\|Gender=Fem\|Number=Sing\|POS=DET\|PronType=Prs`, `Case=Gen\|Definite=Def\|Gender=Fem\|Number=Sing\|POS=NOUN`, `Gender=Fem\|Number=Sing\|POS=DET\|PronType=Art`, `Case=Gen\|Definite=Def\|Degree=Pos\|Number=Sing\|POS=ADJ`, `Gender=Masc\|Number=Sing\|POS=DET\|PronType=Int`, `NumType=Card\|Number=Sing\|POS=NUM`, `Animacy=Hum\|Case=Acc\|Number=Plur\|POS=PRON\|Person=2\|PronType=Prs`, `Animacy=Hum\|Case=Nom\|Number=Plur\|POS=PRON\|Person=2\|PronType=Prs`, `Case=Gen\|Definite=Ind\|Degree=Pos\|Gender=Neut\|Number=Sing\|POS=ADJ`, `Degree=Sup\|POS=ADJ`, `Animacy=Hum\|POS=PRON\|PronType=Int`, `POS=DET\|PronType=Ind`, `Definite=Def\|Number=Sing\|POS=DET\|PronType=Dem`, `Gender=Fem\|POS=NOUN`, `Case=Gen\|Number=Plur\|POS=DET\|PronType=Dem`, `Gender=Fem,Masc\|Number=Sing\|POS=PRON\|Person=3\|PronType=Prs,Tot`, `Case=Gen\|Definite=Ind\|Gender=Fem\|Number=Plur\|POS=NOUN`, `Gender=Neut\|Number=Sing\|POS=DET\|Polarity=Neg\|PronType=Neg`, `Number=Plur\|POS=NOUN`, `POS=PRON\|PronType=Prs`, `Case=Gen\|Definite=Ind\|Degree=Pos\|Number=Sing\|POS=ADJ`, `Definite=Ind\|Number=Sing\|POS=VERB\|VerbForm=Part`, `Case=Gen\|Definite=Def\|Number=Sing\|POS=ADJ\|VerbForm=Part`, `Mood=Ind\|POS=VERB\|Tense=Past\|VerbForm=Fin\|Voice=Pass`, `Gender=Neut\|Number=Sing\|POS=DET\|PronType=Dem,Ind`, `Animacy=Hum\|POS=PRON\|Poss=Yes\|PronType=Int`, `Abbr=Yes\|POS=ADJ`, `Case=Gen\|Gender=Masc\|Number=Sing\|POS=DET\|PronType=Art`, `Abbr=Yes\|Definite=Def,Ind\|Gender=Masc\|Number=Sing\|POS=NOUN`, `Case=Gen\|Gender=Fem\|Number=Sing\|POS=DET\|PronType=Dem`, `Number=Plur\|POS=PRON\|Poss=Yes\|PronType=Rcp`, `Definite=Ind\|Degree=Pos\|POS=ADJ`, `Number=Plur\|POS=DET\|PronType=Art`, `Case=Gen\|NumType=Card\|Number=Plur\|POS=NUM`, `Abbr=Yes\|Definite=Def,Ind\|Gender=Neut\|Number=Plur,Sing\|POS=NOUN`, `Case=Gen\|Number=Plur\|POS=DET\|PronType=Tot`, `Abbr=Yes\|Definite=Def,Ind\|Gender=Masc\|Number=Plur,Sing\|POS=NOUN`, `Gender=Fem\|Number=Sing\|POS=DET\|PronType=Int`, `Definite=Ind\|Gender=Neut\|Number=Sing\|POS=ADJ`, `Case=Gen\|Definite=Ind\|Gender=Masc\|Number=Sing\|POS=DET\|PronType=Dem`, `Gender=Fem\|Number=Sing\|POS=DET\|PronType=Prs`, `Animacy=Hum\|Case=Gen,Nom\|Number=Sing\|POS=PRON\|PronType=Art,Prs`, `Definite=Def\|Degree=Pos\|Gender=Masc\|Number=Sing\|POS=ADJ`, `Animacy=Hum\|Case=Gen\|Number=Sing\|POS=PRON\|PronType=Art,Prs`, `Gender=Fem\|NumType=Card\|Number=Sing\|POS=NUM`, `Definite=Ind\|Gender=Masc\|POS=NOUN`, `Definite=Def\|Number=Plur\|POS=NOUN`, `Number=Sing\|POS=ADJ\|VerbForm=Part`, `Definite=Ind\|Gender=Masc\|Number=Sing\|POS=ADJ\|VerbForm=Part`, `Abbr=Yes\|Gender=Masc\|POS=NOUN`, `Abbr=Yes\|Case=Gen\|POS=NOUN`, `Abbr=Yes\|Mood=Ind\|POS=VERB\|Tense=Pres\|VerbForm=Fin`, `Abbr=Yes\|Degree=Pos\|POS=ADJ`, `Case=Gen\|Gender=Fem\|POS=NOUN`, `Case=Gen\|Degree=Cmp\|POS=ADJ`, `Definite=Ind\|Degree=Pos\|Gender=Masc\|Number=Sing\|POS=ADJ`, `Gender=Masc\|Number=Sing\|POS=NOUN` | | **`parser`** | `ROOT`, `acl`, `acl:cleft`, `acl:relcl`, `advcl`, `advmod`, `amod`, `appos`, `aux`, `aux:pass`, `case`, `cc`, `ccomp`, `compound`, `compound:prt`, `conj`, `cop`, `csubj`, `dep`, `det`, `discourse`, `expl`, `flat:foreign`, `flat:name`, `iobj`, `mark`, `nmod`, `nsubj`, `nsubj:pass`, `nummod`, `obj`, `obl`, `orphan`, `parataxis`, `punct`, `xcomp` | | **`ner`** | `DRV`, `EVT`, `GPE_LOC`, `GPE_ORG`, `LOC`, `MISC`, `ORG`, `PER`, `PROD` | </details> | 4304b3707929a5bae182766a81a8dbaa |

mit | ['spacy', 'token-classification'] | false | Accuracy | Type | Score | | --- | --- | | `TOKEN_ACC` | 99.81 | | `TOKEN_P` | 99.71 | | `TOKEN_R` | 99.53 | | `TOKEN_F` | 99.62 | | `POS_ACC` | 97.38 | | `MORPH_ACC` | 96.28 | | `MORPH_MICRO_P` | 97.90 | | `MORPH_MICRO_R` | 97.07 | | `MORPH_MICRO_F` | 97.48 | | `SENTS_P` | 94.18 | | `SENTS_R` | 94.11 | | `SENTS_F` | 94.14 | | `DEP_UAS` | 89.46 | | `DEP_LAS` | 86.42 | | `LEMMA_ACC` | 97.29 | | `TAG_ACC` | 97.38 | | `ENTS_P` | 84.84 | | `ENTS_R` | 84.18 | | `ENTS_F` | 84.51 | | 12cd25138400fe4ce4e45fdf8c193b74 |

apache-2.0 | [] | false | NB-ROBERTA Training Code This is the current training code for the planned nb-roberta models. We are currently planning to run the following experiments: <table> <tr> <td><strong>Name</strong> </td> <td><strong>nb-roberta-base-old (C)</strong> </td> </tr> <tr> <td>Corpus </td> <td>NbAiLab/nb_bert </td> </tr> <tr> <td>Pod size </td> <td>v4-64 </td> </tr> <tr> <td>Batch size </td> <td>62*4*8 = 1984 = 2k </td> </tr> <tr> <td>Learning rate </td> <td>3e-4 (RoBERTa article is using 6e-4 and bs=8k) </td> </tr> <tr> <td>Number of steps </td> <td>250k </td> </tr> </table> <table> <tr> <td><strong>Name</strong> </td> <td><strong>nb-roberta-base-ext (B)</strong> </td> </tr> <tr> <td>Corpus </td> <td>NbAiLab/nbailab_extended </td> </tr> <tr> <td>Pod size </td> <td>v4-64 </td> </tr> <tr> <td>Batch size </td> <td>62*4*8 = 1984 = 2k </td> </tr> <tr> <td>Learning rate </td> <td>3e-4 (RoBERTa article is using 6e-4 and bs=8k) </td> </tr> <tr> <td>Number of steps </td> <td>250k </td> </tr> </table> <table> <tr> <td><strong>Name</strong> </td> <td><strong>nb-roberta-large-ext</strong> </td> </tr> <tr> <td>Corpus </td> <td>NbAiLab/nbailab_extended </td> </tr> <tr> <td>Pod size </td> <td>v4-64 </td> </tr> <tr> <td>Batch size </td> <td>32*4*8 = 2024 = 1k </td> </tr> <tr> <td>Learning rate </td> <td>2-e4 (RoBERTa article is using 4e-4 and bs=8k) </td> </tr> <tr> <td>Number of steps </td> <td>500k </td> </tr> </table> <table> <tr> <td><strong>Name</strong> </td> <td><strong>nb-roberta-base-scandi</strong> </td> </tr> <tr> <td>Corpus </td> <td>NbAiLab/scandinavian </td> </tr> <tr> <td>Pod size </td> <td>v4-64 </td> </tr> <tr> <td>Batch size </td> <td>62*4*8 = 1984 = 2k </td> </tr> <tr> <td>Learning rate </td> <td>3e-4 (RoBERTa article is using 6e-4 and bs=8k) </td> </tr> <tr> <td>Number of steps </td> <td>250k </td> </tr> </table> <table> <tr> <td><strong>Name</strong> </td> <td><strong>nb-roberta-large-scandi</strong> </td> </tr> <tr> <td>Corpus </td> <td>NbAiLab/scandinavian </td> </tr> <tr> <td>Pod size </td> <td>v4-64 </td> </tr> <tr> <td>Batch size </td> <td>32*4*8 = 1024 = 1k </td> </tr> <tr> <td>Learning rate </td> <td>2-e4 (RoBERTa article is using 4e-4 and bs=8k) </td> </tr> <tr> <td>Number of steps </td> <td>500k </td> </tr> </table> | 6c44a967f6950fda0b4e2fd67eceb4f8 |

apache-2.0 | [] | false | Calculations Some basic that we used when estimating the number of training steps: * The Scandinavic Corpus is 85GB * The Scandinavic Corpus contains 13B words * With a conversion factor of 2.3, this is estimated to around 30B tokens * 30B tokens / (512 seq length * 3000 batch size) = 20.000 steps | bf6b26c220779d9f6d14880fb0076853 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper base Czech CV low LR This model is a fine-tuned version of [openai/whisper-base](https://huggingface.co/openai/whisper-base) on the mozilla-foundation/common_voice_11_0 cs dataset. It achieves the following results on the evaluation set: - Loss: 0.5171 - Wer: 42.9053 | 1b3dbfc95bc9abd538c25bb1447a0e26 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-06 - train_batch_size: 64 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 5000 - mixed_precision_training: Native AMP | b5baf9df4b1f9b8bdd05a3070681824c |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.6046 | 4.01 | 1000 | 0.6535 | 52.3084 | | 0.4037 | 8.02 | 2000 | 0.5706 | 46.6879 | | 0.3172 | 12.03 | 3000 | 0.5369 | 44.1042 | | 0.3606 | 16.04 | 4000 | 0.5218 | 43.0766 | | 0.3792 | 21.01 | 5000 | 0.5171 | 42.9053 | | f09419c943aaf77c7d8e8f1dff50b2bd |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.