license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

mit | ['stable-diffusion', 'text-to-image'] | false | Example Pictures from Rebecca_3.5k <table> <tr> <td><img src=https://i.imgur.com/h9milQd.png width=100% height=100%/></td> <td><img src=https://i.imgur.com/3Uxe6Bi.png width=100% height=100%/></td> <td><img src=https://i.imgur.com/FHczkJj.png width=100% height=100%/></td> </tr> </table> | 55436e315c285924d5d8e211ab48c5ad |

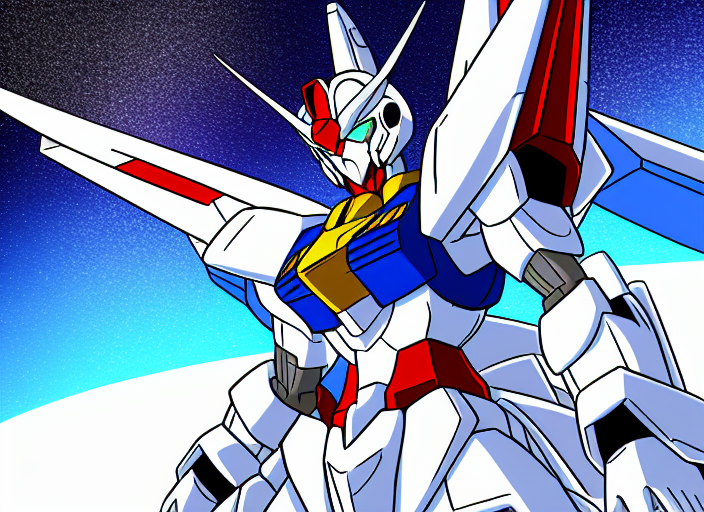

creativeml-openrail-m | [] | false | **Prompts:** The model is dreamboothed on tagged suisei no majo images; some prompts that work are 1. suletta mercury 2. miorine rembran 3. gundam aerial --- **Training details:** Trained with [kanewallmann Dreambooth repository](https://github.com/kanewallmann/Dreambooth-Stable-Diffusion) using tags as captions 1. Trained for 10000 steps probably at the default learning ratet lr=1e-6 2. Dataset: around 500 tagged images of suise no majo + thousands of customized reg images --- **Problems:** As the model is trained only on tagged images, it is more flexible but it is only harder to prompt. Some detailed description may be needed to get the character right, especially when trying to prompt suletta and miorine in the same image. --- **Example Generations:**           | d0c3d85ef2ee5bac51dffa85ef44972b |

mit | ['generated_from_trainer'] | false | bart-large-cnn-samsum-ElectrifAi_v6 This model is a fine-tuned version of [philschmid/bart-large-cnn-samsum](https://huggingface.co/philschmid/bart-large-cnn-samsum) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.4591 - Rouge1: 70.5822 - Rouge2: 55.7529 - Rougel: 63.7452 - Rougelsum: 69.9659 - Gen Len: 113.6 | ab58bc855863e4fade83b6e4f6acfbc4 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:| | No log | 1.0 | 20 | 0.7010 | 63.9182 | 44.7625 | 53.1206 | 63.0249 | 102.5 | | No log | 2.0 | 40 | 0.5084 | 68.113 | 52.0277 | 60.5913 | 67.282 | 114.8 | | No log | 3.0 | 60 | 0.4591 | 70.5822 | 55.7529 | 63.7452 | 69.9659 | 113.6 | | 4b3ea461a202534a517a3f4ac69546e5 |

apache-2.0 | ['deep-narrow'] | false | T5-Efficient-SMALL-EL8-DL4 (Deep-Narrow version) T5-Efficient-SMALL-EL8-DL4 is a variation of [Google's original T5](https://ai.googleblog.com/2020/02/exploring-transfer-learning-with-t5.html) following the [T5 model architecture](https://huggingface.co/docs/transformers/model_doc/t5). It is a *pretrained-only* checkpoint and was released with the paper **[Scale Efficiently: Insights from Pre-training and Fine-tuning Transformers](https://arxiv.org/abs/2109.10686)** by *Yi Tay, Mostafa Dehghani, Jinfeng Rao, William Fedus, Samira Abnar, Hyung Won Chung, Sharan Narang, Dani Yogatama, Ashish Vaswani, Donald Metzler*. In a nutshell, the paper indicates that a **Deep-Narrow** model architecture is favorable for **downstream** performance compared to other model architectures of similar parameter count. To quote the paper: > We generally recommend a DeepNarrow strategy where the model’s depth is preferentially increased > before considering any other forms of uniform scaling across other dimensions. This is largely due to > how much depth influences the Pareto-frontier as shown in earlier sections of the paper. Specifically, a > tall small (deep and narrow) model is generally more efficient compared to the base model. Likewise, > a tall base model might also generally more efficient compared to a large model. We generally find > that, regardless of size, even if absolute performance might increase as we continue to stack layers, > the relative gain of Pareto-efficiency diminishes as we increase the layers, converging at 32 to 36 > layers. Finally, we note that our notion of efficiency here relates to any one compute dimension, i.e., > params, FLOPs or throughput (speed). We report all three key efficiency metrics (number of params, > FLOPS and speed) and leave this decision to the practitioner to decide which compute dimension to > consider. To be more precise, *model depth* is defined as the number of transformer blocks that are stacked sequentially. A sequence of word embeddings is therefore processed sequentially by each transformer block. | fe0d61ce253e09bbfac30d94b64193a8 |

apache-2.0 | ['deep-narrow'] | false | Details model architecture This model checkpoint - **t5-efficient-small-el8-dl4** - is of model type **Small** with the following variations: - **el** is **8** - **dl** is **4** It has **58.42** million parameters and thus requires *ca.* **233.69 MB** of memory in full precision (*fp32*) or **116.84 MB** of memory in half precision (*fp16* or *bf16*). A summary of the *original* T5 model architectures can be seen here: | Model | nl (el/dl) | ff | dm | kv | nh | | 1035fb58e5cf2bd8daae90259d89d914 |

mit | ['generated_from_trainer'] | false | hyunwoongko-kobart-eb-finetuned-papers-meetings This model is a fine-tuned version of [hyunwoongko/kobart](https://huggingface.co/hyunwoongko/kobart) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.3136 - Rouge1: 18.3166 - Rouge2: 8.0509 - Rougel: 18.3332 - Rougelsum: 18.3146 - Gen Len: 19.9143 | 149933beb82c201c6b8e5690559d8281 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:-----:|:---------------:|:-------:|:------:|:-------:|:---------:|:-------:| | 0.2118 | 1.0 | 7739 | 0.2951 | 18.0837 | 7.9585 | 18.0787 | 18.0784 | 19.896 | | 0.1598 | 2.0 | 15478 | 0.2812 | 18.529 | 7.9891 | 18.5421 | 18.5271 | 19.8977 | | 0.1289 | 3.0 | 23217 | 0.2807 | 18.0638 | 7.8086 | 18.0787 | 18.0583 | 19.9129 | | 0.0873 | 4.0 | 30956 | 0.2923 | 18.3483 | 8.0233 | 18.3716 | 18.3696 | 19.914 | | 0.0844 | 5.0 | 38695 | 0.3136 | 18.3166 | 8.0509 | 18.3332 | 18.3146 | 19.9143 | | da4c7026afea624ec82b20be6fd93d15 |

apache-2.0 | ['image-classification', 'timm'] | false | Model card for convnext_large_mlp.clip_laion2b_augreg_ft_in1k_384 A ConvNeXt image classification model. CLIP image tower weights pretrained in [OpenCLIP](https://github.com/mlfoundations/open_clip) on LAION and fine-tuned on ImageNet-1k in `timm` by Ross Wightman. Please see related OpenCLIP model cards for more details on pretrain: * https://huggingface.co/laion/CLIP-convnext_large_d.laion2B-s26B-b102K-augreg * https://huggingface.co/laion/CLIP-convnext_base_w-laion2B-s13B-b82K-augreg * https://huggingface.co/laion/CLIP-convnext_base_w_320-laion_aesthetic-s13B-b82K | 05a741375635a16e1c44f760290a6117 |

apache-2.0 | ['image-classification', 'timm'] | false | Model Details - **Model Type:** Image classification / feature backbone - **Model Stats:** - Params (M): 200.1 - GMACs: 101.1 - Activations (M): 126.7 - Image size: 384 x 384 - **Papers:** - LAION-5B: An open large-scale dataset for training next generation image-text models: https://arxiv.org/abs/2210.08402 - A ConvNet for the 2020s: https://arxiv.org/abs/2201.03545 - Learning Transferable Visual Models From Natural Language Supervision: https://arxiv.org/abs/2103.00020 - **Original:** https://github.com/mlfoundations/open_clip - **Pretrain Dataset:** LAION-2B - **Dataset:** ImageNet-1k | 84de9ed4c17aa6ebb63dfc36c6c9bc6d |

apache-2.0 | ['image-classification', 'timm'] | false | Image Classification ```python from urllib.request import urlopen from PIL import Image import timm img = Image.open( urlopen('https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png')) model = timm.create_model('convnext_large_mlp.clip_laion2b_augreg_ft_in1k_384', pretrained=True) model = model.eval() | 5e72c51ea0463bf48a8bc07ac75ac159 |

apache-2.0 | ['image-classification', 'timm'] | false | Feature Map Extraction ```python from urllib.request import urlopen from PIL import Image import timm img = Image.open( urlopen('https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png')) model = timm.create_model( 'convnext_large_mlp.clip_laion2b_augreg_ft_in1k_384', pretrained=True, features_only=True, ) model = model.eval() | 14555db102d34ba84f1ef8326b73ac8b |

apache-2.0 | ['image-classification', 'timm'] | false | Image Embeddings ```python from urllib.request import urlopen from PIL import Image import timm img = Image.open( urlopen('https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png')) model = timm.create_model( 'convnext_large_mlp.clip_laion2b_augreg_ft_in1k_384', pretrained=True, num_classes=0, | c1256afe16c1a1fa48562c964338da88 |

apache-2.0 | ['image-classification', 'timm'] | false | By Top-1 All timing numbers from eager model PyTorch 1.13 on RTX 3090 w/ AMP. |model |top1 |top5 |img_size|param_count|gmacs |macts |samples_per_sec|batch_size| |----------------------------------------------|------|------|--------|-----------|------|------|---------------|----------| |[convnextv2_huge.fcmae_ft_in22k_in1k_512](https://huggingface.co/timm/convnextv2_huge.fcmae_ft_in22k_in1k_512)|88.848|98.742|512 |660.29 |600.81|413.07|28.58 |48 | |[convnextv2_huge.fcmae_ft_in22k_in1k_384](https://huggingface.co/timm/convnextv2_huge.fcmae_ft_in22k_in1k_384)|88.668|98.738|384 |660.29 |337.96|232.35|50.56 |64 | |[convnextv2_large.fcmae_ft_in22k_in1k_384](https://huggingface.co/timm/convnextv2_large.fcmae_ft_in22k_in1k_384)|88.196|98.532|384 |197.96 |101.1 |126.74|128.94 |128 | |[convnext_large_mlp.clip_laion2b_augreg_ft_in1k_384](https://huggingface.co/timm/convnext_large_mlp.clip_laion2b_augreg_ft_in1k_384)|87.870|98.452|384 |200.13 |101.11 |126.74 |197.92 |256 | |[convnext_xlarge.fb_in22k_ft_in1k_384](https://huggingface.co/timm/convnext_xlarge.fb_in22k_ft_in1k_384)|87.75 |98.556|384 |350.2 |179.2 |168.99|124.85 |192 | |[convnextv2_base.fcmae_ft_in22k_in1k_384](https://huggingface.co/timm/convnextv2_base.fcmae_ft_in22k_in1k_384)|87.646|98.422|384 |88.72 |45.21 |84.49 |209.51 |256 | |[convnext_large.fb_in22k_ft_in1k_384](https://huggingface.co/timm/convnext_large.fb_in22k_ft_in1k_384)|87.476|98.382|384 |197.77 |101.1 |126.74|194.66 |256 | |[convnext_large_mlp.clip_laion2b_augreg_ft_in1k](https://huggingface.co/timm/convnext_large_mlp.clip_laion2b_augreg_ft_in1k)|87.344|98.218|256 |200.13 |44.94 |56.33 |438.08 |256 | |[convnextv2_large.fcmae_ft_in22k_in1k](https://huggingface.co/timm/convnextv2_large.fcmae_ft_in22k_in1k)|87.26 |98.248|224 |197.96 |34.4 |43.13 |376.84 |256 | |[convnext_xlarge.fb_in22k_ft_in1k](https://huggingface.co/timm/convnext_xlarge.fb_in22k_ft_in1k)|87.002|98.208|224 |350.2 |60.98 |57.5 |368.01 |256 | |[convnext_base.fb_in22k_ft_in1k_384](https://huggingface.co/timm/convnext_base.fb_in22k_ft_in1k_384)|86.796|98.264|384 |88.59 |45.21 |84.49 |366.54 |256 | |[convnextv2_base.fcmae_ft_in22k_in1k](https://huggingface.co/timm/convnextv2_base.fcmae_ft_in22k_in1k)|86.74 |98.022|224 |88.72 |15.38 |28.75 |624.23 |256 | |[convnext_large.fb_in22k_ft_in1k](https://huggingface.co/timm/convnext_large.fb_in22k_ft_in1k)|86.636|98.028|224 |197.77 |34.4 |43.13 |581.43 |256 | |[convnext_base.clip_laiona_augreg_ft_in1k_384](https://huggingface.co/timm/convnext_base.clip_laiona_augreg_ft_in1k_384)|86.504|97.97 |384 |88.59 |45.21 |84.49 |368.14 |256 | |[convnextv2_huge.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_huge.fcmae_ft_in1k)|86.256|97.75 |224 |660.29 |115.0 |79.07 |154.72 |256 | |[convnext_small.in12k_ft_in1k_384](https://huggingface.co/timm/convnext_small.in12k_ft_in1k_384)|86.182|97.92 |384 |50.22 |25.58 |63.37 |516.19 |256 | |[convnext_base.clip_laion2b_augreg_ft_in1k](https://huggingface.co/timm/convnext_base.clip_laion2b_augreg_ft_in1k)|86.154|97.68 |256 |88.59 |20.09 |37.55 |819.86 |256 | |[convnext_base.fb_in22k_ft_in1k](https://huggingface.co/timm/convnext_base.fb_in22k_ft_in1k)|85.822|97.866|224 |88.59 |15.38 |28.75 |1037.66 |256 | |[convnext_small.fb_in22k_ft_in1k_384](https://huggingface.co/timm/convnext_small.fb_in22k_ft_in1k_384)|85.778|97.886|384 |50.22 |25.58 |63.37 |518.95 |256 | |[convnextv2_large.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_large.fcmae_ft_in1k)|85.742|97.584|224 |197.96 |34.4 |43.13 |375.23 |256 | |[convnext_small.in12k_ft_in1k](https://huggingface.co/timm/convnext_small.in12k_ft_in1k)|85.174|97.506|224 |50.22 |8.71 |21.56 |1474.31 |256 | |[convnext_tiny.in12k_ft_in1k_384](https://huggingface.co/timm/convnext_tiny.in12k_ft_in1k_384)|85.118|97.608|384 |28.59 |13.14 |39.48 |856.76 |256 | |[convnextv2_tiny.fcmae_ft_in22k_in1k_384](https://huggingface.co/timm/convnextv2_tiny.fcmae_ft_in22k_in1k_384)|85.112|97.63 |384 |28.64 |13.14 |39.48 |491.32 |256 | |[convnextv2_base.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_base.fcmae_ft_in1k)|84.874|97.09 |224 |88.72 |15.38 |28.75 |625.33 |256 | |[convnext_small.fb_in22k_ft_in1k](https://huggingface.co/timm/convnext_small.fb_in22k_ft_in1k)|84.562|97.394|224 |50.22 |8.71 |21.56 |1478.29 |256 | |[convnext_large.fb_in1k](https://huggingface.co/timm/convnext_large.fb_in1k)|84.282|96.892|224 |197.77 |34.4 |43.13 |584.28 |256 | |[convnext_tiny.in12k_ft_in1k](https://huggingface.co/timm/convnext_tiny.in12k_ft_in1k)|84.186|97.124|224 |28.59 |4.47 |13.44 |2433.7 |256 | |[convnext_tiny.fb_in22k_ft_in1k_384](https://huggingface.co/timm/convnext_tiny.fb_in22k_ft_in1k_384)|84.084|97.14 |384 |28.59 |13.14 |39.48 |862.95 |256 | |[convnextv2_tiny.fcmae_ft_in22k_in1k](https://huggingface.co/timm/convnextv2_tiny.fcmae_ft_in22k_in1k)|83.894|96.964|224 |28.64 |4.47 |13.44 |1452.72 |256 | |[convnext_base.fb_in1k](https://huggingface.co/timm/convnext_base.fb_in1k)|83.82 |96.746|224 |88.59 |15.38 |28.75 |1054.0 |256 | |[convnextv2_nano.fcmae_ft_in22k_in1k_384](https://huggingface.co/timm/convnextv2_nano.fcmae_ft_in22k_in1k_384)|83.37 |96.742|384 |15.62 |7.22 |24.61 |801.72 |256 | |[convnext_small.fb_in1k](https://huggingface.co/timm/convnext_small.fb_in1k)|83.142|96.434|224 |50.22 |8.71 |21.56 |1464.0 |256 | |[convnextv2_tiny.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_tiny.fcmae_ft_in1k)|82.92 |96.284|224 |28.64 |4.47 |13.44 |1425.62 |256 | |[convnext_tiny.fb_in22k_ft_in1k](https://huggingface.co/timm/convnext_tiny.fb_in22k_ft_in1k)|82.898|96.616|224 |28.59 |4.47 |13.44 |2480.88 |256 | |[convnext_nano.in12k_ft_in1k](https://huggingface.co/timm/convnext_nano.in12k_ft_in1k)|82.282|96.344|224 |15.59 |2.46 |8.37 |3926.52 |256 | |[convnext_tiny_hnf.a2h_in1k](https://huggingface.co/timm/convnext_tiny_hnf.a2h_in1k)|82.216|95.852|224 |28.59 |4.47 |13.44 |2529.75 |256 | |[convnext_tiny.fb_in1k](https://huggingface.co/timm/convnext_tiny.fb_in1k)|82.066|95.854|224 |28.59 |4.47 |13.44 |2346.26 |256 | |[convnextv2_nano.fcmae_ft_in22k_in1k](https://huggingface.co/timm/convnextv2_nano.fcmae_ft_in22k_in1k)|82.03 |96.166|224 |15.62 |2.46 |8.37 |2300.18 |256 | |[convnextv2_nano.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_nano.fcmae_ft_in1k)|81.83 |95.738|224 |15.62 |2.46 |8.37 |2321.48 |256 | |[convnext_nano_ols.d1h_in1k](https://huggingface.co/timm/convnext_nano_ols.d1h_in1k)|80.866|95.246|224 |15.65 |2.65 |9.38 |3523.85 |256 | |[convnext_nano.d1h_in1k](https://huggingface.co/timm/convnext_nano.d1h_in1k)|80.768|95.334|224 |15.59 |2.46 |8.37 |3915.58 |256 | |[convnextv2_pico.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_pico.fcmae_ft_in1k)|80.304|95.072|224 |9.07 |1.37 |6.1 |3274.57 |256 | |[convnext_pico.d1_in1k](https://huggingface.co/timm/convnext_pico.d1_in1k)|79.526|94.558|224 |9.05 |1.37 |6.1 |5686.88 |256 | |[convnext_pico_ols.d1_in1k](https://huggingface.co/timm/convnext_pico_ols.d1_in1k)|79.522|94.692|224 |9.06 |1.43 |6.5 |5422.46 |256 | |[convnextv2_femto.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_femto.fcmae_ft_in1k)|78.488|93.98 |224 |5.23 |0.79 |4.57 |4264.2 |256 | |[convnext_femto_ols.d1_in1k](https://huggingface.co/timm/convnext_femto_ols.d1_in1k)|77.86 |93.83 |224 |5.23 |0.82 |4.87 |6910.6 |256 | |[convnext_femto.d1_in1k](https://huggingface.co/timm/convnext_femto.d1_in1k)|77.454|93.68 |224 |5.22 |0.79 |4.57 |7189.92 |256 | |[convnextv2_atto.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_atto.fcmae_ft_in1k)|76.664|93.044|224 |3.71 |0.55 |3.81 |4728.91 |256 | |[convnext_atto_ols.a2_in1k](https://huggingface.co/timm/convnext_atto_ols.a2_in1k)|75.88 |92.846|224 |3.7 |0.58 |4.11 |7963.16 |256 | |[convnext_atto.d2_in1k](https://huggingface.co/timm/convnext_atto.d2_in1k)|75.664|92.9 |224 |3.7 |0.55 |3.81 |8439.22 |256 | | fc7dab2c6dd893b7f063efa956690409 |

apache-2.0 | ['image-classification', 'timm'] | false | By Throughput (samples / sec) All timing numbers from eager model PyTorch 1.13 on RTX 3090 w/ AMP. |model |top1 |top5 |img_size|param_count|gmacs |macts |samples_per_sec|batch_size| |----------------------------------------------|------|------|--------|-----------|------|------|---------------|----------| |[convnext_atto.d2_in1k](https://huggingface.co/timm/convnext_atto.d2_in1k)|75.664|92.9 |224 |3.7 |0.55 |3.81 |8439.22 |256 | |[convnext_atto_ols.a2_in1k](https://huggingface.co/timm/convnext_atto_ols.a2_in1k)|75.88 |92.846|224 |3.7 |0.58 |4.11 |7963.16 |256 | |[convnext_femto.d1_in1k](https://huggingface.co/timm/convnext_femto.d1_in1k)|77.454|93.68 |224 |5.22 |0.79 |4.57 |7189.92 |256 | |[convnext_femto_ols.d1_in1k](https://huggingface.co/timm/convnext_femto_ols.d1_in1k)|77.86 |93.83 |224 |5.23 |0.82 |4.87 |6910.6 |256 | |[convnext_pico.d1_in1k](https://huggingface.co/timm/convnext_pico.d1_in1k)|79.526|94.558|224 |9.05 |1.37 |6.1 |5686.88 |256 | |[convnext_pico_ols.d1_in1k](https://huggingface.co/timm/convnext_pico_ols.d1_in1k)|79.522|94.692|224 |9.06 |1.43 |6.5 |5422.46 |256 | |[convnextv2_atto.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_atto.fcmae_ft_in1k)|76.664|93.044|224 |3.71 |0.55 |3.81 |4728.91 |256 | |[convnextv2_femto.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_femto.fcmae_ft_in1k)|78.488|93.98 |224 |5.23 |0.79 |4.57 |4264.2 |256 | |[convnext_nano.in12k_ft_in1k](https://huggingface.co/timm/convnext_nano.in12k_ft_in1k)|82.282|96.344|224 |15.59 |2.46 |8.37 |3926.52 |256 | |[convnext_nano.d1h_in1k](https://huggingface.co/timm/convnext_nano.d1h_in1k)|80.768|95.334|224 |15.59 |2.46 |8.37 |3915.58 |256 | |[convnext_nano_ols.d1h_in1k](https://huggingface.co/timm/convnext_nano_ols.d1h_in1k)|80.866|95.246|224 |15.65 |2.65 |9.38 |3523.85 |256 | |[convnextv2_pico.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_pico.fcmae_ft_in1k)|80.304|95.072|224 |9.07 |1.37 |6.1 |3274.57 |256 | |[convnext_tiny_hnf.a2h_in1k](https://huggingface.co/timm/convnext_tiny_hnf.a2h_in1k)|82.216|95.852|224 |28.59 |4.47 |13.44 |2529.75 |256 | |[convnext_tiny.fb_in22k_ft_in1k](https://huggingface.co/timm/convnext_tiny.fb_in22k_ft_in1k)|82.898|96.616|224 |28.59 |4.47 |13.44 |2480.88 |256 | |[convnext_tiny.in12k_ft_in1k](https://huggingface.co/timm/convnext_tiny.in12k_ft_in1k)|84.186|97.124|224 |28.59 |4.47 |13.44 |2433.7 |256 | |[convnext_tiny.fb_in1k](https://huggingface.co/timm/convnext_tiny.fb_in1k)|82.066|95.854|224 |28.59 |4.47 |13.44 |2346.26 |256 | |[convnextv2_nano.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_nano.fcmae_ft_in1k)|81.83 |95.738|224 |15.62 |2.46 |8.37 |2321.48 |256 | |[convnextv2_nano.fcmae_ft_in22k_in1k](https://huggingface.co/timm/convnextv2_nano.fcmae_ft_in22k_in1k)|82.03 |96.166|224 |15.62 |2.46 |8.37 |2300.18 |256 | |[convnext_small.fb_in22k_ft_in1k](https://huggingface.co/timm/convnext_small.fb_in22k_ft_in1k)|84.562|97.394|224 |50.22 |8.71 |21.56 |1478.29 |256 | |[convnext_small.in12k_ft_in1k](https://huggingface.co/timm/convnext_small.in12k_ft_in1k)|85.174|97.506|224 |50.22 |8.71 |21.56 |1474.31 |256 | |[convnext_small.fb_in1k](https://huggingface.co/timm/convnext_small.fb_in1k)|83.142|96.434|224 |50.22 |8.71 |21.56 |1464.0 |256 | |[convnextv2_tiny.fcmae_ft_in22k_in1k](https://huggingface.co/timm/convnextv2_tiny.fcmae_ft_in22k_in1k)|83.894|96.964|224 |28.64 |4.47 |13.44 |1452.72 |256 | |[convnextv2_tiny.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_tiny.fcmae_ft_in1k)|82.92 |96.284|224 |28.64 |4.47 |13.44 |1425.62 |256 | |[convnext_base.fb_in1k](https://huggingface.co/timm/convnext_base.fb_in1k)|83.82 |96.746|224 |88.59 |15.38 |28.75 |1054.0 |256 | |[convnext_base.fb_in22k_ft_in1k](https://huggingface.co/timm/convnext_base.fb_in22k_ft_in1k)|85.822|97.866|224 |88.59 |15.38 |28.75 |1037.66 |256 | |[convnext_tiny.fb_in22k_ft_in1k_384](https://huggingface.co/timm/convnext_tiny.fb_in22k_ft_in1k_384)|84.084|97.14 |384 |28.59 |13.14 |39.48 |862.95 |256 | |[convnext_tiny.in12k_ft_in1k_384](https://huggingface.co/timm/convnext_tiny.in12k_ft_in1k_384)|85.118|97.608|384 |28.59 |13.14 |39.48 |856.76 |256 | |[convnext_base.clip_laion2b_augreg_ft_in1k](https://huggingface.co/timm/convnext_base.clip_laion2b_augreg_ft_in1k)|86.154|97.68 |256 |88.59 |20.09 |37.55 |819.86 |256 | |[convnextv2_nano.fcmae_ft_in22k_in1k_384](https://huggingface.co/timm/convnextv2_nano.fcmae_ft_in22k_in1k_384)|83.37 |96.742|384 |15.62 |7.22 |24.61 |801.72 |256 | |[convnextv2_base.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_base.fcmae_ft_in1k)|84.874|97.09 |224 |88.72 |15.38 |28.75 |625.33 |256 | |[convnextv2_base.fcmae_ft_in22k_in1k](https://huggingface.co/timm/convnextv2_base.fcmae_ft_in22k_in1k)|86.74 |98.022|224 |88.72 |15.38 |28.75 |624.23 |256 | |[convnext_large.fb_in1k](https://huggingface.co/timm/convnext_large.fb_in1k)|84.282|96.892|224 |197.77 |34.4 |43.13 |584.28 |256 | |[convnext_large.fb_in22k_ft_in1k](https://huggingface.co/timm/convnext_large.fb_in22k_ft_in1k)|86.636|98.028|224 |197.77 |34.4 |43.13 |581.43 |256 | |[convnext_small.fb_in22k_ft_in1k_384](https://huggingface.co/timm/convnext_small.fb_in22k_ft_in1k_384)|85.778|97.886|384 |50.22 |25.58 |63.37 |518.95 |256 | |[convnext_small.in12k_ft_in1k_384](https://huggingface.co/timm/convnext_small.in12k_ft_in1k_384)|86.182|97.92 |384 |50.22 |25.58 |63.37 |516.19 |256 | |[convnextv2_tiny.fcmae_ft_in22k_in1k_384](https://huggingface.co/timm/convnextv2_tiny.fcmae_ft_in22k_in1k_384)|85.112|97.63 |384 |28.64 |13.14 |39.48 |491.32 |256 | |[convnext_large_mlp.clip_laion2b_augreg_ft_in1k](https://huggingface.co/timm/convnext_large_mlp.clip_laion2b_augreg_ft_in1k)|87.344|98.218|256 |200.13 |44.94 |56.33 |438.08 |256 | |[convnextv2_large.fcmae_ft_in22k_in1k](https://huggingface.co/timm/convnextv2_large.fcmae_ft_in22k_in1k)|87.26 |98.248|224 |197.96 |34.4 |43.13 |376.84 |256 | |[convnextv2_large.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_large.fcmae_ft_in1k)|85.742|97.584|224 |197.96 |34.4 |43.13 |375.23 |256 | |[convnext_base.clip_laiona_augreg_ft_in1k_384](https://huggingface.co/timm/convnext_base.clip_laiona_augreg_ft_in1k_384)|86.504|97.97 |384 |88.59 |45.21 |84.49 |368.14 |256 | |[convnext_xlarge.fb_in22k_ft_in1k](https://huggingface.co/timm/convnext_xlarge.fb_in22k_ft_in1k)|87.002|98.208|224 |350.2 |60.98 |57.5 |368.01 |256 | |[convnext_base.fb_in22k_ft_in1k_384](https://huggingface.co/timm/convnext_base.fb_in22k_ft_in1k_384)|86.796|98.264|384 |88.59 |45.21 |84.49 |366.54 |256 | |[convnextv2_base.fcmae_ft_in22k_in1k_384](https://huggingface.co/timm/convnextv2_base.fcmae_ft_in22k_in1k_384)|87.646|98.422|384 |88.72 |45.21 |84.49 |209.51 |256 | |[convnext_large_mlp.clip_laion2b_augreg_ft_in1k_384](https://huggingface.co/timm/convnext_large_mlp.clip_laion2b_augreg_ft_in1k_384)|87.870 |98.452 |384 |200.13 |101.11 |126.74 |197.92 |256 | |[convnext_large.fb_in22k_ft_in1k_384](https://huggingface.co/timm/convnext_large.fb_in22k_ft_in1k_384)|87.476|98.382|384 |197.77 |101.1 |126.74|194.66 |256 | |[convnextv2_huge.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_huge.fcmae_ft_in1k)|86.256|97.75 |224 |660.29 |115.0 |79.07 |154.72 |256 | |[convnextv2_large.fcmae_ft_in22k_in1k_384](https://huggingface.co/timm/convnextv2_large.fcmae_ft_in22k_in1k_384)|88.196|98.532|384 |197.96 |101.1 |126.74|128.94 |128 | |[convnext_xlarge.fb_in22k_ft_in1k_384](https://huggingface.co/timm/convnext_xlarge.fb_in22k_ft_in1k_384)|87.75 |98.556|384 |350.2 |179.2 |168.99|124.85 |192 | |[convnextv2_huge.fcmae_ft_in22k_in1k_384](https://huggingface.co/timm/convnextv2_huge.fcmae_ft_in22k_in1k_384)|88.668|98.738|384 |660.29 |337.96|232.35|50.56 |64 | |[convnextv2_huge.fcmae_ft_in22k_in1k_512](https://huggingface.co/timm/convnextv2_huge.fcmae_ft_in22k_in1k_512)|88.848|98.742|512 |660.29 |600.81|413.07|28.58 |48 | | 24051d84314462bfd306b594ad6ba23c |

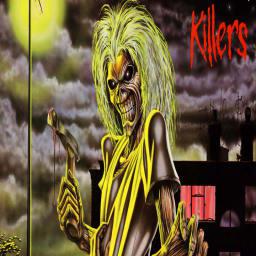

mit | [] | false | Eddie on Stable Diffusion This is the `Eddie` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:      | eebc1a68a76003bd3c8ec32e163aaddd |

apache-2.0 | ['generated_from_keras_callback'] | false | leabum/distilbert-base-uncased-finetuned-squad This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 5.5824 - Train End Logits Accuracy: 0.0347 - Train Start Logits Accuracy: 0.0694 - Validation Loss: 5.8343 - Validation End Logits Accuracy: 0.0 - Validation Start Logits Accuracy: 0.0 - Epoch: 1 | e6b961217bc8d176343965f2768a3369 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch | |:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:| | 5.8427 | 0.0069 | 0.0069 | 5.8688 | 0.0 | 0.0 | 0 | | 5.5824 | 0.0347 | 0.0694 | 5.8343 | 0.0 | 0.0 | 1 | | 2eeb4bd030ce5a8be5ef265823ff2c9d |

creativeml-openrail-m | [] | false | **Model Description** The model was created by merging Well-known models. (Waifu Diffusion, Novel AI, Anything 3.0, etc) There is no separate trigger word, and keywords commonly applied in Waifu Diffusion and Novel AI can be used. 모델은 잘 알려진 공개 모델을 병합하여 만들었습니다. (Waifu Diffusion, Novel AI, Anything 3.0 등) 별도의 트리거 단어는 없으며, Waifu Diffusion과 Novel AI 에서 일반적으로 적용되는 키워드를 사용 할 수 있습니다. | 056a3a2be5e29cb0b523d47026f8be8a |

creativeml-openrail-m | [] | false | **Vox-mix Samples**  >(masterpiece, best quality, ultra-detailed, illustration, painting), >best illumination, dynamic angle, finely detail, >(full body shot of a High Quality Victorian Era cute girl), (oil painting), >(Francois Boucher), alphonse mucha, (Claude Monet), Franz Xaver Winterhalter, [NORMAN ROCKWELL], >(PERFECT FACE:1.2), (SEXY FACE:1.2), (DETAILED PUPILS:1.2), (SMIRK), (HIGH DETAIL:1.2), SHARP, glitter many particles, artgerm, ((intricate details)), ((highres)), (finely detailed), >absurdres, soft lighting, glow, (1girl), (solo), beautiful detailed glow, (large breasts), cleavage, sideswept hair, hair bowtie, (intricate halter backless dress), gloves, (highheels:1.14), [ornate mansion's foyer, bannisters in background], >Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry, extra fingers, mutation, bad anatomy, missing arms, missing legs, extra arms, extra legs, mutated hands, fused fingers, too many fingers, (nipples on navel:1.34), (nipples on stomach:1.34), (3 or more nipples:1.34), (censored:1.22), (censor bar:1.22), (ugly:1.48), (duplicate:1.34), (morbid:1.22), (mutilated:1.22), (tranny:1.34), (trans:1.34), (trannsexual:1.34), (hermaphrodite:1.1), extra fingers, mutated hands, (poorly drawn hands:1.22), (poorly drawn face:1.22), (mutation:1.34), (deformed:1.34), (ugly:1.22), blurry, (bad anatomy:1.22), >Seed: 3483746954, Steps: 50, CFG scale: 8  >(masterpiece, best quality, ultra-detailed, illustration, painting), >best illumination, dynamic angle, finely detail, >(full body shot of a High Quality Victorian Era cute girl), (oil painting), >(Francois Boucher), alphonse mucha, (Claude Monet), Franz Xaver Winterhalter, [NORMAN ROCKWELL], >(PERFECT FACE:1.2), (SEXY FACE:1.2), (DETAILED PUPILS:1.2), (SMIRK), (HIGH DETAIL:1.2), SHARP, glitter many particles, artgerm, ((intricate details)), ((highres)), (finely detailed), >absurdres, soft lighting, glow, (1girl), (solo), beautiful detailed glow, (large breasts), cleavage, sideswept hair, hair bowtie, (intricate halter backless dress), gloves, (highheels:1.14), [ornate mansion's foyer, bannisters in background], >Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry, extra fingers, mutation, bad anatomy, missing arms, missing legs, extra arms, extra legs, mutated hands, fused fingers, too many fingers, (nipples on navel:1.34), (nipples on stomach:1.34), (3 or more nipples:1.34), (censored:1.22), (censor bar:1.22), (ugly:1.48), (duplicate:1.34), (morbid:1.22), (mutilated:1.22), (tranny:1.34), (trans:1.34), (trannsexual:1.34), (hermaphrodite:1.1), extra fingers, mutated hands, (poorly drawn hands:1.22), (poorly drawn face:1.22), (mutation:1.34), (deformed:1.34), (ugly:1.22), blurry, (bad anatomy:1.22), >Seed: 4009463661, Steps: 50, Sampler: DDIM, CFG scale: 8  >(masterpiece, best quality, ultra-detailed, illustration, painting), >best illumination, dynamic angle, finely detail, >(full body shot of a High Quality Victorian Era cute girl), (oil painting), >(Francois Boucher), alphonse mucha, (Claude Monet), Franz Xaver Winterhalter, [NORMAN ROCKWELL], >(PERFECT FACE:1.2), (SEXY FACE:1.2), (DETAILED PUPILS:1.2), (SMIRK), (HIGH DETAIL:1.2), SHARP, glitter many particles, artgerm, ((intricate details)), ((highres)), (finely detailed), (wearing a sexy see-through backless dress:1.28), >absurdres, soft lighting, glow, (1girl), (solo), beautiful detailed glow, (large breasts), cleavage, sideswept hair, hair bowtie, gloves, (highheels:1.12), [ornate mansion's foyer, bannisters in background], (NSFW:1.2) >Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry, extra fingers, mutation, bad anatomy, missing arms, missing legs, extra arms, extra legs, mutated hands, fused fingers, too many fingers, (nipples on navel:1.34), (nipples on stomach:1.34), (3 or more nipples:1.34), (censored:1.22), (censor bar:1.22), (ugly:1.48), (duplicate:1.34), (morbid:1.22), (mutilated:1.22), (tranny:1.34), (trans:1.34), (trannsexual:1.34), (hermaphrodite:1.1), extra fingers, mutated hands, (poorly drawn hands:1.22), (poorly drawn face:1.22), (mutation:1.34), (deformed:1.34), (ugly:1.22), blurry, (bad anatomy:1.22), >Seed: 3002977200, Steps: 50, Sampler: DDIM, CFG scale: 10 | 44a9b69a4db2f7d0566a46fa74a272bb |

creativeml-openrail-m | [] | false | **Vox-mix2 Samples**  >((masterpiece, best quality, ultra-detailed, illustration, painting), (poster illustration), trending on artstation, (4girls:1.6), >(a High Quality Victorian Era sexy girl), (nsfw:1.2), (intricate see-through dress:1.2), >((1girl)), long hair, (PERFECT FACE:1.2), (SEXY FACE:1.2), (DETAILED PUPILS:1.2), (SMIRK), sideswept hair, (full body:1.2), >((1girl)), pixie cut, (sexy eyes), detailed face, detailed eyes, slight smile, (full body:1.2), >((1girl)), shot hair, (PERFECT FACE:1.2), (DETAILED PUPILS:1.2), (SMIRK), (full body:1.2), >((1girl)), wave hair, (bride), (beautiful face), (sexy eyes), (DETAILED PUPILS:1.2), (SMIRK), ideswept hair, (full body:1.2), >((1girl)), bob cut, (beautiful face), (sexy eyes), detailed face, detailed eyes, (full body:1.2), >SHARP, glitter many particles, ((intricate details)), ((highres)), (finely detailed), >oil painting by ((Francois Boucher), (alphonse mucha:0.8), (Claude Monet), Franz Xaver Winterhalter, (NORMAN ROCKWELL:0.8)), > >Negative prompt:logo, title, text, caption, solo:1.5, identical outfits, split panels, white background, plain background, black background, simple background, gradient background, lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry, extra fingers, mutation, bad anatomy, missing arms, missing legs, extra arms, extra legs, mutated hands, fused fingers, too many fingers, (nipples on navel:1.34), (nipples on stomach:1.34), (3 or more nipples:1.34), (censored:1.22), (censor bar:1.22), (ugly:1.48), (duplicate:1.34), (morbid:1.22), (mutilated:1.22), (tranny:1.34), (trans:1.34), (trannsexual:1.34), (hermaphrodite:1.1), extra fingers, mutated hands, (poorly drawn hands:1.22), (poorly drawn face:1.22), (mutation:1.34), (deformed:1.34), (ugly:1.22), blurry, (bad anatomy:1.22), (bad proportions:1.34), (extra limbs:1.22), cloned face, (disfigured:1.34), (more than 2 nipples:1.34), extra limbs, (bad anatomy:1.1), gross proportions, (malformed limbs:1.1), (missing arms:1.22), (missing legs:1.22), (extra arms:1.34), (extra legs:1.34), mutated hands, (fused fingers:1.1), (too many fingers:1.1), (long neck:1.34), (out of frame:1.1), (more than one person in focus:1.1), (bad anatomy:1.1), (more than two arm per body:1.48), (more than two leg per body:1.48), (more than five fingers on one hand:1.48), bad detailed background, unclear architectural outline, non-linear background, over one person in focus, (over four finger:1.05), (fingers excluding thumb:1.98), fused anatomy, (bad anatomybody:1.1), (bad anatomyhand:1.1), (bad anatomyfinger:1.1), (four fingers excluding thumbfingers:1.98), (bad anatomyarms:1.1), (over two armsbody:1.1), (bad anatomyleg:1.1), (over two legsbody:1.1), (bad anatomyarm:1.1), (bad detailfinger:1.05), (bad anatomyfingers:1.1), (multifulfingers:1.1), (bad anatomyfinger:1.1), (bad anatomyfingers:1.1), (fusedfingers:1.1), (over four fingerfingers excluding thumb:1.98), (multifulhands:1.1), (multifularms:1.1), (multifullegs:1.1), ((frame)) > >Steps: 50, Sampler: DDIM, CFG scale: 12, Seed: 1023642063, Size: 768x512, Model hash: ab05b088cd, Model: 20_Vox-mix2anu, Denoising strength: 0.53, ENSD: -1, Hires upscale: 2, Hires upscaler: R-ESRGAN 4x+ Anime6B **License** This model is open access and available to all, with a CreativeML OpenRAIL-M license further specifying rights and usage. 이 모델은 권한 및 사용을 추가로 지정하는 CreativeML OpenRAIL-M 라이선스를 통해 모든 사람이 액세스할 수 있고 사용할 수 있습니다. The CreativeML OpenRAIL License specifies: CreativeML OpenRAIL 라이선스는 다음을 지정합니다. 1. You can't use the model to deliberately produce nor share illegal or harmful outputs or content / 모델을 사용하여 불법적이거나 유해한 출력물 또는 콘텐츠를 의도적으로 생성하거나 공유할 수 없습니다. 2. The authors claims no rights on the outputs you generate, you are free to use them and are accountable for their use which must not go against the provisions set in the license / 작성자는 귀하가 생성한 결과물에 대해 어떠한 권리도 주장하지 않으며, 귀하는 이를 자유롭게 사용할 수 있으며 라이센스에 설정된 조항에 위배되지 않는 사용에 대해 책임을 집니다. 3. You may re-distribute the weights and use the model commercially and/or as a service. If you do, please be aware you have to include the same use restrictions as the ones in the license and share a copy of the CreativeML OpenRAIL-M to all your users (please read the license entirely and carefully) / 가중치를 재배포하고 모델을 상업적 및/또는 서비스로 사용할 수 있습니다. 그렇게 하는 경우 라이선스에 있는 것과 동일한 사용 제한을 포함하고 모든 사용자에게 CreativeML OpenRAIL-M 사본을 공유해야 합니다(라이선스를 완전히 주의 깊게 읽으십시오). **[Please read the full license here](https://huggingface.co/spaces/CompVis/stable-diffusion-license)** | 6da6c8ee49217415ec3774972ab7ff89 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2251 - Accuracy: 0.923 - F1: 0.9230 | e4b09526d7d111d8b8bf34df25875bdd |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8643 | 1.0 | 250 | 0.3395 | 0.901 | 0.8969 | | 0.2615 | 2.0 | 500 | 0.2251 | 0.923 | 0.9230 | | 377cc1866666920cf46369578c73bd45 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-misogyny-sexism-fr-indomain-trans This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.9813 - Accuracy: 0.8708 - F1: 0.0 - Precision: 0.0 - Recall: 0.0 - Mae: 0.1292 | ca7045111b1e49fb6dc2c907796652e4 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | Precision | Recall | Mae | |:-------------:|:-----:|:----:|:---------------:|:--------:|:---:|:---------:|:------:|:------:| | 0.3606 | 1.0 | 2297 | 0.8082 | 0.8710 | 0.0 | 0.0 | 0.0 | 0.1290 | | 0.3169 | 2.0 | 4594 | 0.8868 | 0.8702 | 0.0 | 0.0 | 0.0 | 0.1298 | | 0.2708 | 3.0 | 6891 | 0.9082 | 0.8710 | 0.0 | 0.0 | 0.0 | 0.1290 | | 0.2337 | 4.0 | 9188 | 0.9813 | 0.8708 | 0.0 | 0.0 | 0.0 | 0.1292 | | d3aef9ef2f2495e92f980ed8d2f9eba0 |

mit | [] | false | obama_self_2 on Stable Diffusion This is the `<Obama>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:     | 8ba69381026fcad0320fdc3153865cc0 |

apache-2.0 | ['translation'] | false | opus-mt-sv-en * source languages: sv * target languages: en * OPUS readme: [sv-en](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/sv-en/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-02-26.zip](https://object.pouta.csc.fi/OPUS-MT-models/sv-en/opus-2020-02-26.zip) * test set translations: [opus-2020-02-26.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/sv-en/opus-2020-02-26.test.txt) * test set scores: [opus-2020-02-26.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/sv-en/opus-2020-02-26.eval.txt) | 954511eea51ce49882d7224ceea181f0 |

apache-2.0 | ['generated_from_trainer'] | false | my_awesome_eli5_clm-model This model is a fine-tuned version of [distilgpt2](https://huggingface.co/distilgpt2) on the None dataset. It achieves the following results on the evaluation set: - Loss: 3.9043 | dd7f4d5329a7bbb42459cc2bbe7d5e27 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | No log | 1.0 | 25 | 3.9350 | | No log | 2.0 | 50 | 3.9107 | | No log | 3.0 | 75 | 3.9043 | | 4813839bf4eefbb617a68b3c1d4aeacd |

mit | [] | false | youtooz candy on Stable Diffusion This is the `<youtooz-candy>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:       | a77ca9d79e85ded60c493830875fe8ec |

apache-2.0 | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 1 - eval_batch_size: 1 - gradient_accumulation_steps: 4 - optimizer: AdamW with betas=(0.9, 0.999), weight_decay=0.01 and epsilon=1e-08 - lr_scheduler: constant - lr_warmup_steps: 500 - ema_inv_gamma: None - ema_inv_gamma: None - ema_inv_gamma: None - mixed_precision: fp16 | df272cf1a72f539b1a94c7631f12b2ac |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased_fold_6_ternary This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.6625 - F1: 0.7588 | 8a6dc33aa3443c40fb14eac861ea9158 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | No log | 1.0 | 292 | 0.5117 | 0.7306 | | 0.5701 | 2.0 | 584 | 0.5273 | 0.7296 | | 0.5701 | 3.0 | 876 | 0.6037 | 0.7415 | | 0.2468 | 4.0 | 1168 | 0.7132 | 0.7318 | | 0.2468 | 5.0 | 1460 | 0.8980 | 0.7504 | | 0.12 | 6.0 | 1752 | 1.0343 | 0.7369 | | 0.0486 | 7.0 | 2044 | 1.1860 | 0.7333 | | 0.0486 | 8.0 | 2336 | 1.3348 | 0.7437 | | 0.019 | 9.0 | 2628 | 1.3040 | 0.7561 | | 0.019 | 10.0 | 2920 | 1.4649 | 0.7293 | | 0.0152 | 11.0 | 3212 | 1.4870 | 0.7431 | | 0.0078 | 12.0 | 3504 | 1.5668 | 0.7455 | | 0.0078 | 13.0 | 3796 | 1.5280 | 0.7378 | | 0.0091 | 14.0 | 4088 | 1.5672 | 0.7410 | | 0.0091 | 15.0 | 4380 | 1.5948 | 0.7491 | | 0.0052 | 16.0 | 4672 | 1.6625 | 0.7588 | | 0.0052 | 17.0 | 4964 | 1.6544 | 0.7411 | | 0.0048 | 18.0 | 5256 | 1.7124 | 0.7425 | | 0.0024 | 19.0 | 5548 | 1.7211 | 0.7477 | | 0.0024 | 20.0 | 5840 | 1.8216 | 0.7373 | | 0.001 | 21.0 | 6132 | 1.8325 | 0.7361 | | 0.001 | 22.0 | 6424 | 1.8089 | 0.7498 | | 0.0015 | 23.0 | 6716 | 1.8026 | 0.7506 | | 0.0005 | 24.0 | 7008 | 1.8026 | 0.7464 | | 0.0005 | 25.0 | 7300 | 1.8043 | 0.7464 | | 380757bb48c353dd465db0fca7298b87 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 50 - mixed_precision_training: Native AMP | dd50778c8280013d5f8a53d35ea42bd8 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased__subj__train-8-8 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.3160 - Accuracy: 0.8735 | 99ee26781cfbd769cd8d86b157ece1f3 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.7187 | 1.0 | 3 | 0.6776 | 1.0 | | 0.684 | 2.0 | 6 | 0.6608 | 1.0 | | 0.6532 | 3.0 | 9 | 0.6364 | 1.0 | | 0.5996 | 4.0 | 12 | 0.6119 | 1.0 | | 0.5242 | 5.0 | 15 | 0.5806 | 1.0 | | 0.4612 | 6.0 | 18 | 0.5320 | 1.0 | | 0.4192 | 7.0 | 21 | 0.4714 | 1.0 | | 0.3274 | 8.0 | 24 | 0.4071 | 1.0 | | 0.2871 | 9.0 | 27 | 0.3378 | 1.0 | | 0.2082 | 10.0 | 30 | 0.2822 | 1.0 | | 0.1692 | 11.0 | 33 | 0.2271 | 1.0 | | 0.1242 | 12.0 | 36 | 0.1793 | 1.0 | | 0.0977 | 13.0 | 39 | 0.1417 | 1.0 | | 0.0776 | 14.0 | 42 | 0.1117 | 1.0 | | 0.0631 | 15.0 | 45 | 0.0894 | 1.0 | | 0.0453 | 16.0 | 48 | 0.0733 | 1.0 | | 0.0399 | 17.0 | 51 | 0.0617 | 1.0 | | 0.0333 | 18.0 | 54 | 0.0528 | 1.0 | | 0.0266 | 19.0 | 57 | 0.0454 | 1.0 | | 0.0234 | 20.0 | 60 | 0.0393 | 1.0 | | 0.0223 | 21.0 | 63 | 0.0345 | 1.0 | | 0.0195 | 22.0 | 66 | 0.0309 | 1.0 | | 0.0161 | 23.0 | 69 | 0.0281 | 1.0 | | 0.0167 | 24.0 | 72 | 0.0260 | 1.0 | | 0.0163 | 25.0 | 75 | 0.0242 | 1.0 | | 0.0134 | 26.0 | 78 | 0.0227 | 1.0 | | 0.0128 | 27.0 | 81 | 0.0214 | 1.0 | | 0.0101 | 28.0 | 84 | 0.0204 | 1.0 | | 0.0109 | 29.0 | 87 | 0.0194 | 1.0 | | 0.0112 | 30.0 | 90 | 0.0186 | 1.0 | | 0.0108 | 31.0 | 93 | 0.0179 | 1.0 | | 0.011 | 32.0 | 96 | 0.0174 | 1.0 | | 0.0099 | 33.0 | 99 | 0.0169 | 1.0 | | 0.0083 | 34.0 | 102 | 0.0164 | 1.0 | | 0.0096 | 35.0 | 105 | 0.0160 | 1.0 | | 0.01 | 36.0 | 108 | 0.0156 | 1.0 | | 0.0084 | 37.0 | 111 | 0.0152 | 1.0 | | 0.0089 | 38.0 | 114 | 0.0149 | 1.0 | | 0.0073 | 39.0 | 117 | 0.0146 | 1.0 | | 0.0082 | 40.0 | 120 | 0.0143 | 1.0 | | 0.008 | 41.0 | 123 | 0.0141 | 1.0 | | 0.0093 | 42.0 | 126 | 0.0139 | 1.0 | | 0.0078 | 43.0 | 129 | 0.0138 | 1.0 | | 0.0086 | 44.0 | 132 | 0.0136 | 1.0 | | 0.009 | 45.0 | 135 | 0.0135 | 1.0 | | 0.0072 | 46.0 | 138 | 0.0134 | 1.0 | | 0.0075 | 47.0 | 141 | 0.0133 | 1.0 | | 0.0082 | 48.0 | 144 | 0.0133 | 1.0 | | 0.0068 | 49.0 | 147 | 0.0132 | 1.0 | | 0.0074 | 50.0 | 150 | 0.0132 | 1.0 | | 29a21f5c6b78b4a2bbdde611ca29c152 |

apache-2.0 | ['generated_from_trainer'] | false | all-roberta-large-v1-meta-7-16-5 This model is a fine-tuned version of [sentence-transformers/all-roberta-large-v1](https://huggingface.co/sentence-transformers/all-roberta-large-v1) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.4797 - Accuracy: 0.28 | 11766b1414c2a26389919263d669961c |

apache-2.0 | ['automatic-speech-recognition', 'es'] | false | exp_w2v2r_es_xls-r_age_teens-2_sixties-8_s786 Fine-tuned [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) for speech recognition using the train split of [Common Voice 7.0 (es)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 3ecb53a290d042681bf2d54db4f918ad |

mit | ['generated_from_trainer'] | false | camembert-base-finetuned-paraphrase This model is a fine-tuned version of [camembert-base](https://huggingface.co/camembert-base) on the pawsx dataset. It achieves the following results on the evaluation set: - Loss: 0.2708 - Accuracy: 0.9085 - F1: 0.9089 | 8166c519c20e41039b11700ec3be499b |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 64 - eval_batch_size: 64 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 4 | b66cc38c0c9542137d92224d0e5232e7 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.3918 | 1.0 | 772 | 0.3211 | 0.869 | 0.8696 | | 0.2103 | 2.0 | 1544 | 0.2448 | 0.9075 | 0.9077 | | 0.1622 | 3.0 | 2316 | 0.2577 | 0.9055 | 0.9059 | | 0.1344 | 4.0 | 3088 | 0.2708 | 0.9085 | 0.9089 | | 9ddaba3aeeb37cd18007c9a491d6ad54 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | Jak's Creepy Critter Pack v2.0-768px! Higher resolution 768px images used for training with fine tuning to now allow better control of output images. Compared to v1.0 which creates messy blob monsters (which is still fun), this version allows finer control to unleash your creativity! Enjoy! Tips: use "food_crit" to start your prompt add "3d, ceramic, octane render" to add a shiny 3D appearance go wild Sample pictures of this concept using the 768px model:        | 67d2fcc3d29572f6931c6c23a68f6e18 |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-cased-finetuned-imdb This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 1.3367 - Accuracy: 0.625 | 858dcd318c6ee5e8e515d8d9a759306f |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 1.687 | 1.0 | 20 | 1.4339 | 0.625 | | 1.4117 | 2.0 | 40 | 1.3367 | 0.625 | | 91ff10a66242f4cae73b698186ba9d33 |

apache-2.0 | ['generated_from_keras_callback'] | false | example_workflow_model This model is a fine-tuned version of [hfl/chinese-roberta-wwm-ext](https://huggingface.co/hfl/chinese-roberta-wwm-ext) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.4118 - Train Sparse Categorical Accuracy: 0.8765 - Validation Loss: 0.5309 - Validation Sparse Categorical Accuracy: 0.8448 - Epoch: 1 | c694764985ee9a9bcea0f6be8e190bcc |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train Sparse Categorical Accuracy | Validation Loss | Validation Sparse Categorical Accuracy | Epoch | |:----------:|:---------------------------------:|:---------------:|:--------------------------------------:|:-----:| | 0.7242 | 0.7814 | 0.5739 | 0.8254 | 0 | | 0.4118 | 0.8765 | 0.5309 | 0.8448 | 1 | | a07f90f43bb41f597b906aa3d985be6a |

apache-2.0 | ['generated_from_trainer'] | false | bert-large-uncased_cls_subj This model is a fine-tuned version of [bert-large-uncased](https://huggingface.co/bert-large-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.1860 - Accuracy: 0.9675 | 9f537c18bd616f0f3acf344d682de7a6 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.2427 | 1.0 | 500 | 0.1733 | 0.9585 | | 0.1349 | 2.0 | 1000 | 0.1377 | 0.958 | | 0.0487 | 3.0 | 1500 | 0.1701 | 0.9635 | | 0.0184 | 4.0 | 2000 | 0.1906 | 0.9675 | | 0.0144 | 5.0 | 2500 | 0.1860 | 0.9675 | | 7fbef32b4c185f6eb1b11e67eea343cb |

apache-2.0 | ['text-classfication', 'int8', 'Intel® Neural Compressor', 'PostTrainingDynamic'] | false | Post-training dynamic quantization This is an INT8 PyTorch model quantized with [huggingface/optimum-intel](https://github.com/huggingface/optimum-intel) through the usage of [Intel® Neural Compressor](https://github.com/intel/neural-compressor). The original fp32 model comes from the fine-tuned model [bart-large-mrpc](https://huggingface.co/Intel/bart-large-mrpc). | ff3041288c3a140ddaa6904e5445da5d |

apache-2.0 | ['text-classfication', 'int8', 'Intel® Neural Compressor', 'PostTrainingDynamic'] | false | Load with optimum: ```python from optimum.intel.neural_compressor.quantization import IncQuantizedModelForSequenceClassification int8_model = IncQuantizedModelForSequenceClassification.from_pretrained( 'Intel/bart-large-mrpc-int8-dynamic', ) ``` | e91601e030d119a0b8fc09ee4f2c505b |

mit | ['spacy', 'token-classification'] | false | | Feature | Description | | --- |-----------------------------------------| | **Name** | `it_tei2go` | | **Version** | `0.0.0` | | **spaCy** | `>=3.2.4,<3.3.0` | | **Default Pipeline** | `ner` | | **Components** | `ner` | | **Vectors** | 0 keys, 0 unique vectors (0 dimensions) | | **Sources** | n/a | | **License** | MIT | | **Author** | [n/a]() | | 63486c449d92f747644a061d9a52def3 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | mt5-small-test-amazon This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 1.9515 - Rouge1: 30.3066 - Rouge2: 3.3019 - Rougel: 30.1887 - Rougelsum: 30.0314 | ff5d806ff67e996d7dd9cc6aa1629a20 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | |:-------------:|:-----:|:----:|:---------------:|:-------:|:------:|:-------:|:---------:| | 10.0147 | 1.0 | 1004 | 2.9904 | 7.3703 | 0.2358 | 7.3703 | 7.4292 | | 3.4892 | 2.0 | 2008 | 2.4061 | 23.4178 | 2.4764 | 23.2901 | 23.3097 | | 2.724 | 3.0 | 3012 | 2.1630 | 26.6706 | 2.8302 | 26.6509 | 26.5723 | | 2.4395 | 4.0 | 4016 | 2.0815 | 26.7296 | 2.9481 | 26.6313 | 26.533 | | 2.2881 | 5.0 | 5020 | 2.0048 | 30.1887 | 3.3019 | 30.0708 | 29.9135 | | 2.1946 | 6.0 | 6024 | 1.9712 | 29.4811 | 2.9481 | 29.4025 | 29.3042 | | 2.1458 | 7.0 | 7028 | 1.9545 | 29.8153 | 3.3019 | 29.717 | 29.5204 | | 2.1069 | 8.0 | 8032 | 1.9515 | 30.3066 | 3.3019 | 30.1887 | 30.0314 | | a441d7f82a7711c03210540b997816e1 |

apache-2.0 | ['generated_from_trainer'] | false | Tagged_Uni_50v1_NER_Model_3Epochs_AUGMENTED This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the tagged_uni50v1_wikigold_split dataset. It achieves the following results on the evaluation set: - Loss: 0.5851 - Precision: 0.1466 - Recall: 0.0256 - F1: 0.0437 - Accuracy: 0.7941 | f766c3ce35a8232733e088f2bc62b625 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 24 | 0.6704 | 0.0 | 0.0 | 0.0 | 0.7775 | | No log | 2.0 | 48 | 0.5824 | 0.1479 | 0.0154 | 0.0279 | 0.7895 | | No log | 3.0 | 72 | 0.5851 | 0.1466 | 0.0256 | 0.0437 | 0.7941 | | 8760eeaf22901af9896ccad8e8678225 |

other | ['vision', 'image-segmentation', 'generated_from_trainer'] | false | segformer-b0-finetuned-segments-sidewalk-2 This model is a fine-tuned version of [nvidia/mit-b0](https://huggingface.co/nvidia/mit-b0) on the segments/sidewalk-semantic dataset. It achieves the following results on the evaluation set: - Loss: 2.6306 - Mean Iou: 0.1027 - Mean Accuracy: 0.1574 - Overall Accuracy: 0.6552 - Per Category Iou: [0.0, 0.40932069741697885, 0.6666047315185674, 0.0015527279135260222, 0.000557997451181134, 0.004734463745284192, 0.0, 0.00024311836753505628, 0.0, 0.0, 0.5448608416905849, 0.005644290758731727, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.4689142754019952, 0.0, 0.00039031599380590526, 0.010175747938072128, 0.0, 0.0, 0.0, 0.0008842445754996234, 0.0, 0.0, 0.6689560919488968, 0.10178439680971307, 0.7089823411348399, 0.0, 0.0, 0.0, 0.0] - Per Category Accuracy: [nan, 0.6798160901382586, 0.8601972223213155, 0.001563543652833044, 0.0005586801134972854, 0.004789605465686377, nan, 0.00024743825184288725, 0.0, 0.0, 0.8407289173400536, 0.012641370267169317, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.7574833533176979, 0.0, 0.00039110009377117975, 0.013959849889225483, 0.0, nan, 0.0, 0.0009309900323061499, 0.0, 0.0, 0.9337304207449932, 0.12865528611713883, 0.8019892660736478, 0.0, 0.0, 0.0, 0.0] | b197c3d73cd8dc1e0d199a4f460ace58 |

other | ['vision', 'image-segmentation', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 6e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1 | d6a426364496904f69a46637e886f461 |

other | ['vision', 'image-segmentation', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Mean Iou | Mean Accuracy | Overall Accuracy | Per Category Iou | Per Category Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:|:-------------:|:----------------:|:-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------:|:---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------:| | 2.8872 | 0.5 | 20 | 3.1018 | 0.0995 | 0.1523 | 0.6415 | [0.0, 0.3982872425364927, 0.6582689116809847, 0.0, 0.00044314555867048773, 0.019651883205738383, 0.0, 0.0006528617866575068, 0.0, 0.0, 0.4861235900758522, 0.003961411405960721, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.4437814560942763, 0.0, 1.1600860783870164e-06, 0.019965880301918204, 0.0, 0.0, 0.0, 0.0074026601990928, 0.0, 0.0, 0.666238976894996, 0.13012673492067245, 0.6486315429686865, 0.0, 2.0656177918545805e-05, 0.0001944735843164534, 0.0] | [nan, 0.6263716501798601, 0.8841421548179447, 0.0, 0.00044410334445801165, 0.020659891877382746, nan, 0.0006731258604635891, 0.0, 0.0, 0.8403154629142631, 0.017886412063596133, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.6324385775164868, 0.0, 1.160534402881839e-06, 0.06036834410935781, 0.0, nan, 0.0, 0.010232933175604348, 0.0, 0.0, 0.9320173945724101, 0.15828224740687694, 0.6884182010535304, 0.0, 2.3169780427714147e-05, 0.00019505205451704924, 0.0] | | 2.6167 | 1.0 | 40 | 2.6306 | 0.1027 | 0.1574 | 0.6552 | [0.0, 0.40932069741697885, 0.6666047315185674, 0.0015527279135260222, 0.000557997451181134, 0.004734463745284192, 0.0, 0.00024311836753505628, 0.0, 0.0, 0.5448608416905849, 0.005644290758731727, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.4689142754019952, 0.0, 0.00039031599380590526, 0.010175747938072128, 0.0, 0.0, 0.0, 0.0008842445754996234, 0.0, 0.0, 0.6689560919488968, 0.10178439680971307, 0.7089823411348399, 0.0, 0.0, 0.0, 0.0] | [nan, 0.6798160901382586, 0.8601972223213155, 0.001563543652833044, 0.0005586801134972854, 0.004789605465686377, nan, 0.00024743825184288725, 0.0, 0.0, 0.8407289173400536, 0.012641370267169317, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, 0.7574833533176979, 0.0, 0.00039110009377117975, 0.013959849889225483, 0.0, nan, 0.0, 0.0009309900323061499, 0.0, 0.0, 0.9337304207449932, 0.12865528611713883, 0.8019892660736478, 0.0, 0.0, 0.0, 0.0] | | d496bfce511c51310ed688c5bc5d6c94 |

apache-2.0 | ['generated_from_trainer'] | false | Vin11-P3 This model is a fine-tuned version of [HuyenNguyen/Vin9-P3](https://huggingface.co/HuyenNguyen/Vin9-P3) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2151 - Wer: 11.6220 | de440983dddde2aa51a9786dbd967074 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.1595 | 0.15 | 300 | 0.2195 | 11.2807 | | 0.1691 | 0.31 | 600 | 0.2151 | 11.6220 | | 7332116a0415d4929ddf31925517dae2 |

mit | ['generated_from_trainer'] | false | aces-roberta-base-reduced This model is a fine-tuned version of [roberta-base](https://huggingface.co/roberta-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.3101 - Precision: 0.9036 - Recall: 0.9038 - F1: 0.9029 - Accuracy: 0.9038 - F1 Who: 0.8727 - F1 What: 0.8295 - F1 Where: 0.8468 - F1 How: 0.9414 | 827eea1b55416d52e2cea3c3927ab7e3 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | F1 Who | F1 What | F1 Where | F1 How | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|:------:|:-------:|:--------:|:------:| | 0.6299 | 1.0 | 48 | 0.3723 | 0.8943 | 0.8946 | 0.8939 | 0.8946 | 0.8846 | 0.8179 | 0.8559 | 0.9208 | | 0.3067 | 2.0 | 96 | 0.3481 | 0.8911 | 0.8803 | 0.8820 | 0.8803 | 0.8649 | 0.8102 | 0.7766 | 0.9365 | | 0.2054 | 3.0 | 144 | 0.3018 | 0.9129 | 0.9121 | 0.9117 | 0.9121 | 0.8649 | 0.8571 | 0.8720 | 0.9430 | | 0.2196 | 4.0 | 192 | 0.3061 | 0.9108 | 0.9105 | 0.9098 | 0.9105 | 0.8649 | 0.8385 | 0.8610 | 0.9508 | | 0.1505 | 5.0 | 240 | 0.3101 | 0.9036 | 0.9038 | 0.9029 | 0.9038 | 0.8727 | 0.8295 | 0.8468 | 0.9414 | | a748aeca3b626d41c545c7947b361e83 |

mit | ['text-classification'] | false | Multi2ConvAI-Logistics: finetuned Bert for Croatian

This model was developed in the [Multi2ConvAI](https://multi2conv.ai) project:

- domain: Logistics (more details about our use cases: ([en](https://multi2convai/en/blog/use-cases), [de](https://multi2convai/en/blog/use-cases)))

- language: Croatian (hr)

- model type: finetuned Bert

| beb94420bbc6ac548a027db1085a5525 |

mit | ['text-classification'] | false | Run with Huggingface Transformers

````python

from transformers import AutoTokenizer, AutoModelForSequenceClassification

tokenizer = AutoTokenizer.from_pretrained("inovex/multi2convai-logistics-hr-bert")

model = AutoModelForSequenceClassification.from_pretrained("inovex/multi2convai-logistics-hr-bert")

````

| b17e6bb9518ac95b2b2dbe6662188851 |

cc-by-4.0 | ['questions and answers generation'] | false | Model Card of `lmqg/mbart-large-cc25-itquad-qag` This model is fine-tuned version of [facebook/mbart-large-cc25](https://huggingface.co/facebook/mbart-large-cc25) for question & answer pair generation task on the [lmqg/qag_itquad](https://huggingface.co/datasets/lmqg/qag_itquad) (dataset_name: default) via [`lmqg`](https://github.com/asahi417/lm-question-generation). | a1f9e61c20d704e0456c5f4866286560 |

cc-by-4.0 | ['questions and answers generation'] | false | Overview - **Language model:** [facebook/mbart-large-cc25](https://huggingface.co/facebook/mbart-large-cc25) - **Language:** it - **Training data:** [lmqg/qag_itquad](https://huggingface.co/datasets/lmqg/qag_itquad) (default) - **Online Demo:** [https://autoqg.net/](https://autoqg.net/) - **Repository:** [https://github.com/asahi417/lm-question-generation](https://github.com/asahi417/lm-question-generation) - **Paper:** [https://arxiv.org/abs/2210.03992](https://arxiv.org/abs/2210.03992) | 9bb1cc0aec7cceab1b0f7b090244baea |

cc-by-4.0 | ['questions and answers generation'] | false | model prediction question_answer_pairs = model.generate_qa("Dopo il 1971 , l' OPEC ha tardato ad adeguare i prezzi per riflettere tale deprezzamento.") ``` - With `transformers` ```python from transformers import pipeline pipe = pipeline("text2text-generation", "lmqg/mbart-large-cc25-itquad-qag") output = pipe("Dopo il 1971 , l' OPEC ha tardato ad adeguare i prezzi per riflettere tale deprezzamento.") ``` | 97e254f6ce238c4a425aefa1fe80e199 |

cc-by-4.0 | ['questions and answers generation'] | false | Evaluation - ***Metric (Question & Answer Generation)***: [raw metric file](https://huggingface.co/lmqg/mbart-large-cc25-itquad-qag/raw/main/eval/metric.first.answer.paragraph.questions_answers.lmqg_qag_itquad.default.json) | | Score | Type | Dataset | |:--------------------------------|--------:|:--------|:-------------------------------------------------------------------| | QAAlignedF1Score (BERTScore) | 72.96 | default | [lmqg/qag_itquad](https://huggingface.co/datasets/lmqg/qag_itquad) | | QAAlignedF1Score (MoverScore) | 51.25 | default | [lmqg/qag_itquad](https://huggingface.co/datasets/lmqg/qag_itquad) | | QAAlignedPrecision (BERTScore) | 74.2 | default | [lmqg/qag_itquad](https://huggingface.co/datasets/lmqg/qag_itquad) | | QAAlignedPrecision (MoverScore) | 52.44 | default | [lmqg/qag_itquad](https://huggingface.co/datasets/lmqg/qag_itquad) | | QAAlignedRecall (BERTScore) | 71.83 | default | [lmqg/qag_itquad](https://huggingface.co/datasets/lmqg/qag_itquad) | | QAAlignedRecall (MoverScore) | 50.21 | default | [lmqg/qag_itquad](https://huggingface.co/datasets/lmqg/qag_itquad) | | 6ef0d8fb7537c81b168282844f7cdbfb |

cc-by-4.0 | ['questions and answers generation'] | false | Training hyperparameters The following hyperparameters were used during fine-tuning: - dataset_path: lmqg/qag_itquad - dataset_name: default - input_types: ['paragraph'] - output_types: ['questions_answers'] - prefix_types: None - model: facebook/mbart-large-cc25 - max_length: 512 - max_length_output: 256 - epoch: 14 - batch: 8 - lr: 0.0001 - fp16: False - random_seed: 1 - gradient_accumulation_steps: 16 - label_smoothing: 0.15 The full configuration can be found at [fine-tuning config file](https://huggingface.co/lmqg/mbart-large-cc25-itquad-qag/raw/main/trainer_config.json). | d76d6557c98d57f48d7072a984ba5b71 |

apache-2.0 | ['automatic-speech-recognition', 'fi', 'finnish', 'generated_from_trainer', 'hf-asr-leaderboard'] | false | Wav2vec2-large-uralic-voxpopuli-v2 for Finnish ASR This acoustic model is a fine-tuned version of [facebook/wav2vec2-large-uralic-voxpopuli-v2](https://huggingface.co/facebook/wav2vec2-large-uralic-voxpopuli-v2) for Finnish ASR. The model has been fine-tuned with 276.7 hours of Finnish transcribed speech data. Wav2Vec2 was introduced in [this paper](https://arxiv.org/abs/2006.11477) and first released at [this page](https://github.com/pytorch/fairseq/tree/main/examples/wav2vec | 3a1ef7a2930f3a9383ff41f1c3d7c158 |

apache-2.0 | ['automatic-speech-recognition', 'fi', 'finnish', 'generated_from_trainer', 'hf-asr-leaderboard'] | false | Model description [Wav2vec2-large-uralic-voxpopuli-v2](https://huggingface.co/facebook/wav2vec2-large-uralic-voxpopuli-v2) is Facebook AI's pretrained model for uralic language family (Finnish, Estonian, Hungarian) speech. It is pretrained on 42.5k hours of unlabeled Finnish, Estonian and Hungarian speech from [VoxPopuli V2 dataset](https://github.com/facebookresearch/voxpopuli/) with the wav2vec 2.0 objective. This model is fine-tuned version of the pretrained model for Finnish ASR. | 7ed498843f3ed60181c305e9e0752fad |

apache-2.0 | ['automatic-speech-recognition', 'fi', 'finnish', 'generated_from_trainer', 'hf-asr-leaderboard'] | false | How to use Check the [run-finnish-asr-models.ipynb](https://huggingface.co/Finnish-NLP/wav2vec2-large-uralic-voxpopuli-v2-finnish/blob/main/run-finnish-asr-models.ipynb) notebook in this repository for an detailed example on how to use this model. | d88d3176f0497ec7b3263201791a63bb |

apache-2.0 | ['automatic-speech-recognition', 'fi', 'finnish', 'generated_from_trainer', 'hf-asr-leaderboard'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-04 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - optimizer: [8-bit Adam](https://github.com/facebookresearch/bitsandbytes) with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 10 - mixed_precision_training: Native AMP The pretrained `facebook/wav2vec2-large-uralic-voxpopuli-v2` model was initialized with following hyperparameters: - attention_dropout: 0.094 - hidden_dropout: 0.047 - feat_proj_dropout: 0.04 - mask_time_prob: 0.082 - layerdrop: 0.041 - activation_dropout: 0.055 - ctc_loss_reduction: "mean" | fd2e0a19ab0d78dc1a3fed28a480aa8d |

apache-2.0 | ['automatic-speech-recognition', 'fi', 'finnish', 'generated_from_trainer', 'hf-asr-leaderboard'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:------:| | 1.9421 | 0.17 | 500 | 0.8633 | 0.8870 | | 0.572 | 0.33 | 1000 | 0.1650 | 0.1829 | | 0.5149 | 0.5 | 1500 | 0.1416 | 0.1711 | | 0.4884 | 0.66 | 2000 | 0.1265 | 0.1605 | | 0.4729 | 0.83 | 2500 | 0.1205 | 0.1485 | | 0.4723 | 1.0 | 3000 | 0.1108 | 0.1403 | | 0.443 | 1.16 | 3500 | 0.1175 | 0.1439 | | 0.4378 | 1.33 | 4000 | 0.1083 | 0.1482 | | 0.4313 | 1.49 | 4500 | 0.1110 | 0.1398 | | 0.4182 | 1.66 | 5000 | 0.1024 | 0.1418 | | 0.3884 | 1.83 | 5500 | 0.1032 | 0.1395 | | 0.4034 | 1.99 | 6000 | 0.0985 | 0.1318 | | 0.3735 | 2.16 | 6500 | 0.1008 | 0.1355 | | 0.4174 | 2.32 | 7000 | 0.0970 | 0.1361 | | 0.3581 | 2.49 | 7500 | 0.0968 | 0.1297 | | 0.3783 | 2.66 | 8000 | 0.0881 | 0.1284 | | 0.3827 | 2.82 | 8500 | 0.0921 | 0.1352 | | 0.3651 | 2.99 | 9000 | 0.0861 | 0.1298 | | 0.3684 | 3.15 | 9500 | 0.0844 | 0.1270 | | 0.3784 | 3.32 | 10000 | 0.0870 | 0.1248 | | 0.356 | 3.48 | 10500 | 0.0828 | 0.1214 | | 0.3524 | 3.65 | 11000 | 0.0878 | 0.1218 | | 0.3879 | 3.82 | 11500 | 0.0874 | 0.1216 | | 0.3521 | 3.98 | 12000 | 0.0860 | 0.1210 | | 0.3527 | 4.15 | 12500 | 0.0818 | 0.1184 | | 0.3529 | 4.31 | 13000 | 0.0787 | 0.1185 | | 0.3114 | 4.48 | 13500 | 0.0852 | 0.1202 | | 0.3495 | 4.65 | 14000 | 0.0807 | 0.1187 | | 0.34 | 4.81 | 14500 | 0.0796 | 0.1162 | | 0.3646 | 4.98 | 15000 | 0.0782 | 0.1149 | | 0.3004 | 5.14 | 15500 | 0.0799 | 0.1142 | | 0.3167 | 5.31 | 16000 | 0.0847 | 0.1123 | | 0.3249 | 5.48 | 16500 | 0.0837 | 0.1171 | | 0.3202 | 5.64 | 17000 | 0.0749 | 0.1109 | | 0.3104 | 5.81 | 17500 | 0.0798 | 0.1093 | | 0.3039 | 5.97 | 18000 | 0.0810 | 0.1132 | | 0.3157 | 6.14 | 18500 | 0.0847 | 0.1156 | | 0.3133 | 6.31 | 19000 | 0.0833 | 0.1140 | | 0.3203 | 6.47 | 19500 | 0.0838 | 0.1113 | | 0.3178 | 6.64 | 20000 | 0.0907 | 0.1141 | | 0.3182 | 6.8 | 20500 | 0.0938 | 0.1143 | | 0.3 | 6.97 | 21000 | 0.0854 | 0.1133 | | 0.3151 | 7.14 | 21500 | 0.0859 | 0.1109 | | 0.2963 | 7.3 | 22000 | 0.0832 | 0.1122 | | 0.3099 | 7.47 | 22500 | 0.0865 | 0.1103 | | 0.322 | 7.63 | 23000 | 0.0833 | 0.1105 | | 0.3064 | 7.8 | 23500 | 0.0865 | 0.1078 | | 0.2964 | 7.97 | 24000 | 0.0859 | 0.1096 | | 0.2869 | 8.13 | 24500 | 0.0872 | 0.1100 | | 0.315 | 8.3 | 25000 | 0.0869 | 0.1099 | | 0.3003 | 8.46 | 25500 | 0.0878 | 0.1105 | | 0.2947 | 8.63 | 26000 | 0.0884 | 0.1084 | | 0.297 | 8.8 | 26500 | 0.0891 | 0.1102 | | 0.3049 | 8.96 | 27000 | 0.0863 | 0.1081 | | 0.2957 | 9.13 | 27500 | 0.0846 | 0.1083 | | 0.2908 | 9.29 | 28000 | 0.0848 | 0.1059 | | 0.2955 | 9.46 | 28500 | 0.0846 | 0.1085 | | 0.2991 | 9.62 | 29000 | 0.0839 | 0.1081 | | 0.3112 | 9.79 | 29500 | 0.0832 | 0.1071 | | 0.29 | 9.96 | 30000 | 0.0828 | 0.1075 | | 76290987857d87219eb589a4d9ecac31 |

apache-2.0 | ['automatic-speech-recognition', 'fi', 'finnish', 'generated_from_trainer', 'hf-asr-leaderboard'] | false | Common Voice 7.0 testing To evaluate this model, run the `eval.py` script in this repository: ```bash python3 eval.py --model_id Finnish-NLP/wav2vec2-large-uralic-voxpopuli-v2-finnish --dataset mozilla-foundation/common_voice_7_0 --config fi --split test ``` This model (the second row of the table) achieves the following WER (Word Error Rate) and CER (Character Error Rate) results compared to our other models and their parameter counts: | | Model parameters | WER (with LM) | WER (without LM) | CER (with LM) | CER (without LM) | |-------------------------------------------------------|------------------|---------------|------------------|---------------|------------------| |Finnish-NLP/wav2vec2-base-fi-voxpopuli-v2-finetuned | 95 million |5.85 |13.52 |1.35 |2.44 | |Finnish-NLP/wav2vec2-large-uralic-voxpopuli-v2-finnish | 300 million |4.13 |**9.66** |0.90 |1.66 | |Finnish-NLP/wav2vec2-xlsr-300m-finnish-lm | 300 million |8.16 |17.92 |1.97 |3.36 | |Finnish-NLP/wav2vec2-xlsr-1b-finnish-lm | 1000 million |5.65 |13.11 |1.20 |2.23 | |Finnish-NLP/wav2vec2-xlsr-1b-finnish-lm-v2 | 1000 million |**4.09** |9.73 |**0.88** |**1.65** | | 701197139e247db22f9b7cb97b762f57 |

apache-2.0 | ['automatic-speech-recognition', 'fi', 'finnish', 'generated_from_trainer', 'hf-asr-leaderboard'] | false | Common Voice 9.0 testing To evaluate this model, run the `eval.py` script in this repository: ```bash python3 eval.py --model_id Finnish-NLP/wav2vec2-large-uralic-voxpopuli-v2-finnish --dataset mozilla-foundation/common_voice_9_0 --config fi --split test ``` This model (the second row of the table) achieves the following WER (Word Error Rate) and CER (Character Error Rate) results compared to our other models and their parameter counts: | | Model parameters | WER (with LM) | WER (without LM) | CER (with LM) | CER (without LM) | |-------------------------------------------------------|------------------|---------------|------------------|---------------|------------------| |Finnish-NLP/wav2vec2-base-fi-voxpopuli-v2-finetuned | 95 million |5.93 |14.08 |1.40 |2.59 | |Finnish-NLP/wav2vec2-large-uralic-voxpopuli-v2-finnish | 300 million |4.13 |9.83 |0.92 |1.71 | |Finnish-NLP/wav2vec2-xlsr-300m-finnish-lm | 300 million |7.42 |16.45 |1.79 |3.07 | |Finnish-NLP/wav2vec2-xlsr-1b-finnish-lm | 1000 million |5.35 |13.00 |1.14 |2.20 | |Finnish-NLP/wav2vec2-xlsr-1b-finnish-lm-v2 | 1000 million |**3.72** |**8.96** |**0.80** |**1.52** | | 96dd17d73ad521b2c9eb7376e3b0d630 |

apache-2.0 | ['automatic-speech-recognition', 'fi', 'finnish', 'generated_from_trainer', 'hf-asr-leaderboard'] | false | FLEURS ASR testing To evaluate this model, run the `eval.py` script in this repository: ```bash python3 eval.py --model_id Finnish-NLP/wav2vec2-large-uralic-voxpopuli-v2-finnish --dataset google/fleurs --config fi_fi --split test ``` This model (the second row of the table) achieves the following WER (Word Error Rate) and CER (Character Error Rate) results compared to our other models and their parameter counts: | | Model parameters | WER (with LM) | WER (without LM) | CER (with LM) | CER (without LM) | |-------------------------------------------------------|------------------|---------------|------------------|---------------|------------------| |Finnish-NLP/wav2vec2-base-fi-voxpopuli-v2-finetuned | 95 million |13.99 |17.16 |6.07 |6.61 | |Finnish-NLP/wav2vec2-large-uralic-voxpopuli-v2-finnish | 300 million |12.44 |**14.63** |5.77 |6.22 | |Finnish-NLP/wav2vec2-xlsr-300m-finnish-lm | 300 million |17.72 |23.30 |6.78 |7.67 | |Finnish-NLP/wav2vec2-xlsr-1b-finnish-lm | 1000 million |20.34 |16.67 |6.97 |6.35 | |Finnish-NLP/wav2vec2-xlsr-1b-finnish-lm-v2 | 1000 million |**12.11** |14.89 |**5.65** |**6.06** | | 3a0e757245df4ea44ed79a59058d57ae |

cc-by-4.0 | ['espnet', 'audio', 'text-to-speech'] | false | Demo: How to use in ESPnet2 ```bash cd espnet git checkout 49a284e69308d81c142b89795de255b4ce290c54 pip install -e . cd egs2/talromur/tts1 ./run.sh --skip_data_prep false --skip_train true --download_model espnet/GunnarThor_talromur_d_fastspeech2 ``` | 8c2821066af1642bb751bd8910a12162 |