license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['translation'] | false | System Info: - hf_name: tur-ara - source_languages: tur - target_languages: ara - opus_readme_url: https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/tur-ara/README.md - original_repo: Tatoeba-Challenge - tags: ['translation'] - languages: ['tr', 'ar'] - src_constituents: {'tur'} - tgt_constituents: {'apc', 'ara', 'arq_Latn', 'arq', 'afb', 'ara_Latn', 'apc_Latn', 'arz'} - src_multilingual: False - tgt_multilingual: False - prepro: normalization + SentencePiece (spm32k,spm32k) - url_model: https://object.pouta.csc.fi/Tatoeba-MT-models/tur-ara/opus-2020-07-03.zip - url_test_set: https://object.pouta.csc.fi/Tatoeba-MT-models/tur-ara/opus-2020-07-03.test.txt - src_alpha3: tur - tgt_alpha3: ara - short_pair: tr-ar - chrF2_score: 0.455 - bleu: 14.9 - brevity_penalty: 0.988 - ref_len: 6944.0 - src_name: Turkish - tgt_name: Arabic - train_date: 2020-07-03 - src_alpha2: tr - tgt_alpha2: ar - prefer_old: False - long_pair: tur-ara - helsinki_git_sha: 480fcbe0ee1bf4774bcbe6226ad9f58e63f6c535 - transformers_git_sha: 2207e5d8cb224e954a7cba69fa4ac2309e9ff30b - port_machine: brutasse - port_time: 2020-08-21-14:41 | bd1e7854f9508f755bce9af83a9769ec |

apache-2.0 | ['automatic-speech-recognition', 'th'] | false | exp_w2v2t_th_no-pretraining_s414 Fine-tuned randomly initialized wav2vec2 model for speech recognition on Thai using the train split of [Common Voice 7.0](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 4736d7a3e2a1d83f51e4f50490f43546 |

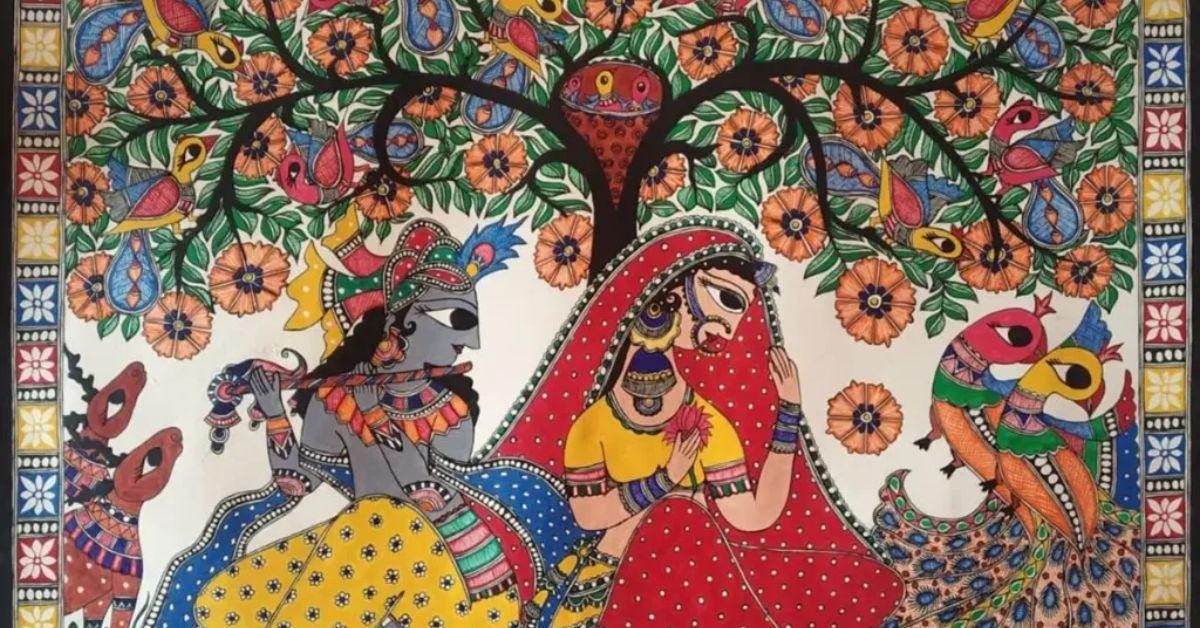

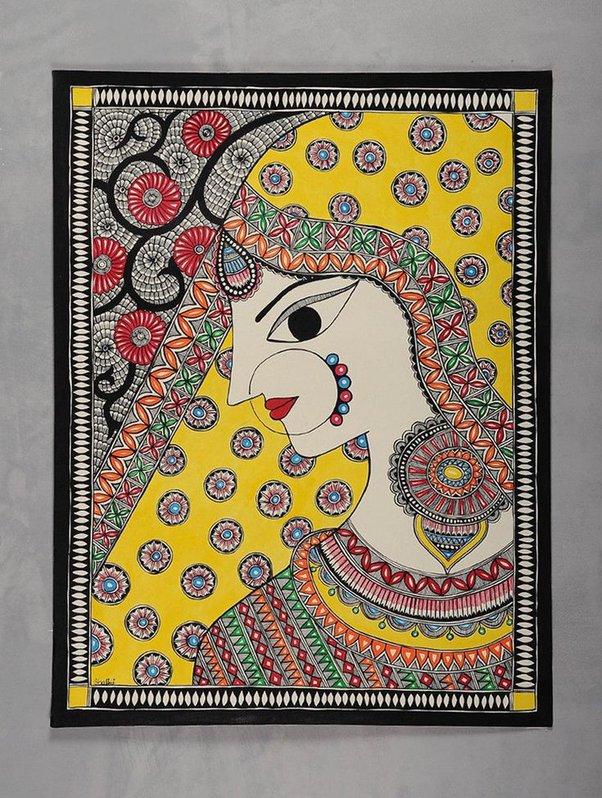

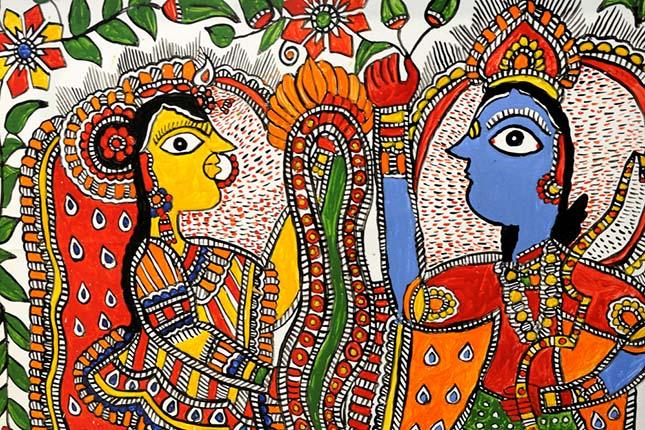

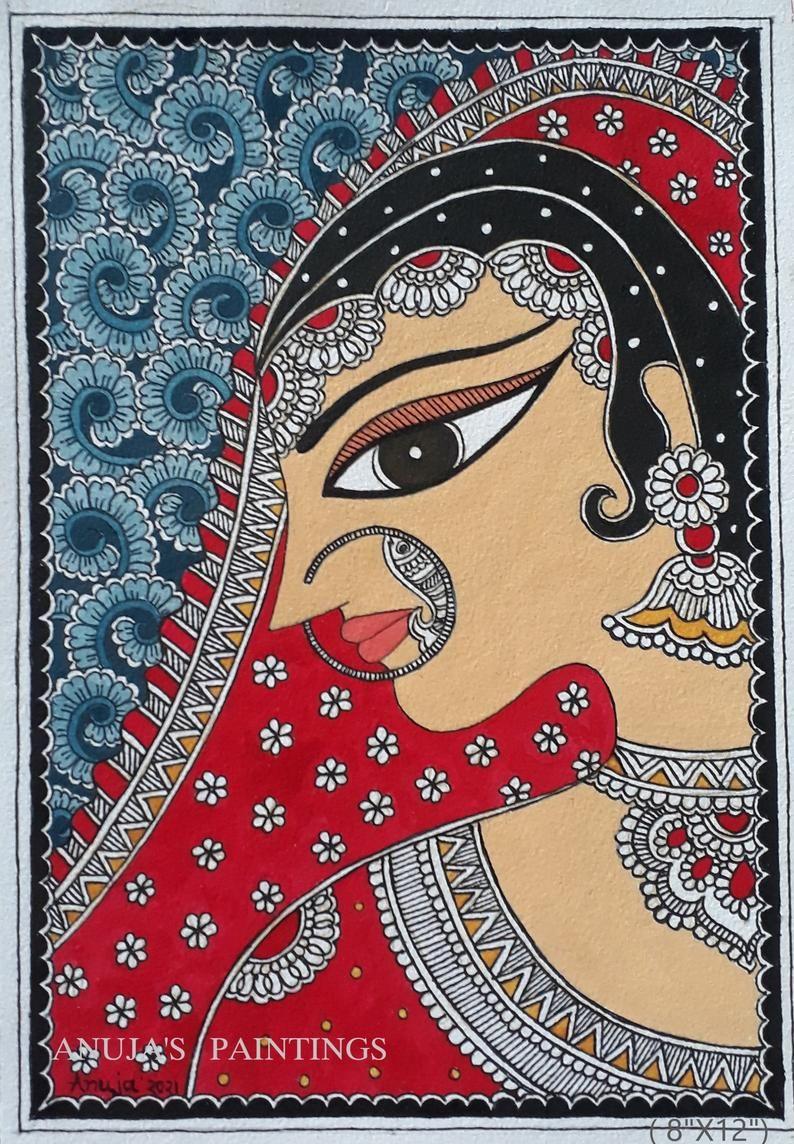

mit | [] | false | madhubani art on Stable Diffusion This is the `<madhubani-art>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `style`:     | 77f80b2477c106aabff9f546c4d2f7fc |

apache-2.0 | ['generated_from_trainer'] | false | tiny-mlm-glue-cola-custom-tokenizer-target-glue-cola This model is a fine-tuned version of [muhtasham/tiny-mlm-glue-cola-custom-tokenizer](https://huggingface.co/muhtasham/tiny-mlm-glue-cola-custom-tokenizer) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.7759 - Matthews Correlation: 0.0718 | e612a129a59a72370aad9f056426d7fe |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Matthews Correlation | |:-------------:|:-----:|:----:|:---------------:|:--------------------:| | 0.6088 | 1.87 | 500 | 0.6180 | 0.0 | | 0.5968 | 3.73 | 1000 | 0.6118 | -0.0207 | | 0.5683 | 5.6 | 1500 | 0.6259 | 0.0868 | | 0.5362 | 7.46 | 2000 | 0.6551 | 0.0556 | | 0.5082 | 9.33 | 2500 | 0.6918 | 0.1167 | | 0.4859 | 11.19 | 3000 | 0.7117 | 0.0947 | | 0.463 | 13.06 | 3500 | 0.7509 | 0.0464 | | 0.442 | 14.93 | 4000 | 0.7759 | 0.0718 | | 415e6f41a32a35847efd3008ee8de965 |

apache-2.0 | [] | false | distilbert-base-fr-cased We are sharing smaller versions of [distilbert-base-multilingual-cased](https://huggingface.co/distilbert-base-multilingual-cased) that handle a custom number of languages. Our versions give exactly the same representations produced by the original model which preserves the original accuracy. For more information please visit our paper: [Load What You Need: Smaller Versions of Multilingual BERT](https://www.aclweb.org/anthology/2020.sustainlp-1.16.pdf). | 780a1e65b5a42425601faedcb9f4c670 |

apache-2.0 | [] | false | How to use ```python from transformers import AutoTokenizer, AutoModel tokenizer = AutoTokenizer.from_pretrained("Geotrend/distilbert-base-fr-cased") model = AutoModel.from_pretrained("Geotrend/distilbert-base-fr-cased") ``` To generate other smaller versions of multilingual transformers please visit [our Github repo](https://github.com/Geotrend-research/smaller-transformers). | 4bbab634f0d6dfdcffc38646d692ea60 |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-uncased-finetuned-vr-comfort-description-review-epoch15-20221107_2125 This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.0521 - Accuracy: 0.8443 - F1: 0.8449 | d47c554c6dfe6aa5e389e524312e6e75 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.7304 | 1.0 | 157 | 0.5838 | 0.7521 | 0.6457 | | 0.6062 | 2.0 | 314 | 0.5416 | 0.7593 | 0.7487 | | 0.4363 | 3.0 | 471 | 0.4852 | 0.8120 | 0.8139 | | 0.2679 | 4.0 | 628 | 0.5454 | 0.8204 | 0.8102 | | 0.164 | 5.0 | 785 | 0.6908 | 0.8060 | 0.8162 | | 0.112 | 6.0 | 942 | 0.7277 | 0.8287 | 0.8304 | | 0.0759 | 7.0 | 1099 | 0.9089 | 0.8096 | 0.8192 | | 0.0323 | 8.0 | 1256 | 0.8422 | 0.8551 | 0.8524 | | 0.0174 | 9.0 | 1413 | 1.0020 | 0.8299 | 0.8357 | | 0.0138 | 10.0 | 1570 | 0.9637 | 0.8491 | 0.8473 | | 0.0057 | 11.0 | 1727 | 1.0195 | 0.8503 | 0.8411 | | 0.0044 | 12.0 | 1884 | 1.0172 | 0.8455 | 0.8462 | | 0.0035 | 13.0 | 2041 | 1.0056 | 0.8503 | 0.8487 | | 0.002 | 14.0 | 2198 | 1.0554 | 0.8443 | 0.8451 | | 0.0014 | 15.0 | 2355 | 1.0521 | 0.8443 | 0.8449 | | 521f06840e8ce11d4c0eea673ff5ceb1 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-en This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.3909 - F1: 0.6901 | 44ba00f3a5afc034f6d4c838170d05ce |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 1.1446 | 1.0 | 50 | 0.6385 | 0.3858 | | 0.5317 | 2.0 | 100 | 0.4248 | 0.6626 | | 0.3614 | 3.0 | 150 | 0.3909 | 0.6901 | | fe31c6c6972f549b1c3961b97ac5a593 |

cc-by-4.0 | [] | false | roberta-base for QA NOTE: This is version 2 of the model. See [this github issue](https://github.com/deepset-ai/FARM/issues/552) from the FARM repository for an explanation of why we updated. If you'd like to use version 1, specify `revision="v1.0"` when loading the model in Transformers 3.5. For exmaple: ``` model_name = "deepset/roberta-base-squad2" pipeline(model=model_name, tokenizer=model_name, revision="v1.0", task="question-answering") ``` | acf501bba393a87c832f481b682cd8fd |

cc-by-4.0 | [] | false | Overview **Language model:** roberta-base **Language:** English **Downstream-task:** Extractive QA **Training data:** SQuAD 2.0 **Eval data:** SQuAD 2.0 **Code:** See [example](https://github.com/deepset-ai/FARM/blob/master/examples/question_answering.py) in [FARM](https://github.com/deepset-ai/FARM/blob/master/examples/question_answering.py) **Infrastructure**: 4x Tesla v100 | d225102d4dff5af755e8b71ad50e0e66 |

cc-by-4.0 | [] | false | In FARM ```python from farm.modeling.adaptive_model import AdaptiveModel from farm.modeling.tokenization import Tokenizer from farm.infer import Inferencer model_name = "deepset/roberta-base-squad2" | 7f8defac50552f79e90cdc783bcf1642 |

cc-by-4.0 | [] | false | a) Get predictions nlp = Inferencer.load(model_name, task_type="question_answering") QA_input = [{"questions": ["Why is model conversion important?"], "text": "The option to convert models between FARM and transformers gives freedom to the user and let people easily switch between frameworks."}] res = nlp.inference_from_dicts(dicts=QA_input, rest_api_schema=True) | 49922d66c9155ae82e76a296e82ff56b |

cc-by-4.0 | [] | false | In haystack For doing QA at scale (i.e. many docs instead of single paragraph), you can load the model also in [haystack](https://github.com/deepset-ai/haystack/): ```python reader = FARMReader(model_name_or_path="deepset/roberta-base-squad2") | c4ca74b9616d8d10522ba19d8c1a4c5c |

mit | [] | false | dalle-paint on Stable Diffusion This is the `<dalle-paint>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). | 153211d5c62e0a32999013ca776ece9a |

apache-2.0 | ['generated_from_trainer'] | false | finetuned_sentence_itr5_2e-05_all_26_02_2022-04_25_39 This model is a fine-tuned version of [distilbert-base-uncased-finetuned-sst-2-english](https://huggingface.co/distilbert-base-uncased-finetuned-sst-2-english) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.4676 - Accuracy: 0.8299 - F1: 0.8892 | 566a5aa85ad480bd9a78d58ec0df30f8 |

cc-by-4.0 | ['norwegian', 'GPT2', 'casual language modeling'] | false | Description Experimental Norwegian GPT-2-model trained on a 37GB mainly social corpus. The following sub-corpora are used: ```bash wikipedia_download_nb.jsonl wikipedia_download_nn.jsonl newspapers_online_nb.jsonl newspapers_online_nn.jsonl twitter_2016_2018_no.jsonl twitter_news_2016_2018_no.jsonl open_subtitles_no.jsonl facebook_no.jsonl reddit_no.jsonl vgdebatt_no.jsonl ``` | fe5daa968f8a740ebb50cb3236cdd616 |

apache-2.0 | ['translation'] | false | opus-mt-hr-fi * source languages: hr * target languages: fi * OPUS readme: [hr-fi](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/hr-fi/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-09.zip](https://object.pouta.csc.fi/OPUS-MT-models/hr-fi/opus-2020-01-09.zip) * test set translations: [opus-2020-01-09.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/hr-fi/opus-2020-01-09.test.txt) * test set scores: [opus-2020-01-09.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/hr-fi/opus-2020-01-09.eval.txt) | 9e09c217ba5dd57525ca3a0366317599 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0005 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - gradient_accumulation_steps: 8 - total_train_batch_size: 256 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: cosine - lr_scheduler_warmup_steps: 1000 - num_epochs: 20 - mixed_precision_training: Native AMP | 06f23dc9f652d85e078b7e682a16976a |

apache-2.0 | ['legal', 'spanish'] | false | Model description The **RoBERTalex** is a transformer-based masked language model for the Spanish language. It is based on the [RoBERTa](https://arxiv.org/abs/1907.11692) base model and has been pre-trained using a large [Spanish Legal Domain Corpora](https://zenodo.org/record/5495529), with a total of 8.9GB of text. | 7b687a7b7bc2a50f607f360e309b8869 |

apache-2.0 | ['legal', 'spanish'] | false | Intended uses and limitations The **RoBERTalex** model is ready-to-use only for masked language modeling to perform the Fill Mask task (try the inference API or read the next section). However, it is intended to be fine-tuned on non-generative downstream tasks such as Question Answering, Text Classification, or Named Entity Recognition. You can use the raw model for fill mask or fine-tune it to a downstream task. | 185dcaef60284c23841ca9bbc8f9f417 |

apache-2.0 | ['legal', 'spanish'] | false | How to use Here is how to use this model: ```python >>> from transformers import pipeline >>> from pprint import pprint >>> unmasker = pipeline('fill-mask', model='PlanTL-GOB-ES/RoBERTalex') >>> pprint(unmasker("La ley fue <mask> finalmente.")) [{'score': 0.21217258274555206, 'sequence': ' La ley fue modificada finalmente.', 'token': 5781, 'token_str': ' modificada'}, {'score': 0.20414969325065613, 'sequence': ' La ley fue derogada finalmente.', 'token': 15951, 'token_str': ' derogada'}, {'score': 0.19272951781749725, 'sequence': ' La ley fue aprobada finalmente.', 'token': 5534, 'token_str': ' aprobada'}, {'score': 0.061143241822719574, 'sequence': ' La ley fue revisada finalmente.', 'token': 14192, 'token_str': ' revisada'}, {'score': 0.041809432208538055, 'sequence': ' La ley fue aplicada finalmente.', 'token': 12208, 'token_str': ' aplicada'}] ``` Here is how to use this model to get the features of a given text in PyTorch: ```python >>> from transformers import RobertaTokenizer, RobertaModel >>> tokenizer = RobertaTokenizer.from_pretrained('PlanTL-GOB-ES/RoBERTalex') >>> model = RobertaModel.from_pretrained('PlanTL-GOB-ES/RoBERTalex') >>> text = "Gracias a los datos legales se ha podido desarrollar este modelo del lenguaje." >>> encoded_input = tokenizer(text, return_tensors='pt') >>> output = model(**encoded_input) >>> print(output.last_hidden_state.shape) torch.Size([1, 16, 768]) ``` | 65bf6c4659cf4c46905df7c8cc2e557f |

apache-2.0 | ['legal', 'spanish'] | false | Training data The [Spanish Legal Domain Corpora](https://zenodo.org/record/5495529) corpora comprise multiple digital resources and it has a total of 8.9GB of textual data. Part of it has been obtained from [previous work](https://aclanthology.org/2020.lt4gov-1.6/). To obtain a high-quality training corpus, the corpus has been preprocessed with a pipeline of operations, including among others, sentence splitting, language detection, filtering of bad-formed sentences, and deduplication of repetitive contents. During the process, document boundaries are kept. | e27c4f4193b2fc568320cdaab9814267 |

apache-2.0 | ['legal', 'spanish'] | false | Training procedure The training corpus has been tokenized using a byte version of Byte-Pair Encoding (BPE) used in the original [RoBERTA](https://arxiv.org/abs/1907.11692) model with a vocabulary size of 50,262 tokens. The **RoBERTalex** pre-training consists of a masked language model training, that follows the approach employed for the RoBERTa base. The model was trained until convergence with 2 computing nodes, each one with 4 NVIDIA V100 GPUs of 16GB VRAM. | ce7cab805f148f42ff5b3fa7c465d977 |

apache-2.0 | ['legal', 'spanish'] | false | Evaluation Due to the lack of domain-specific evaluation data, the model was evaluated on general domain tasks, where it obtains reasonable performance. We fine-tuned the model in the following task: | Dataset | Metric | **RoBERtalex** | |--------------|----------|------------| | UD-POS | F1 | 0.9871 | | CoNLL-NERC | F1 | 0.8323 | | CAPITEL-POS | F1 | 0.9788| | CAPITEL-NERC | F1 | 0.8394 | | STS | Combined | 0.7374 | | MLDoc | Accuracy | 0.9417 | | PAWS-X | F1 | 0.7304 | | XNLI | Accuracy | 0.7337 | | 797344c644777f61e364ecdd47759150 |

apache-2.0 | ['legal', 'spanish'] | false | Citing information ``` @misc{gutierrezfandino2021legal, title={Spanish Legalese Language Model and Corpora}, author={Asier Gutiérrez-Fandiño and Jordi Armengol-Estapé and Aitor Gonzalez-Agirre and Marta Villegas}, year={2021}, eprint={2110.12201}, archivePrefix={arXiv}, primaryClass={cs.CL} } ``` | 1d8cc7288c5018753eea9b2b6343b4d7 |

cc-by-4.0 | ['question generation'] | false | Model Card of `research-backup/bart-base-subjqa-vanilla-electronics-qg` This model is fine-tuned version of [facebook/bart-base](https://huggingface.co/facebook/bart-base) for question generation task on the [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) (dataset_name: electronics) via [`lmqg`](https://github.com/asahi417/lm-question-generation). | 032bd41b8575647ec8b92606b5b0a92c |

cc-by-4.0 | ['question generation'] | false | Overview - **Language model:** [facebook/bart-base](https://huggingface.co/facebook/bart-base) - **Language:** en - **Training data:** [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) (electronics) - **Online Demo:** [https://autoqg.net/](https://autoqg.net/) - **Repository:** [https://github.com/asahi417/lm-question-generation](https://github.com/asahi417/lm-question-generation) - **Paper:** [https://arxiv.org/abs/2210.03992](https://arxiv.org/abs/2210.03992) | 0a094c6966a6d182d682f28b9cbf0907 |

cc-by-4.0 | ['question generation'] | false | model prediction questions = model.generate_q(list_context="William Turner was an English painter who specialised in watercolour landscapes", list_answer="William Turner") ``` - With `transformers` ```python from transformers import pipeline pipe = pipeline("text2text-generation", "research-backup/bart-base-subjqa-vanilla-electronics-qg") output = pipe("generate question: <hl> Beyonce <hl> further expanded her acting career, starring as blues singer Etta James in the 2008 musical biopic, Cadillac Records.") ``` | 7b85788e535ed77995a6831a566176f0 |

cc-by-4.0 | ['question generation'] | false | Evaluation - ***Metric (Question Generation)***: [raw metric file](https://huggingface.co/research-backup/bart-base-subjqa-vanilla-electronics-qg/raw/main/eval/metric.first.sentence.paragraph_answer.question.lmqg_qg_subjqa.electronics.json) | | Score | Type | Dataset | |:-----------|--------:|:------------|:-----------------------------------------------------------------| | BERTScore | 90.59 | electronics | [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) | | Bleu_1 | 11.26 | electronics | [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) | | Bleu_2 | 7.45 | electronics | [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) | | Bleu_3 | 3 | electronics | [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) | | Bleu_4 | 1.42 | electronics | [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) | | METEOR | 19.5 | electronics | [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) | | MoverScore | 62.1 | electronics | [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) | | ROUGE_L | 22.87 | electronics | [lmqg/qg_subjqa](https://huggingface.co/datasets/lmqg/qg_subjqa) | | 03aa637da6947e5bed4384460637fd19 |

cc-by-4.0 | ['question generation'] | false | Training hyperparameters The following hyperparameters were used during fine-tuning: - dataset_path: lmqg/qg_subjqa - dataset_name: electronics - input_types: ['paragraph_answer'] - output_types: ['question'] - prefix_types: ['qg'] - model: facebook/bart-base - max_length: 512 - max_length_output: 32 - epoch: 1 - batch: 8 - lr: 5e-05 - fp16: False - random_seed: 1 - gradient_accumulation_steps: 16 - label_smoothing: 0.15 The full configuration can be found at [fine-tuning config file](https://huggingface.co/research-backup/bart-base-subjqa-vanilla-electronics-qg/raw/main/trainer_config.json). | ba7a5d99e8fce814924d38e3d981bae0 |

apache-2.0 | ['generated_from_trainer'] | false | patent-summarization-fb-bart-base-2022-09-20 This model is a fine-tuned version of [facebook/bart-base](https://huggingface.co/facebook/bart-base) on the farleyknight/big_patent_5_percent dataset. It achieves the following results on the evaluation set: - Loss: 2.4088 - Rouge1: 39.4401 - Rouge2: 14.2445 - Rougel: 26.2701 - Rougelsum: 33.7535 - Gen Len: 78.9702 | 2e91d3e648c3d83ef458466b8e7bc624 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:-----:|:---------------:|:-------:|:------:|:-------:|:---------:|:-------:| | 3.0567 | 0.08 | 5000 | 2.8864 | 18.9387 | 7.1014 | 15.4506 | 16.8377 | 19.9979 | | 2.9285 | 0.17 | 10000 | 2.7800 | 19.8983 | 7.3258 | 16.0823 | 17.7019 | 20.0 | | 2.9252 | 0.25 | 15000 | 2.7080 | 19.6623 | 7.4627 | 16.0153 | 17.4485 | 20.0 | | 2.8123 | 0.33 | 20000 | 2.6585 | 19.7414 | 7.5251 | 15.8166 | 17.4668 | 20.0 | | 2.7117 | 0.41 | 25000 | 2.6070 | 19.7661 | 7.7193 | 16.2795 | 17.7884 | 20.0 | | 2.7131 | 0.5 | 30000 | 2.5616 | 19.6706 | 7.4229 | 15.7998 | 17.4324 | 20.0 | | 2.6373 | 0.58 | 35000 | 2.5250 | 20.0155 | 7.6811 | 16.1231 | 17.7578 | 20.0 | | 2.6785 | 0.66 | 40000 | 2.4977 | 20.0974 | 7.9578 | 16.543 | 18.0242 | 20.0 | | 2.6265 | 0.75 | 45000 | 2.4701 | 19.994 | 7.9114 | 16.3501 | 17.8786 | 20.0 | | 2.5833 | 0.83 | 50000 | 2.4441 | 19.9981 | 7.934 | 16.3033 | 17.8674 | 20.0 | | 2.5579 | 0.91 | 55000 | 2.4251 | 20.0544 | 7.8966 | 16.3889 | 17.9491 | 20.0 | | 2.5242 | 0.99 | 60000 | 2.4097 | 20.1093 | 8.0572 | 16.4935 | 17.9823 | 20.0 | | ae691e7f9637d6dfe5794ad9f4edb783 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-de This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.1358 - F1: 0.8495 | abc31d898555bd774a9ccb8182e7b77b |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 128 - eval_batch_size: 128 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3 | ac51df732ee42d970dc13464f8b962d5 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.3842 | 1.0 | 99 | 0.1687 | 0.8120 | | 0.1526 | 2.0 | 198 | 0.1447 | 0.8355 | | 0.1139 | 3.0 | 297 | 0.1358 | 0.8495 | | befbd3e18b63ca4ca2f5d3ca38b07bdf |

apache-2.0 | ['automatic-speech-recognition', 'ja'] | false | exp_w2v2t_ja_wavlm_s664 Fine-tuned [microsoft/wavlm-large](https://huggingface.co/microsoft/wavlm-large) for speech recognition using the train split of [Common Voice 7.0 (ja)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 42bc1100f270eabd7d9e2d6cb082bde6 |

apache-2.0 | ['translation'] | false | ru-he * source group: Russian * target group: Hebrew * OPUS readme: [rus-heb](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/rus-heb/README.md) * model: transformer * source language(s): rus * target language(s): heb * model: transformer * pre-processing: normalization + SentencePiece (spm32k,spm32k) * download original weights: [opus-2020-10-04.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/rus-heb/opus-2020-10-04.zip) * test set translations: [opus-2020-10-04.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/rus-heb/opus-2020-10-04.test.txt) * test set scores: [opus-2020-10-04.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/rus-heb/opus-2020-10-04.eval.txt) | 7ae93244f15917ca8506d5e93c1adba9 |

apache-2.0 | ['translation'] | false | System Info: - hf_name: ru-he - source_languages: rus - target_languages: heb - opus_readme_url: https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/rus-heb/README.md - original_repo: Tatoeba-Challenge - tags: ['translation'] - languages: ['ru', 'he'] - src_constituents: ('Russian', {'rus'}) - tgt_constituents: ('Hebrew', {'heb'}) - src_multilingual: False - tgt_multilingual: False - long_pair: rus-heb - prepro: normalization + SentencePiece (spm32k,spm32k) - url_model: https://object.pouta.csc.fi/Tatoeba-MT-models/rus-heb/opus-2020-10-04.zip - url_test_set: https://object.pouta.csc.fi/Tatoeba-MT-models/rus-heb/opus-2020-10-04.test.txt - src_alpha3: rus - tgt_alpha3: heb - chrF2_score: 0.569 - bleu: 36.1 - brevity_penalty: 0.9990000000000001 - ref_len: 15028.0 - src_name: Russian - tgt_name: Hebrew - train_date: 2020-10-04 00:00:00 - src_alpha2: ru - tgt_alpha2: he - prefer_old: False - short_pair: ru-he - helsinki_git_sha: 61fd6908b37d9a7b21cc3e27c1ae1fccedc97561 - transformers_git_sha: b0a907615aca0d728a9bc90f16caef0848f6a435 - port_machine: LM0-400-22516.local - port_time: 2020-10-26-16:16 | bc48bbc7c446d9501e165475c91667e2 |

apache-2.0 | ['image-classification', 'generated_from_trainer'] | false | exper_batch_8_e4 This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on the sudo-s/herbier_mesuem1 dataset. It achieves the following results on the evaluation set: - Loss: 0.3353 - Accuracy: 0.9183 | 7fe3490b8cdf4c9187994e6db1066ebf |

apache-2.0 | ['image-classification', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0002 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 4 - mixed_precision_training: Apex, opt level O1 | ea1619f6b308415f548e964566a0db4f |

apache-2.0 | ['image-classification', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 4.2251 | 0.08 | 100 | 4.1508 | 0.1203 | | 3.4942 | 0.16 | 200 | 3.5566 | 0.2082 | | 3.2871 | 0.23 | 300 | 3.0942 | 0.3092 | | 2.7273 | 0.31 | 400 | 2.8338 | 0.3308 | | 2.4984 | 0.39 | 500 | 2.4860 | 0.4341 | | 2.3423 | 0.47 | 600 | 2.2201 | 0.4796 | | 1.8785 | 0.55 | 700 | 2.1890 | 0.4653 | | 1.8012 | 0.63 | 800 | 1.9901 | 0.4865 | | 1.7236 | 0.7 | 900 | 1.6821 | 0.5736 | | 1.4949 | 0.78 | 1000 | 1.5422 | 0.6083 | | 1.5573 | 0.86 | 1100 | 1.5436 | 0.6110 | | 1.3241 | 0.94 | 1200 | 1.4077 | 0.6207 | | 1.0773 | 1.02 | 1300 | 1.1417 | 0.6916 | | 0.7935 | 1.1 | 1400 | 1.1194 | 0.6931 | | 0.7677 | 1.17 | 1500 | 1.0727 | 0.7167 | | 0.9468 | 1.25 | 1600 | 1.0707 | 0.7136 | | 0.7563 | 1.33 | 1700 | 0.9427 | 0.7390 | | 0.8471 | 1.41 | 1800 | 0.8906 | 0.7571 | | 0.9998 | 1.49 | 1900 | 0.8098 | 0.7845 | | 0.6039 | 1.57 | 2000 | 0.7244 | 0.8034 | | 0.7052 | 1.64 | 2100 | 0.7881 | 0.7953 | | 0.6753 | 1.72 | 2200 | 0.7458 | 0.7926 | | 0.3758 | 1.8 | 2300 | 0.6987 | 0.8022 | | 0.4985 | 1.88 | 2400 | 0.6286 | 0.8265 | | 0.4122 | 1.96 | 2500 | 0.5949 | 0.8358 | | 0.1286 | 2.04 | 2600 | 0.5691 | 0.8385 | | 0.1989 | 2.11 | 2700 | 0.5535 | 0.8389 | | 0.3304 | 2.19 | 2800 | 0.5261 | 0.8520 | | 0.3415 | 2.27 | 2900 | 0.5504 | 0.8477 | | 0.4066 | 2.35 | 3000 | 0.5418 | 0.8497 | | 0.1208 | 2.43 | 3100 | 0.5156 | 0.8612 | | 0.1668 | 2.51 | 3200 | 0.5655 | 0.8539 | | 0.0727 | 2.58 | 3300 | 0.4971 | 0.8658 | | 0.0929 | 2.66 | 3400 | 0.4962 | 0.8635 | | 0.0678 | 2.74 | 3500 | 0.4903 | 0.8670 | | 0.1212 | 2.82 | 3600 | 0.4357 | 0.8867 | | 0.1579 | 2.9 | 3700 | 0.4642 | 0.8739 | | 0.2625 | 2.98 | 3800 | 0.3994 | 0.8951 | | 0.024 | 3.05 | 3900 | 0.3953 | 0.8971 | | 0.0696 | 3.13 | 4000 | 0.3883 | 0.9056 | | 0.0169 | 3.21 | 4100 | 0.3755 | 0.9086 | | 0.023 | 3.29 | 4200 | 0.3685 | 0.9109 | | 0.0337 | 3.37 | 4300 | 0.3623 | 0.9109 | | 0.0123 | 3.45 | 4400 | 0.3647 | 0.9067 | | 0.0159 | 3.52 | 4500 | 0.3630 | 0.9082 | | 0.0154 | 3.6 | 4600 | 0.3522 | 0.9094 | | 0.0112 | 3.68 | 4700 | 0.3439 | 0.9163 | | 0.0219 | 3.76 | 4800 | 0.3404 | 0.9194 | | 0.0183 | 3.84 | 4900 | 0.3371 | 0.9183 | | 0.0103 | 3.92 | 5000 | 0.3362 | 0.9183 | | 0.0357 | 3.99 | 5100 | 0.3353 | 0.9183 | | 3108f85490685d5172b94b2cbb02b7cf |

apache-2.0 | ['text-classification', 'generated_from_trainer'] | false | platzi-distilroberta-base-glue-mrpc-eduardo-ag This model is a fine-tuned version of [distilroberta-base](https://huggingface.co/distilroberta-base) on the glue and the mrpc datasets. It achieves the following results on the evaluation set: - Loss: 0.6614 - Accuracy: 0.8186 - F1: 0.8635 | 3545db0f849f8828be33e0327ef97f4f |

apache-2.0 | ['text-classification', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.5185 | 1.09 | 500 | 0.4796 | 0.8431 | 0.8889 | | 0.3449 | 2.18 | 1000 | 0.6614 | 0.8186 | 0.8635 | | 05a6321a697fd402ee25195a286000ec |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | atcosim_corpus This model is a fine-tuned version of [openai/whisper-tiny](https://huggingface.co/openai/whisper-tiny) on the atcosim_corpus dataset. It achieves the following results on the evaluation set: - Loss: 0.0623 - Wer: 2.4909 | c66e67ba282d762729255f8309105830 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.0281 | 2.1 | 1000 | 0.0716 | 4.1957 | | 0.0051 | 4.19 | 2000 | 0.0650 | 2.7162 | | 0.0009 | 6.29 | 3000 | 0.0624 | 2.4733 | | 0.0005 | 8.39 | 4000 | 0.0623 | 2.4909 | | 61f405e02598a3e31bbd98d07d236be8 |

apache-2.0 | ['vision', 'depth-estimation', 'generated_from_trainer'] | false | glpn-nyu-finetuned-diode-221121-063504 This model is a fine-tuned version of [vinvino02/glpn-nyu](https://huggingface.co/vinvino02/glpn-nyu) on the diode-subset dataset. It achieves the following results on the evaluation set: - Loss: 0.3533 - Mae: 0.2668 - Rmse: 0.3716 - Abs Rel: 0.3427 - Log Mae: 0.1167 - Log Rmse: 0.1703 - Delta1: 0.5522 - Delta2: 0.8362 - Delta3: 0.9382 | 5de8a7699e39ed6532ceb556d283698e |

apache-2.0 | ['vision', 'depth-estimation', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Mae | Rmse | Abs Rel | Log Mae | Log Rmse | Delta1 | Delta2 | Delta3 | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:-------:|:-------:|:--------:|:------:|:------:|:------:| | 1.3991 | 1.0 | 72 | 1.2199 | 3.6023 | 3.6519 | 5.2780 | 0.7010 | 0.7461 | 0.0 | 0.0007 | 0.0616 | | 1.1099 | 2.0 | 144 | 0.7471 | 1.2562 | 1.5028 | 1.6644 | 0.3550 | 0.4165 | 0.0965 | 0.2342 | 0.4292 | | 0.5036 | 3.0 | 216 | 0.4876 | 0.5019 | 0.6198 | 0.7615 | 0.2000 | 0.2599 | 0.2878 | 0.5643 | 0.7803 | | 0.4157 | 4.0 | 288 | 0.3789 | 0.3008 | 0.4211 | 0.3793 | 0.1291 | 0.1840 | 0.4961 | 0.7961 | 0.9261 | | 0.4043 | 5.0 | 360 | 0.3795 | 0.3025 | 0.4117 | 0.4028 | 0.1303 | 0.1850 | 0.4889 | 0.7892 | 0.9278 | | 0.3638 | 6.0 | 432 | 0.3790 | 0.3022 | 0.4019 | 0.4175 | 0.1313 | 0.1862 | 0.4851 | 0.7889 | 0.9262 | | 0.3532 | 7.0 | 504 | 0.3605 | 0.2756 | 0.3864 | 0.3447 | 0.1201 | 0.1732 | 0.5397 | 0.8202 | 0.9330 | | 0.3087 | 8.0 | 576 | 0.3599 | 0.2781 | 0.3896 | 0.3365 | 0.1206 | 0.1722 | 0.5312 | 0.8183 | 0.9332 | | 0.3232 | 9.0 | 648 | 0.3613 | 0.2772 | 0.3879 | 0.3444 | 0.1204 | 0.1733 | 0.5341 | 0.8237 | 0.9334 | | 0.3072 | 10.0 | 720 | 0.3570 | 0.2752 | 0.3794 | 0.3582 | 0.1195 | 0.1731 | 0.5341 | 0.8270 | 0.9374 | | 0.2673 | 11.0 | 792 | 0.3633 | 0.2747 | 0.3838 | 0.3390 | 0.1207 | 0.1728 | 0.5330 | 0.8221 | 0.9333 | | 0.3222 | 12.0 | 864 | 0.3548 | 0.2713 | 0.3783 | 0.3441 | 0.1180 | 0.1711 | 0.5448 | 0.8315 | 0.9367 | | 0.3072 | 13.0 | 936 | 0.3532 | 0.2668 | 0.3700 | 0.3441 | 0.1168 | 0.1701 | 0.5502 | 0.8353 | 0.9387 | | 0.3214 | 14.0 | 1008 | 0.3553 | 0.2674 | 0.3747 | 0.3322 | 0.1177 | 0.1701 | 0.5472 | 0.8324 | 0.9355 | | 0.3406 | 15.0 | 1080 | 0.3533 | 0.2668 | 0.3716 | 0.3427 | 0.1167 | 0.1703 | 0.5522 | 0.8362 | 0.9382 | | c8ccba3c5ba40ddb592b4fe904a4a5b3 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Whisper Tiny it 9 This model is a fine-tuned version of [openai/whisper-tiny](https://huggingface.co/openai/whisper-tiny) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 0.777710 - Wer: 45.327232 | 05b61781c5c4409a75a0cc43ffe16df9 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Model description This model is the openai whisper small transformer adapted for Italian audio to text transcription. This model has weight decay set to 0.1 and the learning rate has been set to 1e-4 in the hyperparameter tuning process. | 53d5c8508bf7d729c7a9364aa4670ab6 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Training and evaluation data Data used for training is the initial 10% of train and validation of [Italian Common Voice](https://huggingface.co/datasets/mozilla-foundation/common_voice_11_0/viewer/it/train) 11.0 from Mozilla Foundation. The dataset used for evaluation is the initial 10% of test of Italian Common Voice. The training data has been augmented with random noise, random pitching and change of the speed of the voice. | 1ba661fe44f9cead5555b33bcb717e46 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 1.5158 | 0.95 | 1000 | 0.9359 | 64.8780 | | 0.9302 | 1.91 | 2000 | 0.8190 | 50.6864 | | 0.5034 | 2.86 | 3000 | 0.7768 | 45.3688 | | 0.2248 | 3.82 | 4000 | 0.7777 | 45.3272 | | b1e23bfdddaa74dce6f76253e80cf239 |

apache-2.0 | ['generated_from_trainer'] | false | results This model is a fine-tuned version of [sshleifer/distilbart-xsum-12-3](https://huggingface.co/sshleifer/distilbart-xsum-12-3) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 4.8564 - Rouge1: 12.9538 - Rouge2: 3.654 - Rougel: 12.3643 - Rougelsum: 12.521 - Gen Len: 13.14 | a8d1f460843c4ce24a5cbd85a18ccb30 |

apache-2.0 | ['generated_from_keras_callback'] | false | new_vit This model is a fine-tuned version of [google/vit-base-patch16-224](https://huggingface.co/google/vit-base-patch16-224) on an unknown dataset. It achieves the following results on the evaluation set: | 8fadda2c9d3034d0cfcf2c6b3bbc98bc |

apache-2.0 | ['generated_from_trainer'] | false | bert-large-uncased-finetuned-DA-Zero-shot-20 This model is a fine-tuned version of [bert-large-uncased](https://huggingface.co/bert-large-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 1.0118 | 02bd942b9f633af3240c2bb1e23b5a63 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 20.0 - mixed_precision_training: Native AMP | 5709fe6d68679d42537a9c6bda0059dd |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 0.6214 | 1.0 | 435 | 1.1818 | | 0.6285 | 2.0 | 870 | 1.2124 | | 0.713 | 3.0 | 1305 | 1.1673 | | 0.7902 | 4.0 | 1740 | 1.1342 | | 0.8051 | 5.0 | 2175 | 1.1042 | | 0.8167 | 6.0 | 2610 | 1.1086 | | 0.8412 | 7.0 | 3045 | 1.0797 | | 0.8885 | 8.0 | 3480 | 1.0575 | | 0.918 | 9.0 | 3915 | 1.0749 | | 0.9765 | 10.0 | 4350 | 1.0565 | | 1.0009 | 11.0 | 4785 | 1.0509 | | 0.986 | 12.0 | 5220 | 1.0564 | | 0.9819 | 13.0 | 5655 | 1.0527 | | 0.9786 | 14.0 | 6090 | 1.0064 | | 0.9689 | 15.0 | 6525 | 1.0038 | | 0.9481 | 16.0 | 6960 | 1.0186 | | 0.955 | 17.0 | 7395 | 0.9860 | | 0.9481 | 18.0 | 7830 | 0.9914 | | 0.9452 | 19.0 | 8265 | 1.0173 | | 0.9452 | 20.0 | 8700 | 1.0050 | | f84202bd93e34ad98313b61930d2b828 |

cc-by-sa-4.0 | ['japanese', 'token-classification', 'pos', 'dependency-parsing'] | false | Model Description This is a DeBERTa(V2) model pre-trained on 青空文庫 texts for POS-tagging and dependency-parsing, derived from [deberta-large-japanese-aozora](https://huggingface.co/KoichiYasuoka/deberta-large-japanese-aozora). Every short-unit-word is tagged by [UPOS](https://universaldependencies.org/u/pos/) (Universal Part-Of-Speech). | 038aec35eb631398cac016ac1652c806 |

cc-by-sa-4.0 | ['japanese', 'token-classification', 'pos', 'dependency-parsing'] | false | How to Use ```py import torch from transformers import AutoTokenizer,AutoModelForTokenClassification tokenizer=AutoTokenizer.from_pretrained("KoichiYasuoka/deberta-large-japanese-upos") model=AutoModelForTokenClassification.from_pretrained("KoichiYasuoka/deberta-large-japanese-upos") s="国境の長いトンネルを抜けると雪国であった。" t=tokenizer.tokenize(s) p=[model.config.id2label[q] for q in torch.argmax(model(tokenizer.encode(s,return_tensors="pt"))["logits"],dim=2)[0].tolist()[1:-1]] print(list(zip(t,p))) ``` or ```py import esupar nlp=esupar.load("KoichiYasuoka/deberta-large-japanese-upos") print(nlp("国境の長いトンネルを抜けると雪国であった。")) ``` | 10f79c1526dbc7913ec47d25b9b3966a |

mit | ['generated_from_trainer'] | false | rubert-tiny2_finetuned_emotion_experiment This model is a fine-tuned version of [cointegrated/rubert-tiny2](https://huggingface.co/cointegrated/rubert-tiny2) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.3947 - Accuracy: 0.8616 - F1: 0.8577 | 6d0969cdbef4a81ab388b159586b88cf |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.651 | 1.0 | 54 | 0.5689 | 0.8172 | 0.8008 | | 0.5355 | 2.0 | 108 | 0.4842 | 0.8486 | 0.8349 | | 0.4561 | 3.0 | 162 | 0.4436 | 0.8590 | 0.8509 | | 0.4133 | 4.0 | 216 | 0.4203 | 0.8590 | 0.8528 | | 0.3709 | 5.0 | 270 | 0.4071 | 0.8564 | 0.8515 | | 0.3346 | 6.0 | 324 | 0.3980 | 0.8564 | 0.8529 | | 0.3153 | 7.0 | 378 | 0.3985 | 0.8590 | 0.8565 | | 0.302 | 8.0 | 432 | 0.3967 | 0.8642 | 0.8619 | | 0.2774 | 9.0 | 486 | 0.3958 | 0.8616 | 0.8575 | | 0.2728 | 10.0 | 540 | 0.3959 | 0.8668 | 0.8644 | | 0.2427 | 11.0 | 594 | 0.3962 | 0.8590 | 0.8550 | | 0.2425 | 12.0 | 648 | 0.3959 | 0.8642 | 0.8611 | | 0.2414 | 13.0 | 702 | 0.3959 | 0.8642 | 0.8611 | | 0.2249 | 14.0 | 756 | 0.3949 | 0.8616 | 0.8582 | | 0.2391 | 15.0 | 810 | 0.3947 | 0.8616 | 0.8577 | | f0212498db985d822f133a7c4ff28a45 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 4 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 20 - mixed_precision_training: Native AMP | 164d6736991ae5662a94ff9df10a25f8 |

cc-by-4.0 | ['espnet', 'audio', 'automatic-speech-recognition'] | false | Demo: How to use in ESPnet2 ```bash cd espnet git checkout 716eb8f92e19708acfd08ba3bd39d40890d3a84b pip install -e . cd egs2/commonvoice/asr1 ./run.sh --skip_data_prep false --skip_train true --download_model espnet/arabic_commonvoice_blstm ``` <!-- Generated by scripts/utils/show_asr_result.sh --> | 0e597c893dc58698e36e5fb3ed9869ea |

cc-by-4.0 | ['espnet', 'audio', 'automatic-speech-recognition'] | false | Environments - date: `Sat Apr 16 17:11:01 EDT 2022` - python version: `3.9.5 (default, Jun 4 2021, 12:28:51) [GCC 7.5.0]` - espnet version: `espnet 0.10.6a1` - pytorch version: `pytorch 1.8.1+cu102` - Git hash: `5e6e95d087af8a7a4c33c4248b75114237eae64b` - Commit date: `Mon Apr 4 21:04:45 2022 -0400` | e6338bdcaedc4b35ab595a956020c182 |

cc-by-4.0 | ['espnet', 'audio', 'automatic-speech-recognition'] | false | ASR config <details><summary>expand</summary> ``` config: conf/tuning/train_asr_rnn.yaml print_config: false log_level: INFO dry_run: false iterator_type: sequence output_dir: exp/asr_train_asr_rnn_raw_ar_bpe150_sp ngpu: 1 seed: 0 num_workers: 1 num_att_plot: 3 dist_backend: nccl dist_init_method: env:// dist_world_size: null dist_rank: null local_rank: 0 dist_master_addr: null dist_master_port: null dist_launcher: null multiprocessing_distributed: false unused_parameters: false sharded_ddp: false cudnn_enabled: true cudnn_benchmark: false cudnn_deterministic: true collect_stats: false write_collected_feats: false max_epoch: 15 patience: 3 val_scheduler_criterion: - valid - loss early_stopping_criterion: - valid - loss - min best_model_criterion: - - train - loss - min - - valid - loss - min - - train - acc - max - - valid - acc - max keep_nbest_models: - 10 nbest_averaging_interval: 0 grad_clip: 5.0 grad_clip_type: 2.0 grad_noise: false accum_grad: 1 no_forward_run: false resume: true train_dtype: float32 use_amp: false log_interval: null use_matplotlib: true use_tensorboard: true use_wandb: false wandb_project: null wandb_id: null wandb_entity: null wandb_name: null wandb_model_log_interval: -1 detect_anomaly: false pretrain_path: null init_param: [] ignore_init_mismatch: false freeze_param: [] num_iters_per_epoch: null batch_size: 30 valid_batch_size: null batch_bins: 1000000 valid_batch_bins: null train_shape_file: - exp/asr_stats_raw_ar_bpe150_sp/train/speech_shape - exp/asr_stats_raw_ar_bpe150_sp/train/text_shape.bpe valid_shape_file: - exp/asr_stats_raw_ar_bpe150_sp/valid/speech_shape - exp/asr_stats_raw_ar_bpe150_sp/valid/text_shape.bpe batch_type: folded valid_batch_type: null fold_length: - 80000 - 150 sort_in_batch: descending sort_batch: descending multiple_iterator: false chunk_length: 500 chunk_shift_ratio: 0.5 num_cache_chunks: 1024 train_data_path_and_name_and_type: - - dump/raw/train_ar_sp/wav.scp - speech - sound - - dump/raw/train_ar_sp/text - text - text valid_data_path_and_name_and_type: - - dump/raw/dev_ar/wav.scp - speech - sound - - dump/raw/dev_ar/text - text - text allow_variable_data_keys: false max_cache_size: 0.0 max_cache_fd: 32 valid_max_cache_size: null optim: adadelta optim_conf: lr: 0.1 scheduler: null scheduler_conf: {} token_list: - <blank> - <unk> - َ - ا - ِ - ْ - م - ي - ل - ن - ُ - ر - ه - ▁ال - ت - ب - ع - ك - د - و - ▁و - . - س - ▁أ - ق - ة - ▁م - َّ - ح - ▁ل - ف - ▁ي - ▁ب - ▁ف - ج - ▁ت - أ - ذ - ▁ع - ال - ّ - ً - ص - ▁ك - ى - ط - ض - خ - ون - ش - ▁ق - ين - ز - ▁أن - ▁س - ▁من - ▁إ - ث - ▁ر - ▁ن - وا - ٌ - ٍ - ▁ا - غ - ▁ح - اء - ▁في - إ - ان - ▁ج - ▁ - ِّ - ظ - ▁؟ - ▁ه - اب - ▁ش - ُّ - ول - ▁خ - ار - ئ - ▁ص - ▁سامي - ▁إن - ▁لا - ▁الل - ▁كان - يد - اد - ائ - ات - ؟ - ▁الأ - ▁د - ▁إلى - ير - ▁غ - ▁هل - آ - ؤ - ء - '!' - ـ - '"' - ، - ',' - ':' - ی - ٰ - '-' - ک - ؛ - “ - ” - T - '?' - I - ; - E - O - G - » - A - L - U - F - ۛ - — - S - M - D - « - N - ۗ - _ - ۚ - H - '''' - W - Y - چ - ڨ - ھ - ۘ - ☭ - C - ۖ - <sos/eos> init: null input_size: null ctc_conf: dropout_rate: 0.0 ctc_type: builtin reduce: true ignore_nan_grad: true joint_net_conf: null model_conf: ctc_weight: 0.5 use_preprocessor: true token_type: bpe bpemodel: data/ar_token_list/bpe_unigram150/bpe.model non_linguistic_symbols: null cleaner: null g2p: null speech_volume_normalize: null rir_scp: null rir_apply_prob: 1.0 noise_scp: null noise_apply_prob: 1.0 noise_db_range: '13_15' frontend: default frontend_conf: fs: 16k specaug: specaug specaug_conf: apply_time_warp: true time_warp_window: 5 time_warp_mode: bicubic apply_freq_mask: true freq_mask_width_range: - 0 - 27 num_freq_mask: 2 apply_time_mask: true time_mask_width_ratio_range: - 0.0 - 0.05 num_time_mask: 2 normalize: global_mvn normalize_conf: stats_file: exp/asr_stats_raw_ar_bpe150_sp/train/feats_stats.npz preencoder: null preencoder_conf: {} encoder: vgg_rnn encoder_conf: rnn_type: lstm bidirectional: true use_projection: true num_layers: 4 hidden_size: 1024 output_size: 1024 postencoder: null postencoder_conf: {} decoder: rnn decoder_conf: num_layers: 2 hidden_size: 1024 sampling_probability: 0 att_conf: atype: location adim: 1024 aconv_chans: 10 aconv_filts: 100 required: - output_dir - token_list version: 0.10.6a1 distributed: false ``` </details> | 1bbde949c01ccde2f9251717b5b3611c |

apache-2.0 | ['generated_from_keras_callback'] | false | QA-finetuned-distilbert-TFv2 This model is a fine-tuned version of [distilbert-base-cased](https://huggingface.co/distilbert-base-cased) on an unknown dataset. It achieves the following results on the evaluation set: | 03bf453af47af3d6b1f04632b72308f9 |

mit | ['conversational'] | false | This classification model is based on [DeepPavlov/rubert-base-cased-sentence](https://huggingface.co/DeepPavlov/rubert-base-cased-sentence). The model should be used to produce relevance and specificity of the last message in the context of a dialogue. The labels explanation: - `relevance`: is the last message in the dialogue relevant in the context of the full dialogue. - `specificity`: is the last message in the dialogue interesting and promotes the continuation of the dialogue. It is pretrained on a large corpus of dialog data in unsupervised manner: the model is trained to predict whether last response was in a real dialog, or it was pulled from some other dialog at random. Then it was finetuned on manually labelled examples (dataset will be posted soon). The model was trained with three messages in the context and one response. Each message was tokenized separately with ``` max_length = 32 ```. The performance of the model on validation split (dataset will be posted soon) (with the best thresholds for validation samples): | | threshold | f0.5 | ROC AUC | |:------------|------------:|-------:|----------:| | relevance | 0.49 | 0.84 | 0.79 | | specificity | 0.53 | 0.83 | 0.83 | How to use: ```python import torch from transformers import AutoTokenizer, AutoModelForSequenceClassification tokenizer = AutoTokenizer.from_pretrained('tinkoff-ai/response-quality-classifier-base') model = AutoModelForSequenceClassification.from_pretrained('tinkoff-ai/response-quality-classifier-base') inputs = tokenizer('[CLS]привет[SEP]привет![SEP]как дела?[RESPONSE_TOKEN]норм, у тя как?', max_length=128, add_special_tokens=False, return_tensors='pt') with torch.inference_mode(): logits = model(**inputs).logits probas = torch.sigmoid(logits)[0].cpu().detach().numpy() relevance, specificity = probas ``` The [app](https://huggingface.co/spaces/tinkoff-ai/response-quality-classifiers) where you can easily interact with this model. The work was done during internship at Tinkoff by [egoriyaa](https://github.com/egoriyaa), mentored by [solemn-leader](https://huggingface.co/solemn-leader). | dd542c75c1b5274574abd6eaa4bda53a |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | opus-mt-tc-base-fi-uk Neural machine translation model for translating from Finnish (fi) to Ukrainian (uk). This model is part of the [OPUS-MT project](https://github.com/Helsinki-NLP/Opus-MT), an effort to make neural machine translation models widely available and accessible for many languages in the world. All models are originally trained using the amazing framework of [Marian NMT](https://marian-nmt.github.io/), an efficient NMT implementation written in pure C++. The models have been converted to pyTorch using the transformers library by huggingface. Training data is taken from [OPUS](https://opus.nlpl.eu/) and training pipelines use the procedures of [OPUS-MT-train](https://github.com/Helsinki-NLP/Opus-MT-train). * Publications: [OPUS-MT – Building open translation services for the World](https://aclanthology.org/2020.eamt-1.61/) and [The Tatoeba Translation Challenge – Realistic Data Sets for Low Resource and Multilingual MT](https://aclanthology.org/2020.wmt-1.139/) (Please, cite if you use this model.) ``` @inproceedings{tiedemann-thottingal-2020-opus, title = "{OPUS}-{MT} {--} Building open translation services for the World", author = {Tiedemann, J{\"o}rg and Thottingal, Santhosh}, booktitle = "Proceedings of the 22nd Annual Conference of the European Association for Machine Translation", month = nov, year = "2020", address = "Lisboa, Portugal", publisher = "European Association for Machine Translation", url = "https://aclanthology.org/2020.eamt-1.61", pages = "479--480", } @inproceedings{tiedemann-2020-tatoeba, title = "The Tatoeba Translation Challenge {--} Realistic Data Sets for Low Resource and Multilingual {MT}", author = {Tiedemann, J{\"o}rg}, booktitle = "Proceedings of the Fifth Conference on Machine Translation", month = nov, year = "2020", address = "Online", publisher = "Association for Computational Linguistics", url = "https://aclanthology.org/2020.wmt-1.139", pages = "1174--1182", } ``` | 0a5c3a924557869a9c8ca06717ee63d0 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Model info * Release: 2022-03-07 * source language(s): fin * target language(s): ukr * model: transformer-align * data: opusTCv20210807+pbt ([source](https://github.com/Helsinki-NLP/Tatoeba-Challenge)) * tokenization: SentencePiece (spm32k,spm32k) * original model: [opusTCv20210807+pbt_transformer-align_2022-03-07.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/fin-ukr/opusTCv20210807+pbt_transformer-align_2022-03-07.zip) * more information released models: [OPUS-MT fin-ukr README](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/fin-ukr/README.md) | 94ecb54b417b945d3bb62feff6874331 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Usage A short example code: ```python from transformers import MarianMTModel, MarianTokenizer src_text = [ "Afrikka on ihmiskunnan kehto.", "Yksi, kaksi, kolme, neljä, viisi, kuusi, seitsemän, kahdeksan, yhdeksän, kymmenen." ] model_name = "pytorch-models/opus-mt-tc-base-fi-uk" tokenizer = MarianTokenizer.from_pretrained(model_name) model = MarianMTModel.from_pretrained(model_name) translated = model.generate(**tokenizer(src_text, return_tensors="pt", padding=True)) for t in translated: print( tokenizer.decode(t, skip_special_tokens=True) ) | 11761a76d78885df575df7709f613177 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Один, два, три, чотири, п'ять, шість, сім, вісім, дев'ять, десять. ``` You can also use OPUS-MT models with the transformers pipelines, for example: ```python from transformers import pipeline pipe = pipeline("translation", model="Helsinki-NLP/opus-mt-tc-base-fi-uk") print(pipe("Afrikka on ihmiskunnan kehto.")) | 2925dac2c7daf5100b42582b4b46c984 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Benchmarks * test set translations: [opusTCv20210807+pbt_transformer-align_2022-03-07.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/fin-ukr/opusTCv20210807+pbt_transformer-align_2022-03-07.test.txt) * test set scores: [opusTCv20210807+pbt_transformer-align_2022-03-07.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/fin-ukr/opusTCv20210807+pbt_transformer-align_2022-03-07.eval.txt) * benchmark results: [benchmark_results.txt](benchmark_results.txt) * benchmark output: [benchmark_translations.zip](benchmark_translations.zip) | langpair | testset | chr-F | BLEU | | a8d96b7e4ca171674a81705563a96c88 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-base-finetuned-sentiment-mesd-v2 This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.7213 - Accuracy: 0.3923 | 3cd5348fd4c904a28cade58a75401eef |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1.25e-05 - train_batch_size: 64 - eval_batch_size: 40 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 256 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - num_epochs: 20 | 306935bc32c9aad6046442c6d40ca8ae |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 0.86 | 3 | 1.7961 | 0.1462 | | 1.9685 | 1.86 | 6 | 1.7932 | 0.1692 | | 1.9685 | 2.86 | 9 | 1.7891 | 0.2 | | 2.1386 | 3.86 | 12 | 1.7820 | 0.2923 | | 1.9492 | 4.86 | 15 | 1.7750 | 0.2923 | | 1.9492 | 5.86 | 18 | 1.7684 | 0.2846 | | 2.1143 | 6.86 | 21 | 1.7624 | 0.3231 | | 2.1143 | 7.86 | 24 | 1.7561 | 0.3308 | | 2.0945 | 8.86 | 27 | 1.7500 | 0.3462 | | 1.9121 | 9.86 | 30 | 1.7443 | 0.3385 | | 1.9121 | 10.86 | 33 | 1.7386 | 0.3231 | | 2.0682 | 11.86 | 36 | 1.7328 | 0.3231 | | 2.0682 | 12.86 | 39 | 1.7272 | 0.3769 | | 2.0527 | 13.86 | 42 | 1.7213 | 0.3923 | | 1.8705 | 14.86 | 45 | 1.7154 | 0.3846 | | 1.8705 | 15.86 | 48 | 1.7112 | 0.3846 | | 2.0263 | 16.86 | 51 | 1.7082 | 0.3769 | | 2.0263 | 17.86 | 54 | 1.7044 | 0.3846 | | 2.0136 | 18.86 | 57 | 1.7021 | 0.3846 | | 1.8429 | 19.86 | 60 | 1.7013 | 0.3846 | | 73a49d4169001dabd389a7f55fbba708 |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | Files 6 files available (Best version is 4000steps): -Boichi2_style-1000 - 1000 steps -Boichi2_style-1000 - 2000 steps -Boichi2_style-1000 - 3000 steps -Boichi2_style-1000 - 4000 steps (recommended) -Boichi2_style-1000 - 5000 steps -Boichi2_style-1000 - 6000 steps | d5b6b2f233707db8be5f629c605e7399 |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | Prompt You need to use DeepDanBooru Tags (https://gigazine.net/gsc_news/en/20221012-automatic1111-stable-diffusion-webui-deep-danbooru/) I also used Nixeu_style embedding (not necessary): https://huggingface.co/sd-concepts-library/nixeu) And Elysium_Anime_V2.ckpt (https://huggingface.co/hesw23168/SD-Elysium-Model) | 68192243727e3cd52c41637f5ca09730 |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | Example Positive Prompt: (Nixeu_style:1.2), (Boichi2_style-4000:1.2), (1boy:1.4), (muscular:1.2), (muscular_chest:1.2),pectorals, abs,(male_focus:1.5), (black_eyes:1.2), (white_hair:1.3),(muscular:1.2), (half_shaved_hair:1.1), (gel_spiked_hair:1.2), (white_hair:1.3), attractive, facing_camera, (male_focus:1.4), (solo:1.3), single, (detailed _mouth:1.2), (mouth_closed:1.2), ultra_detailed_face, (ultra_detailed_eyes:1.2), (symmetrical_eyes:1.2), (rounded_eyes:1.2), flame_in_the_eyes, high_details, high_quality, masterpiece, manga, (monochrome:1.4) Negative Prompt: (mediocre:1.2), (average:1.2), (bad:1.2), (wrong:1.2), (error:1.2), (fault:1.2),( badly_drawn:1.2), (poorly_drawn:1.2), ( low_quality:1.2), no_quality, bad_quality, no_resolution, low_resolution, (lowres:1.2), normal_resolution, (disfigured:1.8), (deformed:1.8), (distortion:1.2), bad_anatomy, (no_detail:1.2), low_detail, normal_detail, (scribble:1.2), (rushed:1.2), (unfinished:1.2), blur, blurry, claws, (misplaced:1.2), (disconnected:1.2), nonsense, random, (noise:1.2), (deformation:1.2), 3d, dull, boring, uninteresting, screencap, (text:1.2), (frame:1.1), (out_of_frame:1.2), (title:1.2), (description:1.3), (sexual:1.2), text, error,(logo:1.3), (watermark:1.3), bad_perspective, bad_proportions, cinematic, jpg_artifacts, jpeg_artifacts, extra_leg, missing_leg, extra_arm, missing_arm, long_hand, bad_hands, (mutated_hand:1.2), (extra_finger:1.2), (missing_finger:1.2), broken_finger, (fused_fingers:1.2), extra_feet, missing_feet, fused_feet, long_feet, missing_limbs, extra_limbs, fused_limbs, claw, (extra_digit:1.2), (fewer_digits:1.2), elves_ears, (naked:1.3), (wet:1.2), (girl:1.4) <img src="https://huggingface.co/Akumetsu971/SD_Boichi_Art_Style/resolve/main/04273-294460776-(Nixeu_style_1.2)%2C%20(Boichi2_style-4000_1.2)%2C%20(1boy_1.4)%2C%20(muscular_1.2)%2C%20(muscular_chest_1.2)%2Cpectorals%2C%20abs%2C(male_focus_1.5)%2C%20(.png" width="50%"/> <img src="https://huggingface.co/Akumetsu971/SD_Boichi_Art_Style/resolve/main/03917-1065737464-(Nixeu_style_1.2)%2C%20(Boichi2_style-4000_1.1)%2C%20(1girl_1.4)%2C%20(school_uniform_1.3)%2C%20in%20classroom%2C%20(full_body_1.2)%2C%20attractive%2C%20beaut.png" width="50%"/> <img src="https://huggingface.co/Akumetsu971/SD_Boichi_Art_Style/resolve/main/04007-2494757721-(Bchi_step_4000_1.2)%2C%20(1girl_1.3)%2C%20attractive%2C%20(wide_shot_1.2)%2C%20beautiful%20and%20elegant%2C%20black_eyes%2C%20facing_camera%2C%20solo%2C%20single%2C.png" width="50%"/> <img src="https://huggingface.co/Akumetsu971/SD_Boichi_Art_Style/resolve/main/03905-3403646630-(Nixeu_style_1.2)%2C%20(Boichi2_style-4000_1.1)%2C%20(1boy_1.4)%2C%20(profile_1.4)%2C%20%20fight_club%2C%20(muscular_1.3)%2C%20(no_clothe_1.4)%2C%20(naked_1.4.png" width="50%"/> | 7a7f882a7d8f7df1902c9e2b72f53961 |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | Bad Example Used on another model or with bad prompt <img src="https://huggingface.co/Akumetsu971/SD_Boichi_Art_Style/resolve/main/03461-2842380376-1boy%2C%20(highly%20detailed)%2C%20masterpiece%2C%20Boichi_style.png" width="50%"/> ``` | bac035daa42ea62fab4ecde24f7ce6ed |

apache-2.0 | ['translation'] | false | jpn-heb * source group: Japanese * target group: Hebrew * OPUS readme: [jpn-heb](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/jpn-heb/README.md) * model: transformer-align * source language(s): jpn_Hani jpn_Hira jpn_Kana * target language(s): heb * model: transformer-align * pre-processing: normalization + SentencePiece (spm32k,spm32k) * download original weights: [opus-2020-06-17.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/jpn-heb/opus-2020-06-17.zip) * test set translations: [opus-2020-06-17.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/jpn-heb/opus-2020-06-17.test.txt) * test set scores: [opus-2020-06-17.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/jpn-heb/opus-2020-06-17.eval.txt) | 57a9b8591ab3161514ac1bd3639ca1b8 |

apache-2.0 | ['translation'] | false | System Info: - hf_name: jpn-heb - source_languages: jpn - target_languages: heb - opus_readme_url: https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/jpn-heb/README.md - original_repo: Tatoeba-Challenge - tags: ['translation'] - languages: ['ja', 'he'] - src_constituents: {'jpn_Hang', 'jpn', 'jpn_Yiii', 'jpn_Kana', 'jpn_Hani', 'jpn_Bopo', 'jpn_Latn', 'jpn_Hira'} - tgt_constituents: {'heb'} - src_multilingual: False - tgt_multilingual: False - prepro: normalization + SentencePiece (spm32k,spm32k) - url_model: https://object.pouta.csc.fi/Tatoeba-MT-models/jpn-heb/opus-2020-06-17.zip - url_test_set: https://object.pouta.csc.fi/Tatoeba-MT-models/jpn-heb/opus-2020-06-17.test.txt - src_alpha3: jpn - tgt_alpha3: heb - short_pair: ja-he - chrF2_score: 0.397 - bleu: 20.2 - brevity_penalty: 1.0 - ref_len: 1598.0 - src_name: Japanese - tgt_name: Hebrew - train_date: 2020-06-17 - src_alpha2: ja - tgt_alpha2: he - prefer_old: False - long_pair: jpn-heb - helsinki_git_sha: 480fcbe0ee1bf4774bcbe6226ad9f58e63f6c535 - transformers_git_sha: 2207e5d8cb224e954a7cba69fa4ac2309e9ff30b - port_machine: brutasse - port_time: 2020-08-21-14:41 | afdf73e7ac24ff4aa551873f98789571 |

apache-2.0 | ['translation'] | false | opus-mt-en-umb * source languages: en * target languages: umb * OPUS readme: [en-umb](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/en-umb/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-08.zip](https://object.pouta.csc.fi/OPUS-MT-models/en-umb/opus-2020-01-08.zip) * test set translations: [opus-2020-01-08.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/en-umb/opus-2020-01-08.test.txt) * test set scores: [opus-2020-01-08.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/en-umb/opus-2020-01-08.eval.txt) | 80d73e77109a97f2cf4d8fb74aab3f91 |

creativeml-openrail-m | ['stable-diffusion'] | false | Description > Kobo Kanaeru (こぼ・かなえる) is a female Indonesian Virtual YouTuber associated with hololive, > debuting as part of its Indonesian (ID) branch third generation of VTubers alongside Vestia Zeta and Kaela Kovalskia. > ([Fandom](https://virtualyoutuber.fandom.com/wiki/Kobo_Kanaeru)) | 1fa9c07be73b941a0e7df88172f215e8 |

creativeml-openrail-m | ['stable-diffusion'] | false | Preview > **Model:** [anything-v4.5-pruned.ckpt](https://huggingface.co/andite/anything-v4.0/tree/main)\ > **Model VAE:** [anything-v4.0.vae.pt](https://huggingface.co/andite/anything-v4.0/tree/main)\ > **Prompt:** TI-EMB_kobo-kanaeru\ > **Negative Prompt:** obese, (ugly:1.3), (duplicate:1.3), (morbid), (mutilated), out of frame, extra fingers, mutated hands, (poorly drawn hands), (poorly drawn face), (mutation:1.3), (deformed:1.3), (amputee:1.3), blurry, bad anatomy, bad proportions, (extra limbs), cloned face, (disfigured:1.3), gross proportions, (malformed limbs), (missing arms), (missing legs), (extra arms), (extra legs), mutated hands, (fused fingers), (too many fingers), (long neck:1.3), lowres, text, error, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry, black and white, monochrome, censored,empty     | 1f744633adaeee109e7e3b7b269289ca |

apache-2.0 | ['automatic-speech-recognition', 'et'] | false | exp_w2v2t_et_vp-it_s992 Fine-tuned [facebook/wav2vec2-large-it-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-it-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (et)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 9983b0fc53981a4f78a3bac121cfad7e |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:-------:|:------:|:-------:|:---------:|:-------:| | No log | 1.0 | 27 | 6.1310 | 11.5882 | 3.2614 | 10.0378 | 11.2317 | 17.2 | | 68a73eca5939f696e4b30f7f8155ba9c |

apache-2.0 | ['generated_from_trainer'] | false | distilbart-cnn-12-6-finetuned-1.2.3 This model is a fine-tuned version of [sshleifer/distilbart-cnn-12-6](https://huggingface.co/sshleifer/distilbart-cnn-12-6) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.0679 - Rouge1: 39.561 - Rouge2: 19.2826 - Rougel: 33.2976 - Rougelsum: 33.4508 | d670e5856a2aa648549e5108527100ba |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:| | 2.6987 | 1.0 | 98 | 2.2214 | 39.1186 | 19.1018 | 32.9027 | 33.0949 | | 1.8484 | 2.0 | 196 | 2.0679 | 39.561 | 19.2826 | 33.2976 | 33.4508 | | 6fe7dbca45f09fa45fc4f90007172e17 |

creativeml-openrail-m | ['text-to-image'] | false | yeah Dreambooth model trained by duben with [Hugging Face Dreambooth Training Space](https://huggingface.co/spaces/multimodalart/dreambooth-training) with the v2-1-768 base model You run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb). Don't forget to use the concept prompts! Sample pictures of: a man (use that on your prompt)  | 1e78a496cf91b3a120026f170ae602d7 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - distributed_type: multi-GPU - num_devices: 2 - total_train_batch_size: 32 - total_eval_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 2.0 | 133e905ed1e584cc7db8b8683a5e0b12 |

apache-2.0 | ['generated_from_keras_callback'] | false | Jasmine8596/distilbert-finetuned-imdb This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 2.8423 - Validation Loss: 2.6128 - Epoch: 0 | d107507cf1272d3b65f9a66d8adb6aaf |

mit | ['generated_from_trainer'] | false | ko-en-m2m This model is a fine-tuned version of [facebook/m2m100_418M](https://huggingface.co/facebook/m2m100_418M) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.4282 - Bleu: 25.8137 - Gen Len: 10.9556 | 163c4435e034b60540c714896ec951a2 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 6 - mixed_precision_training: Native AMP | 177ee8011ca9414ec59eccb7bfb50365 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len | |:-------------:|:-----:|:-----:|:---------------:|:-------:|:-------:| | 0.5891 | 0.3 | 5000 | 0.7640 | 12.7212 | 10.465 | | 0.5653 | 0.6 | 10000 | 0.7211 | 13.4957 | 11.5844 | | 0.5464 | 0.91 | 15000 | 0.6875 | 13.5204 | 10.6604 | | 0.5254 | 1.21 | 20000 | 0.6690 | 14.5273 | 10.5754 | | 0.5308 | 1.51 | 25000 | 0.6757 | 14.1623 | 11.9493 | | 0.5192 | 1.81 | 30000 | 0.6458 | 15.1048 | 10.8811 | | 0.502 | 2.11 | 35000 | 0.6423 | 14.7989 | 11.047 | | 0.4971 | 2.42 | 40000 | 0.6259 | 15.6324 | 11.0428 | | 0.502 | 2.72 | 45000 | 0.6047 | 16.684 | 10.9814 | | 0.4544 | 3.02 | 50000 | 0.5834 | 16.9704 | 10.9722 | | 0.4541 | 3.32 | 55000 | 0.5722 | 17.6061 | 10.8485 | | 0.4362 | 3.63 | 60000 | 0.5523 | 19.1337 | 10.7972 | | 0.4285 | 3.93 | 65000 | 0.5325 | 19.4546 | 10.6665 | | 0.3851 | 4.23 | 70000 | 0.5159 | 20.4035 | 10.6171 | | 0.3891 | 4.53 | 75000 | 0.4926 | 21.8822 | 10.8857 | | 0.3602 | 4.83 | 80000 | 0.4740 | 22.737 | 11.0248 | | 0.336 | 5.14 | 85000 | 0.4570 | 23.7202 | 10.7115 | | 0.3355 | 5.44 | 90000 | 0.4415 | 24.9891 | 10.9077 | | 0.3244 | 5.74 | 95000 | 0.4282 | 25.8137 | 10.9556 | | c9c6389ac1364e401cb30c2e3b2a1f0c |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Whisper Tiny it 9 This model is a fine-tuned version of [openai/whisper-tiny](https://huggingface.co/openai/whisper-tiny) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 0.732505 - Wer: 45.327232 | 81b01161e52facf36309d1c5f27aa620 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 1.5103 | 0.95 | 1000 | 0.8238 | 52.6830 | | 1.2030 | 1.91 | 2000 | 0.7581 | 49.4038 | | 1.0094 | 2.86 | 3000 | 0.7364 | 47.7884 | | 0.8973 | 3.82 | 4000 | 0.7325 | 46.8178 | 7a7900c5782eea55d06edcb7baabf129 |

mit | [] | false | Description This model is a fine-tuned version of [BETO (spanish bert)](https://huggingface.co/dccuchile/bert-base-spanish-wwm-uncased) that has been trained on the *Datathon Against Racism* dataset (2022) We performed several experiments that will be described in the upcoming paper "Estimating Ground Truth in a Low-labelled Data Regime:A Study of Racism Detection in Spanish" (NEATClasS 2022) We applied 6 different methods ground-truth estimations, and for each one we performed 4 epochs of fine-tuning. The result is made of 24 models: | method | epoch 1 | epoch 3 | epoch 3 | epoch 4 | |--- |--- |--- |--- |--- | | raw-label | [raw-label-epoch-1](https://huggingface.co/MartinoMensio/racism-models-raw-label-epoch-1) | [raw-label-epoch-2](https://huggingface.co/MartinoMensio/racism-models-raw-label-epoch-2) | [raw-label-epoch-3](https://huggingface.co/MartinoMensio/racism-models-raw-label-epoch-3) | [raw-label-epoch-4](https://huggingface.co/MartinoMensio/racism-models-raw-label-epoch-4) | | m-vote-strict | [m-vote-strict-epoch-1](https://huggingface.co/MartinoMensio/racism-models-m-vote-strict-epoch-1) | [m-vote-strict-epoch-2](https://huggingface.co/MartinoMensio/racism-models-m-vote-strict-epoch-2) | [m-vote-strict-epoch-3](https://huggingface.co/MartinoMensio/racism-models-m-vote-strict-epoch-3) | [m-vote-strict-epoch-4](https://huggingface.co/MartinoMensio/racism-models-m-vote-strict-epoch-4) | | m-vote-nonstrict | [m-vote-nonstrict-epoch-1](https://huggingface.co/MartinoMensio/racism-models-m-vote-nonstrict-epoch-1) | [m-vote-nonstrict-epoch-2](https://huggingface.co/MartinoMensio/racism-models-m-vote-nonstrict-epoch-2) | [m-vote-nonstrict-epoch-3](https://huggingface.co/MartinoMensio/racism-models-m-vote-nonstrict-epoch-3) | [m-vote-nonstrict-epoch-4](https://huggingface.co/MartinoMensio/racism-models-m-vote-nonstrict-epoch-4) | | regression-w-m-vote | [regression-w-m-vote-epoch-1](https://huggingface.co/MartinoMensio/racism-models-regression-w-m-vote-epoch-1) | [regression-w-m-vote-epoch-2](https://huggingface.co/MartinoMensio/racism-models-regression-w-m-vote-epoch-2) | [regression-w-m-vote-epoch-3](https://huggingface.co/MartinoMensio/racism-models-regression-w-m-vote-epoch-3) | [regression-w-m-vote-epoch-4](https://huggingface.co/MartinoMensio/racism-models-regression-w-m-vote-epoch-4) | | w-m-vote-strict | [w-m-vote-strict-epoch-1](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-strict-epoch-1) | [w-m-vote-strict-epoch-2](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-strict-epoch-2) | [w-m-vote-strict-epoch-3](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-strict-epoch-3) | [w-m-vote-strict-epoch-4](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-strict-epoch-4) | | w-m-vote-nonstrict | [w-m-vote-nonstrict-epoch-1](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-nonstrict-epoch-1) | [w-m-vote-nonstrict-epoch-2](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-nonstrict-epoch-2) | [w-m-vote-nonstrict-epoch-3](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-nonstrict-epoch-3) | [w-m-vote-nonstrict-epoch-4](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-nonstrict-epoch-4) | This model is `raw-label-epoch-1` | c4ab544b693c5961ad3719e3470fc47c |

mit | [] | false | Usage ```python from transformers import AutoTokenizer, AutoModelForSequenceClassification, pipeline model_name = 'raw-label-epoch-1' tokenizer = AutoTokenizer.from_pretrained("dccuchile/bert-base-spanish-wwm-uncased") full_model_path = f'MartinoMensio/racism-models-{model_name}' model = AutoModelForSequenceClassification.from_pretrained(full_model_path) pipe = pipeline("text-classification", model = model, tokenizer = tokenizer) texts = [ 'y porqué es lo que hay que hacer con los menas y con los adultos también!!!! NO a los inmigrantes ilegales!!!!', 'Es que los judíos controlan el mundo' ] print(pipe(texts)) | 4ed80db0e8523c0a237a06cb69188243 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.