license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['longformer', 'longformer-scico'] | false | Longformer for SciCo This model is the `unified` model discussed in the paper [SciCo: Hierarchical Cross-Document Coreference for Scientific Concepts (AKBC 2021)](https://openreview.net/forum?id=OFLbgUP04nC) that formulates the task of hierarchical cross-document coreference resolution (H-CDCR) as a multiclass problem. The model takes as input two mentions `m1` and `m2` with their corresponding context and outputs 4 scores: * 0: not related * 1: `m1` and `m2` corefer * 2: `m1` is a parent of `m2` * 3: `m1` is a child of `m2`. We provide the following code as an example to set the global attention on the special tokens: `<s>`, `<m>` and `</m>`. ```python from transformers import AutoTokenizer, AutoModelForSequenceClassification import torch tokenizer = AutoTokenizer.from_pretrained('allenai/longformer-scico') model = AutoModelForSequenceClassification.from_pretrained('allenai/longformer-scico') start_token = tokenizer.convert_tokens_to_ids("<m>") end_token = tokenizer.convert_tokens_to_ids("</m>") def get_global_attention(input_ids): global_attention_mask = torch.zeros(input_ids.shape) global_attention_mask[:, 0] = 1 | ee42024b63cf8c95ad990341f154d36d |

apache-2.0 | ['longformer', 'longformer-scico'] | false | global attention to the </m> token globs = torch.cat((start, end)) value = torch.ones(globs.shape[0]) global_attention_mask.index_put_(tuple(globs.t()), value) return global_attention_mask m1 = "In this paper we present the results of an experiment in <m> automatic concept and definition extraction </m> from written sources of law using relatively simple natural methods." m2 = "This task is important since many natural language processing (NLP) problems, such as <m> information extraction </m>, summarization and dialogue." inputs = m1 + " </s></s> " + m2 tokens = tokenizer(inputs, return_tensors='pt') global_attention_mask = get_global_attention(tokens['input_ids']) with torch.no_grad(): output = model(tokens['input_ids'], tokens['attention_mask'], global_attention_mask) scores = torch.softmax(output.logits, dim=-1) | 9a2055633a6dc63beca8b549ac7b7e59 |

apache-2.0 | ['longformer', 'longformer-scico'] | false | tensor([[0.0818, 0.0023, 0.0019, 0.9139]]) -- m1 is a child of m2 ``` **Note:** There is a slight difference between this model and the original model presented in the [paper](https://openreview.net/forum?id=OFLbgUP04nC). The original model includes a single linear layer on top of the `<s>` token (equivalent to `[CLS]`) while this model includes a two-layers MLP to be in line with `LongformerForSequenceClassification`. The original repository can be found [here](https://github.com/ariecattan/scico). | beddc925a00b83c9d6a3787f2f5b3062 |

apache-2.0 | ['longformer', 'longformer-scico'] | false | Citation ```python @inproceedings{ cattan2021scico, title={SciCo: Hierarchical Cross-Document Coreference for Scientific Concepts}, author={Arie Cattan and Sophie Johnson and Daniel S Weld and Ido Dagan and Iz Beltagy and Doug Downey and Tom Hope}, booktitle={3rd Conference on Automated Knowledge Base Construction}, year={2021}, url={https://openreview.net/forum?id=OFLbgUP04nC} } ``` | 48a4a4f06c792c28740fc79481617fdb |

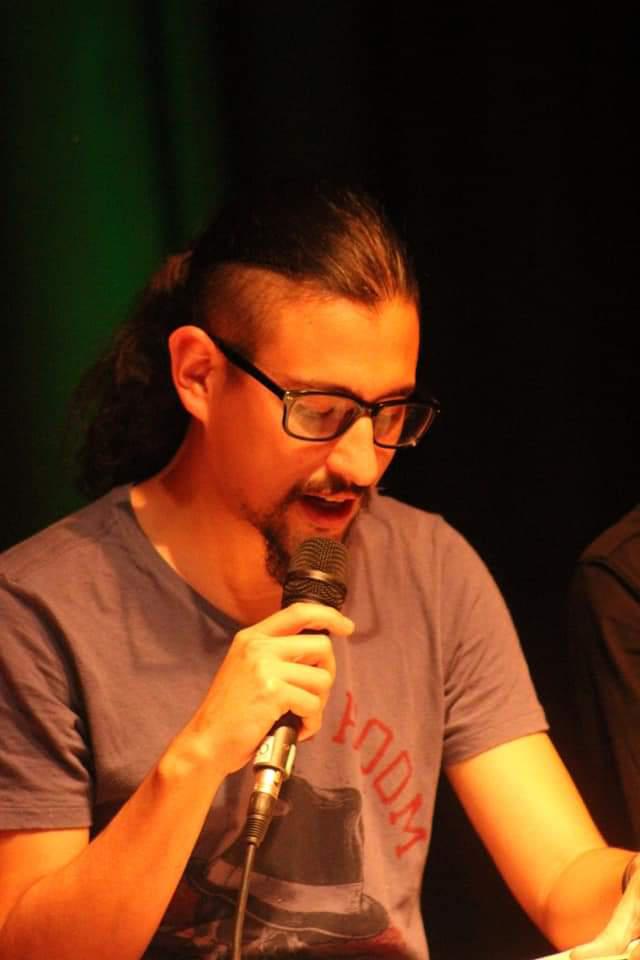

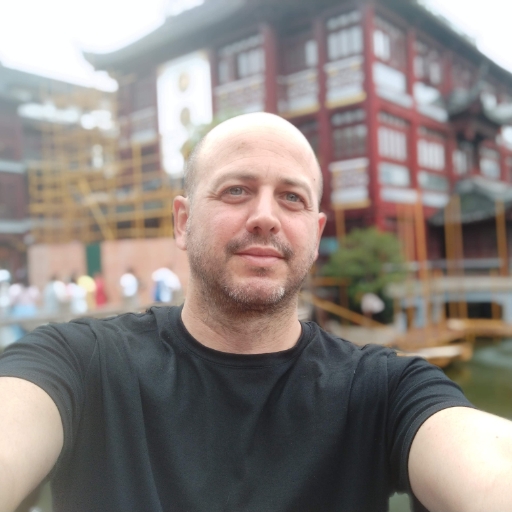

mit | [] | false | model by daniel16 This your the Stable Diffusion model fine-tuned the Josemiel concept taught to Stable Diffusion with Dreambooth. It can be used by modifying the `instance_prompt`: **a photo of Josemiel** You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb). And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) Here are the images used for training this concept:          | 5fe9653dd75bdeb3cc2910e53b45f37f |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 447 | 0.0023 | 0.9994 | | 263e0a6d9699b585ba4df27b685e365f |

apache-2.0 | ['automatic-speech-recognition', 'et'] | false | exp_w2v2t_et_r-wav2vec2_s957 Fine-tuned [facebook/wav2vec2-large-robust](https://huggingface.co/facebook/wav2vec2-large-robust) for speech recognition using the train split of [Common Voice 7.0 (et)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 2fa5033316b1583a95baeaa3fcfe95d0 |

mit | [] | false | Among-Us-Logic-AI-Characters on Stable Diffusion via Dreambooth trained on the [fast-DreamBooth.ipynb by TheLastBen](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook | c8d2a893c7b4968afb18cedc22e2fa08 |

mit | [] | false | Model by Laughify This your the Stable Diffusion model fine-tuned the Among-Us-Logic-AI-Characters concept taught to Stable Diffusion with Dreambooth. It can be used by modifying the `instance_prompt(s)`: nmaguosilgco You can also train your own concepts and upload them to the library by using [the fast-DremaBooth.ipynb by TheLastBen](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb). You can run your new concept via A1111 Colab :[Fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) Sample pictures of this concept: <https://i.imgur.com/nw1JRwp.png> <https://i.imgur.com/oJ686Fk.png> <https://i.imgur.com/8ydP39q.png> | 5b3f06cc74b1f9e65af7688a3b7abe35 |

apache-2.0 | ['generated_from_trainer'] | false | PSST medium Scrambled This model is a fine-tuned version of [openai/whisper-medium.en](https://huggingface.co/openai/whisper-medium.en) on the Santa Barbara Corpus of Spoken American English dataset. | 965947144e17f1ce503d3efcdcbba3fd |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 32 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 64 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 50 - training_steps: 400 - mixed_precision_training: Native AMP | a597043da6146fe4870b7edd13ea4902 |

apache-2.0 | ['translation'] | false | eng-aav * source group: English * target group: Austro-Asiatic languages * OPUS readme: [eng-aav](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/eng-aav/README.md) * model: transformer * source language(s): eng * target language(s): hoc hoc_Latn kha khm khm_Latn mnw vie vie_Hani * model: transformer * pre-processing: normalization + SentencePiece (spm32k,spm32k) * a sentence initial language token is required in the form of `>>id<<` (id = valid target language ID) * download original weights: [opus-2020-07-26.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/eng-aav/opus-2020-07-26.zip) * test set translations: [opus-2020-07-26.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/eng-aav/opus-2020-07-26.test.txt) * test set scores: [opus-2020-07-26.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/eng-aav/opus-2020-07-26.eval.txt) | cf2d8711b90e6e5aadff0cb5caf307bc |

apache-2.0 | ['translation'] | false | Benchmarks | testset | BLEU | chr-F | |-----------------------|-------|-------| | Tatoeba-test.eng-hoc.eng.hoc | 0.1 | 0.033 | | Tatoeba-test.eng-kha.eng.kha | 0.4 | 0.043 | | Tatoeba-test.eng-khm.eng.khm | 0.2 | 0.242 | | Tatoeba-test.eng-mnw.eng.mnw | 0.8 | 0.003 | | Tatoeba-test.eng.multi | 16.1 | 0.311 | | Tatoeba-test.eng-vie.eng.vie | 33.2 | 0.508 | | 56db41429f23b2ac8b31bb2c14162e10 |

apache-2.0 | ['translation'] | false | System Info: - hf_name: eng-aav - source_languages: eng - target_languages: aav - opus_readme_url: https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/eng-aav/README.md - original_repo: Tatoeba-Challenge - tags: ['translation'] - languages: ['en', 'vi', 'km', 'aav'] - src_constituents: {'eng'} - tgt_constituents: {'mnw', 'vie', 'kha', 'khm', 'vie_Hani', 'khm_Latn', 'hoc_Latn', 'hoc'} - src_multilingual: False - tgt_multilingual: True - prepro: normalization + SentencePiece (spm32k,spm32k) - url_model: https://object.pouta.csc.fi/Tatoeba-MT-models/eng-aav/opus-2020-07-26.zip - url_test_set: https://object.pouta.csc.fi/Tatoeba-MT-models/eng-aav/opus-2020-07-26.test.txt - src_alpha3: eng - tgt_alpha3: aav - short_pair: en-aav - chrF2_score: 0.311 - bleu: 16.1 - brevity_penalty: 1.0 - ref_len: 38261.0 - src_name: English - tgt_name: Austro-Asiatic languages - train_date: 2020-07-26 - src_alpha2: en - tgt_alpha2: aav - prefer_old: False - long_pair: eng-aav - helsinki_git_sha: 480fcbe0ee1bf4774bcbe6226ad9f58e63f6c535 - transformers_git_sha: 2207e5d8cb224e954a7cba69fa4ac2309e9ff30b - port_machine: brutasse - port_time: 2020-08-21-14:41 | ecea63d841ff41147c0c252cc47f1d99 |

apache-2.0 | ['generated_from_trainer'] | false | electra-small-finetuned-amazon-review This model is a fine-tuned version of [google/electra-small-discriminator](https://huggingface.co/google/electra-small-discriminator) on the amazon_reviews_multi dataset. It achieves the following results on the evaluation set: - Loss: 1.0560 - Accuracy: 0.5504 - F1: 0.5458 - Precision: 0.5429 - Recall: 0.5504 | beea5e8ab83ccc21a09bdbdeebaac3e9 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | Precision | Recall | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|:---------:|:------:| | 1.2172 | 1.0 | 1000 | 1.1014 | 0.5216 | 0.4902 | 0.4954 | 0.5216 | | 1.0027 | 2.0 | 2000 | 1.0388 | 0.549 | 0.5471 | 0.5494 | 0.549 | | 0.9035 | 3.0 | 3000 | 1.0560 | 0.5504 | 0.5458 | 0.5429 | 0.5504 | | b572a5a555b0a8dbf2d5ac523d5a5d0f |

apache-2.0 | ['generated_from_trainer'] | false | distilroberta-base-finetuned-wikitext2 This model is a fine-tuned version of [distilroberta-base](https://huggingface.co/distilroberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.8512 | 76376a1bb04c0be486efd2690e467a44 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.084 | 1.0 | 2406 | 1.9229 | | 1.9999 | 2.0 | 4812 | 1.8832 | | 1.9616 | 3.0 | 7218 | 1.8173 | | 1127ef09675b2f2ebcbe55c30ceacfa7 |

mit | ['generated_from_trainer'] | false | distilcamembert-cae-no-thinking This model is a fine-tuned version of [cmarkea/distilcamembert-base](https://huggingface.co/cmarkea/distilcamembert-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.5464 - Precision: 0.7959 - Recall: 0.7848 - F1: 0.7869 | ba045f9a980cae9c01fd6d893616553a |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:| | 1.1607 | 1.0 | 40 | 0.9958 | 0.6444 | 0.4684 | 0.3248 | | 1.0099 | 2.0 | 80 | 0.9761 | 0.6090 | 0.5316 | 0.4480 | | 0.6294 | 3.0 | 120 | 0.6770 | 0.8067 | 0.7215 | 0.7542 | | 0.3294 | 4.0 | 160 | 0.5464 | 0.7959 | 0.7848 | 0.7869 | | 0.1986 | 5.0 | 200 | 0.5440 | 0.7882 | 0.7722 | 0.7785 | | 78d97204df86f1dfa1dc68f1a436e27a |

apache-2.0 | ['generated_from_trainer'] | false | BERT_MC_OpenBookQA This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 1.8077 - Accuracy: 0.654 | fa86f2d9fdad0adaf5f614089020d808 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.8972 | 1.61 | 500 | 0.9912 | 0.636 | | 0.2906 | 3.23 | 1000 | 1.4448 | 0.654 | | 0.07 | 4.84 | 1500 | 1.8077 | 0.654 | | 24b20f147cffbae2d636a44c0149b907 |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-uncased-finetuned-cola This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset. It achieves the following results on the evaluation set: - Loss: 0.8989 - Matthews Correlation: 0.5774 | ac64f8b77a1e4081c537146497f3e3ff |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Matthews Correlation | |:-------------:|:-----:|:----:|:---------------:|:--------------------:| | 0.4832 | 1.0 | 535 | 0.5599 | 0.4693 | | 0.2869 | 2.0 | 1070 | 0.4750 | 0.5771 | | 0.1816 | 3.0 | 1605 | 0.7246 | 0.5259 | | 0.1311 | 4.0 | 2140 | 0.8119 | 0.5656 | | 0.0876 | 5.0 | 2675 | 0.8989 | 0.5774 | | 546649b752185564c057dcc90e7d6b65 |

mit | ['generated_from_keras_callback'] | false | ishaankul67/Pub-clustered This model is a fine-tuned version of [nandysoham16/16-clustered_aug](https://huggingface.co/nandysoham16/16-clustered_aug) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.3332 - Train End Logits Accuracy: 0.9028 - Train Start Logits Accuracy: 0.9062 - Validation Loss: 0.5714 - Validation End Logits Accuracy: 0.7692 - Validation Start Logits Accuracy: 0.7692 - Epoch: 0 | 7cc9e36636bd0814592b706fa6fab149 |

mit | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch | |:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:| | 0.3332 | 0.9028 | 0.9062 | 0.5714 | 0.7692 | 0.7692 | 0 | | 56aff7f8c28f95fba6df4a194cc02e5b |

apache-2.0 | ['generated_from_trainer'] | false | all-roberta-large-v1-banking-1-16-5 This model is a fine-tuned version of [sentence-transformers/all-roberta-large-v1](https://huggingface.co/sentence-transformers/all-roberta-large-v1) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.4479 - Accuracy: 0.2301 | fc50bb4a9695845ce672928823c70924 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 2.716 | 1.0 | 1 | 2.6641 | 0.1327 | | 2.1674 | 2.0 | 2 | 2.5852 | 0.1858 | | 1.7169 | 3.0 | 3 | 2.5202 | 0.2035 | | 1.3976 | 4.0 | 4 | 2.4729 | 0.2124 | | 1.2503 | 5.0 | 5 | 2.4479 | 0.2301 | | 3d774a792f948fa4d4608b717792fbb4 |

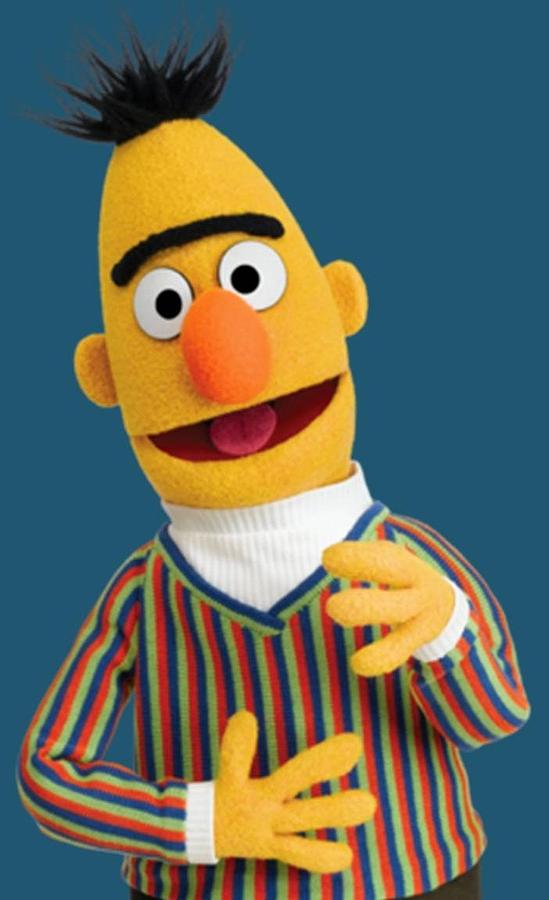

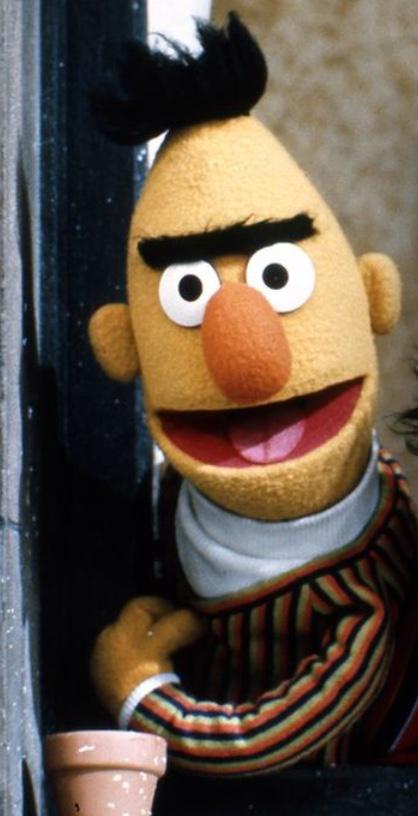

mit | [] | false | Bert muppet 2 on Stable Diffusion This is the `<bert-muppet>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:      | 5006bb7831adb1fff6ad145cad9541a0 |

cc-by-sa-4.0 | ['japanese', 'token-classification', 'pos', 'dependency-parsing'] | false | Model Description This is a DeBERTa(V2) model pre-trained on 青空文庫 texts for POS-tagging and dependency-parsing, derived from [deberta-large-japanese-aozora](https://huggingface.co/KoichiYasuoka/deberta-large-japanese-aozora). Every long-unit-word is tagged by [UPOS](https://universaldependencies.org/u/pos/) (Universal Part-Of-Speech). | 7680eeab5960325b121520e92a2bc387 |

cc-by-sa-4.0 | ['japanese', 'token-classification', 'pos', 'dependency-parsing'] | false | How to Use ```py import torch from transformers import AutoTokenizer,AutoModelForTokenClassification tokenizer=AutoTokenizer.from_pretrained("KoichiYasuoka/deberta-large-japanese-luw-upos") model=AutoModelForTokenClassification.from_pretrained("KoichiYasuoka/deberta-large-japanese-luw-upos") s="国境の長いトンネルを抜けると雪国であった。" t=tokenizer.tokenize(s) p=[model.config.id2label[q] for q in torch.argmax(model(tokenizer.encode(s,return_tensors="pt"))["logits"],dim=2)[0].tolist()[1:-1]] print(list(zip(t,p))) ``` or ```py import esupar nlp=esupar.load("KoichiYasuoka/deberta-large-japanese-luw-upos") print(nlp("国境の長いトンネルを抜けると雪国であった。")) ``` | 164a7d46802e276a6d904a2ff45a1c0d |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-cased-finetuned-basil This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 1.2272 | 246e85e5009829337795cca852b8b321 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 1.8527 | 1.0 | 800 | 1.4425 | | 1.4878 | 2.0 | 1600 | 1.2740 | | 1.3776 | 3.0 | 2400 | 1.2273 | | de72506c0e7be7d9517573d6afff3cc0 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 256 - eval_batch_size: 256 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1 - mixed_precision_training: Native AMP | 2c7f719726ecf1ff72d9b71f95207871 |

apache-2.0 | ['image-classification', 'generated_from_trainer'] | false | modeversion1_m7_e4 This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on the sudo-s/herbier_mesuem7 dataset. It achieves the following results on the evaluation set: - Loss: 0.0902 - Accuracy: 0.9731 | cf82e1e2601b0f356f5b42e0bb07b477 |

apache-2.0 | ['image-classification', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 4.073 | 0.06 | 100 | 3.9370 | 0.1768 | | 3.4186 | 0.12 | 200 | 3.2721 | 0.2590 | | 2.6745 | 0.18 | 300 | 2.6465 | 0.3856 | | 2.2806 | 0.23 | 400 | 2.2600 | 0.4523 | | 1.9275 | 0.29 | 500 | 1.9653 | 0.5109 | | 1.6958 | 0.35 | 600 | 1.6815 | 0.6078 | | 1.2797 | 0.41 | 700 | 1.4514 | 0.6419 | | 1.3772 | 0.47 | 800 | 1.3212 | 0.6762 | | 1.1765 | 0.53 | 900 | 1.1476 | 0.7028 | | 1.0152 | 0.59 | 1000 | 1.0357 | 0.7313 | | 0.7861 | 0.64 | 1100 | 1.0230 | 0.7184 | | 1.0262 | 0.7 | 1200 | 0.9469 | 0.7386 | | 0.8905 | 0.76 | 1300 | 0.8184 | 0.7756 | | 0.6919 | 0.82 | 1400 | 0.8083 | 0.7711 | | 0.7494 | 0.88 | 1500 | 0.7601 | 0.7825 | | 0.5078 | 0.94 | 1600 | 0.6884 | 0.8056 | | 0.7134 | 1.0 | 1700 | 0.6311 | 0.8160 | | 0.4328 | 1.06 | 1800 | 0.5740 | 0.8252 | | 0.4971 | 1.11 | 1900 | 0.5856 | 0.8290 | | 0.5207 | 1.17 | 2000 | 0.6219 | 0.8167 | | 0.4027 | 1.23 | 2100 | 0.5703 | 0.8266 | | 0.5605 | 1.29 | 2200 | 0.5217 | 0.8372 | | 0.2723 | 1.35 | 2300 | 0.4805 | 0.8565 | | 0.401 | 1.41 | 2400 | 0.4811 | 0.8490 | | 0.3419 | 1.47 | 2500 | 0.4619 | 0.8608 | | 0.301 | 1.52 | 2600 | 0.4318 | 0.8712 | | 0.2872 | 1.58 | 2700 | 0.4698 | 0.8573 | | 0.2451 | 1.64 | 2800 | 0.4210 | 0.8729 | | 0.2211 | 1.7 | 2900 | 0.3645 | 0.8851 | | 0.3145 | 1.76 | 3000 | 0.4139 | 0.8715 | | 0.2001 | 1.82 | 3100 | 0.3605 | 0.8864 | | 0.3095 | 1.88 | 3200 | 0.4274 | 0.8675 | | 0.1915 | 1.93 | 3300 | 0.2910 | 0.9101 | | 0.2465 | 1.99 | 3400 | 0.2726 | 0.9103 | | 0.1218 | 2.05 | 3500 | 0.2742 | 0.9129 | | 0.0752 | 2.11 | 3600 | 0.2572 | 0.9183 | | 0.1067 | 2.17 | 3700 | 0.2584 | 0.9203 | | 0.0838 | 2.23 | 3800 | 0.2458 | 0.9212 | | 0.1106 | 2.29 | 3900 | 0.2412 | 0.9237 | | 0.092 | 2.34 | 4000 | 0.2232 | 0.9277 | | 0.1056 | 2.4 | 4100 | 0.2817 | 0.9077 | | 0.0696 | 2.46 | 4200 | 0.2334 | 0.9285 | | 0.0444 | 2.52 | 4300 | 0.2142 | 0.9363 | | 0.1046 | 2.58 | 4400 | 0.2036 | 0.9352 | | 0.066 | 2.64 | 4500 | 0.2115 | 0.9365 | | 0.0649 | 2.7 | 4600 | 0.1730 | 0.9448 | | 0.0513 | 2.75 | 4700 | 0.2148 | 0.9339 | | 0.0917 | 2.81 | 4800 | 0.1810 | 0.9438 | | 0.0879 | 2.87 | 4900 | 0.1971 | 0.9388 | | 0.1052 | 2.93 | 5000 | 0.1602 | 0.9508 | | 0.0362 | 2.99 | 5100 | 0.1475 | 0.9556 | | 0.041 | 3.05 | 5200 | 0.1328 | 0.9585 | | 0.0156 | 3.11 | 5300 | 0.1389 | 0.9571 | | 0.0047 | 3.17 | 5400 | 0.1224 | 0.9638 | | 0.0174 | 3.22 | 5500 | 0.1193 | 0.9651 | | 0.0087 | 3.28 | 5600 | 0.1276 | 0.9622 | | 0.0084 | 3.34 | 5700 | 0.1134 | 0.9662 | | 0.0141 | 3.4 | 5800 | 0.1239 | 0.9631 | | 0.0291 | 3.46 | 5900 | 0.1199 | 0.9645 | | 0.0049 | 3.52 | 6000 | 0.1103 | 0.9679 | | 0.0055 | 3.58 | 6100 | 0.1120 | 0.9662 | | 0.0061 | 3.63 | 6200 | 0.1071 | 0.9668 | | 0.0054 | 3.69 | 6300 | 0.1032 | 0.9697 | | 0.0041 | 3.75 | 6400 | 0.0961 | 0.9711 | | 0.0018 | 3.81 | 6500 | 0.0930 | 0.9718 | | 0.0032 | 3.87 | 6600 | 0.0918 | 0.9730 | | 0.0048 | 3.93 | 6700 | 0.0906 | 0.9732 | | 0.002 | 3.99 | 6800 | 0.0902 | 0.9731 | | ca7fc453fd534a5f028d0b8b2f922e73 |

apache-2.0 | ['generated_from_trainer'] | false | bert-sentiment-analysis-model-40k-samples This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.2669 - Accuracy: 0.9276 - F1: 0.9624 | 68986bbc221455676da47a8fdb33c451 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | samantha Dreambooth model trained by Spiltcokesf with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Sample pictures of this concept: | 146492372e3050a31f6453cb1fcc5196 |

apache-2.0 | ['automatic-speech-recognition', 'nl'] | false | exp_w2v2t_nl_vp-100k_s899 Fine-tuned [facebook/wav2vec2-large-100k-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-100k-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (nl)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 2ff2f217e963788a652f757d86375c0f |

cc-by-4.0 | [] | false | Intended Use This model is ready to be used for entity recognition. It is capable of tagging the 6 entity types from [ACE 2005](https://www.ldc.upenn.edu/sites/www.ldc.upenn.edu/files/english-entities-guidelines-v6.6.pdf) - Person (PER) - ORG - GPE - LOC - VEH - FAC Due to the fine-tuning domain, it is expected to work best with literary sentences. | a1288ebcdee28523b0cbce2246944e9f |

mit | [] | false | обученный rubert от sberbank-ai/ruBert-base. размер выборки - 4. Эпохи - 2. ```python from transformers import pipeline qa_pipeline = pipeline( "question-answering", model="Den4ikAI/rubert-large-squad", tokenizer="Den4ikAI/rubert-large-squad" ) predictions = qa_pipeline({ 'context': "Пушкин родился 6 июля 1799 года", 'question': "Когда родился Пушкин?" }) print(predictions) | f6593da30b0d2c20cf11f95c46f87a4e |

mit | ['generated_from_trainer'] | false | zealous_almeida This model was trained from scratch on the tomekkorbak/detoxify-pile-chunk3-0-50000, the tomekkorbak/detoxify-pile-chunk3-50000-100000, the tomekkorbak/detoxify-pile-chunk3-100000-150000, the tomekkorbak/detoxify-pile-chunk3-150000-200000, the tomekkorbak/detoxify-pile-chunk3-200000-250000, the tomekkorbak/detoxify-pile-chunk3-250000-300000, the tomekkorbak/detoxify-pile-chunk3-300000-350000, the tomekkorbak/detoxify-pile-chunk3-350000-400000, the tomekkorbak/detoxify-pile-chunk3-400000-450000, the tomekkorbak/detoxify-pile-chunk3-450000-500000, the tomekkorbak/detoxify-pile-chunk3-500000-550000, the tomekkorbak/detoxify-pile-chunk3-550000-600000, the tomekkorbak/detoxify-pile-chunk3-600000-650000, the tomekkorbak/detoxify-pile-chunk3-650000-700000, the tomekkorbak/detoxify-pile-chunk3-700000-750000, the tomekkorbak/detoxify-pile-chunk3-750000-800000, the tomekkorbak/detoxify-pile-chunk3-800000-850000, the tomekkorbak/detoxify-pile-chunk3-850000-900000, the tomekkorbak/detoxify-pile-chunk3-900000-950000, the tomekkorbak/detoxify-pile-chunk3-950000-1000000, the tomekkorbak/detoxify-pile-chunk3-1000000-1050000, the tomekkorbak/detoxify-pile-chunk3-1050000-1100000, the tomekkorbak/detoxify-pile-chunk3-1100000-1150000, the tomekkorbak/detoxify-pile-chunk3-1150000-1200000, the tomekkorbak/detoxify-pile-chunk3-1200000-1250000, the tomekkorbak/detoxify-pile-chunk3-1250000-1300000, the tomekkorbak/detoxify-pile-chunk3-1300000-1350000, the tomekkorbak/detoxify-pile-chunk3-1350000-1400000, the tomekkorbak/detoxify-pile-chunk3-1400000-1450000, the tomekkorbak/detoxify-pile-chunk3-1450000-1500000, the tomekkorbak/detoxify-pile-chunk3-1500000-1550000, the tomekkorbak/detoxify-pile-chunk3-1550000-1600000, the tomekkorbak/detoxify-pile-chunk3-1600000-1650000, the tomekkorbak/detoxify-pile-chunk3-1650000-1700000, the tomekkorbak/detoxify-pile-chunk3-1700000-1750000, the tomekkorbak/detoxify-pile-chunk3-1750000-1800000, the tomekkorbak/detoxify-pile-chunk3-1800000-1850000, the tomekkorbak/detoxify-pile-chunk3-1850000-1900000 and the tomekkorbak/detoxify-pile-chunk3-1900000-1950000 datasets. | 1af74f5b0e3cc8544a93e321c0372371 |

mit | ['generated_from_trainer'] | false | Full config {'dataset': {'datasets': ['tomekkorbak/detoxify-pile-chunk3-0-50000', 'tomekkorbak/detoxify-pile-chunk3-50000-100000', 'tomekkorbak/detoxify-pile-chunk3-100000-150000', 'tomekkorbak/detoxify-pile-chunk3-150000-200000', 'tomekkorbak/detoxify-pile-chunk3-200000-250000', 'tomekkorbak/detoxify-pile-chunk3-250000-300000', 'tomekkorbak/detoxify-pile-chunk3-300000-350000', 'tomekkorbak/detoxify-pile-chunk3-350000-400000', 'tomekkorbak/detoxify-pile-chunk3-400000-450000', 'tomekkorbak/detoxify-pile-chunk3-450000-500000', 'tomekkorbak/detoxify-pile-chunk3-500000-550000', 'tomekkorbak/detoxify-pile-chunk3-550000-600000', 'tomekkorbak/detoxify-pile-chunk3-600000-650000', 'tomekkorbak/detoxify-pile-chunk3-650000-700000', 'tomekkorbak/detoxify-pile-chunk3-700000-750000', 'tomekkorbak/detoxify-pile-chunk3-750000-800000', 'tomekkorbak/detoxify-pile-chunk3-800000-850000', 'tomekkorbak/detoxify-pile-chunk3-850000-900000', 'tomekkorbak/detoxify-pile-chunk3-900000-950000', 'tomekkorbak/detoxify-pile-chunk3-950000-1000000', 'tomekkorbak/detoxify-pile-chunk3-1000000-1050000', 'tomekkorbak/detoxify-pile-chunk3-1050000-1100000', 'tomekkorbak/detoxify-pile-chunk3-1100000-1150000', 'tomekkorbak/detoxify-pile-chunk3-1150000-1200000', 'tomekkorbak/detoxify-pile-chunk3-1200000-1250000', 'tomekkorbak/detoxify-pile-chunk3-1250000-1300000', 'tomekkorbak/detoxify-pile-chunk3-1300000-1350000', 'tomekkorbak/detoxify-pile-chunk3-1350000-1400000', 'tomekkorbak/detoxify-pile-chunk3-1400000-1450000', 'tomekkorbak/detoxify-pile-chunk3-1450000-1500000', 'tomekkorbak/detoxify-pile-chunk3-1500000-1550000', 'tomekkorbak/detoxify-pile-chunk3-1550000-1600000', 'tomekkorbak/detoxify-pile-chunk3-1600000-1650000', 'tomekkorbak/detoxify-pile-chunk3-1650000-1700000', 'tomekkorbak/detoxify-pile-chunk3-1700000-1750000', 'tomekkorbak/detoxify-pile-chunk3-1750000-1800000', 'tomekkorbak/detoxify-pile-chunk3-1800000-1850000', 'tomekkorbak/detoxify-pile-chunk3-1850000-1900000', 'tomekkorbak/detoxify-pile-chunk3-1900000-1950000'], 'filter_threshold': 0.00078, 'is_split_by_sentences': True}, 'generation': {'force_call_on': [25354], 'metrics_configs': [{}, {'n': 1}, {'n': 2}, {'n': 5}], 'scenario_configs': [{'generate_kwargs': {'do_sample': True, 'max_length': 128, 'min_length': 10, 'temperature': 0.7, 'top_k': 0, 'top_p': 0.9}, 'name': 'unconditional', 'num_samples': 2048}, {'generate_kwargs': {'do_sample': True, 'max_length': 128, 'min_length': 10, 'temperature': 0.7, 'top_k': 0, 'top_p': 0.9}, 'name': 'challenging_rtp', 'num_samples': 2048, 'prompts_path': 'resources/challenging_rtp.jsonl'}], 'scorer_config': {'device': 'cuda:0'}}, 'kl_gpt3_callback': {'force_call_on': [25354], 'max_tokens': 64, 'num_samples': 4096}, 'model': {'from_scratch': True, 'gpt2_config_kwargs': {'reorder_and_upcast_attn': True, 'scale_attn_by': True}, 'path_or_name': 'gpt2'}, 'objective': {'name': 'MLE'}, 'tokenizer': {'path_or_name': 'gpt2'}, 'training': {'dataloader_num_workers': 0, 'effective_batch_size': 64, 'evaluation_strategy': 'no', 'fp16': True, 'hub_model_id': 'zealous_almeida', 'hub_strategy': 'all_checkpoints', 'learning_rate': 0.0005, 'logging_first_step': True, 'logging_steps': 1, 'num_tokens': 3300000000, 'output_dir': 'training_output104340', 'per_device_train_batch_size': 16, 'push_to_hub': True, 'remove_unused_columns': False, 'save_steps': 25354, 'save_strategy': 'steps', 'seed': 42, 'warmup_ratio': 0.01, 'weight_decay': 0.1}} | fb1a656379253e4298a90a86db397b59 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | Sample pictures of this concept: .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) .png) | 1db30e260ac1fe13e8ffc5ba54d7f3c0 |

mit | [] | false | Model description This model follows the implementation by Allen AI team about [Aristo Roberta V7 Model](https://leaderboard.allenai.org/arc/submission/blcotvl7rrltlue6bsv0) given in [ARC Challenge](https://leaderboard.allenai.org/arc/submissions/public) | 4bab99195b3a30044598fee14c4f57b5 |

mit | [] | false | How to use ```python import datasets from transformers import RobertaTokenizer from transformers import RobertaForMultipleChoice tokenizer = RobertaTokenizer.from_pretrained( "LIAMF-USP/aristo-roberta") model = RobertaForMultipleChoice.from_pretrained( "LIAMF-USP/aristo-roberta") dataset = datasets.load_dataset( "arc",, split=["train", "validation", "test"], ) training_examples = dataset[0] evaluation_examples = dataset[1] test_examples = dataset[2] example=training_examples[0] example_id = example["example_id"] question = example["question"] label_example = example["answer"] options = example["options"] if label_example in ["A", "B", "C", "D", "E"]: label_map = {label: i for i, label in enumerate( ["A", "B", "C", "D", "E"])} elif label_example in ["1", "2", "3", "4", "5"]: label_map = {label: i for i, label in enumerate( ["1", "2", "3", "4", "5"])} else: print(f"{label_example} not found") while len(options) < 5: empty_option = {} empty_option['option_context'] = '' empty_option['option_text'] = '' options.append(empty_option) choices_inputs = [] for ending_idx, option in enumerate(options): ending = option["option_text"] context = option["option_context"] if question.find("_") != -1: | 3f7744881f82180dfedb801035ec2b46 |

mit | [] | false | fill in the banks questions question_option = question.replace("_", ending) else: question_option = question + " " + ending inputs = tokenizer( context, question_option, add_special_tokens=True, max_length=MAX_SEQ_LENGTH, padding="max_length", truncation=True, return_overflowing_tokens=False, ) if "num_truncated_tokens" in inputs and inputs["num_truncated_tokens"] > 0: logging.warning(f"Question: {example_id} with option {ending_idx} was truncated") choices_inputs.append(inputs) label = label_map[label_example] input_ids = [x["input_ids"] for x in choices_inputs] attention_mask = ( [x["attention_mask"] for x in choices_inputs] | ab4826915071d2f45bec5309ceb77119 |

mit | [] | false | necessary to check if "attention_mask" in choices_inputs[0] else None ) example_encoded = { "example_id": example_id, "input_ids": input_ids, "attention_mask": attention_mask, "token_type_ids": token_type_ids, "label": label } output = model(**example_encoded) ``` | 3ed7e1a3b3b359f4a3fbc763106ce446 |

mit | [] | false | Training data the Training data was the same as proposed [here](https://leaderboard.allenai.org/arc/submission/blcotvl7rrltlue6bsv0) The only diferrence was the hypeparameters of RACE fine tuned model, which were reported [here](https://huggingface.co/LIAMF-USP/roberta-large-finetuned-race | e714bff8e3b11230cdd0c1fd215358b3 |

mit | [] | false | Training procedure It was necessary to preprocess the data with a method that is exemplified for a single instance in the _How to use_ section. The used hyperparameters were the following: | Hyperparameter | Value | |:----:|:----:| | adam_beta1 | 0.9 | | adam_beta2 | 0.98 | | adam_epsilon | 1.000e-8 | | eval_batch_size | 16 | | train_batch_size | 4 | | fp16 | True | | gradient_accumulation_steps | 4 | | learning_rate | 0.00001 | | warmup_steps | 0.06 | | max_length | 256 | | epochs | 4 | The other parameters were the default ones from [Trainer](https://huggingface.co/transformers/main_classes/trainer.html) and [Trainer Arguments](https://huggingface.co/transformers/main_classes/trainer.html | d222acbc02a35aba63277475f05de558 |

mit | [] | false | Garcon the cat on Stable Diffusion This is the `<garcon-the-cat>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:     | 13427b60c6863e6e8baa72895d07abc3 |

afl-3.0 | [] | false | Model Description We release all models introduced in our [paper](https://arxiv.org/pdf/2206.11147.pdf), covering 13 different application scenarios. Each model contains 11 billion parameters. | Model | Description | Recommended Application | ----------- | ----------- |----------- | | rst-all-11b | Trained with all the signals below except signals that are used to train Gaokao models | All applications below (specialized models are recommended first if high performance is preferred) | | rst-fact-retrieval-11b | Trained with the following signals: WordNet meaning, WordNet part-of-speech, WordNet synonym, WordNet antonym, wikiHow category hierarchy, Wikidata relation, Wikidata entity typing, Paperswithcode entity typing | Knowledge intensive tasks, information extraction tasks,factual checker | | rst-summarization-11b | Trained with the following signals: DailyMail summary, Paperswithcode summary, arXiv summary, wikiHow summary | Summarization or other general generation tasks, meta-evaluation (e.g., BARTScore) | | rst-temporal-reasoning-11b | Trained with the following signals: DailyMail temporal information, wikiHow procedure | Temporal reasoning, relation extraction, event-based extraction | | rst-information-extraction-11b | Trained with the following signals: Paperswithcode entity, Paperswithcode entity typing, Wikidata entity typing, Wikidata relation, Wikipedia entity | Named entity recognition, relation extraction and other general IE tasks in the news, scientific or other domains| | rst-intent-detection-11b | Trained with the following signals: wikiHow goal-step relation | Intent prediction, event prediction | | rst-topic-classification-11b | Trained with the following signals: DailyMail category, arXiv category, wikiHow text category, Wikipedia section title | general text classification | | rst-word-sense-disambiguation-11b | Trained with the following signals: WordNet meaning, WordNet part-of-speech, WordNet synonym, WordNet antonym | Word sense disambiguation, part-of-speech tagging, general IE tasks, common sense reasoning | | rst-natural-language-inference-11b | Trained with the following signals: ConTRoL dataset, DREAM dataset, LogiQA dataset, RACE & RACE-C dataset, ReClor dataset, DailyMail temporal information | Natural language inference, multiple-choice question answering, reasoning | | rst-sentiment-classification-11b | Trained with the following signals: Rotten Tomatoes sentiment, Wikipedia sentiment | Sentiment classification, emotion classification | | **rst-gaokao-rc-11b** | **Trained with multiple-choice QA datasets that are used to train the [T0pp](https://huggingface.co/bigscience/T0pp) model** | **General multiple-choice question answering**| | rst-gaokao-cloze-11b | Trained with manually crafted cloze datasets | General cloze filling| | rst-gaokao-writing-11b | Trained with example essays from past Gaokao-English exams and grammar error correction signals | Essay writing, story generation, grammar error correction and other text generation tasks | | 6fee60892462f62e70c9b9a586c23dcf |

mit | ['generated_from_trainer'] | false | deberta-base-finetuned-cola This model is a fine-tuned version of [microsoft/deberta-base](https://huggingface.co/microsoft/deberta-base) on the glue dataset. It achieves the following results on the evaluation set: - Loss: 0.5812 - Matthews Correlation: 0.6332 | 1a78aecd7d01ae6c416ea141831909ab |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Matthews Correlation | |:-------------:|:-----:|:----:|:---------------:|:--------------------:| | 0.4826 | 1.0 | 535 | 0.5277 | 0.5443 | | 0.28 | 2.0 | 1070 | 0.4723 | 0.6331 | | 0.1893 | 3.0 | 1605 | 0.5812 | 0.6332 | | a17c6e8b6be07b2982988b554a33d9cc |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-xls-r-bengali_v1 This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 3.2973 - Wer: 1.0 | 3a4e80968f0f0d0bac5a29d17e1a992b |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 6 - mixed_precision_training: Native AMP | c62f742b1190a748ecb4af9eb7e6f085 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:---:| | 8.7896 | 0.8 | 500 | 3.8455 | 1.0 | | 3.3871 | 1.6 | 1000 | 3.2862 | 1.0 | | 3.3302 | 2.4 | 1500 | 3.3086 | 1.0 | | 3.3259 | 3.2 | 2000 | 3.2973 | 1.0 | | 3.325 | 4.0 | 2500 | 3.2973 | 1.0 | | 3.3178 | 4.8 | 3000 | 3.2973 | 1.0 | | 3.3226 | 5.6 | 3500 | 3.2973 | 1.0 | | a05c1bf19b03d6d7e8944151ce2bcaca |

mit | [] | false | Running synthesizer Example for the synthesizing command: ``` tts --text "Гепарды жывуць у адкрытых і прасторных месцах, дзе ёсць шмат здабычы." \ --config_path ${PATH_TO_FILE}/config.json \ --model_path ${PATH_TO_FILE}/model.pth \ --out_path ${PATH_TO_FILE}/output.wav \ --vocoder_path ${PATH_TO_FILE}/vocoder.pth \ --vocoder_config_path ${PATH_TO_FILE}/vocoder_config.json ``` (change ${PATH_TO_FILE} to your directory) | 5885d183a4eeb93272cdd872a1c409b7 |

other | ['art'] | false | モデル説明 (model explanation) - [YaguruMagiku](https://huggingface.co/Toooajk/YaguruMagiku/blob/main/YaguruMagiku-v3-Anybased/YaguruMagiku-v3.1-AnyBased.ckpt) 0.6 : [AbyssOrangeMix2_sfw](https://huggingface.co/WarriorMama777/OrangeMixs/blob/main/Models/AbyssOrangeMix2/AbyssOrangeMix2_sfw.ckpt) 0.4 - **マージ元のルーツにNAIリークが含まれるという噂があるので、NAIリークアンチには非推奨** - 理想の黒髪ポニテ顔が出せるYaguruMagikuを、ある程度顔が近くて制御しやすいAbyssOrangeMix2と混ぜてみた。 - よってマージ者の目的である黒髪ポニテ以外の動作は興味ないし、知らない。 - sfw版をマージしたので、nsfwは出にくい(たまに出る)。 - YaguruMagikuが顔はいいけど指示を無視する暴れ馬モデルなので、AbyssOrangeMix2とのマージで少しは落ち着かせたが、4枚同時生成を推奨。 - vaeを入れた方が多分発色は良くなる。 - colabのWebUIで動かせる。 - [これ](https://colab.research.google.com/drive/1ldhBc70wvuvkp4Af_vNTzTfBXwpf_cH5?usp=sharing)の以下の書き換えを行う。やり方は[ここ](https://the-pioneer.notion.site/Colab-Automatic1111-6043f15ef44d4ba0b11920c95d33a78c)。 ```python !aria2c --summary-interval=10 -x 16 -s 16 --allow-overwrite=true -Z https://huggingface.co/JosephusCheung/ACertainModel/resolve/main/ACertainModel-half.ckpt ``` - [YaguruMagiku](https://huggingface.co/Toooajk/YaguruMagiku/blob/main/YaguruMagiku-v3-Anybased/YaguruMagiku-v3.1-AnyBased.ckpt) 0.6 : [AbyssOrangeMix2_sfw](https://huggingface.co/WarriorMama777/OrangeMixs/blob/main/Models/AbyssOrangeMix2/AbyssOrangeMix2_sfw.ckpt) 0.4 - **Since the original models might have the root back in NovelAI leak, according to some rumors, I do not recommend you use it, if you are a hater of NAI leak and its derivatives.** - What I wanted was to control my favorite waifu with a black hair and ponytail, which can be generated only in YaguruMagiku afaik, and therefore I have mixed YaguruMagiku with AbyssOrangeMix2, which can generate a relatively close face, and is controlable. - Thus I do not care about other styles. It *should* work, but no guarantees. - Since I've merged the sfw version, it's not very easy for you to get an nsfw output (though it will appear once in a while). - As YaguruMagiku very often ignores the prompt, the mixed model, which is still controlable compared to the original YaguruMagiku, may generate some unexpected results. I recommend you generate 4 images at a time. - I think the coloring will be better if you add an vae. - You can run this model on colab WebUI. - Rewrite the following line of [this notebook](https://colab.research.google.com/drive/1ldhBc70wvuvkp4Af_vNTzTfBXwpf_cH5?usp=sharing) following the instructions I posted [here](https://the-pioneer.notion.site/Colab-Automatic1111-6043f15ef44d4ba0b11920c95d33a78c). ```python !aria2c --summary-interval=10 -x 16 -s 16 --allow-overwrite=true -Z https://huggingface.co/JosephusCheung/ACertainModel/resolve/main/ACertainModel-half.ckpt ``` | e3ef33b76b7e46128b67437826587b7a |

other | ['art'] | false | サンプル画像 (sample images) ``art by yaguru magiku``プロンプトを適切な強さで追加することで、YaguruMagikuスタイルの顔を出力できる。 Add the propmpt ``art by yaguru magiku`` with a proper strength to get the face in the style of YaguruMagiku.  ``` [[art by yaguru magiku]], A teenage girl wearing a black bikini, angry smile, in the style of Kyoto Animation in the 2010s, official art, ((((black hair)), eyes of Haruhi Suzumiya, face of Haruhi Suzumiya)), beautiful symmetric face, ponytail, at at a beach on a summer day, alone, solo, 8k, posing of Haruhi Suzumiya Negative prompt: low quality, bad face, ((ugly face)), asymmetric face, ((((bad anatomy)))), ((bad hand)), too many fingers, missing fingers, too many legs, lowres, jpeg artifacts, 2d, 3d, cg, (((text))), logo, signature, sad face, ((loli)), twintails, plaits, pajamas, blushing, boy, adult, bells, fanart, pixiv, card game, ahoge, ribbon, weapons, armors, tools Steps: 50 Sampler: Euler a CFG scale: 7 Size: 512x512 ```  ``` [[art by yaguru magiku]], A teenage girl wearing a black yukata, angry smile, in the style of Kyoto Animation in the 2010s, official art, ((((black hair)), eyes of Haruhi Suzumiya, face of Haruhi Suzumiya)), beautiful symmetric face, ponytail, at at a beach on a summer day, alone, solo, 8k, posing of Haruhi Suzumiya Negative prompt: low quality, bad face, ((ugly face)), asymmetric face, ((((bad anatomy)))), ((bad hand)), too many fingers, missing fingers, too many legs, lowres, jpeg artifacts, 2d, 3d, cg, (((text))), logo, signature, sad face, ((loli)), twintails, plaits, pajamas, blushing, boy, adult, bells, fanart, pixiv, card game, ahoge, ribbon, weapons, armors, tools Steps: 50 Sampler: Euler a CFG scale: 7 Size: 512x512 ```  ``` [[art by yaguru magiku]], A teenage girl wearing a black bikini, angry smile, in the style of Kyoto Animation in the 2010s, official art, ((((black hair)), eyes of Haruhi Suzumiya, face of Haruhi Suzumiya)), beautiful symmetric face, ponytail, at at a beach on a summer day, alone, solo, 8k, posing of Haruhi Suzumiya Negative prompt: low quality, bad face, ((ugly face)), asymmetric face, ((((bad anatomy)))), ((bad hand)), too many fingers, missing fingers, too many legs, lowres, jpeg artifacts, 2d, 3d, cg, (((text))), logo, signature, sad face, ((loli)), twintails, plaits, pajamas, blushing, boy, adult, bells, fanart, pixiv, card game, ahoge, ribbon, weapons, armors, tools Steps: 50, Sampler: Euler a CFG scale: 7 Seed: 2206943626 Size: 512x512 ```  ``` [[art by yaguru magiku]], A teenage girl wearing a black uniform, angry smile, in the style of Kyoto Animation in the 2010s, official art, ((((black hair)), eyes of Haruhi Suzumiya, face of Haruhi Suzumiya)), beautiful symmetric face, ponytail, in the universe with stars and galaxies, psychedelic art, rainbows, transcendence, alone, solo, 8k, posing of Haruhi Suzumiya Negative prompt: low quality, bad face, ((ugly face)), asymmetric face, ((((bad anatomy)))), ((bad hand)), too many fingers, missing fingers, too many legs, lowres, jpeg artifacts, 2d, 3d, cg, (((text))), logo, signature, sad face, ((loli)), twintails, plaits, pajamas, blushing, boy, adult, bells, fanart, pixiv, card game, ahoge, ribbon, weapons, armors, tools, white background, simple background Steps: 50 Sampler: Euler a CFG scale: 7 Seed: 2732902091 Size: 512x512 ```  ``` [[art by yaguru magiku]], A teenage girl wearing a black yukata, angry smile, in the style of Kyoto Animation in the 2010s, official art, ((((black hair)), eyes of Haruhi Suzumiya, face of Haruhi Suzumiya)), beautiful symmetric face, ponytail, in the universe with stars and galaxies, psychedelic art, rainbows, transcendence, alone, solo, 8k, posing of Haruhi Suzumiya Negative prompt: low quality, bad face, ((ugly face)), asymmetric face, ((((bad anatomy)))), ((bad hand)), too many fingers, missing fingers, too many legs, lowres, jpeg artifacts, 2d, 3d, cg, (((text))), logo, signature, sad face, ((loli)), twintails, plaits, pajamas, blushing, boy, adult, bells, fanart, pixiv, card game, ahoge, ribbon, weapons, armors, tools, white background, simple background Steps: 50 Sampler: Euler a CFG scale: 7 Seed: 1256509796 Size: 512x512 ```  ``` [[art by yaguru magiku]], A teenage girl wearing a black yukata, angry smile, in the style of Kyoto Animation in the 2010s, official art, ((((black hair)), eyes of Haruhi Suzumiya, face of Haruhi Suzumiya)), beautiful symmetric face, ponytail, at at a summer festival at night, alone, solo, 8k, posing of Haruhi Suzumiya Negative prompt: low quality, bad face, ((ugly face)), asymmetric face, ((((bad anatomy)))), ((bad hand)), too many fingers, missing fingers, too many legs, lowres, jpeg artifacts, 2d, 3d, cg, (((text))), logo, signature, sad face, ((loli)), twintails, plaits, pajamas, blushing, boy, adult, bells, fanart, pixiv, card game, ahoge, ribbon, weapons, armors, tools Steps: 50 Sampler: Euler a CFG scale: 7 Seed: 3667740274 Size: 512x512 ```  ``` [[art by yaguru magiku]], A teenage girl wearing a black uniform, angry smile, in the style of Kyoto Animation in the 2010s, official art, ((((black hair)), eyes of Haruhi Suzumiya, face of Haruhi Suzumiya)), beautiful symmetric face, ponytail, sitting on a desk in a classroom, alone, solo, 8k, posing of Haruhi Suzumiya Negative prompt: low quality, bad face, ((ugly face)), asymmetric face, ((((bad anatomy)))), ((bad hand)), too many fingers, missing fingers, too many legs, lowres, jpeg artifacts, 2d, 3d, cg, (((text))), logo, signature, sad face, ((loli)), twintails, plaits, pajamas, blushing, boy, adult, bells, fanart, pixiv, card game, ahoge, ribbon, weapons, armors, tools Steps: 50 Sampler: Euler a CFG scale: 7 Seed: 113997253 Size: 512x512 ```  ``` [[art by yaguru magiku]], A teenage girl wearing a black uniform, angry smile, in the style of Kyoto Animation in the 2010s, official art, ((((black hair)), eyes of Haruhi Suzumiya, face of Haruhi Suzumiya)), beautiful symmetric face, ponytail, ((in an bar)) with a city nightscape in the background, alone, solo, 8k, posing of Haruhi Suzumiya Negative prompt: low quality, bad face, ((ugly face)), asymmetric face, ((((bad anatomy)))), ((bad hand)), too many fingers, missing fingers, too many legs, lowres, jpeg artifacts, 2d, 3d, cg, (((text))), logo, signature, sad face, ((loli)), twintails, plaits, pajamas, blushing, boy, adult, bells, fanart, pixiv, card game, ahoge, ribbon, weapons, armors, tools Steps: 50 Sampler: Euler a CFG scale: 7 Seed: 3758118950 Size: 512x512 ``` | b449adc4c2b711a067aecc5b76d0d627 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2168 - Accuracy: 0.925 - F1: 0.9247 | fefdad42000d849c606dcf84d5db154e |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8435 | 1.0 | 250 | 0.3160 | 0.9065 | 0.9045 | | 0.2457 | 2.0 | 500 | 0.2168 | 0.925 | 0.9247 | | 24cd713c158b34554a3943a061963271 |

apache-2.0 | ['vision, language', 'pretrained model', 'image-to-text'] | false | How to use ```python from transformers import AutoProcessor, AutoModel processor = AutoProcessor.from_pretrained("KETI-AIR/veld-base", trust_remote_code=True) model = AutoModel.from_pretrained("KETI-AIR/veld-base", trust_remote_code=True) ``` You can use AutoTokenizer and AutoFeatureExtractor instead AutoProcessor. You don't need to pass `trust_remote_code=True` for AutoTokenizer and AutoFeatureExtractor ```python from transformers import AutoFeatureExtractor, AutoTokenizer, AutoModel feature_extractor = AutoFeatureExtractor.from_pretrained("KETI-AIR/veld-base") tokenizer = AutoTokenizer.from_pretrained("KETI-AIR/veld-base") model = AutoModel.from_pretrained("KETI-AIR/veld-base", trust_remote_code=True) ``` | 7f50dfd1a493904b3040063e989e48d7 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion-medium This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.3555 - Accuracy: 0.8491 - F1: 0.8491 | adf2e50943361158b361cac4d8d8cf58 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 100 - eval_batch_size: 100 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 2 | 72154065d46aace4a51e8a203df1a41b |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.3886 | 1.0 | 1400 | 0.3562 | 0.844 | 0.8440 | | 0.3194 | 2.0 | 2800 | 0.3555 | 0.8491 | 0.8491 | | 2696691a31122ab6cea5021e0fc87da6 |

creativeml-openrail-m | ['text-to-image'] | false | hilleli Dreambooth model trained by tzvc with the v1-5 base model Sample pictures of: sdcid (use that on your prompt)  | d9497fa1bd0f3f3656527a7c10adcc49 |

mit | [] | false | hoi4 on Stable Diffusion This is the `<hoi4>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:      | 2d02d4a033635ba1f1a4cb53867e5a94 |

mit | ['ja', 'japanese', 'gpt', 'text-generation', 'lm', 'nlp'] | false | How to use the model *NOTE:* Use `T5Tokenizer` to initiate the tokenizer. ~~~~ import torch from transformers import T5Tokenizer, AutoModelForCausalLM tokenizer = T5Tokenizer.from_pretrained("rinna/japanese-gpt-1b") model = AutoModelForCausalLM.from_pretrained("rinna/japanese-gpt-1b") if torch.cuda.is_available(): model = model.to("cuda") text = "西田幾多郎は、" token_ids = tokenizer.encode(text, add_special_tokens=False, return_tensors="pt") with torch.no_grad(): output_ids = model.generate( token_ids.to(model.device), max_length=100, min_length=100, do_sample=True, top_k=500, top_p=0.95, pad_token_id=tokenizer.pad_token_id, bos_token_id=tokenizer.bos_token_id, eos_token_id=tokenizer.eos_token_id, bad_word_ids=[[tokenizer.unk_token_id]] ) output = tokenizer.decode(output_ids.tolist()[0]) print(output) | 3384f0dc3b86cb0b85324b13058e948d |

mit | ['ja', 'japanese', 'gpt', 'text-generation', 'lm', 'nlp'] | false | sample output: 西田幾多郎は、その主著の「善の研究」などで、人間の内面に自然とその根源があると指摘し、その根源的な性格は、この西田哲学を象徴しているとして、カントの「純粋理性批判」と「判断力批判」を対比して捉えます。それは、「人が理性的存在であるかぎりにおいて、人はその当人に固有な道徳的に自覚された善悪の基準を持っている」とするもので、この理性的な善悪の観念を否定するのがカントの ~~~~ | 8c78b17578b8caeef91acfb439dd338f |

mit | ['ja', 'japanese', 'gpt', 'text-generation', 'lm', 'nlp'] | false | Training The model was trained on [Japanese C4](https://huggingface.co/datasets/allenai/c4), [Japanese CC-100](http://data.statmt.org/cc-100/ja.txt.xz) and [Japanese Wikipedia](https://dumps.wikimedia.org/other/cirrussearch) to optimize a traditional language modelling objective. It reaches around 14 perplexity on a chosen validation set from the same data. | dc5777d1be45ecb10c180770583153e4 |

mit | ['ja', 'japanese', 'gpt', 'text-generation', 'lm', 'nlp'] | false | Tokenization The model uses a [sentencepiece](https://github.com/google/sentencepiece)-based tokenizer. The vocabulary was first trained on a selected subset from the training data using the official sentencepiece training script, and then augmented with emojis and symbols. | 72d461ee42c4b244a463413d82de95ec |

mit | [] | false | Hubris-Oshri on Stable Diffusion This is the `<Hubris>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:      | a7fcb08b79b092270765b4deef9d7408 |

mit | ['Text Generation'] | false | GPT-Rapgenerator The Rapgenerator is trained for [nullsechsroy](https://genius.com/artists/Nullsechsroy) on an english [GPT2](https://huggingface.co/transformers/model_doc/gpt2.html) that is converted to a german [GerPT2](https://github.com/bminixhofer/gerpt2). | 07f95f69ea16c924eaa6cf784eb43776 |

mit | ['Text Generation'] | false | Usage ``` from transformers import pipeline, AutoTokenizer,AutoModelForCausalLM german_gpt_model = "stefan-it/german-gpt2-larger" rap_model = AutoModelForCausalLM.from_pretrained("Bachstelze/Rapgenerator") tokenizer = AutoTokenizer.from_pretrained(german_gpt_model) rap_pipe = pipeline('text-generation', model=rap_model, tokenizer=german_gpt_model, pad_token_id=tokenizer.eos_token_id, max_length=250) | d0f3c6fe688dc91883c6f8264bcf76c1 |

mit | ['Text Generation'] | false | create a new title with Deluxe as rap-feature title_line = rap_pipe("[Title_nullsechsroy feat. Deluxe_")[0]['generated_text'] raw_title_line= title_line[33 : int(title_line.index("]"))] ``` We used the [genius](https://docs.genius.com/ | 78646b0c234f55cb0a930263965274d1 |

mit | ['Text Generation'] | false | /songs-h2) songlyrics from the following artists: ['Ace Tee', 'Aligatoah', 'AnnenMayKantereit', 'Apache 207', 'Azad', 'Badmómzjay', 'Bausa', 'Blumentopf', 'Blumio', 'Capital Bra', 'Casper', 'Celo & Abdi', 'Cro', 'Dardan', 'Dendemann', 'Die P', 'Dondon', 'Dynamite Deluxe', 'Edgar Wasser', 'Eko Fresh', 'Farid Bang', 'Favorite', 'Genetikk', 'Haftbefehl', 'Haiyti', 'Huss und Hodn', 'Jamule', 'Jamule', 'Juju', 'Kasimir1441', 'Katja Krasavice', 'Kay One', 'Kitty Kat', 'Kool Savas', 'LX & Maxwell', 'Leila Akinyi', 'Loredana', 'Loredana & Mozzik', 'Luciano', 'Marsimoto', 'Marteria', 'Morlockk Dilemma', 'Moses Pelham', 'Nimo', 'NullSechsRoy', 'Prinz Pi', 'SSIO', 'SXTN', 'Sabrina Setlur', 'Samy Deluxe', 'Sanito', 'Sebastian Fitzek', 'Shirin David', 'Summer Cem', 'T-Low', 'Ufo361', 'YBRE', 'YFG Pave'] | 2e66133ef3188de46b14f100b093e566 |

mit | ['Text Generation'] | false | Example song structure ``` [Title_nullsechsroy_Goodies] [Part 1_nullsechsroy_Goodies] Soulja Boy – „Pretty Boy Swag“ Heute bei ihr, aber morgen schon weg, ja .. [Hook_nullsechsroy_Goodies] Ich hab' Jungs in der Trap, ich hab' Jungs an der Uni (Ahh) ... [Part 2_nullsechsroy_Goodies] Ja, Soulja Boy – „Pretty Boy Swag“ ... [Hook_nullsechsroy_Goodies] Ich hab' Jungs in der Trap, ich hab' Jungs an der Uni (Ahh) ... [Post-Hook_nullsechsroy_Goodies] Ja, ich weiß, sie findet niemals ein'n wie mich (Ahh) ... ``` | ce059eed55c89b1f55f357b28df67d9d |

apache-2.0 | ['setfit', 'sentence-transformers', 'text-classification'] | false | fathyshalab/domain_transfer_clinic_credit_cards-massive_lists-roberta-large-v1-2-93 This is a [SetFit model](https://github.com/huggingface/setfit) that can be used for text classification. The model has been trained using an efficient few-shot learning technique that involves: 1. Fine-tuning a [Sentence Transformer](https://www.sbert.net) with contrastive learning. 2. Training a classification head with features from the fine-tuned Sentence Transformer. | 764640165ec90eddd0f4d38a16fab578 |

apache-2.0 | ['text', 'token-classification', 'en-atc', 'en', 'generated_from_trainer', 'bert', 'bertraffic'] | false | bert-base-token-classification-for-atc-en-uwb-atcc This model allow to detect speaker roles and speaker changes based on text. Normally, this task is done on the acoustic level. However, we propose to perform this task on the text level. We solve this challenge by performing speaker role and change detection with a BERT model. We fine-tune it on the chunking task (token-classification). For instance: - Speaker 1: **lufthansa six two nine charlie tango report when established** - Speaker 2: **report when established lufthansa six two nine charlie tango** Based on that, could you tell the speaker role? Is it speaker 1 air traffic controller or pilot? Also, if you have a recording with 2 or more speakers, like this: - Recording with 2 or more segments: **report when established lufthansa six two nine charlie tango lufthansa six two nine charlie tango report when established** could you tell when the first speaker ends and when the second starts? This is basically diarization plus speaker role detection. Check the inference API (there are3 examples)! This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the [UWB-ATCC corpus](https://huggingface.co/datasets/Jzuluaga/uwb_atcc). <a href="https://github.com/idiap/bert-text-diarization-atc"> <img alt="GitHub" src="https://img.shields.io/badge/GitHub-Open%20source-green\"> </a> It achieves the following results on the evaluation set: - Loss: 0.0098 - Precision: 0.9760 - Recall: 0.9741 - F1: 0.9750 - Accuracy: 0.9965 Paper: [BERTraffic: BERT-based Joint Speaker Role and Speaker Change Detection for Air Traffic Control Communications](https://arxiv.org/abs/2110.05781). Authors: Juan Zuluaga-Gomez, Seyyed Saeed Sarfjoo, Amrutha Prasad, Iuliia Nigmatulina, Petr Motlicek, Karel Ondrej, Oliver Ohneiser, Hartmut Helmke Abstract: Automatic speech recognition (ASR) allows transcribing the communications between air traffic controllers (ATCOs) and aircraft pilots. The transcriptions are used later to extract ATC named entities, e.g., aircraft callsigns. One common challenge is speech activity detection (SAD) and speaker diarization (SD). In the failure condition, two or more segments remain in the same recording, jeopardizing the overall performance. We propose a system that combines SAD and a BERT model to perform speaker change detection and speaker role detection (SRD) by chunking ASR transcripts, i.e., SD with a defined number of speakers together with SRD. The proposed model is evaluated on real-life public ATC databases. Our BERT SD model baseline reaches up to 10% and 20% token-based Jaccard error rate (JER) in public and private ATC databases. We also achieved relative improvements of 32% and 7.7% in JERs and SD error rate (DER), respectively, compared to VBx, a well-known SD system. Code — GitHub repository: https://github.com/idiap/bert-text-diarization-atc | 3abf13770a90cd923c9459abb4d661b9 |

apache-2.0 | ['text', 'token-classification', 'en-atc', 'en', 'generated_from_trainer', 'bert', 'bertraffic'] | false | Intended uses & limitations This model was fine-tuned on air traffic control data. We don't expect that it keeps the same performance on some others datasets where BERT was pre-trained or fine-tuned. | d97b6ab9970894b423e0ac6983eb7642 |

apache-2.0 | ['text', 'token-classification', 'en-atc', 'en', 'generated_from_trainer', 'bert', 'bertraffic'] | false | Training and evaluation data See Table 3 (page 5) in our paper:[BERTraffic: BERT-based Joint Speaker Role and Speaker Change Detection for Air Traffic Control Communications](https://arxiv.org/abs/2110.05781).. We described there the data used to fine-tune or model for speaker role and speaker change detection. - We use the UWB-ATCC corpus to fine-tune this model. You can download the raw data here: https://lindat.mff.cuni.cz/repository/xmlui/handle/11858/00-097C-0000-0001-CCA1-0 - However, do not worry, we have prepared a script in our repository for preparing this databases: - Dataset preparation folder: https://github.com/idiap/bert-text-diarization-atc/tree/main/data/databases/uwb_atcc - Prepare the data: https://github.com/idiap/bert-text-diarization-atc/blob/main/data/databases/uwb_atcc/data_prepare_uwb_atcc_corpus.sh - Get the data in the format required by HuggingFace: https://github.com/idiap/bert-text-diarization-atc/blob/main/data/databases/uwb_atcc/exp_prepare_uwb_atcc_corpus.sh | e1dc07d53fc2cd2e937922927d4e1115 |

apache-2.0 | ['text', 'token-classification', 'en-atc', 'en', 'generated_from_trainer', 'bert', 'bertraffic'] | false | Writing your own inference script The snippet of code: ```python from transformers import pipeline, AutoTokenizer, AutoModelForTokenClassification tokenizer = AutoTokenizer.from_pretrained("Jzuluaga/bert-base-token-classification-for-atc-en-uwb-atcc") model = AutoModelForTokenClassification.from_pretrained("Jzuluaga/bert-base-token-classification-for-atc-en-uwb-atcc") | 5a85b925c3ac8749ada465301758f93a |

apache-2.0 | ['text', 'token-classification', 'en-atc', 'en', 'generated_from_trainer', 'bert', 'bertraffic'] | false | Process text sample (from UWB-ATCC) from transformers import pipeline nlp = pipeline('ner', model=model, tokenizer=tokenizer, aggregation_strategy="simple") nlp("lining up runway three one csa five bravo b easy five three kilo romeo contact ruzyne ground one two one decimal nine good bye) [{'entity_group': 'pilot', 'score': 0.99991554, 'word': 'lining up runway three one csa five bravo b', 'start': 0, 'end': 43 }, {'entity_group': 'atco', 'score': 0.99994576, 'word': 'easy five three kilo romeo contact ruzyne ground one two one decimal nine good bye', 'start': 44, 'end': 126 }] ``` | f5620586c821257fa2442e7a6e4e7ea6 |

apache-2.0 | ['text', 'token-classification', 'en-atc', 'en', 'generated_from_trainer', 'bert', 'bertraffic'] | false | Cite us If you use this code for your research, please cite our paper with: ``` @article{zuluaga2022bertraffic, title={BERTraffic: BERT-based Joint Speaker Role and Speaker Change Detection for Air Traffic Control Communications}, author={Zuluaga-Gomez, Juan and Sarfjoo, Seyyed Saeed and Prasad, Amrutha and others}, journal={IEEE Spoken Language Technology Workshop (SLT), Doha, Qatar}, year={2022} } ``` and, ``` @article{zuluaga2022how, title={How Does Pre-trained Wav2Vec2. 0 Perform on Domain Shifted ASR? An Extensive Benchmark on Air Traffic Control Communications}, author={Zuluaga-Gomez, Juan and Prasad, Amrutha and Nigmatulina, Iuliia and Sarfjoo, Saeed and others}, journal={IEEE Spoken Language Technology Workshop (SLT), Doha, Qatar}, year={2022} } ``` and, ``` @article{zuluaga2022atco2, title={ATCO2 corpus: A Large-Scale Dataset for Research on Automatic Speech Recognition and Natural Language Understanding of Air Traffic Control Communications}, author={Zuluaga-Gomez, Juan and Vesel{\`y}, Karel and Sz{\"o}ke, Igor and Motlicek, Petr and others}, journal={arXiv preprint arXiv:2211.04054}, year={2022} } ``` | c185ad04055a651cd8e30b5084e11d4a |

apache-2.0 | ['text', 'token-classification', 'en-atc', 'en', 'generated_from_trainer', 'bert', 'bertraffic'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 64 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 1000 - training_steps: 10000 | 33cdb7e0db0c7aa38f7f6cddb56956a0 |

apache-2.0 | ['text', 'token-classification', 'en-atc', 'en', 'generated_from_trainer', 'bert', 'bertraffic'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 0.03 | 500 | 0.2282 | 0.6818 | 0.7001 | 0.6908 | 0.9246 | | 0.3487 | 0.06 | 1000 | 0.1214 | 0.8163 | 0.8024 | 0.8093 | 0.9631 | | 0.3487 | 0.1 | 1500 | 0.0933 | 0.8496 | 0.8544 | 0.8520 | 0.9722 | | 0.1124 | 0.13 | 2000 | 0.0693 | 0.8845 | 0.8739 | 0.8791 | 0.9786 | | 0.1124 | 0.16 | 2500 | 0.0540 | 0.8993 | 0.8911 | 0.8952 | 0.9817 | | 0.0667 | 0.19 | 3000 | 0.0474 | 0.9058 | 0.8929 | 0.8993 | 0.9857 | | 0.0667 | 0.23 | 3500 | 0.0418 | 0.9221 | 0.9245 | 0.9233 | 0.9865 | | 0.0492 | 0.26 | 4000 | 0.0294 | 0.9369 | 0.9415 | 0.9392 | 0.9903 | | 0.0492 | 0.29 | 4500 | 0.0263 | 0.9512 | 0.9446 | 0.9479 | 0.9911 | | 0.0372 | 0.32 | 5000 | 0.0223 | 0.9495 | 0.9497 | 0.9496 | 0.9915 | | 0.0372 | 0.35 | 5500 | 0.0212 | 0.9530 | 0.9514 | 0.9522 | 0.9923 | | 0.0308 | 0.39 | 6000 | 0.0177 | 0.9585 | 0.9560 | 0.9572 | 0.9933 | | 0.0308 | 0.42 | 6500 | 0.0169 | 0.9619 | 0.9613 | 0.9616 | 0.9936 | | 0.0261 | 0.45 | 7000 | 0.0140 | 0.9689 | 0.9662 | 0.9676 | 0.9951 | | 0.0261 | 0.48 | 7500 | 0.0130 | 0.9652 | 0.9629 | 0.9641 | 0.9945 | | 0.0214 | 0.51 | 8000 | 0.0127 | 0.9676 | 0.9635 | 0.9656 | 0.9953 | | 0.0214 | 0.55 | 8500 | 0.0109 | 0.9714 | 0.9708 | 0.9711 | 0.9959 | | 0.0177 | 0.58 | 9000 | 0.0103 | 0.9740 | 0.9727 | 0.9734 | 0.9961 | | 0.0177 | 0.61 | 9500 | 0.0101 | 0.9768 | 0.9744 | 0.9756 | 0.9963 | | 0.0159 | 0.64 | 10000 | 0.0098 | 0.9760 | 0.9741 | 0.9750 | 0.9965 | | c19f2366722932673ef197080a5504aa |

apache-2.0 | ['generated_from_trainer'] | false | finetuning-sentiment-model-3000-samples This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.2954 - Accuracy: 0.86 - F1: 0.8600 | 1009c16d0470493249c055cf1c131aae |

gpl-3.0 | ['generated_from_trainer'] | false | bert-tagalog-base-uncased-WWM-ner-v1 This model is a fine-tuned version of [jcblaise/bert-tagalog-base-uncased-WWM](https://huggingface.co/jcblaise/bert-tagalog-base-uncased-WWM) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2838 - Precision: 0.9280 - Recall: 0.9153 - F1: 0.9216 - Accuracy: 0.9509 | a7c5fe91f53427460a1a7c5794b428b0 |

gpl-3.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 205 | 0.4812 | 0.6267 | 0.6367 | 0.6317 | 0.8502 | | No log | 2.0 | 410 | 0.2683 | 0.8322 | 0.8289 | 0.8305 | 0.9228 | | 0.4348 | 3.0 | 615 | 0.2377 | 0.9020 | 0.8846 | 0.8932 | 0.9398 | | 0.4348 | 4.0 | 820 | 0.2566 | 0.8906 | 0.8977 | 0.8941 | 0.9439 | | 0.0549 | 5.0 | 1025 | 0.2587 | 0.9249 | 0.9034 | 0.9140 | 0.9469 | | 0.0549 | 6.0 | 1230 | 0.2616 | 0.8988 | 0.9136 | 0.9061 | 0.9469 | | 0.0549 | 7.0 | 1435 | 0.2716 | 0.9102 | 0.9164 | 0.9133 | 0.9497 | | 0.011 | 8.0 | 1640 | 0.2929 | 0.9317 | 0.9147 | 0.9231 | 0.9507 | | 0.011 | 9.0 | 1845 | 0.2819 | 0.9280 | 0.9153 | 0.9216 | 0.9512 | | 0.0043 | 10.0 | 2050 | 0.2838 | 0.9280 | 0.9153 | 0.9216 | 0.9509 | | 92dbd27b8d3ab19147248352ebc750b5 |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-uncased-wiki This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.7509 | d1e620e39d6eda43e93d87387d8b80ca |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 1.9294 | 1.0 | 2319 | 1.7732 | | 1.8219 | 2.0 | 4638 | 1.7363 | | 1.7957 | 3.0 | 6957 | 1.7454 | | 61cc19a0cfd5f06bda781d7fd20ab330 |

apache-2.0 | ['t5', 'pytorch', 'zh', 'Text2Text-Generation'] | false | T5 for Chinese Couplet(t5-chinese-couplet) Model T5中文对联生成模型 `t5-chinese-couplet` evaluate couplet test data: The overall performance of T5 on couplet **test**: |prefix|input_text|target_text|pred| |:-- |:--- |:--- |:-- | |对联:|春回大地,对对黄莺鸣暖树|日照神州,群群紫燕衔新泥|福至人间,家家紫燕舞和风| 在Couplet测试集上生成结果满足字数相同、词性对齐、词面对齐、形似要求,而语义对仗工整和平仄合律还不满足。 T5的网络结构(原生T5):  | 0225fa940aaf0b73cf435cd097d8e64d |

apache-2.0 | ['t5', 'pytorch', 'zh', 'Text2Text-Generation'] | false | Usage 本项目开源在文本生成项目:[textgen](https://github.com/shibing624/textgen),可支持T5模型,通过如下命令调用: Install package: ```shell pip install -U textgen ``` ```python from textgen import T5Model model = T5Model("t5", "shibing624/t5-chinese-couplet") r = model.predict(["对联:丹枫江冷人初去"]) print(r) | e50be63296d6caabee5d2be2c9963e0a |

apache-2.0 | ['t5', 'pytorch', 'zh', 'Text2Text-Generation'] | false | Usage (HuggingFace Transformers) Without [textgen](https://github.com/shibing624/textgen), you can use the model like this: First, you pass your input through the transformer model, then you get the generated sentence. Install package: ``` pip install transformers ``` ```python from transformers import T5ForConditionalGeneration, T5Tokenizer tokenizer = T5Tokenizer.from_pretrained("shibing624/t5-chinese-couplet") model = T5ForConditionalGeneration.from_pretrained("shibing624/t5-chinese-couplet") def batch_generate(input_texts, max_length=64): features = tokenizer(input_texts, return_tensors='pt') outputs = model.generate(input_ids=features['input_ids'], attention_mask=features['attention_mask'], max_length=max_length) return tokenizer.batch_decode(outputs, skip_special_tokens=True) r = batch_generate(["对联:丹枫江冷人初去"]) print(r) ``` output: ```shell ['白石矶寒客不归'] ``` 模型文件组成: ``` t5-chinese-couplet ├── config.json ├── model_args.json ├── pytorch_model.bin ├── special_tokens_map.json ├── tokenizer_config.json ├── spiece.model └── vocab.txt ``` | 3d3691ddeda33181f1834e84c41c8ce1 |

apache-2.0 | ['t5', 'pytorch', 'zh', 'Text2Text-Generation'] | false | 中文对联数据集 - 数据:[对联github](https://github.com/wb14123/couplet-dataset)、[清洗过的对联github](https://github.com/v-zich/couplet-clean-dataset) - 相关内容 - [Huggingface](https://huggingface.co/) - LangZhou Chinese [MengZi T5 pretrained Model](https://huggingface.co/Langboat/mengzi-t5-base) and [paper](https://arxiv.org/pdf/2110.06696.pdf) - [textgen](https://github.com/shibing624/textgen) 数据格式: ```text head -n 1 couplet_files/couplet/train/in.txt 晚 风 摇 树 树 还 挺 head -n 1 couplet_files/couplet/train/out.txt 晨 露 润 花 花 更 红 ``` 如果需要训练T5模型,请参考[https://github.com/shibing624/textgen/blob/main/docs/%E5%AF%B9%E8%81%94%E7%94%9F%E6%88%90%E6%A8%A1%E5%9E%8B%E5%AF%B9%E6%AF%94.md](https://github.com/shibing624/textgen/blob/main/docs/%E5%AF%B9%E8%81%94%E7%94%9F%E6%88%90%E6%A8%A1%E5%9E%8B%E5%AF%B9%E6%AF%94.md) | 72230893a3bd77cbd367368e191b438b |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | sentence-transformers/nli-bert-large This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 1024 dimensional dense vector space and can be used for tasks like clustering or semantic search. | 6cd324903f4afdac80848d97f961449c |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Usage (Sentence-Transformers) Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed: ``` pip install -U sentence-transformers ``` Then you can use the model like this: ```python from sentence_transformers import SentenceTransformer sentences = ["This is an example sentence", "Each sentence is converted"] model = SentenceTransformer('sentence-transformers/nli-bert-large') embeddings = model.encode(sentences) print(embeddings) ``` | ffd665253641f0280082bc58621f27f3 |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Evaluation Results For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=sentence-transformers/nli-bert-large) | 380b23cf298e80eac09eda4bc5727315 |

apache-2.0 | ['translation'] | false | tur-ara * source group: Turkish * target group: Arabic * OPUS readme: [tur-ara](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/tur-ara/README.md) * model: transformer * source language(s): tur * target language(s): apc_Latn ara ara_Latn arq_Latn * model: transformer * pre-processing: normalization + SentencePiece (spm32k,spm32k) * a sentence initial language token is required in the form of `>>id<<` (id = valid target language ID) * download original weights: [opus-2020-07-03.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/tur-ara/opus-2020-07-03.zip) * test set translations: [opus-2020-07-03.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/tur-ara/opus-2020-07-03.test.txt) * test set scores: [opus-2020-07-03.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/tur-ara/opus-2020-07-03.eval.txt) | a40fd4792d201bc7602d800cd4619f2c |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.