license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['vision', 'maxim', 'image-to-image'] | false | MAXIM pre-trained on Rain13k for image deraining MAXIM model pre-trained for image deraining. It was introduced in the paper [MAXIM: Multi-Axis MLP for Image Processing](https://arxiv.org/abs/2201.02973) by Zhengzhong Tu, Hossein Talebi, Han Zhang, Feng Yang, Peyman Milanfar, Alan Bovik, Yinxiao Li and first released in [this repository](https://github.com/google-research/maxim). Disclaimer: The team releasing MAXIM did not write a model card for this model so this model card has been written by the Hugging Face team. | d1c36ee3ba73608c523af6a377bbe565 |

apache-2.0 | ['vision', 'maxim', 'image-to-image'] | false | Intended uses & limitations You can use the raw model for image deraining tasks. The model is [officially released in JAX](https://github.com/google-research/maxim). It was ported to TensorFlow in [this repository](https://github.com/sayakpaul/maxim-tf). | 255fb372851829adfb7881c402c88751 |

apache-2.0 | ['vision', 'maxim', 'image-to-image'] | false | How to use Here is how to use this model: ```python from huggingface_hub import from_pretrained_keras from PIL import Image import tensorflow as tf import numpy as np import requests url = "https://github.com/sayakpaul/maxim-tf/raw/main/images/Deraining/input/55.png" image = Image.open(requests.get(url, stream=True).raw) image = np.array(image) image = tf.convert_to_tensor(image) image = tf.image.resize(image, (256, 256)) model = from_pretrained_keras("google/maxim-s2-deraining-rain13k") predictions = model.predict(tf.expand_dims(image, 0)) ``` For a more elaborate prediction pipeline, refer to [this Colab Notebook](https://colab.research.google.com/github/sayakpaul/maxim-tf/blob/main/notebooks/inference-dynamic-resize.ipynb). | 5ef5662baae766cce3fd660cc0884779 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert_sa_GLUE_Experiment_mrpc_192 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the GLUE MRPC dataset. It achieves the following results on the evaluation set: - Loss: 0.5927 - Accuracy: 0.6887 - F1: 0.7784 - Combined Score: 0.7335 | 58d942f416ff143f620e7fe121b8dc73 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | Combined Score | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|:--------------:| | 0.6412 | 1.0 | 15 | 0.6239 | 0.6838 | 0.8122 | 0.7480 | | 0.6281 | 2.0 | 30 | 0.6238 | 0.6838 | 0.8122 | 0.7480 | | 0.629 | 3.0 | 45 | 0.6239 | 0.6838 | 0.8122 | 0.7480 | | 0.6296 | 4.0 | 60 | 0.6236 | 0.6838 | 0.8122 | 0.7480 | | 0.6323 | 5.0 | 75 | 0.6228 | 0.6838 | 0.8122 | 0.7480 | | 0.6272 | 6.0 | 90 | 0.6209 | 0.6838 | 0.8122 | 0.7480 | | 0.6175 | 7.0 | 105 | 0.6000 | 0.6838 | 0.8122 | 0.7480 | | 0.5733 | 8.0 | 120 | 0.5927 | 0.6887 | 0.7784 | 0.7335 | | 0.5199 | 9.0 | 135 | 0.5969 | 0.6936 | 0.7818 | 0.7377 | | 0.4423 | 10.0 | 150 | 0.6369 | 0.6765 | 0.7700 | 0.7233 | | 0.3645 | 11.0 | 165 | 0.6708 | 0.6838 | 0.7832 | 0.7335 | | 0.3203 | 12.0 | 180 | 0.7179 | 0.6446 | 0.7249 | 0.6847 | | 0.2778 | 13.0 | 195 | 0.7517 | 0.6740 | 0.7726 | 0.7233 | | 716f043ac6de4e56839852b8a832f050 |

cc-by-4.0 | ['espnet', 'audio', 'text-to-speech'] | false | `kan-bayashi/jsut_conformer_fastspeech2_tacotron2_prosody` ♻️ Imported from https://zenodo.org/record/5499050/ This model was trained by kan-bayashi using jsut/tts1 recipe in [espnet](https://github.com/espnet/espnet/). | b80a35fe64f253cb7e86bb8381ef8a9c |

apache-2.0 | ['translation'] | false | opus-mt-bcl-fi * source languages: bcl * target languages: fi * OPUS readme: [bcl-fi](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/bcl-fi/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-08.zip](https://object.pouta.csc.fi/OPUS-MT-models/bcl-fi/opus-2020-01-08.zip) * test set translations: [opus-2020-01-08.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/bcl-fi/opus-2020-01-08.test.txt) * test set scores: [opus-2020-01-08.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/bcl-fi/opus-2020-01-08.eval.txt) | c1e7f4cd8cbed813ad238105e236c12d |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-de-fr This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1637 - F1: 0.8581 | 8d5fa9026fb641aa8d3795e7ad383a0b |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | Kuman-generator Dreambooth model trained by Kumar-kun with [buildspace's DreamBooth](https://colab.research.google.com/github/buildspace/diffusers/blob/main/examples/dreambooth/DreamBooth_Stable_Diffusion.ipynb) notebook Build your own using the [AI Avatar project](https://buildspace.so/builds/ai-avatar)! To get started head over to the [project dashboard](https://buildspace.so/p/build-ai-avatars). Sample pictures of this concept: | 0965a0f1d5a369c2fdd2ae3c0799a2a1 |

cc-by-4.0 | ['question generation'] | false | Model Card of `lmqg/t5-small-squadshifts-reddit-qg` This model is fine-tuned version of [lmqg/t5-small-squad](https://huggingface.co/lmqg/t5-small-squad) for question generation task on the [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) (dataset_name: reddit) via [`lmqg`](https://github.com/asahi417/lm-question-generation). | 5bc8cbf2260e495735fd0296ac1f3a4e |

cc-by-4.0 | ['question generation'] | false | Overview - **Language model:** [lmqg/t5-small-squad](https://huggingface.co/lmqg/t5-small-squad) - **Language:** en - **Training data:** [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) (reddit) - **Online Demo:** [https://autoqg.net/](https://autoqg.net/) - **Repository:** [https://github.com/asahi417/lm-question-generation](https://github.com/asahi417/lm-question-generation) - **Paper:** [https://arxiv.org/abs/2210.03992](https://arxiv.org/abs/2210.03992) | a86d119e827fb834a02a6149e5a2d1d6 |

cc-by-4.0 | ['question generation'] | false | model prediction questions = model.generate_q(list_context="William Turner was an English painter who specialised in watercolour landscapes", list_answer="William Turner") ``` - With `transformers` ```python from transformers import pipeline pipe = pipeline("text2text-generation", "lmqg/t5-small-squadshifts-reddit-qg") output = pipe("generate question: <hl> Beyonce <hl> further expanded her acting career, starring as blues singer Etta James in the 2008 musical biopic, Cadillac Records.") ``` | a2c03c146bd5e58156982d1cdfbfbfd7 |

cc-by-4.0 | ['question generation'] | false | Evaluation - ***Metric (Question Generation)***: [raw metric file](https://huggingface.co/lmqg/t5-small-squadshifts-reddit-qg/raw/main/eval/metric.first.sentence.paragraph_answer.question.lmqg_qg_squadshifts.reddit.json) | | Score | Type | Dataset | |:-----------|--------:|:-------|:---------------------------------------------------------------------------| | BERTScore | 91.7 | reddit | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_1 | 25.11 | reddit | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_2 | 16.32 | reddit | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_3 | 10.93 | reddit | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_4 | 7.6 | reddit | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | METEOR | 21.9 | reddit | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | MoverScore | 61.39 | reddit | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | ROUGE_L | 24.9 | reddit | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | 29edde420ee0fbc3463265a70d989050 |

cc-by-4.0 | ['question generation'] | false | Training hyperparameters The following hyperparameters were used during fine-tuning: - dataset_path: lmqg/qg_squadshifts - dataset_name: reddit - input_types: ['paragraph_answer'] - output_types: ['question'] - prefix_types: ['qg'] - model: lmqg/t5-small-squad - max_length: 512 - max_length_output: 32 - epoch: 5 - batch: 32 - lr: 0.0001 - fp16: False - random_seed: 1 - gradient_accumulation_steps: 2 - label_smoothing: 0.15 The full configuration can be found at [fine-tuning config file](https://huggingface.co/lmqg/t5-small-squadshifts-reddit-qg/raw/main/trainer_config.json). | cd7a0b4c10b21913bcf7fe1ee8ba5f80 |

apache-2.0 | ['translation', 'generated_from_trainer'] | false | t5-small-disfluent-fluent This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the None dataset. It achieves the following results on the evaluation set: - Loss: 3.4839 - Bleu: 10.1823 | 33cbc4af2868844b35be3d94416cd32f |

apache-2.0 | ['vision', 'image-classification'] | false | ResNet-101 v1.5 ResNet model pre-trained on ImageNet-1k at resolution 224x224. It was introduced in the paper [Deep Residual Learning for Image Recognition](https://arxiv.org/abs/1512.03385) by He et al. Disclaimer: The team releasing ResNet did not write a model card for this model so this model card has been written by the Hugging Face team. | 2d7d7fe5f98796abb7e21e2779a07748 |

apache-2.0 | ['vision', 'image-classification'] | false | How to use Here is how to use this model to classify an image of the COCO 2017 dataset into one of the 1,000 ImageNet classes: ```python from transformers import AutoFeatureExtractor, ResNetForImageClassification import torch from datasets import load_dataset dataset = load_dataset("huggingface/cats-image") image = dataset["test"]["image"][0] feature_extractor = AutoFeatureExtractor.from_pretrained("microsoft/resnet-101") model = ResNetForImageClassification.from_pretrained("microsoft/resnet-101") inputs = feature_extractor(image, return_tensors="pt") with torch.no_grad(): logits = model(**inputs).logits | 14c173d5c8b605dbe87617afd6ba5fc7 |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-uncased-finetuned-swag This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the swag dataset. It achieves the following results on the evaluation set: - Loss: 0.5155 - Accuracy: 0.8002 | a03bd3e709c36561fce1ac8cde5035c5 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.6904 | 1.0 | 4597 | 0.5155 | 0.8002 | | e4bdad5588d2df547368e19e19ede810 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | Mannyv2 Dreambooth model trained by MannyD with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Sample pictures of this concept: | 577435cc3e6cc93e37fb47353660b0a0 |

apache-2.0 | ['trl', 'transformers', 'reinforcement-learning'] | false | TRL Model This is a [TRL language model](https://github.com/lvwerra/trl) that has been fine-tuned with reinforcement learning to guide the model outputs according to a value, function, or human feedback. The model can be used for text generation. | 5d0f4eec21fb9ec37ea90fe8e4161cd3 |

apache-2.0 | ['trl', 'transformers', 'reinforcement-learning'] | false | Usage To use this model for inference, first install the TRL library: ```bash python -m pip install trl ``` You can then generate text as follows: ```python from transformers import pipeline generator = pipeline("text-generation", model="lewtun/dummy-trl-model") outputs = generator("Hello, my llama is cute") ``` If you want to use the model for training or to obtain the outputs from the value head, load the model as follows: ```python from transformers import AutoTokenizer from trl import AutoModelForCausalLMWithValueHead tokenizer = AutoTokenizer.from_pretrained("lewtun/dummy-trl-model") model = AutoModelForCausalLMWithValueHead.from_pretrained("lewtun/dummy-trl-model") inputs = tokenizer("Hello, my llama is cute", return_tensors="pt") outputs = model(**inputs, labels=inputs["input_ids"]) ``` | 048af2fda0b646582169aa297b760cad |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | aaureeliaav2 Dreambooth model trained by akahnn with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Sample pictures of this concept: | 1ad21ce66667360913922dc44e6ca2aa |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Whisper Small Hi - Sanchit Gandhi This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 0.4272 - Wer: 31.6135 | 477f46d26c0324bee9afa49ab2e00187 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.0879 | 2.44 | 1000 | 0.2916 | 33.7213 | | 0.0212 | 4.89 | 2000 | 0.3454 | 32.9679 | | 0.0016 | 7.33 | 3000 | 0.4065 | 31.7447 | | 0.0005 | 9.78 | 4000 | 0.4272 | 31.6135 | | eb651ce4c29eee0b63a79d28a52a8686 |

apache-2.0 | ['vision', 'image-segmentation'] | false | SegFormer (b1-sized) model fine-tuned on sidewalk-semantic dataset SegFormer model fine-tuned on segments/sidewalk-semantic at resolution 512x512. It was introduced in the paper [SegFormer: Simple and Efficient Design for Semantic Segmentation with Transformers](https://arxiv.org/abs/2105.15203) by Xie et al. and first released in [this repository](https://github.com/NVlabs/SegFormer). | a5c8d2308a456031e9ea2e884dba5788 |

apache-2.0 | ['vision', 'image-segmentation'] | false | How to use Here is how to use this model to classify an image of the COCO 2017 dataset into one of the 1,000 ImageNet classes: ```python from transformers import SegformerFeatureExtractor, SegformerForSemanticSegmentation from PIL import Image import requests feature_extractor = SegformerFeatureExtractor(reduce_labels=True) model = SegformerForSemanticSegmentation.from_pretrained("ChainYo/segformer-sidewalk") url = "http://images.cocodataset.org/val2017/000000039769.jpg" image = Image.open(requests.get(url, stream=True).raw) inputs = feature_extractor(images=image, return_tensors="pt") outputs = model(**inputs) logits = outputs.logits | b8324e2b12bad2e92d4721bb8824f016 |

creativeml-openrail-m | ['text-to-image'] | false | atmant_1_0_sd_1_5 Dreambooth model trained by clybrg with [Hugging Face Dreambooth Training Space](https://huggingface.co/spaces/multimodalart/dreambooth-training) with the v1-5 base model You run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb). Don't forget to use the concept prompts! Sample pictures of: atmant (use that on your prompt)  | 933b769d319b86cb368dc488f2206124 |

mit | ['aspect-based-sentiment-analysis', 'PyABSA'] | false | Note

This model is training with 30k+ ABSA samples, see [ABSADatasets](https://github.com/yangheng95/ABSADatasets). Yet the test sets are not included in pre-training, so you can use this model for training and benchmarking on common ABSA datasets, e.g., Laptop14, Rest14 datasets. (Except for the Rest15 dataset!)

| aeb2c55cdf0c3abace69b14edb209148 |

mit | ['aspect-based-sentiment-analysis', 'PyABSA'] | false | Training Model

This model is trained based on the FAST-LCF-BERT model with `microsoft/deberta-v3-large`, which comes from [PyABSA](https://github.com/yangheng95/PyABSA).

To track state-of-the-art models, please see [PyASBA](https://github.com/yangheng95/PyABSA).

| f2ccada9e33bc2eab0058d4438e96c36 |

mit | ['aspect-based-sentiment-analysis', 'PyABSA'] | false | Usage

```python3

from transformers import AutoTokenizer, AutoModelForSequenceClassification

tokenizer = AutoTokenizer.from_pretrained("yangheng/deberta-v3-large-absa-v1.1")

model = AutoModelForSequenceClassification.from_pretrained("yangheng/deberta-v3-large-absa-v1.1")

```

| 94f833632a4ca0da980c1707cfdd05ef |

mit | ['aspect-based-sentiment-analysis', 'PyABSA'] | false | Datasets

This model is fine-tuned with 180k examples for the ABSA dataset (including augmented data). Training dataset files:

```

loading: integrated_datasets/apc_datasets/SemEval/laptop14/Laptops_Train.xml.seg

loading: integrated_datasets/apc_datasets/SemEval/restaurant14/Restaurants_Train.xml.seg

loading: integrated_datasets/apc_datasets/SemEval/restaurant16/restaurant_train.raw

loading: integrated_datasets/apc_datasets/ACL_Twitter/acl-14-short-data/train.raw

loading: integrated_datasets/apc_datasets/MAMS/train.xml.dat

loading: integrated_datasets/apc_datasets/Television/Television_Train.xml.seg

loading: integrated_datasets/apc_datasets/TShirt/Menstshirt_Train.xml.seg

loading: integrated_datasets/apc_datasets/Yelp/yelp.train.txt

```

If you use this model in your research, please cite our paper:

```

@article{YangZMT21,

author = {Heng Yang and

Biqing Zeng and

Mayi Xu and

Tianxing Wang},

title = {Back to Reality: Leveraging Pattern-driven Modeling to Enable Affordable

Sentiment Dependency Learning},

journal = {CoRR},

volume = {abs/2110.08604},

year = {2021},

url = {https://arxiv.org/abs/2110.08604},

eprinttype = {arXiv},

eprint = {2110.08604},

timestamp = {Fri, 22 Oct 2021 13:33:09 +0200},

biburl = {https://dblp.org/rec/journals/corr/abs-2110-08604.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

``` | e94c659ba99596dcec8a3c47c5bdd6d8 |

apache-2.0 | ['automatic-speech-recognition', 'pt'] | false | exp_w2v2t_pt_vp-es_s506 Fine-tuned [facebook/wav2vec2-large-es-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-es-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (pt)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 2b3a865b43cb81c106645daf80290727 |

apache-2.0 | ['generated_from_trainer'] | false | van-base-finetuned-eurosat-imgaug This model is a fine-tuned version of [Visual-Attention-Network/van-base](https://huggingface.co/Visual-Attention-Network/van-base) on the image_folder dataset. It achieves the following results on the evaluation set: - Loss: 0.0379 - Accuracy: 0.9885 | cc38b0dc34f20aa1201635001bdcf377 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.0887 | 1.0 | 190 | 0.0589 | 0.98 | | 0.055 | 2.0 | 380 | 0.0390 | 0.9878 | | 0.0223 | 3.0 | 570 | 0.0379 | 0.9885 | | 239899176937f14ae26983f1c19d3b20 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert_sa_GLUE_Experiment_logit_kd_stsb This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the GLUE STSB dataset. It achieves the following results on the evaluation set: - Loss: 1.1792 - Pearson: 0.1721 - Spearmanr: 0.1790 - Combined Score: 0.1755 | 2b31b30053a80634e7cefc6674d610a1 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Pearson | Spearmanr | Combined Score | |:-------------:|:-----:|:----:|:---------------:|:-------:|:---------:|:--------------:| | 1.6404 | 1.0 | 23 | 1.2916 | 0.0486 | 0.0549 | 0.0518 | | 1.0137 | 2.0 | 46 | 1.6141 | 0.0993 | 0.0887 | 0.0940 | | 0.9483 | 3.0 | 69 | 1.1792 | 0.1721 | 0.1790 | 0.1755 | | 0.8128 | 4.0 | 92 | 1.3857 | 0.1405 | 0.1428 | 0.1416 | | 0.6939 | 5.0 | 115 | 1.2921 | 0.1809 | 0.1954 | 0.1881 | | 0.5773 | 6.0 | 138 | 1.4230 | 0.1545 | 0.1669 | 0.1607 | | 0.5082 | 7.0 | 161 | 1.4663 | 0.1550 | 0.1645 | 0.1598 | | 0.4467 | 8.0 | 184 | 1.4837 | 0.1520 | 0.1603 | 0.1561 | | 4e030aef92beabd2bc6d7dfbc66e9b2f |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | t5-small-finetuned-pubmed This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on a truncated [PubMed Summarization](https://huggingface.co/datasets/ccdv/pubmed-summarization) dataset. It achieves the following results on the evaluation set: - Loss: 2.7252 - Rouge1: 19.4457 - Rouge2: 3.125 - Rougel: 18.3168 - Rougelsum: 18.5625 | 10202edbb99958c0a2bf788c1ae847ff |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | |:-------------:|:-----:|:----:|:---------------:|:-------:|:------:|:-------:|:---------:| | 3.2735 | 1.0 | 13 | 2.9820 | 18.745 | 3.7918 | 15.7876 | 15.8512 | | 3.0428 | 2.0 | 26 | 2.8828 | 17.953 | 2.5 | 15.49 | 15.468 | | 2.6259 | 3.0 | 39 | 2.8283 | 21.5532 | 5.9278 | 19.7523 | 19.9232 | | 3.0795 | 4.0 | 52 | 2.7910 | 20.9244 | 5.9278 | 19.8685 | 20.0181 | | 2.8276 | 5.0 | 65 | 2.7613 | 20.6403 | 3.125 | 18.0574 | 18.2227 | | 2.64 | 6.0 | 78 | 2.7404 | 19.4457 | 3.125 | 18.3168 | 18.5625 | | 2.5525 | 7.0 | 91 | 2.7286 | 19.4457 | 3.125 | 18.3168 | 18.5625 | | 2.4951 | 8.0 | 104 | 2.7252 | 19.4457 | 3.125 | 18.3168 | 18.5625 | | c1ad624f6325cdd717aef991c11b7d63 |

mit | ['generated_from_trainer'] | false | finetuned-im-rahmen-der-rechtlichen-und-ethischen-bestimmungen-arbeiten This model is a fine-tuned version of [bert-base-german-cased](https://huggingface.co/bert-base-german-cased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2919 - Accuracy: 0.8970 - F1: 0.8843 | 50d247a492f42d97f249f2634be493d1 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.2946 | 1.0 | 1365 | 0.2791 | 0.8992 | 0.8829 | | 0.2204 | 2.0 | 2730 | 0.2919 | 0.8970 | 0.8843 | | 6563d217ccc57cd32df1da481fb7969c |

apache-2.0 | ['int8', 'Intel® Neural Compressor', 'neural-compressor', 'PostTrainingDynamic'] | false | Post-training dynamic quantization This is an INT8 PyTorch model quantized with [huggingface/optimum-intel](https://github.com/huggingface/optimum-intel) through the usage of [Intel® Neural Compressor](https://github.com/intel/neural-compressor). The original fp32 model comes from the fine-tuned model [shivaniNK8/t5-small-finetuned-cnn-news](https://huggingface.co/shivaniNK8/t5-small-finetuned-cnn-news). The calibration dataloader is the train dataloader. The default calibration sampling size 100 isn't divisible exactly by batch size 8, so the real sampling size is 104. The linear modules **lm.head**, fall back to fp32 for less than 1% relative accuracy loss. | d1f7040347d6ef8d0bac20d9a4f628d5 |

apache-2.0 | ['int8', 'Intel® Neural Compressor', 'neural-compressor', 'PostTrainingDynamic'] | false | Load with optimum: ```python from optimum.intel.neural_compressor.quantization import IncQuantizedModelForSeq2SeqLM int8_model = IncQuantizedModelForSeq2SeqLM.from_pretrained( 'Intel/t5-small-finetuned-cnn-news-int8-dynamic', ) ``` | 39690ce6490c43fb0593f7c4c62e6978 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-imdb This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 2.3114 | 8750b4ad4088df7d2e9eddbb7ae33406 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.5561 | 1.0 | 782 | 2.3738 | | 2.4474 | 2.0 | 1564 | 2.3108 | | 2.4037 | 3.0 | 2346 | 2.3017 | | 9011513be875678bfc059f70c9b61bf4 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | stable-diffusion-kiminonawa-v1.2 Dreambooth model trained by Thaweewat with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) **Prompt Seed:** "kiminonawa" (Base-Model already encoded "makoto shinkai" so I used this one instead) **Sample pictures of this concept:**      | dbfd3ffa5dbd0344f2ccb7598f527b9d |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'hf-asr-leaderboard'] | false | Overview This folder contains a fully trained German speech recognition pipeline consisting of an acoustic model using the new wav2vec 2.0 XLS-R 1B **TEVR** architecture and a 5-gram KenLM language model. For an explanation of the TEVR enhancements and their motivation, please see our paper: [TEVR: Improving Speech Recognition by Token Entropy Variance Reduction](https://arxiv.org/abs/2206.12693). [](https://paperswithcode.com/sota/speech-recognition-on-common-voice-german?p=tevr-improving-speech-recognition-by-token) This pipeline scores a very competitive (as of June 2022) **word error rate of 3.64%** on CommonVoice German. The character error rate was 1.54%. | 1a5ddd94b21488e8acd2d8a9c4a6f2e9 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'hf-asr-leaderboard'] | false | Citation If you use this ASR pipeline for research, please cite: ```bibtex @misc{https://doi.org/10.48550/arxiv.2206.12693, doi = {10.48550/ARXIV.2206.12693}, url = {https://arxiv.org/abs/2206.12693}, author = {Krabbenhöft, Hajo Nils and Barth, Erhardt}, keywords = {Computation and Language (cs.CL), Sound (cs.SD), Audio and Speech Processing (eess.AS), FOS: Computer and information sciences, FOS: Computer and information sciences, FOS: Electrical engineering, electronic engineering, information engineering, FOS: Electrical engineering, electronic engineering, information engineering, F.2.1; I.2.6; I.2.7}, title = {TEVR: Improving Speech Recognition by Token Entropy Variance Reduction}, publisher = {arXiv}, year = {2022}, copyright = {Creative Commons Attribution 4.0 International} } ``` | 2efcd2b5ab293aba57bdbfbd85b29d9f |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'hf-asr-leaderboard'] | false | TEVR Tokenizer Creation / Testing See https://huggingface.co/fxtentacle/tevr-token-entropy-predictor-de for: - our trained ByT5 model used to calculate the entropies in the paper - a Jupyter Notebook to generate a TEVR Tokenizer from a text corpus - a Jupyter Notebook to generate the illustration image in the paper | 1fc39886800bfefd2f3f696771aa10c8 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'hf-asr-leaderboard'] | false | Evaluation To evalue this pipeline yourself and/or on your own data, see the `HF Eval Script.ipynb` Jupyter Notebook or use the following python script: ```python !pip install --quiet --root-user-action=ignore --upgrade pip !pip install --quiet --root-user-action=ignore "datasets>=1.18.3" "transformers==4.11.3" librosa jiwer huggingface_hub !pip install --quiet --root-user-action=ignore https://github.com/kpu/kenlm/archive/master.zip pyctcdecode !pip install --quiet --root-user-action=ignore --upgrade transformers !pip install --quiet --root-user-action=ignore torch_audiomentations audiomentations ``` ```python from datasets import load_dataset, Audio, load_metric from transformers import AutoModelForCTC, Wav2Vec2ProcessorWithLM import torchaudio.transforms as T import torch import unicodedata import numpy as np import re | b1cd11458274f4b5f5647c8896dafa36 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'hf-asr-leaderboard'] | false | replace invisible characters with space allchars = list(set([c for t in testing_dataset['sentence'] for c in list(t)])) map_to_space = [c for c in allchars if unicodedata.category(c)[0] in 'PSZ' and c not in 'ʻ-'] replacements = ''.maketrans(''.join(map_to_space), ''.join(' ' for i in range(len(map_to_space))), '\'ʻ') def text_fix(text): | 342e45156ca2bc678dac91858e4a9bc2 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'hf-asr-leaderboard'] | false | load processor class HajoProcessor(Wav2Vec2ProcessorWithLM): @staticmethod def get_missing_alphabet_tokens(decoder, tokenizer): return [] processor = HajoProcessor.from_pretrained("fxtentacle/wav2vec2-xls-r-1b-tevr") | 4d51285dabf611999bb11ccd018ebe7b |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'hf-asr-leaderboard'] | false | print results print('WER', load_metric("wer").compute(predictions=all_predictions['prediction'], references=all_predictions['groundtruth'])*100.0, '%') print('CER', load_metric("cer").compute(predictions=all_predictions['prediction'], references=all_predictions['groundtruth'])*100.0, '%') ``` WER 3.6433399042523233 % CER 1.5398893560981173 % | 98f9b3bdbb2b78ce65b6b638d1bc1037 |

cc-by-4.0 | ['espnet', 'audio', 'automatic-speech-recognition'] | false | `Xuankai Chang/xuankai_chang_librispeech_asr_train_asr_conformer7_hubert_960hr_large_raw_en_bpe5000_sp_26epoch, fs=16k, lang=en` This model was trained by Takashi Maekaku using librispeech recipe in [espnet](https://github.com/espnet/espnet/). | 9b9aab11f03579161feebae95e493207 |

cc-by-4.0 | ['espnet', 'audio', 'automatic-speech-recognition'] | false | Environments - date: `Fri Aug 6 11:44:39 JST 2021` - python version: `3.7.9 (default, Apr 23 2021, 13:48:31) [GCC 5.5.0 20171010]` - espnet version: `espnet 0.9.9` - pytorch version: `pytorch 1.7.0` - Git hash: `0f7558a716ab830d0c29da8785840124f358d47b` - Commit date: `Tue Jun 8 15:33:49 2021 -0400` | 766f7e87c516e6c67c776cef51e41aad |

cc-by-4.0 | ['espnet', 'audio', 'automatic-speech-recognition'] | false | WER |dataset|Snt|Wrd|Corr|Sub|Del|Ins|Err|S.Err| |---|---|---|---|---|---|---|---|---| |decode_asr_lm_lm_train_lm_transformer2_en_bpe5000_17epoch_asr_model_valid.acc.best/dev_clean|2703|54402|98.5|1.3|0.2|0.2|1.7|22.1| |decode_asr_lm_lm_train_lm_transformer2_en_bpe5000_17epoch_asr_model_valid.acc.best/dev_other|2864|50948|96.8|2.8|0.4|0.3|3.4|33.7| |decode_asr_lm_lm_train_lm_transformer2_en_bpe5000_17epoch_asr_model_valid.acc.best/test_clean|2620|52576|98.4|1.4|0.2|0.2|1.8|22.1| |decode_asr_lm_lm_train_lm_transformer2_en_bpe5000_17epoch_asr_model_valid.acc.best/test_other|2939|52343|96.8|2.8|0.4|0.4|3.6|36.0| | dcc86b5f61ab268ce4dde07673f926dc |

cc-by-4.0 | ['espnet', 'audio', 'automatic-speech-recognition'] | false | CER |dataset|Snt|Wrd|Corr|Sub|Del|Ins|Err|S.Err| |---|---|---|---|---|---|---|---|---| |decode_asr_lm_lm_train_lm_transformer2_en_bpe5000_17epoch_asr_model_valid.acc.best/dev_clean|2703|288456|99.6|0.2|0.2|0.2|0.6|22.1| |decode_asr_lm_lm_train_lm_transformer2_en_bpe5000_17epoch_asr_model_valid.acc.best/dev_other|2864|265951|98.8|0.6|0.6|0.3|1.5|33.7| |decode_asr_lm_lm_train_lm_transformer2_en_bpe5000_17epoch_asr_model_valid.acc.best/test_clean|2620|281530|99.6|0.2|0.2|0.2|0.6|22.1| |decode_asr_lm_lm_train_lm_transformer2_en_bpe5000_17epoch_asr_model_valid.acc.best/test_other|2939|272758|98.9|0.5|0.5|0.4|1.4|36.0| | 8b090eaf3aa29de9735570bf4419fe8a |

cc-by-4.0 | ['espnet', 'audio', 'automatic-speech-recognition'] | false | TER |dataset|Snt|Wrd|Corr|Sub|Del|Ins|Err|S.Err| |---|---|---|---|---|---|---|---|---| |decode_asr_lm_lm_train_lm_transformer2_en_bpe5000_17epoch_asr_model_valid.acc.best/dev_clean|2703|68010|98.2|1.3|0.5|0.4|2.2|22.1| |decode_asr_lm_lm_train_lm_transformer2_en_bpe5000_17epoch_asr_model_valid.acc.best/dev_other|2864|63110|96.0|2.8|1.2|0.6|4.6|33.7| |decode_asr_lm_lm_train_lm_transformer2_en_bpe5000_17epoch_asr_model_valid.acc.best/test_clean|2620|65818|98.1|1.3|0.6|0.4|2.3|22.1| |decode_asr_lm_lm_train_lm_transformer2_en_bpe5000_17epoch_asr_model_valid.acc.best/test_other|2939|65101|96.0|2.7|1.3|0.6|4.6|36.0| ``` | e2761908299c574a15828df474e0833d |

apache-2.0 | ['multiple-choice', 'int8', 'Intel® Neural Compressor', 'PostTrainingStatic'] | false | Post-training static quantization This is an INT8 PyTorch model quantized with [huggingface/optimum-intel](https://github.com/huggingface/optimum-intel) through the usage of [Intel® Neural Compressor](https://github.com/intel/neural-compressor). The original fp32 model comes from the fine-tuned model [thyagosme/bert-base-uncased-finetuned-swag](https://huggingface.co/thyagosme/bert-base-uncased-finetuned-swag). The calibration dataloader is the train dataloader. The default calibration sampling size 100 isn't divisible exactly by batch size 8, so the real sampling size is 104. The linear modules **bert.encoder.layer.2.output.dense, bert.encoder.layer.5.intermediate.dense, bert.encoder.layer.9.output.dense, bert.encoder.layer.10.output.dense** fall back to fp32 to meet the 1% relative accuracy loss. | 6204f85da65de7fe3eb4b446770a922d |

apache-2.0 | ['multiple-choice', 'int8', 'Intel® Neural Compressor', 'PostTrainingStatic'] | false | Load with optimum: ```python from optimum.intel.neural_compressor.quantization import IncQuantizedModelForMultipleChoice int8_model = IncQuantizedModelForMultipleChoice.from_pretrained( 'Intel/bert-base-uncased-finetuned-swag-int8-static', ) ``` | 25d6edaa75e39cb05bde352edc9218c7 |

apache-2.0 | ['translation'] | false | eng-bnt * source group: English * target group: Bantu languages * OPUS readme: [eng-bnt](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/eng-bnt/README.md) * model: transformer * source language(s): eng * target language(s): kin lin lug nya run sna swh toi_Latn tso umb xho zul * model: transformer * pre-processing: normalization + SentencePiece (spm32k,spm32k) * a sentence initial language token is required in the form of `>>id<<` (id = valid target language ID) * download original weights: [opus-2020-07-26.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/eng-bnt/opus-2020-07-26.zip) * test set translations: [opus-2020-07-26.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/eng-bnt/opus-2020-07-26.test.txt) * test set scores: [opus-2020-07-26.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/eng-bnt/opus-2020-07-26.eval.txt) | 8aa1ec8fb1033f03bbf7d43a1704c3cc |

apache-2.0 | ['translation'] | false | Benchmarks | testset | BLEU | chr-F | |-----------------------|-------|-------| | Tatoeba-test.eng-kin.eng.kin | 12.5 | 0.519 | | Tatoeba-test.eng-lin.eng.lin | 1.1 | 0.277 | | Tatoeba-test.eng-lug.eng.lug | 4.8 | 0.415 | | Tatoeba-test.eng.multi | 12.1 | 0.449 | | Tatoeba-test.eng-nya.eng.nya | 22.1 | 0.616 | | Tatoeba-test.eng-run.eng.run | 13.2 | 0.492 | | Tatoeba-test.eng-sna.eng.sna | 32.1 | 0.669 | | Tatoeba-test.eng-swa.eng.swa | 1.7 | 0.180 | | Tatoeba-test.eng-toi.eng.toi | 10.7 | 0.266 | | Tatoeba-test.eng-tso.eng.tso | 26.9 | 0.631 | | Tatoeba-test.eng-umb.eng.umb | 5.2 | 0.295 | | Tatoeba-test.eng-xho.eng.xho | 22.6 | 0.615 | | Tatoeba-test.eng-zul.eng.zul | 41.1 | 0.769 | | e2b1ca1575f822091ec166751c4cb768 |

apache-2.0 | ['translation'] | false | System Info: - hf_name: eng-bnt - source_languages: eng - target_languages: bnt - opus_readme_url: https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/eng-bnt/README.md - original_repo: Tatoeba-Challenge - tags: ['translation'] - languages: ['en', 'sn', 'zu', 'rw', 'lg', 'ts', 'ln', 'ny', 'xh', 'rn', 'bnt'] - src_constituents: {'eng'} - tgt_constituents: {'sna', 'zul', 'kin', 'lug', 'tso', 'lin', 'nya', 'xho', 'swh', 'run', 'toi_Latn', 'umb'} - src_multilingual: False - tgt_multilingual: True - prepro: normalization + SentencePiece (spm32k,spm32k) - url_model: https://object.pouta.csc.fi/Tatoeba-MT-models/eng-bnt/opus-2020-07-26.zip - url_test_set: https://object.pouta.csc.fi/Tatoeba-MT-models/eng-bnt/opus-2020-07-26.test.txt - src_alpha3: eng - tgt_alpha3: bnt - short_pair: en-bnt - chrF2_score: 0.449 - bleu: 12.1 - brevity_penalty: 1.0 - ref_len: 9989.0 - src_name: English - tgt_name: Bantu languages - train_date: 2020-07-26 - src_alpha2: en - tgt_alpha2: bnt - prefer_old: False - long_pair: eng-bnt - helsinki_git_sha: 480fcbe0ee1bf4774bcbe6226ad9f58e63f6c535 - transformers_git_sha: 2207e5d8cb224e954a7cba69fa4ac2309e9ff30b - port_machine: brutasse - port_time: 2020-08-21-14:41 | 625fcc0884c1f469a7a64569b23e515a |

apache-2.0 | ['generated_from_trainer'] | false | recipe-lr8e06-wd0.005-bs32 This model is a fine-tuned version of [paola-md/recipe-distilroberta-Is](https://huggingface.co/paola-md/recipe-distilroberta-Is) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2752 - Rmse: 0.5246 - Mse: 0.2752 - Mae: 0.4184 | b617a447e46952226fa2ffac930032ad |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rmse | Mse | Mae | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:| | 0.2769 | 1.0 | 623 | 0.2773 | 0.5266 | 0.2773 | 0.4296 | | 0.2745 | 2.0 | 1246 | 0.2739 | 0.5233 | 0.2739 | 0.4144 | | 0.2733 | 3.0 | 1869 | 0.2752 | 0.5246 | 0.2752 | 0.4215 | | 0.2722 | 4.0 | 2492 | 0.2744 | 0.5238 | 0.2744 | 0.4058 | | 0.2714 | 5.0 | 3115 | 0.2758 | 0.5251 | 0.2758 | 0.4232 | | 0.2705 | 6.0 | 3738 | 0.2752 | 0.5246 | 0.2752 | 0.4184 | | 4d8cdf267cb048367b712bed05b08e18 |

apache-2.0 | ['finnish', 'gpt2'] | false | GPT-2 large for Finnish Pretrained GPT-2 large model on Finnish language using a causal language modeling (CLM) objective. GPT-2 was introduced in [this paper](https://d4mucfpksywv.cloudfront.net/better-language-models/language_models_are_unsupervised_multitask_learners.pdf) and first released at [this page](https://openai.com/blog/better-language-models/). **Note**: this model is 774M parameter variant as in Huggingface's [GPT-2-large config](https://huggingface.co/gpt2-large), so not the famous big 1.5B parameter variant by OpenAI. | bce00e83115f6d2f0f4f380ca7cd731c |

apache-2.0 | ['finnish', 'gpt2'] | false | How to use You can use this model directly with a pipeline for text generation: ```python >>> from transformers import pipeline >>> generator = pipeline('text-generation', model='Finnish-NLP/gpt2-large-finnish') >>> generator("Tekstiä tuottava tekoäly on", max_length=30, num_return_sequences=5) [{'generated_text': 'Tekstiä tuottava tekoäly on valmis yhteistyöhön ihmisen kanssa: Tekoäly hoitaa ihmisen puolesta tekstin tuottamisen. Se myös ymmärtää, missä vaiheessa tekstiä voidaan alkaa kirjoittamaan'}, {'generated_text': 'Tekstiä tuottava tekoäly on älykäs, mutta se ei ole vain älykkäisiin koneisiin kuuluva älykäs olento, vaan se on myös kone. Se ei'}, {'generated_text': 'Tekstiä tuottava tekoäly on ehkä jo pian todellisuutta - se voisi tehdä myös vanhustenhoidosta nykyistä ä tuottava tekoäly on ehkä jo pian todellisuutta - se voisi tehdä'}, {'generated_text': 'Tekstiä tuottava tekoäly on kehitetty ihmisen ja ihmisen aivoihin yhteistyössä neurotieteiden ja käyttäytymistieteen tutkijatiimin kanssa. Uusi teknologia avaa aivan uudenlaisia tutkimusi'}, {'generated_text': 'Tekstiä tuottava tekoäly on kuin tietokone, jonka kanssa voi elää. Tekoälyn avulla voi kirjoittaa mitä tahansa, mistä tahansa ja miten paljon. Tässä'}] ``` Here is how to use this model to get the features of a given text in PyTorch: ```python from transformers import GPT2Tokenizer, GPT2Model tokenizer = GPT2Tokenizer.from_pretrained('Finnish-NLP/gpt2-large-finnish') model = GPT2Model.from_pretrained('Finnish-NLP/gpt2-large-finnish') text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` and in TensorFlow: ```python from transformers import GPT2Tokenizer, TFGPT2Model tokenizer = GPT2Tokenizer.from_pretrained('Finnish-NLP/gpt2-large-finnish') model = TFGPT2Model.from_pretrained('Finnish-NLP/gpt2-large-finnish', from_pt=True) text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='tf') output = model(encoded_input) ``` | 4a081c9a4a237873ecb16b50dbf24b5e |

apache-2.0 | ['finnish', 'gpt2'] | false | Pretraining The model was trained on TPUv3-8 VM, sponsored by the [Google TPU Research Cloud](https://sites.research.google/trc/about/), for 640k steps (a bit over 1 epoch, 64 batch size). The optimizer used was a AdamW with learning rate 4e-5, learning rate warmup for 4000 steps and cosine decay of the learning rate after. | fcada85c3b4d90a93209bc94465e5919 |

apache-2.0 | ['finnish', 'gpt2'] | false | perplexity-for-language-models) (smaller score the better) as the evaluation metric. As seen from the table below, this model (the first row of the table) performs better than our smaller model variants. | | Perplexity | |------------------------------------------|------------| |Finnish-NLP/gpt2-large-finnish |**30.74** | |Finnish-NLP/gpt2-medium-finnish |34.08 | |Finnish-NLP/gpt2-finnish |44.19 | | 081888c56b86c70c2051797630a83203 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Wav2Vec2-Large-XLSR-53-Vietnamese Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on Vietnamese using the [Common Voice](https://huggingface.co/datasets/common_voice). When using this model, make sure that your speech input is sampled at 16kHz. | 262610dc5cf6fa3483393f1196a31968 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Usage The model can be used directly (without a language model) as follows: ```python import torch import torchaudio from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor test_dataset = load_dataset("common_voice", "vi", split="test[:2%]") processor = Wav2Vec2Processor.from_pretrained("anuragshas/wav2vec2-large-xlsr-53-vietnamese") model = Wav2Vec2ForCTC.from_pretrained("anuragshas/wav2vec2-large-xlsr-53-vietnamese") resampler = torchaudio.transforms.Resample(48_000, 16_000) | 5b6af548d9cff2ba3e8c65ffa4986980 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Evaluation The model can be evaluated as follows on the Vietnamese test data of Common Voice. ```python import torch import torchaudio from datasets import load_dataset, load_metric from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor import re test_dataset = load_dataset("common_voice", "vi", split="test") wer = load_metric("wer") processor = Wav2Vec2Processor.from_pretrained("anuragshas/wav2vec2-large-xlsr-53-vietnamese") model = Wav2Vec2ForCTC.from_pretrained("anuragshas/wav2vec2-large-xlsr-53-vietnamese") model.to("cuda") chars_to_ignore_regex = '[\,\?\.\!\-\;\:\"\“]' resampler = torchaudio.transforms.Resample(48_000, 16_000) | ba1adf958b9eb755c500d74538d92120 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def evaluate(batch): inputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits pred_ids = torch.argmax(logits, dim=-1) batch["pred_strings"] = processor.batch_decode(pred_ids) return batch result = test_dataset.map(evaluate, batched=True, batch_size=8) print("WER: {:2f}".format(100 * wer.compute(predictions=result["pred_strings"], references=result["sentence"]))) ``` **Test Result**: 66.78 % | 05d284f2446ad8f2798c3e899a82fda7 |

apache-2.0 | ['speech-recognition', 'common_voice', 'generated_from_trainer'] | false | wav2vec2-common_voice-tr-demo This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the COMMON_VOICE - TR dataset. It achieves the following results on the evaluation set: - Loss: 0.3856 - Wer: 0.3556 | 5c9c3ae0a4d6ddaf3c954173a2795715 |

apache-2.0 | ['speech-recognition', 'common_voice', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 3.7391 | 0.92 | 100 | 3.5760 | 1.0 | | 2.927 | 1.83 | 200 | 3.0796 | 0.9999 | | 0.9009 | 2.75 | 300 | 0.9278 | 0.8226 | | 0.6529 | 3.67 | 400 | 0.5926 | 0.6367 | | 0.3623 | 4.59 | 500 | 0.5372 | 0.5692 | | 0.2888 | 5.5 | 600 | 0.4407 | 0.4838 | | 0.285 | 6.42 | 700 | 0.4341 | 0.4694 | | 0.0842 | 7.34 | 800 | 0.4153 | 0.4302 | | 0.1415 | 8.26 | 900 | 0.4317 | 0.4136 | | 0.1552 | 9.17 | 1000 | 0.4145 | 0.4013 | | 0.1184 | 10.09 | 1100 | 0.4115 | 0.3844 | | 0.0556 | 11.01 | 1200 | 0.4182 | 0.3862 | | 0.0851 | 11.93 | 1300 | 0.3985 | 0.3688 | | 0.0961 | 12.84 | 1400 | 0.4030 | 0.3665 | | 0.0596 | 13.76 | 1500 | 0.3880 | 0.3631 | | 0.0917 | 14.68 | 1600 | 0.3878 | 0.3582 | | 8cd2ba5e58c805f794f05e029a82f67a |

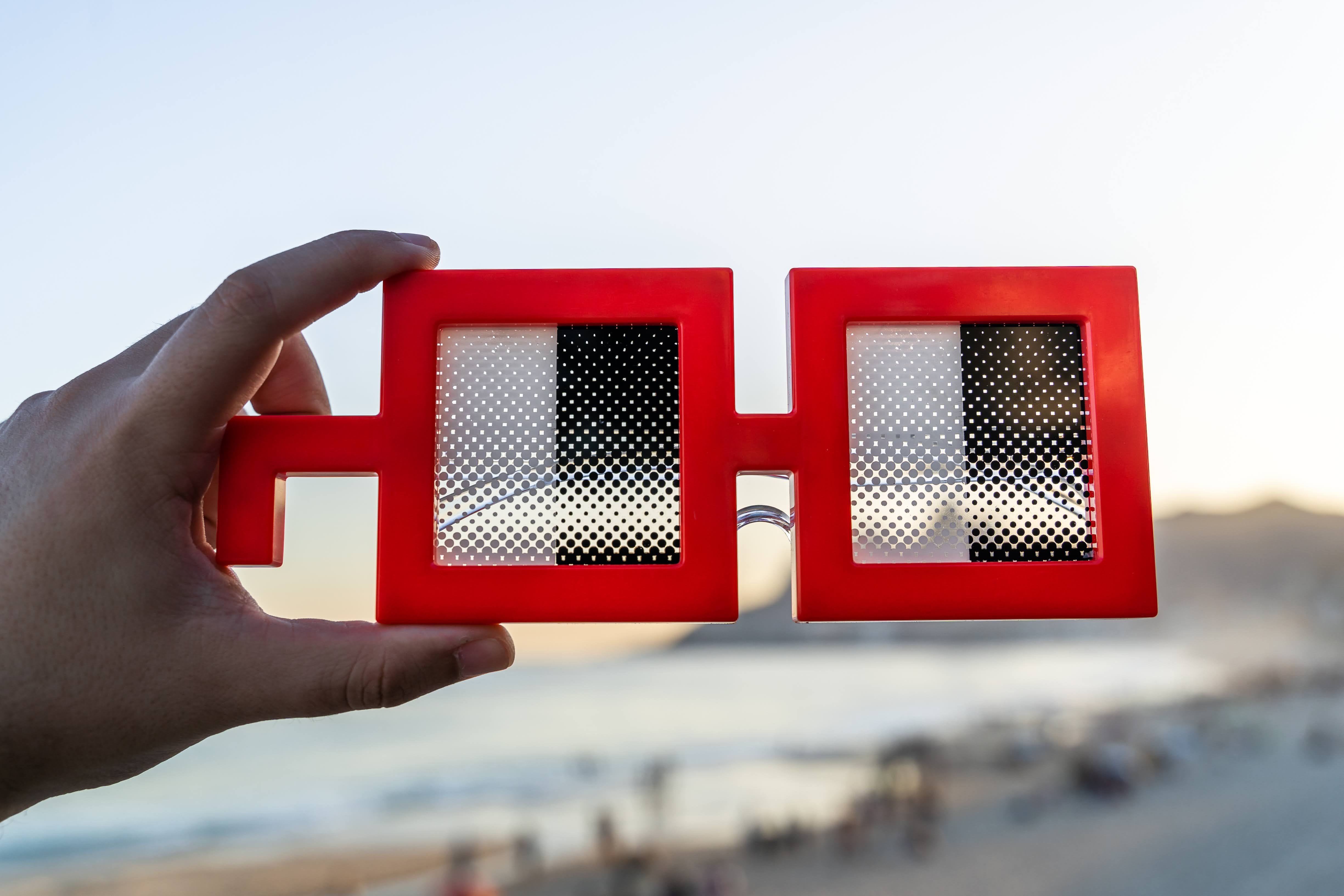

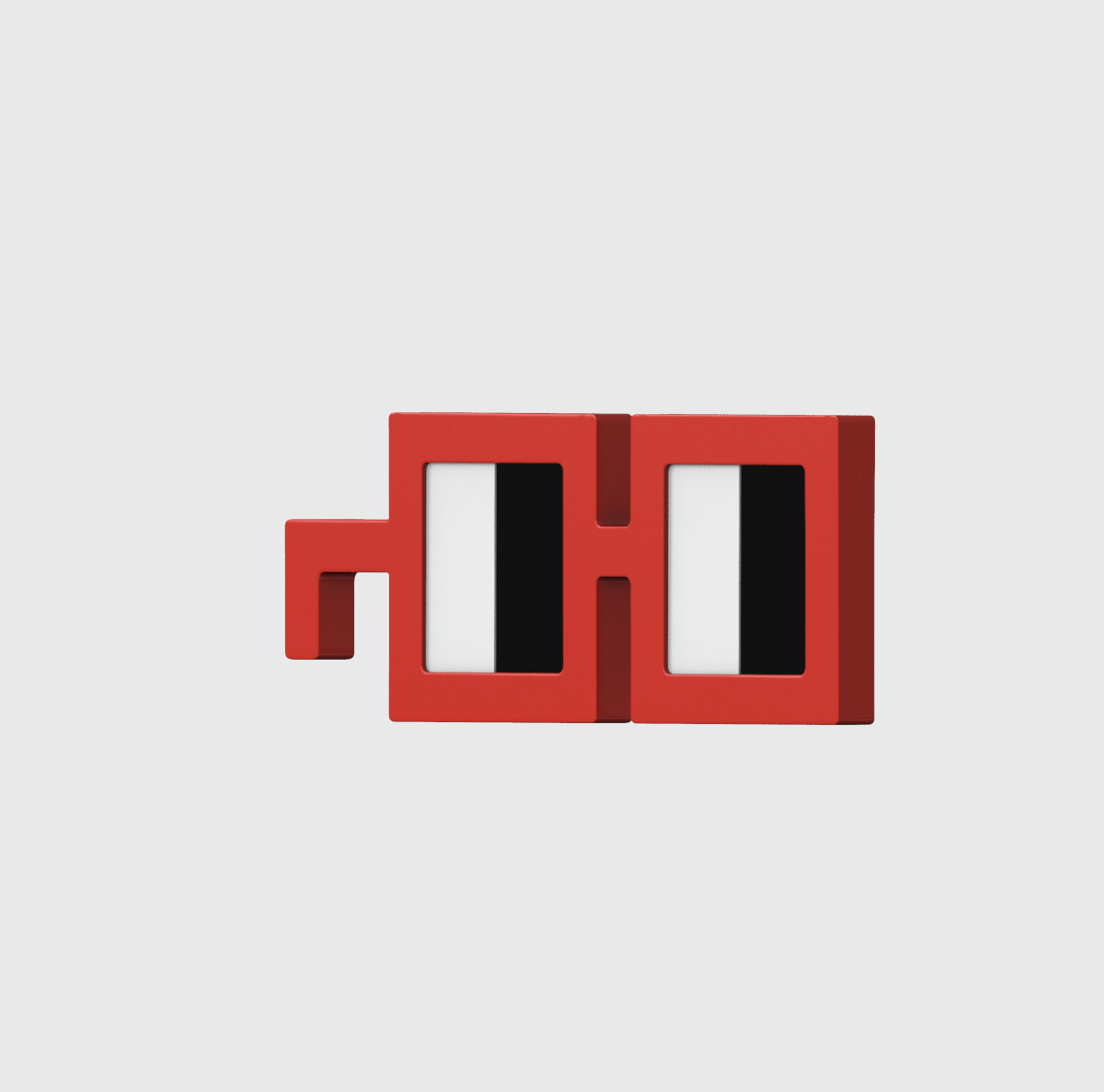

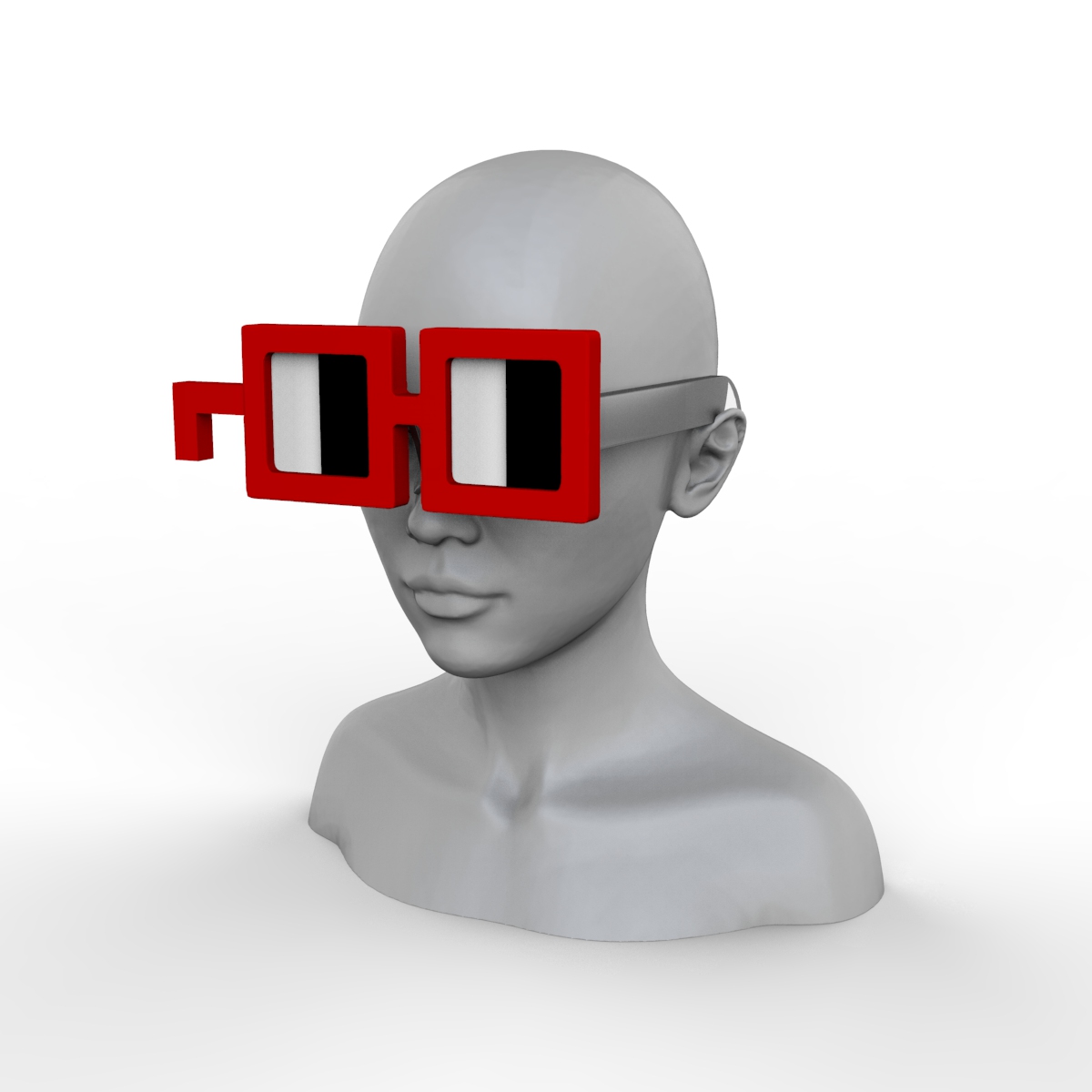

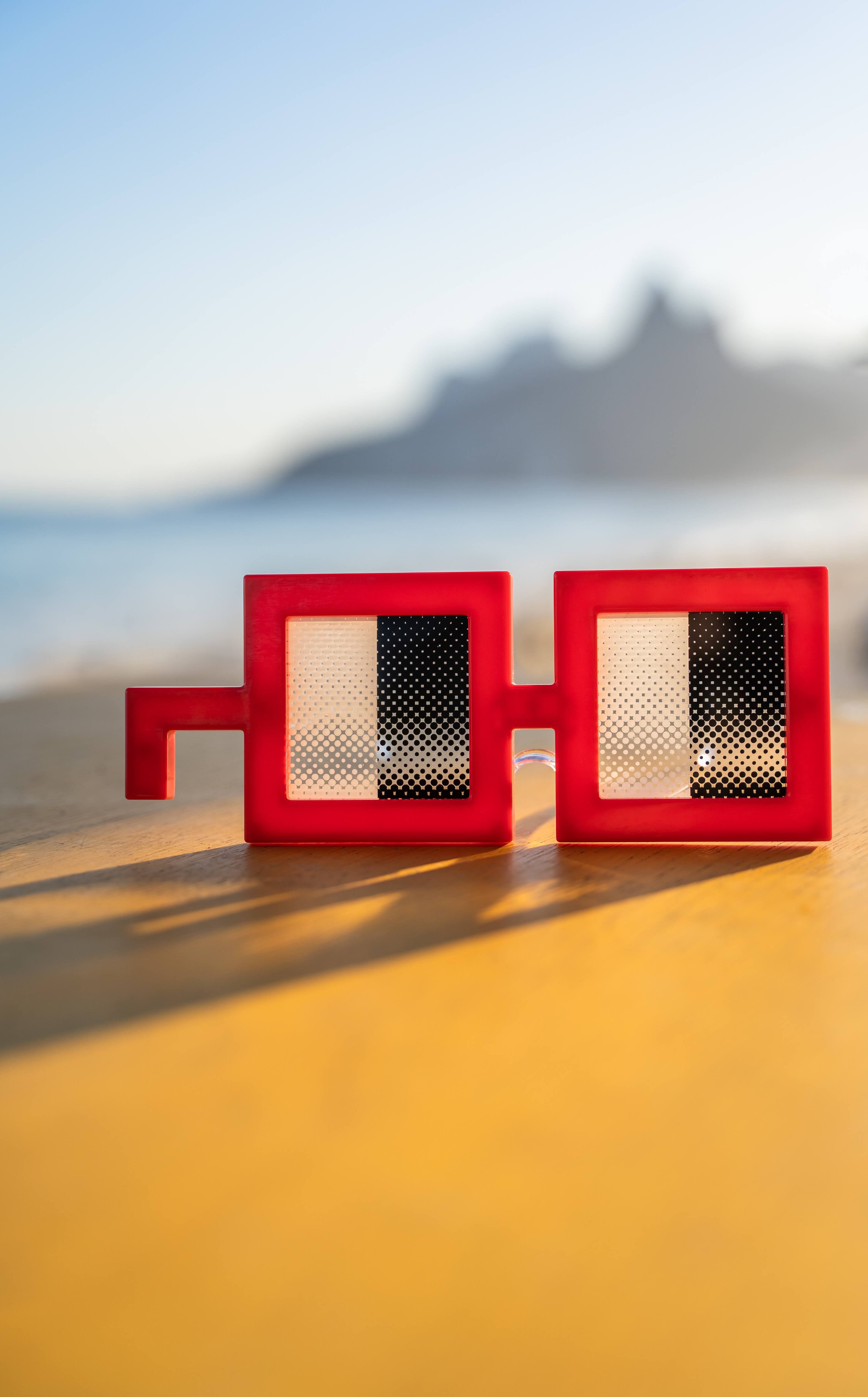

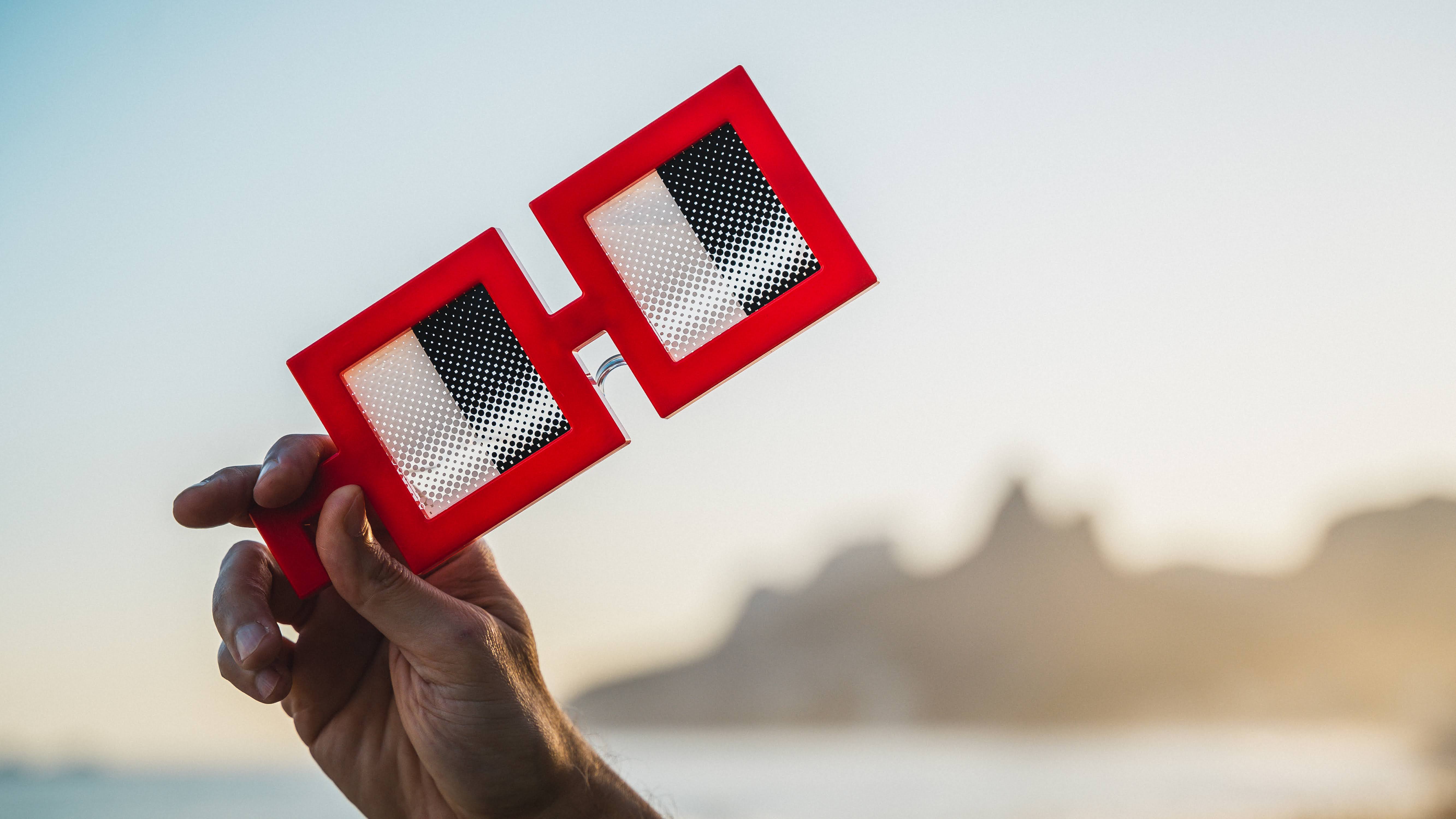

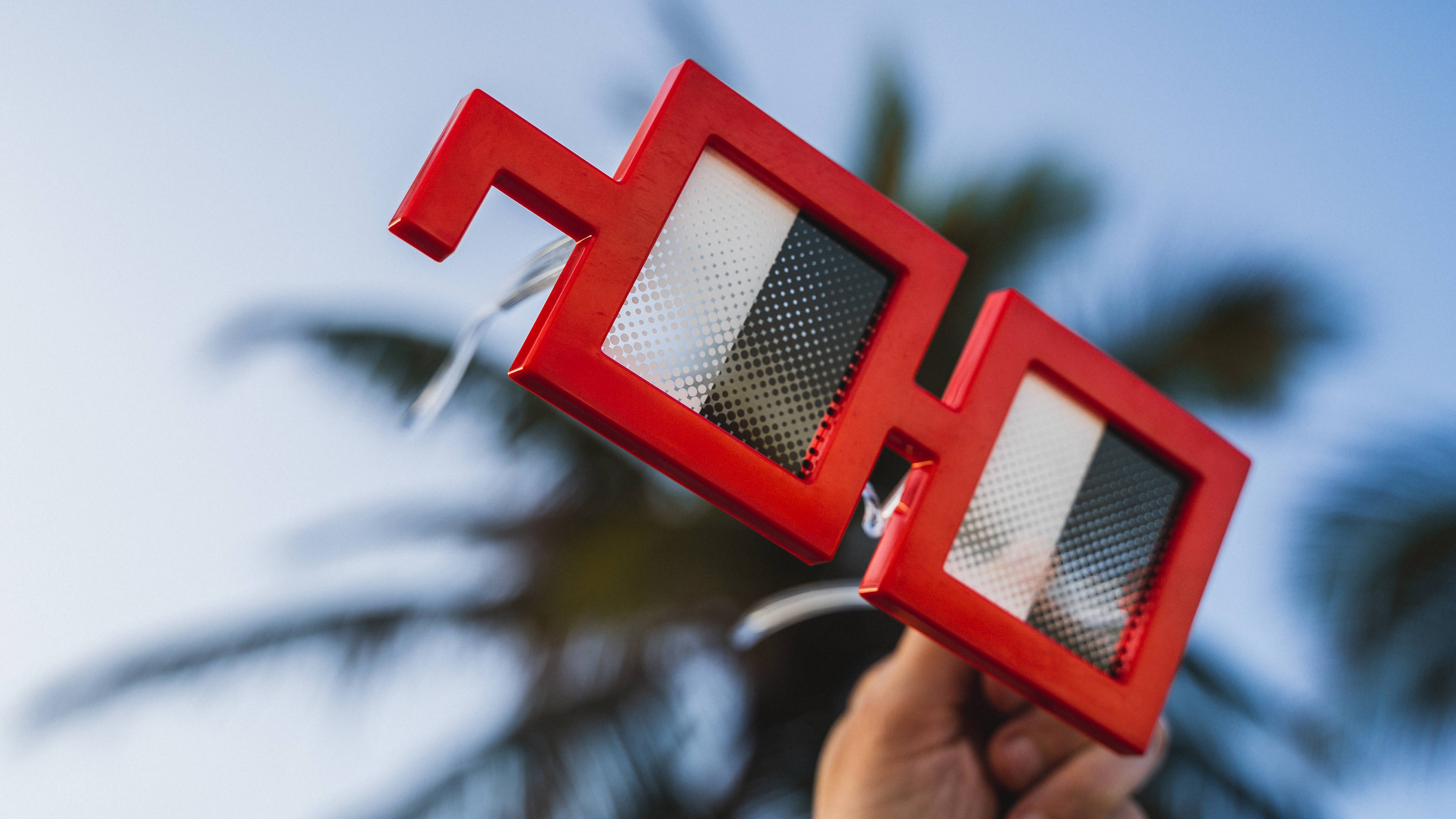

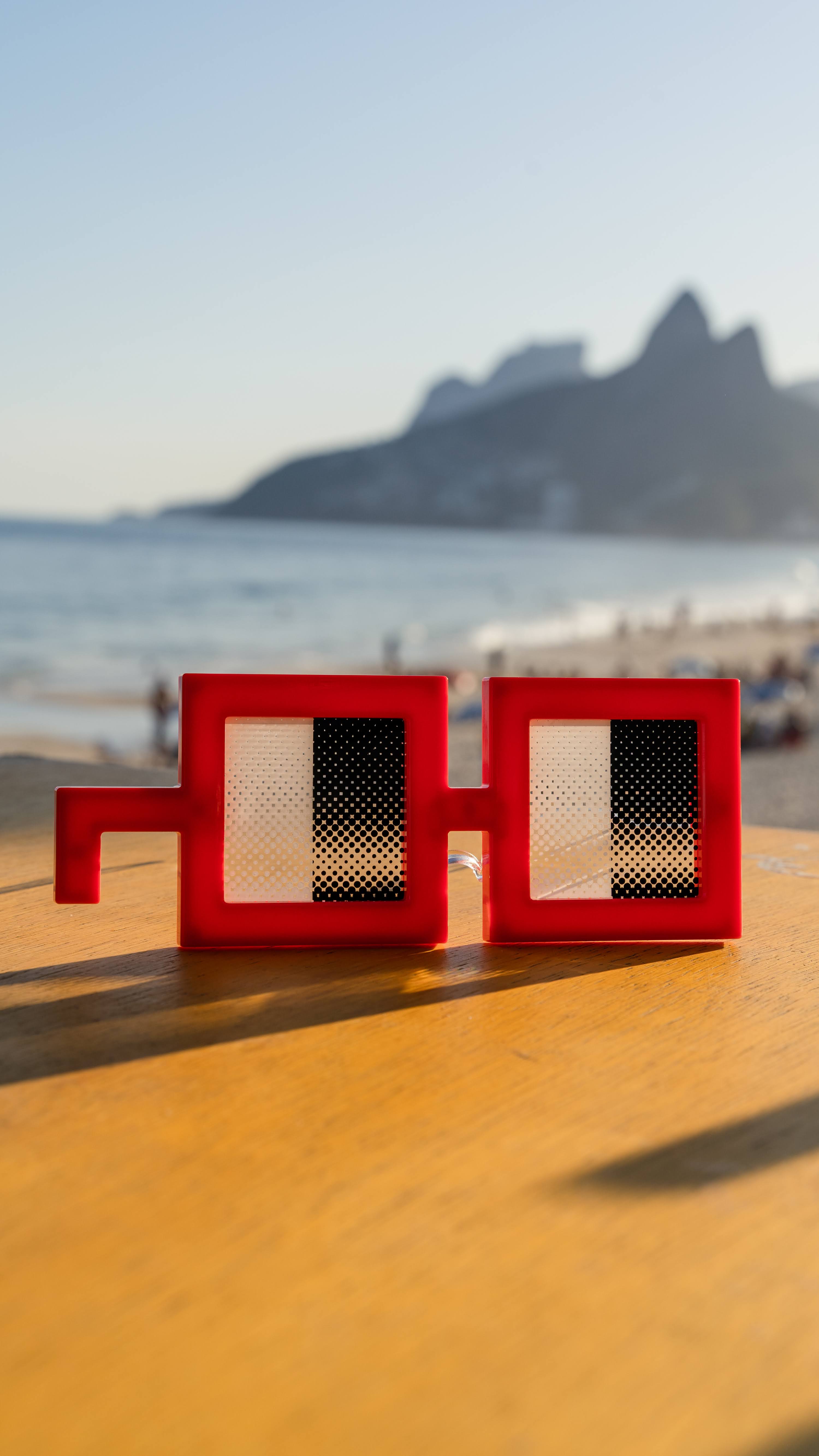

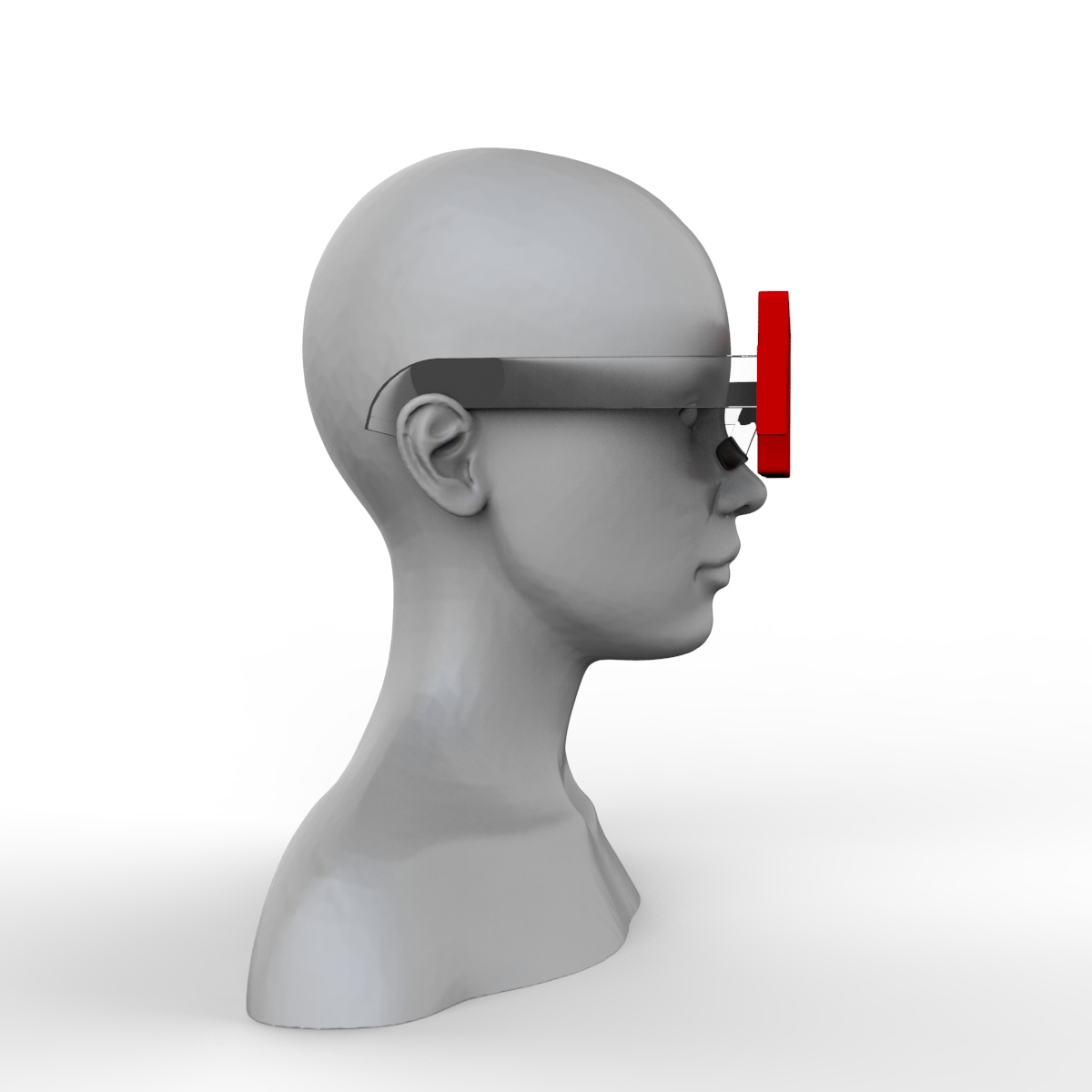

mit | [] | false | noggles on Stable Diffusion This is the `<noggles>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:                                                       | a801431f9fda078e7925e294c8141f9d |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-jm-finetuned-panx-all_hub This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1725 - F1: 0.8551 | 4127f088a9a4ef742a709049543c7e08 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.3036 | 1.0 | 835 | 0.1925 | 0.8094 | | 0.1537 | 2.0 | 1670 | 0.1792 | 0.8392 | | 0.0995 | 3.0 | 2505 | 0.1725 | 0.8551 | | bf895349897642bd894bbe36a22d738e |

apache-2.0 | ['generated_from_trainer'] | false | bert-mini-mlm-finetuned-emotion This model is a fine-tuned version of [google/bert_uncased_L-4_H-256_A-4](https://huggingface.co/google/bert_uncased_L-4_H-256_A-4) on the None dataset. It achieves the following results on the evaluation set: - Loss: 3.0041 | 5d9e0723d60342a707e99bff747a4b22 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:------:|:----:|:---------------:| | 3.3034 | 22.73 | 500 | 3.0423 | | 3.0612 | 45.45 | 1000 | 3.0242 | | 2.9507 | 68.18 | 1500 | 2.9806 | | 2.8609 | 90.91 | 2000 | 3.0442 | | 2.7887 | 113.64 | 2500 | 3.0179 | | 2.7104 | 136.36 | 3000 | 3.0041 | | ee6c7f70b1bbecce96943590f1706844 |

apache-2.0 | ['vision', 'maxim', 'image-to-image'] | false | MAXIM pre-trained on RESIDE-Indoor for image dehazing MAXIM model pre-trained for image dehazing. It was introduced in the paper [MAXIM: Multi-Axis MLP for Image Processing](https://arxiv.org/abs/2201.02973) by Zhengzhong Tu, Hossein Talebi, Han Zhang, Feng Yang, Peyman Milanfar, Alan Bovik, Yinxiao Li and first released in [this repository](https://github.com/google-research/maxim). Disclaimer: The team releasing MAXIM did not write a model card for this model so this model card has been written by the Hugging Face team. | 4f0456d03a7269e869033fed89bfa3e2 |

apache-2.0 | ['vision', 'maxim', 'image-to-image'] | false | Intended uses & limitations You can use the raw model for image dehazing tasks. The model is [officially released in JAX](https://github.com/google-research/maxim). It was ported to TensorFlow in [this repository](https://github.com/sayakpaul/maxim-tf). | 6be28a8e08969e5ba0f0df17858a62ad |

apache-2.0 | ['vision', 'maxim', 'image-to-image'] | false | How to use Here is how to use this model: ```python from huggingface_hub import from_pretrained_keras from PIL import Image import tensorflow as tf import numpy as np import requests url = "https://github.com/sayakpaul/maxim-tf/raw/main/images/Dehazing/input/1440_10.png" image = Image.open(requests.get(url, stream=True).raw) image = np.array(image) image = tf.convert_to_tensor(image) image = tf.image.resize(image, (256, 256)) model = from_pretrained_keras("google/maxim-s2-dehazing-sots-indoor") predictions = model.predict(tf.expand_dims(image, 0)) ``` For a more elaborate prediction pipeline, refer to [this Colab Notebook](https://colab.research.google.com/github/sayakpaul/maxim-tf/blob/main/notebooks/inference-dynamic-resize.ipynb). | 296a71ccb4b7cbcebc6d6fa17bb45135 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2086 - Accuracy: 0.9255 - F1: 0.9257 | 69329f20e1aad7f34c2025b8d281f38b |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8249 | 1.0 | 250 | 0.3042 | 0.9085 | 0.9068 | | 0.2437 | 2.0 | 500 | 0.2086 | 0.9255 | 0.9257 | | 35f3b98b24b58eb46635852716a2af15 |

apache-2.0 | ['generated_from_trainer'] | false | tiny-mlm-glue-stsb-target-glue-sst2 This model is a fine-tuned version of [muhtasham/tiny-mlm-glue-stsb](https://huggingface.co/muhtasham/tiny-mlm-glue-stsb) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.5105 - Accuracy: 0.7970 | 1ed36c062bfbf1dac501fd2112b3c7ff |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.5917 | 0.24 | 500 | 0.4991 | 0.7649 | | 0.4448 | 0.48 | 1000 | 0.4693 | 0.7787 | | 0.3934 | 0.71 | 1500 | 0.4471 | 0.7970 | | 0.3733 | 0.95 | 2000 | 0.4623 | 0.7913 | | 0.3336 | 1.19 | 2500 | 0.4510 | 0.8005 | | 0.3146 | 1.43 | 3000 | 0.4510 | 0.8062 | | 0.2976 | 1.66 | 3500 | 0.4523 | 0.8028 | | 0.2912 | 1.9 | 4000 | 0.4576 | 0.8062 | | 0.272 | 2.14 | 4500 | 0.5105 | 0.7970 | | 6220e1bd57f0f03887f23dea4d5ee03b |

mit | ['exbert'] | false | CXR-BERT-general [CXR-BERT](https://arxiv.org/abs/2204.09817) is a chest X-ray (CXR) domain-specific language model that makes use of an improved vocabulary, novel pretraining procedure, weight regularization, and text augmentations. The resulting model demonstrates improved performance on radiology natural language inference, radiology masked language model token prediction, and downstream vision-language processing tasks such as zero-shot phrase grounding and image classification. First, we pretrain **CXR-BERT-general** from a randomly initialized BERT model via Masked Language Modeling (MLM) on abstracts [PubMed](https://pubmed.ncbi.nlm.nih.gov/) and clinical notes from the publicly-available [MIMIC-III](https://physionet.org/content/mimiciii/1.4/) and [MIMIC-CXR](https://physionet.org/content/mimic-cxr/). In that regard, the general model is expected be applicable for research in clinical domains other than the chest radiology through domain specific fine-tuning. **CXR-BERT-specialized** is continually pretrained from CXR-BERT-general to further specialize in the chest X-ray domain. At the final stage, CXR-BERT is trained in a multi-modal contrastive learning framework, similar to the [CLIP](https://arxiv.org/abs/2103.00020) framework. The latent representation of [CLS] token is utilized to align text/image embeddings. | 36f78e5bf57ee1faaf8ca8062866f78e |

mit | ['exbert'] | false | Further information Please refer to the corresponding paper, ["Making the Most of Text Semantics to Improve Biomedical Vision-Language Processing", ECCV'22](https://arxiv.org/abs/2204.09817) for additional details on the model training and evaluation. For additional inference pipelines with CXR-BERT, please refer to the [HI-ML GitHub](https://aka.ms/biovil-code) repository. The associated source files will soon be accessible through this link. | bd23f3bc1023c473dc251a8ff0961744 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | t5-small-finetuned-cnn-news This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the cnn_dailymail dataset. It achieves the following results on the evaluation set: - Loss: 1.8412 - Rouge1: 24.7231 - Rouge2: 12.292 - Rougel: 20.5347 - Rougelsum: 23.4668 | 3296dad2fc3347d8519d025cacd97b14 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.00056 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 5 | 2a58eb6ccb6c7da149e2275a60b67133 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:| | 2.0318 | 1.0 | 718 | 1.8028 | 24.5415 | 12.0907 | 20.5343 | 23.3386 | | 1.8307 | 2.0 | 1436 | 1.8028 | 24.0965 | 11.6367 | 20.2078 | 22.8138 | | 1.6881 | 3.0 | 2154 | 1.8136 | 25.0822 | 12.6509 | 20.9523 | 23.8303 | | 1.5778 | 4.0 | 2872 | 1.8269 | 24.4271 | 11.8443 | 20.2281 | 23.0941 | | 1.501 | 5.0 | 3590 | 1.8412 | 24.7231 | 12.292 | 20.5347 | 23.4668 | | 6f7821ef06071eb23e5313e5d94ba869 |

mit | ['generated_from_trainer'] | false | compassionate_hypatia This model was trained from scratch on the tomekkorbak/detoxify-pile-chunk3-0-50000, the tomekkorbak/detoxify-pile-chunk3-50000-100000, the tomekkorbak/detoxify-pile-chunk3-100000-150000, the tomekkorbak/detoxify-pile-chunk3-150000-200000, the tomekkorbak/detoxify-pile-chunk3-200000-250000, the tomekkorbak/detoxify-pile-chunk3-250000-300000, the tomekkorbak/detoxify-pile-chunk3-300000-350000, the tomekkorbak/detoxify-pile-chunk3-350000-400000, the tomekkorbak/detoxify-pile-chunk3-400000-450000, the tomekkorbak/detoxify-pile-chunk3-450000-500000, the tomekkorbak/detoxify-pile-chunk3-500000-550000, the tomekkorbak/detoxify-pile-chunk3-550000-600000, the tomekkorbak/detoxify-pile-chunk3-600000-650000, the tomekkorbak/detoxify-pile-chunk3-650000-700000, the tomekkorbak/detoxify-pile-chunk3-700000-750000, the tomekkorbak/detoxify-pile-chunk3-750000-800000, the tomekkorbak/detoxify-pile-chunk3-800000-850000, the tomekkorbak/detoxify-pile-chunk3-850000-900000, the tomekkorbak/detoxify-pile-chunk3-900000-950000, the tomekkorbak/detoxify-pile-chunk3-950000-1000000, the tomekkorbak/detoxify-pile-chunk3-1000000-1050000, the tomekkorbak/detoxify-pile-chunk3-1050000-1100000, the tomekkorbak/detoxify-pile-chunk3-1100000-1150000, the tomekkorbak/detoxify-pile-chunk3-1150000-1200000, the tomekkorbak/detoxify-pile-chunk3-1200000-1250000, the tomekkorbak/detoxify-pile-chunk3-1250000-1300000, the tomekkorbak/detoxify-pile-chunk3-1300000-1350000, the tomekkorbak/detoxify-pile-chunk3-1350000-1400000, the tomekkorbak/detoxify-pile-chunk3-1400000-1450000, the tomekkorbak/detoxify-pile-chunk3-1450000-1500000, the tomekkorbak/detoxify-pile-chunk3-1500000-1550000, the tomekkorbak/detoxify-pile-chunk3-1550000-1600000, the tomekkorbak/detoxify-pile-chunk3-1600000-1650000, the tomekkorbak/detoxify-pile-chunk3-1650000-1700000, the tomekkorbak/detoxify-pile-chunk3-1700000-1750000, the tomekkorbak/detoxify-pile-chunk3-1750000-1800000, the tomekkorbak/detoxify-pile-chunk3-1800000-1850000, the tomekkorbak/detoxify-pile-chunk3-1850000-1900000 and the tomekkorbak/detoxify-pile-chunk3-1900000-1950000 datasets. | 33bde23a9ea1e6a31149f9d70ec1877d |

mit | ['generated_from_trainer'] | false | Full config {'dataset': {'datasets': ['tomekkorbak/detoxify-pile-chunk3-0-50000', 'tomekkorbak/detoxify-pile-chunk3-50000-100000', 'tomekkorbak/detoxify-pile-chunk3-100000-150000', 'tomekkorbak/detoxify-pile-chunk3-150000-200000', 'tomekkorbak/detoxify-pile-chunk3-200000-250000', 'tomekkorbak/detoxify-pile-chunk3-250000-300000', 'tomekkorbak/detoxify-pile-chunk3-300000-350000', 'tomekkorbak/detoxify-pile-chunk3-350000-400000', 'tomekkorbak/detoxify-pile-chunk3-400000-450000', 'tomekkorbak/detoxify-pile-chunk3-450000-500000', 'tomekkorbak/detoxify-pile-chunk3-500000-550000', 'tomekkorbak/detoxify-pile-chunk3-550000-600000', 'tomekkorbak/detoxify-pile-chunk3-600000-650000', 'tomekkorbak/detoxify-pile-chunk3-650000-700000', 'tomekkorbak/detoxify-pile-chunk3-700000-750000', 'tomekkorbak/detoxify-pile-chunk3-750000-800000', 'tomekkorbak/detoxify-pile-chunk3-800000-850000', 'tomekkorbak/detoxify-pile-chunk3-850000-900000', 'tomekkorbak/detoxify-pile-chunk3-900000-950000', 'tomekkorbak/detoxify-pile-chunk3-950000-1000000', 'tomekkorbak/detoxify-pile-chunk3-1000000-1050000', 'tomekkorbak/detoxify-pile-chunk3-1050000-1100000', 'tomekkorbak/detoxify-pile-chunk3-1100000-1150000', 'tomekkorbak/detoxify-pile-chunk3-1150000-1200000', 'tomekkorbak/detoxify-pile-chunk3-1200000-1250000', 'tomekkorbak/detoxify-pile-chunk3-1250000-1300000', 'tomekkorbak/detoxify-pile-chunk3-1300000-1350000', 'tomekkorbak/detoxify-pile-chunk3-1350000-1400000', 'tomekkorbak/detoxify-pile-chunk3-1400000-1450000', 'tomekkorbak/detoxify-pile-chunk3-1450000-1500000', 'tomekkorbak/detoxify-pile-chunk3-1500000-1550000', 'tomekkorbak/detoxify-pile-chunk3-1550000-1600000', 'tomekkorbak/detoxify-pile-chunk3-1600000-1650000', 'tomekkorbak/detoxify-pile-chunk3-1650000-1700000', 'tomekkorbak/detoxify-pile-chunk3-1700000-1750000', 'tomekkorbak/detoxify-pile-chunk3-1750000-1800000', 'tomekkorbak/detoxify-pile-chunk3-1800000-1850000', 'tomekkorbak/detoxify-pile-chunk3-1850000-1900000', 'tomekkorbak/detoxify-pile-chunk3-1900000-1950000'], 'filter_threshold': 0.00065, 'is_split_by_sentences': True}, 'generation': {'force_call_on': [25354], 'metrics_configs': [{}, {'n': 1}, {'n': 2}, {'n': 5}], 'scenario_configs': [{'generate_kwargs': {'do_sample': True, 'max_length': 128, 'min_length': 10, 'temperature': 0.7, 'top_k': 0, 'top_p': 0.9}, 'name': 'unconditional', 'num_samples': 2048}, {'generate_kwargs': {'do_sample': True, 'max_length': 128, 'min_length': 10, 'temperature': 0.7, 'top_k': 0, 'top_p': 0.9}, 'name': 'challenging_rtp', 'num_samples': 2048, 'prompts_path': 'resources/challenging_rtp.jsonl'}], 'scorer_config': {'device': 'cuda:0'}}, 'kl_gpt3_callback': {'force_call_on': [25354], 'max_tokens': 64, 'num_samples': 4096}, 'model': {'from_scratch': True, 'gpt2_config_kwargs': {'reorder_and_upcast_attn': True, 'scale_attn_by': True}, 'path_or_name': 'gpt2'}, 'objective': {'name': 'MLE'}, 'tokenizer': {'path_or_name': 'gpt2'}, 'training': {'dataloader_num_workers': 0, 'effective_batch_size': 64, 'evaluation_strategy': 'no', 'fp16': True, 'hub_model_id': 'compassionate_hypatia', 'hub_strategy': 'all_checkpoints', 'learning_rate': 0.0005, 'logging_first_step': True, 'logging_steps': 1, 'num_tokens': 3300000000, 'output_dir': 'training_output104340', 'per_device_train_batch_size': 16, 'push_to_hub': True, 'remove_unused_columns': False, 'save_steps': 25354, 'save_strategy': 'steps', 'seed': 42, 'warmup_ratio': 0.01, 'weight_decay': 0.1}} | 6108c53dde75a0187d9ab10fb90709f5 |

creativeml-openrail-m | ['text-to-image'] | false | Zlikwid Dreambooth model trained by Zlikwid with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept: 20221120210939  | 587856bb22cdc1ee685d5a9ac24be6f1 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper Medium Hindi- Drishti Sharma This model is a fine-tuned version of [openai/whisper-medium](https://huggingface.co/openai/whisper-medium) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 0.3655 - Wer: 11.7663 | d50b137a31256446806f97e23232671d |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 100 - training_steps: 10000 - mixed_precision_training: Native AMP | 89eb27f4fa3413ff004ad96e2b8aa1ae |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:-------:| | 0.0 | 12.22 | 10000 | 0.3655 | 11.7663 | | 005c166a918c0cc35e0a5ac4df4efc80 |

apache-2.0 | ['minds14', 'google/xtreme_s', 'generated_from_trainer'] | false | xtreme_s_xlsr_300m_minds14 This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the GOOGLE/XTREME_S - MINDS14.ALL dataset. It achieves the following results on the evaluation set: - Accuracy: 0.9033 - Accuracy Cs-cz: 0.9164 - Accuracy De-de: 0.9477 - Accuracy En-au: 0.9235 - Accuracy En-gb: 0.9324 - Accuracy En-us: 0.9326 - Accuracy Es-es: 0.9177 - Accuracy Fr-fr: 0.9444 - Accuracy It-it: 0.9167 - Accuracy Ko-kr: 0.8649 - Accuracy Nl-nl: 0.9450 - Accuracy Pl-pl: 0.9146 - Accuracy Pt-pt: 0.8940 - Accuracy Ru-ru: 0.8667 - Accuracy Zh-cn: 0.7291 - F1: 0.9015 - F1 Cs-cz: 0.9154 - F1 De-de: 0.9467 - F1 En-au: 0.9199 - F1 En-gb: 0.9334 - F1 En-us: 0.9308 - F1 Es-es: 0.9158 - F1 Fr-fr: 0.9436 - F1 It-it: 0.9135 - F1 Ko-kr: 0.8642 - F1 Nl-nl: 0.9440 - F1 Pl-pl: 0.9159 - F1 Pt-pt: 0.8883 - F1 Ru-ru: 0.8646 - F1 Zh-cn: 0.7249 - Loss: 0.4119 - Loss Cs-cz: 0.3790 - Loss De-de: 0.2649 - Loss En-au: 0.3459 - Loss En-gb: 0.2853 - Loss En-us: 0.2203 - Loss Es-es: 0.2731 - Loss Fr-fr: 0.1909 - Loss It-it: 0.3520 - Loss Ko-kr: 0.5431 - Loss Nl-nl: 0.2515 - Loss Pl-pl: 0.4113 - Loss Pt-pt: 0.4798 - Loss Ru-ru: 0.6470 - Loss Zh-cn: 1.1216 - Predict Samples: 4086 | 327baa6da86b7fcb4afe9951600efb1e |

apache-2.0 | ['minds14', 'google/xtreme_s', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 32 - eval_batch_size: 8 - seed: 42 - distributed_type: multi-GPU - num_devices: 2 - total_train_batch_size: 64 - total_eval_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 1500 - num_epochs: 50.0 - mixed_precision_training: Native AMP | f2c5ca8e2a6fa02856a0380e8064dcd4 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.