license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Epoch | |:----------:|:---------------:|:-----:| | 2.0219 | 1.9405 | 0 | | 2.0081 | 1.8806 | 1 | | 1.9741 | 1.8750 | 2 | | 1.9575 | 1.8781 | 3 | | 1.9444 | 1.8302 | 4 | | 1.9151 | 1.8887 | 5 | | 275c842aebdb48c596b1068852fe0bd9 |

apache-2.0 | ['audio', 'speech', 'wav2vec2', 'pt', 'portuguese-speech-corpus', 'automatic-speech-recognition', 'hf-asr-leaderboard', 'speech', 'PyTorch'] | false | Use this model ```python from transformers import AutoTokenizer, Wav2Vec2ForCTC tokenizer = AutoTokenizer.from_pretrained("Edresson/wav2vec2-large-xlsr-coraa-portuguese") model = Wav2Vec2ForCTC.from_pretrained("Edresson/wav2vec2-large-xlsr-coraa-portuguese") ``` | 7bcc2f712961f87a8a1676686050a52d |

apache-2.0 | ['audio', 'speech', 'wav2vec2', 'pt', 'portuguese-speech-corpus', 'automatic-speech-recognition', 'hf-asr-leaderboard', 'speech', 'PyTorch'] | false | Example test with Common Voice Dataset ```python dataset = load_dataset("common_voice", "pt", split="test", data_dir="./cv-corpus-6.1-2020-12-11") resampler = torchaudio.transforms.Resample(orig_freq=48_000, new_freq=16_000) def map_to_array(batch): speech, _ = torchaudio.load(batch["path"]) batch["speech"] = resampler.forward(speech.squeeze(0)).numpy() batch["sampling_rate"] = resampler.new_freq batch["sentence"] = re.sub(chars_to_ignore_regex, '', batch["sentence"]).lower().replace("’", "'") return batch ``` ```python ds = dataset.map(map_to_array) result = ds.map(map_to_pred, batched=True, batch_size=1, remove_columns=list(ds.features.keys())) print(wer.compute(predictions=result["predicted"], references=result["target"])) ``` | 29312d4911093814be84fb44111b6da3 |

openrail | [] | false | это файнтюн sberai ruGPT3 small (125 млн параметров) на всех оригинальных нуждиках (2 часа транскрибированные через openai whisper medium). размер блока при файнтюне 1024, 25 эпох. все скрипты по инференсу модели тут https://github.com/ai-forever/ru-gpts, через transformers вполне себе работает на 4 гб видеопамяти, на 2 думаю тоже заработает. -как запустить через transformers? запускаем строки ниже в jupyterе from transformers import pipeline, set_seed set_seed(32) generator = pipeline('text-generation', model="4eJIoBek/ruGPT3_small_nujdiki_fithah", do_sample=True, max_length=350) generator("Александр Сергеевич Пушкин известен также благодаря своим сказкам, которые включают в себя: ") и всё работает и вообще нихуёво | 44d6f519abd4e22cd4f841cfcc9a19cc |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-amazon-shoe-reviews_ubuntu This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.9573 - Accuracy: 0.5726 - F1: [0.62998761 0.45096564 0.49037037 0.55640244 0.73547094] - Precision: [0.62334478 0.45704118 0.47534706 0.5858748 0.72102161] - Recall: [0.63677355 0.4450495 0.5063743 0.52975327 0.75051125] | 9532af97835175e4b4f4394a33ddb300 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 32 - eval_batch_size: 64 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1 | 7d42ffebae4776670e6612474d9be61e |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | Precision | Recall | |:-------------:|:-----:|:----:|:---------------:|:--------:|:--------------------------------------------------------:|:--------------------------------------------------------:|:--------------------------------------------------------:| | 0.9617 | 1.0 | 2813 | 0.9573 | 0.5726 | [0.62998761 0.45096564 0.49037037 0.55640244 0.73547094] | [0.62334478 0.45704118 0.47534706 0.5858748 0.72102161] | [0.63677355 0.4450495 0.5063743 0.52975327 0.75051125] | | 8c22e4334d99a631f6f3eb9172192778 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Evaluation on Common Voice FR Test The script used for training and evaluation can be found here: https://github.com/irebai/wav2vec2 ```python import torch import torchaudio from datasets import load_dataset, load_metric from transformers import ( Wav2Vec2ForCTC, Wav2Vec2Processor, ) import re model_name = "Ilyes/wav2vec2-large-xlsr-53-french" device = "cpu" | 61edfdf45a4c53974e677adb344087c2 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | "cuda" model = Wav2Vec2ForCTC.from_pretrained(model_name).to(device) processor = Wav2Vec2Processor.from_pretrained(model_name) ds = load_dataset("common_voice", "fr", split="test", cache_dir="./data/fr") chars_to_ignore_regex = '[\,\?\.\!\;\:\"\“\%\‘\”\�\‘\’\’\’\‘\…\·\!\ǃ\?\«\‹\»\›“\”\\ʿ\ʾ\„\∞\\|\.\,\;\:\*\—\–\─\―\_\/\:\ː\;\,\=\«\»\→]' def map_to_array(batch): speech, _ = torchaudio.load(batch["path"]) batch["speech"] = resampler.forward(speech.squeeze(0)).numpy() batch["sampling_rate"] = resampler.new_freq batch["sentence"] = re.sub(chars_to_ignore_regex, '', batch["sentence"]).lower().replace("’", "'") return batch resampler = torchaudio.transforms.Resample(48_000, 16_000) ds = ds.map(map_to_array) def map_to_pred(batch): features = processor(batch["speech"], sampling_rate=batch["sampling_rate"][0], padding=True, return_tensors="pt") input_values = features.input_values.to(device) attention_mask = features.attention_mask.to(device) with torch.no_grad(): logits = model(input_values, attention_mask=attention_mask).logits pred_ids = torch.argmax(logits, dim=-1) batch["predicted"] = processor.batch_decode(pred_ids) batch["target"] = batch["sentence"] return batch result = ds.map(map_to_pred, batched=True, batch_size=16, remove_columns=list(ds.features.keys())) wer = load_metric("wer") print(wer.compute(predictions=result["predicted"], references=result["target"])) ``` | e331cb0132eef058ee18e5b3ff52bdb0 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-large-xls-r-300m-turkish-colab This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 0.3859 - Wer: 0.4680 | be6efc95f64d8a9a1caa4a67354a3eb3 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 3.8707 | 3.67 | 400 | 0.6588 | 0.7110 | | 0.3955 | 7.34 | 800 | 0.3859 | 0.4680 | | 45594558d6803575eee3bba2d34f4f42 |

mit | ['generated_from_trainer'] | false | roberta_large-chunk-conll2003_0818_v0 This model is a fine-tuned version of [roberta-large](https://huggingface.co/roberta-large) on the conll2003 dataset. It achieves the following results on the evaluation set: - Loss: 0.1566 - Precision: 0.9016 - Recall: 0.9295 - F1: 0.9154 - Accuracy: 0.9784 | 2bb8cb2d6ccde68e92503b34500409b0 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.2179 | 1.0 | 878 | 0.0527 | 0.9210 | 0.9472 | 0.9339 | 0.9875 | | 0.0434 | 2.0 | 1756 | 0.0455 | 0.9366 | 0.9616 | 0.9489 | 0.9899 | | 5a59ebdad1cd822c53b6b39ebc4f491a |

apache-2.0 | ['automatic-speech-recognition', 'id'] | false | exp_w2v2t_id_vp-fr_s335 Fine-tuned [facebook/wav2vec2-large-fr-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-fr-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (id)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 016b929381bd1da7d317726cc2183f12 |

apache-2.0 | ['tabular-regression', 'baseline-trainer'] | false | Baseline Model trained on tips9y0jvt5q to apply regression on tip **Metrics of the best model:** r2 0.415240 neg_mean_squared_error -1.098792 Name: Ridge(alpha=10), dtype: float64 **See model plot below:** <style> | f66d391ac592b3b4824874377728e888 |

apache-2.0 | ['tabular-regression', 'baseline-trainer'] | false | x27;,EasyPreprocessor(types= continuous dirty_float low_card_int ... date free_string useless total_bill True False False ... False False False sex False False False ... False False False smoker False False False ... False False False day False False False ... False False False time False False False ... False False False size False False False ... False False False[6 rows x 7 columns])),(& | 1a8ab6d25b565537a744634a92c6b2f9 |

apache-2.0 | ['tabular-regression', 'baseline-trainer'] | false | x27;, Ridge(alpha=10))])</pre><b>In a Jupyter environment, please rerun this cell to show the HTML representation or trust the notebook. <br />On GitHub, the HTML representation is unable to render, please try loading this page with nbviewer.org.</b></div><div class="sk-container" hidden><div class="sk-item sk-dashed-wrapped"><div class="sk-label-container"><div class="sk-label sk-toggleable"><input class="sk-toggleable__control sk-hidden--visually" id="sk-estimator-id-1" type="checkbox" ><label for="sk-estimator-id-1" class="sk-toggleable__label sk-toggleable__label-arrow">Pipeline</label><div class="sk-toggleable__content"><pre>Pipeline(steps=[(& | 2335a71e3fdd28ddd38e72479b722aa5 |

apache-2.0 | ['tabular-regression', 'baseline-trainer'] | false | x27;, Ridge(alpha=10))])</pre></div></div></div><div class="sk-serial"><div class="sk-item"><div class="sk-estimator sk-toggleable"><input class="sk-toggleable__control sk-hidden--visually" id="sk-estimator-id-2" type="checkbox" ><label for="sk-estimator-id-2" class="sk-toggleable__label sk-toggleable__label-arrow">EasyPreprocessor</label><div class="sk-toggleable__content"><pre>EasyPreprocessor(types= continuous dirty_float low_card_int ... date free_string useless total_bill True False False ... False False False sex False False False ... False False False smoker False False False ... False False False day False False False ... False False False time False False False ... False False False size False False False ... False False False[6 rows x 7 columns])</pre></div></div></div><div class="sk-item"><div class="sk-estimator sk-toggleable"><input class="sk-toggleable__control sk-hidden--visually" id="sk-estimator-id-3" type="checkbox" ><label for="sk-estimator-id-3" class="sk-toggleable__label sk-toggleable__label-arrow">Ridge</label><div class="sk-toggleable__content"><pre>Ridge(alpha=10)</pre></div></div></div></div></div></div></div> **Disclaimer:** This model is trained with dabl library as a baseline, for better results, use [AutoTrain](https://huggingface.co/autotrain). **Logs of training** including the models tried in the process can be found in logs.txt | 310b8156740219a53dc806412fb3f5a6 |

gpl-3.0 | ['object-detection', 'computer-vision', 'yolov8', 'yolov5'] | false | Yolov8 Inference ```python from ultralytics import YOLO model = YOLO('kadirnar/yolov8n-v8.0') model.conf = conf_threshold model.iou = iou_threshold prediction = model.predict(image, imgsz=image_size, show=False, save=False) ``` | 19ef6105f45c3a3b236542dfbce3ed65 |

apache-2.0 | ['generated_from_trainer'] | false | IA_Trabalho01 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.2717 - Accuracy: 0.8990 - F1: 0.8987 | 9edeb477afbc588b5604c01895903743 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers'] | false | Plat Diffusion v1.3.1 Plat Diffusion v1.3.1 is a latent model fine-tuned on [Waifu Diffusion v1.4 Anime Epoch 2](https://huggingface.co/hakurei/waifu-diffusion-v1-4) with images from niji・journey and some generative AI. `.safetensors` file is [here](https://huggingface.co/p1atdev/pd-archive/tree/main). [kl-f8-anime2.ckpt](https://huggingface.co/hakurei/waifu-diffusion-v1-4/blob/main/vae/kl-f8-anime2.ckpt) is recommended for VAE.  | 9269b885de8a7af33116b16175f8c032 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers'] | false | Recomended Negative Prompt ``` nsfw, worst quality, low quality, medium quality, deleted, lowres, bad anatomy, bad hands, text, error, missing fingers, extra digits, fewer digits, cropped, jpeg artifacts, signature, watermark, username, blurry ``` | db293514120171dc218a9966c1b5f193 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers'] | false | Samples  ``` masterpiece, best quality, high quality, 1girl, solo, brown hair, green eyes, looking at viewer, autumn, cumulonimbus cloud, lighting, blue sky, autumn leaves, garden, ultra detailed illustration, intricate detailed Negative prompt: nsfw, worst quality, low quality, medium quality, deleted, lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, jpeg artifacts, signature, watermark, username, blurry, Steps: 20, Sampler: DPM++ 2M Karras, CFG scale: 7 ``` --- (This model is not good at male, sorry)  ``` masterpiece, best quality, high quality, 1boy, man, male, brown hair, green eyes, looking at viewer, autumn, cumulonimbus cloud, lighting, blue sky, autumn leaves, garden, ultra detailed illustration, intricate detailed Negative prompt: nsfw, worst quality, low quality, medium quality, deleted, lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, jpeg artifacts, signature, watermark, username, blurry, Steps: 20, Sampler: DPM++ 2M Karras, CFG scale: 7 ``` ---  ``` masterpiece, best quality, 1girl, pirate, gloves, parrot, bird, looking at viewer, Negative prompt: nsfw, worst quality, low quality, medium quality, deleted, lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, jpeg artifacts, signature, watermark, username, blurry, 3d Steps: 20, Sampler: DPM++ 2M Karras, CFG scale: 7 ``` ---  ``` masterpiece, best quality, scenery, starry sky, mountains, no humans Negative prompt: nsfw, worst quality, low quality, medium quality, deleted, lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, jpeg artifacts, signature, watermark, username, blurry Steps: 20, Sampler: DPM++ 2M Karras, CFG scale: 7 ``` | 4687fe29898567418f13850cbf74592e |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers'] | false | not working with float16 pipe = pipe.to("cuda") prompt = "masterpiece, best quality, 1girl, solo, short hair, looking at viewer, japanese clothes, blue hair, portrait, kimono, bangs, colorful, closed mouth, blue kimono, butterfly, blue eyes, ultra detailed illustration" negative_prompt = "nsfw, worst quality, low quality, medium quality, deleted, lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, jpeg artifacts, signature, watermark, username, blurry" image = pipe(prompt, negative_prompt=negative_prompt).images[0] image.save("girl.png") ``` | 7abd2926cc939a57147f63f347f2df56 |

apache-2.0 | ['automatic-speech-recognition', 'pl'] | false | exp_w2v2t_pl_unispeech-sat_s695 Fine-tuned [microsoft/unispeech-sat-large](https://huggingface.co/microsoft/unispeech-sat-large) for speech recognition using the train split of [Common Voice 7.0 (pl)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | f7202fb6e1cc3e44c442b4a060d48593 |

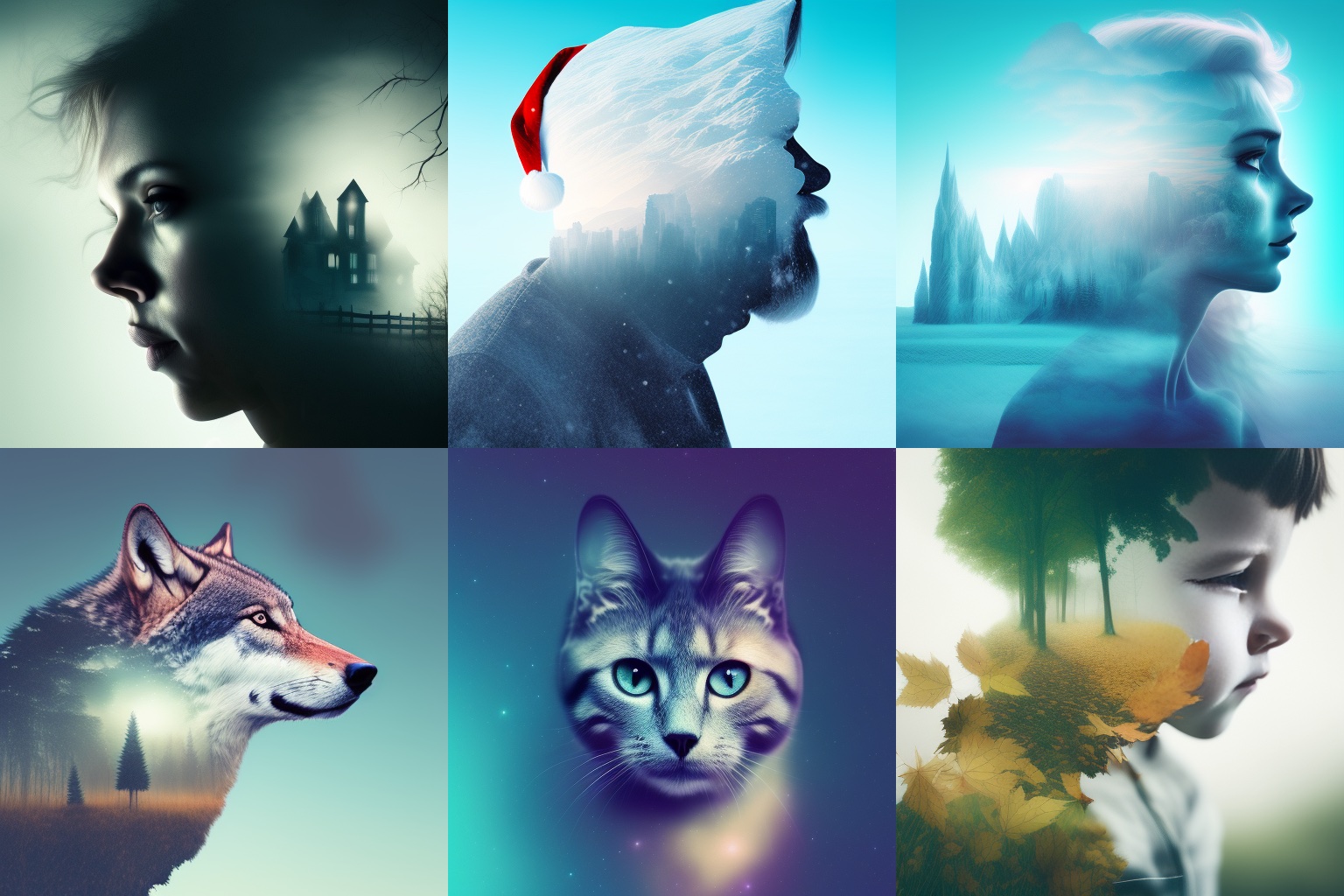

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | [*Click here to download the latest Double Exposure embedding for SD 2.x in higher resolution*](https://huggingface.co/joachimsallstrom/Double-Exposure-Embedding)! **Double Exposure Diffusion** This is version 2 of the <i>Double Exposure Diffusion</i> model, trained specifically on images of people and a few animals. The model file (Double_Exposure_v2.ckpt) can be downloaded on the **Files** page. You trigger double exposure style images using token: **_dublex style_** or just **_dublex_**. **Example 1:**  | c79992d1cb041b7bc6c207a8c5c282c9 |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | Example prompts and settings <i>Galaxy man (image 1):</i><br> **dublex man galaxy**<br> _Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 3273014177, Size: 512x512_ <i>Emma Stone (image 2):</i><br> **dublex style Emma Stone, galaxy**<br> _Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 250257155, Size: 512x512_ <i>Frodo (image 6):</i><br> **dublex style young Elijah Wood as (Frodo), portrait, dark nature**<br> _Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 3717002975, Size: 512x512_ <br> **Example 2:**  | 7c3a745d513fe37ab7dac7880902fb89 |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | Example prompts and settings <i>Scarlett Johansson (image 1):</i><br> **dublex Scarlett Johansson, (haunted house), black background**<br> _Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 3059560186, Size: 512x512_ <i>Frozen Elsa (image 3):</i><br> **dublex style Elsa, ice castle**<br> _Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 2867934627, Size: 512x512_ <i>Wolf (image 4):</i><br> **dublex style wolf closeup, moon**<br> _Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 312924946, Size: 512x512_ <br> <p> This model was trained using Shivam's DreamBooth model on Google Colab @ 2000 steps. </p> The previous version 1 of Double Exposure Diffusion is also available in the **Files** section. | a318b42f1c2f1d2eb2e7e62ce6708f53 |

apache-2.0 | ['translation'] | false | opus-mt-sv-tpi * source languages: sv * target languages: tpi * OPUS readme: [sv-tpi](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/sv-tpi/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/sv-tpi/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/sv-tpi/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/sv-tpi/opus-2020-01-16.eval.txt) | 7a22b741f58505fedc3ac5b535fd6fb0 |

apache-2.0 | ['national library of spain', 'spanish', 'bne', 'qa', 'question answering'] | false | Model description The **roberta-large-bne-sqac** is a Question Answering (QA) model for the Spanish language fine-tuned from the [roberta-large-bne](https://huggingface.co/PlanTL-GOB-ES/roberta-large-bne) model, a [RoBERTa](https://arxiv.org/abs/1907.11692) large model pre-trained using the largest Spanish corpus known to date, with a total of 570GB of clean and deduplicated text, processed for this work, compiled from the web crawlings performed by the [National Library of Spain (Biblioteca Nacional de España)](http://www.bne.es/en/Inicio/index.html) from 2009 to 2019. | 60c7cd9ca229cb3c113410130492414e |

apache-2.0 | ['national library of spain', 'spanish', 'bne', 'qa', 'question answering'] | false | Intended uses and limitations **roberta-large-bne-sqac** model can be used for extractive question answering. The model is limited by its training dataset and may not generalize well for all use cases. | 79941511ee73a9b27703b8bd4039bd95 |

apache-2.0 | ['national library of spain', 'spanish', 'bne', 'qa', 'question answering'] | false | How to use ```python from transformers import pipeline nlp = pipeline("question-answering", model="PlanTL-GOB-ES/roberta-large-bne-sqac") text = "¿Dónde vivo?" context = "Me llamo Wolfgang y vivo en Berlin" qa_results = nlp(text, context) print(qa_results) ``` | a46f99f20dc8d7b3431a6bf58dc3b806 |

apache-2.0 | ['national library of spain', 'spanish', 'bne', 'qa', 'question answering'] | false | Training procedure The model was trained with a batch size of 16 and a learning rate of 1e-5 for 5 epochs. We then selected the best checkpoint using the downstream task metric in the corresponding development set and then evaluated it on the test set. | 9d41a389579670e0ddf5c4325a32bec8 |

apache-2.0 | ['national library of spain', 'spanish', 'bne', 'qa', 'question answering'] | false | Evaluation results We evaluated the **roberta-large-bne-sqac** on the SQAC test set against standard multilingual and monolingual baselines: | Model | SQAC (F1) | | ------------|:----| | roberta-large-bne-sqac | **82.02** | | roberta-base-bne-sqac | 79.23| | BETO | 79.23 | | mBERT | 75.62 | | BERTIN | 76.78 | | ELECTRA | 73.83 | For more details, check the fine-tuning and evaluation scripts in the official [GitHub repository](https://github.com/PlanTL-GOB-ES/lm-spanish). | 0ba4ac4f0a613a47bf5466ee98e03515 |

apache-2.0 | ['national library of spain', 'spanish', 'bne', 'qa', 'question answering'] | false | Citing information If you use this model, please cite our [paper](http://journal.sepln.org/sepln/ojs/ojs/index.php/pln/article/view/6405): ``` @article{, abstract = {We want to thank the National Library of Spain for such a large effort on the data gathering and the Future of Computing Center, a Barcelona Supercomputing Center and IBM initiative (2020). This work was funded by the Spanish State Secretariat for Digitalization and Artificial Intelligence (SEDIA) within the framework of the Plan-TL.}, author = {Asier Gutiérrez Fandiño and Jordi Armengol Estapé and Marc Pàmies and Joan Llop Palao and Joaquin Silveira Ocampo and Casimiro Pio Carrino and Carme Armentano Oller and Carlos Rodriguez Penagos and Aitor Gonzalez Agirre and Marta Villegas}, doi = {10.26342/2022-68-3}, issn = {1135-5948}, journal = {Procesamiento del Lenguaje Natural}, keywords = {Artificial intelligence,Benchmarking,Data processing.,MarIA,Natural language processing,Spanish language modelling,Spanish language resources,Tractament del llenguatge natural (Informàtica),Àrees temàtiques de la UPC::Informàtica::Intel·ligència artificial::Llenguatge natural}, publisher = {Sociedad Española para el Procesamiento del Lenguaje Natural}, title = {MarIA: Spanish Language Models}, volume = {68}, url = {https://upcommons.upc.edu/handle/2117/367156 | 4e6f82947050c950cfaf4c4341a987e9 |

apache-2.0 | ['text-classification'] | false | bert-base-uncased model fine-tuned on SST-2 This model was created using the [nn_pruning](https://github.com/huggingface/nn_pruning) python library: the linear layers contains **37%** of the original weights. The model contains **51%** of the original weights **overall** (the embeddings account for a significant part of the model, and they are not pruned by this method). <div class="graph"><script src="/echarlaix/bert-base-uncased-sst2-acc91.1-d37-hybrid/raw/main/model_card/density_info.js" id="2d0fc334-fe98-4315-8890-d6eaca1fa9be"></script></div> In terms of perfomance, its **accuracy** is **91.17**. | fb0f05ffa340a451d2eb58932de94661 |

apache-2.0 | ['text-classification'] | false | Fine-Pruning details This model was fine-tuned from the HuggingFace [model](https://huggingface.co/bert-base-uncased) checkpoint on task, and distilled from the model [textattack/bert-base-uncased-SST-2](https://huggingface.co/textattack/bert-base-uncased-SST-2). This model is case-insensitive: it does not make a difference between english and English. A side-effect of the block pruning method is that some of the attention heads are completely removed: 88 heads were removed on a total of 144 (61.1%). Here is a detailed view on how the remaining heads are distributed in the network after pruning. <div class="graph"><script src="/echarlaix/bert-base-uncased-sst2-acc91.1-d37-hybrid/raw/main/model_card/pruning_info.js" id="93b19d7f-c11b-4edf-9670-091e40d9be25"></script></div> | 2d11823655ff2b44f22f4e3f9bc69b5e |

apache-2.0 | ['text-classification'] | false | Example Usage Install nn_pruning: it contains the optimization script, which just pack the linear layers into smaller ones by removing empty rows/columns. `pip install nn_pruning` Then you can use the `transformers library` almost as usual: you just have to call `optimize_model` when the pipeline has loaded. ```python from transformers import pipeline from nn_pruning.inference_model_patcher import optimize_model cls_pipeline = pipeline( "text-classification", model="echarlaix/bert-base-uncased-sst2-acc91.1-d37-hybrid", tokenizer="echarlaix/bert-base-uncased-sst2-acc91.1-d37-hybrid", ) print(f"Parameters count (includes only head pruning, no feed forward pruning)={int(cls_pipeline.model.num_parameters() / 1E6)}M") cls_pipeline.model = optimize_model(cls_pipeline.model, "dense") print(f"Parameters count after optimization={int(cls_pipeline.model.num_parameters() / 1E6)}M") predictions = cls_pipeline("This restaurant is awesome") print(predictions) ``` | 75df042c3addc6e1d373642b409f366c |

cc-by-sa-4.0 | ['japanese', 'wikipedia', 'pos', 'dependency-parsing'] | false | Model Description This is a DeBERTa(V2) model pretrained on Japanese Wikipedia and 青空文庫 texts for POS-tagging and dependency-parsing (using `goeswith` for subwords), derived from [deberta-large-japanese-wikipedia](https://huggingface.co/KoichiYasuoka/deberta-large-japanese-wikipedia) and [UD_Japanese-GSDLUW](https://github.com/UniversalDependencies/UD_Japanese-GSDLUW). | a929349d66488c8cbde51d224e3f706a |

cc-by-sa-4.0 | ['japanese', 'wikipedia', 'pos', 'dependency-parsing'] | false | text = "+text+"\n" v=[(s,e) for s,e in w["offset_mapping"] if s<e] for i,(s,e) in enumerate(v,1): q=self.model.config.id2label[p[i,h[i]]].split("|") u+="\t".join([str(i),text[s:e],"_",q[0],"_","|".join(q[1:-1]),str(h[i]),q[-1],"_","_" if i<len(v) and e<v[i][0] else "SpaceAfter=No"])+"\n" return u+"\n" nlp=UDgoeswith("KoichiYasuoka/deberta-large-japanese-wikipedia-ud-goeswith") print(nlp("全学年にわたって小学校の国語の教科書に挿し絵が用いられている")) ``` with [ufal.chu-liu-edmonds](https://pypi.org/project/ufal.chu-liu-edmonds/). Or without ufal.chu-liu-edmonds: ``` from transformers import pipeline nlp=pipeline("universal-dependencies","KoichiYasuoka/deberta-large-japanese-wikipedia-ud-goeswith",trust_remote_code=True,aggregation_strategy="simple") print(nlp("全学年にわたって小学校の国語の教科書に挿し絵が用いられている")) ``` | de9b0f6497a36b820f7e983028449641 |

apache-2.0 | ['generated_from_trainer'] | false | my_asr_model_2 This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the minds14 dataset. It achieves the following results on the evaluation set: - Loss: 3.1785 - Wer: 1.0 | 562fb2cd5f6f38097fa852947c665fd4 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.001 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 100 - training_steps: 500 | e90e7407afdfcd022591526e3c3af1b7 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:---:| | 3.0949 | 20.0 | 100 | 3.1923 | 1.0 | | 3.0836 | 40.0 | 200 | 3.1769 | 1.0 | | 3.0539 | 60.0 | 300 | 3.1766 | 1.0 | | 3.0687 | 80.0 | 400 | 3.1853 | 1.0 | | 3.0649 | 100.0 | 500 | 3.1785 | 1.0 | | f60475969a8df2925a6aaed43a25d25e |

apache-2.0 | ['automatic-speech-recognition', 'it'] | false | exp_w2v2t_it_vp-nl_s222 Fine-tuned [facebook/wav2vec2-large-nl-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-nl-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (it)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 943369e17952b4fa8ed35fbc277a12ca |

apache-2.0 | ['generated_from_trainer'] | false | mt5-small-tuto-mt5-small-2 This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.8564 - Rouge1: 0.4159 - Rouge2: 0.2906 - Rougel: 0.3928 - Rougelsum: 0.3929 | 096abc848ae7b6e2819c5ab64638d643 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5.6e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3 | 4dfa3c49dc060f2bb9ee70c7e953067b |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | |:-------------:|:-----:|:-----:|:---------------:|:------:|:------:|:------:|:---------:| | 2.1519 | 1.0 | 6034 | 1.8564 | 0.4159 | 0.2906 | 0.3928 | 0.3929 | | 2.1289 | 2.0 | 12068 | 1.8564 | 0.4159 | 0.2906 | 0.3928 | 0.3929 | | 2.1291 | 3.0 | 18102 | 1.8564 | 0.4159 | 0.2906 | 0.3928 | 0.3929 | | e9f725df0c44dab9b2fa69f34b261843 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | opus-mt-tc-big-et-en Neural machine translation model for translating from Estonian (et) to English (en). This model is part of the [OPUS-MT project](https://github.com/Helsinki-NLP/Opus-MT), an effort to make neural machine translation models widely available and accessible for many languages in the world. All models are originally trained using the amazing framework of [Marian NMT](https://marian-nmt.github.io/), an efficient NMT implementation written in pure C++. The models have been converted to pyTorch using the transformers library by huggingface. Training data is taken from [OPUS](https://opus.nlpl.eu/) and training pipelines use the procedures of [OPUS-MT-train](https://github.com/Helsinki-NLP/Opus-MT-train). * Publications: [OPUS-MT – Building open translation services for the World](https://aclanthology.org/2020.eamt-1.61/) and [The Tatoeba Translation Challenge – Realistic Data Sets for Low Resource and Multilingual MT](https://aclanthology.org/2020.wmt-1.139/) (Please, cite if you use this model.) ``` @inproceedings{tiedemann-thottingal-2020-opus, title = "{OPUS}-{MT} {--} Building open translation services for the World", author = {Tiedemann, J{\"o}rg and Thottingal, Santhosh}, booktitle = "Proceedings of the 22nd Annual Conference of the European Association for Machine Translation", month = nov, year = "2020", address = "Lisboa, Portugal", publisher = "European Association for Machine Translation", url = "https://aclanthology.org/2020.eamt-1.61", pages = "479--480", } @inproceedings{tiedemann-2020-tatoeba, title = "The Tatoeba Translation Challenge {--} Realistic Data Sets for Low Resource and Multilingual {MT}", author = {Tiedemann, J{\"o}rg}, booktitle = "Proceedings of the Fifth Conference on Machine Translation", month = nov, year = "2020", address = "Online", publisher = "Association for Computational Linguistics", url = "https://aclanthology.org/2020.wmt-1.139", pages = "1174--1182", } ``` | 30e9002697403d92c5eb229ffcb9e530 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Model info * Release: 2022-03-09 * source language(s): est * target language(s): eng * model: transformer-big * data: opusTCv20210807+bt ([source](https://github.com/Helsinki-NLP/Tatoeba-Challenge)) * tokenization: SentencePiece (spm32k,spm32k) * original model: [opusTCv20210807+bt_transformer-big_2022-03-09.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/est-eng/opusTCv20210807+bt_transformer-big_2022-03-09.zip) * more information released models: [OPUS-MT est-eng README](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/est-eng/README.md) | 4c74d5989c76e0880db960008f711e2a |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Usage A short example code: ```python from transformers import MarianMTModel, MarianTokenizer src_text = [ "Takso ootab.", "Kon sa elät?" ] model_name = "pytorch-models/opus-mt-tc-big-et-en" tokenizer = MarianTokenizer.from_pretrained(model_name) model = MarianMTModel.from_pretrained(model_name) translated = model.generate(**tokenizer(src_text, return_tensors="pt", padding=True)) for t in translated: print( tokenizer.decode(t, skip_special_tokens=True) ) | 42067f8b658c70c032a5a769b742e787 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Kon you elät? ``` You can also use OPUS-MT models with the transformers pipelines, for example: ```python from transformers import pipeline pipe = pipeline("translation", model="Helsinki-NLP/opus-mt-tc-big-et-en") print(pipe("Takso ootab.")) | c856d6caebadb321becf4a93a34db36a |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Benchmarks * test set translations: [opusTCv20210807+bt_transformer-big_2022-03-09.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/est-eng/opusTCv20210807+bt_transformer-big_2022-03-09.test.txt) * test set scores: [opusTCv20210807+bt_transformer-big_2022-03-09.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/est-eng/opusTCv20210807+bt_transformer-big_2022-03-09.eval.txt) * benchmark results: [benchmark_results.txt](benchmark_results.txt) * benchmark output: [benchmark_translations.zip](benchmark_translations.zip) | langpair | testset | chr-F | BLEU | | a69fa5a3b3cd63dc9d090cb5a6c00beb |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | words | |----------|---------|-------|-------|-------|--------| | est-eng | tatoeba-test-v2021-08-07 | 0.73707 | 59.7 | 1359 | 8811 | | est-eng | flores101-devtest | 0.64463 | 38.6 | 1012 | 24721 | | est-eng | newsdev2018 | 0.59899 | 33.8 | 2000 | 43068 | | est-eng | newstest2018 | 0.60708 | 34.3 | 2000 | 45405 | | 3d4dea0765f6371aabbd02acb6aa7b3f |

apache-2.0 | ['automatic-speech-recognition', 'th'] | false | exp_w2v2t_th_unispeech-ml_s351 Fine-tuned [microsoft/unispeech-large-multi-lingual-1500h-cv](https://huggingface.co/microsoft/unispeech-large-multi-lingual-1500h-cv) for speech recognition on Thai using the train split of [Common Voice 7.0](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | ce650861605f5d193fb20c040aff3386 |

apache-2.0 | ['generated_from_trainer'] | false | tiny-mlm-glue-qqp-target-glue-qnli This model is a fine-tuned version of [muhtasham/tiny-mlm-glue-qqp](https://huggingface.co/muhtasham/tiny-mlm-glue-qqp) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.4653 - Accuracy: 0.7820 | 30c78d5263e1cd74aada2f5f8b8df4c5 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.6087 | 0.15 | 500 | 0.5449 | 0.7311 | | 0.5432 | 0.31 | 1000 | 0.5312 | 0.7390 | | 0.521 | 0.46 | 1500 | 0.4937 | 0.7648 | | 0.5144 | 0.61 | 2000 | 0.5254 | 0.7465 | | 0.5128 | 0.76 | 2500 | 0.4786 | 0.7769 | | 0.5037 | 0.92 | 3000 | 0.4670 | 0.7849 | | 0.4915 | 1.07 | 3500 | 0.4569 | 0.7899 | | 0.4804 | 1.22 | 4000 | 0.4689 | 0.7800 | | 0.4676 | 1.37 | 4500 | 0.4725 | 0.7769 | | 0.4738 | 1.53 | 5000 | 0.4653 | 0.7820 | | 0378ee206fb809d45b8d1bd0ab088918 |

mit | ['generated_from_keras_callback'] | false | sachinsahu/Heresy-clustered This model is a fine-tuned version of [nandysoham16/11-clustered_aug](https://huggingface.co/nandysoham16/11-clustered_aug) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.1596 - Train End Logits Accuracy: 0.9653 - Train Start Logits Accuracy: 0.9653 - Validation Loss: 0.4279 - Validation End Logits Accuracy: 0.6667 - Validation Start Logits Accuracy: 0.6667 - Epoch: 0 | ebcfd0225b1b7e7e8cc4812f56392ee6 |

mit | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch | |:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:| | 0.1596 | 0.9653 | 0.9653 | 0.4279 | 0.6667 | 0.6667 | 0 | | 82fbafde72c21f433f08ad7b0725a76a |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-burak-new-300-v2-2 This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 0.6158 - Wer: 0.3094 | 25818c9056959db5419c17cb21f80a94 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 32 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 241 | 797b8d55f577ddb061424015e7e3200f |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:------:|:-----:|:---------------:|:------:| | 5.5201 | 8.62 | 500 | 3.1581 | 1.0 | | 2.1532 | 17.24 | 1000 | 0.6883 | 0.5979 | | 0.5465 | 25.86 | 1500 | 0.5028 | 0.4432 | | 0.3287 | 34.48 | 2000 | 0.4986 | 0.4024 | | 0.2571 | 43.1 | 2500 | 0.4920 | 0.3824 | | 0.217 | 51.72 | 3000 | 0.5265 | 0.3724 | | 0.1848 | 60.34 | 3500 | 0.5539 | 0.3714 | | 0.1605 | 68.97 | 4000 | 0.5689 | 0.3670 | | 0.1413 | 77.59 | 4500 | 0.5962 | 0.3501 | | 0.1316 | 86.21 | 5000 | 0.5732 | 0.3494 | | 0.1168 | 94.83 | 5500 | 0.5912 | 0.3461 | | 0.1193 | 103.45 | 6000 | 0.5766 | 0.3378 | | 0.0996 | 112.07 | 6500 | 0.5818 | 0.3403 | | 0.0941 | 120.69 | 7000 | 0.5986 | 0.3315 | | 0.0912 | 129.31 | 7500 | 0.5802 | 0.3280 | | 0.0865 | 137.93 | 8000 | 0.5878 | 0.3290 | | 0.0804 | 146.55 | 8500 | 0.5784 | 0.3228 | | 0.0739 | 155.17 | 9000 | 0.5791 | 0.3180 | | 0.0718 | 163.79 | 9500 | 0.5864 | 0.3146 | | 0.0681 | 172.41 | 10000 | 0.6104 | 0.3178 | | 0.0688 | 181.03 | 10500 | 0.5983 | 0.3160 | | 0.0657 | 189.66 | 11000 | 0.6228 | 0.3203 | | 0.0598 | 198.28 | 11500 | 0.6057 | 0.3122 | | 0.0597 | 206.9 | 12000 | 0.6094 | 0.3129 | | 0.0551 | 215.52 | 12500 | 0.6114 | 0.3127 | | 0.0507 | 224.14 | 13000 | 0.6056 | 0.3094 | | 0.0554 | 232.76 | 13500 | 0.6158 | 0.3094 | | ea4e2513ef26c3d1f19dc7700b7b3b2d |

mit | ['setfit', 'endpoints-template', 'text-classification'] | false | SetFit AG News This is a [SetFit](https://github.com/huggingface/setfit/tree/main) classifier fine-tuned on the [AG News](https://huggingface.co/datasets/ag_news) dataset. The model was created following the [Outperform OpenAI GPT-3 with SetFit for text-classifiation](https://www.philschmid.de/getting-started-setfit) blog post of [Philipp Schmid](https://www.linkedin.com/in/philipp-schmid-a6a2bb196/). The model achieves an accuracy of 0.87 on the test set and was only trained with `32` total examples (8 per class). ```bash ***** Running evaluation ***** model used: sentence-transformers/all-mpnet-base-v2 train dataset: 32 samples accuracy: 0.8731578947368421 ``` | 221f1eef1eafef9002e91ed4220a1fe0 |

mit | ['setfit', 'endpoints-template', 'text-classification'] | false | What is SetFit? "SetFit" (https://arxiv.org/abs/2209.11055) is a new approach that can be used to create high accuracte text-classification models with limited labeled data. SetFit is outperforming GPT-3 in 7 out of 11 tasks, while being 1600x smaller. Check out the blog to learn more: [Outperform OpenAI GPT-3 with SetFit for text-classifiation](https://www.philschmid.de/getting-started-setfit) | bd480f56a6177e1b1128ead09f609d60 |

mit | ['setfit', 'endpoints-template', 'text-classification'] | false | Inference Endpoints The model repository also implements a generic custom `handler.py` as an example for how to use `SetFit` models with [inference-endpoints](https://hf.co/inference-endpoints). Code: https://huggingface.co/philschmid/setfit-ag-news-endpoint/blob/main/handler.py | 10727dd2dcfe553241a9204ad1192c0c |

mit | ['setfit', 'endpoints-template', 'text-classification'] | false | [ { "label": "World", "score": 0.12341519122860946 }, { "label": "Sports", "score": 0.11741269832494523 }, { "label": "Business", "score": 0.6124446065942992 }, { "label": "Sci/Tech", "score": 0.14672750385214603 } ] ``` **curl example** ```bash curl https://YOURDOMAIN.us-east-1.aws.endpoints.huggingface.cloud \ -X POST \ -d '{"inputs": "Coming to The Rescue Got a unique problem? Not to worry: you can find a financial planner for every specialized need"}' \ -H "Authorization: Bearer XXX" \ -H "Content-Type: application/json" ``` | 4d46665efe386c0a9d8b6348128b972a |

mit | ['generated_from_trainer'] | false | spelling-correction-english-base-finetuned-places This model is a fine-tuned version of [oliverguhr/spelling-correction-english-base](https://huggingface.co/oliverguhr/spelling-correction-english-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.1461 - Cer: 0.0143 | 06ab36c5872ed4bd0cfa0a81124c0506 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Cer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.1608 | 1.0 | 875 | 0.1461 | 0.0143 | | 0.13 | 2.0 | 1750 | 0.1461 | 0.0143 | | 52aa3ab6c24ffa53664ee8b2024e7e5f |

apache-2.0 | ['generated_from_keras_callback'] | false | darshanz/occupation-prediction This model is ViT base patch16. Which is pretrained on imagenet dataset, then trained on our custom dataset which is based on occupation prediction. This dataset contains facial images of Indian people which are labeled by occupation. This model predicts the occupation of a person from the facial image of a person. This model categorizes input facial images into 5 classes: Anchor, Athlete, Doctor, Professor, and Farmer. This model gives an accuracy of 84.43%. | 5762f3785c8984d1dee1c86b7b3248b6 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'inner_optimizer': {'class_name': 'AdamWeightDecay', 'config': {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 0.0001, 'decay_steps': 70, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.4}}, 'dynamic': True, 'initial_scale': 32768.0, 'dynamic_growth_steps': 2000} - training_precision: mixed_float16 | aa5e9195d740ed070227955af09feeb1 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train Accuracy | Train Top-3-accuracy | Validation Loss | Validation Accuracy | Validation Top-3-accuracy | Epoch | |:----------:|:--------------:|:--------------------:|:---------------:|:-------------------:|:-------------------------:|:-----:| | 1.0840 | 0.6156 | 0.8813 | 0.6843 | 0.75 | 0.9700 | 0 | | 0.4686 | 0.8406 | 0.9875 | 0.5345 | 0.8100 | 0.9867 | 1 | | 0.2600 | 0.9312 | 0.9953 | 0.4805 | 0.8333 | 0.9800 | 2 | | 0.1515 | 0.9609 | 0.9969 | 0.5071 | 0.8267 | 0.9733 | 3 | | 0.0746 | 0.9875 | 1.0 | 0.4853 | 0.8500 | 0.9833 | 4 | | 0.0468 | 0.9953 | 1.0 | 0.5006 | 0.8433 | 0.9733 | 5 | | 0.0378 | 0.9953 | 1.0 | 0.4967 | 0.8433 | 0.9800 | 6 | | ae9aa9b870703c42695abe3928ea4759 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-clinc This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the clinc_oos dataset. It achieves the following results on the evaluation set: - Loss: 0.7755 - Accuracy: 0.9171 | 6fbf1fc848ebcde7fa1d96d1f3c3be9a |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 4.2892 | 1.0 | 318 | 3.2834 | 0.7394 | | 2.6289 | 2.0 | 636 | 1.8732 | 0.8348 | | 1.5479 | 3.0 | 954 | 1.1580 | 0.8903 | | 1.0135 | 4.0 | 1272 | 0.8585 | 0.9077 | | 0.7968 | 5.0 | 1590 | 0.7755 | 0.9171 | | 6cce01a8585327811d6a7975953a599a |

apache-2.0 | ['translation'] | false | urj-urj * source group: Uralic languages * target group: Uralic languages * OPUS readme: [urj-urj](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/urj-urj/README.md) * model: transformer * source language(s): est fin fkv_Latn hun izh krl liv_Latn vep vro * target language(s): est fin fkv_Latn hun izh krl liv_Latn vep vro * model: transformer * pre-processing: normalization + SentencePiece (spm32k,spm32k) * a sentence initial language token is required in the form of `>>id<<` (id = valid target language ID) * download original weights: [opus-2020-07-27.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/urj-urj/opus-2020-07-27.zip) * test set translations: [opus-2020-07-27.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/urj-urj/opus-2020-07-27.test.txt) * test set scores: [opus-2020-07-27.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/urj-urj/opus-2020-07-27.eval.txt) | a6984eabbe2a23848c666c88376cd59a |

apache-2.0 | ['translation'] | false | Benchmarks | testset | BLEU | chr-F | |-----------------------|-------|-------| | Tatoeba-test.est-est.est.est | 5.1 | 0.288 | | Tatoeba-test.est-fin.est.fin | 50.9 | 0.709 | | Tatoeba-test.est-fkv.est.fkv | 0.7 | 0.215 | | Tatoeba-test.est-vep.est.vep | 1.0 | 0.154 | | Tatoeba-test.fin-est.fin.est | 55.5 | 0.718 | | Tatoeba-test.fin-fkv.fin.fkv | 1.8 | 0.254 | | Tatoeba-test.fin-hun.fin.hun | 45.0 | 0.672 | | Tatoeba-test.fin-izh.fin.izh | 7.1 | 0.492 | | Tatoeba-test.fin-krl.fin.krl | 2.6 | 0.278 | | Tatoeba-test.fkv-est.fkv.est | 0.6 | 0.099 | | Tatoeba-test.fkv-fin.fkv.fin | 15.5 | 0.444 | | Tatoeba-test.fkv-liv.fkv.liv | 0.6 | 0.101 | | Tatoeba-test.fkv-vep.fkv.vep | 0.6 | 0.113 | | Tatoeba-test.hun-fin.hun.fin | 46.3 | 0.675 | | Tatoeba-test.izh-fin.izh.fin | 13.4 | 0.431 | | Tatoeba-test.izh-krl.izh.krl | 2.9 | 0.078 | | Tatoeba-test.krl-fin.krl.fin | 14.1 | 0.439 | | Tatoeba-test.krl-izh.krl.izh | 1.0 | 0.125 | | Tatoeba-test.liv-fkv.liv.fkv | 0.9 | 0.170 | | Tatoeba-test.liv-vep.liv.vep | 2.6 | 0.176 | | Tatoeba-test.multi.multi | 32.9 | 0.580 | | Tatoeba-test.vep-est.vep.est | 3.4 | 0.265 | | Tatoeba-test.vep-fkv.vep.fkv | 0.9 | 0.239 | | Tatoeba-test.vep-liv.vep.liv | 2.6 | 0.190 | | 7cae8603d1912d83ad749d23ddfbf559 |

apache-2.0 | ['translation'] | false | System Info: - hf_name: urj-urj - source_languages: urj - target_languages: urj - opus_readme_url: https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/urj-urj/README.md - original_repo: Tatoeba-Challenge - tags: ['translation'] - languages: ['se', 'fi', 'hu', 'et', 'urj'] - src_constituents: {'izh', 'mdf', 'vep', 'vro', 'sme', 'myv', 'fkv_Latn', 'krl', 'fin', 'hun', 'kpv', 'udm', 'liv_Latn', 'est', 'mhr', 'sma'} - tgt_constituents: {'izh', 'mdf', 'vep', 'vro', 'sme', 'myv', 'fkv_Latn', 'krl', 'fin', 'hun', 'kpv', 'udm', 'liv_Latn', 'est', 'mhr', 'sma'} - src_multilingual: True - tgt_multilingual: True - prepro: normalization + SentencePiece (spm32k,spm32k) - url_model: https://object.pouta.csc.fi/Tatoeba-MT-models/urj-urj/opus-2020-07-27.zip - url_test_set: https://object.pouta.csc.fi/Tatoeba-MT-models/urj-urj/opus-2020-07-27.test.txt - src_alpha3: urj - tgt_alpha3: urj - short_pair: urj-urj - chrF2_score: 0.58 - bleu: 32.9 - brevity_penalty: 1.0 - ref_len: 19444.0 - src_name: Uralic languages - tgt_name: Uralic languages - train_date: 2020-07-27 - src_alpha2: urj - tgt_alpha2: urj - prefer_old: False - long_pair: urj-urj - helsinki_git_sha: 480fcbe0ee1bf4774bcbe6226ad9f58e63f6c535 - transformers_git_sha: 2207e5d8cb224e954a7cba69fa4ac2309e9ff30b - port_machine: brutasse - port_time: 2020-08-21-14:41 | 5b0678fcb7a62e73b3103ff1f273d45e |

apache-2.0 | ['translation'] | false | kor-fin * source group: Korean * target group: Finnish * OPUS readme: [kor-fin](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/kor-fin/README.md) * model: transformer-align * source language(s): kor kor_Hang kor_Latn * target language(s): fin * model: transformer-align * pre-processing: normalization + SentencePiece (spm32k,spm32k) * download original weights: [opus-2020-06-17.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/kor-fin/opus-2020-06-17.zip) * test set translations: [opus-2020-06-17.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/kor-fin/opus-2020-06-17.test.txt) * test set scores: [opus-2020-06-17.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/kor-fin/opus-2020-06-17.eval.txt) | d9d4b5186844c3f88dd7b03772fe1c38 |

apache-2.0 | ['translation'] | false | System Info: - hf_name: kor-fin - source_languages: kor - target_languages: fin - opus_readme_url: https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/kor-fin/README.md - original_repo: Tatoeba-Challenge - tags: ['translation'] - languages: ['ko', 'fi'] - src_constituents: {'kor_Hani', 'kor_Hang', 'kor_Latn', 'kor'} - tgt_constituents: {'fin'} - src_multilingual: False - tgt_multilingual: False - prepro: normalization + SentencePiece (spm32k,spm32k) - url_model: https://object.pouta.csc.fi/Tatoeba-MT-models/kor-fin/opus-2020-06-17.zip - url_test_set: https://object.pouta.csc.fi/Tatoeba-MT-models/kor-fin/opus-2020-06-17.test.txt - src_alpha3: kor - tgt_alpha3: fin - short_pair: ko-fi - chrF2_score: 0.502 - bleu: 26.6 - brevity_penalty: 0.892 - ref_len: 2251.0 - src_name: Korean - tgt_name: Finnish - train_date: 2020-06-17 - src_alpha2: ko - tgt_alpha2: fi - prefer_old: False - long_pair: kor-fin - helsinki_git_sha: 480fcbe0ee1bf4774bcbe6226ad9f58e63f6c535 - transformers_git_sha: 2207e5d8cb224e954a7cba69fa4ac2309e9ff30b - port_machine: brutasse - port_time: 2020-08-21-14:41 | 1566d2052d1b75d3462c77f7da608661 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-xls-r-300m-arabic_speech_commands_10s_one_speaker_5_classes_unknown This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.7951 - Accuracy: 0.8278 | a800d6ff8a252192155b08ed370a51a4 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - num_epochs: 80 | ad954db0ea9686f44e108888da7bdd9e |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 0.8 | 3 | 1.7933 | 0.2306 | | No log | 1.8 | 6 | 1.7927 | 0.1667 | | No log | 2.8 | 9 | 1.7908 | 0.1667 | | 2.1874 | 3.8 | 12 | 1.7836 | 0.225 | | 2.1874 | 4.8 | 15 | 1.7728 | 0.3083 | | 2.1874 | 5.8 | 18 | 1.6857 | 0.3889 | | 2.0742 | 6.8 | 21 | 1.4554 | 0.7333 | | 2.0742 | 7.8 | 24 | 1.2621 | 0.6861 | | 2.0742 | 8.8 | 27 | 1.0360 | 0.7528 | | 1.5429 | 9.8 | 30 | 1.0220 | 0.6472 | | 1.5429 | 10.8 | 33 | 0.7951 | 0.8278 | | 1.5429 | 11.8 | 36 | 0.7954 | 0.8111 | | 1.5429 | 12.8 | 39 | 0.6698 | 0.8167 | | 0.927 | 13.8 | 42 | 0.8400 | 0.6694 | | 0.927 | 14.8 | 45 | 0.7026 | 0.7194 | | 0.927 | 15.8 | 48 | 0.7232 | 0.6944 | | 0.429 | 16.8 | 51 | 0.6640 | 0.7333 | | 0.429 | 17.8 | 54 | 1.1750 | 0.6 | | 0.429 | 18.8 | 57 | 0.9270 | 0.6722 | | 0.2583 | 19.8 | 60 | 1.4541 | 0.5417 | | 0.2583 | 20.8 | 63 | 1.8917 | 0.4472 | | 0.2583 | 21.8 | 66 | 1.3213 | 0.6472 | | 0.2583 | 22.8 | 69 | 1.3114 | 0.7 | | 0.1754 | 23.8 | 72 | 0.8079 | 0.7389 | | 0.1754 | 24.8 | 75 | 1.6070 | 0.4861 | | 0.1754 | 25.8 | 78 | 1.6949 | 0.5083 | | 0.1348 | 26.8 | 81 | 1.4364 | 0.6472 | | 0.1348 | 27.8 | 84 | 0.9045 | 0.7889 | | 0.1348 | 28.8 | 87 | 1.1878 | 0.7111 | | 0.0634 | 29.8 | 90 | 0.9678 | 0.7667 | | 0.0634 | 30.8 | 93 | 0.9572 | 0.7889 | | 0.0634 | 31.8 | 96 | 0.8931 | 0.8139 | | 0.0634 | 32.8 | 99 | 1.4805 | 0.6583 | | 0.1267 | 33.8 | 102 | 2.6092 | 0.4778 | | 0.1267 | 34.8 | 105 | 2.2933 | 0.5306 | | 0.1267 | 35.8 | 108 | 1.9648 | 0.6083 | | 0.0261 | 36.8 | 111 | 1.8385 | 0.65 | | 0.0261 | 37.8 | 114 | 2.0328 | 0.6028 | | 0.0261 | 38.8 | 117 | 2.3722 | 0.55 | | 0.041 | 39.8 | 120 | 2.7606 | 0.4917 | | 0.041 | 40.8 | 123 | 2.5793 | 0.5056 | | 0.041 | 41.8 | 126 | 2.0967 | 0.5917 | | 0.041 | 42.8 | 129 | 1.7498 | 0.6611 | | 0.1004 | 43.8 | 132 | 1.6564 | 0.6722 | | 0.1004 | 44.8 | 135 | 1.7533 | 0.6583 | | 0.1004 | 45.8 | 138 | 2.3335 | 0.5806 | | 0.0688 | 46.8 | 141 | 2.9578 | 0.4778 | | 0.0688 | 47.8 | 144 | 3.2396 | 0.4472 | | 0.0688 | 48.8 | 147 | 3.2100 | 0.4528 | | 0.0082 | 49.8 | 150 | 3.2018 | 0.4472 | | 0.0082 | 50.8 | 153 | 3.1985 | 0.45 | | 0.0082 | 51.8 | 156 | 2.6950 | 0.525 | | 0.0082 | 52.8 | 159 | 2.2335 | 0.6056 | | 0.0159 | 53.8 | 162 | 2.0467 | 0.6306 | | 0.0159 | 54.8 | 165 | 1.8858 | 0.6583 | | 0.0159 | 55.8 | 168 | 1.8239 | 0.6694 | | 0.0083 | 56.8 | 171 | 1.7927 | 0.675 | | 0.0083 | 57.8 | 174 | 1.7636 | 0.6861 | | 0.0083 | 58.8 | 177 | 1.7792 | 0.675 | | 0.0645 | 59.8 | 180 | 1.9165 | 0.6611 | | 0.0645 | 60.8 | 183 | 2.0780 | 0.6361 | | 0.0645 | 61.8 | 186 | 2.2058 | 0.6028 | | 0.0645 | 62.8 | 189 | 2.3011 | 0.5944 | | 0.01 | 63.8 | 192 | 2.4047 | 0.5722 | | 0.01 | 64.8 | 195 | 2.4870 | 0.5639 | | 0.01 | 65.8 | 198 | 2.5513 | 0.5417 | | 0.008 | 66.8 | 201 | 2.5512 | 0.5333 | | 0.008 | 67.8 | 204 | 2.3419 | 0.5778 | | 0.008 | 68.8 | 207 | 2.2424 | 0.5944 | | 0.0404 | 69.8 | 210 | 2.2009 | 0.6167 | | 0.0404 | 70.8 | 213 | 2.1788 | 0.6278 | | 0.0404 | 71.8 | 216 | 2.1633 | 0.6306 | | 0.0404 | 72.8 | 219 | 2.1525 | 0.6306 | | 0.0106 | 73.8 | 222 | 2.1435 | 0.6389 | | 0.0106 | 74.8 | 225 | 2.1391 | 0.6389 | | 0.0106 | 75.8 | 228 | 2.1327 | 0.6472 | | 0.0076 | 76.8 | 231 | 2.1287 | 0.6444 | | 0.0076 | 77.8 | 234 | 2.1267 | 0.65 | | 0.0076 | 78.8 | 237 | 2.1195 | 0.6472 | | 0.0097 | 79.8 | 240 | 2.1182 | 0.6417 | | f9771e784e18f5ba6a47492a467b527a |

apache-2.0 | ['deep-reinforcement-learning', 'reinforcement-learning'] | false | stable-baselines3-ppo-LunarLander-v2 🚀👩🚀 This is a saved model of a PPO agent playing [LunarLander-v2](https://gym.openai.com/envs/LunarLander-v2/). The model is taken from [rl-baselines3-zoo](https://github.com/DLR-RM/rl-trained-agents) The goal is to correctly land the lander by controlling firing engines (fire left orientation engine, fire main engine and fire right orientation engine). <iframe width="560" height="315" src="https://www.youtube.com/embed/kE-Fvht81I0" title="YouTube video player" frameborder="0" allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture" allowfullscreen></iframe> 👉 You can watch the agent playing by using this [notebook](https://colab.research.google.com/drive/19OonMRkMyCH6Dg0ECFQi7evxMRqkW3U0?usp=sharing) | cc6525e97779d05561e64621bfc747b0 |

apache-2.0 | ['deep-reinforcement-learning', 'reinforcement-learning'] | false | Install the dependencies You need to use the [Stable Baselines 3 Hugging Face version](https://github.com/simoninithomas/stable-baselines3) of the library (this version contains the function to load saved models directly from the Hugging Face Hub): ``` pip install git+https://github.com/simoninithomas/stable-baselines3.git ``` | c5fe79cb3d0f6aea9a1fc06781b0fc8a |

apache-2.0 | ['deep-reinforcement-learning', 'reinforcement-learning'] | false | Evaluate the agent ⚠️You need to have Linux or MacOS to be able to use this environment. If it's not the case you can use the [colab notebook](https://colab.research.google.com/drive/19OonMRkMyCH6Dg0ECFQi7evxMRqkW3U0 | 122661dc0cca350bb327f68fa31b8a8f |

apache-2.0 | ['deep-reinforcement-learning', 'reinforcement-learning'] | false | Evaluate the agent eval_env = gym.make('LunarLander-v2') mean_reward, std_reward = evaluate_policy(model, eval_env, n_eval_episodes=10, deterministic=True) print(f"mean_reward={mean_reward:.2f} +/- {std_reward}") | 11173cfe49e2d7a7238a3f18e959160b |

apache-2.0 | ['deep-reinforcement-learning', 'reinforcement-learning'] | false | Watch the agent play obs = env.reset() for i in range(1000): action, _state = model.predict(obs) obs, reward, done, info = env.step(action) env.render() if done: obs = env.reset() ``` | e325ecd5586648006391bb5d8f482404 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'hf-asr-leaderboard'] | false | Evaluation The model can be evaluated as follows on the German test data of Common Voice. ```python import torch from transformers import AutoModelForCTC, AutoProcessor from unidecode import unidecode import re from datasets import load_dataset, load_metric import datasets counter = 0 wer_counter = 0 cer_counter = 0 device = "cuda" if torch.cuda.is_available() else "cpu" special_chars = [["Ä"," AE "], ["Ö"," OE "], ["Ü"," UE "], ["ä"," ae "], ["ö"," oe "], ["ü"," ue "]] def clean_text(sentence): for special in special_chars: sentence = sentence.replace(special[0], special[1]) sentence = unidecode(sentence) for special in special_chars: sentence = sentence.replace(special[1], special[0]) sentence = re.sub("[^a-zA-Z0-9öäüÖÄÜ ,.!?]", " ", sentence) return sentence def main(model_id): print("load model") model = AutoModelForCTC.from_pretrained(model_id).to(device) print("load processor") processor = AutoProcessor.from_pretrained(processor_id) print("load metrics") wer = load_metric("wer") cer = load_metric("cer") ds = load_dataset("mozilla-foundation/common_voice_8_0","de") ds = ds["test"] ds = ds.cast_column( "audio", datasets.features.Audio(sampling_rate=16_000) ) def calculate_metrics(batch): global counter, wer_counter, cer_counter resampled_audio = batch["audio"]["array"] input_values = processor(resampled_audio, return_tensors="pt", sampling_rate=16_000).input_values with torch.no_grad(): logits = model(input_values.to(device)).logits.cpu().numpy()[0] decoded = processor.decode(logits) pred = decoded.text.lower() ref = clean_text(batch["sentence"]).lower() wer_result = wer.compute(predictions=[pred], references=[ref]) cer_result = cer.compute(predictions=[pred], references=[ref]) counter += 1 wer_counter += wer_result cer_counter += cer_result if counter % 100 == True: print(f"WER: {(wer_counter/counter)*100} | CER: {(cer_counter/counter)*100}") return batch ds.map(calculate_metrics, remove_columns=ds.column_names) print(f"WER: {(wer_counter/counter)*100} | CER: {(cer_counter/counter)*100}") model_id = "flozi00/wav2vec2-xls-r-1b-5gram-german" main(model_id) ``` | 110c4095d8d757051c3787b85b4454a4 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-squad_2_512_1 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the squad_v2 dataset. It achieves the following results on the evaluation set: - Loss: 1.3225 | 18a73549cce4be6b302618efd8325b98 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 1.2681 | 1.0 | 4079 | 1.2434 | | 1.0223 | 2.0 | 8158 | 1.3153 | | 0.865 | 3.0 | 12237 | 1.3225 | | e164a2625c5f11c43e1750004b055840 |

mit | [] | false | Languages Our Historic Language Models Zoo contains support for the following languages - incl. their training data source: | Language | Training data | Size | -------- | ------------- | ---- | German | [Europeana](http://www.europeana-newspapers.eu/) | 13-28GB (filtered) | French | [Europeana](http://www.europeana-newspapers.eu/) | 11-31GB (filtered) | English | [British Library](https://data.bl.uk/digbks/db14.html) | 24GB (year filtered) | Finnish | [Europeana](http://www.europeana-newspapers.eu/) | 1.2GB | Swedish | [Europeana](http://www.europeana-newspapers.eu/) | 1.1GB | 12adf4c123165b1a5871c5204296b9a8 |

mit | [] | false | Models At the moment, the following models are available on the model hub: | Model identifier | Model Hub link | --------------------------------------------- | -------------------------------------------------------------------------- | `dbmdz/bert-base-historic-multilingual-cased` | [here](https://huggingface.co/dbmdz/bert-base-historic-multilingual-cased) | `dbmdz/bert-base-historic-english-cased` | [here](https://huggingface.co/dbmdz/bert-base-historic-english-cased) | `dbmdz/bert-base-finnish-europeana-cased` | [here](https://huggingface.co/dbmdz/bert-base-finnish-europeana-cased) | `dbmdz/bert-base-swedish-europeana-cased` | [here](https://huggingface.co/dbmdz/bert-base-swedish-europeana-cased) | 34742937eb8ef03a2cb7b9f79b4eceeb |

mit | [] | false | German Europeana Corpus We provide some statistics using different thresholds of ocr confidences, in order to shrink down the corpus size and use less-noisier data: | OCR confidence | Size | -------------- | ---- | **0.60** | 28GB | 0.65 | 18GB | 0.70 | 13GB For the final corpus we use a OCR confidence of 0.6 (28GB). The following plot shows a tokens per year distribution:  | 36e59fd281cc3d546baaa253e1f39033 |

mit | [] | false | French Europeana Corpus Like German, we use different ocr confidence thresholds: | OCR confidence | Size | -------------- | ---- | 0.60 | 31GB | 0.65 | 27GB | **0.70** | 27GB | 0.75 | 23GB | 0.80 | 11GB For the final corpus we use a OCR confidence of 0.7 (27GB). The following plot shows a tokens per year distribution:  | 2b8f014a7bfe75c966df20fab029baee |

mit | [] | false | British Library Corpus Metadata is taken from [here](https://data.bl.uk/digbks/DB21.html). Stats incl. year filtering: | Years | Size | ----------------- | ---- | ALL | 24GB | >= 1800 && < 1900 | 24GB We use the year filtered variant. The following plot shows a tokens per year distribution:  | ad6362619d66979fe8d4a2112bc706a9 |

mit | [] | false | Finnish Europeana Corpus | OCR confidence | Size | -------------- | ---- | 0.60 | 1.2GB The following plot shows a tokens per year distribution:  | ae0fee6d194971ca295d1116a6c1c8d6 |

mit | [] | false | Swedish Europeana Corpus | OCR confidence | Size | -------------- | ---- | 0.60 | 1.1GB The following plot shows a tokens per year distribution:  | 1ac908e4bd272be1868a650969ad8562 |

mit | [] | false | Multilingual Vocab generation For the first attempt, we use the first 10GB of each pretraining corpus. We upsample both Finnish and Swedish to ~10GB. The following tables shows the exact size that is used for generating a 32k and 64k subword vocabs: | Language | Size | -------- | ---- | German | 10GB | French | 10GB | English | 10GB | Finnish | 9.5GB | Swedish | 9.7GB We then calculate the subword fertility rate and portion of `[UNK]`s over the following NER corpora: | Language | NER corpora | -------- | ------------------ | German | CLEF-HIPE, NewsEye | French | CLEF-HIPE, NewsEye | English | CLEF-HIPE | Finnish | NewsEye | Swedish | NewsEye Breakdown of subword fertility rate and unknown portion per language for the 32k vocab: | Language | Subword fertility | Unknown portion | -------- | ------------------ | --------------- | German | 1.43 | 0.0004 | French | 1.25 | 0.0001 | English | 1.25 | 0.0 | Finnish | 1.69 | 0.0007 | Swedish | 1.43 | 0.0 Breakdown of subword fertility rate and unknown portion per language for the 64k vocab: | Language | Subword fertility | Unknown portion | -------- | ------------------ | --------------- | German | 1.31 | 0.0004 | French | 1.16 | 0.0001 | English | 1.17 | 0.0 | Finnish | 1.54 | 0.0007 | Swedish | 1.32 | 0.0 | 771ed46e6c8173b6ed6c7df86a0cffca |

mit | [] | false | Final pretraining corpora We upsample Swedish and Finnish to ~27GB. The final stats for all pretraining corpora can be seen here: | Language | Size | -------- | ---- | German | 28GB | French | 27GB | English | 24GB | Finnish | 27GB | Swedish | 27GB Total size is 130GB. | e0a9da635efa1c450ae07c590f08d356 |

mit | [] | false | Multilingual model We train a multilingual BERT model using the 32k vocab with the official BERT implementation on a v3-32 TPU using the following parameters: ```bash python3 run_pretraining.py --input_file gs://histolectra/historic-multilingual-tfrecords/*.tfrecord \ --output_dir gs://histolectra/bert-base-historic-multilingual-cased \ --bert_config_file ./config.json \ --max_seq_length=512 \ --max_predictions_per_seq=75 \ --do_train=True \ --train_batch_size=128 \ --num_train_steps=3000000 \ --learning_rate=1e-4 \ --save_checkpoints_steps=100000 \ --keep_checkpoint_max=20 \ --use_tpu=True \ --tpu_name=electra-2 \ --num_tpu_cores=32 ``` The following plot shows the pretraining loss curve:  | bf730e4b48599c9df7ceb163fdc2ff9c |

mit | [] | false | English model The English BERT model - with texts from British Library corpus - was trained with the Hugging Face JAX/FLAX implementation for 10 epochs (approx. 1M steps) on a v3-8 TPU, using the following command: ```bash python3 run_mlm_flax.py --model_type bert \ --config_name /mnt/datasets/bert-base-historic-english-cased/ \ --tokenizer_name /mnt/datasets/bert-base-historic-english-cased/ \ --train_file /mnt/datasets/bl-corpus/bl_1800-1900_extracted.txt \ --validation_file /mnt/datasets/bl-corpus/english_validation.txt \ --max_seq_length 512 \ --per_device_train_batch_size 16 \ --learning_rate 1e-4 \ --num_train_epochs 10 \ --preprocessing_num_workers 96 \ --output_dir /mnt/datasets/bert-base-historic-english-cased-512-noadafactor-10e \ --save_steps 2500 \ --eval_steps 2500 \ --warmup_steps 10000 \ --line_by_line \ --pad_to_max_length ``` The following plot shows the pretraining loss curve:  | f9b50abbd6fd2001fd38dead060254e3 |

mit | [] | false | Finnish model The BERT model - with texts from Finnish part of Europeana - was trained with the Hugging Face JAX/FLAX implementation for 40 epochs (approx. 1M steps) on a v3-8 TPU, using the following command: ```bash python3 run_mlm_flax.py --model_type bert \ --config_name /mnt/datasets/bert-base-finnish-europeana-cased/ \ --tokenizer_name /mnt/datasets/bert-base-finnish-europeana-cased/ \ --train_file /mnt/datasets/hlms/extracted_content_Finnish_0.6.txt \ --validation_file /mnt/datasets/hlms/finnish_validation.txt \ --max_seq_length 512 \ --per_device_train_batch_size 16 \ --learning_rate 1e-4 \ --num_train_epochs 40 \ --preprocessing_num_workers 96 \ --output_dir /mnt/datasets/bert-base-finnish-europeana-cased-512-dupe1-noadafactor-40e \ --save_steps 2500 \ --eval_steps 2500 \ --warmup_steps 10000 \ --line_by_line \ --pad_to_max_length ``` The following plot shows the pretraining loss curve:  | 9a1cd9adae7b64314df694cc6fb5d2ab |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.