license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

mit | [] | false | Swedish model The BERT model - with texts from Swedish part of Europeana - was trained with the Hugging Face JAX/FLAX implementation for 40 epochs (approx. 660K steps) on a v3-8 TPU, using the following command: ```bash python3 run_mlm_flax.py --model_type bert \ --config_name /mnt/datasets/bert-base-swedish-europeana-cased/ \ --tokenizer_name /mnt/datasets/bert-base-swedish-europeana-cased/ \ --train_file /mnt/datasets/hlms/extracted_content_Swedish_0.6.txt \ --validation_file /mnt/datasets/hlms/swedish_validation.txt \ --max_seq_length 512 \ --per_device_train_batch_size 16 \ --learning_rate 1e-4 \ --num_train_epochs 40 \ --preprocessing_num_workers 96 \ --output_dir /mnt/datasets/bert-base-swedish-europeana-cased-512-dupe1-noadafactor-40e \ --save_steps 2500 \ --eval_steps 2500 \ --warmup_steps 10000 \ --line_by_line \ --pad_to_max_length ``` The following plot shows the pretraining loss curve:  | 4e63e70aa0f6dc7f3606f118c9c7891d |

mit | [] | false | Acknowledgments Research supported with Cloud TPUs from Google's TPU Research Cloud (TRC) program, previously known as TensorFlow Research Cloud (TFRC). Many thanks for providing access to the TRC ❤️ Thanks to the generous support from the [Hugging Face](https://huggingface.co/) team, it is possible to download both cased and uncased models from their S3 storage 🤗 | 225016d52bcce9f374638753d580d040 |

bsd-3-clause | [] | false | Model description CodeGen is a family of autoregressive language models for **program synthesis** from the paper: [A Conversational Paradigm for Program Synthesis](https://arxiv.org/abs/2203.13474) by Erik Nijkamp, Bo Pang, Hiroaki Hayashi, Lifu Tu, Huan Wang, Yingbo Zhou, Silvio Savarese, Caiming Xiong. The models are originally released in [this repository](https://github.com/salesforce/CodeGen), under 3 pre-training data variants (`NL`, `Multi`, `Mono`) and 4 model size variants (`350M`, `2B`, `6B`, `16B`). The checkpoint included in this repository is denoted as **CodeGen-Mono 16B** in the paper, where "Mono" means the model is initialized with *CodeGen-Multi 16B* and further pre-trained on a Python programming language dataset, and "16B" refers to the number of trainable parameters. | 82b681b71a76ec0263927de5861f1d31 |

bsd-3-clause | [] | false | Training data This checkpoint (CodeGen-Mono 16B) was firstly initialized with *CodeGen-Multi 16B*, and then pre-trained on BigPython dataset. The data consists of 71.7B tokens of Python programming language. See Section 2.1 of the [paper](https://arxiv.org/abs/2203.13474) for more details. | 699dd1e54a188b5af2f947929991ee43 |

bsd-3-clause | [] | false | How to use This model can be easily loaded using the `AutoModelForCausalLM` functionality: ```python from transformers import AutoTokenizer, AutoModelForCausalLM tokenizer = AutoTokenizer.from_pretrained("Salesforce/codegen-16B-mono") model = AutoModelForCausalLM.from_pretrained("Salesforce/codegen-16B-mono") text = "def hello_world():" input_ids = tokenizer(text, return_tensors="pt").input_ids generated_ids = model.generate(input_ids, max_length=128) print(tokenizer.decode(generated_ids[0], skip_special_tokens=True)) ``` | 27c21043c628f635fa6a180f43f99171 |

apache-2.0 | [] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 4 - eval_batch_size: 4 - gradient_accumulation_steps: 1 - optimizer: AdamW with betas=(None, None), weight_decay=None and epsilon=None - lr_scheduler: None - lr_warmup_steps: 500 - ema_inv_gamma: None - ema_inv_gamma: None - ema_inv_gamma: None - mixed_precision: fp16 | 9f88190901c57c6e51b1d135309b275c |

mit | [] | false | The model generated in the Enrich4All project.<br> Evaluated the perplexity of MLM Task fine-tuned for construction permits related corpus.<br> Baseline model: https://huggingface.co/racai/distilbert-base-romanian-cased <br> Scripts and corpus used for training: https://github.com/racai-ai/e4all-models Corpus --------------- The construction authorization corpus is meant to ease the task of interested people to get informed on the legal framework related to activities like building, repairing, extending, and modifying their living environment, or setup of economic activities like establishing commercial or industrial centers. It is aimed as well to ease and reduce the activity of official representatives of regional administrative centers. The corpus is built to comply with the Romanian legislation in this domain and is structured in sets of labeled questions with a single answer each, covering various categories of issues: * Construction activities and operations, including industrial structures, which require or do not require authorization, * The necessary steps and documents to be acquired according to the Romanian regulations, * validity terms, * involved costs. The data is acquired from two main sources: * Internet: official sites, frequently asked questions * Personal experiences of people: building permanent or provisory structures, replacing roofs, fences, installing photovoltaic panels, etc. <br><br> The construction permits corpus contains 500,351 words in 110 UTF-8 encoded files. Results ----------------- | MLM Task | Perplexity | | --------------------------------- | ------------- | | Baseline | 62.79 | | Construction Permits Fine-tuning | 7.13 | | 96c84a2680e31aeae307ca8d1659db4e |

mit | [] | false | MagicPrompt - Stable Diffusion This is a model from the MagicPrompt series of models, which are [GPT-2](https://huggingface.co/gpt2) models intended to generate prompt texts for imaging AIs, in this case: [Stable Diffusion](https://huggingface.co/CompVis/stable-diffusion). | 63e413f098cf24041648b0d34950f670 |

mit | [] | false | 🖼️ Here's an example: <img src="https://files.catbox.moe/ac3jq7.png"> This model was trained with 150,000 steps and a set of about 80,000 data filtered and extracted from the image finder for Stable Diffusion: "[Lexica.art](https://lexica.art/)". It was a little difficult to extract the data, since the search engine still doesn't have a public API without being protected by cloudflare, but if you want to take a look at the original dataset, you can have a look here: [datasets/Gustavosta/Stable-Diffusion-Prompts](https://huggingface.co/datasets/Gustavosta/Stable-Diffusion-Prompts). If you want to test the model with a demo, you can go to: "[spaces/Gustavosta/MagicPrompt-Stable-Diffusion](https://huggingface.co/spaces/Gustavosta/MagicPrompt-Stable-Diffusion)". | bffba38757a0f90b900af7afb0d2f52f |

mit | [] | false | 💻 You can see other MagicPrompt models: - For Dall-E 2: [Gustavosta/MagicPrompt-Dalle](https://huggingface.co/Gustavosta/MagicPrompt-Dalle) - For Midjourney: [Gustavosta/MagicPrompt-Midourney](https://huggingface.co/Gustavosta/MagicPrompt-Midjourney) **[⚠️ In progress]** - MagicPrompt full: [Gustavosta/MagicPrompt](https://huggingface.co/Gustavosta/MagicPrompt) **[⚠️ In progress]** | 4a5e5f4fe2010fcf28fecd3f6ed5f2e4 |

apache-2.0 | ['generated_from_trainer'] | false | mobilebert_add_GLUE_Experiment_logit_kd_sst2_256 This model is a fine-tuned version of [google/mobilebert-uncased](https://huggingface.co/google/mobilebert-uncased) on the GLUE SST2 dataset. It achieves the following results on the evaluation set: - Loss: 1.2641 - Accuracy: 0.7076 | 30f4163f04949576e46914d985a5f927 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 1.5438 | 1.0 | 527 | 1.4012 | 0.5814 | | 1.364 | 2.0 | 1054 | 1.5474 | 0.5413 | | 1.2907 | 3.0 | 1581 | 1.5138 | 0.5642 | | 1.257 | 4.0 | 2108 | 1.4409 | 0.5665 | | 1.2417 | 5.0 | 2635 | 1.4473 | 0.5929 | | 1.2056 | 6.0 | 3162 | 1.2641 | 0.7076 | | 0.6274 | 7.0 | 3689 | nan | 0.4908 | | 0.0 | 8.0 | 4216 | nan | 0.4908 | | 0.0 | 9.0 | 4743 | nan | 0.4908 | | 0.0 | 10.0 | 5270 | nan | 0.4908 | | 0.0 | 11.0 | 5797 | nan | 0.4908 | | 048d0295baeede0a878500acbbf3ae96 |

apache-2.0 | ['generated_from_trainer'] | false | small-mlm-glue-stsb This model is a fine-tuned version of [google/bert_uncased_L-4_H-512_A-8](https://huggingface.co/google/bert_uncased_L-4_H-512_A-8) on the None dataset. It achieves the following results on the evaluation set: - Loss: 2.7187 | 391fefa2723bfc2e5ef553602f43b4f4 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 3.2666 | 0.7 | 500 | 2.7896 | | 3.0117 | 1.39 | 1000 | 2.8245 | | 2.9461 | 2.09 | 1500 | 2.7108 | | 2.7341 | 2.78 | 2000 | 2.6721 | | 2.7235 | 3.48 | 2500 | 2.6946 | | 2.6687 | 4.17 | 3000 | 2.7103 | | 2.5373 | 4.87 | 3500 | 2.7187 | | 204777a1a6577c47cebf109b821e6bbb |

apache-2.0 | ['automatic-speech-recognition', 'en'] | false | exp_w2v2r_en_xls-r_gender_male-5_female-5_s544 Fine-tuned [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) for speech recognition using the train split of [Common Voice 7.0 (en)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | bb176f89c3d1007fb20e5e1398396bee |

mit | [] | false | Dreamcore on Stable Diffusion This is the `<dreamcore>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:     | e419e820e4de7df0178a58c792cc1bdb |

mit | ['sundanese-roberta-base'] | false | Sundanese RoBERTa Base Sundanese RoBERTa Base is a masked language model based on the [RoBERTa](https://arxiv.org/abs/1907.11692) model. It was trained on four datasets: [OSCAR](https://hf.co/datasets/oscar)'s `unshuffled_deduplicated_su` subset, the Sundanese [mC4](https://hf.co/datasets/mc4) subset, the Sundanese [CC100](https://hf.co/datasets/cc100) subset, and Sundanese [Wikipedia](https://su.wikipedia.org/). 10% of the dataset is kept for evaluation purposes. The model was trained from scratch and achieved an evaluation loss of 1.952 and an evaluation accuracy of 63.98%. This model was trained using HuggingFace's Flax framework. All necessary scripts used for training could be found in the [Files and versions](https://hf.co/w11wo/sundanese-roberta-base/tree/main) tab, as well as the [Training metrics](https://hf.co/w11wo/sundanese-roberta-base/tensorboard) logged via Tensorboard. | 4a181208a860e7d176de688ce91fff7e |

mit | ['sundanese-roberta-base'] | false | params | Arch. | Training/Validation data (text) | | ------------------------ | ------- | ------- | ------------------------------------- | | `sundanese-roberta-base` | 124M | RoBERTa | OSCAR, mC4, CC100, Wikipedia (758 MB) | | ff2ee6ef01278bbca44185781a4a3658 |

mit | ['sundanese-roberta-base'] | false | Evaluation Results The model was trained for 50 epochs and the following is the final result once the training ended. | train loss | valid loss | valid accuracy | total time | | ---------- | ---------- | -------------- | ---------- | | 1.965 | 1.952 | 0.6398 | 6:24:51 | | 0743f4f997ef763bdbf926c8dd3585a0 |

mit | ['sundanese-roberta-base'] | false | As Masked Language Model ```python from transformers import pipeline pretrained_name = "w11wo/sundanese-roberta-base" fill_mask = pipeline( "fill-mask", model=pretrained_name, tokenizer=pretrained_name ) fill_mask("Budi nuju <mask> di sakola.") ``` | 05932c1f8c62cbfbf453e5455108036e |

mit | ['sundanese-roberta-base'] | false | Feature Extraction in PyTorch ```python from transformers import RobertaModel, RobertaTokenizerFast pretrained_name = "w11wo/sundanese-roberta-base" model = RobertaModel.from_pretrained(pretrained_name) tokenizer = RobertaTokenizerFast.from_pretrained(pretrained_name) prompt = "Budi nuju diajar di sakola." encoded_input = tokenizer(prompt, return_tensors='pt') output = model(**encoded_input) ``` | a3701d1912d5fea8a7b1ecaa3bbb8695 |

mit | ['sundanese-roberta-base'] | false | Citation Information ```bib @article{rs-907893, author = {Wongso, Wilson and Lucky, Henry and Suhartono, Derwin}, journal = {Journal of Big Data}, year = {2022}, month = {Feb}, day = {26}, abstract = {The Sundanese language has over 32 million speakers worldwide, but the language has reaped little to no benefits from the recent advances in natural language understanding. Like other low-resource languages, the only alternative is to fine-tune existing multilingual models. In this paper, we pre-trained three monolingual Transformer-based language models on Sundanese data. When evaluated on a downstream text classification task, we found that most of our monolingual models outperformed larger multilingual models despite the smaller overall pre-training data. In the subsequent analyses, our models benefited strongly from the Sundanese pre-training corpus size and do not exhibit socially biased behavior. We released our models for other researchers and practitioners to use.}, issn = {2693-5015}, doi = {10.21203/rs.3.rs-907893/v1}, url = {https://doi.org/10.21203/rs.3.rs-907893/v1} } ``` | 3e94aae448ff60b3201889c0b28ef83b |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-cola This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the glue dataset. It achieves the following results on the evaluation set: - Loss: 0.7480 - Matthews Correlation: 0.5370 | 1a63165b4a5f80e41ff9076e254d45fe |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Matthews Correlation | |:-------------:|:-----:|:----:|:---------------:|:--------------------:| | 0.5292 | 1.0 | 535 | 0.5110 | 0.4239 | | 0.3508 | 2.0 | 1070 | 0.4897 | 0.4993 | | 0.2346 | 3.0 | 1605 | 0.6275 | 0.5029 | | 0.1806 | 4.0 | 2140 | 0.7480 | 0.5370 | | 0.1291 | 5.0 | 2675 | 0.8841 | 0.5200 | | 8c2f0c67360cf0ed5cb40c7332b9a6d6 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-squad This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the squad dataset. It achieves the following results on the evaluation set: - Loss: 1.1569 | 4426b5440ef64907cc3aef7ac1e76489 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 1.2213 | 1.0 | 5533 | 1.1560 | | 0.943 | 2.0 | 11066 | 1.1227 | | 0.7633 | 3.0 | 16599 | 1.1569 | | 777a57544aff192a16dd9594e8f33144 |

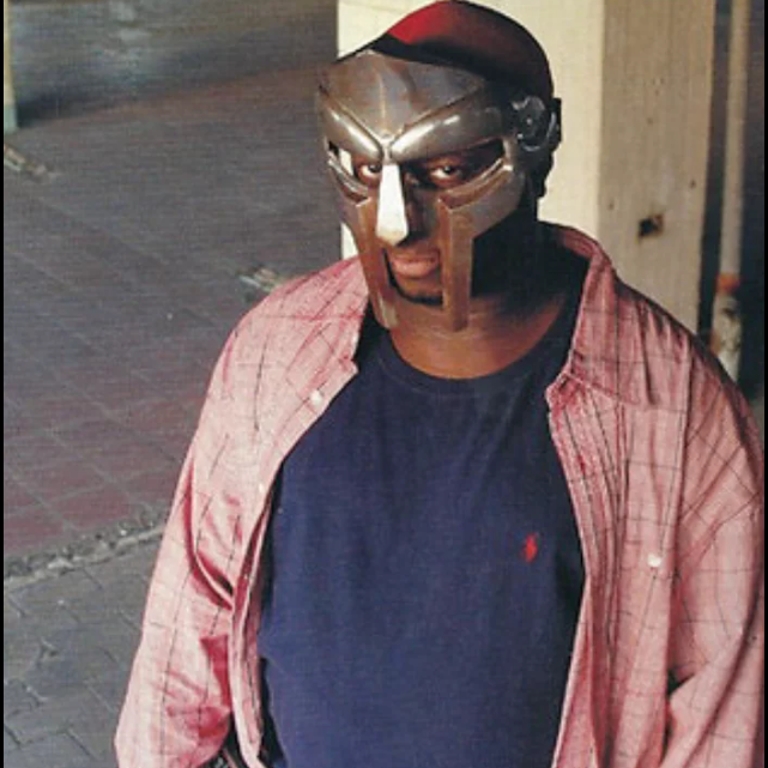

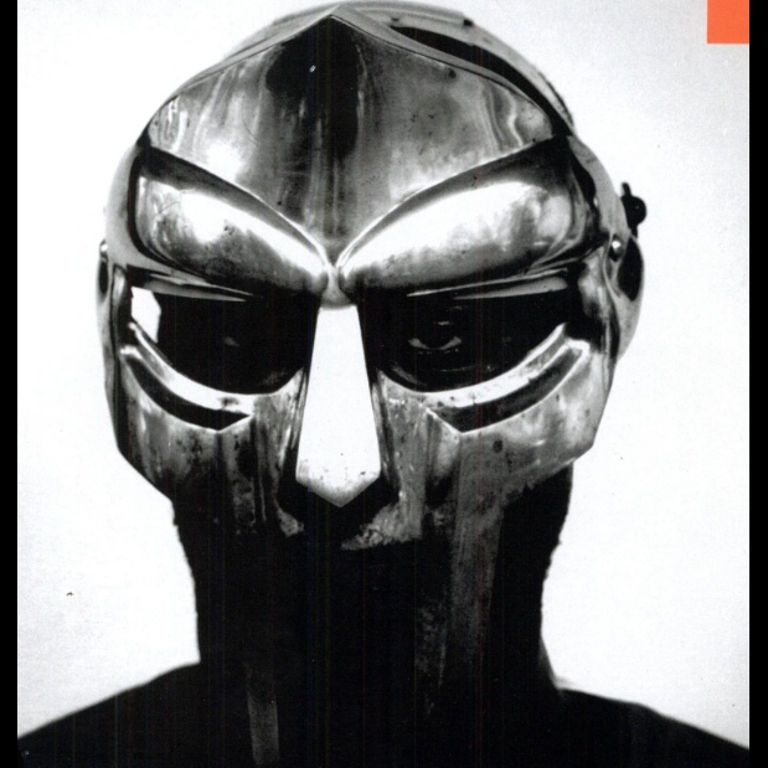

creativeml-openrail-m | ['text-to-image'] | false | MF Doomfusion Dreambooth model trained by koankoan with [Hugging Face Dreambooth Training Space](https://huggingface.co/spaces/multimodalart/dreambooth-training) with the v2-1-768 base model You run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb). Don't forget to use the concept prompts! Sample pictures of: Doomfusion (use that on your prompt)  | 9eae4857798c379d02f5cc38905812f7 |

apache-2.0 | ['automatic-speech-recognition', 'fa'] | false | exp_w2v2t_fa_xls-r_s44 Fine-tuned [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) for speech recognition using the train split of [Common Voice 7.0 (fa)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 0b474e28e500962c3cb0182e33ea5888 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-base-timit-demo-colab57 This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.7328 - Wer: 0.4593 | d4696776caf5eee2166014b8fdc8a02a |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 4.9876 | 7.04 | 500 | 3.1483 | 1.0 | | 1.4621 | 14.08 | 1000 | 0.6960 | 0.6037 | | 0.4404 | 21.13 | 1500 | 0.6392 | 0.5630 | | 0.2499 | 28.17 | 2000 | 0.6738 | 0.5281 | | 0.1732 | 35.21 | 2500 | 0.6789 | 0.4952 | | 0.1347 | 42.25 | 3000 | 0.7328 | 0.4835 | | 0.1044 | 49.3 | 3500 | 0.7258 | 0.4840 | | 0.0896 | 56.34 | 4000 | 0.7328 | 0.4593 | | 6d326a583e4621991131e916e73ecee9 |

mit | ['text-generation'] | false | Tokenizer We first trained a tokenizer on OSCAR's `unshuffled_original_ko` Korean data subset by following the training of GPT2 tokenizer (same vocab size of 50,257). Here's the [Python file](https://github.com/bigscience-workshop/multilingual-modeling/blob/gpt2-ko/experiments/exp-001/train_tokenizer_gpt2.py) for the training. | 87df221b2d19814941a37753fce4ddb7 |

mit | ['text-generation'] | false | Model We finetuned the `wte` and `wpe` layers of GPT-2 (while freezing the parameters of all other layers) on OSCAR's `unshuffled_original_ko` Korean data subset. We used [Huggingface's code](https://github.com/huggingface/transformers/blob/master/examples/pytorch/language-modeling/run_clm.py) for fine-tuning the causal language model GPT-2, but with the following parameters changed ``` - preprocessing_num_workers: 8 - per_device_train_batch_size: 2 - gradient_accumulation_steps: 4 - per_device_eval_batch_size: 2 - eval_accumulation_steps: 4 - eval_steps: 1000 - evaluation_strategy: "steps" - max_eval_samples: 5000 ``` **Training details**: total training steps: 688000, effective train batch size per step: 32, max tokens per batch: 1024) | 33b0b81b6102b3ada81bee07ffd38586 |

apache-2.0 | ['masked-lm', 'BERTa', 'catalan'] | false | BibTeX citation If you use any of these resources (datasets or models) in your work, please cite our latest paper: ```bibtex @inproceedings{armengol-estape-etal-2021-multilingual, title = "Are Multilingual Models the Best Choice for Moderately Under-resourced Languages? {A} Comprehensive Assessment for {C}atalan", author = "Armengol-Estap{\'e}, Jordi and Carrino, Casimiro Pio and Rodriguez-Penagos, Carlos and de Gibert Bonet, Ona and Armentano-Oller, Carme and Gonzalez-Agirre, Aitor and Melero, Maite and Villegas, Marta", booktitle = "Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021", month = aug, year = "2021", address = "Online", publisher = "Association for Computational Linguistics", url = "https://aclanthology.org/2021.findings-acl.437", doi = "10.18653/v1/2021.findings-acl.437", pages = "4933--4946", } ``` | 0e3038040f6b4866282acf515f478718 |

apache-2.0 | ['masked-lm', 'BERTa', 'catalan'] | false | Model description BERTa is a transformer-based masked language model for the Catalan language. It is based on the [RoBERTA](https://github.com/pytorch/fairseq/tree/master/examples/roberta) base model and has been trained on a medium-size corpus collected from publicly available corpora and crawlers. | 602487c4c9b0db6742d8035eb9f9ed6a |

apache-2.0 | ['masked-lm', 'BERTa', 'catalan'] | false | Training corpora and preprocessing The training corpus consists of several corpora gathered from web crawling and public corpora. The publicly available corpora are: 1. the Catalan part of the [DOGC](http://opus.nlpl.eu/DOGC-v2.php) corpus, a set of documents from the Official Gazette of the Catalan Government 2. the [Catalan Open Subtitles](http://opus.nlpl.eu/download.php?f=OpenSubtitles/v2018/mono/OpenSubtitles.raw.ca.gz), a collection of translated movie subtitles 3. the non-shuffled version of the Catalan part of the [OSCAR](https://traces1.inria.fr/oscar/) corpus \\\\cite{suarez2019asynchronous}, a collection of monolingual corpora, filtered from [Common Crawl](https://commoncrawl.org/about/) 4. The [CaWac](http://nlp.ffzg.hr/resources/corpora/cawac/) corpus, a web corpus of Catalan built from the .cat top-level-domain in late 2013 the non-deduplicated version 5. the [Catalan Wikipedia articles](https://ftp.acc.umu.se/mirror/wikimedia.org/dumps/cawiki/20200801/) downloaded on 18-08-2020. The crawled corpora are: 6. The Catalan General Crawling, obtained by crawling the 500 most popular .cat and .ad domains 7. the Catalan Government Crawling, obtained by crawling the .gencat domain and subdomains, belonging to the Catalan Government 8. the ACN corpus with 220k news items from March 2015 until October 2020, crawled from the [Catalan News Agency](https://www.acn.cat/) To obtain a high-quality training corpus, each corpus have preprocessed with a pipeline of operations, including among the others, sentence splitting, language detection, filtering of bad-formed sentences and deduplication of repetitive contents. During the process, we keep document boundaries are kept. Finally, the corpora are concatenated and further global deduplication among the corpora is applied. The final training corpus consists of about 1,8B tokens. | 9851f722340588c4c6b941fa0cf11a92 |

apache-2.0 | ['masked-lm', 'BERTa', 'catalan'] | false | Tokenization and pretraining The training corpus has been tokenized using a byte version of [Byte-Pair Encoding (BPE)](https://github.com/openai/gpt-2) used in the original [RoBERTA](https://github.com/pytorch/fairseq/tree/master/examples/roberta) model with a vocabulary size of 52,000 tokens. The BERTa pretraining consists of a masked language model training that follows the approach employed for the RoBERTa base model with the same hyperparameters as in the original work. The training lasted a total of 48 hours with 16 NVIDIA V100 GPUs of 16GB DDRAM. | 4bf31c803b9221e4d3490f316f3bd58b |

apache-2.0 | ['masked-lm', 'BERTa', 'catalan'] | false | CLUB benchmark The BERTa model has been fine-tuned on the downstream tasks of the Catalan Language Understanding Evaluation benchmark (CLUB), that has been created along with the model. It contains the following tasks and their related datasets: 1. Part-of-Speech Tagging (POS) Catalan-Ancora: from the [Universal Dependencies treebank](https://github.com/UniversalDependencies/UD_Catalan-AnCora) of the well-known Ancora corpus 2. Named Entity Recognition (NER) **[AnCora Catalan 2.0.0](https://zenodo.org/record/4762031 | 746415c436b2474e853717de2332fb3c |

apache-2.0 | ['masked-lm', 'BERTa', 'catalan'] | false | .YKaFjqGxWUk)**: extracted named entities from the original [Ancora](https://doi.org/10.5281/zenodo.4762030) version, filtering out some unconventional ones, like book titles, and transcribed them into a standard CONLL-IOB format 3. Text Classification (TC) **[TeCla](https://doi.org/10.5281/zenodo.4627197)**: consisting of 137k news pieces from the Catalan News Agency ([ACN](https://www.acn.cat/)) corpus 4. Semantic Textual Similarity (STS) **[Catalan semantic textual similarity](https://doi.org/10.5281/zenodo.4529183)**: consisting of more than 3000 sentence pairs, annotated with the semantic similarity between them, scraped from the [Catalan Textual Corpus](https://doi.org/10.5281/zenodo.4519349) 5. Question Answering (QA): **[ViquiQuAD](https://doi.org/10.5281/zenodo.4562344)**: consisting of more than 15,000 questions outsourced from Catalan Wikipedia randomly chosen from a set of 596 articles that were originally written in Catalan. **[XQuAD](https://doi.org/10.5281/zenodo.4526223)**: the Catalan translation of XQuAD, a multilingual collection of manual translations of 1,190 question-answer pairs from English Wikipedia used only as a _test set_ Here are the train/dev/test splits of the datasets: | Task (Dataset) | Total | Train | Dev | Test | |:--|:--|:--|:--|:--| | NER (Ancora) |13,581 | 10,628 | 1,427 | 1,526 | | POS (Ancora)| 16,678 | 13,123 | 1,709 | 1,846 | | STS | 3,073 | 2,073 | 500 | 500 | | TC (TeCla) | 137,775 | 110,203 | 13,786 | 13,786| | QA (ViquiQuAD) | 14,239 | 11,255 | 1,492 | 1,429 | _The fine-tuning on downstream tasks have been performed with the HuggingFace [**Transformers**](https://github.com/huggingface/transformers) library_ | 37a3ce0692bb3c69d4bd3d6fc732c0ae |

apache-2.0 | ['masked-lm', 'BERTa', 'catalan'] | false | Results Below the evaluation results on the CLUB tasks compared with the multilingual mBERT, XLM-RoBERTa models and the Catalan WikiBERT-ca model | Task | NER (F1) | POS (F1) | STS (Pearson) | TC (accuracy) | QA (ViquiQuAD) (F1/EM) | QA (XQuAD) (F1/EM) | | ------------|:-------------:| -----:|:------|:-------|:------|:----| | BERTa | **88.13** | **98.97** | **79.73** | **74.16** | **86.97/72.29** | **68.89/48.87** | | mBERT | 86.38 | 98.82 | 76.34 | 70.56 | 86.97/72.22 | 67.15/46.51 | | XLM-RoBERTa | 87.66 | 98.89 | 75.40 | 71.68 | 85.50/70.47 | 67.10/46.42 | | WikiBERT-ca | 77.66 | 97.60 | 77.18 | 73.22 | 85.45/70.75 | 65.21/36.60 | | eb3d48d46f55d1ac58a146bbc42e821f |

apache-2.0 | ['masked-lm', 'BERTa', 'catalan'] | false | Intended uses & limitations The model is ready-to-use only for masked language modelling to perform the Fill Mask task (try the inference API or read the next section) However, the is intended to be fine-tuned on non-generative downstream tasks such as Question Answering, Text Classification or Named Entity Recognition. --- | f9daef2268ce8f76db9a5468246e4ca0 |

apache-2.0 | ['masked-lm', 'BERTa', 'catalan'] | false | Load model and tokenizer ``` python from transformers import AutoTokenizer, AutoModelForMaskedLM tokenizer = AutoTokenizer.from_pretrained("BSC-TeMU/roberta-base-ca-cased") model = AutoModelForMaskedLM.from_pretrained("BSC-TeMU/roberta-base-ca-cased") ``` | e31c62c15b8f3583c850e9d278b731ab |

apache-2.0 | ['masked-lm', 'BERTa', 'catalan'] | false | Fill Mask task Below, an example of how to use the masked language modelling task with a pipeline. ```python >>> from transformers import pipeline >>> unmasker = pipeline('fill-mask', model='BSC-TeMU/roberta-base-ca-cased') >>> unmasker("Situada a la costa de la mar Mediterrània, <mask> s'assenta en una plana formada " "entre els deltes de les desembocadures dels rius Llobregat, al sud-oest, " "i Besòs, al nord-est, i limitada pel sud-est per la línia de costa," "i pel nord-oest per la serralada de Collserola " "(amb el cim del Tibidabo, 516,2 m, com a punt més alt) que segueix paral·lela " "la línia de costa encaixant la ciutat en un perímetre molt definit.") [ { "sequence": " Situada a la costa de la mar Mediterrània, <mask> s'assenta en una plana formada " "entre els deltes de les desembocadures dels rius Llobregat, al sud-oest, " "i Besòs, al nord-est, i limitada pel sud-est per la línia de costa," "i pel nord-oest per la serralada de Collserola " "(amb el cim del Tibidabo, 516,2 m, com a punt més alt) que segueix paral·lela " "la línia de costa encaixant la ciutat en un perímetre molt definit.", "score": 0.4177263379096985, "token": 734, "token_str": " Barcelona" }, { "sequence": " Situada a la costa de la mar Mediterrània, <mask> s'assenta en una plana formada " "entre els deltes de les desembocadures dels rius Llobregat, al sud-oest, " "i Besòs, al nord-est, i limitada pel sud-est per la línia de costa," "i pel nord-oest per la serralada de Collserola " "(amb el cim del Tibidabo, 516,2 m, com a punt més alt) que segueix paral·lela " "la línia de costa encaixant la ciutat en un perímetre molt definit.", "score": 0.10696165263652802, "token": 3849, "token_str": " Badalona" }, { "sequence": " Situada a la costa de la mar Mediterrània, <mask> s'assenta en una plana formada " "entre els deltes de les desembocadures dels rius Llobregat, al sud-oest, " "i Besòs, al nord-est, i limitada pel sud-est per la línia de costa," "i pel nord-oest per la serralada de Collserola " "(amb el cim del Tibidabo, 516,2 m, com a punt més alt) que segueix paral·lela " "la línia de costa encaixant la ciutat en un perímetre molt definit.", "score": 0.08135009557008743, "token": 19349, "token_str": " Collserola" }, { "sequence": " Situada a la costa de la mar Mediterrània, <mask> s'assenta en una plana formada " "entre els deltes de les desembocadures dels rius Llobregat, al sud-oest, " "i Besòs, al nord-est, i limitada pel sud-est per la línia de costa," "i pel nord-oest per la serralada de Collserola " "(amb el cim del Tibidabo, 516,2 m, com a punt més alt) que segueix paral·lela " "la línia de costa encaixant la ciutat en un perímetre molt definit.", "score": 0.07330769300460815, "token": 4974, "token_str": " Terrassa" }, { "sequence": " Situada a la costa de la mar Mediterrània, <mask> s'assenta en una plana formada " "entre els deltes de les desembocadures dels rius Llobregat, al sud-oest, " "i Besòs, al nord-est, i limitada pel sud-est per la línia de costa," "i pel nord-oest per la serralada de Collserola " "(amb el cim del Tibidabo, 516,2 m, com a punt més alt) que segueix paral·lela " "la línia de costa encaixant la ciutat en un perímetre molt definit.", "score": 0.03317456692457199, "token": 14333, "token_str": " Gavà" } ] ``` This model was originally published as [bsc/roberta-base-ca-cased](https://huggingface.co/bsc/roberta-base-ca-cased). | 65adb726a2308570cbb26c28f2862cdc |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 32 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 1000 - num_epochs: 5 | 0723defa3884fe7476df8149fb215c87 |

apache-2.0 | ['Vocoder', 'HiFIGAN', 'text-to-speech', 'TTS', 'speech-synthesis', 'speechbrain'] | false | Vocoder with HiFIGAN trained on LibriTTS This repository provides all the necessary tools for using a [HiFIGAN](https://arxiv.org/abs/2010.05646) vocoder trained with [LibriTTS](https://www.openslr.org/60/) (with multiple speakers). The sample rate used for the vocoder is 22050 Hz. The pre-trained model takes in input a spectrogram and produces a waveform in output. Typically, a vocoder is used after a TTS model that converts an input text into a spectrogram. Alternatives to this models are the following: - [tts-hifigan-libritts-16kHz](https://huggingface.co/speechbrain/tts-hifigan-libritts-16kHz/) (same model trained on the same dataset, but for a sample rate of 16000 Hz) - [tts-hifigan-ljspeech](https://huggingface.co/speechbrain/tts-hifigan-ljspeech) (same model trained on LJSpeech for a sample rate of 22050 Hz). | f01f68a8a88887951f889a5eba9d2fe7 |

apache-2.0 | ['Vocoder', 'HiFIGAN', 'text-to-speech', 'TTS', 'speech-synthesis', 'speechbrain'] | false | Using the Vocoder ```python import torch from speechbrain.pretrained import HIFIGAN hifi_gan = HIFIGAN.from_hparams(source="speechbrain/tts-hifigan-libritts-22050Hz", savedir="tmpdir") mel_specs = torch.rand(2, 80,298) | 2925bdf0e50a2d341d5aa1aeba4bcc1d |

apache-2.0 | ['Vocoder', 'HiFIGAN', 'text-to-speech', 'TTS', 'speech-synthesis', 'speechbrain'] | false | Intialize TTS (tacotron2) and Vocoder (HiFIGAN) tacotron2 = Tacotron2.from_hparams(source="speechbrain/tts-tacotron2-ljspeech", savedir="tmpdir_tts") hifi_gan = HIFIGAN.from_hparams(source="speechbrain/tts-hifigan-libritts-22050Hz", savedir="tmpdir_vocoder") | 2811066587aa3a7cbb735ecf859ca65f |

apache-2.0 | ['Vocoder', 'HiFIGAN', 'text-to-speech', 'TTS', 'speech-synthesis', 'speechbrain'] | false | Training The model was trained with SpeechBrain. To train it from scratch follow these steps: 1. Clone SpeechBrain: ```bash git clone https://github.com/speechbrain/speechbrain/ ``` 2. Install it: ```bash cd speechbrain pip install -r requirements.txt pip install -e . ``` 3. Run Training: ```bash cd recipes/LibriTTS/vocoder/hifigan/ python train.py hparams/train.yaml --data_folder=/path/to/LibriTTS_data_destination --sample_rate=22050 ``` To change the sample rate for model training go to the `"recipes/LibriTTS/vocoder/hifigan/hparams/train.yaml"` file and change the value for `sample_rate` as required. The training logs and checkpoints are available [here](https://drive.google.com/drive/folders/1cImFzEonNYhetS9tmH9R_d0EFXXN0zpn?usp=sharing). | 11ecb1104054f653627fc9381b2e93b7 |

apache-2.0 | ['automatic-speech-recognition', 'id'] | false | exp_w2v2t_id_no-pretraining_s724 Fine-tuned randomly initialized wav2vec2 model for speech recognition using the train split of [Common Voice 7.0 (id)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | a2a611c25f0d26e0480f4a6d500236d6 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | mt5-small-finetuned-amazon-en-es This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the None dataset. It achieves the following results on the evaluation set: - Loss: 3.0318 - Rouge1: 0.1806 - Rouge2: 0.0917 - Rougel: 0.1765 - Rougelsum: 0.1766 | e313724143b64a4acd5d61547ebf63d5 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:|:---------:| | 6.6121 | 1.0 | 1209 | 3.2969 | 0.1522 | 0.0635 | 0.1476 | 0.147 | | 3.901 | 2.0 | 2418 | 3.1307 | 0.1672 | 0.0821 | 0.1604 | 0.1602 | | 3.5788 | 3.0 | 3627 | 3.0910 | 0.1804 | 0.0922 | 0.1748 | 0.1751 | | 3.4198 | 4.0 | 4836 | 3.0646 | 0.1717 | 0.0813 | 0.167 | 0.1664 | | 3.321 | 5.0 | 6045 | 3.0659 | 0.1782 | 0.0877 | 0.1759 | 0.1756 | | 3.2441 | 6.0 | 7254 | 3.0407 | 0.1785 | 0.088 | 0.1755 | 0.1751 | | 3.2075 | 7.0 | 8463 | 3.0356 | 0.1789 | 0.09 | 0.1743 | 0.1747 | | 3.1803 | 8.0 | 9672 | 3.0318 | 0.1806 | 0.0917 | 0.1765 | 0.1766 | | 3c03ad0b4fd69e3c9968e72bda2416e3 |

mit | [] | false | This model is the **passage** encoder of ANCE-Tele trained on NQ, described in the EMNLP 2022 paper ["Reduce Catastrophic Forgetting of Dense Retrieval Training with Teleportation Negatives"](https://arxiv.org/pdf/2210.17167.pdf). The associated GitHub repository is available at https://github.com/OpenMatch/ANCE-Tele. ANCE-Tele only trains with self-mined negatives (teleportation negatives) without using additional negatives (e.g., BM25, other DR systems) and eliminates the dependency on filtering strategies and distillation modules. |NQ (Test)|R@5|R@20|R@20| |:---|:---|:---|:---| |ANCE-Tele|77.0|84.9|89.7| ``` @inproceedings{sun2022ancetele, title={Reduce Catastrophic Forgetting of Dense Retrieval Training with Teleportation Negatives}, author={Si Sun, Chenyan Xiong, Yue Yu, Arnold Overwijk, Zhiyuan Liu and Jie Bao}, booktitle={Proceedings of EMNLP 2022}, year={2022} } ``` | 8ca00119429e6da5a133ccc7d0af5fa7 |

gpl-3.0 | [] | false | Pretrained model: [GODEL-v1_1-base-seq2seq](https://huggingface.co/microsoft/GODEL-v1_1-base-seq2seq/) Fine-tuning dataset: [MultiWOZ 2.2](https://github.com/budzianowski/multiwoz/tree/master/data/MultiWOZ_2.2) | dc8e38db5c1733adfc5262b34ddda890 |

gpl-3.0 | [] | false | Encoder input context = [ "USER: I need train reservations from norwich to cambridge", "SYSTEM: I have 133 trains matching your request. Is there a specific day and time you would like to travel?", "USER: I'd like to leave on Monday and arrive by 18:00.", ] input_text = " EOS ".join(context[-5:]) + " => " model_inputs = tokenizer( input_text, max_length=512, truncation=True, return_tensors="pt" )["input_ids"] | 946d8e149f826e2eb069c759975e3689 |

gpl-3.0 | [] | false | Decoder input answer_start = "SYSTEM: " decoder_input_ids = tokenizer( "<pad>" + answer_start, max_length=256, truncation=True, add_special_tokens=False, return_tensors="pt", )["input_ids"] | 0f4e1ae1f7f681616516a2bc76daa7c8 |

gpl-3.0 | [] | false | Generate output = model.generate( model_inputs, decoder_input_ids=decoder_input_ids, max_length=256 ) output = tokenizer.decode( output[0], clean_up_tokenization_spaces=True, skip_special_tokens=True ) print(output) | 58305676f95a8046f2de2602c832a2ff |

other | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | Stable Diffusion v1-4 Model Card Stable Diffusion is a latent text-to-image diffusion model capable of generating photo-realistic images given any text input. For more information about how Stable Diffusion functions, please have a look at [🤗's Stable Diffusion with 🧨Diffusers blog](https://huggingface.co/blog/stable_diffusion). The **Stable-Diffusion-v1-4** checkpoint was initialized with the weights of the [Stable-Diffusion-v1-2](https:/steps/huggingface.co/CompVis/stable-diffusion-v1-2) checkpoint and subsequently fine-tuned on 225k steps at resolution 512x512 on "laion-aesthetics v2 5+" and 10% dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598). This weights here are intended to be used with the 🧨 Diffusers library. If you are looking for the weights to be loaded into the CompVis Stable Diffusion codebase, [come here](https://huggingface.co/CompVis/stable-diffusion-v-1-4-original) | cad1b93599da5e0c6e7ca8c652433b2b |

other | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | Examples We recommend using [🤗's Diffusers library](https://github.com/huggingface/diffusers) to run Stable Diffusion. ```bash pip install --upgrade diffusers transformers scipy ``` Run this command to log in with your HF Hub token if you haven't before: ```bash huggingface-cli login ``` Running the pipeline with the default PNDM scheduler: ```python import torch from torch import autocast from diffusers import StableDiffusionPipeline model_id = "CompVis/stable-diffusion-v1-4" device = "cuda" pipe = StableDiffusionPipeline.from_pretrained(model_id, use_auth_token=True) pipe = pipe.to(device) prompt = "a photo of an astronaut riding a horse on mars" with autocast("cuda"): image = pipe(prompt, guidance_scale=7.5)["sample"][0] image.save("astronaut_rides_horse.png") ``` **Note**: If you are limited by GPU memory and have less than 10GB of GPU RAM available, please make sure to load the StableDiffusionPipeline in float16 precision instead of the default float32 precision as done above. You can do so by telling diffusers to expect the weights to be in float16 precision: ```py import torch pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16, revision="fp16", use_auth_token=True) pipe = pipe.to(device) prompt = "a photo of an astronaut riding a horse on mars" with autocast("cuda"): image = pipe(prompt, guidance_scale=7.5)["sample"][0] image.save("astronaut_rides_horse.png") ``` To swap out the noise scheduler, pass it to `from_pretrained`: ```python from diffusers import StableDiffusionPipeline, LMSDiscreteScheduler model_id = "CompVis/stable-diffusion-v1-4" | d1cc6a8d5f787062bb5092f14d6caca5 |

other | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | Training **Training Data** The model developers used the following dataset for training the model: - LAION-2B (en) and subsets thereof (see next section) **Training Procedure** Stable Diffusion v1-4 is a latent diffusion model which combines an autoencoder with a diffusion model that is trained in the latent space of the autoencoder. During training, - Images are encoded through an encoder, which turns images into latent representations. The autoencoder uses a relative downsampling factor of 8 and maps images of shape H x W x 3 to latents of shape H/f x W/f x 4 - Text prompts are encoded through a ViT-L/14 text-encoder. - The non-pooled output of the text encoder is fed into the UNet backbone of the latent diffusion model via cross-attention. - The loss is a reconstruction objective between the noise that was added to the latent and the prediction made by the UNet. We currently provide four checkpoints, which were trained as follows. - [`stable-diffusion-v1-1`](https://huggingface.co/CompVis/stable-diffusion-v1-1): 237,000 steps at resolution `256x256` on [laion2B-en](https://huggingface.co/datasets/laion/laion2B-en). 194,000 steps at resolution `512x512` on [laion-high-resolution](https://huggingface.co/datasets/laion/laion-high-resolution) (170M examples from LAION-5B with resolution `>= 1024x1024`). - [`stable-diffusion-v1-2`](https://huggingface.co/CompVis/stable-diffusion-v1-2): Resumed from `stable-diffusion-v1-1`. 515,000 steps at resolution `512x512` on "laion-improved-aesthetics" (a subset of laion2B-en, filtered to images with an original size `>= 512x512`, estimated aesthetics score `> 5.0`, and an estimated watermark probability `< 0.5`. The watermark estimate is from the LAION-5B metadata, the aesthetics score is estimated using an [improved aesthetics estimator](https://github.com/christophschuhmann/improved-aesthetic-predictor)). - [`stable-diffusion-v1-3`](https://huggingface.co/CompVis/stable-diffusion-v1-3): Resumed from `stable-diffusion-v1-2`. 195,000 steps at resolution `512x512` on "laion-improved-aesthetics" and 10 % dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598). - [`stable-diffusion-v1-4`](https://huggingface.co/CompVis/stable-diffusion-v1-4) Resumed from `stable-diffusion-v1-2`.225,000 steps at resolution `512x512` on "laion-aesthetics v2 5+" and 10 % dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598). - **Hardware:** 32 x 8 x A100 GPUs - **Optimizer:** AdamW - **Gradient Accumulations**: 2 - **Batch:** 32 x 8 x 2 x 4 = 2048 - **Learning rate:** warmup to 0.0001 for 10,000 steps and then kept constant | c05753f7bc6bbac7d1d185d1b343953d |

other | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | Evaluation Results Evaluations with different classifier-free guidance scales (1.5, 2.0, 3.0, 4.0, 5.0, 6.0, 7.0, 8.0) and 50 PLMS sampling steps show the relative improvements of the checkpoints:  Evaluated using 50 PLMS steps and 10000 random prompts from the COCO2017 validation set, evaluated at 512x512 resolution. Not optimized for FID scores. | defac0cce636a61ac34207b11bd98496 |

mit | [] | false | This model has been pretrained on BEIR corpus without relevance-level supervision following the approach described in the paper **COCO-DR: Combating Distribution Shifts in Zero-Shot Dense Retrieval with Contrastive and Distributionally Robust Learning**. The associated GitHub repository is available here https://github.com/OpenMatch/COCO-DR. This model is trained with BERT-large as the backbone with 335M hyperparameters. | 5e3714023983d56d8e324255293ae8e8 |

mit | [] | false | Altyn-Helmet on Stable Diffusion This is the `<Altyn>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:        | dcfdbf626287f134d7c89ccd53d72d21 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-it This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.2630 - F1: 0.8124 | f3018b10d23b25d41be2bfba297b7c58 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.8193 | 1.0 | 70 | 0.3200 | 0.7356 | | 0.2773 | 2.0 | 140 | 0.2841 | 0.7882 | | 0.1807 | 3.0 | 210 | 0.2630 | 0.8124 | | 31f70197cb9c259496810347313fda30 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2158 - Accuracy: 0.9285 - F1: 0.9286 | 30ce6266077a1d975fa98d5ea09dc02f |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8235 | 1.0 | 250 | 0.3085 | 0.915 | 0.9127 | | 0.2493 | 2.0 | 500 | 0.2158 | 0.9285 | 0.9286 | | c13693b2b97874d40472a8a47c0e724a |

apache-2.0 | ['generated_from_trainer'] | false | AI-DAY-distilbert-base-uncased-finetuned-cola This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the glue dataset. It achieves the following results on the evaluation set: - Loss: 0.7236 - Matthews Correlation: 0.5382 | 941d02df88d50fe17153068cff6acb8d |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Matthews Correlation | |:-------------:|:-----:|:----:|:---------------:|:--------------------:| | 0.5308 | 1.0 | 535 | 0.5065 | 0.4296 | | 0.3565 | 2.0 | 1070 | 0.5109 | 0.4940 | | 0.2399 | 3.0 | 1605 | 0.6056 | 0.5094 | | 0.1775 | 4.0 | 2140 | 0.7236 | 0.5382 | | 0.1242 | 5.0 | 2675 | 0.8659 | 0.5347 | | 0a4d9c552253b04292fac4e629f95f10 |

mit | [] | false | Gymnastics Leotard v2 on Stable Diffusion This is the `<gymnastics-leotard2>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:       | 65470f72b2809e506520be7a966ea23d |

other | ['transformers', 'feature-extraction', 'materials'] | false | MaterialsBERT This model is a fine-tuned version of [PubMedBERT model](https://huggingface.co/microsoft/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext) on a dataset of 2.4 million materials science abstracts. It was introduced in [this](https://arxiv.org/abs/2209.13136) paper. This model is uncased. | 0ee2991f5912368e54c5a151bafc509f |

other | ['transformers', 'feature-extraction', 'materials'] | false | Model description Domain-specific fine-tuning has been [shown](https://arxiv.org/abs/2007.15779) to improve performance in downstream performance on a variety of NLP tasks. MaterialsBERT fine-tunes PubMedBERT, a pre-trained language model trained using biomedical literature. This model was chosen as the biomedical domain is close to the materials science domain. MaterialsBERT when further fine-tuned on a variety of downstream sequence labeling tasks in materials science, outperformed other baseline language models tested on three out of five datasets. | 0fcb6cca82a6c9dca8c98b0f32bd750a |

other | ['transformers', 'feature-extraction', 'materials'] | false | Intended uses & limitations You can use the raw model for either masked language modeling or next sentence prediction, but it's mostly intended to be fine-tuned on materials-science relevant downstream tasks. Note that this model is primarily aimed at being fine-tuned on tasks that use a sentence or a paragraph (potentially masked) to make decisions, such as sequence classification, token classification or question answering. | 32a02afb6f94cdee04bf89f4d704cf7f |

other | ['transformers', 'feature-extraction', 'materials'] | false | How to Use Here is how to use this model to get the features of a given text in PyTorch: ```python from transformers import BertForMaskedLM, BertTokenizer tokenizer = BertTokenizer.from_pretrained('pranav-s/MaterialsBERT') model = BertForMaskedLM.from_pretrained('pranav-s/MaterialsBERT') text = "Enter any text you like" encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` | 9b9bae1b5a6098d1f65058418a367695 |

other | ['transformers', 'feature-extraction', 'materials'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3.0 - mixed_precision_training: Native AMP | 0bc1b741e341dcbcd6c7c7db46a4c3d0 |

other | ['transformers', 'feature-extraction', 'materials'] | false | Citation If you find MaterialsBERT useful in your research, please cite the following paper: ```latex @misc{materialsbert, author = {Pranav Shetty, Arunkumar Chitteth Rajan, Christopher Kuenneth, Sonkakshi Gupta, Lakshmi Prerana Panchumarti, Lauren Holm, Chao Zhang, and Rampi Ramprasad}, title = {A general-purpose material property data extraction pipeline from large polymer corpora using Natural Language Processing}, year = {2022}, eprint = {arXiv:2209.13136}, } ``` <a href="https://huggingface.co/exbert/?model=pranav-s/MaterialsBERT"> <img width="300px" src="https://cdn-media.huggingface.co/exbert/button.png"> </a> | a5112a4e555b4647fa69682e26c9d528 |

cc | ['generated_from_trainer'] | false | racism-finetuned-detests-02-11-2022 This model is a fine-tuned version of [davidmasip/racism](https://huggingface.co/davidmasip/racism) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.8819 - F1: 0.6199 | 195ac2fc076ac038eac9b7d93cd4445a |

cc | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 64 - eval_batch_size: 64 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 10 | 248bc385d25c4abdc54a6e377006f2e2 |

cc | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.3032 | 0.64 | 25 | 0.3482 | 0.6434 | | 0.1132 | 1.28 | 50 | 0.3707 | 0.6218 | | 0.1253 | 1.92 | 75 | 0.4004 | 0.6286 | | 0.0064 | 2.56 | 100 | 0.6223 | 0.6254 | | 0.0007 | 3.21 | 125 | 0.7347 | 0.6032 | | 0.0006 | 3.85 | 150 | 0.7705 | 0.6312 | | 0.0004 | 4.49 | 175 | 0.7988 | 0.6304 | | 0.0003 | 5.13 | 200 | 0.8206 | 0.6255 | | 0.0003 | 5.77 | 225 | 0.8371 | 0.6097 | | 0.0003 | 6.41 | 250 | 0.8503 | 0.6148 | | 0.0003 | 7.05 | 275 | 0.8610 | 0.6148 | | 0.0002 | 7.69 | 300 | 0.8693 | 0.6199 | | 0.0002 | 8.33 | 325 | 0.8755 | 0.6199 | | 0.0002 | 8.97 | 350 | 0.8797 | 0.6199 | | 0.0002 | 9.62 | 375 | 0.8819 | 0.6199 | | b692a2a6bfae7700e8846e224a989a78 |

apache-2.0 | ['translation'] | false | opus-mt-zne-fr * source languages: zne * target languages: fr * OPUS readme: [zne-fr](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/zne-fr/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/zne-fr/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/zne-fr/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/zne-fr/opus-2020-01-16.eval.txt) | 4862dd673db210eba06744507226240d |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | Core ML Converted Model: - This model was converted to [Core ML for use on Apple Silicon devices](https://github.com/apple/ml-stable-diffusion). Conversion instructions can be found [here](https://github.com/godly-devotion/MochiDiffusion/wiki/How-to-convert-ckpt-or-safetensors-files-to-Core-ML).<br> - Provide the model to an app such as Mochi Diffusion [Github](https://github.com/godly-devotion/MochiDiffusion) - [Discord](https://discord.gg/x2kartzxGv) to generate images.<br> - `split_einsum` version is compatible with all compute unit options including Neural Engine.<br> - `original` version is only compatible with CPU & GPU option.<br> | e7874d84da0668ab29b23020f2041c50 |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | knollingcase Dreambooth model trained by Aybeeceedee with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook UPDATE: The images on the following imgur link were all made just using 'knollingcase' as the main prompt, then letting the Dynamic Prompt add-on for AUTOMATIC1111 fill in the rest! There's some beauty's in here if you have a scroll through! https://imgur.com/gallery/MQtdkv5 Use 'knollingcase' anywhere in the prompt and you're good to go. Example: knollingcase, isometic render, a single cherry blossom tree, isometric display case, knolling teardown, transparent data visualization infographic, high-resolution OLED GUI interface display, micro-details, octane render, photorealism, photorealistic Example: (clockwork:1.2), knollingcase, labelled, overlays, oled display, annotated, technical, knolling diagram, technical drawing, display case, dramatic lighting, glow, dof, reflections, refractions <img src="https://preview.redd.it/998kvw5cja2a1.png?width=640&crop=smart&auto=webp&s=74b9d242271215b1b75e7938e1f5ca7259ccbf50" width="512px" /> <img src="https://preview.redd.it/2zwc1aul3c2a1.png?width=1024&format=png&auto=webp&s=74c05cc9a3f21654d6b559a62ffda9a7d7da2955" width="512px" /> <img src="https://preview.redd.it/sindy9s24c2a1.png?width=1024&format=png&auto=webp&s=cffbfe28810e646b73f7499b0ebc512a2d85fba2" width="512px" /> <img src="https://preview.redd.it/lpg5b2yqja2a1.png?width=640&crop=smart&auto=webp&s=f10965c95439df5724a8d6e5fa45f111d6f9f0d6" width="512px" /> <img src="https://preview.redd.it/qxa04u7qja2a1.png?width=640&crop=smart&auto=webp&s=fe7b86632241ceff5c79992de2abb064878dfd49" width="512px" /> <img src="https://preview.redd.it/my747twpja2a1.png?width=640&crop=smart&auto=webp&s=e576e16e9e34168209ce0b51bc2d52a139f2dd30" width="512px" /> <img src="https://preview.redd.it/lzrbu1vcja2a1.png?width=640&crop=smart&auto=webp&s=494437df5ce587ac15fee80957455ff999e26af9" width="512px" /> <img src="https://preview.redd.it/zalueljcja2a1.png?width=640&crop=smart&auto=webp&s=550e420d6ea9c89581394f298e947cdf9a240012" width="512px" /> <img src="https://preview.redd.it/8zxn9t8bja2a1.png?width=640&crop=smart&auto=webp&s=dc97582482573a39ec4fabe7a03deb62332df5ed" width="512px" /> <img src="https://preview.redd.it/zeiuwkwaja2a1.png?width=640&crop=smart&auto=webp&s=2f13fa6386b302ab35df2ed9ea328f7f442cbad7" width="512px" /> <img src="https://preview.redd.it/3l43tqa23c2a1.png?width=1024&format=png&auto=webp&s=79dab20b3d2d8b8c963a67f7aefe1d1b724a86d8" width="512px" /> | 94c2f9e4cdabf7448ce22ed8e8b6974f |

cc-by-4.0 | ['anglicisms', 'loanwords', 'borrowing', 'codeswitching', 'arxiv:2203.16169'] | false | anglicisms-spanish-mbert This is a pretrained model for detecting unassimilated English lexical borrowings (a.k.a. anglicisms) on Spanish newswire. This model labels words of foreign origin (fundamentally from English) used in Spanish language, words such as *fake news*, *machine learning*, *smartwatch*, *influencer* or *streaming*. The model is a fine-tuned version of [multilingual BERT](https://huggingface.co/bert-base-multilingual-cased) trained on the [COALAS](https://github.com/lirondos/coalas/) corpus for the task of detecting lexical borrowings. The model considers two labels: * ``ENG``: For English lexical borrowings (*smartphone*, *online*, *podcast*) * ``OTHER``: For lexical borrowings from any other language (*boutique*, *anime*, *umami*) The model uses BIO encoding to account for multitoken borrowings. **⚠ This is not the best-performing model for this task.** For the best-performing model (F1=85.76) see [Flair model](https://huggingface.co/lirondos/anglicisms-spanish-flair-cs). | ed8feac3f594b52b85e5900e9ae4a204 |

cc-by-4.0 | ['anglicisms', 'loanwords', 'borrowing', 'codeswitching', 'arxiv:2203.16169'] | false | Metrics (on the test set) The following table summarizes the results obtained on the test set of the [COALAS](https://github.com/lirondos/coalas/) corpus. | LABEL | Precision | Recall | F1 | |:-------|-----:|-----:|---------:| | ALL | 88.09 | 79.46 | 83.55 | | ENG | 88.44 | 82.16 | 85.19 | | OTHER | 37.5 | 6.52 | 11.11 | | f54af7a07372255cc92fb9813aa60ad4 |

cc-by-4.0 | ['anglicisms', 'loanwords', 'borrowing', 'codeswitching', 'arxiv:2203.16169'] | false | Dataset This model was trained on [COALAS](https://github.com/lirondos/coalas/), a corpus of Spanish newswire annotated with unassimilated lexical borrowings. The corpus contains 370,000 tokens and includes various written media written in European Spanish. The test set was designed to be as difficult as possible: it covers sources and dates not seen in the training set, includes a high number of OOV words (92% of the borrowings in the test set are OOV) and is very borrowing-dense (20 borrowings per 1,000 tokens). |Set | Tokens | ENG | OTHER | Unique | |:-------|-----:|-----:|---------:|---------:| |Training |231,126 |1,493 | 28 |380 | |Development |82,578 |306 |49 |316| |Test |58,997 |1,239 |46 |987| |**Total** |372,701 |3,038 |123 |1,683 | | 388918d2a097798e232536e9bb55edea |

cc-by-4.0 | ['anglicisms', 'loanwords', 'borrowing', 'codeswitching', 'arxiv:2203.16169'] | false | More info More information about the dataset, model experimentation and error analysis can be found in the paper: *[Detecting Unassimilated Borrowings in Spanish: An Annotated Corpus and Approaches to Modeling](https://aclanthology.org/2022.acl-long.268/)*. | 63d9de7c830fcade922df6b29755b30d |

cc-by-4.0 | ['anglicisms', 'loanwords', 'borrowing', 'codeswitching', 'arxiv:2203.16169'] | false | How to use ``` from transformers import pipeline, AutoModelForTokenClassification, AutoTokenizer tokenizer = AutoTokenizer.from_pretrained("lirondos/anglicisms-spanish-mbert") model = AutoModelForTokenClassification.from_pretrained("lirondos/anglicisms-spanish-mbert") nlp = pipeline("ner", model=model, tokenizer=tokenizer) example = example = "Buscamos data scientist para proyecto de machine learning." borrowings = nlp(example) print(borrowings) ``` | 62ef88a7d739bb44081ef79ebadf74b9 |

cc-by-4.0 | ['anglicisms', 'loanwords', 'borrowing', 'codeswitching', 'arxiv:2203.16169'] | false | Citation If you use this model, please cite the following reference: ``` @inproceedings{alvarez-mellado-lignos-2022-detecting, title = "Detecting Unassimilated Borrowings in {S}panish: {A}n Annotated Corpus and Approaches to Modeling", author = "{\'A}lvarez-Mellado, Elena and Lignos, Constantine", booktitle = "Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers)", month = may, year = "2022", address = "Dublin, Ireland", publisher = "Association for Computational Linguistics", url = "https://aclanthology.org/2022.acl-long.268", pages = "3868--3888", abstract = "This work presents a new resource for borrowing identification and analyzes the performance and errors of several models on this task. We introduce a new annotated corpus of Spanish newswire rich in unassimilated lexical borrowings{---}words from one language that are introduced into another without orthographic adaptation{---}and use it to evaluate how several sequence labeling models (CRF, BiLSTM-CRF, and Transformer-based models) perform. The corpus contains 370,000 tokens and is larger, more borrowing-dense, OOV-rich, and topic-varied than previous corpora available for this task. Our results show that a BiLSTM-CRF model fed with subword embeddings along with either Transformer-based embeddings pretrained on codeswitched data or a combination of contextualized word embeddings outperforms results obtained by a multilingual BERT-based model.", } ``` | 84948537f5b825ecb18dc687a55abd4e |

apache-2.0 | ['whisper-event', 'hf-asr-leaderboard', 'generated_from_trainer'] | false | whisper-small-mn-7 This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.3061 - Wer: 32.6469 - Cer: 11.2319 | 82981f9fa6a5cb0f61f529c1bb18039a |

apache-2.0 | ['whisper-event', 'hf-asr-leaderboard', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 15000 - mixed_precision_training: Native AMP | d0e5663dbe3ad832af75324a1805042a |

apache-2.0 | ['whisper-event', 'hf-asr-leaderboard', 'generated_from_trainer'] | false | Training script ```bash python train.py \ --train_datasets "mozilla-foundation/common_voice_11_0|mn|train+validation,google/fleurs|mn_mn|train+validation,bayartsogt/ulaanbal-v0||train" \ --eval_datasets "mozilla-foundation/common_voice_11_0|mn|test" \ --whisper-size "small" \ --language "mn,Mongolian" \ --keep-chars " абвгдеёжзийклмноөпрстуүфхцчшъыьэюя.,?!" \ --train-batch-size 32 \ --eval-batch-size 32 \ --max-steps 15000 \ --num-workers 8 \ --version 7 \ ``` | ed8564324ca6061a371aacd865d7de9f |

apache-2.0 | ['whisper-event', 'hf-asr-leaderboard', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | Cer | |:-------------:|:-----:|:-----:|:---------------:|:-------:|:-------:| | 0.3416 | 0.61 | 1000 | 0.4335 | 51.0979 | 17.8608 | | 0.2266 | 1.22 | 2000 | 0.3383 | 39.5346 | 13.6468 | | 0.2134 | 1.83 | 3000 | 0.2994 | 35.6565 | 12.1677 | | 0.165 | 2.43 | 4000 | 0.2927 | 34.1927 | 11.4602 | | 0.1205 | 3.04 | 5000 | 0.2879 | 33.5209 | 11.3002 | | 0.1284 | 3.65 | 6000 | 0.2884 | 32.7507 | 10.9885 | | 0.0893 | 4.26 | 7000 | 0.3022 | 33.0894 | 11.2075 | | 0.0902 | 4.87 | 8000 | 0.3061 | 32.6469 | 11.2319 | | 0.065 | 5.48 | 9000 | 0.3233 | 32.8163 | 11.1595 | | 0.0436 | 6.09 | 10000 | 0.3372 | 32.6852 | 11.1384 | | 0.0469 | 6.7 | 11000 | 0.3481 | 32.8272 | 11.2867 | | 0.0292 | 7.3 | 12000 | 0.3643 | 33.0784 | 11.3785 | | 0.0277 | 7.91 | 13000 | 0.3700 | 33.1877 | 11.3600 | | 0.0196 | 8.52 | 14000 | 0.3806 | 33.3734 | 11.4273 | | 0.016 | 9.13 | 15000 | 0.3844 | 33.3188 | 11.4248 | | b15bb41ae5471d9bfb9a7473147077f3 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert_add_GLUE_Experiment_logit_kd_qnli_96 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the GLUE QNLI dataset. It achieves the following results on the evaluation set: - Loss: 0.3984 - Accuracy: 0.5806 | 7648fd8e831b84d1c7c213e0ae4ca873 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.4153 | 1.0 | 410 | 0.4114 | 0.5054 | | 0.4152 | 2.0 | 820 | 0.4115 | 0.5054 | | 0.4129 | 3.0 | 1230 | 0.4020 | 0.5686 | | 0.3995 | 4.0 | 1640 | 0.3984 | 0.5806 | | 0.3934 | 5.0 | 2050 | 0.3992 | 0.5794 | | 0.3888 | 6.0 | 2460 | 0.4024 | 0.5810 | | 0.385 | 7.0 | 2870 | 0.4105 | 0.5675 | | 0.3808 | 8.0 | 3280 | 0.4050 | 0.5777 | | 0.3768 | 9.0 | 3690 | 0.4074 | 0.5722 | | b988a3122995509444a078fa92f1208d |

apache-2.0 | ['generated_from_trainer'] | false | my_awesome_qa_model This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the squad dataset. It achieves the following results on the evaluation set: - Loss: 1.7293 | 4279bf9c852ba82f7ac98d98e2764aa5 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | No log | 1.0 | 250 | 2.4442 | | 2.7857 | 2.0 | 500 | 1.7960 | | 2.7857 | 3.0 | 750 | 1.7293 | | 2711609199fa4ed217b0a49fc031cd4a |

apache-2.0 | ['generated_from_trainer'] | false | distilbert_sa_GLUE_Experiment_logit_kd_sst2_256 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the GLUE SST2 dataset. It achieves the following results on the evaluation set: - Loss: 0.7397 - Accuracy: 0.8108 | 8a78b71ac33a0529766543084e2711bd |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 1.1137 | 1.0 | 264 | 0.7619 | 0.8016 | | 0.5525 | 2.0 | 528 | 0.7758 | 0.8050 | | 0.4209 | 3.0 | 792 | 0.7397 | 0.8108 | | 0.3585 | 4.0 | 1056 | 0.8179 | 0.8085 | | 0.3153 | 5.0 | 1320 | 0.8172 | 0.7982 | | 0.2824 | 6.0 | 1584 | 0.8974 | 0.8096 | | 0.2512 | 7.0 | 1848 | 0.9205 | 0.7924 | | 0.2315 | 8.0 | 2112 | 0.9320 | 0.8016 | | ad58e3f995bc3c935370265ed16340f7 |

creativeml-openrail-m | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image', 'diffusers', 'lora'] | false | LoRA DreamBooth - nekotest1-1 These are LoRA adaption weights for [stabilityai/stable-diffusion-2-1-base](https://huggingface.co/stabilityai/stable-diffusion-2-1-base). The weights were trained on the instance prompt "nekotst" using [DreamBooth](https://dreambooth.github.io/). You can find some example images in the following. Test prompt: a tabby cat     | c513020c5826144907fb7144cbb6fea7 |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | Core ML Converted Model: - This model was converted to Core ML for use on Apple Silicon devices. Instructions can be found [here](https://github.com/godly-devotion/MochiDiffusion/wiki/How-to-convert-ckpt-files-to-Core-ML).<br> - Provide the model to an app such as [Mochi Diffusion](https://github.com/godly-devotion/MochiDiffusion) to generate images.<br> - `split_einsum` version is compatible with all compute unit options including Neural Engine.<br> | 45c2a6c85b6d0535d37815ba58f1764c |

creativeml-openrail-m | ['coreml', 'stable-diffusion', 'text-to-image'] | false | Elldreth's Dream Mix: Source(s): [CivitAI](https://civitai.com/models/1254/elldreths-dream-mix) This mixed model is a combination of some of my favorites. A little Pyros Model A mixed with a F111-sd14 diff and mixed into Anything. What's it good at? Portraits Landscapes Fantasy Sci-Fi Anime Semi-realistic Horror It's an all-around easy-to-prompt general purpose model that cranks out some really nice images. No trigger words required. All models were scanned prior to mixing and totally safe. | 4c1d7455be041eef2a0b0b2f666b5743 |

mit | [] | false | Description This model is a fine-tuned version of [BETO (spanish bert)](https://huggingface.co/dccuchile/bert-base-spanish-wwm-uncased) that has been trained on the *Datathon Against Racism* dataset (2022) We performed several experiments that will be described in the upcoming paper "Estimating Ground Truth in a Low-labelled Data Regime:A Study of Racism Detection in Spanish" (NEATClasS 2022) We applied 6 different methods ground-truth estimations, and for each one we performed 4 epochs of fine-tuning. The result is made of 24 models: | method | epoch 1 | epoch 3 | epoch 3 | epoch 4 | |--- |--- |--- |--- |--- | | raw-label | [raw-label-epoch-1](https://huggingface.co/MartinoMensio/racism-models-raw-label-epoch-1) | [raw-label-epoch-2](https://huggingface.co/MartinoMensio/racism-models-raw-label-epoch-2) | [raw-label-epoch-3](https://huggingface.co/MartinoMensio/racism-models-raw-label-epoch-3) | [raw-label-epoch-4](https://huggingface.co/MartinoMensio/racism-models-raw-label-epoch-4) | | m-vote-strict | [m-vote-strict-epoch-1](https://huggingface.co/MartinoMensio/racism-models-m-vote-strict-epoch-1) | [m-vote-strict-epoch-2](https://huggingface.co/MartinoMensio/racism-models-m-vote-strict-epoch-2) | [m-vote-strict-epoch-3](https://huggingface.co/MartinoMensio/racism-models-m-vote-strict-epoch-3) | [m-vote-strict-epoch-4](https://huggingface.co/MartinoMensio/racism-models-m-vote-strict-epoch-4) | | m-vote-nonstrict | [m-vote-nonstrict-epoch-1](https://huggingface.co/MartinoMensio/racism-models-m-vote-nonstrict-epoch-1) | [m-vote-nonstrict-epoch-2](https://huggingface.co/MartinoMensio/racism-models-m-vote-nonstrict-epoch-2) | [m-vote-nonstrict-epoch-3](https://huggingface.co/MartinoMensio/racism-models-m-vote-nonstrict-epoch-3) | [m-vote-nonstrict-epoch-4](https://huggingface.co/MartinoMensio/racism-models-m-vote-nonstrict-epoch-4) | | regression-w-m-vote | [regression-w-m-vote-epoch-1](https://huggingface.co/MartinoMensio/racism-models-regression-w-m-vote-epoch-1) | [regression-w-m-vote-epoch-2](https://huggingface.co/MartinoMensio/racism-models-regression-w-m-vote-epoch-2) | [regression-w-m-vote-epoch-3](https://huggingface.co/MartinoMensio/racism-models-regression-w-m-vote-epoch-3) | [regression-w-m-vote-epoch-4](https://huggingface.co/MartinoMensio/racism-models-regression-w-m-vote-epoch-4) | | w-m-vote-strict | [w-m-vote-strict-epoch-1](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-strict-epoch-1) | [w-m-vote-strict-epoch-2](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-strict-epoch-2) | [w-m-vote-strict-epoch-3](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-strict-epoch-3) | [w-m-vote-strict-epoch-4](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-strict-epoch-4) | | w-m-vote-nonstrict | [w-m-vote-nonstrict-epoch-1](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-nonstrict-epoch-1) | [w-m-vote-nonstrict-epoch-2](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-nonstrict-epoch-2) | [w-m-vote-nonstrict-epoch-3](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-nonstrict-epoch-3) | [w-m-vote-nonstrict-epoch-4](https://huggingface.co/MartinoMensio/racism-models-w-m-vote-nonstrict-epoch-4) | This model is `w-m-vote-strict-epoch-3` | 99cc492215a95c4dd5376a8ef8b56796 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.