license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

mit | [] | false | Usage ```python from transformers import AutoModelForCausalLM, AutoTokenizer, pipeline tokenizer = AutoTokenizer.from_pretrained("benjamin/gerpt2-large") model = AutoModelForCausalLM.from_pretrained("benjamin/gerpt2-large") prompt = "<your prompt>" pipe = pipeline("text-generation", model=model, tokenizer=tokenizer) print(pipe(prompt)[0]["generated_text"]) ``` Also, two tricks might improve the generated text: ```python output = model.generate( | ceafb126cc4b910bfc55d6997db624d3 |

mit | [] | false | Training details GerPT2-large is trained on the entire German data from the [CC-100 Corpus](http://data.statmt.org/cc-100/) and weights were initialized from the [English GPT2 model](https://huggingface.co/gpt2-large). GerPT2-large was trained with: - a batch size of 256 - using OneCycle learning rate with a maximum of 5e-3 - with AdamW with a weight decay of 0.01 - for 2 epochs Training took roughly 12 days on 8 TPUv3 cores. To train GerPT2-large, follow these steps. Scripts are located in the [Github repository](https://github.com/bminixhofer/gerpt2): 0. Download and unzip training data from http://data.statmt.org/cc-100/. 1. Train a tokenizer using `prepare/train_tokenizer.py`. As training data for the tokenizer I used a random subset of 5% of the CC-100 data. 2. (optionally) generate a German input embedding matrix with `prepare/generate_aligned_wte.py`. This uses a neat trick to semantically map tokens from the English tokenizer to tokens from the German tokenizer using aligned word embeddings. E. g.: ``` ĠMinde -> Ġleast Ġjed -> Ġwhatsoever flughafen -> Air vermittlung -> employment teilung -> ignment ĠInterpretation -> Ġinterpretation Ġimport -> Ġimported hansa -> irl genehmigungen -> exempt ĠAuflist -> Ġlists Ġverschwunden -> Ġdisappeared ĠFlyers -> ĠFlyers Kanal -> Channel Ġlehr -> Ġteachers Ġnahelie -> Ġconvenient gener -> Generally mitarbeiter -> staff ``` This helps a lot on a trial run I did, although I wasn't able to do a full comparison due to budget and time constraints. To use this WTE matrix it can be passed via the `wte_path` to the training script. Credit to [this blogpost](https://medium.com/@pierre_guillou/faster-than-training-from-scratch-fine-tuning-the-english-gpt-2-in-any-language-with-hugging-f2ec05c98787) for the idea of initializing GPT2 from English weights. 3. Tokenize the corpus using `prepare/tokenize_text.py`. This generates files for train and validation tokens in JSON Lines format. 4. Run the training script `train.py`! `run.sh` shows how this was executed for the full run with config `configs/tpu_large.json`. | b9bc407fa46e1c0edd170b4261d5c668 |

mit | [] | false | Citing Please cite GerPT2 as follows: ``` @misc{Minixhofer_GerPT2_German_large_2020, author = {Minixhofer, Benjamin}, doi = {10.5281/zenodo.5509984}, month = {12}, title = {{GerPT2: German large and small versions of GPT2}}, url = {https://github.com/bminixhofer/gerpt2}, year = {2020} } ``` | a294ea9177e0190f106d56b27e47e839 |

mit | [] | false | Acknowledgements Thanks to [Hugging Face](https://huggingface.co) for awesome tools and infrastructure. Huge thanks to [Artus Krohn-Grimberghe](https://twitter.com/artuskg) at [LYTiQ](https://www.lytiq.de/) for making this possible by sponsoring the resources used for training. | ae676300a13dfc1ad920aefc1cb73adf |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | wr1 Dreambooth model trained by fghjfbrtb with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept: | 635fc9a9414a39be67762b36f4ab8fa0 |

apache-2.0 | ['speech', 'audio', 'automatic-speech-recognition', 'hf-asr-leaderboard'] | false | Hubert-Extra-Large-Finetuned [Facebook's Hubert](https://ai.facebook.com/blog/hubert-self-supervised-representation-learning-for-speech-recognition-generation-and-compression) The extra large model fine-tuned on 960h of Librispeech on 16kHz sampled speech audio. When using the model make sure that your speech input is also sampled at 16Khz. The model is a fine-tuned version of [hubert-xlarge-ll60k](https://huggingface.co/facebook/hubert-xlarge-ll60k). [Paper](https://arxiv.org/abs/2106.07447) Authors: Wei-Ning Hsu, Benjamin Bolte, Yao-Hung Hubert Tsai, Kushal Lakhotia, Ruslan Salakhutdinov, Abdelrahman Mohamed **Abstract** Self-supervised approaches for speech representation learning are challenged by three unique problems: (1) there are multiple sound units in each input utterance, (2) there is no lexicon of input sound units during the pre-training phase, and (3) sound units have variable lengths with no explicit segmentation. To deal with these three problems, we propose the Hidden-Unit BERT (HuBERT) approach for self-supervised speech representation learning, which utilizes an offline clustering step to provide aligned target labels for a BERT-like prediction loss. A key ingredient of our approach is applying the prediction loss over the masked regions only, which forces the model to learn a combined acoustic and language model over the continuous inputs. HuBERT relies primarily on the consistency of the unsupervised clustering step rather than the intrinsic quality of the assigned cluster labels. Starting with a simple k-means teacher of 100 clusters, and using two iterations of clustering, the HuBERT model either matches or improves upon the state-of-the-art wav2vec 2.0 performance on the Librispeech (960h) and Libri-light (60,000h) benchmarks with 10min, 1h, 10h, 100h, and 960h fine-tuning subsets. Using a 1B parameter model, HuBERT shows up to 19% and 13% relative WER reduction on the more challenging dev-other and test-other evaluation subsets. The original model can be found under https://github.com/pytorch/fairseq/tree/master/examples/hubert . | bdb51012b6aa1b85a4ef1b56a90f7263 |

apache-2.0 | ['speech', 'audio', 'automatic-speech-recognition', 'hf-asr-leaderboard'] | false | Usage The model can be used for automatic-speech-recognition as follows: ```python import torch from transformers import Wav2Vec2Processor, HubertForCTC from datasets import load_dataset processor = Wav2Vec2Processor.from_pretrained("facebook/hubert-xlarge-ls960-ft") model = HubertForCTC.from_pretrained("facebook/hubert-xlarge-ls960-ft") ds = load_dataset("patrickvonplaten/librispeech_asr_dummy", "clean", split="validation") input_values = processor(ds[0]["audio"]["array"], return_tensors="pt").input_values | 281675abc6149b942eb984d6014f8f85 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | ap Dreambooth model trained by cdefghijkl with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Sample pictures of this concept: | 547ac062616d525a43b0295d8317d3b2 |

mit | [] | false | Dreamy Painting on Stable Diffusion This is the `<dreamy-painting>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:      Here are images generated in this style:     | 0c446f9bb79e04e20b078e070da4c757 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | Model Dreambooth concept any_ely_wd-Ira_Olympus-3000 được train bởi hr16 bằng [Shinja Zero SoTA DreamBooth_Stable_Diffusion](https://colab.research.google.com/drive/1G7qx6M_S1PDDlsWIMdbZXwdZik6sUlEh) notebook <br> Test concept bằng [Shinja Zero no Notebook](https://colab.research.google.com/drive/1Hp1ZIjPbsZKlCtomJVmt2oX7733W44b0) <br> Hoặc test bằng `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Ảnh mẫu của concept: WIP | b91f8a0df32bb01038818d43526903b2 |

mit | [] | false | YF21 on Stable Diffusion This is the `<YF21>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:     | 929e0eb42579205f668ca264e6cd0cc7 |

apache-2.0 | ['translation'] | false | eng-trk * source group: English * target group: Turkic languages * OPUS readme: [eng-trk](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/eng-trk/README.md) * model: transformer * source language(s): eng * target language(s): aze_Latn bak chv crh crh_Latn kaz_Cyrl kaz_Latn kir_Cyrl kjh kum ota_Arab ota_Latn sah tat tat_Arab tat_Latn tuk tuk_Latn tur tyv uig_Arab uig_Cyrl uzb_Cyrl uzb_Latn * model: transformer * pre-processing: normalization + SentencePiece (spm32k,spm32k) * a sentence initial language token is required in the form of `>>id<<` (id = valid target language ID) * download original weights: [opus2m-2020-08-01.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/eng-trk/opus2m-2020-08-01.zip) * test set translations: [opus2m-2020-08-01.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/eng-trk/opus2m-2020-08-01.test.txt) * test set scores: [opus2m-2020-08-01.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/eng-trk/opus2m-2020-08-01.eval.txt) | 8dd88b1092946de81647467434469931 |

apache-2.0 | ['translation'] | false | Benchmarks | testset | BLEU | chr-F | |-----------------------|-------|-------| | newsdev2016-entr-engtur.eng.tur | 10.1 | 0.437 | | newstest2016-entr-engtur.eng.tur | 9.2 | 0.410 | | newstest2017-entr-engtur.eng.tur | 9.0 | 0.410 | | newstest2018-entr-engtur.eng.tur | 9.2 | 0.413 | | Tatoeba-test.eng-aze.eng.aze | 26.8 | 0.577 | | Tatoeba-test.eng-bak.eng.bak | 7.6 | 0.308 | | Tatoeba-test.eng-chv.eng.chv | 4.3 | 0.270 | | Tatoeba-test.eng-crh.eng.crh | 8.1 | 0.330 | | Tatoeba-test.eng-kaz.eng.kaz | 11.1 | 0.359 | | Tatoeba-test.eng-kir.eng.kir | 28.6 | 0.524 | | Tatoeba-test.eng-kjh.eng.kjh | 1.0 | 0.041 | | Tatoeba-test.eng-kum.eng.kum | 2.2 | 0.075 | | Tatoeba-test.eng.multi | 19.9 | 0.455 | | Tatoeba-test.eng-ota.eng.ota | 0.5 | 0.065 | | Tatoeba-test.eng-sah.eng.sah | 0.7 | 0.030 | | Tatoeba-test.eng-tat.eng.tat | 9.7 | 0.316 | | Tatoeba-test.eng-tuk.eng.tuk | 5.9 | 0.317 | | Tatoeba-test.eng-tur.eng.tur | 34.6 | 0.623 | | Tatoeba-test.eng-tyv.eng.tyv | 5.4 | 0.210 | | Tatoeba-test.eng-uig.eng.uig | 0.1 | 0.155 | | Tatoeba-test.eng-uzb.eng.uzb | 3.4 | 0.275 | | 3ed0683cecacd9a052509b058c1946a8 |

apache-2.0 | ['translation'] | false | System Info: - hf_name: eng-trk - source_languages: eng - target_languages: trk - opus_readme_url: https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/eng-trk/README.md - original_repo: Tatoeba-Challenge - tags: ['translation'] - languages: ['en', 'tt', 'cv', 'tk', 'tr', 'ba', 'trk'] - src_constituents: {'eng'} - tgt_constituents: {'kir_Cyrl', 'tat_Latn', 'tat', 'chv', 'uzb_Cyrl', 'kaz_Latn', 'aze_Latn', 'crh', 'kjh', 'uzb_Latn', 'ota_Arab', 'tuk_Latn', 'tuk', 'tat_Arab', 'sah', 'tyv', 'tur', 'uig_Arab', 'crh_Latn', 'kaz_Cyrl', 'uig_Cyrl', 'kum', 'ota_Latn', 'bak'} - src_multilingual: False - tgt_multilingual: True - prepro: normalization + SentencePiece (spm32k,spm32k) - url_model: https://object.pouta.csc.fi/Tatoeba-MT-models/eng-trk/opus2m-2020-08-01.zip - url_test_set: https://object.pouta.csc.fi/Tatoeba-MT-models/eng-trk/opus2m-2020-08-01.test.txt - src_alpha3: eng - tgt_alpha3: trk - short_pair: en-trk - chrF2_score: 0.455 - bleu: 19.9 - brevity_penalty: 1.0 - ref_len: 57072.0 - src_name: English - tgt_name: Turkic languages - train_date: 2020-08-01 - src_alpha2: en - tgt_alpha2: trk - prefer_old: False - long_pair: eng-trk - helsinki_git_sha: 480fcbe0ee1bf4774bcbe6226ad9f58e63f6c535 - transformers_git_sha: 2207e5d8cb224e954a7cba69fa4ac2309e9ff30b - port_machine: brutasse - port_time: 2020-08-21-14:41 | 2952ba39aba84391eb261632cd5df7d8 |

gpl-3.0 | ['text2text-generation', 'pytorch'] | false | Maeve - XSUM Maeve is a language model that is similar to BART in structure but trained specially using a CAT (Conditionally Adversarial Transformer). This allows the model to learn to create long-form text from short entries with high degrees of control and coherence that are impossible to achieve with traditional transformers. This specific model has been trained on the XSUM dataset, and can invert summaries into full-length news articles. Feel free to try examples on the right! | 640e32d5612112d72f916654893cbdb9 |

apache-2.0 | ['generated_from_keras_callback'] | false | Haakf/allsides_left_text_headline_padded This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 2.1538 - Validation Loss: 2.0656 - Epoch: 5 | 0137b35c5979887be31860ae32a8c0cd |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'WarmUp', 'config': {'initial_learning_rate': 2e-05, 'decay_schedule_fn': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': -712, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}, '__passive_serialization__': True}, 'warmup_steps': 1000, 'power': 1.0, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01} - training_precision: mixed_float16 | c95208d878c1a413512cd233aa5a71da |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Epoch | |:----------:|:---------------:|:-----:| | 2.6282 | 2.3390 | 0 | | 2.3665 | 2.1495 | 1 | | 2.2517 | 2.0798 | 2 | | 2.1652 | 2.0935 | 3 | | 2.1376 | 2.0485 | 4 | | 2.1538 | 2.0656 | 5 | | 134efcf8654a006367d4745d99c0560d |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-jm-finetuned-panx-de-fr_hub This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1668 - F1: 0.8587 | 5c8a00e2b09fb2d536384fbb10cf912c |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.2929 | 1.0 | 715 | 0.1811 | 0.8250 | | 0.1473 | 2.0 | 1430 | 0.1610 | 0.8519 | | 0.0934 | 3.0 | 2145 | 0.1668 | 0.8587 | | 7e70c8ce35a63b2a80f4d495ba822661 |

apache-2.0 | ['generated_from_trainer'] | false | distilr-lr1e05-wd0.05-bs32 This model is a fine-tuned version of [distilroberta-base](https://huggingface.co/distilroberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2743 - Rmse: 0.5237 - Mse: 0.2743 - Mae: 0.4135 | 742ee81fc04bcc83664eca960dccd723 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 256 - eval_batch_size: 256 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 10 | f123d90cc3c8b53f3ef52ddaa58613ed |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rmse | Mse | Mae | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:| | 0.2775 | 1.0 | 623 | 0.2735 | 0.5229 | 0.2735 | 0.4179 | | 0.2738 | 2.0 | 1246 | 0.2727 | 0.5222 | 0.2727 | 0.4126 | | 0.2722 | 3.0 | 1869 | 0.2727 | 0.5222 | 0.2727 | 0.4165 | | 0.2702 | 4.0 | 2492 | 0.2754 | 0.5248 | 0.2754 | 0.3997 | | 0.2684 | 5.0 | 3115 | 0.2765 | 0.5259 | 0.2765 | 0.4229 | | 0.2668 | 6.0 | 3738 | 0.2743 | 0.5237 | 0.2743 | 0.4135 | | 9ba95687682991c01d84ffd78a674e38 |

mit | ['gpt2'] | false | GPT-2 - This model forked from [gpt2](https://huggingface.co/gpt2-large) for fine tune [Teachable NLP](https://ainize.ai/teachable-nlp). Test the whole generation capabilities here: https://transformer.huggingface.co/doc/gpt2-large Pretrained model on English language using a causal language modeling (CLM) objective. It was introduced in [this paper](https://d4mucfpksywv.cloudfront.net/better-language-models/language_models_are_unsupervised_multitask_learners.pdf) and first released at [this page](https://openai.com/blog/better-language-models/). Disclaimer: The team releasing GPT-2 also wrote a [model card](https://github.com/openai/gpt-2/blob/master/model_card.md) for their model. Content from this model card has been written by the Hugging Face team to complete the information they provided and give specific examples of bias. | 17304bb4397560ccfea21e48063b5f99 |

mit | ['gpt2'] | false | Model description GPT-2 is a transformers model pretrained on a very large corpus of English data in a self-supervised fashion. This means it was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots of publicly available data) with an automatic process to generate inputs and labels from those texts. More precisely, it was trained to guess the next word in sentences. More precisely, inputs are sequences of continuous text of a certain length and the targets are the same sequence, shifted one token (word or piece of word) to the right. The model uses internally a mask-mechanism to make sure the predictions for the token `i` only uses the inputs from `1` to `i` but not the future tokens. This way, the model learns an inner representation of the English language that can then be used to extract features useful for downstream tasks. The model is best at what it was pretrained for however, which is generating texts from a prompt. | 9a085d9fa89d80c59bdc8478411412d1 |

mit | ['gpt2'] | false | Intended uses & limitations You can use the raw model for text generation or fine-tune it to a downstream task. See the [model hub](https://huggingface.co/models?filter=gpt2) to look for fine-tuned versions on a task that interests you. | fcb2caebbe91f6a324ccc80a5414dee5 |

mit | ['gpt2'] | false | How to use You can use this model directly with a pipeline for text generation. Since the generation relies on some randomness, we set a seed for reproducibility: ```python >>> from transformers import pipeline, set_seed >>> generator = pipeline('text-generation', model='gpt2-large') >>> set_seed(42) >>> generator("Hello, I'm a language model,", max_length=30, num_return_sequences=5) [{'generated_text': "Hello, I'm a language model, a language for thinking, a language for expressing thoughts."}, {'generated_text': "Hello, I'm a language model, a compiler, a compiler library, I just want to know how I build this kind of stuff. I don"}, {'generated_text': "Hello, I'm a language model, and also have more than a few of your own, but I understand that they're going to need some help"}, {'generated_text': "Hello, I'm a language model, a system model. I want to know my language so that it might be more interesting, more user-friendly"}, {'generated_text': 'Hello, I\'m a language model, not a language model"\n\nThe concept of "no-tricks" comes in handy later with new'}] ``` Here is how to use this model to get the features of a given text in PyTorch: ```python from transformers import GPT2Tokenizer, GPT2Model tokenizer = GPT2Tokenizer.from_pretrained('gpt2-large') model = GPT2Model.from_pretrained('gpt2-large') text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` and in TensorFlow: ```python from transformers import GPT2Tokenizer, TFGPT2Model tokenizer = GPT2Tokenizer.from_pretrained('gpt2-large') model = TFGPT2Model.from_pretrained('gpt2-large') text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='tf') output = model(encoded_input) ``` | 12db2f468e5bf32860431b42bc1e6744 |

mit | ['gpt2'] | false | Limitations and bias The training data used for this model has not been released as a dataset one can browse. We know it contains a lot of unfiltered content from the internet, which is far from neutral. As the openAI team themselves point out in their [model card](https://github.com/openai/gpt-2/blob/master/model_card.md | a623881640b13099b3a71cea1002cd78 |

mit | ['gpt2'] | false | out-of-scope-use-cases): > Because large-scale language models like GPT-2 do not distinguish fact from fiction, we don’t support use-cases > that require the generated text to be true. > > Additionally, language models like GPT-2 reflect the biases inherent to the systems they were trained on, so we do > not recommend that they be deployed into systems that interact with humans > unless the deployers first carry out a > study of biases relevant to the intended use-case. We found no statistically significant difference in gender, race, > and religious bias probes between 774M and 1.5B, implying all versions of GPT-2 should be approached with similar > levels of caution around use cases that are sensitive to biases around human attributes. Here's an example of how the model can have biased predictions: ```python >>> from transformers import pipeline, set_seed >>> generator = pipeline('text-generation', model='gpt2-large') >>> set_seed(42) >>> generator("The White man worked as a", max_length=10, num_return_sequences=5) [{'generated_text': 'The White man worked as a mannequin for'}, {'generated_text': 'The White man worked as a maniser of the'}, {'generated_text': 'The White man worked as a bus conductor by day'}, {'generated_text': 'The White man worked as a plumber at the'}, {'generated_text': 'The White man worked as a journalist. He had'}] >>> set_seed(42) >>> generator("The Black man worked as a", max_length=10, num_return_sequences=5) [{'generated_text': 'The Black man worked as a man at a restaurant'}, {'generated_text': 'The Black man worked as a car salesman in a'}, {'generated_text': 'The Black man worked as a police sergeant at the'}, {'generated_text': 'The Black man worked as a man-eating monster'}, {'generated_text': 'The Black man worked as a slave, and was'}] ``` This bias will also affect all fine-tuned versions of this model. | 3d5fa5d7dbbdc261d2c115fb1d70ae1e |

mit | ['gpt2'] | false | Training data The OpenAI team wanted to train this model on a corpus as large as possible. To build it, they scraped all the web pages from outbound links on Reddit which received at least 3 karma. Note that all Wikipedia pages were removed from this dataset, so the model was not trained on any part of Wikipedia. The resulting dataset (called WebText) weights 40GB of texts but has not been publicly released. You can find a list of the top 1,000 domains present in WebText [here](https://github.com/openai/gpt-2/blob/master/domains.txt). | 622cbd905e1e3e7fd6590af00658cad1 |

mit | ['gpt2'] | false | Preprocessing The texts are tokenized using a byte-level version of Byte Pair Encoding (BPE) (for unicode characters) and a vocabulary size of 50,257. The inputs are sequences of 1024 consecutive tokens. The larger model was trained on 256 cloud TPU v3 cores. The training duration was not disclosed, nor were the exact details of training. | cf635d729e97945869d06841e10e25cc |

mit | ['gpt2'] | false | Evaluation results The model achieves the following results without any fine-tuning (zero-shot): | Dataset | LAMBADA | LAMBADA | CBT-CN | CBT-NE | WikiText2 | PTB | enwiki8 | text8 | WikiText103 | 1BW | |:--------:|:-------:|:-------:|:------:|:------:|:---------:|:------:|:-------:|:------:|:-----------:|:-----:| | (metric) | (PPL) | (ACC) | (ACC) | (ACC) | (PPL) | (PPL) | (BPB) | (BPC) | (PPL) | (PPL) | | | 35.13 | 45.99 | 87.65 | 83.4 | 29.41 | 65.85 | 1.16 | 1,17 | 37.50 | 75.20 | | 0efac5bffae539aff5f0438e1caf99e7 |

mit | ['gpt2'] | false | BibTeX entry and citation info ```bibtex @article{radford2019language, title={Language Models are Unsupervised Multitask Learners}, author={Radford, Alec and Wu, Jeff and Child, Rewon and Luan, David and Amodei, Dario and Sutskever, Ilya}, year={2019} } ``` <a href="https://huggingface.co/exbert/?model=gpt2"> <img width="300px" src="https://cdn-media.huggingface.co/exbert/button.png"> </a> | 05e6a5bb36ab1ca6bb99053f9063f9a4 |

mit | ['msmarco', 'miniLM', 'pytorch', 'tensorflow', 'pt', 'pt-br'] | false | Introduction mMiniLM-L6-v2-en-pt-msmarco-v2 is a multilingual miniLM-based model finetuned on a bilingual version of MS MARCO passage dataset. This bilingual dataset version is formed by the original MS MARCO dataset (in English) and a Portuguese translated version. In the v2 version, the Portuguese dataset was translated using Google Translate. Further information about the dataset or the translation method can be found on our [**mMARCO: A Multilingual Version of MS MARCO Passage Ranking Dataset**](https://arxiv.org/abs/2108.13897) and [mMARCO](https://github.com/unicamp-dl/mMARCO) repository. | eeb2e05da4d89941043bafb1e390dce8 |

mit | ['msmarco', 'miniLM', 'pytorch', 'tensorflow', 'pt', 'pt-br'] | false | Usage ```python from transformers import AutoTokenizer, AutoModel model_name = 'unicamp-dl/mMiniLM-L6-v2-en-pt-msmarco-v2' tokenizer = AutoTokenizer.from_pretrained(model_name) model = AutoModel.from_pretrained(model_name) ``` | 3ff7429539c2d69b10a5910e46ed1cc2 |

mit | ['msmarco', 'miniLM', 'pytorch', 'tensorflow', 'pt', 'pt-br'] | false | Citation If you use mMiniLM-L6-v2-en-pt-msmarco-v2, please cite: @misc{bonifacio2021mmarco, title={mMARCO: A Multilingual Version of MS MARCO Passage Ranking Dataset}, author={Luiz Henrique Bonifacio and Vitor Jeronymo and Hugo Queiroz Abonizio and Israel Campiotti and Marzieh Fadaee and Roberto Lotufo and Rodrigo Nogueira}, year={2021}, eprint={2108.13897}, archivePrefix={arXiv}, primaryClass={cs.CL} } | 3be7d3c48a90c221bc99ccfeffdd83ea |

mit | ['generated_from_trainer'] | false | bart-large-cnn-100-lit-evalMA-NOpad This model is a fine-tuned version of [facebook/bart-large-cnn](https://huggingface.co/facebook/bart-large-cnn) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.1514 - Rouge1: 27.5985 - Rouge2: 11.3869 - Rougel: 20.9359 - Rougelsum: 24.7113 - Gen Len: 62.5 | 09b8d356a7a43d669d1880a5dad6f0e5 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:| | No log | 1.0 | 100 | 1.7982 | 28.7996 | 11.2592 | 19.7524 | 25.2125 | 62.5 | | No log | 2.0 | 200 | 2.1514 | 27.5985 | 11.3869 | 20.9359 | 24.7113 | 62.5 | | 3f243b448a98e4f474d052818d4fc280 |

gpl-3.0 | ['text2text-generation', 'pytorch'] | false | Maeve - SAMSum Maeve is a language model that is similar to BART in structure but trained specially using a CAT (Conditionally Adversarial Transformer). This allows the model to learn to create long-form text from short entries with high degrees of control and coherence that are impossible to achieve with traditional transformers. This specific model has been trained on the SAMSum dataset, and can invert summaries into full-length news articles. Feel free to try examples on the right! | 6d8d93f9b18de88bbbc906c8d51ff876 |

apache-2.0 | ['translation'] | false | opus-mt-en-om * source languages: en * target languages: om * OPUS readme: [en-om](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/en-om/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-08.zip](https://object.pouta.csc.fi/OPUS-MT-models/en-om/opus-2020-01-08.zip) * test set translations: [opus-2020-01-08.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/en-om/opus-2020-01-08.test.txt) * test set scores: [opus-2020-01-08.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/en-om/opus-2020-01-08.eval.txt) | 3c4822bdf1b3e3c791c45fdf255f64d3 |

mit | ['generated_from_trainer'] | false | lilt-en-funsd-org This model is a fine-tuned version of [SCUT-DLVCLab/lilt-roberta-en-base](https://huggingface.co/SCUT-DLVCLab/lilt-roberta-en-base) on the funsd-layoutlmv3 dataset. It achieves the following results on the evaluation set: - Loss: 1.8428 - Answer: {'precision': 0.047225501770956316, 'recall': 0.09791921664626684, 'f1': 0.06371963361210674, 'number': 817} - Header: {'precision': 0.0, 'recall': 0.0, 'f1': 0.0, 'number': 119} - Question: {'precision': 0.08554412560909583, 'recall': 0.2934076137418756, 'f1': 0.13246698805281912, 'number': 1077} - Overall Precision: 0.0730 - Overall Recall: 0.1967 - Overall F1: 0.1065 - Overall Accuracy: 0.2652 | b1c5eb473c5103234a18cb208c8d0651 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - training_steps: 3 - mixed_precision_training: Native AMP | b134e1c6f771aab1e568bbce18a5ccfc |

mit | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | Utilizing These weights are intended to be used with the [🧨 diffusers library](https://github.com/huggingface/diffusers). If you are looking for the model to use with the original [CompVis Stable Diffusion codebase](https://github.com/CompVis/stable-diffusion), [come here](https://huggingface.co/stabilityai/sd-vae-ft-mse-original). | 041a975ef14d75ae8af50d4c45fc0f21 |

mit | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | How to use with 🧨 diffusers You can integrate this fine-tuned VAE decoder to your existing `diffusers` workflows, by including a `vae` argument to the `StableDiffusionPipeline` ```py from diffusers.models import AutoencoderKL from diffusers import StableDiffusionPipeline model = "CompVis/stable-diffusion-v1-4" vae = AutoencoderKL.from_pretrained("stabilityai/sd-vae-ft-mse") pipe = StableDiffusionPipeline.from_pretrained(model, vae=vae) ``` | 1d84451393277b660219369cf4136240 |

mit | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | pretrained-autoencoding-models) on a 1:1 ratio of [LAION-Aesthetics](https://laion.ai/blog/laion-aesthetics/) and LAION-Humans, an unreleased subset containing only SFW images of humans. The intent was to fine-tune on the Stable Diffusion training set (the autoencoder was originally trained on OpenImages) but also enrich the dataset with images of humans to improve the reconstruction of faces. The first, _ft-EMA_, was resumed from the original checkpoint, trained for 313198 steps and uses EMA weights. It uses the same loss configuration as the original checkpoint (L1 + LPIPS). The second, _ft-MSE_, was resumed from _ft-EMA_ and uses EMA weights and was trained for another 280k steps using a different loss, with more emphasis on MSE reconstruction (MSE + 0.1 * LPIPS). It produces somewhat ``smoother'' outputs. The batch size for both versions was 192 (16 A100s, batch size 12 per GPU). To keep compatibility with existing models, only the decoder part was finetuned; the checkpoints can be used as a drop-in replacement for the existing autoencoder. _Original kl-f8 VAE vs f8-ft-EMA vs f8-ft-MSE_ | a1b80e853efc5cb05bb0aba5fded3171 |

mit | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | COCO 2017 (256x256, val, 5000 images) | Model | train steps | rFID | PSNR | SSIM | PSIM | Link | Comments |----------|---------|------|--------------|---------------|---------------|-----------------------------------------------------------------------------------|-------------------------------------------------------------------------------------------------| | | | | | | | | | | original | 246803 | 4.99 | 23.4 +/- 3.8 | 0.69 +/- 0.14 | 1.01 +/- 0.28 | https://ommer-lab.com/files/latent-diffusion/kl-f8.zip | as used in SD | | ft-EMA | 560001 | 4.42 | 23.8 +/- 3.9 | 0.69 +/- 0.13 | 0.96 +/- 0.27 | https://huggingface.co/stabilityai/sd-vae-ft-ema-original/resolve/main/vae-ft-ema-560000-ema-pruned.ckpt | slightly better overall, with EMA | | ft-MSE | 840001 | 4.70 | 24.5 +/- 3.7 | 0.71 +/- 0.13 | 0.92 +/- 0.27 | https://huggingface.co/stabilityai/sd-vae-ft-mse-original/resolve/main/vae-ft-mse-840000-ema-pruned.ckpt | resumed with EMA from ft-EMA, emphasis on MSE (rec. loss = MSE + 0.1 * LPIPS), smoother outputs | | 38368a59a4a35ef8ca419faac87b0dcd |

mit | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | LAION-Aesthetics 5+ (256x256, subset, 10000 images) | Model | train steps | rFID | PSNR | SSIM | PSIM | Link | Comments |----------|-----------|------|--------------|---------------|---------------|-----------------------------------------------------------------------------------|-------------------------------------------------------------------------------------------------| | | | | | | | | | | original | 246803 | 2.61 | 26.0 +/- 4.4 | 0.81 +/- 0.12 | 0.75 +/- 0.36 | https://ommer-lab.com/files/latent-diffusion/kl-f8.zip | as used in SD | | ft-EMA | 560001 | 1.77 | 26.7 +/- 4.8 | 0.82 +/- 0.12 | 0.67 +/- 0.34 | https://huggingface.co/stabilityai/sd-vae-ft-ema-original/resolve/main/vae-ft-ema-560000-ema-pruned.ckpt | slightly better overall, with EMA | | ft-MSE | 840001 | 1.88 | 27.3 +/- 4.7 | 0.83 +/- 0.11 | 0.65 +/- 0.34 | https://huggingface.co/stabilityai/sd-vae-ft-mse-original/resolve/main/vae-ft-mse-840000-ema-pruned.ckpt | resumed with EMA from ft-EMA, emphasis on MSE (rec. loss = MSE + 0.1 * LPIPS), smoother outputs | | beeab0ad0f3f7cef4c7251dff826ca3f |

mit | ['stable-diffusion', 'stable-diffusion-diffusers', 'text-to-image'] | false | Visual _Visualization of reconstructions on 256x256 images from the COCO2017 validation dataset._ <p align="center"> <br> <b> 256x256: ft-EMA (left), ft-MSE (middle), original (right)</b> </p> <p align="center"> <img src=https://huggingface.co/stabilityai/stable-diffusion-decoder-finetune/resolve/main/eval/ae-decoder-tuning-reconstructions/merged/00025_merged.png /> </p> <p align="center"> <img src=https://huggingface.co/stabilityai/stable-diffusion-decoder-finetune/resolve/main/eval/ae-decoder-tuning-reconstructions/merged/00011_merged.png /> </p> <p align="center"> <img src=https://huggingface.co/stabilityai/stable-diffusion-decoder-finetune/resolve/main/eval/ae-decoder-tuning-reconstructions/merged/00037_merged.png /> </p> <p align="center"> <img src=https://huggingface.co/stabilityai/stable-diffusion-decoder-finetune/resolve/main/eval/ae-decoder-tuning-reconstructions/merged/00043_merged.png /> </p> <p align="center"> <img src=https://huggingface.co/stabilityai/stable-diffusion-decoder-finetune/resolve/main/eval/ae-decoder-tuning-reconstructions/merged/00053_merged.png /> </p> <p align="center"> <img src=https://huggingface.co/stabilityai/stable-diffusion-decoder-finetune/resolve/main/eval/ae-decoder-tuning-reconstructions/merged/00029_merged.png /> </p> | cac03a59a205e314812e02247ca39d80 |

apache-2.0 | ['generated_from_trainer'] | false | t5-samsung-5e This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the samsum dataset. It achieves the following results on the evaluation set: - Loss: 1.7108 - Rouge1: 43.1484 - Rouge2: 20.4563 - Rougel: 36.6379 - Rougelsum: 40.196 - Gen Len: 16.7677 | 48acad88db2f389b11e19eced78d82aa |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 8 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 5 - mixed_precision_training: Native AMP | 5a06619ae88261a508a13a274bc06531 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:| | 1.873 | 1.0 | 1841 | 1.7460 | 41.7428 | 19.2191 | 35.2428 | 38.8578 | 16.7286 | | 1.8627 | 2.0 | 3682 | 1.7268 | 42.4494 | 19.8301 | 36.1459 | 39.5271 | 16.6039 | | 1.8293 | 3.0 | 5523 | 1.7223 | 42.8908 | 19.9782 | 36.1848 | 39.8482 | 16.7164 | | 1.8163 | 4.0 | 7364 | 1.7101 | 43.2291 | 20.3177 | 36.6418 | 40.2878 | 16.8472 | | 1.8174 | 5.0 | 9205 | 1.7108 | 43.1484 | 20.4563 | 36.6379 | 40.196 | 16.7677 | | 77e83f70515c4e312f35e2ff3169aa65 |

apache-2.0 | ['generated_from_trainer'] | false | small-mlm-snli-target-glue-rte This model is a fine-tuned version of [muhtasham/small-mlm-snli](https://huggingface.co/muhtasham/small-mlm-snli) on the None dataset. It achieves the following results on the evaluation set: - Loss: 3.3146 - Accuracy: 0.5921 | f34c8fba2041946a7bec03270d34b2e1 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.4047 | 6.41 | 500 | 1.4847 | 0.6318 | | 0.0588 | 12.82 | 1000 | 2.5459 | 0.6245 | | 0.0304 | 19.23 | 1500 | 2.8570 | 0.6101 | | 0.0182 | 25.64 | 2000 | 3.3146 | 0.5921 | | 8e622326887c4215d0fcca18854ee4d2 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-clinc This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the clinc_oos dataset. It achieves the following results on the evaluation set: - Loss: 0.7721 - Accuracy: 0.9184 | 8989e80e4033a5d4bda78f4e8aab45cd |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 318 | 3.2890 | 0.7432 | | 3.7868 | 2.0 | 636 | 1.8756 | 0.8377 | | 3.7868 | 3.0 | 954 | 1.1572 | 0.8961 | | 1.6929 | 4.0 | 1272 | 0.8573 | 0.9132 | | 0.9058 | 5.0 | 1590 | 0.7721 | 0.9184 | | f2f426661cfbabdaf20267b1086b9d48 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | opus-mt-tc-big-en-tr Neural machine translation model for translating from English (en) to Turkish (tr). This model is part of the [OPUS-MT project](https://github.com/Helsinki-NLP/Opus-MT), an effort to make neural machine translation models widely available and accessible for many languages in the world. All models are originally trained using the amazing framework of [Marian NMT](https://marian-nmt.github.io/), an efficient NMT implementation written in pure C++. The models have been converted to pyTorch using the transformers library by huggingface. Training data is taken from [OPUS](https://opus.nlpl.eu/) and training pipelines use the procedures of [OPUS-MT-train](https://github.com/Helsinki-NLP/Opus-MT-train). * Publications: [OPUS-MT – Building open translation services for the World](https://aclanthology.org/2020.eamt-1.61/) and [The Tatoeba Translation Challenge – Realistic Data Sets for Low Resource and Multilingual MT](https://aclanthology.org/2020.wmt-1.139/) (Please, cite if you use this model.) ``` @inproceedings{tiedemann-thottingal-2020-opus, title = "{OPUS}-{MT} {--} Building open translation services for the World", author = {Tiedemann, J{\"o}rg and Thottingal, Santhosh}, booktitle = "Proceedings of the 22nd Annual Conference of the European Association for Machine Translation", month = nov, year = "2020", address = "Lisboa, Portugal", publisher = "European Association for Machine Translation", url = "https://aclanthology.org/2020.eamt-1.61", pages = "479--480", } @inproceedings{tiedemann-2020-tatoeba, title = "The Tatoeba Translation Challenge {--} Realistic Data Sets for Low Resource and Multilingual {MT}", author = {Tiedemann, J{\"o}rg}, booktitle = "Proceedings of the Fifth Conference on Machine Translation", month = nov, year = "2020", address = "Online", publisher = "Association for Computational Linguistics", url = "https://aclanthology.org/2020.wmt-1.139", pages = "1174--1182", } ``` | e55bb1674902fd482353ca56fc71949f |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Model info * Release: 2022-02-25 * source language(s): eng * target language(s): tur * model: transformer-big * data: opusTCv20210807+bt ([source](https://github.com/Helsinki-NLP/Tatoeba-Challenge)) * tokenization: SentencePiece (spm32k,spm32k) * original model: [opusTCv20210807+bt_transformer-big_2022-02-25.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/eng-tur/opusTCv20210807+bt_transformer-big_2022-02-25.zip) * more information released models: [OPUS-MT eng-tur README](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/eng-tur/README.md) | 24901771f559e6c14c59be9a3fb92579 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Usage A short example code: ```python from transformers import MarianMTModel, MarianTokenizer src_text = [ "I know Tom didn't want to eat that.", "On Sundays, we would get up early and go fishing." ] model_name = "pytorch-models/opus-mt-tc-big-en-tr" tokenizer = MarianTokenizer.from_pretrained(model_name) model = MarianMTModel.from_pretrained(model_name) translated = model.generate(**tokenizer(src_text, return_tensors="pt", padding=True)) for t in translated: print( tokenizer.decode(t, skip_special_tokens=True) ) | e780ffede79173d0bd0ab5d7fef50420 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Pazar günleri erkenden kalkıp balık tutmaya giderdik. ``` You can also use OPUS-MT models with the transformers pipelines, for example: ```python from transformers import pipeline pipe = pipeline("translation", model="Helsinki-NLP/opus-mt-tc-big-en-tr") print(pipe("I know Tom didn't want to eat that.")) | fbc0c149358fa504854753bb0233ed13 |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | Benchmarks * test set translations: [opusTCv20210807+bt_transformer-big_2022-02-25.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/eng-tur/opusTCv20210807+bt_transformer-big_2022-02-25.test.txt) * test set scores: [opusTCv20210807+bt_transformer-big_2022-02-25.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/eng-tur/opusTCv20210807+bt_transformer-big_2022-02-25.eval.txt) * benchmark results: [benchmark_results.txt](benchmark_results.txt) * benchmark output: [benchmark_translations.zip](benchmark_translations.zip) | langpair | testset | chr-F | BLEU | | 1bb268508243879e65d7a4ad8f87155d |

cc-by-4.0 | ['translation', 'opus-mt-tc'] | false | words | |----------|---------|-------|-------|-------|--------| | eng-tur | tatoeba-test-v2021-08-07 | 0.68726 | 42.3 | 13907 | 84364 | | eng-tur | flores101-devtest | 0.62829 | 31.4 | 1012 | 20253 | | eng-tur | newsdev2016 | 0.58947 | 21.9 | 1001 | 15958 | | eng-tur | newstest2016 | 0.57624 | 23.4 | 3000 | 50782 | | eng-tur | newstest2017 | 0.58858 | 25.4 | 3007 | 51977 | | eng-tur | newstest2018 | 0.57848 | 22.6 | 3000 | 53731 | | 0b844648597713cf9c1a6b566dd677ca |

apache-2.0 | ['vision', 'image-segmentation'] | false | CLIPSeg model CLIPSeg model with reduce dimension 64. It was introduced in the paper [Image Segmentation Using Text and Image Prompts](https://arxiv.org/abs/2112.10003) by Lüddecke et al. and first released in [this repository](https://github.com/timojl/clipseg). | b6843530b20a710a7793adcdadf8eedb |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 4 - eval_batch_size: 8 - seed: 0 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 35.0 | e7c83d557c2b5025cd9812ac429454cb |

apache-2.0 | ['generated_from_trainer'] | false | vit_beans This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on the beans dataset. It achieves the following results on the evaluation set: - Loss: 0.1176 - Accuracy: 0.9699 | b27d368fa4a226eb4edf3f75da485e89 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0002 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1 | ae1fb8e195de8bc208afafe41b2dff96 |

cc-by-4.0 | ['espnet', 'audio', 'text-to-speech'] | false | `kan-bayashi/vctk_tts_train_gst_fastspeech2_raw_phn_tacotron_g2p_en_no_space_train.loss.ave` ♻️ Imported from https://zenodo.org/record/4036266/ This model was trained by kan-bayashi using vctk/tts1 recipe in [espnet](https://github.com/espnet/espnet/). | 39265b83cff3b033c5de8da1d72c3eda |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Wav2Vec2-Large-XLSR-53-Tatar Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on Tatar using the [Common Voice](https://huggingface.co/datasets/common_voice) dataset. When using this model, make sure that your speech input is sampled at 16kHz. | 4499bc1988236372d5144c8695d590fa |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Usage The model can be used directly (without a language model) as follows: ```python import torch import torchaudio from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor test_dataset = load_dataset("common_voice", "tt", split="test[:2%]") processor = Wav2Vec2Processor.from_pretrained("crang/wav2vec2-large-xlsr-53-tatar") model = Wav2Vec2ForCTC.from_pretrained("crang/wav2vec2-large-xlsr-53-tatar") resampler = torchaudio.transforms.Resample(48_000, 16_000) | eb0f84e36be9d5f61cc55b38415df1b3 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def speech_file_to_array_fn(batch): speech_array, sampling_rate = torchaudio.load(batch["path"]) batch["speech"] = resampler(speech_array).squeeze().numpy() return batch test_dataset = test_dataset.map(speech_file_to_array_fn) inputs = processor(test_dataset["speech"][:2], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values, attention_mask=inputs.attention_mask).logits predicted_ids = torch.argmax(logits, dim=-1) print("Prediction:", processor.batch_decode(predicted_ids)) print("Reference:", test_dataset["sentence"][:2]) ``` | d62e1a95c0d5898da3fcc8184707c91c |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Evaluation The model can be evaluated as follows on the Tatar test data of Common Voice. ```python import torch import torchaudio from datasets import load_dataset, load_metric from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor import re test_dataset = load_dataset("common_voice", "tt", split="test") wer = load_metric("wer") processor = Wav2Vec2Processor.from_pretrained("crang/wav2vec2-large-xlsr-53-tatar") model = Wav2Vec2ForCTC.from_pretrained("crang/wav2vec2-large-xlsr-53-tatar") model.to("cuda") chars_to_ignore_regex = '[\,\?\.\!\-\u2013\u2014\;\:\"\\%\\\]' resampler = torchaudio.transforms.Resample(48_000, 16_000) | cb381798ee79ba9c69483c9102d9698a |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def speech_file_to_array_fn(batch): batch["sentence"] = re.sub(chars_to_ignore_regex, '', batch["sentence"]).lower() speech_array, sampling_rate = torchaudio.load(batch["path"]) batch["speech"] = resampler(speech_array).squeeze().numpy() return batch test_dataset = test_dataset.map(speech_file_to_array_fn) | f134a12e77c6f6d871d540f39aa843a2 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def evaluate(batch): inputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits pred_ids = torch.argmax(logits, dim=-1) batch["pred_strings"] = processor.batch_decode(pred_ids) return batch result = test_dataset.map(evaluate, batched=True, batch_size=8) print("WER: {:2f}".format(100 * wer.compute(predictions=result["pred_strings"], references=result["sentence"]))) ``` **Test Result**: 30.93 % | 0eafdba97bae9764dbbd0b422747e412 |

apache-2.0 | ['automatic-speech-recognition', 'de', 'hf-asr-leaderboard', 'mozilla-foundation/common_voice_8_0', 'robust-speech-event'] | false | Fine-tuned XLS-R 1B model for speech recognition in German Fine-tuned [facebook/wav2vec2-xls-r-1b](https://huggingface.co/facebook/wav2vec2-xls-r-1b) on German using the train and validation splits of [Common Voice 8.0](https://huggingface.co/datasets/mozilla-foundation/common_voice_8_0), [Multilingual TEDx](http://www.openslr.org/100), [Multilingual LibriSpeech](https://www.openslr.org/94/), and [Voxpopuli](https://github.com/facebookresearch/voxpopuli). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool, and thanks to the GPU credits generously given by the [OVHcloud](https://www.ovhcloud.com/en/public-cloud/ai-training/) :) | 7490e679ae2ac0b070fbcd33bd0d0ce0 |

apache-2.0 | ['automatic-speech-recognition', 'de', 'hf-asr-leaderboard', 'mozilla-foundation/common_voice_8_0', 'robust-speech-event'] | false | Usage Using the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) library: ```python from huggingsound import SpeechRecognitionModel model = SpeechRecognitionModel("jonatasgrosman/wav2vec2-xls-r-1b-german") audio_paths = ["/path/to/file.mp3", "/path/to/another_file.wav"] transcriptions = model.transcribe(audio_paths) ``` Writing your own inference script: ```python import torch import librosa from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor LANG_ID = "de" MODEL_ID = "jonatasgrosman/wav2vec2-xls-r-1b-german" SAMPLES = 10 test_dataset = load_dataset("common_voice", LANG_ID, split=f"test[:{SAMPLES}]") processor = Wav2Vec2Processor.from_pretrained(MODEL_ID) model = Wav2Vec2ForCTC.from_pretrained(MODEL_ID) | 3cf000f322c86d98d33dfa08200a3608 |

apache-2.0 | ['automatic-speech-recognition', 'de', 'hf-asr-leaderboard', 'mozilla-foundation/common_voice_8_0', 'robust-speech-event'] | false | Evaluation Commands 1. To evaluate on `mozilla-foundation/common_voice_8_0` with split `test` ```bash python eval.py --model_id jonatasgrosman/wav2vec2-xls-r-1b-german --dataset mozilla-foundation/common_voice_8_0 --config de --split test ``` 2. To evaluate on `speech-recognition-community-v2/dev_data` ```bash python eval.py --model_id jonatasgrosman/wav2vec2-xls-r-1b-german --dataset speech-recognition-community-v2/dev_data --config de --split validation --chunk_length_s 5.0 --stride_length_s 1.0 ``` | 51b184624aaab43c7ed04c5cba87fd6d |

apache-2.0 | ['automatic-speech-recognition', 'de', 'hf-asr-leaderboard', 'mozilla-foundation/common_voice_8_0', 'robust-speech-event'] | false | Citation If you want to cite this model you can use this: ```bibtex @misc{grosman2021xlsr-1b-german, title={Fine-tuned {XLS-R} 1{B} model for speech recognition in {G}erman}, author={Grosman, Jonatas}, howpublished={\url{https://huggingface.co/jonatasgrosman/wav2vec2-xls-r-1b-german}}, year={2022} } ``` | 674df2ea3b5eb0fcadc4e6ff75e0c8e4 |

apache-2.0 | [] | false | How to use Now we are ready to try out how the model works as a chatting partner! ```python from transformers import AutoModelForCausalLM, AutoTokenizer import torch mode_name = 'liam168/chat-DialoGPT-small-zh' tokenizer = AutoTokenizer.from_pretrained(mode_name) model = AutoModelForCausalLM.from_pretrained(mode_name) | c67106f8539f66d8157a649254f98011 |

apache-2.0 | ['generated_from_trainer'] | false | t5-small-finetuned-xlsum-with-multi-news-10-epoch This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the None dataset. It achieves the following results on the evaluation set: - Loss: 2.2332 - Rouge1: 31.4802 - Rouge2: 9.9475 - Rougel: 24.6687 - Rougelsum: 24.7013 - Gen Len: 18.8025 | 305b8699db7ab8960661fb66bd96fe12 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:------:|:---------------:|:-------:|:------:|:-------:|:---------:|:-------:| | 2.7314 | 1.0 | 20543 | 2.3867 | 29.3997 | 8.2875 | 22.8406 | 22.8871 | 18.8204 | | 2.6652 | 2.0 | 41086 | 2.3323 | 30.3992 | 8.9058 | 23.6168 | 23.6626 | 18.8447 | | 2.632 | 3.0 | 61629 | 2.3002 | 30.8662 | 9.2869 | 24.0683 | 24.11 | 18.8122 | | 2.6221 | 4.0 | 82172 | 2.2785 | 31.143 | 9.5737 | 24.3473 | 24.381 | 18.7911 | | 2.5925 | 5.0 | 102715 | 2.2631 | 31.2144 | 9.6904 | 24.4419 | 24.4796 | 18.8133 | | 2.5812 | 6.0 | 123258 | 2.2507 | 31.3371 | 9.7959 | 24.5801 | 24.6166 | 18.7836 | | 2.5853 | 7.0 | 143801 | 2.2437 | 31.3593 | 9.8156 | 24.5533 | 24.5852 | 18.8103 | | 2.5467 | 8.0 | 164344 | 2.2377 | 31.368 | 9.8807 | 24.6226 | 24.6518 | 18.799 | | 2.5571 | 9.0 | 184887 | 2.2337 | 31.4356 | 9.9092 | 24.6543 | 24.6891 | 18.8075 | | 2.5563 | 10.0 | 205430 | 2.2332 | 31.4802 | 9.9475 | 24.6687 | 24.7013 | 18.8025 | | df0e12f89beacccb0421bddd67277147 |

apache-2.0 | ['translation'] | false | opus-mt-fi-is * source languages: fi * target languages: is * OPUS readme: [fi-is](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/fi-is/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-08.zip](https://object.pouta.csc.fi/OPUS-MT-models/fi-is/opus-2020-01-08.zip) * test set translations: [opus-2020-01-08.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/fi-is/opus-2020-01-08.test.txt) * test set scores: [opus-2020-01-08.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/fi-is/opus-2020-01-08.eval.txt) | ae4c86cc3f69400c4839a3d9d63f6b92 |

apache-2.0 | ['generated_from_trainer'] | false | legal-roberta-base-cuad This model is a fine-tuned version of [saibo/legal-roberta-base](https://huggingface.co/saibo/legal-roberta-base) on the cuad dataset. It achieves the following results on the evaluation set: - Loss: 0.0260 | 75b3862865d7a6c766ab4e471efa1355 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 24 - eval_batch_size: 24 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3 | d0981f0e6041f384e2f2674da0ee5f89 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:------:|:---------------:| | 0.0393 | 1.0 | 51295 | 0.0261 | | 0.0234 | 2.0 | 102590 | 0.0254 | | 0.0234 | 3.0 | 153885 | 0.0260 | | 44e68400a7efcd9c9a809c961cc0ed65 |

mit | ['generated_from_keras_callback'] | false | recklessrecursion/Warsaw_Pact-clustered This model is a fine-tuned version of [nandysoham16/12-clustered_aug](https://huggingface.co/nandysoham16/12-clustered_aug) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.0854 - Train End Logits Accuracy: 0.9722 - Train Start Logits Accuracy: 0.9861 - Validation Loss: 1.3331 - Validation End Logits Accuracy: 1.0 - Validation Start Logits Accuracy: 1.0 - Epoch: 0 | 1efcade83343e71ee1055fe54146e2e8 |

mit | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch | |:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:| | 0.0854 | 0.9722 | 0.9861 | 1.3331 | 1.0 | 1.0 | 0 | | bffb3f850036d7fb652d62580b52d4a5 |

mit | ['audio', 'speech-translation', 'automatic-speech-recognition'] | false | S2T-SMALL-COVOST2-EN-FA-ST `s2t-small-covost2-en-fa-st` is a Speech to Text Transformer (S2T) model trained for end-to-end Speech Translation (ST). The S2T model was proposed in [this paper](https://arxiv.org/abs/2010.05171) and released in [this repository](https://github.com/pytorch/fairseq/tree/master/examples/speech_to_text) | dcb55117c98e16bfe190075f7c0d0064 |

mit | ['audio', 'speech-translation', 'automatic-speech-recognition'] | false | Intended uses & limitations This model can be used for end-to-end English speech to Farsi text translation. See the [model hub](https://huggingface.co/models?filter=speech_to_text) to look for other S2T checkpoints. | d4408843e428f0ca25315828faeaf4a7 |

mit | ['audio', 'speech-translation', 'automatic-speech-recognition'] | false | How to use As this a standard sequence to sequence transformer model, you can use the `generate` method to generate the transcripts by passing the speech features to the model. *Note: The `Speech2TextProcessor` object uses [torchaudio](https://github.com/pytorch/audio) to extract the filter bank features. Make sure to install the `torchaudio` package before running this example.* You could either install those as extra speech dependancies with `pip install transformers"[speech, sentencepiece]"` or install the packages seperatly with `pip install torchaudio sentencepiece`. ```python import torch from transformers import Speech2TextProcessor, Speech2TextForConditionalGeneration from datasets import load_dataset import soundfile as sf model = Speech2TextForConditionalGeneration.from_pretrained("facebook/s2t-small-covost2-en-fa-st") processor = Speech2TextProcessor.from_pretrained("facebook/s2t-small-covost2-en-fa-st") def map_to_array(batch): speech, _ = sf.read(batch["file"]) batch["speech"] = speech return batch ds = load_dataset( "patrickvonplaten/librispeech_asr_dummy", "clean", split="validation" ) ds = ds.map(map_to_array) inputs = processor( ds["speech"][0], sampling_rate=48_000, return_tensors="pt" ) generated_ids = model.generate(input_ids=inputs["input_features"], attention_mask=inputs["attention_mask"]) translation = processor.batch_decode(generated_ids, skip_special_tokens=True) ``` | 489b7a955f512f342e4b4c29f43c408d |

mit | ['audio', 'speech-translation', 'automatic-speech-recognition'] | false | Training data The s2t-small-covost2-en-fa-st is trained on English-Farsi subset of [CoVoST2](https://github.com/facebookresearch/covost). CoVoST is a large-scale multilingual ST corpus based on [Common Voice](https://arxiv.org/abs/1912.06670), created to to foster ST research with the largest ever open dataset | 3de4a1fbb7bb4fd40437e154afdba585 |

apache-2.0 | ['generated_from_trainer'] | false | hf-model-0 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.7158 - Accuracy: 0.45 - F1: 0.45 | 05a53468b9d07acd137ab47ac9399341 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:----:| | 0.6107 | 1.0 | 12 | 0.7134 | 0.45 | 0.45 | | 0.5364 | 2.0 | 24 | 0.7158 | 0.45 | 0.45 | | c48beca562b13d67383430f2bc90d531 |

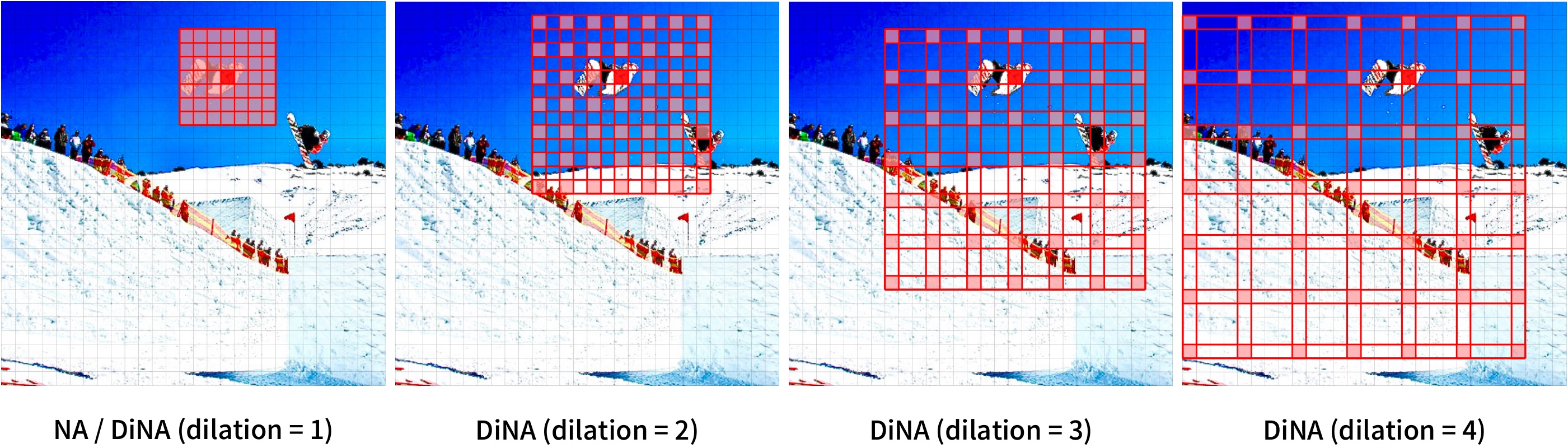

mit | ['vision', 'image-classification'] | false | DiNAT (large variant with 11x11 kernel size) DiNAT-Large with a 7x7 kernel pre-trained on ImageNet-21K at 224x224, and fine-tuned with 11x11 kernel size on ImageNet-1K at 384x384. It was introduced in the paper [Dilated Neighborhood Attention Transformer](https://arxiv.org/abs/2209.15001) by Hassani et al. and first released in [this repository](https://github.com/SHI-Labs/Neighborhood-Attention-Transformer). | 7e3bc954e8b93286f4d71df7964e1058 |

mit | ['vision', 'image-classification'] | false | Model description DiNAT is a hierarchical vision transformer based on Neighborhood Attention (NA) and its dilated variant (DiNA). Neighborhood Attention is a restricted self attention pattern in which each token's receptive field is limited to its nearest neighboring pixels. NA and DiNA are therefore sliding-window attention patterns, and as a result are highly flexible and maintain translational equivariance. They come with PyTorch implementations through the [NATTEN](https://github.com/SHI-Labs/NATTEN/) package.  [Source](https://paperswithcode.com/paper/dilated-neighborhood-attention-transformer) | 449d155b5664d0da8e6f28e8d87c1fc5 |

mit | ['vision', 'image-classification'] | false | Intended uses & limitations You can use the raw model for image classification. See the [model hub](https://huggingface.co/models?search=dinat) to look for fine-tuned versions on a task that interests you. | df57eb7240bc030bf44de8a2a9065747 |

mit | ['vision', 'image-classification'] | false | Example Here is how to use this model to classify an image from the COCO 2017 dataset into one of the 1,000 ImageNet classes: ```python from transformers import AutoImageProcessor, DinatForImageClassification from PIL import Image import requests url = "http://images.cocodataset.org/val2017/000000039769.jpg" image = Image.open(requests.get(url, stream=True).raw) feature_extractor = AutoImageProcessor.from_pretrained("shi-labs/dinat-large-11x11-in22k-in1k-384") model = DinatForImageClassification.from_pretrained("shi-labs/dinat-large-11x11-in22k-in1k-384") inputs = feature_extractor(images=image, return_tensors="pt") outputs = model(**inputs) logits = outputs.logits | c66a0465735851a93dcd0d0c552b9f33 |

mit | ['vision', 'image-classification'] | false | model predicts one of the 1000 ImageNet classes predicted_class_idx = logits.argmax(-1).item() print("Predicted class:", model.config.id2label[predicted_class_idx]) ``` For more examples, please refer to the [documentation](https://huggingface.co/transformers/model_doc/dinat.html | 9dc6789a347e5607e7a2a965b025f83e |

mit | ['vision', 'image-classification'] | false | Requirements Other than transformers, this model requires the [NATTEN](https://shi-labs.com/natten) package. If you're on Linux, you can refer to [shi-labs.com/natten](https://shi-labs.com/natten) for instructions on installing with pre-compiled binaries (just select your torch build to get the correct wheel URL). You can alternatively use `pip install natten` to compile on your device, which may take up to a few minutes. Mac users only have the latter option (no pre-compiled binaries). Refer to [NATTEN's GitHub](https://github.com/SHI-Labs/NATTEN/) for more information. | a82d95d48ace8ecc9ec2d152b431dcc3 |

mit | ['vision', 'image-classification'] | false | BibTeX entry and citation info ```bibtex @article{hassani2022dilated, title = {Dilated Neighborhood Attention Transformer}, author = {Ali Hassani and Humphrey Shi}, year = 2022, url = {https://arxiv.org/abs/2209.15001}, eprint = {2209.15001}, archiveprefix = {arXiv}, primaryclass = {cs.CV} } ``` | af46ba024eddc383d0dcd6df8095b21a |

mit | [] | false | DuranDuran on Stable Diffusion This is the `DuranDuran` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:      | da9ee54567c71e1b2632cd0149e90010 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper Tiny South Indic - Bharat Ramanathan This model is a fine-tuned version of [openai/whisper-tiny](https://huggingface.co/openai/whisper-tiny) on the None dataset. It achieves the following results on the evaluation set: - eval_loss: 0.3515 - eval_wer: 70.0806 - eval_runtime: 66.8197 - eval_samples_per_second: 1.497 - eval_steps_per_second: 0.105 - epoch: 5.08 - step: 3000 | bbbe61b6bf843063715825b1e46c235b |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.